Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

140,696 | 5,414,595,379 | IssuesEvent | 2017-03-01 19:28:24 | vmware/vic | https://api.github.com/repos/vmware/vic | closed | VIC container shim supervisor for containerd. | area/containerd kind/investigation priority/medium | As an engineer, I need to design and implement a POC shim layer for containerd in order to enable containerd to work VIC containers.

This is purely a research work that at best would have a prototype that can create and run VIC container from CLI. I would not expect containerd to be able to use it, however, these are just first steps.

Acceptance criteria

1. Prototype of runc analogue for VIC

2. List of missing things and problems which may arise on VIC side to accommodate containerd shim interface requirements. | 1.0 | VIC container shim supervisor for containerd. - As an engineer, I need to design and implement a POC shim layer for containerd in order to enable containerd to work VIC containers.

This is purely a research work that at best would have a prototype that can create and run VIC container from CLI. I would not expect containerd to be able to use it, however, these are just first steps.

Acceptance criteria

1. Prototype of runc analogue for VIC

2. List of missing things and problems which may arise on VIC side to accommodate containerd shim interface requirements. | priority | vic container shim supervisor for containerd as an engineer i need to design and implement a poc shim layer for containerd in order to enable containerd to work vic containers this is purely a research work that at best would have a prototype that can create and run vic container from cli i would not expect containerd to be able to use it however these are just first steps acceptance criteria prototype of runc analogue for vic list of missing things and problems which may arise on vic side to accommodate containerd shim interface requirements | 1 |

470,478 | 13,538,543,710 | IssuesEvent | 2020-09-16 12:15:47 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | opened | packetfence-pki: add support for SCEP | Priority: Medium Type: Feature / Enhancement | **Is your feature request related to a problem? Please describe.**

When you use `packetfence-pki` to generate RADIUS certificates for EAP-TLS, you may want to configure a [MDM](https://en.wikipedia.org/wiki/Mobile_device_management) software on nodes to automatically get a certificate from `packetfence-pki`.

**Describe the solution you'd like**

Be able to automatically get a client certificate from PacketFence PKI using SCEP protocol. Perhaps [ACME](https://en.wikipedia.org/wiki/Automated_Certificate_Management_Environment) can be used too.

| 1.0 | packetfence-pki: add support for SCEP - **Is your feature request related to a problem? Please describe.**

When you use `packetfence-pki` to generate RADIUS certificates for EAP-TLS, you may want to configure a [MDM](https://en.wikipedia.org/wiki/Mobile_device_management) software on nodes to automatically get a certificate from `packetfence-pki`.

**Describe the solution you'd like**

Be able to automatically get a client certificate from PacketFence PKI using SCEP protocol. Perhaps [ACME](https://en.wikipedia.org/wiki/Automated_Certificate_Management_Environment) can be used too.

| priority | packetfence pki add support for scep is your feature request related to a problem please describe when you use packetfence pki to generate radius certificates for eap tls you may want to configure a software on nodes to automatically get a certificate from packetfence pki describe the solution you d like be able to automatically get a client certificate from packetfence pki using scep protocol perhaps can be used too | 1 |

523,102 | 15,172,897,289 | IssuesEvent | 2021-02-13 11:34:23 | dnnsoftware/Dnn.Platform | https://api.github.com/repos/dnnsoftware/Dnn.Platform | closed | Please restore "Replace Page From a Template" functionality | Area: AE > PersonaBar Ext > Pages.Web Effort: High Priority: Medium Status: Ready for Development Type: Enhancement stale |

## Description of problem

In DNN 8 this was an option under the Pages admin menu. It allowed you to replace an existing page using a template. I think that this has disappeared in DNN 9. I wasn't able to find it in DNN 9.2.1.

## Description of solution

I'd like the old functionality to return

## Description of alternatives considered

Can always delete a page and re-create it from a template, but that doesn't include all of the possibilities available before. And, the old way was very easy to do.

## Additional context

Old functionality

## Screenshots

## Additional context

## Affected version

<!-- Check all that apply and add more if necessary -->

* [x] 9.2.2

* [x] 9.2.1

* [x] 9.2

* [x] 9.1.1

* [x] 9.1

* [x] 9.0

## Affected browser

It's not a browser issue.

* [ ] Chrome

* [ ] Firefox

* [ ] Safari

* [ ] Internet Explorer

* [ ] Edge

| 1.0 | Please restore "Replace Page From a Template" functionality -

## Description of problem

In DNN 8 this was an option under the Pages admin menu. It allowed you to replace an existing page using a template. I think that this has disappeared in DNN 9. I wasn't able to find it in DNN 9.2.1.

## Description of solution

I'd like the old functionality to return

## Description of alternatives considered

Can always delete a page and re-create it from a template, but that doesn't include all of the possibilities available before. And, the old way was very easy to do.

## Additional context

Old functionality

## Screenshots

## Additional context

## Affected version

<!-- Check all that apply and add more if necessary -->

* [x] 9.2.2

* [x] 9.2.1

* [x] 9.2

* [x] 9.1.1

* [x] 9.1

* [x] 9.0

## Affected browser

It's not a browser issue.

* [ ] Chrome

* [ ] Firefox

* [ ] Safari

* [ ] Internet Explorer

* [ ] Edge

| priority | please restore replace page from a template functionality description of problem in dnn this was an option under the pages admin menu it allowed you to replace an existing page using a template i think that this has disappeared in dnn i wasn t able to find it in dnn description of solution i d like the old functionality to return description of alternatives considered can always delete a page and re create it from a template but that doesn t include all of the possibilities available before and the old way was very easy to do additional context old functionality screenshots additional context affected version affected browser it s not a browser issue chrome firefox safari internet explorer edge | 1 |

389,041 | 11,496,582,174 | IssuesEvent | 2020-02-12 08:18:54 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | [Coverity CID :207985] Argument cannot be negative in subsys/net/lib/websocket/websocket.c | Coverity area: Networking bug priority: medium |

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/a3e89e84a801d9bc048b0ee2177f0fb11d1a925a/subsys/net/lib/websocket/websocket.c#L789

Category: Memory - corruptions

Function: `websocket_recv_msg`

Component: Networking

CID: [207985](https://scan9.coverity.com/reports.htm#v29726/p12996/mergedDefectId=207985)

Details:

```

697 #else

698 ret = recv(ctx->real_sock, &ctx->tmp_buf[ctx->tmp_buf_pos],

699 ctx->tmp_buf_len - ctx->tmp_buf_pos,

700 timeout == K_NO_WAIT ? MSG_DONTWAIT : 0);

701 #endif /* CONFIG_NET_TEST */

702

>>> CID 207985: (REVERSE_NEGATIVE)

>>> You might be using variable "ret" before verifying that it is >= 0.

703 if (ret < 0) {

704 return -errno;

705 }

706

707 if (ret == 0) {

708 /* Socket closed */

783 ret = input_len;

784 #else

785 ret = recv(ctx->real_sock, ctx->tmp_buf, ctx->tmp_buf_len,

786 timeout == K_NO_WAIT ? MSG_DONTWAIT : 0);

787 #endif /* CONFIG_NET_TEST */

788

>>> CID 207985: (REVERSE_NEGATIVE)

>>> You might be using variable "ret" before verifying that it is >= 0.

789 if (ret < 0) {

790 return -errno;

791 }

792

793 if (ret == 0) {

794 return 0;

```

Please fix or provide comments in coverity using the link:

https://scan9.coverity.com/reports.htm#v32951/p12996.

Note: This issue was created automatically. Priority was set based on classification

of the file affected and the impact field in coverity. Assignees were set using the CODEOWNERS file.

| 1.0 | [Coverity CID :207985] Argument cannot be negative in subsys/net/lib/websocket/websocket.c -

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/a3e89e84a801d9bc048b0ee2177f0fb11d1a925a/subsys/net/lib/websocket/websocket.c#L789

Category: Memory - corruptions

Function: `websocket_recv_msg`

Component: Networking

CID: [207985](https://scan9.coverity.com/reports.htm#v29726/p12996/mergedDefectId=207985)

Details:

```

697 #else

698 ret = recv(ctx->real_sock, &ctx->tmp_buf[ctx->tmp_buf_pos],

699 ctx->tmp_buf_len - ctx->tmp_buf_pos,

700 timeout == K_NO_WAIT ? MSG_DONTWAIT : 0);

701 #endif /* CONFIG_NET_TEST */

702

>>> CID 207985: (REVERSE_NEGATIVE)

>>> You might be using variable "ret" before verifying that it is >= 0.

703 if (ret < 0) {

704 return -errno;

705 }

706

707 if (ret == 0) {

708 /* Socket closed */

783 ret = input_len;

784 #else

785 ret = recv(ctx->real_sock, ctx->tmp_buf, ctx->tmp_buf_len,

786 timeout == K_NO_WAIT ? MSG_DONTWAIT : 0);

787 #endif /* CONFIG_NET_TEST */

788

>>> CID 207985: (REVERSE_NEGATIVE)

>>> You might be using variable "ret" before verifying that it is >= 0.

789 if (ret < 0) {

790 return -errno;

791 }

792

793 if (ret == 0) {

794 return 0;

```

Please fix or provide comments in coverity using the link:

https://scan9.coverity.com/reports.htm#v32951/p12996.

Note: This issue was created automatically. Priority was set based on classification

of the file affected and the impact field in coverity. Assignees were set using the CODEOWNERS file.

| priority | argument cannot be negative in subsys net lib websocket websocket c static code scan issues found in file category memory corruptions function websocket recv msg component networking cid details else ret recv ctx real sock ctx tmp buf ctx tmp buf len ctx tmp buf pos timeout k no wait msg dontwait endif config net test cid reverse negative you might be using variable ret before verifying that it is if ret return errno if ret socket closed ret input len else ret recv ctx real sock ctx tmp buf ctx tmp buf len timeout k no wait msg dontwait endif config net test cid reverse negative you might be using variable ret before verifying that it is if ret return errno if ret return please fix or provide comments in coverity using the link note this issue was created automatically priority was set based on classification of the file affected and the impact field in coverity assignees were set using the codeowners file | 1 |

88,467 | 3,778,043,988 | IssuesEvent | 2016-03-17 22:22:30 | Fermat-ORG/fermat-org | https://api.github.com/repos/Fermat-ORG/fermat-org | closed | Save the correct value for super layer | Priority: MEDIUM server | We thought that this was not serious, but it was, and a lot. If the value is "false" instead of `false`, then the client code thought it was an actual super layer, thus bubbling up many bugs, I temporarily fixed it by converting to `false` if the name was actually "false". | 1.0 | Save the correct value for super layer - We thought that this was not serious, but it was, and a lot. If the value is "false" instead of `false`, then the client code thought it was an actual super layer, thus bubbling up many bugs, I temporarily fixed it by converting to `false` if the name was actually "false". | priority | save the correct value for super layer we thought that this was not serious but it was and a lot if the value is false instead of false then the client code thought it was an actual super layer thus bubbling up many bugs i temporarily fixed it by converting to false if the name was actually false | 1 |

622,680 | 19,653,844,145 | IssuesEvent | 2022-01-10 10:22:47 | debops/debops | https://api.github.com/repos/debops/debops | closed | debops script doesn't seem to use proper exit statuses | bug priority: medium tag: DebOps script | ```

$ debops run bootstrap -l vmtest1 -e ansible_user=root

Executing Ansible playbooks:

bootstrap

ERROR! the playbook: bootstrap could not be found

$ echo $?

0

```

I'd have expected any exit value except 0 here... | 1.0 | debops script doesn't seem to use proper exit statuses - ```

$ debops run bootstrap -l vmtest1 -e ansible_user=root

Executing Ansible playbooks:

bootstrap

ERROR! the playbook: bootstrap could not be found

$ echo $?

0

```

I'd have expected any exit value except 0 here... | priority | debops script doesn t seem to use proper exit statuses debops run bootstrap l e ansible user root executing ansible playbooks bootstrap error the playbook bootstrap could not be found echo i d have expected any exit value except here | 1 |

3,501 | 2,538,569,580 | IssuesEvent | 2015-01-27 08:20:19 | newca12/gapt | https://api.github.com/repos/newca12/gapt | closed | structs should be trees | 1 star enhancement imported Priority-Medium | _From [fra...@gmail.com](https://code.google.com/u/108596877348066494139/) on February 01, 2011 11:03:51_

Since Structs are Trees, they should inherit the from them. This will allow prooftool (which displays Trees) to display them.

_Original issue: http://code.google.com/p/gapt/issues/detail?id=106_ | 1.0 | structs should be trees - _From [fra...@gmail.com](https://code.google.com/u/108596877348066494139/) on February 01, 2011 11:03:51_

Since Structs are Trees, they should inherit the from them. This will allow prooftool (which displays Trees) to display them.

_Original issue: http://code.google.com/p/gapt/issues/detail?id=106_ | priority | structs should be trees from on february since structs are trees they should inherit the from them this will allow prooftool which displays trees to display them original issue | 1 |

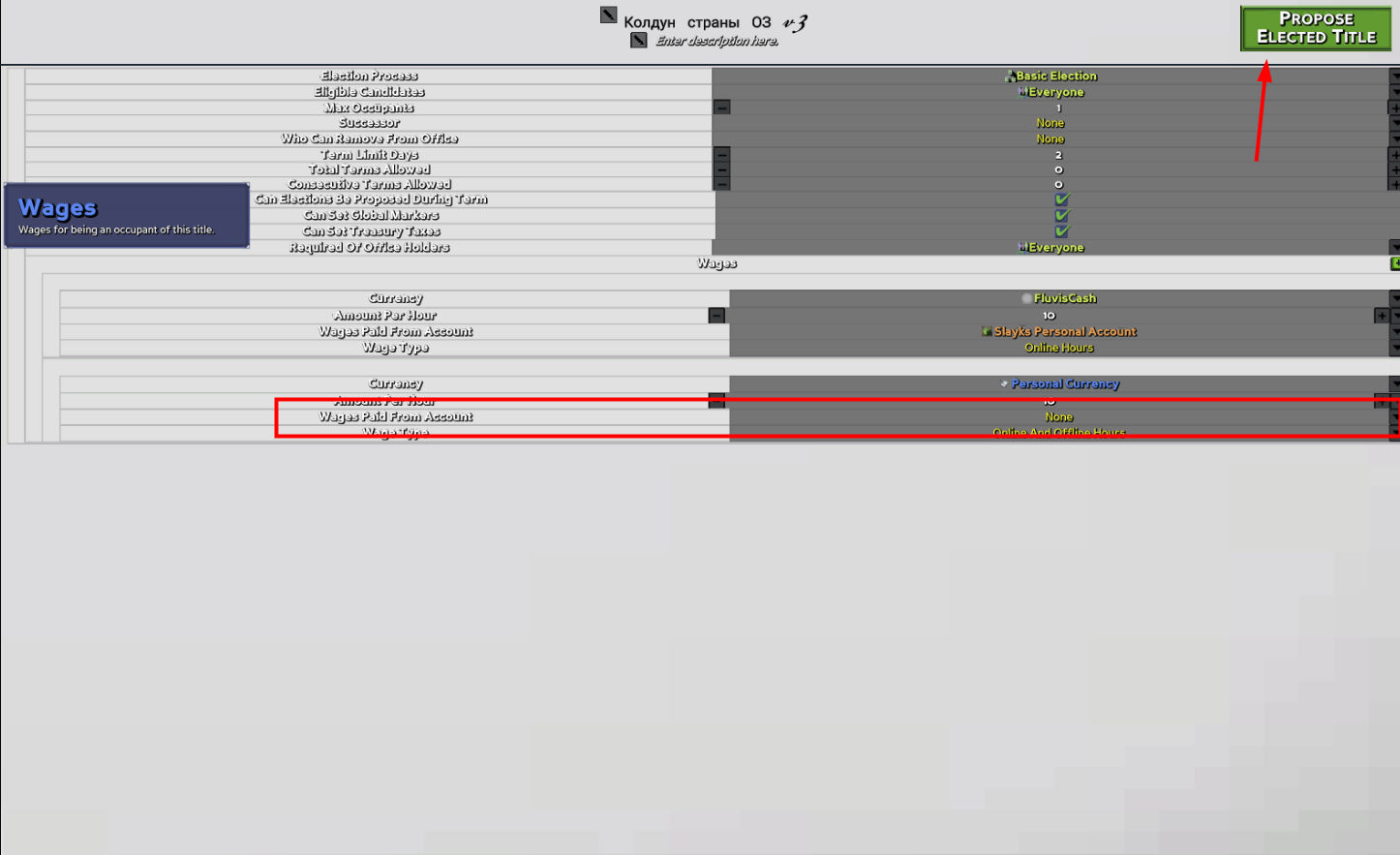

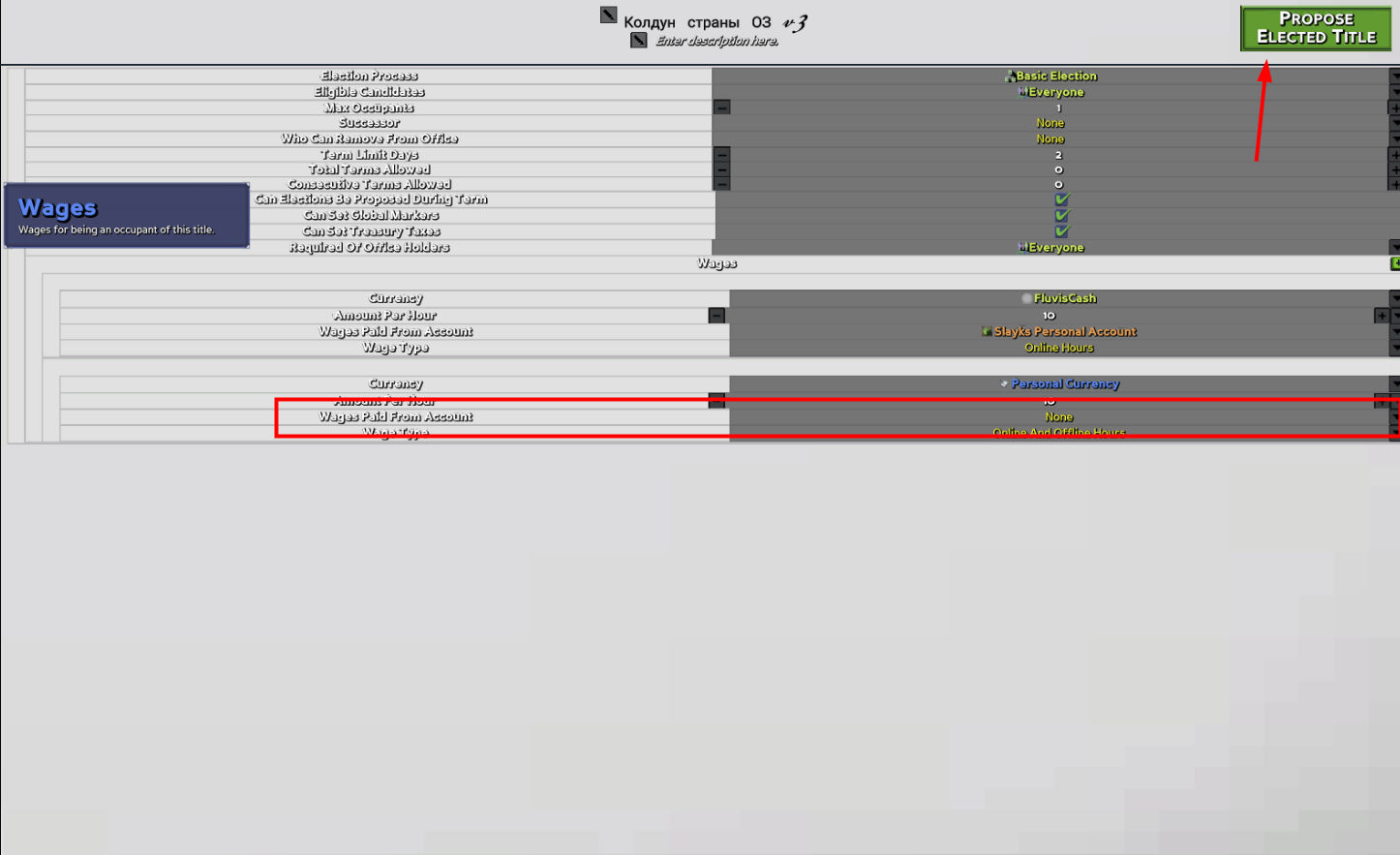

368,780 | 10,884,451,551 | IssuesEvent | 2019-11-18 08:18:54 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.0 staging-1250] Civics: wages without source account | Fixed Medium Priority | Currently we can create Elected Title with Wages that doesn't have pointed source Account.

Wage payment process will fail | 1.0 | [0.9.0 staging-1250] Civics: wages without source account - Currently we can create Elected Title with Wages that doesn't have pointed source Account.

Wage payment process will fail | priority | civics wages without source account currently we can create elected title with wages that doesn t have pointed source account wage payment process will fail | 1 |

584,201 | 17,408,458,609 | IssuesEvent | 2021-08-03 09:11:41 | dataware-tools/dataware-tools | https://api.github.com/repos/dataware-tools/dataware-tools | closed | [Data-browser] Show formatted record info | kind/feature priority/medium wg/web-app | ## Purpose

- feature request

## Description

- Add function to format record information depend on pydtk.config.dtype

| 1.0 | [Data-browser] Show formatted record info - ## Purpose

- feature request

## Description

- Add function to format record information depend on pydtk.config.dtype

| priority | show formatted record info purpose feature request description add function to format record information depend on pydtk config dtype | 1 |

26,430 | 2,684,493,654 | IssuesEvent | 2015-03-29 01:36:45 | gtcasl/gpuocelot | https://api.github.com/repos/gtcasl/gpuocelot | closed | 2-Element Vectors of floats are broken in the llvm backend on 32-bit platforms | bug imported Priority-Medium | _From [SolusStu...@gmail.com](https://code.google.com/u/100974457117804684489/) on February 20, 2010 15:15:08_

What steps will reproduce the problem? See this bug report from llvm: http://hlvm.llvm.org/bugs/show_bug.cgi?id=3287 What is the expected output? What do you see instead? Loads to 2-element vectors of floats randomly produce nan values. What version of the product are you using? On what operating system? 32-bit platforms using LLVM.

_Original issue: http://code.google.com/p/gpuocelot/issues/detail?id=39_ | 1.0 | 2-Element Vectors of floats are broken in the llvm backend on 32-bit platforms - _From [SolusStu...@gmail.com](https://code.google.com/u/100974457117804684489/) on February 20, 2010 15:15:08_

What steps will reproduce the problem? See this bug report from llvm: http://hlvm.llvm.org/bugs/show_bug.cgi?id=3287 What is the expected output? What do you see instead? Loads to 2-element vectors of floats randomly produce nan values. What version of the product are you using? On what operating system? 32-bit platforms using LLVM.

_Original issue: http://code.google.com/p/gpuocelot/issues/detail?id=39_ | priority | element vectors of floats are broken in the llvm backend on bit platforms from on february what steps will reproduce the problem see this bug report from llvm what is the expected output what do you see instead loads to element vectors of floats randomly produce nan values what version of the product are you using on what operating system bit platforms using llvm original issue | 1 |

67,215 | 3,267,221,263 | IssuesEvent | 2015-10-23 01:27:10 | TheLens/elections | https://api.github.com/repos/TheLens/elections | closed | Change footer language on table | Bug Medium priority | Now says: View all candidate results

Change to: View all candidates

Similar on the hide text. Say: Show top candidates | 1.0 | Change footer language on table - Now says: View all candidate results

Change to: View all candidates

Similar on the hide text. Say: Show top candidates | priority | change footer language on table now says view all candidate results change to view all candidates similar on the hide text say show top candidates | 1 |

77,479 | 3,506,395,641 | IssuesEvent | 2016-01-08 06:26:49 | OregonCore/OregonCore | https://api.github.com/repos/OregonCore/OregonCore | closed | MessageChat logs spam (BB #524) | migrated Priority: Medium Type: Bug | This issue was migrated from bitbucket.

**Original Reporter:**

**Original Date:** 04.03.2014 05:40:23 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** resolved

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/524

<hr>

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 100)

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 90)

04-03-14 06:32

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 92)

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 84)

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 98)

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 90)

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 92)

04-03-14 06:32

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 84)

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 98)

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 90) | 1.0 | MessageChat logs spam (BB #524) - This issue was migrated from bitbucket.

**Original Reporter:**

**Original Date:** 04.03.2014 05:40:23 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** resolved

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/524

<hr>

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 100)

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 90)

04-03-14 06:32

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 92)

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 84)

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 98)

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 90)

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 92)

04-03-14 06:32

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 84)

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 98)

SESSION: opcode CMSG_MESSAGECHAT (0x0095) has unprocessed tail data (read stop at 8 from 90) | priority | messagechat logs spam bb this issue was migrated from bitbucket original reporter original date gmt original priority major original type bug original state resolved direct link session opcode cmsg messagechat has unprocessed tail data read stop at from session opcode cmsg messagechat has unprocessed tail data read stop at from session opcode cmsg messagechat has unprocessed tail data read stop at from session opcode cmsg messagechat has unprocessed tail data read stop at from session opcode cmsg messagechat has unprocessed tail data read stop at from session opcode cmsg messagechat has unprocessed tail data read stop at from session opcode cmsg messagechat has unprocessed tail data read stop at from session opcode cmsg messagechat has unprocessed tail data read stop at from session opcode cmsg messagechat has unprocessed tail data read stop at from session opcode cmsg messagechat has unprocessed tail data read stop at from | 1 |

108,861 | 4,351,831,245 | IssuesEvent | 2016-08-01 02:09:28 | ssadedin/bpipe | https://api.github.com/repos/ssadedin/bpipe | closed | out of memory processing when operating on 500+ files | bug imported Priority-Medium | _From [henning....@gmail.com](https://code.google.com/u/107754961382921025555/) on 2014-05-27T18:02:08Z_

Running a simple bpipe script like

get_quality = {

exec """

qc.pl -q -s 0 -i ${input} &&

touch $output

"""

forward input

}

summarise = {

produce("summary.txt"){

exec """

summarize.py .

"""

}

}

Bpipe.run {

"%" * [ get_quality ] + summarise

}

operating on 500+ files I get OutOfMemory exception even though I set

: ${MAX_JAVA_MEM:="1024m"}

in bin/bpipe.

I know my example is poorly described, I just wanted to hear what you typically set MAX_JAVA_MEM too, if it is normal that bpipe requires a lot of memory like this?

...and btw, thanks for an awesome project we are getting more and more into bpipe here in my office!

_Original issue: http://code.google.com/p/bpipe/issues/detail?id=97_ | 1.0 | out of memory processing when operating on 500+ files - _From [henning....@gmail.com](https://code.google.com/u/107754961382921025555/) on 2014-05-27T18:02:08Z_

Running a simple bpipe script like

get_quality = {

exec """

qc.pl -q -s 0 -i ${input} &&

touch $output

"""

forward input

}

summarise = {

produce("summary.txt"){

exec """

summarize.py .

"""

}

}

Bpipe.run {

"%" * [ get_quality ] + summarise

}

operating on 500+ files I get OutOfMemory exception even though I set

: ${MAX_JAVA_MEM:="1024m"}

in bin/bpipe.

I know my example is poorly described, I just wanted to hear what you typically set MAX_JAVA_MEM too, if it is normal that bpipe requires a lot of memory like this?

...and btw, thanks for an awesome project we are getting more and more into bpipe here in my office!

_Original issue: http://code.google.com/p/bpipe/issues/detail?id=97_ | priority | out of memory processing when operating on files from on running a simple bpipe script like get quality exec qc pl q s i input touch output forward input summarise produce summary txt exec summarize py bpipe run summarise operating on files i get outofmemory exception even though i set max java mem in bin bpipe i know my example is poorly described i just wanted to hear what you typically set max java mem too if it is normal that bpipe requires a lot of memory like this and btw thanks for an awesome project we are getting more and more into bpipe here in my office original issue | 1 |

351,158 | 10,513,400,345 | IssuesEvent | 2019-09-27 20:29:10 | robotframework/SeleniumLibrary | https://api.github.com/repos/robotframework/SeleniumLibrary | closed | Use pabot to run acceptance test locally. | help wanted priority: medium task | The acceptance testing starts to take quite long time, in Travis and when running locally. Experiment how to run acceptance test parallel with a local machine. Changing Travis is not mandatory in this issue.

- [ ] Enhance the [atest/run.py](https://github.com/robotframework/SeleniumLibrary/blob/master/atest/run.py) to use `pabot` instead of `robot` as command line switch.

- [ ] It might be useful to expose `--processes` as argument too

- [x] Testing: Is simple http server enough for the load, can tests run in parallel, are test stable | 1.0 | Use pabot to run acceptance test locally. - The acceptance testing starts to take quite long time, in Travis and when running locally. Experiment how to run acceptance test parallel with a local machine. Changing Travis is not mandatory in this issue.

- [ ] Enhance the [atest/run.py](https://github.com/robotframework/SeleniumLibrary/blob/master/atest/run.py) to use `pabot` instead of `robot` as command line switch.

- [ ] It might be useful to expose `--processes` as argument too

- [x] Testing: Is simple http server enough for the load, can tests run in parallel, are test stable | priority | use pabot to run acceptance test locally the acceptance testing starts to take quite long time in travis and when running locally experiment how to run acceptance test parallel with a local machine changing travis is not mandatory in this issue enhance the to use pabot instead of robot as command line switch it might be useful to expose processes as argument too testing is simple http server enough for the load can tests run in parallel are test stable | 1 |

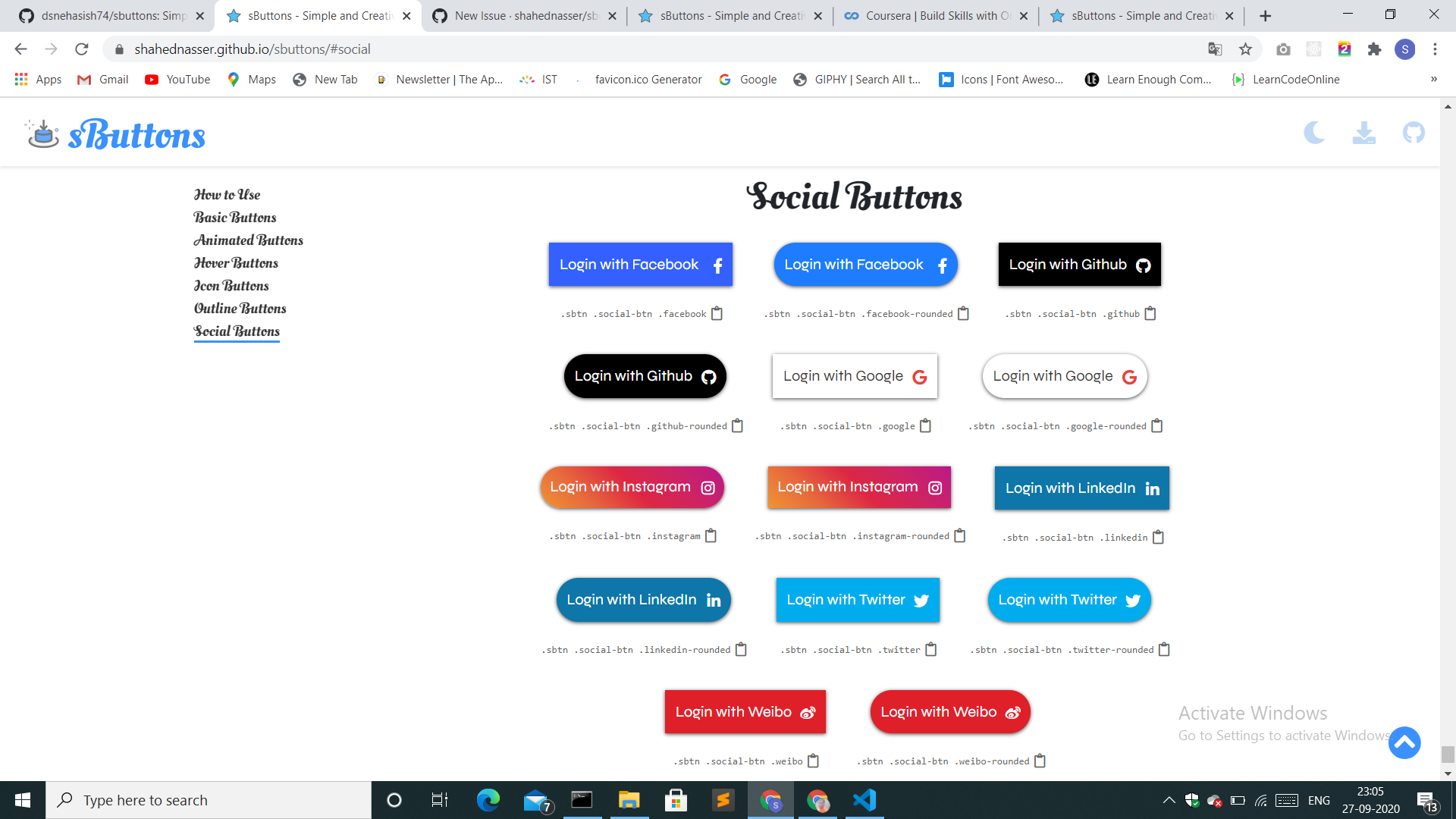

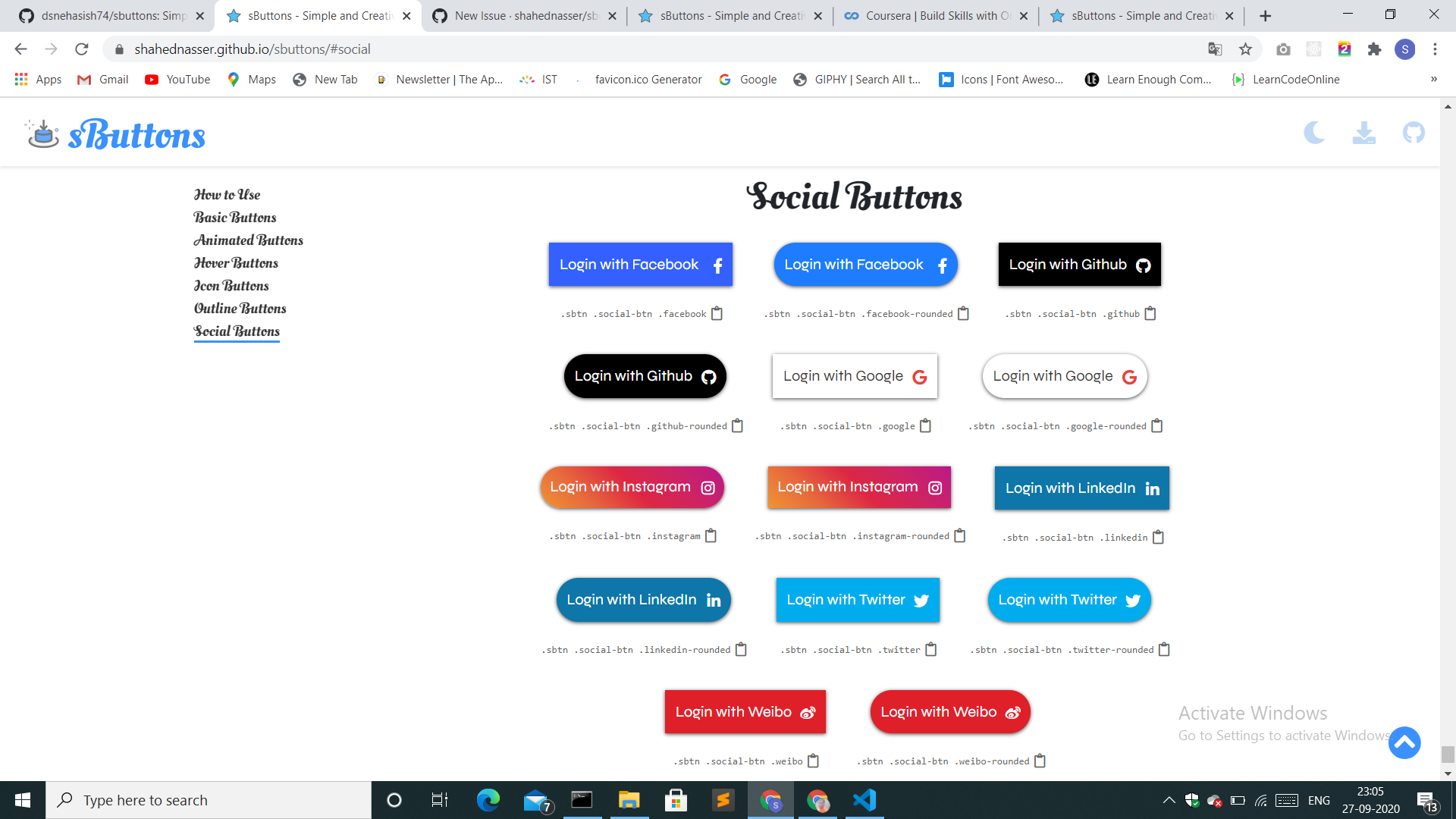

475,100 | 13,686,914,529 | IssuesEvent | 2020-09-30 09:21:49 | shahednasser/sbuttons | https://api.github.com/repos/shahednasser/sbuttons | closed | The squared social Buttons and Rounded social buttons should be separated for clear UI | Priority: Medium buttons enhancement | **Is your feature request related to a problem? Please describe.**

**Describe the solution you'd like**

**Additional notes**

| 1.0 | The squared social Buttons and Rounded social buttons should be separated for clear UI - **Is your feature request related to a problem? Please describe.**

**Describe the solution you'd like**

**Additional notes**

| priority | the squared social buttons and rounded social buttons should be separated for clear ui is your feature request related to a problem please describe describe the solution you d like additional notes | 1 |

189,120 | 6,794,363,960 | IssuesEvent | 2017-11-01 11:50:07 | dotkom/super-duper-fiesta | https://api.github.com/repos/dotkom/super-duper-fiesta | opened | Store passwordHash in localstorage and only send it when needed to backend | Package: Client Priority: Medium Status: Available Type: Enhancement | This way we don't have to force a reload after registration.

It's only used in two places:

- On initial connection

- When voting on anonymous issues

Fixing the initial connection case is a bit trickier. | 1.0 | Store passwordHash in localstorage and only send it when needed to backend - This way we don't have to force a reload after registration.

It's only used in two places:

- On initial connection

- When voting on anonymous issues

Fixing the initial connection case is a bit trickier. | priority | store passwordhash in localstorage and only send it when needed to backend this way we don t have to force a reload after registration it s only used in two places on initial connection when voting on anonymous issues fixing the initial connection case is a bit trickier | 1 |

52,543 | 3,023,833,576 | IssuesEvent | 2015-08-01 23:05:37 | WarGamesLabs/Jack | https://api.github.com/repos/WarGamesLabs/Jack | closed | Protocol: DMX512-A | auto-migrated Priority-Medium Type-Enhancement | ```

Add the DMX industrial lighting protocol.

Transceiver/physical layer? RS484?

```

Original issue reported on code.google.com by `ianles...@gmail.com` on 31 Mar 2009 at 2:38 | 1.0 | Protocol: DMX512-A - ```

Add the DMX industrial lighting protocol.

Transceiver/physical layer? RS484?

```

Original issue reported on code.google.com by `ianles...@gmail.com` on 31 Mar 2009 at 2:38 | priority | protocol a add the dmx industrial lighting protocol transceiver physical layer original issue reported on code google com by ianles gmail com on mar at | 1 |

614,105 | 19,142,383,989 | IssuesEvent | 2021-12-02 01:19:14 | FrequencyX4/Fate | https://api.github.com/repos/FrequencyX4/Fate | opened | Fix-perms command | Priority: Medium Category: Module | A command to re-setup server permissions so that role permissions are primarily in control rather than channel overwrites | 1.0 | Fix-perms command - A command to re-setup server permissions so that role permissions are primarily in control rather than channel overwrites | priority | fix perms command a command to re setup server permissions so that role permissions are primarily in control rather than channel overwrites | 1 |

314,670 | 9,601,303,077 | IssuesEvent | 2019-05-10 11:47:13 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | Test OIDC Hybrid Flow | Complexity/Medium Component/OIDC Priority/High Severity/Major Type/Task | Aspects to check are,

1. Whether the code, token returned has correct expiry times (consider SP wise expiry times as well)

2. id_token generated honours claims configs | 1.0 | Test OIDC Hybrid Flow - Aspects to check are,

1. Whether the code, token returned has correct expiry times (consider SP wise expiry times as well)

2. id_token generated honours claims configs | priority | test oidc hybrid flow aspects to check are whether the code token returned has correct expiry times consider sp wise expiry times as well id token generated honours claims configs | 1 |

760,397 | 26,638,368,929 | IssuesEvent | 2023-01-25 00:44:22 | ualis0n/portifolio | https://api.github.com/repos/ualis0n/portifolio | closed | Adicionar os dados de contato | Priority: Medium Weight: 3 Type: Feature | ## Informações

- [ ] Linkedin

- [ ] Email

- [ ] Telefone (Whatsapp/Telegram)

- [ ] Github

| 1.0 | Adicionar os dados de contato - ## Informações

- [ ] Linkedin

- [ ] Email

- [ ] Telefone (Whatsapp/Telegram)

- [ ] Github

| priority | adicionar os dados de contato informações linkedin email telefone whatsapp telegram github | 1 |

435,441 | 12,535,594,011 | IssuesEvent | 2020-06-04 21:42:21 | onicagroup/runway | https://api.github.com/repos/onicagroup/runway | closed | [REQUEST] deployment/module.environments should be strict | breaking feature priority:medium status:in_progress | As show, this will break legacy uses of `environments`. This would need to be released in a major release or, made toggleable with a default of `false` until the next major release. It should be a top-level config option.

# Example

runway.yml

```yaml

deployments:

- modules:

- path: sampleapp.cfn

parameters:

key: val

environments:

example: 0000/us-east-1

regions:

- us-east-1

- us-west-2

```

## Expectation

- `DEPLOY_ENVIRONMENT=example, accountId=0000, region=us-east-1` deploy the module

- `DEPLOY_ENVIRONMENT=example, accountId=0000, region=us-west-2` always skip the module because the region does not match

- `DEPLOY_ENVIRONMENT=example, accountId=1111, region=us-east-1` always skip the module because the accountId does not match

- `DEPLOY_ENVIRONMENT=prod, accountId=0000, region=us-east-1` always skip the module because the environment is not defined

- `DEPLOY_ENVIRONMENT=prod, accountId=0000, region=us-west-2` always skip the module because the environment is not defined

If `environments` is not defined for an deployment/module, don't skip, let the module class determine if it should skip.

Each environment should still accept an explicit true or false that would enable/disable it for all regions in all accounts.

Each environment should be able to accept an accountId only or a region only. | 1.0 | [REQUEST] deployment/module.environments should be strict - As show, this will break legacy uses of `environments`. This would need to be released in a major release or, made toggleable with a default of `false` until the next major release. It should be a top-level config option.

# Example

runway.yml

```yaml

deployments:

- modules:

- path: sampleapp.cfn

parameters:

key: val

environments:

example: 0000/us-east-1

regions:

- us-east-1

- us-west-2

```

## Expectation

- `DEPLOY_ENVIRONMENT=example, accountId=0000, region=us-east-1` deploy the module

- `DEPLOY_ENVIRONMENT=example, accountId=0000, region=us-west-2` always skip the module because the region does not match

- `DEPLOY_ENVIRONMENT=example, accountId=1111, region=us-east-1` always skip the module because the accountId does not match

- `DEPLOY_ENVIRONMENT=prod, accountId=0000, region=us-east-1` always skip the module because the environment is not defined

- `DEPLOY_ENVIRONMENT=prod, accountId=0000, region=us-west-2` always skip the module because the environment is not defined

If `environments` is not defined for an deployment/module, don't skip, let the module class determine if it should skip.

Each environment should still accept an explicit true or false that would enable/disable it for all regions in all accounts.

Each environment should be able to accept an accountId only or a region only. | priority | deployment module environments should be strict as show this will break legacy uses of environments this would need to be released in a major release or made toggleable with a default of false until the next major release it should be a top level config option example runway yml yaml deployments modules path sampleapp cfn parameters key val environments example us east regions us east us west expectation deploy environment example accountid region us east deploy the module deploy environment example accountid region us west always skip the module because the region does not match deploy environment example accountid region us east always skip the module because the accountid does not match deploy environment prod accountid region us east always skip the module because the environment is not defined deploy environment prod accountid region us west always skip the module because the environment is not defined if environments is not defined for an deployment module don t skip let the module class determine if it should skip each environment should still accept an explicit true or false that would enable disable it for all regions in all accounts each environment should be able to accept an accountid only or a region only | 1 |

72,095 | 3,371,898,768 | IssuesEvent | 2015-11-23 21:07:33 | gsstudios/Dorimanx-SG2-I9100-Kernel | https://api.github.com/repos/gsstudios/Dorimanx-SG2-I9100-Kernel | opened | Root install doesn't work in stweaks | bug Lollipop Medium priority | The root installer in stweaks needs to be updated for lollipop just in case people want to re-root their device. | 1.0 | Root install doesn't work in stweaks - The root installer in stweaks needs to be updated for lollipop just in case people want to re-root their device. | priority | root install doesn t work in stweaks the root installer in stweaks needs to be updated for lollipop just in case people want to re root their device | 1 |

562,801 | 16,670,095,897 | IssuesEvent | 2021-06-07 09:45:54 | belivipro9x99/ctms-plus | https://api.github.com/repos/belivipro9x99/ctms-plus | closed | 🍰 Scrollbar thumb does not display properly when clamping | bug help wanted priority:medium | ## 🐞 báo cáo lỗi

---

### 📃 Mô Tả

Scrollbar thumb change height massively when clamping on top and overflow when clamping on the bottom

### 🔬 Cách Gây Ra Lỗi

1. Try scrolling on an not overflowed scroll container

2. See scrollbar like glitching

### 🎯 Hành Vi Dự Kiến

Scrollbar should behave correctly when clamping

### 📷 Ảnh Chụp

| 1.0 | 🍰 Scrollbar thumb does not display properly when clamping - ## 🐞 báo cáo lỗi

---

### 📃 Mô Tả

Scrollbar thumb change height massively when clamping on top and overflow when clamping on the bottom

### 🔬 Cách Gây Ra Lỗi

1. Try scrolling on an not overflowed scroll container

2. See scrollbar like glitching

### 🎯 Hành Vi Dự Kiến

Scrollbar should behave correctly when clamping

### 📷 Ảnh Chụp

| priority | 🍰 scrollbar thumb does not display properly when clamping 🐞 báo cáo lỗi 📃 mô tả scrollbar thumb change height massively when clamping on top and overflow when clamping on the bottom 🔬 cách gây ra lỗi try scrolling on an not overflowed scroll container see scrollbar like glitching 🎯 hành vi dự kiến scrollbar should behave correctly when clamping 📷 ảnh chụp | 1 |

458,870 | 13,183,337,414 | IssuesEvent | 2020-08-12 17:19:58 | indianapublicmedia/indianapublicmedia-web | https://api.github.com/repos/indianapublicmedia/indianapublicmedia-web | closed | /journeyindiana/ map embed | enhancement medium priority | JI is to have a embedded map (Google or otherwise) of various stories, akin to the from Weekly Special. Some technical research needed here. | 1.0 | /journeyindiana/ map embed - JI is to have a embedded map (Google or otherwise) of various stories, akin to the from Weekly Special. Some technical research needed here. | priority | journeyindiana map embed ji is to have a embedded map google or otherwise of various stories akin to the from weekly special some technical research needed here | 1 |

800,015 | 28,322,933,617 | IssuesEvent | 2023-04-11 03:49:47 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [CDCSDK] Multi Schema + DDL + Pause/Resume Connector + Nemesis + Colocation fails | kind/bug priority/medium area/cdcsdk | Jira Link: [DB-5979](https://yugabyte.atlassian.net/browse/DB-5979)

### Description

http://stress.dev.yugabyte.com/stress_test/88b85897-ee85-41bc-88e8-edff8ede1550

After few iterations, data is not seen in target

### Source connector version

latest

1.9.5.y.17

### Connector configuration

NA

### YugabyteDB version

2.17.4.0-b9

### Warning: Please confirm that this issue does not contain any sensitive information

- [X] I confirm this issue does not contain any sensitive information.

[DB-5979]: https://yugabyte.atlassian.net/browse/DB-5979?atlOrigin=eyJpIjoiNWRkNTljNzYxNjVmNDY3MDlhMDU5Y2ZhYzA5YTRkZjUiLCJwIjoiZ2l0aHViLWNvbS1KU1cifQ | 1.0 | [CDCSDK] Multi Schema + DDL + Pause/Resume Connector + Nemesis + Colocation fails - Jira Link: [DB-5979](https://yugabyte.atlassian.net/browse/DB-5979)

### Description

http://stress.dev.yugabyte.com/stress_test/88b85897-ee85-41bc-88e8-edff8ede1550

After few iterations, data is not seen in target

### Source connector version

latest

1.9.5.y.17

### Connector configuration

NA

### YugabyteDB version

2.17.4.0-b9

### Warning: Please confirm that this issue does not contain any sensitive information

- [X] I confirm this issue does not contain any sensitive information.

[DB-5979]: https://yugabyte.atlassian.net/browse/DB-5979?atlOrigin=eyJpIjoiNWRkNTljNzYxNjVmNDY3MDlhMDU5Y2ZhYzA5YTRkZjUiLCJwIjoiZ2l0aHViLWNvbS1KU1cifQ | priority | multi schema ddl pause resume connector nemesis colocation fails jira link description after few iterations data is not seen in target source connector version latest y connector configuration na yugabytedb version warning please confirm that this issue does not contain any sensitive information i confirm this issue does not contain any sensitive information | 1 |

675,952 | 23,112,652,317 | IssuesEvent | 2022-07-27 14:12:32 | codbex/codbex-kronos | https://api.github.com/repos/codbex/codbex-kronos | opened | [Core] Configure destination caching timeout | effort-medium core supportability priority-low | From xsk created by [dpanayotov](https://github.com/dpanayotov): SAP/xsk#1413

### Details

Currently if a destination is not found initially it will not be retried for 5 minutes. For development purposes this cache may be disabled

### Target

Allow destination cache to be configurable via environment variable

See https://github.com/SAP/cloud-sdk/issues/599 how to work around the inability to configure the library itself | 1.0 | [Core] Configure destination caching timeout - From xsk created by [dpanayotov](https://github.com/dpanayotov): SAP/xsk#1413

### Details

Currently if a destination is not found initially it will not be retried for 5 minutes. For development purposes this cache may be disabled

### Target

Allow destination cache to be configurable via environment variable

See https://github.com/SAP/cloud-sdk/issues/599 how to work around the inability to configure the library itself | priority | configure destination caching timeout from xsk created by sap xsk details currently if a destination is not found initially it will not be retried for minutes for development purposes this cache may be disabled target allow destination cache to be configurable via environment variable see how to work around the inability to configure the library itself | 1 |

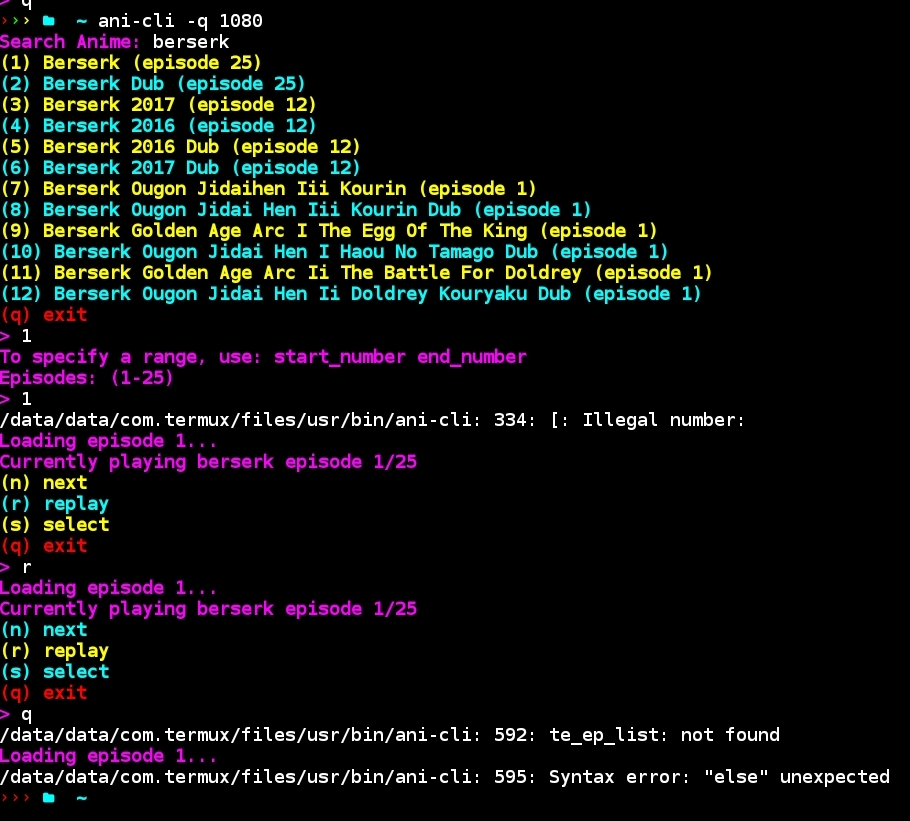

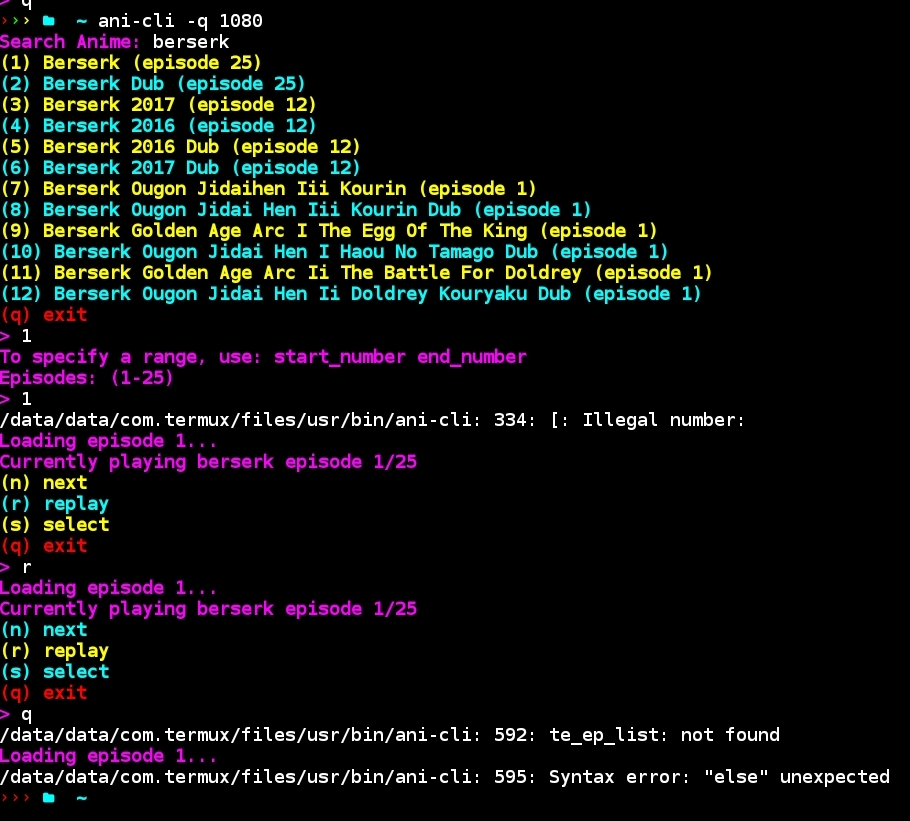

647,446 | 21,103,881,861 | IssuesEvent | 2022-04-04 16:47:14 | pystardust/ani-cli | https://api.github.com/repos/pystardust/ani-cli | closed | Termux errors when launching ep | type: bug priority 2: medium | **Metadata (please complete the following information)**

OS: Termux on Android

Application version:

0.118.0

Packages CPU architecture:

aarch64

Subscribed repositories:

# sources.list

deb https://grimler.se/termux-packages-24/ stable main

# x11-repo (sources.list.d/x11.list)

deb https://dl.kcubeterm.com/termux-x11 x11 main

Updatable packages:

All packages up to date

Android version:

9

Kernel build information:

Linux localhost 4.4.111-21737876 #1 SMP PREEMPT Thu Jul 15 19:28:19 KST 2021 aarch64 Android

Device manufacturer:

samsung

Device model:

SM-N950F

Shell: zsh

ani-cli: 2.0.2

Anime: Berserk

**Describe the bug**

A few errors are popping up with the latest update. Playing brings 1 error, and "q" pops up 2

When playing an ep

`ani-cli: 334: [: Illegal number:`

Note: The episode still played

When quitting

`ani-cli: 592: te_ep_list: not found`

`ani-cli: 595: Syntax error: "else" unexpected`

**Steps To Reproduce**

1. Run `ani-cli -q 1080`

2. Type in `Berserk`

3. Select `(1) Berserk (episode 25)` from the list

4. Choose episode 1

5. Go back to Termux, select `q` to quit

**Expected behavior**

Playing the episode and quitting should not bring up any errors

**Screenshots (if applicable; you can just drag the image onto github)**

**Additional context**

I cannot reproduce the 2nd issue when I quit Termux, it seemed random. I tried replaying my file like the steps in pic but it did not reoccur. | 1.0 | Termux errors when launching ep - **Metadata (please complete the following information)**

OS: Termux on Android

Application version:

0.118.0

Packages CPU architecture:

aarch64

Subscribed repositories:

# sources.list

deb https://grimler.se/termux-packages-24/ stable main

# x11-repo (sources.list.d/x11.list)

deb https://dl.kcubeterm.com/termux-x11 x11 main

Updatable packages:

All packages up to date

Android version:

9

Kernel build information:

Linux localhost 4.4.111-21737876 #1 SMP PREEMPT Thu Jul 15 19:28:19 KST 2021 aarch64 Android

Device manufacturer:

samsung

Device model:

SM-N950F

Shell: zsh

ani-cli: 2.0.2

Anime: Berserk

**Describe the bug**

A few errors are popping up with the latest update. Playing brings 1 error, and "q" pops up 2

When playing an ep

`ani-cli: 334: [: Illegal number:`

Note: The episode still played

When quitting

`ani-cli: 592: te_ep_list: not found`

`ani-cli: 595: Syntax error: "else" unexpected`

**Steps To Reproduce**

1. Run `ani-cli -q 1080`

2. Type in `Berserk`

3. Select `(1) Berserk (episode 25)` from the list

4. Choose episode 1

5. Go back to Termux, select `q` to quit

**Expected behavior**

Playing the episode and quitting should not bring up any errors

**Screenshots (if applicable; you can just drag the image onto github)**

**Additional context**

I cannot reproduce the 2nd issue when I quit Termux, it seemed random. I tried replaying my file like the steps in pic but it did not reoccur. | priority | termux errors when launching ep metadata please complete the following information os termux on android application version packages cpu architecture subscribed repositories sources list deb stable main repo sources list d list deb main updatable packages all packages up to date android version kernel build information linux localhost smp preempt thu jul kst android device manufacturer samsung device model sm shell zsh ani cli anime berserk describe the bug a few errors are popping up with the latest update playing brings error and q pops up when playing an ep ani cli illegal number note the episode still played when quitting ani cli te ep list not found ani cli syntax error else unexpected steps to reproduce run ani cli q type in berserk select berserk episode from the list choose episode go back to termux select q to quit expected behavior playing the episode and quitting should not bring up any errors screenshots if applicable you can just drag the image onto github additional context i cannot reproduce the issue when i quit termux it seemed random i tried replaying my file like the steps in pic but it did not reoccur | 1 |

278,167 | 8,637,584,534 | IssuesEvent | 2018-11-23 11:47:30 | MarcusWolschon/osmeditor4android | https://api.github.com/repos/MarcusWolschon/osmeditor4android | closed | Presets: Display in alphabetical order | Enhancement Medium Priority Usability | # Current state

- Presets (`Vorlagen`) are show in **random** (?) order on the screen, see screenshot.

# Target state

- Presets (`Vorlagen`) are show in **alphabetical** order on the screen.

- I think this will make it easier to find a preset.

- The alphabetical order should not be hardcoded so it can be dynamically applied when the system language is changed such as from English to German. | 1.0 | Presets: Display in alphabetical order - # Current state

- Presets (`Vorlagen`) are show in **random** (?) order on the screen, see screenshot.

# Target state

- Presets (`Vorlagen`) are show in **alphabetical** order on the screen.

- I think this will make it easier to find a preset.

- The alphabetical order should not be hardcoded so it can be dynamically applied when the system language is changed such as from English to German. | priority | presets display in alphabetical order current state presets vorlagen are show in random order on the screen see screenshot target state presets vorlagen are show in alphabetical order on the screen i think this will make it easier to find a preset the alphabetical order should not be hardcoded so it can be dynamically applied when the system language is changed such as from english to german | 1 |

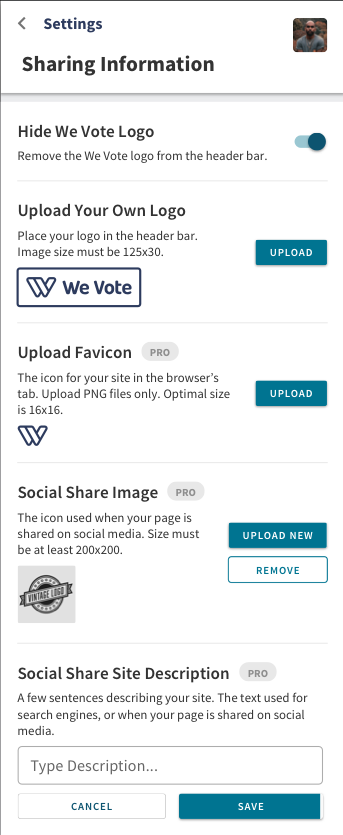

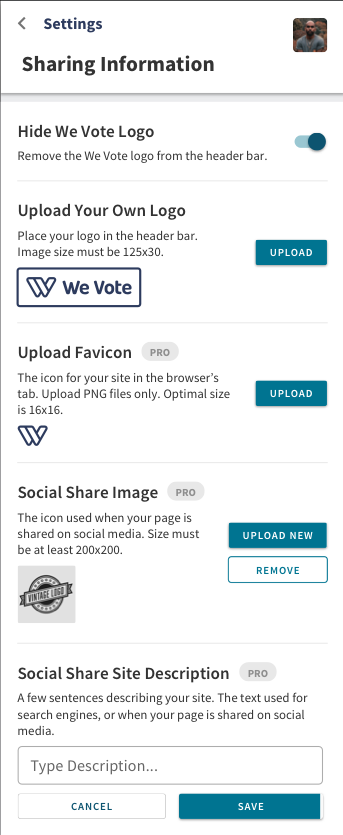

338,455 | 10,229,557,169 | IssuesEvent | 2019-08-17 13:47:24 | wevote/WebApp | https://api.github.com/repos/wevote/WebApp | closed | Settings-Sharing (Mobile): Configure social media sharing | Difficulty: Medium Priority: 1 | Upgrade the current http://localhost:3000/settings/sharing page to use the following layout in mobile mode:

| 1.0 | Settings-Sharing (Mobile): Configure social media sharing - Upgrade the current http://localhost:3000/settings/sharing page to use the following layout in mobile mode:

| priority | settings sharing mobile configure social media sharing upgrade the current page to use the following layout in mobile mode | 1 |

830,915 | 32,030,203,737 | IssuesEvent | 2023-09-22 11:47:34 | SkriptLang/Skript | https://api.github.com/repos/SkriptLang/Skript | closed | Data values not properly saved in database | bug priority: medium variables | There are problems when I try to use variables to store an item with a data value

Like storing a clown fish

`!set {test} to clownfish` 349:1

Close the server and restart after storage

`!give {test} to player`

What I got was a raw fish 349:0

Worse, it loses some NBT data

`set {test} to clownfish with nbt "{xbxy:""i35"",display:{Name:""fish""}}"`

When I restart the server, it will become

` {test} = raw fishwith with nbt "{display:{Name:""fish""}}"`

I also tested the enchanted golden apple, which will turn into a normal golden apple when rebooted

| 1.0 | Data values not properly saved in database - There are problems when I try to use variables to store an item with a data value

Like storing a clown fish

`!set {test} to clownfish` 349:1

Close the server and restart after storage

`!give {test} to player`

What I got was a raw fish 349:0

Worse, it loses some NBT data

`set {test} to clownfish with nbt "{xbxy:""i35"",display:{Name:""fish""}}"`

When I restart the server, it will become

` {test} = raw fishwith with nbt "{display:{Name:""fish""}}"`

I also tested the enchanted golden apple, which will turn into a normal golden apple when rebooted

| priority | data values not properly saved in database there are problems when i try to use variables to store an item with a data value like storing a clown fish set test to clownfish close the server and restart after storage give test to player what i got was a raw fish worse it loses some nbt data set test to clownfish with nbt xbxy display name fish when i restart the server it will become test raw fishwith with nbt display name fish i also tested the enchanted golden apple which will turn into a normal golden apple when rebooted | 1 |

698,088 | 23,965,060,976 | IssuesEvent | 2022-09-12 23:30:02 | returntocorp/semgrep | https://api.github.com/repos/returntocorp/semgrep | reopened | [RFC] Add minimum semgrep version needed to run rule | priority:medium rfc | With speed we are adding rules and functionality into semgrep we have hit situations when we

publish rules that depend on features in just released semgrep so when run on a version of semgrep

that is older, causes a crash.

Proposed solution:

We can add an optional field to the rule_schema: `requires` that takes a semver string mentioning the minimum version

of semgrep needed to run or even parse the rule successfully.

semgrep-cli can as a first step filter out rules that do not worth with the currently running version of semgrep (and print out info to user on what rules failed to run and why)

Alternatives:

- Instead of a semver string we can even just have a minimum semgrep version needed to run a rule?

- We could also have rules that fail to parse/run correctly in semgrep-core be reported to semgrep-cli in the response json (semgrep-core will just skip running that rule) and semgrep-cli will let user know of bad rules

- This removes the need for rule writers to be aware of minimum semgrep version, removes need to update schema, but adds complexity to interface | 1.0 | [RFC] Add minimum semgrep version needed to run rule - With speed we are adding rules and functionality into semgrep we have hit situations when we

publish rules that depend on features in just released semgrep so when run on a version of semgrep

that is older, causes a crash.

Proposed solution:

We can add an optional field to the rule_schema: `requires` that takes a semver string mentioning the minimum version

of semgrep needed to run or even parse the rule successfully.

semgrep-cli can as a first step filter out rules that do not worth with the currently running version of semgrep (and print out info to user on what rules failed to run and why)

Alternatives:

- Instead of a semver string we can even just have a minimum semgrep version needed to run a rule?

- We could also have rules that fail to parse/run correctly in semgrep-core be reported to semgrep-cli in the response json (semgrep-core will just skip running that rule) and semgrep-cli will let user know of bad rules

- This removes the need for rule writers to be aware of minimum semgrep version, removes need to update schema, but adds complexity to interface | priority | add minimum semgrep version needed to run rule with speed we are adding rules and functionality into semgrep we have hit situations when we publish rules that depend on features in just released semgrep so when run on a version of semgrep that is older causes a crash proposed solution we can add an optional field to the rule schema requires that takes a semver string mentioning the minimum version of semgrep needed to run or even parse the rule successfully semgrep cli can as a first step filter out rules that do not worth with the currently running version of semgrep and print out info to user on what rules failed to run and why alternatives instead of a semver string we can even just have a minimum semgrep version needed to run a rule we could also have rules that fail to parse run correctly in semgrep core be reported to semgrep cli in the response json semgrep core will just skip running that rule and semgrep cli will let user know of bad rules this removes the need for rule writers to be aware of minimum semgrep version removes need to update schema but adds complexity to interface | 1 |

127,657 | 5,037,954,940 | IssuesEvent | 2016-12-17 23:56:44 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | opened | Investigate bazel support for Xcode | configuration: bazel priority: medium type: installation and distribution | posted initially in #4485, but it deserves it's own issue.

fwiw, just lost a few hours trying Tulsi with bazel to work with Xcode. Long story short, I think it's not going to support our workflow without some work. I made some local edits to the tulsi code to get fairly far along (https://github.com/RussTedrake/tulsi), but it is not able to understand the "@glib://" deps, which apparently comes in through pkg-config (which comes in through the thirdparty bazel support).

The local edits that I did make were needed for it to even consider cc_* as valid targets. It was only looking for e.g. ios_application. So it's clearly not being used for our workflow yet. | 1.0 | Investigate bazel support for Xcode - posted initially in #4485, but it deserves it's own issue.

fwiw, just lost a few hours trying Tulsi with bazel to work with Xcode. Long story short, I think it's not going to support our workflow without some work. I made some local edits to the tulsi code to get fairly far along (https://github.com/RussTedrake/tulsi), but it is not able to understand the "@glib://" deps, which apparently comes in through pkg-config (which comes in through the thirdparty bazel support).

The local edits that I did make were needed for it to even consider cc_* as valid targets. It was only looking for e.g. ios_application. So it's clearly not being used for our workflow yet. | priority | investigate bazel support for xcode posted initially in but it deserves it s own issue fwiw just lost a few hours trying tulsi with bazel to work with xcode long story short i think it s not going to support our workflow without some work i made some local edits to the tulsi code to get fairly far along but it is not able to understand the glib deps which apparently comes in through pkg config which comes in through the thirdparty bazel support the local edits that i did make were needed for it to even consider cc as valid targets it was only looking for e g ios application so it s clearly not being used for our workflow yet | 1 |

196,183 | 6,925,491,402 | IssuesEvent | 2017-11-30 16:05:23 | googlei18n/noto-fonts | https://api.github.com/repos/googlei18n/noto-fonts | closed | Glyph correction needed for Sundanese Letter JA (1B8F) | Android Priority-Medium Script-Sundanese | http://unicode.org/cldr/trac/ticket/9344

[quote]

we've spotted mistake in the Glyph shown for Sundanese Letter JA (1B8F) in this document:

http://unicode.org/charts/PDF/U1B80.pdf

The mistake is at the top part of the glyph.

Currently it is displayed as Z shaped

In fact, the top part should be similar to the Sundanese Letter DA (1B93).

The font which implements this correction can be found in:

http://www.kairaga.com/2015/05/05/font-aksara-sunda-unicode-versi-2013-revisi.html

[/quote]

| 1.0 | Glyph correction needed for Sundanese Letter JA (1B8F) - http://unicode.org/cldr/trac/ticket/9344

[quote]

we've spotted mistake in the Glyph shown for Sundanese Letter JA (1B8F) in this document:

http://unicode.org/charts/PDF/U1B80.pdf

The mistake is at the top part of the glyph.

Currently it is displayed as Z shaped

In fact, the top part should be similar to the Sundanese Letter DA (1B93).

The font which implements this correction can be found in:

http://www.kairaga.com/2015/05/05/font-aksara-sunda-unicode-versi-2013-revisi.html

[/quote]

| priority | glyph correction needed for sundanese letter ja we ve spotted mistake in the glyph shown for sundanese letter ja in this document the mistake is at the top part of the glyph currently it is displayed as z shaped in fact the top part should be similar to the sundanese letter da the font which implements this correction can be found in | 1 |

498,156 | 14,401,945,423 | IssuesEvent | 2020-12-03 14:20:53 | tellor-io/telliot | https://api.github.com/repos/tellor-io/telliot | closed | Revisit the `indexes.json` file format to see if the format can be simplified or if can use an existing golang parser. | help wanted priority: medium type: research | @themandalore mentioned that this is the format that other projects use this format(Chainlink....) so on the plus side this should mean that people are familiar with this format. Lets see if can use some existing golang module to remove the need for a custom parser and sync with some user of the miner to agree on the format.

@mikeghen maybe you can also comment?

```

"json(https://api.binance.com/api/v1/klines?symbol=BTCUSDT&interval=1d&limit=1).0.4",

```

could maybe be something like:

```

"URL": "https://api.binance.com/api/v1/klines?symbol=BTCUSDT&interval=1d&limit=1"

"type": "json",

"jsonPath":".0.4"

```

The format seems to be similar to https://goessner.net/articles/JsonPath/

| 1.0 | Revisit the `indexes.json` file format to see if the format can be simplified or if can use an existing golang parser. - @themandalore mentioned that this is the format that other projects use this format(Chainlink....) so on the plus side this should mean that people are familiar with this format. Lets see if can use some existing golang module to remove the need for a custom parser and sync with some user of the miner to agree on the format.

@mikeghen maybe you can also comment?

```

"json(https://api.binance.com/api/v1/klines?symbol=BTCUSDT&interval=1d&limit=1).0.4",

```

could maybe be something like:

```

"URL": "https://api.binance.com/api/v1/klines?symbol=BTCUSDT&interval=1d&limit=1"

"type": "json",

"jsonPath":".0.4"

```

The format seems to be similar to https://goessner.net/articles/JsonPath/

| priority | revisit the indexes json file format to see if the format can be simplified or if can use an existing golang parser themandalore mentioned that this is the format that other projects use this format chainlink so on the plus side this should mean that people are familiar with this format lets see if can use some existing golang module to remove the need for a custom parser and sync with some user of the miner to agree on the format mikeghen maybe you can also comment json could maybe be something like url type json jsonpath the format seems to be similar to | 1 |

127,144 | 5,019,434,392 | IssuesEvent | 2016-12-14 11:44:13 | swash99/ims | https://api.github.com/repos/swash99/ims | closed | Add search bar on inventory entry page | feature Medium Priority | Usecase: During the day, employee might run into situations where they need to add a small note/reminder for a specific item and that note will be taken into account when inventory entry process is being performed or perhaps when the print preview results are passed on.

The solution I suggest is to add a search bar above the inventory entry table (similar to the one on Admin Tasks -> Items page). User will search for item name and add whatever information is needed in the 'Notes' column.

This solution is just a suggestion and if the requirement can be met in a better way, then you are free to pursue experimenting and implementing. | 1.0 | Add search bar on inventory entry page - Usecase: During the day, employee might run into situations where they need to add a small note/reminder for a specific item and that note will be taken into account when inventory entry process is being performed or perhaps when the print preview results are passed on.

The solution I suggest is to add a search bar above the inventory entry table (similar to the one on Admin Tasks -> Items page). User will search for item name and add whatever information is needed in the 'Notes' column.

This solution is just a suggestion and if the requirement can be met in a better way, then you are free to pursue experimenting and implementing. | priority | add search bar on inventory entry page usecase during the day employee might run into situations where they need to add a small note reminder for a specific item and that note will be taken into account when inventory entry process is being performed or perhaps when the print preview results are passed on the solution i suggest is to add a search bar above the inventory entry table similar to the one on admin tasks items page user will search for item name and add whatever information is needed in the notes column this solution is just a suggestion and if the requirement can be met in a better way then you are free to pursue experimenting and implementing | 1 |

445,213 | 12,827,641,571 | IssuesEvent | 2020-07-06 18:54:04 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | opened | Website Release | Category: Web Priority: Medium Type: Feature | - [ ] production .env

- [ ] qa account and hosted worlds - cross browser

- [ ] set hw throttle

- [ ] 301 redirects

- [ ] cdn | 1.0 | Website Release - - [ ] production .env

- [ ] qa account and hosted worlds - cross browser

- [ ] set hw throttle

- [ ] 301 redirects

- [ ] cdn | priority | website release production env qa account and hosted worlds cross browser set hw throttle redirects cdn | 1 |

58,110 | 3,087,555,120 | IssuesEvent | 2015-08-25 12:41:47 | juju/docs | https://api.github.com/repos/juju/docs | closed | LXC caching needs docs | 1.22 in progress Medium Priority PR review | From Juju 1.22 onwards, LXC images are cached in the Juju environment

when they are retrieved to instantiate a new LXC container. This applies

to the local provider and all other cloud providers. This caching is

done independently of whether image cloning is enabled.

Note: Due to current upgrade limitations, image caching is currently not

available for machines upgraded to 1.22. Only machines deployed with

1.22 will cache the images.

In Juju 1.22, lxc-create is configured to fetch images from the Juju

state server. If no image is available, the state server will fetch the

image from http://cloud-images.ubuntu.com and then cache it. This means

that the retrieval of images from the external site is only done once

per *environment*, not once per new machine which is the default

behaviour of lxc. The next time lxc-create needs to fetch an image, it

comes directly from the Juju environment cache.

The 'cached-images' command can list and delete cached LXC images stored

in the Juju environment. The 'list' and 'delete' subcommands support

'--arch' and '--series' options to filter the result.

To see all cached images, run:

juju cached-images list

Or to see just the amd64 trusty images run:

juju cached-images list --series trusty --arch amd64

To delete the amd64 trusty cached images run:

juju cache-images delete --series trusty --arch amd64

Future development work will allow Juju to automatically download new

LXC images when they becomes available, but for now, the only way update

a cached image is to remove the old one from the Juju environment. Juju

will also support KVM image caching in the future.

See 'juju cached-images list --help' and 'juju cached-images delete

--help' for more details.

| 1.0 | LXC caching needs docs - From Juju 1.22 onwards, LXC images are cached in the Juju environment

when they are retrieved to instantiate a new LXC container. This applies

to the local provider and all other cloud providers. This caching is

done independently of whether image cloning is enabled.

Note: Due to current upgrade limitations, image caching is currently not

available for machines upgraded to 1.22. Only machines deployed with

1.22 will cache the images.

In Juju 1.22, lxc-create is configured to fetch images from the Juju

state server. If no image is available, the state server will fetch the

image from http://cloud-images.ubuntu.com and then cache it. This means

that the retrieval of images from the external site is only done once

per *environment*, not once per new machine which is the default

behaviour of lxc. The next time lxc-create needs to fetch an image, it

comes directly from the Juju environment cache.

The 'cached-images' command can list and delete cached LXC images stored

in the Juju environment. The 'list' and 'delete' subcommands support

'--arch' and '--series' options to filter the result.

To see all cached images, run:

juju cached-images list

Or to see just the amd64 trusty images run:

juju cached-images list --series trusty --arch amd64

To delete the amd64 trusty cached images run:

juju cache-images delete --series trusty --arch amd64

Future development work will allow Juju to automatically download new

LXC images when they becomes available, but for now, the only way update

a cached image is to remove the old one from the Juju environment. Juju

will also support KVM image caching in the future.

See 'juju cached-images list --help' and 'juju cached-images delete

--help' for more details.

| priority | lxc caching needs docs from juju onwards lxc images are cached in the juju environment when they are retrieved to instantiate a new lxc container this applies to the local provider and all other cloud providers this caching is done independently of whether image cloning is enabled note due to current upgrade limitations image caching is currently not available for machines upgraded to only machines deployed with will cache the images in juju lxc create is configured to fetch images from the juju state server if no image is available the state server will fetch the image from and then cache it this means that the retrieval of images from the external site is only done once per environment not once per new machine which is the default behaviour of lxc the next time lxc create needs to fetch an image it comes directly from the juju environment cache the cached images command can list and delete cached lxc images stored in the juju environment the list and delete subcommands support arch and series options to filter the result to see all cached images run juju cached images list or to see just the trusty images run juju cached images list series trusty arch to delete the trusty cached images run juju cache images delete series trusty arch future development work will allow juju to automatically download new lxc images when they becomes available but for now the only way update a cached image is to remove the old one from the juju environment juju will also support kvm image caching in the future see juju cached images list help and juju cached images delete help for more details | 1 |

25,976 | 2,684,074,897 | IssuesEvent | 2015-03-28 16:42:50 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | opened | В режиме эскизов - некорректное поведение Shift+стрелок | 1 star bug imported Priority-Medium | _From [jbak1...@gmail.com](https://code.google.com/u/111605209573957257873/) on May 14, 2012 01:44:18_

OS version: Win7 x86 ConEmu version: ConEmu .120513

Far version: Far Manager, version 2.1 (build 1807 bis27) x86 *Bug description* В режиме эскизов стрелки работают с учетом табличной структуры - стрелка вправо переходит на следующий файл, стрелка влево - на предыдущий, и т.д. И это хорошо.

А вот нажатия курсорных стрелок с Shift видимо передаются Far'у, и это очень сильно сбивает с толку.

Хотелось бы, чтобы Shift+вправо выделял файл под курсором и ставил курсор на следующий файл, Shift+вниз - переходил на следующую строку с выделением и т. д.

_Original issue: http://code.google.com/p/conemu-maximus5/issues/detail?id=542_ | 1.0 | В режиме эскизов - некорректное поведение Shift+стрелок - _From [jbak1...@gmail.com](https://code.google.com/u/111605209573957257873/) on May 14, 2012 01:44:18_

OS version: Win7 x86 ConEmu version: ConEmu .120513

Far version: Far Manager, version 2.1 (build 1807 bis27) x86 *Bug description* В режиме эскизов стрелки работают с учетом табличной структуры - стрелка вправо переходит на следующий файл, стрелка влево - на предыдущий, и т.д. И это хорошо.

А вот нажатия курсорных стрелок с Shift видимо передаются Far'у, и это очень сильно сбивает с толку.

Хотелось бы, чтобы Shift+вправо выделял файл под курсором и ставил курсор на следующий файл, Shift+вниз - переходил на следующую строку с выделением и т. д.

_Original issue: http://code.google.com/p/conemu-maximus5/issues/detail?id=542_ | priority | в режиме эскизов некорректное поведение shift стрелок from on may os version conemu version conemu far version far manager version build bug description в режиме эскизов стрелки работают с учетом табличной структуры стрелка вправо переходит на следующий файл стрелка влево на предыдущий и т д и это хорошо а вот нажатия курсорных стрелок с shift видимо передаются far у и это очень сильно сбивает с толку хотелось бы чтобы shift вправо выделял файл под курсором и ставил курсор на следующий файл shift вниз переходил на следующую строку с выделением и т д original issue | 1 |

681,454 | 23,311,725,909 | IssuesEvent | 2022-08-08 08:52:18 | wasmerio/wasmer | https://api.github.com/repos/wasmerio/wasmer | closed | The execution result of the program differs from that of the native program. | 🐞 bug priority-medium | <!-- Thanks for the bug report! -->

### Describe the bug

```

#include <dirent.h>

#include <stdio.h>

#include <errno.h>

int main(int argc, char **argv) {

DIR *d;

char * target = ".";

if (argc == 2) {

target = argv[1];

}

struct dirent *dir;

d = opendir(target);

if (d) {

while ((dir = readdir(d)) != NULL) {

printf("%s\n", dir->d_name);

}

printf("errno: %d\n", errno);

closedir(d);

}

return(0);

}

```

This is a source code first mentioned in wasmtime issues NO.2493.

My project files are as follows:

[wasmtime-2493.zip](https://github.com/wasmerio/wasmer/files/9159635/wasmtime-2493.zip)

```sh

echo "`wasmer -V` | `rustc -V` | `uname -m`"

wasmer 2.3.0 | rustc 1.62.0 (a8314ef7d 2022-06-27) | x86_64

```

### Steps to reproduce

```

wasmer run ls.wasm --dir=./testfolder -- testfolder | wc -l

```

### Expected behavior

When I compile using g++, the program results in the following

```

203

```

### Actual behavior

```

201

```

| 1.0 | The execution result of the program differs from that of the native program. - <!-- Thanks for the bug report! -->

### Describe the bug

```

#include <dirent.h>

#include <stdio.h>

#include <errno.h>

int main(int argc, char **argv) {

DIR *d;

char * target = ".";

if (argc == 2) {

target = argv[1];

}

struct dirent *dir;

d = opendir(target);

if (d) {

while ((dir = readdir(d)) != NULL) {

printf("%s\n", dir->d_name);

}

printf("errno: %d\n", errno);

closedir(d);

}

return(0);

}

```

This is a source code first mentioned in wasmtime issues NO.2493.

My project files are as follows:

[wasmtime-2493.zip](https://github.com/wasmerio/wasmer/files/9159635/wasmtime-2493.zip)

```sh

echo "`wasmer -V` | `rustc -V` | `uname -m`"

wasmer 2.3.0 | rustc 1.62.0 (a8314ef7d 2022-06-27) | x86_64

```

### Steps to reproduce

```

wasmer run ls.wasm --dir=./testfolder -- testfolder | wc -l

```

### Expected behavior

When I compile using g++, the program results in the following

```

203

```

### Actual behavior

```

201

```

| priority | the execution result of the program differs from that of the native program describe the bug include include include int main int argc char argv dir d char target if argc target argv struct dirent dir d opendir target if d while dir readdir d null printf s n dir d name printf errno d n errno closedir d return this is a source code first mentioned in wasmtime issues no my project files are as follows sh echo wasmer v rustc v uname m wasmer rustc steps to reproduce wasmer run ls wasm dir testfolder testfolder wc l expected behavior when i compile using g the program results in the following actual behavior | 1 |

184,440 | 6,713,274,248 | IssuesEvent | 2017-10-13 12:52:50 | nim-lang/Nim | https://api.github.com/repos/nim-lang/Nim | closed | [times.nim] Timezone offset gives no indication of +/- | Medium Priority Stdlib Times | ## Summary

The timezone offset provided by `getLocalTime()` and `getGMTime()` provide only a 'plain' hour instead of indicating whether the timezone is ahead or behind of UTC/GMT. This is counter to the [documentation](http://nim-lang.org/docs/times.html#format,TimeInfo,string) which suggests +/- should be present for `z`, `zz`, and `zzz`.

See also #3199.

## nim test code

``` nim

import times

echo getTzname()

echo getTime().getGMTime.format("zzz")

echo getTime().getLocalTime.format("zzz")

```

## Output, BST

http://www.timeanddate.com/time/zones/bst

| Code | Actual | Expected |

| --- | --- | --- |

| `getTzname()` | (nonDST: GMT, DST: BST) | (nonDST: GMT, DST: BST) |

| `getTime().getGMTime.format("zzz")` | 00:00 | +00:00 |

| `getTime().getLocalTime.format("zzz")` | 00:00 | +01:00 |

## Output, EDT

http://www.timeanddate.com/time/zones/edt

| Code | Actual | Expected |

| --- | --- | --- |

| `getTzname()` | (nonDST: EST, DST: EDT) | (nonDST: EST, DST: EDT) |

| `getTime().getGMTime.format("zzz")` | 00:00 | +00:00 |

| `getTime().getLocalTime.format("zzz")` | 05:00 | -04:00 |

| 1.0 | [times.nim] Timezone offset gives no indication of +/- - ## Summary

The timezone offset provided by `getLocalTime()` and `getGMTime()` provide only a 'plain' hour instead of indicating whether the timezone is ahead or behind of UTC/GMT. This is counter to the [documentation](http://nim-lang.org/docs/times.html#format,TimeInfo,string) which suggests +/- should be present for `z`, `zz`, and `zzz`.

See also #3199.

## nim test code

``` nim

import times

echo getTzname()

echo getTime().getGMTime.format("zzz")

echo getTime().getLocalTime.format("zzz")

```

## Output, BST

http://www.timeanddate.com/time/zones/bst

| Code | Actual | Expected |

| --- | --- | --- |

| `getTzname()` | (nonDST: GMT, DST: BST) | (nonDST: GMT, DST: BST) |

| `getTime().getGMTime.format("zzz")` | 00:00 | +00:00 |