Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

253,298 | 8,053,830,504 | IssuesEvent | 2018-08-02 01:26:36 | kjohnsen/MMAPPR2 | https://api.github.com/repos/kjohnsen/MMAPPR2 | closed | Does callSampleSpecificVariants eliminate variants that have low frequency in WT? | complexity-medium priority-medium | This would be a problem because one missorted mutant would get the causative mutation thrown out. | 1.0 | Does callSampleSpecificVariants eliminate variants that have low frequency in WT? - This would be a problem because one missorted mutant would get the causative mutation thrown out. | priority | does callsamplespecificvariants eliminate variants that have low frequency in wt this would be a problem because one missorted mutant would get the causative mutation thrown out | 1 |

405,920 | 11,884,405,607 | IssuesEvent | 2020-03-27 17:35:23 | visit-dav/visit | https://api.github.com/repos/visit-dav/visit | closed | Qt 5 enabled, plot List missing up/down arrows and x | bug impact medium likelihood medium priority reviewed | Our plot list, when expanded, has up/down arrows next to operators for moving them relative to other operators, and has an x button for deleting the operator.These are missing when VisIt is built with Qt 5.I've observed this on Windows, don't have access to linux at the moment to see if it occurs there, too.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in the transition. Below is

a complete record of the original redmine ticket.

Ticket number: 2795

Status: Pending

Project: VisIt

Tracker: Bug

Priority: High

Subject: Qt 5 enabled, plot List missing up/down arrows and x

Assigned to: -

Category: -

Target version: -

Author: Kathleen Biagas

Start: 03/29/2017

Due date:

% Done: 0%

Estimated time:

Created: 03/29/2017 04:28 pm

Updated: 04/04/2017 07:04 pm

Likelihood: 3 - Occasional

Severity: 3 - Major Irritation

Found in version: trunk

Impact:

Expected Use:

OS: All

Support Group: Any

Description:

Our plot list, when expanded, has up/down arrows next to operators for moving them relative to other operators, and has an x button for deleting the operator.These are missing when VisIt is built with Qt 5.I've observed this on Windows, don't have access to linux at the moment to see if it occurs there, too.

Comments:

| 1.0 | Qt 5 enabled, plot List missing up/down arrows and x - Our plot list, when expanded, has up/down arrows next to operators for moving them relative to other operators, and has an x button for deleting the operator.These are missing when VisIt is built with Qt 5.I've observed this on Windows, don't have access to linux at the moment to see if it occurs there, too.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in the transition. Below is

a complete record of the original redmine ticket.

Ticket number: 2795

Status: Pending

Project: VisIt

Tracker: Bug

Priority: High

Subject: Qt 5 enabled, plot List missing up/down arrows and x

Assigned to: -

Category: -

Target version: -

Author: Kathleen Biagas

Start: 03/29/2017

Due date:

% Done: 0%

Estimated time:

Created: 03/29/2017 04:28 pm

Updated: 04/04/2017 07:04 pm

Likelihood: 3 - Occasional

Severity: 3 - Major Irritation

Found in version: trunk

Impact:

Expected Use:

OS: All

Support Group: Any

Description:

Our plot list, when expanded, has up/down arrows next to operators for moving them relative to other operators, and has an x button for deleting the operator.These are missing when VisIt is built with Qt 5.I've observed this on Windows, don't have access to linux at the moment to see if it occurs there, too.

Comments:

| priority | qt enabled plot list missing up down arrows and x our plot list when expanded has up down arrows next to operators for moving them relative to other operators and has an x button for deleting the operator these are missing when visit is built with qt i ve observed this on windows don t have access to linux at the moment to see if it occurs there too redmine migration this ticket was migrated from redmine as such not all information was able to be captured in the transition below is a complete record of the original redmine ticket ticket number status pending project visit tracker bug priority high subject qt enabled plot list missing up down arrows and x assigned to category target version author kathleen biagas start due date done estimated time created pm updated pm likelihood occasional severity major irritation found in version trunk impact expected use os all support group any description our plot list when expanded has up down arrows next to operators for moving them relative to other operators and has an x button for deleting the operator these are missing when visit is built with qt i ve observed this on windows don t have access to linux at the moment to see if it occurs there too comments | 1 |

822,959 | 30,922,230,884 | IssuesEvent | 2023-08-06 03:16:27 | hphothong/smite | https://api.github.com/repos/hphothong/smite | closed | Team Balance toggle button can be turned off | bug good first issue medium priority | The toggle button for Team Balance should not be able to be turned off. When you select the same button as the current selection, nothing should happen.

Repro Steps:

1. Load the page

2. Select the BALANCED toggle filter

3. Select the BALANCED toggle filter again

4. Notice that the toggle button now shows no buttons as selected

AC:

- [ ] Given the BALANCED button is selected when selecting the BALANCED button again, then the BALANCED button should still be selected

- [ ] Given the BALANCED button is selected when selecting the UNBALANCED button, then the toggle button should switch to UNBALANCED

- [ ] Given the UNBALANCED button is selected when selecting the BALANCED button, then the toggle button should switch to BALANCED

- [ ] Given the UNBALANCED button is selected when selecting the UNBALANCED button, then the UNBALANCED button should still be selected | 1.0 | Team Balance toggle button can be turned off - The toggle button for Team Balance should not be able to be turned off. When you select the same button as the current selection, nothing should happen.

Repro Steps:

1. Load the page

2. Select the BALANCED toggle filter

3. Select the BALANCED toggle filter again

4. Notice that the toggle button now shows no buttons as selected

AC:

- [ ] Given the BALANCED button is selected when selecting the BALANCED button again, then the BALANCED button should still be selected

- [ ] Given the BALANCED button is selected when selecting the UNBALANCED button, then the toggle button should switch to UNBALANCED

- [ ] Given the UNBALANCED button is selected when selecting the BALANCED button, then the toggle button should switch to BALANCED

- [ ] Given the UNBALANCED button is selected when selecting the UNBALANCED button, then the UNBALANCED button should still be selected | priority | team balance toggle button can be turned off the toggle button for team balance should not be able to be turned off when you select the same button as the current selection nothing should happen repro steps load the page select the balanced toggle filter select the balanced toggle filter again notice that the toggle button now shows no buttons as selected ac given the balanced button is selected when selecting the balanced button again then the balanced button should still be selected given the balanced button is selected when selecting the unbalanced button then the toggle button should switch to unbalanced given the unbalanced button is selected when selecting the balanced button then the toggle button should switch to balanced given the unbalanced button is selected when selecting the unbalanced button then the unbalanced button should still be selected | 1 |

711,333 | 24,458,950,347 | IssuesEvent | 2022-10-07 09:22:00 | unikraft/unikraft | https://api.github.com/repos/unikraft/unikraft | opened | GDB Stub | kind/enhancement kind/project area/arch arch/x86_64 arch/arm arch/arm64 priority/medium | ### Feature request summary

Enable GDB introspection of running Unikraft instance.

Provides the interface to list threads, memory allocations, open files in Unikraft etc.

Requires a second serial connection to Unikraft

**Summary of objectives**

- [ ] Add a GDB stub server in Unikraft, that a GDB client can connect to to debug the running unikernel instance.

- [ ] Add support for hardware breakpoints.

**Depends on**: TODO

### Describe alternatives

_No response_

### Related architectures

_No response_

### Related platforms

_No response_

### Additional context

_No response_ | 1.0 | GDB Stub - ### Feature request summary

Enable GDB introspection of running Unikraft instance.

Provides the interface to list threads, memory allocations, open files in Unikraft etc.

Requires a second serial connection to Unikraft

**Summary of objectives**

- [ ] Add a GDB stub server in Unikraft, that a GDB client can connect to to debug the running unikernel instance.

- [ ] Add support for hardware breakpoints.

**Depends on**: TODO

### Describe alternatives

_No response_

### Related architectures

_No response_

### Related platforms

_No response_

### Additional context

_No response_ | priority | gdb stub feature request summary enable gdb introspection of running unikraft instance provides the interface to list threads memory allocations open files in unikraft etc requires a second serial connection to unikraft summary of objectives add a gdb stub server in unikraft that a gdb client can connect to to debug the running unikernel instance add support for hardware breakpoints depends on todo describe alternatives no response related architectures no response related platforms no response additional context no response | 1 |

39,526 | 2,856,236,478 | IssuesEvent | 2015-06-02 14:08:22 | aseprite/aseprite | https://api.github.com/repos/aseprite/aseprite | closed | Cannot create new frame if on a disabled layer | bug imported medium priority | _From [st...@sprixelsoft.com](https://code.google.com/u/105605591483612568850/) on June 22, 2013 22:45:18_

What steps will reproduce the problem? 1.disable current layer

2.attempt to create new frame

3.fail

_Original issue: http://code.google.com/p/aseprite/issues/detail?id=243_ | 1.0 | Cannot create new frame if on a disabled layer - _From [st...@sprixelsoft.com](https://code.google.com/u/105605591483612568850/) on June 22, 2013 22:45:18_

What steps will reproduce the problem? 1.disable current layer

2.attempt to create new frame

3.fail

_Original issue: http://code.google.com/p/aseprite/issues/detail?id=243_ | priority | cannot create new frame if on a disabled layer from on june what steps will reproduce the problem disable current layer attempt to create new frame fail original issue | 1 |

280,547 | 8,683,541,435 | IssuesEvent | 2018-12-02 19:03:46 | GammaStation/Gamma-Station | https://api.github.com/repos/GammaStation/Gamma-Station | closed | Двери клятые двери | Priority: Medium bug | <!--

ВАЖНО: Если ваш ишью является не репортом о баге, а предложением для чего-либо, то ОБЯЗАТЕЛЬНО добавьте в название тег [Proposal]

1. ОТВЕТЫ ОСТАВЛЯТЬ ПОД СООТВЕТСТВУЮЩИЕ ЗАГОЛОВКИ

(они в самом низу, после всех правил)

2. В ОДНОМ РЕПОРТЕ ДОЛЖНО БЫТЬ ОПИСАНИЕ ТОЛЬКО ОДНОЙ ПРОБЛЕМЫ

3. КОРРЕКТНОЕ НАЗВАНИЕ РЕПОРТА НЕ МЕНЕЕ ВАЖНО ЧЕМ ОПИСАНИЕ

-. Ниже описание каждого пункта.

1. Весь данный текст что уже написан до вас -

НЕ УДАЛЯТЬ И НЕ РЕДАКТИРОВАТЬ.

Если нечего написать в тот или иной пункт -

просто оставить пустым.

2. Не надо описывать пачку багов в одном репорте,

(!даже если там все описать можно парой слов!)

шанс что их исправят за раз, крайне мал.

А вот использовать на гите удобную функцию -

автозакрытия репорта при мерже пулл реквеста -

исправляющего данный репорт, будет невозможно.

3. Корректное и в меру подробное название репорта -

тоже очень важно! Чтобы даже не заходя в сам репорт -

было понятно что за проблема.

Плохой пример: "Ковер." - что мы должны понять из такого названия?

Хороший пример: "Некорректное отображение спрайтов ковра." -

а вот так уже будет понятно о чем репорт.

Это надо как минимум для того, чтобы вам же самим -

было видно, что репорта_нейм еще нет или наоборот,

уже есть, и это можно было понять не углубляясь в -

чтение каждого репорта внутри. Когда название не имеет конкретики, из -

которого нельзя понять о чем репорт, это также затрудняет функцию поиска.

-->

#### Подробное описание проблемы

Сломались доступы у бармена и библиотекаря, конкретно стеклянные

#### Что должно было произойти

Они должны открываться

#### Что произошло на самом деле

Они не открылись

#### Как повторить

Попробовать открыть за библиотекаря стеклянную дверь у него в офисе

#### Дополнительная информация:

Возможно где-то ещё я не проверял | 1.0 | Двери клятые двери - <!--

ВАЖНО: Если ваш ишью является не репортом о баге, а предложением для чего-либо, то ОБЯЗАТЕЛЬНО добавьте в название тег [Proposal]

1. ОТВЕТЫ ОСТАВЛЯТЬ ПОД СООТВЕТСТВУЮЩИЕ ЗАГОЛОВКИ

(они в самом низу, после всех правил)

2. В ОДНОМ РЕПОРТЕ ДОЛЖНО БЫТЬ ОПИСАНИЕ ТОЛЬКО ОДНОЙ ПРОБЛЕМЫ

3. КОРРЕКТНОЕ НАЗВАНИЕ РЕПОРТА НЕ МЕНЕЕ ВАЖНО ЧЕМ ОПИСАНИЕ

-. Ниже описание каждого пункта.

1. Весь данный текст что уже написан до вас -

НЕ УДАЛЯТЬ И НЕ РЕДАКТИРОВАТЬ.

Если нечего написать в тот или иной пункт -

просто оставить пустым.

2. Не надо описывать пачку багов в одном репорте,

(!даже если там все описать можно парой слов!)

шанс что их исправят за раз, крайне мал.

А вот использовать на гите удобную функцию -

автозакрытия репорта при мерже пулл реквеста -

исправляющего данный репорт, будет невозможно.

3. Корректное и в меру подробное название репорта -

тоже очень важно! Чтобы даже не заходя в сам репорт -

было понятно что за проблема.

Плохой пример: "Ковер." - что мы должны понять из такого названия?

Хороший пример: "Некорректное отображение спрайтов ковра." -

а вот так уже будет понятно о чем репорт.

Это надо как минимум для того, чтобы вам же самим -

было видно, что репорта_нейм еще нет или наоборот,

уже есть, и это можно было понять не углубляясь в -

чтение каждого репорта внутри. Когда название не имеет конкретики, из -

которого нельзя понять о чем репорт, это также затрудняет функцию поиска.

-->

#### Подробное описание проблемы

Сломались доступы у бармена и библиотекаря, конкретно стеклянные

#### Что должно было произойти

Они должны открываться

#### Что произошло на самом деле

Они не открылись

#### Как повторить

Попробовать открыть за библиотекаря стеклянную дверь у него в офисе

#### Дополнительная информация:

Возможно где-то ещё я не проверял | priority | двери клятые двери важно если ваш ишью является не репортом о баге а предложением для чего либо то обязательно добавьте в название тег ответы оставлять под соответствующие заголовки они в самом низу после всех правил в одном репорте должно быть описание только одной проблемы корректное название репорта не менее важно чем описание ниже описание каждого пункта весь данный текст что уже написан до вас не удалять и не редактировать если нечего написать в тот или иной пункт просто оставить пустым не надо описывать пачку багов в одном репорте даже если там все описать можно парой слов шанс что их исправят за раз крайне мал а вот использовать на гите удобную функцию автозакрытия репорта при мерже пулл реквеста исправляющего данный репорт будет невозможно корректное и в меру подробное название репорта тоже очень важно чтобы даже не заходя в сам репорт было понятно что за проблема плохой пример ковер что мы должны понять из такого названия хороший пример некорректное отображение спрайтов ковра а вот так уже будет понятно о чем репорт это надо как минимум для того чтобы вам же самим было видно что репорта нейм еще нет или наоборот уже есть и это можно было понять не углубляясь в чтение каждого репорта внутри когда название не имеет конкретики из которого нельзя понять о чем репорт это также затрудняет функцию поиска подробное описание проблемы сломались доступы у бармена и библиотекаря конкретно стеклянные что должно было произойти они должны открываться что произошло на самом деле они не открылись как повторить попробовать открыть за библиотекаря стеклянную дверь у него в офисе дополнительная информация возможно где то ещё я не проверял | 1 |

701,786 | 24,108,176,721 | IssuesEvent | 2022-09-20 09:10:41 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | Bluetooth: Controller: Syncing with devices with per. adv. int. < ~10ms eventually causes events from BT controller stop arriving | bug priority: medium area: Bluetooth platform: nRF area: Bluetooth Controller | **Describe the bug**

When using periodic adv. intervals <~10ms then sometimes BT Controller stops reporting any events, including sync termination, CTEs, scan events etc.

- What target platform are you using? nRF52833, Zephyr

- Is this a regression? Not checked.

**To Reproduce**

Have 10 tags sending per. adv. with 7.5ms interval with adv. train of 5 and CTE enabled.

I have not found replicatable way to reproduce, sometimes it's enough to just let all 10 tags sync and then wait a minute and it happens. Sometimes it's easier to reproduce by randomly resetting some tags. I have reproduced without CTE sampling enabled, however may or may not be easier to reproduce with it on. I have also reproduced with only 5-6 tags but I think it's easier with more.

Application:

[aoa_receiver_multiple_49915.zip](https://github.com/zephyrproject-rtos/zephyr/files/9488286/aoa_receiver_multiple_49915.zip)

**Expected behavior**

BT controller keeps reporting events.

**Impact**

BT Controller stops generating events, application will be in strange state.

**Logs and console output**

The application prints <wrn> main: Interval: 6 whenever a device sending per. adv. is seen, as can be seen it's stopped reporting at the end.

```

*** Booting Zephyr OS build zephyr-v3.1.0-4244-g1995d349db37 ***

Starting Connectionless Locator Demo

Bluetooth initialization...success

Scan callbacks register...success.

Periodic Advertising callbacks register...success.

Start scanning...success

[00:00:00.004,638] <inf> main: Creating Sync for tags in list

Waiting for periodic advertising...

Scan is running...

[00:00:00.328,277] <wrn> main: Interval: 6

[00:00:00.328,521] <dbg> main: scan_recv: Added tag from per adv list

[00:00:00.470,764] <wrn> main: Interval: 6

[00:00:00.470,977] <dbg> main: scan_recv: Added tag from per adv list

[00:00:00.498,992] <wrn> main: Interval: 6

[00:00:00.499,206] <dbg> main: scan_recv: Added tag from per adv list

Scan is running...

[00:00:00.709,167] <wrn> main: Interval: 6

(709) PER_ADV_SYNC[0]: [DEVICE]: 36:DC:42:90:4D:0E (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:00.710,174] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:00.710,174] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(710) success. CTE receive enabled.

[00:00:00.741,027] <wrn> main: Interval: 6

[00:00:00.741,241] <dbg> main: scan_recv: Added tag from per adv list

[00:00:00.762,207] <wrn> main: Interval: 6

[00:00:00.762,420] <dbg> main: scan_recv: Added tag from per adv list

[00:00:00.883,422] <wrn> main: Interval: 6

[00:00:00.883,666] <dbg> main: scan_recv: Added tag from per adv list

[00:00:00.972,381] <wrn> main: Interval: 6

(988) PER_ADV_SYNC[1]: [DEVICE]: 3B:66:BD:7F:64:67 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:00.988,983] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:00.989,013] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(989) success. CTE receive enabled.

Running...

TAGS:sync...,sync...,33,sync...,2,sync...,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 4 Synced: 2 Num err: 0/35

[00:00:01.096,862] <wrn> main: Interval: 6

[00:00:01.097,106] <dbg> main: scan_recv: Added tag from per adv list

Scan is running...

[00:00:01.310,974] <wrn> main: Interval: 6

(1312) PER_ADV_SYNC[2]: [DEVICE]: 08:F7:34:24:2B:28 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:01.312,622] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:01.312,622] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(1313) success. CTE receive enabled.

[00:00:01.730,895] <wrn> main: Interval: 6

Scan is running...

[00:00:01.944,915] <wrn> main: Interval: 6

[00:00:01.945,129] <dbg> main: scan_recv: Added tag from per adv list

Running...

TAGS:sync...,55,56,sync...,66,sync...,sync...,sync...,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 5 Synced: 3 Num err: 0/178

[00:00:02.179,016] <wrn> main: Interval: 6

[00:00:02.442,382] <wrn> main: Interval: 6

Scan is running...

[00:00:02.598,999] <wrn> main: Interval: 6

[00:00:02.862,396] <wrn> main: Interval: 6

Running...

TAGS:sync...,79,49,sync...,59,sync...,sync...,sync...,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 5 Synced: 3 Num err: 0/191

[00:00:03.018,981] <wrn> main: Interval: 6

[00:00:03.035,156] <wrn> main: Interval: 6

[00:00:03.035,369] <dbg> main: scan_recv: Added tag from per adv list

[00:00:03.197,052] <wrn> main: Interval: 6

(3197) PER_ADV_SYNC[3]: [DEVICE]: 28:B5:CF:0A:86:88 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:03.198,150] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:03.198,181] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(3198) success. CTE receive enabled.

[00:00:03.268,554] <wrn> main: Interval: 6

(3276) PER_ADV_SYNC[4]: [DEVICE]: 1D:29:0E:6A:E8:65 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:03.277,221] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:03.277,221] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(3277) success. CTE receive enabled.

Scan is running...

[00:00:03.613,586] <wrn> main: Interval: 6

(3621) PER_ADV_SYNC[5]: [DEVICE]: 17:50:92:34:97:F7 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:03.622,253] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:03.622,253] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(3622) success. CTE receive enabled.

[00:00:03.912,384] <wrn> main: Interval: 6

Running...

TAGS:19,82,44,sync...,53,13,144,sync...,sync...,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 3 Synced: 6 Num err: 0/358

[00:00:04.050,933] <wrn> main: Interval: 40

[00:00:04.069,000] <wrn> main: Interval: 6

[00:00:04.453,491] <wrn> main: Interval: 6

[00:00:04.671,020] <wrn> main: Interval: 6

[00:00:04.948,516] <wrn> main: Interval: 6

Running...

TAGS:24,64,21,sync...,35,30,204,sync...,sync...,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 3 Synced: 6 Num err: 0/384

Scan is running...

[00:00:05.368,530] <wrn> main: Interval: 6

Running...

TAGS:24,56,30,sync...,32,35,196,sync...,sync...,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 3 Synced: 6 Num err: 0/381

[00:00:06.150,970] <wrn> main: Interval: 40

[00:00:06.185,180] <wrn> main: Interval: 6

(6207) PER_ADV_SYNC[6]: [DEVICE]: 2B:D6:79:55:8E:32 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:06.207,916] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:06.207,916] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(6208) success. CTE receive enabled.

[00:00:06.418,548] <wrn> main: Interval: 6

[00:00:06.770,996] <wrn> main: Interval: 6

Scan is running...

Running...

TAGS:27,37,23,sync...,27,35,191,sync...,16,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 2 Synced: 7 Num err: 0/367

[00:00:07.191,009] <wrn> main: Interval: 6

[00:00:07.200,988] <wrn> main: Interval: 40

[00:00:07.654,937] <wrn> main: Interval: 6

[00:00:07.888,549] <wrn> main: Interval: 6

Running...

TAGS:27,43,36,sync...,37,44,170,sync...,20,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 2 Synced: 7 Num err: 0/380

[00:00:08.034,912] <wrn> main: Interval: 6

(8088) PER_ADV_SYNC[7]: [DEVICE]: 17:BB:4D:10:4D:39 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:08.089,447] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:08.089,447] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(8090) success. CTE receive enabled.

Scan is running...

[00:00:08.867,034] <wrn> main: Interval: 6

Running...

TAGS:40,55,21,sync...,25,32,17,14,18,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 1 Synced: 8 Num err: 0/223

[00:00:09.287,017] <wrn> main: Interval: 6

[00:00:09.560,943] <wrn> main: Interval: 6

(9570) PER_ADV_SYNC[8]: [DEVICE]: 3A:9E:4A:1C:6F:D1 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:09.570,434] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:09.570,465] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(9570) success. CTE receive enabled.

Scan is running...

[00:00:09.710,906] <wrn> main: Interval: 6

[00:00:09.783,935] <err> bt_scan: Prepare CTE conn IQ report failed -22

Running...

TAGS:33,53,30,15,31,38,18,21,21,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 9 Num err: 0/263

[00:00:10.134,857] <wrn> main: Interval: 6

Running...

TAGS:37,50,27,44,31,34,21,23,21,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 9 Num err: 0/289

[00:00:11.030,792] <wrn> main: Interval: 6

[00:00:11.038,604] <wrn> main: Interval: 6

Scan is running...

[00:00:12.008,056] <err> bt_scan: Prepare CTE conn IQ report failed -22

Running...

TAGS:39,49,22,43,34,40,11,18,17,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 9 Num err: 0/281

[00:00:12.049,133] <wrn> main: Interval: 6

[00:00:12.436,981] <wrn> main: Interval: 6

[00:00:12.445,037] <wrn> main: Interval: 6

Running...

TAGS:39,55,24,39,24,28,16,16,19,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 9 Num err: 0/262

[00:00:13.740,722] <err> bt_scan: Prepare CTE conn IQ report failed -22

Running...

TAGS:30,44,27,39,32,34,19,19,20,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 9 Num err: 0/277

[00:00:14.116,912] <wrn> main: Interval: 6

Scan is running...

Running...

TAGS:23,49,26,24,32,31,14,18,17,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 9 Num err: 0/244

[00:00:15.251,892] <wrn> main: Interval: 6

[00:00:15.586,822] <wrn> main: Interval: 6

[00:00:16.003,265] <wrn> main: Interval: 6

Running...

TAGS:28,46,23,29,32,33,18,19,20,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 9 Num err: 0/260

[00:00:16.054,779] <wrn> main: Interval: 6

[00:00:16.424,407] <wrn> main: Interval: 6

[00:00:16.424,652] <dbg> main: scan_recv: Added tag from per adv list

[00:00:16.435,058] <wrn> main: Interval: 6

Scan is running...

Running...

TAGS:23,52,20,39,28,34,12,13,14,sync...,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 1 Synced: 9 Num err: 0/235

[00:00:17.270,812] <wrn> main: Interval: 6

[00:00:17.900,756] <wrn> main: Interval: 6

Running...

TAGS:25,64,23,41,31,36,14,20,21,sync...,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 1 Synced: 9 Num err: 0/280

[00:00:18.734,436] <wrn> main: Interval: 6

(18773) PER_ADV_SYNC[9]: [DEVICE]: 2B:DA:D3:2E:AE:89 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:18.774,322] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:18.774,353] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(18775) success. CTE receive enabled.

[00:00:18.961,120] <wrn> main: Interval: 40

Running...

TAGS:23,45,27,31,26,31,14,14,17,2,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 10 Num err: 0/237

Running...

----->After here no events arrive from controller, even powering of tags etc. won't generate sync term<-----

TAGS:0,0,0,0,0,0,0,0,0,0,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 10 Num err: 0/0

Running...

TAGS:0,0,0,0,0,0,0,0,0,0,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 10 Num err: 0/0

Running...

TAGS:0,0,0,0,0,0,0,0,0,0,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 10 Num err: 0/0

Running...

TAGS:0,0,0,0,0,0,0,0,0,0,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 10 Num err: 0/0

Running...

TAGS:0,0,0,0,0,0,0,0,0,0,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 10 Num err: 0/0

Running...

TAGS:0,0,0,0,0,0,0,0,0,0,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 10 Num err: 0/0

```

**Environment (please complete the following information):**

- OS: Windows

- Toolchain: Zephyr

- Commit SHA or Version used: 894423e9aed34a394b97c995c33549513b0ac4a5

| 1.0 | Bluetooth: Controller: Syncing with devices with per. adv. int. < ~10ms eventually causes events from BT controller stop arriving - **Describe the bug**

When using periodic adv. intervals <~10ms then sometimes BT Controller stops reporting any events, including sync termination, CTEs, scan events etc.

- What target platform are you using? nRF52833, Zephyr

- Is this a regression? Not checked.

**To Reproduce**

Have 10 tags sending per. adv. with 7.5ms interval with adv. train of 5 and CTE enabled.

I have not found replicatable way to reproduce, sometimes it's enough to just let all 10 tags sync and then wait a minute and it happens. Sometimes it's easier to reproduce by randomly resetting some tags. I have reproduced without CTE sampling enabled, however may or may not be easier to reproduce with it on. I have also reproduced with only 5-6 tags but I think it's easier with more.

Application:

[aoa_receiver_multiple_49915.zip](https://github.com/zephyrproject-rtos/zephyr/files/9488286/aoa_receiver_multiple_49915.zip)

**Expected behavior**

BT controller keeps reporting events.

**Impact**

BT Controller stops generating events, application will be in strange state.

**Logs and console output**

The application prints <wrn> main: Interval: 6 whenever a device sending per. adv. is seen, as can be seen it's stopped reporting at the end.

```

*** Booting Zephyr OS build zephyr-v3.1.0-4244-g1995d349db37 ***

Starting Connectionless Locator Demo

Bluetooth initialization...success

Scan callbacks register...success.

Periodic Advertising callbacks register...success.

Start scanning...success

[00:00:00.004,638] <inf> main: Creating Sync for tags in list

Waiting for periodic advertising...

Scan is running...

[00:00:00.328,277] <wrn> main: Interval: 6

[00:00:00.328,521] <dbg> main: scan_recv: Added tag from per adv list

[00:00:00.470,764] <wrn> main: Interval: 6

[00:00:00.470,977] <dbg> main: scan_recv: Added tag from per adv list

[00:00:00.498,992] <wrn> main: Interval: 6

[00:00:00.499,206] <dbg> main: scan_recv: Added tag from per adv list

Scan is running...

[00:00:00.709,167] <wrn> main: Interval: 6

(709) PER_ADV_SYNC[0]: [DEVICE]: 36:DC:42:90:4D:0E (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:00.710,174] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:00.710,174] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(710) success. CTE receive enabled.

[00:00:00.741,027] <wrn> main: Interval: 6

[00:00:00.741,241] <dbg> main: scan_recv: Added tag from per adv list

[00:00:00.762,207] <wrn> main: Interval: 6

[00:00:00.762,420] <dbg> main: scan_recv: Added tag from per adv list

[00:00:00.883,422] <wrn> main: Interval: 6

[00:00:00.883,666] <dbg> main: scan_recv: Added tag from per adv list

[00:00:00.972,381] <wrn> main: Interval: 6

(988) PER_ADV_SYNC[1]: [DEVICE]: 3B:66:BD:7F:64:67 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:00.988,983] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:00.989,013] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(989) success. CTE receive enabled.

Running...

TAGS:sync...,sync...,33,sync...,2,sync...,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 4 Synced: 2 Num err: 0/35

[00:00:01.096,862] <wrn> main: Interval: 6

[00:00:01.097,106] <dbg> main: scan_recv: Added tag from per adv list

Scan is running...

[00:00:01.310,974] <wrn> main: Interval: 6

(1312) PER_ADV_SYNC[2]: [DEVICE]: 08:F7:34:24:2B:28 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:01.312,622] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:01.312,622] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(1313) success. CTE receive enabled.

[00:00:01.730,895] <wrn> main: Interval: 6

Scan is running...

[00:00:01.944,915] <wrn> main: Interval: 6

[00:00:01.945,129] <dbg> main: scan_recv: Added tag from per adv list

Running...

TAGS:sync...,55,56,sync...,66,sync...,sync...,sync...,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 5 Synced: 3 Num err: 0/178

[00:00:02.179,016] <wrn> main: Interval: 6

[00:00:02.442,382] <wrn> main: Interval: 6

Scan is running...

[00:00:02.598,999] <wrn> main: Interval: 6

[00:00:02.862,396] <wrn> main: Interval: 6

Running...

TAGS:sync...,79,49,sync...,59,sync...,sync...,sync...,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 5 Synced: 3 Num err: 0/191

[00:00:03.018,981] <wrn> main: Interval: 6

[00:00:03.035,156] <wrn> main: Interval: 6

[00:00:03.035,369] <dbg> main: scan_recv: Added tag from per adv list

[00:00:03.197,052] <wrn> main: Interval: 6

(3197) PER_ADV_SYNC[3]: [DEVICE]: 28:B5:CF:0A:86:88 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:03.198,150] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:03.198,181] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(3198) success. CTE receive enabled.

[00:00:03.268,554] <wrn> main: Interval: 6

(3276) PER_ADV_SYNC[4]: [DEVICE]: 1D:29:0E:6A:E8:65 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:03.277,221] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:03.277,221] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(3277) success. CTE receive enabled.

Scan is running...

[00:00:03.613,586] <wrn> main: Interval: 6

(3621) PER_ADV_SYNC[5]: [DEVICE]: 17:50:92:34:97:F7 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:03.622,253] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:03.622,253] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(3622) success. CTE receive enabled.

[00:00:03.912,384] <wrn> main: Interval: 6

Running...

TAGS:19,82,44,sync...,53,13,144,sync...,sync...,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 3 Synced: 6 Num err: 0/358

[00:00:04.050,933] <wrn> main: Interval: 40

[00:00:04.069,000] <wrn> main: Interval: 6

[00:00:04.453,491] <wrn> main: Interval: 6

[00:00:04.671,020] <wrn> main: Interval: 6

[00:00:04.948,516] <wrn> main: Interval: 6

Running...

TAGS:24,64,21,sync...,35,30,204,sync...,sync...,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 3 Synced: 6 Num err: 0/384

Scan is running...

[00:00:05.368,530] <wrn> main: Interval: 6

Running...

TAGS:24,56,30,sync...,32,35,196,sync...,sync...,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 3 Synced: 6 Num err: 0/381

[00:00:06.150,970] <wrn> main: Interval: 40

[00:00:06.185,180] <wrn> main: Interval: 6

(6207) PER_ADV_SYNC[6]: [DEVICE]: 2B:D6:79:55:8E:32 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:06.207,916] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:06.207,916] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(6208) success. CTE receive enabled.

[00:00:06.418,548] <wrn> main: Interval: 6

[00:00:06.770,996] <wrn> main: Interval: 6

Scan is running...

Running...

TAGS:27,37,23,sync...,27,35,191,sync...,16,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 2 Synced: 7 Num err: 0/367

[00:00:07.191,009] <wrn> main: Interval: 6

[00:00:07.200,988] <wrn> main: Interval: 40

[00:00:07.654,937] <wrn> main: Interval: 6

[00:00:07.888,549] <wrn> main: Interval: 6

Running...

TAGS:27,43,36,sync...,37,44,170,sync...,20,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 2 Synced: 7 Num err: 0/380

[00:00:08.034,912] <wrn> main: Interval: 6

(8088) PER_ADV_SYNC[7]: [DEVICE]: 17:BB:4D:10:4D:39 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:08.089,447] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:08.089,447] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(8090) success. CTE receive enabled.

Scan is running...

[00:00:08.867,034] <wrn> main: Interval: 6

Running...

TAGS:40,55,21,sync...,25,32,17,14,18,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 1 Synced: 8 Num err: 0/223

[00:00:09.287,017] <wrn> main: Interval: 6

[00:00:09.560,943] <wrn> main: Interval: 6

(9570) PER_ADV_SYNC[8]: [DEVICE]: 3A:9E:4A:1C:6F:D1 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:09.570,434] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:09.570,465] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(9570) success. CTE receive enabled.

Scan is running...

[00:00:09.710,906] <wrn> main: Interval: 6

[00:00:09.783,935] <err> bt_scan: Prepare CTE conn IQ report failed -22

Running...

TAGS:33,53,30,15,31,38,18,21,21,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 9 Num err: 0/263

[00:00:10.134,857] <wrn> main: Interval: 6

Running...

TAGS:37,50,27,44,31,34,21,23,21,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 9 Num err: 0/289

[00:00:11.030,792] <wrn> main: Interval: 6

[00:00:11.038,604] <wrn> main: Interval: 6

Scan is running...

[00:00:12.008,056] <err> bt_scan: Prepare CTE conn IQ report failed -22

Running...

TAGS:39,49,22,43,34,40,11,18,17,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 9 Num err: 0/281

[00:00:12.049,133] <wrn> main: Interval: 6

[00:00:12.436,981] <wrn> main: Interval: 6

[00:00:12.445,037] <wrn> main: Interval: 6

Running...

TAGS:39,55,24,39,24,28,16,16,19,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 9 Num err: 0/262

[00:00:13.740,722] <err> bt_scan: Prepare CTE conn IQ report failed -22

Running...

TAGS:30,44,27,39,32,34,19,19,20,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 9 Num err: 0/277

[00:00:14.116,912] <wrn> main: Interval: 6

Scan is running...

Running...

TAGS:23,49,26,24,32,31,14,18,17,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 9 Num err: 0/244

[00:00:15.251,892] <wrn> main: Interval: 6

[00:00:15.586,822] <wrn> main: Interval: 6

[00:00:16.003,265] <wrn> main: Interval: 6

Running...

TAGS:28,46,23,29,32,33,18,19,20,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 9 Num err: 0/260

[00:00:16.054,779] <wrn> main: Interval: 6

[00:00:16.424,407] <wrn> main: Interval: 6

[00:00:16.424,652] <dbg> main: scan_recv: Added tag from per adv list

[00:00:16.435,058] <wrn> main: Interval: 6

Scan is running...

Running...

TAGS:23,52,20,39,28,34,12,13,14,sync...,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 1 Synced: 9 Num err: 0/235

[00:00:17.270,812] <wrn> main: Interval: 6

[00:00:17.900,756] <wrn> main: Interval: 6

Running...

TAGS:25,64,23,41,31,36,14,20,21,sync...,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 1 Synced: 9 Num err: 0/280

[00:00:18.734,436] <wrn> main: Interval: 6

(18773) PER_ADV_SYNC[9]: [DEVICE]: 2B:DA:D3:2E:AE:89 (random) synced, Interval 0x0006 (7 ms), PHY LE 2M

[00:00:18.774,322] <dbg> main: sync_cb: Removed tag from per adv list

[00:00:18.774,353] <inf> main: Creating Sync for tags in list

Enable receiving of CTE...

(18775) success. CTE receive enabled.

[00:00:18.961,120] <wrn> main: Interval: 40

Running...

TAGS:23,45,27,31,26,31,14,14,17,2,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 10 Num err: 0/237

Running...

----->After here no events arrive from controller, even powering of tags etc. won't generate sync term<-----

TAGS:0,0,0,0,0,0,0,0,0,0,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 10 Num err: 0/0

Running...

TAGS:0,0,0,0,0,0,0,0,0,0,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 10 Num err: 0/0

Running...

TAGS:0,0,0,0,0,0,0,0,0,0,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 10 Num err: 0/0

Running...

TAGS:0,0,0,0,0,0,0,0,0,0,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 10 Num err: 0/0

Running...

TAGS:0,0,0,0,0,0,0,0,0,0,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 10 Num err: 0/0

Running...

TAGS:0,0,0,0,0,0,0,0,0,0,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,-,

Queued: 0 Synced: 10 Num err: 0/0

```

**Environment (please complete the following information):**

- OS: Windows

- Toolchain: Zephyr

- Commit SHA or Version used: 894423e9aed34a394b97c995c33549513b0ac4a5

| priority | bluetooth controller syncing with devices with per adv int eventually causes events from bt controller stop arriving describe the bug when using periodic adv intervals then sometimes bt controller stops reporting any events including sync termination ctes scan events etc what target platform are you using zephyr is this a regression not checked to reproduce have tags sending per adv with interval with adv train of and cte enabled i have not found replicatable way to reproduce sometimes it s enough to just let all tags sync and then wait a minute and it happens sometimes it s easier to reproduce by randomly resetting some tags i have reproduced without cte sampling enabled however may or may not be easier to reproduce with it on i have also reproduced with only tags but i think it s easier with more application expected behavior bt controller keeps reporting events impact bt controller stops generating events application will be in strange state logs and console output the application prints main interval whenever a device sending per adv is seen as can be seen it s stopped reporting at the end booting zephyr os build zephyr starting connectionless locator demo bluetooth initialization success scan callbacks register success periodic advertising callbacks register success start scanning success main creating sync for tags in list waiting for periodic advertising scan is running main interval main scan recv added tag from per adv list main interval main scan recv added tag from per adv list main interval main scan recv added tag from per adv list scan is running main interval per adv sync dc random synced interval ms phy le main sync cb removed tag from per adv list main creating sync for tags in list enable receiving of cte success cte receive enabled main interval main scan recv added tag from per adv list main interval main scan recv added tag from per adv list main interval main scan recv added tag from per adv list main interval per adv sync bd random synced interval ms phy le main sync cb removed tag from per adv list main creating sync for tags in list enable receiving of cte success cte receive enabled running tags sync sync sync sync queued synced num err main interval main scan recv added tag from per adv list scan is running main interval per adv sync random synced interval ms phy le main sync cb removed tag from per adv list main creating sync for tags in list enable receiving of cte success cte receive enabled main interval scan is running main interval main scan recv added tag from per adv list running tags sync sync sync sync sync queued synced num err main interval main interval scan is running main interval main interval running tags sync sync sync sync sync queued synced num err main interval main interval main scan recv added tag from per adv list main interval per adv sync cf random synced interval ms phy le main sync cb removed tag from per adv list main creating sync for tags in list enable receiving of cte success cte receive enabled main interval per adv sync random synced interval ms phy le main sync cb removed tag from per adv list main creating sync for tags in list enable receiving of cte success cte receive enabled scan is running main interval per adv sync random synced interval ms phy le main sync cb removed tag from per adv list main creating sync for tags in list enable receiving of cte success cte receive enabled main interval running tags sync sync sync queued synced num err main interval main interval main interval main interval main interval running tags sync sync sync queued synced num err scan is running main interval running tags sync sync sync queued synced num err main interval main interval per adv sync random synced interval ms phy le main sync cb removed tag from per adv list main creating sync for tags in list enable receiving of cte success cte receive enabled main interval main interval scan is running running tags sync sync queued synced num err main interval main interval main interval main interval running tags sync sync queued synced num err main interval per adv sync bb random synced interval ms phy le main sync cb removed tag from per adv list main creating sync for tags in list enable receiving of cte success cte receive enabled scan is running main interval running tags sync queued synced num err main interval main interval per adv sync random synced interval ms phy le main sync cb removed tag from per adv list main creating sync for tags in list enable receiving of cte success cte receive enabled scan is running main interval bt scan prepare cte conn iq report failed running tags queued synced num err main interval running tags queued synced num err main interval main interval scan is running bt scan prepare cte conn iq report failed running tags queued synced num err main interval main interval main interval running tags queued synced num err bt scan prepare cte conn iq report failed running tags queued synced num err main interval scan is running running tags queued synced num err main interval main interval main interval running tags queued synced num err main interval main interval main scan recv added tag from per adv list main interval scan is running running tags sync queued synced num err main interval main interval running tags sync queued synced num err main interval per adv sync da ae random synced interval ms phy le main sync cb removed tag from per adv list main creating sync for tags in list enable receiving of cte success cte receive enabled main interval running tags queued synced num err running after here no events arrive from controller even powering of tags etc won t generate sync term tags queued synced num err running tags queued synced num err running tags queued synced num err running tags queued synced num err running tags queued synced num err running tags queued synced num err environment please complete the following information os windows toolchain zephyr commit sha or version used | 1 |

25,658 | 2,683,911,299 | IssuesEvent | 2015-03-28 13:15:27 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | [ConEmu 2010.3.8] Biew - нет кейбара | 1 star bug imported Priority-Medium wontfix | _From [andrey.b...@gmail.com](https://code.google.com/u/117303310870772091797/) on March 17, 2010 14:45:25_

В biew (хекс едитор) не видно кейбара. Если отобразить реальную консоль, то

видно, что она километровой высоты. https://sourceforge.net/projects/beye/files/biew/6.1.0/biew-610-win32.zip/download

_Original issue: http://code.google.com/p/conemu-maximus5/issues/detail?id=209_ | 1.0 | [ConEmu 2010.3.8] Biew - нет кейбара - _From [andrey.b...@gmail.com](https://code.google.com/u/117303310870772091797/) on March 17, 2010 14:45:25_

В biew (хекс едитор) не видно кейбара. Если отобразить реальную консоль, то

видно, что она километровой высоты. https://sourceforge.net/projects/beye/files/biew/6.1.0/biew-610-win32.zip/download

_Original issue: http://code.google.com/p/conemu-maximus5/issues/detail?id=209_ | priority | biew нет кейбара from on march в biew хекс едитор не видно кейбара если отобразить реальную консоль то видно что она километровой высоты original issue | 1 |

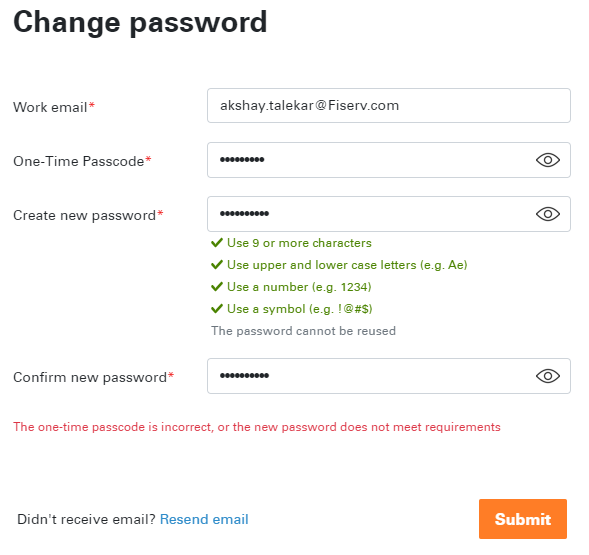

774,781 | 27,209,902,997 | IssuesEvent | 2023-02-20 15:45:18 | Fiserv/Support | https://api.github.com/repos/Fiserv/Support | closed | Unable to reset Developer Studio Password | bug Priority - Medium Severity - Low BankingHub Login/Signup | # Reporting new issue for Fiser Developer Studio

I'm unable to reset the Developer Studio account password. Whenever I enter the One-time passcode (OTP) received via email to reset the password, it throws incorrect OTP error all the time.

| 1.0 | Unable to reset Developer Studio Password - # Reporting new issue for Fiser Developer Studio

I'm unable to reset the Developer Studio account password. Whenever I enter the One-time passcode (OTP) received via email to reset the password, it throws incorrect OTP error all the time.

| priority | unable to reset developer studio password reporting new issue for fiser developer studio i m unable to reset the developer studio account password whenever i enter the one time passcode otp received via email to reset the password it throws incorrect otp error all the time | 1 |

671,257 | 22,751,261,574 | IssuesEvent | 2022-07-07 13:18:57 | status-im/status-desktop | https://api.github.com/repos/status-im/status-desktop | closed | The notification banner 'Secure your seed phrase Back up now' is still shown after back up the seed phrase | bug priority 2: medium E:Bugfixes Settings S:2 E:Settings | # Bug Report

## Steps to reproduce

1. Create a new account

2. Go to Settings and click 'Secure your seed phrase Back up now'

3. Go step by step and complete successfully the process of backup

4. Check the Setting page and click on the notification banner 'Secure your seed phrase Back up now'

#### Expected behavior

The notification banner 'Secure your seed phrase Back up now' is NOT shown after backing up the seed phrase because the user successfully backed up the seed phrase

#### Actual behavior

The notification banner 'Secure your seed phrase Back up now' is still shown after backing up the seed phrase

### Additional Information

https://user-images.githubusercontent.com/14942081/176036318-d3f0112a-99fe-46cc-a04e-1878c1530a0f.mov

Status desktop version: https://ci.status.im/job/status-desktop/job/branches/job/macos/job/master/2246/

Operating System: macOS Monterey 12.3 Beta (21E5227a)

| 1.0 | The notification banner 'Secure your seed phrase Back up now' is still shown after back up the seed phrase - # Bug Report

## Steps to reproduce

1. Create a new account

2. Go to Settings and click 'Secure your seed phrase Back up now'

3. Go step by step and complete successfully the process of backup

4. Check the Setting page and click on the notification banner 'Secure your seed phrase Back up now'

#### Expected behavior

The notification banner 'Secure your seed phrase Back up now' is NOT shown after backing up the seed phrase because the user successfully backed up the seed phrase

#### Actual behavior

The notification banner 'Secure your seed phrase Back up now' is still shown after backing up the seed phrase

### Additional Information

https://user-images.githubusercontent.com/14942081/176036318-d3f0112a-99fe-46cc-a04e-1878c1530a0f.mov

Status desktop version: https://ci.status.im/job/status-desktop/job/branches/job/macos/job/master/2246/

Operating System: macOS Monterey 12.3 Beta (21E5227a)

| priority | the notification banner secure your seed phrase back up now is still shown after back up the seed phrase bug report steps to reproduce create a new account go to settings and click secure your seed phrase back up now go step by step and complete successfully the process of backup check the setting page and click on the notification banner secure your seed phrase back up now expected behavior the notification banner secure your seed phrase back up now is not shown after backing up the seed phrase because the user successfully backed up the seed phrase actual behavior the notification banner secure your seed phrase back up now is still shown after backing up the seed phrase additional information status desktop version operating system macos monterey beta | 1 |

552,205 | 16,218,309,014 | IssuesEvent | 2021-05-06 00:07:01 | MycroftAI/hardware-mycroft-mark-II | https://api.github.com/repos/MycroftAI/hardware-mycroft-mark-II | opened | Mycroft seems slower to respond on the Mark II | Priority: Medium bug | **Describe the bug**

Have had it raised by community members that responses to queries sometimes seem very slow on the Mark II where they are not on other instances of Mycroft. It seems that this may be caused by the listener silence detection not working correctly.

By default Mycroft will listen for up to 10 seconds before sending this to an STT service for transcription. However as many utterances are much less than this, if there is a long enough period of silence, Mycroft will consider the utterance finished and send the audio earlier than that 10 seconds.

I'm seeing that sometimes the listener remains open for the whole 10 seconds, even if the user is not speaking. This makes it seem like the processing is taking a very long time but it's actually listening for too long.

I presume this is related to the silence detection and may differ based on the audio input being received.

If that's the case, consideration should be given to making whatever fixes it configurable so it can be defined in `mycroft.conf` or the Hardware Abstraction Layer and hence differ from device to device.

**To Reproduce**

Steps to reproduce the behavior:

1. Open the mycroft-cli-client

2. Speak to Mycroft

3. Watch when the mic is activated and stopped. | 1.0 | Mycroft seems slower to respond on the Mark II - **Describe the bug**

Have had it raised by community members that responses to queries sometimes seem very slow on the Mark II where they are not on other instances of Mycroft. It seems that this may be caused by the listener silence detection not working correctly.

By default Mycroft will listen for up to 10 seconds before sending this to an STT service for transcription. However as many utterances are much less than this, if there is a long enough period of silence, Mycroft will consider the utterance finished and send the audio earlier than that 10 seconds.

I'm seeing that sometimes the listener remains open for the whole 10 seconds, even if the user is not speaking. This makes it seem like the processing is taking a very long time but it's actually listening for too long.

I presume this is related to the silence detection and may differ based on the audio input being received.

If that's the case, consideration should be given to making whatever fixes it configurable so it can be defined in `mycroft.conf` or the Hardware Abstraction Layer and hence differ from device to device.

**To Reproduce**

Steps to reproduce the behavior:

1. Open the mycroft-cli-client

2. Speak to Mycroft

3. Watch when the mic is activated and stopped. | priority | mycroft seems slower to respond on the mark ii describe the bug have had it raised by community members that responses to queries sometimes seem very slow on the mark ii where they are not on other instances of mycroft it seems that this may be caused by the listener silence detection not working correctly by default mycroft will listen for up to seconds before sending this to an stt service for transcription however as many utterances are much less than this if there is a long enough period of silence mycroft will consider the utterance finished and send the audio earlier than that seconds i m seeing that sometimes the listener remains open for the whole seconds even if the user is not speaking this makes it seem like the processing is taking a very long time but it s actually listening for too long i presume this is related to the silence detection and may differ based on the audio input being received if that s the case consideration should be given to making whatever fixes it configurable so it can be defined in mycroft conf or the hardware abstraction layer and hence differ from device to device to reproduce steps to reproduce the behavior open the mycroft cli client speak to mycroft watch when the mic is activated and stopped | 1 |

325,501 | 9,931,876,005 | IssuesEvent | 2019-07-02 08:33:55 | Code-Poets/sheetstorm | https://api.github.com/repos/Code-Poets/sheetstorm | closed | Unify validator usage in models | chore priority medium refactor | Right now there is no structured way of validators' usage. Part of the validation is done by passing `validator`s to models' fields arguments, part of validation is done in `clean` method.

Should be done:

------------

- Single field related validation should be done via `validator` passed as argument. ie.

```python

email = models.EmailField(

CustomUserModelText.EMAIL_ADDRESS,

max_length=constants.EMAIL_MAX_LENGTH,

unique=True,

validators=[custom_validate_email_function]

)

```

- Validation related to more fields (or related to inter-dependency between fields) should be done in `clean()` method, ie.

```python

def clean(self) -> None:

super().clean()

if (

hasattr(self, "author")

and isinstance(self.work_hours, Decimal)

and self.author.report_set.get_report_work_hours_sum_for_date(self.date, self.pk) + self.work_hours > 24

):

raise ValidationError(

message=ReportValidationStrings.WORK_HOURS_SUM_FOR_GIVEN_DATE_FOR_SINGLE_AUTHOR_EXCEEDED.value

)

```

| 1.0 | Unify validator usage in models - Right now there is no structured way of validators' usage. Part of the validation is done by passing `validator`s to models' fields arguments, part of validation is done in `clean` method.

Should be done:

------------

- Single field related validation should be done via `validator` passed as argument. ie.

```python

email = models.EmailField(

CustomUserModelText.EMAIL_ADDRESS,

max_length=constants.EMAIL_MAX_LENGTH,

unique=True,

validators=[custom_validate_email_function]

)

```

- Validation related to more fields (or related to inter-dependency between fields) should be done in `clean()` method, ie.

```python

def clean(self) -> None:

super().clean()

if (

hasattr(self, "author")

and isinstance(self.work_hours, Decimal)

and self.author.report_set.get_report_work_hours_sum_for_date(self.date, self.pk) + self.work_hours > 24

):

raise ValidationError(

message=ReportValidationStrings.WORK_HOURS_SUM_FOR_GIVEN_DATE_FOR_SINGLE_AUTHOR_EXCEEDED.value

)

```

| priority | unify validator usage in models right now there is no structured way of validators usage part of the validation is done by passing validator s to models fields arguments part of validation is done in clean method should be done single field related validation should be done via validator passed as argument ie python email models emailfield customusermodeltext email address max length constants email max length unique true validators validation related to more fields or related to inter dependency between fields should be done in clean method ie python def clean self none super clean if hasattr self author and isinstance self work hours decimal and self author report set get report work hours sum for date self date self pk self work hours raise validationerror message reportvalidationstrings work hours sum for given date for single author exceeded value | 1 |

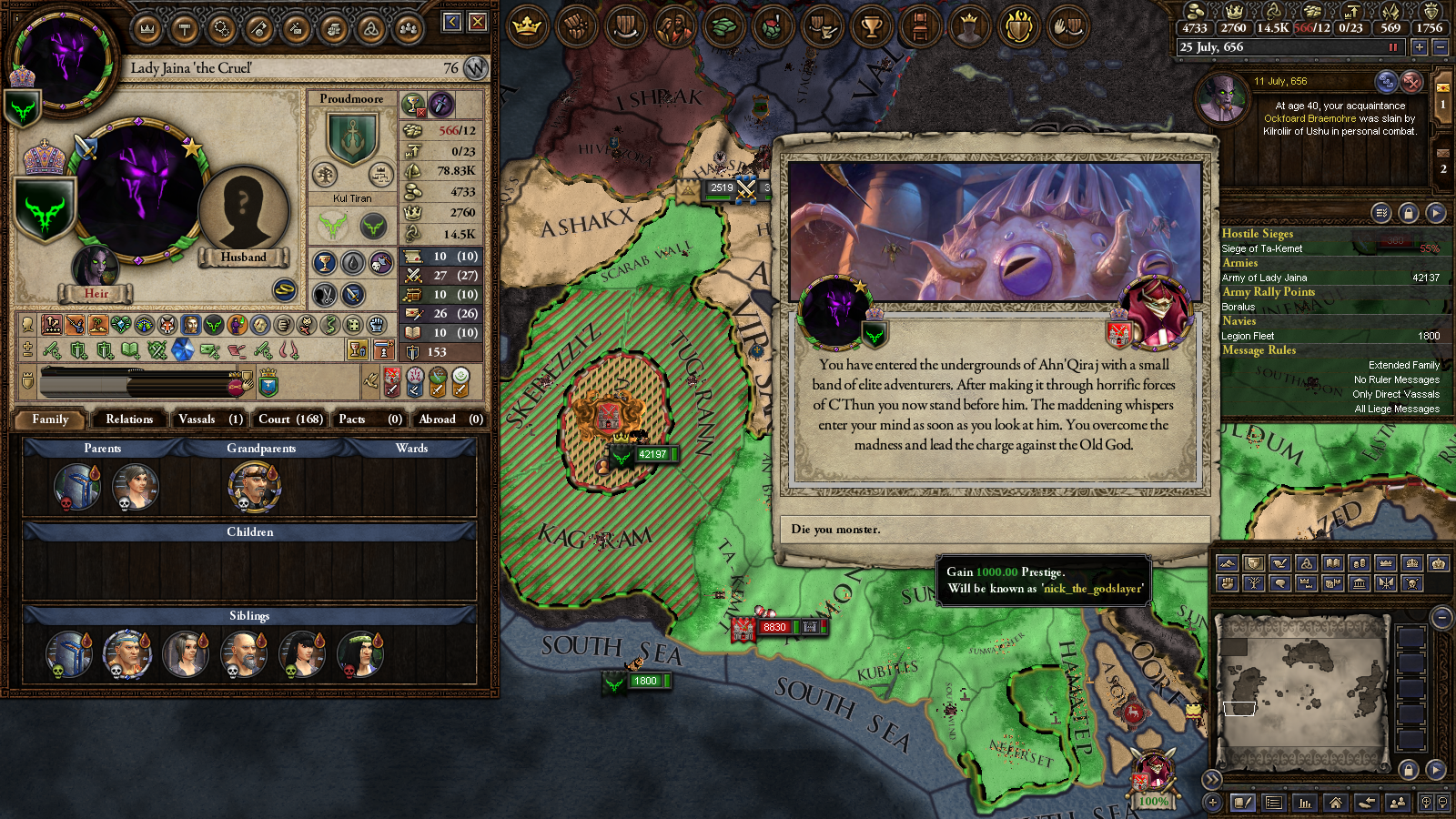

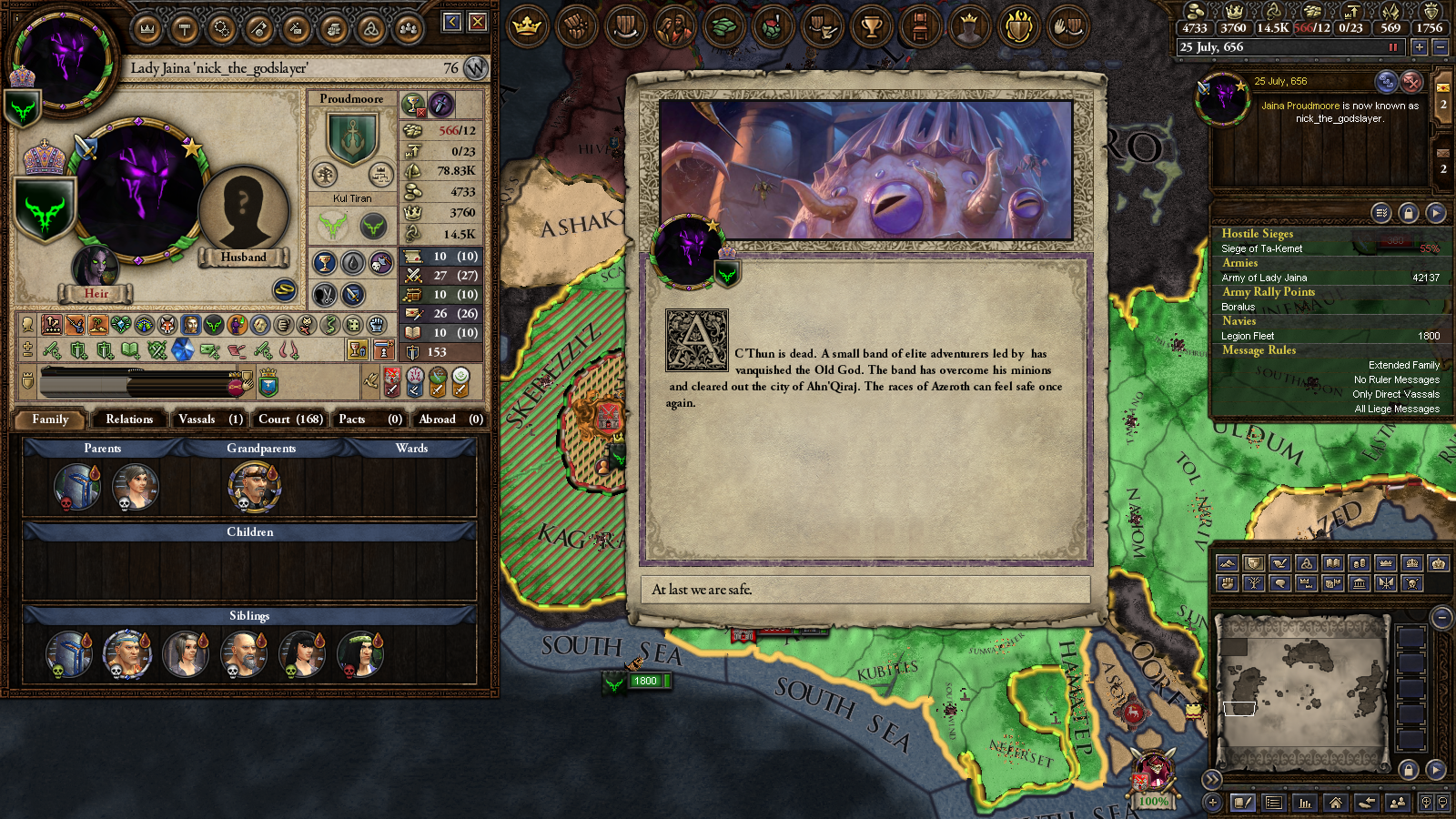

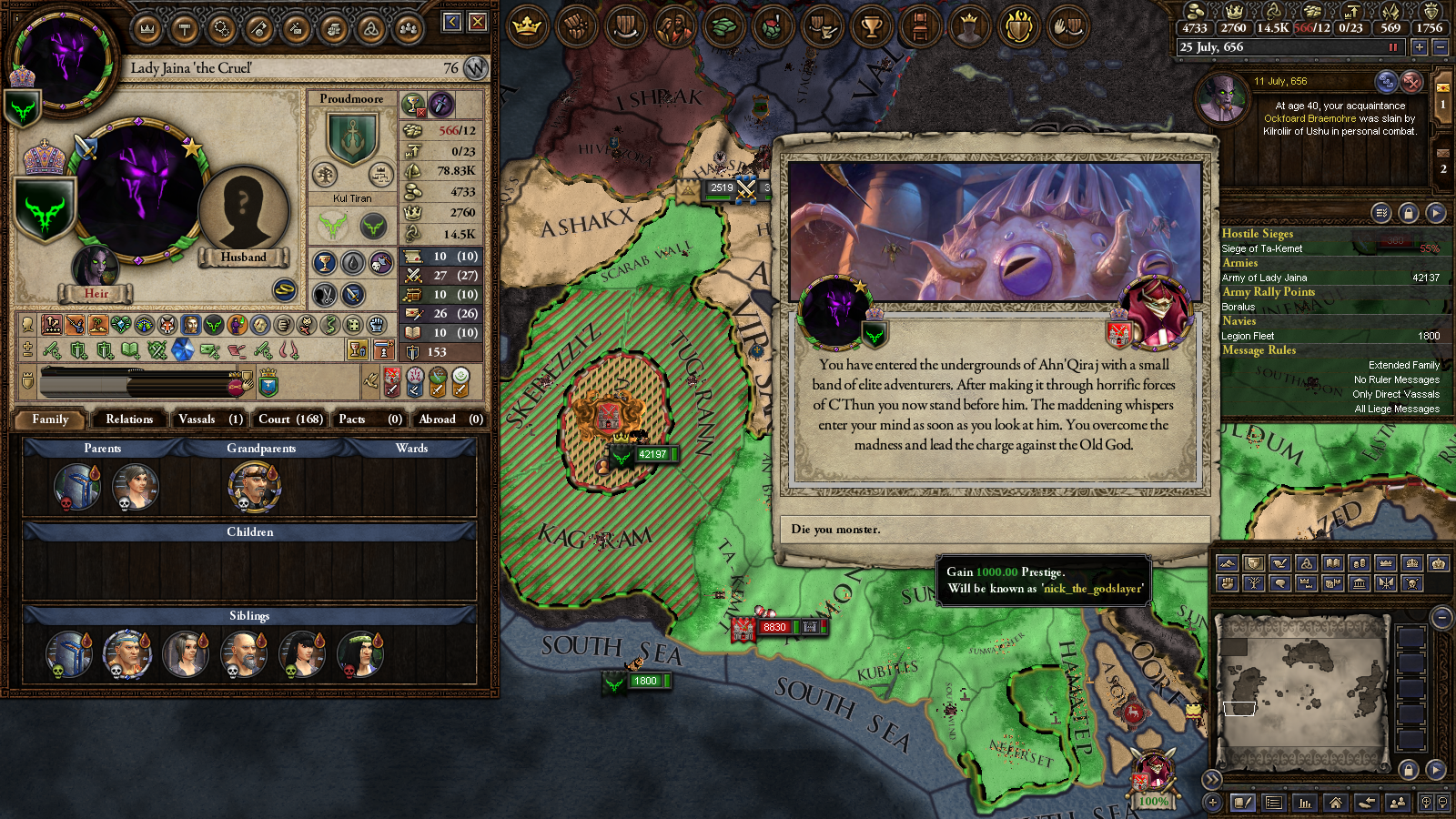

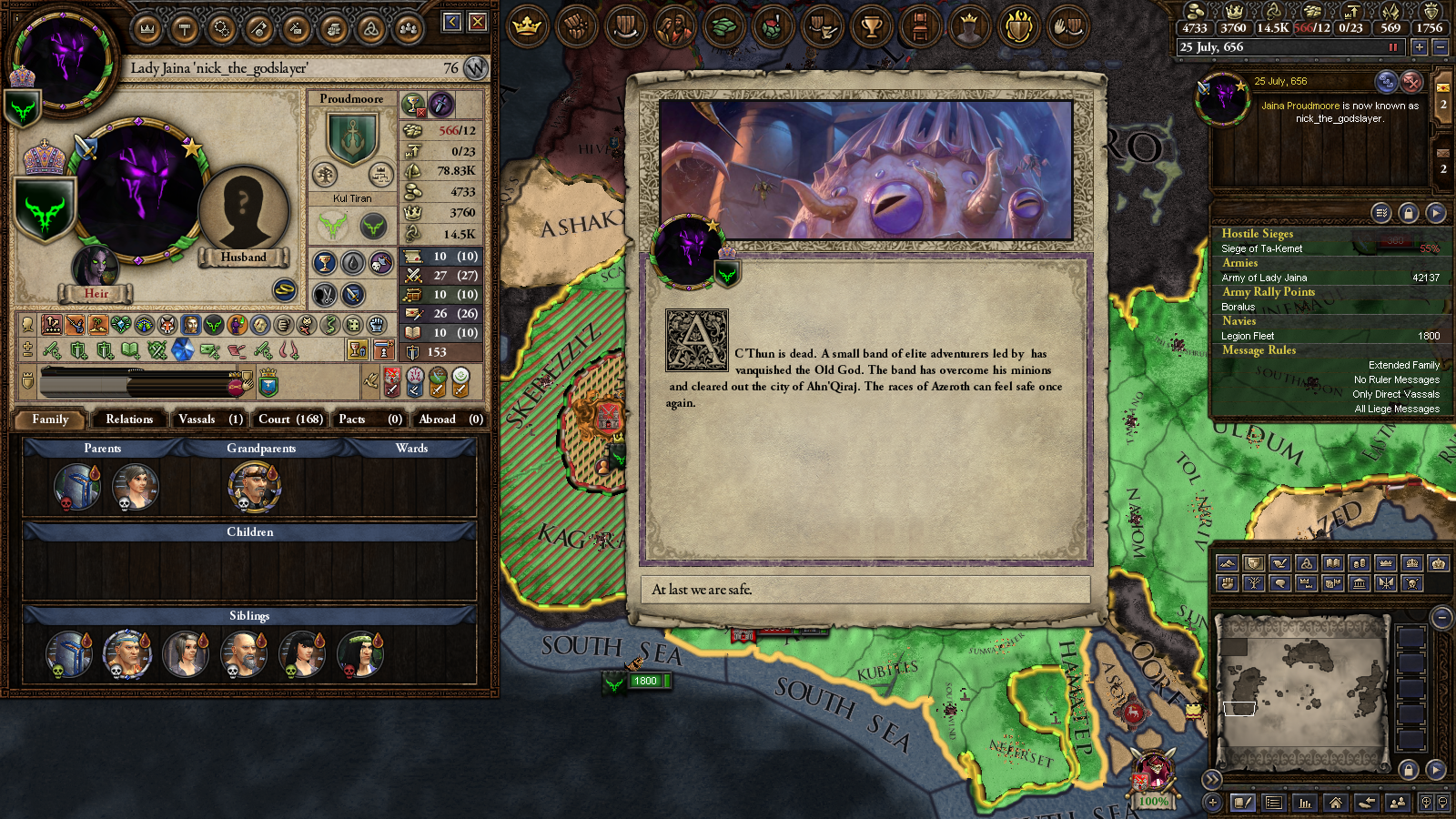

295,136 | 9,082,303,810 | IssuesEvent | 2019-02-17 11:03:37 | Warcraft-GoA-Development-Team/Warcraft-Guardians-of-Azeroth | https://api.github.com/repos/Warcraft-GoA-Development-Team/Warcraft-Guardians-of-Azeroth | closed | [LOCALIZATION] | nick_the_godslayer | :beetle: bug - localization :scroll: :grey_exclamation: priority medium | **Mod Version**

Master branch

**Please explain your issue in as much detail as possible:**

"The godslayer" nickname don't have localisation.

**Upload screenshots of the problem localization:**

<details>

<summary>Click to expand</summary>

</details> | 1.0 | [LOCALIZATION] | nick_the_godslayer - **Mod Version**

Master branch

**Please explain your issue in as much detail as possible:**

"The godslayer" nickname don't have localisation.

**Upload screenshots of the problem localization:**

<details>

<summary>Click to expand</summary>

</details> | priority | nick the godslayer mod version master branch please explain your issue in as much detail as possible the godslayer nickname don t have localisation upload screenshots of the problem localization click to expand | 1 |

268,100 | 8,403,275,112 | IssuesEvent | 2018-10-11 09:19:42 | ePADD/epadd | https://api.github.com/repos/ePADD/epadd | opened | Autocomplete for Attachments in Advanced Search not working | Bug Medium priority | v7 10Oct; Bush full

An attachment with filename "A letter" exists in Blobs folder. System gives "no results" when user type "A" in the File name field in Attachment section in Advanced search. | 1.0 | Autocomplete for Attachments in Advanced Search not working - v7 10Oct; Bush full

An attachment with filename "A letter" exists in Blobs folder. System gives "no results" when user type "A" in the File name field in Attachment section in Advanced search. | priority | autocomplete for attachments in advanced search not working bush full an attachment with filename a letter exists in blobs folder system gives no results when user type a in the file name field in attachment section in advanced search | 1 |

629,890 | 20,070,134,812 | IssuesEvent | 2022-02-04 05:05:26 | sonia-auv/octopus-telemetry | https://api.github.com/repos/sonia-auv/octopus-telemetry | closed | Depth Indicator (SONIA) | Priority: Medium Type: Feature | **Warning :** Before creating an issue or task, make sure that it does not already exists in the [issue tracker](../). Thank you.

## Context

Implémenter l'indicateur de profondeur

## Changes

<!-- Give a brief description of the components that need to change and how -->

## Comments

<!-- Add further comments if needed -->

| 1.0 | Depth Indicator (SONIA) - **Warning :** Before creating an issue or task, make sure that it does not already exists in the [issue tracker](../). Thank you.

## Context

Implémenter l'indicateur de profondeur

## Changes

<!-- Give a brief description of the components that need to change and how -->

## Comments

<!-- Add further comments if needed -->

| priority | depth indicator sonia warning before creating an issue or task make sure that it does not already exists in the thank you context implémenter l indicateur de profondeur changes comments | 1 |

26,949 | 2,689,104,720 | IssuesEvent | 2015-03-31 07:49:14 | adobe/brackets | https://api.github.com/repos/adobe/brackets | opened | Drag & drop text preference (dragDropText) doesn't take effect properly on files already open | F Editor medium priority | 1. Start with the preference off

2. Open a few files

3. Open the preferences file, turn `dragDropText` on, and save

4. Try to drag the selected text in any of the already open editors

Result:

- on Mac, dragging the selection does nothing

- on Win, dragging the selection appears to drag an image of the entire editor area

Expected:

Selection should be dragged normally, as seen if the files are closed & reopened or if Brackets is restarted. | 1.0 | Drag & drop text preference (dragDropText) doesn't take effect properly on files already open - 1. Start with the preference off

2. Open a few files

3. Open the preferences file, turn `dragDropText` on, and save

4. Try to drag the selected text in any of the already open editors

Result:

- on Mac, dragging the selection does nothing

- on Win, dragging the selection appears to drag an image of the entire editor area

Expected:

Selection should be dragged normally, as seen if the files are closed & reopened or if Brackets is restarted. | priority | drag drop text preference dragdroptext doesn t take effect properly on files already open start with the preference off open a few files open the preferences file turn dragdroptext on and save try to drag the selected text in any of the already open editors result on mac dragging the selection does nothing on win dragging the selection appears to drag an image of the entire editor area expected selection should be dragged normally as seen if the files are closed reopened or if brackets is restarted | 1 |

545,053 | 15,935,106,733 | IssuesEvent | 2021-04-14 09:27:42 | ansible-collections/amazon.aws | https://api.github.com/repos/ansible-collections/amazon.aws | closed | When using keyed_groups with tags, an empty value introduces a trailing underscore | bug has_pr inventory plugins priority/medium | <!--- Verify first that your issue is not already reported on GitHub -- I searched, didn't find anything -->

<!--- Also test if the latest release and devel branch are affected too -->

<!--- Complete *all* sections as described, this form is processed automatically -->

##### SUMMARY

I am using the `aws_ec2` inventory module to dynamically get inventory. I have configured it so that it creates groups of machines based on the tags that are added to the instance. With the old `ec2.py` inventory module I was using, a tag that did not have a value would result in a group named `tag_foo` where `foo` is the key name. Since the tag does not have a value, the tag simply includes the key and no value.

The `aws_ec2` inventory module has similar support when using `keyed_groups`. However, when a tag does not include a value, a trailing slash is appended to the group. In the example above, the name of the group ends up being: `tag_foo_`.

I feel that the trailing underscore is a bug and should not be appended if the tag's value is empty.

##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

<!--- Write the short name of the module, plugin, task or feature below, use your best guess if unsure -->

aws_ec2 inventory module

##### ANSIBLE VERSION

<!--- Paste verbatim output from "ansible --version" between quotes -->

```paste below

ansible 2.10.6

config file = /Users/rca/projects/ansible-playbooks/ansible.cfg

configured module search path = ['/Users/rca/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /Users/rca/.local/share/virtualenvs/ansible-playbooks-NHzTLeaW/lib/python3.9/site-packages/ansible

executable location = /Users/rca/.local/share/virtualenvs/ansible-playbooks-NHzTLeaW/bin/ansible

python version = 3.9.2 (v3.9.2:1a79785e3e, Feb 19 2021, 09:06:10) [Clang 6.0 (clang-600.0.57)]

```

##### CONFIGURATION

<!--- Paste verbatim output from "ansible-config dump --only-changed" between quotes -->

```paste below

---

plugin: aws_ec2

cache: yes

regions:

- us-east-1

filters:

instance-state-name: running

hostnames:

- name: private-ip-address

prefix: tag:Name

keyed_groups:

- prefix: tag

key: tags

compose:

# Use the private IP address to connect to the host

# (note: this does not modify inventory_hostname, which is set via I(hostnames))

ansible_host: private_ip_address

```

##### OS / ENVIRONMENT

<!--- Provide all relevant information below, e.g. target OS versions, network device firmware, etc. -->

mac os 10.15

##### STEPS TO REPRODUCE

<!--- Describe exactly how to reproduce the problem, using a minimal test-case -->

create an ec2 instance and add a tag `foo` with no value. create the inventory and notice that the keyed group created for that tag has a trailing underscore.

<!--- Paste example playbooks or commands between quotes below -->

- clear out inventory cache before running this

```yaml

ansible-inventory --list | less

```

search for `tag_foo` and notice that it has a trailing slash

<!--- HINT: You can paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- Describe what you expected to happen when running the steps above -->

Expect to have a tag without a trailing slash.

##### ACTUAL RESULTS

<!--- Describe what actually happened. If possible run with extra verbosity (-vvvv) -->

The tag has a trailing slash:

```

"tag_foo_": {

"hosts": [

"machine-name_10.0.0.123"

]

},

```

<!--- Paste verbatim command output between quotes -->

```paste below

ansible tag_foo_ -m ping

```

that will ping the machine with that tag and confirm the group can be used with the trailing slash, but IMO the trailing slash should not be there.

Thank you! | 1.0 | When using keyed_groups with tags, an empty value introduces a trailing underscore - <!--- Verify first that your issue is not already reported on GitHub -- I searched, didn't find anything -->

<!--- Also test if the latest release and devel branch are affected too -->

<!--- Complete *all* sections as described, this form is processed automatically -->

##### SUMMARY

I am using the `aws_ec2` inventory module to dynamically get inventory. I have configured it so that it creates groups of machines based on the tags that are added to the instance. With the old `ec2.py` inventory module I was using, a tag that did not have a value would result in a group named `tag_foo` where `foo` is the key name. Since the tag does not have a value, the tag simply includes the key and no value.

The `aws_ec2` inventory module has similar support when using `keyed_groups`. However, when a tag does not include a value, a trailing slash is appended to the group. In the example above, the name of the group ends up being: `tag_foo_`.

I feel that the trailing underscore is a bug and should not be appended if the tag's value is empty.

##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

<!--- Write the short name of the module, plugin, task or feature below, use your best guess if unsure -->

aws_ec2 inventory module

##### ANSIBLE VERSION

<!--- Paste verbatim output from "ansible --version" between quotes -->

```paste below

ansible 2.10.6

config file = /Users/rca/projects/ansible-playbooks/ansible.cfg

configured module search path = ['/Users/rca/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /Users/rca/.local/share/virtualenvs/ansible-playbooks-NHzTLeaW/lib/python3.9/site-packages/ansible

executable location = /Users/rca/.local/share/virtualenvs/ansible-playbooks-NHzTLeaW/bin/ansible

python version = 3.9.2 (v3.9.2:1a79785e3e, Feb 19 2021, 09:06:10) [Clang 6.0 (clang-600.0.57)]

```

##### CONFIGURATION

<!--- Paste verbatim output from "ansible-config dump --only-changed" between quotes -->

```paste below

---

plugin: aws_ec2

cache: yes

regions:

- us-east-1

filters:

instance-state-name: running

hostnames:

- name: private-ip-address

prefix: tag:Name

keyed_groups:

- prefix: tag

key: tags

compose:

# Use the private IP address to connect to the host

# (note: this does not modify inventory_hostname, which is set via I(hostnames))

ansible_host: private_ip_address

```

##### OS / ENVIRONMENT

<!--- Provide all relevant information below, e.g. target OS versions, network device firmware, etc. -->

mac os 10.15

##### STEPS TO REPRODUCE

<!--- Describe exactly how to reproduce the problem, using a minimal test-case -->

create an ec2 instance and add a tag `foo` with no value. create the inventory and notice that the keyed group created for that tag has a trailing underscore.

<!--- Paste example playbooks or commands between quotes below -->

- clear out inventory cache before running this

```yaml

ansible-inventory --list | less

```

search for `tag_foo` and notice that it has a trailing slash

<!--- HINT: You can paste gist.github.com links for larger files -->

##### EXPECTED RESULTS

<!--- Describe what you expected to happen when running the steps above -->

Expect to have a tag without a trailing slash.

##### ACTUAL RESULTS

<!--- Describe what actually happened. If possible run with extra verbosity (-vvvv) -->

The tag has a trailing slash:

```

"tag_foo_": {

"hosts": [

"machine-name_10.0.0.123"

]

},

```

<!--- Paste verbatim command output between quotes -->

```paste below

ansible tag_foo_ -m ping

```

that will ping the machine with that tag and confirm the group can be used with the trailing slash, but IMO the trailing slash should not be there.