Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

414,530 | 12,104,213,324 | IssuesEvent | 2020-04-20 19:48:09 | wri/gfw-mapbuilder | https://api.github.com/repos/wri/gfw-mapbuilder | opened | Languge Dropdown styling/language | 4.x Upgrade medium priority | - [ ] Lang dropdown needs to match styling of PROD

- [ ] Lang dropdown needs to be language aware, having appropriate translations

| 1.0 | Languge Dropdown styling/language - - [ ] Lang dropdown needs to match styling of PROD

- [ ] Lang dropdown needs to be language aware, having appropriate translations

| priority | languge dropdown styling language lang dropdown needs to match styling of prod lang dropdown needs to be language aware having appropriate translations | 1 |

258,865 | 8,180,357,410 | IssuesEvent | 2018-08-28 19:12:35 | cms-ttbarAC/Analysis | https://api.github.com/repos/cms-ttbarAC/Analysis | opened | Lepton-Jet Cleaning Producer | Priority: Medium Project: Genesis Status: Open Type: Enhancement | Make a new producer that reads leptons and AK4 jets, applies the lepton-jet cleaning, and saves a new collection of AK4 (and updated MET).

Then, the `updateJetCollection()` from the JEC tools can be used to apply the JECs rather than doing it by hand. | 1.0 | Lepton-Jet Cleaning Producer - Make a new producer that reads leptons and AK4 jets, applies the lepton-jet cleaning, and saves a new collection of AK4 (and updated MET).

Then, the `updateJetCollection()` from the JEC tools can be used to apply the JECs rather than doing it by hand. | priority | lepton jet cleaning producer make a new producer that reads leptons and jets applies the lepton jet cleaning and saves a new collection of and updated met then the updatejetcollection from the jec tools can be used to apply the jecs rather than doing it by hand | 1 |

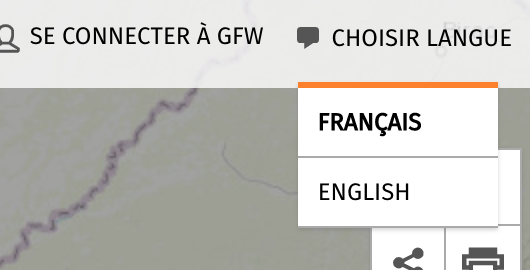

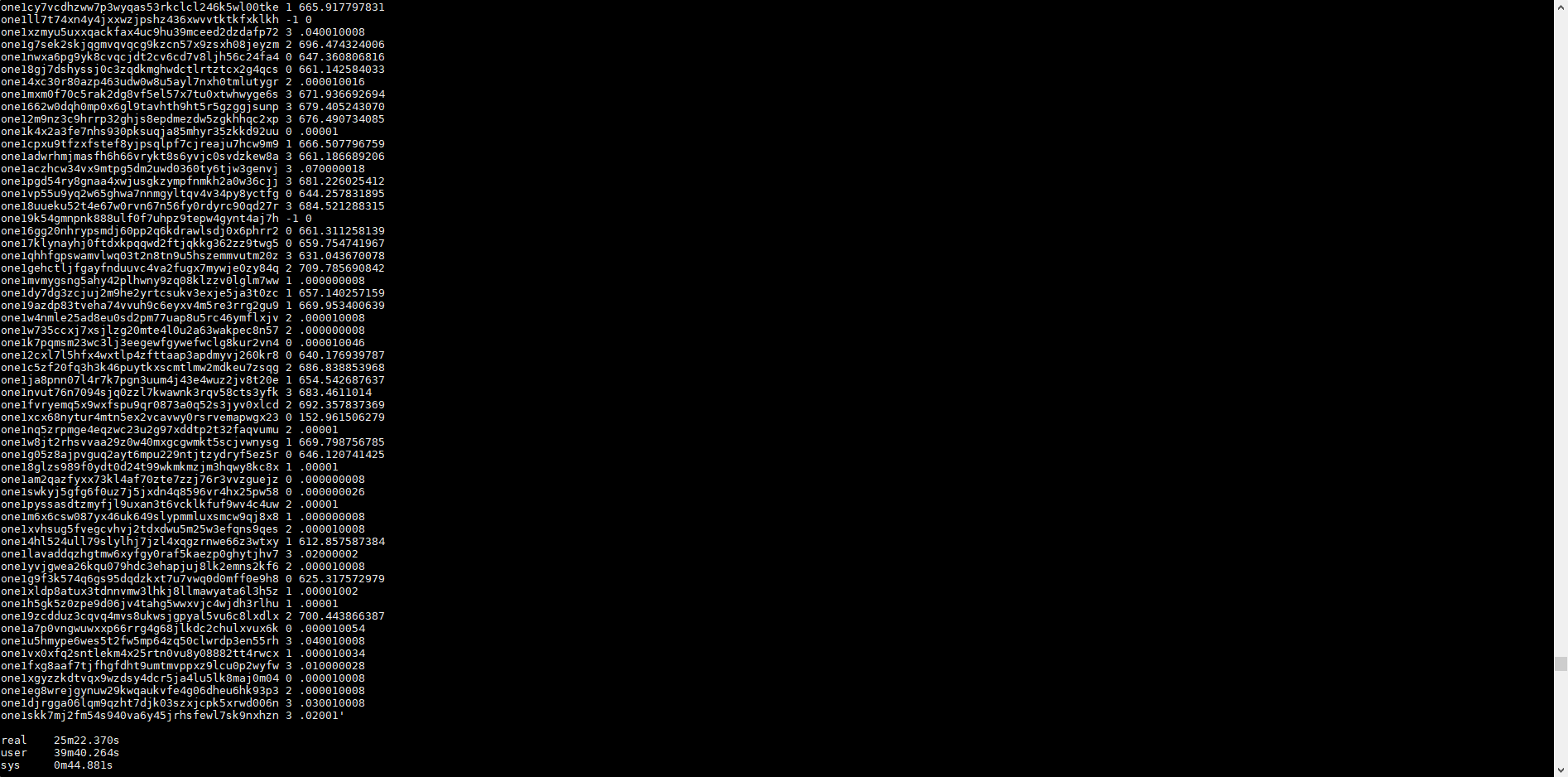

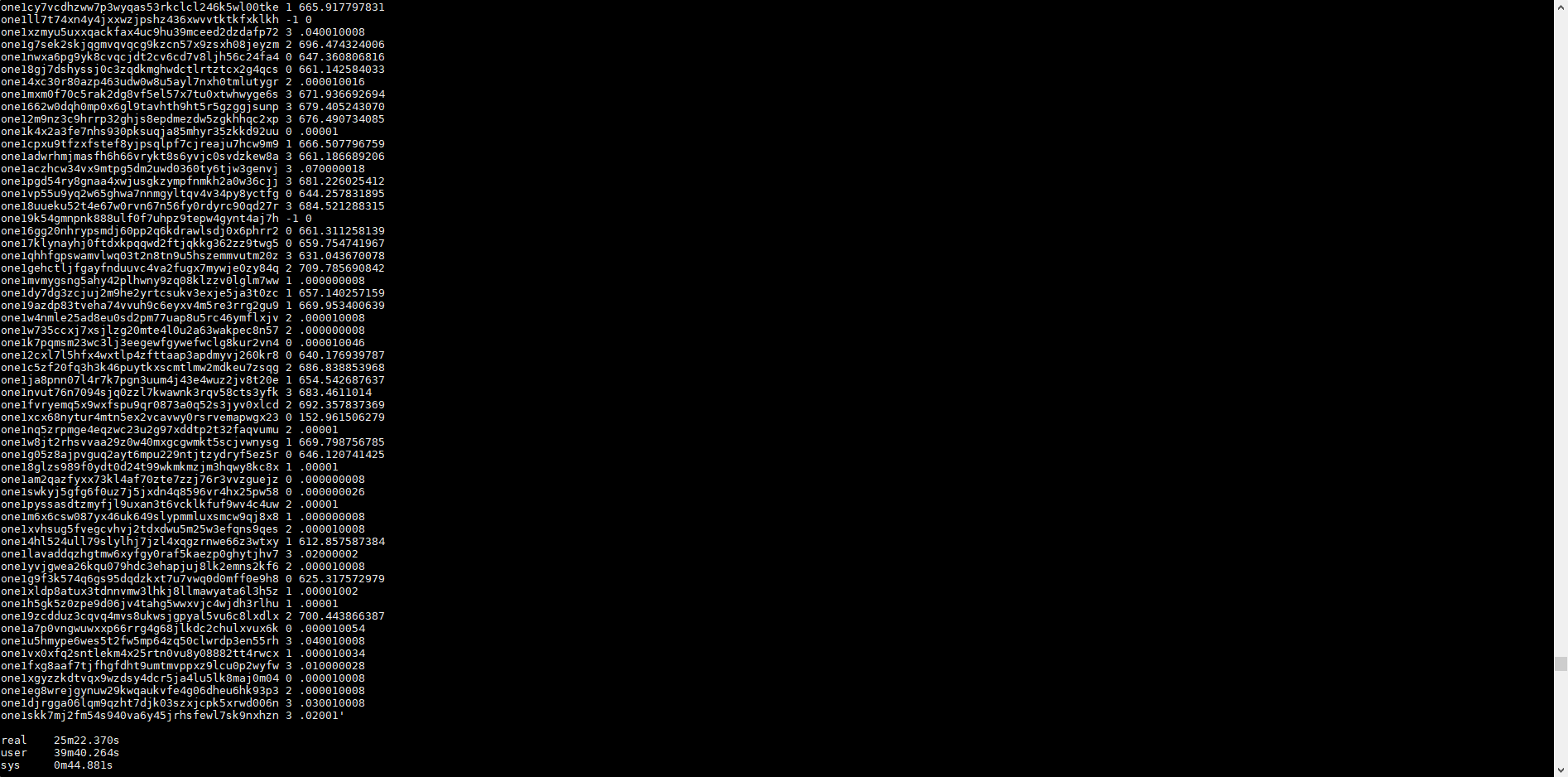

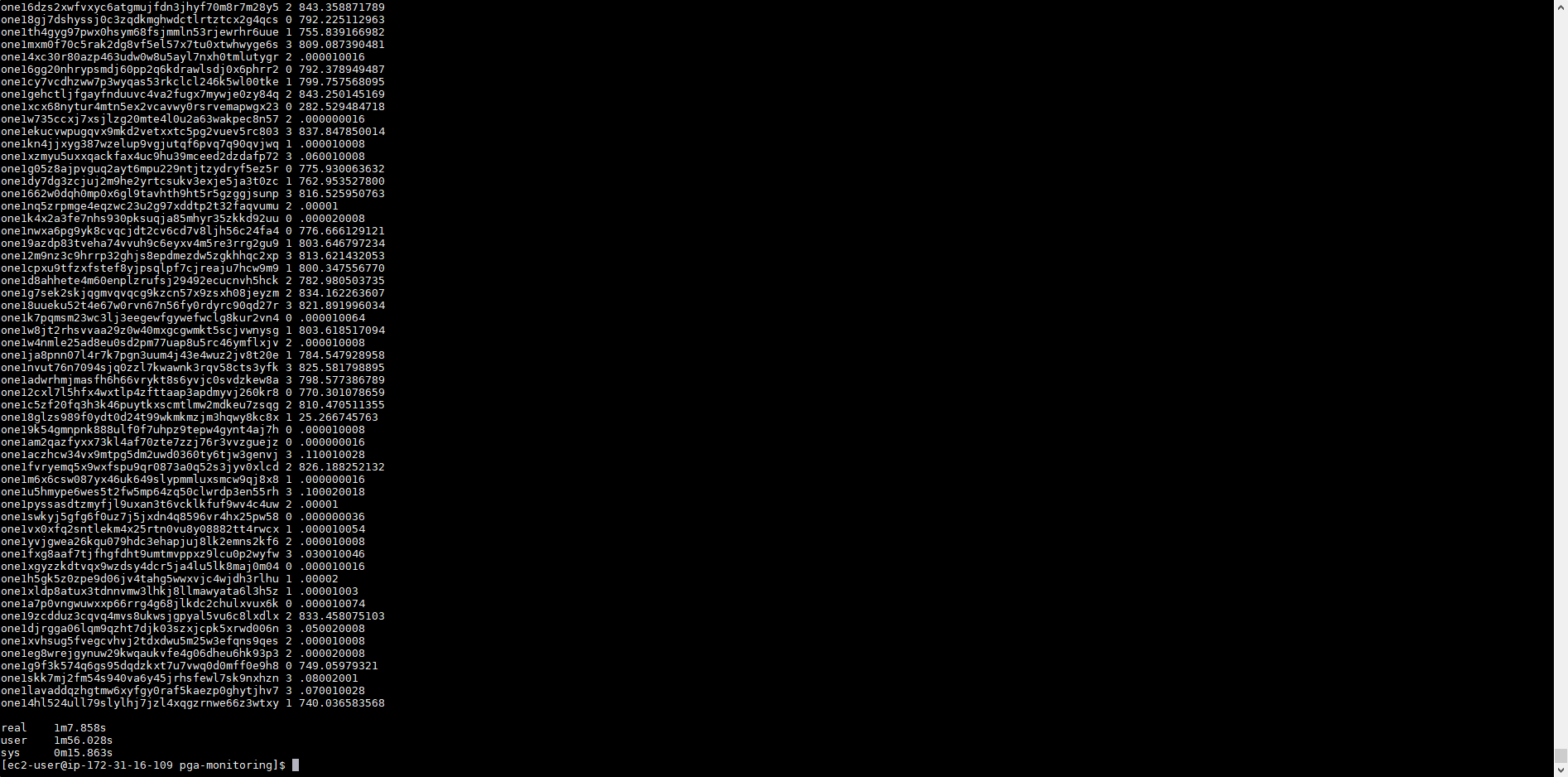

403,558 | 11,842,985,303 | IssuesEvent | 2020-03-24 00:47:38 | harmony-one/harmony | https://api.github.com/repos/harmony-one/harmony | closed | Current wallet check balance is resource-heavy and slow | bug medium priority | Not sure what change caused this, but the [current wallet binary](https://github.com/harmony-one/harmony/releases/tag/pangaea-20190910.0)'s balance check cannot be run in parallel very well. The time it takes to run [check.sh](https://github.com/harmony-one/harmony-ops/blob/master/monitoring/pga-monitoring/check.sh) is now 25 minutes and 22 seconds long.

In comparison, the [previous compiled binary](https://github.com/harmony-one/harmony/releases/tag/pangaea-20190903.0) runs the script at just about 1 minute.

This causes complete incapability to check balances with accuracy and overloads the system. Can be worked around by downgrading the binary and using custom wallet.ini.

| 1.0 | Current wallet check balance is resource-heavy and slow - Not sure what change caused this, but the [current wallet binary](https://github.com/harmony-one/harmony/releases/tag/pangaea-20190910.0)'s balance check cannot be run in parallel very well. The time it takes to run [check.sh](https://github.com/harmony-one/harmony-ops/blob/master/monitoring/pga-monitoring/check.sh) is now 25 minutes and 22 seconds long.

In comparison, the [previous compiled binary](https://github.com/harmony-one/harmony/releases/tag/pangaea-20190903.0) runs the script at just about 1 minute.

This causes complete incapability to check balances with accuracy and overloads the system. Can be worked around by downgrading the binary and using custom wallet.ini.

| priority | current wallet check balance is resource heavy and slow not sure what change caused this but the balance check cannot be run in parallel very well the time it takes to run is now minutes and seconds long in comparison the runs the script at just about minute this causes complete incapability to check balances with accuracy and overloads the system can be worked around by downgrading the binary and using custom wallet ini | 1 |

175,050 | 6,546,333,228 | IssuesEvent | 2017-09-04 09:50:43 | robertgruening/Munins-Archiv | https://api.github.com/repos/robertgruening/Munins-Archiv | opened | Zwischenablage | priority: medium urgency: medium | Um unterschiedliche Arbeitsprozesse zu beschleunigen, soll es eine Zwischenablage in der Oberfläche geben, die auf allen Seiten verfügbar ist. Elemente, wie bspw. Ablagen oder Funde, können in die Zwischenablage mittel Maussteuerung (Anfassen und Ziehen) oder ggf. Kontextmenü gebracht werden. Die Elemente werden als Kachel dargestellt und können über Kontextmenü oder ein Löschen-X in der Kachel entfernt werden. Angewendet werden die Elemente per Maussteuerung wie folgt: Der Anwender zieht ein in der Zwischenablage ausgewähltes Element in ein entsprechendes HTML-Control, das daraufhin mit den Informationen des Elementes gefüllt wird.

Beispiel:

Der Anwender befindet sich im Formular für Funde und legt einen neuen Eintrag an. Aus der Zwischenablage wählt der Anwender eine Ablage, z. B. einen Karton, und zieht diesen mit der Maus in die Ablagetextbox im Fundformular. Daraufhin trägt das System den Datenpfad der gewählten Ablage in die Textbox ein.

Der Vorteil besteht darin, dass der Anwender beim Anlegen und Bearbeiten von Elementen sich wiederholende Informationen nicht von Hand erneut eingeben muss, sondern diese mit einer einfachen Geste hinzufügt oder überschreibt.

- [ ] Ablegen von Elementen in die Zwischenablage (Ablagen, Kontexte, Funde, Fundattribute und Orte)

- [ ] Entfernen von Elementen aus der Zwischenablage

- [ ] Öffnen des Elements in seinem Bearbeitungsformular über das Kontextmenü

- [ ] Einfügen der Elementinformation in dafür vorgesehenen HTML-Controls

- [ ] Maussteuerung

- [ ] Kentextmenüsteuerung

- [ ] ein Element kann nur ein Mal in der Zwischenablage sein

- [ ] Elemente in der Zwischenablage können vom Anwender beliebig angeordnet werden | 1.0 | Zwischenablage - Um unterschiedliche Arbeitsprozesse zu beschleunigen, soll es eine Zwischenablage in der Oberfläche geben, die auf allen Seiten verfügbar ist. Elemente, wie bspw. Ablagen oder Funde, können in die Zwischenablage mittel Maussteuerung (Anfassen und Ziehen) oder ggf. Kontextmenü gebracht werden. Die Elemente werden als Kachel dargestellt und können über Kontextmenü oder ein Löschen-X in der Kachel entfernt werden. Angewendet werden die Elemente per Maussteuerung wie folgt: Der Anwender zieht ein in der Zwischenablage ausgewähltes Element in ein entsprechendes HTML-Control, das daraufhin mit den Informationen des Elementes gefüllt wird.

Beispiel:

Der Anwender befindet sich im Formular für Funde und legt einen neuen Eintrag an. Aus der Zwischenablage wählt der Anwender eine Ablage, z. B. einen Karton, und zieht diesen mit der Maus in die Ablagetextbox im Fundformular. Daraufhin trägt das System den Datenpfad der gewählten Ablage in die Textbox ein.

Der Vorteil besteht darin, dass der Anwender beim Anlegen und Bearbeiten von Elementen sich wiederholende Informationen nicht von Hand erneut eingeben muss, sondern diese mit einer einfachen Geste hinzufügt oder überschreibt.

- [ ] Ablegen von Elementen in die Zwischenablage (Ablagen, Kontexte, Funde, Fundattribute und Orte)

- [ ] Entfernen von Elementen aus der Zwischenablage

- [ ] Öffnen des Elements in seinem Bearbeitungsformular über das Kontextmenü

- [ ] Einfügen der Elementinformation in dafür vorgesehenen HTML-Controls

- [ ] Maussteuerung

- [ ] Kentextmenüsteuerung

- [ ] ein Element kann nur ein Mal in der Zwischenablage sein

- [ ] Elemente in der Zwischenablage können vom Anwender beliebig angeordnet werden | priority | zwischenablage um unterschiedliche arbeitsprozesse zu beschleunigen soll es eine zwischenablage in der oberfläche geben die auf allen seiten verfügbar ist elemente wie bspw ablagen oder funde können in die zwischenablage mittel maussteuerung anfassen und ziehen oder ggf kontextmenü gebracht werden die elemente werden als kachel dargestellt und können über kontextmenü oder ein löschen x in der kachel entfernt werden angewendet werden die elemente per maussteuerung wie folgt der anwender zieht ein in der zwischenablage ausgewähltes element in ein entsprechendes html control das daraufhin mit den informationen des elementes gefüllt wird beispiel der anwender befindet sich im formular für funde und legt einen neuen eintrag an aus der zwischenablage wählt der anwender eine ablage z b einen karton und zieht diesen mit der maus in die ablagetextbox im fundformular daraufhin trägt das system den datenpfad der gewählten ablage in die textbox ein der vorteil besteht darin dass der anwender beim anlegen und bearbeiten von elementen sich wiederholende informationen nicht von hand erneut eingeben muss sondern diese mit einer einfachen geste hinzufügt oder überschreibt ablegen von elementen in die zwischenablage ablagen kontexte funde fundattribute und orte entfernen von elementen aus der zwischenablage öffnen des elements in seinem bearbeitungsformular über das kontextmenü einfügen der elementinformation in dafür vorgesehenen html controls maussteuerung kentextmenüsteuerung ein element kann nur ein mal in der zwischenablage sein elemente in der zwischenablage können vom anwender beliebig angeordnet werden | 1 |

708,703 | 24,350,723,382 | IssuesEvent | 2022-10-02 22:37:59 | thegrumpys/odop | https://api.github.com/repos/thegrumpys/odop | opened | Create release rollback procedure | Priority Medium | Create (and test in the staging system) a release rollback procedure.

In spite of diligent testing, there may come a time when there is a desire to (undo, retract, rollback) a recent release. Considering that there are various currently poorly understood consequences, for example impact to user designs saved by the code to be rolled back, the process needs to be tested and documented before using it on live data.

| 1.0 | Create release rollback procedure - Create (and test in the staging system) a release rollback procedure.

In spite of diligent testing, there may come a time when there is a desire to (undo, retract, rollback) a recent release. Considering that there are various currently poorly understood consequences, for example impact to user designs saved by the code to be rolled back, the process needs to be tested and documented before using it on live data.

| priority | create release rollback procedure create and test in the staging system a release rollback procedure in spite of diligent testing there may come a time when there is a desire to undo retract rollback a recent release considering that there are various currently poorly understood consequences for example impact to user designs saved by the code to be rolled back the process needs to be tested and documented before using it on live data | 1 |

89,692 | 3,798,606,883 | IssuesEvent | 2016-03-23 13:17:15 | WhitestormJS/whitestorm.js | https://api.github.com/repos/WhitestormJS/whitestorm.js | closed | [Improve] WHS.PointLight and WHS.SpotLight need to be updated. | E-easy enhancement Medium [priority] v0.1(Beta) | There are some properties like `exponent` and `decay` that need to be applied to these lights. | 1.0 | [Improve] WHS.PointLight and WHS.SpotLight need to be updated. - There are some properties like `exponent` and `decay` that need to be applied to these lights. | priority | whs pointlight and whs spotlight need to be updated there are some properties like exponent and decay that need to be applied to these lights | 1 |

482,156 | 13,901,774,546 | IssuesEvent | 2020-10-20 03:43:52 | momentum-mod/game | https://api.github.com/repos/momentum-mod/game | closed | Show Map Info crash if opened too quickly | Priority: Medium Size: Small Type: Bug | **Describe the bug**

If you open/close the Map Info dialog too quickly in the Map Selector, the game may crash.

**To Reproduce**

Steps to reproduce the behavior:

Open Map Selector

Right Click Map -> Show Map Info

Close Immediately, Repeat with any other map

Eventual Crash

If you have a video with the steps to recreate the bug, please post it here.

[Video](https://youtu.be/60Y9D1iNDXI)

**Expected behavior**

The game should not crash!

**Screenshots**

N/A

**Desktop/Branch (please complete the following information):**

- OS: Windows

- Branch: Latest develop branch

**Additional context**

N/A

| 1.0 | Show Map Info crash if opened too quickly - **Describe the bug**

If you open/close the Map Info dialog too quickly in the Map Selector, the game may crash.

**To Reproduce**

Steps to reproduce the behavior:

Open Map Selector

Right Click Map -> Show Map Info

Close Immediately, Repeat with any other map

Eventual Crash

If you have a video with the steps to recreate the bug, please post it here.

[Video](https://youtu.be/60Y9D1iNDXI)

**Expected behavior**

The game should not crash!

**Screenshots**

N/A

**Desktop/Branch (please complete the following information):**

- OS: Windows

- Branch: Latest develop branch

**Additional context**

N/A

| priority | show map info crash if opened too quickly describe the bug if you open close the map info dialog too quickly in the map selector the game may crash to reproduce steps to reproduce the behavior open map selector right click map show map info close immediately repeat with any other map eventual crash if you have a video with the steps to recreate the bug please post it here expected behavior the game should not crash screenshots n a desktop branch please complete the following information os windows branch latest develop branch additional context n a | 1 |

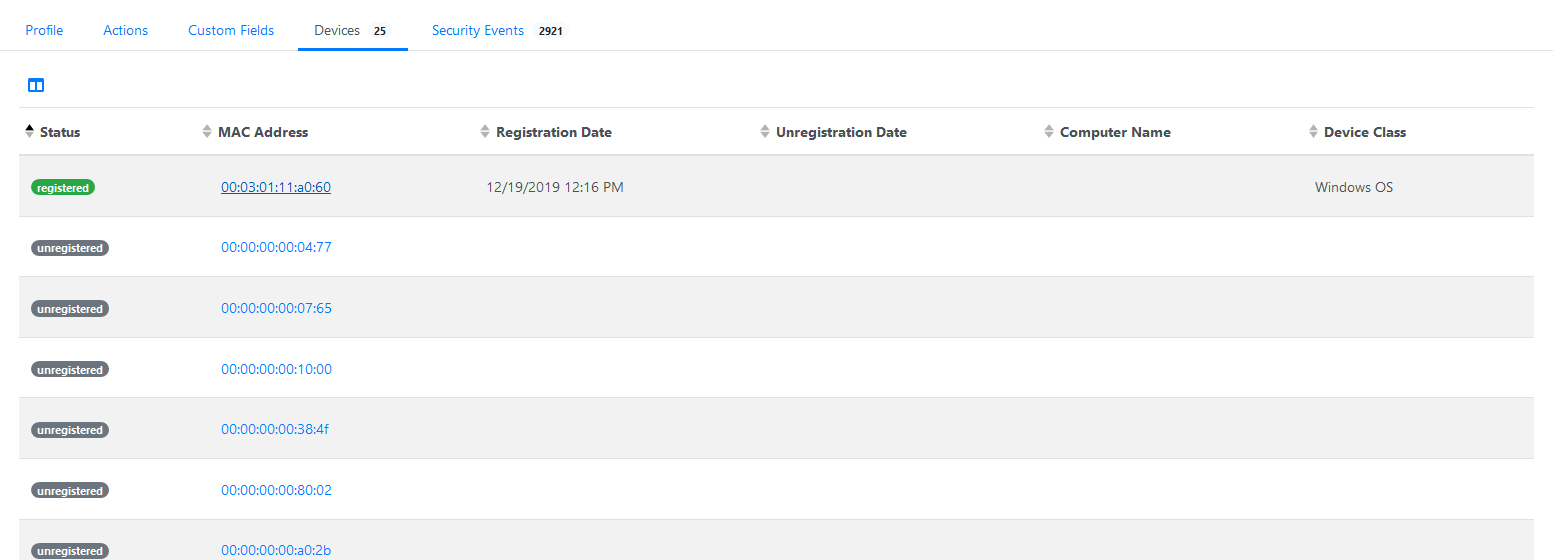

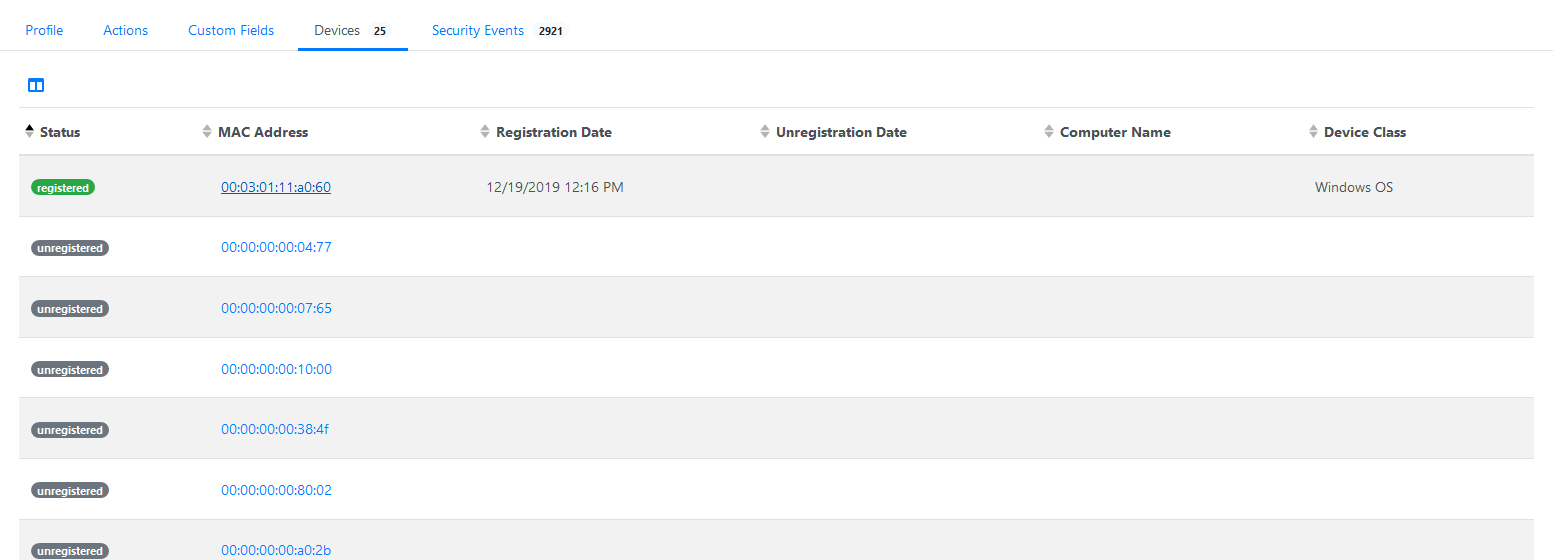

444,346 | 12,810,259,579 | IssuesEvent | 2020-07-03 18:02:05 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | closed | Web admin: users device list only 25 devices | Priority: Medium Type: Bug | **Describe the bug**

Users device list shows only 25 devices.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to 'Users' > `Username` > `Devices`

**Screenshots**

**Expected behavior**

All user devices should be listed.

| 1.0 | Web admin: users device list only 25 devices - **Describe the bug**

Users device list shows only 25 devices.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to 'Users' > `Username` > `Devices`

**Screenshots**

**Expected behavior**

All user devices should be listed.

| priority | web admin users device list only devices describe the bug users device list shows only devices to reproduce steps to reproduce the behavior go to users username devices screenshots expected behavior all user devices should be listed | 1 |

220,723 | 7,370,236,027 | IssuesEvent | 2018-03-13 07:35:09 | teamforus/research-and-development | https://api.github.com/repos/teamforus/research-and-development | closed | POC: Pay transaction fees for other contract | fill-template priority-medium proposal | ## poc

### Background / Context

**Goal/user story:**

**More:**

### Hypothesis:

### Method

*documentation/code*

### Result

*present findings*

### Recommendation

*write recomendation*

| 1.0 | POC: Pay transaction fees for other contract - ## poc

### Background / Context

**Goal/user story:**

**More:**

### Hypothesis:

### Method

*documentation/code*

### Result

*present findings*

### Recommendation

*write recomendation*

| priority | poc pay transaction fees for other contract poc background context goal user story more hypothesis method documentation code result present findings recommendation write recomendation | 1 |

341,613 | 10,300,001,538 | IssuesEvent | 2019-08-28 13:56:04 | pupil-labs/pupil | https://api.github.com/repos/pupil-labs/pupil | closed | Player: Improve "Invalid Recording" error message | enhancement priority:medium | Currently, one gets the same error message for different cases:

- User drops a video file instead of the recording folder (wrong usage, message: "No valid dir supplied")

- User drops a folder that does not contain (actual invalid recording)

- an `info.csv` (message: "Could not read info.csv file: Not a valid Pupil recording.")

- any video files (message: "Could not generate world timestamps from eye timestamps. This is an invalid recording.")

- `info.csv` does not contain `Recording Name` (message: "Could not read info.csv file: Not a valid Pupil recording.")

- Error during parsing `info.csv` (message: "Could not read info.csv file: Not a valid Pupil recording.") | 1.0 | Player: Improve "Invalid Recording" error message - Currently, one gets the same error message for different cases:

- User drops a video file instead of the recording folder (wrong usage, message: "No valid dir supplied")

- User drops a folder that does not contain (actual invalid recording)

- an `info.csv` (message: "Could not read info.csv file: Not a valid Pupil recording.")

- any video files (message: "Could not generate world timestamps from eye timestamps. This is an invalid recording.")

- `info.csv` does not contain `Recording Name` (message: "Could not read info.csv file: Not a valid Pupil recording.")

- Error during parsing `info.csv` (message: "Could not read info.csv file: Not a valid Pupil recording.") | priority | player improve invalid recording error message currently one gets the same error message for different cases user drops a video file instead of the recording folder wrong usage message no valid dir supplied user drops a folder that does not contain actual invalid recording an info csv message could not read info csv file not a valid pupil recording any video files message could not generate world timestamps from eye timestamps this is an invalid recording info csv does not contain recording name message could not read info csv file not a valid pupil recording error during parsing info csv message could not read info csv file not a valid pupil recording | 1 |

165,219 | 6,265,605,811 | IssuesEvent | 2017-07-16 18:53:18 | CS2103JUN2017-T1/main | https://api.github.com/repos/CS2103JUN2017-T1/main | closed | Implement feature to filter events | priority.medium type.enhancement type.epic | Filter by event type.

Filter by tags.

Filter by due date.

Other filters. | 1.0 | Implement feature to filter events - Filter by event type.

Filter by tags.

Filter by due date.

Other filters. | priority | implement feature to filter events filter by event type filter by tags filter by due date other filters | 1 |

793,631 | 28,005,317,967 | IssuesEvent | 2023-03-27 14:55:58 | clt313/SuperballVR | https://api.github.com/repos/clt313/SuperballVR | opened | Fix loading screen MissingReferenceException | priority: medium bug | When loading a new scene, a MissingReferenceException pops up. We're trying to access an object that's already been deleted, so need to look into that to prevent the error. | 1.0 | Fix loading screen MissingReferenceException - When loading a new scene, a MissingReferenceException pops up. We're trying to access an object that's already been deleted, so need to look into that to prevent the error. | priority | fix loading screen missingreferenceexception when loading a new scene a missingreferenceexception pops up we re trying to access an object that s already been deleted so need to look into that to prevent the error | 1 |

28,186 | 2,700,368,083 | IssuesEvent | 2015-04-04 02:50:52 | cs2103jan2015-t15-4j/main | https://api.github.com/repos/cs2103jan2015-t15-4j/main | closed | A user can access help with one click | priority.medium type.story | ...so that I can explore more features of the software easily and manage my tasks better. | 1.0 | A user can access help with one click - ...so that I can explore more features of the software easily and manage my tasks better. | priority | a user can access help with one click so that i can explore more features of the software easily and manage my tasks better | 1 |

790,669 | 27,832,447,923 | IssuesEvent | 2023-03-20 06:40:02 | TimerTiTi/TiTi | https://api.github.com/repos/TimerTiTi/TiTi | opened | VC, VM, Manager 명칭 관련 리펙토링 작업 (3h) | refactor priority: medium | [제목] VC, VM, Manager 명칭 관련 리펙토링 작업 (3h)

[내용]

### 배경

Manager, Controller, ViewModel 명칭들을 일관되도록 작성하고, 불필요한 코드가 제거되어야 Android 개발자에게 관련 코드를 보여주기에 용이하고, 이해하시기 더욱 좋을 것으로 예상되어 진행합니다.

### 요구사항

- [ ] VC, VM 명칭 반영

- [ ] RecordController → Records 명칭 정정

- [ ] DailyViewModel → DailyManager 명칭 정정 및 리펙토링 | 1.0 | VC, VM, Manager 명칭 관련 리펙토링 작업 (3h) - [제목] VC, VM, Manager 명칭 관련 리펙토링 작업 (3h)

[내용]

### 배경

Manager, Controller, ViewModel 명칭들을 일관되도록 작성하고, 불필요한 코드가 제거되어야 Android 개발자에게 관련 코드를 보여주기에 용이하고, 이해하시기 더욱 좋을 것으로 예상되어 진행합니다.

### 요구사항

- [ ] VC, VM 명칭 반영

- [ ] RecordController → Records 명칭 정정

- [ ] DailyViewModel → DailyManager 명칭 정정 및 리펙토링 | priority | vc vm manager 명칭 관련 리펙토링 작업 vc vm manager 명칭 관련 리펙토링 작업 배경 manager controller viewmodel 명칭들을 일관되도록 작성하고 불필요한 코드가 제거되어야 android 개발자에게 관련 코드를 보여주기에 용이하고 이해하시기 더욱 좋을 것으로 예상되어 진행합니다 요구사항 vc vm 명칭 반영 recordcontroller → records 명칭 정정 dailyviewmodel → dailymanager 명칭 정정 및 리펙토링 | 1 |

277,563 | 8,629,660,598 | IssuesEvent | 2018-11-21 21:37:47 | Viq111/kvimd | https://api.github.com/repos/Viq111/kvimd | opened | [main] Add snapshotting | enhancement priority:medium | Snapshotting means:

- Lock and create a new HashDisk

- Lock and create a new ValuesDisk (in that order so we don't reference to the other ValuesDisk)

- Add a `EntriesSinceLastSnapshot() int` that counts how many entries there are since last snapshot | 1.0 | [main] Add snapshotting - Snapshotting means:

- Lock and create a new HashDisk

- Lock and create a new ValuesDisk (in that order so we don't reference to the other ValuesDisk)

- Add a `EntriesSinceLastSnapshot() int` that counts how many entries there are since last snapshot | priority | add snapshotting snapshotting means lock and create a new hashdisk lock and create a new valuesdisk in that order so we don t reference to the other valuesdisk add a entriessincelastsnapshot int that counts how many entries there are since last snapshot | 1 |

476,459 | 13,745,273,436 | IssuesEvent | 2020-10-06 02:23:00 | puddletag/puddletag | https://api.github.com/repos/puddletag/puddletag | closed | Swedish | Priority-Medium Type-Translation auto-migrated | ```

You should update your Translating page on sourceforge.net, instructions on how

to create my native ts file, don't work.

First issue is the step two, "cd puddletag-hg"... should be "cd

puddletag-hg/source".

At step three I'm lost. In terminal I get ImportError: No module named mutagen.

That's it, can't get any further.

Åke Engelbrektson

```

Original issue reported on code.google.com by `eso...@gmail.com` on 11 Jan 2015 at 6:39

| 1.0 | Swedish - ```

You should update your Translating page on sourceforge.net, instructions on how

to create my native ts file, don't work.

First issue is the step two, "cd puddletag-hg"... should be "cd

puddletag-hg/source".

At step three I'm lost. In terminal I get ImportError: No module named mutagen.

That's it, can't get any further.

Åke Engelbrektson

```

Original issue reported on code.google.com by `eso...@gmail.com` on 11 Jan 2015 at 6:39

| priority | swedish you should update your translating page on sourceforge net instructions on how to create my native ts file don t work first issue is the step two cd puddletag hg should be cd puddletag hg source at step three i m lost in terminal i get importerror no module named mutagen that s it can t get any further åke engelbrektson original issue reported on code google com by eso gmail com on jan at | 1 |

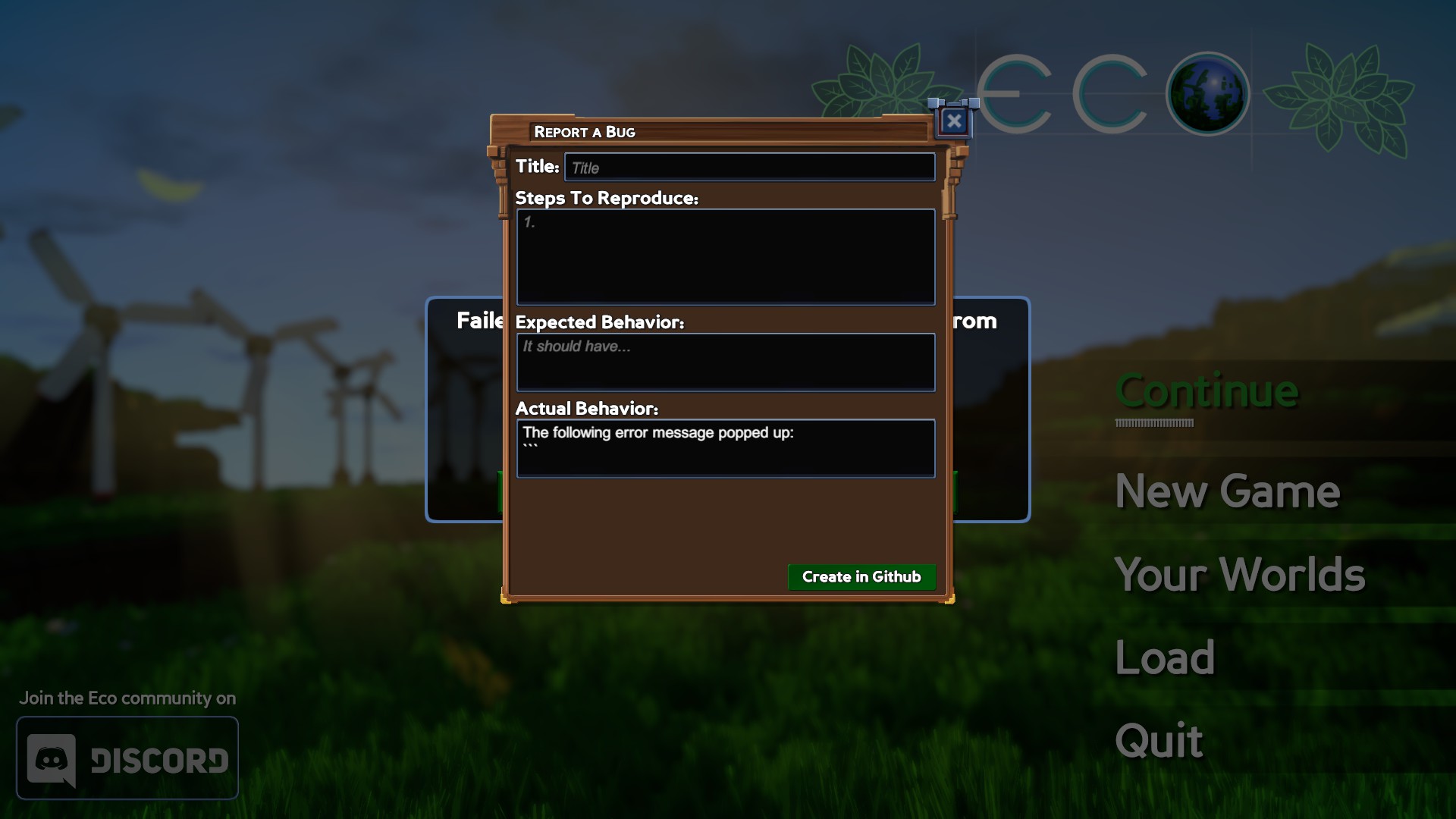

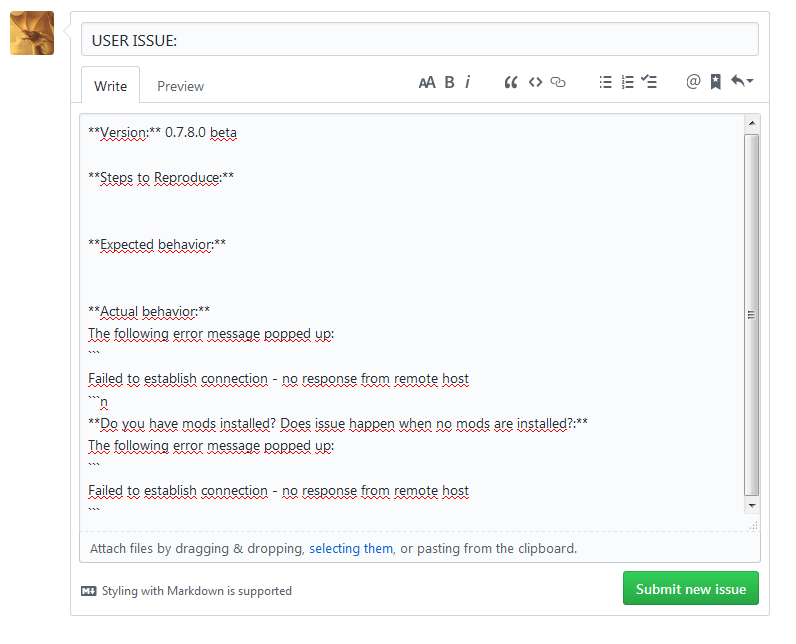

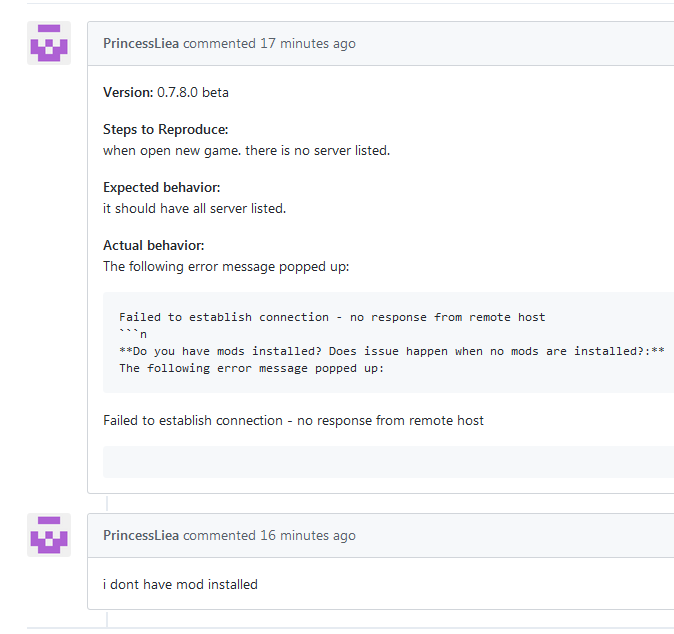

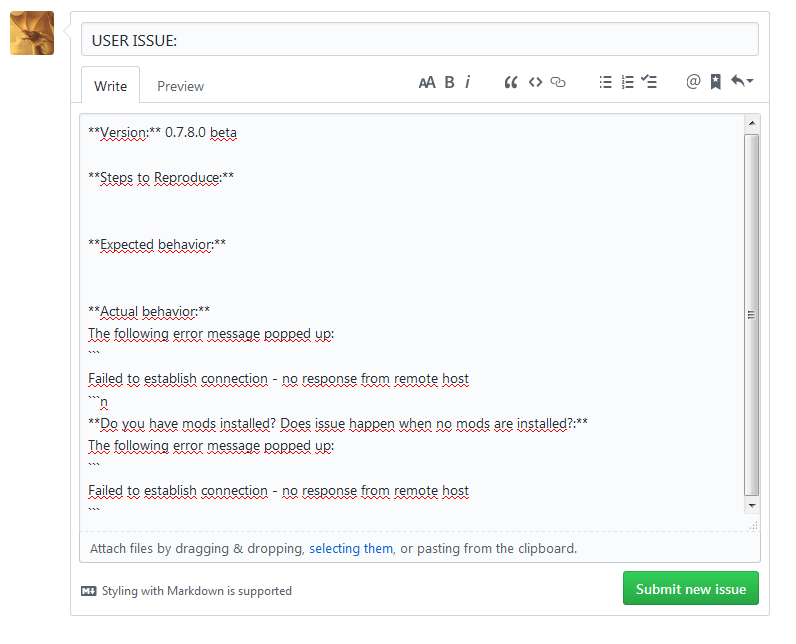

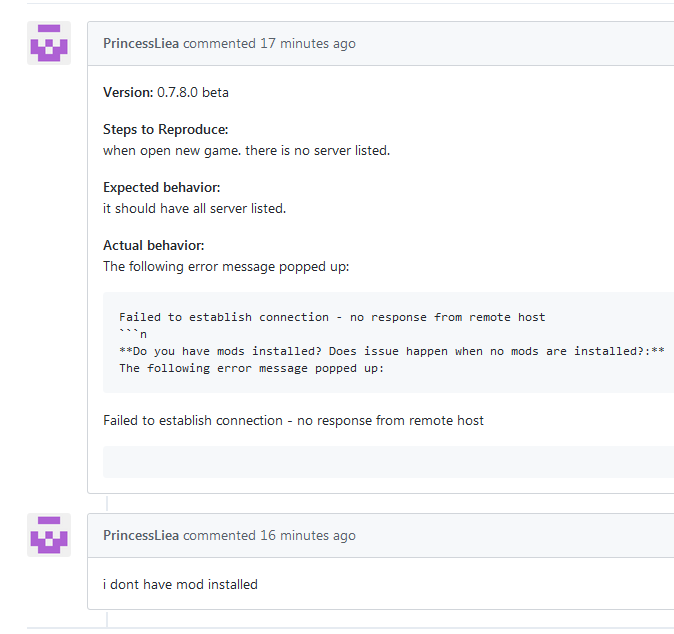

278,849 | 8,651,177,026 | IssuesEvent | 2018-11-27 01:51:48 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | "Report a bug" on "connect failed" error generate incorrect GH code. | Fixed Medium Priority outsource | **Version:** 0.7.8.0 beta

As i see, generated code gor GH incorrect, you can see result in many ussues.

e.g.

https://github.com/StrangeLoopGames/EcoIssues/issues/10010

| 1.0 | "Report a bug" on "connect failed" error generate incorrect GH code. - **Version:** 0.7.8.0 beta

As i see, generated code gor GH incorrect, you can see result in many ussues.

e.g.

https://github.com/StrangeLoopGames/EcoIssues/issues/10010

| priority | report a bug on connect failed error generate incorrect gh code version beta as i see generated code gor gh incorrect you can see result in many ussues e g | 1 |

589,634 | 17,754,969,454 | IssuesEvent | 2021-08-28 15:20:24 | dodona-edu/dodona | https://api.github.com/repos/dodona-edu/dodona | opened | Merging institutions sometimes fails | bug medium priority | Merging institution sometimes fails with this error:

```

An ActionView::Template::Error occurred while POST </nl/institutions/699/merge/?other_institution_id=30> was processed by institutions#do_merge

Exception

undefined method 'identifier_string' for nil:NilClass

Hostname

dodona

Backtrace

app/views/institutions/_merge_changes.html.erb:19

app/views/institutions/merge.html.erb:42

app/controllers/institutions_controller.rb:64:in `do_merge'

app/controllers/application_controller.rb:170:in `user_time_zone'

```

Some users might be in limbo due to this:

- users of institution 699 when merging into 30

- users of institution 350 when merging into 301 | 1.0 | Merging institutions sometimes fails - Merging institution sometimes fails with this error:

```

An ActionView::Template::Error occurred while POST </nl/institutions/699/merge/?other_institution_id=30> was processed by institutions#do_merge

Exception

undefined method 'identifier_string' for nil:NilClass

Hostname

dodona

Backtrace

app/views/institutions/_merge_changes.html.erb:19

app/views/institutions/merge.html.erb:42

app/controllers/institutions_controller.rb:64:in `do_merge'

app/controllers/application_controller.rb:170:in `user_time_zone'

```

Some users might be in limbo due to this:

- users of institution 699 when merging into 30

- users of institution 350 when merging into 301 | priority | merging institutions sometimes fails merging institution sometimes fails with this error an actionview template error occurred while post was processed by institutions do merge exception undefined method identifier string for nil nilclass hostname dodona backtrace app views institutions merge changes html erb app views institutions merge html erb app controllers institutions controller rb in do merge app controllers application controller rb in user time zone some users might be in limbo due to this users of institution when merging into users of institution when merging into | 1 |

122,303 | 4,833,557,145 | IssuesEvent | 2016-11-08 11:24:51 | arescentral/antares | https://api.github.com/repos/arescentral/antares | opened | Use system libraries when possible | Complexity:Medium OS:Linux Priority:Medium Type:Enhancement | Antares currently keeps vendored copies of most of its libraries in ``ext/``. If there are system-provided versions of those libraries, we should use those instead.

This is mainly of interest for Linux—historically, Antares does what it does because it was originally Mac-only, where the system doesn't provide the libraries we want and it's easiest if we build and link them statically ourselves. | 1.0 | Use system libraries when possible - Antares currently keeps vendored copies of most of its libraries in ``ext/``. If there are system-provided versions of those libraries, we should use those instead.

This is mainly of interest for Linux—historically, Antares does what it does because it was originally Mac-only, where the system doesn't provide the libraries we want and it's easiest if we build and link them statically ourselves. | priority | use system libraries when possible antares currently keeps vendored copies of most of its libraries in ext if there are system provided versions of those libraries we should use those instead this is mainly of interest for linux—historically antares does what it does because it was originally mac only where the system doesn t provide the libraries we want and it s easiest if we build and link them statically ourselves | 1 |

651,517 | 21,481,718,457 | IssuesEvent | 2022-04-26 18:25:32 | Samfundet/Samfundet | https://api.github.com/repos/Samfundet/Samfundet | closed | allow either lim_web or applicants to edit information | admission medium priority | As of now there is no way for us or applicants to edit applicant-information, this causes some issues every admission. We should add a way for us to easily edit this information, and look into the posibility of giving applicants the option further down the road. | 1.0 | allow either lim_web or applicants to edit information - As of now there is no way for us or applicants to edit applicant-information, this causes some issues every admission. We should add a way for us to easily edit this information, and look into the posibility of giving applicants the option further down the road. | priority | allow either lim web or applicants to edit information as of now there is no way for us or applicants to edit applicant information this causes some issues every admission we should add a way for us to easily edit this information and look into the posibility of giving applicants the option further down the road | 1 |

251,601 | 8,017,849,890 | IssuesEvent | 2018-07-25 17:11:43 | MARKETProtocol/dApp | https://api.github.com/repos/MARKETProtocol/dApp | closed | [Explorer] Implement Explorer UI/UX Mock Up | Bounty Attached Help Wanted Priority: Medium Status: Review Needed Type: Enhancement | ## Gitcoin Bounty Hunters

Please only consider picking up this issue if you feel confident that you are able to implement the designs with a high fidelity to the provided assets. Additionally, responsiveness of the implementation is very important and the design must translate well across, mobile, tablet, and large monitor resolutions.

## Before you `start work`

Please read our [contribution guidelines](https://docs.marketprotocol.io/#contributing) and if there is a bounty involved please also see [here](https://docs.marketprotocol.io/#gitcoin-and-bounties).

If you have ongoing work from other bounties with us where funding has not been released, please do not pick up a new issue. We would like to involve as many contributors as possible and parallelize the work flow as much as possible.

Please make sure to comment in the issue here immediately after starting work so we know your plans for implementation and a timeline.

Please also note that in order for work to be accepted, all code must be accompanied by test cases as well.

## Why Is this Needed?

*Summary:* Implements a key view for the MARKET dApp

## Description

Type: Feature

*Solution*

Summary: Using the .sketch file found [here](https://github.com/MARKETProtocol/assets/blob/master/MockUps/MARKET_Protocol-dApp.sketch) as a reference implement new design using react. The design can be found below.

## Definition of Done

- [ ] Using the provided assets, implement the new design for the MARKET dApp

- [ ] Update tests as needed

- [ ] Add new tests for all newly generated code

- [ ] For reference the existing implementation exists here http://dev.dapp.marketprotocol.io/contract/explorer

- [ ] Interact with @MARKETProtocol/core and expect a few revisions

Additional comments

• This is just the first of a few different pages for the dApp, so hunters who do a good job on this bounty will have more similar tasks coming their way | 1.0 | [Explorer] Implement Explorer UI/UX Mock Up - ## Gitcoin Bounty Hunters

Please only consider picking up this issue if you feel confident that you are able to implement the designs with a high fidelity to the provided assets. Additionally, responsiveness of the implementation is very important and the design must translate well across, mobile, tablet, and large monitor resolutions.

## Before you `start work`

Please read our [contribution guidelines](https://docs.marketprotocol.io/#contributing) and if there is a bounty involved please also see [here](https://docs.marketprotocol.io/#gitcoin-and-bounties).

If you have ongoing work from other bounties with us where funding has not been released, please do not pick up a new issue. We would like to involve as many contributors as possible and parallelize the work flow as much as possible.

Please make sure to comment in the issue here immediately after starting work so we know your plans for implementation and a timeline.

Please also note that in order for work to be accepted, all code must be accompanied by test cases as well.

## Why Is this Needed?

*Summary:* Implements a key view for the MARKET dApp

## Description

Type: Feature

*Solution*

Summary: Using the .sketch file found [here](https://github.com/MARKETProtocol/assets/blob/master/MockUps/MARKET_Protocol-dApp.sketch) as a reference implement new design using react. The design can be found below.

## Definition of Done

- [ ] Using the provided assets, implement the new design for the MARKET dApp

- [ ] Update tests as needed

- [ ] Add new tests for all newly generated code

- [ ] For reference the existing implementation exists here http://dev.dapp.marketprotocol.io/contract/explorer

- [ ] Interact with @MARKETProtocol/core and expect a few revisions

Additional comments

• This is just the first of a few different pages for the dApp, so hunters who do a good job on this bounty will have more similar tasks coming their way | priority | implement explorer ui ux mock up gitcoin bounty hunters please only consider picking up this issue if you feel confident that you are able to implement the designs with a high fidelity to the provided assets additionally responsiveness of the implementation is very important and the design must translate well across mobile tablet and large monitor resolutions before you start work please read our and if there is a bounty involved please also see if you have ongoing work from other bounties with us where funding has not been released please do not pick up a new issue we would like to involve as many contributors as possible and parallelize the work flow as much as possible please make sure to comment in the issue here immediately after starting work so we know your plans for implementation and a timeline please also note that in order for work to be accepted all code must be accompanied by test cases as well why is this needed summary implements a key view for the market dapp description type feature solution summary using the sketch file found as a reference implement new design using react the design can be found below definition of done using the provided assets implement the new design for the market dapp update tests as needed add new tests for all newly generated code for reference the existing implementation exists here interact with marketprotocol core and expect a few revisions additional comments • this is just the first of a few different pages for the dapp so hunters who do a good job on this bounty will have more similar tasks coming their way | 1 |

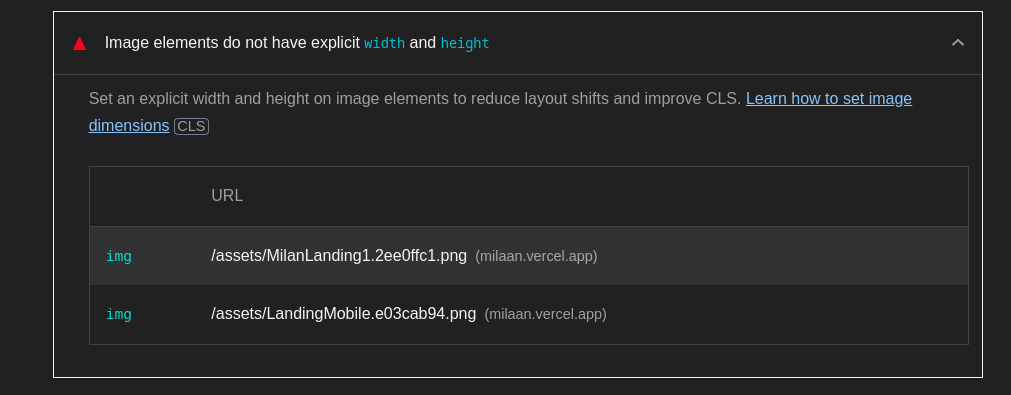

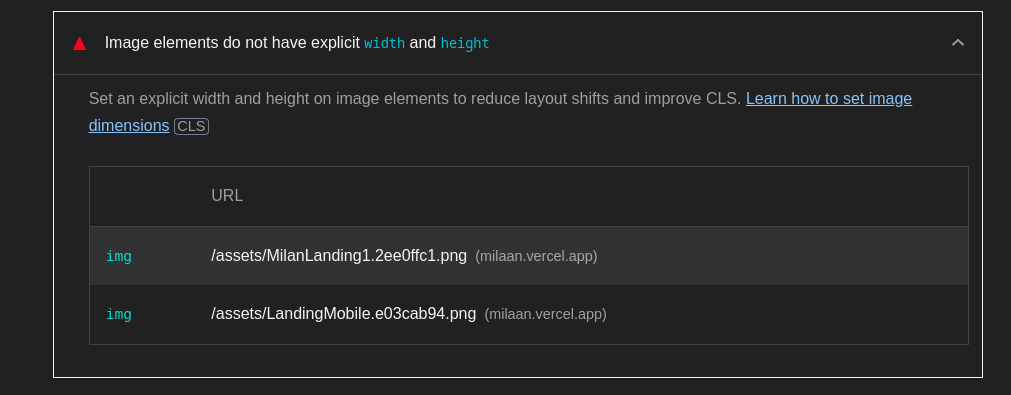

800,916 | 28,438,205,333 | IssuesEvent | 2023-04-15 15:22:34 | IAmTamal/Milan | https://api.github.com/repos/IAmTamal/Milan | opened | fix: add proper image size | ✨ goal: improvement 🟨 priority: medium 🛠 status : under development | ### What would you like to share?

- We need to add proper image size to improve SEO

images

- https://milaan.vercel.app/assets/MilanLanding1.2ee0ffc1.png

- https://milaan.vercel.app/assets/LandingMobile.e03cab94.png

### 🥦 Browser

Brave

### Checklist ✅

- [X] I checked and didn't find similar issue

- [X] I have read the [Contributing Guidelines](https://github.com/IAmTamal/Milan/blob/main/CONTRIBUTING.md)

- [X] I am willing to work on this issue (blank for no) | 1.0 | fix: add proper image size - ### What would you like to share?

- We need to add proper image size to improve SEO

images

- https://milaan.vercel.app/assets/MilanLanding1.2ee0ffc1.png

- https://milaan.vercel.app/assets/LandingMobile.e03cab94.png

### 🥦 Browser

Brave

### Checklist ✅

- [X] I checked and didn't find similar issue

- [X] I have read the [Contributing Guidelines](https://github.com/IAmTamal/Milan/blob/main/CONTRIBUTING.md)

- [X] I am willing to work on this issue (blank for no) | priority | fix add proper image size what would you like to share we need to add proper image size to improve seo images 🥦 browser brave checklist ✅ i checked and didn t find similar issue i have read the i am willing to work on this issue blank for no | 1 |

251,965 | 8,030,008,075 | IssuesEvent | 2018-07-27 18:02:07 | esteemapp/esteem-surfer | https://api.github.com/repos/esteemapp/esteem-surfer | closed | Older revisions of post | medium priority | Have possibility to see older revisions of the post with eSync.

https://github.com/google/diff-match-patch might be useful to show differences... | 1.0 | Older revisions of post - Have possibility to see older revisions of the post with eSync.

https://github.com/google/diff-match-patch might be useful to show differences... | priority | older revisions of post have possibility to see older revisions of the post with esync might be useful to show differences | 1 |

620,312 | 19,558,818,079 | IssuesEvent | 2022-01-03 13:33:39 | bounswe/2021SpringGroup1 | https://api.github.com/repos/bounswe/2021SpringGroup1 | closed | Adding comment related API functionality | Priority: Medium Status: In Progress Platform: Backend | This is not very crutial for now, but it looks quite straightforward so I will try to address this requirement now. We discussed specifications as:

- Returned comment objects will contain `id:<comment_id>`, `poster_id:<user_id>` ,`body:<str>`, `replies: [<comments>]`, `created_date: <date>` fields.

- Comments will be rendered in a special page containing only the post itself.

- Comments will be generated by entrypoint taking fields `post_id:<post_id>`, `body:<str>`, `replied_to:<comnent_id>` | 1.0 | Adding comment related API functionality - This is not very crutial for now, but it looks quite straightforward so I will try to address this requirement now. We discussed specifications as:

- Returned comment objects will contain `id:<comment_id>`, `poster_id:<user_id>` ,`body:<str>`, `replies: [<comments>]`, `created_date: <date>` fields.

- Comments will be rendered in a special page containing only the post itself.

- Comments will be generated by entrypoint taking fields `post_id:<post_id>`, `body:<str>`, `replied_to:<comnent_id>` | priority | adding comment related api functionality this is not very crutial for now but it looks quite straightforward so i will try to address this requirement now we discussed specifications as returned comment objects will contain id poster id body replies created date fields comments will be rendered in a special page containing only the post itself comments will be generated by entrypoint taking fields post id body replied to | 1 |

45,478 | 2,933,889,560 | IssuesEvent | 2015-06-30 03:12:25 | openpnp/openpnp | https://api.github.com/repos/openpnp/openpnp | closed | Program requires restart before recognizing new cameras. | bug Component-GUI imported Priority-Medium | _Original author: ja...@vonnieda.org (May 13, 2012 04:10:48)_

When a new camera is added through the GUI the camera panel and machine controls panel do not know about it until after a restart. This is because these panels only read the configuration when it's loaded. These classes will need to become PropertyChangeListeners for the machine.cameras list.

_Original issue: http://code.google.com/p/openpnp/issues/detail?id=10_ | 1.0 | Program requires restart before recognizing new cameras. - _Original author: ja...@vonnieda.org (May 13, 2012 04:10:48)_

When a new camera is added through the GUI the camera panel and machine controls panel do not know about it until after a restart. This is because these panels only read the configuration when it's loaded. These classes will need to become PropertyChangeListeners for the machine.cameras list.

_Original issue: http://code.google.com/p/openpnp/issues/detail?id=10_ | priority | program requires restart before recognizing new cameras original author ja vonnieda org may when a new camera is added through the gui the camera panel and machine controls panel do not know about it until after a restart this is because these panels only read the configuration when it s loaded these classes will need to become propertychangelisteners for the machine cameras list original issue | 1 |

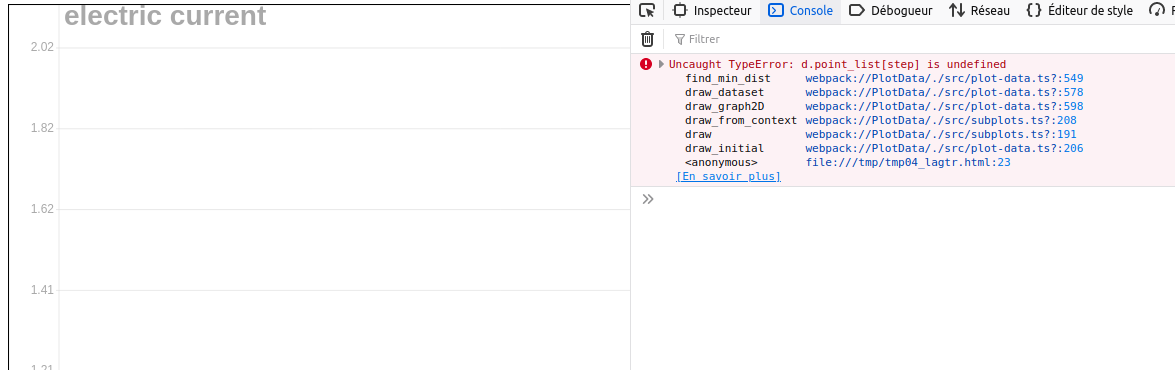

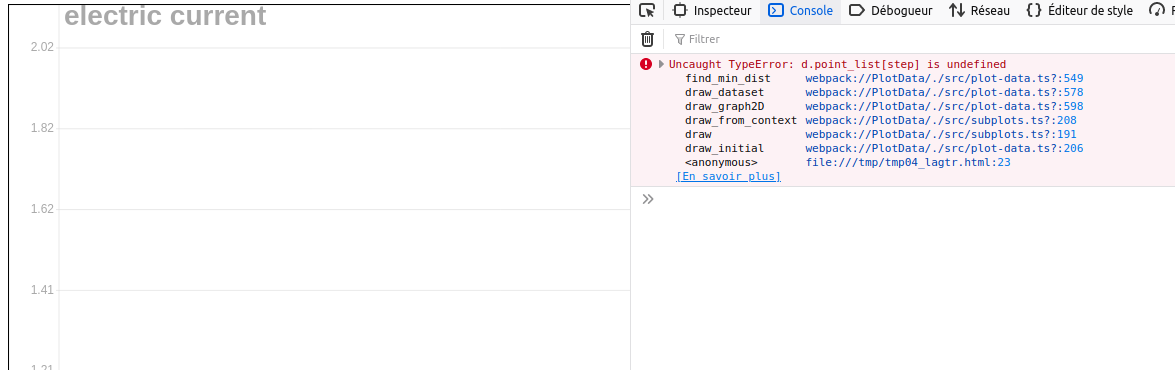

821,302 | 30,816,096,325 | IssuesEvent | 2023-08-01 13:34:09 | Dessia-tech/plot_data | https://api.github.com/repos/Dessia-tech/plot_data | closed | bug: single point dataset makes a bug | type: bug priority: medium | * **I'm submitting a ...**

- [x] bug report

- [ ] feature request

- [ ] support request => Please do not submit support request here, see note at the top of this template.

Having a single point dataset makes the plot crash:

Even if there is another dataset with more than 1 point.

```

import plot_data

from plot_data.colors import *

import numpy as np

elements1 = [{'time': 0, 'electric current': 1}]

dataset1 = plot_data.Dataset(elements=elements1, name='I1 = f(t)')

# The previous line instantiates a dataset with limited arguments but

# several customizations are available

point_style = plot_data.PointStyle(color_fill=RED, color_stroke=BLACK)

edge_style = plot_data.EdgeStyle(color_stroke=BLUE, dashline=[10, 5])

custom_dataset = plot_data.Dataset(elements=elements1, name='I = f(t)',

point_style=point_style,

edge_style=edge_style)

graph2d = plot_data.Graph2D(graphs=[dataset1],

x_variable='time', y_variable='electric current')

plot_data.plot_canvas(plot_data_object=graph2d, canvas_id='canvas',

debug_mode=True)

```

* **If the current behavior is a bug, please provide the steps to reproduce and if possible a minimal demo of the problem** Avoid reference to other packages

* **Please tell us about your environment:**

- python version: 0.10.8

| 1.0 | bug: single point dataset makes a bug - * **I'm submitting a ...**

- [x] bug report

- [ ] feature request

- [ ] support request => Please do not submit support request here, see note at the top of this template.

Having a single point dataset makes the plot crash:

Even if there is another dataset with more than 1 point.

```

import plot_data

from plot_data.colors import *

import numpy as np

elements1 = [{'time': 0, 'electric current': 1}]

dataset1 = plot_data.Dataset(elements=elements1, name='I1 = f(t)')

# The previous line instantiates a dataset with limited arguments but

# several customizations are available

point_style = plot_data.PointStyle(color_fill=RED, color_stroke=BLACK)

edge_style = plot_data.EdgeStyle(color_stroke=BLUE, dashline=[10, 5])

custom_dataset = plot_data.Dataset(elements=elements1, name='I = f(t)',

point_style=point_style,

edge_style=edge_style)

graph2d = plot_data.Graph2D(graphs=[dataset1],

x_variable='time', y_variable='electric current')

plot_data.plot_canvas(plot_data_object=graph2d, canvas_id='canvas',

debug_mode=True)

```

* **If the current behavior is a bug, please provide the steps to reproduce and if possible a minimal demo of the problem** Avoid reference to other packages

* **Please tell us about your environment:**

- python version: 0.10.8

| priority | bug single point dataset makes a bug i m submitting a bug report feature request support request please do not submit support request here see note at the top of this template having a single point dataset makes the plot crash even if there is another dataset with more than point import plot data from plot data colors import import numpy as np plot data dataset elements name f t the previous line instantiates a dataset with limited arguments but several customizations are available point style plot data pointstyle color fill red color stroke black edge style plot data edgestyle color stroke blue dashline custom dataset plot data dataset elements name i f t point style point style edge style edge style plot data graphs x variable time y variable electric current plot data plot canvas plot data object canvas id canvas debug mode true if the current behavior is a bug please provide the steps to reproduce and if possible a minimal demo of the problem avoid reference to other packages please tell us about your environment python version | 1 |

780,341 | 27,390,664,357 | IssuesEvent | 2023-02-28 16:04:00 | CDCgov/prime-reportstream | https://api.github.com/repos/CDCgov/prime-reportstream | closed | Elitemedical - Lab Data from SimpleReport - IL state not receiving negative test results | onboarding-ops Medium Priority Illinois | Problem Statement:

EliteMed Laboratories is concerned that their negative lab data is not going to IL State. After reviewing the data, it looks like the test results are showing as: not detected. Please see below the SimpleReport CSV Uploads being sent to ReportStream Below:

Acceptance Criteria: Ensure that all relevant negative lab test results are going to IL.

To Do: Assign Engineer to Investigate | 1.0 | Elitemedical - Lab Data from SimpleReport - IL state not receiving negative test results - Problem Statement:

EliteMed Laboratories is concerned that their negative lab data is not going to IL State. After reviewing the data, it looks like the test results are showing as: not detected. Please see below the SimpleReport CSV Uploads being sent to ReportStream Below:

Acceptance Criteria: Ensure that all relevant negative lab test results are going to IL.

To Do: Assign Engineer to Investigate | priority | elitemedical lab data from simplereport il state not receiving negative test results problem statement elitemed laboratories is concerned that their negative lab data is not going to il state after reviewing the data it looks like the test results are showing as not detected please see below the simplereport csv uploads being sent to reportstream below acceptance criteria ensure that all relevant negative lab test results are going to il to do assign engineer to investigate | 1 |

757,878 | 26,533,199,310 | IssuesEvent | 2023-01-19 13:59:14 | netlify/next-runtime | https://api.github.com/repos/netlify/next-runtime | closed | Edge runtime routes throw 500 error on trailing slash mismatch | priority: medium | If trailing slash in disabled, requesting an edge runtime route with a trailing slash will throw a 500 error. This is because the matcher pattern strictly matches just the route without the slash, meaning the site tries to serve the route from the origin which will fail. The expected behaviour is to redirect to the canonical version.

The fix is the trailing slash fix as indicated in #1448, however this may need to happen before the edge router is implemented.

e2e test: `streaming-ssr` | 1.0 | Edge runtime routes throw 500 error on trailing slash mismatch - If trailing slash in disabled, requesting an edge runtime route with a trailing slash will throw a 500 error. This is because the matcher pattern strictly matches just the route without the slash, meaning the site tries to serve the route from the origin which will fail. The expected behaviour is to redirect to the canonical version.

The fix is the trailing slash fix as indicated in #1448, however this may need to happen before the edge router is implemented.

e2e test: `streaming-ssr` | priority | edge runtime routes throw error on trailing slash mismatch if trailing slash in disabled requesting an edge runtime route with a trailing slash will throw a error this is because the matcher pattern strictly matches just the route without the slash meaning the site tries to serve the route from the origin which will fail the expected behaviour is to redirect to the canonical version the fix is the trailing slash fix as indicated in however this may need to happen before the edge router is implemented test streaming ssr | 1 |

551,643 | 16,177,755,409 | IssuesEvent | 2021-05-03 09:43:38 | sopra-fs21-group-4/server | https://api.github.com/repos/sopra-fs21-group-4/server | closed | Save/Update Lobby Settings for the Lobby Entity (espc. Time Limits) | medium priority removed task | - [x] Implement adaptSettings() in Game

- [x] Implement adaptSettings() in GameService

- [x] Implement adaptSettings() in GameController

- [ ] test

#37 Story 15 | 1.0 | Save/Update Lobby Settings for the Lobby Entity (espc. Time Limits) - - [x] Implement adaptSettings() in Game

- [x] Implement adaptSettings() in GameService

- [x] Implement adaptSettings() in GameController

- [ ] test

#37 Story 15 | priority | save update lobby settings for the lobby entity espc time limits implement adaptsettings in game implement adaptsettings in gameservice implement adaptsettings in gamecontroller test story | 1 |

596,274 | 18,101,467,788 | IssuesEvent | 2021-09-22 14:36:56 | gravityview/GravityView | https://api.github.com/repos/gravityview/GravityView | closed | Fix enqueued scripts and styles when embedded in ACF fields | Enhancement Compat: Plugin Difficulty: Medium Priority: Medium | > If the shortcode is in the standard content editor, the default css is included (table-view.css, gv-default.css). When we switch the page to use a page builder powered by ACF, the CSS is not included. We love the idea of the CSS only being included on pages where it's required but, if it's only checking "the_content()" this is going to be an issue. Is there any way around this other than manually enqueueing the files globally? | 1.0 | Fix enqueued scripts and styles when embedded in ACF fields - > If the shortcode is in the standard content editor, the default css is included (table-view.css, gv-default.css). When we switch the page to use a page builder powered by ACF, the CSS is not included. We love the idea of the CSS only being included on pages where it's required but, if it's only checking "the_content()" this is going to be an issue. Is there any way around this other than manually enqueueing the files globally? | priority | fix enqueued scripts and styles when embedded in acf fields if the shortcode is in the standard content editor the default css is included table view css gv default css when we switch the page to use a page builder powered by acf the css is not included we love the idea of the css only being included on pages where it s required but if it s only checking the content this is going to be an issue is there any way around this other than manually enqueueing the files globally | 1 |

779,817 | 27,367,344,948 | IssuesEvent | 2023-02-27 20:17:28 | DSpace/dspace-angular | https://api.github.com/repos/DSpace/dspace-angular | closed | In facets, vertically align checkbox with first word of option | bug help wanted usability component: Discovery good first issue medium priority Estimate TBD | **Describe the bug** DSpace 7.2?

As first reported in https://groups.google.com/g/dspace-community/c/GMO020isfZQ/m/1ISexkihAwAJ,

in facets, the check box for each option seems to be vertically centered on each option. This is fine for single lines but is confusing for multi-line options. For example, the ETD collection in the Iowa State University repository running DSpace 7.2, https://dr.lib.iastate.edu/collections/0830d32e-14e1-4a4f-bb8f-271a75ed35af?scope=0830d32e-14e1-4a4f-bb8f-271a75ed35af, offers a Department facet with the long option, Civil, Construction, and Environmental Engineering. I propose putting the check box inline with the first word of each option. I observe this in Firefox but not in Chrome or Safari. Attached are screenshots of each. [Note also that checkboxes in Safari overlap the text for some options.]

___________

Firefox

<img width="271" alt="Firefox_facet_checkbox" src="https://user-images.githubusercontent.com/13037168/176241621-f3fbe7ca-7aa1-45a8-8799-b823283cf379.png">

___________

Chrome

<img width="271" alt="Chrome_facet_checkbot" src="https://user-images.githubusercontent.com/13037168/176241670-c298117a-f9e6-4f01-8840-f8f7c0361688.png">

___________

Safari

<img width="271" alt="Safari_facet_checkbox" src="https://user-images.githubusercontent.com/13037168/176241700-d681b86d-bbd4-447f-9c14-94f4cf58948b.png">

___________

A clear and concise description of what the bug is. Include the version(s) of DSpace where you've seen this problem & what *web browser* you were using. Link to examples if they are public.

**To Reproduce**

Steps to reproduce the behavior:

1. Do this

2. Then this...

**Expected behavior**

A clear and concise description of what you expected to happen.

**Related work**

Link to any related tickets or PRs here.

| 1.0 | In facets, vertically align checkbox with first word of option - **Describe the bug** DSpace 7.2?

As first reported in https://groups.google.com/g/dspace-community/c/GMO020isfZQ/m/1ISexkihAwAJ,

in facets, the check box for each option seems to be vertically centered on each option. This is fine for single lines but is confusing for multi-line options. For example, the ETD collection in the Iowa State University repository running DSpace 7.2, https://dr.lib.iastate.edu/collections/0830d32e-14e1-4a4f-bb8f-271a75ed35af?scope=0830d32e-14e1-4a4f-bb8f-271a75ed35af, offers a Department facet with the long option, Civil, Construction, and Environmental Engineering. I propose putting the check box inline with the first word of each option. I observe this in Firefox but not in Chrome or Safari. Attached are screenshots of each. [Note also that checkboxes in Safari overlap the text for some options.]

___________

Firefox

<img width="271" alt="Firefox_facet_checkbox" src="https://user-images.githubusercontent.com/13037168/176241621-f3fbe7ca-7aa1-45a8-8799-b823283cf379.png">

___________

Chrome

<img width="271" alt="Chrome_facet_checkbot" src="https://user-images.githubusercontent.com/13037168/176241670-c298117a-f9e6-4f01-8840-f8f7c0361688.png">

___________

Safari

<img width="271" alt="Safari_facet_checkbox" src="https://user-images.githubusercontent.com/13037168/176241700-d681b86d-bbd4-447f-9c14-94f4cf58948b.png">

___________

A clear and concise description of what the bug is. Include the version(s) of DSpace where you've seen this problem & what *web browser* you were using. Link to examples if they are public.

**To Reproduce**

Steps to reproduce the behavior:

1. Do this

2. Then this...

**Expected behavior**

A clear and concise description of what you expected to happen.

**Related work**

Link to any related tickets or PRs here.

| priority | in facets vertically align checkbox with first word of option describe the bug dspace as first reported in in facets the check box for each option seems to be vertically centered on each option this is fine for single lines but is confusing for multi line options for example the etd collection in the iowa state university repository running dspace offers a department facet with the long option civil construction and environmental engineering i propose putting the check box inline with the first word of each option i observe this in firefox but not in chrome or safari attached are screenshots of each firefox img width alt firefox facet checkbox src chrome img width alt chrome facet checkbot src safari img width alt safari facet checkbox src a clear and concise description of what the bug is include the version s of dspace where you ve seen this problem what web browser you were using link to examples if they are public to reproduce steps to reproduce the behavior do this then this expected behavior a clear and concise description of what you expected to happen related work link to any related tickets or prs here | 1 |

761,004 | 26,663,184,445 | IssuesEvent | 2023-01-25 23:26:51 | NIAEFEUP/tts-be | https://api.github.com/repos/NIAEFEUP/tts-be | opened | Analysis Tool - Scheduled Caching | medium effort low priority | Currently, the caching of the statistics is made synchronously: when a user makes a GET request on the '/statistics/` endpoint, the current statistics are cached.

This is unreliable since it requires someone to make these requests for us to have a good chronological representation of the statistics over time.

An asynchronous implementation that caches the statistics 1x or 2x a day is needed. Suggestion:

- django-q

- cron

- celery

- (can't remember but I'm sure there are others :) ) | 1.0 | Analysis Tool - Scheduled Caching - Currently, the caching of the statistics is made synchronously: when a user makes a GET request on the '/statistics/` endpoint, the current statistics are cached.

This is unreliable since it requires someone to make these requests for us to have a good chronological representation of the statistics over time.

An asynchronous implementation that caches the statistics 1x or 2x a day is needed. Suggestion:

- django-q

- cron

- celery

- (can't remember but I'm sure there are others :) ) | priority | analysis tool scheduled caching currently the caching of the statistics is made synchronously when a user makes a get request on the statistics endpoint the current statistics are cached this is unreliable since it requires someone to make these requests for us to have a good chronological representation of the statistics over time an asynchronous implementation that caches the statistics or a day is needed suggestion django q cron celery can t remember but i m sure there are others | 1 |

716,303 | 24,627,795,931 | IssuesEvent | 2022-10-16 18:42:02 | AY2223S1-CS2103T-W16-3/tp | https://api.github.com/repos/AY2223S1-CS2103T-W16-3/tp | closed | Get /wn command restrictions to user inputs | priority.Medium type.Enhancement | There should be restrictions implemented for ward number inputs for patients. This restriction is subjective, but for our case we can set it to accept only **ONE** alphabet followed by numerical values.

Example inputs:

- D312

- T46

- F17 | 1.0 | Get /wn command restrictions to user inputs - There should be restrictions implemented for ward number inputs for patients. This restriction is subjective, but for our case we can set it to accept only **ONE** alphabet followed by numerical values.

Example inputs:

- D312

- T46

- F17 | priority | get wn command restrictions to user inputs there should be restrictions implemented for ward number inputs for patients this restriction is subjective but for our case we can set it to accept only one alphabet followed by numerical values example inputs | 1 |

307,171 | 9,414,248,825 | IssuesEvent | 2019-04-10 09:42:08 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | USER ISSUE: steam tractor - no ability to turn on\off for sower and harvester | Medium Priority | **Version:** 0.7.5.0 beta staging-bad4361f

Only plough can be enabled\disabled by pressing 1.

Both other modules work everytime. | 1.0 | USER ISSUE: steam tractor - no ability to turn on\off for sower and harvester - **Version:** 0.7.5.0 beta staging-bad4361f

Only plough can be enabled\disabled by pressing 1.

Both other modules work everytime. | priority | user issue steam tractor no ability to turn on off for sower and harvester version beta staging only plough can be enabled disabled by pressing both other modules work everytime | 1 |

720,863 | 24,809,250,318 | IssuesEvent | 2022-10-25 08:08:17 | AY2223S1-CS2103-F13-1/tp | https://api.github.com/repos/AY2223S1-CS2103-F13-1/tp | closed | As a developer, I can protect my data | type.Story priority.Medium | ...so that others do not get access to my project information | 1.0 | As a developer, I can protect my data - ...so that others do not get access to my project information | priority | as a developer i can protect my data so that others do not get access to my project information | 1 |

813,272 | 30,450,918,648 | IssuesEvent | 2023-07-16 09:31:13 | sef-global/scholarx-backend | https://api.github.com/repos/sef-global/scholarx-backend | opened | Implement update my profile endpoint | backend priority: medium | **Description:**

This issue involves implementing an API to update the profile details. The endpoint should allow clients to make a PUT request to `{{baseUrl}}/api/me`.

sample response body:

```

{

"created_at": "2023-06-29T09:15:00Z",

"updated_at": "2023-06-30T16:45:00Z",

"primary_email": "mentor@example.com",

"contact_email": "mentor_contact@example.com",

"first_name": "John",

"last_name": "Doe",

"image_url": "https://example.com/mentor_profile_image.jpg",

"linkedin_url": "https://www.linkedin.com/in/johndoe",

"type": "DEFAULT",

"uuid": "12345678-1234-5678-1234-567812345678"

}

```

**Tasks:**

1. Create a controller for the `/controllers/profile` endpoint in the backend (create the route profile if not created).

2. Parse and validate the request body to ensure it matches the expected format.

3. Implement appropriate error handling and response status codes for different scenarios (e.g., validation errors, database errors).

5. Write unit tests to validate the functionality and correctness of the endpoint.

API documentation: https://documenter.getpostman.com/view/27421496/2s93m1a4ac#8744a3ee-970f-489a-853d-8b23fdee8de3

ER diagram: https://drive.google.com/file/d/11KMgdNu2mSAm0Ner8UsSPQpZJS8QNqYc/view

**Acceptance Criteria:**

- The profile API endpoint `/controllers/profile` is implemented and accessible via a PUT request.

- The request body is properly parsed and validated for the required format.

- Appropriate error handling is implemented, providing meaningful error messages and correct response status codes.

- Unit tests are written to validate the functionality and correctness of the endpoint.

**Additional Information:**

No

**Related Dependencies or References:**

No

| 1.0 | Implement update my profile endpoint - **Description:**

This issue involves implementing an API to update the profile details. The endpoint should allow clients to make a PUT request to `{{baseUrl}}/api/me`.

sample response body:

```

{

"created_at": "2023-06-29T09:15:00Z",

"updated_at": "2023-06-30T16:45:00Z",

"primary_email": "mentor@example.com",

"contact_email": "mentor_contact@example.com",

"first_name": "John",

"last_name": "Doe",

"image_url": "https://example.com/mentor_profile_image.jpg",

"linkedin_url": "https://www.linkedin.com/in/johndoe",

"type": "DEFAULT",

"uuid": "12345678-1234-5678-1234-567812345678"

}

```

**Tasks:**

1. Create a controller for the `/controllers/profile` endpoint in the backend (create the route profile if not created).

2. Parse and validate the request body to ensure it matches the expected format.

3. Implement appropriate error handling and response status codes for different scenarios (e.g., validation errors, database errors).

5. Write unit tests to validate the functionality and correctness of the endpoint.

API documentation: https://documenter.getpostman.com/view/27421496/2s93m1a4ac#8744a3ee-970f-489a-853d-8b23fdee8de3

ER diagram: https://drive.google.com/file/d/11KMgdNu2mSAm0Ner8UsSPQpZJS8QNqYc/view

**Acceptance Criteria:**

- The profile API endpoint `/controllers/profile` is implemented and accessible via a PUT request.

- The request body is properly parsed and validated for the required format.

- Appropriate error handling is implemented, providing meaningful error messages and correct response status codes.

- Unit tests are written to validate the functionality and correctness of the endpoint.

**Additional Information:**

No

**Related Dependencies or References:**

No

| priority | implement update my profile endpoint description this issue involves implementing an api to update the profile details the endpoint should allow clients to make a put request to baseurl api me sample response body created at updated at primary email mentor example com contact email mentor contact example com first name john last name doe image url linkedin url type default uuid tasks create a controller for the controllers profile endpoint in the backend create the route profile if not created parse and validate the request body to ensure it matches the expected format implement appropriate error handling and response status codes for different scenarios e g validation errors database errors write unit tests to validate the functionality and correctness of the endpoint api documentation er diagram acceptance criteria the profile api endpoint controllers profile is implemented and accessible via a put request the request body is properly parsed and validated for the required format appropriate error handling is implemented providing meaningful error messages and correct response status codes unit tests are written to validate the functionality and correctness of the endpoint additional information no related dependencies or references no | 1 |

504,353 | 14,617,081,791 | IssuesEvent | 2020-12-22 14:15:40 | ita-social-projects/horondi_client_fe | https://api.github.com/repos/ita-social-projects/horondi_client_fe | opened | ['Моделі' page] Font-size and font-family of headline and content of page don't met the appropriates | UI bug priority: medium severity: trivial | **Environment:** Windows 10 Home, Google Chrome Version 87.0.4280.88.

**Reproducible:** always.

**Build found:** "commit https://horondi-admin-staging.azurewebsites.net/models"

**Preconditions**

Go to https://horondi-admin-staging.azurewebsites.net

Log into Administrator page as Administrator: 'User'="admin2@gmail.com", 'Password'="qwertY123"

**Steps to reproduce**

1. Click on item 'Моделі'

2. Click on button of light/dark theme

**Actual result**

Headline 'Інформація про моделі' - font-size 24px, font-family Roboto, Helvetica

Content - font-size 12px, font-family Roboto, Helvetica

**Expected result**

Headline 'Інформація про моделі' - font-size 24px, font-family Montserrat

Content - font-size 16px, font-family Montserrat.

**User story and test case links**

"User story #LVHRB-19

[Test case](https://jira.softserve.academy/browse/LVHRB-41)

**Labels to be added**

"Bug", Priority ("medium "), Severity ("trivial:"), Type ("UI").

| 1.0 | ['Моделі' page] Font-size and font-family of headline and content of page don't met the appropriates - **Environment:** Windows 10 Home, Google Chrome Version 87.0.4280.88.

**Reproducible:** always.

**Build found:** "commit https://horondi-admin-staging.azurewebsites.net/models"

**Preconditions**

Go to https://horondi-admin-staging.azurewebsites.net

Log into Administrator page as Administrator: 'User'="admin2@gmail.com", 'Password'="qwertY123"

**Steps to reproduce**

1. Click on item 'Моделі'

2. Click on button of light/dark theme

**Actual result**

Headline 'Інформація про моделі' - font-size 24px, font-family Roboto, Helvetica

Content - font-size 12px, font-family Roboto, Helvetica

**Expected result**

Headline 'Інформація про моделі' - font-size 24px, font-family Montserrat

Content - font-size 16px, font-family Montserrat.

**User story and test case links**

"User story #LVHRB-19

[Test case](https://jira.softserve.academy/browse/LVHRB-41)

**Labels to be added**

"Bug", Priority ("medium "), Severity ("trivial:"), Type ("UI").

| priority | font size and font family of headline and content of page don t met the appropriates environment windows home google chrome version reproducible always build found commit preconditions go to log into administrator page as administrator user gmail com password steps to reproduce click on item моделі click on button of light dark theme actual result headline інформація про моделі font size font family roboto helvetica content font size font family roboto helvetica expected result headline інформація про моделі font size font family montserrat content font size font family montserrat user story and test case links user story lvhrb labels to be added bug priority medium severity trivial type ui | 1 |

370,667 | 10,935,283,409 | IssuesEvent | 2019-11-24 17:16:44 | UW-Macrostrat/burwell-processing | https://api.github.com/repos/UW-Macrostrat/burwell-processing | closed | Reno Junction, WY | Medium priority | ## Info

**Name:**

Geologic Map Of The Reno Junction 30' X 60' Quadrangle, Campbell, And Weston Counties, Wyoming

**Source URL:**

GIS Data: http://www.wsgs.wyo.gov/gis-files/mapseries/100k/bedrock/gis-2003-ms-62.zip

PDF: http://www.wsgs.wyo.gov/products/wsgs-2004-ms-62.pdf

**Estimated scale:**

large

**Number of bedrock polygons:**

**Lithology field:**

name

**Time field:**

age,early_id,late_id

**Stratigraphy name field:**

strat_name,hierarchy

**Description field:**

description

**Comments field:**

comments

**Comments:**

coal mine polygon is not processed.

No lines or points shapefiles

## Todo:

- [x] Insert new source record

- [x] Add data to database

- [x] Process and add to homogenized tables

- [ ] Process and import lines

- [x] Match

- [x] Roll tiles

| 1.0 | Reno Junction, WY - ## Info

**Name:**

Geologic Map Of The Reno Junction 30' X 60' Quadrangle, Campbell, And Weston Counties, Wyoming

**Source URL:**

GIS Data: http://www.wsgs.wyo.gov/gis-files/mapseries/100k/bedrock/gis-2003-ms-62.zip

PDF: http://www.wsgs.wyo.gov/products/wsgs-2004-ms-62.pdf

**Estimated scale:**

large

**Number of bedrock polygons:**

**Lithology field:**

name

**Time field:**

age,early_id,late_id

**Stratigraphy name field:**

strat_name,hierarchy

**Description field:**

description

**Comments field:**

comments

**Comments:**

coal mine polygon is not processed.

No lines or points shapefiles

## Todo:

- [x] Insert new source record

- [x] Add data to database

- [x] Process and add to homogenized tables

- [ ] Process and import lines

- [x] Match

- [x] Roll tiles

| priority | reno junction wy info name geologic map of the reno junction x quadrangle campbell and weston counties wyoming source url gis data pdf estimated scale large number of bedrock polygons lithology field name time field age early id late id stratigraphy name field strat name hierarchy description field description comments field comments comments coal mine polygon is not processed no lines or points shapefiles todo insert new source record add data to database process and add to homogenized tables process and import lines match roll tiles | 1 |

605,947 | 18,752,375,479 | IssuesEvent | 2021-11-05 05:04:10 | CMPUT301F21T20/HabitTracker | https://api.github.com/repos/CMPUT301F21T20/HabitTracker | closed | 1.5 Delete Habit | priority: medium Habits complexity: low | Story Point: As a doer, I want to delete a habit of mine.

Provide a method for doers to delete already created habits. Refer to UI mockups for design. Integration with firestore is required.

Halfway Checkpoint: Backend implemetation complete

Points: 2

Priority: Medium

Complexity: Low | 1.0 | 1.5 Delete Habit - Story Point: As a doer, I want to delete a habit of mine.

Provide a method for doers to delete already created habits. Refer to UI mockups for design. Integration with firestore is required.

Halfway Checkpoint: Backend implemetation complete

Points: 2

Priority: Medium

Complexity: Low | priority | delete habit story point as a doer i want to delete a habit of mine provide a method for doers to delete already created habits refer to ui mockups for design integration with firestore is required halfway checkpoint backend implemetation complete points priority medium complexity low | 1 |

16,468 | 2,615,116,738 | IssuesEvent | 2015-03-01 05:41:52 | chrsmith/google-api-java-client | https://api.github.com/repos/chrsmith/google-api-java-client | closed | Youtube | auto-migrated Priority-Medium Type-Sample | ```

Code required for fetching from youtube, the following using

google-api-java-client

1) Most Rated Videos

2) Most Viewed Videos

3) Searching a video

Regards,

Anees

```

Original issue reported on code.google.com by `aneesaha...@gmail.com` on 27 Sep 2010 at 5:02

* Merged into: #16 | 1.0 | Youtube - ```

Code required for fetching from youtube, the following using

google-api-java-client

1) Most Rated Videos

2) Most Viewed Videos

3) Searching a video

Regards,

Anees

```

Original issue reported on code.google.com by `aneesaha...@gmail.com` on 27 Sep 2010 at 5:02

* Merged into: #16 | priority | youtube code required for fetching from youtube the following using google api java client most rated videos most viewed videos searching a video regards anees original issue reported on code google com by aneesaha gmail com on sep at merged into | 1 |

35,967 | 2,794,222,704 | IssuesEvent | 2015-05-11 15:34:33 | CUL-DigitalServices/grasshopper-ui | https://api.github.com/repos/CUL-DigitalServices/grasshopper-ui | opened | Editting Module Event time- (Day and time) dropdown options doesnt dislay the time on interface | Medium Priority | -Editing Module Event - (Day and time) drop down buttons covering the day and time view on interface, this happens in Firefox browser(25 v), OS Windows 7 Professional.

-In Chrome browser(42.0 v) time is not displayed in fields, and day field length should be increased so that when day is selected it should display full text of the day

-Attached Screenshot for reference

| 1.0 | Editting Module Event time- (Day and time) dropdown options doesnt dislay the time on interface - -Editing Module Event - (Day and time) drop down buttons covering the day and time view on interface, this happens in Firefox browser(25 v), OS Windows 7 Professional.

-In Chrome browser(42.0 v) time is not displayed in fields, and day field length should be increased so that when day is selected it should display full text of the day

-Attached Screenshot for reference

| priority | editting module event time day and time dropdown options doesnt dislay the time on interface editing module event day and time drop down buttons covering the day and time view on interface this happens in firefox browser v os windows professional in chrome browser v time is not displayed in fields and day field length should be increased so that when day is selected it should display full text of the day attached screenshot for reference | 1 |

317,887 | 9,670,421,703 | IssuesEvent | 2019-05-21 19:52:43 | x-klanas/Wrath | https://api.github.com/repos/x-klanas/Wrath | closed | Start testing button | 2 points medium priority user story | As a player I want to have a button in the garage, which when pressed turn on the engine on the vehicle.

- [x] Then button must be easily reachable by the player

- [x] When pressed, the testing timer starts and the engine turns on | 1.0 | Start testing button - As a player I want to have a button in the garage, which when pressed turn on the engine on the vehicle.

- [x] Then button must be easily reachable by the player

- [x] When pressed, the testing timer starts and the engine turns on | priority | start testing button as a player i want to have a button in the garage which when pressed turn on the engine on the vehicle then button must be easily reachable by the player when pressed the testing timer starts and the engine turns on | 1 |

399,709 | 11,759,481,933 | IssuesEvent | 2020-03-13 17:21:46 | eric-bixby/auto-sort-bookmarks-webext | https://api.github.com/repos/eric-bixby/auto-sort-bookmarks-webext | closed | Sort by Last visited missing in new version | Priority: Medium Status: Pending Type: Bug | Hi

Any chance in getting option to sort by "Last Visited" returned in future version? Disappeared in 3.4

| 1.0 | Sort by Last visited missing in new version - Hi

Any chance in getting option to sort by "Last Visited" returned in future version? Disappeared in 3.4

| priority | sort by last visited missing in new version hi any chance in getting option to sort by last visited returned in future version disappeared in | 1 |

715,438 | 24,599,267,244 | IssuesEvent | 2022-10-14 11:01:04 | bounswe/bounswe2022group2 | https://api.github.com/repos/bounswe/bounswe2022group2 | closed | Research & Report for Backend Technologies (Backend Team) | priority-medium status-inprogress back-end | ### Issue Description

After our first meeting, backend team for our project has been decided as @codingAku, @mbatuhancelik and @hasancan-code. Our first task as backend team is make a research and present our findings about various technologies for implementing backend, first ideas include Node & Python FastAPI.

### Step Details

Steps that will be performed: