Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

742,417 | 25,853,459,248 | IssuesEvent | 2022-12-13 12:04:44 | dodona-edu/dodona | https://api.github.com/repos/dodona-edu/dodona | closed | Order activities by popularity | feature medium priority | Popularity of an exercise is definitely a strong indicator of interestingness of the exercise so if possible we should surface that information in the table. I don't think we want to list the exact number of courses where an exercises is used, but maybe we can use an icon with different states: low, medium, high, featured. We will then have to pick sensible threshold values. I don't know what the best strategy is for fetching this information: calculate it on demand, store it in the DB or store it in cache. In addition, we could add a filter for it.

_Originally posted by @bmesuere in https://github.com/dodona-edu/dodona/pull/4203#pullrequestreview-1199499438_

| 1.0 | Order activities by popularity - Popularity of an exercise is definitely a strong indicator of interestingness of the exercise so if possible we should surface that information in the table. I don't think we want to list the exact number of courses where an exercises is used, but maybe we can use an icon with different states: low, medium, high, featured. We will then have to pick sensible threshold values. I don't know what the best strategy is for fetching this information: calculate it on demand, store it in the DB or store it in cache. In addition, we could add a filter for it.

_Originally posted by @bmesuere in https://github.com/dodona-edu/dodona/pull/4203#pullrequestreview-1199499438_

| priority | order activities by popularity popularity of an exercise is definitely a strong indicator of interestingness of the exercise so if possible we should surface that information in the table i don t think we want to list the exact number of courses where an exercises is used but maybe we can use an icon with different states low medium high featured we will then have to pick sensible threshold values i don t know what the best strategy is for fetching this information calculate it on demand store it in the db or store it in cache in addition we could add a filter for it originally posted by bmesuere in | 1 |

601,835 | 18,436,208,964 | IssuesEvent | 2021-10-14 13:18:51 | AY2122S1-CS2103T-F11-3/tp | https://api.github.com/repos/AY2122S1-CS2103T-F11-3/tp | closed | Update test cases for leave related functionality | priority.MEDIUM | Writing/Editing test cases for the add/remove leaves command. | 1.0 | Update test cases for leave related functionality - Writing/Editing test cases for the add/remove leaves command. | priority | update test cases for leave related functionality writing editing test cases for the add remove leaves command | 1 |

323,530 | 9,856,088,895 | IssuesEvent | 2019-06-19 21:05:50 | USGS-Astrogeology/PyHAT_Point_Spectra_GUI | https://api.github.com/repos/USGS-Astrogeology/PyHAT_Point_Spectra_GUI | opened | Fix how model coefficients are saved for local regression | Difficulty Intermediate Priority: Medium | Currently intercept and model name are incorrect. Intercept should be the actual intercept of the model, model name should convey the algorithm, unknown spectrum name, number of local spectra used, etc. | 1.0 | Fix how model coefficients are saved for local regression - Currently intercept and model name are incorrect. Intercept should be the actual intercept of the model, model name should convey the algorithm, unknown spectrum name, number of local spectra used, etc. | priority | fix how model coefficients are saved for local regression currently intercept and model name are incorrect intercept should be the actual intercept of the model model name should convey the algorithm unknown spectrum name number of local spectra used etc | 1 |

666,463 | 22,356,729,703 | IssuesEvent | 2022-06-15 16:16:56 | oslopride/oslopride.no | https://api.github.com/repos/oslopride/oslopride.no | closed | Events - Improve UI | priority-medium | Link til Figma:

https://www.figma.com/file/0iN4qC7XqR4UagR3o7VEHn/Oslo-Pride-%E2%80%93-Design-System-%26-Web?node-id=829%3A20951

Legg samme event-filter som Skeivtkulturkalender.

Endringer på selve event-kort. | 1.0 | Events - Improve UI - Link til Figma:

https://www.figma.com/file/0iN4qC7XqR4UagR3o7VEHn/Oslo-Pride-%E2%80%93-Design-System-%26-Web?node-id=829%3A20951

Legg samme event-filter som Skeivtkulturkalender.

Endringer på selve event-kort. | priority | events improve ui link til figma legg samme event filter som skeivtkulturkalender endringer på selve event kort | 1 |

351,248 | 10,514,571,364 | IssuesEvent | 2019-09-28 01:43:42 | AY1920S1-CS2113T-W17-4/main | https://api.github.com/repos/AY1920S1-CS2113T-W17-4/main | opened | As a Computing student, I can have a do-after task | priority.Medium type.Story | so that I know what tasks need to be done after completing a specific task. | 1.0 | As a Computing student, I can have a do-after task - so that I know what tasks need to be done after completing a specific task. | priority | as a computing student i can have a do after task so that i know what tasks need to be done after completing a specific task | 1 |

150,164 | 5,738,811,117 | IssuesEvent | 2017-04-23 08:40:53 | diamm/diamm | https://api.github.com/repos/diamm/diamm | closed | Image display order | Component: Metadata Priority: Medium Type: Support | Need to be able to edit image order number to adjust incorrect display order if necessary | 1.0 | Image display order - Need to be able to edit image order number to adjust incorrect display order if necessary | priority | image display order need to be able to edit image order number to adjust incorrect display order if necessary | 1 |

577,797 | 17,135,069,201 | IssuesEvent | 2021-07-13 00:08:02 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | net: ip: Assertion fails when tcp_send_data() with zero length packet | bug priority: medium | **Describe the bug**

When testing TCP stack, assertion fails as following:

```

ASSERTION FAIL [frag] @ /zephyr/zephyrmaster/zephyr/subsys/net/buf.c:640

@ /zephyr/zephyrmaster/zephyr/lib/os/assert.c:45

E: >>> ZEPHYR FATAL ERROR 4: Kernel panic on CPU 0

E: Current thread: 0x3d87e0 (tcp_work)

E: Halting system

```

The flow of this failure is:

```tcp_work thread``` -> tcp_resend_data() -> tcp_send_data()

```

{

/* 1. send_data_totoal and unacked_len are all 0 in this case */

len = 0 = MIN3(conn->send_data_total - conn->unacked_len,

conn->send_win - conn->unacked_len,

conn_mss(conn));

/* 2. zero length packet (pkt->buffer=NULL) allocated */

pkt = tcp_pkt_alloc(conn, len=0);

ret = tcp_pkt_peek(pkt, conn->send_data, pos, len=0);

/* 3. zero length packet passed to tcp_out_ext() */

ret = tcp_out_ext(conn, PSH | ACK, pkt, conn->seq + conn->unacked_len);

}

```

-> tcp_out_ext() -> net_pkt_append_buffer(pkt, data->buffer=```NULL```) -> net_buf_frag_insert(..., buffer=```NULL```) -> _ASSERT_NO_MSG(frag) -> ```ASSERTION FAIL```

**To Solve**

Don't proceed if TCP resend length is zero.

```

len = MIN3(conn->send_data_total - conn->unacked_len,

conn->send_win - conn->unacked_len,

conn_mss(conn));

if (len == 0) {

goto out;

}

```

**Environment**

- OS: Linux

- Toolchain: Zephyr SDK

- Commit 89212a7fbf5fcd8e4d661c016344ae4bf2d46f53

| 1.0 | net: ip: Assertion fails when tcp_send_data() with zero length packet - **Describe the bug**

When testing TCP stack, assertion fails as following:

```

ASSERTION FAIL [frag] @ /zephyr/zephyrmaster/zephyr/subsys/net/buf.c:640

@ /zephyr/zephyrmaster/zephyr/lib/os/assert.c:45

E: >>> ZEPHYR FATAL ERROR 4: Kernel panic on CPU 0

E: Current thread: 0x3d87e0 (tcp_work)

E: Halting system

```

The flow of this failure is:

```tcp_work thread``` -> tcp_resend_data() -> tcp_send_data()

```

{

/* 1. send_data_totoal and unacked_len are all 0 in this case */

len = 0 = MIN3(conn->send_data_total - conn->unacked_len,

conn->send_win - conn->unacked_len,

conn_mss(conn));

/* 2. zero length packet (pkt->buffer=NULL) allocated */

pkt = tcp_pkt_alloc(conn, len=0);

ret = tcp_pkt_peek(pkt, conn->send_data, pos, len=0);

/* 3. zero length packet passed to tcp_out_ext() */

ret = tcp_out_ext(conn, PSH | ACK, pkt, conn->seq + conn->unacked_len);

}

```

-> tcp_out_ext() -> net_pkt_append_buffer(pkt, data->buffer=```NULL```) -> net_buf_frag_insert(..., buffer=```NULL```) -> _ASSERT_NO_MSG(frag) -> ```ASSERTION FAIL```

**To Solve**

Don't proceed if TCP resend length is zero.

```

len = MIN3(conn->send_data_total - conn->unacked_len,

conn->send_win - conn->unacked_len,

conn_mss(conn));

if (len == 0) {

goto out;

}

```

**Environment**

- OS: Linux

- Toolchain: Zephyr SDK

- Commit 89212a7fbf5fcd8e4d661c016344ae4bf2d46f53

| priority | net ip assertion fails when tcp send data with zero length packet describe the bug when testing tcp stack assertion fails as following assertion fail zephyr zephyrmaster zephyr subsys net buf c zephyr zephyrmaster zephyr lib os assert c e zephyr fatal error kernel panic on cpu e current thread tcp work e halting system the flow of this failure is tcp work thread tcp resend data tcp send data send data totoal and unacked len are all in this case len conn send data total conn unacked len conn send win conn unacked len conn mss conn zero length packet pkt buffer null allocated pkt tcp pkt alloc conn len ret tcp pkt peek pkt conn send data pos len zero length packet passed to tcp out ext ret tcp out ext conn psh ack pkt conn seq conn unacked len tcp out ext net pkt append buffer pkt data buffer null net buf frag insert buffer null assert no msg frag assertion fail to solve don t proceed if tcp resend length is zero len conn send data total conn unacked len conn send win conn unacked len conn mss conn if len goto out environment os linux toolchain zephyr sdk commit | 1 |

796,217 | 28,102,406,553 | IssuesEvent | 2023-03-30 20:37:46 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [DocDB] Tablet splitting should be blocked on system_postgres.sequences_data | kind/enhancement area/docdb priority/medium 2.14 Backport Required 2.16 Backport Required | Jira Link: [DB-5577](https://yugabyte.atlassian.net/browse/DB-5577)

### Description

Tablet splitting can currently be applied to the `system_postgres.sequences_data`. This is a metadata table, even though it is not a system catalog table, and therefore similar to system catalog tables, tablet splitting should be disabled for it.

Reproduction:

```

.bin/yb-admin --master_addresses 127.0.0.1:7100 list_tablets ysql.system_postgres sequences_data

.bin/yb-admin --master_addresses 127.0.0.1:7100 split_tablet [tablet id]

```

[DB-5577]: https://yugabyte.atlassian.net/browse/DB-5577?atlOrigin=eyJpIjoiNWRkNTljNzYxNjVmNDY3MDlhMDU5Y2ZhYzA5YTRkZjUiLCJwIjoiZ2l0aHViLWNvbS1KU1cifQ | 1.0 | [DocDB] Tablet splitting should be blocked on system_postgres.sequences_data - Jira Link: [DB-5577](https://yugabyte.atlassian.net/browse/DB-5577)

### Description

Tablet splitting can currently be applied to the `system_postgres.sequences_data`. This is a metadata table, even though it is not a system catalog table, and therefore similar to system catalog tables, tablet splitting should be disabled for it.

Reproduction:

```

.bin/yb-admin --master_addresses 127.0.0.1:7100 list_tablets ysql.system_postgres sequences_data

.bin/yb-admin --master_addresses 127.0.0.1:7100 split_tablet [tablet id]

```

[DB-5577]: https://yugabyte.atlassian.net/browse/DB-5577?atlOrigin=eyJpIjoiNWRkNTljNzYxNjVmNDY3MDlhMDU5Y2ZhYzA5YTRkZjUiLCJwIjoiZ2l0aHViLWNvbS1KU1cifQ | priority | tablet splitting should be blocked on system postgres sequences data jira link description tablet splitting can currently be applied to the system postgres sequences data this is a metadata table even though it is not a system catalog table and therefore similar to system catalog tables tablet splitting should be disabled for it reproduction bin yb admin master addresses list tablets ysql system postgres sequences data bin yb admin master addresses split tablet | 1 |

690,192 | 23,648,910,081 | IssuesEvent | 2022-08-26 03:21:18 | Kong/gateway-operator | https://api.github.com/repos/Kong/gateway-operator | closed | Update dataplane env vars in controlplane when dataplane service changes | size/medium area/maintenance priority/medium | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Problem Statement

The `ControlPlane` deployments are pointed to the correct `DataPlane` service via 2 different env vars: `CONTROLLER_PUBLISH_SERVICE`, and `CONTROLLER_KONG_ADMIN_URL`. These 2 vars contain the correct dataplane service address to talk to. With https://github.com/Kong/gateway-operator/pull/91, when the `DataPlane` service is deleted, it is recreated automatically by the `dataplane-controller`; the problem is that the service name is not static, but created through the `generateName` field. Hence, every time the `DataPlane`service is recreated by the `dataplane-controller`, the `controlplane-controller` should also update the `ControlPlane` deployment env vars.

### Proposed Solution

We should trigger an update on the `controlplane-controller` every time the `DataPlane` service changes. To do so, we can watch the `Dataplane` services in the `controlplane-controller` and trigger a reconciliation loop every time an event occurs.

### Additional information

_No response_

### Acceptance Criteria

- [ ] Every time the dataplane service is created, the dataplane service env vars are set in the controlplane deployment

- [ ] Every time the dataplane service is deleted, the dataplane service env vars are unset from the controlplane deployment | 1.0 | Update dataplane env vars in controlplane when dataplane service changes - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Problem Statement

The `ControlPlane` deployments are pointed to the correct `DataPlane` service via 2 different env vars: `CONTROLLER_PUBLISH_SERVICE`, and `CONTROLLER_KONG_ADMIN_URL`. These 2 vars contain the correct dataplane service address to talk to. With https://github.com/Kong/gateway-operator/pull/91, when the `DataPlane` service is deleted, it is recreated automatically by the `dataplane-controller`; the problem is that the service name is not static, but created through the `generateName` field. Hence, every time the `DataPlane`service is recreated by the `dataplane-controller`, the `controlplane-controller` should also update the `ControlPlane` deployment env vars.

### Proposed Solution

We should trigger an update on the `controlplane-controller` every time the `DataPlane` service changes. To do so, we can watch the `Dataplane` services in the `controlplane-controller` and trigger a reconciliation loop every time an event occurs.

### Additional information

_No response_

### Acceptance Criteria

- [ ] Every time the dataplane service is created, the dataplane service env vars are set in the controlplane deployment

- [ ] Every time the dataplane service is deleted, the dataplane service env vars are unset from the controlplane deployment | priority | update dataplane env vars in controlplane when dataplane service changes is there an existing issue for this i have searched the existing issues problem statement the controlplane deployments are pointed to the correct dataplane service via different env vars controller publish service and controller kong admin url these vars contain the correct dataplane service address to talk to with when the dataplane service is deleted it is recreated automatically by the dataplane controller the problem is that the service name is not static but created through the generatename field hence every time the dataplane service is recreated by the dataplane controller the controlplane controller should also update the controlplane deployment env vars proposed solution we should trigger an update on the controlplane controller every time the dataplane service changes to do so we can watch the dataplane services in the controlplane controller and trigger a reconciliation loop every time an event occurs additional information no response acceptance criteria every time the dataplane service is created the dataplane service env vars are set in the controlplane deployment every time the dataplane service is deleted the dataplane service env vars are unset from the controlplane deployment | 1 |

267,345 | 8,388,015,029 | IssuesEvent | 2018-10-09 03:52:22 | ankidroid/Anki-Android | https://api.github.com/repos/ankidroid/Anki-Android | opened | Implement sampling strategy in analytics | Priority-Medium enhancement | We will most likely overwhelm the free tier in google analytics, but there's an easy answer - sampling strategy.

Need to implement this in the upstream library and tune it hear based on the usage we see | 1.0 | Implement sampling strategy in analytics - We will most likely overwhelm the free tier in google analytics, but there's an easy answer - sampling strategy.

Need to implement this in the upstream library and tune it hear based on the usage we see | priority | implement sampling strategy in analytics we will most likely overwhelm the free tier in google analytics but there s an easy answer sampling strategy need to implement this in the upstream library and tune it hear based on the usage we see | 1 |

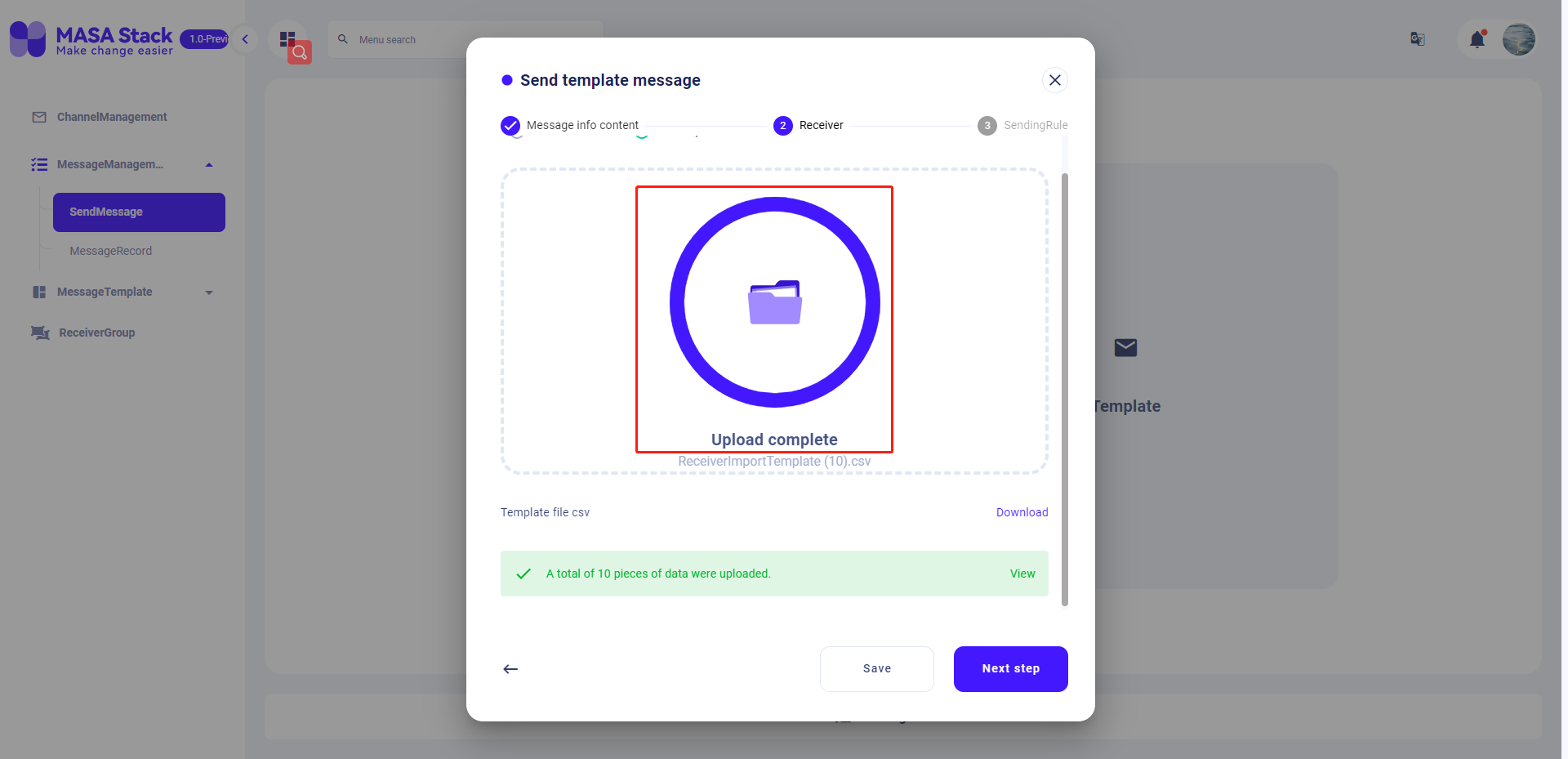

794,887 | 28,053,515,452 | IssuesEvent | 2023-03-29 07:51:22 | masastack/MASA.MC | https://api.github.com/repos/masastack/MASA.MC | closed | Send messages and upload recipients in batches. After uploading files, select File Upload again. The upload process is displayed | type/bug status/resolved severity/medium site/staging priority/p2 | 发送消息,批量上传收件人,上传文件后,再次选择文件上传,应显示上传的过程

| 1.0 | Send messages and upload recipients in batches. After uploading files, select File Upload again. The upload process is displayed - 发送消息,批量上传收件人,上传文件后,再次选择文件上传,应显示上传的过程

| priority | send messages and upload recipients in batches after uploading files select file upload again the upload process is displayed 发送消息,批量上传收件人,上传文件后,再次选择文件上传,应显示上传的过程 | 1 |

770,685 | 27,050,682,526 | IssuesEvent | 2023-02-13 13:02:48 | union-platform/union-app | https://api.github.com/repos/union-platform/union-app | closed | Rework functions to expect full data structure, not PK only | type: enhancement priority: medium ss: backend | # Clarification and motivation

<!--

Clarify what you want to be done and why.

-->

With current implementation there two problems:

1. It's easier to construct wrong argument and receive error at some point.

2. Sql exception in case of non existing foreign key will be translated as 500, but in some handlers we should trait it as 404 because provided pk is part of url.

# Acceptance criteria

<!--

Clarify how we can verify that the task is done.

-->

All functions where it's possible receives full data as argument and extracts PK in local scope. | 1.0 | Rework functions to expect full data structure, not PK only - # Clarification and motivation

<!--

Clarify what you want to be done and why.

-->

With current implementation there two problems:

1. It's easier to construct wrong argument and receive error at some point.

2. Sql exception in case of non existing foreign key will be translated as 500, but in some handlers we should trait it as 404 because provided pk is part of url.

# Acceptance criteria

<!--

Clarify how we can verify that the task is done.

-->

All functions where it's possible receives full data as argument and extracts PK in local scope. | priority | rework functions to expect full data structure not pk only clarification and motivation clarify what you want to be done and why with current implementation there two problems it s easier to construct wrong argument and receive error at some point sql exception in case of non existing foreign key will be translated as but in some handlers we should trait it as because provided pk is part of url acceptance criteria clarify how we can verify that the task is done all functions where it s possible receives full data as argument and extracts pk in local scope | 1 |

670,723 | 22,701,391,129 | IssuesEvent | 2022-07-05 10:59:46 | FEeasy404/GameUs | https://api.github.com/repos/FEeasy404/GameUs | opened | 채팅 목록 마크업 구현 | ✨new feature 🖐Priority: Medium | ## 추가 기능 설명

채팅 목록과 채팅방은 마크업 구현 및 스타일 적용만 진행합니다. 현재 대화가 진행 중인 채팅 목록이 표시됩니다.

## 할 일

- [ ] 채팅 목록 리스트 컴포넌트 생성

- [ ] 채팅 목록 페이지 구현

## ETC

| 1.0 | 채팅 목록 마크업 구현 - ## 추가 기능 설명

채팅 목록과 채팅방은 마크업 구현 및 스타일 적용만 진행합니다. 현재 대화가 진행 중인 채팅 목록이 표시됩니다.

## 할 일

- [ ] 채팅 목록 리스트 컴포넌트 생성

- [ ] 채팅 목록 페이지 구현

## ETC

| priority | 채팅 목록 마크업 구현 추가 기능 설명 채팅 목록과 채팅방은 마크업 구현 및 스타일 적용만 진행합니다 현재 대화가 진행 중인 채팅 목록이 표시됩니다 할 일 채팅 목록 리스트 컴포넌트 생성 채팅 목록 페이지 구현 etc | 1 |

756,317 | 26,466,441,706 | IssuesEvent | 2023-01-17 00:29:27 | cs-utulsa/Encrypted-Chat-Service | https://api.github.com/repos/cs-utulsa/Encrypted-Chat-Service | closed | Coding: GUI Working with Chat | coding Priority 2 Medium Effort | Issue exists to ensure all screens created in other issues work with the chat class and have all existing functionality included.

- [x] Properly commented

- [x] GUIs do not block use of functions

- [x] All functions available to user via the GUI

- [x] Add Boolean at top of chat to indicate GUI or console mode. Functionality for both must be put in place as part of this. | 1.0 | Coding: GUI Working with Chat - Issue exists to ensure all screens created in other issues work with the chat class and have all existing functionality included.

- [x] Properly commented

- [x] GUIs do not block use of functions

- [x] All functions available to user via the GUI

- [x] Add Boolean at top of chat to indicate GUI or console mode. Functionality for both must be put in place as part of this. | priority | coding gui working with chat issue exists to ensure all screens created in other issues work with the chat class and have all existing functionality included properly commented guis do not block use of functions all functions available to user via the gui add boolean at top of chat to indicate gui or console mode functionality for both must be put in place as part of this | 1 |

581,654 | 17,314,079,662 | IssuesEvent | 2021-07-27 01:54:41 | lewisjwilson/kmj | https://api.github.com/repos/lewisjwilson/kmj | opened | Fix percentage in FlashcardsComplete.kt | Medium Priority bug | Easy fix, percentage is showing to too many sf. Change to 1/2 s.f.

Also change text to `Accuracy ${value}`? | 1.0 | Fix percentage in FlashcardsComplete.kt - Easy fix, percentage is showing to too many sf. Change to 1/2 s.f.

Also change text to `Accuracy ${value}`? | priority | fix percentage in flashcardscomplete kt easy fix percentage is showing to too many sf change to s f also change text to accuracy value | 1 |

84,276 | 3,662,593,620 | IssuesEvent | 2016-02-19 00:02:48 | sandialabs/slycat | https://api.github.com/repos/sandialabs/slycat | opened | Scientific Notation not supported in hyperchunk query language | bug Medium Priority PS Model | Filtering using either a slider or categorical buttons fails if the input data includes values using scientific notation. HQL doesn't handle the 'e-' portion of the number. | 1.0 | Scientific Notation not supported in hyperchunk query language - Filtering using either a slider or categorical buttons fails if the input data includes values using scientific notation. HQL doesn't handle the 'e-' portion of the number. | priority | scientific notation not supported in hyperchunk query language filtering using either a slider or categorical buttons fails if the input data includes values using scientific notation hql doesn t handle the e portion of the number | 1 |

548,588 | 16,067,153,218 | IssuesEvent | 2021-04-23 21:11:32 | Qiskit/qiskit-terra | https://api.github.com/repos/Qiskit/qiskit-terra | closed | Incorrect deserialization result with ByType polymorphic field | bug priority: medium | # Information

- **Qiskit Terra version**: 0.10.0

- **Python version**: 3.7.3

- **Operating system**: Darwin Kernel Version 18.7.0

### What is the current behavior?

When using polymorphic field `qiskit.validation.fields.ByType` combined with multi-nested schemas leads to incorrect deserialization result. If you have a nested schema listed in `ByType` polymorphic field, it might not get correctly deserialized. An example is provided below.

### Steps to reproduce the problem

Consider the following setup:

```

from qiskit.validation import BaseSchema

from qiskit.validation.fields import ByType, Nested, Integer, String

class IntSchema(BaseSchema):

int_field = Integer(required=True)

class FirstNestedSchema(BaseSchema):

string = String(required=True)

class SecondNestedchema(BaseSchema):

nested_int = Nested(IntSchema)

class TestSchema(BaseSchema):

test = ByType([Nested(FirstNestedSchema), Nested(SecondNestedchema)])

```

And corresponding model bindings:

```

from qiskit.validation import BaseModel, bind_schema

@bind_schema(TestSchema)

class TestModel(BaseModel):

pass

@bind_schema(SecondNestedchema)

class TestSecondNested(BaseModel):

pass

@bind_schema(IntSchema)

class TestInt(BaseModel):

pass

```

The following test case will always fail:

```

from qiskit.test import QiskitTestCase

class Test(QiskitTestCase):

def test_schema(self):

int_model = TestInt(int_field=1)

second_nested = TestSecondNested(nested_int=int_model)

result = TestModel(test=second_nested).to_dict()

# result == {'test': {'nested_int': TestInt(int_field=1)}}

expected = {'test': {'nested_int': {'int_field': 1}}}

self.assertEqual(result, expected)

```

### What is the expected behavior?

When you create a dict from given schemas:

```

int_model = TestInt(int_field=1)

second_nested = TestSecondNested(nested_int=int_model)

result = TestModel(test=second_nested).to_dict()

```

`result` dict is supposed to match the following dict:

```

{'test': {'nested_int': {'int_field': 1}}}

```

Instead, `TestInt` will not get deserialized properly, and will result in:

```

{'test': {'nested_int': TestInt(int_field=1)}}

```

### Observations

Interestingly enough, the deserialization result depends on the **order of nested schemas** in the list of `ByType` fields.

For example, if we declare `TestSchema` as:

```

class TestSchema(BaseSchema):

test = ByType([Nested(SecondNestedchema), Nested(FirstNestedSchema)])

```

i.e. changing the order of nested schemas or swapping `FirstNestedSchema` and `SecondNestedchema` it will result in correct deserialized dict:

```

{'test': {'nested_int': {'int_field': 1}}}

```

¯\\\_(ツ)_/¯ | 1.0 | Incorrect deserialization result with ByType polymorphic field - # Information

- **Qiskit Terra version**: 0.10.0

- **Python version**: 3.7.3

- **Operating system**: Darwin Kernel Version 18.7.0

### What is the current behavior?

When using polymorphic field `qiskit.validation.fields.ByType` combined with multi-nested schemas leads to incorrect deserialization result. If you have a nested schema listed in `ByType` polymorphic field, it might not get correctly deserialized. An example is provided below.

### Steps to reproduce the problem

Consider the following setup:

```

from qiskit.validation import BaseSchema

from qiskit.validation.fields import ByType, Nested, Integer, String

class IntSchema(BaseSchema):

int_field = Integer(required=True)

class FirstNestedSchema(BaseSchema):

string = String(required=True)

class SecondNestedchema(BaseSchema):

nested_int = Nested(IntSchema)

class TestSchema(BaseSchema):

test = ByType([Nested(FirstNestedSchema), Nested(SecondNestedchema)])

```

And corresponding model bindings:

```

from qiskit.validation import BaseModel, bind_schema

@bind_schema(TestSchema)

class TestModel(BaseModel):

pass

@bind_schema(SecondNestedchema)

class TestSecondNested(BaseModel):

pass

@bind_schema(IntSchema)

class TestInt(BaseModel):

pass

```

The following test case will always fail:

```

from qiskit.test import QiskitTestCase

class Test(QiskitTestCase):

def test_schema(self):

int_model = TestInt(int_field=1)

second_nested = TestSecondNested(nested_int=int_model)

result = TestModel(test=second_nested).to_dict()

# result == {'test': {'nested_int': TestInt(int_field=1)}}

expected = {'test': {'nested_int': {'int_field': 1}}}

self.assertEqual(result, expected)

```

### What is the expected behavior?

When you create a dict from given schemas:

```

int_model = TestInt(int_field=1)

second_nested = TestSecondNested(nested_int=int_model)

result = TestModel(test=second_nested).to_dict()

```

`result` dict is supposed to match the following dict:

```

{'test': {'nested_int': {'int_field': 1}}}

```

Instead, `TestInt` will not get deserialized properly, and will result in:

```

{'test': {'nested_int': TestInt(int_field=1)}}

```

### Observations

Interestingly enough, the deserialization result depends on the **order of nested schemas** in the list of `ByType` fields.

For example, if we declare `TestSchema` as:

```

class TestSchema(BaseSchema):

test = ByType([Nested(SecondNestedchema), Nested(FirstNestedSchema)])

```

i.e. changing the order of nested schemas or swapping `FirstNestedSchema` and `SecondNestedchema` it will result in correct deserialized dict:

```

{'test': {'nested_int': {'int_field': 1}}}

```

¯\\\_(ツ)_/¯ | priority | incorrect deserialization result with bytype polymorphic field information qiskit terra version python version operating system darwin kernel version what is the current behavior when using polymorphic field qiskit validation fields bytype combined with multi nested schemas leads to incorrect deserialization result if you have a nested schema listed in bytype polymorphic field it might not get correctly deserialized an example is provided below steps to reproduce the problem consider the following setup from qiskit validation import baseschema from qiskit validation fields import bytype nested integer string class intschema baseschema int field integer required true class firstnestedschema baseschema string string required true class secondnestedchema baseschema nested int nested intschema class testschema baseschema test bytype and corresponding model bindings from qiskit validation import basemodel bind schema bind schema testschema class testmodel basemodel pass bind schema secondnestedchema class testsecondnested basemodel pass bind schema intschema class testint basemodel pass the following test case will always fail from qiskit test import qiskittestcase class test qiskittestcase def test schema self int model testint int field second nested testsecondnested nested int int model result testmodel test second nested to dict result test nested int testint int field expected test nested int int field self assertequal result expected what is the expected behavior when you create a dict from given schemas int model testint int field second nested testsecondnested nested int int model result testmodel test second nested to dict result dict is supposed to match the following dict test nested int int field instead testint will not get deserialized properly and will result in test nested int testint int field observations interestingly enough the deserialization result depends on the order of nested schemas in the list of bytype fields for example if we declare testschema as class testschema baseschema test bytype i e changing the order of nested schemas or swapping firstnestedschema and secondnestedchema it will result in correct deserialized dict test nested int int field ¯ ツ ¯ | 1 |

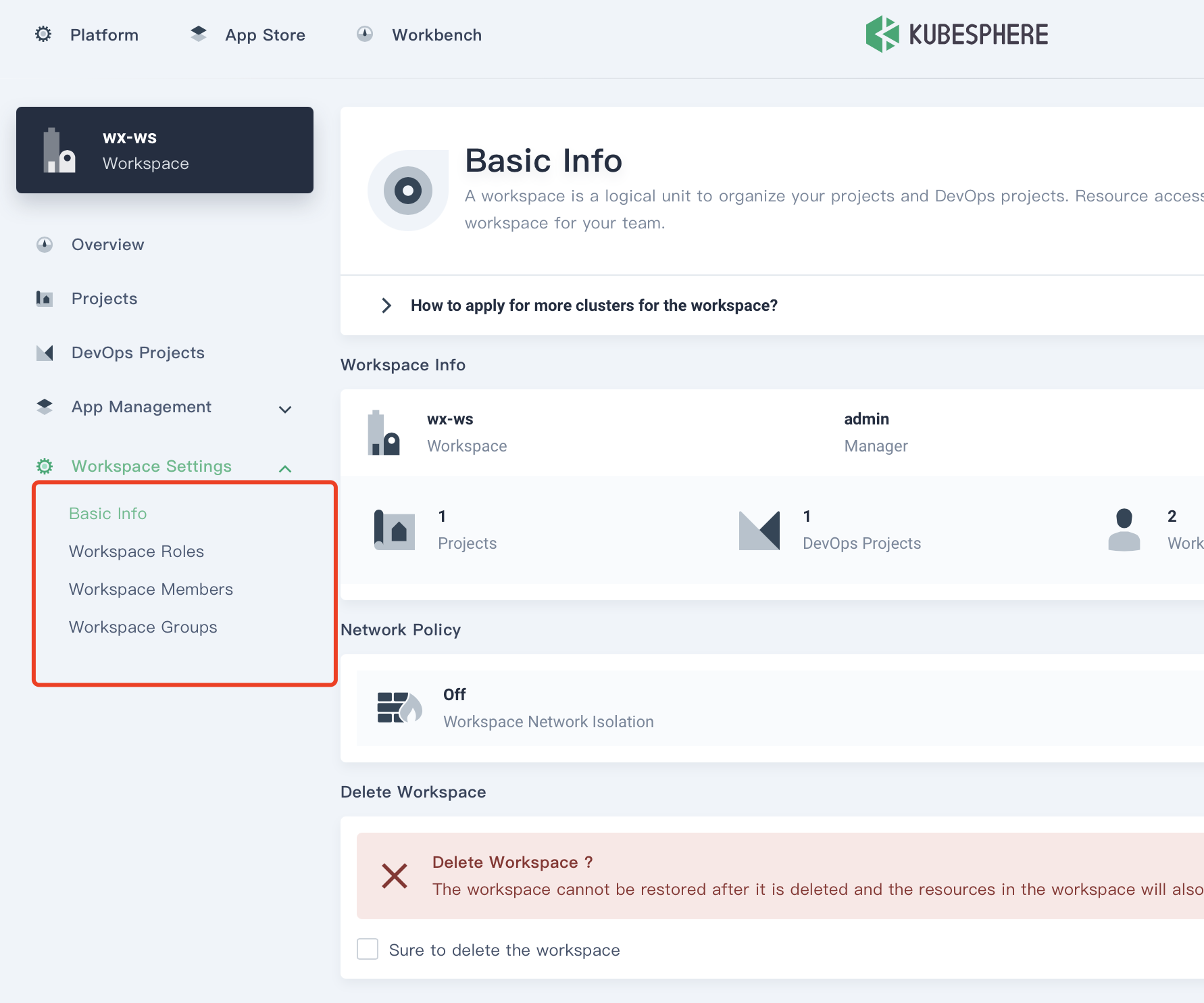

532,964 | 15,574,451,210 | IssuesEvent | 2021-03-17 09:51:55 | kubesphere/kubesphere | https://api.github.com/repos/kubesphere/kubesphere | closed | workspace admin can not view the workspace quote management. | kind/bug priority/medium | **Describe**

A user with global role `regular` or `Cluster Management` and workspace role `admin` can not view the workspace quote management.

**Environment**

kubespheredev/ks-console:latest

**Preset conditions**

A user with global role `regular` and workspace role `admin`

**Expected behavior**

A user with global role `regular` and workspace role `admin` can view the workspace quote management.

**Actual behavior**

/kind bug

/assign @wansir

/milestone 3.1.0

/priority medium

| 1.0 | workspace admin can not view the workspace quote management. - **Describe**

A user with global role `regular` or `Cluster Management` and workspace role `admin` can not view the workspace quote management.

**Environment**

kubespheredev/ks-console:latest

**Preset conditions**

A user with global role `regular` and workspace role `admin`

**Expected behavior**

A user with global role `regular` and workspace role `admin` can view the workspace quote management.

**Actual behavior**

/kind bug

/assign @wansir

/milestone 3.1.0

/priority medium

| priority | workspace admin can not view the workspace quote management describe a user with global role regular or cluster management and workspace role admin can not view the workspace quote management environment kubespheredev ks console latest preset conditions a user with global role regular and workspace role admin expected behavior a user with global role regular and workspace role admin can view the workspace quote management actual behavior kind bug assign wansir milestone priority medium | 1 |

80,097 | 3,550,700,507 | IssuesEvent | 2016-01-20 23:03:07 | ualbertalib/discovery | https://api.github.com/repos/ualbertalib/discovery | opened | Find more by this author link not providing results | bug Medium priority | Clicking on [Cordasco, Francesco, 1920-2001](https://www.library.ualberta.ca/catalog?f%5Bauthor_display%5D%5B%5D=Cordasco,+Francesco,+1920-2001) from [this record](https://www.library.ualberta.ca/catalog/466240) leads to the following screen:

NEOS shows 52 items for this author. | 1.0 | Find more by this author link not providing results - Clicking on [Cordasco, Francesco, 1920-2001](https://www.library.ualberta.ca/catalog?f%5Bauthor_display%5D%5B%5D=Cordasco,+Francesco,+1920-2001) from [this record](https://www.library.ualberta.ca/catalog/466240) leads to the following screen:

NEOS shows 52 items for this author. | priority | find more by this author link not providing results clicking on from leads to the following screen neos shows items for this author | 1 |

738,186 | 25,548,414,315 | IssuesEvent | 2022-11-29 21:02:07 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [YSQL] `yb_build.sh --no-tests reinitdb` fails | kind/bug area/ysql priority/medium status/awaiting-triage | Jira Link: [DB-4276](https://yugabyte.atlassian.net/browse/DB-4276)

### Description

Error message:

```

Standard output from external program {{ '/home/amartsin/code/yugabyte-db/build-support/run-test.sh' /home/amartsin/code/yugabyte-db/build/debug-clang15-dynamic-ninja/tests-pgwrapper/create_initial_sys_catalog_snapshot }} running in '/home/amartsin/code/yugabyte-db/build/debug-clang15-dynamic-ninja', saving stdout to {{ /home/amartsin/code/yugabyte-db/build/debug-clang15-dynamic-ninja/create_initial_sys_catalog_snapshot.out }}, stderr to {{ /home/amartsin/code/yugabyte-db/build/debug-clang15-dynamic-ninja/create_initial_sys_catalog_snapshot.err }}:

Test is running on host amartsin-laptop, arguments: /home/amartsin/code/yugabyte-db/build/debug-clang15-dynamic-ninja/tests-pgwrapper/create_initial_sys_catalog_snapshot

/home/amartsin/code/yugabyte-db/build-support/common-test-env.sh: line 912: /home/amartsin/code/yugabyte-db/build/debug-clang15-dynamic-ninja/bin/run-with-timeout: No such file or directory

(end of standard output)

```

Internally the `initial_sys_catalog_snapshot` build target uses run-with-timeout tool, which considered a part of test infrastructure and is not built if `--no-tests` is specified. | 1.0 | [YSQL] `yb_build.sh --no-tests reinitdb` fails - Jira Link: [DB-4276](https://yugabyte.atlassian.net/browse/DB-4276)

### Description

Error message:

```

Standard output from external program {{ '/home/amartsin/code/yugabyte-db/build-support/run-test.sh' /home/amartsin/code/yugabyte-db/build/debug-clang15-dynamic-ninja/tests-pgwrapper/create_initial_sys_catalog_snapshot }} running in '/home/amartsin/code/yugabyte-db/build/debug-clang15-dynamic-ninja', saving stdout to {{ /home/amartsin/code/yugabyte-db/build/debug-clang15-dynamic-ninja/create_initial_sys_catalog_snapshot.out }}, stderr to {{ /home/amartsin/code/yugabyte-db/build/debug-clang15-dynamic-ninja/create_initial_sys_catalog_snapshot.err }}:

Test is running on host amartsin-laptop, arguments: /home/amartsin/code/yugabyte-db/build/debug-clang15-dynamic-ninja/tests-pgwrapper/create_initial_sys_catalog_snapshot

/home/amartsin/code/yugabyte-db/build-support/common-test-env.sh: line 912: /home/amartsin/code/yugabyte-db/build/debug-clang15-dynamic-ninja/bin/run-with-timeout: No such file or directory

(end of standard output)

```

Internally the `initial_sys_catalog_snapshot` build target uses run-with-timeout tool, which considered a part of test infrastructure and is not built if `--no-tests` is specified. | priority | yb build sh no tests reinitdb fails jira link description error message standard output from external program home amartsin code yugabyte db build support run test sh home amartsin code yugabyte db build debug dynamic ninja tests pgwrapper create initial sys catalog snapshot running in home amartsin code yugabyte db build debug dynamic ninja saving stdout to home amartsin code yugabyte db build debug dynamic ninja create initial sys catalog snapshot out stderr to home amartsin code yugabyte db build debug dynamic ninja create initial sys catalog snapshot err test is running on host amartsin laptop arguments home amartsin code yugabyte db build debug dynamic ninja tests pgwrapper create initial sys catalog snapshot home amartsin code yugabyte db build support common test env sh line home amartsin code yugabyte db build debug dynamic ninja bin run with timeout no such file or directory end of standard output internally the initial sys catalog snapshot build target uses run with timeout tool which considered a part of test infrastructure and is not built if no tests is specified | 1 |

689,796 | 23,634,522,521 | IssuesEvent | 2022-08-25 12:16:02 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [CDCSDK] Stale entry in CDC Cache causes Steam Expiration. | priority/medium 2.12 Backport Required 2.14 Backport Required | Consider a cluster with 3 tservers (TS1, TS2, TS3) and a table with a single tablet. Today we maintain and track the stream active time in the cache. During starting time tablet LEADER is TS1, so there is a Cache entry for the tablet, to track its active time. After some time TS2 becomes the tablet LEADER, so an entry will be created in TS2's cache to track the active time of the tablet. now after cdc_intent_retention_ms expiration time, TS1 becomes a LEADER, but its existing cache entry is not in sync, so if we call GetChanges stream will expire.

| 1.0 | [CDCSDK] Stale entry in CDC Cache causes Steam Expiration. - Consider a cluster with 3 tservers (TS1, TS2, TS3) and a table with a single tablet. Today we maintain and track the stream active time in the cache. During starting time tablet LEADER is TS1, so there is a Cache entry for the tablet, to track its active time. After some time TS2 becomes the tablet LEADER, so an entry will be created in TS2's cache to track the active time of the tablet. now after cdc_intent_retention_ms expiration time, TS1 becomes a LEADER, but its existing cache entry is not in sync, so if we call GetChanges stream will expire.

| priority | stale entry in cdc cache causes steam expiration consider a cluster with tservers and a table with a single tablet today we maintain and track the stream active time in the cache during starting time tablet leader is so there is a cache entry for the tablet to track its active time after some time becomes the tablet leader so an entry will be created in s cache to track the active time of the tablet now after cdc intent retention ms expiration time becomes a leader but its existing cache entry is not in sync so if we call getchanges stream will expire | 1 |

459,142 | 13,187,092,154 | IssuesEvent | 2020-08-13 02:19:27 | Twin-Cities-Mutual-Aid/twin-cities-aid-distribution-locations | https://api.github.com/repos/Twin-Cities-Mutual-Aid/twin-cities-aid-distribution-locations | closed | Replacing the back end sheet with a copy | Priority: Medium Type: Discussion Type: Feature Request From TCMAP Type: Maintenance | The current owner of the Google Sheets document that is our backend...is someone who hasn't been part of this project for more than a month and hasn't answered emails. I'd like to do a "make a copy" on it so that one of our registered email addresses can become the owner, to remove the possibility that she cleans up her Drive and deletes the whole thing.

Here are things I know will need to change to make that happen:

- The public version of the data will need to draw from the new private sheet

- Everyone who has edit access will need to be given access to the new sheet/lose access to the old one so they don't update in the wrong place

- Pinned links/Slackbot auto-responses that spit out the link will need to be updated

@maxine and/or @mc-funk, what will need to be done in Twilio to make sure texts go to the new sheet?

Everyone else, what else am I missing? | 1.0 | Replacing the back end sheet with a copy - The current owner of the Google Sheets document that is our backend...is someone who hasn't been part of this project for more than a month and hasn't answered emails. I'd like to do a "make a copy" on it so that one of our registered email addresses can become the owner, to remove the possibility that she cleans up her Drive and deletes the whole thing.

Here are things I know will need to change to make that happen:

- The public version of the data will need to draw from the new private sheet

- Everyone who has edit access will need to be given access to the new sheet/lose access to the old one so they don't update in the wrong place

- Pinned links/Slackbot auto-responses that spit out the link will need to be updated

@maxine and/or @mc-funk, what will need to be done in Twilio to make sure texts go to the new sheet?

Everyone else, what else am I missing? | priority | replacing the back end sheet with a copy the current owner of the google sheets document that is our backend is someone who hasn t been part of this project for more than a month and hasn t answered emails i d like to do a make a copy on it so that one of our registered email addresses can become the owner to remove the possibility that she cleans up her drive and deletes the whole thing here are things i know will need to change to make that happen the public version of the data will need to draw from the new private sheet everyone who has edit access will need to be given access to the new sheet lose access to the old one so they don t update in the wrong place pinned links slackbot auto responses that spit out the link will need to be updated maxine and or mc funk what will need to be done in twilio to make sure texts go to the new sheet everyone else what else am i missing | 1 |

741,296 | 25,787,875,737 | IssuesEvent | 2022-12-09 22:44:25 | scs-lab/ChronoLog | https://api.github.com/repos/scs-lab/ChronoLog | opened | Add show Chronicle/Story to ClientAPI | medium priority | Add two methods to ClientAPI that would provide a list of chronicles and /or stories on the cluster that the client is authorized to access.

showChronicles() - returns a list of chronicle names

showStories(const string& chronicle_name) - returns a list of story names that belong to a chronicle given the chronicle name | 1.0 | Add show Chronicle/Story to ClientAPI - Add two methods to ClientAPI that would provide a list of chronicles and /or stories on the cluster that the client is authorized to access.

showChronicles() - returns a list of chronicle names

showStories(const string& chronicle_name) - returns a list of story names that belong to a chronicle given the chronicle name | priority | add show chronicle story to clientapi add two methods to clientapi that would provide a list of chronicles and or stories on the cluster that the client is authorized to access showchronicles returns a list of chronicle names showstories const string chronicle name returns a list of story names that belong to a chronicle given the chronicle name | 1 |

54,969 | 3,071,738,239 | IssuesEvent | 2015-08-19 13:48:40 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | closed | Ошибки в отображении мгновенной скорости сразу после старта передачи | bug imported Priority-Medium | _From [infinitysky7](https://code.google.com/u/infinitysky7/) on November 25, 2010 13:14:06_

Неверное отображение текущей скорости, скачки. На скриншоте канал 100 Мбит (12,5 Миб\с).

**Attachment:** [Speed.png](http://code.google.com/p/flylinkdc/issues/detail?id=230)

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=230_ | 1.0 | Ошибки в отображении мгновенной скорости сразу после старта передачи - _From [infinitysky7](https://code.google.com/u/infinitysky7/) on November 25, 2010 13:14:06_

Неверное отображение текущей скорости, скачки. На скриншоте канал 100 Мбит (12,5 Миб\с).

**Attachment:** [Speed.png](http://code.google.com/p/flylinkdc/issues/detail?id=230)

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=230_ | priority | ошибки в отображении мгновенной скорости сразу после старта передачи from on november неверное отображение текущей скорости скачки на скриншоте канал мбит миб с attachment original issue | 1 |

241,632 | 7,818,430,332 | IssuesEvent | 2018-06-13 12:18:52 | weglot/translate-laravel | https://api.github.com/repos/weglot/translate-laravel | closed | Update request tracking | priority: medium status: confirmed type: enhancement | <!-- This form is for bug reports and feature requests ONLY! -->

**Is this a BUG REPORT or FEATURE REQUEST?**: FEATURE REQUEST

**What happened**: Update request tracking with new one | 1.0 | Update request tracking - <!-- This form is for bug reports and feature requests ONLY! -->

**Is this a BUG REPORT or FEATURE REQUEST?**: FEATURE REQUEST

**What happened**: Update request tracking with new one | priority | update request tracking is this a bug report or feature request feature request what happened update request tracking with new one | 1 |

271,828 | 8,489,991,905 | IssuesEvent | 2018-10-26 22:02:29 | hydroshare/hydroshare | https://api.github.com/repos/hydroshare/hydroshare | closed | Scope - Create/Edit Resource workflow | (3) Large Effort Medium Priority enhancement | This issue will be a checklist of all of the separate functionality to develop for implementing the create/edit workflow updates found in the Scope work.

* [ ] #???? Ensure and enforce empty resources to be unable to be marked public

* [ ] #???? Open Resource in edit mode when a resource is created

* [ ] #???? Hide Ratings and comments in Edit mode

* [ ] #???? Include on-hover information boxes for each item on the resource page in edit mode. Make the information boxes editable in mezzanine interface.

* [ ] #???? Dynamic keyword suggestions based on existing keywords as user is typing. Setup a page that is editable through mezzanine for hydroshare common keywords.

* [ ] #???? sharing status rules revamp

This issue is derived from the Creating a Resource and Editing it section in https://docs.google.com/document/d/1td4-tb-cMOjeHZt0cJGE8xVSSlBL4vG3MUzkZtpL4r0

| 1.0 | Scope - Create/Edit Resource workflow - This issue will be a checklist of all of the separate functionality to develop for implementing the create/edit workflow updates found in the Scope work.

* [ ] #???? Ensure and enforce empty resources to be unable to be marked public

* [ ] #???? Open Resource in edit mode when a resource is created

* [ ] #???? Hide Ratings and comments in Edit mode

* [ ] #???? Include on-hover information boxes for each item on the resource page in edit mode. Make the information boxes editable in mezzanine interface.

* [ ] #???? Dynamic keyword suggestions based on existing keywords as user is typing. Setup a page that is editable through mezzanine for hydroshare common keywords.

* [ ] #???? sharing status rules revamp

This issue is derived from the Creating a Resource and Editing it section in https://docs.google.com/document/d/1td4-tb-cMOjeHZt0cJGE8xVSSlBL4vG3MUzkZtpL4r0

| priority | scope create edit resource workflow this issue will be a checklist of all of the separate functionality to develop for implementing the create edit workflow updates found in the scope work ensure and enforce empty resources to be unable to be marked public open resource in edit mode when a resource is created hide ratings and comments in edit mode include on hover information boxes for each item on the resource page in edit mode make the information boxes editable in mezzanine interface dynamic keyword suggestions based on existing keywords as user is typing setup a page that is editable through mezzanine for hydroshare common keywords sharing status rules revamp this issue is derived from the creating a resource and editing it section in | 1 |

522,646 | 15,164,501,648 | IssuesEvent | 2021-02-12 13:49:56 | erlang/otp | https://api.github.com/repos/erlang/otp | closed | ERL-1465: Child erl.exe process must be killed when erlsrv.exe is terminated | bug priority:medium team:VM |

Original reporter: `ilyan`

Affected version: `Not Specified`

Component: `Not Specified`

Migrated from: https://bugs.erlang.org/browse/ERL-1465

---

```

Killing erlsrv.exe (manually or 30 seconds after stopping the service) leaves erl.exe running.

The service can't be started until erl.exe is manually killed.

This link explains how to create child processes in the same job:

[https://stackoverflow.com/questions/24012773/c-winapi-how-to-kill-child-processes-when-the-calling-parent-process-is-for/24020820]

```

| 1.0 | ERL-1465: Child erl.exe process must be killed when erlsrv.exe is terminated -

Original reporter: `ilyan`

Affected version: `Not Specified`

Component: `Not Specified`

Migrated from: https://bugs.erlang.org/browse/ERL-1465

---

```

Killing erlsrv.exe (manually or 30 seconds after stopping the service) leaves erl.exe running.

The service can't be started until erl.exe is manually killed.

This link explains how to create child processes in the same job:

[https://stackoverflow.com/questions/24012773/c-winapi-how-to-kill-child-processes-when-the-calling-parent-process-is-for/24020820]

```

| priority | erl child erl exe process must be killed when erlsrv exe is terminated original reporter ilyan affected version not specified component not specified migrated from killing erlsrv exe manually or seconds after stopping the service leaves erl exe running the service can t be started until erl exe is manually killed this link explains how to create child processes in the same job | 1 |

790,082 | 27,815,055,400 | IssuesEvent | 2023-03-18 15:34:36 | WordPress/openverse | https://api.github.com/repos/WordPress/openverse | opened | Line heights are inconsistent | 🟨 priority: medium 🛠 goal: fix 🕹 aspect: interface 🧱 stack: frontend | ## Description

<!-- Concisely describe the bug. Compare your experience with what you expected to happen. -->

<!-- For example: "I clicked the 'submit' button and instead of seeing a thank you message, I saw a blank page." -->

Tailwind config parameter for setting custom line heights is called `lineHeight`. In our `tailwind.config` it is called `lineHeights` (with an extra s at the end). Because of this, all the named line heights (larger, large, normal, snug, tight) fall back on the Tailwind default values instead of using our custom values. This makes our line heights inconsistent.

We need to rename `lineHeights` to `lineHeight` in `tailwind.config` and update all the snapshots.

## Reproduction

<!-- Provide detailed steps to reproduce the bug. -->

Check the homepage h1 element. Instead of using our `tight` value of 1.2 for line height, it uses the Tailwind default of 1.25.

## Additional context

There is a `FIXME` comment about it in the code. We did not update it when developing the new header/footer/homepage to prevent a massive update in snapshots. Now, it should be useful to update before the `Core UI improvements` project. | 1.0 | Line heights are inconsistent - ## Description

<!-- Concisely describe the bug. Compare your experience with what you expected to happen. -->

<!-- For example: "I clicked the 'submit' button and instead of seeing a thank you message, I saw a blank page." -->

Tailwind config parameter for setting custom line heights is called `lineHeight`. In our `tailwind.config` it is called `lineHeights` (with an extra s at the end). Because of this, all the named line heights (larger, large, normal, snug, tight) fall back on the Tailwind default values instead of using our custom values. This makes our line heights inconsistent.

We need to rename `lineHeights` to `lineHeight` in `tailwind.config` and update all the snapshots.

## Reproduction

<!-- Provide detailed steps to reproduce the bug. -->

Check the homepage h1 element. Instead of using our `tight` value of 1.2 for line height, it uses the Tailwind default of 1.25.

## Additional context

There is a `FIXME` comment about it in the code. We did not update it when developing the new header/footer/homepage to prevent a massive update in snapshots. Now, it should be useful to update before the `Core UI improvements` project. | priority | line heights are inconsistent description tailwind config parameter for setting custom line heights is called lineheight in our tailwind config it is called lineheights with an extra s at the end because of this all the named line heights larger large normal snug tight fall back on the tailwind default values instead of using our custom values this makes our line heights inconsistent we need to rename lineheights to lineheight in tailwind config and update all the snapshots reproduction check the homepage element instead of using our tight value of for line height it uses the tailwind default of additional context there is a fixme comment about it in the code we did not update it when developing the new header footer homepage to prevent a massive update in snapshots now it should be useful to update before the core ui improvements project | 1 |

426,419 | 12,372,216,126 | IssuesEvent | 2020-05-18 19:59:21 | Big-Brain-Crew/learn_ml | https://api.github.com/repos/Big-Brain-Crew/learn_ml | opened | Define streaming interface for inference results | feature medium priority | Create an interface to stream data from the Coral board to microcontrollers and other computers.

Need to be able to access:

* Raw sensor feeds

* Inference results

Additionally, there must be an interface on the master computer/microcontroller that makes it easy to parse the transmitted data. For example, a python package, Arduino Package, and UI. | 1.0 | Define streaming interface for inference results - Create an interface to stream data from the Coral board to microcontrollers and other computers.

Need to be able to access:

* Raw sensor feeds

* Inference results

Additionally, there must be an interface on the master computer/microcontroller that makes it easy to parse the transmitted data. For example, a python package, Arduino Package, and UI. | priority | define streaming interface for inference results create an interface to stream data from the coral board to microcontrollers and other computers need to be able to access raw sensor feeds inference results additionally there must be an interface on the master computer microcontroller that makes it easy to parse the transmitted data for example a python package arduino package and ui | 1 |

602,802 | 18,507,075,829 | IssuesEvent | 2021-10-19 20:00:58 | r-lib/styler | https://api.github.com/repos/r-lib/styler | closed | Other style guides | Priority: Medium Complexity: High Status: Unassigned Type: Meta | What would it take to support the following styles?

- @yihui's, e.g. in [tinytex](https://github.com/yihui/tinytex)

- e.g., use `=` for assignment, use `'` for strings

- @wlandau's in [drake](https://github.com/ropensci/drake)

- start argument list on the following line in a function declaration, no space between `)` and `{`

I'm sure there's a related postponed issue, but I haven't looked. | 1.0 | Other style guides - What would it take to support the following styles?

- @yihui's, e.g. in [tinytex](https://github.com/yihui/tinytex)

- e.g., use `=` for assignment, use `'` for strings

- @wlandau's in [drake](https://github.com/ropensci/drake)

- start argument list on the following line in a function declaration, no space between `)` and `{`

I'm sure there's a related postponed issue, but I haven't looked. | priority | other style guides what would it take to support the following styles yihui s e g in e g use for assignment use for strings wlandau s in start argument list on the following line in a function declaration no space between and i m sure there s a related postponed issue but i haven t looked | 1 |

461,962 | 13,239,023,415 | IssuesEvent | 2020-08-19 02:11:01 | pingcap/dumpling | https://api.github.com/repos/pingcap/dumpling | closed | dump column name as `--complete-insert` did in mydumper | difficulty/2-medium priority/P2 | ## Feature Request

**Is your feature request related to a problem? Please describe:**

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

we may need to dump columns' name in some cases, like:

- will load data to a table with more columns than the dumped one

- different column orders between the target and source tables.

**Describe the feature you'd like:**

<!-- A clear and concise description of what you want to happen. -->

add a flag like `--complete-insert` in mydumper to support dump the columns' name (or make it as the default behavior).

**Describe alternatives you've considered:**

<!-- A clear and concise description of any alternative solutions or features you've considered. -->

**Teachability, Documentation, Adoption, Optimization:**

<!-- If you can, explain some scenarios how users might use this, situations it would be helpful in. Any API designs, mockups, or diagrams are also helpful. --> | 1.0 | dump column name as `--complete-insert` did in mydumper - ## Feature Request

**Is your feature request related to a problem? Please describe:**

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

we may need to dump columns' name in some cases, like:

- will load data to a table with more columns than the dumped one

- different column orders between the target and source tables.

**Describe the feature you'd like:**

<!-- A clear and concise description of what you want to happen. -->

add a flag like `--complete-insert` in mydumper to support dump the columns' name (or make it as the default behavior).

**Describe alternatives you've considered:**

<!-- A clear and concise description of any alternative solutions or features you've considered. -->

**Teachability, Documentation, Adoption, Optimization:**

<!-- If you can, explain some scenarios how users might use this, situations it would be helpful in. Any API designs, mockups, or diagrams are also helpful. --> | priority | dump column name as complete insert did in mydumper feature request is your feature request related to a problem please describe we may need to dump columns name in some cases like will load data to a table with more columns than the dumped one different column orders between the target and source tables describe the feature you d like add a flag like complete insert in mydumper to support dump the columns name or make it as the default behavior describe alternatives you ve considered teachability documentation adoption optimization | 1 |

358,606 | 10,618,582,796 | IssuesEvent | 2019-10-13 06:00:02 | AY1920S1-CS2113T-W17-3/main | https://api.github.com/repos/AY1920S1-CS2113T-W17-3/main | closed | As an existing user, I can delete my investment account (bond) | priority.Medium type.Story | so that I can sell it before the maturity date.

| 1.0 | As an existing user, I can delete my investment account (bond) - so that I can sell it before the maturity date.

| priority | as an existing user i can delete my investment account bond so that i can sell it before the maturity date | 1 |

84,145 | 3,654,276,718 | IssuesEvent | 2016-02-17 11:44:48 | brunoais/javadude | https://api.github.com/repos/brunoais/javadude | closed | test trailing comma after properties & document error message from APT | auto-migrated Priority-Medium Project-Annotations Type-Task | ```

test trailing comma after properties & document error message from APT

```

Original issue reported on code.google.com by `scott%ja...@gtempaccount.com` on 23 Jul 2008 at 1:55 | 1.0 | test trailing comma after properties & document error message from APT - ```

test trailing comma after properties & document error message from APT

```

Original issue reported on code.google.com by `scott%ja...@gtempaccount.com` on 23 Jul 2008 at 1:55 | priority | test trailing comma after properties document error message from apt test trailing comma after properties document error message from apt original issue reported on code google com by scott ja gtempaccount com on jul at | 1 |

812,831 | 30,385,558,398 | IssuesEvent | 2023-07-13 00:08:26 | calcom/cal.com | https://api.github.com/repos/calcom/cal.com | closed | [CAL-1688] Inconsistent time availability slots on current day in the middle of availability | 🐛 bug Medium priority bookings | ### Issue Summary

Inconsistent availability slots when the current day is in the middle of an availability time interval.

### Steps to Reproduce

I will give an example on reproduction for my scenario, you can edit the times based on your current date/time. Right now, it is 15:16 local time for me on Sunday.

1. Set availability for 09:00 - 17:00 (with event length of 35 minutes) on Sunday, and any other day in the future just for reference.

2. Since my local time is 15:16, it will obviously only show me the availabilities in the future, in my case: [15:45, 16:20]

3. Then go to a day in the future and look at the availabilities: [..., 14:50, 15:25, 16:00, ...]

The problem is this inconsistency. The availability seems to be computed depending on the current time, which is not correct for a booking system. As someone who wants to have time slots throughout the day, you want them to be static and consistent. So in this example, I am expecting time slots to be [15:25, 16:00]. It's a bit difficult to explain, so let me know if that didn't make any sense.

<sub>[CAL-1688](https://linear.app/calcom/issue/CAL-1688/inconsistent-time-availability-slots-on-current-day-in-the-middle-of)</sub> | 1.0 | [CAL-1688] Inconsistent time availability slots on current day in the middle of availability - ### Issue Summary

Inconsistent availability slots when the current day is in the middle of an availability time interval.

### Steps to Reproduce

I will give an example on reproduction for my scenario, you can edit the times based on your current date/time. Right now, it is 15:16 local time for me on Sunday.

1. Set availability for 09:00 - 17:00 (with event length of 35 minutes) on Sunday, and any other day in the future just for reference.

2. Since my local time is 15:16, it will obviously only show me the availabilities in the future, in my case: [15:45, 16:20]

3. Then go to a day in the future and look at the availabilities: [..., 14:50, 15:25, 16:00, ...]

The problem is this inconsistency. The availability seems to be computed depending on the current time, which is not correct for a booking system. As someone who wants to have time slots throughout the day, you want them to be static and consistent. So in this example, I am expecting time slots to be [15:25, 16:00]. It's a bit difficult to explain, so let me know if that didn't make any sense.

<sub>[CAL-1688](https://linear.app/calcom/issue/CAL-1688/inconsistent-time-availability-slots-on-current-day-in-the-middle-of)</sub> | priority | inconsistent time availability slots on current day in the middle of availability issue summary inconsistent availability slots when the current day is in the middle of an availability time interval steps to reproduce i will give an example on reproduction for my scenario you can edit the times based on your current date time right now it is local time for me on sunday set availability for with event length of minutes on sunday and any other day in the future just for reference since my local time is it will obviously only show me the availabilities in the future in my case then go to a day in the future and look at the availabilities the problem is this inconsistency the availability seems to be computed depending on the current time which is not correct for a booking system as someone who wants to have time slots throughout the day you want them to be static and consistent so in this example i am expecting time slots to be it s a bit difficult to explain so let me know if that didn t make any sense | 1 |

2,072 | 2,523,069,026 | IssuesEvent | 2015-01-20 06:26:27 | dartsim/dart | https://api.github.com/repos/dartsim/dart | closed | const correctness violations | Comp: API Priority: Medium | I noticed that there are some issues with the way pointers are passed around by const member functions. In particular, it's very easy to violate const correctness without the user needing to use a const_cast. To illustrate this, consider the following example functions which are perfectly valid in the current master of DART, but which circumvent const correctness:

```

BodyNode* const_breaking(const BodyNode* const_node)

{

return const_node->getChildBodyNode(0)->getParentBodyNode();

}

Skeleton* const_breaking(const Skeleton* const_skel)

{

return const_skel->getBodyNode(0)->getSkeleton();

}

```

These functions are able to return non-const versions of their const input without explicitly using a const_cast. I see this as a safety liability.

I have changed the API to offer overloaded nonconst/const versions of these getter functions. This does not seem to have broken any code (which is good, because any broken code would have been violating const-correctness), although I did have to overload SoftContactConstraint::selectCollidingPointMass(~) with a const and non-const version.

I also added getter functions that return the child BodyNode and parent BodyNode of a Joint, because I frequently find that useful.

The changes are all available in this commit: 0af26387ac1524b5076ddb30d0458efa7a29101e

(Note that the commit also contains my changes to the name mapping from #261) | 1.0 | const correctness violations - I noticed that there are some issues with the way pointers are passed around by const member functions. In particular, it's very easy to violate const correctness without the user needing to use a const_cast. To illustrate this, consider the following example functions which are perfectly valid in the current master of DART, but which circumvent const correctness:

```

BodyNode* const_breaking(const BodyNode* const_node)

{

return const_node->getChildBodyNode(0)->getParentBodyNode();

}

Skeleton* const_breaking(const Skeleton* const_skel)

{

return const_skel->getBodyNode(0)->getSkeleton();

}

```

These functions are able to return non-const versions of their const input without explicitly using a const_cast. I see this as a safety liability.

I have changed the API to offer overloaded nonconst/const versions of these getter functions. This does not seem to have broken any code (which is good, because any broken code would have been violating const-correctness), although I did have to overload SoftContactConstraint::selectCollidingPointMass(~) with a const and non-const version.

I also added getter functions that return the child BodyNode and parent BodyNode of a Joint, because I frequently find that useful.

The changes are all available in this commit: 0af26387ac1524b5076ddb30d0458efa7a29101e

(Note that the commit also contains my changes to the name mapping from #261) | priority | const correctness violations i noticed that there are some issues with the way pointers are passed around by const member functions in particular it s very easy to violate const correctness without the user needing to use a const cast to illustrate this consider the following example functions which are perfectly valid in the current master of dart but which circumvent const correctness bodynode const breaking const bodynode const node return const node getchildbodynode getparentbodynode skeleton const breaking const skeleton const skel return const skel getbodynode getskeleton these functions are able to return non const versions of their const input without explicitly using a const cast i see this as a safety liability i have changed the api to offer overloaded nonconst const versions of these getter functions this does not seem to have broken any code which is good because any broken code would have been violating const correctness although i did have to overload softcontactconstraint selectcollidingpointmass with a const and non const version i also added getter functions that return the child bodynode and parent bodynode of a joint because i frequently find that useful the changes are all available in this commit note that the commit also contains my changes to the name mapping from | 1 |

825,874 | 31,476,115,132 | IssuesEvent | 2023-08-30 10:49:11 | vscentrum/vsc-software-stack | https://api.github.com/repos/vscentrum/vsc-software-stack | closed | Omnipose | difficulty: easy new priority: medium Python site:t1_ugent_hortense GPU sources-only | * link to support ticket: [#2023013160000892](https://otrsdict.ugent.be/otrs/index.pl?Action=AgentTicketZoom;TicketID=108644)

* website: https://omnipose.readthedocs.io/

* installation docs: https://omnipose.readthedocs.io/installation.html

* toolchain: `foss/2022a`

* easyblock to use: `PythonBundle`

* required dependencies:

* [ ] [cellpose-omni](https://github.com/kevinjohncutler/cellpose-omni)

* see https://github.com/kevinjohncutler/omnipose/blob/main/setup.py

* notes:

* ...

* effort: *(TBD)*

| 1.0 | Omnipose - * link to support ticket: [#2023013160000892](https://otrsdict.ugent.be/otrs/index.pl?Action=AgentTicketZoom;TicketID=108644)

* website: https://omnipose.readthedocs.io/

* installation docs: https://omnipose.readthedocs.io/installation.html

* toolchain: `foss/2022a`

* easyblock to use: `PythonBundle`

* required dependencies:

* [ ] [cellpose-omni](https://github.com/kevinjohncutler/cellpose-omni)

* see https://github.com/kevinjohncutler/omnipose/blob/main/setup.py

* notes:

* ...

* effort: *(TBD)*

| priority | omnipose link to support ticket website installation docs toolchain foss easyblock to use pythonbundle required dependencies see notes effort tbd | 1 |

595,443 | 18,067,037,865 | IssuesEvent | 2021-09-20 20:30:58 | azerothcore/azerothcore-wotlk | https://api.github.com/repos/azerothcore/azerothcore-wotlk | closed | [Blackrock Depths - Boss] Summoner's Tomb/ The Seven Event too fast | Priority-Medium Instance - Dungeon - Classic Confirmed 50-59 | ### What client do you play on?

enUS

### Faction

- [X] Alliance

- [X] Horde

### Content Phase:

- [ ] Generic

- [ ] 1-19

- [ ] 20-29

- [ ] 30-39

- [ ] 40-49

- [x] 50-59

### Current Behaviour

When you get to Summoner's Tomb and when you pull the event. Bosses that should spawn every 45 seconds or so, but here they spawn every 20 sec or so, might be wrong, never counted. But everyone in multiple runs (over 20) stated that they spawn faster than expected leading to fight 3 bosses at once instead max 2.

https://classic.wowhead.com/npc=9039/doomrel#drops;mode:normal

### Expected Blizzlike Behaviour

https://www.youtube.com/watch?v=wW84027e2JU&ab_channel=Chou-chiChang

At lower levels in BRD you on Blizzlike WoW have time to kill 1 by 1 boss, while here bosses spawns so fast that you get 3 at some point even with BiS lvl 49 gear and so fast killing with WoTLK talents. Our spawn times are insane.

### Source

_No response_

### Steps to reproduce the problem

1. Enter BRD and get to Summoner's Tob (Near the end of BRD full run)

2. Start the event by talking to one of bosses (straight forward when you enter door)

3. Bosses spawning so fast, literally 20 sec or 25, get 3 bosses at some point to handle.

### Extra Notes

Original Report: https://github.com/chromiecraft/chromiecraft/issues/1659

Triager notes:

https://www.youtube.com/watch?v=QoEwUz7Bdq8

24:41 Anger'rel

25:18 Seeth'rel (killed before next one activated)

25:40 Dope'rel (killed before next one activated)

26:03 Gloom'rel (killed before next one activated)

26:26 Vile'rel (killed before next one activated)

26:51 Hate'rel (killed before next one activated)

27:12 Doom'rel

Right now, they spawn in 14-20 seconds on CC.

They should either spawn on a timer, or when they are too quickly die, the next one should activate.

https://wowpedia.fandom.com/wiki/The_Seven

`All of the mobs in the encounter are immune to curses. Also the event is entirely on a timer. After a set amount of seconds, the next mob will release and aggro the first player it sees.`

### AC rev. hash/commit

https://github.com/chromiecraft/azerothcore-wotlk/commit/595bb6adccbabc714469f3935541978283b8bdfb

### Operating system

Ubuntu 20.04

### Modules

- [mod-ah-bot](https://github.com/azerothcore/mod-ah-bot)

- [mod-cfbg](https://github.com/azerothcore/mod-cfbg)

- [mod-chromie-xp](https://github.com/azerothcore/mod-chromie-xp)

- [mod-desertion-warnings](https://github.com/azerothcore/mod-desertion-warnings)

- [mod-duel-reset](https://github.com/azerothcore/mod-duel-reset)

- [mod-eluna-lua-engine](https://github.com/azerothcore/mod-eluna-lua-engine)

- [mod-ip-tracker](https://github.com/azerothcore/mod-ip-tracker)

- [mod-low-level-arena](https://github.com/azerothcore/mod-low-level-arena)

- [mod-multi-client-check](https://github.com/azerothcore/mod-multi-client-check)

- [mod-pvp-titles](https://github.com/azerothcore/mod-pvp-titles)

- [mod-pvpstats-announcer](https://github.com/azerothcore/mod-pvpstats-announcer)

- [mod-queue-list-cache](https://github.com/azerothcore/mod-queue-list-cache)

- [mod-server-auto-shutdown](https://github.com/azerothcore/mod-server-auto-shutdown)

- [lua-carbon-copy](https://github.com/55Honey/Acore_CarbonCopy)

- [lua-custom-corldboss](https://github.com/55Honey/Acore_CustomWorldboss)

- [lua-level-up-reward](https://github.com/55Honey/Acore_LevelUpReward)

- [lua-recruit-a-friend](https://github.com/55Honey/Acore_RecruitAFriend)

- [lua-send-and-bind](https://github.com/55Honey/Acore_SendAndBind)

- [lua-temp-announcements](https://github.com/55Honey/Acore_TempAnnouncements)

- [lua-zonecheck](https://github.com/55Honey/acore_Zonecheck)

### Customizations

None

### Server

ChromieCraft

| 1.0 | [Blackrock Depths - Boss] Summoner's Tomb/ The Seven Event too fast - ### What client do you play on?

enUS

### Faction

- [X] Alliance

- [X] Horde

### Content Phase:

- [ ] Generic

- [ ] 1-19

- [ ] 20-29

- [ ] 30-39

- [ ] 40-49

- [x] 50-59

### Current Behaviour

When you get to Summoner's Tomb and when you pull the event. Bosses that should spawn every 45 seconds or so, but here they spawn every 20 sec or so, might be wrong, never counted. But everyone in multiple runs (over 20) stated that they spawn faster than expected leading to fight 3 bosses at once instead max 2.