Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

827,682 | 31,792,319,095 | IssuesEvent | 2023-09-13 05:02:44 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [YSQL] BNL query on information_schema crashes in 2.16 | kind/enhancement area/ysql priority/medium | Jira Link: [DB-7018](https://yugabyte.atlassian.net/browse/DB-7018)

### Description

We see that the following query crashes in 2.16:

```

set yb_bnl_batch_size=1024;

SELECT bc.constraint_name as constraint_name, ac.column_name as column_name FROM information_schema.table_constraints bc, information_schema.key_column_usage ac WHERE bc.constraint_type = 'PRIMARY KEY' AND ac.table_name = bc.table_name AND ac.table_schema = bc.table_schema AND ac.constraint_name = bc.constraint_name AND bc.table_schema = 'public' AND bc.table_name = 'example_table' ORDER BY ac.ordinal_position ASC;

```

### Warning: Please confirm that this issue does not contain any sensitive information

- [X] I confirm this issue does not contain any sensitive information.

[DB-7018]: https://yugabyte.atlassian.net/browse/DB-7018?atlOrigin=eyJpIjoiNWRkNTljNzYxNjVmNDY3MDlhMDU5Y2ZhYzA5YTRkZjUiLCJwIjoiZ2l0aHViLWNvbS1KU1cifQ | 1.0 | [YSQL] BNL query on information_schema crashes in 2.16 - Jira Link: [DB-7018](https://yugabyte.atlassian.net/browse/DB-7018)

### Description

We see that the following query crashes in 2.16:

```

set yb_bnl_batch_size=1024;

SELECT bc.constraint_name as constraint_name, ac.column_name as column_name FROM information_schema.table_constraints bc, information_schema.key_column_usage ac WHERE bc.constraint_type = 'PRIMARY KEY' AND ac.table_name = bc.table_name AND ac.table_schema = bc.table_schema AND ac.constraint_name = bc.constraint_name AND bc.table_schema = 'public' AND bc.table_name = 'example_table' ORDER BY ac.ordinal_position ASC;

```

### Warning: Please confirm that this issue does not contain any sensitive information

- [X] I confirm this issue does not contain any sensitive information.

[DB-7018]: https://yugabyte.atlassian.net/browse/DB-7018?atlOrigin=eyJpIjoiNWRkNTljNzYxNjVmNDY3MDlhMDU5Y2ZhYzA5YTRkZjUiLCJwIjoiZ2l0aHViLWNvbS1KU1cifQ | priority | bnl query on information schema crashes in jira link description we see that the following query crashes in set yb bnl batch size select bc constraint name as constraint name ac column name as column name from information schema table constraints bc information schema key column usage ac where bc constraint type primary key and ac table name bc table name and ac table schema bc table schema and ac constraint name bc constraint name and bc table schema public and bc table name example table order by ac ordinal position asc warning please confirm that this issue does not contain any sensitive information i confirm this issue does not contain any sensitive information | 1 |

688,580 | 23,588,499,942 | IssuesEvent | 2022-08-23 13:31:50 | Kong/docs.konghq.com | https://api.github.com/repos/Kong/docs.konghq.com | closed | GW API Documentation: make the 1-1-1 relationship between GatewayClass, Gateway and KIC a constraint | priority/medium area/gateway-api | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Problem Statement

In the unmanaged Gateway API mode, ask users of Gateway API TP/beta to define only one `GatewayClass` and only one `Gateway` per KIC instance.

This is because the behavior for more than 1 `Gateway` resource in a GatewayClass per KIC instance is undefined today (#2559 captures the problem).

### Proposed Solution

Document KIC's Gateway API support in the following way:

In order to use KIC with Gateway API in unmanaged mode, create one `GatewayClass` and one `Gateway` resource for a KIC instance. Do not define more than one `Gateway` in a `GatewayClass`.

### Additional information

_No response_

### Acceptance Criteria

- [ ] A Gateway API documentation entry as described above exists. | 1.0 | GW API Documentation: make the 1-1-1 relationship between GatewayClass, Gateway and KIC a constraint - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Problem Statement

In the unmanaged Gateway API mode, ask users of Gateway API TP/beta to define only one `GatewayClass` and only one `Gateway` per KIC instance.

This is because the behavior for more than 1 `Gateway` resource in a GatewayClass per KIC instance is undefined today (#2559 captures the problem).

### Proposed Solution

Document KIC's Gateway API support in the following way:

In order to use KIC with Gateway API in unmanaged mode, create one `GatewayClass` and one `Gateway` resource for a KIC instance. Do not define more than one `Gateway` in a `GatewayClass`.

### Additional information

_No response_

### Acceptance Criteria

- [ ] A Gateway API documentation entry as described above exists. | priority | gw api documentation make the relationship between gatewayclass gateway and kic a constraint is there an existing issue for this i have searched the existing issues problem statement in the unmanaged gateway api mode ask users of gateway api tp beta to define only one gatewayclass and only one gateway per kic instance this is because the behavior for more than gateway resource in a gatewayclass per kic instance is undefined today captures the problem proposed solution document kic s gateway api support in the following way in order to use kic with gateway api in unmanaged mode create one gatewayclass and one gateway resource for a kic instance do not define more than one gateway in a gatewayclass additional information no response acceptance criteria a gateway api documentation entry as described above exists | 1 |

48,686 | 2,999,448,850 | IssuesEvent | 2015-07-23 19:05:08 | jayway/powermock | https://api.github.com/repos/jayway/powermock | opened | Investigate if mock policies fails when applied in a test suite | imported Milestone-Release1.5 Priority-Medium Type-Task | _From [johan.ha...@gmail.com](https://code.google.com/u/105676376875942041029/) on November 03, 2009 21:12:07_

From phatblat:

I have a few related test classes running great under the

PowerMockRunner but today I threw them together into a JUnit4 suite

and am finding that the Log4jMockPolicy I have applied to them is not

being honored. The tests still run and pass, the mock policy just

suppresses the following distracting errors:

log4j:ERROR A "org.apache.log4j.xml.DOMConfigurator" object is not

assignable to a "org.apache.log4j.spi.Configurator" variable.

log4j:ERROR The class "org.apache.log4j.spi.Configurator" was loaded

by

log4j:ERROR [org.powermock.core.classloader.MockClassLoader@11121f6]

whereas object of type

log4j:ERROR "org.apache.log4j.xml.DOMConfigurator" was loaded by

[sun.misc.Launcher$AppClassLoader@92e78c].

log4j:ERROR Could not instantiate configurator

[org.apache.log4j.xml.DOMConfigurator].

I thought this issue might have been related to the hierarchy of my

test class setup. I have an abstract base class which has all the mock

construction and PowerMock class-level annotations. The test

subclasses have no class-level annotations. I have experimented with

moving the @MockPolicy(Log4jMockPolicy.class) annotation to the

subclasses with no change in behavior, I still get the above errors

which mean the policy is not being applied. If any of the other

PowerMock annotations were not being picked up the tests would

certainly fail.

Below is a summary of the classes involved (each defined in own file,

class body removed for brevity):

@RunWith(Suite.class)

@SuiteClasses( { HCUploaderManagerImplReconciliationTest.class,

HCUploaderManagerImplDupsInEnteredStateTest.class })

public class HCUploaderManagerTestSuite {}

@RunWith(PowerMockRunner.class)

@PrepareForTest(BusinessManagerImpl.class)

@SuppressStaticInitializationFor

("com.company.business.BusinessManagerImpl")

@MockPolicy(Log4jMockPolicy.class)

public class HCUploaderManagerImplAbstractTest {...}

public class HCUploaderManagerImplReconciliationTest extends

HCUploaderManagerImplAbstractTest {...}

public class HCUploaderManagerImplDupsInEnteredStateTest extends

HCUploaderManagerImplAbstractTest {...}

My question is whether I have set up this test suite incorrectly or

does PowerMock not currently support mock policies when run within a

test suite?

_Original issue: http://code.google.com/p/powermock/issues/detail?id=191_ | 1.0 | Investigate if mock policies fails when applied in a test suite - _From [johan.ha...@gmail.com](https://code.google.com/u/105676376875942041029/) on November 03, 2009 21:12:07_

From phatblat:

I have a few related test classes running great under the

PowerMockRunner but today I threw them together into a JUnit4 suite

and am finding that the Log4jMockPolicy I have applied to them is not

being honored. The tests still run and pass, the mock policy just

suppresses the following distracting errors:

log4j:ERROR A "org.apache.log4j.xml.DOMConfigurator" object is not

assignable to a "org.apache.log4j.spi.Configurator" variable.

log4j:ERROR The class "org.apache.log4j.spi.Configurator" was loaded

by

log4j:ERROR [org.powermock.core.classloader.MockClassLoader@11121f6]

whereas object of type

log4j:ERROR "org.apache.log4j.xml.DOMConfigurator" was loaded by

[sun.misc.Launcher$AppClassLoader@92e78c].

log4j:ERROR Could not instantiate configurator

[org.apache.log4j.xml.DOMConfigurator].

I thought this issue might have been related to the hierarchy of my

test class setup. I have an abstract base class which has all the mock

construction and PowerMock class-level annotations. The test

subclasses have no class-level annotations. I have experimented with

moving the @MockPolicy(Log4jMockPolicy.class) annotation to the

subclasses with no change in behavior, I still get the above errors

which mean the policy is not being applied. If any of the other

PowerMock annotations were not being picked up the tests would

certainly fail.

Below is a summary of the classes involved (each defined in own file,

class body removed for brevity):

@RunWith(Suite.class)

@SuiteClasses( { HCUploaderManagerImplReconciliationTest.class,

HCUploaderManagerImplDupsInEnteredStateTest.class })

public class HCUploaderManagerTestSuite {}

@RunWith(PowerMockRunner.class)

@PrepareForTest(BusinessManagerImpl.class)

@SuppressStaticInitializationFor

("com.company.business.BusinessManagerImpl")

@MockPolicy(Log4jMockPolicy.class)

public class HCUploaderManagerImplAbstractTest {...}

public class HCUploaderManagerImplReconciliationTest extends

HCUploaderManagerImplAbstractTest {...}

public class HCUploaderManagerImplDupsInEnteredStateTest extends

HCUploaderManagerImplAbstractTest {...}

My question is whether I have set up this test suite incorrectly or

does PowerMock not currently support mock policies when run within a

test suite?

_Original issue: http://code.google.com/p/powermock/issues/detail?id=191_ | priority | investigate if mock policies fails when applied in a test suite from on november from phatblat i have a few related test classes running great under the powermockrunner but today i threw them together into a suite and am finding that the i have applied to them is not being honored the tests still run and pass the mock policy just suppresses the following distracting errors error a org apache xml domconfigurator object is not assignable to a org apache spi configurator variable error the class org apache spi configurator was loaded by error whereas object of type error org apache xml domconfigurator was loaded by error could not instantiate configurator i thought this issue might have been related to the hierarchy of my test class setup i have an abstract base class which has all the mock construction and powermock class level annotations the test subclasses have no class level annotations i have experimented with moving the mockpolicy class annotation to the subclasses with no change in behavior i still get the above errors which mean the policy is not being applied if any of the other powermock annotations were not being picked up the tests would certainly fail below is a summary of the classes involved each defined in own file class body removed for brevity runwith suite class suiteclasses hcuploadermanagerimplreconciliationtest class hcuploadermanagerimpldupsinenteredstatetest class public class hcuploadermanagertestsuite runwith powermockrunner class preparefortest businessmanagerimpl class suppressstaticinitializationfor com company business businessmanagerimpl mockpolicy class public class hcuploadermanagerimplabstracttest public class hcuploadermanagerimplreconciliationtest extends hcuploadermanagerimplabstracttest public class hcuploadermanagerimpldupsinenteredstatetest extends hcuploadermanagerimplabstracttest my question is whether i have set up this test suite incorrectly or does powermock not currently support mock policies when run within a test suite original issue | 1 |

778,604 | 27,322,095,978 | IssuesEvent | 2023-02-24 20:53:24 | authzed/spicedb-operator | https://api.github.com/repos/authzed/spicedb-operator | closed | Persistent customization strategy | priority/2 medium state/needs discussion | The operator creates kube resources on behalf of the users: deployments, services, serviceaccounts, rbac, etc, which may require some additional modification by the user:

- Adding extra labels or annotations to integrate with other tools (i.e. GKE workload identity)

- Directing workloads in specific ways (tolerations, nodeselectors, affinitty/anti-affinity, topologySpreadConstraints, etc)

- Capacity planning (resource requests / limits)

- Other unforseen future needs due to new SpiceDB features, tooling (HPA?), or the evolution of Kubernetes

All of these modifications are possible today by modifying operator-created resources after (or before!) they have been created. The operator uses Server Side Apply and will not touch fields it does not own. Users can query for which fields are owned by reading the fieldmanager metadata on a given resource.

But modifying the resources after creation makes git-ops workflows difficult, it would be nice if there was a way to persist such modifications in `SpiceDBCluster` or other native Kube resources.

There are some native methods for persisting this type of change, but only for specific fields of specific resources:

- resource requests can be added automatically via a [limitrange](https://kubernetes.io/docs/concepts/policy/limit-range/) on the namespace to set a default

- tolerations can be added with a [default toleration](https://kubernetes.io/docs/reference/access-authn-authz/admission-controllers/#podtolerationrestriction) setting for the namespace

- volumes and env vars could be injected with `PodPreset` (which are deprecated and no longer available)

With the background out of the way, this leaves some general approaches we could take:

1. **Add new fields** to `SpiceDBCluster` to cover any needs as they come up. This is the approach that most operators seem to take, but doing this for more than a couple of fields leads to huge schemas with dozens of options for customizing specific parts of downstream resources. I don't personally favor this approach - it seems at odds with the fieldmanager tracking that Kube introduced for SSA, and it brings things into the operator's scope that it doesn't actually have an opinion on (all such config is passed blindly to other resources).

2. **Admission Controllers**: this is the general form of the `PodPreset` solution, where external config can modify the resource before it is persisted. There are a couple of competing projects with no clear (to me) leader: [Kyverno](https://kyverno.io/docs/writing-policies/mutate/) and [Gatekeeper](https://open-policy-agent.github.io/gatekeeper/website/docs/mutation/) both support "mutation" policies that can inject arbitrary data into a resource on creation. This approach can be used with the operator today, but we have no example policies for users to lean on, and it requires installing and running one of these projects as well.

3. **Embed generic customizations**: instead of providing specific fields for specific customizations, we could provide a hook to allow users to provide arbitrary customizations. This could look like a single `kustomization: <configMapName>` field with Kustomize manifests (that the operator parses and applies, similar to [kubebuilder-declarative-pattern](https://github.com/kubernetes-sigs/kubebuilder-declarative-pattern/blob/master/docs/addon/walkthrough/README.md)), or it might look more like a Kyverno/Gatekeeper API but with a smaller, spicedb-operator focused scope.

| 1.0 | Persistent customization strategy - The operator creates kube resources on behalf of the users: deployments, services, serviceaccounts, rbac, etc, which may require some additional modification by the user:

- Adding extra labels or annotations to integrate with other tools (i.e. GKE workload identity)

- Directing workloads in specific ways (tolerations, nodeselectors, affinitty/anti-affinity, topologySpreadConstraints, etc)

- Capacity planning (resource requests / limits)

- Other unforseen future needs due to new SpiceDB features, tooling (HPA?), or the evolution of Kubernetes

All of these modifications are possible today by modifying operator-created resources after (or before!) they have been created. The operator uses Server Side Apply and will not touch fields it does not own. Users can query for which fields are owned by reading the fieldmanager metadata on a given resource.

But modifying the resources after creation makes git-ops workflows difficult, it would be nice if there was a way to persist such modifications in `SpiceDBCluster` or other native Kube resources.

There are some native methods for persisting this type of change, but only for specific fields of specific resources:

- resource requests can be added automatically via a [limitrange](https://kubernetes.io/docs/concepts/policy/limit-range/) on the namespace to set a default

- tolerations can be added with a [default toleration](https://kubernetes.io/docs/reference/access-authn-authz/admission-controllers/#podtolerationrestriction) setting for the namespace

- volumes and env vars could be injected with `PodPreset` (which are deprecated and no longer available)

With the background out of the way, this leaves some general approaches we could take:

1. **Add new fields** to `SpiceDBCluster` to cover any needs as they come up. This is the approach that most operators seem to take, but doing this for more than a couple of fields leads to huge schemas with dozens of options for customizing specific parts of downstream resources. I don't personally favor this approach - it seems at odds with the fieldmanager tracking that Kube introduced for SSA, and it brings things into the operator's scope that it doesn't actually have an opinion on (all such config is passed blindly to other resources).

2. **Admission Controllers**: this is the general form of the `PodPreset` solution, where external config can modify the resource before it is persisted. There are a couple of competing projects with no clear (to me) leader: [Kyverno](https://kyverno.io/docs/writing-policies/mutate/) and [Gatekeeper](https://open-policy-agent.github.io/gatekeeper/website/docs/mutation/) both support "mutation" policies that can inject arbitrary data into a resource on creation. This approach can be used with the operator today, but we have no example policies for users to lean on, and it requires installing and running one of these projects as well.

3. **Embed generic customizations**: instead of providing specific fields for specific customizations, we could provide a hook to allow users to provide arbitrary customizations. This could look like a single `kustomization: <configMapName>` field with Kustomize manifests (that the operator parses and applies, similar to [kubebuilder-declarative-pattern](https://github.com/kubernetes-sigs/kubebuilder-declarative-pattern/blob/master/docs/addon/walkthrough/README.md)), or it might look more like a Kyverno/Gatekeeper API but with a smaller, spicedb-operator focused scope.

| priority | persistent customization strategy the operator creates kube resources on behalf of the users deployments services serviceaccounts rbac etc which may require some additional modification by the user adding extra labels or annotations to integrate with other tools i e gke workload identity directing workloads in specific ways tolerations nodeselectors affinitty anti affinity topologyspreadconstraints etc capacity planning resource requests limits other unforseen future needs due to new spicedb features tooling hpa or the evolution of kubernetes all of these modifications are possible today by modifying operator created resources after or before they have been created the operator uses server side apply and will not touch fields it does not own users can query for which fields are owned by reading the fieldmanager metadata on a given resource but modifying the resources after creation makes git ops workflows difficult it would be nice if there was a way to persist such modifications in spicedbcluster or other native kube resources there are some native methods for persisting this type of change but only for specific fields of specific resources resource requests can be added automatically via a on the namespace to set a default tolerations can be added with a setting for the namespace volumes and env vars could be injected with podpreset which are deprecated and no longer available with the background out of the way this leaves some general approaches we could take add new fields to spicedbcluster to cover any needs as they come up this is the approach that most operators seem to take but doing this for more than a couple of fields leads to huge schemas with dozens of options for customizing specific parts of downstream resources i don t personally favor this approach it seems at odds with the fieldmanager tracking that kube introduced for ssa and it brings things into the operator s scope that it doesn t actually have an opinion on all such config is passed blindly to other resources admission controllers this is the general form of the podpreset solution where external config can modify the resource before it is persisted there are a couple of competing projects with no clear to me leader and both support mutation policies that can inject arbitrary data into a resource on creation this approach can be used with the operator today but we have no example policies for users to lean on and it requires installing and running one of these projects as well embed generic customizations instead of providing specific fields for specific customizations we could provide a hook to allow users to provide arbitrary customizations this could look like a single kustomization field with kustomize manifests that the operator parses and applies similar to or it might look more like a kyverno gatekeeper api but with a smaller spicedb operator focused scope | 1 |

33,308 | 2,763,834,780 | IssuesEvent | 2015-04-29 12:18:54 | handsontable/hot-table | https://api.github.com/repos/handsontable/hot-table | closed | Better keyboard arrow keys support for nested tables | Enhancement Priority: medium To review | It should be better support for navigating through nested tables via arrows keys. For now it's not possible to navigate between different tables without using mouse. | 1.0 | Better keyboard arrow keys support for nested tables - It should be better support for navigating through nested tables via arrows keys. For now it's not possible to navigate between different tables without using mouse. | priority | better keyboard arrow keys support for nested tables it should be better support for navigating through nested tables via arrows keys for now it s not possible to navigate between different tables without using mouse | 1 |

408,587 | 11,949,561,943 | IssuesEvent | 2020-04-03 13:51:16 | AY1920S2-CS2103T-W12-3/main | https://api.github.com/repos/AY1920S2-CS2103T-W12-3/main | closed | As an organised student I can categorise my spending | priority.Medium type.Story | ... so that I know the proportions of my spending. | 1.0 | As an organised student I can categorise my spending - ... so that I know the proportions of my spending. | priority | as an organised student i can categorise my spending so that i know the proportions of my spending | 1 |

106,336 | 4,270,115,550 | IssuesEvent | 2016-07-13 05:10:14 | mmisw/orr-portal | https://api.github.com/repos/mmisw/orr-portal | opened | m2r: check and avoid triple duplications | bug m2r Priority-Medium | since triples could duplicated, upon removing one occurrence, make sure to remove all corresp duplicates | 1.0 | m2r: check and avoid triple duplications - since triples could duplicated, upon removing one occurrence, make sure to remove all corresp duplicates | priority | check and avoid triple duplications since triples could duplicated upon removing one occurrence make sure to remove all corresp duplicates | 1 |

40,723 | 2,868,938,429 | IssuesEvent | 2015-06-05 22:04:34 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | closed | Add pub commands for managing the uploader list | enhancement Fixed Priority-Medium | <a href="https://github.com/munificent"><img src="https://avatars.githubusercontent.com/u/46275?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [munificent](https://github.com/munificent)**

_Originally opened as dart-lang/sdk#7363_

----

The server has URLs for this, but there's no UI to hit those URLs. I'm thinking:

$ pub uploader[s]

Lists the uploaders for the current package and tells the user if they are in the list.

$ pub uploader[s] add <email>

Adds the given user to the uploader list, if this user has permission to do so.

$ pub uploader[s] remove <email>

Removes the given user to the uploader list, if this user has permission to do so. | 1.0 | Add pub commands for managing the uploader list - <a href="https://github.com/munificent"><img src="https://avatars.githubusercontent.com/u/46275?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [munificent](https://github.com/munificent)**

_Originally opened as dart-lang/sdk#7363_

----

The server has URLs for this, but there's no UI to hit those URLs. I'm thinking:

$ pub uploader[s]

Lists the uploaders for the current package and tells the user if they are in the list.

$ pub uploader[s] add <email>

Adds the given user to the uploader list, if this user has permission to do so.

$ pub uploader[s] remove <email>

Removes the given user to the uploader list, if this user has permission to do so. | priority | add pub commands for managing the uploader list issue by originally opened as dart lang sdk the server has urls for this but there s no ui to hit those urls i m thinking nbsp nbsp pub uploader lists the uploaders for the current package and tells the user if they are in the list nbsp nbsp pub uploader add lt email gt adds the given user to the uploader list if this user has permission to do so nbsp nbsp pub uploader remove lt email gt removes the given user to the uploader list if this user has permission to do so | 1 |

718,038 | 24,701,720,500 | IssuesEvent | 2022-10-19 15:44:27 | bounswe/bounswe2022group2 | https://api.github.com/repos/bounswe/bounswe2022group2 | closed | Revising the Diagrams based on Lecture Structure | priority-medium type-enhancement status-needreview diagrams | ### Issue Description

As we decided in our second meeting, I made changes under "Lecture Structure" title of our requirements. Following these changes, design diagrams should be updated to be consistent with our requirements. This issue corresponds to updating the diagrams according to changes made in issue #341.

### Step Details

Steps that will be performed:

- [ ] Update diagrams according to newly updated Lecture Structure requirements

### Final Actions

After necessary changes is determined, I will update all parts related to Lecture Structure in the diagrams accordingly.

### Deadline of the Issue

16.10.2022 - Sunday - 23:59

### Reviewer

Muhammed Enes Sürmeli

### Deadline for the Review

17.10.2022 - Monday - 23:59 | 1.0 | Revising the Diagrams based on Lecture Structure - ### Issue Description

As we decided in our second meeting, I made changes under "Lecture Structure" title of our requirements. Following these changes, design diagrams should be updated to be consistent with our requirements. This issue corresponds to updating the diagrams according to changes made in issue #341.

### Step Details

Steps that will be performed:

- [ ] Update diagrams according to newly updated Lecture Structure requirements

### Final Actions

After necessary changes is determined, I will update all parts related to Lecture Structure in the diagrams accordingly.

### Deadline of the Issue

16.10.2022 - Sunday - 23:59

### Reviewer

Muhammed Enes Sürmeli

### Deadline for the Review

17.10.2022 - Monday - 23:59 | priority | revising the diagrams based on lecture structure issue description as we decided in our second meeting i made changes under lecture structure title of our requirements following these changes design diagrams should be updated to be consistent with our requirements this issue corresponds to updating the diagrams according to changes made in issue step details steps that will be performed update diagrams according to newly updated lecture structure requirements final actions after necessary changes is determined i will update all parts related to lecture structure in the diagrams accordingly deadline of the issue sunday reviewer muhammed enes sürmeli deadline for the review monday | 1 |

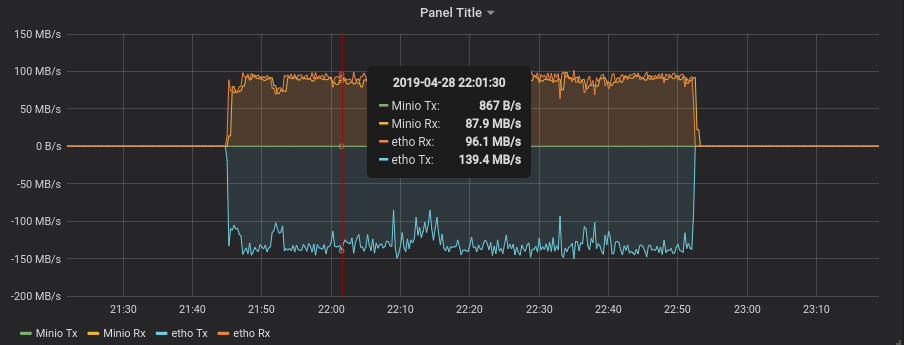

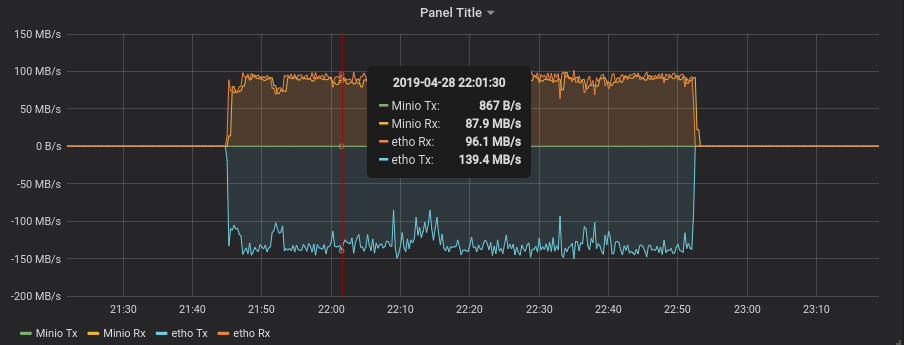

324,322 | 9,887,683,026 | IssuesEvent | 2019-06-25 09:44:18 | minio/minio | https://api.github.com/repos/minio/minio | closed | Expected behaviour of prometheus Metrics for network sent/received | community priority: medium triage | I have a dashboard which displays the `minio_network_sent_bytes_total` and `minio_network_received_bytes_total` overlayed with the same results from `node_exporter` for the host sent/received bytes.

We have a 4 node minio cluster and I am only graphing metrics from one of the nodes which is receiving the data. I would expect to see received bytes that match closely with the hosts received bytes, and transmit bytes that match closely with the hosts transmit bytes. However, it appears that only the receive matches while the transmit is close to 0.

**Is this a bug?**

There is definitely data being transmitted to the other nodes (Minio is replicating data between nodes).

**OR - will the transmit bytes only show when a client pulls data from minio?**

## Expected Behavior

Minio Sent/Received should be similar to the Hosts Sent/Received bytes

## Current Behavior

Minio Received matches Host Received but Minio Transmitted is almost 0

## Steps to Reproduce (for bugs)

Grafana dashboard showing Minio sent/received overlayed with `node_exporter` values of `node_network_transmit_bytes_total` and `node_network_received_bytes_total`

## Context

I am just trying to accurately show Minio and Host network usage.

## Regression

n/a

| 1.0 | Expected behaviour of prometheus Metrics for network sent/received - I have a dashboard which displays the `minio_network_sent_bytes_total` and `minio_network_received_bytes_total` overlayed with the same results from `node_exporter` for the host sent/received bytes.

We have a 4 node minio cluster and I am only graphing metrics from one of the nodes which is receiving the data. I would expect to see received bytes that match closely with the hosts received bytes, and transmit bytes that match closely with the hosts transmit bytes. However, it appears that only the receive matches while the transmit is close to 0.

**Is this a bug?**

There is definitely data being transmitted to the other nodes (Minio is replicating data between nodes).

**OR - will the transmit bytes only show when a client pulls data from minio?**

## Expected Behavior

Minio Sent/Received should be similar to the Hosts Sent/Received bytes

## Current Behavior

Minio Received matches Host Received but Minio Transmitted is almost 0

## Steps to Reproduce (for bugs)

Grafana dashboard showing Minio sent/received overlayed with `node_exporter` values of `node_network_transmit_bytes_total` and `node_network_received_bytes_total`

## Context

I am just trying to accurately show Minio and Host network usage.

## Regression

n/a

| priority | expected behaviour of prometheus metrics for network sent received i have a dashboard which displays the minio network sent bytes total and minio network received bytes total overlayed with the same results from node exporter for the host sent received bytes we have a node minio cluster and i am only graphing metrics from one of the nodes which is receiving the data i would expect to see received bytes that match closely with the hosts received bytes and transmit bytes that match closely with the hosts transmit bytes however it appears that only the receive matches while the transmit is close to is this a bug there is definitely data being transmitted to the other nodes minio is replicating data between nodes or will the transmit bytes only show when a client pulls data from minio expected behavior minio sent received should be similar to the hosts sent received bytes current behavior minio received matches host received but minio transmitted is almost steps to reproduce for bugs grafana dashboard showing minio sent received overlayed with node exporter values of node network transmit bytes total and node network received bytes total context i am just trying to accurately show minio and host network usage regression n a | 1 |

746,394 | 26,028,694,641 | IssuesEvent | 2022-12-21 18:48:02 | encorelab/ck-board | https://api.github.com/repos/encorelab/ck-board | closed | Comment Task Bugs in Workspace UI | bug medium priority | These bugs are related to tasks with a "comment at least once" requirement in the Workspace UI:

1. The average group progress bar value is incorrectly showing the current group's progress

2. Commenting on a post from the Workspace UI increments the number of comments by two or more in the Workspace UI

For example, this post has 2 comments and I comment one more time:

The pop-up correctly shows 3 comments.

<img width="421" alt="Screen Shot 2022-11-11 at 6 41 58 PM" src="https://user-images.githubusercontent.com/6416247/201444862-37abff5b-60ee-40e9-9840-7a9e65abdc37.png">

However, the Workspace UI incorrectly shows that there are 4 comments

<img width="689" alt="Screen Shot 2022-11-11 at 6 42 17 PM" src="https://user-images.githubusercontent.com/6416247/201444878-64fbf339-3d29-4f45-93e5-3ba35524311f.png">

| 1.0 | Comment Task Bugs in Workspace UI - These bugs are related to tasks with a "comment at least once" requirement in the Workspace UI:

1. The average group progress bar value is incorrectly showing the current group's progress

2. Commenting on a post from the Workspace UI increments the number of comments by two or more in the Workspace UI

For example, this post has 2 comments and I comment one more time:

The pop-up correctly shows 3 comments.

<img width="421" alt="Screen Shot 2022-11-11 at 6 41 58 PM" src="https://user-images.githubusercontent.com/6416247/201444862-37abff5b-60ee-40e9-9840-7a9e65abdc37.png">

However, the Workspace UI incorrectly shows that there are 4 comments

<img width="689" alt="Screen Shot 2022-11-11 at 6 42 17 PM" src="https://user-images.githubusercontent.com/6416247/201444878-64fbf339-3d29-4f45-93e5-3ba35524311f.png">

| priority | comment task bugs in workspace ui these bugs are related to tasks with a comment at least once requirement in the workspace ui the average group progress bar value is incorrectly showing the current group s progress commenting on a post from the workspace ui increments the number of comments by two or more in the workspace ui for example this post has comments and i comment one more time the pop up correctly shows comments img width alt screen shot at pm src however the workspace ui incorrectly shows that there are comments img width alt screen shot at pm src | 1 |

434,190 | 12,515,368,528 | IssuesEvent | 2020-06-03 07:32:41 | canonical-web-and-design/build.snapcraft.io | https://api.github.com/repos/canonical-web-and-design/build.snapcraft.io | closed | "All set up…" and progress bar briefly appear | Priority: Medium | **To reproduce:**

* After going through the first-time flow, add an additional repository.

* Add a snapcraft.yaml and register the snap name.

**What happens:**

* "All set up…" and the first-time flow progress bar briefly appear (~1-2s).

This is confusing. I'm shown progress for steps unrelated to my current task.

**What should happen:**

* Neither the "All set up…" message nor the first-time flow progress bar appear. | 1.0 | "All set up…" and progress bar briefly appear - **To reproduce:**

* After going through the first-time flow, add an additional repository.

* Add a snapcraft.yaml and register the snap name.

**What happens:**

* "All set up…" and the first-time flow progress bar briefly appear (~1-2s).

This is confusing. I'm shown progress for steps unrelated to my current task.

**What should happen:**

* Neither the "All set up…" message nor the first-time flow progress bar appear. | priority | all set up… and progress bar briefly appear to reproduce after going through the first time flow add an additional repository add a snapcraft yaml and register the snap name what happens all set up… and the first time flow progress bar briefly appear this is confusing i m shown progress for steps unrelated to my current task what should happen neither the all set up… message nor the first time flow progress bar appear | 1 |

502,601 | 14,562,727,459 | IssuesEvent | 2020-12-17 00:45:07 | nih-cfde/training-and-engagement | https://api.github.com/repos/nih-cfde/training-and-engagement | closed | Upload files to Cavatica using the command line uploader thing | Dec-2020 MediumPriority | This tutorial is the official tutorial but it has got some confusing parts: https://docs.cavatica.org/v1.0/docs/upload-via-the-command-line.

For other tutorials we need to be able to transfer fastq data files from AWS to Cavatica. Here are some steps:

Install java on AWS

Download the uploader onto AWS

Figure out how to use it

Related to simulated data issue #191 | 1.0 | Upload files to Cavatica using the command line uploader thing - This tutorial is the official tutorial but it has got some confusing parts: https://docs.cavatica.org/v1.0/docs/upload-via-the-command-line.

For other tutorials we need to be able to transfer fastq data files from AWS to Cavatica. Here are some steps:

Install java on AWS

Download the uploader onto AWS

Figure out how to use it

Related to simulated data issue #191 | priority | upload files to cavatica using the command line uploader thing this tutorial is the official tutorial but it has got some confusing parts for other tutorials we need to be able to transfer fastq data files from aws to cavatica here are some steps install java on aws download the uploader onto aws figure out how to use it related to simulated data issue | 1 |

4,343 | 2,550,445,376 | IssuesEvent | 2015-02-01 15:28:56 | olga-jane/prizm | https://api.github.com/repos/olga-jane/prizm | opened | Impossible to send release note with "empty" railcars | Coding MEDIUM priority Mill railcar | This feature is artefact of "railcar" implementation. It should be impossible to send release note when no pipes are in *release note* itself. | 1.0 | Impossible to send release note with "empty" railcars - This feature is artefact of "railcar" implementation. It should be impossible to send release note when no pipes are in *release note* itself. | priority | impossible to send release note with empty railcars this feature is artefact of railcar implementation it should be impossible to send release note when no pipes are in release note itself | 1 |

92,737 | 3,873,250,932 | IssuesEvent | 2016-04-11 16:18:57 | duckduckgo/p5-app-duckpan | https://api.github.com/repos/duckduckgo/p5-app-duckpan | opened | Incorrect detection of Instant Answer files | Bug Low-Hanging Fruit Priority: Medium | Some commands, such as `duckpan server`, will pick up any `.pm` files in `lib/DDG/(Goodie/Spice/...)` and treat them as Instant Answer files; making it incompatible with multi-file Instant Answers (see https://github.com/duckduckgo/zeroclickinfo-goodies/pull/1927#issuecomment-208041735). There is no issue if the Instant Answer is specified (see https://github.com/duckduckgo/zeroclickinfo-goodies/pull/1927#issuecomment-208042511 and https://github.com/duckduckgo/zeroclickinfo-goodies/pull/1927#issuecomment-208042609), as in `duckpan server MyIA`. | 1.0 | Incorrect detection of Instant Answer files - Some commands, such as `duckpan server`, will pick up any `.pm` files in `lib/DDG/(Goodie/Spice/...)` and treat them as Instant Answer files; making it incompatible with multi-file Instant Answers (see https://github.com/duckduckgo/zeroclickinfo-goodies/pull/1927#issuecomment-208041735). There is no issue if the Instant Answer is specified (see https://github.com/duckduckgo/zeroclickinfo-goodies/pull/1927#issuecomment-208042511 and https://github.com/duckduckgo/zeroclickinfo-goodies/pull/1927#issuecomment-208042609), as in `duckpan server MyIA`. | priority | incorrect detection of instant answer files some commands such as duckpan server will pick up any pm files in lib ddg goodie spice and treat them as instant answer files making it incompatible with multi file instant answers see there is no issue if the instant answer is specified see and as in duckpan server myia | 1 |

493,108 | 14,226,516,032 | IssuesEvent | 2020-11-17 23:10:32 | moonwards1/Moonwards-Virtual-Moon | https://api.github.com/repos/moonwards1/Moonwards-Virtual-Moon | closed | Make left clicking the mouse interact with interactables. | Department: Gameplay Department: UI/UX Priority: Medium Type: Feature | It feels awkward having the Interactables being interacted with by F when clickables get pressed by the mouse.

Keep the F key but add left mouse clicking as an action for "use". (use being the action for interacting.) | 1.0 | Make left clicking the mouse interact with interactables. - It feels awkward having the Interactables being interacted with by F when clickables get pressed by the mouse.

Keep the F key but add left mouse clicking as an action for "use". (use being the action for interacting.) | priority | make left clicking the mouse interact with interactables it feels awkward having the interactables being interacted with by f when clickables get pressed by the mouse keep the f key but add left mouse clicking as an action for use use being the action for interacting | 1 |

158,949 | 6,038,000,870 | IssuesEvent | 2017-06-09 20:12:30 | ngageoint/hootenanny | https://api.github.com/repos/ngageoint/hootenanny | closed | Create messaging mode for hoot command line | Category: Core Priority: Medium Status: New/Undefined Type: Task | Expose a mode where hoot takes messages on stdin and writes messages back to stdout. This will help simplify the interface between services & core.

| 1.0 | Create messaging mode for hoot command line - Expose a mode where hoot takes messages on stdin and writes messages back to stdout. This will help simplify the interface between services & core.

| priority | create messaging mode for hoot command line expose a mode where hoot takes messages on stdin and writes messages back to stdout this will help simplify the interface between services core | 1 |

187,254 | 6,750,475,093 | IssuesEvent | 2017-10-23 05:09:56 | opencurrents/opencurrents | https://api.github.com/repos/opencurrents/opencurrents | opened | record hours: Let admins track hours for volunteers | priority medium | Requested by Bike Austin when their volunteers don't submit hours or don't have an account. | 1.0 | record hours: Let admins track hours for volunteers - Requested by Bike Austin when their volunteers don't submit hours or don't have an account. | priority | record hours let admins track hours for volunteers requested by bike austin when their volunteers don t submit hours or don t have an account | 1 |

353,850 | 10,559,628,502 | IssuesEvent | 2019-10-04 12:05:43 | bounswe/bounswe2019group8 | https://api.github.com/repos/bounswe/bounswe2019group8 | opened | Design the database model | Backend Diagrams Effort: High Group work Planning Priority: Medium Project Plan Status: Available | **Actions:**

1. Develop currently existing User model in database. Specify required fields for an User object.

2. Design models, collections and relations according to the upcoming milestone requirements.

3. Create a document explaining the results of **1** and **2**

**Deadline:** 12.10.2019 - 21.00

| 1.0 | Design the database model - **Actions:**

1. Develop currently existing User model in database. Specify required fields for an User object.

2. Design models, collections and relations according to the upcoming milestone requirements.

3. Create a document explaining the results of **1** and **2**

**Deadline:** 12.10.2019 - 21.00

| priority | design the database model actions develop currently existing user model in database specify required fields for an user object design models collections and relations according to the upcoming milestone requirements create a document explaining the results of and deadline | 1 |

702,959 | 24,142,911,289 | IssuesEvent | 2022-09-21 16:08:50 | ufs-community/regional_workflow | https://api.github.com/repos/ufs-community/regional_workflow | closed | Clean up regional_workflow wiki and add/remove certain sections | enhancement Work in Progress medium priority | Update the regional_workflow wiki with any relevant changes. Decide whether some portions should be migrated to the ufs-srweather-app or whether it can be removed all together (such as the FV3-LAM Workflow Setup and Execution section) | 1.0 | Clean up regional_workflow wiki and add/remove certain sections - Update the regional_workflow wiki with any relevant changes. Decide whether some portions should be migrated to the ufs-srweather-app or whether it can be removed all together (such as the FV3-LAM Workflow Setup and Execution section) | priority | clean up regional workflow wiki and add remove certain sections update the regional workflow wiki with any relevant changes decide whether some portions should be migrated to the ufs srweather app or whether it can be removed all together such as the lam workflow setup and execution section | 1 |

237,542 | 7,761,465,844 | IssuesEvent | 2018-06-01 09:57:41 | Repair-DeskPOS/RepairDesk-Bugs | https://api.github.com/repos/Repair-DeskPOS/RepairDesk-Bugs | closed | Duplicate Barcodes | Medium Priority enhancement | Hi just curious, is there anyway for the system not to use the same UPC or SKU twice? I added two products with the same barcode would be nice to get a warning etc of duplicate codes. | 1.0 | Duplicate Barcodes - Hi just curious, is there anyway for the system not to use the same UPC or SKU twice? I added two products with the same barcode would be nice to get a warning etc of duplicate codes. | priority | duplicate barcodes hi just curious is there anyway for the system not to use the same upc or sku twice i added two products with the same barcode would be nice to get a warning etc of duplicate codes | 1 |

657,444 | 21,794,202,542 | IssuesEvent | 2022-05-15 11:31:23 | stackturing/tekton-visualise | https://api.github.com/repos/stackturing/tekton-visualise | opened | Add basic deployment as a part of the CRD | area/dev stage/baseline priority/medium complexity/medium | Add a deployment with served blank webpage as a part of the CRD | 1.0 | Add basic deployment as a part of the CRD - Add a deployment with served blank webpage as a part of the CRD | priority | add basic deployment as a part of the crd add a deployment with served blank webpage as a part of the crd | 1 |

509,575 | 14,739,917,707 | IssuesEvent | 2021-01-07 08:10:41 | rubyforgood/casa | https://api.github.com/repos/rubyforgood/casa | closed | Add seeding for hearing types | :woman_judge: Court Reports Good First Issue Help Wanted Priority: Medium | **What type of user is this for? volunteer/supervisor/admin/all**

Developer

**Description**

Add seeding for hearing types.

Per #1000 we have added an interface for hearing types per organization, however there was a conversation in #928 regarding seeding the data for this which was not added to the PR.

| 1.0 | Add seeding for hearing types - **What type of user is this for? volunteer/supervisor/admin/all**

Developer

**Description**

Add seeding for hearing types.

Per #1000 we have added an interface for hearing types per organization, however there was a conversation in #928 regarding seeding the data for this which was not added to the PR.

| priority | add seeding for hearing types what type of user is this for volunteer supervisor admin all developer description add seeding for hearing types per we have added an interface for hearing types per organization however there was a conversation in regarding seeding the data for this which was not added to the pr | 1 |

470,414 | 13,537,053,875 | IssuesEvent | 2020-09-16 09:55:21 | buddyboss/buddyboss-platform | https://api.github.com/repos/buddyboss/buddyboss-platform | opened | BB REST API - Create the Lost Password Endpoint | feature: enhancement priority: medium | **Is your feature request related to a problem? Please describe.**

Could you please add a route in the REST API which helps a user to reset his password when he lose it.

If that can help you, here is the code I used to use with BuddyPress :

[class-pf-lost-password-endpoints.php](https://github.com/buddyboss/buddyboss-platform/files/5231323/class-pf-lost-password-endpoints.txt)

**Describe the solution you'd like**

Create an endpoint to reset lost password

**Describe alternatives you've considered**

none

**Support ticket links**

none

| 1.0 | BB REST API - Create the Lost Password Endpoint - **Is your feature request related to a problem? Please describe.**

Could you please add a route in the REST API which helps a user to reset his password when he lose it.

If that can help you, here is the code I used to use with BuddyPress :

[class-pf-lost-password-endpoints.php](https://github.com/buddyboss/buddyboss-platform/files/5231323/class-pf-lost-password-endpoints.txt)

**Describe the solution you'd like**

Create an endpoint to reset lost password

**Describe alternatives you've considered**

none

**Support ticket links**

none

| priority | bb rest api create the lost password endpoint is your feature request related to a problem please describe could you please add a route in the rest api which helps a user to reset his password when he lose it if that can help you here is the code i used to use with buddypress describe the solution you d like create an endpoint to reset lost password describe alternatives you ve considered none support ticket links none | 1 |

706,113 | 24,260,777,113 | IssuesEvent | 2022-09-27 22:26:56 | objectify/objectify | https://api.github.com/repos/objectify/objectify | closed | make the docs downloadable | Priority-Medium Type-Task | Original [issue 172](https://code.google.com/p/objectify-appengine/issues/detail?id=172) created by objectify on 2013-08-13T12:36:04.000Z:

pls include the v4 guide somewhere than can be downloaded. thanks. :)

| 1.0 | make the docs downloadable - Original [issue 172](https://code.google.com/p/objectify-appengine/issues/detail?id=172) created by objectify on 2013-08-13T12:36:04.000Z:

pls include the v4 guide somewhere than can be downloaded. thanks. :)

| priority | make the docs downloadable original created by objectify on pls include the guide somewhere than can be downloaded thanks | 1 |

58,045 | 3,087,110,070 | IssuesEvent | 2015-08-25 09:27:11 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | closed | Баг со /switch | bug imported Priority-Medium | _From [mnr...@gmail.com](https://code.google.com/u/114542743364409907977/) on October 15, 2013 13:10:57_

При запуске флай иногда сам меняет местами чат\список пользователей, хотя при выходе из него было "правильное" расположение.

Хорошо видно при запуске с 500 хабами.

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=1342_ | 1.0 | Баг со /switch - _From [mnr...@gmail.com](https://code.google.com/u/114542743364409907977/) on October 15, 2013 13:10:57_

При запуске флай иногда сам меняет местами чат\список пользователей, хотя при выходе из него было "правильное" расположение.

Хорошо видно при запуске с 500 хабами.

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=1342_ | priority | баг со switch from on october при запуске флай иногда сам меняет местами чат список пользователей хотя при выходе из него было правильное расположение хорошо видно при запуске с хабами original issue | 1 |

1,955 | 2,522,175,050 | IssuesEvent | 2015-01-19 19:59:12 | couchbase/couchbase-lite-net | https://api.github.com/repos/couchbase/couchbase-lite-net | closed | [iOS] TestContinuousPushReplicationGoesIdle fails only in release mode | bug P4: minor priority-low size-medium | Appears to deadlock, causing the CountdownLatch timeout to fire. | 1.0 | [iOS] TestContinuousPushReplicationGoesIdle fails only in release mode - Appears to deadlock, causing the CountdownLatch timeout to fire. | priority | testcontinuouspushreplicationgoesidle fails only in release mode appears to deadlock causing the countdownlatch timeout to fire | 1 |

128,266 | 5,051,962,927 | IssuesEvent | 2016-12-20 23:48:39 | vanowm/MasterPasswordPlus | https://api.github.com/repos/vanowm/MasterPasswordPlus | closed | Replace pre-prompt with prompt. | auto-migrated enhancement Priority-Medium | ```

I would like to see "Click here or press any key to unlock" replaced with the

next step "Please enter the master password...etc...etc..."

My idea is to use only one screen with "enter password to unlock".

If used regularly there is two passwords: startup, lock. Lock has extra key (or

click).

To me it seems this is a mental hindrance, more steps than necessary.

```

Original issue reported on code.google.com by `flipyali...@gmail.com` on 16 Jul 2015 at 8:46

| 1.0 | Replace pre-prompt with prompt. - ```

I would like to see "Click here or press any key to unlock" replaced with the

next step "Please enter the master password...etc...etc..."

My idea is to use only one screen with "enter password to unlock".

If used regularly there is two passwords: startup, lock. Lock has extra key (or

click).

To me it seems this is a mental hindrance, more steps than necessary.

```

Original issue reported on code.google.com by `flipyali...@gmail.com` on 16 Jul 2015 at 8:46

| priority | replace pre prompt with prompt i would like to see click here or press any key to unlock replaced with the next step please enter the master password etc etc my idea is to use only one screen with enter password to unlock if used regularly there is two passwords startup lock lock has extra key or click to me it seems this is a mental hindrance more steps than necessary original issue reported on code google com by flipyali gmail com on jul at | 1 |

265,543 | 8,355,737,742 | IssuesEvent | 2018-10-02 16:27:41 | otrv4/pidgin-otrng | https://api.github.com/repos/otrv4/pidgin-otrng | opened | Fingerprint verification - the privacy status changes too early | bug medium priority | When doing manual fingerprint verification, you switch the drop down from "I have not" to "I have". At this point the plugin will switch the privacy status to "Private", print this to the conversation window, and print "trusted" in the otr4.fingerprints file. This all happens BEFORE the "authenticate" button has been pressed.

Correct behavior should be to not do anything until the "authenticate" button is pressed. | 1.0 | Fingerprint verification - the privacy status changes too early - When doing manual fingerprint verification, you switch the drop down from "I have not" to "I have". At this point the plugin will switch the privacy status to "Private", print this to the conversation window, and print "trusted" in the otr4.fingerprints file. This all happens BEFORE the "authenticate" button has been pressed.

Correct behavior should be to not do anything until the "authenticate" button is pressed. | priority | fingerprint verification the privacy status changes too early when doing manual fingerprint verification you switch the drop down from i have not to i have at this point the plugin will switch the privacy status to private print this to the conversation window and print trusted in the fingerprints file this all happens before the authenticate button has been pressed correct behavior should be to not do anything until the authenticate button is pressed | 1 |

271,877 | 8,491,769,168 | IssuesEvent | 2018-10-27 16:18:25 | INET-Complexity/housing-model | https://api.github.com/repos/INET-Complexity/housing-model | closed | Averaging of time on market to consider different quality bands | enhancement high-priority medium-time | This is mostly for consistency with the averaging of sale prices that household look at when making their decisions. | 1.0 | Averaging of time on market to consider different quality bands - This is mostly for consistency with the averaging of sale prices that household look at when making their decisions. | priority | averaging of time on market to consider different quality bands this is mostly for consistency with the averaging of sale prices that household look at when making their decisions | 1 |

666,449 | 22,356,059,140 | IssuesEvent | 2022-06-15 15:44:26 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | opened | Review 2D/3D switch in the map viewer | enhancement Priority: Medium | ## Description

<!-- A few sentences describing new feature -->

<!-- screenshot, video, or link to mockup/prototype are welcome -->

The switch mechanism between 2D/3D in the map viewer is related to the mapType path inside the viewer url and this information is not persisted in the map config.

Some map could contain layer only visible in a specific context, for example the 3D tiles layers that could be available only in a 3D scene. In this specific case it would be nice to be able to save the map with the information related to the current map type in use.

- [ ] Find a solution to store this property in the state. Should we store it as 2D/3D flag or be explicit with the map type in use (oprnlayers, leaflet, cesium)? In some case we switch the 2d library based on device

- [ ] Evaluate if it makes sense to deprecate the /viewer/:mapType/:mapId in favor of /viewer/:mapId where the mapType is managed only via confing or internal state

- [ ] Review if query params needs some adjusment based on previous improvement. In particular if we need to be explicit with a map_type param or guess the map type based on the query content

**What kind of improvement you want to add?** (check one with "x", remove the others)

- [x] Minor changes to existing features

## Other useful information

see https://github.com/geosolutions-it/MapStore2/pull/8320#pullrequestreview-1007647980

| 1.0 | Review 2D/3D switch in the map viewer - ## Description

<!-- A few sentences describing new feature -->

<!-- screenshot, video, or link to mockup/prototype are welcome -->

The switch mechanism between 2D/3D in the map viewer is related to the mapType path inside the viewer url and this information is not persisted in the map config.

Some map could contain layer only visible in a specific context, for example the 3D tiles layers that could be available only in a 3D scene. In this specific case it would be nice to be able to save the map with the information related to the current map type in use.

- [ ] Find a solution to store this property in the state. Should we store it as 2D/3D flag or be explicit with the map type in use (oprnlayers, leaflet, cesium)? In some case we switch the 2d library based on device

- [ ] Evaluate if it makes sense to deprecate the /viewer/:mapType/:mapId in favor of /viewer/:mapId where the mapType is managed only via confing or internal state

- [ ] Review if query params needs some adjusment based on previous improvement. In particular if we need to be explicit with a map_type param or guess the map type based on the query content

**What kind of improvement you want to add?** (check one with "x", remove the others)

- [x] Minor changes to existing features

## Other useful information

see https://github.com/geosolutions-it/MapStore2/pull/8320#pullrequestreview-1007647980

| priority | review switch in the map viewer description the switch mechanism between in the map viewer is related to the maptype path inside the viewer url and this information is not persisted in the map config some map could contain layer only visible in a specific context for example the tiles layers that could be available only in a scene in this specific case it would be nice to be able to save the map with the information related to the current map type in use find a solution to store this property in the state should we store it as flag or be explicit with the map type in use oprnlayers leaflet cesium in some case we switch the library based on device evaluate if it makes sense to deprecate the viewer maptype mapid in favor of viewer mapid where the maptype is managed only via confing or internal state review if query params needs some adjusment based on previous improvement in particular if we need to be explicit with a map type param or guess the map type based on the query content what kind of improvement you want to add check one with x remove the others minor changes to existing features other useful information see | 1 |

195,874 | 6,919,452,162 | IssuesEvent | 2017-11-29 15:30:34 | uracreative/task-management | https://api.github.com/repos/uracreative/task-management | opened | Blog post: working in the open | Internal: Social Media Internal: Website Priority: Medium | Following the discussion and decisions during our work week regarding our way of working in the open, please proceed with a blog post explaining how our new approach about working in the open is now defined. Please also work with @AnXh3L0 on the visuals needed for the blog post.

Deadline: 20.12.2017 | 1.0 | Blog post: working in the open - Following the discussion and decisions during our work week regarding our way of working in the open, please proceed with a blog post explaining how our new approach about working in the open is now defined. Please also work with @AnXh3L0 on the visuals needed for the blog post.

Deadline: 20.12.2017 | priority | blog post working in the open following the discussion and decisions during our work week regarding our way of working in the open please proceed with a blog post explaining how our new approach about working in the open is now defined please also work with on the visuals needed for the blog post deadline | 1 |

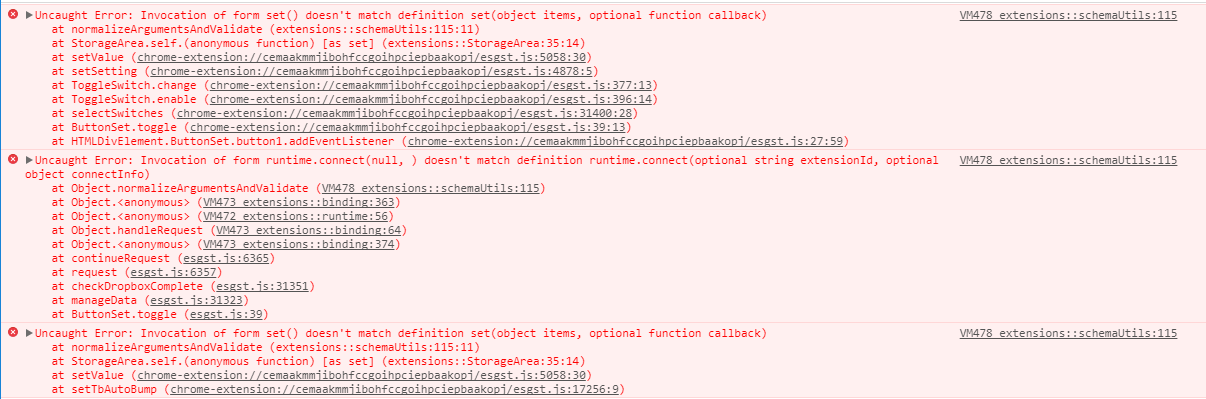

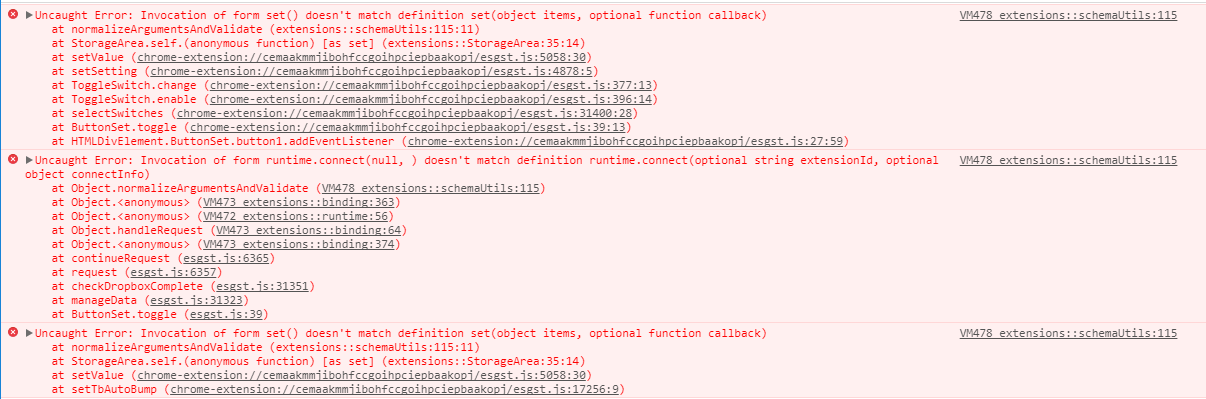

189,611 | 6,799,909,498 | IssuesEvent | 2017-11-02 12:08:10 | revilheart/ESGST | https://api.github.com/repos/revilheart/ESGST | closed | Exporting to dropbox - errors | Enhancement Medium Priority | I am not able to export all the settings to dropbox anymore. In the attached image, you can see three errors.

First is when I clicked on Select all.

The second is when I clicked on Export (Dropbox.)

Third is after a few minutes of importing.

| 1.0 | Exporting to dropbox - errors - I am not able to export all the settings to dropbox anymore. In the attached image, you can see three errors.

First is when I clicked on Select all.

The second is when I clicked on Export (Dropbox.)

Third is after a few minutes of importing.

| priority | exporting to dropbox errors i am not able to export all the settings to dropbox anymore in the attached image you can see three errors first is when i clicked on select all the second is when i clicked on export dropbox third is after a few minutes of importing | 1 |

193,811 | 6,888,215,500 | IssuesEvent | 2017-11-22 04:19:12 | vedantswain/vedantswain.github.io | https://api.github.com/repos/vedantswain/vedantswain.github.io | closed | Remove stupid staircase lists | enhancement priority: medium | They aren't responsive, and add little to progression. Consider replacing with unordered/ordered list. | 1.0 | Remove stupid staircase lists - They aren't responsive, and add little to progression. Consider replacing with unordered/ordered list. | priority | remove stupid staircase lists they aren t responsive and add little to progression consider replacing with unordered ordered list | 1 |

468,461 | 13,483,390,822 | IssuesEvent | 2020-09-11 03:48:12 | dmwm/WMCore | https://api.github.com/repos/dmwm/WMCore | closed | Use Rucio list_dataset_replicas with a list of DIDs instead of single DID calls | Enhancement Feature change Medium Priority Rucio Transition WMAgent WorkQueue | **Impact of the new feature**

WMCore in general

**Is your feature request related to a problem? Please describe.**

Now that Rucio supports listing dataset replicas in bulk, as reported in this issue:

https://github.com/rucio/rucio/issues/2459

made available through this client API `list_dataset_replicas_bulk`.

We should update the Rucio wrapper `getReplicaInfoForBlocks` method and make HTTP calls in bulk, instead of making single block HTTP calls.

**Describe the solution you'd like**

Once we have a list of blocks to list their replicas, use the `list_dataset_replicas_bulk` client API to retrieve replicas for all of them with a single HTTP call.

This change should be transparent, but we better tag Stefano once a solution gets implemented.

**Describe alternatives you've considered**

not touch anything and keep hitting the Rucio server with many "unneeded" calls.

**Additional context**

none

| 1.0 | Use Rucio list_dataset_replicas with a list of DIDs instead of single DID calls - **Impact of the new feature**

WMCore in general

**Is your feature request related to a problem? Please describe.**

Now that Rucio supports listing dataset replicas in bulk, as reported in this issue:

https://github.com/rucio/rucio/issues/2459

made available through this client API `list_dataset_replicas_bulk`.

We should update the Rucio wrapper `getReplicaInfoForBlocks` method and make HTTP calls in bulk, instead of making single block HTTP calls.

**Describe the solution you'd like**

Once we have a list of blocks to list their replicas, use the `list_dataset_replicas_bulk` client API to retrieve replicas for all of them with a single HTTP call.

This change should be transparent, but we better tag Stefano once a solution gets implemented.

**Describe alternatives you've considered**

not touch anything and keep hitting the Rucio server with many "unneeded" calls.

**Additional context**

none

| priority | use rucio list dataset replicas with a list of dids instead of single did calls impact of the new feature wmcore in general is your feature request related to a problem please describe now that rucio supports listing dataset replicas in bulk as reported in this issue made available through this client api list dataset replicas bulk we should update the rucio wrapper getreplicainfoforblocks method and make http calls in bulk instead of making single block http calls describe the solution you d like once we have a list of blocks to list their replicas use the list dataset replicas bulk client api to retrieve replicas for all of them with a single http call this change should be transparent but we better tag stefano once a solution gets implemented describe alternatives you ve considered not touch anything and keep hitting the rucio server with many unneeded calls additional context none | 1 |

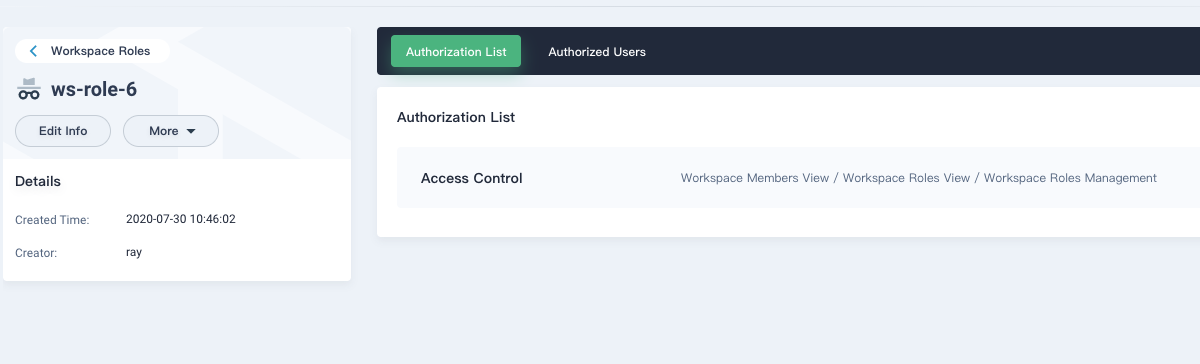

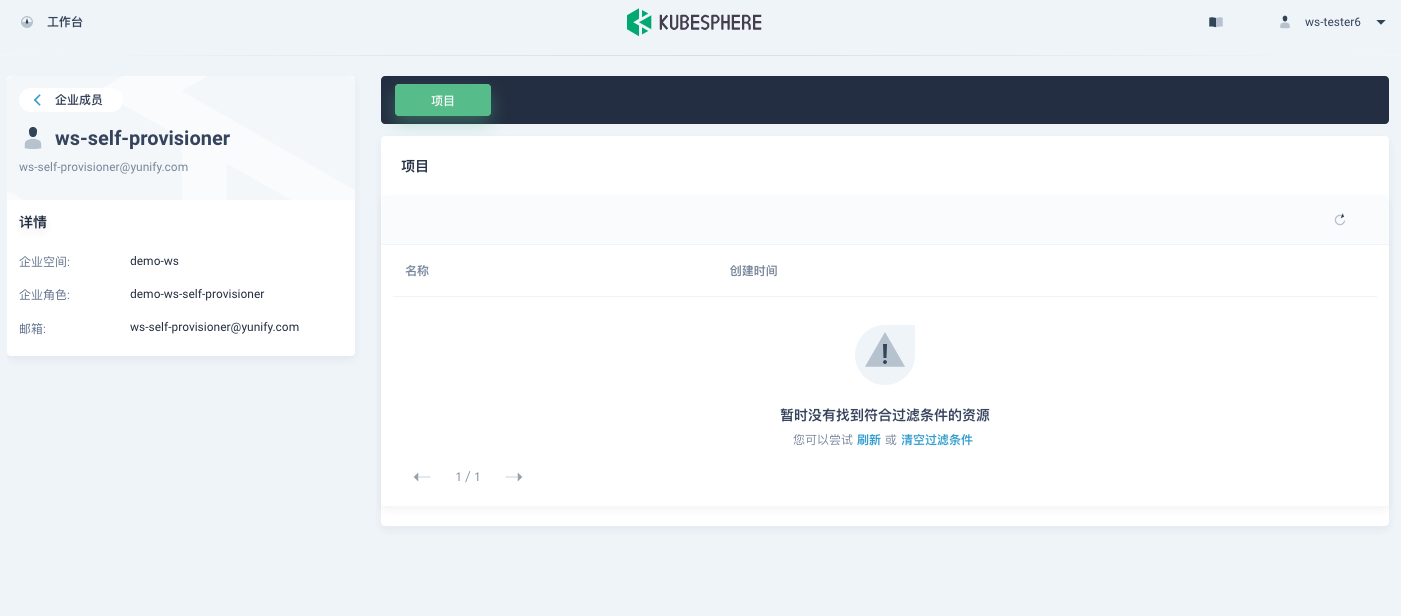

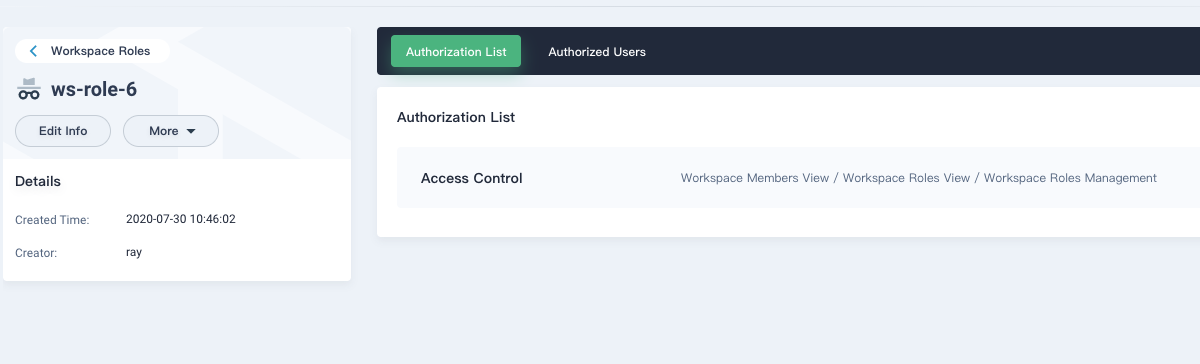

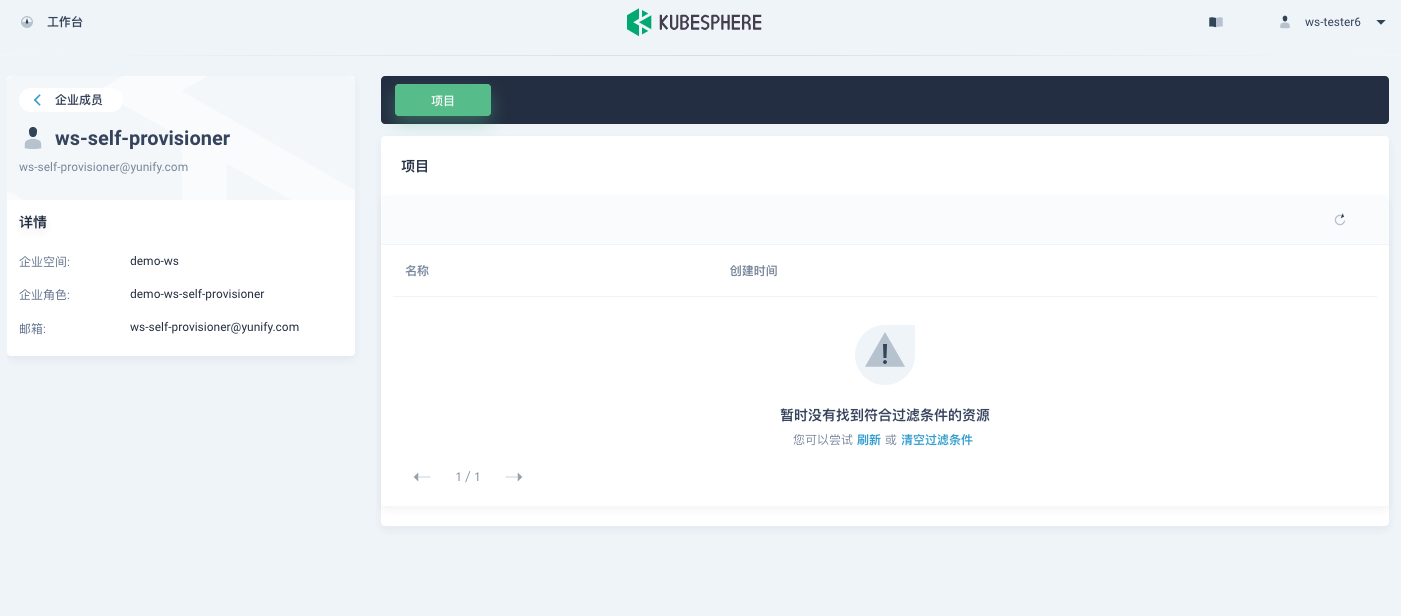

452,569 | 13,055,915,683 | IssuesEvent | 2020-07-30 03:06:35 | kubesphere/console | https://api.github.com/repos/kubesphere/console | opened | ws custom role missing info | area/console area/iam kind/bug priority/medium | **Describe the bug**

`ws-tester6` is the user with the custom role of Workspace Members View / Workspace Roles View / Workspace Roles Management. Use `ws-tester6` to log in and check the user `ws-self-provisioner` but found not project over there. Actually `ws-self-provisioner` is the admin of a project.

**Versions used(KubeSphere/Kubernetes)**

KubeSphere: 3.0.0-dev | 1.0 | ws custom role missing info - **Describe the bug**

`ws-tester6` is the user with the custom role of Workspace Members View / Workspace Roles View / Workspace Roles Management. Use `ws-tester6` to log in and check the user `ws-self-provisioner` but found not project over there. Actually `ws-self-provisioner` is the admin of a project.

**Versions used(KubeSphere/Kubernetes)**

KubeSphere: 3.0.0-dev | priority | ws custom role missing info describe the bug ws is the user with the custom role of workspace members view workspace roles view workspace roles management use ws to log in and check the user ws self provisioner but found not project over there actually ws self provisioner is the admin of a project versions used kubesphere kubernetes kubesphere dev | 1 |

45,881 | 2,941,817,117 | IssuesEvent | 2015-07-02 10:25:51 | google/google-api-dotnet-client | https://api.github.com/repos/google/google-api-dotnet-client | closed | Google Analytics in mvc | auto-migrated Component-Samples Priority-Medium | ```

public IList<int> GetStats()

{

string scope = AnalyticsService.Scopes.AnalyticsReadonly.GetStringValue();

//UPDATE this to match your developer account address. Note, you also need to add this address

//as a user on your Google Analytics profile which you want to extract data from (this may take

//up to 15 mins to recognise)

//string client_id = "493694130884-m0jlcf6dabtnnjf5ji3jpfk5uh1m5ose@developer.gserviceaccount.com";

string client_id = "372469488083-8imjoam8une71sevtmn3urtvulq9kcom.apps.googleusercontent.com";

//836224293376-3524as8ngv6jia4l9qsf7dd4snr1utds@developer.gserviceaccount.com

//UPDATE this to match the path to your certificate

//string key_file = @"E:\8678acc035aa1965cbf36543dcf30f330c7549d2-privatekey.p12";

string key_file = @"E:\7b6826181f30240ce74035b2d09d6c2869885b6c-privatekey.p12";

//ddd39a925dad7f817b609931ddd91cbf03aa7e81-privatekey

//string key_pass = "notasecret";

string key_pass = "notasecret";

AuthorizationServerDescription desc = GoogleAuthenticationServer.Description;

X509Certificate2 key = new X509Certificate2(key_file, key_pass, X509KeyStorageFlags.Exportable);

AssertionFlowClient client =

new AssertionFlowClient(desc, key) { ServiceAccountId = client_id, Scope = scope };

OAuth2Authenticator<AssertionFlowClient> auth =

new OAuth2Authenticator<AssertionFlowClient>(client, AssertionFlowClient.GetState);

AnalyticsService gas = new AnalyticsService(new BaseClientService.Initializer() { Authenticator = auth });

//UPDATE the ga:nnnnnnnn string to match your profile Id from Google Analytics

DataResource.GaResource.GetRequest r =

gas.Data.Ga.Get("ga:88028792", "2014-01-01", "2014-07-31", "ga:visitors");

r.Dimensions = "ga:pagePath";

r.Sort = "-ga:visitors";

r.MaxResults = 5;

GaData d = r.Execute();

IList<int> stats = new List<int>();

for (int y = 0; y < d.Rows.Count; y++)

{

stats.Add(Convert.ToInt32(d.Rows[y][1]));

}

return stats;

}

This is giving error of the auth.token (server error 404)

```

Original issue reported on code.google.com by `rakhi001...@gmail.com` on 3 Jul 2014 at 10:09 | 1.0 | Google Analytics in mvc - ```

public IList<int> GetStats()

{

string scope = AnalyticsService.Scopes.AnalyticsReadonly.GetStringValue();

//UPDATE this to match your developer account address. Note, you also need to add this address

//as a user on your Google Analytics profile which you want to extract data from (this may take

//up to 15 mins to recognise)

//string client_id = "493694130884-m0jlcf6dabtnnjf5ji3jpfk5uh1m5ose@developer.gserviceaccount.com";

string client_id = "372469488083-8imjoam8une71sevtmn3urtvulq9kcom.apps.googleusercontent.com";

//836224293376-3524as8ngv6jia4l9qsf7dd4snr1utds@developer.gserviceaccount.com

//UPDATE this to match the path to your certificate

//string key_file = @"E:\8678acc035aa1965cbf36543dcf30f330c7549d2-privatekey.p12";

string key_file = @"E:\7b6826181f30240ce74035b2d09d6c2869885b6c-privatekey.p12";

//ddd39a925dad7f817b609931ddd91cbf03aa7e81-privatekey

//string key_pass = "notasecret";

string key_pass = "notasecret";

AuthorizationServerDescription desc = GoogleAuthenticationServer.Description;

X509Certificate2 key = new X509Certificate2(key_file, key_pass, X509KeyStorageFlags.Exportable);

AssertionFlowClient client =

new AssertionFlowClient(desc, key) { ServiceAccountId = client_id, Scope = scope };

OAuth2Authenticator<AssertionFlowClient> auth =

new OAuth2Authenticator<AssertionFlowClient>(client, AssertionFlowClient.GetState);

AnalyticsService gas = new AnalyticsService(new BaseClientService.Initializer() { Authenticator = auth });

//UPDATE the ga:nnnnnnnn string to match your profile Id from Google Analytics

DataResource.GaResource.GetRequest r =

gas.Data.Ga.Get("ga:88028792", "2014-01-01", "2014-07-31", "ga:visitors");

r.Dimensions = "ga:pagePath";

r.Sort = "-ga:visitors";

r.MaxResults = 5;

GaData d = r.Execute();

IList<int> stats = new List<int>();

for (int y = 0; y < d.Rows.Count; y++)

{

stats.Add(Convert.ToInt32(d.Rows[y][1]));

}

return stats;

}

This is giving error of the auth.token (server error 404)

```

Original issue reported on code.google.com by `rakhi001...@gmail.com` on 3 Jul 2014 at 10:09 | priority | google analytics in mvc public ilist getstats string scope analyticsservice scopes analyticsreadonly getstringvalue update this to match your developer account address note you also need to add this address as a user on your google analytics profile which you want to extract data from this may take up to mins to recognise string client id developer gserviceaccount com string client id apps googleusercontent com developer gserviceaccount com update this to match the path to your certificate string key file e privatekey string key file e privatekey privatekey string key pass notasecret string key pass notasecret authorizationserverdescription desc googleauthenticationserver description key new key file key pass exportable assertionflowclient client new assertionflowclient desc key serviceaccountid client id scope scope auth new client assertionflowclient getstate analyticsservice gas new analyticsservice new baseclientservice initializer authenticator auth update the ga nnnnnnnn string to match your profile id from google analytics dataresource garesource getrequest r gas data ga get ga ga visitors r dimensions ga pagepath r sort ga visitors r maxresults gadata d r execute ilist stats new list for int y y d rows count y stats add convert d rows return stats this is giving error of the auth token server error original issue reported on code google com by gmail com on jul at | 1 |

598,510 | 18,246,649,371 | IssuesEvent | 2021-10-01 19:23:33 | fosscord/fosscord-server | https://api.github.com/repos/fosscord/fosscord-server | opened | Admin API/Controlled accounts route: PUT /users/ | enhancement route api medium priority admin dashboard | This route shall create a pre-prepared user with the details supplied in the JSON body. Request body shall follow the same format as the one returned by `GET /users/@me`.

Use restricted to server operators and account controllers. | 1.0 | Admin API/Controlled accounts route: PUT /users/ - This route shall create a pre-prepared user with the details supplied in the JSON body. Request body shall follow the same format as the one returned by `GET /users/@me`.

Use restricted to server operators and account controllers. | priority | admin api controlled accounts route put users this route shall create a pre prepared user with the details supplied in the json body request body shall follow the same format as the one returned by get users me use restricted to server operators and account controllers | 1 |