Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

142,869 | 5,478,171,421 | IssuesEvent | 2017-03-12 15:45:53 | benvenutti/hasm | https://api.github.com/repos/benvenutti/hasm | closed | Add clang 3.6 and 3.7 to Travis build. | priority: medium status: completed type: maintenance | Update Travis CI scripts to add support for the aforementioned compilers. | 1.0 | Add clang 3.6 and 3.7 to Travis build. - Update Travis CI scripts to add support for the aforementioned compilers. | priority | add clang and to travis build update travis ci scripts to add support for the aforementioned compilers | 1 |

520,912 | 15,097,241,781 | IssuesEvent | 2021-02-07 17:59:23 | noter-org/noter-client | https://api.github.com/repos/noter-org/noter-client | opened | Add ability for editing comments | priority:medium requires:server-change size:medium | Also show that the comment was edited (maybe if `modified_at` differs from `created_at`) | 1.0 | Add ability for editing comments - Also show that the comment was edited (maybe if `modified_at` differs from `created_at`) | priority | add ability for editing comments also show that the comment was edited maybe if modified at differs from created at | 1 |

660,847 | 22,033,266,365 | IssuesEvent | 2022-05-28 06:56:03 | momentum-mod/game | https://api.github.com/repos/momentum-mod/game | closed | State isn't correctly saved in slide triggers on saveloc | Type: Bug Priority: Medium Size: Small Where: Game | if saving inside of a Slide Trigger ([trigger_momentum_slide](https://docs.momentum-mod.org/entity/trigger_momentum_slide/)), it does not save the properties of you being inside of a Slide Trigger or any effect of the trigger, meaning on teleporting to that save, there is a short time where it does not think you are in... | 1.0 | State isn't correctly saved in slide triggers on saveloc - if saving inside of a Slide Trigger ([trigger_momentum_slide](https://docs.momentum-mod.org/entity/trigger_momentum_slide/)), it does not save the properties of you being inside of a Slide Trigger or any effect of the trigger, meaning on teleporting to that sav... | priority | state isn t correctly saved in slide triggers on saveloc if saving inside of a slide trigger it does not save the properties of you being inside of a slide trigger or any effect of the trigger meaning on teleporting to that save there is a short time where it does not think you are in one so friction takes ov... | 1 |

511,892 | 14,884,484,757 | IssuesEvent | 2021-01-20 14:38:34 | OC-DA-JAVA-PROJETS/P4_PARK_IT | https://api.github.com/repos/OC-DA-JAVA-PROJETS/P4_PARK_IT | closed | STORY#2 : 5%-discount for recurring users | enhancement medium priority | > As a user, I want to get a discount when I use the parking garage regularly.

In order to improve user retention, we decided to offer recurring users a 5% discount every time they come back to our parking lot.

### Description

1. When a user enters the parking garage, they are asked for their license plate num... | 1.0 | STORY#2 : 5%-discount for recurring users - > As a user, I want to get a discount when I use the parking garage regularly.

In order to improve user retention, we decided to offer recurring users a 5% discount every time they come back to our parking lot.

### Description

1. When a user enters the parking garage... | priority | story discount for recurring users as a user i want to get a discount when i use the parking garage regularly in order to improve user retention we decided to offer recurring users a discount every time they come back to our parking lot description when a user enters the parking garage... | 1 |

555,172 | 16,448,479,856 | IssuesEvent | 2021-05-20 23:32:29 | nilearn/nilearn | https://api.github.com/repos/nilearn/nilearn | closed | Axes Cutoff in Example 9.2.15.9 (plotting.plot_img_on_surf) | Bug effort: medium impact: medium priority: high | [`Example 9.2.15.9`](https://nilearn.github.io/auto_examples/01_plotting/plot_3d_map_to_surface_projection.html#plot-multiple-views-of-the-3d-volume-on-a-surface) features a quick plot showing multiple views of a volumetric stat map on an average surface.

- [`Example 9.2.15.9`](https://nilearn.github.io/auto_examples/01_plotting/plot_3d_map_to_surface_projection.html#plot-multiple-views-of-the-3d-volume-on-a-surface) features a quick plot showing multiple views of a volumetric stat map on an average surface.

... | priority | axes cutoff in example plotting plot img on surf features a quick plot showing multiple views of a volumetric stat map on an average surface however the brain is cutoff on both axes picture shown both in the online example and when i use it on nilearn within a jupyter notebook usin... | 1 |

214,119 | 7,266,890,287 | IssuesEvent | 2018-02-20 00:59:42 | ansible/awx | https://api.github.com/repos/ansible/awx | closed | installer inventory should allow 'cmd line'/'run time' over-rides for its playbook default values | component:installer priority:medium state:needs_info type:enhancement | ##### ISSUE TYPE

- Feature Idea

##### COMPONENT NAME

- Installer

##### SUMMARY

the hard coded values for secrets, passwords, ports, directories etc should only use defaults if nothing else has been passed as an argument

##### ENVIRONMENT

* AWX version: 1.0.2

* AWX install method: openshift, minishift, d... | 1.0 | installer inventory should allow 'cmd line'/'run time' over-rides for its playbook default values - ##### ISSUE TYPE

- Feature Idea

##### COMPONENT NAME

- Installer

##### SUMMARY

the hard coded values for secrets, passwords, ports, directories etc should only use defaults if nothing else has been passed as a... | priority | installer inventory should allow cmd line run time over rides for its playbook default values issue type feature idea component name installer summary the hard coded values for secrets passwords ports directories etc should only use defaults if nothing else has been passed as a... | 1 |

213,059 | 7,245,385,068 | IssuesEvent | 2018-02-14 17:54:47 | department-of-veterans-affairs/caseflow-efolder | https://api.github.com/repos/department-of-veterans-affairs/caseflow-efolder | opened | [FE] Make the copy to clipboard function work in react rewrite | bug-medium-priority eFolder Express v2 whiskey | In re-writing the efolder express UI in react, we left a few thing behind. One of those things was the ability to copy the veteran ID to the clipboard by clicking the button in the top right-hand side of the search and download progress pages (pictured below). This [was implemented](https://github.com/department-of-vet... | 1.0 | [FE] Make the copy to clipboard function work in react rewrite - In re-writing the efolder express UI in react, we left a few thing behind. One of those things was the ability to copy the veteran ID to the clipboard by clicking the button in the top right-hand side of the search and download progress pages (pictured be... | priority | make the copy to clipboard function work in react rewrite in re writing the efolder express ui in react we left a few thing behind one of those things was the ability to copy the veteran id to the clipboard by clicking the button in the top right hand side of the search and download progress pages pictured below... | 1 |

25,628 | 2,683,869,206 | IssuesEvent | 2015-03-28 12:07:38 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | conemu 100213 (и раньше): перезагрузка по Ctrl+Alt+Tab | 2–5 stars bug imported Priority-Medium | _From [yury.fin...@gmail.com](https://code.google.com/u/103818921530185261007/) on February 16, 2010 04:17:49_

Версия ОС: Windows XP Home Edition SP2

Версия FAR: 2.0 build 1400

С тех пор как conemu стал отлавливать открепление окна FAR'а (по

Ctrl+Alt+Tab), у меня _на одной машине_ при попытке это сделать происход... | 1.0 | conemu 100213 (и раньше): перезагрузка по Ctrl+Alt+Tab - _From [yury.fin...@gmail.com](https://code.google.com/u/103818921530185261007/) on February 16, 2010 04:17:49_

Версия ОС: Windows XP Home Edition SP2

Версия FAR: 2.0 build 1400

С тех пор как conemu стал отлавливать открепление окна FAR'а (по

Ctrl+Alt+Tab), ... | priority | conemu и раньше перезагрузка по ctrl alt tab from on february версия ос windows xp home edition версия far build с тех пор как conemu стал отлавливать открепление окна far а по ctrl alt tab у меня на одной машине при попытке это сделать происходит перезагрузка в случ... | 1 |

155,113 | 5,949,305,186 | IssuesEvent | 2017-05-26 13:58:08 | ciena-frost/ember-frost-core | https://api.github.com/repos/ciena-frost/ember-frost-core | closed | Move blueprint generators to a new `ember-cli-frost-blueprints` repo | enhancement Low priority Medium cost | We've got a way to integration test our blueprint generators now, but it's rather slow, so let's move that code into a separate repo so that tests on it don't get run unless the actual blueprints are changing. | 1.0 | Move blueprint generators to a new `ember-cli-frost-blueprints` repo - We've got a way to integration test our blueprint generators now, but it's rather slow, so let's move that code into a separate repo so that tests on it don't get run unless the actual blueprints are changing. | priority | move blueprint generators to a new ember cli frost blueprints repo we ve got a way to integration test our blueprint generators now but it s rather slow so let s move that code into a separate repo so that tests on it don t get run unless the actual blueprints are changing | 1 |

672,781 | 22,840,760,469 | IssuesEvent | 2022-07-12 21:34:06 | codeforbtv/green-up-app | https://api.github.com/repos/codeforbtv/green-up-app | closed | Failing to set a profile photo | Type: Bug Priority: Medium | **Describe the bug**

Failing to set a profile photo

**To Reproduce**

1. Got to Menu --> My Profile

2. Tap the person icon next to the name

3. Choose a photo from photo library

4. Save Profile

5. Go back to "My Profile": Photo doesn't show

**Expected behavior**

Saved photo shows up in the profile

**App V... | 1.0 | Failing to set a profile photo - **Describe the bug**

Failing to set a profile photo

**To Reproduce**

1. Got to Menu --> My Profile

2. Tap the person icon next to the name

3. Choose a photo from photo library

4. Save Profile

5. Go back to "My Profile": Photo doesn't show

**Expected behavior**

Saved photo s... | priority | failing to set a profile photo describe the bug failing to set a profile photo to reproduce got to menu my profile tap the person icon next to the name choose a photo from photo library save profile go back to my profile photo doesn t show expected behavior saved photo s... | 1 |

426,733 | 12,378,817,133 | IssuesEvent | 2020-05-19 11:23:05 | threefoldtech/3bot_wallet | https://api.github.com/repos/threefoldtech/3bot_wallet | closed | Stellar Staging - When not entering a message, validation is incorrect | priority_medium type_bug | 1) Restart the wallet

2) Send a transaction without message

Expected:

Works, message should be optional

Actual:

Sometimes it works, but after a restart it is mandatory again, with an incorrect error.

Restart the wallet

2) Send a transaction without message

Expected:

Works, message should be optional

Actual:

Sometimes it works, but after a restart it is mandatory again, with an incorrect error.

, I have to download whole file from start to requested

position (or whole file to end without on-demand feature).

* What is the expected output? What do you see instead?

Many file formats (m... | 1.0 | RemoteFileBuffer range requests feature - ```

* What steps will reproduce the problem?

When I need just part of file stored on remote system (FS using

RemoteFileBuffer), I have to download whole file from start to requested

position (or whole file to end without on-demand feature).

* What is the expected output? Wha... | priority | remotefilebuffer range requests feature what steps will reproduce the problem when i need just part of file stored on remote system fs using remotefilebuffer i have to download whole file from start to requested position or whole file to end without on demand feature what is the expected output wha... | 1 |

188,063 | 6,767,976,918 | IssuesEvent | 2017-10-26 06:57:47 | edenlabllc/ehealth.api | https://api.github.com/repos/edenlabllc/ehealth.api | closed | OTP SMS delivery metrics | epic/sms kind/user_story priority/medium status/wontfix | We should have a metrics for SMS delivery process

* Succesful/unsuccessful SMS submissions stats

* Undelivered SMS

* SMS delivery latency

- [ ] integration with life report to store counters

- [ ] new metrics on datadog

https://docs.google.com/spreadsheets/d/1X1gQEWQc02loG1OtNRZzzuN3NssLRoESIgpn-aRDMPQ/edit... | 1.0 | OTP SMS delivery metrics - We should have a metrics for SMS delivery process

* Succesful/unsuccessful SMS submissions stats

* Undelivered SMS

* SMS delivery latency

- [ ] integration with life report to store counters

- [ ] new metrics on datadog

https://docs.google.com/spreadsheets/d/1X1gQEWQc02loG1OtNRZzz... | priority | otp sms delivery metrics we should have a metrics for sms delivery process succesful unsuccessful sms submissions stats undelivered sms sms delivery latency integration with life report to store counters new metrics on datadog | 1 |

154,957 | 5,945,806,342 | IssuesEvent | 2017-05-26 00:17:31 | slackapi/node-slack-sdk | https://api.github.com/repos/slackapi/node-slack-sdk | closed | Confusing implications of "UNABLE_TO_RTM_START" | bug Priority—Medium | I think https://github.com/slackhq/node-slack-sdk/blob/master/lib/clients/rtm/client.js#L348 should be some event other than `UNABLE_TO_RTM_START`.

here: https://github.com/slackhq/node-slack-sdk/blob/master/lib/clients/rtm/client.js#L258, an `UNABLE_TO_RTM_START` message is published, but that doesn't mean anything d... | 1.0 | Confusing implications of "UNABLE_TO_RTM_START" - I think https://github.com/slackhq/node-slack-sdk/blob/master/lib/clients/rtm/client.js#L348 should be some event other than `UNABLE_TO_RTM_START`.

here: https://github.com/slackhq/node-slack-sdk/blob/master/lib/clients/rtm/client.js#L258, an `UNABLE_TO_RTM_START` mess... | priority | confusing implications of unable to rtm start i think should be some event other than unable to rtm start here an unable to rtm start message is published but that doesn t mean anything definitively if it happens to be an error it can t recover from disconnect is later published if autoreconnect ... | 1 |

88,076 | 3,771,312,560 | IssuesEvent | 2016-03-16 17:12:30 | cs2103jan2016-f13-1j/main | https://api.github.com/repos/cs2103jan2016-f13-1j/main | closed | A user can add task by entering flexible commands | priority.medium type.story | so that task can be added easily without caring too much about typos | 1.0 | A user can add task by entering flexible commands - so that task can be added easily without caring too much about typos | priority | a user can add task by entering flexible commands so that task can be added easily without caring too much about typos | 1 |

729,114 | 25,109,788,437 | IssuesEvent | 2022-11-08 19:30:07 | teogor/ceres | https://api.github.com/repos/teogor/ceres | closed | Implement `Toolbar` compatible with M3 Guidelines | @priority-medium @feature m3 | Implement `Toolbar` compatible with M3 Guidelines as follows:

- when content is at top the color should be the same as the background (alpha 5%)

- else the color should be lighter by 2 levels (alpha 11%) | 1.0 | Implement `Toolbar` compatible with M3 Guidelines - Implement `Toolbar` compatible with M3 Guidelines as follows:

- when content is at top the color should be the same as the background (alpha 5%)

- else the color should be lighter by 2 levels (alpha 11%) | priority | implement toolbar compatible with guidelines implement toolbar compatible with guidelines as follows when content is at top the color should be the same as the background alpha else the color should be lighter by levels alpha | 1 |

993 | 2,506,547,014 | IssuesEvent | 2015-01-12 11:39:00 | WeAreAthlon/silla.io | https://api.github.com/repos/WeAreAthlon/silla.io | opened | Create a database Filter object | feature medium priority | This will be used for setting a conditions on queries to the database. | 1.0 | Create a database Filter object - This will be used for setting a conditions on queries to the database. | priority | create a database filter object this will be used for setting a conditions on queries to the database | 1 |

587,475 | 17,617,060,168 | IssuesEvent | 2021-08-18 11:04:23 | nimblehq/nimble-medium-ios | https://api.github.com/repos/nimblehq/nimble-medium-ios | opened | As a user, I can create a new article from the home screen | type : feature category: integration priority : medium | ## Why

When the users logged in the application successfully, they can create a new article from the `Home` screen.

## Acceptance Criteria

- [ ] When the users tap on the create new article button in the top right navigation bar of the `Home` screen, navigate to the `New Article` screen.

- [ ] Once in the `New Ar... | 1.0 | As a user, I can create a new article from the home screen - ## Why

When the users logged in the application successfully, they can create a new article from the `Home` screen.

## Acceptance Criteria

- [ ] When the users tap on the create new article button in the top right navigation bar of the `Home` screen, nav... | priority | as a user i can create a new article from the home screen why when the users logged in the application successfully they can create a new article from the home screen acceptance criteria when the users tap on the create new article button in the top right navigation bar of the home screen navig... | 1 |

588,560 | 17,662,432,692 | IssuesEvent | 2021-08-21 19:48:00 | ZsgsDesign/NOJ | https://api.github.com/repos/ZsgsDesign/NOJ | opened | Native VSCode Theme Support | New Feature Priority 3 (Medium) | Now NOJ Editor only supports the built-in `Monach` themes.

We are going to support native VSCode themes in the next version.

Also, the next version would come with a brand new Language Service, including support for language configuration similar to VSCode and grammar analysis based on TextMate grammar. | 1.0 | Native VSCode Theme Support - Now NOJ Editor only supports the built-in `Monach` themes.

We are going to support native VSCode themes in the next version.

Also, the next version would come with a brand new Language Service, including support for language configuration similar to VSCode and grammar analysis based ... | priority | native vscode theme support now noj editor only supports the built in monach themes we are going to support native vscode themes in the next version also the next version would come with a brand new language service including support for language configuration similar to vscode and grammar analysis based ... | 1 |

428,864 | 12,418,375,502 | IssuesEvent | 2020-05-23 00:01:27 | rubyforgood/casa | https://api.github.com/repos/rubyforgood/casa | closed | Admin view/edit case page should include volunteer/s assigned to the case | :crown: Admin Priority: Medium Status: Available help wanted | Part of epic #4 (Admin Dashboard)

**What type of user is this for? [volunteer/supervisor/admin/all]**

This feature is for **admins** and should not change what non-admins can see or do.

**Where does/should this occur?**

Currently, [in the admin view/edit case view](https://casa-r4g-staging.herokuapp.com/casa_c... | 1.0 | Admin view/edit case page should include volunteer/s assigned to the case - Part of epic #4 (Admin Dashboard)

**What type of user is this for? [volunteer/supervisor/admin/all]**

This feature is for **admins** and should not change what non-admins can see or do.

**Where does/should this occur?**

Currently, [in ... | priority | admin view edit case page should include volunteer s assigned to the case part of epic admin dashboard what type of user is this for this feature is for admins and should not change what non admins can see or do where does should this occur currently admins only see case info ... | 1 |

565,436 | 16,761,265,247 | IssuesEvent | 2021-06-13 20:49:26 | peering-manager/peering-manager | https://api.github.com/repos/peering-manager/peering-manager | closed | Adding IX Peering Sessions manually from the AS page | priority: medium type: enhancement | ### Environment

* Python version: 3.9.0

* Peering Manager version: 7ba396768ebc (v1.2.1)

### Proposed Functionality

Make the IX Peering Sessions tab for an AS always visible and add an Add button to it to create IX Peering Sessions manually.

### Use Case

When a peer doesn't have any IX peering sessions yet ... | 1.0 | Adding IX Peering Sessions manually from the AS page - ### Environment

* Python version: 3.9.0

* Peering Manager version: 7ba396768ebc (v1.2.1)

### Proposed Functionality

Make the IX Peering Sessions tab for an AS always visible and add an Add button to it to create IX Peering Sessions manually.

### Use Case... | priority | adding ix peering sessions manually from the as page environment python version peering manager version proposed functionality make the ix peering sessions tab for an as always visible and add an add button to it to create ix peering sessions manually use case when a pee... | 1 |

404,481 | 11,858,131,290 | IssuesEvent | 2020-03-25 10:51:10 | cpeditor/cpeditor | https://api.github.com/repos/cpeditor/cpeditor | closed | Support `cf parse` and `cf race` | enhancement help wanted medium_priority | **Is your feature request related to a problem? Please describe.**

When we use "open contest option",we open multiple files say A,B,C..etc.But when we parse contest using competitive companion,the new tabs of those questions A,B,C gets created.

**Describe the solution you'd like**

My request is to add a feature so... | 1.0 | Support `cf parse` and `cf race` - **Is your feature request related to a problem? Please describe.**

When we use "open contest option",we open multiple files say A,B,C..etc.But when we parse contest using competitive companion,the new tabs of those questions A,B,C gets created.

**Describe the solution you'd like**... | priority | support cf parse and cf race is your feature request related to a problem please describe when we use open contest option we open multiple files say a b c etc but when we parse contest using competitive companion the new tabs of those questions a b c gets created describe the solution you d like ... | 1 |

831,659 | 32,057,366,124 | IssuesEvent | 2023-09-24 08:40:29 | Seprintour-Test/test | https://api.github.com/repos/Seprintour-Test/test | reopened | Update Documentation On The Ubiquity Readme | Time: <1 Hour Priority: 2 (Medium) Price: 25 USD | modify the documentation on the ubiquity readme

###### [ **[ View on Telegram ]** ](https://t.me/c/1975484291/223) | 1.0 | Update Documentation On The Ubiquity Readme - modify the documentation on the ubiquity readme

###### [ **[ View on Telegram ]** ](https://t.me/c/1975484291/223) | priority | update documentation on the ubiquity readme modify the documentation on the ubiquity readme | 1 |

732,964 | 25,282,486,842 | IssuesEvent | 2022-11-16 16:43:06 | Clan-Attack/Core | https://api.github.com/repos/Clan-Attack/Core | closed | [Enchant]: Extend IPlayer | Priority: Medium Type: Enchant | ### Is your feature request related to a problem?

- [X] Check this if your future request is related to a problem

### Please describe the problem

I needed to get a uuid and name from an `IPlayer`, no way to do so

### Describe the solution you'd like

Extent the `IPlayer` with

- `IPlayer#uuid`

- `IPlayer#name`

- ... | 1.0 | [Enchant]: Extend IPlayer - ### Is your feature request related to a problem?

- [X] Check this if your future request is related to a problem

### Please describe the problem

I needed to get a uuid and name from an `IPlayer`, no way to do so

### Describe the solution you'd like

Extent the `IPlayer` with

- `IPlayer... | priority | extend iplayer is your feature request related to a problem check this if your future request is related to a problem please describe the problem i needed to get a uuid and name from an iplayer no way to do so describe the solution you d like extent the iplayer with iplayer uuid ... | 1 |

796,372 | 28,108,554,407 | IssuesEvent | 2023-03-31 04:23:28 | WordPress/openverse | https://api.github.com/repos/WordPress/openverse | closed | Deployment workflow runs do not show in workflow run history | 🟨 priority: medium 🛠 goal: fix 🤖 aspect: dx 🧱 stack: mgmt | ## Description

<!-- Concisely describe the bug. Compare your experience with what you expected to happen. -->

<!-- For example: "I clicked the 'submit' button and instead of seeing a thank you message, I saw a blank page." -->

Workflow runs triggered by the `workflow_call` apparently do not show up in the workflow... | 1.0 | Deployment workflow runs do not show in workflow run history - ## Description

<!-- Concisely describe the bug. Compare your experience with what you expected to happen. -->

<!-- For example: "I clicked the 'submit' button and instead of seeing a thank you message, I saw a blank page." -->

Workflow runs triggered b... | priority | deployment workflow runs do not show in workflow run history description workflow runs triggered by the workflow call apparently do not show up in the workflow run history this means that staging deployments do not show up in the staging deployment workflow run histories due to their being dispatched v... | 1 |

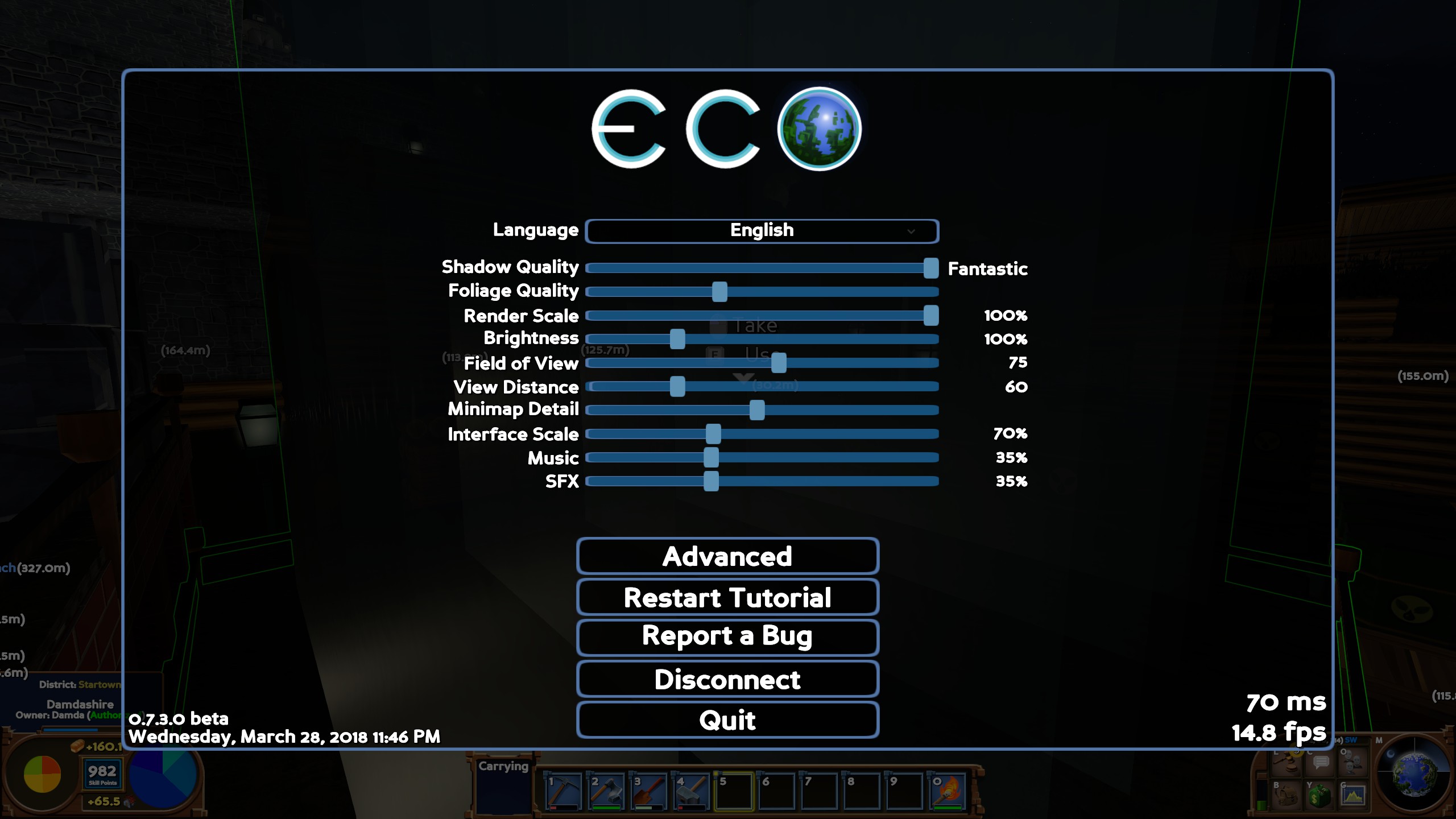

274,001 | 8,555,989,483 | IssuesEvent | 2018-11-08 11:43:25 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | reopened | [7.8.0 #79e33fd9] Placing down a workbench does not fulfill the tutorials requirement anymore | Medium Priority | I tried several times, it does not tick the objective:

<img width="1920" alt="workbench" src="https://user-images.githubusercontent.com/25908592/47251103-e330a400-d42e-11e8-94ec-0b18606e75a3.png">

| 1.0 | [7.8.0 #79e33fd9] Placing down a workbench does not fulfill the tutorials requirement anymore - I tried several times, it does not tick the objective:

<img width="1920" alt="workbench" src="https://user-images.githubusercontent.com/25908592/47251103-e330a400-d42e-11e8-94ec-0b18606e75a3.png">

| priority | placing down a workbench does not fulfill the tutorials requirement anymore i tried several times it does not tick the objective img width alt workbench src | 1 |

599,242 | 18,268,676,579 | IssuesEvent | 2021-10-04 11:30:18 | moducate/heimdall | https://api.github.com/repos/moducate/heimdall | reopened | Split REST/GraphQL endpoints into separate services | Priority: Medium Status: Available Type: Enhancement good first issue Hacktoberfest | Currently, the GraphQL endpoint (`/graphql`) is exposed on the same HTTP service as the REST endpoints (such as `/school`).

To promote more modularity, we should split up GraphQL and REST onto separate HTTP services (ports 1470 and 1471 respectively), with CLI commands to serve either: one of the services, or both a... | 1.0 | Split REST/GraphQL endpoints into separate services - Currently, the GraphQL endpoint (`/graphql`) is exposed on the same HTTP service as the REST endpoints (such as `/school`).

To promote more modularity, we should split up GraphQL and REST onto separate HTTP services (ports 1470 and 1471 respectively), with CLI co... | priority | split rest graphql endpoints into separate services currently the graphql endpoint graphql is exposed on the same http service as the rest endpoints such as school to promote more modularity we should split up graphql and rest onto separate http services ports and respectively with cli commands... | 1 |

30,744 | 2,725,078,287 | IssuesEvent | 2015-04-14 21:27:57 | IQSS/dataverse | https://api.github.com/repos/IQSS/dataverse | closed | Create new account: General ToU show incorrectly | Component: UX & Upgrade Priority: Medium Status: QA Type: Bug | - I think there are either typos or/and formatting issues

- I see many question marks. Don't think thats correct.

| 1.0 | Create new account: General ToU show incorrectly - - I think there are either typos or/and formatting issues

- I see many question marks. Don't think thats correct.

| priority | create new account general tou show incorrectly i think there are either typos or and formatting issues i see many question marks don t think thats correct | 1 |

25,448 | 2,683,802,491 | IssuesEvent | 2015-03-28 10:18:01 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | ConEmu 090620a сворачивание/разворачивание в таск-бар | 1 star bug imported Priority-Medium | _From [alexandr...@gmail.com](https://code.google.com/u/102266525303291005921/) on June 21, 2009 05:10:25_

Версия ОС: Win7

Версия FAR: Far20b1001.x86.20090621

Описание бага:

Запускаю Far (через ConEmu ); всё прекрасно; нажимаю на иконку в таск-баре, окно

сворачивается, нажимаю снова - окно разворачивается, но C... | 1.0 | ConEmu 090620a сворачивание/разворачивание в таск-бар - _From [alexandr...@gmail.com](https://code.google.com/u/102266525303291005921/) on June 21, 2009 05:10:25_

Версия ОС: Win7

Версия FAR: Far20b1001.x86.20090621

Описание бага:

Запускаю Far (через ConEmu ); всё прекрасно; нажимаю на иконку в таск-баре, окно

с... | priority | conemu сворачивание разворачивание в таск бар from on june версия ос версия far описание бага запускаю far через conemu всё прекрасно нажимаю на иконку в таск баре окно сворачивается нажимаю снова окно разворачивается но conemu больше не реагирует ни на что вернее р... | 1 |

29,607 | 2,716,628,253 | IssuesEvent | 2015-04-10 20:20:08 | CruxFramework/crux | https://api.github.com/repos/CruxFramework/crux | closed | [Smart-Faces] Create a Simple View Container | enhancement imported Milestone-M14-C4 Module-CruxSmartFaces Priority-Medium | _From [juli...@cruxframework.org](https://code.google.com/u/108392056359000771618/) on August 27, 2014 14:16:08_

Create a componente simple view container on crux-smart-faces

_Original issue: http://code.google.com/p/crux-framework/issues/detail?id=494_ | 1.0 | [Smart-Faces] Create a Simple View Container - _From [juli...@cruxframework.org](https://code.google.com/u/108392056359000771618/) on August 27, 2014 14:16:08_

Create a componente simple view container on crux-smart-faces

_Original issue: http://code.google.com/p/crux-framework/issues/detail?id=494_ | priority | create a simple view container from on august create a componente simple view container on crux smart faces original issue | 1 |

770,526 | 27,043,384,814 | IssuesEvent | 2023-02-13 07:54:22 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | closed | Rename `adoptium-java` datasource to `java-version` | priority-3-medium type:refactor status:ready | ### What would you like Renovate to be able to do?

We should do this to be in line with our other datasources.

- #20233

### If you have any ideas on how this should be implemented, please tell us here.

just rename and migrate

### Is this a feature you are interested in implementing yourself?

Maybe | 1.0 | Rename `adoptium-java` datasource to `java-version` - ### What would you like Renovate to be able to do?

We should do this to be in line with our other datasources.

- #20233

### If you have any ideas on how this should be implemented, please tell us here.

just rename and migrate

### Is this a feature you are inter... | priority | rename adoptium java datasource to java version what would you like renovate to be able to do we should do this to be in line with our other datasources if you have any ideas on how this should be implemented please tell us here just rename and migrate is this a feature you are intereste... | 1 |

17,051 | 2,615,129,766 | IssuesEvent | 2015-03-01 05:59:30 | chrsmith/google-api-java-client | https://api.github.com/repos/chrsmith/google-api-java-client | closed | GDrive sample for android | auto-migrated Priority-Medium Type-Sample | ```

Which Google API and version (e.g. Google Calendar Data API version 2)?

Google Drive api

What format (e.g. JSON, Atom)?

JSON

Java environment (e.g. Java 6, Android 2.3, App Engine)?

Android

External references, such as API reference guide?

Please provide any additional information below.

```

Original issue r... | 1.0 | GDrive sample for android - ```

Which Google API and version (e.g. Google Calendar Data API version 2)?

Google Drive api

What format (e.g. JSON, Atom)?

JSON

Java environment (e.g. Java 6, Android 2.3, App Engine)?

Android

External references, such as API reference guide?

Please provide any additional information b... | priority | gdrive sample for android which google api and version e g google calendar data api version google drive api what format e g json atom json java environment e g java android app engine android external references such as api reference guide please provide any additional information b... | 1 |

690,083 | 23,645,202,239 | IssuesEvent | 2022-08-25 21:15:09 | bcgov/cas-cif | https://api.github.com/repos/bcgov/cas-cif | closed | Record demo for the upcoming branch meeting | Task Backlog Refinement Medium Priority | #### Describe the task

The next scheduled Branch meeting is scheduled for July 26th. The presentation [slide deck](https://bcgov.sharepoint.com/:p:/t/00608-ScrumTeam/EV6WsJ49vrxMr1ZqMUV7nMYB68gvCWgv8E7NwGkEaQYurg?e=KdVHKc) is done. Pre-recorded demo is upon accomplishment.

#### Acceptance Criteria

- [x] Decide who a... | 1.0 | Record demo for the upcoming branch meeting - #### Describe the task

The next scheduled Branch meeting is scheduled for July 26th. The presentation [slide deck](https://bcgov.sharepoint.com/:p:/t/00608-ScrumTeam/EV6WsJ49vrxMr1ZqMUV7nMYB68gvCWgv8E7NwGkEaQYurg?e=KdVHKc) is done. Pre-recorded demo is upon accomplishment.... | priority | record demo for the upcoming branch meeting describe the task the next scheduled branch meeting is scheduled for july the presentation is done pre recorded demo is upon accomplishment acceptance criteria decide who and what to be recorded record and host in the ms teams folder link t... | 1 |

103,518 | 4,174,564,757 | IssuesEvent | 2016-06-21 14:25:42 | CascadesCarnivoreProject/Timelapse | https://api.github.com/repos/CascadesCarnivoreProject/Timelapse | opened | Bug: DialogDateRereadDatesFromImages doesn't update the dates | Medium Priority fix | To reproduce:

Update bug. Reread date and time from the images

1. Change some of the dates in the date field so that they differ from what had been read in

2. Select Re-read dates from images

3. The feedback says that the dates haven’t changed,

4. The changed dates in the field are not updated in either the datag... | 1.0 | Bug: DialogDateRereadDatesFromImages doesn't update the dates - To reproduce:

Update bug. Reread date and time from the images

1. Change some of the dates in the date field so that they differ from what had been read in

2. Select Re-read dates from images

3. The feedback says that the dates haven’t changed,

4. Th... | priority | bug dialogdaterereaddatesfromimages doesn t update the dates to reproduce update bug reread date and time from the images change some of the dates in the date field so that they differ from what had been read in select re read dates from images the feedback says that the dates haven’t changed th... | 1 |

25,668 | 2,683,918,822 | IssuesEvent | 2015-03-28 13:26:26 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | Многоточия в меню | 2–5 stars bug imported Priority-Medium | _From [yurivk...@gmail.com](https://code.google.com/u/109564459582005085765/) on April 06, 2010 23:12:34_

Согласно всем guideline’ам, многоточие в пункте меню должно быть, если

перед выполнением команды запрашиваются дополнительные сведения, и не

должно, если не запрашиваются.

**Attachment:** [0001-Fix-menu-ellipse... | 1.0 | Многоточия в меню - _From [yurivk...@gmail.com](https://code.google.com/u/109564459582005085765/) on April 06, 2010 23:12:34_

Согласно всем guideline’ам, многоточие в пункте меню должно быть, если

перед выполнением команды запрашиваются дополнительные сведения, и не

должно, если не запрашиваются.

**Attachment:** [0... | priority | многоточия в меню from on april согласно всем guideline’ам многоточие в пункте меню должно быть если перед выполнением команды запрашиваются дополнительные сведения и не должно если не запрашиваются attachment original issue | 1 |

463,131 | 13,260,315,154 | IssuesEvent | 2020-08-20 18:00:32 | juju/js-libjuju | https://api.github.com/repos/juju/js-libjuju | closed | Can't get model from Charm store | Priority: Medium | As per our discussions on IRC today, it seems impossible to get a bundle staged via the getChanges API in the bundle facade.

Passing in the raw YAML to the same API call does work.

Whatever we pass in the bundleurl the reponse is "at least one application must be specified" | 1.0 | Can't get model from Charm store - As per our discussions on IRC today, it seems impossible to get a bundle staged via the getChanges API in the bundle facade.

Passing in the raw YAML to the same API call does work.

Whatever we pass in the bundleurl the reponse is "at least one application must be specified" | priority | can t get model from charm store as per our discussions on irc today it seems impossible to get a bundle staged via the getchanges api in the bundle facade passing in the raw yaml to the same api call does work whatever we pass in the bundleurl the reponse is at least one application must be specified | 1 |

80,330 | 3,560,934,653 | IssuesEvent | 2016-01-23 12:40:06 | ankidroid/Anki-Android | https://api.github.com/repos/ankidroid/Anki-Android | closed | Anki skips to next card without user direction | bug Priority-Medium waitingforfeedback | Originally reported on Google Code with ID 2001

```

What steps will reproduce the problem?

(unfortunately, this is hard to reproduce. Something odd must be going on in the background)

1. Turn off 'automatically display answer'

2. Open a deck

3. Wait an unspecified length of time (sometime 5min, sometimes 30 seconds,... | 1.0 | Anki skips to next card without user direction - Originally reported on Google Code with ID 2001

```

What steps will reproduce the problem?

(unfortunately, this is hard to reproduce. Something odd must be going on in the background)

1. Turn off 'automatically display answer'

2. Open a deck

3. Wait an unspecified len... | priority | anki skips to next card without user direction originally reported on google code with id what steps will reproduce the problem unfortunately this is hard to reproduce something odd must be going on in the background turn off automatically display answer open a deck wait an unspecified length... | 1 |

227,160 | 7,527,604,565 | IssuesEvent | 2018-04-13 17:40:00 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | excavator cant dump mud in to water | Medium Priority | why cant the excavator dump mud in to the water like you used to be able to do ? makes trying to level a area very hard | 1.0 | excavator cant dump mud in to water - why cant the excavator dump mud in to the water like you used to be able to do ? makes trying to level a area very hard | priority | excavator cant dump mud in to water why cant the excavator dump mud in to the water like you used to be able to do makes trying to level a area very hard | 1 |

47,374 | 2,978,350,715 | IssuesEvent | 2015-07-16 05:24:42 | adobe/brackets | https://api.github.com/repos/adobe/brackets | opened | [IQE]: Health Data Report File Stat metric for working set does not capture the preferences file count opened from debug menu in the w | IQE medium priority | Steps:

1) Launch Bracekts

2) Open preferences file from debug menu

3) Open Health Data Report

Result : Health Data Report File Stat metric for working set does not capture the preferences file count opened from debug menu, only openedFileExt count increases | 1.0 | [IQE]: Health Data Report File Stat metric for working set does not capture the preferences file count opened from debug menu in the w - Steps:

1) Launch Bracekts

2) Open preferences file from debug menu

3) Open Health Data Report

Result : Health Data Report File Stat metric for working set does not capture the p... | priority | health data report file stat metric for working set does not capture the preferences file count opened from debug menu in the w steps launch bracekts open preferences file from debug menu open health data report result health data report file stat metric for working set does not capture the prefe... | 1 |

509,621 | 14,740,565,037 | IssuesEvent | 2021-01-07 09:17:30 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | opened | Extend display of bans | Category: Accounts Priority: Medium | Bans of users should additionally display the reason and a note of

"If you think this is in error, contact support@strangeloopgames.com"

| 1.0 | Extend display of bans - Bans of users should additionally display the reason and a note of

"If you think this is in error, contact support@strangeloopgames.com"

| priority | extend display of bans bans of users should additionally display the reason and a note of if you think this is in error contact support strangeloopgames com | 1 |

209,463 | 7,176,332,112 | IssuesEvent | 2018-01-31 09:41:43 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | Write Consistency Improvements | Priority: Medium Source: Internal Team: Core Type: Enhancement | - [x] Sync writes and sync backups should be consistent and synchronous from caller point of view.

- [x] Graceful shutdown should:

- [x] prevent receiving new operations

- [x] wait for in-flight operations to finish and send responses back to callers

https://hazelcast.atlassian.net/wiki/display/PM/Write+Cons... | 1.0 | Write Consistency Improvements - - [x] Sync writes and sync backups should be consistent and synchronous from caller point of view.

- [x] Graceful shutdown should:

- [x] prevent receiving new operations

- [x] wait for in-flight operations to finish and send responses back to callers

https://hazelcast.atlassi... | priority | write consistency improvements sync writes and sync backups should be consistent and synchronous from caller point of view graceful shutdown should prevent receiving new operations wait for in flight operations to finish and send responses back to callers | 1 |

751,011 | 26,227,781,226 | IssuesEvent | 2023-01-04 20:27:21 | KingSupernova31/RulesGuru | https://api.github.com/repos/KingSupernova31/RulesGuru | closed | Allow ORing of template fields | enhancement medium priority | Allow separate template rules to be ORed together such that a card must only satisfy at least one in order to be returned. (The most common usage of this would be for "instant or sorcery".)

The UI should probably accomplish this by having some sort of drag-able line or box that can connect rules. Perhaps allow the r... | 1.0 | Allow ORing of template fields - Allow separate template rules to be ORed together such that a card must only satisfy at least one in order to be returned. (The most common usage of this would be for "instant or sorcery".)

The UI should probably accomplish this by having some sort of drag-able line or box that can c... | priority | allow oring of template fields allow separate template rules to be ored together such that a card must only satisfy at least one in order to be returned the most common usage of this would be for instant or sorcery the ui should probably accomplish this by having some sort of drag able line or box that can c... | 1 |

32,536 | 2,755,726,815 | IssuesEvent | 2015-04-26 22:06:15 | IIsi50MHz/chromey-calculator | https://api.github.com/repos/IIsi50MHz/chromey-calculator | reopened | popout's input field not initially focused | auto-migrated bug Priority-Medium | ```

What steps will reproduce the problem?

Press the button or trigger it in some other way. It automatically takes

focus, requiring me to click in the text field every single time. Sure, it's

only one click, but it is still annoying.

What is the expected output?

What do you see instead?

What version of Chrome ... | 1.0 | popout's input field not initially focused - ```

What steps will reproduce the problem?

Press the button or trigger it in some other way. It automatically takes

focus, requiring me to click in the text field every single time. Sure, it's

only one click, but it is still annoying.

What is the expected output?

What... | priority | popout s input field not initially focused what steps will reproduce the problem press the button or trigger it in some other way it automatically takes focus requiring me to click in the text field every single time sure it s only one click but it is still annoying what is the expected output what... | 1 |

227,080 | 7,526,704,723 | IssuesEvent | 2018-04-13 14:46:47 | emoncms/MyHomeEnergyPlanner | https://api.github.com/repos/emoncms/MyHomeEnergyPlanner | closed | Merge 'roofs' and 'lofts' measures list. | For release Libraries Medium priority usability | When applying measures, sometimes need to change a 'loft' to a 'roof' and vice versa.

This is because sometimes works are planned by the householder that involve moving the insulation line from the ceiling to the rafters.

At the moment this option is locked out - you can't select a 'roof' measure when it was a ... | 1.0 | Merge 'roofs' and 'lofts' measures list. - When applying measures, sometimes need to change a 'loft' to a 'roof' and vice versa.

This is because sometimes works are planned by the householder that involve moving the insulation line from the ceiling to the rafters.

At the moment this option is locked out - you ... | priority | merge roofs and lofts measures list when applying measures sometimes need to change a loft to a roof and vice versa this is because sometimes works are planned by the householder that involve moving the insulation line from the ceiling to the rafters at the moment this option is locked out you ... | 1 |

782,249 | 27,491,295,441 | IssuesEvent | 2023-03-04 16:55:27 | aleksbobic/csx | https://api.github.com/repos/aleksbobic/csx | closed | UI Guide & Survey | enhancement priority:medium Complexity:medium | **Is your feature request related to a problem? Please describe.**

Users should have the option to click "show me around". This should guide them through the UI and provide them with an overview of basic features.

| 1.0 | UI Guide & Survey - **Is your feature request related to a problem? Please describe.**

Users should have the option to click "show me around". This should guide them through the UI and provide them with an overview of basic features.

| priority | ui guide survey is your feature request related to a problem please describe users should have the option to click show me around this should guide them through the ui and provide them with an overview of basic features | 1 |

351,957 | 10,525,704,273 | IssuesEvent | 2019-09-30 15:33:48 | forceworkbench/forceworkbench | https://api.github.com/repos/forceworkbench/forceworkbench | closed | Add UI support for HAVING in SOQL | Component-Query Priority-Medium Scheduled-Backlog enhancement imported | _Original author: ryan.bra...@gmail.com (February 06, 2010 04:46:02)_

New HAVING Clause

There is a new HAVING clause in SOQL that is similar to HAVING in SQL.

You can use a HAVING clause with a GROUP BY clause to filter the results

returned by aggregate functions, such as SUM(). A HAVING clause is similar

to a WHE... | 1.0 | Add UI support for HAVING in SOQL - _Original author: ryan.bra...@gmail.com (February 06, 2010 04:46:02)_

New HAVING Clause

There is a new HAVING clause in SOQL that is similar to HAVING in SQL.

You can use a HAVING clause with a GROUP BY clause to filter the results

returned by aggregate functions, such as SUM().... | priority | add ui support for having in soql original author ryan bra gmail com february new having clause there is a new having clause in soql that is similar to having in sql you can use a having clause with a group by clause to filter the results returned by aggregate functions such as sum a havi... | 1 |

340,442 | 10,272,435,853 | IssuesEvent | 2019-08-23 16:26:03 | 0xfr34ky/webeng-viergewinnt | https://api.github.com/repos/0xfr34ky/webeng-viergewinnt | closed | Farbauswahl für die Steine | medium priority | Satz an Farb-Kombinationen erzeugen.

Vor dem Spiel Spieler fragen, welche Farbkombination gewünscht wird. | 1.0 | Farbauswahl für die Steine - Satz an Farb-Kombinationen erzeugen.

Vor dem Spiel Spieler fragen, welche Farbkombination gewünscht wird. | priority | farbauswahl für die steine satz an farb kombinationen erzeugen vor dem spiel spieler fragen welche farbkombination gewünscht wird | 1 |

443,075 | 12,759,318,767 | IssuesEvent | 2020-06-29 05:29:07 | buddyboss/buddyboss-platform | https://api.github.com/repos/buddyboss/buddyboss-platform | closed | Documents: PDF document upload not working on Forum Discussions | bug component: document priority: medium | **Describe the bug**

Uploading PDF not working on Forum Discussions. But this is working on Profile document uploads

**To Reproduce**

Steps to reproduce the behavior:

1. Enable Documents on BuddyBoss Settings

2. allow Document uploads on Profile and Forums on Settings > Media

3. Go to a Forums, create a New Dis... | 1.0 | Documents: PDF document upload not working on Forum Discussions - **Describe the bug**

Uploading PDF not working on Forum Discussions. But this is working on Profile document uploads

**To Reproduce**

Steps to reproduce the behavior:

1. Enable Documents on BuddyBoss Settings

2. allow Document uploads on Profile a... | priority | documents pdf document upload not working on forum discussions describe the bug uploading pdf not working on forum discussions but this is working on profile document uploads to reproduce steps to reproduce the behavior enable documents on buddyboss settings allow document uploads on profile a... | 1 |

766,430 | 26,883,548,510 | IssuesEvent | 2023-02-05 22:45:37 | dcs-retribution/dcs-retribution | https://api.github.com/repos/dcs-retribution/dcs-retribution | closed | Adjustment slider for AI purchase behavior | Enhancement Good First Issue Priority Medium | ### Is your feature request related to a problem? Please describe.

Currently the AI purchase behavior regarding the ratio of air forces to ground forces is defined by a default ratio (I think it's 50/50). The user should have more control over this ratio.

### Describe the solution you'd like

Create an adjustment sli... | 1.0 | Adjustment slider for AI purchase behavior - ### Is your feature request related to a problem? Please describe.

Currently the AI purchase behavior regarding the ratio of air forces to ground forces is defined by a default ratio (I think it's 50/50). The user should have more control over this ratio.

### Describe the ... | priority | adjustment slider for ai purchase behavior is your feature request related to a problem please describe currently the ai purchase behavior regarding the ratio of air forces to ground forces is defined by a default ratio i think it s the user should have more control over this ratio describe the so... | 1 |

536,857 | 15,715,912,844 | IssuesEvent | 2021-03-28 04:10:04 | AY2021S2-CS2103T-T12-4/tp | https://api.github.com/repos/AY2021S2-CS2103T-T12-4/tp | closed | UI: remove menu bar? | priority.Medium type.Enhancement | I'm working on the demo slides and thought that since we are going for a simplistic no bs UI, should we remove the menu bar? Right now all it does is to provide an exit button and a help button. For exit, users can simply click the top right corner to close, for help users can simply type in help. Wonder what the rest ... | 1.0 | UI: remove menu bar? - I'm working on the demo slides and thought that since we are going for a simplistic no bs UI, should we remove the menu bar? Right now all it does is to provide an exit button and a help button. For exit, users can simply click the top right corner to close, for help users can simply type in help... | priority | ui remove menu bar i m working on the demo slides and thought that since we are going for a simplistic no bs ui should we remove the menu bar right now all it does is to provide an exit button and a help button for exit users can simply click the top right corner to close for help users can simply type in help... | 1 |

465,815 | 13,392,668,934 | IssuesEvent | 2020-09-03 02:01:36 | alanqchen/Bear-Blog-Engine | https://api.github.com/repos/alanqchen/Bear-Blog-Engine | opened | Add support for uploading images in the body of the post | Medium Priority backend enhancement frontend | Currently only uploading the feature image to the server is allowed. The only way to add an image is by using an URL. To change this, the following needs to be added:

- [ ] Add backend API endpoint to delete an image given the image name in the request URL

- [ ] Add parser in the frontend that goes compares the raw... | 1.0 | Add support for uploading images in the body of the post - Currently only uploading the feature image to the server is allowed. The only way to add an image is by using an URL. To change this, the following needs to be added:

- [ ] Add backend API endpoint to delete an image given the image name in the request URL

... | priority | add support for uploading images in the body of the post currently only uploading the feature image to the server is allowed the only way to add an image is by using an url to change this the following needs to be added add backend api endpoint to delete an image given the image name in the request url ... | 1 |

487,644 | 14,049,809,255 | IssuesEvent | 2020-11-02 10:48:11 | opencrvs/opencrvs-core | https://api.github.com/repos/opencrvs/opencrvs-core | closed | Workqueues appears briefly before 'Set new pin entry' flow | Priority: medium 👹Bug | **Describe the bug**

- Workqueues appear before 'Set new pin entry' flow

**To Reproduce**

Steps to reproduce the behaviour:

1. Create new user

2. Login as the user

3. Workqueue appears

4. Fraction of a second later the Set pin flow appears

**Expected behaviour**

Go directly to Pin entry on Login

**Screenshots**

..

| 1.0 | Workqueues appears briefly before 'Set new pin entry' flow - **Describe the bug**

- Workqueues appear before 'Set new pin entry' flow

**To Reproduce**

Steps to reproduce the behaviour:

1. Create new user

2. Login as the user

3. Workqueue appears

4. Fraction of a second later the Set pin flow appears

**Expected behavi... | priority | workqueues appears briefly before set new pin entry flow describe the bug workqueues appear before set new pin entry flow to reproduce steps to reproduce the behaviour create new user login as the user workqueue appears fraction of a second later the set pin flow appears expected behavi... | 1 |

3,358 | 2,537,767,328 | IssuesEvent | 2015-01-26 22:52:38 | newca12/gapt | https://api.github.com/repos/newca12/gapt | closed | add de-Bruijn indices | 1 star imported Milestone-Release2.0 Priority-Medium Type-Task | _From [shaoli...@gmail.com](https://code.google.com/u/113190107447576027220/) on December 22, 2009 13:56:05_

Add de-Bruijn (db) indices to Var as Option[Int]. None means the Var is

free. The following should be taken into account:

1) Abs should preserves its nominal form but will also place the index in

all the b... | 1.0 | add de-Bruijn indices - _From [shaoli...@gmail.com](https://code.google.com/u/113190107447576027220/) on December 22, 2009 13:56:05_

Add de-Bruijn (db) indices to Var as Option[Int]. None means the Var is

free. The following should be taken into account:

1) Abs should preserves its nominal form but will also place... | priority | add de bruijn indices from on december add de bruijn db indices to var as option none means the var is free the following should be taken into account abs should preserves its nominal form but will also place the index in all the bound variables in substitution beta reduction et... | 1 |

584,053 | 17,404,938,810 | IssuesEvent | 2021-08-03 03:38:43 | f-lab-edu/conference-reservation | https://api.github.com/repos/f-lab-edu/conference-reservation | closed | [기업]id와 비밀번호 체크시 Exception 처리 추가 | Priority: Medium Type: Feature/Function | - 추가 / 개선 요소

회원 아이디 중복체크와 회원탈퇴 시 체크하는 로직에서

공통된 Exception처리가 필요하다.

- 추가 / 개선 이유

Service에서 로직 처리 후, boolean 리턴을 받을 때, Insert시 난 에러인지, 중복체크 시에 발생한 리턴 값인지

구분하기 위해서이다. | 1.0 | [기업]id와 비밀번호 체크시 Exception 처리 추가 - - 추가 / 개선 요소

회원 아이디 중복체크와 회원탈퇴 시 체크하는 로직에서

공통된 Exception처리가 필요하다.

- 추가 / 개선 이유

Service에서 로직 처리 후, boolean 리턴을 받을 때, Insert시 난 에러인지, 중복체크 시에 발생한 리턴 값인지

구분하기 위해서이다. | priority | id와 비밀번호 체크시 exception 처리 추가 추가 개선 요소 회원 아이디 중복체크와 회원탈퇴 시 체크하는 로직에서 공통된 exception처리가 필요하다 추가 개선 이유 service에서 로직 처리 후 boolean 리턴을 받을 때 insert시 난 에러인지 중복체크 시에 발생한 리턴 값인지 구분하기 위해서이다 | 1 |

132,266 | 5,173,985,041 | IssuesEvent | 2017-01-18 17:23:20 | ngageoint/hootenanny | https://api.github.com/repos/ngageoint/hootenanny | closed | User issues with MGCP / OSM Conflate | Category: Translation Priority: Medium Status: Defined Type: Bug | I have downloaded a planet.osm.pbf file and extracted a bbox using Osmosis. My next process was to filter the road and rail layers of my bbox:

**Rail**: osmosis --read-xml odesa.osm --way-key-value keyValueList="railway.tram,railway=light_rail,railway=rail" --used-node --write-xml rail.osm

**Road**: osmosis --read-x... | 1.0 | User issues with MGCP / OSM Conflate - I have downloaded a planet.osm.pbf file and extracted a bbox using Osmosis. My next process was to filter the road and rail layers of my bbox:

**Rail**: osmosis --read-xml odesa.osm --way-key-value keyValueList="railway.tram,railway=light_rail,railway=rail" --used-node --write-xm... | priority | user issues with mgcp osm conflate i have downloaded a planet osm pbf file and extracted a bbox using osmosis my next process was to filter the road and rail layers of my bbox rail osmosis read xml odesa osm way key value keyvaluelist railway tram railway light rail railway rail used node write xm... | 1 |

251,078 | 7,999,564,385 | IssuesEvent | 2018-07-22 03:33:22 | Marri/glowfic | https://api.github.com/repos/Marri/glowfic | opened | In replies#search, the 'condensed' checkbox resets to true after each search | 3. medium priority 7. easy type: bug | It should persist its previous value if a search has been performed, while also defaulting (otherwise) to true. | 1.0 | In replies#search, the 'condensed' checkbox resets to true after each search - It should persist its previous value if a search has been performed, while also defaulting (otherwise) to true. | priority | in replies search the condensed checkbox resets to true after each search it should persist its previous value if a search has been performed while also defaulting otherwise to true | 1 |

259,375 | 8,198,070,949 | IssuesEvent | 2018-08-31 15:14:42 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | tests/kernel/mem_pool/mem_pool_concept/testcase.yaml#kernel.memory_pool fails on nrf52840_pca10056, nrf52_pca10040 and nrf51_pca10028 | area: ARM bug nRF priority: medium | Execution log:

```

Running test suite mpool_concept

===================================================================

starting test - test_mpool_alloc_wait_prio

Assertion failed at /home/kspoorth/work/latest_zephyr/tests/kernel/mem_pool/mem_pool_concept/src/test_mpool_alloc_wait.c:24: tmpool_alloc_wait_tim... | 1.0 | tests/kernel/mem_pool/mem_pool_concept/testcase.yaml#kernel.memory_pool fails on nrf52840_pca10056, nrf52_pca10040 and nrf51_pca10028 - Execution log:

```

Running test suite mpool_concept

===================================================================

starting test - test_mpool_alloc_wait_prio

Assertion... | priority | tests kernel mem pool mem pool concept testcase yaml kernel memory pool fails on and execution log running test suite mpool concept starting test test mpool alloc wait prio assertion failed at home kspoorth work lates... | 1 |

88,706 | 3,783,890,475 | IssuesEvent | 2016-03-19 12:46:04 | VladSerdobintsev/zfcore | https://api.github.com/repos/VladSerdobintsev/zfcore | closed | Module Comments | auto-migrated Priority-Medium Type-Enhancement | ```

Добавить в раздел администратора страницу

с комментариями ожидающими апрува

администратора

```

Original issue reported on code.google.com by `AntonShe...@gmail.com` on 2 Aug 2012 at 10:10 | 1.0 | Module Comments - ```

Добавить в раздел администратора страницу

с комментариями ожидающими апрува

администратора

```

Original issue reported on code.google.com by `AntonShe...@gmail.com` on 2 Aug 2012 at 10:10 | priority | module comments добавить в раздел администратора страницу с комментариями ожидающими апрува администратора original issue reported on code google com by antonshe gmail com on aug at | 1 |

271,837 | 8,490,023,459 | IssuesEvent | 2018-10-26 22:10:58 | department-of-veterans-affairs/caseflow | https://api.github.com/repos/department-of-veterans-affairs/caseflow | opened | Investigate case list | bug-medium-priority case-search/list foxtrot | Jebby reported a case where there are only 3 cases associated with the Veteran ID in Caseflow ( showing in the Case list/search results) but may more in VACOLS. Can we investigate why Caseflow isn't showing all of the results?

but may more in VACOLS. Can we investigate why Caseflow isn't showing all of the results?

- Maps do not match: test-output/algorithms/wayjoiner/WayJoinerConflateOutput.osm test-files/algorithms/wayjoiner/WayJoinerConflateExpected.osm` | 1.0 | WayJoinerTest fails when quick tests are run sequentially, but passes when run in parallel. - I noticed this a while ago...

The specific test is `N4hoot13WayJoinerTestE::runConflateTest`

Error:

`src/test/cpp/hoot/core/algorithms/WayJoinerTest.cpp(152) - Maps do not match: test-output/algorithms/wayjoiner/WayJoine... | priority | wayjoinertest fails when quick tests are run sequentially but passes when run in parallel i noticed this a while ago the specific test is runconflatetest error src test cpp hoot core algorithms wayjoinertest cpp maps do not match test output algorithms wayjoiner wayjoinerconflateoutput osm tes... | 1 |

341,061 | 10,282,318,213 | IssuesEvent | 2019-08-26 10:48:11 | salesagility/SuiteCRM | https://api.github.com/repos/salesagility/SuiteCRM | closed | Upgrade packages empty lato font files | Bug Medium Priority | Same as the issues #5915 and #7331, the lato font files in the 7.11.x to 7.11.8 upgrade packages are empty. This is also the case with (at least) the upgrade package from 7.10.x to 7.10.20 and 7.10.x to 7.11.8.

The one from 7.8.x to 7.11.8 does contain the correct font files.

According to #7331 it should be fixed, ... | 1.0 | Upgrade packages empty lato font files - Same as the issues #5915 and #7331, the lato font files in the 7.11.x to 7.11.8 upgrade packages are empty. This is also the case with (at least) the upgrade package from 7.10.x to 7.10.20 and 7.10.x to 7.11.8.

The one from 7.8.x to 7.11.8 does contain the correct font files.

... | priority | upgrade packages empty lato font files same as the issues and the lato font files in the x to upgrade packages are empty this is also the case with at least the upgrade package from x to and x to the one from x to does contain the correct font files according to... | 1 |

771,820 | 27,093,868,400 | IssuesEvent | 2023-02-15 00:02:40 | docker-mailserver/docker-mailserver | https://api.github.com/repos/docker-mailserver/docker-mailserver | closed | [BUG] extended parameters not parsed main.cf | kind/bug meta/needs triage priority/medium | ### Miscellaneous first checks

- [X] I checked that all ports are open and not blocked by my ISP / hosting provider.

- [X] I know that SSL errors are likely the result of a wrong setup on the user side and not caused by DMS itself. I'm confident my setup is correct.

### Affected Component(s)

cant add mynetworks by u... | 1.0 | [BUG] extended parameters not parsed main.cf - ### Miscellaneous first checks

- [X] I checked that all ports are open and not blocked by my ISP / hosting provider.

- [X] I know that SSL errors are likely the result of a wrong setup on the user side and not caused by DMS itself. I'm confident my setup is correct.

### ... | priority | extended parameters not parsed main cf miscellaneous first checks i checked that all ports are open and not blocked by my isp hosting provider i know that ssl errors are likely the result of a wrong setup on the user side and not caused by dms itself i m confident my setup is correct affected... | 1 |

497,227 | 14,366,299,175 | IssuesEvent | 2020-12-01 04:01:35 | rich-iannone/pointblank | https://api.github.com/repos/rich-iannone/pointblank | closed | Add the `col_vals_increasing()` and `col_vals_decreasing()` functions | Difficulty: [3] Advanced Effort: [3] High Priority: [2] Medium Type: ★ Enhancement | These functions would validate for whether values are increasing or decreasing. Any NA/NULL values can be excluded with`na_pass`.

Whether to accept stationary values as passing should be an option. Further to this, a tolerance distance (for movement in the opposite direction) should be available. | 1.0 | Add the `col_vals_increasing()` and `col_vals_decreasing()` functions - These functions would validate for whether values are increasing or decreasing. Any NA/NULL values can be excluded with`na_pass`.

Whether to accept stationary values as passing should be an option. Further to this, a tolerance distance (for mov... | priority | add the col vals increasing and col vals decreasing functions these functions would validate for whether values are increasing or decreasing any na null values can be excluded with na pass whether to accept stationary values as passing should be an option further to this a tolerance distance for mov... | 1 |

23,991 | 2,665,345,662 | IssuesEvent | 2015-03-20 19:54:18 | jeffbryner/MozDef | https://api.github.com/repos/jeffbryner/MozDef | closed | Documentation for local accounts | category:doc category:question priority:medium | First, thanks for the help and the work.

I am trying to mount Mozdef a debian and I'm going crazy with the installation process.

I managed to install everything by Docker, although the installation manual on is not clear, at least to those who have never used Docker.

I have a question about documentation.

For... | 1.0 | Documentation for local accounts - First, thanks for the help and the work.

I am trying to mount Mozdef a debian and I'm going crazy with the installation process.

I managed to install everything by Docker, although the installation manual on is not clear, at least to those who have never used Docker.

I have a q... | priority | documentation for local accounts first thanks for the help and the work i am trying to mount mozdef a debian and i m going crazy with the installation process i managed to install everything by docker although the installation manual on is not clear at least to those who have never used docker i have a q... | 1 |

80,571 | 3,567,778,416 | IssuesEvent | 2016-01-26 00:40:52 | RaymondEllis/simpled | https://api.github.com/repos/RaymondEllis/simpled | closed | Need better name | auto-migrated Priority-Medium Type-Task wontfix | ```

What steps will reproduce the problem?

1. Go to http://google.com/

2. Search for "SimpleD"

What is the expected output? What do you see instead?

You see other stuff. You should see this project.

```

Original issue reported on code.google.com by `raymonde...@gmail.com` on 29 Oct 2012 at 5:02 | 1.0 | Need better name - ```

What steps will reproduce the problem?

1. Go to http://google.com/

2. Search for "SimpleD"

What is the expected output? What do you see instead?

You see other stuff. You should see this project.

```

Original issue reported on code.google.com by `raymonde...@gmail.com` on 29 Oct 2012 at 5:02 | priority | need better name what steps will reproduce the problem go to search for simpled what is the expected output what do you see instead you see other stuff you should see this project original issue reported on code google com by raymonde gmail com on oct at | 1 |

539,012 | 15,782,142,737 | IssuesEvent | 2021-04-01 12:24:16 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | [Coverity CID: 220428] Out-of-bounds access in subsys/bluetooth/audio/vocs.c | Coverity bug priority: medium |

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/169144afa1826511ee6ec3f53d590b2c0d39d3d4/subsys/bluetooth/audio/vocs.c#L325

Category: Memory - corruptions

Function: `bt_vocs_init`

Component: Bluetooth

CID: [220428](https://scan9.coverity.com/reports.htm#v29726/p12996/mergedDe... | 1.0 | [Coverity CID: 220428] Out-of-bounds access in subsys/bluetooth/audio/vocs.c -

Static code scan issues found in file:

https://github.com/zephyrproject-rtos/zephyr/tree/169144afa1826511ee6ec3f53d590b2c0d39d3d4/subsys/bluetooth/audio/vocs.c#L325

Category: Memory - corruptions

Function: `bt_vocs_init`

Component: Blueto... | priority | out of bounds access in subsys bluetooth audio vocs c static code scan issues found in file category memory corruptions function bt vocs init component bluetooth cid details also update the characteristic declaration always found at with the bt gatt chrc wr... | 1 |

214,704 | 7,276,066,497 | IssuesEvent | 2018-02-21 15:23:41 | ilestis/miscellany | https://api.github.com/repos/ilestis/miscellany | closed | Add a "New Entity" button in the view of each entity | feature good first issue medium priority | # Story

As a user, I want to quickly create a new entity of the same type of entity I am currently looking at.

# Work

1. Propose a change to the view crud interface of entities to add a button for "new entity"

2. Implement change

# Tests

* When viewing an entity, a "New EntityName" button is visible somewhere... | 1.0 | Add a "New Entity" button in the view of each entity - # Story

As a user, I want to quickly create a new entity of the same type of entity I am currently looking at.

# Work

1. Propose a change to the view crud interface of entities to add a button for "new entity"

2. Implement change

# Tests

* When viewing an... | priority | add a new entity button in the view of each entity story as a user i want to quickly create a new entity of the same type of entity i am currently looking at work propose a change to the view crud interface of entities to add a button for new entity implement change tests when viewing an... | 1 |

544,147 | 15,890,130,203 | IssuesEvent | 2021-04-10 14:19:18 | beta-team/beta-recsys | https://api.github.com/repos/beta-team/beta-recsys | closed | Use Modin to replace pandas | priority:medium status:confirmed type:enhancement | Use the latest Modin package to replace our pandas package. The Modin can work in parallel, and it is supposed to faster. It also requires no additional knowledge if you are already familiar with pandas.

"Modin uses Ray or Dask to provide an effortless way to speed up your pandas notebooks, scripts, and libraries. ... | 1.0 | Use Modin to replace pandas - Use the latest Modin package to replace our pandas package. The Modin can work in parallel, and it is supposed to faster. It also requires no additional knowledge if you are already familiar with pandas.

"Modin uses Ray or Dask to provide an effortless way to speed up your pandas noteb... | priority | use modin to replace pandas use the latest modin package to replace our pandas package the modin can work in parallel and it is supposed to faster it also requires no additional knowledge if you are already familiar with pandas modin uses ray or dask to provide an effortless way to speed up your pandas noteb... | 1 |

250,061 | 7,967,123,268 | IssuesEvent | 2018-07-15 10:03:36 | pixijs/pixi.js | https://api.github.com/repos/pixijs/pixi.js | closed | Graphics disappears when cached and multiple filters are applied | Domain: API Plugin: Filters Plugin: Graphics Plugin: cacheAsBitmap Priority: Medium Status: Needs Investigation Type: Bug Version: v4.x | I've noticed following behavior of _PIXI.Graphics_ object (checked on v4.4.4).

It disappears completely from scene when there are 3 conditions fulfilled:

1) it has at least one filter applied

2) one of its parent containers also has at least one filter applied

3) cacheAsBitmap is set to _true_

Removing any of th... | 1.0 | Graphics disappears when cached and multiple filters are applied - I've noticed following behavior of _PIXI.Graphics_ object (checked on v4.4.4).

It disappears completely from scene when there are 3 conditions fulfilled:

1) it has at least one filter applied

2) one of its parent containers also has at least one filt... | priority | graphics disappears when cached and multiple filters are applied i ve noticed following behavior of pixi graphics object checked on it disappears completely from scene when there are conditions fulfilled it has at least one filter applied one of its parent containers also has at least one filte... | 1 |

31,573 | 2,734,096,529 | IssuesEvent | 2015-04-17 17:45:47 | Esri/briefing-book | https://api.github.com/repos/Esri/briefing-book | closed | 2-D array height for each page inconsistent | bug Medium Priority | In the JSON there is a 2D array height for each page (BookConfigData.BookPages[2].height[n][m]). We want to interpret the numbers in the height array to be the heights of the items on that particular page but then noticed that in some cases, the numer of elements in the "heights" array is not equal to the number of it... | 1.0 | 2-D array height for each page inconsistent - In the JSON there is a 2D array height for each page (BookConfigData.BookPages[2].height[n][m]). We want to interpret the numbers in the height array to be the heights of the items on that particular page but then noticed that in some cases, the numer of elements in the "h... | priority | d array height for each page inconsistent in the json there is a array height for each page bookconfigdata bookpages height we want to interpret the numbers in the height array to be the heights of the items on that particular page but then noticed that in some cases the numer of elements in the heights ... | 1 |

467,356 | 13,446,454,158 | IssuesEvent | 2020-09-08 12:59:18 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | opened | multibody: `ModelInstanceIndex` as an optional argument to the Jacobian methods. | priority: medium team: dynamics type: feature request | Working with Jacobians in a MBP that has multiple instances is a pain -- it's up the user to pull out the elements of the jacobian that they actually need. ([here is an example](https://colab.research.google.com/github/RussTedrake/manipulation/blob/master/pick.ipynb#scrollTo=ue9ofS7GHpXr))

It seems quite reasonable... | 1.0 | multibody: `ModelInstanceIndex` as an optional argument to the Jacobian methods. - Working with Jacobians in a MBP that has multiple instances is a pain -- it's up the user to pull out the elements of the jacobian that they actually need. ([here is an example](https://colab.research.google.com/github/RussTedrake/manip... | priority | multibody modelinstanceindex as an optional argument to the jacobian methods working with jacobians in a mbp that has multiple instances is a pain it s up the user to pull out the elements of the jacobian that they actually need it seems quite reasonable to support an optional argument for modelinst... | 1 |

483,674 | 13,928,107,130 | IssuesEvent | 2020-10-21 20:54:51 | Alluxio/alluxio | https://api.github.com/repos/Alluxio/alluxio | closed | Optimize the du -s command | machine-learning priority-medium type-feature | **Is your feature request related to a problem? Please describe.**

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

It's hard for me to do `alluxio fs du -sh /` if there are large amounts of files under `/`.

For instance, I've got about 3.8 million files in my Aliyun OS... | 1.0 | Optimize the du -s command - **Is your feature request related to a problem? Please describe.**

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

It's hard for me to do `alluxio fs du -sh /` if there are large amounts of files under `/`.