Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

677,529 | 23,164,381,546 | IssuesEvent | 2022-07-29 22:02:41 | CursedMC/YummyQuiltHacks | https://api.github.com/repos/CursedMC/YummyQuiltHacks | opened | ASM API Reform | enhancement help wanted medium priority rfc | ## Abstract

Currently, the ASM API is janky. We need to rework the API so we can use `MixoutPlugin`s instead of registering `TransformEvent`s.

## Alternatives

* Use the current event-based API.

## Advantages

## Drawbacks | 1.0 | ASM API Reform - ## Abstract

Currently, the ASM API is janky. We need to rework the API so we can use `MixoutPlugin`s instead of registering `TransformEvent`s.

## Alternatives

* Use the current event-based API.

## Advantages

## Drawbacks | priority | asm api reform abstract currently the asm api is janky we need to rework the api so we can use mixoutplugin s instead of registering transformevent s alternatives use the current event based api advantages drawbacks | 1 |

686,281 | 23,484,985,518 | IssuesEvent | 2022-08-17 13:46:27 | onesoft-sudo/sudobot | https://api.github.com/repos/onesoft-sudo/sudobot | closed | Log uncaught errors via webhooks | feature priority:medium non-moderation chore error-handling | Log uncaught errors via webhooks, in a channel on the home discord server. | 1.0 | Log uncaught errors via webhooks - Log uncaught errors via webhooks, in a channel on the home discord server. | priority | log uncaught errors via webhooks log uncaught errors via webhooks in a channel on the home discord server | 1 |

283,566 | 8,719,951,517 | IssuesEvent | 2018-12-08 06:37:47 | aowen87/BAR | https://api.github.com/repos/aowen87/BAR | closed | new -v logic interferes with cli arg parsing | bug likelihood medium priority reviewed severity medium | visit nowin v 2.8.1 cli s <script>

will turn into:

visit nowin cli s <script> forceversion 2.8.1

The fact that "-forceversion 2.8.1" it is put on the end of the command line undermines argument parsing (especially for standard python module "setup.py") scripts

-----------------------REDMINE MIGRATION-----... | 1.0 | new -v logic interferes with cli arg parsing - visit nowin v 2.8.1 cli s <script>

will turn into:

visit nowin cli s <script> forceversion 2.8.1

The fact that "-forceversion 2.8.1" it is put on the end of the command line undermines argument parsing (especially for standard python module "setup.py") scripts

... | priority | new v logic interferes with cli arg parsing visit nowin v cli s will turn into visit nowin cli s forceversion the fact that forceversion it is put on the end of the command line undermines argument parsing especially for standard python module setup py scripts ... | 1 |

255,683 | 8,126,138,347 | IssuesEvent | 2018-08-17 00:15:25 | aowen87/BAR | https://api.github.com/repos/aowen87/BAR | closed | Move Radial Resample Operator into Geometry category?? | Expected Use: 3 - Occasional Feature Impact: 3 - Medium Priority: Normal | Should the Radial Resample operator be moved to the Geometry category???

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in the transition. Below is

a complete record of the original redmine ticket.

Ticket... | 1.0 | Move Radial Resample Operator into Geometry category?? - Should the Radial Resample operator be moved to the Geometry category???

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in the transition. Below is

... | priority | move radial resample operator into geometry category should the radial resample operator be moved to the geometry category redmine migration this ticket was migrated from redmine as such not all information was able to be captured in the transition below is ... | 1 |

468,019 | 13,460,074,401 | IssuesEvent | 2020-09-09 13:09:46 | silentium-labs/merlin-gql | https://api.github.com/repos/silentium-labs/merlin-gql | closed | GqlContext should allow for multiple roles | Priority: Medium Status: Pending Type: Enhancement | Currently the role property on the user field of the GqlContext only allows for one role, we should change that to multiple roles to give more flexibility | 1.0 | GqlContext should allow for multiple roles - Currently the role property on the user field of the GqlContext only allows for one role, we should change that to multiple roles to give more flexibility | priority | gqlcontext should allow for multiple roles currently the role property on the user field of the gqlcontext only allows for one role we should change that to multiple roles to give more flexibility | 1 |

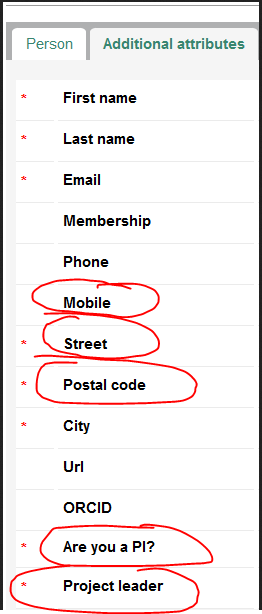

651,756 | 21,509,480,036 | IssuesEvent | 2022-04-28 01:45:48 | Flutter-Vision/FlutterVision | https://api.github.com/repos/Flutter-Vision/FlutterVision | closed | RadioButton clickable area is really small | Bug Front-end Medium Priority | FileName??

Test if the "Group Name" properties appear in the radio button components and create multiple radio buttons with the same group name. | 1.0 | RadioButton clickable area is really small - FileName??

Test if the "Group Name" properties appear in the radio button components and create multiple radio buttons with the same group name. | priority | radiobutton clickable area is really small filename test if the group name properties appear in the radio button components and create multiple radio buttons with the same group name | 1 |

708,896 | 24,359,954,481 | IssuesEvent | 2022-10-03 10:47:47 | CS3219-AY2223S1/cs3219-project-ay2223s1-g33 | https://api.github.com/repos/CS3219-AY2223S1/cs3219-project-ay2223s1-g33 | closed | [Collaboration UI] Display Question Test Case | Module/Front-End Status/Medium-Priority Type/Feature | ## Description

The UI should display the question, including the input, output and an explanation.

## Parent Task

- #104 | 1.0 | [Collaboration UI] Display Question Test Case - ## Description

The UI should display the question, including the input, output and an explanation.

## Parent Task

- #104 | priority | display question test case description the ui should display the question including the input output and an explanation parent task | 1 |

196,031 | 6,923,534,570 | IssuesEvent | 2017-11-30 09:24:43 | remkos/rads | https://api.github.com/repos/remkos/rads | closed | Add DAC-ERA | data Priority-Medium | http://www.aviso.altimetry.fr/en/data/products/auxiliary-products/atmospheric-corrections/description-atmospheric-corrections.html

Within the Climate Change Initiative (CCI) project (ESA), a specific DAC-ERA has been computed for climate applications using the ECMWF ERA-INTERIM reanalysis on the 1991-2014 period. This... | 1.0 | Add DAC-ERA - http://www.aviso.altimetry.fr/en/data/products/auxiliary-products/atmospheric-corrections/description-atmospheric-corrections.html

Within the Climate Change Initiative (CCI) project (ESA), a specific DAC-ERA has been computed for climate applications using the ECMWF ERA-INTERIM reanalysis on the 1991-201... | priority | add dac era within the climate change initiative cci project esa a specific dac era has been computed for climate applications using the ecmwf era interim reanalysis on the period this dac era is significantly improved for the first years of altimetry carrère et al major improvement of altimetry sea l... | 1 |

309,291 | 9,466,426,768 | IssuesEvent | 2019-04-18 04:28:33 | minio/minio | https://api.github.com/repos/minio/minio | closed | GCS: Return correct error message when wrong location is used in makeBucket | priority: medium | <!--- Provide a general summary of the issue in the Title above -->

if `us-west-1` is used for location, then the error sent to the client is `InvalidBucketName`, though the failure is because of invalid location. Correct error should be sent to the client.

## Expected Behavior

makeBucket should fail with Invalid ... | 1.0 | GCS: Return correct error message when wrong location is used in makeBucket - <!--- Provide a general summary of the issue in the Title above -->

if `us-west-1` is used for location, then the error sent to the client is `InvalidBucketName`, though the failure is because of invalid location. Correct error should be sen... | priority | gcs return correct error message when wrong location is used in makebucket if us west is used for location then the error sent to the client is invalidbucketname though the failure is because of invalid location correct error should be sent to the client expected behavior makebucket should fail w... | 1 |

50,819 | 3,007,001,604 | IssuesEvent | 2015-07-27 14:03:02 | Ombridride/minetest-minetestforfun-server | https://api.github.com/repos/Ombridride/minetest-minetestforfun-server | closed | Boat usebug | Modding@BugFix Priority@Medium | Many players use the boat to attack monster safely, we need to fix this ! (or reduce its easy utilisation...)

- Forbidden the left click when in a boat ?

- Forbidden put a boat in an another location than the water_source and river_source ?

- any other ideas ? | 1.0 | Boat usebug - Many players use the boat to attack monster safely, we need to fix this ! (or reduce its easy utilisation...)

- Forbidden the left click when in a boat ?

- Forbidden put a boat in an another location than the water_source and river_source ?

- any other ideas ? | priority | boat usebug many players use the boat to attack monster safely we need to fix this or reduce its easy utilisation forbidden the left click when in a boat forbidden put a boat in an another location than the water source and river source any other ideas | 1 |

716,793 | 24,648,504,550 | IssuesEvent | 2022-10-17 16:35:46 | PrefectHQ/prefect | https://api.github.com/repos/PrefectHQ/prefect | closed | The Value of Block is Not Updated After Clicking Save | bug ui priority:medium | ### First check

- [X] I added a descriptive title to this issue.

- [X] I used the GitHub search to find a similar issue and didn't find it.

- [X] I searched the Prefect documentation for this issue.

- [X] I checked that this issue is related to Prefect and not one of its dependencies.

### Bug summary

In the Docker C... | 1.0 | The Value of Block is Not Updated After Clicking Save - ### First check

- [X] I added a descriptive title to this issue.

- [X] I used the GitHub search to find a similar issue and didn't find it.

- [X] I searched the Prefect documentation for this issue.

- [X] I checked that this issue is related to Prefect and not on... | priority | the value of block is not updated after clicking save first check i added a descriptive title to this issue i used the github search to find a similar issue and didn t find it i searched the prefect documentation for this issue i checked that this issue is related to prefect and not one of its... | 1 |

221,563 | 7,389,797,781 | IssuesEvent | 2018-03-16 10:00:35 | salesagility/SuiteCRM | https://api.github.com/repos/salesagility/SuiteCRM | closed | Address fields (auto generated) not displaying help | Fix Proposed Medium Priority Resolved: Next Release bug | #### Issue

You set a help text into help for address fields and this help is not displayed when you go into edit view.

#### Expected Behavior

The help texts you wrote should be displayed

#### Actual Behavior

No help is displayed

#### Possible Fix

<!--- Not obligatory, but suggest a fix or reason for the bu... | 1.0 | Address fields (auto generated) not displaying help - #### Issue

You set a help text into help for address fields and this help is not displayed when you go into edit view.

#### Expected Behavior

The help texts you wrote should be displayed

#### Actual Behavior

No help is displayed

#### Possible Fix

<!--- ... | priority | address fields auto generated not displaying help issue you set a help text into help for address fields and this help is not displayed when you go into edit view expected behavior the help texts you wrote should be displayed actual behavior no help is displayed possible fix ... | 1 |

253,692 | 8,059,243,432 | IssuesEvent | 2018-08-02 21:13:14 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | opened | [deployer] Switch to a threadpool model instead of thread per target | enhancement priority: medium | Today we start a thread per deployment target. Given that the thread can get stuck as it connects over SSH and never recovers. A target may get stuck. Additionally, this means a pretty heavy use of threads for a system with a large number of threads and a large number of Engines.

We should switch to:

* Thread pool ... | 1.0 | [deployer] Switch to a threadpool model instead of thread per target - Today we start a thread per deployment target. Given that the thread can get stuck as it connects over SSH and never recovers. A target may get stuck. Additionally, this means a pretty heavy use of threads for a system with a large number of threads... | priority | switch to a threadpool model instead of thread per target today we start a thread per deployment target given that the thread can get stuck as it connects over ssh and never recovers a target may get stuck additionally this means a pretty heavy use of threads for a system with a large number of threads and a la... | 1 |

359,413 | 10,675,887,656 | IssuesEvent | 2019-10-21 12:42:21 | carbon-design-system/ibm-dotcom-library | https://api.github.com/repos/carbon-design-system/ibm-dotcom-library | opened | Create the Vanilla version of the Dotcom Shell | dev package: vanilla priority: medium | #### User Story

<!-- {{Provide a detailed description of the user's need here, but avoid any type of solutions}} -->

> As a `[user role below]`:

Developer

> I need to:

utilize a vanilla javascript version of the DotcomShell from the IBM.com library

> so that I can:

integrate the ibm.com page shell with masthead and f... | 1.0 | Create the Vanilla version of the Dotcom Shell - #### User Story

<!-- {{Provide a detailed description of the user's need here, but avoid any type of solutions}} -->

> As a `[user role below]`:

Developer

> I need to:

utilize a vanilla javascript version of the DotcomShell from the IBM.com library

> so that I can:

int... | priority | create the vanilla version of the dotcom shell user story as a developer i need to utilize a vanilla javascript version of the dotcomshell from the ibm com library so that i can integrate the ibm com page shell with masthead and footer in my application additional information should util... | 1 |

285,586 | 8,766,930,173 | IssuesEvent | 2018-12-17 18:12:22 | GrottoCenter/Grottocenter3 | https://api.github.com/repos/GrottoCenter/Grottocenter3 | closed | Display issues | Priority: Medium Type: Bug | - public entries count on homepage

- position or donate button

- hide option menu button on homepage header (on the right of the header) | 1.0 | Display issues - - public entries count on homepage

- position or donate button

- hide option menu button on homepage header (on the right of the header) | priority | display issues public entries count on homepage position or donate button hide option menu button on homepage header on the right of the header | 1 |

359,003 | 10,652,894,933 | IssuesEvent | 2019-10-17 13:29:55 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | opened | web admin: bad handle of "unit" fields | Priority: Medium Type: Bug | When you want to modify unit of a field on web admin, it's easy to set a wrong value.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to System configuration -> Main configuration -> Advanced

2. On "API token max expiration" field, click in the dropdown unit:

=> It displays all units (seconds, minutes, h... | 1.0 | web admin: bad handle of "unit" fields - When you want to modify unit of a field on web admin, it's easy to set a wrong value.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to System configuration -> Main configuration -> Advanced

2. On "API token max expiration" field, click in the dropdown unit:

=> I... | priority | web admin bad handle of unit fields when you want to modify unit of a field on web admin it s easy to set a wrong value to reproduce steps to reproduce the behavior go to system configuration main configuration advanced on api token max expiration field click in the dropdown unit i... | 1 |

686,825 | 23,505,746,240 | IssuesEvent | 2022-08-18 12:29:23 | apache/incubator-devlake | https://api.github.com/repos/apache/incubator-devlake | closed | [Doc][Metrics] Update the structure of 'Engineering Metrics' doc for better display | type/docs priority/medium | ## Documentation Scope

https://devlake.apache.org/docs/EngineeringMetrics

## Describe the Change

- [x] Change the table to small sections

- [x] Each section contains

- Metric definition/value/use case

- Queries

- Panel settings

- Screenshots

## Screenshots

reduce the download

size while making it possible to update it via the marketplace.

We should have 'standard rules' governing the 5s at which specific translated help

files can be released at a... | 1.0 | Move help files into add-ons - ```

Move all of the supported help files into add-ons

The English one should be included by default, which will (slightly) reduce the download

size while making it possible to update it via the marketplace.

We should have 'standard rules' governing the 5s at which specific translated ... | priority | move help files into add ons move all of the supported help files into add ons the english one should be included by default which will slightly reduce the download size while making it possible to update it via the marketplace we should have standard rules governing the at which specific translated h... | 1 |

200,102 | 6,998,107,853 | IssuesEvent | 2017-12-16 23:13:08 | Marri/glowfic | https://api.github.com/repos/Marri/glowfic | opened | Allow drag-drop into and out of board sections in boards#edit | 4. low priority 8. medium type: enhancement | Allow users to drag-drop posts between board sections (including "unsectioned") on the board editor UI. | 1.0 | Allow drag-drop into and out of board sections in boards#edit - Allow users to drag-drop posts between board sections (including "unsectioned") on the board editor UI. | priority | allow drag drop into and out of board sections in boards edit allow users to drag drop posts between board sections including unsectioned on the board editor ui | 1 |

784,077 | 27,557,057,106 | IssuesEvent | 2023-03-07 18:46:47 | eclipse/dirigible | https://api.github.com/repos/eclipse/dirigible | opened | [UI] Disable two finger horizontal swipe gesture | enhancement web-ide usability priority-high efforts-medium | **Describe the bug**

In modern browsers, when using touchpads, the two finger horizontal swipe gesture equals pressing the back button. This is brilliant for normal web pages but in web apps such as Dirigible, it's very intrusive.

**Expected behavior**

The navigational gestures should be disabled.

**Desktop:**

... | 1.0 | [UI] Disable two finger horizontal swipe gesture - **Describe the bug**

In modern browsers, when using touchpads, the two finger horizontal swipe gesture equals pressing the back button. This is brilliant for normal web pages but in web apps such as Dirigible, it's very intrusive.

**Expected behavior**

The navigat... | priority | disable two finger horizontal swipe gesture describe the bug in modern browsers when using touchpads the two finger horizontal swipe gesture equals pressing the back button this is brilliant for normal web pages but in web apps such as dirigible it s very intrusive expected behavior the navigation... | 1 |

538,822 | 15,778,976,479 | IssuesEvent | 2021-04-01 08:16:54 | knurling-rs/probe-run | https://api.github.com/repos/knurling-rs/probe-run | closed | backtrace can infinte-loop. | difficulty: medium priority: high status: needs info type: bug | I'm seeing this behavior with `-C force-frame-pointers=no`.

I think it's to be expected that backtracing doesn't work correctly with it, but I think at least this should be detected and fail with `error: the stack appears to be corrupted beyond this point` instead of looping forever.

If there's interest I can tr... | 1.0 | backtrace can infinte-loop. - I'm seeing this behavior with `-C force-frame-pointers=no`.

I think it's to be expected that backtracing doesn't work correctly with it, but I think at least this should be detected and fail with `error: the stack appears to be corrupted beyond this point` instead of looping forever.

... | priority | backtrace can infinte loop i m seeing this behavior with c force frame pointers no i think it s to be expected that backtracing doesn t work correctly with it but i think at least this should be detected and fail with error the stack appears to be corrupted beyond this point instead of looping forever ... | 1 |

252,759 | 8,041,443,460 | IssuesEvent | 2018-07-31 02:59:26 | dmwm/WMCore | https://api.github.com/repos/dmwm/WMCore | closed | Mapping OutputDataset to TaskName | Medium Priority | in case of step/task chain, what is the best way to get

{

"Task1": ["output1"],

"Task2":[].

...

}

type of dictionnary ? | 1.0 | Mapping OutputDataset to TaskName - in case of step/task chain, what is the best way to get

{

"Task1": ["output1"],

"Task2":[].

...

}

type of dictionnary ? | priority | mapping outputdataset to taskname in case of step task chain what is the best way to get type of dictionnary | 1 |

401,484 | 11,790,772,483 | IssuesEvent | 2020-03-17 19:38:25 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | closed | MultibodyPlant::DoCalcTimeDerivatives() throws (i.e., running in continuous mode) | priority: medium team: dynamics type: bug | An error message is output that the articulated body inertia is not valid. No existing inertial or kinematic parameter checks have triggered any problems before the change to the O(n) algorithm.

The issue appears to be that the tolerances are set to tight, as this comment indicates:

https://github.com/RobotLocom... | 1.0 | MultibodyPlant::DoCalcTimeDerivatives() throws (i.e., running in continuous mode) - An error message is output that the articulated body inertia is not valid. No existing inertial or kinematic parameter checks have triggered any problems before the change to the O(n) algorithm.

The issue appears to be that the tole... | priority | multibodyplant docalctimederivatives throws i e running in continuous mode an error message is output that the articulated body inertia is not valid no existing inertial or kinematic parameter checks have triggered any problems before the change to the o n algorithm the issue appears to be that the tole... | 1 |

61,272 | 3,143,488,950 | IssuesEvent | 2015-09-14 07:24:04 | SiCKRAGETV/sickrage-issues | https://api.github.com/repos/SiCKRAGETV/sickrage-issues | closed | [APP SUBMITTED]: 'NoneType' object has no attribute 'mount' | 1: Bug / issue 2: Medium Priority | ### INFO

Python Version: **2.7.9 (default, Apr 2 2015, 15:34:55) [GCC 4.9.2]**

Operating System: **Linux-3.19.0-26-generic-i686-with-Ubuntu-15.04-vivid**

Locale: UTF-8

Branch: **develop**

Commit: SiCKRAGETV/SickRage@f15adac877b6f4c7f434f734ecf6824e87675ddc

Link to Log: https://gist.github.com/e3f169680dace8d48a89

### ... | 1.0 | [APP SUBMITTED]: 'NoneType' object has no attribute 'mount' - ### INFO

Python Version: **2.7.9 (default, Apr 2 2015, 15:34:55) [GCC 4.9.2]**

Operating System: **Linux-3.19.0-26-generic-i686-with-Ubuntu-15.04-vivid**

Locale: UTF-8

Branch: **develop**

Commit: SiCKRAGETV/SickRage@f15adac877b6f4c7f434f734ecf6824e87675ddc

... | priority | nonetype object has no attribute mount info python version default apr operating system linux generic with ubuntu vivid locale utf branch develop commit sickragetv sickrage link to log error searchqueue manual unable to connect ... | 1 |

26,106 | 2,684,174,740 | IssuesEvent | 2015-03-28 18:37:04 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | abd.exe (Android Debug Bridge) shell history bug | 2–5 stars bug imported Priority-Medium | _From [LazyRoy@gmail.com](https://code.google.com/u/LazyRoy@gmail.com/) on September 10, 2012 06:31:08_

Required information! OS version: WinXP SP3 x86 ConEmu version: 120904

Far version (if you are using Far Manager): 1.75 build 2619 x86

Если "abd shell" выполняется из cmd файла, то в нем перестает работать истор... | 1.0 | abd.exe (Android Debug Bridge) shell history bug - _From [LazyRoy@gmail.com](https://code.google.com/u/LazyRoy@gmail.com/) on September 10, 2012 06:31:08_

Required information! OS version: WinXP SP3 x86 ConEmu version: 120904

Far version (if you are using Far Manager): 1.75 build 2619 x86

Если "abd shell" выполняе... | priority | abd exe android debug bridge shell history bug from on september required information os version winxp conemu version far version if you are using far manager build если abd shell выполняется из cmd файла то в нем перестает работать история команд по стрелкам если за... | 1 |

479,067 | 13,790,882,120 | IssuesEvent | 2020-10-09 11:11:12 | Kreateer/automatic-file-sorter | https://api.github.com/repos/Kreateer/automatic-file-sorter | opened | Allow user to choose whether or not to continously move/copy files from src to dst | GUI difficulty: medium enhancement good first issue hacktoberfest optional-feature priority: low | # Goal

- Allow user to choose whether or not to continously move/copy files from chosen source to destination as they are added.

# Details

- When the user chooses this option, the program should run on a loop and move/copy and/or sort any files that are subsequently added into the source folder.

- This issue ... | 1.0 | Allow user to choose whether or not to continously move/copy files from src to dst - # Goal

- Allow user to choose whether or not to continously move/copy files from chosen source to destination as they are added.

# Details

- When the user chooses this option, the program should run on a loop and move/copy and... | priority | allow user to choose whether or not to continously move copy files from src to dst goal allow user to choose whether or not to continously move copy files from chosen source to destination as they are added details when the user chooses this option the program should run on a loop and move copy and... | 1 |

391,616 | 11,576,283,724 | IssuesEvent | 2020-02-21 11:34:45 | bounswe/bounswe2020group4 | https://api.github.com/repos/bounswe/bounswe2020group4 | opened | Licensing | Effort: Medium Priority: Low Status: Pending Type: Research | The license of the project needs to be decided.

Some of the popular licenses by comparison:

https://choosealicense.com/licenses/

How to add license to project:

https://stackoverflow.com/a/31666878/10091826 | 1.0 | Licensing - The license of the project needs to be decided.

Some of the popular licenses by comparison:

https://choosealicense.com/licenses/

How to add license to project:

https://stackoverflow.com/a/31666878/10091826 | priority | licensing the license of the project needs to be decided some of the popular licenses by comparison how to add license to project | 1 |

444,679 | 12,819,177,609 | IssuesEvent | 2020-07-06 01:01:16 | OpenMined/PyGridNetwork | https://api.github.com/repos/OpenMined/PyGridNetwork | closed | Add parameters parser APP | Good first issue :mortar_board: Priority: 3 - Medium :unamused: Severity: 3 - Medium :unamused: Type: Improvement :chart_with_upwards_trend: | ## Description

At this moment when an user set up the server, some parameters are hardcoded https://github.com/OpenMined/PyGridNetwork/blob/0a9e6197c3f4436887b3312b33bc9709f46f9873/gridnetwork/__init__.py#L78

A parameter parser `argparser` similar to what we had in PyGrid repository would help

## Are you interes... | 1.0 | Add parameters parser APP - ## Description

At this moment when an user set up the server, some parameters are hardcoded https://github.com/OpenMined/PyGridNetwork/blob/0a9e6197c3f4436887b3312b33bc9709f46f9873/gridnetwork/__init__.py#L78

A parameter parser `argparser` similar to what we had in PyGrid repository woul... | priority | add parameters parser app description at this moment when an user set up the server some parameters are hardcoded a parameter parser argparser similar to what we had in pygrid repository would help are you interested in working on this improvement yourself yes i am | 1 |

526,188 | 15,283,145,899 | IssuesEvent | 2021-02-23 10:28:40 | pupil-labs/pupil | https://api.github.com/repos/pupil-labs/pupil | closed | Test msgpack 0.6.0 compatibility | dependency: msgpack priority: medium | We use [check_code](https://github.com/pupil-labs/pupil/blob/master/pupil_src/shared_modules/file_methods.py#L23)

```

assert (

msgpack.version[1] == 5

), "msgpack out of date, please upgrade to version (0, 5, 6 ) or later."

```

which doesn't work when msgpack version is 0.6.0

we can use:

```

from dis... | 1.0 | Test msgpack 0.6.0 compatibility - We use [check_code](https://github.com/pupil-labs/pupil/blob/master/pupil_src/shared_modules/file_methods.py#L23)

```

assert (

msgpack.version[1] == 5

), "msgpack out of date, please upgrade to version (0, 5, 6 ) or later."

```

which doesn't work when msgpack version is 0.... | priority | test msgpack compatibility we use assert msgpack version msgpack out of date please upgrade to version or later which doesn t work when msgpack version is we can use from distutils version import looseversion strictversion assert strictversio... | 1 |

96,883 | 3,974,634,418 | IssuesEvent | 2016-05-04 23:06:44 | haganbt/pepp | https://api.github.com/repos/haganbt/pepp | closed | Tableau Exports - add ability to overload tableau copy for easy regeneration of custom workbooks | enhancement PRIORITY: Medium | It is typical to want to refresh a custom Tableau workbook repeatedly. To support this, we could overload the workbook export function by simply checking if a workbook with the same name as the config file exists. This was, any user can move a tableau workbook in to the source dir, and when the recipe is run, the custo... | 1.0 | Tableau Exports - add ability to overload tableau copy for easy regeneration of custom workbooks - It is typical to want to refresh a custom Tableau workbook repeatedly. To support this, we could overload the workbook export function by simply checking if a workbook with the same name as the config file exists. This wa... | priority | tableau exports add ability to overload tableau copy for easy regeneration of custom workbooks it is typical to want to refresh a custom tableau workbook repeatedly to support this we could overload the workbook export function by simply checking if a workbook with the same name as the config file exists this wa... | 1 |

484,418 | 13,939,585,218 | IssuesEvent | 2020-10-22 16:41:22 | interferences-at/mpop | https://api.github.com/repos/interferences-at/mpop | closed | Update all the English text for each multiple-question pages | QML difficulty: medium kiosk_central mpop_kiosk priority: high | The text are detailed in #84

There is QML model for the questions.

Ask @aalex in case of doubt about which question has which text.

`ModelQuestions.qml`

Also update min/max text for both languages. | 1.0 | Update all the English text for each multiple-question pages - The text are detailed in #84

There is QML model for the questions.

Ask @aalex in case of doubt about which question has which text.

`ModelQuestions.qml`

Also update min/max text for both languages. | priority | update all the english text for each multiple question pages the text are detailed in there is qml model for the questions ask aalex in case of doubt about which question has which text modelquestions qml also update min max text for both languages | 1 |

311,538 | 9,534,950,457 | IssuesEvent | 2019-04-30 04:25:53 | cuappdev/ithaca-transit-ios | https://api.github.com/repos/cuappdev/ithaca-transit-ios | opened | RouteOptions: Circle gets flattened in route diagram | Priority: Medium Type: Bug | Suspect it's a constraint issue; no idea though

<img src="https://user-images.githubusercontent.com/36868927/56940753-4b4afb80-6ade-11e9-9f44-e3019a44affb.png" width="300"> | 1.0 | RouteOptions: Circle gets flattened in route diagram - Suspect it's a constraint issue; no idea though

<img src="https://user-images.githubusercontent.com/36868927/56940753-4b4afb80-6ade-11e9-9f44-e3019a44affb.png" width="300"> | priority | routeoptions circle gets flattened in route diagram suspect it s a constraint issue no idea though | 1 |

258,664 | 8,178,772,260 | IssuesEvent | 2018-08-28 14:38:46 | AlexsLemonade/refinebio-frontend | https://api.github.com/repos/AlexsLemonade/refinebio-frontend | closed | 'Back to Results' Button Loses Search Position | interaction medium priority review | To reproduce:

View by 50 per page.

Scroll down to 50, click an item.

Press Back to Results Page

You are now at item 1 of 50.

This is going to be infuriating for building large data-sets. | 1.0 | 'Back to Results' Button Loses Search Position - To reproduce:

View by 50 per page.

Scroll down to 50, click an item.

Press Back to Results Page

You are now at item 1 of 50.

This is going to be infuriating for building large data-sets. | priority | back to results button loses search position to reproduce view by per page scroll down to click an item press back to results page you are now at item of this is going to be infuriating for building large data sets | 1 |

632,992 | 20,241,501,544 | IssuesEvent | 2022-02-14 09:43:23 | PoProstuMieciek/wikipedia-scraper | https://api.github.com/repos/PoProstuMieciek/wikipedia-scraper | closed | feat/prepare-images-table | priority: medium type: feat scope: database | **AC**

- [ ] primary key - subpage id

- [x] image etag string - reference to object store | 1.0 | feat/prepare-images-table - **AC**

- [ ] primary key - subpage id

- [x] image etag string - reference to object store | priority | feat prepare images table ac primary key subpage id image etag string reference to object store | 1 |

351,953 | 10,525,704,140 | IssuesEvent | 2019-09-30 15:33:47 | forceworkbench/forceworkbench | https://api.github.com/repos/forceworkbench/forceworkbench | closed | Add UI support for GROUP BY in SOQL | Component-Query Priority-Medium Scheduled-Backlog enhancement imported | _Original author: ryan.bra...@gmail.com (February 06, 2010 04:45:16)_

New GROUP BY Clause

Idea light bulb You asked for it! This enhancement is an idea from the

IdeaExchange.

Spring '10 introduces a new GROUP BY clause in SOQL that is similar to

GROUP BY in SQL. You can use GROUP BY with new aggregate function... | 1.0 | Add UI support for GROUP BY in SOQL - _Original author: ryan.bra...@gmail.com (February 06, 2010 04:45:16)_

New GROUP BY Clause

Idea light bulb You asked for it! This enhancement is an idea from the

IdeaExchange.

Spring '10 introduces a new GROUP BY clause in SOQL that is similar to

GROUP BY in SQL. You can us... | priority | add ui support for group by in soql original author ryan bra gmail com february new group by clause idea light bulb you asked for it this enhancement is an idea from the ideaexchange spring introduces a new group by clause in soql that is similar to group by in sql you can use group ... | 1 |

2,282 | 2,525,001,916 | IssuesEvent | 2015-01-20 21:32:15 | graybeal/ont | https://api.github.com/repos/graybeal/ont | closed | Process graphId parameter in direct registration capability | 1 star duplicate enhancement imported ooici portal Priority-Medium | _From [caru...@gmail.com](https://code.google.com/u/113886747689301365533/) on November 24, 2009 22:54:01_

What capability do you want added or improved? register an ontology such that the contents are added to a desired graph Where do you want this capability to be accessible? In the direct registration capability, i... | 1.0 | Process graphId parameter in direct registration capability - _From [caru...@gmail.com](https://code.google.com/u/113886747689301365533/) on November 24, 2009 22:54:01_

What capability do you want added or improved? register an ontology such that the contents are added to a desired graph Where do you want this capabil... | priority | process graphid parameter in direct registration capability from on november what capability do you want added or improved register an ontology such that the contents are added to a desired graph where do you want this capability to be accessible in the direct registration capability issue w... | 1 |

188,292 | 6,774,957,883 | IssuesEvent | 2017-10-27 12:36:01 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | Coverity issue seen with CID: 178235 | area: Networking bug priority: medium | Static code scan issues seen in File: /subsys/net/lib/dns/mdns_responder.c

Category: Null pointer dereferences

Function: send_response

Component: Networking

Please fix or provide comments to square it off in coverity in the link: https://scan9.coverity.com/reports.htm#v32951/p12996 | 1.0 | Coverity issue seen with CID: 178235 - Static code scan issues seen in File: /subsys/net/lib/dns/mdns_responder.c

Category: Null pointer dereferences

Function: send_response

Component: Networking

Please fix or provide comments to square it off in coverity in the link: https://scan9.coverity.com/reports.htm#v32951/p... | priority | coverity issue seen with cid static code scan issues seen in file subsys net lib dns mdns responder c category null pointer dereferences function send response component networking please fix or provide comments to square it off in coverity in the link | 1 |

28,198 | 2,700,417,072 | IssuesEvent | 2015-04-04 04:21:05 | NodineLegal/OpenLawOffice | https://api.github.com/repos/NodineLegal/OpenLawOffice | closed | Creating matters needs to check for possible duplicates | Priority : Medium Status : Confirmed Type : Enhancement | When creating a matter, possible duplicates need checked | 1.0 | Creating matters needs to check for possible duplicates - When creating a matter, possible duplicates need checked | priority | creating matters needs to check for possible duplicates when creating a matter possible duplicates need checked | 1 |

167,831 | 6,347,492,920 | IssuesEvent | 2017-07-28 07:13:49 | arquillian/smart-testing | https://api.github.com/repos/arquillian/smart-testing | closed | Ability to disable surefire provider using a flag | Component: Maven Priority: Medium Status: In Progress Type: Feature | ##### Issue Overview

There is no way of disabling `smart-testing-surefire-provider` other than commenting out / removing it from the `pom.xml`. We should implement simple flag switch so we can disable it in the same way as one can disable tests using `-DskipTests` or `-DskipITs`.

Our flag (e.g. `disableSmartTest... | 1.0 | Ability to disable surefire provider using a flag - ##### Issue Overview

There is no way of disabling `smart-testing-surefire-provider` other than commenting out / removing it from the `pom.xml`. We should implement simple flag switch so we can disable it in the same way as one can disable tests using `-DskipTests` ... | priority | ability to disable surefire provider using a flag issue overview there is no way of disabling smart testing surefire provider other than commenting out removing it from the pom xml we should implement simple flag switch so we can disable it in the same way as one can disable tests using dskiptests ... | 1 |

54,831 | 3,071,423,590 | IssuesEvent | 2015-08-19 12:01:49 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | opened | "Просмотреть список файлов" доработка подсветки папки с уже скачанными файлами | bug imported Priority-Medium | _From [kirill.B...@gmail.com](https://code.google.com/u/118374335061098442652/) on September 07, 2010 13:50:06_

Имеем:

Флай: r500beta17 x64

OS: Win7 x64

Описание:

При просмотре файл-листа через "Посмотреть список файлов", папки, содержащие уже скачанные файлы не подсвечиваются.

**Attachment:** [r500beta17_Fol... | 1.0 | "Просмотреть список файлов" доработка подсветки папки с уже скачанными файлами - _From [kirill.B...@gmail.com](https://code.google.com/u/118374335061098442652/) on September 07, 2010 13:50:06_

Имеем:

Флай: r500beta17 x64

OS: Win7 x64

Описание:

При просмотре файл-листа через "Посмотреть список файлов", папки, с... | priority | просмотреть список файлов доработка подсветки папки с уже скачанными файлами from on september имеем флай os описание при просмотре файл листа через посмотреть список файлов папки содержащие уже скачанные файлы не подсвечиваются attachment original issue | 1 |

793,062 | 27,982,099,226 | IssuesEvent | 2023-03-26 09:18:39 | 7-lin/Final_Project_BE | https://api.github.com/repos/7-lin/Final_Project_BE | closed | [feat] 회원가입 기능생성과 관련된 예외처리 | For : API For : Backend Priority : Medium Status : In Progress Type : Feature | ## Description(설명)

form 회원가입 기능과 관련된 예외처리 기능을 구현한다.

## Tasks(New feature)

- [ ] 필요한 dto 생성

- [ ] repository 코드 추가

- [ ] service 코드 추가

- [ ] 예외처리 및 handler 생성

- [ ] security 설정

| 1.0 | [feat] 회원가입 기능생성과 관련된 예외처리 - ## Description(설명)

form 회원가입 기능과 관련된 예외처리 기능을 구현한다.

## Tasks(New feature)

- [ ] 필요한 dto 생성

- [ ] repository 코드 추가

- [ ] service 코드 추가

- [ ] 예외처리 및 handler 생성

- [ ] security 설정

| priority | 회원가입 기능생성과 관련된 예외처리 description 설명 form 회원가입 기능과 관련된 예외처리 기능을 구현한다 tasks new feature 필요한 dto 생성 repository 코드 추가 service 코드 추가 예외처리 및 handler 생성 security 설정 | 1 |

619,833 | 19,536,607,129 | IssuesEvent | 2021-12-31 08:42:58 | bounswe/2021SpringGroup7 | https://api.github.com/repos/bounswe/2021SpringGroup7 | opened | CF-44 Subcomment and Pin Features for Comments | Status: In Progress Priority: Medium Frontend | User shall be able to

- Comment on comments

- Pin comments for his posts | 1.0 | CF-44 Subcomment and Pin Features for Comments - User shall be able to

- Comment on comments

- Pin comments for his posts | priority | cf subcomment and pin features for comments user shall be able to comment on comments pin comments for his posts | 1 |

771,834 | 27,094,526,114 | IssuesEvent | 2023-02-15 00:56:30 | kevslinger/bot-be-named | https://api.github.com/repos/kevslinger/bot-be-named | closed | Support for Threads : New commands | enhancement Priority: Medium | Discord Threads are very flexible and useful, and I see a strong usecase for adding support for them soon.

Most of the chan commands would end up having some sort of thread counterpart too. Off the top of my head...

- ~makethread

- ~renamethread

- ~threadcrab

- ~threadlion | 1.0 | Support for Threads : New commands - Discord Threads are very flexible and useful, and I see a strong usecase for adding support for them soon.

Most of the chan commands would end up having some sort of thread counterpart too. Off the top of my head...

- ~makethread

- ~renamethread

- ~threadcrab

- ~threadlion | priority | support for threads new commands discord threads are very flexible and useful and i see a strong usecase for adding support for them soon most of the chan commands would end up having some sort of thread counterpart too off the top of my head makethread renamethread threadcrab threadlion | 1 |

40,519 | 2,868,925,167 | IssuesEvent | 2015-06-05 21:59:48 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | closed | Request to host package js-interop | bug Fixed Priority-Medium | <a href="https://github.com/vsmenon"><img src="https://avatars.githubusercontent.com/u/2119553?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [vsmenon](https://github.com/vsmenon)**

_Originally opened as dart-lang/sdk#5413_

----

The js-interop package is located at:

https://github.com/dar... | 1.0 | Request to host package js-interop - <a href="https://github.com/vsmenon"><img src="https://avatars.githubusercontent.com/u/2119553?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [vsmenon](https://github.com/vsmenon)**

_Originally opened as dart-lang/sdk#5413_

----

The js-interop package i... | priority | request to host package js interop issue by originally opened as dart lang sdk the js interop package is located at | 1 |

504,033 | 14,612,101,240 | IssuesEvent | 2020-12-22 05:17:27 | goldeimer/goldeimer | https://api.github.com/repos/goldeimer/goldeimer | opened | Improve SEO & analytics features | priority medium type feature | ## Goal

Our SEO strategy yields room for improvement.

## How

- Identify low-hanging fruits.

- Pick 'em.

## Metric

Progression of ranking for relevant keyword(s) over time. Method of tracking tbd.

## Relevant keywords

Incomplete list of relevant terms:

```

nachhaltiges Klopapier

Klopapier Abo

... | 1.0 | Improve SEO & analytics features - ## Goal

Our SEO strategy yields room for improvement.

## How

- Identify low-hanging fruits.

- Pick 'em.

## Metric

Progression of ranking for relevant keyword(s) over time. Method of tracking tbd.

## Relevant keywords

Incomplete list of relevant terms:

```

nac... | priority | improve seo analytics features goal our seo strategy yields room for improvement how identify low hanging fruits pick em metric progression of ranking for relevant keyword s over time method of tracking tbd relevant keywords incomplete list of relevant terms nac... | 1 |

733,995 | 25,334,037,025 | IssuesEvent | 2022-11-18 15:24:31 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | closed | No logging on the connector client. | Type: Bug Priority: Medium | **Describe the bug**

There appears to be no logging created by the connector client on the server where the client is installed. This makes troubleshooting impossible as to why it is not functioning.

**To Reproduce**

Steps to reproduce the behaviour:

1. Installed the connector

2. Configure connector to point at... | 1.0 | No logging on the connector client. - **Describe the bug**

There appears to be no logging created by the connector client on the server where the client is installed. This makes troubleshooting impossible as to why it is not functioning.

**To Reproduce**

Steps to reproduce the behaviour:

1. Installed the connect... | priority | no logging on the connector client describe the bug there appears to be no logging created by the connector client on the server where the client is installed this makes troubleshooting impossible as to why it is not functioning to reproduce steps to reproduce the behaviour installed the connect... | 1 |

109,872 | 4,414,865,192 | IssuesEvent | 2016-08-13 18:14:12 | williewillus/Botania | https://api.github.com/repos/williewillus/Botania | closed | [1.10.2] Crash when breaking Botania "Special" flowers with Scythe from BoP | priority-medium | I'm running the latest 1.10.2 Botania build and whenever I attempt to break any "special" botania flower like the Pure Daisy with a Biomes' O Plenty Scythe I get this crash, http://pastebin.com/ECDDfdVx It is reproducible by multiple people running the All the Mods mpdpack, which you can find here, http://minecraft.cur... | 1.0 | [1.10.2] Crash when breaking Botania "Special" flowers with Scythe from BoP - I'm running the latest 1.10.2 Botania build and whenever I attempt to break any "special" botania flower like the Pure Daisy with a Biomes' O Plenty Scythe I get this crash, http://pastebin.com/ECDDfdVx It is reproducible by multiple people r... | priority | crash when breaking botania special flowers with scythe from bop i m running the latest botania build and whenever i attempt to break any special botania flower like the pure daisy with a biomes o plenty scythe i get this crash it is reproducible by multiple people running the all the mods mpdpack wh... | 1 |

231,912 | 7,644,793,283 | IssuesEvent | 2018-05-08 16:30:09 | department-of-veterans-affairs/caseflow | https://api.github.com/repos/department-of-veterans-affairs/caseflow | closed | Persist issue dispositions in the attorney check out flow | In-Progress bug-medium-priority caseflow-queue foxtrot | An attorney received a case that his judge asked him to make revisions on. So, he had already entered issue dispositions in his initial check out to the judge. Upon starting the check out flow again after he got the case back, all of those dispositions were cleared. These issue dispositions should persist so the attorn... | 1.0 | Persist issue dispositions in the attorney check out flow - An attorney received a case that his judge asked him to make revisions on. So, he had already entered issue dispositions in his initial check out to the judge. Upon starting the check out flow again after he got the case back, all of those dispositions were cl... | priority | persist issue dispositions in the attorney check out flow an attorney received a case that his judge asked him to make revisions on so he had already entered issue dispositions in his initial check out to the judge upon starting the check out flow again after he got the case back all of those dispositions were cl... | 1 |

433,122 | 12,501,911,225 | IssuesEvent | 2020-06-02 02:47:05 | buddyboss/buddyboss-platform | https://api.github.com/repos/buddyboss/buddyboss-platform | opened | Enhancement - Replace Comment System to Actvity Block | feature: enhancement priority: medium | **Describe the solution you'd like**

https://www.loom.com/share/ec71af7b2bcb4e1bba46ab234d87ee3c

| 1.0 | Enhancement - Replace Comment System to Actvity Block - **Describe the solution you'd like**

https://www.loom.com/share/ec71af7b2bcb4e1bba46ab234d87ee3c

| priority | enhancement replace comment system to actvity block describe the solution you d like | 1 |

52,979 | 3,032,281,985 | IssuesEvent | 2015-08-05 07:44:39 | clementine-player/Clementine | https://api.github.com/repos/clementine-player/Clementine | closed | All album in one flac isn't played | bug imported Priority-Medium | _From [armaty...@gmail.com](https://code.google.com/u/117692321962641050001/) on April 19, 2012 00:29:25_

What steps will reproduce the problem? 1.Start clementine via console.

2.Manually add one-album flac. file.

3.Be amazed by flood output in console as follows:

ERROR GstEnginePipeline:506 167 "gstfile... | 1.0 | All album in one flac isn't played - _From [armaty...@gmail.com](https://code.google.com/u/117692321962641050001/) on April 19, 2012 00:29:25_

What steps will reproduce the problem? 1.Start clementine via console.

2.Manually add one-album flac. file.

3.Be amazed by flood output in console as follows:

ERROR GstEngin... | priority | all album in one flac isn t played from on april what steps will reproduce the problem start clementine via console manually add one album flac file be amazed by flood output in console as follows error gstenginepipeline gstfilesrc c gst file src start gstpipel... | 1 |

804,802 | 29,502,460,839 | IssuesEvent | 2023-06-03 00:21:02 | oppia/oppia-android | https://api.github.com/repos/oppia/oppia-android | reopened | Language selection screen [Blocked: #20, #44] | Type: Improvement Priority: Nice-to-have Impact: Medium Issue: Needs Clarification Issue: Needs Break-down ibt enhancement Work: Medium | There needs to be a page where the user can view all languages that the Oppia app supports and select which on it should be displayed in. This should be a per-profile setting that sets the language for the app, but not for the whole system. It should be easy to navigate to this screen. It should also be possible for th... | 1.0 | Language selection screen [Blocked: #20, #44] - There needs to be a page where the user can view all languages that the Oppia app supports and select which on it should be displayed in. This should be a per-profile setting that sets the language for the app, but not for the whole system. It should be easy to navigate t... | priority | language selection screen there needs to be a page where the user can view all languages that the oppia app supports and select which on it should be displayed in this should be a per profile setting that sets the language for the app but not for the whole system it should be easy to navigate to this screen it ... | 1 |

447,797 | 12,893,373,498 | IssuesEvent | 2020-07-13 21:29:27 | DSpace/dspace-angular | https://api.github.com/repos/DSpace/dspace-angular | opened | Embargo an archived Item | medium priority | From release plan spreadsheet

Estimate from release plan: none

Expressing interest: none

No additional notes | 1.0 | Embargo an archived Item - From release plan spreadsheet

Estimate from release plan: none

Expressing interest: none

No additional notes | priority | embargo an archived item from release plan spreadsheet estimate from release plan none expressing interest none no additional notes | 1 |

56,658 | 3,080,706,159 | IssuesEvent | 2015-08-22 00:54:25 | Nava2/umple-issue-test | https://api.github.com/repos/Nava2/umple-issue-test | opened | Implement the unique keyword | attributes Component-SemanticsAndGen contribSought Diffic-Med imported Priority-Medium Type-ProjectUG ucosp unique | _From [TimothyCLethbridge](https://code.google.com/u/TimothyCLethbridge/) on June 24, 2011 10:13:22_

Implement the unique constraint

class X

{

unique String a;

}

Resulting in code similar to (a mixture of Umple and Java)

public class X

{

private static ArrayList<Object>() allAs = new ArrayList<Object... | 1.0 | Implement the unique keyword - _From [TimothyCLethbridge](https://code.google.com/u/TimothyCLethbridge/) on June 24, 2011 10:13:22_

Implement the unique constraint

class X

{

unique String a;

}

Resulting in code similar to (a mixture of Umple and Java)

public class X

{

private static ArrayList<Object>... | priority | implement the unique keyword from on june implement the unique constraint class x unique string a resulting in code similar to a mixture of umple and java public class x private static arraylist allas new arraylist add the following code injection before co... | 1 |

730,604 | 25,181,439,403 | IssuesEvent | 2022-11-11 14:02:26 | AY2223S1-CS2103T-T09-1/tp | https://api.github.com/repos/AY2223S1-CS2103T-T09-1/tp | closed | As a home-based business owner / reseller, I can store my transaction with suppliers / buyers | type.Story priority.Medium | so that I can track them electronically

| 1.0 | As a home-based business owner / reseller, I can store my transaction with suppliers / buyers - so that I can track them electronically

| priority | as a home based business owner reseller i can store my transaction with suppliers buyers so that i can track them electronically | 1 |

555,888 | 16,472,139,487 | IssuesEvent | 2021-05-23 16:21:48 | Team-uMigrate/umigrate | https://api.github.com/repos/Team-uMigrate/umigrate | opened | App: Sending messages in chat rooms | hard medium priority | Implement the ability to send messages between clients. When a user presses the send button, a new message object should be created in the DB (via the API) and it should show up on the recipient's device in real-time. | 1.0 | App: Sending messages in chat rooms - Implement the ability to send messages between clients. When a user presses the send button, a new message object should be created in the DB (via the API) and it should show up on the recipient's device in real-time. | priority | app sending messages in chat rooms implement the ability to send messages between clients when a user presses the send button a new message object should be created in the db via the api and it should show up on the recipient s device in real time | 1 |

720,677 | 24,801,232,050 | IssuesEvent | 2022-10-24 21:58:31 | Automattic/abacus | https://api.github.com/repos/Automattic/abacus | closed | Add absolute impact credible intervals | [!priority] medium [type] enhancement [section] experiment results [!team] explat [!milestone] current | What do we think about adding **absolute impact** credible intervals to the experiment results page like "# of users / month" and "$ in revenue / month"? This was originally suggested in this comment (pbmo2S-UZ-p2#comment-2110) and then analyzed with this method in this comment (pbxNRc-1qR-p2#comment-3353).

In addi... | 1.0 | Add absolute impact credible intervals - What do we think about adding **absolute impact** credible intervals to the experiment results page like "# of users / month" and "$ in revenue / month"? This was originally suggested in this comment (pbmo2S-UZ-p2#comment-2110) and then analyzed with this method in this comment ... | priority | add absolute impact credible intervals what do we think about adding absolute impact credible intervals to the experiment results page like of users month and in revenue month this was originally suggested in this comment uz comment and then analyzed with this method in this comment pbxnrc ... | 1 |

334,195 | 10,137,084,748 | IssuesEvent | 2019-08-02 14:29:56 | input-output-hk/cardano-wallet | https://api.github.com/repos/input-output-hk/cardano-wallet | closed | Implement `listTransactions` endpoint (no filtering) | PRIORITY[MEDIUM] | # Context

<!-- WHEN CREATED

What is the issue that we are seeing that is motivating this decision or change.

Give any elements that help understanding where this issue comes from. Leave no

room for suggestions or implicit deduction.

-->

The following endpoint is still missing but crucial for end-users:

h... | 1.0 | Implement `listTransactions` endpoint (no filtering) - # Context

<!-- WHEN CREATED

What is the issue that we are seeing that is motivating this decision or change.

Give any elements that help understanding where this issue comes from. Leave no

room for suggestions or implicit deduction.

-->

The following en... | priority | implement listtransactions endpoint no filtering context when created what is the issue that we are seeing that is motivating this decision or change give any elements that help understanding where this issue comes from leave no room for suggestions or implicit deduction the following en... | 1 |

75,477 | 3,462,770,692 | IssuesEvent | 2015-12-21 03:46:11 | pentoo/pentoo-historical | https://api.github.com/repos/pentoo/pentoo-historical | closed | Pentoo minimal version | auto-migrated Priority-Medium Type-Enhancement | ```

I just hate it when I can't use my own laptop during a pentest and have to use

a crappy windows host instead.

We need a minimal pentoo with msf, nmap, burp and a few others in order to have

a small footprint iso/vm to deploy quickly during pentests.

Here's an attempt of a minimal use flag for the pentoo meta-pa... | 1.0 | Pentoo minimal version - ```

I just hate it when I can't use my own laptop during a pentest and have to use

a crappy windows host instead.

We need a minimal pentoo with msf, nmap, burp and a few others in order to have

a small footprint iso/vm to deploy quickly during pentests.

Here's an attempt of a minimal use fl... | priority | pentoo minimal version i just hate it when i can t use my own laptop during a pentest and have to use a crappy windows host instead we need a minimal pentoo with msf nmap burp and a few others in order to have a small footprint iso vm to deploy quickly during pentests here s an attempt of a minimal use fl... | 1 |

30,082 | 2,722,219,245 | IssuesEvent | 2015-04-14 01:10:39 | CruxFramework/crux-smart-faces | https://api.github.com/repos/CruxFramework/crux-smart-faces | closed | Close buttons do not appear on Internet Explorer 8 | bug imported Milestone-M14-C4 Module-CruxWidgets Priority-Medium TargetVersion-5.3.0 | _From [flavia.jesus@triggolabs.com](https://code.google.com/u/flavia.jesus@triggolabs.com/) on March 25, 2015 17:43:06_

Several close buttons do not appear on Internet Explorer 8, for exemple DialogViewContainer component.

_Original issue: http://code.google.com/p/crux-framework/issues/detail?id=662_ | 1.0 | Close buttons do not appear on Internet Explorer 8 - _From [flavia.jesus@triggolabs.com](https://code.google.com/u/flavia.jesus@triggolabs.com/) on March 25, 2015 17:43:06_

Several close buttons do not appear on Internet Explorer 8, for exemple DialogViewContainer component.

_Original issue: http://code.google.com/p/... | priority | close buttons do not appear on internet explorer from on march several close buttons do not appear on internet explorer for exemple dialogviewcontainer component original issue | 1 |

145,188 | 5,560,081,040 | IssuesEvent | 2017-03-24 18:30:46 | CS2103JAN2017-W14-B4/main | https://api.github.com/repos/CS2103JAN2017-W14-B4/main | closed | As a power user I can map standard commands to my preferred shortcut commands | priority.medium type.story | so I can be familiar with my own modified commands | 1.0 | As a power user I can map standard commands to my preferred shortcut commands - so I can be familiar with my own modified commands | priority | as a power user i can map standard commands to my preferred shortcut commands so i can be familiar with my own modified commands | 1 |

126,497 | 4,996,537,166 | IssuesEvent | 2016-12-09 14:12:55 | softdevteam/krun | https://api.github.com/repos/softdevteam/krun | opened | Post-exec commands | bug medium priority (a clear improvement but not a blocker for publication) | The post-exec commands run directly after every benchmark has completed, and typically include commands to bring the network back up and scp the current data and logs to a different machine.

Because post-execs run *directly* after the benchmark is complete, the log that is tarred up and scp'd does not contain some ... | 1.0 | Post-exec commands - The post-exec commands run directly after every benchmark has completed, and typically include commands to bring the network back up and scp the current data and logs to a different machine.

Because post-execs run *directly* after the benchmark is complete, the log that is tarred up and scp'd d... | priority | post exec commands the post exec commands run directly after every benchmark has completed and typically include commands to bring the network back up and scp the current data and logs to a different machine because post execs run directly after the benchmark is complete the log that is tarred up and scp d d... | 1 |

623,435 | 19,667,726,106 | IssuesEvent | 2022-01-11 01:27:13 | cdklabs/construct-hub-webapp | https://api.github.com/repos/cdklabs/construct-hub-webapp | closed | Inconsistent display of constructor arguments between python and typescript references | effort/medium priority/p2 risk/medium stale | The `Python` and `TypeScript` experience differ when displaying the information needed to initialize a construct/class.

In Python, the initializer looks like so:

<img width="401" alt="Screen Shot 2021-06-20 at 6 38 01 PM" src="https://user-images.githubusercontent.com/1428812/122680200-c9a31400-d1f6-11eb-8540-62... | 1.0 | Inconsistent display of constructor arguments between python and typescript references - The `Python` and `TypeScript` experience differ when displaying the information needed to initialize a construct/class.

In Python, the initializer looks like so:

<img width="401" alt="Screen Shot 2021-06-20 at 6 38 01 PM" sr... | priority | inconsistent display of constructor arguments between python and typescript references the python and typescript experience differ when displaying the information needed to initialize a construct class in python the initializer looks like so img width alt screen shot at pm src n... | 1 |

55,366 | 3,073,043,198 | IssuesEvent | 2015-08-19 19:56:31 | RobotiumTech/robotium | https://api.github.com/repos/RobotiumTech/robotium | closed | Configurable TIMEOUT values | bug imported Priority-Medium | _From [glenview...@gmail.com](https://code.google.com/u/110087215095127878251/) on August 22, 2012 10:52:21_

It would be great if Robotium offered setTimeout(int timeout), setSmallTimeout(int smallTimeout) methods.

_Original issue: http://code.google.com/p/robotium/issues/detail?id=314_ | 1.0 | Configurable TIMEOUT values - _From [glenview...@gmail.com](https://code.google.com/u/110087215095127878251/) on August 22, 2012 10:52:21_

It would be great if Robotium offered setTimeout(int timeout), setSmallTimeout(int smallTimeout) methods.

_Original issue: http://code.google.com/p/robotium/issues/detail?id=314_ | priority | configurable timeout values from on august it would be great if robotium offered settimeout int timeout setsmalltimeout int smalltimeout methods original issue | 1 |

266,223 | 8,364,394,646 | IssuesEvent | 2018-10-03 22:50:49 | theAsmodai/metamod-r | https://api.github.com/repos/theAsmodai/metamod-r | closed | Crashing server with steam-Sven Coop when installing metamod-r | OS: Windows Priority: Medium Status: Pending Type: Bug Type: Help wanted | Please help, REHLDS is not working and not search game dll :( | 1.0 | Crashing server with steam-Sven Coop when installing metamod-r - Please help, REHLDS is not working and not search game dll :( | priority | crashing server with steam sven coop when installing metamod r please help rehlds is not working and not search game dll | 1 |

146,429 | 5,621,383,712 | IssuesEvent | 2017-04-04 09:48:46 | linux-audit/audit-userspace | https://api.github.com/repos/linux-audit/audit-userspace | opened | BUG: errormsg descriptions drifting or overloaded | bug priority/medium | A number of error message descriptions have drifted from the conditions that

caused them in audit_rule_fieldpair_data() including expansion of fields to be used by the user filter list, restriction to the exit list only and changing an

operat... | 1.0 | BUG: errormsg descriptions drifting or overloaded - A number of error message descriptions have drifted from the conditions that

caused them in audit_rule_fieldpair_data() including expansion of fields to be used by the user filter list, restr... | priority | bug errormsg descriptions drifting or overloaded a number of error message descriptions have drifted from the conditions that caused them in audit rule fieldpair data including expansion of fields to be used by the user filter list restr... | 1 |

455,140 | 13,112,435,684 | IssuesEvent | 2020-08-05 02:12:07 | Seamonster778778778788/SQBeyondPublic | https://api.github.com/repos/Seamonster778778778788/SQBeyondPublic | closed | Paid twice for quests | bug medium priority | I bought a fighter at spawn in order to do the 'leave spawn' quest, but I was informed by someone that I needed to click on the [step-by-step guide] button in the book first and had to buy another one. (IDK if this is correct)

I bought a fighter, followed the instructions the quest gave me, and when I completed it the... | 1.0 | Paid twice for quests - I bought a fighter at spawn in order to do the 'leave spawn' quest, but I was informed by someone that I needed to click on the [step-by-step guide] button in the book first and had to buy another one. (IDK if this is correct)

I bought a fighter, followed the instructions the quest gave me, and... | priority | paid twice for quests i bought a fighter at spawn in order to do the leave spawn quest but i was informed by someone that i needed to click on the button in the book first and had to buy another one idk if this is correct i bought a fighter followed the instructions the quest gave me and when i completed i... | 1 |

569,076 | 16,993,992,980 | IssuesEvent | 2021-07-01 02:22:29 | cjs8487/SS-Randomizer-Tracker | https://api.github.com/repos/cjs8487/SS-Randomizer-Tracker | closed | Dungeon Panel Rework | Medium Priority enhancement | Rework the Dungeon Panel in order to better use the space and include more features.

Additional Features:

- Icon/button to click to open the dungeons location list

Layout Tweaks:

- Entrance Rando

- Combine Small Keys and Boss Keys onto a single row

- Combine Entered and Required onto a second row

- Without... | 1.0 | Dungeon Panel Rework - Rework the Dungeon Panel in order to better use the space and include more features.

Additional Features:

- Icon/button to click to open the dungeons location list

Layout Tweaks:

- Entrance Rando

- Combine Small Keys and Boss Keys onto a single row

- Combine Entered and Required onto ... | priority | dungeon panel rework rework the dungeon panel in order to better use the space and include more features additional features icon button to click to open the dungeons location list layout tweaks entrance rando combine small keys and boss keys onto a single row combine entered and required onto ... | 1 |

752,103 | 26,273,286,205 | IssuesEvent | 2023-01-06 19:11:44 | minio/mc | https://api.github.com/repos/minio/mc | closed | `mc mirror` without `--overwrite` does not continue syncing other objects after one fails to synchronize | community priority: medium | ## Expected behavior

As the [docs](https://docs.min.io/minio/baremetal/reference/minio-mc/mc-mirror.html#mc.mirror.-overwrite) state and as one would expect using common sense:

> Without --overwrite, if an object already exists on the Destination, the mirror process fails to synchronize that object. mc mirror logs an... | 1.0 | `mc mirror` without `--overwrite` does not continue syncing other objects after one fails to synchronize - ## Expected behavior

As the [docs](https://docs.min.io/minio/baremetal/reference/minio-mc/mc-mirror.html#mc.mirror.-overwrite) state and as one would expect using common sense:

> Without --overwrite, if an objec... | priority | mc mirror without overwrite does not continue syncing other objects after one fails to synchronize expected behavior as the state and as one would expect using common sense without overwrite if an object already exists on the destination the mirror process fails to synchronize that object mc mi... | 1 |

231,711 | 7,642,345,598 | IssuesEvent | 2018-05-08 08:57:31 | strapi/strapi | https://api.github.com/repos/strapi/strapi | closed | ctx.session inside policies is always an empty object? | priority: medium status: need more informations 🤔 type: bug 🐛 | <!-- ⚠️ Before writing your issue make sure you are using:-->

<!-- Node 9.x.x -->

<!-- npm 5.x.x -->

<!-- The latest version of Strapi. -->

**Informations**

- **Node.js version**:

9.11.1

- **npm version**:

5.6.0

- **Strapi version**:

3.0.0-alpha.12.1.2

- **Database**:

MongoDB

- **Operatin... | 1.0 | ctx.session inside policies is always an empty object? - <!-- ⚠️ Before writing your issue make sure you are using:-->

<!-- Node 9.x.x -->

<!-- npm 5.x.x -->

<!-- The latest version of Strapi. -->

**Informations**

- **Node.js version**:

9.11.1

- **npm version**:

5.6.0

- **Strapi version**:

3.0.0-a... | priority | ctx session inside policies is always an empty object informations node js version npm version strapi version alpha database mongodb operating system windows what is the current behavior ctx session... | 1 |

597,771 | 18,171,381,237 | IssuesEvent | 2021-09-27 20:27:26 | CanberraOceanRacingClub/namadgi3 | https://api.github.com/repos/CanberraOceanRacingClub/namadgi3 | closed | Finalise arrangement for headsail double luff tracks | priority 2: Medium Working bee | Sam tells me:

* We have the foil installed

* We need our headsails measure (why?)

* Phil may have the fitting that is used to load the sails -- it needs to be returned to the boat

More investigation required.

@peterottesen might know more details. | 1.0 | Finalise arrangement for headsail double luff tracks - Sam tells me:

* We have the foil installed

* We need our headsails measure (why?)

* Phil may have the fitting that is used to load the sails -- it needs to be returned to the boat

More investigation required.

@peterottesen might know more details. | priority | finalise arrangement for headsail double luff tracks sam tells me we have the foil installed we need our headsails measure why phil may have the fitting that is used to load the sails it needs to be returned to the boat more investigation required peterottesen might know more details | 1 |

30,589 | 2,724,295,737 | IssuesEvent | 2015-04-14 17:02:24 | CruxFramework/crux-widgets | https://api.github.com/repos/CruxFramework/crux-widgets | closed | Crux report error when using Html Entities in Views | bug imported Priority-Medium | _From [moac...@gmail.com](https://code.google.com/u/116048952297984795716/) on July 09, 2013 15:43:57_

What steps will reproduce the problem? 1. Create a Div Element and put a Html Entity like " " What is the expected output? What do you see instead? The following error is reported:

The entity "nbsp" was referen... | 1.0 | Crux report error when using Html Entities in Views - _From [moac...@gmail.com](https://code.google.com/u/116048952297984795716/) on July 09, 2013 15:43:57_

What steps will reproduce the problem? 1. Create a Div Element and put a Html Entity like " " What is the expected output? What do you see instead? The follo... | priority | crux report error when using html entities in views from on july what steps will reproduce the problem create a div element and put a html entity like nbsp what is the expected output what do you see instead the following error is reported the entity nbsp was referenced but not declar... | 1 |

51,848 | 3,014,630,977 | IssuesEvent | 2015-07-29 15:39:32 | jpchanson/BeSeenium | https://api.github.com/repos/jpchanson/BeSeenium | opened | Feedback to web interface | Core functionality Medium Priority | Need some way of getting the output back onto the web interface, perhaps by dropping a flatfile that can be picked up with something on the web end (data enclosed in iframe for instance) | 1.0 | Feedback to web interface - Need some way of getting the output back onto the web interface, perhaps by dropping a flatfile that can be picked up with something on the web end (data enclosed in iframe for instance) | priority | feedback to web interface need some way of getting the output back onto the web interface perhaps by dropping a flatfile that can be picked up with something on the web end data enclosed in iframe for instance | 1 |

59,535 | 3,114,046,535 | IssuesEvent | 2015-09-03 05:34:08 | cs2103aug2015-t16-1j/main | https://api.github.com/repos/cs2103aug2015-t16-1j/main | opened | As a user, I can auto-save/auto-sync every certain time interval | priority.medium type.story | so that there will not be any info-loss accidentally

| 1.0 | As a user, I can auto-save/auto-sync every certain time interval - so that there will not be any info-loss accidentally

| priority | as a user i can auto save auto sync every certain time interval so that there will not be any info loss accidentally | 1 |

772,654 | 27,130,985,225 | IssuesEvent | 2023-02-16 09:46:22 | LuanRT/YouTube.js | https://api.github.com/repos/LuanRT/YouTube.js | closed | Add support for hashtag page | enhancement priority: medium | ### Describe your suggestion

add a getHashtag method to get info from a hashtag page (ex: https://www.youtube.com/hashtag/shorts )

### Other details

I tried to implement myself this but I do not know enough about protobuf:

`execute('/browse', { browseId: 'FEhashtag', params: 'protobuf hashtag'})`

### Check... | 1.0 | Add support for hashtag page - ### Describe your suggestion

add a getHashtag method to get info from a hashtag page (ex: https://www.youtube.com/hashtag/shorts )

### Other details

I tried to implement myself this but I do not know enough about protobuf:

`execute('/browse', { browseId: 'FEhashtag', params: 'pr... | priority | add support for hashtag page describe your suggestion add a gethashtag method to get info from a hashtag page ex other details i tried to implement myself this but i do not know enough about protobuf execute browse browseid fehashtag params protobuf hashtag checklist ... | 1 |

746,557 | 26,035,030,106 | IssuesEvent | 2022-12-22 03:25:02 | EthicalSoftwareCommunity/HippieVerse-Game | https://api.github.com/repos/EthicalSoftwareCommunity/HippieVerse-Game | closed | Add shield in HF | enhancement MEDIUM PRIORITY HF (HippieFall) | The player will pick up the shield during the fall.

Once activated, the shield will be available for Nth amount of time.

It gives resistance to any damage:

to collisions

to traps | 1.0 | Add shield in HF - The player will pick up the shield during the fall.

Once activated, the shield will be available for Nth amount of time.

It gives resistance to any damage:

to collisions

to traps | priority | add shield in hf the player will pick up the shield during the fall once activated the shield will be available for nth amount of time it gives resistance to any damage to collisions to traps | 1 |