Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

212,896 | 7,243,823,068 | IssuesEvent | 2018-02-14 13:12:35 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | opened | Portal redirection: Destination URL should be ignored for portal detection | Priority: Medium Type: Bug | As experienced by @lzammit, he was redirected to `http://captive.apple.com/hotspot-detect.html` at the end of the portal.

He should instead have been redirected to the redirect URL value in the profile

Not sure if its v8 related or affects v7 as well. If v7 is affected I'll cherry-pick in maintenance | 1.0 | Portal redirection: Destination URL should be ignored for portal detection - As experienced by @lzammit, he was redirected to `http://captive.apple.com/hotspot-detect.html` at the end of the portal.

He should instead have been redirected to the redirect URL value in the profile

Not sure if its v8 related or affec... | priority | portal redirection destination url should be ignored for portal detection as experienced by lzammit he was redirected to at the end of the portal he should instead have been redirected to the redirect url value in the profile not sure if its related or affects as well if is affected i ll cherry pi... | 1 |

11,541 | 2,610,140,426 | IssuesEvent | 2015-02-26 18:44:16 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | closed | Allow resizing window | auto-migrated Priority-Medium Type-Enhancement | ```

I have a dual-monitor setup with my primary monitor on the right hand side.

The first window (where you choose single player vs network) correct shows only

on the primary monitor.

The actual game tries to span both monitors.

Under settings, I can choose only 2304x1024.

I'm on Ubuntu 10.10 / NVidia 6600 / TwinV... | 1.0 | Allow resizing window - ```

I have a dual-monitor setup with my primary monitor on the right hand side.

The first window (where you choose single player vs network) correct shows only

on the primary monitor.

The actual game tries to span both monitors.

Under settings, I can choose only 2304x1024.

I'm on Ubuntu 10.... | priority | allow resizing window i have a dual monitor setup with my primary monitor on the right hand side the first window where you choose single player vs network correct shows only on the primary monitor the actual game tries to span both monitors under settings i can choose only i m on ubuntu nvidi... | 1 |

793,343 | 27,991,644,591 | IssuesEvent | 2023-03-27 04:38:47 | WordPress/openverse | https://api.github.com/repos/WordPress/openverse | opened | Consider disabling exposed ports in CI to avoid port conflict flakiness | 🟨 priority: medium 🛠 goal: fix 🤖 aspect: dx 🧱 stack: mgmt | ## Problem

<!-- Describe a problem solved by this feature; or delete the section entirely. -->

CI jobs sometimes fail due to port conflicts.

cf #990 and #200

## Description

<!-- Describe the feature and how it solves the problem. -->

One way to avoid this is to not bind ports at all in CI. At a glance, I ... | 1.0 | Consider disabling exposed ports in CI to avoid port conflict flakiness - ## Problem

<!-- Describe a problem solved by this feature; or delete the section entirely. -->

CI jobs sometimes fail due to port conflicts.

cf #990 and #200

## Description

<!-- Describe the feature and how it solves the problem. -->... | priority | consider disabling exposed ports in ci to avoid port conflict flakiness problem ci jobs sometimes fail due to port conflicts cf and description one way to avoid this is to not bind ports at all in ci at a glance i don t think we need to bind ports at all in ci as we never make req... | 1 |

690,964 | 23,679,710,931 | IssuesEvent | 2022-08-28 16:08:03 | pokt-network/pocket | https://api.github.com/repos/pokt-network/pocket | opened | [Development] Documentation for local debugging | priority:medium infra tooling | ## Objective

Document how to use the `dlv` debugger in LocalNet.

## Origin Document

The `LocalNet` CLI and logging are useful, but we need to be able to step through the code in unit tests and in a dev environment

## Goals

- [ ] Anyone who spins up a V1 LocalNet should be able to easily use the debugger,... | 1.0 | [Development] Documentation for local debugging - ## Objective

Document how to use the `dlv` debugger in LocalNet.

## Origin Document

The `LocalNet` CLI and logging are useful, but we need to be able to step through the code in unit tests and in a dev environment

## Goals

- [ ] Anyone who spins up a V1 L... | priority | documentation for local debugging objective document how to use the dlv debugger in localnet origin document the localnet cli and logging are useful but we need to be able to step through the code in unit tests and in a dev environment goals anyone who spins up a localnet should ... | 1 |

657,834 | 21,869,490,858 | IssuesEvent | 2022-05-19 03:04:07 | pixley/TimelineBuilder | https://api.github.com/repos/pixley/TimelineBuilder | opened | Calendar of Harptos script | type: feature status: to do priority: medium | Create a Python script to implement the Calendar of Harptos, the main calendar for the Faerun of Forgotten Realms. | 1.0 | Calendar of Harptos script - Create a Python script to implement the Calendar of Harptos, the main calendar for the Faerun of Forgotten Realms. | priority | calendar of harptos script create a python script to implement the calendar of harptos the main calendar for the faerun of forgotten realms | 1 |

304,368 | 9,331,347,658 | IssuesEvent | 2019-03-28 09:33:09 | CS2103-AY1819S2-W10-1/main | https://api.github.com/repos/CS2103-AY1819S2-W10-1/main | closed | UI: Display current context | priority.Medium type.Enhancement | Right now, users only know which context they are in after executing a context-switching command, so displaying the context somewhere would help users orientate themselves. | 1.0 | UI: Display current context - Right now, users only know which context they are in after executing a context-switching command, so displaying the context somewhere would help users orientate themselves. | priority | ui display current context right now users only know which context they are in after executing a context switching command so displaying the context somewhere would help users orientate themselves | 1 |

5,324 | 2,574,225,165 | IssuesEvent | 2015-02-11 15:46:37 | dmwm/WMCore | https://api.github.com/repos/dmwm/WMCore | closed | PyCondorPlugin error | Medium Priority | Message: Unhandled exception while calling update method for plugin PyCondorPlugin

Failed to fetch ads from schedd | 1.0 | PyCondorPlugin error - Message: Unhandled exception while calling update method for plugin PyCondorPlugin

Failed to fetch ads from schedd | priority | pycondorplugin error message unhandled exception while calling update method for plugin pycondorplugin failed to fetch ads from schedd | 1 |

415,543 | 12,130,453,266 | IssuesEvent | 2020-04-23 01:36:53 | minio/mc | https://api.github.com/repos/minio/mc | closed | allow fetching individual object's public url | community priority: medium stale | ## Expected behavior

It is possible to fetch an object's public url without side-effects.

## Actual behavior

The only current way (from what I can tell) to get an individual object's public url is using the following command:

`mc share download --json --expire=1s $OBJECT | jq -r .url`

This is undesirable bec... | 1.0 | allow fetching individual object's public url - ## Expected behavior

It is possible to fetch an object's public url without side-effects.

## Actual behavior

The only current way (from what I can tell) to get an individual object's public url is using the following command:

`mc share download --json --expire=1... | priority | allow fetching individual object s public url expected behavior it is possible to fetch an object s public url without side effects actual behavior the only current way from what i can tell to get an individual object s public url is using the following command mc share download json expire ... | 1 |

150,043 | 5,733,138,889 | IssuesEvent | 2017-04-21 16:27:49 | knipferrc/plate | https://api.github.com/repos/knipferrc/plate | closed | Content flash unauthorized | Priority: Medium Type: Bug | If manually changing the URL to a page which you are not authorized you get a flash of unauthorized content. | 1.0 | Content flash unauthorized - If manually changing the URL to a page which you are not authorized you get a flash of unauthorized content. | priority | content flash unauthorized if manually changing the url to a page which you are not authorized you get a flash of unauthorized content | 1 |

676,399 | 23,124,241,495 | IssuesEvent | 2022-07-28 02:47:09 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [docdb] RegistrationTest.TestTabletReports is flaky some 3/1000 times | kind/bug area/docdb priority/medium | Jira Link: [DB-1366](https://yugabyte.atlassian.net/browse/DB-1366)

Follow up to #3327. Seems like there's a genuine logical bug in the test expectation.

| 1.0 | [docdb] RegistrationTest.TestTabletReports is flaky some 3/1000 times - Jira Link: [DB-1366](https://yugabyte.atlassian.net/browse/DB-1366)

Follow up to #3327. Seems like there's a genuine logical bug in the test expectation.

| priority | registrationtest testtabletreports is flaky some times jira link follow up to seems like there s a genuine logical bug in the test expectation | 1 |

25,887 | 2,684,026,415 | IssuesEvent | 2015-03-28 15:47:10 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | bring window to top with putty in console mode | 1 star bug imported Priority-Medium | _From [mickem](https://code.google.com/u/mickem/) on October 28, 2011 22:45:21_

When using putty in console mode you cannot bring the window to top by clicking it.

_Original issue: http://code.google.com/p/conemu-maximus5/issues/detail?id=448_ | 1.0 | bring window to top with putty in console mode - _From [mickem](https://code.google.com/u/mickem/) on October 28, 2011 22:45:21_

When using putty in console mode you cannot bring the window to top by clicking it.

_Original issue: http://code.google.com/p/conemu-maximus5/issues/detail?id=448_ | priority | bring window to top with putty in console mode from on october when using putty in console mode you cannot bring the window to top by clicking it original issue | 1 |

540,047 | 15,798,817,460 | IssuesEvent | 2021-04-02 19:30:23 | itslupus/gamersnet | https://api.github.com/repos/itslupus/gamersnet | closed | Profile Game Ranking | medium priority user story | **Description**:

As a user, I want to be able to manage my game specific ranks

**Acceptance Criteria**:

* User profile displays the user's game ranks

* User can update the game ranks

**Dev Tasks**:

[Edit game ranks backend](https://github.com/itslupus/gamersnet/issues/48)

[UI to edit game ranks](https:... | 1.0 | Profile Game Ranking - **Description**:

As a user, I want to be able to manage my game specific ranks

**Acceptance Criteria**:

* User profile displays the user's game ranks

* User can update the game ranks

**Dev Tasks**:

[Edit game ranks backend](https://github.com/itslupus/gamersnet/issues/48)

[UI to ... | priority | profile game ranking description as a user i want to be able to manage my game specific ranks acceptance criteria user profile displays the user s game ranks user can update the game ranks dev tasks story points | 1 |

542,583 | 15,862,803,537 | IssuesEvent | 2021-04-08 12:04:33 | sopra-fs21-group-08/sopra-fs21-group08-client | https://api.github.com/repos/sopra-fs21-group-08/sopra-fs21-group08-client | closed | Create a pop up window with the game rules that can be accessed from the lobby of the game. | lobby medium priority task | Time estimate: 3h

This task is part of user story #25 | 1.0 | Create a pop up window with the game rules that can be accessed from the lobby of the game. - Time estimate: 3h

This task is part of user story #25 | priority | create a pop up window with the game rules that can be accessed from the lobby of the game time estimate this task is part of user story | 1 |

515,392 | 14,961,744,743 | IssuesEvent | 2021-01-27 08:16:28 | wp-media/wp-rocket | https://api.github.com/repos/wp-media/wp-rocket | opened | Add Missing Image Dimensions is not applied if only single attribute (width or height) is missing | module: media priority: medium severity: major type: bug | **Before submitting an issue please check that you’ve completed the following steps:**

- [ ] Made sure you’re on the latest version

- [ ] Used the search feature to ensure that the bug hasn’t been reported before

**Describe the bug**

When image is missing width or height, Add missing image dimensions is not wo... | 1.0 | Add Missing Image Dimensions is not applied if only single attribute (width or height) is missing - **Before submitting an issue please check that you’ve completed the following steps:**

- [ ] Made sure you’re on the latest version

- [ ] Used the search feature to ensure that the bug hasn’t been reported before

... | priority | add missing image dimensions is not applied if only single attribute width or height is missing before submitting an issue please check that you’ve completed the following steps made sure you’re on the latest version used the search feature to ensure that the bug hasn’t been reported before d... | 1 |

267,361 | 8,388,142,295 | IssuesEvent | 2018-10-09 04:42:05 | MagiCircles/BanGDream | https://api.github.com/repos/MagiCircles/BanGDream | closed | Replace Stamp Fields With Foreign Key To Stamp Assets | feature medium priority optimization | Pretty Self-Explanatory. Title would function the same as Stamp Translation, and each image would be displayed under their proper version.

Would reduce images uploaded in the future & duplication of inputted info, so good all around. Most difficult thing would probably be configuring it to work with our special even... | 1.0 | Replace Stamp Fields With Foreign Key To Stamp Assets - Pretty Self-Explanatory. Title would function the same as Stamp Translation, and each image would be displayed under their proper version.

Would reduce images uploaded in the future & duplication of inputted info, so good all around. Most difficult thing would ... | priority | replace stamp fields with foreign key to stamp assets pretty self explanatory title would function the same as stamp translation and each image would be displayed under their proper version would reduce images uploaded in the future duplication of inputted info so good all around most difficult thing would ... | 1 |

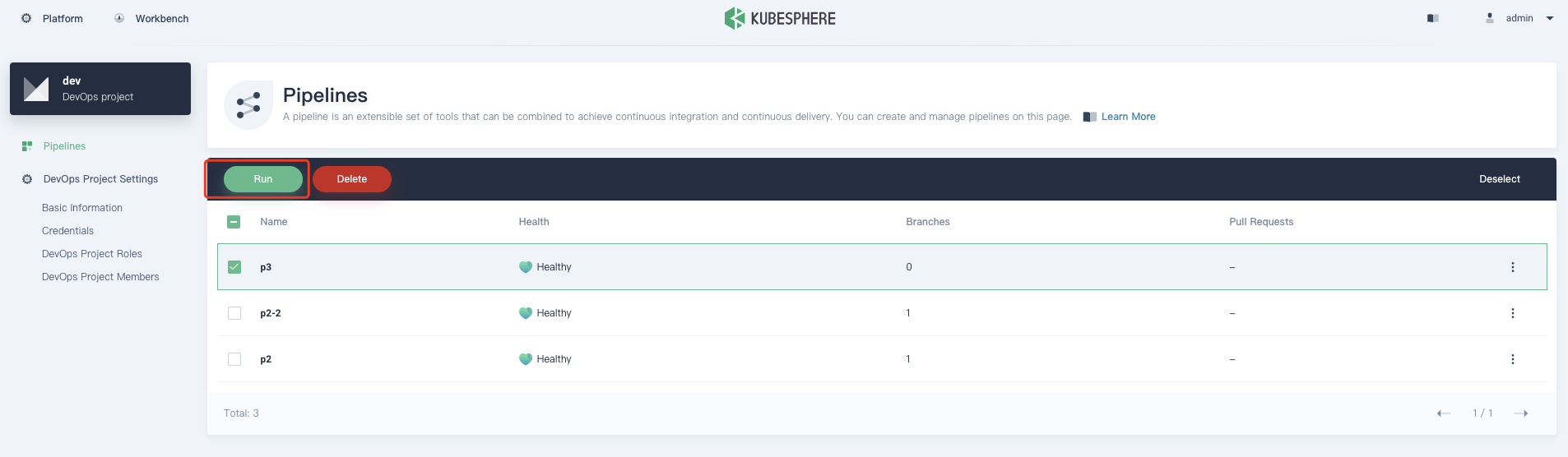

818,951 | 30,712,941,941 | IssuesEvent | 2023-07-27 11:04:56 | kubesphere/ks-devops | https://api.github.com/repos/kubesphere/ks-devops | closed | When executing a pipeline that requires input parameters, no input box pops up | kind/bug priority/medium | **Versions used**

KubeSphere: `v3.2.0`

How To Reproduce

Steps to reproduce the behavior:

1、Go to pipeline list

2、Select pipeline

3、Click on 'Run'

```

pipeline {

agent any

parameters... | 1.0 | When executing a pipeline that requires input parameters, no input box pops up - **Versions used**

KubeSphere: `v3.2.0`

How To Reproduce

Steps to reproduce the behavior:

1、Go to pipeline list

2、Select pipeline

3、Click on 'Run'

As a user, I want to see a timeline page | Priority: Medium feature front-end | ### **FRONT END**

To do:

# Template for the timeline page needs to be created and should have the following:

- Timeline page should have a navbar

- navbar should contain the <a href = "/timeline">LOGO<a/> on the top left and a "POST" button on the top right

- timeline page should have places for posted pictures ... | 1.0 | (Feature) As a user, I want to see a timeline page - ### **FRONT END**

To do:

# Template for the timeline page needs to be created and should have the following:

- Timeline page should have a navbar

- navbar should contain the <a href = "/timeline">LOGO<a/> on the top left and a "POST" button on the top right

- ... | priority | feature as a user i want to see a timeline page front end to do template for the timeline page needs to be created and should have the following timeline page should have a navbar navbar should contain the logo on the top left and a post button on the top right timeline page should hav... | 1 |

55,275 | 3,072,620,009 | IssuesEvent | 2015-08-19 17:50:27 | RobotiumTech/robotium | https://api.github.com/repos/RobotiumTech/robotium | closed | junit.framework.AssertionFailedError: Button with index 2131230847 is not available! | bug imported invalid Priority-Medium | _From [Surhum...@gmail.com](https://code.google.com/u/107563385414450670028/) on April 25, 2012 02:53:27_

What steps will reproduce the problem? 1.solo = new Solo(getInstrumentation(), getActivity());

2.solo.clickOnButton(dk.lector.ao.mobile.R.id.productButton);

3.solo.assertCurrentActivity("ProductSearch", ProductS... | 1.0 | junit.framework.AssertionFailedError: Button with index 2131230847 is not available! - _From [Surhum...@gmail.com](https://code.google.com/u/107563385414450670028/) on April 25, 2012 02:53:27_

What steps will reproduce the problem? 1.solo = new Solo(getInstrumentation(), getActivity());

2.solo.clickOnButton(dk.lecto... | priority | junit framework assertionfailederror button with index is not available from on april what steps will reproduce the problem solo new solo getinstrumentation getactivity solo clickonbutton dk lector ao mobile r id productbutton solo assertcurrentactivity productsearch prod... | 1 |

282,496 | 8,707,050,086 | IssuesEvent | 2018-12-06 06:06:05 | magda-io/magda | https://api.github.com/repos/magda-io/magda | closed | Indexer load queue doesn't backpressure | bug help wanted priority: medium refined | ### Problem description

When the indexer does a full reindex of the registry it's supposed to crawl through and keep up to 1000 datasets in a buffer, but crawling and ingestion should proceed at roughly the right rate because of akka backpressure.

However recent improvements to the crawl speed in the database have ... | 1.0 | Indexer load queue doesn't backpressure - ### Problem description

When the indexer does a full reindex of the registry it's supposed to crawl through and keep up to 1000 datasets in a buffer, but crawling and ingestion should proceed at roughly the right rate because of akka backpressure.

However recent improvement... | priority | indexer load queue doesn t backpressure problem description when the indexer does a full reindex of the registry it s supposed to crawl through and keep up to datasets in a buffer but crawling and ingestion should proceed at roughly the right rate because of akka backpressure however recent improvements t... | 1 |

136,811 | 5,289,019,720 | IssuesEvent | 2017-02-08 16:27:14 | jeveloper/jayrock | https://api.github.com/repos/jeveloper/jayrock | closed | Add Ext.Direct support | auto-migrated Priority-Medium Type-Enhancement | ```

- What new or enhanced feature are you proposing?

Ext.Direct requires a server-side stack that fits pretty well with what Jayrock

already provides. It has a lot of support within the Ext framework for

populating stores, and generally calling services.

See the spec here: http://www.sencha.com/products/js/direct.p... | 1.0 | Add Ext.Direct support - ```

- What new or enhanced feature are you proposing?

Ext.Direct requires a server-side stack that fits pretty well with what Jayrock

already provides. It has a lot of support within the Ext framework for

populating stores, and generally calling services.

See the spec here: http://www.sencha... | priority | add ext direct support what new or enhanced feature are you proposing ext direct requires a server side stack that fits pretty well with what jayrock already provides it has a lot of support within the ext framework for populating stores and generally calling services see the spec here what goal wo... | 1 |

187,230 | 6,750,243,586 | IssuesEvent | 2017-10-23 03:12:26 | cdierdorff/quiz | https://api.github.com/repos/cdierdorff/quiz | closed | User deletes quiz | good first issue medium priority | A user will be able to log on and view a list of all available quizzes. The user will have the option to navigate to a page listing his/her own quizzes and delete the quiz. A dialogue asking if the user is sure will be displayed as the action cannot be undone. | 1.0 | User deletes quiz - A user will be able to log on and view a list of all available quizzes. The user will have the option to navigate to a page listing his/her own quizzes and delete the quiz. A dialogue asking if the user is sure will be displayed as the action cannot be undone. | priority | user deletes quiz a user will be able to log on and view a list of all available quizzes the user will have the option to navigate to a page listing his her own quizzes and delete the quiz a dialogue asking if the user is sure will be displayed as the action cannot be undone | 1 |

174,051 | 6,536,038,654 | IssuesEvent | 2017-08-31 16:34:06 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | closed | Share link title in linkedin share is "GeoSolutions" and is not overridable | bug Priority: Medium Project: C040 | Share buttons trigger use the title "GeoSolutions" for the shared resources.

The share title is used only by Linkedin, as far as I know. This causes the link you are sharing on Linkedin contains the "GeoSolutions" as title.

We should get the title of the current page or map.

| 1.0 | Share link title in linkedin share is "GeoSolutions" and is not overridable - Share buttons trigger use the title "GeoSolutions" for the shared resources.

The share title is used only by Linkedin, as far as I know. This causes the link you are sharing on Linkedin contains the "GeoSolutions" as title.

We should get... | priority | share link title in linkedin share is geosolutions and is not overridable share buttons trigger use the title geosolutions for the shared resources the share title is used only by linkedin as far as i know this causes the link you are sharing on linkedin contains the geosolutions as title we should get... | 1 |

306,034 | 9,379,806,044 | IssuesEvent | 2019-04-04 15:39:04 | CanadianClimateDataPortal/Canadian-Climate-Data-Portal | https://api.github.com/repos/CanadianClimateDataPortal/Canadian-Climate-Data-Portal | opened | IDF curves - Bulk download | 3- Portal F.E- Download Priority - Medium WG- Data | - [ ] Possibility to download multiple or all data on a single station

- [ ] Possibility to download multiple station at the same time by selecting them

Possibility to go through the download section of the portal instead of the vizual mapping representation | 1.0 | IDF curves - Bulk download - - [ ] Possibility to download multiple or all data on a single station

- [ ] Possibility to download multiple station at the same time by selecting them

Possibility to go through the download section of the portal instead of the vizual mapping representation | priority | idf curves bulk download possibility to download multiple or all data on a single station possibility to download multiple station at the same time by selecting them possibility to go through the download section of the portal instead of the vizual mapping representation | 1 |

505,789 | 14,645,638,514 | IssuesEvent | 2020-12-26 09:08:56 | schemathesis/schemathesis | https://api.github.com/repos/schemathesis/schemathesis | closed | [BUG] Unsatisfiable errors in explicit example breaks the CLI execution | Difficulty: Medium Priority: Low Type: Bug | **Describe the bug**

If the schema provides examples that lead to generating data for unsatisfiable schemas, then the execution process stops.

Reported in gitter - I'll update this issue once I have more info

| 1.0 | [BUG] Unsatisfiable errors in explicit example breaks the CLI execution - **Describe the bug**

If the schema provides examples that lead to generating data for unsatisfiable schemas, then the execution process stops.

Reported in gitter - I'll update this issue once I have more info

| priority | unsatisfiable errors in explicit example breaks the cli execution describe the bug if the schema provides examples that lead to generating data for unsatisfiable schemas then the execution process stops reported in gitter i ll update this issue once i have more info | 1 |

708,897 | 24,359,954,653 | IssuesEvent | 2022-10-03 10:47:47 | CS3219-AY2223S1/cs3219-project-ay2223s1-g33 | https://api.github.com/repos/CS3219-AY2223S1/cs3219-project-ay2223s1-g33 | closed | [Collaboration UI] Display Question Bounds | Module/Front-End Status/Medium-Priority Type/Feature | ## Description

The UI should show the bounds of the question's input variable(s)

## Parent Task

- #104 | 1.0 | [Collaboration UI] Display Question Bounds - ## Description

The UI should show the bounds of the question's input variable(s)

## Parent Task

- #104 | priority | display question bounds description the ui should show the bounds of the question s input variable s parent task | 1 |

404,928 | 11,864,652,324 | IssuesEvent | 2020-03-25 22:09:33 | cds-snc/report-a-cybercrime | https://api.github.com/repos/cds-snc/report-a-cybercrime | closed | Tweak "They asked for" lines in email | medium priority | ## Summary

From Trello https://trello.com/c/gvgdJKYX

> The best I can tell, it looks like the victim selected personal info was affected on the form > What information did they ask for? SIN [Report reads: They asked for: sin] > What information did they obtain? nil [Report reads: They asked for: undefined].

>

>Co... | 1.0 | Tweak "They asked for" lines in email - ## Summary

From Trello https://trello.com/c/gvgdJKYX

> The best I can tell, it looks like the victim selected personal info was affected on the form > What information did they ask for? SIN [Report reads: They asked for: sin] > What information did they obtain? nil [Report re... | priority | tweak they asked for lines in email summary from trello the best i can tell it looks like the victim selected personal info was affected on the form what information did they ask for sin what information did they obtain nil confusing to analyst because the field looks like a duplica... | 1 |

795,966 | 28,094,325,935 | IssuesEvent | 2023-03-30 14:52:05 | KDT3-Final-6/final-project-BE | https://api.github.com/repos/KDT3-Final-6/final-project-BE | closed | feat: 컨트롤러에서 토큰을 받게 수정 | Type: Feature Status: In Progress Priority: Medium For: API For: Backend | ## Description

컨트롤러에서 토큰을 받게 수정

## Tasks(Process)

- [x] 컨트롤러 파라미터 수정

## References

| 1.0 | feat: 컨트롤러에서 토큰을 받게 수정 - ## Description

컨트롤러에서 토큰을 받게 수정

## Tasks(Process)

- [x] 컨트롤러 파라미터 수정

## References

| priority | feat 컨트롤러에서 토큰을 받게 수정 description 컨트롤러에서 토큰을 받게 수정 tasks process 컨트롤러 파라미터 수정 references | 1 |

134,057 | 5,219,503,501 | IssuesEvent | 2017-01-26 19:16:13 | ualbertalib/HydraNorth | https://api.github.com/repos/ualbertalib/HydraNorth | closed | Create a good four-digit date field | hydranorth2 priority:medium size:TBD team:metadata | Replace the current year_created field with a well-though-out four-digit date field for use in faceting in the public interface and for reporting publication year in DOI and ARK. It should work well with all of our content types and sources (Dataverse etc.)

| 1.0 | Create a good four-digit date field - Replace the current year_created field with a well-though-out four-digit date field for use in faceting in the public interface and for reporting publication year in DOI and ARK. It should work well with all of our content types and sources (Dataverse etc.)

| priority | create a good four digit date field replace the current year created field with a well though out four digit date field for use in faceting in the public interface and for reporting publication year in doi and ark it should work well with all of our content types and sources dataverse etc | 1 |

157,191 | 5,996,385,255 | IssuesEvent | 2017-06-03 13:50:28 | Rsl1122/Plan-PlayerAnalytics | https://api.github.com/repos/Rsl1122/Plan-PlayerAnalytics | opened | ConcurrentModificationException during Command use save | Bug Priority: MEDIUM | **Plan Version:** 3.3.0

**Server Version:** 1.8.0

**Database Type:** Unknown

**Command Causing Issue:** None

**Description:**

ConcurrentModificationException during Command use save

(Command was issued during save)

**Steps to Reproduce:**

1. Issue command while commanduse is being saved

**Proposed Solu... | 1.0 | ConcurrentModificationException during Command use save - **Plan Version:** 3.3.0

**Server Version:** 1.8.0

**Database Type:** Unknown

**Command Causing Issue:** None

**Description:**

ConcurrentModificationException during Command use save

(Command was issued during save)

**Steps to Reproduce:**

1. Issue ... | priority | concurrentmodificationexception during command use save plan version server version database type unknown command causing issue none description concurrentmodificationexception during command use save command was issued during save steps to reproduce issue ... | 1 |

375,528 | 11,104,736,807 | IssuesEvent | 2019-12-17 08:20:59 | OpenSourceEconomics/soepy | https://api.github.com/repos/OpenSourceEconomics/soepy | opened | update numerical integration | pb package priority medium size medium | We made a lot of progress with improving the quality of numerical integration in the `construct_emax` over the last months. This needs to be incorporated in this package as well. | 1.0 | update numerical integration - We made a lot of progress with improving the quality of numerical integration in the `construct_emax` over the last months. This needs to be incorporated in this package as well. | priority | update numerical integration we made a lot of progress with improving the quality of numerical integration in the construct emax over the last months this needs to be incorporated in this package as well | 1 |

786,230 | 27,639,861,087 | IssuesEvent | 2023-03-10 17:08:01 | KinsonDigital/BranchValidator | https://api.github.com/repos/KinsonDigital/BranchValidator | closed | 🚧Setup dependabot | medium priority preview cicd | ### Complete The Item Below

- [X] I have updated the title without removing the 🚧 emoji.

### Description

Setup [dependabot](https://docs.github.com/en/code-security/dependabot) for the project.

Refer to the **Velaptor** project for the how to setup the **YAML** config tile.

The name of the file is [dependabot... | 1.0 | 🚧Setup dependabot - ### Complete The Item Below

- [X] I have updated the title without removing the 🚧 emoji.

### Description

Setup [dependabot](https://docs.github.com/en/code-security/dependabot) for the project.

Refer to the **Velaptor** project for the how to setup the **YAML** config tile.

The name of th... | priority | 🚧setup dependabot complete the item below i have updated the title without removing the 🚧 emoji description setup for the project refer to the velaptor project for the how to setup the yaml config tile the name of the file is acceptance criteria dependabot setup for... | 1 |

787,421 | 27,717,305,407 | IssuesEvent | 2023-03-14 17:48:13 | TykTechnologies/tyk | https://api.github.com/repos/TykTechnologies/tyk | closed | [TT-1955] UseCertificate field is used even if AuthToken is disable | bug Priority: Medium zendesk | **Branch/Environment/Version**

v2.9.3

**Describe the bug**

UseCertificate field is checked even if AuthToken is disabled

**Reproduction steps**

Steps to reproduce the behavior:

1. Create API with Auth token authentication mode and enable client certificate field.

2. Create Key for API

3. Update API Authen... | 1.0 | [TT-1955] UseCertificate field is used even if AuthToken is disable - **Branch/Environment/Version**

v2.9.3

**Describe the bug**

UseCertificate field is checked even if AuthToken is disabled

**Reproduction steps**

Steps to reproduce the behavior:

1. Create API with Auth token authentication mode and enable ... | priority | usecertificate field is used even if authtoken is disable branch environment version describe the bug usecertificate field is checked even if authtoken is disabled reproduction steps steps to reproduce the behavior create api with auth token authentication mode and enable client ce... | 1 |

257,970 | 8,149,305,705 | IssuesEvent | 2018-08-22 09:12:58 | Xceptance/neodymium-library | https://api.github.com/repos/Xceptance/neodymium-library | closed | Validate the value attribute using a regex | Medium Priority doneInDevelop feature | Add a condition that can validate the value attribute using a regex.

The Condition should be located in the SelenideAddons class. | 1.0 | Validate the value attribute using a regex - Add a condition that can validate the value attribute using a regex.

The Condition should be located in the SelenideAddons class. | priority | validate the value attribute using a regex add a condition that can validate the value attribute using a regex the condition should be located in the selenideaddons class | 1 |

462,456 | 13,247,719,078 | IssuesEvent | 2020-08-19 17:43:51 | radical-cybertools/radical.entk | https://api.github.com/repos/radical-cybertools/radical.entk | closed | unexpected keyboardinterrupt messages | layer:saga priority:medium topic:execution topic:resource topic:termination type:bug | My jobs gives some keyboardinterrupt messages after it runs as expected and finishes once walltime runs out. I didn't keyboard interrupt, it automatically quits due to walltime run out. This error is just cosmetic, doesn't affect me otherwise.

```

+ re.session.login1.eh22.018310.0003 (json)

+ pilot.0000 (profiles)

... | 1.0 | unexpected keyboardinterrupt messages - My jobs gives some keyboardinterrupt messages after it runs as expected and finishes once walltime runs out. I didn't keyboard interrupt, it automatically quits due to walltime run out. This error is just cosmetic, doesn't affect me otherwise.

```

+ re.session.login1.eh22.01831... | priority | unexpected keyboardinterrupt messages my jobs gives some keyboardinterrupt messages after it runs as expected and finishes once walltime runs out i didn t keyboard interrupt it automatically quits due to walltime run out this error is just cosmetic doesn t affect me otherwise re session json ... | 1 |

654,881 | 21,672,800,819 | IssuesEvent | 2022-05-08 08:16:00 | uriahf/rtichoke | https://api.github.com/repos/uriahf/rtichoke | opened | Fix range for decision curves | Difficulty: novice :suspect: Priority: medium | Limits for the y axis should be according to the prevalence with constant margins. | 1.0 | Fix range for decision curves - Limits for the y axis should be according to the prevalence with constant margins. | priority | fix range for decision curves limits for the y axis should be according to the prevalence with constant margins | 1 |

490,477 | 14,134,989,264 | IssuesEvent | 2020-11-10 00:35:31 | dnnsoftware/Dnn.Platform | https://api.github.com/repos/dnnsoftware/Dnn.Platform | closed | Export data tier application in Azure not possible (DNN database) | Area: Platform > Providers Effort: Medium Priority: Medium Status: Ready for Development Type: Maintenance stale | ## Current result

Try to make backup of the DNN database created as Azure SQL database.

1. In SQL Server Management Studio click on Export data tier application (generate backup, BACPAK file).

## Expected result

BACPAK generation can be done successfully.

## Error log

TITLE: Microsoft SQL Server Managemen... | 1.0 | Export data tier application in Azure not possible (DNN database) - ## Current result

Try to make backup of the DNN database created as Azure SQL database.

1. In SQL Server Management Studio click on Export data tier application (generate backup, BACPAK file).

## Expected result

BACPAK generation can be done su... | priority | export data tier application in azure not possible dnn database current result try to make backup of the dnn database created as azure sql database in sql server management studio click on export data tier application generate backup bacpak file expected result bacpak generation can be done su... | 1 |

258,925 | 8,180,901,512 | IssuesEvent | 2018-08-28 20:57:32 | VulcanForge/pvp-mode | https://api.github.com/repos/VulcanForge/pvp-mode | opened | Add the transformer exclusions annotation to the coremod | cleanup medium priority | Exclude:

* The coremod package

* The API packages | 1.0 | Add the transformer exclusions annotation to the coremod - Exclude:

* The coremod package

* The API packages | priority | add the transformer exclusions annotation to the coremod exclude the coremod package the api packages | 1 |

26,502 | 2,684,633,791 | IssuesEvent | 2015-03-29 06:08:12 | gtcasl/gpuocelot | https://api.github.com/repos/gtcasl/gpuocelot | opened | Error while installing in Arch Linux | bug imported Priority-Medium | _From [zaidho...@gmail.com](https://code.google.com/u/101209106559517423416/) on September 13, 2014 18:27:08_

What steps will reproduce the problem? After fixing the ptxgrammer issue I am getting an error with I think the boost libraries when I run the ./build.py --install command. I get Build Error. What is the expec... | 1.0 | Error while installing in Arch Linux - _From [zaidho...@gmail.com](https://code.google.com/u/101209106559517423416/) on September 13, 2014 18:27:08_

What steps will reproduce the problem? After fixing the ptxgrammer issue I am getting an error with I think the boost libraries when I run the ./build.py --install comman... | priority | error while installing in arch linux from on september what steps will reproduce the problem after fixing the ptxgrammer issue i am getting an error with i think the boost libraries when i run the build py install command i get build error what is the expected output what do you see instead ... | 1 |

328,411 | 9,994,475,692 | IssuesEvent | 2019-07-11 17:46:11 | minio/mc | https://api.github.com/repos/minio/mc | closed | do not edit user configuration files without notifying user/confirmation | community priority: medium | ## Expected behavior

don't edit user configuration files without explicit confirmation

## Actual behavior

adds

```

autoload -U +X bashcompinit && bashcompinit

complete -o nospace -C /home/atomi/go/bin/mc mc

```

to `.zshrc`

## Steps to reproduce the behavior

run mc

## mc version

- (paste output of... | 1.0 | do not edit user configuration files without notifying user/confirmation - ## Expected behavior

don't edit user configuration files without explicit confirmation

## Actual behavior

adds

```

autoload -U +X bashcompinit && bashcompinit

complete -o nospace -C /home/atomi/go/bin/mc mc

```

to `.zshrc`

## S... | priority | do not edit user configuration files without notifying user confirmation expected behavior don t edit user configuration files without explicit confirmation actual behavior adds autoload u x bashcompinit bashcompinit complete o nospace c home atomi go bin mc mc to zshrc s... | 1 |

92,174 | 3,868,680,929 | IssuesEvent | 2016-04-10 03:54:59 | HubTurbo/HubTurbo | https://api.github.com/repos/HubTurbo/HubTurbo | closed | User can add issues to a 'watch list' | feature-filters feature-panels priority.medium type.story | ... so that users can maintain a 'watch list' of selected issues.

User should be able to right click on an issue card and choose `add to new watch list`. This will create a new panel with the corresponding `id:repo#id` filter. User will be prompted to give a name for the panel.

After that, user should be able to ... | 1.0 | User can add issues to a 'watch list' - ... so that users can maintain a 'watch list' of selected issues.

User should be able to right click on an issue card and choose `add to new watch list`. This will create a new panel with the corresponding `id:repo#id` filter. User will be prompted to give a name for the panel... | priority | user can add issues to a watch list so that users can maintain a watch list of selected issues user should be able to right click on an issue card and choose add to new watch list this will create a new panel with the corresponding id repo id filter user will be prompted to give a name for the panel... | 1 |

198,691 | 6,975,786,474 | IssuesEvent | 2017-12-12 08:43:55 | webpack/webpack-cli | https://api.github.com/repos/webpack/webpack-cli | opened | [Init] Add keyboard functionality for interpretor lists | CLI Good First Contribution Priority: Medium | <!-- Before creating an issue please make sure you are using the latest version of webpack. -->

**Do you want to request a *feature* or report a *bug*?**

<!-- Please ask questions on StackOverflow or the webpack Gitter (https://gitter.im/webpack/webpack). Questions will be closed. -->

Bug (Usability)

**What is th... | 1.0 | [Init] Add keyboard functionality for interpretor lists - <!-- Before creating an issue please make sure you are using the latest version of webpack. -->

**Do you want to request a *feature* or report a *bug*?**

<!-- Please ask questions on StackOverflow or the webpack Gitter (https://gitter.im/webpack/webpack). Qu... | priority | add keyboard functionality for interpretor lists do you want to request a feature or report a bug bug usability what is the current behavior currently when you get a multiple choice list from the init generator there is no way to key through the options with arrow keys technically with... | 1 |

350,097 | 10,478,446,362 | IssuesEvent | 2019-09-24 00:04:24 | BCcampus/edehr | https://api.github.com/repos/BCcampus/edehr | closed | Add "back to assignments" link | Effort - Low Priority - Medium ~Feature | Give users an easier way to get back to where they came from.

Please add a `< Back to assignments` link to the right of the "new assignments button | 1.0 | Add "back to assignments" link - Give users an easier way to get back to where they came from.

Please add a `< Back to assignments` link to the right of the "new assignments button | priority | add back to assignments link give users an easier way to get back to where they came from please add a back to assignments link to the right of the new assignments button | 1 |

328,901 | 10,001,235,175 | IssuesEvent | 2019-07-12 15:09:50 | HabitRPG/habitica | https://api.github.com/repos/HabitRPG/habitica | closed | purchasing quest scrolls / bundles should have confirmation popup | good first issue priority: medium section: Quest Shop status: issue: in progress | Quest scroll purchases don't have a confirmation step.

When you buy other gem-purchasable items such as hatching potions, you see a confirmation dialog that tells you how many gems the total purchase will be and asks you to confirm:

.

**Update form header on desktop only**:

- [ ] form... | 1.0 | Minor updates to Enketo UI - This issue updates the UI for Enketo forms on mobile and desktop. These are small tweaks for now and we hope to come back and make additional changes in the future. [Design spec](https://docs.google.com/document/d/1bnbQ17I9fq1ZRbu2v9otaRpUXn_7eODe7YTYzsXQoDg/edit).

**Update form header o... | priority | minor updates to enketo ui this issue updates the ui for enketo forms on mobile and desktop these are small tweaks for now and we hope to come back and make additional changes in the future update form header on desktop only form title is bold in black title is left aligned from the left... | 1 |

517,282 | 14,998,668,474 | IssuesEvent | 2021-01-29 18:47:58 | JamieMason/syncpack | https://api.github.com/repos/JamieMason/syncpack | closed | Use workspace's packages version as source of truth in fix-mismatches | Priority: Medium Status: Awaiting Release Type: Feat | ## Description

When doing `fix-mismatches` it would be cool to sync dependencies version to use the local version, taken from the `package.json` version field instead of using the higher version found in the deps tree

<!--

Describe why this change is required, what problem it solves, and what

alternatives exist... | 1.0 | Use workspace's packages version as source of truth in fix-mismatches - ## Description

When doing `fix-mismatches` it would be cool to sync dependencies version to use the local version, taken from the `package.json` version field instead of using the higher version found in the deps tree

<!--

Describe why this ... | priority | use workspace s packages version as source of truth in fix mismatches description when doing fix mismatches it would be cool to sync dependencies version to use the local version taken from the package json version field instead of using the higher version found in the deps tree describe why this ... | 1 |

817,399 | 30,639,684,970 | IssuesEvent | 2023-07-24 20:45:35 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [YSQL] Unnecessary yb_lsm.h header | kind/enhancement area/ysql priority/medium status/awaiting-triage | Jira Link: [DB-7387](https://yugabyte.atlassian.net/browse/DB-7387)

### Description

All funcs in that header could be made static.

### Warning: Please confirm that this issue does not contain any sensitive information

- [X] I confirm this issue does not contain any sensitive information.

[DB-7387]: https://yugabyt... | 1.0 | [YSQL] Unnecessary yb_lsm.h header - Jira Link: [DB-7387](https://yugabyte.atlassian.net/browse/DB-7387)

### Description

All funcs in that header could be made static.

### Warning: Please confirm that this issue does not contain any sensitive information

- [X] I confirm this issue does not contain any sensitive inf... | priority | unnecessary yb lsm h header jira link description all funcs in that header could be made static warning please confirm that this issue does not contain any sensitive information i confirm this issue does not contain any sensitive information | 1 |

776,108 | 27,247,248,352 | IssuesEvent | 2023-02-22 03:47:10 | ansible-collections/azure | https://api.github.com/repos/ansible-collections/azure | closed | azure_rm_privatednszonelink - vNet subscription_id being overridden by value from profile | medium_priority new_featrue work in | <!--- Verify first that your issue is not already reported on GitHub -->

<!--- Also test if the latest release and devel branch are affected too -->

<!--- Complete *all* sections as described, this form is processed automatically -->

##### SUMMARY

<!--- Explain the problem briefly below -->

When executing module... | 1.0 | azure_rm_privatednszonelink - vNet subscription_id being overridden by value from profile - <!--- Verify first that your issue is not already reported on GitHub -->

<!--- Also test if the latest release and devel branch are affected too -->

<!--- Complete *all* sections as described, this form is processed automatica... | priority | azure rm privatednszonelink vnet subscription id being overridden by value from profile summary when executing module supplying entire resource id to the virtual network parameter seeing unexpected behavior ansible module seems to be reading the information provided to it correctly howeve... | 1 |

465,540 | 13,387,711,492 | IssuesEvent | 2020-09-02 16:21:43 | ansible/ansible-lint | https://api.github.com/repos/ansible/ansible-lint | opened | Add rule warning about use of run_once with strategy: free | priority/medium status/new | <!--- Verify first that your feature was not already discussed on GitHub -->

##### Summary

<!--- Describe the new feature/improvement briefly below -->

It would be useful for ansible-lint to have a rule that warns against the use of `run_once` with `strategy: free`. Ansible ignores `run_once` with the free strat... | 1.0 | Add rule warning about use of run_once with strategy: free - <!--- Verify first that your feature was not already discussed on GitHub -->

##### Summary

<!--- Describe the new feature/improvement briefly below -->

It would be useful for ansible-lint to have a rule that warns against the use of `run_once` with `st... | priority | add rule warning about use of run once with strategy free summary it would be useful for ansible lint to have a rule that warns against the use of run once with strategy free ansible ignores run once with the free strategy which means your tasks are run many times once for each valid invent... | 1 |

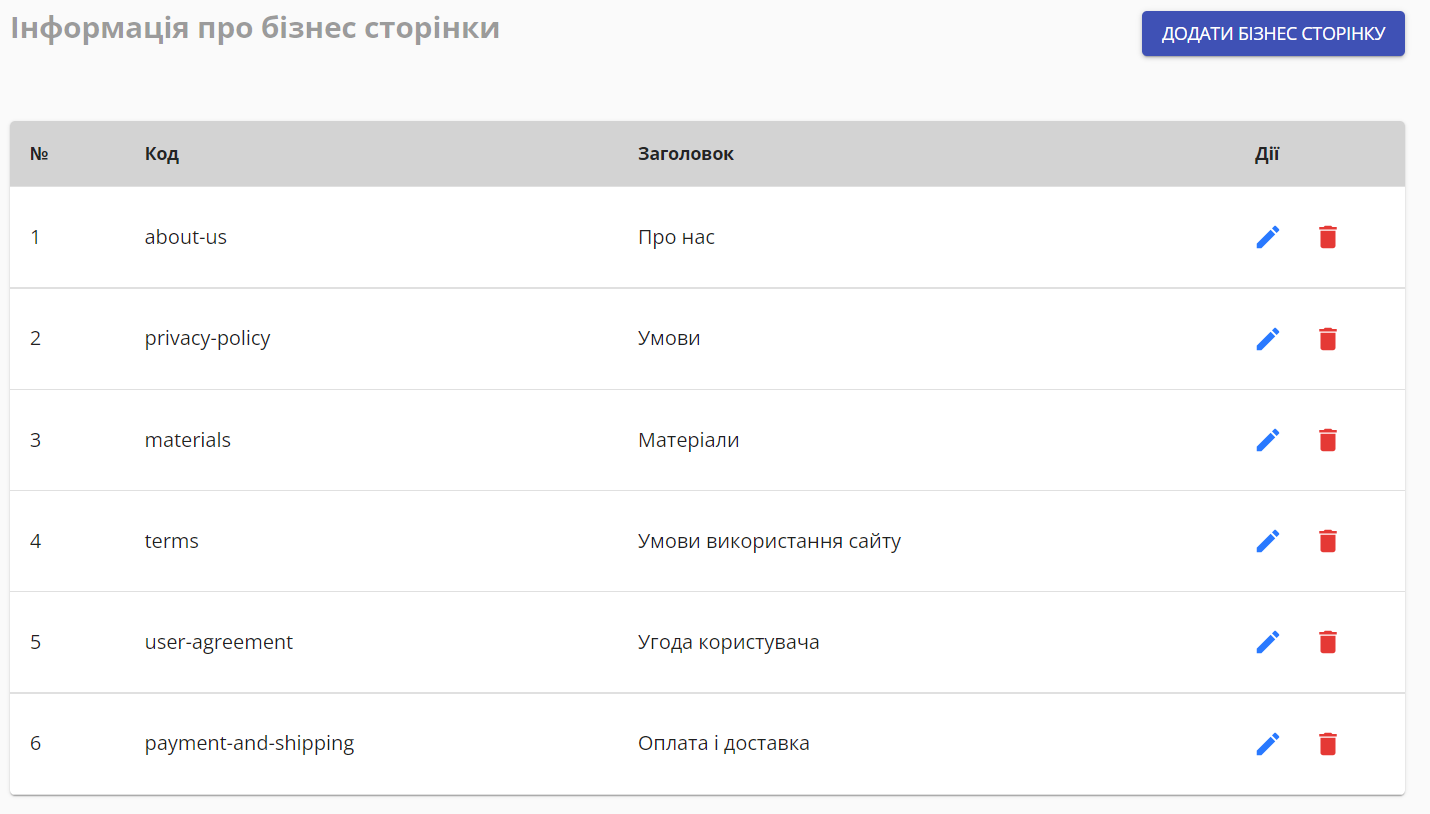

654,049 | 21,635,223,236 | IssuesEvent | 2022-05-05 13:41:19 | ita-social-projects/horondi_admin | https://api.github.com/repos/ita-social-projects/horondi_admin | closed | [Sidebar] Move links to materials, news, about us pages, etc from business pages to static pages as subfields. | priority: medium UI severity: major | Move links to materials, news, about us pages, etc from business pages to static pages as subfields.

- add lists (using markdown lists in note field or pressing enter in list)

- recognise links and email addresses (using regex? Lukas will supply the regex)

### add budget

see budget task

### add files

- via drag and drop (files a... | 1.0 | Task details - As a user I want to _add details to tasks_ to _remember what to do_.

### add notes (text)

- add lists (using markdown lists in note field or pressing enter in list)

- recognise links and email addresses (using regex? Lukas will supply the regex)

### add budget

see budget task

### add files

- via drag an... | priority | task details as a user i want to add details to tasks to remember what to do add notes text add lists using markdown lists in note field or pressing enter in list recognise links and email addresses using regex lukas will supply the regex add budget see budget task add files via drag an... | 1 |

826,094 | 31,552,182,959 | IssuesEvent | 2023-09-02 07:17:54 | vicholp/template-laravel | https://api.github.com/repos/vicholp/template-laravel | closed | Check outdated dependencies | priority: medium | Remove dependabot

```

composer outdated -D --no-dev -m -f json

```

```

npm outdated -j -l

```

| 1.0 | Check outdated dependencies - Remove dependabot

```

composer outdated -D --no-dev -m -f json

```

```

npm outdated -j -l

```

| priority | check outdated dependencies remove dependabot composer outdated d no dev m f json npm outdated j l | 1 |

606,900 | 18,770,094,622 | IssuesEvent | 2021-11-06 17:26:17 | AY2122S1-CS2113T-T12-3/tp | https://api.github.com/repos/AY2122S1-CS2113T-T12-3/tp | closed | [PE-D] Hard-to-test features related to datetime | priority.Medium | Since date-time is set as the current date when the user adds in new items, it is very hard to test when working with date-time in the past or in the future.

<!--session: 1635497070033-2edf8de8-fb5a-49df-be08-9aeb1415bdf6-->

<!--Version: Web v3.4.1-->

-------------

Labels: `severity.Medium` `type.FeatureFlaw`

origin... | 1.0 | [PE-D] Hard-to-test features related to datetime - Since date-time is set as the current date when the user adds in new items, it is very hard to test when working with date-time in the past or in the future.

<!--session: 1635497070033-2edf8de8-fb5a-49df-be08-9aeb1415bdf6-->

<!--Version: Web v3.4.1-->

-------------

... | priority | hard to test features related to datetime since date time is set as the current date when the user adds in new items it is very hard to test when working with date time in the past or in the future labels severity medium type featureflaw original ped | 1 |

561,725 | 16,622,136,739 | IssuesEvent | 2021-06-03 03:43:05 | rstudio/gt | https://api.github.com/repos/rstudio/gt | closed | Add support for side-by-side regression tables? | Difficulty: [2] Intermediate Effort: [2] Medium Priority: [2] Medium Type: ★ Enhancement | This is a phenomenal package and I'm a huge fan of the API for creating tables.

At least two other packages provide support for side-by-side regression tables ([**stargazer**](https://cran.r-project.org/web/packages/stargazer/index.html) and [**huxtable**](https://github.com/hughjonesd/huxtable)), but both have limi... | 1.0 | Add support for side-by-side regression tables? - This is a phenomenal package and I'm a huge fan of the API for creating tables.

At least two other packages provide support for side-by-side regression tables ([**stargazer**](https://cran.r-project.org/web/packages/stargazer/index.html) and [**huxtable**](https://gi... | priority | add support for side by side regression tables this is a phenomenal package and i m a huge fan of the api for creating tables at least two other packages provide support for side by side regression tables and but both have limitations stargazer only supports html and tex and doesn t play well with ... | 1 |

531,373 | 15,496,262,882 | IssuesEvent | 2021-03-11 02:21:16 | dtcenter/MET | https://api.github.com/repos/dtcenter/MET | closed | For Python embedding, support the grid attribute being defined as a grid specification string. | alert: NEED ACCOUNT KEY component: python interface priority: medium requestor: METplus Team type: enhancement | ## Describe the Enhancement ##

Python embedding currently requires a user to define all attributes about the grid that their data is on. Could we support numbered grids already known to MET, either via a new Python embedding-specific attribute (i.e. grid_number) or via the set_attr_grid command line argument like show... | 1.0 | For Python embedding, support the grid attribute being defined as a grid specification string. - ## Describe the Enhancement ##

Python embedding currently requires a user to define all attributes about the grid that their data is on. Could we support numbered grids already known to MET, either via a new Python embedd... | priority | for python embedding support the grid attribute being defined as a grid specification string describe the enhancement python embedding currently requires a user to define all attributes about the grid that their data is on could we support numbered grids already known to met either via a new python embedd... | 1 |

479,781 | 13,805,704,651 | IssuesEvent | 2020-10-11 14:46:44 | ayumi-cloud/oc2-security-module | https://api.github.com/repos/ayumi-cloud/oc2-security-module | opened | Move `Cloudflare` Crawlers into bot module | Add to Whitelist Firewall In-progress Priority: Medium | ### Enhancement idea

- [ ] Move `Cloudflare` Crawlers into bot module.

| 1.0 | Move `Cloudflare` Crawlers into bot module - ### Enhancement idea

- [ ] Move `Cloudflare` Crawlers into bot module.

| priority | move cloudflare crawlers into bot module enhancement idea move cloudflare crawlers into bot module | 1 |

518,770 | 15,034,446,064 | IssuesEvent | 2021-02-02 12:54:20 | gnosis/conditional-tokens-explorer | https://api.github.com/repos/gnosis/conditional-tokens-explorer | closed | Redeem positions: Display details on question and outcome names for Omen/Reality.eth positions. | Medium priority enhancement feature requested | Case 5 in the #546 | 1.0 | Redeem positions: Display details on question and outcome names for Omen/Reality.eth positions. - Case 5 in the #546 | priority | redeem positions display details on question and outcome names for omen reality eth positions case in the | 1 |

140,925 | 5,426,114,869 | IssuesEvent | 2017-03-03 09:05:29 | NostraliaWoW/mangoszero | https://api.github.com/repos/NostraliaWoW/mangoszero | closed | Zul'farrak event | Dungeon Priority - Medium System | Name - event after the stairs part, to engage the prisoners and have the goblin destroy the wall to allow access to final boss.

Summary - after finishing the stairs event and boss there I attempted to talk to goblin dude to get him to blow the wall, allowing access up to the final boss. He would only greet me, nothin... | 1.0 | Zul'farrak event - Name - event after the stairs part, to engage the prisoners and have the goblin destroy the wall to allow access to final boss.

Summary - after finishing the stairs event and boss there I attempted to talk to goblin dude to get him to blow the wall, allowing access up to the final boss. He would on... | priority | zul farrak event name event after the stairs part to engage the prisoners and have the goblin destroy the wall to allow access to final boss summary after finishing the stairs event and boss there i attempted to talk to goblin dude to get him to blow the wall allowing access up to the final boss he would on... | 1 |

34,262 | 2,776,595,235 | IssuesEvent | 2015-05-04 22:43:06 | umutafacan/bounswe2015group3 | https://api.github.com/repos/umutafacan/bounswe2015group3 | reopened | adding new use cases and creating use case diagram | auto-migrated Priority-Medium Type-Task | ```

add new use cases and create use case diagram

```

Original issue reported on code.google.com by `bayrakta...@gmail.com` on 14 Mar 2015 at 5:26 | 1.0 | adding new use cases and creating use case diagram - ```

add new use cases and create use case diagram

```

Original issue reported on code.google.com by `bayrakta...@gmail.com` on 14 Mar 2015 at 5:26 | priority | adding new use cases and creating use case diagram add new use cases and create use case diagram original issue reported on code google com by bayrakta gmail com on mar at | 1 |

821,602 | 30,828,069,464 | IssuesEvent | 2023-08-01 21:57:52 | Haidoe/arc | https://api.github.com/repos/Haidoe/arc | closed | Date selector should be centered on mobile screen | priority-medium style | ## Bug Report

**Reporter: ❗️**

@ksdhir

**Describe the bug: ❗️**

The date selector is left aligned on the mobile screen. Should be centered as displayed in the mockups.

**Steps to reproduce: ❗️**

1. Go to 'dashboard' of any production

2. Resize your browser to mobile screen.

3. Scroll to the Production Pr... | 1.0 | Date selector should be centered on mobile screen - ## Bug Report

**Reporter: ❗️**

@ksdhir

**Describe the bug: ❗️**

The date selector is left aligned on the mobile screen. Should be centered as displayed in the mockups.

**Steps to reproduce: ❗️**

1. Go to 'dashboard' of any production

2. Resize your brows... | priority | date selector should be centered on mobile screen bug report reporter ❗️ ksdhir describe the bug ❗️ the date selector is left aligned on the mobile screen should be centered as displayed in the mockups steps to reproduce ❗️ go to dashboard of any production resize your brows... | 1 |

420,069 | 12,232,724,143 | IssuesEvent | 2020-05-04 10:13:22 | osmontrouge/caresteouvert | https://api.github.com/repos/osmontrouge/caresteouvert | closed | Layout issues with longer translations in Missing + report dialogues | bug priority: medium | **Describe the bug**

A clear and concise description of what the bug is.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to https://www.caresteouvert.fr/@48.864702,2.332550,17.08/place/n682347923

2. Switch language to "Deutsch".

3. Click on "Fehlendes Geschäft melden"

4. See layout issues in both dialo... | 1.0 | Layout issues with longer translations in Missing + report dialogues - **Describe the bug**

A clear and concise description of what the bug is.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to https://www.caresteouvert.fr/@48.864702,2.332550,17.08/place/n682347923

2. Switch language to "Deutsch".

3. C... | priority | layout issues with longer translations in missing report dialogues describe the bug a clear and concise description of what the bug is to reproduce steps to reproduce the behavior go to switch language to deutsch click on fehlendes geschäft melden see layout issues in both dial... | 1 |

815,590 | 30,563,287,367 | IssuesEvent | 2023-07-20 15:54:55 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | closed | Mesh warnings from franka_description with recent Drake versions | type: bug priority: medium component: geometry perception | ### What happened?

In `drake/manipulation/models/franka_description/urdf` we provide some sample URDFs for the Franka Panda robot.

If one of those models is loaded into a scene that contains render engines (e.g., for camera simulation) then there are a log of warnings spammed to the console:

For example:

```

... | 1.0 | Mesh warnings from franka_description with recent Drake versions - ### What happened?

In `drake/manipulation/models/franka_description/urdf` we provide some sample URDFs for the Franka Panda robot.

If one of those models is loaded into a scene that contains render engines (e.g., for camera simulation) then there ... | priority | mesh warnings from franka description with recent drake versions what happened in drake manipulation models franka description urdf we provide some sample urdfs for the franka panda robot if one of those models is loaded into a scene that contains render engines e g for camera simulation then there ... | 1 |

202,369 | 7,047,328,141 | IssuesEvent | 2018-01-02 12:58:37 | Casumo/BarcelonaOffice | https://api.github.com/repos/Casumo/BarcelonaOffice | closed | Accomodate Dimitri Visnadi | Medium Priority | Dimitri is joining our team. He arrives the 29th of December and will stay at least for 6 weeks until he finds an apartment for him. | 1.0 | Accomodate Dimitri Visnadi - Dimitri is joining our team. He arrives the 29th of December and will stay at least for 6 weeks until he finds an apartment for him. | priority | accomodate dimitri visnadi dimitri is joining our team he arrives the of december and will stay at least for weeks until he finds an apartment for him | 1 |

498,025 | 14,399,041,415 | IssuesEvent | 2020-12-03 10:23:20 | buddyboss/buddyboss-platform | https://api.github.com/repos/buddyboss/buddyboss-platform | opened | Add Search box for photos and album page | component: media feature: enhancement priority: medium | **Is your feature request related to a problem? Please describe.**

Although we have the same functionality on the global photos page. Users can search for photos from the global photos page but not from Member Profile and Group photos page.

**Describe the solution you'd like**

Add Search box for photos and album p... | 1.0 | Add Search box for photos and album page - **Is your feature request related to a problem? Please describe.**

Although we have the same functionality on the global photos page. Users can search for photos from the global photos page but not from Member Profile and Group photos page.

**Describe the solution you'd li... | priority | add search box for photos and album page is your feature request related to a problem please describe although we have the same functionality on the global photos page users can search for photos from the global photos page but not from member profile and group photos page describe the solution you d li... | 1 |

91,873 | 3,863,516,300 | IssuesEvent | 2016-04-08 09:45:33 | iamxavier/elmah | https://api.github.com/repos/iamxavier/elmah | closed | elmah usage example with windows based project in framework 3.5 | auto-migrated Priority-Medium Type-Enhancement | ```

Please let me know Is it possible to use the Elmah in windows or console based

project? If yes please provide a sample code with app.config and required code

```

Original issue reported on code.google.com by `anjali.b...@gmail.com` on 10 Sep 2012 at 10:54 | 1.0 | elmah usage example with windows based project in framework 3.5 - ```

Please let me know Is it possible to use the Elmah in windows or console based

project? If yes please provide a sample code with app.config and required code

```

Original issue reported on code.google.com by `anjali.b...@gmail.com` on 10 Sep 2012 a... | priority | elmah usage example with windows based project in framework please let me know is it possible to use the elmah in windows or console based project if yes please provide a sample code with app config and required code original issue reported on code google com by anjali b gmail com on sep at ... | 1 |

235,025 | 7,733,878,929 | IssuesEvent | 2018-05-26 17:05:48 | vinitkumar/googlecl | https://api.github.com/repos/vinitkumar/googlecl | closed | GoogleCL is not available through Google's linux repositories | Priority-Medium bug imported | _From [jonasfa@gmail.com](https://code.google.com/u/jonasfa@gmail.com/) on June 20, 2010 06:13:14_

http://www.google.com/linuxrepositories/

_Original issue: http://code.google.com/p/googlecl/issues/detail?id=92_

| 1.0 | GoogleCL is not available through Google's linux repositories - _From [jonasfa@gmail.com](https://code.google.com/u/jonasfa@gmail.com/) on June 20, 2010 06:13:14_

http://www.google.com/linuxrepositories/

_Original issue: http://code.google.com/p/googlecl/issues/detail?id=92_

| priority | googlecl is not available through google s linux repositories from on june original issue | 1 |

85,423 | 3,690,560,953 | IssuesEvent | 2016-02-25 20:28:35 | BCGamer/website | https://api.github.com/repos/BCGamer/website | opened | New URI: /organize/ | enhancement medium priority | To be used by event organizers for reviewing/exporting critical data.

/organize/

-Requires event organizer group

-Show a list of all conventions

-Link to list view of all events for convention

/organize/<convention-slug>/

-Requires event organizer group

-Show a list of all events

-Display ... | 1.0 | New URI: /organize/ - To be used by event organizers for reviewing/exporting critical data.

/organize/

-Requires event organizer group

-Show a list of all conventions

-Link to list view of all events for convention

/organize/<convention-slug>/

-Requires event organizer group

-Show a list of al... | priority | new uri organize to be used by event organizers for reviewing exporting critical data organize requires event organizer group show a list of all conventions link to list view of all events for convention organize requires event organizer group show a list of all events di... | 1 |

364,187 | 10,760,246,099 | IssuesEvent | 2019-10-31 18:11:32 | Vhoyon/Vramework | https://api.github.com/repos/Vhoyon/Vramework | opened | Add default implementation to createRequest() method in AbstractCommandRouter | !Enhancement: Framework !Priority: Medium ~owner-interactions | https://github.com/Vhoyon/Vramework/blob/91877d3525fcda00bb2036b3089b69a1c9c43526/src/main/java/io/github/vhoyon/vramework/abstracts/AbstractCommandRouter.java#L66

https://github.com/Vhoyon/Discord-Bot/blob/3f21717dfbaf6456fd5bc26211ebd2ee1a664446/src/main/java/io/github/vhoyon/bot/app/CommandRouter.java#L47-L51

... | 1.0 | Add default implementation to createRequest() method in AbstractCommandRouter - https://github.com/Vhoyon/Vramework/blob/91877d3525fcda00bb2036b3089b69a1c9c43526/src/main/java/io/github/vhoyon/vramework/abstracts/AbstractCommandRouter.java#L66

https://github.com/Vhoyon/Discord-Bot/blob/3f21717dfbaf6456fd5bc26211ebd2... | priority | add default implementation to createrequest method in abstractcommandrouter the implementation in our bot is generic enough to be applied by every project by default while allowing the override if wanted porting this to our framework would let a first timer do less on initial setup also this wou... | 1 |

69,384 | 3,298,154,306 | IssuesEvent | 2015-11-02 13:09:40 | ox-it/ords | https://api.github.com/repos/ox-it/ords | opened | Changing a field name breaks saved queries involving that field | David Paine Priority-Medium | If a user changes a field name in the schema designer, any saved queries that mention this field break (as one would expect).

It would be nice if (dynamic) saved queries were updated when field names were changed.

I appreciate that there are plenty of other ways of breaking saved queries by editing the schema tha... | 1.0 | Changing a field name breaks saved queries involving that field - If a user changes a field name in the schema designer, any saved queries that mention this field break (as one would expect).

It would be nice if (dynamic) saved queries were updated when field names were changed.

I appreciate that there are plenty... | priority | changing a field name breaks saved queries involving that field if a user changes a field name in the schema designer any saved queries that mention this field break as one would expect it would be nice if dynamic saved queries were updated when field names were changed i appreciate that there are plenty... | 1 |

418,753 | 12,202,971,088 | IssuesEvent | 2020-04-30 09:49:08 | kenodressel/quarantine-hero | https://api.github.com/repos/kenodressel/quarantine-hero | reopened | Improve email-verification redirect logic | priority-medium | Currently, when attempting to verify your email address, you need to click the verification link in the email, which takes you to the google auth success page. It would be better UX however if the link would redirect you to the website again after successful verification, similar to the sign-in logic for the `notify-me... | 1.0 | Improve email-verification redirect logic - Currently, when attempting to verify your email address, you need to click the verification link in the email, which takes you to the google auth success page. It would be better UX however if the link would redirect you to the website again after successful verification, sim... | priority | improve email verification redirect logic currently when attempting to verify your email address you need to click the verification link in the email which takes you to the google auth success page it would be better ux however if the link would redirect you to the website again after successful verification sim... | 1 |

544,577 | 15,894,729,110 | IssuesEvent | 2021-04-11 11:21:14 | marcusolsson/grafana-json-datasource | https://api.github.com/repos/marcusolsson/grafana-json-datasource | closed | Params in "Params" is not updating when variables changes. | priority/medium type/enhancement | When using variables in the "Params" option and change the variable, the query doesn't change.

https://user-images.githubusercontent.com/39485579/112290812-df547c00-8c8f-11eb-9c18-00eb63027d2d.mp4

### Param in "Path" panel

on October 11, 2010 09:30:01_

We should somehow encourage the stated approval from authoritative bodies (IOOS, Qartoc, OGC, NMMO, NOAA, etc) of full ontologies or individual terms. Then the potential user of a term can determine how strong... | 1.0 | Supporting Authority Approval "Bumper Stickers" - _From [mike.e.b...@gmail.com](https://code.google.com/u/104806978225310943594/) on October 11, 2010 09:30:01_

We should somehow encourage the stated approval from authoritative bodies (IOOS, Qartoc, OGC, NMMO, NOAA, etc) of full ontologies or individual terms. Then the... | priority | supporting authority approval bumper stickers from on october we should somehow encourage the stated approval from authoritative bodies ioos qartoc ogc nmmo noaa etc of full ontologies or individual terms then the potential user of a term can determine how strong that term is within their ... | 1 |

314,973 | 9,605,248,244 | IssuesEvent | 2019-05-10 23:00:41 | umple/umple | https://api.github.com/repos/umple/umple | closed | Make the E G S T D A M icons in UmpleOnline context sensitive | Component-UmpleOnline Diffic-Med Priority-Medium ucosp | The 'icons' that allow the user to control the diagram type, whether text or diagrams are displayed, and whether attributes or methods are displayed are currently not sensitive to the current state.

A lighter shade should be shown for currently inactive states. So only one of the E, G or S icons should be in normal ... | 1.0 | Make the E G S T D A M icons in UmpleOnline context sensitive - The 'icons' that allow the user to control the diagram type, whether text or diagrams are displayed, and whether attributes or methods are displayed are currently not sensitive to the current state.

A lighter shade should be shown for currentl... | priority | make the e g s t d a m icons in umpleonline context sensitive the icons that allow the user to control the diagram type whether text or diagrams are displayed and whether attributes or methods are displayed are currently not sensitive to the current state a lighter shade should be shown for currentl... | 1 |

141,984 | 5,447,890,144 | IssuesEvent | 2017-03-07 14:42:03 | DOAJ/doaj | https://api.github.com/repos/DOAJ/doaj | closed | Add the URL shortener functionality to Share button on ToCs | medium priority ready for review tnm | As per https://github.com/DOAJ/doaj/issues/983#issuecomment-231721859, we should reproduce the URL shortener on ToCs too.

| 1.0 | Add the URL shortener functionality to Share button on ToCs - As per https://github.com/DOAJ/doaj/issues/983#issuecomment-231721859, we should reproduce the URL shortener on ToCs too.

| priority | add the url shortener functionality to share button on tocs as per we should reproduce the url shortener on tocs too | 1 |

282,244 | 8,704,739,205 | IssuesEvent | 2018-12-05 20:16:26 | Shymain/Monochrome | https://api.github.com/repos/Shymain/Monochrome | opened | Image Displaying | GUI New Feature Priority: Medium | Write code to display images in the top-left Image Panel. Support a background and foreground image being simultaneously loaded and de-coupled to each other. | 1.0 | Image Displaying - Write code to display images in the top-left Image Panel. Support a background and foreground image being simultaneously loaded and de-coupled to each other. | priority | image displaying write code to display images in the top left image panel support a background and foreground image being simultaneously loaded and de coupled to each other | 1 |

24,301 | 2,667,292,238 | IssuesEvent | 2015-03-22 13:25:24 | andresriancho/w3af | https://api.github.com/repos/andresriancho/w3af | closed | Vulnerability name titles - Assert that all names are in vulns.py | core improvement priority:medium | Assert that all names are in https://github.com/andresriancho/w3af/blob/master/w3af/core/data/constants/vulns.py , when during tests write the names that are NOT in the vulns.py in a text file, assert that the file is empty at the end of the whole test run | 1.0 | Vulnerability name titles - Assert that all names are in vulns.py - Assert that all names are in https://github.com/andresriancho/w3af/blob/master/w3af/core/data/constants/vulns.py , when during tests write the names that are NOT in the vulns.py in a text file, assert that the file is empty at the end of the whole test... | priority | vulnerability name titles assert that all names are in vulns py assert that all names are in when during tests write the names that are not in the vulns py in a text file assert that the file is empty at the end of the whole test run | 1 |

733,046 | 25,285,640,305 | IssuesEvent | 2022-11-16 19:03:27 | svthalia/concrexit | https://api.github.com/repos/svthalia/concrexit | closed | Calendar wrongly shows registered for an event. | priority: medium bug | ### Describe the bug

I was registered for an event, and then deregistered, however the calendar still shows "you are registered for this event". This includes the event being magenta. When going to the event page it does not show as registered.

### How to reproduce

Steps to reproduce the behaviour:

1. Register fo... | 1.0 | Calendar wrongly shows registered for an event. - ### Describe the bug

I was registered for an event, and then deregistered, however the calendar still shows "you are registered for this event". This includes the event being magenta. When going to the event page it does not show as registered.

### How to reproduce

... | priority | calendar wrongly shows registered for an event describe the bug i was registered for an event and then deregistered however the calendar still shows you are registered for this event this includes the event being magenta when going to the event page it does not show as registered how to reproduce ... | 1 |

25,137 | 2,676,643,921 | IssuesEvent | 2015-03-25 18:46:01 | dump247/udt-net | https://api.github.com/repos/dump247/udt-net | closed | Needs help on TcpClient and TcpListener | auto-migrated Priority-Medium Type-Enhancement | ```

As mentioned in ToDo list "Something similar to TcpClient and TcpListener", are

you working on this ? If yes when can we expect it ?

```

Original issue reported on code.google.com by `ppdevm...@gmail.com` on 27 Nov 2012 at 4:25 | 1.0 | Needs help on TcpClient and TcpListener - ```

As mentioned in ToDo list "Something similar to TcpClient and TcpListener", are

you working on this ? If yes when can we expect it ?

```

Original issue reported on code.google.com by `ppdevm...@gmail.com` on 27 Nov 2012 at 4:25 | priority | needs help on tcpclient and tcplistener as mentioned in todo list something similar to tcpclient and tcplistener are you working on this if yes when can we expect it original issue reported on code google com by ppdevm gmail com on nov at | 1 |