Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 795 | body stringlengths 1 259k | index stringclasses 12

values | text_combine stringlengths 96 259k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

57,063 | 3,081,235,533 | IssuesEvent | 2015-08-22 14:24:24 | bitfighter/bitfighter | https://api.github.com/repos/bitfighter/bitfighter | closed | Quitting editor while uploading causes problems | 019 bug imported Priority-Medium | _From [watusim...@bitfighter.org](https://code.google.com/u/105427273526970468779/) on November 09, 2013 15:21:57_

Quitting the editor while uploading a level can cause a crash, in this particular instance, the thread was trying to save the level, when the level no longer existed because the editor was closed.

One ... | 1.0 | Quitting editor while uploading causes problems - _From [watusim...@bitfighter.org](https://code.google.com/u/105427273526970468779/) on November 09, 2013 15:21:57_

Quitting the editor while uploading a level can cause a crash, in this particular instance, the thread was trying to save the level, when the level no lon... | priority | quitting editor while uploading causes problems from on november quitting the editor while uploading a level can cause a crash in this particular instance the thread was trying to save the level when the level no longer existed because the editor was closed one possible solution might be for ... | 1 |

629,162 | 20,025,006,667 | IssuesEvent | 2022-02-01 20:16:19 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | tests: driver: clock: nrf: Several failures on nrf52dk_nrf52832 | bug priority: medium platform: nRF | **Describe the bug**

Several tests related to clock driver fails on nrf52dk_nrf52832. It looks like the board hangs after some initial commands and the test is terminated by a timeout. Following scenarios are failing:

drivers.clock.drivers.clock.clock_control_nrf5

drivers.clock.drivers.clock.clock_control_nrf5_lfclk... | 1.0 | tests: driver: clock: nrf: Several failures on nrf52dk_nrf52832 - **Describe the bug**

Several tests related to clock driver fails on nrf52dk_nrf52832. It looks like the board hangs after some initial commands and the test is terminated by a timeout. Following scenarios are failing:

drivers.clock.drivers.clock.cloc... | priority | tests driver clock nrf several failures on describe the bug several tests related to clock driver fails on it looks like the board hangs after some initial commands and the test is terminated by a timeout following scenarios are failing drivers clock drivers clock clock control drivers clock... | 1 |

7,184 | 2,598,259,943 | IssuesEvent | 2015-02-22 10:06:24 | HubTurbo/HubTurbo | https://api.github.com/repos/HubTurbo/HubTurbo | closed | Don't trigger a sync if HT gains focus due to a click on the project switcher | feature-projects priority.medium status.accepted type.enhancement | It's annoying when I want to switch projects but HT goes into a sync when I click on the project switcher drop down. | 1.0 | Don't trigger a sync if HT gains focus due to a click on the project switcher - It's annoying when I want to switch projects but HT goes into a sync when I click on the project switcher drop down. | priority | don t trigger a sync if ht gains focus due to a click on the project switcher it s annoying when i want to switch projects but ht goes into a sync when i click on the project switcher drop down | 1 |

296,821 | 9,126,351,784 | IssuesEvent | 2019-02-24 20:53:50 | pixijs/pixi.js | https://api.github.com/repos/pixijs/pixi.js | closed | Compressed textures integration | Difficulty: Medium Domain: API Priority: Medium Renderer: WebGL Resolution: Won't Fix Type: Feature Request Version: v5.x | I dont have enough experience to refactor the code properly.

".dds", ".pvr", ".pkm" formats, as in https://github.com/pixijs/pixi-compressed-textures

There is already working example in v4: http://pixijs.github.io/examples/#/textures/dds.js

We dont have to integrate chooser, just do the following:

1. refactor Compre... | 1.0 | Compressed textures integration - I dont have enough experience to refactor the code properly.

".dds", ".pvr", ".pkm" formats, as in https://github.com/pixijs/pixi-compressed-textures

There is already working example in v4: http://pixijs.github.io/examples/#/textures/dds.js

We dont have to integrate chooser, just do... | priority | compressed textures integration i dont have enough experience to refactor the code properly dds pvr pkm formats as in there is already working example in we dont have to integrate chooser just do the following refactor compressedimage add it to pixi gl core fix textureparser so it acce... | 1 |

273,437 | 8,530,713,365 | IssuesEvent | 2018-11-04 02:15:28 | robotframework/robotframework | https://api.github.com/repos/robotframework/robotframework | closed | Write Control Character does not work on Python 3 | beta 2 bug priority: medium | In telnetlib, control characters are no more 'char' type, but 'bytes' type.

So if you try to issue command:

Write Control Character 26 # CTRL-Z

then it gives the error: TypeError: can't concat str to bytes

| 1.0 | Write Control Character does not work on Python 3 - In telnetlib, control characters are no more 'char' type, but 'bytes' type.

So if you try to issue command:

Write Control Character 26 # CTRL-Z

then it gives the error: TypeError: can't concat str to bytes

| priority | write control character does not work on python in telnetlib control characters are no more char type but bytes type so if you try to issue command write control character ctrl z then it gives the error typeerror can t concat str to bytes | 1 |

781,280 | 27,430,508,213 | IssuesEvent | 2023-03-02 00:42:36 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | Zephyr does not define minimal C++ language standard requirement and does not track it | bug priority: medium area: C++ | **Describe the bug**

Zephyr defines C11 as minimal supported standard for C language in [C language support - language standards](https://docs.zephyrproject.org/latest/develop/languages/c/index.html#language-standards).

Similar documentation article: [C++ language support](https://docs.zephyrproject.org/latest/develo... | 1.0 | Zephyr does not define minimal C++ language standard requirement and does not track it - **Describe the bug**

Zephyr defines C11 as minimal supported standard for C language in [C language support - language standards](https://docs.zephyrproject.org/latest/develop/languages/c/index.html#language-standards).

Similar d... | priority | zephyr does not define minimal c language standard requirement and does not track it describe the bug zephyr defines as minimal supported standard for c language in similar documentation article does not have language standard section roadmap also does not say a word about minimal requirement... | 1 |

339,804 | 10,262,515,504 | IssuesEvent | 2019-08-22 12:30:50 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | openocd unable to flash hello_world to cc26x2r1_launchxl | bug priority: medium | **Describe the bug**

west flash fails to write hello_world to the LaunchXL CC26X2R1, complaining that the debug regions are unpowered.

**To Reproduce**

follow the instructions here:

https://docs.zephyrproject.org/latest/boards/arm/cc26x2r1_launchxl/doc/index.html

Use a LAUNCHXL-CC26X2R1

**Expected behavior**

... | 1.0 | openocd unable to flash hello_world to cc26x2r1_launchxl - **Describe the bug**

west flash fails to write hello_world to the LaunchXL CC26X2R1, complaining that the debug regions are unpowered.

**To Reproduce**

follow the instructions here:

https://docs.zephyrproject.org/latest/boards/arm/cc26x2r1_launchxl/doc/i... | priority | openocd unable to flash hello world to launchxl describe the bug west flash fails to write hello world to the launchxl complaining that the debug regions are unpowered to reproduce follow the instructions here use a launchxl expected behavior west flash completes successfully and ... | 1 |

281,801 | 8,699,803,730 | IssuesEvent | 2018-12-05 06:13:55 | hotosm/tasking-manager | https://api.github.com/repos/hotosm/tasking-manager | closed | Task history lost on split | High Priority In Progress Medium Difficulty regression | Tiles are split 4 ways. In the old task manager the edit history and comments would propogate to the four child tiles after a split. In the new task manager this does not happen and all history and comments are lost. | 1.0 | Task history lost on split - Tiles are split 4 ways. In the old task manager the edit history and comments would propogate to the four child tiles after a split. In the new task manager this does not happen and all history and comments are lost. | priority | task history lost on split tiles are split ways in the old task manager the edit history and comments would propogate to the four child tiles after a split in the new task manager this does not happen and all history and comments are lost | 1 |

162,794 | 6,175,949,087 | IssuesEvent | 2017-07-01 08:22:42 | minio/minio-py | https://api.github.com/repos/minio/minio-py | closed | Add additional headers in all requests during multipart upload | priority: medium | About a month ago I've made a pull requests to add support for additional headers in get requests (https://github.com/minio/minio-py/pull/522). I'm using this headers in both, put and get methods, to enable SSE-C in S3 storage. Additional metadata was implemented before for `put_object` and `fput_object`.

But, as I... | 1.0 | Add additional headers in all requests during multipart upload - About a month ago I've made a pull requests to add support for additional headers in get requests (https://github.com/minio/minio-py/pull/522). I'm using this headers in both, put and get methods, to enable SSE-C in S3 storage. Additional metadata was imp... | priority | add additional headers in all requests during multipart upload about a month ago i ve made a pull requests to add support for additional headers in get requests i m using this headers in both put and get methods to enable sse c in storage additional metadata was implemented before for put object and fput o... | 1 |

415,542 | 12,130,411,509 | IssuesEvent | 2020-04-23 01:28:51 | minio/mc | https://api.github.com/repos/minio/mc | closed | Unable to copy a list of files to a remote directory | community priority: medium | ## Expected behavior

Minio copies all files to a new directory on minio

## Actual behavior

Minio fails to copy: "Object name contains unsupported characters."

## Steps to reproduce the behavior

```

$ mkdir test

$ echo test1 > test/file1

$ echo test1 > test/file2

$ echo test1 > test/file3

$ echo test1 > te... | 1.0 | Unable to copy a list of files to a remote directory - ## Expected behavior

Minio copies all files to a new directory on minio

## Actual behavior

Minio fails to copy: "Object name contains unsupported characters."

## Steps to reproduce the behavior

```

$ mkdir test

$ echo test1 > test/file1

$ echo test1 > ... | priority | unable to copy a list of files to a remote directory expected behavior minio copies all files to a new directory on minio actual behavior minio fails to copy object name contains unsupported characters steps to reproduce the behavior mkdir test echo test echo test ec... | 1 |

688,537 | 23,586,727,806 | IssuesEvent | 2022-08-23 12:16:07 | aleksbobic/csx | https://api.github.com/repos/aleksbobic/csx | opened | Provide selected nodes / components / clusters as advanced search nodes | enhancement priority:high Complexity:medium | It should be possible to use the selected node and component values to directly feed a search request form the "advanced search" | 1.0 | Provide selected nodes / components / clusters as advanced search nodes - It should be possible to use the selected node and component values to directly feed a search request form the "advanced search" | priority | provide selected nodes components clusters as advanced search nodes it should be possible to use the selected node and component values to directly feed a search request form the advanced search | 1 |

44,080 | 2,899,112,220 | IssuesEvent | 2015-06-17 09:17:09 | greenlion/PHP-SQL-Parser | https://api.github.com/repos/greenlion/PHP-SQL-Parser | closed | Parser breaks on nexted subqueries ending in )) | bug imported Priority-Medium | _From [smalys...@gmail.com](https://code.google.com/u/106185853731560372304/) on February 15, 2012 02:13:50_

If I try to parse a complex query like this:

SELECT * FROM contacts WHERE contacts.id IN (SELECT email_addr_bean_rel.bean_id FROM email_addr_bean_rel, email_addresses WHERE email_addresses.id = email_addr_be... | 1.0 | Parser breaks on nexted subqueries ending in )) - _From [smalys...@gmail.com](https://code.google.com/u/106185853731560372304/) on February 15, 2012 02:13:50_

If I try to parse a complex query like this:

SELECT * FROM contacts WHERE contacts.id IN (SELECT email_addr_bean_rel.bean_id FROM email_addr_bean_rel, email_... | priority | parser breaks on nexted subqueries ending in from on february if i try to parse a complex query like this select from contacts where contacts id in select email addr bean rel bean id from email addr bean rel email addresses where email addresses id email addr bean rel email address id a... | 1 |

661,006 | 22,038,246,500 | IssuesEvent | 2022-05-28 23:52:43 | LuanRT/google-this | https://api.github.com/repos/LuanRT/google-this | closed | Move to Google's private API (Image Search) | enhancement priority: medium | It seems like the google website uses an API to fetch image search results, the data is a bit messy but it's way faster than parsing raw html, has more metadata, returns up to 100 images, and allows more request options.

## Sample Code

This could be done by sending a post request to `https://www.google.com/_/Visu... | 1.0 | Move to Google's private API (Image Search) - It seems like the google website uses an API to fetch image search results, the data is a bit messy but it's way faster than parsing raw html, has more metadata, returns up to 100 images, and allows more request options.

## Sample Code

This could be done by sending a ... | priority | move to google s private api image search it seems like the google website uses an api to fetch image search results the data is a bit messy but it s way faster than parsing raw html has more metadata returns up to images and allows more request options sample code this could be done by sending a po... | 1 |

479,116 | 13,791,439,260 | IssuesEvent | 2020-10-09 12:09:02 | gnosis/conditional-tokens-explorer | https://api.github.com/repos/gnosis/conditional-tokens-explorer | closed | Positions list: filter by OWL returns no results | Medium priority QA Passed bug | Open Positions list and select OWL collateral in the filter

**Actual result:** filter by OWL returns no results

**Expected Result:** positions with OWL collateral are displayed in the grid

| 1.0 | Positions list: filter by OWL returns no results - Open Positions list and select OWL collateral in the filter

**Actual result:** filter by OWL returns no results

**Expected Result:** positions with OWL c... | priority | positions list filter by owl returns no results open positions list and select owl collateral in the filter actual result filter by owl returns no results expected result positions with owl collateral are displayed in the grid | 1 |

188,042 | 6,767,690,266 | IssuesEvent | 2017-10-26 05:13:14 | RSPluto/Web-UI | https://api.github.com/repos/RSPluto/Web-UI | closed | 实时监测--进行区域配置,在添加配置时,添加的底图不是图片文件时,提示信息不正确。 | bug Fixed Medium Priority | 测试步骤:

1、进入区域配置页面。

2、在填写区域信息时,选择上传的文件格式为非图片文件。

期望结果:

2、添加的底图为非图片文件(如.doc)文件,显示的提示信息应该明确指出所添加的文件格式有误。

例如“添加图片失败,请检查文件格式是否为.png/.jpg....”

实际结果:

2、添加的底图为非图片文件,显示的提示信息为“Error:加载图片文件失败,图片压缩失败,请换一张图片”。未对所添加的文件格式进行校验。

具体如图所示:

文件,显示的提示信息应该明确指出所添加的文件格式有误。

例如“添加图片失败,请检查文件格式是否为.png/.jpg....”

实际结果:

2、添加的底图为非图片文件,显示的提示信息为“Error:加载图片文件失败,图片压缩失败,请换一张图片”。未对所添加的文件格式进行校验。

具体如图所示:

文件,显示的提示信息应该明确指出所添加的文件格式有误。 例如“添加图片失败,请检查文件格式是否为 png jpg ” 实际结果 、添加的底图为非图片文件,显示的提示信息为“error 加载图片文件失败,图片压缩失败,请换一张图片”。未对所添加的文件格式进行校验。 具体如图所示: | 1 |

222,616 | 7,434,470,915 | IssuesEvent | 2018-03-26 11:09:08 | minio/minio-py | https://api.github.com/repos/minio/minio-py | reopened | Several documentation issues | priority: medium | There are several minio-py SDK documentation issues as listed below:

- [x] **`Missing`** optional arguments and/or their default values in command titles:

**`..., request_headers = None`**) in **get_object**, **get_partial_object**, **fget_object**

`..., copy_conditions`**`= None`**) in **copy_object**

`..., cont... | 1.0 | Several documentation issues - There are several minio-py SDK documentation issues as listed below:

- [x] **`Missing`** optional arguments and/or their default values in command titles:

**`..., request_headers = None`**) in **get_object**, **get_partial_object**, **fget_object**

`..., copy_conditions`**`= None`**)... | priority | several documentation issues there are several minio py sdk documentation issues as listed below missing optional arguments and or their default values in command titles request headers none in get object get partial object fget object copy conditions none i... | 1 |

585,627 | 17,512,790,739 | IssuesEvent | 2021-08-11 01:16:58 | airbytehq/airbyte | https://api.github.com/repos/airbytehq/airbyte | closed | Do not lose workspace on page refresh. | type/enhancement area/frontend priority/medium airbyte-cloud cloud-private-beta | ## Tell us about the problem you're trying to solve

In cloud when we refresh the page we end up back in the workspaces list page. We want to stay in the workspace. In the long term we want to add workspace slug to the URL https://github.com/airbytehq/airbyte/issues/5227, but that is a bigger change than we can make be... | 1.0 | Do not lose workspace on page refresh. - ## Tell us about the problem you're trying to solve

In cloud when we refresh the page we end up back in the workspaces list page. We want to stay in the workspace. In the long term we want to add workspace slug to the URL https://github.com/airbytehq/airbyte/issues/5227, but th... | priority | do not lose workspace on page refresh tell us about the problem you re trying to solve in cloud when we refresh the page we end up back in the workspaces list page we want to stay in the workspace in the long term we want to add workspace slug to the url but that is a bigger change than we can make before to... | 1 |

773,180 | 27,148,668,638 | IssuesEvent | 2023-02-16 22:23:13 | balena-os/balena-supervisor | https://api.github.com/repos/balena-os/balena-supervisor | closed | Allow toggling the public URL from the supervisor API | type/feature Medium Priority low-hanging fruit | This should be a simple call to the backend, to enable the public url on the device resource. Should also provide an endpoint to detail the state of the public url. | 1.0 | Allow toggling the public URL from the supervisor API - This should be a simple call to the backend, to enable the public url on the device resource. Should also provide an endpoint to detail the state of the public url. | priority | allow toggling the public url from the supervisor api this should be a simple call to the backend to enable the public url on the device resource should also provide an endpoint to detail the state of the public url | 1 |

418,729 | 12,202,844,163 | IssuesEvent | 2020-04-30 09:36:32 | SKefalidis/DangerousAdventures | https://api.github.com/repos/SKefalidis/DangerousAdventures | closed | [BUG] Cannot reset movement direction after hitting a wall. | bug medium priority | **Describe the bug**

The user cannot reset the direction of a monster (using r) if the monster has moved previously.

**To Reproduce**

Steps to reproduce the behavior:

1. Start turn

2. Select a direction and move to a wall

3. Select direction

4. Press R to reset

**Expected behavior**

The direction should be... | 1.0 | [BUG] Cannot reset movement direction after hitting a wall. - **Describe the bug**

The user cannot reset the direction of a monster (using r) if the monster has moved previously.

**To Reproduce**

Steps to reproduce the behavior:

1. Start turn

2. Select a direction and move to a wall

3. Select direction

4. Pres... | priority | cannot reset movement direction after hitting a wall describe the bug the user cannot reset the direction of a monster using r if the monster has moved previously to reproduce steps to reproduce the behavior start turn select a direction and move to a wall select direction press r ... | 1 |

6,022 | 2,582,412,051 | IssuesEvent | 2015-02-15 06:40:37 | dhowe/AdNauseam | https://api.github.com/repos/dhowe/AdNauseam | closed | Import/Export: Character encoding problem | Bug Needs-verification PRIORITY: Medium | Character encoding problem when exporting Ads

Environment: OS X 10.10.2, Firefox 35, ADP 2.6.7, ADN 1.11

To reproduce this on any Chinese website with EasyList China:

1. export Ads

2. clear Ads

3. import Ads

4. open AD vault and messed up Chinese characters will be seen

**

_Originally opened as dart-lang/sdk#3722_

----

If we had:

typedef bool Predicate<T>(T item);

int... | 1.0 | Matcher: extend a Predicate interface - <a href="https://github.com/seaneagan"><img src="https://avatars.githubusercontent.com/u/444270?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [seaneagan](https://github.com/seaneagan)**

_Originally opened as dart-lang/sdk#3722_

----

If we had:

type... | priority | matcher extend a predicate interface issue by originally opened as dart lang sdk if we had typedef bool predicate lt t gt t item interface matcher lt t gt extends predicate lt t gt instead of matcher matches thus using quot operator call quot then the following would work co... | 1 |

315,108 | 9,606,734,319 | IssuesEvent | 2019-05-11 13:08:10 | LifeMC/LifeSkript | https://api.github.com/repos/LifeMC/LifeSkript | closed | Add Timings support to Skript | enhancement feature request medium priority | **Is your feature request related to a problem? Please describe.**

Skript sometimes make lag, and all we see in timings report was the delay class.

**Describe the solution you'd like**

Add timings (v2) support to Skript (backport it), bensku's fork already includes this, for reference.

**Describe alternatives y... | 1.0 | Add Timings support to Skript - **Is your feature request related to a problem? Please describe.**

Skript sometimes make lag, and all we see in timings report was the delay class.

**Describe the solution you'd like**

Add timings (v2) support to Skript (backport it), bensku's fork already includes this, for referen... | priority | add timings support to skript is your feature request related to a problem please describe skript sometimes make lag and all we see in timings report was the delay class describe the solution you d like add timings support to skript backport it bensku s fork already includes this for referenc... | 1 |

207,815 | 7,134,081,260 | IssuesEvent | 2018-01-22 19:37:11 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Can't connect to my server. | Medium Priority | Issues happening on GreenLeaf Main.

[ClientUpdateException TargetInvocationException 01220331.zip](https://github.com/StrangeLoopGames/EcoIssues/files/1652857/ClientUpdateException.TargetInvocationException.01220331.zip)

Server is running 6.4.1 as well as the client, and I seem to be the only one having this issu... | 1.0 | Can't connect to my server. - Issues happening on GreenLeaf Main.

[ClientUpdateException TargetInvocationException 01220331.zip](https://github.com/StrangeLoopGames/EcoIssues/files/1652857/ClientUpdateException.TargetInvocationException.01220331.zip)

Server is running 6.4.1 as well as the client, and I seem to be... | priority | can t connect to my server issues happening on greenleaf main server is running as well as the client and i seem to be the only one having this issue on phlo account name on another note i wanted to ask you if modders will get a heads up before beta releases as the current update messed wi... | 1 |

319,886 | 9,761,854,142 | IssuesEvent | 2019-06-05 09:46:48 | canonical-web-and-design/vanilla-framework | https://api.github.com/repos/canonical-web-and-design/vanilla-framework | closed | Provide variables to override navigation pattern colours | Priority: Medium | ## Pattern to amend

p-navigation

## Context

Some colours are automatically generated within the p-navigation pattern from the colour variables passed in. There should be an option to override these generated colours with specific variables.

e.g. The background colour of hovered nav items is `background-colo... | 1.0 | Provide variables to override navigation pattern colours - ## Pattern to amend

p-navigation

## Context

Some colours are automatically generated within the p-navigation pattern from the colour variables passed in. There should be an option to override these generated colours with specific variables.

e.g. The... | priority | provide variables to override navigation pattern colours pattern to amend p navigation context some colours are automatically generated within the p navigation pattern from the colour variables passed in there should be an option to override these generated colours with specific variables e g the... | 1 |

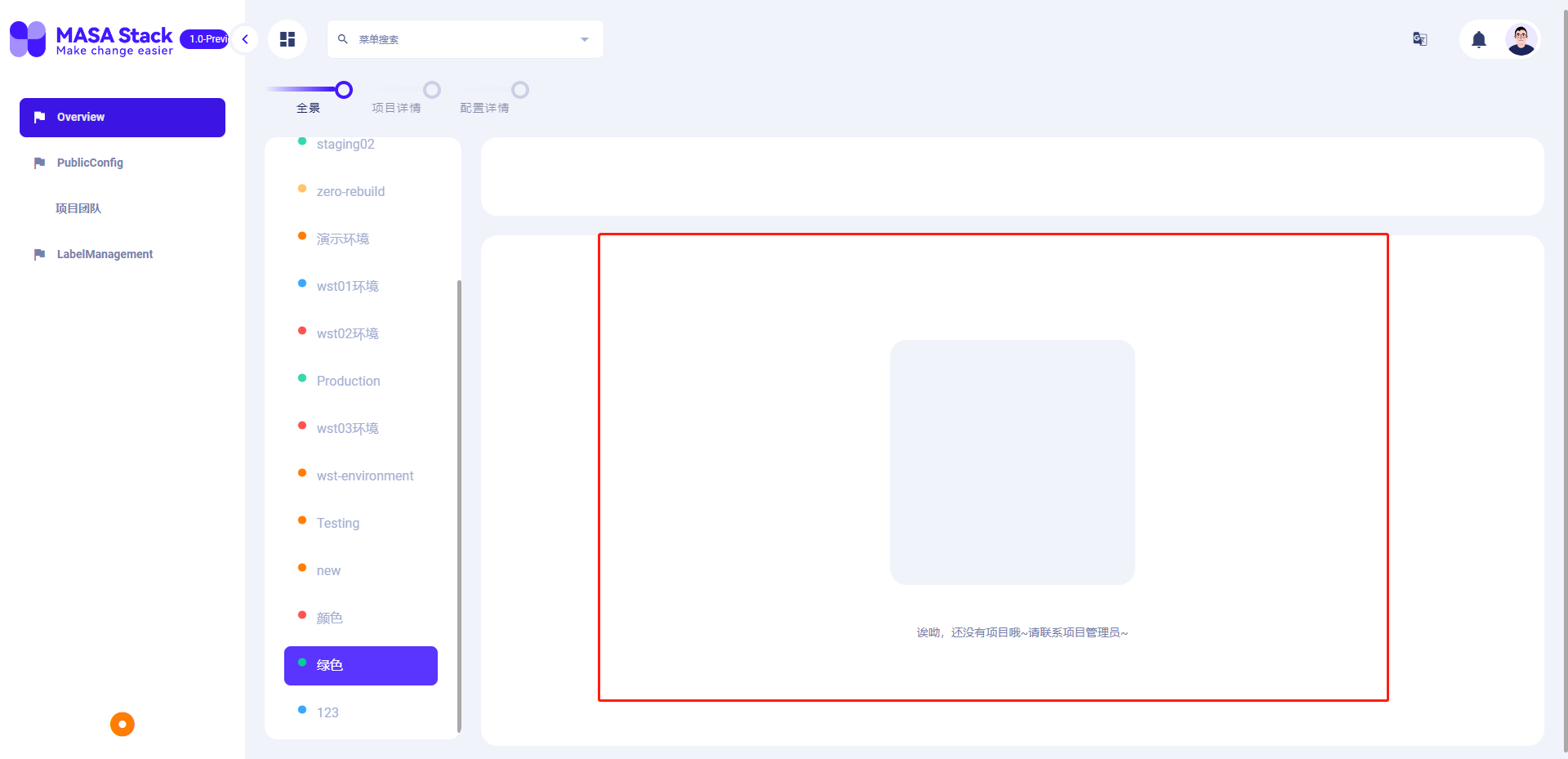

791,277 | 27,858,265,377 | IssuesEvent | 2023-03-21 02:11:50 | masastack/MASA.DCC | https://api.github.com/repos/masastack/MASA.DCC | reopened | When there are no projects and applications, the default page is not needed for the time being | type/ui status/resolved severity/medium site/staging priority/p3 | 没有项目和应用时,暂时不需要缺省页。等后期UI设计后,所有项目统一更换

on March 02, 2012 01:19:23_

Centralize the per-type configuration in CiteReference. Rather than having separate default, required, icon, etc, put them in a single object indexed by type then other information. E.g.:

var configur... | 1.0 | Centralize CiteReference per-type configuration - _From [matthew.flaschen@gatech.edu](https://code.google.com/u/108647890027017428365/) on March 02, 2012 01:19:23_

Centralize the per-type configuration in CiteReference. Rather than having separate default, required, icon, etc, put them in a single object indexed by t... | priority | centralize citereference per type configuration from on march centralize the per type configuration in citereference rather than having separate default required icon etc put them in a single object indexed by type then other information e g var configuration web ... | 1 |

175,200 | 6,548,037,643 | IssuesEvent | 2017-09-04 18:21:45 | cms-gem-daq-project/vfatqc-python-scripts | https://api.github.com/repos/cms-gem-daq-project/vfatqc-python-scripts | opened | Bug Report: run_scans.py spawns additional processes | Priority: Medium Type: Bug | <!--- Provide a general summary of the issue in the Title above -->

## Brief summary of issue

<!--- Provide a description of the issue, including any other issues or pull requests it references -->

As shown: http://cmsonline.cern.ch/cms-elog/1007990

`run_scans.py` seems to have spawned 12 processes even though `c... | 1.0 | Bug Report: run_scans.py spawns additional processes - <!--- Provide a general summary of the issue in the Title above -->

## Brief summary of issue

<!--- Provide a description of the issue, including any other issues or pull requests it references -->

As shown: http://cmsonline.cern.ch/cms-elog/1007990

`run_scan... | priority | bug report run scans py spawns additional processes brief summary of issue as shown run scans py seems to have spawned processes even though chamber config has only one entry link this has been reported before and in mattermost investigating using top seems to show these processes are... | 1 |

587,305 | 17,612,622,881 | IssuesEvent | 2021-08-18 04:58:48 | buddyboss/buddyboss-platform | https://api.github.com/repos/buddyboss/buddyboss-platform | closed | vertical scroll bar disappears (and progressive load fails) when message list height > 660 px | bug priority: medium | **Describe the bug**

In Private Messaging, Progressively loads message history as long as .bp-messages-nav-panel is shorter than 660px height. However, on large monitors, the div (correctly) gets taller. But then the scrollbar disappears, and as a result, only the first 10 messages show. No progressive loading.

... | 1.0 | vertical scroll bar disappears (and progressive load fails) when message list height > 660 px - **Describe the bug**

In Private Messaging, Progressively loads message history as long as .bp-messages-nav-panel is shorter than 660px height. However, on large monitors, the div (correctly) gets taller. But then the scro... | priority | vertical scroll bar disappears and progressive load fails when message list height px describe the bug in private messaging progressively loads message history as long as bp messages nav panel is shorter than height however on large monitors the div correctly gets taller but then the scrollbar ... | 1 |

264,254 | 8,306,930,669 | IssuesEvent | 2018-09-23 01:13:14 | dirkwhoffmann/virtualc64 | https://api.github.com/repos/dirkwhoffmann/virtualc64 | opened | Test case VICII/split-tests/lightpen.prg fails | Priority-Medium bug | VirtualC64:

<img width="791" alt="lightpen" src="https://user-images.githubusercontent.com/12561945/45923162-30098380-bf32-11e8-89d4-89cb4d84a320.png">

VICE:

<img width="853" alt="lightpen_vice" src="https://user-images.githubusercontent.com/12561945/45923163-34ce3780-bf32-11e8-8157-445c0a3b7b8a.png">

| 1.0 | Test case VICII/split-tests/lightpen.prg fails - VirtualC64:

<img width="791" alt="lightpen" src="https://user-images.githubusercontent.com/12561945/45923162-30098380-bf32-11e8-89d4-89cb4d84a320.png">

VICE:

<img width="853" alt="lightpen_vice" src="https://user-images.githubusercontent.com/12561945/45923163-... | priority | test case vicii split tests lightpen prg fails img width alt lightpen src vice img width alt lightpen vice src | 1 |

47,198 | 2,974,598,080 | IssuesEvent | 2015-07-15 02:13:31 | Reimashi/jotai | https://api.github.com/repos/Reimashi/jotai | closed | CPU Temerature missing | auto-migrated Priority-Medium Type-Enhancement | ```

What is the expected output? What do you see instead?

I do not see CPU temperature, only use. Fir one of the HDs the temperature is

missing. I do not see any output of the graphics card.

What version of the product are you using? On what operating system?

Version is 0.3.2 Beta

Please provide any additional infor... | 1.0 | CPU Temerature missing - ```

What is the expected output? What do you see instead?

I do not see CPU temperature, only use. Fir one of the HDs the temperature is

missing. I do not see any output of the graphics card.

What version of the product are you using? On what operating system?

Version is 0.3.2 Beta

Please pro... | priority | cpu temerature missing what is the expected output what do you see instead i do not see cpu temperature only use fir one of the hds the temperature is missing i do not see any output of the graphics card what version of the product are you using on what operating system version is beta please pro... | 1 |

720,161 | 24,781,741,414 | IssuesEvent | 2022-10-24 06:04:39 | airqo-platform/AirQo-frontend | https://api.github.com/repos/airqo-platform/AirQo-frontend | closed | Redirect all AirQo domains point to the default one (.net) | enhancement airqo-website feature-request priority-medium | **Is your feature request related to a problem? Please describe.**

AirQo has many domain names, tracking the analytics for all these could be hard to manage.

**Describe the solution you'd like**

Redirect all of them to the default domain name which is tracked. This will make it easy to get analytics of all our vis... | 1.0 | Redirect all AirQo domains point to the default one (.net) - **Is your feature request related to a problem? Please describe.**

AirQo has many domain names, tracking the analytics for all these could be hard to manage.

**Describe the solution you'd like**

Redirect all of them to the default domain name which is tr... | priority | redirect all airqo domains point to the default one net is your feature request related to a problem please describe airqo has many domain names tracking the analytics for all these could be hard to manage describe the solution you d like redirect all of them to the default domain name which is tr... | 1 |

772,363 | 27,118,423,390 | IssuesEvent | 2023-02-15 20:34:00 | NASA-NAVO/navo-workshop | https://api.github.com/repos/NASA-NAVO/navo-workshop | closed | Make a separate "environment" repo that pulls the notebook content | enhancement priority:medium | Following [this Binder Discourse thread](https://discourse.jupyter.org/t/how-to-reduce-mybinder-org-repository-startup-time/4956), we can make a separate repository specifying the environment, then use `nbgitpuller` to pull the content from our notebooks repository. This way, Binder sessions will start quickly, even if... | 1.0 | Make a separate "environment" repo that pulls the notebook content - Following [this Binder Discourse thread](https://discourse.jupyter.org/t/how-to-reduce-mybinder-org-repository-startup-time/4956), we can make a separate repository specifying the environment, then use `nbgitpuller` to pull the content from our notebo... | priority | make a separate environment repo that pulls the notebook content following we can make a separate repository specifying the environment then use nbgitpuller to pull the content from our notebooks repository this way binder sessions will start quickly even if we tweak notebooks just before a workshop f... | 1 |

119,714 | 4,774,982,492 | IssuesEvent | 2016-10-27 08:53:25 | MatchboxDorry/dorry-web | https://api.github.com/repos/MatchboxDorry/dorry-web | closed | [UI] test1-see the user head | censor: approved effort: 2 (medium) feature: view template flag: fixed priority: 2 (required) type: enhancement | **System:**

Mac mini Os X EI Capitan

**Browser:**

Chrome

**What I want to do**

I want to see the user head and name.

**Where I am**

services page

**What I have done**

I click 'services' button in menu and see the page of services.

**What I expect:**

I can see user head and name at the top right-ha... | 1.0 | [UI] test1-see the user head - **System:**

Mac mini Os X EI Capitan

**Browser:**

Chrome

**What I want to do**

I want to see the user head and name.

**Where I am**

services page

**What I have done**

I click 'services' button in menu and see the page of services.

**What I expect:**

I can see user he... | priority | see the user head system mac mini os x ei capitan browser chrome what i want to do i want to see the user head and name where i am services page what i have done i click services button in menu and see the page of services what i expect i can see user head and ... | 1 |

660,362 | 21,963,331,413 | IssuesEvent | 2022-05-24 17:40:06 | QuiltMC/quiltflower | https://api.github.com/repos/QuiltMC/quiltflower | closed | `requires static` is decompiled as `requires` in module-info | bug Subsystem: Writing Priority: Medium | Example: `META-INF/versions/16/module-info.class` in https://github.com/Juuxel/unprotect/releases/download/1.1.0/unprotect-1.1.0.jar

The static vs normal `requires` info is available in the bytecode since JDK's javap can read it. (And produce a more accurate decompiled version of the module-info, ironically) | 1.0 | `requires static` is decompiled as `requires` in module-info - Example: `META-INF/versions/16/module-info.class` in https://github.com/Juuxel/unprotect/releases/download/1.1.0/unprotect-1.1.0.jar

The static vs normal `requires` info is available in the bytecode since JDK's javap can read it. (And produce a more accu... | priority | requires static is decompiled as requires in module info example meta inf versions module info class in the static vs normal requires info is available in the bytecode since jdk s javap can read it and produce a more accurate decompiled version of the module info ironically | 1 |

277,842 | 8,633,367,292 | IssuesEvent | 2018-11-22 13:40:14 | geosolutions-it/pyfulcrum | https://api.github.com/repos/geosolutions-it/pyfulcrum | closed | PyBackup filter/validation | Priority: Medium Task review | - [x] Option to possibly define a list of forms and users to be included in the backups. Records will be filtered according to this information in case it is defined.

- [x] Incoming (**filtered**) records need to be validated before starting the involved backups procedures. Payload nature and contents (as well as requ... | 1.0 | PyBackup filter/validation - - [x] Option to possibly define a list of forms and users to be included in the backups. Records will be filtered according to this information in case it is defined.

- [x] Incoming (**filtered**) records need to be validated before starting the involved backups procedures. Payload nature ... | priority | pybackup filter validation option to possibly define a list of forms and users to be included in the backups records will be filtered according to this information in case it is defined incoming filtered records need to be validated before starting the involved backups procedures payload nature and ... | 1 |

188,681 | 6,779,848,363 | IssuesEvent | 2017-10-29 06:03:47 | MyMICDS/MyMICDS-v2-Angular | https://api.github.com/repos/MyMICDS/MyMICDS-v2-Angular | closed | Edit button is there even when user isn't logged in | effort: easy priority: medium ui / ux work length: short | A great idea would be to keep the edit button, but have some sort of dialogue that says the user must create an account before customizing the homepage | 1.0 | Edit button is there even when user isn't logged in - A great idea would be to keep the edit button, but have some sort of dialogue that says the user must create an account before customizing the homepage | priority | edit button is there even when user isn t logged in a great idea would be to keep the edit button but have some sort of dialogue that says the user must create an account before customizing the homepage | 1 |

331,307 | 10,063,995,978 | IssuesEvent | 2019-07-23 07:36:32 | AbsaOSS/enceladus | https://api.github.com/repos/AbsaOSS/enceladus | closed | Add index sort direction and uniqueness constraints to migration framework | feature priority: medium | ## Background

* MongoDB compound indexes can have [a sort order](https://docs.mongodb.com/manual/core/index-compound/#index-ascending-and-descending).

Currently, the Migration framework allows to create compound indexes, but only with ascending order.

* MongoDB indexes can have [a uniqueness constraint](https://do... | 1.0 | Add index sort direction and uniqueness constraints to migration framework - ## Background

* MongoDB compound indexes can have [a sort order](https://docs.mongodb.com/manual/core/index-compound/#index-ascending-and-descending).

Currently, the Migration framework allows to create compound indexes, but only with ascend... | priority | add index sort direction and uniqueness constraints to migration framework background mongodb compound indexes can have currently the migration framework allows to create compound indexes but only with ascending order mongodb indexes can have currently the migration framework does not allow i... | 1 |

657,468 | 21,794,894,729 | IssuesEvent | 2022-05-15 13:31:40 | SELab-2/OSOC-6 | https://api.github.com/repos/SELab-2/OSOC-6 | opened | Communication list | enhancement priority:medium | This issue will track the creation of a communication list component. This component will be restricted to one student. | 1.0 | Communication list - This issue will track the creation of a communication list component. This component will be restricted to one student. | priority | communication list this issue will track the creation of a communication list component this component will be restricted to one student | 1 |

316,284 | 9,640,112,506 | IssuesEvent | 2019-05-16 14:52:00 | eJourn-al/eJournal | https://api.github.com/repos/eJourn-al/eJournal | closed | More intuitive adding of nodes in timeline | Priority: High Status: Review Needed Type: Enhancement Workload: Medium | **Describe the solution you'd like**

Adding nodes to format editor needs to be in a more intuitive way upon pressing the new node button.

Currently, a node is immediately added upon clicking the add node. We should have this work the same as adding a node to a journal by a student. | 1.0 | More intuitive adding of nodes in timeline - **Describe the solution you'd like**

Adding nodes to format editor needs to be in a more intuitive way upon pressing the new node button.

Currently, a node is immediately added upon clicking the add node. We should have this work the same as adding a node to a journal by... | priority | more intuitive adding of nodes in timeline describe the solution you d like adding nodes to format editor needs to be in a more intuitive way upon pressing the new node button currently a node is immediately added upon clicking the add node we should have this work the same as adding a node to a journal by... | 1 |

120,832 | 4,794,936,639 | IssuesEvent | 2016-10-31 22:42:00 | w3prog/GoodTravel | https://api.github.com/repos/w3prog/GoodTravel | closed | Создание математической модели подбора маршрута по заданным критериями. | Feature help wanted Priority: MEDIUM | Нужно подобрать варианты расчет подходящий мест под определенные запросы

| 1.0 | Создание математической модели подбора маршрута по заданным критериями. - Нужно подобрать варианты расчет подходящий мест под определенные запросы

| priority | создание математической модели подбора маршрута по заданным критериями нужно подобрать варианты расчет подходящий мест под определенные запросы | 1 |

405,439 | 11,873,254,919 | IssuesEvent | 2020-03-26 17:01:02 | JEvents/JEvents | https://api.github.com/repos/JEvents/JEvents | closed | uikit: Club Theme Options tab missing from JEvents configuration | Priority - Medium | As stated, there is no tab in the JEvents configuration for club layouts. | 1.0 | uikit: Club Theme Options tab missing from JEvents configuration - As stated, there is no tab in the JEvents configuration for club layouts. | priority | uikit club theme options tab missing from jevents configuration as stated there is no tab in the jevents configuration for club layouts | 1 |

587,713 | 17,629,815,288 | IssuesEvent | 2021-08-19 06:15:08 | nimblehq/nimble-medium-ios | https://api.github.com/repos/nimblehq/nimble-medium-ios | opened | As a user, I can mark an article as my favorite | type : feature category : backend priority : medium | ## Why

Once logged in, the users will be able to mark an article as their favorite.

## Acceptance Criteria

- [ ] Implement the API for marking an article as favorite via this [endpoint](https://github.com/gothinkster/realworld/tree/master/api#favorite-article).

- [ ] The endpoint takes the article's `slug` as the... | 1.0 | As a user, I can mark an article as my favorite - ## Why

Once logged in, the users will be able to mark an article as their favorite.

## Acceptance Criteria

- [ ] Implement the API for marking an article as favorite via this [endpoint](https://github.com/gothinkster/realworld/tree/master/api#favorite-article).

- ... | priority | as a user i can mark an article as my favorite why once logged in the users will be able to mark an article as their favorite acceptance criteria implement the api for marking an article as favorite via this the endpoint takes the article s slug as the query parameter in the request url ... | 1 |

47,138 | 2,974,566,581 | IssuesEvent | 2015-07-15 01:52:56 | MozillaHive/HiveCHI-rwm | https://api.github.com/repos/MozillaHive/HiveCHI-rwm | closed | Link to Registration page on Login page | Medium Priority | The registration page is accessible as /register. Put a button on the login page to link to it. | 1.0 | Link to Registration page on Login page - The registration page is accessible as /register. Put a button on the login page to link to it. | priority | link to registration page on login page the registration page is accessible as register put a button on the login page to link to it | 1 |

293,660 | 8,998,645,519 | IssuesEvent | 2019-02-02 23:58:34 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | Assert and printk not printed on RTT | area: Logging bug priority: medium | **Describe the bug**

Log messages are printed, but assert message is not printed out. As a result, it gives impression that device hanged, but does not give any clue why. You can see that it was an assertion, by opening debugger and stopping after the device hanged:

```

>> ninja debug

gdb> c

gdb> (CTRL + C)

```

... | 1.0 | Assert and printk not printed on RTT - **Describe the bug**

Log messages are printed, but assert message is not printed out. As a result, it gives impression that device hanged, but does not give any clue why. You can see that it was an assertion, by opening debugger and stopping after the device hanged:

```

>> ninj... | priority | assert and printk not printed on rtt describe the bug log messages are printed but assert message is not printed out as a result it gives impression that device hanged but does not give any clue why you can see that it was an assertion by opening debugger and stopping after the device hanged ninj... | 1 |

791,773 | 27,876,499,461 | IssuesEvent | 2023-03-21 16:20:25 | AY2223S2-CS2113-F13-2/tp | https://api.github.com/repos/AY2223S2-CS2113-F13-2/tp | opened | Add Wishlist feature | enhancement type.Story priority.Medium | Allows users to have a wishlist, which is a list of goods that the user would like to save for and buy.

Users will be able to add new goods to the wishlist.

Users can dedicate certain incomes to their wishlist, effectively saving up for the product.

Users will be able to see how much percentage of the good's price h... | 1.0 | Add Wishlist feature - Allows users to have a wishlist, which is a list of goods that the user would like to save for and buy.

Users will be able to add new goods to the wishlist.

Users can dedicate certain incomes to their wishlist, effectively saving up for the product.

Users will be able to see how much percentag... | priority | add wishlist feature allows users to have a wishlist which is a list of goods that the user would like to save for and buy users will be able to add new goods to the wishlist users can dedicate certain incomes to their wishlist effectively saving up for the product users will be able to see how much percentag... | 1 |

1,974 | 2,522,368,910 | IssuesEvent | 2015-01-19 21:32:52 | roberttdev/dactyl4 | https://api.github.com/repos/roberttdev/dactyl4 | closed | Being Ready for Data Entry and Clicking Clone on Another Point is Visually Uncertain | medium priority | When in data entry, if I have one point ready for data entry and then click clone on another point, the first point appears ready for DE while the second point appears ready for cloning.

Steps to reproduce:

1. Create a templated group

2. Click on the first point to do DE

3. It turns yellow

4. Click on the clon... | 1.0 | Being Ready for Data Entry and Clicking Clone on Another Point is Visually Uncertain - When in data entry, if I have one point ready for data entry and then click clone on another point, the first point appears ready for DE while the second point appears ready for cloning.

Steps to reproduce:

1. Create a template... | priority | being ready for data entry and clicking clone on another point is visually uncertain when in data entry if i have one point ready for data entry and then click clone on another point the first point appears ready for de while the second point appears ready for cloning steps to reproduce create a template... | 1 |

703,340 | 24,154,490,789 | IssuesEvent | 2022-09-22 06:17:23 | redhat-developer/odo | https://api.github.com/repos/redhat-developer/odo | reopened | `odo dev` not handling changes to the Devfile after removing a Binding with `odo remove binding` | kind/bug priority/Medium area/binding triage/ready | /kind bug

/area binding

## What versions of software are you using?

**Operating System:**

Fedora 36

**Output of `odo version`:**

odo v3.0.0-rc1 (897f5f3e0)

## How did you run odo exactly?

Reproduction steps:

1. New project, but you can use any other project: `odo init --name my-sample-go --devfile go... | 1.0 | `odo dev` not handling changes to the Devfile after removing a Binding with `odo remove binding` - /kind bug

/area binding

## What versions of software are you using?

**Operating System:**

Fedora 36

**Output of `odo version`:**

odo v3.0.0-rc1 (897f5f3e0)

## How did you run odo exactly?

Reproduction s... | priority | odo dev not handling changes to the devfile after removing a binding with odo remove binding kind bug area binding what versions of software are you using operating system fedora output of odo version odo how did you run odo exactly reproduction steps ne... | 1 |

38,599 | 2,849,190,795 | IssuesEvent | 2015-05-30 13:30:45 | joshdrummond/webpasswordsafe | https://api.github.com/repos/joshdrummond/webpasswordsafe | closed | Duo Security Multi-Factor Authentication Plugin | enhancement Milestone-Release1.4 Priority-Medium Type-Enhancement | Create an authentication plugin that supports Duo Security multi-factor authentication (https://www.duosecurity.com/) | 1.0 | Duo Security Multi-Factor Authentication Plugin - Create an authentication plugin that supports Duo Security multi-factor authentication (https://www.duosecurity.com/) | priority | duo security multi factor authentication plugin create an authentication plugin that supports duo security multi factor authentication | 1 |

795,219 | 28,066,164,530 | IssuesEvent | 2023-03-29 15:33:37 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [DocDB] Deadlock detector may overwrite wait-for information if received from multiple tablets | kind/bug area/docdb priority/medium | Jira Link: [DB-5459](https://yugabyte.atlassian.net/browse/DB-5459)

### Description

In case we have a transaction waiting at multiple tablets, deadlock detection may fail to detect the deadlock, since it currently stores only the latest update it received from any tablet. We can hit such a case if the user issues an ... | 1.0 | [DocDB] Deadlock detector may overwrite wait-for information if received from multiple tablets - Jira Link: [DB-5459](https://yugabyte.atlassian.net/browse/DB-5459)

### Description

In case we have a transaction waiting at multiple tablets, deadlock detection may fail to detect the deadlock, since it currently stores ... | priority | deadlock detector may overwrite wait for information if received from multiple tablets jira link description in case we have a transaction waiting at multiple tablets deadlock detection may fail to detect the deadlock since it currently stores only the latest update it received from any tablet we can ... | 1 |

48,288 | 2,997,019,201 | IssuesEvent | 2015-07-23 02:53:27 | theminted/lesswrong-migrated | https://api.github.com/repos/theminted/lesswrong-migrated | closed | add distinct viewable blogs for individual contributors | Contributions-Welcome imported Priority-Medium Type-Feature | _From [wjmo...@gmail.com](https://code.google.com/u/117567618910921056910/) on January 28, 2009 17:42:32_

Gerrit: I think this means filtered views of the post list, which can be by author, but may as well be

by a number of filter criteria, integrating the following point.

_Original issue: http://code.google.com/p/... | 1.0 | add distinct viewable blogs for individual contributors - _From [wjmo...@gmail.com](https://code.google.com/u/117567618910921056910/) on January 28, 2009 17:42:32_

Gerrit: I think this means filtered views of the post list, which can be by author, but may as well be

by a number of filter criteria, integrating the fo... | priority | add distinct viewable blogs for individual contributors from on january gerrit i think this means filtered views of the post list which can be by author but may as well be by a number of filter criteria integrating the following point original issue | 1 |

531,083 | 15,440,441,024 | IssuesEvent | 2021-03-08 03:19:25 | space-wizards/space-station-14 | https://api.github.com/repos/space-wizards/space-station-14 | opened | Add a disposable tag | Difficulty: 2 - Medium Priority: 4-wishlist Size: 2 - Small Type: Improvement | Just like recyclable or whatever it should be cleaner than just checking for storeable or IBody IMO. | 1.0 | Add a disposable tag - Just like recyclable or whatever it should be cleaner than just checking for storeable or IBody IMO. | priority | add a disposable tag just like recyclable or whatever it should be cleaner than just checking for storeable or ibody imo | 1 |

428,200 | 12,404,581,686 | IssuesEvent | 2020-05-21 15:46:44 | department-of-veterans-affairs/caseflow | https://api.github.com/repos/department-of-veterans-affairs/caseflow | closed | Set up logging for hearing dispositions | Priority: Medium Product: caseflow-hearings Stakeholder: BVA Team: Tango 💃 | ## Description

Record when dispositions were set on hearings and what they were

## Acceptance criteria

- [ ] Write query

- [ ] Set up graphs in Metabase

## Background/context/resources

Long-term goal: increase hearing capacity

Short-term goal: allow more hearings to be held during Covid-19 stay-at-home orders

## ... | 1.0 | Set up logging for hearing dispositions - ## Description

Record when dispositions were set on hearings and what they were

## Acceptance criteria

- [ ] Write query

- [ ] Set up graphs in Metabase

## Background/context/resources

Long-term goal: increase hearing capacity

Short-term goal: allow more hearings to be hel... | priority | set up logging for hearing dispositions description record when dispositions were set on hearings and what they were acceptance criteria write query set up graphs in metabase background context resources long term goal increase hearing capacity short term goal allow more hearings to be held du... | 1 |

671,493 | 22,763,450,768 | IssuesEvent | 2022-07-08 00:11:35 | DinoDevs/GladiatusCrazyAddon | https://api.github.com/repos/DinoDevs/GladiatusCrazyAddon | opened | Show mercenary max stats on tooltips | :bulb: feature request 📈 Medium priority | I think this is helpful comparing mercenaries:

Stats are the maximum values from mercenary's stats | 1.0 | Show mercenary max stats on tooltips - I think this is helpful comparing mercenaries:

Stats are the maximum values from mercenary's stats | priority | show mercenary max stats on tooltips i think this is helpful comparing mercenaries stats are the maximum values from mercenary s stats | 1 |

717,135 | 24,663,303,444 | IssuesEvent | 2022-10-18 08:26:26 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | opened | Point and Line layers make it difficult to use Identify | bug Priority: Medium 3D C040-COMUNE_GE-2022-CUSTOM-SUPPORT | ## Description

<!-- Add here a few sentences describing the bug. -->

In 3D mode it is quite difficult to obtain Identify response when clicking on a Line or Point WFS layers. The current logic make it really difficult to understand where to click to obtain Identify information probably due to the camera orientation i... | 1.0 | Point and Line layers make it difficult to use Identify - ## Description

<!-- Add here a few sentences describing the bug. -->

In 3D mode it is quite difficult to obtain Identify response when clicking on a Line or Point WFS layers. The current logic make it really difficult to understand where to click to obtain Ide... | priority | point and line layers make it difficult to use identify description in mode it is quite difficult to obtain identify response when clicking on a line or point wfs layers the current logic make it really difficult to understand where to click to obtain identify information probably due to the camera orientat... | 1 |

409,610 | 11,965,386,945 | IssuesEvent | 2020-04-05 23:10:55 | poissonconsulting/shinyssdtools | https://api.github.com/repos/poissonconsulting/shinyssdtools | closed | Update and redeploy for ssdtools 0.1.1.9003 | Difficulty: 2 Intermediate Effort: 2 Medium Priority: 2 High Type: Refactor | Relevant changes

```

- Change computable (standard errors) to FALSE by default.

- Only give warning about standard errors if computable = TRUE.

- Replaced 'burrIII2' for 'llogis' in default set.

- Deprecated 'burrIII2' for 'llogis'.

- Provide warning message about change in default for ci argument in predict fu... | 1.0 | Update and redeploy for ssdtools 0.1.1.9003 - Relevant changes

```

- Change computable (standard errors) to FALSE by default.

- Only give warning about standard errors if computable = TRUE.

- Replaced 'burrIII2' for 'llogis' in default set.

- Deprecated 'burrIII2' for 'llogis'.

- Provide warning message about c... | priority | update and redeploy for ssdtools relevant changes change computable standard errors to false by default only give warning about standard errors if computable true replaced for llogis in default set deprecated for llogis provide warning message about change in default ... | 1 |

157,722 | 6,011,555,383 | IssuesEvent | 2017-06-06 15:25:53 | infolab-csail/WikipediaBase | https://api.github.com/repos/infolab-csail/WikipediaBase | opened | IMAGE-LIVE --> "local variable 'img_url' referenced before assignment" | bug priority/medium | Most of the time `IMAGE-LIVE` works fine, but not in this case:

```

$ telnet <host> 8023

(get "wikibase-term" "William \"Tiger\" Dunlop" (:CODE "IMAGE-LIVE") (:FILE "canmedaj01489-0107-a.gif" :MAX-WIDTH 400))

((:error UnboundLocalError :message "local variable 'img_url' referenced before assignment"))

```

When I ... | 1.0 | IMAGE-LIVE --> "local variable 'img_url' referenced before assignment" - Most of the time `IMAGE-LIVE` works fine, but not in this case:

```

$ telnet <host> 8023

(get "wikibase-term" "William \"Tiger\" Dunlop" (:CODE "IMAGE-LIVE") (:FILE "canmedaj01489-0107-a.gif" :MAX-WIDTH 400))

((:error UnboundLocalError :messag... | priority | image live local variable img url referenced before assignment most of the time image live works fine but not in this case telnet get wikibase term william tiger dunlop code image live file a gif max width error unboundlocalerror message local variable img ur... | 1 |

205,515 | 7,102,778,464 | IssuesEvent | 2018-01-16 00:23:37 | davide-romanini/comictagger | https://api.github.com/repos/davide-romanini/comictagger | closed | Will Not Save Tags | Priority-Medium bug imported | _From [kylekoco...@gmail.com](https://code.google.com/u/101735958907267326152/) on March 09, 2014 18:58:09_

What steps will reproduce the problem? 1. Searching for tags

2. Auto-tag

3. Auto search What is the expected output? What do you see instead? When I click save tags, it should save the tags. It worked for about... | 1.0 | Will Not Save Tags - _From [kylekoco...@gmail.com](https://code.google.com/u/101735958907267326152/) on March 09, 2014 18:58:09_

What steps will reproduce the problem? 1. Searching for tags

2. Auto-tag

3. Auto search What is the expected output? What do you see instead? When I click save tags, it should save the tags.... | priority | will not save tags from on march what steps will reproduce the problem searching for tags auto tag auto search what is the expected output what do you see instead when i click save tags it should save the tags it worked for about a dozen comics now it keeps giving me an error sayin... | 1 |

270,105 | 8,452,417,716 | IssuesEvent | 2018-10-20 03:30:38 | minio/minio | https://api.github.com/repos/minio/minio | closed | Unable to heal deleted files in distributed mode | priority: medium |

## Expected Behavior

In distributed mode, when a node is taken out of the cluster for maintenance or due to outage, the node can be returned to the cluster and healed using the Heal API.

After healing, cluster should be restored to 100% Green state.

## Current Behavior

If a file has been deleted from Minio ... | 1.0 | Unable to heal deleted files in distributed mode -

## Expected Behavior

In distributed mode, when a node is taken out of the cluster for maintenance or due to outage, the node can be returned to the cluster and healed using the Heal API.

After healing, cluster should be restored to 100% Green state.

## Current... | priority | unable to heal deleted files in distributed mode expected behavior in distributed mode when a node is taken out of the cluster for maintenance or due to outage the node can be returned to the cluster and healed using the heal api after healing cluster should be restored to green state current b... | 1 |

65,163 | 3,226,915,075 | IssuesEvent | 2015-10-10 18:25:23 | Sonarr/Sonarr | https://api.github.com/repos/Sonarr/Sonarr | closed | Blackhole doesn't treat previous grab as meeting cutoff | priority:medium suboptimal | Normally Sonarr relies on the queue to determine if the cutoff has been met by an item in the queue, but with blackhole it doesn't have a queue to track, in this scenario Sonarr should use its history to see if the history if the cutoff has been met, until it knows otherwise (failed or completed). | 1.0 | Blackhole doesn't treat previous grab as meeting cutoff - Normally Sonarr relies on the queue to determine if the cutoff has been met by an item in the queue, but with blackhole it doesn't have a queue to track, in this scenario Sonarr should use its history to see if the history if the cutoff has been met, until it kn... | priority | blackhole doesn t treat previous grab as meeting cutoff normally sonarr relies on the queue to determine if the cutoff has been met by an item in the queue but with blackhole it doesn t have a queue to track in this scenario sonarr should use its history to see if the history if the cutoff has been met until it kn... | 1 |

122,651 | 4,838,579,540 | IssuesEvent | 2016-11-09 04:24:49 | smirkspace/smirkspace | https://api.github.com/repos/smirkspace/smirkspace | opened | Add Blurbs | backend frontend medium priority | Add blurbs for each space. Before entering a space a user will enter a blurb of what they want to talk about. | 1.0 | Add Blurbs - Add blurbs for each space. Before entering a space a user will enter a blurb of what they want to talk about. | priority | add blurbs add blurbs for each space before entering a space a user will enter a blurb of what they want to talk about | 1 |

76,156 | 3,482,131,601 | IssuesEvent | 2015-12-29 21:00:10 | M-Zuber/MyHome | https://api.github.com/repos/M-Zuber/MyHome | closed | The dropdown in DataChartUI shows a category for both transaction types - even though it is only in one | bug Medium Priority | I am not sure what layer the code that causes the bug is on...

[EDIT]

See below as the issue is caused when entering a new transaction | 1.0 | The dropdown in DataChartUI shows a category for both transaction types - even though it is only in one - I am not sure what layer the code that causes the bug is on...

[EDIT]

See below as the issue is caused when entering a new transaction | priority | the dropdown in datachartui shows a category for both transaction types even though it is only in one i am not sure what layer the code that causes the bug is on see below as the issue is caused when entering a new transaction | 1 |

365,255 | 10,780,098,860 | IssuesEvent | 2019-11-04 12:11:07 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | When METRICS_DISTRIBUTED_DATASTRUCTURES is enabled, Cache statistics is not available on the metrics | Module: Diagnostics Module: ICache Module: Metrics Priority: Medium Source: Internal Source: Jet Team: Core | The reason is that the Cache service doesn't implement the `StatisticsAwareService` interface.

P.S. While implementing this, there is an opportunity to remove the cache specific statistics collection code from `com.hazelcast.internal.management.TimedMemberStateFactory#createMemState` | 1.0 | When METRICS_DISTRIBUTED_DATASTRUCTURES is enabled, Cache statistics is not available on the metrics - The reason is that the Cache service doesn't implement the `StatisticsAwareService` interface.

P.S. While implementing this, there is an opportunity to remove the cache specific statistics collection code from `com... | priority | when metrics distributed datastructures is enabled cache statistics is not available on the metrics the reason is that the cache service doesn t implement the statisticsawareservice interface p s while implementing this there is an opportunity to remove the cache specific statistics collection code from com... | 1 |

523,154 | 15,173,727,783 | IssuesEvent | 2021-02-13 15:25:15 | glotaran/pyglotaran | https://api.github.com/repos/glotaran/pyglotaran | closed | Store the parameters of each optimization step | Priority: Medium Status: In Progress Type: Enhancement | An optimization call will optimize a set of parameters given a model in a number of steps or iterations. The information of the trajectory that each parameter following in optimization space is very valuable information for modelling.

Which parameters were optimized the most, by how much.

Which parameters were larg... | 1.0 | Store the parameters of each optimization step - An optimization call will optimize a set of parameters given a model in a number of steps or iterations. The information of the trajectory that each parameter following in optimization space is very valuable information for modelling.

Which parameters were optimized t... | priority | store the parameters of each optimization step an optimization call will optimize a set of parameters given a model in a number of steps or iterations the information of the trajectory that each parameter following in optimization space is very valuable information for modelling which parameters were optimized t... | 1 |

43,551 | 2,889,846,970 | IssuesEvent | 2015-06-13 20:26:05 | damonkohler/sl4a | https://api.github.com/repos/damonkohler/sl4a | opened | Add scipy and numpy modules to standard modules | auto-migrated Priority-Medium Type-Enhancement | _From @GoogleCodeExporter on May 31, 2015 11:25_

```

Is it possible to add numpy and scipy to the list of modules bundled with ASE.

They are not pure python modules and so cannot be added on the sd card.

```

Original issue reported on code.google.com by `mszar...@gmail.com` on 23 Mar 2010 at 11:33

_Copied from origi... | 1.0 | Add scipy and numpy modules to standard modules - _From @GoogleCodeExporter on May 31, 2015 11:25_

```

Is it possible to add numpy and scipy to the list of modules bundled with ASE.

They are not pure python modules and so cannot be added on the sd card.

```

Original issue reported on code.google.com by `mszar...@gmai... | priority | add scipy and numpy modules to standard modules from googlecodeexporter on may is it possible to add numpy and scipy to the list of modules bundled with ase they are not pure python modules and so cannot be added on the sd card original issue reported on code google com by mszar gmail com ... | 1 |

297,565 | 9,172,193,831 | IssuesEvent | 2019-03-04 05:59:35 | OpenPrinting/openprinting.github.io | https://api.github.com/repos/OpenPrinting/openprinting.github.io | closed | Add content to the 'Downloads' page | content migration difficulty/low help wanted priority/medium | Add content to the `Downloads` page located here: https://openprinting.github.io/downloads/

Depends on: #36 | 1.0 | Add content to the 'Downloads' page - Add content to the `Downloads` page located here: https://openprinting.github.io/downloads/

Depends on: #36 | priority | add content to the downloads page add content to the downloads page located here depends on | 1 |

94,241 | 3,923,495,856 | IssuesEvent | 2016-04-22 11:36:14 | haiwen/seafile | https://api.github.com/repos/haiwen/seafile | reopened | Move file, then upload with same name -> file already exists | priority-medium | Server Version: 5.0.2

If you move a file through the web interface to a subfolder and then upload a new file with exactly the same name to the old location, the message pops up that the file already exists.

How to reproduce:

1. Create a file called foo.txt in your library root `/foo.txt`

2. Open the web inter... | 1.0 | Move file, then upload with same name -> file already exists - Server Version: 5.0.2

If you move a file through the web interface to a subfolder and then upload a new file with exactly the same name to the old location, the message pops up that the file already exists.

How to reproduce:

1. Create a file called... | priority | move file then upload with same name file already exists server version if you move a file through the web interface to a subfolder and then upload a new file with exactly the same name to the old location the message pops up that the file already exists how to reproduce create a file called... | 1 |

39,335 | 2,853,636,325 | IssuesEvent | 2015-06-01 19:36:52 | Sistema-Integrado-Gestao-Academica/SiGA | https://api.github.com/repos/Sistema-Integrado-Gestao-Academica/SiGA | closed | Avaliação: Estrutura Básica | [Medium Priority] | Como **Administrador** desejo visualizar a avaliação dos programas do sistema, para que possa acompanhar em tempo real a condição de cada programa frente a avaliação da **CAPES**.

--------------

* Construir a estrutura básica para abrigar a **Avaliação** para os N programas dentro do software.

* Cada programa poss... | 1.0 | Avaliação: Estrutura Básica - Como **Administrador** desejo visualizar a avaliação dos programas do sistema, para que possa acompanhar em tempo real a condição de cada programa frente a avaliação da **CAPES**.

--------------

* Construir a estrutura básica para abrigar a **Avaliação** para os N programas dentro do so... | priority | avaliação estrutura básica como administrador desejo visualizar a avaliação dos programas do sistema para que possa acompanhar em tempo real a condição de cada programa frente a avaliação da capes construir a estrutura básica para abrigar a avaliação para os n programas dentro do so... | 1 |

75,371 | 3,461,844,657 | IssuesEvent | 2015-12-20 12:45:27 | PowerPointLabs/powerpointlabs | https://api.github.com/repos/PowerPointLabs/powerpointlabs | closed | Make drop-zone remember its position | Feature.ImagesLab Priority.Medium status.releaseCandidate type-enhancement | e.g. if I remove it my other window where I do the image search, I would like it to remain there (or appear at the same location) until I move it again. | 1.0 | Make drop-zone remember its position - e.g. if I remove it my other window where I do the image search, I would like it to remain there (or appear at the same location) until I move it again. | priority | make drop zone remember its position e g if i remove it my other window where i do the image search i would like it to remain there or appear at the same location until i move it again | 1 |

427,079 | 12,392,667,118 | IssuesEvent | 2020-05-20 14:20:04 | OaklandDevTeam/umbrella | https://api.github.com/repos/OaklandDevTeam/umbrella | closed | Endpoint to login/register Users | Priority: Medium back-end | Users should be able to register an account to use the application. Users should also be able to login to their respective accounts to use the application.

A REST endpoint must be created to register an account with the auth layer.

A REST endpoint must be created to login an account with the auth layer. | 1.0 | Endpoint to login/register Users - Users should be able to register an account to use the application. Users should also be able to login to their respective accounts to use the application.

A REST endpoint must be created to register an account with the auth layer.

A REST endpoint must be created to login an account... | priority | endpoint to login register users users should be able to register an account to use the application users should also be able to login to their respective accounts to use the application a rest endpoint must be created to register an account with the auth layer a rest endpoint must be created to login an account... | 1 |

651,770 | 21,509,833,762 | IssuesEvent | 2022-04-28 02:22:47 | pystardust/ani-cli | https://api.github.com/repos/pystardust/ani-cli | opened | Updates that occur during a loss of internet connectivity can cause the ani-cli script to be corrupted on more likely be void. | type: bug priority 2: medium | **Metadata (please complete the following information)**

Version: 2.1.5

OS: Arch lts kernel

Shell: fish

Anime: N/A

**Describe the bug**

Updates that occur during a loss of internet connectivity can cause the ani-cli script to be corrupted on more likely be void.

**Steps To Reproduce**

1. Run `ani-cli -U`... | 1.0 | Updates that occur during a loss of internet connectivity can cause the ani-cli script to be corrupted on more likely be void. - **Metadata (please complete the following information)**

Version: 2.1.5

OS: Arch lts kernel

Shell: fish

Anime: N/A

**Describe the bug**

Updates that occur during a loss of internet c... | priority | updates that occur during a loss of internet connectivity can cause the ani cli script to be corrupted on more likely be void metadata please complete the following information version os arch lts kernel shell fish anime n a describe the bug updates that occur during a loss of internet c... | 1 |

819,044 | 30,717,790,495 | IssuesEvent | 2023-07-27 14:04:48 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [xCluster] Simplify the xcluster test helper functions | kind/enhancement area/docdb priority/medium | Jira Link: [DB-7394](https://yugabyte.atlassian.net/browse/DB-7394)

### Description

SetupUniverseReplication returns a list of tables in a weirdly sorted order. Each xcluster test has to parse and extract out producer and consumer tables from this list. Instead we can directly store the two lists in the base class.

... | 1.0 | [xCluster] Simplify the xcluster test helper functions - Jira Link: [DB-7394](https://yugabyte.atlassian.net/browse/DB-7394)

### Description

SetupUniverseReplication returns a list of tables in a weirdly sorted order. Each xcluster test has to parse and extract out producer and consumer tables from this list. Instead... | priority | simplify the xcluster test helper functions jira link description setupuniversereplication returns a list of tables in a weirdly sorted order each xcluster test has to parse and extract out producer and consumer tables from this list instead we can directly store the two lists in the base class ... | 1 |

655,156 | 21,678,924,161 | IssuesEvent | 2022-05-09 02:55:50 | TeamTwilight/twilightforest-fabric | https://api.github.com/repos/TeamTwilight/twilightforest-fabric | closed | no fog in spooky forest | bug Priority: Medium missing feature | ### Forge Version

0.11.7

### Twilight Forest Version

4.0.69

### Client Log

_No response_

### Crash Report (if applicable)

_No response_

### Steps to Reproduce

there is no pink or magenta fog in spooky forest

### What You Expected

there to be pink or magenta fog in the spooky forest like normal.

### What Hap... | 1.0 | no fog in spooky forest - ### Forge Version

0.11.7

### Twilight Forest Version

4.0.69

### Client Log

_No response_

### Crash Report (if applicable)

_No response_

### Steps to Reproduce

there is no pink or magenta fog in spooky forest

### What You Expected

there to be pink or magenta fog in the spooky forest ... | priority | no fog in spooky forest forge version twilight forest version client log no response crash report if applicable no response steps to reproduce there is no pink or magenta fog in spooky forest what you expected there to be pink or magenta fog in the spooky forest li... | 1 |

247,112 | 7,896,222,810 | IssuesEvent | 2018-06-29 07:48:07 | Creepsky/creepMiner | https://api.github.com/repos/Creepsky/creepMiner | closed | The pool calculated a different deadline + during validate the plotfile the Miner chrashes with segmentation fault (1.8.2) + ignoring memory allocation | Priority: Medium Status: To check | ### Subject of the issue

Mining with POC2 plots causes this message:

"The pool calculated a different deadline for your nonce than your miner has!"

I tried to check the plotfile with CreepMiner. Everytime i click the validate button in the webserver the CreepMiner crashes with the message Segmentation fault. I got... | 1.0 | The pool calculated a different deadline + during validate the plotfile the Miner chrashes with segmentation fault (1.8.2) + ignoring memory allocation - ### Subject of the issue

Mining with POC2 plots causes this message:

"The pool calculated a different deadline for your nonce than your miner has!"