Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

775,185 | 27,222,355,448 | IssuesEvent | 2023-02-21 06:55:14 | CodeforHawaii/HIERR | https://api.github.com/repos/CodeforHawaii/HIERR | opened | Add leaflet address widget | Medium Priority | It can't be assumed that the user knows their zip code.

This would add in a leaflet address widget that would zoom in to the location based on the user's street and house number | 1.0 | Add leaflet address widget - It can't be assumed that the user knows their zip code.

This would add in a leaflet address widget that would zoom in to the location based on the user's street and house number | priority | add leaflet address widget it can t be assumed that the user knows their zip code this would add in a leaflet address widget that would zoom in to the location based on the user s street and house number | 1 |

645,776 | 21,015,005,394 | IssuesEvent | 2022-03-30 10:09:52 | micronaut-projects/micronaut-core | https://api.github.com/repos/micronaut-projects/micronaut-core | closed | Native image builds do not respect Jackson directive in application.yml | type: improvement priority: low | ### Expected Behavior

In a java app, with a src/main/resources/application,yml of

`micronaut:

application:

name: mntest2

jackson:

property-naming-strategy: SNAKE_CASE`

the expectation is that the properties of any POJO are transposed to snake case.

`➜ mntest1 curl http://localhost:8080/v1/things/fred

... | 1.0 | Native image builds do not respect Jackson directive in application.yml - ### Expected Behavior

In a java app, with a src/main/resources/application,yml of

`micronaut:

application:

name: mntest2

jackson:

property-naming-strategy: SNAKE_CASE`

the expectation is that the properties of any POJO are transpo... | priority | native image builds do not respect jackson directive in application yml expected behavior in a java app with a src main resources application yml of micronaut application name jackson property naming strategy snake case the expectation is that the properties of any pojo are transposed to... | 1 |

732,374 | 25,257,388,371 | IssuesEvent | 2022-11-15 19:20:26 | dtcenter/METplotpy | https://api.github.com/repos/dtcenter/METplotpy | closed | Create the METplotpy v2.0.0-beta5 release | type: task priority: high alert: NEED ACCOUNT KEY METplotpy: General |

## Describe the Task ##

Create the beta5 release following these instructions:

https://metplus.readthedocs.io/en/develop/Release_Guide/metplotpy_development.html

### Time Estimate ###

*Estimate the amount of work required here.*

*Issues should represent approximately 1 to 3 days of work.*

### Sub-Issues #... | 1.0 | Create the METplotpy v2.0.0-beta5 release -

## Describe the Task ##

Create the beta5 release following these instructions:

https://metplus.readthedocs.io/en/develop/Release_Guide/metplotpy_development.html

### Time Estimate ###

*Estimate the amount of work required here.*

*Issues should represent approximate... | priority | create the metplotpy release describe the task create the release following these instructions time estimate estimate the amount of work required here issues should represent approximately to days of work sub issues consider breaking the task down into sub i... | 1 |

54,480 | 13,912,020,375 | IssuesEvent | 2020-10-20 18:14:25 | jgeraigery/LocalCatalogManager | https://api.github.com/repos/jgeraigery/LocalCatalogManager | opened | CVE-2019-16942 (High) detected in jackson-databind-2.8.5.jar | security vulnerability | ## CVE-2019-16942 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.5.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2019-16942 (High) detected in jackson-databind-2.8.5.jar - ## CVE-2019-16942 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.5.jar</b></p></summary>

<p>General... | non_priority | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file localcatalogmanager lcm server ... | 0 |

37,875 | 5,146,880,217 | IssuesEvent | 2017-01-13 03:39:30 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Test failure: ReadAndWrite/OutputEncoding fail with "Assert+WrapperXunitException" | area-System.Console test bug test-run-desktop | Opened on behalf of @Jiayili1

The test `ReadAndWrite/OutputEncoding` has failed.

```

Assert+WrapperXunitException : File path: D:\A\_work\2\s\src\System.Console\tests\ReadAndWrite.cs. Line: 202\r

---- Assert.Equal() Failure\r

Expected: Byte[] []\r

Actual: Byte[] [239, 187, 191]

```

Stack Trace:

```

at Assert.W... | 2.0 | Test failure: ReadAndWrite/OutputEncoding fail with "Assert+WrapperXunitException" - Opened on behalf of @Jiayili1

The test `ReadAndWrite/OutputEncoding` has failed.

```

Assert+WrapperXunitException : File path: D:\A\_work\2\s\src\System.Console\tests\ReadAndWrite.cs. Line: 202\r

---- Assert.Equal() Failure\r

Expecte... | non_priority | test failure readandwrite outputencoding fail with assert wrapperxunitexception opened on behalf of the test readandwrite outputencoding has failed assert wrapperxunitexception file path d a work s src system console tests readandwrite cs line r assert equal failure r expected byte ... | 0 |

172,805 | 27,331,237,448 | IssuesEvent | 2023-02-25 17:00:41 | SpenceKonde/megaTinyCore | https://api.github.com/repos/SpenceKonde/megaTinyCore | closed | Suggestion: provide an alternative boards-hv.txt file | enhancement design decision noplans | Currently you have to locate and uncomment some 20 lines in the **boards.txt** file to activate the **UPDI/Reset Pin Function** menu option on the **Tools** menu.

As HV programmers are becoming available, more people will need these options. How about including an alternative version of the **boards.txt** file, call... | 1.0 | Suggestion: provide an alternative boards-hv.txt file - Currently you have to locate and uncomment some 20 lines in the **boards.txt** file to activate the **UPDI/Reset Pin Function** menu option on the **Tools** menu.

As HV programmers are becoming available, more people will need these options. How about including... | non_priority | suggestion provide an alternative boards hv txt file currently you have to locate and uncomment some lines in the boards txt file to activate the updi reset pin function menu option on the tools menu as hv programmers are becoming available more people will need these options how about including ... | 0 |

254,447 | 8,074,130,096 | IssuesEvent | 2018-08-06 21:47:54 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [studio-ui] options on right click options of wcm-assets-folder dont work when using multi-path configuration | CI bug priority: high | ### Expected behavior

right click options should function correctly

### Actual behavior

selecting a right click menu option loads the preview with a 404 (undefined)

### Steps to reproduce the problem

* set up content so that you have multiple roots to use

```

/static-assets/images

/static-assets/images/fol... | 1.0 | [studio-ui] options on right click options of wcm-assets-folder dont work when using multi-path configuration - ### Expected behavior

right click options should function correctly

### Actual behavior

selecting a right click menu option loads the preview with a 404 (undefined)

### Steps to reproduce the proble... | priority | options on right click options of wcm assets folder dont work when using multi path configuration expected behavior right click options should function correctly actual behavior selecting a right click menu option loads the preview with a undefined steps to reproduce the problem set up ... | 1 |

552,661 | 16,246,321,870 | IssuesEvent | 2021-05-07 14:59:25 | ethereum/sourcify | https://api.github.com/repos/ethereum/sourcify | closed | Skip storing metadata under IPFS hash if not available | medium priority | In verification, after all sources and metadata are stored, the metadata additionally gets stored under the its IPFS hash. If this hash is not available, error is reported. As this additionally stored metadata is not used anywhere, this step can be skipped in this case, only perhaps logging a message on the server. | 1.0 | Skip storing metadata under IPFS hash if not available - In verification, after all sources and metadata are stored, the metadata additionally gets stored under the its IPFS hash. If this hash is not available, error is reported. As this additionally stored metadata is not used anywhere, this step can be skipped in thi... | priority | skip storing metadata under ipfs hash if not available in verification after all sources and metadata are stored the metadata additionally gets stored under the its ipfs hash if this hash is not available error is reported as this additionally stored metadata is not used anywhere this step can be skipped in thi... | 1 |

435,848 | 30,522,733,528 | IssuesEvent | 2023-07-19 09:10:06 | dana-team/hns-nqs-plugin | https://api.github.com/repos/dana-team/hns-nqs-plugin | closed | Add documentation for API | kind/documentation | The API needs to be documented and each field in the API needs to be briefly explained so it can be understood by outside readers

https://github.com/dana-team/hns-nodeQuotaSync-plugin/blob/46ca5f5b968b1d8bf34ce7b63d38aac77662b6a1/api/v1alpha1/nodequotaconfig_types.go#L28-L55

/kind documentation | 1.0 | Add documentation for API - The API needs to be documented and each field in the API needs to be briefly explained so it can be understood by outside readers

https://github.com/dana-team/hns-nodeQuotaSync-plugin/blob/46ca5f5b968b1d8bf34ce7b63d38aac77662b6a1/api/v1alpha1/nodequotaconfig_types.go#L28-L55

/kind docu... | non_priority | add documentation for api the api needs to be documented and each field in the api needs to be briefly explained so it can be understood by outside readers kind documentation | 0 |

472,405 | 13,623,855,640 | IssuesEvent | 2020-09-24 07:06:47 | incognitochain/incognito-chain | https://api.github.com/repos/incognitochain/incognito-chain | closed | [Test][Testnet] branch master-temp-B-deploy-consensus-v2-optimized | Priority: High | - [x] Transaction

-- Privacy V1

-- No-privacy

-- Batching verify

-- Withdraw reward

- [x] Brigde

-- Centralize

-- Decentralize

- [ ] Portal V2

- [ ] Staking

- [ ] pDex

| 1.0 | [Test][Testnet] branch master-temp-B-deploy-consensus-v2-optimized - - [x] Transaction

-- Privacy V1

-- No-privacy

-- Batching verify

-- Withdraw reward

- [x] Brigde

-- Centralize

-- Decentralize

- [ ] Portal V2

- [ ] Staking

- [ ] pDex

| priority | branch master temp b deploy consensus optimized transaction privacy no privacy batching verify withdraw reward brigde centralize decentralize portal staking pdex | 1 |

5,409 | 12,451,970,866 | IssuesEvent | 2020-05-27 11:27:02 | corona-warn-app/cwa-documentation | https://api.github.com/repos/corona-warn-app/cwa-documentation | closed | Use Corona-Warn-Server instead of Corona-Warn-App-Server | architecture documentation | ## Where to find the issue

Complete Project documentation.

Especially visible in figure_7.svg

## Describe the issue

Corona-Warn-App-Server is not unambiguous

Example figure 7 Is the corona Warn App Database on the server or on the phone? It should be on the server but why is it not called Corona Warn Server... | 1.0 | Use Corona-Warn-Server instead of Corona-Warn-App-Server - ## Where to find the issue

Complete Project documentation.

Especially visible in figure_7.svg

## Describe the issue

Corona-Warn-App-Server is not unambiguous

Example figure 7 Is the corona Warn App Database on the server or on the phone? It should b... | non_priority | use corona warn server instead of corona warn app server where to find the issue complete project documentation especially visible in figure svg describe the issue corona warn app server is not unambiguous example figure is the corona warn app database on the server or on the phone it should b... | 0 |

368,367 | 25,790,108,854 | IssuesEvent | 2022-12-10 02:33:42 | wesen/glazed | https://api.github.com/repos/wesen/glazed | reopened | doc: add example program | documentation | The example program should show how to:

- integrate the library with command line flag parsing

- register custom middlewares

| 1.0 | doc: add example program - The example program should show how to:

- integrate the library with command line flag parsing

- register custom middlewares

| non_priority | doc add example program the example program should show how to integrate the library with command line flag parsing register custom middlewares | 0 |

1,477 | 6,404,174,472 | IssuesEvent | 2017-08-07 01:23:43 | caskroom/homebrew-cask | https://api.github.com/repos/caskroom/homebrew-cask | closed | microsoft-office uninstall does not remove .app files from /Applications | awaiting maintainer feedback | #### General troubleshooting steps

- [X] I have checked the instructions for [reporting bugs](https://github.com/caskroom/homebrew-cask#reporting-bugs) (or [making requests](https://github.com/caskroom/homebrew-cask#requests)) before opening the issue.

- [X] None of the templates was appropriate for my issue, or ... | True | microsoft-office uninstall does not remove .app files from /Applications - #### General troubleshooting steps

- [X] I have checked the instructions for [reporting bugs](https://github.com/caskroom/homebrew-cask#reporting-bugs) (or [making requests](https://github.com/caskroom/homebrew-cask#requests)) before opening ... | non_priority | microsoft office uninstall does not remove app files from applications general troubleshooting steps i have checked the instructions for or before opening the issue none of the templates was appropriate for my issue or i’m not sure i ran brew update reset brew update and ret... | 0 |

434,868 | 30,473,189,939 | IssuesEvent | 2023-07-17 14:49:48 | Infisical/infisical | https://api.github.com/repos/Infisical/infisical | closed | Typo in Docs | 🐞 bug 📑 documentation good first issue | ### Describe the bug

There's a little typo that I encountered

### To Reproduce

Steps to reproduce the behavior:

1. Go to [here](https://infisical.com/docs/documentation/platform/token)

2. See the typo error

### Screenshots

2. See the typo error

### Screenshots

` or `__init__()` and not at the class level.

`LFSR` shows the arguments, but doesn't show the docstring.

` or `__init__()` and not at the class level.

`LFSR` shows the arguments, but doesn't show the docstring.

addressed it.

Th... | 1.0 | Root causing protonj "no current delivery" - ## Background

Two customer tickets reported that the ProtonJ error "no current delivery" appeared in the logs, but when it happened in the lower layer, it activated a code path in the upper layer that triggered a reliability issue;[ this-pr](https://github.com/Azure/azure... | non_priority | root causing protonj no current delivery background two customer tickets reported that the protonj error no current delivery appeared in the logs but when it happened in the lower layer it activated a code path in the upper layer that triggered a reliability issue addressed it this ticket is creat... | 0 |

209,865 | 7,180,654,065 | IssuesEvent | 2018-02-01 00:22:05 | INN/umbrella-energynewsnetwork | https://api.github.com/repos/INN/umbrella-energynewsnetwork | closed | MORI isn't saving things properly | bug priority: normal | The following URL

> 'posts-from-site-id-64/in-michigan-solar-growth-meets-uncertainty-with-end-of-net-metering'

where `posts-from-site-id-64` is the slug of a term in the region taxonomy

Might match one of these rules from `$wp_rewrite->wp_rewrite_rules()`:

```

'region/([^/]+)/feed/(feed|rdf|rss|rss2|ato... | 1.0 | MORI isn't saving things properly - The following URL

> 'posts-from-site-id-64/in-michigan-solar-growth-meets-uncertainty-with-end-of-net-metering'

where `posts-from-site-id-64` is the slug of a term in the region taxonomy

Might match one of these rules from `$wp_rewrite->wp_rewrite_rules()`:

```

'region... | priority | mori isn t saving things properly the following url posts from site id in michigan solar growth meets uncertainty with end of net metering where posts from site id is the slug of a term in the region taxonomy might match one of these rules from wp rewrite wp rewrite rules region ... | 1 |

6,160 | 2,584,002,073 | IssuesEvent | 2015-02-16 12:07:14 | TrinityCore/TrinityCore | https://api.github.com/repos/TrinityCore/TrinityCore | closed | [Bug]Tome of Mel'Thandris event missing | Comp-Database Feedback-FixOutdatedMissingWip Priority-Low Sub-Quests | How it should work:

1. Accept "The Howling Vale".

2. Use "Tome of Mel'Thandris".

3. "Velinde Starsong" will appear and say:

"The numbers of my companions dwindles, goddess, and my own power shall soon be insufficient to hold back the demons of Felwood."

"Goddess, grant me the power to overcome my enemies! Hear me,... | 1.0 | [Bug]Tome of Mel'Thandris event missing - How it should work:

1. Accept "The Howling Vale".

2. Use "Tome of Mel'Thandris".

3. "Velinde Starsong" will appear and say:

"The numbers of my companions dwindles, goddess, and my own power shall soon be insufficient to hold back the demons of Felwood."

"Goddess, grant me ... | priority | tome of mel thandris event missing how it should work accept the howling vale use tome of mel thandris velinde starsong will appear and say the numbers of my companions dwindles goddess and my own power shall soon be insufficient to hold back the demons of felwood goddess grant me the ... | 1 |

5,601 | 2,955,394,197 | IssuesEvent | 2015-07-08 02:32:25 | abargnesi/rantly | https://api.github.com/repos/abargnesi/rantly | opened | Make tests runnable. | documentation test | - [x] Update to latest minitest gem.

- [x] Use minitest spec-style testing to match *shoulda* appeal.

- [ ] Update README documentation. | 1.0 | Make tests runnable. - - [x] Update to latest minitest gem.

- [x] Use minitest spec-style testing to match *shoulda* appeal.

- [ ] Update README documentation. | non_priority | make tests runnable update to latest minitest gem use minitest spec style testing to match shoulda appeal update readme documentation | 0 |

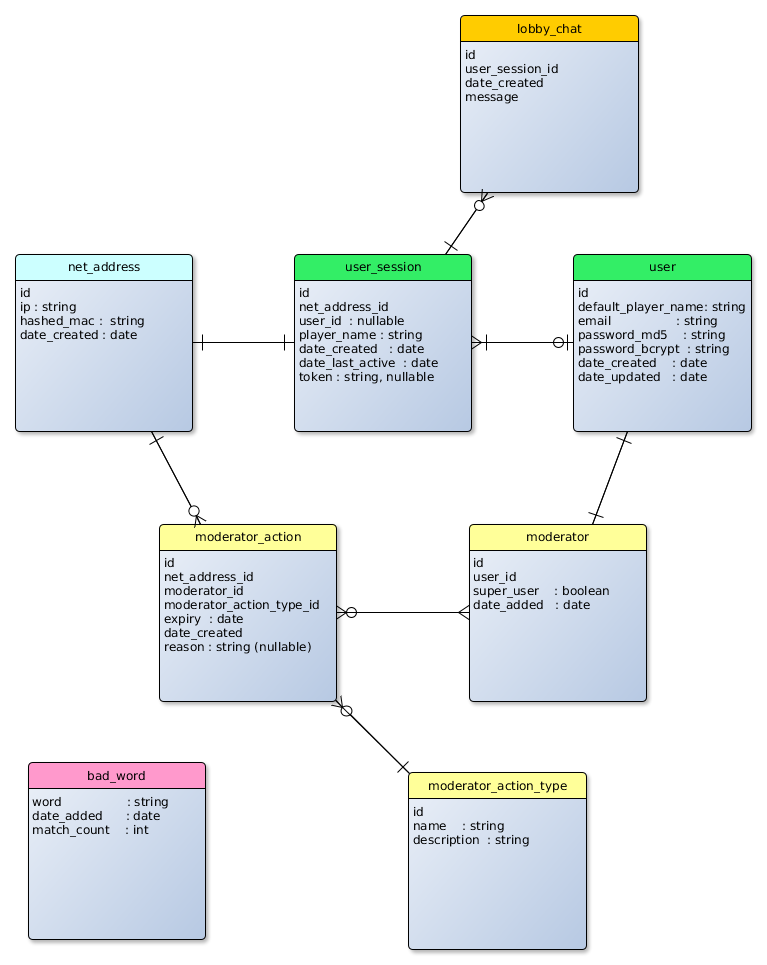

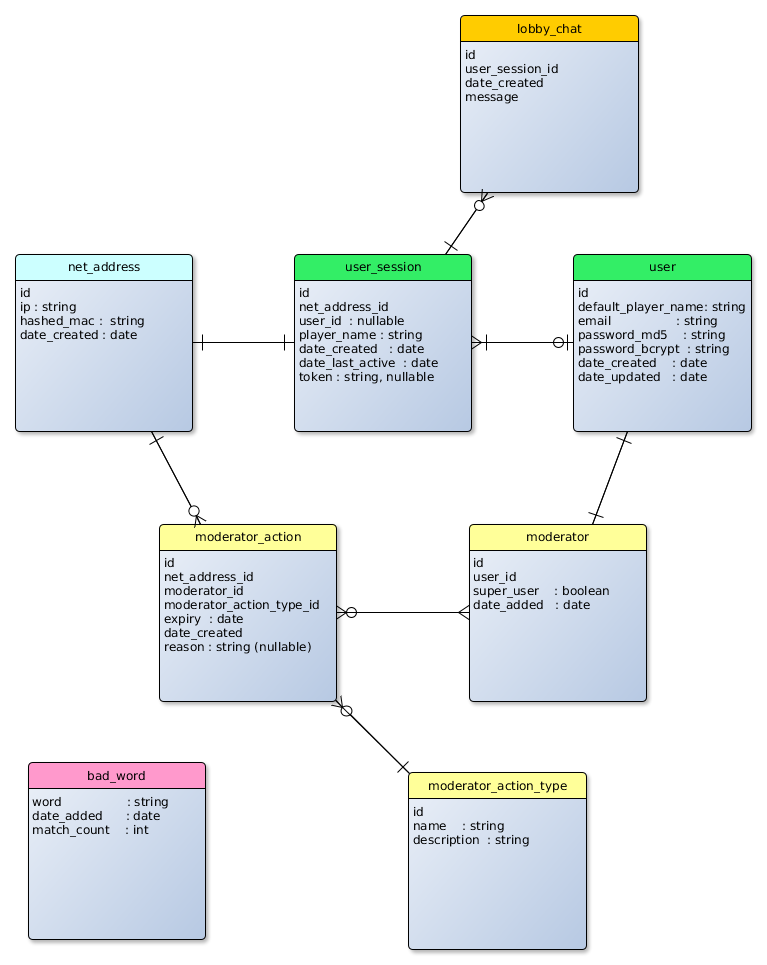

50,513 | 10,518,155,621 | IssuesEvent | 2019-09-29 08:46:52 | triplea-game/triplea | https://api.github.com/repos/triplea-game/triplea | closed | 'ta_users' Schema Update | Design Stale code discussion | Following up to a number of initiatives, I'd like to propose/discuss a schema update. An ER diagram is below:

A few items are forward looking and would be added in later iterations:

- chat_... | 1.0 | 'ta_users' Schema Update - Following up to a number of initiatives, I'd like to propose/discuss a schema update. An ER diagram is below:

A few items are forward looking and would be added in... | non_priority | ta users schema update following up to a number of initiatives i d like to propose discuss a schema update an er diagram is below a few items are forward looking and would be added in later iterations chat history table token column on user session match count on bad word otherwise of note... | 0 |

360,999 | 10,700,471,126 | IssuesEvent | 2019-10-24 00:07:54 | octobercms/october | https://api.github.com/repos/octobercms/october | closed | Pivot data support in deferred binding | Priority: High Status: Archived Status: Review Needed Type: Enhancement | Is there any way to create relation manager which has some pivot data and works with deffered binding?

Are there any chances for October to support this anytime soon?

| 1.0 | Pivot data support in deferred binding - Is there any way to create relation manager which has some pivot data and works with deffered binding?

Are there any chances for October to support this anytime soon?

| priority | pivot data support in deferred binding is there any way to create relation manager which has some pivot data and works with deffered binding are there any chances for october to support this anytime soon | 1 |

43,137 | 5,522,092,432 | IssuesEvent | 2017-03-19 20:44:19 | rust-lang/rust | https://api.github.com/repos/rust-lang/rust | closed | ICE on with #[repr(..)] for single-variant enum | E-needstest I-ICE | ``` rust

#[repr(u8)]

enum Foo {

Foo(u8),

}

fn main() {

match Foo::Foo(1) {

_ => ()

}

}

```

Same error on all nightly and stable:

```

error: internal compiler error: unexpected panic

note: the compiler unexpectedly panicked. this is a bug.

note: we would appreciate a bug report: https://github.com... | 1.0 | ICE on with #[repr(..)] for single-variant enum - ``` rust

#[repr(u8)]

enum Foo {

Foo(u8),

}

fn main() {

match Foo::Foo(1) {

_ => ()

}

}

```

Same error on all nightly and stable:

```

error: internal compiler error: unexpected panic

note: the compiler unexpectedly panicked. this is a bug.

note: w... | non_priority | ice on with for single variant enum rust enum foo foo fn main match foo foo same error on all nightly and stable error internal compiler error unexpected panic note the compiler unexpectedly panicked this is a bug note we would appreciate ... | 0 |

88,641 | 25,477,159,595 | IssuesEvent | 2022-11-25 15:34:37 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [GCI] [SB] A popup message is getting displayed while adding non-organizational user account in the below scenario | Bug P2 Study builder Process: Fixed Process: Tested QA Process: Tested dev | **Steps:**

1. Login to SB

2. Click on Manage admins icon

3. Click on Add admin button

4. Complete first name, last name , select the role and enter non-organizational user email

5. Now enter invalid number in phone number field

6. Click on Add button and Observe.

**AR:** A popup message is getting displayed... | 1.0 | [GCI] [SB] A popup message is getting displayed while adding non-organizational user account in the below scenario - **Steps:**

1. Login to SB

2. Click on Manage admins icon

3. Click on Add admin button

4. Complete first name, last name , select the role and enter non-organizational user email

5. Now enter invali... | non_priority | a popup message is getting displayed while adding non organizational user account in the below scenario steps login to sb click on manage admins icon click on add admin button complete first name last name select the role and enter non organizational user email now enter invalid numbe... | 0 |

95,260 | 19,683,848,028 | IssuesEvent | 2022-01-11 19:39:37 | Pokecube-Development/Pokecube-Issues-and-Wiki | https://api.github.com/repos/Pokecube-Development/Pokecube-Issues-and-Wiki | closed | New worldgen chunks without NPCs | Bug - Code Fixed | #### Issue Description:

When generating brand new chunks in the world (including ultraspace), NPCs are not spawning in villages or structures. NPCs which had been previously spawned in villages still remain. Affects all NPC types from wandering trainers to stationary ones like N.

#### What happens:

Moving to new c... | 1.0 | New worldgen chunks without NPCs - #### Issue Description:

When generating brand new chunks in the world (including ultraspace), NPCs are not spawning in villages or structures. NPCs which had been previously spawned in villages still remain. Affects all NPC types from wandering trainers to stationary ones like N.

... | non_priority | new worldgen chunks without npcs issue description when generating brand new chunks in the world including ultraspace npcs are not spawning in villages or structures npcs which had been previously spawned in villages still remain affects all npc types from wandering trainers to stationary ones like n ... | 0 |

256,355 | 19,409,321,279 | IssuesEvent | 2021-12-20 07:36:47 | glific/glific | https://api.github.com/repos/glific/glific | opened | View contact profile and history | documentation | @abhi1203 Assign this document to you for review. Let me know if you have feedback or suggestions.

**View contact profile and history**

https://glific.slab.com/posts/12-view-contact-profile-and-history-v9zuhety | 1.0 | View contact profile and history - @abhi1203 Assign this document to you for review. Let me know if you have feedback or suggestions.

**View contact profile and history**

https://glific.slab.com/posts/12-view-contact-profile-and-history-v9zuhety | non_priority | view contact profile and history assign this document to you for review let me know if you have feedback or suggestions view contact profile and history | 0 |

226,481 | 24,947,191,808 | IssuesEvent | 2022-11-01 01:59:08 | saif-khan1211/First_real_scan | https://api.github.com/repos/saif-khan1211/First_real_scan | closed | CVE-2022-40156 (High) detected in xstream-1.4.8.jar - autoclosed | security vulnerability | ## CVE-2022-40156 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.4.8.jar</b></p></summary>

<p>XStream is a serialization library from Java objects to XML and back.</p>

<p>Pa... | True | CVE-2022-40156 (High) detected in xstream-1.4.8.jar - autoclosed - ## CVE-2022-40156 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.4.8.jar</b></p></summary>

<p>XStream is a... | non_priority | cve high detected in xstream jar autoclosed cve high severity vulnerability vulnerable library xstream jar xstream is a serialization library from java objects to xml and back path to vulnerable library target libs provided xstream jar dependency hierarchy ... | 0 |

737,036 | 25,498,211,317 | IssuesEvent | 2022-11-27 23:05:04 | turgutcem/swe573 | https://api.github.com/repos/turgutcem/swe573 | opened | Develop User Recomendations | effort:medium priority:medium backend | Develop a generic user recomendation interface and implement this interface . | 1.0 | Develop User Recomendations - Develop a generic user recomendation interface and implement this interface . | priority | develop user recomendations develop a generic user recomendation interface and implement this interface | 1 |

567,767 | 16,891,711,744 | IssuesEvent | 2021-06-23 10:02:01 | googleapis/google-cloud-php | https://api.github.com/repos/googleapis/google-cloud-php | closed | Synthesis failed for analyticsdata | api: analyticsdata autosynth failure priority: p2 type: bug | Hello! Autosynth couldn't regenerate analyticsdata. :broken_heart:

Please investigate and fix this issue within 5 business days. While it remains broken,

this library cannot be updated with changes to the analyticsdata API, and the library grows

stale.

See https://github.com/googleapis/synthtool/blob/master/autosynt... | 1.0 | Synthesis failed for analyticsdata - Hello! Autosynth couldn't regenerate analyticsdata. :broken_heart:

Please investigate and fix this issue within 5 business days. While it remains broken,

this library cannot be updated with changes to the analyticsdata API, and the library grows

stale.

See https://github.com/goog... | priority | synthesis failed for analyticsdata hello autosynth couldn t regenerate analyticsdata broken heart please investigate and fix this issue within business days while it remains broken this library cannot be updated with changes to the analyticsdata api and the library grows stale see for trouble shooting ... | 1 |

585,226 | 17,483,157,202 | IssuesEvent | 2021-08-09 07:21:05 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | sniffies.com - Unable to get location | browser-firefox-mobile nsfw priority-normal severity-critical browser-focus-geckoview engine-gecko | <!-- @browser: Firefox Mobile 90.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:90.0) Gecko/90.0 Firefox/90.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/81320 -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://sniffies.com/

**Brows... | 1.0 | sniffies.com - Unable to get location - <!-- @browser: Firefox Mobile 90.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:90.0) Gecko/90.0 Firefox/90.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/81320 -->

<!-- @extra_labels: browser-focus-geckoview -->

... | priority | sniffies com unable to get location url browser version firefox mobile operating system android tested another browser no problem type site is not usable description page not loading correctly steps to reproduce it just doesn t work on this browser it says... | 1 |

642,565 | 20,907,317,690 | IssuesEvent | 2022-03-24 04:39:31 | mikezimm/drilldown7 | https://api.github.com/repos/mikezimm/drilldown7 | closed | Bug: Crash when using refiner Codigo in Espanol | High Priority complete | ## Location: /sites/SolutionTesting/SitePages/CH-Drilldown-2.aspx

Getting the 2 errors in the attached screenshot.

It doesn't seem to be a fetch error because it is fetching 146 items.

But is throwing both an undefined an null error.

Similar item to #79 and #80. #79 was resolved which was adding this column ... | 1.0 | Bug: Crash when using refiner Codigo in Espanol - ## Location: /sites/SolutionTesting/SitePages/CH-Drilldown-2.aspx

Getting the 2 errors in the attached screenshot.

It doesn't seem to be a fetch error because it is fetching 146 items.

But is throwing both an undefined an null error.

Similar item to #79 and #8... | priority | bug crash when using refiner codigo in espanol location sites solutiontesting sitepages ch drilldown aspx getting the errors in the attached screenshot it doesn t seem to be a fetch error because it is fetching items but is throwing both an undefined an null error similar item to and ... | 1 |

649,721 | 21,318,168,180 | IssuesEvent | 2022-04-16 16:51:03 | kubesphere/kubesphere | https://api.github.com/repos/kubesphere/kubesphere | closed | After service mesh of cluster is enabled, the application governance status of the application is wrong | kind/bug stale priority/medium | **Versions Used**

KubeSphere: `v3.2.1-rc.3`

**Precondition**

```

servicemesh:

enabled: false

```

**How To Reproduce**

Steps to reproduce the behavior:

1. Create application 'test' and select close of Application governance

2. After the application ‘test’ is successfully created

Gecko/73.0 Firefox/73.0 -->

<!-- @reported_with: -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/48755 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.pontofrio.com.br/acessorioseinovacoe... | 1.0 | www.pontofrio.com.br - Some page elements are missing - <!-- @browser: Firefox Mobile 73.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:73.0) Gecko/73.0 Firefox/73.0 -->

<!-- @reported_with: -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/48755 -->

<!-- @extra_labels: browser-fenix -->

*... | priority | some page elements are missing url browser version firefox mobile operating system android tested another browser yes problem type site is not usable description site broken steps to reproduce browser configuration none from with ❤️ | 1 |

187,731 | 22,045,873,516 | IssuesEvent | 2022-05-30 01:35:25 | Nivaskumark/kernel_v4.1.15 | https://api.github.com/repos/Nivaskumark/kernel_v4.1.15 | closed | CVE-2017-16530 (Medium) detected in linuxlinux-4.6, linuxlinux-4.6 - autoclosed | security vulnerability | ## CVE-2017-16530 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linuxlinux-4.6</b>, <b>linuxlinux-4.6</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='h... | True | CVE-2017-16530 (Medium) detected in linuxlinux-4.6, linuxlinux-4.6 - autoclosed - ## CVE-2017-16530 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linuxlinux-4.6</b>, <b>linuxlinux... | non_priority | cve medium detected in linuxlinux linuxlinux autoclosed cve medium severity vulnerability vulnerable libraries linuxlinux linuxlinux vulnerability details the uas driver in the linux kernel before allows local users to cause a denial of servi... | 0 |

322,390 | 9,817,051,992 | IssuesEvent | 2019-06-13 15:51:31 | dice-group/Squirrel | https://api.github.com/repos/dice-group/Squirrel | closed | Improve seed file reader | component:frontier priority:high type:enhancement | ## Description

As a user, I would like to provide additional information for seed files, e.g., the type of a URI or some other data that could improve its processing. Therefore, the reader for the seed file should be improved to be able to process this additional information.

## Solution

* To keep the solution g... | 1.0 | Improve seed file reader - ## Description

As a user, I would like to provide additional information for seed files, e.g., the type of a URI or some other data that could improve its processing. Therefore, the reader for the seed file should be improved to be able to process this additional information.

## Solutio... | priority | improve seed file reader description as a user i would like to provide additional information for seed files e g the type of a uri or some other data that could improve its processing therefore the reader for the seed file should be improved to be able to process this additional information solutio... | 1 |

15,611 | 10,325,516,963 | IssuesEvent | 2019-09-01 18:00:38 | microsoft/BotBuilder-Samples | https://api.github.com/repos/microsoft/BotBuilder-Samples | closed | OAuthPrompt popup is not showing for login in MS-Teams. | Bot Services customer-replied-to customer-reported | I have use OAuthPrompt card to login user for bot authentication. It is working in Emulator but when i test in Teams then pop-up is not showing to login.

sample code:

==========

return await stepContext.BeginDialogAsync(nameof(OAuthPrompt), null, cancellationToken);

Bot Framework version: 4.5.1

| 1.0 | OAuthPrompt popup is not showing for login in MS-Teams. - I have use OAuthPrompt card to login user for bot authentication. It is working in Emulator but when i test in Teams then pop-up is not showing to login.

sample code:

==========

return await stepContext.BeginDialogAsync(nameof(OAuthPrompt), null, cancellat... | non_priority | oauthprompt popup is not showing for login in ms teams i have use oauthprompt card to login user for bot authentication it is working in emulator but when i test in teams then pop up is not showing to login sample code return await stepcontext begindialogasync nameof oauthprompt null cancellat... | 0 |

162,296 | 6,150,306,351 | IssuesEvent | 2017-06-27 22:11:49 | geoff-maddock/events-tracker | https://api.github.com/repos/geoff-maddock/events-tracker | closed | Search > Add tag summary as result | low priority | When a user searches for a term, if it matches a tag, display a tag summary

Include

- name

- button to follow (or unfollow)

- number of related events / entities? | 1.0 | Search > Add tag summary as result - When a user searches for a term, if it matches a tag, display a tag summary

Include

- name

- button to follow (or unfollow)

- number of related events / entities? | priority | search add tag summary as result when a user searches for a term if it matches a tag display a tag summary include name button to follow or unfollow number of related events entities | 1 |

451,501 | 32,030,631,594 | IssuesEvent | 2023-09-22 12:06:01 | swedenconnect/bankid-saml-idp | https://api.github.com/repos/swedenconnect/bankid-saml-idp | closed | Add documentation about how to join Sweden Connect federation | documentation | Add documentation about how to join Sweden Connect federation. | 1.0 | Add documentation about how to join Sweden Connect federation - Add documentation about how to join Sweden Connect federation. | non_priority | add documentation about how to join sweden connect federation add documentation about how to join sweden connect federation | 0 |

272,954 | 8,519,556,788 | IssuesEvent | 2018-11-01 15:00:15 | desktop/desktop | https://api.github.com/repos/desktop/desktop | closed | Aborting a new merge doesn't update the changed files list | bug priority-2 | ## Description

After aborting a merge, the status doesn't update. I continue to see all the conflicted files, etc. If I check `git status` on the command line, it reflects that I aborted the merge. If I switch away from the app and back, the changed files updates as I'd expect.

## Version

* GitHub Desktop: 1.5... | 1.0 | Aborting a new merge doesn't update the changed files list - ## Description

After aborting a merge, the status doesn't update. I continue to see all the conflicted files, etc. If I check `git status` on the command line, it reflects that I aborted the merge. If I switch away from the app and back, the changed files ... | priority | aborting a new merge doesn t update the changed files list description after aborting a merge the status doesn t update i continue to see all the conflicted files etc if i check git status on the command line it reflects that i aborted the merge if i switch away from the app and back the changed files ... | 1 |

157,183 | 5,996,375,119 | IssuesEvent | 2017-06-03 13:44:27 | TASVideos/BizHawk | https://api.github.com/repos/TASVideos/BizHawk | closed | Disconnect SNES Controllers | auto-migrated Core-BSNES Priority-Medium Type-Enhancement | ```

What steps will reproduce the problem?

1. Play Tiny Toon Adventures Wacky Sports Challenge

2. Select Start Game

3. You will need to push the A button on Player 2 to start playing

What is the expected output? What do you see instead?

I would like a way to turn off SNES Controllers that I don't want/need.

What vers... | 1.0 | Disconnect SNES Controllers - ```

What steps will reproduce the problem?

1. Play Tiny Toon Adventures Wacky Sports Challenge

2. Select Start Game

3. You will need to push the A button on Player 2 to start playing

What is the expected output? What do you see instead?

I would like a way to turn off SNES Controllers that... | priority | disconnect snes controllers what steps will reproduce the problem play tiny toon adventures wacky sports challenge select start game you will need to push the a button on player to start playing what is the expected output what do you see instead i would like a way to turn off snes controllers that... | 1 |

125,051 | 17,795,927,974 | IssuesEvent | 2021-08-31 22:13:07 | ghc-dev/Ashley-Campos | https://api.github.com/repos/ghc-dev/Ashley-Campos | opened | CVE-2017-1000048 (High) detected in qs-4.0.0.tgz | security vulnerability | ## CVE-2017-1000048 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>qs-4.0.0.tgz</b></p></summary>

<p>A querystring parser that supports nesting and arrays, with a depth limit</p>

<p>L... | True | CVE-2017-1000048 (High) detected in qs-4.0.0.tgz - ## CVE-2017-1000048 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>qs-4.0.0.tgz</b></p></summary>

<p>A querystring parser that suppo... | non_priority | cve high detected in qs tgz cve high severity vulnerability vulnerable library qs tgz a querystring parser that supports nesting and arrays with a depth limit library home page a href path to dependency file ashley campos package json path to vulnerable library ashl... | 0 |

16,609 | 2,615,119,834 | IssuesEvent | 2015-03-01 05:45:54 | chrsmith/google-api-java-client | https://api.github.com/repos/chrsmith/google-api-java-client | closed | Gzipped Request Content Unsupported by Some Servers | auto-migrated Component-HTTP Milestone-Version1.4.0 Priority-Medium Type-Enhancement | ```

Version of google-api-java-client (e.g. 1.3.1-alpha)?

1.4.0-SNAPSHOT

Java environment (e.g. Java 6, Android 2.3, App Engine 1.4.2)?

Java 6

Describe the problem.

The library currently gzips all content > 256 bytes. There is no guarantee

that all servers will accept gzipped content, nor any mechanism to negotiate... | 1.0 | Gzipped Request Content Unsupported by Some Servers - ```

Version of google-api-java-client (e.g. 1.3.1-alpha)?

1.4.0-SNAPSHOT

Java environment (e.g. Java 6, Android 2.3, App Engine 1.4.2)?

Java 6

Describe the problem.

The library currently gzips all content > 256 bytes. There is no guarantee

that all servers will ... | priority | gzipped request content unsupported by some servers version of google api java client e g alpha snapshot java environment e g java android app engine java describe the problem the library currently gzips all content bytes there is no guarantee that all servers will ac... | 1 |

489,542 | 14,107,904,085 | IssuesEvent | 2020-11-06 16:58:19 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | samples: net: sockets: tcp: tcp2 server not accepting with ipv6 bsd sockets | area: Networking bug priority: medium | **Describe the bug**

Prior to 70dae094ba2dd3e1f02684a8d673d434966b8b76 it was possible to run an IPv6 TCP server using the default TCP implementation and the BSD sockets API over IEEE 802.15.4. However, since TCP2 became the default TCP implementation, it has not been possible (with the same configuration).

Further... | 1.0 | samples: net: sockets: tcp: tcp2 server not accepting with ipv6 bsd sockets - **Describe the bug**

Prior to 70dae094ba2dd3e1f02684a8d673d434966b8b76 it was possible to run an IPv6 TCP server using the default TCP implementation and the BSD sockets API over IEEE 802.15.4. However, since TCP2 became the default TCP impl... | priority | samples net sockets tcp server not accepting with bsd sockets describe the bug prior to it was possible to run an tcp server using the default tcp implementation and the bsd sockets api over ieee however since became the default tcp implementation it has not been possible with the same co... | 1 |

322,565 | 9,819,497,695 | IssuesEvent | 2019-06-13 22:13:38 | clearlinux/distribution | https://api.github.com/repos/clearlinux/distribution | closed | xdg-open (and other XDG tools) missing | bug medium priority | **Describe the bug**

```

$ xdg-open

bash: xdg-open: command not found

```

Many scripts assume the XDG tools to be present on any desktop system.

**To Reproduce**

Steps to reproduce the behavior:

1. Install the XFCE desktop

2. open `xfce-terminal`

3. open `xdg-open`

4. Try to close it

**Expected behavi... | 1.0 | xdg-open (and other XDG tools) missing - **Describe the bug**

```

$ xdg-open

bash: xdg-open: command not found

```

Many scripts assume the XDG tools to be present on any desktop system.

**To Reproduce**

Steps to reproduce the behavior:

1. Install the XFCE desktop

2. open `xfce-terminal`

3. open `xdg-open`... | priority | xdg open and other xdg tools missing describe the bug xdg open bash xdg open command not found many scripts assume the xdg tools to be present on any desktop system to reproduce steps to reproduce the behavior install the xfce desktop open xfce terminal open xdg open ... | 1 |

263,178 | 28,026,453,322 | IssuesEvent | 2023-03-28 09:26:42 | Dima2021/cargo-audit | https://api.github.com/repos/Dima2021/cargo-audit | closed | CVE-2020-25575 (High) detected in failure-0.1.8.crate - autoclosed | Mend: dependency security vulnerability | ## CVE-2020-25575 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>failure-0.1.8.crate</b></p></summary>

<p>Experimental error handling abstraction.</p>

<p>Library home page: <a href="h... | True | CVE-2020-25575 (High) detected in failure-0.1.8.crate - autoclosed - ## CVE-2020-25575 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>failure-0.1.8.crate</b></p></summary>

<p>Experime... | non_priority | cve high detected in failure crate autoclosed cve high severity vulnerability vulnerable library failure crate experimental error handling abstraction library home page a href dependency hierarchy rustsec crate root library cargo edit crate ... | 0 |

525,442 | 15,253,561,020 | IssuesEvent | 2021-02-20 08:16:57 | FaultyFunctions/Crochet | https://api.github.com/repos/FaultyFunctions/Crochet | closed | Crochet for Yarn 2.0 | Priority: High Status: In-Progress Type: Feature | - [x] File tags editing

- [x] File tags saving

- [x] File tags loading

- [x] Syntax highlighting for editor

- [x] Title validation

- [x] Prevent errors and fix loading if yarn file doesn't have Crochet specific, node-level metadata like position.

- [x] Header tags

- [x] File Tags don't get reset when opening new files | 1.0 | Crochet for Yarn 2.0 - - [x] File tags editing

- [x] File tags saving

- [x] File tags loading

- [x] Syntax highlighting for editor

- [x] Title validation

- [x] Prevent errors and fix loading if yarn file doesn't have Crochet specific, node-level metadata like position.

- [x] Header tags

- [x] File Tags don't get reset ... | priority | crochet for yarn file tags editing file tags saving file tags loading syntax highlighting for editor title validation prevent errors and fix loading if yarn file doesn t have crochet specific node level metadata like position header tags file tags don t get reset when opening new... | 1 |

55,170 | 13,535,947,597 | IssuesEvent | 2020-09-16 08:20:52 | googleapis/nodejs-iot | https://api.github.com/repos/googleapis/nodejs-iot | opened | The build failed | buildcop: issue priority: p1 type: bug | This test failed!

To configure my behavior, see [the Build Cop Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/buildcop).

If I'm commenting on this issue too often, add the `buildcop: quiet` label and

I will stop commenting.

---

commit: cd7bf85083bade2f6522d5484fd7635b3834... | 1.0 | The build failed - This test failed!

To configure my behavior, see [the Build Cop Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/buildcop).

If I'm commenting on this issue too often, add the `buildcop: quiet` label and

I will stop commenting.

---

commit: cd7bf85083bade2f6... | non_priority | the build failed this test failed to configure my behavior see if i m commenting on this issue too often add the buildcop quiet label and i will stop commenting commit buildurl status failed test output the expression evaluated to a falsy value assert ok devices includes gatewa... | 0 |

451,276 | 13,032,702,608 | IssuesEvent | 2020-07-28 05:00:53 | kubesphere/kubesphere | https://api.github.com/repos/kubesphere/kubesphere | closed | one email can be used for different user names | area/iam kind/bug kind/need-to-verify priority/high | **Describe the Bug**

I might accidentally create a new user with the email that is already used by another user. But it is successful created.

<img width="322" alt="Screen Shot 2020-07-24 at 8 50 51 PM" src="https://user-images.githubusercontent.com/28859385/88393041-9d68f280-cdef-11ea-9aee-32ae3bcb88fd.png">

<img w... | 1.0 | one email can be used for different user names - **Describe the Bug**

I might accidentally create a new user with the email that is already used by another user. But it is successful created.

<img width="322" alt="Screen Shot 2020-07-24 at 8 50 51 PM" src="https://user-images.githubusercontent.com/28859385/88393041-9... | priority | one email can be used for different user names describe the bug i might accidentally create a new user with the email that is already used by another user but it is successful created img width alt screen shot at pm src img width alt screen shot at pm src vers... | 1 |

41,032 | 21,394,439,071 | IssuesEvent | 2022-04-21 10:11:47 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | [APM] Add experimental probability setting for aggregations | Team:apm apm:performance v8.3.0 | with the `random_sampler` aggregation being available in ES (currently behind a feature flag), we can add a simple, experimental `probability` setting that takes any value between 0 and 1. In the future we can come up with something better, but to expose and test the feature a simple setting would be a good start.

W... | True | [APM] Add experimental probability setting for aggregations - with the `random_sampler` aggregation being available in ES (currently behind a feature flag), we can add a simple, experimental `probability` setting that takes any value between 0 and 1. In the future we can come up with something better, but to expose and... | non_priority | add experimental probability setting for aggregations with the random sampler aggregation being available in es currently behind a feature flag we can add a simple experimental probability setting that takes any value between and in the future we can come up with something better but to expose and tes... | 0 |

50,090 | 3,006,179,338 | IssuesEvent | 2015-07-27 08:41:25 | Itseez/opencv | https://api.github.com/repos/Itseez/opencv | opened | Error in Python Find Contours | affected: 2.4 auto-transferred bug category: python bindings priority: low | Transferred from http://code.opencv.org/issues/1440

```

|| kscottz - on 2011-10-20 16:25

|| Priority: Low

|| Affected: 2.4.3

|| Category: python bindings

|| Tracker: Bug

|| Difficulty: None

|| PR:

|| Platform: None / None

```

Error in Python Find Contours

-----------

```

I found a subtle bug in the cv.findContours m... | 1.0 | Error in Python Find Contours - Transferred from http://code.opencv.org/issues/1440

```

|| kscottz - on 2011-10-20 16:25

|| Priority: Low

|| Affected: 2.4.3

|| Category: python bindings

|| Tracker: Bug

|| Difficulty: None

|| PR:

|| Platform: None / None

```

Error in Python Find Contours

-----------

```

I found a sub... | priority | error in python find contours transferred from kscottz on priority low affected category python bindings tracker bug difficulty none pr platform none none error in python find contours i found a subtle bug in the cv findcontours method t... | 1 |

313,337 | 9,559,795,641 | IssuesEvent | 2019-05-03 17:44:14 | GOTO-OBS/goto-alert | https://api.github.com/repos/GOTO-OBS/goto-alert | opened | [WIP] Lessons from S190425z and S190426c | -effort 1 -priority 1 -type proposal GW_events database event_handler | So we've had two actual BNS/NSBH events that we tried to follow up with GOTO. For S190425z and S190426c I ended up doing a fair amount of database insertion myself, from the EMMA workshop in Baltimore. There were a few reasons for that:

* The sentinel failed a couple of times thanks to the way skymaps are stored in th... | 1.0 | [WIP] Lessons from S190425z and S190426c - So we've had two actual BNS/NSBH events that we tried to follow up with GOTO. For S190425z and S190426c I ended up doing a fair amount of database insertion myself, from the EMMA workshop in Baltimore. There were a few reasons for that:

* The sentinel failed a couple of times... | priority | lessons from and so we ve had two actual bns nsbh events that we tried to follow up with goto for and i ended up doing a fair amount of database insertion myself from the emma workshop in baltimore there were a few reasons for that the sentinel failed a couple of times thanks to the way skymaps are s... | 1 |

43,492 | 2,889,811,853 | IssuesEvent | 2015-06-13 19:42:39 | IMAGINARY/imaginary-web | https://api.github.com/repos/IMAGINARY/imaginary-web | opened | Translation of new Texts section | enhancement high priority | The new Texts section has many unstranslated interface strings. | 1.0 | Translation of new Texts section - The new Texts section has many unstranslated interface strings. | priority | translation of new texts section the new texts section has many unstranslated interface strings | 1 |

354,188 | 10,563,508,231 | IssuesEvent | 2019-10-04 21:10:51 | wso2-cellery/sdk | https://api.github.com/repos/wso2-cellery/sdk | closed | Error while executing cellery test command | Priority/Highest Severity/Critical Type/Bug | Traceback (most recent call last):

File "/Library/Cellery/telepresence-0.101/bin/telepresence/telepresence/cli.py", line 136, in crash_reporting

yield

File "/Library/Cellery/telepresence-0.101/bin/telepresence/telepresence/main.py", line 66, in main

env, pod_info = get_remote_env(runner, ssh, remote_info)

... | 1.0 | Error while executing cellery test command - Traceback (most recent call last):

File "/Library/Cellery/telepresence-0.101/bin/telepresence/telepresence/cli.py", line 136, in crash_reporting

yield

File "/Library/Cellery/telepresence-0.101/bin/telepresence/telepresence/main.py", line 66, in main

env, pod_info... | priority | error while executing cellery test command traceback most recent call last file library cellery telepresence bin telepresence telepresence cli py line in crash reporting yield file library cellery telepresence bin telepresence telepresence main py line in main env pod info get ... | 1 |

317,088 | 23,663,509,847 | IssuesEvent | 2022-08-26 18:05:42 | awslabs/amazon-eks-ami | https://api.github.com/repos/awslabs/amazon-eks-ami | closed | Bring back EKS AMI versions in AWS documentation | documentation | I used to be able to visit https://docs.aws.amazon.com/eks/latest/userguide/eks-linux-ami-versions.html to get a list of valid AMI versions for EKS.

Now, the list is gone.

I have to visit https://github.com/awslabs/amazon-eks-ami/blob/master/CHANGELOG.md, do information stitching with trial and error to get the r... | 1.0 | Bring back EKS AMI versions in AWS documentation - I used to be able to visit https://docs.aws.amazon.com/eks/latest/userguide/eks-linux-ami-versions.html to get a list of valid AMI versions for EKS.

Now, the list is gone.

I have to visit https://github.com/awslabs/amazon-eks-ami/blob/master/CHANGELOG.md, do info... | non_priority | bring back eks ami versions in aws documentation i used to be able to visit to get a list of valid ami versions for eks now the list is gone i have to visit do information stitching with trial and error to get the right ami versions example what s the corresponding version for ami release to ... | 0 |

712,392 | 24,493,870,770 | IssuesEvent | 2022-10-10 06:43:41 | open-telemetry/opentelemetry-js-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-js-contrib | closed | hapi: support direct plugins with prefixed paths | bug up-for-grabs stale priority:p1 | <!--

Please answer these questions before submitting a bug report.

-->

### What version of OpenTelemetry are you using?

`@opentelemetry/instrumentation-hapi 0.27.0`

### What version of Node are you using?

`16.10.0`

### What did you do?

Passed plugin directly to `server.register` along with options:... | 1.0 | hapi: support direct plugins with prefixed paths - <!--

Please answer these questions before submitting a bug report.

-->

### What version of OpenTelemetry are you using?

`@opentelemetry/instrumentation-hapi 0.27.0`

### What version of Node are you using?

`16.10.0`

### What did you do?

Passed plugi... | priority | hapi support direct plugins with prefixed paths please answer these questions before submitting a bug report what version of opentelemetry are you using opentelemetry instrumentation hapi what version of node are you using what did you do passed plugin d... | 1 |

17,294 | 6,401,186,191 | IssuesEvent | 2017-08-05 18:28:10 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | Building export templates (release & release_debug) X11 failed (core/rid.h) | bug platform:linux topic:buildsystem | *Bugsquad edit:* Current master HEAD.

Impossible to compile export templates for X11

scons platform=x11 -> works

but:

scons platform=x11 tools=no target=release_debug bits=64 -> failed

scons platform=x11 tools=no target=release bits=64 -> failed

```

scons platform=x11 tools=no target=release bits=... | 1.0 | Building export templates (release & release_debug) X11 failed (core/rid.h) - *Bugsquad edit:* Current master HEAD.

Impossible to compile export templates for X11

scons platform=x11 -> works

but:

scons platform=x11 tools=no target=release_debug bits=64 -> failed

scons platform=x11 tools=no target=releas... | non_priority | building export templates release release debug failed core rid h bugsquad edit current master head impossible to compile export templates for scons platform works but scons platform tools no target release debug bits failed scons platform tools no target release bits ... | 0 |

165,085 | 6,262,024,367 | IssuesEvent | 2017-07-15 06:05:33 | HabitRPG/habitica | https://api.github.com/repos/HabitRPG/habitica | closed | Add Reasons for Leaving Group/Kicking from Group | priority: minor status: issue: help welcome now | Need to add an optional text field so people can state why they're leaving a group or party leaders can send a message explaining why they're kicking someone out.

## <bountysource-plugin>

Want to back this issue? **[Post a bounty on it!](https://www.bountysource.com/issues/1419952-add-reasons-for-leaving-group-kicking... | 1.0 | Add Reasons for Leaving Group/Kicking from Group - Need to add an optional text field so people can state why they're leaving a group or party leaders can send a message explaining why they're kicking someone out.

## <bountysource-plugin>

Want to back this issue? **[Post a bounty on it!](https://www.bountysource.com/i... | priority | add reasons for leaving group kicking from group need to add an optional text field so people can state why they re leaving a group or party leaders can send a message explaining why they re kicking someone out want to back this issue we accept bounties via | 1 |

235,533 | 18,051,394,948 | IssuesEvent | 2021-09-19 20:10:25 | orcmid/nfoTools | https://api.github.com/repos/orcmid/nfoTools | opened | Triage nfoWare glossary project | task documentation pattern practice | There is a very old semantic exploration associated with this activity. It has to do with collaborative lexicography and is perhaps tied to the adbib upgrade as well. It certainly deals with networks of agreements.

There is also the matter of having an useful glossary for things nfoTool. | 1.0 | Triage nfoWare glossary project - There is a very old semantic exploration associated with this activity. It has to do with collaborative lexicography and is perhaps tied to the adbib upgrade as well. It certainly deals with networks of agreements.

There is also the matter of having an useful glossary for things n... | non_priority | triage nfoware glossary project there is a very old semantic exploration associated with this activity it has to do with collaborative lexicography and is perhaps tied to the adbib upgrade as well it certainly deals with networks of agreements there is also the matter of having an useful glossary for things n... | 0 |

38,678 | 15,772,613,332 | IssuesEvent | 2021-03-31 22:02:21 | dotnet/fsharp | https://api.github.com/repos/dotnet/fsharp | closed | Differentiate between signature and implementation files in Go to All results | Area-IDE Language Service Feature Request | When looking up `mknull` with Ctrl+T, the results look like this

There's no way to tell which results corresponde to implementations and which to signatures. (And the order of `QuotationPickler... | 1.0 | Differentiate between signature and implementation files in Go to All results - When looking up `mknull` with Ctrl+T, the results look like this

There's no way to tell which results corresponde... | non_priority | differentiate between signature and implementation files in go to all results when looking up mknull with ctrl t the results look like this there s no way to tell which results corresponde to implementations and which to signatures and the order of quotationpickler mknull results is a bit weird wh... | 0 |

89,398 | 8,202,632,598 | IssuesEvent | 2018-09-02 12:01:06 | humera987/HumTestData | https://api.github.com/repos/humera987/HumTestData | opened | Humera_Test_Proj : api_v1_dashboard_count-projects_get_auth_invalid | Humera_Test_Proj | Project : Humera_Test_Proj

Job : Stg

Env : Stg

Region : FXLabs/US_WEST_1

Result : fail

Status Code : 500

Headers : {}

Endpoint : http://13.57.51.56/api/v1/dashboard/count-projects

Request :

Response :

I/O error on GET request for "http://13.57.51.56/api/v1/dashboard/count-projects": Conn... | 1.0 | Humera_Test_Proj : api_v1_dashboard_count-projects_get_auth_invalid - Project : Humera_Test_Proj

Job : Stg

Env : Stg

Region : FXLabs/US_WEST_1

Result : fail

Status Code : 500

Headers : {}

Endpoint : http://13.57.51.56/api/v1/dashboard/count-projects

Request :

Response :

I/O error on GET ... | non_priority | humera test proj api dashboard count projects get auth invalid project humera test proj job stg env stg region fxlabs us west result fail status code headers endpoint request response i o error on get request for connect to failed connec... | 0 |

385,326 | 11,418,648,255 | IssuesEvent | 2020-02-03 05:27:01 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | Incorrect connection url in Analytics configuration | Priority/Low | **Description:**

Readme has incorrect connection url as tcp://localhost:7612/,tcp://localhost:7613/,tcp://localhost:7614/ and due to this it gets Number format exception.

It should be tcp://localhost:7612,tcp://localhost:7613,tcp://localhost:7614. Due to

https://github.com/wso2/carbon-apimgt/blob/v6.1.66/featur... | 1.0 | Incorrect connection url in Analytics configuration - **Description:**

Readme has incorrect connection url as tcp://localhost:7612/,tcp://localhost:7613/,tcp://localhost:7614/ and due to this it gets Number format exception.

It should be tcp://localhost:7612,tcp://localhost:7613,tcp://localhost:7614. Due to

htt... | priority | incorrect connection url in analytics configuration description readme has incorrect connection url as tcp localhost tcp localhost tcp localhost and due to this it gets number format exception it should be tcp localhost tcp localhost tcp localhost due to suggested labe... | 1 |

562,026 | 16,636,625,340 | IssuesEvent | 2021-06-04 00:09:49 | kubernetes/website | https://api.github.com/repos/kubernetes/website | closed | Investigate “Persist Hugo resources Between Builds” plugin for Netlify | area/web-development lifecycle/rotten needs-triage priority/awaiting-more-evidence | **What would you like to be investigated**

Look into whether the [Persist Hugo resources Between Builds](https://github.com/cdeleeuwe/netlify-plugin-hugo-cache-resources) plugin for Netlify would speed up site builds; if so, is it worth employing for the site?

**Why is this needed**

The site build in Netlify takes... | 1.0 | Investigate “Persist Hugo resources Between Builds” plugin for Netlify - **What would you like to be investigated**

Look into whether the [Persist Hugo resources Between Builds](https://github.com/cdeleeuwe/netlify-plugin-hugo-cache-resources) plugin for Netlify would speed up site builds; if so, is it worth employing... | priority | investigate “persist hugo resources between builds” plugin for netlify what would you like to be investigated look into whether the plugin for netlify would speed up site builds if so is it worth employing for the site why is this needed the site build in netlify takes a while might speed it up... | 1 |

315,504 | 9,621,434,651 | IssuesEvent | 2019-05-14 10:37:42 | teambit/bit | https://api.github.com/repos/teambit/bit | opened | Bit overrides my readme file with an autogenerated file during export | area/export priority/critical type/bug | ## Expected Behavior

The readme file should not be changed.

## Actual Behavior

The readme file changed to a link file:

```

/* THIS IS A BIT-AUTO-GENERATED FILE. DO NOT EDIT THIS FILE DIRECTLY. */

module.exports = require('../../../../dist/my-comp/my-comp');

```

## Steps to Reproduce the Problem

```sh

bi... | 1.0 | Bit overrides my readme file with an autogenerated file during export - ## Expected Behavior

The readme file should not be changed.

## Actual Behavior

The readme file changed to a link file:

```

/* THIS IS A BIT-AUTO-GENERATED FILE. DO NOT EDIT THIS FILE DIRECTLY. */

module.exports = require('../../../../dist... | priority | bit overrides my readme file with an autogenerated file during export expected behavior the readme file should not be changed actual behavior the readme file changed to a link file this is a bit auto generated file do not edit this file directly module exports require dist... | 1 |

599,252 | 18,268,991,186 | IssuesEvent | 2021-10-04 11:52:36 | Matocolotoe/Skript-1.8 | https://api.github.com/repos/Matocolotoe/Skript-1.8 | closed | Problem of comparing ItemTypes | bug help wanted priority : high completed | For example, using the code below, the `ItemType#isTypeOf` method does not work as expected, with for example nether warts:

```applescript

on click on nether wart:

broadcast "test"

```

Related code: https://github.com/Matocolotoe/Skript-1.8/blob/master/src/main/java/ch/njol/skript/events/EvtClick.java#L197

Th... | 1.0 | Problem of comparing ItemTypes - For example, using the code below, the `ItemType#isTypeOf` method does not work as expected, with for example nether warts:

```applescript

on click on nether wart:

broadcast "test"

```

Related code: https://github.com/Matocolotoe/Skript-1.8/blob/master/src/main/java/ch/njol/skr... | priority | problem of comparing itemtypes for example using the code below the itemtype istypeof method does not work as expected with for example nether warts applescript on click on nether wart broadcast test related code the itemstack of o equals itemstack nether warts x while the itemstac... | 1 |

55,373 | 30,720,313,958 | IssuesEvent | 2023-07-27 15:29:27 | praetorian-inc/noseyparker | https://api.github.com/repos/praetorian-inc/noseyparker | opened | Rework input enumeration to make it possible to enumerate Git repositories in parallel | performance content discovery | Currently, the `scan` command runs in two main phases: input enumeration and content scanning. Each of these phases runs in parallel (but not concurrently; the input enumeration phase completes entirely before the content scanning phase completes).

However, within the input enumeration phase, when a Git repository i... | True | Rework input enumeration to make it possible to enumerate Git repositories in parallel - Currently, the `scan` command runs in two main phases: input enumeration and content scanning. Each of these phases runs in parallel (but not concurrently; the input enumeration phase completes entirely before the content scanning ... | non_priority | rework input enumeration to make it possible to enumerate git repositories in parallel currently the scan command runs in two main phases input enumeration and content scanning each of these phases runs in parallel but not concurrently the input enumeration phase completes entirely before the content scanning ... | 0 |

317,012 | 9,659,835,458 | IssuesEvent | 2019-05-20 14:16:58 | carbon-design-system/carbon-website | https://api.github.com/repos/carbon-design-system/carbon-website | closed | Improved component search and filter | inactive priority: low project: website type: dev :robot: wontfix | As a product designer I can discover and know I found the right component without knowing the component's name or clicking into each component's page.

User feedback:

- Feels like form elements should be next to each other

- She had no idea that they are alphabetical!!!!

- Q: How were you imagining that it woul... | 1.0 | Improved component search and filter - As a product designer I can discover and know I found the right component without knowing the component's name or clicking into each component's page.

User feedback:

- Feels like form elements should be next to each other

- She had no idea that they are alphabetical!!!!

-... | priority | improved component search and filter as a product designer i can discover and know i found the right component without knowing the component s name or clicking into each component s page user feedback feels like form elements should be next to each other she had no idea that they are alphabetical ... | 1 |

56,641 | 14,078,468,698 | IssuesEvent | 2020-11-04 13:36:57 | themagicalmammal/android_kernel_samsung_s5neolte | https://api.github.com/repos/themagicalmammal/android_kernel_samsung_s5neolte | opened | CVE-2019-9003 (High) detected in linuxv3.10 | security vulnerability | ## CVE-2019-9003 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.10</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/torva... | True | CVE-2019-9003 (High) detected in linuxv3.10 - ## CVE-2019-9003 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.10</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Libra... | non_priority | cve high detected in cve high severity vulnerability vulnerable library linux kernel source tree library home page a href found in head commit a href found in base branch cosmic experimental vulnerable source files android kernel sam... | 0 |

268,799 | 20,361,693,658 | IssuesEvent | 2022-02-20 19:30:05 | sqlalchemy/sqlalchemy | https://api.github.com/repos/sqlalchemy/sqlalchemy | closed | [docs] `make epub` results in error `TopLevelLookupException("Cant locate template for uri 'page.mako'")` | wontfix documentation use case | ### Describe the bug

I try to build the documentation into `epub` format to be able to read the docs on my ebook reader, as I couldn't find the pre-built `epub` anywhere on the https://www.sqlalchemy.org/.

Unfortunately, this fails with the error.

I tried `make html` to see if that's a general issue or only an i... | 1.0 | [docs] `make epub` results in error `TopLevelLookupException("Cant locate template for uri 'page.mako'")` - ### Describe the bug

I try to build the documentation into `epub` format to be able to read the docs on my ebook reader, as I couldn't find the pre-built `epub` anywhere on the https://www.sqlalchemy.org/.

Un... | non_priority | make epub results in error toplevellookupexception cant locate template for uri page mako describe the bug i try to build the documentation into epub format to be able to read the docs on my ebook reader as i couldn t find the pre built epub anywhere on the unfortunately this fails with the... | 0 |

54,056 | 13,247,072,056 | IssuesEvent | 2020-08-19 16:39:01 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Implement to parse and store the syntax tree API | Area/BuildTools Points/3 Type/Task | **Description:**

Parse the ballerina files to build and give a syntax tree

| 1.0 | Implement to parse and store the syntax tree API - **Description:**

Parse the ballerina files to build and give a syntax tree

| non_priority | implement to parse and store the syntax tree api description parse the ballerina files to build and give a syntax tree | 0 |

31,247 | 11,893,280,814 | IssuesEvent | 2020-03-29 10:47:31 | nihalmurmu/automata | https://api.github.com/repos/nihalmurmu/automata | closed | WS-2016-0075 (Medium) detected in moment-2.8.4.min.js | security vulnerability | ## WS-2016-0075 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>moment-2.8.4.min.js</b></p></summary>

<p>Parse, validate, manipulate, and display dates</p>

<p>Library home page: <a h... | True | WS-2016-0075 (Medium) detected in moment-2.8.4.min.js - ## WS-2016-0075 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>moment-2.8.4.min.js</b></p></summary>

<p>Parse, validate, mani... | non_priority | ws medium detected in moment min js ws medium severity vulnerability vulnerable library moment min js parse validate manipulate and display dates library home page a href path to dependency file tmp ws scm automata node modules vis examples performance html pa... | 0 |

19,603 | 11,254,685,527 | IssuesEvent | 2020-01-12 01:59:41 | tktaofik/airnd-market | https://api.github.com/repos/tktaofik/airnd-market | closed | Bootstrap user service | user-service | - [x] start app with makefile

- [x] postgreSQL db

- [x] basic api routes

- [x] health endpoints

- [x] dockerfile

- [x] docker compose file

- [x] start app with docker-compose | 1.0 | Bootstrap user service - - [x] start app with makefile

- [x] postgreSQL db

- [x] basic api routes

- [x] health endpoints

- [x] dockerfile

- [x] docker compose file

- [x] start app with docker-compose | non_priority | bootstrap user service start app with makefile postgresql db basic api routes health endpoints dockerfile docker compose file start app with docker compose | 0 |

531,484 | 15,499,101,338 | IssuesEvent | 2021-03-11 07:29:02 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.youtube.com - see bug description | browser-firefox engine-gecko priority-critical | <!-- @browser: Firefox 88.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:88.0) Gecko/20100101 Firefox/88.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/68112 -->

**URL**: https://www.youtube.com/results?search_query=hello+darkness+my+... | 1.0 | www.youtube.com - see bug description - <!-- @browser: Firefox 88.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:88.0) Gecko/20100101 Firefox/88.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/68112 -->

**URL**: https://www.youtube.com... | priority | see bug description url browser version firefox operating system windows tested another browser yes chrome problem type something else description i can t use space in the searchfield when searching while a video runs space in the searchfield causes the vid... | 1 |

322,635 | 9,820,741,112 | IssuesEvent | 2019-06-14 04:10:42 | oh-my-fish/oh-my-fish | https://api.github.com/repos/oh-my-fish/oh-my-fish | closed | Installer script checksum is out of sync | installer priority: high | You forgot to update the installer checksum in the latest install script update.

The new hash should be

```

bbace7ef16956d87fd40bff91cd1992a90621e7931ac3055f16b7f6d679e8fff install

```

as @foxcpp mentioned too under the [commit](https://github.com/oh-my-fish/oh-my-fish/commit/a4b2f1cfaac12a614c491e3b8c7ea7e0b... | 1.0 | Installer script checksum is out of sync - You forgot to update the installer checksum in the latest install script update.

The new hash should be

```

bbace7ef16956d87fd40bff91cd1992a90621e7931ac3055f16b7f6d679e8fff install