Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

604,401 | 18,683,861,383 | IssuesEvent | 2021-11-01 09:52:39 | ita-social-projects/TeachUA | https://api.github.com/repos/ita-social-projects/TeachUA | opened | [UI Mobile] The club's cover photo is not aligned on 'Club's card' page | bug UI Priority: Low | **Environment**: Samsung Edge S7

**Reproducible**: always

**Build found**: the last commit

**Steps to reproduce**

1. Log in to https://speak-ukrainian.org.ua/dev with valid data.

2. Click on the 'Menu' button for website navigation and choose 'Гуртки' tab

3. Choose any club showing on the 'Club list' page and... | 1.0 | [UI Mobile] The club's cover photo is not aligned on 'Club's card' page - **Environment**: Samsung Edge S7

**Reproducible**: always

**Build found**: the last commit

**Steps to reproduce**

1. Log in to https://speak-ukrainian.org.ua/dev with valid data.

2. Click on the 'Menu' button for website navigation and c... | priority | the club s cover photo is not aligned on club s card page environment samsung edge reproducible always build found the last commit steps to reproduce log in to with valid data click on the menu button for website navigation and choose гуртки tab choose any club showi... | 1 |

42,514 | 22,638,869,589 | IssuesEvent | 2022-06-30 22:18:59 | flutter/flutter | https://api.github.com/repos/flutter/flutter | closed | [Impeller] New gallery transition perf worst and 99th %-ile raster time regression on Android | engine severe: performance perf: speed P4 impeller | On the change to render BackdropFilter layers directly to DisplayListBuilder https://github.com/flutter/engine/pull/34337, worst and 99th %-ile frame rasterization times regressed on Moto G4:

https://flutter-flutter-perf.skia.org/e/?begin=1654156387&end=1656469887&keys=X5f6e343cfb7467cdc34ede6764f63458&requestType=0... | True | [Impeller] New gallery transition perf worst and 99th %-ile raster time regression on Android - On the change to render BackdropFilter layers directly to DisplayListBuilder https://github.com/flutter/engine/pull/34337, worst and 99th %-ile frame rasterization times regressed on Moto G4:

https://flutter-flutter-perf.... | non_priority | new gallery transition perf worst and ile raster time regression on android on the change to render backdropfilter layers directly to displaylistbuilder worst and ile frame rasterization times regressed on moto chinmaygarde bdero flar | 0 |

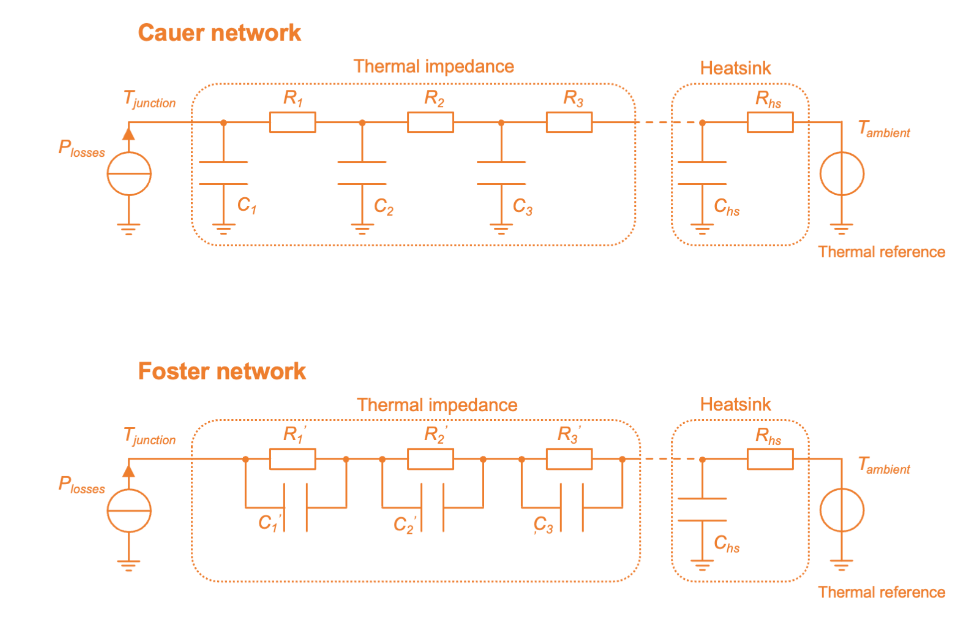

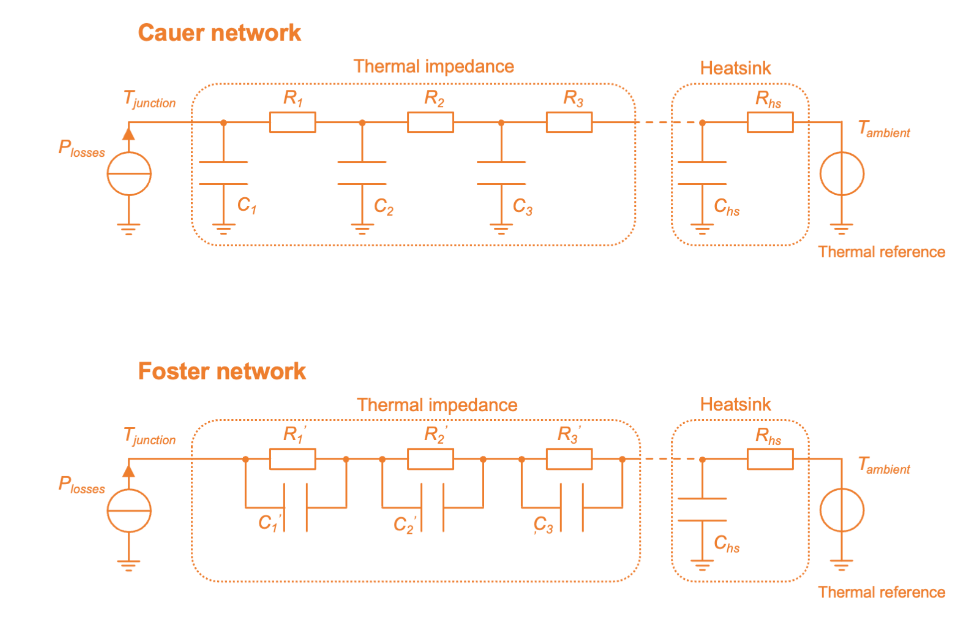

406,684 | 27,577,103,333 | IssuesEvent | 2023-03-08 13:49:40 | aesim-tech/simba-project | https://api.github.com/repos/aesim-tech/simba-project | opened | Fix Thermal Data documentation | documentation | **Is your feature request related to a problem? Please describe.**

There is an error in this picture on the Foster chain. It is impossible to connect a Foster network to a Cauer network (heat sink) as ... | 1.0 | Fix Thermal Data documentation - **Is your feature request related to a problem? Please describe.**

There is an error in this picture on the Foster chain. It is impossible to connect a Foster network ... | non_priority | fix thermal data documentation is your feature request related to a problem please describe there is an error in this picture on the foster chain it is impossible to connect a foster network to a cauer network heat sink as shown in the figure it is necessary to connect a cauer network with anoth... | 0 |

79,914 | 9,966,327,335 | IssuesEvent | 2019-07-08 10:53:44 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | vscode-uri escapes colon in drive letter | *as-designed under-discussion uri | We are trying to use vscode-uri in the debug adapter, and one issue is that vscode-uri uri-encodes the colon after the drive letter in a windows file uri which causes issues downstream.

I see https://github.com/microsoft/vscode/issues/2990#issuecomment-204295374 but I'm worried that will just cause other issues by ... | 1.0 | vscode-uri escapes colon in drive letter - We are trying to use vscode-uri in the debug adapter, and one issue is that vscode-uri uri-encodes the colon after the drive letter in a windows file uri which causes issues downstream.

I see https://github.com/microsoft/vscode/issues/2990#issuecomment-204295374 but I'm wo... | non_priority | vscode uri escapes colon in drive letter we are trying to use vscode uri in the debug adapter and one issue is that vscode uri uri encodes the colon after the drive letter in a windows file uri which causes issues downstream i see but i m worried that will just cause other issues by not encoding other charact... | 0 |

95,648 | 3,954,704,922 | IssuesEvent | 2016-04-29 18:01:35 | ngageoint/hootenanny | https://api.github.com/repos/ngageoint/hootenanny | opened | Ingesting PortAuPrinceOsmPoiRoadBuilding.osm file results in cache error when exportrenderdb script is run | Category: Core Priority: Medium Status: Defined Type: Bug | 2016-04-29 13:59:21,370 ERROR JobExecutionManager:225 - Job with ID: 70c4bae4-666e-45e4-8cfd-595428e7dd8a failed: Failed to execute.Error running osm2ogr:

Relation element with ID: 11380 and type: Way did not exist in the element cache with size = 20000.

make: *** [step1] Error 255

2016-04-29 13:59:21,380 ERROR Ra... | 1.0 | Ingesting PortAuPrinceOsmPoiRoadBuilding.osm file results in cache error when exportrenderdb script is run - 2016-04-29 13:59:21,370 ERROR JobExecutionManager:225 - Job with ID: 70c4bae4-666e-45e4-8cfd-595428e7dd8a failed: Failed to execute.Error running osm2ogr:

Relation element with ID: 11380 and type: Way did not e... | priority | ingesting portauprinceosmpoiroadbuilding osm file results in cache error when exportrenderdb script is run error jobexecutionmanager job with id failed failed to execute error running relation element with id and type way did not exist in the element cache with size make ... | 1 |

121,651 | 17,661,987,133 | IssuesEvent | 2021-08-21 17:50:48 | ghc-dev/Dale-Park | https://api.github.com/repos/ghc-dev/Dale-Park | opened | CVE-2021-3533 (Low) detected in ansible-2.9.9.tar.gz | security vulnerability | ## CVE-2021-3533 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansible-2.9.9.tar.gz</b></p></summary>

<p>Radically simple IT automation</p>

<p>Library home page: <a href="https://file... | True | CVE-2021-3533 (Low) detected in ansible-2.9.9.tar.gz - ## CVE-2021-3533 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansible-2.9.9.tar.gz</b></p></summary>

<p>Radically simple IT aut... | non_priority | cve low detected in ansible tar gz cve low severity vulnerability vulnerable library ansible tar gz radically simple it automation library home page a href path to dependency file dale park requirements txt path to vulnerable library requirements txt dependency ... | 0 |

326,421 | 24,083,730,635 | IssuesEvent | 2022-09-19 09:02:59 | valory-xyz/open-aea | https://api.github.com/repos/valory-xyz/open-aea | closed | Docs Generator No longer generates ABC class docs. | bug documentation | The script is;

./scripts/generate_api_docs

The relevant libraries are;

pydoc-markdown

https://niklasrosenstein.github.io/pydoc-markdown/

Which uses;

https://niklasrosenstein.github.io/docspec/

I have been unable to identify anything within both of these packages which would allow us to generate the ABC... | 1.0 | Docs Generator No longer generates ABC class docs. - The script is;

./scripts/generate_api_docs

The relevant libraries are;

pydoc-markdown

https://niklasrosenstein.github.io/pydoc-markdown/

Which uses;

https://niklasrosenstein.github.io/docspec/

I have been unable to identify anything within both of th... | non_priority | docs generator no longer generates abc class docs the script is scripts generate api docs the relevant libraries are pydoc markdown which uses i have been unable to identify anything within both of these packages which would allow us to generate the abcs | 0 |

227,266 | 18,054,249,846 | IssuesEvent | 2021-09-20 05:20:31 | logicmoo/logicmoo_workspace | https://api.github.com/repos/logicmoo/logicmoo_workspace | opened | logicmoo.pfc.test.sanity_base.VERBATUMS_04 JUnit | Test_9999 logicmoo.pfc.test.sanity_base unit_test VERBATUMS_04 Failing | (cd /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/pfc/t/sanity_base ; timeout --foreground --preserve-status -s SIGKILL -k 10s 10s swipl -x /var/lib/jenkins/workspace/logicmoo_workspace/bin/lmoo-clif verbatums_04.pfc)

% ISSUE: https://github.com/logicmoo/logicmoo_workspace/issues/

% EDIT: https://github.co... | 3.0 | logicmoo.pfc.test.sanity_base.VERBATUMS_04 JUnit - (cd /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/pfc/t/sanity_base ; timeout --foreground --preserve-status -s SIGKILL -k 10s 10s swipl -x /var/lib/jenkins/workspace/logicmoo_workspace/bin/lmoo-clif verbatums_04.pfc)

% ISSUE: https://github.com/logicmoo/lo... | non_priority | logicmoo pfc test sanity base verbatums junit cd var lib jenkins workspace logicmoo workspace packs sys pfc t sanity base timeout foreground preserve status s sigkill k swipl x var lib jenkins workspace logicmoo workspace bin lmoo clif verbatums pfc issue edit jenkins issue ... | 0 |

34,783 | 14,520,870,808 | IssuesEvent | 2020-12-14 06:21:23 | MicrosoftDocs/dynamics-365-customer-engagement | https://api.github.com/repos/MicrosoftDocs/dynamics-365-customer-engagement | closed | Sample? | Channel Integration Framework Pri2 assigned-to-author dynamics-365-customerservice/svc waiting on customer | Any sample code on how to raise the onclicktoact event? I've tried every way I know how

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 829606f3-febb-1082-5233-fc754fe79bbe

* Version Independent ID: be5e2a6d-674d-c598-c667-6445917a4409

* Co... | 1.0 | Sample? - Any sample code on how to raise the onclicktoact event? I've tried every way I know how

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 829606f3-febb-1082-5233-fc754fe79bbe

* Version Independent ID: be5e2a6d-674d-c598-c667-6445917... | non_priority | sample any sample code on how to raise the onclicktoact event i ve tried every way i know how document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id febb version independent id content content source servic... | 0 |

619,135 | 19,517,167,784 | IssuesEvent | 2021-12-29 12:16:19 | slcommunity/til-back | https://api.github.com/repos/slcommunity/til-back | closed | FormatDatetime 설정 | Feature/Function Status:To Do Priority:High | ## 목적

작성일에서 Date를 다 찍지말고 format 정하기

## 작업 상세 내용

- [x] (YYYY-MM-DD)

## 참고사항

| 1.0 | FormatDatetime 설정 - ## 목적

작성일에서 Date를 다 찍지말고 format 정하기

## 작업 상세 내용

- [x] (YYYY-MM-DD)

## 참고사항

| priority | formatdatetime 설정 목적 작성일에서 date를 다 찍지말고 format 정하기 작업 상세 내용 yyyy mm dd 참고사항 | 1 |

40,624 | 5,243,298,867 | IssuesEvent | 2017-01-31 20:20:00 | verfriemelt-dot-org/sachsencacher | https://api.github.com/repos/verfriemelt-dot-org/sachsencacher | closed | listensuche ungünstig plaziert. | Design enhancement | wir müssen mal die suchleiste besser positionieren auf den listen seiten.

die gefilterte suche steht in konkurrenz mit der globalen suche und verwirrt an der stelle etwas, das muss vom design her klar sein, um was es da geht. | 1.0 | listensuche ungünstig plaziert. - wir müssen mal die suchleiste besser positionieren auf den listen seiten.

die gefilterte suche steht in konkurrenz mit der globalen suche und verwirrt an der stelle etwas, das muss vom design her klar sein, um was es da geht. | non_priority | listensuche ungünstig plaziert wir müssen mal die suchleiste besser positionieren auf den listen seiten die gefilterte suche steht in konkurrenz mit der globalen suche und verwirrt an der stelle etwas das muss vom design her klar sein um was es da geht | 0 |

380,140 | 11,254,273,024 | IssuesEvent | 2020-01-11 22:18:50 | python-discord/bot | https://api.github.com/repos/python-discord/bot | opened | PEP Command Fails on Empty Metadata | area: information priority: 3 - low type: bug | Currently, the PEP command assumes that if a metadata field is present in the PEP's summary table that the value is populated:

https://github.com/python-discord/bot/blob/74d990540a1072c1782fa7593d7d1abe3c165f49/bot/cogs/utils.py#L64-L72

However, this is not always the case, e.g. [PEP 249](https://www.python.org/d... | 1.0 | PEP Command Fails on Empty Metadata - Currently, the PEP command assumes that if a metadata field is present in the PEP's summary table that the value is populated:

https://github.com/python-discord/bot/blob/74d990540a1072c1782fa7593d7d1abe3c165f49/bot/cogs/utils.py#L64-L72

However, this is not always the case, e... | priority | pep command fails on empty metadata currently the pep command assumes that if a metadata field is present in the pep s summary table that the value is populated however this is not always the case e g because of this the embed field value is provided a null value causing an error to be... | 1 |

730,201 | 25,163,936,830 | IssuesEvent | 2022-11-10 19:03:59 | zowe/vscode-extension-for-zowe | https://api.github.com/repos/zowe/vscode-extension-for-zowe | closed | ZE: create all `.zowe` subfolders needed (e.g. `~/.zowe/settings`) | priority-high 22PI4 | We would still want to have the means of creating all other missing folders (`~/.zowe/settings`)

_Originally posted by @zFernand0 in https://github.com/zowe/vscode-extension-for-zowe/issues/1830#issuecomment-1161834205_ | 1.0 | ZE: create all `.zowe` subfolders needed (e.g. `~/.zowe/settings`) - We would still want to have the means of creating all other missing folders (`~/.zowe/settings`)

_Originally posted by @zFernand0 in https://github.com/zowe/vscode-extension-for-zowe/issues/1830#issuecomment-1161834205_ | priority | ze create all zowe subfolders needed e g zowe settings we would still want to have the means of creating all other missing folders zowe settings originally posted by in | 1 |

797,491 | 28,147,217,394 | IssuesEvent | 2023-04-02 16:17:30 | x13pixels/remedybg-issues | https://api.github.com/repos/x13pixels/remedybg-issues | closed | `char8_t` support | Type: Bug Priority: 6 (Medium) Component: Watch Window | Currently, RemedyBG displays a `char8_t` array as a `TBI []` type and this makes it hard to debug in certain cases (it doesn't seem to recognize `static` allocation of these in the global variables view). | 1.0 | `char8_t` support - Currently, RemedyBG displays a `char8_t` array as a `TBI []` type and this makes it hard to debug in certain cases (it doesn't seem to recognize `static` allocation of these in the global variables view). | priority | t support currently remedybg displays a t array as a tbi type and this makes it hard to debug in certain cases it doesn t seem to recognize static allocation of these in the global variables view | 1 |

95,646 | 10,884,649,787 | IssuesEvent | 2019-11-18 08:47:47 | emielvanseveren/hyperledger | https://api.github.com/repos/emielvanseveren/hyperledger | closed | Word template (project mgmt) | documentation | Het aanmaken van een

> mooi

template waar we de project analyse in kunnen steken. | 1.0 | Word template (project mgmt) - Het aanmaken van een

> mooi

template waar we de project analyse in kunnen steken. | non_priority | word template project mgmt het aanmaken van een mooi template waar we de project analyse in kunnen steken | 0 |

93,981 | 11,839,612,492 | IssuesEvent | 2020-03-23 17:26:03 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | Predictive typing incorrectly performs selection when dot character is typed | *as-designed | Issue Type: <b>Bug</b>

When I start typing, "vue" the following predictive typing popup is display.

The problem is when I type ".", vscode incorrectly replaces the text "vue" with "VTTCue." which is not w... | 1.0 | Predictive typing incorrectly performs selection when dot character is typed - Issue Type: <b>Bug</b>

When I start typing, "vue" the following predictive typing popup is display.

The problem is when I typ... | non_priority | predictive typing incorrectly performs selection when dot character is typed issue type bug when i start typing vue the following predictive typing popup is display the problem is when i type vscode incorrectly replaces the text vue with vttcue which is not what i expect what i expe... | 0 |

477,427 | 13,762,153,615 | IssuesEvent | 2020-10-07 08:45:14 | HackYourFuture-CPH/chattie | https://api.github.com/repos/HackYourFuture-CPH/chattie | closed | When signing up, add the username to the database | High priority User story | There is a bug now, where the username is not added to the database | 1.0 | When signing up, add the username to the database - There is a bug now, where the username is not added to the database | priority | when signing up add the username to the database there is a bug now where the username is not added to the database | 1 |

48,086 | 2,990,153,033 | IssuesEvent | 2015-07-21 07:21:20 | jayway/rest-assured | https://api.github.com/repos/jayway/rest-assured | closed | Replace host:port to baseUri in log when path contains query | bug imported Priority-Medium | _From [twilig...@gmail.com](https://code.google.com/u/115210657126813255036/) on January 30, 2014 11:01:31_

What steps will reproduce the problem? given().log().all().expect().log().all().get(" http://ya.ru/bla/?param"); What is the expected output? What do you see instead? Expect in log:

Request method: GET

Requ... | 1.0 | Replace host:port to baseUri in log when path contains query - _From [twilig...@gmail.com](https://code.google.com/u/115210657126813255036/) on January 30, 2014 11:01:31_

What steps will reproduce the problem? given().log().all().expect().log().all().get(" http://ya.ru/bla/?param"); What is the expected output? What d... | priority | replace host port to baseuri in log when path contains query from on january what steps will reproduce the problem given log all expect log all get what is the expected output what do you see instead expect in log request method get request path request params qu... | 1 |

768,271 | 26,959,907,444 | IssuesEvent | 2023-02-08 17:22:20 | Automattic/woocommerce-payments | https://api.github.com/repos/Automattic/woocommerce-payments | opened | Split UPE: migrate saved SEPA tokens | type: bug priority: high | ### Describe the bug

<!-- A clear and concise description of what the bug is. Please be as descriptive as possible. -->

If a merchant switches from the "Legacy" UPE to the "Split UPE", saved SEPA tokens will not appear anymore.

We need to find a way to migrate the SEPA tokens to the new SEPA gateway.

| 1.0 | Split UPE: migrate saved SEPA tokens - ### Describe the bug

<!-- A clear and concise description of what the bug is. Please be as descriptive as possible. -->

If a merchant switches from the "Legacy" UPE to the "Split UPE", saved SEPA tokens will not appear anymore.

We need to find a way to migrate the SEPA tokens to ... | priority | split upe migrate saved sepa tokens describe the bug if a merchant switches from the legacy upe to the split upe saved sepa tokens will not appear anymore we need to find a way to migrate the sepa tokens to the new sepa gateway | 1 |

368,122 | 10,866,426,066 | IssuesEvent | 2019-11-14 21:13:27 | cassproject/cass-editor | https://api.github.com/repos/cassproject/cass-editor | closed | On attempt to export/publish some older frameworks get a TypeError on splice | Competency Framework High Priority bug | I was republishing the competency frameworks to fix the date formatting issue.

Several frameworks failed to publish/export.

If edit one of the frameworks (**Connecting Learning Theories to Your Teaching Strategies**), and then do a CE ASN JSON-LD export, I get the following error:

TypeError: f["schema:publisher"].... | 1.0 | On attempt to export/publish some older frameworks get a TypeError on splice - I was republishing the competency frameworks to fix the date formatting issue.

Several frameworks failed to publish/export.

If edit one of the frameworks (**Connecting Learning Theories to Your Teaching Strategies**), and then do a CE AS... | priority | on attempt to export publish some older frameworks get a typeerror on splice i was republishing the competency frameworks to fix the date formatting issue several frameworks failed to publish export if edit one of the frameworks connecting learning theories to your teaching strategies and then do a ce as... | 1 |

176,450 | 28,097,975,512 | IssuesEvent | 2023-03-30 17:10:24 | Enterprise-CMCS/eAPD | https://api.github.com/repos/Enterprise-CMCS/eAPD | closed | [Design Issue] Create Closing Notes and Error Messages for Alt Considerations and Risks and Conditions for Enhanced Funding | Design small | The designs are done for these pages with the MES expansion, but we need closing notes, annotations, and error message designs for them as they transition to dev work

### This task is done when…

- [ ] any acceptance criteria (not process oriented, requirements of feature)

- [x] designs are created, taking into consi... | 1.0 | [Design Issue] Create Closing Notes and Error Messages for Alt Considerations and Risks and Conditions for Enhanced Funding - The designs are done for these pages with the MES expansion, but we need closing notes, annotations, and error message designs for them as they transition to dev work

### This task is done whe... | non_priority | create closing notes and error messages for alt considerations and risks and conditions for enhanced funding the designs are done for these pages with the mes expansion but we need closing notes annotations and error message designs for them as they transition to dev work this task is done when… any a... | 0 |

476,946 | 13,752,818,441 | IssuesEvent | 2020-10-06 14:56:44 | AY2021S1-CS2103T-W11-2/tp | https://api.github.com/repos/AY2021S1-CS2103T-W11-2/tp | closed | Add Tasks | priority.Medium severity.Low type.Story | As a user, I am able to add my personal tasks or tasks related to CS1101S but are not currently specified by any categories so that all CS1101S related tasks are stored together for easier reference. Tasks includes todos, deadlines and events. | 1.0 | Add Tasks - As a user, I am able to add my personal tasks or tasks related to CS1101S but are not currently specified by any categories so that all CS1101S related tasks are stored together for easier reference. Tasks includes todos, deadlines and events. | priority | add tasks as a user i am able to add my personal tasks or tasks related to but are not currently specified by any categories so that all related tasks are stored together for easier reference tasks includes todos deadlines and events | 1 |

279,940 | 24,267,150,359 | IssuesEvent | 2022-09-28 07:22:39 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | SubViewports doesn't handle custom inputs | bug needs testing topic:input topic:gui | ### Godot version

Godot Engine v4.0.beta1.mono.official.4ba934bf3

### System information

Windows 11, Vulkan API 1.2.0 - Using Vulkan Device #0: AMD - AMD Radeon RX 5700

### Issue description

Using the _Input method on a viewport does not work. Recreating the same situation in Godot 3.5, using .Input and a viewport... | 1.0 | SubViewports doesn't handle custom inputs - ### Godot version

Godot Engine v4.0.beta1.mono.official.4ba934bf3

### System information

Windows 11, Vulkan API 1.2.0 - Using Vulkan Device #0: AMD - AMD Radeon RX 5700

### Issue description

Using the _Input method on a viewport does not work. Recreating the same situati... | non_priority | subviewports doesn t handle custom inputs godot version godot engine mono official system information windows vulkan api using vulkan device amd amd radeon rx issue description using the input method on a viewport does not work recreating the same situation in godot ... | 0 |

656,742 | 21,774,071,895 | IssuesEvent | 2022-05-13 12:07:22 | metabase/metabase | https://api.github.com/repos/metabase/metabase | opened | Custom Expression aggregation in Metrics does not work | Type:Bug Priority:P1 .Correctness Administration/Metrics & Segments .Frontend .Regression | **Describe the bug**

Custom Expression aggregation in Metrics does not work.

Regression since 0.43.0

**To Reproduce**

1. Admin > Data Model > Metrics > Create > Sample > Orders

2. Click "View" > Custom Expression

1. The modal is too small

2. There is no formula validation, so incorrect things can be inpu... | 1.0 | Custom Expression aggregation in Metrics does not work - **Describe the bug**

Custom Expression aggregation in Metrics does not work.

Regression since 0.43.0

**To Reproduce**

1. Admin > Data Model > Metrics > Create > Sample > Orders

2. Click "View" > Custom Expression

1. The modal is too small

2. There ... | priority | custom expression aggregation in metrics does not work describe the bug custom expression aggregation in metrics does not work regression since to reproduce admin data model metrics create sample orders click view custom expression the modal is too small there i... | 1 |

130,304 | 27,642,403,633 | IssuesEvent | 2023-03-10 19:17:19 | ArctosDB/arctos | https://api.github.com/repos/ArctosDB/arctos | opened | Code Table Request - UCR: University of California Riverside Herbarium | Function-CodeTables | ## Instructions

This is a template to facilitate communication with the Arctos Code Table Committee. Submit a separate request for each relevant value. This form is appropriate for exploring how data may best be stored, for adding vocabulary, or for updating existing definitions.

Reviewing documentation before pr... | 1.0 | Code Table Request - UCR: University of California Riverside Herbarium - ## Instructions

This is a template to facilitate communication with the Arctos Code Table Committee. Submit a separate request for each relevant value. This form is appropriate for exploring how data may best be stored, for adding vocabulary, o... | non_priority | code table request ucr university of california riverside herbarium instructions this is a template to facilitate communication with the arctos code table committee submit a separate request for each relevant value this form is appropriate for exploring how data may best be stored for adding vocabulary o... | 0 |

305,972 | 9,379,097,375 | IssuesEvent | 2019-04-04 14:16:28 | OpenNebula/one | https://api.github.com/repos/OpenNebula/one | reopened | ZONE_NAME header gets stripped by passenger | Category: Drivers - Network Category: Sunstone Priority: High Sponsored Status: Accepted Type: Bug | **Description**

ZONE_NAME header gets stripped by passenger

**To Reproduce**

change zone using sunstone + passenger

**Expected behavior**

zone should be changed

**Details**

- Affected Component: Sunstone

<!--////////////////////////////////////////////-->

<!-- THIS SECTION IS FOR THE DEVELOPMENT TEAM ... | 1.0 | ZONE_NAME header gets stripped by passenger - **Description**

ZONE_NAME header gets stripped by passenger

**To Reproduce**

change zone using sunstone + passenger

**Expected behavior**

zone should be changed

**Details**

- Affected Component: Sunstone

<!--////////////////////////////////////////////-->

... | priority | zone name header gets stripped by passenger description zone name header gets stripped by passenger to reproduce change zone using sunstone passenger expected behavior zone should be changed details affected component sunstone progress status branch creat... | 1 |

14,625 | 3,412,316,260 | IssuesEvent | 2015-12-05 20:01:21 | radare/radare2 | https://api.github.com/repos/radare/radare2 | closed | ELF: no imported functions identification | bug file-format test-required | On this binary: https://github.com/ctfs/write-ups-2015/tree/master/nuit-du-hack-ctf-quals-2015/reverse/clark-kent r2 fails to correctly identify imported functions.

This is probably because the binary doesn't have section header information. | 1.0 | ELF: no imported functions identification - On this binary: https://github.com/ctfs/write-ups-2015/tree/master/nuit-du-hack-ctf-quals-2015/reverse/clark-kent r2 fails to correctly identify imported functions.

This is probably because the binary doesn't have section header information. | non_priority | elf no imported functions identification on this binary fails to correctly identify imported functions this is probably because the binary doesn t have section header information | 0 |

535,501 | 15,689,435,358 | IssuesEvent | 2021-03-25 15:40:29 | fecgov/fec-eregs | https://api.github.com/repos/fecgov/fec-eregs | opened | Load the 2021 11 CFR into e-regs | High priority Pairing opportunity Work: Back-end Work: Content | ### What we are after

Making sure the newest version (2021) of 11 CFR is parsed into e-regs

GPO links to different formats: will add link here when available

Content Team will put in changes in for the new regs so we know what to look for.

### Completion criteria

- [ ] Regs loaded onto dev

- [ ] Regs load... | 1.0 | Load the 2021 11 CFR into e-regs - ### What we are after

Making sure the newest version (2021) of 11 CFR is parsed into e-regs

GPO links to different formats: will add link here when available

Content Team will put in changes in for the new regs so we know what to look for.

### Completion criteria

- [ ] Re... | priority | load the cfr into e regs what we are after making sure the newest version of cfr is parsed into e regs gpo links to different formats will add link here when available content team will put in changes in for the new regs so we know what to look for completion criteria regs loaded ... | 1 |

42,122 | 9,162,056,792 | IssuesEvent | 2019-03-01 12:18:04 | mozilla-releng/firefox-infra-changelog | https://api.github.com/repos/mozilla-releng/firefox-infra-changelog | closed | W0622: Redefining built-in 'all' (redefined-builtin) in file 'client.py' | code-style | ```Python

client.py:30:8: W0622: Redefining built-in 'all' (redefined-builtin)

```

We should rename the variable to use something else than `all`.

Background: all() is a method that returns a bool True value when all elements in the given iterable are true.

| 1.0 | W0622: Redefining built-in 'all' (redefined-builtin) in file 'client.py' - ```Python

client.py:30:8: W0622: Redefining built-in 'all' (redefined-builtin)

```

We should rename the variable to use something else than `all`.

Background: all() is a method that returns a bool True value when all elements in the given ... | non_priority | redefining built in all redefined builtin in file client py python client py redefining built in all redefined builtin we should rename the variable to use something else than all background all is a method that returns a bool true value when all elements in the given iterable ... | 0 |

240,094 | 20,010,512,320 | IssuesEvent | 2022-02-01 05:28:33 | open-telemetry/opentelemetry-java-instrumentation | https://api.github.com/repos/open-telemetry/opentelemetry-java-instrumentation | opened | Run tests against more versions in muzzle range | enhancement area:tests | Currently tests are typically run against the initial (base) and latest version in the muzzle range.

The muzzle checks tell us that there are no API-incompatible changes in the versions in the middle that affect the instrumentation. But what muzzle can't tell us is if there were any behavior changes in the versions ... | 1.0 | Run tests against more versions in muzzle range - Currently tests are typically run against the initial (base) and latest version in the muzzle range.

The muzzle checks tell us that there are no API-incompatible changes in the versions in the middle that affect the instrumentation. But what muzzle can't tell us is i... | non_priority | run tests against more versions in muzzle range currently tests are typically run against the initial base and latest version in the muzzle range the muzzle checks tell us that there are no api incompatible changes in the versions in the middle that affect the instrumentation but what muzzle can t tell us is i... | 0 |

375,836 | 11,135,042,653 | IssuesEvent | 2019-12-20 13:27:08 | Rithari/OnePlusBot | https://api.github.com/repos/Rithari/OnePlusBot | opened | Rework the way modmail messages are being logged | enhancement low priority | **Is your feature request related to a problem? Please describe.**

Currently, when closing, each embed is replayed into the logging channel, which, because for ratelimiting reasons, is delayed. This mechanism causes the logging to take a significant amount.

**Describe the solution you'd like**

Logging mechanism sh... | 1.0 | Rework the way modmail messages are being logged - **Is your feature request related to a problem? Please describe.**

Currently, when closing, each embed is replayed into the logging channel, which, because for ratelimiting reasons, is delayed. This mechanism causes the logging to take a significant amount.

**Descr... | priority | rework the way modmail messages are being logged is your feature request related to a problem please describe currently when closing each embed is replayed into the logging channel which because for ratelimiting reasons is delayed this mechanism causes the logging to take a significant amount descr... | 1 |

173,733 | 6,530,405,298 | IssuesEvent | 2017-08-30 15:01:04 | axvr/Vivid.vim | https://api.github.com/repos/axvr/Vivid.vim | reopened | Modify plugin install location | enhancement priority | Automatic setting of plugin install location depending upon running Vim or Neovim. Allow user to set a custom location. | 1.0 | Modify plugin install location - Automatic setting of plugin install location depending upon running Vim or Neovim. Allow user to set a custom location. | priority | modify plugin install location automatic setting of plugin install location depending upon running vim or neovim allow user to set a custom location | 1 |

690,494 | 23,661,759,237 | IssuesEvent | 2022-08-26 16:15:23 | o3de/o3de | https://api.github.com/repos/o3de/o3de | closed | Lua Editor: Unable to connect to the O3DE Editor | feature/networking kind/bug sig/content triage/accepted priority/critical | **Describe the bug**

The Lua Editor cannot be connected to the O3DE Editor. On the first launch of the Lua Editor, the only available options in Target list are AssetProcessor and AssetBuilder. When the Lua Editor is restarted, these are no longer available and the Target list is empty.

Additionally, when the Lua Edi... | 1.0 | Lua Editor: Unable to connect to the O3DE Editor - **Describe the bug**

The Lua Editor cannot be connected to the O3DE Editor. On the first launch of the Lua Editor, the only available options in Target list are AssetProcessor and AssetBuilder. When the Lua Editor is restarted, these are no longer available and the Ta... | priority | lua editor unable to connect to the editor describe the bug the lua editor cannot be connected to the editor on the first launch of the lua editor the only available options in target list are assetprocessor and assetbuilder when the lua editor is restarted these are no longer available and the target l... | 1 |

101,546 | 21,709,364,207 | IssuesEvent | 2022-05-10 12:39:05 | appsmithorg/appsmith | https://api.github.com/repos/appsmithorg/appsmith | closed | [Bug]: Allow query/js confirmation modal to be dismissed by clicking outside | Bug UX Improvement Production Needs Triaging Query Execution BE Coders Pod medium Actions Pod | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Description

Currently, user has to click on `yes` or `no` buttons on the confirmation modal to close the modal - but clicking outside the modal should also be allowed when user wants to quickly dismiss the action and continu... | 1.0 | [Bug]: Allow query/js confirmation modal to be dismissed by clicking outside - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Description

Currently, user has to click on `yes` or `no` buttons on the confirmation modal to close the modal - but clicking outside the modal s... | non_priority | allow query js confirmation modal to be dismissed by clicking outside is there an existing issue for this i have searched the existing issues description currently user has to click on yes or no buttons on the confirmation modal to close the modal but clicking outside the modal should ... | 0 |

83,435 | 24,055,870,278 | IssuesEvent | 2022-09-16 16:48:49 | gradle/gradle | https://api.github.com/repos/gradle/gradle | closed | Improve 'dependency not found' error message to be less misleading about the cause of the problem | a:feature in:composite-builds stale | @adammurdoch commented on [Thu Jun 15 2017](https://github.com/gradle/composite-builds/issues/116)

The error messages that Gradle produces when a match for a given selector cannot be found all assume that the only place we look is in repositories defined by the project. For example, we have error messages that say thi... | 1.0 | Improve 'dependency not found' error message to be less misleading about the cause of the problem - @adammurdoch commented on [Thu Jun 15 2017](https://github.com/gradle/composite-builds/issues/116)

The error messages that Gradle produces when a match for a given selector cannot be found all assume that the only place... | non_priority | improve dependency not found error message to be less misleading about the cause of the problem adammurdoch commented on the error messages that gradle produces when a match for a given selector cannot be found all assume that the only place we look is in repositories defined by the project for example we h... | 0 |

119,575 | 15,574,721,597 | IssuesEvent | 2021-03-17 10:11:02 | OfficeDev/office-js | https://api.github.com/repos/OfficeDev/office-js | closed | In Excel Online, the automatically created worksheet of all new workbooks, have the same id | Area: Excel Resolution: by design Type: product bug | As described in [this Stack Overflow question](https://stackoverflow.com/questions/56502276/all-first-worksheets-of-files-created-in-excel-online-have-the-same-id), when I go to [office.com](https://www.office.com) and create a new Excel file, the worksheet that already exists in the new file, has always id `{00000000-... | 1.0 | In Excel Online, the automatically created worksheet of all new workbooks, have the same id - As described in [this Stack Overflow question](https://stackoverflow.com/questions/56502276/all-first-worksheets-of-files-created-in-excel-online-have-the-same-id), when I go to [office.com](https://www.office.com) and create ... | non_priority | in excel online the automatically created worksheet of all new workbooks have the same id as described in when i go to and create a new excel file the worksheet that already exists in the new file has always id if i manually create a new worksheet the worksheet has a normal looking rand... | 0 |

371,585 | 10,974,366,452 | IssuesEvent | 2019-11-29 08:58:13 | deepnetworkgmbh/deepnetworkgmbh.github.io | https://api.github.com/repos/deepnetworkgmbh/deepnetworkgmbh.github.io | closed | Garbled UI Elements on Chrome/Windows on first load. | bug high priority | **Repro steps**

- either ctrl-F5, or do a first time load of home page.

- Issue resolves after first slider action.

Expected Behavior:

- First time load acts normal.

Observe the following for few seconds:

63.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 6.0.1; Tablet; rv:63.0) Gecko/63.0 Firefox/63.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://www.ebay.com/itm/Bayer-Seresto-Flea-and-Tick-Collar-for-cat-8-Month-Protection-odorless/302831012146?hash=item4682228932:g:... | 1.0 | www.ebay.com - see bug description - <!-- @browser: Firefox Mobile (Tablet) 63.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 6.0.1; Tablet; rv:63.0) Gecko/63.0 Firefox/63.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://www.ebay.com/itm/Bayer-Seresto-Flea-and-Tick-Collar-for-cat-8-Month-Protection-odorles... | priority | see bug description url browser version firefox mobile tablet operating system android tested another browser yes problem type something else description while on ebay pages load until i click on a thumbnail to view an item when i do so the page gets an erro... | 1 |

73,238 | 19,602,695,559 | IssuesEvent | 2022-01-06 04:27:12 | carla-simulator/carla | https://api.github.com/repos/carla-simulator/carla | closed | Carla 0.9.9 Docker Image Build Error: Error 403 Forbidden | build system | When I'm trying to build the docker image for carla branch 0.9.9, after running this command

`docker build -t carla -f Carla.Dockerfile . --build-arg GIT_BRANCH=0.9.9`

The error below will show:

T... | 1.0 | Carla 0.9.9 Docker Image Build Error: Error 403 Forbidden - When I'm trying to build the docker image for carla branch 0.9.9, after running this command

`docker build -t carla -f Carla.Dockerfile . --build-arg GIT_BRANCH=0.9.9`

The error below will show:

> IsPublic IsSerial Name BaseType ... | 1.0 | Add-PnPFile can't write Values Modified & Created - ### Reporting an Issue or Missing Feature

When uploading a file with Add-PnPFile values for Modified & Created are ignored

### Expected behavior

> PS > $pubdate

> **Thursday, 19 November 2015 2:47:38 PM**

>

> PS > $pubdate.GetType()

> IsPublic IsSerial Na... | non_priority | add pnpfile can t write values modified created reporting an issue or missing feature when uploading a file with add pnpfile values for modified created are ignored expected behavior ps pubdate thursday november pm ps pubdate gettype ispublic isserial name ... | 0 |

192,134 | 15,339,730,145 | IssuesEvent | 2021-02-27 03:17:39 | UBC-MDS/picturepyfect | https://api.github.com/repos/UBC-MDS/picturepyfect | closed | README.md | documentation | Outline the package you would like to build in the README.md file. (This can be identical for both projects at this point in the project). In particular, your README.md should contain:

- a summary paragraph that describes the project at a high level

- a bulleted list of the functions (and datasets if applicable) ... | 1.0 | README.md - Outline the package you would like to build in the README.md file. (This can be identical for both projects at this point in the project). In particular, your README.md should contain:

- a summary paragraph that describes the project at a high level

- a bulleted list of the functions (and datasets if ... | non_priority | readme md outline the package you would like to build in the readme md file this can be identical for both projects at this point in the project in particular your readme md should contain a summary paragraph that describes the project at a high level a bulleted list of the functions and datasets if ... | 0 |

453,398 | 13,069,264,924 | IssuesEvent | 2020-07-31 06:05:53 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.youtube.com - site is not usable | browser-firefox-mobile engine-gecko priority-critical | <!-- @browser: Firefox Mobile 80.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:80.0) Gecko/80.0 Firefox/80.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/56046 -->

**URL**: https://www.youtube.com/

**Browser / Version**: Firefox Mobile 80.0

*... | 1.0 | www.youtube.com - site is not usable - <!-- @browser: Firefox Mobile 80.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:80.0) Gecko/80.0 Firefox/80.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/56046 -->

**URL**: https://www.youtube.com/

**Bro... | priority | site is not usable url browser version firefox mobile operating system android tested another browser yes chrome problem type site is not usable description browser unsupported steps to reproduce when i open youtube site the browser need me to open it in anot... | 1 |

244,065 | 7,870,331,392 | IssuesEvent | 2018-06-25 00:32:39 | openshift/origin | https://api.github.com/repos/openshift/origin | closed | Unable to get deployment logs for multi container pods | component/cli kind/bug lifecycle/rotten priority/P2 | When there are multiple containers in pod `oc logs` fails for deploymentconfigs for that pod and it is not possible to specify the container with -c parameter.

##### Version

oc v3.2.0.20

kubernetes v1.2.0-36-g4a3f9c5

##### Steps To Reproduce

1. oc logs dc/name_of_pod_with_multiple_containers -c name_of_container

#####... | 1.0 | Unable to get deployment logs for multi container pods - When there are multiple containers in pod `oc logs` fails for deploymentconfigs for that pod and it is not possible to specify the container with -c parameter.

##### Version

oc v3.2.0.20

kubernetes v1.2.0-36-g4a3f9c5

##### Steps To Reproduce

1. oc logs dc/name_o... | priority | unable to get deployment logs for multi container pods when there are multiple containers in pod oc logs fails for deploymentconfigs for that pod and it is not possible to specify the container with c parameter version oc kubernetes steps to reproduce oc logs dc name of pod with ... | 1 |

21,982 | 6,227,830,899 | IssuesEvent | 2017-07-10 21:38:47 | XceedBoucherS/TestImport5 | https://api.github.com/repos/XceedBoucherS/TestImport5 | closed | Problem with BusyIndicator | CodePlex | <b>janglinj[CodePlex]</b> <br />I'm having a problem where after the busy indicator is done it leaves some of my buttons with disabled appearance. They look disabled but they still work. Any chance of fixing that? See the attachment. The image on the left is before the busyindicator

was displayed. The image is after ... | 1.0 | Problem with BusyIndicator - <b>janglinj[CodePlex]</b> <br />I'm having a problem where after the busy indicator is done it leaves some of my buttons with disabled appearance. They look disabled but they still work. Any chance of fixing that? See the attachment. The image on the left is before the busyindicator

was d... | non_priority | problem with busyindicator janglinj i m having a problem where after the busy indicator is done it leaves some of my buttons with disabled appearance they look disabled but they still work any chance of fixing that see the attachment the image on the left is before the busyindicator was displayed the image... | 0 |

459,910 | 13,201,119,938 | IssuesEvent | 2020-08-14 09:31:20 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | uart_fifo_read can only read one character | bug platform: STM32 priority: low | **Describe the bug**

'''

int uart_fifo_init(void){

uint8_t c;

dev = device_get_binding("UART_2");

if (dev == NULL) {

printk("cannot find device\n");

return -1;

}

uart_irq_rx_disable(dev);

uart_irq_tx_disable(dev);

uart_irq_callback_set(dev, uart_fifo_callback);

/* Drain the fifo */

if (uart_i... | 1.0 | uart_fifo_read can only read one character - **Describe the bug**

'''

int uart_fifo_init(void){

uint8_t c;

dev = device_get_binding("UART_2");

if (dev == NULL) {

printk("cannot find device\n");

return -1;

}

uart_irq_rx_disable(dev);

uart_irq_tx_disable(dev);

uart_irq_callback_set(dev, uart_fifo_c... | priority | uart fifo read can only read one character describe the bug int uart fifo init void t c dev device get binding uart if dev null printk cannot find device n return uart irq rx disable dev uart irq tx disable dev uart irq callback set dev uart fifo callb... | 1 |

657,693 | 21,801,282,557 | IssuesEvent | 2022-05-16 05:42:03 | Automattic/woocommerce-payments | https://api.github.com/repos/Automattic/woocommerce-payments | closed | Display fee breakdown in order notes. | type: enhancement priority: high status: has pr component: order details size: medium component: countries currencies localization impact: high category: core | Splitted from: https://github.com/Automattic/woocommerce-payments/issues/2233

All transactions are subject to a base fee (determined by the merchant's country) plus a number of additional fees (like international card processing, FX fees etc).

We currently only show the total fee. We want to break this number down ... | 1.0 | Display fee breakdown in order notes. - Splitted from: https://github.com/Automattic/woocommerce-payments/issues/2233

All transactions are subject to a base fee (determined by the merchant's country) plus a number of additional fees (like international card processing, FX fees etc).

We currently only show the total... | priority | display fee breakdown in order notes splitted from all transactions are subject to a base fee determined by the merchant s country plus a number of additional fees like international card processing fx fees etc we currently only show the total fee we want to break this number down to make it clearer ho... | 1 |

284,658 | 21,464,216,290 | IssuesEvent | 2022-04-26 00:46:25 | makeworld-the-better-one/amfora | https://api.github.com/repos/makeworld-the-better-one/amfora | closed | flatpak package for Amfora | documentation enhancement help wanted | Would really like to have a flatpak package for Amfora (or a .deb package but I think flatpak would cover more ground). | 1.0 | flatpak package for Amfora - Would really like to have a flatpak package for Amfora (or a .deb package but I think flatpak would cover more ground). | non_priority | flatpak package for amfora would really like to have a flatpak package for amfora or a deb package but i think flatpak would cover more ground | 0 |

2,209 | 24,150,830,507 | IssuesEvent | 2022-09-22 00:24:57 | hackforla/ops | https://api.github.com/repos/hackforla/ops | opened | Create Glossary of AWS resources used in Incubator | size: 1pt role: Site Reliability Engineer feature: administrative | ### Overview

As a Dev Ops member, I would like to have a glossary or list of all active AWS resources used by Incubator to deploy its apps. These resources can be identified within the Incubator's code in ... | True | Create Glossary of AWS resources used in Incubator - ### Overview

As a Dev Ops member, I would like to have a glossary or list of all active AWS resources used by Incubator to deploy its apps. These resour... | non_priority | create glossary of aws resources used in incubator overview as a dev ops member i would like to have a glossary or list of all active aws resources used by incubator to deploy its apps these resources can be identified within the incubator s code in the confirmation as a github user when i ... | 0 |

692,393 | 23,732,745,443 | IssuesEvent | 2022-08-31 04:23:05 | wso2/api-manager | https://api.github.com/repos/wso2/api-manager | opened | Make MGW default cache expiry time as a configurable property from MGW level | Type/Bug Priority/Normal | ### Description

Currently, in MGW, when a token comes with a non integer "exp" value, the Oauth2 token cache is set to a default expiry time with 1 hour.

What the customer expect is, since we can configure cache expiry time in the micro-gw.conf file, regardless of the value of "exp" value of the token, it should be s... | 1.0 | Make MGW default cache expiry time as a configurable property from MGW level - ### Description

Currently, in MGW, when a token comes with a non integer "exp" value, the Oauth2 token cache is set to a default expiry time with 1 hour.

What the customer expect is, since we can configure cache expiry time in the micro-gw... | priority | make mgw default cache expiry time as a configurable property from mgw level description currently in mgw when a token comes with a non integer exp value the token cache is set to a default expiry time with hour what the customer expect is since we can configure cache expiry time in the micro gw conf... | 1 |

29,472 | 2,716,125,483 | IssuesEvent | 2015-04-10 17:11:00 | CruxFramework/crux | https://api.github.com/repos/CruxFramework/crux | closed | Hide or Show Slider controls | bug imported Milestone-M14-C3 Priority-Medium TargetVersion-5.1.1 | _From [samuel@cruxframework.org](https://code.google.com/u/samuel@cruxframework.org/) on June 11, 2014 15:05:39_

Add a method to implement this behavior

_Original issue: http://code.google.com/p/crux-framework/issues/detail?id=398_ | 1.0 | Hide or Show Slider controls - _From [samuel@cruxframework.org](https://code.google.com/u/samuel@cruxframework.org/) on June 11, 2014 15:05:39_

Add a method to implement this behavior

_Original issue: http://code.google.com/p/crux-framework/issues/detail?id=398_ | priority | hide or show slider controls from on june add a method to implement this behavior original issue | 1 |

132,772 | 12,517,553,229 | IssuesEvent | 2020-06-03 11:22:11 | abrt/abrt | https://api.github.com/repos/abrt/abrt | closed | update links of fedorahosted.org/abrt | documentation | Most of these files need to be updated.

https://github.com/search?utf8=%E2%9C%93&q=org%3Aabrt+%22fedorahosted.org%2Fabrt%22&type=Code

And we probably can update strings in translations. I.e. download updates from zanata, change url, upload translation and sources to zanata. | 1.0 | update links of fedorahosted.org/abrt - Most of these files need to be updated.

https://github.com/search?utf8=%E2%9C%93&q=org%3Aabrt+%22fedorahosted.org%2Fabrt%22&type=Code

And we probably can update strings in translations. I.e. download updates from zanata, change url, upload translation and sources to zanata. | non_priority | update links of fedorahosted org abrt most of these files need to be updated and we probably can update strings in translations i e download updates from zanata change url upload translation and sources to zanata | 0 |

252,900 | 27,271,379,909 | IssuesEvent | 2023-02-22 22:43:13 | snowflakedb/libsnowflakeclient | https://api.github.com/repos/snowflakedb/libsnowflakeclient | closed | util-linuxv2.36.2: 2 vulnerabilities (highest severity is: 5.5) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>util-linuxv2.36.2</b></p></summary>

<p>

<p>The util-linux code repository.</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/utils/util-linux/util-linu... | True | util-linuxv2.36.2: 2 vulnerabilities (highest severity is: 5.5) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>util-linuxv2.36.2</b></p></summary>

<p>

<p>The util-linux code repository.</p>

<p>Library home page:... | non_priority | util vulnerabilities highest severity is vulnerable library util the util linux code repository library home page a href vulnerable source files deps util linux tar util linux login utils chsh c deps util linux tar util linux login utils chfn... | 0 |

702,693 | 24,131,973,904 | IssuesEvent | 2022-09-21 08:13:12 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | barclays.co.uk - site is not usable | browser-firefox priority-normal engine-gecko | <!-- @browser: Firefox 104.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:104.0) Gecko/20100101 Firefox/104.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/111122 -->

**URL**: https://barclays.co.uk

**Browser / Version**: Firefox 104.0

**Opera... | 1.0 | barclays.co.uk - site is not usable - <!-- @browser: Firefox 104.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:104.0) Gecko/20100101 Firefox/104.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/111122 -->

**URL**: https://barclays.co.uk

**Brow... | priority | barclays co uk site is not usable url browser version firefox operating system windows tested another browser yes edge problem type site is not usable description unable to login steps to reproduce log in first page using card number log in and name works ok... | 1 |

180,224 | 6,647,422,911 | IssuesEvent | 2017-09-28 03:53:28 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [studio-ui] Some items in various dialogs are not translated when the language is changed | enhancement Priority: Medium | Some items in various dialogs are not translated when the language is changed.

Using the website_editorial bp, change the language by clicking on your user name at the top right of Studio, then select **Settings**

Under **Profile**, change the language from **English** to **Spanish**, then click on the **Update Profi... | 1.0 | [studio-ui] Some items in various dialogs are not translated when the language is changed - Some items in various dialogs are not translated when the language is changed.

Using the website_editorial bp, change the language by clicking on your user name at the top right of Studio, then select **Settings**

Under **Prof... | priority | some items in various dialogs are not translated when the language is changed some items in various dialogs are not translated when the language is changed using the website editorial bp change the language by clicking on your user name at the top right of studio then select settings under profile cha... | 1 |

305,272 | 26,374,613,551 | IssuesEvent | 2023-01-12 00:35:55 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [BACKEND] [REMOTO] Engenheiro de Software Backend na [STONE] | BACK-END BANCO DE DADOS MYSQL SQL SERVER POSTGRESQL REMOTO GITHUB CI/CD APIs TESTES AUTOMATIZADOS DESENVOLVIMENTO WEB HELP WANTED Stale | <!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVASCRIPT] [MYSQL] [NODE.JS] Desenvolvedor Front-End na [NOME DA EMPRESA]`

========================... | 1.0 | [BACKEND] [REMOTO] Engenheiro de Software Backend na [STONE] - <!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVASCRIPT] [MYSQL] [NODE.JS] Desenvol... | non_priority | engenheiro de software backend na por favor só poste se a vaga for para salvador e cidades vizinhas use desenvolvedor front end ao invés de front end developer o exemplo desenvolvedor front end na ... | 0 |

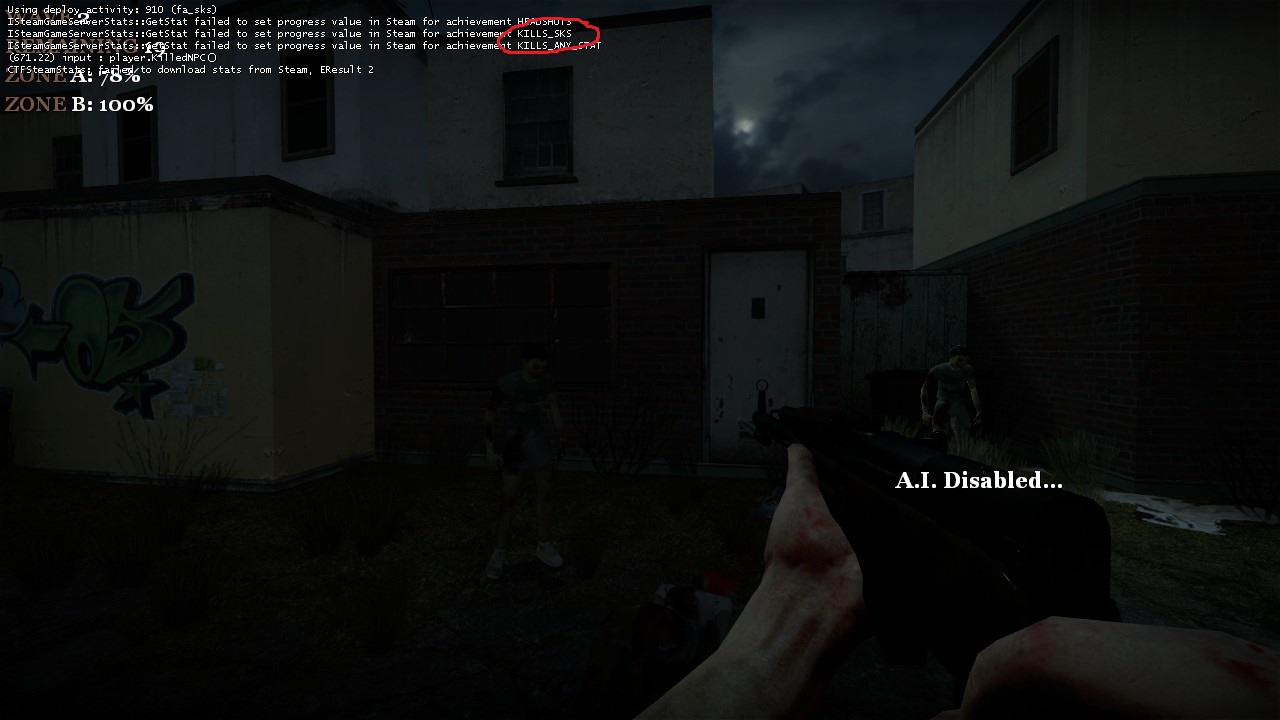

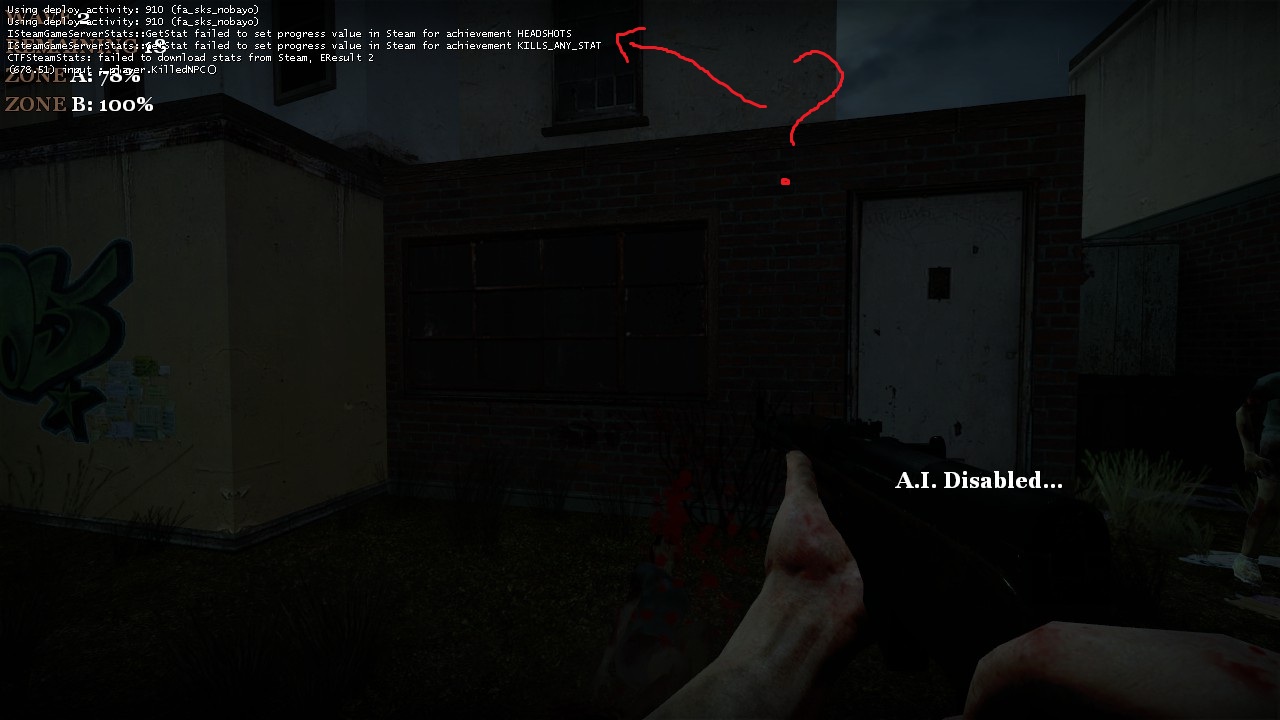

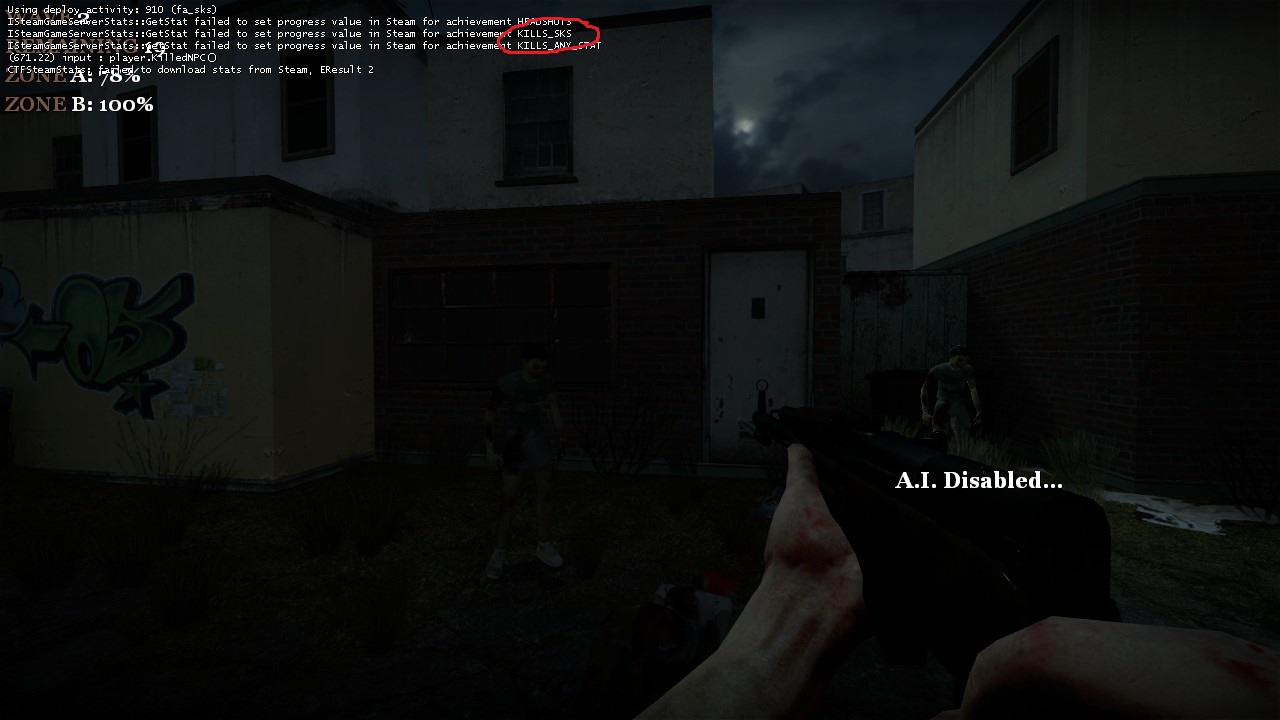

69,353 | 13,237,596,888 | IssuesEvent | 2020-08-18 22:02:00 | nmrih/source-game | https://api.github.com/repos/nmrih/source-game | closed | (public) kills with "new" variants of weapons don't count in achievement | Priority: Normal Status: Assigned Type: Code | killing with fa_sks:

killing with fa_sks_nobayo

same with fa_sako85_... | 1.0 | (public) kills with "new" variants of weapons don't count in achievement - killing with fa_sks:

killing with fa_sks_nobayo

**If a user has healthcare, we must include:**

- [ ] Form number

- [x] Enrollment Status... | 1.0 | [Design] Healthcare: Healthcare application status - ## Background

This should be done first.

See [Design plan](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/products/identity-personalization/my-va/2.0-redesign/product/LIH-outline-and-timeline.md)

**If a user has healthcare, we must i... | non_priority | healthcare healthcare application status background this should be done first see if a user has healthcare we must include form number enrollment status application date enrollment date learn more about your va health benefits if a user does not have hea... | 0 |

28,146 | 13,543,390,727 | IssuesEvent | 2020-09-16 18:53:08 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | .NET 5.0 Microbenchmarks Performance Study Report | Discussion Triaged area-Meta tenet-performance tenet-performance-benchmarks tracking | # Goals

The main goal of my study was to ensure that **we ship .NET 5.0 without any performance regressions** and validate whether in the near future we can **fully** rely on the regressions auto-filing bot written by @DrewScoggins.

My other goal was to get .NET Library Team members involved and keep on growing the... | True | .NET 5.0 Microbenchmarks Performance Study Report - # Goals

The main goal of my study was to ensure that **we ship .NET 5.0 without any performance regressions** and validate whether in the near future we can **fully** rely on the regressions auto-filing bot written by @DrewScoggins.

My other goal was to get .NET L... | non_priority | net microbenchmarks performance study report goals the main goal of my study was to ensure that we ship net without any performance regressions and validate whether in the near future we can fully rely on the regressions auto filing bot written by drewscoggins my other goal was to get net l... | 0 |

194,995 | 14,698,531,033 | IssuesEvent | 2021-01-04 06:40:32 | isontheline/pro.webssh.net | https://api.github.com/repos/isontheline/pro.webssh.net | closed | Fn keys : move access to a better place | enhancement testflight-fixed | **Describe the feature**

Fn keys on the navigation bar is not a good idea :/

Instead add them to :

* the virtual keyboard OR

* the top right floating menu OR

* the contextual menu | 1.0 | Fn keys : move access to a better place - **Describe the feature**

Fn keys on the navigation bar is not a good idea :/

Instead add them to :

* the virtual keyboard OR

* the top right floating menu OR

* the contextual menu | non_priority | fn keys move access to a better place describe the feature fn keys on the navigation bar is not a good idea instead add them to the virtual keyboard or the top right floating menu or the contextual menu | 0 |

212,210 | 7,229,359,057 | IssuesEvent | 2018-02-11 19:04:23 | Team-2502/RobotCode2018 | https://api.github.com/repos/Team-2502/RobotCode2018 | closed | PIDs are strange | PID bug calibration / tuning high priority ¯\_(ツ)_/¯ | When using PID for tuning wheel velocity, the velocities always oscillate at a value too small. For example, at the target speed of 1 RPM, the wheels actually go at ~.75 RPM (this proportion is not constant at different speeds). | 1.0 | PIDs are strange - When using PID for tuning wheel velocity, the velocities always oscillate at a value too small. For example, at the target speed of 1 RPM, the wheels actually go at ~.75 RPM (this proportion is not constant at different speeds). | priority | pids are strange when using pid for tuning wheel velocity the velocities always oscillate at a value too small for example at the target speed of rpm the wheels actually go at rpm this proportion is not constant at different speeds | 1 |

21,711 | 6,208,822,397 | IssuesEvent | 2017-07-07 01:23:08 | ahmedahamid/test | https://api.github.com/repos/ahmedahamid/test | closed | Cannot connect to TFS server anymore | bug CodePlexMigrationInitiated impact: High | Since you guys changed the user names to automatically lower-case the first letter (on the CodePlex site itself), I cannot connect to the TFS01. I have tried IDisposable (what I signed up as--which used to work), iDisposable (what is show as I login--JavaScript client changing this?), and idisposable (just a guess). I... | 1.0 | Cannot connect to TFS server anymore - Since you guys changed the user names to automatically lower-case the first letter (on the CodePlex site itself), I cannot connect to the TFS01. I have tried IDisposable (what I signed up as--which used to work), iDisposable (what is show as I login--JavaScript client changing thi... | non_priority | cannot connect to tfs server anymore since you guys changed the user names to automatically lower case the first letter on the codeplex site itself i cannot connect to the i have tried idisposable what i signed up as which used to work idisposable what is show as i login javascript client changing this ... | 0 |

536,012 | 15,702,653,712 | IssuesEvent | 2021-03-26 12:55:09 | HabitRPG/habitica-ios | https://api.github.com/repos/HabitRPG/habitica-ios | closed | Reminders go off on the day they're set up, not on the day scheduled | Help wanted Priority: medium Type: Bug | User report:

> When I set up an item in my todo list for a future date and set a reminder, the reminder goes off on the day I set up the todo instead of the date the item is scheduled.

>

>

> The following lines help us find and squash the Bug you encountered. Please do not delete/change them.

> iOS Version: 14... | 1.0 | Reminders go off on the day they're set up, not on the day scheduled - User report:

> When I set up an item in my todo list for a future date and set a reminder, the reminder goes off on the day I set up the todo instead of the date the item is scheduled.

>

>

> The following lines help us find and squash the Bu... | priority | reminders go off on the day they re set up not on the day scheduled user report when i set up an item in my todo list for a future date and set a reminder the reminder goes off on the day i set up the todo instead of the date the item is scheduled the following lines help us find and squash the bu... | 1 |

719,136 | 24,748,065,155 | IssuesEvent | 2022-10-21 11:27:25 | ooni/explorer | https://api.github.com/repos/ooni/explorer | closed | Search queries should be done client side | enhancement priority/medium | Currently when I access https://explorer.ooni.org/search, the queries are being performed server-side, instead these should be done client-side so that we don't block on rendering some content to the user.

This may also help solve the fact that while the search page is rendering it is not possible to toggle the sear... | 1.0 | Search queries should be done client side - Currently when I access https://explorer.ooni.org/search, the queries are being performed server-side, instead these should be done client-side so that we don't block on rendering some content to the user.

This may also help solve the fact that while the search page is ren... | priority | search queries should be done client side currently when i access the queries are being performed server side instead these should be done client side so that we don t block on rendering some content to the user this may also help solve the fact that while the search page is rendering it is not possible to tog... | 1 |

813,043 | 30,443,425,307 | IssuesEvent | 2023-07-15 11:04:45 | Counselllor/Counsellor-Web | https://api.github.com/repos/Counselllor/Counsellor-Web | closed | I will be creating landing page | gssoc23 assigned level3 priority: high | ## Issue

I will be creating the first page of the website

## Screenshot

Please assign me this task so that I can create the above mentioned page

I am in GSSOC'2... | 1.0 | I will be creating landing page - ## Issue

I will be creating the first page of the website

## Screenshot

Please assign me this task so that I can create the abov... | priority | i will be creating landing page issue i will be creating the first page of the website screenshot please assign me this task so that i can create the above mentioned page i am in gssoc | 1 |

418,313 | 12,196,187,681 | IssuesEvent | 2020-04-29 18:38:56 | googleapis/google-cloud-ruby | https://api.github.com/repos/googleapis/google-cloud-ruby | closed | NoMethodError (undefined method `shutdown?' for nil:NilClass) after stopping async_writer | api: logging priority: p2 type: bug |

#### Environment details

- OS: ubuntu

- Ruby version: 2.6.3

- Gem name and version: google-cloud-logging

#### Steps to reproduce

1. setup `async_writer` normally.

2. without logging anything, run `.stop!`

#### Code example

```ruby

logging = Google::Cloud::Logging.new #project id

async = logg... | 1.0 | NoMethodError (undefined method `shutdown?' for nil:NilClass) after stopping async_writer -

#### Environment details

- OS: ubuntu

- Ruby version: 2.6.3

- Gem name and version: google-cloud-logging

#### Steps to reproduce

1. setup `async_writer` normally.

2. without logging anything, run `.stop!`

... | priority | nomethoderror undefined method shutdown for nil nilclass after stopping async writer environment details os ubuntu ruby version gem name and version google cloud logging steps to reproduce setup async writer normally without logging anything run stop ... | 1 |

370,361 | 25,902,444,450 | IssuesEvent | 2022-12-15 07:21:40 | VisualGameData/VIGAD | https://api.github.com/repos/VisualGameData/VIGAD | closed | Update SRS accordingly | documentation Phase: Construction RUP: Project Management | # Issue description

*Describe your issue in detail here*

Our SRS should be up-to-date and be clearer, therefore we should be update ours.

Maybe get inspired here from : https://github.com/nilskre/CommonPlayground/blob/dev/docs/SoftwareRequirementsSpecification.md

How they did it.

So please update it accordin... | 1.0 | Update SRS accordingly - # Issue description

*Describe your issue in detail here*

Our SRS should be up-to-date and be clearer, therefore we should be update ours.

Maybe get inspired here from : https://github.com/nilskre/CommonPlayground/blob/dev/docs/SoftwareRequirementsSpecification.md

How they did it.

So ... | non_priority | update srs accordingly issue description describe your issue in detail here our srs should be up to date and be clearer therefore we should be update ours maybe get inspired here from how they did it so please update it accordingly definition of ready dor this issue can be worked on if ... | 0 |

21,816 | 3,756,895,313 | IssuesEvent | 2016-03-13 17:08:47 | gustafl/lexeme | https://api.github.com/repos/gustafl/lexeme | opened | Consider making lexical categories language-dependant | design future | I just learned that Japanese don't have prepositions but _postpositions_. In the very long run, we may have to accept that the nine lexical categories are not the same for every language out there. | 1.0 | Consider making lexical categories language-dependant - I just learned that Japanese don't have prepositions but _postpositions_. In the very long run, we may have to accept that the nine lexical categories are not the same for every language out there. | non_priority | consider making lexical categories language dependant i just learned that japanese don t have prepositions but postpositions in the very long run we may have to accept that the nine lexical categories are not the same for every language out there | 0 |

277,665 | 8,631,057,813 | IssuesEvent | 2018-11-22 05:52:08 | medic/medic-webapp | https://api.github.com/repos/medic/medic-webapp | closed | Reports incorrectly filtered if filter changes before search returns | Priority: 2 - Medium Status: 5 - Ready Type: Bug | If you use the form type filter in the Reports tab the results can include reports that should not be there.

To reproduce:

- Select an item from the form filter, wait for the results to be updated in the LHS

- Select a different item from the form filter **and deselect the item selected first before the results re... | 1.0 | Reports incorrectly filtered if filter changes before search returns - If you use the form type filter in the Reports tab the results can include reports that should not be there.

To reproduce:

- Select an item from the form filter, wait for the results to be updated in the LHS

- Select a different item from the f... | priority | reports incorrectly filtered if filter changes before search returns if you use the form type filter in the reports tab the results can include reports that should not be there to reproduce select an item from the form filter wait for the results to be updated in the lhs select a different item from the f... | 1 |

531,294 | 15,444,338,957 | IssuesEvent | 2021-03-08 10:16:39 | AY2021S2-CS2103T-T12-4/tp | https://api.github.com/repos/AY2021S2-CS2103T-T12-4/tp | closed | Bug: Response time adds multiple seconds | priority.High severity.High type.Bug | To recreate, open the close, do a few commands and close the app multiple times.

Sample of error:

{

"endpoints" : [ {

"method" : "GET",

"address" : "jakjdfl",

"data" : "somedata",

"headers" : [ ],

"tagged" : [ ],

"response" : {

"protocolVersion" : "",

"statusCode" : ""... | 1.0 | Bug: Response time adds multiple seconds - To recreate, open the close, do a few commands and close the app multiple times.

Sample of error:

{

"endpoints" : [ {

"method" : "GET",

"address" : "jakjdfl",

"data" : "somedata",

"headers" : [ ],

"tagged" : [ ],

"response" : {

"prot... | priority | bug response time adds multiple seconds to recreate open the close do a few commands and close the app multiple times sample of error endpoints method get address jakjdfl data somedata headers tagged response protocol... | 1 |

546,696 | 16,017,844,926 | IssuesEvent | 2021-04-20 18:21:06 | GoogleCloudPlatform/java-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/java-docs-samples | closed | com.example.trace.TraceSampleIT: testCreateAndRegisterFullSampled failed | :rotating_light: api: cloudtrace flakybot: issue priority: p1 samples type: bug | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 0059c8f41748088dda645ab19f738ac53f007f74... | 1.0 | com.example.trace.TraceSampleIT: testCreateAndRegisterFullSampled failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I w... | priority | com example trace tracesampleit testcreateandregisterfullsampled failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output java lang illegalstatee... | 1 |

404,428 | 11,857,328,929 | IssuesEvent | 2020-03-25 09:22:55 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.spectrum.net - site is not usable | browser-firefox-mobile engine-gecko form-v2-experiment ml-needsdiagnosis-false priority-normal | <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/50669 -->

<!-- @extra_labels: form-v2-experiment -->

**URL**: https://www.spectrum.net/lo... | 1.0 | www.spectrum.net - site is not usable - <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/50669 -->

<!-- @extra_labels: form-v2-experiment -... | priority | site is not usable url browser version firefox mobile operating system android tested another browser yes other problem type site is not usable description page not loading correctly steps to reproduce page will not load automatically view the screenshot ... | 1 |

805,179 | 29,510,327,902 | IssuesEvent | 2023-06-03 21:10:12 | f1lem0n/falscify | https://api.github.com/repos/f1lem0n/falscify | closed | Write an about section for the website | feature priority | *Please read the following instructions carefully.*

*Having detailed, yet not overcomplicated feedback will help us implement your idea efficiently.* 🚒

***Before suggesting a new feature, please search for a similar feature requests in the [issues section](https://github.com/f1lem0n/falscify/issues).*** 👀️

#... | 1.0 | Write an about section for the website - *Please read the following instructions carefully.*

*Having detailed, yet not overcomplicated feedback will help us implement your idea efficiently.* 🚒

***Before suggesting a new feature, please search for a similar feature requests in the [issues section](https://github... | priority | write an about section for the website please read the following instructions carefully having detailed yet not overcomplicated feedback will help us implement your idea efficiently 🚒 before suggesting a new feature please search for a similar feature requests in the 👀️ checklist ✅ ... | 1 |