Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

289,063 | 8,854,501,838 | IssuesEvent | 2019-01-09 01:39:09 | Polymer/lit-element | https://api.github.com/repos/Polymer/lit-element | closed | [docs] Add info on testing | Area: docs Priority: Critical Status: Available Type: Maintenance | <!--

If you are asking a question rather than filing a bug, try one of these instead:

- StackOverflow (https://stackoverflow.com/questions/tagged/polymer)

- Polymer Slack Channel (https://bit.ly/polymerslack)

- Mailing List (https://groups.google.com/forum/#!forum/polymer-dev)

-->

<!-- Instructions For Filing a B... | 1.0 | [docs] Add info on testing - <!--

If you are asking a question rather than filing a bug, try one of these instead:

- StackOverflow (https://stackoverflow.com/questions/tagged/polymer)

- Polymer Slack Channel (https://bit.ly/polymerslack)

- Mailing List (https://groups.google.com/forum/#!forum/polymer-dev)

-->

<!-... | priority | add info on testing if you are asking a question rather than filing a bug try one of these instead stackoverflow polymer slack channel mailing list description live demo steps to reproduce example create my element append ... | 1 |

697,118 | 23,928,140,579 | IssuesEvent | 2022-09-10 05:27:24 | kubernetes-sigs/cluster-api-provider-vsphere | https://api.github.com/repos/kubernetes-sigs/cluster-api-provider-vsphere | closed | Binary exposes testing related flags | kind/bug priority/critical-urgent | /kind bug

**What steps did you take and what happened:**

Currently the binary exposes the following flags:

```bash

cluster-api-provider-vsphere on main [$?] via 🏎💨 v1.18.5

➜ ./binary --help

Usage of ./binary:

-add_dir_header

If true, adds the file directory to the header of the log messages

-als... | 1.0 | Binary exposes testing related flags - /kind bug

**What steps did you take and what happened:**

Currently the binary exposes the following flags:

```bash

cluster-api-provider-vsphere on main [$?] via 🏎💨 v1.18.5

➜ ./binary --help

Usage of ./binary:

-add_dir_header

If true, adds the file directory to... | priority | binary exposes testing related flags kind bug what steps did you take and what happened currently the binary exposes the following flags bash cluster api provider vsphere on main via 🏎💨 ➜ binary help usage of binary add dir header if true adds the file directory to the ... | 1 |

211,444 | 7,201,170,222 | IssuesEvent | 2018-02-05 21:36:31 | buttercup/buttercup-desktop | https://api.github.com/repos/buttercup/buttercup-desktop | closed | Use non-minified buttercup core library (speed issue) | Effort: Low Priority: High Status: Abandoned Type: Bug | The core currently has an issue with minification, in that the `buttercup-web.min.js` asset is much slower than the non-minified copy when it comes to crypto (locking/unlocking archives via `ArchiveManager`).

Issue: buttercup/buttercup-core#199

Use the non-minified copy for now. | 1.0 | Use non-minified buttercup core library (speed issue) - The core currently has an issue with minification, in that the `buttercup-web.min.js` asset is much slower than the non-minified copy when it comes to crypto (locking/unlocking archives via `ArchiveManager`).

Issue: buttercup/buttercup-core#199

Use the non-m... | priority | use non minified buttercup core library speed issue the core currently has an issue with minification in that the buttercup web min js asset is much slower than the non minified copy when it comes to crypto locking unlocking archives via archivemanager issue buttercup buttercup core use the non min... | 1 |

612,622 | 19,027,017,916 | IssuesEvent | 2021-11-24 05:45:49 | aitos-io/BoAT-X-Framework | https://api.github.com/repos/aitos-io/BoAT-X-Framework | closed | linux-default/src/port_crypto_default/boatplatform.c Compile failed | bug Severity/major Priority/P2 | when " SOFT_CRYPTO ?= CRYPTO_DEFAULT" ,linux-default/src/port_crypto_default/boatplatform.c Compile failed。

error: implicit declaration of function 'keccak_256' is invalid in C99 [-Werror,-Wimplicit-function-declaration] | 1.0 | linux-default/src/port_crypto_default/boatplatform.c Compile failed - when " SOFT_CRYPTO ?= CRYPTO_DEFAULT" ,linux-default/src/port_crypto_default/boatplatform.c Compile failed。

error: implicit declaration of function 'keccak_256' is invalid in C99 [-Werror,-Wimplicit-function-declaration] | priority | linux default src port crypto default boatplatform c compile failed when soft crypto crypto default ,linux default src port crypto default boatplatform c compile failed。 error implicit declaration of function keccak is invalid in | 1 |

264,745 | 8,319,194,918 | IssuesEvent | 2018-09-25 16:31:33 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.youtube.com - site is not usable | browser-firefox priority-critical | <!-- @browser: Firefox 63.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.3; Win64; x64; rv:63.0) Gecko/20100101 Firefox/63.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://www.youtube.com/watch?v=ME2sKsRYmoM&index=4&list=PL2mWImrXdC2sjH3ZO2LnC4pb3L4g1NBpk

**Browser / Version**: Firefox 63.0

**Operat... | 1.0 | www.youtube.com - site is not usable - <!-- @browser: Firefox 63.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.3; Win64; x64; rv:63.0) Gecko/20100101 Firefox/63.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://www.youtube.com/watch?v=ME2sKsRYmoM&index=4&list=PL2mWImrXdC2sjH3ZO2LnC4pb3L4g1NBpk

**Bro... | priority | site is not usable url browser version firefox operating system windows tested another browser no problem type site is not usable description i don t need this site but it s always the first to come out when i open youtube steps to reproduce i tried to chan... | 1 |

93,357 | 26,933,358,767 | IssuesEvent | 2023-02-07 18:30:23 | microsoft/fluentui | https://api.github.com/repos/microsoft/fluentui | opened | [Epic]: 1JS Visual Regression Tool feature suggestions | Area: Build System | ## Story:

- This issue will serve as the main hub for any 1JS VR tool feature requests that you may have. Please add a comment to this issue for any requests you may have and then update this issue.

### Feature Requests:

- [ ] Have VR results be integrated as blocking github checks.

- [ ] "Magnify" feature does n... | 1.0 | [Epic]: 1JS Visual Regression Tool feature suggestions - ## Story:

- This issue will serve as the main hub for any 1JS VR tool feature requests that you may have. Please add a comment to this issue for any requests you may have and then update this issue.

### Feature Requests:

- [ ] Have VR results be integrated ... | non_priority | visual regression tool feature suggestions story this issue will serve as the main hub for any vr tool feature requests that you may have please add a comment to this issue for any requests you may have and then update this issue feature requests have vr results be integrated as blocking... | 0 |

18,928 | 6,657,930,798 | IssuesEvent | 2017-09-30 12:50:05 | servo/servo | https://api.github.com/repos/servo/servo | closed | Can't build servo - "Failed to run custom build command for `harfbuzz" | A-build A-platform/shaping | This is the issue i'm getting. I've searched around, but not found anything.

Anyone got any ideas. Running latest version of OSX Yosemite. 10.10.1

```

/Users/kevin.cannon/.cargo/git/checkouts/gcc-rs-769a83e2edfc991e/servo/src/lib.rs:1:12: 1:18 warning: feature has been added to Rust, directive not necessary

/Users/ke... | 1.0 | Can't build servo - "Failed to run custom build command for `harfbuzz" - This is the issue i'm getting. I've searched around, but not found anything.

Anyone got any ideas. Running latest version of OSX Yosemite. 10.10.1

```

/Users/kevin.cannon/.cargo/git/checkouts/gcc-rs-769a83e2edfc991e/servo/src/lib.rs:1:12: 1:18 w... | non_priority | can t build servo failed to run custom build command for harfbuzz this is the issue i m getting i ve searched around but not found anything anyone got any ideas running latest version of osx yosemite users kevin cannon cargo git checkouts gcc rs servo src lib rs warning feature has... | 0 |

603,579 | 18,669,316,778 | IssuesEvent | 2021-10-30 11:57:12 | Corne2Plum3/fnf2osumania | https://api.github.com/repos/Corne2Plum3/fnf2osumania | closed | I can't hit the notes of a song | bug priority=1 | **Describe the bug**

When playing a song that I converted (Blocked by the Vs Dave mod) only the first notes are hittable for a strange reason..

**Error message**

No error message

**Screenshots:**

I will attach a video if I can

Update: no, I can't sorry, my pc lags af when trying to record

**Program version... | 1.0 | I can't hit the notes of a song - **Describe the bug**

When playing a song that I converted (Blocked by the Vs Dave mod) only the first notes are hittable for a strange reason..

**Error message**

No error message

**Screenshots:**

I will attach a video if I can

Update: no, I can't sorry, my pc lags af when try... | priority | i can t hit the notes of a song describe the bug when playing a song that i converted blocked by the vs dave mod only the first notes are hittable for a strange reason error message no error message screenshots i will attach a video if i can update no i can t sorry my pc lags af when try... | 1 |

623,374 | 19,666,271,172 | IssuesEvent | 2022-01-10 23:01:18 | South-Carolina-Language-Map/South-Carolina-Language-Map | https://api.github.com/repos/South-Carolina-Language-Map/South-Carolina-Language-Map | opened | Snackbar throws occasional errors, but updates go through | bug Low Priority | I don't think this is breaking anything, just throwing an error, because technically the snackbar is rendering as a child of a table element | 1.0 | Snackbar throws occasional errors, but updates go through - I don't think this is breaking anything, just throwing an error, because technically the snackbar is rendering as a child of a table element | priority | snackbar throws occasional errors but updates go through i don t think this is breaking anything just throwing an error because technically the snackbar is rendering as a child of a table element | 1 |

103,762 | 8,948,779,205 | IssuesEvent | 2019-01-25 04:10:08 | 67P/hyperchannel | https://api.github.com/repos/67P/hyperchannel | opened | Share pictures, files, code snippets, etc. via RS | feature remotestorage | This one needs multiple separate issues, because sharing a code snippet will need different UI and function calls than sharing an image file for example. Just adding this as a tracking issue, so we have it on the roadmap. | 1.0 | Share pictures, files, code snippets, etc. via RS - This one needs multiple separate issues, because sharing a code snippet will need different UI and function calls than sharing an image file for example. Just adding this as a tracking issue, so we have it on the roadmap. | non_priority | share pictures files code snippets etc via rs this one needs multiple separate issues because sharing a code snippet will need different ui and function calls than sharing an image file for example just adding this as a tracking issue so we have it on the roadmap | 0 |

748,901 | 26,142,589,547 | IssuesEvent | 2022-12-29 21:01:31 | ceph/ceph-csi | https://api.github.com/repos/ceph/ceph-csi | closed | Few E2E tests are getting skipped in devel branch | bug wontfix Priority-0 | > @Madhu-1 this keeps failing, maybe there is an issue with it?

I see a problem with tests in the devel branch here https://github.com/ceph/ceph-csi/blob/0f0957164ec882a91d11bf1c880e1c0777abfb01/e2e/rbd.go#L4592-L4610. You can see the ceph users are deleted. Now we are trying to run one more test, `pvcDelete... | 1.0 | Few E2E tests are getting skipped in devel branch - > @Madhu-1 this keeps failing, maybe there is an issue with it?

I see a problem with tests in the devel branch here https://github.com/ceph/ceph-csi/blob/0f0957164ec882a91d11bf1c880e1c0777abfb01/e2e/rbd.go#L4592-L4610. You can see the ceph users are deleted... | priority | few tests are getting skipped in devel branch madhu this keeps failing maybe there is an issue with it i see a problem with tests in the devel branch here you can see the ceph users are deleted now we are trying to run one more test pvcdeletewhenpoolnotfound which creates the pvc and deletes... | 1 |

15,566 | 3,475,711,754 | IssuesEvent | 2015-12-26 00:55:12 | nnnick/Chart.js | https://api.github.com/repos/nnnick/Chart.js | closed | Donut chart active segment mouse over cursor style not applyed | Needs test case | Hi,

i am using Donut chart ,i don't have any option to set like when we mouse over on active segment applying the cursor style to that current segment

I have saw in stackoverflow link: http://stackoverflow.com/questions/30741085/mouse-over-event-and-cursor-pointer-on-the-line-series

there mouse over in points in... | 1.0 | Donut chart active segment mouse over cursor style not applyed - Hi,

i am using Donut chart ,i don't have any option to set like when we mouse over on active segment applying the cursor style to that current segment

I have saw in stackoverflow link: http://stackoverflow.com/questions/30741085/mouse-over-event-and... | non_priority | donut chart active segment mouse over cursor style not applyed hi i am using donut chart i don t have any option to set like when we mouse over on active segment applying the cursor style to that current segment i have saw in stackoverflow link there mouse over in points in a line css style cursor getting... | 0 |

711,984 | 24,481,129,480 | IssuesEvent | 2022-10-08 21:11:55 | epicmaxco/vuestic-ui | https://api.github.com/repos/epicmaxco/vuestic-ui | closed | Bundlers tests should execute on any OS | good first issue LOW PRIORITY | * **What is the expected behavior?**

Bundlers tests should execute on any OS.

* **What is the current behavior?**

Bundlers tests `package.json` commands work only for Linux, for example:

`"build": "rm -rf ./dist && yarn build:vite && yarn build:vue-cli"`

Looks like the best way to resolve this is by writing ne... | 1.0 | Bundlers tests should execute on any OS - * **What is the expected behavior?**

Bundlers tests should execute on any OS.

* **What is the current behavior?**

Bundlers tests `package.json` commands work only for Linux, for example:

`"build": "rm -rf ./dist && yarn build:vite && yarn build:vue-cli"`

Looks like the... | priority | bundlers tests should execute on any os what is the expected behavior bundlers tests should execute on any os what is the current behavior bundlers tests package json commands work only for linux for example build rm rf dist yarn build vite yarn build vue cli looks like the... | 1 |

594,651 | 18,050,409,576 | IssuesEvent | 2021-09-19 16:44:53 | rstemmer/musicdb | https://api.github.com/repos/rstemmer/musicdb | opened | fuzzywuzzy library is now called thefuzz | Low Priority Back-End | [fuzzywuzzy](https://github.com/seatgeek/fuzzywuzzy) is now called [thefuzz](https://github.com/seatgeek/thefuzz).

This needs to be investigated.

- [ ] Is this library still maintained or is it just being buried?

- [ ] Updating the requirements

- [ ] Update `import` statements | 1.0 | fuzzywuzzy library is now called thefuzz - [fuzzywuzzy](https://github.com/seatgeek/fuzzywuzzy) is now called [thefuzz](https://github.com/seatgeek/thefuzz).

This needs to be investigated.

- [ ] Is this library still maintained or is it just being buried?

- [ ] Updating the requirements

- [ ] Update `import` s... | priority | fuzzywuzzy library is now called thefuzz is now called this needs to be investigated is this library still maintained or is it just being buried updating the requirements update import statements | 1 |

364,289 | 10,761,771,804 | IssuesEvent | 2019-10-31 21:34:37 | apifytech/apify-js | https://api.github.com/repos/apifytech/apify-js | closed | Apify.call and Apify.callTask should support passing ad hoc webhooks | enhancement low priority | It is in the client already | 1.0 | Apify.call and Apify.callTask should support passing ad hoc webhooks - It is in the client already | priority | apify call and apify calltask should support passing ad hoc webhooks it is in the client already | 1 |

100,758 | 16,490,391,912 | IssuesEvent | 2021-05-25 02:15:42 | jinuem/Parse-SDK-JS | https://api.github.com/repos/jinuem/Parse-SDK-JS | opened | WS-2019-0493 (High) detected in handlebars-4.1.2.tgz | security vulnerability | ## WS-2019-0493 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.1.2.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates ef... | True | WS-2019-0493 (High) detected in handlebars-4.1.2.tgz - ## WS-2019-0493 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.1.2.tgz</b></p></summary>

<p>Handlebars provides the... | non_priority | ws high detected in handlebars tgz ws high severity vulnerability vulnerable library handlebars tgz handlebars provides the power necessary to let you build semantic templates effectively with no frustration library home page a href path to dependency file parse sdk js... | 0 |

409,036 | 11,955,756,593 | IssuesEvent | 2020-04-04 06:39:40 | googleapis/google-cloud-dotnet | https://api.github.com/repos/googleapis/google-cloud-dotnet | closed | Synthesis failed for Google.Cloud.RecaptchaEnterprise.V1 | autosynth failure priority: p1 type: bug | Hello! Autosynth couldn't regenerate Google.Cloud.RecaptchaEnterprise.V1. :broken_heart:

Here's the output from running `synth.py`:

```

Cloning into 'working_repo'...

Switched to a new branch 'autosynth-Google.Cloud.RecaptchaEnterprise.V1'

Cloning into '/tmpfs/tmp/tmpsxx4dmx_/googleapis'...

Note: checking out '9d4a3a... | 1.0 | Synthesis failed for Google.Cloud.RecaptchaEnterprise.V1 - Hello! Autosynth couldn't regenerate Google.Cloud.RecaptchaEnterprise.V1. :broken_heart:

Here's the output from running `synth.py`:

```

Cloning into 'working_repo'...

Switched to a new branch 'autosynth-Google.Cloud.RecaptchaEnterprise.V1'

Cloning into '/tmpf... | priority | synthesis failed for google cloud recaptchaenterprise hello autosynth couldn t regenerate google cloud recaptchaenterprise broken heart here s the output from running synth py cloning into working repo switched to a new branch autosynth google cloud recaptchaenterprise cloning into tmpfs t... | 1 |

205,540 | 15,646,064,209 | IssuesEvent | 2021-03-23 00:05:15 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: tpce/c=5000/nodes=3 failed | C-test-failure O-roachtest O-robot branch-master | [(roachtest).tpce/c=5000/nodes=3 failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2712399&tab=buildLog) on [master@ec011620c7cf299fdbb898db692b36454defc4a2](https://github.com/cockroachdb/cockroach/commits/ec011620c7cf299fdbb898db692b36454defc4a2):

```

| cd798458a46f: Pull complete

| Digest: sha25... | 2.0 | roachtest: tpce/c=5000/nodes=3 failed - [(roachtest).tpce/c=5000/nodes=3 failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2712399&tab=buildLog) on [master@ec011620c7cf299fdbb898db692b36454defc4a2](https://github.com/cockroachdb/cockroach/commits/ec011620c7cf299fdbb898db692b36454defc4a2):

```

| cd79845... | non_priority | roachtest tpce c nodes failed on pull complete digest status downloaded newer image for cockroachdb tpc e latest error error kind db cause some dberror severity error parsed severity none code sqlstate xxuuu message relation charge is offline... | 0 |

11,275 | 14,074,388,014 | IssuesEvent | 2020-11-04 07:12:38 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Create Points Layer From Table algorithm does not work in processing modeler | Bug Feedback Modeller Processing | <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

-... | 1.0 | Create Points Layer From Table algorithm does not work in processing modeler - <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support or... | non_priority | create points layer from table algorithm does not work in processing modeler bug fixing and feature development is a community responsibility and not the responsibility of the qgis project alone if this bug report or feature request is high priority for you we suggest engaging a qgis developer or support or... | 0 |

631,128 | 20,145,346,445 | IssuesEvent | 2022-02-09 06:39:24 | DeFiCh/whale | https://api.github.com/repos/DeFiCh/whale | closed | Basic DEX PoolSwap & CompositeSwap Indexing | priority/urgent-now triage/accepted kind/feature area/module-database area/module-indexer | <!-- Please only use this template for submitting enhancement/feature requests -->

#### What would you like to be added:

Simplified indexing of PoolSwap and CompositeSwap to track volume and activity. For Volume and APR tracking only hence Add/Remove Pool DfTx is not required.

/triage accepted

/area module-in... | 1.0 | Basic DEX PoolSwap & CompositeSwap Indexing - <!-- Please only use this template for submitting enhancement/feature requests -->

#### What would you like to be added:

Simplified indexing of PoolSwap and CompositeSwap to track volume and activity. For Volume and APR tracking only hence Add/Remove Pool DfTx is not ... | priority | basic dex poolswap compositeswap indexing what would you like to be added simplified indexing of poolswap and compositeswap to track volume and activity for volume and apr tracking only hence add remove pool dftx is not required triage accepted area module indexer module database priority im... | 1 |

220,250 | 17,172,479,495 | IssuesEvent | 2021-07-15 07:14:01 | submariner-io/submariner-operator | https://api.github.com/repos/submariner-io/submariner-operator | opened | `subctl benchmark throughput` appears to be stuck | 0.10.0-testday enhancement | When running `subctl benchmark throughput` it appears as if it's stuck when it's running the test.

```bash

[root@483221d9c954 submariner]# subctl benchmark throughput --kubecontexts cluster1,cluster2

Performing throughput tests from Gateway pod on cluster "cluster1" to Gateway pod on cluster "cluster2"

... | 1.0 | `subctl benchmark throughput` appears to be stuck - When running `subctl benchmark throughput` it appears as if it's stuck when it's running the test.

```bash

[root@483221d9c954 submariner]# subctl benchmark throughput --kubecontexts cluster1,cluster2

Performing throughput tests from Gateway pod on cluster "cluste... | non_priority | subctl benchmark throughput appears to be stuck when running subctl benchmark throughput it appears as if it s stuck when it s running the test bash subctl benchmark throughput kubecontexts performing throughput tests from gateway pod on cluster to gateway pod on cluster ... | 0 |

137,262 | 5,301,186,726 | IssuesEvent | 2017-02-10 08:43:54 | Cadasta/cadasta-platform | https://api.github.com/repos/Cadasta/cadasta-platform | closed | API: unauthorizated users can edit organization | bug priority: high security | ### Steps to reproduce the error

__Anonymous User__

- Create an organization (name: 'Westeros')

- Run `http PUT https://platform-staging.cadasta.org/api/v1/organizations/westeros/ name='Westeros (The Capital)'`

OR

__Non-org member__

- Create an organization (name: 'Westeros')

- Run `http PUT https://platform... | 1.0 | API: unauthorizated users can edit organization - ### Steps to reproduce the error

__Anonymous User__

- Create an organization (name: 'Westeros')

- Run `http PUT https://platform-staging.cadasta.org/api/v1/organizations/westeros/ name='Westeros (The Capital)'`

OR

__Non-org member__

- Create an organization (n... | priority | api unauthorizated users can edit organization steps to reproduce the error anonymous user create an organization name westeros run http put name westeros the capital or non org member create an organization name westeros run http put name westeros the capital ... | 1 |

123,576 | 17,772,266,231 | IssuesEvent | 2021-08-30 14:54:48 | kapseliboi/farmOS-client | https://api.github.com/repos/kapseliboi/farmOS-client | opened | CVE-2021-28092 (High) detected in is-svg-3.0.0.tgz | security vulnerability | ## CVE-2021-28092 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>is-svg-3.0.0.tgz</b></p></summary>

<p>Check if a string or buffer is SVG</p>

<p>Library home page: <a href="https://re... | True | CVE-2021-28092 (High) detected in is-svg-3.0.0.tgz - ## CVE-2021-28092 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>is-svg-3.0.0.tgz</b></p></summary>

<p>Check if a string or buffer... | non_priority | cve high detected in is svg tgz cve high severity vulnerability vulnerable library is svg tgz check if a string or buffer is svg library home page a href path to dependency file farmos client package json path to vulnerable library farmos client node modules is svg p... | 0 |

265,892 | 8,360,136,505 | IssuesEvent | 2018-10-03 10:30:24 | lendingblock/aio-openapi | https://api.github.com/repos/lendingblock/aio-openapi | closed | Add search by column | high priority | We want to be able to search (postgres `ilike` style). Let's start with one column with intent to extend to multicolumn search | 1.0 | Add search by column - We want to be able to search (postgres `ilike` style). Let's start with one column with intent to extend to multicolumn search | priority | add search by column we want to be able to search postgres ilike style let s start with one column with intent to extend to multicolumn search | 1 |

82,329 | 7,837,774,550 | IssuesEvent | 2018-06-18 07:51:17 | brave/browser-laptop | https://api.github.com/repos/brave/browser-laptop | closed | Do not update model from private tabs | initiative/bat-ads initiative/bat-ads/ads-test release/blocking | Currently, private tabs show up in the model log. They shouldn't. cf. https://github.com/brave-intl/internal/issues/57 | 1.0 | Do not update model from private tabs - Currently, private tabs show up in the model log. They shouldn't. cf. https://github.com/brave-intl/internal/issues/57 | non_priority | do not update model from private tabs currently private tabs show up in the model log they shouldn t cf | 0 |

705,274 | 24,229,071,189 | IssuesEvent | 2022-09-26 16:36:01 | googleapis/release-please | https://api.github.com/repos/googleapis/release-please | closed | Missing proxy configuration for the graphql client when use proxy configuration | priority: p2 type: bug | Thanks for stopping by to let us know something could be better!

**PLEASE READ**: If you have a support contract with Google, please create an issue in the [support console](https://cloud.google.com/support/) instead of filing on GitHub. This will ensure a timely response.

1) Is this a client library issue or a p... | 1.0 | Missing proxy configuration for the graphql client when use proxy configuration - Thanks for stopping by to let us know something could be better!

**PLEASE READ**: If you have a support contract with Google, please create an issue in the [support console](https://cloud.google.com/support/) instead of filing on GitHu... | priority | missing proxy configuration for the graphql client when use proxy configuration thanks for stopping by to let us know something could be better please read if you have a support contract with google please create an issue in the instead of filing on github this will ensure a timely response is t... | 1 |

218,011 | 24,351,727,114 | IssuesEvent | 2022-10-03 01:13:46 | hygieia/hygieia-whitesource-collector | https://api.github.com/repos/hygieia/hygieia-whitesource-collector | opened | CVE-2022-42003 (Medium) detected in jackson-databind-2.8.11.3.jar | security vulnerability | ## CVE-2022-42003 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.11.3.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core str... | True | CVE-2022-42003 (Medium) detected in jackson-databind-2.8.11.3.jar - ## CVE-2022-42003 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.11.3.jar</b></p></summary>

... | non_priority | cve medium detected in jackson databind jar cve medium severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file pom xml path to vuln... | 0 |

124,362 | 12,229,417,604 | IssuesEvent | 2020-05-04 00:07:59 | drakessn/Kaggle_Learn | https://api.github.com/repos/drakessn/Kaggle_Learn | closed | Geospatial Analysis | Learning documentation | Create interactive maps, and discover patterns in geospatial data

- [x] Your First Map

- [x] Coordinate Reference Systems

- [ ] Interactive Maps

- [ ] Manipulating Geospatial Data

- [ ] Proximity Analysis | 1.0 | Geospatial Analysis - Create interactive maps, and discover patterns in geospatial data

- [x] Your First Map

- [x] Coordinate Reference Systems

- [ ] Interactive Maps

- [ ] Manipulating Geospatial Data

- [ ] Proximity Analysis | non_priority | geospatial analysis create interactive maps and discover patterns in geospatial data your first map coordinate reference systems interactive maps manipulating geospatial data proximity analysis | 0 |

600,579 | 18,345,450,156 | IssuesEvent | 2021-10-08 05:19:52 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | Kubelet is reporting OutOfCpu on previously running workloads after restart | kind/bug priority/critical-urgent sig/scheduling sig/node triage/accepted | <!-- Please use this template while reporting a bug and provide as much info as possible. Not doing so may result in your bug not being addressed in a timely manner. Thanks!

If the matter is security related, please disclose it privately via https://kubernetes.io/security/

-->

#### What happened:

Restart: A... | 1.0 | Kubelet is reporting OutOfCpu on previously running workloads after restart - <!-- Please use this template while reporting a bug and provide as much info as possible. Not doing so may result in your bug not being addressed in a timely manner. Thanks!

If the matter is security related, please disclose it privately v... | priority | kubelet is reporting outofcpu on previously running workloads after restart please use this template while reporting a bug and provide as much info as possible not doing so may result in your bug not being addressed in a timely manner thanks if the matter is security related please disclose it privately v... | 1 |

444,047 | 12,805,565,837 | IssuesEvent | 2020-07-03 07:47:14 | acl-org/acl-2020-virtual-conference | https://api.github.com/repos/acl-org/acl-2020-virtual-conference | closed | Sponsors & Exhibitor page | priority:high | **Number of Volunteers**: 3

TODO (Updated after #41)

- [x] Edit [sponsors.json](https://github.com/acl-org/acl-2020-virtual-conference-sitedata/blob/master/sponsors.json). The information below need to be provided by the sponsor. For demo purpose, just put some generic values.

- [x] change the logos (needs to up... | 1.0 | Sponsors & Exhibitor page - **Number of Volunteers**: 3

TODO (Updated after #41)

- [x] Edit [sponsors.json](https://github.com/acl-org/acl-2020-virtual-conference-sitedata/blob/master/sponsors.json). The information below need to be provided by the sponsor. For demo purpose, just put some generic values.

- [x] c... | priority | sponsors exhibitor page number of volunteers todo updated after edit the information below need to be provided by the sponsor for demo purpose just put some generic values change the logos needs to upload images to the folder static images add a channel field to recor... | 1 |

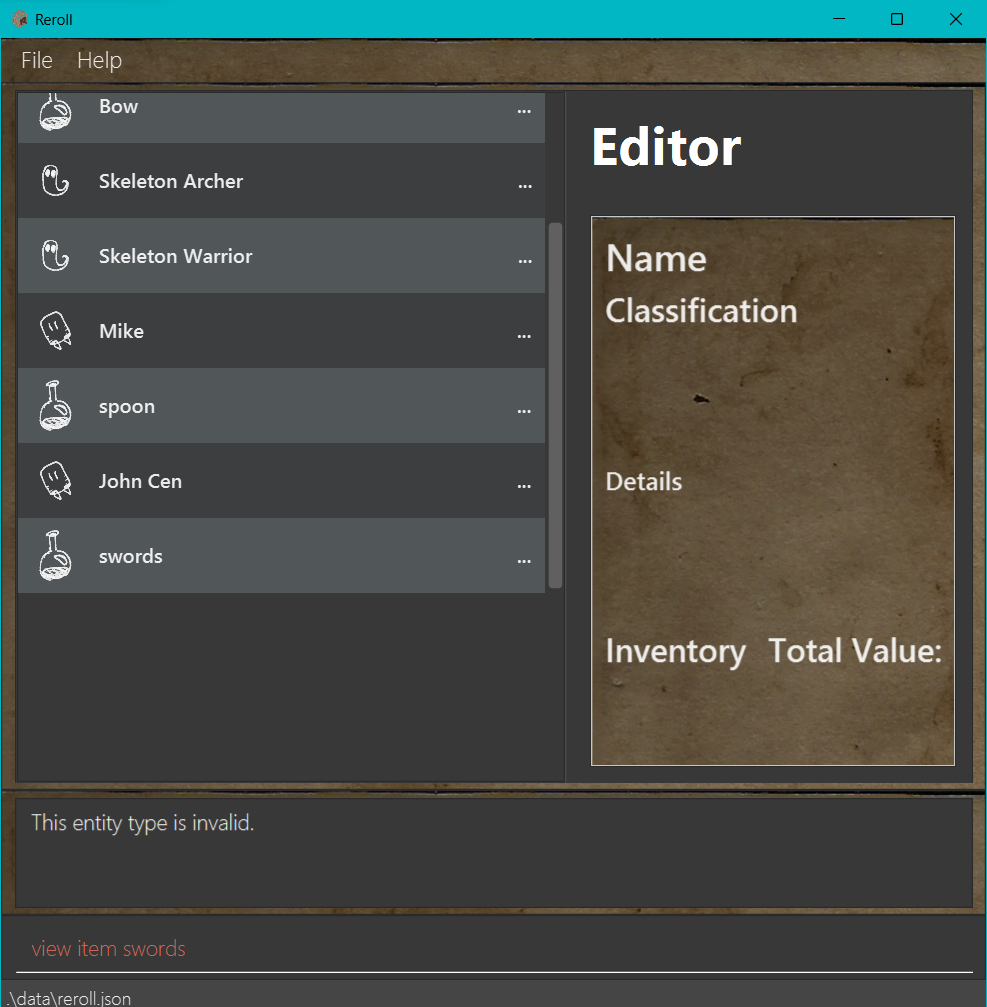

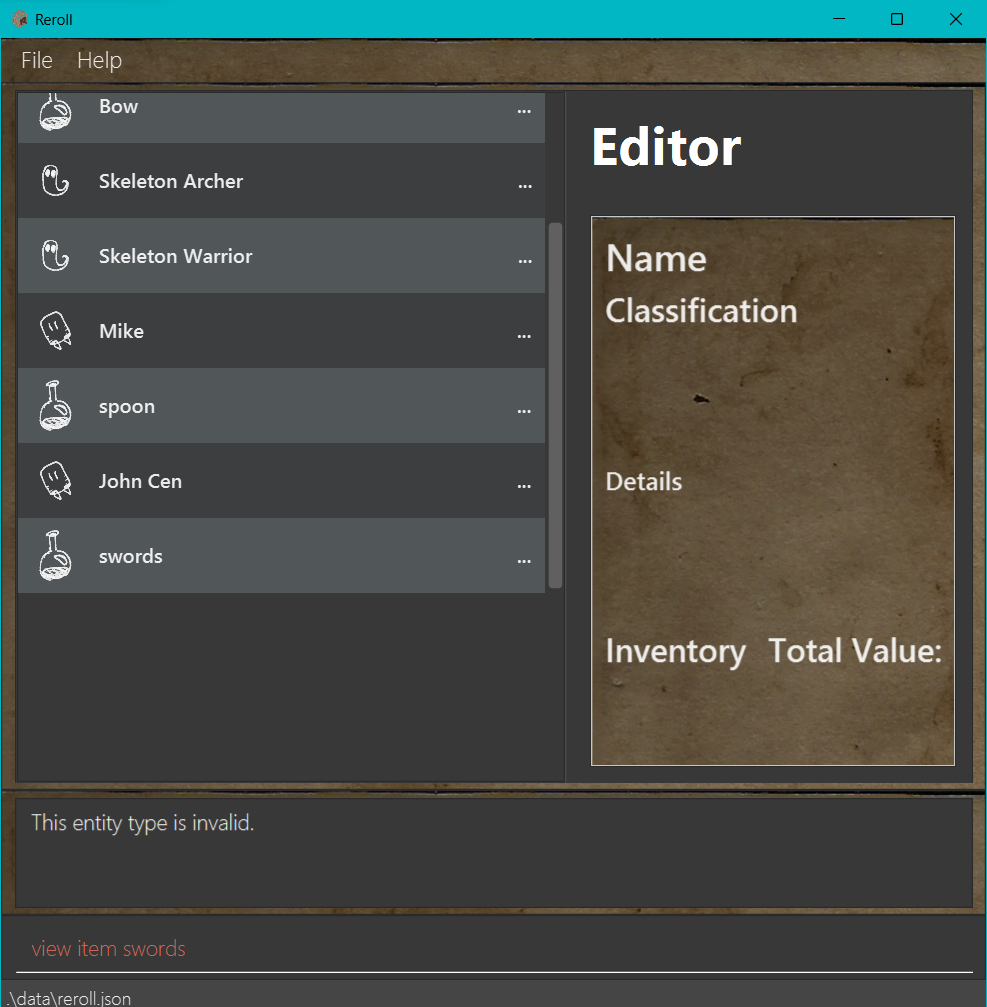

798,621 | 28,291,539,316 | IssuesEvent | 2023-04-09 09:10:10 | AY2223S2-CS2103T-T15-1/tp | https://api.github.com/repos/AY2223S2-CS2103T-T15-1/tp | closed | [PE-D][Tester F] Inappropriate error message by view feature while in view mode | type.Bug priority.Low |

Command sequence: view item swords -> view item swords

While the user guide doesn't contain information regarding the expected error message nor specify the need to use `back` `b` after entering the view ... | 1.0 | [PE-D][Tester F] Inappropriate error message by view feature while in view mode -

Command sequence: view item swords -> view item swords

While the user guide doesn't contain information regarding the expe... | priority | inappropriate error message by view feature while in view mode command sequence view item swords view item swords while the user guide doesn t contain information regarding the expected error message nor specify the need to use back b after entering the view mode the error message is still misleadi... | 1 |

15,967 | 3,996,047,901 | IssuesEvent | 2016-05-10 17:27:51 | snowplow/snowplow | https://api.github.com/repos/snowplow/snowplow | closed | Documentation capitalisation change for Python tracker | documentation | I think the code snippet on the Python tracker page should have `subject` capitalised.

https://github.com/snowplow/snowplow/wiki/Python-Tracker

```

from snowplow_tracker import Subject

s = Subject()

``` | 1.0 | Documentation capitalisation change for Python tracker - I think the code snippet on the Python tracker page should have `subject` capitalised.

https://github.com/snowplow/snowplow/wiki/Python-Tracker

```

from snowplow_tracker import Subject

s = Subject()

``` | non_priority | documentation capitalisation change for python tracker i think the code snippet on the python tracker page should have subject capitalised from snowplow tracker import subject s subject | 0 |

36,060 | 4,712,187,099 | IssuesEvent | 2016-10-14 15:58:21 | brave/browser-laptop | https://api.github.com/repos/brave/browser-laptop | opened | l10n of ledger advanced settings modal window | design feature/ledger l10n | **Describe the issue you encountered:** buttons on ledger advanced settings modal window are broken.

**Expected behavior:** Buttons should not word-wrap.

- Platform (Win7, 8, 10? macOS? Linux di... | 1.0 | l10n of ledger advanced settings modal window - **Describe the issue you encountered:** buttons on ledger advanced settings modal window are broken.

**Expected behavior:** Buttons should not word-w... | non_priority | of ledger advanced settings modal window describe the issue you encountered buttons on ledger advanced settings modal window are broken expected behavior buttons should not word wrap platform macos linux distro windows brave version any related issues ... | 0 |

31,272 | 11,903,303,730 | IssuesEvent | 2020-03-30 15:09:13 | istio/istio | https://api.github.com/repos/istio/istio | opened | TLS handshake errors connecting to Istiod with low TTL | area/security | istiod logs:

```

2020-03-30T15:06:05.093291Z info grpc: Server.Serve failed to complete security handshake from "10.28.1.200:53890": tls: failed to verify client's certificate: x509: certificate has expired or is not yet valid

```

istio-agent logs:

```

[Envoy (Epoch 0)] [2020-03-30 15:03:37.052][26][warn... | True | TLS handshake errors connecting to Istiod with low TTL - istiod logs:

```

2020-03-30T15:06:05.093291Z info grpc: Server.Serve failed to complete security handshake from "10.28.1.200:53890": tls: failed to verify client's certificate: x509: certificate has expired or is not yet valid

```

istio-agent logs:

... | non_priority | tls handshake errors connecting to istiod with low ttl istiod logs info grpc server serve failed to complete security handshake from tls failed to verify client s certificate certificate has expired or is not yet valid istio agent logs streamaggrega... | 0 |

254,190 | 8,071,026,813 | IssuesEvent | 2018-08-06 11:49:28 | FlowzPlatform/Sprint-User-Story-Board | https://api.github.com/repos/FlowzPlatform/Sprint-User-Story-Board | closed | Uploader-[feature]-Image Upload | Epic High Priority uploader - Images upload | ### SUMMARY: customer can upload his product images

----

### Prerequisite:

- R&D of HTML Folder upload

Ref : https://www.aurigma.com/docs/us8/uploading-folders-in-other-platforms-iuf.htm#setClientSide

-subscription module have add-ons

### User Story Description:

As a supplier, i want upload product image ... | 1.0 | Uploader-[feature]-Image Upload - ### SUMMARY: customer can upload his product images

----

### Prerequisite:

- R&D of HTML Folder upload

Ref : https://www.aurigma.com/docs/us8/uploading-folders-in-other-platforms-iuf.htm#setClientSide

-subscription module have add-ons

### User Story Description:

As a supp... | priority | uploader image upload summary customer can upload his product images prerequisite r d of html folder upload ref subscription module have add ons user story description as a supplier i want upload product image file product image will appear in product listing page ... | 1 |

498,283 | 14,405,043,106 | IssuesEvent | 2020-12-03 18:07:12 | timescale/promscale | https://api.github.com/repos/timescale/promscale | opened | Avoid possible loss of resolution when switching leaders | kind/enhancement priority/sev3 | The current leader-election for HA configuration is such that once the leader stops receiving the samples from prometheus instance for a period of the timeout, it gives up the advisory-lock and the non-leader then after receiving its samples from its corresponding Prometheus attempts and takes the lock. This means the ... | 1.0 | Avoid possible loss of resolution when switching leaders - The current leader-election for HA configuration is such that once the leader stops receiving the samples from prometheus instance for a period of the timeout, it gives up the advisory-lock and the non-leader then after receiving its samples from its correspond... | priority | avoid possible loss of resolution when switching leaders the current leader election for ha configuration is such that once the leader stops receiving the samples from prometheus instance for a period of the timeout it gives up the advisory lock and the non leader then after receiving its samples from its correspond... | 1 |

191,535 | 6,830,003,316 | IssuesEvent | 2017-11-09 03:43:42 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | services.gst.gov.in - site is not usable | browser-firefox-mobile priority-normal | <!-- @browser: Firefox Mobile 58.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.0; Mobile; rv:58.0) Gecko/58.0 Firefox/58.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://services.gst.gov.in/services/login

**Browser / Version**: Firefox Mobile 58.0

**Operating System**: Android 7.0

**Tested Another Brow... | 1.0 | services.gst.gov.in - site is not usable - <!-- @browser: Firefox Mobile 58.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.0; Mobile; rv:58.0) Gecko/58.0 Firefox/58.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://services.gst.gov.in/services/login

**Browser / Version**: Firefox Mobile 58.0

**Operating ... | priority | services gst gov in site is not usable url browser version firefox mobile operating system android tested another browser yes problem type site is not usable description the website works fine on all other modern browsers steps to reproduce this website works ... | 1 |

593,855 | 18,018,518,320 | IssuesEvent | 2021-09-16 16:22:09 | brave/qa-resources | https://api.github.com/repos/brave/qa-resources | closed | C94 1.31.x (Nightly) manual pass/regression check | OS/Desktop QA Completed - Win x64 priority/P1 OS/Windows | ### <img src="https://www.rebron.org/wordpress/wp-content/uploads/2019/06/release-1.png"> `Chromium 94 Nightly Manual Passes/Regression Check`

#### Release Date/Target:

* Release Date: **`ASAP`**

#### Summary:

QA needs to run through **`C94`** on Nightly before it's uplifted into the other channels (targeti... | 1.0 | C94 1.31.x (Nightly) manual pass/regression check - ### <img src="https://www.rebron.org/wordpress/wp-content/uploads/2019/06/release-1.png"> `Chromium 94 Nightly Manual Passes/Regression Check`

#### Release Date/Target:

* Release Date: **`ASAP`**

#### Summary:

QA needs to run through **`C94`** on Nightly b... | priority | x nightly manual pass regression check img src chromium nightly manual passes regression check release date target release date asap summary qa needs to run through on nightly before it s uplifted into the other channels targeting x manual p... | 1 |

107,716 | 4,314,369,732 | IssuesEvent | 2016-07-22 14:16:56 | citusdata/citus | https://api.github.com/repos/citusdata/citus | opened | PurgeConnection may segfault when re-raising error | 1-2 days priority:high | We recently made this change https://github.com/citusdata/citus/commit/16fc92bf6b95eed0ee7e7097572b4337a27f0573

However, there are various callers to ReportRemoteError that use it on connections that are not from the connection cache (e.g. COPY, master_modify_multiple_shards, DDL), and this will segfault if the conn... | 1.0 | PurgeConnection may segfault when re-raising error - We recently made this change https://github.com/citusdata/citus/commit/16fc92bf6b95eed0ee7e7097572b4337a27f0573

However, there are various callers to ReportRemoteError that use it on connections that are not from the connection cache (e.g. COPY, master_modify_mult... | priority | purgeconnection may segfault when re raising error we recently made this change however there are various callers to reportremoteerror that use it on connections that are not from the connection cache e g copy master modify multiple shards ddl and this will segfault if the connection cache hasn t been ini... | 1 |

100,708 | 12,551,696,798 | IssuesEvent | 2020-06-06 15:38:01 | mcneel/rhino.inside-revit | https://api.github.com/repos/mcneel/rhino.inside-revit | closed | Rhino.Inside Revit - Element - Delete Element | design enhancement ux | Would be possible to add true/ false input Toggle to` Delete Element` or make the second node.

Everyone always forgets to detach this node and this cause issue. With `Button` component this will be the perfect solution.

[//]: # (http://wowhead.com/)

[//]: # (cata-twinhead.twinstar.cz)

**Description:**

So I Just Broke Stormwind Royal Guards patroling Stormwind > Goldshire Area and they cleared almost all mobs behind goldshire till murlock islands tel... | 1.0 | Just Broke Stormwind Royal Guards patrol and they cleared almost all mobs behind goldshire till murlock lil islands [PATROL BUG] - [//]: # (REMBEMBER! Add links to things related to the bug using for example:)

[//]: # (http://wowhead.com/)

[//]: # (cata-twinhead.twinstar.cz)

**Description:**

So I Just Broke Sto... | priority | just broke stormwind royal guards patrol and they cleared almost all mobs behind goldshire till murlock lil islands rembember add links to things related to the bug using for example cata twinhead twinstar cz description so i just broke stormwind royal guards patroling stormwind... | 1 |

44,066 | 2,899,099,610 | IssuesEvent | 2015-06-17 09:12:43 | greenlion/PHP-SQL-Parser | https://api.github.com/repos/greenlion/PHP-SQL-Parser | closed | 1. please turn off debug print_r() 2. probbaly, some bug in parser triggers it | bug imported Priority-Medium | _From [rel...@gmail.com](https://code.google.com/u/113326933444776031366/) on June 19, 2011 15:31:59_

WHAT STEPS WILL REPRODUCE THE PROBLEM?

1. $sql = "SELECT SQL_CALC_FOUND_ROWS SmTable.*, MATCH (SmTable.fulltextsearch_keyword) AGAINST ('google googles') AS keyword_score FROM SmTable WHERE SmTable.status = 'A' AND... | 1.0 | 1. please turn off debug print_r() 2. probbaly, some bug in parser triggers it - _From [rel...@gmail.com](https://code.google.com/u/113326933444776031366/) on June 19, 2011 15:31:59_

WHAT STEPS WILL REPRODUCE THE PROBLEM?

1. $sql = "SELECT SQL_CALC_FOUND_ROWS SmTable.*, MATCH (SmTable.fulltextsearch_keyword) AGAINS... | priority | please turn off debug print r probbaly some bug in parser triggers it from on june what steps will reproduce the problem sql select sql calc found rows smtable match smtable fulltextsearch keyword against google googles as keyword score from smtable where smtable status ... | 1 |

674,601 | 23,058,837,469 | IssuesEvent | 2022-07-25 08:05:03 | Raid-Training-Initiative/RTIBot | https://api.github.com/repos/Raid-Training-Initiative/RTIBot | closed | Slash Command: /managetrainingrequest | feature high priority slash commands | **Details:**

* `/managetrainingrequest add <member> <wings> <comment>` adds a training request for the given `<member>`, with the `<wings>` being a comma-separated list of wings (use `8` for EoD strikes) and `<comment>` being a comment that shows up in the training request.

* `/managetrainingrequest remove <user>` re... | 1.0 | Slash Command: /managetrainingrequest - **Details:**

* `/managetrainingrequest add <member> <wings> <comment>` adds a training request for the given `<member>`, with the `<wings>` being a comma-separated list of wings (use `8` for EoD strikes) and `<comment>` being a comment that shows up in the training request.

* `... | priority | slash command managetrainingrequest details managetrainingrequest add adds a training request for the given with the being a comma separated list of wings use for eod strikes and being a comment that shows up in the training request managetrainingrequest remove removes a... | 1 |

116,452 | 9,852,871,337 | IssuesEvent | 2019-06-19 13:44:48 | kcigeospatial/Fred_Co_Land-Management | https://api.github.com/repos/kcigeospatial/Fred_Co_Land-Management | reopened | PreProd Mapping-Final Inspection Milestone | Ready for PreProd Env. Retest | Permit# 190619 (Non-Residential Tank) -MCoffman

Permit Only has Final Inspection left to be completed, but is still in the "Inspections" Milestone not "Final Inspection"; This issue has been found on multiple permit types

-MCoffman

Permit Only has Final Inspection left to be completed, but is still in the "Inspections" Milestone not "Final Inspection"; This issue has been found on multiple permit types

failure at 2022-10-21T08:48:49.667Z: <BadLogLines nodes=ip-172-31-11-31(1) example="ERROR 2022-10-21 07:07:09,233 [shard 0] rpc - server.cc:127 - kafka rpc protocol... | True | Failure in AccessControlListTestUpgrade.test_describe_acls - FAIL test: AccessControlListTestUpgrade.test_describe_acls.use_tls=True.use_sasl=False.enable_authz=False.authn_method=sasl.client_auth=True (1/46 runs)

failure at 2022-10-21T08:48:49.667Z: <BadLogLines nodes=ip-172-31-11-31(1) example="ERROR 2022-10-21 07... | non_priority | failure in accesscontrollisttestupgrade test describe acls fail test accesscontrollisttestupgrade test describe acls use tls true use sasl false enable authz false authn method sasl client auth true runs failure at in job | 0 |

543,535 | 15,883,452,096 | IssuesEvent | 2021-04-09 17:24:39 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | [bug] [tests] cant run tests locally without setting the ENV variables | high priority module: ci triage review triaged | After the PR https://github.com/pytorch/pytorch/pull/55522, **cant run tests locally without setting the ENV variables**

```

$ pytest test/test_ops.py

======================================================================= test session starts ========================================================================... | 1.0 | [bug] [tests] cant run tests locally without setting the ENV variables - After the PR https://github.com/pytorch/pytorch/pull/55522, **cant run tests locally without setting the ENV variables**

```

$ pytest test/test_ops.py

======================================================================= test session starts... | priority | cant run tests locally without setting the env variables after the pr cant run tests locally without setting the env variables pytest test test ops py test session starts ... | 1 |

768,191 | 26,957,690,570 | IssuesEvent | 2023-02-08 15:57:17 | biodiversitydata-se/biocollect | https://api.github.com/repos/biodiversitydata-se/biocollect | closed | When a new route is created and "sent in", give the person a note that "you have to wait" | 2-High priority | When a new point count route is created, we as admin repeatedly get several notes by mail that a route is created. Not just one note. This is because the person who created the route doesn't understand that the route is in the system and the (s)he needs to wait for an OK from us. They therefore submit the route several... | 1.0 | When a new route is created and "sent in", give the person a note that "you have to wait" - When a new point count route is created, we as admin repeatedly get several notes by mail that a route is created. Not just one note. This is because the person who created the route doesn't understand that the route is in the s... | priority | when a new route is created and sent in give the person a note that you have to wait when a new point count route is created we as admin repeatedly get several notes by mail that a route is created not just one note this is because the person who created the route doesn t understand that the route is in the s... | 1 |

160,577 | 6,100,322,915 | IssuesEvent | 2017-06-20 12:20:33 | javaee/glassfish | https://api.github.com/repos/javaee/glassfish | opened | Create a new test suite in CI pipeline for admin GUI tests | Component: admin_gui Priority: Critical | The task is to create a script and make necessary changes in the devtests to make the new test suite run on hudson. | 1.0 | Create a new test suite in CI pipeline for admin GUI tests - The task is to create a script and make necessary changes in the devtests to make the new test suite run on hudson. | priority | create a new test suite in ci pipeline for admin gui tests the task is to create a script and make necessary changes in the devtests to make the new test suite run on hudson | 1 |

24,540 | 6,551,638,719 | IssuesEvent | 2017-09-05 15:22:50 | rmap-project/rmap | https://api.github.com/repos/rmap-project/rmap | closed | Update the backend code to implement new user management approach | Code improvement and features Production-readiness User Management | Follow up work from issue #65

Relating to Workplan task 3.7.5 | 1.0 | Update the backend code to implement new user management approach - Follow up work from issue #65

Relating to Workplan task 3.7.5 | non_priority | update the backend code to implement new user management approach follow up work from issue relating to workplan task | 0 |

598,474 | 18,245,938,555 | IssuesEvent | 2021-10-01 18:24:03 | AXeL-dev/youtube-viewer | https://api.github.com/repos/AXeL-dev/youtube-viewer | closed | Refactor channel selection | refactor high priority | Switching between channels can be done through routes for each selection/channel instead of filtering videos cache state by the current selected channel. | 1.0 | Refactor channel selection - Switching between channels can be done through routes for each selection/channel instead of filtering videos cache state by the current selected channel. | priority | refactor channel selection switching between channels can be done through routes for each selection channel instead of filtering videos cache state by the current selected channel | 1 |

15,308 | 19,400,850,809 | IssuesEvent | 2021-12-19 06:13:31 | ethereum/EIPs | https://api.github.com/repos/ethereum/EIPs | closed | Add mission statement | type: Meta type: EIP1 (Process) stale | Presently the ethereum/EIPs project does not have a mission statement.

---

<strike>Recently something changed and now the majority of EIPs here have no path to become "final" standards. Pull request #1100 addresses that issue.</strike>

However, one of the EIP editors (the people with commit access here) mentio... | 1.0 | Add mission statement - Presently the ethereum/EIPs project does not have a mission statement.

---

<strike>Recently something changed and now the majority of EIPs here have no path to become "final" standards. Pull request #1100 addresses that issue.</strike>

However, one of the EIP editors (the people with co... | non_priority | add mission statement presently the ethereum eips project does not have a mission statement recently something changed and now the majority of eips here have no path to become final standards pull request addresses that issue however one of the eip editors the people with commit access here ... | 0 |

149,135 | 23,432,756,880 | IssuesEvent | 2022-08-15 05:53:55 | OneCoin-00/BRJM-iOS | https://api.github.com/repos/OneCoin-00/BRJM-iOS | closed | Button 및 TextField Extension 추가 | ✨ feature 🎨 design | ## ⭐️ Issues

## 📌 To Do

- [x] 버튼 클릭 효과 적용

- [x] 텍스트필드 하단 라인 및 삭제 버튼 추가 | 1.0 | Button 및 TextField Extension 추가 - ## ⭐️ Issues

## 📌 To Do

- [x] 버튼 클릭 효과 적용

- [x] 텍스트필드 하단 라인 및 삭제 버튼 추가 | non_priority | button 및 textfield extension 추가 ⭐️ issues 📌 to do 버튼 클릭 효과 적용 텍스트필드 하단 라인 및 삭제 버튼 추가 | 0 |

44,731 | 2,911,361,124 | IssuesEvent | 2015-06-22 09:03:17 | mintty/mintty | https://api.github.com/repos/mintty/mintty | closed | Italics support | auto-migrated Difficulty-Hard Priority-Low Type-Enhancement | ```

I would like to use italics in my vim syntax files, prompt, etc.

```

Original issue reported on code.google.com by `randy.ha...@gmail.com` on 10 Nov 2009 at 3:31 | 1.0 | Italics support - ```

I would like to use italics in my vim syntax files, prompt, etc.

```

Original issue reported on code.google.com by `randy.ha...@gmail.com` on 10 Nov 2009 at 3:31 | priority | italics support i would like to use italics in my vim syntax files prompt etc original issue reported on code google com by randy ha gmail com on nov at | 1 |

307,112 | 9,414,200,616 | IssuesEvent | 2019-04-10 09:35:36 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | "worldobject" ladders is not working | Low Priority | **Version:** 0.7.8.0 beta

e.g. blast furnace, crane, oil refinery, excavator, etc.

| 1.0 | "worldobject" ladders is not working - **Version:** 0.7.8.0 beta

e.g. blast furnace, crane, oil refinery, excavator, etc.

| priority | worldobject ladders is not working version beta e g blast furnace crane oil refinery excavator etc | 1 |

269,297 | 28,960,074,561 | IssuesEvent | 2023-05-10 01:13:04 | dpteam/RK3188_TABLET | https://api.github.com/repos/dpteam/RK3188_TABLET | reopened | CVE-2021-3483 (High) detected in linuxv3.0 | Mend: dependency security vulnerability | ## CVE-2021-3483 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.0</b></p></summary>

<p>

<p>miscellaneous core development</p>

<p>Library home page: <a href=https://git.kernel.... | True | CVE-2021-3483 (High) detected in linuxv3.0 - ## CVE-2021-3483 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.0</b></p></summary>

<p>

<p>miscellaneous core development</p>

<p>L... | non_priority | cve high detected in cve high severity vulnerability vulnerable library miscellaneous core development library home page a href found in head commit a href found in base branch master vulnerable source files drivers firewire nosy c ... | 0 |

211,942 | 23,856,833,485 | IssuesEvent | 2022-09-07 01:08:00 | HoangBachLeLe/TemplateRepository | https://api.github.com/repos/HoangBachLeLe/TemplateRepository | opened | CVE-2022-38751 (Medium) detected in snakeyaml-1.29.jar | security vulnerability | ## CVE-2022-38751 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>snakeyaml-1.29.jar</b></p></summary>

<p>YAML 1.1 parser and emitter for Java</p>

<p>Library home page: <a href="http... | True | CVE-2022-38751 (Medium) detected in snakeyaml-1.29.jar - ## CVE-2022-38751 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>snakeyaml-1.29.jar</b></p></summary>

<p>YAML 1.1 parser and... | non_priority | cve medium detected in snakeyaml jar cve medium severity vulnerability vulnerable library snakeyaml jar yaml parser and emitter for java library home page a href path to dependency file build gradle path to vulnerable library home wss scanner gradle caches modules... | 0 |

677,863 | 23,178,266,563 | IssuesEvent | 2022-07-31 18:50:21 | chaotic-aur/packages | https://api.github.com/repos/chaotic-aur/packages | closed | [Request] sqlcl | request:new-pkg priority:low | ### Link to the package(s) in the AUR

https://aur.archlinux.org/packages/sqlcl

### Utility this package has for you

Sqlcl is a command-line utility for Oracle, it is used for remotely connecting to oracle db.

I use it to manage Oracle DB which reside in docker container.

### Do you consider the package(s) to be us... | 1.0 | [Request] sqlcl - ### Link to the package(s) in the AUR

https://aur.archlinux.org/packages/sqlcl

### Utility this package has for you

Sqlcl is a command-line utility for Oracle, it is used for remotely connecting to oracle db.

I use it to manage Oracle DB which reside in docker container.

### Do you consider the p... | priority | sqlcl link to the package s in the aur utility this package has for you sqlcl is a command line utility for oracle it is used for remotely connecting to oracle db i use it to manage oracle db which reside in docker container do you consider the package s to be useful for every chaotic aur us... | 1 |

14,139 | 17,016,659,615 | IssuesEvent | 2021-07-02 13:01:23 | docker/compose-cli | https://api.github.com/repos/docker/compose-cli | closed | No warning provided for empty environment variables | compatibility |

**Description**

Use this docker-compose.yaml

```yaml

services:

web:

image: busybox

user: "$UID:$GID"

```

`docker-compose up`

In docker-compose v1 you get:

```

$ docker-compose up

WARNING: The UID variable is not set. Defaulting to a blank string.

WARNING: The GID variable is not set. De... | True | No warning provided for empty environment variables -

**Description**

Use this docker-compose.yaml

```yaml

services:

web:

image: busybox

user: "$UID:$GID"

```

`docker-compose up`

In docker-compose v1 you get:

```

$ docker-compose up

WARNING: The UID variable is not set. Defaulting to a b... | non_priority | no warning provided for empty environment variables description use this docker compose yaml yaml services web image busybox user uid gid docker compose up in docker compose you get docker compose up warning the uid variable is not set defaulting to a bl... | 0 |

270,149 | 23,494,004,595 | IssuesEvent | 2022-08-17 22:00:09 | pandas-dev/pandas | https://api.github.com/repos/pandas-dev/pandas | closed | WARN,TST check stacklevel for all warnings | Testing Warnings | Currently, the stacklevel is only checked for two classes of warnings:

https://github.com/pandas-dev/pandas/blob/14de3fd9ca4178bfce5dd681fa5d0925e057c04d/pandas/_testing/_warnings.py#L132-L134

it would be good to extend this to other (all?) classes of warnings, and fixing the parts of the codebase where this fail... | 1.0 | WARN,TST check stacklevel for all warnings - Currently, the stacklevel is only checked for two classes of warnings:

https://github.com/pandas-dev/pandas/blob/14de3fd9ca4178bfce5dd681fa5d0925e057c04d/pandas/_testing/_warnings.py#L132-L134

it would be good to extend this to other (all?) classes of warnings, and fix... | non_priority | warn tst check stacklevel for all warnings currently the stacklevel is only checked for two classes of warnings it would be good to extend this to other all classes of warnings and fixing the parts of the codebase where this fails | 0 |

722,688 | 24,871,800,812 | IssuesEvent | 2022-10-27 15:44:18 | windchime-yk/resources | https://api.github.com/repos/windchime-yk/resources | opened | `og-edge`によるOGP画像の動的配信 | Type: Feature Priority: High | Deno環境移植の`vercel/og`として[og-edge](https://github.com/ascorbic/og-edge)がリリースされている。

サンプルを見る限りシンプルなので、deno_blog系のOGP画像を配信する機能を追加したい。 | 1.0 | `og-edge`によるOGP画像の動的配信 - Deno環境移植の`vercel/og`として[og-edge](https://github.com/ascorbic/og-edge)がリリースされている。

サンプルを見る限りシンプルなので、deno_blog系のOGP画像を配信する機能を追加したい。 | priority | og edge によるogp画像の動的配信 deno環境移植の vercel og として サンプルを見る限りシンプルなので、deno blog系のogp画像を配信する機能を追加したい。 | 1 |

31,923 | 26,246,523,421 | IssuesEvent | 2023-01-05 15:40:15 | CDCgov/data-exchange-hl7 | https://api.github.com/repos/CDCgov/data-exchange-hl7 | closed | Create new Functions due to Renaming by OPS team | infrastructure waiting | App

- #414

Ops

- Functions: #371

- EventHubs: #343

Priority 1: Done 6 Dec 2022

Everything else: Scheduled for Thu 15 Dec

Create brand new functions due to renaming:

- [x] ocio-ede-dev-hl7-mmg-based-transformer (*)

- [x] ocio-ede-dev-hl7-cache-loader

- [x] extra config:

- [x] MMG_AT_TIME_TRIGGER = "0 ... | 1.0 | Create new Functions due to Renaming by OPS team - App

- #414

Ops

- Functions: #371

- EventHubs: #343

Priority 1: Done 6 Dec 2022

Everything else: Scheduled for Thu 15 Dec

Create brand new functions due to renaming:

- [x] ocio-ede-dev-hl7-mmg-based-transformer (*)

- [x] ocio-ede-dev-hl7-cache-loader

- [x] extr... | non_priority | create new functions due to renaming by ops team app ops functions eventhubs priority done dec everything else scheduled for thu dec create brand new functions due to renaming ocio ede dev mmg based transformer ocio ede dev cache loader extra config ... | 0 |

661,283 | 22,046,310,901 | IssuesEvent | 2022-05-30 02:23:34 | microsoft/Recognizers-Text | https://api.github.com/repos/microsoft/Recognizers-Text | closed | [NL DateTimeV2] Multiple issues from speech across date/time sub-types | bug Priority:P2 | 1- Space between the day and rd/th like “Ik ga 20 ste van de volgende maand terug” isn’t recognized as a date

2- The expression “Ik ga terug vier dagen gerekend vanaf gisteren” is resolved as four days from today, but replacing the text with the digit four is not detected “Ik ga terug 4 dagen gerekend vanaf gisteren”.... | 1.0 | [NL DateTimeV2] Multiple issues from speech across date/time sub-types - 1- Space between the day and rd/th like “Ik ga 20 ste van de volgende maand terug” isn’t recognized as a date

2- The expression “Ik ga terug vier dagen gerekend vanaf gisteren” is resolved as four days from today, but replacing the text with the ... | priority | multiple issues from speech across date time sub types space between the day and rd th like “ik ga ste van de volgende maand terug” isn’t recognized as a date the expression “ik ga terug vier dagen gerekend vanaf gisteren” is resolved as four days from today but replacing the text with the digit four is n... | 1 |

65,410 | 3,228,109,250 | IssuesEvent | 2015-10-11 20:12:14 | cylc/cylc | https://api.github.com/repos/cylc/cylc | opened | Dynamic family size? | priority low | There are some situations where it would be useful to determine the number of family members just before a family submits, e.g. a suite that spawns forecast jobs to model all of the storm systems currently active in some part of the world. **This can be done now by running a sub-suite that takes the number of family ... | 1.0 | Dynamic family size? - There are some situations where it would be useful to determine the number of family members just before a family submits, e.g. a suite that spawns forecast jobs to model all of the storm systems currently active in some part of the world. **This can be done now by running a sub-suite that take... | priority | dynamic family size there are some situations where it would be useful to determine the number of family members just before a family submits e g a suite that spawns forecast jobs to model all of the storm systems currently active in some part of the world this can be done now by running a sub suite that take... | 1 |

56,008 | 14,894,769,041 | IssuesEvent | 2021-01-21 08:06:32 | SasView/sasview | https://api.github.com/repos/SasView/sasview | closed | ESS_GUI: Model label on plot keeps being reset | Hackathon: Plotting SasView Bug Fixing defect | Noticed by User EmilyE on Mac. Verified by @smk78 on Windows. Using 5.0.2.

Matplotlib really, really, doesn't like long dataset names. If you display such a dataset the actual graph gets squashed up in favour of displaying the legend! Like this:

detected in multiple libraries | security vulnerability | ## CVE-2020-28500 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-4.17.4.tgz</b>, <b>lodash-4.12.0.tgz</b>, <b>lodash-4.17.11.tgz</b></p></summary>

<p>

<details><summary><b>... | True | CVE-2020-28500 (Medium) detected in multiple libraries - ## CVE-2020-28500 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-4.17.4.tgz</b>, <b>lodash-4.12.0.tgz</b>, <b>lodash... | non_priority | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries lodash tgz lodash tgz lodash tgz lodash tgz lodash modular utilities library home page a href path to dependency file kubernetes staging src io... | 0 |

10,948 | 8,228,550,434 | IssuesEvent | 2018-09-07 05:59:21 | snowplow/snowplow | https://api.github.com/repos/snowplow/snowplow | closed | Clojure Collector: update CORS configuration | bug security | Tomcat 8.0.53 changed their default CORS configuration, from [CVE-2018-8014](http://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-8014):

>The defaults settings for the CORS filter provided in Apache Tomcat 9.0.0.M1 to 9.0.8, 8.5.0 to 8.5.31, 8.0.0.RC1 to 8.0.52, 7.0.41 to 7.0.88 are insecure and enable 'supportsCr... | True | Clojure Collector: update CORS configuration - Tomcat 8.0.53 changed their default CORS configuration, from [CVE-2018-8014](http://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-8014):

>The defaults settings for the CORS filter provided in Apache Tomcat 9.0.0.M1 to 9.0.8, 8.5.0 to 8.5.31, 8.0.0.RC1 to 8.0.52, 7.0.4... | non_priority | clojure collector update cors configuration tomcat changed their default cors configuration from the defaults settings for the cors filter provided in apache tomcat to to to to are insecure and enable supportscredentials for all origins it is e... | 0 |

328,439 | 9,994,942,014 | IssuesEvent | 2019-07-11 18:57:20 | INN/largo | https://api.github.com/repos/INN/largo | closed | apply font-display:block to fontello | Estimate: < 2 Hours category: styles priority: low type: improvement | https://developers.google.com/web/updates/2016/02/font-display

> **block**

>

> block gives the font face a short block period (3s is recommended in most cases) and an infinite swap period. In other words, the browser draws "invisible" text at first if the font is not loaded, but swaps the font face in as soon as i... | 1.0 | apply font-display:block to fontello - https://developers.google.com/web/updates/2016/02/font-display

> **block**

>

> block gives the font face a short block period (3s is recommended in most cases) and an infinite swap period. In other words, the browser draws "invisible" text at first if the font is not loaded, ... | priority | apply font display block to fontello block block gives the font face a short block period is recommended in most cases and an infinite swap period in other words the browser draws invisible text at first if the font is not loaded but swaps the font face in as soon as it loads to do this the... | 1 |

19,468 | 4,413,792,715 | IssuesEvent | 2016-08-13 02:12:25 | PokemonGoMap/PokemonGo-Map | https://api.github.com/repos/PokemonGoMap/PokemonGo-Map | closed | Display more helpful error when account has not accepted ToS | backend documentation enhancement feature request minor | Hello, i get this error message for 20 minutes now :

2016-08-08 19:21:29,384 [ search_worker_7][ search][ ERROR] Exception in search_worker: sequence index must be integer, not 'str'

Traceback (most recent call last):

File "/Users/Musa/Documents/PokemonGo-Map/pogom/search.py", line 242, in search_worke... | 1.0 | Display more helpful error when account has not accepted ToS - Hello, i get this error message for 20 minutes now :

2016-08-08 19:21:29,384 [ search_worker_7][ search][ ERROR] Exception in search_worker: sequence index must be integer, not 'str'

Traceback (most recent call last):

File "/Users/Musa/Docu... | non_priority | display more helpful error when account has not accepted tos hello i get this error message for minutes now exception in search worker sequence index must be integer not str traceback most recent call last file users musa documents pokemongo map pogom search py line in sea... | 0 |

705,042 | 24,219,305,358 | IssuesEvent | 2022-09-26 09:29:53 | Co-Laon/claon-server | https://api.github.com/repos/Co-Laon/claon-server | closed | 검색 화면 구현 | enhancement priority: medium | ## Describe

검색어와 유사한 이용자와 암장을 검색 허용

## (Optional) Solution

Please describe your preferred solution

-

| 1.0 | 검색 화면 구현 - ## Describe

검색어와 유사한 이용자와 암장을 검색 허용

## (Optional) Solution

Please describe your preferred solution

-

| priority | 검색 화면 구현 describe 검색어와 유사한 이용자와 암장을 검색 허용 optional solution please describe your preferred solution | 1 |

343,357 | 10,328,720,611 | IssuesEvent | 2019-09-02 10:13:08 | webpack/schema-utils | https://api.github.com/repos/webpack/schema-utils | closed | improve output in some cases | priority: 5 (nice to have) semver: Minor type: Feature | <!--

Issues are so 🔥

If you remove or skip this template, you'll make the 🐼 sad and the mighty god

of Github will appear and pile-drive the close button from a great height

while making animal noises.

👉🏽 Need support, advice, or help? Don't open an issue!

Head to StackOverflow or https://gitte... | 1.0 | improve output in some cases - <!--

Issues are so 🔥

If you remove or skip this template, you'll make the 🐼 sad and the mighty god

of Github will appear and pile-drive the close button from a great height

while making animal noises.

👉🏽 Need support, advice, or help? Don't open an issue!

Head to... | priority | improve output in some cases issues are so 🔥 if you remove or skip this template you ll make the 🐼 sad and the mighty god of github will appear and pile drive the close button from a great height while making animal noises 👉🏽 need support advice or help don t open an issue head to... | 1 |

89,922 | 11,302,730,342 | IssuesEvent | 2020-01-17 18:22:58 | 18F/identity-style-guide | https://api.github.com/repos/18F/identity-style-guide | closed | Develop an initial content style guide page | skill: content design / strategy type: feature request | **Why:** By consistently practicing language in an intentional way, we can provide content that supports login.gov users’ needs and improve their experience on our sites and materials.

**Stories:**

- As a login.gov team member, I would like to use design.login.gov as a go-to reference when writing login materials... | 1.0 | Develop an initial content style guide page - **Why:** By consistently practicing language in an intentional way, we can provide content that supports login.gov users’ needs and improve their experience on our sites and materials.

**Stories:**

- As a login.gov team member, I would like to use design.login.gov as ... | non_priority | develop an initial content style guide page why by consistently practicing language in an intentional way we can provide content that supports login gov users’ needs and improve their experience on our sites and materials stories as a login gov team member i would like to use design login gov as ... | 0 |

822,796 | 30,885,220,174 | IssuesEvent | 2023-08-03 21:05:56 | letehaha/budget-tracker-fe | https://api.github.com/repos/letehaha/budget-tracker-fe | closed | Update monobank balances history not by calculating it from income/expense, but by just reading "balance" property | type::enhancement repo: backend priority-0-highest | Currently, each Monobank's account balance history is calculated based on transaction movements, and it is calculated manually. Since the bank already providing us info about the account's balance "after" the tx, we actually just need to update the balance history for that account by just setting the `balance` value. T... | 1.0 | Update monobank balances history not by calculating it from income/expense, but by just reading "balance" property - Currently, each Monobank's account balance history is calculated based on transaction movements, and it is calculated manually. Since the bank already providing us info about the account's balance "after... | priority | update monobank balances history not by calculating it from income expense but by just reading balance property currently each monobank s account balance history is calculated based on transaction movements and it is calculated manually since the bank already providing us info about the account s balance after... | 1 |

16,579 | 12,058,036,757 | IssuesEvent | 2020-04-15 16:46:49 | skypyproject/skypy | https://api.github.com/repos/skypyproject/skypy | closed | Twine warning: `long_description_content_type` missing | bug infrastructure v0.1 Hack | **Describe the bug**

When packaging for release, twine warns that `long_description_content_type` is missing.

**To Reproduce**

1. `python setup.py build sdist`

2. `twine check dist/*`

```

Checking dist/skypy-0.1rc1.tar.gz: PASSED, with warnings

warning: `long_description_content_type` missing. defaulting to... | 1.0 | Twine warning: `long_description_content_type` missing - **Describe the bug**

When packaging for release, twine warns that `long_description_content_type` is missing.

**To Reproduce**

1. `python setup.py build sdist`

2. `twine check dist/*`

```

Checking dist/skypy-0.1rc1.tar.gz: PASSED, with warnings

warning... | non_priority | twine warning long description content type missing describe the bug when packaging for release twine warns that long description content type is missing to reproduce python setup py build sdist twine check dist checking dist skypy tar gz passed with warnings warning ... | 0 |

181,462 | 21,658,680,215 | IssuesEvent | 2022-05-06 16:39:02 | doc-ai/snipe-it | https://api.github.com/repos/doc-ai/snipe-it | closed | CVE-2021-41183 (Medium) detected in jquery-ui-1.11.4.js, jquery-ui-1.11.4.min.js - autoclosed | security vulnerability | ## CVE-2021-41183 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jquery-ui-1.11.4.js</b>, <b>jquery-ui-1.11.4.min.js</b></p></summary>

<p>

<details><summary><b>jquery-ui-1.11.4.js... | True | CVE-2021-41183 (Medium) detected in jquery-ui-1.11.4.js, jquery-ui-1.11.4.min.js - autoclosed - ## CVE-2021-41183 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jquery-ui-1.11.4.js... | non_priority | cve medium detected in jquery ui js jquery ui min js autoclosed cve medium severity vulnerability vulnerable libraries jquery ui js jquery ui min js jquery ui js a curated set of user interface interactions effects widgets and themes built on ... | 0 |