Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 1k | labels stringlengths 4 1.38k | body stringlengths 1 262k | index stringclasses 16

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

109,472 | 13,777,745,700 | IssuesEvent | 2020-10-08 11:25:37 | CatalogueOfLife/clearinghouse-ui | https://api.github.com/repos/CatalogueOfLife/clearinghouse-ui | closed | Reduce size of source dataset in public tree | design tiny | When there is a single source dataset for a taxon in the public tree, the source is shown in the same font size as the name and it convolutes the tree of names:

<img width="1199" alt="Screenshot 2020-10-07 at 13 11 06" src="https://user-images.githubusercontent.com/327505/95323529-9d30ad00-089e-11eb-94b0-087936e3008... | 1.0 | Reduce size of source dataset in public tree - When there is a single source dataset for a taxon in the public tree, the source is shown in the same font size as the name and it convolutes the tree of names:

<img width="1199" alt="Screenshot 2020-10-07 at 13 11 06" src="https://user-images.githubusercontent.com/3275... | non_priority | reduce size of source dataset in public tree when there is a single source dataset for a taxon in the public tree the source is shown in the same font size as the name and it convolutes the tree of names img width alt screenshot at src use the smaller font size applied when there are mul... | 0 |

259,892 | 8,201,226,191 | IssuesEvent | 2018-09-01 15:10:37 | clearlydefined/website | https://api.github.com/repos/clearlydefined/website | closed | Details view | high-priority | Need a reusable component that shows the full detail of a definition. It should optionally allow editing of the values in definition in a way that is shareable with other components. That is, the detail component described here does not "own" the definition. This component will show up in two primary places/ways

1. ... | 1.0 | Details view - Need a reusable component that shows the full detail of a definition. It should optionally allow editing of the values in definition in a way that is shareable with other components. That is, the detail component described here does not "own" the definition. This component will show up in two primary pla... | priority | details view need a reusable component that shows the full detail of a definition it should optionally allow editing of the values in definition in a way that is shareable with other components that is the detail component described here does not own the definition this component will show up in two primary pla... | 1 |

19,711 | 10,418,375,554 | IssuesEvent | 2019-09-15 08:02:10 | tiarebalbi/tiarebalbi-website | https://api.github.com/repos/tiarebalbi/tiarebalbi-website | closed | WS-2018-0236 (Medium) detected in mem-1.1.0.tgz | security vulnerability | ## WS-2018-0236 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mem-1.1.0.tgz</b></p></summary>

<p>Memoize functions - An optimization used to speed up consecutive function calls by ... | True | WS-2018-0236 (Medium) detected in mem-1.1.0.tgz - ## WS-2018-0236 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mem-1.1.0.tgz</b></p></summary>

<p>Memoize functions - An optimizati... | non_priority | ws medium detected in mem tgz ws medium severity vulnerability vulnerable library mem tgz memoize functions an optimization used to speed up consecutive function calls by caching the result of calls with identical input library home page a href path to dependency file ... | 0 |

262,919 | 8,272,608,617 | IssuesEvent | 2018-09-16 22:04:08 | javaee/glassfish | https://api.github.com/repos/javaee/glassfish | closed | [Blocking] Unable to update a GF Server 3.0.1 full distribution to 3.0.1.1 from Support Repository - Package Violation Constraint | 3_1_1-scrubbed Component: packaging Priority: Minor Type: Bug | The problem is seen on a community installation of GlassFish Server 3.0.1 and the attempt to Update it through the Oracle Support repository and to version Oracle GF Server 3.0.1 patch1\. After installation, the system is configured as follows:

* cd <GlassFish_Installation>

* bin/pkg set-publisher -P -k $HOME/Orac... | 1.0 | [Blocking] Unable to update a GF Server 3.0.1 full distribution to 3.0.1.1 from Support Repository - Package Violation Constraint - The problem is seen on a community installation of GlassFish Server 3.0.1 and the attempt to Update it through the Oracle Support repository and to version Oracle GF Server 3.0.1 patch1\. ... | priority | unable to update a gf server full distribution to from support repository package violation constraint the problem is seen on a community installation of glassfish server and the attempt to update it through the oracle support repository and to version oracle gf server after installa... | 1 |

239,556 | 7,799,805,308 | IssuesEvent | 2018-06-09 00:42:41 | tine20/Tine-2.0-Open-Source-Groupware-and-CRM | https://api.github.com/repos/tine20/Tine-2.0-Open-Source-Groupware-and-CRM | closed | 0005528:

update content_sequence of container on record move | Feature Request Mantis Tinebase high priority | **Reported by pschuele on 3 Feb 2012 13:45**

update content_sequence of container on record move

both containers need to be updated

| 1.0 | 0005528:

update content_sequence of container on record move - **Reported by pschuele on 3 Feb 2012 13:45**

update content_sequence of container on record move

both containers need to be updated

| priority | update content sequence of container on record move reported by pschuele on feb update content sequence of container on record move both containers need to be updated | 1 |

116,785 | 4,706,562,710 | IssuesEvent | 2016-10-13 17:32:24 | google/grr | https://api.github.com/repos/google/grr | opened | MySQL datastore Warning: Duplicate entry | bug Priority-Low | When running the tests on mysql 5.7 (installed from xenial ubuntu), I get tons of warnings. Since this code hasn't changed in a while I suspect this is just MySQL being more noisy in the newer version.

Sean could you take a look when you get a chance? Might be we need to give some people guidance on how to disable t... | 1.0 | MySQL datastore Warning: Duplicate entry - When running the tests on mysql 5.7 (installed from xenial ubuntu), I get tons of warnings. Since this code hasn't changed in a while I suspect this is just MySQL being more noisy in the newer version.

Sean could you take a look when you get a chance? Might be we need to gi... | priority | mysql datastore warning duplicate entry when running the tests on mysql installed from xenial ubuntu i get tons of warnings since this code hasn t changed in a while i suspect this is just mysql being more noisy in the newer version sean could you take a look when you get a chance might be we need to gi... | 1 |

341,723 | 24,709,924,056 | IssuesEvent | 2022-10-19 23:06:23 | PinataCloud/Pinata-SDK | https://api.github.com/repos/PinataCloud/Pinata-SDK | closed | Question: Can I pre-calculate the the CID returned by pinFileToIPFS? | question documentation | I need to be able to pre-calculate the CID returned by `pinFileToIPFS` - is this possible? | 1.0 | Question: Can I pre-calculate the the CID returned by pinFileToIPFS? - I need to be able to pre-calculate the CID returned by `pinFileToIPFS` - is this possible? | non_priority | question can i pre calculate the the cid returned by pinfiletoipfs i need to be able to pre calculate the cid returned by pinfiletoipfs is this possible | 0 |

27,768 | 8,034,168,771 | IssuesEvent | 2018-07-29 15:32:04 | Krzmbrzl/SQDev | https://api.github.com/repos/Krzmbrzl/SQDev | closed | Error marker at wrong position | bug completed in Dev-Build | ```

if (isClass (configFile >> "CfgVehicles" >> _name)) {

}

_baseClass;

```

claims that there'd be a missing semicolon at `_name` whilst it is missing after the closing pranthesis (missing `then`) and at the closing curly bracket. | 1.0 | Error marker at wrong position - ```

if (isClass (configFile >> "CfgVehicles" >> _name)) {

}

_baseClass;

```

claims that there'd be a missing semicolon at `_name` whilst it is missing after the closing pranthesis (missing `then`) and at the closing curly bracket. | non_priority | error marker at wrong position if isclass configfile cfgvehicles name baseclass claims that there d be a missing semicolon at name whilst it is missing after the closing pranthesis missing then and at the closing curly bracket | 0 |

450,798 | 13,019,446,222 | IssuesEvent | 2020-07-26 22:40:25 | constellation-app/constellation | https://api.github.com/repos/constellation-app/constellation | opened | Copy/paste from Histogram | enhancement priority request user relevant ux | <!--

### Requirements

* Filling out the template is required. Any pull request that does not include

enough information to be reviewed in a timely manner may be closed at the

maintainers' discretion.

* Have you read Constellation's Code of Conduct? By filing an issue, you are

expected to comply with it, inclu... | 1.0 | Copy/paste from Histogram - <!--

### Requirements

* Filling out the template is required. Any pull request that does not include

enough information to be reviewed in a timely manner may be closed at the

maintainers' discretion.

* Have you read Constellation's Code of Conduct? By filing an issue, you are

expec... | priority | copy paste from histogram requirements filling out the template is required any pull request that does not include enough information to be reviewed in a timely manner may be closed at the maintainers discretion have you read constellation s code of conduct by filing an issue you are expec... | 1 |

505,603 | 14,642,386,529 | IssuesEvent | 2020-12-25 10:51:50 | buddyboss/buddyboss-platform | https://api.github.com/repos/buddyboss/buddyboss-platform | opened | Message Improvement | feature: enhancement priority: medium | Some code refactoring required to fix some performance-related core logic

- With the Message thread ajax, We sending all recipients lists for every thread. it'll be a little heavy with a large network

> We should send recipients with some limit like 10 and we also need to send total recipients with thread objects... | 1.0 | Message Improvement - Some code refactoring required to fix some performance-related core logic

- With the Message thread ajax, We sending all recipients lists for every thread. it'll be a little heavy with a large network

> We should send recipients with some limit like 10 and we also need to send total recipien... | priority | message improvement some code refactoring required to fix some performance related core logic with the message thread ajax we sending all recipients lists for every thread it ll be a little heavy with a large network we should send recipients with some limit like and we also need to send total recipient... | 1 |

94,221 | 27,148,962,122 | IssuesEvent | 2023-02-16 22:41:36 | dyninst/dyninst | https://api.github.com/repos/dyninst/dyninst | closed | Dockerfile spack.yaml property error | build | **Intention**

Building Dyninst in docker

**Bug & Reproduce**

```

$ docker build -t dyninst -f Dockerfile ../

[+] Building 196.3s (17/19)

=> [internal] load build definition from Dockerfile ... | 1.0 | Dockerfile spack.yaml property error - **Intention**

Building Dyninst in docker

**Bug & Reproduce**

```

$ docker build -t dyninst -f Dockerfile ../

[+] Building 196.3s (17/19)

=> [internal] load build d... | non_priority | dockerfile spack yaml property error intention building dyninst in docker bug reproduce docker build t dyninst f dockerfile building load build definition from d... | 0 |

258,732 | 8,179,346,429 | IssuesEvent | 2018-08-28 16:06:19 | melsicon/melsicon.de | https://api.github.com/repos/melsicon/melsicon.de | opened | Space around contact section | Priority: Low Type: Enhancement | ### **Type of Issue**

- [ ] Bug

- [X] Enhancement

- [ ] Documentation

- [ ] Maintenance

- [ ] Question

#### Priority

- [X] Low

- [ ] Medium

- [ ] High

- [ ] Critical

## **Description**

The space around the contact section is too cramped

## **Steps to reproduce**

Scroll to contact section

... | 1.0 | Space around contact section - ### **Type of Issue**

- [ ] Bug

- [X] Enhancement

- [ ] Documentation

- [ ] Maintenance

- [ ] Question

#### Priority

- [X] Low

- [ ] Medium

- [ ] High

- [ ] Critical

## **Description**

The space around the contact section is too cramped

## **Steps to reproduce**

... | priority | space around contact section type of issue bug enhancement documentation maintenance question priority low medium high critical description the space around the contact section is too cramped steps to reproduce scroll to contac... | 1 |

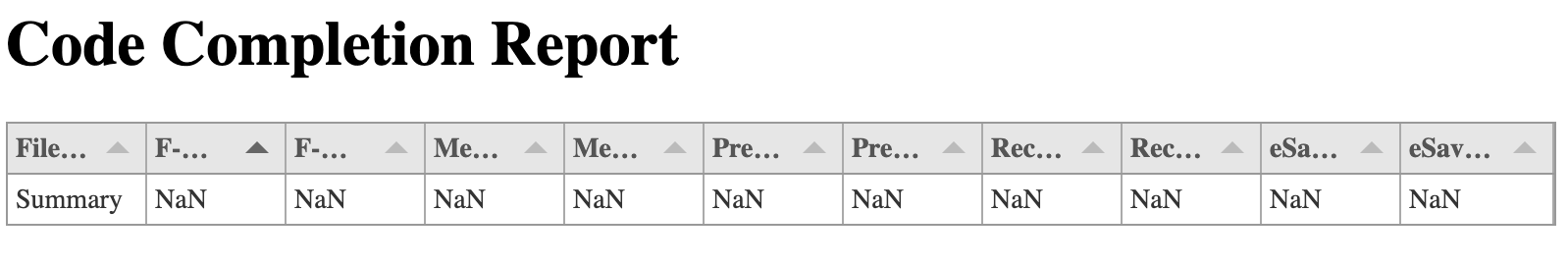

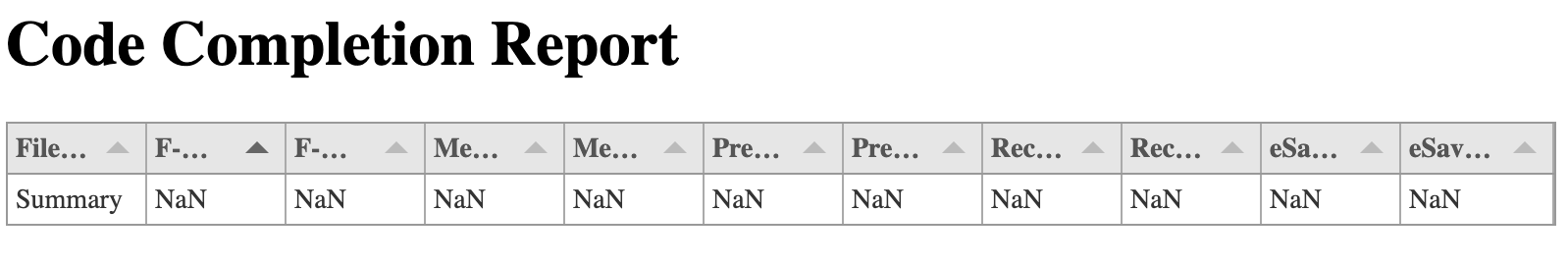

330,121 | 10,029,586,574 | IssuesEvent | 2019-07-17 14:12:53 | bibaev/code-completion-evaluation | https://api.github.com/repos/bibaev/code-completion-evaluation | closed | Добавлять диагностику в отчет, если что-то пошло не так | effort: medium priority: low type: bug | Сейчас если запустить с выключенными докером, то получится такое:

При этом в лог валятся два ислючения. Проблема в том, что сейчас в отчете нет информации о том, на каких файлах мы запустили, и что на некот... | 1.0 | Добавлять диагностику в отчет, если что-то пошло не так - Сейчас если запустить с выключенными докером, то получится такое:

При этом в лог валятся два ислючения. Проблема в том, что сейчас в отчете нет инфо... | priority | добавлять диагностику в отчет если что то пошло не так сейчас если запустить с выключенными докером то получится такое при этом в лог валятся два ислючения проблема в том что сейчас в отчете нет информации о том на каких файлах мы запустили и что на некоторыз файлах ничего не получилось а происхошла ... | 1 |

9,360 | 11,411,253,941 | IssuesEvent | 2020-02-01 04:09:26 | ballerina-platform/ballerina-spec | https://api.github.com/repos/ballerina-platform/ballerina-spec | closed | New approach to identity for XML | enhancement incompatible | Two XML sequences are `===` if they have the same length and their constituent items are pairwise `===`. Two text XML items are `===` if they are `==.` Other types of XML item are `===` if they have the same storage location. | True | New approach to identity for XML - Two XML sequences are `===` if they have the same length and their constituent items are pairwise `===`. Two text XML items are `===` if they are `==.` Other types of XML item are `===` if they have the same storage location. | non_priority | new approach to identity for xml two xml sequences are if they have the same length and their constituent items are pairwise two text xml items are if they are other types of xml item are if they have the same storage location | 0 |

436,489 | 12,550,735,643 | IssuesEvent | 2020-06-06 12:15:51 | googleapis/elixir-google-api | https://api.github.com/repos/googleapis/elixir-google-api | opened | Synthesis failed for BigQueryConnection | autosynth failure priority: p1 type: bug | Hello! Autosynth couldn't regenerate BigQueryConnection. :broken_heart:

Here's the output from running `synth.py`:

```

2020-06-06 05:15:08,130 autosynth [INFO] > logs will be written to: /tmpfs/src/github/synthtool/logs/googleapis/elixir-google-api

2020-06-06 05:15:09,292 autosynth [DEBUG] > Running: git config --glo... | 1.0 | Synthesis failed for BigQueryConnection - Hello! Autosynth couldn't regenerate BigQueryConnection. :broken_heart:

Here's the output from running `synth.py`:

```

2020-06-06 05:15:08,130 autosynth [INFO] > logs will be written to: /tmpfs/src/github/synthtool/logs/googleapis/elixir-google-api

2020-06-06 05:15:09,292 aut... | priority | synthesis failed for bigqueryconnection hello autosynth couldn t regenerate bigqueryconnection broken heart here s the output from running synth py autosynth logs will be written to tmpfs src github synthtool logs googleapis elixir google api autosynth running git c... | 1 |

799,727 | 28,312,701,138 | IssuesEvent | 2023-04-10 16:49:52 | SparkDevNetwork/Rock | https://api.github.com/repos/SparkDevNetwork/Rock | closed | Slow Rock Startup | Status: Confirmed Priority: High Fixed in v15.0 Fixed in v14.2 | ### Please go through all the tasks below

- [ ] Check this box only after you have successfully completed both the above tasks

### Please provide a brief description of the problem. Please do not forget to attach the relevant screenshots from your side.

We're seeing significant performance issues in Rock V14 during ... | 1.0 | Slow Rock Startup - ### Please go through all the tasks below

- [ ] Check this box only after you have successfully completed both the above tasks

### Please provide a brief description of the problem. Please do not forget to attach the relevant screenshots from your side.

We're seeing significant performance issues... | priority | slow rock startup please go through all the tasks below check this box only after you have successfully completed both the above tasks please provide a brief description of the problem please do not forget to attach the relevant screenshots from your side we re seeing significant performance issues i... | 1 |

702,557 | 24,125,741,882 | IssuesEvent | 2022-09-21 00:03:58 | zulip/zulip | https://api.github.com/repos/zulip/zulip | closed | Allow custom profile fields to be shown in profile card | help wanted area: settings (admin/org) priority: high release goal area: popovers | At present, a user's profile card displays their name, local time, and role.

Organizations may find it useful to display additional fields there, such as pronouns (#21214), G... | 1.0 | Allow custom profile fields to be shown in profile card - At present, a user's profile card displays their name, local time, and role.

Organizations may find it useful to dis... | priority | allow custom profile fields to be shown in profile card at present a user s profile card displays their name local time and role organizations may find it useful to display additional fields there such as pronouns github username job title team etc and we should make it possible to do so ... | 1 |

273,791 | 23,786,103,489 | IssuesEvent | 2022-09-02 10:13:06 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Add automated tests for resizable editor functionality | Automated Testing Needs Dev [Status] Stale | **Is your feature request related to a problem? Please describe.**

The resizable editor work from PR https://github.com/WordPress/gutenberg/pull/19082 needs some form of automated testing.

**Describe the solution you'd like**

Ideally we should add e2e tests as discussed in [this thread](https://github.com/WordPr... | 1.0 | Add automated tests for resizable editor functionality - **Is your feature request related to a problem? Please describe.**

The resizable editor work from PR https://github.com/WordPress/gutenberg/pull/19082 needs some form of automated testing.

**Describe the solution you'd like**

Ideally we should add e2e test... | non_priority | add automated tests for resizable editor functionality is your feature request related to a problem please describe the resizable editor work from pr needs some form of automated testing describe the solution you d like ideally we should add tests as discussed in and perhaps unit tests for s... | 0 |

52,955 | 6,287,542,755 | IssuesEvent | 2017-07-19 15:09:37 | CLARIAH/wp5_mediasuite | https://api.github.com/repos/CLARIAH/wp5_mediasuite | closed | Connect user annotations (video) to user who added them | Effort: improvement Function: annotation (video) Importance: medium MS-Component-function ToDo: testing needed Work: functionality | This is for users to see which annotations they made (attached to their user, as logged in the surfConnex). | 1.0 | Connect user annotations (video) to user who added them - This is for users to see which annotations they made (attached to their user, as logged in the surfConnex). | non_priority | connect user annotations video to user who added them this is for users to see which annotations they made attached to their user as logged in the surfconnex | 0 |

131,187 | 10,684,066,983 | IssuesEvent | 2019-10-22 09:40:26 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | CI Failures: AzureBlobStoreRepositoryTests.testIndicesDeletedFromRepository | :Distributed/Snapshot/Restore >test-failure | This test has failed periodically (but consistently):

https://groups.google.com/a/elastic.co/forum/#!searchin/build-elasticsearch/AzureBlobStoreRepositoryTests$20testIndicesDeletedFromRepository%7Csort:date

I have not been able to reproduce but (like #47380) it also looks like its coming from the Azure plugin:

... | 1.0 | CI Failures: AzureBlobStoreRepositoryTests.testIndicesDeletedFromRepository - This test has failed periodically (but consistently):

https://groups.google.com/a/elastic.co/forum/#!searchin/build-elasticsearch/AzureBlobStoreRepositoryTests$20testIndicesDeletedFromRepository%7Csort:date

I have not been able to repro... | non_priority | ci failures azureblobstorerepositorytests testindicesdeletedfromrepository this test has failed periodically but consistently i have not been able to reproduce but like it also looks like its coming from the azure plugin caused by com microsoft azure storage storageexception an ... | 0 |

652,038 | 21,518,837,534 | IssuesEvent | 2022-04-28 12:32:54 | HGustavs/LenaSYS | https://api.github.com/repos/HGustavs/LenaSYS | opened | Rescue the poop emoji! | High priority Group-1-2022 | Someone have removed the holy poop emoji! We need to bring it back! Its a staple for the diagram!

| 1.0 | Rescue the poop emoji! - Someone have removed the holy poop emoji! We need to bring it back! Its a staple for the diagram!

| priority | rescue the poop emoji someone have removed the holy poop emoji we need to bring it back its a staple for the diagram | 1 |

344,121 | 30,717,183,948 | IssuesEvent | 2023-07-27 13:44:44 | Nexters/o-escape-be | https://api.github.com/repos/Nexters/o-escape-be | closed | 업체 테스트 코드 작성 | 📃test | ### Issue 타입

- [x] 테스트

### 이슈 상세 내용

- 업체(shop) 단위 테스트 작성

### 체크리스트

- [ ] API 명세 기반 모든 로직의 테스트를 작성 하였나요?

- [ ] 작성한 코드가 의도대로 작동하였나요? | 1.0 | 업체 테스트 코드 작성 - ### Issue 타입

- [x] 테스트

### 이슈 상세 내용

- 업체(shop) 단위 테스트 작성

### 체크리스트

- [ ] API 명세 기반 모든 로직의 테스트를 작성 하였나요?

- [ ] 작성한 코드가 의도대로 작동하였나요? | non_priority | 업체 테스트 코드 작성 issue 타입 테스트 이슈 상세 내용 업체 shop 단위 테스트 작성 체크리스트 api 명세 기반 모든 로직의 테스트를 작성 하였나요 작성한 코드가 의도대로 작동하였나요 | 0 |

114,571 | 11,851,672,540 | IssuesEvent | 2020-03-24 18:30:10 | yarnpkg/berry | https://api.github.com/repos/yarnpkg/berry | closed | [Bug] Docs say deferred versions are stored in .yarn/releases; are actually stored in .yarn/versions | bug documentation | - [ ] I'd be willing to implement a fix

**Describe the bug**

Docs here: https://yarnpkg.com/features/release-workflow

Say that deferred versions (`yarn version -d`) are stored in .yarn/releases but it looks like they actually get stored in .yarn/versions.

I omitted the rest of the issue template because this ... | 1.0 | [Bug] Docs say deferred versions are stored in .yarn/releases; are actually stored in .yarn/versions - - [ ] I'd be willing to implement a fix

**Describe the bug**

Docs here: https://yarnpkg.com/features/release-workflow

Say that deferred versions (`yarn version -d`) are stored in .yarn/releases but it looks lik... | non_priority | docs say deferred versions are stored in yarn releases are actually stored in yarn versions i d be willing to implement a fix describe the bug docs here say that deferred versions yarn version d are stored in yarn releases but it looks like they actually get stored in yarn versions i... | 0 |

86,438 | 24,850,291,124 | IssuesEvent | 2022-10-26 19:25:34 | regen-network/regen-ledger | https://api.github.com/repos/regen-network/regen-ledger | closed | Set up completions for goreleaser | Type: Build | ## Summary

<!-- Short, concise description of the proposed feature -->

Ref https://github.com/regen-network/regen-ledger/pull/1546#issue-1408540078

https://carlosbecker.com/posts/golang-completions-cobra/

> users would have to enable completions manually. I think it’s nicer to enable the completions upon in... | 1.0 | Set up completions for goreleaser - ## Summary

<!-- Short, concise description of the proposed feature -->

Ref https://github.com/regen-network/regen-ledger/pull/1546#issue-1408540078

https://carlosbecker.com/posts/golang-completions-cobra/

> users would have to enable completions manually. I think it’s nic... | non_priority | set up completions for goreleaser summary ref users would have to enable completions manually i think it’s nicer to enable the completions upon installing the package and since i release my go projects with i can leverage it to handle this for me for admin use not ... | 0 |

772,922 | 27,141,683,159 | IssuesEvent | 2023-02-16 16:46:40 | googleapis/python-bigquery-sqlalchemy | https://api.github.com/repos/googleapis/python-bigquery-sqlalchemy | closed | tests.sqlalchemy_dialect_compliance.test_dialect_compliance.TimeTest_bigquery+bigquery: test_null_bound_comparison failed | type: bug priority: p1 flakybot: issue api: bigquery | Note: #694 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 074321ddaa10001773e7e6044f4a0df1bb530331

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/82580611-18ba-4d10-8e25-a7bf752e1da1), [Sponge](http://sponge2/82580611-18ba-4d10-8... | 1.0 | tests.sqlalchemy_dialect_compliance.test_dialect_compliance.TimeTest_bigquery+bigquery: test_null_bound_comparison failed - Note: #694 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: 074321ddaa10001773e7e6044f4a0df1bb530331

buildURL: [Build Status](https://sou... | priority | tests sqlalchemy dialect compliance test dialect compliance timetest bigquery bigquery test null bound comparison failed note was also for this test but it was closed more than days ago so i didn t mark it flaky commit buildurl status failed test output traceback most recent call l... | 1 |

130,279 | 18,061,419,456 | IssuesEvent | 2021-09-20 14:20:58 | hoprnet/hoprnet-org | https://api.github.com/repos/hoprnet/hoprnet-org | closed | /token Fix detail in "Token supply distribution"-chart | workflow:new issue type:design | # Page

/token - Token supply distribution (M)

# Current behavior

in the Token supply distribution (M)-chart and there in the black overlay:

instead of for example "Month 16" it says there "16_Month 16", due to some field-error.

# Expected behavior

# Current behavior

in the Token supply distribution (M)-chart and there in the black overlay:

instead of for example "Month 16" it says there "16_Month 16", due to some field-error.

# Expected behavior

NOT NULL,

FirstName STRING(50),

LastName STRING(50),

Age INT64 NOT NULL,

FullName S... | 1.0 | spanner/spansql: generated columns - **Client**

spansql Version v1.13.0

**Environment**

windows

**Go Environment**

$ go version : go version go1.13 windows/amd64

$ go env

If you extract the following example

CREATE TABLE users (

Id STRING(20) NOT NULL,

FirstName STRING(50),

LastName STRING(50... | priority | spanner spansql generated columns client spansql version environment windows go environment go version go version windows go env if you extract the following example create table users id string not null firstname string lastname string age n... | 1 |

214,713 | 16,607,733,925 | IssuesEvent | 2021-06-02 07:07:43 | PlaceOS/drivers | https://api.github.com/repos/PlaceOS/drivers | closed | Stienel Driver | priority: high status: requires testing type: driver | **Driver Type**

Device

**Manufacturer**

Stienel

**Model/Service**

Model or Service

**Link to or Attach Device API or Protocol**

https://drive.google.com/drive/folders/1oLiUoWW5CSWyWPwTrDqQT-8SnBdPtc1N

**Describe any desired functionality**

- Control all aspects of device

**Additional context**

Add any other ... | 1.0 | Stienel Driver - **Driver Type**

Device

**Manufacturer**

Stienel

**Model/Service**

Model or Service

**Link to or Attach Device API or Protocol**

https://drive.google.com/drive/folders/1oLiUoWW5CSWyWPwTrDqQT-8SnBdPtc1N

**Describe any desired functionality**

- Control all aspects of device

**Additional context*... | non_priority | stienel driver driver type device manufacturer stienel model service model or service link to or attach device api or protocol describe any desired functionality control all aspects of device additional context add any other context about the driver request here | 0 |

755,746 | 26,438,359,228 | IssuesEvent | 2023-01-15 17:17:01 | xLPMG/EMailClient-GUI | https://api.github.com/repos/xLPMG/EMailClient-GUI | opened | pop3 folder scan does not work | bug low priority | MailReceiver.java:

`for (Folder folder : folders) {

System.out.println(folder.getFullName() + ": " + folder.getMessageCount());

}`

gibt nur `INBOX: -1` aus

| 1.0 | pop3 folder scan does not work - MailReceiver.java:

`for (Folder folder : folders) {

System.out.println(folder.getFullName() + ": " + folder.getMessageCount());

}`

gibt nur `INBOX: -1` aus

| priority | folder scan does not work mailreceiver java for folder folder folders system out println folder getfullname folder getmessagecount gibt nur inbox aus | 1 |

741,963 | 25,829,766,897 | IssuesEvent | 2022-12-12 15:20:06 | limesquid/favicon-thief | https://api.github.com/repos/limesquid/favicon-thief | closed | HTTP 403 - index page | Priority - Should have Type - Bug | - [x] brandbucket.com (quasi-fixed by a heuristic that guesses favicon URL)

- [ ] pixabay.com

- [ ] wa.me

- [ ] pexels.com

----

Found in https://github.com/limesquid/favicon-thief/pull/6 | 1.0 | HTTP 403 - index page - - [x] brandbucket.com (quasi-fixed by a heuristic that guesses favicon URL)

- [ ] pixabay.com

- [ ] wa.me

- [ ] pexels.com

----

Found in https://github.com/limesquid/favicon-thief/pull/6 | priority | http index page brandbucket com quasi fixed by a heuristic that guesses favicon url pixabay com wa me pexels com found in | 1 |

125,389 | 26,650,904,447 | IssuesEvent | 2023-01-25 13:37:26 | OudayAhmed/Assignment-1-DECIDE | https://api.github.com/repos/OudayAhmed/Assignment-1-DECIDE | opened | CMV-2 | code | Description: Implement a method for DECIDE() with PI and EPSILON as parameters.

There exists at least one set of three consecutive data points which form an angle such that:

angle < (PI−EPSILON)

or

angle > (PI+EPSILON)

The second of the three consecutive points is always the vertex of the angle. If either the f... | 1.0 | CMV-2 - Description: Implement a method for DECIDE() with PI and EPSILON as parameters.

There exists at least one set of three consecutive data points which form an angle such that:

angle < (PI−EPSILON)

or

angle > (PI+EPSILON)

The second of the three consecutive points is always the vertex of the angle. If eith... | non_priority | cmv description implement a method for decide with pi and epsilon as parameters there exists at least one set of three consecutive data points which form an angle such that angle pi−epsilon or angle pi epsilon the second of the three consecutive points is always the vertex of the angle if eith... | 0 |

93,626 | 15,895,508,843 | IssuesEvent | 2021-04-11 14:14:47 | rammatzkvosky/123 | https://api.github.com/repos/rammatzkvosky/123 | opened | CVE-2020-36189 (High) detected in jackson-databind-2.8.8.jar | security vulnerability | ## CVE-2020-36189 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.8.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2020-36189 (High) detected in jackson-databind-2.8.8.jar - ## CVE-2020-36189 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.8.jar</b></p></summary>

<p>General... | non_priority | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file pom xml path to vulnerable ... | 0 |

275,902 | 8,582,018,477 | IssuesEvent | 2018-11-13 16:00:43 | blackbaud/skyux2 | https://api.github.com/repos/blackbaud/skyux2 | closed | Set focus to first focusable element when modal opens | Priority: Low accessibility sky-modal | From @Blackbaud-MattGregg SPA Navigation guidelines.

By default, focus should be set to first focusable element when a modal opens. The developer should be able to override this if necessary.

Will then need to add documentation about how to handle focus when the first focusable element would cause the top of the ... | 1.0 | Set focus to first focusable element when modal opens - From @Blackbaud-MattGregg SPA Navigation guidelines.

By default, focus should be set to first focusable element when a modal opens. The developer should be able to override this if necessary.

Will then need to add documentation about how to handle focus when... | priority | set focus to first focusable element when modal opens from blackbaud mattgregg spa navigation guidelines by default focus should be set to first focusable element when a modal opens the developer should be able to override this if necessary will then need to add documentation about how to handle focus when... | 1 |

241,965 | 20,176,525,020 | IssuesEvent | 2022-02-10 14:57:11 | mennaelkashef/eShop | https://api.github.com/repos/mennaelkashef/eShop | opened | No description entered by the user. | Hello! RULE-GOT-APPLIED DOES-NOT-CONTAIN-STRING Rule-works-on-convert-to-bug test instabug | # :clipboard: Bug Details

>No description entered by the user.

key | value

--|--

Reported At | 2022-02-10 14:49:56 UTC

Email | imohamady@instabug.com

Categories | Report a bug

Tags | test, Hello!, RULE-GOT-APPLIED, DOES-NOT-CONTAIN-STRING, Rule-works-on-convert-to-bug, instabug

App Version | 1.1 (1)

Sess... | 1.0 | No description entered by the user. - # :clipboard: Bug Details

>No description entered by the user.

key | value

--|--

Reported At | 2022-02-10 14:49:56 UTC

Email | imohamady@instabug.com

Categories | Report a bug

Tags | test, Hello!, RULE-GOT-APPLIED, DOES-NOT-CONTAIN-STRING, Rule-works-on-convert-to-bug,... | non_priority | no description entered by the user clipboard bug details no description entered by the user key value reported at utc email imohamady instabug com categories report a bug tags test hello rule got applied does not contain string rule works on convert to bug instabu... | 0 |

347,299 | 31,154,858,651 | IssuesEvent | 2023-08-16 12:31:18 | epam/ketcher | https://api.github.com/repos/epam/ketcher | opened | Autotests: Manipulations with Bond Tool #1734 | Autotests | - Add tests to Bond Tool

- Plan to automate tests

| 1.0 | Autotests: Manipulations with Bond Tool #1734 - - Add tests to Bond Tool

- Plan to automate tests

| non_priority | autotests manipulations with bond tool add tests to bond tool plan to automate tests | 0 |

318,076 | 27,283,560,863 | IssuesEvent | 2023-02-23 11:51:56 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | Godot 4 errors in exported debug file and gpu driver crash in editor after some time | bug topic:rendering needs testing topic:3d | ### Godot version

v4.0.dev.calinou [78f0d2d1d]

### System information

Win 10 64, AMD RX 480

### Issue description

While Testing "Godot 4.0 AAA Graphics? "The Junk Shop" after exporting the project to an debug exe some spam errors show up(some of them are known). I did disable the enviro effects and lowered some ot... | 1.0 | Godot 4 errors in exported debug file and gpu driver crash in editor after some time - ### Godot version

v4.0.dev.calinou [78f0d2d1d]

### System information

Win 10 64, AMD RX 480

### Issue description

While Testing "Godot 4.0 AAA Graphics? "The Junk Shop" after exporting the project to an debug exe some spam erro... | non_priority | godot errors in exported debug file and gpu driver crash in editor after some time godot version dev calinou system information win amd rx issue description while testing godot aaa graphics the junk shop after exporting the project to an debug exe some spam errors show up some... | 0 |

25,534 | 2,683,835,531 | IssuesEvent | 2015-03-28 11:13:58 | ConEmu/old-issues | https://api.github.com/repos/ConEmu/old-issues | closed | Maximus5 - 2009.09.23 - не работает с 4-мя ядрами ... | 2–5 stars bug imported Priority-Medium | _From [Aleksoid...@mail.ru](https://code.google.com/u/106652170189453997668/) on September 24, 2009 15:05:52_

Vista 7 x64, Far 2.0 build 1133 x86

Баг следующий - на 4-х ядерном проце(Phenom X4) ConEmu запускается всегда

только на двух ядрах(видно через диспетчер задач), более старые версии -

тоже самое.

И са... | 1.0 | Maximus5 - 2009.09.23 - не работает с 4-мя ядрами ... - _From [Aleksoid...@mail.ru](https://code.google.com/u/106652170189453997668/) on September 24, 2009 15:05:52_

Vista 7 x64, Far 2.0 build 1133 x86

Баг следующий - на 4-х ядерном проце(Phenom X4) ConEmu запускается всегда

только на двух ядрах(видно через диспе... | priority | не работает с мя ядрами from on september vista far build баг следующий на х ядерном проце phenom conemu запускается всегда только на двух ядрах видно через диспетчер задач более старые версии тоже самое и самое плохое в этом что любая программа... | 1 |

234,988 | 7,733,539,466 | IssuesEvent | 2018-05-26 13:07:33 | zetkin/www.zetk.in | https://api.github.com/repos/zetkin/www.zetk.in | closed | "Your bookings" not chronological | bug priority | The "your bookings" section of the dashboard does not render actions chronologically (see screenshot).

| 1.0 | "Your bookings" not chronological - The "your bookings" section of the dashboard does not render actions chronologically (see screenshot).

| priority | your bookings not chronological the your bookings section of the dashboard does not render actions chronologically see screenshot | 1 |

15,832 | 9,632,325,835 | IssuesEvent | 2019-05-15 15:56:37 | kimxogus/react-native-version-check | https://api.github.com/repos/kimxogus/react-native-version-check | closed | WS-2019-0047 (Medium) detected in tar-2.2.1.tgz | security vulnerability | ## WS-2019-0047 - Medium Severity Vulnerability

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tar-2.2.1.tgz</b></p></summary>

<p>tar for node</p>

<p>Library home page: <a href="https://registry.npmjs.org... | True | WS-2019-0047 (Medium) detected in tar-2.2.1.tgz - ## WS-2019-0047 - Medium Severity Vulnerability

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tar-2.2.1.tgz</b></p></summary>

<p>tar for node</p>

<p>Libr... | non_priority | ws medium detected in tar tgz ws medium severity vulnerability vulnerable library tar tgz tar for node library home page a href path to dependency file react native version check package json path to vulnerable library tmp git react native version check node module... | 0 |

149,064 | 19,563,439,156 | IssuesEvent | 2022-01-03 19:41:58 | Hugh-Cushing-Campaign/GoASQ | https://api.github.com/repos/Hugh-Cushing-Campaign/GoASQ | opened | CVE-2019-14806 (High) detected in Werkzeug-0.14.1-py2.py3-none-any.whl | security vulnerability | ## CVE-2019-14806 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Werkzeug-0.14.1-py2.py3-none-any.whl</b></p></summary>

<p>The comprehensive WSGI web application library.</p>

<p>Libra... | True | CVE-2019-14806 (High) detected in Werkzeug-0.14.1-py2.py3-none-any.whl - ## CVE-2019-14806 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Werkzeug-0.14.1-py2.py3-none-any.whl</b></p></... | non_priority | cve high detected in werkzeug none any whl cve high severity vulnerability vulnerable library werkzeug none any whl the comprehensive wsgi web application library library home page a href path to dependency file src requirements txt path to vulnerable librar... | 0 |

74,501 | 14,265,042,628 | IssuesEvent | 2020-11-20 16:33:30 | aws-samples/aws-secure-environment-accelerator | https://api.github.com/repos/aws-samples/aws-secure-environment-accelerator | opened | [BUG][Config Validation] z141-PBMM Accelerator Issue - VPC removal protections dropped | 1-Codebase 2-Bug/Issue 3-Planned | ## Short Problem Description

- while changes to a VPC configuration appear to still be blocked, one of my collegues removed (xx'd) out a VPC and the state machine allowed the change, executing and then failing when it could not remove the VPC

- ticket z002 (https://issues.amazon.com/issues/PBMM-83) was supposed to pr... | 1.0 | [BUG][Config Validation] z141-PBMM Accelerator Issue - VPC removal protections dropped - ## Short Problem Description

- while changes to a VPC configuration appear to still be blocked, one of my collegues removed (xx'd) out a VPC and the state machine allowed the change, executing and then failing when it could not re... | non_priority | pbmm accelerator issue vpc removal protections dropped short problem description while changes to a vpc configuration appear to still be blocked one of my collegues removed xx d out a vpc and the state machine allowed the change executing and then failing when it could not remove the vpc ticket ... | 0 |

30,988 | 5,892,424,859 | IssuesEvent | 2017-05-17 19:26:22 | ESMCI/cime | https://api.github.com/repos/ESMCI/cime | closed | Create clear and complete porting documentation | documentation ready | There is a new section on CIME internals that has been pushed to gh-pages that will help the porting documentation. The porting documentation needs to be significantly enhanced with examples to enable users to easily determine what needs to be done to port to their platformas. | 1.0 | Create clear and complete porting documentation - There is a new section on CIME internals that has been pushed to gh-pages that will help the porting documentation. The porting documentation needs to be significantly enhanced with examples to enable users to easily determine what needs to be done to port to their plat... | non_priority | create clear and complete porting documentation there is a new section on cime internals that has been pushed to gh pages that will help the porting documentation the porting documentation needs to be significantly enhanced with examples to enable users to easily determine what needs to be done to port to their plat... | 0 |

580,847 | 17,268,444,277 | IssuesEvent | 2021-07-22 16:25:13 | mentortechabc/play | https://api.github.com/repos/mentortechabc/play | closed | Починить тесты: | priority:high | * Тесты должны быть зелеными

* Убедиться, что тесты работают из другой тайм-зоны

* После этого перед коммитом теперь обязательно прогоняем тесты, коммитим только изменения с зелеными тестами | 1.0 | Починить тесты: - * Тесты должны быть зелеными

* Убедиться, что тесты работают из другой тайм-зоны

* После этого перед коммитом теперь обязательно прогоняем тесты, коммитим только изменения с зелеными тестами | priority | починить тесты тесты должны быть зелеными убедиться что тесты работают из другой тайм зоны после этого перед коммитом теперь обязательно прогоняем тесты коммитим только изменения с зелеными тестами | 1 |

356,561 | 25,176,213,221 | IssuesEvent | 2022-11-11 09:29:19 | sheshenk/pe | https://api.github.com/repos/sheshenk/pe | opened | Invalid markdown syntax under design considerations | severity.VeryLow type.DocumentationBug | In the developer guide, there is some markdown that failed to render. You could cut the whitespaces after the bolding tag to resolve this!

<!--session: 1668157724467-d46740b4-59fe-4087-b248-d4a34ba06500-... | 1.0 | Invalid markdown syntax under design considerations - In the developer guide, there is some markdown that failed to render. You could cut the whitespaces after the bolding tag to resolve this!

<!--sessio... | non_priority | invalid markdown syntax under design considerations in the developer guide there is some markdown that failed to render you could cut the whitespaces after the bolding tag to resolve this | 0 |

465,116 | 13,356,773,543 | IssuesEvent | 2020-08-31 08:43:14 | aau-network-security/haaukins | https://api.github.com/repos/aau-network-security/haaukins | closed | Integrate github actions into Haaukins project | enhancement low priority | **Is your feature request related to a problem? Please describe.**

Not exactly, will add ability to test and deploy all binaries with Github's CI.

- [x] Run test cases

- [x] Build binaries

- [ ] Release

- [ ] Deploy [could be] | 1.0 | Integrate github actions into Haaukins project - **Is your feature request related to a problem? Please describe.**

Not exactly, will add ability to test and deploy all binaries with Github's CI.

- [x] Run test cases

- [x] Build binaries

- [ ] Release

- [ ] Deploy [could be] | priority | integrate github actions into haaukins project is your feature request related to a problem please describe not exactly will add ability to test and deploy all binaries with github s ci run test cases build binaries release deploy | 1 |

798,175 | 28,238,724,619 | IssuesEvent | 2023-04-06 04:36:08 | Warcraft-GoA-Development-Team/Warcraft-Guardians-of-Azeroth-2 | https://api.github.com/repos/Warcraft-GoA-Development-Team/Warcraft-Guardians-of-Azeroth-2 | opened | Titanforged Reproduction | new feature :star: priority low :grey_exclamation: balance :balance_scale: cultural :mortar_board: racial:fish: | <!--

**DO NOT REMOVE PRE-EXISTING LINES**

------------------------------------------------------------------------------------------------------------

-->

## Titanforged should not reproduce biologically but rather be created.

- Assign infertile trait to all races with `flag = titanforged_class`

- Create constr... | 1.0 | Titanforged Reproduction - <!--

**DO NOT REMOVE PRE-EXISTING LINES**

------------------------------------------------------------------------------------------------------------

-->

## Titanforged should not reproduce biologically but rather be created.

- Assign infertile trait to all races with `flag = titanfor... | priority | titanforged reproduction do not remove pre existing lines titanforged should not reproduce biologically but rather be created assign infertile trait to all races with flag titanfor... | 1 |

196,062 | 22,409,808,498 | IssuesEvent | 2022-06-18 14:51:24 | EmpoHQ/empo.im | https://api.github.com/repos/EmpoHQ/empo.im | opened | CVE-2022-0155 (Medium) detected in follow-redirects-1.14.1.tgz | security vulnerability | ## CVE-2022-0155 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>follow-redirects-1.14.1.tgz</b></p></summary>

<p>HTTP and HTTPS modules that follow redirects.</p>

<p>Library home pa... | True | CVE-2022-0155 (Medium) detected in follow-redirects-1.14.1.tgz - ## CVE-2022-0155 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>follow-redirects-1.14.1.tgz</b></p></summary>

<p>HTT... | non_priority | cve medium detected in follow redirects tgz cve medium severity vulnerability vulnerable library follow redirects tgz http and https modules that follow redirects library home page a href path to dependency file package json path to vulnerable library node modules... | 0 |

528,834 | 15,375,386,463 | IssuesEvent | 2021-03-02 14:52:47 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | closed | Unify Instrument calibration - across tubes and other det types | Diffraction High Priority Stale | This issue was originally [TRAC 9303](http://trac.mantidproject.org/mantid/ticket/9303)

Hard to estimate without a review of what we have so far and what we are missing

---

Keywords: SSC,2014,All,Calibration

| 1.0 | Unify Instrument calibration - across tubes and other det types - This issue was originally [TRAC 9303](http://trac.mantidproject.org/mantid/ticket/9303)

Hard to estimate without a review of what we have so far and what we are missing

---

Keywords: SSC,2014,All,Calibration

| priority | unify instrument calibration across tubes and other det types this issue was originally hard to estimate without a review of what we have so far and what we are missing keywords ssc all calibration | 1 |

44,896 | 5,894,106,271 | IssuesEvent | 2017-05-18 00:22:43 | phetsims/circuit-construction-kit-dc | https://api.github.com/repos/phetsims/circuit-construction-kit-dc | closed | Controls for internal resistance of battery | design:general | In Java, the battery can have an internal resistance of up to 9 ohms. We have not yet discussed this feature, though I imagine it will be desirable for 1.0. Self-assigning to mock up a few options.

<img width="219" alt="screen shot 2017-03-23 at 4 29 14 pm" src="https://cloud.githubusercontent.com/assets/8419308/24273... | 1.0 | Controls for internal resistance of battery - In Java, the battery can have an internal resistance of up to 9 ohms. We have not yet discussed this feature, though I imagine it will be desirable for 1.0. Self-assigning to mock up a few options.

<img width="219" alt="screen shot 2017-03-23 at 4 29 14 pm" src="https://cl... | non_priority | controls for internal resistance of battery in java the battery can have an internal resistance of up to ohms we have not yet discussed this feature though i imagine it will be desirable for self assigning to mock up a few options img width alt screen shot at pm src img width ... | 0 |

405,623 | 11,879,878,584 | IssuesEvent | 2020-03-27 09:38:39 | AugurProject/augur | https://api.github.com/repos/AugurProject/augur | closed | Issues with market info on markets in reporting | Needed for V2 launch Priority: Medium | Images from build.

The Event expiration should be shown here with the arrow to expand to show more market details

Now when clicking the arrow you see two arrow. This shouldn't happen - only need the one ... | 1.0 | Issues with market info on markets in reporting - Images from build.

The Event expiration should be shown here with the arrow to expand to show more market details

Now when clicking the arrow you see two... | priority | issues with market info on markets in reporting images from build the event expiration should be shown here with the arrow to expand to show more market details now when clicking the arrow you see two arrow this shouldn t happen only need the one arrow here clicking the arrow shows the spacing on ... | 1 |

283,076 | 21,316,032,534 | IssuesEvent | 2022-04-16 09:38:04 | FTang21/pe | https://api.github.com/repos/FTang21/pe | opened | Missing the construction of Order object | severity.Low type.DocumentationBug |

Order object should be shown to be created. This current visualization implies that the Order object had previously already been created.

<!--session: 1650096032233-d65dfecb-8... | 1.0 | Missing the construction of Order object -

Order object should be shown to be created. This current visualization implies that the Order object had previously already been creat... | non_priority | missing the construction of order object order object should be shown to be created this current visualization implies that the order object had previously already been created | 0 |

59,093 | 8,328,697,744 | IssuesEvent | 2018-09-27 02:17:01 | roboll/helmfile | https://api.github.com/repos/roboll/helmfile | closed | Implement absent for deletion of charts | documentation | I would like to be able to define `absent` as part of a release in the helmfile. Upon `helmfile sync` all releases with `absent: true` will be deleted.

```

releases:

- name: super-magician

namespace: default

chart: stable/prometheus-cloudwatch-exporter

version: 0.2.0

values:

- promethe... | 1.0 | Implement absent for deletion of charts - I would like to be able to define `absent` as part of a release in the helmfile. Upon `helmfile sync` all releases with `absent: true` will be deleted.

```

releases:

- name: super-magician

namespace: default

chart: stable/prometheus-cloudwatch-exporter

ver... | non_priority | implement absent for deletion of charts i would like to be able to define absent as part of a release in the helmfile upon helmfile sync all releases with absent true will be deleted releases name super magician namespace default chart stable prometheus cloudwatch exporter ver... | 0 |

44,227 | 9,553,528,698 | IssuesEvent | 2019-05-02 19:30:12 | redhat-developer/vscode-java | https://api.github.com/repos/redhat-developer/vscode-java | closed | The quick fix label for generating getter and setter has an unnecessary ellipsis | bug code action | So if you have 2 fields without accessors, the quickfix to generate them has an ellipsis, yet no wizard is shown as you'd expect from the ellipsis.

<img width="378" alt="Screen Shot 2019-04-29 at 7 05 30 PM" src="https://user-images.githubusercontent.com/148698/56932428-c9dd7400-6ab1-11e9-86c2-5c2e5714ea88.png">

| 1.0 | The quick fix label for generating getter and setter has an unnecessary ellipsis - So if you have 2 fields without accessors, the quickfix to generate them has an ellipsis, yet no wizard is shown as you'd expect from the ellipsis.

<img width="378" alt="Screen Shot 2019-04-29 at 7 05 30 PM" src="https://user-images.git... | non_priority | the quick fix label for generating getter and setter has an unnecessary ellipsis so if you have fields without accessors the quickfix to generate them has an ellipsis yet no wizard is shown as you d expect from the ellipsis img width alt screen shot at pm src | 0 |

14,457 | 4,933,052,198 | IssuesEvent | 2016-11-28 15:24:39 | serde-rs/serde | https://api.github.com/repos/serde-rs/serde | opened | Support big arrays | codegen enhancement | Servo does this to support big arrays in one of their proc macros:

https://github.com/servo/heapsize/blob/44e86d6d48a09c9cbc30a122bc8725b188d017b2/derive/lib.rs#L36-L41

Let's do the same but only if the size of the array exceeds our biggest builtin impl.

Thanks @nox. | 1.0 | Support big arrays - Servo does this to support big arrays in one of their proc macros:

https://github.com/servo/heapsize/blob/44e86d6d48a09c9cbc30a122bc8725b188d017b2/derive/lib.rs#L36-L41

Let's do the same but only if the size of the array exceeds our biggest builtin impl.

Thanks @nox. | non_priority | support big arrays servo does this to support big arrays in one of their proc macros let s do the same but only if the size of the array exceeds our biggest builtin impl thanks nox | 0 |

6,473 | 23,220,380,941 | IssuesEvent | 2022-08-02 17:36:27 | angular/dev-infra | https://api.github.com/repos/angular/dev-infra | opened | Defer GitHub releases until after NPM packages are published | domain: release automation | Action item from [requiem/doc/postmortem475066](http://requiem/doc/postmortem475066).

In the `14.1.0-rc.0` release, the NPM publish operation failed several times. However the GitHub release was published before NPM failed, so each attempt published a GitHub release which isn't actually usable and confuses people wa... | 1.0 | Defer GitHub releases until after NPM packages are published - Action item from [requiem/doc/postmortem475066](http://requiem/doc/postmortem475066).

In the `14.1.0-rc.0` release, the NPM publish operation failed several times. However the GitHub release was published before NPM failed, so each attempt published a Gi... | non_priority | defer github releases until after npm packages are published action item from in the rc release the npm publish operation failed several times however the github release was published before npm failed so each attempt published a github release which isn t actually usable and confuses people watch... | 0 |

270,494 | 8,461,141,308 | IssuesEvent | 2018-10-22 20:53:59 | prometheus/prombench | https://api.github.com/repos/prometheus/prombench | opened | expose master and pr prometheus instances so we can run custom queries | help wanted priority: high | in most cases so far it would be usefull to run custom quiries

for example in https://github.com/prometheus/prometheus/pull/4768 and https://github.com/prometheus/prometheus/pull/4763 | 1.0 | expose master and pr prometheus instances so we can run custom queries - in most cases so far it would be usefull to run custom quiries

for example in https://github.com/prometheus/prometheus/pull/4768 and https://github.com/prometheus/prometheus/pull/4763 | priority | expose master and pr prometheus instances so we can run custom queries in most cases so far it would be usefull to run custom quiries for example in and | 1 |

437,601 | 30,604,993,301 | IssuesEvent | 2023-07-22 22:19:50 | Kitware/trame | https://api.github.com/repos/Kitware/trame | closed | Used by ... | documentation | The goal of this issue is to capture companies using trame that are willing to have their logo featured on our website.

Please provide:

- Short sentence on what you are using trame for.

- Which logo to use from your company (links).

- Sentence granting Kitware to feature your logo on our website as a user of t... | 1.0 | Used by ... - The goal of this issue is to capture companies using trame that are willing to have their logo featured on our website.

Please provide:

- Short sentence on what you are using trame for.

- Which logo to use from your company (links).

- Sentence granting Kitware to feature your logo on our website ... | non_priority | used by the goal of this issue is to capture companies using trame that are willing to have their logo featured on our website please provide short sentence on what you are using trame for which logo to use from your company links sentence granting kitware to feature your logo on our website ... | 0 |

411,343 | 12,017,132,890 | IssuesEvent | 2020-04-10 17:40:59 | Sarrasor/INNO-S20-SP | https://api.github.com/repos/Sarrasor/INNO-S20-SP | closed | US-TM-3. Client part | display feature high priority | As a technical manager, I want to utilize different file formats in my content, such as video, audio, images or rich-text, so that the knowledge can be conveyed in the most suitable form.

Add textual display feature on the client-side in the app | 1.0 | US-TM-3. Client part - As a technical manager, I want to utilize different file formats in my content, such as video, audio, images or rich-text, so that the knowledge can be conveyed in the most suitable form.

Add textual display feature on the client-side in the app | priority | us tm client part as a technical manager i want to utilize different file formats in my content such as video audio images or rich text so that the knowledge can be conveyed in the most suitable form add textual display feature on the client side in the app | 1 |

37,898 | 12,505,853,120 | IssuesEvent | 2020-06-02 11:30:49 | fieldenms/tg | https://api.github.com/repos/fieldenms/tg | closed | Entity Master: user-driven filtering to be applied to masters by default | Entity master P1 Pull request Security | ### Description

TG explicitly supports the concept of user-drivent filtering. And by providing the implementation and binding for contract `IFilter`, any EQL query can be automatically augmented to filter out the data that should not be accesible/visible to the current user.

Entity Centres apply user-driven filte... | True | Entity Master: user-driven filtering to be applied to masters by default - ### Description

TG explicitly supports the concept of user-drivent filtering. And by providing the implementation and binding for contract `IFilter`, any EQL query can be automatically augmented to filter out the data that should not be acces... | non_priority | entity master user driven filtering to be applied to masters by default description tg explicitly supports the concept of user drivent filtering and by providing the implementation and binding for contract ifilter any eql query can be automatically augmented to filter out the data that should not be acces... | 0 |

24,130 | 12,221,737,356 | IssuesEvent | 2020-05-02 09:36:51 | base33/Mimic | https://api.github.com/repos/base33/Mimic | opened | Implement Factory Activator and benchmark comparing to current activator | Performance-enhancement | Implement the factory activator to create new instances detailed here https://github.com/Dotnet-Boxed/Framework/blob/master/Source/Boxed.Mapping/Factory.cs

Create benchmark tests and create comparisons between current and new implementation | True | Implement Factory Activator and benchmark comparing to current activator - Implement the factory activator to create new instances detailed here https://github.com/Dotnet-Boxed/Framework/blob/master/Source/Boxed.Mapping/Factory.cs

Create benchmark tests and create comparisons between current and new implementation | non_priority | implement factory activator and benchmark comparing to current activator implement the factory activator to create new instances detailed here create benchmark tests and create comparisons between current and new implementation | 0 |

166,043 | 14,018,524,281 | IssuesEvent | 2020-10-29 16:56:44 | HenrikBengtsson/parallelly | https://api.github.com/repos/HenrikBengtsson/parallelly | closed | DOCS: example with availableCores() - 1 | documentation | Using `availableCores() - 1` or `0.4 * availableCores()` does not always work because we might end up with zero cores.

Instead we need to use `max(1, availableCores() - 1)`.

Add this to the help or one of the examples. | 1.0 | DOCS: example with availableCores() - 1 - Using `availableCores() - 1` or `0.4 * availableCores()` does not always work because we might end up with zero cores.

Instead we need to use `max(1, availableCores() - 1)`.

Add this to the help or one of the examples. | non_priority | docs example with availablecores using availablecores or availablecores does not always work because we might end up with zero cores instead we need to use max availablecores add this to the help or one of the examples | 0 |

265,263 | 28,262,388,503 | IssuesEvent | 2023-04-07 01:16:56 | hshivhare67/platform_device_renesas_kernel_v4.19.72 | https://api.github.com/repos/hshivhare67/platform_device_renesas_kernel_v4.19.72 | closed | CVE-2019-19054 (Medium) detected in linuxlinux-4.19.279 - autoclosed | Mend: dependency security vulnerability | ## CVE-2019-19054 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.279</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge... | True | CVE-2019-19054 (Medium) detected in linuxlinux-4.19.279 - autoclosed - ## CVE-2019-19054 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.279</b></p></summary>

<p>

<p>... | non_priority | cve medium detected in linuxlinux autoclosed cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch main vulnerable source files d... | 0 |

555,202 | 16,449,477,351 | IssuesEvent | 2021-05-21 02:04:02 | mobigen/IRIS-BigData-Platform | https://api.github.com/repos/mobigen/IRIS-BigData-Platform | closed | 보고서/ 지도 - 범례의 최소값/최대값 표시 기능 | #IBP Priority: P2 Status: Backlog Type: Suggestion | ## 기능 요청 ##

{문제가 무엇인지에 대한 명확하고 간결한 설명 부탁드립니다.}

보고서/ 지도 - 범례의 최소값/최대값 표시 기능

## 원하는 솔루션 설명 ##

{ 원하는 기능에 대한 명확하고 간결한 설명 부탁드립니다 }

지도에서 범례를 표시할때,

히트맵의 최소값과 최대값의 실값이 함께 표시할 수 있었으면 좋겠습니다.

<현재 범례상태 >: 최소/최대값의 실값이 표시되지 않음

<img width="405" alt="스크린샷 2020-03-11 오전 11 41 38" src="https://user-images.githubusercon... | 1.0 | 보고서/ 지도 - 범례의 최소값/최대값 표시 기능 - ## 기능 요청 ##

{문제가 무엇인지에 대한 명확하고 간결한 설명 부탁드립니다.}

보고서/ 지도 - 범례의 최소값/최대값 표시 기능

## 원하는 솔루션 설명 ##

{ 원하는 기능에 대한 명확하고 간결한 설명 부탁드립니다 }

지도에서 범례를 표시할때,

히트맵의 최소값과 최대값의 실값이 함께 표시할 수 있었으면 좋겠습니다.

<현재 범례상태 >: 최소/최대값의 실값이 표시되지 않음

<img width="405" alt="스크린샷 2020-03-11 오전 11 41 38" src="htt... | priority | 보고서 지도 범례의 최소값 최대값 표시 기능 기능 요청 문제가 무엇인지에 대한 명확하고 간결한 설명 부탁드립니다 보고서 지도 범례의 최소값 최대값 표시 기능 원하는 솔루션 설명 원하는 기능에 대한 명확하고 간결한 설명 부탁드립니다 지도에서 범례를 표시할때 히트맵의 최소값과 최대값의 실값이 함께 표시할 수 있었으면 좋겠습니다 최소 최대값의 실값이 표시되지 않음 img width alt 스크린샷 오전 src 최소 최대값의 실값... | 1 |

186,062 | 15,045,334,378 | IssuesEvent | 2021-02-03 05:12:18 | jsakaluk/dySEM | https://api.github.com/repos/jsakaluk/dySEM | opened | Add News | documentation | Can just briefly summarize what's good and the few exceptions to "stable" in the stable release | 1.0 | Add News - Can just briefly summarize what's good and the few exceptions to "stable" in the stable release | non_priority | add news can just briefly summarize what s good and the few exceptions to stable in the stable release | 0 |

221,406 | 24,622,653,666 | IssuesEvent | 2022-10-16 05:09:52 | paley777/spiunib | https://api.github.com/repos/paley777/spiunib | closed | jquery-1.11.0.min.js: 4 vulnerabilities (highest severity is: 6.1) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.11.0.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.11.... | True | jquery-1.11.0.min.js: 4 vulnerabilities (highest severity is: 6.1) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.11.0.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library ho... | non_priority | jquery min js vulnerabilities highest severity is vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file vendor ckeditor ckeditor samples old jquery html path to vulnerable library vendor ckeditor ckeditor... | 0 |

30,213 | 14,477,464,454 | IssuesEvent | 2020-12-10 06:37:06 | seung-lab/cloud-volume | https://api.github.com/repos/seung-lab/cloud-volume | closed | pulling thousands of 2D brain slices | performance question | I’m trying pull thousands of two-dimensional brain image slices using CloudVolume, but it seems to be a prohibitively slow process. After eight hours, only 100 slices have been completed. Is there anyway of speeding them up?

_Note: The Bbox is [0, 0, 0],[33792, 25600, 13312]._

**Code:**

```

dir = "s3://private... | True | pulling thousands of 2D brain slices - I’m trying pull thousands of two-dimensional brain image slices using CloudVolume, but it seems to be a prohibitively slow process. After eight hours, only 100 slices have been completed. Is there anyway of speeding them up?

_Note: The Bbox is [0, 0, 0],[33792, 25600, 13312]._... | non_priority | pulling thousands of brain slices i’m trying pull thousands of two dimensional brain image slices using cloudvolume but it seems to be a prohibitively slow process after eight hours only slices have been completed is there anyway of speeding them up note the bbox is code dir ... | 0 |

770,564 | 27,045,256,058 | IssuesEvent | 2023-02-13 09:18:39 | Avaiga/taipy-studio-gui | https://api.github.com/repos/Avaiga/taipy-studio-gui | opened | Preview of Taipy GUI pages | ✨New feature 🟧 Priority: High | **What would that feature address**

Visual Studio Code comes with a preview facility when edition Markdown files.

It would be great to allow for previewing Taipy visual elements embedded into Markdown code.

***Description of the ideal solution***

While working on a Markdown file, pressing `Ctrl-Shift-V` bring... | 1.0 | Preview of Taipy GUI pages - **What would that feature address**

Visual Studio Code comes with a preview facility when edition Markdown files.

It would be great to allow for previewing Taipy visual elements embedded into Markdown code.

***Description of the ideal solution***

While working on a Markdown file, ... | priority | preview of taipy gui pages what would that feature address visual studio code comes with a preview facility when edition markdown files it would be great to allow for previewing taipy visual elements embedded into markdown code description of the ideal solution while working on a markdown file ... | 1 |

511,933 | 14,885,186,574 | IssuesEvent | 2021-01-20 15:26:36 | comic/grand-challenge.org | https://api.github.com/repos/comic/grand-challenge.org | closed | Use Paginated DataTables for the Image List view in a Reader Study | area/reader-studies estimate/hours priority/p2 | **Problem**

In order to view the cases in a reader study, I need to sequentially scroll through pages one by one. When a reader study contains hundreds of images this is not doable anymore.

**Proposed solution**

Archives allow the option of searching through the list of cases/ images and also to jump ahead severa... | 1.0 | Use Paginated DataTables for the Image List view in a Reader Study - **Problem**

In order to view the cases in a reader study, I need to sequentially scroll through pages one by one. When a reader study contains hundreds of images this is not doable anymore.

**Proposed solution**

Archives allow the option of sear... | priority | use paginated datatables for the image list view in a reader study problem in order to view the cases in a reader study i need to sequentially scroll through pages one by one when a reader study contains hundreds of images this is not doable anymore proposed solution archives allow the option of sear... | 1 |

4,072 | 10,552,476,500 | IssuesEvent | 2019-10-03 15:14:11 | dotnet/docs | https://api.github.com/repos/dotnet/docs | closed | Multuple IHostedService registration | :book: guide - .NET Microservices :books: Area - .NET Architecture Guide Source - Docs.ms | If i try to register two or more services, only one could work properly.

For example:

```

services.AddSingleton<IHostedService, ServiceA>();

services.AddSingleton<IHostedService, ServiceB>();

```

Implementations are simplest as possible:

```

public class ServiceA: IHostedService

{

public Task Star... | 1.0 | Multuple IHostedService registration - If i try to register two or more services, only one could work properly.

For example:

```

services.AddSingleton<IHostedService, ServiceA>();

services.AddSingleton<IHostedService, ServiceB>();

```

Implementations are simplest as possible:

```

public class ServiceA: IHostedS... | non_priority | multuple ihostedservice registration if i try to register two or more services only one could work properly for example services addsingleton services addsingleton implementations are simplest as possible public class servicea ihostedservice public task startasync canc... | 0 |

1,356 | 2,511,929,010 | IssuesEvent | 2015-01-14 12:41:14 | transientskp/tkp | https://api.github.com/repos/transientskp/tkp | opened | Quality Control: is the full FoV imaged? | priority normal | Is the full field of view imaged?

This has been raised as an interesting test, as if the full field of view (FOV) has not been imaged we may want to image the full dataset. The imaged FOV information can be estimated using the number of pixels and the size of the pixels. (nx and ny are the number of pixels in the x an... | 1.0 | Quality Control: is the full FoV imaged? - Is the full field of view imaged?

This has been raised as an interesting test, as if the full field of view (FOV) has not been imaged we may want to image the full dataset. The imaged FOV information can be estimated using the number of pixels and the size of the pixels. (nx ... | priority | quality control is the full fov imaged is the full field of view imaged this has been raised as an interesting test as if the full field of view fov has not been imaged we may want to image the full dataset the imaged fov information can be estimated using the number of pixels and the size of the pixels nx ... | 1 |

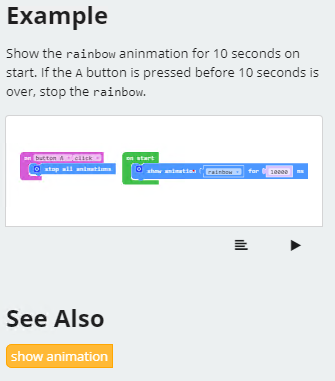

34,085 | 6,289,061,757 | IssuesEvent | 2017-07-19 18:22:25 | Microsoft/pxt | https://api.github.com/repos/Microsoft/pxt | closed | The reference of block "setAll" lack of "See Also" | bug cti team documentation | **Repro step:**

- Navigate to [https://makecode.adafruit.com/ ](url)

- Click "_?_" and choose "_Reference_"

- Choose "_light_" in "_See also_"

- Then click "_setAll_"

**Expected Result:**

**Actual... | 1.0 | The reference of block "setAll" lack of "See Also" - **Repro step:**

- Navigate to [https://makecode.adafruit.com/ ](url)

- Click "_?_" and choose "_Reference_"

- Choose "_light_" in "_See also_"

- Then click "_setAll_"

**Expected Result:**

detected in marked-0.3.19.js | security vulnerability | ## WS-2019-0027 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>marked-0.3.19.js</b></p></summary>

<p>A markdown parser built for speed</p>

<p>Library home page: <a href="https://cdn... | True | WS-2019-0027 (Medium) detected in marked-0.3.19.js - ## WS-2019-0027 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>marked-0.3.19.js</b></p></summary>

<p>A markdown parser built for... | non_priority | ws medium detected in marked js ws medium severity vulnerability vulnerable library marked js a markdown parser built for speed library home page a href path to dependency file tmp ws scm cwa website node modules marked www demo html path to vulnerable library cwa ... | 0 |

321,257 | 23,848,313,033 | IssuesEvent | 2022-09-06 15:37:17 | timescale/docs | https://api.github.com/repos/timescale/docs | closed | [Feedback] Page: /timescaledb/latest/how-to-guides/upgrades/upgrade-docker - documentation is out of date | bug documentation community | `docker inspect timescaledb --format='{{range .Mounts }}{{.Type}}{{end}}' `

doesnt work as "Mounts": [] is empty array when running

`docker inspect timescaledb`

I have run the command on both:

Docker version 20.10.12, build e91ed57 on Debian linux

Docker version 20.10.17, build 100c701 on windows 11

Havin... | 1.0 | [Feedback] Page: /timescaledb/latest/how-to-guides/upgrades/upgrade-docker - documentation is out of date - `docker inspect timescaledb --format='{{range .Mounts }}{{.Type}}{{end}}' `

doesnt work as "Mounts": [] is empty array when running

`docker inspect timescaledb`

I have run the command on both:

Docker ver... | non_priority | page timescaledb latest how to guides upgrades upgrade docker documentation is out of date docker inspect timescaledb format range mounts type end doesnt work as mounts is empty array when running docker inspect timescaledb i have run the command on both docker version ... | 0 |

629,475 | 20,034,264,180 | IssuesEvent | 2022-02-02 10:10:58 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | coap: blockwise: context current does not match total size after transfer is completed | bug priority: low area: Networking area: Networking Clients has-pr | **Describe the bug**

After downloading a filed using coap block transfer, I noticed that after receiving the last block `ctx->current` does not match ctx->total size.

Example:

File size: 300

Block size: 256

1. Request block 0.

block current = 0.

2. Receive block 0, 256 bytes

block-> current = 2... | 1.0 | coap: blockwise: context current does not match total size after transfer is completed - **Describe the bug**