Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

9,536

| 12,504,875,738

|

IssuesEvent

|

2020-06-02 09:44:20

|

prisma/prisma

|

https://api.github.com/repos/prisma/prisma

|

closed

|

Add a test case for project paths with spaces

|

process/candidate

|

Some user's project paths contain spaces. This broke client generation various times already, e.g. https://github.com/prisma/prisma/issues/1973 and https://github.com/prisma/prisma/issues/2612.

We should add at least one dedicated test for this so this won't break again for our users.

|

1.0

|

Add a test case for project paths with spaces - Some user's project paths contain spaces. This broke client generation various times already, e.g. https://github.com/prisma/prisma/issues/1973 and https://github.com/prisma/prisma/issues/2612.

We should add at least one dedicated test for this so this won't break again for our users.

|

process

|

add a test case for project paths with spaces some user s project paths contain spaces this broke client generation various times already e g and we should add at least one dedicated test for this so this won t break again for our users

| 1

|

21,982

| 30,474,010,994

|

IssuesEvent

|

2023-07-17 15:17:10

|

The-Data-Alchemists-Manipal/MindWave

|

https://api.github.com/repos/The-Data-Alchemists-Manipal/MindWave

|

closed

|

Thumbnailator

|

gssoc23 level2 image-processing

|

### Is your feature request related to a problem? Please describe.

Thumbnailator's fluent interface can be used to perform fairly complicated thumbnail processing task in one simple step.

### Describe the solution you'd like

creating JPEG thumbnails of image files in a directory, all resized to a maximum dimension of 640 pixels by 480 pixels while preserving the aspect ratio of the original image can be performed

### Describe alternatives you've considered

_No response_

### Additional context

Assign this issue to me under GSSOC'23

### Code of Conduct

- [X] I agree to follow this project's Code of Conduct

|

1.0

|

Thumbnailator - ### Is your feature request related to a problem? Please describe.

Thumbnailator's fluent interface can be used to perform fairly complicated thumbnail processing task in one simple step.

### Describe the solution you'd like

creating JPEG thumbnails of image files in a directory, all resized to a maximum dimension of 640 pixels by 480 pixels while preserving the aspect ratio of the original image can be performed

### Describe alternatives you've considered

_No response_

### Additional context

Assign this issue to me under GSSOC'23

### Code of Conduct

- [X] I agree to follow this project's Code of Conduct

|

process

|

thumbnailator is your feature request related to a problem please describe thumbnailator s fluent interface can be used to perform fairly complicated thumbnail processing task in one simple step describe the solution you d like creating jpeg thumbnails of image files in a directory all resized to a maximum dimension of pixels by pixels while preserving the aspect ratio of the original image can be performed describe alternatives you ve considered no response additional context assign this issue to me under gssoc code of conduct i agree to follow this project s code of conduct

| 1

|

124,378

| 17,772,541,543

|

IssuesEvent

|

2021-08-30 15:10:40

|

kapseliboi/evergreen

|

https://api.github.com/repos/kapseliboi/evergreen

|

opened

|

CVE-2019-8331 (Medium) detected in bootstrap-3.2.0.min.js

|

security vulnerability

|

## CVE-2019-8331 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-3.2.0.min.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile first projects on the web.</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/3.2.0/js/bootstrap.min.js">https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/3.2.0/js/bootstrap.min.js</a></p>

<p>Path to dependency file: evergreen/services/node_modules/remarkable/demo/index.html</p>

<p>Path to vulnerable library: /services/node_modules/remarkable/demo/index.html</p>

<p>

Dependency Hierarchy:

- :x: **bootstrap-3.2.0.min.js** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/kapseliboi/evergreen/commit/13675096220f0e986aa94cafc5f57de6b38e38cd">13675096220f0e986aa94cafc5f57de6b38e38cd</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In Bootstrap before 3.4.1 and 4.3.x before 4.3.1, XSS is possible in the tooltip or popover data-template attribute.

<p>Publish Date: 2019-02-20

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-8331>CVE-2019-8331</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/twbs/bootstrap/pull/28236">https://github.com/twbs/bootstrap/pull/28236</a></p>

<p>Release Date: 2019-02-20</p>

<p>Fix Resolution: bootstrap - 3.4.1,4.3.1;bootstrap-sass - 3.4.1,4.3.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2019-8331 (Medium) detected in bootstrap-3.2.0.min.js - ## CVE-2019-8331 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-3.2.0.min.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile first projects on the web.</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/3.2.0/js/bootstrap.min.js">https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/3.2.0/js/bootstrap.min.js</a></p>

<p>Path to dependency file: evergreen/services/node_modules/remarkable/demo/index.html</p>

<p>Path to vulnerable library: /services/node_modules/remarkable/demo/index.html</p>

<p>

Dependency Hierarchy:

- :x: **bootstrap-3.2.0.min.js** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/kapseliboi/evergreen/commit/13675096220f0e986aa94cafc5f57de6b38e38cd">13675096220f0e986aa94cafc5f57de6b38e38cd</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In Bootstrap before 3.4.1 and 4.3.x before 4.3.1, XSS is possible in the tooltip or popover data-template attribute.

<p>Publish Date: 2019-02-20

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-8331>CVE-2019-8331</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/twbs/bootstrap/pull/28236">https://github.com/twbs/bootstrap/pull/28236</a></p>

<p>Release Date: 2019-02-20</p>

<p>Fix Resolution: bootstrap - 3.4.1,4.3.1;bootstrap-sass - 3.4.1,4.3.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in bootstrap min js cve medium severity vulnerability vulnerable library bootstrap min js the most popular front end framework for developing responsive mobile first projects on the web library home page a href path to dependency file evergreen services node modules remarkable demo index html path to vulnerable library services node modules remarkable demo index html dependency hierarchy x bootstrap min js vulnerable library found in head commit a href found in base branch master vulnerability details in bootstrap before and x before xss is possible in the tooltip or popover data template attribute publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope changed impact metrics confidentiality impact low integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution bootstrap bootstrap sass step up your open source security game with whitesource

| 0

|

281,037

| 8,689,924,593

|

IssuesEvent

|

2018-12-03 20:03:37

|

SpaceNetChallenge/utilities

|

https://api.github.com/repos/SpaceNetChallenge/utilities

|

closed

|

You might want a .gitignore file in this repo

|

Difficulty: Easy Priority: High Status: Unassigned Type: Maintenance

|

`.pyc` files don't need to be committed; they can be filtered out with .gitignore.

|

1.0

|

You might want a .gitignore file in this repo - `.pyc` files don't need to be committed; they can be filtered out with .gitignore.

|

non_process

|

you might want a gitignore file in this repo pyc files don t need to be committed they can be filtered out with gitignore

| 0

|

417,964

| 28,112,517,879

|

IssuesEvent

|

2023-03-31 08:19:31

|

venuslimm/ped

|

https://api.github.com/repos/venuslimm/ped

|

opened

|

Screenshot of find command on user guide does not match with the current UI

|

severity.VeryLow type.DocumentationBug

|

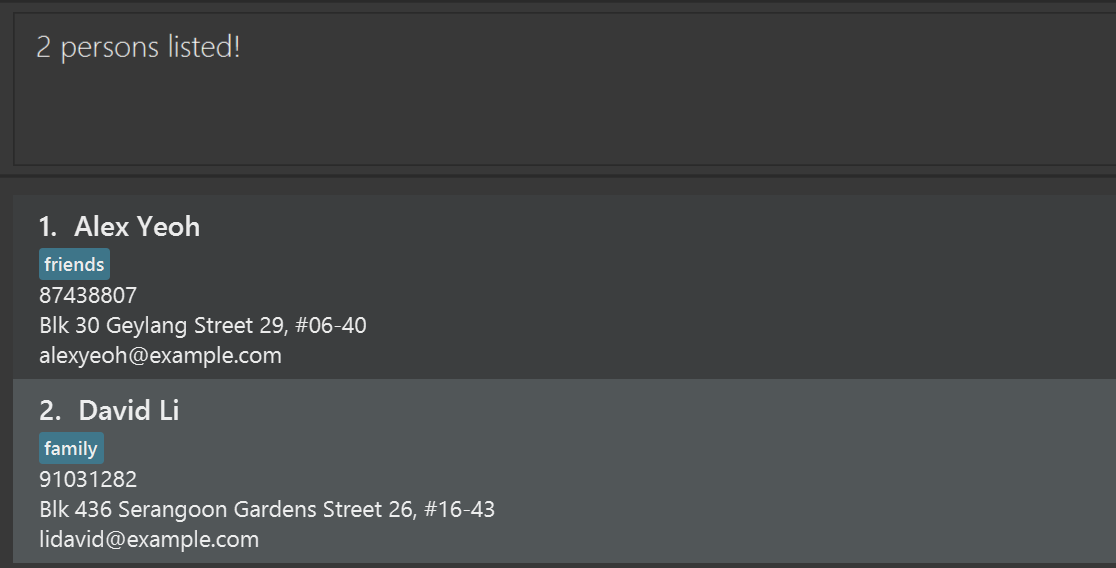

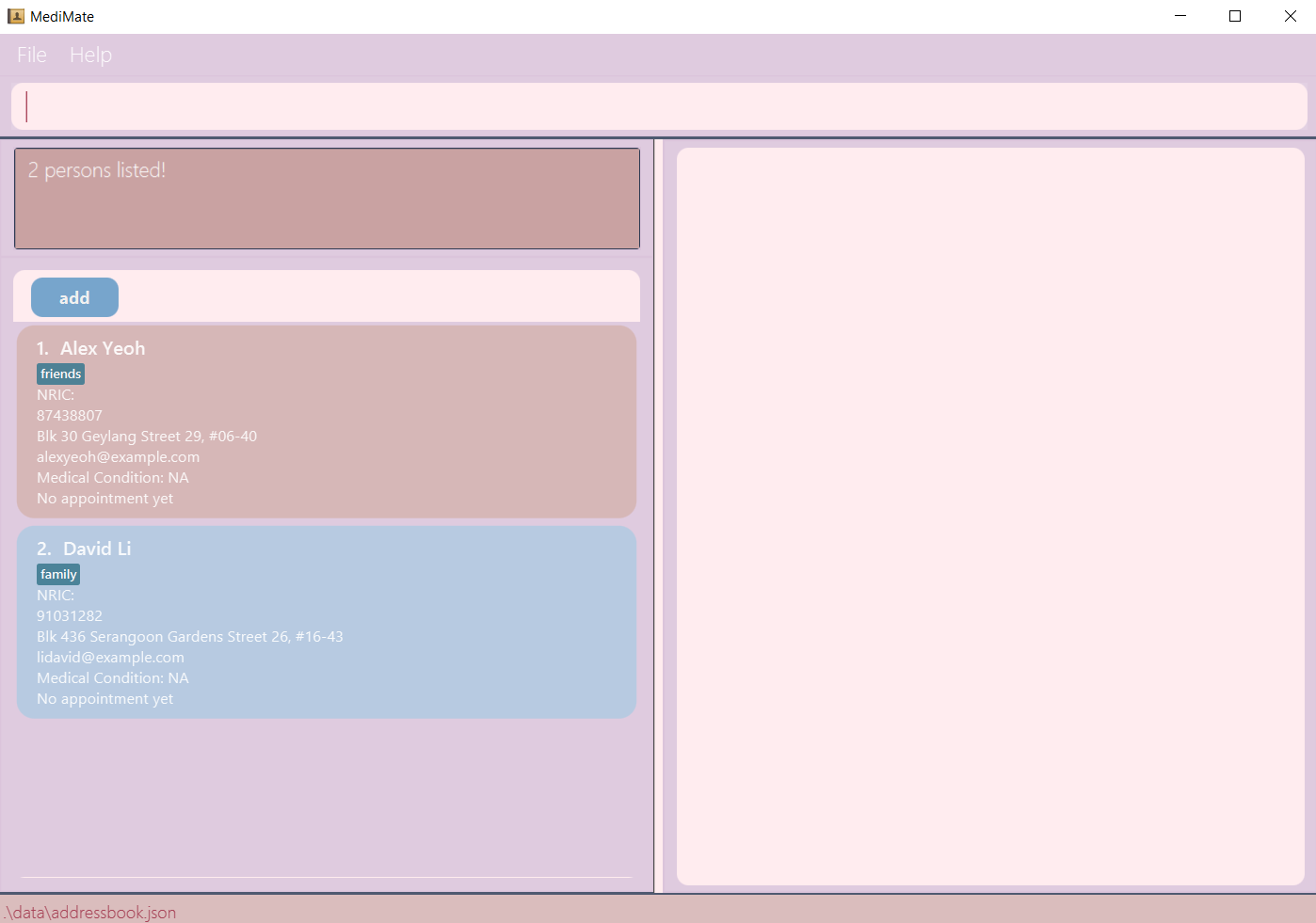

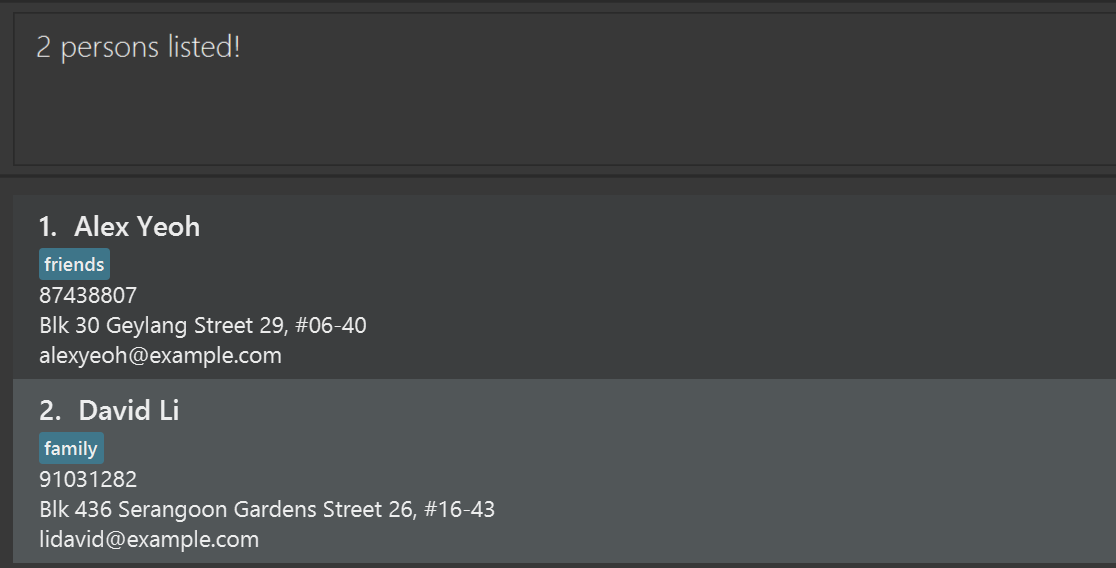

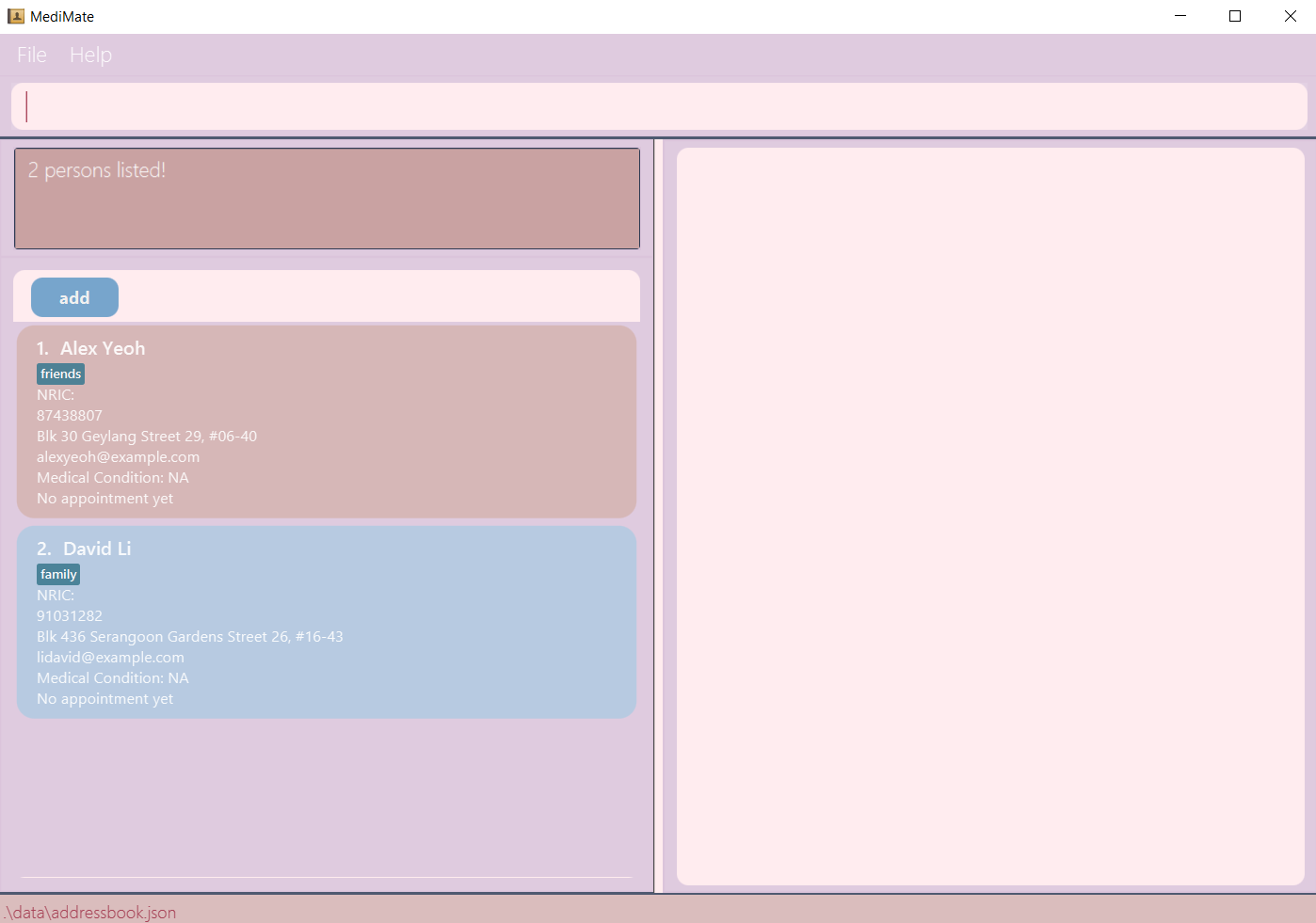

Screenshot on the user guide:

Actual UI:

<!--session: 1680242405607-d67b960d-7651-4494-be68-de1656255e7d-->

<!--Version: Web v3.4.7-->

|

1.0

|

Screenshot of find command on user guide does not match with the current UI - Screenshot on the user guide:

Actual UI:

<!--session: 1680242405607-d67b960d-7651-4494-be68-de1656255e7d-->

<!--Version: Web v3.4.7-->

|

non_process

|

screenshot of find command on user guide does not match with the current ui screenshot on the user guide actual ui

| 0

|

301,569

| 9,221,755,877

|

IssuesEvent

|

2019-03-11 20:48:46

|

RobotLocomotion/drake

|

https://api.github.com/repos/RobotLocomotion/drake

|

opened

|

ci: Turn off `xenial-valgrind-memcheck-weekly`

|

priority: medium team: kitware

|

See Slack convo:

https://drakedevelopers.slack.com/archives/C270MN28G/p1552336898014300?thread_ts=1552312717.012700&cid=C270MN28G

Summary: It's difficult to reproduce in Bionic, and not providing too much value as the Enabling team has moved primary development / usage to Bionic.

\cc @jwnimmer-tri

|

1.0

|

ci: Turn off `xenial-valgrind-memcheck-weekly` - See Slack convo:

https://drakedevelopers.slack.com/archives/C270MN28G/p1552336898014300?thread_ts=1552312717.012700&cid=C270MN28G

Summary: It's difficult to reproduce in Bionic, and not providing too much value as the Enabling team has moved primary development / usage to Bionic.

\cc @jwnimmer-tri

|

non_process

|

ci turn off xenial valgrind memcheck weekly see slack convo summary it s difficult to reproduce in bionic and not providing too much value as the enabling team has moved primary development usage to bionic cc jwnimmer tri

| 0

|

562

| 3,023,861,228

|

IssuesEvent

|

2015-08-01 23:56:31

|

HazyResearch/dd-genomics

|

https://api.github.com/repos/HazyResearch/dd-genomics

|

opened

|

Set up and document AWS pipeline for pre-processing

|

Preprocessing PRIORITY

|

This is / should be already documented in `HazyResearch/bazaar` but make sure we have some notes here / run this for everything today...

|

1.0

|

Set up and document AWS pipeline for pre-processing - This is / should be already documented in `HazyResearch/bazaar` but make sure we have some notes here / run this for everything today...

|

process

|

set up and document aws pipeline for pre processing this is should be already documented in hazyresearch bazaar but make sure we have some notes here run this for everything today

| 1

|

29,811

| 13,173,137,014

|

IssuesEvent

|

2020-08-11 19:44:13

|

thkl/hap-homematic

|

https://api.github.com/repos/thkl/hap-homematic

|

closed

|

Variablen mit mehr Services

|

DeviceService enhancement

|

Ist es Möglich im Bereich Variablen mehr Services unter zubringen. z.B. Luftfeuchtigkeit, Bewegungsmelder, Belegtmelder, Feuchtigkeitssensor etc.

|

1.0

|

Variablen mit mehr Services - Ist es Möglich im Bereich Variablen mehr Services unter zubringen. z.B. Luftfeuchtigkeit, Bewegungsmelder, Belegtmelder, Feuchtigkeitssensor etc.

|

non_process

|

variablen mit mehr services ist es möglich im bereich variablen mehr services unter zubringen z b luftfeuchtigkeit bewegungsmelder belegtmelder feuchtigkeitssensor etc

| 0

|

11,488

| 5,011,878,407

|

IssuesEvent

|

2016-12-13 09:32:43

|

LLNL/spack

|

https://api.github.com/repos/LLNL/spack

|

closed

|

SLEPc fails to configure with Spack's python

|

bug build-error package python

|

I just wiped my installation of Spack to re-install and check things and got the error `Symbol not found: __PyCodecInfo_GetIncrementalDecoder`:

```

==> './configure' '--prefix=/Users/davydden/spack/opt/spack/darwin-sierra-x86_64/clang-8.0.0-apple/slepc-3.7.3-rhcxmg2ntqe3v6epgljeseffnpa4gla2' '--with-arpack-dir=/Users/davydden/spack/opt/spack/darwin-sierra-x86_64/clang-8.0.0-apple/arpack-ng-3.4.0-g76ncwdpqcyx5lm5e65ydwaetbx5sulo/lib' '--with-arpack-flags=-lparpack,-larpack'

Traceback (most recent call last):

File "./configure", line 10, in <module>

execfile(os.path.join(os.path.dirname(__file__), 'config', 'configure.py'))

File "./config/configure.py", line 140, in <module>

import slepc, petsc, arpack, blzpack, trlan, feast, primme, blopex, sowing, lapack

File "/private/var/folders/5k/sqpp24tx3ylds4fgm13pfht00000gn/T/davydden/spack-stage/spack-stage-ZOF1pH/slepc-3.7.3/config/packages/petsc.py", line 22, in <module>

import package, os, sys, commands

File "/private/var/folders/5k/sqpp24tx3ylds4fgm13pfht00000gn/T/davydden/spack-stage/spack-stage-ZOF1pH/slepc-3.7.3/config/package.py", line 22, in <module>

import os, sys, commands, tempfile, shutil, urllib, urlparse, tarfile

File "/Users/davydden/spack/opt/spack/darwin-sierra-x86_64/clang-8.0.0-apple/python-2.7.12-6dtr7kw2sj5zu7z7v3ox3agrmpw5cndt/lib/python2.7/tempfile.py", line 32, in <module>

import io as _io

File "/Users/davydden/spack/opt/spack/darwin-sierra-x86_64/clang-8.0.0-apple/python-2.7.12-6dtr7kw2sj5zu7z7v3ox3agrmpw5cndt/lib/python2.7/io.py", line 51, in <module>

import _io

ImportError: dlopen(/Users/davydden/spack/opt/spack/darwin-sierra-x86_64/clang-8.0.0-apple/python-2.7.12-6dtr7kw2sj5zu7z7v3ox3agrmpw5cndt/lib/python2.7/lib-dynload/_io.so, 2): Symbol not found: __PyCodecInfo_GetIncrementalDecoder

Referenced from: /Users/davydden/spack/opt/spack/darwin-sierra-x86_64/clang-8.0.0-apple/python-2.7.12-6dtr7kw2sj5zu7z7v3ox3agrmpw5cndt/lib/python2.7/lib-dynload/_io.so

Expected in: flat namespace

in /Users/davydden/spack/opt/spack/darwin-sierra-x86_64/clang-8.0.0-apple/python-2.7.12-6dtr7kw2sj5zu7z7v3ox3agrmpw5cndt/lib/python2.7/lib-dynload/_io.so

```

Looking at the history of `python` package, i don't see what could have led to this.

For now will be using

```

python:

version: [2.7.10]

paths:

python@2.7.10: /usr

buildable: False

```

|

1.0

|

SLEPc fails to configure with Spack's python - I just wiped my installation of Spack to re-install and check things and got the error `Symbol not found: __PyCodecInfo_GetIncrementalDecoder`:

```

==> './configure' '--prefix=/Users/davydden/spack/opt/spack/darwin-sierra-x86_64/clang-8.0.0-apple/slepc-3.7.3-rhcxmg2ntqe3v6epgljeseffnpa4gla2' '--with-arpack-dir=/Users/davydden/spack/opt/spack/darwin-sierra-x86_64/clang-8.0.0-apple/arpack-ng-3.4.0-g76ncwdpqcyx5lm5e65ydwaetbx5sulo/lib' '--with-arpack-flags=-lparpack,-larpack'

Traceback (most recent call last):

File "./configure", line 10, in <module>

execfile(os.path.join(os.path.dirname(__file__), 'config', 'configure.py'))

File "./config/configure.py", line 140, in <module>

import slepc, petsc, arpack, blzpack, trlan, feast, primme, blopex, sowing, lapack

File "/private/var/folders/5k/sqpp24tx3ylds4fgm13pfht00000gn/T/davydden/spack-stage/spack-stage-ZOF1pH/slepc-3.7.3/config/packages/petsc.py", line 22, in <module>

import package, os, sys, commands

File "/private/var/folders/5k/sqpp24tx3ylds4fgm13pfht00000gn/T/davydden/spack-stage/spack-stage-ZOF1pH/slepc-3.7.3/config/package.py", line 22, in <module>

import os, sys, commands, tempfile, shutil, urllib, urlparse, tarfile

File "/Users/davydden/spack/opt/spack/darwin-sierra-x86_64/clang-8.0.0-apple/python-2.7.12-6dtr7kw2sj5zu7z7v3ox3agrmpw5cndt/lib/python2.7/tempfile.py", line 32, in <module>

import io as _io

File "/Users/davydden/spack/opt/spack/darwin-sierra-x86_64/clang-8.0.0-apple/python-2.7.12-6dtr7kw2sj5zu7z7v3ox3agrmpw5cndt/lib/python2.7/io.py", line 51, in <module>

import _io

ImportError: dlopen(/Users/davydden/spack/opt/spack/darwin-sierra-x86_64/clang-8.0.0-apple/python-2.7.12-6dtr7kw2sj5zu7z7v3ox3agrmpw5cndt/lib/python2.7/lib-dynload/_io.so, 2): Symbol not found: __PyCodecInfo_GetIncrementalDecoder

Referenced from: /Users/davydden/spack/opt/spack/darwin-sierra-x86_64/clang-8.0.0-apple/python-2.7.12-6dtr7kw2sj5zu7z7v3ox3agrmpw5cndt/lib/python2.7/lib-dynload/_io.so

Expected in: flat namespace

in /Users/davydden/spack/opt/spack/darwin-sierra-x86_64/clang-8.0.0-apple/python-2.7.12-6dtr7kw2sj5zu7z7v3ox3agrmpw5cndt/lib/python2.7/lib-dynload/_io.so

```

Looking at the history of `python` package, i don't see what could have led to this.

For now will be using

```

python:

version: [2.7.10]

paths:

python@2.7.10: /usr

buildable: False

```

|

non_process

|

slepc fails to configure with spack s python i just wiped my installation of spack to re install and check things and got the error symbol not found pycodecinfo getincrementaldecoder configure prefix users davydden spack opt spack darwin sierra clang apple slepc with arpack dir users davydden spack opt spack darwin sierra clang apple arpack ng lib with arpack flags lparpack larpack traceback most recent call last file configure line in execfile os path join os path dirname file config configure py file config configure py line in import slepc petsc arpack blzpack trlan feast primme blopex sowing lapack file private var folders t davydden spack stage spack stage slepc config packages petsc py line in import package os sys commands file private var folders t davydden spack stage spack stage slepc config package py line in import os sys commands tempfile shutil urllib urlparse tarfile file users davydden spack opt spack darwin sierra clang apple python lib tempfile py line in import io as io file users davydden spack opt spack darwin sierra clang apple python lib io py line in import io importerror dlopen users davydden spack opt spack darwin sierra clang apple python lib lib dynload io so symbol not found pycodecinfo getincrementaldecoder referenced from users davydden spack opt spack darwin sierra clang apple python lib lib dynload io so expected in flat namespace in users davydden spack opt spack darwin sierra clang apple python lib lib dynload io so looking at the history of python package i don t see what could have led to this for now will be using python version paths python usr buildable false

| 0

|

328,938

| 10,007,238,770

|

IssuesEvent

|

2019-07-14 08:56:47

|

answeropedia/answeropedia.org

|

https://api.github.com/repos/answeropedia/answeropedia.org

|

closed

|

Abolish short answer (extraction from common answer)

|

priority-critical

|

@gomzyakov

>Короткий ответ будем хранить в отдельном поле сущности, не пытаясь выцарапать из общего ответа (т.к. это иногд просто бессмыслено)

|

1.0

|

Abolish short answer (extraction from common answer) - @gomzyakov

>Короткий ответ будем хранить в отдельном поле сущности, не пытаясь выцарапать из общего ответа (т.к. это иногд просто бессмыслено)

|

non_process

|

abolish short answer extraction from common answer gomzyakov короткий ответ будем хранить в отдельном поле сущности не пытаясь выцарапать из общего ответа т к это иногд просто бессмыслено

| 0

|

13,297

| 22,574,830,062

|

IssuesEvent

|

2022-06-28 06:11:47

|

FederatedAI/KubeFATE

|

https://api.github.com/repos/FederatedAI/KubeFATE

|

closed

|

希望将fate-Serving的Ingress的api由networking.k8s.io/v1beta1升级到networking.k8s.io/v1,以适配kubernates-1.22以上版本

|

kind/requirement

|

**Is your feature request related to a problem? Please describe.**

最新版本的k8s安装cluster-serving时报错:https://github.com/FederatedAI/KubeFATE/issues/618

**Describe the solution you'd like**

支持最新的k8s,由networking.k8s.io/v1beta1升级到networking.k8s.io/v1

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

|

1.0

|

希望将fate-Serving的Ingress的api由networking.k8s.io/v1beta1升级到networking.k8s.io/v1,以适配kubernates-1.22以上版本 - **Is your feature request related to a problem? Please describe.**

最新版本的k8s安装cluster-serving时报错:https://github.com/FederatedAI/KubeFATE/issues/618

**Describe the solution you'd like**

支持最新的k8s,由networking.k8s.io/v1beta1升级到networking.k8s.io/v1

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context or screenshots about the feature request here.

|

non_process

|

希望将fate serving的ingress的api由networking io io ,以适配kubernates is your feature request related to a problem please describe serving时报错: describe the solution you d like ,由networking io io describe alternatives you ve considered a clear and concise description of any alternative solutions or features you ve considered additional context add any other context or screenshots about the feature request here

| 0

|

2,358

| 5,165,992,743

|

IssuesEvent

|

2017-01-17 15:11:17

|

AllenFang/react-bootstrap-table

|

https://api.github.com/repos/AllenFang/react-bootstrap-table

|

closed

|

Clarity of `filterFormatted` in Documentation

|

inprocess

|

## Issue

The `filterFormatted` prop has an unclear description in the documentation. I spent hours trying to figure out why my select filter wouldn't work. It turns out I had included `filterFormatted` in the column (because of the [examples for the select filter](http://allenfang.github.io/react-bootstrap-table/example.html#column-filter)) without fully understanding what it did. Looking at the documentation it seemed like I just needed to include it in the column to enable filtering.

## Proposed fix

Make the documentation for `filterFormatted` clearer. To get started in the right direction, I'd suggest something as follows.

> When true, the column will filter using the value returned by the column's formatter. When false (default), the column will filter using the pre-formatted value.

Also consider changing the [filter examples](http://allenfang.github.io/react-bootstrap-table/example.html#column-filter) to not include `filterFormatted` and possibly include one extra example to showcase the use of `filterFormatted` explicitly.

Anyways, thanks to everyone who's worked on this component. It's extremely powerful and versatile.

|

1.0

|

Clarity of `filterFormatted` in Documentation - ## Issue

The `filterFormatted` prop has an unclear description in the documentation. I spent hours trying to figure out why my select filter wouldn't work. It turns out I had included `filterFormatted` in the column (because of the [examples for the select filter](http://allenfang.github.io/react-bootstrap-table/example.html#column-filter)) without fully understanding what it did. Looking at the documentation it seemed like I just needed to include it in the column to enable filtering.

## Proposed fix

Make the documentation for `filterFormatted` clearer. To get started in the right direction, I'd suggest something as follows.

> When true, the column will filter using the value returned by the column's formatter. When false (default), the column will filter using the pre-formatted value.

Also consider changing the [filter examples](http://allenfang.github.io/react-bootstrap-table/example.html#column-filter) to not include `filterFormatted` and possibly include one extra example to showcase the use of `filterFormatted` explicitly.

Anyways, thanks to everyone who's worked on this component. It's extremely powerful and versatile.

|

process

|

clarity of filterformatted in documentation issue the filterformatted prop has an unclear description in the documentation i spent hours trying to figure out why my select filter wouldn t work it turns out i had included filterformatted in the column because of the without fully understanding what it did looking at the documentation it seemed like i just needed to include it in the column to enable filtering proposed fix make the documentation for filterformatted clearer to get started in the right direction i d suggest something as follows when true the column will filter using the value returned by the column s formatter when false default the column will filter using the pre formatted value also consider changing the to not include filterformatted and possibly include one extra example to showcase the use of filterformatted explicitly anyways thanks to everyone who s worked on this component it s extremely powerful and versatile

| 1

|

334,497

| 10,142,069,030

|

IssuesEvent

|

2019-08-03 20:07:38

|

tensorwerk/hangar-py

|

https://api.github.com/repos/tensorwerk/hangar-py

|

closed

|

[BUG REPORT] Commit inside context manager throws RuntimeError

|

Bug: Priority 2 PR In Progress

|

**Describe the bug**

If we try to commit inside the context manager (before `__exit__()`), hangar throws RuntimeError saying `No changes made in the staging area. Cannot commit.`. We should allow the user to do commits inside the context manager IMO but probably with a warning about the performance hit

**Severity**

<!--- fill in the space between `[ ]` with and `x` (ie. `[x]`) --->

Select an option:

- [ ] Data Corruption / Loss of Any Kind

- [x] Unexpected Behavior, Exceptions or Error Thrown

- [ ] Performance Bottleneck

**To Reproduce**

```python

import numpy as np

from hangar import Repository

repo = Repository(path='myhangarrepo')

repo.init(user_name='Sherin Thomas', user_email='sherin@gmail.com', remove_old=True)

# generate data

data = []

for i in range(1000):

data.append(np.random.rand(28, 28))

data = np.array(data)

co = repo.checkout(write=True)

data_dset = co.datasets.init_dataset('mnist_data', prototype=data[0])

co.commit('datasets init')

co.close()

co = repo.checkout(write=True)

data_dset = co.datasets['mnist_data']

with data_dset:

for i in range(len(data)):

sample_name = str(i)

data_dset[sample_name] = data[i]

co.commit('dataset curation: stage 1') # this throws error

co.close()

```

**Expected behavior**

It should not break the program instead raise a warning about the performance hit

|

1.0

|

[BUG REPORT] Commit inside context manager throws RuntimeError - **Describe the bug**

If we try to commit inside the context manager (before `__exit__()`), hangar throws RuntimeError saying `No changes made in the staging area. Cannot commit.`. We should allow the user to do commits inside the context manager IMO but probably with a warning about the performance hit

**Severity**

<!--- fill in the space between `[ ]` with and `x` (ie. `[x]`) --->

Select an option:

- [ ] Data Corruption / Loss of Any Kind

- [x] Unexpected Behavior, Exceptions or Error Thrown

- [ ] Performance Bottleneck

**To Reproduce**

```python

import numpy as np

from hangar import Repository

repo = Repository(path='myhangarrepo')

repo.init(user_name='Sherin Thomas', user_email='sherin@gmail.com', remove_old=True)

# generate data

data = []

for i in range(1000):

data.append(np.random.rand(28, 28))

data = np.array(data)

co = repo.checkout(write=True)

data_dset = co.datasets.init_dataset('mnist_data', prototype=data[0])

co.commit('datasets init')

co.close()

co = repo.checkout(write=True)

data_dset = co.datasets['mnist_data']

with data_dset:

for i in range(len(data)):

sample_name = str(i)

data_dset[sample_name] = data[i]

co.commit('dataset curation: stage 1') # this throws error

co.close()

```

**Expected behavior**

It should not break the program instead raise a warning about the performance hit

|

non_process

|

commit inside context manager throws runtimeerror describe the bug if we try to commit inside the context manager before exit hangar throws runtimeerror saying no changes made in the staging area cannot commit we should allow the user to do commits inside the context manager imo but probably with a warning about the performance hit severity select an option data corruption loss of any kind unexpected behavior exceptions or error thrown performance bottleneck to reproduce python import numpy as np from hangar import repository repo repository path myhangarrepo repo init user name sherin thomas user email sherin gmail com remove old true generate data data for i in range data append np random rand data np array data co repo checkout write true data dset co datasets init dataset mnist data prototype data co commit datasets init co close co repo checkout write true data dset co datasets with data dset for i in range len data sample name str i data dset data co commit dataset curation stage this throws error co close expected behavior it should not break the program instead raise a warning about the performance hit

| 0

|

35

| 2,505,372,297

|

IssuesEvent

|

2015-01-11 12:42:06

|

Graylog2/graylog2-server

|

https://api.github.com/repos/Graylog2/graylog2-server

|

closed

|

Ship a better/higher default configuration for output_batch_size

|

processing

|

We see significantly improved/less CPU usage by Elasticsearch when setting output_batch_size = 1000 or higher even when dealing with lower loads (around 250 msg/s). The default configuration value of 25 seems to be quite low if not too low, it might be good to raise it a bit. It probably would be also useful to have the batch size adjust dynamically based on relevant metric values.

|

1.0

|

Ship a better/higher default configuration for output_batch_size - We see significantly improved/less CPU usage by Elasticsearch when setting output_batch_size = 1000 or higher even when dealing with lower loads (around 250 msg/s). The default configuration value of 25 seems to be quite low if not too low, it might be good to raise it a bit. It probably would be also useful to have the batch size adjust dynamically based on relevant metric values.

|

process

|

ship a better higher default configuration for output batch size we see significantly improved less cpu usage by elasticsearch when setting output batch size or higher even when dealing with lower loads around msg s the default configuration value of seems to be quite low if not too low it might be good to raise it a bit it probably would be also useful to have the batch size adjust dynamically based on relevant metric values

| 1

|

19,059

| 25,078,181,879

|

IssuesEvent

|

2022-11-07 17:01:06

|

MicrosoftDocs/azure-devops-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-devops-docs

|

closed

|

templateContext not well explained / exampled

|

doc-enhancement devops/prod Pri1 devops-cicd-process/tech

|

https://learn.microsoft.com/en-us/azure/devops/pipelines/process/templates?view=azure-devops#use-templatecontext-to-pass-properties-to-templates

I’d never noticed this functionality before but I’m struggling to understand the use case. I don’t feel it’s hugely well explained as it really just shows an if switch.

I’ve done much the same type of thing as this example before with the if conditionals but it referenced a variable or parameters.

Why do I want to use templateContext over those methods of if-ing off a variable or parameter.

What advantage is it bringing to the table ?

I seen the original blog post and again don’t feel it gave a good example. Can anyone give me more examples ?

I posted this on azure devops on Reddit and the excellent Ming pointed out you can use this on top of a variable template but that still left me wondering why ?

there’s clearly something I’m missing as you’d not create this functionality without a need to fill.

thank you !

Much appreciated

---

#### Document Details

⚠ *Do not edit this section. It is required for learn.microsoft.com ➟ GitHub issue linking.*

* ID: 6724abea-bbdc-bf66-ed5e-3214fa6c3e66

* Version Independent ID: 4f8dab21-3f0e-da32-cc0e-1d85c13c0065

* Content: [Templates - Azure Pipelines](https://learn.microsoft.com/en-us/azure/devops/pipelines/process/templates?view=azure-devops)

* Content Source: [docs/pipelines/process/templates.md](https://github.com/MicrosoftDocs/azure-devops-docs/blob/main/docs/pipelines/process/templates.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @juliakm

* Microsoft Alias: **jukullam**

|

1.0

|

templateContext not well explained / exampled - https://learn.microsoft.com/en-us/azure/devops/pipelines/process/templates?view=azure-devops#use-templatecontext-to-pass-properties-to-templates

I’d never noticed this functionality before but I’m struggling to understand the use case. I don’t feel it’s hugely well explained as it really just shows an if switch.

I’ve done much the same type of thing as this example before with the if conditionals but it referenced a variable or parameters.

Why do I want to use templateContext over those methods of if-ing off a variable or parameter.

What advantage is it bringing to the table ?

I seen the original blog post and again don’t feel it gave a good example. Can anyone give me more examples ?

I posted this on azure devops on Reddit and the excellent Ming pointed out you can use this on top of a variable template but that still left me wondering why ?

there’s clearly something I’m missing as you’d not create this functionality without a need to fill.

thank you !

Much appreciated

---

#### Document Details

⚠ *Do not edit this section. It is required for learn.microsoft.com ➟ GitHub issue linking.*

* ID: 6724abea-bbdc-bf66-ed5e-3214fa6c3e66

* Version Independent ID: 4f8dab21-3f0e-da32-cc0e-1d85c13c0065

* Content: [Templates - Azure Pipelines](https://learn.microsoft.com/en-us/azure/devops/pipelines/process/templates?view=azure-devops)

* Content Source: [docs/pipelines/process/templates.md](https://github.com/MicrosoftDocs/azure-devops-docs/blob/main/docs/pipelines/process/templates.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @juliakm

* Microsoft Alias: **jukullam**

|

process

|

templatecontext not well explained exampled i’d never noticed this functionality before but i’m struggling to understand the use case i don’t feel it’s hugely well explained as it really just shows an if switch i’ve done much the same type of thing as this example before with the if conditionals but it referenced a variable or parameters why do i want to use templatecontext over those methods of if ing off a variable or parameter what advantage is it bringing to the table i seen the original blog post and again don’t feel it gave a good example can anyone give me more examples i posted this on azure devops on reddit and the excellent ming pointed out you can use this on top of a variable template but that still left me wondering why there’s clearly something i’m missing as you’d not create this functionality without a need to fill thank you much appreciated document details ⚠ do not edit this section it is required for learn microsoft com ➟ github issue linking id bbdc version independent id content content source product devops technology devops cicd process github login juliakm microsoft alias jukullam

| 1

|

214,324

| 7,268,889,980

|

IssuesEvent

|

2018-02-20 11:43:49

|

STEP-tw/battleship-phoenix

|

https://api.github.com/repos/STEP-tw/battleship-phoenix

|

closed

|

Game starts when both players are ready.

|

High Priority small

|

As a _player_

I want the _game to start_

So that I _can play_

**Additional Details**

1.Both players are ready.(assumption)

**Acceptance Criteria**

- [x] Criteria 1

- Given _opponent is ready and_

- When _I'm ready_

- Then _the game should start with a game started message_

|

1.0

|

Game starts when both players are ready. - As a _player_

I want the _game to start_

So that I _can play_

**Additional Details**

1.Both players are ready.(assumption)

**Acceptance Criteria**

- [x] Criteria 1

- Given _opponent is ready and_

- When _I'm ready_

- Then _the game should start with a game started message_

|

non_process

|

game starts when both players are ready as a player i want the game to start so that i can play additional details both players are ready assumption acceptance criteria criteria given opponent is ready and when i m ready then the game should start with a game started message

| 0

|

10,387

| 13,196,387,330

|

IssuesEvent

|

2020-08-13 20:32:59

|

MicrosoftDocs/azure-devops-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-devops-docs

|

closed

|

Triggers are not scheduled in UTC

|

Pri2 devops-cicd-process/tech devops/prod doc-bug investigating

|

Triggers are scheduled in the org's timezone, not UTC.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 2ea2c851-bd1e-cddc-b4d0-e9f4112b8565

* Version Independent ID: 07c23fdd-14b5-985b-1c63-3f26f3a216ad

* Content: [Configure schedules to run pipelines - Azure Pipelines](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/scheduled-triggers?view=azure-devops&tabs=yaml)

* Content Source: [docs/pipelines/process/scheduled-triggers.md](https://github.com/MicrosoftDocs/azure-devops-docs/blob/master/docs/pipelines/process/scheduled-triggers.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @steved0x

* Microsoft Alias: **sdanie**

|

1.0

|

Triggers are not scheduled in UTC -

Triggers are scheduled in the org's timezone, not UTC.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 2ea2c851-bd1e-cddc-b4d0-e9f4112b8565

* Version Independent ID: 07c23fdd-14b5-985b-1c63-3f26f3a216ad

* Content: [Configure schedules to run pipelines - Azure Pipelines](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/scheduled-triggers?view=azure-devops&tabs=yaml)

* Content Source: [docs/pipelines/process/scheduled-triggers.md](https://github.com/MicrosoftDocs/azure-devops-docs/blob/master/docs/pipelines/process/scheduled-triggers.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @steved0x

* Microsoft Alias: **sdanie**

|

process

|

triggers are not scheduled in utc triggers are scheduled in the org s timezone not utc document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id cddc version independent id content content source product devops technology devops cicd process github login microsoft alias sdanie

| 1

|

4,182

| 7,114,540,309

|

IssuesEvent

|

2018-01-18 01:18:11

|

sysown/proxysql

|

https://api.github.com/repos/sysown/proxysql

|

closed

|

Extract modifiers from comment

|

GLOBAL MYSQL PROTOCOL QUERY PROCESSOR cxx_pa development enhancement

|

Application should be able to send instructions and modify the behavior of the proxy using key/value pairs inside a comment.

We need to define the list of variables.

|

1.0

|

Extract modifiers from comment - Application should be able to send instructions and modify the behavior of the proxy using key/value pairs inside a comment.

We need to define the list of variables.

|

process

|

extract modifiers from comment application should be able to send instructions and modify the behavior of the proxy using key value pairs inside a comment we need to define the list of variables

| 1

|

15,976

| 20,188,183,795

|

IssuesEvent

|

2022-02-11 01:16:05

|

savitamittalmsft/WAS-SEC-TEST

|

https://api.github.com/repos/savitamittalmsft/WAS-SEC-TEST

|

opened

|

Scan container workloads for vulnerabilities

|

WARP-Import WAF FEB 2021 Security Performance and Scalability Capacity Management Processes Deployment & Testing Testing & Validation

|

<a href="https://docs.microsoft.com/azure/security-center/container-security">Scan container workloads for vulnerabilities</a>

<p><b>Why Consider This?</b></p>

To build secure containerized workloads, ensure the images that they're based on are free of known vulnerabilities. Shipping a known vulnerability in a container isn't significantly safer than on a VM.

<p><b>Context</b></p>

<p><span>Azure Security Center / Azure Defender is the Azure-native solution for securing containers. Azure Defender can protect virtual machines that are running Docker, Azure Kubernetes Service clusters, Azure Container Registry registries. Azure Defender is able to scan container images and identify security issues, or provide real-time threat detection for containerized environments.</span></p>

<p><b>Suggested Actions</b></p>

<p><span>Consider using Azure Defender for securing containerized workloads.</span></p>

<p><b>Learn More</b></p>

<p><a href="https://docs.microsoft.com/en-us/azure/security-center/container-security" target="_blank"><span>https://docs.microsoft.com/en-us/azure/security-center/container-security</span></a><span /></p>

|

1.0

|

Scan container workloads for vulnerabilities - <a href="https://docs.microsoft.com/azure/security-center/container-security">Scan container workloads for vulnerabilities</a>

<p><b>Why Consider This?</b></p>

To build secure containerized workloads, ensure the images that they're based on are free of known vulnerabilities. Shipping a known vulnerability in a container isn't significantly safer than on a VM.

<p><b>Context</b></p>

<p><span>Azure Security Center / Azure Defender is the Azure-native solution for securing containers. Azure Defender can protect virtual machines that are running Docker, Azure Kubernetes Service clusters, Azure Container Registry registries. Azure Defender is able to scan container images and identify security issues, or provide real-time threat detection for containerized environments.</span></p>

<p><b>Suggested Actions</b></p>

<p><span>Consider using Azure Defender for securing containerized workloads.</span></p>

<p><b>Learn More</b></p>

<p><a href="https://docs.microsoft.com/en-us/azure/security-center/container-security" target="_blank"><span>https://docs.microsoft.com/en-us/azure/security-center/container-security</span></a><span /></p>

|

process

|

scan container workloads for vulnerabilities why consider this to build secure containerized workloads ensure the images that they re based on are free of known vulnerabilities shipping a known vulnerability in a container isn t significantly safer than on a vm context azure security center azure defender is the azure native solution for securing containers azure defender can protect virtual machines that are running docker azure kubernetes service clusters azure container registry registries azure defender is able to scan container images and identify security issues or provide real time threat detection for containerized environments suggested actions consider using azure defender for securing containerized workloads learn more

| 1

|

82,383

| 10,279,473,812

|

IssuesEvent

|

2019-08-25 23:33:54

|

fga-desenho-2019-2/Wiki

|

https://api.github.com/repos/fga-desenho-2019-2/Wiki

|

opened

|

API de pagamento

|

Iniciativa extra Pesquisas documentation

|

## Descrição da Issue

Procurar API's de pagamento e verificar como elas funcionam e precificação

### Tasks:

- [ ] Listar API's de pagamento

- [ ] Descrever o funcionamento delas e precificação

|

1.0

|

API de pagamento - ## Descrição da Issue

Procurar API's de pagamento e verificar como elas funcionam e precificação

### Tasks:

- [ ] Listar API's de pagamento

- [ ] Descrever o funcionamento delas e precificação

|

non_process

|

api de pagamento descrição da issue procurar api s de pagamento e verificar como elas funcionam e precificação tasks listar api s de pagamento descrever o funcionamento delas e precificação

| 0

|

15,874

| 20,049,635,950

|

IssuesEvent

|

2022-02-03 03:43:33

|

q191201771/lal

|

https://api.github.com/repos/q191201771/lal

|

closed

|

开启 hls 并启用 use_memory_as_disk_flag 后,cleanup_mode 为0或者1时,会出现内存持续增长

|

#Question #Need doc *In process *Waiting reply

|

开启 hls 并启用 use_memory_as_disk_flag 后,m3u8 和 ts 会存储在内存中

当 cleanup_mode 为1或者2时,不会持续删除过期的 ts 文件,导致内存持续增长

建议在手册的 lalserver 配置文件说明 中注明

|

1.0

|

开启 hls 并启用 use_memory_as_disk_flag 后,cleanup_mode 为0或者1时,会出现内存持续增长 - 开启 hls 并启用 use_memory_as_disk_flag 后,m3u8 和 ts 会存储在内存中

当 cleanup_mode 为1或者2时,不会持续删除过期的 ts 文件,导致内存持续增长

建议在手册的 lalserver 配置文件说明 中注明

|

process

|

开启 hls 并启用 use memory as disk flag 后,cleanup mode ,会出现内存持续增长 开启 hls 并启用 use memory as disk flag 后, 和 ts 会存储在内存中 当 cleanup mode ,不会持续删除过期的 ts 文件,导致内存持续增长 建议在手册的 lalserver 配置文件说明 中注明

| 1

|

60,357

| 7,333,143,774

|

IssuesEvent

|

2018-03-05 18:27:45

|

juliett-golf-hotel/web-app

|

https://api.github.com/repos/juliett-golf-hotel/web-app

|

opened

|

Add hero banner to home page

|

content design dev

|

- [ ] Add hero banner images for different screen sizes

- [ ] Add current temperature

- [ ] Add feels like temperature

- [ ] Add what the weather is (partly cloudy, hail, sun showers, etc)

- [ ] Add a greeting

|

1.0

|

Add hero banner to home page - - [ ] Add hero banner images for different screen sizes

- [ ] Add current temperature

- [ ] Add feels like temperature

- [ ] Add what the weather is (partly cloudy, hail, sun showers, etc)

- [ ] Add a greeting

|

non_process

|

add hero banner to home page add hero banner images for different screen sizes add current temperature add feels like temperature add what the weather is partly cloudy hail sun showers etc add a greeting

| 0

|

1,324

| 3,874,111,167

|

IssuesEvent

|

2016-04-11 19:20:28

|

neuropoly/spinalcordtoolbox

|

https://api.github.com/repos/neuropoly/spinalcordtoolbox

|

closed

|

IndexError for computing CSA

|

bug sct_process_segmentation

|

data:

dropbox simon/results/2015-12-14

~~~

sct_process_segmentation -i T2_seg.nii -p csa -t label/template/MNI-Poly-AMU_level.nii.gz -vert 1:1

Check parameters:

.. segmentation file: T2_seg.nii

Create temporary folder...

mkdir tmp.151214225906_621711/

Copying input data to tmp folder and convert to nii...

sct_convert -i /Users/julien/data/biospective/2015-12-14_sct2.1-2015-12-14/100-011_s2_T2/T2_seg.nii -o tmp.151214225906_621711/segmentation.nii.gz

Change orientation to RPI...

sct_image -i segmentation.nii.gz -setorient RPI -o segmentation_RPI.nii.gz

Open segmentation volume...

Get data dimensions...

56 x 288 x 288

Smooth centerline/segmentation...

.. Get center of mass of the centerline/segmentation...

.. Smoothing algo = hanning

.. Windows length = 50

Compute CSA...

Traceback (most recent call last):

File "/Users/julien/code/spinalcordtoolbox/bin/sct_process_segmentation", line 815, in <module>

main(sys.argv[1:])

File "/Users/julien/code/spinalcordtoolbox/bin/sct_process_segmentation", line 235, in main

compute_csa(fname_segmentation, verbose, remove_temp_files, step, smoothing_param, figure_fit, param.file_csa_volume, slices, vert_lev, fname_vertebral_labeling, algo_fitting = param.algo_fitting, type_window= param.type_window, window_length=param.window_length)

File "/Users/julien/code/spinalcordtoolbox/bin/sct_process_segmentation", line 487, in compute_csa

normal = normalize(np.array([x_centerline_deriv[iz-min_z_index], y_centerline_deriv[iz-min_z_index], z_centerline_deriv[iz-min_z_index]]))

IndexError: index 278 is out of bounds for axis 0 with size 278

~~~

|

1.0

|

IndexError for computing CSA - data:

dropbox simon/results/2015-12-14

~~~

sct_process_segmentation -i T2_seg.nii -p csa -t label/template/MNI-Poly-AMU_level.nii.gz -vert 1:1

Check parameters:

.. segmentation file: T2_seg.nii

Create temporary folder...

mkdir tmp.151214225906_621711/

Copying input data to tmp folder and convert to nii...

sct_convert -i /Users/julien/data/biospective/2015-12-14_sct2.1-2015-12-14/100-011_s2_T2/T2_seg.nii -o tmp.151214225906_621711/segmentation.nii.gz

Change orientation to RPI...

sct_image -i segmentation.nii.gz -setorient RPI -o segmentation_RPI.nii.gz

Open segmentation volume...

Get data dimensions...

56 x 288 x 288

Smooth centerline/segmentation...

.. Get center of mass of the centerline/segmentation...

.. Smoothing algo = hanning

.. Windows length = 50

Compute CSA...

Traceback (most recent call last):

File "/Users/julien/code/spinalcordtoolbox/bin/sct_process_segmentation", line 815, in <module>

main(sys.argv[1:])

File "/Users/julien/code/spinalcordtoolbox/bin/sct_process_segmentation", line 235, in main

compute_csa(fname_segmentation, verbose, remove_temp_files, step, smoothing_param, figure_fit, param.file_csa_volume, slices, vert_lev, fname_vertebral_labeling, algo_fitting = param.algo_fitting, type_window= param.type_window, window_length=param.window_length)

File "/Users/julien/code/spinalcordtoolbox/bin/sct_process_segmentation", line 487, in compute_csa

normal = normalize(np.array([x_centerline_deriv[iz-min_z_index], y_centerline_deriv[iz-min_z_index], z_centerline_deriv[iz-min_z_index]]))

IndexError: index 278 is out of bounds for axis 0 with size 278

~~~

|

process

|

indexerror for computing csa data dropbox simon results sct process segmentation i seg nii p csa t label template mni poly amu level nii gz vert check parameters segmentation file seg nii create temporary folder mkdir tmp copying input data to tmp folder and convert to nii sct convert i users julien data biospective seg nii o tmp segmentation nii gz change orientation to rpi sct image i segmentation nii gz setorient rpi o segmentation rpi nii gz open segmentation volume get data dimensions x x smooth centerline segmentation get center of mass of the centerline segmentation smoothing algo hanning windows length compute csa traceback most recent call last file users julien code spinalcordtoolbox bin sct process segmentation line in main sys argv file users julien code spinalcordtoolbox bin sct process segmentation line in main compute csa fname segmentation verbose remove temp files step smoothing param figure fit param file csa volume slices vert lev fname vertebral labeling algo fitting param algo fitting type window param type window window length param window length file users julien code spinalcordtoolbox bin sct process segmentation line in compute csa normal normalize np array y centerline deriv z centerline deriv indexerror index is out of bounds for axis with size

| 1

|

3,535

| 6,572,687,732

|

IssuesEvent

|

2017-09-11 04:25:42

|

zero-os/0-Disk

|

https://api.github.com/repos/zero-os/0-Disk

|

closed

|

add clusterID to ardb (failure) 0-log messages

|

process_wontfix type_feature

|

Currently only the address and db of the ardb storage server is given, but it would also be useful to give its clusterID alongside [ardb-storage-server-issues](https://github.com/zero-os/0-Disk/blob/master/docs/log.md#ardb-storage-server-issues) messages.

|

1.0

|

add clusterID to ardb (failure) 0-log messages - Currently only the address and db of the ardb storage server is given, but it would also be useful to give its clusterID alongside [ardb-storage-server-issues](https://github.com/zero-os/0-Disk/blob/master/docs/log.md#ardb-storage-server-issues) messages.

|

process

|

add clusterid to ardb failure log messages currently only the address and db of the ardb storage server is given but it would also be useful to give its clusterid alongside messages

| 1

|

17,763

| 23,691,657,953

|

IssuesEvent

|

2022-08-29 11:22:56

|

ankidroid/Anki-Android

|

https://api.github.com/repos/ankidroid/Anki-Android

|

opened

|

Make code coverage reporting stable

|

Dev Test process

|

`codecov` often reports random fluctuations in the coverage. This is likely because tests are nondeterministic, but may be a bug in codecov.

* Determine why this occurs (typically by finding the classes which fluctuate)

* Fix this, so we no longer get `codecov`-based CI failures on changes which should be no-ops

|

1.0

|

Make code coverage reporting stable - `codecov` often reports random fluctuations in the coverage. This is likely because tests are nondeterministic, but may be a bug in codecov.

* Determine why this occurs (typically by finding the classes which fluctuate)

* Fix this, so we no longer get `codecov`-based CI failures on changes which should be no-ops

|

process

|

make code coverage reporting stable codecov often reports random fluctuations in the coverage this is likely because tests are nondeterministic but may be a bug in codecov determine why this occurs typically by finding the classes which fluctuate fix this so we no longer get codecov based ci failures on changes which should be no ops

| 1

|

75,081

| 3,455,052,267

|

IssuesEvent

|

2015-12-17 18:26:21

|

ThoughtWorksInc/registrolivre

|

https://api.github.com/repos/ThoughtWorksInc/registrolivre

|

closed

|

Bug no highlight do formulário de cadastro de empresa

|

Bug Priority

|

Bug no highlight do formulário de cadastro de empresas.

Ao tentar adicionar sócios na empresa, caso um CPF esteja errado o highlight aparece nos dois formulários mesmo que o segundo CPF esteja correto.

|

1.0

|

Bug no highlight do formulário de cadastro de empresa - Bug no highlight do formulário de cadastro de empresas.

Ao tentar adicionar sócios na empresa, caso um CPF esteja errado o highlight aparece nos dois formulários mesmo que o segundo CPF esteja correto.

|

non_process

|

bug no highlight do formulário de cadastro de empresa bug no highlight do formulário de cadastro de empresas ao tentar adicionar sócios na empresa caso um cpf esteja errado o highlight aparece nos dois formulários mesmo que o segundo cpf esteja correto

| 0

|

18,775

| 24,678,010,403

|

IssuesEvent

|

2022-10-18 18:41:02

|

hashgraph/hedera-mirror-node

|

https://api.github.com/repos/hashgraph/hedera-mirror-node

|

closed

|

Monitor slow to startup in Kubernetes

|

bug process monitor

|

### Description

The monitor is now taking too long to startup that its liveness probe is causing it to restart.

### Steps to reproduce

Run monitor on Kubernetes

### Additional context

_No response_

### Hedera network

other

### Version

main

### Operating system

_No response_

|

1.0

|

Monitor slow to startup in Kubernetes - ### Description

The monitor is now taking too long to startup that its liveness probe is causing it to restart.

### Steps to reproduce

Run monitor on Kubernetes

### Additional context

_No response_

### Hedera network

other

### Version

main

### Operating system

_No response_

|

process

|

monitor slow to startup in kubernetes description the monitor is now taking too long to startup that its liveness probe is causing it to restart steps to reproduce run monitor on kubernetes additional context no response hedera network other version main operating system no response

| 1

|

311,218

| 23,376,086,577

|

IssuesEvent

|

2022-08-11 03:19:10

|

singularity-data/risingwave-docs

|

https://api.github.com/repos/singularity-data/risingwave-docs

|

closed

|

Update the lookup join behavior

|

documentation

|

### Related code PR

PR: https://github.com/singularity-data/risingwave/pull/4207

Code issue: https://github.com/singularity-data/risingwave/issues/4044

### Which part(s) of the docs might be affected or should be updated? And how?

Add this code change (issue fixes) to Release Notes.

When using lookup joins (JOIN ... ON ...), RW now will cast the data types if the data type of the two key columns are different, instead of throwing an error.

### Reference

_No response_

|

1.0

|

Update the lookup join behavior - ### Related code PR

PR: https://github.com/singularity-data/risingwave/pull/4207

Code issue: https://github.com/singularity-data/risingwave/issues/4044

### Which part(s) of the docs might be affected or should be updated? And how?

Add this code change (issue fixes) to Release Notes.

When using lookup joins (JOIN ... ON ...), RW now will cast the data types if the data type of the two key columns are different, instead of throwing an error.

### Reference

_No response_

|

non_process

|

update the lookup join behavior related code pr pr code issue which part s of the docs might be affected or should be updated and how add this code change issue fixes to release notes when using lookup joins join on rw now will cast the data types if the data type of the two key columns are different instead of throwing an error reference no response

| 0

|

82,645

| 10,300,605,138

|

IssuesEvent

|

2019-08-28 16:14:13

|

ISibboI/graphrepresentations

|

https://api.github.com/repos/ISibboI/graphrepresentations

|

opened

|

Fix documentation asserts

|

documentation

|

Asserting that the node ids are output in order does not prove anything or help the user understand. It should be asserted that the data is at the correct place.

|

1.0

|

Fix documentation asserts - Asserting that the node ids are output in order does not prove anything or help the user understand. It should be asserted that the data is at the correct place.

|

non_process

|

fix documentation asserts asserting that the node ids are output in order does not prove anything or help the user understand it should be asserted that the data is at the correct place

| 0

|

11,315

| 14,134,856,491

|

IssuesEvent

|

2020-11-10 00:15:56

|

googleapis/python-speech

|

https://api.github.com/repos/googleapis/python-speech

|

closed

|

speec2srt.py is not working because of removal of submodules enums and types

|

api: speech type: process

|

Following [this](https://www.youtube.com/watch?v=uBzp5xGSZ6o&ab_channel=GoogleCloudAPAC) for creating speech to srt using google apis. When running the command `python3 speech2srt.py --storage_uri gs://subtitlingsc/en.wav` is showing that

```

File "speech2srt.py", line 19, in <module>

from google.cloud.speech_v1 import enums

ImportError: cannot import name 'enums' from 'google.cloud.speech_v1'

```

I think this is due to removal of enums and types. Please do the needful and update those videos and [GitHub file](https://github.com/GoogleCloudPlatform/community/blob/master/tutorials/speech2srt/speech2srt.py)

|

1.0

|

speec2srt.py is not working because of removal of submodules enums and types - Following [this](https://www.youtube.com/watch?v=uBzp5xGSZ6o&ab_channel=GoogleCloudAPAC) for creating speech to srt using google apis. When running the command `python3 speech2srt.py --storage_uri gs://subtitlingsc/en.wav` is showing that

```

File "speech2srt.py", line 19, in <module>

from google.cloud.speech_v1 import enums

ImportError: cannot import name 'enums' from 'google.cloud.speech_v1'

```

I think this is due to removal of enums and types. Please do the needful and update those videos and [GitHub file](https://github.com/GoogleCloudPlatform/community/blob/master/tutorials/speech2srt/speech2srt.py)

|

process

|

py is not working because of removal of submodules enums and types following for creating speech to srt using google apis when running the command py storage uri gs subtitlingsc en wav is showing that file py line in from google cloud speech import enums importerror cannot import name enums from google cloud speech i think this is due to removal of enums and types please do the needful and update those videos and

| 1

|

136,030

| 30,462,136,059

|

IssuesEvent

|

2023-07-17 07:47:25

|

FerretDB/FerretDB

|

https://api.github.com/repos/FerretDB/FerretDB

|

opened

|

Integration tests should report their progress

|

code/chore not ready

|

### What should be done?

The integration tests should print something meaningful to the console once in a while, like "running test 24/1032".

Right now they can take 10-30 minutes on a dev machine without printing a thing. The terminal looks like it hangs, there is no indication of progress. A natural reaction to that is `ctrl+C` (which doesn't allow the tests to complete).

It could be a good idea to disable this progress printing when running the tests from CI (to avoid some redundant logs in a non-interactive environment).

### Where?

The integration tests runner maybe?

I haven't looked at the relevant test runner yet. :swe

### Definition of Done

- test runner is updated

|

1.0

|

Integration tests should report their progress - ### What should be done?

The integration tests should print something meaningful to the console once in a while, like "running test 24/1032".

Right now they can take 10-30 minutes on a dev machine without printing a thing. The terminal looks like it hangs, there is no indication of progress. A natural reaction to that is `ctrl+C` (which doesn't allow the tests to complete).

It could be a good idea to disable this progress printing when running the tests from CI (to avoid some redundant logs in a non-interactive environment).

### Where?

The integration tests runner maybe?

I haven't looked at the relevant test runner yet. :swe

### Definition of Done

- test runner is updated

|

non_process

|

integration tests should report their progress what should be done the integration tests should print something meaningful to the console once in a while like running test right now they can take minutes on a dev machine without printing a thing the terminal looks like it hangs there is no indication of progress a natural reaction to that is ctrl c which doesn t allow the tests to complete it could be a good idea to disable this progress printing when running the tests from ci to avoid some redundant logs in a non interactive environment where the integration tests runner maybe i haven t looked at the relevant test runner yet swe definition of done test runner is updated

| 0

|

11,304

| 14,107,274,324

|

IssuesEvent

|

2020-11-06 16:03:57

|

panther-labs/panther

|

https://api.github.com/repos/panther-labs/panther

|

opened

|

Invalid JSON in test crashes entire test suite

|

bug p1 team:data processing

|

### Describe the bug

Currently, when adding an invalid input as a test body, I expect to get back a structured response including a particular set of keys (like `ruleError` and `genericError`), stating that I have added invalid content.

Instead, the entire lambda fails, causes a runtime issue and AppSync doesn't return any structured data.

### Steps to reproduce

Steps to reproduce the behavior:

1. Create a New Rule

2. Add a test

3. Fill the body of the test with the content

```

{ "uuid": "123" }

{ "uuid": "123" }

```

4. Click "Run all"

### Expected behavior

There should be a structured API response with a specific key containing the error

### Environment

How are you deploying or using Panther?

- Panther version or commit: v1.12

- OS: Mac

- Browser: Chrome

### Additional context

If I have 20 tests and 1 of them has invalid body, I will never get feedback for **any of the other 19 tests**, since the entire API response gets replaced by the runtime error

### Screenshots

<img width="1287" alt="Screen Shot 2020-11-06 at 5 55 55 PM" src="https://user-images.githubusercontent.com/10436045/98387474-19630f80-205a-11eb-88ed-5c358783b789.png">

|

1.0

|

Invalid JSON in test crashes entire test suite -

### Describe the bug

Currently, when adding an invalid input as a test body, I expect to get back a structured response including a particular set of keys (like `ruleError` and `genericError`), stating that I have added invalid content.

Instead, the entire lambda fails, causes a runtime issue and AppSync doesn't return any structured data.

### Steps to reproduce

Steps to reproduce the behavior:

1. Create a New Rule

2. Add a test

3. Fill the body of the test with the content

```

{ "uuid": "123" }

{ "uuid": "123" }

```

4. Click "Run all"

### Expected behavior

There should be a structured API response with a specific key containing the error

### Environment

How are you deploying or using Panther?

- Panther version or commit: v1.12

- OS: Mac

- Browser: Chrome

### Additional context

If I have 20 tests and 1 of them has invalid body, I will never get feedback for **any of the other 19 tests**, since the entire API response gets replaced by the runtime error

### Screenshots

<img width="1287" alt="Screen Shot 2020-11-06 at 5 55 55 PM" src="https://user-images.githubusercontent.com/10436045/98387474-19630f80-205a-11eb-88ed-5c358783b789.png">

|

process

|

invalid json in test crashes entire test suite describe the bug currently when adding an invalid input as a test body i expect to get back a structured response including a particular set of keys like ruleerror and genericerror stating that i have added invalid content instead the entire lambda fails causes a runtime issue and appsync doesn t return any structured data steps to reproduce steps to reproduce the behavior create a new rule add a test fill the body of the test with the content uuid uuid click run all expected behavior there should be a structured api response with a specific key containing the error environment how are you deploying or using panther panther version or commit os mac browser chrome additional context if i have tests and of them has invalid body i will never get feedback for any of the other tests since the entire api response gets replaced by the runtime error screenshots img width alt screen shot at pm src

| 1

|

20,590

| 27,254,450,947

|

IssuesEvent

|

2023-02-22 10:27:51

|

python/cpython

|

https://api.github.com/repos/python/cpython

|

reopened

|

Deadlock when using fork whilst multiprocessing.resource_tracker._resource_tracker._lock is held

|

type-bug stdlib expert-multiprocessing

|

# Bug report

Given the following situation: You have a Process "a" with two threads ("aa", "ab"). aa is currently creating a shared memory segment and is holding `multiprocessing.resource_tracker._resource_tracker._lock` for that reason. Note that that lock is not acquired by user code, but deep inside the SharedMemory constructor.

ab now wants to create a second process using the `fork` method. Process b is created with one thread: bb. As per `man fork` the thread aa is not duplicated in process b.

If aa now finishes creating its SharedMemory it releases `multiprocessing.resource_tracker._resource_tracker._lock`. But that lock is only a `threading.Lock`, which means it is released only in process a. In process b it will not be released. If b now wants to create a SharedMemory object and therefore tries to acquire `multiprocessing.resource_tracker._resource_tracker._lock` this will take forever, as it will never be free in process b. `man fork` explicitly mentions that such situations are a potential source of issues.

As this is a race condition there is no minimal, reproducible example I can give.

# Possible solutions

One could replace this `threading.Lock` with a `multiprocessing.Lock`. Alternatively one could replace that lock with a new one in the child process after a fork. Im not sure about the intended behavior of the resource tracker in such situations.

# Your environment

<!-- Include as many relevant details as possible about the environment you experienced the bug in -->

- CPython versions tested on: 3.9, 3.10

- Operating system and architecture: Unix (Arch, Kernel 5.19.7-1)

<!--

You can freely edit this text. Remove any lines you believe are unnecessary.

-->

|

1.0

|

Deadlock when using fork whilst multiprocessing.resource_tracker._resource_tracker._lock is held - # Bug report

Given the following situation: You have a Process "a" with two threads ("aa", "ab"). aa is currently creating a shared memory segment and is holding `multiprocessing.resource_tracker._resource_tracker._lock` for that reason. Note that that lock is not acquired by user code, but deep inside the SharedMemory constructor.

ab now wants to create a second process using the `fork` method. Process b is created with one thread: bb. As per `man fork` the thread aa is not duplicated in process b.

If aa now finishes creating its SharedMemory it releases `multiprocessing.resource_tracker._resource_tracker._lock`. But that lock is only a `threading.Lock`, which means it is released only in process a. In process b it will not be released. If b now wants to create a SharedMemory object and therefore tries to acquire `multiprocessing.resource_tracker._resource_tracker._lock` this will take forever, as it will never be free in process b. `man fork` explicitly mentions that such situations are a potential source of issues.

As this is a race condition there is no minimal, reproducible example I can give.

# Possible solutions

One could replace this `threading.Lock` with a `multiprocessing.Lock`. Alternatively one could replace that lock with a new one in the child process after a fork. Im not sure about the intended behavior of the resource tracker in such situations.

# Your environment