Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

249,729

| 18,858,231,264

|

IssuesEvent

|

2021-11-12 09:31:53

|

fans2619/pe

|

https://api.github.com/repos/fans2619/pe

|

opened

|

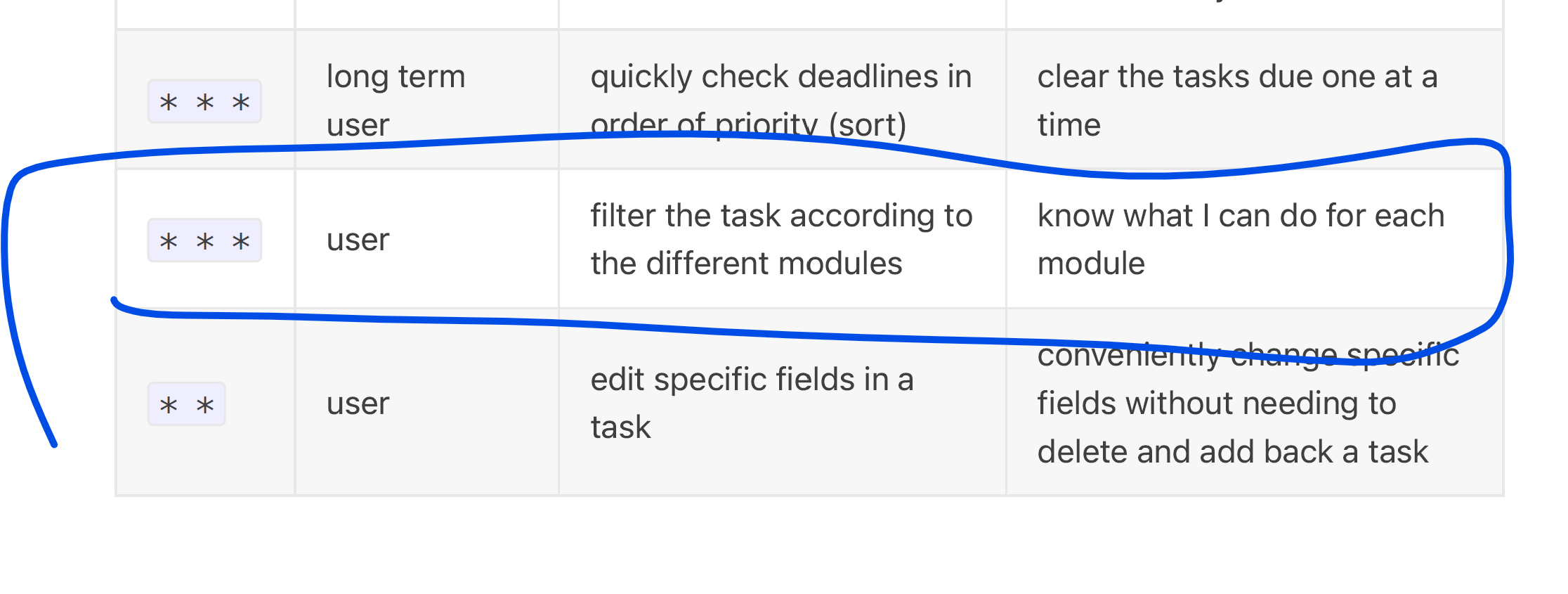

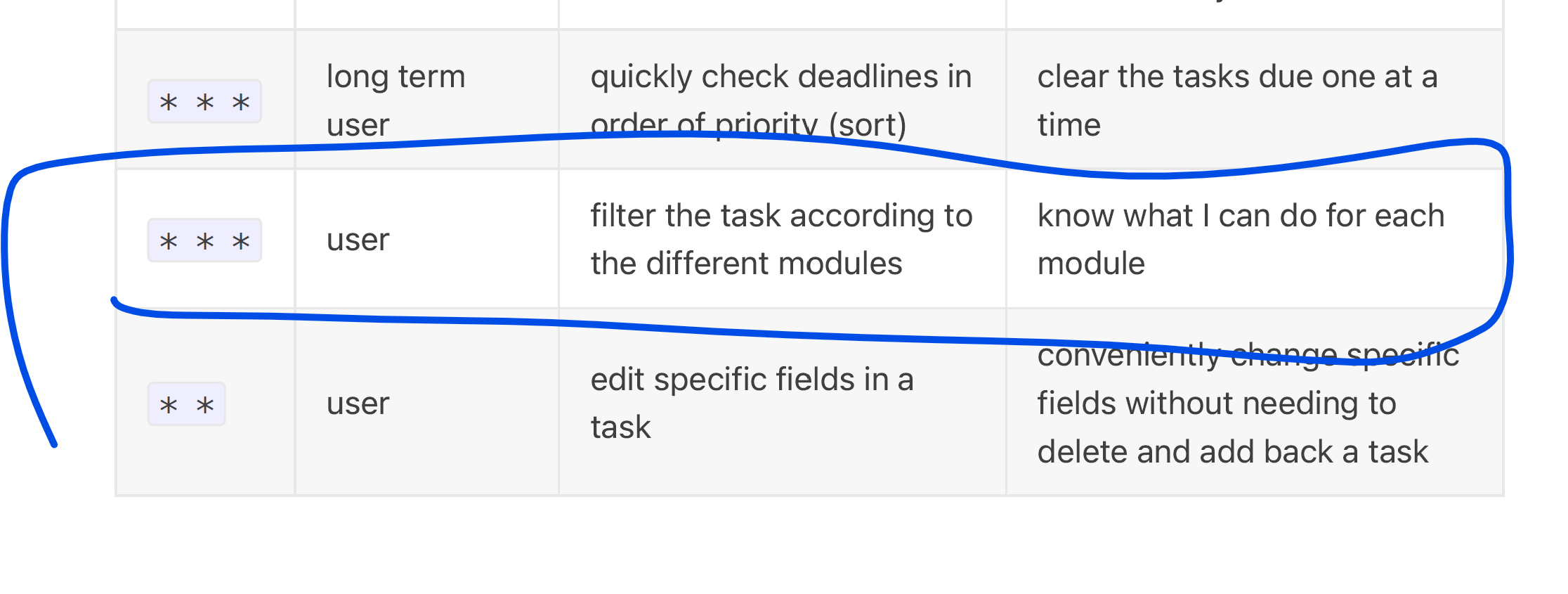

DG: missing important user stories

|

severity.Low type.DocumentationBug

|

The app can filter tasks by multiple criteria, but the user story only mentions module.

<!--session: 1636704000181-d38cfce8-c355-4989-aedc-b31601a51075-->

<!--Version: Web v3.4.1-->

|

1.0

|

DG: missing important user stories - The app can filter tasks by multiple criteria, but the user story only mentions module.

<!--session: 1636704000181-d38cfce8-c355-4989-aedc-b31601a51075-->

<!--Version: Web v3.4.1-->

|

non_process

|

dg missing important user stories the app can filter tasks by multiple criteria but the user story only mentions module

| 0

|

244,700

| 18,765,810,898

|

IssuesEvent

|

2021-11-05 23:54:16

|

dmyersturnbull/tyrannosaurus

|

https://api.github.com/repos/dmyersturnbull/tyrannosaurus

|

closed

|

README comparison table is broken

|

status: fixed ✓ kind: documentation kind: bug

|

See the screenshot, the README.md table has an error in it:

|

1.0

|

README comparison table is broken - See the screenshot, the README.md table has an error in it:

|

non_process

|

readme comparison table is broken see the screenshot the readme md table has an error in it

| 0

|

55,217

| 6,893,014,592

|

IssuesEvent

|

2017-11-23 00:16:01

|

Automattic/wp-calypso

|

https://api.github.com/repos/Automattic/wp-calypso

|

opened

|

Store Shipping Labels: Address modification notice has no icon

|

Design Store

|

The address modification notice might warrant a revisit since the notice component styling changes:

<img width="847" alt="screen shot 2017-11-22 at 4 04 29 pm" src="https://user-images.githubusercontent.com/63922/33154826-72037f04-cfa8-11e7-97ed-e5aecaba53ce.png">

|

1.0

|

Store Shipping Labels: Address modification notice has no icon - The address modification notice might warrant a revisit since the notice component styling changes:

<img width="847" alt="screen shot 2017-11-22 at 4 04 29 pm" src="https://user-images.githubusercontent.com/63922/33154826-72037f04-cfa8-11e7-97ed-e5aecaba53ce.png">

|

non_process

|

store shipping labels address modification notice has no icon the address modification notice might warrant a revisit since the notice component styling changes img width alt screen shot at pm src

| 0

|

12,650

| 15,023,214,267

|

IssuesEvent

|

2021-02-01 17:56:21

|

hashgraph/hedera-mirror-node

|

https://api.github.com/repos/hashgraph/hedera-mirror-node

|

closed

|

Integrate rosetta module into maven cycle

|

P3 enhancement process rosetta

|

**Problem**

The rosetta module current uses standalone go commands to build and test.

Given the rest of the modules can all be managed under maven for build and test we should incorporate rosetta also.

**Solution**

Integrate rosetta module into maven cycle [mvn-golang](https://github.com/raydac/mvn-golang) seems like a good starting option

**Alternatives**

**Additional Context**

|

1.0

|

Integrate rosetta module into maven cycle - **Problem**

The rosetta module current uses standalone go commands to build and test.

Given the rest of the modules can all be managed under maven for build and test we should incorporate rosetta also.

**Solution**

Integrate rosetta module into maven cycle [mvn-golang](https://github.com/raydac/mvn-golang) seems like a good starting option

**Alternatives**

**Additional Context**

|

process

|

integrate rosetta module into maven cycle problem the rosetta module current uses standalone go commands to build and test given the rest of the modules can all be managed under maven for build and test we should incorporate rosetta also solution integrate rosetta module into maven cycle seems like a good starting option alternatives additional context

| 1

|

732,999

| 25,284,246,193

|

IssuesEvent

|

2022-11-16 17:56:43

|

googleapis/nodejs-compute

|

https://api.github.com/repos/googleapis/nodejs-compute

|

closed

|

VMDisk Snapshot

|

priority: p3 type: feature request api: compute

|

Thanks for stopping by to let us know something could be better!

**PLEASE READ**: If you have a support contract with Google, please create an issue in the [support console](https://cloud.google.com/support/) instead of filing on GitHub. This will ensure a timely response.

**Is your feature request related to a problem? Please describe.**

I need to take manual backups on GCP and looking for ways to automate it

**Describe the solution you'd like**

Create VM Disk backups using nodejs.

**Describe alternatives you've considered**

**Additional context**

https://github.com/andrikosrikos/Google-Cloud-Functions/blob/master/Node.js/backupVMDiskSnapshot.js

|

1.0

|

VMDisk Snapshot - Thanks for stopping by to let us know something could be better!

**PLEASE READ**: If you have a support contract with Google, please create an issue in the [support console](https://cloud.google.com/support/) instead of filing on GitHub. This will ensure a timely response.

**Is your feature request related to a problem? Please describe.**

I need to take manual backups on GCP and looking for ways to automate it

**Describe the solution you'd like**

Create VM Disk backups using nodejs.

**Describe alternatives you've considered**

**Additional context**

https://github.com/andrikosrikos/Google-Cloud-Functions/blob/master/Node.js/backupVMDiskSnapshot.js

|

non_process

|

vmdisk snapshot thanks for stopping by to let us know something could be better please read if you have a support contract with google please create an issue in the instead of filing on github this will ensure a timely response is your feature request related to a problem please describe i need to take manual backups on gcp and looking for ways to automate it describe the solution you d like create vm disk backups using nodejs describe alternatives you ve considered additional context

| 0

|

357,072

| 25,176,319,810

|

IssuesEvent

|

2022-11-11 09:34:43

|

ichigh0st/pe

|

https://api.github.com/repos/ichigh0st/pe

|

opened

|

Proposed export list feature in DG has been implemented

|

type.DocumentationBug severity.VeryLow

|

No details provided by bug reporter.

<!--session: 1668153167875-9825e2e9-0581-4c44-b169-8f750bbdb01e-->

<!--Version: Web v3.4.4-->

|

1.0

|

Proposed export list feature in DG has been implemented - No details provided by bug reporter.

<!--session: 1668153167875-9825e2e9-0581-4c44-b169-8f750bbdb01e-->

<!--Version: Web v3.4.4-->

|

non_process

|

proposed export list feature in dg has been implemented no details provided by bug reporter

| 0

|

206,272

| 7,111,102,899

|

IssuesEvent

|

2018-01-17 13:09:15

|

rte-antares-rpackage/antaDraft

|

https://api.github.com/repos/rte-antares-rpackage/antaDraft

|

closed

|

[prod] NA au lieu de 0 pour plusieurs filières sur SPAIN

|

Priority 1

|

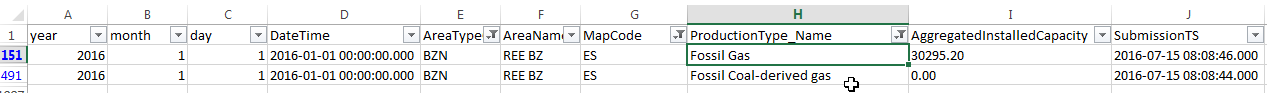

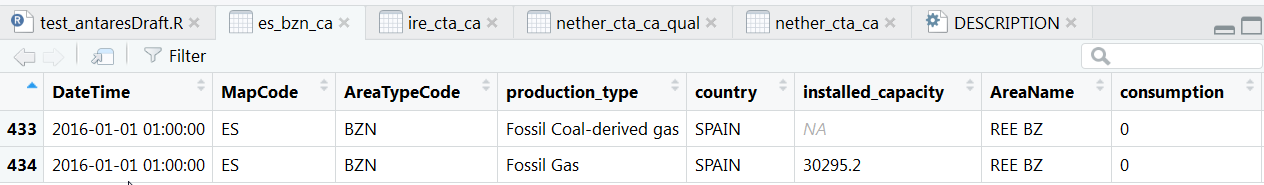

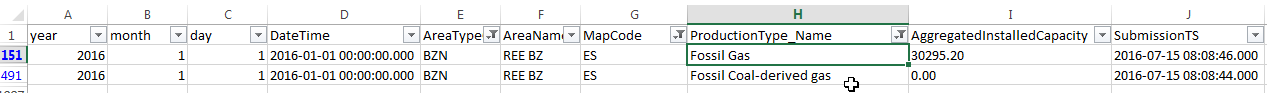

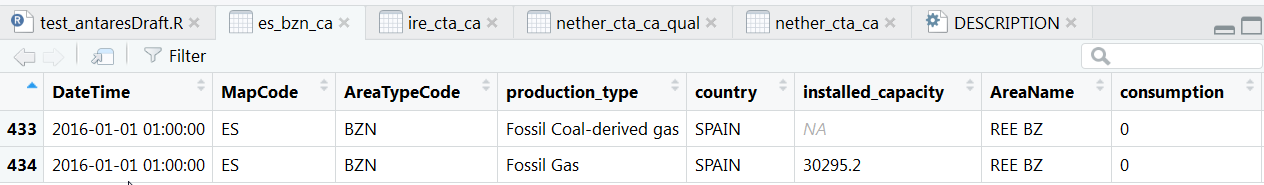

Ce que je constate sur le FTP

Ce que je constate sur RStudio

Le code pour retrouver l'anomalie :

Au lieu d'avoir un NA on devrait avoir un 0.

```R

perso_data_channel<-"D:\\Users\\jalazawa\\Documents\\2_ANTARES\\Dev\\packages\\transparency\\data_20180104\\B-PRODUCTION\\B01-Production_réalisée_par_filière\\2016"

perso_data_channel_capa2016<-"D:\\Users\\jalazawa\\Documents\\2_ANTARES\\Dev\\packages\\transparency\\data_20180104\\B-PRODUCTION\\B06-Capacité_installée_par_filière\\2016"

res2016<-anta_prod_channel(

production_dir =perso_data_channel,

capacity_dir = perso_data_channel_capa2016

)

res2016Valid<-augment_validation(res2016)

res2016Valid[ res2016Valid$DateTime=="2016-01-01 02:00:00" & res2016Valid$AreaTypeCode=="BZN" & res2016Valid$country=="SPAIN" & (res2016Valid$production_type=="Fossil Coal-derived gas" | res2016Valid$production_type=="Fossil Gas") ,] %>% View("es_bzn_ca")

```

|

1.0

|

[prod] NA au lieu de 0 pour plusieurs filières sur SPAIN - Ce que je constate sur le FTP

Ce que je constate sur RStudio

Le code pour retrouver l'anomalie :

Au lieu d'avoir un NA on devrait avoir un 0.

```R

perso_data_channel<-"D:\\Users\\jalazawa\\Documents\\2_ANTARES\\Dev\\packages\\transparency\\data_20180104\\B-PRODUCTION\\B01-Production_réalisée_par_filière\\2016"

perso_data_channel_capa2016<-"D:\\Users\\jalazawa\\Documents\\2_ANTARES\\Dev\\packages\\transparency\\data_20180104\\B-PRODUCTION\\B06-Capacité_installée_par_filière\\2016"

res2016<-anta_prod_channel(

production_dir =perso_data_channel,

capacity_dir = perso_data_channel_capa2016

)

res2016Valid<-augment_validation(res2016)

res2016Valid[ res2016Valid$DateTime=="2016-01-01 02:00:00" & res2016Valid$AreaTypeCode=="BZN" & res2016Valid$country=="SPAIN" & (res2016Valid$production_type=="Fossil Coal-derived gas" | res2016Valid$production_type=="Fossil Gas") ,] %>% View("es_bzn_ca")

```

|

non_process

|

na au lieu de pour plusieurs filières sur spain ce que je constate sur le ftp ce que je constate sur rstudio le code pour retrouver l anomalie au lieu d avoir un na on devrait avoir un r perso data channel d users jalazawa documents antares dev packages transparency data b production production réalisée par filière perso data channel d users jalazawa documents antares dev packages transparency data b production capacité installée par filière anta prod channel production dir perso data channel capacity dir perso data channel augment validation view es bzn ca

| 0

|

2,048

| 4,858,003,633

|

IssuesEvent

|

2016-11-12 22:20:15

|

CredentialTransparencyInitiative/vocabularies

|

https://api.github.com/repos/CredentialTransparencyInitiative/vocabularies

|

closed

|

Review ProcessProfile

|

Process Profile

|

Many properties within ProcessProfile probably belong with other entities - we need to review how ProcessProfile is used and determine which of its properties should be moved elsewhere.

Likely suspects so far:

- staffEvaluationMethod (move to Organization)

- staffSelectionCriteria (move to Organization)

|

1.0

|

Review ProcessProfile - Many properties within ProcessProfile probably belong with other entities - we need to review how ProcessProfile is used and determine which of its properties should be moved elsewhere.

Likely suspects so far:

- staffEvaluationMethod (move to Organization)

- staffSelectionCriteria (move to Organization)

|

process

|

review processprofile many properties within processprofile probably belong with other entities we need to review how processprofile is used and determine which of its properties should be moved elsewhere likely suspects so far staffevaluationmethod move to organization staffselectioncriteria move to organization

| 1

|

34,580

| 12,293,476,381

|

IssuesEvent

|

2020-05-10 19:10:54

|

heholek/better-onetab

|

https://api.github.com/repos/heholek/better-onetab

|

opened

|

CVE-2018-11693 (High) detected in opennms-opennms-source-22.0.1-1

|

security vulnerability

|

## CVE-2018-11693 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>opennmsopennms-source-22.0.1-1</b></p></summary>

<p>

<p>A Java based fault and performance management system</p>

<p>Library home page: <a href=https://sourceforge.net/projects/opennms/>https://sourceforge.net/projects/opennms/</a></p>

<p>Found in HEAD commit: <a href="https://github.com/heholek/better-onetab/commit/34ef2ec6547275b25ebbcfdab80f0894bbeac266">34ef2ec6547275b25ebbcfdab80f0894bbeac266</a></p>

</p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Library Source Files (64)</summary>

<p></p>

<p> * The source files were matched to this source library based on a best effort match. Source libraries are selected from a list of probable public libraries.</p>

<p>

- /better-onetab/node_modules/console-browserify/test/static/test-adapter.js

- /better-onetab/node_modules/nan/nan_callbacks_pre_12_inl.h

- /better-onetab/node_modules/node-sass/src/libsass/src/expand.hpp

- /better-onetab/node_modules/node-sass/src/sass_types/factory.cpp

- /better-onetab/node_modules/js-base64/.attic/test-moment/./yoshinoya.js

- /better-onetab/node_modules/node-sass/src/sass_types/boolean.cpp

- /better-onetab/node_modules/node-sass/src/sass_types/value.h

- /better-onetab/node_modules/node-sass/src/libsass/src/emitter.hpp

- /better-onetab/node_modules/nan/nan_converters_pre_43_inl.h

- /better-onetab/node_modules/node-sass/src/libsass/src/file.cpp

- /better-onetab/node_modules/nan/nan_persistent_12_inl.h

- /better-onetab/node_modules/node-sass/src/libsass/src/operation.hpp

- /better-onetab/node_modules/nan/nan_persistent_pre_12_inl.h

- /better-onetab/node_modules/node-sass/src/libsass/src/operators.hpp

- /better-onetab/node_modules/node-sass/src/libsass/src/constants.hpp

- /better-onetab/node_modules/node-sass/src/libsass/src/error_handling.hpp

- /better-onetab/node_modules/nan/nan_implementation_pre_12_inl.h

- /better-onetab/node_modules/js-base64/test/./dankogai.js

- /better-onetab/node_modules/node-sass/src/libsass/src/constants.cpp

- /better-onetab/node_modules/node-sass/src/sass_types/list.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/functions.hpp

- /better-onetab/node_modules/node-sass/src/libsass/src/util.cpp

- /better-onetab/node_modules/node-sass/src/custom_function_bridge.cpp

- /better-onetab/node_modules/node-sass/src/custom_importer_bridge.h

- /better-onetab/node_modules/node-sass/src/libsass/src/bind.cpp

- /better-onetab/node_modules/nan/nan_json.h

- /better-onetab/node_modules/node-sass/src/libsass/src/eval.hpp

- /better-onetab/node_modules/nan/nan_converters.h

- /better-onetab/node_modules/node-sass/src/libsass/src/backtrace.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/extend.cpp

- /better-onetab/node_modules/node-sass/src/sass_types/sass_value_wrapper.h

- /better-onetab/node_modules/node-sass/src/libsass/src/error_handling.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/emitter.cpp

- /better-onetab/node_modules/node-sass/src/sass_types/number.cpp

- /better-onetab/node_modules/node-sass/src/sass_types/color.h

- /better-onetab/node_modules/nan/nan_new.h

- /better-onetab/node_modules/node-sass/src/libsass/src/sass_values.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/ast.hpp

- /better-onetab/node_modules/node-sass/src/libsass/src/output.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/check_nesting.cpp

- /better-onetab/node_modules/node-sass/src/sass_types/null.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/ast_def_macros.hpp

- /better-onetab/node_modules/node-sass/src/libsass/src/cssize.hpp

- /better-onetab/node_modules/node-sass/src/libsass/src/ast.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/to_c.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/to_value.hpp

- /better-onetab/node_modules/node-sass/src/libsass/src/ast_fwd_decl.hpp

- /better-onetab/node_modules/nan/nan_callbacks.h

- /better-onetab/node_modules/node-sass/src/libsass/src/inspect.hpp

- /better-onetab/node_modules/node-sass/src/sass_types/color.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/values.cpp

- /better-onetab/node_modules/node-sass/src/sass_types/list.h

- /better-onetab/node_modules/node-sass/src/libsass/src/check_nesting.hpp

- /better-onetab/node_modules/nan/nan_define_own_property_helper.h

- /better-onetab/node_modules/js-base64/test/./es5.js

- /better-onetab/node_modules/node-sass/src/sass_types/map.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/to_value.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/context.cpp

- /better-onetab/node_modules/node-sass/src/sass_types/string.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/sass_context.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/prelexer.hpp

- /better-onetab/node_modules/node-sass/src/libsass/src/context.hpp

- /better-onetab/node_modules/node-sass/src/sass_types/boolean.h

- /better-onetab/node_modules/nan/nan_private.h

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in LibSass through 3.5.4. An out-of-bounds read of a memory region was found in the function Sass::Prelexer::skip_over_scopes which could be leveraged by an attacker to disclose information or manipulated to read from unmapped memory causing a denial of service.

<p>Publish Date: 2018-06-04

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-11693>CVE-2018-11693</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-11693">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-11693</a></p>

<p>Release Date: 2018-06-04</p>

<p>Fix Resolution: LibSass - 3.5.5</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2018-11693 (High) detected in opennms-opennms-source-22.0.1-1 - ## CVE-2018-11693 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>opennmsopennms-source-22.0.1-1</b></p></summary>

<p>

<p>A Java based fault and performance management system</p>

<p>Library home page: <a href=https://sourceforge.net/projects/opennms/>https://sourceforge.net/projects/opennms/</a></p>

<p>Found in HEAD commit: <a href="https://github.com/heholek/better-onetab/commit/34ef2ec6547275b25ebbcfdab80f0894bbeac266">34ef2ec6547275b25ebbcfdab80f0894bbeac266</a></p>

</p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Library Source Files (64)</summary>

<p></p>

<p> * The source files were matched to this source library based on a best effort match. Source libraries are selected from a list of probable public libraries.</p>

<p>

- /better-onetab/node_modules/console-browserify/test/static/test-adapter.js

- /better-onetab/node_modules/nan/nan_callbacks_pre_12_inl.h

- /better-onetab/node_modules/node-sass/src/libsass/src/expand.hpp

- /better-onetab/node_modules/node-sass/src/sass_types/factory.cpp

- /better-onetab/node_modules/js-base64/.attic/test-moment/./yoshinoya.js

- /better-onetab/node_modules/node-sass/src/sass_types/boolean.cpp

- /better-onetab/node_modules/node-sass/src/sass_types/value.h

- /better-onetab/node_modules/node-sass/src/libsass/src/emitter.hpp

- /better-onetab/node_modules/nan/nan_converters_pre_43_inl.h

- /better-onetab/node_modules/node-sass/src/libsass/src/file.cpp

- /better-onetab/node_modules/nan/nan_persistent_12_inl.h

- /better-onetab/node_modules/node-sass/src/libsass/src/operation.hpp

- /better-onetab/node_modules/nan/nan_persistent_pre_12_inl.h

- /better-onetab/node_modules/node-sass/src/libsass/src/operators.hpp

- /better-onetab/node_modules/node-sass/src/libsass/src/constants.hpp

- /better-onetab/node_modules/node-sass/src/libsass/src/error_handling.hpp

- /better-onetab/node_modules/nan/nan_implementation_pre_12_inl.h

- /better-onetab/node_modules/js-base64/test/./dankogai.js

- /better-onetab/node_modules/node-sass/src/libsass/src/constants.cpp

- /better-onetab/node_modules/node-sass/src/sass_types/list.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/functions.hpp

- /better-onetab/node_modules/node-sass/src/libsass/src/util.cpp

- /better-onetab/node_modules/node-sass/src/custom_function_bridge.cpp

- /better-onetab/node_modules/node-sass/src/custom_importer_bridge.h

- /better-onetab/node_modules/node-sass/src/libsass/src/bind.cpp

- /better-onetab/node_modules/nan/nan_json.h

- /better-onetab/node_modules/node-sass/src/libsass/src/eval.hpp

- /better-onetab/node_modules/nan/nan_converters.h

- /better-onetab/node_modules/node-sass/src/libsass/src/backtrace.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/extend.cpp

- /better-onetab/node_modules/node-sass/src/sass_types/sass_value_wrapper.h

- /better-onetab/node_modules/node-sass/src/libsass/src/error_handling.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/emitter.cpp

- /better-onetab/node_modules/node-sass/src/sass_types/number.cpp

- /better-onetab/node_modules/node-sass/src/sass_types/color.h

- /better-onetab/node_modules/nan/nan_new.h

- /better-onetab/node_modules/node-sass/src/libsass/src/sass_values.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/ast.hpp

- /better-onetab/node_modules/node-sass/src/libsass/src/output.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/check_nesting.cpp

- /better-onetab/node_modules/node-sass/src/sass_types/null.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/ast_def_macros.hpp

- /better-onetab/node_modules/node-sass/src/libsass/src/cssize.hpp

- /better-onetab/node_modules/node-sass/src/libsass/src/ast.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/to_c.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/to_value.hpp

- /better-onetab/node_modules/node-sass/src/libsass/src/ast_fwd_decl.hpp

- /better-onetab/node_modules/nan/nan_callbacks.h

- /better-onetab/node_modules/node-sass/src/libsass/src/inspect.hpp

- /better-onetab/node_modules/node-sass/src/sass_types/color.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/values.cpp

- /better-onetab/node_modules/node-sass/src/sass_types/list.h

- /better-onetab/node_modules/node-sass/src/libsass/src/check_nesting.hpp

- /better-onetab/node_modules/nan/nan_define_own_property_helper.h

- /better-onetab/node_modules/js-base64/test/./es5.js

- /better-onetab/node_modules/node-sass/src/sass_types/map.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/to_value.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/context.cpp

- /better-onetab/node_modules/node-sass/src/sass_types/string.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/sass_context.cpp

- /better-onetab/node_modules/node-sass/src/libsass/src/prelexer.hpp

- /better-onetab/node_modules/node-sass/src/libsass/src/context.hpp

- /better-onetab/node_modules/node-sass/src/sass_types/boolean.h

- /better-onetab/node_modules/nan/nan_private.h

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in LibSass through 3.5.4. An out-of-bounds read of a memory region was found in the function Sass::Prelexer::skip_over_scopes which could be leveraged by an attacker to disclose information or manipulated to read from unmapped memory causing a denial of service.

<p>Publish Date: 2018-06-04

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-11693>CVE-2018-11693</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-11693">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-11693</a></p>

<p>Release Date: 2018-06-04</p>

<p>Fix Resolution: LibSass - 3.5.5</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve high detected in opennms opennms source cve high severity vulnerability vulnerable library opennmsopennms source a java based fault and performance management system library home page a href found in head commit a href library source files the source files were matched to this source library based on a best effort match source libraries are selected from a list of probable public libraries better onetab node modules console browserify test static test adapter js better onetab node modules nan nan callbacks pre inl h better onetab node modules node sass src libsass src expand hpp better onetab node modules node sass src sass types factory cpp better onetab node modules js attic test moment yoshinoya js better onetab node modules node sass src sass types boolean cpp better onetab node modules node sass src sass types value h better onetab node modules node sass src libsass src emitter hpp better onetab node modules nan nan converters pre inl h better onetab node modules node sass src libsass src file cpp better onetab node modules nan nan persistent inl h better onetab node modules node sass src libsass src operation hpp better onetab node modules nan nan persistent pre inl h better onetab node modules node sass src libsass src operators hpp better onetab node modules node sass src libsass src constants hpp better onetab node modules node sass src libsass src error handling hpp better onetab node modules nan nan implementation pre inl h better onetab node modules js test dankogai js better onetab node modules node sass src libsass src constants cpp better onetab node modules node sass src sass types list cpp better onetab node modules node sass src libsass src functions hpp better onetab node modules node sass src libsass src util cpp better onetab node modules node sass src custom function bridge cpp better onetab node modules node sass src custom importer bridge h better onetab node modules node sass src libsass src bind cpp better onetab node modules nan nan json h better onetab node modules node sass src libsass src eval hpp better onetab node modules nan nan converters h better onetab node modules node sass src libsass src backtrace cpp better onetab node modules node sass src libsass src extend cpp better onetab node modules node sass src sass types sass value wrapper h better onetab node modules node sass src libsass src error handling cpp better onetab node modules node sass src libsass src emitter cpp better onetab node modules node sass src sass types number cpp better onetab node modules node sass src sass types color h better onetab node modules nan nan new h better onetab node modules node sass src libsass src sass values cpp better onetab node modules node sass src libsass src ast hpp better onetab node modules node sass src libsass src output cpp better onetab node modules node sass src libsass src check nesting cpp better onetab node modules node sass src sass types null cpp better onetab node modules node sass src libsass src ast def macros hpp better onetab node modules node sass src libsass src cssize hpp better onetab node modules node sass src libsass src ast cpp better onetab node modules node sass src libsass src to c cpp better onetab node modules node sass src libsass src to value hpp better onetab node modules node sass src libsass src ast fwd decl hpp better onetab node modules nan nan callbacks h better onetab node modules node sass src libsass src inspect hpp better onetab node modules node sass src sass types color cpp better onetab node modules node sass src libsass src values cpp better onetab node modules node sass src sass types list h better onetab node modules node sass src libsass src check nesting hpp better onetab node modules nan nan define own property helper h better onetab node modules js test js better onetab node modules node sass src sass types map cpp better onetab node modules node sass src libsass src to value cpp better onetab node modules node sass src libsass src context cpp better onetab node modules node sass src sass types string cpp better onetab node modules node sass src libsass src sass context cpp better onetab node modules node sass src libsass src prelexer hpp better onetab node modules node sass src libsass src context hpp better onetab node modules node sass src sass types boolean h better onetab node modules nan nan private h vulnerability details an issue was discovered in libsass through an out of bounds read of a memory region was found in the function sass prelexer skip over scopes which could be leveraged by an attacker to disclose information or manipulated to read from unmapped memory causing a denial of service publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact high integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution libsass step up your open source security game with whitesource

| 0

|

5,413

| 8,248,134,309

|

IssuesEvent

|

2018-09-11 17:31:35

|

emacs-ess/ESS

|

https://api.github.com/repos/emacs-ess/ESS

|

closed

|

Error with tracebug

|

bug:severe process:debug

|

Tracebug recently stopped working for me. I don't have time to investigate but I see these errors:

```

error in process filter: ess--dbg-find-buffer: No catch for tag: --cl-block-nil--, nil

error in process filter: No catch for tag: --cl-block-nil--, nil

```

Can anyone confirm?

|

1.0

|

Error with tracebug - Tracebug recently stopped working for me. I don't have time to investigate but I see these errors:

```

error in process filter: ess--dbg-find-buffer: No catch for tag: --cl-block-nil--, nil

error in process filter: No catch for tag: --cl-block-nil--, nil

```

Can anyone confirm?

|

process

|

error with tracebug tracebug recently stopped working for me i don t have time to investigate but i see these errors error in process filter ess dbg find buffer no catch for tag cl block nil nil error in process filter no catch for tag cl block nil nil can anyone confirm

| 1

|

247,730

| 20,987,903,712

|

IssuesEvent

|

2022-03-29 06:22:02

|

LimeChain/hashport-validator

|

https://api.github.com/repos/LimeChain/hashport-validator

|

closed

|

Interface and mock for gorm.DB

|

unit tests

|

Currently, we can't mock gorm.DB as it is concrete struct. In order to do It we need to do the following:

- Create an interface for gorm.DB

- Create Mock which implements the new interface

|

1.0

|

Interface and mock for gorm.DB - Currently, we can't mock gorm.DB as it is concrete struct. In order to do It we need to do the following:

- Create an interface for gorm.DB

- Create Mock which implements the new interface

|

non_process

|

interface and mock for gorm db currently we can t mock gorm db as it is concrete struct in order to do it we need to do the following create an interface for gorm db create mock which implements the new interface

| 0

|

175,103

| 21,300,770,930

|

IssuesEvent

|

2022-04-15 02:35:06

|

NakRex/virtual-library

|

https://api.github.com/repos/NakRex/virtual-library

|

opened

|

CVE-2021-43138 (High) detected in async-0.9.2.tgz, async-2.6.3.tgz

|

security vulnerability

|

## CVE-2021-43138 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>async-0.9.2.tgz</b>, <b>async-2.6.3.tgz</b></p></summary>

<p>

<details><summary><b>async-0.9.2.tgz</b></p></summary>

<p>Higher-order functions and common patterns for asynchronous code</p>

<p>Library home page: <a href="https://registry.npmjs.org/async/-/async-0.9.2.tgz">https://registry.npmjs.org/async/-/async-0.9.2.tgz</a></p>

<p>Path to dependency file: /frontend/package.json</p>

<p>Path to vulnerable library: /frontend/node_modules/jake/node_modules/async/package.json,/Backend/node_modules/async/package.json</p>

<p>

Dependency Hierarchy:

- ejs-3.1.6.tgz (Root Library)

- jake-10.8.2.tgz

- :x: **async-0.9.2.tgz** (Vulnerable Library)

</details>

<details><summary><b>async-2.6.3.tgz</b></p></summary>

<p>Higher-order functions and common patterns for asynchronous code</p>

<p>Library home page: <a href="https://registry.npmjs.org/async/-/async-2.6.3.tgz">https://registry.npmjs.org/async/-/async-2.6.3.tgz</a></p>

<p>Path to dependency file: /frontend/package.json</p>

<p>Path to vulnerable library: /frontend/node_modules/async/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-5.0.0.tgz (Root Library)

- webpack-dev-server-4.7.1.tgz

- portfinder-1.0.28.tgz

- :x: **async-2.6.3.tgz** (Vulnerable Library)

</details>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A vulnerability exists in Async through 3.2.1 (fixed in 3.2.2) , which could let a malicious user obtain privileges via the mapValues() method.

<p>Publish Date: 2022-04-06

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-43138>CVE-2021-43138</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2021-43138">https://nvd.nist.gov/vuln/detail/CVE-2021-43138</a></p>

<p>Release Date: 2022-04-06</p>

<p>Fix Resolution: async - v3.2.2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2021-43138 (High) detected in async-0.9.2.tgz, async-2.6.3.tgz - ## CVE-2021-43138 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>async-0.9.2.tgz</b>, <b>async-2.6.3.tgz</b></p></summary>

<p>

<details><summary><b>async-0.9.2.tgz</b></p></summary>

<p>Higher-order functions and common patterns for asynchronous code</p>

<p>Library home page: <a href="https://registry.npmjs.org/async/-/async-0.9.2.tgz">https://registry.npmjs.org/async/-/async-0.9.2.tgz</a></p>

<p>Path to dependency file: /frontend/package.json</p>

<p>Path to vulnerable library: /frontend/node_modules/jake/node_modules/async/package.json,/Backend/node_modules/async/package.json</p>

<p>

Dependency Hierarchy:

- ejs-3.1.6.tgz (Root Library)

- jake-10.8.2.tgz

- :x: **async-0.9.2.tgz** (Vulnerable Library)

</details>

<details><summary><b>async-2.6.3.tgz</b></p></summary>

<p>Higher-order functions and common patterns for asynchronous code</p>

<p>Library home page: <a href="https://registry.npmjs.org/async/-/async-2.6.3.tgz">https://registry.npmjs.org/async/-/async-2.6.3.tgz</a></p>

<p>Path to dependency file: /frontend/package.json</p>

<p>Path to vulnerable library: /frontend/node_modules/async/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-5.0.0.tgz (Root Library)

- webpack-dev-server-4.7.1.tgz

- portfinder-1.0.28.tgz

- :x: **async-2.6.3.tgz** (Vulnerable Library)

</details>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A vulnerability exists in Async through 3.2.1 (fixed in 3.2.2) , which could let a malicious user obtain privileges via the mapValues() method.

<p>Publish Date: 2022-04-06

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-43138>CVE-2021-43138</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2021-43138">https://nvd.nist.gov/vuln/detail/CVE-2021-43138</a></p>

<p>Release Date: 2022-04-06</p>

<p>Fix Resolution: async - v3.2.2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve high detected in async tgz async tgz cve high severity vulnerability vulnerable libraries async tgz async tgz async tgz higher order functions and common patterns for asynchronous code library home page a href path to dependency file frontend package json path to vulnerable library frontend node modules jake node modules async package json backend node modules async package json dependency hierarchy ejs tgz root library jake tgz x async tgz vulnerable library async tgz higher order functions and common patterns for asynchronous code library home page a href path to dependency file frontend package json path to vulnerable library frontend node modules async package json dependency hierarchy react scripts tgz root library webpack dev server tgz portfinder tgz x async tgz vulnerable library found in base branch main vulnerability details a vulnerability exists in async through fixed in which could let a malicious user obtain privileges via the mapvalues method publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution async step up your open source security game with whitesource

| 0

|

308,163

| 9,430,987,546

|

IssuesEvent

|

2019-04-12 10:22:40

|

CS2103-AY1819S2-W14-2/main

|

https://api.github.com/repos/CS2103-AY1819S2-W14-2/main

|

closed

|

[QOL] Unused/outdated assets and temp folders/methods to be removed

|

priority.Medium severity.Medium status.Ongoing

|

- [x] Remove unused folders from application directory.

- [ ] Remove outdated methods

|

1.0

|

[QOL] Unused/outdated assets and temp folders/methods to be removed - - [x] Remove unused folders from application directory.

- [ ] Remove outdated methods

|

non_process

|

unused outdated assets and temp folders methods to be removed remove unused folders from application directory remove outdated methods

| 0

|

6,480

| 9,552,538,721

|

IssuesEvent

|

2019-05-02 16:53:45

|

hashicorp/packer

|

https://api.github.com/repos/hashicorp/packer

|

closed

|

post-processor vsphere-template add parameter for wait for ‘VMware vSphere to start’ bug/feature-request

|

bug post-processor/vsphere-template waiting-reply

|

Packer version: v1.2.1

Host platform: Packer running on Windows 10, ESXi/vCenter 6.5 and ESXi 6.0/vCenter 6.5.

This is a bug report that could be worked around with the simple new feature. The problem appeared in Terraform, trying to clone a VM from a template that had been successfully created by Packer using the builders "vmware-iso" on a [Remote vSphere Hypervisor ](https://www.packer.io/docs/builders/vmware-iso.html#building-on-a-remote-vsphere-hypervisor) and the post-processors "vsphere-template". See [sample Packer template ](https://gist.github.com/GMZwinge/8743b1be26a931d846c1b43aabb880fd).

Terraform was giving this error:

* vsphere_virtual_machine.vm: Resource 'data.vsphere_virtual_machine.template' not found for variable 'data.vsphere_virtual_machine.template.id'

This problem always happened on a test ESXi/vCenter 6.5 running on a slower hardware, while it did not happen all the time on a faster hardware running ESXi 6.0/vCenter 6.5. After some investigation, the root cause was identified as being two entries uuid.bios and vc.uuid that were missing from the .vmtx file. Backtracking to the Packer script revealed that sometime around the end of the builder “vmware-iso” and the start of the post processor “vsphere-template”, those entries disappear from the .vmx file, and reappear with different values some seconds later (about 10 seconds on the faster hardware and about 15 seconds on the slower hardware).

Looking at the vsphere-template post-processor code, I noticed a 10 seconds delay when starting the post processor “vsphere-template”. See https://github.com/hashicorp/packer/blob/ace5fb7622ed46b63831d43ecd6d05b58544cf25/post-processor/vsphere-template/post-processor.go#L105

The comments explaining the reason for this delay have slightly changed over time, but have always been vague about the reason for the delay:

Before Jul 10 2017 , https://github.com/hashicorp/packer/commit/3cc9f204acc289e9adbf70c3be087b5c2dd25b8a#diff-2d1af112f5b55ed31686536a6d1b4ac1

//We give a vSphere-ESXI 10s to sync

Jul 18 2017 , https://github.com/hashicorp/packer/commit/fa10616f57f1801713a70793cb2596967b6bbb32#diff-2d1af112f5b55ed31686536a6d1b4ac1:

// In some occasions when the VM is mark as template it loses its configuration if it's done immediately

// after the ESXi creates it. If vSphere is given a few seconds this behavior doesn't reappear.

Aug 14 2017 , https://github.com/hashicorp/packer/commit/81272d1427b5ce0c30fb79d55a1f7618921a8ad4#diff-2d1af112f5b55ed31686536a6d1b4ac1:

// In some occasions the VM state is powered on and if we immediately try to mark as template

// (after the ESXi creates it) it will fail. If vSphere is given a few seconds this behavior doesn't reappear.

I still don’t know what triggers the removal and addition of those uuids, but it seems clear that the reason for the delay is not fully understood. Turning the delay into a parameter could give a workaround for this issue and possibly future issues due to ESXi/vCenter doing things outside of Packer's knowledge.

Thanks,

Georges

|

1.0

|

post-processor vsphere-template add parameter for wait for ‘VMware vSphere to start’ bug/feature-request - Packer version: v1.2.1

Host platform: Packer running on Windows 10, ESXi/vCenter 6.5 and ESXi 6.0/vCenter 6.5.

This is a bug report that could be worked around with the simple new feature. The problem appeared in Terraform, trying to clone a VM from a template that had been successfully created by Packer using the builders "vmware-iso" on a [Remote vSphere Hypervisor ](https://www.packer.io/docs/builders/vmware-iso.html#building-on-a-remote-vsphere-hypervisor) and the post-processors "vsphere-template". See [sample Packer template ](https://gist.github.com/GMZwinge/8743b1be26a931d846c1b43aabb880fd).

Terraform was giving this error:

* vsphere_virtual_machine.vm: Resource 'data.vsphere_virtual_machine.template' not found for variable 'data.vsphere_virtual_machine.template.id'

This problem always happened on a test ESXi/vCenter 6.5 running on a slower hardware, while it did not happen all the time on a faster hardware running ESXi 6.0/vCenter 6.5. After some investigation, the root cause was identified as being two entries uuid.bios and vc.uuid that were missing from the .vmtx file. Backtracking to the Packer script revealed that sometime around the end of the builder “vmware-iso” and the start of the post processor “vsphere-template”, those entries disappear from the .vmx file, and reappear with different values some seconds later (about 10 seconds on the faster hardware and about 15 seconds on the slower hardware).

Looking at the vsphere-template post-processor code, I noticed a 10 seconds delay when starting the post processor “vsphere-template”. See https://github.com/hashicorp/packer/blob/ace5fb7622ed46b63831d43ecd6d05b58544cf25/post-processor/vsphere-template/post-processor.go#L105

The comments explaining the reason for this delay have slightly changed over time, but have always been vague about the reason for the delay:

Before Jul 10 2017 , https://github.com/hashicorp/packer/commit/3cc9f204acc289e9adbf70c3be087b5c2dd25b8a#diff-2d1af112f5b55ed31686536a6d1b4ac1

//We give a vSphere-ESXI 10s to sync

Jul 18 2017 , https://github.com/hashicorp/packer/commit/fa10616f57f1801713a70793cb2596967b6bbb32#diff-2d1af112f5b55ed31686536a6d1b4ac1:

// In some occasions when the VM is mark as template it loses its configuration if it's done immediately

// after the ESXi creates it. If vSphere is given a few seconds this behavior doesn't reappear.

Aug 14 2017 , https://github.com/hashicorp/packer/commit/81272d1427b5ce0c30fb79d55a1f7618921a8ad4#diff-2d1af112f5b55ed31686536a6d1b4ac1:

// In some occasions the VM state is powered on and if we immediately try to mark as template

// (after the ESXi creates it) it will fail. If vSphere is given a few seconds this behavior doesn't reappear.

I still don’t know what triggers the removal and addition of those uuids, but it seems clear that the reason for the delay is not fully understood. Turning the delay into a parameter could give a workaround for this issue and possibly future issues due to ESXi/vCenter doing things outside of Packer's knowledge.

Thanks,

Georges

|

process

|

post processor vsphere template add parameter for wait for ‘vmware vsphere to start’ bug feature request packer version host platform packer running on windows esxi vcenter and esxi vcenter this is a bug report that could be worked around with the simple new feature the problem appeared in terraform trying to clone a vm from a template that had been successfully created by packer using the builders vmware iso on a and the post processors vsphere template see terraform was giving this error vsphere virtual machine vm resource data vsphere virtual machine template not found for variable data vsphere virtual machine template id this problem always happened on a test esxi vcenter running on a slower hardware while it did not happen all the time on a faster hardware running esxi vcenter after some investigation the root cause was identified as being two entries uuid bios and vc uuid that were missing from the vmtx file backtracking to the packer script revealed that sometime around the end of the builder “vmware iso” and the start of the post processor “vsphere template” those entries disappear from the vmx file and reappear with different values some seconds later about seconds on the faster hardware and about seconds on the slower hardware looking at the vsphere template post processor code i noticed a seconds delay when starting the post processor “vsphere template” see the comments explaining the reason for this delay have slightly changed over time but have always been vague about the reason for the delay before jul we give a vsphere esxi to sync jul in some occasions when the vm is mark as template it loses its configuration if it s done immediately after the esxi creates it if vsphere is given a few seconds this behavior doesn t reappear aug in some occasions the vm state is powered on and if we immediately try to mark as template after the esxi creates it it will fail if vsphere is given a few seconds this behavior doesn t reappear i still don’t know what triggers the removal and addition of those uuids but it seems clear that the reason for the delay is not fully understood turning the delay into a parameter could give a workaround for this issue and possibly future issues due to esxi vcenter doing things outside of packer s knowledge thanks georges

| 1

|

176,590

| 21,411,784,899

|

IssuesEvent

|

2022-04-22 06:58:28

|

AlexRogalskiy/java-patterns

|

https://api.github.com/repos/AlexRogalskiy/java-patterns

|

opened

|

CVE-2018-3721 (Medium) detected in multiple libraries

|

security vulnerability

|

## CVE-2018-3721 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-4.17.4.tgz</b>, <b>lodash-3.10.1.tgz</b>, <b>lodash-2.4.2.tgz</b></p></summary>

<p>

<details><summary><b>lodash-4.17.4.tgz</b></p></summary>

<p>Lodash modular utilities.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash/-/lodash-4.17.4.tgz">https://registry.npmjs.org/lodash/-/lodash-4.17.4.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/gitbook-cli/node_modules/lodash/package.json</p>

<p>

Dependency Hierarchy:

- gitbook-cli-2.3.2.tgz (Root Library)

- :x: **lodash-4.17.4.tgz** (Vulnerable Library)

</details>

<details><summary><b>lodash-3.10.1.tgz</b></p></summary>

<p>The modern build of lodash modular utilities.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash/-/lodash-3.10.1.tgz">https://registry.npmjs.org/lodash/-/lodash-3.10.1.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/jsdoctypeparser/node_modules/lodash/package.json,/node_modules/jscs/node_modules/lodash/package.json,/node_modules/xmlbuilder/node_modules/lodash/package.json,/node_modules/roadmarks/node_modules/lodash/package.json</p>

<p>

Dependency Hierarchy:

- jscs-3.0.7.tgz (Root Library)

- :x: **lodash-3.10.1.tgz** (Vulnerable Library)

</details>

<details><summary><b>lodash-2.4.2.tgz</b></p></summary>

<p>A utility library delivering consistency, customization, performance, & extras.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash/-/lodash-2.4.2.tgz">https://registry.npmjs.org/lodash/-/lodash-2.4.2.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/dockerfile_lint/node_modules/lodash/package.json</p>

<p>

Dependency Hierarchy:

- dockerfile_lint-0.3.4.tgz (Root Library)

- :x: **lodash-2.4.2.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/AlexRogalskiy/java-patterns/commit/0e3f838823fb09cc237bb3fc8f2e2651a2d0f0e6">0e3f838823fb09cc237bb3fc8f2e2651a2d0f0e6</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

lodash node module before 4.17.5 suffers from a Modification of Assumed-Immutable Data (MAID) vulnerability via defaultsDeep, merge, and mergeWith functions, which allows a malicious user to modify the prototype of "Object" via __proto__, causing the addition or modification of an existing property that will exist on all objects.

<p>Publish Date: 2018-06-07

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-3721>CVE-2018-3721</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2018-3721">https://nvd.nist.gov/vuln/detail/CVE-2018-3721</a></p>

<p>Release Date: 2018-06-07</p>

<p>Fix Resolution: 4.17.5</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2018-3721 (Medium) detected in multiple libraries - ## CVE-2018-3721 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-4.17.4.tgz</b>, <b>lodash-3.10.1.tgz</b>, <b>lodash-2.4.2.tgz</b></p></summary>

<p>

<details><summary><b>lodash-4.17.4.tgz</b></p></summary>

<p>Lodash modular utilities.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash/-/lodash-4.17.4.tgz">https://registry.npmjs.org/lodash/-/lodash-4.17.4.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/gitbook-cli/node_modules/lodash/package.json</p>

<p>

Dependency Hierarchy:

- gitbook-cli-2.3.2.tgz (Root Library)

- :x: **lodash-4.17.4.tgz** (Vulnerable Library)

</details>

<details><summary><b>lodash-3.10.1.tgz</b></p></summary>

<p>The modern build of lodash modular utilities.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash/-/lodash-3.10.1.tgz">https://registry.npmjs.org/lodash/-/lodash-3.10.1.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/jsdoctypeparser/node_modules/lodash/package.json,/node_modules/jscs/node_modules/lodash/package.json,/node_modules/xmlbuilder/node_modules/lodash/package.json,/node_modules/roadmarks/node_modules/lodash/package.json</p>

<p>

Dependency Hierarchy:

- jscs-3.0.7.tgz (Root Library)

- :x: **lodash-3.10.1.tgz** (Vulnerable Library)

</details>

<details><summary><b>lodash-2.4.2.tgz</b></p></summary>

<p>A utility library delivering consistency, customization, performance, & extras.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash/-/lodash-2.4.2.tgz">https://registry.npmjs.org/lodash/-/lodash-2.4.2.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/dockerfile_lint/node_modules/lodash/package.json</p>

<p>

Dependency Hierarchy:

- dockerfile_lint-0.3.4.tgz (Root Library)

- :x: **lodash-2.4.2.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/AlexRogalskiy/java-patterns/commit/0e3f838823fb09cc237bb3fc8f2e2651a2d0f0e6">0e3f838823fb09cc237bb3fc8f2e2651a2d0f0e6</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

lodash node module before 4.17.5 suffers from a Modification of Assumed-Immutable Data (MAID) vulnerability via defaultsDeep, merge, and mergeWith functions, which allows a malicious user to modify the prototype of "Object" via __proto__, causing the addition or modification of an existing property that will exist on all objects.

<p>Publish Date: 2018-06-07

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-3721>CVE-2018-3721</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2018-3721">https://nvd.nist.gov/vuln/detail/CVE-2018-3721</a></p>

<p>Release Date: 2018-06-07</p>

<p>Fix Resolution: 4.17.5</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries lodash tgz lodash tgz lodash tgz lodash tgz lodash modular utilities library home page a href path to dependency file package json path to vulnerable library node modules gitbook cli node modules lodash package json dependency hierarchy gitbook cli tgz root library x lodash tgz vulnerable library lodash tgz the modern build of lodash modular utilities library home page a href path to dependency file package json path to vulnerable library node modules jsdoctypeparser node modules lodash package json node modules jscs node modules lodash package json node modules xmlbuilder node modules lodash package json node modules roadmarks node modules lodash package json dependency hierarchy jscs tgz root library x lodash tgz vulnerable library lodash tgz a utility library delivering consistency customization performance extras library home page a href path to dependency file package json path to vulnerable library node modules dockerfile lint node modules lodash package json dependency hierarchy dockerfile lint tgz root library x lodash tgz vulnerable library found in head commit a href found in base branch master vulnerability details lodash node module before suffers from a modification of assumed immutable data maid vulnerability via defaultsdeep merge and mergewith functions which allows a malicious user to modify the prototype of object via proto causing the addition or modification of an existing property that will exist on all objects publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact none integrity impact high availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource

| 0

|

82,805

| 23,880,319,525

|

IssuesEvent

|

2022-09-08 00:19:59

|

cloudfoundry/korifi

|

https://api.github.com/repos/cloudfoundry/korifi

|

opened

|

[Feature]: When repushing an existing App, specifying any buildpack other than default errors

|

Build and Run Workloads

|

### Blockers/Dependencies

#1054

### Background

**As a** cf CLI user

**I want** to receive an error message when I select a buildpack other than default

**So that** I have understand that I cannot select a specific buildpack (right now we silently ignore the buildpack request)

### Acceptance Criteria

**GIVEN** a korifi cluster

**WHEN** I run `cf push`

**AND** I wait for the push to succeed

**AND** I run `cf push -b paketo-buildpacks/go` for the same app

**THEN I** should see that the build fails with an error explaining that only the default buildpack is currently supported

---

**WHEN I** run `cf push`, `cf push -b default`, or `cf push -b null` for an existing App

**THEN I** should see that the build succeeds

**AND** that `cf app <AppName>` shows no buildpacks (i.e default)

### Dev Notes

This is a follow-up to #1171 to handle the update case. The original story only handled this problem when the App is first created.

|

1.0

|

[Feature]: When repushing an existing App, specifying any buildpack other than default errors - ### Blockers/Dependencies

#1054

### Background

**As a** cf CLI user

**I want** to receive an error message when I select a buildpack other than default

**So that** I have understand that I cannot select a specific buildpack (right now we silently ignore the buildpack request)

### Acceptance Criteria

**GIVEN** a korifi cluster

**WHEN** I run `cf push`

**AND** I wait for the push to succeed

**AND** I run `cf push -b paketo-buildpacks/go` for the same app

**THEN I** should see that the build fails with an error explaining that only the default buildpack is currently supported

---

**WHEN I** run `cf push`, `cf push -b default`, or `cf push -b null` for an existing App

**THEN I** should see that the build succeeds

**AND** that `cf app <AppName>` shows no buildpacks (i.e default)

### Dev Notes

This is a follow-up to #1171 to handle the update case. The original story only handled this problem when the App is first created.

|

non_process

|

when repushing an existing app specifying any buildpack other than default errors blockers dependencies background as a cf cli user i want to receive an error message when i select a buildpack other than default so that i have understand that i cannot select a specific buildpack right now we silently ignore the buildpack request acceptance criteria given a korifi cluster when i run cf push and i wait for the push to succeed and i run cf push b paketo buildpacks go for the same app then i should see that the build fails with an error explaining that only the default buildpack is currently supported when i run cf push cf push b default or cf push b null for an existing app then i should see that the build succeeds and that cf app shows no buildpacks i e default dev notes this is a follow up to to handle the update case the original story only handled this problem when the app is first created

| 0

|

162,482

| 25,545,016,158

|

IssuesEvent

|

2022-11-29 18:02:41

|

flutter/flutter

|

https://api.github.com/repos/flutter/flutter

|

closed

|

Update Snackbar to support Material 3

|

framework f: material design

|

As part of #91605, we need to migrate the `Snackbar` widget to [Material 3](https://m3.material.io/components/snackbar/overview):

<img width="357" alt="Screen Shot 2022-10-26 at 11 52 11 AM" src="https://user-images.githubusercontent.com/19588/198112489-048946c3-66a6-46e5-bef4-537782b4b2b6.png">

|

1.0

|

Update Snackbar to support Material 3 - As part of #91605, we need to migrate the `Snackbar` widget to [Material 3](https://m3.material.io/components/snackbar/overview):

<img width="357" alt="Screen Shot 2022-10-26 at 11 52 11 AM" src="https://user-images.githubusercontent.com/19588/198112489-048946c3-66a6-46e5-bef4-537782b4b2b6.png">

|

non_process

|

update snackbar to support material as part of we need to migrate the snackbar widget to img width alt screen shot at am src

| 0

|

218,941

| 7,332,821,003

|

IssuesEvent

|

2018-03-05 17:24:22

|

NCEAS/metacat

|

https://api.github.com/repos/NCEAS/metacat

|

closed

|

Problem in activating a new generated ldap account

|

Component: Bugzilla-Id Priority: Normal Status: Closed Tracker: Bug

|

---

Author Name: **Jing Tao** (Jing Tao)

Original Redmine Issue: 6473, https://projects.ecoinformatics.org/ecoinfo/issues/6473

Original Date: 2014-03-19

Original Assignee: Jing Tao

---

Zach Nelson reported there was an issue to activate his account:

hash string Kv9aZuLOdu$tO3 doesn't match our record.

but the account was activated:

river:conf tao$ ldapsearch -x -h ldap.ecoinformatics.org -b o=unaffiliated,dc=ecoinformatics,dc=org uid=zach-nelson

1. extended LDIF

#

1. LDAPv3

1. base <o=unaffiliated,dc=ecoinformatics,dc=org> with scope subtree

1. filter: uid=zach-nelson

1. requesting: ALL

#

1. zach-nelson, unaffiliated, ecoinformatics.org

dn: uid=zach-nelson,o=unaffiliated,dc=ecoinformatics,dc=org

cn: Zachary nelson

sn: nelson

givenName: Zachary

mail: z.j.nelson2010@gmail.com

employeeNumber: Kv9aZuLOdu$tO3^X

uidNumber: 30056

gidNumber: 30056

loginShell: /sbin/nologin

homeDirectory: /dev/null

objectClass: top

objectClass: person

objectClass: organizationalPerson

objectClass: inetOrgPerson

objectClass: posixAccount

objectClass: shadowAccount

o: unaffiliated

gecos: Zachary nelson,,,

uid: zach-nelson

1. search result

search: 2

result: 0 Success

1. numResponses: 2

1. numEntries: 1

We need figure out why the hash string didn't match and why the account was activated even though the hash string didn't match.

|

1.0

|

Problem in activating a new generated ldap account - ---

Author Name: **Jing Tao** (Jing Tao)

Original Redmine Issue: 6473, https://projects.ecoinformatics.org/ecoinfo/issues/6473

Original Date: 2014-03-19

Original Assignee: Jing Tao

---

Zach Nelson reported there was an issue to activate his account:

hash string Kv9aZuLOdu$tO3 doesn't match our record.

but the account was activated:

river:conf tao$ ldapsearch -x -h ldap.ecoinformatics.org -b o=unaffiliated,dc=ecoinformatics,dc=org uid=zach-nelson

1. extended LDIF

#

1. LDAPv3

1. base <o=unaffiliated,dc=ecoinformatics,dc=org> with scope subtree

1. filter: uid=zach-nelson

1. requesting: ALL

#

1. zach-nelson, unaffiliated, ecoinformatics.org

dn: uid=zach-nelson,o=unaffiliated,dc=ecoinformatics,dc=org

cn: Zachary nelson

sn: nelson

givenName: Zachary

mail: z.j.nelson2010@gmail.com

employeeNumber: Kv9aZuLOdu$tO3^X

uidNumber: 30056

gidNumber: 30056

loginShell: /sbin/nologin

homeDirectory: /dev/null

objectClass: top

objectClass: person

objectClass: organizationalPerson

objectClass: inetOrgPerson

objectClass: posixAccount

objectClass: shadowAccount

o: unaffiliated

gecos: Zachary nelson,,,

uid: zach-nelson

1. search result

search: 2

result: 0 Success

1. numResponses: 2

1. numEntries: 1

We need figure out why the hash string didn't match and why the account was activated even though the hash string didn't match.

|

non_process

|

problem in activating a new generated ldap account author name jing tao jing tao original redmine issue original date original assignee jing tao zach nelson reported there was an issue to activate his account hash string doesn t match our record but the account was activated river conf tao ldapsearch x h ldap ecoinformatics org b o unaffiliated dc ecoinformatics dc org uid zach nelson extended ldif base with scope subtree filter uid zach nelson requesting all zach nelson unaffiliated ecoinformatics org dn uid zach nelson o unaffiliated dc ecoinformatics dc org cn zachary nelson sn nelson givenname zachary mail z j gmail com employeenumber x uidnumber gidnumber loginshell sbin nologin homedirectory dev null objectclass top objectclass person objectclass organizationalperson objectclass inetorgperson objectclass posixaccount objectclass shadowaccount o unaffiliated gecos zachary nelson uid zach nelson search result search result success numresponses numentries we need figure out why the hash string didn t match and why the account was activated even though the hash string didn t match

| 0

|

3,197

| 6,261,735,509

|

IssuesEvent

|

2017-07-15 02:46:35

|

P0cL4bs/WiFi-Pumpkin

|

https://api.github.com/repos/P0cL4bs/WiFi-Pumpkin

|

closed

|

IOError: [Errno socket error] [Errno -2] Name or service not known

|

in process priority solved

|

LOG

After the IO error i dont get any more traffic.

When i visit a HTTPS page on victim's device. It redirects it to http and then :

Traceback (most recent call last):

File "/home/hargerao/Documents/Tools/WiFi-Pumpkin-master/core/servers/proxy/tcp/intercept.py", line 43, in run

self.main()

File "/home/hargerao/Documents/Tools/WiFi-Pumpkin-master/core/servers/proxy/tcp/intercept.py", line 94, in main

self.plugins[Active].filterPackets(pkt)

File "/home/hargerao/Documents/Tools/WiFi-Pumpkin-master/plugins/analyzers/image.py", line 40, in filterPackets

urlretrieve('http://{}{}'.format(http_layer.fields['Host'], http_layer.fields['Path']),file_name)

File "/usr/lib/python2.7/urllib.py", line 98, in urlretrieve

return opener.retrieve(url, filename, reporthook, data)

File "/usr/lib/python2.7/urllib.py", line 245, in retrieve

fp = self.open(url, data)

File "/usr/lib/python2.7/urllib.py", line 213, in open

return getattr(self, name)(url)

File "/usr/lib/python2.7/urllib.py", line 350, in open_http

h.endheaders(data)

File "/usr/lib/python2.7/httplib.py", line 1038, in endheaders

self._send_output(message_body)

File "/usr/lib/python2.7/httplib.py", line 882, in _send_output

self.send(msg)

File "/usr/lib/python2.7/httplib.py", line 844, in send

self.connect()

File "/usr/lib/python2.7/httplib.py", line 821, in connect

self.timeout, self.source_address)

File "/usr/lib/python2.7/socket.py", line 557, in create_connection

for res in getaddrinfo(host, port, 0, SOCK_STREAM):

IOError: [Errno socket error] [Errno -2] Name or service not known

I get this error then.

WiFi-Pumpkin is running fine with Sergioproxy or Pumpkinproxy. This is only with SSLSTRIP+/Dns2Proxy

Thankyou

|

1.0

|

IOError: [Errno socket error] [Errno -2] Name or service not known - LOG

After the IO error i dont get any more traffic.

When i visit a HTTPS page on victim's device. It redirects it to http and then :

Traceback (most recent call last):

File "/home/hargerao/Documents/Tools/WiFi-Pumpkin-master/core/servers/proxy/tcp/intercept.py", line 43, in run

self.main()

File "/home/hargerao/Documents/Tools/WiFi-Pumpkin-master/core/servers/proxy/tcp/intercept.py", line 94, in main

self.plugins[Active].filterPackets(pkt)

File "/home/hargerao/Documents/Tools/WiFi-Pumpkin-master/plugins/analyzers/image.py", line 40, in filterPackets

urlretrieve('http://{}{}'.format(http_layer.fields['Host'], http_layer.fields['Path']),file_name)

File "/usr/lib/python2.7/urllib.py", line 98, in urlretrieve

return opener.retrieve(url, filename, reporthook, data)

File "/usr/lib/python2.7/urllib.py", line 245, in retrieve

fp = self.open(url, data)

File "/usr/lib/python2.7/urllib.py", line 213, in open

return getattr(self, name)(url)

File "/usr/lib/python2.7/urllib.py", line 350, in open_http

h.endheaders(data)

File "/usr/lib/python2.7/httplib.py", line 1038, in endheaders

self._send_output(message_body)

File "/usr/lib/python2.7/httplib.py", line 882, in _send_output

self.send(msg)