Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

19,081

| 25,127,606,887

|

IssuesEvent

|

2022-11-09 12:58:17

|

inmanta/inmanta-core

|

https://api.github.com/repos/inmanta/inmanta-core

|

reopened

|

Enable timestamp on the log messages produced by the test cases

|

process tiny

|

In order to debug a test case that times out on a wait condition, it's handy to see the timestamps in the log messages. That way it easier to see in which stage a lot of time is being lost.

Adding the timestamps can be achieved by providing the following options to pytest: `--log-format="%(asctime)s.%(msecs)03d %(levelname)s %(message)s"`.

If `pytest.ini` supports it, add it there.

|

1.0

|

Enable timestamp on the log messages produced by the test cases - In order to debug a test case that times out on a wait condition, it's handy to see the timestamps in the log messages. That way it easier to see in which stage a lot of time is being lost.

Adding the timestamps can be achieved by providing the following options to pytest: `--log-format="%(asctime)s.%(msecs)03d %(levelname)s %(message)s"`.

If `pytest.ini` supports it, add it there.

|

process

|

enable timestamp on the log messages produced by the test cases in order to debug a test case that times out on a wait condition it s handy to see the timestamps in the log messages that way it easier to see in which stage a lot of time is being lost adding the timestamps can be achieved by providing the following options to pytest log format asctime s msecs levelname s message s if pytest ini supports it add it there

| 1

|

139,101

| 12,837,281,236

|

IssuesEvent

|

2020-07-07 15:33:10

|

rust-bio/rust-bio

|

https://api.github.com/repos/rust-bio/rust-bio

|

closed

|

Improve docs: `seq_analysis::gc`

|

documentation

|

* [ ] add at least one example

* [ ] describe time and/or memory complexity

|

1.0

|

Improve docs: `seq_analysis::gc` - * [ ] add at least one example

* [ ] describe time and/or memory complexity

|

non_process

|

improve docs seq analysis gc add at least one example describe time and or memory complexity

| 0

|

20,431

| 27,098,151,954

|

IssuesEvent

|

2023-02-15 05:56:49

|

anobaka/InsideWorld

|

https://api.github.com/repos/anobaka/InsideWorld

|

closed

|

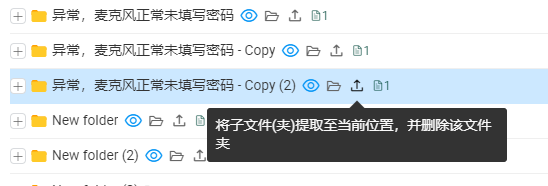

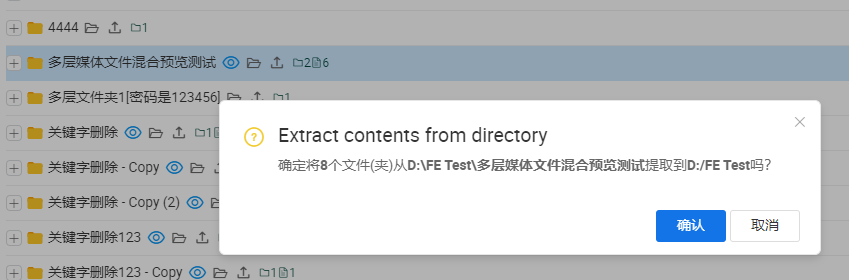

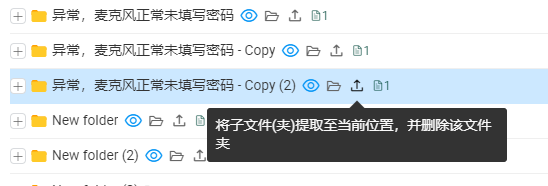

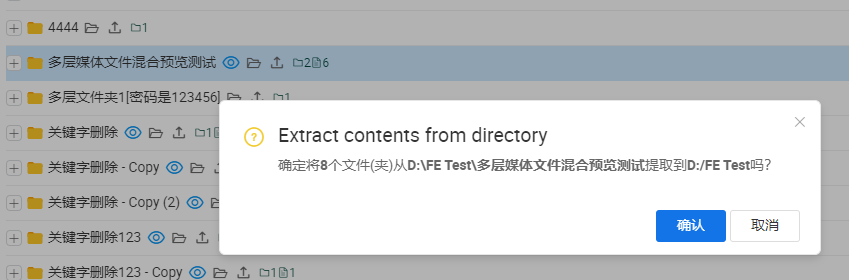

优化文件处理器部分操作交互

|

enhancement file-processor

|

+ [x] 当上方文件数量发生变化时,可能会导致误点击【提取】等按钮,提取按钮现调整至文件名右侧,并增加确认弹窗(支持回车提交)

+ [x] 文件操作按钮可能需要尽可能贴近文件名

+ [x] 根目录变更时显示加载中图标

|

1.0

|

优化文件处理器部分操作交互 - + [x] 当上方文件数量发生变化时,可能会导致误点击【提取】等按钮,提取按钮现调整至文件名右侧,并增加确认弹窗(支持回车提交)

+ [x] 文件操作按钮可能需要尽可能贴近文件名

+ [x] 根目录变更时显示加载中图标

|

process

|

优化文件处理器部分操作交互 当上方文件数量发生变化时,可能会导致误点击【提取】等按钮,提取按钮现调整至文件名右侧,并增加确认弹窗(支持回车提交) 文件操作按钮可能需要尽可能贴近文件名 根目录变更时显示加载中图标

| 1

|

262,590

| 19,822,793,084

|

IssuesEvent

|

2022-01-20 00:44:08

|

vercel/next.js

|

https://api.github.com/repos/vercel/next.js

|

opened

|

Docs: Tailwind scaffolding in Next.js Projects for separation of concerns

|

template: documentation

|

### What is the improvement or update you wish to see?

I would like to see a template on how we should organize scaffolding of CSS files when using tailwind.

### Is there any context that might help us understand?

I know we can use global.css file for global variables, but what is the proper way of organizing CSS files for the sake of separation of concerns, route folders, and/or general organization?

Are there any gotchas/caveats to separating CSS files?

Checked the with-tailwind example, and unfortunately it only provided the simplest example with global.css file(initial setup) and inline modifications.

And thanks! It really has been great getting to know Next.js btw. Still got loads to learn but having fun.

### Does the docs page already exist? Please link to it.

https://github.com/vercel/next.js/tree/canary/examples/with-tailwindcss

|

1.0

|

Docs: Tailwind scaffolding in Next.js Projects for separation of concerns - ### What is the improvement or update you wish to see?

I would like to see a template on how we should organize scaffolding of CSS files when using tailwind.

### Is there any context that might help us understand?

I know we can use global.css file for global variables, but what is the proper way of organizing CSS files for the sake of separation of concerns, route folders, and/or general organization?

Are there any gotchas/caveats to separating CSS files?

Checked the with-tailwind example, and unfortunately it only provided the simplest example with global.css file(initial setup) and inline modifications.

And thanks! It really has been great getting to know Next.js btw. Still got loads to learn but having fun.

### Does the docs page already exist? Please link to it.

https://github.com/vercel/next.js/tree/canary/examples/with-tailwindcss

|

non_process

|

docs tailwind scaffolding in next js projects for separation of concerns what is the improvement or update you wish to see i would like to see a template on how we should organize scaffolding of css files when using tailwind is there any context that might help us understand i know we can use global css file for global variables but what is the proper way of organizing css files for the sake of separation of concerns route folders and or general organization are there any gotchas caveats to separating css files checked the with tailwind example and unfortunately it only provided the simplest example with global css file initial setup and inline modifications and thanks it really has been great getting to know next js btw still got loads to learn but having fun does the docs page already exist please link to it

| 0

|

284,561

| 21,444,711,111

|

IssuesEvent

|

2022-04-25 04:13:04

|

cengage/react-magma

|

https://api.github.com/repos/cengage/react-magma

|

closed

|

Documentation> Checkbox link> "Not Found" error message is appearing upon clicking the link

|

documentation

|

**Describe the bug**

"Not Found" error message is appearing upon clicking the link "Checkbox".

**To Reproduce**

Steps to reproduce the behavior:

1. Go to https://react-magma.cengage.com/version/2.5.7/api/radio/

2. Click on "Checkbox" link

3. Verify the error

**Expected behavior**

Appropriate page should appear upon clicking the link "Checkbox".

**Screenshots**

https://somup.com/c3nXXZZRQO

**Desktop:**

- OS: [Win 10]

- Browser [chrome]

- Version [Chrome version: Version 98.0.4758.81 (Official Build) (64-bit)]

|

1.0

|

Documentation> Checkbox link> "Not Found" error message is appearing upon clicking the link - **Describe the bug**

"Not Found" error message is appearing upon clicking the link "Checkbox".

**To Reproduce**

Steps to reproduce the behavior:

1. Go to https://react-magma.cengage.com/version/2.5.7/api/radio/

2. Click on "Checkbox" link

3. Verify the error

**Expected behavior**

Appropriate page should appear upon clicking the link "Checkbox".

**Screenshots**

https://somup.com/c3nXXZZRQO

**Desktop:**

- OS: [Win 10]

- Browser [chrome]

- Version [Chrome version: Version 98.0.4758.81 (Official Build) (64-bit)]

|

non_process

|

documentation checkbox link not found error message is appearing upon clicking the link describe the bug not found error message is appearing upon clicking the link checkbox to reproduce steps to reproduce the behavior go to click on checkbox link verify the error expected behavior appropriate page should appear upon clicking the link checkbox screenshots desktop os browser version

| 0

|

10,346

| 13,172,404,656

|

IssuesEvent

|

2020-08-11 18:22:41

|

openenclave/openenclave

|

https://api.github.com/repos/openenclave/openenclave

|

closed

|

Unable to distinguish API versions

|

attestation bug engineering process triaged

|

The master branch saw a change to the host-side quote verification library: `oe_verify_remote_report` now expects 5 arguments instead of 3 arguments in v0.7.0. We now check for `#if defined(OE_CLAIM_ID_VERSION)`, which isn't defined in v0.7.0, to tell the difference.

It would be nice if the version number were updated when the API changes but as far as I can tell, `OE_API_VERSION` is always the same (2). Perhaps it makes sense to add separate versioning for the host-side quote verification library too?

|

1.0

|

Unable to distinguish API versions - The master branch saw a change to the host-side quote verification library: `oe_verify_remote_report` now expects 5 arguments instead of 3 arguments in v0.7.0. We now check for `#if defined(OE_CLAIM_ID_VERSION)`, which isn't defined in v0.7.0, to tell the difference.

It would be nice if the version number were updated when the API changes but as far as I can tell, `OE_API_VERSION` is always the same (2). Perhaps it makes sense to add separate versioning for the host-side quote verification library too?

|

process

|

unable to distinguish api versions the master branch saw a change to the host side quote verification library oe verify remote report now expects arguments instead of arguments in we now check for if defined oe claim id version which isn t defined in to tell the difference it would be nice if the version number were updated when the api changes but as far as i can tell oe api version is always the same perhaps it makes sense to add separate versioning for the host side quote verification library too

| 1

|

10,265

| 13,112,556,253

|

IssuesEvent

|

2020-08-05 02:34:22

|

kubeflow/manifests

|

https://api.github.com/repos/kubeflow/manifests

|

closed

|

Owners file for kubeflow/metadata needs to be updated

|

area/metadata kind/process lifecycle/stale priority/p2

|

The OWNERs file for metadata needs to be updated it looks like the current set of approvers is no longer up to date and responsive.

See #928

|

1.0

|

Owners file for kubeflow/metadata needs to be updated - The OWNERs file for metadata needs to be updated it looks like the current set of approvers is no longer up to date and responsive.

See #928

|

process

|

owners file for kubeflow metadata needs to be updated the owners file for metadata needs to be updated it looks like the current set of approvers is no longer up to date and responsive see

| 1

|

10,826

| 13,609,564,470

|

IssuesEvent

|

2020-09-23 05:36:14

|

GoogleCloudPlatform/getting-started-java

|

https://api.github.com/repos/GoogleCloudPlatform/getting-started-java

|

closed

|

Dependency Dashboard

|

type: process

|

This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/org.apache.maven.plugins-maven-compiler-plugin-3.x -->chore(deps): update dependency org.apache.maven.plugins:maven-compiler-plugin to v3.8.1

- [ ] <!-- rebase-branch=renovate/javax.servlet-javax.servlet-api-4.x -->chore(deps): update dependency javax.servlet:javax.servlet-api to v4

---

- [ ] <!-- manual job -->Check this box to trigger a request for Renovate to run again on this repository

|

1.0

|

Dependency Dashboard - This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/org.apache.maven.plugins-maven-compiler-plugin-3.x -->chore(deps): update dependency org.apache.maven.plugins:maven-compiler-plugin to v3.8.1

- [ ] <!-- rebase-branch=renovate/javax.servlet-javax.servlet-api-4.x -->chore(deps): update dependency javax.servlet:javax.servlet-api to v4

---

- [ ] <!-- manual job -->Check this box to trigger a request for Renovate to run again on this repository

|

process

|

dependency dashboard this issue contains a list of renovate updates and their statuses open these updates have all been created already click a checkbox below to force a retry rebase of any chore deps update dependency org apache maven plugins maven compiler plugin to chore deps update dependency javax servlet javax servlet api to check this box to trigger a request for renovate to run again on this repository

| 1

|

355,314

| 25,175,898,206

|

IssuesEvent

|

2022-11-11 09:13:58

|

Devanshshah1309/pe

|

https://api.github.com/repos/Devanshshah1309/pe

|

opened

|

Link to FAQ Section not working

|

severity.Low type.DocumentationBug

|

The link to the FAQ section here is broken (or there's no link at all).

This prevents me from being able to go to the FAQ section if I'm only interested in that - it is not purely a cosmetic bug. It hinders my reading. Hence, low severity.

<!--session: 1668147090315-757e20e0-6c8f-46c9-a0a7-2575a40077ea-->

<!--Version: Web v3.4.4-->

|

1.0

|

Link to FAQ Section not working - The link to the FAQ section here is broken (or there's no link at all).

This prevents me from being able to go to the FAQ section if I'm only interested in that - it is not purely a cosmetic bug. It hinders my reading. Hence, low severity.

<!--session: 1668147090315-757e20e0-6c8f-46c9-a0a7-2575a40077ea-->

<!--Version: Web v3.4.4-->

|

non_process

|

link to faq section not working the link to the faq section here is broken or there s no link at all this prevents me from being able to go to the faq section if i m only interested in that it is not purely a cosmetic bug it hinders my reading hence low severity

| 0

|

11,531

| 14,403,749,597

|

IssuesEvent

|

2020-12-03 16:25:55

|

LOVDnl/LOVD3

|

https://api.github.com/repos/LOVDnl/LOVD3

|

opened

|

Curators and up can create variant submissions without classification.

|

bug minor cat: submission process

|

Curators and up can create variant submissions without classification, but only when a gene is selected. VOG entries still require at least the reported classification, while VOT entries do not. Submitters are always required to fill in the reported classification.

|

1.0

|

Curators and up can create variant submissions without classification. - Curators and up can create variant submissions without classification, but only when a gene is selected. VOG entries still require at least the reported classification, while VOT entries do not. Submitters are always required to fill in the reported classification.

|

process

|

curators and up can create variant submissions without classification curators and up can create variant submissions without classification but only when a gene is selected vog entries still require at least the reported classification while vot entries do not submitters are always required to fill in the reported classification

| 1

|

9,175

| 12,226,438,488

|

IssuesEvent

|

2020-05-03 10:55:40

|

labnote-ant/labnote

|

https://api.github.com/repos/labnote-ant/labnote

|

closed

|

Add description box

|

chemical-view process-view

|

In chemical view or process view, it needs a description box where the user can input details of chemicals or process.

|

1.0

|

Add description box - In chemical view or process view, it needs a description box where the user can input details of chemicals or process.

|

process

|

add description box in chemical view or process view it needs a description box where the user can input details of chemicals or process

| 1

|

14,960

| 18,445,033,755

|

IssuesEvent

|

2021-10-15 00:01:02

|

cloudfoundry/cf-k8s-api

|

https://api.github.com/repos/cloudfoundry/cf-k8s-api

|

closed

|

[Feature]: API Client can List Processes for an App via `GET /v3/apps/:guid/processes`

|

Processes

|

### Blockers/Dependencies

_No response_

### Background

**As a** client of the API Shim

**I want** to be able to list all Processes for my App

**So that** I can discover information about my Processes

The CF CLI hits this endpoint during `cf push` so that it can get a list of Process guids to make future API calls (such as fetching stats for a Process).

### Acceptance Criteria

## Scenarios

### Happy Path (App with Processes)

**GIVEN** I have a CFApp and CFProcesses are associated with it

**WHEN** I make the following API request:

```bash

curl "https://api-shim.example.org/v3/apps/<app-guid>/processes" \

-X GET \

-H "Authorization: bearer <placeholder-bearer-token>"

```

**THEN** I see a response that reflects the information on the CFProcesses

```json

HTTP/1.1 200 OK

Content-Type: application/json

{

"pagination": {

"total_results": 2,

"total_pages": 1,

"first": {

"href": "https://api-shim.example.org/v3/apps/ccc25a0f-c8f4-4b39-9f1b-de9f328d0ee5/processes?page=1&per_page=2"

},

"last": {

"href": "https://api-shim.example.org/v3/apps/ccc25a0f-c8f4-4b39-9f1b-de9f328d0ee5/processes?page=2&per_page=2"

},

"next": {

"href": "https://api-shim.example.org/v3/apps/ccc25a0f-c8f4-4b39-9f1b-de9f328d0ee5/processes?page=2&per_page=2"

},

"previous": null

},

"resources": [

{

"guid": "6a901b7c-9417-4dc1-8189-d3234aa0ab82",

"type": "web",

"command": "[PRIVATE DATA HIDDEN IN LISTS]",

"instances": 5,

"memory_in_mb": 256,

"disk_in_mb": 1024,

"health_check": {

"type": "port",

"data": {

"timeout": null,

"invocation_timeout": null

}

},

"relationships": {

"app": {

"data": {

"guid": "ccc25a0f-c8f4-4b39-9f1b-de9f328d0ee5"

}

}

},

"metadata": {

"labels": {},

"annotations": {}

},

"created_at": "2016-03-23T18:48:22Z",

"updated_at": "2016-03-23T18:48:42Z",

"links": {

"self": {

"href": "https://api-shim.example.org/v3/processes/6a901b7c-9417-4dc1-8189-d3234aa0ab82"

},

"scale": {

"href": "https://api-shim.example.org/v3/processes/6a901b7c-9417-4dc1-8189-d3234aa0ab82/actions/scale",

"method": "POST"

},

"app": {

"href": "https://api-shim.example.org/v3/apps/ccc25a0f-c8f4-4b39-9f1b-de9f328d0ee5"

},

"space": {

"href": "https://api-shim.example.org/v3/spaces/2f35885d-0c9d-4423-83ad-fd05066f8576"

},

"stats": {

"href": "https://api-shim.example.org/v3/processes/6a901b7c-9417-4dc1-8189-d3234aa0ab82/stats"

}

}

},

{

"guid": "3fccacd9-4b02-4b96-8d02-8e865865e9eb",

"type": "worker",

"command": "[PRIVATE DATA HIDDEN IN LISTS]",

"instances": 1,

"memory_in_mb": 256,

"disk_in_mb": 1024,

"health_check": {

"type": "process",

"data": {

"timeout": null,

"invocation_timeout": null

}

},

"relationships": {

"app": {

"data": {

"guid": "ccc25a0f-c8f4-4b39-9f1b-de9f328d0ee5"

}

},

},

"metadata": {

"labels": {},

"annotations": {}

},

"created_at": "2016-03-23T18:48:22Z",

"updated_at": "2016-03-23T18:48:42Z",

"links": {

"self": {

"href": "https://api-shim.example.org/v3/processes/3fccacd9-4b02-4b96-8d02-8e865865e9eb"

},

"scale": {

"href": "https://api-shim.example.org/v3/processes/3fccacd9-4b02-4b96-8d02-8e865865e9eb/actions/scale",

"method": "POST"

},

"app": {

"href": "https://api-shim.example.org/v3/apps/ccc25a0f-c8f4-4b39-9f1b-de9f328d0ee5"

},

"space": {

"href": "https://api-shim.example.org/v3/spaces/2f35885d-0c9d-4423-83ad-fd05066f8576"

},

"stats": {

"href": "https://api-shim.example.org/v3/processes/3fccacd9-4b02-4b96-8d02-8e865865e9eb/stats"

}

}

}

]

}

```

*Note*: we're omitting the `revision` key entirely. The `metadata` key will always contain empty hashes, as in other stories.

---

### App with No Processes

**GIVEN** I have a CFApp and **no** CFProcesses are associated with it

**WHEN** I make the following API request:

```bash

curl "https://api-shim.example.org/v3/apps/<app-guid>/processes" \

-X GET \

-H "Authorization: bearer <placeholder-bearer-token>"

```

**THEN** I get back a response with an empty resources array

```json

HTTP/1.1 200 OK

Content-Type: application/json

{

"pagination": {

"total_results": 0,

"total_pages": 1,

"first": {

"href": "https://api.bramble-quester.capi.land/v3/apps/ea2501a0-a579-40a8-8cc9-2da76cb1d72d/processes?page=1&per_page=50"

},

"last": {

"href": "https://api.bramble-quester.capi.land/v3/apps/ea2501a0-a579-40a8-8cc9-2da76cb1d72d/processes?page=1&per_page=50"

},

"next": null,

"previous": null

},

"resources": [

]

}

```

---

### App doesn't exist

**GIVEN** I have do not have a CFApp with the guid below

**WHEN** I make the following API request:

```bash

curl "https://api-shim.example.org/v3/apps/<non-existant-app-guid>/processes" \

-X GET \

-H "Authorization: bearer <placeholder-bearer-token>"

```

**THEN** I get back a 404 response

```json

HTTP/1.1 404 Not Found

{

"errors": [

{

"detail": "App not found",

"title": "CF-ResourceNotFound",

"code": 10010

}

]

}

```

### Dev Notes

* V3 API Docs: https://v3-apidocs.cloudfoundry.org/version/3.107.0/index.html#list-processes

* Pagination: Always return all results for now (as we have been doing on other stories)

* Query parameters: Ignore filter parameters for now. We can add them in later

* Be sure to add the necessary RBAC annotations in the new Process repository. Otherwise the app will error when deployed to a real cluster (but will work locally)

|

1.0

|

[Feature]: API Client can List Processes for an App via `GET /v3/apps/:guid/processes` - ### Blockers/Dependencies

_No response_

### Background

**As a** client of the API Shim

**I want** to be able to list all Processes for my App

**So that** I can discover information about my Processes

The CF CLI hits this endpoint during `cf push` so that it can get a list of Process guids to make future API calls (such as fetching stats for a Process).

### Acceptance Criteria

## Scenarios

### Happy Path (App with Processes)

**GIVEN** I have a CFApp and CFProcesses are associated with it

**WHEN** I make the following API request:

```bash

curl "https://api-shim.example.org/v3/apps/<app-guid>/processes" \

-X GET \

-H "Authorization: bearer <placeholder-bearer-token>"

```

**THEN** I see a response that reflects the information on the CFProcesses

```json

HTTP/1.1 200 OK

Content-Type: application/json

{

"pagination": {

"total_results": 2,

"total_pages": 1,

"first": {

"href": "https://api-shim.example.org/v3/apps/ccc25a0f-c8f4-4b39-9f1b-de9f328d0ee5/processes?page=1&per_page=2"

},

"last": {

"href": "https://api-shim.example.org/v3/apps/ccc25a0f-c8f4-4b39-9f1b-de9f328d0ee5/processes?page=2&per_page=2"

},

"next": {

"href": "https://api-shim.example.org/v3/apps/ccc25a0f-c8f4-4b39-9f1b-de9f328d0ee5/processes?page=2&per_page=2"

},

"previous": null

},

"resources": [

{

"guid": "6a901b7c-9417-4dc1-8189-d3234aa0ab82",

"type": "web",

"command": "[PRIVATE DATA HIDDEN IN LISTS]",

"instances": 5,

"memory_in_mb": 256,

"disk_in_mb": 1024,

"health_check": {

"type": "port",

"data": {

"timeout": null,

"invocation_timeout": null

}

},

"relationships": {

"app": {

"data": {

"guid": "ccc25a0f-c8f4-4b39-9f1b-de9f328d0ee5"

}

}

},

"metadata": {

"labels": {},

"annotations": {}

},

"created_at": "2016-03-23T18:48:22Z",

"updated_at": "2016-03-23T18:48:42Z",

"links": {

"self": {

"href": "https://api-shim.example.org/v3/processes/6a901b7c-9417-4dc1-8189-d3234aa0ab82"

},

"scale": {

"href": "https://api-shim.example.org/v3/processes/6a901b7c-9417-4dc1-8189-d3234aa0ab82/actions/scale",

"method": "POST"

},

"app": {

"href": "https://api-shim.example.org/v3/apps/ccc25a0f-c8f4-4b39-9f1b-de9f328d0ee5"

},

"space": {

"href": "https://api-shim.example.org/v3/spaces/2f35885d-0c9d-4423-83ad-fd05066f8576"

},

"stats": {

"href": "https://api-shim.example.org/v3/processes/6a901b7c-9417-4dc1-8189-d3234aa0ab82/stats"

}

}

},

{

"guid": "3fccacd9-4b02-4b96-8d02-8e865865e9eb",

"type": "worker",

"command": "[PRIVATE DATA HIDDEN IN LISTS]",

"instances": 1,

"memory_in_mb": 256,

"disk_in_mb": 1024,

"health_check": {

"type": "process",

"data": {

"timeout": null,

"invocation_timeout": null

}

},

"relationships": {

"app": {

"data": {

"guid": "ccc25a0f-c8f4-4b39-9f1b-de9f328d0ee5"

}

},

},

"metadata": {

"labels": {},

"annotations": {}

},

"created_at": "2016-03-23T18:48:22Z",

"updated_at": "2016-03-23T18:48:42Z",

"links": {

"self": {

"href": "https://api-shim.example.org/v3/processes/3fccacd9-4b02-4b96-8d02-8e865865e9eb"

},

"scale": {

"href": "https://api-shim.example.org/v3/processes/3fccacd9-4b02-4b96-8d02-8e865865e9eb/actions/scale",

"method": "POST"

},

"app": {

"href": "https://api-shim.example.org/v3/apps/ccc25a0f-c8f4-4b39-9f1b-de9f328d0ee5"

},

"space": {

"href": "https://api-shim.example.org/v3/spaces/2f35885d-0c9d-4423-83ad-fd05066f8576"

},

"stats": {

"href": "https://api-shim.example.org/v3/processes/3fccacd9-4b02-4b96-8d02-8e865865e9eb/stats"

}

}

}

]

}

```

*Note*: we're omitting the `revision` key entirely. The `metadata` key will always contain empty hashes, as in other stories.

---

### App with No Processes

**GIVEN** I have a CFApp and **no** CFProcesses are associated with it

**WHEN** I make the following API request:

```bash

curl "https://api-shim.example.org/v3/apps/<app-guid>/processes" \

-X GET \

-H "Authorization: bearer <placeholder-bearer-token>"

```

**THEN** I get back a response with an empty resources array

```json

HTTP/1.1 200 OK

Content-Type: application/json

{

"pagination": {

"total_results": 0,

"total_pages": 1,

"first": {

"href": "https://api.bramble-quester.capi.land/v3/apps/ea2501a0-a579-40a8-8cc9-2da76cb1d72d/processes?page=1&per_page=50"

},

"last": {

"href": "https://api.bramble-quester.capi.land/v3/apps/ea2501a0-a579-40a8-8cc9-2da76cb1d72d/processes?page=1&per_page=50"

},

"next": null,

"previous": null

},

"resources": [

]

}

```

---

### App doesn't exist

**GIVEN** I have do not have a CFApp with the guid below

**WHEN** I make the following API request:

```bash

curl "https://api-shim.example.org/v3/apps/<non-existant-app-guid>/processes" \

-X GET \

-H "Authorization: bearer <placeholder-bearer-token>"

```

**THEN** I get back a 404 response

```json

HTTP/1.1 404 Not Found

{

"errors": [

{

"detail": "App not found",

"title": "CF-ResourceNotFound",

"code": 10010

}

]

}

```

### Dev Notes

* V3 API Docs: https://v3-apidocs.cloudfoundry.org/version/3.107.0/index.html#list-processes

* Pagination: Always return all results for now (as we have been doing on other stories)

* Query parameters: Ignore filter parameters for now. We can add them in later

* Be sure to add the necessary RBAC annotations in the new Process repository. Otherwise the app will error when deployed to a real cluster (but will work locally)

|

process

|

api client can list processes for an app via get apps guid processes blockers dependencies no response background as a client of the api shim i want to be able to list all processes for my app so that i can discover information about my processes the cf cli hits this endpoint during cf push so that it can get a list of process guids to make future api calls such as fetching stats for a process acceptance criteria scenarios happy path app with processes given i have a cfapp and cfprocesses are associated with it when i make the following api request bash curl x get h authorization bearer then i see a response that reflects the information on the cfprocesses json http ok content type application json pagination total results total pages first href last href next href previous null resources guid type web command instances memory in mb disk in mb health check type port data timeout null invocation timeout null relationships app data guid metadata labels annotations created at updated at links self href scale href method post app href space href stats href guid type worker command instances memory in mb disk in mb health check type process data timeout null invocation timeout null relationships app data guid metadata labels annotations created at updated at links self href scale href method post app href space href stats href note we re omitting the revision key entirely the metadata key will always contain empty hashes as in other stories app with no processes given i have a cfapp and no cfprocesses are associated with it when i make the following api request bash curl x get h authorization bearer then i get back a response with an empty resources array json http ok content type application json pagination total results total pages first href last href next null previous null resources app doesn t exist given i have do not have a cfapp with the guid below when i make the following api request bash curl x get h authorization bearer then i get back a response json http not found errors detail app not found title cf resourcenotfound code dev notes api docs pagination always return all results for now as we have been doing on other stories query parameters ignore filter parameters for now we can add them in later be sure to add the necessary rbac annotations in the new process repository otherwise the app will error when deployed to a real cluster but will work locally

| 1

|

271,136

| 29,299,168,536

|

IssuesEvent

|

2023-05-25 01:06:58

|

hshivhare67/kernel_v4.19.72_CVE-2023-0461

|

https://api.github.com/repos/hshivhare67/kernel_v4.19.72_CVE-2023-0461

|

opened

|

CVE-2023-33203 (Medium) detected in linuxlinux-4.19.282

|

Mend: dependency security vulnerability

|

## CVE-2023-33203 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.282</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux>https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux</a></p>

<p>Found in HEAD commit: <a href="https://github.com/hshivhare67/kernel_v4.19.72_CVE-2023-0461/commit/20984407a51d9f25ee9889e4b1304489f480d36e">20984407a51d9f25ee9889e4b1304489f480d36e</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/net/ethernet/qualcomm/emac/emac.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/net/ethernet/qualcomm/emac/emac.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

The Linux kernel before 6.2.9 has a race condition and resultant use-after-free in drivers/net/ethernet/qualcomm/emac/emac.c if a physically proximate attacker unplugs an emac based device.

<p>Publish Date: 2023-05-18

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-33203>CVE-2023-33203</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2023-33203">https://www.linuxkernelcves.com/cves/CVE-2023-33203</a></p>

<p>Release Date: 2023-05-18</p>

<p>Fix Resolution: v4.14.312,v4.19.280,v5.4.240,v5.10.177,v5.15.105,v6.1.22,v6.2.9</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2023-33203 (Medium) detected in linuxlinux-4.19.282 - ## CVE-2023-33203 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.282</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux>https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux</a></p>

<p>Found in HEAD commit: <a href="https://github.com/hshivhare67/kernel_v4.19.72_CVE-2023-0461/commit/20984407a51d9f25ee9889e4b1304489f480d36e">20984407a51d9f25ee9889e4b1304489f480d36e</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/net/ethernet/qualcomm/emac/emac.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/drivers/net/ethernet/qualcomm/emac/emac.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

The Linux kernel before 6.2.9 has a race condition and resultant use-after-free in drivers/net/ethernet/qualcomm/emac/emac.c if a physically proximate attacker unplugs an emac based device.

<p>Publish Date: 2023-05-18

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-33203>CVE-2023-33203</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2023-33203">https://www.linuxkernelcves.com/cves/CVE-2023-33203</a></p>

<p>Release Date: 2023-05-18</p>

<p>Fix Resolution: v4.14.312,v4.19.280,v5.4.240,v5.10.177,v5.15.105,v6.1.22,v6.2.9</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in linuxlinux cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files drivers net ethernet qualcomm emac emac c drivers net ethernet qualcomm emac emac c vulnerability details the linux kernel before has a race condition and resultant use after free in drivers net ethernet qualcomm emac emac c if a physically proximate attacker unplugs an emac based device publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend

| 0

|

2,858

| 5,824,282,508

|

IssuesEvent

|

2017-05-07 11:31:07

|

QCoDeS/Qcodes

|

https://api.github.com/repos/QCoDeS/Qcodes

|

closed

|

Leaking sockets?

|

bug mulitprocessing p2

|

### Steps to reproduce

1. Run code for some time on windows including possible shutdown and restart of notebook and instrument communication.

### Expected behaviour

Things should keep working

### Actual behaviour

Notebook fails with socket related connection issues from Tornado and ZMQ.

```

File "c:\users\triton2acq\anaconda3\envs\qcodes-master\lib\site-packages\j

upyter_client\multikernelmanager.py", line 33, in wrapped

r = method(*args, **kwargs)

File "c:\users\triton2acq\anaconda3\envs\qcodes-master\lib\site-packages\j

upyter_client\ioloop\manager.py", line 33, in wrapped

socket = f(self, *args, **kwargs)

File "c:\users\triton2acq\anaconda3\envs\qcodes-master\lib\site-packages\j

upyter_client\connect.py", line 492, in connect_shell

return self._create_connected_socket('shell', identity=identity)

File "c:\users\triton2acq\anaconda3\envs\qcodes-master\lib\site-packages\j

upyter_client\connect.py", line 476, in _create_connected_socket

sock = self.context.socket(socket_type)

File "c:\users\triton2acq\anaconda3\envs\qcodes-master\lib\site-packages\z

mq\sugar\context.py", line 146, in socket

s = self._socket_class(self, socket_type, **kwargs)

File "zmq\backend\cython\socket.pyx", line 285, in zmq.backend.cython.sock

et.Socket.__cinit__ (zmq\backend\cython\socket.c:3861)

zmq.error.ZMQError: No buffer space available

OSError: [WinError 10055] An operation on a socket could not be performed because the system lacked sufficient buffer space or because a queue was full

```

Regular internet (browsing etc) is flaky too

We suspect that sockets are leaked and not cleaned up. This may be in the VISA driver of

network instruments such as ZNMB20 VNA

### System

**operating system**

Windows

**qcodes branch**

master

**qcodes commit**

?

|

1.0

|

Leaking sockets? -

### Steps to reproduce

1. Run code for some time on windows including possible shutdown and restart of notebook and instrument communication.

### Expected behaviour

Things should keep working

### Actual behaviour

Notebook fails with socket related connection issues from Tornado and ZMQ.

```

File "c:\users\triton2acq\anaconda3\envs\qcodes-master\lib\site-packages\j

upyter_client\multikernelmanager.py", line 33, in wrapped

r = method(*args, **kwargs)

File "c:\users\triton2acq\anaconda3\envs\qcodes-master\lib\site-packages\j

upyter_client\ioloop\manager.py", line 33, in wrapped

socket = f(self, *args, **kwargs)

File "c:\users\triton2acq\anaconda3\envs\qcodes-master\lib\site-packages\j

upyter_client\connect.py", line 492, in connect_shell

return self._create_connected_socket('shell', identity=identity)

File "c:\users\triton2acq\anaconda3\envs\qcodes-master\lib\site-packages\j

upyter_client\connect.py", line 476, in _create_connected_socket

sock = self.context.socket(socket_type)

File "c:\users\triton2acq\anaconda3\envs\qcodes-master\lib\site-packages\z

mq\sugar\context.py", line 146, in socket

s = self._socket_class(self, socket_type, **kwargs)

File "zmq\backend\cython\socket.pyx", line 285, in zmq.backend.cython.sock

et.Socket.__cinit__ (zmq\backend\cython\socket.c:3861)

zmq.error.ZMQError: No buffer space available

OSError: [WinError 10055] An operation on a socket could not be performed because the system lacked sufficient buffer space or because a queue was full

```

Regular internet (browsing etc) is flaky too

We suspect that sockets are leaked and not cleaned up. This may be in the VISA driver of

network instruments such as ZNMB20 VNA

### System

**operating system**

Windows

**qcodes branch**

master

**qcodes commit**

?

|

process

|

leaking sockets steps to reproduce run code for some time on windows including possible shutdown and restart of notebook and instrument communication expected behaviour things should keep working actual behaviour notebook fails with socket related connection issues from tornado and zmq file c users envs qcodes master lib site packages j upyter client multikernelmanager py line in wrapped r method args kwargs file c users envs qcodes master lib site packages j upyter client ioloop manager py line in wrapped socket f self args kwargs file c users envs qcodes master lib site packages j upyter client connect py line in connect shell return self create connected socket shell identity identity file c users envs qcodes master lib site packages j upyter client connect py line in create connected socket sock self context socket socket type file c users envs qcodes master lib site packages z mq sugar context py line in socket s self socket class self socket type kwargs file zmq backend cython socket pyx line in zmq backend cython sock et socket cinit zmq backend cython socket c zmq error zmqerror no buffer space available oserror an operation on a socket could not be performed because the system lacked sufficient buffer space or because a queue was full regular internet browsing etc is flaky too we suspect that sockets are leaked and not cleaned up this may be in the visa driver of network instruments such as vna system operating system windows qcodes branch master qcodes commit

| 1

|

117,932

| 25,216,777,579

|

IssuesEvent

|

2022-11-14 09:45:05

|

appsmithorg/appsmith

|

https://api.github.com/repos/appsmithorg/appsmith

|

closed

|

[Feature][Custom JS Lib Epic] Delete installed JS package on demand

|

JS Evaluation Task FE Coders Pod

|

## Summary

Remove installed package from application when user clicks on the delete JS package button.

|

1.0

|

[Feature][Custom JS Lib Epic] Delete installed JS package on demand - ## Summary

Remove installed package from application when user clicks on the delete JS package button.

|

non_process

|

delete installed js package on demand summary remove installed package from application when user clicks on the delete js package button

| 0

|

160,013

| 25,095,864,333

|

IssuesEvent

|

2022-11-08 10:07:13

|

metacpan/metacpan-web

|

https://api.github.com/repos/metacpan/metacpan-web

|

closed

|

multiple levels of indentation not handled

|

type:Bug design-2022-followup

|

Multiple levels of `=over 4` are not visible in the site redesign -- everything appears at the same level, so it's impossible to tell what content is meant to be nested.

example: https://metacpan.org/pod/Net::IDN::Encode -- there is a list of functions, and inside the function description is a list of options, but the options are shown at the same level as the functions themselves.

|

1.0

|

multiple levels of indentation not handled - Multiple levels of `=over 4` are not visible in the site redesign -- everything appears at the same level, so it's impossible to tell what content is meant to be nested.

example: https://metacpan.org/pod/Net::IDN::Encode -- there is a list of functions, and inside the function description is a list of options, but the options are shown at the same level as the functions themselves.

|

non_process

|

multiple levels of indentation not handled multiple levels of over are not visible in the site redesign everything appears at the same level so it s impossible to tell what content is meant to be nested example there is a list of functions and inside the function description is a list of options but the options are shown at the same level as the functions themselves

| 0

|

350,710

| 31,931,967,598

|

IssuesEvent

|

2023-09-19 08:03:21

|

Convergence-Project/step-backend

|

https://api.github.com/repos/Convergence-Project/step-backend

|

opened

|

[2주차] (문제집) 게시판 좋아요 기능 구현

|

🎯test ✨feature

|

✏️Description

-

작업사항을 입력해주세요

✅TODO

-

- [ ] 테스트 코드 작성

- [ ] 컨트롤러 프론트 연동

🐾ETC

-

|

1.0

|

[2주차] (문제집) 게시판 좋아요 기능 구현 - ✏️Description

-

작업사항을 입력해주세요

✅TODO

-

- [ ] 테스트 코드 작성

- [ ] 컨트롤러 프론트 연동

🐾ETC

-

|

non_process

|

문제집 게시판 좋아요 기능 구현 ✏️description 작업사항을 입력해주세요 ✅todo 테스트 코드 작성 컨트롤러 프론트 연동 🐾etc

| 0

|

18,485

| 24,550,797,339

|

IssuesEvent

|

2022-10-12 12:27:50

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[iOS] 'View consent' and 'View website' buttons are not getting displayed on the study overview screen

|

Bug P1 iOS Process: Fixed Process: Tested dev

|

Steps:

1. Sign up or sign in to the app

2. Click on any study to enroll

3. Click on the participate

4. I enrollment flow, click on the 'Cancel' button

5. Click on 'End task' or 'Click on 'Discard result' and observe

AR: 'View consent' and 'View website' buttons are not getting displayed on the study overview screen

ER: ''View consent' and 'View website' buttons should get displayed on the study overview screen

|

2.0

|

[iOS] 'View consent' and 'View website' buttons are not getting displayed on the study overview screen - Steps:

1. Sign up or sign in to the app

2. Click on any study to enroll

3. Click on the participate

4. I enrollment flow, click on the 'Cancel' button

5. Click on 'End task' or 'Click on 'Discard result' and observe

AR: 'View consent' and 'View website' buttons are not getting displayed on the study overview screen

ER: ''View consent' and 'View website' buttons should get displayed on the study overview screen

|

process

|

view consent and view website buttons are not getting displayed on the study overview screen steps sign up or sign in to the app click on any study to enroll click on the participate i enrollment flow click on the cancel button click on end task or click on discard result and observe ar view consent and view website buttons are not getting displayed on the study overview screen er view consent and view website buttons should get displayed on the study overview screen

| 1

|

14,604

| 17,703,628,988

|

IssuesEvent

|

2021-08-25 03:26:03

|

tdwg/dwc

|

https://api.github.com/repos/tdwg/dwc

|

closed

|

Change term - basisOfRecord

|

Term - change Class - Record-level non-normative Process - complete

|

## Term change

* Submitter: John Wieczorek

* Efficacy Justification (why is this change necessary?): completeness

* Demand Justification (if the change is semantic in nature, name at least two organizations that independently need this term): Result of recent public review

* Stability Justification (what concerns are there that this might affect existing implementations?): An addition, no effect on stability except to promote standardization on a ratified term.

* Implications for dwciri: namespace (does this change affect a dwciri term version)?: None

Current Term definition: https://dwc.tdwg.org/list/#dwc_basisOfRecord

Proposed attributes of the new term:

* Term name (in lowerCamelCase for properties, UpperCamelCase for classes): basisOfRecord

* Organized in Class (e.g., Occurrence, Event, Location, Taxon): None

* Definition of the term (normative): The specific nature of the data record.

* Usage comments (recommendations regarding content, etc., not normative): Recommended best practice is to use the standard label of one of the Darwin Core classes.

* Examples (not normative): `PreservedSpecimen`, `FossilSpecimen`, `LivingSpecimen`, `MaterialSample`, `Event`, `HumanObservation`, `MachineObservation`, `Taxon`, `Occurrence`, **`MaterialCitation`**

* Refines (identifier of the broader term this term refines; normative): None

* Replaces (identifier of the existing term that would be deprecated and replaced by this term; normative): **http://rs.tdwg.org/dwc/terms/version/basisOfRecord-2017-10-06**

* ABCD 2.06 (XPATH of the equivalent term in ABCD or EFG; not normative): DataSets/DataSet/Units/Unit/RecordBasis

This is to accommodate the addition of the MaterialCitation class.

|

1.0

|

Change term - basisOfRecord - ## Term change

* Submitter: John Wieczorek

* Efficacy Justification (why is this change necessary?): completeness

* Demand Justification (if the change is semantic in nature, name at least two organizations that independently need this term): Result of recent public review

* Stability Justification (what concerns are there that this might affect existing implementations?): An addition, no effect on stability except to promote standardization on a ratified term.

* Implications for dwciri: namespace (does this change affect a dwciri term version)?: None

Current Term definition: https://dwc.tdwg.org/list/#dwc_basisOfRecord

Proposed attributes of the new term:

* Term name (in lowerCamelCase for properties, UpperCamelCase for classes): basisOfRecord

* Organized in Class (e.g., Occurrence, Event, Location, Taxon): None

* Definition of the term (normative): The specific nature of the data record.

* Usage comments (recommendations regarding content, etc., not normative): Recommended best practice is to use the standard label of one of the Darwin Core classes.

* Examples (not normative): `PreservedSpecimen`, `FossilSpecimen`, `LivingSpecimen`, `MaterialSample`, `Event`, `HumanObservation`, `MachineObservation`, `Taxon`, `Occurrence`, **`MaterialCitation`**

* Refines (identifier of the broader term this term refines; normative): None

* Replaces (identifier of the existing term that would be deprecated and replaced by this term; normative): **http://rs.tdwg.org/dwc/terms/version/basisOfRecord-2017-10-06**

* ABCD 2.06 (XPATH of the equivalent term in ABCD or EFG; not normative): DataSets/DataSet/Units/Unit/RecordBasis

This is to accommodate the addition of the MaterialCitation class.

|

process

|

change term basisofrecord term change submitter john wieczorek efficacy justification why is this change necessary completeness demand justification if the change is semantic in nature name at least two organizations that independently need this term result of recent public review stability justification what concerns are there that this might affect existing implementations an addition no effect on stability except to promote standardization on a ratified term implications for dwciri namespace does this change affect a dwciri term version none current term definition proposed attributes of the new term term name in lowercamelcase for properties uppercamelcase for classes basisofrecord organized in class e g occurrence event location taxon none definition of the term normative the specific nature of the data record usage comments recommendations regarding content etc not normative recommended best practice is to use the standard label of one of the darwin core classes examples not normative preservedspecimen fossilspecimen livingspecimen materialsample event humanobservation machineobservation taxon occurrence materialcitation refines identifier of the broader term this term refines normative none replaces identifier of the existing term that would be deprecated and replaced by this term normative abcd xpath of the equivalent term in abcd or efg not normative datasets dataset units unit recordbasis this is to accommodate the addition of the materialcitation class

| 1

|

438,189

| 12,623,665,407

|

IssuesEvent

|

2020-06-14 00:30:23

|

hack4impact-uiuc/kids-save-ocean

|

https://api.github.com/repos/hack4impact-uiuc/kids-save-ocean

|

closed

|

Remove fake links

|

high priority

|

Feed:

- Saved projects

- My projects

- Updates

- Followers

- Following

Navbar:

- Notifications

- Current project

Also remove all hardcoded data and comment out features projects from homepage and feed

|

1.0

|

Remove fake links - Feed:

- Saved projects

- My projects

- Updates

- Followers

- Following

Navbar:

- Notifications

- Current project

Also remove all hardcoded data and comment out features projects from homepage and feed

|

non_process

|

remove fake links feed saved projects my projects updates followers following navbar notifications current project also remove all hardcoded data and comment out features projects from homepage and feed

| 0

|

336,706

| 10,195,758,226

|

IssuesEvent

|

2019-08-12 18:57:07

|

jenkins-x/jx

|

https://api.github.com/repos/jenkins-x/jx

|

opened

|

Add kaniko image version to version stream

|

area/tekton area/versions kind/enhancement priority/important-soon

|

We hardcode the default Kaniko image and version in the code currently. That's kinda silly when we've got this whole version stream thing here. =) So let's add a Kaniko version to the version stream, and then update the logic in the CRD translation to use that.

|

1.0

|

Add kaniko image version to version stream - We hardcode the default Kaniko image and version in the code currently. That's kinda silly when we've got this whole version stream thing here. =) So let's add a Kaniko version to the version stream, and then update the logic in the CRD translation to use that.

|

non_process

|

add kaniko image version to version stream we hardcode the default kaniko image and version in the code currently that s kinda silly when we ve got this whole version stream thing here so let s add a kaniko version to the version stream and then update the logic in the crd translation to use that

| 0

|

7,647

| 10,738,585,256

|

IssuesEvent

|

2019-10-29 15:00:40

|

openopps/openopps-platform

|

https://api.github.com/repos/openopps/openopps-platform

|

opened

|

Move USAJOBS data pull from Apply button to Next Steps "Continue"

|

Apply Process State Dept.

|

Who:

What:

Why:

Acceptance Criteria:

- Currently the USAJOBS one profile data is pulled for a student when they select "Apply"

- Change the data pull to when they click "Continue" on the Next Steps page

|

1.0

|

Move USAJOBS data pull from Apply button to Next Steps "Continue" - Who:

What:

Why:

Acceptance Criteria:

- Currently the USAJOBS one profile data is pulled for a student when they select "Apply"

- Change the data pull to when they click "Continue" on the Next Steps page

|

process

|

move usajobs data pull from apply button to next steps continue who what why acceptance criteria currently the usajobs one profile data is pulled for a student when they select apply change the data pull to when they click continue on the next steps page

| 1

|

129,603

| 12,414,793,498

|

IssuesEvent

|

2020-05-22 15:07:38

|

alpheios-project/alpheios-core

|

https://api.github.com/repos/alpheios-project/alpheios-core

|

closed

|

selective enabling of Alpheios on components

|

components documentation

|

for alpheios-project/components#129 we disabled alpheios on the panel and popup . sometimes we want to be able to enable it selectively. This requires some thought about the best way to do it but, for example, the ge'ez parser provides short definitions for its words in Latin. We should able to enable Alpheios on those definitions in the popup.

One way to do this might be to look for the language code on the text that is displayed in a component and compare that to available languages to determine if Alpheios can be activated. But we might need even finer grained control of that.

Probably an issue for after refactoring of component state and data.

|

1.0

|

selective enabling of Alpheios on components - for alpheios-project/components#129 we disabled alpheios on the panel and popup . sometimes we want to be able to enable it selectively. This requires some thought about the best way to do it but, for example, the ge'ez parser provides short definitions for its words in Latin. We should able to enable Alpheios on those definitions in the popup.

One way to do this might be to look for the language code on the text that is displayed in a component and compare that to available languages to determine if Alpheios can be activated. But we might need even finer grained control of that.

Probably an issue for after refactoring of component state and data.

|

non_process

|

selective enabling of alpheios on components for alpheios project components we disabled alpheios on the panel and popup sometimes we want to be able to enable it selectively this requires some thought about the best way to do it but for example the ge ez parser provides short definitions for its words in latin we should able to enable alpheios on those definitions in the popup one way to do this might be to look for the language code on the text that is displayed in a component and compare that to available languages to determine if alpheios can be activated but we might need even finer grained control of that probably an issue for after refactoring of component state and data

| 0

|

1,659

| 4,288,680,282

|

IssuesEvent

|

2016-07-17 16:31:09

|

log2timeline/plaso

|

https://api.github.com/repos/log2timeline/plaso

|

closed

|

Preprocessor not working for Windows

|

bug preprocessing

|

Preprocessor not working for Windows

```

2015-12-26 20:53:12,946 [INFO] (MainProcess) PID:4027 <interface> [PreProcess] Set attribute: sysregistry to /WINDOWS/system32/config

2015-12-26 20:53:12,951 [INFO] (MainProcess) PID:4027 <interface> [PreProcess] Set attribute: systemroot to /WINDOWS

2015-12-26 20:53:12,955 [INFO] (MainProcess) PID:4027 <interface> [PreProcess] Set attribute: windir to /WINDOWS

2015-12-26 20:53:12,985 [INFO] (MainProcess) PID:4027 <extraction_frontend> Parser filter expression changed to: win7

```

Should be:

```

2015-12-26 20:53:27,192 [INFO] (MainProcess) PID:4128 <interface> [PreProcess] Set attribute: sysregistry to \WINDOWS\system32\config

2015-12-26 20:53:27,196 [INFO] (MainProcess) PID:4128 <interface> [PreProcess] Set attribute: systemroot to \WINDOWS

2015-12-26 20:53:27,200 [INFO] (MainProcess) PID:4128 <interface> [PreProcess] Set attribute: windir to \WINDOWS

2015-12-26 20:53:27,338 [INFO] (MainProcess) PID:4128 <windows> [PreProcess] Set attribute: code_page to cp1252

2015-12-26 20:53:27,338 [INFO] (MainProcess) PID:4128 <windows> [PreProcess] Set attribute: hostname to TEST

2015-12-26 20:53:27,521 [INFO] (MainProcess) PID:4128 <windows> [PreProcess] Set attribute: programfiles to \Program Files

```

* [x] fix issue

* ~~introduces a complication for preg and requires http://codereview.appspot.com/284880043/~~

* ~~https://codereview.appspot.com/276600043/~~

|

1.0

|

Preprocessor not working for Windows - Preprocessor not working for Windows

```

2015-12-26 20:53:12,946 [INFO] (MainProcess) PID:4027 <interface> [PreProcess] Set attribute: sysregistry to /WINDOWS/system32/config

2015-12-26 20:53:12,951 [INFO] (MainProcess) PID:4027 <interface> [PreProcess] Set attribute: systemroot to /WINDOWS

2015-12-26 20:53:12,955 [INFO] (MainProcess) PID:4027 <interface> [PreProcess] Set attribute: windir to /WINDOWS

2015-12-26 20:53:12,985 [INFO] (MainProcess) PID:4027 <extraction_frontend> Parser filter expression changed to: win7

```

Should be:

```

2015-12-26 20:53:27,192 [INFO] (MainProcess) PID:4128 <interface> [PreProcess] Set attribute: sysregistry to \WINDOWS\system32\config

2015-12-26 20:53:27,196 [INFO] (MainProcess) PID:4128 <interface> [PreProcess] Set attribute: systemroot to \WINDOWS

2015-12-26 20:53:27,200 [INFO] (MainProcess) PID:4128 <interface> [PreProcess] Set attribute: windir to \WINDOWS

2015-12-26 20:53:27,338 [INFO] (MainProcess) PID:4128 <windows> [PreProcess] Set attribute: code_page to cp1252

2015-12-26 20:53:27,338 [INFO] (MainProcess) PID:4128 <windows> [PreProcess] Set attribute: hostname to TEST

2015-12-26 20:53:27,521 [INFO] (MainProcess) PID:4128 <windows> [PreProcess] Set attribute: programfiles to \Program Files

```

* [x] fix issue

* ~~introduces a complication for preg and requires http://codereview.appspot.com/284880043/~~

* ~~https://codereview.appspot.com/276600043/~~

|

process

|

preprocessor not working for windows preprocessor not working for windows mainprocess pid set attribute sysregistry to windows config mainprocess pid set attribute systemroot to windows mainprocess pid set attribute windir to windows mainprocess pid parser filter expression changed to should be mainprocess pid set attribute sysregistry to windows config mainprocess pid set attribute systemroot to windows mainprocess pid set attribute windir to windows mainprocess pid set attribute code page to mainprocess pid set attribute hostname to test mainprocess pid set attribute programfiles to program files fix issue introduces a complication for preg and requires

| 1

|

681,397

| 23,309,660,230

|

IssuesEvent

|

2022-08-08 06:58:49

|

oasis-engine/engine

|

https://api.github.com/repos/oasis-engine/engine

|

closed

|

Improve the text system

|

feature 2D high priority

|

Design: @GuoLei1990 , @singlecoder

PR: @singlecoder @cptbtptpbcptdtptp

PR reviewers: @GuoLei1990 , @cptbtptpbcptdtptp , @gz65555

|

1.0

|

Improve the text system - Design: @GuoLei1990 , @singlecoder

PR: @singlecoder @cptbtptpbcptdtptp

PR reviewers: @GuoLei1990 , @cptbtptpbcptdtptp , @gz65555

|

non_process

|

improve the text system design singlecoder pr singlecoder cptbtptpbcptdtptp pr reviewers cptbtptpbcptdtptp

| 0

|

9,376

| 12,374,399,803

|

IssuesEvent

|

2020-05-19 01:27:35

|

kubernetes/minikube

|

https://api.github.com/repos/kubernetes/minikube

|

closed

|

change triage party meeeting to google meet

|

kind/process priority/important-soon

|

for better experience with most of the maintainers

|

1.0

|

change triage party meeeting to google meet - for better experience with most of the maintainers

|

process

|

change triage party meeeting to google meet for better experience with most of the maintainers

| 1

|

3,052

| 6,044,561,726

|

IssuesEvent

|

2017-06-12 06:22:52

|

javabird25/long-hour-and-a-half

|

https://api.github.com/repos/javabird25/long-hour-and-a-half

|

closed

|

"THIS IS A BUG"

|

bug will be processed soon

|

When character is peeing during a class without underwear or/and outerwear, "THIS IS A BUG" will appear.

Because there is no special wear handling in `ASK_TO_PEE` stage.

|

1.0

|

"THIS IS A BUG" - When character is peeing during a class without underwear or/and outerwear, "THIS IS A BUG" will appear.

Because there is no special wear handling in `ASK_TO_PEE` stage.

|

process

|

this is a bug when character is peeing during a class without underwear or and outerwear this is a bug will appear because there is no special wear handling in ask to pee stage

| 1

|

12,114

| 14,740,543,303

|

IssuesEvent

|

2021-01-07 09:15:25

|

kdjstudios/SABillingGitlab

|

https://api.github.com/repos/kdjstudios/SABillingGitlab

|

closed

|

Bogus Email Address

|

anc-process anp-2 ant-enhancement has attachment

|

In GitLab by @kdjstudios on Nov 19, 2018, 16:01

Hello Team,

I just recently noticed one of the errors we are receiving: [SA_Billing_Error_Report_customers_update__NetSMTPFatalError__554_5.7.1_none_none.com_Recipient_address_rejected....msg](/uploads/67c74a6f96dfbf330c7c90eba35a7cc1/SA_Billing_Error_Report_customers_update__NetSMTPFatalError__554_5.7.1_none_none.com_Recipient_address_rejected....msg)

It would appear that we are having an issue with being able to send to "none@none.com"; which is completely valid. My thoughts on this would be the following to resolve this. I did a quick internal search and we have over 15 customers that use this address, and over 600 accounts that use the address. The email is not a required field correct? So we should be able to remove them.

1. Notify operations that on a certain date we will be removing all "bogus" email addresses from the system.

2. If any accounts are setup for email invoicing that have a bogus email address. Notify Operations to correct those accounts accordingly.

3. On that date remove all bogus email addresses.

4. Update the validation on all email address fields to check for bogus email addresses before allowing to save.

This will then in turn any accounts that did not get updated to a valid email and are setup for email invoicing will be displayed with an error to the site managers on the next billing cycle right?

|

1.0

|

Bogus Email Address - In GitLab by @kdjstudios on Nov 19, 2018, 16:01

Hello Team,

I just recently noticed one of the errors we are receiving: [SA_Billing_Error_Report_customers_update__NetSMTPFatalError__554_5.7.1_none_none.com_Recipient_address_rejected....msg](/uploads/67c74a6f96dfbf330c7c90eba35a7cc1/SA_Billing_Error_Report_customers_update__NetSMTPFatalError__554_5.7.1_none_none.com_Recipient_address_rejected....msg)

It would appear that we are having an issue with being able to send to "none@none.com"; which is completely valid. My thoughts on this would be the following to resolve this. I did a quick internal search and we have over 15 customers that use this address, and over 600 accounts that use the address. The email is not a required field correct? So we should be able to remove them.

1. Notify operations that on a certain date we will be removing all "bogus" email addresses from the system.

2. If any accounts are setup for email invoicing that have a bogus email address. Notify Operations to correct those accounts accordingly.

3. On that date remove all bogus email addresses.

4. Update the validation on all email address fields to check for bogus email addresses before allowing to save.

This will then in turn any accounts that did not get updated to a valid email and are setup for email invoicing will be displayed with an error to the site managers on the next billing cycle right?

|

process

|

bogus email address in gitlab by kdjstudios on nov hello team i just recently noticed one of the errors we are receiving uploads sa billing error report customers update netsmtpfatalerror none none com recipient address rejected msg it would appear that we are having an issue with being able to send to none none com which is completely valid my thoughts on this would be the following to resolve this i did a quick internal search and we have over customers that use this address and over accounts that use the address the email is not a required field correct so we should be able to remove them notify operations that on a certain date we will be removing all bogus email addresses from the system if any accounts are setup for email invoicing that have a bogus email address notify operations to correct those accounts accordingly on that date remove all bogus email addresses update the validation on all email address fields to check for bogus email addresses before allowing to save this will then in turn any accounts that did not get updated to a valid email and are setup for email invoicing will be displayed with an error to the site managers on the next billing cycle right

| 1

|

193,146

| 22,216,072,041

|

IssuesEvent

|

2022-06-08 01:53:00

|

maddyCode23/linux-4.1.15

|

https://api.github.com/repos/maddyCode23/linux-4.1.15

|

reopened

|

CVE-2017-18552 (High) detected in linux-stable-rtv4.1.33

|

security vulnerability

|

## CVE-2017-18552 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git>https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/maddyCode23/linux-4.1.15/commit/f1f3d2b150be669390b32dfea28e773471bdd6e7">f1f3d2b150be669390b32dfea28e773471bdd6e7</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/net/rds/af_rds.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/net/rds/af_rds.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in net/rds/af_rds.c in the Linux kernel before 4.11. There is an out of bounds write and read in the function rds_recv_track_latency.

<p>Publish Date: 2019-08-19

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-18552>CVE-2017-18552</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2017-18552">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2017-18552</a></p>

<p>Release Date: 2019-08-19</p>

<p>Fix Resolution: v4.11-rc1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2017-18552 (High) detected in linux-stable-rtv4.1.33 - ## CVE-2017-18552 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git>https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git</a></p>