Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

6,114

| 8,972,798,251

|

IssuesEvent

|

2019-01-29 19:15:28

|

material-components/material-components-ios

|

https://api.github.com/repos/material-components/material-components-ios

|

closed

|

[ActionSheet] Theme examples using Theming Extensions

|

[ActionSheet] type:Process

|

This was filed as an internal issue. If you are a Googler, please visit [b/123234713](http://b/123234713) for more details.

<!-- Auto-generated content below, do not modify -->

---

#### Internal data

- Associated internal bug: [b/123234713](http://b/123234713)

|

1.0

|

[ActionSheet] Theme examples using Theming Extensions - This was filed as an internal issue. If you are a Googler, please visit [b/123234713](http://b/123234713) for more details.

<!-- Auto-generated content below, do not modify -->

---

#### Internal data

- Associated internal bug: [b/123234713](http://b/123234713)

|

process

|

theme examples using theming extensions this was filed as an internal issue if you are a googler please visit for more details internal data associated internal bug

| 1

|

94,873

| 8,526,472,217

|

IssuesEvent

|

2018-11-02 16:20:59

|

SME-Issues/issues

|

https://api.github.com/repos/SME-Issues/issues

|

closed

|

Query Balance Tests Comprehension Partial - 01/11/2018 - 5004

|

NLP Api pulse_tests

|

**Query Balance Tests Comprehension Partial**

- Total: 11

- Passed: 6

- **Full Pass: 6 (55%)**

- Not Understood: 0

- Failed but Understood: 5 (45%)

|

1.0

|

Query Balance Tests Comprehension Partial - 01/11/2018 - 5004 - **Query Balance Tests Comprehension Partial**

- Total: 11

- Passed: 6

- **Full Pass: 6 (55%)**

- Not Understood: 0

- Failed but Understood: 5 (45%)

|

non_process

|

query balance tests comprehension partial query balance tests comprehension partial total passed full pass not understood failed but understood

| 0

|

8,321

| 11,487,748,330

|

IssuesEvent

|

2020-02-11 12:37:08

|

darktable-org/darktable

|

https://api.github.com/repos/darktable-org/darktable

|

closed

|

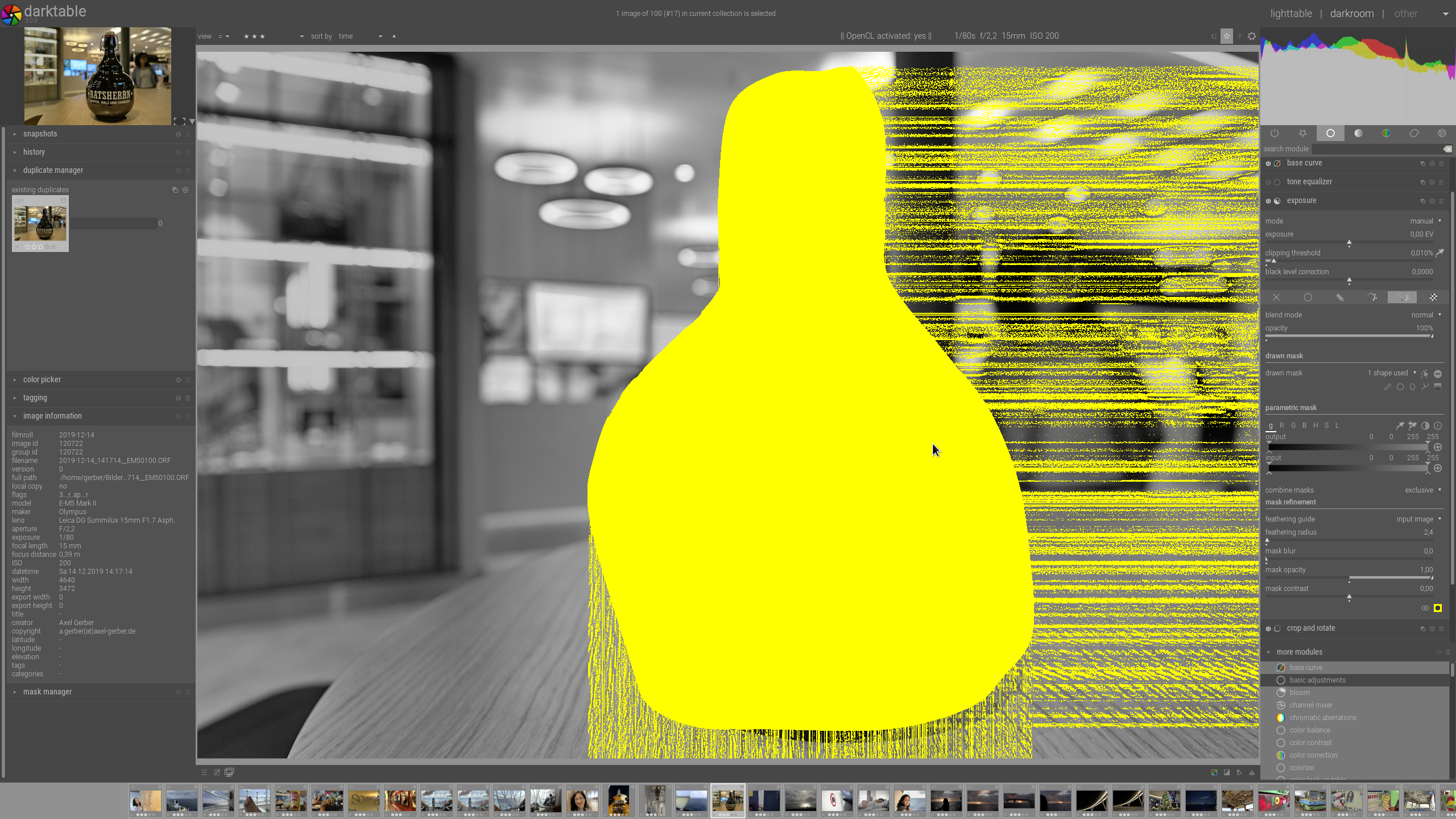

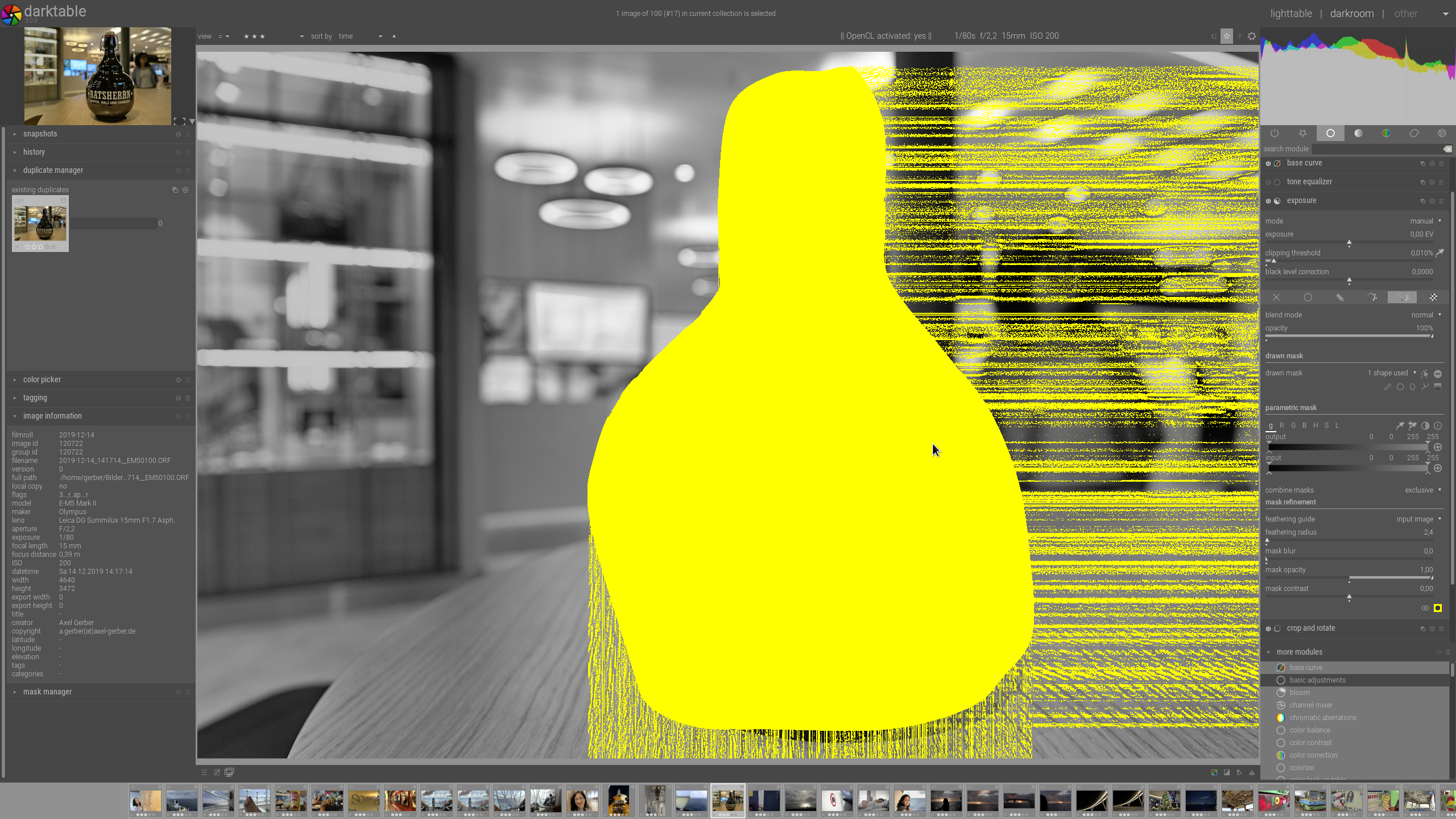

[mask refinement] feathering radius weird artifacts

|

bug: pending priority: high reproduce: confirmed scope: image processing understood: clear

|

**Describe the bug**

When mask opacity has been set to 1,00 in a drawn mask, feathering radius creates strange lines, and they are actually in the mask than

I know it makes no sense in a drawn mask only, but drawn and parametric, it does, typically L-Mask). Just the error also appears with drawn only, so we can concentrate on that.

**To Reproduce**

1. Go to any module, e.g. exposer

2. create a drawn mask

3. change opacity to 1,00

4. change feathering radius to e.g. 2,4

**Expected behavior**

Mask should be handled propperly, as it is, when mask opacity is not changed

**Screenshots**

**Platform (please complete the following information):**

- Darktable Version: **3.0.0** and **current master**

- OS: **Gentoo Linux**

- OpenCL activated or no? **on and off**

- Which graphics card and driver version **2x nvidia GTX1060; nvidia-drivers 440.44-r1**

**Additional context**

- Can you reproduce with another Darktable version? **Yes**

- Can you reproduce with a RAW or Jpeg or both? **RAW**

- Are the steps above reproduce with a fresh edit (removing history)? **Yes**

- Attach an XMP if this is necessary

[2019-12-14_124134__EM50100.ORF.xmp.tar.gz](https://github.com/darktable-org/darktable/files/4021953/2019-12-14_124134__EM50100.ORF.xmp.tar.gz)

- Did you compile Darktable yourself? If so which compiler was used, with what options? **x86_64-pc-linux-gnu-9.2.0 CMAKE_BUILD_TYPE="Release" CFLAGS="-march=native -O3 -mtune=native -pipe"**

- Is the issue still present using an empty/new config-dir **Yes**

|

1.0

|

[mask refinement] feathering radius weird artifacts - **Describe the bug**

When mask opacity has been set to 1,00 in a drawn mask, feathering radius creates strange lines, and they are actually in the mask than

I know it makes no sense in a drawn mask only, but drawn and parametric, it does, typically L-Mask). Just the error also appears with drawn only, so we can concentrate on that.

**To Reproduce**

1. Go to any module, e.g. exposer

2. create a drawn mask

3. change opacity to 1,00

4. change feathering radius to e.g. 2,4

**Expected behavior**

Mask should be handled propperly, as it is, when mask opacity is not changed

**Screenshots**

**Platform (please complete the following information):**

- Darktable Version: **3.0.0** and **current master**

- OS: **Gentoo Linux**

- OpenCL activated or no? **on and off**

- Which graphics card and driver version **2x nvidia GTX1060; nvidia-drivers 440.44-r1**

**Additional context**

- Can you reproduce with another Darktable version? **Yes**

- Can you reproduce with a RAW or Jpeg or both? **RAW**

- Are the steps above reproduce with a fresh edit (removing history)? **Yes**

- Attach an XMP if this is necessary

[2019-12-14_124134__EM50100.ORF.xmp.tar.gz](https://github.com/darktable-org/darktable/files/4021953/2019-12-14_124134__EM50100.ORF.xmp.tar.gz)

- Did you compile Darktable yourself? If so which compiler was used, with what options? **x86_64-pc-linux-gnu-9.2.0 CMAKE_BUILD_TYPE="Release" CFLAGS="-march=native -O3 -mtune=native -pipe"**

- Is the issue still present using an empty/new config-dir **Yes**

|

process

|

feathering radius weird artifacts describe the bug when mask opacity has been set to in a drawn mask feathering radius creates strange lines and they are actually in the mask than i know it makes no sense in a drawn mask only but drawn and parametric it does typically l mask just the error also appears with drawn only so we can concentrate on that to reproduce go to any module e g exposer create a drawn mask change opacity to change feathering radius to e g expected behavior mask should be handled propperly as it is when mask opacity is not changed screenshots platform please complete the following information darktable version and current master os gentoo linux opencl activated or no on and off which graphics card and driver version nvidia nvidia drivers additional context can you reproduce with another darktable version yes can you reproduce with a raw or jpeg or both raw are the steps above reproduce with a fresh edit removing history yes attach an xmp if this is necessary did you compile darktable yourself if so which compiler was used with what options pc linux gnu cmake build type release cflags march native mtune native pipe is the issue still present using an empty new config dir yes

| 1

|

431,161

| 12,475,906,727

|

IssuesEvent

|

2020-05-29 12:32:31

|

cilium/cilium

|

https://api.github.com/repos/cilium/cilium

|

closed

|

Cilium drops pod traffic that should be allowed by policy (due to CIDR / FQDN identity)

|

kind/bug kind/community-report kind/regression needs-backport/1.7 priority/release-blocker

|

## Summary

Cilium 1.7.3. Issue reported from the community during upgrade testing from v1.6.x.

Kernel 4.15.

Connectivity from pod to pod is rejected by policy despite the policy allowing that traffic.

## Symptoms

Cilium monitor reports drops for traffic that should be allowed by policy:

```

# cilium monitor --type=drop

Listening for events on 16 CPUs with 64x4096 of shared memory

Press Ctrl-C to quit

level=info msg="Initializing dissection cache..." subsys=monitor

xx drop (Policy denied) flow 0xb6b3b6b3 to endpoint 1585, identity 16777388->33623: 10.0.x.y:55454 -> 10.0.a.b:c tcp SYN

```

Source ip `10.0.x.y` Userspace report of ipcache reports that the IP is mapped to a pod identity:

```

# cilium map get cilium_ipcache | grep 10.0.x.y

10.0.x.y/32 6464 0 10.0.x.y sync

```

BPF map dump fails due to lack of kernel support:

```

# cilium bpf ipcache list

error dumping contents of map: Unable to get next key from map with file descriptor 5: errno 524

```

The IP also appears in the identity list as a CIDR identity:

```

cilium-identity-list.md:16777388 cidr:10.0.x.y/32

```

Furthermore it is listed in `cilium fqdn cache list`:

```

cilium-fqdn-cache-list.md:3906 lookup foo.namespace.svc.cluster.local. 3600 2020-05-12T19:02:17.413Z 10.0.x.y

```

Cilium status reports that the ipcache-bpf-garbage-collection controller has recently run successfully on the node, so userspace should be in sync with the datapath:

```

ipcache-bpf-garbage-collection 1m47s ago never 0 no error

```

Endpoint was regenerated regularly per expected ipcache GC controller behaviour:

```

$ grep -e "Endpoint Log" -e "ipcache" cilium-debuginfo-20200512-180805.689+0000-UTC.md | grep -A 200 1585 | head -n+8

#### Endpoint Log 1585

2020-05-12T18:06:16Z OK waiting-to-regenerate Successfully regenerated endpoint program (Reason: datapath ipcache)

2020-05-12T18:06:13Z OK regenerating Regenerating endpoint: datapath ipcache

2020-05-12T18:06:13Z OK waiting-to-regenerate Triggering endpoint regeneration due to datapath ipcache

2020-05-12T18:01:12Z OK waiting-to-regenerate Successfully regenerated endpoint program (Reason: datapath ipcache)

2020-05-12T18:01:10Z OK regenerating Regenerating endpoint: datapath ipcache

2020-05-12T18:01:08Z OK waiting-to-regenerate Triggering endpoint regeneration due to datapath ipcache

2020-05-12T17:56:06Z OK ready Successfully regenerated endpoint program (Reason: datapath ipcache)

```

If we look more broadly at the regenerations occurring around the time of the ipcache-triggered regenerations, we also see other reasons and there is not a 1-1 correlation between triggers and moving the endpoint into "ready" state, for example:

```

2020-05-12T18:06:27Z OK waiting-to-regenerate Triggering endpoint regeneration due to one or more identities created or deleted

2020-05-12T18:06:16Z OK ready Successfully regenerated endpoint program (Reason: one or more identities created or deleted)

2020-05-12T18:06:16Z OK ready Completed endpoint regeneration with no pending regeneration requests

2020-05-12T18:06:16Z OK regenerating Regenerating endpoint: one or more identities created or deleted

2020-05-12T18:06:16Z OK waiting-to-regenerate Successfully regenerated endpoint program (Reason: datapath ipcache)

2020-05-12T18:06:16Z OK waiting-to-regenerate Triggering endpoint regeneration due to one or more identities created or deleted

2020-05-12T18:06:13Z OK regenerating Regenerating endpoint: datapath ipcache

2020-05-12T18:06:13Z OK waiting-to-regenerate Triggering endpoint regeneration due to datapath ipcache

2020-05-12T18:05:33Z OK ready Successfully regenerated endpoint program (Reason: one or more identities created or deleted)

```

|

1.0

|

Cilium drops pod traffic that should be allowed by policy (due to CIDR / FQDN identity) - ## Summary

Cilium 1.7.3. Issue reported from the community during upgrade testing from v1.6.x.

Kernel 4.15.

Connectivity from pod to pod is rejected by policy despite the policy allowing that traffic.

## Symptoms

Cilium monitor reports drops for traffic that should be allowed by policy:

```

# cilium monitor --type=drop

Listening for events on 16 CPUs with 64x4096 of shared memory

Press Ctrl-C to quit

level=info msg="Initializing dissection cache..." subsys=monitor

xx drop (Policy denied) flow 0xb6b3b6b3 to endpoint 1585, identity 16777388->33623: 10.0.x.y:55454 -> 10.0.a.b:c tcp SYN

```

Source ip `10.0.x.y` Userspace report of ipcache reports that the IP is mapped to a pod identity:

```

# cilium map get cilium_ipcache | grep 10.0.x.y

10.0.x.y/32 6464 0 10.0.x.y sync

```

BPF map dump fails due to lack of kernel support:

```

# cilium bpf ipcache list

error dumping contents of map: Unable to get next key from map with file descriptor 5: errno 524

```

The IP also appears in the identity list as a CIDR identity:

```

cilium-identity-list.md:16777388 cidr:10.0.x.y/32

```

Furthermore it is listed in `cilium fqdn cache list`:

```

cilium-fqdn-cache-list.md:3906 lookup foo.namespace.svc.cluster.local. 3600 2020-05-12T19:02:17.413Z 10.0.x.y

```

Cilium status reports that the ipcache-bpf-garbage-collection controller has recently run successfully on the node, so userspace should be in sync with the datapath:

```

ipcache-bpf-garbage-collection 1m47s ago never 0 no error

```

Endpoint was regenerated regularly per expected ipcache GC controller behaviour:

```

$ grep -e "Endpoint Log" -e "ipcache" cilium-debuginfo-20200512-180805.689+0000-UTC.md | grep -A 200 1585 | head -n+8

#### Endpoint Log 1585

2020-05-12T18:06:16Z OK waiting-to-regenerate Successfully regenerated endpoint program (Reason: datapath ipcache)

2020-05-12T18:06:13Z OK regenerating Regenerating endpoint: datapath ipcache

2020-05-12T18:06:13Z OK waiting-to-regenerate Triggering endpoint regeneration due to datapath ipcache

2020-05-12T18:01:12Z OK waiting-to-regenerate Successfully regenerated endpoint program (Reason: datapath ipcache)

2020-05-12T18:01:10Z OK regenerating Regenerating endpoint: datapath ipcache

2020-05-12T18:01:08Z OK waiting-to-regenerate Triggering endpoint regeneration due to datapath ipcache

2020-05-12T17:56:06Z OK ready Successfully regenerated endpoint program (Reason: datapath ipcache)

```

If we look more broadly at the regenerations occurring around the time of the ipcache-triggered regenerations, we also see other reasons and there is not a 1-1 correlation between triggers and moving the endpoint into "ready" state, for example:

```

2020-05-12T18:06:27Z OK waiting-to-regenerate Triggering endpoint regeneration due to one or more identities created or deleted

2020-05-12T18:06:16Z OK ready Successfully regenerated endpoint program (Reason: one or more identities created or deleted)

2020-05-12T18:06:16Z OK ready Completed endpoint regeneration with no pending regeneration requests

2020-05-12T18:06:16Z OK regenerating Regenerating endpoint: one or more identities created or deleted

2020-05-12T18:06:16Z OK waiting-to-regenerate Successfully regenerated endpoint program (Reason: datapath ipcache)

2020-05-12T18:06:16Z OK waiting-to-regenerate Triggering endpoint regeneration due to one or more identities created or deleted

2020-05-12T18:06:13Z OK regenerating Regenerating endpoint: datapath ipcache

2020-05-12T18:06:13Z OK waiting-to-regenerate Triggering endpoint regeneration due to datapath ipcache

2020-05-12T18:05:33Z OK ready Successfully regenerated endpoint program (Reason: one or more identities created or deleted)

```

|

non_process

|

cilium drops pod traffic that should be allowed by policy due to cidr fqdn identity summary cilium issue reported from the community during upgrade testing from x kernel connectivity from pod to pod is rejected by policy despite the policy allowing that traffic symptoms cilium monitor reports drops for traffic that should be allowed by policy cilium monitor type drop listening for events on cpus with of shared memory press ctrl c to quit level info msg initializing dissection cache subsys monitor xx drop policy denied flow to endpoint identity x y a b c tcp syn source ip x y userspace report of ipcache reports that the ip is mapped to a pod identity cilium map get cilium ipcache grep x y x y x y sync bpf map dump fails due to lack of kernel support cilium bpf ipcache list error dumping contents of map unable to get next key from map with file descriptor errno the ip also appears in the identity list as a cidr identity cilium identity list md cidr x y furthermore it is listed in cilium fqdn cache list cilium fqdn cache list md lookup foo namespace svc cluster local x y cilium status reports that the ipcache bpf garbage collection controller has recently run successfully on the node so userspace should be in sync with the datapath ipcache bpf garbage collection ago never no error endpoint was regenerated regularly per expected ipcache gc controller behaviour grep e endpoint log e ipcache cilium debuginfo utc md grep a head n endpoint log ok waiting to regenerate successfully regenerated endpoint program reason datapath ipcache ok regenerating regenerating endpoint datapath ipcache ok waiting to regenerate triggering endpoint regeneration due to datapath ipcache ok waiting to regenerate successfully regenerated endpoint program reason datapath ipcache ok regenerating regenerating endpoint datapath ipcache ok waiting to regenerate triggering endpoint regeneration due to datapath ipcache ok ready successfully regenerated endpoint program reason datapath ipcache if we look more broadly at the regenerations occurring around the time of the ipcache triggered regenerations we also see other reasons and there is not a correlation between triggers and moving the endpoint into ready state for example ok waiting to regenerate triggering endpoint regeneration due to one or more identities created or deleted ok ready successfully regenerated endpoint program reason one or more identities created or deleted ok ready completed endpoint regeneration with no pending regeneration requests ok regenerating regenerating endpoint one or more identities created or deleted ok waiting to regenerate successfully regenerated endpoint program reason datapath ipcache ok waiting to regenerate triggering endpoint regeneration due to one or more identities created or deleted ok regenerating regenerating endpoint datapath ipcache ok waiting to regenerate triggering endpoint regeneration due to datapath ipcache ok ready successfully regenerated endpoint program reason one or more identities created or deleted

| 0

|

350,564

| 10,492,236,720

|

IssuesEvent

|

2019-09-25 12:54:40

|

conan-community/community

|

https://api.github.com/repos/conan-community/community

|

closed

|

[boost] building boost with python builds boost_numpy

|

complex: high priority: medium stage: queue type: bug

|

### Description of Problem, Request, or Question

building boost with python builds boost_numpy depending on if numpy happens to be installed on the building machine.

boost/1.69.0@conan/stable

|

1.0

|

[boost] building boost with python builds boost_numpy - ### Description of Problem, Request, or Question

building boost with python builds boost_numpy depending on if numpy happens to be installed on the building machine.

boost/1.69.0@conan/stable

|

non_process

|

building boost with python builds boost numpy description of problem request or question building boost with python builds boost numpy depending on if numpy happens to be installed on the building machine boost conan stable

| 0

|

220,624

| 24,565,341,057

|

IssuesEvent

|

2022-10-13 02:06:22

|

phunware/react-select

|

https://api.github.com/repos/phunware/react-select

|

opened

|

CVE-2022-37617 (Medium) detected in browserify-shim-3.8.14.tgz

|

security vulnerability

|

## CVE-2022-37617 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>browserify-shim-3.8.14.tgz</b></p></summary>

<p>Makes CommonJS-incompatible modules browserifyable.</p>

<p>Library home page: <a href="https://registry.npmjs.org/browserify-shim/-/browserify-shim-3.8.14.tgz">https://registry.npmjs.org/browserify-shim/-/browserify-shim-3.8.14.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/browserify-shim/package.json</p>

<p>

Dependency Hierarchy:

- react-component-gulp-tasks-0.7.7.tgz (Root Library)

- :x: **browserify-shim-3.8.14.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Prototype pollution vulnerability in function resolveShims in resolve-shims.js in thlorenz browserify-shim 3.8.15 via the k variable in resolve-shims.js.

<p>Publish Date: 2022-10-11

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-37617>CVE-2022-37617</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2022-37617 (Medium) detected in browserify-shim-3.8.14.tgz - ## CVE-2022-37617 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>browserify-shim-3.8.14.tgz</b></p></summary>

<p>Makes CommonJS-incompatible modules browserifyable.</p>

<p>Library home page: <a href="https://registry.npmjs.org/browserify-shim/-/browserify-shim-3.8.14.tgz">https://registry.npmjs.org/browserify-shim/-/browserify-shim-3.8.14.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/browserify-shim/package.json</p>

<p>

Dependency Hierarchy:

- react-component-gulp-tasks-0.7.7.tgz (Root Library)

- :x: **browserify-shim-3.8.14.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Prototype pollution vulnerability in function resolveShims in resolve-shims.js in thlorenz browserify-shim 3.8.15 via the k variable in resolve-shims.js.

<p>Publish Date: 2022-10-11

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-37617>CVE-2022-37617</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in browserify shim tgz cve medium severity vulnerability vulnerable library browserify shim tgz makes commonjs incompatible modules browserifyable library home page a href path to dependency file package json path to vulnerable library node modules browserify shim package json dependency hierarchy react component gulp tasks tgz root library x browserify shim tgz vulnerable library vulnerability details prototype pollution vulnerability in function resolveshims in resolve shims js in thlorenz browserify shim via the k variable in resolve shims js publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href step up your open source security game with mend

| 0

|

16,667

| 21,771,397,044

|

IssuesEvent

|

2022-05-13 09:25:34

|

camunda/feel-scala

|

https://api.github.com/repos/camunda/feel-scala

|

opened

|

Learning material for the FEEL language

|

type: documentation team/process-automation

|

## Which documentation is missing/incorrect?

* Learning material that leads the reader through exploring the FEEL language.

This could be inspired by other great language guides (https://doc.rust-lang.org/book/, http://www.learnyouahaskell.com/, etc)

Currently, the documentation is more reference-based:

* Getting Started: https://camunda.github.io/feel-scala/docs/reference

* What is FEEL: https://camunda.github.io/feel-scala/docs/reference/what-is-feel

Affected versions:

* 1.15

|

1.0

|

Learning material for the FEEL language - ## Which documentation is missing/incorrect?

* Learning material that leads the reader through exploring the FEEL language.

This could be inspired by other great language guides (https://doc.rust-lang.org/book/, http://www.learnyouahaskell.com/, etc)

Currently, the documentation is more reference-based:

* Getting Started: https://camunda.github.io/feel-scala/docs/reference

* What is FEEL: https://camunda.github.io/feel-scala/docs/reference/what-is-feel

Affected versions:

* 1.15

|

process

|

learning material for the feel language which documentation is missing incorrect learning material that leads the reader through exploring the feel language this could be inspired by other great language guides etc currently the documentation is more reference based getting started what is feel affected versions

| 1

|

297,838

| 9,182,295,528

|

IssuesEvent

|

2019-03-05 12:29:13

|

servicemesher/istio-official-translation

|

https://api.github.com/repos/servicemesher/istio-official-translation

|

closed

|

content/about/contribute/writing-a-new-topic/index.md

|

lang/zh pending priority/P0 sync/update version/1.1

|

文件路径:content/about/contribute/writing-a-new-topic/index.md

[源码](https://github.com/istio/istio.github.io/tree/master/content/about/contribute/writing-a-new-topic/index.md)

[网址](https://istio.io//about/contribute/writing-a-new-topic/index.htm)

```diff

diff --git a/content/about/contribute/writing-a-new-topic/index.md b/content/about/contribute/writing-a-new-topic/index.md

index 03836859..7e92ac78 100644

--- a/content/about/contribute/writing-a-new-topic/index.md

+++ b/content/about/contribute/writing-a-new-topic/index.md

@@ -65,11 +65,6 @@ is the best fit for your content:

</tr>

</table>

-### About blog posts

-

-The Istio blog is intended to contain authoritative posts regarding Istio and technologies or products related to

-Istio. We generally do not publish user or enthusiast posts about using Istio.

-

## Naming a topic

Choose a title for your topic that has the keywords you want search engines to find.

@@ -128,25 +123,21 @@ Within markdown, use the following sequence to add the image:

{{< text html >}}

{{</* image width="75%"

link="./myfile.svg"

- alt="Alternate text to display when the image can't be loaded"

+ alt="Alternate text to display when the image is not available"

title="A tooltip displayed when hovering over the image"

caption="A caption displayed under the image"

*/>}}

{{< /text >}}

-The `link` and `caption` values are required, all other values are optional.

-

-If the `title` value isn't supplied, it'll default to the same as `caption`. If the `alt` value is not supplied, it'll

+The `width`, `link` and `caption` values are always required. If the image is a PNG or JPG file, then the

+`ratio` value is required. If the `title` value isn't

+supplied, it'll default to the same as `caption`. If the `alt` value is not supplied, it'll

default to `title` or if that's not defined, to `caption`.

`width` represents the percentage of space used by the image

-relative to the surrounding text. If the value is not specified, it

-defaults to 100$.

+relative to the surrounding text.

-`ratio` represents the ratio of the image height compared to the image width. This

-value is calculated automatically for any local image content, but must be calculated

-manually when referencing external image content.

-In that case, `ratio` must be manually calculated using (image height / image width) * 100.

+For PNG and JPG images, `ratio` must be manually calculated using (image height / image width) * 100.

## Adding icons & emojis

@@ -442,22 +433,6 @@ will use when the user chooses to download the file. For example:

If you don't specify the `downloadas` attribute, then the download name is taken from the `url`

attribute instead.

-## Embedding boilerplate text

-

-You can embed common boilerplate text into any markdown output using the `boilerplate` sequence:

-

-{{< text markdown >}}

-{{</* boilerplate example */>}}

-{{< /text >}}

-

-which results in:

-

-{{< boilerplate example >}}

-

-You supply the name of a boilerplate file to insert at the current location. Available boilerplates are

-located in the `boilerplates` directory. Boilerplates are just

-normal markdown files.

-

## Using tabs

If you have some content to display in a variety of formats, it is convenient to use a tab set and display each

```

|

1.0

|

content/about/contribute/writing-a-new-topic/index.md - 文件路径:content/about/contribute/writing-a-new-topic/index.md

[源码](https://github.com/istio/istio.github.io/tree/master/content/about/contribute/writing-a-new-topic/index.md)

[网址](https://istio.io//about/contribute/writing-a-new-topic/index.htm)

```diff

diff --git a/content/about/contribute/writing-a-new-topic/index.md b/content/about/contribute/writing-a-new-topic/index.md

index 03836859..7e92ac78 100644

--- a/content/about/contribute/writing-a-new-topic/index.md

+++ b/content/about/contribute/writing-a-new-topic/index.md

@@ -65,11 +65,6 @@ is the best fit for your content:

</tr>

</table>

-### About blog posts

-

-The Istio blog is intended to contain authoritative posts regarding Istio and technologies or products related to

-Istio. We generally do not publish user or enthusiast posts about using Istio.

-

## Naming a topic

Choose a title for your topic that has the keywords you want search engines to find.

@@ -128,25 +123,21 @@ Within markdown, use the following sequence to add the image:

{{< text html >}}

{{</* image width="75%"

link="./myfile.svg"

- alt="Alternate text to display when the image can't be loaded"

+ alt="Alternate text to display when the image is not available"

title="A tooltip displayed when hovering over the image"

caption="A caption displayed under the image"

*/>}}

{{< /text >}}

-The `link` and `caption` values are required, all other values are optional.

-

-If the `title` value isn't supplied, it'll default to the same as `caption`. If the `alt` value is not supplied, it'll

+The `width`, `link` and `caption` values are always required. If the image is a PNG or JPG file, then the

+`ratio` value is required. If the `title` value isn't

+supplied, it'll default to the same as `caption`. If the `alt` value is not supplied, it'll

default to `title` or if that's not defined, to `caption`.

`width` represents the percentage of space used by the image

-relative to the surrounding text. If the value is not specified, it

-defaults to 100$.

+relative to the surrounding text.

-`ratio` represents the ratio of the image height compared to the image width. This

-value is calculated automatically for any local image content, but must be calculated

-manually when referencing external image content.

-In that case, `ratio` must be manually calculated using (image height / image width) * 100.

+For PNG and JPG images, `ratio` must be manually calculated using (image height / image width) * 100.

## Adding icons & emojis

@@ -442,22 +433,6 @@ will use when the user chooses to download the file. For example:

If you don't specify the `downloadas` attribute, then the download name is taken from the `url`

attribute instead.

-## Embedding boilerplate text

-

-You can embed common boilerplate text into any markdown output using the `boilerplate` sequence:

-

-{{< text markdown >}}

-{{</* boilerplate example */>}}

-{{< /text >}}

-

-which results in:

-

-{{< boilerplate example >}}

-

-You supply the name of a boilerplate file to insert at the current location. Available boilerplates are

-located in the `boilerplates` directory. Boilerplates are just

-normal markdown files.

-

## Using tabs

If you have some content to display in a variety of formats, it is convenient to use a tab set and display each

```

|

non_process

|

content about contribute writing a new topic index md 文件路径:content about contribute writing a new topic index md diff diff git a content about contribute writing a new topic index md b content about contribute writing a new topic index md index a content about contribute writing a new topic index md b content about contribute writing a new topic index md is the best fit for your content about blog posts the istio blog is intended to contain authoritative posts regarding istio and technologies or products related to istio we generally do not publish user or enthusiast posts about using istio naming a topic choose a title for your topic that has the keywords you want search engines to find within markdown use the following sequence to add the image image width link myfile svg alt alternate text to display when the image can t be loaded alt alternate text to display when the image is not available title a tooltip displayed when hovering over the image caption a caption displayed under the image the link and caption values are required all other values are optional if the title value isn t supplied it ll default to the same as caption if the alt value is not supplied it ll the width link and caption values are always required if the image is a png or jpg file then the ratio value is required if the title value isn t supplied it ll default to the same as caption if the alt value is not supplied it ll default to title or if that s not defined to caption width represents the percentage of space used by the image relative to the surrounding text if the value is not specified it defaults to relative to the surrounding text ratio represents the ratio of the image height compared to the image width this value is calculated automatically for any local image content but must be calculated manually when referencing external image content in that case ratio must be manually calculated using image height image width for png and jpg images ratio must be manually calculated using image height image width adding icons emojis will use when the user chooses to download the file for example if you don t specify the downloadas attribute then the download name is taken from the url attribute instead embedding boilerplate text you can embed common boilerplate text into any markdown output using the boilerplate sequence which results in you supply the name of a boilerplate file to insert at the current location available boilerplates are located in the boilerplates directory boilerplates are just normal markdown files using tabs if you have some content to display in a variety of formats it is convenient to use a tab set and display each

| 0

|

143,301

| 21,995,887,603

|

IssuesEvent

|

2022-05-26 06:16:02

|

stores-cedcommerce/Anthony-Store-Design

|

https://api.github.com/repos/stores-cedcommerce/Anthony-Store-Design

|

opened

|

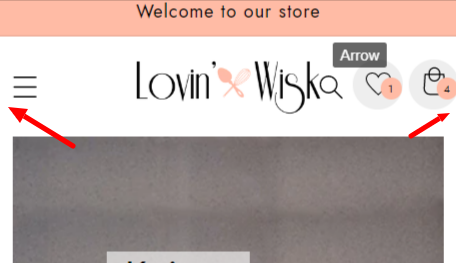

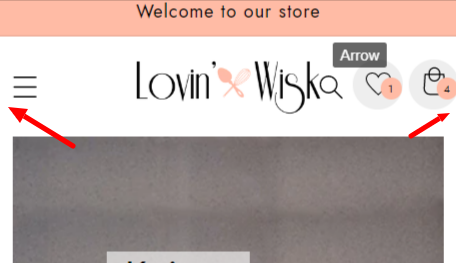

The spacing from the left and right side is not equal.

|

Header section Mobile Design / UI / UX

|

**Actual result:**

The spacing from the left and right side is not equal.

**Expected result:**

The spacing can be equal from the left and right side.

|

1.0

|

The spacing from the left and right side is not equal. - **Actual result:**

The spacing from the left and right side is not equal.

**Expected result:**

The spacing can be equal from the left and right side.

|

non_process

|

the spacing from the left and right side is not equal actual result the spacing from the left and right side is not equal expected result the spacing can be equal from the left and right side

| 0

|

722,814

| 24,874,771,351

|

IssuesEvent

|

2022-10-27 18:05:43

|

CarnegieLearningWeb/UpGrade

|

https://api.github.com/repos/CarnegieLearningWeb/UpGrade

|

closed

|

Batch import/export of experiment files

|

enhancement priority: low

|

It would be helpful to enable batch import/export of experiment json files to facilitate faster setup. Low priority though.

|

1.0

|

Batch import/export of experiment files - It would be helpful to enable batch import/export of experiment json files to facilitate faster setup. Low priority though.

|

non_process

|

batch import export of experiment files it would be helpful to enable batch import export of experiment json files to facilitate faster setup low priority though

| 0

|

433,244

| 30,320,197,013

|

IssuesEvent

|

2023-07-10 18:37:07

|

VerisimilitudeX/DNAnalyzer

|

https://api.github.com/repos/VerisimilitudeX/DNAnalyzer

|

closed

|

Design `Current Version` Documentation

|

documentation help wanted hacktoberfest-accepted no-issue-activity

|

**Is your feature request related to a problem? Please describe.**

We need a documentation file to display current version of the application. This will also be used with the CLI argument for version identification.

**Describe the solution you'd like**

Either some form of version control [like this](https://rebelsguidetopm.com/how-to-do-document-version-control/) or a basic file with the current version.

|

1.0

|

Design `Current Version` Documentation - **Is your feature request related to a problem? Please describe.**

We need a documentation file to display current version of the application. This will also be used with the CLI argument for version identification.

**Describe the solution you'd like**

Either some form of version control [like this](https://rebelsguidetopm.com/how-to-do-document-version-control/) or a basic file with the current version.

|

non_process

|

design current version documentation is your feature request related to a problem please describe we need a documentation file to display current version of the application this will also be used with the cli argument for version identification describe the solution you d like either some form of version control or a basic file with the current version

| 0

|

273,763

| 20,815,136,847

|

IssuesEvent

|

2022-03-18 09:26:03

|

rtsoft-gmbh/up2date-cpp

|

https://api.github.com/repos/rtsoft-gmbh/up2date-cpp

|

opened

|

Add docs for public api

|

documentation

|

Add usage commemnts for public api in ddi and dps modules

May be use docs-generator: https://github.com/pseudomuto/protoc-gen-doc ?

|

1.0

|

Add docs for public api - Add usage commemnts for public api in ddi and dps modules

May be use docs-generator: https://github.com/pseudomuto/protoc-gen-doc ?

|

non_process

|

add docs for public api add usage commemnts for public api in ddi and dps modules may be use docs generator

| 0

|

179,871

| 6,630,774,789

|

IssuesEvent

|

2017-09-25 02:21:46

|

Citadel-Station-13/Citadel-Station-13

|

https://api.github.com/repos/Citadel-Station-13/Citadel-Station-13

|

closed

|

Examining breaks when a ` is present in your flavortext

|

Bug Priority: CRITICAL

|

Basically, if a ` (backtick, NOT an apostrophe) is present in the flavortext, your character will be entirely unable to be examined

|

1.0

|

Examining breaks when a ` is present in your flavortext - Basically, if a ` (backtick, NOT an apostrophe) is present in the flavortext, your character will be entirely unable to be examined

|

non_process

|

examining breaks when a is present in your flavortext basically if a backtick not an apostrophe is present in the flavortext your character will be entirely unable to be examined

| 0

|

17,189

| 22,769,985,518

|

IssuesEvent

|

2022-07-08 09:05:05

|

geneontology/go-ontology

|

https://api.github.com/repos/geneontology/go-ontology

|

closed

|

Obsoletion notice: GO:0036472 suppression by virus of host protein-protein interaction

|

multi-species process

|

Dear all,

The proposal has been made to obsolete GO:0036472 suppression by virus of host protein-protein interaction.

The reason for obsoletion is that this term represents a molecular function (GO:0140311 protein sequestering activity or other type of GO:0098772 molecular function regulator activity).

There is a single annotation to this term (disputed in P2GO). There are no mappings; this term is not present in any subsets.

You can comment on the ticket: https://github.com/geneontology/go-ontology/issues/23645

Thanks, Pascale

|

1.0

|

Obsoletion notice: GO:0036472 suppression by virus of host protein-protein interaction - Dear all,

The proposal has been made to obsolete GO:0036472 suppression by virus of host protein-protein interaction.

The reason for obsoletion is that this term represents a molecular function (GO:0140311 protein sequestering activity or other type of GO:0098772 molecular function regulator activity).

There is a single annotation to this term (disputed in P2GO). There are no mappings; this term is not present in any subsets.

You can comment on the ticket: https://github.com/geneontology/go-ontology/issues/23645

Thanks, Pascale

|

process

|

obsoletion notice go suppression by virus of host protein protein interaction dear all the proposal has been made to obsolete go suppression by virus of host protein protein interaction the reason for obsoletion is that this term represents a molecular function go protein sequestering activity or other type of go molecular function regulator activity there is a single annotation to this term disputed in there are no mappings this term is not present in any subsets you can comment on the ticket thanks pascale

| 1

|

602,748

| 18,502,810,594

|

IssuesEvent

|

2021-10-19 15:17:57

|

tinkerbell/hook

|

https://api.github.com/repos/tinkerbell/hook

|

closed

|

Push-based publish job is failing

|

kind/bug priority/important-soon

|

## Expected Behaviour

Current publish job is referencing a non-existing s3 bucket and does not have credentials, example failure: https://github.com/tinkerbell/hook/actions/runs/559080308

|

1.0

|

Push-based publish job is failing - ## Expected Behaviour

Current publish job is referencing a non-existing s3 bucket and does not have credentials, example failure: https://github.com/tinkerbell/hook/actions/runs/559080308

|

non_process

|

push based publish job is failing expected behaviour current publish job is referencing a non existing bucket and does not have credentials example failure

| 0

|

17,735

| 23,651,255,527

|

IssuesEvent

|

2022-08-26 06:53:53

|

openxla/stablehlo

|

https://api.github.com/repos/openxla/stablehlo

|

closed

|

Prefix includes with "stablehlo"

|

Process

|

At the moment, our includes are not prefixed with the project name, e.g. `#include dialect/ChloOps.h`. Now that StableHLO is starting getting used, we should change that to `#include stablehlo/dialect/ChloOps.h`.

|

1.0

|

Prefix includes with "stablehlo" - At the moment, our includes are not prefixed with the project name, e.g. `#include dialect/ChloOps.h`. Now that StableHLO is starting getting used, we should change that to `#include stablehlo/dialect/ChloOps.h`.

|

process

|

prefix includes with stablehlo at the moment our includes are not prefixed with the project name e g include dialect chloops h now that stablehlo is starting getting used we should change that to include stablehlo dialect chloops h

| 1

|

551,350

| 16,166,641,440

|

IssuesEvent

|

2021-05-01 16:29:06

|

sopra-fs21-group-13/Server

|

https://api.github.com/repos/sopra-fs21-group-13/Server

|

closed

|

Functionality to add members to a learnSet

|

high priority task

|

Duration: 6h

Add new dependencies between user and set

Create easy access to memberList and functionality to add members and remove them

#6

|

1.0

|

Functionality to add members to a learnSet - Duration: 6h

Add new dependencies between user and set

Create easy access to memberList and functionality to add members and remove them

#6

|

non_process

|

functionality to add members to a learnset duration add new dependencies between user and set create easy access to memberlist and functionality to add members and remove them

| 0

|

22,135

| 30,679,908,236

|

IssuesEvent

|

2023-07-26 08:28:32

|

MicrosoftDocs/azure-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-docs

|

closed

|

Create Automation PowerShell runbook using managed identity

|

automation/svc triaged cxp product-question process-automation/subsvc Pri2

|

[Enter feedback here]

While creating Automation Powershell runbook using managed identities, this particular solution doesn't enable the System assigned identity, as a result of which there is no ObjectId associated and hence role assignment fails.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 8a8470c7-57d1-e2ec-cc70-a43c8dfc42d6

* Version Independent ID: 2da6432e-e642-10ae-199c-9ebb1e19a5d8

* Content: [Create PowerShell runbook using managed identity in Azure Automation](https://docs.microsoft.com/en-us/azure/automation/learn/powershell-runbook-managed-identity)

* Content Source: [articles/automation/learn/powershell-runbook-managed-identity.md](https://github.com/MicrosoftDocs/azure-docs/blob/master/articles/automation/learn/powershell-runbook-managed-identity.md)

* Service: **automation**

* Sub-service: **process-automation**

* GitHub Login: @MGoedtel

* Microsoft Alias: **magoedte**

|

1.0

|

Create Automation PowerShell runbook using managed identity -

[Enter feedback here]

While creating Automation Powershell runbook using managed identities, this particular solution doesn't enable the System assigned identity, as a result of which there is no ObjectId associated and hence role assignment fails.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 8a8470c7-57d1-e2ec-cc70-a43c8dfc42d6

* Version Independent ID: 2da6432e-e642-10ae-199c-9ebb1e19a5d8

* Content: [Create PowerShell runbook using managed identity in Azure Automation](https://docs.microsoft.com/en-us/azure/automation/learn/powershell-runbook-managed-identity)

* Content Source: [articles/automation/learn/powershell-runbook-managed-identity.md](https://github.com/MicrosoftDocs/azure-docs/blob/master/articles/automation/learn/powershell-runbook-managed-identity.md)

* Service: **automation**

* Sub-service: **process-automation**

* GitHub Login: @MGoedtel

* Microsoft Alias: **magoedte**

|

process

|

create automation powershell runbook using managed identity while creating automation powershell runbook using managed identities this particular solution doesn t enable the system assigned identity as a result of which there is no objectid associated and hence role assignment fails document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source service automation sub service process automation github login mgoedtel microsoft alias magoedte

| 1

|

17,787

| 23,714,837,929

|

IssuesEvent

|

2022-08-30 10:52:26

|

wp-media/wp-rocket

|

https://api.github.com/repos/wp-media/wp-rocket

|

closed

|

Use Action Scheduler for background processing

|

needs: testing module: preload module: database feature request tool: background process priority: medium status: blocked needs: r&d

|

Right now the primary issue with the delicious brains batch processing is it stores everything in wp_options and tries to use admin-ajax to keep the queue going. The preloader is also badly designed in my opinion such that all URLs are stored as a single batch vs individual.

The problem with that is no way to detect for the actual progress of the queue beside the simple options based counter.

the latest action scheduler (https://actionscheduler.org) stores to a dedicated table and has integrated delicious brains async class, but it does not rely on it 100%, and you can still process everything individually.

This overall would provide better scalability and performance in both shared hosting and more advanced setups/use cases.

I have had to write a plugin myself not too long ago that hooks into the WP HTTP API to abort the ajax callbacks from the preloader and force everything on the CLI/cron, and I would like to see that not be needed truthfully.

Thanks :)

|

1.0

|

Use Action Scheduler for background processing - Right now the primary issue with the delicious brains batch processing is it stores everything in wp_options and tries to use admin-ajax to keep the queue going. The preloader is also badly designed in my opinion such that all URLs are stored as a single batch vs individual.

The problem with that is no way to detect for the actual progress of the queue beside the simple options based counter.

the latest action scheduler (https://actionscheduler.org) stores to a dedicated table and has integrated delicious brains async class, but it does not rely on it 100%, and you can still process everything individually.

This overall would provide better scalability and performance in both shared hosting and more advanced setups/use cases.

I have had to write a plugin myself not too long ago that hooks into the WP HTTP API to abort the ajax callbacks from the preloader and force everything on the CLI/cron, and I would like to see that not be needed truthfully.

Thanks :)

|

process

|

use action scheduler for background processing right now the primary issue with the delicious brains batch processing is it stores everything in wp options and tries to use admin ajax to keep the queue going the preloader is also badly designed in my opinion such that all urls are stored as a single batch vs individual the problem with that is no way to detect for the actual progress of the queue beside the simple options based counter the latest action scheduler stores to a dedicated table and has integrated delicious brains async class but it does not rely on it and you can still process everything individually this overall would provide better scalability and performance in both shared hosting and more advanced setups use cases i have had to write a plugin myself not too long ago that hooks into the wp http api to abort the ajax callbacks from the preloader and force everything on the cli cron and i would like to see that not be needed truthfully thanks

| 1

|

620,079

| 19,548,168,647

|

IssuesEvent

|

2022-01-02 08:48:06

|

WA-WF-Bot/WA-WF-Bot-Public

|

https://api.github.com/repos/WA-WF-Bot/WA-WF-Bot-Public

|

opened

|

Change bug report option

|

priority-1

|

The command !bug should create the following message:

You can report bugs to the following mail:connect@toinbox.org

.Thank you for helping us make ToInbox better!

|

1.0

|

Change bug report option - The command !bug should create the following message:

You can report bugs to the following mail:connect@toinbox.org

.Thank you for helping us make ToInbox better!

|

non_process

|

change bug report option the command bug should create the following message you can report bugs to the following mail connect toinbox org thank you for helping us make toinbox better

| 0

|

23,528

| 3,834,753,711

|

IssuesEvent

|

2016-04-01 11:21:31

|

jOOQ/jOOQ

|

https://api.github.com/repos/jOOQ/jOOQ

|

closed

|

Meta Table.getKeys() returns an empty list containing "null", if a table has no primary key

|

C: Functionality P: Medium R: Fixed T: Defect

|

Related issue: #5179

|

1.0

|

Meta Table.getKeys() returns an empty list containing "null", if a table has no primary key - Related issue: #5179

|

non_process

|

meta table getkeys returns an empty list containing null if a table has no primary key related issue

| 0

|

22,933

| 7,247,259,294

|

IssuesEvent

|

2018-02-15 01:36:26

|

jaagr/polybar

|

https://api.github.com/repos/jaagr/polybar

|

closed

|

xpp/proto build error on openSUSE tumbleweed

|

build

|

Hello

When I try to build polybar, I get this error. I have tried playing around with different dependencies and versions of polybar, but it just will not go away.

Error:

```

[ 1%] Generating ../../../lib/xpp/include/xpp/proto/x.hpp

Traceback (most recent call last):

File "/home/james/mysuseconfig/polybar/lib/xpp/generators/cpp_client.py", line 3163, in <module>

from xcbgen.state import Module

File "/usr/lib/python3.6/site-packages/xcbgen/state.py", line 7, in <module>

from xcbgen import matcher

File "/usr/lib/python3.6/site-packages/xcbgen/matcher.py", line 12, in <module>

from xcbgen.xtypes import *

File "/usr/lib/python3.6/site-packages/xcbgen/xtypes.py", line 1201, in <module>

class EventStruct(Union):

File "/usr/lib/python3.6/site-packages/xcbgen/xtypes.py", line 1219, in EventStruct

out = __main__.output['eventstruct']

KeyError: 'eventstruct'

make[2]: *** [lib/xpp/CMakeFiles/xpp.dir/build.make:70: ../lib/xpp/include/xpp/proto/x.hpp] Error 1

make[2]: *** Deleting file '../lib/xpp/include/xpp/proto/x.hpp'

make[1]: *** [CMakeFiles/Makefile2:402: lib/xpp/CMakeFiles/xpp.dir/all] Error 2

make: *** [Makefile:130: all] Error 2

```

James

|

1.0

|

xpp/proto build error on openSUSE tumbleweed - Hello

When I try to build polybar, I get this error. I have tried playing around with different dependencies and versions of polybar, but it just will not go away.

Error:

```

[ 1%] Generating ../../../lib/xpp/include/xpp/proto/x.hpp

Traceback (most recent call last):

File "/home/james/mysuseconfig/polybar/lib/xpp/generators/cpp_client.py", line 3163, in <module>

from xcbgen.state import Module

File "/usr/lib/python3.6/site-packages/xcbgen/state.py", line 7, in <module>

from xcbgen import matcher

File "/usr/lib/python3.6/site-packages/xcbgen/matcher.py", line 12, in <module>

from xcbgen.xtypes import *

File "/usr/lib/python3.6/site-packages/xcbgen/xtypes.py", line 1201, in <module>

class EventStruct(Union):

File "/usr/lib/python3.6/site-packages/xcbgen/xtypes.py", line 1219, in EventStruct

out = __main__.output['eventstruct']

KeyError: 'eventstruct'

make[2]: *** [lib/xpp/CMakeFiles/xpp.dir/build.make:70: ../lib/xpp/include/xpp/proto/x.hpp] Error 1

make[2]: *** Deleting file '../lib/xpp/include/xpp/proto/x.hpp'

make[1]: *** [CMakeFiles/Makefile2:402: lib/xpp/CMakeFiles/xpp.dir/all] Error 2

make: *** [Makefile:130: all] Error 2

```

James

|

non_process

|

xpp proto build error on opensuse tumbleweed hello when i try to build polybar i get this error i have tried playing around with different dependencies and versions of polybar but it just will not go away error generating lib xpp include xpp proto x hpp traceback most recent call last file home james mysuseconfig polybar lib xpp generators cpp client py line in from xcbgen state import module file usr lib site packages xcbgen state py line in from xcbgen import matcher file usr lib site packages xcbgen matcher py line in from xcbgen xtypes import file usr lib site packages xcbgen xtypes py line in class eventstruct union file usr lib site packages xcbgen xtypes py line in eventstruct out main output keyerror eventstruct make error make deleting file lib xpp include xpp proto x hpp make error make error james

| 0

|

441,983

| 12,735,724,040

|

IssuesEvent

|

2020-06-25 15:45:14

|

graknlabs/grakn

|

https://api.github.com/repos/graknlabs/grakn

|

closed

|

Incorrect Graql behaviour in some scenarios (minor issues)

|

priority: low type: bug

|

## Description

A number of minor issues have been found while crafting BDD scenarios, where the actual behaviour of Graql does not match the expected behaviour.

## Environment

1. OS (where Grakn server runs): Mac OS 10

2. Grakn version: Grakn Core 1.7.2

3. Grakn client: client-java

## Scenarios

### Scenario: define attribute subtype throws if you try to override 'value'

#### expected behaviour

given

```

define

name sub attribute, value string;

```

then `define code-name sub name, value long;` should fail with an error message.

#### actual behaviour

It does not throw an error, and defines the type `code-name`. (fixed)

### Scenario: define rule with an attribute value set in `then` that doesn't match the attribute's type throws on commit

#### expected behaviour

given

```

define

name sub attribute, value string;

nickname sub name;

person has nickname;

```

then `define may-has-nickname-5 sub rule, when { $p has name "May"; }, then { $p has nickname 5; };` should throw an error on commit, saying that the rule infers an attribute value that is of the incorrect type.

#### actual behaviour

It defines the rule successfully. It then lets you insert a person with name "May". If you then try to `match $n isa name; get;` this query throws an error, saying that Long cannot be cast to String. (raise issue, do not fix- too hard)

### Scenario: define rule that infers an abstract relation throws on commit

#### expected behaviour

When you define a rule that infers an abstract relation, it should throw on commit.

#### actual behaviour

It doesn't. (raise issue + fix)

### Scenario: define rule that infers an abstract attribute value throws on commit

#### expected behaviour

When you define a rule that infers the value of an abstract attribute, it should throw on commit.

#### actual behaviour

It doesn't. (raise issue + fix)

### Scenario: define a subrule throws on commit

#### expected behaviour

When you define a rule to `sub` another rule, it should throw on commit (or on define)

#### actual behaviour

It lets you define the rule. The rule works and functions normally, as if it was defined normally with `sub rule`. (fixed in 2.0)

### Scenario: assign new supertype with existing data succeeds if the supertypes play the same roles

#### expected behaviour

Given

```

define

bird sub entity, plays flier;

pigeon sub bird;

flying sub relation, relates flier;

insert $p isa pigeon;

define

animal sub entity, plays flier;

```

then `define pigeon sub animal;` should be allowed because `animal` and `bird` both play the same roles and have no other differences.

#### actual behaviour

The following error is thrown:

`Cannot change the super type {bird} to {animal} because {bird} is connected to role {flier} which {animal} is not connected to.` - SUCCEEDS with no data - this should be put into a test! Raise an issue, but don't fix yet; it's complicated.

### Scenario: assign new supertype with existing data succeeds if the supertypes have the same attributes

Similar to the above scenario, but where the two parent types each have an attribute ownership and nothing else, and the attributes they own are the same attribute. - Same notes as above scenario.

### Scenario: write a variable in a 'define' throws

#### expected behaviour

We should throw an exception if the user writes a variable in a 'define'.

#### actual behaviour

We don't. - Raise issue in graql repo, and fix.

|

1.0

|

Incorrect Graql behaviour in some scenarios (minor issues) - ## Description

A number of minor issues have been found while crafting BDD scenarios, where the actual behaviour of Graql does not match the expected behaviour.

## Environment

1. OS (where Grakn server runs): Mac OS 10

2. Grakn version: Grakn Core 1.7.2

3. Grakn client: client-java

## Scenarios

### Scenario: define attribute subtype throws if you try to override 'value'

#### expected behaviour

given

```

define

name sub attribute, value string;

```

then `define code-name sub name, value long;` should fail with an error message.

#### actual behaviour

It does not throw an error, and defines the type `code-name`. (fixed)

### Scenario: define rule with an attribute value set in `then` that doesn't match the attribute's type throws on commit

#### expected behaviour

given

```

define

name sub attribute, value string;

nickname sub name;

person has nickname;

```

then `define may-has-nickname-5 sub rule, when { $p has name "May"; }, then { $p has nickname 5; };` should throw an error on commit, saying that the rule infers an attribute value that is of the incorrect type.

#### actual behaviour

It defines the rule successfully. It then lets you insert a person with name "May". If you then try to `match $n isa name; get;` this query throws an error, saying that Long cannot be cast to String. (raise issue, do not fix- too hard)

### Scenario: define rule that infers an abstract relation throws on commit

#### expected behaviour

When you define a rule that infers an abstract relation, it should throw on commit.

#### actual behaviour

It doesn't. (raise issue + fix)

### Scenario: define rule that infers an abstract attribute value throws on commit

#### expected behaviour

When you define a rule that infers the value of an abstract attribute, it should throw on commit.

#### actual behaviour

It doesn't. (raise issue + fix)

### Scenario: define a subrule throws on commit

#### expected behaviour

When you define a rule to `sub` another rule, it should throw on commit (or on define)

#### actual behaviour

It lets you define the rule. The rule works and functions normally, as if it was defined normally with `sub rule`. (fixed in 2.0)

### Scenario: assign new supertype with existing data succeeds if the supertypes play the same roles

#### expected behaviour

Given

```

define

bird sub entity, plays flier;

pigeon sub bird;

flying sub relation, relates flier;

insert $p isa pigeon;

define

animal sub entity, plays flier;

```

then `define pigeon sub animal;` should be allowed because `animal` and `bird` both play the same roles and have no other differences.

#### actual behaviour

The following error is thrown:

`Cannot change the super type {bird} to {animal} because {bird} is connected to role {flier} which {animal} is not connected to.` - SUCCEEDS with no data - this should be put into a test! Raise an issue, but don't fix yet; it's complicated.

### Scenario: assign new supertype with existing data succeeds if the supertypes have the same attributes

Similar to the above scenario, but where the two parent types each have an attribute ownership and nothing else, and the attributes they own are the same attribute. - Same notes as above scenario.

### Scenario: write a variable in a 'define' throws

#### expected behaviour

We should throw an exception if the user writes a variable in a 'define'.

#### actual behaviour

We don't. - Raise issue in graql repo, and fix.

|

non_process

|

incorrect graql behaviour in some scenarios minor issues description a number of minor issues have been found while crafting bdd scenarios where the actual behaviour of graql does not match the expected behaviour environment os where grakn server runs mac os grakn version grakn core grakn client client java scenarios scenario define attribute subtype throws if you try to override value expected behaviour given define name sub attribute value string then define code name sub name value long should fail with an error message actual behaviour it does not throw an error and defines the type code name fixed scenario define rule with an attribute value set in then that doesn t match the attribute s type throws on commit expected behaviour given define name sub attribute value string nickname sub name person has nickname then define may has nickname sub rule when p has name may then p has nickname should throw an error on commit saying that the rule infers an attribute value that is of the incorrect type actual behaviour it defines the rule successfully it then lets you insert a person with name may if you then try to match n isa name get this query throws an error saying that long cannot be cast to string raise issue do not fix too hard scenario define rule that infers an abstract relation throws on commit expected behaviour when you define a rule that infers an abstract relation it should throw on commit actual behaviour it doesn t raise issue fix scenario define rule that infers an abstract attribute value throws on commit expected behaviour when you define a rule that infers the value of an abstract attribute it should throw on commit actual behaviour it doesn t raise issue fix scenario define a subrule throws on commit expected behaviour when you define a rule to sub another rule it should throw on commit or on define actual behaviour it lets you define the rule the rule works and functions normally as if it was defined normally with sub rule fixed in scenario assign new supertype with existing data succeeds if the supertypes play the same roles expected behaviour given define bird sub entity plays flier pigeon sub bird flying sub relation relates flier insert p isa pigeon define animal sub entity plays flier then define pigeon sub animal should be allowed because animal and bird both play the same roles and have no other differences actual behaviour the following error is thrown cannot change the super type bird to animal because bird is connected to role flier which animal is not connected to succeeds with no data this should be put into a test raise an issue but don t fix yet it s complicated scenario assign new supertype with existing data succeeds if the supertypes have the same attributes similar to the above scenario but where the two parent types each have an attribute ownership and nothing else and the attributes they own are the same attribute same notes as above scenario scenario write a variable in a define throws expected behaviour we should throw an exception if the user writes a variable in a define actual behaviour we don t raise issue in graql repo and fix

| 0

|

306,701

| 23,169,770,066

|

IssuesEvent

|

2022-07-30 14:23:58

|

RippeR37/libbase-example-cmake

|

https://api.github.com/repos/RippeR37/libbase-example-cmake

|

closed

|

GLOG user guide is not visible in online documentation

|

bug documentation external on hold

|

When opening the documentation on GitHub Pages, then navigating to `Logging` page, the `GLOG's user guide` section is empty.

|

1.0

|

GLOG user guide is not visible in online documentation - When opening the documentation on GitHub Pages, then navigating to `Logging` page, the `GLOG's user guide` section is empty.

|

non_process

|

glog user guide is not visible in online documentation when opening the documentation on github pages then navigating to logging page the glog s user guide section is empty

| 0

|

50,149

| 13,187,348,673

|

IssuesEvent

|

2020-08-13 03:07:42

|

icecube-trac/tix3

|

https://api.github.com/repos/icecube-trac/tix3

|

closed

|

PFTriggerFilterMonitoring may flood the logging with "No filter mask present in frame at position ..." (Trac #205)

|

Migrated from Trac defect jeb + pnf

|

the filter + rate monitoring currently (plan-b) runs within the filter clients - all events should include a filter mask.

it will move into the server in future (plan-a), so that events may not include a filter mask, if the filter mode isn't physics filtering.

PFTriggerFilterMonitoring will create a warn logging for such events...

solutions:

a) PFTriggerFilterMonitoring only runs on physics-filtered data changing it into a conditional module using a PFFilterModeFilter (that needs to be implemented) or checking the run summary service for the current filter mode

b) PFTriggerFilterMonitoring only processes the filter mask for physics-filtered data checking the run summary service for the current filter mode

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/205

, reported by tschmidt and owned by rfranke_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2014-11-23T03:37:57",

"description": "the filter + rate monitoring currently (plan-b) runs within the filter clients - all events should include a filter mask.[[BR]]\nit will move into the server in future (plan-a), so that events may not include a filter mask, if the filter mode isn't physics filtering.[[BR]]\nPFTriggerFilterMonitoring will create a warn logging for such events...\n\nsolutions:[[BR]]\na) PFTriggerFilterMonitoring only runs on physics-filtered data changing it into a conditional module using a PFFilterModeFilter (that needs to be implemented) or checking the run summary service for the current filter mode[[BR]]\nb) PFTriggerFilterMonitoring only processes the filter mask for physics-filtered data checking the run summary service for the current filter mode\n",

"reporter": "tschmidt",

"cc": "",

"resolution": "fixed",

"_ts": "1416713877066511",

"component": "jeb + pnf",

"summary": "PFTriggerFilterMonitoring may flood the logging with \"No filter mask present in frame at position ...\"",

"priority": "normal",

"keywords": "trigger + filter rate monitoring, JEB, JEB server",

"time": "2010-04-14T18:20:20",

"milestone": "",

"owner": "rfranke",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

PFTriggerFilterMonitoring may flood the logging with "No filter mask present in frame at position ..." (Trac #205) - the filter + rate monitoring currently (plan-b) runs within the filter clients - all events should include a filter mask.

it will move into the server in future (plan-a), so that events may not include a filter mask, if the filter mode isn't physics filtering.

PFTriggerFilterMonitoring will create a warn logging for such events...

solutions:

a) PFTriggerFilterMonitoring only runs on physics-filtered data changing it into a conditional module using a PFFilterModeFilter (that needs to be implemented) or checking the run summary service for the current filter mode

b) PFTriggerFilterMonitoring only processes the filter mask for physics-filtered data checking the run summary service for the current filter mode

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/205

, reported by tschmidt and owned by rfranke_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2014-11-23T03:37:57",

"description": "the filter + rate monitoring currently (plan-b) runs within the filter clients - all events should include a filter mask.[[BR]]\nit will move into the server in future (plan-a), so that events may not include a filter mask, if the filter mode isn't physics filtering.[[BR]]\nPFTriggerFilterMonitoring will create a warn logging for such events...\n\nsolutions:[[BR]]\na) PFTriggerFilterMonitoring only runs on physics-filtered data changing it into a conditional module using a PFFilterModeFilter (that needs to be implemented) or checking the run summary service for the current filter mode[[BR]]\nb) PFTriggerFilterMonitoring only processes the filter mask for physics-filtered data checking the run summary service for the current filter mode\n",

"reporter": "tschmidt",

"cc": "",

"resolution": "fixed",

"_ts": "1416713877066511",

"component": "jeb + pnf",

"summary": "PFTriggerFilterMonitoring may flood the logging with \"No filter mask present in frame at position ...\"",

"priority": "normal",

"keywords": "trigger + filter rate monitoring, JEB, JEB server",

"time": "2010-04-14T18:20:20",

"milestone": "",

"owner": "rfranke",

"type": "defect"

}

```

</p>

</details>

|

non_process

|