Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

12,808

| 15,184,789,425

|

IssuesEvent

|

2021-02-15 10:03:18

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

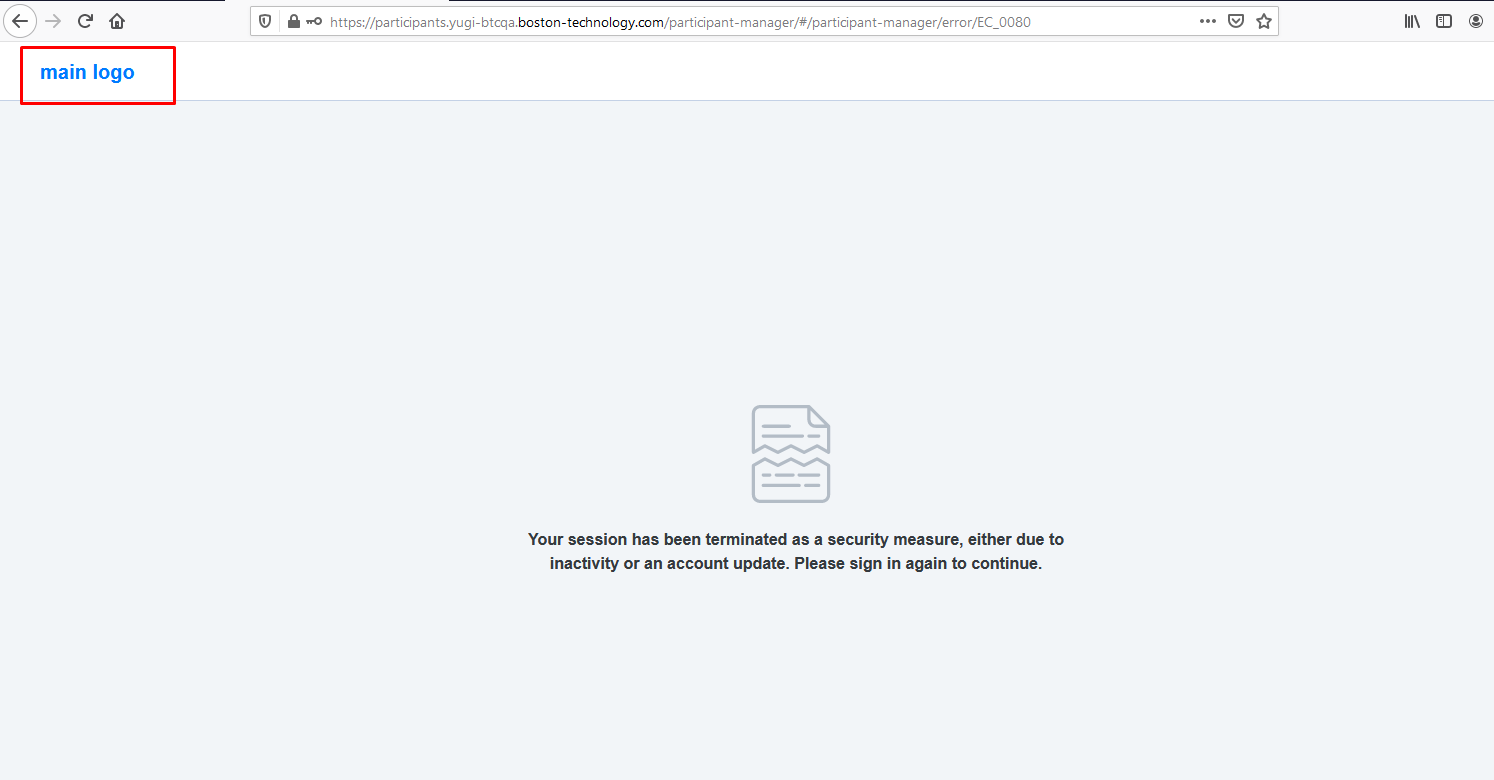

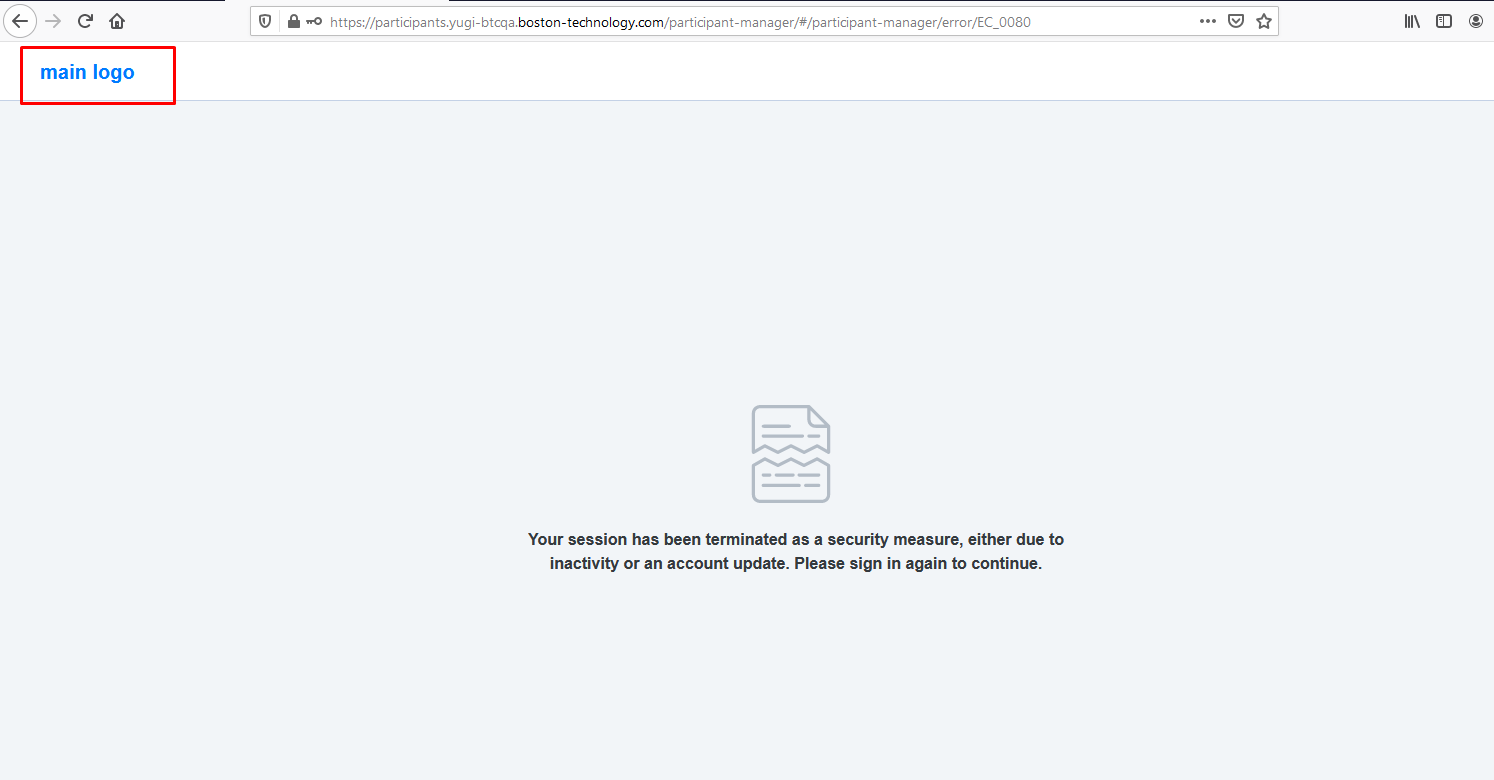

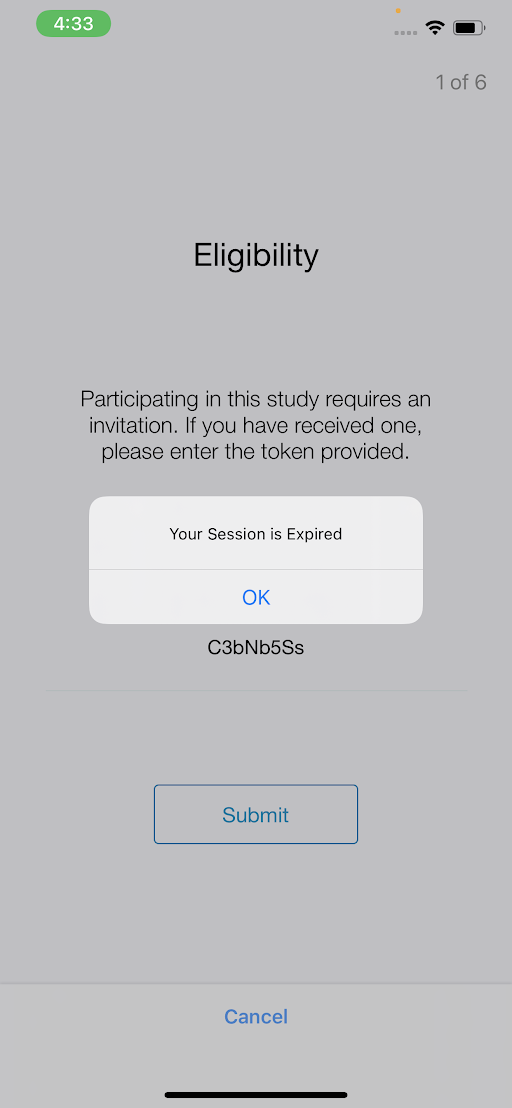

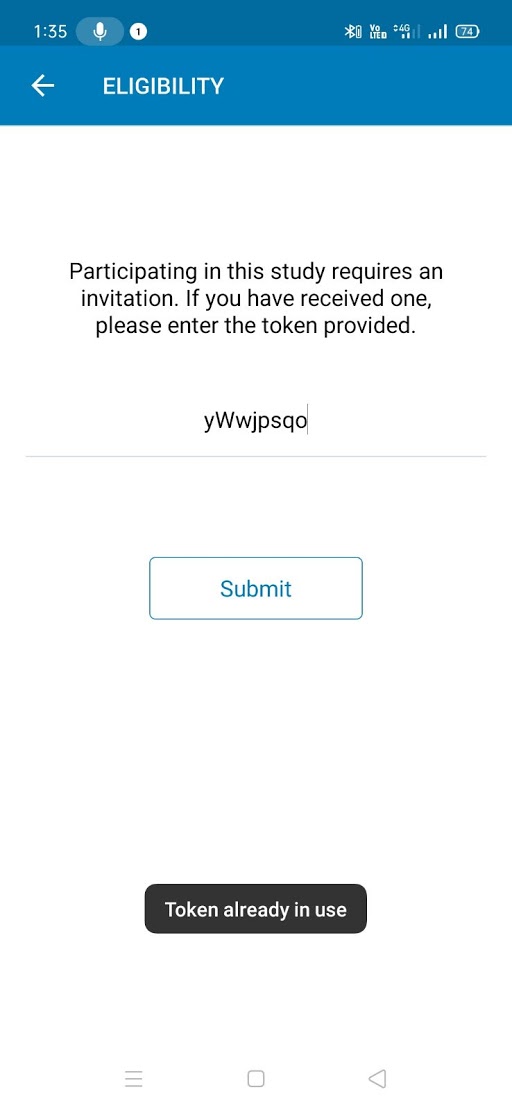

[PM] Need to fix the UI of logo in error page

|

Bug P2 Participant manager Process: Fixed Process: Tested QA Process: Tested dev

|

A/R:- Currently app is shown some text in place of customer logo

E/R:- Text need to be replaced with customer logo as per design

|

3.0

|

[PM] Need to fix the UI of logo in error page - A/R:- Currently app is shown some text in place of customer logo

E/R:- Text need to be replaced with customer logo as per design

|

process

|

need to fix the ui of logo in error page a r currently app is shown some text in place of customer logo e r text need to be replaced with customer logo as per design

| 1

|

7,633

| 10,731,782,968

|

IssuesEvent

|

2019-10-28 20:20:04

|

GroceriStar/food-datasets-csv-parser

|

https://api.github.com/repos/GroceriStar/food-datasets-csv-parser

|

closed

|

refactor parsers to use `mainWrapper`

|

in-process

|

**Is your feature request related to a problem? Please describe.**

with @sibasish14 latest changes in `mainWrapper` I think we should refactor all parsers to use it to reduce code duplication

**Describe the solution you'd like**

refactor all parsers to use `mainWrapper` fn in projects2.0

|

1.0

|

refactor parsers to use `mainWrapper` - **Is your feature request related to a problem? Please describe.**

with @sibasish14 latest changes in `mainWrapper` I think we should refactor all parsers to use it to reduce code duplication

**Describe the solution you'd like**

refactor all parsers to use `mainWrapper` fn in projects2.0

|

process

|

refactor parsers to use mainwrapper is your feature request related to a problem please describe with latest changes in mainwrapper i think we should refactor all parsers to use it to reduce code duplication describe the solution you d like refactor all parsers to use mainwrapper fn in

| 1

|

142,065

| 13,012,790,931

|

IssuesEvent

|

2020-07-25 07:51:51

|

sebrock/BananoNault

|

https://api.github.com/repos/sebrock/BananoNault

|

closed

|

Message to team members and RoE

|

documentation

|

## Thank you for joining the team for Project BananoNault (working title)

This **_sebrock/BananoNault_** is the Development/Test repo.

Production code will be in BananoCoin/Nault.

In this message I want to lay down some rules for the teamwork in this Project.

Please feel free to comment and discuss below. Eventually, we should all be happy with the way of working and guidelines around it, so it is critical that we have a common understanding and level of comfort.

## Rule #1: Have fun, collaborate, live and learn

Does not need much explanation other than:

- it´s okay to not know something

- nobody is perfect or knows all, we are all going to learn from this

- mistakes are allowed - talk openly about them for maximum team learning effect.

- when in doubt, ask someone to avoid mistakes, - there are no stupid questions

- respect each other

- have fun

- sleep well

- drink water

- don´t sweat it

## Project/activity Planning

Project planning is done in [BananoCoin/Nault](https://github.com/BananoCoin/Nault/projects) with the "**Projects**" function and [_Issues_](https://github.com/sebrock/BananoNault/issues) representing activities.

There are four Projects which represent the 4 main workstreams:

- Project Environment

- Functionality

- Visual Design

- i18n

Each activity is/has to be assigned to one or more Projects in order for it to show up in the Projects view.

There, each one is represented by a _Card_

## Projects in action

At the Project level you will see a [Kanban board](https://en.wikipedia.org/wiki/Kanban_board) with the typical columns representing the activity/issue resolution workflow.

Example: Visual Design

If you are beginning to work on one of the activities in the "To do" column, drag the card into the "In Progress" column and assign it to yourself, if it hasn´t already been assigned to you. You can also add another assignee if you are going to work on it together.

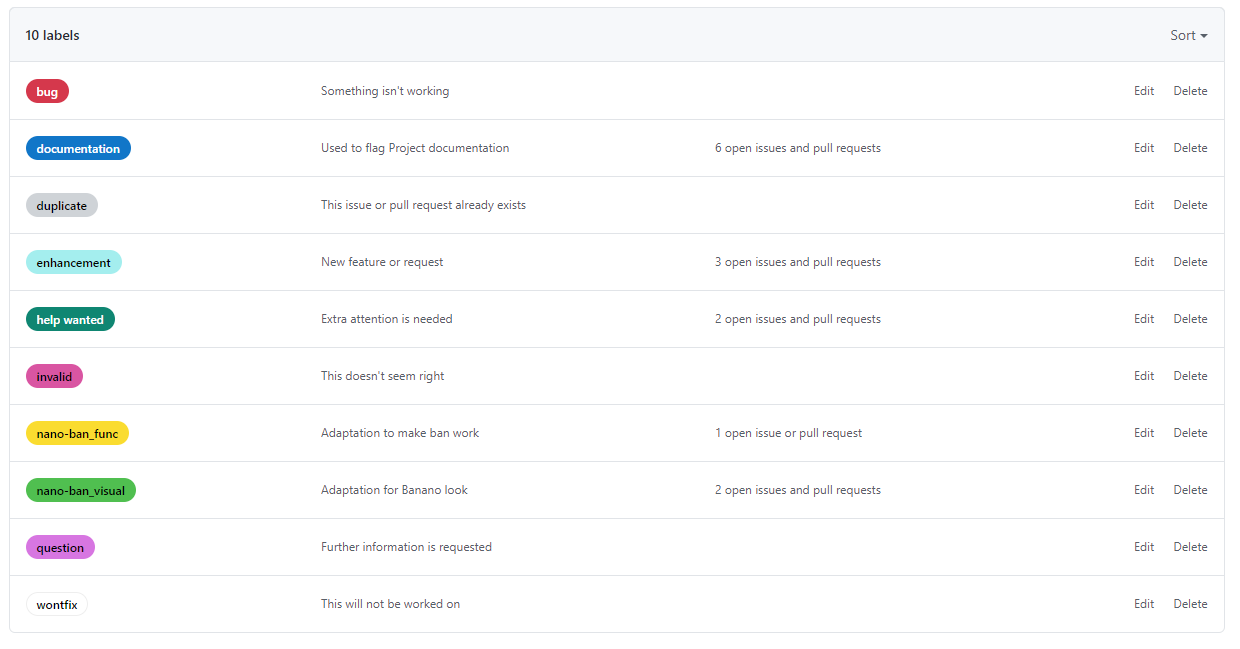

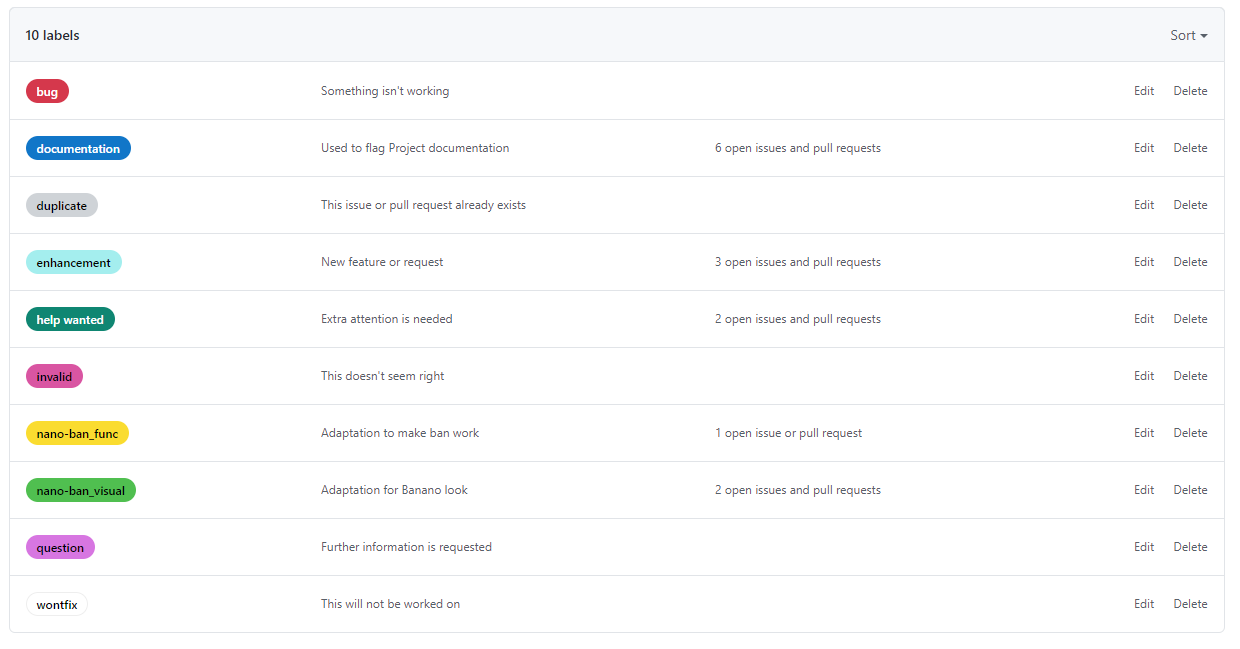

## Labels

There are a couple of standard/custom [_Labels_ ](https://github.com/BananoCoin/Nault/issues/labels )available, which should be used to categorize Issues.

Note that this enables us to use Issues not only to handle activity, but e.g. also documentation.

That means that Information worth while keeping as documentation or reference for the project or future developments can and should be put into an Issue and flagged with the _documentation_ label.

An example is the [Project Definition](https://github.com/BananoCoin/Nault/issues/21) or the [list of languages ](https://github.com/BananoCoin/Nault/issues/29 )for the i18n effort.

## Local development and testing

Each of you should be working in a local clone of the repo, using [Github Desktop](https://desktop.github.com/).

At a minimum, you will need the following installations (Also see [here](https://github.com/BananoCoin/Nault/issues/14))

- Node Package Manager: [Install NPM](https://www.npmjs.com/get-npm)

- Typescript `npm install -g typescript`

- Angular CLI: `npm install -g @angular/cli`

Each of you should be testing the things you are changing/creating locally.

You can compile and run the app locally by executing

`ng serve --open`

or

`npm run wallet:dev`

## Commit and PRs

Commits from your local repo to this (Dev/Test) repo should always be made to a separate branch at first.

Then create a Pull Request to merge with sebrock/BananoNault:master and assign a reviewer. That way we can be more confident to merge the changes with [Upstream](https://github.com/BananoCoin/Nault)

|

1.0

|

Message to team members and RoE - ## Thank you for joining the team for Project BananoNault (working title)

This **_sebrock/BananoNault_** is the Development/Test repo.

Production code will be in BananoCoin/Nault.

In this message I want to lay down some rules for the teamwork in this Project.

Please feel free to comment and discuss below. Eventually, we should all be happy with the way of working and guidelines around it, so it is critical that we have a common understanding and level of comfort.

## Rule #1: Have fun, collaborate, live and learn

Does not need much explanation other than:

- it´s okay to not know something

- nobody is perfect or knows all, we are all going to learn from this

- mistakes are allowed - talk openly about them for maximum team learning effect.

- when in doubt, ask someone to avoid mistakes, - there are no stupid questions

- respect each other

- have fun

- sleep well

- drink water

- don´t sweat it

## Project/activity Planning

Project planning is done in [BananoCoin/Nault](https://github.com/BananoCoin/Nault/projects) with the "**Projects**" function and [_Issues_](https://github.com/sebrock/BananoNault/issues) representing activities.

There are four Projects which represent the 4 main workstreams:

- Project Environment

- Functionality

- Visual Design

- i18n

Each activity is/has to be assigned to one or more Projects in order for it to show up in the Projects view.

There, each one is represented by a _Card_

## Projects in action

At the Project level you will see a [Kanban board](https://en.wikipedia.org/wiki/Kanban_board) with the typical columns representing the activity/issue resolution workflow.

Example: Visual Design

If you are beginning to work on one of the activities in the "To do" column, drag the card into the "In Progress" column and assign it to yourself, if it hasn´t already been assigned to you. You can also add another assignee if you are going to work on it together.

## Labels

There are a couple of standard/custom [_Labels_ ](https://github.com/BananoCoin/Nault/issues/labels )available, which should be used to categorize Issues.

Note that this enables us to use Issues not only to handle activity, but e.g. also documentation.

That means that Information worth while keeping as documentation or reference for the project or future developments can and should be put into an Issue and flagged with the _documentation_ label.

An example is the [Project Definition](https://github.com/BananoCoin/Nault/issues/21) or the [list of languages ](https://github.com/BananoCoin/Nault/issues/29 )for the i18n effort.

## Local development and testing

Each of you should be working in a local clone of the repo, using [Github Desktop](https://desktop.github.com/).

At a minimum, you will need the following installations (Also see [here](https://github.com/BananoCoin/Nault/issues/14))

- Node Package Manager: [Install NPM](https://www.npmjs.com/get-npm)

- Typescript `npm install -g typescript`

- Angular CLI: `npm install -g @angular/cli`

Each of you should be testing the things you are changing/creating locally.

You can compile and run the app locally by executing

`ng serve --open`

or

`npm run wallet:dev`

## Commit and PRs

Commits from your local repo to this (Dev/Test) repo should always be made to a separate branch at first.

Then create a Pull Request to merge with sebrock/BananoNault:master and assign a reviewer. That way we can be more confident to merge the changes with [Upstream](https://github.com/BananoCoin/Nault)

|

non_process

|

message to team members and roe thank you for joining the team for project bananonault working title this sebrock bananonault is the development test repo production code will be in bananocoin nault in this message i want to lay down some rules for the teamwork in this project please feel free to comment and discuss below eventually we should all be happy with the way of working and guidelines around it so it is critical that we have a common understanding and level of comfort rule have fun collaborate live and learn does not need much explanation other than it´s okay to not know something nobody is perfect or knows all we are all going to learn from this mistakes are allowed talk openly about them for maximum team learning effect when in doubt ask someone to avoid mistakes there are no stupid questions respect each other have fun sleep well drink water don´t sweat it project activity planning project planning is done in with the projects function and representing activities there are four projects which represent the main workstreams project environment functionality visual design each activity is has to be assigned to one or more projects in order for it to show up in the projects view there each one is represented by a card projects in action at the project level you will see a with the typical columns representing the activity issue resolution workflow example visual design if you are beginning to work on one of the activities in the to do column drag the card into the in progress column and assign it to yourself if it hasn´t already been assigned to you you can also add another assignee if you are going to work on it together labels there are a couple of standard custom available which should be used to categorize issues note that this enables us to use issues not only to handle activity but e g also documentation that means that information worth while keeping as documentation or reference for the project or future developments can and should be put into an issue and flagged with the documentation label an example is the or the for the effort local development and testing each of you should be working in a local clone of the repo using at a minimum you will need the following installations also see node package manager typescript npm install g typescript angular cli npm install g angular cli each of you should be testing the things you are changing creating locally you can compile and run the app locally by executing ng serve open or npm run wallet dev commit and prs commits from your local repo to this dev test repo should always be made to a separate branch at first then create a pull request to merge with sebrock bananonault master and assign a reviewer that way we can be more confident to merge the changes with

| 0

|

2,621

| 5,396,080,299

|

IssuesEvent

|

2017-02-27 10:35:48

|

jlm2017/jlm-video-subtitles

|

https://api.github.com/repos/jlm2017/jlm-video-subtitles

|

closed

|

[subtitles] [fr] #RDLS18 - CETA, OTAN, EUROPE, SECOURS CATHOLIQUE À CALAIS

|

Language: French Process: [4] Ready for review (2)

|

# Video title

#RDLS18 - CETA, OTAN, EUROPE, SECOURS CATHOLIQUE À CALAIS

# URL

https://www.youtube.com/watch?v=qhJVmuEtII8

# Youtube subtitles language

French

# Duration

30:34

# Subtitles URL

https://www.youtube.com/timedtext_editor?ui=hd&action_mde_edit_form=1&bl=vmp&ref=player&v=qhJVmuEtII8&tab=captions&lang=fr

|

1.0

|

[subtitles] [fr] #RDLS18 - CETA, OTAN, EUROPE, SECOURS CATHOLIQUE À CALAIS - # Video title

#RDLS18 - CETA, OTAN, EUROPE, SECOURS CATHOLIQUE À CALAIS

# URL

https://www.youtube.com/watch?v=qhJVmuEtII8

# Youtube subtitles language

French

# Duration

30:34

# Subtitles URL

https://www.youtube.com/timedtext_editor?ui=hd&action_mde_edit_form=1&bl=vmp&ref=player&v=qhJVmuEtII8&tab=captions&lang=fr

|

process

|

ceta otan europe secours catholique à calais video title ceta otan europe secours catholique à calais url youtube subtitles language french duration subtitles url

| 1

|

186,028

| 15,044,092,523

|

IssuesEvent

|

2021-02-03 02:10:26

|

facebookresearch/droidlet

|

https://api.github.com/repos/facebookresearch/droidlet

|

closed

|

Updates for autocomplete tool

|

documentation

|

## Type of Issue

The documentation should talk about -

1. datasets folder should be fetched and updated before starting with the app and perhaps point to it

2. The README of template tool should mention auto_complete is in it

3. Can I wipe out the `commands.txt` entirely ? I think this file should be blank / user should create from scratch

4. What is `args` in `python ~/droidlet/tools/data_processing/txt_to_JSON.py [args]` ?

5. I think step #1 and #4 should be run before running the backend and frontend ?

6. Nit to rename : `~/droidlet/tools/data_processing/txt_to_JSON.py` to something

7. We need to pretty print the dictionaries

8. The location for where to run `python ~/droidlet/tools/data_processing/txt_to_JSON.py` should be mentioned

9. Fix the path for `annotations_dir_path` in the script in #8

10. "point" inside "dance" doesn't work for autocomplete

11. Once we are over or under the index, either wipe out the command field or show a prompt saying "you are done annotating" . Right now, it keeps showing the last message and incrementing the count.

12. We should not track the files : `backend/command_dict_pairs.json` and `backend/commands.txt`.

13. when json validation fails, show a pop up.

14. Rename `autocomplete_annotation.txt` to `high_pri_commands.txt` in S3

Select the type of issue:

- [ ] Bug report (to report a bug)

- [ ] Feature request (to request an additional feature)

- [ ] Tracker (I am just using this as a tracker)

- [x] Documentation Ask

## Description

Detailed description of the requested feature/documentation ask/bug report.

## Current Behavior

Description of current behavior or functionality.

## Expected Behavior

Description of the expected behavior or functionality. In case of feature request, please add input and expected output.

## Steps to reproduce

Please add steps to reproduce the bug here along with the stack trace.

## Links to any relevant pastes or documents

Please post links to any relevant documents that might contain any extra information.

## Checklist

Use this checklist if you are using this issue as a tracker:

- [ ] Task 1

- [ ] Task 2

- [ ] Task 3 ...

|

1.0

|

Updates for autocomplete tool - ## Type of Issue

The documentation should talk about -

1. datasets folder should be fetched and updated before starting with the app and perhaps point to it

2. The README of template tool should mention auto_complete is in it

3. Can I wipe out the `commands.txt` entirely ? I think this file should be blank / user should create from scratch

4. What is `args` in `python ~/droidlet/tools/data_processing/txt_to_JSON.py [args]` ?

5. I think step #1 and #4 should be run before running the backend and frontend ?

6. Nit to rename : `~/droidlet/tools/data_processing/txt_to_JSON.py` to something

7. We need to pretty print the dictionaries

8. The location for where to run `python ~/droidlet/tools/data_processing/txt_to_JSON.py` should be mentioned

9. Fix the path for `annotations_dir_path` in the script in #8

10. "point" inside "dance" doesn't work for autocomplete

11. Once we are over or under the index, either wipe out the command field or show a prompt saying "you are done annotating" . Right now, it keeps showing the last message and incrementing the count.

12. We should not track the files : `backend/command_dict_pairs.json` and `backend/commands.txt`.

13. when json validation fails, show a pop up.

14. Rename `autocomplete_annotation.txt` to `high_pri_commands.txt` in S3

Select the type of issue:

- [ ] Bug report (to report a bug)

- [ ] Feature request (to request an additional feature)

- [ ] Tracker (I am just using this as a tracker)

- [x] Documentation Ask

## Description

Detailed description of the requested feature/documentation ask/bug report.

## Current Behavior

Description of current behavior or functionality.

## Expected Behavior

Description of the expected behavior or functionality. In case of feature request, please add input and expected output.

## Steps to reproduce

Please add steps to reproduce the bug here along with the stack trace.

## Links to any relevant pastes or documents

Please post links to any relevant documents that might contain any extra information.

## Checklist

Use this checklist if you are using this issue as a tracker:

- [ ] Task 1

- [ ] Task 2

- [ ] Task 3 ...

|

non_process

|

updates for autocomplete tool type of issue the documentation should talk about datasets folder should be fetched and updated before starting with the app and perhaps point to it the readme of template tool should mention auto complete is in it can i wipe out the commands txt entirely i think this file should be blank user should create from scratch what is args in python droidlet tools data processing txt to json py i think step and should be run before running the backend and frontend nit to rename droidlet tools data processing txt to json py to something we need to pretty print the dictionaries the location for where to run python droidlet tools data processing txt to json py should be mentioned fix the path for annotations dir path in the script in point inside dance doesn t work for autocomplete once we are over or under the index either wipe out the command field or show a prompt saying you are done annotating right now it keeps showing the last message and incrementing the count we should not track the files backend command dict pairs json and backend commands txt when json validation fails show a pop up rename autocomplete annotation txt to high pri commands txt in select the type of issue bug report to report a bug feature request to request an additional feature tracker i am just using this as a tracker documentation ask description detailed description of the requested feature documentation ask bug report current behavior description of current behavior or functionality expected behavior description of the expected behavior or functionality in case of feature request please add input and expected output steps to reproduce please add steps to reproduce the bug here along with the stack trace links to any relevant pastes or documents please post links to any relevant documents that might contain any extra information checklist use this checklist if you are using this issue as a tracker task task task

| 0

|

9,260

| 27,818,389,183

|

IssuesEvent

|

2023-03-18 23:47:43

|

hackforla/website

|

https://api.github.com/repos/hackforla/website

|

closed

|

GitHub Actions: Bot adding and removing "Status: Updated" and "To Update!" label

|

role: back end/devOps Complexity: Large Status: Updated Feature: Board/GitHub Maintenance automation size: 2pt

|

### Overview

There is a bug with the Github bot in which it both adds and removes the "Status: Updated" label. This should be fixed to avoid confusion and to make sure that the proper labels are being applied.

### Action Items

- [x] Please go through the wiki article on [Hack for LA's GitHub Actions](https://github.com/hackforla/website/wiki/Hack-for-LA's-GitHub-Actions)

- [x] Review the [add-label.js](https://github.com/hackforla/website/blob/2fe355b396ed7f785d382505b8c2ecafe09cb486/github-actions/add-update-label-weekly/add-label.js#L226) file, to understand how the bot adds and removes labels from the issue.

- [x] Understand why the Github action bot adds and removes the "Status: Updated" and the "To Update!" label.

- [x] Change the code logic to fix this error

### Checks

- [x] Test in your local environment that it works

### Resources/Instructions

Relevant files:

- https://github.com/hackforla/website/blob/2fe355b396ed7f785d382505b8c2ecafe09cb486/github-actions/add-update-label-weekly/add-label.js

- https://github.com/hackforla/website/blob/gh-pages/.github/workflows/add-update-label-weekly.yml

Relevant Issues:

Status: Updated Bug:

- #2497

- #2561

- #2397

- #3059

To Update! Bug:

- #2462

- #2317

Never done GitHub actions? [Start here!](https://docs.github.com/en/actions)

- [GitHub Complex Workflows doc](https://docs.github.com/en/actions/learn-github-actions/managing-complex-workflows)

- [GitHub Actions Workflow Directory](https://github.com/hackforla/website/tree/gh-pages/.github/workflows)

- [Events that trigger workflows](https://docs.github.com/en/actions/reference/events-that-trigger-workflows)

- [Workflow syntax for GitHub Actions](https://docs.github.com/en/actions/reference/workflow-syntax-for-github-actions)

- [actions/github-script](https://github.com/actions/github-script)

- [GitHub RESTAPI](https://docs.github.com/en/rest)

#### Architecture Notes

The idea behind the refactor is to organize our GitHub Actions so that developers can easily maintain and understand them. Currently, we want our GitHub Actions to be structured like so based on this [proposal](https://docs.google.com/spreadsheets/d/12NcZQoyGYlHlMQtJE2IM8xLYpHN75agb/edit#gid=1231634015):

- Schedules (military time)

- Schedule Friday 0700

- Schedule Thursday 1100

- Schedule Daily 1100

- Linters

- Lint SCSS

- PR Trigger

- Add Linked Issue Labels to Pull Request

- Add Pull Request Instructions

- Issue Trigger

- Add Missing Labels To Issues

- WR - PR Trigger

- WR Add Linked Issue Labels to Pull Request

- WR Add Pull Request Instructions

- WR - Issue Trigger

Actions with the same triggers (excluding linters, which will be their own category) will live in the same github action file. Scheduled actions will live in the same file if they trigger on the same schedule (i.e. all files that trigger everyday at 11am will live in one file, while files that trigger on Friday at 7am will be on a separate file).

That said, this structure is not set in stone. If any part of it feels strange, or you have questions, feel free to bring it up with the team so we can evolve this format!

|

1.0

|

GitHub Actions: Bot adding and removing "Status: Updated" and "To Update!" label - ### Overview

There is a bug with the Github bot in which it both adds and removes the "Status: Updated" label. This should be fixed to avoid confusion and to make sure that the proper labels are being applied.

### Action Items

- [x] Please go through the wiki article on [Hack for LA's GitHub Actions](https://github.com/hackforla/website/wiki/Hack-for-LA's-GitHub-Actions)

- [x] Review the [add-label.js](https://github.com/hackforla/website/blob/2fe355b396ed7f785d382505b8c2ecafe09cb486/github-actions/add-update-label-weekly/add-label.js#L226) file, to understand how the bot adds and removes labels from the issue.

- [x] Understand why the Github action bot adds and removes the "Status: Updated" and the "To Update!" label.

- [x] Change the code logic to fix this error

### Checks

- [x] Test in your local environment that it works

### Resources/Instructions

Relevant files:

- https://github.com/hackforla/website/blob/2fe355b396ed7f785d382505b8c2ecafe09cb486/github-actions/add-update-label-weekly/add-label.js

- https://github.com/hackforla/website/blob/gh-pages/.github/workflows/add-update-label-weekly.yml

Relevant Issues:

Status: Updated Bug:

- #2497

- #2561

- #2397

- #3059

To Update! Bug:

- #2462

- #2317

Never done GitHub actions? [Start here!](https://docs.github.com/en/actions)

- [GitHub Complex Workflows doc](https://docs.github.com/en/actions/learn-github-actions/managing-complex-workflows)

- [GitHub Actions Workflow Directory](https://github.com/hackforla/website/tree/gh-pages/.github/workflows)

- [Events that trigger workflows](https://docs.github.com/en/actions/reference/events-that-trigger-workflows)

- [Workflow syntax for GitHub Actions](https://docs.github.com/en/actions/reference/workflow-syntax-for-github-actions)

- [actions/github-script](https://github.com/actions/github-script)

- [GitHub RESTAPI](https://docs.github.com/en/rest)

#### Architecture Notes

The idea behind the refactor is to organize our GitHub Actions so that developers can easily maintain and understand them. Currently, we want our GitHub Actions to be structured like so based on this [proposal](https://docs.google.com/spreadsheets/d/12NcZQoyGYlHlMQtJE2IM8xLYpHN75agb/edit#gid=1231634015):

- Schedules (military time)

- Schedule Friday 0700

- Schedule Thursday 1100

- Schedule Daily 1100

- Linters

- Lint SCSS

- PR Trigger

- Add Linked Issue Labels to Pull Request

- Add Pull Request Instructions

- Issue Trigger

- Add Missing Labels To Issues

- WR - PR Trigger

- WR Add Linked Issue Labels to Pull Request

- WR Add Pull Request Instructions

- WR - Issue Trigger

Actions with the same triggers (excluding linters, which will be their own category) will live in the same github action file. Scheduled actions will live in the same file if they trigger on the same schedule (i.e. all files that trigger everyday at 11am will live in one file, while files that trigger on Friday at 7am will be on a separate file).

That said, this structure is not set in stone. If any part of it feels strange, or you have questions, feel free to bring it up with the team so we can evolve this format!

|

non_process

|

github actions bot adding and removing status updated and to update label overview there is a bug with the github bot in which it both adds and removes the status updated label this should be fixed to avoid confusion and to make sure that the proper labels are being applied action items please go through the wiki article on review the file to understand how the bot adds and removes labels from the issue understand why the github action bot adds and removes the status updated and the to update label change the code logic to fix this error checks test in your local environment that it works resources instructions relevant files relevant issues status updated bug to update bug never done github actions architecture notes the idea behind the refactor is to organize our github actions so that developers can easily maintain and understand them currently we want our github actions to be structured like so based on this schedules military time schedule friday schedule thursday schedule daily linters lint scss pr trigger add linked issue labels to pull request add pull request instructions issue trigger add missing labels to issues wr pr trigger wr add linked issue labels to pull request wr add pull request instructions wr issue trigger actions with the same triggers excluding linters which will be their own category will live in the same github action file scheduled actions will live in the same file if they trigger on the same schedule i e all files that trigger everyday at will live in one file while files that trigger on friday at will be on a separate file that said this structure is not set in stone if any part of it feels strange or you have questions feel free to bring it up with the team so we can evolve this format

| 0

|

38,210

| 8,697,523,238

|

IssuesEvent

|

2018-12-04 20:31:30

|

ReubenBond/dict4cn

|

https://api.github.com/repos/ReubenBond/dict4cn

|

closed

|

word not find

|

Priority-Medium Type-Defect auto-migrated

|

```

What steps will reproduce the problem?

1.

2.

3.

What is the expected output? What do you see instead?

What version of the product are you using? On what operating system?

Please provide any additional information below.

```

Original issue reported on code.google.com by `ja...@mt.com.tw` on 15 Oct 2012 at 9:21

|

1.0

|

word not find - ```

What steps will reproduce the problem?

1.

2.

3.

What is the expected output? What do you see instead?

What version of the product are you using? On what operating system?

Please provide any additional information below.

```

Original issue reported on code.google.com by `ja...@mt.com.tw` on 15 Oct 2012 at 9:21

|

non_process

|

word not find what steps will reproduce the problem what is the expected output what do you see instead what version of the product are you using on what operating system please provide any additional information below original issue reported on code google com by ja mt com tw on oct at

| 0

|

6,590

| 2,590,025,448

|

IssuesEvent

|

2015-02-18 16:30:17

|

learningequality/ka-lite

|

https://api.github.com/repos/learningequality/ka-lite

|

closed

|

No indication is given to the user that a video is not available to watch from the topic bar

|

0.13.x bug bash bug has PR high priority

|

Branch: develop

Expected Behavior: The sidebar should give some indication if content is not available to view, whether by greying out content in the sidebar or making it unclickable.

Current Behavior: Content in the sidebar looks identical regardless of whether it is available or not.

Steps to reproduce: Navigate to a topic that contains unavailable videos in the sidebar

Screenshot(s):

|

1.0

|

No indication is given to the user that a video is not available to watch from the topic bar - Branch: develop

Expected Behavior: The sidebar should give some indication if content is not available to view, whether by greying out content in the sidebar or making it unclickable.

Current Behavior: Content in the sidebar looks identical regardless of whether it is available or not.

Steps to reproduce: Navigate to a topic that contains unavailable videos in the sidebar

Screenshot(s):

|

non_process

|

no indication is given to the user that a video is not available to watch from the topic bar branch develop expected behavior the sidebar should give some indication if content is not available to view whether by greying out content in the sidebar or making it unclickable current behavior content in the sidebar looks identical regardless of whether it is available or not steps to reproduce navigate to a topic that contains unavailable videos in the sidebar screenshot s

| 0

|

8,545

| 11,717,634,427

|

IssuesEvent

|

2020-03-09 17:36:02

|

shirou/gopsutil

|

https://api.github.com/repos/shirou/gopsutil

|

closed

|

Crash at call process.Cmdline

|

os:windows package:process

|

**Describe the bug**

Crash at call process.Cmdline, occurs occasionally but must occur

Details:

499:

.......................................................................................................................................................................could not get CommandLine: could not get win32Proc: empty

......could not get CommandLine: could not get win32Proc: empty

........................................................................................

500:

........................................................................................................................could not get CommandLine: could not get win32Proc: empty

.....................................................could not get CommandLine: could not get win32Proc: empty

.could not get CommandLine: could not get win32Proc: empty

...............................................................................Exception 0xc0000005 0x0 0xc00059a000 0x7ffa10a0dc12

PC=0x7ffa10a0dc12

syscall.Syscall(0x7ffa10a0e4a0, 0x2, 0xc00059a000, 0xc000598320, 0x0, 0x0, 0x0, 0x0)

D:/Go/src/runtime/syscall_windows.go:188 +0xfa

syscall.(*Proc).Call(0xc000004600, 0xc000598330, 0x2, 0x2, 0x449229, 0x0, 0x0, 0xc000175be8)

D:/Go/src/syscall/dll_windows.go:173 +0x1f0

github.com/go-ole/go-ole.CLSIDFromProgID(0x5562e9, 0x1a, 0x4e1496, 0xc000004580, 0xc000598310)

D:/Gopath/go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/com.go:120 +0xb1

github.com/go-ole/go-ole.ClassIDFrom(0x5562e9, 0x1a, 0x42d901, 0x663800, 0x54c6c0)

D:/Gopath/go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/utility.go:14 +0x40

github.com/go-ole/go-ole/oleutil.CreateObject(0x5562e9, 0x1a, 0x0, 0x1, 0x57b460)

D:/Gopath/go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/oleutil/oleutil.go:16 +0x40

github.com/StackExchange/wmi.(*Client).Query(0x644620, 0xc000578f00, 0x267, 0x514540, 0xc00049cec0, 0x0, 0x0, 0x0, 0x0, 0x0)

D:/Gopath/go/pkg/mod/github.com/!stack!exchange/wmi@v0.0.0-20190523213315-cbe66965904d/wmi.go:150 +0x2d3

github.com/StackExchange/wmi.Query(0xc000578f00, 0x267, 0x514540, 0xc00049cec0, 0x0, 0x0, 0x0, 0x0, 0x0)

D:/Gopath/go/pkg/mod/github.com/!stack!exchange/wmi@v0.0.0-20190523213315-cbe66965904d/wmi.go:76 +0x10c

github.com/shirou/gopsutil/internal/common.WMIQueryWithContext.func1(0xc0005759e0, 0xc000578f00, 0x267, 0x514540, 0xc00049cec0, 0x0, 0x0, 0x0)

D:/Gopath/go/pkg/mod/github.com/shirou/gopsutil@v2.20.2+incompatible/internal/common/common_windows.go:131 +0x81

created by github.com/shirou/gopsutil/internal/common.WMIQueryWithContext

D:/Gopath/go/pkg/mod/github.com/shirou/gopsutil@v2.20.2+incompatible/internal/common/common_windows.go:130 +0x122

goroutine 1 [select]:

github.com/shirou/gopsutil/internal/common.WMIQueryWithContext(0x579e20, 0xc0005758c0, 0xc000578f00, 0x267, 0x514540, 0xc00049cec0, 0x0, 0x0, 0x0, 0x0, ...)

D:/Gopath/go/pkg/mod/github.com/shirou/gopsutil@v2.20.2+incompatible/internal/common/common_windows.go:134 +0x1d4

github.com/shirou/gopsutil/process.GetWin32ProcWithContext(0x579de0, 0xc0000100b0, 0x75e0, 0x646680, 0x1313, 0x2, 0xc0000ec080, 0xc000036000)

D:/Gopath/go/pkg/mod/github.com/shirou/gopsutil@v2.20.2+incompatible/process/process_windows.go:248 +0x12b

github.com/shirou/gopsutil/process.(*Process).CmdlineWithContext(0xc00023a8f0, 0x579de0, 0xc0000100b0, 0x1, 0x0, 0x0, 0x0)

D:/Gopath/go/pkg/mod/github.com/shirou/gopsutil@v2.20.2+incompatible/process/process_windows.go:312 +0x4b

github.com/shirou/gopsutil/process.(*Process).Cmdline(...)

D:/Gopath/go/pkg/mod/github.com/shirou/gopsutil@v2.20.2+incompatible/process/process_windows.go:308

main.Test()

E:/Project/33.dfagent/test/b/t.go:25 +0xc4

main.main()

E:/Project/33.dfagent/test/b/t.go:43 +0xa8

rax 0x1

rbx 0x1

rcx 0x1bbfc58

rdi 0x1bbfce8

rsi 0xc00059a000

rbp 0x7ffa10bc2528

rsp 0x1bbfbe0

r8 0x0

r9 0x0

r10 0x1bbfc58

r11 0xc00059a000

r12 0x80040154

r13 0xffff8005ef3ca7b0

r14 0x0

r15 0x7ffa10c35860

rip 0x7ffa10a0dc12

rflags 0x10202

cs 0x33

fs 0x53

gs 0x2b

exit status 2

**To Reproduce**

```go

package main

import (

"fmt"

"time"

"github.com/shirou/gopsutil/process"

)

func IgnoreError() {

if err := recover(); err != nil {

fmt.Println(err)

}

}

func Test() {

v, err := process.Processes()

if err != nil {

fmt.Println(err)

return

}

for _, p := range v {

cmd, err := p.Cmdline()

if err != nil {

fmt.Println(err)

continue

}

//fmt.Printf("%+v\n", cmd)

_ = cmd

fmt.Printf(".")

time.Sleep(time.Duration(10) * time.Millisecond)

}

fmt.Printf("\n")

}

func main() {

var i int = 0

for {

fmt.Printf("%d:\n", i)

Test()

fmt.Printf("\n")

i += 1

time.Sleep(time.Duration(3) * time.Second)

}

}

```

**Expected behavior**

don't crash

**Environment

Microsoft Windows [版本 10.0.17134.829]

**Additional context**

[Cross-compiling? Paste the command you are using to cross-compile and the result of the corresponding `go env`]

|

1.0

|

Crash at call process.Cmdline - **Describe the bug**

Crash at call process.Cmdline, occurs occasionally but must occur

Details:

499:

.......................................................................................................................................................................could not get CommandLine: could not get win32Proc: empty

......could not get CommandLine: could not get win32Proc: empty

........................................................................................

500:

........................................................................................................................could not get CommandLine: could not get win32Proc: empty

.....................................................could not get CommandLine: could not get win32Proc: empty

.could not get CommandLine: could not get win32Proc: empty

...............................................................................Exception 0xc0000005 0x0 0xc00059a000 0x7ffa10a0dc12

PC=0x7ffa10a0dc12

syscall.Syscall(0x7ffa10a0e4a0, 0x2, 0xc00059a000, 0xc000598320, 0x0, 0x0, 0x0, 0x0)

D:/Go/src/runtime/syscall_windows.go:188 +0xfa

syscall.(*Proc).Call(0xc000004600, 0xc000598330, 0x2, 0x2, 0x449229, 0x0, 0x0, 0xc000175be8)

D:/Go/src/syscall/dll_windows.go:173 +0x1f0

github.com/go-ole/go-ole.CLSIDFromProgID(0x5562e9, 0x1a, 0x4e1496, 0xc000004580, 0xc000598310)

D:/Gopath/go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/com.go:120 +0xb1

github.com/go-ole/go-ole.ClassIDFrom(0x5562e9, 0x1a, 0x42d901, 0x663800, 0x54c6c0)

D:/Gopath/go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/utility.go:14 +0x40

github.com/go-ole/go-ole/oleutil.CreateObject(0x5562e9, 0x1a, 0x0, 0x1, 0x57b460)

D:/Gopath/go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/oleutil/oleutil.go:16 +0x40

github.com/StackExchange/wmi.(*Client).Query(0x644620, 0xc000578f00, 0x267, 0x514540, 0xc00049cec0, 0x0, 0x0, 0x0, 0x0, 0x0)

D:/Gopath/go/pkg/mod/github.com/!stack!exchange/wmi@v0.0.0-20190523213315-cbe66965904d/wmi.go:150 +0x2d3

github.com/StackExchange/wmi.Query(0xc000578f00, 0x267, 0x514540, 0xc00049cec0, 0x0, 0x0, 0x0, 0x0, 0x0)

D:/Gopath/go/pkg/mod/github.com/!stack!exchange/wmi@v0.0.0-20190523213315-cbe66965904d/wmi.go:76 +0x10c

github.com/shirou/gopsutil/internal/common.WMIQueryWithContext.func1(0xc0005759e0, 0xc000578f00, 0x267, 0x514540, 0xc00049cec0, 0x0, 0x0, 0x0)

D:/Gopath/go/pkg/mod/github.com/shirou/gopsutil@v2.20.2+incompatible/internal/common/common_windows.go:131 +0x81

created by github.com/shirou/gopsutil/internal/common.WMIQueryWithContext

D:/Gopath/go/pkg/mod/github.com/shirou/gopsutil@v2.20.2+incompatible/internal/common/common_windows.go:130 +0x122

goroutine 1 [select]:

github.com/shirou/gopsutil/internal/common.WMIQueryWithContext(0x579e20, 0xc0005758c0, 0xc000578f00, 0x267, 0x514540, 0xc00049cec0, 0x0, 0x0, 0x0, 0x0, ...)

D:/Gopath/go/pkg/mod/github.com/shirou/gopsutil@v2.20.2+incompatible/internal/common/common_windows.go:134 +0x1d4

github.com/shirou/gopsutil/process.GetWin32ProcWithContext(0x579de0, 0xc0000100b0, 0x75e0, 0x646680, 0x1313, 0x2, 0xc0000ec080, 0xc000036000)

D:/Gopath/go/pkg/mod/github.com/shirou/gopsutil@v2.20.2+incompatible/process/process_windows.go:248 +0x12b

github.com/shirou/gopsutil/process.(*Process).CmdlineWithContext(0xc00023a8f0, 0x579de0, 0xc0000100b0, 0x1, 0x0, 0x0, 0x0)

D:/Gopath/go/pkg/mod/github.com/shirou/gopsutil@v2.20.2+incompatible/process/process_windows.go:312 +0x4b

github.com/shirou/gopsutil/process.(*Process).Cmdline(...)

D:/Gopath/go/pkg/mod/github.com/shirou/gopsutil@v2.20.2+incompatible/process/process_windows.go:308

main.Test()

E:/Project/33.dfagent/test/b/t.go:25 +0xc4

main.main()

E:/Project/33.dfagent/test/b/t.go:43 +0xa8

rax 0x1

rbx 0x1

rcx 0x1bbfc58

rdi 0x1bbfce8

rsi 0xc00059a000

rbp 0x7ffa10bc2528

rsp 0x1bbfbe0

r8 0x0

r9 0x0

r10 0x1bbfc58

r11 0xc00059a000

r12 0x80040154

r13 0xffff8005ef3ca7b0

r14 0x0

r15 0x7ffa10c35860

rip 0x7ffa10a0dc12

rflags 0x10202

cs 0x33

fs 0x53

gs 0x2b

exit status 2

**To Reproduce**

```go

package main

import (

"fmt"

"time"

"github.com/shirou/gopsutil/process"

)

func IgnoreError() {

if err := recover(); err != nil {

fmt.Println(err)

}

}

func Test() {

v, err := process.Processes()

if err != nil {

fmt.Println(err)

return

}

for _, p := range v {

cmd, err := p.Cmdline()

if err != nil {

fmt.Println(err)

continue

}

//fmt.Printf("%+v\n", cmd)

_ = cmd

fmt.Printf(".")

time.Sleep(time.Duration(10) * time.Millisecond)

}

fmt.Printf("\n")

}

func main() {

var i int = 0

for {

fmt.Printf("%d:\n", i)

Test()

fmt.Printf("\n")

i += 1

time.Sleep(time.Duration(3) * time.Second)

}

}

```

**Expected behavior**

don't crash

**Environment

Microsoft Windows [版本 10.0.17134.829]

**Additional context**

[Cross-compiling? Paste the command you are using to cross-compile and the result of the corresponding `go env`]

|

process

|

crash at call process cmdline describe the bug crash at call process cmdline occurs occasionally but must occur details could not get commandline could not get empty could not get commandline could not get empty could not get commandline could not get empty could not get commandline could not get empty could not get commandline could not get empty exception pc syscall syscall d go src runtime syscall windows go syscall proc call d go src syscall dll windows go github com go ole go ole clsidfromprogid d gopath go pkg mod github com go ole go ole com go github com go ole go ole classidfrom d gopath go pkg mod github com go ole go ole utility go github com go ole go ole oleutil createobject d gopath go pkg mod github com go ole go ole oleutil oleutil go github com stackexchange wmi client query d gopath go pkg mod github com stack exchange wmi wmi go github com stackexchange wmi query d gopath go pkg mod github com stack exchange wmi wmi go github com shirou gopsutil internal common wmiquerywithcontext d gopath go pkg mod github com shirou gopsutil incompatible internal common common windows go created by github com shirou gopsutil internal common wmiquerywithcontext d gopath go pkg mod github com shirou gopsutil incompatible internal common common windows go goroutine github com shirou gopsutil internal common wmiquerywithcontext d gopath go pkg mod github com shirou gopsutil incompatible internal common common windows go github com shirou gopsutil process d gopath go pkg mod github com shirou gopsutil incompatible process process windows go github com shirou gopsutil process process cmdlinewithcontext d gopath go pkg mod github com shirou gopsutil incompatible process process windows go github com shirou gopsutil process process cmdline d gopath go pkg mod github com shirou gopsutil incompatible process process windows go main test e project dfagent test b t go main main e project dfagent test b t go rax rbx rcx rdi rsi rbp rsp rip rflags cs fs gs exit status to reproduce go package main import fmt time github com shirou gopsutil process func ignoreerror if err recover err nil fmt println err func test v err process processes if err nil fmt println err return for p range v cmd err p cmdline if err nil fmt println err continue fmt printf v n cmd cmd fmt printf time sleep time duration time millisecond fmt printf n func main var i int for fmt printf d n i test fmt printf n i time sleep time duration time second expected behavior don t crash environment microsoft windows additional context

| 1

|

8,206

| 11,402,552,746

|

IssuesEvent

|

2020-01-31 03:42:06

|

scala/community-build

|

https://api.github.com/repos/scala/community-build

|

opened

|

`./narrow` shouldn't modify version-controlled files

|

process

|

think about having `narrow` not modify `projs.conf` directly; it's annoying to have git always thinking it's a change I should check in

perhaps `narrow` could write out a `projs-narrowed.conf` file that would be `.gitignore`d and would be used when present (with `projs.conf` used as a fallback)

|

1.0

|

`./narrow` shouldn't modify version-controlled files - think about having `narrow` not modify `projs.conf` directly; it's annoying to have git always thinking it's a change I should check in

perhaps `narrow` could write out a `projs-narrowed.conf` file that would be `.gitignore`d and would be used when present (with `projs.conf` used as a fallback)

|

process

|

narrow shouldn t modify version controlled files think about having narrow not modify projs conf directly it s annoying to have git always thinking it s a change i should check in perhaps narrow could write out a projs narrowed conf file that would be gitignore d and would be used when present with projs conf used as a fallback

| 1

|

317,144

| 23,665,838,688

|

IssuesEvent

|

2022-08-26 20:48:50

|

hackforla/peopledepot

|

https://api.github.com/repos/hackforla/peopledepot

|

opened

|

PeopleDepot: PM Agenda

|

documentation help wanted question

|

### Overview

We mainly need to onboard Eric Vennemeyer to PeopleDepot

### Action Items

- [ ] Onboard Eric Vennemeyer #27

- [ ] Discuss using the next month's time to make PeopleDepot locally usable for VRMS development

- [ ] Make milestones for PeopleDepot

### Resources

[Roadmap for the near future](https://github.com/hackforla/peopledepot/wiki/Quick-roadmap)

|

1.0

|

PeopleDepot: PM Agenda - ### Overview

We mainly need to onboard Eric Vennemeyer to PeopleDepot

### Action Items

- [ ] Onboard Eric Vennemeyer #27

- [ ] Discuss using the next month's time to make PeopleDepot locally usable for VRMS development

- [ ] Make milestones for PeopleDepot

### Resources

[Roadmap for the near future](https://github.com/hackforla/peopledepot/wiki/Quick-roadmap)

|

non_process

|

peopledepot pm agenda overview we mainly need to onboard eric vennemeyer to peopledepot action items onboard eric vennemeyer discuss using the next month s time to make peopledepot locally usable for vrms development make milestones for peopledepot resources

| 0

|

267,970

| 23,337,218,691

|

IssuesEvent

|

2022-08-09 11:03:12

|

enonic/app-contentstudio-plus

|

https://api.github.com/repos/enonic/app-contentstudio-plus

|

closed

|

Version Widget - version items are not loaded after opening the widget

|

bug Test is Failing

|

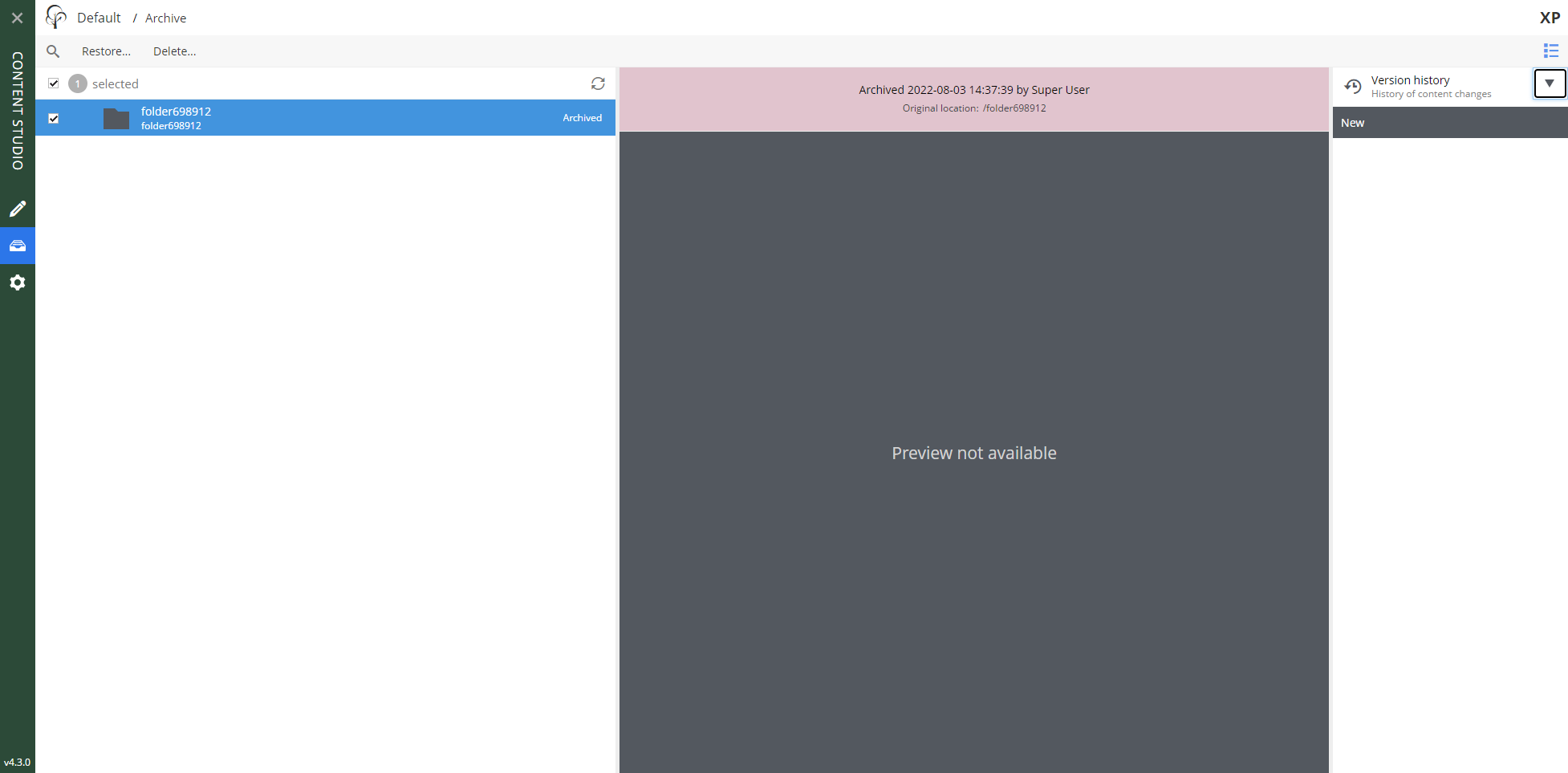

1. Do login then open `Archive` browse panel.

2. Select an exisiting folder then open versions widget.

**BUG**: version items are not loaded

Items appear after refreshing the page in browser,

|

1.0

|

Version Widget - version items are not loaded after opening the widget - 1. Do login then open `Archive` browse panel.

2. Select an exisiting folder then open versions widget.

**BUG**: version items are not loaded

Items appear after refreshing the page in browser,

|

non_process

|

version widget version items are not loaded after opening the widget do login then open archive browse panel select an exisiting folder then open versions widget bug version items are not loaded items appear after refreshing the page in browser

| 0

|

6,733

| 9,854,687,262

|

IssuesEvent

|

2019-06-19 17:28:32

|

googleapis/google-cloud-python

|

https://api.github.com/repos/googleapis/google-cloud-python

|

closed

|

Bigtable: 'test_create_instance_with_two_clusters' flakes modifying profile.

|

api: bigtable flaky testing type: process

|

Similar to #5928, but the failure occurs while re-modifying the instance's app profile.

From [this Kokoro failure](https://source.cloud.google.com/results/invocations/b5f7a8bc-d02c-45f8-b23d-31b94e4d0493/targets/cloud-devrel%2Fclient-libraries%2Fgoogle-cloud-python%2Fpresubmit%2Fbigtable/log):

```python

___________ TestInstanceAdminAPI.test_create_instance_w_two_clusters ___________

target = functools.partial(<bound method PollingFuture._done_or_raise of <google.api_core.operation.Operation object at 0x7f7c280cee80>>)

predicate = <function if_exception_type.<locals>.if_exception_type_predicate at 0x7f7c299b70d0>

sleep_generator = <generator object exponential_sleep_generator at 0x7f7c297d3a98>

deadline = 10, on_error = None

def retry_target(target, predicate, sleep_generator, deadline, on_error=None):

"""Call a function and retry if it fails.

This is the lowest-level retry helper. Generally, you'll use the

higher-level retry helper :class:`Retry`.

Args:

target(Callable): The function to call and retry. This must be a

nullary function - apply arguments with `functools.partial`.

predicate (Callable[Exception]): A callable used to determine if an

exception raised by the target should be considered retryable.

It should return True to retry or False otherwise.

sleep_generator (Iterable[float]): An infinite iterator that determines

how long to sleep between retries.

deadline (float): How long to keep retrying the target.

on_error (Callable): A function to call while processing a retryable

exception. Any error raised by this function will *not* be

caught.

Returns:

Any: the return value of the target function.

Raises:

google.api_core.RetryError: If the deadline is exceeded while retrying.

ValueError: If the sleep generator stops yielding values.

Exception: If the target raises a method that isn't retryable.

"""

if deadline is not None:

deadline_datetime = datetime_helpers.utcnow() + datetime.timedelta(

seconds=deadline

)

else:

deadline_datetime = None

last_exc = None

for sleep in sleep_generator:

try:

> return target()

../api_core/google/api_core/retry.py:179:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

self = <google.api_core.operation.Operation object at 0x7f7c280cee80>

def _done_or_raise(self):

"""Check if the future is done and raise if it's not."""

if not self.done():

> raise _OperationNotComplete()

E google.api_core.future.polling._OperationNotComplete

../api_core/google/api_core/future/polling.py:81: _OperationNotComplete

The above exception was the direct cause of the following exception:

self = <google.api_core.operation.Operation object at 0x7f7c280cee80>

timeout = 10

def _blocking_poll(self, timeout=None):

"""Poll and wait for the Future to be resolved.

Args:

timeout (int):

How long (in seconds) to wait for the operation to complete.

If None, wait indefinitely.

"""

if self._result_set:

return

retry_ = self._retry.with_deadline(timeout)

try:

> retry_(self._done_or_raise)()

../api_core/google/api_core/future/polling.py:101:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

args = (), kwargs = {}

target = functools.partial(<bound method PollingFuture._done_or_raise of <google.api_core.operation.Operation object at 0x7f7c280cee80>>)

sleep_generator = <generator object exponential_sleep_generator at 0x7f7c297d3a98>

@general_helpers.wraps(func)

def retry_wrapped_func(*args, **kwargs):

"""A wrapper that calls target function with retry."""

target = functools.partial(func, *args, **kwargs)

sleep_generator = exponential_sleep_generator(

self._initial, self._maximum, multiplier=self._multiplier

)

return retry_target(

target,

self._predicate,

sleep_generator,

self._deadline,

> on_error=on_error,

)

../api_core/google/api_core/retry.py:270:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

target = functools.partial(<bound method PollingFuture._done_or_raise of <google.api_core.operation.Operation object at 0x7f7c280cee80>>)

predicate = <function if_exception_type.<locals>.if_exception_type_predicate at 0x7f7c299b70d0>

sleep_generator = <generator object exponential_sleep_generator at 0x7f7c297d3a98>

deadline = 10, on_error = None

def retry_target(target, predicate, sleep_generator, deadline, on_error=None):

"""Call a function and retry if it fails.

This is the lowest-level retry helper. Generally, you'll use the

higher-level retry helper :class:`Retry`.

Args:

target(Callable): The function to call and retry. This must be a

nullary function - apply arguments with `functools.partial`.

predicate (Callable[Exception]): A callable used to determine if an

exception raised by the target should be considered retryable.

It should return True to retry or False otherwise.

sleep_generator (Iterable[float]): An infinite iterator that determines

how long to sleep between retries.

deadline (float): How long to keep retrying the target.

on_error (Callable): A function to call while processing a retryable

exception. Any error raised by this function will *not* be

caught.

Returns:

Any: the return value of the target function.

Raises:

google.api_core.RetryError: If the deadline is exceeded while retrying.

ValueError: If the sleep generator stops yielding values.

Exception: If the target raises a method that isn't retryable.

"""

if deadline is not None:

deadline_datetime = datetime_helpers.utcnow() + datetime.timedelta(

seconds=deadline

)

else:

deadline_datetime = None

last_exc = None

for sleep in sleep_generator:

try:

return target()

# pylint: disable=broad-except

# This function explicitly must deal with broad exceptions.

except Exception as exc:

if not predicate(exc):

raise

last_exc = exc

if on_error is not None:

on_error(exc)

now = datetime_helpers.utcnow()

if deadline_datetime is not None and deadline_datetime < now:

six.raise_from(

exceptions.RetryError(

"Deadline of {:.1f}s exceeded while calling {}".format(

deadline, target

),

last_exc,

),

> last_exc,

)

../api_core/google/api_core/retry.py:199:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

value = None, from_value = _OperationNotComplete()

> ???

E google.api_core.exceptions.RetryError: Deadline of 10.0s exceeded while calling functools.partial(<bound method PollingFuture._done_or_raise of <google.api_core.operation.Operation object at 0x7f7c280cee80>>), last exception:

<string>:3: RetryError

During handling of the above exception, another exception occurred:

self = <tests.system.TestInstanceAdminAPI testMethod=test_create_instance_w_two_clusters>

def test_create_instance_w_two_clusters(self):

from google.cloud.bigtable import enums

from google.cloud.bigtable.table import ClusterState

_PRODUCTION = enums.Instance.Type.PRODUCTION

ALT_INSTANCE_ID = "dif" + unique_resource_id("-")

instance = Config.CLIENT.instance(

ALT_INSTANCE_ID, instance_type=_PRODUCTION, labels=LABELS

)

ALT_CLUSTER_ID_1 = ALT_INSTANCE_ID + "-c1"

ALT_CLUSTER_ID_2 = ALT_INSTANCE_ID + "-c2"

LOCATION_ID_2 = "us-central1-f"

STORAGE_TYPE = enums.StorageType.HDD

cluster_1 = instance.cluster(

ALT_CLUSTER_ID_1,

location_id=LOCATION_ID,

serve_nodes=SERVE_NODES,

default_storage_type=STORAGE_TYPE,

)

cluster_2 = instance.cluster(

ALT_CLUSTER_ID_2,

location_id=LOCATION_ID_2,

serve_nodes=SERVE_NODES,

default_storage_type=STORAGE_TYPE,

)

operation = instance.create(clusters=[cluster_1, cluster_2])

# Make sure this instance gets deleted after the test case.

self.instances_to_delete.append(instance)

# We want to make sure the operation completes.

operation.result(timeout=10)

# Create a new instance instance and make sure it is the same.

instance_alt = Config.CLIENT.instance(ALT_INSTANCE_ID)

instance_alt.reload()

self.assertEqual(instance, instance_alt)

self.assertEqual(instance.display_name, instance_alt.display_name)

self.assertEqual(instance.type_, instance_alt.type_)

clusters, failed_locations = instance_alt.list_clusters()

self.assertEqual(failed_locations, [])

clusters.sort(key=lambda x: x.name)

alt_cluster_1, alt_cluster_2 = clusters

self.assertEqual(cluster_1.location_id, alt_cluster_1.location_id)

self.assertEqual(alt_cluster_1.state, enums.Cluster.State.READY)

self.assertEqual(cluster_1.serve_nodes, alt_cluster_1.serve_nodes)

self.assertEqual(

cluster_1.default_storage_type, alt_cluster_1.default_storage_type

)

self.assertEqual(cluster_2.location_id, alt_cluster_2.location_id)

self.assertEqual(alt_cluster_2.state, enums.Cluster.State.READY)

self.assertEqual(cluster_2.serve_nodes, alt_cluster_2.serve_nodes)

self.assertEqual(

cluster_2.default_storage_type, alt_cluster_2.default_storage_type

)

# Test list clusters in project via 'client.list_clusters'

clusters, failed_locations = Config.CLIENT.list_clusters()

self.assertFalse(failed_locations)

found = set([cluster.name for cluster in clusters])

self.assertTrue(

{alt_cluster_1.name, alt_cluster_2.name, Config.CLUSTER.name}.issubset(

found

)

)

temp_table_id = "test-get-cluster-states"

temp_table = instance.table(temp_table_id)

temp_table.create()

result = temp_table.get_cluster_states()

ReplicationState = enums.Table.ReplicationState

expected_results = [

ClusterState(ReplicationState.STATE_NOT_KNOWN),

ClusterState(ReplicationState.INITIALIZING),

ClusterState(ReplicationState.PLANNED_MAINTENANCE),

ClusterState(ReplicationState.UNPLANNED_MAINTENANCE),

ClusterState(ReplicationState.READY),

]

cluster_id_list = result.keys()

self.assertEqual(len(cluster_id_list), 2)

self.assertIn(ALT_CLUSTER_ID_1, cluster_id_list)

self.assertIn(ALT_CLUSTER_ID_2, cluster_id_list)

for clusterstate in result.values():

self.assertIn(clusterstate, expected_results)

# Test create app profile with multi_cluster_routing policy

app_profiles_to_delete = []

description = "routing policy-multy"

app_profile_id_1 = "app_profile_id_1"

routing = enums.RoutingPolicyType.ANY

self._test_create_app_profile_helper(

app_profile_id_1,

instance,

routing_policy_type=routing,

description=description,

ignore_warnings=True,

)

app_profiles_to_delete.append(app_profile_id_1)

# Test list app profiles

self._test_list_app_profiles_helper(instance, [app_profile_id_1])

# Test modify app profile app_profile_id_1

# routing policy to single cluster policy,

# cluster -> ALT_CLUSTER_ID_1,

# allow_transactional_writes -> disallowed

# modify description

description = "to routing policy-single"

routing = enums.RoutingPolicyType.SINGLE

self._test_modify_app_profile_helper(

app_profile_id_1,

instance,

routing_policy_type=routing,

description=description,

cluster_id=ALT_CLUSTER_ID_1,

allow_transactional_writes=False,

)

# Test modify app profile app_profile_id_1

# cluster -> ALT_CLUSTER_ID_2,

# allow_transactional_writes -> allowed

self._test_modify_app_profile_helper(

app_profile_id_1,

instance,

routing_policy_type=routing,

description=description,

cluster_id=ALT_CLUSTER_ID_2,

allow_transactional_writes=True,

ignore_warnings=True,

)

# Test create app profile with single cluster routing policy

description = "routing policy-single"

app_profile_id_2 = "app_profile_id_2"

routing = enums.RoutingPolicyType.SINGLE

self._test_create_app_profile_helper(

app_profile_id_2,

instance,

routing_policy_type=routing,

description=description,

cluster_id=ALT_CLUSTER_ID_2,

allow_transactional_writes=False,

)

app_profiles_to_delete.append(app_profile_id_2)

# Test list app profiles

self._test_list_app_profiles_helper(

instance, [app_profile_id_1, app_profile_id_2]

)

# Test modify app profile app_profile_id_2 to

# allow transactional writes

# Note: no need to set ``ignore_warnings`` to True

# since we are not restrictings anything with this modification.

self._test_modify_app_profile_helper(

app_profile_id_2,

instance,

routing_policy_type=routing,

description=description,

cluster_id=ALT_CLUSTER_ID_2,

> allow_transactional_writes=True,

)

tests/system.py:409:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

tests/system.py:613: in _test_modify_app_profile_helper

operation.result(timeout=10)

../api_core/google/api_core/future/polling.py:122: in result

self._blocking_poll(timeout=timeout)

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

self = <google.api_core.operation.Operation object at 0x7f7c280cee80>

timeout = 10

def _blocking_poll(self, timeout=None):

"""Poll and wait for the Future to be resolved.

Args:

timeout (int):

How long (in seconds) to wait for the operation to complete.

If None, wait indefinitely.

"""

if self._result_set:

return

retry_ = self._retry.with_deadline(timeout)

try:

retry_(self._done_or_raise)()

except exceptions.RetryError:

raise concurrent.futures.TimeoutError(

> "Operation did not complete within the designated " "timeout."

)

E concurrent.futures._base.TimeoutError: Operation did not complete within the designated timeout.

../api_core/google/api_core/future/polling.py:104: TimeoutError

```

|

1.0

|

Bigtable: 'test_create_instance_with_two_clusters' flakes modifying profile. - Similar to #5928, but the failure occurs while re-modifying the instance's app profile.

From [this Kokoro failure](https://source.cloud.google.com/results/invocations/b5f7a8bc-d02c-45f8-b23d-31b94e4d0493/targets/cloud-devrel%2Fclient-libraries%2Fgoogle-cloud-python%2Fpresubmit%2Fbigtable/log):

```python

___________ TestInstanceAdminAPI.test_create_instance_w_two_clusters ___________

target = functools.partial(<bound method PollingFuture._done_or_raise of <google.api_core.operation.Operation object at 0x7f7c280cee80>>)

predicate = <function if_exception_type.<locals>.if_exception_type_predicate at 0x7f7c299b70d0>

sleep_generator = <generator object exponential_sleep_generator at 0x7f7c297d3a98>

deadline = 10, on_error = None

def retry_target(target, predicate, sleep_generator, deadline, on_error=None):

"""Call a function and retry if it fails.

This is the lowest-level retry helper. Generally, you'll use the

higher-level retry helper :class:`Retry`.

Args:

target(Callable): The function to call and retry. This must be a

nullary function - apply arguments with `functools.partial`.

predicate (Callable[Exception]): A callable used to determine if an

exception raised by the target should be considered retryable.

It should return True to retry or False otherwise.

sleep_generator (Iterable[float]): An infinite iterator that determines

how long to sleep between retries.

deadline (float): How long to keep retrying the target.

on_error (Callable): A function to call while processing a retryable

exception. Any error raised by this function will *not* be

caught.

Returns:

Any: the return value of the target function.

Raises:

google.api_core.RetryError: If the deadline is exceeded while retrying.

ValueError: If the sleep generator stops yielding values.

Exception: If the target raises a method that isn't retryable.

"""

if deadline is not None:

deadline_datetime = datetime_helpers.utcnow() + datetime.timedelta(

seconds=deadline

)

else:

deadline_datetime = None

last_exc = None

for sleep in sleep_generator:

try:

> return target()

../api_core/google/api_core/retry.py:179:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

self = <google.api_core.operation.Operation object at 0x7f7c280cee80>

def _done_or_raise(self):

"""Check if the future is done and raise if it's not."""

if not self.done():

> raise _OperationNotComplete()

E google.api_core.future.polling._OperationNotComplete

../api_core/google/api_core/future/polling.py:81: _OperationNotComplete

The above exception was the direct cause of the following exception:

self = <google.api_core.operation.Operation object at 0x7f7c280cee80>

timeout = 10

def _blocking_poll(self, timeout=None):

"""Poll and wait for the Future to be resolved.

Args:

timeout (int):

How long (in seconds) to wait for the operation to complete.

If None, wait indefinitely.

"""

if self._result_set:

return

retry_ = self._retry.with_deadline(timeout)

try:

> retry_(self._done_or_raise)()

../api_core/google/api_core/future/polling.py:101:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

args = (), kwargs = {}

target = functools.partial(<bound method PollingFuture._done_or_raise of <google.api_core.operation.Operation object at 0x7f7c280cee80>>)

sleep_generator = <generator object exponential_sleep_generator at 0x7f7c297d3a98>

@general_helpers.wraps(func)

def retry_wrapped_func(*args, **kwargs):

"""A wrapper that calls target function with retry."""

target = functools.partial(func, *args, **kwargs)

sleep_generator = exponential_sleep_generator(

self._initial, self._maximum, multiplier=self._multiplier

)

return retry_target(

target,

self._predicate,

sleep_generator,

self._deadline,

> on_error=on_error,

)

../api_core/google/api_core/retry.py:270:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

target = functools.partial(<bound method PollingFuture._done_or_raise of <google.api_core.operation.Operation object at 0x7f7c280cee80>>)

predicate = <function if_exception_type.<locals>.if_exception_type_predicate at 0x7f7c299b70d0>

sleep_generator = <generator object exponential_sleep_generator at 0x7f7c297d3a98>

deadline = 10, on_error = None

def retry_target(target, predicate, sleep_generator, deadline, on_error=None):

"""Call a function and retry if it fails.

This is the lowest-level retry helper. Generally, you'll use the

higher-level retry helper :class:`Retry`.

Args:

target(Callable): The function to call and retry. This must be a

nullary function - apply arguments with `functools.partial`.

predicate (Callable[Exception]): A callable used to determine if an

exception raised by the target should be considered retryable.

It should return True to retry or False otherwise.

sleep_generator (Iterable[float]): An infinite iterator that determines

how long to sleep between retries.

deadline (float): How long to keep retrying the target.

on_error (Callable): A function to call while processing a retryable

exception. Any error raised by this function will *not* be

caught.

Returns:

Any: the return value of the target function.

Raises:

google.api_core.RetryError: If the deadline is exceeded while retrying.

ValueError: If the sleep generator stops yielding values.

Exception: If the target raises a method that isn't retryable.

"""

if deadline is not None:

deadline_datetime = datetime_helpers.utcnow() + datetime.timedelta(

seconds=deadline

)

else:

deadline_datetime = None

last_exc = None

for sleep in sleep_generator:

try:

return target()

# pylint: disable=broad-except

# This function explicitly must deal with broad exceptions.

except Exception as exc:

if not predicate(exc):

raise

last_exc = exc

if on_error is not None:

on_error(exc)

now = datetime_helpers.utcnow()

if deadline_datetime is not None and deadline_datetime < now:

six.raise_from(

exceptions.RetryError(

"Deadline of {:.1f}s exceeded while calling {}".format(

deadline, target

),

last_exc,

),

> last_exc,

)

../api_core/google/api_core/retry.py:199:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

value = None, from_value = _OperationNotComplete()

> ???

E google.api_core.exceptions.RetryError: Deadline of 10.0s exceeded while calling functools.partial(<bound method PollingFuture._done_or_raise of <google.api_core.operation.Operation object at 0x7f7c280cee80>>), last exception:

<string>:3: RetryError

During handling of the above exception, another exception occurred:

self = <tests.system.TestInstanceAdminAPI testMethod=test_create_instance_w_two_clusters>

def test_create_instance_w_two_clusters(self):

from google.cloud.bigtable import enums

from google.cloud.bigtable.table import ClusterState

_PRODUCTION = enums.Instance.Type.PRODUCTION

ALT_INSTANCE_ID = "dif" + unique_resource_id("-")

instance = Config.CLIENT.instance(

ALT_INSTANCE_ID, instance_type=_PRODUCTION, labels=LABELS

)

ALT_CLUSTER_ID_1 = ALT_INSTANCE_ID + "-c1"

ALT_CLUSTER_ID_2 = ALT_INSTANCE_ID + "-c2"

LOCATION_ID_2 = "us-central1-f"

STORAGE_TYPE = enums.StorageType.HDD

cluster_1 = instance.cluster(

ALT_CLUSTER_ID_1,

location_id=LOCATION_ID,

serve_nodes=SERVE_NODES,

default_storage_type=STORAGE_TYPE,

)

cluster_2 = instance.cluster(

ALT_CLUSTER_ID_2,

location_id=LOCATION_ID_2,

serve_nodes=SERVE_NODES,

default_storage_type=STORAGE_TYPE,

)

operation = instance.create(clusters=[cluster_1, cluster_2])

# Make sure this instance gets deleted after the test case.

self.instances_to_delete.append(instance)

# We want to make sure the operation completes.

operation.result(timeout=10)

# Create a new instance instance and make sure it is the same.

instance_alt = Config.CLIENT.instance(ALT_INSTANCE_ID)

instance_alt.reload()

self.assertEqual(instance, instance_alt)

self.assertEqual(instance.display_name, instance_alt.display_name)

self.assertEqual(instance.type_, instance_alt.type_)

clusters, failed_locations = instance_alt.list_clusters()

self.assertEqual(failed_locations, [])

clusters.sort(key=lambda x: x.name)

alt_cluster_1, alt_cluster_2 = clusters

self.assertEqual(cluster_1.location_id, alt_cluster_1.location_id)

self.assertEqual(alt_cluster_1.state, enums.Cluster.State.READY)

self.assertEqual(cluster_1.serve_nodes, alt_cluster_1.serve_nodes)

self.assertEqual(

cluster_1.default_storage_type, alt_cluster_1.default_storage_type

)

self.assertEqual(cluster_2.location_id, alt_cluster_2.location_id)

self.assertEqual(alt_cluster_2.state, enums.Cluster.State.READY)

self.assertEqual(cluster_2.serve_nodes, alt_cluster_2.serve_nodes)

self.assertEqual(

cluster_2.default_storage_type, alt_cluster_2.default_storage_type

)

# Test list clusters in project via 'client.list_clusters'

clusters, failed_locations = Config.CLIENT.list_clusters()

self.assertFalse(failed_locations)

found = set([cluster.name for cluster in clusters])

self.assertTrue(

{alt_cluster_1.name, alt_cluster_2.name, Config.CLUSTER.name}.issubset(

found

)

)

temp_table_id = "test-get-cluster-states"

temp_table = instance.table(temp_table_id)

temp_table.create()

result = temp_table.get_cluster_states()

ReplicationState = enums.Table.ReplicationState

expected_results = [

ClusterState(ReplicationState.STATE_NOT_KNOWN),

ClusterState(ReplicationState.INITIALIZING),

ClusterState(ReplicationState.PLANNED_MAINTENANCE),

ClusterState(ReplicationState.UNPLANNED_MAINTENANCE),

ClusterState(ReplicationState.READY),

]

cluster_id_list = result.keys()

self.assertEqual(len(cluster_id_list), 2)

self.assertIn(ALT_CLUSTER_ID_1, cluster_id_list)

self.assertIn(ALT_CLUSTER_ID_2, cluster_id_list)

for clusterstate in result.values():

self.assertIn(clusterstate, expected_results)

# Test create app profile with multi_cluster_routing policy

app_profiles_to_delete = []

description = "routing policy-multy"

app_profile_id_1 = "app_profile_id_1"

routing = enums.RoutingPolicyType.ANY

self._test_create_app_profile_helper(

app_profile_id_1,

instance,

routing_policy_type=routing,

description=description,

ignore_warnings=True,

)

app_profiles_to_delete.append(app_profile_id_1)

# Test list app profiles

self._test_list_app_profiles_helper(instance, [app_profile_id_1])

# Test modify app profile app_profile_id_1

# routing policy to single cluster policy,

# cluster -> ALT_CLUSTER_ID_1,