Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

7,214

| 10,346,996,816

|

IssuesEvent

|

2019-09-04 16:22:05

|

qri-io/desktop

|

https://api.github.com/repos/qri-io/desktop

|

closed

|

ensure auto-update is running

|

chore main process

|

qri-io/frontend successfully ran auto-update in the background. We should make sure the same functionality is in place for Desktop

|

1.0

|

ensure auto-update is running - qri-io/frontend successfully ran auto-update in the background. We should make sure the same functionality is in place for Desktop

|

process

|

ensure auto update is running qri io frontend successfully ran auto update in the background we should make sure the same functionality is in place for desktop

| 1

|

21,228

| 6,132,434,420

|

IssuesEvent

|

2017-06-25 02:08:23

|

ganeti/ganeti

|

https://api.github.com/repos/ganeti/ganeti

|

opened

|

Misnamed option in NEWS file

|

imported_from_google_code Status:Fixed

|

Originally reported of Google Code with ID 1177.

```

In the NEWS file:

"- RPC security got enhanced by using different client SSL certificates

for each node. In this context 'gnt-cluster renew-crypto' got a new

option '--renew-node-certificates'"

But the option is actually "--new-node-certificates"

```

Originally added on 2016-05-06 14:04:58 +0000 UTC.

|

1.0

|

Misnamed option in NEWS file - Originally reported of Google Code with ID 1177.

```

In the NEWS file:

"- RPC security got enhanced by using different client SSL certificates

for each node. In this context 'gnt-cluster renew-crypto' got a new

option '--renew-node-certificates'"

But the option is actually "--new-node-certificates"

```

Originally added on 2016-05-06 14:04:58 +0000 UTC.

|

non_process

|

misnamed option in news file originally reported of google code with id in the news file rpc security got enhanced by using different client ssl certificates for each node in this context gnt cluster renew crypto got a new option renew node certificates but the option is actually new node certificates originally added on utc

| 0

|

4,248

| 7,187,159,249

|

IssuesEvent

|

2018-02-02 03:18:15

|

Great-Hill-Corporation/quickBlocks

|

https://api.github.com/repos/Great-Hill-Corporation/quickBlocks

|

closed

|

cacheMan does not correct the file when finding a reversal

|

monitors-cacheMan status-inprocess type-bug

|

The cacheMan -f mode does not actually correct the cache. It should, if it finds a reversal, simply truncate the remainder of the cache because once there's a reveral all bets are off.

If there's a reversal, there may be very large jumps later in the data (there's a reversal because the data is messed up).

Suggestion: the first reversal is teh location of a total truncate at that point.

|

1.0

|

cacheMan does not correct the file when finding a reversal - The cacheMan -f mode does not actually correct the cache. It should, if it finds a reversal, simply truncate the remainder of the cache because once there's a reveral all bets are off.

If there's a reversal, there may be very large jumps later in the data (there's a reversal because the data is messed up).

Suggestion: the first reversal is teh location of a total truncate at that point.

|

process

|

cacheman does not correct the file when finding a reversal the cacheman f mode does not actually correct the cache it should if it finds a reversal simply truncate the remainder of the cache because once there s a reveral all bets are off if there s a reversal there may be very large jumps later in the data there s a reversal because the data is messed up suggestion the first reversal is teh location of a total truncate at that point

| 1

|

16,095

| 20,264,412,972

|

IssuesEvent

|

2022-02-15 10:39:04

|

bazelbuild/bazel

|

https://api.github.com/repos/bazelbuild/bazel

|

closed

|

jni_md.h not found on linux_mips64 and linux_riscv64

|

type: support / not a bug (process) untriaged team-OSS

|

### Description of the problem / feature request:

The header `jni_md.h` cannot be found on some architectures when compiling due to these 2 lines.

https://github.com/bazelbuild/bazel/blob/eeec121668e6307c21e1a9698a96237988269dba/tools/jdk/BUILD.tools#L109-L110

|

1.0

|

jni_md.h not found on linux_mips64 and linux_riscv64 - ### Description of the problem / feature request:

The header `jni_md.h` cannot be found on some architectures when compiling due to these 2 lines.

https://github.com/bazelbuild/bazel/blob/eeec121668e6307c21e1a9698a96237988269dba/tools/jdk/BUILD.tools#L109-L110

|

process

|

jni md h not found on linux and linux description of the problem feature request the header jni md h cannot be found on some architectures when compiling due to these lines

| 1

|

10,208

| 13,067,104,008

|

IssuesEvent

|

2020-07-30 23:24:49

|

googleapis/proto-plus-python

|

https://api.github.com/repos/googleapis/proto-plus-python

|

closed

|

Automated publish to PyPI is broken

|

type: process

|

https://app.circleci.com/pipelines/github/googleapis/proto-plus-python/132/workflows/41232c5b-ce36-4a48-9fc5-5eb3943dceb7/jobs/955/steps

```

#!/bin/bash -eo pipefail

openssl aes-256-cbc -d \

-in .circleci/.pypirc.enc \

-out ~/.pypirc \

-k "${PYPIRC_ENCRYPTION_KEY}"

*** WARNING : deprecated key derivation used.

Using -iter or -pbkdf2 would be better.

bad decrypt

140293096486016:error:06065064:digital envelope routines:EVP_DecryptFinal_ex:bad decrypt:../crypto/evp/evp_enc.c:570:

Exited with code exit status 1

```

|

1.0

|

Automated publish to PyPI is broken - https://app.circleci.com/pipelines/github/googleapis/proto-plus-python/132/workflows/41232c5b-ce36-4a48-9fc5-5eb3943dceb7/jobs/955/steps

```

#!/bin/bash -eo pipefail

openssl aes-256-cbc -d \

-in .circleci/.pypirc.enc \

-out ~/.pypirc \

-k "${PYPIRC_ENCRYPTION_KEY}"

*** WARNING : deprecated key derivation used.

Using -iter or -pbkdf2 would be better.

bad decrypt

140293096486016:error:06065064:digital envelope routines:EVP_DecryptFinal_ex:bad decrypt:../crypto/evp/evp_enc.c:570:

Exited with code exit status 1

```

|

process

|

automated publish to pypi is broken bin bash eo pipefail openssl aes cbc d in circleci pypirc enc out pypirc k pypirc encryption key warning deprecated key derivation used using iter or would be better bad decrypt error digital envelope routines evp decryptfinal ex bad decrypt crypto evp evp enc c exited with code exit status

| 1

|

342,756

| 24,755,812,731

|

IssuesEvent

|

2022-10-21 17:36:43

|

Py-Contributors/AudioBook

|

https://api.github.com/repos/Py-Contributors/AudioBook

|

opened

|

extends the current documentation for readthedocs

|

documentation enhancement help wanted good first issue hacktoberfest-accepted

|

**Is your feature request related to a problem? Please describe.**

Current Documentation is not sufficient. we have to extend it.

**Plan for Docs**

will use Sphinx[¶](https://docs.readthedocs.io/en/stable/intro/getting-started-with-sphinx.html#getting-started-with-sphinx) for documentation and write proper multipage documentation.

This issue is open to assigning.

Reference:-

https://pypdf2.readthedocs.io/en/latest/

https://flask.palletsprojects.com/en/2.2.x/

|

1.0

|

extends the current documentation for readthedocs - **Is your feature request related to a problem? Please describe.**

Current Documentation is not sufficient. we have to extend it.

**Plan for Docs**

will use Sphinx[¶](https://docs.readthedocs.io/en/stable/intro/getting-started-with-sphinx.html#getting-started-with-sphinx) for documentation and write proper multipage documentation.

This issue is open to assigning.

Reference:-

https://pypdf2.readthedocs.io/en/latest/

https://flask.palletsprojects.com/en/2.2.x/

|

non_process

|

extends the current documentation for readthedocs is your feature request related to a problem please describe current documentation is not sufficient we have to extend it plan for docs will use sphinx for documentation and write proper multipage documentation this issue is open to assigning reference

| 0

|

21,582

| 14,656,962,668

|

IssuesEvent

|

2020-12-28 14:34:42

|

airyhq/airy

|

https://api.github.com/repos/airyhq/airy

|

closed

|

Import core go libraries from anywhere

|

fix infrastructure

|

We need to make our go libraries importable from anywhere.

|

1.0

|

Import core go libraries from anywhere - We need to make our go libraries importable from anywhere.

|

non_process

|

import core go libraries from anywhere we need to make our go libraries importable from anywhere

| 0

|

14,220

| 17,141,349,176

|

IssuesEvent

|

2021-07-13 09:57:40

|

2i2c-org/team-compass

|

https://api.github.com/repos/2i2c-org/team-compass

|

opened

|

Finalize the titles that we use describe roles in a given hub

|

:label: administration :label: team-process prio: med type: enhancement

|

# Summary

In a few issues now, we've run into ambiguity / multiple kinds of terminology for how we describe roles in a hub. We should just nail this down and agree upon one description so that we can remain consistent.

# Proposed titles

## On the community side

- **Hub Community**: The group of people that we are (or might be) serving with a hub. **We use this word instead of "client" or "customer"**.

- My rationale here is that we are "in between" a SaaS platform and a consultancy, with a strong community+pro-social focus. As such, neither "customer" or "client" feel right to me. This is a stab at something that aligns more with the mission focus of 2i2c.

- **Community Representative**: The point of contact with Hub Engineers, and the main interface with a hub's community

- **Community Admin Team**: The group of community members with "Administrative privileges" on a hub. They are expected to do most hub administration via the JupyterHub UI. This group must have at least one member (usually the Community Rep).

## On the 2i2c side

- **Hub Engineer**: A member of the @2i2c-org/tech-team that performs more complex dev/ops for a hub

# Actions

- [ ] Decide whether "Hub Community" is an acceptable drop-in for "customer" or "client"

- [ ] Agree on the other major roles and what we call them

- [ ] Agree that we're not missing any important roles

- [ ] Write these up in our Team Compass

- [ ] Update respective documents (e.g. the Managed Hub Service doc)

# Related issues

- Some discussion of this in https://github.com/2i2c-org/team-compass/issues/147

|

1.0

|

Finalize the titles that we use describe roles in a given hub - # Summary

In a few issues now, we've run into ambiguity / multiple kinds of terminology for how we describe roles in a hub. We should just nail this down and agree upon one description so that we can remain consistent.

# Proposed titles

## On the community side

- **Hub Community**: The group of people that we are (or might be) serving with a hub. **We use this word instead of "client" or "customer"**.

- My rationale here is that we are "in between" a SaaS platform and a consultancy, with a strong community+pro-social focus. As such, neither "customer" or "client" feel right to me. This is a stab at something that aligns more with the mission focus of 2i2c.

- **Community Representative**: The point of contact with Hub Engineers, and the main interface with a hub's community

- **Community Admin Team**: The group of community members with "Administrative privileges" on a hub. They are expected to do most hub administration via the JupyterHub UI. This group must have at least one member (usually the Community Rep).

## On the 2i2c side

- **Hub Engineer**: A member of the @2i2c-org/tech-team that performs more complex dev/ops for a hub

# Actions

- [ ] Decide whether "Hub Community" is an acceptable drop-in for "customer" or "client"

- [ ] Agree on the other major roles and what we call them

- [ ] Agree that we're not missing any important roles

- [ ] Write these up in our Team Compass

- [ ] Update respective documents (e.g. the Managed Hub Service doc)

# Related issues

- Some discussion of this in https://github.com/2i2c-org/team-compass/issues/147

|

process

|

finalize the titles that we use describe roles in a given hub summary in a few issues now we ve run into ambiguity multiple kinds of terminology for how we describe roles in a hub we should just nail this down and agree upon one description so that we can remain consistent proposed titles on the community side hub community the group of people that we are or might be serving with a hub we use this word instead of client or customer my rationale here is that we are in between a saas platform and a consultancy with a strong community pro social focus as such neither customer or client feel right to me this is a stab at something that aligns more with the mission focus of community representative the point of contact with hub engineers and the main interface with a hub s community community admin team the group of community members with administrative privileges on a hub they are expected to do most hub administration via the jupyterhub ui this group must have at least one member usually the community rep on the side hub engineer a member of the org tech team that performs more complex dev ops for a hub actions decide whether hub community is an acceptable drop in for customer or client agree on the other major roles and what we call them agree that we re not missing any important roles write these up in our team compass update respective documents e g the managed hub service doc related issues some discussion of this in

| 1

|

802,563

| 28,967,034,537

|

IssuesEvent

|

2023-05-10 08:37:48

|

ahmedkaludi/accelerated-mobile-pages

|

https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages

|

closed

|

PHP error after the recent update 1.0.84

|

bug [Priority: HIGH] Ready for Review

|

**_Error notice_**

` PHP Notice: Function WP_Scripts::localize was called <strong>incorrectly</strong>. The <code>$l10n</code> parameter must be an array. To pass arbitrary data to scripts, use the <code>wp_add_inline_script()</code> function instead. Please see <a href="https://wordpress.org/documentation/article/debugging-in-wordpress/">Debugging in WordPress</a> for more information. (This message was added in version 5.7.0.) in C:\xampp\htdocs\wordpress\wp-includes\functions.php on line 5865

`

Reference ticket: https://wordpress.org/support/topic/function-wp_scriptslocalize-was-called-incorrectly-the-l10n-parameter-must-b-2/

|

1.0

|

PHP error after the recent update 1.0.84 - **_Error notice_**

` PHP Notice: Function WP_Scripts::localize was called <strong>incorrectly</strong>. The <code>$l10n</code> parameter must be an array. To pass arbitrary data to scripts, use the <code>wp_add_inline_script()</code> function instead. Please see <a href="https://wordpress.org/documentation/article/debugging-in-wordpress/">Debugging in WordPress</a> for more information. (This message was added in version 5.7.0.) in C:\xampp\htdocs\wordpress\wp-includes\functions.php on line 5865

`

Reference ticket: https://wordpress.org/support/topic/function-wp_scriptslocalize-was-called-incorrectly-the-l10n-parameter-must-b-2/

|

non_process

|

php error after the recent update error notice php notice function wp scripts localize was called incorrectly the parameter must be an array to pass arbitrary data to scripts use the wp add inline script function instead please see for more information this message was added in version in c xampp htdocs wordpress wp includes functions php on line reference ticket

| 0

|

15,479

| 19,688,035,840

|

IssuesEvent

|

2022-01-12 01:34:50

|

dtcenter/MET

|

https://api.github.com/repos/dtcenter/MET

|

closed

|

ioda2nc fails if the same input file is given with -iodafile option

|

type: bug priority: high alert: NEED MORE DEFINITION component: CI/CD reporting: DTC NCAR Base requestor: METplus Team required: FOR OFFICIAL RELEASE MET: PreProcessing Tools (Point)

|

*Replace italics below with details for this issue.*

## Describe the Problem ##

ioda2nc fails if the same input file is given with -iodafile option

### Expected Behavior ###

It should work without errors.

### Environment ###

Describe your runtime environment:

*1. Machine: (Linux Workstation)*

*2. OS: (RedHat Linux)*

*3. Software version number(s): 10.1.0 and 11.0 beta*

### To Reproduce ###

Describe the steps to reproduce the behavior:

*1. Go to seneca*

*2. run the following command*

/usr/local/met/bin/ioda2nc -v 2 /d1/projects/METplus/METplus_Data/development/feature_1203_ioda2nc/met_test/new/ioda \

/ioda.NC001007.2020031012.nc ioda.NC001007.2020031012.summary.nc \

-iodafile /d1/projects/METplus/METplus_Data/development/feature_1203_ioda2nc/met_test/new/ioda/ioda.NC001007.2020031012.nc

*3. See error*

terminate called after throwing an instance of 'netCDF::exceptions::NcEdge'

what(): NetCDF: Start+count exceeds dimension bound

file: ncVar.cpp line:958

Aborted

*4. run the following command*

export METPLUS_ELEVATION_RANGE_DICT="elevation_range = {beg = -1000;end = 100000;}"

export METPLUS_LEVEL_RANGE_DICT=""

export METPLUS_MASK_DICT=""

export METPLUS_MESSAGE_TYPE=""

export METPLUS_MESSAGE_TYPE_GROUP_MAP=""

export METPLUS_MESSAGE_TYPE_MAP=""

export METPLUS_METADATA_MAP=""

export METPLUS_MET_CONFIG_OVERRIDES=""

export METPLUS_MISSING_THRESH=""

export METPLUS_OBS_NAME_MAP="obs_name_map = [{ key = \"wind_direction\"; val = \"WDIR\"; }, { key = \"wind_speed\"; val = \"WIND\"; }];"

export METPLUS_OBS_VAR=""

export METPLUS_OBS_WINDOW_DICT="obs_window = {beg = -5400;end = 5400;}"

export METPLUS_QUALITY_MARK_THRESH="quality_mark_thresh = 0;"

export METPLUS_STATION_ID=""

export METPLUS_TIME_SUMMARY_DICT="time_summary = {flag = TRUE;raw_data = TRUE;beg = \"000000\";end = \"235959\";step = 300;width = 600;grib_code = [];obs_var = [\"WIND\"];type = [\"min\", \"max\", \"range\", \"mean\", \"stdev\", \"median\", \"p80\"];vld_freq = 0;vld_thresh = 0.0;}"

export MET_TMP_DIR="/d1/personal/mccabe/out2/tmp"

/usr/local/met/bin/ioda2nc -v 2 /d1/projects/METplus/METplus_Data/development/feature_1203_ioda2nc/met_test/new/ioda \

/ioda.NC001007.2020031012.nc ioda.NC001007.2020031012.summary.nc \

-config /d1/personal/mccabe/METplus/parm/met_config/IODA2NCConfig_wrapped \

-iodafile /d1/projects/METplus/METplus_Data/development/feature_1203_ioda2nc/met_test/new/ioda/ioda.NC001007.2020031012.nc

*5. See error*

ERROR :

ERROR : get_obs_data_float() -> WDIR@ObsValue does not exist!

ERROR :

*Post relevant sample data following these instructions:*

*https://dtcenter.org/community-code/model-evaluation-tools-met/met-help-desk#ftp*

### Relevant Deadlines ###

*List relevant project deadlines here or state NONE.*

### Funding Source ###

2702691

## Define the Metadata ##

### Assignee ###

- [ ] Select **engineer(s)** or **no engineer** required

- [x] Select **scientist(s)** or **no scientist** required: no scientist

### Labels ###

- [x] Select **component(s)**

- [x] Select **priority**

- [x] Select **requestor(s)**

### Projects and Milestone ###

- [ ] Select **Organization** level **Project** for support of the current coordinated release

- [ ] Select **Repository** level **Project** for development toward the next official release or add **alert: NEED PROJECT ASSIGNMENT** label

- [ ] Select **Milestone** as the next bugfix version

## Define Related Issue(s) ##

Consider the impact to the other METplus components.

- [ ] [METplus](https://github.com/dtcenter/METplus/issues/new/choose), [MET](https://github.com/dtcenter/MET/issues/new/choose), [METdatadb](https://github.com/dtcenter/METdatadb/issues/new/choose), [METviewer](https://github.com/dtcenter/METviewer/issues/new/choose), [METexpress](https://github.com/dtcenter/METexpress/issues/new/choose), [METcalcpy](https://github.com/dtcenter/METcalcpy/issues/new/choose), [METplotpy](https://github.com/dtcenter/METplotpy/issues/new/choose)

## Bugfix Checklist ##

See the [METplus Workflow](https://metplus.readthedocs.io/en/latest/Contributors_Guide/github_workflow.html) for details.

- [ ] Complete the issue definition above, including the **Time Estimate** and **Funding Source**.

- [ ] Fork this repository or create a branch of **main_\<Version>**.

Branch name: `bugfix_<Issue Number>_main_<Version>_<Description>`

- [ ] Fix the bug and test your changes.

- [ ] Add/update log messages for easier debugging.

- [ ] Add/update unit tests.

- [ ] Add/update documentation.

- [ ] Push local changes to GitHub.

- [ ] Submit a pull request to merge into **main_\<Version>**.

Pull request: `bugfix <Issue Number> main_<Version> <Description>`

- [ ] Define the pull request metadata, as permissions allow.

Select: **Reviewer(s)** and **Linked issues**

Select: **Organization** level software support **Project** for the current coordinated release

Select: **Milestone** as the next bugfix version

- [ ] Iterate until the reviewer(s) accept and merge your changes.

- [ ] Delete your fork or branch.

- [ ] Complete the steps above to fix the bug on the **develop** branch.

Branch name: `bugfix_<Issue Number>_develop_<Description>`

Pull request: `bugfix <Issue Number> develop <Description>`

Select: **Reviewer(s)** and **Linked issues**

Select: **Repository** level development cycle **Project** for the next official release

Select: **Milestone** as the next official version

- [ ] Close this issue.

|

1.0

|

ioda2nc fails if the same input file is given with -iodafile option - *Replace italics below with details for this issue.*

## Describe the Problem ##

ioda2nc fails if the same input file is given with -iodafile option

### Expected Behavior ###

It should work without errors.

### Environment ###

Describe your runtime environment:

*1. Machine: (Linux Workstation)*

*2. OS: (RedHat Linux)*

*3. Software version number(s): 10.1.0 and 11.0 beta*

### To Reproduce ###

Describe the steps to reproduce the behavior:

*1. Go to seneca*

*2. run the following command*

/usr/local/met/bin/ioda2nc -v 2 /d1/projects/METplus/METplus_Data/development/feature_1203_ioda2nc/met_test/new/ioda \

/ioda.NC001007.2020031012.nc ioda.NC001007.2020031012.summary.nc \

-iodafile /d1/projects/METplus/METplus_Data/development/feature_1203_ioda2nc/met_test/new/ioda/ioda.NC001007.2020031012.nc

*3. See error*

terminate called after throwing an instance of 'netCDF::exceptions::NcEdge'

what(): NetCDF: Start+count exceeds dimension bound

file: ncVar.cpp line:958

Aborted

*4. run the following command*

export METPLUS_ELEVATION_RANGE_DICT="elevation_range = {beg = -1000;end = 100000;}"

export METPLUS_LEVEL_RANGE_DICT=""

export METPLUS_MASK_DICT=""

export METPLUS_MESSAGE_TYPE=""

export METPLUS_MESSAGE_TYPE_GROUP_MAP=""

export METPLUS_MESSAGE_TYPE_MAP=""

export METPLUS_METADATA_MAP=""

export METPLUS_MET_CONFIG_OVERRIDES=""

export METPLUS_MISSING_THRESH=""

export METPLUS_OBS_NAME_MAP="obs_name_map = [{ key = \"wind_direction\"; val = \"WDIR\"; }, { key = \"wind_speed\"; val = \"WIND\"; }];"

export METPLUS_OBS_VAR=""

export METPLUS_OBS_WINDOW_DICT="obs_window = {beg = -5400;end = 5400;}"

export METPLUS_QUALITY_MARK_THRESH="quality_mark_thresh = 0;"

export METPLUS_STATION_ID=""

export METPLUS_TIME_SUMMARY_DICT="time_summary = {flag = TRUE;raw_data = TRUE;beg = \"000000\";end = \"235959\";step = 300;width = 600;grib_code = [];obs_var = [\"WIND\"];type = [\"min\", \"max\", \"range\", \"mean\", \"stdev\", \"median\", \"p80\"];vld_freq = 0;vld_thresh = 0.0;}"

export MET_TMP_DIR="/d1/personal/mccabe/out2/tmp"

/usr/local/met/bin/ioda2nc -v 2 /d1/projects/METplus/METplus_Data/development/feature_1203_ioda2nc/met_test/new/ioda \

/ioda.NC001007.2020031012.nc ioda.NC001007.2020031012.summary.nc \

-config /d1/personal/mccabe/METplus/parm/met_config/IODA2NCConfig_wrapped \

-iodafile /d1/projects/METplus/METplus_Data/development/feature_1203_ioda2nc/met_test/new/ioda/ioda.NC001007.2020031012.nc

*5. See error*

ERROR :

ERROR : get_obs_data_float() -> WDIR@ObsValue does not exist!

ERROR :

*Post relevant sample data following these instructions:*

*https://dtcenter.org/community-code/model-evaluation-tools-met/met-help-desk#ftp*

### Relevant Deadlines ###

*List relevant project deadlines here or state NONE.*

### Funding Source ###

2702691

## Define the Metadata ##

### Assignee ###

- [ ] Select **engineer(s)** or **no engineer** required

- [x] Select **scientist(s)** or **no scientist** required: no scientist

### Labels ###

- [x] Select **component(s)**

- [x] Select **priority**

- [x] Select **requestor(s)**

### Projects and Milestone ###

- [ ] Select **Organization** level **Project** for support of the current coordinated release

- [ ] Select **Repository** level **Project** for development toward the next official release or add **alert: NEED PROJECT ASSIGNMENT** label

- [ ] Select **Milestone** as the next bugfix version

## Define Related Issue(s) ##

Consider the impact to the other METplus components.

- [ ] [METplus](https://github.com/dtcenter/METplus/issues/new/choose), [MET](https://github.com/dtcenter/MET/issues/new/choose), [METdatadb](https://github.com/dtcenter/METdatadb/issues/new/choose), [METviewer](https://github.com/dtcenter/METviewer/issues/new/choose), [METexpress](https://github.com/dtcenter/METexpress/issues/new/choose), [METcalcpy](https://github.com/dtcenter/METcalcpy/issues/new/choose), [METplotpy](https://github.com/dtcenter/METplotpy/issues/new/choose)

## Bugfix Checklist ##

See the [METplus Workflow](https://metplus.readthedocs.io/en/latest/Contributors_Guide/github_workflow.html) for details.

- [ ] Complete the issue definition above, including the **Time Estimate** and **Funding Source**.

- [ ] Fork this repository or create a branch of **main_\<Version>**.

Branch name: `bugfix_<Issue Number>_main_<Version>_<Description>`

- [ ] Fix the bug and test your changes.

- [ ] Add/update log messages for easier debugging.

- [ ] Add/update unit tests.

- [ ] Add/update documentation.

- [ ] Push local changes to GitHub.

- [ ] Submit a pull request to merge into **main_\<Version>**.

Pull request: `bugfix <Issue Number> main_<Version> <Description>`

- [ ] Define the pull request metadata, as permissions allow.

Select: **Reviewer(s)** and **Linked issues**

Select: **Organization** level software support **Project** for the current coordinated release

Select: **Milestone** as the next bugfix version

- [ ] Iterate until the reviewer(s) accept and merge your changes.

- [ ] Delete your fork or branch.

- [ ] Complete the steps above to fix the bug on the **develop** branch.

Branch name: `bugfix_<Issue Number>_develop_<Description>`

Pull request: `bugfix <Issue Number> develop <Description>`

Select: **Reviewer(s)** and **Linked issues**

Select: **Repository** level development cycle **Project** for the next official release

Select: **Milestone** as the next official version

- [ ] Close this issue.

|

process

|

fails if the same input file is given with iodafile option replace italics below with details for this issue describe the problem fails if the same input file is given with iodafile option expected behavior it should work without errors environment describe your runtime environment machine linux workstation os redhat linux software version number s and beta to reproduce describe the steps to reproduce the behavior go to seneca run the following command usr local met bin v projects metplus metplus data development feature met test new ioda ioda nc ioda summary nc iodafile projects metplus metplus data development feature met test new ioda ioda nc see error terminate called after throwing an instance of netcdf exceptions ncedge what netcdf start count exceeds dimension bound file ncvar cpp line aborted run the following command export metplus elevation range dict elevation range beg end export metplus level range dict export metplus mask dict export metplus message type export metplus message type group map export metplus message type map export metplus metadata map export metplus met config overrides export metplus missing thresh export metplus obs name map obs name map export metplus obs var export metplus obs window dict obs window beg end export metplus quality mark thresh quality mark thresh export metplus station id export metplus time summary dict time summary flag true raw data true beg end step width grib code obs var type vld freq vld thresh export met tmp dir personal mccabe tmp usr local met bin v projects metplus metplus data development feature met test new ioda ioda nc ioda summary nc config personal mccabe metplus parm met config wrapped iodafile projects metplus metplus data development feature met test new ioda ioda nc see error error error get obs data float wdir obsvalue does not exist error post relevant sample data following these instructions relevant deadlines list relevant project deadlines here or state none funding source define the metadata assignee select engineer s or no engineer required select scientist s or no scientist required no scientist labels select component s select priority select requestor s projects and milestone select organization level project for support of the current coordinated release select repository level project for development toward the next official release or add alert need project assignment label select milestone as the next bugfix version define related issue s consider the impact to the other metplus components bugfix checklist see the for details complete the issue definition above including the time estimate and funding source fork this repository or create a branch of main branch name bugfix main fix the bug and test your changes add update log messages for easier debugging add update unit tests add update documentation push local changes to github submit a pull request to merge into main pull request bugfix main define the pull request metadata as permissions allow select reviewer s and linked issues select organization level software support project for the current coordinated release select milestone as the next bugfix version iterate until the reviewer s accept and merge your changes delete your fork or branch complete the steps above to fix the bug on the develop branch branch name bugfix develop pull request bugfix develop select reviewer s and linked issues select repository level development cycle project for the next official release select milestone as the next official version close this issue

| 1

|

21,910

| 30,440,085,688

|

IssuesEvent

|

2023-07-15 01:22:53

|

metabase/metabase

|

https://api.github.com/repos/metabase/metabase

|

closed

|

[MLv2] Medium-Priority Bug Fixes

|

.Epic .metabase-lib .Team/QueryProcessor :hammer_and_wrench:

|

```[tasklist]

### Tasks

- [x] #29734

- [x] #29702

- [x] #29746

- [x] #29764

- [x] #29902

- [x] #29745

- [x] #29770

- [x] #29988

- [x] #29747

- [x] #29898

- [x] #29964

- [x] #29944

- [x] #29947

- [ ] #30568

- [ ] #29895

- [ ] #29908

- [ ] #30280

- [ ] #29748

- [ ] #29897

- [ ] #29904

- [ ] #29909

- [ ] #29910

- [ ] #29935

- [ ] #29936

- [ ] #29938

- [ ] #29941

- [ ] #29942

- [ ] #29946

- [ ] #29948

- [ ] #29950

- [ ] #29953

- [ ] #29958

- [ ] #30385

- [ ] https://github.com/metabase/metabase/issues/30397

- [ ] #30401

- [ ] https://github.com/metabase/metabase/issues/30857

- [ ] https://github.com/metabase/metabase/issues/30858

- [ ] https://github.com/metabase/metabase/issues/30948

- [ ] https://github.com/metabase/metabase/issues/30949

- [ ] https://github.com/metabase/metabase/issues/30950

- [ ] https://github.com/metabase/metabase/issues/30957

- [ ] #29949

- [ ] https://github.com/metabase/metabase/issues/31053

- [ ] https://github.com/metabase/metabase/issues/31223

- [ ] https://github.com/metabase/metabase/issues/31268

- [ ] https://github.com/metabase/metabase/issues/31365

- [ ] https://github.com/metabase/metabase/issues/31366

- [ ] https://github.com/metabase/metabase/issues/31368

- [ ] #31521

- [ ] https://github.com/metabase/metabase/issues/31624

- [ ] https://github.com/metabase/metabase/issues/31741

- [ ] https://github.com/metabase/metabase/issues/31769

- [ ] https://github.com/metabase/metabase/issues/31775

- [ ] https://github.com/metabase/metabase/issues/31858

- [ ] https://github.com/metabase/metabase/issues/32049

- [ ] https://github.com/metabase/metabase/issues/32063

```

|

1.0

|

[MLv2] Medium-Priority Bug Fixes - ```[tasklist]

### Tasks

- [x] #29734

- [x] #29702

- [x] #29746

- [x] #29764

- [x] #29902

- [x] #29745

- [x] #29770

- [x] #29988

- [x] #29747

- [x] #29898

- [x] #29964

- [x] #29944

- [x] #29947

- [ ] #30568

- [ ] #29895

- [ ] #29908

- [ ] #30280

- [ ] #29748

- [ ] #29897

- [ ] #29904

- [ ] #29909

- [ ] #29910

- [ ] #29935

- [ ] #29936

- [ ] #29938

- [ ] #29941

- [ ] #29942

- [ ] #29946

- [ ] #29948

- [ ] #29950

- [ ] #29953

- [ ] #29958

- [ ] #30385

- [ ] https://github.com/metabase/metabase/issues/30397

- [ ] #30401

- [ ] https://github.com/metabase/metabase/issues/30857

- [ ] https://github.com/metabase/metabase/issues/30858

- [ ] https://github.com/metabase/metabase/issues/30948

- [ ] https://github.com/metabase/metabase/issues/30949

- [ ] https://github.com/metabase/metabase/issues/30950

- [ ] https://github.com/metabase/metabase/issues/30957

- [ ] #29949

- [ ] https://github.com/metabase/metabase/issues/31053

- [ ] https://github.com/metabase/metabase/issues/31223

- [ ] https://github.com/metabase/metabase/issues/31268

- [ ] https://github.com/metabase/metabase/issues/31365

- [ ] https://github.com/metabase/metabase/issues/31366

- [ ] https://github.com/metabase/metabase/issues/31368

- [ ] #31521

- [ ] https://github.com/metabase/metabase/issues/31624

- [ ] https://github.com/metabase/metabase/issues/31741

- [ ] https://github.com/metabase/metabase/issues/31769

- [ ] https://github.com/metabase/metabase/issues/31775

- [ ] https://github.com/metabase/metabase/issues/31858

- [ ] https://github.com/metabase/metabase/issues/32049

- [ ] https://github.com/metabase/metabase/issues/32063

```

|

process

|

medium priority bug fixes tasks

| 1

|

112,816

| 17,102,345,872

|

IssuesEvent

|

2021-07-09 13:06:09

|

turkdevops/codecov-action

|

https://api.github.com/repos/turkdevops/codecov-action

|

closed

|

CVE-2021-23362 (Medium) detected in hosted-git-info-2.8.8.tgz - autoclosed

|

security vulnerability

|

## CVE-2021-23362 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>hosted-git-info-2.8.8.tgz</b></p></summary>

<p>Provides metadata and conversions from repository urls for Github, Bitbucket and Gitlab</p>

<p>Library home page: <a href="https://registry.npmjs.org/hosted-git-info/-/hosted-git-info-2.8.8.tgz">https://registry.npmjs.org/hosted-git-info/-/hosted-git-info-2.8.8.tgz</a></p>

<p>Path to dependency file: codecov-action/package.json</p>

<p>Path to vulnerable library: codecov-action/node_modules/hosted-git-info/package.json</p>

<p>

Dependency Hierarchy:

- jest-26.6.3.tgz (Root Library)

- core-26.6.3.tgz

- jest-resolve-26.6.2.tgz

- read-pkg-up-7.0.1.tgz

- read-pkg-5.2.0.tgz

- normalize-package-data-2.5.0.tgz

- :x: **hosted-git-info-2.8.8.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/turkdevops/codecov-action/commit/55e35bb57ce02b251f09ff66d3ac34c5a60291ea">55e35bb57ce02b251f09ff66d3ac34c5a60291ea</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package hosted-git-info before 3.0.8 are vulnerable to Regular Expression Denial of Service (ReDoS) via regular expression shortcutMatch in the fromUrl function in index.js. The affected regular expression exhibits polynomial worst-case time complexity.

<p>Publish Date: 2021-03-23

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23362>CVE-2021-23362</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-43f8-2h32-f4cj">https://github.com/advisories/GHSA-43f8-2h32-f4cj</a></p>

<p>Release Date: 2021-03-23</p>

<p>Fix Resolution: hosted-git-info - 2.8.9,3.0.8</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2021-23362 (Medium) detected in hosted-git-info-2.8.8.tgz - autoclosed - ## CVE-2021-23362 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>hosted-git-info-2.8.8.tgz</b></p></summary>

<p>Provides metadata and conversions from repository urls for Github, Bitbucket and Gitlab</p>

<p>Library home page: <a href="https://registry.npmjs.org/hosted-git-info/-/hosted-git-info-2.8.8.tgz">https://registry.npmjs.org/hosted-git-info/-/hosted-git-info-2.8.8.tgz</a></p>

<p>Path to dependency file: codecov-action/package.json</p>

<p>Path to vulnerable library: codecov-action/node_modules/hosted-git-info/package.json</p>

<p>

Dependency Hierarchy:

- jest-26.6.3.tgz (Root Library)

- core-26.6.3.tgz

- jest-resolve-26.6.2.tgz

- read-pkg-up-7.0.1.tgz

- read-pkg-5.2.0.tgz

- normalize-package-data-2.5.0.tgz

- :x: **hosted-git-info-2.8.8.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/turkdevops/codecov-action/commit/55e35bb57ce02b251f09ff66d3ac34c5a60291ea">55e35bb57ce02b251f09ff66d3ac34c5a60291ea</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package hosted-git-info before 3.0.8 are vulnerable to Regular Expression Denial of Service (ReDoS) via regular expression shortcutMatch in the fromUrl function in index.js. The affected regular expression exhibits polynomial worst-case time complexity.

<p>Publish Date: 2021-03-23

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23362>CVE-2021-23362</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-43f8-2h32-f4cj">https://github.com/advisories/GHSA-43f8-2h32-f4cj</a></p>

<p>Release Date: 2021-03-23</p>

<p>Fix Resolution: hosted-git-info - 2.8.9,3.0.8</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in hosted git info tgz autoclosed cve medium severity vulnerability vulnerable library hosted git info tgz provides metadata and conversions from repository urls for github bitbucket and gitlab library home page a href path to dependency file codecov action package json path to vulnerable library codecov action node modules hosted git info package json dependency hierarchy jest tgz root library core tgz jest resolve tgz read pkg up tgz read pkg tgz normalize package data tgz x hosted git info tgz vulnerable library found in head commit a href found in base branch master vulnerability details the package hosted git info before are vulnerable to regular expression denial of service redos via regular expression shortcutmatch in the fromurl function in index js the affected regular expression exhibits polynomial worst case time complexity publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact low for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution hosted git info step up your open source security game with whitesource

| 0

|

16,114

| 20,376,965,342

|

IssuesEvent

|

2022-02-21 16:33:08

|

prisma/prisma

|

https://api.github.com/repos/prisma/prisma

|

opened

|

Query Engine tests with sharded MongoDB connection strings

|

process/candidate topic: internal team/migrations team/client

|

MongoDB connection strings are not URIs. It would be great to have Rust-level tests to see if sharded strings work correctly with the Query Engine. The URI format is this:

```

mongodb://user:password@srv1.rgyl0.mongodb.net:27017,srv2.rgyl0.mongodb.net:27017,srv3.rgyl0.mongodb.net:27017/database?ssl=true&authSource=admin&retryWrites=true&w=majority

```

So we should have a few replicas, and test with a connection string that lists them like in the example.

|

1.0

|

Query Engine tests with sharded MongoDB connection strings - MongoDB connection strings are not URIs. It would be great to have Rust-level tests to see if sharded strings work correctly with the Query Engine. The URI format is this:

```

mongodb://user:password@srv1.rgyl0.mongodb.net:27017,srv2.rgyl0.mongodb.net:27017,srv3.rgyl0.mongodb.net:27017/database?ssl=true&authSource=admin&retryWrites=true&w=majority

```

So we should have a few replicas, and test with a connection string that lists them like in the example.

|

process

|

query engine tests with sharded mongodb connection strings mongodb connection strings are not uris it would be great to have rust level tests to see if sharded strings work correctly with the query engine the uri format is this mongodb user password mongodb net mongodb net mongodb net database ssl true authsource admin retrywrites true w majority so we should have a few replicas and test with a connection string that lists them like in the example

| 1

|

785,119

| 27,599,080,620

|

IssuesEvent

|

2023-03-09 08:50:04

|

AY2223S2-CS2113-T15-4/tp

|

https://api.github.com/repos/AY2223S2-CS2113-T15-4/tp

|

opened

|

Delete flashcards

|

type.Epic priority.High

|

As a user I can delete any if my cards so that I don’t get asked to review that card later on in case I am confident I have truly memorised the card

|

1.0

|

Delete flashcards - As a user I can delete any if my cards so that I don’t get asked to review that card later on in case I am confident I have truly memorised the card

|

non_process

|

delete flashcards as a user i can delete any if my cards so that i don’t get asked to review that card later on in case i am confident i have truly memorised the card

| 0

|

3,783

| 6,760,953,854

|

IssuesEvent

|

2017-10-24 22:44:58

|

aspnet/IISIntegration

|

https://api.github.com/repos/aspnet/IISIntegration

|

closed

|

React to ANCM changes for NativeMethods pInvoke layer.

|

bug in-process

|

Parallel issue to https://github.com/aspnet/KestrelHttpServer/blob/dev/src/Kestrel.Core/Internal/Http/HttpProtocol.FeatureCollection.cs#L97-L101. With Pan's changes ( to the native ANCM and recognition that we need to expose more pInvoke methods, we will need to react to these appropriately.

|

1.0

|

React to ANCM changes for NativeMethods pInvoke layer. - Parallel issue to https://github.com/aspnet/KestrelHttpServer/blob/dev/src/Kestrel.Core/Internal/Http/HttpProtocol.FeatureCollection.cs#L97-L101. With Pan's changes ( to the native ANCM and recognition that we need to expose more pInvoke methods, we will need to react to these appropriately.

|

process

|

react to ancm changes for nativemethods pinvoke layer parallel issue to with pan s changes to the native ancm and recognition that we need to expose more pinvoke methods we will need to react to these appropriately

| 1

|

186,029

| 15,044,100,855

|

IssuesEvent

|

2021-02-03 02:11:31

|

walterimaican/nightlight

|

https://api.github.com/repos/walterimaican/nightlight

|

opened

|

Documentation: Stale

|

documentation

|

Some of the screenshots used in the README.md are now inconsistent with the current state of the project. These images should be updated when possible.

|

1.0

|

Documentation: Stale - Some of the screenshots used in the README.md are now inconsistent with the current state of the project. These images should be updated when possible.

|

non_process

|

documentation stale some of the screenshots used in the readme md are now inconsistent with the current state of the project these images should be updated when possible

| 0

|

4,208

| 7,166,652,311

|

IssuesEvent

|

2018-01-29 17:54:37

|

itsyouonline/identityserver

|

https://api.github.com/repos/itsyouonline/identityserver

|

reopened

|

Cloudflare returns error 504 on rare occasions

|

process_wontfix type_bug

|

Very rarely, cloudlfare will return a 504 when calling any url, even if others go through just fine. The 504 is not generated on our site as observed from the logs (tracing an `/error504` page load, no earlier requests gave a 504 for that users). This can cause pages to render empty, translations to not load etc...

|

1.0

|

Cloudflare returns error 504 on rare occasions - Very rarely, cloudlfare will return a 504 when calling any url, even if others go through just fine. The 504 is not generated on our site as observed from the logs (tracing an `/error504` page load, no earlier requests gave a 504 for that users). This can cause pages to render empty, translations to not load etc...

|

process

|

cloudflare returns error on rare occasions very rarely cloudlfare will return a when calling any url even if others go through just fine the is not generated on our site as observed from the logs tracing an page load no earlier requests gave a for that users this can cause pages to render empty translations to not load etc

| 1

|

656,733

| 21,773,565,908

|

IssuesEvent

|

2022-05-13 11:35:17

|

awslabs/aws-lambda-powertools-typescript

|

https://api.github.com/repos/awslabs/aws-lambda-powertools-typescript

|

opened

|

Feature (all): evaluate migration to Middy 3.x

|

enhancement dependencies utility:all triage priority:low

|

## Description of the feature request

**Problem statement**

Middy, the dependency that we use to vend middleware has [recently released](https://github.com/middyjs/middy/releases/tag/3.0.0) a new major version (v 3.x). This version drops support for Node JS version 12.x and introduces some breaking changes.

Given that Middy, as a whole, has a large surface and we are only using `@middy/core` we need to investigate on whether there's a path to upgrade to the newer version while still continuing to support Node JS 12.x in Powertools.

**Additional context**

Running the e2e tests on a branch that upgraded `@middy/core` to 3.0.1 seems to show that the newer release is compatible with Powertools on both versions 12 and 14 of Node ([see run results](https://github.com/awslabs/aws-lambda-powertools-typescript/actions/runs/2318810802)).

At the same time, the unit tests for that same branch are failing for all utilities and specifically in the section that relates the middleware implementations. The error message (see detail below) seems to hint at incompatibilities between the new bundling of middy that now supports both CJS and ESM bundling, and our project's configuration since the errors are related to the imports and the tests don't run at all.

<details>

```sh

FAIL AWS Lambda Powertools utility: TRACER tests/unit/middy.test.ts

● Test suite failed to run

Jest encountered an unexpected token

Jest failed to parse a file. This happens e.g. when your code or its dependencies use non-standard JavaScript syntax, or when Jest is not configured to support such syntax.

Out of the box Jest supports Babel, which will be used to transform your files into valid JS based on your Babel configuration.

By default "node_modules" folder is ignored by transformers.

Here's what you can do:

• If you are trying to use ECMAScript Modules, see https://jestjs.io/docs/ecmascript-modules for how to enable it.

• If you are trying to use TypeScript, see https://jestjs.io/docs/getting-started#using-typescript

• To have some of your "node_modules" files transformed, you can specify a custom "transformIgnorePatterns" in your config.

• If you need a custom transformation specify a "transform" option in your config.

• If you simply want to mock your non-JS modules (e.g. binary assets) you can stub them out with the "moduleNameMapper" config option.

You'll find more details and examples of these config options in the docs:

https://jestjs.io/docs/configuration

For information about custom transformations, see:

https://jestjs.io/docs/code-transformation

Details:

/home/ec2-user/aws-lambda-powertools-typescript/node_modules/@middy/core/index.js:1

({"Object.<anonymous>":function(module,exports,require,__dirname,__filename,jest){import{EventEmitter}from"events";const defaultLambdaHandler=()=>{};const defaultPlugin={timeoutEarlyInMillis:5,timeoutEarlyResponse:()=>{throw new Error("Timeout")}};const middy=(lambdaHandler=defaultLambdaHandler,plugin={})=>{if(typeof lambdaHandler!=="function"){plugin=lambdaHandler;lambdaHandler=defaultLambdaHandler}plugin={...defaultPlugin,...plugin};plugin.timeoutEarly=plugin.timeoutEarlyInMillis>0;plugin.beforePrefetch?.();const beforeMiddlewares=[];const afterMiddlewares=[];const onErrorMiddlewares=[];const middy1=(event={},context={})=>{plugin.requestStart?.();const request={event,context,response:undefined,error:undefined,internal:plugin.internal??{}};return runRequest(request,[...beforeMiddlewares],lambdaHandler,[...afterMiddlewares],[...onErrorMiddlewares],plugin)};middy1.use=middlewares=>{if(!Array.isArray(middlewares)){middlewares=[middlewares]}for(const middleware of middlewares){const{before,after,onError}=middleware;if(!before&&!after&&!onError){throw new Error('Middleware must be an object containing at least one key among "before", "after", "onError"')}if(before)middy1.before(before);if(after)middy1.after(after);if(onError)middy1.onError(onError)}return middy1};middy1.before=beforeMiddleware=>{beforeMiddlewares.push(beforeMiddleware);return middy1};middy1.after=afterMiddleware=>{afterMiddlewares.unshift(afterMiddleware);return middy1};middy1.onError=onErrorMiddleware=>{onErrorMiddlewares.unshift(onErrorMiddleware);return middy1};middy1.handler=replaceLambdaHandler=>{lambdaHandler=replaceLambdaHandler;return middy1};return middy1};const runRequest=async(request,beforeMiddlewares,lambdaHandler,afterMiddlewares,onErrorMiddlewares,plugin)=>{const timeoutEarly=plugin.timeoutEarly&&request.context.getRemainingTimeInMillis;try{await runMiddlewares(request,beforeMiddlewares,plugin);if(request.response===undefined){plugin.beforeHandler?.();const handlerAbort=new AbortController;let timeoutAbort;if(timeoutEarly)timeoutAbort=new AbortController;request.response=await Promise.race([lambdaHandler(request.event,request.context,{signal:handlerAbort.signal}),timeoutEarly?setTimeoutPromise(request.context.getRemainingTimeInMillis()-plugin.timeoutEarlyInMillis,{signal:timeoutAbort.signal}).then(()=>{handlerAbort.abort();return plugin.timeoutEarlyResponse()}):Promise.race([])]);if(timeoutEarly)timeoutAbort.abort();plugin.afterHandler?.();await runMiddlewares(request,afterMiddlewares,plugin)}}catch(e){request.response=undefined;request.error=e;try{await runMiddlewares(request,onErrorMiddlewares,plugin)}catch(e){e.originalError=request.error;request.error=e;throw request.error}if(request.response===undefined)throw request.error}finally{await plugin.requestEnd?.(request)}return request.response};const runMiddlewares=async(request,middlewares,plugin)=>{for(const nextMiddleware of middlewares){plugin.beforeMiddleware?.(nextMiddleware.name);const res=await nextMiddleware(request);plugin.afterMiddleware?.(nextMiddleware.name);if(res!==undefined){request.response=res;return}}};const polyfillAbortController=()=>{if(process.version<"v15.0.0"){let _toStringTag;let _toStringTag1=Symbol.toStringTag;class AbortSignal{toString(){return"[object AbortSignal]"}get[_toStringTag1](){return"AbortSignal"}removeEventListener(name,handler){this.eventEmitter.removeListener(name,handler)}addEventListener(name,handler){this.eventEmitter.on(name,handler)}dispatchEvent(type){const event={type,target:this};const handlerName=`on${type}`;if(typeof this[handlerName]==="function")this[handlerName](event);this.eventEmitter.emit(type,event)}constructor(){this.eventEmitter=new EventEmitter;this.onabort=null;this.aborted=false}}return _toStringTag=Symbol.toStringTag,class AbortController{abort(){if(this.signal.aborted)return;this.signal.aborted=true;this.signal.dispatchEvent("abort")}toString(){return"[object AbortController]"}get[_toStringTag](){return"AbortController"}constructor(){this.signal=new AbortSignal}}}else{return AbortController}};global.AbortController=polyfillAbortController();const polyfillSetTimeoutPromise=()=>{return(ms,{signal})=>{if(signal.aborted){return Promise.reject(new Error("Aborted","AbortError"))}return new Promise((resolve,reject)=>{const abortHandler=()=>{clearTimeout(timeout);reject(new Error("Aborted","AbortError"))};const timeout=setTimeout(()=>{resolve();signal.removeEventListener("abort",abortHandler)},ms);signal.addEventListener("abort",abortHandler)})}};const setTimeoutPromise=polyfillSetTimeoutPromise();export default middy

^^^^^^

SyntaxError: Cannot use import statement outside a module

6 |

7 | import { captureLambdaHandler } from '../../src/middleware/middy';

> 8 | import middy from '@middy/core';

| ^

9 | import { Tracer } from './../../src';

10 | import type { Context, Handler } from 'aws-lambda/handler';

11 | import { Segment, setContextMissingStrategy, Subsegment } from 'aws-xray-sdk-core';

at Runtime.createScriptFromCode (../../node_modules/jest-runtime/build/index.js:1728:14)

at Object.<anonymous> (tests/unit/middy.test.ts:8:1)

```

</details>

**Code examples**

N/A

**Benefits for you and the wider AWS community**

<!-- What are the benefits your your proposed feature? -->

**Describe alternatives you've considered**

Not upgrading to 3.x and staying on version 2.5.x

**Additional context**

<!-- Add any other context or screenshots about the feature request here. -->

### Related issues, RFCs

#861

|

1.0

|

Feature (all): evaluate migration to Middy 3.x - ## Description of the feature request

**Problem statement**

Middy, the dependency that we use to vend middleware has [recently released](https://github.com/middyjs/middy/releases/tag/3.0.0) a new major version (v 3.x). This version drops support for Node JS version 12.x and introduces some breaking changes.

Given that Middy, as a whole, has a large surface and we are only using `@middy/core` we need to investigate on whether there's a path to upgrade to the newer version while still continuing to support Node JS 12.x in Powertools.

**Additional context**

Running the e2e tests on a branch that upgraded `@middy/core` to 3.0.1 seems to show that the newer release is compatible with Powertools on both versions 12 and 14 of Node ([see run results](https://github.com/awslabs/aws-lambda-powertools-typescript/actions/runs/2318810802)).

At the same time, the unit tests for that same branch are failing for all utilities and specifically in the section that relates the middleware implementations. The error message (see detail below) seems to hint at incompatibilities between the new bundling of middy that now supports both CJS and ESM bundling, and our project's configuration since the errors are related to the imports and the tests don't run at all.

<details>

```sh

FAIL AWS Lambda Powertools utility: TRACER tests/unit/middy.test.ts

● Test suite failed to run

Jest encountered an unexpected token

Jest failed to parse a file. This happens e.g. when your code or its dependencies use non-standard JavaScript syntax, or when Jest is not configured to support such syntax.

Out of the box Jest supports Babel, which will be used to transform your files into valid JS based on your Babel configuration.

By default "node_modules" folder is ignored by transformers.

Here's what you can do:

• If you are trying to use ECMAScript Modules, see https://jestjs.io/docs/ecmascript-modules for how to enable it.

• If you are trying to use TypeScript, see https://jestjs.io/docs/getting-started#using-typescript

• To have some of your "node_modules" files transformed, you can specify a custom "transformIgnorePatterns" in your config.

• If you need a custom transformation specify a "transform" option in your config.

• If you simply want to mock your non-JS modules (e.g. binary assets) you can stub them out with the "moduleNameMapper" config option.

You'll find more details and examples of these config options in the docs:

https://jestjs.io/docs/configuration

For information about custom transformations, see:

https://jestjs.io/docs/code-transformation

Details:

/home/ec2-user/aws-lambda-powertools-typescript/node_modules/@middy/core/index.js:1

({"Object.<anonymous>":function(module,exports,require,__dirname,__filename,jest){import{EventEmitter}from"events";const defaultLambdaHandler=()=>{};const defaultPlugin={timeoutEarlyInMillis:5,timeoutEarlyResponse:()=>{throw new Error("Timeout")}};const middy=(lambdaHandler=defaultLambdaHandler,plugin={})=>{if(typeof lambdaHandler!=="function"){plugin=lambdaHandler;lambdaHandler=defaultLambdaHandler}plugin={...defaultPlugin,...plugin};plugin.timeoutEarly=plugin.timeoutEarlyInMillis>0;plugin.beforePrefetch?.();const beforeMiddlewares=[];const afterMiddlewares=[];const onErrorMiddlewares=[];const middy1=(event={},context={})=>{plugin.requestStart?.();const request={event,context,response:undefined,error:undefined,internal:plugin.internal??{}};return runRequest(request,[...beforeMiddlewares],lambdaHandler,[...afterMiddlewares],[...onErrorMiddlewares],plugin)};middy1.use=middlewares=>{if(!Array.isArray(middlewares)){middlewares=[middlewares]}for(const middleware of middlewares){const{before,after,onError}=middleware;if(!before&&!after&&!onError){throw new Error('Middleware must be an object containing at least one key among "before", "after", "onError"')}if(before)middy1.before(before);if(after)middy1.after(after);if(onError)middy1.onError(onError)}return middy1};middy1.before=beforeMiddleware=>{beforeMiddlewares.push(beforeMiddleware);return middy1};middy1.after=afterMiddleware=>{afterMiddlewares.unshift(afterMiddleware);return middy1};middy1.onError=onErrorMiddleware=>{onErrorMiddlewares.unshift(onErrorMiddleware);return middy1};middy1.handler=replaceLambdaHandler=>{lambdaHandler=replaceLambdaHandler;return middy1};return middy1};const runRequest=async(request,beforeMiddlewares,lambdaHandler,afterMiddlewares,onErrorMiddlewares,plugin)=>{const timeoutEarly=plugin.timeoutEarly&&request.context.getRemainingTimeInMillis;try{await runMiddlewares(request,beforeMiddlewares,plugin);if(request.response===undefined){plugin.beforeHandler?.();const handlerAbort=new AbortController;let timeoutAbort;if(timeoutEarly)timeoutAbort=new AbortController;request.response=await Promise.race([lambdaHandler(request.event,request.context,{signal:handlerAbort.signal}),timeoutEarly?setTimeoutPromise(request.context.getRemainingTimeInMillis()-plugin.timeoutEarlyInMillis,{signal:timeoutAbort.signal}).then(()=>{handlerAbort.abort();return plugin.timeoutEarlyResponse()}):Promise.race([])]);if(timeoutEarly)timeoutAbort.abort();plugin.afterHandler?.();await runMiddlewares(request,afterMiddlewares,plugin)}}catch(e){request.response=undefined;request.error=e;try{await runMiddlewares(request,onErrorMiddlewares,plugin)}catch(e){e.originalError=request.error;request.error=e;throw request.error}if(request.response===undefined)throw request.error}finally{await plugin.requestEnd?.(request)}return request.response};const runMiddlewares=async(request,middlewares,plugin)=>{for(const nextMiddleware of middlewares){plugin.beforeMiddleware?.(nextMiddleware.name);const res=await nextMiddleware(request);plugin.afterMiddleware?.(nextMiddleware.name);if(res!==undefined){request.response=res;return}}};const polyfillAbortController=()=>{if(process.version<"v15.0.0"){let _toStringTag;let _toStringTag1=Symbol.toStringTag;class AbortSignal{toString(){return"[object AbortSignal]"}get[_toStringTag1](){return"AbortSignal"}removeEventListener(name,handler){this.eventEmitter.removeListener(name,handler)}addEventListener(name,handler){this.eventEmitter.on(name,handler)}dispatchEvent(type){const event={type,target:this};const handlerName=`on${type}`;if(typeof this[handlerName]==="function")this[handlerName](event);this.eventEmitter.emit(type,event)}constructor(){this.eventEmitter=new EventEmitter;this.onabort=null;this.aborted=false}}return _toStringTag=Symbol.toStringTag,class AbortController{abort(){if(this.signal.aborted)return;this.signal.aborted=true;this.signal.dispatchEvent("abort")}toString(){return"[object AbortController]"}get[_toStringTag](){return"AbortController"}constructor(){this.signal=new AbortSignal}}}else{return AbortController}};global.AbortController=polyfillAbortController();const polyfillSetTimeoutPromise=()=>{return(ms,{signal})=>{if(signal.aborted){return Promise.reject(new Error("Aborted","AbortError"))}return new Promise((resolve,reject)=>{const abortHandler=()=>{clearTimeout(timeout);reject(new Error("Aborted","AbortError"))};const timeout=setTimeout(()=>{resolve();signal.removeEventListener("abort",abortHandler)},ms);signal.addEventListener("abort",abortHandler)})}};const setTimeoutPromise=polyfillSetTimeoutPromise();export default middy

^^^^^^

SyntaxError: Cannot use import statement outside a module

6 |

7 | import { captureLambdaHandler } from '../../src/middleware/middy';

> 8 | import middy from '@middy/core';

| ^

9 | import { Tracer } from './../../src';

10 | import type { Context, Handler } from 'aws-lambda/handler';

11 | import { Segment, setContextMissingStrategy, Subsegment } from 'aws-xray-sdk-core';

at Runtime.createScriptFromCode (../../node_modules/jest-runtime/build/index.js:1728:14)

at Object.<anonymous> (tests/unit/middy.test.ts:8:1)

```

</details>

**Code examples**

N/A

**Benefits for you and the wider AWS community**

<!-- What are the benefits your your proposed feature? -->

**Describe alternatives you've considered**

Not upgrading to 3.x and staying on version 2.5.x

**Additional context**

<!-- Add any other context or screenshots about the feature request here. -->

### Related issues, RFCs

#861

|

non_process

|

feature all evaluate migration to middy x description of the feature request problem statement middy the dependency that we use to vend middleware has a new major version v x this version drops support for node js version x and introduces some breaking changes given that middy as a whole has a large surface and we are only using middy core we need to investigate on whether there s a path to upgrade to the newer version while still continuing to support node js x in powertools additional context running the tests on a branch that upgraded middy core to seems to show that the newer release is compatible with powertools on both versions and of node at the same time the unit tests for that same branch are failing for all utilities and specifically in the section that relates the middleware implementations the error message see detail below seems to hint at incompatibilities between the new bundling of middy that now supports both cjs and esm bundling and our project s configuration since the errors are related to the imports and the tests don t run at all sh fail aws lambda powertools utility tracer tests unit middy test ts ● test suite failed to run jest encountered an unexpected token jest failed to parse a file this happens e g when your code or its dependencies use non standard javascript syntax or when jest is not configured to support such syntax out of the box jest supports babel which will be used to transform your files into valid js based on your babel configuration by default node modules folder is ignored by transformers here s what you can do • if you are trying to use ecmascript modules see for how to enable it • if you are trying to use typescript see • to have some of your node modules files transformed you can specify a custom transformignorepatterns in your config • if you need a custom transformation specify a transform option in your config • if you simply want to mock your non js modules e g binary assets you can stub them out with the modulenamemapper config option you ll find more details and examples of these config options in the docs for information about custom transformations see details home user aws lambda powertools typescript node modules middy core index js object function module exports require dirname filename jest import eventemitter from events const defaultlambdahandler const defaultplugin timeoutearlyinmillis timeoutearlyresponse throw new error timeout const middy lambdahandler defaultlambdahandler plugin if typeof lambdahandler function plugin lambdahandler lambdahandler defaultlambdahandler plugin defaultplugin plugin plugin timeoutearly plugin timeoutearlyinmillis plugin beforeprefetch const beforemiddlewares const aftermiddlewares const onerrormiddlewares const event context plugin requeststart const request event context response undefined error undefined internal plugin internal return runrequest request lambdahandler plugin use middlewares if array isarray middlewares middlewares for const middleware of middlewares const before after onerror middleware if before after onerror throw new error middleware must be an object containing at least one key among before after onerror if before before before if after after after if onerror onerror onerror return before beforemiddleware beforemiddlewares push beforemiddleware return after aftermiddleware aftermiddlewares unshift aftermiddleware return onerror onerrormiddleware onerrormiddlewares unshift onerrormiddleware return handler replacelambdahandler lambdahandler replacelambdahandler return return const runrequest async request beforemiddlewares lambdahandler aftermiddlewares onerrormiddlewares plugin const timeoutearly plugin timeoutearly request context getremainingtimeinmillis try await runmiddlewares request beforemiddlewares plugin if request response undefined plugin beforehandler const handlerabort new abortcontroller let timeoutabort if timeoutearly timeoutabort new abortcontroller request response await promise race if timeoutearly timeoutabort abort plugin afterhandler await runmiddlewares request aftermiddlewares plugin catch e request response undefined request error e try await runmiddlewares request onerrormiddlewares plugin catch e e originalerror request error request error e throw request error if request response undefined throw request error finally await plugin requestend request return request response const runmiddlewares async request middlewares plugin for const nextmiddleware of middlewares plugin beforemiddleware nextmiddleware name const res await nextmiddleware request plugin aftermiddleware nextmiddleware name if res undefined request response res return const polyfillabortcontroller if process version return ms signal if signal aborted return promise reject new error aborted aborterror return new promise resolve reject const aborthandler cleartimeout timeout reject new error aborted aborterror const timeout settimeout resolve signal removeeventlistener abort aborthandler ms signal addeventlistener abort aborthandler const settimeoutpromise polyfillsettimeoutpromise export default middy syntaxerror cannot use import statement outside a module import capturelambdahandler from src middleware middy import middy from middy core import tracer from src import type context handler from aws lambda handler import segment setcontextmissingstrategy subsegment from aws xray sdk core at runtime createscriptfromcode node modules jest runtime build index js at object tests unit middy test ts code examples n a benefits for you and the wider aws community describe alternatives you ve considered not upgrading to x and staying on version x additional context related issues rfcs

| 0

|

2,776

| 5,713,195,463

|

IssuesEvent

|

2017-04-19 07:00:09

|

g8os/grid

|

https://api.github.com/repos/g8os/grid

|

closed

|

AYS service for grid controller

|

process_wontfix type_feature

|

relates to : https://github.com/g8os/grid/issues/66

We need a service that deploy and configure the grid controller.

actions:

- install:

- reserve a pair disk of disk, create btrfs raid1 on them for replication.

- create container with the [grid flist](https://hub.gig.tech/maxux/grid.flist)

- start AYS Server

- start Grid API server

|

1.0

|

AYS service for grid controller - relates to : https://github.com/g8os/grid/issues/66

We need a service that deploy and configure the grid controller.

actions:

- install:

- reserve a pair disk of disk, create btrfs raid1 on them for replication.

- create container with the [grid flist](https://hub.gig.tech/maxux/grid.flist)

- start AYS Server

- start Grid API server

|

process

|

ays service for grid controller relates to we need a service that deploy and configure the grid controller actions install reserve a pair disk of disk create btrfs on them for replication create container with the start ays server start grid api server

| 1

|

59,041

| 14,365,891,805

|

IssuesEvent

|

2020-12-01 02:57:02

|

NixOS/nixpkgs

|

https://api.github.com/repos/NixOS/nixpkgs

|

closed

|

Vulnerability roundup 93: postgresql-9.6.17: 1 advisory [7.3]

|

1.severity: security

|

[search](https://search.nix.gsc.io/?q=postgresql&i=fosho&repos=NixOS-nixpkgs), [files](https://github.com/NixOS/nixpkgs/search?utf8=%E2%9C%93&q=postgresql+in%3Apath&type=Code)

* [ ] [CVE-2020-10733](https://nvd.nist.gov/vuln/detail/CVE-2020-10733) CVSSv3=7.3 (nixos-20.03)

Scanned versions: nixos-20.03: 0d0660fde3b.

Cc @danbst

Cc @globin

Cc @ocharles

Cc @thoughtpolice

|

True

|

Vulnerability roundup 93: postgresql-9.6.17: 1 advisory [7.3] - [search](https://search.nix.gsc.io/?q=postgresql&i=fosho&repos=NixOS-nixpkgs), [files](https://github.com/NixOS/nixpkgs/search?utf8=%E2%9C%93&q=postgresql+in%3Apath&type=Code)

* [ ] [CVE-2020-10733](https://nvd.nist.gov/vuln/detail/CVE-2020-10733) CVSSv3=7.3 (nixos-20.03)

Scanned versions: nixos-20.03: 0d0660fde3b.

Cc @danbst

Cc @globin

Cc @ocharles

Cc @thoughtpolice

|

non_process

|

vulnerability roundup postgresql advisory nixos scanned versions nixos cc danbst cc globin cc ocharles cc thoughtpolice

| 0

|

15,317

| 19,424,845,342

|

IssuesEvent

|

2021-12-21 03:08:50

|

qgis/QGIS

|

https://api.github.com/repos/qgis/QGIS

|

closed

|

GDAL Translate not respecting -a_srs for TFW files

|

Feedback Processing Bug

|

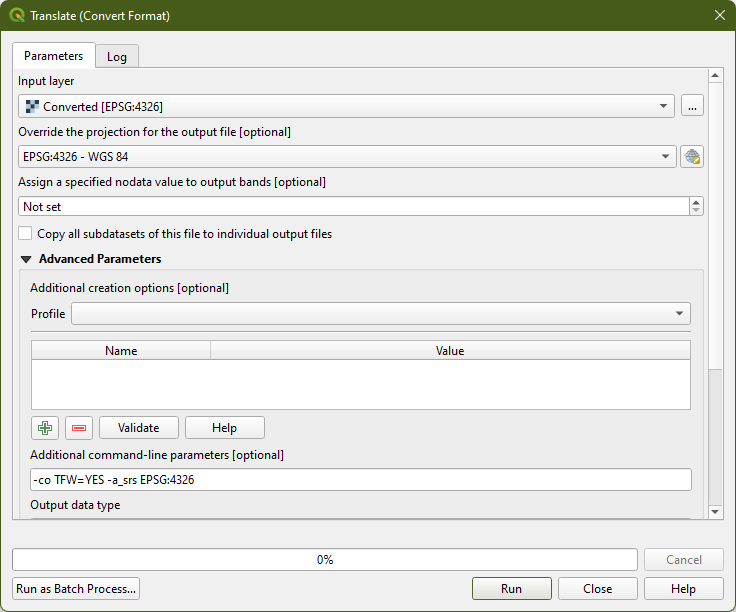

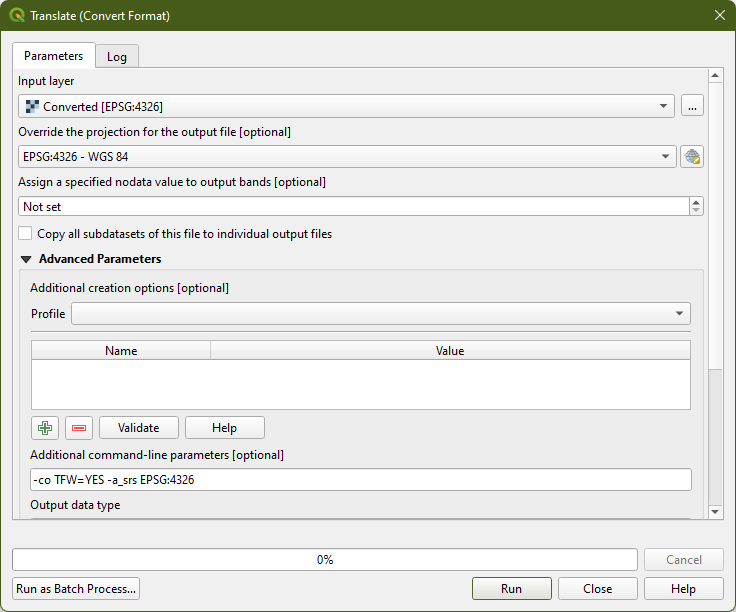

### What is the bug or the crash?

Choose Translate, set a different SRS as the override AND/OR ``-a_srs EPSG:4326`` in Additional command-line parameters, and the generated TFW will have coordinates in the layer's original SRS.

### Steps to reproduce the issue

1. Run Translate from Processing Toolbox

2. Setup Override CRS as EPSG:4326