Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

417,909

| 12,189,832,614

|

IssuesEvent

|

2020-04-29 08:15:02

|

zoot-hq/zoot

|

https://api.github.com/repos/zoot-hq/zoot

|

closed

|

user-page

|

good first issue high priority

|

- accessed via navbar 2nd button icon

- features username, email, and password (ONLY MOCK PASSWORD "****") and a way to update each item in the DB

- contact button (opens email editor, subject line "User Contact"

- logout button

- delete button (purges user's UN, email, and password from DB)

- delete button must feature a popup confirming user wants to delete their account

please refer to wireframes for more details

|

1.0

|

user-page - - accessed via navbar 2nd button icon

- features username, email, and password (ONLY MOCK PASSWORD "****") and a way to update each item in the DB

- contact button (opens email editor, subject line "User Contact"

- logout button

- delete button (purges user's UN, email, and password from DB)

- delete button must feature a popup confirming user wants to delete their account

please refer to wireframes for more details

|

non_process

|

user page accessed via navbar button icon features username email and password only mock password and a way to update each item in the db contact button opens email editor subject line user contact logout button delete button purges user s un email and password from db delete button must feature a popup confirming user wants to delete their account please refer to wireframes for more details

| 0

|

103,941

| 16,613,270,015

|

IssuesEvent

|

2021-06-02 13:58:48

|

rammatzkvosky/789

|

https://api.github.com/repos/rammatzkvosky/789

|

opened

|

CVE-2018-20225 (High) detected in pip-19.1.1-py2.py3-none-any.whl, pip-19.3.1-py2.py3-none-any.whl

|

security vulnerability

|

## CVE-2018-20225 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>pip-19.1.1-py2.py3-none-any.whl</b>, <b>pip-19.3.1-py2.py3-none-any.whl</b></p></summary>

<p>

<details><summary><b>pip-19.1.1-py2.py3-none-any.whl</b></p></summary>

<p>The PyPA recommended tool for installing Python packages.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/5c/e0/be401c003291b56efc55aeba6a80ab790d3d4cece2778288d65323009420/pip-19.1.1-py2.py3-none-any.whl">https://files.pythonhosted.org/packages/5c/e0/be401c003291b56efc55aeba6a80ab790d3d4cece2778288d65323009420/pip-19.1.1-py2.py3-none-any.whl</a></p>

<p>Path to vulnerable library: canner/.poetry/lib/poetry/_vendor/py2.7/virtualenv_support/pip-19.1.1-py2.py3-none-any.whl</p>

<p>

Dependency Hierarchy:

- :x: **pip-19.1.1-py2.py3-none-any.whl** (Vulnerable Library)

</details>

<details><summary><b>pip-19.3.1-py2.py3-none-any.whl</b></p></summary>

<p>The PyPA recommended tool for installing Python packages.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/00/b6/9cfa56b4081ad13874b0c6f96af8ce16cfbc1cb06bedf8e9164ce5551ec1/pip-19.3.1-py2.py3-none-any.whl">https://files.pythonhosted.org/packages/00/b6/9cfa56b4081ad13874b0c6f96af8ce16cfbc1cb06bedf8e9164ce5551ec1/pip-19.3.1-py2.py3-none-any.whl</a></p>

<p>Path to vulnerable library: canner/.poetry/lib/poetry/_vendor/py2.7/virtualenv_support/pip-19.3.1-py2.py3-none-any.whl</p>

<p>

Dependency Hierarchy:

- :x: **pip-19.3.1-py2.py3-none-any.whl** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/rammatzkvosky/789/commit/a94ab1af7b954e06163acb325d4b035831f88835">a94ab1af7b954e06163acb325d4b035831f88835</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

** DISPUTED ** An issue was discovered in pip (all versions) because it installs the version with the highest version number, even if the user had intended to obtain a private package from a private index. This only affects use of the --extra-index-url option, and exploitation requires that the package does not already exist in the public index (and thus the attacker can put the package there with an arbitrary version number). NOTE: it has been reported that this is intended functionality and the user is responsible for using --extra-index-url securely.

<p>Publish Date: 2020-05-08

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-20225>CVE-2018-20225</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Python","packageName":"pip","packageVersion":"19.1.1","packageFilePaths":[],"isTransitiveDependency":false,"dependencyTree":"pip:19.1.1","isMinimumFixVersionAvailable":false},{"packageType":"Python","packageName":"pip","packageVersion":"19.3.1","packageFilePaths":[],"isTransitiveDependency":false,"dependencyTree":"pip:19.3.1","isMinimumFixVersionAvailable":false}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2018-20225","vulnerabilityDetails":"** DISPUTED ** An issue was discovered in pip (all versions) because it installs the version with the highest version number, even if the user had intended to obtain a private package from a private index. This only affects use of the --extra-index-url option, and exploitation requires that the package does not already exist in the public index (and thus the attacker can put the package there with an arbitrary version number). NOTE: it has been reported that this is intended functionality and the user is responsible for using --extra-index-url securely.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-20225","cvss3Severity":"high","cvss3Score":"7.8","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"High","UI":"Required","AV":"Local","I":"High"},"extraData":{}}</REMEDIATE> -->

|

True

|

CVE-2018-20225 (High) detected in pip-19.1.1-py2.py3-none-any.whl, pip-19.3.1-py2.py3-none-any.whl - ## CVE-2018-20225 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>pip-19.1.1-py2.py3-none-any.whl</b>, <b>pip-19.3.1-py2.py3-none-any.whl</b></p></summary>

<p>

<details><summary><b>pip-19.1.1-py2.py3-none-any.whl</b></p></summary>

<p>The PyPA recommended tool for installing Python packages.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/5c/e0/be401c003291b56efc55aeba6a80ab790d3d4cece2778288d65323009420/pip-19.1.1-py2.py3-none-any.whl">https://files.pythonhosted.org/packages/5c/e0/be401c003291b56efc55aeba6a80ab790d3d4cece2778288d65323009420/pip-19.1.1-py2.py3-none-any.whl</a></p>

<p>Path to vulnerable library: canner/.poetry/lib/poetry/_vendor/py2.7/virtualenv_support/pip-19.1.1-py2.py3-none-any.whl</p>

<p>

Dependency Hierarchy:

- :x: **pip-19.1.1-py2.py3-none-any.whl** (Vulnerable Library)

</details>

<details><summary><b>pip-19.3.1-py2.py3-none-any.whl</b></p></summary>

<p>The PyPA recommended tool for installing Python packages.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/00/b6/9cfa56b4081ad13874b0c6f96af8ce16cfbc1cb06bedf8e9164ce5551ec1/pip-19.3.1-py2.py3-none-any.whl">https://files.pythonhosted.org/packages/00/b6/9cfa56b4081ad13874b0c6f96af8ce16cfbc1cb06bedf8e9164ce5551ec1/pip-19.3.1-py2.py3-none-any.whl</a></p>

<p>Path to vulnerable library: canner/.poetry/lib/poetry/_vendor/py2.7/virtualenv_support/pip-19.3.1-py2.py3-none-any.whl</p>

<p>

Dependency Hierarchy:

- :x: **pip-19.3.1-py2.py3-none-any.whl** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/rammatzkvosky/789/commit/a94ab1af7b954e06163acb325d4b035831f88835">a94ab1af7b954e06163acb325d4b035831f88835</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

** DISPUTED ** An issue was discovered in pip (all versions) because it installs the version with the highest version number, even if the user had intended to obtain a private package from a private index. This only affects use of the --extra-index-url option, and exploitation requires that the package does not already exist in the public index (and thus the attacker can put the package there with an arbitrary version number). NOTE: it has been reported that this is intended functionality and the user is responsible for using --extra-index-url securely.

<p>Publish Date: 2020-05-08

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-20225>CVE-2018-20225</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Python","packageName":"pip","packageVersion":"19.1.1","packageFilePaths":[],"isTransitiveDependency":false,"dependencyTree":"pip:19.1.1","isMinimumFixVersionAvailable":false},{"packageType":"Python","packageName":"pip","packageVersion":"19.3.1","packageFilePaths":[],"isTransitiveDependency":false,"dependencyTree":"pip:19.3.1","isMinimumFixVersionAvailable":false}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2018-20225","vulnerabilityDetails":"** DISPUTED ** An issue was discovered in pip (all versions) because it installs the version with the highest version number, even if the user had intended to obtain a private package from a private index. This only affects use of the --extra-index-url option, and exploitation requires that the package does not already exist in the public index (and thus the attacker can put the package there with an arbitrary version number). NOTE: it has been reported that this is intended functionality and the user is responsible for using --extra-index-url securely.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-20225","cvss3Severity":"high","cvss3Score":"7.8","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"High","UI":"Required","AV":"Local","I":"High"},"extraData":{}}</REMEDIATE> -->

|

non_process

|

cve high detected in pip none any whl pip none any whl cve high severity vulnerability vulnerable libraries pip none any whl pip none any whl pip none any whl the pypa recommended tool for installing python packages library home page a href path to vulnerable library canner poetry lib poetry vendor virtualenv support pip none any whl dependency hierarchy x pip none any whl vulnerable library pip none any whl the pypa recommended tool for installing python packages library home page a href path to vulnerable library canner poetry lib poetry vendor virtualenv support pip none any whl dependency hierarchy x pip none any whl vulnerable library found in head commit a href found in base branch master vulnerability details disputed an issue was discovered in pip all versions because it installs the version with the highest version number even if the user had intended to obtain a private package from a private index this only affects use of the extra index url option and exploitation requires that the package does not already exist in the public index and thus the attacker can put the package there with an arbitrary version number note it has been reported that this is intended functionality and the user is responsible for using extra index url securely publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href isopenpronvulnerability true ispackagebased true isdefaultbranch true packages istransitivedependency false dependencytree pip isminimumfixversionavailable false packagetype python packagename pip packageversion packagefilepaths istransitivedependency false dependencytree pip isminimumfixversionavailable false basebranches vulnerabilityidentifier cve vulnerabilitydetails disputed an issue was discovered in pip all versions because it installs the version with the highest version number even if the user had intended to obtain a private package from a private index this only affects use of the extra index url option and exploitation requires that the package does not already exist in the public index and thus the attacker can put the package there with an arbitrary version number note it has been reported that this is intended functionality and the user is responsible for using extra index url securely vulnerabilityurl

| 0

|

424,523

| 12,312,460,612

|

IssuesEvent

|

2020-05-12 13:58:04

|

DataDog/dd-trace-dotnet

|

https://api.github.com/repos/DataDog/dd-trace-dotnet

|

closed

|

Setting LoaderOptimization reg key prevents IIS from starting

|

area:integrations/aspnet priority:high type:bug

|

**Describe the bug**

With APM >= 1.12 you can no longer set the `LoaderOptimization` registry key to work around #475/#592 because you get error

```

Failed to initialize the AppDomain:/LM/W3SVC/1/ROOT

Exception: System.InvalidOperationException

Message: The configuration system has already been initialized.

StackTrace: at System.Configuration.ConfigurationManager.SetConfigurationSystem(IInternalConfigSystem configSystem, Boolean initComplete)

at System.Web.Configuration.HttpConfigurationSystem.EnsureInit(IConfigMapPath configMapPath, Boolean listenToFileChanges, Boolean initComplete)

at System.Web.Hosting.HostingEnvironment.Initialize(ApplicationManager appManager, IApplicationHost appHost, IConfigMapPathFactory configMapPathFactory, HostingEnvironmentParameters hostingParameters, PolicyLevel policyLevel, Exception appDomainCreationException)

at System.Web.Hosting.HostingEnvironment.Initialize(ApplicationManager appManager, IApplicationHost appHost, IConfigMapPathFactory configMapPathFactory, HostingEnvironmentParameters hostingParameters, PolicyLevel policyLevel)

at System.Web.Hosting.HostingEnvironment.Initialize(ApplicationManager appManager, IApplicationHost appHost, IConfigMapPathFactory configMapPathFactory, HostingEnvironmentParameters hostingParameters, PolicyLevel policyLevel)

at System.Web.Hosting.ApplicationManager.CreateAppDomainWithHostingEnvironment(String appId, IApplicationHost appHost, HostingEnvironmentParameters hostingParameters)

at System.Web.Hosting.ApplicationManager.CreateAppDomainWithHostingEnvironmentAndReportErrors(String appId, IApplicationHost appHost, HostingEnvironmentParameters hostingParameters)

```

**To Reproduce**

1. Install IIS with ASP.Net

1. Install APM/DD Agent

1. Set `HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\.NETFramework\LoaderOptimization` to `1`

1. Access http://localhost

You'll get a 500 server error. Check app event log for details

**Expected behavior**

The web app starts

**Runtime environment (please complete the following information):**

- Instrumentation mode: MSI

- Tracer version: 1.13.2

- OS: Windows 2016

- CLR: Default (4.6.2)

**Additional context**

As an exercise I manually added `Datadog.Trace.AspNet` to the GAC and installed the module into IIS the normal way. Disabling the profiler allowed me to get ASP.Net traces. Attempts to have profiling enabled but the aspnet integration disabled (by adding `DD_DISABLED_INTEGRATIONS=AspNet` to the WAS environment reg key) failed.

|

1.0

|

Setting LoaderOptimization reg key prevents IIS from starting - **Describe the bug**

With APM >= 1.12 you can no longer set the `LoaderOptimization` registry key to work around #475/#592 because you get error

```

Failed to initialize the AppDomain:/LM/W3SVC/1/ROOT

Exception: System.InvalidOperationException

Message: The configuration system has already been initialized.

StackTrace: at System.Configuration.ConfigurationManager.SetConfigurationSystem(IInternalConfigSystem configSystem, Boolean initComplete)

at System.Web.Configuration.HttpConfigurationSystem.EnsureInit(IConfigMapPath configMapPath, Boolean listenToFileChanges, Boolean initComplete)

at System.Web.Hosting.HostingEnvironment.Initialize(ApplicationManager appManager, IApplicationHost appHost, IConfigMapPathFactory configMapPathFactory, HostingEnvironmentParameters hostingParameters, PolicyLevel policyLevel, Exception appDomainCreationException)

at System.Web.Hosting.HostingEnvironment.Initialize(ApplicationManager appManager, IApplicationHost appHost, IConfigMapPathFactory configMapPathFactory, HostingEnvironmentParameters hostingParameters, PolicyLevel policyLevel)

at System.Web.Hosting.HostingEnvironment.Initialize(ApplicationManager appManager, IApplicationHost appHost, IConfigMapPathFactory configMapPathFactory, HostingEnvironmentParameters hostingParameters, PolicyLevel policyLevel)

at System.Web.Hosting.ApplicationManager.CreateAppDomainWithHostingEnvironment(String appId, IApplicationHost appHost, HostingEnvironmentParameters hostingParameters)

at System.Web.Hosting.ApplicationManager.CreateAppDomainWithHostingEnvironmentAndReportErrors(String appId, IApplicationHost appHost, HostingEnvironmentParameters hostingParameters)

```

**To Reproduce**

1. Install IIS with ASP.Net

1. Install APM/DD Agent

1. Set `HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\.NETFramework\LoaderOptimization` to `1`

1. Access http://localhost

You'll get a 500 server error. Check app event log for details

**Expected behavior**

The web app starts

**Runtime environment (please complete the following information):**

- Instrumentation mode: MSI

- Tracer version: 1.13.2

- OS: Windows 2016

- CLR: Default (4.6.2)

**Additional context**

As an exercise I manually added `Datadog.Trace.AspNet` to the GAC and installed the module into IIS the normal way. Disabling the profiler allowed me to get ASP.Net traces. Attempts to have profiling enabled but the aspnet integration disabled (by adding `DD_DISABLED_INTEGRATIONS=AspNet` to the WAS environment reg key) failed.

|

non_process

|

setting loaderoptimization reg key prevents iis from starting describe the bug with apm you can no longer set the loaderoptimization registry key to work around because you get error failed to initialize the appdomain lm root exception system invalidoperationexception message the configuration system has already been initialized stacktrace at system configuration configurationmanager setconfigurationsystem iinternalconfigsystem configsystem boolean initcomplete at system web configuration httpconfigurationsystem ensureinit iconfigmappath configmappath boolean listentofilechanges boolean initcomplete at system web hosting hostingenvironment initialize applicationmanager appmanager iapplicationhost apphost iconfigmappathfactory configmappathfactory hostingenvironmentparameters hostingparameters policylevel policylevel exception appdomaincreationexception at system web hosting hostingenvironment initialize applicationmanager appmanager iapplicationhost apphost iconfigmappathfactory configmappathfactory hostingenvironmentparameters hostingparameters policylevel policylevel at system web hosting hostingenvironment initialize applicationmanager appmanager iapplicationhost apphost iconfigmappathfactory configmappathfactory hostingenvironmentparameters hostingparameters policylevel policylevel at system web hosting applicationmanager createappdomainwithhostingenvironment string appid iapplicationhost apphost hostingenvironmentparameters hostingparameters at system web hosting applicationmanager createappdomainwithhostingenvironmentandreporterrors string appid iapplicationhost apphost hostingenvironmentparameters hostingparameters to reproduce install iis with asp net install apm dd agent set hkey local machine software microsoft netframework loaderoptimization to access you ll get a server error check app event log for details expected behavior the web app starts runtime environment please complete the following information instrumentation mode msi tracer version os windows clr default additional context as an exercise i manually added datadog trace aspnet to the gac and installed the module into iis the normal way disabling the profiler allowed me to get asp net traces attempts to have profiling enabled but the aspnet integration disabled by adding dd disabled integrations aspnet to the was environment reg key failed

| 0

|

14,774

| 18,049,787,085

|

IssuesEvent

|

2021-09-19 14:44:54

|

shirou/gopsutil

|

https://api.github.com/repos/shirou/gopsutil

|

closed

|

Does not compile for Arm Windows due to go-ole

|

os:windows package:process package:cpu

|

**Describe the bug**

Compiling for Windows Arm fails due to indirect dependency on go-ole, which appears to be win32-only.

**To Reproduce**

```go

package main

import (

"fmt"

"os"

"github.com/shirou/gopsutil/process"

)

func main() {

currentPid := os.Getpid()

myself, _ := process.NewProcess(int32(currentPid))

_, err := myself.CPUPercent()

if err != nil {

fmt.Printf("CPU Percent: %s\n", err)

}

}

```

```bash

$ GOOS=windows GOARCH=arm go build .

go: downloading github.com/shirou/gopsutil v2.20.7+incompatible

go: extracting github.com/shirou/gopsutil v2.20.7+incompatible

go: downloading github.com/StackExchange/wmi v0.0.0-20190523213315-cbe66965904d

go: downloading golang.org/x/sys v0.0.0-20200808120158-1030fc2bf1d9

go: extracting github.com/StackExchange/wmi v0.0.0-20190523213315-cbe66965904d

go: downloading github.com/go-ole/go-ole v1.2.4

go: extracting github.com/go-ole/go-ole v1.2.4

go: extracting golang.org/x/sys v0.0.0-20200808120158-1030fc2bf1d9

go: finding github.com/shirou/gopsutil v2.20.7+incompatible

go: finding golang.org/x/sys v0.0.0-20200808120158-1030fc2bf1d9

go: finding github.com/StackExchange/wmi v0.0.0-20190523213315-cbe66965904d

go: finding github.com/go-ole/go-ole v1.2.4

# github.com/go-ole/go-ole

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/com.go:238:21: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/com.go:247:22: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/connect.go:79:72: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/connect.go:90:76: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/connect.go:105:69: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/connect.go:115:73: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/connect.go:129:69: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/connect.go:139:73: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/connect.go:173:84: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/idispatch.go:26:90: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/idispatch.go:26:90: too many errors

```

**Expected behavior**

A clean compilation

**Environment (please complete the following information):**

I'm cross-compiling, as I don't have a straightforward way to test the build on Arm directly, and our toolchain builds all cross-compile on Linux at the moment.

- [ ] Windows: [paste the result of `ver`]

- [x] Linux:

```

"Ubuntu 20.04.1 LTS"

Linux myhostname 5.4.0-42-generic #46-Ubuntu SMP Fri Jul 10 00:24:02 UTC 2020 x86_64 x86_64 x86_64 GNU/Linux

```

- [x] Mac OS: [paste the result of `sw_vers` and `uname -a`

```

ProductName: Mac OS X

ProductVersion: 10.15.6

BuildVersion: 19G73

Darwin myhostname.local 19.6.0 Darwin Kernel Version 19.6.0: Sun Jul 5 00:43:10 PDT 2020; root:xnu-6153.141.1~9/RELEASE_X86_64 x86_64

```

- [ ] FreeBSD: [paste the result of `freebsd-version -k -r -u` and `uname -a`]

- [ ] OpenBSD: [paste the result of `uname -a`]

**Additional context**

[Cross-compiling? Paste the command you are using to cross-compile and the result of the corresponding `go env`]

Linux `go env`

```

GO111MODULE=""

GOARCH="amd64"

GOBIN=""

GOCACHE="/home/simon/.cache/go-build"

GOENV="/home/simon/.config/go/env"

GOEXE=""

GOFLAGS=""

GOHOSTARCH="amd64"

GOHOSTOS="linux"

GONOPROXY=""

GONOSUMDB=""

GOOS="linux"

GOPATH="/home/simon/go"

GOPRIVATE=""

GOPROXY="https://proxy.golang.org,direct"

GOROOT="/usr/lib/go-1.13"

GOSUMDB="sum.golang.org"

GOTMPDIR=""

GOTOOLDIR="/usr/lib/go-1.13/pkg/tool/linux_amd64"

GCCGO="gccgo"

AR="ar"

CC="gcc"

CXX="g++"

CGO_ENABLED="1"

GOMOD="/home/simon/gopsutil-mvp/go.mod"

CGO_CFLAGS="-g -O2"

CGO_CPPFLAGS=""

CGO_CXXFLAGS="-g -O2"

CGO_FFLAGS="-g -O2"

CGO_LDFLAGS="-g -O2"

PKG_CONFIG="pkg-config"

GOGCCFLAGS="-fPIC -m64 -pthread -fmessage-length=0 -fdebug-prefix-map=/tmp/go-build453655880=/tmp/go-build -gno-record-gcc-switches"

```

Mac `go env`:

```

GO111MODULE=""

GOARCH="amd64"

GOBIN=""

GOCACHE="/Users/sfraser/Library/Caches/go-build"

GOENV="/Users/sfraser/Library/Application Support/go/env"

GOEXE=""

GOFLAGS=""

GOHOSTARCH="amd64"

GOHOSTOS="darwin"

GOINSECURE=""

GONOPROXY=""

GONOSUMDB=""

GOOS="darwin"

GOPATH="/Users/sfraser/gosrc"

GOPRIVATE=""

GOPROXY="https://proxy.golang.org,direct"

GOROOT="/usr/local/Cellar/go/1.14.6/libexec"

GOSUMDB="sum.golang.org"

GOTMPDIR=""

GOTOOLDIR="/usr/local/Cellar/go/1.14.6/libexec/pkg/tool/darwin_amd64"

GCCGO="gccgo"

AR="ar"

CC="clang"

CXX="clang++"

CGO_ENABLED="1"

GOMOD="/Users/sfraser/github/srfraser/gopsutil-mvp/go.mod"

CGO_CFLAGS="-g -O2"

CGO_CPPFLAGS=""

CGO_CXXFLAGS="-g -O2"

CGO_FFLAGS="-g -O2"

CGO_LDFLAGS="-g -O2"

PKG_CONFIG="pkg-config"

GOGCCFLAGS="-fPIC -m64 -pthread -fno-caret-diagnostics -Qunused-arguments -fmessage-length=0 -fdebug-prefix-map=/var/folders/g8/rv_wx46d5w10phdnqyw2fvt80000gn/T/go-build183372748=/tmp/go-build -gno-record-gcc-switches -fno-common"

```

|

1.0

|

Does not compile for Arm Windows due to go-ole - **Describe the bug**

Compiling for Windows Arm fails due to indirect dependency on go-ole, which appears to be win32-only.

**To Reproduce**

```go

package main

import (

"fmt"

"os"

"github.com/shirou/gopsutil/process"

)

func main() {

currentPid := os.Getpid()

myself, _ := process.NewProcess(int32(currentPid))

_, err := myself.CPUPercent()

if err != nil {

fmt.Printf("CPU Percent: %s\n", err)

}

}

```

```bash

$ GOOS=windows GOARCH=arm go build .

go: downloading github.com/shirou/gopsutil v2.20.7+incompatible

go: extracting github.com/shirou/gopsutil v2.20.7+incompatible

go: downloading github.com/StackExchange/wmi v0.0.0-20190523213315-cbe66965904d

go: downloading golang.org/x/sys v0.0.0-20200808120158-1030fc2bf1d9

go: extracting github.com/StackExchange/wmi v0.0.0-20190523213315-cbe66965904d

go: downloading github.com/go-ole/go-ole v1.2.4

go: extracting github.com/go-ole/go-ole v1.2.4

go: extracting golang.org/x/sys v0.0.0-20200808120158-1030fc2bf1d9

go: finding github.com/shirou/gopsutil v2.20.7+incompatible

go: finding golang.org/x/sys v0.0.0-20200808120158-1030fc2bf1d9

go: finding github.com/StackExchange/wmi v0.0.0-20190523213315-cbe66965904d

go: finding github.com/go-ole/go-ole v1.2.4

# github.com/go-ole/go-ole

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/com.go:238:21: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/com.go:247:22: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/connect.go:79:72: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/connect.go:90:76: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/connect.go:105:69: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/connect.go:115:73: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/connect.go:129:69: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/connect.go:139:73: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/connect.go:173:84: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/idispatch.go:26:90: undefined: VARIANT

../go/pkg/mod/github.com/go-ole/go-ole@v1.2.4/idispatch.go:26:90: too many errors

```

**Expected behavior**

A clean compilation

**Environment (please complete the following information):**

I'm cross-compiling, as I don't have a straightforward way to test the build on Arm directly, and our toolchain builds all cross-compile on Linux at the moment.

- [ ] Windows: [paste the result of `ver`]

- [x] Linux:

```

"Ubuntu 20.04.1 LTS"

Linux myhostname 5.4.0-42-generic #46-Ubuntu SMP Fri Jul 10 00:24:02 UTC 2020 x86_64 x86_64 x86_64 GNU/Linux

```

- [x] Mac OS: [paste the result of `sw_vers` and `uname -a`

```

ProductName: Mac OS X

ProductVersion: 10.15.6

BuildVersion: 19G73

Darwin myhostname.local 19.6.0 Darwin Kernel Version 19.6.0: Sun Jul 5 00:43:10 PDT 2020; root:xnu-6153.141.1~9/RELEASE_X86_64 x86_64

```

- [ ] FreeBSD: [paste the result of `freebsd-version -k -r -u` and `uname -a`]

- [ ] OpenBSD: [paste the result of `uname -a`]

**Additional context**

[Cross-compiling? Paste the command you are using to cross-compile and the result of the corresponding `go env`]

Linux `go env`

```

GO111MODULE=""

GOARCH="amd64"

GOBIN=""

GOCACHE="/home/simon/.cache/go-build"

GOENV="/home/simon/.config/go/env"

GOEXE=""

GOFLAGS=""

GOHOSTARCH="amd64"

GOHOSTOS="linux"

GONOPROXY=""

GONOSUMDB=""

GOOS="linux"

GOPATH="/home/simon/go"

GOPRIVATE=""

GOPROXY="https://proxy.golang.org,direct"

GOROOT="/usr/lib/go-1.13"

GOSUMDB="sum.golang.org"

GOTMPDIR=""

GOTOOLDIR="/usr/lib/go-1.13/pkg/tool/linux_amd64"

GCCGO="gccgo"

AR="ar"

CC="gcc"

CXX="g++"

CGO_ENABLED="1"

GOMOD="/home/simon/gopsutil-mvp/go.mod"

CGO_CFLAGS="-g -O2"

CGO_CPPFLAGS=""

CGO_CXXFLAGS="-g -O2"

CGO_FFLAGS="-g -O2"

CGO_LDFLAGS="-g -O2"

PKG_CONFIG="pkg-config"

GOGCCFLAGS="-fPIC -m64 -pthread -fmessage-length=0 -fdebug-prefix-map=/tmp/go-build453655880=/tmp/go-build -gno-record-gcc-switches"

```

Mac `go env`:

```

GO111MODULE=""

GOARCH="amd64"

GOBIN=""

GOCACHE="/Users/sfraser/Library/Caches/go-build"

GOENV="/Users/sfraser/Library/Application Support/go/env"

GOEXE=""

GOFLAGS=""

GOHOSTARCH="amd64"

GOHOSTOS="darwin"

GOINSECURE=""

GONOPROXY=""

GONOSUMDB=""

GOOS="darwin"

GOPATH="/Users/sfraser/gosrc"

GOPRIVATE=""

GOPROXY="https://proxy.golang.org,direct"

GOROOT="/usr/local/Cellar/go/1.14.6/libexec"

GOSUMDB="sum.golang.org"

GOTMPDIR=""

GOTOOLDIR="/usr/local/Cellar/go/1.14.6/libexec/pkg/tool/darwin_amd64"

GCCGO="gccgo"

AR="ar"

CC="clang"

CXX="clang++"

CGO_ENABLED="1"

GOMOD="/Users/sfraser/github/srfraser/gopsutil-mvp/go.mod"

CGO_CFLAGS="-g -O2"

CGO_CPPFLAGS=""

CGO_CXXFLAGS="-g -O2"

CGO_FFLAGS="-g -O2"

CGO_LDFLAGS="-g -O2"

PKG_CONFIG="pkg-config"

GOGCCFLAGS="-fPIC -m64 -pthread -fno-caret-diagnostics -Qunused-arguments -fmessage-length=0 -fdebug-prefix-map=/var/folders/g8/rv_wx46d5w10phdnqyw2fvt80000gn/T/go-build183372748=/tmp/go-build -gno-record-gcc-switches -fno-common"

```

|

process

|

does not compile for arm windows due to go ole describe the bug compiling for windows arm fails due to indirect dependency on go ole which appears to be only to reproduce go package main import fmt os github com shirou gopsutil process func main currentpid os getpid myself process newprocess currentpid err myself cpupercent if err nil fmt printf cpu percent s n err bash goos windows goarch arm go build go downloading github com shirou gopsutil incompatible go extracting github com shirou gopsutil incompatible go downloading github com stackexchange wmi go downloading golang org x sys go extracting github com stackexchange wmi go downloading github com go ole go ole go extracting github com go ole go ole go extracting golang org x sys go finding github com shirou gopsutil incompatible go finding golang org x sys go finding github com stackexchange wmi go finding github com go ole go ole github com go ole go ole go pkg mod github com go ole go ole com go undefined variant go pkg mod github com go ole go ole com go undefined variant go pkg mod github com go ole go ole connect go undefined variant go pkg mod github com go ole go ole connect go undefined variant go pkg mod github com go ole go ole connect go undefined variant go pkg mod github com go ole go ole connect go undefined variant go pkg mod github com go ole go ole connect go undefined variant go pkg mod github com go ole go ole connect go undefined variant go pkg mod github com go ole go ole connect go undefined variant go pkg mod github com go ole go ole idispatch go undefined variant go pkg mod github com go ole go ole idispatch go too many errors expected behavior a clean compilation environment please complete the following information i m cross compiling as i don t have a straightforward way to test the build on arm directly and our toolchain builds all cross compile on linux at the moment windows linux ubuntu lts linux myhostname generic ubuntu smp fri jul utc gnu linux mac os paste the result of sw vers and uname a productname mac os x productversion buildversion darwin myhostname local darwin kernel version sun jul pdt root xnu release freebsd openbsd additional context linux go env goarch gobin gocache home simon cache go build goenv home simon config go env goexe goflags gohostarch gohostos linux gonoproxy gonosumdb goos linux gopath home simon go goprivate goproxy goroot usr lib go gosumdb sum golang org gotmpdir gotooldir usr lib go pkg tool linux gccgo gccgo ar ar cc gcc cxx g cgo enabled gomod home simon gopsutil mvp go mod cgo cflags g cgo cppflags cgo cxxflags g cgo fflags g cgo ldflags g pkg config pkg config gogccflags fpic pthread fmessage length fdebug prefix map tmp go tmp go build gno record gcc switches mac go env goarch gobin gocache users sfraser library caches go build goenv users sfraser library application support go env goexe goflags gohostarch gohostos darwin goinsecure gonoproxy gonosumdb goos darwin gopath users sfraser gosrc goprivate goproxy goroot usr local cellar go libexec gosumdb sum golang org gotmpdir gotooldir usr local cellar go libexec pkg tool darwin gccgo gccgo ar ar cc clang cxx clang cgo enabled gomod users sfraser github srfraser gopsutil mvp go mod cgo cflags g cgo cppflags cgo cxxflags g cgo fflags g cgo ldflags g pkg config pkg config gogccflags fpic pthread fno caret diagnostics qunused arguments fmessage length fdebug prefix map var folders rv t go tmp go build gno record gcc switches fno common

| 1

|

636,564

| 20,603,098,564

|

IssuesEvent

|

2022-03-06 15:21:32

|

chrisreddington/CV

|

https://api.github.com/repos/chrisreddington/CV

|

closed

|

Refactor skills section

|

Type/Enhancement Community/HelpWanted Community/GoodFirstIssue Priority/Critical Size/Large

|

- [x] Create skills data section

- [x] Separate skills into its own partial

|

1.0

|

Refactor skills section - - [x] Create skills data section

- [x] Separate skills into its own partial

|

non_process

|

refactor skills section create skills data section separate skills into its own partial

| 0

|

9,400

| 12,399,017,255

|

IssuesEvent

|

2020-05-21 03:45:22

|

googleapis/nodejs-spanner

|

https://api.github.com/repos/googleapis/nodejs-spanner

|

opened

|

Run the system tests against the Cloud Spanner Emulator

|

api: spanner type: process

|

We want to run the system tests against the [Cloud Spanner Emulator](https://cloud.google.com/spanner/docs/emulator) on presubmits. Reasons for doing so are summarized nicely [here](https://github.com/googleapis/google-cloud-php/pull/2930#issuecomment-622212337).

|

1.0

|

Run the system tests against the Cloud Spanner Emulator - We want to run the system tests against the [Cloud Spanner Emulator](https://cloud.google.com/spanner/docs/emulator) on presubmits. Reasons for doing so are summarized nicely [here](https://github.com/googleapis/google-cloud-php/pull/2930#issuecomment-622212337).

|

process

|

run the system tests against the cloud spanner emulator we want to run the system tests against the on presubmits reasons for doing so are summarized nicely

| 1

|

248,941

| 26,867,478,162

|

IssuesEvent

|

2023-02-04 03:10:58

|

kxxt/kxxt-website

|

https://api.github.com/repos/kxxt/kxxt-website

|

closed

|

CVE-2022-25881 (Medium) detected in http-cache-semantics-4.1.0.tgz - autoclosed

|

security vulnerability

|

## CVE-2022-25881 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>http-cache-semantics-4.1.0.tgz</b></p></summary>

<p>Parses Cache-Control and other headers. Helps building correct HTTP caches and proxies</p>

<p>Library home page: <a href="https://registry.npmjs.org/http-cache-semantics/-/http-cache-semantics-4.1.0.tgz">https://registry.npmjs.org/http-cache-semantics/-/http-cache-semantics-4.1.0.tgz</a></p>

<p>

Dependency Hierarchy:

- gatsby-5.3.3.tgz (Root Library)

- got-11.8.6.tgz

- cacheable-request-7.0.2.tgz

- :x: **http-cache-semantics-4.1.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/kxxt/kxxt-website/commit/37f8543da5164a1a7ef318756aa0eac1c5e89a09">37f8543da5164a1a7ef318756aa0eac1c5e89a09</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

This affects versions of the package http-cache-semantics before 4.1.1. The issue can be exploited via malicious request header values sent to a server, when that server reads the cache policy from the request using this library.

<p>Publish Date: 2023-01-31

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-25881>CVE-2022-25881</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.cve.org/CVERecord?id=CVE-2022-25881">https://www.cve.org/CVERecord?id=CVE-2022-25881</a></p>

<p>Release Date: 2023-01-31</p>

<p>Fix Resolution: http-cache-semantics - 4.1.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2022-25881 (Medium) detected in http-cache-semantics-4.1.0.tgz - autoclosed - ## CVE-2022-25881 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>http-cache-semantics-4.1.0.tgz</b></p></summary>

<p>Parses Cache-Control and other headers. Helps building correct HTTP caches and proxies</p>

<p>Library home page: <a href="https://registry.npmjs.org/http-cache-semantics/-/http-cache-semantics-4.1.0.tgz">https://registry.npmjs.org/http-cache-semantics/-/http-cache-semantics-4.1.0.tgz</a></p>

<p>

Dependency Hierarchy:

- gatsby-5.3.3.tgz (Root Library)

- got-11.8.6.tgz

- cacheable-request-7.0.2.tgz

- :x: **http-cache-semantics-4.1.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/kxxt/kxxt-website/commit/37f8543da5164a1a7ef318756aa0eac1c5e89a09">37f8543da5164a1a7ef318756aa0eac1c5e89a09</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

This affects versions of the package http-cache-semantics before 4.1.1. The issue can be exploited via malicious request header values sent to a server, when that server reads the cache policy from the request using this library.

<p>Publish Date: 2023-01-31

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-25881>CVE-2022-25881</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.cve.org/CVERecord?id=CVE-2022-25881">https://www.cve.org/CVERecord?id=CVE-2022-25881</a></p>

<p>Release Date: 2023-01-31</p>

<p>Fix Resolution: http-cache-semantics - 4.1.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in http cache semantics tgz autoclosed cve medium severity vulnerability vulnerable library http cache semantics tgz parses cache control and other headers helps building correct http caches and proxies library home page a href dependency hierarchy gatsby tgz root library got tgz cacheable request tgz x http cache semantics tgz vulnerable library found in head commit a href found in base branch master vulnerability details this affects versions of the package http cache semantics before the issue can be exploited via malicious request header values sent to a server when that server reads the cache policy from the request using this library publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact low for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution http cache semantics step up your open source security game with mend

| 0

|

28,573

| 13,751,717,266

|

IssuesEvent

|

2020-10-06 13:41:39

|

cartographer-project/cartographer

|

https://api.github.com/repos/cartographer-project/cartographer

|

closed

|

Cached precomputation grids of map are problematic for RAM efficiency in pure localization mode

|

performance

|

_[This is a ticket to explain the motivation behind 2 upcoming PRs.]_

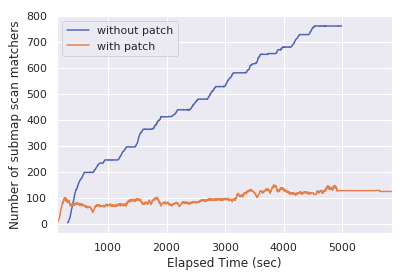

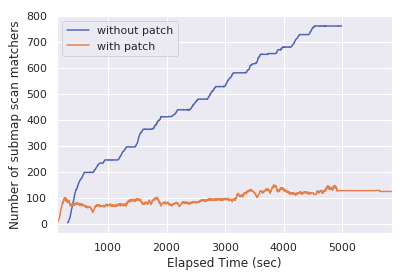

Construction of branch-and-bound submap scan matchers used for constraint search is dispatched dynamically, i.e. only when needed for individual submaps. They are kept afterwards. This is efficient because the precomputed grids at different resolutions only need to be computed once.

However, in localization mode this means that we construct and collect more and more of those precomputed grids - proportional to the explored area and bounded by the max. number of submaps in the frozen map. (driving over the same submaps multiple times doesn’t add new ones).

What I did to tackle this:

* metric for counting these matchers #1738

* garbage collection of matchers that aren't needed anymore #1745

The garbage collector has been saving us a large chunk of memory during 24/7 operation in large maps since we deployed it at Magazino last year.

Here's a comparison of two simulation runs we did, where the robot explores the whole area of a large map in 2D localization mode.

(time axis don't match, but roughly corresponds to explored area in both cases)

|

True

|

Cached precomputation grids of map are problematic for RAM efficiency in pure localization mode - _[This is a ticket to explain the motivation behind 2 upcoming PRs.]_

Construction of branch-and-bound submap scan matchers used for constraint search is dispatched dynamically, i.e. only when needed for individual submaps. They are kept afterwards. This is efficient because the precomputed grids at different resolutions only need to be computed once.

However, in localization mode this means that we construct and collect more and more of those precomputed grids - proportional to the explored area and bounded by the max. number of submaps in the frozen map. (driving over the same submaps multiple times doesn’t add new ones).

What I did to tackle this:

* metric for counting these matchers #1738

* garbage collection of matchers that aren't needed anymore #1745

The garbage collector has been saving us a large chunk of memory during 24/7 operation in large maps since we deployed it at Magazino last year.

Here's a comparison of two simulation runs we did, where the robot explores the whole area of a large map in 2D localization mode.

(time axis don't match, but roughly corresponds to explored area in both cases)

|

non_process

|

cached precomputation grids of map are problematic for ram efficiency in pure localization mode construction of branch and bound submap scan matchers used for constraint search is dispatched dynamically i e only when needed for individual submaps they are kept afterwards this is efficient because the precomputed grids at different resolutions only need to be computed once however in localization mode this means that we construct and collect more and more of those precomputed grids proportional to the explored area and bounded by the max number of submaps in the frozen map driving over the same submaps multiple times doesn’t add new ones what i did to tackle this metric for counting these matchers garbage collection of matchers that aren t needed anymore the garbage collector has been saving us a large chunk of memory during operation in large maps since we deployed it at magazino last year here s a comparison of two simulation runs we did where the robot explores the whole area of a large map in localization mode time axis don t match but roughly corresponds to explored area in both cases

| 0

|

14,800

| 18,090,403,465

|

IssuesEvent

|

2021-09-22 00:36:16

|

yandali-damian/LIM015-social-network

|

https://api.github.com/repos/yandali-damian/LIM015-social-network

|

closed

|

HU-001

|

Process

|

Como usuario nuevo tengo que crear una cuenta con correo electrónico y contraseña (válidos) para iniciar sesión.

- Prototipado

> - [x] Definición de colores

> - [x] Selección de imágenes

> - [x] Tipo de letra

> - [x] Posicionamiento

> - [x] Tamaño

- Tarea

> - [x] Maquetar la primera vista

> - [x] Botones de ingresar y registrarse

> - [x] Inputs para ingresar de correo y contraseña

> - [x] Inputs para registrase nombre, correo, contraseña y confirma contraseña

> - [x] Radio para sexo

> - [ ] Función del login

> - [ ] Interacción del botón ingresar con la vista de inicio

> - [ ] Función del registro de datos

> - [x] Interacción del botón registrarse con la vista de registro

> - [ ] Agregar avatar

|

1.0

|

HU-001 - Como usuario nuevo tengo que crear una cuenta con correo electrónico y contraseña (válidos) para iniciar sesión.

- Prototipado

> - [x] Definición de colores

> - [x] Selección de imágenes

> - [x] Tipo de letra

> - [x] Posicionamiento

> - [x] Tamaño

- Tarea

> - [x] Maquetar la primera vista

> - [x] Botones de ingresar y registrarse

> - [x] Inputs para ingresar de correo y contraseña

> - [x] Inputs para registrase nombre, correo, contraseña y confirma contraseña

> - [x] Radio para sexo

> - [ ] Función del login

> - [ ] Interacción del botón ingresar con la vista de inicio

> - [ ] Función del registro de datos

> - [x] Interacción del botón registrarse con la vista de registro

> - [ ] Agregar avatar

|

process

|

hu como usuario nuevo tengo que crear una cuenta con correo electrónico y contraseña válidos para iniciar sesión prototipado definición de colores selección de imágenes tipo de letra posicionamiento tamaño tarea maquetar la primera vista botones de ingresar y registrarse inputs para ingresar de correo y contraseña inputs para registrase nombre correo contraseña y confirma contraseña radio para sexo función del login interacción del botón ingresar con la vista de inicio función del registro de datos interacción del botón registrarse con la vista de registro agregar avatar

| 1

|

192,366

| 6,849,084,129

|

IssuesEvent

|

2017-11-13 20:48:21

|

minio/minio-dotnet

|

https://api.github.com/repos/minio/minio-dotnet

|

closed

|

Implement RemoveObjects API to remove objects in batches

|

priority: medium

|

Refer #467 of Minio-Java. Content-Md5 needs to be set for multi object deletes

|

1.0

|

Implement RemoveObjects API to remove objects in batches - Refer #467 of Minio-Java. Content-Md5 needs to be set for multi object deletes

|

non_process

|

implement removeobjects api to remove objects in batches refer of minio java content needs to be set for multi object deletes

| 0

|

17,730

| 23,637,557,968

|

IssuesEvent

|

2022-08-25 14:24:22

|

threefoldtech/tfgrid_dashboard

|

https://api.github.com/repos/threefoldtech/tfgrid_dashboard

|

closed

|

'More Details' of DAO proposal systematically gives spinning screens

|

process_wontfix type_bug

|

I click on 'More Details' in a DAO proposal, I get a screen with permanent spinning wheel (both qa and testnet, and for all proposals made).

<img width="1512" alt="Screenshot 2022-07-28 at 16 29 32" src="https://user-images.githubusercontent.com/30384423/181556861-28b54a3d-501a-4464-9cdc-485e8fe56ca4.png">

|

1.0

|

'More Details' of DAO proposal systematically gives spinning screens - I click on 'More Details' in a DAO proposal, I get a screen with permanent spinning wheel (both qa and testnet, and for all proposals made).

<img width="1512" alt="Screenshot 2022-07-28 at 16 29 32" src="https://user-images.githubusercontent.com/30384423/181556861-28b54a3d-501a-4464-9cdc-485e8fe56ca4.png">

|

process

|

more details of dao proposal systematically gives spinning screens i click on more details in a dao proposal i get a screen with permanent spinning wheel both qa and testnet and for all proposals made img width alt screenshot at src

| 1

|

454,963

| 13,109,790,942

|

IssuesEvent

|

2020-08-04 19:22:35

|

carbon-design-system/carbon-addons-iot-react

|

https://api.github.com/repos/carbon-design-system/carbon-addons-iot-react

|

opened

|

[Dashboard cards] Need "pop out" expand icon in card header

|

offering: health offering: predict status: needs priority :inbox_tray: status: needs triage :mag: type: enhancement :bulb:

|

<!--

Use this template if you want to request a new feature, or a change to an

existing feature.

If you'd like to request an entirely new component, please use the component request template instead.

If you are reporting a bug or problem, please use the bug template instead.

-->

### Summary

Need "expand" option in dashboard card header.

**Additional context**

Health & Predict are following the pattern that Monitor set for expanding cards to show a larger view of the data, but it looks like the expand button isn't available in the IoT cards. We need to expand a value card first, but also need this option on chart cards.

Click on the expand icon in the upper right

to open a dialog that has additional info

### Specific timeline issues / requests

Do you want this work within a specific time period? Is it related to an

upcoming release?

Health needs this for our October 2020 release.

Lack of this button blocks creation of the dialog that is invoked when the user clicks the button.

### Available extra resources

What resources do you have to assist this effort?

The Predict team hard-coded this into their chart cards, copying the Monitor team, and should hopefully be able to contribute it back.

|

1.0

|

[Dashboard cards] Need "pop out" expand icon in card header - <!--

Use this template if you want to request a new feature, or a change to an

existing feature.

If you'd like to request an entirely new component, please use the component request template instead.

If you are reporting a bug or problem, please use the bug template instead.

-->

### Summary

Need "expand" option in dashboard card header.

**Additional context**

Health & Predict are following the pattern that Monitor set for expanding cards to show a larger view of the data, but it looks like the expand button isn't available in the IoT cards. We need to expand a value card first, but also need this option on chart cards.

Click on the expand icon in the upper right

to open a dialog that has additional info

### Specific timeline issues / requests

Do you want this work within a specific time period? Is it related to an

upcoming release?

Health needs this for our October 2020 release.

Lack of this button blocks creation of the dialog that is invoked when the user clicks the button.

### Available extra resources

What resources do you have to assist this effort?

The Predict team hard-coded this into their chart cards, copying the Monitor team, and should hopefully be able to contribute it back.

|

non_process

|

need pop out expand icon in card header use this template if you want to request a new feature or a change to an existing feature if you d like to request an entirely new component please use the component request template instead if you are reporting a bug or problem please use the bug template instead summary need expand option in dashboard card header additional context health predict are following the pattern that monitor set for expanding cards to show a larger view of the data but it looks like the expand button isn t available in the iot cards we need to expand a value card first but also need this option on chart cards click on the expand icon in the upper right to open a dialog that has additional info specific timeline issues requests do you want this work within a specific time period is it related to an upcoming release health needs this for our october release lack of this button blocks creation of the dialog that is invoked when the user clicks the button available extra resources what resources do you have to assist this effort the predict team hard coded this into their chart cards copying the monitor team and should hopefully be able to contribute it back

| 0

|

348,429

| 10,442,325,301

|

IssuesEvent

|

2019-09-18 12:51:57

|

input-output-hk/jormungandr

|

https://api.github.com/repos/input-output-hk/jormungandr

|

closed

|

Chain head storage tag not kept up to date

|

Priority - High bug

|

Currently, there is a tag in storage for the main branch, but it is only ever set to the block0's hash.

The tag:

https://github.com/input-output-hk/jormungandr/blob/646b07116e2f40be66aee51df5e353c56b4fdd9e/jormungandr/src/blockchain/chain.rs#L106

Expected behavior: The tag to be kept up to date when the chain tip changes.

comments from discussion with @NicolasDP about it:

> Every time the tip is updated it should update the storage tag for this too.

> It’s only done for the block0 hash.

|

1.0

|

Chain head storage tag not kept up to date - Currently, there is a tag in storage for the main branch, but it is only ever set to the block0's hash.

The tag:

https://github.com/input-output-hk/jormungandr/blob/646b07116e2f40be66aee51df5e353c56b4fdd9e/jormungandr/src/blockchain/chain.rs#L106

Expected behavior: The tag to be kept up to date when the chain tip changes.

comments from discussion with @NicolasDP about it:

> Every time the tip is updated it should update the storage tag for this too.

> It’s only done for the block0 hash.

|

non_process

|

chain head storage tag not kept up to date currently there is a tag in storage for the main branch but it is only ever set to the s hash the tag expected behavior the tag to be kept up to date when the chain tip changes comments from discussion with nicolasdp about it every time the tip is updated it should update the storage tag for this too it’s only done for the hash

| 0

|

74,646

| 20,259,789,910

|

IssuesEvent

|

2022-02-15 05:42:08

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

opened

|

[SB] Eligibility section can not be marked as complete in the below scenario

|

Bug P1 Study builder

|

**Steps:**

1. Edit the study.

2. Naviagte to eligibility section.

3. In token validation > Remove the instruction text and then click on save button.

4. Now navigate to eligibility test > add eligibility test questions.

5. Click on mark as complete button and Observe.

**AR:** Eligibility section can not be marked as complete in the above scenario.

**ER:** Eligibility section should be marked as complete in the above scenario.

|

1.0

|

[SB] Eligibility section can not be marked as complete in the below scenario - **Steps:**

1. Edit the study.

2. Naviagte to eligibility section.

3. In token validation > Remove the instruction text and then click on save button.

4. Now navigate to eligibility test > add eligibility test questions.

5. Click on mark as complete button and Observe.

**AR:** Eligibility section can not be marked as complete in the above scenario.

**ER:** Eligibility section should be marked as complete in the above scenario.

|

non_process

|

eligibility section can not be marked as complete in the below scenario steps edit the study naviagte to eligibility section in token validation remove the instruction text and then click on save button now navigate to eligibility test add eligibility test questions click on mark as complete button and observe ar eligibility section can not be marked as complete in the above scenario er eligibility section should be marked as complete in the above scenario

| 0

|

2,464

| 5,242,913,515

|

IssuesEvent

|

2017-01-31 19:18:35

|

opentrials/opentrials

|

https://api.github.com/repos/opentrials/opentrials

|

closed

|

Introduce `database.identifiers` table

|

API Processors refactoring

|

# Overview

For now we handle identifiers in `database.records.identifiers` jsonb dict and it's the most important part of our deduplication system. We have following problems with it:

- can't have a few identifiers from one source (because it's dict) [#299]

- should search using query like `identifiers @> '{"nct": "NCT124123423"}' (we need key+value) because of the way how Postgres GIN indexes works.

- can't make it array because we need `source_id` keys

Introducing normalized `database.identifiers` table will solve this problems and open some other possibilities to work with identifiers.

|

1.0

|

Introduce `database.identifiers` table - # Overview

For now we handle identifiers in `database.records.identifiers` jsonb dict and it's the most important part of our deduplication system. We have following problems with it:

- can't have a few identifiers from one source (because it's dict) [#299]

- should search using query like `identifiers @> '{"nct": "NCT124123423"}' (we need key+value) because of the way how Postgres GIN indexes works.

- can't make it array because we need `source_id` keys

Introducing normalized `database.identifiers` table will solve this problems and open some other possibilities to work with identifiers.

|

process

|

introduce database identifiers table overview for now we handle identifiers in database records identifiers jsonb dict and it s the most important part of our deduplication system we have following problems with it can t have a few identifiers from one source because it s dict should search using query like identifiers nct we need key value because of the way how postgres gin indexes works can t make it array because we need source id keys introducing normalized database identifiers table will solve this problems and open some other possibilities to work with identifiers

| 1

|

20,068

| 26,557,884,178

|

IssuesEvent

|

2023-01-20 13:36:12

|

NixOS/nix

|

https://api.github.com/repos/NixOS/nix

|

closed

|

Pull request checklist

|

developer-experience process

|

**Is your feature request related to a problem? Please describe.**

Checklists help with guiding a process to completion. They ensure quality and make their users confident, because they won't forget certain aspects of the process.

**Describe the solution you'd like**

Add a pull requests checklist to the pull request template and/or "maintainer handbook" (the manual or `maintainers/*.md`)

<!--

Some items:

- [ ] is the idea good? has it been discussed by the Nix team?

- [ ] unit tests

- [ ] functional tests (`tests/**.sh`)

- [ ] documentation in the manual

- [ ] documentation in the code (if necessary; ideally code is already clear)

- [ ] documentation in the commit message (why was this change made? for future reference when maintaining the code)

- [ ] documentation in the changelog (to announce features and fixes to existing users who might have to do something to finally solve their problem, and to summarize the development history)

-->

**Describe alternatives you've considered**

- Keep forgetting to do certain things leading to

- bloat

- regressions

- forgotten documentation

- uncertainty as to why changes to the code were made

- prs getting slowed by delays, reminders and more delays

Have a github action that posts the checklist to each PR. This is a bit more robust as contributors may mess with the checklist.

**Additional context**

- I forgot to ask for documentation in #7314.

**Priorities**

Add :+1: to [issues you find important](https://github.com/NixOS/nix/issues?q=is%3Aissue+is%3Aopen+sort%3Areactions-%2B1-desc).

|

1.0

|

Pull request checklist - **Is your feature request related to a problem? Please describe.**

Checklists help with guiding a process to completion. They ensure quality and make their users confident, because they won't forget certain aspects of the process.

**Describe the solution you'd like**

Add a pull requests checklist to the pull request template and/or "maintainer handbook" (the manual or `maintainers/*.md`)

<!--

Some items:

- [ ] is the idea good? has it been discussed by the Nix team?

- [ ] unit tests

- [ ] functional tests (`tests/**.sh`)

- [ ] documentation in the manual

- [ ] documentation in the code (if necessary; ideally code is already clear)

- [ ] documentation in the commit message (why was this change made? for future reference when maintaining the code)

- [ ] documentation in the changelog (to announce features and fixes to existing users who might have to do something to finally solve their problem, and to summarize the development history)

-->

**Describe alternatives you've considered**

- Keep forgetting to do certain things leading to

- bloat

- regressions

- forgotten documentation

- uncertainty as to why changes to the code were made

- prs getting slowed by delays, reminders and more delays

Have a github action that posts the checklist to each PR. This is a bit more robust as contributors may mess with the checklist.

**Additional context**

- I forgot to ask for documentation in #7314.

**Priorities**

Add :+1: to [issues you find important](https://github.com/NixOS/nix/issues?q=is%3Aissue+is%3Aopen+sort%3Areactions-%2B1-desc).

|

process

|

pull request checklist is your feature request related to a problem please describe checklists help with guiding a process to completion they ensure quality and make their users confident because they won t forget certain aspects of the process describe the solution you d like add a pull requests checklist to the pull request template and or maintainer handbook the manual or maintainers md some items is the idea good has it been discussed by the nix team unit tests functional tests tests sh documentation in the manual documentation in the code if necessary ideally code is already clear documentation in the commit message why was this change made for future reference when maintaining the code documentation in the changelog to announce features and fixes to existing users who might have to do something to finally solve their problem and to summarize the development history describe alternatives you ve considered keep forgetting to do certain things leading to bloat regressions forgotten documentation uncertainty as to why changes to the code were made prs getting slowed by delays reminders and more delays have a github action that posts the checklist to each pr this is a bit more robust as contributors may mess with the checklist additional context i forgot to ask for documentation in priorities add to

| 1

|

3,439

| 6,537,316,309

|

IssuesEvent

|

2017-08-31 21:51:39

|

pburns96/Revature-VenderBender

|

https://api.github.com/repos/pburns96/Revature-VenderBender

|

closed

|

As a customer, I can view CDs

|

High Priority Work In Process

|

Requirements:

I can sort CDs by: Year, Artist, Title, Price.

I can navigate to the pages of results,

|

1.0

|

As a customer, I can view CDs - Requirements:

I can sort CDs by: Year, Artist, Title, Price.

I can navigate to the pages of results,

|

process

|

as a customer i can view cds requirements i can sort cds by year artist title price i can navigate to the pages of results

| 1

|

98,938

| 12,379,601,540

|

IssuesEvent

|

2020-05-19 12:46:19

|

spotify/backstage

|

https://api.github.com/repos/spotify/backstage

|

opened

|

Tabs (primary level navigation)

|

component design storybook

|

## 🗒 General

Hi! Would love if someone could help us build our primary level navigation, which comes in the form of tabs! ✨Please add this to our [Storybook](http://storybook.backstage.io/) as well.

<!--- Write a nice note to the community requesting the creation of a new component! -->

<!--- Include an image of your component. Bonus points for a GIF! -->

## 💻 Usage

Horizontal tabs are the first level of navigation within a plugin or entity page on Backstage. First level navigation should be broader, and we recommend keeping the number of first level tabs to less than 8. However, if there are more tabs, we have designed a way for users to view additional tabs by clicking on the caret icon at the very right of the tab area.

We recommend that you follow the [usage](https://material.io/components/tabs#usage) and [interaction](https://material-ui.com/components/tabs/) guidelines for tabs that Material has listed.

<!--- Tell us what the point of this component/pattern is! How does it help? How should it work? Any rules? -->

## 📐 Specs

<!--- Include images that detail the redlines for your component.-->

<!--- Once we get our Figma workspace set up, we'll be posting the Figma files rather than doing specs by hand.-->

## 🔮 Future

We're currently brainstorming secondary and tertiary level navigation as well! We'll keep you all posted! 🎉

<!-- Any upcoming, exciting functionality for this component in the future? List that out here. -->

|

1.0

|

Tabs (primary level navigation) - ## 🗒 General

Hi! Would love if someone could help us build our primary level navigation, which comes in the form of tabs! ✨Please add this to our [Storybook](http://storybook.backstage.io/) as well.

<!--- Write a nice note to the community requesting the creation of a new component! -->

<!--- Include an image of your component. Bonus points for a GIF! -->

## 💻 Usage

Horizontal tabs are the first level of navigation within a plugin or entity page on Backstage. First level navigation should be broader, and we recommend keeping the number of first level tabs to less than 8. However, if there are more tabs, we have designed a way for users to view additional tabs by clicking on the caret icon at the very right of the tab area.

We recommend that you follow the [usage](https://material.io/components/tabs#usage) and [interaction](https://material-ui.com/components/tabs/) guidelines for tabs that Material has listed.

<!--- Tell us what the point of this component/pattern is! How does it help? How should it work? Any rules? -->

## 📐 Specs

<!--- Include images that detail the redlines for your component.-->

<!--- Once we get our Figma workspace set up, we'll be posting the Figma files rather than doing specs by hand.-->

## 🔮 Future

We're currently brainstorming secondary and tertiary level navigation as well! We'll keep you all posted! 🎉

<!-- Any upcoming, exciting functionality for this component in the future? List that out here. -->

|

non_process

|

tabs primary level navigation 🗒 general hi would love if someone could help us build our primary level navigation which comes in the form of tabs ✨please add this to our as well 💻 usage horizontal tabs are the first level of navigation within a plugin or entity page on backstage first level navigation should be broader and we recommend keeping the number of first level tabs to less than however if there are more tabs we have designed a way for users to view additional tabs by clicking on the caret icon at the very right of the tab area we recommend that you follow the and guidelines for tabs that material has listed 📐 specs 🔮 future we re currently brainstorming secondary and tertiary level navigation as well we ll keep you all posted 🎉

| 0

|

37,316

| 15,240,861,768

|

IssuesEvent

|

2021-02-19 07:28:11

|

Azure/azure-cli

|

https://api.github.com/repos/Azure/azure-cli

|

reopened

|

Can subnet of kubernetes cluster be used for cosmos db subnet?

|

AKS Cosmos Service Attention

|

Can subnet of kubernetes cluster be used for cosmos db subnet?

Or do I need to create a new second subnet?

[Enter feedback here]

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: ceafa346-5ac6-9a5b-b36b-fb6a91449ae0

* Version Independent ID: 12dc1540-3a61-aece-0ef0-0d4c192d930e

* Content: [az cosmosdb network-rule](https://docs.microsoft.com/en-us/cli/azure/cosmosdb/network-rule?view=azure-cli-latest)

* Content Source: [latest/docs-ref-autogen/cosmosdb/network-rule.yml](https://github.com/MicrosoftDocs/azure-docs-cli/blob/master/latest/docs-ref-autogen/cosmosdb/network-rule.yml)

* Service: **cosmos-db**

* GitHub Login: @rloutlaw

* Microsoft Alias: **routlaw**

|

1.0

|

Can subnet of kubernetes cluster be used for cosmos db subnet? - Can subnet of kubernetes cluster be used for cosmos db subnet?

Or do I need to create a new second subnet?

[Enter feedback here]

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: ceafa346-5ac6-9a5b-b36b-fb6a91449ae0

* Version Independent ID: 12dc1540-3a61-aece-0ef0-0d4c192d930e