Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

17,181

| 22,762,361,086

|

IssuesEvent

|

2022-07-07 22:40:23

|

Carlosmtp/DomuzSGI

|

https://api.github.com/repos/Carlosmtp/DomuzSGI

|

closed

|

Añadir columnas en los indicadores de procesos

|

Enhancement High Process Management Reports Management

|

- [x] Crear columna goal en indicadores de procesos

- [x] Enviar los perodic_reports en la funcion para consultar los procesos

- [x] Crear la tabla process-periodic_records con campos de fecha:date, eficiencia:float e id del proceso al que hace referencia

|

1.0

|

Añadir columnas en los indicadores de procesos - - [x] Crear columna goal en indicadores de procesos

- [x] Enviar los perodic_reports en la funcion para consultar los procesos

- [x] Crear la tabla process-periodic_records con campos de fecha:date, eficiencia:float e id del proceso al que hace referencia

|

process

|

añadir columnas en los indicadores de procesos crear columna goal en indicadores de procesos enviar los perodic reports en la funcion para consultar los procesos crear la tabla process periodic records con campos de fecha date eficiencia float e id del proceso al que hace referencia

| 1

|

1,657

| 4,287,664,497

|

IssuesEvent

|

2016-07-16 22:32:35

|

pwittchen/NetworkEvents

|

https://api.github.com/repos/pwittchen/NetworkEvents

|

closed

|

Release v. 2.1.4

|

release process

|

**Initial release notes**:

- changed implementation of the `OnlineChecker` in `OnlineCheckerImpl` class. Now it pings remote host.

- added `android.permission.INTERNET` to `AndroidManifest.xml`

- added back `NetworkHelper` class with static method `boolean isConnectedToWiFiOrMobileNetwork(context)`

- updated sample apps

**Things to do**:

- [x] update documentation in `README.md`

- [x] bump library version to 2.1.4

- [x] upload Archives to Maven Central Repository

- [x] close and release artifact on Nexus

- [x] update gh-pages

- [x] update `CHANGELOG.md` after Maven Sync

- [x] bump library version in `README.md` after Maven Sync

- [x] write deprecation note in `README.md`

- [x] create new GitHub release

**Important note**:

After this release NetworkEvents library will be **deprecated and no longer maintained** in favor of [ReactiveNetwork](https://github.com/pwittchen/ReactiveNetwork) project.

|

1.0

|

Release v. 2.1.4 - **Initial release notes**:

- changed implementation of the `OnlineChecker` in `OnlineCheckerImpl` class. Now it pings remote host.

- added `android.permission.INTERNET` to `AndroidManifest.xml`

- added back `NetworkHelper` class with static method `boolean isConnectedToWiFiOrMobileNetwork(context)`

- updated sample apps

**Things to do**:

- [x] update documentation in `README.md`

- [x] bump library version to 2.1.4

- [x] upload Archives to Maven Central Repository

- [x] close and release artifact on Nexus

- [x] update gh-pages

- [x] update `CHANGELOG.md` after Maven Sync

- [x] bump library version in `README.md` after Maven Sync

- [x] write deprecation note in `README.md`

- [x] create new GitHub release

**Important note**:

After this release NetworkEvents library will be **deprecated and no longer maintained** in favor of [ReactiveNetwork](https://github.com/pwittchen/ReactiveNetwork) project.

|

process

|

release v initial release notes changed implementation of the onlinechecker in onlinecheckerimpl class now it pings remote host added android permission internet to androidmanifest xml added back networkhelper class with static method boolean isconnectedtowifiormobilenetwork context updated sample apps things to do update documentation in readme md bump library version to upload archives to maven central repository close and release artifact on nexus update gh pages update changelog md after maven sync bump library version in readme md after maven sync write deprecation note in readme md create new github release important note after this release networkevents library will be deprecated and no longer maintained in favor of project

| 1

|

17,123

| 22,638,917,381

|

IssuesEvent

|

2022-06-30 22:23:21

|

open-telemetry/opentelemetry-collector-contrib

|

https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib

|

opened

|

[processor/transform] Add option to define TQL with declarative syntax

|

priority:p2 comp: transformprocessor

|

**Is your feature request related to a problem? Please describe.**

Currently the only way to interact with the Telemetry Query Language is to use the language's SQL-style syntax:

```yaml

set(attribute["test"], "pass") where attribute["syntax style"] == "SQL"

```

This works great for the transform processor since it is a new component with no new users and no existing patterns, but for other components this may be a barrier of entry.

With the TQL being moved to is own package (#11751) it needs to be as accessible as possible. If existing components want to take advantage of the language, it will be more natural to use a declarative syntax instead of the SQL syntax.

**Describe the solution you'd like**

The TQL package should be able to interpret the SQL-like syntax that it can today AND the ability to interpret a declarative syntax. At least to start, I do not think it should allow both types of input at the same time; it should be one or the other.

All outputs and functionality of the package should remain the same. The only change should be its ability to interpret a new type of input.

**Additional context**

[A declarative syntax for the telemetry query language has already been discussed in the collector's processing doc](https://github.com/open-telemetry/opentelemetry-collector/blob/main/docs/processing.md#declarative-configuration)

|

1.0

|

[processor/transform] Add option to define TQL with declarative syntax - **Is your feature request related to a problem? Please describe.**

Currently the only way to interact with the Telemetry Query Language is to use the language's SQL-style syntax:

```yaml

set(attribute["test"], "pass") where attribute["syntax style"] == "SQL"

```

This works great for the transform processor since it is a new component with no new users and no existing patterns, but for other components this may be a barrier of entry.

With the TQL being moved to is own package (#11751) it needs to be as accessible as possible. If existing components want to take advantage of the language, it will be more natural to use a declarative syntax instead of the SQL syntax.

**Describe the solution you'd like**

The TQL package should be able to interpret the SQL-like syntax that it can today AND the ability to interpret a declarative syntax. At least to start, I do not think it should allow both types of input at the same time; it should be one or the other.

All outputs and functionality of the package should remain the same. The only change should be its ability to interpret a new type of input.

**Additional context**

[A declarative syntax for the telemetry query language has already been discussed in the collector's processing doc](https://github.com/open-telemetry/opentelemetry-collector/blob/main/docs/processing.md#declarative-configuration)

|

process

|

add option to define tql with declarative syntax is your feature request related to a problem please describe currently the only way to interact with the telemetry query language is to use the language s sql style syntax yaml set attribute pass where attribute sql this works great for the transform processor since it is a new component with no new users and no existing patterns but for other components this may be a barrier of entry with the tql being moved to is own package it needs to be as accessible as possible if existing components want to take advantage of the language it will be more natural to use a declarative syntax instead of the sql syntax describe the solution you d like the tql package should be able to interpret the sql like syntax that it can today and the ability to interpret a declarative syntax at least to start i do not think it should allow both types of input at the same time it should be one or the other all outputs and functionality of the package should remain the same the only change should be its ability to interpret a new type of input additional context

| 1

|

44,519

| 12,223,146,245

|

IssuesEvent

|

2020-05-02 16:19:17

|

scipy/scipy

|

https://api.github.com/repos/scipy/scipy

|

closed

|

ValueError 'k exceeds matrix dimensions' for sparse.diagonal() when 0 in sparse.shape

|

defect good first issue scipy.sparse

|

When a sparse matrix has a 0 in its shape, such as `(0, 0)`, `(0, 1)` or `(1, 0)`, calling `diagonal()` fails. This differs to `np.diag` on the equivalent dense array, which succeeds, returning an empty array.

The best behaviour here seems like it'd be open for debate, but it's unfortunate that the default `diagonal()` method doesn't work on every square sparse matrix. It can require adding special cases/conditionals around `.diagonal()` calls, such as https://github.com/stellargraph/stellargraph/pull/1378.

#### Reproducing code example:

Minimal:

```python

import scipy.sparse as sps

import numpy as np

m = sps.csr_matrix((0, 0))

print(np.diag(m.todense()).shape) # (0,)

m.diagonal() # ValueError: k exceeds matrix dimensions

```

"Complete" tests:

```python

import scipy.sparse as sps

# check all the sparse matrix classes

classes = [

sps.bsr_matrix,

sps.coo_matrix,

sps.csc_matrix,

sps.csr_matrix,

sps.dia_matrix,

sps.dok_matrix,

sps.lil_matrix,

]

for cls in classes:

try:

cls((0,0)).diagonal()

except Exception as e:

msg = e

else:

msg = None

print(f"{cls.__name__}: {msg!r}")

# For completeness, non-(0, 0) empty matrices

m = sps.csr_matrix((1, 0))

m.diagonal() # ValueError: k exceeds matrix dimensions

m = sps.csr_matrix((0, 1))

m.diagonal() # ValueError: k exceeds matrix dimensions

```

#### Error message:

Minimal example:

```

(0,)

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

<ipython-input-44-106e34b7d64c> in <module>

2 print(np.diag(m.todense()).shape)

3

----> 4 m.diagonal()

~/.pyenv/versions/3.6.9/lib/python3.6/site-packages/scipy/sparse/compressed.py in diagonal(self, k)

531 rows, cols = self.shape

532 if k <= -rows or k >= cols:

--> 533 raise ValueError("k exceeds matrix dimensions")

534 fn = getattr(_sparsetools, self.format + "_diagonal")

535 y = np.empty(min(rows + min(k, 0), cols - max(k, 0)),

ValueError: k exceeds matrix dimensions

```

Output of the loop in the "complete" example:

```

bsr_matrix: ValueError('k exceeds matrix dimensions',)

coo_matrix: ValueError('k exceeds matrix dimensions',)

csc_matrix: ValueError('k exceeds matrix dimensions',)

csr_matrix: ValueError('k exceeds matrix dimensions',)

dia_matrix: ValueError('k exceeds matrix dimensions',)

dok_matrix: ValueError('k exceeds matrix dimensions',)

lil_matrix: ValueError('k exceeds matrix dimensions',)

```

#### Scipy/Numpy/Python version information:

```

1.4.1 1.17.4 sys.version_info(major=3, minor=6, micro=9, releaselevel='final', serial=0)

```

|

1.0

|

ValueError 'k exceeds matrix dimensions' for sparse.diagonal() when 0 in sparse.shape - When a sparse matrix has a 0 in its shape, such as `(0, 0)`, `(0, 1)` or `(1, 0)`, calling `diagonal()` fails. This differs to `np.diag` on the equivalent dense array, which succeeds, returning an empty array.

The best behaviour here seems like it'd be open for debate, but it's unfortunate that the default `diagonal()` method doesn't work on every square sparse matrix. It can require adding special cases/conditionals around `.diagonal()` calls, such as https://github.com/stellargraph/stellargraph/pull/1378.

#### Reproducing code example:

Minimal:

```python

import scipy.sparse as sps

import numpy as np

m = sps.csr_matrix((0, 0))

print(np.diag(m.todense()).shape) # (0,)

m.diagonal() # ValueError: k exceeds matrix dimensions

```

"Complete" tests:

```python

import scipy.sparse as sps

# check all the sparse matrix classes

classes = [

sps.bsr_matrix,

sps.coo_matrix,

sps.csc_matrix,

sps.csr_matrix,

sps.dia_matrix,

sps.dok_matrix,

sps.lil_matrix,

]

for cls in classes:

try:

cls((0,0)).diagonal()

except Exception as e:

msg = e

else:

msg = None

print(f"{cls.__name__}: {msg!r}")

# For completeness, non-(0, 0) empty matrices

m = sps.csr_matrix((1, 0))

m.diagonal() # ValueError: k exceeds matrix dimensions

m = sps.csr_matrix((0, 1))

m.diagonal() # ValueError: k exceeds matrix dimensions

```

#### Error message:

Minimal example:

```

(0,)

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

<ipython-input-44-106e34b7d64c> in <module>

2 print(np.diag(m.todense()).shape)

3

----> 4 m.diagonal()

~/.pyenv/versions/3.6.9/lib/python3.6/site-packages/scipy/sparse/compressed.py in diagonal(self, k)

531 rows, cols = self.shape

532 if k <= -rows or k >= cols:

--> 533 raise ValueError("k exceeds matrix dimensions")

534 fn = getattr(_sparsetools, self.format + "_diagonal")

535 y = np.empty(min(rows + min(k, 0), cols - max(k, 0)),

ValueError: k exceeds matrix dimensions

```

Output of the loop in the "complete" example:

```

bsr_matrix: ValueError('k exceeds matrix dimensions',)

coo_matrix: ValueError('k exceeds matrix dimensions',)

csc_matrix: ValueError('k exceeds matrix dimensions',)

csr_matrix: ValueError('k exceeds matrix dimensions',)

dia_matrix: ValueError('k exceeds matrix dimensions',)

dok_matrix: ValueError('k exceeds matrix dimensions',)

lil_matrix: ValueError('k exceeds matrix dimensions',)

```

#### Scipy/Numpy/Python version information:

```

1.4.1 1.17.4 sys.version_info(major=3, minor=6, micro=9, releaselevel='final', serial=0)

```

|

non_process

|

valueerror k exceeds matrix dimensions for sparse diagonal when in sparse shape when a sparse matrix has a in its shape such as or calling diagonal fails this differs to np diag on the equivalent dense array which succeeds returning an empty array the best behaviour here seems like it d be open for debate but it s unfortunate that the default diagonal method doesn t work on every square sparse matrix it can require adding special cases conditionals around diagonal calls such as reproducing code example minimal python import scipy sparse as sps import numpy as np m sps csr matrix print np diag m todense shape m diagonal valueerror k exceeds matrix dimensions complete tests python import scipy sparse as sps check all the sparse matrix classes classes sps bsr matrix sps coo matrix sps csc matrix sps csr matrix sps dia matrix sps dok matrix sps lil matrix for cls in classes try cls diagonal except exception as e msg e else msg none print f cls name msg r for completeness non empty matrices m sps csr matrix m diagonal valueerror k exceeds matrix dimensions m sps csr matrix m diagonal valueerror k exceeds matrix dimensions error message minimal example valueerror traceback most recent call last in print np diag m todense shape m diagonal pyenv versions lib site packages scipy sparse compressed py in diagonal self k rows cols self shape if k cols raise valueerror k exceeds matrix dimensions fn getattr sparsetools self format diagonal y np empty min rows min k cols max k valueerror k exceeds matrix dimensions output of the loop in the complete example bsr matrix valueerror k exceeds matrix dimensions coo matrix valueerror k exceeds matrix dimensions csc matrix valueerror k exceeds matrix dimensions csr matrix valueerror k exceeds matrix dimensions dia matrix valueerror k exceeds matrix dimensions dok matrix valueerror k exceeds matrix dimensions lil matrix valueerror k exceeds matrix dimensions scipy numpy python version information sys version info major minor micro releaselevel final serial

| 0

|

276,800

| 24,021,054,494

|

IssuesEvent

|

2022-09-15 07:41:43

|

chshersh/tool-sync

|

https://api.github.com/repos/chshersh/tool-sync

|

opened

|

Create 'AssetError' and test 'select_asset' function

|

good first issue test refactoring

|

This function selects an asset to download based on config:

https://github.com/chshersh/tool-sync/blob/b79eeb91cfdc3a122e6693d503117f68ff1fb44e/src/model/tool.rs#L72-L86

Currently, it returns `Result<Asset, String>` but the goal is to return a custom type for error: `Result<Asset, AssetError`.

The idea is to replace `String` a custom constructor and add tests for these cases.

- [ ] Create a new enum with two constructors

- [ ] Implement a `display()` function for this enum

- [ ] Replace `String` with enum

- [ ] Write tests

|

1.0

|

Create 'AssetError' and test 'select_asset' function - This function selects an asset to download based on config:

https://github.com/chshersh/tool-sync/blob/b79eeb91cfdc3a122e6693d503117f68ff1fb44e/src/model/tool.rs#L72-L86

Currently, it returns `Result<Asset, String>` but the goal is to return a custom type for error: `Result<Asset, AssetError`.

The idea is to replace `String` a custom constructor and add tests for these cases.

- [ ] Create a new enum with two constructors

- [ ] Implement a `display()` function for this enum

- [ ] Replace `String` with enum

- [ ] Write tests

|

non_process

|

create asseterror and test select asset function this function selects an asset to download based on config currently it returns result but the goal is to return a custom type for error result asset asseterror the idea is to replace string a custom constructor and add tests for these cases create a new enum with two constructors implement a display function for this enum replace string with enum write tests

| 0

|

367,242

| 25,728,661,215

|

IssuesEvent

|

2022-12-07 18:28:14

|

Fiserv/Support

|

https://api.github.com/repos/Fiserv/Support

|

closed

|

Documentation section not rendering in Dev Studio

|

bug documentation Severity - High Priority - High BankingHub

|

# Reporting new issue for BankingHub tenant

Documentation section of Banking Hub is not displaying in Dev instance of Developer Studio.

https://dev-developerstudio.fiserv.com/product/BankingHub

Please refer the screenshot below.

cc: @rahravin @bobburghardt

|

1.0

|

Documentation section not rendering in Dev Studio - # Reporting new issue for BankingHub tenant

Documentation section of Banking Hub is not displaying in Dev instance of Developer Studio.

https://dev-developerstudio.fiserv.com/product/BankingHub

Please refer the screenshot below.

cc: @rahravin @bobburghardt

|

non_process

|

documentation section not rendering in dev studio reporting new issue for bankinghub tenant documentation section of banking hub is not displaying in dev instance of developer studio please refer the screenshot below cc rahravin bobburghardt

| 0

|

16,931

| 5,310,799,515

|

IssuesEvent

|

2017-02-12 22:59:54

|

oppia/oppia

|

https://api.github.com/repos/oppia/oppia

|

opened

|

ImageClickInput: Allow learners to respond with a set/sequence of points

|

loc: full-stack owner: @tjiang11 TODO: code TODO: tech (instructions) type: feature (minor)

|

Currently, learners can only respond with one point in an ImageClickInput, it would be nice to allow learners to respond with a set of points ("Pick all the fruits") and a sequence of points ("Pick the items in order of density"). This could be done as an entirely new widget or integrated with the existing ImageClickInput interaction.

Originally part of #531

|

1.0

|

ImageClickInput: Allow learners to respond with a set/sequence of points - Currently, learners can only respond with one point in an ImageClickInput, it would be nice to allow learners to respond with a set of points ("Pick all the fruits") and a sequence of points ("Pick the items in order of density"). This could be done as an entirely new widget or integrated with the existing ImageClickInput interaction.

Originally part of #531

|

non_process

|

imageclickinput allow learners to respond with a set sequence of points currently learners can only respond with one point in an imageclickinput it would be nice to allow learners to respond with a set of points pick all the fruits and a sequence of points pick the items in order of density this could be done as an entirely new widget or integrated with the existing imageclickinput interaction originally part of

| 0

|

180,713

| 30,554,442,667

|

IssuesEvent

|

2023-07-20 10:41:25

|

DeveloperAcademy-POSTECH/MC3-Team11-BeyondThe3F

|

https://api.github.com/repos/DeveloperAcademy-POSTECH/MC3-Team11-BeyondThe3F

|

closed

|

[Design] Component 재활용성 수정

|

Design

|

## 이슈

- 기존 컴포넌트의 중복을 조금 줄이기 위해 재사용 가능한 코드로 수정

## To - Do

- [ ] 중복 컴포턴트 제거

- [ ] 컬러, 텍스트 의존성 주입

|

1.0

|

[Design] Component 재활용성 수정 - ## 이슈

- 기존 컴포넌트의 중복을 조금 줄이기 위해 재사용 가능한 코드로 수정

## To - Do

- [ ] 중복 컴포턴트 제거

- [ ] 컬러, 텍스트 의존성 주입

|

non_process

|

component 재활용성 수정 이슈 기존 컴포넌트의 중복을 조금 줄이기 위해 재사용 가능한 코드로 수정 to do 중복 컴포턴트 제거 컬러 텍스트 의존성 주입

| 0

|

353,734

| 25,133,750,669

|

IssuesEvent

|

2022-11-09 16:56:32

|

extratone/WindowsIowa

|

https://api.github.com/repos/extratone/WindowsIowa

|

opened

|

Windows Iowa Theme for Blink Shell

|

documentation

|

```js

t.prefs_.set('color-palette-overrides',["#050387", "#ff2320", "#00ff00", "#f5ff6f", "#2934b3", "#1f0022", "#c4f7f9", "#fff3e4", "#000051", "#ff1f1e", "#00ff00", "#f5ff6f", "#6869f3", "#ed0073", "#93fdff", "#ffffff"]);

t.prefs_.set('foreground-color', "#ffffff");

t.prefs_.set('background-color', "#00006f");

t.prefs_.set('cursor-color', "#ffffff");

```

|

1.0

|

Windows Iowa Theme for Blink Shell -

```js

t.prefs_.set('color-palette-overrides',["#050387", "#ff2320", "#00ff00", "#f5ff6f", "#2934b3", "#1f0022", "#c4f7f9", "#fff3e4", "#000051", "#ff1f1e", "#00ff00", "#f5ff6f", "#6869f3", "#ed0073", "#93fdff", "#ffffff"]);

t.prefs_.set('foreground-color', "#ffffff");

t.prefs_.set('background-color', "#00006f");

t.prefs_.set('cursor-color', "#ffffff");

```

|

non_process

|

windows iowa theme for blink shell js t prefs set color palette overrides t prefs set foreground color ffffff t prefs set background color t prefs set cursor color ffffff

| 0

|

9,929

| 12,966,752,642

|

IssuesEvent

|

2020-07-21 01:25:15

|

googleapis/java-spanner

|

https://api.github.com/repos/googleapis/java-spanner

|

reopened

|

Update Dependencies (Renovate Bot)

|

api: spanner type: process

|

This [master issue](https://renovatebot.com/blog/master-issue) contains a list of Renovate updates and their statuses.

## Closed/Ignored

These updates were closed unmerged and will not be recreated unless you click a checkbox below.

- [ ] <!-- recreate-branch=renovate/org.apache.commons-commons-lang3-3.x -->[deps: update dependency org.apache.commons:commons-lang3 to v3.11](../pull/356)

---

<details><summary>Advanced</summary>

- [ ] <!-- manual job -->Check this box to trigger a request for Renovate to run again on this repository

</details>

|

1.0

|

Update Dependencies (Renovate Bot) - This [master issue](https://renovatebot.com/blog/master-issue) contains a list of Renovate updates and their statuses.

## Closed/Ignored

These updates were closed unmerged and will not be recreated unless you click a checkbox below.

- [ ] <!-- recreate-branch=renovate/org.apache.commons-commons-lang3-3.x -->[deps: update dependency org.apache.commons:commons-lang3 to v3.11](../pull/356)

---

<details><summary>Advanced</summary>

- [ ] <!-- manual job -->Check this box to trigger a request for Renovate to run again on this repository

</details>

|

process

|

update dependencies renovate bot this contains a list of renovate updates and their statuses closed ignored these updates were closed unmerged and will not be recreated unless you click a checkbox below pull advanced check this box to trigger a request for renovate to run again on this repository

| 1

|

2,393

| 2,611,713,688

|

IssuesEvent

|

2015-02-27 08:25:46

|

dambileh/Abblar

|

https://api.github.com/repos/dambileh/Abblar

|

closed

|

As an app I need organisation category screen

|

Design Ready

|

This will simply list different categories of messages (channels) that the user can subscribe to. We probably need some sort of a list with a checkbox next to each item.

Just to give you an idea, take Telkom as an example, it could have "Fault report", "Promotions" and etc categories

|

1.0

|

As an app I need organisation category screen - This will simply list different categories of messages (channels) that the user can subscribe to. We probably need some sort of a list with a checkbox next to each item.

Just to give you an idea, take Telkom as an example, it could have "Fault report", "Promotions" and etc categories

|

non_process

|

as an app i need organisation category screen this will simply list different categories of messages channels that the user can subscribe to we probably need some sort of a list with a checkbox next to each item just to give you an idea take telkom as an example it could have fault report promotions and etc categories

| 0

|

177,873

| 13,750,962,699

|

IssuesEvent

|

2020-10-06 12:46:27

|

enthought/traitsui

|

https://api.github.com/repos/enthought/traitsui

|

closed

|

Occasional error from QAbstractItemModel events upon test tear down

|

component: test suite

|

The following error occurs once from this travis build (https://travis-ci.org/github/enthought/traitsui/jobs/706873557) :

```

======================================================================

FAIL: test_run (test_all_examples.TestExample) (file_path='/home/travis/build/enthought/traitsui/examples/demo/Advanced/ListStrEditor_demo.py')

----------------------------------------------------------------------

Traceback (most recent call last):

File "/home/travis/build/enthought/traitsui/integrationtests/test_all_examples.py", line 293, in test_run

run_file(file_path)

AttributeError: 'NoneType' object has no attribute 'auto_add'

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/home/travis/build/enthought/traitsui/integrationtests/test_all_examples.py", line 300, in test_run

file_path, exc, message

AssertionError: Executing /home/travis/build/enthought/traitsui/examples/demo/Advanced/ListStrEditor_demo.py failed with exception 'NoneType' object has no attribute 'auto_add'

Traceback (most recent call last):

File "/home/travis/build/enthought/traitsui/integrationtests/test_all_examples.py", line 293, in test_run

run_file(file_path)

File "/home/travis/build/enthought/traitsui/integrationtests/test_all_examples.py", line 277, in run_file

exec(content, globals)

File "<string>", line 50, in <module>

File "/home/travis/build/enthought/traitsui/integrationtests/test_all_examples.py", line 238, in replaced_configure_traits

process_cascade_events()

File "/home/travis/.edm/envs/traitsui-test-3.6-pyqt/lib/python3.6/contextlib.py", line 88, in __exit__

next(self.gen)

File "/home/travis/.edm/envs/traitsui-test-3.6-pyqt/lib/python3.6/site-packages/traitsui/tests/_tools.py", line 67, in store_exceptions_on_all_threads

raise exceptions[0]

File "/home/travis/.edm/envs/traitsui-test-3.6-pyqt/lib/python3.6/site-packages/traitsui/qt4/list_str_model.py", line 53, in rowCount

if editor.factory.auto_add:

AttributeError: 'NoneType' object has no attribute 'auto_add'

```

This is similar to the ones seen in https://github.com/enthought/traitsui/issues/854 for `ImageEnumEditor` and the one worked around in https://github.com/enthought/traitsui/pull/897 for `TabularEditor`.

The fix for #854 was to replace/unset the instance of `QAbstractItemModel` from the view widget in the editor's dispose. See https://github.com/enthought/traitsui/pull/974

|

1.0

|

Occasional error from QAbstractItemModel events upon test tear down - The following error occurs once from this travis build (https://travis-ci.org/github/enthought/traitsui/jobs/706873557) :

```

======================================================================

FAIL: test_run (test_all_examples.TestExample) (file_path='/home/travis/build/enthought/traitsui/examples/demo/Advanced/ListStrEditor_demo.py')

----------------------------------------------------------------------

Traceback (most recent call last):

File "/home/travis/build/enthought/traitsui/integrationtests/test_all_examples.py", line 293, in test_run

run_file(file_path)

AttributeError: 'NoneType' object has no attribute 'auto_add'

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/home/travis/build/enthought/traitsui/integrationtests/test_all_examples.py", line 300, in test_run

file_path, exc, message

AssertionError: Executing /home/travis/build/enthought/traitsui/examples/demo/Advanced/ListStrEditor_demo.py failed with exception 'NoneType' object has no attribute 'auto_add'

Traceback (most recent call last):

File "/home/travis/build/enthought/traitsui/integrationtests/test_all_examples.py", line 293, in test_run

run_file(file_path)

File "/home/travis/build/enthought/traitsui/integrationtests/test_all_examples.py", line 277, in run_file

exec(content, globals)

File "<string>", line 50, in <module>

File "/home/travis/build/enthought/traitsui/integrationtests/test_all_examples.py", line 238, in replaced_configure_traits

process_cascade_events()

File "/home/travis/.edm/envs/traitsui-test-3.6-pyqt/lib/python3.6/contextlib.py", line 88, in __exit__

next(self.gen)

File "/home/travis/.edm/envs/traitsui-test-3.6-pyqt/lib/python3.6/site-packages/traitsui/tests/_tools.py", line 67, in store_exceptions_on_all_threads

raise exceptions[0]

File "/home/travis/.edm/envs/traitsui-test-3.6-pyqt/lib/python3.6/site-packages/traitsui/qt4/list_str_model.py", line 53, in rowCount

if editor.factory.auto_add:

AttributeError: 'NoneType' object has no attribute 'auto_add'

```

This is similar to the ones seen in https://github.com/enthought/traitsui/issues/854 for `ImageEnumEditor` and the one worked around in https://github.com/enthought/traitsui/pull/897 for `TabularEditor`.

The fix for #854 was to replace/unset the instance of `QAbstractItemModel` from the view widget in the editor's dispose. See https://github.com/enthought/traitsui/pull/974

|

non_process

|

occasional error from qabstractitemmodel events upon test tear down the following error occurs once from this travis build fail test run test all examples testexample file path home travis build enthought traitsui examples demo advanced liststreditor demo py traceback most recent call last file home travis build enthought traitsui integrationtests test all examples py line in test run run file file path attributeerror nonetype object has no attribute auto add during handling of the above exception another exception occurred traceback most recent call last file home travis build enthought traitsui integrationtests test all examples py line in test run file path exc message assertionerror executing home travis build enthought traitsui examples demo advanced liststreditor demo py failed with exception nonetype object has no attribute auto add traceback most recent call last file home travis build enthought traitsui integrationtests test all examples py line in test run run file file path file home travis build enthought traitsui integrationtests test all examples py line in run file exec content globals file line in file home travis build enthought traitsui integrationtests test all examples py line in replaced configure traits process cascade events file home travis edm envs traitsui test pyqt lib contextlib py line in exit next self gen file home travis edm envs traitsui test pyqt lib site packages traitsui tests tools py line in store exceptions on all threads raise exceptions file home travis edm envs traitsui test pyqt lib site packages traitsui list str model py line in rowcount if editor factory auto add attributeerror nonetype object has no attribute auto add this is similar to the ones seen in for imageenumeditor and the one worked around in for tabulareditor the fix for was to replace unset the instance of qabstractitemmodel from the view widget in the editor s dispose see

| 0

|

107,181

| 13,440,491,581

|

IssuesEvent

|

2020-09-08 01:05:35

|

logseq/logseq

|

https://api.github.com/repos/logseq/logseq

|

closed

|

connectedpapers的UI设计非常漂亮logseq可以参考参考

|

design

|

connectedpapers的UI设计非常漂亮logseq可以参考参考,roam research的UI都是对workflowy进行学习,但connectedpapers的界面设计例如动画效果、页面布局、隐喻等都也是很好看的。

https://www.connectedpapers.com/main/f4b5b7a08649811db655bc0fbc4d33b608617bab/MoFeCoNi/graph

|

1.0

|

connectedpapers的UI设计非常漂亮logseq可以参考参考 - connectedpapers的UI设计非常漂亮logseq可以参考参考,roam research的UI都是对workflowy进行学习,但connectedpapers的界面设计例如动画效果、页面布局、隐喻等都也是很好看的。

https://www.connectedpapers.com/main/f4b5b7a08649811db655bc0fbc4d33b608617bab/MoFeCoNi/graph

|

non_process

|

connectedpapers的ui设计非常漂亮logseq可以参考参考 connectedpapers的ui设计非常漂亮logseq可以参考参考,roam research的ui都是对workflowy进行学习,但connectedpapers的界面设计例如动画效果、页面布局、隐喻等都也是很好看的。

| 0

|

14,441

| 17,498,017,914

|

IssuesEvent

|

2021-08-10 05:07:53

|

dotnet/runtime

|

https://api.github.com/repos/dotnet/runtime

|

closed

|

Test failure System.ServiceProcess.Tests.ServiceControllerTests.Stop_FalseArg_WithDependentServices_ThrowsInvalidOperationException

|

arch-x86 area-System.ServiceProcess os-windows

|

Run: [runtime-libraries-coreclr outerloop 20210615.3](https://dev.azure.com/dnceng/public/_build/results?buildId=1187626&view=ms.vss-test-web.build-test-results-tab&runId=35678872&resultId=104107&paneView=debug)

Failed test:

```

net6.0-windows-Release-x86-CoreCLR_release-Windows.7.Amd64.Open

- System.ServiceProcess.Tests.ServiceControllerTests.Stop_FalseArg_WithDependentServices_ThrowsInvalidOperationException

```

**Error message:**

```

Assert.Throws() Failure

Expected: typeof(System.InvalidOperationException)

Actual: (No exception was thrown)

Stack trace

at System.ServiceProcess.Tests.ServiceControllerTests.Stop_FalseArg_WithDependentServices_ThrowsInvalidOperationException() in /_/src/libraries/System.ServiceProcess.ServiceController/tests/ServiceControllerTests.netcoreapp.cs:line 16

```

|

1.0

|

Test failure System.ServiceProcess.Tests.ServiceControllerTests.Stop_FalseArg_WithDependentServices_ThrowsInvalidOperationException - Run: [runtime-libraries-coreclr outerloop 20210615.3](https://dev.azure.com/dnceng/public/_build/results?buildId=1187626&view=ms.vss-test-web.build-test-results-tab&runId=35678872&resultId=104107&paneView=debug)

Failed test:

```

net6.0-windows-Release-x86-CoreCLR_release-Windows.7.Amd64.Open

- System.ServiceProcess.Tests.ServiceControllerTests.Stop_FalseArg_WithDependentServices_ThrowsInvalidOperationException

```

**Error message:**

```

Assert.Throws() Failure

Expected: typeof(System.InvalidOperationException)

Actual: (No exception was thrown)

Stack trace

at System.ServiceProcess.Tests.ServiceControllerTests.Stop_FalseArg_WithDependentServices_ThrowsInvalidOperationException() in /_/src/libraries/System.ServiceProcess.ServiceController/tests/ServiceControllerTests.netcoreapp.cs:line 16

```

|

process

|

test failure system serviceprocess tests servicecontrollertests stop falsearg withdependentservices throwsinvalidoperationexception run failed test windows release coreclr release windows open system serviceprocess tests servicecontrollertests stop falsearg withdependentservices throwsinvalidoperationexception error message assert throws failure expected typeof system invalidoperationexception actual no exception was thrown stack trace at system serviceprocess tests servicecontrollertests stop falsearg withdependentservices throwsinvalidoperationexception in src libraries system serviceprocess servicecontroller tests servicecontrollertests netcoreapp cs line

| 1

|

111,835

| 4,489,039,409

|

IssuesEvent

|

2016-08-30 09:33:23

|

Victoire/victoire

|

https://api.github.com/repos/Victoire/victoire

|

opened

|

It's not possible to edit a widget list with a DQL query inside

|

bug Priority : Medium

|

Error 500 when i click on the widget (edition)

|

1.0

|

It's not possible to edit a widget list with a DQL query inside - Error 500 when i click on the widget (edition)

|

non_process

|

it s not possible to edit a widget list with a dql query inside error when i click on the widget edition

| 0

|

66,965

| 8,059,810,153

|

IssuesEvent

|

2018-08-02 23:55:33

|

kbase/narrative

|

https://api.github.com/repos/kbase/narrative

|

closed

|

Graphic design tweaks round 1

|

enhancement graphic design minor

|

These are mostly minor and is not a comprehensive list. But some of the consistency issues should be fixed.

Design Throughout

- Separator lines are too pale, they should be #CECECE

- for all the lists (the data list, the apps & methods list), remove the separator at the very top

Header

- put spacing or even divider between account icon and narrative buttons

- make the buttons round if we are mostly going for round buttons

- after saving the narrative, make the title non-italic (italic for unsaved only)

Left Panel

- Make the tabs color #2196F3

- Make inactive tab text color #BBDEFB

- Make active tab indicator #10CE34

Data Panel

- The “Add Data” button, change colors to #E53935 on hover

Data Slideout

- the “add” buttons should be consistent (in example data, the button is persistent, in other tabs, they appear on hover)

- icons need to be the same as the icons in the data panel

- filter options and searching needs to be consistent through all the tabs

- import, need to fix the tabs (should not show the first level of tabs when showing the secondary level of tabs, see the mockups)

Working/Notebook Area

- bottom buttons are too subtle, maybe try blue #2196F3 (with opacity still 0.5)

- the markdown cells shouldn’t have that top panel styling like that, it can be a simple horizontal line. There's sort of mix of styles going on in general.

|

1.0

|

Graphic design tweaks round 1 - These are mostly minor and is not a comprehensive list. But some of the consistency issues should be fixed.

Design Throughout

- Separator lines are too pale, they should be #CECECE

- for all the lists (the data list, the apps & methods list), remove the separator at the very top

Header

- put spacing or even divider between account icon and narrative buttons

- make the buttons round if we are mostly going for round buttons

- after saving the narrative, make the title non-italic (italic for unsaved only)

Left Panel

- Make the tabs color #2196F3

- Make inactive tab text color #BBDEFB

- Make active tab indicator #10CE34

Data Panel

- The “Add Data” button, change colors to #E53935 on hover

Data Slideout

- the “add” buttons should be consistent (in example data, the button is persistent, in other tabs, they appear on hover)

- icons need to be the same as the icons in the data panel

- filter options and searching needs to be consistent through all the tabs

- import, need to fix the tabs (should not show the first level of tabs when showing the secondary level of tabs, see the mockups)

Working/Notebook Area

- bottom buttons are too subtle, maybe try blue #2196F3 (with opacity still 0.5)

- the markdown cells shouldn’t have that top panel styling like that, it can be a simple horizontal line. There's sort of mix of styles going on in general.

|

non_process

|

graphic design tweaks round these are mostly minor and is not a comprehensive list but some of the consistency issues should be fixed design throughout separator lines are too pale they should be cecece for all the lists the data list the apps methods list remove the separator at the very top header put spacing or even divider between account icon and narrative buttons make the buttons round if we are mostly going for round buttons after saving the narrative make the title non italic italic for unsaved only left panel make the tabs color make inactive tab text color bbdefb make active tab indicator data panel the “add data” button change colors to on hover data slideout the “add” buttons should be consistent in example data the button is persistent in other tabs they appear on hover icons need to be the same as the icons in the data panel filter options and searching needs to be consistent through all the tabs import need to fix the tabs should not show the first level of tabs when showing the secondary level of tabs see the mockups working notebook area bottom buttons are too subtle maybe try blue with opacity still the markdown cells shouldn’t have that top panel styling like that it can be a simple horizontal line there s sort of mix of styles going on in general

| 0

|

13,800

| 16,554,004,781

|

IssuesEvent

|

2021-05-28 11:56:00

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[PM] Need to increase detectable area for Password Criteria icon (?) in change Password screen

|

Bug P2 Participant manager Process: Fixed Process: Tested QA Process: Tested dev

|

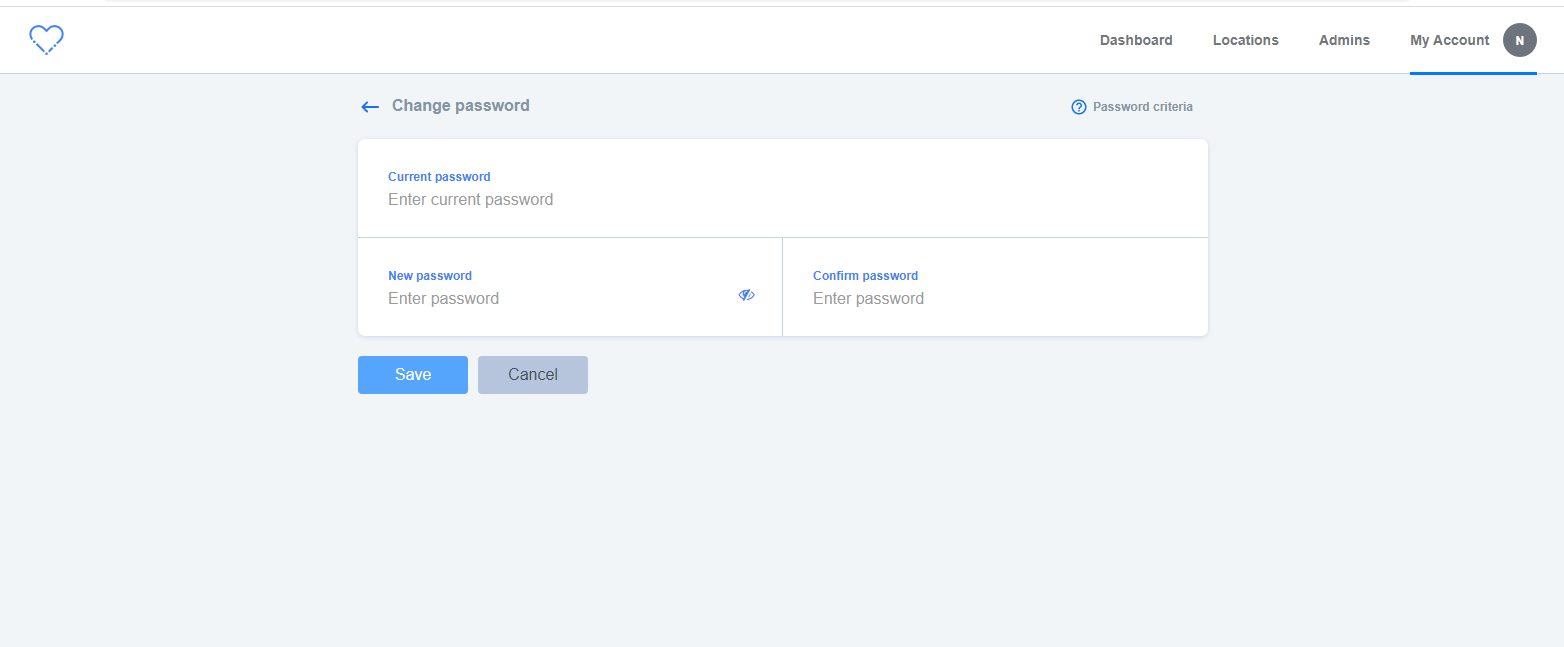

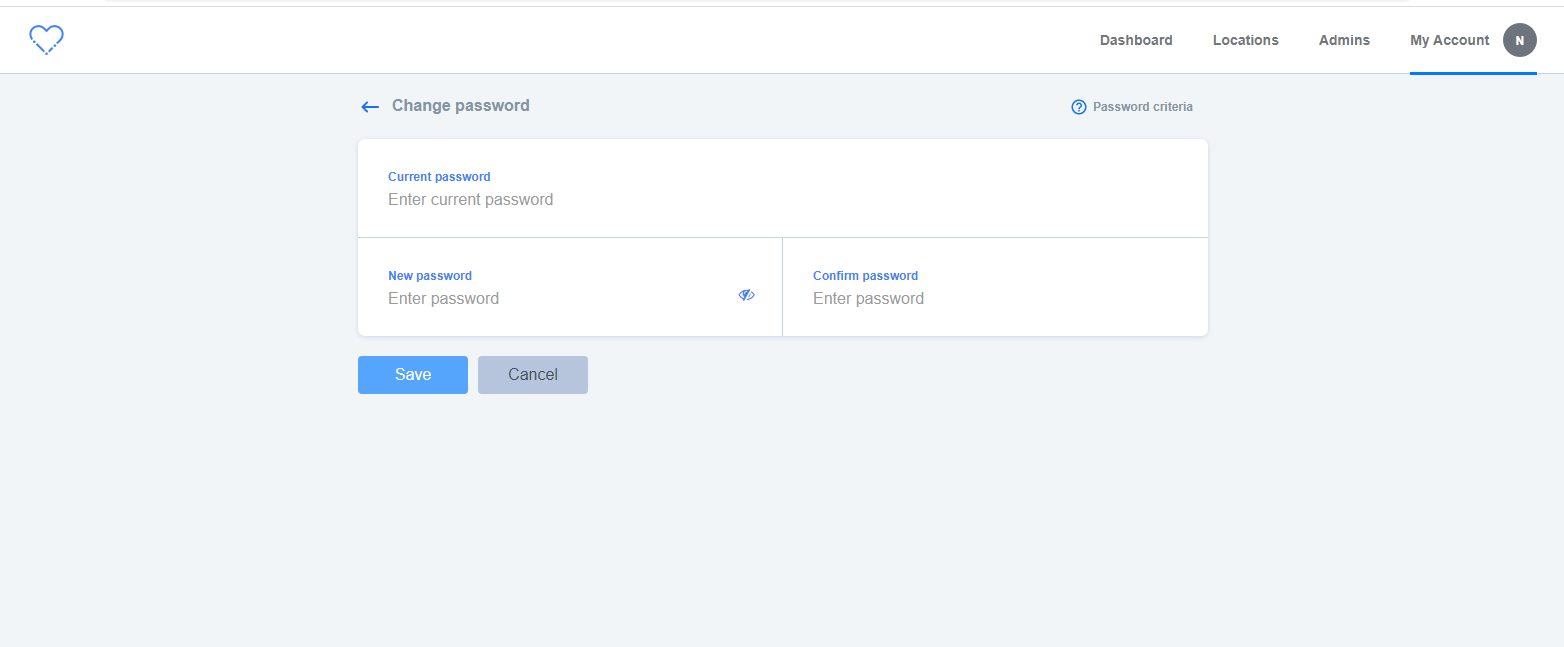

Steps:

1. Login into Participant manager

2. Navigate to My Account section

3. Click on Change Password link

4. Verify the password criteria icon in change password screen

**A/R**:- Detectable area for Password Criteria icon is very less.

**E/R**:- Detectable area should be increase for Password Criteria icon for better user experience

**Note**:- Need to fix the above issue wherever Password criteria icon appears.

|

3.0

|

[PM] Need to increase detectable area for Password Criteria icon (?) in change Password screen - Steps:

1. Login into Participant manager

2. Navigate to My Account section

3. Click on Change Password link

4. Verify the password criteria icon in change password screen

**A/R**:- Detectable area for Password Criteria icon is very less.

**E/R**:- Detectable area should be increase for Password Criteria icon for better user experience

**Note**:- Need to fix the above issue wherever Password criteria icon appears.

|

process

|

need to increase detectable area for password criteria icon in change password screen steps login into participant manager navigate to my account section click on change password link verify the password criteria icon in change password screen a r detectable area for password criteria icon is very less e r detectable area should be increase for password criteria icon for better user experience note need to fix the above issue wherever password criteria icon appears

| 1

|

6,768

| 9,905,872,942

|

IssuesEvent

|

2019-06-27 12:41:16

|

spring-projects/spring-hateoas

|

https://api.github.com/repos/spring-projects/spring-hateoas

|

closed

|

Switch to Spring's ServerWebExchangeContextFilter.

|

in: core process: in progress stack: webflux

|

This was completed on #983 and merged to `master`. But due to instabilities with Spring Framework upstream and Spring Data downstream, the effort was moved off to a branch to delay until we're all ready to accept it.

|

1.0

|

Switch to Spring's ServerWebExchangeContextFilter. - This was completed on #983 and merged to `master`. But due to instabilities with Spring Framework upstream and Spring Data downstream, the effort was moved off to a branch to delay until we're all ready to accept it.

|

process

|

switch to spring s serverwebexchangecontextfilter this was completed on and merged to master but due to instabilities with spring framework upstream and spring data downstream the effort was moved off to a branch to delay until we re all ready to accept it

| 1

|

5,324

| 8,139,530,661

|

IssuesEvent

|

2018-08-20 18:01:11

|

jfmcbrayer/brutaldon

|

https://api.github.com/repos/jfmcbrayer/brutaldon

|

closed

|

Multi-account support

|

enhancement inprocess

|

This would require a Django login and registration for brutaldon. And cross-account actions would require quite a bit of refactoring. But otherwise not hard.

|

1.0

|

Multi-account support - This would require a Django login and registration for brutaldon. And cross-account actions would require quite a bit of refactoring. But otherwise not hard.

|

process

|

multi account support this would require a django login and registration for brutaldon and cross account actions would require quite a bit of refactoring but otherwise not hard

| 1

|

4,507

| 7,350,301,481

|

IssuesEvent

|

2018-03-08 13:56:04

|

shobrook/BitVision

|

https://api.github.com/repos/shobrook/BitVision

|

closed

|

Instead of using Bitstamp price data, use data averaged across multiple exchanges

|

medium priority preprocessing

|

We want to avoid exchange-specific biases.

|

1.0

|

Instead of using Bitstamp price data, use data averaged across multiple exchanges - We want to avoid exchange-specific biases.

|

process

|

instead of using bitstamp price data use data averaged across multiple exchanges we want to avoid exchange specific biases

| 1

|

5,499

| 8,364,474,082

|

IssuesEvent

|

2018-10-03 23:13:34

|

w3c/w3process

|

https://api.github.com/repos/w3c/w3process

|

closed

|

Written notification?

|

AC-review Process2019Candidate

|

In section 3.6 Resignation from a group, it says "On written notification from an Advisory Committee representative...". Does the notification from SysBot (when an AC rep unhooks someone from the WG/IG) qualify as "written notification"?

|

1.0

|

Written notification? - In section 3.6 Resignation from a group, it says "On written notification from an Advisory Committee representative...". Does the notification from SysBot (when an AC rep unhooks someone from the WG/IG) qualify as "written notification"?

|

process

|

written notification in section resignation from a group it says on written notification from an advisory committee representative does the notification from sysbot when an ac rep unhooks someone from the wg ig qualify as written notification

| 1

|

11,969

| 14,730,443,029

|

IssuesEvent

|

2021-01-06 13:13:24

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

To change term 'user' to 'admin' in the error and message codes of PM, also in PM emails

|

Feature request P1 Participant manager Process: Release 2 Process: Tested QA Process: Tested dev

|

Replace the word user with admin in the error and message codes of PM.

Also, we need to replace them in the emails of PM.

|

3.0

|

To change term 'user' to 'admin' in the error and message codes of PM, also in PM emails - Replace the word user with admin in the error and message codes of PM.

Also, we need to replace them in the emails of PM.

|

process

|

to change term user to admin in the error and message codes of pm also in pm emails replace the word user with admin in the error and message codes of pm also we need to replace them in the emails of pm

| 1

|

517,612

| 15,017,110,680

|

IssuesEvent

|

2021-02-01 10:25:46

|

webcompat/web-bugs

|

https://api.github.com/repos/webcompat/web-bugs

|

closed

|

xnxx.com - site is not usable

|

browser-focus-geckoview engine-gecko ml-needsdiagnosis-false priority-important

|

<!-- @browser: Firefox Mobile 84.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:84.0) Gecko/84.0 Firefox/84.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/66445 -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: http://xnxx.com

**Browser / Version**: Firefox Mobile 84.0

**Operating System**: Android 9

**Tested Another Browser**: Yes Other

**Problem type**: Site is not usable

**Description**: Browser unsupported

**Steps to Reproduce**:

Videos doesn't display and unable to open site

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

1.0

|

xnxx.com - site is not usable - <!-- @browser: Firefox Mobile 84.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:84.0) Gecko/84.0 Firefox/84.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/66445 -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: http://xnxx.com

**Browser / Version**: Firefox Mobile 84.0

**Operating System**: Android 9

**Tested Another Browser**: Yes Other

**Problem type**: Site is not usable

**Description**: Browser unsupported

**Steps to Reproduce**:

Videos doesn't display and unable to open site

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

non_process

|

xnxx com site is not usable url browser version firefox mobile operating system android tested another browser yes other problem type site is not usable description browser unsupported steps to reproduce videos doesn t display and unable to open site browser configuration none from with ❤️

| 0

|

196,157

| 22,441,002,875

|

IssuesEvent

|

2022-06-21 01:16:58

|

JMD60260/fetchmeaband

|

https://api.github.com/repos/JMD60260/fetchmeaband

|

opened

|

CVE-2022-32209 (Medium) detected in rails-html-sanitizer-1.3.0.gem

|

security vulnerability

|

## CVE-2022-32209 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>rails-html-sanitizer-1.3.0.gem</b></p></summary>

<p>HTML sanitization for Rails applications</p>

<p>Library home page: <a href="https://rubygems.org/gems/rails-html-sanitizer-1.3.0.gem">https://rubygems.org/gems/rails-html-sanitizer-1.3.0.gem</a></p>

<p>

Dependency Hierarchy:

- coffee-rails-4.2.2.gem (Root Library)

- railties-5.2.3.gem

- actionpack-5.2.3.gem

- :x: **rails-html-sanitizer-1.3.0.gem** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/JMD60260/fetchmeaband/commit/430b5f2947d45ada69dc047ea870d3c988006344">430b5f2947d45ada69dc047ea870d3c988006344</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A possible XSS vulnerability has been discovered in rails-html-sanitizer before 1.4.3. This allows an attacker to inject content if the application developer has overridden the sanitizer's allowed tags to allow both `select` and `style` elements. Code is only impacted if allowed tags are being overridden. This may be done via application configuration.

<p>Publish Date: 2022-06-02

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-32209>CVE-2022-32209</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://hackerone.com/reports/1530898">https://hackerone.com/reports/1530898</a></p>

<p>Release Date: 2022-06-02</p>

<p>Fix Resolution: rails-html-sanitizer - 1.4.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2022-32209 (Medium) detected in rails-html-sanitizer-1.3.0.gem - ## CVE-2022-32209 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>rails-html-sanitizer-1.3.0.gem</b></p></summary>

<p>HTML sanitization for Rails applications</p>

<p>Library home page: <a href="https://rubygems.org/gems/rails-html-sanitizer-1.3.0.gem">https://rubygems.org/gems/rails-html-sanitizer-1.3.0.gem</a></p>

<p>

Dependency Hierarchy:

- coffee-rails-4.2.2.gem (Root Library)

- railties-5.2.3.gem

- actionpack-5.2.3.gem

- :x: **rails-html-sanitizer-1.3.0.gem** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/JMD60260/fetchmeaband/commit/430b5f2947d45ada69dc047ea870d3c988006344">430b5f2947d45ada69dc047ea870d3c988006344</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A possible XSS vulnerability has been discovered in rails-html-sanitizer before 1.4.3. This allows an attacker to inject content if the application developer has overridden the sanitizer's allowed tags to allow both `select` and `style` elements. Code is only impacted if allowed tags are being overridden. This may be done via application configuration.

<p>Publish Date: 2022-06-02

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-32209>CVE-2022-32209</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://hackerone.com/reports/1530898">https://hackerone.com/reports/1530898</a></p>

<p>Release Date: 2022-06-02</p>

<p>Fix Resolution: rails-html-sanitizer - 1.4.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in rails html sanitizer gem cve medium severity vulnerability vulnerable library rails html sanitizer gem html sanitization for rails applications library home page a href dependency hierarchy coffee rails gem root library railties gem actionpack gem x rails html sanitizer gem vulnerable library found in head commit a href found in base branch master vulnerability details a possible xss vulnerability has been discovered in rails html sanitizer before this allows an attacker to inject content if the application developer has overridden the sanitizer s allowed tags to allow both select and style elements code is only impacted if allowed tags are being overridden this may be done via application configuration publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope changed impact metrics confidentiality impact low integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution rails html sanitizer step up your open source security game with mend

| 0

|

53,045

| 13,260,844,386

|

IssuesEvent

|

2020-08-20 18:51:25

|

icecube-trac/tix4

|

https://api.github.com/repos/icecube-trac/tix4

|

closed

|

boost port needs to fail, if it can't find python "devel" parts (Trac #625)

|

Migrated from Trac defect tools/ports

|

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/625">https://code.icecube.wisc.edu/projects/icecube/ticket/625</a>, reported by negaand owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2014-10-22T17:41:41",

"_ts": "1413999701734819",

"description": "",

"reporter": "nega",

"cc": "",

"resolution": "wontfix",

"time": "2011-04-28T20:34:33",

"component": "tools/ports",

"summary": "boost port needs to fail, if it can't find python \"devel\" parts",

"priority": "minor",

"keywords": "",

"milestone": "",

"owner": "nega",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

boost port needs to fail, if it can't find python "devel" parts (Trac #625) -

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/625">https://code.icecube.wisc.edu/projects/icecube/ticket/625</a>, reported by negaand owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2014-10-22T17:41:41",

"_ts": "1413999701734819",

"description": "",

"reporter": "nega",

"cc": "",

"resolution": "wontfix",

"time": "2011-04-28T20:34:33",

"component": "tools/ports",

"summary": "boost port needs to fail, if it can't find python \"devel\" parts",

"priority": "minor",

"keywords": "",

"milestone": "",

"owner": "nega",

"type": "defect"

}

```

</p>

</details>

|

non_process

|

boost port needs to fail if it can t find python devel parts trac migrated from json status closed changetime ts description reporter nega cc resolution wontfix time component tools ports summary boost port needs to fail if it can t find python devel parts priority minor keywords milestone owner nega type defect

| 0

|

35,931

| 6,510,826,645

|

IssuesEvent

|

2017-08-25 06:40:58

|

magenta-aps/mora

|

https://api.github.com/repos/magenta-aps/mora

|

opened

|

Dokumentation af autentificeringsmekanisme

|

documentation

|

Vi skal have tilføjet et afsnit om autentificeringsmekanismen i README-filen

|

1.0

|

Dokumentation af autentificeringsmekanisme - Vi skal have tilføjet et afsnit om autentificeringsmekanismen i README-filen

|

non_process

|

dokumentation af autentificeringsmekanisme vi skal have tilføjet et afsnit om autentificeringsmekanismen i readme filen

| 0

|

21,953

| 30,452,539,832

|

IssuesEvent

|

2023-07-16 13:31:00

|

h4sh5/pypi-auto-scanner

|

https://api.github.com/repos/h4sh5/pypi-auto-scanner

|

opened

|

etm-dgraham 5.1.13 has 4 GuardDog issues

|

guarddog exec-base64 silent-process-execution

|

https://pypi.org/project/etm-dgraham

https://inspector.pypi.io/project/etm-dgraham

```{

"dependency": "etm-dgraham",

"version": "5.1.13",

"result": {

"issues": 4,

"errors": {},

"results": {

"exec-base64": [

{

"location": "etm-dgraham-5.1.13/bump.py:121",

"code": " check_output(f\"git commit -a -m '{tmsg}'\")",

"message": "This package contains a call to the `eval` function with a `base64` encoded string as argument.\nThis is a common method used to hide a malicious payload in a module as static analysis will not decode the\nstring.\n"

},

{

"location": "etm-dgraham-5.1.13/bump.py:123",

"code": " check_output(f\"git tag -a -f '{new_version}' -m '{version_info}'\")",

"message": "This package contains a call to the `eval` function with a `base64` encoded string as argument.\nThis is a common method used to hide a malicious payload in a module as static analysis will not decode the\nstring.\n"

},

{

"location": "etm-dgraham-5.1.13/bump.py:128",

"code": " check_output(f\"git commit -a --amend -m '{tmsg}'\")",

"message": "This package contains a call to the `eval` function with a `base64` encoded string as argument.\nThis is a common method used to hide a malicious payload in a module as static analysis will not decode the\nstring.\n"

}

],

"silent-process-execution": [

{

"location": "etm-dgraham-5.1.13/etm/view.py:1558",

"code": " pid = subprocess.Popen(parts, stdin=subprocess.DEVNULL, stdout=subprocess.DEVNULL, stderr=subprocess.DEVNULL).pid",

"message": "This package is silently executing an external binary, redirecting stdout, stderr and stdin to /dev/null"

}

]

},

"path": "/tmp/tmpajgh5bnl/etm-dgraham"

}

}```

|

1.0

|

etm-dgraham 5.1.13 has 4 GuardDog issues - https://pypi.org/project/etm-dgraham

https://inspector.pypi.io/project/etm-dgraham

```{

"dependency": "etm-dgraham",

"version": "5.1.13",

"result": {

"issues": 4,

"errors": {},

"results": {

"exec-base64": [

{

"location": "etm-dgraham-5.1.13/bump.py:121",

"code": " check_output(f\"git commit -a -m '{tmsg}'\")",

"message": "This package contains a call to the `eval` function with a `base64` encoded string as argument.\nThis is a common method used to hide a malicious payload in a module as static analysis will not decode the\nstring.\n"

},

{

"location": "etm-dgraham-5.1.13/bump.py:123",

"code": " check_output(f\"git tag -a -f '{new_version}' -m '{version_info}'\")",

"message": "This package contains a call to the `eval` function with a `base64` encoded string as argument.\nThis is a common method used to hide a malicious payload in a module as static analysis will not decode the\nstring.\n"

},

{

"location": "etm-dgraham-5.1.13/bump.py:128",

"code": " check_output(f\"git commit -a --amend -m '{tmsg}'\")",

"message": "This package contains a call to the `eval` function with a `base64` encoded string as argument.\nThis is a common method used to hide a malicious payload in a module as static analysis will not decode the\nstring.\n"

}

],

"silent-process-execution": [

{

"location": "etm-dgraham-5.1.13/etm/view.py:1558",

"code": " pid = subprocess.Popen(parts, stdin=subprocess.DEVNULL, stdout=subprocess.DEVNULL, stderr=subprocess.DEVNULL).pid",

"message": "This package is silently executing an external binary, redirecting stdout, stderr and stdin to /dev/null"

}

]

},

"path": "/tmp/tmpajgh5bnl/etm-dgraham"

}

}```

|

process

|

etm dgraham has guarddog issues dependency etm dgraham version result issues errors results exec location etm dgraham bump py code check output f git commit a m tmsg message this package contains a call to the eval function with a encoded string as argument nthis is a common method used to hide a malicious payload in a module as static analysis will not decode the nstring n location etm dgraham bump py code check output f git tag a f new version m version info message this package contains a call to the eval function with a encoded string as argument nthis is a common method used to hide a malicious payload in a module as static analysis will not decode the nstring n location etm dgraham bump py code check output f git commit a amend m tmsg message this package contains a call to the eval function with a encoded string as argument nthis is a common method used to hide a malicious payload in a module as static analysis will not decode the nstring n silent process execution location etm dgraham etm view py code pid subprocess popen parts stdin subprocess devnull stdout subprocess devnull stderr subprocess devnull pid message this package is silently executing an external binary redirecting stdout stderr and stdin to dev null path tmp etm dgraham

| 1

|

90,023

| 8,224,943,689

|

IssuesEvent

|

2018-09-06 14:57:14

|

magento-engcom/msi

|

https://api.github.com/repos/magento-engcom/msi

|

closed

|

[Configuration-Catalog-Products-Configurable product] Configurable Product created with text swatch attribute configuration and Default Source assigned by Admin user

|

MFTF (Functional Test Coverage)

|

1. Login to backend as admin

2. Go to Catalog -> Categories

3. Select Default Category on Categories Tree

4. Click button "Add Subcategory"

5. set "Enable Category" to "Yes"

6. set "Include in Menu" to "Yes"

7. Fill in "Category Name" = "Category 1"

8. Click button "Save"

9. Success message "You saved the category." appears

10. Verify that Category 1 appeared in Categories tree as subcategory of Default category

11. Login to backend as admin

12. Go to Stores -> Manage Sources

13. Click button "Add New Source"

14. fill New Source data in General tab - Name, Code etc:

* name = Test Source 1

* code = test_source_1

15. Set your New Source "Is Enabled" = Yes

16. Fill all fields with data of your New Source in Contact Info tab

17. Fill in address data of your New Source in Address Data tab:

* Country: United States

* State/Province: California (CA)

* City: Culver City

* Street: 6161 West Centinela Avenue

* Postсode: 90230

18. Set In "Carriers" tab "Use global Shipping configuration" to Yes

19. Click button "Save & close"

20. Confirmation message "The Source has been saved" appears

21. verify that data in all tabs is correct

22. Login to backend as admin

23. Go to Catalog -> Products

24. Click button "Add Configurable Product"

25. set "Enable Product" to "Yes"

26. fill Name = "Configurable Product 1"

27. set Price = "10"

28. set Quantity = "100"

29. set Weight = "1"

30. select Category = "Category 1"

31. in "Product in Websites" tab select "Main Website"

32. click button 'Create Configuration"

33. On page "Step 1" - click button 'Create New Attribute"

34. Fill in "Default label" - "Text swatch attribute"

35. Select in "Catalog Input Type for Store Owner" = Text Swatch

36. Add two text swatches with labels - "Text 1" and "Text 2"

37. click button "Save attribute"

38. Select "Text swatch attribute" from Attributes grid

39. click button "Next"

40. On page "Step 2" click on "Select all"

41. click button "Next"

42. On page "Step 3" select "Apply single quantity to each SKUs"

43. Click button "Assign Sources"

44. In Assign Sources grid select "Default Source" and click "Done"

45. set Quantity = 100

46. click button "Next"

47. On page "Step 4" click button "Generate Products"

48. click button "Save"

49. Success message "You saved the product." appears

50. Verify that created variation products are present on "Configuration" tab

51. Go to "Home page"

52. Open "Category 1" page

53. verify "Configurable Product 1" is present

54. verify that "Name" and "Price" are correct

55. Open "Configurable Product 1" page

56. verify that "Name" and "SKU" are correct

57. verify that text swatch options "Text 1" and "Text 2" are present

----

HipTest Original ID: 1432167

|

1.0

|

[Configuration-Catalog-Products-Configurable product] Configurable Product created with text swatch attribute configuration and Default Source assigned by Admin user - 1. Login to backend as admin

2. Go to Catalog -> Categories

3. Select Default Category on Categories Tree

4. Click button "Add Subcategory"

5. set "Enable Category" to "Yes"

6. set "Include in Menu" to "Yes"

7. Fill in "Category Name" = "Category 1"

8. Click button "Save"

9. Success message "You saved the category." appears

10. Verify that Category 1 appeared in Categories tree as subcategory of Default category

11. Login to backend as admin

12. Go to Stores -> Manage Sources

13. Click button "Add New Source"

14. fill New Source data in General tab - Name, Code etc:

* name = Test Source 1

* code = test_source_1

15. Set your New Source "Is Enabled" = Yes

16. Fill all fields with data of your New Source in Contact Info tab

17. Fill in address data of your New Source in Address Data tab:

* Country: United States

* State/Province: California (CA)

* City: Culver City

* Street: 6161 West Centinela Avenue

* Postсode: 90230

18. Set In "Carriers" tab "Use global Shipping configuration" to Yes

19. Click button "Save & close"

20. Confirmation message "The Source has been saved" appears

21. verify that data in all tabs is correct

22. Login to backend as admin

23. Go to Catalog -> Products

24. Click button "Add Configurable Product"

25. set "Enable Product" to "Yes"

26. fill Name = "Configurable Product 1"

27. set Price = "10"

28. set Quantity = "100"

29. set Weight = "1"

30. select Category = "Category 1"

31. in "Product in Websites" tab select "Main Website"

32. click button 'Create Configuration"

33. On page "Step 1" - click button 'Create New Attribute"

34. Fill in "Default label" - "Text swatch attribute"

35. Select in "Catalog Input Type for Store Owner" = Text Swatch

36. Add two text swatches with labels - "Text 1" and "Text 2"

37. click button "Save attribute"

38. Select "Text swatch attribute" from Attributes grid

39. click button "Next"

40. On page "Step 2" click on "Select all"

41. click button "Next"

42. On page "Step 3" select "Apply single quantity to each SKUs"

43. Click button "Assign Sources"

44. In Assign Sources grid select "Default Source" and click "Done"

45. set Quantity = 100

46. click button "Next"

47. On page "Step 4" click button "Generate Products"

48. click button "Save"

49. Success message "You saved the product." appears

50. Verify that created variation products are present on "Configuration" tab

51. Go to "Home page"

52. Open "Category 1" page

53. verify "Configurable Product 1" is present

54. verify that "Name" and "Price" are correct

55. Open "Configurable Product 1" page

56. verify that "Name" and "SKU" are correct

57. verify that text swatch options "Text 1" and "Text 2" are present

----

HipTest Original ID: 1432167

|

non_process

|