Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

28,757

| 5,348,389,306

|

IssuesEvent

|

2017-02-18 04:23:27

|

amitdholiya/vqmod

|

https://api.github.com/repos/amitdholiya/vqmod

|

reopened

|

Empty vqcache on addon domain

|

auto-migrated Priority-Medium Type-Defect

|

```

What steps will reproduce the problem?

1.vqmod is installed correctly

2.file permissions are all 777 on /vqmod and contents

3.cache and log are empty

What is the expected output? What do you see instead?

I expect my mods to be generating cache files, they are not.

vQmod Version:2.5.1

Server Operating System:linux

Please provide any additional information below.

root site works perfectly, about 30 vqmods installed and working great.

same mods, same configuration on addon domain (public_html/www.mydomain2/) mods

do not work. cache does not generate. thanks for your help

```

Original issue reported on code.google.com by `dale.jac...@gmail.com` on 28 Nov 2014 at 5:43

|

1.0

|

Empty vqcache on addon domain - ```

What steps will reproduce the problem?

1.vqmod is installed correctly

2.file permissions are all 777 on /vqmod and contents

3.cache and log are empty

What is the expected output? What do you see instead?

I expect my mods to be generating cache files, they are not.

vQmod Version:2.5.1

Server Operating System:linux

Please provide any additional information below.

root site works perfectly, about 30 vqmods installed and working great.

same mods, same configuration on addon domain (public_html/www.mydomain2/) mods

do not work. cache does not generate. thanks for your help

```

Original issue reported on code.google.com by `dale.jac...@gmail.com` on 28 Nov 2014 at 5:43

|

non_process

|

empty vqcache on addon domain what steps will reproduce the problem vqmod is installed correctly file permissions are all on vqmod and contents cache and log are empty what is the expected output what do you see instead i expect my mods to be generating cache files they are not vqmod version server operating system linux please provide any additional information below root site works perfectly about vqmods installed and working great same mods same configuration on addon domain public html mods do not work cache does not generate thanks for your help original issue reported on code google com by dale jac gmail com on nov at

| 0

|

301,584

| 9,222,039,308

|

IssuesEvent

|

2019-03-11 21:34:58

|

lbryio/chainquery

|

https://api.github.com/repos/lbryio/chainquery

|

opened

|

Abandoned claims not updated

|

priority: high type: bug

|

Abandoned claims are not showing up as spent. This should happen as part of the mempool processing also.

https://baremetal.chainquery.lbry.io/api/sql?query=SELECT%20*%20FROM%20claim%20where%20name=%22test-announce-03%22

|

1.0

|

Abandoned claims not updated - Abandoned claims are not showing up as spent. This should happen as part of the mempool processing also.

https://baremetal.chainquery.lbry.io/api/sql?query=SELECT%20*%20FROM%20claim%20where%20name=%22test-announce-03%22

|

non_process

|

abandoned claims not updated abandoned claims are not showing up as spent this should happen as part of the mempool processing also

| 0

|

38,127

| 12,528,267,184

|

IssuesEvent

|

2020-06-04 09:17:58

|

ckauhaus/nixpkgs

|

https://api.github.com/repos/ckauhaus/nixpkgs

|

opened

|

Vulnerability roundup 4: balsa-2.5.6: 1 advisory

|

1.severity: security

|

[search](https://search.nix.gsc.io/?q=balsa&i=fosho&repos=NixOS-nixpkgs), [files](https://github.com/NixOS/nixpkgs/search?utf8=%E2%9C%93&q=balsa+in%3Apath&type=Code)

* [ ] [CVE-2020-13645](https://nvd.nist.gov/vuln/detail/CVE-2020-13645) CVSSv3=6.5 (nixos-19.03)

Scanned versions: nixos-19.03: 34c7eb7545d. May contain false positives.

|

True

|

Vulnerability roundup 4: balsa-2.5.6: 1 advisory - [search](https://search.nix.gsc.io/?q=balsa&i=fosho&repos=NixOS-nixpkgs), [files](https://github.com/NixOS/nixpkgs/search?utf8=%E2%9C%93&q=balsa+in%3Apath&type=Code)

* [ ] [CVE-2020-13645](https://nvd.nist.gov/vuln/detail/CVE-2020-13645) CVSSv3=6.5 (nixos-19.03)

Scanned versions: nixos-19.03: 34c7eb7545d. May contain false positives.

|

non_process

|

vulnerability roundup balsa advisory nixos scanned versions nixos may contain false positives

| 0

|

679,951

| 23,251,241,311

|

IssuesEvent

|

2022-08-04 04:07:15

|

bigbinary/org_incineration

|

https://api.github.com/repos/bigbinary/org_incineration

|

closed

|

Add `ActionText::EncryptedRichText` under ignored rails models

|

high priority

|

After upgrading rails getting the following error in incineration service. We should add `ActionText::EncryptedRichText` in skipped models under ignored rails models category.

<img width="1126" alt="Screenshot 2022-08-03 at 10 16 00 PM" src="https://user-images.githubusercontent.com/47141466/182663869-fb290955-438b-47ff-943d-9540b8a7335c.png">

cc: @unnitallman

|

1.0

|

Add `ActionText::EncryptedRichText` under ignored rails models - After upgrading rails getting the following error in incineration service. We should add `ActionText::EncryptedRichText` in skipped models under ignored rails models category.

<img width="1126" alt="Screenshot 2022-08-03 at 10 16 00 PM" src="https://user-images.githubusercontent.com/47141466/182663869-fb290955-438b-47ff-943d-9540b8a7335c.png">

cc: @unnitallman

|

non_process

|

add actiontext encryptedrichtext under ignored rails models after upgrading rails getting the following error in incineration service we should add actiontext encryptedrichtext in skipped models under ignored rails models category img width alt screenshot at pm src cc unnitallman

| 0

|

153,373

| 13,503,977,376

|

IssuesEvent

|

2020-09-13 15:55:53

|

dankamongmen/notcurses

|

https://api.github.com/repos/dankamongmen/notcurses

|

opened

|

Multiselector ought have same functionality as selector

|

documentation enhancement

|

An `ncselector` can add and remove items at runtime, and can control the widget through `_nextitem()` and `_previtem()`. `ncmultiselector` ought have these same capabilities, along with `ncmultiselector_toggle_selected()`.

|

1.0

|

Multiselector ought have same functionality as selector - An `ncselector` can add and remove items at runtime, and can control the widget through `_nextitem()` and `_previtem()`. `ncmultiselector` ought have these same capabilities, along with `ncmultiselector_toggle_selected()`.

|

non_process

|

multiselector ought have same functionality as selector an ncselector can add and remove items at runtime and can control the widget through nextitem and previtem ncmultiselector ought have these same capabilities along with ncmultiselector toggle selected

| 0

|

16,357

| 21,035,735,262

|

IssuesEvent

|

2022-03-31 07:39:40

|

elastic/beats

|

https://api.github.com/repos/elastic/beats

|

closed

|

Dissect: ignore_failure doesn't work as expected

|

Filebeat libbeat :Processors Team:Elastic-Agent-Data-Plane

|

Filebeat 7.13.0

The `ignore_failure` setting in the `dissect` processor doesn't seem to work as expected and behaves as if it were `true`.

```yaml

filebeat.inputs:

- type: stdin

output.console:

pretty: true

processors:

- add_tags:

tags: ['before_dissects']

target: "tags"

- dissect:

tokenizer: "%{word1} %{word2}"

field: "message"

target_prefix: "dissect"

ignore_failure: false

- dissect:

tokenizer: "%{word1} %{word2}"

field: "message"

target_prefix: "dissect"

ignore_failure: false

- add_tags:

tags: ['after_dissects']

target: "tags"

```

When the first `dissect` processor fails, the processor _should_ log an error, preventing execution of other processors if `ignore_failure` is `false` (according to https://www.elastic.co/guide/en/beats/filebeat/current/dissect.html).

This is not what we see here:

```

{

"@timestamp": "2021-06-01T07:54:13.072Z",

"@metadata": {

"beat": "filebeat",

"type": "_doc",

"version": "7.13.0"

},

"tags": [

"before_dissects",

"after_dissects"

],

"log": {

"offset": 0,

"file": {

"path": ""

},

"flags": [

"dissect_parsing_error",

"dissect_parsing_error"

]

},

"message": "abc",

"input": {

"type": "stdin"

},

"ecs": {

"version": "1.8.0"

},

"host": {

"name": "fred.home"

},

"agent": {

"ephemeral_id": "a18dc3ee-7a5f-440b-a062-870194fb3cf3",

"id": "fce66f38-e7a3-4bae-aad8-40df579ee1d4",

"name": "fred.home",

"type": "filebeat",

"version": "7.13.0",

"hostname": "fred.home"

}

}

```

The two `dissect` processors fail and all the processors are executed nonetheless.

With `ignore_failure` set to `false` (the default) I would not expect the other processors to be executed and the event to be sent to the output.

|

1.0

|

Dissect: ignore_failure doesn't work as expected - Filebeat 7.13.0

The `ignore_failure` setting in the `dissect` processor doesn't seem to work as expected and behaves as if it were `true`.

```yaml

filebeat.inputs:

- type: stdin

output.console:

pretty: true

processors:

- add_tags:

tags: ['before_dissects']

target: "tags"

- dissect:

tokenizer: "%{word1} %{word2}"

field: "message"

target_prefix: "dissect"

ignore_failure: false

- dissect:

tokenizer: "%{word1} %{word2}"

field: "message"

target_prefix: "dissect"

ignore_failure: false

- add_tags:

tags: ['after_dissects']

target: "tags"

```

When the first `dissect` processor fails, the processor _should_ log an error, preventing execution of other processors if `ignore_failure` is `false` (according to https://www.elastic.co/guide/en/beats/filebeat/current/dissect.html).

This is not what we see here:

```

{

"@timestamp": "2021-06-01T07:54:13.072Z",

"@metadata": {

"beat": "filebeat",

"type": "_doc",

"version": "7.13.0"

},

"tags": [

"before_dissects",

"after_dissects"

],

"log": {

"offset": 0,

"file": {

"path": ""

},

"flags": [

"dissect_parsing_error",

"dissect_parsing_error"

]

},

"message": "abc",

"input": {

"type": "stdin"

},

"ecs": {

"version": "1.8.0"

},

"host": {

"name": "fred.home"

},

"agent": {

"ephemeral_id": "a18dc3ee-7a5f-440b-a062-870194fb3cf3",

"id": "fce66f38-e7a3-4bae-aad8-40df579ee1d4",

"name": "fred.home",

"type": "filebeat",

"version": "7.13.0",

"hostname": "fred.home"

}

}

```

The two `dissect` processors fail and all the processors are executed nonetheless.

With `ignore_failure` set to `false` (the default) I would not expect the other processors to be executed and the event to be sent to the output.

|

process

|

dissect ignore failure doesn t work as expected filebeat the ignore failure setting in the dissect processor doesn t seem to work as expected and behaves as if it were true yaml filebeat inputs type stdin output console pretty true processors add tags tags target tags dissect tokenizer field message target prefix dissect ignore failure false dissect tokenizer field message target prefix dissect ignore failure false add tags tags target tags when the first dissect processor fails the processor should log an error preventing execution of other processors if ignore failure is false according to this is not what we see here timestamp metadata beat filebeat type doc version tags before dissects after dissects log offset file path flags dissect parsing error dissect parsing error message abc input type stdin ecs version host name fred home agent ephemeral id id name fred home type filebeat version hostname fred home the two dissect processors fail and all the processors are executed nonetheless with ignore failure set to false the default i would not expect the other processors to be executed and the event to be sent to the output

| 1

|

21,196

| 28,213,989,094

|

IssuesEvent

|

2023-04-05 07:33:47

|

darktable-org/darktable

|

https://api.github.com/repos/darktable-org/darktable

|

closed

|

Since a recent master, some images make darktable crashes when opening them on darkroom

|

priority: high scope: image processing bug: pending

|

**Describe the bug/issue**

I launch again, after nearly 2 weeks, darktable (and so update master), yesterday. I then discover that darktable crashes, when opening all my images of my recent filmrolls (on what I test) when I open them on darkroom.

I've check that some older images opened without any crashes. I so test to clone images that crashes, copy/paste history of good images and can open duplicates. I tested on original and same issue: no more crashes. Then I tried to remove history from lighttable for some images that crashes but again: a crash.

But images that crashes open correctly when I go back to older master I used (nearly 300 commits behind).

**To Reproduce**

1. Use XMP provided below on an image

[XMP-with-crash.zip](https://github.com/darktable-org/darktable/files/11141630/XMP-with-crash.zip)

2. Open image on darkroom

3. See loading image toast message

4. Then darktable should crash

**Expected behavior**

No crash on those images, like I can have with an older master on march.

**Which commit introduced the error**

Git bisect gives such result:

```

177b1e4f9c7c7d50b402c803b64381950fb1de1c is the first bad commit

commit 177b1e4f9c7c7d50b402c803b64381950fb1de1c

Author: Pascal Obry <pascal@obry.net>

Date: Wed Mar 22 08:29:45 2023 +0100

Use preset's multi_name as module label.

In an attempt to give better control to the actual module label

used (avoiding also long preset names possibly hard to read and

ellipsize) the module label is now using the preset multi_name

if set. If not set, the preset name is used as before.

```

So should be for you @TurboGit. As I know that sometimes git bisect is not precise, I'm sure regarding all tests, compile I've made that this is from commits merged on march 25th (same day that one had been merged). On that day, main updates are PR from @ralfbrown and @jenshannoschwalm.

**Platform**

_Please fill as much information as possible in the list given below. Please state "unknown" where you do not know the answer and remove any sections that are not applicable _

* darktable version : recent master (since at least last master of march 25th

|

1.0

|

Since a recent master, some images make darktable crashes when opening them on darkroom - **Describe the bug/issue**

I launch again, after nearly 2 weeks, darktable (and so update master), yesterday. I then discover that darktable crashes, when opening all my images of my recent filmrolls (on what I test) when I open them on darkroom.

I've check that some older images opened without any crashes. I so test to clone images that crashes, copy/paste history of good images and can open duplicates. I tested on original and same issue: no more crashes. Then I tried to remove history from lighttable for some images that crashes but again: a crash.

But images that crashes open correctly when I go back to older master I used (nearly 300 commits behind).

**To Reproduce**

1. Use XMP provided below on an image

[XMP-with-crash.zip](https://github.com/darktable-org/darktable/files/11141630/XMP-with-crash.zip)

2. Open image on darkroom

3. See loading image toast message

4. Then darktable should crash

**Expected behavior**

No crash on those images, like I can have with an older master on march.

**Which commit introduced the error**

Git bisect gives such result:

```

177b1e4f9c7c7d50b402c803b64381950fb1de1c is the first bad commit

commit 177b1e4f9c7c7d50b402c803b64381950fb1de1c

Author: Pascal Obry <pascal@obry.net>

Date: Wed Mar 22 08:29:45 2023 +0100

Use preset's multi_name as module label.

In an attempt to give better control to the actual module label

used (avoiding also long preset names possibly hard to read and

ellipsize) the module label is now using the preset multi_name

if set. If not set, the preset name is used as before.

```

So should be for you @TurboGit. As I know that sometimes git bisect is not precise, I'm sure regarding all tests, compile I've made that this is from commits merged on march 25th (same day that one had been merged). On that day, main updates are PR from @ralfbrown and @jenshannoschwalm.

**Platform**

_Please fill as much information as possible in the list given below. Please state "unknown" where you do not know the answer and remove any sections that are not applicable _

* darktable version : recent master (since at least last master of march 25th

|

process

|

since a recent master some images make darktable crashes when opening them on darkroom describe the bug issue i launch again after nearly weeks darktable and so update master yesterday i then discover that darktable crashes when opening all my images of my recent filmrolls on what i test when i open them on darkroom i ve check that some older images opened without any crashes i so test to clone images that crashes copy paste history of good images and can open duplicates i tested on original and same issue no more crashes then i tried to remove history from lighttable for some images that crashes but again a crash but images that crashes open correctly when i go back to older master i used nearly commits behind to reproduce use xmp provided below on an image open image on darkroom see loading image toast message then darktable should crash expected behavior no crash on those images like i can have with an older master on march which commit introduced the error git bisect gives such result is the first bad commit commit author pascal obry date wed mar use preset s multi name as module label in an attempt to give better control to the actual module label used avoiding also long preset names possibly hard to read and ellipsize the module label is now using the preset multi name if set if not set the preset name is used as before so should be for you turbogit as i know that sometimes git bisect is not precise i m sure regarding all tests compile i ve made that this is from commits merged on march same day that one had been merged on that day main updates are pr from ralfbrown and jenshannoschwalm platform please fill as much information as possible in the list given below please state unknown where you do not know the answer and remove any sections that are not applicable darktable version recent master since at least last master of march

| 1

|

7,147

| 10,291,590,155

|

IssuesEvent

|

2019-08-27 12:50:09

|

heim-rs/heim

|

https://api.github.com/repos/heim-rs/heim

|

closed

|

Use darwin_libproc crate for macOS process routines

|

A-process C-enhancement C-good-first-issue O-macos

|

`process::Process::exe` for macOS should use the `darwin_libproc::pid_path` from the `darwin-libproc` crate instead of bundled bindings.

Also, git dependency should be changed to a published version.

|

1.0

|

Use darwin_libproc crate for macOS process routines - `process::Process::exe` for macOS should use the `darwin_libproc::pid_path` from the `darwin-libproc` crate instead of bundled bindings.

Also, git dependency should be changed to a published version.

|

process

|

use darwin libproc crate for macos process routines process process exe for macos should use the darwin libproc pid path from the darwin libproc crate instead of bundled bindings also git dependency should be changed to a published version

| 1

|

8,556

| 11,731,041,236

|

IssuesEvent

|

2020-03-10 22:53:01

|

gearboxworks/gearbox

|

https://api.github.com/repos/gearboxworks/gearbox

|

closed

|

Set up Docker build process using Actions

|

Task process-docker process-github process-workflow

|

This includes consolidating duplicated logic into one place such as `Makefile`.

Also renaming [github.com/gearboxworks/docker-gearbox](https://github.com/gearboxworks/docker-gearbox) to [github.com/gearboxworks/docker-base](https://github.com/gearboxworks/docker-base)

It *might* include moving to [Github Package repository](https://github.com/features/packages) from Docker Hub *if* it is a lot easier to build and deploy to Github.com via. DockerHub.com.

|

3.0

|

Set up Docker build process using Actions - This includes consolidating duplicated logic into one place such as `Makefile`.

Also renaming [github.com/gearboxworks/docker-gearbox](https://github.com/gearboxworks/docker-gearbox) to [github.com/gearboxworks/docker-base](https://github.com/gearboxworks/docker-base)

It *might* include moving to [Github Package repository](https://github.com/features/packages) from Docker Hub *if* it is a lot easier to build and deploy to Github.com via. DockerHub.com.

|

process

|

set up docker build process using actions this includes consolidating duplicated logic into one place such as makefile also renaming to it might include moving to from docker hub if it is a lot easier to build and deploy to github com via dockerhub com

| 1

|

31,604

| 7,416,395,729

|

IssuesEvent

|

2018-03-22 00:59:48

|

Microsoft/ChakraCore

|

https://api.github.com/repos/Microsoft/ChakraCore

|

closed

|

Move WinRTDate code from DateUtilities.cpp

|

Codebase Quality Task

|

lib/common/common/DateUtilities.cpp has some WinRTDate utilities that seems not used in ChakraCore. Consider move them.

|

1.0

|

Move WinRTDate code from DateUtilities.cpp - lib/common/common/DateUtilities.cpp has some WinRTDate utilities that seems not used in ChakraCore. Consider move them.

|

non_process

|

move winrtdate code from dateutilities cpp lib common common dateutilities cpp has some winrtdate utilities that seems not used in chakracore consider move them

| 0

|

119,997

| 15,688,896,190

|

IssuesEvent

|

2021-03-25 15:08:05

|

MetaMask/metamask-extension

|

https://api.github.com/repos/MetaMask/metamask-extension

|

opened

|

Update copy of "Password" to "Device Passcode"

|

N00-needsDesign T01-enhancement ux-enhancement

|

**Problem**

There is a lot of user confusion with user's passwords. Many users assume:

1) The password will be the same across devices (both extension and mobile)

2) MetaMask is able to recover their password if it is lost.

These are standard patterns for "Passwords" across web2. Typically to unlock a device, the unlock code is called "Pin", "Pin code", "Passcode" etc. And it is often not retrievable in the same way that a password is.

**Solution**

To match user's mental model we should use familiar language. We should update the terminology across extension and mobile from "password" to "device passcode" or "device pin" (although "pin" may be associated with numbers only)

|

1.0

|

Update copy of "Password" to "Device Passcode" - **Problem**

There is a lot of user confusion with user's passwords. Many users assume:

1) The password will be the same across devices (both extension and mobile)

2) MetaMask is able to recover their password if it is lost.

These are standard patterns for "Passwords" across web2. Typically to unlock a device, the unlock code is called "Pin", "Pin code", "Passcode" etc. And it is often not retrievable in the same way that a password is.

**Solution**

To match user's mental model we should use familiar language. We should update the terminology across extension and mobile from "password" to "device passcode" or "device pin" (although "pin" may be associated with numbers only)

|

non_process

|

update copy of password to device passcode problem there is a lot of user confusion with user s passwords many users assume the password will be the same across devices both extension and mobile metamask is able to recover their password if it is lost these are standard patterns for passwords across typically to unlock a device the unlock code is called pin pin code passcode etc and it is often not retrievable in the same way that a password is solution to match user s mental model we should use familiar language we should update the terminology across extension and mobile from password to device passcode or device pin although pin may be associated with numbers only

| 0

|

2,449

| 5,226,483,990

|

IssuesEvent

|

2017-01-27 21:31:58

|

nodejs/node

|

https://api.github.com/repos/nodejs/node

|

opened

|

fix flaky equential/test-child-process-pass-fd on fedora 24

|

child_process test

|

Example failure:

https://ci.nodejs.org/job/node-test-commit-linux/7552/nodes=fedora24/console

```console

duration_ms: 1.113

severity: fail

stack: |-

events.js:161

throw er; // Unhandled 'error' event

^

Error: spawn /home/iojs/build/workspace/node-test-commit-linux/nodes/fedora24/out/Release/node EAGAIN

at exports._errnoException (util.js:1023:11)

at Process.ChildProcess._handle.onexit (internal/child_process.js:193:32)

at onErrorNT (internal/child_process.js:359:16)

at _combinedTickCallback (internal/process/next_tick.js:74:11)

at process._tickCallback (internal/process/next_tick.js:98:9)

at Module.runMain (module.js:607:11)

at run (bootstrap_node.js:418:7)

at startup (bootstrap_node.js:139:9)

at bootstrap_node.js:533:3

events.js:161

throw er; // Unhandled 'error' event

^

Error: channel closed

at process.target.send (internal/child_process.js:553:16)

at Socket.socketConnected (/home/iojs/build/workspace/node-test-commit-linux/nodes/fedora24/test/sequential/test-child-process-pass-fd.js:39:15)

at Object.onceWrapper (events.js:291:19)

at emitNone (events.js:86:13)

at Socket.emit (events.js:186:7)

at TCPConnectWrap.afterConnect [as oncomplete] (net.js:1077:10)

events.js:161

throw er; // Unhandled 'error' event

^

Error: channel closed

at process.target.send (internal/child_process.js:553:16)

at Socket.socketConnected (/home/iojs/build/workspace/node-test-commit-linux/nodes/fedora24/test/sequential/test-child-process-pass-fd.js:39:15)

at Object.onceWrapper (events.js:291:19)

at emitNone (events.js:86:13)

at Socket.emit (events.js:186:7)

at TCPConnectWrap.afterConnect [as oncomplete] (net.js:1077:10)

events.js:161

throw er; // Unhandled 'error' event

^

Error: channel closed

at process.target.send (internal/child_process.js:553:16)

at Socket.socketConnected (/home/iojs/build/workspace/node-test-commit-linux/nodes/fedora24/test/sequential/test-child-process-pass-fd.js:39:15)

at Object.onceWrapper (events.js:291:19)

at emitNone (events.js:86:13)

at Socket.emit (events.js:186:7)

at TCPConnectWrap.afterConnect [as oncomplete] (net.js:1077:10)

events.js:161

throw er; // Unhandled 'error' event

^

Error: channel closed

at process.target.send (internal/child_process.js:553:16)

at Socket.socketConnected (/home/iojs/build/workspace/node-test-commit-linux/nodes/fedora24/test/sequential/test-child-process-pass-fd.js:39:15)

at Object.onceWrapper (events.js:291:19)

at emitNone (events.js:86:13)

at Socket.emit (events.js:186:7)

at TCPConnectWrap.afterConnect [as oncomplete] (net.js:1077:10)

events.js:161

throw er; // Unhandled 'error' event

^

Error: channel closed

at process.target.send (internal/child_process.js:553:16)

at Socket.socketConnected (/home/iojs/build/workspace/node-test-commit-linux/nodes/fedora24/test/sequential/test-child-process-pass-fd.js:39:15)

at Object.onceWrapper (events.js:291:19)

at emitNone (events.js:86:13)

at Socket.emit (events.js:186:7)

at TCPConnectWrap.afterConnect [as oncomplete] (net.js:1077:10)

events.js:161

throw er; // Unhandled 'error' event

^

Error: channel closed

at process.target.send (internal/child_process.js:553:16)

at Socket.socketConnected (/home/iojs/build/workspace/node-test-commit-linux/nodes/fedora24/test/sequential/test-child-process-pass-fd.js:39:15)

at Object.onceWrapper (events.js:291:19)

at emitNone (events.js:86:13)

at Socket.emit (events.js:186:7)

at TCPConnectWrap.afterConnect [as oncomplete] (net.js:1077:10)

events.js:161

throw er; // Unhandled 'error' event

^

Error: channel closed

at process.target.send (internal/child_process.js:553:16)

at Socket.socketConnected (/home/iojs/build/workspace/node-test-commit-linux/nodes/fedora24/test/sequential/test-child-process-pass-fd.js:39:15)

at Object.onceWrapper (events.js:291:19)

at emitNone (events.js:86:13)

at Socket.emit (events.js:186:7)

at TCPConnectWrap.afterConnect [as oncomplete] (net.js:1077:10)

...

```

/cc @santigimeno

|

1.0

|

fix flaky equential/test-child-process-pass-fd on fedora 24 - Example failure:

https://ci.nodejs.org/job/node-test-commit-linux/7552/nodes=fedora24/console

```console

duration_ms: 1.113

severity: fail

stack: |-

events.js:161

throw er; // Unhandled 'error' event

^

Error: spawn /home/iojs/build/workspace/node-test-commit-linux/nodes/fedora24/out/Release/node EAGAIN

at exports._errnoException (util.js:1023:11)

at Process.ChildProcess._handle.onexit (internal/child_process.js:193:32)

at onErrorNT (internal/child_process.js:359:16)

at _combinedTickCallback (internal/process/next_tick.js:74:11)

at process._tickCallback (internal/process/next_tick.js:98:9)

at Module.runMain (module.js:607:11)

at run (bootstrap_node.js:418:7)

at startup (bootstrap_node.js:139:9)

at bootstrap_node.js:533:3

events.js:161

throw er; // Unhandled 'error' event

^

Error: channel closed

at process.target.send (internal/child_process.js:553:16)

at Socket.socketConnected (/home/iojs/build/workspace/node-test-commit-linux/nodes/fedora24/test/sequential/test-child-process-pass-fd.js:39:15)

at Object.onceWrapper (events.js:291:19)

at emitNone (events.js:86:13)

at Socket.emit (events.js:186:7)

at TCPConnectWrap.afterConnect [as oncomplete] (net.js:1077:10)

events.js:161

throw er; // Unhandled 'error' event

^

Error: channel closed

at process.target.send (internal/child_process.js:553:16)

at Socket.socketConnected (/home/iojs/build/workspace/node-test-commit-linux/nodes/fedora24/test/sequential/test-child-process-pass-fd.js:39:15)

at Object.onceWrapper (events.js:291:19)

at emitNone (events.js:86:13)

at Socket.emit (events.js:186:7)

at TCPConnectWrap.afterConnect [as oncomplete] (net.js:1077:10)

events.js:161

throw er; // Unhandled 'error' event

^

Error: channel closed

at process.target.send (internal/child_process.js:553:16)

at Socket.socketConnected (/home/iojs/build/workspace/node-test-commit-linux/nodes/fedora24/test/sequential/test-child-process-pass-fd.js:39:15)

at Object.onceWrapper (events.js:291:19)

at emitNone (events.js:86:13)

at Socket.emit (events.js:186:7)

at TCPConnectWrap.afterConnect [as oncomplete] (net.js:1077:10)

events.js:161

throw er; // Unhandled 'error' event

^

Error: channel closed

at process.target.send (internal/child_process.js:553:16)

at Socket.socketConnected (/home/iojs/build/workspace/node-test-commit-linux/nodes/fedora24/test/sequential/test-child-process-pass-fd.js:39:15)

at Object.onceWrapper (events.js:291:19)

at emitNone (events.js:86:13)

at Socket.emit (events.js:186:7)

at TCPConnectWrap.afterConnect [as oncomplete] (net.js:1077:10)

events.js:161

throw er; // Unhandled 'error' event

^

Error: channel closed

at process.target.send (internal/child_process.js:553:16)

at Socket.socketConnected (/home/iojs/build/workspace/node-test-commit-linux/nodes/fedora24/test/sequential/test-child-process-pass-fd.js:39:15)

at Object.onceWrapper (events.js:291:19)

at emitNone (events.js:86:13)

at Socket.emit (events.js:186:7)

at TCPConnectWrap.afterConnect [as oncomplete] (net.js:1077:10)

events.js:161

throw er; // Unhandled 'error' event

^

Error: channel closed

at process.target.send (internal/child_process.js:553:16)

at Socket.socketConnected (/home/iojs/build/workspace/node-test-commit-linux/nodes/fedora24/test/sequential/test-child-process-pass-fd.js:39:15)

at Object.onceWrapper (events.js:291:19)

at emitNone (events.js:86:13)

at Socket.emit (events.js:186:7)

at TCPConnectWrap.afterConnect [as oncomplete] (net.js:1077:10)

events.js:161

throw er; // Unhandled 'error' event

^

Error: channel closed

at process.target.send (internal/child_process.js:553:16)

at Socket.socketConnected (/home/iojs/build/workspace/node-test-commit-linux/nodes/fedora24/test/sequential/test-child-process-pass-fd.js:39:15)

at Object.onceWrapper (events.js:291:19)

at emitNone (events.js:86:13)

at Socket.emit (events.js:186:7)

at TCPConnectWrap.afterConnect [as oncomplete] (net.js:1077:10)

...

```

/cc @santigimeno

|

process

|

fix flaky equential test child process pass fd on fedora example failure console duration ms severity fail stack events js throw er unhandled error event error spawn home iojs build workspace node test commit linux nodes out release node eagain at exports errnoexception util js at process childprocess handle onexit internal child process js at onerrornt internal child process js at combinedtickcallback internal process next tick js at process tickcallback internal process next tick js at module runmain module js at run bootstrap node js at startup bootstrap node js at bootstrap node js events js throw er unhandled error event error channel closed at process target send internal child process js at socket socketconnected home iojs build workspace node test commit linux nodes test sequential test child process pass fd js at object oncewrapper events js at emitnone events js at socket emit events js at tcpconnectwrap afterconnect net js events js throw er unhandled error event error channel closed at process target send internal child process js at socket socketconnected home iojs build workspace node test commit linux nodes test sequential test child process pass fd js at object oncewrapper events js at emitnone events js at socket emit events js at tcpconnectwrap afterconnect net js events js throw er unhandled error event error channel closed at process target send internal child process js at socket socketconnected home iojs build workspace node test commit linux nodes test sequential test child process pass fd js at object oncewrapper events js at emitnone events js at socket emit events js at tcpconnectwrap afterconnect net js events js throw er unhandled error event error channel closed at process target send internal child process js at socket socketconnected home iojs build workspace node test commit linux nodes test sequential test child process pass fd js at object oncewrapper events js at emitnone events js at socket emit events js at tcpconnectwrap afterconnect net js events js throw er unhandled error event error channel closed at process target send internal child process js at socket socketconnected home iojs build workspace node test commit linux nodes test sequential test child process pass fd js at object oncewrapper events js at emitnone events js at socket emit events js at tcpconnectwrap afterconnect net js events js throw er unhandled error event error channel closed at process target send internal child process js at socket socketconnected home iojs build workspace node test commit linux nodes test sequential test child process pass fd js at object oncewrapper events js at emitnone events js at socket emit events js at tcpconnectwrap afterconnect net js events js throw er unhandled error event error channel closed at process target send internal child process js at socket socketconnected home iojs build workspace node test commit linux nodes test sequential test child process pass fd js at object oncewrapper events js at emitnone events js at socket emit events js at tcpconnectwrap afterconnect net js cc santigimeno

| 1

|

3,407

| 6,520,469,720

|

IssuesEvent

|

2017-08-28 16:37:32

|

w3c/w3process

|

https://api.github.com/repos/w3c/w3process

|

closed

|

can the AB/TAG chair ask for a special election in *advance* of a known upcoming vacancy?

|

Process2018Candidate

|

[Section 2.5.3 Advisory Board and Technical Architecture Group Vacated Seats](https://w3c.github.io/w3process/#AB-TAG-vacated) says:

> When an elected seat on either the AB or TAG is vacated, the seat is filled at the next regularly scheduled election for the group unless the group Chair requests that W3C hold an election before then (for instance, due to the group's workload). The group Chair should not request an exceptional election if the next regularly scheduled election is fewer than three months away.

It seems to me that, since some vacancies are known in advance of when they occur, it ought to be possible for the Chair to ask for the election when an upcoming vacancy is known even if the seat is not yet vacant (much like regular elections are held before the terms expire), so that the vacant seat can be filled from the result of a special election more quickly.

It's not clear to me whether the above text allows this. I tend to think it should be clarified so that it does allow it.

|

1.0

|

can the AB/TAG chair ask for a special election in *advance* of a known upcoming vacancy? - [Section 2.5.3 Advisory Board and Technical Architecture Group Vacated Seats](https://w3c.github.io/w3process/#AB-TAG-vacated) says:

> When an elected seat on either the AB or TAG is vacated, the seat is filled at the next regularly scheduled election for the group unless the group Chair requests that W3C hold an election before then (for instance, due to the group's workload). The group Chair should not request an exceptional election if the next regularly scheduled election is fewer than three months away.

It seems to me that, since some vacancies are known in advance of when they occur, it ought to be possible for the Chair to ask for the election when an upcoming vacancy is known even if the seat is not yet vacant (much like regular elections are held before the terms expire), so that the vacant seat can be filled from the result of a special election more quickly.

It's not clear to me whether the above text allows this. I tend to think it should be clarified so that it does allow it.

|

process

|

can the ab tag chair ask for a special election in advance of a known upcoming vacancy says when an elected seat on either the ab or tag is vacated the seat is filled at the next regularly scheduled election for the group unless the group chair requests that hold an election before then for instance due to the group s workload the group chair should not request an exceptional election if the next regularly scheduled election is fewer than three months away it seems to me that since some vacancies are known in advance of when they occur it ought to be possible for the chair to ask for the election when an upcoming vacancy is known even if the seat is not yet vacant much like regular elections are held before the terms expire so that the vacant seat can be filled from the result of a special election more quickly it s not clear to me whether the above text allows this i tend to think it should be clarified so that it does allow it

| 1

|

37,385

| 12,477,454,139

|

IssuesEvent

|

2020-05-29 14:59:13

|

LibrIT/passhport

|

https://api.github.com/repos/LibrIT/passhport

|

closed

|

CVE-2019-8331 (Medium) detected in bootstrap-3.3.2.min.js, bootstrap-3.3.7.min.js

|

New security vulnerability

|

## CVE-2019-8331 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>bootstrap-3.3.2.min.js</b>, <b>bootstrap-3.3.7.min.js</b></p></summary>

<p>

<details><summary><b>bootstrap-3.3.2.min.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile first projects on the web.</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/3.3.2/js/bootstrap.min.js">https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/3.3.2/js/bootstrap.min.js</a></p>

<p>Path to dependency file: /tmp/ws-scm/passhport/passhweb/app/static/bower_components/bootstrap-daterangepicker/website/index.html</p>

<p>Path to vulnerable library: /passhport/passhweb/app/static/bower_components/bootstrap-daterangepicker/website/index.html,/passhport/passhweb/app/static/bower_components/bootstrap-daterangepicker/demo.html</p>

<p>

Dependency Hierarchy:

- :x: **bootstrap-3.3.2.min.js** (Vulnerable Library)

</details>

<details><summary><b>bootstrap-3.3.7.min.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile first projects on the web.</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/3.3.7/js/bootstrap.min.js">https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/3.3.7/js/bootstrap.min.js</a></p>

<p>Path to dependency file: /tmp/ws-scm/passhport/passhweb/app/static/bower_components/bootstrap-colorpicker/index.html</p>

<p>Path to vulnerable library: /passhport/passhweb/app/static/bower_components/bootstrap-colorpicker/index.html</p>

<p>

Dependency Hierarchy:

- :x: **bootstrap-3.3.7.min.js** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/LibrIT/passhport/commit/280394daf60b8887c5eebccaca5e3c390a11b1f2">280394daf60b8887c5eebccaca5e3c390a11b1f2</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In Bootstrap before 3.4.1 and 4.3.x before 4.3.1, XSS is possible in the tooltip or popover data-template attribute.

<p>Publish Date: 2019-02-20

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-8331>CVE-2019-8331</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/twbs/bootstrap/pull/28236">https://github.com/twbs/bootstrap/pull/28236</a></p>

<p>Release Date: 2019-02-20</p>

<p>Fix Resolution: bootstrap - 3.4.1,4.3.1;bootstrap-sass - 3.4.1,4.3.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2019-8331 (Medium) detected in bootstrap-3.3.2.min.js, bootstrap-3.3.7.min.js - ## CVE-2019-8331 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>bootstrap-3.3.2.min.js</b>, <b>bootstrap-3.3.7.min.js</b></p></summary>

<p>

<details><summary><b>bootstrap-3.3.2.min.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile first projects on the web.</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/3.3.2/js/bootstrap.min.js">https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/3.3.2/js/bootstrap.min.js</a></p>

<p>Path to dependency file: /tmp/ws-scm/passhport/passhweb/app/static/bower_components/bootstrap-daterangepicker/website/index.html</p>

<p>Path to vulnerable library: /passhport/passhweb/app/static/bower_components/bootstrap-daterangepicker/website/index.html,/passhport/passhweb/app/static/bower_components/bootstrap-daterangepicker/demo.html</p>

<p>

Dependency Hierarchy:

- :x: **bootstrap-3.3.2.min.js** (Vulnerable Library)

</details>

<details><summary><b>bootstrap-3.3.7.min.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile first projects on the web.</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/3.3.7/js/bootstrap.min.js">https://cdnjs.cloudflare.com/ajax/libs/twitter-bootstrap/3.3.7/js/bootstrap.min.js</a></p>

<p>Path to dependency file: /tmp/ws-scm/passhport/passhweb/app/static/bower_components/bootstrap-colorpicker/index.html</p>

<p>Path to vulnerable library: /passhport/passhweb/app/static/bower_components/bootstrap-colorpicker/index.html</p>

<p>

Dependency Hierarchy:

- :x: **bootstrap-3.3.7.min.js** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/LibrIT/passhport/commit/280394daf60b8887c5eebccaca5e3c390a11b1f2">280394daf60b8887c5eebccaca5e3c390a11b1f2</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In Bootstrap before 3.4.1 and 4.3.x before 4.3.1, XSS is possible in the tooltip or popover data-template attribute.

<p>Publish Date: 2019-02-20

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-8331>CVE-2019-8331</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/twbs/bootstrap/pull/28236">https://github.com/twbs/bootstrap/pull/28236</a></p>

<p>Release Date: 2019-02-20</p>

<p>Fix Resolution: bootstrap - 3.4.1,4.3.1;bootstrap-sass - 3.4.1,4.3.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in bootstrap min js bootstrap min js cve medium severity vulnerability vulnerable libraries bootstrap min js bootstrap min js bootstrap min js the most popular front end framework for developing responsive mobile first projects on the web library home page a href path to dependency file tmp ws scm passhport passhweb app static bower components bootstrap daterangepicker website index html path to vulnerable library passhport passhweb app static bower components bootstrap daterangepicker website index html passhport passhweb app static bower components bootstrap daterangepicker demo html dependency hierarchy x bootstrap min js vulnerable library bootstrap min js the most popular front end framework for developing responsive mobile first projects on the web library home page a href path to dependency file tmp ws scm passhport passhweb app static bower components bootstrap colorpicker index html path to vulnerable library passhport passhweb app static bower components bootstrap colorpicker index html dependency hierarchy x bootstrap min js vulnerable library found in head commit a href vulnerability details in bootstrap before and x before xss is possible in the tooltip or popover data template attribute publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope changed impact metrics confidentiality impact low integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution bootstrap bootstrap sass step up your open source security game with whitesource

| 0

|

2,968

| 5,960,738,887

|

IssuesEvent

|

2017-05-29 14:55:51

|

orbardugo/Hahot-Hameshulash

|

https://api.github.com/repos/orbardugo/Hahot-Hameshulash

|

closed

|

Add Queries

|

in process priorty 1 Ruben

|

Todo: Add Queries - drugs, alcohol, criminal record, external contact, occupateon, religion by Ruben

|

1.0

|

Add Queries - Todo: Add Queries - drugs, alcohol, criminal record, external contact, occupateon, religion by Ruben

|

process

|

add queries todo add queries drugs alcohol criminal record external contact occupateon religion by ruben

| 1

|

92,474

| 11,648,497,684

|

IssuesEvent

|

2020-03-01 21:03:48

|

adavijit/BlogMan

|

https://api.github.com/repos/adavijit/BlogMan

|

opened

|

Create wireframe for a particular book

|

design gssoc20 medium

|

BlogMan is all about sharing knowledge. It will have a books suggestions section.

Create a simple wireframe for the page dedicated to a particular book.. it will display:

name of book, author, pic, stars, genre, description.

add to favorite button for book

anything that comes to your mind and you think is needed on this page can be added.

|

1.0

|

Create wireframe for a particular book - BlogMan is all about sharing knowledge. It will have a books suggestions section.

Create a simple wireframe for the page dedicated to a particular book.. it will display:

name of book, author, pic, stars, genre, description.

add to favorite button for book

anything that comes to your mind and you think is needed on this page can be added.

|

non_process

|

create wireframe for a particular book blogman is all about sharing knowledge it will have a books suggestions section create a simple wireframe for the page dedicated to a particular book it will display name of book author pic stars genre description add to favorite button for book anything that comes to your mind and you think is needed on this page can be added

| 0

|

18,698

| 24,595,507,019

|

IssuesEvent

|

2022-10-14 07:58:59

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[FHIR] Unable to submit the responses for all the activities

|

Bug Blocker P0 Process: Fixed Process: Tested dev

|

Unable to submit the responses for all the activities

**AR:** The activity will be in 'Resume' status if the participant clicks the Done button on the 'Activity completed' screen

**ER:** Participant should be submitted the response successfully and activity should be in 'Completed' status

|

2.0

|

[FHIR] Unable to submit the responses for all the activities - Unable to submit the responses for all the activities

**AR:** The activity will be in 'Resume' status if the participant clicks the Done button on the 'Activity completed' screen

**ER:** Participant should be submitted the response successfully and activity should be in 'Completed' status

|

process

|

unable to submit the responses for all the activities unable to submit the responses for all the activities ar the activity will be in resume status if the participant clicks the done button on the activity completed screen er participant should be submitted the response successfully and activity should be in completed status

| 1

|

12,188

| 14,742,251,162

|

IssuesEvent

|

2021-01-07 11:58:14

|

kdjstudios/SABillingGitlab

|

https://api.github.com/repos/kdjstudios/SABillingGitlab

|

closed

|

Site 056 Changes made to Client Account (UI Logging and Tracking)

|

anc-ops anp-1.5 ant-enhancement ant-parent/primary ant-support grt-ui processes

|

In GitLab by @kdjstudios on Apr 2, 2019, 11:23

**Submitted by:** Rich Montano <richard.montano@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-04-02-23193/conversation

**Server:** Internal

**Client/Site:** 056

**Account:** MX4082

**Issue:**

It has come to my attention that Santa Rosa's account MX4082,

MED-Project, has had it's Monthly Base Rate and Monthly Portal Access

turned off since the 2/1/2019 invoice at the very least. To my knowledge

this is not a change made by anyone in Santa Rosa. We need to know how

this happened and if it can be discerned, who made the change.

|

1.0

|

Site 056 Changes made to Client Account (UI Logging and Tracking) - In GitLab by @kdjstudios on Apr 2, 2019, 11:23

**Submitted by:** Rich Montano <richard.montano@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-04-02-23193/conversation

**Server:** Internal

**Client/Site:** 056

**Account:** MX4082

**Issue:**

It has come to my attention that Santa Rosa's account MX4082,

MED-Project, has had it's Monthly Base Rate and Monthly Portal Access

turned off since the 2/1/2019 invoice at the very least. To my knowledge

this is not a change made by anyone in Santa Rosa. We need to know how

this happened and if it can be discerned, who made the change.

|

process

|

site changes made to client account ui logging and tracking in gitlab by kdjstudios on apr submitted by rich montano helpdesk server internal client site account issue it has come to my attention that santa rosa s account med project has had it s monthly base rate and monthly portal access turned off since the invoice at the very least to my knowledge this is not a change made by anyone in santa rosa we need to know how this happened and if it can be discerned who made the change

| 1

|

87,147

| 15,756,002,513

|

IssuesEvent

|

2021-03-31 02:45:28

|

turkdevops/nexus-iq-chrome-extension

|

https://api.github.com/repos/turkdevops/nexus-iq-chrome-extension

|

opened

|

CVE-2011-4969 (Medium) detected in jquery-1.3.2.min.js

|

security vulnerability

|

## CVE-2011-4969 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.3.2.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.3.2/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.3.2/jquery.min.js</a></p>

<p>Path to dependency file: nexus-iq-chrome-extension/release/1.8.1/src/Scripts/lib/jquery-ui-1.12.1/node_modules/underscore.string/test/test_standalone.html</p>

<p>Path to vulnerable library: nexus-iq-chrome-extension/release/1.8.1/src/Scripts/lib/jquery-ui-1.12.1/node_modules/underscore.string/test/test_underscore/vendor/jquery.js,nexus-iq-chrome-extension/src/Scripts/lib/jquery-ui-1.12.1/node_modules/underscore.string/test/test_underscore/vendor/jquery.js</p>

<p>

Dependency Hierarchy:

- :x: **jquery-1.3.2.min.js** (Vulnerable Library)

<p>Found in base branch: <b>fixVersionHistory-gh</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Cross-site scripting (XSS) vulnerability in jQuery before 1.6.3, when using location.hash to select elements, allows remote attackers to inject arbitrary web script or HTML via a crafted tag.

<p>Publish Date: 2013-03-08

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2011-4969>CVE-2011-4969</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>4.3</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2011-4969">https://nvd.nist.gov/vuln/detail/CVE-2011-4969</a></p>

<p>Release Date: 2013-03-08</p>

<p>Fix Resolution: 1.6.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2011-4969 (Medium) detected in jquery-1.3.2.min.js - ## CVE-2011-4969 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.3.2.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.3.2/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.3.2/jquery.min.js</a></p>

<p>Path to dependency file: nexus-iq-chrome-extension/release/1.8.1/src/Scripts/lib/jquery-ui-1.12.1/node_modules/underscore.string/test/test_standalone.html</p>

<p>Path to vulnerable library: nexus-iq-chrome-extension/release/1.8.1/src/Scripts/lib/jquery-ui-1.12.1/node_modules/underscore.string/test/test_underscore/vendor/jquery.js,nexus-iq-chrome-extension/src/Scripts/lib/jquery-ui-1.12.1/node_modules/underscore.string/test/test_underscore/vendor/jquery.js</p>

<p>

Dependency Hierarchy:

- :x: **jquery-1.3.2.min.js** (Vulnerable Library)

<p>Found in base branch: <b>fixVersionHistory-gh</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Cross-site scripting (XSS) vulnerability in jQuery before 1.6.3, when using location.hash to select elements, allows remote attackers to inject arbitrary web script or HTML via a crafted tag.

<p>Publish Date: 2013-03-08

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2011-4969>CVE-2011-4969</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>4.3</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2011-4969">https://nvd.nist.gov/vuln/detail/CVE-2011-4969</a></p>

<p>Release Date: 2013-03-08</p>

<p>Fix Resolution: 1.6.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file nexus iq chrome extension release src scripts lib jquery ui node modules underscore string test test standalone html path to vulnerable library nexus iq chrome extension release src scripts lib jquery ui node modules underscore string test test underscore vendor jquery js nexus iq chrome extension src scripts lib jquery ui node modules underscore string test test underscore vendor jquery js dependency hierarchy x jquery min js vulnerable library found in base branch fixversionhistory gh vulnerability details cross site scripting xss vulnerability in jquery before when using location hash to select elements allows remote attackers to inject arbitrary web script or html via a crafted tag publish date url a href cvss score details base score metrics not available suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource

| 0

|

5,961

| 8,784,243,238

|

IssuesEvent

|

2018-12-20 09:16:56

|

linnovate/root

|

https://api.github.com/repos/linnovate/root

|

closed

|

cant delete doc template

|

Process bug

|

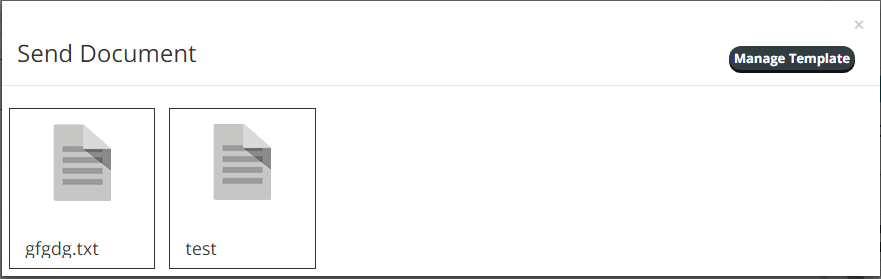

after deleting a document template, it still shows up when choosing a file from template in documents

here, i deleted the gfgdg.txt template but it still shows up in documents

|

1.0

|

cant delete doc template - after deleting a document template, it still shows up when choosing a file from template in documents

here, i deleted the gfgdg.txt template but it still shows up in documents

|

process

|

cant delete doc template after deleting a document template it still shows up when choosing a file from template in documents here i deleted the gfgdg txt template but it still shows up in documents

| 1

|

22,250

| 30,801,982,816

|

IssuesEvent

|

2023-08-01 02:40:19

|

h4sh5/pypi-auto-scanner

|

https://api.github.com/repos/h4sh5/pypi-auto-scanner

|

opened

|

pih 1.47212 has 2 GuardDog issues

|

guarddog typosquatting silent-process-execution

|

https://pypi.org/project/pih

https://inspector.pypi.io/project/pih

```{

"dependency": "pih",

"version": "1.47212",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names, and might be a typosquatting attempt: pid, pip",

"silent-process-execution": [

{

"location": "pih-1.47212/pih/tools.py:774",

"code": " result = subprocess.run(command, stdin=subprocess.DEVNULL, stdout=subprocess.DEVNULL, stderr=subprocess.DEVNULL)",

"message": "This package is silently executing an external binary, redirecting stdout, stderr and stdin to /dev/null"

}

]

},

"path": "/tmp/tmpe_03uveq/pih"

}

}```

|

1.0

|

pih 1.47212 has 2 GuardDog issues - https://pypi.org/project/pih

https://inspector.pypi.io/project/pih

```{

"dependency": "pih",

"version": "1.47212",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names, and might be a typosquatting attempt: pid, pip",

"silent-process-execution": [

{

"location": "pih-1.47212/pih/tools.py:774",

"code": " result = subprocess.run(command, stdin=subprocess.DEVNULL, stdout=subprocess.DEVNULL, stderr=subprocess.DEVNULL)",

"message": "This package is silently executing an external binary, redirecting stdout, stderr and stdin to /dev/null"

}

]

},

"path": "/tmp/tmpe_03uveq/pih"

}

}```

|

process

|

pih has guarddog issues dependency pih version result issues errors results typosquatting this package closely ressembles the following package names and might be a typosquatting attempt pid pip silent process execution location pih pih tools py code result subprocess run command stdin subprocess devnull stdout subprocess devnull stderr subprocess devnull message this package is silently executing an external binary redirecting stdout stderr and stdin to dev null path tmp tmpe pih

| 1

|

60,904

| 3,135,544,543

|

IssuesEvent

|

2015-09-10 15:41:34

|

ceylon/ceylon-ide-eclipse

|

https://api.github.com/repos/ceylon/ceylon-ide-eclipse

|

closed

|

NPE when the saving in the structured comparator.

|

bug high priority

|

```

java.lang.NullPointerException

at com.redhat.ceylon.eclipse.code.outline.CeylonStructureCreator.buildCompareTree(CeylonStructureCreator.java:102)

at com.redhat.ceylon.eclipse.code.outline.CeylonStructureCreator.createStructureComparator(CeylonStructureCreator.java:87)

at org.eclipse.compare.structuremergeviewer.StructureCreator.internalCreateStructure(StructureCreator.java:121)

at org.eclipse.compare.structuremergeviewer.StructureCreator.access$0(StructureCreator.java:109)

at org.eclipse.compare.structuremergeviewer.StructureCreator$1.run(StructureCreator.java:96)

at org.eclipse.swt.custom.BusyIndicator.showWhile(BusyIndicator.java:70)

at org.eclipse.compare.internal.Utilities.runInUIThread(Utilities.java:859)

at org.eclipse.compare.structuremergeviewer.StructureCreator.createStructure(StructureCreator.java:102)

at org.eclipse.compare.structuremergeviewer.StructureDiffViewer$StructureInfo.createStructure(StructureDiffViewer.java:155)

at org.eclipse.compare.structuremergeviewer.StructureDiffViewer$StructureInfo.refresh(StructureDiffViewer.java:133)

at org.eclipse.compare.structuremergeviewer.StructureDiffViewer$StructureInfo.setInput(StructureDiffViewer.java:104)

at org.eclipse.compare.structuremergeviewer.StructureDiffViewer.compareInputChanged(StructureDiffViewer.java:347)

at org.eclipse.compare.structuremergeviewer.StructureDiffViewer$2.run(StructureDiffViewer.java:74)

at org.eclipse.compare.structuremergeviewer.StructureDiffViewer$6.run(StructureDiffViewer.java:322)

at org.eclipse.swt.custom.BusyIndicator.showWhile(BusyIndicator.java:70)

at org.eclipse.compare.structuremergeviewer.StructureDiffViewer.compareInputChanged(StructureDiffViewer.java:319)

at org.eclipse.compare.structuremergeviewer.StructureDiffViewer$5.compareInputChanged(StructureDiffViewer.java:213)

at org.eclipse.compare.structuremergeviewer.DiffNode.fireChange(DiffNode.java:137)

at org.eclipse.egit.ui.internal.NotifiableDiffNode.fireChange(NotifiableDiffNode.java:26)

at org.eclipse.egit.ui.internal.GitCompareFileRevisionEditorInput.fireInputChange(GitCompareFileRevisionEditorInput.java:420)

at org.eclipse.egit.ui.internal.GitCompareFileRevisionEditorInput$InternalResourceSaveableComparison.fireInputChange(GitCompareFileRevisionEditorInput.java:604)

at org.eclipse.team.internal.ui.synchronize.LocalResourceSaveableComparison.performSave(LocalResourceSaveableComparison.java:143)

at org.eclipse.team.ui.mapping.SaveableComparison.doSave(SaveableComparison.java:49)

at org.eclipse.ui.Saveable.doSave(Saveable.java:216)

at org.eclipse.ui.internal.SaveableHelper.doSaveModel(SaveableHelper.java:355)

at org.eclipse.ui.internal.SaveableHelper$3.run(SaveableHelper.java:199)

at org.eclipse.ui.internal.SaveableHelper$5.run(SaveableHelper.java:283)

at org.eclipse.jface.operation.ModalContext.runInCurrentThread(ModalContext.java:466)

at org.eclipse.jface.operation.ModalContext.run(ModalContext.java:374)

at org.eclipse.ui.internal.WorkbenchWindow$13.run(WorkbenchWindow.java:2157)

at org.eclipse.swt.custom.BusyIndicator.showWhile(BusyIndicator.java:70)

at org.eclipse.ui.internal.WorkbenchWindow.run(WorkbenchWindow.java:2153)

at org.eclipse.ui.internal.SaveableHelper.runProgressMonitorOperation(SaveableHelper.java:291)

at org.eclipse.ui.internal.SaveableHelper.runProgressMonitorOperation(SaveableHelper.java:269)

at org.eclipse.ui.internal.SaveableHelper.saveModels(SaveableHelper.java:211)

at org.eclipse.ui.internal.SaveableHelper.savePart(SaveableHelper.java:146)

at org.eclipse.ui.internal.WorkbenchPage.saveSaveable(WorkbenchPage.java:3915)

at org.eclipse.ui.internal.WorkbenchPage.saveEditor(WorkbenchPage.java:3929)

at org.eclipse.ui.internal.handlers.SaveHandler.execute(SaveHandler.java:54)

```

|

1.0

|

NPE when the saving in the structured comparator. - ```

java.lang.NullPointerException

at com.redhat.ceylon.eclipse.code.outline.CeylonStructureCreator.buildCompareTree(CeylonStructureCreator.java:102)

at com.redhat.ceylon.eclipse.code.outline.CeylonStructureCreator.createStructureComparator(CeylonStructureCreator.java:87)

at org.eclipse.compare.structuremergeviewer.StructureCreator.internalCreateStructure(StructureCreator.java:121)

at org.eclipse.compare.structuremergeviewer.StructureCreator.access$0(StructureCreator.java:109)

at org.eclipse.compare.structuremergeviewer.StructureCreator$1.run(StructureCreator.java:96)

at org.eclipse.swt.custom.BusyIndicator.showWhile(BusyIndicator.java:70)

at org.eclipse.compare.internal.Utilities.runInUIThread(Utilities.java:859)

at org.eclipse.compare.structuremergeviewer.StructureCreator.createStructure(StructureCreator.java:102)

at org.eclipse.compare.structuremergeviewer.StructureDiffViewer$StructureInfo.createStructure(StructureDiffViewer.java:155)

at org.eclipse.compare.structuremergeviewer.StructureDiffViewer$StructureInfo.refresh(StructureDiffViewer.java:133)

at org.eclipse.compare.structuremergeviewer.StructureDiffViewer$StructureInfo.setInput(StructureDiffViewer.java:104)

at org.eclipse.compare.structuremergeviewer.StructureDiffViewer.compareInputChanged(StructureDiffViewer.java:347)

at org.eclipse.compare.structuremergeviewer.StructureDiffViewer$2.run(StructureDiffViewer.java:74)

at org.eclipse.compare.structuremergeviewer.StructureDiffViewer$6.run(StructureDiffViewer.java:322)

at org.eclipse.swt.custom.BusyIndicator.showWhile(BusyIndicator.java:70)

at org.eclipse.compare.structuremergeviewer.StructureDiffViewer.compareInputChanged(StructureDiffViewer.java:319)

at org.eclipse.compare.structuremergeviewer.StructureDiffViewer$5.compareInputChanged(StructureDiffViewer.java:213)

at org.eclipse.compare.structuremergeviewer.DiffNode.fireChange(DiffNode.java:137)

at org.eclipse.egit.ui.internal.NotifiableDiffNode.fireChange(NotifiableDiffNode.java:26)

at org.eclipse.egit.ui.internal.GitCompareFileRevisionEditorInput.fireInputChange(GitCompareFileRevisionEditorInput.java:420)

at org.eclipse.egit.ui.internal.GitCompareFileRevisionEditorInput$InternalResourceSaveableComparison.fireInputChange(GitCompareFileRevisionEditorInput.java:604)

at org.eclipse.team.internal.ui.synchronize.LocalResourceSaveableComparison.performSave(LocalResourceSaveableComparison.java:143)

at org.eclipse.team.ui.mapping.SaveableComparison.doSave(SaveableComparison.java:49)

at org.eclipse.ui.Saveable.doSave(Saveable.java:216)

at org.eclipse.ui.internal.SaveableHelper.doSaveModel(SaveableHelper.java:355)

at org.eclipse.ui.internal.SaveableHelper$3.run(SaveableHelper.java:199)

at org.eclipse.ui.internal.SaveableHelper$5.run(SaveableHelper.java:283)

at org.eclipse.jface.operation.ModalContext.runInCurrentThread(ModalContext.java:466)

at org.eclipse.jface.operation.ModalContext.run(ModalContext.java:374)

at org.eclipse.ui.internal.WorkbenchWindow$13.run(WorkbenchWindow.java:2157)

at org.eclipse.swt.custom.BusyIndicator.showWhile(BusyIndicator.java:70)

at org.eclipse.ui.internal.WorkbenchWindow.run(WorkbenchWindow.java:2153)

at org.eclipse.ui.internal.SaveableHelper.runProgressMonitorOperation(SaveableHelper.java:291)

at org.eclipse.ui.internal.SaveableHelper.runProgressMonitorOperation(SaveableHelper.java:269)

at org.eclipse.ui.internal.SaveableHelper.saveModels(SaveableHelper.java:211)

at org.eclipse.ui.internal.SaveableHelper.savePart(SaveableHelper.java:146)

at org.eclipse.ui.internal.WorkbenchPage.saveSaveable(WorkbenchPage.java:3915)

at org.eclipse.ui.internal.WorkbenchPage.saveEditor(WorkbenchPage.java:3929)

at org.eclipse.ui.internal.handlers.SaveHandler.execute(SaveHandler.java:54)

```

|

non_process

|