Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

248,179 | 7,928,251,009 | IssuesEvent | 2018-07-06 10:55:27 | python/mypy | https://api.github.com/repos/python/mypy | closed | allow overloads to be distinguished by contained type of a generic | false-positive feature priority-1-normal topic-overloads | This is a follow-up to the discussion beginning at https://github.com/python/typing/issues/253#issuecomment-235652393

Mypy's choice to not distinguish overload signatures by contained types rules out some natural API choices, e.g.

```

@overload

def map(ids: List[int]) -> Dict[int, MyObj]: ...

@overload

def ... | 1.0 | allow overloads to be distinguished by contained type of a generic - This is a follow-up to the discussion beginning at https://github.com/python/typing/issues/253#issuecomment-235652393

Mypy's choice to not distinguish overload signatures by contained types rules out some natural API choices, e.g.

```

@overload... | non_process | allow overloads to be distinguished by contained type of a generic this is a follow up to the discussion beginning at mypy s choice to not distinguish overload signatures by contained types rules out some natural api choices e g overload def map ids list dict overload def map ids li... | 0 |

285,492 | 31,154,691,209 | IssuesEvent | 2023-08-16 12:25:17 | Trinadh465/linux-4.1.15_CVE-2018-5873 | https://api.github.com/repos/Trinadh465/linux-4.1.15_CVE-2018-5873 | opened | CVE-2022-3564 (High) detected in linuxlinux-4.1.52 | Mend: dependency security vulnerability | ## CVE-2022-3564 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.1.52</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kern... | True | CVE-2022-3564 (High) detected in linuxlinux-4.1.52 - ## CVE-2022-3564 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.1.52</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p... | non_process | cve high detected in linuxlinux cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch main vulnerable source files net bluetooth co... | 0 |

43,667 | 23,326,840,960 | IssuesEvent | 2022-08-08 22:18:00 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Regressions in System.Tests.Perf_UInt64 | area-System.Runtime tenet-performance tenet-performance-benchmarks refs/heads/main RunKind=micro Windows 10.0.19041 Regression CoreClr arm64 | ### Run Information

Architecture | arm64

-- | --

OS | Windows 10.0.19041

Baseline | [b92de6bf0351280cd36221f3232b2964a4e61e88](https://github.com/dotnet/runtime/commit/b92de6bf0351280cd36221f3232b2964a4e61e88)

Compare | [d4a9ade2dfbee1ef532e7793ea9c330c51b5c028](https://github.com/dotnet/runtime/commit/d4a9ade2d... | True | Regressions in System.Tests.Perf_UInt64 - ### Run Information

Architecture | arm64

-- | --

OS | Windows 10.0.19041

Baseline | [b92de6bf0351280cd36221f3232b2964a4e61e88](https://github.com/dotnet/runtime/commit/b92de6bf0351280cd36221f3232b2964a4e61e88)

Compare | [d4a9ade2dfbee1ef532e7793ea9c330c51b5c028](https://... | non_process | regressions in system tests perf run information architecture os windows baseline compare diff regressions in system collections containskeyfalse lt string string gt benchmark baseline test test base test quality edge detector baseline ir ... | 0 |

363,973 | 25,476,838,130 | IssuesEvent | 2022-11-25 15:16:00 | rune004/mkdocs | https://api.github.com/repos/rune004/mkdocs | opened | Upload Ansible Documentation | documentation | Upload all Ansible Documentation needed for deployment via a Ubuntu controller. | 1.0 | Upload Ansible Documentation - Upload all Ansible Documentation needed for deployment via a Ubuntu controller. | non_process | upload ansible documentation upload all ansible documentation needed for deployment via a ubuntu controller | 0 |

5,600 | 8,460,166,563 | IssuesEvent | 2018-10-22 18:02:40 | aspnet/IISIntegration | https://api.github.com/repos/aspnet/IISIntegration | closed | ANCM TCP packet loss | investigate out-of-process | I have a setup when multiple sites (asp.net core, asp.net, python) are hosted in IIS together with [Shibboleth Service Provider](https://wiki.shibboleth.net/confluence/display/SP3/Home) which acts before request reaches the actual site code and passes authenticated user information to applications via request headers. ... | 1.0 | ANCM TCP packet loss - I have a setup when multiple sites (asp.net core, asp.net, python) are hosted in IIS together with [Shibboleth Service Provider](https://wiki.shibboleth.net/confluence/display/SP3/Home) which acts before request reaches the actual site code and passes authenticated user information to application... | process | ancm tcp packet loss i have a setup when multiple sites asp net core asp net python are hosted in iis together with which acts before request reaches the actual site code and passes authenticated user information to applications via request headers it works fine until one of the headers get bigger over kb... | 1 |

15,018 | 18,732,508,482 | IssuesEvent | 2021-11-04 00:20:37 | woowacourse/prolog | https://api.github.com/repos/woowacourse/prolog | closed | 신규 멤버 온보딩을 진행한다. | process | ## 신규 멤버 온보딩

- 기존 인수 테스트를 모두 실행시켜보면서 기존 기능을 이해하기

- 인수 테스트 실행을 비롯한 로컬 개발 환경 설정은 멘토가 하루 날잡고 함께 도와주기

- 개선이 필요하거나 부족한 부분이 있는 인수 테스트가 있으면 개선해서 PR올리기(멘토가 리뷰 할 예정)

## 체크

- [x] 바다

- [x] 수리

- [x] 코다 | 1.0 | 신규 멤버 온보딩을 진행한다. - ## 신규 멤버 온보딩

- 기존 인수 테스트를 모두 실행시켜보면서 기존 기능을 이해하기

- 인수 테스트 실행을 비롯한 로컬 개발 환경 설정은 멘토가 하루 날잡고 함께 도와주기

- 개선이 필요하거나 부족한 부분이 있는 인수 테스트가 있으면 개선해서 PR올리기(멘토가 리뷰 할 예정)

## 체크

- [x] 바다

- [x] 수리

- [x] 코다 | process | 신규 멤버 온보딩을 진행한다 신규 멤버 온보딩 기존 인수 테스트를 모두 실행시켜보면서 기존 기능을 이해하기 인수 테스트 실행을 비롯한 로컬 개발 환경 설정은 멘토가 하루 날잡고 함께 도와주기 개선이 필요하거나 부족한 부분이 있는 인수 테스트가 있으면 개선해서 pr올리기 멘토가 리뷰 할 예정 체크 바다 수리 코다 | 1 |

159,697 | 20,085,894,240 | IssuesEvent | 2022-02-05 01:08:15 | AkshayMukkavilli/Tensorflow | https://api.github.com/repos/AkshayMukkavilli/Tensorflow | opened | CVE-2021-41221 (High) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl | security vulnerability | ## CVE-2021-41221 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning... | True | CVE-2021-41221 (High) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl - ## CVE-2021-41221 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27mu-m... | non_process | cve high detected in tensorflow whl cve high severity vulnerability vulnerable library tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file tensorflow src requirements ... | 0 |

10,723 | 4,817,936,749 | IssuesEvent | 2016-11-04 15:05:07 | uProxy/uproxy | https://api.github.com/repos/uProxy/uproxy | closed | choose an API documentation generator | C:BuildProcess C:Code-Cleanup P3 T:Obsolete | Right now, we have a mish-mash of comment styles. The style guide specifies C++-style comments but we have tons of C-style comments, too, some of them with JSDoc-style annotations. At minimum, let's:

1. investigate API documentation generators for TypeScript (maybe JS generators are fine)

2. investigate what code compl... | 1.0 | choose an API documentation generator - Right now, we have a mish-mash of comment styles. The style guide specifies C++-style comments but we have tons of C-style comments, too, some of them with JSDoc-style annotations. At minimum, let's:

1. investigate API documentation generators for TypeScript (maybe JS generators ... | non_process | choose an api documentation generator right now we have a mish mash of comment styles the style guide specifies c style comments but we have tons of c style comments too some of them with jsdoc style annotations at minimum let s investigate api documentation generators for typescript maybe js generators ... | 0 |

338,294 | 10,227,138,006 | IssuesEvent | 2019-08-16 19:51:49 | onaio/reveal-frontend | https://api.github.com/repos/onaio/reveal-frontend | closed | Debug Focus Area Profile Page | Priority: High bug has pr | This page seems to have a number of problems that we need to investigate:

- [x] The map sometimes loads, sometimes does not load and sometimes loads then starts reloading

- [x] The list of focus areas on this page should be filtered by the focus area (jurisdiction) id. This is not the case

- Some of the queries s... | 1.0 | Debug Focus Area Profile Page - This page seems to have a number of problems that we need to investigate:

- [x] The map sometimes loads, sometimes does not load and sometimes loads then starts reloading

- [x] The list of focus areas on this page should be filtered by the focus area (jurisdiction) id. This is not t... | non_process | debug focus area profile page this page seems to have a number of problems that we need to investigate the map sometimes loads sometimes does not load and sometimes loads then starts reloading the list of focus areas on this page should be filtered by the focus area jurisdiction id this is not the c... | 0 |

143,599 | 11,570,438,180 | IssuesEvent | 2020-02-20 19:30:55 | CBICA/CaPTk | https://api.github.com/repos/CBICA/CaPTk | closed | CaPTk 1.7.6 GUI Failure | Testathon-Feb-2020 wontfix | **Describe the bug**

Failed to load CaPTk GUI from cluster. Crashes immediately upon execution of 'captk' in CL. Have tried to unload captk before loading the new version as well.

**To Reproduce**

Steps to reproduce the behavior:

1. On the cluster

2. "Module load captk/1.7.6"

3. "captk"

4. See error

**Expec... | 1.0 | CaPTk 1.7.6 GUI Failure - **Describe the bug**

Failed to load CaPTk GUI from cluster. Crashes immediately upon execution of 'captk' in CL. Have tried to unload captk before loading the new version as well.

**To Reproduce**

Steps to reproduce the behavior:

1. On the cluster

2. "Module load captk/1.7.6"

3. "captk... | non_process | captk gui failure describe the bug failed to load captk gui from cluster crashes immediately upon execution of captk in cl have tried to unload captk before loading the new version as well to reproduce steps to reproduce the behavior on the cluster module load captk captk... | 0 |

3,831 | 6,802,428,844 | IssuesEvent | 2017-11-02 20:10:31 | gratipay/inside.gratipay.com | https://api.github.com/repos/gratipay/inside.gratipay.com | closed | Clarify terms around notification | Governance & Process | Reticketed from https://github.com/gratipay/inside.gratipay.com/issues/204 via https://github.com/gratipay/gratipay.com/pull/4117#issuecomment-262336157.

>> Regarding the “without notice to you” provisions in §11.4 and §12.1, I would **very** much like to be informed of it, just as under §17.2.

>

>I think these cl... | 1.0 | Clarify terms around notification - Reticketed from https://github.com/gratipay/inside.gratipay.com/issues/204 via https://github.com/gratipay/gratipay.com/pull/4117#issuecomment-262336157.

>> Regarding the “without notice to you” provisions in §11.4 and §12.1, I would **very** much like to be informed of it, just a... | process | clarify terms around notification reticketed from via regarding the “without notice to you” provisions in § and § i would very much like to be informed of it just as under § i think these clauses mean without prior notice to you we certainly notify people of things after the fact bu... | 1 |

227,401 | 17,381,920,919 | IssuesEvent | 2021-07-31 22:24:55 | moja-global/FLINT-UI | https://api.github.com/repos/moja-global/FLINT-UI | closed | Reference Vue 2 style guide in README | documentation good first issue | The Vue docs are amazing. Let's make sure our UI developers know we follow them by adding a section to our README on coding style and providing a link:

~https://v3.vuejs.org/style-guide/~

EDIT: see comment from @waridrox | 1.0 | Reference Vue 2 style guide in README - The Vue docs are amazing. Let's make sure our UI developers know we follow them by adding a section to our README on coding style and providing a link:

~https://v3.vuejs.org/style-guide/~

EDIT: see comment from @waridrox | non_process | reference vue style guide in readme the vue docs are amazing let s make sure our ui developers know we follow them by adding a section to our readme on coding style and providing a link edit see comment from waridrox | 0 |

253,536 | 19,122,574,283 | IssuesEvent | 2021-12-01 01:15:01 | gingerchicken/como-client | https://api.github.com/repos/gingerchicken/como-client | opened | Update README to new Java | documentation | I am pretty sure Java 17 is now required to build the mod, maybe update the README to say this. | 1.0 | Update README to new Java - I am pretty sure Java 17 is now required to build the mod, maybe update the README to say this. | non_process | update readme to new java i am pretty sure java is now required to build the mod maybe update the readme to say this | 0 |

651,117 | 21,465,876,185 | IssuesEvent | 2022-04-26 03:37:00 | ballerina-platform/ballerina-extended-library | https://api.github.com/repos/ballerina-platform/ballerina-extended-library | opened | [Improvement]: Provide pagination support for List and Query operations in Salesforce connector | Priority/High Type/Improvement Team/Connector Component/Connector | ### Connector Name

module/salesforce (Salesforce)

### Suggested improvement

The pagination of Salesforce responses are not handled internally in the Salesforce connector. In the current approach users can call the `getQueryResult` operation to get the first page only. Then he has to call the `getNextQueryResult` op... | 1.0 | [Improvement]: Provide pagination support for List and Query operations in Salesforce connector - ### Connector Name

module/salesforce (Salesforce)

### Suggested improvement

The pagination of Salesforce responses are not handled internally in the Salesforce connector. In the current approach users can call the `get... | non_process | provide pagination support for list and query operations in salesforce connector connector name module salesforce salesforce suggested improvement the pagination of salesforce responses are not handled internally in the salesforce connector in the current approach users can call the getqueryresult ... | 0 |

210,268 | 7,187,423,728 | IssuesEvent | 2018-02-02 05:10:35 | wso2/testgrid | https://api.github.com/repos/wso2/testgrid | opened | TestGrid dev dashboard in apps.wso2 is pointing to the wrong URL | Priority/Highest Severity/Critical Type/Bug | **Description:**

TestGrid dev dashboard in apps.wso2 is pointing to the wrong URL (`https://testgrid-live-dev.private.wso2.com/testgrid/dashboard/api/acs`). This should be (`https://testgrid-live-dev.private.wso2.com/testgrid/dashboard/`) | 1.0 | TestGrid dev dashboard in apps.wso2 is pointing to the wrong URL - **Description:**

TestGrid dev dashboard in apps.wso2 is pointing to the wrong URL (`https://testgrid-live-dev.private.wso2.com/testgrid/dashboard/api/acs`). This should be (`https://testgrid-live-dev.private.wso2.com/testgrid/dashboard/`) | non_process | testgrid dev dashboard in apps is pointing to the wrong url description testgrid dev dashboard in apps is pointing to the wrong url this should be | 0 |

82,494 | 7,842,843,588 | IssuesEvent | 2018-06-19 02:01:42 | DynamoRIO/dynamorio | https://api.github.com/repos/DynamoRIO/dynamorio | closed | tool.drcachesim.TLB-threads failing non-deterministically on Travis | Bug-AppFail Component-Tests | It terminates early but at 90s it may be a timeout (though there's no timeout message).

https://api.travis-ci.org/jobs/174603662/log.txt?deansi=true

```

211:

211: Finished computing current solution distance in mode 0.

211: Mode changed to 0.

211:

211: Started iteration 10 of the computatio... | 1.0 | tool.drcachesim.TLB-threads failing non-deterministically on Travis - It terminates early but at 90s it may be a timeout (though there's no timeout message).

https://api.travis-ci.org/jobs/174603662/log.txt?deansi=true

```

211:

211: Finished computing current solution distance in mode 0.

211: Mode ... | non_process | tool drcachesim tlb threads failing non deterministically on travis it terminates early but at it may be a timeout though there s no timeout message finished computing current solution distance in mode mode changed to started iteration of the computation ... | 0 |

576,964 | 17,100,067,530 | IssuesEvent | 2021-07-09 09:56:34 | PlaceOS/driver | https://api.github.com/repos/PlaceOS/driver | closed | Refactor build process | priority: high type: enhancement | Drivers are currently compiled via setting an environment variable that points to the driver's entry point and compiling a common `build.cr`.

The most intuitive process would be...

- `require "placeos-driver"` like any other library, and write your driver code below.

- Point the compiler at the file the driver was... | 1.0 | Refactor build process - Drivers are currently compiled via setting an environment variable that points to the driver's entry point and compiling a common `build.cr`.

The most intuitive process would be...

- `require "placeos-driver"` like any other library, and write your driver code below.

- Point the compiler a... | non_process | refactor build process drivers are currently compiled via setting an environment variable that points to the driver s entry point and compiling a common build cr the most intuitive process would be require placeos driver like any other library and write your driver code below point the compiler a... | 0 |

46,534 | 6,021,258,811 | IssuesEvent | 2017-06-07 18:17:25 | 18F/omb-eregs | https://api.github.com/repos/18F/omb-eregs | closed | Review user research to date and create/make public a synthesized documentation of it | design | verbs

- [X] read through existing research documentation and notes

- [X] write up a synthesis doc (user archetypes)

- [x] have team (esp. Micah and Nicole) review and add comments/clarifications

- [x] publish on GitHub wiki | 1.0 | Review user research to date and create/make public a synthesized documentation of it - verbs

- [X] read through existing research documentation and notes

- [X] write up a synthesis doc (user archetypes)

- [x] have team (esp. Micah and Nicole) review and add comments/clarifications

- [x] publish on GitHub wiki | non_process | review user research to date and create make public a synthesized documentation of it verbs read through existing research documentation and notes write up a synthesis doc user archetypes have team esp micah and nicole review and add comments clarifications publish on github wiki | 0 |

2,763 | 5,695,995,600 | IssuesEvent | 2017-04-16 05:59:56 | AllenFang/react-bootstrap-table | https://api.github.com/repos/AllenFang/react-bootstrap-table | closed | Autocomplete input field in insert Modal | duplicate enhancement inprocess | I would like to have an input text field in my Insert Modal with autocomplete functionality (given a set of values). I don't really want to implement my custom Modal - the perfect solution would be if the file Editor.js had an additional case for type 'autocomplete' or something similar.What I mean by autocomplete is s... | 1.0 | Autocomplete input field in insert Modal - I would like to have an input text field in my Insert Modal with autocomplete functionality (given a set of values). I don't really want to implement my custom Modal - the perfect solution would be if the file Editor.js had an additional case for type 'autocomplete' or somethi... | process | autocomplete input field in insert modal i would like to have an input text field in my insert modal with autocomplete functionality given a set of values i don t really want to implement my custom modal the perfect solution would be if the file editor js had an additional case for type autocomplete or somethi... | 1 |

431,245 | 30,224,462,884 | IssuesEvent | 2023-07-05 22:29:24 | rancher-sandbox/rancher-desktop | https://api.github.com/repos/rancher-sandbox/rancher-desktop | closed | Document information from original Upgrade Responder client epic in forked Upgrade Responder repo | kind/documentation-devhelp | In [the original Upgrade Responder client epic](https://github.com/rancher-sandbox/rancher-desktop/issues/3925) there is a lot of information about how Upgrade Responder works. We need to document this properly so that anybody looking to learn about it doesn't have to figure out how it should be from reading a long iss... | 1.0 | Document information from original Upgrade Responder client epic in forked Upgrade Responder repo - In [the original Upgrade Responder client epic](https://github.com/rancher-sandbox/rancher-desktop/issues/3925) there is a lot of information about how Upgrade Responder works. We need to document this properly so that a... | non_process | document information from original upgrade responder client epic in forked upgrade responder repo in there is a lot of information about how upgrade responder works we need to document this properly so that anybody looking to learn about it doesn t have to figure out how it should be from reading a long issue | 0 |

48,606 | 10,264,289,065 | IssuesEvent | 2019-08-22 16:01:27 | cityofaustin/techstack | https://api.github.com/repos/cityofaustin/techstack | reopened | Footer copy changes | Copy; not code Janis Alpha Team: Dev | **Acceptance criteria**

- [ ] In English, lowercase "project site"

- [ ] In English and Spanish, "austintexas.gov" should all be lowercase. (We only capitalize a URL if it begins a sentence.)

- [ ] In Spanish, sentence case for the following sentence: "Encuentre más información en nuestro sitio del proyecto"

- [ ] In S... | 1.0 | Footer copy changes - **Acceptance criteria**

- [ ] In English, lowercase "project site"

- [ ] In English and Spanish, "austintexas.gov" should all be lowercase. (We only capitalize a URL if it begins a sentence.)

- [ ] In Spanish, sentence case for the following sentence: "Encuentre más información en nuestro sitio de... | non_process | footer copy changes acceptance criteria in english lowercase project site in english and spanish austintexas gov should all be lowercase we only capitalize a url if it begins a sentence in spanish sentence case for the following sentence encuentre más información en nuestro sitio del proy... | 0 |

9,812 | 12,824,241,624 | IssuesEvent | 2020-07-06 13:10:53 | keep-network/keep-core | https://api.github.com/repos/keep-network/keep-core | opened | Stake delegation system tests | process & client team | Having top-ups implemented we need to execute system tests covering all possible stake delegation scenarios.

Depending on the progress on #1898, tests should be performed using KEEP token dashboard or directly against smart contracts.

- [ ] Grantee can delegate a stake

- [ ] Managed grantee can delegate a stake

-... | 1.0 | Stake delegation system tests - Having top-ups implemented we need to execute system tests covering all possible stake delegation scenarios.

Depending on the progress on #1898, tests should be performed using KEEP token dashboard or directly against smart contracts.

- [ ] Grantee can delegate a stake

- [ ] Managed... | process | stake delegation system tests having top ups implemented we need to execute system tests covering all possible stake delegation scenarios depending on the progress on tests should be performed using keep token dashboard or directly against smart contracts grantee can delegate a stake managed grante... | 1 |

15,992 | 4,003,910,388 | IssuesEvent | 2016-05-12 03:36:31 | abstratt/textuml | https://api.github.com/repos/abstratt/textuml | closed | Provide documentation for the collection/grouping operations in TextUML | documentation | See abstratt/cloudfier#85. | 1.0 | Provide documentation for the collection/grouping operations in TextUML - See abstratt/cloudfier#85. | non_process | provide documentation for the collection grouping operations in textuml see abstratt cloudfier | 0 |

8,378 | 7,372,892,294 | IssuesEvent | 2018-03-13 15:50:41 | OpenLiberty/open-liberty | https://api.github.com/repos/OpenLiberty/open-liberty | closed | When building lib.index.cache, preserve original jar names | springboot team:OSGi Infrastructure | The lib.index.cache currently renames the jars to sha-1 hashes of their contents. This is a little confusing when the jar names appear in error message. Preserve the original jar names in a folder named after the hash. | 1.0 | When building lib.index.cache, preserve original jar names - The lib.index.cache currently renames the jars to sha-1 hashes of their contents. This is a little confusing when the jar names appear in error message. Preserve the original jar names in a folder named after the hash. | non_process | when building lib index cache preserve original jar names the lib index cache currently renames the jars to sha hashes of their contents this is a little confusing when the jar names appear in error message preserve the original jar names in a folder named after the hash | 0 |

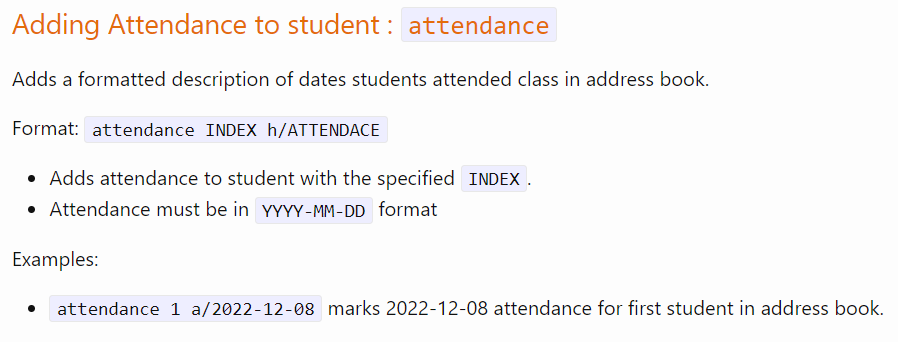

346,525 | 24,886,957,300 | IssuesEvent | 2022-10-28 08:37:03 | Yongbeom-Kim/ped | https://api.github.com/repos/Yongbeom-Kim/ped | opened | Inconsistency in attendance command documentation | severity.Low type.DocumentationBug | The documentation for attendance is not updated, as seen below:

<!--session: 1666944979154-bbc9b0eb-75d6-4d6c-be94-8f557b314ad1-->

<!--Version: Web v3.4.4--> | 1.0 | Inconsistency in attendance command documentation - The documentation for attendance is not updated, as seen below:

<!--session: 1666944979154-bbc9b0eb-75d6-4d6c-be94-8f557b314ad1-->

<!--Version: Web... | non_process | inconsistency in attendance command documentation the documentation for attendance is not updated as seen below | 0 |

16,835 | 22,068,051,199 | IssuesEvent | 2022-05-31 06:40:16 | googleapis/google-cloud-dotnet | https://api.github.com/repos/googleapis/google-cloud-dotnet | opened | Translation snippet tests are failing | type: process | (This is probably just a matter of changing expectations again.) | 1.0 | Translation snippet tests are failing - (This is probably just a matter of changing expectations again.) | process | translation snippet tests are failing this is probably just a matter of changing expectations again | 1 |

64,266 | 8,721,361,429 | IssuesEvent | 2018-12-08 22:14:26 | facebook/create-react-app | https://api.github.com/repos/facebook/create-react-app | closed | Unclear default code splitting in cra v2 | tag: documentation | <!--

PLEASE READ THE FIRST SECTION :-)

-->

### Is this a bug report?

If very unclear documentation is a bug then yes.

A newly created and built app with `Create react app v2` creates three js chucks by default. But there is not a single line in neither index.js nor in App.js that does code splitting accor... | 1.0 | Unclear default code splitting in cra v2 - <!--

PLEASE READ THE FIRST SECTION :-)

-->

### Is this a bug report?

If very unclear documentation is a bug then yes.

A newly created and built app with `Create react app v2` creates three js chucks by default. But there is not a single line in neither index.js n... | non_process | unclear default code splitting in cra please read the first section is this a bug report if very unclear documentation is a bug then yes a newly created and built app with create react app creates three js chucks by default but there is not a single line in neither index js nor... | 0 |

42,396 | 11,013,587,047 | IssuesEvent | 2019-12-04 20:48:08 | cliffparnitzky/CheckedEmail | https://api.github.com/repos/cliffparnitzky/CheckedEmail | closed | After completing the registration module, the mail address in the backend will not be displayed | Defect | Ich verwende die Erweiterung für die Überprüfung der Mail-Adresse bei der Mitgliederregistrierung (Frontend-Modul).

Das funktioniert soweit wunderbar. Leider wird die im Frontend bei der Registrierung eingegebene Mailadresse aber im Backend nicht angezeigt. In der Tabelle tl_member ist sie aber korrekt gespeichert.

C... | 1.0 | After completing the registration module, the mail address in the backend will not be displayed - Ich verwende die Erweiterung für die Überprüfung der Mail-Adresse bei der Mitgliederregistrierung (Frontend-Modul).

Das funktioniert soweit wunderbar. Leider wird die im Frontend bei der Registrierung eingegebene Mailadre... | non_process | after completing the registration module the mail address in the backend will not be displayed ich verwende die erweiterung für die überprüfung der mail adresse bei der mitgliederregistrierung frontend modul das funktioniert soweit wunderbar leider wird die im frontend bei der registrierung eingegebene mailadre... | 0 |

21,029 | 27,969,934,531 | IssuesEvent | 2023-03-25 00:19:49 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | Raster masks segfault if ROI size is changed | understood: unclear scope: image processing no-issue-activity | 1. Define a crop in crop or crop and rotate or perspective modules,

2. Define a parametric mask in some module,

3. Re-use that mask as a raster mask later in pipe,

4. Disable the cropping module,

5. Witness :

```

Thread 14 "worker res 0" received signal SIGSEGV, Segmentation fault.

[Switching to Thread 0x7fffb3... | 1.0 | Raster masks segfault if ROI size is changed - 1. Define a crop in crop or crop and rotate or perspective modules,

2. Define a parametric mask in some module,

3. Re-use that mask as a raster mask later in pipe,

4. Disable the cropping module,

5. Witness :

```

Thread 14 "worker res 0" received signal SIGSEGV, Seg... | process | raster masks segfault if roi size is changed define a crop in crop or crop and rotate or perspective modules define a parametric mask in some module re use that mask as a raster mask later in pipe disable the cropping module witness thread worker res received signal sigsegv segm... | 1 |

8,799 | 11,908,254,855 | IssuesEvent | 2020-03-31 00:25:15 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | processing doesn't output a shapefile's encoding | Bug Feedback Processing | Author Name: **Tobias Wendorff** (Tobias Wendorff)

Original Redmine Issue: [20556](https://issues.qgis.org/issues/20556)

Affected QGIS version: 3.5(master)

Redmine category:processing/core

---

The tools in "processing", like "split vector layer" don't write any information about encoding of shapefiles.

Postprocessin... | 1.0 | processing doesn't output a shapefile's encoding - Author Name: **Tobias Wendorff** (Tobias Wendorff)

Original Redmine Issue: [20556](https://issues.qgis.org/issues/20556)

Affected QGIS version: 3.5(master)

Redmine category:processing/core

---

The tools in "processing", like "split vector layer" don't write any infor... | process | processing doesn t output a shapefile s encoding author name tobias wendorff tobias wendorff original redmine issue affected qgis version master redmine category processing core the tools in processing like split vector layer don t write any information about encoding of shapefiles post... | 1 |

1,441 | 4,007,034,570 | IssuesEvent | 2016-05-12 16:43:53 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Intermittent failures in test-process-exec-argv | process test | * **Version**: 6.0 (built from source)

* **Platform**: Seen on s390 but believe could occur on any

* **Subsystem**: process

We see this test fail intermittently (about 0.4% of the time on one of our machines) with:

<PRE>

680 - test-process-exec-argv.js

not ok 680 test-process-exec-argv.js

# undefined:1

#

#

... | 1.0 | Intermittent failures in test-process-exec-argv - * **Version**: 6.0 (built from source)

* **Platform**: Seen on s390 but believe could occur on any

* **Subsystem**: process

We see this test fail intermittently (about 0.4% of the time on one of our machines) with:

<PRE>

680 - test-process-exec-argv.js

not ok 6... | process | intermittent failures in test process exec argv version built from source platform seen on but believe could occur on any subsystem process we see this test fail intermittently about of the time on one of our machines with test process exec argv js not ok test pro... | 1 |

71,911 | 18,925,034,242 | IssuesEvent | 2021-11-17 08:37:09 | firoorg/firo | https://api.github.com/repos/firoorg/firo | closed | 'make cov' is broken | build-system Priority 2 | ## Actual behaviour

```

zcoin-builder@ac9b1304543f:~/zcoin$ make cov

/usr/bin/lcov --gcov-tool=/usr/bin/gcov -c -i -d /home/zcoin-builder/zcoin/src -o baseline.info

Capturing coverage data from /home/zcoin-builder/zcoin/src

Found gcov version: 4.8.4

Scanning /home/zcoin-builder/zcoin/src for .gcno files ...

Foun... | 1.0 | 'make cov' is broken - ## Actual behaviour

```

zcoin-builder@ac9b1304543f:~/zcoin$ make cov

/usr/bin/lcov --gcov-tool=/usr/bin/gcov -c -i -d /home/zcoin-builder/zcoin/src -o baseline.info

Capturing coverage data from /home/zcoin-builder/zcoin/src

Found gcov version: 4.8.4

Scanning /home/zcoin-builder/zcoin/src fo... | non_process | make cov is broken actual behaviour zcoin builder zcoin make cov usr bin lcov gcov tool usr bin gcov c i d home zcoin builder zcoin src o baseline info capturing coverage data from home zcoin builder zcoin src found gcov version scanning home zcoin builder zcoin src for gcno fil... | 0 |

9,634 | 12,598,493,856 | IssuesEvent | 2020-06-11 03:04:18 | googleapis/java-spanner | https://api.github.com/repos/googleapis/java-spanner | opened | SpannerRetryHelperTest.testExceptionWithRetryInfo test failure | type: process | In #251 (after moving to GitHub actions), we consistently get this test failure on Java 8 Windows.

```

[ERROR] Failures:

[ERROR] SpannerRetryHelperTest.testExceptionWithRetryInfo:218 expected to be true

[INFO]

[ERROR] Tests run: 3581, Failures: 1, Errors: 0, Skipped: 0

```

I will merge in #251 to cleanup... | 1.0 | SpannerRetryHelperTest.testExceptionWithRetryInfo test failure - In #251 (after moving to GitHub actions), we consistently get this test failure on Java 8 Windows.

```

[ERROR] Failures:

[ERROR] SpannerRetryHelperTest.testExceptionWithRetryInfo:218 expected to be true

[INFO]

[ERROR] Tests run: 3581, Failures:... | process | spannerretryhelpertest testexceptionwithretryinfo test failure in after moving to github actions we consistently get this test failure on java windows failures spannerretryhelpertest testexceptionwithretryinfo expected to be true tests run failures errors skipped ... | 1 |

285,343 | 21,514,745,822 | IssuesEvent | 2022-04-28 08:51:41 | SciTools/iris | https://api.github.com/repos/SciTools/iris | closed | Iris API param not rendering | Type: Documentation | ## 📚 Documentation

<!-- See https://scitools-iris.readthedocs.io/en/latest/ -->

<!-- Describe the issue or provide a suggestion for improving the Iris documentation -->

Whilst reviewing the dsocs for another task I noticed there are a few occurrences of `:param:` that is not rendering correctly due to alignment a... | 1.0 | Iris API param not rendering - ## 📚 Documentation

<!-- See https://scitools-iris.readthedocs.io/en/latest/ -->

<!-- Describe the issue or provide a suggestion for improving the Iris documentation -->

Whilst reviewing the dsocs for another task I noticed there are a few occurrences of `:param:` that is not renderi... | non_process | iris api param not rendering 📚 documentation whilst reviewing the dsocs for another task i noticed there are a few occurrences of param that is not rendering correctly due to alignment and maybe other syntax issues there maybe other occurrences too not dug any further | 0 |

9,904 | 12,908,536,151 | IssuesEvent | 2020-07-15 07:37:02 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Get-AutomationConnection does not support | Pri2 automation/svc cxp process-automation/subsvc product-question triaged |

[Enter feedback here]

Get-AutomationConnection does not support in PS 7.0 Az model.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 8dcc44c4-9f64-6b0c-e045-0e5e3c567970

* Version Independent ID: 6456a6aa-bdeb-651c-0c83-a84d4541f73... | 1.0 | Get-AutomationConnection does not support -

[Enter feedback here]

Get-AutomationConnection does not support in PS 7.0 Az model.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 8dcc44c4-9f64-6b0c-e045-0e5e3c567970

* Version Indepen... | process | get automationconnection does not support get automationconnection does not support in ps az model document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id bdeb content conten... | 1 |

65,374 | 14,724,298,341 | IssuesEvent | 2021-01-06 02:15:23 | jamestiotio/orientation2020 | https://api.github.com/repos/jamestiotio/orientation2020 | opened | WS-2020-0208 (Medium) detected in highlight.js-9.18.5.tgz | security vulnerability | ## WS-2020-0208 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>highlight.js-9.18.5.tgz</b></p></summary>

<p>Syntax highlighting with language autodetection.</p>

<p>Library home page... | True | WS-2020-0208 (Medium) detected in highlight.js-9.18.5.tgz - ## WS-2020-0208 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>highlight.js-9.18.5.tgz</b></p></summary>

<p>Syntax highli... | non_process | ws medium detected in highlight js tgz ws medium severity vulnerability vulnerable library highlight js tgz syntax highlighting with language autodetection library home page a href path to dependency file package json path to vulnerable library node modules high... | 0 |

22,615 | 31,841,521,961 | IssuesEvent | 2023-09-14 16:39:39 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | Rotation and perspective : crop not properly taken into account | priority: high reproduce: confirmed scope: image processing bug: pending | With the manual guides (manually draw - pencil - or manually define - square guide) and using the "largest area" mode the crop is not properly done with leaving the module:

All ok when doing the fit action:

... | 1.0 | Rotation and perspective : crop not properly taken into account - With the manual guides (manually draw - pencil - or manually define - square guide) and using the "largest area" mode the crop is not properly done with leaving the module:

All ok when doing the fit action:

.SecretValueText

with

$secret = (Get-AzKeyVaultSecret -VaultName $VaultName -Name "SendGridAPIKey").SecretValue

$ssPtr = [System.Runtime.InteropServ... | 1.0 | SecretValueText has been removed -

SecretValueText has been removed from PSKeyVaultSecret as of Az 5.0.0. I had to replace

$secret = (Get-AzKeyVaultSecret -VaultName $VaultName -Name "SendGridAPIKey").SecretValueText

with

$secret = (Get-AzKeyVaultSecret -VaultName $VaultName -Name "SendGridAPIKey").SecretValue

$... | process | secretvaluetext has been removed secretvaluetext has been removed from pskeyvaultsecret as of az i had to replace secret get azkeyvaultsecret vaultname vaultname name sendgridapikey secretvaluetext with secret get azkeyvaultsecret vaultname vaultname name sendgridapikey secretvalue ... | 1 |

246,393 | 7,895,189,336 | IssuesEvent | 2018-06-29 01:39:10 | aowen87/BAR | https://api.github.com/repos/aowen87/BAR | closed | Viewer windows pop-up automatically | Likelihood: 3 - Occasional OS: Windows 7 Priority: Normal Severity: 3 - Major Irritation Support Group: Any bug version: 2.10.0 | While in Jose Milovich's office he showed me how Viewer windows will automatically pop-up when using VisIt on Windows 7 (Enterprise, 64-bit). From my observation, it seem to happen when he used the scroll wheel on his mouse. He said it also happens on his Windows box at home (not sure if he uses a mouse with the scroll... | 1.0 | Viewer windows pop-up automatically - While in Jose Milovich's office he showed me how Viewer windows will automatically pop-up when using VisIt on Windows 7 (Enterprise, 64-bit). From my observation, it seem to happen when he used the scroll wheel on his mouse. He said it also happens on his Windows box at home (not ... | non_process | viewer windows pop up automatically while in jose milovich s office he showed me how viewer windows will automatically pop up when using visit on windows enterprise bit from my observation it seem to happen when he used the scroll wheel on his mouse he said it also happens on his windows box at home not s... | 0 |

15,689 | 3,331,669,171 | IssuesEvent | 2015-11-11 16:45:55 | owncloud/core | https://api.github.com/repos/owncloud/core | closed | Use product name in "save to ownCloud" button | design enhancement needs info sev4-low sharing | currently when a theme is used that changes the product name the button to save a public share still says "ownCloud" | 1.0 | Use product name in "save to ownCloud" button - currently when a theme is used that changes the product name the button to save a public share still says "ownCloud" | non_process | use product name in save to owncloud button currently when a theme is used that changes the product name the button to save a public share still says owncloud | 0 |

10,317 | 13,159,912,372 | IssuesEvent | 2020-08-10 16:38:23 | cncf/cnf-conformance | https://api.github.com/repos/cncf/cnf-conformance | closed | [Process] Add "good first issues" to issues and documentation | 2 pts process sprint13 | ### [Process] Add "good first issues" to issues and documentation

Tasks:

- [x] Brainstorm on good first issues for a new contributor

- [x] Triage first set of issues

- [x] add new issues to Github

- [x] add "good first issues" label to issues

- [x] Add comment suggesting updates as needed for:

- [x] the ... | 1.0 | [Process] Add "good first issues" to issues and documentation - ### [Process] Add "good first issues" to issues and documentation

Tasks:

- [x] Brainstorm on good first issues for a new contributor

- [x] Triage first set of issues

- [x] add new issues to Github

- [x] add "good first issues" label to issues

-... | process | add good first issues to issues and documentation add good first issues to issues and documentation tasks brainstorm on good first issues for a new contributor triage first set of issues add new issues to github add good first issues label to issues add comment suggestin... | 1 |

19,764 | 26,139,113,375 | IssuesEvent | 2022-12-29 15:52:33 | MicrosoftDocs/windows-dev-docs | https://api.github.com/repos/MicrosoftDocs/windows-dev-docs | closed | Is there a code somewhere | uwp/prod processes-and-threading/tech Pri2 | Things on this page get confusing mostly with the names of each product. I'm not sure what this article seems to consider the service project to be. It is contained in an app to run in proc but I think the containing app is referred to as the service which it's not. Making it worse the article describes getting the pac... | 1.0 | Is there a code somewhere - Things on this page get confusing mostly with the names of each product. I'm not sure what this article seems to consider the service project to be. It is contained in an app to run in proc but I think the containing app is referred to as the service which it's not. Making it worse the artic... | process | is there a code somewhere things on this page get confusing mostly with the names of each product i m not sure what this article seems to consider the service project to be it is contained in an app to run in proc but i think the containing app is referred to as the service which it s not making it worse the artic... | 1 |

9,262 | 12,294,715,001 | IssuesEvent | 2020-05-11 01:15:56 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | On-disk storage crash in GoAccess | bug duplicate log-processing on-disk waiting reply | ```

[root@sites ~]# goaccess -f /var/log/httpd/access_log -o /Sites/usdsites/bryan/report.html --real-time-html --log-format=COMBINED --ws-url=sites-mgmt.sandiego.edu --keep-db-files --load-from-disk

Unable to open fifo out: No such device or address.

Accepted: 168 10.80.20.81

Active: 1

CLOSE

Active: 0

Accepted:... | 1.0 | On-disk storage crash in GoAccess - ```

[root@sites ~]# goaccess -f /var/log/httpd/access_log -o /Sites/usdsites/bryan/report.html --real-time-html --log-format=COMBINED --ws-url=sites-mgmt.sandiego.edu --keep-db-files --load-from-disk

Unable to open fifo out: No such device or address.

Accepted: 168 10.80.20.81

Ac... | process | on disk storage crash in goaccess goaccess f var log httpd access log o sites usdsites bryan report html real time html log format combined ws url sites mgmt sandiego edu keep db files load from disk unable to open fifo out no such device or address accepted active close act... | 1 |

17,568 | 2,615,147,331 | IssuesEvent | 2015-03-01 06:23:34 | chrsmith/html5rocks | https://api.github.com/repos/chrsmith/html5rocks | closed | Including lovelywebapp on the front page | auto-migrated lovelywebapp Milestone-6 MovedFrom4 Priority-P1 Type-Feature | ```

What steps will reproduce the problem?

1.

2.

3.

What is the expected output? What do you see instead?

Please use labels and text to provide additional information.

```

Original issue reported on code.google.com by `v...@google.com` on 24 Sep 2010 at 5:29 | 1.0 | Including lovelywebapp on the front page - ```

What steps will reproduce the problem?

1.

2.

3.

What is the expected output? What do you see instead?

Please use labels and text to provide additional information.

```

Original issue reported on code.google.com by `v...@google.com` on 24 Sep 2010 at 5:29 | non_process | including lovelywebapp on the front page what steps will reproduce the problem what is the expected output what do you see instead please use labels and text to provide additional information original issue reported on code google com by v google com on sep at | 0 |

16,516 | 21,527,181,569 | IssuesEvent | 2022-04-28 19:43:17 | oasis-tcs/csaf | https://api.github.com/repos/oasis-tcs/csaf | opened | Register `signature` and `hash` as link rel types for ROLIE | csaf 2.0 external oasis_tc_process | In the ROLIE feed, we suggest to explicitly mention the signature files and hash files. We should register those link rel types. | 1.0 | Register `signature` and `hash` as link rel types for ROLIE - In the ROLIE feed, we suggest to explicitly mention the signature files and hash files. We should register those link rel types. | process | register signature and hash as link rel types for rolie in the rolie feed we suggest to explicitly mention the signature files and hash files we should register those link rel types | 1 |

20,195 | 26,768,721,412 | IssuesEvent | 2023-01-31 12:37:14 | corona-warn-app/cwa-wishlist | https://api.github.com/repos/corona-warn-app/cwa-wishlist | closed | Allow pasting of TeleTAN | enhancement mirrored-to-jira Test/Share process | ## Current implementation

When entering a Tele Tan it is not possible to past a TAN copied from somewhere.

## Suggested Enhancement

Use prompt to paste copied TeleTAN into entry field.

## Expected Benefits

This will reduce human error and increase convenience.

---

Internal Tracking ID: EXPOSUREAPP-... | 1.0 | Allow pasting of TeleTAN - ## Current implementation

When entering a Tele Tan it is not possible to past a TAN copied from somewhere.

## Suggested Enhancement

Use prompt to paste copied TeleTAN into entry field.

## Expected Benefits

This will reduce human error and increase convenience.

---

Interna... | process | allow pasting of teletan current implementation when entering a tele tan it is not possible to past a tan copied from somewhere suggested enhancement use prompt to paste copied teletan into entry field expected benefits this will reduce human error and increase convenience interna... | 1 |

2,742 | 5,635,499,708 | IssuesEvent | 2017-04-06 00:58:48 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Named aggregations in BigQuery can't have spaces/special characters | Bug Database/BigQuery Limitation Priority/P1 Query Processor | Trying to name an aggregation "hello world" results in this error:

> Invalid field name "hello world". Fields must contain only letters, numbers, and underscores, start with a letter or underscore, and be at most 128 characters long. | 1.0 | Named aggregations in BigQuery can't have spaces/special characters - Trying to name an aggregation "hello world" results in this error:

> Invalid field name "hello world". Fields must contain only letters, numbers, and underscores, start with a letter or underscore, and be at most 128 characters long. | process | named aggregations in bigquery can t have spaces special characters trying to name an aggregation hello world results in this error invalid field name hello world fields must contain only letters numbers and underscores start with a letter or underscore and be at most characters long | 1 |

2,347 | 5,154,920,832 | IssuesEvent | 2017-01-15 05:46:21 | rubberduck-vba/Rubberduck | https://api.github.com/repos/rubberduck-vba/Rubberduck | closed | Undeclared variables don't have annotations | bug parse-tree-processing | Undeclared variables are "declared" on-the-spot in the resolver's "identifier references" pass, so that when an identifier can't be resolved it's assumed to be a local variable, and so subsequent references to that identifier in the procedure scope will resolve to that newly created `Declaration`.

As a result, this ... | 1.0 | Undeclared variables don't have annotations - Undeclared variables are "declared" on-the-spot in the resolver's "identifier references" pass, so that when an identifier can't be resolved it's assumed to be a local variable, and so subsequent references to that identifier in the procedure scope will resolve to that newl... | process | undeclared variables don t have annotations undeclared variables are declared on the spot in the resolver s identifier references pass so that when an identifier can t be resolved it s assumed to be a local variable and so subsequent references to that identifier in the procedure scope will resolve to that newl... | 1 |

158,177 | 20,009,258,961 | IssuesEvent | 2022-02-01 02:55:48 | snowdensb/sonar-xanitizer | https://api.github.com/repos/snowdensb/sonar-xanitizer | opened | CVE-2022-23437 (High) detected in xercesImpl-2.11.0.jar | security vulnerability | ## CVE-2022-23437 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xercesImpl-2.11.0.jar</b></p></summary>

<p></p>

<p>Library home page: <a href="http://archive.apache.org/dist/drill/dr... | True | CVE-2022-23437 (High) detected in xercesImpl-2.11.0.jar - ## CVE-2022-23437 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xercesImpl-2.11.0.jar</b></p></summary>

<p></p>

<p>Library h... | non_process | cve high detected in xercesimpl jar cve high severity vulnerability vulnerable library xercesimpl jar library home page a href path to vulnerable library src test resources webgoat web inf lib xercesimpl jar dependency hierarchy x xercesimpl j... | 0 |

263,739 | 19,974,672,078 | IssuesEvent | 2022-01-29 00:15:47 | carbonplan/cmip6-downscaling | https://api.github.com/repos/carbonplan/cmip6-downscaling | opened | Use tutorial: How to override configuration variables | documentation | ### Intro

The `cmip6_downscaling` python package uses the [donfig](https://donfig.readthedocs.io/en/latest/) package for configuration. The config schema as well as defaults are laid out in [config.py](https://github.com/carbonplan/cmip6-downscaling/blob/main/cmip6_downscaling/config.py). These defaults can be overwri... | 1.0 | Use tutorial: How to override configuration variables - ### Intro

The `cmip6_downscaling` python package uses the [donfig](https://donfig.readthedocs.io/en/latest/) package for configuration. The config schema as well as defaults are laid out in [config.py](https://github.com/carbonplan/cmip6-downscaling/blob/main/cmi... | non_process | use tutorial how to override configuration variables intro the downscaling python package uses the package for configuration the config schema as well as defaults are laid out in these defaults can be overwritten by setting or using the yaml configuration donfig allows ya... | 0 |

181,493 | 14,045,106,434 | IssuesEvent | 2020-11-02 00:14:07 | Elle624/FitLit-Refactor | https://api.github.com/repos/Elle624/FitLit-Refactor | closed | Add Chai Spy for domUpdate | Priority 1 js testing | As a developer, I should be able to test if functions are being called in domUpdate object | 1.0 | Add Chai Spy for domUpdate - As a developer, I should be able to test if functions are being called in domUpdate object | non_process | add chai spy for domupdate as a developer i should be able to test if functions are being called in domupdate object | 0 |

344,561 | 24,818,839,568 | IssuesEvent | 2022-10-25 14:57:43 | joshuadavidthomas/dotfiles | https://api.github.com/repos/joshuadavidthomas/dotfiles | opened | Add better documentation about what the installation script does | documentation | Should include applications installed | 1.0 | Add better documentation about what the installation script does - Should include applications installed | non_process | add better documentation about what the installation script does should include applications installed | 0 |

247,522 | 20,984,019,236 | IssuesEvent | 2022-03-28 23:42:28 | rstudio/rstudio | https://api.github.com/repos/rstudio/rstudio | closed | git diff (Review Changes) window fails to load | bug electron test | For Electron. It looks like it's trying to query `window.desktopInfo`, but that object isn't populated in windows other than the main window. | 1.0 | git diff (Review Changes) window fails to load - For Electron. It looks like it's trying to query `window.desktopInfo`, but that object isn't populated in windows other than the main window. | non_process | git diff review changes window fails to load for electron it looks like it s trying to query window desktopinfo but that object isn t populated in windows other than the main window | 0 |

805,232 | 29,513,207,081 | IssuesEvent | 2023-06-04 07:04:50 | slynch8/10x | https://api.github.com/repos/slynch8/10x | opened | updating subscription requires a restart | bug Priority 2 | Potential minor bug if you designed 10x to pick up new subscription details right after updating them without the need to restart 10x:

My first cancelled subscription expired today and after starting 10x I got a window where I could put new subscription details.

I put the new subscription details and clicked ok.

Aft... | 1.0 | updating subscription requires a restart - Potential minor bug if you designed 10x to pick up new subscription details right after updating them without the need to restart 10x:

My first cancelled subscription expired today and after starting 10x I got a window where I could put new subscription details.

I put the ne... | non_process | updating subscription requires a restart potential minor bug if you designed to pick up new subscription details right after updating them without the need to restart my first cancelled subscription expired today and after starting i got a window where i could put new subscription details i put the new subs... | 0 |

21,649 | 30,083,638,391 | IssuesEvent | 2023-06-29 06:51:23 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | GO:0039644 'suppression by virus of host NF-kappaB cascade' missing parent | multi-species process | GO:0039644 'suppression by virus of host NF-kappaB cascade'

is not under modulation/perturbation of host signaling

<img width="780" alt="image" src="https://github.com/geneontology/go-ontology/assets/4782928/8e134ee0-1969-4964-8b7c-1f6d1761e7a2">

| 1.0 | GO:0039644 'suppression by virus of host NF-kappaB cascade' missing parent - GO:0039644 'suppression by virus of host NF-kappaB cascade'

is not under modulation/perturbation of host signaling

<img width="780" alt="image" src="https://github.com/geneontology/go-ontology/assets/4782928/8e134ee0-1969-4964-8b7c-1f6d17... | process | go suppression by virus of host nf kappab cascade missing parent go suppression by virus of host nf kappab cascade is not under modulation perturbation of host signaling img width alt image src | 1 |

39,840 | 5,252,293,475 | IssuesEvent | 2017-02-02 03:39:34 | semperfiwebdesign/all-in-one-seo-pack | https://api.github.com/repos/semperfiwebdesign/all-in-one-seo-pack | closed | Social Meta Module - Upload image is not working and is causing an error in console | Bug Needs Testing Priority - High | Reported here - https://wordpress.org/support/topic/upload-image-is-not-working/

AIOSEOP->Social Meta->Upload image is not working and is causing an error in console:

aioseop_module.js?ver=2.3.11.3:27 Uncaught TypeError: Cannot read property 'frames' of undefined

at HTMLInputElement.<anonymous> (aioseop_modu... | 1.0 | Social Meta Module - Upload image is not working and is causing an error in console - Reported here - https://wordpress.org/support/topic/upload-image-is-not-working/

AIOSEOP->Social Meta->Upload image is not working and is causing an error in console:

aioseop_module.js?ver=2.3.11.3:27 Uncaught TypeError: Cannot ... | non_process | social meta module upload image is not working and is causing an error in console reported here aioseop social meta upload image is not working and is causing an error in console aioseop module js ver uncaught typeerror cannot read property frames of undefined at htmlinputelement a... | 0 |

5,898 | 8,712,869,239 | IssuesEvent | 2018-12-06 23:52:58 | googleapis/nodejs-resource | https://api.github.com/repos/googleapis/nodejs-resource | closed | Resource is not a constructor error | type: process | Thanks for stopping by to let us know something could be better!

Please run down the following list and make sure you've tried the usual "quick

fixes":

- Search the issues already opened: https://github.com/googleapis/nodejs-resource/issues

- Search the issues on our "catch-all" repository: https://github.c... | 1.0 | Resource is not a constructor error - Thanks for stopping by to let us know something could be better!

Please run down the following list and make sure you've tried the usual "quick

fixes":

- Search the issues already opened: https://github.com/googleapis/nodejs-resource/issues

- Search the issues on our "c... | process | resource is not a constructor error thanks for stopping by to let us know something could be better please run down the following list and make sure you ve tried the usual quick fixes search the issues already opened search the issues on our catch all repository search stackoverflow ... | 1 |

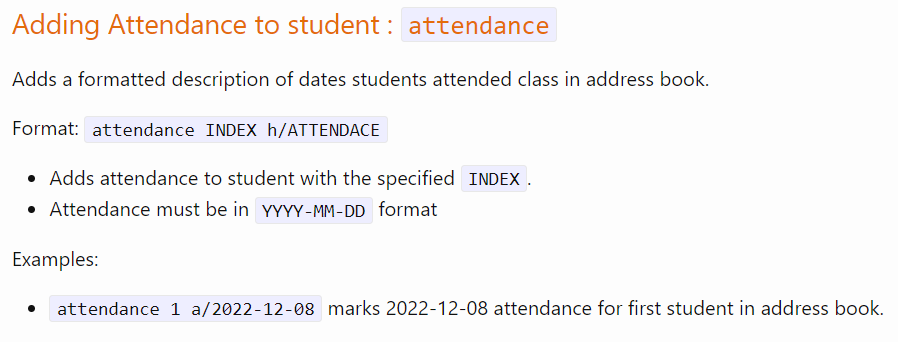

233,863 | 19,073,952,526 | IssuesEvent | 2021-11-27 12:18:51 | JukeBot-Org/JukeBot | https://api.github.com/repos/JukeBot-Org/JukeBot | opened | !queue fails to account for a queue with >=10 tracks | type: bug branch: testing priority: low | If there is more than 10 tracks in a guild's queue when invoking the `!guild` command, the queue view shifts over. As expected, of course, but not as intended.

| 1.0 | !queue fails to account for a queue with >=10 tracks - If there is more than 10 tracks in a guild's queue when invoking the `!guild` command, the queue view shifts over. As expected, of course, but not as intended.

detected in avandroid-10.0.0_r33 | Mend: dependency security vulnerability | ## CVE-2020-0113 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>avandroid-10.0.0_r33</b></p></summary>

<p>

<p>Library home page: <a href=https://android.googlesource.com/platform/fr... | True | CVE-2020-0113 (Medium) detected in avandroid-10.0.0_r33 - ## CVE-2020-0113 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>avandroid-10.0.0_r33</b></p></summary>

<p>

<p>Library home ... | non_process | cve medium detected in avandroid cve medium severity vulnerability vulnerable library avandroid library home page a href found in head commit a href found in base branch main vulnerable source files services camera libcameraservic... | 0 |

17,450 | 23,269,180,506 | IssuesEvent | 2022-08-04 20:42:17 | googleapis/google-cloud-ruby | https://api.github.com/repos/googleapis/google-cloud-ruby | closed | Solvable failures in the nightly samples tests | type: process samples | See https://source.cloud.google.com/results/invocations/7741f5cc-ca8e-4043-b484-4e7dae441929/log

* kms: appears to be due to the samples defining toplevel methods that are interfering with the clients. We should probably rename the sample methods (e.g. by appending `_sample` to them).

* monitoring: appears to be du... | 1.0 | Solvable failures in the nightly samples tests - See https://source.cloud.google.com/results/invocations/7741f5cc-ca8e-4043-b484-4e7dae441929/log

* kms: appears to be due to the samples defining toplevel methods that are interfering with the clients. We should probably rename the sample methods (e.g. by appending `_... | process | solvable failures in the nightly samples tests see kms appears to be due to the samples defining toplevel methods that are interfering with the clients we should probably rename the sample methods e g by appending sample to them monitoring appears to be due to changes in the responses we probably... | 1 |

215,507 | 16,675,840,311 | IssuesEvent | 2021-06-07 16:04:14 | sass/libsass | https://api.github.com/repos/sass/libsass | closed | url() should be able to contain an exclamation mark | Bug - Confirmed Compatibility - P3 Dart Backport Done Dev - Test Written | The [CSS spec](https://drafts.csswg.org/css-syntax-3/#url-token-diagram) says that a `url()` can contain `!`, so Sass should allow that too. Note that we don't allow *everything* that CSS does, because we want to be able to parse `url($foo)` as a URL taking a variable, but `!` is unlikely to be used in a SassScript exp... | 1.0 | url() should be able to contain an exclamation mark - The [CSS spec](https://drafts.csswg.org/css-syntax-3/#url-token-diagram) says that a `url()` can contain `!`, so Sass should allow that too. Note that we don't allow *everything* that CSS does, because we want to be able to parse `url($foo)` as a URL taking a variab... | non_process | url should be able to contain an exclamation mark the says that a url can contain so sass should allow that too note that we don t allow everything that css does because we want to be able to parse url foo as a url taking a variable but is unlikely to be used in a sassscript expression ... | 0 |

18,332 | 24,450,529,156 | IssuesEvent | 2022-10-06 22:23:20 | microsoft/react-native-windows | https://api.github.com/repos/microsoft/react-native-windows | closed | 0.70 Release Status | enhancement Area: Release Process | ## Checklist

**Before Preview**

- [x] Draft GitHub release notes from commit log (chiaramooney)

- [x] Promote canary build to preview using [wiki instructions](https://github.com/microsoft/react-native-windows/wiki/How-to-promote-a-release) (chiaramooney)

- [x] Push build to stable branch (chiaramooney)

- [x] ... | 1.0 | 0.70 Release Status - ## Checklist

**Before Preview**

- [x] Draft GitHub release notes from commit log (chiaramooney)

- [x] Promote canary build to preview using [wiki instructions](https://github.com/microsoft/react-native-windows/wiki/How-to-promote-a-release) (chiaramooney)

- [x] Push build to stable branch (c... | process | release status checklist before preview draft github release notes from commit log chiaramooney promote canary build to preview using chiaramooney push build to stable branch chiaramooney enable ci schedule for new branch of update with an entry for ci v... | 1 |

35,236 | 6,424,123,274 | IssuesEvent | 2017-08-09 12:51:02 | RobertLucian/GoPiGo3 | https://api.github.com/repos/RobertLucian/GoPiGo3 | opened | `DistanceSensor.read_mm` giving false readings | bug documentation | The `read_mm` method of the `DistanceSensor` class seems to be returning values up to `3000` instead of going up to `8000`.

Also, when the sensor is out of range, the method doesn't return `8190` as the documentation says. | 1.0 | `DistanceSensor.read_mm` giving false readings - The `read_mm` method of the `DistanceSensor` class seems to be returning values up to `3000` instead of going up to `8000`.

Also, when the sensor is out of range, the method doesn't return `8190` as the documentation says. | non_process | distancesensor read mm giving false readings the read mm method of the distancesensor class seems to be returning values up to instead of going up to also when the sensor is out of range the method doesn t return as the documentation says | 0 |

21,827 | 30,318,214,467 | IssuesEvent | 2023-07-10 17:07:09 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | closed | Move terms from Occurrence to MaterialSample | Term - change Class - Occurrence Class - MaterialSample Process - need templated change request non-normative Task Group - Material Sample | Was

https://code.google.com/p/darwincore/issues/detail?id=236 and

https://code.google.com/p/darwincore/issues/detail?id=239 and

https://code.google.com/p/darwincore/issues/detail?id=241

When the MaterialSample proposal was ratified, no consideration had been given to which existing terms might be organized in this n... | 1.0 | Move terms from Occurrence to MaterialSample - Was

https://code.google.com/p/darwincore/issues/detail?id=236 and

https://code.google.com/p/darwincore/issues/detail?id=239 and

https://code.google.com/p/darwincore/issues/detail?id=241

When the MaterialSample proposal was ratified, no consideration had been given to wh... | process | move terms from occurrence to materialsample was and and when the materialsample proposal was ratified no consideration had been given to which existing terms might be organized in this new class three terms preparations associatedsequences and disposition make sense to belong in this class term... | 1 |

148,405 | 11,851,639,125 | IssuesEvent | 2020-03-24 18:26:42 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | closed | REST API integration tests for all parameters | P2 enhancement rest test | **Problem**

We keep having regressions in the REST API because we don't have good test coverage. We have an integration test framework but it is not filled out with specs for all URLs and combinations of parameters.

In addition - the way we setup integration test data, execute integration tests, and compare results, i... | 1.0 | REST API integration tests for all parameters - **Problem**

We keep having regressions in the REST API because we don't have good test coverage. We have an integration test framework but it is not filled out with specs for all URLs and combinations of parameters.

In addition - the way we setup integration test data, e... | non_process | rest api integration tests for all parameters problem we keep having regressions in the rest api because we don t have good test coverage we have an integration test framework but it is not filled out with specs for all urls and combinations of parameters in addition the way we setup integration test data e... | 0 |

284,001 | 30,913,580,599 | IssuesEvent | 2023-08-05 02:18:11 | hshivhare67/kernel_v4.19.72 | https://api.github.com/repos/hshivhare67/kernel_v4.19.72 | reopened | CVE-2020-24490 (Medium) detected in linuxlinux-4.19.282 | Mend: dependency security vulnerability | ## CVE-2020-24490 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.282</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge... | True | CVE-2020-24490 (Medium) detected in linuxlinux-4.19.282 - ## CVE-2020-24490 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.282</b></p></summary>

<p>

<p>The Linux Ker... | non_process | cve medium detected in linuxlinux cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files net bluetoot... | 0 |

10,056 | 13,044,161,745 | IssuesEvent | 2020-07-29 03:47:25 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `SubDateDatetimeDecimal` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `SubDateDatetimeDecimal` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @sticnarf

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query... | 2.0 | UCP: Migrate scalar function `SubDateDatetimeDecimal` from TiDB -

## Description

Port the scalar function `SubDateDatetimeDecimal` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @sticnarf

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB... | process | ucp migrate scalar function subdatedatetimedecimal from tidb description port the scalar function subdatedatetimedecimal from tidb to coprocessor score mentor s sticnarf recommended skills rust programming learning materials already implemented expressions ported from tidb ... | 1 |

11,093 | 13,936,770,098 | IssuesEvent | 2020-10-22 13:21:52 | prisma/prisma-engines | https://api.github.com/repos/prisma/prisma-engines | opened | Write a script to extract the `UserFacingError` metadata for all user facing errors and return it as JSON | kind/docs process/candidate team/engines topic: error | The script should use the `UserFacingError` trait to get information about each user facing error and put that information a JSON file for other tools to generate readable docs from it.

The only awkward bit is that we need a central list of all the errors in the user-facing-errors crate. Exhaustiveness can be enforc... | 1.0 | Write a script to extract the `UserFacingError` metadata for all user facing errors and return it as JSON - The script should use the `UserFacingError` trait to get information about each user facing error and put that information a JSON file for other tools to generate readable docs from it.

The only awkward bit is... | process | write a script to extract the userfacingerror metadata for all user facing errors and return it as json the script should use the userfacingerror trait to get information about each user facing error and put that information a json file for other tools to generate readable docs from it the only awkward bit is... | 1 |

1,868 | 4,697,449,435 | IssuesEvent | 2016-10-12 09:24:13 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | degrading performance after using child_process | child_process confirmed-bug lts-watch-v4.x os x performance | After running a child process ( using exec or spawn ) I have found that the performance of my node.js application decreases by a factor of 10. Below is a contrived example and output.

```

var exec = require('child_process').exec;

function runExpensiveOperation(times) {

while(times > 0) {

console.time('ex... | 1.0 | degrading performance after using child_process - After running a child process ( using exec or spawn ) I have found that the performance of my node.js application decreases by a factor of 10. Below is a contrived example and output.

```

var exec = require('child_process').exec;

function runExpensiveOperation(ti... | process | degrading performance after using child process after running a child process using exec or spawn i have found that the performance of my node js application decreases by a factor of below is a contrived example and output var exec require child process exec function runexpensiveoperation tim... | 1 |

37,841 | 18,792,476,583 | IssuesEvent | 2021-11-08 18:16:21 | rust-lang/rustup | https://api.github.com/repos/rust-lang/rustup | reopened | Modify `rustup-init.sh` to support retrying the download of `rustup-init` | enhancement help wanted E-easy performance E-mentor | <!-- Thanks for filing an 🙋 enhancement request 😄! -->

**Describe the problem you are trying to solve**

<!-- A clear and concise description of the problem this enhancement request is trying to solve. -->

I am a newbie trying to install rustup. it is having difficulties on downloading required packages from st... | True | Modify `rustup-init.sh` to support retrying the download of `rustup-init` - <!-- Thanks for filing an 🙋 enhancement request 😄! -->

**Describe the problem you are trying to solve**

<!-- A clear and concise description of the problem this enhancement request is trying to solve. -->

I am a newbie trying to instal... | non_process | modify rustup init sh to support retrying the download of rustup init describe the problem you are trying to solve i am a newbie trying to install rustup it is having difficulties on downloading required packages from static rust lang org because the connection constantly drops randomly it tries ... | 0 |

47,581 | 25,081,770,866 | IssuesEvent | 2022-11-07 19:59:34 | benwbrum/fromthepage | https://api.github.com/repos/benwbrum/fromthepage | closed | user visit screen performance | performance | The admin-side screen displaying a list of user visits has terrible performance because we are fetching the deeds for each visit, but there is no index on `deeds.visit_id` so the DB has to do a full table scan, multiple times per screen. | True | user visit screen performance - The admin-side screen displaying a list of user visits has terrible performance because we are fetching the deeds for each visit, but there is no index on `deeds.visit_id` so the DB has to do a full table scan, multiple times per screen. | non_process | user visit screen performance the admin side screen displaying a list of user visits has terrible performance because we are fetching the deeds for each visit but there is no index on deeds visit id so the db has to do a full table scan multiple times per screen | 0 |

12,365 | 14,892,885,511 | IssuesEvent | 2021-01-21 03:59:30 | microsoft/react-native-windows | https://api.github.com/repos/microsoft/react-native-windows | closed | Automatic Release Note Creation Failed in 0.64-stable Branch | Area: Release Process bug | The task to create automated release notes failed for the first publish of the 0.64-stable branch. Beachball did correctly push Git tags, but the changelog creation script doesn't seem to locally find a new local tag without release notes. https://dev.azure.com/ms/react-native-windows/_build/results?buildId=125090&view... | 1.0 | Automatic Release Note Creation Failed in 0.64-stable Branch - The task to create automated release notes failed for the first publish of the 0.64-stable branch. Beachball did correctly push Git tags, but the changelog creation script doesn't seem to locally find a new local tag without release notes. https://dev.azure... | process | automatic release note creation failed in stable branch the task to create automated release notes failed for the first publish of the stable branch beachball did correctly push git tags but the changelog creation script doesn t seem to locally find a new local tag without release notes | 1 |