Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

72,500 | 8,744,661,889 | IssuesEvent | 2018-12-12 23:03:19 | openopps/OpenOppsTasks | https://api.github.com/repos/openopps/OpenOppsTasks | closed | Design for Editing location | Design | Location will be edited on Open Opportunities and will not come from USAJOBS.

Need design for allowing the Open Opps user to edit location in their Open Opps profile.

Date Needed: This will go into sprint 4 of the current release. Sprint planning will occur on 11/1 | 1.0 | Design for Editing location - Location will be edited on Open Opportunities and will not come from USAJOBS.

Need design for allowing the Open Opps user to edit location in their Open Opps profile.

Date Needed: This will go into sprint 4 of the current release. Sprint planning will occur on 11/1 | non_process | design for editing location location will be edited on open opportunities and will not come from usajobs need design for allowing the open opps user to edit location in their open opps profile date needed this will go into sprint of the current release sprint planning will occur on | 0 |

20,101 | 10,456,226,183 | IssuesEvent | 2019-09-20 00:08:27 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Mislabeled section | Pri1 cxp doc-bug security-fundamentals/subsvc security/svc triaged | I believe the section labeled "Server-side encryption using service-managed keys in customer-controlled hardware" should read "Server-side encryption using customer-managed keys in customer-controlled hardware". In the data encryption models section, there is no mention of supporting service-managed keys in customer-c... | True | Mislabeled section - I believe the section labeled "Server-side encryption using service-managed keys in customer-controlled hardware" should read "Server-side encryption using customer-managed keys in customer-controlled hardware". In the data encryption models section, there is no mention of supporting service-manag... | non_process | mislabeled section i believe the section labeled server side encryption using service managed keys in customer controlled hardware should read server side encryption using customer managed keys in customer controlled hardware in the data encryption models section there is no mention of supporting service manag... | 0 |

21,688 | 30,184,711,334 | IssuesEvent | 2023-07-04 11:16:00 | Seddryck/Tseesecake | https://api.github.com/repos/Seddryck/Tseesecake | opened | Processor to transform projections to slicers for aggragations | new-feature query-processor | If you're defining an `AggregationProjection`, any other projection should be part of the slicers. We should allow people to not define them as slicers, just as projection and copy them to slicers with a processor. | 1.0 | Processor to transform projections to slicers for aggragations - If you're defining an `AggregationProjection`, any other projection should be part of the slicers. We should allow people to not define them as slicers, just as projection and copy them to slicers with a processor. | process | processor to transform projections to slicers for aggragations if you re defining an aggregationprojection any other projection should be part of the slicers we should allow people to not define them as slicers just as projection and copy them to slicers with a processor | 1 |

14,907 | 18,293,393,528 | IssuesEvent | 2021-10-05 17:42:25 | microsoft/react-native-windows | https://api.github.com/repos/microsoft/react-native-windows | closed | Update react-native-platform-override for new nightly version pattern | must-have enhancement Area: Release Process Integration Follow-up | RN nightlies now encode more than just the commit hash into the prerelease segment. E.g. 0.0.0-2d2de744b-20210929-2246.

We need to update integration tooling to properly extract just the commit hash from this. | 1.0 | Update react-native-platform-override for new nightly version pattern - RN nightlies now encode more than just the commit hash into the prerelease segment. E.g. 0.0.0-2d2de744b-20210929-2246.

We need to update integration tooling to properly extract just the commit hash from this. | process | update react native platform override for new nightly version pattern rn nightlies now encode more than just the commit hash into the prerelease segment e g we need to update integration tooling to properly extract just the commit hash from this | 1 |

17,566 | 23,378,245,169 | IssuesEvent | 2022-08-11 06:50:33 | Battle-s/battle-school-backend | https://api.github.com/repos/Battle-s/battle-school-backend | closed | [FEAT] JPA (jpa.show-sql설정) | feature :computer: processing :hourglass_flowing_sand: | ## 설명

> JPA가 만드는 SQL을 보기위해 설정을 추가합니다.

## 관련 논의

> 관련 설정 추가해서 PR보낼테니 확인해주세요.

| 1.0 | [FEAT] JPA (jpa.show-sql설정) - ## 설명

> JPA가 만드는 SQL을 보기위해 설정을 추가합니다.

## 관련 논의

> 관련 설정 추가해서 PR보낼테니 확인해주세요.

| process | jpa jpa show sql설정 설명 jpa가 만드는 sql을 보기위해 설정을 추가합니다 관련 논의 관련 설정 추가해서 pr보낼테니 확인해주세요 | 1 |

134,575 | 12,619,445,259 | IssuesEvent | 2020-06-13 00:39:08 | MYE2020-A2-GRUPO-04/Archivos-Boveda_de_seguridad | https://api.github.com/repos/MYE2020-A2-GRUPO-04/Archivos-Boveda_de_seguridad | closed | Demostración del proyecto | documentation | Crear una vídeo demostración o animación del proyecto en funcionamiento | 1.0 | Demostración del proyecto - Crear una vídeo demostración o animación del proyecto en funcionamiento | non_process | demostración del proyecto crear una vídeo demostración o animación del proyecto en funcionamiento | 0 |

19,174 | 25,282,120,965 | IssuesEvent | 2022-11-16 16:28:43 | ncbo/bioportal-project | https://api.github.com/repos/ncbo/bioportal-project | closed | AIO: latest submission failed to process - status "Uploaded, Error Rdf" | ontology processing problem | Received report from an end user on the support list that the latest version of their [AIO ontology](https://bioportal.bioontology.org/ontologies/AIO) failed to process (submission ID 4). The summary page on BioPortal is showing statuses of "Uploaded, Error Rdf".

Production log file indicates that the OWL API wasn't... | 1.0 | AIO: latest submission failed to process - status "Uploaded, Error Rdf" - Received report from an end user on the support list that the latest version of their [AIO ontology](https://bioportal.bioontology.org/ontologies/AIO) failed to process (submission ID 4). The summary page on BioPortal is showing statuses of "Uplo... | process | aio latest submission failed to process status uploaded error rdf received report from an end user on the support list that the latest version of their failed to process submission id the summary page on bioportal is showing statuses of uploaded error rdf production log file indicates that the o... | 1 |

35,678 | 4,695,799,363 | IssuesEvent | 2016-10-12 00:33:50 | WordPress/twentyseventeen | https://api.github.com/repos/WordPress/twentyseventeen | closed | Setting a baseline | design | As in Twenty Sixteen, I think it's helpful **setting a baseline**. Some elements are already aligned vertically, but there isn't consistence all over the theme. | 1.0 | Setting a baseline - As in Twenty Sixteen, I think it's helpful **setting a baseline**. Some elements are already aligned vertically, but there isn't consistence all over the theme. | non_process | setting a baseline as in twenty sixteen i think it s helpful setting a baseline some elements are already aligned vertically but there isn t consistence all over the theme | 0 |

12,189 | 4,385,788,468 | IssuesEvent | 2016-08-08 10:14:10 | AquariaOSE/Aquaria | https://api.github.com/repos/AquariaOSE/Aquaria | opened | Rework Cmake files | enhancement non-code | Current CMakeLists.txt is long and messy and not suitable for generating proper project files one can work with.

Should be split into smaller files, into proper sub-projects, and reference header files properly.

| 1.0 | Rework Cmake files - Current CMakeLists.txt is long and messy and not suitable for generating proper project files one can work with.

Should be split into smaller files, into proper sub-projects, and reference header files properly.

| non_process | rework cmake files current cmakelists txt is long and messy and not suitable for generating proper project files one can work with should be split into smaller files into proper sub projects and reference header files properly | 0 |

66,533 | 27,498,435,486 | IssuesEvent | 2023-03-05 12:22:42 | APIs-guru/openapi-directory | https://api.github.com/repos/APIs-guru/openapi-directory | closed | Add "Amadeus Airport On-Time Performance" API | add API needs-servicename | **Format**: OpenAPI 2.0 (fka Swagger)

**Official**: YES

**Url**: https://raw.githubusercontent.com/amadeus4dev/amadeus-open-api-specification/main/spec/json/AirportOnTimePerformance_v1_swagger_specification.json

**Name**: Amadeus Airport On-Time Performance

**Category**: transport

**Logo**: https://developers.amad... | 1.0 | Add "Amadeus Airport On-Time Performance" API - **Format**: OpenAPI 2.0 (fka Swagger)

**Official**: YES

**Url**: https://raw.githubusercontent.com/amadeus4dev/amadeus-open-api-specification/main/spec/json/AirportOnTimePerformance_v1_swagger_specification.json

**Name**: Amadeus Airport On-Time Performance

**Category... | non_process | add amadeus airport on time performance api format openapi fka swagger official yes url name amadeus airport on time performance category transport logo | 0 |

777,963 | 27,299,160,133 | IssuesEvent | 2023-02-23 23:27:46 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Should handle incorrect decimal values specified for custom networks | priority/P3 QA/Yes release-notes/exclude closed/not-actionable feature/web3/wallet OS/Desktop front-end-change | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | Should handle incorrect decimal values specified for custom networks - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET T... | non_process | should handle incorrect decimal values specified for custom networks have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info will get t... | 0 |

406,542 | 11,899,423,534 | IssuesEvent | 2020-03-30 08:59:06 | osmontrouge/caresteouvert | https://api.github.com/repos/osmontrouge/caresteouvert | closed | Bug d'affichage quand aucune couche n'est sélectionnée | bug priority: medium | On ne devrait rien voir d'affiché quand aucune couche n'est sélectionnée, ce qui n'est pas le cas :

https://www.caresteouvert.fr/police=false&pharmacy=false&post_office=false&food=false&bakery=false&shop=false&bank=false&fuel=false&funeral_directors=false@-17.545042,-149.575043,14.90 | 1.0 | Bug d'affichage quand aucune couche n'est sélectionnée - On ne devrait rien voir d'affiché quand aucune couche n'est sélectionnée, ce qui n'est pas le cas :

https://www.caresteouvert.fr/police=false&pharmacy=false&post_office=false&food=false&bakery=false&shop=false&bank=false&fuel=false&funeral_directors=false@-17.5... | non_process | bug d affichage quand aucune couche n est sélectionnée on ne devrait rien voir d affiché quand aucune couche n est sélectionnée ce qui n est pas le cas | 0 |

96,862 | 16,168,287,934 | IssuesEvent | 2021-05-01 23:51:33 | gabriel-milan/uptime-bot | https://api.github.com/repos/gabriel-milan/uptime-bot | opened | CVE-2021-27290 (High) detected in ssri-7.1.0.tgz, ssri-6.0.1.tgz | security vulnerability | ## CVE-2021-27290 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>ssri-7.1.0.tgz</b>, <b>ssri-6.0.1.tgz</b></p></summary>

<p>

<details><summary><b>ssri-7.1.0.tgz</b></p></summary>

<... | True | CVE-2021-27290 (High) detected in ssri-7.1.0.tgz, ssri-6.0.1.tgz - ## CVE-2021-27290 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>ssri-7.1.0.tgz</b>, <b>ssri-6.0.1.tgz</b></p></sum... | non_process | cve high detected in ssri tgz ssri tgz cve high severity vulnerability vulnerable libraries ssri tgz ssri tgz ssri tgz standard subresource integrity library parses serializes generates and verifies integrity metadata according to the sri spe... | 0 |

3,006 | 6,007,772,524 | IssuesEvent | 2017-06-06 05:08:39 | TEAMMATES/teammates | https://api.github.com/repos/TEAMMATES/teammates | closed | Development Workflow: Explicitly state that contributors should not create multiple PRs for a single issue | a-Docs a-Process c.DevOps d.FirstTimers | Contributors should not be opening multiple PRs for the same issue (e.g. Closing a PR and creating a new one). Doing this makes it difficult to keep track of past reviews/comments on the PR. We should consider stating this explicitly in our development workflow document. | 1.0 | Development Workflow: Explicitly state that contributors should not create multiple PRs for a single issue - Contributors should not be opening multiple PRs for the same issue (e.g. Closing a PR and creating a new one). Doing this makes it difficult to keep track of past reviews/comments on the PR. We should consider s... | process | development workflow explicitly state that contributors should not create multiple prs for a single issue contributors should not be opening multiple prs for the same issue e g closing a pr and creating a new one doing this makes it difficult to keep track of past reviews comments on the pr we should consider s... | 1 |

16,641 | 6,258,775,103 | IssuesEvent | 2017-07-14 16:22:28 | gap-system/gap | https://api.github.com/repos/gap-system/gap | closed | Unconditionally using compiler warning flags causes gcc 4.3.4 compile failure | build system | On a supercomputer I am allowed to use compilation stops:

````

DEBRECEN[service0] ~/bin/gap (0)$ make

C src/ariths.c => obj/ariths.lo

cc1: error: unrecognized command line option "-Wno-implicit-fallthrough"

cc1: error: unrecognized command line option "-Wno-unknown-warning-option"

make: *** [obj/ariths.l... | 1.0 | Unconditionally using compiler warning flags causes gcc 4.3.4 compile failure - On a supercomputer I am allowed to use compilation stops:

````

DEBRECEN[service0] ~/bin/gap (0)$ make

C src/ariths.c => obj/ariths.lo

cc1: error: unrecognized command line option "-Wno-implicit-fallthrough"

cc1: error: unrecog... | non_process | unconditionally using compiler warning flags causes gcc compile failure on a supercomputer i am allowed to use compilation stops debrecen bin gap make c src ariths c obj ariths lo error unrecognized command line option wno implicit fallthrough error unrecognized command... | 0 |

1,880 | 4,711,655,398 | IssuesEvent | 2016-10-14 14:28:53 | CERNDocumentServer/cds | https://api.github.com/repos/CERNDocumentServer/cds | opened | webhooks: AVC tasks refactoring | avc_processing in progress | Update code structure based on newest Invenio-Webhooks approach. | 1.0 | webhooks: AVC tasks refactoring - Update code structure based on newest Invenio-Webhooks approach. | process | webhooks avc tasks refactoring update code structure based on newest invenio webhooks approach | 1 |

98,696 | 30,055,922,218 | IssuesEvent | 2023-06-28 06:47:04 | apache/camel-k | https://api.github.com/repos/apache/camel-k | closed | Use maven distribution available in the operator image | kind/bug area/builder | During the docker build of the operator image, the maven distribution is downloaded inside the folder `/usr/share/maven/wrapper/dists/` (using maven wrapper).

When the builder is generated the content of the folder is never added to the builder image (see `https://github.com/apache/camel-k/blob/main/pkg/controller/c... | 1.0 | Use maven distribution available in the operator image - During the docker build of the operator image, the maven distribution is downloaded inside the folder `/usr/share/maven/wrapper/dists/` (using maven wrapper).

When the builder is generated the content of the folder is never added to the builder image (see `htt... | non_process | use maven distribution available in the operator image during the docker build of the operator image the maven distribution is downloaded inside the folder usr share maven wrapper dists using maven wrapper when the builder is generated the content of the folder is never added to the builder image see c... | 0 |

110,224 | 4,423,766,184 | IssuesEvent | 2016-08-16 09:48:53 | Optiboot/optiboot | https://api.github.com/repos/Optiboot/optiboot | opened | Better error handling for bad LED values? | Maintainability Priority-Low Type-Enhancement | It would be nice if the error message for a bad LED value distinguished between a bad setting and LED being "undefined" (as can happen when adding a new CPU type, if you forget to modify pin_defs.h)

(also, it would be nice if there was more internal documentation on how the LED assignments actually work!)

| 1.0 | Better error handling for bad LED values? - It would be nice if the error message for a bad LED value distinguished between a bad setting and LED being "undefined" (as can happen when adding a new CPU type, if you forget to modify pin_defs.h)

(also, it would be nice if there was more internal documentation on how th... | non_process | better error handling for bad led values it would be nice if the error message for a bad led value distinguished between a bad setting and led being undefined as can happen when adding a new cpu type if you forget to modify pin defs h also it would be nice if there was more internal documentation on how th... | 0 |

213,254 | 16,507,538,838 | IssuesEvent | 2021-05-25 21:23:18 | ansible/awx | https://api.github.com/repos/ansible/awx | closed | [ui_next] Favicon is missing | component:ui priority:high state:needs_test type:bug | ##### ISSUE TYPE

- Bug Report

##### SUMMARY

No favicon!

##### ENVIRONMENT

* AWX version: b338da40c534d1b2809b5426b61f13eff7770434

* AWX install method: `npm install`

##### ADDITIONAL INFORMATION

217708.69 tps ( 61.1 allocs/op, 13.1 tasks/op, 43542 insns/op, 0 errors)

219495.02 tps ( 61.1 allocs/op, 13.1 tasks/op, 43538 insns/op, 0 errors)

2... | True | performance regression after extending statement scope guard - Bad commit: c42a91ec72

perf-simple-query --smp 1

before:

216489.88 tps ( 61.1 allocs/op, 13.1 tasks/op, 43558 insns/op, 0 errors)

217708.69 tps ( 61.1 allocs/op, 13.1 tasks/op, 43542 insns/op, 0 errors)

219495.02 tps ( 61.1 a... | non_process | performance regression after extending statement scope guard bad commit perf simple query smp before tps allocs op tasks op insns op errors tps allocs op tasks op insns op errors tps allocs op tasks op insns op ... | 0 |

11,451 | 4,227,187,211 | IssuesEvent | 2016-07-03 00:59:46 | btkelly/gnag | https://api.github.com/repos/btkelly/gnag | opened | Improve log output when violation detector creation fails due to invalid config | code difficulty-easy enhancement | Example:

```

:app:gnagCheck FAILED

FAILURE: Build failed with an exception.

* What went wrong:

Execution failed for task ':app:gnagCheck'.

> Unable to create a Checker: configLocation {/Users/stkent/dev/detroit_labs/apps/herbie-android/app/config/checkstyle.xml}, classpath {null}.

* Try:

Run with --info... | 1.0 | Improve log output when violation detector creation fails due to invalid config - Example:

```

:app:gnagCheck FAILED

FAILURE: Build failed with an exception.

* What went wrong:

Execution failed for task ':app:gnagCheck'.

> Unable to create a Checker: configLocation {/Users/stkent/dev/detroit_labs/apps/herbi... | non_process | improve log output when violation detector creation fails due to invalid config example app gnagcheck failed failure build failed with an exception what went wrong execution failed for task app gnagcheck unable to create a checker configlocation users stkent dev detroit labs apps herbi... | 0 |

278,163 | 30,702,219,930 | IssuesEvent | 2023-07-27 01:12:28 | Nivaskumark/kernel_v4.1.15 | https://api.github.com/repos/Nivaskumark/kernel_v4.1.15 | closed | CVE-2016-3135 (High) detected in linuxlinux-4.6 - autoclosed | Mend: dependency security vulnerability | ## CVE-2016-3135 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.... | True | CVE-2016-3135 (High) detected in linuxlinux-4.6 - autoclosed - ## CVE-2016-3135 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel... | non_process | cve high detected in linuxlinux autoclosed cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files net net... | 0 |

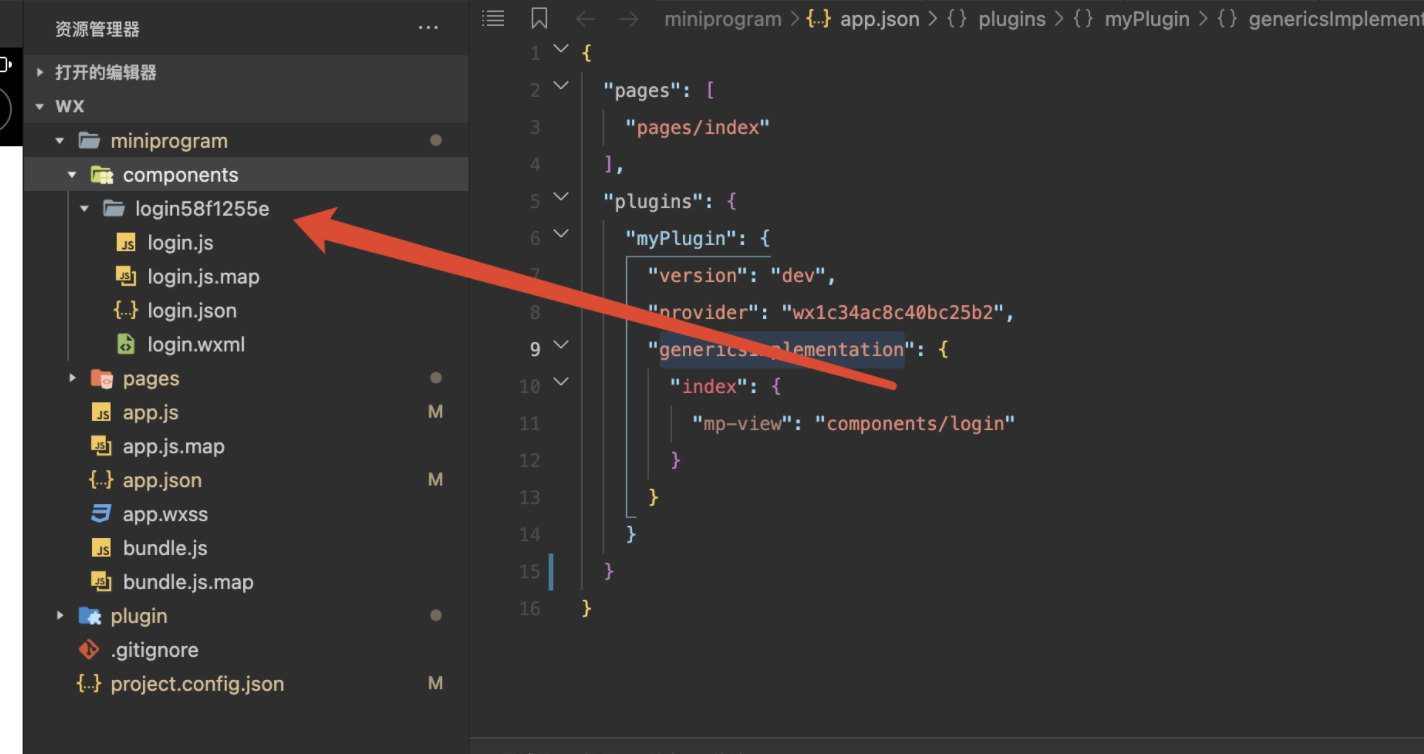

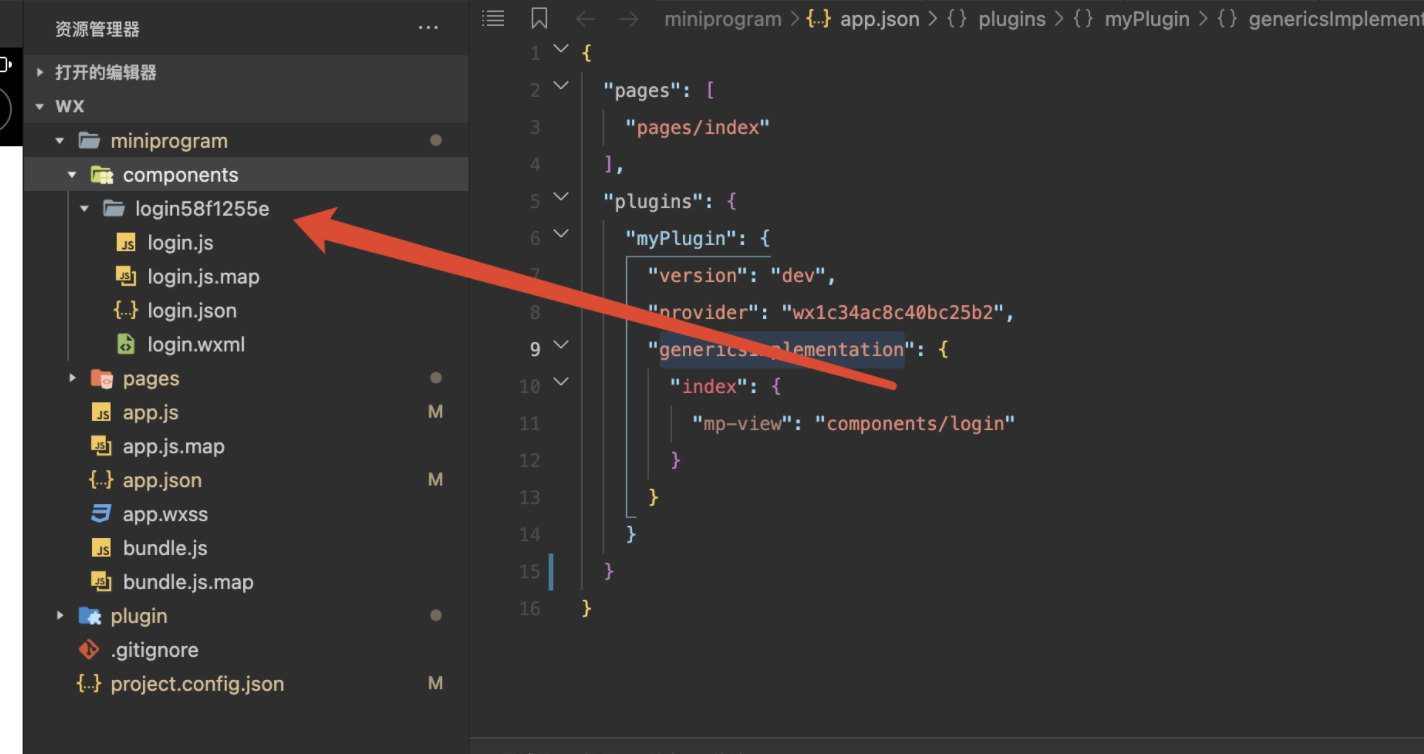

14,336 | 17,367,136,918 | IssuesEvent | 2021-07-30 08:53:41 | didi/mpx | https://api.github.com/repos/didi/mpx | closed | [Bug report] 小程序插件中引用主包组件提示找不到 | processing | **问题描述**

1. 问题触发的条件

微信小程序插件中引用主包的组件提示找不到文件

2. 期望的表现

可以在小程序插件中引用主包的文件

3. 实际的表现

页面中有报错,如下图: app.json与打包后的路径不一致

**最简复现demo**

github地址请[点击](https://github.com/nianxiongd... | 1.0 | [Bug report] 小程序插件中引用主包组件提示找不到 - **问题描述**

1. 问题触发的条件

微信小程序插件中引用主包的组件提示找不到文件

2. 期望的表现

可以在小程序插件中引用主包的文件

3. 实际的表现

页面中有报错,如下图: app.json与打包后的路径不一致

**最简复现demo**

github地址请... | process | 小程序插件中引用主包组件提示找不到 问题描述 问题触发的条件 微信小程序插件中引用主包的组件提示找不到文件 期望的表现 可以在小程序插件中引用主包的文件 实际的表现 页面中有报错,如下图 app json与打包后的路径不一致 最简复现demo github地址请 | 1 |

152,870 | 5,871,407,452 | IssuesEvent | 2017-05-15 08:38:11 | salesagility/SuiteCRM | https://api.github.com/repos/salesagility/SuiteCRM | closed | Project Templates: Project from Project Title - language | bug Fix Proposed Low Priority Resolved: Next Release | <!--- Provide a general summary of the issue in the **Title** above -->

<!--- Before you open an issue, please check if a similar issue already exists or has been closed before. --->

#### Issue

<!--- Provide a more detailed introduction to the issue itself, and why you consider it to be a bug -->

Punctuation chan... | 1.0 | Project Templates: Project from Project Title - language - <!--- Provide a general summary of the issue in the **Title** above -->

<!--- Before you open an issue, please check if a similar issue already exists or has been closed before. --->

#### Issue

<!--- Provide a more detailed introduction to the issue itself... | non_process | project templates project from project title language issue punctuation change required to modules am projecttemplates language en us lang php see it would make the sentence exactly the same as in modules am tasktemplates language en us lang php located here this will help trans... | 0 |

6,676 | 9,792,662,942 | IssuesEvent | 2019-06-10 17:58:47 | googleapis/google-cloud-cpp-spanner | https://api.github.com/repos/googleapis/google-cloud-cpp-spanner | closed | Add a CI build using MemorySanitizer. | type: process | A build with MemorySanitizer will discover problems that neither AddressSanitizer nor UndefinedBehaviorSanitizer cover. But it requires compiling `libc++` from source and I do not know how to do that.

| 1.0 | Add a CI build using MemorySanitizer. - A build with MemorySanitizer will discover problems that neither AddressSanitizer nor UndefinedBehaviorSanitizer cover. But it requires compiling `libc++` from source and I do not know how to do that.

| process | add a ci build using memorysanitizer a build with memorysanitizer will discover problems that neither addresssanitizer nor undefinedbehaviorsanitizer cover but it requires compiling libc from source and i do not know how to do that | 1 |

1,908 | 4,734,069,829 | IssuesEvent | 2016-10-19 13:16:21 | AllenFang/react-bootstrap-table | https://api.github.com/repos/AllenFang/react-bootstrap-table | reopened | Do you know why filters do not work with dynamically generated columns? | inprocess | Do you know why filters do not work with dynamically generated columns? I followed the steps as in https://github.com/AllenFang/react-bootstrap-table/issues/450 , the dynamic columns work great.

Its only filters that do not work(Example numeric filter). It displays the filter controls , but does not do the filter... | 1.0 | Do you know why filters do not work with dynamically generated columns? - Do you know why filters do not work with dynamically generated columns? I followed the steps as in https://github.com/AllenFang/react-bootstrap-table/issues/450 , the dynamic columns work great.

Its only filters that do not work(Example num... | process | do you know why filters do not work with dynamically generated columns do you know why filters do not work with dynamically generated columns i followed the steps as in the dynamic columns work great its only filters that do not work example numeric filter it displays the filter controls but does not... | 1 |

5,633 | 8,484,729,530 | IssuesEvent | 2018-10-26 04:19:25 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | spawnSync segfaults when given throwing toString | child_process | <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: output of `node -v`

P... | 1.0 | spawnSync segfaults when given throwing toString - <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below... | process | spawnsync segfaults when given throwing tostring thank you for reporting an issue this issue tracker is for bugs and issues found within node js core if you require more general support please file an issue on our help repo please fill in as much of the template below as you re able version ... | 1 |

2,588 | 5,345,496,274 | IssuesEvent | 2017-02-17 17:05:41 | sysown/proxysql | https://api.github.com/repos/sysown/proxysql | closed | "use <schema>" query missing in stats_mysql_query_digest | bug CONNECTION POOL QUERY PROCESSOR | I wrote a web interface that creates rules from all queries listed in stats_mysql_query_digest.

I've just discovered that all kind of queries listed, but not the "use table_name" query - therefor it was missing in my auto created rules. Since I create a deny all at the end it run into that rule and got denied.

Creat... | 1.0 | "use <schema>" query missing in stats_mysql_query_digest - I wrote a web interface that creates rules from all queries listed in stats_mysql_query_digest.

I've just discovered that all kind of queries listed, but not the "use table_name" query - therefor it was missing in my auto created rules. Since I create a deny ... | process | use query missing in stats mysql query digest i wrote a web interface that creates rules from all queries listed in stats mysql query digest i ve just discovered that all kind of queries listed but not the use table name query therefor it was missing in my auto created rules since i create a deny all at ... | 1 |

1,484 | 2,514,743,684 | IssuesEvent | 2015-01-15 14:09:36 | olga-jane/prizm | https://api.github.com/repos/olga-jane/prizm | closed | can the spool length take negative value | bug bug - crash/performance/leak Coding Construction HIGH priority Incoming inspection | Scenario:

1. Open "Катушки"

2. Click "Редактировать"

3. Click "Ok" or "Close" in error dialog

4. Set the value of spool length in -1

5. Click "Save" button

Result:

An unhandled exception of type 'System.NullReferenceException' occurred in Prizm.Data.dll

Additional information: Object reference not set to an i... | 1.0 | can the spool length take negative value - Scenario:

1. Open "Катушки"

2. Click "Редактировать"

3. Click "Ok" or "Close" in error dialog

4. Set the value of spool length in -1

5. Click "Save" button

Result:

An unhandled exception of type 'System.NullReferenceException' occurred in Prizm.Data.dll

Additional in... | non_process | can the spool length take negative value scenario open катушки click редактировать click ok or close in error dialog set the value of spool length in click save button result an unhandled exception of type system nullreferenceexception occurred in prizm data dll additional in... | 0 |

154,603 | 24,326,961,671 | IssuesEvent | 2022-09-30 15:34:57 | carbon-design-system/carbon-for-ibm-dotcom | https://api.github.com/repos/carbon-design-system/carbon-for-ibm-dotcom | closed | [Figma] Card section carousel | design design kit stretch project | Add the Card section carousel component to Figma kit. Remember we are only building variants for `xlg`, `md`, and `sm`.

[Design spec](https://ibm.ent.box.com/folder/126468155543?s=wyj5uboppri7nc3gn4v1f7ft80qc5eir)

[Website](https://www.ibm.com/standards/carbon/components/card-section-carousel)

**Must haves:**

- [ ] ... | 2.0 | [Figma] Card section carousel - Add the Card section carousel component to Figma kit. Remember we are only building variants for `xlg`, `md`, and `sm`.

[Design spec](https://ibm.ent.box.com/folder/126468155543?s=wyj5uboppri7nc3gn4v1f7ft80qc5eir)

[Website](https://www.ibm.com/standards/carbon/components/card-section-c... | non_process | card section carousel add the card section carousel component to figma kit remember we are only building variants for xlg md and sm must haves use card basic in the component use the carousel control from carousel use link with icon resources com compo... | 0 |

22,506 | 31,558,942,349 | IssuesEvent | 2023-09-03 02:06:28 | tdwg/hc | https://api.github.com/repos/tdwg/hc | opened | New Term - siteNestingDescription | Term - add normative Process - under public review Class - Event | ## New term

* Submitter: Humboldt Extension Task Group

* Efficacy Justification (why is this term necessary?): Part of a package of terms in support of biological inventory data.

* Demand Justification (name at least two organizations that independently need this term): The Humboldt Extension Task Group proposing ... | 1.0 | New Term - siteNestingDescription - ## New term

* Submitter: Humboldt Extension Task Group

* Efficacy Justification (why is this term necessary?): Part of a package of terms in support of biological inventory data.

* Demand Justification (name at least two organizations that independently need this term): The Humb... | process | new term sitenestingdescription new term submitter humboldt extension task group efficacy justification why is this term necessary part of a package of terms in support of biological inventory data demand justification name at least two organizations that independently need this term the humb... | 1 |

397,831 | 27,179,153,965 | IssuesEvent | 2023-02-18 11:56:15 | swarnarkadas/ichat_app | https://api.github.com/repos/swarnarkadas/ichat_app | closed | Make User interface attractive | documentation enhancement good first issue JWOC Easy | Upgrade the user interface & make it as attractive as possible. You can use any CSS framework **except Bootstrap**. | 1.0 | Make User interface attractive - Upgrade the user interface & make it as attractive as possible. You can use any CSS framework **except Bootstrap**. | non_process | make user interface attractive upgrade the user interface make it as attractive as possible you can use any css framework except bootstrap | 0 |

424,561 | 29,144,656,677 | IssuesEvent | 2023-05-18 01:01:20 | jrsteensen/OpenHornet | https://api.github.com/repos/jrsteensen/OpenHornet | opened | Generate MFG Files: OH4A7A1-1 - ASSY, COMM PANEL | Type: Documentation "Category: MCAD Priority: Normal" | Generate the manufacturing files for Generate MFG Files: OH4A7A1-1 - ASSY, COMM PANEL.

__Check off each item in issue as you complete it.__

### File generation

- [OH Wiki HOWTO Link](https://github.com/jrsteensen/OpenHornet/wiki/HOWTO:-Generating-Fusion360-Manufacturing-Files)

- [ ] Generate SVG files (if required.)... | 1.0 | Generate MFG Files: OH4A7A1-1 - ASSY, COMM PANEL - Generate the manufacturing files for Generate MFG Files: OH4A7A1-1 - ASSY, COMM PANEL.

__Check off each item in issue as you complete it.__

### File generation

- [OH Wiki HOWTO Link](https://github.com/jrsteensen/OpenHornet/wiki/HOWTO:-Generating-Fusion360-Manufactu... | non_process | generate mfg files assy comm panel generate the manufacturing files for generate mfg files assy comm panel check off each item in issue as you complete it file generation generate svg files if required generate files if required generate step files if required ... | 0 |

81,268 | 15,610,851,707 | IssuesEvent | 2021-03-19 13:40:50 | AlexRogalskiy/stylegrams | https://api.github.com/repos/AlexRogalskiy/stylegrams | opened | CVE-2021-27290 (High) detected in ssri-6.0.1.tgz | security vulnerability | ## CVE-2021-27290 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ssri-6.0.1.tgz</b></p></summary>

<p>Standard Subresource Integrity library -- parses, serializes, generates, and veri... | True | CVE-2021-27290 (High) detected in ssri-6.0.1.tgz - ## CVE-2021-27290 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ssri-6.0.1.tgz</b></p></summary>

<p>Standard Subresource Integrity ... | non_process | cve high detected in ssri tgz cve high severity vulnerability vulnerable library ssri tgz standard subresource integrity library parses serializes generates and verifies integrity metadata according to the sri spec library home page a href path to dependency file... | 0 |

104,880 | 9,011,965,830 | IssuesEvent | 2019-02-05 15:51:10 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | opened | Failing test in master-blocking: Dynamic PV/Inline-volume subPath should support creating multiple subpath from same volumes | kind/failing-test | <!-- Please only use this template for submitting reports about failing tests in Kubernetes CI jobs -->

**Which jobs are failing**:

- https://testgrid.k8s.io/sig-release-master-blocking#gce-cos-master-slow

**Which test(s) are failing**:

`subPath should support creating multiple subpath from same volumes`, for b... | 1.0 | Failing test in master-blocking: Dynamic PV/Inline-volume subPath should support creating multiple subpath from same volumes - <!-- Please only use this template for submitting reports about failing tests in Kubernetes CI jobs -->

**Which jobs are failing**:

- https://testgrid.k8s.io/sig-release-master-blocking#gce... | non_process | failing test in master blocking dynamic pv inline volume subpath should support creating multiple subpath from same volumes which jobs are failing which test s are failing subpath should support creating multiple subpath from same volumes for both in tree and csi volumes and multiple dri... | 0 |

19,168 | 25,268,342,022 | IssuesEvent | 2022-11-16 07:15:37 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Test CockroachDB 22.2 in prisma/prisma and prisma/prisma-engines | process/candidate topic: internal kind/tech team/schema team/client | Prompted by a notification about SCRAM authentication becoming the default (https://prisma-company.slack.com/files/USLACKBOT/F04AZJYMAHK/scram_password_authentication_is_now_the_default_in_cockroachdb). | 1.0 | Test CockroachDB 22.2 in prisma/prisma and prisma/prisma-engines - Prompted by a notification about SCRAM authentication becoming the default (https://prisma-company.slack.com/files/USLACKBOT/F04AZJYMAHK/scram_password_authentication_is_now_the_default_in_cockroachdb). | process | test cockroachdb in prisma prisma and prisma prisma engines prompted by a notification about scram authentication becoming the default | 1 |

6,513 | 9,599,015,306 | IssuesEvent | 2019-05-10 04:29:23 | furukawa-laboratory/workout_report_2019 | https://api.github.com/repos/furukawa-laboratory/workout_report_2019 | closed | [ビギナー・常人] sklearnを用いて基本的な前処理を学ぶ | preprocessing training | ## sklearnを用いて基本的な前処理を学ぶ

ここでは、Data Preprocessing, Analysis & Visualization – Python Machine Learningのサイトに沿って、前処理の基本の使い方を学ぶ。具体的には、Pythonのsklearnライブラリを用いてデータの基本的なリスケールや正規化などの処理を写経して実装し、動作を確認する。また、sklearnに実装されているiris等のデータセットやUCIレポジトリをPCAを用いて可視化する処理を行う。

## 取り組む期間

04/23~04/25(予定)

## 獲得を目指すスキル

・sklearnやnumpyライブラリを用い... | 1.0 | [ビギナー・常人] sklearnを用いて基本的な前処理を学ぶ - ## sklearnを用いて基本的な前処理を学ぶ

ここでは、Data Preprocessing, Analysis & Visualization – Python Machine Learningのサイトに沿って、前処理の基本の使い方を学ぶ。具体的には、Pythonのsklearnライブラリを用いてデータの基本的なリスケールや正規化などの処理を写経して実装し、動作を確認する。また、sklearnに実装されているiris等のデータセットやUCIレポジトリをPCAを用いて可視化する処理を行う。

## 取り組む期間

04/23~04/25(予定)

##... | process | sklearnを用いて基本的な前処理を学ぶ sklearnを用いて基本的な前処理を学ぶ ここでは、data preprocessing analysis visualization – python machine learningのサイトに沿って、前処理の基本の使い方を学ぶ。具体的には、pythonのsklearnライブラリを用いてデータの基本的なリスケールや正規化などの処理を写経して実装し、動作を確認する。また、sklearnに実装されているiris等のデータセットやuciレポジトリをpcaを用いて可視化する処理を行う。 取り組む期間 予定 獲得を目指すスキル ... | 1 |

4,314 | 7,203,329,737 | IssuesEvent | 2018-02-06 08:49:19 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | [FEATURE][processing] Extract by attribute can extract for null/notnull values | Automatic new feature Processing | Original commit: https://github.com/qgis/QGIS/commit/24ffa15ecf1aa20ef389fad1f0aaf6f235b712da by nyalldawson

Adds support for filtering where an attribute value is null or not null | 1.0 | [FEATURE][processing] Extract by attribute can extract for null/notnull values - Original commit: https://github.com/qgis/QGIS/commit/24ffa15ecf1aa20ef389fad1f0aaf6f235b712da by nyalldawson

Adds support for filtering where an attribute value is null or not null | process | extract by attribute can extract for null notnull values original commit by nyalldawson adds support for filtering where an attribute value is null or not null | 1 |

11,248 | 14,016,238,384 | IssuesEvent | 2020-10-29 14:17:49 | googleapis/java-bigtable-hbase | https://api.github.com/repos/googleapis/java-bigtable-hbase | closed | chore: Tasks to be performed before next release | api: bigtable type: process | As per #2467, We have marked snapshot operation as unsupported with this library. We need to make sure of the following before next release cut:

- [ ] add backup support

- [ ] add a breaking change note in the release notes | 1.0 | chore: Tasks to be performed before next release - As per #2467, We have marked snapshot operation as unsupported with this library. We need to make sure of the following before next release cut:

- [ ] add backup support

- [ ] add a breaking change note in the release notes | process | chore tasks to be performed before next release as per we have marked snapshot operation as unsupported with this library we need to make sure of the following before next release cut add backup support add a breaking change note in the release notes | 1 |

306,747 | 26,492,476,958 | IssuesEvent | 2023-01-18 00:35:35 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix jax_numpy_statistical.test_jax_numpy_var | JAX Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/3941620207/jobs/6744286919" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/3941620207/jobs/6744297694" rel="noopener nore... | 1.0 | Fix jax_numpy_statistical.test_jax_numpy_var - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/3941620207/jobs/6744286919" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs... | non_process | fix jax numpy statistical test jax numpy var tensorflow img src torch img src numpy img src jax img src failed ivy tests test ivy test frontends test jax test jax numpy statistical py test jax numpy var e assertionerror the return with a tenso... | 0 |

19,609 | 25,961,536,339 | IssuesEvent | 2022-12-18 23:49:59 | streamnative/pulsar-spark | https://api.github.com/repos/streamnative/pulsar-spark | closed | [BUG] (Spark 2.4.5) Pulsar receiver requires DataFrame creation before readStream/writeStream methods can be used | type/bug compute/data-processing | **Describe the bug**

The spark-submit of a spark job written with the connector fails if a DataFrame is not created prior to calling readStream and writeStream.

**To Reproduce**

Steps to reproduce the behavior:

1. Write a pyspark program that streams data from pulsar using `readStream` and writes somewhere using... | 1.0 | [BUG] (Spark 2.4.5) Pulsar receiver requires DataFrame creation before readStream/writeStream methods can be used - **Describe the bug**

The spark-submit of a spark job written with the connector fails if a DataFrame is not created prior to calling readStream and writeStream.

**To Reproduce**

Steps to reproduce t... | process | spark pulsar receiver requires dataframe creation before readstream writestream methods can be used describe the bug the spark submit of a spark job written with the connector fails if a dataframe is not created prior to calling readstream and writestream to reproduce steps to reproduce the b... | 1 |

307,857 | 9,423,229,635 | IssuesEvent | 2019-04-11 11:19:52 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.google.com - see bug description | browser-firefox priority-critical | <!-- @browser: Firefox 67.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; WOW64; rv:67.0) Gecko/20100101 Firefox/67.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://www.google.com/sorry/index?continue=https://www.google.com/search%3Fclient%3Dfirefox-b-d%26q%3Dsacda%2Bmeaning&q=EgQpTky-GOirnOUFIhkA8... | 1.0 | www.google.com - see bug description - <!-- @browser: Firefox 67.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; WOW64; rv:67.0) Gecko/20100101 Firefox/67.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://www.google.com/sorry/index?continue=https://www.google.com/search%3Fclient%3Dfirefox-b-d%26q%3D... | non_process | see bug description url browser version firefox operating system windows tested another browser no problem type something else description it keeps asking me if i am a robot steps to reproduce browser configuration mixed active content blocked ... | 0 |

12,822 | 9,793,521,658 | IssuesEvent | 2019-06-10 20:10:27 | terraform-providers/terraform-provider-aws | https://api.github.com/repos/terraform-providers/terraform-provider-aws | closed | Allow tags nested in aws_redshift_parameter_group resource | enhancement service/redshift | _This issue was originally opened by @ntkawasaki as hashicorp/terraform#21620. It was migrated here as a result of the [provider split](https://www.hashicorp.com/blog/upcoming-provider-changes-in-terraform-0-10/). The original body of the issue is below._

<hr>

### Current Terraform Version

```

Terraform v0.12.0... | 1.0 | Allow tags nested in aws_redshift_parameter_group resource - _This issue was originally opened by @ntkawasaki as hashicorp/terraform#21620. It was migrated here as a result of the [provider split](https://www.hashicorp.com/blog/upcoming-provider-changes-in-terraform-0-10/). The original body of the issue is below._

... | non_process | allow tags nested in aws redshift parameter group resource this issue was originally opened by ntkawasaki as hashicorp terraform it was migrated here as a result of the the original body of the issue is below current terraform version terraform use case in order ... | 0 |

622,263 | 19,619,257,922 | IssuesEvent | 2022-01-07 02:45:51 | SystemsGenetics/GEMmaker | https://api.github.com/repos/SystemsGenetics/GEMmaker | opened | dev branch: Kallisto only Workflow that can process paired files | bug needs fix high priority | This issue pertains to the dev branch witch currently contains the new dsl2 branch.

Only Kallisto can process paired end reads.

Demonstration:

1) Clone copy of GEMmaker to get the demo directory. Switch to dev branch

```

git clone git@github.com:SystemsGenetics/GEMmaker.git

cd GEMmaker

git checkout dev

```

... | 1.0 | dev branch: Kallisto only Workflow that can process paired files - This issue pertains to the dev branch witch currently contains the new dsl2 branch.

Only Kallisto can process paired end reads.

Demonstration:

1) Clone copy of GEMmaker to get the demo directory. Switch to dev branch

```

git clone git@github.co... | non_process | dev branch kallisto only workflow that can process paired files this issue pertains to the dev branch witch currently contains the new branch only kallisto can process paired end reads demonstration clone copy of gemmaker to get the demo directory switch to dev branch git clone git github com s... | 0 |

10,985 | 13,783,538,827 | IssuesEvent | 2020-10-08 19:23:07 | googleapis/proto-plus-python | https://api.github.com/repos/googleapis/proto-plus-python | closed | Include tests with PyPi packages? | type: process | The PyPi packages are missing the tests directory, unless this is intentional could they be included? | 1.0 | Include tests with PyPi packages? - The PyPi packages are missing the tests directory, unless this is intentional could they be included? | process | include tests with pypi packages the pypi packages are missing the tests directory unless this is intentional could they be included | 1 |

17,041 | 22,420,243,764 | IssuesEvent | 2022-06-20 01:42:26 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Add Astronaut Dolphin Detective from Mr. Pickles | suggested title in process | Please add as much of the following info as you can:

Title: Astronaut Dolphin Detective

Type (film/tv show): TV Show

Film or show in which it appears: Mr. Pickles

Is the parent film/show streaming anywhere? HBO Max

About when in the parent film/show does it appear? Several Episodes (Pilot, The Cheeseman, A.D.D.)

... | 1.0 | Add Astronaut Dolphin Detective from Mr. Pickles - Please add as much of the following info as you can:

Title: Astronaut Dolphin Detective

Type (film/tv show): TV Show

Film or show in which it appears: Mr. Pickles

Is the parent film/show streaming anywhere? HBO Max

About when in the parent film/show does it appear... | process | add astronaut dolphin detective from mr pickles please add as much of the following info as you can title astronaut dolphin detective type film tv show tv show film or show in which it appears mr pickles is the parent film show streaming anywhere hbo max about when in the parent film show does it appear... | 1 |

3,924 | 6,845,444,706 | IssuesEvent | 2017-11-13 08:16:47 | symfony/symfony | https://api.github.com/repos/symfony/symfony | closed | setPty(true) sends input before the process is ready | Bug Process Status: Needs Review Status: Waiting feedback Unconfirmed | | Q | A

| ---------------- | -----

| Bug report? | yes

| Feature request? | no

| BC Break report? | no

| RFC? | no

| Symfony version | 2.7.*

Hi I'm using Lumen 5.1 in a Ubuntu 14.04.3 LTS, PHP 5.6 Laravel Homestead Virtual Box which comes with Symfony Process 2.7.*. Here is a ... | 1.0 | setPty(true) sends input before the process is ready - | Q | A

| ---------------- | -----

| Bug report? | yes

| Feature request? | no

| BC Break report? | no

| RFC? | no

| Symfony version | 2.7.*

Hi I'm using Lumen 5.1 in a Ubuntu 14.04.3 LTS, PHP 5.6 Laravel Homestead Virtual ... | process | setpty true sends input before the process is ready q a bug report yes feature request no bc break report no rfc no symfony version hi i m using lumen in a ubuntu lts php laravel homestead virtual bo... | 1 |

7,768 | 10,889,617,253 | IssuesEvent | 2019-11-18 18:38:10 | microsoft/ptvsd | https://api.github.com/repos/microsoft/ptvsd | closed | Fork/exec creates two connections from pydevd to adapter | Bug area:Multiprocessing platform: Linux platform: Mac | This is causing `test_subprocess` to fail on Python 2.7, and is also the root cause of Flask test failures.

The problem is that on 2.7, `subprocess.Popen` uses fork+exec - and pydevd detours both. So first `fork()` creates a child process, and pydevd is spun up in that process and connects to the adapter. Then the c... | 1.0 | Fork/exec creates two connections from pydevd to adapter - This is causing `test_subprocess` to fail on Python 2.7, and is also the root cause of Flask test failures.

The problem is that on 2.7, `subprocess.Popen` uses fork+exec - and pydevd detours both. So first `fork()` creates a child process, and pydevd is spun... | process | fork exec creates two connections from pydevd to adapter this is causing test subprocess to fail on python and is also the root cause of flask test failures the problem is that on subprocess popen uses fork exec and pydevd detours both so first fork creates a child process and pydevd is spun... | 1 |

183,137 | 31,161,949,463 | IssuesEvent | 2023-08-16 16:38:24 | multisig-labs/app.gogopool.com | https://api.github.com/repos/multisig-labs/app.gogopool.com | opened | FE(Navbar): Coming soon tag for GoGoPass | enhancement high priority design | For GoGoPass Navlink, we don't want it to be clickable and take you to a page. Right now, there isn't anything to show for that and it just goes to the 404 image.

Instead, lets have it just chill in the navbar as a non clickable link item with the coming soon tag like in the figma comp here:

https://www.figma.com/fil... | 1.0 | FE(Navbar): Coming soon tag for GoGoPass - For GoGoPass Navlink, we don't want it to be clickable and take you to a page. Right now, there isn't anything to show for that and it just goes to the 404 image.

Instead, lets have it just chill in the navbar as a non clickable link item with the coming soon tag like in the ... | non_process | fe navbar coming soon tag for gogopass for gogopass navlink we don t want it to be clickable and take you to a page right now there isn t anything to show for that and it just goes to the image instead lets have it just chill in the navbar as a non clickable link item with the coming soon tag like in the fi... | 0 |

3,459 | 6,544,192,346 | IssuesEvent | 2017-09-03 13:00:52 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | bingbot/2.0 under Windows OS | log-processing question | Could it be that bingbot/2.0 appears under Windows OS?

`Mozilla/5.0 (iPhone; CPU iPhone OS 7_0 like Mac OS X) AppleWebKit/537.51.1 (KHTML, like Gecko) Version/7.0 Mobile/11A465 Safari/9537.53 (compatible; bingbot/2.0; +http://www.bing.com/bingbot.htm)`

| 1.0 | bingbot/2.0 under Windows OS - Could it be that bingbot/2.0 appears under Windows OS?

`Mozilla/5.0 (iPhone; CPU iPhone OS 7_0 like Mac OS X) AppleWebKit/537.51.1 (KHTML, like Gecko) Version/7.0 Mobile/11A465 Safari/9537.53 (compatible; bingbot/2.0; +http://www.bing.com/bingbot.htm)`

| process | bingbot under windows os could it be that bingbot appears under windows os mozilla iphone cpu iphone os like mac os x applewebkit khtml like gecko version mobile safari compatible bingbot | 1 |

37,359 | 6,601,392,143 | IssuesEvent | 2017-09-18 00:00:15 | MilSpouseCoders/milspousecoders | https://api.github.com/repos/MilSpouseCoders/milspousecoders | closed | Instructions for adding a remote don't work | documentation | I followed the instructions to add the main repo as a remote, and it didn't work. It said I didn't have permissions.

Using https://github.com/MilSpouseCoders/milspousecoders.git instead of the link listed worked (this comes from clone and download button). | 1.0 | Instructions for adding a remote don't work - I followed the instructions to add the main repo as a remote, and it didn't work. It said I didn't have permissions.

Using https://github.com/MilSpouseCoders/milspousecoders.git instead of the link listed worked (this comes from clone and download button). | non_process | instructions for adding a remote don t work i followed the instructions to add the main repo as a remote and it didn t work it said i didn t have permissions using instead of the link listed worked this comes from clone and download button | 0 |

9,805 | 3,321,099,199 | IssuesEvent | 2015-11-09 06:03:54 | patacrep/patacrep | https://api.github.com/repos/patacrep/patacrep | closed | Requirements.txt seems deprecated | documentation enhancement | Pour le développement, la commande [`python3 setup.py develop`](https://github.com/patacrep/patacrep/blob/master/README.rst#for-developement) se charge d'installer les dépendances de manière automatique.

Il faudrait donc mettre à jour le README et potentiellement supprimer le fichier `Requirements.txt` (sauf si il y... | 1.0 | Requirements.txt seems deprecated - Pour le développement, la commande [`python3 setup.py develop`](https://github.com/patacrep/patacrep/blob/master/README.rst#for-developement) se charge d'installer les dépendances de manière automatique.

Il faudrait donc mettre à jour le README et potentiellement supprimer le fich... | non_process | requirements txt seems deprecated pour le développement la commande se charge d installer les dépendances de manière automatique il faudrait donc mettre à jour le readme et potentiellement supprimer le fichier requirements txt sauf si il y a des dépendances uniquement utiles pour le dev ce qui n est pas ... | 0 |

16,753 | 21,921,717,689 | IssuesEvent | 2022-05-22 17:18:11 | huutho77/CNPMNC_ThayAi | https://api.github.com/repos/huutho77/CNPMNC_ThayAi | closed | [API] Coding Feature Login | dev/thnguyen processing | - Validate Input value

- Validation Input Value

- Generate AccessToken and RefreshToken | 1.0 | [API] Coding Feature Login - - Validate Input value

- Validation Input Value

- Generate AccessToken and RefreshToken | process | coding feature login validate input value validation input value generate accesstoken and refreshtoken | 1 |

5,828 | 8,664,710,411 | IssuesEvent | 2018-11-28 21:00:27 | lightningWhite/weatherLearning | https://api.github.com/repos/lightningWhite/weatherLearning | closed | Normalize the data | dataProcessing | We will need to normalize the data. Will we do this to the difference columns we created? | 1.0 | Normalize the data - We will need to normalize the data. Will we do this to the difference columns we created? | process | normalize the data we will need to normalize the data will we do this to the difference columns we created | 1 |

10,275 | 13,128,635,833 | IssuesEvent | 2020-08-06 12:38:47 | keep-network/keep-core | https://api.github.com/repos/keep-network/keep-core | closed | Stake delegation system tests | process & client team | Having top-ups implemented we need to execute system tests covering all possible stake delegation scenarios.

Depending on the progress on #1898, tests should be performed using KEEP token dashboard or directly against smart contracts.

- [x] Grantee can delegate a stake

- [x] Managed grantee can delegate a stake

-... | 1.0 | Stake delegation system tests - Having top-ups implemented we need to execute system tests covering all possible stake delegation scenarios.

Depending on the progress on #1898, tests should be performed using KEEP token dashboard or directly against smart contracts.

- [x] Grantee can delegate a stake

- [x] Managed... | process | stake delegation system tests having top ups implemented we need to execute system tests covering all possible stake delegation scenarios depending on the progress on tests should be performed using keep token dashboard or directly against smart contracts grantee can delegate a stake managed grante... | 1 |

311,009 | 26,761,271,215 | IssuesEvent | 2023-01-31 07:07:10 | bazelbuild/intellij | https://api.github.com/repos/bazelbuild/intellij | closed | NPE when parsing Blaze XML output for exceptions without a message | type: bug topic: testing | It looks like when running tests, if you raise an exception without a message (i.e. `throw new NullPointerException()`) it results in test xml output like so:

```xml

<error type='java.lang.NullPointerException'><![CDATA[java.lang.NullPointerException"

at com.example.MyClass.myMethod(MyClass.java:55)

at ...

... | 1.0 | NPE when parsing Blaze XML output for exceptions without a message - It looks like when running tests, if you raise an exception without a message (i.e. `throw new NullPointerException()`) it results in test xml output like so:

```xml

<error type='java.lang.NullPointerException'><![CDATA[java.lang.NullPointerExcept... | non_process | npe when parsing blaze xml output for exceptions without a message it looks like when running tests if you raise an exception without a message i e throw new nullpointerexception it results in test xml output like so xml cdata java lang nullpointerexception at com example myclass mymethod mycl... | 0 |

218,018 | 7,330,168,912 | IssuesEvent | 2018-03-05 08:59:29 | ssu-411/project | https://api.github.com/repos/ssu-411/project | closed | Create Main Page UI | feature request priority/P2 | This page shows most rated books and list of books which were specified in the left sidebar. | 1.0 | Create Main Page UI - This page shows most rated books and list of books which were specified in the left sidebar. | non_process | create main page ui this page shows most rated books and list of books which were specified in the left sidebar | 0 |

31,006 | 8,638,759,756 | IssuesEvent | 2018-11-23 15:50:28 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | Ubuntu package with GeoIP missing | build change debian-repo | Hi,

I installed the goaccess package on Ubuntu 18.04, but this does not seem to have GeoIP enabled:

goaccess: unrecognized option '--geoip-database'

Is there a version with GeoIP avaliable?

Thx in advance

Jan | 1.0 | Ubuntu package with GeoIP missing - Hi,

I installed the goaccess package on Ubuntu 18.04, but this does not seem to have GeoIP enabled:

goaccess: unrecognized option '--geoip-database'

Is there a version with GeoIP avaliable?

Thx in advance

Jan | non_process | ubuntu package with geoip missing hi i installed the goaccess package on ubuntu but this does not seem to have geoip enabled goaccess unrecognized option geoip database is there a version with geoip avaliable thx in advance jan | 0 |

340,798 | 24,671,399,189 | IssuesEvent | 2022-10-18 14:00:33 | nightsailor/online-school | https://api.github.com/repos/nightsailor/online-school | closed | [DOCS] broken links | documentation good first issue hacktoberfest hacktoberfest2022 | ### Description

Hi,

Links to "code of conduct" and "contributing" are broken in the README file : need to change `main` into `master` 😉

example :

- in the README : `https://github.com/nightsailor/online-school/blob/main/CODE_OF_CONDUCT.md `

- in the repo : ` https://github.com/nightsailor/online-school/blob/m... | 1.0 | [DOCS] broken links - ### Description

Hi,

Links to "code of conduct" and "contributing" are broken in the README file : need to change `main` into `master` 😉

example :

- in the README : `https://github.com/nightsailor/online-school/blob/main/CODE_OF_CONDUCT.md `

- in the repo : ` https://github.com/nightsailo... | non_process | broken links description hi links to code of conduct and contributing are broken in the readme file need to change main into master 😉 example in the readme in the repo i can do it if you want to screenshots no response additional information no respon... | 0 |

89,526 | 25,827,144,739 | IssuesEvent | 2022-12-12 13:44:45 | ChristinaKs/WebServicesTermProject | https://api.github.com/repos/ChristinaKs/WebServicesTermProject | closed | Filtering processes for games | Build03 | references; #14

- [x] /games/ -- GameName, MPA Rating, Platform, GameStudioId

- [x] /games/{game}/reviews -- posOrNeg, GameId | 1.0 | Filtering processes for games - references; #14

- [x] /games/ -- GameName, MPA Rating, Platform, GameStudioId

- [x] /games/{game}/reviews -- posOrNeg, GameId | non_process | filtering processes for games references games gamename mpa rating platform gamestudioid games game reviews posorneg gameid | 0 |

88,972 | 11,184,747,798 | IssuesEvent | 2019-12-31 19:58:52 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | closed | Facility Locator Usability and Accessibility Improvements | 508/Accessibility Epic design frontend vsa-facilities | This epic to house the identified usability and accessibility issues that we can address in the short term to deliver high impact changes for our users | 1.0 | Facility Locator Usability and Accessibility Improvements - This epic to house the identified usability and accessibility issues that we can address in the short term to deliver high impact changes for our users | non_process | facility locator usability and accessibility improvements this epic to house the identified usability and accessibility issues that we can address in the short term to deliver high impact changes for our users | 0 |

4,263 | 7,189,091,785 | IssuesEvent | 2018-02-02 12:44:18 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | closed | Store 'badTrans' flag for ultra-high weight trace transactions | apps-blockScrape status-inprocess type-enhancement | Store 'badTrans' flag in transactions and cache traces for bad transactions (all traces for both in error and not in error)

Also -- pull out those ultra high weight traces into a separate file so we only every have to endure that pain once. | 1.0 | Store 'badTrans' flag for ultra-high weight trace transactions - Store 'badTrans' flag in transactions and cache traces for bad transactions (all traces for both in error and not in error)

Also -- pull out those ultra high weight traces into a separate file so we only every have to endure that pain once. | process | store badtrans flag for ultra high weight trace transactions store badtrans flag in transactions and cache traces for bad transactions all traces for both in error and not in error also pull out those ultra high weight traces into a separate file so we only every have to endure that pain once | 1 |

15,047 | 18,762,675,522 | IssuesEvent | 2021-11-05 18:28:16 | ORNL-AMO/AMO-Tools-Suite | https://api.github.com/repos/ORNL-AMO/AMO-Tools-Suite | closed | Condensing Economizer | Needs Verification Process Heating Calculator | Issue overview

--------------

Add condensing economizer math. Will send excel via teams | 1.0 | Condensing Economizer - Issue overview

--------------

Add condensing economizer math. Will send excel via teams | process | condensing economizer issue overview add condensing economizer math will send excel via teams | 1 |

38,550 | 6,676,793,611 | IssuesEvent | 2017-10-05 07:49:46 | SavandBros/badge | https://api.github.com/repos/SavandBros/badge | closed | Github issues/pull-requests template files | documentation in progress | Should be in .github/ hidden dir.

I don't like to have all of them in the root directory.

| 1.0 | Github issues/pull-requests template files - Should be in .github/ hidden dir.

I don't like to have all of them in the root directory.

| non_process | github issues pull requests template files should be in github hidden dir i don t like to have all of them in the root directory | 0 |

20,813 | 27,574,940,949 | IssuesEvent | 2023-03-08 12:17:54 | scikit-learn/scikit-learn | https://api.github.com/repos/scikit-learn/scikit-learn | closed | OneHotEncoder `drop_idx_` attribute description in presence of infrequent categories | Bug module:preprocessing | ### Describe the issue linked to the documentation

### Issue summary

In the OneHotEncoder documentation both for [v1.2](https://scikit-learn.org/stable/modules/generated/sklearn.preprocessing.OneHotEncoder.html#sklearn.preprocessing.OneHotEncoder) and [v1.1](https://scikit-learn.org/1.1/modules/generated/sklearn.... | 1.0 | OneHotEncoder `drop_idx_` attribute description in presence of infrequent categories - ### Describe the issue linked to the documentation

### Issue summary

In the OneHotEncoder documentation both for [v1.2](https://scikit-learn.org/stable/modules/generated/sklearn.preprocessing.OneHotEncoder.html#sklearn.preproce... | process | onehotencoder drop idx attribute description in presence of infrequent categories describe the issue linked to the documentation issue summary in the onehotencoder documentation both for and the description of attribute drop idx in presence of infrequent categories reads as follows i... | 1 |

210,973 | 16,413,753,383 | IssuesEvent | 2021-05-19 01:54:12 | django-cms/django-cms | https://api.github.com/repos/django-cms/django-cms | closed | TypeError: argument of type 'WindowsPath' is not iterable | component: documentation easy pickings | this error face me when I am trying install Django-cms manually

http://docs.django-cms.org/en/latest/how_to/install.html

I use Django 3.0 and django-cms-3.7.4

| 1.0 | TypeError: argument of type 'WindowsPath' is not iterable - this error face me when I am trying install Django-cms manually

http://docs.django-cms.org/en/latest/how_to/install.html

I use Django 3.0 and django-cms-3.7.4

hasn't created any README file yet or is not using Docs Builder | 1.0 | vtex-apps/store-theme-robots has no documentation yet - [vtex-apps/store-theme-robots](https://github.com/vtex-apps/store-theme-robots) hasn't created any README file yet or is not using Docs Builder | non_process | vtex apps store theme robots has no documentation yet hasn t created any readme file yet or is not using docs builder | 0 |

23,828 | 12,128,906,463 | IssuesEvent | 2020-04-22 21:23:45 | tidyverse/tidyr | https://api.github.com/repos/tidyverse/tidyr | closed | nest() / unnest() in 1.0.0 significantly slower | feature performance :racing_car: trees :evergreen_tree: vctrs ↗️ | It appears the new implementation of `nest()` and `unnest()` has resulted in a dramatic slowdown, compared to the previous version of `tidyR`

Perhaps the problem is related to size preallocation?

In that case, `num_rows` needs to be large to observe the slowdown. See code snippet below.

```r

num_rows <- 100... | True | nest() / unnest() in 1.0.0 significantly slower - It appears the new implementation of `nest()` and `unnest()` has resulted in a dramatic slowdown, compared to the previous version of `tidyR`

Perhaps the problem is related to size preallocation?

In that case, `num_rows` needs to be large to observe the slowdown. ... | non_process | nest unnest in significantly slower it appears the new implementation of nest and unnest has resulted in a dramatic slowdown compared to the previous version of tidyr perhaps the problem is related to size preallocation in that case num rows needs to be large to observe the slowdown ... | 0 |

6,553 | 9,646,071,090 | IssuesEvent | 2019-05-17 10:14:29 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | [move-meta] file in output\temp not found // refs to ditamaps with relative paths unstable | bug preprocess priority/medium stale |

Hi all,

we are using DITA to build documents with more than 1000 pages and about 8 levels of chapter depth.

Changes to these documents are summarised in additional smaller documents which points to the corresponding positions, which are to be changed, in the main documents using maprefs.

Since we changed the... | 1.0 | [move-meta] file in output\temp not found // refs to ditamaps with relative paths unstable -

Hi all,

we are using DITA to build documents with more than 1000 pages and about 8 levels of chapter depth.

Changes to these documents are summarised in additional smaller documents which points to the corresponding p... | process | file in output temp not found refs to ditamaps with relative paths unstable hi all we are using dita to build documents with more than pages and about levels of chapter depth changes to these documents are summarised in additional smaller documents which points to the corresponding positions whi... | 1 |

51,222 | 12,691,250,281 | IssuesEvent | 2020-06-21 16:06:24 | supercollider/supercollider | https://api.github.com/repos/supercollider/supercollider | closed | consider using vcpkg on Windows | comp: appveyor comp: build os: Windows waiting for consensus waiting for information waiting for testing | Per discussion below, let's use this thread to discuss possible switch to using vcpkg for dependencies on Windows. Relevant portion of the comment from @brianlheim to follow

------------

### vcpkg

vcpkg is definitely worth another look based on what i'm seeing and what you said here. i searched around for w... | 1.0 | consider using vcpkg on Windows - Per discussion below, let's use this thread to discuss possible switch to using vcpkg for dependencies on Windows. Relevant portion of the comment from @brianlheim to follow

------------

### vcpkg

vcpkg is definitely worth another look based on what i'm seeing and what you ... | non_process | consider using vcpkg on windows per discussion below let s use this thread to discuss possible switch to using vcpkg for dependencies on windows relevant portion of the comment from brianlheim to follow vcpkg vcpkg is definitely worth another look based on what i m seeing and what you ... | 0 |

3,703 | 3,519,560,903 | IssuesEvent | 2016-01-12 17:17:00 | goblint/analyzer | https://api.github.com/repos/goblint/analyzer | opened | use automated builds for docker | usability | The [voglerr/goblint](https://hub.docker.com/r/voglerr/goblint/) image was created manually - its latest commit is from June 2014...

We should use [automated builds](https://docs.docker.com/docker-hub/builds/) to keep this up to date. | True | use automated builds for docker - The [voglerr/goblint](https://hub.docker.com/r/voglerr/goblint/) image was created manually - its latest commit is from June 2014...

We should use [automated builds](https://docs.docker.com/docker-hub/builds/) to keep this up to date. | non_process | use automated builds for docker the image was created manually its latest commit is from june we should use to keep this up to date | 0 |

451,657 | 13,039,791,609 | IssuesEvent | 2020-07-28 17:21:30 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | We need a difficulty setting that disables specialty point requirements for crafting. | Category: Balance Priority: Low | Without this, single player is either impossible or endless, and at the very least it would mean requiring people who play single player to leave their server on always over-night when not playing, which would obviously be ridiculous.

Adding this to the difficulty options is also important for small groups or even ... | 1.0 | We need a difficulty setting that disables specialty point requirements for crafting. - Without this, single player is either impossible or endless, and at the very least it would mean requiring people who play single player to leave their server on always over-night when not playing, which would obviously be ridiculou... | non_process | we need a difficulty setting that disables specialty point requirements for crafting without this single player is either impossible or endless and at the very least it would mean requiring people who play single player to leave their server on always over night when not playing which would obviously be ridiculou... | 0 |

50,212 | 6,336,400,008 | IssuesEvent | 2017-07-26 21:00:34 | devtools-html/debugger.html | https://api.github.com/repos/devtools-html/debugger.html | opened | [SourceSearch] Improve not-found UI | design | Fixes #3450

Currently, no results looks like this:

<img width="544" alt="image" src="https://user-images.githubusercontent.com/55994/28643402-949cb45a-720a-11e7-9afd-11e838e796ed.png">

I'm proposing we:

- [ ] remove the error-red color since there's not necessarily anything wrong here

- [ ] remove the sad ... | 1.0 | [SourceSearch] Improve not-found UI - Fixes #3450

Currently, no results looks like this:

<img width="544" alt="image" src="https://user-images.githubusercontent.com/55994/28643402-949cb45a-720a-11e7-9afd-11e838e796ed.png">

I'm proposing we:

- [ ] remove the error-red color since there's not necessarily anyt... | non_process | improve not found ui fixes currently no results looks like this img width alt image src i m proposing we remove the error red color since there s not necessarily anything wrong here remove the sad smileys because the mood should be neutral change message to the less redunda... | 0 |

8,347 | 11,498,954,271 | IssuesEvent | 2020-02-12 13:04:04 | prisma/prisma2 | https://api.github.com/repos/prisma/prisma2 | opened | Create a small Buildkite Slack Bot to notify the author of a commit if the CI status is broken | process/candidate topic: internal | i.e: As a developer I want to get a notification in Slack when my commit breaks the CI. I would like it to give me the URL to the commit. | 1.0 | Create a small Buildkite Slack Bot to notify the author of a commit if the CI status is broken - i.e: As a developer I want to get a notification in Slack when my commit breaks the CI. I would like it to give me the URL to the commit. | process | create a small buildkite slack bot to notify the author of a commit if the ci status is broken i e as a developer i want to get a notification in slack when my commit breaks the ci i would like it to give me the url to the commit | 1 |

49,853 | 26,362,371,522 | IssuesEvent | 2023-01-11 14:17:34 | treeverse/lakeFS | https://api.github.com/repos/treeverse/lakeFS | closed | UploadObject ifAbsent fails with "not found" / 500 ISE when it should succeed | bug area/cataloger area/API performance team/versioning-engine | # What

lakeFS fails "upload if absent" when it should succeed.

# How to reproduce

Either look at lakeFSFS in #4947 tests failing to mkdir, or apply [these patches]((https://github.com/treeverse/lakeFS/files/10374445/lakectl.if-absent.patch.gz)) and then try to upload to a non-existent path:

```sh

❯ echo foo ... | True | UploadObject ifAbsent fails with "not found" / 500 ISE when it should succeed - # What

lakeFS fails "upload if absent" when it should succeed.

# How to reproduce

Either look at lakeFSFS in #4947 tests failing to mkdir, or apply [these patches]((https://github.com/treeverse/lakeFS/files/10374445/lakectl.if-abse... | non_process | uploadobject ifabsent fails with not found ise when it should succeed what lakefs fails upload if absent when it should succeed how to reproduce either look at lakefsfs in tests failing to mkdir or apply and then try to upload to a non existent path sh ❯ echo foo go run cmd la... | 0 |

13,299 | 15,772,659,058 | IssuesEvent | 2021-03-31 22:06:11 | CivicActions/accessibility | https://api.github.com/repos/CivicActions/accessibility | opened | Research how to encourage participation in async retrospective | process | Provide options/opportunities for more participants to engage in async retrospective. | 1.0 | Research how to encourage participation in async retrospective - Provide options/opportunities for more participants to engage in async retrospective. | process | research how to encourage participation in async retrospective provide options opportunities for more participants to engage in async retrospective | 1 |

3,673 | 6,706,513,777 | IssuesEvent | 2017-10-12 07:24:45 | nuclio/nuclio | https://api.github.com/repos/nuclio/nuclio | closed | Invocation duration should be supported in Python, as close to the function as possible | area/processor priority/medium | The Golang runtime measures function runtime here:

https://github.com/nuclio/nuclio/blob/master/pkg/processor/runtime/golang/runtime.go#L115