Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

21,385 | 29,202,230,368 | IssuesEvent | 2023-05-21 00:37:18 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Hibrido / ] Test Analyst (Híbrido - Belo Horizonte) na Coodesh | SALVADOR TESTE REQUISITOS CYPRESS PROCESSOS INOVAÇÃO GITHUB CI UMA QUALIDADE TESTES DE SOFTWARE METODOLOGIAS ÁGEIS HIBRIDO AUTOMAÇÃO DE TESTES TESTES MANUAIS ALOCADO Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/test-analyst-hibrido-belo-horizonte-1... | 1.0 | [Hibrido / ] Test Analyst (Híbrido - Belo Horizonte) na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.co... | process | test analyst híbrido belo horizonte na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personalizado... | 1 |

543 | 3,004,255,184 | IssuesEvent | 2015-07-25 19:01:27 | e-government-ua/i | https://api.github.com/repos/e-government-ua/i | closed | На бэке централа добавить в сущности Document поле "oSignData" и доработать сервисы для работы с ним | hi priority In process of testing test | 1) Добавить в сущность Document - стринговое обязательное поле oSignData.

Если ЕЩЕ НЕ реализована задача: https://github.com/e-government-ua/i/issues/588, то:

2) При использовании сервиса setDocumentFile - сохранять в это поле значение "{}"

3) При использовании сервиса setDocument - сохранять в это поле значение "{}... | 1.0 | На бэке централа добавить в сущности Document поле "oSignData" и доработать сервисы для работы с ним - 1) Добавить в сущность Document - стринговое обязательное поле oSignData.

Если ЕЩЕ НЕ реализована задача: https://github.com/e-government-ua/i/issues/588, то:

2) При использовании сервиса setDocumentFile - сохранять... | process | на бэке централа добавить в сущности document поле osigndata и доработать сервисы для работы с ним добавить в сущность document стринговое обязательное поле osigndata если еще не реализована задача то при использовании сервиса setdocumentfile сохранять в это поле значение при использовании... | 1 |

12,782 | 15,164,805,116 | IssuesEvent | 2021-02-12 14:14:55 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | [Native Types] Ensure that fields of unsupported types aren't dropped | kind/feature process/candidate team/client team/migrations topic: introspection topic: migrate topic: native database types | ## Problem

It is difficult to maintain the schema and using the client for applications dependent on types that are unsupported by Prisma.

More specifically, whenever introspecting the database, columns of unsupported types are commented out. In these situations, using migrate to update the database schema again late... | 1.0 | [Native Types] Ensure that fields of unsupported types aren't dropped - ## Problem

It is difficult to maintain the schema and using the client for applications dependent on types that are unsupported by Prisma.

More specifically, whenever introspecting the database, columns of unsupported types are commented out. In ... | process | ensure that fields of unsupported types aren t dropped problem it is difficult to maintain the schema and using the client for applications dependent on types that are unsupported by prisma more specifically whenever introspecting the database columns of unsupported types are commented out in these situati... | 1 |

8,792 | 11,908,187,285 | IssuesEvent | 2020-03-31 00:13:31 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | [macOS] When attempting to merge two shape files Processing dialog goes behind the main QGIS window | Bug MacOS Processing | I've made a video of my screen:

https://www.dropbox.com/s/t0zzi10xvcb9i5h/merge%20in%20qgis%20not%20working.mov?dl=0

basically once I choose two vector layers to merge, from **Vector->Data Management Tools-> Merge Vector Layers**, the dialogue window shuts and there is no way to complete the process. There are no e... | 1.0 | [macOS] When attempting to merge two shape files Processing dialog goes behind the main QGIS window - I've made a video of my screen:

https://www.dropbox.com/s/t0zzi10xvcb9i5h/merge%20in%20qgis%20not%20working.mov?dl=0

basically once I choose two vector layers to merge, from **Vector->Data Management Tools-> Merge ... | process | when attempting to merge two shape files processing dialog goes behind the main qgis window i ve made a video of my screen basically once i choose two vector layers to merge from vector data management tools merge vector layers the dialogue window shuts and there is no way to complete the process t... | 1 |

245,533 | 20,775,601,501 | IssuesEvent | 2022-03-16 10:11:01 | bats-core/bats-core | https://api.github.com/repos/bats-core/bats-core | closed | ensure unofficial bash script mode test fails when run out of the tree | Type: Bug Priority: High Status: Unconfirmend Component: Self Test Suite Waiting for Contributor Feedback | I am trying to pass the source tree tests out of place, running an installed bats binary.

This is the failing test from the bats.bats file.

```

@test 'ensure compatibility with unofficial Bash strict mode' {

local expected='ok 1 unofficial Bash strict mode conditions met'

# Run Bats under `set -u` to catch a... | 1.0 | ensure unofficial bash script mode test fails when run out of the tree - I am trying to pass the source tree tests out of place, running an installed bats binary.

This is the failing test from the bats.bats file.

```

@test 'ensure compatibility with unofficial Bash strict mode' {

local expected='ok 1 unofficial B... | non_process | ensure unofficial bash script mode test fails when run out of the tree i am trying to pass the source tree tests out of place running an installed bats binary this is the failing test from the bats bats file test ensure compatibility with unofficial bash strict mode local expected ok unofficial b... | 0 |

65,478 | 19,536,596,890 | IssuesEvent | 2021-12-31 08:41:14 | GoldenSoftwareLtd/gedemin | https://api.github.com/repos/GoldenSoftwareLtd/gedemin | closed | Ошибка при печати накладных | Priority-Low Type-Defect Depot | Originally reported on Google Code with ID 1541

```

УНП организации хранится в текстовом поле, но если внести туда текст, то

при распечатке накладных (в складе) возникает ошибка ‘is a not valid

floating point value’

```

Reported by `danilchyk` on 2009-09-02 12:47:40

| 1.0 | Ошибка при печати накладных - Originally reported on Google Code with ID 1541

```

УНП организации хранится в текстовом поле, но если внести туда текст, то

при распечатке накладных (в складе) возникает ошибка ‘is a not valid

floating point value’

```

Reported by `danilchyk` on 2009-09-02 12:47:40

| non_process | ошибка при печати накладных originally reported on google code with id унп организации хранится в текстовом поле но если внести туда текст то при распечатке накладных в складе возникает ошибка ‘is a not valid floating point value’ reported by danilchyk on | 0 |

20,513 | 27,170,972,374 | IssuesEvent | 2023-02-17 19:23:29 | darkside-princeton/sipm-analysis | https://api.github.com/repos/darkside-princeton/sipm-analysis | closed | Pulse processing for scintillation data | pre-processing | Reproduce the tasks done with `script/root_scintillation.py` using the new framework.

1. Have one script under `sipm/exe/` that handles pulse information without pulse shape analysis. Add necessary methods in the classes under `sipm/recon/`.

2. Include more information to analyze and save, including baseline, charg... | 1.0 | Pulse processing for scintillation data - Reproduce the tasks done with `script/root_scintillation.py` using the new framework.

1. Have one script under `sipm/exe/` that handles pulse information without pulse shape analysis. Add necessary methods in the classes under `sipm/recon/`.

2. Include more information to a... | process | pulse processing for scintillation data reproduce the tasks done with script root scintillation py using the new framework have one script under sipm exe that handles pulse information without pulse shape analysis add necessary methods in the classes under sipm recon include more information to a... | 1 |

8,651 | 11,790,603,910 | IssuesEvent | 2020-03-17 19:17:59 | metabase/metabase | https://api.github.com/repos/metabase/metabase | opened | H2 losing column type info for date_trunc results | .Backend .Correctness Database/H2 Priority:P2 Querying/Processor Type:Bug | The query `SELECT DATE_TRUNC('day', CREATED_AT) FROM ORDERS` fails against the test database on **H2**, on current `master` (37dfebf), with a `NullPointerException`.

The NPE is caused by [this call to `getColumnClassName`](https://github.com/metabase/metabase/blob/37dfebf0589f82da4aefafabaa5da1559b81fd68/src/metabas... | 1.0 | H2 losing column type info for date_trunc results - The query `SELECT DATE_TRUNC('day', CREATED_AT) FROM ORDERS` fails against the test database on **H2**, on current `master` (37dfebf), with a `NullPointerException`.

The NPE is caused by [this call to `getColumnClassName`](https://github.com/metabase/metabase/blob/... | process | losing column type info for date trunc results the query select date trunc day created at from orders fails against the test database on on current master with a nullpointerexception the npe is caused by returning nil the patch below handles the nil value but the root problem ... | 1 |

436,664 | 12,551,310,964 | IssuesEvent | 2020-06-06 14:19:39 | googleapis/nodejs-monitoring-dashboards | https://api.github.com/repos/googleapis/nodejs-monitoring-dashboards | closed | Synthesis failed for nodejs-monitoring-dashboards | api: monitoring autosynth failure priority: p1 type: bug | Hello! Autosynth couldn't regenerate nodejs-monitoring-dashboards. :broken_heart:

Here's the output from running `synth.py`:

```

b'googleapis.\n2020-06-05 04:34:38,854 synthtool [DEBUG] > Using precloned repo /home/kbuilder/.cache/synthtool/googleapis\nDEBUG:synthtool:Using precloned repo /home/kbuilder/.cache/syntht... | 1.0 | Synthesis failed for nodejs-monitoring-dashboards - Hello! Autosynth couldn't regenerate nodejs-monitoring-dashboards. :broken_heart:

Here's the output from running `synth.py`:

```

b'googleapis.\n2020-06-05 04:34:38,854 synthtool [DEBUG] > Using precloned repo /home/kbuilder/.cache/synthtool/googleapis\nDEBUG:synthto... | non_process | synthesis failed for nodejs monitoring dashboards hello autosynth couldn t regenerate nodejs monitoring dashboards broken heart here s the output from running synth py b googleapis synthtool using precloned repo home kbuilder cache synthtool googleapis ndebug synthtool using preclone... | 0 |

84 | 2,533,338,628 | IssuesEvent | 2015-01-23 22:33:16 | GsDevKit/Seaside31 | https://api.github.com/repos/GsDevKit/Seaside31 | closed | WAGemStoneRunSeasideGems should be able to handle multiple named servers | in process | Perhaps it already can ... in which case the webServer script should be updated to provide info about the registered servers and their status ... also let's add multi-port registration for fastcgi support ... | 1.0 | WAGemStoneRunSeasideGems should be able to handle multiple named servers - Perhaps it already can ... in which case the webServer script should be updated to provide info about the registered servers and their status ... also let's add multi-port registration for fastcgi support ... | process | wagemstonerunseasidegems should be able to handle multiple named servers perhaps it already can in which case the webserver script should be updated to provide info about the registered servers and their status also let s add multi port registration for fastcgi support | 1 |

16,276 | 20,884,553,889 | IssuesEvent | 2022-03-23 02:34:49 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Add A Carrot | suggested title in process | Please add as much of the following info as you can:

Title: A Carrot

Type (film/tv show): Film

Film or show in which it appears: South Park (https://www.imdb.com/title/tt0705968/ Season 06 Episode 15)

Is the parent film/show streaming anywhere? Amazon Prime in the UK.

About when in the parent film/show d... | 1.0 | Add A Carrot - Please add as much of the following info as you can:

Title: A Carrot

Type (film/tv show): Film

Film or show in which it appears: South Park (https://www.imdb.com/title/tt0705968/ Season 06 Episode 15)

Is the parent film/show streaming anywhere? Amazon Prime in the UK.

About when in the par... | process | add a carrot please add as much of the following info as you can title a carrot type film tv show film film or show in which it appears south park season episode is the parent film show streaming anywhere amazon prime in the uk about when in the parent film show does it appear minute... | 1 |

156,079 | 5,964,051,687 | IssuesEvent | 2017-05-30 07:45:45 | karmaradio/karma | https://api.github.com/repos/karmaradio/karma | opened | All CAPS for specific words in labels | enhancement priority-4 | Consistent sentence-case is being applied across app.

Some exceptions need to be handled. eg: acronyms like IBAN, SWIFT and VAT:

Presume we can just manually relevant labels?

| 1.0 | All CAPS for specific words in labels - Consistent sentence-case is being applied across app.

Some exceptions need to be handled. eg: acronyms like IBAN, SWIFT and VAT:

Presume we can just manually relevant labels?

extension for incorrectly classified source files (ie, currently .bin -> 'application/octet-stream' for image files with older/nonstandard 'image/jpeg' mimetype in original exif info).

Related to #108. As reviewed and discussed with @DiegoPi... | 1.0 | Add alternate mimetype mappings - ## What's needed?

Add alternate mimetype mapping for standard (correct) extension for incorrectly classified source files (ie, currently .bin -> 'application/octet-stream' for image files with older/nonstandard 'image/jpeg' mimetype in original exif info).

Related to #108. As re... | process | add alternate mimetype mappings what s needed add alternate mimetype mapping for standard correct extension for incorrectly classified source files ie currently bin application octet stream for image files with older nonstandard image jpeg mimetype in original exif info related to as revi... | 1 |

307,386 | 26,528,055,585 | IssuesEvent | 2023-01-19 10:17:16 | apache/shardingsphere | https://api.github.com/repos/apache/shardingsphere | closed | Refactor the distribution for metrics E2E | in: test feature: agent | now, the proxy distribution contains the agent in default. it's useless for the assembly process of matrics E2E.

this should be refactored as followings :

1. delete the assembly file for matrics E2E

2. add proxy distribution into matrics docker image

3. copy the matrics config into docker image

4. test by docker i... | 1.0 | Refactor the distribution for metrics E2E - now, the proxy distribution contains the agent in default. it's useless for the assembly process of matrics E2E.

this should be refactored as followings :

1. delete the assembly file for matrics E2E

2. add proxy distribution into matrics docker image

3. copy the matrics ... | non_process | refactor the distribution for metrics now the proxy distribution contains the agent in default it s useless for the assembly process of matrics this should be refactored as followings delete the assembly file for matrics add proxy distribution into matrics docker image copy the matrics config... | 0 |

18,931 | 24,886,911,806 | IssuesEvent | 2022-10-28 08:34:53 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Error: Error in migration engine. Reason: [migration-engine\cli\src/main.rs:102:23] Error opening datamodel file in `C:\Users\shubham.halder\Documents\blogr-nextjs-prisma\prisma\schema.prisma`: Access is denied. (os error 5) | kind/bug process/candidate topic: windows tech/engines/migration engine topic: error reporting team/schema | <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma db push`

Version: `4.5.0`

Binary Version: `0362da9eebca54d94c8ef5edd3b2e90af99ba452`

Report: https://prisma-errors.netlify.app/report/14389

OS: `x64 win32 10.0.19044`

| 1.0 | Error: Error in migration engine. Reason: [migration-engine\cli\src/main.rs:102:23] Error opening datamodel file in `C:\Users\shubham.halder\Documents\blogr-nextjs-prisma\prisma\schema.prisma`: Access is denied. (os error 5) - <!-- If required, please update the title to be clear and descriptive -->

Command: `prism... | process | error error in migration engine reason error opening datamodel file in c users shubham halder documents blogr nextjs prisma prisma schema prisma access is denied os error command prisma db push version binary version report os | 1 |

9,272 | 12,301,542,882 | IssuesEvent | 2020-05-11 15:33:43 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | opened | r.cost in modeler does not accept output temporary files | Bug Processing | **Describe the bug**

Attached model fail to start running if output is set as temporary file

[test_r_cost.zip](https://github.com/qgis/QGIS/files/4610546/test_r_cost.zip)

**How to Reproduce**

1. create or use a fake raster as input

2. create or use a fake input point layer

3. run model with the above input a... | 1.0 | r.cost in modeler does not accept output temporary files - **Describe the bug**

Attached model fail to start running if output is set as temporary file

[test_r_cost.zip](https://github.com/qgis/QGIS/files/4610546/test_r_cost.zip)

**How to Reproduce**

1. create or use a fake raster as input

2. create or use a ... | process | r cost in modeler does not accept output temporary files describe the bug attached model fail to start running if output is set as temporary file how to reproduce create or use a fake raster as input create or use a fake input point layer run model with the above input as in the follow... | 1 |

20,790 | 27,533,118,411 | IssuesEvent | 2023-03-07 00:11:21 | aolabNeuro/analyze | https://api.github.com/repos/aolabNeuro/analyze | opened | precondition.downsample() | enhancement preprocessing | Current implementation of downsample uses averaging and upsamples data to lcm to prevent data loss. This means every time cursor data is downsampled from 25kHz to 120, it is upsampled first to 75kHz. Not efficient for memory handling and computation.

Todo: optimize downsampling function for our use cases in future.... | 1.0 | precondition.downsample() - Current implementation of downsample uses averaging and upsamples data to lcm to prevent data loss. This means every time cursor data is downsampled from 25kHz to 120, it is upsampled first to 75kHz. Not efficient for memory handling and computation.

Todo: optimize downsampling function ... | process | precondition downsample current implementation of downsample uses averaging and upsamples data to lcm to prevent data loss this means every time cursor data is downsampled from to it is upsampled first to not efficient for memory handling and computation todo optimize downsampling function for our us... | 1 |

5,599 | 8,460,074,984 | IssuesEvent | 2018-10-22 17:45:49 | aspnet/IISIntegration | https://api.github.com/repos/aspnet/IISIntegration | closed | ANCM V2 - net stop was /y Issue | in-process | 'net stop was /y' causes 'Failed to gracefully shutdown application' warnings being logged to the Application event log.

Is this a known issue? | 1.0 | ANCM V2 - net stop was /y Issue - 'net stop was /y' causes 'Failed to gracefully shutdown application' warnings being logged to the Application event log.

Is this a known issue? | process | ancm net stop was y issue net stop was y causes failed to gracefully shutdown application warnings being logged to the application event log is this a known issue | 1 |

13,475 | 15,983,888,932 | IssuesEvent | 2021-04-18 11:02:20 | brucemiller/LaTeXML | https://api.github.com/repos/brucemiller/LaTeXML | closed | Theorem title in Jats-XML | bug postprocessing schema | When i convert a theorem to JATS-XML the title is not appearing in the XML.

For a theorem environment defined like

**test.tex**

```tex

\documentclass{article}

\newtheorem{theorem}{Theorem}[section]

\begin{document}

\begin{theorem}

Let f be a function.

\end{theorem}

\end{document}

```

I get with `latex... | 1.0 | Theorem title in Jats-XML - When i convert a theorem to JATS-XML the title is not appearing in the XML.

For a theorem environment defined like

**test.tex**

```tex

\documentclass{article}

\newtheorem{theorem}{Theorem}[section]

\begin{document}

\begin{theorem}

Let f be a function.

\end{theorem}

\end{docum... | process | theorem title in jats xml when i convert a theorem to jats xml the title is not appearing in the xml for a theorem environment defined like test tex tex documentclass article newtheorem theorem theorem begin document begin theorem let f be a function end theorem end document ... | 1 |

7,063 | 5,831,982,290 | IssuesEvent | 2017-05-08 20:41:31 | dotnet/roslyn | https://api.github.com/repos/dotnet/roslyn | closed | Update use of HashAlgorithm to reduce allocations | Area-Analyzers Bug Tenet-Performance | **Version Used**: 15.1

PerfView is showing substantial overhead for the creation of a temporary array in `HashAlgorithm.ComputeHash(Stream)`, originating from Microsoft.CodeAnalysis and Microsoft.CodeAnalysis.Workspaces. On my machine, this accounted for more than 3 seconds of time while Roslyn.sln was opening. Code... | True | Update use of HashAlgorithm to reduce allocations - **Version Used**: 15.1

PerfView is showing substantial overhead for the creation of a temporary array in `HashAlgorithm.ComputeHash(Stream)`, originating from Microsoft.CodeAnalysis and Microsoft.CodeAnalysis.Workspaces. On my machine, this accounted for more than ... | non_process | update use of hashalgorithm to reduce allocations version used perfview is showing substantial overhead for the creation of a temporary array in hashalgorithm computehash stream originating from microsoft codeanalysis and microsoft codeanalysis workspaces on my machine this accounted for more than ... | 0 |

14,398 | 17,410,358,685 | IssuesEvent | 2021-08-03 11:31:12 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Please consider not throwing an exception when Process.Kill() is called for a process that exited previously | area-System.Diagnostics.Process | This is inspired by an earlier issue https://github.com/dotnet/runtime/issues/16848 If I want to wait for a running process to exit in some short period of time and terminate it if it fails to exits on itself then I'm likely to write code like this:

if(!process.WaitForExit(5000))

{

process.Kill();

... | 1.0 | Please consider not throwing an exception when Process.Kill() is called for a process that exited previously - This is inspired by an earlier issue https://github.com/dotnet/runtime/issues/16848 If I want to wait for a running process to exit in some short period of time and terminate it if it fails to exits on itself ... | process | please consider not throwing an exception when process kill is called for a process that exited previously this is inspired by an earlier issue if i want to wait for a running process to exit in some short period of time and terminate it if it fails to exits on itself then i m likely to write code like this ... | 1 |

258,662 | 19,568,050,721 | IssuesEvent | 2022-01-04 05:24:30 | hrushikeshrv/mjxgui | https://api.github.com/repos/hrushikeshrv/mjxgui | closed | Add anchors to headings in docs | documentation enhancement good first issue hacktoberfest | We need to add anchor tags to all headings in the documentation, and change the font family to a monospace font for certain headings in the API section. | 1.0 | Add anchors to headings in docs - We need to add anchor tags to all headings in the documentation, and change the font family to a monospace font for certain headings in the API section. | non_process | add anchors to headings in docs we need to add anchor tags to all headings in the documentation and change the font family to a monospace font for certain headings in the api section | 0 |

262,088 | 27,850,888,736 | IssuesEvent | 2023-03-20 18:36:12 | jgeraigery/dynatrace-service-broker | https://api.github.com/repos/jgeraigery/dynatrace-service-broker | opened | json-simple-1.1.1.jar: 1 vulnerabilities (highest severity is: 5.5) | Mend: dependency security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>json-simple-1.1.1.jar</b></p></summary>

<p></p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/junit/junit/4... | True | json-simple-1.1.1.jar: 1 vulnerabilities (highest severity is: 5.5) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>json-simple-1.1.1.jar</b></p></summary>

<p></p>

<p>Path to dependency file: /pom.xml</p>

<p>Path... | non_process | json simple jar vulnerabilities highest severity is vulnerable library json simple jar path to dependency file pom xml path to vulnerable library home wss scanner repository junit junit junit jar found in head commit a href vulnerabilities cve ... | 0 |

399,307 | 27,236,161,737 | IssuesEvent | 2023-02-21 16:28:11 | mindsdb/mindsdb | https://api.github.com/repos/mindsdb/mindsdb | closed | [Docs] Add a community tutorial link to the `Using MindsDB via Mongo API -> Machine Learning Examples -> Regression` page | help wanted good first issue documentation first-timers-only | ## Instructions :page_facing_up:

Here are the step-by-step instructions:

1. Go to the `/docs/using-mongo-api/regression.mdx` file.

2. Go to the end of this file and add another item to the list, as follows:

```

- [Tutorial to Predict the Energy Usage using MindsDB and MongoDB](https://dev.to/dohrisalim/tut... | 1.0 | [Docs] Add a community tutorial link to the `Using MindsDB via Mongo API -> Machine Learning Examples -> Regression` page - ## Instructions :page_facing_up:

Here are the step-by-step instructions:

1. Go to the `/docs/using-mongo-api/regression.mdx` file.

2. Go to the end of this file and add another item to th... | non_process | add a community tutorial link to the using mindsdb via mongo api machine learning examples regression page instructions page facing up here are the step by step instructions go to the docs using mongo api regression mdx file go to the end of this file and add another item to the lis... | 0 |

1,448 | 4,020,060,313 | IssuesEvent | 2016-05-16 17:03:28 | emergence-lab/emergence-lab | https://api.github.com/repos/emergence-lab/emergence-lab | closed | Run Process on Sample | backend bug process | Using the Run Process action from the sample detail page gives an error. | 1.0 | Run Process on Sample - Using the Run Process action from the sample detail page gives an error. | process | run process on sample using the run process action from the sample detail page gives an error | 1 |

2,576 | 5,332,395,989 | IssuesEvent | 2017-02-15 21:58:18 | MikePopoloski/slang | https://api.github.com/repos/MikePopoloski/slang | closed | Make macro stringification more robust | area-lexing area-preprocessor cleanup medium | Implemented in Lexer::stringify. It's not always clear what kind of spacing should be in the resulting string; the standard doesn't have much to say about it. Probably we should take a look at what existing Verilog compilers do and try to match them. | 1.0 | Make macro stringification more robust - Implemented in Lexer::stringify. It's not always clear what kind of spacing should be in the resulting string; the standard doesn't have much to say about it. Probably we should take a look at what existing Verilog compilers do and try to match them. | process | make macro stringification more robust implemented in lexer stringify it s not always clear what kind of spacing should be in the resulting string the standard doesn t have much to say about it probably we should take a look at what existing verilog compilers do and try to match them | 1 |

17,337 | 23,155,452,350 | IssuesEvent | 2022-07-29 12:35:51 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | [processing] Add FORCE_RASTER (Fix #48921) and IMAGE_COMPRESSION parameters to printlayouttopdf, atlaslayouttopdf and atlaslayouttomultiplepdf algorithms (Request in QGIS) | Processing Alg 3.28 | ### Request for documentation

From pull request QGIS/qgis#49122

Author: @agiudiceandrea

QGIS version: 3.28

**[processing] Add FORCE_RASTER (Fix #48921) and IMAGE_COMPRESSION parameters to printlayouttopdf, atlaslayouttopdf and atlaslayouttomultiplepdf algorithms**

### PR Description:

## Description

Adds the `FORCE... | 1.0 | [processing] Add FORCE_RASTER (Fix #48921) and IMAGE_COMPRESSION parameters to printlayouttopdf, atlaslayouttopdf and atlaslayouttomultiplepdf algorithms (Request in QGIS) - ### Request for documentation

From pull request QGIS/qgis#49122

Author: @agiudiceandrea

QGIS version: 3.28

**[processing] Add FORCE_RASTER (Fix #... | process | add force raster fix and image compression parameters to printlayouttopdf atlaslayouttopdf and atlaslayouttomultiplepdf algorithms request in qgis request for documentation from pull request qgis qgis author agiudiceandrea qgis version add force raster fix and image compression parame... | 1 |

47,861 | 13,066,295,012 | IssuesEvent | 2020-07-30 21:23:50 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | ipdf - docs are out of date and incomplete (Trac #1298) | Migrated from Trac combo simulation defect | rst docs are light, and barely gloss over IPDF usage. links are dead.

doxygen docs are incomplete (several "Comming soon"'s)

doxygen docs are out of date (refer to the old Makefile build system, "plan to use the icetray unit system")

Migrated from https://code.icecube.wisc.edu/ticket/1298

```json

{

"status": "clo... | 1.0 | ipdf - docs are out of date and incomplete (Trac #1298) - rst docs are light, and barely gloss over IPDF usage. links are dead.

doxygen docs are incomplete (several "Comming soon"'s)

doxygen docs are out of date (refer to the old Makefile build system, "plan to use the icetray unit system")

Migrated from https://code... | non_process | ipdf docs are out of date and incomplete trac rst docs are light and barely gloss over ipdf usage links are dead doxygen docs are incomplete several comming soon s doxygen docs are out of date refer to the old makefile build system plan to use the icetray unit system migrated from json ... | 0 |

1,978 | 4,108,651,261 | IssuesEvent | 2016-06-06 16:48:48 | bcgov/aspb-planning | https://api.github.com/repos/bcgov/aspb-planning | closed | Prepare Retrospective presentation | New Service Deliver Bi-Modal TS Governance TS Roadmap / Integration | describe the Product/Service Design efforts from October to March, with timeline, significant events, lessons learned. | 1.0 | Prepare Retrospective presentation - describe the Product/Service Design efforts from October to March, with timeline, significant events, lessons learned. | non_process | prepare retrospective presentation describe the product service design efforts from october to march with timeline significant events lessons learned | 0 |

193,943 | 15,392,951,848 | IssuesEvent | 2021-03-03 16:10:50 | udistrital/financiera_documentacion | https://api.github.com/repos/udistrital/financiera_documentacion | opened | Capacitación Conciliaciones | Documentation | Se planteó mesa de trabajo con el ingeniero a Cargo el día 1 de marzo y se levanto la respectiva acta, la cual queda registrada en el siguiente link

https://docs.google.com/document/d/1QYmdSUPeNIgsqQqjTMlFd5WOyWc0Il4uPcmaQwy7OOc/edit?usp=sharing | 1.0 | Capacitación Conciliaciones - Se planteó mesa de trabajo con el ingeniero a Cargo el día 1 de marzo y se levanto la respectiva acta, la cual queda registrada en el siguiente link

https://docs.google.com/document/d/1QYmdSUPeNIgsqQqjTMlFd5WOyWc0Il4uPcmaQwy7OOc/edit?usp=sharing | non_process | capacitación conciliaciones se planteó mesa de trabajo con el ingeniero a cargo el día de marzo y se levanto la respectiva acta la cual queda registrada en el siguiente link | 0 |

326,854 | 9,961,656,177 | IssuesEvent | 2019-07-07 07:15:14 | dhis2/d2-ui | https://api.github.com/repos/dhis2/d2-ui | closed | Use runtime configurable DHIS 2 server for examples | priority:low stale usability wontfix | Since developers (us included) will be running the examples locally, we can't rely on any external servers for the examples. It would be best to let the user choose what DHIS 2 server to use at run time, including entering username and password for basic auth.

| 1.0 | Use runtime configurable DHIS 2 server for examples - Since developers (us included) will be running the examples locally, we can't rely on any external servers for the examples. It would be best to let the user choose what DHIS 2 server to use at run time, including entering username and password for basic auth.

| non_process | use runtime configurable dhis server for examples since developers us included will be running the examples locally we can t rely on any external servers for the examples it would be best to let the user choose what dhis server to use at run time including entering username and password for basic auth | 0 |

747,088 | 26,073,029,277 | IssuesEvent | 2022-12-24 04:01:36 | pilot-light/pilotlight | https://api.github.com/repos/pilot-light/pilotlight | closed | [FEATURE]: Add Clipping/Scissoring to Drawing API | priority: Normal type: feature system: draw backend: All | ## Description

Add clipping & scissoring to the drawing API. One pull request per OS.

Progress:

* [x] Windows

* [x] Linux

* [x] MacOS | 1.0 | [FEATURE]: Add Clipping/Scissoring to Drawing API - ## Description

Add clipping & scissoring to the drawing API. One pull request per OS.

Progress:

* [x] Windows

* [x] Linux

* [x] MacOS | non_process | add clipping scissoring to drawing api description add clipping scissoring to the drawing api one pull request per os progress windows linux macos | 0 |

169,929 | 26,876,106,284 | IssuesEvent | 2023-02-05 02:54:56 | patternfly/patternfly-elements | https://api.github.com/repos/patternfly/patternfly-elements | closed | [fix] pfe-tabs | vertically align tab text | good first issue design system needs: prioritization fix | If tabs have text which is stacking to two lines, currently the text is top-aligned.

The tab text should be vertically aligned:

The tab text should be vertically aligned:

| 1.0 | X-NICSE-IDNUMBER not required - Same issue as in this issue: https://github.com/hexonet/whmcs-ispapi-registrar/issues/200

Client can register a .se domain without entering the ID number. Would be great if that field could be required :-) | process | x nicse idnumber not required same issue as in this issue client can register a se domain without entering the id number would be great if that field could be required | 1 |

209,196 | 16,178,132,429 | IssuesEvent | 2021-05-03 10:17:15 | kubewarden/docs | https://api.github.com/repos/kubewarden/docs | closed | Update the architecture docs: talk about OCI registries | documentation | The charts on the page have been updated to show also the involvement of OCI registry. The text should be updated to reflect that. | 1.0 | Update the architecture docs: talk about OCI registries - The charts on the page have been updated to show also the involvement of OCI registry. The text should be updated to reflect that. | non_process | update the architecture docs talk about oci registries the charts on the page have been updated to show also the involvement of oci registry the text should be updated to reflect that | 0 |

50,362 | 21,082,032,046 | IssuesEvent | 2022-04-03 03:04:14 | openstreetmap/operations | https://api.github.com/repos/openstreetmap/operations | reopened | Wiki pages with many Wikimedia Commons images often return HTTP 504 error | service:wiki | Over the past couple weeks, I’ve noticed that any wiki page with many images from Wikimedia Commons (a couple dozen or more?) returns a 504 Gateway Timeout error the first time you try to access it but returns the expected response if you retry the request shortly after. I’m not sure if the problem is on our end or Wik... | 1.0 | Wiki pages with many Wikimedia Commons images often return HTTP 504 error - Over the past couple weeks, I’ve noticed that any wiki page with many images from Wikimedia Commons (a couple dozen or more?) returns a 504 Gateway Timeout error the first time you try to access it but returns the expected response if you retry... | non_process | wiki pages with many wikimedia commons images often return http error over the past couple weeks i’ve noticed that any wiki page with many images from wikimedia commons a couple dozen or more returns a gateway timeout error the first time you try to access it but returns the expected response if you retry the... | 0 |

8,678 | 11,810,629,390 | IssuesEvent | 2020-03-19 16:47:48 | MHRA/products | https://api.github.com/repos/MHRA/products | opened | Sane error handling & routing | EPIC - Auto Batch Process :oncoming_automobile: Enhancement 💫 STORY :book: | ## User want

As a _user_

I want _to see sensible errors_

So that _I understand what's going wrong_

## Acceptance Criteria

- [ ] Rewrite our routing so that we can implement a rejection handler for both JSON & XML requests;

- [ ] Implement custom Rejections where it makes sense to give the user greater visib... | 1.0 | Sane error handling & routing - ## User want

As a _user_

I want _to see sensible errors_

So that _I understand what's going wrong_

## Acceptance Criteria

- [ ] Rewrite our routing so that we can implement a rejection handler for both JSON & XML requests;

- [ ] Implement custom Rejections where it makes sens... | process | sane error handling routing user want as a user i want to see sensible errors so that i understand what s going wrong acceptance criteria rewrite our routing so that we can implement a rejection handler for both json xml requests implement custom rejections where it makes sense to... | 1 |

152,787 | 5,868,557,878 | IssuesEvent | 2017-05-14 13:51:04 | DV8FromTheWorld/JDA | https://api.github.com/repos/DV8FromTheWorld/JDA | opened | Build 194 is not working | bug priority | When JDA tries to chunk members of a guild or apply guild sync the startup sequence breaks as websocket messages are not sent.

This breaks client login and all bots that are in guild requiring member chunking.

This can be seen by only having 2 log messages from JDA:

```

[13:32:40] [Info] [JDA]: Login Successful!

... | 1.0 | Build 194 is not working - When JDA tries to chunk members of a guild or apply guild sync the startup sequence breaks as websocket messages are not sent.

This breaks client login and all bots that are in guild requiring member chunking.

This can be seen by only having 2 log messages from JDA:

```

[13:32:40] [Info... | non_process | build is not working when jda tries to chunk members of a guild or apply guild sync the startup sequence breaks as websocket messages are not sent this breaks client login and all bots that are in guild requiring member chunking this can be seen by only having log messages from jda login succe... | 0 |

19,361 | 25,491,529,376 | IssuesEvent | 2022-11-27 05:31:42 | hsmusic/hsmusic-wiki | https://api.github.com/repos/hsmusic/hsmusic-wiki | closed | Rereleased tracks shouldn't count for total duration by an artist | type: bug (user-facing) scope: data processing thing: artists | Good example: https://hsmusic.wiki/preview-en/artist/joseph-aylsworth/

Technically not a "bug" but definitely not as it should be.

Thanks for the catch, Niklink! | 1.0 | Rereleased tracks shouldn't count for total duration by an artist - Good example: https://hsmusic.wiki/preview-en/artist/joseph-aylsworth/

Technically not a "bug" but definitely not as it should be.

Thanks for the catch, Niklink! | process | rereleased tracks shouldn t count for total duration by an artist good example technically not a bug but definitely not as it should be thanks for the catch niklink | 1 |

43,615 | 7,055,784,029 | IssuesEvent | 2018-01-04 09:54:33 | chartjs/chartjs-plugin-datalabels | https://api.github.com/repos/chartjs/chartjs-plugin-datalabels | closed | Apply datalabels to specific datasets | documentation question resolved | Hi, thanks for this awesome plugin in chartjs. I've read the docs but can't seems to find it even in the samples. I have this mixed chart with bar graphs and line graph, what I want is to add the datalabels in specific datasets and not to the whole chart. Can you give me an example as I have no idea on what to do. BTW,... | 1.0 | Apply datalabels to specific datasets - Hi, thanks for this awesome plugin in chartjs. I've read the docs but can't seems to find it even in the samples. I have this mixed chart with bar graphs and line graph, what I want is to add the datalabels in specific datasets and not to the whole chart. Can you give me an examp... | non_process | apply datalabels to specific datasets hi thanks for this awesome plugin in chartjs i ve read the docs but can t seems to find it even in the samples i have this mixed chart with bar graphs and line graph what i want is to add the datalabels in specific datasets and not to the whole chart can you give me an examp... | 0 |

17,028 | 22,406,802,246 | IssuesEvent | 2022-06-18 04:41:50 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | Update example configuration for metrics transform processor | bug good first issue proc: metricstransformprocessor | The configuration on https://github.com/open-telemetry/opentelemetry-collector-contrib/blob/main/processor/metricstransformprocessor/README.md#configuration appears to be missing `metricstransform:` before ` transforms:`. This is confusing to customers.

| 1.0 | Update example configuration for metrics transform processor - The configuration on https://github.com/open-telemetry/opentelemetry-collector-contrib/blob/main/processor/metricstransformprocessor/README.md#configuration appears to be missing `metricstransform:` before ` transforms:`. This is confusing to customers.

... | process | update example configuration for metrics transform processor the configuration on appears to be missing metricstransform before transforms this is confusing to customers | 1 |

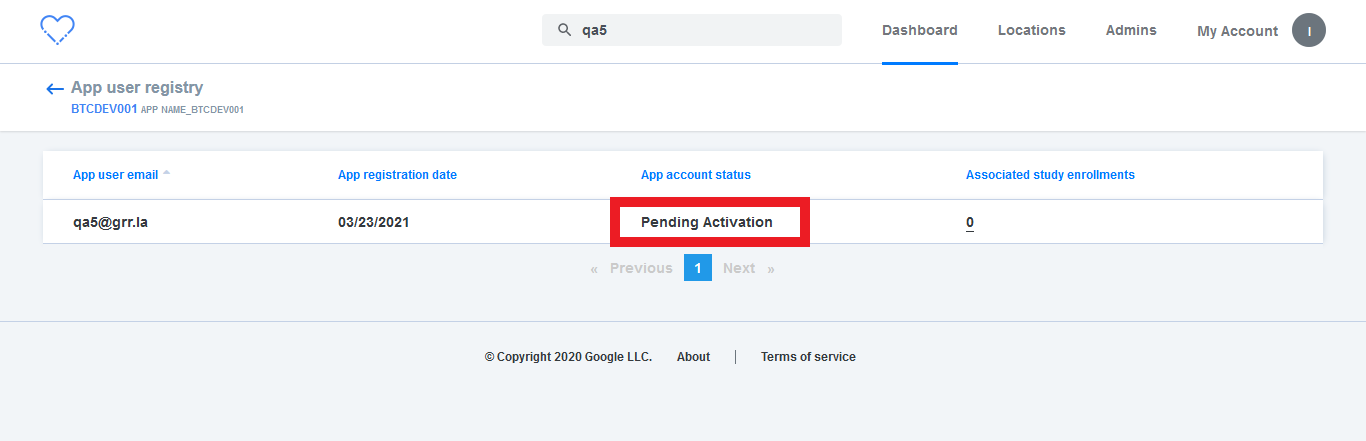

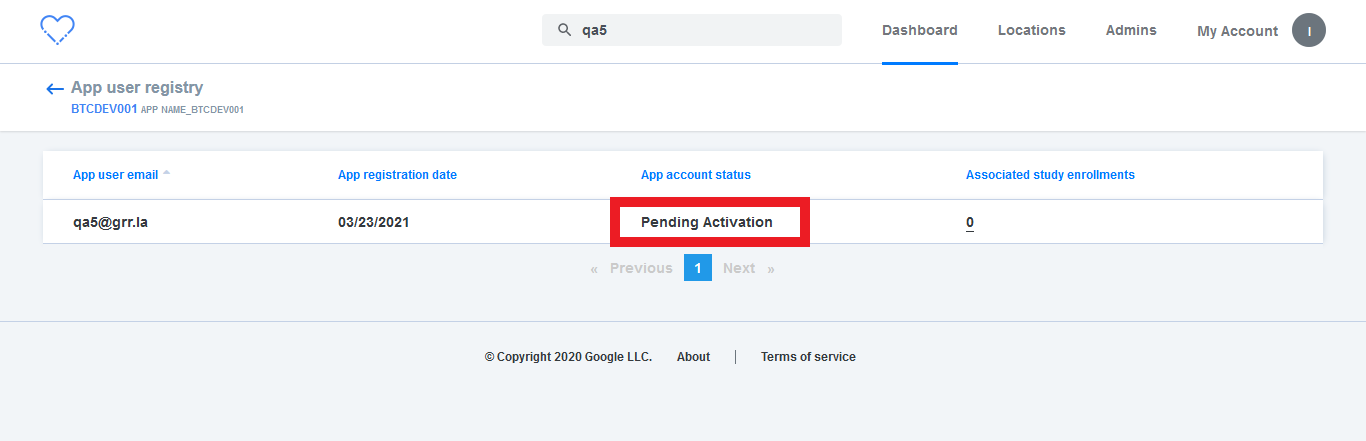

13,405 | 15,878,143,743 | IssuesEvent | 2021-04-09 10:35:15 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] App participant registry > Text change | Bug P2 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | App participant registry > Text change > Status 'Pending Activation' should be 'Pending activation'

| 3.0 | [PM] App participant registry > Text change - App participant registry > Text change > Status 'Pending Activation' should be 'Pending activation'

| process | app participant registry text change app participant registry text change status pending activation should be pending activation | 1 |

18,101 | 24,126,989,809 | IssuesEvent | 2022-09-21 02:04:25 | bitPogo/kmock | https://api.github.com/repos/bitPogo/kmock | closed | Decouple BuildIns from Names | enhancement kmock-processor | ## Description

Currently BuildIns are resolved by their name. Overloaded variants should be resolved nevertheless even if no BuildIns are set. However this is not the case at the moment. | 1.0 | Decouple BuildIns from Names - ## Description

Currently BuildIns are resolved by their name. Overloaded variants should be resolved nevertheless even if no BuildIns are set. However this is not the case at the moment. | process | decouple buildins from names description currently buildins are resolved by their name overloaded variants should be resolved nevertheless even if no buildins are set however this is not the case at the moment | 1 |

21,127 | 28,094,719,907 | IssuesEvent | 2023-03-30 15:04:52 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | `queueMicrotask()` is called before `process.nextTick()` in ESM | process | ### Version

v20.0.0-pre

### Platform

Linux deokjinkim-MS-7885 5.15.0-67-generic #74-Ubuntu SMP Wed Feb 22 14:14:39 UTC 2023 x86_64 x86_64 x86_64 GNU/Linux

### Subsystem

process

### What steps will reproduce the bug?

When I run example of ESM in document, actual result is different from expected res... | 1.0 | `queueMicrotask()` is called before `process.nextTick()` in ESM - ### Version

v20.0.0-pre

### Platform

Linux deokjinkim-MS-7885 5.15.0-67-generic #74-Ubuntu SMP Wed Feb 22 14:14:39 UTC 2023 x86_64 x86_64 x86_64 GNU/Linux

### Subsystem

process

### What steps will reproduce the bug?

When I run exampl... | process | queuemicrotask is called before process nexttick in esm version pre platform linux deokjinkim ms generic ubuntu smp wed feb utc gnu linux subsystem process what steps will reproduce the bug when i run example of esm in document ac... | 1 |

29,023 | 11,706,182,749 | IssuesEvent | 2020-03-07 20:27:25 | vlaship/hadoop-wc | https://api.github.com/repos/vlaship/hadoop-wc | opened | CVE-2018-14718 (High) detected in jackson-databind-2.9.5.jar | security vulnerability | ## CVE-2018-14718 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.5.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2018-14718 (High) detected in jackson-databind-2.9.5.jar - ## CVE-2018-14718 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.5.jar</b></p></summary>

<p>General... | non_process | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to vulnerable library root gradle caches modules... | 0 |

381,514 | 26,456,841,598 | IssuesEvent | 2023-01-16 14:54:41 | CryptoBlades/cryptoblades | https://api.github.com/repos/CryptoBlades/cryptoblades | closed | [Feature] - Website Team Image Updates | documentation enhancement | ### Prerequisites

- [X] I checked to make sure that this feature has not already been filed

- [X] I'm reporting this information to the correct repository

- [X] I understand enough about this issue to complete a comprehensive document

### Describe the feature and its requirements

Please remove David Diebels from the... | 1.0 | [Feature] - Website Team Image Updates - ### Prerequisites

- [X] I checked to make sure that this feature has not already been filed

- [X] I'm reporting this information to the correct repository

- [X] I understand enough about this issue to complete a comprehensive document

### Describe the feature and its requireme... | non_process | website team image updates prerequisites i checked to make sure that this feature has not already been filed i m reporting this information to the correct repository i understand enough about this issue to complete a comprehensive document describe the feature and its requirements please re... | 0 |

12,871 | 5,257,900,935 | IssuesEvent | 2017-02-02 21:48:38 | quicklisp/quicklisp-projects | https://api.github.com/repos/quicklisp/quicklisp-projects | closed | Please Add Lichat-TCP-Server | canbuild | This is a simple, threaded, TCP-based server for the Lichat Protocol.

Author: Nicolas Hafner

Source: https://github.com/Shirakumo/lichat-tcp-server.git

Documentation: https://shirakumo.github.io/lichat-tcp-server/ | 1.0 | Please Add Lichat-TCP-Server - This is a simple, threaded, TCP-based server for the Lichat Protocol.

Author: Nicolas Hafner

Source: https://github.com/Shirakumo/lichat-tcp-server.git

Documentation: https://shirakumo.github.io/lichat-tcp-server/ | non_process | please add lichat tcp server this is a simple threaded tcp based server for the lichat protocol author nicolas hafner source documentation | 0 |

26,466 | 7,840,649,544 | IssuesEvent | 2018-06-18 17:01:14 | wps-2017-2018-apcs/whs | https://api.github.com/repos/wps-2017-2018-apcs/whs | closed | ¡BROKEN BUILD! | broken-build | By removing `public enum State {BOMB, FLAG, DEFAULT};` from Tile.java, the build is now broken, as Minesweeper.java relies on them. Use git status to see what other files you must commit to fix the build. | 1.0 | ¡BROKEN BUILD! - By removing `public enum State {BOMB, FLAG, DEFAULT};` from Tile.java, the build is now broken, as Minesweeper.java relies on them. Use git status to see what other files you must commit to fix the build. | non_process | ¡broken build by removing public enum state bomb flag default from tile java the build is now broken as minesweeper java relies on them use git status to see what other files you must commit to fix the build | 0 |

118,546 | 25,332,789,847 | IssuesEvent | 2022-11-18 14:32:05 | arduino/arduino-serial-plotter-webapp | https://api.github.com/repos/arduino/arduino-serial-plotter-webapp | closed | Do a display range adjustable X-axis, Add repeatable options when displaying the same contents | type: enhancement topic: code | When I use the Serial Plotter, I want to extend the display range of the horizontal axis time so that the latest data acquisition and the previous data acquisition can be displayed on the same graph.

Second, when the x-coordinate data is a cyclic change in a range, I want the x-coordinate data to be displayed within... | 1.0 | Do a display range adjustable X-axis, Add repeatable options when displaying the same contents - When I use the Serial Plotter, I want to extend the display range of the horizontal axis time so that the latest data acquisition and the previous data acquisition can be displayed on the same graph.

Second, when the x-c... | non_process | do a display range adjustable x axis add repeatable options when displaying the same contents when i use the serial plotter i want to extend the display range of the horizontal axis time so that the latest data acquisition and the previous data acquisition can be displayed on the same graph second when the x c... | 0 |

363,274 | 25,415,852,046 | IssuesEvent | 2022-11-22 23:56:17 | opensim-org/opensim-core | https://api.github.com/repos/opensim-org/opensim-core | closed | Update Confluence wiki based on V&V paper guidelines | Documentation | The V&V paper provides guidelines for what reasonable errors in simulations are. We should update the Confluence wiki to reflect these new guidelines.

| 1.0 | Update Confluence wiki based on V&V paper guidelines - The V&V paper provides guidelines for what reasonable errors in simulations are. We should update the Confluence wiki to reflect these new guidelines.

| non_process | update confluence wiki based on v v paper guidelines the v v paper provides guidelines for what reasonable errors in simulations are we should update the confluence wiki to reflect these new guidelines | 0 |

6,134 | 8,998,465,460 | IssuesEvent | 2019-02-02 22:03:16 | leg2015/Aagos | https://api.github.com/repos/leg2015/Aagos | closed | Fix Data node overlap entry | Data Tracking Data visualization data processing | Currently, data for each overlap section is added as `1_overlap` etc, but in python a variable can't start with a number so I have to go in and manually change all column headers. Come up with a versatile, streamlined way to overcome this issue. | 1.0 | Fix Data node overlap entry - Currently, data for each overlap section is added as `1_overlap` etc, but in python a variable can't start with a number so I have to go in and manually change all column headers. Come up with a versatile, streamlined way to overcome this issue. | process | fix data node overlap entry currently data for each overlap section is added as overlap etc but in python a variable can t start with a number so i have to go in and manually change all column headers come up with a versatile streamlined way to overcome this issue | 1 |

29,768 | 13,169,588,216 | IssuesEvent | 2020-08-11 13:58:19 | dockstore/dockstore | https://api.github.com/repos/dockstore/dockstore | closed | Improve addUserToDockstoreWorkflows performance | enhancement review web-service | **Is your feature request related to a problem? Please describe.**

The [endpoint](https://github.com/dockstore/dockstore/blob/2caf914821431764f13228fad1539bf0d1588656/dockstore-webservice/src/main/java/io/dockstore/webservice/resources/UserResource.java#L805) to discover a user's workflows is inefficient and has the po... | 1.0 | Improve addUserToDockstoreWorkflows performance - **Is your feature request related to a problem? Please describe.**

The [endpoint](https://github.com/dockstore/dockstore/blob/2caf914821431764f13228fad1539bf0d1588656/dockstore-webservice/src/main/java/io/dockstore/webservice/resources/UserResource.java#L805) to discove... | non_process | improve addusertodockstoreworkflows performance is your feature request related to a problem please describe the to discover a user s workflows is inefficient and has the potential to consume a lot of memory the current implementation fetches all source control orgs the user belongs to except hosted... | 0 |

10,209 | 13,067,981,162 | IssuesEvent | 2020-07-31 02:10:53 | kevinhenneigh/ECommerceSite | https://api.github.com/repos/kevinhenneigh/ECommerceSite | closed | Add CI Pipeline | developer process | Add continuous integration pipeline that will check to make sure code in a pull request compiles successfully | 1.0 | Add CI Pipeline - Add continuous integration pipeline that will check to make sure code in a pull request compiles successfully | process | add ci pipeline add continuous integration pipeline that will check to make sure code in a pull request compiles successfully | 1 |

32,001 | 6,676,598,252 | IssuesEvent | 2017-10-05 06:47:38 | proarc/proarc | https://api.github.com/repos/proarc/proarc | closed | Povinnost vyplnění - příloha monografie | auto-migrated Priority-Low Type-Defect | ```

Prosíme opravit:

1. type of resource - názvy hodnot v aj

2. subject - name - pole chybí

3. identifier - type - chybí hodnoty oclc a sysno

```

Original issue reported on code.google.com by `daneck...@knav.cz` on 25 Jun 2014 at 3:20

| 1.0 | Povinnost vyplnění - příloha monografie - ```

Prosíme opravit:

1. type of resource - názvy hodnot v aj

2. subject - name - pole chybí

3. identifier - type - chybí hodnoty oclc a sysno

```

Original issue reported on code.google.com by `daneck...@knav.cz` on 25 Jun 2014 at 3:20

| non_process | povinnost vyplnění příloha monografie prosíme opravit type of resource názvy hodnot v aj subject name pole chybí identifier type chybí hodnoty oclc a sysno original issue reported on code google com by daneck knav cz on jun at | 0 |

154,411 | 5,918,603,865 | IssuesEvent | 2017-05-22 15:43:04 | ZooeyMiller/anna-freud-hackday-makatalk | https://api.github.com/repos/ZooeyMiller/anna-freud-hackday-makatalk | opened | user story: as a clinician, I would like to give service users the ability to replay makaton answer options | priority-1 user-story | so that they are able to play again the ones they missed or didn't fully understand | 1.0 | user story: as a clinician, I would like to give service users the ability to replay makaton answer options - so that they are able to play again the ones they missed or didn't fully understand | non_process | user story as a clinician i would like to give service users the ability to replay makaton answer options so that they are able to play again the ones they missed or didn t fully understand | 0 |

14,704 | 17,874,742,050 | IssuesEvent | 2021-09-07 00:31:40 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Add Mister Parker's Cul-De-Sac | suggested title in process | Please add as much of the following info as you can:

Title: Mister Parker's Cul-De-Sac

Type (film/tv show): TV Show

Film or show in which it appears: _Legends of Tomorrow_, Season 5, Episode 6 "Mister Parker's Cul-De-Sac"

Is the parent film/show streaming anywhere? [Netflix](https://www.netflix.com/search?q... | 1.0 | Add Mister Parker's Cul-De-Sac - Please add as much of the following info as you can:

Title: Mister Parker's Cul-De-Sac

Type (film/tv show): TV Show

Film or show in which it appears: _Legends of Tomorrow_, Season 5, Episode 6 "Mister Parker's Cul-De-Sac"

Is the parent film/show streaming anywhere? [Netflix]... | process | add mister parker s cul de sac please add as much of the following info as you can title mister parker s cul de sac type film tv show tv show film or show in which it appears legends of tomorrow season episode mister parker s cul de sac is the parent film show streaming anywhere ... | 1 |

48,333 | 13,325,321,539 | IssuesEvent | 2020-08-27 09:47:14 | solidify/fitbit-api-demo | https://api.github.com/repos/solidify/fitbit-api-demo | opened | CVE-2018-15756 (High) detected in spring-web-4.3.2.RELEASE.jar | security vulnerability | ## CVE-2018-15756 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-web-4.3.2.RELEASE.jar</b></p></summary>

<p>Spring Web</p>

<p>Library home page: <a href="https://github.com/spr... | True | CVE-2018-15756 (High) detected in spring-web-4.3.2.RELEASE.jar - ## CVE-2018-15756 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-web-4.3.2.RELEASE.jar</b></p></summary>

<p>Spr... | non_process | cve high detected in spring web release jar cve high severity vulnerability vulnerable library spring web release jar spring web library home page a href path to dependency file tmp ws scm fitbit api demo pom xml path to vulnerable library home wss scanner repos... | 0 |

21,046 | 27,992,170,789 | IssuesEvent | 2023-03-27 05:17:29 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [attributeprocessor] Support applying an action conditionally on the existence of another attribute | Stale processor/attributes closed as inactive | **Is your feature request related to a problem? Please describe.**

We would like to be able to apply an insert/update/upsert action only if another specified attribute exists.

**Describe the solution you'd like**

Implement this change in the AttrProc whose logic is shared by both the Attribute Processor and Resour... | 1.0 | [attributeprocessor] Support applying an action conditionally on the existence of another attribute - **Is your feature request related to a problem? Please describe.**

We would like to be able to apply an insert/update/upsert action only if another specified attribute exists.

**Describe the solution you'd like**

... | process | support applying an action conditionally on the existence of another attribute is your feature request related to a problem please describe we would like to be able to apply an insert update upsert action only if another specified attribute exists describe the solution you d like implement this chan... | 1 |

256,479 | 27,561,678,457 | IssuesEvent | 2023-03-07 22:39:34 | samqws-marketing/amzn-ion-hive-serde | https://api.github.com/repos/samqws-marketing/amzn-ion-hive-serde | closed | CVE-2019-14540 (High) detected in jackson-databind-2.6.5.jar - autoclosed | Mend: dependency security vulnerability | ## CVE-2019-14540 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.6.5.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2019-14540 (High) detected in jackson-databind-2.6.5.jar - autoclosed - ## CVE-2019-14540 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.6.5.jar</b></p></summary... | non_process | cve high detected in jackson databind jar autoclosed cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file integration test ... | 0 |

348,503 | 10,443,491,598 | IssuesEvent | 2019-09-18 14:57:32 | alphagov/govuk-frontend | https://api.github.com/repos/alphagov/govuk-frontend | closed | Investigate flakey banner test [TIMEBOX: 1 hour] | Effort: hours Priority: low | Maybe to do with cookies being left around, I'm not sure but it is skipped for now: https://github.com/alphagov/govuk-frontend/pull/1383/commits/c44602d578a8a4a8933028823d1516d5ad6a99f0#diff-bac8f4ef40104d619366dd4794cacd26L32 | 1.0 | Investigate flakey banner test [TIMEBOX: 1 hour] - Maybe to do with cookies being left around, I'm not sure but it is skipped for now: https://github.com/alphagov/govuk-frontend/pull/1383/commits/c44602d578a8a4a8933028823d1516d5ad6a99f0#diff-bac8f4ef40104d619366dd4794cacd26L32 | non_process | investigate flakey banner test maybe to do with cookies being left around i m not sure but it is skipped for now | 0 |

9,342 | 12,343,255,265 | IssuesEvent | 2020-05-15 03:24:05 | google/mtail | https://api.github.com/repos/google/mtail | closed | Release builds for power pc users | process |

Hi,

is it fine to open a pull request to enable also release builds for ppc users?

It`s just a one-line change in https://github.com/google/mtail/blob/master/Makefile#L143.

Best,

Tobias | 1.0 | Release builds for power pc users -

Hi,

is it fine to open a pull request to enable also release builds for ppc users?

It`s just a one-line change in https://github.com/google/mtail/blob/master/Makefile#L143.

Best,

Tobias | process | release builds for power pc users hi is it fine to open a pull request to enable also release builds for ppc users it s just a one line change in best tobias | 1 |

10,360 | 13,183,319,726 | IssuesEvent | 2020-08-12 17:17:58 | department-of-veterans-affairs/notification-api | https://api.github.com/repos/department-of-veterans-affairs/notification-api | opened | Internal DNS entry | Needs Prioritization Process Task | Filip to add details

This story depends on the Infra - need the Load Balancer | 1.0 | Internal DNS entry - Filip to add details

This story depends on the Infra - need the Load Balancer | process | internal dns entry filip to add details this story depends on the infra need the load balancer | 1 |

5,002 | 7,836,109,850 | IssuesEvent | 2018-06-17 15:28:41 | GoogleCloudPlatform/google-cloud-cpp | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-cpp | closed | Troubleshoot flaky polling_policy_test | bigtable priority: p0 type: process | The policy test is failing from time to time:

```

44/60 Test #44: polling_policy_test .........................................***Failed 0.41 sec

Running main() from gtest_main.cc

[==========] Running 3 tests from 1 test case.

[----------] Global test environment set-up.

[----------] 3 tests from GenericPoll... | 1.0 | Troubleshoot flaky polling_policy_test - The policy test is failing from time to time:

```

44/60 Test #44: polling_policy_test .........................................***Failed 0.41 sec

Running main() from gtest_main.cc

[==========] Running 3 tests from 1 test case.

[----------] Global test environment set-u... | process | troubleshoot flaky polling policy test the policy test is failing from time to time test polling policy test failed sec running main from gtest main cc running tests from test case global test environment set up tests from generic... | 1 |

287,310 | 21,649,387,103 | IssuesEvent | 2022-05-06 07:43:13 | baobabsoluciones/cornflow | https://api.github.com/repos/baobabsoluciones/cornflow | opened | Update all documentation with core library and move documentation inside monorepo | documentation | The documentation should be on the main folder not on cornflow-server and should have the documentation of cornflow-core as well. | 1.0 | Update all documentation with core library and move documentation inside monorepo - The documentation should be on the main folder not on cornflow-server and should have the documentation of cornflow-core as well. | non_process | update all documentation with core library and move documentation inside monorepo the documentation should be on the main folder not on cornflow server and should have the documentation of cornflow core as well | 0 |

22,471 | 31,387,985,186 | IssuesEvent | 2023-08-26 01:56:54 | Warzone2100/map-submission | https://api.github.com/repos/Warzone2100/map-submission | opened | [MAP]: Crop_Circles | map unprocessed | ### Upload Map

[10c-Crop_Circles.zip](https://github.com/Warzone2100/map-submission/files/12444374/10c-Crop_Circles.zip)

### Authorship

Mine: I am the author of this map

### Map Description (optional)

```text

Concept of various siding and flanking, reverse and surprise potential offensives. While bases are all g... | 1.0 | [MAP]: Crop_Circles - ### Upload Map

[10c-Crop_Circles.zip](https://github.com/Warzone2100/map-submission/files/12444374/10c-Crop_Circles.zip)

### Authorship

Mine: I am the author of this map

### Map Description (optional)

```text

Concept of various siding and flanking, reverse and surprise potential offensives.... | process | crop circles upload map authorship mine i am the author of this map map description optional text concept of various siding and flanking reverse and surprise potential offensives while bases are all grouped in a same box area it will ask a single determined and powerful action from... | 1 |

7,274 | 10,428,326,762 | IssuesEvent | 2019-09-16 22:13:20 | DO-CV/sara | https://api.github.com/repos/DO-CV/sara | closed | [MAINT] Improve unit testing of Gaussian pyramid computation. | ImageProcessing easy | See if we can clean the code as well.

| 1.0 | [MAINT] Improve unit testing of Gaussian pyramid computation. - See if we can clean the code as well.

| process | improve unit testing of gaussian pyramid computation see if we can clean the code as well | 1 |

56,045 | 6,499,508,223 | IssuesEvent | 2017-08-22 21:51:00 | openid/OpenYOLO-Android | https://api.github.com/repos/openid/OpenYOLO-Android | closed | Test app: do not allow save credential until all mandatory fields populated | bug testapp | You can press the "save credential" button in the test app when mandatory fields are not set:

This results in a crash, due to the RequireViolation thrown from the Credential builder.... | 1.0 | Test app: do not allow save credential until all mandatory fields populated - You can press the "save credential" button in the test app when mandatory fields are not set:

This resul... | non_process | test app do not allow save credential until all mandatory fields populated you can press the save credential button in the test app when mandatory fields are not set this results in a crash due to the requireviolation thrown from the credential builder either catch and handle this gracefully or dis... | 0 |

12,462 | 14,937,001,038 | IssuesEvent | 2021-01-25 14:06:47 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Android] Study Activities > Remove 50% completion pop-up | Android Bug P2 Process: Tested dev | Steps:

1. Login and enroll into a study

2. Complete 50% of the study activities

3. Observe the pop-up

Actual: 50% completion pop-up is displayed

Expected: Remove 50% completion pop-up | 1.0 | [Android] Study Activities > Remove 50% completion pop-up - Steps:

1. Login and enroll into a study

2. Complete 50% of the study activities

3. Observe the pop-up

Actual: 50% completion pop-up is displayed

Expected: Remove 50% completion pop-up | process | study activities remove completion pop up steps login and enroll into a study complete of the study activities observe the pop up actual completion pop up is displayed expected remove completion pop up | 1 |

20,375 | 27,029,508,200 | IssuesEvent | 2023-02-12 02:00:06 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Fri, 10 Feb 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

### Optimized Hybrid Focal Margin Loss for Crack Segmentation

- **Authors:** Jiajie Chen

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Image and Video Processing (eess.IV)

- **Arxiv link:** https://arxiv.org/abs/2302.04395

- **Pdf link:** https://arxiv.org/pdf/2302.04395

- **A... | 2.0 | New submissions for Fri, 10 Feb 23 - ## Keyword: events

### Optimized Hybrid Focal Margin Loss for Crack Segmentation

- **Authors:** Jiajie Chen

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Image and Video Processing (eess.IV)

- **Arxiv link:** https://arxiv.org/abs/2302.04395

- **Pdf link:** ht... | process | new submissions for fri feb keyword events optimized hybrid focal margin loss for crack segmentation authors jiajie chen subjects computer vision and pattern recognition cs cv image and video processing eess iv arxiv link pdf link abstract many loss functi... | 1 |

859 | 2,517,803,847 | IssuesEvent | 2015-01-16 17:17:06 | mozilla/webmaker-app | https://api.github.com/repos/mozilla/webmaker-app | closed | Redesign blog template | design: needs visual design | We'd like to try a first-run experience that involves pre-populating the main screen with some "ready-made" apps. The first three we were thinking of trying are:

* store (vendor)

* blog

* survey

| 2.0 | Redesign blog template - We'd like to try a first-run experience that involves pre-populating the main screen with some "ready-made" apps. The first three we were thinking of trying are:

* store (vendor)

* blog

* survey

| non_process | redesign blog template we d like to try a first run experience that involves pre populating the main screen with some ready made apps the first three we were thinking of trying are store vendor blog survey | 0 |

346,866 | 24,887,308,436 | IssuesEvent | 2022-10-28 08:53:40 | seanmanik/ped | https://api.github.com/repos/seanmanik/ped | opened | Lack of documentation for interview | type.DocumentationBug severity.Low | Currently, the summary of CinternS talks about helping users with their internship applications, but does not mention much about how interviews play a part in this context.

Users might thus be confused by the availability of the interview command, as well as the availability of an interview tag.

The User Guide is wel... | 1.0 | Lack of documentation for interview - Currently, the summary of CinternS talks about helping users with their internship applications, but does not mention much about how interviews play a part in this context.

Users might thus be confused by the availability of the interview command, as well as the availability of an... | non_process | lack of documentation for interview currently the summary of cinterns talks about helping users with their internship applications but does not mention much about how interviews play a part in this context users might thus be confused by the availability of the interview command as well as the availability of an... | 0 |

20,980 | 27,843,541,850 | IssuesEvent | 2023-03-20 14:13:22 | UnitTestBot/UTBotJava | https://api.github.com/repos/UnitTestBot/UTBotJava | opened | `IllegalArgumentException`s in Instrumented process for `MockReturnObjectExample` | ctg-bug comp-instrumented-process | **Description**

There are error messages in the `utbot-engine-current.log`.

```java

InstrumentedProcessError: RdFault: InvocationPhase:

IllegalArgumentException: signature=calculateFromArray()I

expecting this, but provided argument list is empty

at org.utbot.instrumentation.instrumentation.InvokeInstrumenta... | 1.0 | `IllegalArgumentException`s in Instrumented process for `MockReturnObjectExample` - **Description**

There are error messages in the `utbot-engine-current.log`.

```java

InstrumentedProcessError: RdFault: InvocationPhase:

IllegalArgumentException: signature=calculateFromArray()I

expecting this, but provided arg... | process | illegalargumentexception s in instrumented process for mockreturnobjectexample description there are error messages in the utbot engine current log java instrumentedprocesserror rdfault invocationphase illegalargumentexception signature calculatefromarray i expecting this but provided arg... | 1 |

13,005 | 8,066,791,709 | IssuesEvent | 2018-08-04 20:17:26 | rixed/ramen | https://api.github.com/repos/rixed/ramen | opened | Improve quantile performences | performance | - Reservoir sampling to avoid collecting more than 10 times the required resolution;

- Selection of the requested element rather than sorting;

- Compute several results out of a single perce tile operation. | True | Improve quantile performences - - Reservoir sampling to avoid collecting more than 10 times the required resolution;

- Selection of the requested element rather than sorting;

- Compute several results out of a single perce tile operation. | non_process | improve quantile performences reservoir sampling to avoid collecting more than times the required resolution selection of the requested element rather than sorting compute several results out of a single perce tile operation | 0 |

324,969 | 27,835,485,207 | IssuesEvent | 2023-03-20 09:10:49 | rhinstaller/kickstart-tests | https://api.github.com/repos/rhinstaller/kickstart-tests | opened | rhel9 flake: "Anaconda.Modules.Security:gi.repository.GLib.GError: g-io-error-quark: Timeout was reached (24)" | test flake | 03-19-2023

```

02:21:27,841 WARNING org.fedoraproject.Anaconda.Boss:DEBUG:anaconda.modules.boss.module_manager.start_modules:org.fedoraproject.Anaconda.Addons.Kdump is available.

02:21:27,842 WARNING org.fedoraproject.Anaconda.Addons.Kdump:DEBUG:anaconda.modules.common.base.base:Start the loop.

02:21:51,638 EMERG k... | 1.0 | rhel9 flake: "Anaconda.Modules.Security:gi.repository.GLib.GError: g-io-error-quark: Timeout was reached (24)" - 03-19-2023

```

02:21:27,841 WARNING org.fedoraproject.Anaconda.Boss:DEBUG:anaconda.modules.boss.module_manager.start_modules:org.fedoraproject.Anaconda.Addons.Kdump is available.

02:21:27,842 WARNING org.... | non_process | flake anaconda modules security gi repository glib gerror g io error quark timeout was reached warning org fedoraproject anaconda boss debug anaconda modules boss module manager start modules org fedoraproject anaconda addons kdump is available warning org fedoraproject anacon... | 0 |

20,423 | 27,086,366,830 | IssuesEvent | 2023-02-14 17:16:35 | esmero/strawberry_runners | https://api.github.com/repos/esmero/strawberry_runners | closed | Fix Binary detection | bug enhancement Post processor Plugins | # What?

For the new TEXT processor I used a very naive Binary Detection way (mb_detect) which funny enough does not work the same in PHP 7+, 8.0 and 8.1

I'm changing this to a `pregmatch` using `//u` as detection. This will work! | 1.0 | Fix Binary detection - # What?

For the new TEXT processor I used a very naive Binary Detection way (mb_detect) which funny enough does not work the same in PHP 7+, 8.0 and 8.1

I'm changing this to a `pregmatch` using `//u` as detection. This will work! | process | fix binary detection what for the new text processor i used a very naive binary detection way mb detect which funny enough does not work the same in php and i m changing this to a pregmatch using u as detection this will work | 1 |