Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

17,715 | 23,616,802,795 | IssuesEvent | 2022-08-24 16:33:50 | celo-org/celo-monorepo | https://api.github.com/repos/celo-org/celo-monorepo | closed | Draft Core-Contracts 8 release notes. | release-process Component: Identity ASv2 | Draft release notes for [Core Contracts Release 8](https://github.com/celo-org/celo-monorepo/releases/tag/core-contracts.v8.pre-audit) (pre-release). In particular the following sections:

- [x] "Key updates in this release" (blurb with description of changes)

- [x] "Specific Version Updates" (table with contract ver... | 1.0 | Draft Core-Contracts 8 release notes. - Draft release notes for [Core Contracts Release 8](https://github.com/celo-org/celo-monorepo/releases/tag/core-contracts.v8.pre-audit) (pre-release). In particular the following sections:

- [x] "Key updates in this release" (blurb with description of changes)

- [x] "Specific V... | process | draft core contracts release notes draft release notes for pre release in particular the following sections key updates in this release blurb with description of changes specific version updates table with contract versions you can use as a template i will add the hacken i... | 1 |

110,875 | 16,995,012,752 | IssuesEvent | 2021-07-01 04:41:18 | avallete/yt-playlists-delete-enhancer | https://api.github.com/repos/avallete/yt-playlists-delete-enhancer | closed | CVE-2018-20821 (Medium) detected in opennmsopennms-source-26.0.0-1, node-sass-4.14.1.tgz | no-issue-activity security vulnerability | ## CVE-2018-20821 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>opennmsopennms-source-26.0.0-1</b>, <b>node-sass-4.14.1.tgz</b></p></summary>

<p>

<details><summary><b>node-sass-4... | True | CVE-2018-20821 (Medium) detected in opennmsopennms-source-26.0.0-1, node-sass-4.14.1.tgz - ## CVE-2018-20821 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>opennmsopennms-source-26... | non_process | cve medium detected in opennmsopennms source node sass tgz cve medium severity vulnerability vulnerable libraries opennmsopennms source node sass tgz node sass tgz wrapper around libsass library home page a href path to dependency file ... | 0 |

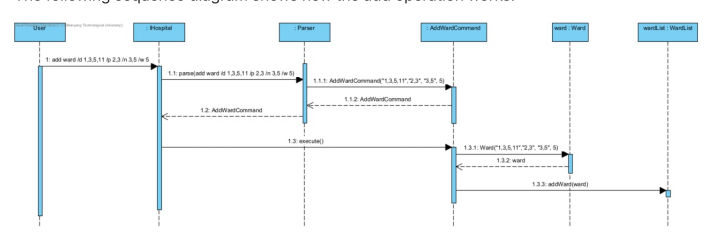

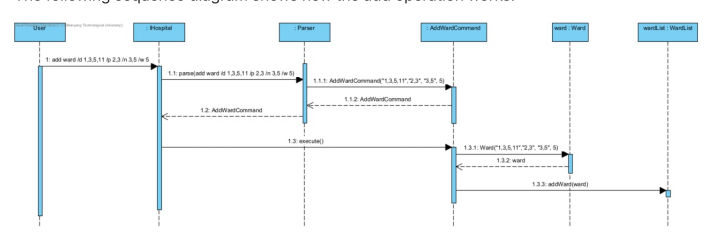

282,826 | 21,315,965,065 | IssuesEvent | 2022-04-16 09:23:44 | johnmcmonigle/pe | https://api.github.com/repos/johnmcmonigle/pe | opened | 'Add Ward' sequence diagram needs calling arrows touching the tops of activation bars, and returning arrows touching the bottoms | severity.Low type.DocumentationBug |

Many of the calling and returning arrows point to the middle of activation bars

<!--session: 1650094551158-c98fb3d6-71d0-4d9b-987f-11636894934a-->

<!--Version: Web v3.4.2--> | 1.0 | 'Add Ward' sequence diagram needs calling arrows touching the tops of activation bars, and returning arrows touching the bottoms -

Many of the calling and returning arrows point to the middle of activat... | non_process | add ward sequence diagram needs calling arrows touching the tops of activation bars and returning arrows touching the bottoms many of the calling and returning arrows point to the middle of activation bars | 0 |

93,905 | 15,946,437,611 | IssuesEvent | 2021-04-15 01:04:05 | jgeraigery/core | https://api.github.com/repos/jgeraigery/core | opened | CVE-2018-10237 (Medium) detected in guava-18.0.jar | security vulnerability | ## CVE-2018-10237 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>guava-18.0.jar</b></p></summary>

<p>Guava is a suite of core and expanded libraries that include

utility classes... | True | CVE-2018-10237 (Medium) detected in guava-18.0.jar - ## CVE-2018-10237 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>guava-18.0.jar</b></p></summary>

<p>Guava is a suite of core an... | non_process | cve medium detected in guava jar cve medium severity vulnerability vulnerable library guava jar guava is a suite of core and expanded libraries that include utility classes google s collections io classes and much much more guava has only one code dependency javax... | 0 |

11,693 | 3,218,596,110 | IssuesEvent | 2015-10-08 02:51:16 | eris-ltd/eris-cli | https://api.github.com/repos/eris-ltd/eris-cli | opened | tests should randomize ports | area/test suite | thus no need to stop all running containers when testing ... then start them back up again | 1.0 | tests should randomize ports - thus no need to stop all running containers when testing ... then start them back up again | non_process | tests should randomize ports thus no need to stop all running containers when testing then start them back up again | 0 |

52,868 | 27,808,822,399 | IssuesEvent | 2023-03-17 23:37:50 | enso-org/enso | https://api.github.com/repos/enso-org/enso | closed | Consider parallelization of ZIO's setup during startup | p-medium x-chore --low-performance -language-server | The current implementation of Language Server makes [heavy usage](https://github.com/enso-org/enso/blob/develop/engine/language-server/src/main/scala/org/enso/languageserver/boot/MainModule.scala#L107) of ZIO.

As per profiling data

of ZIO.

As per profiling data

| enhancement type: process | - [x] Implement CLI for creating a forked pull request (PR)

### Description

The user should be able to create a PR in a fork from either a non-git or git directory.

For non-git directory specified: all of the files in the directory get added on top of the upstream repository

For a git directory specified: all of ... | 1.0 | Implement CLI for creating a forked pull request (PR) - - [x] Implement CLI for creating a forked pull request (PR)

### Description

The user should be able to create a PR in a fork from either a non-git or git directory.

For non-git directory specified: all of the files in the directory get added on top of the ups... | process | implement cli for creating a forked pull request pr implement cli for creating a forked pull request pr description the user should be able to create a pr in a fork from either a non git or git directory for non git directory specified all of the files in the directory get added on top of the upstr... | 1 |

6,233 | 9,180,956,179 | IssuesEvent | 2019-03-05 09:04:08 | kmycode/sangokukmy | https://api.github.com/repos/kmycode/sangokukmy | closed | 農民反乱 | enhancement func-oldkmy process-pending | 三国志NET KMY Versionでは、農民反乱を実装しようと考えています。ほぼ以前あったものと同じです。

異民族( #10 )が若干強力すぎるので、農民反乱は地味なままでもいいかなと思ってます。

## 趣旨・目的

密偵の謀略により、農民反乱が起きるようにする。戦争前、他国への侵攻作戦を考えるのと同時に、自国の防衛作戦を考慮する必要が生じ、戦争は開戦する前からすでに始まっているという、簡単に油断できない雰囲気を作る

## 発生条件

* 密偵が扇動を実行している

* 民忠がゼロである

* その都市に武将が誰もいない

## できること

* 密偵( #12 )を放たれ、毎ターン扇動を実行された都市では、非常... | 1.0 | 農民反乱 - 三国志NET KMY Versionでは、農民反乱を実装しようと考えています。ほぼ以前あったものと同じです。

異民族( #10 )が若干強力すぎるので、農民反乱は地味なままでもいいかなと思ってます。

## 趣旨・目的

密偵の謀略により、農民反乱が起きるようにする。戦争前、他国への侵攻作戦を考えるのと同時に、自国の防衛作戦を考慮する必要が生じ、戦争は開戦する前からすでに始まっているという、簡単に油断できない雰囲気を作る

## 発生条件

* 密偵が扇動を実行している

* 民忠がゼロである

* その都市に武将が誰もいない

## できること

* 密偵( #12 )を放たれ、毎ターン扇動を実行された... | process | 農民反乱 三国志net kmy versionでは、農民反乱を実装しようと考えています。ほぼ以前あったものと同じです。 異民族 が若干強力すぎるので、農民反乱は地味なままでもいいかなと思ってます。 趣旨・目的 密偵の謀略により、農民反乱が起きるようにする。戦争前、他国への侵攻作戦を考えるのと同時に、自国の防衛作戦を考慮する必要が生じ、戦争は開戦する前からすでに始まっているという、簡単に油断できない雰囲気を作る 発生条件 密偵が扇動を実行している 民忠がゼロである その都市に武将が誰もいない できること 密偵 を放たれ、毎ターン扇動を実行された都市... | 1 |

16,065 | 20,205,911,749 | IssuesEvent | 2022-02-11 20:20:05 | createwithrani/superlist | https://api.github.com/repos/createwithrani/superlist | opened | Have a Main and Develop branch | Process | With #29 adding automated deployment from GitHub to SVN, should we have a `main` branch that is `stable` and a `development` branch that is where we merge new features and prepare for new releases?

The advantage of such a setup is that we can then use [WordPress Plugin Readme/Assets Update action](https://github.co... | 1.0 | Have a Main and Develop branch - With #29 adding automated deployment from GitHub to SVN, should we have a `main` branch that is `stable` and a `development` branch that is where we merge new features and prepare for new releases?

The advantage of such a setup is that we can then use [WordPress Plugin Readme/Assets... | process | have a main and develop branch with adding automated deployment from github to svn should we have a main branch that is stable and a development branch that is where we merge new features and prepare for new releases the advantage of such a setup is that we can then use to update just readme files ... | 1 |

19,946 | 26,419,644,632 | IssuesEvent | 2023-01-13 19:05:36 | ORNL-AMO/AMO-Tools-Desktop | https://api.github.com/repos/ORNL-AMO/AMO-Tools-Desktop | opened | Higher Heating Value Calculators | Process Heating Intern To Do | Turn New Gas Fuel and New Solid/Liquid fuel modals into stand alone calcs | 1.0 | Higher Heating Value Calculators - Turn New Gas Fuel and New Solid/Liquid fuel modals into stand alone calcs | process | higher heating value calculators turn new gas fuel and new solid liquid fuel modals into stand alone calcs | 1 |

14,010 | 16,815,860,171 | IssuesEvent | 2021-06-17 07:17:14 | didi/mpx | https://api.github.com/repos/didi/mpx | closed | [Bug report] 无法集成 thread-loader | processing | ```

{

test: /\.mpx$/,

use: [

{

loader: 'thread-loader'

},

MpxWebpackPlugin.loader(currentMpxLoaderConf)

]

}

```

```

Module build failed (from ./node_modules/thread-loader/dist/cjs.js):

Thread Loader (Worker 0)

Cannot read propert... | 1.0 | [Bug report] 无法集成 thread-loader - ```

{

test: /\.mpx$/,

use: [

{

loader: 'thread-loader'

},

MpxWebpackPlugin.loader(currentMpxLoaderConf)

]

}

```

```

Module build failed (from ./node_modules/thread-loader/dist/cjs.js):

Thread Load... | process | 无法集成 thread loader test mpx use loader thread loader mpxwebpackplugin loader currentmpxloaderconf module build failed from node modules thread loader dist cjs js thread loader worker ... | 1 |

20,681 | 27,352,924,987 | IssuesEvent | 2023-02-27 10:50:19 | camunda/issues | https://api.github.com/repos/camunda/issues | opened | Process Instance Modification | component:operate component:zeebe component:zeebe-process-automation public feature-parity version:8.1 riskClass:medium | ### Value Proposition Statement

Repair process instances that ended up in the wrong state by repeating or skipping steps. Move running flow nodes, add new or cancel existing ones in a process instance easily via our UI.

### User Problem

- During execution process instances can end up in the wrong state. Curre... | 1.0 | Process Instance Modification - ### Value Proposition Statement

Repair process instances that ended up in the wrong state by repeating or skipping steps. Move running flow nodes, add new or cancel existing ones in a process instance easily via our UI.

### User Problem

- During execution process instances can ... | process | process instance modification value proposition statement repair process instances that ended up in the wrong state by repeating or skipping steps move running flow nodes add new or cancel existing ones in a process instance easily via our ui user problem during execution process instances can ... | 1 |

29,085 | 23,707,318,039 | IssuesEvent | 2022-08-30 03:21:53 | UBCSailbot/.github | https://api.github.com/repos/UBCSailbot/.github | opened | Create PR template | infrastructure | ### Purpose

Guidelines for what to include in a PR

### Changes

- Write PR template file

- Look at the second resource to see if there is anything that we could do to improve our issues templates

### Resources

- https://embeddedartistry.com/blog/2017/08/04/a-github-pull-request-template-for-your-projects/

- h... | 1.0 | Create PR template - ### Purpose

Guidelines for what to include in a PR

### Changes

- Write PR template file

- Look at the second resource to see if there is anything that we could do to improve our issues templates

### Resources

- https://embeddedartistry.com/blog/2017/08/04/a-github-pull-request-template-fo... | non_process | create pr template purpose guidelines for what to include in a pr changes write pr template file look at the second resource to see if there is anything that we could do to improve our issues templates resources | 0 |

14,349 | 9,084,639,972 | IssuesEvent | 2019-02-18 04:47:03 | OctopusDeploy/Issues | https://api.github.com/repos/OctopusDeploy/Issues | opened | Cant tell what type of deployment target a given machine is | area/usability kind/enhancement | # Prerequisites

- [x ] I have searched [open](https://github.com/OctopusDeploy/Issues/issues) and [closed](https://github.com/OctopusDeploy/Issues/issues?utf8=%E2%9C%93&q=is%3Aissue+is%3Aclosed) issues to make sure it isn't already requested

- [x] I have written a descriptive issue title

- [x] I have linked the or... | True | Cant tell what type of deployment target a given machine is - # Prerequisites

- [x ] I have searched [open](https://github.com/OctopusDeploy/Issues/issues) and [closed](https://github.com/OctopusDeploy/Issues/issues?utf8=%E2%9C%93&q=is%3Aissue+is%3Aclosed) issues to make sure it isn't already requested

- [x] I have... | non_process | cant tell what type of deployment target a given machine is prerequisites i have searched and issues to make sure it isn t already requested i have written a descriptive issue title i have linked the original source of this feature request i have tagged the issue appropriately area ... | 0 |

22,084 | 30,606,733,796 | IssuesEvent | 2023-07-23 04:57:52 | hashgraph/hedera-json-rpc-relay | https://api.github.com/repos/hashgraph/hedera-json-rpc-relay | opened | Separate http and ws metrics | enhancement P2 process | ### Problem

Currently the http and ws server both utilize the relay and other busines logic.

However, since the registries are created in the classes it's not clear the metrics are intended for the ws server when it's running

### Solution

Separate metrics flags or make sure there's a different layer for calls origi... | 1.0 | Separate http and ws metrics - ### Problem

Currently the http and ws server both utilize the relay and other busines logic.

However, since the registries are created in the classes it's not clear the metrics are intended for the ws server when it's running

### Solution

Separate metrics flags or make sure there's a ... | process | separate http and ws metrics problem currently the http and ws server both utilize the relay and other busines logic however since the registries are created in the classes it s not clear the metrics are intended for the ws server when it s running solution separate metrics flags or make sure there s a ... | 1 |

338,024 | 30,277,400,647 | IssuesEvent | 2023-07-07 21:09:19 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | pkg/sql/schemachanger/schemachanger_test: TestValidateMixedVersionElements_drop_column_with_partial_index failed | C-test-failure O-robot branch-master T-sql-foundations | pkg/sql/schemachanger/schemachanger_test.TestValidateMixedVersionElements_drop_column_with_partial_index [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Ci_TestsAwsLinuxArm64_UnitTests/10812861?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Ci_TestsAw... | 1.0 | pkg/sql/schemachanger/schemachanger_test: TestValidateMixedVersionElements_drop_column_with_partial_index failed - pkg/sql/schemachanger/schemachanger_test.TestValidateMixedVersionElements_drop_column_with_partial_index [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Ci_TestsAwsLinuxArm64_UnitTes... | non_process | pkg sql schemachanger schemachanger test testvalidatemixedversionelements drop column with partial index failed pkg sql schemachanger schemachanger test testvalidatemixedversionelements drop column with partial index with on master run testvalidatemixedversionelements drop column with parti... | 0 |

787,020 | 27,701,928,353 | IssuesEvent | 2023-03-14 08:40:57 | hoangnguyen92dn/survey-flutter-ic | https://api.github.com/repos/hoangnguyen92dn/survey-flutter-ic | closed | [UI] As a user, I can sign in with email and password | type: feature priority: high @0.1.0 epic: authentication | ## Why

Users must be authenticated before taking or viewing any surveys. Our surveys have access restrictions. Some surveys are targeted to a specific group of users only for a more accurate survey result.

The mobile app should allow users to authenticate with their email and password.

## Acceptance Criteria

... | 1.0 | [UI] As a user, I can sign in with email and password - ## Why

Users must be authenticated before taking or viewing any surveys. Our surveys have access restrictions. Some surveys are targeted to a specific group of users only for a more accurate survey result.

The mobile app should allow users to authenticate wi... | non_process | as a user i can sign in with email and password why users must be authenticated before taking or viewing any surveys our surveys have access restrictions some surveys are targeted to a specific group of users only for a more accurate survey result the mobile app should allow users to authenticate with ... | 0 |

118,245 | 15,262,598,455 | IssuesEvent | 2021-02-22 00:04:39 | PyTorchLightning/pytorch-lightning | https://api.github.com/repos/PyTorchLightning/pytorch-lightning | closed | on_{validation,test}_epoch_end functions should have an outputs parameter | API / design duplicate enhancement help wanted | ## 🚀 Feature

https://github.com/PyTorchLightning/pytorch-lightning/blob/3b0e4e0b2bc5b62bba09df5976e1460774ae7337/pytorch_lightning/core/hooks.py#L255

https://github.com/PyTorchLightning/pytorch-lightning/blob/3b0e4e0b2bc5b62bba09df5976e1460774ae7337/pytorch_lightning/core/hooks.py#L267

Should have an `outputs... | 1.0 | on_{validation,test}_epoch_end functions should have an outputs parameter - ## 🚀 Feature

https://github.com/PyTorchLightning/pytorch-lightning/blob/3b0e4e0b2bc5b62bba09df5976e1460774ae7337/pytorch_lightning/core/hooks.py#L255

https://github.com/PyTorchLightning/pytorch-lightning/blob/3b0e4e0b2bc5b62bba09df5976e1... | non_process | on validation test epoch end functions should have an outputs parameter 🚀 feature should have an outputs parameter as | 0 |

21,709 | 30,209,012,197 | IssuesEvent | 2023-07-05 11:29:18 | camunda/issues | https://api.github.com/repos/camunda/issues | closed | Catch errors without errorCode | component:desktopModeler component:operate component:optimize component:webModeler component:zeebe-process-automation public kind:epic feature-parity version:8.2 |

### Value Proposition Statement

Error catch events without error codes enable users to model a specific response for a known escalation code, and a general response for unknown escalation codes.

### User Problem

Currently, I can only model Error catch events for known error codes. However, if a BPMN Error with a... | 1.0 | Catch errors without errorCode -

### Value Proposition Statement

Error catch events without error codes enable users to model a specific response for a known escalation code, and a general response for unknown escalation codes.

### User Problem

Currently, I can only model Error catch events for known error codes... | process | catch errors without errorcode value proposition statement error catch events without error codes enable users to model a specific response for a known escalation code and a general response for unknown escalation codes user problem currently i can only model error catch events for known error codes... | 1 |

206,677 | 15,767,814,368 | IssuesEvent | 2021-03-31 16:30:27 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | ccl/importccl: TestProtectedTimestampsDuringImportInto failed | C-test-failure O-robot branch-master | [(ccl/importccl).TestProtectedTimestampsDuringImportInto failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2833638&tab=buildLog) on [master@c6125c3c5f4e416382c19adfaebe6c2190977190](https://github.com/cockroachdb/cockroach/commits/c6125c3c5f4e416382c19adfaebe6c2190977190):

```

=== RUN TestProtectedTimest... | 1.0 | ccl/importccl: TestProtectedTimestampsDuringImportInto failed - [(ccl/importccl).TestProtectedTimestampsDuringImportInto failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2833638&tab=buildLog) on [master@c6125c3c5f4e416382c19adfaebe6c2190977190](https://github.com/cockroachdb/cockroach/commits/c6125c3c5f4e4... | non_process | ccl importccl testprotectedtimestampsduringimportinto failed on run testprotectedtimestampsduringimportinto test log scope go test logs captured to go src github com cockroachdb cockroach artifacts test log scope go use show logs to present logs inline found liveness ... | 0 |

98,631 | 16,387,781,418 | IssuesEvent | 2021-05-17 12:47:06 | fitzinbox/Exomiser | https://api.github.com/repos/fitzinbox/Exomiser | opened | CVE-2020-36184 (High) detected in jackson-databind-2.9.8.jar | security vulnerability | ## CVE-2020-36184 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.8.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2020-36184 (High) detected in jackson-databind-2.9.8.jar - ## CVE-2020-36184 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.8.jar</b></p></summary>

<p>General... | non_process | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file exomiser exomiser data genome p... | 0 |

2,814 | 5,738,746,685 | IssuesEvent | 2017-04-23 07:58:13 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Keydef containing uplevels | bug P2 preprocess/keyref | In my DITA Map I define a key to an image:

`<keydef keys="arch_diagram" href="../../introduction/images/arch_diagram" format="png"/>`

With the keyref:

`<image keyref="arch_diagram"/>`

It's not expanded in 2.3.3 or 2.4.6 but is in 1.8.

Other keyrefs to keys without uplevels and all other images are impacted.

... | 1.0 | Keydef containing uplevels - In my DITA Map I define a key to an image:

`<keydef keys="arch_diagram" href="../../introduction/images/arch_diagram" format="png"/>`

With the keyref:

`<image keyref="arch_diagram"/>`

It's not expanded in 2.3.3 or 2.4.6 but is in 1.8.

Other keyrefs to keys without uplevels and all ... | process | keydef containing uplevels in my dita map i define a key to an image with the keyref it s not expanded in or but is in other keyrefs to keys without uplevels and all other images are impacted also i have the same issue as except the conref has also uplevels | 1 |

35,208 | 30,841,392,211 | IssuesEvent | 2023-08-02 10:54:00 | woowacourse-teams/2023-zipgo | https://api.github.com/repos/woowacourse-teams/2023-zipgo | closed | HTTPS + 도메인 연결 | 🌍 Infrastructure 🕋 Backend 🧚🏻♀️ Support | ### 🧚🏻♀️ Describe

https와 도메인을 연결합니다

### ✅ Tasks

- [x] nginx + certbot을 이용한 도메인과 https 연결

- [x] nginx

- [x] 도메인 연결

- [x] https 연결

### 🕖 예상 작업 소요 시간

- 3시간

- 늦어도 오늘 안엔 가능

### 🙋🏻 More

[적용 완료!](https://zipgo.pet) | 1.0 | HTTPS + 도메인 연결 - ### 🧚🏻♀️ Describe

https와 도메인을 연결합니다

### ✅ Tasks

- [x] nginx + certbot을 이용한 도메인과 https 연결

- [x] nginx

- [x] 도메인 연결

- [x] https 연결

### 🕖 예상 작업 소요 시간

- 3시간

- 늦어도 오늘 안엔 가능

### 🙋🏻 More

[적용 완료!](https://zipgo.pet) | non_process | https 도메인 연결 🧚🏻♀️ describe https와 도메인을 연결합니다 ✅ tasks nginx certbot을 이용한 도메인과 https 연결 nginx 도메인 연결 https 연결 🕖 예상 작업 소요 시간 늦어도 오늘 안엔 가능 🙋🏻 more | 0 |

2,396 | 5,191,905,518 | IssuesEvent | 2017-01-22 01:37:01 | mitchellh/packer | https://api.github.com/repos/mitchellh/packer | closed | Packer crash with vagrant post-processor | crash post-processor/vagrant | https://gist.github.com/cbednarski/26e40b91a1dba233cc78

No crash when I remove the use of the vagrant post processor.

| 1.0 | Packer crash with vagrant post-processor - https://gist.github.com/cbednarski/26e40b91a1dba233cc78

No crash when I remove the use of the vagrant post processor.

| process | packer crash with vagrant post processor no crash when i remove the use of the vagrant post processor | 1 |

753,760 | 26,360,642,490 | IssuesEvent | 2023-01-11 13:08:55 | OpenNebula/one | https://api.github.com/repos/OpenNebula/one | closed | Update VM templates after renaming the components referenced | Type: Backlog Category: Core & System Type: Feature Sponsored Category: API Priority: Low | **Description**

After issuing a rename API Call, like `one.image.rename`, the object new name doesn't appear updated on the VM Templates that reference it.

**Use case**

The calls could issue the name update for consistency purposes. Even though the operation remains fully functional due to the object ID being use... | 1.0 | Update VM templates after renaming the components referenced - **Description**

After issuing a rename API Call, like `one.image.rename`, the object new name doesn't appear updated on the VM Templates that reference it.

**Use case**

The calls could issue the name update for consistency purposes. Even though the op... | non_process | update vm templates after renaming the components referenced description after issuing a rename api call like one image rename the object new name doesn t appear updated on the vm templates that reference it use case the calls could issue the name update for consistency purposes even though the op... | 0 |

39,728 | 20,170,913,819 | IssuesEvent | 2022-02-10 10:19:14 | milessabin/shapeless | https://api.github.com/repos/milessabin/shapeless | closed | performance issue with combined `Length` and `ToSizedHList` implicit derivation | Performance | There seems to be a performance problem with the combined implicit derivation of `Length` and `ToSizedHList`. When `n` grows, the compilation time becomes quickly unpractical. I reproduced the problem with shapeless 2.3.7 and scala 2.12.14/2.13.6 .

```scala

val n = Nat(100)

val f = Fill[n.N, String]

def ... | True | performance issue with combined `Length` and `ToSizedHList` implicit derivation - There seems to be a performance problem with the combined implicit derivation of `Length` and `ToSizedHList`. When `n` grows, the compilation time becomes quickly unpractical. I reproduced the problem with shapeless 2.3.7 and scala 2.12.1... | non_process | performance issue with combined length and tosizedhlist implicit derivation there seems to be a performance problem with the combined implicit derivation of length and tosizedhlist when n grows the compilation time becomes quickly unpractical i reproduced the problem with shapeless and scala ... | 0 |

22,305 | 30,859,670,789 | IssuesEvent | 2023-08-03 01:08:02 | emily-writes-poems/emily-writes-poems-processing | https://api.github.com/repos/emily-writes-poems/emily-writes-poems-processing | closed | editing poems in existing collections | script migration processing | being able to add/remove poems from collections. currently the collection poems list is stored as 2 arrays in Mongo (poem_ids and poem_titles)

easiest way is probably to pass around an array/list of poem ids and then revise the poem_titles array with a query from main poems coll? | 1.0 | editing poems in existing collections - being able to add/remove poems from collections. currently the collection poems list is stored as 2 arrays in Mongo (poem_ids and poem_titles)

easiest way is probably to pass around an array/list of poem ids and then revise the poem_titles array with a query from main poems co... | process | editing poems in existing collections being able to add remove poems from collections currently the collection poems list is stored as arrays in mongo poem ids and poem titles easiest way is probably to pass around an array list of poem ids and then revise the poem titles array with a query from main poems co... | 1 |

205,762 | 15,686,436,957 | IssuesEvent | 2021-03-25 12:31:38 | Slimefun/Slimefun4 | https://api.github.com/repos/Slimefun/Slimefun4 | opened | Automated Ignition Chamber dupe items | 🎯 Needs testing 🐞 Bug Report |

## :round_pushpin: Description (REQUIRED)

When the Automated Ignition Chamber is broken, items from it are doubled

## :bookmark_tabs: Steps to reproduce the Issue (REQUIRED)

https://youtu.be/6hAKlnGN41A

## :bulb: Expected behavior (REQUIRED)

Items do not multiply

## :compass: Environment (REQUIRED)

![ima... | 1.0 | Automated Ignition Chamber dupe items -

## :round_pushpin: Description (REQUIRED)

When the Automated Ignition Chamber is broken, items from it are doubled

## :bookmark_tabs: Steps to reproduce the Issue (REQUIRED)

https://youtu.be/6hAKlnGN41A

## :bulb: Expected behavior (REQUIRED)

Items do not multiply

##... | non_process | automated ignition chamber dupe items round pushpin description required when the automated ignition chamber is broken items from it are doubled bookmark tabs steps to reproduce the issue required bulb expected behavior required items do not multiply compass environment req... | 0 |

296,674 | 9,125,136,293 | IssuesEvent | 2019-02-24 11:00:46 | python/mypy | https://api.github.com/repos/python/mypy | closed | Wrong type inferred for union containing restricted type variable | bug false-positive priority-1-normal topic-union-types | ```

from typing import Generic, TypeVar, Union

T = TypeVar('T')

class G(Generic[T]): pass

class A(object): pass

class B(object): pass

g_a = None # type: G[A]

g_b = None # type: G[B]

AB = TypeVar('AB', A, B)

def f(x):

# type: (Union[G[AB],AB]) -> G[AB]

pass

f(A())

f(B())

f(g_a)

f(g_b) # E... | 1.0 | Wrong type inferred for union containing restricted type variable - ```

from typing import Generic, TypeVar, Union

T = TypeVar('T')

class G(Generic[T]): pass

class A(object): pass

class B(object): pass

g_a = None # type: G[A]

g_b = None # type: G[B]

AB = TypeVar('AB', A, B)

def f(x):

# type: (Union[... | non_process | wrong type inferred for union containing restricted type variable from typing import generic typevar union t typevar t class g generic pass class a object pass class b object pass g a none type g g b none type g ab typevar ab a b def f x type union ab ... | 0 |

3,169 | 6,224,106,515 | IssuesEvent | 2017-07-10 13:36:07 | dzhw/zofar | https://api.github.com/repos/dzhw/zofar | opened | Monitoring-Bridge | category: technical.processes prio: 9999 status: discussion type: backlog.item | Weiterreichung von Metrikdaten. Durch Trennung von HIS-IT: Überlegung über den Aufbau einer

Virtualisierung eigener Metriken!

engl. tba | 1.0 | Monitoring-Bridge - Weiterreichung von Metrikdaten. Durch Trennung von HIS-IT: Überlegung über den Aufbau einer

Virtualisierung eigener Metriken!

engl. tba | process | monitoring bridge weiterreichung von metrikdaten durch trennung von his it überlegung über den aufbau einer virtualisierung eigener metriken engl tba | 1 |

7,953 | 11,137,562,938 | IssuesEvent | 2019-12-20 19:42:22 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | closed | Display education in sorted order on application review page | Apply Process Requirements Ready State Dept. | Who: Student applicants

What: Display education data by sort preference on the application review

Why: to allow applicants to review the application correctly

Acceptance Criteria:

- The education data will now have a sort order (either default from USAJOBS or resorted in open opps). Display the education data in the ... | 1.0 | Display education in sorted order on application review page - Who: Student applicants

What: Display education data by sort preference on the application review

Why: to allow applicants to review the application correctly

Acceptance Criteria:

- The education data will now have a sort order (either default from USAJOBS... | process | display education in sorted order on application review page who student applicants what display education data by sort preference on the application review why to allow applicants to review the application correctly acceptance criteria the education data will now have a sort order either default from usajobs... | 1 |

1,046 | 3,513,113,129 | IssuesEvent | 2016-01-11 08:33:04 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Error in process._tickCallback | process | After upgrading from node v0.12.7 to v4.2.3 our application crashes every few hours or after 1 or 2 days with the following uncaught exception:

```

TypeError: Cannot read property 'callback' of undefined

at process._tickCallback (node.js:341:26)

```

Could this be a bug in node.js?

The code in src/node.... | 1.0 | Error in process._tickCallback - After upgrading from node v0.12.7 to v4.2.3 our application crashes every few hours or after 1 or 2 days with the following uncaught exception:

```

TypeError: Cannot read property 'callback' of undefined

at process._tickCallback (node.js:341:26)

```

Could this be a bug in n... | process | error in process tickcallback after upgrading from node to our application crashes every few hours or after or days with the following uncaught exception typeerror cannot read property callback of undefined at process tickcallback node js could this be a bug in node js... | 1 |

21,604 | 30,005,553,287 | IssuesEvent | 2023-06-26 12:13:31 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | processor: add resource_attributes group in metadata.yaml files | enhancement processor/k8sattributes processor/resourcedetection cmd/mdatagen | ### Component(s)

k8sattributesprocessor, resourcedetectionprocessor

### Describe the issue you're reporting

The group `resource_attributes` was introduced in the following [PR](https://github.com/open-telemetry/opentelemetry-collector-contrib/pull/21664).

As of today, no processors use the `resource_attribu... | 2.0 | processor: add resource_attributes group in metadata.yaml files - ### Component(s)

k8sattributesprocessor, resourcedetectionprocessor

### Describe the issue you're reporting

The group `resource_attributes` was introduced in the following [PR](https://github.com/open-telemetry/opentelemetry-collector-contrib/pu... | process | processor add resource attributes group in metadata yaml files component s resourcedetectionprocessor describe the issue you re reporting the group resource attributes was introduced in the following as of today no processors use the resource attributes group and its generated config... | 1 |

370,922 | 10,958,539,906 | IssuesEvent | 2019-11-27 09:36:39 | krzychu124/Cities-Skylines-Traffic-Manager-President-Edition | https://api.github.com/repos/krzychu124/Cities-Skylines-Traffic-Manager-President-Edition | opened | Speed limits window too constrained on QHD resolution | BUG SPEED LIMITS UI confirmed low priority | On this resolution:

The speed limits panel is too constrained and can't be dragged further towards the bottom-right than shown:

The speed limits panel is too constrained and can't be dragged further towards the bottom-right than shown:

, [Sponge](http://sponge2/ee1c078c-b3c3-4ce6-9... | 1.0 | tests.system.test_bucket: test_bucket_list_blobs_paginated_w_offset failed - Note: #969 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: f9462179f4a4b08eea7471a5ffb4aa5071fc5a5e

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/ee1c07... | non_process | tests system test bucket test bucket list blobs paginated w offset failed note was also for this test but it was closed more than days ago so i didn t mark it flaky commit buildurl status failed test output storage client listable bucket name gcp systest listable file data... | 0 |

16,094 | 20,263,434,083 | IssuesEvent | 2022-02-15 09:48:47 | quark-engine/quark-engine | https://api.github.com/repos/quark-engine/quark-engine | closed | Update README to indicate the compatible Rizin versions | work-in-progress issue-processing-state-04 | **Is your feature request related to a problem? Please describe.**

An API change in Rizin v0.3.0 has led the Rizin-based analysis to fail. To avoid users experiencing errors similar to #305, we need to indicate this restriction before fixing it.

**Describe the solution you'd like.**

Indicate the compatible Ri... | 1.0 | Update README to indicate the compatible Rizin versions - **Is your feature request related to a problem? Please describe.**

An API change in Rizin v0.3.0 has led the Rizin-based analysis to fail. To avoid users experiencing errors similar to #305, we need to indicate this restriction before fixing it.

**Describ... | process | update readme to indicate the compatible rizin versions is your feature request related to a problem please describe an api change in rizin has led the rizin based analysis to fail to avoid users experiencing errors similar to we need to indicate this restriction before fixing it describe t... | 1 |

17,762 | 23,691,256,724 | IssuesEvent | 2022-08-29 11:01:28 | pyanodon/pybugreports | https://api.github.com/repos/pyanodon/pybugreports | closed | Inserter mode gets stomped excessively | bug mod:pycoalprocessing | ### Mod source

PyAE Beta

### Which mod are you having an issue with?

- [ ] pyalienlife

- [ ] pyalternativeenergy

- [X] pycoalprocessing

- [ ] pyfusionenergy

- [ ] pyhightech

- [ ] pyindustry

- [ ] pypetroleumhandling

- [ ] pypostprocessing

- [ ] pyrawores

### Operating system

>=Windows 10

### What kind of issue i... | 1.0 | Inserter mode gets stomped excessively - ### Mod source

PyAE Beta

### Which mod are you having an issue with?

- [ ] pyalienlife

- [ ] pyalternativeenergy

- [X] pycoalprocessing

- [ ] pyfusionenergy

- [ ] pyhightech

- [ ] pyindustry

- [ ] pypetroleumhandling

- [ ] pypostprocessing

- [ ] pyrawores

### Operating syste... | process | inserter mode gets stomped excessively mod source pyae beta which mod are you having an issue with pyalienlife pyalternativeenergy pycoalprocessing pyfusionenergy pyhightech pyindustry pypetroleumhandling pypostprocessing pyrawores operating system windows ... | 1 |

5,736 | 8,580,069,955 | IssuesEvent | 2018-11-13 10:53:42 | threefoldtech/rivine | https://api.github.com/repos/threefoldtech/rivine | closed | Hierarchical Deterministic wallets | process_duplicate type_feature type_story | https://github.com/bitcoin/bips/blob/master/bip-0032.mediawiki

Ou deterministic wallet typically consist of a single "chain" of keypairs. The fact that there is only one chain means that sharing a wallet happens on an all-or-nothing basis. However, in some cases one only wants some (public) keys to be shared and recov... | 1.0 | Hierarchical Deterministic wallets - https://github.com/bitcoin/bips/blob/master/bip-0032.mediawiki

Ou deterministic wallet typically consist of a single "chain" of keypairs. The fact that there is only one chain means that sharing a wallet happens on an all-or-nothing basis. However, in some cases one only wants som... | process | hierarchical deterministic wallets ou deterministic wallet typically consist of a single chain of keypairs the fact that there is only one chain means that sharing a wallet happens on an all or nothing basis however in some cases one only wants some public keys to be shared and recoverable in the example ... | 1 |

17,323 | 23,142,780,670 | IssuesEvent | 2022-07-28 20:17:46 | USGS-R/drb-do-ml | https://api.github.com/repos/USGS-R/drb-do-ml | closed | Check correlation between temperature and DO predictions | process-guidance | We are interested to see the correlation between model DO predictions and input air temperature in the Baseline model. | 1.0 | Check correlation between temperature and DO predictions - We are interested to see the correlation between model DO predictions and input air temperature in the Baseline model. | process | check correlation between temperature and do predictions we are interested to see the correlation between model do predictions and input air temperature in the baseline model | 1 |

18,803 | 10,231,609,955 | IssuesEvent | 2019-08-18 11:05:58 | TryGhost/Ghost | https://api.github.com/repos/TryGhost/Ghost | closed | Delete all content triggers a 504 gateway timeout | admin-api api help wanted performance server stale | ### Issue Summary

Using the Delete all content feature in Labs times out with a collection exceeding 3400 stories. This is not a limit I have explored, but the current situation I am in.

### To Reproduce

Import 3400+ stories in to ghost, try to delete all content.

I had to batch this import as the 5.5Mb fil... | True | Delete all content triggers a 504 gateway timeout - ### Issue Summary

Using the Delete all content feature in Labs times out with a collection exceeding 3400 stories. This is not a limit I have explored, but the current situation I am in.

### To Reproduce

Import 3400+ stories in to ghost, try to delete all con... | non_process | delete all content triggers a gateway timeout issue summary using the delete all content feature in labs times out with a collection exceeding stories this is not a limit i have explored but the current situation i am in to reproduce import stories in to ghost try to delete all content ... | 0 |

218,208 | 16,976,709,180 | IssuesEvent | 2021-06-30 00:45:10 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | VS Code Live Server - June Iteration Plans | feature-request live-server on-testplan | <!-- ⚠️⚠️ Do Not Delete This! feature_request_template ⚠️⚠️ -->

<!-- Please read our Rules of Conduct: https://opensource.microsoft.com/codeofconduct/ -->

<!-- Please search existing issues to avoid creating duplicates. -->

<!-- Describe the feature you'd like. -->

This tracks the upcoming work on the Live Serv... | 1.0 | VS Code Live Server - June Iteration Plans - <!-- ⚠️⚠️ Do Not Delete This! feature_request_template ⚠️⚠️ -->

<!-- Please read our Rules of Conduct: https://opensource.microsoft.com/codeofconduct/ -->

<!-- Please search existing issues to avoid creating duplicates. -->

<!-- Describe the feature you'd like. -->

T... | non_process | vs code live server june iteration plans this tracks the upcoming work on the live server which is described in some of these may be deferred to the july iteration see backlog bug fixes external links on browser are broken relative file links in sub folders are not workin... | 0 |

7,404 | 10,523,658,825 | IssuesEvent | 2019-09-30 11:34:10 | teleporthq/teleport-code-generators | https://api.github.com/repos/teleporthq/teleport-code-generators | closed | HTML formatting utility | enhancement post-processors | We're relying on `prettier` for formatting html chunks (which are represented as strings). However, perf tests (#64) showed that prettier is running very slow when the UIDL gets bigger (ex: 2000 nodes).

We have two ways of approaching this:

### Simple post-processor function that formats html tags

This should ru... | 1.0 | HTML formatting utility - We're relying on `prettier` for formatting html chunks (which are represented as strings). However, perf tests (#64) showed that prettier is running very slow when the UIDL gets bigger (ex: 2000 nodes).

We have two ways of approaching this:

### Simple post-processor function that formats... | process | html formatting utility we re relying on prettier for formatting html chunks which are represented as strings however perf tests showed that prettier is running very slow when the uidl gets bigger ex nodes we have two ways of approaching this simple post processor function that formats htm... | 1 |

26,913 | 2,688,753,225 | IssuesEvent | 2015-03-31 03:36:52 | ChrisMahlke/nominate-2 | https://api.github.com/repos/ChrisMahlke/nominate-2 | closed | My Profile tab is not turning blue on Refresh | MediumPriority | After fixing the “My Profile” section to receive full points, the red text and border does not change until I click on another tab. This should change to blue automatically after reaching the desired score. I really appreciate the “Refresh” button, but it would be nice if it happened automatically.

| 1.0 | My Profile tab is not turning blue on Refresh - After fixing the “My Profile” section to receive full points, the red text and border does not change until I click on another tab. This should change to blue automatically after reaching the desired score. I really appreciate the “Refresh” button, but it would be nice if... | non_process | my profile tab is not turning blue on refresh after fixing the “my profile” section to receive full points the red text and border does not change until i click on another tab this should change to blue automatically after reaching the desired score i really appreciate the “refresh” button but it would be nice if... | 0 |

10,158 | 13,044,162,640 | IssuesEvent | 2020-07-29 03:47:34 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `AesEncryptIV` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `AesEncryptIV` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @lonng

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_expr... | 2.0 | UCP: Migrate scalar function `AesEncryptIV` from TiDB -

## Description

Port the scalar function `AesEncryptIV` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @lonng

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/... | process | ucp migrate scalar function aesencryptiv from tidb description port the scalar function aesencryptiv from tidb to coprocessor score mentor s lonng recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

4,683 | 7,522,205,207 | IssuesEvent | 2018-04-12 19:39:28 | rubberduck-vba/Rubberduck | https://api.github.com/repos/rubberduck-vba/Rubberduck | closed | Members of implicit default object property are not resolved | bug navigation parse-tree-preprocessing | The default member of Workbooks is `[_Default]`, which is roughly equivalent to `Item`. Both `[_Default]` and `Item` both return a `Workbook` object, so RD should be able to resolve the `Workbook` members.

But, when the default member call is implicit, RD fails to resolve the `Workbook` properties

```vb

Debug.... | 1.0 | Members of implicit default object property are not resolved - The default member of Workbooks is `[_Default]`, which is roughly equivalent to `Item`. Both `[_Default]` and `Item` both return a `Workbook` object, so RD should be able to resolve the `Workbook` members.

But, when the default member call is implicit, R... | process | members of implicit default object property are not resolved the default member of workbooks is which is roughly equivalent to item both and item both return a workbook object so rd should be able to resolve the workbook members but when the default member call is implicit rd fails to resolve... | 1 |

516,271 | 14,978,503,001 | IssuesEvent | 2021-01-28 10:55:12 | nextcloud/mail | https://api.github.com/repos/nextcloud/mail | closed | Can not access mail account in nextcloud after password change on imap server | 4. to release bug priority:medium | ### Expected behavior

There should be an option to set the new password.

### Actual behavior

Mail account is not shown anymore in nextcloud. The cronjob gives "OCA\Mail\Exception\ServiceException: IMAP errorMail server denied authentication." Can not access the mail account, so I even can not delete it.

###... | 1.0 | Can not access mail account in nextcloud after password change on imap server - ### Expected behavior

There should be an option to set the new password.

### Actual behavior

Mail account is not shown anymore in nextcloud. The cronjob gives "OCA\Mail\Exception\ServiceException: IMAP errorMail server denied authent... | non_process | can not access mail account in nextcloud after password change on imap server expected behavior there should be an option to set the new password actual behavior mail account is not shown anymore in nextcloud the cronjob gives oca mail exception serviceexception imap errormail server denied authent... | 0 |

8,643 | 7,349,176,814 | IssuesEvent | 2018-03-08 09:45:59 | vector-im/riot-android | https://api.github.com/repos/vector-im/riot-android | closed | Embedded images must be restricted to mxc: URIs | P1 bug security | Right now img tags are allowed arbitrary linking, which is a security issue! | True | Embedded images must be restricted to mxc: URIs - Right now img tags are allowed arbitrary linking, which is a security issue! | non_process | embedded images must be restricted to mxc uris right now img tags are allowed arbitrary linking which is a security issue | 0 |

9,722 | 12,717,208,300 | IssuesEvent | 2020-06-24 04:24:47 | kubeflow/pipelines | https://api.github.com/repos/kubeflow/pipelines | closed | Jest Snapshot Test didn't well handle time string | area/frontend kind/process lifecycle/stale needs investigation priority/p2 status/triaged | In our codes we use Date.toLocalDateString which depends on local time format. It may generate different snapshot. | 1.0 | Jest Snapshot Test didn't well handle time string - In our codes we use Date.toLocalDateString which depends on local time format. It may generate different snapshot. | process | jest snapshot test didn t well handle time string in our codes we use date tolocaldatestring which depends on local time format it may generate different snapshot | 1 |

18,401 | 4,266,248,177 | IssuesEvent | 2016-07-12 14:02:31 | morepath/morepath | https://api.github.com/repos/morepath/morepath | closed | update the morepath example applications to follow cookiecutter-style setup | documentation entry level help wanted | Now that we have a cookiecutter setup we should update the example applications to follow a similar setup, with install instructions in README.txt. The sample applications right now use buildout and depend on development versions of Morepath, but that's not really useful anymore. We should also retire any example appli... | 1.0 | update the morepath example applications to follow cookiecutter-style setup - Now that we have a cookiecutter setup we should update the example applications to follow a similar setup, with install instructions in README.txt. The sample applications right now use buildout and depend on development versions of Morepath,... | non_process | update the morepath example applications to follow cookiecutter style setup now that we have a cookiecutter setup we should update the example applications to follow a similar setup with install instructions in readme txt the sample applications right now use buildout and depend on development versions of morepath ... | 0 |

88 | 2,534,358,013 | IssuesEvent | 2015-01-24 21:44:41 | rhattersley/docbook2asciidoc | https://api.github.com/repos/rhattersley/docbook2asciidoc | opened | Remove formatting from subtitle | pre-process | ```xml

<subtitle>Version 1.7.2 <emphasis role="bold">DRAFT</emphasis>, 28 March,

2014</subtitle>

```

converts to:

```

Version 1.7.2 **DRAFT**, 28 March, 2014

```

which renders the "*" characters literally. | 1.0 | Remove formatting from subtitle - ```xml

<subtitle>Version 1.7.2 <emphasis role="bold">DRAFT</emphasis>, 28 March,

2014</subtitle>

```

converts to:

```

Version 1.7.2 **DRAFT**, 28 March, 2014

```

which renders the "*" characters literally. | process | remove formatting from subtitle xml version draft march converts to version draft march which renders the characters literally | 1 |

11,889 | 14,682,445,293 | IssuesEvent | 2020-12-31 16:27:46 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | fix to #6734 breaks pipe with multi-instanciated modules | bug: pending difficulty: average priority: high scope: image processing | **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

The issue #6734 has been fixed in darktable 3.4 but the fix introduced a new issue when there are multiple instances of the same module in the development pipe. The photos is more or less scrambled and you can't access anymore to the ex... | 1.0 | fix to #6734 breaks pipe with multi-instanciated modules - **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

The issue #6734 has been fixed in darktable 3.4 but the fix introduced a new issue when there are multiple instances of the same module in the development pipe. The photos is mo... | process | fix to breaks pipe with multi instanciated modules describe the bug the issue has been fixed in darktable but the fix introduced a new issue when there are multiple instances of the same module in the development pipe the photos is more or less scrambled and you can t access anymore to the extra in... | 1 |

53,648 | 13,262,047,358 | IssuesEvent | 2020-08-20 21:00:31 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | [ppc] clang 3.8 errors (Trac #1809) | Migrated from Trac combo simulation defect | These errors were found compiling with clang 3.8:

```text

In file included from /scratch/dschultz/icetray_profiling/tmptua13z/src/ppc/private/ppc/ocl/ppc.cxx:696:

/scratch/dschultz/icetray_profiling/tmptua13z/src/ppc/private/ppc/ocl/f2k.cxx:22:7: error: no member named 'w' in 'cl_float4'

p.n.w=type>0?-int(type):-128;... | 1.0 | [ppc] clang 3.8 errors (Trac #1809) - These errors were found compiling with clang 3.8:

```text

In file included from /scratch/dschultz/icetray_profiling/tmptua13z/src/ppc/private/ppc/ocl/ppc.cxx:696:

/scratch/dschultz/icetray_profiling/tmptua13z/src/ppc/private/ppc/ocl/f2k.cxx:22:7: error: no member named 'w' in 'cl... | non_process | clang errors trac these errors were found compiling with clang text in file included from scratch dschultz icetray profiling src ppc private ppc ocl ppc cxx scratch dschultz icetray profiling src ppc private ppc ocl cxx error no member named w in cl p n w type int type ... | 0 |

91,939 | 18,755,813,031 | IssuesEvent | 2021-11-05 10:35:12 | julia-vscode/julia-vscode | https://api.github.com/repos/julia-vscode/julia-vscode | closed | entering new line clears the in-line evaluation popup cell | bug area-code-execution | MWE.

type

```

rand()

```

press ALT+ENTER. A popup appears.

Then press ENTER to start a new line and write whatever other command. The popup to the right of `rand` has dissapeared.

This isn't nice for interactively writing scripts. | 1.0 | entering new line clears the in-line evaluation popup cell - MWE.

type

```

rand()

```

press ALT+ENTER. A popup appears.

Then press ENTER to start a new line and write whatever other command. The popup to the right of `rand` has dissapeared.

This isn't nice for interactively writing scripts. | non_process | entering new line clears the in line evaluation popup cell mwe type rand press alt enter a popup appears then press enter to start a new line and write whatever other command the popup to the right of rand has dissapeared this isn t nice for interactively writing scripts | 0 |

228,362 | 18,172,119,282 | IssuesEvent | 2021-09-27 21:19:03 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | opened | Test: Outline button in Jupyter notebooks | testplan-item | Refs: https://github.com/microsoft/vscode-jupyter/issues/7305

- [ ] anyOS

- [ ] anyOS

Complexity: 1

Author: @IanMatthewHuff

---

File bugs on the Jupyter repo here: https://github.com/microsoft/vscode-jupyter/issues

Jupyter notebook users have been continually asking for Table of Contents control in th... | 1.0 | Test: Outline button in Jupyter notebooks - Refs: https://github.com/microsoft/vscode-jupyter/issues/7305

- [ ] anyOS

- [ ] anyOS

Complexity: 1

Author: @IanMatthewHuff

---

File bugs on the Jupyter repo here: https://github.com/microsoft/vscode-jupyter/issues

Jupyter notebook users have been continual... | non_process | test outline button in jupyter notebooks refs anyos anyos complexity author ianmatthewhuff file bugs on the jupyter repo here jupyter notebook users have been continually asking for table of contents control in their notebooks and this functionality is currently provided by... | 0 |

12,807 | 15,184,213,153 | IssuesEvent | 2021-02-15 09:15:53 | topcoder-platform/community-app | https://api.github.com/repos/topcoder-platform/community-app | opened | Member skills are not getting updated when a member wins a challenge | BE P1 ShapeupProcess challenge- recommender-tool member-skill-extractor | The member skill extractor is not updating the members skills when a member is placed in a challenge.

Example:

member: tester1234

challenge: https://www.topcoder-dev.com/challenges/cfc3f821-64e4-4585-8fdc-744928d2bc9f

the member_skills_history table is not updated with the skills for the this member

cc @laksh... | 1.0 | Member skills are not getting updated when a member wins a challenge - The member skill extractor is not updating the members skills when a member is placed in a challenge.

Example:

member: tester1234

challenge: https://www.topcoder-dev.com/challenges/cfc3f821-64e4-4585-8fdc-744928d2bc9f

the member_skills_history ... | process | member skills are not getting updated when a member wins a challenge the member skill extractor is not updating the members skills when a member is placed in a challenge example member challenge the member skills history table is not updated with the skills for the this member cc lakshmiathreya | 1 |

14,127 | 17,020,220,057 | IssuesEvent | 2021-07-02 17:43:18 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | Perspective module: cannot set the automatic cropping value untile some perspective correction is set | bug: pending scope: image processing | If I try to set automatic cropping to some value which is not off before changing the rotation, lens shift, etc. values, I get an error message on the image "automatic cropping failed".

| 1.0 | Perspective module: cannot set the automatic cropping value untile some perspective correction is set - If I try to set automatic cropping to some value which is not off before changing the rotation, lens shift, etc. values, I get an error message on the image "automatic cropping failed".

| process | perspective module cannot set the automatic cropping value untile some perspective correction is set if i try to set automatic cropping to some value which is not off before changing the rotation lens shift etc values i get an error message on the image automatic cropping failed | 1 |

1,754 | 4,460,688,074 | IssuesEvent | 2016-08-24 00:46:52 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | StandardOutput and StandardError receive an extra line | System.Diagnostics.Process | On Linux, compared to other languages, say Python, .NET is appending (somehow) an extra line on redirected StandardOutput and StandardError.

For example, if I execute the following script:

```bash

#!/bin/sh

exit 42

```

In Python (e.g., subprocess.check_output) I receive, as I would expect, an empty string. ... | 1.0 | StandardOutput and StandardError receive an extra line - On Linux, compared to other languages, say Python, .NET is appending (somehow) an extra line on redirected StandardOutput and StandardError.

For example, if I execute the following script:

```bash

#!/bin/sh

exit 42

```

In Python (e.g., subprocess.chec... | process | standardoutput and standarderror receive an extra line on linux compared to other languages say python net is appending somehow an extra line on redirected standardoutput and standarderror for example if i execute the following script bash bin sh exit in python e g subprocess check... | 1 |

75,349 | 9,850,986,273 | IssuesEvent | 2019-06-19 09:24:59 | mlr-org/mlr | https://api.github.com/repos/mlr-org/mlr | opened | `mRMRe::mrmr` differs from `praznik::mrmr` | type-documentation | I don't think we can do anything about this but it is important to know.

Note that the absolute values are not of interest here but only the ranking of the features.

``` r

suppressPackageStartupMessages(library(mlr))

library(magrittr)

bh.task = dropFeatures(bh.task, "chas")

fv_mrmr = generateFilterValuesD... | 1.0 | `mRMRe::mrmr` differs from `praznik::mrmr` - I don't think we can do anything about this but it is important to know.

Note that the absolute values are not of interest here but only the ranking of the features.

``` r

suppressPackageStartupMessages(library(mlr))

library(magrittr)

bh.task = dropFeatures(bh.tas... | non_process | mrmre mrmr differs from praznik mrmr i don t think we can do anything about this but it is important to know note that the absolute values are not of interest here but only the ranking of the features r suppresspackagestartupmessages library mlr library magrittr bh task dropfeatures bh tas... | 0 |

273,983 | 20,821,837,286 | IssuesEvent | 2022-03-18 16:07:35 | sjefferson99/Boatman-pico-uart-hub | https://api.github.com/repos/sjefferson99/Boatman-pico-uart-hub | closed | Improve documentation in bmserial module | documentation | The bmserial module should document that this is for the serial comms including a definition of the data structure. Functions should be boatman module agnostic and parse data to be passed on to python modules for the appropriate boatman module. | 1.0 | Improve documentation in bmserial module - The bmserial module should document that this is for the serial comms including a definition of the data structure. Functions should be boatman module agnostic and parse data to be passed on to python modules for the appropriate boatman module. | non_process | improve documentation in bmserial module the bmserial module should document that this is for the serial comms including a definition of the data structure functions should be boatman module agnostic and parse data to be passed on to python modules for the appropriate boatman module | 0 |

34,681 | 7,853,763,386 | IssuesEvent | 2018-06-20 18:29:10 | kobotoolbox/kpi | https://api.github.com/repos/kobotoolbox/kpi | closed | Opening tags moves project details up | bug coded low priority ui | Minor one, but irritating - after opening tags editor, the project details (name, date, etc.) move up by few pixels; plus the amount of whitespace between name and tags editor is too big:

<img width="1005" alt="screen shot 2018-06-09 at 15 40 47" src="https://user-images.githubusercontent.com/2521888/41192318-24bf0e... | 1.0 | Opening tags moves project details up - Minor one, but irritating - after opening tags editor, the project details (name, date, etc.) move up by few pixels; plus the amount of whitespace between name and tags editor is too big:

<img width="1005" alt="screen shot 2018-06-09 at 15 40 47" src="https://user-images.githu... | non_process | opening tags moves project details up minor one but irritating after opening tags editor the project details name date etc move up by few pixels plus the amount of whitespace between name and tags editor is too big img width alt screen shot at src img width alt screen shot... | 0 |

5,835 | 8,666,148,624 | IssuesEvent | 2018-11-29 02:36:36 | w3c/w3process | https://api.github.com/repos/w3c/w3process | opened | TAG appointment should be via IETF style nomcom | Process2020Candidate | The TAG is currently elected. We could move to an IETF-style NomCom appointment. This ensures the right people for the role are selected and a balanced TAG can be achieved. Comments are welcome.

- The NomCom consists of a random set of volunteers who meet a set criteria - e.g. have chaired a group or published a dra... | 1.0 | TAG appointment should be via IETF style nomcom - The TAG is currently elected. We could move to an IETF-style NomCom appointment. This ensures the right people for the role are selected and a balanced TAG can be achieved. Comments are welcome.

- The NomCom consists of a random set of volunteers who meet a set crite... | process | tag appointment should be via ietf style nomcom the tag is currently elected we could move to an ietf style nomcom appointment this ensures the right people for the role are selected and a balanced tag can be achieved comments are welcome the nomcom consists of a random set of volunteers who meet a set crite... | 1 |

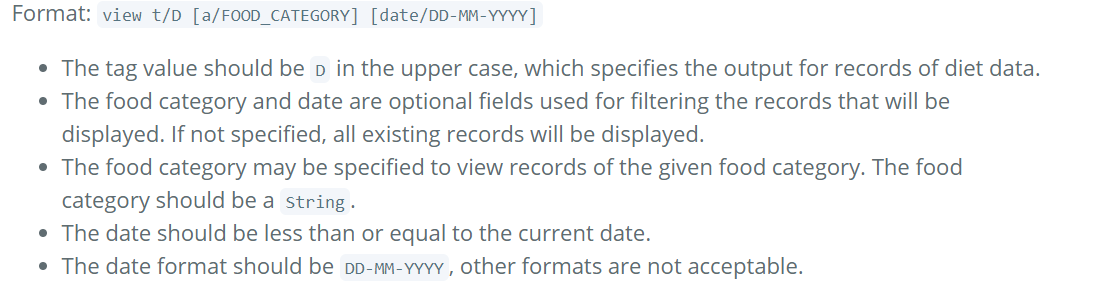

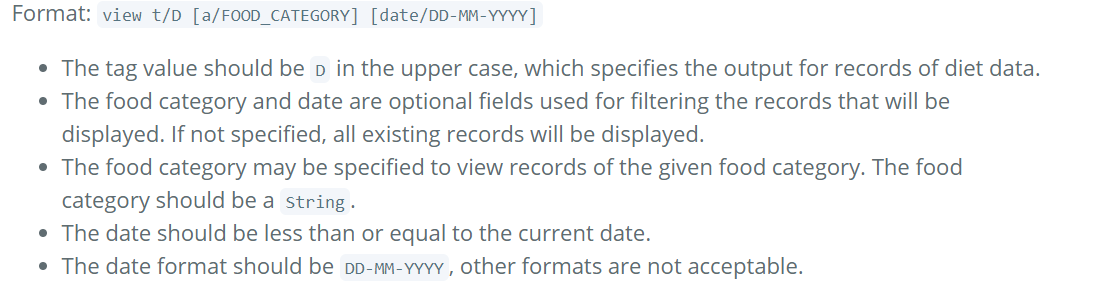

201,384 | 15,802,252,754 | IssuesEvent | 2021-04-03 08:49:38 | LJ-37/ped | https://api.github.com/repos/LJ-37/ped | opened | Typo in UG | severity.Medium type.DocumentationBug | Tag for view food category should be f/ but is written as a/ in the UG under view diet data.

<!--session: 1617437380999-4a5bfdbc-3d33-43fd-9397-daa368b5b349--> | 1.0 | Typo in UG - Tag for view food category should be f/ but is written as a/ in the UG under view diet data.

<!--session: 1617437380999-4a5bfdbc-3d33-43fd-9397-daa368b5b349--> | non_process | typo in ug tag for view food category should be f but is written as a in the ug under view diet data | 0 |

15,296 | 2,850,599,479 | IssuesEvent | 2015-05-31 18:21:31 | damonkohler/sl4a | https://api.github.com/repos/damonkohler/sl4a | opened | SL4A Force Close on droid.startActivityIntent(chooserIntent) | auto-migrated Priority-Medium Type-Defect | _From @GoogleCodeExporter on May 31, 2015 11:30_

```

What device(s) are you experiencing the problem on?

Samsung Vibrant (SGH-T959)

What firmware version are you running on the device?

2.2

What steps will reproduce the problem?

1. Run the attached Python script on an Android device with SL4Ar4

-or-

1. Make an intent... | 1.0 | SL4A Force Close on droid.startActivityIntent(chooserIntent) - _From @GoogleCodeExporter on May 31, 2015 11:30_

```

What device(s) are you experiencing the problem on?

Samsung Vibrant (SGH-T959)

What firmware version are you running on the device?

2.2

What steps will reproduce the problem?

1. Run the attached Python... | non_process | force close on droid startactivityintent chooserintent from googlecodeexporter on may what device s are you experiencing the problem on samsung vibrant sgh what firmware version are you running on the device what steps will reproduce the problem run the attached python script on a... | 0 |

10,455 | 13,234,960,625 | IssuesEvent | 2020-08-18 17:11:26 | googleapis/repo-automation-bots | https://api.github.com/repos/googleapis/repo-automation-bots | closed | bug: refactor for octokit/webhooks | type: process | We either should refactor release-please to not use octokit/webhooks or add it to ignore modules

See #816 | 1.0 | bug: refactor for octokit/webhooks - We either should refactor release-please to not use octokit/webhooks or add it to ignore modules

See #816 | process | bug refactor for octokit webhooks we either should refactor release please to not use octokit webhooks or add it to ignore modules see | 1 |

340,736 | 24,668,635,804 | IssuesEvent | 2022-10-18 12:16:52 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | opened | dev-docs pages not removed on site update | Documentation ISIS Team: Core | Found here: https://github.com/mantidproject/mantid/issues/34357#issuecomment-1248311915

**Describe the bug**

A dev docs file was renamed. The new page exists on the dev docs website, but so does the old.

Old https://developer.mantidproject.org/Testing/SANSGUI/SANSGUITests.html

New https://developer.mantidproje... | 1.0 | dev-docs pages not removed on site update - Found here: https://github.com/mantidproject/mantid/issues/34357#issuecomment-1248311915

**Describe the bug**

A dev docs file was renamed. The new page exists on the dev docs website, but so does the old.

Old https://developer.mantidproject.org/Testing/SANSGUI/SANSGUIT... | non_process | dev docs pages not removed on site update found here describe the bug a dev docs file was renamed the new page exists on the dev docs website but so does the old old new expected behavior if a file is removed or renamed from the dev docs or the user docs the old page is removed from... | 0 |

1,681 | 2,658,826,471 | IssuesEvent | 2015-03-18 17:34:09 | phetsims/pendulum-lab | https://api.github.com/repos/phetsims/pendulum-lab | opened | Missing assets/*-screenshot.png | code review | Noticed during code review #56. Not sure who should be assigned for this. | 1.0 | Missing assets/*-screenshot.png - Noticed during code review #56. Not sure who should be assigned for this. | non_process | missing assets screenshot png noticed during code review not sure who should be assigned for this | 0 |

202,660 | 15,837,159,305 | IssuesEvent | 2021-04-06 20:22:37 | HARDTECHIO/dhoa-front | https://api.github.com/repos/HARDTECHIO/dhoa-front | closed | Aplicar Componentes do Template/MENU | documentation enhancement | ## Tarefas

- [x] Criar Template Menu;

- [x] Criar NavBar com os componentes com Bootstrap; | 1.0 | Aplicar Componentes do Template/MENU - ## Tarefas

- [x] Criar Template Menu;

- [x] Criar NavBar com os componentes com Bootstrap; | non_process | aplicar componentes do template menu tarefas criar template menu criar navbar com os componentes com bootstrap | 0 |

136,853 | 12,736,517,785 | IssuesEvent | 2020-06-25 17:03:23 | ualberta-smr/LibCompPlugin | https://api.github.com/repos/ualberta-smr/LibCompPlugin | closed | Finalize Paper | documentation | As I work on enhancements to LibComp, stay on track and continue to update the paper after each task.

Working on paper will take longer for the user study as I will have to analyze the data and re-write the entire section so I set up some time specifically for this task.

Goal: A good publishable paper for a too... | 1.0 | Finalize Paper - As I work on enhancements to LibComp, stay on track and continue to update the paper after each task.

Working on paper will take longer for the user study as I will have to analyze the data and re-write the entire section so I set up some time specifically for this task.

Goal: A good publishab... | non_process | finalize paper as i work on enhancements to libcomp stay on track and continue to update the paper after each task working on paper will take longer for the user study as i will have to analyze the data and re write the entire section so i set up some time specifically for this task goal a good publishab... | 0 |

131,172 | 18,244,879,937 | IssuesEvent | 2021-10-01 17:01:11 | protocol/nft-website | https://api.github.com/repos/protocol/nft-website | closed | [CONTENT] Write section summary content | help wanted P2 kind/enhancement dif/medium effort/hours topic/design-content | Write brief, TLDR-style summary pages for each content section:

- [ ] `/concepts/`

- [ ] `/tutorial/`

- [ ] `/how-to/`

- [ ] `/reference/`

Each of these already appears as a `README.md` in their respective directories, just without any page body content.

When done, do two things to make them appear:

- [ ... | 1.0 | [CONTENT] Write section summary content - Write brief, TLDR-style summary pages for each content section:

- [ ] `/concepts/`

- [ ] `/tutorial/`

- [ ] `/how-to/`

- [ ] `/reference/`

Each of these already appears as a `README.md` in their respective directories, just without any page body content.

When done, ... | non_process | write section summary content write brief tldr style summary pages for each content section concepts tutorial how to reference each of these already appears as a readme md in their respective directories just without any page body content when done do two things to... | 0 |

29,696 | 8,392,143,480 | IssuesEvent | 2018-10-09 16:46:22 | trilinos/Trilinos | https://api.github.com/repos/trilinos/Trilinos | opened | PyTrilinos: Standardize Configuration Macros | PyTrilinos build | @trilinos/pytrilinos

## Expectations

Configuration macros for PyTrilinos should be consistently named and distinct from other packages.

## Current Behavior

Some configuration macros are not distinct (`HAVE_EPETRA`), some _are_ distinct (`HAVE_PYTRILINOS_AZTECOO`), and some are redundant (`HAVE_PYTRILINOS_EPETR... | 1.0 | PyTrilinos: Standardize Configuration Macros - @trilinos/pytrilinos

## Expectations

Configuration macros for PyTrilinos should be consistently named and distinct from other packages.

## Current Behavior

Some configuration macros are not distinct (`HAVE_EPETRA`), some _are_ distinct (`HAVE_PYTRILINOS_AZTECOO`),... | non_process | pytrilinos standardize configuration macros trilinos pytrilinos expectations configuration macros for pytrilinos should be consistently named and distinct from other packages current behavior some configuration macros are not distinct have epetra some are distinct have pytrilinos aztecoo ... | 0 |

15,051 | 18,762,895,012 | IssuesEvent | 2021-11-05 18:46:18 | GoogleCloudPlatform/ai-platform-samples | https://api.github.com/repos/GoogleCloudPlatform/ai-platform-samples | closed | Python 3.5 CI builds are failing | type: process | Python 3.5 CI builds are failing with this error:

`FileNotFoundError: [Errno 2] No such file or directory: '/tmpfs/src/envs/python3.5/venv'`

[Example failed PR build](https://github.com/GoogleCloudPlatform/ai-platform-samples/pull/514) | 1.0 | Python 3.5 CI builds are failing - Python 3.5 CI builds are failing with this error:

`FileNotFoundError: [Errno 2] No such file or directory: '/tmpfs/src/envs/python3.5/venv'`

[Example failed PR build](https://github.com/GoogleCloudPlatform/ai-platform-samples/pull/514) | process | python ci builds are failing python ci builds are failing with this error filenotfounderror no such file or directory tmpfs src envs venv | 1 |

7,248 | 9,527,289,699 | IssuesEvent | 2019-04-29 02:59:04 | Lothrazar/Cyclic | https://api.github.com/repos/Lothrazar/Cyclic | closed | Garden Scythe Harvesting Bugs | bug: gameplay mod compatibility | Minecraft version & Mod Version:

- Forge v14.23.5.2796-1.12.2

- Cyclic v1.17.11

Single player or Server:

- Probably Both

Describe problem (what you were doing / what happened):

- There are multiple bugs that I noticed when harvesting modded crops using the garden scythe. (See below for id's)

1. The Red O... | True | Garden Scythe Harvesting Bugs - Minecraft version & Mod Version:

- Forge v14.23.5.2796-1.12.2

- Cyclic v1.17.11

Single player or Server:

- Probably Both

Describe problem (what you were doing / what happened):

- There are multiple bugs that I noticed when harvesting modded crops using the garden scythe. (See... | non_process | garden scythe harvesting bugs minecraft version mod version forge cyclic single player or server probably both describe problem what you were doing what happened there are multiple bugs that i noticed when harvesting modded crops using the garden scythe see below for... | 0 |

233,521 | 25,765,525,473 | IssuesEvent | 2022-12-09 01:17:21 | jasonjberry/CDM | https://api.github.com/repos/jasonjberry/CDM | opened | CVE-2022-23491 (Medium) detected in certifi-2019.6.16-py2.py3-none-any.whl | security vulnerability | ## CVE-2022-23491 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>certifi-2019.6.16-py2.py3-none-any.whl</b></p></summary>

<p>Python package for providing Mozilla's CA Bundle.</p>

<p... | True | CVE-2022-23491 (Medium) detected in certifi-2019.6.16-py2.py3-none-any.whl - ## CVE-2022-23491 - Medium Severity Vulnerability