Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

21,756 | 30,275,659,981 | IssuesEvent | 2023-07-07 19:22:10 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | Installing auto replies will force the pty host to spawn | bug terminal-process | Repro:

1. Open VS Code

2. Close all terminal

3. Exit VS Code

4. Open VS Code (a terminal is not restored)

5. Set:

```

"terminal.integrated.autoReplies": {

"Terminate batch job (Y/N)": "Y\r"

}

```

6. Open process explorer, 🐛 the pty host process should not be in the process tree | 1.0 | Installing auto replies will force the pty host to spawn - Repro:

1. Open VS Code

2. Close all terminal

3. Exit VS Code

4. Open VS Code (a terminal is not restored)

5. Set:

```

"terminal.integrated.autoReplies": {

"Terminate batch job (Y/N)": "Y\r"

}

```

6. Open process explorer, 🐛 ... | process | installing auto replies will force the pty host to spawn repro open vs code close all terminal exit vs code open vs code a terminal is not restored set terminal integrated autoreplies terminate batch job y n y r open process explorer 🐛 ... | 1 |

30,697 | 11,842,026,060 | IssuesEvent | 2020-03-23 22:02:55 | Mohib-hub/karate | https://api.github.com/repos/Mohib-hub/karate | opened | CVE-2019-9515 (High) detected in netty-codec-http2-4.1.32.Final.jar | security vulnerability | ## CVE-2019-9515 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-codec-http2-4.1.32.Final.jar</b></p></summary>

<p>Netty is an asynchronous event-driven network application frame... | True | CVE-2019-9515 (High) detected in netty-codec-http2-4.1.32.Final.jar - ## CVE-2019-9515 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-codec-http2-4.1.32.Final.jar</b></p></summar... | non_process | cve high detected in netty codec final jar cve high severity vulnerability vulnerable library netty codec final jar netty is an asynchronous event driven network application framework for rapid development of maintainable high performance protocol servers and clients... | 0 |

50,150 | 6,062,207,110 | IssuesEvent | 2017-06-14 08:51:47 | mattbearman/lime | https://api.github.com/repos/mattbearman/lime | opened | test | question test | ## [View in BugMuncher Control Panel](https://app.bugmuncher.com/websites/a53d1139bceee3b3730c237aff8217d824ad1ccc/feedback/990b6796b1670dde25216fef582f1425c20f1d7b) ##

## Details ##

**Submitted:** June 14, 2017 08:51

**Category:** Question

**Sender Email:**

**Website:** BugMuncher

**URL:** https://www.bugmuncher... | 1.0 | test - ## [View in BugMuncher Control Panel](https://app.bugmuncher.com/websites/a53d1139bceee3b3730c237aff8217d824ad1ccc/feedback/990b6796b1670dde25216fef582f1425c20f1d7b) ##

## Details ##

**Submitted:** June 14, 2017 08:51

**Category:** Question

**Sender Email:**

**Website:** BugMuncher

**URL:** https://www.bug... | non_process | test details submitted june category question sender email website bugmuncher url operating system linux browser firefox browser size x user agent mozilla ubuntu linux rv gecko firefox description ... | 0 |

20,491 | 27,146,979,281 | IssuesEvent | 2023-02-16 20:49:08 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Outdated instruction for using output from different stage | devops/prod doc-bug Pri1 devops-cicd-process/tech | This instruction in [Use outputs in a different stage](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/variables?view=azure-devops&tabs=yaml%2Cbatch#use-outputs-in-a-different-stage) does not seem to work:

> At the stage level, the format for referencing variables from a different stage is dependenci... | 1.0 | Outdated instruction for using output from different stage - This instruction in [Use outputs in a different stage](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/variables?view=azure-devops&tabs=yaml%2Cbatch#use-outputs-in-a-different-stage) does not seem to work:

> At the stage level, the format f... | process | outdated instruction for using output from different stage this instruction in does not seem to work at the stage level the format for referencing variables from a different stage is dependencies stage outputs the only way i could get it to work is to use stagedependencies at stage level too y... | 1 |

802,918 | 29,058,501,341 | IssuesEvent | 2023-05-15 01:52:53 | certbot/certbot | https://api.github.com/repos/certbot/certbot | closed | Delay deployment of certificates to mitigate client’s clock issues | feature request area: cert management area: install priority: unplanned needs-update | According to [this study](https://research.google/pubs/pub46359/) there is a non-negligible number of clients who have certificate errors because of their misconfigured clock. The study gives the numbers of 6.7% of clients whose the clock is more than 24h late and 0.05% whose the clock is more than 24h ahead (see part ... | 1.0 | Delay deployment of certificates to mitigate client’s clock issues - According to [this study](https://research.google/pubs/pub46359/) there is a non-negligible number of clients who have certificate errors because of their misconfigured clock. The study gives the numbers of 6.7% of clients whose the clock is more than... | non_process | delay deployment of certificates to mitigate client’s clock issues according to there is a non negligible number of clients who have certificate errors because of their misconfigured clock the study gives the numbers of of clients whose the clock is more than late and whose the clock is more than a... | 0 |

104,852 | 11,424,315,085 | IssuesEvent | 2020-02-03 17:29:23 | broadinstitute/gatk | https://api.github.com/repos/broadinstitute/gatk | closed | Inaccurate Definition NON_REF in gvcf files | Documentation Vanilla | The issue is that the vcf header NON_REF "Represents any possible alternative allele at this location" but it should instead be described "any allele that is neither the reference nor the observed alt alleles"

User Report:

Hi,

There is a bug in how you define <NON_REF> in gvcf files.

From your vcf header definition:... | 1.0 | Inaccurate Definition NON_REF in gvcf files - The issue is that the vcf header NON_REF "Represents any possible alternative allele at this location" but it should instead be described "any allele that is neither the reference nor the observed alt alleles"

User Report:

Hi,

There is a bug in how you define <NON_REF> in... | non_process | inaccurate definition non ref in gvcf files the issue is that the vcf header non ref represents any possible alternative allele at this location but it should instead be described any allele that is neither the reference nor the observed alt alleles user report hi there is a bug in how you define in gvcf fi... | 0 |

66,372 | 3,253,072,265 | IssuesEvent | 2015-10-19 17:29:21 | NuGet/Home | https://api.github.com/repos/NuGet/Home | closed | %temp%\NuGet has almost 4GB of files | 2 - Working Area:CommandLine Area:VS.Client Priority:2 Type:Bug | It seems that NuGet puts some temporary files there with extracted packages and never cleans up. It's worth noting that this isn't a year's worth of cache or anything - all the timestamps are from a 3-day period.

I believe that NuGet should clean these files/folders up because they are apparently each used only once... | 1.0 | %temp%\NuGet has almost 4GB of files - It seems that NuGet puts some temporary files there with extracted packages and never cleans up. It's worth noting that this isn't a year's worth of cache or anything - all the timestamps are from a 3-day period.

I believe that NuGet should clean these files/folders up because ... | non_process | temp nuget has almost of files it seems that nuget puts some temporary files there with extracted packages and never cleans up it s worth noting that this isn t a year s worth of cache or anything all the timestamps are from a day period i believe that nuget should clean these files folders up because th... | 0 |

467,946 | 13,458,875,828 | IssuesEvent | 2020-09-09 11:21:55 | knime-mpicbg/knime-scripting | https://api.github.com/repos/knime-mpicbg/knime-scripting | closed | open in python should support linux | Python feature request medium priority | linux is reported as unsupported platform.

is there any reason, why?

| 1.0 | open in python should support linux - linux is reported as unsupported platform.

is there any reason, why?

| non_process | open in python should support linux linux is reported as unsupported platform is there any reason why | 0 |

3,252 | 6,329,937,690 | IssuesEvent | 2017-07-26 05:30:11 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Shouldn't the default SIGINT handler trigger process.on('exit')? | process | Maybe it's a bug, maybe it's by design. Documentation doesn't mention anything about whether or not the "exit" event should fire, but it's a bit silly I have to write:

``` js

process.on('SIGINT', function () {

process.exit(somecode); // now the "exit" event will fire

});

```

Just because the built-in handler won't ... | 1.0 | Shouldn't the default SIGINT handler trigger process.on('exit')? - Maybe it's a bug, maybe it's by design. Documentation doesn't mention anything about whether or not the "exit" event should fire, but it's a bit silly I have to write:

``` js

process.on('SIGINT', function () {

process.exit(somecode); // now the "exit... | process | shouldn t the default sigint handler trigger process on exit maybe it s a bug maybe it s by design documentation doesn t mention anything about whether or not the exit event should fire but it s a bit silly i have to write js process on sigint function process exit somecode now the exit... | 1 |

14,663 | 17,786,556,680 | IssuesEvent | 2021-08-31 11:48:39 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Parcel V2 sandbox issue | type: support / not a bug (process) untriaged team-Local-Exec |

### Description of the problem / feature request:

Bazel sandbox on Linux is causing problems with parcel V2 RC

### Bugs: what's the simplest, easiest way to reproduce this bug? Please provide a minimal example if possible.

I trying to run parcel V2 similiar to

the example for parcel V1 at

https://github.c... | 1.0 | Parcel V2 sandbox issue -

### Description of the problem / feature request:

Bazel sandbox on Linux is causing problems with parcel V2 RC

### Bugs: what's the simplest, easiest way to reproduce this bug? Please provide a minimal example if possible.

I trying to run parcel V2 similiar to

the example for parc... | process | parcel sandbox issue description of the problem feature request bazel sandbox on linux is causing problems with parcel rc bugs what s the simplest easiest way to reproduce this bug please provide a minimal example if possible i trying to run parcel similiar to the example for parcel ... | 1 |

218,804 | 16,771,612,406 | IssuesEvent | 2021-06-14 15:24:00 | prometheus-operator/prometheus-operator | https://api.github.com/repos/prometheus-operator/prometheus-operator | closed | Unable to add a new service to be monitored with kube-prometheus | good first issue hacktoberfest help wanted kind/documentation stale | **What did you do?**

Created a new servicemonitor

```

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: sanguine-butterfly-traefik-dashboard

labels:

app: sanguine-butterfly-traefik

spec:

selector:

matchLabels:

app: sanguine-butterfly-traefik

endpoints:

- p... | 1.0 | Unable to add a new service to be monitored with kube-prometheus - **What did you do?**

Created a new servicemonitor

```

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: sanguine-butterfly-traefik-dashboard

labels:

app: sanguine-butterfly-traefik

spec:

selector:

match... | non_process | unable to add a new service to be monitored with kube prometheus what did you do created a new servicemonitor apiversion monitoring coreos com kind servicemonitor metadata name sanguine butterfly traefik dashboard labels app sanguine butterfly traefik spec selector matchl... | 0 |

12,091 | 14,740,073,316 | IssuesEvent | 2021-01-07 08:28:12 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Stockton - SA Billing - Late Fee Account List | anc-process anp-important ant-bug has attachment | In GitLab by @kdjstudios on Oct 3, 2018, 11:07

[Stockton.xlsx](/uploads/89f6e5bba4c020ae8abbd4f57234017f/Stockton.xlsx)

HD: http://www.servicedesk.answernet.com/profiles/ticket/2018-10-03-95123/conversation | 1.0 | Stockton - SA Billing - Late Fee Account List - In GitLab by @kdjstudios on Oct 3, 2018, 11:07

[Stockton.xlsx](/uploads/89f6e5bba4c020ae8abbd4f57234017f/Stockton.xlsx)

HD: http://www.servicedesk.answernet.com/profiles/ticket/2018-10-03-95123/conversation | process | stockton sa billing late fee account list in gitlab by kdjstudios on oct uploads stockton xlsx hd | 1 |

15,189 | 18,957,799,059 | IssuesEvent | 2021-11-18 22:42:09 | slynch8/10x | https://api.github.com/repos/slynch8/10x | opened | support complex statements in preprocessor | bug Priority 3 preprocessor | such as:

#define TEST (1 == (1 ? 1 : 0))

#if TEST | 1.0 | support complex statements in preprocessor - such as:

#define TEST (1 == (1 ? 1 : 0))

#if TEST | process | support complex statements in preprocessor such as define test if test | 1 |

13,693 | 16,449,700,554 | IssuesEvent | 2021-05-21 02:37:33 | pycaret/pycaret | https://api.github.com/repos/pycaret/pycaret | closed | Anomaly Detection Methods | anomaly_detection classification enhancement no-issue-activity preprocessing regression | Hi there, I have a question about anomaly detection.

Is it only supporting "pca" as "outlier_methods" now? Because I find that class Outlier could send different methods including pca, knn and iso, but now is hard coded with pca, and there is not any method parameter could be used when I call setup().

Is there an... | 1.0 | Anomaly Detection Methods - Hi there, I have a question about anomaly detection.

Is it only supporting "pca" as "outlier_methods" now? Because I find that class Outlier could send different methods including pca, knn and iso, but now is hard coded with pca, and there is not any method parameter could be used when I ... | process | anomaly detection methods hi there i have a question about anomaly detection is it only supporting pca as outlier methods now because i find that class outlier could send different methods including pca knn and iso but now is hard coded with pca and there is not any method parameter could be used when i ... | 1 |

17,755 | 23,670,999,946 | IssuesEvent | 2022-08-27 10:53:59 | googleapis/python-ndb | https://api.github.com/repos/googleapis/python-ndb | closed | Dependency Dashboard | type: process api: datastore | This issue lists Renovate updates and detected dependencies. Read the [Dependency Dashboard](https://docs.renovatebot.com/key-concepts/dashboard/) docs to learn more.

## Edited/Blocked

These updates have been manually edited so Renovate will no longer make changes. To discard all commits and start over, click on a ch... | 1.0 | Dependency Dashboard - This issue lists Renovate updates and detected dependencies. Read the [Dependency Dashboard](https://docs.renovatebot.com/key-concepts/dashboard/) docs to learn more.

## Edited/Blocked

These updates have been manually edited so Renovate will no longer make changes. To discard all commits and st... | process | dependency dashboard this issue lists renovate updates and detected dependencies read the docs to learn more edited blocked these updates have been manually edited so renovate will no longer make changes to discard all commits and start over click on a checkbox pull ignored or blocked... | 1 |

21,700 | 30,195,267,106 | IssuesEvent | 2023-07-04 20:06:12 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Processing output as shapefile is missing CPG | Processing Bug | ### What is the bug or the crash?

~~Model~~ Processing output as shapefile is missing CPG and PRJ.

### Steps to reproduce the issue

1. Run model [test.zip](https://github.com/qgis/QGIS/files/9819717/test.zip) with shapefile output

with shapefile output

1. [x] To reduce the cognitive overload we should provide a dashboard with only a general overview. It should be easy for some one without internal knowledge of ze... | 1.0 | Split grafana dashboard into two - **Description**

This came up as part of [the game day](https://confluence.camunda.com/pages/viewpage.action?spaceKey=ZEEBE&title=2022-03-23+Game+Day)

1. [x] To reduce the cognitive overload we should provide a dashboard with only a general overview. It should be easy for some o... | process | split grafana dashboard into two description this came up as part of to reduce the cognitive overload we should provide a dashboard with only a general overview it should be easy for some one without internal knowledge of zeebe to understand the overall health of the system for example a suppor... | 1 |

303,764 | 9,310,365,369 | IssuesEvent | 2019-03-25 18:35:46 | mozilla/addons-frontend | https://api.github.com/repos/mozilla/addons-frontend | closed | Improve handling of needsRestart hint with mozAddonManager | priority: p4 project: amo triaged | Steps to reproduce:

1. Search for a "requires restart" add-on on your device using AMO-dev i.e. https://addons.allizom.org/en-US/android/addon/stylish/?src=hp-dl-featured

2. Tap the add-on installation button

Expected results:

A popup notifying the user that the browser needs to be restarted to apply changes is... | 1.0 | Improve handling of needsRestart hint with mozAddonManager - Steps to reproduce:

1. Search for a "requires restart" add-on on your device using AMO-dev i.e. https://addons.allizom.org/en-US/android/addon/stylish/?src=hp-dl-featured

2. Tap the add-on installation button

Expected results:

A popup notifying the us... | non_process | improve handling of needsrestart hint with mozaddonmanager steps to reproduce search for a requires restart add on on your device using amo dev i e tap the add on installation button expected results a popup notifying the user that the browser needs to be restarted to apply changes is displayed ... | 0 |

434,691 | 30,462,525,096 | IssuesEvent | 2023-07-17 08:04:09 | vijayk3327/LWC | https://api.github.com/repos/vijayk3327/LWC | opened | Create record dynamically Using LWC Apex Framework based on database.insert in Salesforce | documentation question | In this post we are going to learn about [How to Insert record Uses of Apex Framework on standard object ](https://www.w3web.net/lwc-apex-framework-salesforce/)in Salesforce LWC.

Apex is a strongly typed, object-oriented programming language that allows developers to execute flow and transaction control statements o... | 1.0 | Create record dynamically Using LWC Apex Framework based on database.insert in Salesforce - In this post we are going to learn about [How to Insert record Uses of Apex Framework on standard object ](https://www.w3web.net/lwc-apex-framework-salesforce/)in Salesforce LWC.

Apex is a strongly typed, object-oriented prog... | non_process | create record dynamically using lwc apex framework based on database insert in salesforce in this post we are going to learn about salesforce lwc apex is a strongly typed object oriented programming language that allows developers to execute flow and transaction control statements on salesforce servers in co... | 0 |

9,522 | 12,499,706,721 | IssuesEvent | 2020-06-01 20:40:58 | googleapis/google-cloud-cpp | https://api.github.com/repos/googleapis/google-cloud-cpp | closed | Ask DevRel to stop scanning old repo | api: spanner type: process | Ask the DevRel tooling folks to stop scanning the old repository, we will get duplicate samples otherwise.

| 1.0 | Ask DevRel to stop scanning old repo - Ask the DevRel tooling folks to stop scanning the old repository, we will get duplicate samples otherwise.

| process | ask devrel to stop scanning old repo ask the devrel tooling folks to stop scanning the old repository we will get duplicate samples otherwise | 1 |

11,415 | 14,242,922,495 | IssuesEvent | 2020-11-19 03:02:36 | aodn/imos-toolbox | https://api.github.com/repos/aodn/imos-toolbox | opened | Loading several CTD profiles may crash the session | Type:bug Unit:Processing Unit:Profile | There was a report at workshop day that loading a lot of CTD profiles in one toolbox session may slow down or crash the session.

- [ ] Collect some 20+ CTD files with(out) a proper database.

- [ ] Investigate the loading of 20+ CTD dataset profiles and try to reproduce the crash.

- [ ] monitor memory resources/req... | 1.0 | Loading several CTD profiles may crash the session - There was a report at workshop day that loading a lot of CTD profiles in one toolbox session may slow down or crash the session.

- [ ] Collect some 20+ CTD files with(out) a proper database.

- [ ] Investigate the loading of 20+ CTD dataset profiles and try to rep... | process | loading several ctd profiles may crash the session there was a report at workshop day that loading a lot of ctd profiles in one toolbox session may slow down or crash the session collect some ctd files with out a proper database investigate the loading of ctd dataset profiles and try to reproduce... | 1 |

79,588 | 15,586,186,758 | IssuesEvent | 2021-03-18 01:22:11 | ziednov007/JavaSpring | https://api.github.com/repos/ziednov007/JavaSpring | opened | CVE-2020-36183 (High) detected in jackson-databind-2.9.6.jar | security vulnerability | ## CVE-2020-36183 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.6.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2020-36183 (High) detected in jackson-databind-2.9.6.jar - ## CVE-2020-36183 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.6.jar</b></p></summary>

<p>General... | non_process | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file javaspring app build gradle p... | 0 |

7,072 | 5,841,698,326 | IssuesEvent | 2017-05-10 02:08:01 | tensorflow/models | https://api.github.com/repos/tensorflow/models | closed | [slim] performance reduce when train cifarnet with multi-gpu | stat:awaiting tensorflower type:bug/performance | I want to train cifarnet on single machine with 4 gpus, but the performance reduces comparing with training with only one gpu.

## [slim] Train cifarnet using the default script slim/scripts/train_cifarnet_on_cifar10.sh

When using the default script the speed is as follow:

```

INFO:tensorflow:global step 13900: loss =... | True | [slim] performance reduce when train cifarnet with multi-gpu - I want to train cifarnet on single machine with 4 gpus, but the performance reduces comparing with training with only one gpu.

## [slim] Train cifarnet using the default script slim/scripts/train_cifarnet_on_cifar10.sh

When using the default script the spe... | non_process | performance reduce when train cifarnet with multi gpu i want to train cifarnet on single machine with gpus but the performance reduces comparing with training with only one gpu train cifarnet using the default script slim scripts train cifarnet on sh when using the default script the speed is as follow ... | 0 |

3,144 | 6,198,743,808 | IssuesEvent | 2017-07-05 19:54:32 | hashicorp/packer | https://api.github.com/repos/hashicorp/packer | closed | Question: export virtualbox and qemu box while building vagrant box | post-processor/vagrant question | Hello,

is there any way to export a virtualbox image and a qemu image while building the vagrant box or do I need to open the vagrant box after the build manually to get the images?

My goal is that I have the following Output:

> a virtualbox image

> a qemu image

> a virtualbox vagrant box

> a qemu/libvirt vag... | 1.0 | Question: export virtualbox and qemu box while building vagrant box - Hello,

is there any way to export a virtualbox image and a qemu image while building the vagrant box or do I need to open the vagrant box after the build manually to get the images?

My goal is that I have the following Output:

> a virtualbox i... | process | question export virtualbox and qemu box while building vagrant box hello is there any way to export a virtualbox image and a qemu image while building the vagrant box or do i need to open the vagrant box after the build manually to get the images my goal is that i have the following output a virtualbox i... | 1 |

11,814 | 14,630,498,796 | IssuesEvent | 2020-12-23 17:50:32 | KalikaKay/Author-Classification-Project | https://api.github.com/repos/KalikaKay/Author-Classification-Project | closed | Extract Authors | preprocessing | Extract the authors from the text.

Include them in a separate field or somethin. | 1.0 | Extract Authors - Extract the authors from the text.

Include them in a separate field or somethin. | process | extract authors extract the authors from the text include them in a separate field or somethin | 1 |

578 | 3,054,613,624 | IssuesEvent | 2015-08-13 04:47:19 | e-government-ua/i | https://api.github.com/repos/e-government-ua/i | closed | Отображать в списке заявок ( на дашборде чиновника) название услуг. | bug hi priority In process of testing test |

Сейчас отображается id б-процесса активити.

Необходимо отображать полное название услуги. | 1.0 | Отображать в списке заявок ( на дашборде чиновника) название услуг. -

Сейчас отображается id б-процесса активити.

Необходимо отображать полное название услуги. | process | отображать в списке заявок на дашборде чиновника название услуг сейчас отображается id б процесса активити необходимо отображать полное название услуги | 1 |

2,221 | 5,071,297,862 | IssuesEvent | 2016-12-26 12:20:51 | jlm2017/jlm-video-subtitles | https://api.github.com/repos/jlm2017/jlm-video-subtitles | opened | [Subtitles] [FR] MÉLENCHON : Réunion publique au Lamentin en Martinique | Language: French Process: Someone is working on this issue Process: [1] Writing in progress | # Video title

MÉLENCHON : Réunion publique au Lamentin en Martinique

# URL

https://www.youtube.com/watch?v=4vhQIa0KAtw

# Youtube subtitles language

Français

# Duration

1:21:49

# Subtitles URL

https://www.youtube.com/timedtext_editor?lang=fr&ui=hd&action_mde_edit_form=1&tab=captions&v=4vhQIa0KAtw&bl=vmp... | 2.0 | [Subtitles] [FR] MÉLENCHON : Réunion publique au Lamentin en Martinique - # Video title

MÉLENCHON : Réunion publique au Lamentin en Martinique

# URL

https://www.youtube.com/watch?v=4vhQIa0KAtw

# Youtube subtitles language

Français

# Duration

1:21:49

# Subtitles URL

https://www.youtube.com/timedtext_edi... | process | mélenchon réunion publique au lamentin en martinique video title mélenchon réunion publique au lamentin en martinique url youtube subtitles language français duration subtitles url | 1 |

17,299 | 23,115,478,178 | IssuesEvent | 2022-07-27 16:16:36 | GoogleCloudPlatform/professional-services-data-validator | https://api.github.com/repos/GoogleCloudPlatform/professional-services-data-validator | closed | Implement uniform logging | type: process priority: p2 good first issue | DVT uses print statements and logging simultaneously. For example, we usually [print logs when verbose is enabled](https://github.com/GoogleCloudPlatform/professional-services-data-validator/blob/develop/data_validation/data_validation.py#L277), but in some cases we use the [python logging](https://github.com/GoogleClo... | 1.0 | Implement uniform logging - DVT uses print statements and logging simultaneously. For example, we usually [print logs when verbose is enabled](https://github.com/GoogleCloudPlatform/professional-services-data-validator/blob/develop/data_validation/data_validation.py#L277), but in some cases we use the [python logging]... | process | implement uniform logging dvt uses print statements and logging simultaneously for example we usually but in some cases we use the we should standardize to only use the and categorize logs by level debug info warning etc | 1 |

190,875 | 22,171,610,521 | IssuesEvent | 2022-06-06 01:47:24 | KDWSS/dd-trace-java | https://api.github.com/repos/KDWSS/dd-trace-java | opened | CVE-2022-31023 (Medium) detected in play_2.12-2.6.25.jar, play_2.12-2.6.20.jar | security vulnerability | ## CVE-2022-31023 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>play_2.12-2.6.25.jar</b>, <b>play_2.12-2.6.20.jar</b></p></summary>

<p>

<details><summary><b>play_2.12-2.6.25.jar<... | True | CVE-2022-31023 (Medium) detected in play_2.12-2.6.25.jar, play_2.12-2.6.20.jar - ## CVE-2022-31023 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>play_2.12-2.6.25.jar</b>, <b>play_... | non_process | cve medium detected in play jar play jar cve medium severity vulnerability vulnerable libraries play jar play jar play jar play path to dependency file dd smoke tests play play gradle path to vulnerable library c... | 0 |

172,945 | 21,088,929,433 | IssuesEvent | 2022-04-04 01:00:32 | temporalio/subscription-workflow-project-template-java | https://api.github.com/repos/temporalio/subscription-workflow-project-template-java | opened | logback-classic-1.2.3.jar: 1 vulnerabilities (highest severity is: 6.6) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>logback-classic-1.2.3.jar</b></p></summary>

<p></p>

<p>Path to dependency file: /build.gradle</p>

<p>Path to vulnerable library: /home/wss-scanner/.gradle/caches/modu... | True | logback-classic-1.2.3.jar: 1 vulnerabilities (highest severity is: 6.6) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>logback-classic-1.2.3.jar</b></p></summary>

<p></p>

<p>Path to dependency file: /build.gradl... | non_process | logback classic jar vulnerabilities highest severity is vulnerable library logback classic jar path to dependency file build gradle path to vulnerable library home wss scanner gradle caches modules files ch qos logback logback core logback core jar ... | 0 |

412,756 | 27,871,212,639 | IssuesEvent | 2023-03-21 13:35:31 | rooch-network/moveos | https://api.github.com/repos/rooch-network/moveos | opened | [CodeStyle] Define Error code style | documentation | Two Option:

* The first letter is E, and the rest are all capitalized.

* The first letter is E, and the following words use camel case. | 1.0 | [CodeStyle] Define Error code style - Two Option:

* The first letter is E, and the rest are all capitalized.

* The first letter is E, and the following words use camel case. | non_process | define error code style two option the first letter is e and the rest are all capitalized the first letter is e and the following words use camel case | 0 |

220,624 | 7,369,144,717 | IssuesEvent | 2018-03-13 00:59:16 | nco/nco | https://api.github.com/repos/nco/nco | opened | Find cause of core dumps with complex netCDF4 group hierarchies | high priority | NCO is unable to print all the contents of some netCDF4 files. This [file](http://dust.ess.uci.edu/tmp/s5p.nc) illustrates the problem:

```

zender@skyglow:~$ ncks -m s5p.nc > ~/foo 2>&1

*** Error in `ncks': free(): invalid next size (fast): 0x0000000000d67c00 ***

======= Backtrace: =========

/usr/lib64/libc.so.6... | 1.0 | Find cause of core dumps with complex netCDF4 group hierarchies - NCO is unable to print all the contents of some netCDF4 files. This [file](http://dust.ess.uci.edu/tmp/s5p.nc) illustrates the problem:

```

zender@skyglow:~$ ncks -m s5p.nc > ~/foo 2>&1

*** Error in `ncks': free(): invalid next size (fast): 0x000000... | non_process | find cause of core dumps with complex group hierarchies nco is unable to print all the contents of some files this illustrates the problem zender skyglow ncks m nc foo error in ncks free invalid next size fast backtrace usr libc so ... | 0 |

137,168 | 11,101,666,108 | IssuesEvent | 2019-12-16 21:56:36 | linkerd/linkerd2 | https://api.github.com/repos/linkerd/linkerd2 | closed | Add tests for namespace button and filter functionality to dashboard unit tests | area/test area/web priority/P1 | Followup from #3667. Add a test for the namespace select button, and for filter functionality in both namespace selection and filter-able `Table` components, to JS Unit tests.

Also see [comment](https://github.com/linkerd/linkerd2/pull/3667#discussion_r342723228) from @grampelberg on possible filter Regex refactori... | 1.0 | Add tests for namespace button and filter functionality to dashboard unit tests - Followup from #3667. Add a test for the namespace select button, and for filter functionality in both namespace selection and filter-able `Table` components, to JS Unit tests.

Also see [comment](https://github.com/linkerd/linkerd2/pul... | non_process | add tests for namespace button and filter functionality to dashboard unit tests followup from add a test for the namespace select button and for filter functionality in both namespace selection and filter able table components to js unit tests also see from grampelberg on possible filter regex refac... | 0 |

14,099 | 16,988,821,354 | IssuesEvent | 2021-06-30 17:33:15 | googleapis/python-api-core | https://api.github.com/repos/googleapis/python-api-core | closed | Undeprecate IAM factory helpers | type: process | In addition to deprecating legacy role assignments, fd47fda5e3f5eca63522c8d81cffa22bc2a29ab6 (https://github.com/googleapis/google-cloud-python/pull/9869) deprecated the `Policy.user`, `Policy.service_account`, `Policy.group`, `Policy.domain`, `Policy.all_users`, and `Policy.authenticated_users` entity factory helpers.... | 1.0 | Undeprecate IAM factory helpers - In addition to deprecating legacy role assignments, fd47fda5e3f5eca63522c8d81cffa22bc2a29ab6 (https://github.com/googleapis/google-cloud-python/pull/9869) deprecated the `Policy.user`, `Policy.service_account`, `Policy.group`, `Policy.domain`, `Policy.all_users`, and `Policy.authentica... | process | undeprecate iam factory helpers in addition to deprecating legacy role assignments deprecated the policy user policy service account policy group policy domain policy all users and policy authenticated users entity factory helpers istm that those helpers should not be deprecated they hi... | 1 |

67,310 | 20,961,604,486 | IssuesEvent | 2022-03-27 21:47:57 | abedmaatalla/sipdroid | https://api.github.com/repos/abedmaatalla/sipdroid | closed | Doesn't allow changing Google account when creating PBX | Priority-Medium Type-Defect auto-migrated | ```

When clicking "New PBX linked to my Google Voice" it automatically selects one

of the Google accounts on my phone, but not the one with Google voice. There is

no option to change it. There needs to be an option to select the proper

account.

Is there a workaround in the meantime?

```

Original issue reported o... | 1.0 | Doesn't allow changing Google account when creating PBX - ```

When clicking "New PBX linked to my Google Voice" it automatically selects one

of the Google accounts on my phone, but not the one with Google voice. There is

no option to change it. There needs to be an option to select the proper

account.

Is there a w... | non_process | doesn t allow changing google account when creating pbx when clicking new pbx linked to my google voice it automatically selects one of the google accounts on my phone but not the one with google voice there is no option to change it there needs to be an option to select the proper account is there a w... | 0 |

142,228 | 19,083,641,661 | IssuesEvent | 2021-11-29 01:01:16 | renfei/renfei-java-sdk | https://api.github.com/repos/renfei/renfei-java-sdk | opened | CVE-2021-39153 (High) detected in xstream-1.4.17.jar | security vulnerability | ## CVE-2021-39153 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.4.17.jar</b></p></summary>

<p></p>

<p>Library home page: <a href="http://x-stream.github.io">http://x-stream... | True | CVE-2021-39153 (High) detected in xstream-1.4.17.jar - ## CVE-2021-39153 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.4.17.jar</b></p></summary>

<p></p>

<p>Library home pa... | non_process | cve high detected in xstream jar cve high severity vulnerability vulnerable library xstream jar library home page a href path to dependency file renfei java sdk pom xml path to vulnerable library itory com thoughtworks xstream xstream xstream jar dep... | 0 |

9,801 | 12,814,767,165 | IssuesEvent | 2020-07-04 20:55:10 | percybolmer/workflow | https://api.github.com/repos/percybolmer/workflow | closed | Change PropertyMap to work correctly | bug help wanted processor | Remove Requierd, Available fields

Instead add

AddProperty(name, desc string, req bool)

SetProperty(name string, Value int{})

GetProperty(name string)

Update ValidateProperties so that it instead checks

If all Requierd properties is not nil | 1.0 | Change PropertyMap to work correctly - Remove Requierd, Available fields

Instead add

AddProperty(name, desc string, req bool)

SetProperty(name string, Value int{})

GetProperty(name string)

Update ValidateProperties so that it instead checks

If all Requierd properties is not nil | process | change propertymap to work correctly remove requierd available fields instead add addproperty name desc string req bool setproperty name string value int getproperty name string update validateproperties so that it instead checks if all requierd properties is not nil | 1 |

10,767 | 13,562,078,851 | IssuesEvent | 2020-09-18 06:08:20 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | Script fails to load when accessing an website with TestCafe: error: (failed net::ERR_CONTENT_DECODING_FAILED) | AREA: server SYSTEM: resource processing TYPE: bug | ### What is your Test Scenario?

A javaScript file fails to load when accessing the website with TestCafe.

The same javaScript file is loading correctly when accessing the website manually

Steps to reproduce it:

- For TestCafe - Run the attached code

- Manually: it cannot be reproduced.

### What is the Current... | 1.0 | Script fails to load when accessing an website with TestCafe: error: (failed net::ERR_CONTENT_DECODING_FAILED) - ### What is your Test Scenario?

A javaScript file fails to load when accessing the website with TestCafe.

The same javaScript file is loading correctly when accessing the website manually

Steps to repro... | process | script fails to load when accessing an website with testcafe error failed net err content decoding failed what is your test scenario a javascript file fails to load when accessing the website with testcafe the same javascript file is loading correctly when accessing the website manually steps to repro... | 1 |

1,535 | 4,149,817,174 | IssuesEvent | 2016-06-15 15:30:16 | symfony/symfony | https://api.github.com/repos/symfony/symfony | closed | (Process) Passing a new environment variable is problematic on Debian distros | Process | *Use case*: You want to use `Process` to launch a subprocess. The sub-process requires an extra environment variable (eg `CHILDVAR=somevalue`), but any other environment variables (such as `PATH`, `TMPDIR`, `SSH_AUTH_SOCK`, ad nauseum) should be passed-through without modification. In the contract for `Process::__const... | 1.0 | (Process) Passing a new environment variable is problematic on Debian distros - *Use case*: You want to use `Process` to launch a subprocess. The sub-process requires an extra environment variable (eg `CHILDVAR=somevalue`), but any other environment variables (such as `PATH`, `TMPDIR`, `SSH_AUTH_SOCK`, ad nauseum) shou... | process | process passing a new environment variable is problematic on debian distros use case you want to use process to launch a subprocess the sub process requires an extra environment variable eg childvar somevalue but any other environment variables such as path tmpdir ssh auth sock ad nauseum shou... | 1 |

239,128 | 19,822,583,938 | IssuesEvent | 2022-01-20 00:21:55 | JHS-Viking-Robotics/FRC-2022 | https://api.github.com/repos/JHS-Viking-Robotics/FRC-2022 | closed | Hopper: refine commands and update button bindings | Category: Hopper Type: Refactor Priority: In Testing | The commands for the Hopper subsystem, as well as the safety override mode, need to be polished up and bound to buttons on the driver controller. The following need to be set up:

- Unload sequence - Go up on button hold, expel balls for 2 seconds on release (Y button?)

- Load - Go down and run intake (A button?)

-... | 1.0 | Hopper: refine commands and update button bindings - The commands for the Hopper subsystem, as well as the safety override mode, need to be polished up and bound to buttons on the driver controller. The following need to be set up:

- Unload sequence - Go up on button hold, expel balls for 2 seconds on release (Y but... | non_process | hopper refine commands and update button bindings the commands for the hopper subsystem as well as the safety override mode need to be polished up and bound to buttons on the driver controller the following need to be set up unload sequence go up on button hold expel balls for seconds on release y but... | 0 |

20,500 | 27,165,861,619 | IssuesEvent | 2023-02-17 15:17:35 | SpikeInterface/spikeinterface | https://api.github.com/repos/SpikeInterface/spikeinterface | closed | error during whitenning | bug preprocessing | Hello,

I was trying to add whitenning in my preprocess chain, but I got the following error:

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "C:\Users\juventin\AppData\Local\Programs\Python\Python310\lib\multiprocessing\spawn.py", line 116, in spawn_main

exitcode = _main(fd,... | 1.0 | error during whitenning - Hello,

I was trying to add whitenning in my preprocess chain, but I got the following error:

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "C:\Users\juventin\AppData\Local\Programs\Python\Python310\lib\multiprocessing\spawn.py", line 116, in spawn_main... | process | error during whitenning hello i was trying to add whitenning in my preprocess chain but i got the following error traceback most recent call last file line in file c users juventin appdata local programs python lib multiprocessing spawn py line in spawn main exitcode main f... | 1 |

17,583 | 23,392,568,091 | IssuesEvent | 2022-08-11 19:22:40 | apache/arrow-rs | https://api.github.com/repos/apache/arrow-rs | closed | Fix 1.63.0 Clippy Lints | enhancement development-process help wanted | **Is your feature request related to a problem or challenge? Please describe what you are trying to do.**

<!--

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

(This section helps Arrow developers understand the context and *why* for this feature, in addition to the *wha... | 1.0 | Fix 1.63.0 Clippy Lints - **Is your feature request related to a problem or challenge? Please describe what you are trying to do.**

<!--

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

(This section helps Arrow developers understand the context and *why* for this feature... | process | fix clippy lints is your feature request related to a problem or challenge please describe what you are trying to do a clear and concise description of what the problem is ex i m always frustrated when this section helps arrow developers understand the context and why for this feature in ... | 1 |

475,097 | 13,686,870,748 | IssuesEvent | 2020-09-30 09:18:15 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | opened | [0.9.0 develop-68] Update information for Fill Types tooltips | Category: UI Priority: Low |

you can describe what needs to be done to place a specific Fill type | 1.0 | [0.9.0 develop-68] Update information for Fill Types tooltips -

you can describe what needs to be done to place a specific Fill type | non_process | update information for fill types tooltips you can describe what needs to be done to place a specific fill type | 0 |

179,675 | 30,284,528,098 | IssuesEvent | 2023-07-08 13:56:04 | DO-NOTTO-DO/iOS-NOTTODO | https://api.github.com/repos/DO-NOTTO-DO/iOS-NOTTODO | closed | [Fix] 생성/수정 뷰 날짜 선택 UI 및 스와이프 화면전환 시 날짜 Text 수정 | Fix Design 윤서 | ## 🍏 Issue

<!-- 이슈에 대해 간략하게 설명해주세요 -->

- 토글을 열었을 때 선택된 날짜 유지 되도록 수정

- 스와이프에서 낫투두 수정 날짜 텍스트 수정

- 낫투두 수정 navigationTitle 수정

## 📝 To-do

<!-- 진행할 작업에 대해 적어주세요 -->

- [ ] 토글을 열었을 때 선택된 날짜 유지 되도록 수정

- [ ] 스와이프에서 낫투두 수정 날짜 텍스트 수정

- [ ] 낫투두 수정 navigationTitle 수정

| 1.0 | [Fix] 생성/수정 뷰 날짜 선택 UI 및 스와이프 화면전환 시 날짜 Text 수정 - ## 🍏 Issue

<!-- 이슈에 대해 간략하게 설명해주세요 -->

- 토글을 열었을 때 선택된 날짜 유지 되도록 수정

- 스와이프에서 낫투두 수정 날짜 텍스트 수정

- 낫투두 수정 navigationTitle 수정

## 📝 To-do

<!-- 진행할 작업에 대해 적어주세요 -->

- [ ] 토글을 열었을 때 선택된 날짜 유지 되도록 수정

- [ ] 스와이프에서 낫투두 수정 날짜 텍스트 수정

- [ ] 낫투두 수정 navigationTitle 수정

| non_process | 생성 수정 뷰 날짜 선택 ui 및 스와이프 화면전환 시 날짜 text 수정 🍏 issue 토글을 열었을 때 선택된 날짜 유지 되도록 수정 스와이프에서 낫투두 수정 날짜 텍스트 수정 낫투두 수정 navigationtitle 수정 📝 to do 토글을 열었을 때 선택된 날짜 유지 되도록 수정 스와이프에서 낫투두 수정 날짜 텍스트 수정 낫투두 수정 navigationtitle 수정 | 0 |

13,229 | 15,701,997,536 | IssuesEvent | 2021-03-26 12:00:42 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | CRS issue with GRASS raster tools | Bug Feedback Processing | Dear developer team

I found a strange issue regarding the CRS of raster output data in GRASS tools. I explain that bug on an often reproduced case:

I am processing raster data (10x10m)for Austria in EPSG:31287 (MGI /Austria Lambert) and all input data as well as the project itself have this CRS properly defined. Wh... | 1.0 | CRS issue with GRASS raster tools - Dear developer team

I found a strange issue regarding the CRS of raster output data in GRASS tools. I explain that bug on an often reproduced case:

I am processing raster data (10x10m)for Austria in EPSG:31287 (MGI /Austria Lambert) and all input data as well as the project itsel... | process | crs issue with grass raster tools dear developer team i found a strange issue regarding the crs of raster output data in grass tools i explain that bug on an often reproduced case i am processing raster data for austria in epsg mgi austria lambert and all input data as well as the project itself have th... | 1 |

218,538 | 24,376,045,267 | IssuesEvent | 2022-10-04 01:03:11 | BrianMcDonaldWS/atlas | https://api.github.com/repos/BrianMcDonaldWS/atlas | opened | CVE-2022-42003 (Medium) detected in jackson-databind-2.10.2.jar | security vulnerability | ## CVE-2022-42003 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.10.2.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core strea... | True | CVE-2022-42003 (Medium) detected in jackson-databind-2.10.2.jar - ## CVE-2022-42003 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.10.2.jar</b></p></summary>

<p>G... | non_process | cve medium detected in jackson databind jar cve medium severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to vulnerable library cache com fasterxml jac... | 0 |

408,349 | 11,945,462,309 | IssuesEvent | 2020-04-03 05:51:49 | Western-Health-Covid19-Collaboration/wh_covid19_app | https://api.github.com/repos/Western-Health-Covid19-Collaboration/wh_covid19_app | closed | Build out well designed Disclaimer & Conditions of use screen | #2 Priority Ready for Dev enhancement | The Disclaimer & Conditions of use screen needs to present a disclaimer. It is accessible from two places:

1. When the user first starts the app it is the first thing they see

2. You can access it again from a button in the information screen

**We need to build build out a detailed version of the disclaimer & c... | 1.0 | Build out well designed Disclaimer & Conditions of use screen - The Disclaimer & Conditions of use screen needs to present a disclaimer. It is accessible from two places:

1. When the user first starts the app it is the first thing they see

2. You can access it again from a button in the information screen

**We ... | non_process | build out well designed disclaimer conditions of use screen the disclaimer conditions of use screen needs to present a disclaimer it is accessible from two places when the user first starts the app it is the first thing they see you can access it again from a button in the information screen we ... | 0 |

205,234 | 7,096,041,732 | IssuesEvent | 2018-01-14 00:21:01 | twsswt/bug_buddy_jira_plugin | https://api.github.com/repos/twsswt/bug_buddy_jira_plugin | closed | Allow selection of multiple recommendation methods using command line arguments | feature enhancement priority:med | for example --method=skills

--method=word-frequency

--method=hybrid | 1.0 | Allow selection of multiple recommendation methods using command line arguments - for example --method=skills

--method=word-frequency

--method=hybrid | non_process | allow selection of multiple recommendation methods using command line arguments for example method skills method word frequency method hybrid | 0 |

26,920 | 12,497,944,555 | IssuesEvent | 2020-06-01 17:23:21 | Azure/azure-cli | https://api.github.com/repos/Azure/azure-cli | closed | Linux Runtime 'DOTNETCORE|3.1' is not supported. | Service Attention Web Apps |

## Describe the bug

**Command Name**

`az webapp create`

**Errors:**

```

Linux Runtime 'DOTNETCORE|3.1' is not supported.Please invoke 'list-runtimes' to cross check

```

## To Reproduce:

I have been working in azure portal Cloud shell and trying to create a Web App from command line

az group create --... | 1.0 | Linux Runtime 'DOTNETCORE|3.1' is not supported. -

## Describe the bug

**Command Name**

`az webapp create`

**Errors:**

```

Linux Runtime 'DOTNETCORE|3.1' is not supported.Please invoke 'list-runtimes' to cross check

```

## To Reproduce:

I have been working in azure portal Cloud shell and trying to cre... | non_process | linux runtime dotnetcore is not supported describe the bug command name az webapp create errors linux runtime dotnetcore is not supported please invoke list runtimes to cross check to reproduce i have been working in azure portal cloud shell and trying to cre... | 0 |

69,815 | 3,315,419,046 | IssuesEvent | 2015-11-06 11:57:28 | Woseseltops/signbank | https://api.github.com/repos/Woseseltops/signbank | closed | Create more space for field labels | bug top priority | When switching language to Dutch before logging in, the labels for the input fields become too large to fit in front of those fields:

(This is likely to cause problems elsewhere as well)

| 1.0 | Create more space for field labels - When switching language to Dutch before logging in, the labels for the input fields become too large to fit in front of those fields:

(This is likely to cause problems e... | non_process | create more space for field labels when switching language to dutch before logging in the labels for the input fields become too large to fit in front of those fields this is likely to cause problems elsewhere as well | 0 |

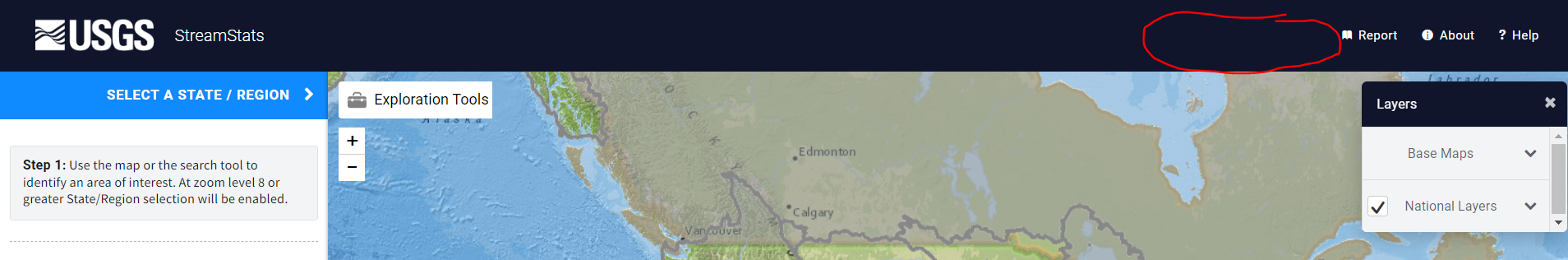

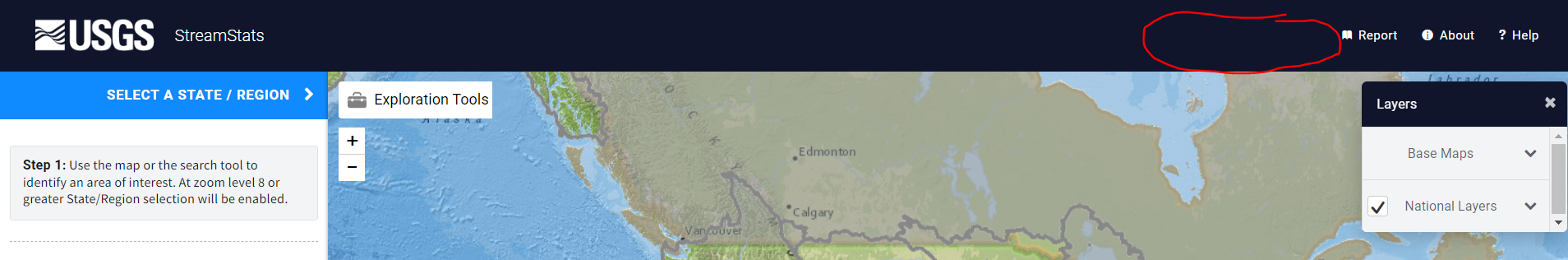

20,245 | 26,862,607,810 | IssuesEvent | 2023-02-03 19:53:46 | USGS-WiM/StreamStats | https://api.github.com/repos/USGS-WiM/StreamStats | closed | BP: Create modal | Batch Processor | Part of #1455

- [x] Add a new button at the top right of the navigation bar called "Batch Processor" with an icon that makes sense (gear?)

- [x] When the user clicks the button, open a new modal

- [x] ... | 1.0 | BP: Create modal - Part of #1455

- [x] Add a new button at the top right of the navigation bar called "Batch Processor" with an icon that makes sense (gear?)

- [x] When the user clicks the button, open ... | process | bp create modal part of add a new button at the top right of the navigation bar called batch processor with an icon that makes sense gear when the user clicks the button open a new modal the modal title should be streamstats batch processor the modal should have tabs called ... | 1 |

10,264 | 13,112,057,988 | IssuesEvent | 2020-08-05 00:59:12 | tokio-rs/tokio | https://api.github.com/repos/tokio-rs/tokio | closed | Document how to remotely kill child process | A-tokio C-maintenance E-easy E-help-wanted M-process T-docs | Add an example that looks roughly like this to the documentation.

```rust

let (send, recv) = oneshot::channel::<()>();

tokio::select! {

_ = &mut child => {},

_ = recv => {

child.kill();

}

}

```

See more [here](https://github.com/tokio-rs/tokio/pull/2512#issuecomment-663954076). | 1.0 | Document how to remotely kill child process - Add an example that looks roughly like this to the documentation.

```rust

let (send, recv) = oneshot::channel::<()>();

tokio::select! {

_ = &mut child => {},

_ = recv => {

child.kill();

}

}

```

See more [here](https://github.com/tokio-rs/to... | process | document how to remotely kill child process add an example that looks roughly like this to the documentation rust let send recv oneshot channel tokio select mut child recv child kill see more | 1 |

18,820 | 24,719,056,622 | IssuesEvent | 2022-10-20 09:16:39 | PyCQA/pylint | https://api.github.com/repos/PyCQA/pylint | closed | Crash with `-j 2` when linting empty file | Blocker 🙅 Regression Crash 💥 Needs investigation 🔬 topic-multiprocessing macOS | ### Bug description

Noticed this crash while linting an empty file with `-j 2`. It might be OS dependent as so far I can only reproduce it on MacOS. Linux seems fine.

Bisected the issue to https://github.com/PyCQA/pylint/pull/7284.

In particular this line:

https://github.com/PyCQA/pylint/blob/77e8ae4f3902697babb1... | 1.0 | Crash with `-j 2` when linting empty file - ### Bug description

Noticed this crash while linting an empty file with `-j 2`. It might be OS dependent as so far I can only reproduce it on MacOS. Linux seems fine.

Bisected the issue to https://github.com/PyCQA/pylint/pull/7284.

In particular this line:

https://githu... | process | crash with j when linting empty file bug description noticed this crash while linting an empty file with j it might be os dependent as so far i can only reproduce it on macos linux seems fine bisected the issue to in particular this line cc daogilvie danielnoord configuration ... | 1 |

139,341 | 5,367,681,649 | IssuesEvent | 2017-02-22 05:35:07 | Cadasta/cadasta-platform | https://api.github.com/repos/Cadasta/cadasta-platform | closed | Tab key is not enabled for right drop-down menu in Organization page | bug First Contribution Friendly priority: low | ### Steps to reproduce the error

1. Go to Organizations.

2. Select an organization from the list

3. Move between the components using tab key

### Actual behavior

Right drop down menu is no... | 1.0 | Tab key is not enabled for right drop-down menu in Organization page - ### Steps to reproduce the error

1. Go to Organizations.

2. Select an organization from the list

3. Move between the components using tab key

... | 1.0 | [API Proposal]: Add a property or method that can be used to check if there is an error in the process - ### Background and motivation

Add a property or method that can be used to check if there is an error in the process

https://github.com/Mr0N/ExampleProcessStart/blob/master/ExampleProcessStart/Program.cs

### API ... | process | add a property or method that can be used to check if there is an error in the process background and motivation add a property or method that can be used to check if there is an error in the process api proposal csharp static class info public static void checkexception this process pro... | 1 |

59,606 | 14,427,007,190 | IssuesEvent | 2020-12-06 01:18:59 | sourcegraph/sourcegraph | https://api.github.com/repos/sourcegraph/sourcegraph | opened | Move GitHub secrets to Vault | RFC-249-2 team/security | In order to use Vault as a single source of truth for secrets, we want to update our GitHub actions to fetch its secrets from Vault. We should be able to use [this HashiCorp provided action](https://github.com/marketplace/actions/vault-secrets) for implementation. | True | Move GitHub secrets to Vault - In order to use Vault as a single source of truth for secrets, we want to update our GitHub actions to fetch its secrets from Vault. We should be able to use [this HashiCorp provided action](https://github.com/marketplace/actions/vault-secrets) for implementation. | non_process | move github secrets to vault in order to use vault as a single source of truth for secrets we want to update our github actions to fetch its secrets from vault we should be able to use for implementation | 0 |

34,094 | 7,343,249,313 | IssuesEvent | 2018-03-07 10:42:13 | proarc/proarc | https://api.github.com/repos/proarc/proarc | closed | Nápovědní bubliny - číslo periodika | Priority-Low Release-3.4.1 Type-Defect auto-migrated | ```

Prosíme opravit:

1. proč je u title info - type "hlavní název bez type"?

2. title - změnit název titulu periodika na "název čísla periodika"

3. subtitle - změnit podnázev titulu periodika na "podnázev čísla

periodika"

4. name part - změnit nutno na "pokud je to možné"

5. role - role term - přidat odkaz na slovník... | 1.0 | Nápovědní bubliny - číslo periodika - ```

Prosíme opravit:

1. proč je u title info - type "hlavní název bez type"?

2. title - změnit název titulu periodika na "název čísla periodika"

3. subtitle - změnit podnázev titulu periodika na "podnázev čísla

periodika"

4. name part - změnit nutno na "pokud je to možné"

5. role... | non_process | nápovědní bubliny číslo periodika prosíme opravit proč je u title info type hlavní název bez type title změnit název titulu periodika na název čísla periodika subtitle změnit podnázev titulu periodika na podnázev čísla periodika name part změnit nutno na pokud je to možné role... | 0 |

369 | 2,813,816,462 | IssuesEvent | 2015-05-18 16:33:35 | arduino/Arduino | https://api.github.com/repos/arduino/Arduino | closed | Bug: splitting define causes java regex error and arduino won't compile | Component: Preprocessor | This doesn't compile:

#define SERIAL_BANNER "\

1) Scan for sensors\n\

2) Change sensor I2C address\n\

3) Show readings for all sensors\n\

"

It causes this:

Exception in thread "Thread-6" java.lang.StackOverflowError

at java.util.regex.Pattern$Branch.match(Pattern.java:4114)

at java.util.regex.Pattern$Gro... | 1.0 | Bug: splitting define causes java regex error and arduino won't compile - This doesn't compile:

#define SERIAL_BANNER "\

1) Scan for sensors\n\

2) Change sensor I2C address\n\

3) Show readings for all sensors\n\

"

It causes this:

Exception in thread "Thread-6" java.lang.StackOverflowError

at java.util.rege... | process | bug splitting define causes java regex error and arduino won t compile this doesn t compile define serial banner scan for sensors n change sensor address n show readings for all sensors n it causes this exception in thread thread java lang stackoverflowerror at java util regex ... | 1 |

70,268 | 3,321,810,885 | IssuesEvent | 2015-11-09 11:03:38 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | closed | Move Mantid Third Party to Visual Studio 2015 on Windows | Component: Framework Priority: High | Visual Studio 2015 community edition will allow us to take advantage of newer C++ features.

To do:

- [x] Build scripts for all third party dependencies

- [x] Add binaries to repository using Git LFS

- [x] Reorganise CMake and allow it to download dependencies

- [x] Changes to only use Python release li... | 1.0 | Move Mantid Third Party to Visual Studio 2015 on Windows - Visual Studio 2015 community edition will allow us to take advantage of newer C++ features.

To do:

- [x] Build scripts for all third party dependencies

- [x] Add binaries to repository using Git LFS

- [x] Reorganise CMake and allow it to download ... | non_process | move mantid third party to visual studio on windows visual studio community edition will allow us to take advantage of newer c features to do build scripts for all third party dependencies add binaries to repository using git lfs reorganise cmake and allow it to download dependencies... | 0 |

19,734 | 26,084,921,680 | IssuesEvent | 2022-12-26 00:35:19 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [REMOTO] [PRESENCIAL] [ESTÁGIO] Estágio RPA na [X-TESTING] | SALVADOR TESTE AUTOMATIZADO REMOTO DOCUMENTAÇÃO HELP WANTED feature_request AUTOMAÇÃO DE PROCESSOS Stale | <!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVASCRIPT] [MYSQL] [NODE.JS] Desenvolvedor Front-End na [NOME DA EMPRESA]`

========================... | 1.0 | [REMOTO] [PRESENCIAL] [ESTÁGIO] Estágio RPA na [X-TESTING] - <!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVASCRIPT] [MYSQL] [NODE.JS] Desenvolve... | process | estágio rpa na por favor só poste se a vaga for para salvador e cidades vizinhas use desenvolvedor front end ao invés de front end developer o exemplo desenvolvedor front end na ... | 1 |

184,577 | 21,784,913,778 | IssuesEvent | 2022-05-14 01:47:10 | n-devs/freebitco.in-mobile | https://api.github.com/repos/n-devs/freebitco.in-mobile | closed | WS-2019-0291 (High) detected in handlebars-4.1.2.tgz - autoclosed | security vulnerability | ## WS-2019-0291 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.1.2.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates ef... | True | WS-2019-0291 (High) detected in handlebars-4.1.2.tgz - autoclosed - ## WS-2019-0291 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.1.2.tgz</b></p></summary>

<p>Handlebars... | non_process | ws high detected in handlebars tgz autoclosed ws high severity vulnerability vulnerable library handlebars tgz handlebars provides the power necessary to let you build semantic templates effectively with no frustration library home page a href path to dependency file ... | 0 |

158,723 | 24,887,101,746 | IssuesEvent | 2022-10-28 08:43:50 | knaw-huc/di-website | https://api.github.com/repos/knaw-huc/di-website | opened | Improve responsiveness of Twitter feed on News page | design | The Twitter feed takes quite a bit of time to load and render. Long enough that an impatient person might think the page isn't working. Can we add something like 'Page Loading..' placeholder before div with Twitter's widget.js loads? Or some way to pre-fetch, cache, and then render the cached content? I think this is t... | 1.0 | Improve responsiveness of Twitter feed on News page - The Twitter feed takes quite a bit of time to load and render. Long enough that an impatient person might think the page isn't working. Can we add something like 'Page Loading..' placeholder before div with Twitter's widget.js loads? Or some way to pre-fetch, cache,... | non_process | improve responsiveness of twitter feed on news page the twitter feed takes quite a bit of time to load and render long enough that an impatient person might think the page isn t working can we add something like page loading placeholder before div with twitter s widget js loads or some way to pre fetch cache ... | 0 |

267,908 | 28,537,304,017 | IssuesEvent | 2023-04-20 01:06:36 | ChoeMinji/redis-6.2.3 | https://api.github.com/repos/ChoeMinji/redis-6.2.3 | closed | CVE-2021-32761 (High) detected in redis6.2.6, redis6.2.6 - autoclosed | Mend: dependency security vulnerability | ## CVE-2021-32761 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>redis6.2.6</b>, <b>redis6.2.6</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whi... | True | CVE-2021-32761 (High) detected in redis6.2.6, redis6.2.6 - autoclosed - ## CVE-2021-32761 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>redis6.2.6</b>, <b>redis6.2.6</b></p></summar... | non_process | cve high detected in autoclosed cve high severity vulnerability vulnerable libraries vulnerability details redis is an in memory database that persists on disk a vulnerability involving out of bounds read and integer overflow to buffer ove... | 0 |

785,716 | 27,623,555,678 | IssuesEvent | 2023-03-10 03:36:52 | coral-xyz/backpack | https://api.github.com/repos/coral-xyz/backpack | closed | add mnemonic hot wallet after creating a ledger only account | priority 1 polish | Right now, if you create an account with a ledger only, you can't later create a mnemonic hot wallet. | 1.0 | add mnemonic hot wallet after creating a ledger only account - Right now, if you create an account with a ledger only, you can't later create a mnemonic hot wallet. | non_process | add mnemonic hot wallet after creating a ledger only account right now if you create an account with a ledger only you can t later create a mnemonic hot wallet | 0 |

2,764 | 5,695,998,584 | IssuesEvent | 2017-04-16 06:01:37 | AllenFang/react-bootstrap-table | https://api.github.com/repos/AllenFang/react-bootstrap-table | closed | Support Conjunction (&&) as Search Mode | help wanted inprocess | Currently, two very different search modes are supported:

1. A very exclusive one, that accepts only rows with the exact `searchText` contained as-is in any of their cells. (default)

2. A very inclusive one, that accepts all rows that contain at least one of the whitespace-separated terms in the `searchText`. (`multi... | 1.0 | Support Conjunction (&&) as Search Mode - Currently, two very different search modes are supported:

1. A very exclusive one, that accepts only rows with the exact `searchText` contained as-is in any of their cells. (default)

2. A very inclusive one, that accepts all rows that contain at least one of the whitespace-se... | process | support conjunction as search mode currently two very different search modes are supported a very exclusive one that accepts only rows with the exact searchtext contained as is in any of their cells default a very inclusive one that accepts all rows that contain at least one of the whitespace se... | 1 |

6,832 | 9,975,295,233 | IssuesEvent | 2019-07-09 12:46:41 | varietywalls/variety | https://api.github.com/repos/varietywalls/variety | opened | Run Black on all files, add black linting to CI (once we configure it) | dev process | @jlu5 I intend to adopt using Black for Variety (https://github.com/python/black) and run it on the whole codebase fairly soon. This will fix the current issue of way-too-inconsistent styling and too many long lines, and will also make it easier for external developers to contribute.

What is your state, do you have ... | 1.0 | Run Black on all files, add black linting to CI (once we configure it) - @jlu5 I intend to adopt using Black for Variety (https://github.com/python/black) and run it on the whole codebase fairly soon. This will fix the current issue of way-too-inconsistent styling and too many long lines, and will also make it easier f... | process | run black on all files add black linting to ci once we configure it i intend to adopt using black for variety and run it on the whole codebase fairly soon this will fix the current issue of way too inconsistent styling and too many long lines and will also make it easier for external developers to contribu... | 1 |

65,858 | 27,259,916,691 | IssuesEvent | 2023-02-22 14:14:44 | red-hat-storage/ocs-ci | https://api.github.com/repos/red-hat-storage/ocs-ci | closed | verify_provider_topology failure | Managed Services | Post-installation check verify_provider_topology failed due to type mismatch:

osd_count == size / 4

TypeError: unsupported operand type(s) for /: 'str' and 'int'

The reason is that the size in the provider config file is a string, not an integer. | 1.0 | verify_provider_topology failure - Post-installation check verify_provider_topology failed due to type mismatch:

osd_count == size / 4

TypeError: unsupported operand type(s) for /: 'str' and 'int'

The reason is that the size in the provider config file is a string, not an integer. | non_process | verify provider topology failure post installation check verify provider topology failed due to type mismatch osd count size typeerror unsupported operand type s for str and int the reason is that the size in the provider config file is a string not an integer | 0 |

724,687 | 24,939,132,977 | IssuesEvent | 2022-10-31 17:24:27 | 0xC0ncord/TURRPG2 | https://api.github.com/repos/0xC0ncord/TURRPG2 | opened | Rework magic weapon enchanting for Adrenaline Masters | type:enhancement priority:p2 | Instead of randomly getting a modifier, Adrenaline masters select a desired modifier and must fill up some sort of gauge in order to receive the enchantment. | 1.0 | Rework magic weapon enchanting for Adrenaline Masters - Instead of randomly getting a modifier, Adrenaline masters select a desired modifier and must fill up some sort of gauge in order to receive the enchantment. | non_process | rework magic weapon enchanting for adrenaline masters instead of randomly getting a modifier adrenaline masters select a desired modifier and must fill up some sort of gauge in order to receive the enchantment | 0 |

358,926 | 10,651,882,351 | IssuesEvent | 2019-10-17 11:24:06 | kubernetes/website | https://api.github.com/repos/kubernetes/website | closed | Issue with k8s.io/docs/tutorials/kubernetes-basics/deploy-app/deploy-interactive/ | kind/cleanup language/en priority/important-longterm | **This is a Bug Report**

<!-- Thanks for filing an issue! Before submitting, please fill in the following information. -->

<!-- See https://kubernetes.io/docs/contribute/start/ for guidance on writing an actionable issue description. -->

<!--Required Information-->

**Problem:**

kubectl run kubernetes-bootca... | 1.0 | Issue with k8s.io/docs/tutorials/kubernetes-basics/deploy-app/deploy-interactive/ - **This is a Bug Report**

<!-- Thanks for filing an issue! Before submitting, please fill in the following information. -->

<!-- See https://kubernetes.io/docs/contribute/start/ for guidance on writing an actionable issue description... | non_process | issue with io docs tutorials kubernetes basics deploy app deploy interactive this is a bug report problem kubectl run kubernetes bootcamp image gcr io google samples kubernetes bootcamp port kubectl run generator deployment apps is deprecated and will be removed in a future ... | 0 |

16,186 | 20,626,546,089 | IssuesEvent | 2022-03-07 23:21:41 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | Add option for on-disk database size limit | log-processing on-disk | Hello!

We are using configuration with database, load-from-disk and keep-db-files. In some cases database size can grow up to 8G or more. Is there any possibility to set maximum database size? So we can keep disk usage under control without removing goaccess database files and losing all of previously stored data. I... | 1.0 | Add option for on-disk database size limit - Hello!

We are using configuration with database, load-from-disk and keep-db-files. In some cases database size can grow up to 8G or more. Is there any possibility to set maximum database size? So we can keep disk usage under control without removing goaccess database file... | process | add option for on disk database size limit hello we are using configuration with database load from disk and keep db files in some cases database size can grow up to or more is there any possibility to set maximum database size so we can keep disk usage under control without removing goaccess database files... | 1 |

10,711 | 13,507,722,953 | IssuesEvent | 2020-09-14 06:32:06 | symfony/symfony-docs | https://api.github.com/repos/symfony/symfony-docs | closed | [Process] allow setting options esp. "create_new_console" to detach a s… | Process | | Q | A

| ------------ | ---

| Feature PR | symfony/symfony#37519

| PR author(s) | @andrei0x309

| Merged in | 5.2-dev | 1.0 | [Process] allow setting options esp. "create_new_console" to detach a s… - | Q | A

| ------------ | ---

| Feature PR | symfony/symfony#37519

| PR author(s) | @andrei0x309

| Merged in | 5.2-dev | process | allow setting options esp create new console to detach a s… q a feature pr symfony symfony pr author s merged in dev | 1 |

7,587 | 10,698,268,271 | IssuesEvent | 2019-10-23 18:19:18 | processing/processing-docs | https://api.github.com/repos/processing/processing-docs | closed | subString should be substring in Handbook | processing handbook | ### Issue description

On page 143 of the second edition of the handbook (but first printing?), chapter 11, under "Syntax introduced" the String.substring() function is incorrectly capitalized as String.subString(). It is correct in the rest of that chapter.

### URL(s) of affected page(s)

### Proposed fix

C... | 1.0 | subString should be substring in Handbook - ### Issue description

On page 143 of the second edition of the handbook (but first printing?), chapter 11, under "Syntax introduced" the String.substring() function is incorrectly capitalized as String.subString(). It is correct in the rest of that chapter.

### URL(s) of... | process | substring should be substring in handbook issue description on page of the second edition of the handbook but first printing chapter under syntax introduced the string substring function is incorrectly capitalized as string substring it is correct in the rest of that chapter url s of af... | 1 |

15,344 | 19,491,831,742 | IssuesEvent | 2021-12-27 08:05:05 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | SAGA provider: "Duplicate algorithm name" warnings | Feedback Processing Bug | ### What is the bug or the crash?

Starting QGIS 3.21.0-master (8498c55), installed using the OSGeo4W Network Installer on Windows 10, a long list of warnings appears in the Processing tab of the Log Messages panel:

```

2021-10-09T11:01:46 WARNING Duplicate algorithm name bioclimaticvariables for provider ... | 1.0 | SAGA provider: "Duplicate algorithm name" warnings - ### What is the bug or the crash?

Starting QGIS 3.21.0-master (8498c55), installed using the OSGeo4W Network Installer on Windows 10, a long list of warnings appears in the Processing tab of the Log Messages panel:

```