Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

20,227 | 26,825,787,177 | IssuesEvent | 2023-02-02 12:47:25 | apache/arrow-rs | https://api.github.com/repos/apache/arrow-rs | closed | Archery Failures with Latest Miniz Oxide | bug development-process | **Describe the bug**

<!--

A clear and concise description of what the bug is.

-->

We are seeing archery failures in CI that appear to occur in miniz_oxide.

It could be correlation, but 0.6.3 was recently released and might be responsible for these failures, more investigation is needed

**To Reproduce**

<!-... | 1.0 | Archery Failures with Latest Miniz Oxide - **Describe the bug**

<!--

A clear and concise description of what the bug is.

-->

We are seeing archery failures in CI that appear to occur in miniz_oxide.

It could be correlation, but 0.6.3 was recently released and might be responsible for these failures, more inves... | process | archery failures with latest miniz oxide describe the bug a clear and concise description of what the bug is we are seeing archery failures in ci that appear to occur in miniz oxide it could be correlation but was recently released and might be responsible for these failures more inves... | 1 |

52,469 | 12,971,424,359 | IssuesEvent | 2020-07-21 10:54:26 | enthought/traits | https://api.github.com/repos/enthought/traits | opened | Traits 6.1.1 release | component: build | I'm making a placeholder issue for the Traits 6.1.1 release, so that it can be added to the appropriate sprint.

There are currently 10 closed and one open PR labelled as needing backport to 6.1. The 10 closed PRs are backported in #1251.

I'm not making a checklist as an issue this time around; instead, see the wi... | 1.0 | Traits 6.1.1 release - I'm making a placeholder issue for the Traits 6.1.1 release, so that it can be added to the appropriate sprint.

There are currently 10 closed and one open PR labelled as needing backport to 6.1. The 10 closed PRs are backported in #1251.

I'm not making a checklist as an issue this time arou... | non_process | traits release i m making a placeholder issue for the traits release so that it can be added to the appropriate sprint there are currently closed and one open pr labelled as needing backport to the closed prs are backported in i m not making a checklist as an issue this time around i... | 0 |

10,922 | 13,724,687,356 | IssuesEvent | 2020-10-03 15:16:02 | MobileOrg/mobileorg | https://api.github.com/repos/MobileOrg/mobileorg | closed | Switch SwiftyDropbox dependency to Swift Package Manager | development process | Carthage has a problem right now with Xcode 12 support (due to Apple Silicon support, aka `arm64` for macOS targets) and it breaks the compilation for all package (check the [#3019](https://github.com/Carthage/Carthage/issues/3019)). They are working on a solution - XCFramework support but it will take a while.

On t... | 1.0 | Switch SwiftyDropbox dependency to Swift Package Manager - Carthage has a problem right now with Xcode 12 support (due to Apple Silicon support, aka `arm64` for macOS targets) and it breaks the compilation for all package (check the [#3019](https://github.com/Carthage/Carthage/issues/3019)). They are working on a solut... | process | switch swiftydropbox dependency to swift package manager carthage has a problem right now with xcode support due to apple silicon support aka for macos targets and it breaks the compilation for all package check the they are working on a solution xcframework support but it will take a while on the... | 1 |

131,454 | 18,288,721,411 | IssuesEvent | 2021-10-05 13:11:52 | carbon-design-system/carbon-for-ibm-dotcom | https://api.github.com/repos/carbon-design-system/carbon-for-ibm-dotcom | closed | [Video card] React: Change video card to display video title as Card headline, not card copy | Feature request package: react dev priority: medium Needs design approval | #### User Story

<!-- {{Provide a detailed description of the user's need here, but avoid any type of solutions}} -->

> As a `[user role below]`:

Carbon for ibm.com developer

> I need to:

create/change the `video card`

> so that I can:

provide the ibm.com adopter developers components they can use to build ibm.com web... | 1.0 | [Video card] React: Change video card to display video title as Card headline, not card copy - #### User Story

<!-- {{Provide a detailed description of the user's need here, but avoid any type of solutions}} -->

> As a `[user role below]`:

Carbon for ibm.com developer

> I need to:

create/change the `video card`

> so ... | non_process | react change video card to display video title as card headline not card copy user story as a carbon for ibm com developer i need to create change the video card so that i can provide the ibm com adopter developers components they can use to build ibm com web pages additional informa... | 0 |

104 | 2,539,972,607 | IssuesEvent | 2015-01-27 18:38:40 | tinkerpop/tinkerpop3 | https://api.github.com/repos/tinkerpop/tinkerpop3 | closed | Throw exceptions when things don't make sense | enhancement process | From our earlier discussion in IM:

```

gremlin> g.V().has(label, "person").values("age").fold().submit(g.compute())

==>[29, 27, 32, 35, 32]

gremlin> g.V().has(label, "person").values("age").fold().map {it.get().mean()}.submit(g.compute())

==>29.0

==>27.0

gremlin> g.V().has(label, "person").values("age").fold()... | 1.0 | Throw exceptions when things don't make sense - From our earlier discussion in IM:

```

gremlin> g.V().has(label, "person").values("age").fold().submit(g.compute())

==>[29, 27, 32, 35, 32]

gremlin> g.V().has(label, "person").values("age").fold().map {it.get().mean()}.submit(g.compute())

==>29.0

==>27.0

gremlin>... | process | throw exceptions when things don t make sense from our earlier discussion in im gremlin g v has label person values age fold submit g compute gremlin g v has label person values age fold map it get mean submit g compute gremlin g v has label pe... | 1 |

80,264 | 7,743,412,850 | IssuesEvent | 2018-05-29 12:46:19 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | opened | Test com.hazelcast.client.topic.Issue9766Test.serverRestartWhenReliableTopicListenerRegistered failed | Type: Test-Failure | During a PR run for maintenance-3.x branch, the following test failure occured:

```

Regression

com.hazelcast.client.topic.Issue9766Test.serverRestartWhenReliableTopicListenerRegistered

Failing for the past 1 build (Since Unstable#15809 )

Took 2 min 4 sec.

Error Message

CountDownLatch failed to complete within ... | 1.0 | Test com.hazelcast.client.topic.Issue9766Test.serverRestartWhenReliableTopicListenerRegistered failed - During a PR run for maintenance-3.x branch, the following test failure occured:

```

Regression

com.hazelcast.client.topic.Issue9766Test.serverRestartWhenReliableTopicListenerRegistered

Failing for the past 1 bu... | non_process | test com hazelcast client topic serverrestartwhenreliabletopiclistenerregistered failed during a pr run for maintenance x branch the following test failure occured regression com hazelcast client topic serverrestartwhenreliabletopiclistenerregistered failing for the past build since unstable ... | 0 |

9,633 | 7,770,041,681 | IssuesEvent | 2018-06-04 07:26:54 | pluck-cms/pluck | https://api.github.com/repos/pluck-cms/pluck | closed | File upload vuln pluck4.7.7 | Security bug | An issue was discovered in Pluck before 4.7.7. Remote PHP code execution is possible.

Do you hava a email? I send details to it. | True | File upload vuln pluck4.7.7 - An issue was discovered in Pluck before 4.7.7. Remote PHP code execution is possible.

Do you hava a email? I send details to it. | non_process | file upload vuln an issue was discovered in pluck before remote php code execution is possible do you hava a email i send details to it | 0 |

22,234 | 30,784,648,204 | IssuesEvent | 2023-07-31 12:29:04 | keras-team/keras-cv | https://api.github.com/repos/keras-team/keras-cv | closed | Add augment_bounding_boxes support to RandomTranslation layer | contribution-welcome preprocessing | The augment_bounding_boxes should be implemented for RandomTranslation Layer in keras_cv. The PR should contain implementation, test scripts and a demo script to verify implementation.

Example code for implementing augment_bounding_boxes() can be found here

- https://github.com/keras-team/keras-cv/blob/master/ker... | 1.0 | Add augment_bounding_boxes support to RandomTranslation layer - The augment_bounding_boxes should be implemented for RandomTranslation Layer in keras_cv. The PR should contain implementation, test scripts and a demo script to verify implementation.

Example code for implementing augment_bounding_boxes() can be found ... | process | add augment bounding boxes support to randomtranslation layer the augment bounding boxes should be implemented for randomtranslation layer in keras cv the pr should contain implementation test scripts and a demo script to verify implementation example code for implementing augment bounding boxes can be found ... | 1 |

13,204 | 15,649,103,891 | IssuesEvent | 2021-03-23 07:01:29 | kubernetes/minikube | https://api.github.com/repos/kubernetes/minikube | closed | Upgrade csi-hostpath-driver addon to v1.6.0 | area/addons kind/process priority/backlog | VolumeSnapshot is upgraded to GA by https://github.com/kubernetes/minikube/pull/10654.

But current csi-hostpath-driver addon is not latest version(It's using rc image version).

I'll send PR to upgrade csi-hostpath-driver addon to v1.6.0(latest).

/area addons | 1.0 | Upgrade csi-hostpath-driver addon to v1.6.0 - VolumeSnapshot is upgraded to GA by https://github.com/kubernetes/minikube/pull/10654.

But current csi-hostpath-driver addon is not latest version(It's using rc image version).

I'll send PR to upgrade csi-hostpath-driver addon to v1.6.0(latest).

/area addons | process | upgrade csi hostpath driver addon to volumesnapshot is upgraded to ga by but current csi hostpath driver addon is not latest version it s using rc image version i ll send pr to upgrade csi hostpath driver addon to latest area addons | 1 |

158,929 | 20,035,850,502 | IssuesEvent | 2022-02-02 11:48:22 | kapseliboi/watch-rtp-play | https://api.github.com/repos/kapseliboi/watch-rtp-play | opened | CVE-2021-3795 (High) detected in semver-regex-3.1.2.tgz, semver-regex-1.0.0.tgz | security vulnerability | ## CVE-2021-3795 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>semver-regex-3.1.2.tgz</b>, <b>semver-regex-1.0.0.tgz</b></p></summary>

<p>

<details><summary><b>semver-regex-3.1.2.t... | True | CVE-2021-3795 (High) detected in semver-regex-3.1.2.tgz, semver-regex-1.0.0.tgz - ## CVE-2021-3795 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>semver-regex-3.1.2.tgz</b>, <b>semve... | non_process | cve high detected in semver regex tgz semver regex tgz cve high severity vulnerability vulnerable libraries semver regex tgz semver regex tgz semver regex tgz regular expression for matching semver versions library home page a href path to ... | 0 |

18,489 | 24,550,905,465 | IssuesEvent | 2022-10-12 12:32:32 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] [Angular Upgrade] My account > Change password > Password criteria > UI of password criteria message should be as per the design document | Bug P1 Participant manager Process: Fixed Process: Tested dev | My account > Change password > Password criteria > UI of password criteria message should be as per the design document

**Note:** Issue needs to be fixed wherever password criteria message is available

**AR:**

when on page | Bug Design | Reproduce:

1. Go to https://dao.hypha.earth/demoxdaox/support

2. Check button colour

Expected behaviour:

The button should be active colour (blue) because I am on the page

Experienced behaviour:

The button is white (not active)

<img width="1089" alt="image" src="https://user-images.githubusercontent.com/... | 1.0 | Support Page: Link Button is not active (blue) when on page - Reproduce:

1. Go to https://dao.hypha.earth/demoxdaox/support

2. Check button colour

Expected behaviour:

The button should be active colour (blue) because I am on the page

Experienced behaviour:

The button is white (not active)

<img width="108... | non_process | support page link button is not active blue when on page reproduce go to check button colour expected behaviour the button should be active colour blue because i am on the page experienced behaviour the button is white not active img width alt image src | 0 |

12,408 | 14,916,969,026 | IssuesEvent | 2021-01-22 19:04:19 | yuta252/startlens_frontend_user | https://api.github.com/repos/yuta252/startlens_frontend_user | closed | i18n国際化対応(react-intl)の導入 | dev process | ## 導入

フロントエンドにおいて、国際化対応するためにreact-intlを導入する。

## 変更点

- localesフォルダ下に翻訳ファイルを設置する(en.ts, js.ts)

- App.tsxにreduxのstateを参照し言語情報を取得した上で言語ファイルを切り替えるchooseLocaleData関数を作成

- constant.tsも言語ファイルを参照するように設計を変更

- 各ページにて<FormattedMessage />コンポーネント及びintl.formatMessage関数を利用し言語ファイルのidを参照するように設定する。

## 参照

- [react-intlを使... | 1.0 | i18n国際化対応(react-intl)の導入 - ## 導入

フロントエンドにおいて、国際化対応するためにreact-intlを導入する。

## 変更点

- localesフォルダ下に翻訳ファイルを設置する(en.ts, js.ts)

- App.tsxにreduxのstateを参照し言語情報を取得した上で言語ファイルを切り替えるchooseLocaleData関数を作成

- constant.tsも言語ファイルを参照するように設計を変更

- 各ページにて<FormattedMessage />コンポーネント及びintl.formatMessage関数を利用し言語ファイルのidを参照するように設定する。

... | process | (react intl)の導入 導入 フロントエンドにおいて、国際化対応するためにreact intlを導入する。 変更点 localesフォルダ下に翻訳ファイルを設置する(en ts js ts) app tsxにreduxのstateを参照し言語情報を取得した上で言語ファイルを切り替えるchooselocaledata関数を作成 constant tsも言語ファイルを参照するように設計を変更 各ページにて コンポーネント及びintl formatmessage関数を利用し言語ファイルのidを参照するように設定する。 参照 ... | 1 |

214,112 | 16,547,352,024 | IssuesEvent | 2021-05-28 02:46:10 | octokit/webhooks.js | https://api.github.com/repos/octokit/webhooks.js | closed | docs: add reference to `@octokit/webhooks-definitions` for event payload types | documentation maintenance | I'm trying to use this package only for the `verify` and `sign` functions and want to handle the webhook events in my own web server.

Is there currently a way to use the types for the plain payload as I would be receiving it from GitHub? As I understand it, the only directly exported type is `EmitterEventMap` which ... | 1.0 | docs: add reference to `@octokit/webhooks-definitions` for event payload types - I'm trying to use this package only for the `verify` and `sign` functions and want to handle the webhook events in my own web server.

Is there currently a way to use the types for the plain payload as I would be receiving it from GitHub... | non_process | docs add reference to octokit webhooks definitions for event payload types i m trying to use this package only for the verify and sign functions and want to handle the webhook events in my own web server is there currently a way to use the types for the plain payload as i would be receiving it from github... | 0 |

3,224 | 6,283,245,061 | IssuesEvent | 2017-07-19 02:33:23 | gaocegege/Processing.R | https://api.github.com/repos/gaocegege/Processing.R | closed | libraryImport video example: can't define movieEvent hook | community/processing priority/p1 size/no-idea status/claimed type/bug | I've tried using the `importLibrary()` function to create a second library example using the Processing Video library ("video"), and specifically its Loop.pde demo sketch.

I was successful -- video plays in a loop in Processing.R -- however I ran into a problem redefining the Video library's movieEvent function hook... | 1.0 | libraryImport video example: can't define movieEvent hook - I've tried using the `importLibrary()` function to create a second library example using the Processing Video library ("video"), and specifically its Loop.pde demo sketch.

I was successful -- video plays in a loop in Processing.R -- however I ran into a pro... | process | libraryimport video example can t define movieevent hook i ve tried using the importlibrary function to create a second library example using the processing video library video and specifically its loop pde demo sketch i was successful video plays in a loop in processing r however i ran into a pro... | 1 |

3,867 | 6,808,645,832 | IssuesEvent | 2017-11-04 06:08:06 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | reopened | opening the miniTransaction file takes more than a minute. Needless to say, this is not good for demos | apps-miniBlocks status-inprocess type-enhancement | From https://github.com/Great-Hill-Corporation/ethslurp/issues/117 | 1.0 | opening the miniTransaction file takes more than a minute. Needless to say, this is not good for demos - From https://github.com/Great-Hill-Corporation/ethslurp/issues/117 | process | opening the minitransaction file takes more than a minute needless to say this is not good for demos from | 1 |

244,514 | 18,760,346,626 | IssuesEvent | 2021-11-05 15:45:20 | lanl/scico | https://api.github.com/repos/lanl/scico | closed | Todo in docs Style Guide | documentation | Todo note (with reference to coding conventions) removed from Overview subsection of Style Guide section of docs in branch `brendt/docs-edits`:

Briefly explain which components are taken from each convention (see above) to avoid ambiguity in cases in which they differ. | 1.0 | Todo in docs Style Guide - Todo note (with reference to coding conventions) removed from Overview subsection of Style Guide section of docs in branch `brendt/docs-edits`:

Briefly explain which components are taken from each convention (see above) to avoid ambiguity in cases in which they differ. | non_process | todo in docs style guide todo note with reference to coding conventions removed from overview subsection of style guide section of docs in branch brendt docs edits briefly explain which components are taken from each convention see above to avoid ambiguity in cases in which they differ | 0 |

236,904 | 26,072,299,432 | IssuesEvent | 2022-12-24 01:15:15 | nexmo-community/node-passwordless-login | https://api.github.com/repos/nexmo-community/node-passwordless-login | closed | body-parser-1.18.3.tgz: 1 vulnerabilities (highest severity is: 7.5) - autoclosed | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>body-parser-1.18.3.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/qs/package.json</p>

<p>

... | True | body-parser-1.18.3.tgz: 1 vulnerabilities (highest severity is: 7.5) - autoclosed - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>body-parser-1.18.3.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /pack... | non_process | body parser tgz vulnerabilities highest severity is autoclosed vulnerable library body parser tgz path to dependency file package json path to vulnerable library node modules qs package json found in head commit a href vulnerabilities cve severity c... | 0 |

351,584 | 25,032,979,480 | IssuesEvent | 2022-11-04 13:54:27 | unoplatform/uno | https://api.github.com/repos/unoplatform/uno | closed | [Documentation] Using Adaptive triggers | hacktoberfest difficulty/starter kind/documentation | ## What would you like clarification on:

Using Adaptive Triggers in Uno Platform apps.

Most documentation should match UWP, but in case of Uno the order of Adaptive Trigger states matters (e.g. in Uno Platform the state triggers are evaluated in order and the first one matching will be applied), which should be p... | 1.0 | [Documentation] Using Adaptive triggers - ## What would you like clarification on:

Using Adaptive Triggers in Uno Platform apps.

Most documentation should match UWP, but in case of Uno the order of Adaptive Trigger states matters (e.g. in Uno Platform the state triggers are evaluated in order and the first one ma... | non_process | using adaptive triggers what would you like clarification on using adaptive triggers in uno platform apps most documentation should match uwp but in case of uno the order of adaptive trigger states matters e g in uno platform the state triggers are evaluated in order and the first one matching will be... | 0 |

13,322 | 15,786,597,617 | IssuesEvent | 2021-04-01 17:58:52 | hasura/ask-me-anything | https://api.github.com/repos/hasura/ask-me-anything | closed | When using `hasura console` and making metadata changes, how will it determine which file to modify? | processing-for-shortvid question | Per @scriptonist "CLI is aware of the expected metadata structure and parses the raw metadata (from the server) to determine the files to edit." | 1.0 | When using `hasura console` and making metadata changes, how will it determine which file to modify? - Per @scriptonist "CLI is aware of the expected metadata structure and parses the raw metadata (from the server) to determine the files to edit." | process | when using hasura console and making metadata changes how will it determine which file to modify per scriptonist cli is aware of the expected metadata structure and parses the raw metadata from the server to determine the files to edit | 1 |

13,534 | 16,066,954,985 | IssuesEvent | 2021-04-23 20:49:43 | googleapis/python-bigquery | https://api.github.com/repos/googleapis/python-bigquery | closed | add test session to nox without installing any "extras" | api: bigquery type: process | https://github.com/googleapis/python-bigquery/pull/613 is making me a bit nervous that we might accidentally introduce a required dependency that we thought was optional. It wouldn't be the first time this has happened (https://github.com/googleapis/python-bigquery/issues/549), so I'd like at least a unit test session ... | 1.0 | add test session to nox without installing any "extras" - https://github.com/googleapis/python-bigquery/pull/613 is making me a bit nervous that we might accidentally introduce a required dependency that we thought was optional. It wouldn't be the first time this has happened (https://github.com/googleapis/python-bigqu... | process | add test session to nox without installing any extras is making me a bit nervous that we might accidentally introduce a required dependency that we thought was optional it wouldn t be the first time this has happened so i d like at least a unit test session that runs without any extras | 1 |

21,522 | 29,805,473,076 | IssuesEvent | 2023-06-16 11:18:54 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | [CI] BigQuery intermittent (but frequent) test failures | Type:Bug Priority:P1 Database/BigQuery .CI & Tests .Backend flaky-test-fix .Team/QueryProcessor :hammer_and_wrench: | Example of a failed run:

https://github.com/metabase/metabase/actions/runs/4304721614/jobs/7506170453#step:3:471

This failure happens **a lot** and the key thing seems to be: `Failed to create :bigquery-cloud-sdk 'test-data' test database`

```

ERROR in metabase-enterprise.sandbox.query-processor.middleware.row-... | 1.0 | [CI] BigQuery intermittent (but frequent) test failures - Example of a failed run:

https://github.com/metabase/metabase/actions/runs/4304721614/jobs/7506170453#step:3:471

This failure happens **a lot** and the key thing seems to be: `Failed to create :bigquery-cloud-sdk 'test-data' test database`

```

ERROR in m... | process | bigquery intermittent but frequent test failures example of a failed run this failure happens a lot and the key thing seems to be failed to create bigquery cloud sdk test data test database error in metabase enterprise sandbox query processor middleware row level restrictions test pivot q... | 1 |

10,439 | 13,220,669,107 | IssuesEvent | 2020-08-17 12:50:17 | timberio/vector | https://api.github.com/repos/timberio/vector | closed | Implement remap arithmetic | domain: processing type: enhancement | The remap mapping syntax needs support for basic numerical arithmetic (+, -, *, /, %) as well as boolean comparison operators (>, >=, ==, !=, <, <=). This would allow expressions such as `.foo = .foo + .bar` as well as conditional expressions `.foo = .bar > 10`, which can later be used as `if` statement arguments `.foo... | 1.0 | Implement remap arithmetic - The remap mapping syntax needs support for basic numerical arithmetic (+, -, *, /, %) as well as boolean comparison operators (>, >=, ==, !=, <, <=). This would allow expressions such as `.foo = .foo + .bar` as well as conditional expressions `.foo = .bar > 10`, which can later be used as `... | process | implement remap arithmetic the remap mapping syntax needs support for basic numerical arithmetic as well as boolean comparison operators which can later be used as if statement arguments foo if foo foo else bar | 1 |

18,326 | 24,445,469,261 | IssuesEvent | 2022-10-06 17:34:09 | bondaleksey/credit-card-fraud-detection | https://api.github.com/repos/bondaleksey/credit-card-fraud-detection | opened | Generate data | work plan data preprocessing | - Setup a Spark cluster with 3 data nodes in Yandex Cloud (YC)

- Generate a 100GB sample of simulated data

- Upload all the generated data to the cluster in the Hadoop Distributed File System (HDFS). | 1.0 | Generate data - - Setup a Spark cluster with 3 data nodes in Yandex Cloud (YC)

- Generate a 100GB sample of simulated data

- Upload all the generated data to the cluster in the Hadoop Distributed File System (HDFS). | process | generate data setup a spark cluster with data nodes in yandex cloud yc generate a sample of simulated data upload all the generated data to the cluster in the hadoop distributed file system hdfs | 1 |

149,781 | 11,914,943,915 | IssuesEvent | 2020-03-31 14:18:26 | VNG-Realisatie/gemma-zaken | https://api.github.com/repos/VNG-Realisatie/gemma-zaken | closed | Applicatie verwijderen in AC cascade niet door naar ZRC | API Test Platform bug | # Bug

Als ik een `Applicatie` verwijder via de API van het AC, dan zouden de gecachete versies van die applicatie in de andere componenten ook verwijderd moeten worden. Voor het ZRC en DRC is dit echter niet het geval, hier blijft de gecachete applicatie bestaan, terwijl hij niet meer bestaat in het AC.

| 1.0 | Applicatie verwijderen in AC cascade niet door naar ZRC - # Bug

Als ik een `Applicatie` verwijder via de API van het AC, dan zouden de gecachete versies van die applicatie in de andere componenten ook verwijderd moeten worden. Voor het ZRC en DRC is dit echter niet het geval, hier blijft de gecachete applicatie best... | non_process | applicatie verwijderen in ac cascade niet door naar zrc bug als ik een applicatie verwijder via de api van het ac dan zouden de gecachete versies van die applicatie in de andere componenten ook verwijderd moeten worden voor het zrc en drc is dit echter niet het geval hier blijft de gecachete applicatie best... | 0 |

15,889 | 20,075,036,694 | IssuesEvent | 2022-02-04 11:43:35 | climatepolicyradar/navigator | https://api.github.com/repos/climatepolicyradar/navigator | opened | Order passages into natural reading order | Document processing | Text will need to be extracted in natural reading order of the document. Since the scope of the alpha will be restricted to English language documents, this means that the order will be assumed to be top to bottom / left to right. Where a document contains a multi-column layout, text should be extracted in each column ... | 1.0 | Order passages into natural reading order - Text will need to be extracted in natural reading order of the document. Since the scope of the alpha will be restricted to English language documents, this means that the order will be assumed to be top to bottom / left to right. Where a document contains a multi-column layo... | process | order passages into natural reading order text will need to be extracted in natural reading order of the document since the scope of the alpha will be restricted to english language documents this means that the order will be assumed to be top to bottom left to right where a document contains a multi column layo... | 1 |

10,799 | 13,609,287,116 | IssuesEvent | 2020-09-23 04:50:02 | googleapis/java-logging-logback | https://api.github.com/repos/googleapis/java-logging-logback | closed | Dependency Dashboard | api: logging type: process | This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/logging.version -->deps: update dependency com.google.cloud:google-cloud-logging to v1.102.0

- [ ] <!-- re... | 1.0 | Dependency Dashboard - This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/logging.version -->deps: update dependency com.google.cloud:google-cloud-logging to ... | process | dependency dashboard this issue contains a list of renovate updates and their statuses open these updates have all been created already click a checkbox below to force a retry rebase of any deps update dependency com google cloud google cloud logging to chore deps update dependency com g... | 1 |

9,803 | 3,072,559,904 | IssuesEvent | 2015-08-19 17:32:49 | liqd/adhocracy3.mercator | https://api.github.com/repos/liqd/adhocracy3.mercator | closed | htmlhint sometimes tries to check deleted files in commit hook | tests | In the `check_code` commit hook I sometimes get the following error:

```

Warning: Unable to read "src/meinberlin/meinberlin/build/js/Packages/MeinBerlin/Burgerhaushalt/Process/Phase.html" file (Error code: ENOENT). Use --force to continue.

```

The file was created and removed but never added to git (maybe stage... | 1.0 | htmlhint sometimes tries to check deleted files in commit hook - In the `check_code` commit hook I sometimes get the following error:

```

Warning: Unable to read "src/meinberlin/meinberlin/build/js/Packages/MeinBerlin/Burgerhaushalt/Process/Phase.html" file (Error code: ENOENT). Use --force to continue.

```

The... | non_process | htmlhint sometimes tries to check deleted files in commit hook in the check code commit hook i sometimes get the following error warning unable to read src meinberlin meinberlin build js packages meinberlin burgerhaushalt process phase html file error code enoent use force to continue the... | 0 |

744,989 | 25,964,425,455 | IssuesEvent | 2022-12-19 04:53:03 | Sunbird-cQube/community | https://api.github.com/repos/Sunbird-cQube/community | closed | Code refactoring for Emission Data Storage | Backlog Size-Medium Tech-Priority-P1 Emission Data Storage | - [ ] **01. Code Structure**

The code structure is not consistent with sunbird standards.

I expected a basic organisation of the code into ingestion, processing, storage, metrics, query etc folders.

Please review this in detail with Anand P.

_Update_

Folder sructure is organised. Require inputs and then review.... | 1.0 | Code refactoring for Emission Data Storage - - [ ] **01. Code Structure**

The code structure is not consistent with sunbird standards.

I expected a basic organisation of the code into ingestion, processing, storage, metrics, query etc folders.

Please review this in detail with Anand P.

_Update_

Folder sructure ... | non_process | code refactoring for emission data storage code structure the code structure is not consistent with sunbird standards i expected a basic organisation of the code into ingestion processing storage metrics query etc folders please review this in detail with anand p update folder sructure is ... | 0 |

9,615 | 12,553,259,626 | IssuesEvent | 2020-06-06 21:15:17 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Bug: Positional parameters are not supported. | Priority:P3 Querying/Native Querying/Processor Type:Bug | `?` marks in a custom query is somehow interpreted as positional parameters.

Minimal required SQL

```sql

#standardSQL

-- ? Removing this comment will resolve the error

select id

from tupac_sightings.sightings

where timestamp_seconds(timestamp) between {{start_date}} and {{end_date}}

```

Error message: `Pos... | 1.0 | Bug: Positional parameters are not supported. - `?` marks in a custom query is somehow interpreted as positional parameters.

Minimal required SQL

```sql

#standardSQL

-- ? Removing this comment will resolve the error

select id

from tupac_sightings.sightings

where timestamp_seconds(timestamp) between {{start_dat... | process | bug positional parameters are not supported marks in a custom query is somehow interpreted as positional parameters minimal required sql sql standardsql removing this comment will resolve the error select id from tupac sightings sightings where timestamp seconds timestamp between start dat... | 1 |

24,582 | 6,555,087,396 | IssuesEvent | 2017-09-06 08:57:32 | mozilla/addons-frontend | https://api.github.com/repos/mozilla/addons-frontend | closed | Clean up the server tests | component: code quality triaged | The server tests are starting to get clunky - it would be great if we could ditch them and move as much code to unitests as possible.

- CSP tests, we could look at passing the config to the middleware and check the output of the middleware directly (saving a whole request/response) setup via supertest.

- SRI tests, we ... | 1.0 | Clean up the server tests - The server tests are starting to get clunky - it would be great if we could ditch them and move as much code to unitests as possible.

- CSP tests, we could look at passing the config to the middleware and check the output of the middleware directly (saving a whole request/response) setup via... | non_process | clean up the server tests the server tests are starting to get clunky it would be great if we could ditch them and move as much code to unitests as possible csp tests we could look at passing the config to the middleware and check the output of the middleware directly saving a whole request response setup via... | 0 |

18,160 | 24,194,224,191 | IssuesEvent | 2022-09-23 21:10:16 | GSA/EDX | https://api.github.com/repos/GSA/EDX | closed | Web records & DLP | process digital council collaboration DLP | ## Summary

Ensure general practices for web records management are included in DLP.

## Additional context and links

[NARA Guidance on Managing Web Records](https://www.archives.gov/records-mgmt/policy/managing-web-records-index.html)

## Checklist

List below the specific actions to be taken

- [x] Meet ... | 1.0 | Web records & DLP - ## Summary

Ensure general practices for web records management are included in DLP.

## Additional context and links

[NARA Guidance on Managing Web Records](https://www.archives.gov/records-mgmt/policy/managing-web-records-index.html)

## Checklist

List below the specific actions to be ... | process | web records dlp summary ensure general practices for web records management are included in dlp additional context and links checklist list below the specific actions to be taken meet with robert smudde s team gsa s records officer update dlp as needed to incorporate recor... | 1 |

5,345 | 8,177,764,447 | IssuesEvent | 2018-08-28 11:51:33 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | Older data wiped | log-processing on-disk question | Sequence of events:

1. built goaccess

2. run it against current access.log (rotated daily). Static html produced and fine

3. saw it was good, so run it against the old gzipped logs. Again html was fine and older data visible

4. cron execution against current access.log: html generated and older data trashed, I see ... | 1.0 | Older data wiped - Sequence of events:

1. built goaccess

2. run it against current access.log (rotated daily). Static html produced and fine

3. saw it was good, so run it against the old gzipped logs. Again html was fine and older data visible

4. cron execution against current access.log: html generated and older d... | process | older data wiped sequence of events built goaccess run it against current access log rotated daily static html produced and fine saw it was good so run it against the old gzipped logs again html was fine and older data visible cron execution against current access log html generated and older d... | 1 |

108,235 | 23,584,168,724 | IssuesEvent | 2022-08-23 10:07:46 | Regalis11/Barotrauma | https://api.github.com/repos/Regalis11/Barotrauma | closed | Commands done in submarine test mode carries over to signleplayer without having to enable cheat | Bug Code Unstable | **Steps To Reproduce**

1. Load up sub editor test mode

2. Use commands that aren't game mode specific like debugdraw and lighting

3. Hit Esc and Main Menu

4. Load up singleplayer

5. Notice that the commands are still in use but you can't even disable them as you don't have cheats enabled

**Version**

0.18.6.0

Branch: b... | 1.0 | Commands done in submarine test mode carries over to signleplayer without having to enable cheat - **Steps To Reproduce**

1. Load up sub editor test mode

2. Use commands that aren't game mode specific like debugdraw and lighting

3. Hit Esc and Main Menu

4. Load up singleplayer

5. Notice that the commands are still in u... | non_process | commands done in submarine test mode carries over to signleplayer without having to enable cheat steps to reproduce load up sub editor test mode use commands that aren t game mode specific like debugdraw and lighting hit esc and main menu load up singleplayer notice that the commands are still in u... | 0 |

254,812 | 21,877,903,127 | IssuesEvent | 2022-05-19 11:57:12 | mennaelkashef/eShop | https://api.github.com/repos/mennaelkashef/eShop | opened | Test comment | Hello! RULE-GOT-APPLIED DOES-NOT-CONTAIN-STRING Rule-works-on-convert-to-bug test instabug ARW | # :clipboard: Bug Details

>Test comment

key | value

--|--

Reported At | 2022-05-19 11:41:21 UTC

Email | a@test.com

Categories | Report a bug

Tags | test, Hello!, RULE-GOT-APPLIED, DOES-NOT-CONTAIN-STRING, Rule-works-on-convert-to-bug, instabug, ARW

App Version | 1.1 (1)

Session Duration | 384

Device | G... | 1.0 | Test comment - # :clipboard: Bug Details

>Test comment

key | value

--|--

Reported At | 2022-05-19 11:41:21 UTC

Email | a@test.com

Categories | Report a bug

Tags | test, Hello!, RULE-GOT-APPLIED, DOES-NOT-CONTAIN-STRING, Rule-works-on-convert-to-bug, instabug, ARW

App Version | 1.1 (1)

Session Duration | ... | non_process | test comment clipboard bug details test comment key value reported at utc email a test com categories report a bug tags test hello rule got applied does not contain string rule works on convert to bug instabug arw app version session duration devic... | 0 |

184,693 | 32,033,900,702 | IssuesEvent | 2023-09-22 14:06:42 | CDCgov/prime-reportstream | https://api.github.com/repos/CDCgov/prime-reportstream | opened | User testing new website | design experience | ## User story

As a ReportStream designer/researcher, I want to conduct usability testing of the ReportStream website's redesign so that we can incorporate user feedback into the ongoing content/design development of the website.

## Background & context

Audrey is conducting the user test starting 09/22 and I will be ... | 1.0 | User testing new website - ## User story

As a ReportStream designer/researcher, I want to conduct usability testing of the ReportStream website's redesign so that we can incorporate user feedback into the ongoing content/design development of the website.

## Background & context

Audrey is conducting the user test st... | non_process | user testing new website user story as a reportstream designer researcher i want to conduct usability testing of the reportstream website s redesign so that we can incorporate user feedback into the ongoing content design development of the website background context audrey is conducting the user test st... | 0 |

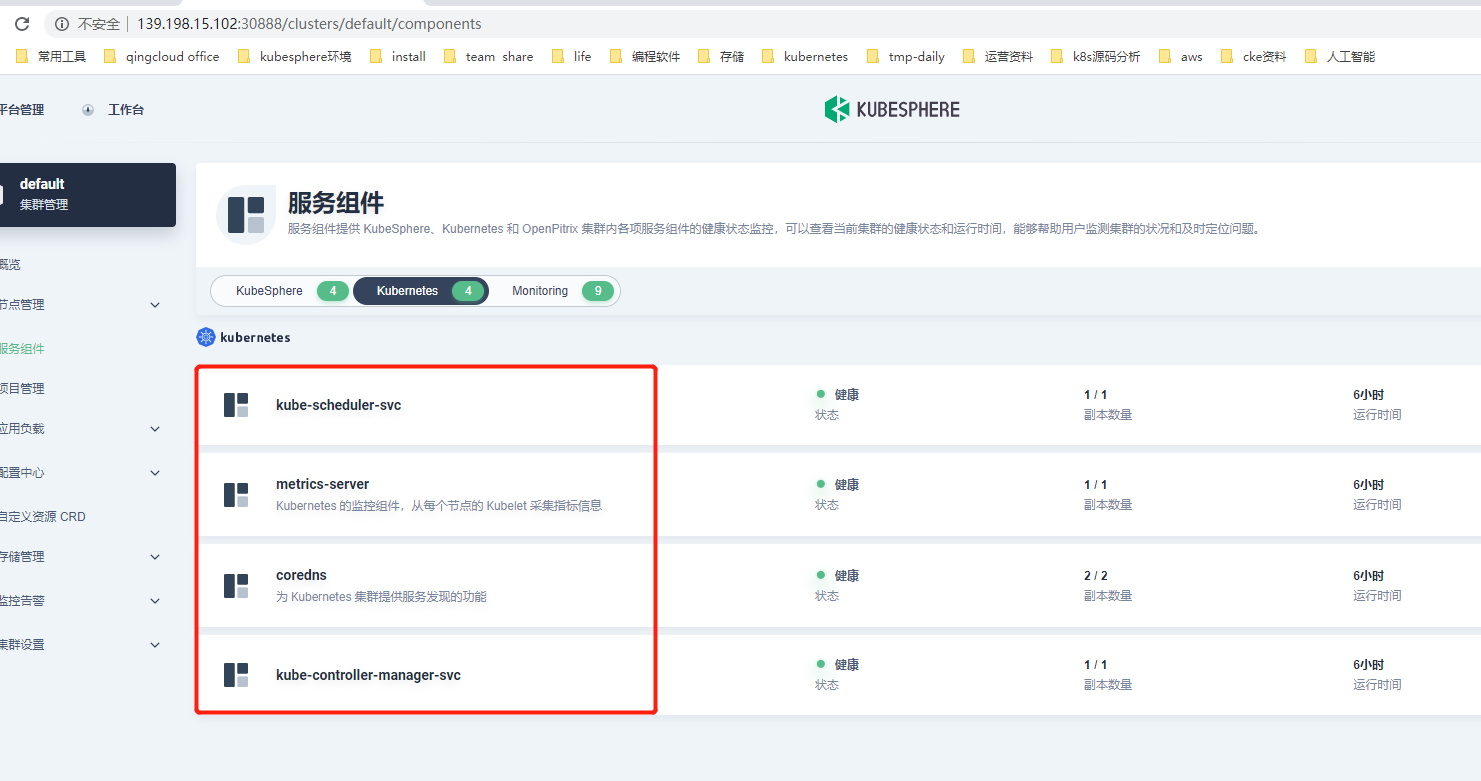

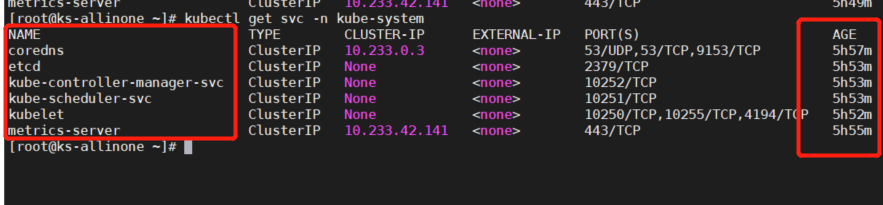

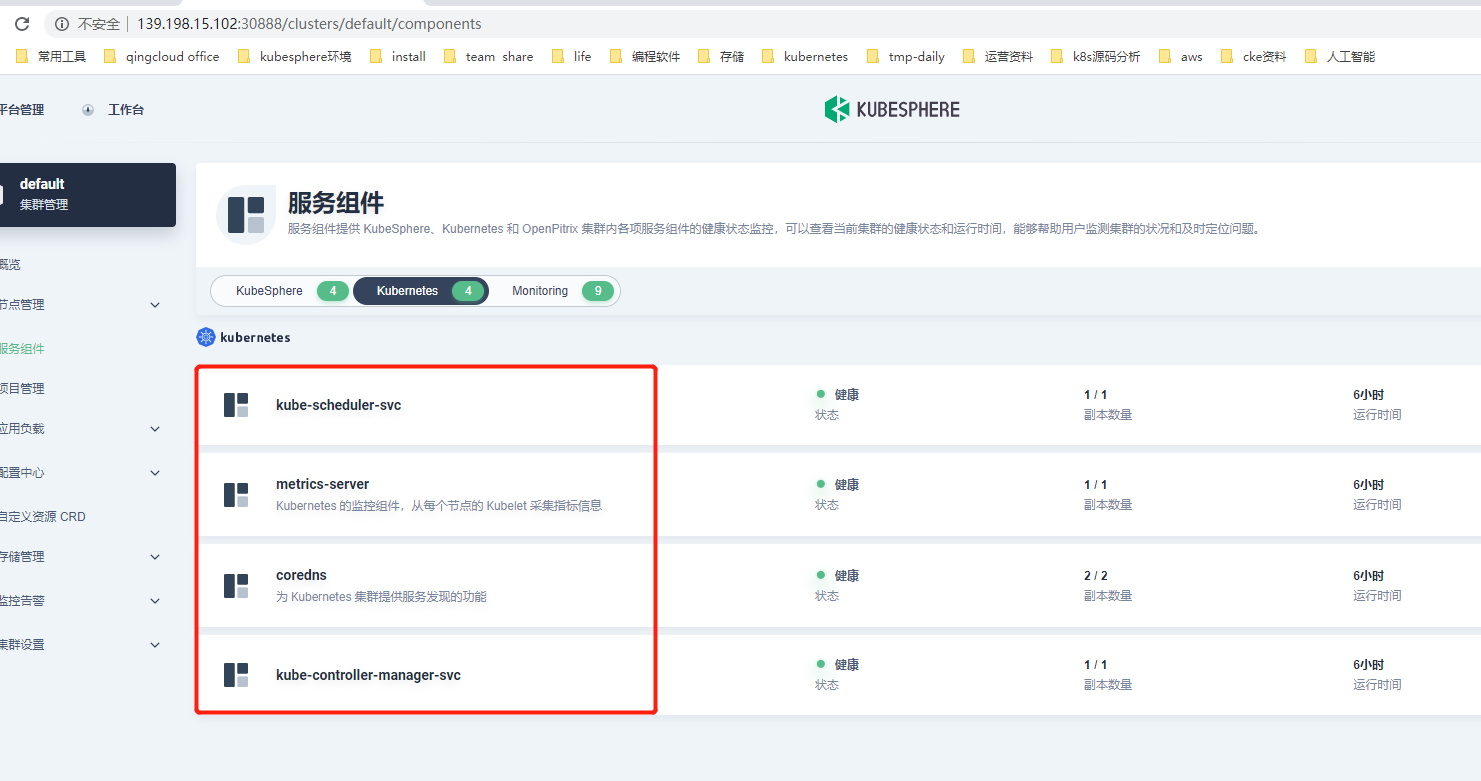

454,499 | 13,102,395,902 | IssuesEvent | 2020-08-04 06:36:51 | kubesphere/kubesphere | https://api.github.com/repos/kubesphere/kubesphere | closed | Kubernetes component validation is inconsistent | area/console kind/bug kind/need-to-verify priority/low | * There are fewer names and different times

* 1、UI show:

* 2、Terminal show:

* k8sv1.17.8+... | 1.0 | Kubernetes component validation is inconsistent - * There are fewer names and different times

* 1、UI show:

* 2、Terminal show:

to get redis-server cmdline in centos | os:linux package:process | It is not meet expectation when I use process.CmdlineSlice() to get redis-server cmdline in centos, as followings:

ps -ef|grep redis-server

```

root 4386 1 0 Sep26 ? 04:53:24 redis-server 192.168.0.1:6379

```

ll /proc/4386

```

lrwxrwxrwx 1 root root 0 Nov 9 19:20 exe -> /usr/bin/redis-server

... | 1.0 | It is not meet expectation when I use process.CmdlineSlice() to get redis-server cmdline in centos - It is not meet expectation when I use process.CmdlineSlice() to get redis-server cmdline in centos, as followings:

ps -ef|grep redis-server

```

root 4386 1 0 Sep26 ? 04:53:24 redis-server 192.168.0... | process | it is not meet expectation when i use process cmdlineslice to get redis server cmdline in centos it is not meet expectation when i use process cmdlineslice to get redis server cmdline in centos as followings ps ef grep redis server root redis server ll ... | 1 |

257,362 | 19,515,195,518 | IssuesEvent | 2021-12-29 09:02:03 | blockstack/docs | https://api.github.com/repos/blockstack/docs | closed | Add click to expand image in lightbox to docs site | enhancement documentation stale | Many of our more detailed diagrams display very small on the documentation site. It would be good if a user could click to expand them into a full-browser lightbox for more comfortable viewing. | 1.0 | Add click to expand image in lightbox to docs site - Many of our more detailed diagrams display very small on the documentation site. It would be good if a user could click to expand them into a full-browser lightbox for more comfortable viewing. | non_process | add click to expand image in lightbox to docs site many of our more detailed diagrams display very small on the documentation site it would be good if a user could click to expand them into a full browser lightbox for more comfortable viewing | 0 |

319,623 | 23,782,055,802 | IssuesEvent | 2022-09-02 06:24:49 | SenseNet/sensenet | https://api.github.com/repos/SenseNet/sensenet | closed | Docs site technology, build and architecture know-how | documentation | Get familiar with the technology and the possibilities, e.g. how the menu works, how do we change the structure. | 1.0 | Docs site technology, build and architecture know-how - Get familiar with the technology and the possibilities, e.g. how the menu works, how do we change the structure. | non_process | docs site technology build and architecture know how get familiar with the technology and the possibilities e g how the menu works how do we change the structure | 0 |

150,404 | 13,346,709,663 | IssuesEvent | 2020-08-29 09:31:23 | nbQA-dev/nbQA | https://api.github.com/repos/nbQA-dev/nbQA | closed | DOC note that reading from stdin won't work | bug documentation | E.g. putting a breakpoint in a test and then running `nbqa pytest` won't work | 1.0 | DOC note that reading from stdin won't work - E.g. putting a breakpoint in a test and then running `nbqa pytest` won't work | non_process | doc note that reading from stdin won t work e g putting a breakpoint in a test and then running nbqa pytest won t work | 0 |

779,403 | 27,351,580,226 | IssuesEvent | 2023-02-27 09:56:31 | sebastien-d-me/SebBlog | https://api.github.com/repos/sebastien-d-me/SebBlog | opened | Preview page of an article | Priority: Medium Statut: Not started Type : Front-end | #### Description:

Creating an article preview page.

------------

###### Estimated time: 2 day(s)

###### Difficulty: ⭐⭐

| 1.0 | Preview page of an article - #### Description:

Creating an article preview page.

------------

###### Estimated time: 2 day(s)

###### Difficulty: ⭐⭐

| non_process | preview page of an article description creating an article preview page estimated time day s difficulty ⭐⭐ | 0 |

67,154 | 3,266,812,880 | IssuesEvent | 2015-10-22 22:29:49 | YetiForceCompany/YetiForceCRM | https://api.github.com/repos/YetiForceCompany/YetiForceCRM | closed | Fe.Req:Document Preview | Label::Core Priority::#2 Normal Type::Discussion Type::Enhancement | Document to be previewed without to be download every time.

Even image crop or rotation would be great.

Yetiforce! | 1.0 | Fe.Req:Document Preview - Document to be previewed without to be download every time.

Even image crop or rotation would be great.

Yetiforce! | non_process | fe req document preview document to be previewed without to be download every time even image crop or rotation would be great yetiforce | 0 |

3,085 | 6,101,120,878 | IssuesEvent | 2017-06-20 14:02:32 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Messages are not received via the process.on('message') event while debugging. | child_process debugger | <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: 6.11.0

Platform: 64-b... | 1.0 | Messages are not received via the process.on('message') event while debugging. - <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in... | process | messages are not received via the process on message event while debugging thank you for reporting an issue this issue tracker is for bugs and issues found within node js core if you require more general support please file an issue on our help repo please fill in as much of the template belo... | 1 |

19,509 | 25,824,519,963 | IssuesEvent | 2022-12-12 12:00:09 | digitalmethodsinitiative/4cat | https://api.github.com/repos/digitalmethodsinitiative/4cat | closed | Replace Hatebase lexicons with Davidson et al.'s lexicon | processors dependencies | ERROR: type should be string, got "https://github.com/t-davidson/hate-speech-and-offensive-language/tree/master/lexicons - it's based on Hatebase but refined through snowballing real-world social media data. And the license is MIT, whereas Hatebase is ambiguously licensed and I'm not 100% sure we can really embed it in 4CAT." | 1.0 | Replace Hatebase lexicons with Davidson et al.'s lexicon - https://github.com/t-davidson/hate-speech-and-offensive-language/tree/master/lexicons - it's based on Hatebase but refined through snowballing real-world social media data. And the license is MIT, whereas Hatebase is ambiguously licensed and I'm not 100% sure w... | process | replace hatebase lexicons with davidson et al s lexicon it s based on hatebase but refined through snowballing real world social media data and the license is mit whereas hatebase is ambiguously licensed and i m not sure we can really embed it in | 1 |

19,198 | 25,328,765,011 | IssuesEvent | 2022-11-18 11:29:15 | threefoldtech/js-sdk | https://api.github.com/repos/threefoldtech/js-sdk | closed | A 3bot name of a failed deployment is not reusable | process_wontfix type_bug | ### Description

When creating a 3bot and the deployment fails, the name chosen first is not reusable.

I suggest to initiate the identity first with a short time to live and it is confirmed by the 3bot itself.

| 1.0 | A 3bot name of a failed deployment is not reusable - ### Description

When creating a 3bot and the deployment fails, the name chosen first is not reusable.

I suggest to initiate the identity first with a short time to live and it is confirmed by the 3bot itself.

| process | a name of a failed deployment is not reusable description when creating a and the deployment fails the name chosen first is not reusable i suggest to initiate the identity first with a short time to live and it is confirmed by the itself | 1 |

41,373 | 12,832,000,916 | IssuesEvent | 2020-07-07 06:48:14 | rvvergara/next-js-basic | https://api.github.com/repos/rvvergara/next-js-basic | closed | CVE-2015-9251 (Medium) detected in jquery-1.7.1.min.js | security vulnerability | ## CVE-2015-9251 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.7.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="htt... | True | CVE-2015-9251 (Medium) detected in jquery-1.7.1.min.js - ## CVE-2015-9251 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.7.1.min.js</b></p></summary>

<p>JavaScript library ... | non_process | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file tmp ws scm next js basic node modules vm browserify example run index html p... | 0 |

336,126 | 10,171,772,978 | IssuesEvent | 2019-08-08 09:09:33 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | fs/nvs: nvs_init can hang if no nvs_ate available | area: File System area: Flash bug priority: medium | **Describe the bug**

While running on a simulated flash file system, nvs_init() failed to return. Inspection with debugger shows that it stuck in an infinite loop.

**To Reproduce**

Calling nvs_init() on flash file system with no "free" sectors.

**Expected behavior**

If no free space available, return an error... | 1.0 | fs/nvs: nvs_init can hang if no nvs_ate available - **Describe the bug**

While running on a simulated flash file system, nvs_init() failed to return. Inspection with debugger shows that it stuck in an infinite loop.

**To Reproduce**

Calling nvs_init() on flash file system with no "free" sectors.

**Expected beh... | non_process | fs nvs nvs init can hang if no nvs ate available describe the bug while running on a simulated flash file system nvs init failed to return inspection with debugger shows that it stuck in an infinite loop to reproduce calling nvs init on flash file system with no free sectors expected beh... | 0 |

176,963 | 6,570,695,789 | IssuesEvent | 2017-09-10 02:54:40 | Mountainview-WebDesign/lifestonechurch | https://api.github.com/repos/Mountainview-WebDesign/lifestonechurch | closed | lg page to go up ASAP | priority | @agarrharr

Let's get LG info up ASAP. I'd love to be able to link TOMORROW'S email. Sorry you're getting this info so late.

LET'S KEEP SAME FORMAT THAT YOU HAD BEFORE, NOT LIKE I HAVE IT HERE.

Also, Clements are new leaders so i'll get you their bio soon. THEIR PIC IS ATTACHED.

![19145986_10209421580597313_175... | 1.0 | lg page to go up ASAP - @agarrharr

Let's get LG info up ASAP. I'd love to be able to link TOMORROW'S email. Sorry you're getting this info so late.

LET'S KEEP SAME FORMAT THAT YOU HAD BEFORE, NOT LIKE I HAVE IT HERE.

Also, Clements are new leaders so i'll get you their bio soon. THEIR PIC IS ATTACHED.

![191459... | non_process | lg page to go up asap agarrharr let s get lg info up asap i d love to be able to link tomorrow s email sorry you re getting this info so late let s keep same format that you had before not like i have it here also clements are new leaders so i ll get you their bio soon their pic is attached ... | 0 |

156,605 | 19,901,883,139 | IssuesEvent | 2022-01-25 08:51:30 | kedacore/external-scaler-azure-cosmos-db | https://api.github.com/repos/kedacore/external-scaler-azure-cosmos-db | opened | CVE-2018-8292 (High) detected in system.net.http.4.3.0.nupkg | security vulnerability | ## CVE-2018-8292 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>system.net.http.4.3.0.nupkg</b></p></summary>

<p>Provides a programming interface for modern HTTP applications, includi... | True | CVE-2018-8292 (High) detected in system.net.http.4.3.0.nupkg - ## CVE-2018-8292 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>system.net.http.4.3.0.nupkg</b></p></summary>

<p>Provide... | non_process | cve high detected in system net http nupkg cve high severity vulnerability vulnerable library system net http nupkg provides a programming interface for modern http applications including http client components that library home page a href path to dependency file ... | 0 |

14,203 | 17,101,215,533 | IssuesEvent | 2021-07-09 11:31:47 | Joystream/hydra | https://api.github.com/repos/Joystream/hydra | closed | Escape `0x00` in mappings | hydra-processor medium-prio-feature |

I was also wondering about this 0x00 byte problem (perhaps you noticed it on query-node channel). Is there an easy way for hydra to hook into String fields setters or perhaps preInsert / preUpdate and remove all \u0000 characters? We can also perpare all strings before saving them on the mappings side, but it's easy ... | 1.0 | Escape `0x00` in mappings -

I was also wondering about this 0x00 byte problem (perhaps you noticed it on query-node channel). Is there an easy way for hydra to hook into String fields setters or perhaps preInsert / preUpdate and remove all \u0000 characters? We can also perpare all strings before saving them on the m... | process | escape in mappings i was also wondering about this byte problem perhaps you noticed it on query node channel is there an easy way for hydra to hook into string fields setters or perhaps preinsert preupdate and remove all characters we can also perpare all strings before saving them on the mappings si... | 1 |

618,080 | 19,424,067,364 | IssuesEvent | 2021-12-21 01:31:26 | justalemon/LemonUI | https://api.github.com/repos/justalemon/LemonUI | closed | RageMP Support | type: feature request status: acknowledged priority: p2 medium | Should be fairly simple to implement; Would fork and implement it myself if I had the time... Mainly recommending this due to RageMP's poor extendibility in the NativeUI Department (Think it actually uses the NativeUI implementation to begin with).

RAGE.Game.Invoker.Invoke<T>( Hash , params[] );

RAGE.Game.Invoker.... | 1.0 | RageMP Support - Should be fairly simple to implement; Would fork and implement it myself if I had the time... Mainly recommending this due to RageMP's poor extendibility in the NativeUI Department (Think it actually uses the NativeUI implementation to begin with).

RAGE.Game.Invoker.Invoke<T>( Hash , params[] );

R... | non_process | ragemp support should be fairly simple to implement would fork and implement it myself if i had the time mainly recommending this due to ragemp s poor extendibility in the nativeui department think it actually uses the nativeui implementation to begin with rage game invoker invoke hash params rage... | 0 |

186,649 | 14,402,840,773 | IssuesEvent | 2020-12-03 15:21:53 | rice-solar-physics/pydrad | https://api.github.com/repos/rice-solar-physics/pydrad | closed | Improve test coverage | test | Should add some very basic unit tests that are run on every PR and merge just to make sure we aren't merging broken code. | 1.0 | Improve test coverage - Should add some very basic unit tests that are run on every PR and merge just to make sure we aren't merging broken code. | non_process | improve test coverage should add some very basic unit tests that are run on every pr and merge just to make sure we aren t merging broken code | 0 |

7,419 | 10,542,780,542 | IssuesEvent | 2019-10-02 13:50:14 | elastic/beats | https://api.github.com/repos/elastic/beats | closed | Javascript Processor panic | :Processors bug | * Winlogbeat: 7.3.0

The javascript processors panics with a nil pointer exception. This seems to happen if the data the processor is looking for does not exists or is in the wrong format.

```

runtime error: invalid memory address or nil pointer dereference [recovered]

panic: Panic at 0: runtime erro... | 1.0 | Javascript Processor panic - * Winlogbeat: 7.3.0

The javascript processors panics with a nil pointer exception. This seems to happen if the data the processor is looking for does not exists or is in the wrong format.

```

runtime error: invalid memory address or nil pointer dereference [recovered]

pa... | process | javascript processor panic winlogbeat the javascript processors panics with a nil pointer exception this seems to happen if the data the processor is looking for does not exists or is in the wrong format runtime error invalid memory address or nil pointer dereference panic panic... | 1 |

278,875 | 8,651,471,687 | IssuesEvent | 2018-11-27 03:19:01 | majorazero/Agility | https://api.github.com/repos/majorazero/Agility | closed | UX/UI Submitting invalid submissions should prompt the user something instead of being irresponsive | High Priority | Making a task of invalid date seems to trigger this, but current date also does the same thing. | 1.0 | UX/UI Submitting invalid submissions should prompt the user something instead of being irresponsive - Making a task of invalid date seems to trigger this, but current date also does the same thing. | non_process | ux ui submitting invalid submissions should prompt the user something instead of being irresponsive making a task of invalid date seems to trigger this but current date also does the same thing | 0 |

8,533 | 11,705,728,863 | IssuesEvent | 2020-03-07 17:38:29 | Ghost-chu/QuickShop-Reremake | https://api.github.com/repos/Ghost-chu/QuickShop-Reremake | closed | [BUG] Server - Crashing // MYSQL | In Process Performance Issue Waiting For Reply | **Describe the bug**

Server gets hung up attempting to... From what I can assume, pull/place data in the mysql db.

**To Reproduce**

Steps to reproduce the behavior:

1. Have mysql enabled? I'd imagine that's all it takes.

**Expected behavior**

The plugin to access the mysql db, clean without causing the server... | 1.0 | [BUG] Server - Crashing // MYSQL - **Describe the bug**

Server gets hung up attempting to... From what I can assume, pull/place data in the mysql db.

**To Reproduce**

Steps to reproduce the behavior:

1. Have mysql enabled? I'd imagine that's all it takes.

**Expected behavior**

The plugin to access the mysql d... | process | server crashing mysql describe the bug server gets hung up attempting to from what i can assume pull place data in the mysql db to reproduce steps to reproduce the behavior have mysql enabled i d imagine that s all it takes expected behavior the plugin to access the mysql db c... | 1 |

62,094 | 15,162,163,155 | IssuesEvent | 2021-02-12 10:11:24 | spatial-model-editor/spatial-model-editor | https://api.github.com/repos/spatial-model-editor/spatial-model-editor | closed | migrate from QCustomPlot to qwt | GUI build system | QCustomPlot is a nicer library but the GPL license is an issue: should replace with LGPL-licensed qwt library | 1.0 | migrate from QCustomPlot to qwt - QCustomPlot is a nicer library but the GPL license is an issue: should replace with LGPL-licensed qwt library | non_process | migrate from qcustomplot to qwt qcustomplot is a nicer library but the gpl license is an issue should replace with lgpl licensed qwt library | 0 |

11,010 | 13,795,555,297 | IssuesEvent | 2020-10-09 18:13:32 | unicode-org/icu4x | https://api.github.com/repos/unicode-org/icu4x | closed | Multi-layered directory structure | C-process T-task | In #18 we decided to make a top-level `/components` directory. I like this, but I'm also thinking that it might be good to have an extra layer of abstraction. We've come across several types of components so far:

1. Core i18n components: Locale, PluralRules, NumberFormat, etc.

2. Non-i18n utilities: FixedDecimal,... | 1.0 | Multi-layered directory structure - In #18 we decided to make a top-level `/components` directory. I like this, but I'm also thinking that it might be good to have an extra layer of abstraction. We've come across several types of components so far:

1. Core i18n components: Locale, PluralRules, NumberFormat, etc.

... | process | multi layered directory structure in we decided to make a top level components directory i like this but i m also thinking that it might be good to have an extra layer of abstraction we ve come across several types of components so far core components locale pluralrules numberformat etc n... | 1 |

128,562 | 27,285,521,469 | IssuesEvent | 2023-02-23 13:14:54 | jakubiec/event-storming-to-code | https://api.github.com/repos/jakubiec/event-storming-to-code | opened | Make invariant scenarios more realistic | presentation code | The AlreadyRegistered scenario is a naive simplification and was correctly spotted that in the real word uniqueness, checks are done a layer above.

Think of a different way to showcase invariants | 1.0 | Make invariant scenarios more realistic - The AlreadyRegistered scenario is a naive simplification and was correctly spotted that in the real word uniqueness, checks are done a layer above.

Think of a different way to showcase invariants | non_process | make invariant scenarios more realistic the alreadyregistered scenario is a naive simplification and was correctly spotted that in the real word uniqueness checks are done a layer above think of a different way to showcase invariants | 0 |

7,835 | 11,011,712,511 | IssuesEvent | 2019-12-04 16:47:31 | 90301/TextReplace | https://api.github.com/repos/90301/TextReplace | closed | Multi-File, Multi Line Block Replace | Log Processor Pre-Processor | Need a list of files, then we can have something like a Log Processor program that can be run on all those files. Exporting a list of files to a file and loading them in may also be helpful

- [x] Get Files In Folder Request / Output them to text for log parser

- [x] Use Files as input File List (Cyberia Pre Process... | 2.0 | Multi-File, Multi Line Block Replace - Need a list of files, then we can have something like a Log Processor program that can be run on all those files. Exporting a list of files to a file and loading them in may also be helpful

- [x] Get Files In Folder Request / Output them to text for log parser

- [x] Use Files ... | process | multi file multi line block replace need a list of files then we can have something like a log processor program that can be run on all those files exporting a list of files to a file and loading them in may also be helpful get files in folder request output them to text for log parser use files as i... | 1 |

83,225 | 15,699,634,715 | IssuesEvent | 2021-03-26 08:46:41 | LalithK90/wisdom-institute | https://api.github.com/repos/LalithK90/wisdom-institute | opened | CVE-2020-13934 (High) detected in tomcat-embed-core-9.0.30.jar | security vulnerability | ## CVE-2020-13934 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tomcat-embed-core-9.0.30.jar</b></p></summary>

<p>Core Tomcat implementation</p>

<p>Library home page: <a href="https:... | True | CVE-2020-13934 (High) detected in tomcat-embed-core-9.0.30.jar - ## CVE-2020-13934 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tomcat-embed-core-9.0.30.jar</b></p></summary>

<p>Cor... | non_process | cve high detected in tomcat embed core jar cve high severity vulnerability vulnerable library tomcat embed core jar core tomcat implementation library home page a href path to dependency file wisdom institute build gradle path to vulnerable library home wss scanner ... | 0 |

12,199 | 14,742,481,914 | IssuesEvent | 2021-01-07 12:22:15 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | opened | FW: Billing Cycle Notification for Toronto, Canada | anc-process anp-2 ant-enhancement ant-parent/primary pl-foran | In GitLab by @kdjstudios on Apr 25, 2019, 08:48

**Submitted by:** "Richard Soltoff" <richard.soltoff@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-04-25-31659

**Server:** Internal

**Client/Site:** All

**Account:** NA

**Issue:**

Can you explain to me what this email is t... | 1.0 | FW: Billing Cycle Notification for Toronto, Canada - In GitLab by @kdjstudios on Apr 25, 2019, 08:48

**Submitted by:** "Richard Soltoff" <richard.soltoff@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-04-25-31659

**Server:** Internal

**Client/Site:** All

**Account:** NA

**I... | process | fw billing cycle notification for toronto canada in gitlab by kdjstudios on apr submitted by richard soltoff helpdesk server internal client site all account na issue can you explain to me what this email is telling me i do not that they have finalized th... | 1 |

6,775 | 9,914,164,406 | IssuesEvent | 2019-06-28 13:45:47 | material-components/material-components-ios | https://api.github.com/repos/material-components/material-components-ios | closed | [Tabs] Internal issue: b/135608374 | [Tabs] type:Process | This was filed as an internal issue. If you are a Googler, please visit [b/135608374](http://b/135608374) for more details.

<!-- Auto-generated content below, do not modify -->

---

#### Internal data

- Associated internal bug: [b/135608374](http://b/135608374)

- Blocked by: https://github.com/material-components/mate... | 1.0 | [Tabs] Internal issue: b/135608374 - This was filed as an internal issue. If you are a Googler, please visit [b/135608374](http://b/135608374) for more details.

<!-- Auto-generated content below, do not modify -->

---

#### Internal data

- Associated internal bug: [b/135608374](http://b/135608374)

- Blocked by: https:... | process | internal issue b this was filed as an internal issue if you are a googler please visit for more details internal data associated internal bug blocked by blocked by blocked by | 1 |

651,088 | 21,465,036,909 | IssuesEvent | 2022-04-26 02:12:12 | weaveworks/eksctl | https://api.github.com/repos/weaveworks/eksctl | closed | [Bug] remoteNodegroups contains failed stacks that are not nodegroups | kind/bug priority/backlog stale | ### What happened?

>$ eksctl nodegroup create failingnodegroup

which fails due to aws internal, then run it again

>$ eksctl nodegroup create ...

and eksctl says that nodegroup is existing

>1 existing nodegroup(s) (failingnodegroup)

but only the failed (rollbacked) stack exists and the nodegroup does n... | 1.0 | [Bug] remoteNodegroups contains failed stacks that are not nodegroups - ### What happened?

>$ eksctl nodegroup create failingnodegroup

which fails due to aws internal, then run it again

>$ eksctl nodegroup create ...

and eksctl says that nodegroup is existing

>1 existing nodegroup(s) (failingnodegroup)

... | non_process | remotenodegroups contains failed stacks that are not nodegroups what happened eksctl nodegroup create failingnodegroup which fails due to aws internal then run it again eksctl nodegroup create and eksctl says that nodegroup is existing existing nodegroup s failingnodegroup bu... | 0 |

11,587 | 14,445,787,726 | IssuesEvent | 2020-12-07 23:44:02 | googleapis/release-please | https://api.github.com/repos/googleapis/release-please | closed | Improve test coverage for Go releaser | type: process | We should increase the test coverage for our mono-repo go logic, we especially need some additional tests for the submodule releaser class.

See: https://github.com/googleapis/release-please/pull/617 | 1.0 | Improve test coverage for Go releaser - We should increase the test coverage for our mono-repo go logic, we especially need some additional tests for the submodule releaser class.

See: https://github.com/googleapis/release-please/pull/617 | process | improve test coverage for go releaser we should increase the test coverage for our mono repo go logic we especially need some additional tests for the submodule releaser class see | 1 |

87,853 | 15,790,335,224 | IssuesEvent | 2021-04-02 01:10:27 | YauheniPo/Elements_Test_Framework | https://api.github.com/repos/YauheniPo/Elements_Test_Framework | closed | CVE-2019-16943 (High) detected in jackson-databind-2.9.8.jar - autoclosed | security vulnerability | ## CVE-2019-16943 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.8.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2019-16943 (High) detected in jackson-databind-2.9.8.jar - autoclosed - ## CVE-2019-16943 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.8.jar</b></p></summary... | non_process | cve high detected in jackson databind jar autoclosed cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file elements test fram... | 0 |

4,469 | 3,869,946,973 | IssuesEvent | 2016-04-10 22:01:47 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 23141927: Xcode-beta (7B85): When renaming, reformat automatically | classification:ui/usability reproducible:always status:open | #### Description

Xcode-beta (7B85): When renaming, reformat automatically

Summary:

When renaming via the refactoring feature or via “Edit All In Scope”, reformat the affected code automatically

Steps to Reproduce:

1. Change a method signature via Edit > Refactor > Rename…

2. Check the preview

3. If there has been a ... | True | 23141927: Xcode-beta (7B85): When renaming, reformat automatically - #### Description

Xcode-beta (7B85): When renaming, reformat automatically

Summary:

When renaming via the refactoring feature or via “Edit All In Scope”, reformat the affected code automatically

Steps to Reproduce:

1. Change a method signature via E... | non_process | xcode beta when renaming reformat automatically description xcode beta when renaming reformat automatically summary when renaming via the refactoring feature or via “edit all in scope” reformat the affected code automatically steps to reproduce change a method signature via edit refacto... | 0 |

6,910 | 10,060,299,331 | IssuesEvent | 2019-07-22 18:29:00 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | closed | BigQuery: 'test_bigquery_magic_w_maximum_bytes_billed_invalid' test uses real client. | api: bigquery testing type: process | The *unit* test fails if there are no valid credentials in the environment:

```python

______________ test_bigquery_magic_w_maximum_bytes_billed_invalid ______________

@pytest.mark.usefixtures("ipython_interactive")

def test_bigquery_magic_w_maximum_bytes_billed_invalid():

ip = IPython.get_ipyth... | 1.0 | BigQuery: 'test_bigquery_magic_w_maximum_bytes_billed_invalid' test uses real client. - The *unit* test fails if there are no valid credentials in the environment:

```python

______________ test_bigquery_magic_w_maximum_bytes_billed_invalid ______________

@pytest.mark.usefixtures("ipython_interactive")

d... | process | bigquery test bigquery magic w maximum bytes billed invalid test uses real client the unit test fails if there are no valid credentials in the environment python test bigquery magic w maximum bytes billed invalid pytest mark usefixtures ipython interactive d... | 1 |

137,213 | 5,299,968,981 | IssuesEvent | 2017-02-10 02:20:42 | OperationCode/operationcode | https://api.github.com/repos/OperationCode/operationcode | closed | Donation by text | Priority: Medium Type: Feature | When `opcode` is texted to `x number` (provisioned by Twilio or similar) user makes a donation to scholarship fund.

| 1.0 | Donation by text - When `opcode` is texted to `x number` (provisioned by Twilio or similar) user makes a donation to scholarship fund.

| non_process | donation by text when opcode is texted to x number provisioned by twilio or similar user makes a donation to scholarship fund | 0 |

71,954 | 13,767,221,818 | IssuesEvent | 2020-10-07 15:28:23 | finos/alloy | https://api.github.com/repos/finos/alloy | closed | FINOS Code Scans, Checks, Validation - SDLC Code | Alloy SDLC Code_Readiness Go Live Readiness Checklist | This task can't start until https://github.com/finos/alloy/issues/235 is completed.

## SDLC

- [x] FINOS Security Vulnerability Check

- [x] FINOS Legal/License Scans

- [x] Apply FINOS Project Blueprint | 1.0 | FINOS Code Scans, Checks, Validation - SDLC Code - This task can't start until https://github.com/finos/alloy/issues/235 is completed.

## SDLC

- [x] FINOS Security Vulnerability Check

- [x] FINOS Legal/License Scans

- [x] Apply FINOS Project Blueprint | non_process | finos code scans checks validation sdlc code this task can t start until is completed sdlc finos security vulnerability check finos legal license scans apply finos project blueprint | 0 |

7,278 | 10,431,736,378 | IssuesEvent | 2019-09-17 09:43:16 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | opened | Give user access to OBS data in different frequencies | enhancement preprocessor | Problem description:

Observational datasets at high frequencies (daily or hourly) are computationally expensive to work with. Not all diagnostics make use of this high time resolution and the necessary preprocessing in these diagnostics is time (and energy) consuming and often leads to memory issues (e.g. #51). Giv... | 1.0 | Give user access to OBS data in different frequencies - Problem description:

Observational datasets at high frequencies (daily or hourly) are computationally expensive to work with. Not all diagnostics make use of this high time resolution and the necessary preprocessing in these diagnostics is time (and energy) co... | process | give user access to obs data in different frequencies problem description observational datasets at high frequencies daily or hourly are computationally expensive to work with not all diagnostics make use of this high time resolution and the necessary preprocessing in these diagnostics is time and energy co... | 1 |

13,841 | 16,602,367,561 | IssuesEvent | 2021-06-01 21:28:15 | CodeForPhilly/paws-data-pipeline | https://api.github.com/repos/CodeForPhilly/paws-data-pipeline | closed | Handle even longer Execute runs, give better UX | API Async processes UX | When we did #227 , the Execute Match run time was < 60 minutes. As we've added more features, it's now taking just under three hours (on a pretty fast machine). This hits two timeouts:

- 30 minute login refresh

- 60 minute nginx request timer

If the user were to keep the tab in the foreground and hit the refresh... | 1.0 | Handle even longer Execute runs, give better UX - When we did #227 , the Execute Match run time was < 60 minutes. As we've added more features, it's now taking just under three hours (on a pretty fast machine). This hits two timeouts:

- 30 minute login refresh

- 60 minute nginx request timer

If the user were to... | process | handle even longer execute runs give better ux when we did the execute match run time was minutes as we ve added more features it s now taking just under three hours on a pretty fast machine this hits two timeouts minute login refresh minute nginx request timer if the user were to keep... | 1 |

16,672 | 21,776,241,278 | IssuesEvent | 2022-05-13 14:04:05 | camunda/zeebe | https://api.github.com/repos/camunda/zeebe | closed | Deprecate Cancel Job command | kind/toil scope/broker team/process-automation area/maintainability | **Description**