Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

75,921 | 15,495,711,666 | IssuesEvent | 2021-03-11 01:21:20 | nicktombeur/naturalcolors | https://api.github.com/repos/nicktombeur/naturalcolors | opened | CVE-2020-36181 (High) detected in jackson-databind-2.9.9.jar | security vulnerability | ## CVE-2020-36181 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.9.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2020-36181 (High) detected in jackson-databind-2.9.9.jar - ## CVE-2020-36181 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.9.jar</b></p></summary>

<p>General... | non_process | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file naturalcolors back end pom xml ... | 0 |

11,032 | 13,838,643,370 | IssuesEvent | 2020-10-14 06:38:19 | amor71/LiuAlgoTrader | https://api.github.com/repos/amor71/LiuAlgoTrader | closed | Expose additional configuration options from config to System Variable or TOML File parameters | in-process | Please expose the following.

Right now I see some of these available in config file. would be good to have a full control over these parameter by strategy.

1. Risk

2. Set Portfolio value

3. Number of minutes before market close for closing positions

4. Stoploss

| 1.0 | Expose additional configuration options from config to System Variable or TOML File parameters - Please expose the following.

Right now I see some of these available in config file. would be good to have a full control over these parameter by strategy.

1. Risk

2. Set Portfolio value

3. Number of minutes before... | process | expose additional configuration options from config to system variable or toml file parameters please expose the following right now i see some of these available in config file would be good to have a full control over these parameter by strategy risk set portfolio value number of minutes before... | 1 |

320,455 | 23,811,217,304 | IssuesEvent | 2022-09-04 19:45:30 | MudBlazor/MudBlazor | https://api.github.com/repos/MudBlazor/MudBlazor | closed | Menu: press goes through to component below on mobile | question won't fix has workaround answered needs documentation | ### Bug type

Component

### Component name

MudMenu

### What happened?

with the link

open dev tools (f12) and select mobile view - select ipad air

open menu

press menu 1

the sort item from the table below will open

-this only happen on touch devices

### Expected behavior

sort menu will not open

### Reprod... | 1.0 | Menu: press goes through to component below on mobile - ### Bug type

Component

### Component name

MudMenu

### What happened?

with the link

open dev tools (f12) and select mobile view - select ipad air

open menu

press menu 1

the sort item from the table below will open

-this only happen on touch devices

##... | non_process | menu press goes through to component below on mobile bug type component component name mudmenu what happened with the link open dev tools and select mobile view select ipad air open menu press menu the sort item from the table below will open this only happen on touch devices ... | 0 |

279,437 | 21,160,379,524 | IssuesEvent | 2022-04-07 08:49:56 | bounswe/bounswe2022group6 | https://api.github.com/repos/bounswe/bounswe2022group6 | closed | Class Diagram: Creating a Class for Account | Type: Documentation Priority: High State: In Progess | Fields and methods of the Account class need to be specified on the LucidChart diagram.

@iremmer is the one responsible for this task.

@berfinsimsekk is the reviewer of this task.

Deadline: 04.04.2022 Sunday 23.59 | 1.0 | Class Diagram: Creating a Class for Account - Fields and methods of the Account class need to be specified on the LucidChart diagram.

@iremmer is the one responsible for this task.

@berfinsimsekk is the reviewer of this task.

Deadline: 04.04.2022 Sunday 23.59 | non_process | class diagram creating a class for account fields and methods of the account class need to be specified on the lucidchart diagram iremmer is the one responsible for this task berfinsimsekk is the reviewer of this task deadline sunday | 0 |

35,410 | 7,734,484,043 | IssuesEvent | 2018-05-27 01:53:59 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | closed | StartsWith method does not compare strings correctly | defect premium | Check sample:

### Steps To Reproduce

https://deck.net/3c0a66eae87385cde73a43f5cc5a433b

https://dotnetfiddle.net/Lg7R3U

```csharp

public class Program

{

public static void Main()

{

Console.WriteLine("V".StartsWith("v", StringComparison.InvariantCultureIgnoreCase));

}

}

```

### Ex... | 1.0 | StartsWith method does not compare strings correctly - Check sample:

### Steps To Reproduce

https://deck.net/3c0a66eae87385cde73a43f5cc5a433b

https://dotnetfiddle.net/Lg7R3U

```csharp

public class Program

{

public static void Main()

{

Console.WriteLine("V".StartsWith("v", StringComparison... | non_process | startswith method does not compare strings correctly check sample steps to reproduce csharp public class program public static void main console writeline v startswith v stringcomparison invariantcultureignorecase expected result t... | 0 |

8,108 | 20,968,779,721 | IssuesEvent | 2022-03-28 09:23:00 | MicrosoftDocs/architecture-center | https://api.github.com/repos/MicrosoftDocs/architecture-center | closed | Error regarding blob name | cxp triaged product-question architecture-center/svc Pri2 azure-guide/subsvc |

[I want to read a csv file located in the blob storage. However, I am getting error that specified blob does not exist. I am confused what should be the blob name. I gave LOCALFILENAME as my csv file name seen in the blob container]

---

#### Document Details

⚠ *Do not edit this section. It is required for do... | 1.0 | Error regarding blob name -

[I want to read a csv file located in the blob storage. However, I am getting error that specified blob does not exist. I am confused what should be the blob name. I gave LOCALFILENAME as my csv file name seen in the blob container]

---

#### Document Details

⚠ *Do not edit this se... | non_process | error regarding blob name document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source service architecture center sub service azure guide ... | 0 |

19,187 | 25,309,047,625 | IssuesEvent | 2022-11-17 16:05:33 | TUM-Dev/NavigaTUM | https://api.github.com/repos/TUM-Dev/NavigaTUM | closed | [General] Hochschule für Politik | webform delete-after-processing general | Bitte auch die Hochschule für Politik direkt bei der Übersicht zum Stammgelände anzeigen. Sonst sieht es echt toll aus, danke! | 1.0 | [General] Hochschule für Politik - Bitte auch die Hochschule für Politik direkt bei der Übersicht zum Stammgelände anzeigen. Sonst sieht es echt toll aus, danke! | process | hochschule für politik bitte auch die hochschule für politik direkt bei der übersicht zum stammgelände anzeigen sonst sieht es echt toll aus danke | 1 |

1,647 | 4,270,987,583 | IssuesEvent | 2016-07-13 09:24:38 | withanage/mpt | https://api.github.com/repos/withanage/mpt | closed | post processer script | post process | Sollte in post-processor eingebaut werden, damit die interviews speaker geschnitten werden

(\<p>)(\s*)(\w+)(:) | 1.0 | post processer script - Sollte in post-processor eingebaut werden, damit die interviews speaker geschnitten werden

(\<p>)(\s*)(\w+)(:) | process | post processer script sollte in post processor eingebaut werden damit die interviews speaker geschnitten werden s w | 1 |

685 | 3,172,211,051 | IssuesEvent | 2015-09-23 06:18:10 | e-government-ua/i | https://api.github.com/repos/e-government-ua/i | closed | На главном дашборде скрывать для Киева два элемента расширенного фильтра "Область и Город" | active hi priority In process of testing question test version | https://test.kiev.igov.org.ua

а потом сделать видимым, для остальных т.к. сейчас это было временно скрыто | 1.0 | На главном дашборде скрывать для Киева два элемента расширенного фильтра "Область и Город" - https://test.kiev.igov.org.ua

а потом сделать видимым, для остальных т.к. сейчас это было временно скрыто | process | на главном дашборде скрывать для киева два элемента расширенного фильтра область и город а потом сделать видимым для остальных т к сейчас это было временно скрыто | 1 |

9,473 | 12,467,568,638 | IssuesEvent | 2020-05-28 17:17:31 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | The latest resource checks aren't documented | Pri1 devops-cicd-process/tech devops/prod | Currently only the manual approval and evaluate artifact checks are mentioned in the documentation. There isn't any information on the others that are available now. It looks like more are in the works, which is awesome! But I'm looking forward to finding out more information on the existing ones in the meantime.

--... | 1.0 | The latest resource checks aren't documented - Currently only the manual approval and evaluate artifact checks are mentioned in the documentation. There isn't any information on the others that are available now. It looks like more are in the works, which is awesome! But I'm looking forward to finding out more informat... | process | the latest resource checks aren t documented currently only the manual approval and evaluate artifact checks are mentioned in the documentation there isn t any information on the others that are available now it looks like more are in the works which is awesome but i m looking forward to finding out more informat... | 1 |

13,391 | 15,865,917,077 | IssuesEvent | 2021-04-08 15:12:25 | COPIM/open-book-collective | https://api.github.com/repos/COPIM/open-book-collective | opened | Alerts about new publications | membership management (pillar 4) not needed organisational process userstory | As a librarian or institutional decision-maker visiting an open access initiative’s profile page ...

...I want, for OABPs, alerts about new publications by specific publishers, or in specific topic areas ...

... so that I can inform faculty members of new content

As a scholar...

I want to receive alerts abo... | 1.0 | Alerts about new publications - As a librarian or institutional decision-maker visiting an open access initiative’s profile page ...

...I want, for OABPs, alerts about new publications by specific publishers, or in specific topic areas ...

... so that I can inform faculty members of new content

As a scholar...... | process | alerts about new publications as a librarian or institutional decision maker visiting an open access initiative’s profile page i want for oabps alerts about new publications by specific publishers or in specific topic areas so that i can inform faculty members of new content as a scholar ... | 1 |

250,288 | 27,066,433,877 | IssuesEvent | 2023-02-14 01:03:22 | DevOps-PM-PGDip-2022-2023/easybuggy4django.old | https://api.github.com/repos/DevOps-PM-PGDip-2022-2023/easybuggy4django.old | opened | CVE-2021-33430 (Medium) detected in numpy-1.14.2.zip | security vulnerability | ## CVE-2021-33430 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>numpy-1.14.2.zip</b></p></summary>

<p>NumPy is the fundamental package for array computing with Python.</p>

<p>Libra... | True | CVE-2021-33430 (Medium) detected in numpy-1.14.2.zip - ## CVE-2021-33430 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>numpy-1.14.2.zip</b></p></summary>

<p>NumPy is the fundamenta... | non_process | cve medium detected in numpy zip cve medium severity vulnerability vulnerable library numpy zip numpy is the fundamental package for array computing with python library home page a href dependency hierarchy x numpy zip vulnerable library found in b... | 0 |

39,366 | 5,231,995,116 | IssuesEvent | 2017-01-30 07:05:24 | c2corg/v6_api | https://api.github.com/repos/c2corg/v6_api | closed | Associated images sorting | fixed and ready for testing Images | As for now, it seems that the images listed in the a document's ``associations`` attribute are id-sorted. It would make more sense to **have them chronologically sorted using the EXIF date** info if available.

This is especially useful for outings to make sure associated images are shown in the order they have been ... | 1.0 | Associated images sorting - As for now, it seems that the images listed in the a document's ``associations`` attribute are id-sorted. It would make more sense to **have them chronologically sorted using the EXIF date** info if available.

This is especially useful for outings to make sure associated images are shown ... | non_process | associated images sorting as for now it seems that the images listed in the a document s associations attribute are id sorted it would make more sense to have them chronologically sorted using the exif date info if available this is especially useful for outings to make sure associated images are shown ... | 0 |

518,670 | 15,032,193,082 | IssuesEvent | 2021-02-02 09:52:51 | eventespresso/barista | https://api.github.com/repos/eventespresso/barista | closed | Flatten Apollo Cache | C: data systems 🗑 D: Packages 📦 P3: med priority 😐 S:1 new 👶🏻 T: enhancement ✨ | Please forgive me for any misconceptions in the following description.

Currently our entities are stored in the Apollo cache using the query keys as accessors. As the complexity of our entity relations increases we need to add additional cache stores because of the differing query keys. This creates a lot of complic... | 1.0 | Flatten Apollo Cache - Please forgive me for any misconceptions in the following description.

Currently our entities are stored in the Apollo cache using the query keys as accessors. As the complexity of our entity relations increases we need to add additional cache stores because of the differing query keys. This c... | non_process | flatten apollo cache please forgive me for any misconceptions in the following description currently our entities are stored in the apollo cache using the query keys as accessors as the complexity of our entity relations increases we need to add additional cache stores because of the differing query keys this c... | 0 |

4,817 | 7,704,323,019 | IssuesEvent | 2018-05-21 11:49:25 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Childprocess behaving differently with exec than spawn | child_process | <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: 8.11.2 LTS

Platform: ... | 1.0 | Childprocess behaving differently with exec than spawn - <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template... | process | childprocess behaving differently with exec than spawn thank you for reporting an issue this issue tracker is for bugs and issues found within node js core if you require more general support please file an issue on our help repo please fill in as much of the template below as you re able ver... | 1 |

10,144 | 13,044,162,528 | IssuesEvent | 2020-07-29 03:47:33 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `Lock` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `Lock` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @andylokandy

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_expr) ... | 2.0 | UCP: Migrate scalar function `Lock` from TiDB -

## Description

Port the scalar function `Lock` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @andylokandy

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/... | process | ucp migrate scalar function lock from tidb description port the scalar function lock from tidb to coprocessor score mentor s andylokandy recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

17,183 | 22,764,702,522 | IssuesEvent | 2022-07-08 02:16:53 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | System.ServiceProcess: Backport MS Docs documentation to triple slash | documentation area-System.ServiceProcess | We are working on a [new documentation process plan](https://github.com/dotnet/runtime/issues/44969#issuecomment-788536998), in which the main objective is to make triple slash comments the source of truth for documentation, instead of MS Docs: We want developers/maintainers to have an easier time maintaining the docum... | 1.0 | System.ServiceProcess: Backport MS Docs documentation to triple slash - We are working on a [new documentation process plan](https://github.com/dotnet/runtime/issues/44969#issuecomment-788536998), in which the main objective is to make triple slash comments the source of truth for documentation, instead of MS Docs: We ... | process | system serviceprocess backport ms docs documentation to triple slash we are working on a in which the main objective is to make triple slash comments the source of truth for documentation instead of ms docs we want developers maintainers to have an easier time maintaining the documentation for their apis y... | 1 |

18,322 | 24,440,356,620 | IssuesEvent | 2022-10-06 14:13:33 | nextflow-io/nextflow | https://api.github.com/repos/nextflow-io/nextflow | opened | Processes as operator closures | lang/operators lang/processes | Based on this discussion: https://github.com/nextflow-io/nextflow/discussions/2521#discussioncomment-2082904

Processes and operators are functionally equivalent, in that they transform input channels into output channels. The different is that operators execute native Groovy code while processes execute bash scripts... | 1.0 | Processes as operator closures - Based on this discussion: https://github.com/nextflow-io/nextflow/discussions/2521#discussioncomment-2082904

Processes and operators are functionally equivalent, in that they transform input channels into output channels. The different is that operators execute native Groovy code whi... | process | processes as operator closures based on this discussion processes and operators are functionally equivalent in that they transform input channels into output channels the different is that operators execute native groovy code while processes execute bash scripts ultimately a process is just syntax sugar for ... | 1 |

158,827 | 24,902,232,278 | IssuesEvent | 2022-10-28 22:32:44 | Office-of-Digital-Services/California-State-Web-Template-Website | https://api.github.com/repos/Office-of-Digital-Services/California-State-Web-Template-Website | closed | Create new Visual Design landing page that will combine content from Typography, Color Schemes, and Icons pages | eng design P1 | Combine content from typography, color schemes and icons pages into one Visual Design page. For icons section make some intro text and the link to separate icons page.

Add "On this page" page navigation with anchors to Typography, Color Schemes and Icons sections | 1.0 | Create new Visual Design landing page that will combine content from Typography, Color Schemes, and Icons pages - Combine content from typography, color schemes and icons pages into one Visual Design page. For icons section make some intro text and the link to separate icons page.

Add "On this page" page navigation... | non_process | create new visual design landing page that will combine content from typography color schemes and icons pages combine content from typography color schemes and icons pages into one visual design page for icons section make some intro text and the link to separate icons page add on this page page navigation... | 0 |

316,575 | 27,167,215,591 | IssuesEvent | 2023-02-17 16:14:27 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix creation_ops.test_torch_tensor | PyTorch Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4013142181/jobs/6892176242" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4013142181/jobs/6892176242" rel="noopener ... | 1.0 | Fix creation_ops.test_torch_tensor - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4013142181/jobs/6892176242" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/40131... | non_process | fix creation ops test torch tensor tensorflow img src torch img src numpy img src jax img src failed ivy tests test ivy test frontends test torch test creation ops py test torch tensor e assertionerror e falsifying examp... | 0 |

60,527 | 17,023,448,599 | IssuesEvent | 2021-07-03 02:05:09 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Coastline Error checker unavailable | Component: utils Priority: major Resolution: invalid Type: defect | **[Submitted to the original trac issue database at 9.35am, Monday, 27th July 2009]**

The coastline error checker[1] has been offline since June 2009. Not only does this hinder fixing of coastline errors, but since it generates the shapefiles used for the Mapnik caostline data it means that the Mapnik layer is outdate... | 1.0 | Coastline Error checker unavailable - **[Submitted to the original trac issue database at 9.35am, Monday, 27th July 2009]**

The coastline error checker[1] has been offline since June 2009. Not only does this hinder fixing of coastline errors, but since it generates the shapefiles used for the Mapnik caostline data it ... | non_process | coastline error checker unavailable the coastline error checker has been offline since june not only does this hinder fixing of coastline errors but since it generates the shapefiles used for the mapnik caostline data it means that the mapnik layer is outdated | 0 |

16,943 | 4,107,649,385 | IssuesEvent | 2016-06-06 13:46:25 | apinf/api-umbrella-dashboard | https://api.github.com/repos/apinf/api-umbrella-dashboard | closed | 'Edit API backend' view in 'Suomi' language with partly translated text strings | API Backend bug Documentation Viewer in progress | Steps:

1. Visit https://nightly.apinf.io

2. Change language to 'Suomi'

3. Login

4. Go to dashboard

5. Add API Backend

6. Go to Manage API Backends

7. Click on 'Edit'

8. Go to 'Documentation'

Findings:

- Title text strings for input fields shown in English language

- Title text for 'Brow... | 1.0 | 'Edit API backend' view in 'Suomi' language with partly translated text strings - Steps:

1. Visit https://nightly.apinf.io

2. Change language to 'Suomi'

3. Login

4. Go to dashboard

5. Add API Backend

6. Go to Manage API Backends

7. Click on 'Edit'

8. Go to 'Documentation'

Findings:

- Tit... | non_process | edit api backend view in suomi language with partly translated text strings steps visit change language to suomi login go to dashboard add api backend go to manage api backends click on edit go to documentation findings title text strings for inp... | 0 |

5,671 | 8,556,325,892 | IssuesEvent | 2018-11-08 12:50:14 | kiwicom/orbit-components | https://api.github.com/repos/kiwicom/orbit-components | closed | <Radio /> pass JSX to the label | Enhancement Processing | **Is your feature request related to a problem? Please describe.**

I would like to be able to pass JSX to the label of the Radio component so that I can nest another component inside the label. See the screenshot below for an example use case.

**Describe the solution you'd like**

To be able to pass JSX as a prop t... | 1.0 | <Radio /> pass JSX to the label - **Is your feature request related to a problem? Please describe.**

I would like to be able to pass JSX to the label of the Radio component so that I can nest another component inside the label. See the screenshot below for an example use case.

**Describe the solution you'd like**

... | process | pass jsx to the label is your feature request related to a problem please describe i would like to be able to pass jsx to the label of the radio component so that i can nest another component inside the label see the screenshot below for an example use case describe the solution you d like to be ab... | 1 |

15,956 | 20,173,302,597 | IssuesEvent | 2022-02-10 12:24:48 | ooi-data/CE01ISSM-MFD35-04-ADCPTM000-recovered_inst-adcpt_m_wvs_recovered | https://api.github.com/repos/ooi-data/CE01ISSM-MFD35-04-ADCPTM000-recovered_inst-adcpt_m_wvs_recovered | opened | 🛑 Processing failed: ZeroDivisionError | process | ## Overview

`ZeroDivisionError` found in `processing_task` task during run ended on 2022-02-10T12:24:47.545345.

## Details

Flow name: `CE01ISSM-MFD35-04-ADCPTM000-recovered_inst-adcpt_m_wvs_recovered`

Task name: `processing_task`

Error type: `ZeroDivisionError`

Error message: division by zero

<details>

<summary>Tr... | 1.0 | 🛑 Processing failed: ZeroDivisionError - ## Overview

`ZeroDivisionError` found in `processing_task` task during run ended on 2022-02-10T12:24:47.545345.

## Details

Flow name: `CE01ISSM-MFD35-04-ADCPTM000-recovered_inst-adcpt_m_wvs_recovered`

Task name: `processing_task`

Error type: `ZeroDivisionError`

Error message... | process | 🛑 processing failed zerodivisionerror overview zerodivisionerror found in processing task task during run ended on details flow name recovered inst adcpt m wvs recovered task name processing task error type zerodivisionerror error message division by zero traceback ... | 1 |

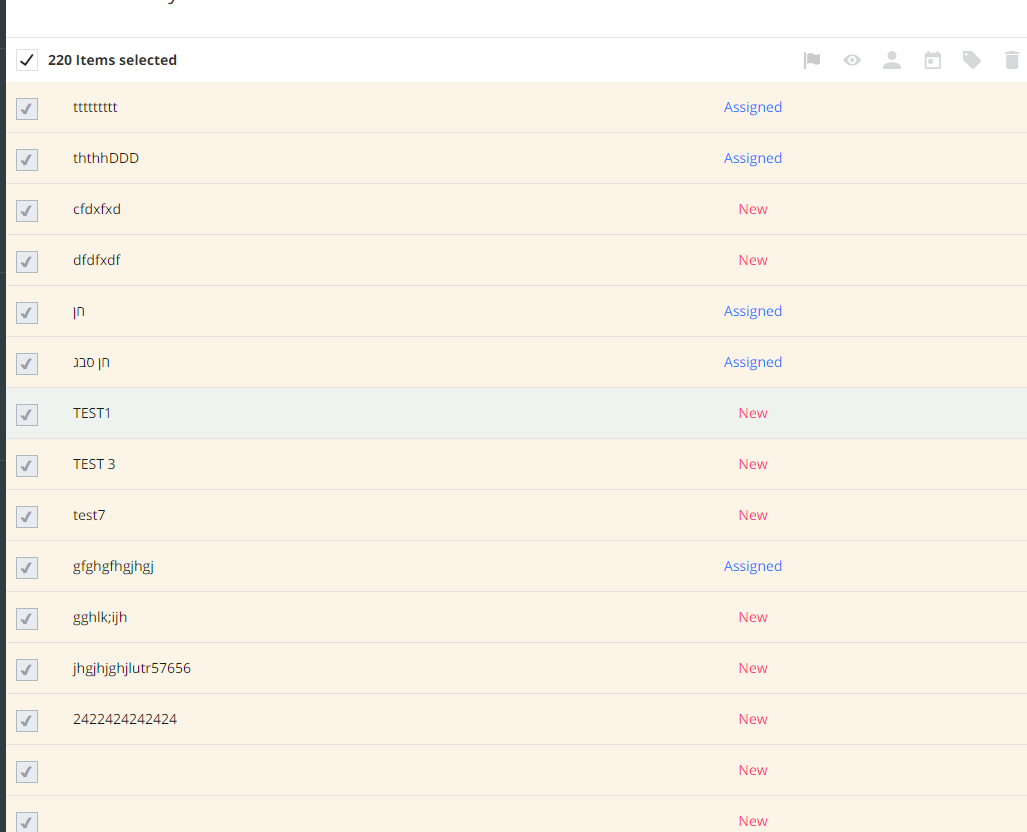

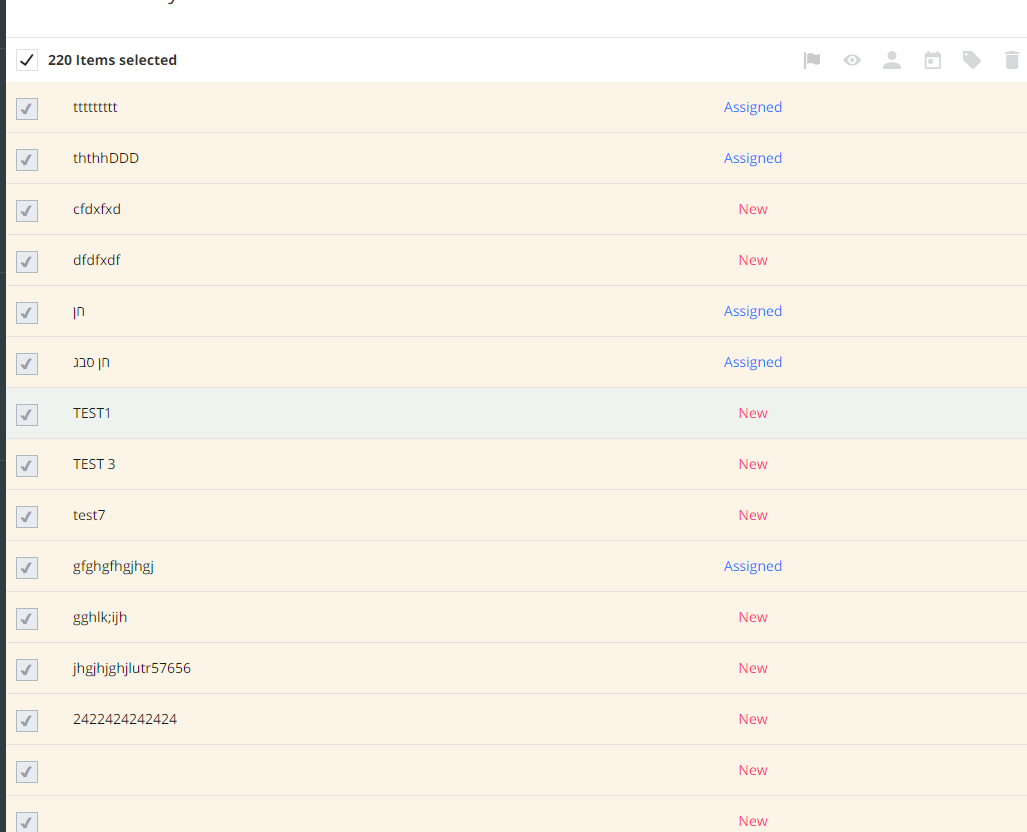

6,013 | 8,822,090,178 | IssuesEvent | 2019-01-02 07:33:49 | linnovate/root | https://api.github.com/repos/linnovate/root | closed | multiple selection my tasks bug | 2.0.7 Fixed Process bug | click on ICU

click on watched tasks twice

click on a multiple selection option on a task

it selects every task on the list and you cant un-select it after

| 1.0 | multiple selection my tasks bug - click on ICU

click on watched tasks twice

click on a multiple selection option on a task

it selects every task on the list and you cant un-select it after

| process | multiple selection my tasks bug click on icu click on watched tasks twice click on a multiple selection option on a task it selects every task on the list and you cant un select it after | 1 |

34,979 | 7,497,166,764 | IssuesEvent | 2018-04-08 17:00:56 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | [Bug?] Example of Bland's Rule for optimize.linprog (simplex) cycling/preventing termination | defect scipy.optimize | Forcing Bland's Rule with the "bland":True options seems to prevent the code example below from terminating, while the purpose of Bland's Rule is to make sure it terminates. The problem is solved quickly when not forcing Bland's Rule, giving an optimal value of -6.044533469014448.

By "not terminating" i mean that it... | 1.0 | [Bug?] Example of Bland's Rule for optimize.linprog (simplex) cycling/preventing termination - Forcing Bland's Rule with the "bland":True options seems to prevent the code example below from terminating, while the purpose of Bland's Rule is to make sure it terminates. The problem is solved quickly when not forcing Blan... | non_process | example of bland s rule for optimize linprog simplex cycling preventing termination forcing bland s rule with the bland true options seems to prevent the code example below from terminating while the purpose of bland s rule is to make sure it terminates the problem is solved quickly when not forcing bland s r... | 0 |

15,815 | 20,014,061,371 | IssuesEvent | 2022-02-01 10:12:09 | gradle/gradle | https://api.github.com/repos/gradle/gradle | closed | Wrong code gets generated when using MapStruct decorators with incremental compilation in Gradle 7.3 | @execution in:annotation-processing | I've ran into a problem with using MapStruct's annotation processor with incremental compilation after upgrading to Gradle 7.3, I have also created a MapStruct issue here: https://github.com/mapstruct/mapstruct/issues/2682

### Expected Behavior

Expected the generated `PersonaMapperImpl.java` after incremental com... | 1.0 | Wrong code gets generated when using MapStruct decorators with incremental compilation in Gradle 7.3 - I've ran into a problem with using MapStruct's annotation processor with incremental compilation after upgrading to Gradle 7.3, I have also created a MapStruct issue here: https://github.com/mapstruct/mapstruct/issues... | process | wrong code gets generated when using mapstruct decorators with incremental compilation in gradle i ve ran into a problem with using mapstruct s annotation processor with incremental compilation after upgrading to gradle i have also created a mapstruct issue here expected behavior expected the ge... | 1 |

10,347 | 13,172,726,999 | IssuesEvent | 2020-08-11 18:58:06 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | closed | Kubernetes: Continuous Deployment from master | P2 enhancement process | **Problem**

We should continuously deploy master to a development environment Kubernetes cluster.

**Solution**

- Implement #625

- Use FluxCD

**Alternatives**

**Additional Context**

| 1.0 | Kubernetes: Continuous Deployment from master - **Problem**

We should continuously deploy master to a development environment Kubernetes cluster.

**Solution**

- Implement #625

- Use FluxCD

**Alternatives**

**Additional Context**

| process | kubernetes continuous deployment from master problem we should continuously deploy master to a development environment kubernetes cluster solution implement use fluxcd alternatives additional context | 1 |

22,126 | 30,671,535,739 | IssuesEvent | 2023-07-25 23:08:46 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | opilot 1.0.0.dev0 has 2 GuardDog issues | guarddog exec-base64 silent-process-execution | https://pypi.org/project/opilot

https://inspector.pypi.io/project/opilot

```{

"dependency": "opilot",

"version": "1.0.0.dev0",

"result": {

"issues": 2,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "opilot-1.0.0.dev0/sky/skylet/log_lib.py:219",

... | 1.0 | opilot 1.0.0.dev0 has 2 GuardDog issues - https://pypi.org/project/opilot

https://inspector.pypi.io/project/opilot

```{

"dependency": "opilot",

"version": "1.0.0.dev0",

"result": {

"issues": 2,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "opilot-1.0.0... | process | opilot has guarddog issues dependency opilot version result issues errors results silent process execution location opilot sky skylet log lib py code subprocess pope... | 1 |

22,613 | 31,837,252,653 | IssuesEvent | 2023-09-14 14:12:48 | h4sh5/npm-auto-scanner | https://api.github.com/repos/h4sh5/npm-auto-scanner | opened | @plone/volto 16.24.0 has 29 guarddog issues | npm-install-script shady-links npm-silent-process-execution | ```{"npm-install-script":[{"code":" \"postinstall\": \"make patches\",","location":"package/package.json:36","message":"The package.json has a script automatically running when the package is installed"},{"code":" \"prepare\": \"husky install\",","location":"package/package.json:41","message":"The package.json ha... | 1.0 | @plone/volto 16.24.0 has 29 guarddog issues - ```{"npm-install-script":[{"code":" \"postinstall\": \"make patches\",","location":"package/package.json:36","message":"The package.json has a script automatically running when the package is installed"},{"code":" \"prepare\": \"husky install\",","location":"package/p... | process | plone volto has guarddog issues npm install script npm silent process execution n t t tdetached true n t t tstdio ignore n t t unref location package src addons volto test addon node modules update notifier update notifier js message this package is silently executing anot... | 1 |

114,211 | 17,195,798,287 | IssuesEvent | 2021-07-16 17:09:39 | harrinry/carbon | https://api.github.com/repos/harrinry/carbon | opened | CVE-2020-28500 (Medium) detected in lodash-4.17.15.tgz | security vulnerability | ## CVE-2020-28500 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.15.tgz</b></p></summary>

<p>Lodash modular utilities.</p>

<p>Library home page: <a href="https://registr... | True | CVE-2020-28500 (Medium) detected in lodash-4.17.15.tgz - ## CVE-2020-28500 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.15.tgz</b></p></summary>

<p>Lodash modular util... | non_process | cve medium detected in lodash tgz cve medium severity vulnerability vulnerable library lodash tgz lodash modular utilities library home page a href path to dependency file carbon package json path to vulnerable library carbon node modules commitlint ensure node mod... | 0 |

18,324 | 24,443,109,370 | IssuesEvent | 2022-10-06 15:49:35 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [processor/transform]: Allow transformation of Trace attribute keys | good first issue priority:p2 processor/transform pkg/ottl | **Is your feature request related to a problem? Please describe.**

I want to able to rename Trace attribute keys with the transform processor.

**Describe the solution you'd like**

```yaml

transform/key_rename:

traces:

queries:

- replace_all_patterns(attributes.keys, "^prefix_+", "prefix.")

... | 1.0 | [processor/transform]: Allow transformation of Trace attribute keys - **Is your feature request related to a problem? Please describe.**

I want to able to rename Trace attribute keys with the transform processor.

**Describe the solution you'd like**

```yaml

transform/key_rename:

traces:

queries:

... | process | allow transformation of trace attribute keys is your feature request related to a problem please describe i want to able to rename trace attribute keys with the transform processor describe the solution you d like yaml transform key rename traces queries replace all p... | 1 |

16,959 | 22,320,861,669 | IssuesEvent | 2022-06-14 06:12:58 | hashgraph/hedera-json-rpc-relay | https://api.github.com/repos/hashgraph/hedera-json-rpc-relay | closed | Add acceptance test support for eth_getTransactionReceipt | enhancement P2 process | ### Problem

The current acceptance tests implemented in #119 were not able to include `eth_getTransactionReceipt` since it kept returning

```

err: {

"type": "PrecheckStatusError",

"message": "transaction 0.0.2@1654029652.985219400 failed precheck with status INVALID_ACCOUNT_ID",

"stack":... | 1.0 | Add acceptance test support for eth_getTransactionReceipt - ### Problem

The current acceptance tests implemented in #119 were not able to include `eth_getTransactionReceipt` since it kept returning

```

err: {

"type": "PrecheckStatusError",

"message": "transaction 0.0.2@1654029652.985219400 fail... | process | add acceptance test support for eth gettransactionreceipt problem the current acceptance tests implemented in were not able to include eth gettransactionreceipt since it kept returning err type precheckstatuserror message transaction failed precheck with st... | 1 |

11,486 | 13,482,196,140 | IssuesEvent | 2020-09-11 00:56:50 | enigma-dev/enigma-dev | https://api.github.com/repos/enigma-dev/enigma-dev | closed | Show Debug Message | Compatibility Editor | It seems 8 years ago polygonz (7a00fb0fba7c4c1cf767e6929810bc89edf66604) implemented `show_debug_message` in the regular terminal I/O instead of the Widget Systems. Need to test GM8.1 and GMSv1.4 to see what the behavior is. User reported that ENIGMA's only shows the message in debug mode, while GMSv1.4 will show it in... | True | Show Debug Message - It seems 8 years ago polygonz (7a00fb0fba7c4c1cf767e6929810bc89edf66604) implemented `show_debug_message` in the regular terminal I/O instead of the Widget Systems. Need to test GM8.1 and GMSv1.4 to see what the behavior is. User reported that ENIGMA's only shows the message in debug mode, while GM... | non_process | show debug message it seems years ago polygonz implemented show debug message in the regular terminal i o instead of the widget systems need to test and to see what the behavior is user reported that enigma s only shows the message in debug mode while will show it in run and debug mode | 0 |

96,144 | 3,964,995,554 | IssuesEvent | 2016-05-03 05:26:25 | firelab/windninja | https://api.github.com/repos/firelab/windninja | closed | UCAR NAM is down | component:wx priority:low severity:high | On there end, even using the web interface I get:

`Message missing required fields: cdmHash` | 1.0 | UCAR NAM is down - On there end, even using the web interface I get:

`Message missing required fields: cdmHash` | non_process | ucar nam is down on there end even using the web interface i get message missing required fields cdmhash | 0 |

5,133 | 7,918,567,851 | IssuesEvent | 2018-07-04 13:43:37 | emacs-ess/ESS | https://api.github.com/repos/emacs-ess/ESS | closed | org-babel-R output broken by recent ESS commit | literate:org process:eval | Recent commit 74d5fbbcde8c2aea5d87c82875e37c134d1af4cb ("Improve on + + replacement and offset detection") has broken org-babel-R output.

In particular consider the following example:

```

#+BEGIN_SRC R :session :results output

print("hello")

#+END_SRC

```

Before the commit, org-babel successfully prints:

``... | 1.0 | org-babel-R output broken by recent ESS commit - Recent commit 74d5fbbcde8c2aea5d87c82875e37c134d1af4cb ("Improve on + + replacement and offset detection") has broken org-babel-R output.

In particular consider the following example:

```

#+BEGIN_SRC R :session :results output

print("hello")

#+END_SRC

```

Befo... | process | org babel r output broken by recent ess commit recent commit improve on replacement and offset detection has broken org babel r output in particular consider the following example begin src r session results output print hello end src before the commit org babel successfully p... | 1 |

667 | 3,137,196,236 | IssuesEvent | 2015-09-11 00:14:48 | MozillaFoundation/plan | https://api.github.com/repos/MozillaFoundation/plan | closed | PROCESS: Figure out and commit to quality-ensuring process | process | Given the stakes of these pages, it is highly desirable to establish a checklist and run through it often. I suspect we'll need two checklists, one for new pages, and one for changes to pages.

## For New Flows (e.g. new forms, new donation flows, new payment processors)

- [ ] Dev: GA tracking in place with the r... | 1.0 | PROCESS: Figure out and commit to quality-ensuring process - Given the stakes of these pages, it is highly desirable to establish a checklist and run through it often. I suspect we'll need two checklists, one for new pages, and one for changes to pages.

## For New Flows (e.g. new forms, new donation flows, new paym... | process | process figure out and commit to quality ensuring process given the stakes of these pages it is highly desirable to establish a checklist and run through it often i suspect we ll need two checklists one for new pages and one for changes to pages for new flows e g new forms new donation flows new paym... | 1 |

4,823 | 24,857,939,086 | IssuesEvent | 2022-10-27 05:15:40 | aws/aws-sam-cli | https://api.github.com/repos/aws/aws-sam-cli | closed | Bug: sam invoke local - Docker 404 Client Error: Not Found | type/question blocked/more-info-needed maintainer/need-followup | ### Description:

I'm having a hard time trying to locally invoking lambda code with hello-world templates

### Steps to reproduce:

`sam init -r python3.8`

`1`

`1`

`N`

`sam-app-py38`

`cd sam-app-py38`

`sam build --debug`

2022-10-19 19:05:12,888 | Telemetry endpoint conf... | True | Bug: sam invoke local - Docker 404 Client Error: Not Found - ### Description:

I'm having a hard time trying to locally invoking lambda code with hello-world templates

### Steps to reproduce:

`sam init -r python3.8`

`1`

`1`

`N`

`sam-app-py38`

`cd sam-app-py38`

`sam build --debug`

... | non_process | bug sam invoke local docker client error not found description i m having a hard time trying to locally invoking lambda code with hello world templates steps to reproduce sam init r n sam app cd sam app sam build debug ... | 0 |

263,647 | 28,047,903,624 | IssuesEvent | 2023-03-29 01:34:42 | kapseliboi/vaadin-date-picker | https://api.github.com/repos/kapseliboi/vaadin-date-picker | closed | CVE-2021-23440 (High) detected in set-value-2.0.1.tgz - autoclosed | Mend: dependency security vulnerability | ## CVE-2021-23440 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>set-value-2.0.1.tgz</b></p></summary>

<p>Create nested values and any intermediaries using dot notation (`'a.b.c'`) pa... | True | CVE-2021-23440 (High) detected in set-value-2.0.1.tgz - autoclosed - ## CVE-2021-23440 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>set-value-2.0.1.tgz</b></p></summary>

<p>Create n... | non_process | cve high detected in set value tgz autoclosed cve high severity vulnerability vulnerable library set value tgz create nested values and any intermediaries using dot notation a b c paths library home page a href path to dependency file package json path to vu... | 0 |

122,644 | 16,195,130,073 | IssuesEvent | 2021-05-04 13:45:05 | devmount/third-stats | https://api.github.com/repos/devmount/third-stats | closed | Empty chart (tooltip only) when only one single data point on abscissa | design enhancement ux | **Describe the bug**

If only one entry on x-axis exists (e.g. only one year in the years chart), the chart appears empty. The data point exists, but is only visible on mouseover via tooltip.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to stats page

2. Set date range to one year only (e.g. 2020-01-01 ... | 1.0 | Empty chart (tooltip only) when only one single data point on abscissa - **Describe the bug**

If only one entry on x-axis exists (e.g. only one year in the years chart), the chart appears empty. The data point exists, but is only visible on mouseover via tooltip.

**To Reproduce**

Steps to reproduce the behavior:

... | non_process | empty chart tooltip only when only one single data point on abscissa describe the bug if only one entry on x axis exists e g only one year in the years chart the chart appears empty the data point exists but is only visible on mouseover via tooltip to reproduce steps to reproduce the behavior ... | 0 |

13,393 | 15,866,628,738 | IssuesEvent | 2021-04-08 15:57:17 | digitalmethodsinitiative/4cat | https://api.github.com/repos/digitalmethodsinitiative/4cat | closed | Greentext processor | (mostly) back-end enhancement processors | A processor, which extracts greentexts (to then split into sentences, or something?) | 1.0 | Greentext processor - A processor, which extracts greentexts (to then split into sentences, or something?) | process | greentext processor a processor which extracts greentexts to then split into sentences or something | 1 |

423,720 | 12,301,099,287 | IssuesEvent | 2020-05-11 14:55:51 | Olyno/oden | https://api.github.com/repos/Olyno/oden | opened | [module: fs] - RoadMap | feature module: fs priority: high | Here is the roadmap for the ``fs`` module.

* - [ ] fs.access(path[, mode], callback)

* - [ ] fs.accessSync(path[, mode])

* - [ ] fs.appendFile(path, data[, options], callback)

* - [ ] fs.appendFileSync(path, data[, options])

* - [ ] fs.chmod(path, mode, callback)

* - [ ] fs.chmodSync(path, mode)

* - [ ]... | 1.0 | [module: fs] - RoadMap - Here is the roadmap for the ``fs`` module.

* - [ ] fs.access(path[, mode], callback)

* - [ ] fs.accessSync(path[, mode])

* - [ ] fs.appendFile(path, data[, options], callback)

* - [ ] fs.appendFileSync(path, data[, options])

* - [ ] fs.chmod(path, mode, callback)

* - [ ] fs.chmod... | non_process | roadmap here is the roadmap for the fs module fs access path callback fs accesssync path fs appendfile path data callback fs appendfilesync path data fs chmod path mode callback fs chmodsync path mode fs chown path uid gid callbac... | 0 |

17,506 | 23,317,597,479 | IssuesEvent | 2022-08-08 13:47:28 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Prisma error: Explicit many to many relationship when updating model | bug/2-confirmed kind/bug process/candidate topic: mysql team/client topic: referentialIntegrity priority/high size/unknown | ### Bug description

I am following the official doc to implement an explicit many to many relationship with an intermediate table that holds additional fields.

The error occurs when I want to **update an existing user object**:

```ts

const user = await prisma.user.create({

data: {

email: "user@exmapl... | 1.0 | Prisma error: Explicit many to many relationship when updating model - ### Bug description

I am following the official doc to implement an explicit many to many relationship with an intermediate table that holds additional fields.

The error occurs when I want to **update an existing user object**:

```ts

cons... | process | prisma error explicit many to many relationship when updating model bug description i am following the official doc to implement an explicit many to many relationship with an intermediate table that holds additional fields the error occurs when i want to update an existing user object ts cons... | 1 |

20,638 | 27,316,804,393 | IssuesEvent | 2023-02-24 16:18:57 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | docs: don't use the term "main pipeline" | devops/prod doc-bug Pri1 devops-cicd-process/tech | On the [template types and usages](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/templates?view=azure-devops#use-other-repositories) page, the docs state:

> You may also use `@self` to refer to the repository where the main pipeline was found.

This comes right after the following Note:

> If ... | 1.0 | docs: don't use the term "main pipeline" - On the [template types and usages](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/templates?view=azure-devops#use-other-repositories) page, the docs state:

> You may also use `@self` to refer to the repository where the main pipeline was found.

This com... | process | docs don t use the term main pipeline on the page the docs state you may also use self to refer to the repository where the main pipeline was found this comes right after the following note if no ref is specified the pipeline will default to using refs heads main at this point it s a... | 1 |

13,151 | 15,572,845,066 | IssuesEvent | 2021-03-17 07:43:47 | bitpal/bitpal_umbrella | https://api.github.com/repos/bitpal/bitpal_umbrella | opened | Admin page | Payment processor | An admin login could give you access to things like:

* View node status

* Give others access to a store

* Restart server or stop/start different parts | 1.0 | Admin page - An admin login could give you access to things like:

* View node status

* Give others access to a store

* Restart server or stop/start different parts | process | admin page an admin login could give you access to things like view node status give others access to a store restart server or stop start different parts | 1 |

15,429 | 19,619,637,352 | IssuesEvent | 2022-01-07 03:34:44 | factor/factor | https://api.github.com/repos/factor/factor | closed | sigset_t definition that works and doesn't crash with posix_spawn interface | unix process-launcher cross-platform-difference libc aliens maybe-close-this c-types | I've tried to research this issue and after many days of debugging I give up but am surely overlooking something obvious.

## What doesn't work?

Any definition for `sigset_t` that I can think of. in POSIX, `sigset_t` should be either an integer or a C object, but shouldn't itself be a pointer type until it needs t... | 1.0 | sigset_t definition that works and doesn't crash with posix_spawn interface - I've tried to research this issue and after many days of debugging I give up but am surely overlooking something obvious.

## What doesn't work?

Any definition for `sigset_t` that I can think of. in POSIX, `sigset_t` should be either an ... | process | sigset t definition that works and doesn t crash with posix spawn interface i ve tried to research this issue and after many days of debugging i give up but am surely overlooking something obvious what doesn t work any definition for sigset t that i can think of in posix sigset t should be either an ... | 1 |

9,008 | 12,121,699,022 | IssuesEvent | 2020-04-22 09:43:33 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Unexpected behaviour of "Merge Selected Feature" in case of error in "Merge Feature Attribute" | Bug Feedback Processing | Working with two consecutive linestrings (linestrings sharing one point) if the "Merge Feature Attribute" raise an exception for example due to a violation of a postgres trigger in one of the attribute fields of the new merged linestring, the two selected lines are deleted definitively even if one stop editing without ... | 1.0 | Unexpected behaviour of "Merge Selected Feature" in case of error in "Merge Feature Attribute" - Working with two consecutive linestrings (linestrings sharing one point) if the "Merge Feature Attribute" raise an exception for example due to a violation of a postgres trigger in one of the attribute fields of the new mer... | process | unexpected behaviour of merge selected feature in case of error in merge feature attribute working with two consecutive linestrings linestrings sharing one point if the merge feature attribute raise an exception for example due to a violation of a postgres trigger in one of the attribute fields of the new mer... | 1 |

1,054 | 3,520,800,224 | IssuesEvent | 2016-01-12 22:22:39 | matz-e/lobster | https://api.github.com/repos/matz-e/lobster | closed | Allow to specify per workflow resource requirements | enhancement processing | Current Lobster usage involves one contract, normally: we tell the pool what resources to request and place no other requirements on tasks but the number of cores they use. With more heterogenous projects involving different workflows, it would be better to specify the resources per workflow, and optionally let WQ lowe... | 1.0 | Allow to specify per workflow resource requirements - Current Lobster usage involves one contract, normally: we tell the pool what resources to request and place no other requirements on tasks but the number of cores they use. With more heterogenous projects involving different workflows, it would be better to specify ... | process | allow to specify per workflow resource requirements current lobster usage involves one contract normally we tell the pool what resources to request and place no other requirements on tasks but the number of cores they use with more heterogenous projects involving different workflows it would be better to specify ... | 1 |

391,600 | 11,576,125,807 | IssuesEvent | 2020-02-21 11:13:02 | luna/enso | https://api.github.com/repos/luna/enso | opened | Recent Projects List | Category: Backend Change: Non-Breaking Difficulty: Core Contributor Priority: Medium Type: Enhancement | ### Summary

We need the ability to list a user's recent projects to them to enable easy opening of projects. This task deals with implementing this functionality.

### Value

The IDE will be able to list recent projects.

### Specification

- [ ] Work out how to store information about recent projects persistent... | 1.0 | Recent Projects List - ### Summary

We need the ability to list a user's recent projects to them to enable easy opening of projects. This task deals with implementing this functionality.

### Value

The IDE will be able to list recent projects.

### Specification

- [ ] Work out how to store information about rec... | non_process | recent projects list summary we need the ability to list a user s recent projects to them to enable easy opening of projects this task deals with implementing this functionality value the ide will be able to list recent projects specification work out how to store information about recen... | 0 |

20,168 | 10,616,786,274 | IssuesEvent | 2019-10-12 14:24:33 | AGarlicMonkey/Clove | https://api.github.com/repos/AGarlicMonkey/Clove | opened | TextSystem creates new textures and sprites each frame | ECS UI performance rendering | The TextSystem creates new sprites / textures each frame before sending the data to the renderer.

We should probably create these when the font class is created and store them in some kind of map to be accessed when needed | True | TextSystem creates new textures and sprites each frame - The TextSystem creates new sprites / textures each frame before sending the data to the renderer.

We should probably create these when the font class is created and store them in some kind of map to be accessed when needed | non_process | textsystem creates new textures and sprites each frame the textsystem creates new sprites textures each frame before sending the data to the renderer we should probably create these when the font class is created and store them in some kind of map to be accessed when needed | 0 |

8,123 | 11,303,528,883 | IssuesEvent | 2020-01-17 20:21:10 | material-components/material-components-ios | https://api.github.com/repos/material-components/material-components-ios | closed | [TextControls] Break up TextControls subspecs/targets | [TextControls] type:Process | The various text controls (input chip view, text area, and text field) should exist in their own Cocoapods subspecs/Bazel targets.

Acceptance criteria:

The blaze target for text controls has been broken up. The various text controls are no longer all in the same target.

This was filed as an internal issue. If yo... | 1.0 | [TextControls] Break up TextControls subspecs/targets - The various text controls (input chip view, text area, and text field) should exist in their own Cocoapods subspecs/Bazel targets.

Acceptance criteria:

The blaze target for text controls has been broken up. The various text controls are no longer all in the sa... | process | break up textcontrols subspecs targets the various text controls input chip view text area and text field should exist in their own cocoapods subspecs bazel targets acceptance criteria the blaze target for text controls has been broken up the various text controls are no longer all in the same target ... | 1 |

15,607 | 19,729,899,226 | IssuesEvent | 2022-01-14 00:37:42 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | `process.exit()` in ESM results in exit code 13 | process esm | ### Version

v16.13.0

### Platform

_No response_

### Subsystem

_No response_

### What steps will reproduce the bug?

```sh

echo "process.exit()" > test.mjs

node ./test.mjs # this exits with code 13

echo "process.exit()" > test.cjs

node ./test.cjs # this exits with code 0

```

### How often does it reproduce? ... | 1.0 | `process.exit()` in ESM results in exit code 13 - ### Version

v16.13.0

### Platform

_No response_

### Subsystem

_No response_

### What steps will reproduce the bug?

```sh

echo "process.exit()" > test.mjs

node ./test.mjs # this exits with code 13

echo "process.exit()" > test.cjs

node ./test.cjs # this exits w... | process | process exit in esm results in exit code version platform no response subsystem no response what steps will reproduce the bug sh echo process exit test mjs node test mjs this exits with code echo process exit test cjs node test cjs this exits with c... | 1 |

137,586 | 12,759,813,955 | IssuesEvent | 2020-06-29 06:46:28 | alpaka-group/alpaka-workshop-slides | https://api.github.com/repos/alpaka-group/alpaka-workshop-slides | closed | Decide on license | documentation question | Before we upload the slides and the cheat sheet we should decide on a license for this repository. My apologies for being late with this but I haven't thought of this until now. Thoughts? @bussmann @psychocoderHPC @sbastrakov | 1.0 | Decide on license - Before we upload the slides and the cheat sheet we should decide on a license for this repository. My apologies for being late with this but I haven't thought of this until now. Thoughts? @bussmann @psychocoderHPC @sbastrakov | non_process | decide on license before we upload the slides and the cheat sheet we should decide on a license for this repository my apologies for being late with this but i haven t thought of this until now thoughts bussmann psychocoderhpc sbastrakov | 0 |

135,179 | 18,672,449,801 | IssuesEvent | 2021-10-31 00:22:06 | odpi/egeria | https://api.github.com/repos/odpi/egeria | closed | securedProperties Field Retrieved Through REST API | security no-issue-activity | The `securedProperties` field on org.odpi.openmetadata.frameworks.connectors.properties.ConnectionProperties.java comes back via the REST API.

The documentation for this field says:

> securedProperties - Protected properties for secure log on by connector to back end server. These

> are protected properties tha... | True | securedProperties Field Retrieved Through REST API - The `securedProperties` field on org.odpi.openmetadata.frameworks.connectors.properties.ConnectionProperties.java comes back via the REST API.

The documentation for this field says:

> securedProperties - Protected properties for secure log on by connector to ba... | non_process | securedproperties field retrieved through rest api the securedproperties field on org odpi openmetadata frameworks connectors properties connectionproperties java comes back via the rest api the documentation for this field says securedproperties protected properties for secure log on by connector to ba... | 0 |

21,093 | 28,045,023,763 | IssuesEvent | 2023-03-28 21:54:14 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | [MLv2] Implement function to return orderable columns | .Backend .metabase-lib .Team/QueryProcessor :hammer_and_wrench: | We need a function to power this UI

<img width="275" alt="image" src="https://user-images.githubusercontent.com/1455846/228049850-5a49d1b7-c356-40fc-837d-6349f8a54e9e.png">

| 1.0 | [MLv2] Implement function to return orderable columns - We need a function to power this UI

<img width="275" alt="image" src="https://user-images.githubusercontent.com/1455846/228049850-5a49d1b7-c356-40fc-837d-6349f8a54e9e.png">

| process | implement function to return orderable columns we need a function to power this ui img width alt image src | 1 |

235,284 | 7,736,173,873 | IssuesEvent | 2018-05-27 23:28:22 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | USER ISSUE: after bleeding_staging updated, i cant connect to my server | High Priority | **Version:** 0.7.5.0 beta staging-77a8710c

**Steps to Reproduce:**

(after update from previous bleeding version)

Launch server.

Try to connect to it.

**Expected behavior:**

Connected and playing.

**Actual behavior:**

Server crash, client deadlocked on rotating gear screen.

Savefile.

https://www.dropbo... | 1.0 | USER ISSUE: after bleeding_staging updated, i cant connect to my server - **Version:** 0.7.5.0 beta staging-77a8710c

**Steps to Reproduce:**

(after update from previous bleeding version)

Launch server.

Try to connect to it.

**Expected behavior:**

Connected and playing.

**Actual behavior:**

Server crash, c... | non_process | user issue after bleeding staging updated i cant connect to my server version beta staging steps to reproduce after update from previous bleeding version launch server try to connect to it expected behavior connected and playing actual behavior server crash client d... | 0 |

227,934 | 25,135,398,485 | IssuesEvent | 2022-11-09 18:09:54 | lukebrogan-mend/NuGetGallery | https://api.github.com/repos/lukebrogan-mend/NuGetGallery | opened | moment-2.18.1.js: 3 vulnerabilities (highest severity is: 7.5) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>moment-2.18.1.js</b></p></summary>

<p>Parse, validate, manipulate, and display dates</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/moment.... | True | moment-2.18.1.js: 3 vulnerabilities (highest severity is: 7.5) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>moment-2.18.1.js</b></p></summary>

<p>Parse, validate, manipulate, and display dates</p>

<p>Library h... | non_process | moment js vulnerabilities highest severity is vulnerable library moment js parse validate manipulate and display dates library home page a href path to vulnerable library scripts gallery moment js vulnerabilities cve severity cvss dependency ... | 0 |

8,034 | 11,210,801,551 | IssuesEvent | 2020-01-06 14:05:46 | kubeflow/kfctl | https://api.github.com/repos/kubeflow/kfctl | closed | Make kubeflow/kfctl source of truth for kfctl development; turn down kfctl in kubeflow/kubeflow | area/kfctl effort/5-days kind/process priority/p0 | Opening this issue to track migrating development of kfctl from kubeflow/kubeflow to kubeflow/kfctl

@kkasravi What are the next steps here? Are we waiting on E2E tests to be migrated to kubeflow/kfctl?

/assign @kkasravi | 1.0 | Make kubeflow/kfctl source of truth for kfctl development; turn down kfctl in kubeflow/kubeflow - Opening this issue to track migrating development of kfctl from kubeflow/kubeflow to kubeflow/kfctl

@kkasravi What are the next steps here? Are we waiting on E2E tests to be migrated to kubeflow/kfctl?

/assign @kkasr... | process | make kubeflow kfctl source of truth for kfctl development turn down kfctl in kubeflow kubeflow opening this issue to track migrating development of kfctl from kubeflow kubeflow to kubeflow kfctl kkasravi what are the next steps here are we waiting on tests to be migrated to kubeflow kfctl assign kkasrav... | 1 |

18,070 | 24,084,415,240 | IssuesEvent | 2022-09-19 09:36:56 | streamnative/flink | https://api.github.com/repos/streamnative/flink | closed | [SQL Connector] Consumer cannot consume from Pulsar topics written by SQL connector with avro schema | compute/data-processing | in writeToExplicitTableAndReadWithJsonSchemaUsingPulsarConsumer test case, we have a

```

org.apache.pulsar.client.api.PulsarClientException$IncompatibleSchemaException: Failed to subscribe whAmv with 1 partitions

{"errorMsg":"Topic does not have schema to check","reqId":2453711855417924595, "remote":"localhost/127.0.... | 1.0 | [SQL Connector] Consumer cannot consume from Pulsar topics written by SQL connector with avro schema - in writeToExplicitTableAndReadWithJsonSchemaUsingPulsarConsumer test case, we have a

```

org.apache.pulsar.client.api.PulsarClientException$IncompatibleSchemaException: Failed to subscribe whAmv with 1 partitions

{... | process | consumer cannot consume from pulsar topics written by sql connector with avro schema in writetoexplicittableandreadwithjsonschemausingpulsarconsumer test case we have a org apache pulsar client api pulsarclientexception incompatibleschemaexception failed to subscribe whamv with partitions errormsg to... | 1 |

12,351 | 9,633,772,766 | IssuesEvent | 2019-05-15 19:30:48 | aws/aws-cli | https://api.github.com/repos/aws/aws-cli | closed | aws rds-data execute-sql fails, urging to use ExecuteStatement API | closing-soon-if-no-response response-requested service-api | I first got this error when I was using AWS SDK in Node, but then I tried replicating it with the AWS CLI.

I got the same result:

```

An error occurred (BadRequestException) when calling the ExecuteSql operation: This API is deprecated and not available. Use ExecuteStatement API instead

```

I know **Data API*... | 1.0 | aws rds-data execute-sql fails, urging to use ExecuteStatement API - I first got this error when I was using AWS SDK in Node, but then I tried replicating it with the AWS CLI.

I got the same result:

```

An error occurred (BadRequestException) when calling the ExecuteSql operation: This API is deprecated and not... | non_process | aws rds data execute sql fails urging to use executestatement api i first got this error when i was using aws sdk in node but then i tried replicating it with the aws cli i got the same result an error occurred badrequestexception when calling the executesql operation this api is deprecated and not... | 0 |

250,706 | 27,111,222,334 | IssuesEvent | 2023-02-15 15:26:35 | EliyaC/NodeGoat | https://api.github.com/repos/EliyaC/NodeGoat | closed | WS-2018-0103 (Medium) detected in stringstream-0.0.5.tgz - autoclosed | security vulnerability | ## WS-2018-0103 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>stringstream-0.0.5.tgz</b></p></summary>

<p>Encode and decode streams into string streams</p>

<p>Library home page: <a... | True | WS-2018-0103 (Medium) detected in stringstream-0.0.5.tgz - autoclosed - ## WS-2018-0103 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>stringstream-0.0.5.tgz</b></p></summary>

<p>En... | non_process | ws medium detected in stringstream tgz autoclosed ws medium severity vulnerability vulnerable library stringstream tgz encode and decode streams into string streams library home page a href path to dependency file package json path to vulnerable library node modu... | 0 |

16,648 | 21,712,325,087 | IssuesEvent | 2022-05-10 14:48:24 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | dbgenerated function cause Null constraint error in MySQL | bug/2-confirmed kind/bug process/candidate topic: mysql team/client topic: dbgenerated topic: uuid size/s | ### Bug description

Some dbgenerated functions work and others produce errors.

The error is not on the database side, but on Prisma Client side.

### How to reproduce

✅ no error:

```prisma

model A {

id Bytes @id @default(dbgenerated("(uuid_to_bin(uuid()))")) @db.Binary(16)

}

```

❌ error:

```prisma

... | 1.0 | dbgenerated function cause Null constraint error in MySQL - ### Bug description

Some dbgenerated functions work and others produce errors.

The error is not on the database side, but on Prisma Client side.

### How to reproduce

✅ no error:

```prisma

model A {

id Bytes @id @default(dbgenerated("(uuid_to_bin... | process | dbgenerated function cause null constraint error in mysql bug description some dbgenerated functions work and others produce errors the error is not on the database side but on prisma client side how to reproduce ✅ no error prisma model a id bytes id default dbgenerated uuid to bin... | 1 |

20,490 | 27,146,979,126 | IssuesEvent | 2023-02-16 20:49:08 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Docs on how to reference output variables from a job which has a matrix strategy | doc-bug Pri1 azure-devops-pipelines/svc azure-devops-pipelines-process/subsvc | In the "Use output variables from tasks" section, you could potentially add information about how to reference jobs that have a strategy matrix associated with them. What happens to their job names? You do you reference them in the `dependencies.JOB...` line?

I currently have a job called "Build" with matrix entrie... | 1.0 | Docs on how to reference output variables from a job which has a matrix strategy - In the "Use output variables from tasks" section, you could potentially add information about how to reference jobs that have a strategy matrix associated with them. What happens to their job names? You do you reference them in the `depe... | process | docs on how to reference output variables from a job which has a matrix strategy in the use output variables from tasks section you could potentially add information about how to reference jobs that have a strategy matrix associated with them what happens to their job names you do you reference them in the depe... | 1 |

21,465 | 29,501,363,060 | IssuesEvent | 2023-06-02 22:18:28 | mrdoob/three.js | https://api.github.com/repos/mrdoob/three.js | closed | CopyShader opacity does not work as expected | Suggestion Examples Post-processing | ##### Description of the problem

In examples/js/shaders/CopyShader.js, there is an issue with the fragment shader:

```

"void main() {",

" vec4 texel = texture2D( tDiffuse, vUv );",

" gl_FragColor = opacity * texel;",

"}"

```

Because `texel` is multiplied by `opacity` directly, all... | 1.0 | CopyShader opacity does not work as expected - ##### Description of the problem

In examples/js/shaders/CopyShader.js, there is an issue with the fragment shader:

```

"void main() {",

" vec4 texel = texture2D( tDiffuse, vUv );",

" gl_FragColor = opacity * texel;",

"}"

```

Because `... | process | copyshader opacity does not work as expected description of the problem in examples js shaders copyshader js there is an issue with the fragment shader void main texel tdiffuse vuv gl fragcolor opacity texel because texel is m... | 1 |

98,358 | 11,071,886,691 | IssuesEvent | 2019-12-12 09:12:03 | beeping-io/beeping | https://api.github.com/repos/beeping-io/beeping | closed | Check Review Section | documentation | Explain how to Pull request the changes:

- Commit

- Push

- Pull request | 1.0 | Check Review Section - Explain how to Pull request the changes:

- Commit

- Push

- Pull request | non_process | check review section explain how to pull request the changes commit push pull request | 0 |

41,753 | 10,591,932,794 | IssuesEvent | 2019-10-09 12:03:21 | vector-im/riot-web | https://api.github.com/repos/vector-im/riot-web | opened | Incorrect warnings on registration with no IS configured | bug defect phase:2 privacy privacy-sprint | Outdated warning text in the registration flow saying you can't reset password.

TODO: Add screenshot | 1.0 | Incorrect warnings on registration with no IS configured - Outdated warning text in the registration flow saying you can't reset password.

TODO: Add screenshot | non_process | incorrect warnings on registration with no is configured outdated warning text in the registration flow saying you can t reset password todo add screenshot | 0 |

21,939 | 30,446,798,942 | IssuesEvent | 2023-07-15 19:28:36 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | pyutils 0.0.1b2 has 2 GuardDog issues | guarddog typosquatting silent-process-execution | https://pypi.org/project/pyutils

https://inspector.pypi.io/project/pyutils

```{

"dependency": "pyutils",

"version": "0.0.1b2",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names, and might be a typosquatting attempt: ... | 1.0 | pyutils 0.0.1b2 has 2 GuardDog issues - https://pypi.org/project/pyutils

https://inspector.pypi.io/project/pyutils

```{

"dependency": "pyutils",

"version": "0.0.1b2",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names... | process | pyutils has guarddog issues dependency pyutils version result issues errors results typosquatting this package closely ressembles the following package names and might be a typosquatting attempt python utils pytils silen... | 1 |

739 | 3,214,324,979 | IssuesEvent | 2015-10-07 00:50:41 | broadinstitute/hellbender | https://api.github.com/repos/broadinstitute/hellbender | closed | Profile and optimize the ReadsPreprocessingPipeline | Dataflow DataflowPreprocessingPipeline profiling | Should probably be started only after the tests in https://github.com/broadinstitute/hellbender/issues/695 are in place. | 1.0 | Profile and optimize the ReadsPreprocessingPipeline - Should probably be started only after the tests in https://github.com/broadinstitute/hellbender/issues/695 are in place. | process | profile and optimize the readspreprocessingpipeline should probably be started only after the tests in are in place | 1 |

487,213 | 14,020,742,995 | IssuesEvent | 2020-10-29 20:09:20 | AY2021S1-CS2103T-W16-3/tp | https://api.github.com/repos/AY2021S1-CS2103T-W16-3/tp | closed | Disable scrolling of tabs by arrow keys | priority.medium :2nd_place_medal: type.bug :bug: | In order to get the user guide tab to be right aligned, its behaviour had to be modified. This does not work too well when scrolling tabs with arrow keys as the user guide tab is disabled when it is not selected. As such, the scrolling never reaches the user guide tab. | 1.0 | Disable scrolling of tabs by arrow keys - In order to get the user guide tab to be right aligned, its behaviour had to be modified. This does not work too well when scrolling tabs with arrow keys as the user guide tab is disabled when it is not selected. As such, the scrolling never reaches the user guide tab. | non_process | disable scrolling of tabs by arrow keys in order to get the user guide tab to be right aligned its behaviour had to be modified this does not work too well when scrolling tabs with arrow keys as the user guide tab is disabled when it is not selected as such the scrolling never reaches the user guide tab | 0 |

20,448 | 27,106,635,008 | IssuesEvent | 2023-02-15 12:36:26 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | Obsoletion: abortive mitotic cell cycle GO:0033277 | cell cycle and DNA processes obsoletion in progress ready | Please taxon restrict

abortive mitotic cell cycle GO:0033277

There are only 2 EXP annotation based on:

http://europepmc.org/article/MED/23509158

entitled

"Megakaryocyte-specific deletion of the protein-tyrosine phosphatases Shp1 and Shp2 causes abnormal megakaryocyte development, platelet production, and func... | 1.0 | Obsoletion: abortive mitotic cell cycle GO:0033277 - Please taxon restrict

abortive mitotic cell cycle GO:0033277

There are only 2 EXP annotation based on:

http://europepmc.org/article/MED/23509158

entitled