Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

73,934 | 8,953,797,155 | IssuesEvent | 2019-01-25 20:36:09 | phetsims/energy-forms-and-changes | https://api.github.com/repos/phetsims/energy-forms-and-changes | closed | Behavior of fan on low energy rate transfer | design:general sim:legacy-bug type:question | I was just playing with the fan and noticed that when the energy rate of transfer is very low it sort of stops, then starts, then stops, as the actual energy chunks arrive. The wheel in constrast moves at a constant, but slower rate. I think it would be more physically accurate to make the fan more like the wheel -- a ... | 1.0 | Behavior of fan on low energy rate transfer - I was just playing with the fan and noticed that when the energy rate of transfer is very low it sort of stops, then starts, then stops, as the actual energy chunks arrive. The wheel in constrast moves at a constant, but slower rate. I think it would be more physically accu... | non_process | behavior of fan on low energy rate transfer i was just playing with the fan and noticed that when the energy rate of transfer is very low it sort of stops then starts then stops as the actual energy chunks arrive the wheel in constrast moves at a constant but slower rate i think it would be more physically accu... | 0 |

130,424 | 5,115,770,653 | IssuesEvent | 2017-01-06 22:58:52 | phetsims/chipper | https://api.github.com/repos/phetsims/chipper | closed | Add an eslint rule to check for uses of native constructors | priority:4-low | `image` and `text` are native to HTML5, and both have constructors that match Image and Text nodes of scenery. Because of this, it is easy to forget to include Image and Text in modules, and the resulting error is difficult to recognize if one is not familiar with it.

@andrewadare suggested that we include a linting ... | 1.0 | Add an eslint rule to check for uses of native constructors - `image` and `text` are native to HTML5, and both have constructors that match Image and Text nodes of scenery. Because of this, it is easy to forget to include Image and Text in modules, and the resulting error is difficult to recognize if one is not famili... | non_process | add an eslint rule to check for uses of native constructors image and text are native to and both have constructors that match image and text nodes of scenery because of this it is easy to forget to include image and text in modules and the resulting error is difficult to recognize if one is not familiar w... | 0 |

1,215 | 2,534,698,414 | IssuesEvent | 2015-01-25 07:27:53 | driftyco/ionic | https://api.github.com/repos/driftyco/ionic | closed | Clear input X | css design feature | Hi Ioniconians,

As always love the work you guys do.

I had a fanastic and simple feature request. Can you extend <input> to include a "clear-icon" attribute to add a little x in the input field that when tapped clears the input?

Very simple, I even might be able to build it myself. But I thought if you guys ar... | 1.0 | Clear input X - Hi Ioniconians,

As always love the work you guys do.

I had a fanastic and simple feature request. Can you extend <input> to include a "clear-icon" attribute to add a little x in the input field that when tapped clears the input?

Very simple, I even might be able to build it myself. But I thoug... | non_process | clear input x hi ioniconians as always love the work you guys do i had a fanastic and simple feature request can you extend to include a clear icon attribute to add a little x in the input field that when tapped clears the input very simple i even might be able to build it myself but i thought if ... | 0 |

21,770 | 30,287,428,262 | IssuesEvent | 2023-07-08 21:34:06 | winter-telescope/mirar | https://api.github.com/repos/winter-telescope/mirar | closed | Nan/zero in swarp | enhancement nearfuture processors | To quote @virajkaram:

"Swarp sets sets masked pixels to zero when it resamples, but the other processors only masks nans. This affects the subtractions"

Right now we mask zeros when loading raw images. Maybe we should try making this self-contained/do such things in the swarp processor. | 1.0 | Nan/zero in swarp - To quote @virajkaram:

"Swarp sets sets masked pixels to zero when it resamples, but the other processors only masks nans. This affects the subtractions"

Right now we mask zeros when loading raw images. Maybe we should try making this self-contained/do such things in the swarp processor. | process | nan zero in swarp to quote virajkaram swarp sets sets masked pixels to zero when it resamples but the other processors only masks nans this affects the subtractions right now we mask zeros when loading raw images maybe we should try making this self contained do such things in the swarp processor | 1 |

511 | 2,981,826,050 | IssuesEvent | 2015-07-17 06:02:38 | e-government-ua/i | https://api.github.com/repos/e-government-ua/i | closed | На бэке централа (wf-central), доработать сервис getDocumentFile, чтоб доступ мог получить не только хозяин документа, но и тот, кому так-же он был открыт | hi priority In process of testing test version | Кроме проверки на соответствие nID_Subject

Реализовать альтернативную проверку через логику сервиса:

getDocumentAccessByHandler (взять от туда образец кода)

и переданные опциональные параметры:

sCode_DocumentAccess

nID_DocumentOperator_SubjectOrgan

nID_DocumentType

sPass

- Если вызов не вернул эксепшина, то т... | 1.0 | На бэке централа (wf-central), доработать сервис getDocumentFile, чтоб доступ мог получить не только хозяин документа, но и тот, кому так-же он был открыт - Кроме проверки на соответствие nID_Subject

Реализовать альтернативную проверку через логику сервиса:

getDocumentAccessByHandler (взять от туда образец кода)

и п... | process | на бэке централа wf central доработать сервис getdocumentfile чтоб доступ мог получить не только хозяин документа но и тот кому так же он был открыт кроме проверки на соответствие nid subject реализовать альтернативную проверку через логику сервиса getdocumentaccessbyhandler взять от туда образец кода и п... | 1 |

11,618 | 14,481,529,463 | IssuesEvent | 2020-12-10 12:46:07 | e4exp/paper_manager_abstract | https://api.github.com/repos/e4exp/paper_manager_abstract | opened | Structure-Aware Procedural Text Generation from an Image Sequence | 2020 Natural Language Processing Recipe Generation Tree Structure _read_later | * 2020

* http://www.lsta.media.kyoto-u.ac.jp/mori/research/public/nishimura-IEEE20.pdf

* 解説 : https://medium.com/sinicx/ieee-access%E3%81%AB%E8%AB%96%E6%96%87%E3%81%8C%E6%8E%A1%E9%8C%B2%E3%81%95%E3%82%8C%E3%81%BE%E3%81%97%E3%81%9F-6fb4f6e35384

素材を組み合わせて新たな価値を生み出すことは、私たちの社会にとって重要な活動です。

手続き文は、日常の料理から工業製品の製造に至るまで、その... | 1.0 | Structure-Aware Procedural Text Generation from an Image Sequence - * 2020

* http://www.lsta.media.kyoto-u.ac.jp/mori/research/public/nishimura-IEEE20.pdf

* 解説 : https://medium.com/sinicx/ieee-access%E3%81%AB%E8%AB%96%E6%96%87%E3%81%8C%E6%8E%A1%E9%8C%B2%E3%81%95%E3%82%8C%E3%81%BE%E3%81%97%E3%81%9F-6fb4f6e35384

素材を... | process | structure aware procedural text generation from an image sequence 解説 素材を組み合わせて新たな価値を生み出すことは、私たちの社会にとって重要な活動です。 手続き文は、日常の料理から工業製品の製造に至るまで、その方法を記述したものであり、読者はこれらの活動の手順を再現することができます。 自然言語理解のための先行研究でも指摘されているように、手続き的テキストの重要な性質の一つは、材料の結合操作である文脈依存性であり、グラフや木構造で表現することが可能である。 本論文では、このような文脈依存性を明示的に導入することが、画像... | 1 |

19,213 | 25,347,066,660 | IssuesEvent | 2022-11-19 10:21:00 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | GO:0007035 ! vacuolar acidification | Other term-related request low priority AspGD-CGD parent relationship query cellular processes | compare:

[Term]

id: GO:0007035

name: vacuolar acidification

namespace: biological_process

def: "Any process that reduces the pH of the vacuole, measured by the concentration of the hydrogen ion." [GOC:jid]

is_a: GO:0051452 ! intracellular pH reduction

intersection_of: GO:0045851 ! pH reduction

intersection_of: occurs_... | 1.0 | GO:0007035 ! vacuolar acidification - compare:

[Term]

id: GO:0007035

name: vacuolar acidification

namespace: biological_process

def: "Any process that reduces the pH of the vacuole, measured by the concentration of the hydrogen ion." [GOC:jid]

is_a: GO:0051452 ! intracellular pH reduction

intersection_of: GO:0045851 !... | process | go vacuolar acidification compare id go name vacuolar acidification namespace biological process def any process that reduces the ph of the vacuole measured by the concentration of the hydrogen ion is a go intracellular ph reduction intersection of go ph reduction intersection of occurs... | 1 |

525,453 | 15,253,739,017 | IssuesEvent | 2021-02-20 09:02:16 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | Need to remove un-used analytics usage | APIM-ANALYTICS Priority/Normal Type/Improvement | - Need to remove SP based analytics usage

- this includes handlers and other attributes added to the synapse configs | 1.0 | Need to remove un-used analytics usage - - Need to remove SP based analytics usage

- this includes handlers and other attributes added to the synapse configs | non_process | need to remove un used analytics usage need to remove sp based analytics usage this includes handlers and other attributes added to the synapse configs | 0 |

6,361 | 9,416,160,818 | IssuesEvent | 2019-04-10 14:08:50 | AmpersandTarski/Ampersand | https://api.github.com/repos/AmpersandTarski/Ampersand | closed | stack install fails on MacBook | OSX priority:normal software process | #### Version of ampersand that was used

This problem occurred on commit c0239e76195cbf7692b5bb7e15dbcdb7aafd60cf, on the development branch of the github Ampersand repository.

#### What I expected

Since the purpose of stack is to build in platform independent ways, I expected "stack install" to build Ampersand fo... | 1.0 | stack install fails on MacBook - #### Version of ampersand that was used

This problem occurred on commit c0239e76195cbf7692b5bb7e15dbcdb7aafd60cf, on the development branch of the github Ampersand repository.

#### What I expected

Since the purpose of stack is to build in platform independent ways, I expected "sta... | process | stack install fails on macbook version of ampersand that was used this problem occurred on commit on the development branch of the github ampersand repository what i expected since the purpose of stack is to build in platform independent ways i expected stack install to build ampersand for me ... | 1 |

10,580 | 13,389,473,781 | IssuesEvent | 2020-09-02 18:56:34 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Re-Introspection does not output list of fields that `cuid()` was applied to | 2.5 bug/2-confirmed kind/bug process/candidate team/typescript topic: re-introspection |

_Originally posted by @alan345 in https://github.com/prisma/upgrade/issues/59#issuecomment-675741019_ | 1.0 | Re-Introspection does not output list of fields that `cuid()` was applied to -

_Originally posted by @alan345 in https://github.com/prisma/upgrade/issues/59#issuecomment-675741019_ | process | re introspection does not output list of fields that cuid was applied to originally posted by in | 1 |

18,223 | 24,284,461,192 | IssuesEvent | 2022-09-28 20:33:47 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | opened | Mono Bionic arm64 CI Failure: System.Diagnostics.Tests.ProcessStartInfoTests, not a valid Base-64 string | arch-arm64 area-System.Diagnostics.Process area-CoreLib-mono test-failure | First saw this in a release/7.0 backport PR: https://github.com/dotnet/runtime/pull/76052

Please help determine if a fix needs to get backported to 7.0.

- Queue: Build Linux_bionic arm64 Release AllSubsets_Mono

- Tests: System.Diagnostics.Process.Tests

- Job results: https://dev.azure.com/dnceng-public/public/_... | 1.0 | Mono Bionic arm64 CI Failure: System.Diagnostics.Tests.ProcessStartInfoTests, not a valid Base-64 string - First saw this in a release/7.0 backport PR: https://github.com/dotnet/runtime/pull/76052

Please help determine if a fix needs to get backported to 7.0.

- Queue: Build Linux_bionic arm64 Release AllSubsets_M... | process | mono bionic ci failure system diagnostics tests processstartinfotests not a valid base string first saw this in a release backport pr please help determine if a fix needs to get backported to queue build linux bionic release allsubsets mono tests system diagnostics process tests jo... | 1 |

9,352 | 12,365,680,822 | IssuesEvent | 2020-05-18 09:12:59 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Prisma Client | process/candidate | ## Bug description

Originally found by @rmatei in https://github.com/prisma/studio/issues/416

## How to reproduce

Use this schema:

```

model Area {

id String @default(cuid()) @id

name String

blocks Block[]

score Float?

createdAt DateTime @default(now())

updatedAt Date... | 1.0 | Prisma Client - ## Bug description

Originally found by @rmatei in https://github.com/prisma/studio/issues/416

## How to reproduce

Use this schema:

```

model Area {

id String @default(cuid()) @id

name String

blocks Block[]

score Float?

createdAt DateTime @default(now())

... | process | prisma client bug description originally found by rmatei in how to reproduce use this schema model area id string default cuid id name string blocks block score float createdat datetime default now updatedat datetime default now updat... | 1 |

235,889 | 25,962,072,546 | IssuesEvent | 2022-12-19 01:03:29 | michaeldotson/auth-app | https://api.github.com/repos/michaeldotson/auth-app | opened | CVE-2022-23516 (High) detected in loofah-2.2.3.gem | security vulnerability | ## CVE-2022-23516 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>loofah-2.2.3.gem</b></p></summary>

<p>Loofah is a general library for manipulating and transforming HTML/XML

documents... | True | CVE-2022-23516 (High) detected in loofah-2.2.3.gem - ## CVE-2022-23516 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>loofah-2.2.3.gem</b></p></summary>

<p>Loofah is a general library... | non_process | cve high detected in loofah gem cve high severity vulnerability vulnerable library loofah gem loofah is a general library for manipulating and transforming html xml documents and fragments it s built on top of nokogiri and so it s fast and has a nice api loofah excels at h... | 0 |

240,880 | 20,099,339,247 | IssuesEvent | 2022-02-07 00:38:20 | ADA-ANU/Request-Access-Console | https://api.github.com/repos/ADA-ANU/Request-Access-Console | opened | UAT Case 8 - Requester submits request for a dataset and then goes back to request access to a sibling dataset but as part of a new request | User Acceptance Test Case | Pre-requisites: UAT Case 2 has been completed with ANU Poll 35 (for example)

ex. User requests access to ANU Poll 35 (https://dataverse5-test.ada.edu.au/dataset.xhtml?persistentId=doi:10.26193/ZFGFNE)

Steps.

1. User navigates in Dataverse to a 'sibling dataset' of ANU Poll 35 (ex. ANU Poll 2017: Housing https://d... | 1.0 | UAT Case 8 - Requester submits request for a dataset and then goes back to request access to a sibling dataset but as part of a new request - Pre-requisites: UAT Case 2 has been completed with ANU Poll 35 (for example)

ex. User requests access to ANU Poll 35 (https://dataverse5-test.ada.edu.au/dataset.xhtml?persistent... | non_process | uat case requester submits request for a dataset and then goes back to request access to a sibling dataset but as part of a new request pre requisites uat case has been completed with anu poll for example ex user requests access to anu poll steps user navigates in dataverse to a sibling da... | 0 |

4,108 | 2,715,314,417 | IssuesEvent | 2015-04-10 12:15:04 | macaw-movies/macaw-movies | https://api.github.com/repos/macaw-movies/macaw-movies | closed | Orphans slots not triggered when deleting last people/tag | DatabaseManager Design IMPORTANT | since 85011f26a8b1fcff6df16d6ce4dfb32ac15daf6d I realized that the `askForOrphan***Deletion` slots are not triggered anymore...

Is it due to the singleton design or the cascades ?

To be investigated. | 1.0 | Orphans slots not triggered when deleting last people/tag - since 85011f26a8b1fcff6df16d6ce4dfb32ac15daf6d I realized that the `askForOrphan***Deletion` slots are not triggered anymore...

Is it due to the singleton design or the cascades ?

To be investigated. | non_process | orphans slots not triggered when deleting last people tag since i realized that the askfororphan deletion slots are not triggered anymore is it due to the singleton design or the cascades to be investigated | 0 |

9,816 | 12,825,917,395 | IssuesEvent | 2020-07-06 15:41:27 | deepset-ai/haystack | https://api.github.com/repos/deepset-ai/haystack | closed | PDF Support | preprocessing question | Is there any way to directly work with PDF documents?

For now, every time I need to work with PDF files, I have to convert it into a text file and then use it.

```

import PyPDF2

article=[]

pdfFileObj = open('data/article/doc.pdf', 'rb')

pdfReader = PyPDF2.PdfFileReader(pdfFileObj)

for page in range(pdfReader... | 1.0 | PDF Support - Is there any way to directly work with PDF documents?

For now, every time I need to work with PDF files, I have to convert it into a text file and then use it.

```

import PyPDF2

article=[]

pdfFileObj = open('data/article/doc.pdf', 'rb')

pdfReader = PyPDF2.PdfFileReader(pdfFileObj)

for page in r... | process | pdf support is there any way to directly work with pdf documents for now every time i need to work with pdf files i have to convert it into a text file and then use it import article pdffileobj open data article doc pdf rb pdfreader pdffilereader pdffileobj for page in range pdfrea... | 1 |

2,958 | 5,955,837,953 | IssuesEvent | 2017-05-28 11:00:47 | eranhd/Anti-Drug-Jerusalem | https://api.github.com/repos/eranhd/Anti-Drug-Jerusalem | closed | סיום עבודה על נקודות קרות וחמות ושמירת מיקומם ב-database | in process | צריך לסיים את העבודה הקשורה לנקודות החמות והקרות במהלך המסלול | 1.0 | סיום עבודה על נקודות קרות וחמות ושמירת מיקומם ב-database - צריך לסיים את העבודה הקשורה לנקודות החמות והקרות במהלך המסלול | process | סיום עבודה על נקודות קרות וחמות ושמירת מיקומם ב database צריך לסיים את העבודה הקשורה לנקודות החמות והקרות במהלך המסלול | 1 |

641,311 | 20,823,790,961 | IssuesEvent | 2022-03-18 18:10:44 | rathena/rathena | https://api.github.com/repos/rathena/rathena | closed | 4th Class Windhawk can attack normally when riding the wolf? | status:confirmed component:core priority:low mode:renewal type:bug | <!-- NOTE: Anything within these brackets will be hidden on the preview of the Issue. -->

* **rAthena Hash**:

latest

<!-- Please specify the rAthena [GitHub hash](https://help.github.com/articles/autolinked-references-and-urls/#commit-shas) on which you encountered this issue.

How to get your GitHub Hash:

1. c... | 1.0 | 4th Class Windhawk can attack normally when riding the wolf? - <!-- NOTE: Anything within these brackets will be hidden on the preview of the Issue. -->

* **rAthena Hash**:

latest

<!-- Please specify the rAthena [GitHub hash](https://help.github.com/articles/autolinked-references-and-urls/#commit-shas) on which y... | non_process | class windhawk can attack normally when riding the wolf rathena hash latest please specify the rathena on which you encountered this issue how to get your github hash cd your rathena directory git rev parse short head copy the resulting hash client date ... | 0 |

467,680 | 13,452,608,656 | IssuesEvent | 2020-09-08 22:35:55 | googleapis/java-bigtable | https://api.github.com/repos/googleapis/java-bigtable | closed | RowCells are not actually serializeable | api: bigtable priority: p2 type: bug | RowCells might contain UnmodifiableLazyStringList for values, which are not actually serializable.

We need to add custom hooks to RowCell to fix serialization.

The primary issue is that labels are a UnmodifiableLazyStringList, which is not serializeable | 1.0 | RowCells are not actually serializeable - RowCells might contain UnmodifiableLazyStringList for values, which are not actually serializable.

We need to add custom hooks to RowCell to fix serialization.

The primary issue is that labels are a UnmodifiableLazyStringList, which is not serializeable | non_process | rowcells are not actually serializeable rowcells might contain unmodifiablelazystringlist for values which are not actually serializable we need to add custom hooks to rowcell to fix serialization the primary issue is that labels are a unmodifiablelazystringlist which is not serializeable | 0 |

8,067 | 11,244,094,156 | IssuesEvent | 2020-01-10 05:55:35 | towavephone/GatsbyBlog | https://api.github.com/repos/towavephone/GatsbyBlog | opened | CSS世界强大文本处理能力 | /CSS-world-text-processing/ Gitalk | /CSS-world-text-processing/line-height 的另外一个朋友 font-size 第 5 章介绍过 line-height 和 vertical-align 的好朋友关系,实际上,font-size 也和 line-height 是好朋友,同样也无处不在,并且纸面上 line-height… | 1.0 | CSS世界强大文本处理能力 - /CSS-world-text-processing/line-height 的另外一个朋友 font-size 第 5 章介绍过 line-height 和 vertical-align 的好朋友关系,实际上,font-size 也和 line-height 是好朋友,同样也无处不在,并且纸面上 line-height… | process | css世界强大文本处理能力 css world text processing line height 的另外一个朋友 font size 第 章介绍过 line height 和 vertical align 的好朋友关系,实际上,font size 也和 line height 是好朋友,同样也无处不在,并且纸面上 line height… | 1 |

16,657 | 21,726,709,025 | IssuesEvent | 2022-05-11 08:20:42 | 2i2c-org/infrastructure | https://api.github.com/repos/2i2c-org/infrastructure | closed | Create a hub operation and support workflow | type: enhancement :label: team-process | # Summary

In https://github.com/2i2c-org/team-compass/pull/73 we are adding some new team workflow structure so that we can better keep track of our priorities and daily tasks.

However, this workflow is focused more around "development" of new things, rather than operation and maintenance of pre-existing things. ... | 1.0 | Create a hub operation and support workflow - # Summary

In https://github.com/2i2c-org/team-compass/pull/73 we are adding some new team workflow structure so that we can better keep track of our priorities and daily tasks.

However, this workflow is focused more around "development" of new things, rather than ope... | process | create a hub operation and support workflow summary in we are adding some new team workflow structure so that we can better keep track of our priorities and daily tasks however this workflow is focused more around development of new things rather than operation and maintenance of pre existing things ... | 1 |

185,917 | 15,037,240,018 | IssuesEvent | 2021-02-02 16:07:29 | hoangvvo/benzene | https://api.github.com/repos/hoangvvo/benzene | opened | Add TypeScript docs and fix TypeScript generic and interface errors | bug documentation | `@benzene/core` includes TypeScript generic for typing `TExtra` and `TContext`. We need to add a documentation for it.

Also, it seems that our WebSocket interface does not work as intended. | 1.0 | Add TypeScript docs and fix TypeScript generic and interface errors - `@benzene/core` includes TypeScript generic for typing `TExtra` and `TContext`. We need to add a documentation for it.

Also, it seems that our WebSocket interface does not work as intended. | non_process | add typescript docs and fix typescript generic and interface errors benzene core includes typescript generic for typing textra and tcontext we need to add a documentation for it also it seems that our websocket interface does not work as intended | 0 |

21,137 | 28,106,592,785 | IssuesEvent | 2023-03-31 01:35:17 | polarismesh/polaris | https://api.github.com/repos/polarismesh/polaris | closed | 当对服务起别名时,拉回来的路由规则中的service和namespace仍旧是老的,导致会存在对路由规则处理的错误。 | bug question in processed | 当对服务起别名时,拉回来的路由规则中的service和namespace仍旧是老的。可能会存在对路由规则处理的错误。 | 1.0 | 当对服务起别名时,拉回来的路由规则中的service和namespace仍旧是老的,导致会存在对路由规则处理的错误。 - 当对服务起别名时,拉回来的路由规则中的service和namespace仍旧是老的。可能会存在对路由规则处理的错误。 | process | 当对服务起别名时,拉回来的路由规则中的service和namespace仍旧是老的,导致会存在对路由规则处理的错误。 当对服务起别名时,拉回来的路由规则中的service和namespace仍旧是老的。可能会存在对路由规则处理的错误。 | 1 |

10,111 | 13,044,162,206 | IssuesEvent | 2020-07-29 03:47:30 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `SysDateWithoutFsp` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `SysDateWithoutFsp` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src... | 2.0 | UCP: Migrate scalar function `SysDateWithoutFsp` from TiDB -

## Description

Port the scalar function `SysDateWithoutFsp` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https... | process | ucp migrate scalar function sysdatewithoutfsp from tidb description port the scalar function sysdatewithoutfsp from tidb to coprocessor score mentor s breeswish recommended skills rust programming learning materials already implemented expressions ported from tidb ... | 1 |

24,560 | 12,127,923,108 | IssuesEvent | 2020-04-22 19:34:50 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | opened | VZE | Bug | Table View breaks with invalid date | Impact: 3-Minor Need: 2-Should Have Product: Vision Zero Crash Data System Service: Dev Type: Bug Report Workgroup: VZ | `Error! GraphQL error: invalid input syntax for type date: "Invalid date"`

To reproduce, click on a date field and delete until the field is blank. See screengrab:

In order to fix this i... | 1.0 | VZE | Bug | Table View breaks with invalid date - `Error! GraphQL error: invalid input syntax for type date: "Invalid date"`

To reproduce, click on a date field and delete until the field is blank. See screengrab:

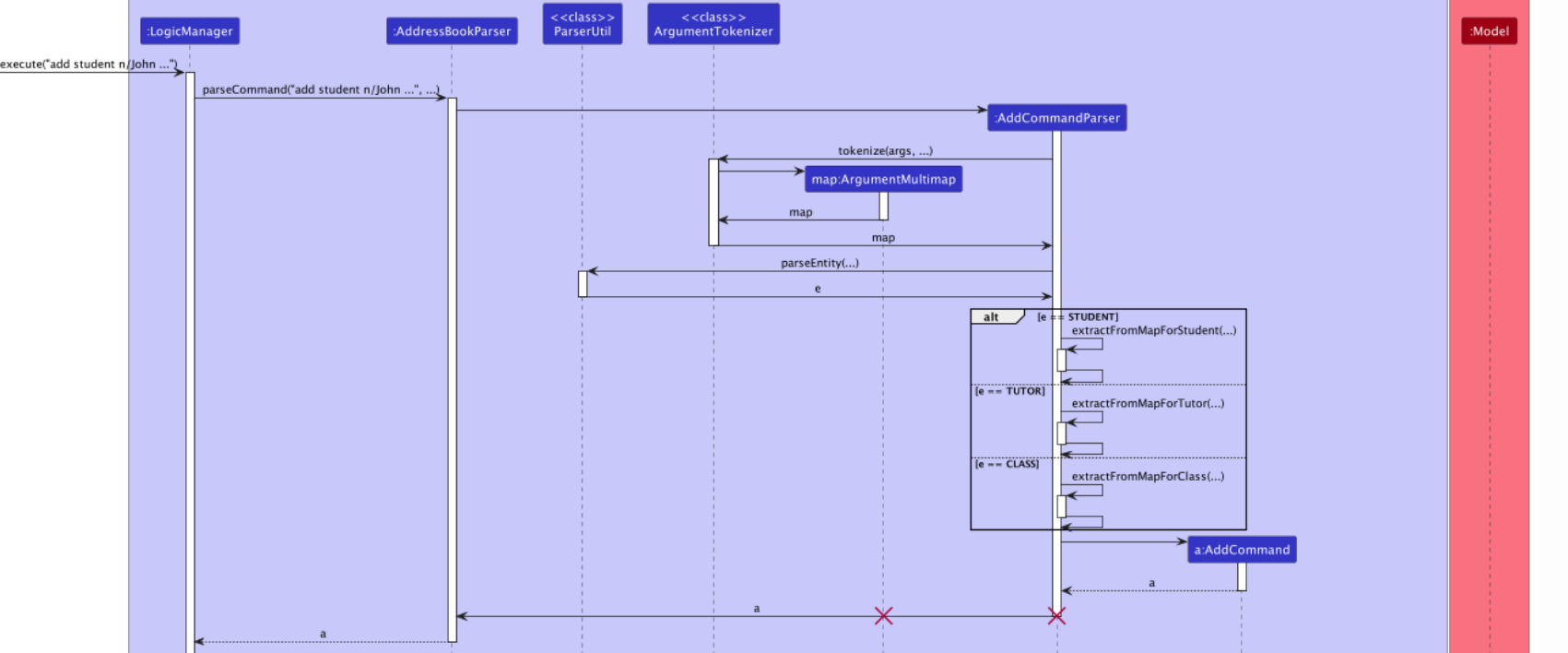

As could be seen here, the return arrows from AddCommandParser should be dashed instead of being a straight line.

<!--session: 1668154291493-0efd108c-1b9d-4f24-95ff-abca5c20b8a9-->

<!--Version: Web v... | 1.0 | Incorrect arrows used in sequence diagrams -

As could be seen here, the return arrows from AddCommandParser should be dashed instead of being a straight line.

<!--session: 1668154291493-0efd108c-1b9d... | non_process | incorrect arrows used in sequence diagrams as could be seen here the return arrows from addcommandparser should be dashed instead of being a straight line | 0 |

252,420 | 21,577,100,096 | IssuesEvent | 2022-05-02 14:46:58 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Test failure: GetAsyncWithRedirect_SetCookieContainer_CorrectCookiesSent | area-System.Net.Http test-run-core | Test type: `System.Net.Http.Functional.Tests.SocketsHttpHandlerTest_Cookies_Http2`

Failures 3/12-9/6 (incl. PRs):

Day | Run | OS

-- | -- | --

7/29 | Official run | Centos.8.Amd64.Open

8/28 | Official run | Debian.10.Amd64.Open

9/4 | Official run | Fedora.34.Amd64.Open

Failure:

```

System.ObjectDisposedEx... | 1.0 | Test failure: GetAsyncWithRedirect_SetCookieContainer_CorrectCookiesSent - Test type: `System.Net.Http.Functional.Tests.SocketsHttpHandlerTest_Cookies_Http2`

Failures 3/12-9/6 (incl. PRs):

Day | Run | OS

-- | -- | --

7/29 | Official run | Centos.8.Amd64.Open

8/28 | Official run | Debian.10.Amd64.Open

9/4 | Of... | non_process | test failure getasyncwithredirect setcookiecontainer correctcookiessent test type system net http functional tests socketshttphandlertest cookies failures incl prs day run os official run centos open official run debian open official run fed... | 0 |

153,916 | 24,208,954,774 | IssuesEvent | 2022-09-25 16:24:42 | rojo-rbx/rojo | https://api.github.com/repos/rojo-rbx/rojo | opened | Rojo Headless Plugin API Proposal | type: enhancement scope: plugin status: needs design size: large | Exposing a headless API into `_G` will be very valuable to the Rojo ecosystem. Users can make companion plugins, and we can even utilize it ourselves for one-time plugin injections for `rojo open`. See issues #321 and #305.

Those are use cases and requests, and we've established there is demand and utility. Now, this ... | 1.0 | Rojo Headless Plugin API Proposal - Exposing a headless API into `_G` will be very valuable to the Rojo ecosystem. Users can make companion plugins, and we can even utilize it ourselves for one-time plugin injections for `rojo open`. See issues #321 and #305.

Those are use cases and requests, and we've established the... | non_process | rojo headless plugin api proposal exposing a headless api into g will be very valuable to the rojo ecosystem users can make companion plugins and we can even utilize it ourselves for one time plugin injections for rojo open see issues and those are use cases and requests and we ve established there i... | 0 |

80,123 | 9,981,749,491 | IssuesEvent | 2019-07-10 08:16:41 | greatnewcls/KNLWKKGOTE62XGFSVAZIMVUH | https://api.github.com/repos/greatnewcls/KNLWKKGOTE62XGFSVAZIMVUH | reopened | OhTBA7UorvsXriCHJ1ogOZIn+EBZunm3OMi+INKHrNB/BJJ7Po50QnBrsVd4c5Mtbi6tQ9kJEUaJcKK3MfNaUtRF6bVVKJD7DzW2uteTdaDX+WdDbs230ekWhxUEN2Ay5lviI/jSRMFXSJrojzRjzNRlvpYAD245YbVSjAZnbnI= | design | XOAOyNWqA53110ic+qsENQ73Dk2nvbvC3eehH+hse81R4M1cUQHqdoQNXBz0Qe5PVRhtwGvN9ZK6jEiG/mp/hYurSCH0pBJ4SBEDxbzXcz+TeLv9GyZmkFWRPV0A61e78NaLxsUXJsDOXm8pDHElRQfTVp6cagKb/46Kmts4XTaWU8by9pOW6UHCr6PBsIyIJzBt6Xm03J64a1hWETGNE8X4z31LvsEtRU9ta/JmU92EoCeQihBwwpi7wEIYK3H7WmL6btRaW4Jyt3hhxe+flIrVSZ5nahEiwGFvQFr6/b2oJvLS9gs2CuR1oMdyK8Sk... | 1.0 | OhTBA7UorvsXriCHJ1ogOZIn+EBZunm3OMi+INKHrNB/BJJ7Po50QnBrsVd4c5Mtbi6tQ9kJEUaJcKK3MfNaUtRF6bVVKJD7DzW2uteTdaDX+WdDbs230ekWhxUEN2Ay5lviI/jSRMFXSJrojzRjzNRlvpYAD245YbVSjAZnbnI= - XOAOyNWqA53110ic+qsENQ73Dk2nvbvC3eehH+hse81R4M1cUQHqdoQNXBz0Qe5PVRhtwGvN9ZK6jEiG/mp/hYurSCH0pBJ4SBEDxbzXcz+TeLv9GyZmkFWRPV0A61e78NaLxsUXJsDOXm8pD... | non_process | inkhrnb mp uygmtbxrbo h wf u jrqkio | 0 |

13,843 | 10,054,777,823 | IssuesEvent | 2019-07-22 03:14:45 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Microsoft Form Recognizer boundingBox values | Pri3 cognitive-services/svc cxp forms-recognizer/subsvc product-question triaged | We are looking to build on the Forms Recognizer platform and was wondering if someone could explain the boundingBox values. For example, could these values be used to programmatically draw a box around the extracted value to show the user where the value was pulled from? Looks like the first value lines up to the x c... | 1.0 | Microsoft Form Recognizer boundingBox values - We are looking to build on the Forms Recognizer platform and was wondering if someone could explain the boundingBox values. For example, could these values be used to programmatically draw a box around the extracted value to show the user where the value was pulled from? ... | non_process | microsoft form recognizer boundingbox values we are looking to build on the forms recognizer platform and was wondering if someone could explain the boundingbox values for example could these values be used to programmatically draw a box around the extracted value to show the user where the value was pulled from ... | 0 |

204,399 | 15,898,077,988 | IssuesEvent | 2021-04-11 23:49:53 | sympy/sympy | https://api.github.com/repos/sympy/sympy | closed | Pass coverage_doctest.py | Documentation Enhancement Valid imported | bc.. This may have been mentioned before, but I'm creating an issue specific for it.

I think there should soon be documentation in the code for most, if not

all, of the classes and functions, even if it is only a single line. This

can be useful for both the generated API documentation, and through

interactive help (i.... | 1.0 | Pass coverage_doctest.py - bc.. This may have been mentioned before, but I'm creating an issue specific for it.

I think there should soon be documentation in the code for most, if not

all, of the classes and functions, even if it is only a single line. This

can be useful for both the generated API documentation, and t... | non_process | pass coverage doctest py bc this may have been mentioned before but i m creating an issue specific for it i think there should soon be documentation in the code for most if not all of the classes and functions even if it is only a single line this can be useful for both the generated api documentation and t... | 0 |

2,273 | 3,369,025,197 | IssuesEvent | 2015-11-23 07:12:38 | owncloud/core | https://api.github.com/repos/owncloud/core | closed | high system load after upgrade to 8.2.1 | bug performance | After upgrading from 8.2.0 to 8.2.1 our server has very high system load, there are many apache processes and sometimes mysql and php as well. The system load is near 100% at all times, and the web interface is very unresponsive.

The upgrade itself finished OK, I just got the usual warnings that some apps had been ... | True | high system load after upgrade to 8.2.1 - After upgrading from 8.2.0 to 8.2.1 our server has very high system load, there are many apache processes and sometimes mysql and php as well. The system load is near 100% at all times, and the web interface is very unresponsive.

The upgrade itself finished OK, I just got t... | non_process | high system load after upgrade to after upgrading from to our server has very high system load there are many apache processes and sometimes mysql and php as well the system load is near at all times and the web interface is very unresponsive the upgrade itself finished ok i just got the... | 0 |

38,719 | 19,523,393,276 | IssuesEvent | 2021-12-30 00:09:30 | facebookexperimental/Recoil | https://api.github.com/repos/facebookexperimental/Recoil | closed | double renders with react-beautiful-dnd | performance | I am familiar with https://github.com/facebookexperimental/Recoil/issues/307 rendering components with recoil twice on initialization.

But I am also getting double renders when I use https://github.com/atlassian/react-beautiful-dnd

Here is my test case [1]

https://github.com/jedierikb/recoil-beautiful-dnd

&... | True | double renders with react-beautiful-dnd - I am familiar with https://github.com/facebookexperimental/Recoil/issues/307 rendering components with recoil twice on initialization.

But I am also getting double renders when I use https://github.com/atlassian/react-beautiful-dnd

Here is my test case [1]

https://githu... | non_process | double renders with react beautiful dnd i am familiar with rendering components with recoil twice on initialization but i am also getting double renders when i use here is my test case here is the line you can toggle to switch between userecoilstate and usestate in the console you wi... | 0 |

726,289 | 24,993,871,453 | IssuesEvent | 2022-11-02 21:27:46 | OpenEnergyDashboard/OED | https://api.github.com/repos/OpenEnergyDashboard/OED | closed | Date ranges and what maps display | t-enhancement p-high-priority | Maps should honor the date ranges selected in the line graphic. This one is only really valuable once the circles are not a simple 4 weeks. Maybe we should consider the average over the time range of the line graph? Maybe some options as in compare for predefined time ranges?

Note the code currently uses the bar val... | 1.0 | Date ranges and what maps display - Maps should honor the date ranges selected in the line graphic. This one is only really valuable once the circles are not a simple 4 weeks. Maybe we should consider the average over the time range of the line graph? Maybe some options as in compare for predefined time ranges?

Note... | non_process | date ranges and what maps display maps should honor the date ranges selected in the line graphic this one is only really valuable once the circles are not a simple weeks maybe we should consider the average over the time range of the line graph maybe some options as in compare for predefined time ranges note... | 0 |

17,313 | 23,137,976,921 | IssuesEvent | 2022-07-28 15:44:06 | scikit-learn/scikit-learn | https://api.github.com/repos/scikit-learn/scikit-learn | closed | Add handle_missing and handle_unknown options to OrdinalEncoder | New Feature module:preprocessing Needs Decision - Close | [category_encoders.ordinal.OrdinalEncoder](http://contrib.scikit-learn.org/category_encoders/ordinal.html) in [scikit-learn-contrib/category_encoders](http://contrib.scikit-learn.org/category_encoders/) has 2 really useful options:

1. `handle_unknown`, options are ‘error’, ‘return_nan’ and ‘value’, defaults to ‘valu... | 1.0 | Add handle_missing and handle_unknown options to OrdinalEncoder - [category_encoders.ordinal.OrdinalEncoder](http://contrib.scikit-learn.org/category_encoders/ordinal.html) in [scikit-learn-contrib/category_encoders](http://contrib.scikit-learn.org/category_encoders/) has 2 really useful options:

1. `handle_unknown`... | process | add handle missing and handle unknown options to ordinalencoder in has really useful options handle unknown options are ‘error’ ‘return nan’ and ‘value’ defaults to ‘value’ which will impute the category handle missing options are ‘error’ ‘return nan’ and ‘value default to ‘value’... | 1 |

1,486 | 4,059,083,344 | IssuesEvent | 2016-05-25 08:14:53 | e-government-ua/iBP | https://api.github.com/repos/e-government-ua/iBP | closed | Дніпропетровська область - Внесення до Державного земельного кадастру відомостей (змін до них) про земельну ділянку | In process of testing in work | Розкрити/створити послугу на наступні міста Дніпропетровської області:

- [ ] Жовті Води

- [x] Марганець

- [ ] Новомосковськ

- [ ] Орджонікідзе

- [ ] Павлоград

- [ ] Першотравенськ

- [ ] Синельникове

- [ ] Тернівка

- [ ] Васильківський р-н

- [ ] Верхньодніпровський р-н

- [ ] Криворізький р-н

- [ ]... | 1.0 | Дніпропетровська область - Внесення до Державного земельного кадастру відомостей (змін до них) про земельну ділянку - Розкрити/створити послугу на наступні міста Дніпропетровської області:

- [ ] Жовті Води

- [x] Марганець

- [ ] Новомосковськ

- [ ] Орджонікідзе

- [ ] Павлоград

- [ ] Першотравенськ

- [ ] Син... | process | дніпропетровська область внесення до державного земельного кадастру відомостей змін до них про земельну ділянку розкрити створити послугу на наступні міста дніпропетровської області жовті води марганець новомосковськ орджонікідзе павлоград першотравенськ синельникове ... | 1 |

184,010 | 14,267,109,353 | IssuesEvent | 2020-11-20 19:53:07 | AlaskaAirlines/WC-Generator | https://api.github.com/repos/AlaskaAirlines/WC-Generator | opened | generator: visual regression testing | Type: Feature Type: Testing help wanted | ## Is your feature request related to a problem? Please describe.

As features are added to each component, we are doing a healthy standard of unit testing, but we are not testing for visual regressions.

## Describe the solution you'd like

What I would like to see is that when we run tests that a visual regres... | 1.0 | generator: visual regression testing - ## Is your feature request related to a problem? Please describe.

As features are added to each component, we are doing a healthy standard of unit testing, but we are not testing for visual regressions.

## Describe the solution you'd like

What I would like to see is that... | non_process | generator visual regression testing is your feature request related to a problem please describe as features are added to each component we are doing a healthy standard of unit testing but we are not testing for visual regressions describe the solution you d like what i would like to see is that... | 0 |

64,580 | 8,745,072,574 | IssuesEvent | 2018-12-13 00:54:50 | flyve-mdm/web-mdm-dashboard | https://api.github.com/repos/flyve-mdm/web-mdm-dashboard | closed | Documentation on the CI fails | bug ci documentation | Hi, @Naylin15

Documentation on the CI fails:

https://circleci.com/gh/flyve-mdm/web-mdm-dashboard/6112?utm_campaign=vcs-integration-link&utm_medium=referral&utm_source=github-build-link

```console

$ yarn jsdoc src -r -d docs -t ./jsdoc_theme

$ /root/flyve/node_modules/.bin/jsdoc src -r -d docs -t ./jsdoc_the... | 1.0 | Documentation on the CI fails - Hi, @Naylin15

Documentation on the CI fails:

https://circleci.com/gh/flyve-mdm/web-mdm-dashboard/6112?utm_campaign=vcs-integration-link&utm_medium=referral&utm_source=github-build-link

```console

$ yarn jsdoc src -r -d docs -t ./jsdoc_theme

$ /root/flyve/node_modules/.bin/jsd... | non_process | documentation on the ci fails hi documentation on the ci fails console yarn jsdoc src r d docs t jsdoc theme root flyve node modules bin jsdoc src r d docs t jsdoc theme | 0 |

31,713 | 13,618,289,813 | IssuesEvent | 2020-09-23 18:19:30 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | The input requirements for a custom Form Recognizer model are the same as the general Form Recognizer input requirements | Pri2 assigned-to-author cognitive-services/svc doc-idea forms-recognizer/subsvc triaged | The input requirements for a custom model are [here](https://docs.microsoft.com/en-us/azure/cognitive-services/form-recognizer/overview#custom-model)

), and as follows:

```

Form Recognizer works on input documents that meet these requirements:

Format must be JPG, PNG, PDF (text or scanned), or TIFF. Text-em... | 1.0 | The input requirements for a custom Form Recognizer model are the same as the general Form Recognizer input requirements - The input requirements for a custom model are [here](https://docs.microsoft.com/en-us/azure/cognitive-services/form-recognizer/overview#custom-model)

), and as follows:

```

Form Recognizer wor... | non_process | the input requirements for a custom form recognizer model are the same as the general form recognizer input requirements the input requirements for a custom model are and as follows form recognizer works on input documents that meet these requirements format must be jpg png pdf text or sca... | 0 |

727,800 | 25,046,850,948 | IssuesEvent | 2022-11-05 11:13:01 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | enabling/disabling snapshot comparison alters | priority: high scope: UI bug: pending | **Describe the bug/issue**

When I turn snapshot comparison on or off, the modules displayed below the histogram jump to a module, _sharpen_, that I do not use, and do not even have in my presets.

**To Reproduce**

1. Open any image.

2. Take a snapshot.

3. Enable snapshot display.

4. Observe the module list cha... | 1.0 | enabling/disabling snapshot comparison alters - **Describe the bug/issue**

When I turn snapshot comparison on or off, the modules displayed below the histogram jump to a module, _sharpen_, that I do not use, and do not even have in my presets.

**To Reproduce**

1. Open any image.

2. Take a snapshot.

3. Enable ... | non_process | enabling disabling snapshot comparison alters describe the bug issue when i turn snapshot comparison on or off the modules displayed below the histogram jump to a module sharpen that i do not use and do not even have in my presets to reproduce open any image take a snapshot enable ... | 0 |

2,145 | 4,996,646,439 | IssuesEvent | 2016-12-09 14:33:24 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Close and exit event triggering on launching child process | child_process question windows | <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: output of `node -v`

P... | 1.0 | Close and exit event triggering on launching child process - <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the tem... | process | close and exit event triggering on launching child process thank you for reporting an issue this issue tracker is for bugs and issues found within node js core if you require more general support please file an issue on our help repo please fill in as much of the template below as you re able ... | 1 |

122,608 | 10,227,579,486 | IssuesEvent | 2019-08-16 21:14:54 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | [UI] It takes long time to show the pipeline config if there are many branches | [zube]: To Test area/pipeline team/ui | UI ticket for https://github.com/rancher/rancher/issues/17231

When users Edit pipeline configs in UI, or view/edit pipeline file by yaml, UI talks to the following APIs to fetch the data:

1. `/v3/project/<id>/pipelines/<id>/branches` to get all branches.

2. `/v3/project/<id>/pipelines/<id>/yaml?branch=<name>` to g... | 1.0 | [UI] It takes long time to show the pipeline config if there are many branches - UI ticket for https://github.com/rancher/rancher/issues/17231

When users Edit pipeline configs in UI, or view/edit pipeline file by yaml, UI talks to the following APIs to fetch the data:

1. `/v3/project/<id>/pipelines/<id>/branches` t... | non_process | it takes long time to show the pipeline config if there are many branches ui ticket for when users edit pipeline configs in ui or view edit pipeline file by yaml ui talks to the following apis to fetch the data project pipelines branches to get all branches project pipelines yaml b... | 0 |

14,554 | 17,672,835,103 | IssuesEvent | 2021-08-23 08:38:01 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | Rescale raster algorithm for Processing (Request in QGIS) | Processing Alg 3.16 | ### Request for documentation

From pull request QGIS/qgis#37671

Author: @alexbruy

QGIS version: 3.16

**Rescale raster algorithm for Processing**

### PR Description:

## Description

Add Rescale raster algorithm to change raster value range preserving shape of the raster's histogram. Useful when rasters from different ... | 1.0 | Rescale raster algorithm for Processing (Request in QGIS) - ### Request for documentation

From pull request QGIS/qgis#37671

Author: @alexbruy

QGIS version: 3.16

**Rescale raster algorithm for Processing**

### PR Description:

## Description

Add Rescale raster algorithm to change raster value range preserving shape of... | process | rescale raster algorithm for processing request in qgis request for documentation from pull request qgis qgis author alexbruy qgis version rescale raster algorithm for processing pr description description add rescale raster algorithm to change raster value range preserving shape of the ... | 1 |

50,284 | 6,077,454,139 | IssuesEvent | 2017-06-16 04:02:44 | Kademi/kademi-dev | https://api.github.com/repos/Kademi/kademi-dev | closed | Editing data series record with incorrect profile causes common message | Ready to Test - Dev Ready to Test QA |

Should be like on creation

BTW, Couldnot locate profile or organisation: vladqas0031 - error ... | 2.0 | Editing data series record with incorrect profile causes common message -

Should be like on creation

, so it could be made via OS-specific traits. | 1.0 | Process disk IO counters for Linux - It seems that there is no way to fetch disk IO counters per process in all platforms supported (`psutil` claims that it is possible for Linux, BSD, Windows and AIX), so it could be made via OS-specific traits. | process | process disk io counters for linux it seems that there is no way to fetch disk io counters per process in all platforms supported psutil claims that it is possible for linux bsd windows and aix so it could be made via os specific traits | 1 |

5,423 | 3,219,122,984 | IssuesEvent | 2015-10-08 07:56:29 | OpenUserJs/OpenUserJS.org | https://api.github.com/repos/OpenUserJs/OpenUserJS.org | closed | Modify `.../scriptStorage.js` in `sendMeta` to trim up .meta.js route text to barebone keys/blocks | CODE enhancement | @sizzlemctwizzle [wrote](/OpenUserJs/OpenUserJS.org/issues/718#issuecomment-135142897):

> Although trimming it down in the future to `@name`, `@namespace`, `@version` should be a goal.

Followup from #718

Let's give some time to migrate any scripts that may need/want to use the JSON route **and** some time to we... | 1.0 | Modify `.../scriptStorage.js` in `sendMeta` to trim up .meta.js route text to barebone keys/blocks - @sizzlemctwizzle [wrote](/OpenUserJs/OpenUserJS.org/issues/718#issuecomment-135142897):

> Although trimming it down in the future to `@name`, `@namespace`, `@version` should be a goal.

Followup from #718

Let's g... | non_process | modify scriptstorage js in sendmeta to trim up meta js route text to barebone keys blocks sizzlemctwizzle openuserjs openuserjs org issues issuecomment although trimming it down in the future to name namespace version should be a goal followup from let s give some time to m... | 0 |

9,118 | 12,195,570,239 | IssuesEvent | 2020-04-29 17:35:39 | pacificclimate/climate-explorer-data-prep | https://api.github.com/repos/pacificclimate/climate-explorer-data-prep | closed | Incorrectly calculated frost free day data | process new data update existing data | Frost free day data (`ffd`) was calculated from frost day (`fdETCCDI`) data via (365 - `fdETCCDI`), which is correct (or at least the approximation we've decided to use for now) for annual data.

Unfortunately, monthly and seasonal data was also calculated this way, and it is _quite_ incorrect. It's not being used f... | 1.0 | Incorrectly calculated frost free day data - Frost free day data (`ffd`) was calculated from frost day (`fdETCCDI`) data via (365 - `fdETCCDI`), which is correct (or at least the approximation we've decided to use for now) for annual data.

Unfortunately, monthly and seasonal data was also calculated this way, and i... | process | incorrectly calculated frost free day data frost free day data ffd was calculated from frost day fdetccdi data via fdetccdi which is correct or at least the approximation we ve decided to use for now for annual data unfortunately monthly and seasonal data was also calculated this way and it ... | 1 |

680,071 | 23,256,498,442 | IssuesEvent | 2022-08-04 09:43:30 | yalla-coop/chiltern-website | https://api.github.com/repos/yalla-coop/chiltern-website | opened | Research tab changes | priority-3 | **Is your feature / client request related to a problem? Please describe.**

A clear and concise description of what the problem is.

1. If we don't upload a report to download, the link to download a report still appears but obviously doesn't go anywhere

2. We can't show the research items in other areas of the s... | 1.0 | Research tab changes - **Is your feature / client request related to a problem? Please describe.**

A clear and concise description of what the problem is.

1. If we don't upload a report to download, the link to download a report still appears but obviously doesn't go anywhere

2. We can't show the research items ... | non_process | research tab changes is your feature client request related to a problem please describe a clear and concise description of what the problem is if we don t upload a report to download the link to download a report still appears but obviously doesn t go anywhere we can t show the research items ... | 0 |

24,709 | 2,672,278,970 | IssuesEvent | 2015-03-24 13:20:55 | FWAJL/FieldWorkAssistantMVC | https://api.github.com/repos/FWAJL/FieldWorkAssistantMVC | closed | Prevent the same PM to log at the same time | priority:very low status:ready to start | When the same PM logs at the same time, it can create inconsistencies in the data. Therefore, we need to prevent that to happen.

Using a log table, we verify that a PM is not currently logged in to authorized the PM to log in.

Tasks to do:

- create table user_log: check with the lead dev for the table definitio... | 1.0 | Prevent the same PM to log at the same time - When the same PM logs at the same time, it can create inconsistencies in the data. Therefore, we need to prevent that to happen.

Using a log table, we verify that a PM is not currently logged in to authorized the PM to log in.

Tasks to do:

- create table user_log: c... | non_process | prevent the same pm to log at the same time when the same pm logs at the same time it can create inconsistencies in the data therefore we need to prevent that to happen using a log table we verify that a pm is not currently logged in to authorized the pm to log in tasks to do create table user log c... | 0 |

88,780 | 15,820,470,536 | IssuesEvent | 2021-04-05 19:01:22 | dmyers87/tika | https://api.github.com/repos/dmyers87/tika | opened | CVE-2020-36184 (Medium) detected in jackson-databind-2.9.9.2.jar | security vulnerability | ## CVE-2020-36184 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.9.2.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core stre... | True | CVE-2020-36184 (Medium) detected in jackson-databind-2.9.9.2.jar - ## CVE-2020-36184 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.9.2.jar</b></p></summary>

<p... | non_process | cve medium detected in jackson databind jar cve medium severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file tika tika parsers pom x... | 0 |

14,294 | 17,266,484,626 | IssuesEvent | 2021-07-22 14:21:28 | googleapis/node-gtoken | https://api.github.com/repos/googleapis/node-gtoken | opened | Move away from /v4 token endpoint to https://oauth2.googleapis.com/token | type: process | > Nit: we're trying to centralize the different token exchange endpoints to 'https://oauth2.googleapis.com/token', even though this is a mock, it might be good for new code to just show that one, unless you think it would be confusing to have different endpoints in the code if elsewhere you're using the one you have he... | 1.0 | Move away from /v4 token endpoint to https://oauth2.googleapis.com/token - > Nit: we're trying to centralize the different token exchange endpoints to 'https://oauth2.googleapis.com/token', even though this is a mock, it might be good for new code to just show that one, unless you think it would be confusing to have di... | process | move away from token endpoint to nit we re trying to centralize the different token exchange endpoints to even though this is a mock it might be good for new code to just show that one unless you think it would be confusing to have different endpoints in the code if elsewhere you re using the one you hav... | 1 |

200,365 | 15,797,903,122 | IssuesEvent | 2021-04-02 17:37:25 | BASIN-3D/basin3d | https://api.github.com/repos/BASIN-3D/basin3d | opened | Setup Github Pages | documentation | Setup Github Pages to host Sphinx documentation.

- Create new branch `gh-pages`

- Generate documentation in `gh-pages`

- Point generated documentation to `gh-pages` and appropriate folder (`/root`?)

- Make sure to create empty .nojekyll file

Look at [NGEET](https://github.com/NGEET/ngt-archive/tree/gh-pages) f... | 1.0 | Setup Github Pages - Setup Github Pages to host Sphinx documentation.

- Create new branch `gh-pages`

- Generate documentation in `gh-pages`

- Point generated documentation to `gh-pages` and appropriate folder (`/root`?)

- Make sure to create empty .nojekyll file

Look at [NGEET](https://github.com/NGEET/ngt-arc... | non_process | setup github pages setup github pages to host sphinx documentation create new branch gh pages generate documentation in gh pages point generated documentation to gh pages and appropriate folder root make sure to create empty nojekyll file look at for reference | 0 |

1,973 | 4,803,517,037 | IssuesEvent | 2016-11-02 10:23:06 | CERNDocumentServer/cds | https://api.github.com/repos/CERNDocumentServer/cds | closed | deposit: SSE channel endpoint | avc_processing enhancement review | Add a new endpoint on each deposit that corresponds to the SSE channel, where clients should subscribe in order to receive messages about this particular deposit.

| 1.0 | deposit: SSE channel endpoint - Add a new endpoint on each deposit that corresponds to the SSE channel, where clients should subscribe in order to receive messages about this particular deposit.

| process | deposit sse channel endpoint add a new endpoint on each deposit that corresponds to the sse channel where clients should subscribe in order to receive messages about this particular deposit | 1 |

7,281 | 10,433,144,883 | IssuesEvent | 2019-09-17 12:54:49 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Processing GDAL algorithms does not handle correctly WFS input layers | Bug Processing | Author Name: **Giovanni Manghi** (@gioman)

Original Redmine Issue: [21848](https://issues.qgis.org/issues/21848)

Affected QGIS version: 3.6.1

Redmine category:processing/ogr

Assignee: Giovanni Manghi

---

the command is not created the right way, it must be something along this lines:

ogr2ogr -f PostgreSQL PG:"dbnam... | 1.0 | Processing GDAL algorithms does not handle correctly WFS input layers - Author Name: **Giovanni Manghi** (@gioman)

Original Redmine Issue: [21848](https://issues.qgis.org/issues/21848)

Affected QGIS version: 3.6.1

Redmine category:processing/ogr

Assignee: Giovanni Manghi

---

the command is not created the right way, i... | process | processing gdal algorithms does not handle correctly wfs input layers author name giovanni manghi gioman original redmine issue affected qgis version redmine category processing ogr assignee giovanni manghi the command is not created the right way it must be something along this lines ... | 1 |

18,493 | 24,550,963,679 | IssuesEvent | 2022-10-12 12:35:02 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [iOS] [Offline indicator] Enrollment flow > Offline error message should get displayed when participant clicks on the 'Next' button on the signature screen | Bug P1 iOS Process: Fixed Process: Tested dev | Steps:

1. Signup or sign in to the app

2. CLick on the Study

3. Turn off the internet

4. Complete all the steps in the enrollment flow

5. On the signature screen, click on Next and observe

AR: Screen is continuously loading

ER: Offline error message should get displayed when participants click on the Next butt... | 2.0 | [iOS] [Offline indicator] Enrollment flow > Offline error message should get displayed when participant clicks on the 'Next' button on the signature screen - Steps:

1. Signup or sign in to the app

2. CLick on the Study

3. Turn off the internet

4. Complete all the steps in the enrollment flow

5. On the signature sc... | process | enrollment flow offline error message should get displayed when participant clicks on the next button on the signature screen steps signup or sign in to the app click on the study turn off the internet complete all the steps in the enrollment flow on the signature screen click on next an... | 1 |

1,625 | 4,238,602,261 | IssuesEvent | 2016-07-06 05:04:28 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | doc: invalid process.hrtime documentation | doc process | The current documentation for `process.hrtime()` does not include information about the optional arguments. See https://nodejs.org/dist/latest-v6.x/docs/api/process.html#process_process_hrtime

| 1.0 | doc: invalid process.hrtime documentation - The current documentation for `process.hrtime()` does not include information about the optional arguments. See https://nodejs.org/dist/latest-v6.x/docs/api/process.html#process_process_hrtime

| process | doc invalid process hrtime documentation the current documentation for process hrtime does not include information about the optional arguments see | 1 |

46,935 | 5,841,872,156 | IssuesEvent | 2017-05-10 03:00:40 | easydigitaldownloads/easy-digital-downloads | https://api.github.com/repos/easydigitaldownloads/easy-digital-downloads | closed | Complete purchase button text is not flexible with translations | Enhancement Has PR Needs Testing | ```

function edd_get_checkout_button_purchase_label() {

$label = edd_get_option( 'checkout_label', '' );

if ( edd_get_cart_total() ) {

$complete_purchase = ! empty( $label ) ? $label : __( 'Purchase', 'easy-digital-downloads' );

} else {

$complete_purchase = ! empty( $label ) ? $label : __( 'Free Downloa... | 1.0 | Complete purchase button text is not flexible with translations - ```

function edd_get_checkout_button_purchase_label() {

$label = edd_get_option( 'checkout_label', '' );

if ( edd_get_cart_total() ) {

$complete_purchase = ! empty( $label ) ? $label : __( 'Purchase', 'easy-digital-downloads' );

} else {

$... | non_process | complete purchase button text is not flexible with translations function edd get checkout button purchase label label edd get option checkout label if edd get cart total complete purchase empty label label purchase easy digital downloads else ... | 0 |

12,242 | 14,743,865,256 | IssuesEvent | 2021-01-07 14:31:30 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Download Button - Always downloading last uploaded file regardless of Billing cycle. | anc-process anp-2.5 ant-bug has attachment | In GitLab by @kdjstudios on Dec 5, 2019, 11:24

This is to address the concern found in http://gitlab.aavaz.biz/AnswerNet/SABilling/issues/1589#note_52500

While development, we found that according to the current functionality, download action always gets the last uploaded file for the site for it's every billing cycl... | 1.0 | Download Button - Always downloading last uploaded file regardless of Billing cycle. - In GitLab by @kdjstudios on Dec 5, 2019, 11:24

This is to address the concern found in http://gitlab.aavaz.biz/AnswerNet/SABilling/issues/1589#note_52500

While development, we found that according to the current functionality, down... | process | download button always downloading last uploaded file regardless of billing cycle in gitlab by kdjstudios on dec this is to address the concern found in while development we found that according to the current functionality download action always gets the last uploaded file for the site for it s e... | 1 |

7,848 | 11,018,246,253 | IssuesEvent | 2019-12-05 10:08:15 | prisma/prisma2 | https://api.github.com/repos/prisma/prisma2 | closed | `prisma2 lift save` creates two new `.db` files for SQLite | bug/2-confirmed kind/bug process/candidate | I have this Prisma schema in a new project:

```prisma

generator photon {

provider = "photonjs"

}

datasource db {

provider = "sqlite"

url = "file:dev.db"

}

model User {

id String @default(cuid()) @id

email String @unique

name String?

}

```

This is the file structure:

```

... | 1.0 | `prisma2 lift save` creates two new `.db` files for SQLite - I have this Prisma schema in a new project:

```prisma

generator photon {

provider = "photonjs"

}

datasource db {

provider = "sqlite"

url = "file:dev.db"

}

model User {

id String @default(cuid()) @id

email String @unique

... | process | lift save creates two new db files for sqlite i have this prisma schema in a new project prisma generator photon provider photonjs datasource db provider sqlite url file dev db model user id string default cuid id email string unique name ... | 1 |

85,156 | 24,525,251,577 | IssuesEvent | 2022-10-11 12:41:25 | cds-snc/platform-forms-client | https://api.github.com/repos/cds-snc/platform-forms-client | closed | Form Builder - Save progress functionality | Epic form-builder | [Co-design mock-ups](https://miro.com/app/board/uXjVOgSVHE8=/)

### User stories

As a form builder I can:

- Download my form as a JSON file

- Upload my form as a JSON file

### Acceptance criteria:

- [ ] Wireframes for the feature

- [ ] Implement functionality | 1.0 | Form Builder - Save progress functionality - [Co-design mock-ups](https://miro.com/app/board/uXjVOgSVHE8=/)

### User stories

As a form builder I can:

- Download my form as a JSON file

- Upload my form as a JSON file

### Acceptance criteria:

- [ ] Wireframes for the feature

- [ ] Implement functionality | non_process | form builder save progress functionality user stories as a form builder i can download my form as a json file upload my form as a json file acceptance criteria wireframes for the feature implement functionality | 0 |

51,469 | 10,679,887,486 | IssuesEvent | 2019-10-21 20:12:24 | RebusFoundation/reader-api | https://api.github.com/repos/RebusFoundation/reader-api | closed | More calls to `debug` | refactor / code quality | Many parts of the server use the[ `debug`](https://www.npmjs.com/package/debug) module to log what's happening in detail when the `debug` env variable is set. This has proven _very_ useful. It would be worthwhile to go through the code and add debug calls to those modules that don't have it. | 1.0 | More calls to `debug` - Many parts of the server use the[ `debug`](https://www.npmjs.com/package/debug) module to log what's happening in detail when the `debug` env variable is set. This has proven _very_ useful. It would be worthwhile to go through the code and add debug calls to those modules that don't have it. | non_process | more calls to debug many parts of the server use the module to log what s happening in detail when the debug env variable is set this has proven very useful it would be worthwhile to go through the code and add debug calls to those modules that don t have it | 0 |

291,943 | 25,186,992,818 | IssuesEvent | 2022-11-11 19:05:25 | statsmodels/statsmodels | https://api.github.com/repos/statsmodels/statsmodels | opened | ENH/TST/SUMM check all get_prediction methods outside tsa | type-enh type-test | (more general than #8519 specific for checking get_prediction across models)

based on the doc index the following classes outside tsa have a `get_prediction` method

so far I mainly checked discrete models, GLMResults and new models BetaModel and OrderedModel

OLS as original implementation

I have not recently ch... | 1.0 | ENH/TST/SUMM check all get_prediction methods outside tsa - (more general than #8519 specific for checking get_prediction across models)

based on the doc index the following classes outside tsa have a `get_prediction` method

so far I mainly checked discrete models, GLMResults and new models BetaModel and OrderedM... | non_process | enh tst summ check all get prediction methods outside tsa more general than specific for checking get prediction across models based on the doc index the following classes outside tsa have a get prediction method so far i mainly checked discrete models glmresults and new models betamodel and orderedmode... | 0 |

7,864 | 11,042,363,885 | IssuesEvent | 2019-12-09 09:00:25 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | [needs-docs][processing] Avoid field collision via optional prefix in overlay algorithms (#10092) | 3.8 Automatic new feature Easy Processing Alg | Original commit: https://github.com/qgis/QGIS/commit/a88898656782ce971e6ce45b6f9487e67de9154f by web-flow

Makes for a more predictable collision avoidance, which

can be neccessary for some models. | 1.0 | [needs-docs][processing] Avoid field collision via optional prefix in overlay algorithms (#10092) - Original commit: https://github.com/qgis/QGIS/commit/a88898656782ce971e6ce45b6f9487e67de9154f by web-flow

Makes for a more predictable collision avoidance, which

can be neccessary for some models. | process | avoid field collision via optional prefix in overlay algorithms original commit by web flow makes for a more predictable collision avoidance which can be neccessary for some models | 1 |

184,649 | 14,289,809,582 | IssuesEvent | 2020-11-23 19:51:46 | github-vet/rangeclosure-findings | https://api.github.com/repos/github-vet/rangeclosure-findings | closed | qingqibing/etcd: clientv3/client_test.go; 28 LoC | fresh small test |

Found a possible issue in [qingqibing/etcd](https://www.github.com/qingqibing/etcd) at [clientv3/client_test.go](https://github.com/qingqibing/etcd/blob/0526f461e1d35f13a85836674951cb12c6bee187/clientv3/client_test.go#L101-L128)

The below snippet of Go code triggered static analysis which searches for goroutines and/... | 1.0 | qingqibing/etcd: clientv3/client_test.go; 28 LoC -

Found a possible issue in [qingqibing/etcd](https://www.github.com/qingqibing/etcd) at [clientv3/client_test.go](https://github.com/qingqibing/etcd/blob/0526f461e1d35f13a85836674951cb12c6bee187/clientv3/client_test.go#L101-L128)

The below snippet of Go code triggered... | non_process | qingqibing etcd client test go loc found a possible issue in at the below snippet of go code triggered static analysis which searches for goroutines and or defer statements which capture loop variables click here to show the line s of go which triggered the analyzer go for i cfg ... | 0 |

154,807 | 13,577,691,357 | IssuesEvent | 2020-09-20 03:02:17 | rwilliams659/beercation | https://api.github.com/repos/rwilliams659/beercation | opened | README | documentation | Make README:

- Description

- Setup/Installation instructions

- Contributor info

- Gifs / screenshots | 1.0 | README - Make README:

- Description

- Setup/Installation instructions

- Contributor info

- Gifs / screenshots | non_process | readme make readme description setup installation instructions contributor info gifs screenshots | 0 |

112,631 | 11,774,055,805 | IssuesEvent | 2020-03-16 08:43:41 | BHoM/BHoM_Engine | https://api.github.com/repos/BHoM/BHoM_Engine | closed | Structure_Engine: Add description documentation on an area of the engine | good first issue type:documentation | Add Description, Input and Output attributes to all methods in the Structure_Engine | 1.0 | Structure_Engine: Add description documentation on an area of the engine - Add Description, Input and Output attributes to all methods in the Structure_Engine | non_process | structure engine add description documentation on an area of the engine add description input and output attributes to all methods in the structure engine | 0 |

3,846 | 6,378,829,617 | IssuesEvent | 2017-08-02 13:35:45 | syndesisio/syndesis-ui | https://api.github.com/repos/syndesisio/syndesis-ui | closed | Basic Filter: Changes persisted in-memory prior to saving | bug sprint requirement | Important bug related to persistence in the API vs in-memory store, as pointed out in issue #623.

How to reproduce:

1. Make changes to an integration's basic filter rule.

2. Do not save changes to the integration.

3. Begin to create a new integration and add the basic filter as a step.

4. Fields will be pre-popu... | 1.0 | Basic Filter: Changes persisted in-memory prior to saving - Important bug related to persistence in the API vs in-memory store, as pointed out in issue #623.

How to reproduce:

1. Make changes to an integration's basic filter rule.

2. Do not save changes to the integration.

3. Begin to create a new integration and... | non_process | basic filter changes persisted in memory prior to saving important bug related to persistence in the api vs in memory store as pointed out in issue how to reproduce make changes to an integration s basic filter rule do not save changes to the integration begin to create a new integration and a... | 0 |

234,453 | 7,721,298,507 | IssuesEvent | 2018-05-24 04:27:01 | cilium/cilium | https://api.github.com/repos/cilium/cilium | closed | Add description file metricsmap/doc.go | priority/medium | As a followup to #4211 PR, we need to add a file pkg/maps/metricsmap/doc.go which describes the package, in a similar way to pkg/maps/lxcmap/doc.go? This shows up here:

https://godoc.org/github.com/cilium/cilium

(Not every package does this today, but we should try to improve this over time) | 1.0 | Add description file metricsmap/doc.go - As a followup to #4211 PR, we need to add a file pkg/maps/metricsmap/doc.go which describes the package, in a similar way to pkg/maps/lxcmap/doc.go? This shows up here:

https://godoc.org/github.com/cilium/cilium

(Not every package does this today, but we should try to impr... | non_process | add description file metricsmap doc go as a followup to pr we need to add a file pkg maps metricsmap doc go which describes the package in a similar way to pkg maps lxcmap doc go this shows up here not every package does this today but we should try to improve this over time | 0 |

434,157 | 12,515,090,749 | IssuesEvent | 2020-06-03 06:59:22 | wso2/product-microgateway | https://api.github.com/repos/wso2/product-microgateway | closed | Improvement to support multiple token issuers with Claim Mappings | Priority/Normal Type/New Feature | ### Describe your problem(s)

When JWT retrieved from Multiple Identity providers Gateway should able to validate the JWT and map the Relevant Claims

### Describe your solution

1. Retrieve the Issuer from Given JWT.

2. Retrieve the issuer details from the config file

3. Validate the JWT signature against that.

4... | 1.0 | Improvement to support multiple token issuers with Claim Mappings - ### Describe your problem(s)

When JWT retrieved from Multiple Identity providers Gateway should able to validate the JWT and map the Relevant Claims

### Describe your solution

1. Retrieve the Issuer from Given JWT.

2. Retrieve the issuer details ... | non_process | improvement to support multiple token issuers with claim mappings describe your problem s when jwt retrieved from multiple identity providers gateway should able to validate the jwt and map the relevant claims describe your solution retrieve the issuer from given jwt retrieve the issuer details ... | 0 |

335,998 | 30,112,689,505 | IssuesEvent | 2023-06-30 09:01:20 | matrixpower1004/fastcampus-baseball | https://api.github.com/repos/matrixpower1004/fastcampus-baseball | closed | 통합 테스트 | test | - [x] 통합 테스트 목록 작성

- [x] 선수 등록 테스트

- [x] teamId로 선수 목록 보기 테스트

- [x] 퇴출 선수 등록 테스트

- [x] 퇴출 선수 목록 보기 테스트

- [x] 야구장 조회 테스트

- [x] 야구장 등록 테스트

- [x] 팀 조회 테스트

- [x] 팀 등록 테스트 | 1.0 | 통합 테스트 - - [x] 통합 테스트 목록 작성

- [x] 선수 등록 테스트

- [x] teamId로 선수 목록 보기 테스트

- [x] 퇴출 선수 등록 테스트

- [x] 퇴출 선수 목록 보기 테스트

- [x] 야구장 조회 테스트

- [x] 야구장 등록 테스트

- [x] 팀 조회 테스트

- [x] 팀 등록 테스트 | non_process | 통합 테스트 통합 테스트 목록 작성 선수 등록 테스트 teamid로 선수 목록 보기 테스트 퇴출 선수 등록 테스트 퇴출 선수 목록 보기 테스트 야구장 조회 테스트 야구장 등록 테스트 팀 조회 테스트 팀 등록 테스트 | 0 |

10,758 | 13,549,206,252 | IssuesEvent | 2020-09-17 07:51:29 | timberio/vector | https://api.github.com/repos/timberio/vector | closed | New `uuid_v4` remap function | domain: mapping domain: processing type: feature | The `uuid_v4` remap function generates a random ID using the [UUID v4 algorithm](https://en.wikipedia.org/wiki/Universally_unique_identifier#Version_4_(random)).

## Example

```

.id = uuid_v4()

```

Would result in

```js

{

"id": "fb49a0ec-d60c-4d20-9264-3b4cfe272106"

}

``` | 1.0 | New `uuid_v4` remap function - The `uuid_v4` remap function generates a random ID using the [UUID v4 algorithm](https://en.wikipedia.org/wiki/Universally_unique_identifier#Version_4_(random)).

## Example

```

.id = uuid_v4()

```

Would result in

```js

{

"id": "fb49a0ec-d60c-4d20-9264-3b4cfe272106"

}

`... | process | new uuid remap function the uuid remap function generates a random id using the example id uuid would result in js id | 1 |

423,357 | 28,505,831,744 | IssuesEvent | 2023-04-18 21:20:15 | nextauthjs/next-auth | https://api.github.com/repos/nextauthjs/next-auth | opened | Using `getServerSession` with the Advanced initialization | documentation triage | ### What is the improvement or update you wish to see?