Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

22 | 2,496,263,003 | IssuesEvent | 2015-01-06 18:14:42 | vivo-isf/vivo-isf-ontology | https://api.github.com/repos/vivo-isf/vivo-isf-ontology | closed | Cell Potency | biological_process imported | _From [rgar...@eagle-i.org](https://code.google.com/u/111247205719752845822/) on March 25, 2013 08:50:35_

\<b>**** Use the form below to request a new term ****</b>

\<b>**** Scroll down to see a term request example ****</b>

\<b>Please indicate the label for the proposed term:</b>

Cell Potency

\<b>P... | 1.0 | Cell Potency - _From [rgar...@eagle-i.org](https://code.google.com/u/111247205719752845822/) on March 25, 2013 08:50:35_

\<b>**** Use the form below to request a new term ****</b>

\<b>**** Scroll down to see a term request example ****</b>

\<b>Please indicate the label for the proposed term:</b>

Cell Potency&#... | process | cell potency from on march use the form below to request a new term scroll down to see a term request example please indicate the label for the proposed term cell potency please provide a textual definition with source the differentiation potential... | 1 |

21,390 | 29,202,231,792 | IssuesEvent | 2023-05-21 00:37:38 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Remoto] Site Reliability Engineer (SRE) na Coodesh | SALVADOR PJ BANCO DE DADOS BIG DATA REDES PLENO POSTGRESQL KUBERNETES DEVOPS AWS REQUISITOS LINUX REMOTO PROCESSOS GITHUB AZURE SEGURANÇA UMA ANALYTICS ESPANHOL SISTEMAS OPERACIONAIS MACHINE LEARNING NEGÓCIOS GCP INTELIGÊNCIA ARTIFICIAL OPENSHIFT ARQUITETURA DE DADOS MONITORAMENTO SRE Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/site-reliability-engineer-sre-1806054... | 1.0 | [Remoto] Site Reliability Engineer (SRE) na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://c... | process | site reliability engineer sre na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personalizado de cand... | 1 |

2,169 | 5,019,525,145 | IssuesEvent | 2016-12-14 12:03:39 | openvstorage/framework | https://api.github.com/repos/openvstorage/framework | closed | Config gone in config manager of abm arakoon | process_wontfix | **On all our CI test environments** we noticed the following exception:

```

[EXCEPTION] Error during execution of <alba_health_check.AlbaHealthCheck object at 0x7fd74ab24510>.run

Traceback (most recent call last):

File "/opt/OpenvStorage/ovs/lib/healthcheck.py", line 35, in <module>

class HealthCheckControll... | 1.0 | Config gone in config manager of abm arakoon - **On all our CI test environments** we noticed the following exception:

```

[EXCEPTION] Error during execution of <alba_health_check.AlbaHealthCheck object at 0x7fd74ab24510>.run

Traceback (most recent call last):

File "/opt/OpenvStorage/ovs/lib/healthcheck.py", line... | process | config gone in config manager of abm arakoon on all our ci test environments we noticed the following exception error during execution of run traceback most recent call last file opt openvstorage ovs lib healthcheck py line in class healthcheckcontroller object file opt open... | 1 |

19,914 | 26,375,608,737 | IssuesEvent | 2023-01-12 02:00:07 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Thu, 12 Jan 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

### Adapting to Skew: Imputing Spatiotemporal Urban Data with 3D Partial Convolutions and Biased Masking

- **Authors:** Bin Han, Bill Howe

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Artificial Intelligence (cs.AI); Computers and Society (cs.CY)

- **Arxiv link:** https://arxi... | 2.0 | New submissions for Thu, 12 Jan 23 - ## Keyword: events

### Adapting to Skew: Imputing Spatiotemporal Urban Data with 3D Partial Convolutions and Biased Masking

- **Authors:** Bin Han, Bill Howe

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Artificial Intelligence (cs.AI); Computers and Society (c... | process | new submissions for thu jan keyword events adapting to skew imputing spatiotemporal urban data with partial convolutions and biased masking authors bin han bill howe subjects computer vision and pattern recognition cs cv artificial intelligence cs ai computers and society cs c... | 1 |

21,705 | 3,548,146,128 | IssuesEvent | 2016-01-20 13:14:15 | briandonahue/FluxJpeg.Core | https://api.github.com/repos/briandonahue/FluxJpeg.Core | opened | Encoding a 8-bit Grayscale jpg fails (colorspace conversion bug) | Resubmit | auto-migrated Priority-High Type-Defect | _From @GoogleCodeExporter on January 20, 2016 12:59_

```

What steps will reproduce the problem?

1. Try to encode a 8-bit Grayscale jpg

What is the expected output? What do you see instead?

Expected: Encoded image.

Actual: IndexOutOfException on Encoding (Line 261, "CompressTo"-method).

What version of the product ar... | 1.0 | Encoding a 8-bit Grayscale jpg fails (colorspace conversion bug) | Resubmit - _From @GoogleCodeExporter on January 20, 2016 12:59_

```

What steps will reproduce the problem?

1. Try to encode a 8-bit Grayscale jpg

What is the expected output? What do you see instead?

Expected: Encoded image.

Actual: IndexOutOfExceptio... | non_process | encoding a bit grayscale jpg fails colorspace conversion bug resubmit from googlecodeexporter on january what steps will reproduce the problem try to encode a bit grayscale jpg what is the expected output what do you see instead expected encoded image actual indexoutofexception on e... | 0 |

585,791 | 17,534,282,915 | IssuesEvent | 2021-08-12 03:37:55 | shoepro/server | https://api.github.com/repos/shoepro/server | opened | [Feat]: Create user model CRUD logic | Priority: Middle 2.0h Feature Server | ### ISSUE

- Group: `Server`

- Type: `Feat`

- Time: `2.0h`

- Priority: `Middle`

### TODO

1. [ ] Create user repository file

2. [ ] Create CRUD logic based on API docs | 1.0 | [Feat]: Create user model CRUD logic - ### ISSUE

- Group: `Server`

- Type: `Feat`

- Time: `2.0h`

- Priority: `Middle`

### TODO

1. [ ] Create user repository file

2. [ ] Create CRUD logic based on API docs | non_process | create user model crud logic issue group server type feat time priority middle todo create user repository file create crud logic based on api docs | 0 |

209,623 | 23,730,711,153 | IssuesEvent | 2022-08-31 01:16:09 | benlazarine/atmosphere-v2-apiary-docs | https://api.github.com/repos/benlazarine/atmosphere-v2-apiary-docs | opened | CVE-2020-11023 (Medium) detected in jquery-1.8.1.min.js | security vulnerability | ## CVE-2020-11023 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.8.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="ht... | True | CVE-2020-11023 (Medium) detected in jquery-1.8.1.min.js - ## CVE-2020-11023 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.8.1.min.js</b></p></summary>

<p>JavaScript librar... | non_process | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file node modules redeyed examples browser index html path to vulnerable library ... | 0 |

3,231 | 6,289,280,093 | IssuesEvent | 2017-07-19 18:51:20 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | [S.D.Process] CloseMainWindow_NotStarted_ThrowsInvalidOperationException does not throw | area-System.Diagnostics.Process | (Test case will be added soon, creating issue so that I can disable that in the PR)

I believe we should be able to throw even on UAP since we can detect that process is not started. On the other hand we might need to be consistent with non throwing behavior because MainWindowHandle is not supported

```

ERROR: ... | 1.0 | [S.D.Process] CloseMainWindow_NotStarted_ThrowsInvalidOperationException does not throw - (Test case will be added soon, creating issue so that I can disable that in the PR)

I believe we should be able to throw even on UAP since we can detect that process is not started. On the other hand we might need to be consist... | process | closemainwindow notstarted throwsinvalidoperationexception does not throw test case will be added soon creating issue so that i can disable that in the pr i believe we should be able to throw even on uap since we can detect that process is not started on the other hand we might need to be consistent with non... | 1 |

19,586 | 25,922,149,238 | IssuesEvent | 2022-12-15 23:17:38 | nion-software/nionswift | https://api.github.com/repos/nion-software/nionswift | closed | Processing > Rebin should work on 1D data | type - enhancement level - easy f - processing | Unfortunately this will most likely require a separate menu item for now; the ability of a computation to make its UI depend on the rank of the data is not possible at the moment.

See also

- #274 | 1.0 | Processing > Rebin should work on 1D data - Unfortunately this will most likely require a separate menu item for now; the ability of a computation to make its UI depend on the rank of the data is not possible at the moment.

See also

- #274 | process | processing rebin should work on data unfortunately this will most likely require a separate menu item for now the ability of a computation to make its ui depend on the rank of the data is not possible at the moment see also | 1 |

28,506 | 5,510,585,062 | IssuesEvent | 2017-03-17 00:30:27 | joshcummingsdesign/grizzly-wp | https://api.github.com/repos/joshcummingsdesign/grizzly-wp | closed | Match Cloudways Server | documentation enhancement pipeline | Local and dev should match Cloudways as closely as possible (PHP and Apache configuration, database config, etc.) | 1.0 | Match Cloudways Server - Local and dev should match Cloudways as closely as possible (PHP and Apache configuration, database config, etc.) | non_process | match cloudways server local and dev should match cloudways as closely as possible php and apache configuration database config etc | 0 |

19,745 | 26,107,931,275 | IssuesEvent | 2022-12-27 15:32:39 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [processor/logstransformprocessor] Processor hangs waiting for logs that were filtered out | bug priority:p2 processor/logstransform | ### What happened?

## Description

When using the `logstransformprocessor` to filter logs, the [loop](https://github.com/open-telemetry/opentelemetry-collector-contrib/blob/main/processor/logstransformprocessor/processor.go#L145) in `processLogs` will eventually hang waiting for the filtered out logs. `processLogs` ... | 1.0 | [processor/logstransformprocessor] Processor hangs waiting for logs that were filtered out - ### What happened?

## Description

When using the `logstransformprocessor` to filter logs, the [loop](https://github.com/open-telemetry/opentelemetry-collector-contrib/blob/main/processor/logstransformprocessor/processor.go#... | process | processor hangs waiting for logs that were filtered out what happened description when using the logstransformprocessor to filter logs the in processlogs will eventually hang waiting for the filtered out logs processlogs is blocking so the pipeline is blocked there steps to reproduc... | 1 |

49,079 | 13,440,480,427 | IssuesEvent | 2020-09-08 01:03:27 | jgeraigery/crnk-framework | https://api.github.com/repos/jgeraigery/crnk-framework | opened | CVE-2020-24616 (High) detected in jackson-databind-2.9.10.jar | security vulnerability | ## CVE-2020-24616 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.10.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streami... | True | CVE-2020-24616 (High) detected in jackson-databind-2.9.10.jar - ## CVE-2020-24616 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.10.jar</b></p></summary>

<p>Gener... | non_process | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to vulnerable library root gradle caches modules... | 0 |

328,358 | 28,116,372,598 | IssuesEvent | 2023-03-31 11:04:16 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | sql: TestQueryCache failed | C-test-failure O-robot branch-release-23.1 | sql.TestQueryCache [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/9357134?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/9357134?buildTab=artifacts#/) on release-23.1 @ [7e72aae900c3ff4b44f1643c2d7ba55fbb2c... | 1.0 | sql: TestQueryCache failed - sql.TestQueryCache [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/9357134?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/9357134?buildTab=artifacts#/) on release-23.1 @ [7e72aae... | non_process | sql testquerycache failed sql testquerycache with on release goroutine lock github com cockroachdb cockroach pkg kv kvserver pkg kv kvserver replica raft go kvserver replica tick github com cockroachdb cockroach pkg kv kvserver pkg kv kvserver replica raft go kvserver ... | 0 |

274,293 | 29,992,824,381 | IssuesEvent | 2023-06-26 01:06:42 | panasalap/linux-4.19.72_1 | https://api.github.com/repos/panasalap/linux-4.19.72_1 | opened | CVE-2023-35788 (High) detected in linux-yoctov5.4.51 | Mend: dependency security vulnerability | ## CVE-2023-35788 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Embedded kernel</p>

<p>Library home page: <a href=https://git.... | True | CVE-2023-35788 (High) detected in linux-yoctov5.4.51 - ## CVE-2023-35788 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Embedde... | non_process | cve high detected in linux cve high severity vulnerability vulnerable library linux yocto linux embedded kernel library home page a href found in base branch master vulnerable source files net sched cls flower c net sched cls flowe... | 0 |

9,048 | 12,130,108,048 | IssuesEvent | 2020-04-23 00:30:41 | GoogleCloudPlatform/python-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples | closed | remove gcp-devrel-py-tools from appengine/standard/blobstore/gcs/requirements-test.txt | priority: p2 remove-gcp-devrel-py-tools type: process | remove gcp-devrel-py-tools from appengine/standard/blobstore/gcs/requirements-test.txt | 1.0 | remove gcp-devrel-py-tools from appengine/standard/blobstore/gcs/requirements-test.txt - remove gcp-devrel-py-tools from appengine/standard/blobstore/gcs/requirements-test.txt | process | remove gcp devrel py tools from appengine standard blobstore gcs requirements test txt remove gcp devrel py tools from appengine standard blobstore gcs requirements test txt | 1 |

151,455 | 13,402,291,899 | IssuesEvent | 2020-09-03 18:43:20 | dogsheep/dogsheep-beta | https://api.github.com/repos/dogsheep/dogsheep-beta | reopened | Mechanism for defining custom display of results | documentation enhancement | Part of #3 - in particular I want to make sure my photos are displayed with a thumbnail. | 1.0 | Mechanism for defining custom display of results - Part of #3 - in particular I want to make sure my photos are displayed with a thumbnail. | non_process | mechanism for defining custom display of results part of in particular i want to make sure my photos are displayed with a thumbnail | 0 |

590,377 | 17,777,061,915 | IssuesEvent | 2021-08-30 20:42:19 | SkriptLang/Skript | https://api.github.com/repos/SkriptLang/Skript | closed | If the hex color tag is set to a variable, it does not work. | bug priority: low completed | ### Skript/Server Version

[Skript] Skript's aliases can be found here: https://github.com/SkriptLang/skript-aliases

[Skript] Skript's documentation can be found here: https://skriptlang.github.io/Skript

[Skript] Server Version: git-Paper-127 (MC: 1.17.1)

[Skript] Skript Version: 2.6-beta2-nightly-5a6633f

[Skript] ... | 1.0 | If the hex color tag is set to a variable, it does not work. - ### Skript/Server Version

[Skript] Skript's aliases can be found here: https://github.com/SkriptLang/skript-aliases

[Skript] Skript's documentation can be found here: https://skriptlang.github.io/Skript

[Skript] Server Version: git-Paper-127 (MC: 1.17.1)... | non_process | if the hex color tag is set to a variable it does not work skript server version skript s aliases can be found here skript s documentation can be found here server version git paper mc skript version nightly installed skript addons bug description if the hex colo... | 0 |

14,896 | 18,291,214,867 | IssuesEvent | 2021-10-05 15:26:05 | kcp-dev/kcp | https://api.github.com/repos/kcp-dev/kcp | opened | Create a first sketch of APIs / CRDs for resources like `Workspace`, `WorkspaceShard`, `APIBindings`, etc ... | in-process | Create a first sketch of APIs / CRDs for resources like `Workspace`, `WorkspaceShard`, `APIBindings`, etc ... | 1.0 | Create a first sketch of APIs / CRDs for resources like `Workspace`, `WorkspaceShard`, `APIBindings`, etc ... - Create a first sketch of APIs / CRDs for resources like `Workspace`, `WorkspaceShard`, `APIBindings`, etc ... | process | create a first sketch of apis crds for resources like workspace workspaceshard apibindings etc create a first sketch of apis crds for resources like workspace workspaceshard apibindings etc | 1 |

12,320 | 14,879,445,838 | IssuesEvent | 2021-01-20 07:42:02 | lutraconsulting/qgis-crayfish-plugin | https://api.github.com/repos/lutraconsulting/qgis-crayfish-plugin | closed | Create contour from color ramp | enhancement processing | This feature was available in crayfish 2.x: the color ramp from the active dataset can be used for the contouring option. | 1.0 | Create contour from color ramp - This feature was available in crayfish 2.x: the color ramp from the active dataset can be used for the contouring option. | process | create contour from color ramp this feature was available in crayfish x the color ramp from the active dataset can be used for the contouring option | 1 |

7,788 | 10,928,178,315 | IssuesEvent | 2019-11-22 18:25:43 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | opened | PubSub: Add more system tests covering various RBAC-related scenarios | api: pubsub testing type: process | A [regression](https://github.com/googleapis/google-cloud-python/issues/9339) introduced not so long ago could have been prevented if we had more RBAC-related system tests. A system test covering the fix was added in #9507.

Since the PubSub backend defines several different [user roles](https://cloud.google.com/pubs... | 1.0 | PubSub: Add more system tests covering various RBAC-related scenarios - A [regression](https://github.com/googleapis/google-cloud-python/issues/9339) introduced not so long ago could have been prevented if we had more RBAC-related system tests. A system test covering the fix was added in #9507.

Since the PubSub back... | process | pubsub add more system tests covering various rbac related scenarios a introduced not so long ago could have been prevented if we had more rbac related system tests a system test covering the fix was added in since the pubsub backend defines several different system tests must be added to cover at le... | 1 |

268,807 | 20,361,840,345 | IssuesEvent | 2022-02-20 19:56:46 | MiguelDuba/git_flow_practice | https://api.github.com/repos/MiguelDuba/git_flow_practice | closed | Un commit que no sigue la convención de código o arreglo a realizar | documentation | La convención del mensaje del último commit no es la esperada:

`FIX1: Arreglo pagina arroz con coco`

Recuerde que debe tener el siguiente formato: `<Identificador de la corrección>: <Comentario>`

Para realizar la corrección del mensaje de commit ejecute los comandos `git commit --amend` y `git push -f`

Este issue es... | 1.0 | Un commit que no sigue la convención de código o arreglo a realizar - La convención del mensaje del último commit no es la esperada:

`FIX1: Arreglo pagina arroz con coco`

Recuerde que debe tener el siguiente formato: `<Identificador de la corrección>: <Comentario>`

Para realizar la corrección del mensaje de commit ej... | non_process | un commit que no sigue la convención de código o arreglo a realizar la convención del mensaje del último commit no es la esperada arreglo pagina arroz con coco recuerde que debe tener el siguiente formato para realizar la corrección del mensaje de commit ejecute los comandos git commit amend y gi... | 0 |

12,595 | 14,992,981,034 | IssuesEvent | 2021-01-29 10:38:33 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] Default study thumbnail image is not displayed in sites and studies tab | Bug P2 Process: Fixed Process: Tested dev Unknown backend | Steps:

1. Login to SB

2. Don't upload any thumbnail image i.e let be alternate image

3. Launch the study

4. Observe the study in PM

Actual: Default study thumbnail image is not displayed in sites and studies tab

Expected: Default study thumbnail image should be displayed in sites and studies tab

![Screensh... | 2.0 | [PM] Default study thumbnail image is not displayed in sites and studies tab - Steps:

1. Login to SB

2. Don't upload any thumbnail image i.e let be alternate image

3. Launch the study

4. Observe the study in PM

Actual: Default study thumbnail image is not displayed in sites and studies tab

Expected: Default s... | process | default study thumbnail image is not displayed in sites and studies tab steps login to sb don t upload any thumbnail image i e let be alternate image launch the study observe the study in pm actual default study thumbnail image is not displayed in sites and studies tab expected default stud... | 1 |

7,556 | 18,241,771,012 | IssuesEvent | 2021-10-01 13:43:36 | dfds/backstage | https://api.github.com/repos/dfds/backstage | closed | Distributed Systems Design - Workshop | Architecture | - [x] Meet with LearnSome

- [x] Draft ppt

- [x] Draft learnsome profile

- [x] Get feedback on LearnSome profile + PPT from Jakob F, Jan W & Martin O

- [x] Demo | 1.0 | Distributed Systems Design - Workshop - - [x] Meet with LearnSome

- [x] Draft ppt

- [x] Draft learnsome profile

- [x] Get feedback on LearnSome profile + PPT from Jakob F, Jan W & Martin O

- [x] Demo | non_process | distributed systems design workshop meet with learnsome draft ppt draft learnsome profile get feedback on learnsome profile ppt from jakob f jan w martin o demo | 0 |

3,217 | 6,277,231,688 | IssuesEvent | 2017-07-18 11:40:24 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Test: System.Diagnostics.Tests.ProcessTests/TestExitTime failed with "TestExitTime is incorrect" | area-System.Diagnostics.Process os-linux test-run-core | Opened on behalf of @Jiayili1

The test `System.Diagnostics.Tests.ProcessTests/TestExitTime` has failed.

TestExitTime is incorrect. TimeBeforeStart 7/13/17 12:51:12 AM Ticks=636355038728165136, ExitTime=7/13/17 12:51:12 AM, Ticks=636355038727555168, ExitTimeUniversal 7/13/17 12:51:12 AM Ticks=636355038727555168, NowUn... | 1.0 | Test: System.Diagnostics.Tests.ProcessTests/TestExitTime failed with "TestExitTime is incorrect" - Opened on behalf of @Jiayili1

The test `System.Diagnostics.Tests.ProcessTests/TestExitTime` has failed.

TestExitTime is incorrect. TimeBeforeStart 7/13/17 12:51:12 AM Ticks=636355038728165136, ExitTime=7/13/17 12:51:12 ... | process | test system diagnostics tests processtests testexittime failed with testexittime is incorrect opened on behalf of the test system diagnostics tests processtests testexittime has failed testexittime is incorrect timebeforestart am ticks exittime am ticks exittimeuniversal ... | 1 |

5,000 | 7,834,687,700 | IssuesEvent | 2018-06-16 17:11:49 | StrikeNP/trac_test | https://api.github.com/repos/StrikeNP/trac_test | closed | Add useful plots and get rid of useless plots on plotgen (Trac #824) | Migrated from Trac bmg2@uwm.edu post_processing task | Plotgen could be improved by adding more useful panels to it. However, that also increases the amount of space that each plotgen ```.maff``` files takes up, and we don't want to go over the attachment limit for Trac. In order to make room for new plots, useless panels should be taken off plotgen. An example of a use... | 1.0 | Add useful plots and get rid of useless plots on plotgen (Trac #824) - Plotgen could be improved by adding more useful panels to it. However, that also increases the amount of space that each plotgen ```.maff``` files takes up, and we don't want to go over the attachment limit for Trac. In order to make room for new ... | process | add useful plots and get rid of useless plots on plotgen trac plotgen could be improved by adding more useful panels to it however that also increases the amount of space that each plotgen maff files takes up and we don t want to go over the attachment limit for trac in order to make room for new pl... | 1 |

591,010 | 17,792,961,447 | IssuesEvent | 2021-08-31 18:26:05 | lowRISC/opentitan | https://api.github.com/repos/lowRISC/opentitan | closed | [rv_dm] Current life cycle gating incomplete | Priority:P0 Type:Bug | The current rv_dm life cycle gating does not gate on the input of the JTAG->DMI interface.

This means certain jtag functionality, such as ID, bypass etc are still available even though the DMI interface is no longer useable.

This makes it a bit more difficult for DV to verify, so we should probably consider updati... | 1.0 | [rv_dm] Current life cycle gating incomplete - The current rv_dm life cycle gating does not gate on the input of the JTAG->DMI interface.

This means certain jtag functionality, such as ID, bypass etc are still available even though the DMI interface is no longer useable.

This makes it a bit more difficult for DV t... | non_process | current life cycle gating incomplete the current rv dm life cycle gating does not gate on the input of the jtag dmi interface this means certain jtag functionality such as id bypass etc are still available even though the dmi interface is no longer useable this makes it a bit more difficult for dv to veri... | 0 |

8,801 | 11,908,262,183 | IssuesEvent | 2020-03-31 00:26:32 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Processing algorithms are not able to add the output layer to an exiting GPKG or SQLite container without completely overwrite it | Bug Processing | Author Name: **Andrea Giudiceandrea** (@agiudiceandrea)

Original Redmine Issue: [20026](https://issues.qgis.org/issues/20026)

Affected QGIS version: 3.4.0

Redmine category:processing/core

---

Core processing algorithms are not able to add the output layer to an exiting SQLite (maybe others?) container file without co... | 1.0 | Processing algorithms are not able to add the output layer to an exiting GPKG or SQLite container without completely overwrite it - Author Name: **Andrea Giudiceandrea** (@agiudiceandrea)

Original Redmine Issue: [20026](https://issues.qgis.org/issues/20026)

Affected QGIS version: 3.4.0

Redmine category:processing/core

... | process | processing algorithms are not able to add the output layer to an exiting gpkg or sqlite container without completely overwrite it author name andrea giudiceandrea agiudiceandrea original redmine issue affected qgis version redmine category processing core core processing algorithms are not a... | 1 |

40,271 | 2,868,312,639 | IssuesEvent | 2015-06-05 18:05:47 | openshift/origin | https://api.github.com/repos/openshift/origin | closed | Console: refresh is sometimes needed after starting build | component/web kind/bug priority/P2 | Sometimes when you trigger a build from the console, the UI doesn't show the build and you have to refresh in order to see it. | 1.0 | Console: refresh is sometimes needed after starting build - Sometimes when you trigger a build from the console, the UI doesn't show the build and you have to refresh in order to see it. | non_process | console refresh is sometimes needed after starting build sometimes when you trigger a build from the console the ui doesn t show the build and you have to refresh in order to see it | 0 |

296,420 | 9,115,465,861 | IssuesEvent | 2019-02-22 05:07:54 | WeAreDevs/material-clicker | https://api.github.com/repos/WeAreDevs/material-clicker | opened | Achievements | enhancement priority: low | When doing certain things, you can trigger an achievement. I propose an API Something similar to promises.

```js

registerAchievement({

id: 'win-universe',

name: 'Win the Universe'

description: 'Example to show how cool the world is.'

}, (award) => {

// add event handles and stuff to detect if t... | 1.0 | Achievements - When doing certain things, you can trigger an achievement. I propose an API Something similar to promises.

```js

registerAchievement({

id: 'win-universe',

name: 'Win the Universe'

description: 'Example to show how cool the world is.'

}, (award) => {

// add event handles and stuff... | non_process | achievements when doing certain things you can trigger an achievement i propose an api something similar to promises js registerachievement id win universe name win the universe description example to show how cool the world is award add event handles and stuff... | 0 |

77,176 | 9,982,611,364 | IssuesEvent | 2019-07-10 10:15:19 | tomusdrw/rust-web3 | https://api.github.com/repos/tomusdrw/rust-web3 | closed | [Question] Regarding Example | documentation | Hello, I am wondering if perhaps the readme for example is missing something?

I have cloned the project and followed the guide from `example/readme.md`. That is

```

ganache-cli -m "hamster coin cup brief quote trick stove draft hobby strong caught unable"

cargo run --example contract

```

Everything appears ... | 1.0 | [Question] Regarding Example - Hello, I am wondering if perhaps the readme for example is missing something?

I have cloned the project and followed the guide from `example/readme.md`. That is

```

ganache-cli -m "hamster coin cup brief quote trick stove draft hobby strong caught unable"

cargo run --example contr... | non_process | regarding example hello i am wondering if perhaps the readme for example is missing something i have cloned the project and followed the guide from example readme md that is ganache cli m hamster coin cup brief quote trick stove draft hobby strong caught unable cargo run example contract ... | 0 |

22,686 | 31,987,992,167 | IssuesEvent | 2023-09-21 02:00:08 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Thu, 21 Sep 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

There is no result

## Keyword: event camera

There is no result

## Keyword: events camera

There is no result

## Keyword: white balance

There is no result

## Keyword: color contrast

There is no result

## Keyword: AWB

There is no result

## Keyword: ISP

### GL-Fusion: Global-Local Fusion Network fo... | 2.0 | New submissions for Thu, 21 Sep 23 - ## Keyword: events

There is no result

## Keyword: event camera

There is no result

## Keyword: events camera

There is no result

## Keyword: white balance

There is no result

## Keyword: color contrast

There is no result

## Keyword: AWB

There is no result

## Keyword: ISP

### GL-F... | process | new submissions for thu sep keyword events there is no result keyword event camera there is no result keyword events camera there is no result keyword white balance there is no result keyword color contrast there is no result keyword awb there is no result keyword isp gl fus... | 1 |

62,773 | 12,240,559,054 | IssuesEvent | 2020-05-05 00:44:36 | tinymce/tinymce | https://api.github.com/repos/tinymce/tinymce | closed | deleting in lists does not work properally if <br> tag is removed with "code" or "paste" plugins | plugin: code status: verified type: bug | If you type out a list in an editor:

```

1. Test

2. Test

3. Test

4. Test

5. Test

```

then using the "_code_" plugin to view the source and click ok to apply changes then start pressing the **delete** button (at least 3 times) from the end of "_1. Test_" it will pull the next sibling element up to soon... and if you k... | 1.0 | deleting in lists does not work properally if <br> tag is removed with "code" or "paste" plugins - If you type out a list in an editor:

```

1. Test

2. Test

3. Test

4. Test

5. Test

```

then using the "_code_" plugin to view the source and click ok to apply changes then start pressing the **delete** button (at least 3... | non_process | deleting in lists does not work properally if tag is removed with code or paste plugins if you type out a list in an editor test test test test test then using the code plugin to view the source and click ok to apply changes then start pressing the delete button at least ti... | 0 |

60,102 | 3,120,764,896 | IssuesEvent | 2015-09-05 01:40:28 | framingeinstein/issues-test | https://api.github.com/repos/framingeinstein/issues-test | closed | SPK-83: Breadcrumbs: Structure is not correct on About Speakman & Support | priority:normal resolution:fixed type:enhancement | Hi Sam,

Creating a ticket for this issue we found last week. Please notice that the structure isn't correct for About Speakman & Support. It seems like Blog is a parent.

Thanks,

Jessica | 1.0 | SPK-83: Breadcrumbs: Structure is not correct on About Speakman & Support - Hi Sam,

Creating a ticket for this issue we found last week. Please notice that the structure isn't correct for About Speakman & Support. It seems like Blog is a parent.

Thanks,

Jessica | non_process | spk breadcrumbs structure is not correct on about speakman support hi sam creating a ticket for this issue we found last week please notice that the structure isn t correct for about speakman support it seems like blog is a parent thanks jessica | 0 |

9,942 | 12,975,530,779 | IssuesEvent | 2020-07-21 17:09:23 | tc39/proposal-promise-any | https://api.github.com/repos/tc39/proposal-promise-any | closed | Advance to stage 4 | process | Criteria taken from [the TC39 process document](https://tc39.github.io/process-document/) minus those from previous stages:

> - [x] [Test262](https://github.com/tc39/test262) acceptance tests have been written for mainline usage scenarios, and merged

- [x] `Promise.any`: https://github.com/tc39/test262/tree/maste... | 1.0 | Advance to stage 4 - Criteria taken from [the TC39 process document](https://tc39.github.io/process-document/) minus those from previous stages:

> - [x] [Test262](https://github.com/tc39/test262) acceptance tests have been written for mainline usage scenarios, and merged

- [x] `Promise.any`: https://github.com/tc... | process | advance to stage criteria taken from minus those from previous stages acceptance tests have been written for mainline usage scenarios and merged promise any aggregateerror two compatible implementations which pass the acceptance tests bug tickets to track ... | 1 |

9,645 | 12,605,062,111 | IssuesEvent | 2020-06-11 15:53:41 | ORNL-AMO/AMO-Tools-Desktop | https://api.github.com/repos/ORNL-AMO/AMO-Tools-Desktop | opened | Cooling Tower Form Input Ordering | Process Cooling | Feedback from ORNL testing:

**Order of Input fields**

1. Group 1: can we do this order: Water Flow Rate, Cooling Load, then Annual Operating hours? Typically, water flow rate is the easiest to get and operators and cooling load and operating hours are a little harder.

2. Group 2: can we have Cycles of Concentration an... | 1.0 | Cooling Tower Form Input Ordering - Feedback from ORNL testing:

**Order of Input fields**

1. Group 1: can we do this order: Water Flow Rate, Cooling Load, then Annual Operating hours? Typically, water flow rate is the easiest to get and operators and cooling load and operating hours are a little harder.

2. Group 2: ca... | process | cooling tower form input ordering feedback from ornl testing order of input fields group can we do this order water flow rate cooling load then annual operating hours typically water flow rate is the easiest to get and operators and cooling load and operating hours are a little harder group ca... | 1 |

13,994 | 16,766,075,086 | IssuesEvent | 2021-06-14 08:58:05 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | opened | Masked values when regridding datasets with coord `circular=False` | cmor preprocessor | **Describe the bug**

At the BSC, it was reported that when regridding dataset MCM-UA-1-0, a white line with masked values appeared for lon > 355.

After some debugging it turns out that when the longitudes are not circular and do not span fully from [0,360], those masked values can appear after regridding.

This d... | 1.0 | Masked values when regridding datasets with coord `circular=False` - **Describe the bug**

At the BSC, it was reported that when regridding dataset MCM-UA-1-0, a white line with masked values appeared for lon > 355.

After some debugging it turns out that when the longitudes are not circular and do not span fully from... | process | masked values when regridding datasets with coord circular false describe the bug at the bsc it was reported that when regridding dataset mcm ua a white line with masked values appeared for lon after some debugging it turns out that when the longitudes are not circular and do not span fully from ... | 1 |

83,051 | 3,621,349,284 | IssuesEvent | 2016-02-08 23:41:08 | isolver/OpenHandWrite | https://api.github.com/repos/isolver/OpenHandWrite | closed | Loading source data file fails if .mwp file with same name exists in same folder. | bug MarkWrite Priority 1 | If both a source file, like input.txyp, and a markwrite project file, like input.mwp, are in the same folder, trying to load input.txyp fails because _detectAssociatedSegmentTagsFile() function is reading 'input.mwp' as a possible segment tags file and crashing because it is a binary file. | 1.0 | Loading source data file fails if .mwp file with same name exists in same folder. - If both a source file, like input.txyp, and a markwrite project file, like input.mwp, are in the same folder, trying to load input.txyp fails because _detectAssociatedSegmentTagsFile() function is reading 'input.mwp' as a possible segme... | non_process | loading source data file fails if mwp file with same name exists in same folder if both a source file like input txyp and a markwrite project file like input mwp are in the same folder trying to load input txyp fails because detectassociatedsegmenttagsfile function is reading input mwp as a possible segme... | 0 |

426,688 | 29,579,935,762 | IssuesEvent | 2023-06-07 04:27:29 | jupyterlab/jupyterlab-desktop | https://api.github.com/repos/jupyterlab/jupyterlab-desktop | closed | venv selection fails | bug documentation | ## Description

Upon selecting an environment and choosing a venv python one.

I am getting this error.

## Reproduce

Create a virtual environment with venv and then select it.

| 1.0 | venv selection fails - ## Description

Upon selecting an environment and choosing a venv python one.

I am getting this error.

## Reproduce

Create a virtual environment with venv and then select it.

... | non_process | venv selection fails description upon selecting an environment and choosing a venv python one i am getting this error reproduce create a virtual environment with venv and then select it | 0 |

2,577 | 5,332,789,030 | IssuesEvent | 2017-02-15 23:03:23 | csstree/stylelint-validator | https://api.github.com/repos/csstree/stylelint-validator | closed | sass interpolation | enhancement preprocessors | Slightly related to #4, I've found our codese problems coming from [sass interpolation]( http://sass-lang.com/documentation/file.SASS_REFERENCE.html#interpolation_)

```

.navbar-static-top .dropdown .dropdown-menu {

max-height: calc(100vh - #{$navbar-height});

overflow-y: auto;

}

```

```

>> theme/boost... | 1.0 | sass interpolation - Slightly related to #4, I've found our codese problems coming from [sass interpolation]( http://sass-lang.com/documentation/file.SASS_REFERENCE.html#interpolation_)

```

.navbar-static-top .dropdown .dropdown-menu {

max-height: calc(100vh - #{$navbar-height});

overflow-y: auto;

}

```

... | process | sass interpolation slightly related to i ve found our codese problems coming from navbar static top dropdown dropdown menu max height calc navbar height overflow y auto theme boost scss moodle undo scss ✖ can t parse value calc navbar he... | 1 |

141,889 | 12,991,206,642 | IssuesEvent | 2020-07-23 02:47:06 | KuChainNetwork/Project-Decalogue | https://api.github.com/repos/KuChainNetwork/Project-Decalogue | closed | [DOC] Features for easier trading | documentation uncorrelated |

**Type of Proposal**

- [✓] Feature Request (e.g. functionality)

- [ ] Economic Model

- [ ] Underlying Technology (e.g. performance)

- [ ] Application Development

- [ ] Others

**Background**

Ease of use for traders, especially those who are new to KuCoin, based on my personal exp... | 1.0 | [DOC] Features for easier trading -

**Type of Proposal**

- [✓] Feature Request (e.g. functionality)

- [ ] Economic Model

- [ ] Underlying Technology (e.g. performance)

- [ ] Application Development

- [ ] Others

**Background**

Ease of use for traders, especially those who are new... | non_process | features for easier trading type of proposal feature request e g functionality economic model underlying technology e g performance application development others background ease of use for traders especially those who are new to kucoin based on... | 0 |

7,912 | 11,092,325,985 | IssuesEvent | 2019-12-15 18:14:27 | Jeffail/benthos | https://api.github.com/repos/Jeffail/benthos | closed | Add new `sync_response` processor | annoying enhancement processors | It would be useful to have a `sync_response` processor that would enable more customized behaviour for pipelines containing responses. This is going to be simple to implement but we need to add extra care that it won't introduce race conditions (post-buffer alterations). | 1.0 | Add new `sync_response` processor - It would be useful to have a `sync_response` processor that would enable more customized behaviour for pipelines containing responses. This is going to be simple to implement but we need to add extra care that it won't introduce race conditions (post-buffer alterations). | process | add new sync response processor it would be useful to have a sync response processor that would enable more customized behaviour for pipelines containing responses this is going to be simple to implement but we need to add extra care that it won t introduce race conditions post buffer alterations | 1 |

29,800 | 2,717,434,445 | IssuesEvent | 2015-04-11 08:29:55 | codenameone/CodenameOne | https://api.github.com/repos/codenameone/CodenameOne | closed | RFE: line seperator component | Priority-Medium Type-Enhancement | Original [issue 264](https://code.google.com/p/codenameone/issues/detail?id=264) created by codenameone on 2012-07-15T22:33:51.000Z:

<b>What steps will reproduce the problem?</b>

1. (Line) Seperator Component in Designer

<b>What is the expected output? What do you see instead?</b>

I would like to have a Seperator C... | 1.0 | RFE: line seperator component - Original [issue 264](https://code.google.com/p/codenameone/issues/detail?id=264) created by codenameone on 2012-07-15T22:33:51.000Z:

<b>What steps will reproduce the problem?</b>

1. (Line) Seperator Component in Designer

<b>What is the expected output? What do you see instead?</b>

I ... | non_process | rfe line seperator component original created by codenameone on what steps will reproduce the problem line seperator component in designer what is the expected output what do you see instead i would like to have a seperator component in the designer that can seperate for example a li... | 0 |

57,908 | 16,136,922,287 | IssuesEvent | 2021-04-29 13:01:38 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | opened | Tree Selection does not fulfil all accessibility criteria (WCAG) | defect | **Issue Description**

Primefaces' Tree Selection component does nut fulfil the WCAG accessibility criterion [4.1.1](https://www.w3.org/WAI/WCAG21/Understanding/parsing.html) as it renders syntactically incorrect HTML code. The problems seems to be that in complex trees which include components like checkboxes the incl... | 1.0 | Tree Selection does not fulfil all accessibility criteria (WCAG) - **Issue Description**

Primefaces' Tree Selection component does nut fulfil the WCAG accessibility criterion [4.1.1](https://www.w3.org/WAI/WCAG21/Understanding/parsing.html) as it renders syntactically incorrect HTML code. The problems seems to be that... | non_process | tree selection does not fulfil all accessibility criteria wcag issue description primefaces tree selection component does nut fulfil the wcag accessibility criterion as it renders syntactically incorrect html code the problems seems to be that in complex trees which include components like checkboxes the... | 0 |

12,816 | 15,190,289,263 | IssuesEvent | 2021-02-15 17:41:23 | panther-labs/panther | https://api.github.com/repos/panther-labs/panther | closed | If user deletes the IAM role, deleting an s3 source fails | bug p0 team:data processing | ### Describe the bug

A clear and concise description of what the bug is.

### Steps to reproduce

1. Onboard an s3 source, enable Panther to manage bucket notifications

2. Finish source onboarding

3. Delete the IAM role

4. Delete source - Removing bucket notifications will fail because the role is absent and ... | 1.0 | If user deletes the IAM role, deleting an s3 source fails - ### Describe the bug

A clear and concise description of what the bug is.

### Steps to reproduce

1. Onboard an s3 source, enable Panther to manage bucket notifications

2. Finish source onboarding

3. Delete the IAM role

4. Delete source - Removing bu... | process | if user deletes the iam role deleting an source fails describe the bug a clear and concise description of what the bug is steps to reproduce onboard an source enable panther to manage bucket notifications finish source onboarding delete the iam role delete source removing buck... | 1 |

508,291 | 14,697,981,439 | IssuesEvent | 2021-01-04 05:14:35 | boomerang-io/roadmap | https://api.github.com/repos/boomerang-io/roadmap | opened | No network flag security context for Custom Task or All Tasks | enhancement priority: high revision needed | **Is your request related to a problem? Please describe.**

The ability to set low level kubernetes security contexts such as the No Network flag.

**Describe the solution you'd like**

Be able to define specific low level security contexts at either the Custom Task level or the System wide level

**Describe the benefits... | 1.0 | No network flag security context for Custom Task or All Tasks - **Is your request related to a problem? Please describe.**

The ability to set low level kubernetes security contexts such as the No Network flag.

**Describe the solution you'd like**

Be able to define specific low level security contexts at either the Cus... | non_process | no network flag security context for custom task or all tasks is your request related to a problem please describe the ability to set low level kubernetes security contexts such as the no network flag describe the solution you d like be able to define specific low level security contexts at either the cus... | 0 |

104,863 | 4,226,242,267 | IssuesEvent | 2016-07-02 10:03:11 | FAC-GM/app | https://api.github.com/repos/FAC-GM/app | closed | Re-designing the home page + login page | priority-5 UI | Re-designing the home page + login page according to PO wireframes.

| 1.0 | Re-designing the home page + login page - Re-designing the home page + login page according to PO wireframes.

| non_process | re designing the home page login page re designing the home page login page according to po wireframes | 0 |

11,027 | 13,822,892,707 | IssuesEvent | 2020-10-13 06:06:15 | NixOS/nixpkgs | https://api.github.com/repos/NixOS/nixpkgs | closed | 20.09 Release notes | 0.kind: enhancement 6.topic: release process | This thread is for any release-worthy notes which may have not have made their way into https://github.com/NixOS/nixpkgs/blob/master/nixos/doc/manual/release-notes/rl-2009.xml yet.

Please leave a summary and any relevant links to the items. I will try and go through them before the release to ensure the notes are in... | 1.0 | 20.09 Release notes - This thread is for any release-worthy notes which may have not have made their way into https://github.com/NixOS/nixpkgs/blob/master/nixos/doc/manual/release-notes/rl-2009.xml yet.

Please leave a summary and any relevant links to the items. I will try and go through them before the release to e... | process | release notes this thread is for any release worthy notes which may have not have made their way into yet please leave a summary and any relevant links to the items i will try and go through them before the release to ensure the notes are in order list to make items easier to track was removed ... | 1 |

954 | 3,418,291,273 | IssuesEvent | 2015-12-08 01:05:40 | wekan/wekan | https://api.github.com/repos/wekan/wekan | opened | Release v0.10 | Meta:Release-process | Hi,

I've released the [first release candidate](https://github.com/wekan/wekan/releases/tag/v0.10.0-rc1) of Wekan v0.10. The release link contains the Sandstorm `spk`, the Nodejs `tar.gz` application, and the Docker image.

You can [read the release notes here](https://github.com/wekan/wekan/blob/master/History.md... | 1.0 | Release v0.10 - Hi,

I've released the [first release candidate](https://github.com/wekan/wekan/releases/tag/v0.10.0-rc1) of Wekan v0.10. The release link contains the Sandstorm `spk`, the Nodejs `tar.gz` application, and the Docker image.

You can [read the release notes here](https://github.com/wekan/wekan/blob/m... | process | release hi i ve released the of wekan the release link contains the sandstorm spk the nodejs tar gz application and the docker image you can this release includes some contributions by alexanders fisle floatinghotpot fuzzywuzzie mnutt ndarilek sircmpwn and xavierpriour t... | 1 |

12,729 | 15,099,298,986 | IssuesEvent | 2021-02-08 02:07:53 | scikit-learn/scikit-learn | https://api.github.com/repos/scikit-learn/scikit-learn | closed | Allowing drop='first' and handle_unknown='ignore' in OneHotEncoding | module:preprocessing | When using OneHotEncoding it is a common feature to drop a column in order to prevent multi-collinearity. This is done with the drop='first' flag.

On the other hand, when cross validating a model, it can happen that not every category appears on train split. In this setting, it is usual to just assign 0 to all colu... | 1.0 | Allowing drop='first' and handle_unknown='ignore' in OneHotEncoding - When using OneHotEncoding it is a common feature to drop a column in order to prevent multi-collinearity. This is done with the drop='first' flag.

On the other hand, when cross validating a model, it can happen that not every category appears on ... | process | allowing drop first and handle unknown ignore in onehotencoding when using onehotencoding it is a common feature to drop a column in order to prevent multi collinearity this is done with the drop first flag on the other hand when cross validating a model it can happen that not every category appears on ... | 1 |

84,981 | 3,683,069,368 | IssuesEvent | 2016-02-24 12:32:31 | wp-property/wp-property | https://api.github.com/repos/wp-property/wp-property | opened | Can't delete lats item in Meta and Terms section | priority/high type/bug | There should be at least one.

User notices from wordpress - https://wordpress.org/support/topic/cant-properly-remove-community-features-terms?replies=4#post-8070870

| 1.0 | Can't delete lats item in Meta and Terms section - There should be at least one.

User notices from wordpress - https://wordpress.org/support/topic/cant-properly-remove-community-features-terms?replies=4#post-8070870

| non_process | can t delete lats item in meta and terms section there should be at least one user notices from wordpress | 0 |

100,320 | 12,514,843,239 | IssuesEvent | 2020-06-03 06:24:37 | Altinn/altinn-studio | https://api.github.com/repos/Altinn/altinn-studio | closed | Hide yellow banner | area/dashboard kind/user-story solution/studio/designer | ## Description

Hide the yellow stuff on dashboard since the content is no longer relevant.

## Screenshots

## Considerations

Only comment out so it's easy to put back.

## Acceptance criteria

Yellow ... | 1.0 | Hide yellow banner - ## Description

Hide the yellow stuff on dashboard since the content is no longer relevant.

## Screenshots

## Considerations

Only comment out so it's easy to put back.

## Accepta... | non_process | hide yellow banner description hide the yellow stuff on dashboard since the content is no longer relevant screenshots considerations only comment out so it s easy to put back acceptance criteria yellow banner is gone tasks remove banner comment out test | 0 |

23,804 | 3,870,942,345 | IssuesEvent | 2016-04-11 07:36:40 | geetsisbac/WCVVENIXYFVIRBXH3BYTI6TE | https://api.github.com/repos/geetsisbac/WCVVENIXYFVIRBXH3BYTI6TE | closed | a45kw6vEeeNoXIqYQ5fZIz2ddDWg8Fu3Q/+yx96hgmve51lCYgVUw3DGJEDqhgYRKT2yNP5qAzdKY+FOApdtsgcXjZs6pCF86t3nDfpDORFAf2ou+TQ/Qhw8M9aOL2xy6CV6OG3YoO2YBef6MXt59ZMS8UO8uxt00syItffwpNo= | design | EThWldcBapO7q/nWLiBxYLEPPp+7IglaA3AugKF557F2XQgSL7HlAKiZE6FGSRYfkYLuM9cde1RUA++Y9+ciA6dL4Qs9J/cUTvozU+JnVdJv18cJ4QZYK1tSo8lLAVM0YkCFjJ4Tm5fXmQS87OZodfLNWQ5u6aRksAgg/v67u0FTH0rvTFckM/OEDyg8ZuSYSTHZHvQo3M+K77qdG+1dbmZEJId+1uAIQH5CLmxQTt3OBTaIPCPEJF9U4yBEZEkMwpn9oUrxCEajy+Al0+ZwISJlX3LBYab/YClVa+HiJKjJyeIH3Wwy/K37b6aVTIZL... | 1.0 | a45kw6vEeeNoXIqYQ5fZIz2ddDWg8Fu3Q/+yx96hgmve51lCYgVUw3DGJEDqhgYRKT2yNP5qAzdKY+FOApdtsgcXjZs6pCF86t3nDfpDORFAf2ou+TQ/Qhw8M9aOL2xy6CV6OG3YoO2YBef6MXt59ZMS8UO8uxt00syItffwpNo= - EThWldcBapO7q/nWLiBxYLEPPp+7IglaA3AugKF557F2XQgSL7HlAKiZE6FGSRYfkYLuM9cde1RUA++Y9+ciA6dL4Qs9J/cUTvozU+JnVdJv18cJ4QZYK1tSo8lLAVM0YkCFjJ4Tm5fXmQS87... | non_process | tq nwlibxyleppp cutvozu yclva p hspa | 0 |

109,331 | 9,378,327,358 | IssuesEvent | 2019-04-04 12:38:58 | kcigeospatial/Fred_Co_Land-Management | https://api.github.com/repos/kcigeospatial/Fred_Co_Land-Management | closed | Planning-verification letters | Ready For Retest | verification letters should not go to finalized before the condition requiring letter to be sent is approved.

ME | 1.0 | Planning-verification letters - verification letters should not go to finalized before the condition requiring letter to be sent is approved.

ME | non_process | planning verification letters verification letters should not go to finalized before the condition requiring letter to be sent is approved me | 0 |

72,528 | 3,386,806,491 | IssuesEvent | 2015-11-27 21:33:23 | arcualberta/TAPoR | https://api.github.com/repos/arcualberta/TAPoR | closed | New tags not returning tools | high priority | After adding new tags, the tag doesn't return the tool as a search result when clicked as a link or when searched from the search bar. | 1.0 | New tags not returning tools - After adding new tags, the tag doesn't return the tool as a search result when clicked as a link or when searched from the search bar. | non_process | new tags not returning tools after adding new tags the tag doesn t return the tool as a search result when clicked as a link or when searched from the search bar | 0 |

15,841 | 20,028,187,313 | IssuesEvent | 2022-02-02 00:26:45 | googleapis/java-translate | https://api.github.com/repos/googleapis/java-translate | closed | com.example.translate.BatchTranslateTextWithGlossaryTests: testBatchTranslateTextWithGlossary failed | priority: p2 type: process api: translate flakybot: issue flakybot: flaky | Note: #567 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: b7ad2372edd50a38d703efb8305d7c5e71145409

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/7385ee88-4195-4405-a11e-8d439d404617), [Sponge](http://sponge2/7385ee88-4195-4405-a... | 1.0 | com.example.translate.BatchTranslateTextWithGlossaryTests: testBatchTranslateTextWithGlossary failed - Note: #567 was also for this test, but it was closed more than 10 days ago. So, I didn't mark it flaky.

----

commit: b7ad2372edd50a38d703efb8305d7c5e71145409

buildURL: [Build Status](https://source.cloud.google.com/... | process | com example translate batchtranslatetextwithglossarytests testbatchtranslatetextwithglossary failed note was also for this test but it was closed more than days ago so i didn t mark it flaky commit buildurl status failed test output java util concurrent executionexception com googl... | 1 |

332,191 | 24,338,204,765 | IssuesEvent | 2022-10-01 10:57:21 | Crequency/KitX | https://api.github.com/repos/Crequency/KitX | closed | 关于插件的实现 | documentation | 按我的理解是插件编译为托管dll,然后调用预先规定名称的函数吗

似乎是直接用GetExportedValues导入为IIdentityInterface对象后启动controller然后向dashboard发送json化的插件信息?

也就是说每一个插件都有一个loader相对应吗

这样的话有些无法编译为托管dll的语言是怎么支持的?插件间的交互如自定义函数按名调用是怎么实现的?

(我不太熟悉csharp,没有在源码里找到相应的功能) | 1.0 | 关于插件的实现 - 按我的理解是插件编译为托管dll,然后调用预先规定名称的函数吗

似乎是直接用GetExportedValues导入为IIdentityInterface对象后启动controller然后向dashboard发送json化的插件信息?

也就是说每一个插件都有一个loader相对应吗

这样的话有些无法编译为托管dll的语言是怎么支持的?插件间的交互如自定义函数按名调用是怎么实现的?

(我不太熟悉csharp,没有在源码里找到相应的功能) | non_process | 关于插件的实现 按我的理解是插件编译为托管dll,然后调用预先规定名称的函数吗 似乎是直接用getexportedvalues导入为iidentityinterface对象后启动controller然后向dashboard发送json化的插件信息? 也就是说每一个插件都有一个loader相对应吗 这样的话有些无法编译为托管dll的语言是怎么支持的?插件间的交互如自定义函数按名调用是怎么实现的? (我不太熟悉csharp,没有在源码里找到相应的功能) | 0 |

195,436 | 22,339,631,699 | IssuesEvent | 2022-06-14 22:33:24 | vincenzodistasio97/events-manager-io | https://api.github.com/repos/vincenzodistasio97/events-manager-io | closed | CVE-2019-10752 (High) detected in sequelize-4.37.6.tgz - autoclosed | security vulnerability | ## CVE-2019-10752 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>sequelize-4.37.6.tgz</b></p></summary>

<p>Multi dialect ORM for Node.JS</p>

<p>Library home page: <a href="https://reg... | True | CVE-2019-10752 (High) detected in sequelize-4.37.6.tgz - autoclosed - ## CVE-2019-10752 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>sequelize-4.37.6.tgz</b></p></summary>

<p>Multi ... | non_process | cve high detected in sequelize tgz autoclosed cve high severity vulnerability vulnerable library sequelize tgz multi dialect orm for node js library home page a href path to dependency file package json path to vulnerable library node modules sequelize package js... | 0 |

19,799 | 3,497,607,435 | IssuesEvent | 2016-01-06 02:24:51 | meren/anvio | https://api.github.com/repos/meren/anvio | opened | Switching to cherrypy: user management re-design. | design enhancement server | OK. This is a big change.

We have been having some random issues with the server performance as we were using the default backend from within bottle (`wsgiref`; the single-threaded backend).

I think in the long run it is much better to rely on a multithreaded backend like cherrypy (see the reasoning [here](http:/... | 1.0 | Switching to cherrypy: user management re-design. - OK. This is a big change.

We have been having some random issues with the server performance as we were using the default backend from within bottle (`wsgiref`; the single-threaded backend).

I think in the long run it is much better to rely on a multithreaded ba... | non_process | switching to cherrypy user management re design ok this is a big change we have been having some random issues with the server performance as we were using the default backend from within bottle wsgiref the single threaded backend i think in the long run it is much better to rely on a multithreaded ba... | 0 |

2,698 | 5,541,396,341 | IssuesEvent | 2017-03-22 12:45:51 | mathiasbynens/es-regexp-dotall-flag | https://api.github.com/repos/mathiasbynens/es-regexp-dotall-flag | closed | Advance to stage 3 | process | Criteria taken from [the TC39 process document](https://tc39.github.io/process-document/) minus those from previous stages:

> - [x] Complete spec text

https://github.com/mathiasbynens/es-regexp-singleline-flag/blob/master/spec.html

https://mathiasbynens.github.io/es-regexp-singleline-flag/

> - [ ] Designated reviewe... | 1.0 | Advance to stage 3 - Criteria taken from [the TC39 process document](https://tc39.github.io/process-document/) minus those from previous stages:

> - [x] Complete spec text

https://github.com/mathiasbynens/es-regexp-singleline-flag/blob/master/spec.html

https://mathiasbynens.github.io/es-regexp-singleline-flag/

> - [... | process | advance to stage criteria taken from minus those from previous stages complete spec text designated reviewers have signed off on the current spec text todo the ecmascript editor has signed off on the current spec text todo | 1 |

9,848 | 25,384,553,893 | IssuesEvent | 2022-11-21 20:38:07 | backend-br/vagas | https://api.github.com/repos/backend-br/vagas | closed | [REMOTO] Back-end developer - DB1 Group | CLT PJ Sênior PHP TDD Remoto MySQL DDD SOLID Hexagonal architecture Clean architecture | ## Nossa empresa

A DB1 Global Software é uma empresa do DB1 Group e é uma Software House especializada no desenvolvimento de software para grandes players do mercado.

Na DGS, como chamamos aqui, trabalhamos com a transformação digital alinhada a uma entrega de valor aos nossos clientes. Temos o propósito de enfren... | 2.0 | [REMOTO] Back-end developer - DB1 Group - ## Nossa empresa

A DB1 Global Software é uma empresa do DB1 Group e é uma Software House especializada no desenvolvimento de software para grandes players do mercado.

Na DGS, como chamamos aqui, trabalhamos com a transformação digital alinhada a uma entrega de valor aos no... | non_process | back end developer group nossa empresa a global software é uma empresa do group e é uma software house especializada no desenvolvimento de software para grandes players do mercado na dgs como chamamos aqui trabalhamos com a transformação digital alinhada a uma entrega de valor aos nossos clientes... | 0 |

222,463 | 24,708,940,783 | IssuesEvent | 2022-10-19 21:51:46 | lukebrogan-mend/bag-of-holding | https://api.github.com/repos/lukebrogan-mend/bag-of-holding | closed | CVE-2020-8203 (High) detected in lodash-1.0.2.tgz, lodash-2.4.2.tgz - autoclosed | security vulnerability | ## CVE-2020-8203 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-1.0.2.tgz</b>, <b>lodash-2.4.2.tgz</b></p></summary>

<p>

<details><summary><b>lodash-1.0.2.tgz</b></p></summar... | True | CVE-2020-8203 (High) detected in lodash-1.0.2.tgz, lodash-2.4.2.tgz - autoclosed - ## CVE-2020-8203 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-1.0.2.tgz</b>, <b>lodash-2.4... | non_process | cve high detected in lodash tgz lodash tgz autoclosed cve high severity vulnerability vulnerable libraries lodash tgz lodash tgz lodash tgz a utility library delivering consistency customization performance and extras library home page a ... | 0 |

14,695 | 17,858,600,265 | IssuesEvent | 2021-09-05 14:22:27 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | how get absolute path in .bzl? | P4 type: support / not a bug (process) team-Rules-CPP | ### Description of the problem / feature request:

> problem

### Feature requests: what underlying problem are you trying to solve with this feature?

> show "src/main/tools/process-wrapper-legacy.cc:80: execvp(toolchain/darwin_arm64e/libtool.sh, ...): No such file or directory " when i use cxx_builtin_include_d... | 1.0 | how get absolute path in .bzl? - ### Description of the problem / feature request:

> problem

### Feature requests: what underlying problem are you trying to solve with this feature?

> show "src/main/tools/process-wrapper-legacy.cc:80: execvp(toolchain/darwin_arm64e/libtool.sh, ...): No such file or directory "... | process | how get absolute path in bzl description of the problem feature request problem feature requests what underlying problem are you trying to solve with this feature show src main tools process wrapper legacy cc execvp toolchain darwin libtool sh no such file or directory when ... | 1 |

747,688 | 26,095,637,804 | IssuesEvent | 2022-12-26 19:06:08 | bounswe/bounswe2022group2 | https://api.github.com/repos/bounswe/bounswe2022group2 | closed | Frontend: Create Event Component | priority-medium status-inprogress feature front-end | ### Issue Description

Events are one of the key features of our application. A user can create or participate in events for a particular learning space when they are joined to that learning space.

Thus, there will be a component to create an event for the learning space in general learning space page. In our sixth ... | 1.0 | Frontend: Create Event Component - ### Issue Description

Events are one of the key features of our application. A user can create or participate in events for a particular learning space when they are joined to that learning space.

Thus, there will be a component to create an event for the learning space in general... | non_process | frontend create event component issue description events are one of the key features of our application a user can create or participate in events for a particular learning space when they are joined to that learning space thus there will be a component to create an event for the learning space in general... | 0 |

507,321 | 14,679,965,562 | IssuesEvent | 2020-12-31 08:38:10 | k8smeetup/website-tasks | https://api.github.com/repos/k8smeetup/website-tasks | opened | /docs/reference/glossary/kube-apiserver.md | lang/zh priority/P0 sync/update version/master welcome | Source File: [/docs/reference/glossary/kube-apiserver.md](https://github.com/kubernetes/website/blob/master/content/en/docs/reference/glossary/kube-apiserver.md)

Diff 命令参考:

```bash

# 查看原始文档与翻译文档更新差异

git diff --no-index -- content/en/docs/reference/glossary/kube-apiserver.md content/zh/docs/reference/glossary/kube-ap... | 1.0 | /docs/reference/glossary/kube-apiserver.md - Source File: [/docs/reference/glossary/kube-apiserver.md](https://github.com/kubernetes/website/blob/master/content/en/docs/reference/glossary/kube-apiserver.md)

Diff 命令参考:

```bash

# 查看原始文档与翻译文档更新差异

git diff --no-index -- content/en/docs/reference/glossary/kube-apiserver.... | non_process | docs reference glossary kube apiserver md source file diff 命令参考 bash 查看原始文档与翻译文档更新差异 git diff no index content en docs reference glossary kube apiserver md content zh docs reference glossary kube apiserver md 跨分支持查看原始文档更新差异 git diff release master content en docs reference glossary kube ... | 0 |

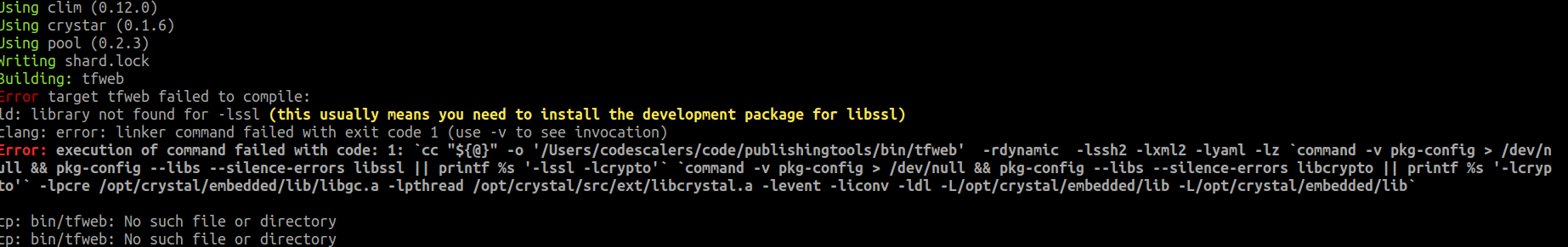

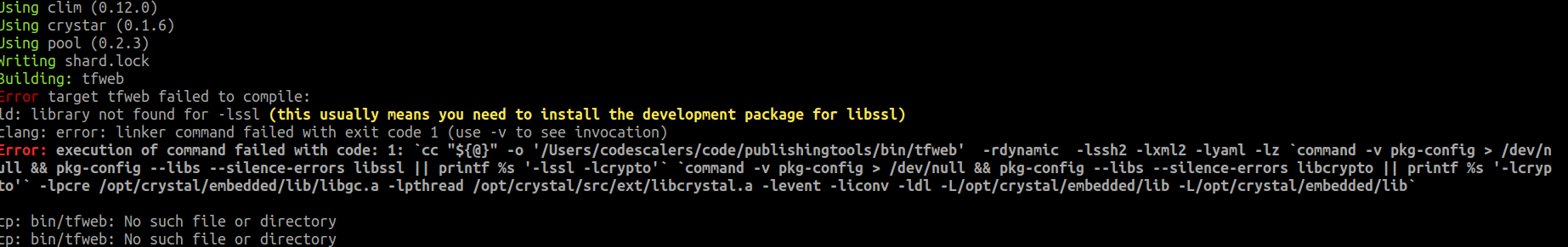

9,985 | 13,033,759,303 | IssuesEvent | 2020-07-28 07:33:35 | crystaluniverse/publishingtools | https://api.github.com/repos/crystaluniverse/publishingtools | closed | error when build tool with crystal 0.34.0 | process_wontfix | ```

codescalerssMBP:publishingtools codescalers$ bash build.sh

```

```

codescalerssMBP:publishingtools codescalers$ crystal --version

Crystal 0.34.0 (2020-04-06)

LLVM: 6.0.1

Default target: x86_64... | 1.0 | error when build tool with crystal 0.34.0 - ```

codescalerssMBP:publishingtools codescalers$ bash build.sh

```

```

codescalerssMBP:publishingtools codescalers$ crystal --version

Crystal 0.34.0 (2020-0... | process | error when build tool with crystal codescalerssmbp publishingtools codescalers bash build sh codescalerssmbp publishingtools codescalers crystal version crystal llvm default target apple macosx | 1 |

217,752 | 7,327,925,368 | IssuesEvent | 2018-03-04 15:43:30 | openSUSE/docbookrx | https://api.github.com/repos/openSUSE/docbookrx | closed | Add Appendix as a supported document type | High Priority enhancement | Appendix is missing from the supported document types:

`/lib/docbookrx/docbook_visitor.rb`

This is required as some of SUSE's larger books have appendix documents and do not just use the tag internal to a document. The document itself is an appendix and is linked from a book entry.

```Ruby

DOCUMENT_NAMES = ... | 1.0 | Add Appendix as a supported document type - Appendix is missing from the supported document types:

`/lib/docbookrx/docbook_visitor.rb`

This is required as some of SUSE's larger books have appendix documents and do not just use the tag internal to a document. The document itself is an appendix and is linked from a b... | non_process | add appendix as a supported document type appendix is missing from the supported document types lib docbookrx docbook visitor rb this is required as some of suse s larger books have appendix documents and do not just use the tag internal to a document the document itself is an appendix and is linked from a b... | 0 |

207,957 | 23,529,511,376 | IssuesEvent | 2022-08-19 14:04:19 | MatBenfield/news | https://api.github.com/repos/MatBenfield/news | closed | [SecurityWeek] Evasive 'DarkTortilla' Crypter Delivers RATs, Targeted Malware | SecurityWeek Stale |

**Secureworks security researchers have analyzed ‘DarkTortilla’, a .NET-based crypter used to deliver both popular malware and targeted payloads.**

[read more](https://www.securityweek.com/evasive-darktortilla-crypter-delivers-rats-targeted-malware)

<https://www.securityweek.com/evasive-darktortilla-crypter-deliv... | True | [SecurityWeek] Evasive 'DarkTortilla' Crypter Delivers RATs, Targeted Malware -

**Secureworks security researchers have analyzed ‘DarkTortilla’, a .NET-based crypter used to deliver both popular malware and targeted payloads.**

[read more](https://www.securityweek.com/evasive-darktortilla-crypter-delivers-rats-targe... | non_process | evasive darktortilla crypter delivers rats targeted malware secureworks security researchers have analyzed ‘darktortilla’ a net based crypter used to deliver both popular malware and targeted payloads | 0 |

15,880 | 20,070,548,980 | IssuesEvent | 2022-02-04 05:56:40 | swig/swig | https://api.github.com/repos/swig/swig | opened | -DFOO works different to a real compiler preprocessor | preprocessor | In a C/C++ compiler, `-DFOO` on the command line sets `FOO` to `1`.

In SWIG it sets `FOO` to an empty value.

(In both SWIG and compilers, `#define FOO` in a file sets `FOO` to an empty value.)

Reproducer (same result with 4.0.2 and git master):

```

$ cat emptydefine.i

#define EMPTY2

[EMPTY1] [EMPTY2]

$... | 1.0 | -DFOO works different to a real compiler preprocessor - In a C/C++ compiler, `-DFOO` on the command line sets `FOO` to `1`.

In SWIG it sets `FOO` to an empty value.

(In both SWIG and compilers, `#define FOO` in a file sets `FOO` to an empty value.)

Reproducer (same result with 4.0.2 and git master):

```

$ ... | process | dfoo works different to a real compiler preprocessor in a c c compiler dfoo on the command line sets foo to in swig it sets foo to an empty value in both swig and compilers define foo in a file sets foo to an empty value reproducer same result with and git master ... | 1 |

3,061 | 6,047,932,293 | IssuesEvent | 2017-06-12 15:25:01 | openvstorage/framework | https://api.github.com/repos/openvstorage/framework | reopened | claim asd failed but gui alert is claimed | process_moved | Unable to claim an asd but gui shows claimed.

After refreshing the page the asds is still available to claim.

Error in the logging:

```

Jun 12 07:20:53 NY1SRV0019 celery[13127]: 2017-06-12 07:20:53 1... | 1.0 | claim asd failed but gui alert is claimed - Unable to claim an asd but gui shows claimed.

After refreshing the page the asds is still available to claim.

Error in the logging:

```

Jun 12 07:20:53 NY1... | process | claim asd failed but gui alert is claimed unable to claim an asd but gui shows claimed after refreshing the page the asds is still available to claim error in the logging jun celery celery celery redirected warning exte... | 1 |

58 | 2,518,635,051 | IssuesEvent | 2015-01-17 00:06:33 | MozillaFoundation/plan | https://api.github.com/repos/MozillaFoundation/plan | closed | ENGINEERING: Create reference app using stack from engineering handbook; communicate to team | p1 process | We need a reference application we can all refer to when suggesting changes to our stack.

Phase: Build / Ship

Owner: @simonwex

Decision: @simonwex

Lead design: n/a

Lead dev: @Pomax

Quality: @jbuck

Dev: @alicoding | 1.0 | ENGINEERING: Create reference app using stack from engineering handbook; communicate to team - We need a reference application we can all refer to when suggesting changes to our stack.

Phase: Build / Ship

Owner: @simonwex

Decision: @simonwex

Lead design: n/a

Lead dev: @Pomax

Quality: @jbuck

Dev: @alicoding | process | engineering create reference app using stack from engineering handbook communicate to team we need a reference application we can all refer to when suggesting changes to our stack phase build ship owner simonwex decision simonwex lead design n a lead dev pomax quality jbuck dev alicoding | 1 |

3,724 | 6,732,910,472 | IssuesEvent | 2017-10-18 13:16:11 | lockedata/rcms | https://api.github.com/repos/lockedata/rcms | opened | Manage registration | attendee odoo processes | ## Detailed task

- Edit your information

- Get a refund on your ticker

## Assessing the task

Try to perform the task. Use google and the system documentation to help - part of what we're trying to assess how easy it is for people to work out how to do tasks.

Use a 👍 (`:+1:`) reaction to this task if you were ... | 1.0 | Manage registration - ## Detailed task

- Edit your information

- Get a refund on your ticker

## Assessing the task

Try to perform the task. Use google and the system documentation to help - part of what we're trying to assess how easy it is for people to work out how to do tasks.