Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

354,932 | 25,175,238,220 | IssuesEvent | 2022-11-11 08:38:57 | garfield-oo7/pe | https://api.github.com/repos/garfield-oo7/pe | opened | view, parameter issue | severity.Low type.DocumentationBug | In the user guide it is mentioned that the command view takes in one parameter index, and index must be a positive integer, however when you try to enter the number 0, which can be considered a positive integer, it throws an error saying that please enter a positive integer.

I think it will be nice if you can specify... | 1.0 | view, parameter issue - In the user guide it is mentioned that the command view takes in one parameter index, and index must be a positive integer, however when you try to enter the number 0, which can be considered a positive integer, it throws an error saying that please enter a positive integer.

I think it will b... | non_process | view parameter issue in the user guide it is mentioned that the command view takes in one parameter index and index must be a positive integer however when you try to enter the number which can be considered a positive integer it throws an error saying that please enter a positive integer i think it will b... | 0 |

6,175 | 9,084,139,203 | IssuesEvent | 2019-02-18 01:56:37 | googleapis/google-cloud-cpp | https://api.github.com/repos/googleapis/google-cloud-cpp | closed | Flaky test: InstanceAdminIntegrationTest.* | priority: p2 type: process | The integration tests fail from time to time, but they run fine a second time. It must be some kind of race condition, but as they execute against the emulator it is a more subtle one that the typical "used the same resources from two simultaneous builds".

The latest failure was in:

https://travis-ci.org/GoogleCl... | 1.0 | Flaky test: InstanceAdminIntegrationTest.* - The integration tests fail from time to time, but they run fine a second time. It must be some kind of race condition, but as they execute against the emulator it is a more subtle one that the typical "used the same resources from two simultaneous builds".

The latest fail... | process | flaky test instanceadminintegrationtest the integration tests fail from time to time but they run fine a second time it must be some kind of race condition but as they execute against the emulator it is a more subtle one that the typical used the same resources from two simultaneous builds the latest fail... | 1 |

5,295 | 7,107,028,087 | IssuesEvent | 2018-01-16 18:31:43 | microsoftgraph/msgraph-sdk-dotnet | https://api.github.com/repos/microsoftgraph/msgraph-sdk-dotnet | closed | Values larger than Int32.Max are truncated when sent to Excel sheets | service bug under investigation | I'm using the Microsoft Graph API to modify cells in Excel spreadsheets. When writing integer values larger than Int32.Max to the spreadsheet via the API, the upper 32 bits of the values appear to be truncated, and only the lower 32 bits seem to be written to the spreadsheet.

Below are the values that I have tested.... | 1.0 | Values larger than Int32.Max are truncated when sent to Excel sheets - I'm using the Microsoft Graph API to modify cells in Excel spreadsheets. When writing integer values larger than Int32.Max to the spreadsheet via the API, the upper 32 bits of the values appear to be truncated, and only the lower 32 bits seem to be ... | non_process | values larger than max are truncated when sent to excel sheets i m using the microsoft graph api to modify cells in excel spreadsheets when writing integer values larger than max to the spreadsheet via the api the upper bits of the values appear to be truncated and only the lower bits seem to be written to... | 0 |

652 | 3,120,796,281 | IssuesEvent | 2015-09-05 02:14:14 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | [Converge] child_process argument type checking | child_process | Continuing from https://github.com/nodejs/node-convergence-archive/issues/22, I don't believe this has been done yet, @jasnell can you confirm please? That thread seems to have enough agreement to pull these changes in. Marking on the 4.0.0 milestone.

> These commits add argument type checking to methods within chil... | 1.0 | [Converge] child_process argument type checking - Continuing from https://github.com/nodejs/node-convergence-archive/issues/22, I don't believe this has been done yet, @jasnell can you confirm please? That thread seems to have enough agreement to pull these changes in. Marking on the 4.0.0 milestone.

> These commits... | process | child process argument type checking continuing from i don t believe this has been done yet jasnell can you confirm please that thread seems to have enough agreement to pull these changes in marking on the milestone these commits add argument type checking to methods within child process this nee... | 1 |

134,125 | 29,832,673,700 | IssuesEvent | 2023-06-18 13:11:43 | llvm/llvm-project | https://api.github.com/repos/llvm/llvm-project | closed | clang 3.5 and 3.4 crash in clang::CodeGen::CodeGenTypes::ConvertType(clang::QualType) | c++ clang:codegen bugzilla worksforme | | | |

| --- | --- |

| Bugzilla Link | [19532](https://llvm.org/bz19532) |

| Version | trunk |

| OS | Linux |

| Reporter | LLVM Bugzilla Contributor |

| CC | @DougGregor,@rnk |

## Extended Description

Compiling https://github.com/beniz/libcmaes with clang 3.4 and clang 3.5 on Linux and on Mac OSX does inevitably cra... | 1.0 | clang 3.5 and 3.4 crash in clang::CodeGen::CodeGenTypes::ConvertType(clang::QualType) - | | |

| --- | --- |

| Bugzilla Link | [19532](https://llvm.org/bz19532) |

| Version | trunk |

| OS | Linux |

| Reporter | LLVM Bugzilla Contributor |

| CC | @DougGregor,@rnk |

## Extended Description

Compiling https://github.com... | non_process | clang and crash in clang codegen codegentypes converttype clang qualtype bugzilla link version trunk os linux reporter llvm bugzilla contributor cc douggregor rnk extended description compiling with clang and clang on linux and on m... | 0 |

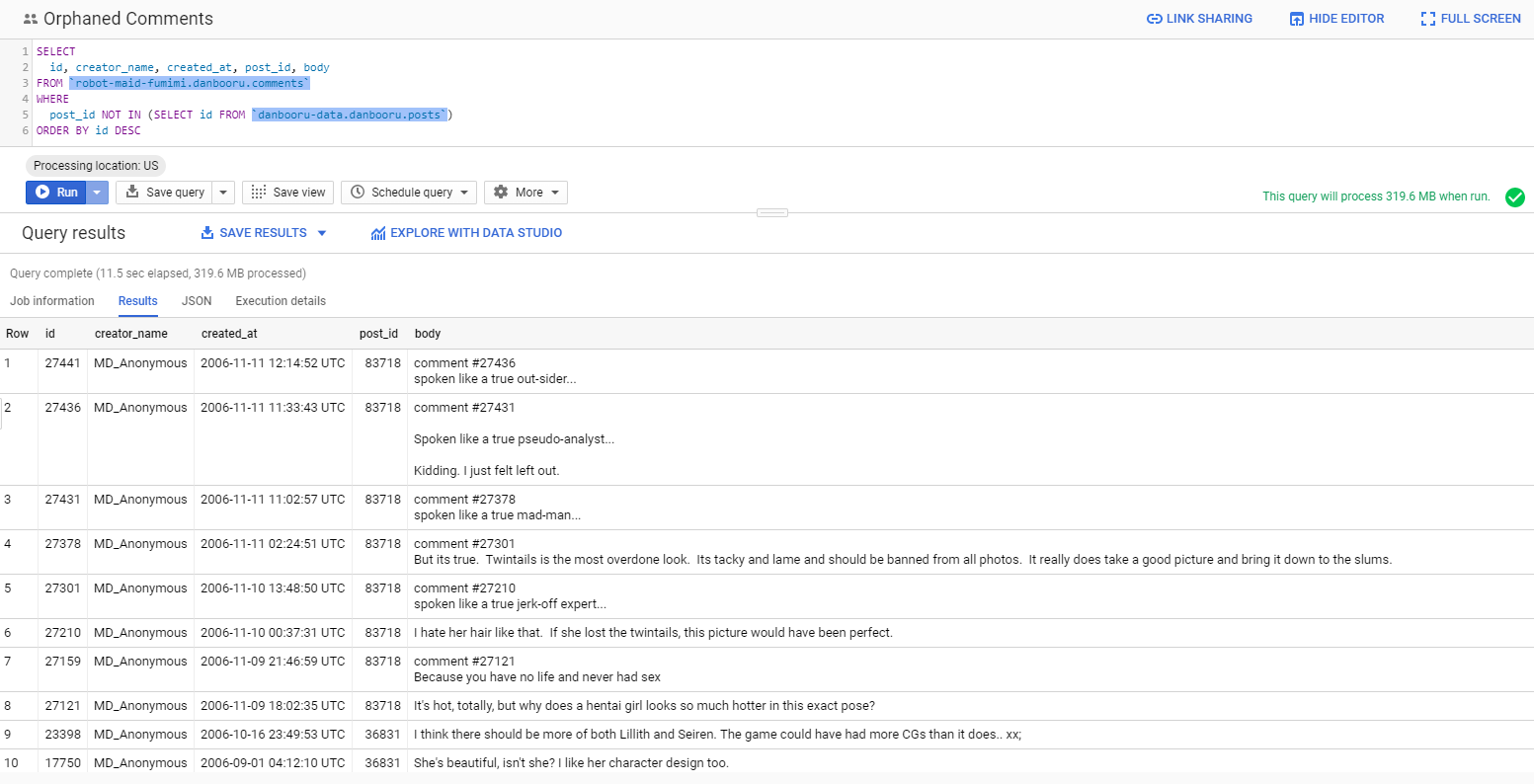

349,541 | 10,470,820,365 | IssuesEvent | 2019-09-23 05:44:57 | r888888888/danbooru | https://api.github.com/repos/r888888888/danbooru | closed | Orphaned comments | Bug Low Priority | There are 37 comments with invalid post ids:

Since these are all old low-quality comments belonging to expunged posts, they should be expunged too. | 1.0 | Orphaned comments - There are 37 comments with invalid post ids:

Since these are all old low-quality comments belonging to expunged posts, they should be expunged too. | non_process | orphaned comments there are comments with invalid post ids since these are all old low quality comments belonging to expunged posts they should be expunged too | 0 |

69,077 | 17,567,202,094 | IssuesEvent | 2021-08-14 00:47:20 | processing/processing-android | https://api.github.com/repos/processing/processing-android | opened | Query renderer from sketch's code | mode building | The renderer is needed during the sketch build process because selecting the correct template to build a watch face project [depends](https://github.com/processing/processing-android/blob/master/mode/src/processing/mode/android/AndroidBuild.java#L424) on whether the sketch uses the default renderer or the OpenGL render... | 1.0 | Query renderer from sketch's code - The renderer is needed during the sketch build process because selecting the correct template to build a watch face project [depends](https://github.com/processing/processing-android/blob/master/mode/src/processing/mode/android/AndroidBuild.java#L424) on whether the sketch uses the d... | non_process | query renderer from sketch s code the renderer is needed during the sketch build process because selecting the correct template to build a watch face project on whether the sketch uses the default renderer or the opengl renderer there was in an early version of the preprocessor in processing that allowe... | 0 |

189,382 | 22,047,028,824 | IssuesEvent | 2022-05-30 03:44:41 | panasalap/linux-4.1.15 | https://api.github.com/repos/panasalap/linux-4.1.15 | closed | CVE-2017-16525 (Medium) detected in linux179e72b561d3d331c850e1a5779688d7a7de5246 - autoclosed | security vulnerability | ## CVE-2017-16525 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux179e72b561d3d331c850e1a5779688d7a7de5246</b></p></summary>

<p>

<p>Linux kernel stable tree mirror</p>

<p>Librar... | True | CVE-2017-16525 (Medium) detected in linux179e72b561d3d331c850e1a5779688d7a7de5246 - autoclosed - ## CVE-2017-16525 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux179e72b561d3d33... | non_process | cve medium detected in autoclosed cve medium severity vulnerability vulnerable library linux kernel stable tree mirror library home page a href found in head commit a href found in base branch master vulnerable source files vu... | 0 |

187,374 | 15,097,310,233 | IssuesEvent | 2021-02-07 18:14:47 | NFIBrokerage/slipstream | https://api.github.com/repos/NFIBrokerage/slipstream | closed | example of a repeater-style client | t:Documentation | some clients simply re-broadcast a message after joining a topic

I've been calling them a Repeater in the Haste implementation

it probably makes sense to solidify this with an example+tutorial | 1.0 | example of a repeater-style client - some clients simply re-broadcast a message after joining a topic

I've been calling them a Repeater in the Haste implementation

it probably makes sense to solidify this with an example+tutorial | non_process | example of a repeater style client some clients simply re broadcast a message after joining a topic i ve been calling them a repeater in the haste implementation it probably makes sense to solidify this with an example tutorial | 0 |

14,845 | 18,239,434,307 | IssuesEvent | 2021-10-01 11:03:33 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | [feature] [mesh] Mesh Calculator | User Manual Automatic new feature Processing Alg 3.6 Mesh | Original commit: https://github.com/qgis/QGIS/commit/cd9a84e11c2649f3af9b4cee08652bdfcd340134 by PeterPetrik

Similarly to raster calculator, mesh calculator can take dataset groups from current mesh layer and

combine them with various aritmentic/logical operators to new dataset group. | 1.0 | [feature] [mesh] Mesh Calculator - Original commit: https://github.com/qgis/QGIS/commit/cd9a84e11c2649f3af9b4cee08652bdfcd340134 by PeterPetrik

Similarly to raster calculator, mesh calculator can take dataset groups from current mesh layer and

combine them with various aritmentic/logical operators to new dataset group... | process | mesh calculator original commit by peterpetrik similarly to raster calculator mesh calculator can take dataset groups from current mesh layer and combine them with various aritmentic logical operators to new dataset group | 1 |

210,017 | 16,079,712,672 | IssuesEvent | 2021-04-26 00:50:59 | backend-br/vagas | https://api.github.com/repos/backend-br/vagas | closed | [Porto Alegre] Desenvolvedor (a) Back-End Júnior @ Grupo Dream Work | AWS Express Git Junior MySQL PJ Rest Stale Testes automatizados startup | ## Descrição da vaga

**Selecionamos para startup de tecnologia sediada em Porto Alegre:**

**Estamos procurando uma pessoa proativa, apaixonada e com muita vontade de trabalhar! Os principais conhecimentos necessários para a função são em Node.js, Express.js, API REST e MySQL, juntamente com um perfil questionador... | 1.0 | [Porto Alegre] Desenvolvedor (a) Back-End Júnior @ Grupo Dream Work - ## Descrição da vaga

**Selecionamos para startup de tecnologia sediada em Porto Alegre:**

**Estamos procurando uma pessoa proativa, apaixonada e com muita vontade de trabalhar! Os principais conhecimentos necessários para a função são em Node.j... | non_process | desenvolvedor a back end júnior grupo dream work descrição da vaga selecionamos para startup de tecnologia sediada em porto alegre estamos procurando uma pessoa proativa apaixonada e com muita vontade de trabalhar os principais conhecimentos necessários para a função são em node js express js... | 0 |

10,648 | 13,446,738,036 | IssuesEvent | 2020-09-08 13:22:31 | symfony/symfony | https://api.github.com/repos/symfony/symfony | closed | Process Component add support for option "create_new_console" | Feature Process | **Description**

I saw that PHP has added in version 7.4.4 the option "create_new_console" for the function _proc-open.php_ (https://www.php.net/manual/en/function.proc-open.php), "create_new_console" can totally change the behavior of _proc-open.php_ on windows so it may be important for some users.

This is the... | 1.0 | Process Component add support for option "create_new_console" - **Description**

I saw that PHP has added in version 7.4.4 the option "create_new_console" for the function _proc-open.php_ (https://www.php.net/manual/en/function.proc-open.php), "create_new_console" can totally change the behavior of _proc-open.php_ ... | process | process component add support for option create new console description i saw that php has added in version the option create new console for the function proc open php create new console can totally change the behavior of proc open php on windows so it may be important for some users ... | 1 |

5,243 | 8,038,843,255 | IssuesEvent | 2018-07-30 16:30:06 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | opened | Improve deploy process of releases | process: release process: tests type: chore | ### Current behavior:

- Need more visibility on if there are failing tests in 'production' env.

### Desired behavior:

<!-- A clear and concise description of what you want to happen -->

| 2.0 | Improve deploy process of releases - ### Current behavior:

- Need more visibility on if there are failing tests in 'production' env.

### Desired behavior:

<!-- A clear and concise description of what you want to happen -->

| process | improve deploy process of releases current behavior need more visibility on if there are failing tests in production env desired behavior | 1 |

16,686 | 21,791,068,428 | IssuesEvent | 2022-05-14 22:53:25 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Add Way Too Complicated | suggested title in process | Please add as much of the following info as you can:

Title: Way Too Complicated

Type (film/tv show): TV show

Film or show in which it appears: Dinosaurs (Disney)

Is the parent film/show streaming anywhere? Disney+ I think

About when in the parent film/show does it appear? S02E04

Actual footage of the ... | 1.0 | Add Way Too Complicated - Please add as much of the following info as you can:

Title: Way Too Complicated

Type (film/tv show): TV show

Film or show in which it appears: Dinosaurs (Disney)

Is the parent film/show streaming anywhere? Disney+ I think

About when in the parent film/show does it appear? S02E04... | process | add way too complicated please add as much of the following info as you can title way too complicated type film tv show tv show film or show in which it appears dinosaurs disney is the parent film show streaming anywhere disney i think about when in the parent film show does it appear a... | 1 |

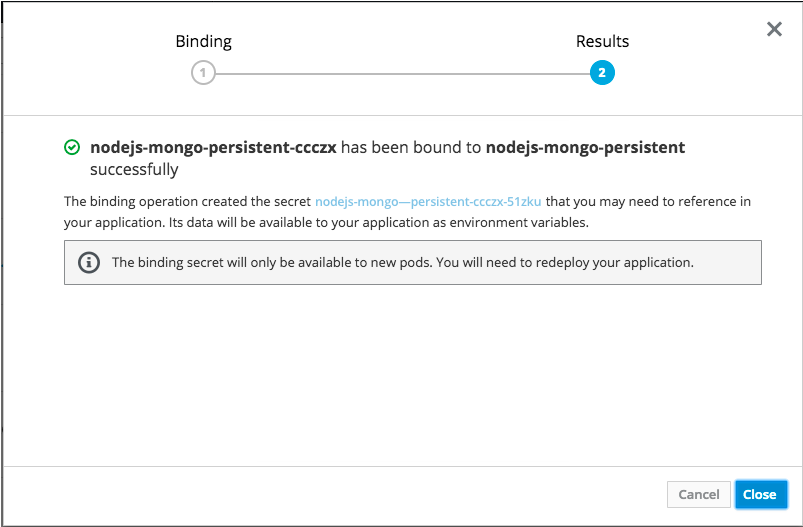

171,667 | 6,492,970,033 | IssuesEvent | 2017-08-21 15:14:52 | openshift/origin-web-console | https://api.github.com/repos/openshift/origin-web-console | closed | Secret in binding confirmation should be a link | area/usability kind/bug priority/P3 | The secret named in the results step of the bind wizard should be a link to that secret, like in the mockup below.

| 1.0 | Secret in binding confirmation should be a link - The secret named in the results step of the bind wizard should be a link to that secret, like in the mockup below.

| non_process | secret in binding confirmation should be a link the secret named in the results step of the bind wizard should be a link to that secret like in the mockup below | 0 |

77,134 | 9,543,752,438 | IssuesEvent | 2019-05-01 11:36:48 | med-material/BakingTrayTaskVR | https://api.github.com/repos/med-material/BakingTrayTaskVR | opened | Improve Visuals | design | - [ ] The scene currently doesn't have nice lighting, and lack more or less shadows completely.

- [ ] The current model does not look like something eatable, so it would be nice to have a proper cookie model instead. | 1.0 | Improve Visuals - - [ ] The scene currently doesn't have nice lighting, and lack more or less shadows completely.

- [ ] The current model does not look like something eatable, so it would be nice to have a proper cookie model instead. | non_process | improve visuals the scene currently doesn t have nice lighting and lack more or less shadows completely the current model does not look like something eatable so it would be nice to have a proper cookie model instead | 0 |

398,202 | 27,188,034,597 | IssuesEvent | 2023-02-19 13:09:19 | aiken-lang/aiken | https://api.github.com/repos/aiken-lang/aiken | opened | Documentation gaps | need for documentation help welcomed | - [ ] Extend the documentation on constants in the user manual.

Cover what types can be used as constants and give examples.

- [ ] An end-to-end minting/burning policy example would be beneficial.

We only have spending validator. Ideally, we could show an example of a minting policy that is parameteriz... | 1.0 | Documentation gaps - - [ ] Extend the documentation on constants in the user manual.

Cover what types can be used as constants and give examples.

- [ ] An end-to-end minting/burning policy example would be beneficial.

We only have spending validator. Ideally, we could show an example of a minting polic... | non_process | documentation gaps extend the documentation on constants in the user manual cover what types can be used as constants and give examples an end to end minting burning policy example would be beneficial we only have spending validator ideally we could show an example of a minting policy th... | 0 |

607,863 | 18,792,839,744 | IssuesEvent | 2021-11-08 18:37:47 | azerothcore/azerothcore-wotlk | https://api.github.com/repos/azerothcore/azerothcore-wotlk | opened | Server crash | ChromieCraft Generic Priority-Critical | ```

Thread 3 "worldserver" received signal SIGSEGV, Segmentation fault.

[Switching to Thread 0x14c7833fe700 (LWP 20404)]

0x000000000155a2fe in Acore::Abort (file=0x16325b7 "/root/env/chromiecraft/azerothcore-wotlk/src/server/worldserver/Main.cpp", line=646, function=0x1891455 "Handler") at /root/env/chromiecraft/a... | 1.0 | Server crash - ```

Thread 3 "worldserver" received signal SIGSEGV, Segmentation fault.

[Switching to Thread 0x14c7833fe700 (LWP 20404)]

0x000000000155a2fe in Acore::Abort (file=0x16325b7 "/root/env/chromiecraft/azerothcore-wotlk/src/server/worldserver/Main.cpp", line=646, function=0x1891455 "Handler") at /root/env... | non_process | server crash thread worldserver received signal sigsegv segmentation fault in acore abort file root env chromiecraft azerothcore wotlk src server worldserver main cpp line function handler at root env chromiecraft azerothcore wotlk src common debugging errors cpp crash... | 0 |

21,787 | 30,296,854,005 | IssuesEvent | 2023-07-09 23:48:33 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | dbretina 2.2.10 has 1 GuardDog issues | guarddog silent-process-execution | https://pypi.org/project/dbretina

https://inspector.pypi.io/project/dbretina

```{

"dependency": "dbretina",

"version": "2.2.10",

"result": {

"issues": 1,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "kSpider2/ks_filter.py/kSpider2/ks_filter.py:15",

... | 1.0 | dbretina 2.2.10 has 1 GuardDog issues - https://pypi.org/project/dbretina

https://inspector.pypi.io/project/dbretina

```{

"dependency": "dbretina",

"version": "2.2.10",

"result": {

"issues": 1,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "kSpider2/ks_... | process | dbretina has guarddog issues dependency dbretina version result issues errors results silent process execution location ks filter py ks filter py code subprocess run stdin su... | 1 |

7,076 | 10,224,829,959 | IssuesEvent | 2019-08-16 13:46:12 | Ultimate-Hosts-Blacklist/whitelist | https://api.github.com/repos/Ultimate-Hosts-Blacklist/whitelist | closed | ytmnd.com | whitelisting process | *@maxgoldberg commented on Aug 12, 2019, 2:39 AM UTC:*

**Domains or links**

ytmnd.com, *.ytmnd.com

**More Information**

How did you discover your web site or domain was listed here?

1. Installed pi-hole and was no longer able to access site.

**Have you requested removal from other sources?**

Not necessary, this ap... | 1.0 | ytmnd.com - *@maxgoldberg commented on Aug 12, 2019, 2:39 AM UTC:*

**Domains or links**

ytmnd.com, *.ytmnd.com

**More Information**

How did you discover your web site or domain was listed here?

1. Installed pi-hole and was no longer able to access site.

**Have you requested removal from other sources?**

Not necess... | process | ytmnd com maxgoldberg commented on aug am utc domains or links ytmnd com ytmnd com more information how did you discover your web site or domain was listed here installed pi hole and was no longer able to access site have you requested removal from other sources not necessary ... | 1 |

255,521 | 19,306,203,927 | IssuesEvent | 2021-12-13 11:47:51 | o3de/o3de | https://api.github.com/repos/o3de/o3de | opened | Update physx docs to mention the new LY_PHYSX_PROFILE_USE_CHECKED_LIBS macro for cmake configuration | feature/physics kind/documentation needs-triage sig/core priority/minor | This change lives in development branch at the moment, once it hits release "docs/user-guide/interactivity/physics/debugging/" page needs to be modified to include that users can use the macro `LY_PHYSX_PROFILE_USE_CHECKED_LIBS ` in cmake configuration to make o3de profile configuration use the checked version of PhysX... | 1.0 | Update physx docs to mention the new LY_PHYSX_PROFILE_USE_CHECKED_LIBS macro for cmake configuration - This change lives in development branch at the moment, once it hits release "docs/user-guide/interactivity/physics/debugging/" page needs to be modified to include that users can use the macro `LY_PHYSX_PROFILE_USE_CH... | non_process | update physx docs to mention the new ly physx profile use checked libs macro for cmake configuration this change lives in development branch at the moment once it hits release docs user guide interactivity physics debugging page needs to be modified to include that users can use the macro ly physx profile use ch... | 0 |

11,301 | 14,105,771,126 | IssuesEvent | 2020-11-06 14:00:00 | paul-buerkner/brms | https://api.github.com/repos/paul-buerkner/brms | closed | Estimate and plot survival functions | feature post-processing | I realized that in survival regression it is pretty common to compute and plot the survival functions for different conditions. Using the internal structure of `marginal_effects` this should be relatively straightforward to implement. | 1.0 | Estimate and plot survival functions - I realized that in survival regression it is pretty common to compute and plot the survival functions for different conditions. Using the internal structure of `marginal_effects` this should be relatively straightforward to implement. | process | estimate and plot survival functions i realized that in survival regression it is pretty common to compute and plot the survival functions for different conditions using the internal structure of marginal effects this should be relatively straightforward to implement | 1 |

1,508 | 4,102,792,593 | IssuesEvent | 2016-06-04 07:25:58 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | opened | The href of a link processsed incorrectly in an iframe without src if it is set from the main window | !IMPORTANT! AREA: client SYSTEM: URL processing TYPE: bug | There is an iframe

```html

<iframe id="iframe"></iframe>

```

If I create a link inside the iframe in the following way:

```js

// This code executes in the context of the top window

var iframe = document.getElementById('iframe');

var body = iframe.contentDocument.body;

body.innerHTML = '<a href="somePage.ht... | 1.0 | The href of a link processsed incorrectly in an iframe without src if it is set from the main window - There is an iframe

```html

<iframe id="iframe"></iframe>

```

If I create a link inside the iframe in the following way:

```js

// This code executes in the context of the top window

var iframe = document.getEl... | process | the href of a link processsed incorrectly in an iframe without src if it is set from the main window there is an iframe html if i create a link inside the iframe in the following way js this code executes in the context of the top window var iframe document getelementbyid iframe var b... | 1 |

5,907 | 8,725,552,096 | IssuesEvent | 2018-12-10 09:44:27 | linnovate/root | https://api.github.com/repos/linnovate/root | opened | discussion deletion bug | 2.0.6 Process bug | When deleting a discussion, it doesnt get deleted, until moving to another tab(tasks, projects, etc.) or refreshing the page, and all the changes before doing so are saved and remain after deletion. | 1.0 | discussion deletion bug - When deleting a discussion, it doesnt get deleted, until moving to another tab(tasks, projects, etc.) or refreshing the page, and all the changes before doing so are saved and remain after deletion. | process | discussion deletion bug when deleting a discussion it doesnt get deleted until moving to another tab tasks projects etc or refreshing the page and all the changes before doing so are saved and remain after deletion | 1 |

764,266 | 26,792,443,832 | IssuesEvent | 2023-02-01 09:30:23 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.reddit.com - see bug description | browser-firefox priority-critical os-linux engine-gecko | <!-- @browser: Firefox 109.0 -->

<!-- @ua_header: Mozilla/5.0 (X11; Linux x86_64; rv:109.0) Gecko/20100101 Firefox/109.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/117501 -->

**URL**: https://www.reddit.com/

**Browser / Version**: Firefox 109.0

**Operating Syst... | 1.0 | www.reddit.com - see bug description - <!-- @browser: Firefox 109.0 -->

<!-- @ua_header: Mozilla/5.0 (X11; Linux x86_64; rv:109.0) Gecko/20100101 Firefox/109.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/117501 -->

**URL**: https://www.reddit.com/

**Browser / Ve... | non_process | see bug description url browser version firefox operating system linux tested another browser yes edge problem type something else description cannot use scrollbar after click on a post steps to reproduce after click on a post and open it appear two scrollbar... | 0 |

290,321 | 21,875,768,264 | IssuesEvent | 2022-05-19 09:59:14 | appsmithorg/appsmith | https://api.github.com/repos/appsmithorg/appsmith | closed | [Docs] #12531 [Bug]-[1200]:Unable to select previously selected option from dropdown if value outside the options is set - unless other option is selected at-least once | Documentation User Education Pod | > TODO

- [ ] Evaluate if this task is needed. If not add the "Skip Docs" label on the parent ticket

- [ ] Fill these fields

- [ ] Prepare first draft

- [ ] Add label: "Ready for Docs Team"

Field | Details

-----|-----

**POD** | App Viewers Pod

**Parent Ticket** | #12531

Engineer |

Release Date |

Live Date |... | 1.0 | [Docs] #12531 [Bug]-[1200]:Unable to select previously selected option from dropdown if value outside the options is set - unless other option is selected at-least once - > TODO

- [ ] Evaluate if this task is needed. If not add the "Skip Docs" label on the parent ticket

- [ ] Fill these fields

- [ ] Prepare first ... | non_process | unable to select previously selected option from dropdown if value outside the options is set unless other option is selected at least once todo evaluate if this task is needed if not add the skip docs label on the parent ticket fill these fields prepare first draft add label r... | 0 |

19,266 | 25,455,925,088 | IssuesEvent | 2022-11-24 14:11:22 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [IDP] [PM] Last name is getting displayed in the phone number field | Bug Blocker P0 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | **Steps:**

1. Login to PM

2. Click on 'Admins' tab

3. Edit admin in the list

4. Update phone number and Verify

**AR:** Last name is getting displayed in the phone number field

**ER:** Updated phone number should get displayed | 3.0 | [IDP] [PM] Last name is getting displayed in the phone number field - **Steps:**

1. Login to PM

2. Click on 'Admins' tab

3. Edit admin in the list

4. Update phone number and Verify

**AR:** Last name is getting displayed in the phone number field

**ER:** Updated phone number should get displayed | process | last name is getting displayed in the phone number field steps login to pm click on admins tab edit admin in the list update phone number and verify ar last name is getting displayed in the phone number field er updated phone number should get displayed | 1 |

2,054 | 4,862,864,124 | IssuesEvent | 2016-11-14 13:53:31 | openvstorage/framework | https://api.github.com/repos/openvstorage/framework | closed | Upgrade framework does not continue after `read timeout` | priority_critical process_wontfix type_bug | As seen in this error, upgrade framework stops after it cannot reach 1 sdm node. It should skip it:

```

2016-09-21 17:21:26 04800 +0200 - stor-202.be-gen8-1 - 10809/140546189371200 - lib/update - 0 - INFO - +++ Starting framework update +++

2016-09-21 17:21:26 04900 +0200 - stor-202.be-gen8-1 - 10809/140546189371200 -... | 1.0 | Upgrade framework does not continue after `read timeout` - As seen in this error, upgrade framework stops after it cannot reach 1 sdm node. It should skip it:

```

2016-09-21 17:21:26 04800 +0200 - stor-202.be-gen8-1 - 10809/140546189371200 - lib/update - 0 - INFO - +++ Starting framework update +++

2016-09-21 17:21:26... | process | upgrade framework does not continue after read timeout as seen in this error upgrade framework stops after it cannot reach sdm node it should skip it stor be lib update info starting framework update stor be lib update ... | 1 |

42,949 | 23,052,000,950 | IssuesEvent | 2022-07-24 19:20:28 | maxbachmann/RapidFuzz | https://api.github.com/repos/maxbachmann/RapidFuzz | closed | implement string_metric.levenshtein_editops using Hirschbergs algorithm | performance | [Hirschbergs algorithm](https://en.wikipedia.org/wiki/Hirschberg%27s_algorithm) could be used to split the alignment calculation into multiple subproblems which are solved using the current algorithm. This would significantly reduce the memory consumption for long sequences. However it should be checked starting at whi... | True | implement string_metric.levenshtein_editops using Hirschbergs algorithm - [Hirschbergs algorithm](https://en.wikipedia.org/wiki/Hirschberg%27s_algorithm) could be used to split the alignment calculation into multiple subproblems which are solved using the current algorithm. This would significantly reduce the memory co... | non_process | implement string metric levenshtein editops using hirschbergs algorithm could be used to split the alignment calculation into multiple subproblems which are solved using the current algorithm this would significantly reduce the memory consumption for long sequences however it should be checked starting at which... | 0 |

19,188 | 25,309,963,595 | IssuesEvent | 2022-11-17 16:42:00 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | opened | Generalized mas: apply a mask built from a dataset to all other datasets | enhancement preprocessor | Hi @alistairsellar @eleanorgb @ehogan - this is a follow-up to our discussion we had at the MO during the ESMValTool workshop (my apologies for the slightly tardy time to open this, been busy with the release that is now done).

Let me try and recap in broad lines what the requirements of the functionality are, but ... | 1.0 | Generalized mas: apply a mask built from a dataset to all other datasets - Hi @alistairsellar @eleanorgb @ehogan - this is a follow-up to our discussion we had at the MO during the ESMValTool workshop (my apologies for the slightly tardy time to open this, been busy with the release that is now done).

Let me try an... | process | generalized mas apply a mask built from a dataset to all other datasets hi alistairsellar eleanorgb ehogan this is a follow up to our discussion we had at the mo during the esmvaltool workshop my apologies for the slightly tardy time to open this been busy with the release that is now done let me try an... | 1 |

307,183 | 26,518,571,817 | IssuesEvent | 2023-01-18 23:21:05 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | DISABLED test_cond_dynamic_shapes (torch._dynamo.testing.make_test_cls_with_patches.<locals>.DummyTestClass) | module: flaky-tests skipped module: unknown | Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/failure/test_cond_dynamic_shapes) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/10713308472).

Over the past 72 hours, it has flakily failed in 2 workflow(s).

**Debugg... | 1.0 | DISABLED test_cond_dynamic_shapes (torch._dynamo.testing.make_test_cls_with_patches.<locals>.DummyTestClass) - Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/failure/test_cond_dynamic_shapes) and the most recent trunk [workflow logs](https://github.co... | non_process | disabled test cond dynamic shapes torch dynamo testing make test cls with patches dummytestclass platforms linux this test was disabled because it is failing in ci see and the most recent trunk over the past hours it has flakily failed in workflow s debugging instructions after clicking on... | 0 |

13,365 | 15,831,158,465 | IssuesEvent | 2021-04-06 13:20:41 | ESE-Peasy/PosturePerfection | https://api.github.com/repos/ESE-Peasy/PosturePerfection | closed | Incorrect arrow indicated when passing the north line | bug post-processing | # Goal

The arrow is drawn in the incorrect direction if you set your ideal pose on one side of the north line, and then move into a posture which is on the other side

# Plan

1. Revise the logic to calculate angles

1. Determine why the incorrect direction is being indicated

1. Fix it 😄 | 1.0 | Incorrect arrow indicated when passing the north line - # Goal

The arrow is drawn in the incorrect direction if you set your ideal pose on one side of the north line, and then move into a posture which is on the other side

# Plan

1. Revise the logic to calculate angles

1. Determine why the incorrect direction... | process | incorrect arrow indicated when passing the north line goal the arrow is drawn in the incorrect direction if you set your ideal pose on one side of the north line and then move into a posture which is on the other side plan revise the logic to calculate angles determine why the incorrect direction... | 1 |

5,903 | 8,722,366,751 | IssuesEvent | 2018-12-09 11:47:28 | kerubistan/kerub | https://api.github.com/repos/kerubistan/kerub | opened | measure and track cpu temperature information | component:data processing enhancement priority: normal | probably lm-sensors, or proc filesystem, or whatever is available on the OS | 1.0 | measure and track cpu temperature information - probably lm-sensors, or proc filesystem, or whatever is available on the OS | process | measure and track cpu temperature information probably lm sensors or proc filesystem or whatever is available on the os | 1 |

70,841 | 3,344,097,617 | IssuesEvent | 2015-11-16 00:21:35 | oakesville/mythling | https://api.github.com/repos/oakesville/mythling | closed | Back Button Problems with EPG Activity | priority:medium type:usability | In EPG Activity, Close Calendar, Search and Details Dialog on Back Button (see comment below from TQ). Probably should use same javascript interaction from FireTV EPG. | 1.0 | Back Button Problems with EPG Activity - In EPG Activity, Close Calendar, Search and Details Dialog on Back Button (see comment below from TQ). Probably should use same javascript interaction from FireTV EPG. | non_process | back button problems with epg activity in epg activity close calendar search and details dialog on back button see comment below from tq probably should use same javascript interaction from firetv epg | 0 |

13,636 | 16,255,155,182 | IssuesEvent | 2021-05-08 03:07:28 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | Coprocessor support ENUM / SET | difficulty/medium sig/coprocessor type/enhancement | ## Development Task

Currently TiKV Coprocessor does not support ENUM / SET data types, so that expressions related to these data types cannot be pushed down, which greatly affects performance in some scenarios. We have met this case several times.

This task is to add ENUM / SET data type support to the TiKV Copro... | 1.0 | Coprocessor support ENUM / SET - ## Development Task

Currently TiKV Coprocessor does not support ENUM / SET data types, so that expressions related to these data types cannot be pushed down, which greatly affects performance in some scenarios. We have met this case several times.

This task is to add ENUM / SET da... | process | coprocessor support enum set development task currently tikv coprocessor does not support enum set data types so that expressions related to these data types cannot be pushed down which greatly affects performance in some scenarios we have met this case several times this task is to add enum set da... | 1 |

21,469 | 29,503,167,125 | IssuesEvent | 2023-06-03 02:03:37 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Capture test artifacts (movies and screenshots) when running AppVeyor tests | process: tests CI: appveyor type: chore stale | Because trying to update kitchensink fails (Chrome 81 browser)

- https://www.appveyor.com/docs/packaging-artifacts/

- failing build https://github.com/cypress-io/cypress/pull/7282

```

Running: examples\assertions.spec.js (3 of 19)

Assertions

Implicit A... | 1.0 | Capture test artifacts (movies and screenshots) when running AppVeyor tests - Because trying to update kitchensink fails (Chrome 81 browser)

- https://www.appveyor.com/docs/packaging-artifacts/

- failing build https://github.com/cypress-io/cypress/pull/7282

```

Running: examples\assertions.spec.js ... | process | capture test artifacts movies and screenshots when running appveyor tests because trying to update kitchensink fails chrome browser failing build running examples assertions spec js of assertions implicit assertions √ ... | 1 |

13,319 | 15,786,563,581 | IssuesEvent | 2021-04-01 17:55:58 | hasura/ask-me-anything | https://api.github.com/repos/hasura/ask-me-anything | closed | What was your (Adron's) favorite database ... ? | processing-for-shortvid question | I have opinions about all sorts of things, and that being the case, I have multiple answers for this question! | 1.0 | What was your (Adron's) favorite database ... ? - I have opinions about all sorts of things, and that being the case, I have multiple answers for this question! | process | what was your adron s favorite database i have opinions about all sorts of things and that being the case i have multiple answers for this question | 1 |

10,364 | 13,186,015,272 | IssuesEvent | 2020-08-12 22:50:43 | googleapis/nodejs-speech | https://api.github.com/repos/googleapis/nodejs-speech | closed | Streaming wav file doesn't know the sample rate in advance | api: speech type: process | Going through the example for streaming a wav file, though speech to text it requires the programer to set the sampleRateHertz in advance. But different wav files can have different sample rates. A program would have to read in the wav file before piping to recognizeStream in order to figure out the sampleRateHertz ... | 1.0 | Streaming wav file doesn't know the sample rate in advance - Going through the example for streaming a wav file, though speech to text it requires the programer to set the sampleRateHertz in advance. But different wav files can have different sample rates. A program would have to read in the wav file before piping t... | process | streaming wav file doesn t know the sample rate in advance going through the example for streaming a wav file though speech to text it requires the programer to set the sampleratehertz in advance but different wav files can have different sample rates a program would have to read in the wav file before piping t... | 1 |

585,359 | 17,485,855,359 | IssuesEvent | 2021-08-09 10:57:36 | slynch8/10x | https://api.github.com/repos/slynch8/10x | opened | Disable adding folders if folder sync is true | feature Priority 3 | Can be confusing if you add files or folders to a sync'd folder, as the ones that have been added will disappear when re-opening. Need to disallow adding stuff to a sync'd folder, and maybe disable setting sync to true if there are manually added files/folders. | 1.0 | Disable adding folders if folder sync is true - Can be confusing if you add files or folders to a sync'd folder, as the ones that have been added will disappear when re-opening. Need to disallow adding stuff to a sync'd folder, and maybe disable setting sync to true if there are manually added files/folders. | non_process | disable adding folders if folder sync is true can be confusing if you add files or folders to a sync d folder as the ones that have been added will disappear when re opening need to disallow adding stuff to a sync d folder and maybe disable setting sync to true if there are manually added files folders | 0 |

19,464 | 25,758,413,222 | IssuesEvent | 2022-12-08 18:15:51 | mdsreq-fga-unb/2022.2-Receitalista | https://api.github.com/repos/mdsreq-fga-unb/2022.2-Receitalista | closed | Processo de Desenvolvimento de Software | Processo | - [x] em todas as atividades não há entrega/produto informado, e sim, o que será feito. Corrigir.

- [x] nenhuma das atividades está inserida no ciclo de vida que será utilizado.

- [x] em que atividade, por exemplo, será feito o backlog do produto?

- [x] em que atividade, por exemplo, será feito o backlog da sprint?

... | 1.0 | Processo de Desenvolvimento de Software - - [x] em todas as atividades não há entrega/produto informado, e sim, o que será feito. Corrigir.

- [x] nenhuma das atividades está inserida no ciclo de vida que será utilizado.

- [x] em que atividade, por exemplo, será feito o backlog do produto?

- [x] em que atividade, por... | process | processo de desenvolvimento de software em todas as atividades não há entrega produto informado e sim o que será feito corrigir nenhuma das atividades está inserida no ciclo de vida que será utilizado em que atividade por exemplo será feito o backlog do produto em que atividade por exemplo... | 1 |

14,253 | 17,189,038,873 | IssuesEvent | 2021-07-16 08:18:15 | googleapis/python-bigquery | https://api.github.com/repos/googleapis/python-bigquery | closed | don't pass `read_session` to BQ Storage API `rows` from `to_dataframe` and `to_arrow` | api: bigquery type: process | https://github.com/googleapis/python-bigquery-storage/pull/228 makes `read_session` optional and quietly deprecates it.

If a new enough `google-cloud-bigquery-storage` client is installed, I'd like to avoid passing the `read_session` to `rows`, so that we can more loudly deprecate it (https://github.com/googleapis/p... | 1.0 | don't pass `read_session` to BQ Storage API `rows` from `to_dataframe` and `to_arrow` - https://github.com/googleapis/python-bigquery-storage/pull/228 makes `read_session` optional and quietly deprecates it.

If a new enough `google-cloud-bigquery-storage` client is installed, I'd like to avoid passing the `read_sess... | process | don t pass read session to bq storage api rows from to dataframe and to arrow makes read session optional and quietly deprecates it if a new enough google cloud bigquery storage client is installed i d like to avoid passing the read session to rows so that we can more loudly deprecate it | 1 |

5,849 | 8,674,507,911 | IssuesEvent | 2018-11-30 07:54:01 | facebook/osquery | https://api.github.com/repos/facebook/osquery | closed | osquery can't take over audit | process auditing | # Bug report

### What operating system and version are you using?

```

# cat /etc/*-release

CentOS Linux release 7.3.1611 (Core)

NAME="CentOS Linux"

VERSION="7 (Core)"

ID="centos"

ID_LIKE="rhel fedora"

VERSION_ID="7"

PRETTY_NAME="CentOS Linux 7 (Core)"

ANSI_COLOR="0;31"

CPE_NAME="cpe:/o:centos:centos:7"

... | 1.0 | osquery can't take over audit - # Bug report

### What operating system and version are you using?

```

# cat /etc/*-release

CentOS Linux release 7.3.1611 (Core)

NAME="CentOS Linux"

VERSION="7 (Core)"

ID="centos"

ID_LIKE="rhel fedora"

VERSION_ID="7"

PRETTY_NAME="CentOS Linux 7 (Core)"

ANSI_COLOR="0;31"

CP... | process | osquery can t take over audit bug report what operating system and version are you using cat etc release centos linux release core name centos linux version core id centos id like rhel fedora version id pretty name centos linux core ansi color cpe na... | 1 |

17,724 | 23,625,621,372 | IssuesEvent | 2022-08-25 03:22:29 | MPMG-DCC-UFMG/C01 | https://api.github.com/repos/MPMG-DCC-UFMG/C01 | closed | Problema na visualização de passos de coleta dinâmica | [1] Bug [2] Alta Prioridade [E] Externa [0] Desenvolvimento [3] Processamento Dinâmico | ## Comportamento Esperado

Visualizar os parâmetros inseridos nos passos de coleta dinâmica assim como especificado no arquivo de configuração da coleta.

## Comportamento Atual

Alguns passos não exibem os parâmetros inseridos previamente, como se não estivessem especificados no arquivo de configuração. No entanto... | 1.0 | Problema na visualização de passos de coleta dinâmica - ## Comportamento Esperado

Visualizar os parâmetros inseridos nos passos de coleta dinâmica assim como especificado no arquivo de configuração da coleta.

## Comportamento Atual

Alguns passos não exibem os parâmetros inseridos previamente, como se não estives... | process | problema na visualização de passos de coleta dinâmica comportamento esperado visualizar os parâmetros inseridos nos passos de coleta dinâmica assim como especificado no arquivo de configuração da coleta comportamento atual alguns passos não exibem os parâmetros inseridos previamente como se não estives... | 1 |

394,579 | 27,033,762,652 | IssuesEvent | 2023-02-12 14:27:13 | hasadna/TreeBase | https://api.github.com/repos/hasadna/TreeBase | closed | פתיחת מייל לפרויקט trees@hasadna.org.il | documentation Admin | האם יש עלות? המייל גם ככה יפנה אליי. לטובת מראה טוב יותר של עמוד הנחיתה | 1.0 | פתיחת מייל לפרויקט trees@hasadna.org.il - האם יש עלות? המייל גם ככה יפנה אליי. לטובת מראה טוב יותר של עמוד הנחיתה | non_process | פתיחת מייל לפרויקט trees hasadna org il האם יש עלות המייל גם ככה יפנה אליי לטובת מראה טוב יותר של עמוד הנחיתה | 0 |

6,063 | 8,901,155,078 | IssuesEvent | 2019-01-17 01:01:01 | rubberduck-vba/Rubberduck | https://api.github.com/repos/rubberduck-vba/Rubberduck | closed | Handle Procedure Attributes | difficulty-03-duck enhancement module-and-procedure-attributes parse-tree-processing | There are a number of opened issues that have been around since 0.x:

- [x] #52 toggle default instance attribute

- [x] #53 toggle default member attribute

- [x] #54 edit procedure description attribute

- [x] #55 toggle iterator getter attribute

2.0 is going to have to make them happen. I'm tagging with [code-parsing],... | 1.0 | Handle Procedure Attributes - There are a number of opened issues that have been around since 0.x:

- [x] #52 toggle default instance attribute

- [x] #53 toggle default member attribute

- [x] #54 edit procedure description attribute

- [x] #55 toggle iterator getter attribute

2.0 is going to have to make them happen. I'... | process | handle procedure attributes there are a number of opened issues that have been around since x toggle default instance attribute toggle default member attribute edit procedure description attribute toggle iterator getter attribute is going to have to make them happen i m tagging wi... | 1 |

8,332 | 11,493,161,101 | IssuesEvent | 2020-02-11 22:27:13 | ORNL-AMO/AMO-Tools-Desktop | https://api.github.com/repos/ORNL-AMO/AMO-Tools-Desktop | opened | Remove old acronyms | Process Heating | I think this is mostly an issue with PHAST - in Report - Energy Used, System Setup - Design Energy Use & System Setup - Metered Energy

Except when referring to the older software (the home page and the about page), the old acronyms should not be used

Old name New name

PSAT PA

PHAST ... | 1.0 | Remove old acronyms - I think this is mostly an issue with PHAST - in Report - Energy Used, System Setup - Design Energy Use & System Setup - Metered Energy

Except when referring to the older software (the home page and the about page), the old acronyms should not be used

Old name New name

PSAT ... | process | remove old acronyms i think this is mostly an issue with phast in report energy used system setup design energy use system setup metered energy except when referring to the older software the home page and the about page the old acronyms should not be used old name new name psat ... | 1 |

8,093 | 11,271,315,781 | IssuesEvent | 2020-01-14 12:48:26 | spring-projects/spring-hateoas | https://api.github.com/repos/spring-projects/spring-hateoas | closed | Refine factory methods and nullability checks for RepresentationModel types | in: core in: mediatypes process: in progress type: bug | Sonar issued us a curious warning which made us look into the code why this warning was issued. Sonar reported a resource's content should be checked against null because the third constructor in EntityModel has a `@Nullable` annotation for content. However, directly below that, there's an `Assert.notNull` for content... | 1.0 | Refine factory methods and nullability checks for RepresentationModel types - Sonar issued us a curious warning which made us look into the code why this warning was issued. Sonar reported a resource's content should be checked against null because the third constructor in EntityModel has a `@Nullable` annotation for ... | process | refine factory methods and nullability checks for representationmodel types sonar issued us a curious warning which made us look into the code why this warning was issued sonar reported a resource s content should be checked against null because the third constructor in entitymodel has a nullable annotation for ... | 1 |

523,332 | 15,178,376,288 | IssuesEvent | 2021-02-14 15:13:27 | TrinityCore/TrinityCore | https://api.github.com/repos/TrinityCore/TrinityCore | closed | Core/SpawnGroup: Phase 2: SPAWN_FLAG_NO_RESPAWN | Comp-Core Priority-FutureFeatureRequest | **Description:**

For example we want to temp spawn creature only once without allowing it to respawn. Currently to achieve it we need to spawn SpawnGroup and despawn it after creature is killed. Each time for each creature.

Or we need to change respawn time to prevent respawn. But it's something evil

**Expected ... | 1.0 | Core/SpawnGroup: Phase 2: SPAWN_FLAG_NO_RESPAWN - **Description:**

For example we want to temp spawn creature only once without allowing it to respawn. Currently to achieve it we need to spawn SpawnGroup and despawn it after creature is killed. Each time for each creature.

Or we need to change respawn time to preve... | non_process | core spawngroup phase spawn flag no respawn description for example we want to temp spawn creature only once without allowing it to respawn currently to achieve it we need to spawn spawngroup and despawn it after creature is killed each time for each creature or we need to change respawn time to preve... | 0 |

39,921 | 6,782,240,883 | IssuesEvent | 2017-10-30 06:59:58 | cyberFund/cyber-search | https://api.github.com/repos/cyberFund/cyber-search | closed | Bitcoin multisig transaction | discussion documentation improvement | Bitcoin transaction output could contains multiple addresses.

Define model for this

See https://blockchain.info/tx/56214420a7c4dcc4832944298d169a75e93acf9721f00656b2ee0e4d194f9970 | 1.0 | Bitcoin multisig transaction - Bitcoin transaction output could contains multiple addresses.

Define model for this

See https://blockchain.info/tx/56214420a7c4dcc4832944298d169a75e93acf9721f00656b2ee0e4d194f9970 | non_process | bitcoin multisig transaction bitcoin transaction output could contains multiple addresses define model for this see | 0 |

19,719 | 26,073,816,742 | IssuesEvent | 2022-12-24 07:03:51 | pyanodon/pybugreports | https://api.github.com/repos/pyanodon/pybugreports | closed | pypostprocessing now incompatible with True Nukes | postprocess-fail compatibility | ### Mod source

PyAE Beta

### Which mod are you having an issue with?

- [ ] pyalienlife

- [ ] pyalternativeenergy

- [ ] pycoalprocessing

- [ ] pyfusionenergy

- [ ] pyhightech

- [ ] pyindustry

- [ ] pypetroleumhandling

- [X] pypostprocessing

- [ ] pyrawores

### Operating system

>=Windows 10

### What kind of issue i... | 1.0 | pypostprocessing now incompatible with True Nukes - ### Mod source

PyAE Beta

### Which mod are you having an issue with?

- [ ] pyalienlife

- [ ] pyalternativeenergy

- [ ] pycoalprocessing

- [ ] pyfusionenergy

- [ ] pyhightech

- [ ] pyindustry

- [ ] pypetroleumhandling

- [X] pypostprocessing

- [ ] pyrawores

### Oper... | process | pypostprocessing now incompatible with true nukes mod source pyae beta which mod are you having an issue with pyalienlife pyalternativeenergy pycoalprocessing pyfusionenergy pyhightech pyindustry pypetroleumhandling pypostprocessing pyrawores operating system wi... | 1 |

15,271 | 19,250,569,633 | IssuesEvent | 2021-12-09 04:22:54 | elastic/beats | https://api.github.com/repos/elastic/beats | closed | Filebeat decode_cef parser error with \n\r chars in message | bug Filebeat :Processors Team:Security-External Integrations | The filebeat decode_cef function doesn't skip the new lines chars if are present into message field.

es.

`event.original: "CEF:0|Trend Micro|Deep Security Manager|12.0.393|771|Contact by Unrecognized Client|6|src=10.41.128.199 suser=System msg=A connection to Deep Security Manager was initiated by a client not iden... | 1.0 | Filebeat decode_cef parser error with \n\r chars in message - The filebeat decode_cef function doesn't skip the new lines chars if are present into message field.

es.

`event.original: "CEF:0|Trend Micro|Deep Security Manager|12.0.393|771|Contact by Unrecognized Client|6|src=10.41.128.199 suser=System msg=A connecti... | process | filebeat decode cef parser error with n r chars in message the filebeat decode cef function doesn t skip the new lines chars if are present into message field es event original cef trend micro deep security manager contact by unrecognized client src suser system msg a connection to deep ... | 1 |

245,846 | 20,799,081,366 | IssuesEvent | 2022-03-17 12:15:00 | Uuvana-Studios/longvinter-windows-client | https://api.github.com/repos/Uuvana-Studios/longvinter-windows-client | closed | Cant place new tent anywhere | bug Not Tested | receiving too close to base error no matter how far away from original base.

shared a house code now i cant place down my own house :( | 1.0 | Cant place new tent anywhere - receiving too close to base error no matter how far away from original base.

shared a house code now i cant place down my own house :( | non_process | cant place new tent anywhere receiving too close to base error no matter how far away from original base shared a house code now i cant place down my own house | 0 |

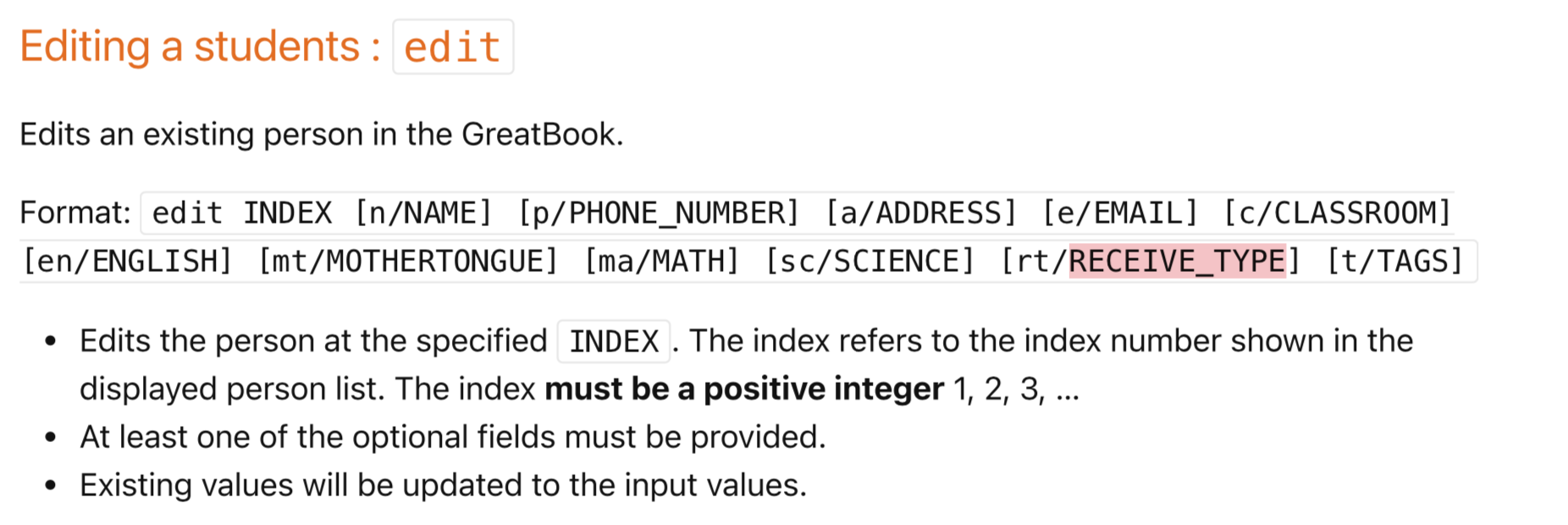

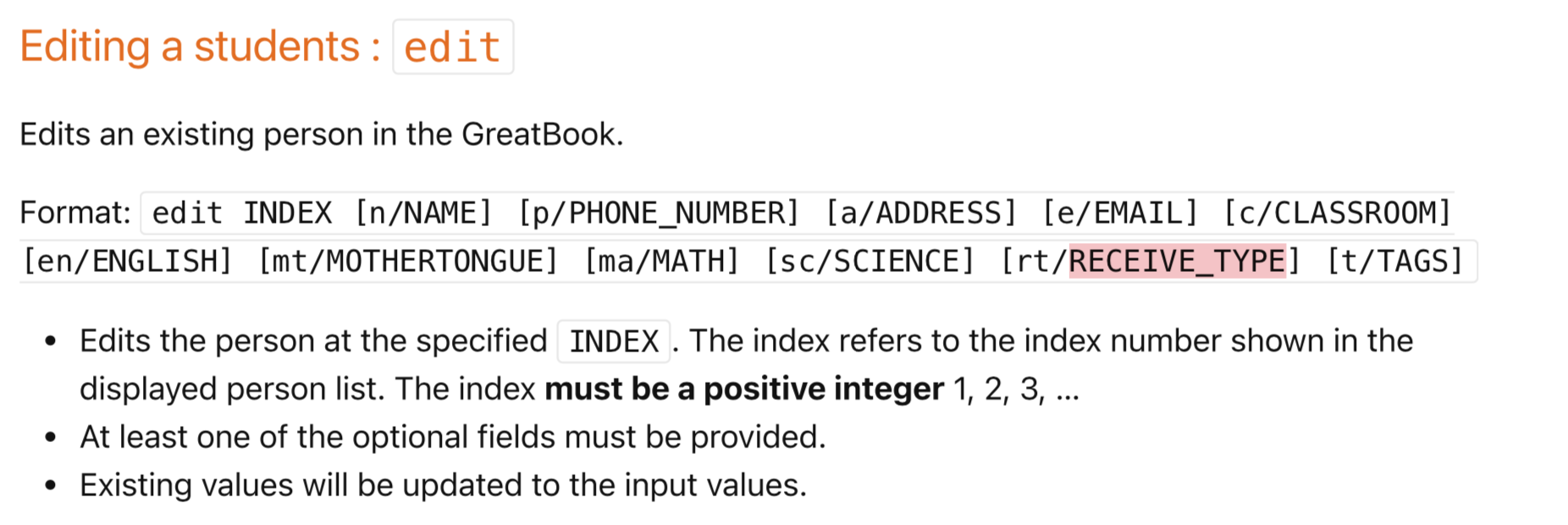

283,407 | 21,316,554,320 | IssuesEvent | 2022-04-16 11:33:14 | jr-mojito/pe | https://api.github.com/repos/jr-mojito/pe | opened | Option field for [rt/RECEIVE_TYPE] found on UG but not available for Edit function | severity.Low type.DocumentationBug | Option field for [rt/RECEIVE_TYPE] found on UG but not available for Edit function

<!--session: 1650108417268-dccfef75-89da-4d4a-87bc-8b7c9ca8a778-->

<!--Version: Web v3.4.... | 1.0 | Option field for [rt/RECEIVE_TYPE] found on UG but not available for Edit function - Option field for [rt/RECEIVE_TYPE] found on UG but not available for Edit function

<!--... | non_process | option field for found on ug but not available for edit function option field for found on ug but not available for edit function | 0 |

4,708 | 7,547,918,735 | IssuesEvent | 2018-04-18 09:30:26 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Very slow child_process.spawn stdout stream performance on macOS | child_process | The `child_process.spawn` stdout stream seems to be taking ~4x longer than other "similar" methods of I/O streaming with similarly sized data streams.

Running on Node.js v4.2.1, Mac OS X 10.11, Macbook Air 1.7GHz

As a baseline, the following program, where `file` is a 472MB gzip file, and `stdout` is set to `ignore` ... | 1.0 | Very slow child_process.spawn stdout stream performance on macOS - The `child_process.spawn` stdout stream seems to be taking ~4x longer than other "similar" methods of I/O streaming with similarly sized data streams.

Running on Node.js v4.2.1, Mac OS X 10.11, Macbook Air 1.7GHz

As a baseline, the following program, ... | process | very slow child process spawn stdout stream performance on macos the child process spawn stdout stream seems to be taking longer than other similar methods of i o streaming with similarly sized data streams running on node js mac os x macbook air as a baseline the following program where ... | 1 |

230,421 | 17,616,036,155 | IssuesEvent | 2021-08-18 09:49:10 | rism-digital/verovio | https://api.github.com/repos/rism-digital/verovio | closed | Possible improvements to the test suite output page | enhancement documentation / test suite low priority | In the new test suite it would be handy to be able to copy and paste a link to view and reference the exact test. | 1.0 | Possible improvements to the test suite output page - In the new test suite it would be handy to be able to copy and paste a link to view and reference the exact test. | non_process | possible improvements to the test suite output page in the new test suite it would be handy to be able to copy and paste a link to view and reference the exact test | 0 |

2,335 | 5,142,772,284 | IssuesEvent | 2017-01-12 14:19:05 | jimbrown75/Permit-Vision-Enhancements | https://api.github.com/repos/jimbrown75/Permit-Vision-Enhancements | opened | Provide facility for guest signature for Permit Requester | Could Fix Low Priority Process Related | If a new and short term (often one time only) contractor comes to Site they are not a system user. They can use the Guest Signature as a Permit Holder, but they will often have to define work scope too as Permit Requester, however in current build the Permit Requester must be a system user.

T&S have raised this as ... | 1.0 | Provide facility for guest signature for Permit Requester - If a new and short term (often one time only) contractor comes to Site they are not a system user. They can use the Guest Signature as a Permit Holder, but they will often have to define work scope too as Permit Requester, however in current build the Permit R... | process | provide facility for guest signature for permit requester if a new and short term often one time only contractor comes to site they are not a system user they can use the guest signature as a permit holder but they will often have to define work scope too as permit requester however in current build the permit r... | 1 |

5,620 | 8,476,917,445 | IssuesEvent | 2018-10-25 00:08:15 | easy-software-ufal/annotations_repos | https://api.github.com/repos/easy-software-ufal/annotations_repos | opened | elastic/elasticsearch-net "DataMember" attribute is not applied when deserializing searching query | ADA C# test wrong processing | Issue: `https://github.com/elastic/elasticsearch-net/issues/3107`

PR: `https://github.com/elastic/elasticsearch-net/commit/57c44dba240e48f2110a61e59fc91bdd662e810d` | 1.0 | elastic/elasticsearch-net "DataMember" attribute is not applied when deserializing searching query - Issue: `https://github.com/elastic/elasticsearch-net/issues/3107`

PR: `https://github.com/elastic/elasticsearch-net/commit/57c44dba240e48f2110a61e59fc91bdd662e810d` | process | elastic elasticsearch net datamember attribute is not applied when deserializing searching query issue pr | 1 |

79,722 | 10,138,129,389 | IssuesEvent | 2019-08-02 17:04:52 | YugaByte/yugabyte-db | https://api.github.com/repos/YugaByte/yugabyte-db | opened | Doc support for index attributes in JSONB columns | area/documentation | Answer by @rkarthik007 here, but GitHub issue was never filed by the user:

https://stackoverflow.com/questions/56610522/does-yugabyte-s-sql-support-index-attributes-inside-a-jsonb-column?rq=1

| 1.0 | Doc support for index attributes in JSONB columns - Answer by @rkarthik007 here, but GitHub issue was never filed by the user:

https://stackoverflow.com/questions/56610522/does-yugabyte-s-sql-support-index-attributes-inside-a-jsonb-column?rq=1

| non_process | doc support for index attributes in jsonb columns answer by here but github issue was never filed by the user | 0 |

339,453 | 30,448,651,602 | IssuesEvent | 2023-07-16 02:03:01 | ContinualAI/avalanche | https://api.github.com/repos/ContinualAI/avalanche | opened | Creation of a new envirnment failed for python 3.8 | test Continuous integration | Here are the differences between the last working environment and the new one that I tried to run:

```

9c9,10

< - aiohttp=3.7.4.post0=py38h497a2fe_1

---

> - aiohttp=3.8.4=py38h01eb140_1

> - aiosignal=1.3.1=pyhd8ed1ab_0

12c13

< - async-timeout=3.0.1=py_1000

---

> - async-timeout=4.0.2=pyhd8ed1ab_0

18,20c19,21... | 1.0 | Creation of a new envirnment failed for python 3.8 - Here are the differences between the last working environment and the new one that I tried to run:

```

9c9,10

< - aiohttp=3.7.4.post0=py38h497a2fe_1

---

> - aiohttp=3.8.4=py38h01eb140_1

> - aiosignal=1.3.1=pyhd8ed1ab_0

12c13

< - async-timeout=3.0.1=py_1000

-... | non_process | creation of a new envirnment failed for python here are the differences between the last working environment and the new one that i tried to run aiohttp aiohttp aiosignal async timeout py async timeout ... | 0 |

262,161 | 22,819,948,882 | IssuesEvent | 2022-07-12 00:29:56 | zeek/zeek | https://api.github.com/repos/zeek/zeek | closed | The `scripts.base.utils.dir` btest is unstable | Type: Bug :bug: Area: CI/Testing | ... at least on my system:

```

$ while true; do btest ./scripts/base/utils/dir.test || break; done

all 1 tests successful

all 1 tests successful

all 1 tests successful

all 1 tests successful

all 1 tests successful

all 1 tests successful

all 1 tests successful

[ 0%] scripts.base.utils.dir ... failed

1 of 1 t... | 1.0 | The `scripts.base.utils.dir` btest is unstable - ... at least on my system:

```

$ while true; do btest ./scripts/base/utils/dir.test || break; done

all 1 tests successful

all 1 tests successful

all 1 tests successful

all 1 tests successful

all 1 tests successful

all 1 tests successful

all 1 tests successful

[... | non_process | the scripts base utils dir btest is unstable at least on my system while true do btest scripts base utils dir test break done all tests successful all tests successful all tests successful all tests successful all tests successful all tests successful all tests successful ... | 0 |

785,244 | 27,605,838,498 | IssuesEvent | 2023-03-09 12:57:23 | jenkins-x/jx | https://api.github.com/repos/jenkins-x/jx | closed | Link to user in Jira in changelog | kind/enhancement priority/important-longterm area/issue-tracker | ### Summary

### Steps to reproduce the behavior

Create a release for a project with Jira as the issue tracker

### Expected behavior

Links to assignees for related issues in the changelog leads to the user in Jira.

### Actual behavior

When a changelog is generated for a project with Jira as the issue ... | 1.0 | Link to user in Jira in changelog - ### Summary

### Steps to reproduce the behavior

Create a release for a project with Jira as the issue tracker

### Expected behavior

Links to assignees for related issues in the changelog leads to the user in Jira.

### Actual behavior

When a changelog is generated f... | non_process | link to user in jira in changelog summary steps to reproduce the behavior create a release for a project with jira as the issue tracker expected behavior links to assignees for related issues in the changelog leads to the user in jira actual behavior when a changelog is generated f... | 0 |

266,446 | 8,367,761,904 | IssuesEvent | 2018-10-04 13:10:50 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Need to scroll a lot horizontally to see the full xml message when debugging | Component/Composer Component/Debugger Imported Priority/High Type/Improvement | <a href="https://github.com/dilinisg"><img src="https://avatars2.githubusercontent.com/u/1845370?v=4" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [dilinisg](https://github.com/dilinisg)**

_Tuesday Aug 29, 2017 at 09:32 GMT_

_Originally opened as https://github.com/ballerina-lang/composer/issue... | 1.0 | Need to scroll a lot horizontally to see the full xml message when debugging - <a href="https://github.com/dilinisg"><img src="https://avatars2.githubusercontent.com/u/1845370?v=4" align="left" width="96" height="96" hspace="10"></img></a> **Issue by [dilinisg](https://github.com/dilinisg)**

_Tuesday Aug 29, 2017 at 09... | non_process | need to scroll a lot horizontally to see the full xml message when debugging issue by tuesday aug at gmt originally opened as release need to scroll a lot horizontally to see the full xml message when there are multiple namespaces | 0 |

1,715 | 4,364,870,660 | IssuesEvent | 2016-08-03 08:43:06 | openvstorage/framework | https://api.github.com/repos/openvstorage/framework | closed | Nginx already configured before setup | process_wontfix type_bug | When you install the packages of openvstorage on a node, the nginx configuration is already in place before the setup is ran.

We noticed this when trying to access the GUI before the installation is ran or when you try to access the GUI on a EXTRA node. | 1.0 | Nginx already configured before setup - When you install the packages of openvstorage on a node, the nginx configuration is already in place before the setup is ran.

We noticed this when trying to access the GUI before the installation is ran or when you try to access the GUI on a EXTRA node. | process | nginx already configured before setup when you install the packages of openvstorage on a node the nginx configuration is already in place before the setup is ran we noticed this when trying to access the gui before the installation is ran or when you try to access the gui on a extra node | 1 |

5,300 | 8,121,816,604 | IssuesEvent | 2018-08-16 09:26:09 | openvstorage/arakoon | https://api.github.com/repos/openvstorage/arakoon | closed | Arakoon_exc.Exception(4, "Not_found") | process_wontfix | If a key doesn't exist, `arakoon --get ` will throw an exception. For example,

[root@node-03 ~]# arakoon -config /opt/OpenvStorage/config/arakoon_config.ini --get ovs/framework/scheduling/celery

Uncaught exception:

Arakoon_exc.Exception(4, "Not_found")

Raised at file "src/core/lwt.ml", line 805, characte... | 1.0 | Arakoon_exc.Exception(4, "Not_found") - If a key doesn't exist, `arakoon --get ` will throw an exception. For example,

[root@node-03 ~]# arakoon -config /opt/OpenvStorage/config/arakoon_config.ini --get ovs/framework/scheduling/celery

Uncaught exception:

Arakoon_exc.Exception(4, "Not_found")

Raised at fi... | process | arakoon exc exception not found if a key doesn t exist arakoon get will throw an exception for example arakoon config opt openvstorage config arakoon config ini get ovs framework scheduling celery uncaught exception arakoon exc exception not found raised at file src core lw... | 1 |

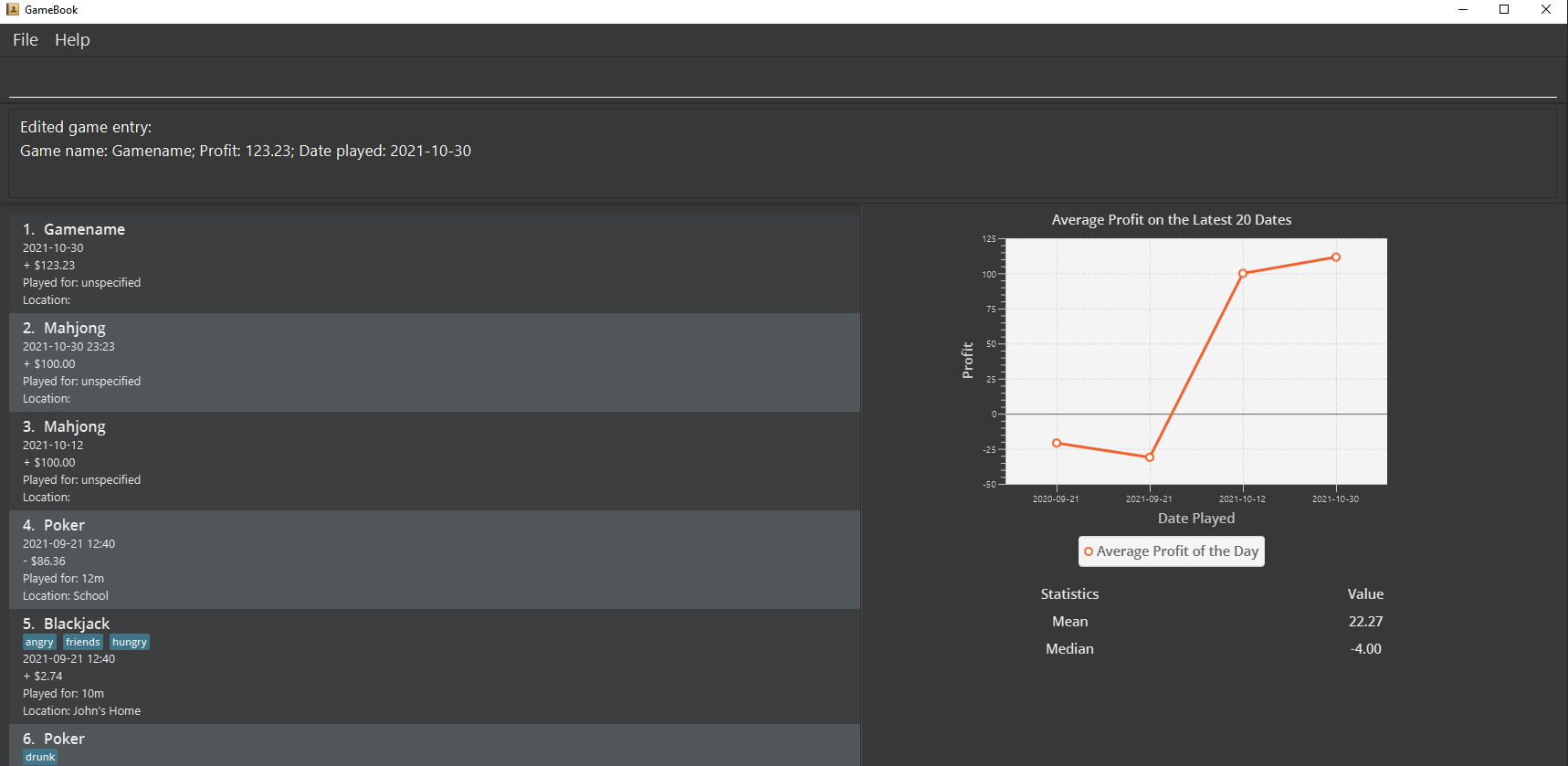

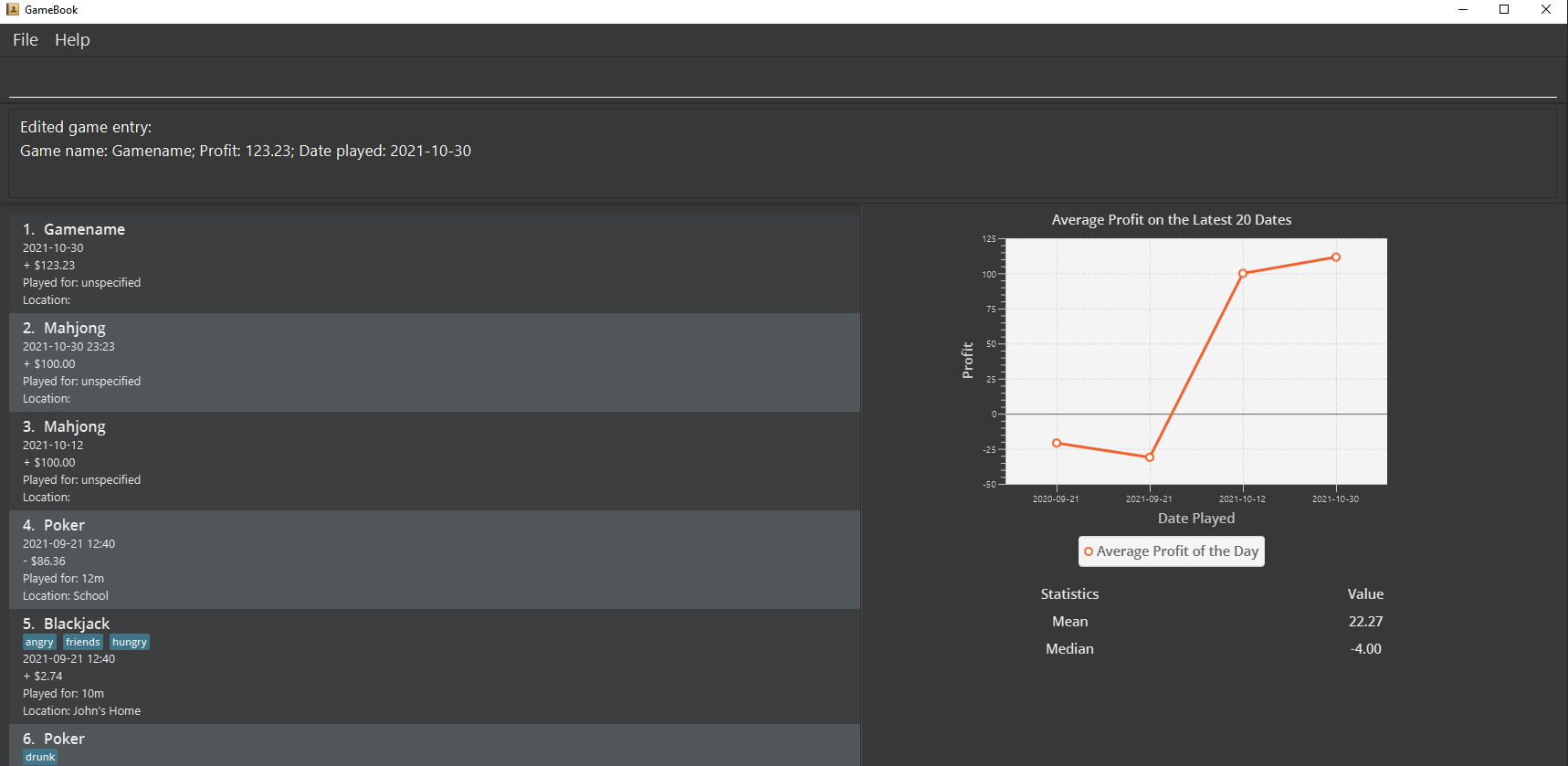

605,782 | 18,740,514,884 | IssuesEvent | 2021-11-04 13:06:55 | AY2122S1-CS2103T-W13-3/tp | https://api.github.com/repos/AY2122S1-CS2103T-W13-3/tp | closed | Sort feature does not work when list is edited | bug priority.Medium | Before (game 1 has date being `2021-10-30`):

After (edited previous game 1 to have date `2020-10-30`):

:

After (edited previous game 1 to have date `2020-10-30`):

)

> [1] "TCGA.BH.A0DZ.01" "TCGA.AN.A046.01" "TCGA.BH.A0DG.01" "TCGA.BH.A1F8.01" "TCGA.A7.A13D.01" "TCGA.A8.A0A6.01"

> head(rownames(mtx.cn))

> [1] "TCGA.05.4244.01" "TCGA.05.4249.01" "TCGA.05.4250.01" "TCGA.05.4382.01" "TCGA.05.4384.01" "TCGA.05.4389.01"

> length(intersect(rownames(mtx.cn), rowna... | 1.0 | TCGAbrca copy number and mut patient ids do not intersect - > head(rownames(mtx.mu))

> [1] "TCGA.BH.A0DZ.01" "TCGA.AN.A046.01" "TCGA.BH.A0DG.01" "TCGA.BH.A1F8.01" "TCGA.A7.A13D.01" "TCGA.A8.A0A6.01"

> head(rownames(mtx.cn))

> [1] "TCGA.05.4244.01" "TCGA.05.4249.01" "TCGA.05.4250.01" "TCGA.05.4382.01" "TCGA.05.4384.01" ... | non_process | tcgabrca copy number and mut patient ids do not intersect head rownames mtx mu tcga bh tcga an tcga bh tcga bh tcga tcga head rownames mtx cn tcga tcga tcga tcga tcga tcga length intersect rownames mtx cn rowna... | 0 |

3,955 | 6,892,352,935 | IssuesEvent | 2017-11-22 20:40:28 | PWRFLcreative/Lightwork-Mapper | https://api.github.com/repos/PWRFLcreative/Lightwork-Mapper | opened | Try using image sequence for capture | Processing | Store sequence of images, instead of raw stream (sync problems) or recorded video. (performance problems) | 1.0 | Try using image sequence for capture - Store sequence of images, instead of raw stream (sync problems) or recorded video. (performance problems) | process | try using image sequence for capture store sequence of images instead of raw stream sync problems or recorded video performance problems | 1 |

15,635 | 19,805,527,312 | IssuesEvent | 2022-01-19 06:04:21 | redwoodjs/redwood | https://api.github.com/repos/redwoodjs/redwood | closed | `rw-test-app` improvements | triage/processing | cc @jtoar / @dthyresson / @thedavidprice

I noticed while working on #3515 that the generated `jest` tests and `storybook` stories are broken.

For example, the generated `BlogPost.stories.tsx`, and `BlogPost.test.tsx` do not have the proper mock passed into them (see screenshot).

.

!... | process | rw test app improvements cc jtoar dthyresson thedavidprice i noticed while working on that the generated jest tests and storybook stories are broken for example the generated blogpost stories tsx and blogpost test tsx do not have the proper mock passed into them see screenshot ... | 1 |

3,122 | 6,153,851,397 | IssuesEvent | 2017-06-28 11:04:20 | openvstorage/volumedriver | https://api.github.com/repos/openvstorage/volumedriver | closed | Observation: halted volumes after volume move to node with full root partition | process_wontfix | The move from volumes failed due to a full root partition on the destination node. All of the volumes got in halted state (on the destination node). The MDS failed.

```

root@stor-04:~# df -h

Filesystem Size Used Avail Use% Mounted on

udev 126G 0 12... | 1.0 | Observation: halted volumes after volume move to node with full root partition - The move from volumes failed due to a full root partition on the destination node. All of the volumes got in halted state (on the destination node). The MDS failed.

```

root@stor-04:~# df -h

Filesystem Size ... | process | observation halted volumes after volume move to node with full root partition the move from volumes failed due to a full root partition on the destination node all of the volumes got in halted state on the destination node the mds failed root stor df h filesystem size ... | 1 |

21,963 | 6,227,689,392 | IssuesEvent | 2017-07-10 21:19:07 | XceedBoucherS/TestImport5 | https://api.github.com/repos/XceedBoucherS/TestImport5 | closed | TimePicker is not fully culture aware | CodePlex | <b>tobias[CodePlex]</b> <br />Binding to a DateTime handles the DateTime in a incorrect way.

nbsp

I have attached a sample project where the problem is visible (I don't know if this problem can be replicated on a English Windows system):

Sample DateTime: 3. June 2011 (the problem occurs only, if the day is lower inc... | 1.0 | TimePicker is not fully culture aware - <b>tobias[CodePlex]</b> <br />Binding to a DateTime handles the DateTime in a incorrect way.

nbsp

I have attached a sample project where the problem is visible (I don't know if this problem can be replicated on a English Windows system):

Sample DateTime: 3. June 2011 (the prob... | non_process | timepicker is not fully culture aware tobias binding to a datetime handles the datetime in a incorrect way nbsp i have attached a sample project where the problem is visible i don t know if this problem can be replicated on a english windows system sample datetime june the problem occurs only if th... | 0 |

40,038 | 5,267,113,556 | IssuesEvent | 2017-02-04 19:20:59 | paperjs/paper.js | https://api.github.com/repos/paperjs/paper.js | closed | Optimize boolean operations when there are no crossings. | cat: boolean-operations status: needs-tests type: improvemnet | When there are no crossings, the result can already be known ahead of `tracePaths()`, probably leading to a massive speed-up:

- intersect: return `null`

- unite: return a compound path with both operands

- subtract: return the first operand

- exclude: same as unite.

- divide: no change needed, since it redirects to the... | 1.0 | Optimize boolean operations when there are no crossings. - When there are no crossings, the result can already be known ahead of `tracePaths()`, probably leading to a massive speed-up:

- intersect: return `null`

- unite: return a compound path with both operands

- subtract: return the first operand

- exclude: same as u... | non_process | optimize boolean operations when there are no crossings when there are no crossings the result can already be known ahead of tracepaths probably leading to a massive speed up intersect return null unite return a compound path with both operands subtract return the first operand exclude same as u... | 0 |

22,718 | 32,040,067,371 | IssuesEvent | 2023-09-22 18:31:22 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | procpath 1.8.1 has 2 GuardDog issues | guarddog exec-base64 silent-process-execution | https://pypi.org/project/procpath

https://inspector.pypi.io/project/procpath

```{

"dependency": "procpath",

"version": "1.8.1",

"result": {

"issues": 2,

"errors": {},

"results": {

"exec-base64": [

{

"location": "Procpath-1.8.1/procpath/utility.py:27",

"code": " env... | 1.0 | procpath 1.8.1 has 2 GuardDog issues - https://pypi.org/project/procpath

https://inspector.pypi.io/project/procpath

```{

"dependency": "procpath",

"version": "1.8.1",

"result": {

"issues": 2,

"errors": {},

"results": {

"exec-base64": [

{

"location": "Procpath-1.8.1/procpath/uti... | process | procpath has guarddog issues dependency procpath version result issues errors results exec location procpath procpath utility py code env subprocess check output n join sc... | 1 |

4,055 | 6,988,621,710 | IssuesEvent | 2017-12-14 13:38:11 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | process._tickDomainCallback is not a function using 9.3.0 | process | * **Version**: 9.3.0

* **Platform**: Windows 10 64 bit

* **Subsystem**: process

<!-- Enter your issue details below this comment. -->

Using 9.3.0 only I'm seeing `process._tickDomainCallback is not a function`, (we're using [deasync](https://github.com/abbr/deasync/blob/60fbfa97f26a79ccf918d1d3188ac3a7e0d685ff/... | 1.0 | process._tickDomainCallback is not a function using 9.3.0 - * **Version**: 9.3.0

* **Platform**: Windows 10 64 bit

* **Subsystem**: process