Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

163,100 | 12,703,900,301 | IssuesEvent | 2020-06-22 23:38:57 | M0nica/ambition-fund-website | https://api.github.com/repos/M0nica/ambition-fund-website | closed | [Increase Test Coverage] Sign Up Form | test-coverage | - jest/react-testing-library unit tests should be added to the signup component to confirm that the form fields appear and the form is functional. The request/request URL for the form service should be mocked. | 1.0 | [Increase Test Coverage] Sign Up Form - - jest/react-testing-library unit tests should be added to the signup component to confirm that the form fields appear and the form is functional. The request/request URL for the form service should be mocked. | non_process | sign up form jest react testing library unit tests should be added to the signup component to confirm that the form fields appear and the form is functional the request request url for the form service should be mocked | 0 |

12,283 | 5,183,379,350 | IssuesEvent | 2017-01-20 00:36:20 | dotnet/coreclr | https://api.github.com/repos/dotnet/coreclr | closed | Win Arm64 and Arm32 crossgen fails due to JIT asserts | area-CodeGen blocking-pipeline-build | ERROR: type should be string, got "\r\nhttps://devdiv.visualstudio.com/DefaultCollection/DevDiv/_build?_a=summary&buildId=525330&tab=details (arm)\r\n \r\n```\r\nAssert failure(PID 6352 [0x000018d0], Thread: 7604 [0x1db4]): Assertion failed '(block->bbFlags & BBF_FINALLY_TARGET) != 0' in 'System.Reflection.LoaderAllocatorScout:Finalize():this' (IL size 63)\r\n \r\n File: e:\\a\\_work\\35\\s\\src\\jit\\flowgraph.cpp Line: 11661\r\n Image: E:\\A\\_work\\35\\s\\bin\\Product\\Windows_NT.arm.Checked\\x86\\crossgen.exe\r\n```\r\n \r\nhttps://devdiv.visualstudio.com/DefaultCollection/DevDiv/_build?_a=summary&buildId=525329&tab=details (arm64)\r\n \r\n```\r\nAssert failure(PID 780 [0x0000030c], Thread: 5808 [0x16b0]): Assertion failed '(lastConsumedNode == nullptr) || (node->gtUseNum == -1) || (node->gtUseNum > lastConsumedNode->gtUseNum)' in 'System.Globalization.HebrewCalendar:GetDatePart(long,int):int:this' (IL size 467)\r\n \r\n File: e:\\a\\_work\\12\\s\\src\\jit\\codegenlinear.cpp Line: 1101\r\n Image: E:\\A\\_work\\12\\s\\bin\\Product\\Windows_NT.arm64.Checked\\x64\\crossgen.exe\r\n```\r\n\r\nAlso reported in today's (1/18) build - https://devdiv.visualstudio.com/DevDiv/_build?buildId=526476." | 1.0 | Win Arm64 and Arm32 crossgen fails due to JIT asserts -

https://devdiv.visualstudio.com/DefaultCollection/DevDiv/_build?_a=summary&buildId=525330&tab=details (arm)

```

Assert failure(PID 6352 [0x000018d0], Thread: 7604 [0x1db4]): Assertion failed '(block->bbFlags & BBF_FINALLY_TARGET) != 0' in 'System.Reflection... | non_process | win and crossgen fails due to jit asserts arm assert failure pid thread assertion failed block bbflags bbf finally target in system reflection loaderallocatorscout finalize this il size file e a work s src jit flowgraph cpp line image e a work... | 0 |

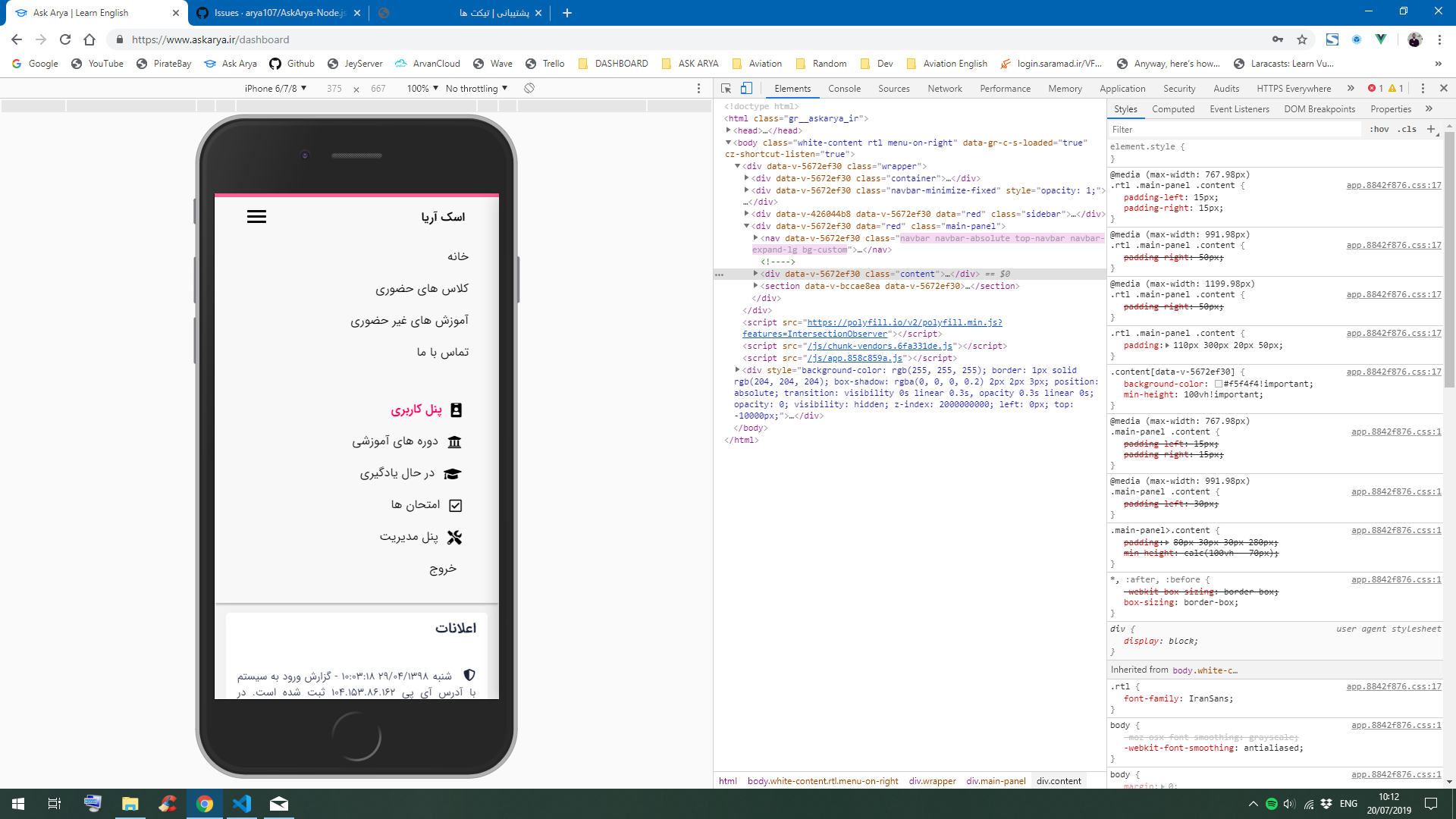

332,548 | 10,097,781,883 | IssuesEvent | 2019-07-28 09:25:52 | arya107/AskArya-Node.js-Vue.js | https://api.github.com/repos/arya107/AskArya-Node.js-Vue.js | closed | Auto Close Functionality Doesn't Work Work on Dashboard Navbar | Priority Fix Required | When a link is clicked, the page changes but the Navbar doesn't close like the Frontend Navbar.

| 1.0 | Auto Close Functionality Doesn't Work Work on Dashboard Navbar - When a link is clicked, the page changes but the Navbar doesn't close like the Frontend Navbar.

| non_process | auto close functionality doesn t work work on dashboard navbar when a link is clicked the page changes but the navbar doesn t close like the frontend navbar | 0 |

204 | 2,612,696,005 | IssuesEvent | 2015-02-27 16:09:36 | Graylog2/graylog2-server | https://api.github.com/repos/Graylog2/graylog2-server | closed | Correlation of messages | processing | Right now graylog2 has the streams where you could define rules to match messages, the thing is that you need to know the specifics of what you are looking for.

There's on feature that I think would make graylog2 really powerful, the ability to correlate messages, example:

Alert01: If field "user" has the same va... | 1.0 | Correlation of messages - Right now graylog2 has the streams where you could define rules to match messages, the thing is that you need to know the specifics of what you are looking for.

There's on feature that I think would make graylog2 really powerful, the ability to correlate messages, example:

Alert01: If fi... | process | correlation of messages right now has the streams where you could define rules to match messages the thing is that you need to know the specifics of what you are looking for there s on feature that i think would make really powerful the ability to correlate messages example if field user has the s... | 1 |

7,853 | 11,027,681,369 | IssuesEvent | 2019-12-06 09:59:02 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | race condition affecting the TilesXYZ MBTiles export plugin | Bug Processing | [i already reported the bug to original author (https://github.com/lutraconsulting/qgis-xyz-tiles/issues/30), but because the plugin is in the meanwhile a default component of QGIS and the issue could perhaps familiar to the developers of the main application, i try to report it here as well.]

your TilesXYZ MBTile... | 1.0 | race condition affecting the TilesXYZ MBTiles export plugin - [i already reported the bug to original author (https://github.com/lutraconsulting/qgis-xyz-tiles/issues/30), but because the plugin is in the meanwhile a default component of QGIS and the issue could perhaps familiar to the developers of the main applicatio... | process | race condition affecting the tilesxyz mbtiles export plugin your tilesxyz mbtiles export plugin qgis python plugins processing algs qgis tilesxyz py included in qgis for linux seems to be plagued by race conditions when it becomes executed in multiple threads the issue doesn t appear in case of the... | 1 |

24,952 | 6,608,877,143 | IssuesEvent | 2017-09-19 12:46:07 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | Duplicate article when change category on frontend with custom field | No Code Attached Yet | ### Steps to reproduce the issue

1. Edit any article on frontend

2. Make sure that article has custom field

3. Change category

4. Hit save

5. Go to articles management on backend and see the result

### Expected result

- Article Category is changed

### Actual result

- Article is duplicated with new categor... | 1.0 | Duplicate article when change category on frontend with custom field - ### Steps to reproduce the issue

1. Edit any article on frontend

2. Make sure that article has custom field

3. Change category

4. Hit save

5. Go to articles management on backend and see the result

### Expected result

- Article Category is... | non_process | duplicate article when change category on frontend with custom field steps to reproduce the issue edit any article on frontend make sure that article has custom field change category hit save go to articles management on backend and see the result expected result article category is... | 0 |

57,883 | 8,212,567,639 | IssuesEvent | 2018-09-04 16:43:30 | mrdoob/three.js | https://api.github.com/repos/mrdoob/three.js | closed | Documentation: Broken link in InstancedBufferGeometry doc page | Bug Documentation | ##### Description of the problem

The bottom of the doc page of [InstancedBufferGeometry](https://threejs.org/docs/index.html#api/en/core/InstancedBufferGeometry) has a link to the source [src/en/core/InstancedBufferGeometry.js](https://github.com/mrdoob/three.js/blob/master/src/en/core/InstancedBufferGeometry.js) wh... | 1.0 | Documentation: Broken link in InstancedBufferGeometry doc page - ##### Description of the problem

The bottom of the doc page of [InstancedBufferGeometry](https://threejs.org/docs/index.html#api/en/core/InstancedBufferGeometry) has a link to the source [src/en/core/InstancedBufferGeometry.js](https://github.com/mrdoo... | non_process | documentation broken link in instancedbuffergeometry doc page description of the problem the bottom of the doc page of has a link to the source which is broken three js version browser opera chrome and maybe all others os all of them ... | 0 |

49,579 | 13,454,372,727 | IssuesEvent | 2020-09-09 03:31:37 | ErezDasa/RB2 | https://api.github.com/repos/ErezDasa/RB2 | opened | CVE-2020-14060 (High) detected in jackson-databind-2.9.9.jar | security vulnerability | ## CVE-2020-14060 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.9.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2020-14060 (High) detected in jackson-databind-2.9.9.jar - ## CVE-2020-14060 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.9.jar</b></p></summary>

<p>General... | non_process | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file tmp ws scm infra github pom ... | 0 |

35,605 | 7,787,795,432 | IssuesEvent | 2018-06-07 00:29:48 | google/sanitizers | https://api.github.com/repos/google/sanitizers | closed | Wrong line number in MSan report | Priority-Medium ProjectMemorySanitizer Status-Accepted Type-Defect | Originally reported on Google Code with ID 49

```

https://code.google.com/p/chromium/issues/detail?id=338382

This chromium bug mentions a report in S32A_Opaque_BlitRow32_SSE2 with wrong line number

in the top frame.

Please make a reduced test case out of it.

```

Reported by `eugenis@google.com` on 2014-02-20 10:42:... | 1.0 | Wrong line number in MSan report - Originally reported on Google Code with ID 49

```

https://code.google.com/p/chromium/issues/detail?id=338382

This chromium bug mentions a report in S32A_Opaque_BlitRow32_SSE2 with wrong line number

in the top frame.

Please make a reduced test case out of it.

```

Reported by `eugen... | non_process | wrong line number in msan report originally reported on google code with id this chromium bug mentions a report in opaque with wrong line number in the top frame please make a reduced test case out of it reported by eugenis google com on | 0 |

5,336 | 8,154,988,163 | IssuesEvent | 2018-08-23 06:29:47 | zeebe-io/zeebe | https://api.github.com/repos/zeebe-io/zeebe | opened | Concurrent reprocessing of WorkflowInstanceStreamProcessor and DeploymentProcessor is broken | broker bug stream processor | ## Scenario

* deploy workflow

* start workflow instance

* stop broker

* purge snapshots

* start broker

* reprocess is happening in parallel

* reprocess workflow instance events

* reprocess deployment events

## Problem

Since deployment are not yet deployed again (not added to the Workflow... | 1.0 | Concurrent reprocessing of WorkflowInstanceStreamProcessor and DeploymentProcessor is broken - ## Scenario

* deploy workflow

* start workflow instance

* stop broker

* purge snapshots

* start broker

* reprocess is happening in parallel

* reprocess workflow instance events

* reprocess deploym... | process | concurrent reprocessing of workflowinstancestreamprocessor and deploymentprocessor is broken scenario deploy workflow start workflow instance stop broker purge snapshots start broker reprocess is happening in parallel reprocess workflow instance events reprocess deploym... | 1 |

18,860 | 24,781,222,765 | IssuesEvent | 2022-10-24 05:17:41 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Error in migration engine: We should only be setting a changed default if there was one on the previous schema and in the next with the same enum. | kind/bug process/candidate tech/engines/datamodel topic: error reporting team/schema topic: postgresql | <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma migrate dev`

Version: `4.5.0`

Binary Version: `0362da9eebca54d94c8ef5edd3b2e90af99ba452`

Report: https://prisma-errors.netlify.app/report/14387

OS: `arm64 darwin 21.6.0`

Rust Stacktrace:

```

Starting migration engine... | 1.0 | Error in migration engine: We should only be setting a changed default if there was one on the previous schema and in the next with the same enum. - <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma migrate dev`

Version: `4.5.0`

Binary Version: `0362da9eebca54d94c8ef5edd3b... | process | error in migration engine we should only be setting a changed default if there was one on the previous schema and in the next with the same enum command prisma migrate dev version binary version report os darwin rust stacktrace starting migration engine rpc server... | 1 |

610,653 | 18,912,957,200 | IssuesEvent | 2021-11-16 15:46:23 | mozilla/addons-server | https://api.github.com/repos/mozilla/addons-server | closed | Add an admin for ReviewActionReasonLog | component: reviewer tools priority: p3 | A feature request is to be able to change the reason assigned to a review action if the original reason selected was incorrect. Possibly the simplest way to address this is to add an admin for `ReviewActionReasonLog` which will allow a user to edit the `reason`. I did a quick test of this, and it seems to be doable, al... | 1.0 | Add an admin for ReviewActionReasonLog - A feature request is to be able to change the reason assigned to a review action if the original reason selected was incorrect. Possibly the simplest way to address this is to add an admin for `ReviewActionReasonLog` which will allow a user to edit the `reason`. I did a quick te... | non_process | add an admin for reviewactionreasonlog a feature request is to be able to change the reason assigned to a review action if the original reason selected was incorrect possibly the simplest way to address this is to add an admin for reviewactionreasonlog which will allow a user to edit the reason i did a quick te... | 0 |

12,184 | 14,742,116,400 | IssuesEvent | 2021-01-07 11:43:48 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Add Keith Mayer | anc-process anp-1 ant-support | In GitLab by @tim.traylor on Mar 14, 2019, 09:06

Hi, Can we please add Keith Mayer (keith.mayer@answernet.com) to this Gitlab? Thx | 1.0 | Add Keith Mayer - In GitLab by @tim.traylor on Mar 14, 2019, 09:06

Hi, Can we please add Keith Mayer (keith.mayer@answernet.com) to this Gitlab? Thx | process | add keith mayer in gitlab by tim traylor on mar hi can we please add keith mayer keith mayer answernet com to this gitlab thx | 1 |

230,607 | 25,482,735,099 | IssuesEvent | 2022-11-26 01:21:38 | Nivaskumark/kernel_v4.1.15 | https://api.github.com/repos/Nivaskumark/kernel_v4.1.15 | reopened | CVE-2017-17855 (High) detected in linuxlinux-4.6 | security vulnerability | ## CVE-2017-17855 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel... | True | CVE-2017-17855 (High) detected in linuxlinux-4.6 - ## CVE-2017-17855 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Lib... | non_process | cve high detected in linuxlinux cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files kernel bpf verifier ... | 0 |

80,799 | 3,574,631,841 | IssuesEvent | 2016-01-27 12:48:26 | leeensminger/OED_Wetlands | https://api.github.com/repos/leeensminger/OED_Wetlands | closed | Mitigation Summary grid does not display edits. | bug - high priority | After editing and saving the Mitigation Summary panel, edits are not displayed within the grid, and values return to zero. This feature had previously been working as designed.

Reproduce:

1. In Projects tab, enable editing. Double click in the Mitigation Summary panel to open the form.

2. Enter a value for MITI... | 1.0 | Mitigation Summary grid does not display edits. - After editing and saving the Mitigation Summary panel, edits are not displayed within the grid, and values return to zero. This feature had previously been working as designed.

Reproduce:

1. In Projects tab, enable editing. Double click in the Mitigation Summary ... | non_process | mitigation summary grid does not display edits after editing and saving the mitigation summary panel edits are not displayed within the grid and values return to zero this feature had previously been working as designed reproduce in projects tab enable editing double click in the mitigation summary ... | 0 |

21,720 | 30,221,394,258 | IssuesEvent | 2023-07-05 19:42:50 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | Obsolete positive regulation by symbiont of host inflammatory response | multi-species process | Single protein annotated, see https://github.com/geneontology/go-ontology/issues/25685 | 1.0 | Obsolete positive regulation by symbiont of host inflammatory response - Single protein annotated, see https://github.com/geneontology/go-ontology/issues/25685 | process | obsolete positive regulation by symbiont of host inflammatory response single protein annotated see | 1 |

465,721 | 13,390,937,494 | IssuesEvent | 2020-09-02 21:28:45 | edgi-govdata-archiving/Environmental-Enforcement-Watch | https://api.github.com/repos/edgi-govdata-archiving/Environmental-Enforcement-Watch | closed | Develop Sunrise notebook | [priority-★★☆] | See: https://github.com/edgi-govdata-archiving/EEW_Planning/issues/169

To do:

- [x] Create new repo: https://github.com/edgi-govdata-archiving/ECHO-Sunrise

- [x] Start this from existing Cross Program notebook

- [x] Constrain to MA

- [ ] Test relevant metrics (density, violations/facility, non-compliance rate, p... | 1.0 | Develop Sunrise notebook - See: https://github.com/edgi-govdata-archiving/EEW_Planning/issues/169

To do:

- [x] Create new repo: https://github.com/edgi-govdata-archiving/ECHO-Sunrise

- [x] Start this from existing Cross Program notebook

- [x] Constrain to MA

- [ ] Test relevant metrics (density, violations/facil... | non_process | develop sunrise notebook see to do create new repo start this from existing cross program notebook constrain to ma test relevant metrics density violations facility non compliance rate penalties facility etc test additional geographies ma house municipalities | 0 |

16,544 | 21,568,598,905 | IssuesEvent | 2022-05-02 04:17:55 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Add Gina Tracker, FBI | suggested title in process | Please add as much of the following info as you can:

Title: Gina Tracker, FBI

Type (film/tv show): Crime Show

Film or show in which it appears: Central Park, Season 2 Episode 1 ([Apple TV+ link](https://tv.apple.com/us/episode/central-dark/umc.cmc.3n9rqp0k7hw231v87h614ndii))

Is the parent film/show streamin... | 1.0 | Add Gina Tracker, FBI - Please add as much of the following info as you can:

Title: Gina Tracker, FBI

Type (film/tv show): Crime Show

Film or show in which it appears: Central Park, Season 2 Episode 1 ([Apple TV+ link](https://tv.apple.com/us/episode/central-dark/umc.cmc.3n9rqp0k7hw231v87h614ndii))

Is the p... | process | add gina tracker fbi please add as much of the following info as you can title gina tracker fbi type film tv show crime show film or show in which it appears central park season episode is the parent film show streaming anywhere apple tv about when in the parent film show does it ap... | 1 |

102,380 | 11,296,779,785 | IssuesEvent | 2020-01-17 03:12:20 | vuetifyjs/vuetify | https://api.github.com/repos/vuetifyjs/vuetify | closed | [Bug Report] V-Autocomplete selects wrong item | T: documentation | ### Environment

**Vuetify Version:** 2.1.6

**Vue Version:** 2.5.22

**Browsers:** Firefox 70.0

**OS:** Windows 10

### Steps to reproduce

1. Type 1009 into the autocomplete to search

2. Select the entry with 1009 in it

3. The entry with 6 is selected

### Expected Behavior

1. Search for entry 1009

2. Select... | 1.0 | [Bug Report] V-Autocomplete selects wrong item - ### Environment

**Vuetify Version:** 2.1.6

**Vue Version:** 2.5.22

**Browsers:** Firefox 70.0

**OS:** Windows 10

### Steps to reproduce

1. Type 1009 into the autocomplete to search

2. Select the entry with 1009 in it

3. The entry with 6 is selected

### Expec... | non_process | v autocomplete selects wrong item environment vuetify version vue version browsers firefox os windows steps to reproduce type into the autocomplete to search select the entry with in it the entry with is selected expected behavior sea... | 0 |

10,381 | 7,174,588,366 | IssuesEvent | 2018-01-31 00:24:16 | Beep6581/RawTherapee | https://api.github.com/repos/Beep6581/RawTherapee | closed | Improve time to start rt | Performance enhancement | This issue is about to improve the time to start rt.

First patch will follow soon. | True | Improve time to start rt - This issue is about to improve the time to start rt.

First patch will follow soon. | non_process | improve time to start rt this issue is about to improve the time to start rt first patch will follow soon | 0 |

123,527 | 17,772,253,525 | IssuesEvent | 2021-08-30 14:54:03 | kapseliboi/ac-web | https://api.github.com/repos/kapseliboi/ac-web | opened | CVE-2019-20920 (High) detected in handlebars-4.4.5.tgz | security vulnerability | ## CVE-2019-20920 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.4.5.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates ... | True | CVE-2019-20920 (High) detected in handlebars-4.4.5.tgz - ## CVE-2019-20920 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.4.5.tgz</b></p></summary>

<p>Handlebars provides... | non_process | cve high detected in handlebars tgz cve high severity vulnerability vulnerable library handlebars tgz handlebars provides the power necessary to let you build semantic templates effectively with no frustration library home page a href path to dependency file ac web pack... | 0 |

4,237 | 7,187,096,583 | IssuesEvent | 2018-02-02 02:54:54 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | closed | Modeling for database samples and tokenomics | monitors-all status-inprocess type-enhancement | This is a first pass, but I think I can do pretty much everything I want to do with this. Is this what you were looking for? Does it make sense?

- A monitor is a list of Ethereum addresses

- Each address can participate as either the ‘to’ or the ‘from’ address in a transaction.

- A transaction either sends ether f... | 1.0 | Modeling for database samples and tokenomics - This is a first pass, but I think I can do pretty much everything I want to do with this. Is this what you were looking for? Does it make sense?

- A monitor is a list of Ethereum addresses

- Each address can participate as either the ‘to’ or the ‘from’ address in a tra... | process | modeling for database samples and tokenomics this is a first pass but i think i can do pretty much everything i want to do with this is this what you were looking for does it make sense a monitor is a list of ethereum addresses each address can participate as either the ‘to’ or the ‘from’ address in a tra... | 1 |

7,295 | 10,441,693,583 | IssuesEvent | 2019-09-18 11:26:23 | Altinn/altinn-studio | https://api.github.com/repos/Altinn/altinn-studio | closed | Create BPMN Engine | analysis process user-story | ## Description

App Backend need to process the Process Defined in BPMN for the app.

This processing should be made as a separate "component" and not be a a integrated part of the app backend.

This to make it reusable

## Considerations

- The process engine should not have any dependency on other Altinn Studio... | 1.0 | Create BPMN Engine - ## Description

App Backend need to process the Process Defined in BPMN for the app.

This processing should be made as a separate "component" and not be a a integrated part of the app backend.

This to make it reusable

## Considerations

- The process engine should not have any dependency o... | process | create bpmn engine description app backend need to process the process defined in bpmn for the app this processing should be made as a separate component and not be a a integrated part of the app backend this to make it reusable considerations the process engine should not have any dependency o... | 1 |

360,159 | 10,684,895,596 | IssuesEvent | 2019-10-22 11:32:10 | official-antistasi-community/A3-Antistasi | https://api.github.com/repos/official-antistasi-community/A3-Antistasi | closed | Fix "Flag capture" exploit | Priority bug | When a person is taking the flag on (for example) a captured outposts he can do it several times whilst effectively in the animation and therefore exploit the system and get sweet money from it.

If I'm correct the function being called is mrkWin.

My idea, change it so the function can only be called once every 2 ... | 1.0 | Fix "Flag capture" exploit - When a person is taking the flag on (for example) a captured outposts he can do it several times whilst effectively in the animation and therefore exploit the system and get sweet money from it.

If I'm correct the function being called is mrkWin.

My idea, change it so the function can... | non_process | fix flag capture exploit when a person is taking the flag on for example a captured outposts he can do it several times whilst effectively in the animation and therefore exploit the system and get sweet money from it if i m correct the function being called is mrkwin my idea change it so the function can... | 0 |

51,912 | 13,211,336,615 | IssuesEvent | 2020-08-15 22:24:11 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | [clsim] run the python tests (Trac #1252) | Incomplete Migration Migrated from Trac combo simulation defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1252">https://code.icecube.wisc.edu/projects/icecube/ticket/1252</a>, reported by david.schultzand owned by claudio.kopper</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:11:45",

... | 1.0 | [clsim] run the python tests (Trac #1252) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1252">https://code.icecube.wisc.edu/projects/icecube/ticket/1252</a>, reported by david.schultzand owned by claudio.kopper</em></summary>

<p>

```json

{

"status": "closed"... | non_process | run the python tests trac migrated from json status closed changetime ts description clsim has python tests under resources tests but cmake doesn t know about them perhaps add test scripts resources tests py in the right place ... | 0 |

298,870 | 25,862,596,865 | IssuesEvent | 2022-12-13 18:05:03 | quarkusio/quarkus | https://api.github.com/repos/quarkusio/quarkus | closed | Rename `quarkus.test.native-image-profile` to reflect its use for integration tests | area/testing area/housekeeping | ### Description

Since specific native image testing has been replaced by integration testing, which may or may not be a native image, this property name should reflect its new usage.

### Implementation ideas

A name such as `quarkus.test.integration-profile` or similar would better reflect its current usage. | 1.0 | Rename `quarkus.test.native-image-profile` to reflect its use for integration tests - ### Description

Since specific native image testing has been replaced by integration testing, which may or may not be a native image, this property name should reflect its new usage.

### Implementation ideas

A name such as `quarkus... | non_process | rename quarkus test native image profile to reflect its use for integration tests description since specific native image testing has been replaced by integration testing which may or may not be a native image this property name should reflect its new usage implementation ideas a name such as quarkus... | 0 |

221,537 | 7,389,578,159 | IssuesEvent | 2018-03-16 09:14:49 | Wozza365/GameDevelopment | https://api.github.com/repos/Wozza365/GameDevelopment | opened | Key Must Trigger Door to Open When Key is Pressed Within Area | enhancement high priority | This work should only be a small enhancement of existing door work.

The door must open when player presses button within the trigger area.

Must trigger unlocking sound and remove key.

Should transition the key into the keyhole and then twist it as sound is played.

| 1.0 | Key Must Trigger Door to Open When Key is Pressed Within Area - This work should only be a small enhancement of existing door work.

The door must open when player presses button within the trigger area.

Must trigger unlocking sound and remove key.

Should transition the key into the keyhole and then twist it as sound... | non_process | key must trigger door to open when key is pressed within area this work should only be a small enhancement of existing door work the door must open when player presses button within the trigger area must trigger unlocking sound and remove key should transition the key into the keyhole and then twist it as sound... | 0 |

9,195 | 12,230,722,159 | IssuesEvent | 2020-05-04 05:51:59 | zotero/zotero | https://api.github.com/repos/zotero/zotero | opened | Multi-section bibliographies | Word Processor Integration | As noted in https://github.com/citation-style-language/documentation/issues/71 this is a frequently requested feature, which require a both a technical and GUI solution in CSL/citeproc clients.

Since the GUI is likely to inform the technical implementation, I wanted to discuss it separately in more detail.

(1) If... | 1.0 | Multi-section bibliographies - As noted in https://github.com/citation-style-language/documentation/issues/71 this is a frequently requested feature, which require a both a technical and GUI solution in CSL/citeproc clients.

Since the GUI is likely to inform the technical implementation, I wanted to discuss it separ... | process | multi section bibliographies as noted in this is a frequently requested feature which require a both a technical and gui solution in csl citeproc clients since the gui is likely to inform the technical implementation i wanted to discuss it separately in more detail if we were to do this i think we sho... | 1 |

10,764 | 13,551,960,105 | IssuesEvent | 2020-09-17 11:55:03 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Object Detail on field with very large integer fails | Database/Postgres Priority:P2 Querying/Processor Type:Bug | **Describe the bug**

Object Detail on field with large (#5816) `bigint`/`bigserial` fails on Postgres, but works on MariaDB.

**To Reproduce**

1. Create table and sync:

```

CREATE TABLE sampledata.x5816 (

id bigserial NOT NULL,

biggie bigint NULL,

CONSTRAINT idx_pk PRIMARY KEY (id)

);

INSERT INTO sampleda... | 1.0 | Object Detail on field with very large integer fails - **Describe the bug**

Object Detail on field with large (#5816) `bigint`/`bigserial` fails on Postgres, but works on MariaDB.

**To Reproduce**

1. Create table and sync:

```

CREATE TABLE sampledata.x5816 (

id bigserial NOT NULL,

biggie bigint NULL,

CONST... | process | object detail on field with very large integer fails describe the bug object detail on field with large bigint bigserial fails on postgres but works on mariadb to reproduce create table and sync create table sampledata id bigserial not null biggie bigint null constraint i... | 1 |

20,600 | 27,265,745,060 | IssuesEvent | 2023-02-22 17:53:29 | tikv/tikv | https://api.github.com/repos/tikv/tikv | opened | suspended-time should be passed through to TiDB to avoid confusion | type/bug sig/coprocessor | ## Bug Report

in PR #9257, we introduced the suspended time in the tracker. However this information is included in process time in TiDB side, which misleads the investigation. As slow process time typically means low IO or CPU resources.

It should be exposed as suspended time itself in TiDB.

<!-- Thanks for your ... | 1.0 | suspended-time should be passed through to TiDB to avoid confusion - ## Bug Report

in PR #9257, we introduced the suspended time in the tracker. However this information is included in process time in TiDB side, which misleads the investigation. As slow process time typically means low IO or CPU resources.

It should... | process | suspended time should be passed through to tidb to avoid confusion bug report in pr we introduced the suspended time in the tracker however this information is included in process time in tidb side which misleads the investigation as slow process time typically means low io or cpu resources it should be... | 1 |

355,941 | 25,176,068,512 | IssuesEvent | 2022-11-11 09:22:26 | jwdavis0200/pe | https://api.github.com/repos/jwdavis0200/pe | opened | Images used in the UG for features examples is redundant/ineffective for value adding. | severity.Low type.DocumentationBug | The Features in the UG were explained with many examples and result-of-command lists but fails to show the difference between the before-command state of the list and the after-command state of the list, hence is not useful to the user to learn about how the commands change the state of the HealthContact lists.

May ev... | 1.0 | Images used in the UG for features examples is redundant/ineffective for value adding. - The Features in the UG were explained with many examples and result-of-command lists but fails to show the difference between the before-command state of the list and the after-command state of the list, hence is not useful to the ... | non_process | images used in the ug for features examples is redundant ineffective for value adding the features in the ug were explained with many examples and result of command lists but fails to show the difference between the before command state of the list and the after command state of the list hence is not useful to the ... | 0 |

62,651 | 14,656,554,120 | IssuesEvent | 2020-12-28 13:41:03 | fu1771695yongxie/Rocket.Chat | https://api.github.com/repos/fu1771695yongxie/Rocket.Chat | opened | CVE-2020-7661 (High) detected in url-regex-5.0.0.tgz, url-regex-3.2.0.tgz | security vulnerability | ## CVE-2020-7661 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>url-regex-5.0.0.tgz</b>, <b>url-regex-3.2.0.tgz</b></p></summary>

<p>

<details><summary><b>url-regex-5.0.0.tgz</b></p... | True | CVE-2020-7661 (High) detected in url-regex-5.0.0.tgz, url-regex-3.2.0.tgz - ## CVE-2020-7661 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>url-regex-5.0.0.tgz</b>, <b>url-regex-3.2.... | non_process | cve high detected in url regex tgz url regex tgz cve high severity vulnerability vulnerable libraries url regex tgz url regex tgz url regex tgz regular expression for matching urls library home page a href path to dependency file rocket ch... | 0 |

652,330 | 21,528,497,419 | IssuesEvent | 2022-04-28 21:06:21 | kubernetes/minikube | https://api.github.com/repos/kubernetes/minikube | closed | GUI: Add cluster status to tray menu | priority/important-soon kind/gui | Cluster status should be added to the tray context menu so the user knows the status of the cluster without having to open the GUI. | 1.0 | GUI: Add cluster status to tray menu - Cluster status should be added to the tray context menu so the user knows the status of the cluster without having to open the GUI. | non_process | gui add cluster status to tray menu cluster status should be added to the tray context menu so the user knows the status of the cluster without having to open the gui | 0 |

83,844 | 16,376,858,628 | IssuesEvent | 2021-05-16 09:34:33 | qutip/qutip | https://api.github.com/repos/qutip/qutip | closed | ffmpeg command from User Guide gives an error | code good first issue unitaryhack | When I run `ffmpeg -r 20 -b 1800 -i bloch_%01d.png bloch.mp4`

from the User Guide's [Generating Images for Animation

](http://qutip.org/docs/4.1/guide/guide-bloch.html#generating-images-for-animation) section I get the following error:

```

Option b (video bitrate (please use -b:v)) cannot be applied to input url ... | 1.0 | ffmpeg command from User Guide gives an error - When I run `ffmpeg -r 20 -b 1800 -i bloch_%01d.png bloch.mp4`

from the User Guide's [Generating Images for Animation

](http://qutip.org/docs/4.1/guide/guide-bloch.html#generating-images-for-animation) section I get the following error:

```

Option b (video bitrate (p... | non_process | ffmpeg command from user guide gives an error when i run ffmpeg r b i bloch png bloch from the user guide s generating images for animation section i get the following error option b video bitrate please use b v cannot be applied to input url png you are trying to apply an inp... | 0 |

13,088 | 15,436,475,898 | IssuesEvent | 2021-03-07 13:11:55 | ismail-yilmaz/upp-components | https://api.github.com/repos/ismail-yilmaz/upp-components | closed | PtyProcess: Winpty should be made into a U++ plugin and statically linked. | PtyProcess Terminal enhancement | I have already managed to compile and **statically** link winpty library with stock Upp and the bundled clang compiler with very little wrestling . Not to mention that winpty has MIT license.. This means we can simply provide the library with the PtyProcess package, and preferably make it the default backend, for easy ... | 1.0 | PtyProcess: Winpty should be made into a U++ plugin and statically linked. - I have already managed to compile and **statically** link winpty library with stock Upp and the bundled clang compiler with very little wrestling . Not to mention that winpty has MIT license.. This means we can simply provide the library with ... | process | ptyprocess winpty should be made into a u plugin and statically linked i have already managed to compile and statically link winpty library with stock upp and the bundled clang compiler with very little wrestling not to mention that winpty has mit license this means we can simply provide the library with ... | 1 |

201,364 | 22,948,589,997 | IssuesEvent | 2022-07-19 04:22:06 | snowdensb/wildfly | https://api.github.com/repos/snowdensb/wildfly | opened | CVE-2015-2575 (Medium) detected in mysql-connector-java-5.1.15.jar | security vulnerability | ## CVE-2015-2575 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mysql-connector-java-5.1.15.jar</b></p></summary>

<p>MySQL JDBC Type 4 driver</p>

<p>Library home page: <a href="http... | True | CVE-2015-2575 (Medium) detected in mysql-connector-java-5.1.15.jar - ## CVE-2015-2575 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mysql-connector-java-5.1.15.jar</b></p></summary>... | non_process | cve medium detected in mysql connector java jar cve medium severity vulnerability vulnerable library mysql connector java jar mysql jdbc type driver library home page a href path to vulnerable library testsuite integration smoke src test resources mysql connector jav... | 0 |

15,322 | 19,433,139,004 | IssuesEvent | 2021-12-21 14:15:59 | threefoldtech/tfchain | https://api.github.com/repos/threefoldtech/tfchain | closed | Staking: increase GRANDPA delay | process_wontfix | The current GRANDPA delay is only 2 blocks. This is workeable for AURA, but since staking uses BABE, which might incur bigger forks, we will need to increase this delay. Failure to do so might result in unrecoverable forks (since a chain with Finalized block B1, and a different chain with finalized block B2, which are ... | 1.0 | Staking: increase GRANDPA delay - The current GRANDPA delay is only 2 blocks. This is workeable for AURA, but since staking uses BABE, which might incur bigger forks, we will need to increase this delay. Failure to do so might result in unrecoverable forks (since a chain with Finalized block B1, and a different chain w... | process | staking increase grandpa delay the current grandpa delay is only blocks this is workeable for aura but since staking uses babe which might incur bigger forks we will need to increase this delay failure to do so might result in unrecoverable forks since a chain with finalized block and a different chain wi... | 1 |

258,360 | 27,563,923,197 | IssuesEvent | 2023-03-08 01:16:16 | Abhi347/vid-to-speech-api-json | https://api.github.com/repos/Abhi347/vid-to-speech-api-json | opened | CVE-2017-20165 (High) detected in debug-2.6.8.tgz | Mend: dependency security vulnerability | ## CVE-2017-20165 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>debug-2.6.8.tgz</b></p></summary>

<p>small debugging utility</p>

<p>Library home page: <a href="https://registry.npmjs... | True | CVE-2017-20165 (High) detected in debug-2.6.8.tgz - ## CVE-2017-20165 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>debug-2.6.8.tgz</b></p></summary>

<p>small debugging utility</p>

<... | non_process | cve high detected in debug tgz cve high severity vulnerability vulnerable library debug tgz small debugging utility library home page a href path to dependency file package json path to vulnerable library node modules grpc node modules debug package json depend... | 0 |

323,627 | 27,741,238,394 | IssuesEvent | 2023-03-15 14:25:10 | ntop/ntopng | https://api.github.com/repos/ntop/ntopng | closed | modification of max char length in Client Server columnt - IPv6 address length cut and replaced with … - for better search possibility | Ready to Test | We suggest the possibility of changing the width of the Client or Server column width to accommodate the length of IPv6 addresses. So they don't get cut and are searchable by browser search function.

| 1.0 | modification of max char length in Client Server columnt - IPv6 address length cut and replaced with … - for better search possibility - We suggest the possibility of changing the width of the Client or Server column width to accommodate the length of IPv6 addresses. So they don't get cut and are searchable by browser ... | non_process | modification of max char length in client server columnt address length cut and replaced with … for better search possibility we suggest the possibility of changing the width of the client or server column width to accommodate the length of addresses so they don t get cut and are searchable by browser search... | 0 |

18,966 | 24,931,236,007 | IssuesEvent | 2022-10-31 11:48:45 | deepset-ai/haystack | https://api.github.com/repos/deepset-ai/haystack | closed | `PDFToTextOCRConverter.convert()` write temp files into the package folder | type:bug topic:preprocessing journey:first steps topic:indexing | **Describe the bug**

- `PDFToTextOCRConverter.convert()` write temp files into the package folder: https://github.com/deepset-ai/haystack/blob/5ca96357ff526bf11aefa7fe4aa24fd11135cd0c/haystack/nodes/file_converter/pdf.py#L255

- This breaks the node in some contexts, i.e. conda on Windows, non-editable install.

- See... | 1.0 | `PDFToTextOCRConverter.convert()` write temp files into the package folder - **Describe the bug**

- `PDFToTextOCRConverter.convert()` write temp files into the package folder: https://github.com/deepset-ai/haystack/blob/5ca96357ff526bf11aefa7fe4aa24fd11135cd0c/haystack/nodes/file_converter/pdf.py#L255

- This breaks t... | process | pdftotextocrconverter convert write temp files into the package folder describe the bug pdftotextocrconverter convert write temp files into the package folder this breaks the node in some contexts i e conda on windows non editable install see error message error haystack no... | 1 |

3,715 | 6,732,609,371 | IssuesEvent | 2017-10-18 12:12:12 | lockedata/rcms | https://api.github.com/repos/lockedata/rcms | opened | Manage attendees | conference team odoo processes | ## Detailed task

- Monitor sales

- Modify a registration e.g. issue a refund

- Send an email to attendees

## Assessing the task

Try to perform the task. Use google and the system documentation to help - part of what we're trying to assess how easy it is for people to work out how to do tasks.

Use a 👍 (`:+1:`... | 1.0 | Manage attendees - ## Detailed task

- Monitor sales

- Modify a registration e.g. issue a refund

- Send an email to attendees

## Assessing the task

Try to perform the task. Use google and the system documentation to help - part of what we're trying to assess how easy it is for people to work out how to do tasks.

... | process | manage attendees detailed task monitor sales modify a registration e g issue a refund send an email to attendees assessing the task try to perform the task use google and the system documentation to help part of what we re trying to assess how easy it is for people to work out how to do tasks ... | 1 |

128,283 | 17,470,026,949 | IssuesEvent | 2021-08-07 01:08:37 | RecordReplay/devtools | https://api.github.com/repos/RecordReplay/devtools | closed | July Onboarding Refresh | design-complete | Here's a link to the Figma file: https://www.figma.com/file/SSr1ljyzF0lCXTL7BX1Whh/Replay-Design-Doc?node-id=5210%3A12083

**Here are the steps people will walk through, in order:**

1. Big bold welcome screen

2. Download links

3. Nicer Google signin

4. Create your first Replay screen

5. Glitch demo with circle... | 1.0 | July Onboarding Refresh - Here's a link to the Figma file: https://www.figma.com/file/SSr1ljyzF0lCXTL7BX1Whh/Replay-Design-Doc?node-id=5210%3A12083

**Here are the steps people will walk through, in order:**

1. Big bold welcome screen

2. Download links

3. Nicer Google signin

4. Create your first Replay screen

... | non_process | july onboarding refresh here s a link to the figma file here are the steps people will walk through in order big bold welcome screen download links nicer google signin create your first replay screen glitch demo with circles console logs in dev from a priority standpoint let... | 0 |

428,997 | 30,019,840,309 | IssuesEvent | 2023-06-26 22:01:54 | VEMULA-MOUNITHA/ProjectBoard0501 | https://api.github.com/repos/VEMULA-MOUNITHA/ProjectBoard0501 | opened | Final Document: | documentation | Need to complete all the project related works and need to verify that the website is working properly or not. | 1.0 | Final Document: - Need to complete all the project related works and need to verify that the website is working properly or not. | non_process | final document need to complete all the project related works and need to verify that the website is working properly or not | 0 |

499,999 | 14,484,054,062 | IssuesEvent | 2020-12-10 15:52:47 | ccmbioinfo/ST2020 | https://api.github.com/repos/ccmbioinfo/ST2020 | closed | Epic: /api/groups | backend priority:high | Create a new file `groups.py` for these endpoints

- [x] **GET /api/groups** (list_groups)

Requires authentication. Returns a list of all group codes and display names, e.g. `[{ "group_code": "ACH", "group_name": "Alberta" }]`

- [x] **GET /api/groups/:group_code** (get_group)

Returns `{ "group_code": "", "gr... | 1.0 | Epic: /api/groups - Create a new file `groups.py` for these endpoints

- [x] **GET /api/groups** (list_groups)

Requires authentication. Returns a list of all group codes and display names, e.g. `[{ "group_code": "ACH", "group_name": "Alberta" }]`

- [x] **GET /api/groups/:group_code** (get_group)

Returns `{ "... | non_process | epic api groups create a new file groups py for these endpoints get api groups list groups requires authentication returns a list of all group codes and display names e g get api groups group code get group returns group code group name users whe... | 0 |

19,396 | 25,539,287,900 | IssuesEvent | 2022-11-29 14:16:30 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | closed | Preprocessor `multimodel_statistics` fails when data have no horizontal dimension | bug preprocessor | **Describe the bug**

Hi all, I'm developing some tests for the multimodel statistics using real data (#856), and I'm coming accross this bug:

Running multimodel statistics with a list of cubes with **no horizontal dimension**, will result in

`iris.exceptions.CoordinateNotFoundError: 'Expected to find exactly 1 dep... | 1.0 | Preprocessor `multimodel_statistics` fails when data have no horizontal dimension - **Describe the bug**

Hi all, I'm developing some tests for the multimodel statistics using real data (#856), and I'm coming accross this bug:

Running multimodel statistics with a list of cubes with **no horizontal dimension**, will ... | process | preprocessor multimodel statistics fails when data have no horizontal dimension describe the bug hi all i m developing some tests for the multimodel statistics using real data and i m coming accross this bug running multimodel statistics with a list of cubes with no horizontal dimension will re... | 1 |

20,479 | 27,138,053,950 | IssuesEvent | 2023-02-16 14:33:51 | benthosdev/benthos | https://api.github.com/repos/benthosdev/benthos | closed | Mapping proccesors are missing or skipped in tracers | bug processors | When we replace mapping with bloblang we could see it’s corresponding entry in tracers. So I am guessing the issue is only with mapping processor. | 1.0 | Mapping proccesors are missing or skipped in tracers - When we replace mapping with bloblang we could see it’s corresponding entry in tracers. So I am guessing the issue is only with mapping processor. | process | mapping proccesors are missing or skipped in tracers when we replace mapping with bloblang we could see it’s corresponding entry in tracers so i am guessing the issue is only with mapping processor | 1 |

446,411 | 31,474,466,300 | IssuesEvent | 2023-08-30 09:43:56 | PocketRelay/Website | https://api.github.com/repos/PocketRelay/Website | opened | Update docker config example | documentation enhancement | ## Description

Current docker example doesn't show how to easily include config variables. This should be updated to include volume binding examples so that its easier to use (Including docker compose) | 1.0 | Update docker config example - ## Description

Current docker example doesn't show how to easily include config variables. This should be updated to include volume binding examples so that its easier to use (Including docker compose) | non_process | update docker config example description current docker example doesn t show how to easily include config variables this should be updated to include volume binding examples so that its easier to use including docker compose | 0 |

560,520 | 16,598,730,723 | IssuesEvent | 2021-06-01 16:21:40 | wp-media/wp-rocket | https://api.github.com/repos/wp-media/wp-rocket | closed | Remove "Image Optimization" menu when WP_ROCKET_WHITE_LABEL_ACCOUNT is used | Module: dashboard community effort: [XS] good first issue priority: low type: enhancement | **Describe the solution you'd like**

The customer wants to be able to disable Imagify ads in WP Rocket. We could add this to the WP_ROCKET_WHITE_LABEL_ACCOUNT constant.

**Additional context**

From productboard:

https://wp-media.productboard.com/insights/shared-inbox/notes/9688386 | 1.0 | Remove "Image Optimization" menu when WP_ROCKET_WHITE_LABEL_ACCOUNT is used - **Describe the solution you'd like**

The customer wants to be able to disable Imagify ads in WP Rocket. We could add this to the WP_ROCKET_WHITE_LABEL_ACCOUNT constant.

**Additional context**

From productboard:

https://wp-media.productb... | non_process | remove image optimization menu when wp rocket white label account is used describe the solution you d like the customer wants to be able to disable imagify ads in wp rocket we could add this to the wp rocket white label account constant additional context from productboard | 0 |

298,144 | 9,196,330,488 | IssuesEvent | 2019-03-07 06:39:38 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | [Doc] Update the CorrettoJDK 8 and AdoptOpenJDK 8 compatibility with WSO2 IS 5.7 | 5.7.0 Priority/Highest Resolution/Done Severity/Critical Type/Docs | **Background**

Update the [Tested Operating Systems and JDKs](https://docs.wso2.com/display/compatibility/Tested+Operating+Systems+and+JDKs) to indicate the compatibility of CorrettoJDK 8 and AdoptOpenJDK 8 with WSO2 Identity Server 5.7. | 1.0 | [Doc] Update the CorrettoJDK 8 and AdoptOpenJDK 8 compatibility with WSO2 IS 5.7 - **Background**

Update the [Tested Operating Systems and JDKs](https://docs.wso2.com/display/compatibility/Tested+Operating+Systems+and+JDKs) to indicate the compatibility of CorrettoJDK 8 and AdoptOpenJDK 8 with WSO2 Identity Server 5... | non_process | update the correttojdk and adoptopenjdk compatibility with is background update the to indicate the compatibility of correttojdk and adoptopenjdk with identity server | 0 |

92,396 | 18,848,274,772 | IssuesEvent | 2021-11-11 17:20:19 | sourcegraph/sourcegraph | https://api.github.com/repos/sourcegraph/sourcegraph | closed | executors: Write docs for experimental auto-indexer configuration | team/code-intelligence server-side auto-index-on-prem | We currently have no user-facing docs on how to deploy, configure, or use auto-indexers. | 1.0 | executors: Write docs for experimental auto-indexer configuration - We currently have no user-facing docs on how to deploy, configure, or use auto-indexers. | non_process | executors write docs for experimental auto indexer configuration we currently have no user facing docs on how to deploy configure or use auto indexers | 0 |

74,133 | 24,962,771,900 | IssuesEvent | 2022-11-01 16:49:07 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | Wrong transformation for transformPatternsTrivialPredicates when DISTINCT predicate operand is NULL | T: Defect C: Functionality P: Medium E: Professional Edition E: Enterprise Edition | The `QOM.IsDistinctFrom` and `QOM.IsNotDistinctFrom` predicates extend `CompareCondition`, which is the only check done for `Settings.transformPatternsTrivialPredicates` to decide whether a condition (e.g. `a = null`) is reduced to a `nullCondition()`.

This means the following wrong transformation is made:

```sql... | 1.0 | Wrong transformation for transformPatternsTrivialPredicates when DISTINCT predicate operand is NULL - The `QOM.IsDistinctFrom` and `QOM.IsNotDistinctFrom` predicates extend `CompareCondition`, which is the only check done for `Settings.transformPatternsTrivialPredicates` to decide whether a condition (e.g. `a = null`) ... | non_process | wrong transformation for transformpatternstrivialpredicates when distinct predicate operand is null the qom isdistinctfrom and qom isnotdistinctfrom predicates extend comparecondition which is the only check done for settings transformpatternstrivialpredicates to decide whether a condition e g a null ... | 0 |

420,549 | 28,289,766,526 | IssuesEvent | 2023-04-09 03:29:00 | abluenautilus/SeasideModularVCV | https://api.github.com/repos/abluenautilus/SeasideModularVCV | closed | Some scales left out | documentation Proteus | Documentation mentioned a new scale was added but this did not show up in the latest release. | 1.0 | Some scales left out - Documentation mentioned a new scale was added but this did not show up in the latest release. | non_process | some scales left out documentation mentioned a new scale was added but this did not show up in the latest release | 0 |

1,755 | 4,460,997,489 | IssuesEvent | 2016-08-24 02:37:38 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | System.Diagnostics.ProcessThread.StartAddress reports incorrect address on Linux | 3 - Ready For Review System.Diagnostics.Process X-Plat | We get the value of `ProcessThread.StartAddress` by looking at `/proc/[pid]/task/[tid]/stat`, parsing out the `startstack` field from that file. However, this gives the address of the *stack*, not the ["...address of the function that the operating system called that started this thread."](https://msdn.microsoft.com/e... | 1.0 | System.Diagnostics.ProcessThread.StartAddress reports incorrect address on Linux - We get the value of `ProcessThread.StartAddress` by looking at `/proc/[pid]/task/[tid]/stat`, parsing out the `startstack` field from that file. However, this gives the address of the *stack*, not the ["...address of the function that t... | process | system diagnostics processthread startaddress reports incorrect address on linux we get the value of processthread startaddress by looking at proc task stat parsing out the startstack field from that file however this gives the address of the stack not the it doesn t look to me like the value... | 1 |

111,744 | 14,142,315,604 | IssuesEvent | 2020-11-10 13:54:58 | patternfly/patternfly-org | https://api.github.com/repos/patternfly/patternfly-org | closed | UX writing style guide: Add CCS terms & conventions to "Terminology" | Content PF4 design Guidelines UX writing style guide | Link CCS terms and conventions in PatternFly's terminology page. Specify that it's a resource for Red Hat-specific terms.

Link to CCS page: https://redhat-documentation.github.io/supplementary-style-guide/#introduction

Link to PF terminology: https://www.patternfly.org/v4/ux-writing/terminology | 1.0 | UX writing style guide: Add CCS terms & conventions to "Terminology" - Link CCS terms and conventions in PatternFly's terminology page. Specify that it's a resource for Red Hat-specific terms.

Link to CCS page: https://redhat-documentation.github.io/supplementary-style-guide/#introduction

Link to PF terminology: ... | non_process | ux writing style guide add ccs terms conventions to terminology link ccs terms and conventions in patternfly s terminology page specify that it s a resource for red hat specific terms link to ccs page link to pf terminology | 0 |

347,868 | 31,281,486,058 | IssuesEvent | 2023-08-22 09:51:57 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | opened | [CI] RestEsqlIT testWarningHeadersOnFailedConversions failing | :Search/Search >test-failure | **Build scan:**

https://gradle-enterprise.elastic.co/s/3iejlt6s5z2gq/tests/:x-pack:plugin:esql:qa:server:single-node:javaRestTest/org.elasticsearch.xpack.esql.qa.single_node.RestEsqlIT/testWarningHeadersOnFailedConversions

**Reproduction line:**

```

./gradlew ':x-pack:plugin:esql:qa:server:single-node:javaRestTest' --... | 1.0 | [CI] RestEsqlIT testWarningHeadersOnFailedConversions failing - **Build scan:**

https://gradle-enterprise.elastic.co/s/3iejlt6s5z2gq/tests/:x-pack:plugin:esql:qa:server:single-node:javaRestTest/org.elasticsearch.xpack.esql.qa.single_node.RestEsqlIT/testWarningHeadersOnFailedConversions

**Reproduction line:**

```

./gra... | non_process | restesqlit testwarningheadersonfailedconversions failing build scan reproduction line gradlew x pack plugin esql qa server single node javaresttest tests org elasticsearch xpack esql qa single node restesqlit testwarningheadersonfailedconversions dtests seed dtests locale id id dtests... | 0 |

21,918 | 30,444,992,706 | IssuesEvent | 2023-07-15 14:48:44 | bitfocus/companion-module-requests | https://api.github.com/repos/bitfocus/companion-module-requests | opened | Lightkey OSC with feedback | NOT YET PROCESSED | - [ ] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control:

Lightkey

What you would like to be able to make it do from Companion:

Control the lightkey sw through OSC o... | 1.0 | Lightkey OSC with feedback - - [ ] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control:

Lightkey

What you would like to be able to make it do from Companion:

Control ... | process | lightkey osc with feedback i have researched the list of existing companion modules and requests and have determined this has not yet been requested the name of the device hardware or software you would like to control lightkey what you would like to be able to make it do from companion control th... | 1 |

6,461 | 9,546,580,744 | IssuesEvent | 2019-05-01 20:21:57 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | closed | Department of State: Right Rail of Submission Confirmation Page | Apply Process Requirements Ready State Dept. | Who: Student

What: Notification that the user now has a USAJOBS profile and what they can do about it.

Why: As a student I want to be informed that I now have a USAJOBS profile and information about federal hiring.

A/C

- There will be a box in the right rail "You now have a USAJOBS profile"

- There will be cont... | 1.0 | Department of State: Right Rail of Submission Confirmation Page - Who: Student

What: Notification that the user now has a USAJOBS profile and what they can do about it.

Why: As a student I want to be informed that I now have a USAJOBS profile and information about federal hiring.

A/C

- There will be a box in the... | process | department of state right rail of submission confirmation page who student what notification that the user now has a usajobs profile and what they can do about it why as a student i want to be informed that i now have a usajobs profile and information about federal hiring a c there will be a box in the... | 1 |

6,837 | 9,979,468,918 | IssuesEvent | 2019-07-09 23:00:31 | osquery/foundation | https://api.github.com/repos/osquery/foundation | closed | Proposal: Require squash commits for osquery | process proposal | I propose we require squash commits for osquery.

My interest comes from working in large monorepos, and allowing people to iterate with a lot of little commits. These days, github has a toggles. We currently allow rebase and squash. I propose we only allow squash.

Please vote, thumbs up or thumbs down. Or discus... | 1.0 | Proposal: Require squash commits for osquery - I propose we require squash commits for osquery.

My interest comes from working in large monorepos, and allowing people to iterate with a lot of little commits. These days, github has a toggles. We currently allow rebase and squash. I propose we only allow squash.

P... | process | proposal require squash commits for osquery i propose we require squash commits for osquery my interest comes from working in large monorepos and allowing people to iterate with a lot of little commits these days github has a toggles we currently allow rebase and squash i propose we only allow squash p... | 1 |

15,638 | 19,822,613,467 | IssuesEvent | 2022-01-20 00:25:12 | Jeffail/benthos | https://api.github.com/repos/Jeffail/benthos | closed | Include metadata with specific prefixes as headers to http_client output request | enhancement processors inputs outputs effort: lower | I would like to provide a list of prefixes and all metadata with these prefixes to be included as headers in the http_client request. | 1.0 | Include metadata with specific prefixes as headers to http_client output request - I would like to provide a list of prefixes and all metadata with these prefixes to be included as headers in the http_client request. | process | include metadata with specific prefixes as headers to http client output request i would like to provide a list of prefixes and all metadata with these prefixes to be included as headers in the http client request | 1 |

398,723 | 11,742,255,693 | IssuesEvent | 2020-03-12 00:08:23 | grpc/grpc | https://api.github.com/repos/grpc/grpc | closed | Skip SSL Verification? | area/security kind/question lang/go priority/P2 | I'm re-implementing a Go app (in rust) that uses grpc-go which uses the tls.Config{InsecureSkipVerify: true} option in the Go TLS library.

example usage:

https://github.com/grpc/grpc-go/blob/4abb3622b0fb97df222670b189869b44d0c2454d/credentials/credentials_test.go#L178

I'm using the rust wrapper for the C impleme... | 1.0 | Skip SSL Verification? - I'm re-implementing a Go app (in rust) that uses grpc-go which uses the tls.Config{InsecureSkipVerify: true} option in the Go TLS library.

example usage:

https://github.com/grpc/grpc-go/blob/4abb3622b0fb97df222670b189869b44d0c2454d/credentials/credentials_test.go#L178

I'm using the rust ... | non_process | skip ssl verification i m re implementing a go app in rust that uses grpc go which uses the tls config insecureskipverify true option in the go tls library example usage i m using the rust wrapper for the c implementation for grpc and its come down to the sslcredentialsoptions is there a way to get t... | 0 |

20,978 | 27,831,412,902 | IssuesEvent | 2023-03-20 05:24:29 | serai-dex/serai | https://api.github.com/repos/serai-dex/serai | opened | Consider using a coordinated model for signature shares | cryptography processor | Since FROST isn't robust, any signer failing it a fault. Accordingly, the likelihood of failure doesn't fall under a coordinated model.

Under Seraphis, Monero's future transaction protocol, any multisig member will be able to independently produce membership proofs. This include a membership proof of [1 ..= 127, act... | 1.0 | Consider using a coordinated model for signature shares - Since FROST isn't robust, any signer failing it a fault. Accordingly, the likelihood of failure doesn't fall under a coordinated model.

Under Seraphis, Monero's future transaction protocol, any multisig member will be able to independently produce membership ... | process | consider using a coordinated model for signature shares since frost isn t robust any signer failing it a fault accordingly the likelihood of failure doesn t fall under a coordinated model under seraphis monero s future transaction protocol any multisig member will be able to independently produce membership ... | 1 |

20,533 | 27,190,744,727 | IssuesEvent | 2023-02-19 19:18:03 | NationalSecurityAgency/ghidra | https://api.github.com/repos/NationalSecurityAgency/ghidra | closed | Bug: 65C02 TRB/TSB instructions incorrectly analysed | Type: Bug Feature: Processor/6502 Status: Internal | **Describe the bug**

On the 65C02 processor core, the TRB and TSB instructions are not implemented correctly.

The decompiler incorrectly suggests that they have no effect -- yet they should write the updated value (with bits cleared/set respectively) back to OP1. This is documented at http://www.6502.org/tutorials/... | 1.0 | Bug: 65C02 TRB/TSB instructions incorrectly analysed - **Describe the bug**

On the 65C02 processor core, the TRB and TSB instructions are not implemented correctly.

The decompiler incorrectly suggests that they have no effect -- yet they should write the updated value (with bits cleared/set respectively) back to OP... | process | bug trb tsb instructions incorrectly analysed describe the bug on the processor core the trb and tsb instructions are not implemented correctly the decompiler incorrectly suggests that they have no effect yet they should write the updated value with bits cleared set respectively back to this is... | 1 |

16,445 | 4,053,937,855 | IssuesEvent | 2016-05-24 10:23:38 | ES-DOC/esdoc-docs | https://api.github.com/repos/ES-DOC/esdoc-docs | opened | External review for ScenarioMIP experiment documentation | CMIP6 Documentation | Review the ScenarioMIP experiments with the PIs. | 1.0 | External review for ScenarioMIP experiment documentation - Review the ScenarioMIP experiments with the PIs. | non_process | external review for scenariomip experiment documentation review the scenariomip experiments with the pis | 0 |

167,778 | 13,042,165,206 | IssuesEvent | 2020-07-28 21:51:34 | thefrontside/bigtest | https://api.github.com/repos/thefrontside/bigtest | opened | Should we dispose of each test's JavaScript context before the next lane is run? | @bigtest/agent question | We currently have two JavaScript context's at play when running tests: The `Agent` JS context, and the `Harness` JS context. The `Agent` JS context is initialized once when the agent itself is connected to the orchestrator, whereas the `Harness` JS context is created once _per test_ by clearing the contents of the test... | 1.0 | Should we dispose of each test's JavaScript context before the next lane is run? - We currently have two JavaScript context's at play when running tests: The `Agent` JS context, and the `Harness` JS context. The `Agent` JS context is initialized once when the agent itself is connected to the orchestrator, whereas the `... | non_process | should we dispose of each test s javascript context before the next lane is run we currently have two javascript context s at play when running tests the agent js context and the harness js context the agent js context is initialized once when the agent itself is connected to the orchestrator whereas the ... | 0 |

12,333 | 14,882,568,540 | IssuesEvent | 2021-01-20 12:05:33 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Android] Unable to submit location based questionnaires | Android Bug P1 Process: Tested dev | Unable to submit the location based questionnaires in android | 1.0 | [Android] Unable to submit location based questionnaires - Unable to submit the location based questionnaires in android | process | unable to submit location based questionnaires unable to submit the location based questionnaires in android | 1 |

19,137 | 25,196,148,199 | IssuesEvent | 2022-11-12 14:42:11 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Need better design for "skip" properties | priority/medium preprocess enhancement | ## Description

Many of our Ant targets in the preprocess code have "skip" properties that allow you to skip running part of our build pipeline. These are considered internal properties and subject to change, but available to advanced users who understand the code. There are several problems:

* They are not docume... | 1.0 | Need better design for "skip" properties - ## Description

Many of our Ant targets in the preprocess code have "skip" properties that allow you to skip running part of our build pipeline. These are considered internal properties and subject to change, but available to advanced users who understand the code. There are... | process | need better design for skip properties description many of our ant targets in the preprocess code have skip properties that allow you to skip running part of our build pipeline these are considered internal properties and subject to change but available to advanced users who understand the code there are... | 1 |

8,363 | 11,518,467,649 | IssuesEvent | 2020-02-14 10:34:19 | prisma/specs | https://api.github.com/repos/prisma/specs | opened | CLI spec: some commands are missing | area/cli kind/spec process/candidate | I found that the CLI spec at https://github.com/prisma/specs/blob/master/cli/README.md

Is missing the following commands

- `validate`

- `studio`

- `lift save|up|down`

Note: these commands can take the --schema argument. | 1.0 | CLI spec: some commands are missing - I found that the CLI spec at https://github.com/prisma/specs/blob/master/cli/README.md

Is missing the following commands

- `validate`

- `studio`

- `lift save|up|down`

Note: these commands can take the --schema argument. | process | cli spec some commands are missing i found that the cli spec at is missing the following commands validate studio lift save up down note these commands can take the schema argument | 1 |

15,818 | 20,014,751,954 | IssuesEvent | 2022-02-01 10:53:00 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Drop support for Ubuntu 14.04 / 16.04 / CentOS 7 on Bazel@HEAD | P1 type: process team-OSS | Considering that Ubuntu 16.04 LTS is EOL now, it might be reasonable to drop support for Ubuntu 14.04 / 16.04 / CentOS 7 on Bazel's main branch.

One reason to discontinue support for outdated platforms, is that Bazel@HEAD cannot be built on modern platforms, like Fedora 34 rawhide (x86_64) that switched to `gcc` 11.... | 1.0 | Drop support for Ubuntu 14.04 / 16.04 / CentOS 7 on Bazel@HEAD - Considering that Ubuntu 16.04 LTS is EOL now, it might be reasonable to drop support for Ubuntu 14.04 / 16.04 / CentOS 7 on Bazel's main branch.

One reason to discontinue support for outdated platforms, is that Bazel@HEAD cannot be built on modern plat... | process | drop support for ubuntu centos on bazel head considering that ubuntu lts is eol now it might be reasonable to drop support for ubuntu centos on bazel s main branch one reason to discontinue support for outdated platforms is that bazel head cannot be built on modern platforms lik... | 1 |

1,227 | 3,758,446,632 | IssuesEvent | 2016-03-14 08:58:48 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | opened | Keyref resolution fails if input path contains ../ | bug preprocess/keyref | 2.2.3 and `develop`.

If you do e.g. `dita -i ../docsrc/userguide.ditamap -f pdf`, keyref resolution fails:

```

[keyref] file:/tmp/docsrc/index.dita:6:55: [DOTJ047I][INFO] Unable to find key definition for key reference "release" in root scope. The href attribute may be used as fallback if it exists

```

If yo... | 1.0 | Keyref resolution fails if input path contains ../ - 2.2.3 and `develop`.

If you do e.g. `dita -i ../docsrc/userguide.ditamap -f pdf`, keyref resolution fails:

```

[keyref] file:/tmp/docsrc/index.dita:6:55: [DOTJ047I][INFO] Unable to find key definition for key reference "release" in root scope. The href attribu... | process | keyref resolution fails if input path contains and develop if you do e g dita i docsrc userguide ditamap f pdf keyref resolution fails file tmp docsrc index dita unable to find key definition for key reference release in root scope the href attribute may be used as fall... | 1 |

8,487 | 11,645,741,734 | IssuesEvent | 2020-03-01 03:56:52 | SE-Garden/tms-webserver | https://api.github.com/repos/SE-Garden/tms-webserver | opened | 既存ソースの改修ポイント | kind:共通機能 kind:機能 process:CQ | ## 概要

既存ソースの改修すべきポイントをmasterをベースに再Rvして探し出す。

結果として修正すべき部分は、修正しPullRequestで処理する。

## ゴール

再ソースRvと改修

## 成果物

ソース

## 関連Issue

None | 1.0 | 既存ソースの改修ポイント - ## 概要

既存ソースの改修すべきポイントをmasterをベースに再Rvして探し出す。

結果として修正すべき部分は、修正しPullRequestで処理する。

## ゴール

再ソースRvと改修

## 成果物

ソース

## 関連Issue

None | process | 既存ソースの改修ポイント 概要 既存ソースの改修すべきポイントをmasterをベースに再rvして探し出す。 結果として修正すべき部分は、修正しpullrequestで処理する。 ゴール 再ソースrvと改修 成果物 ソース 関連issue none | 1 |

15,657 | 3,331,318,506 | IssuesEvent | 2015-11-11 15:23:37 | Marginal/EDMarketConnector | https://api.github.com/repos/Marginal/EDMarketConnector | closed | The special modules aren't displayed into the data sent to Eddn | working as designed | example: there's no prismatic shields into the data that arrive to eddn | 1.0 | The special modules aren't displayed into the data sent to Eddn - example: there's no prismatic shields into the data that arrive to eddn | non_process | the special modules aren t displayed into the data sent to eddn example there s no prismatic shields into the data that arrive to eddn | 0 |

33,252 | 15,834,426,857 | IssuesEvent | 2021-04-06 16:46:19 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Create index pattern page is slow if cluster is busy | Feature:Kibana Management Team:KibanaApp performance | **Kibana version:** 6.4

**Elasticsearch version:** 6.4