Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

127,635 | 17,346,584,361 | IssuesEvent | 2021-07-29 00:16:17 | CDCgov/prime-reportstream | https://api.github.com/repos/CDCgov/prime-reportstream | opened | Loading spinner component | Design front-end website | ## Problem statement

As we start working with new test types or advanced filtering, users may experience a beat or two of load time. Create a reusable loading spinner/indicator that can work across RS.

## What you need to know

- As we're moving to React ... is there anything built in there that might be helpful with... | 1.0 | Loading spinner component - ## Problem statement

As we start working with new test types or advanced filtering, users may experience a beat or two of load time. Create a reusable loading spinner/indicator that can work across RS.

## What you need to know

- As we're moving to React ... is there anything built in ther... | non_process | loading spinner component problem statement as we start working with new test types or advanced filtering users may experience a beat or two of load time create a reusable loading spinner indicator that can work across rs what you need to know as we re moving to react is there anything built in ther... | 0 |

180,112 | 13,921,274,554 | IssuesEvent | 2020-10-21 11:42:09 | oasisprotocol/oasis-core | https://api.github.com/repos/oasisprotocol/oasis-core | closed | Runtime upgrade E2E test should wait for old node expiration | c:bug c:testing | Currently [the runtime upgrade E2E test](https://github.com/oasisprotocol/oasis-core/blob/e79b4979c20447f4b47ba4e7a35ae67e74647a0e/go/oasis-test-runner/scenario/e2e/runtime/runtime_upgrade.go) shuts down the old compute nodes after upgrade and starts the client, assuming that the new compute nodes will process the tran... | 1.0 | Runtime upgrade E2E test should wait for old node expiration - Currently [the runtime upgrade E2E test](https://github.com/oasisprotocol/oasis-core/blob/e79b4979c20447f4b47ba4e7a35ae67e74647a0e/go/oasis-test-runner/scenario/e2e/runtime/runtime_upgrade.go) shuts down the old compute nodes after upgrade and starts the cl... | non_process | runtime upgrade test should wait for old node expiration currently shuts down the old compute nodes after upgrade and starts the client assuming that the new compute nodes will process the transactions the problem is that the committee scheduler can still schedule one of the old nodes making the test f... | 0 |

2,248 | 5,088,648,204 | IssuesEvent | 2016-12-31 23:59:51 | sw4j-org/tool-jpa-processor | https://api.github.com/repos/sw4j-org/tool-jpa-processor | opened | Handle @MapsId Annotation | annotation processor task | Handle the `@MapsId` annotation for a property or field.

See [JSR 338: Java Persistence API, Version 2.1](http://download.oracle.com/otn-pub/jcp/persistence-2_1-fr-eval-spec/JavaPersistence.pdf)

- 11.1.39 MapsId Annotation

| 1.0 | Handle @MapsId Annotation - Handle the `@MapsId` annotation for a property or field.

See [JSR 338: Java Persistence API, Version 2.1](http://download.oracle.com/otn-pub/jcp/persistence-2_1-fr-eval-spec/JavaPersistence.pdf)

- 11.1.39 MapsId Annotation

| process | handle mapsid annotation handle the mapsid annotation for a property or field see mapsid annotation | 1 |

72,597 | 9,601,242,190 | IssuesEvent | 2019-05-10 11:36:23 | gama-platform/gama | https://api.github.com/repos/gama-platform/gama | opened | Several items missing from categories in the online doc | > Bug Affects Usability Concerns Documentation Concerns GAML OS All Priority Critical Version Git | **Describe the bug**

Some items are completely missing from the online, incl. types defined in plugins (like `emotion` in `simple_bdi`) or in the core, statements defined in the plugins, etc.

| 1.0 | Several items missing from categories in the online doc - **Describe the bug**

Some items are completely missing from the online, incl. types defined in plugins (like `emotion` in `simple_bdi`) or in the core, statements defined in the plugins, etc.

| non_process | several items missing from categories in the online doc describe the bug some items are completely missing from the online incl types defined in plugins like emotion in simple bdi or in the core statements defined in the plugins etc | 0 |

17,066 | 22,502,946,725 | IssuesEvent | 2022-06-23 13:22:12 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Avoid renaming non-DITA resources used in branch filtering with dvrResourceSuffix or dvrResourcePrefix specified | priority/medium preprocess/filtering enhancement preprocess/branch-filtering | Let's say I have in the DITA Map a portion like this:

<topicref href="topics/introduction.dita">

<ditavalref href="test.ditaval">

<ditavalmeta>

<dvrResourceSuffix>stuff</dvrResourceSuffix>

</ditavalmeta>

</ditavalref>

<topicref h... | 2.0 | Avoid renaming non-DITA resources used in branch filtering with dvrResourceSuffix or dvrResourcePrefix specified - Let's say I have in the DITA Map a portion like this:

<topicref href="topics/introduction.dita">

<ditavalref href="test.ditaval">

<ditavalmeta>

<dvrResourc... | process | avoid renaming non dita resources used in branch filtering with dvrresourcesuffix or dvrresourceprefix specified let s say i have in the dita map a portion like this stuff right now even with lates... | 1 |

893 | 3,355,307,589 | IssuesEvent | 2015-11-18 15:58:55 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | investigate potentially flaky test on centos | child_process test | https://ci.nodejs.org/job/node-test-commit-linux/1215/nodes=centos5-32/console

```

not ok 61 test-child-process-spawnsync-input.js

#

#assert.js:89

# throw new assert.AssertionError({

# ^

#AssertionError: <Buffer > deepEqual <Buffer 74 68 69 73 20 69 73 20 73 74 64 6f 75 74 0a>

# at Object.<anonymous> (/h... | 1.0 | investigate potentially flaky test on centos - https://ci.nodejs.org/job/node-test-commit-linux/1215/nodes=centos5-32/console

```

not ok 61 test-child-process-spawnsync-input.js

#

#assert.js:89

# throw new assert.AssertionError({

# ^

#AssertionError: <Buffer > deepEqual <Buffer 74 68 69 73 20 69 73 20 73 74 ... | process | investigate potentially flaky test on centos not ok test child process spawnsync input js assert js throw new assert assertionerror assertionerror deepequal at object home iojs build workspace node test commit linux nodes test parallel test child process spawnsync i... | 1 |

50,458 | 26,653,562,996 | IssuesEvent | 2023-01-25 15:18:24 | getsentry/sentry-javascript | https://api.github.com/repos/getsentry/sentry-javascript | closed | Transactions/spans started regardless of tracing enablement | Type: Improvement Type: Breaking Feature: Performance Package: tracing Status: Backlog | _Note that in the description below, "tracing being disabled" does NOT mean having `tracesSampleRate` set to `0`, but rather having neither `tracesSampleRate` nor `tracesSampler` defined in `Sentry.init()._

Right now, if tracing is disabled, our SDKs correctly do not send transactions to Sentry, by [forcing the samp... | True | Transactions/spans started regardless of tracing enablement - _Note that in the description below, "tracing being disabled" does NOT mean having `tracesSampleRate` set to `0`, but rather having neither `tracesSampleRate` nor `tracesSampler` defined in `Sentry.init()._

Right now, if tracing is disabled, our SDKs corr... | non_process | transactions spans started regardless of tracing enablement note that in the description below tracing being disabled does not mean having tracessamplerate set to but rather having neither tracessamplerate nor tracessampler defined in sentry init right now if tracing is disabled our sdks corr... | 0 |

176,753 | 28,149,138,135 | IssuesEvent | 2023-04-02 20:44:02 | bounswe/bounswe2023group1 | https://api.github.com/repos/bounswe/bounswe2023group1 | closed | Creating and Documentation of High Level Mock-ups | Priority/High Status/In Progress Type/Design | We are creating mockups for each of the the four user groups we have. The work will involve writing the mockup texts, creating mock UI's and updating the requirements based on newly discovered needs(#88). A common template for the mock UI's will be created in #72. These issues track the progress of the four mockups:

... | 1.0 | Creating and Documentation of High Level Mock-ups - We are creating mockups for each of the the four user groups we have. The work will involve writing the mockup texts, creating mock UI's and updating the requirements based on newly discovered needs(#88). A common template for the mock UI's will be created in #72. The... | non_process | creating and documentation of high level mock ups we are creating mockups for each of the the four user groups we have the work will involve writing the mockup texts creating mock ui s and updating the requirements based on newly discovered needs a common template for the mock ui s will be created in these... | 0 |

22,382 | 31,142,283,889 | IssuesEvent | 2023-08-16 01:44:06 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Flaky test: net_stubbing absolute path | process: flaky test topic: flake ❄️ stage: fire watch priority: low topic: net_stubbing.cy.ts stale | ### Link to dashboard or CircleCI failure

https://dashboard.cypress.io/projects/ypt4pf/runs/38102/test-results/06201ab2-edbe-4cc8-a295-40de3145e25b

### Link to failing test in GitHub

https://github.com/cypress-io/cypress/blob/develop/packages/driver/cypress/e2e/commands/net_stubbing.cy.ts#L1859

### Analysis... | 1.0 | Flaky test: net_stubbing absolute path - ### Link to dashboard or CircleCI failure

https://dashboard.cypress.io/projects/ypt4pf/runs/38102/test-results/06201ab2-edbe-4cc8-a295-40de3145e25b

### Link to failing test in GitHub

https://github.com/cypress-io/cypress/blob/develop/packages/driver/cypress/e2e/commands... | process | flaky test net stubbing absolute path link to dashboard or circleci failure link to failing test in github analysis img width alt screen shot at pm src cypress version other search for this issue number in the codebase to find the test ... | 1 |

71,218 | 15,185,661,336 | IssuesEvent | 2021-02-15 11:14:45 | Neko7sora/github-slideshow | https://api.github.com/repos/Neko7sora/github-slideshow | opened | CVE-2011-4969 (Medium) detected in jquery-1.4.4.min.js | security vulnerability | ## CVE-2011-4969 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.4.4.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="htt... | True | CVE-2011-4969 (Medium) detected in jquery-1.4.4.min.js - ## CVE-2011-4969 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.4.4.min.js</b></p></summary>

<p>JavaScript library ... | non_process | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file github slideshow assets fonts droid serif web fonts droidserif bolditalic macro... | 0 |

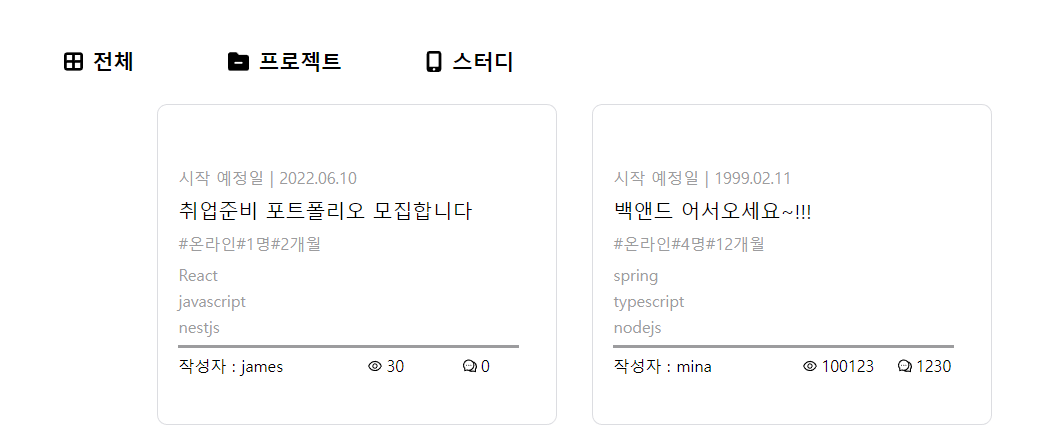

302,060 | 22,785,994,597 | IssuesEvent | 2022-07-09 08:44:25 | h-dt/hola-clone | https://api.github.com/repos/h-dt/hola-clone | closed | [bug,docs] Front home 화면 | documentation help wanted status: in progress question |

home 화면

전체/ 프로젝트/스터디 select하는 영역에서

프로젝트 하고 스터디는 각각 데이터를 받아오면 되는데

전체적으로 받아오는 데이터는 프로젝트+스터디로 주시는지 아니면 전체데이터 하나를 주시는지

확인해보시고 알려주시면 감사하겟습니다!

| 1.0 | [bug,docs] Front home 화면 -

home 화면

전체/ 프로젝트/스터디 select하는 영역에서

프로젝트 하고 스터디는 각각 데이터를 받아오면 되는데

전체적으로 받아오는 데이터는 프로젝트+스터디로 주시는지 아니면 전체데이터 하나를 주시는지

확인해보시고 알려주시면 감사하겟습니다!

| non_process | front home 화면 home 화면 전체 프로젝트 스터디 select하는 영역에서 프로젝트 하고 스터디는 각각 데이터를 받아오면 되는데 전체적으로 받아오는 데이터는 프로젝트 스터디로 주시는지 아니면 전체데이터 하나를 주시는지 확인해보시고 알려주시면 감사하겟습니다 | 0 |

1,829 | 4,613,629,721 | IssuesEvent | 2016-09-25 04:10:12 | EBrown8534/StackExchangeStatisticsExplorer | https://api.github.com/repos/EBrown8534/StackExchangeStatisticsExplorer | opened | Site-to-Site Comparison Graphs | enhancement in process | We should have line graphs that show comparisons between all sites selected in the site-to-site comparison for various metrics. (Need a list of metrics?) | 1.0 | Site-to-Site Comparison Graphs - We should have line graphs that show comparisons between all sites selected in the site-to-site comparison for various metrics. (Need a list of metrics?) | process | site to site comparison graphs we should have line graphs that show comparisons between all sites selected in the site to site comparison for various metrics need a list of metrics | 1 |

6,648 | 9,769,806,538 | IssuesEvent | 2019-06-06 09:26:07 | stoyicker/test-accessors | https://api.github.com/repos/stoyicker/test-accessors | closed | Add a fallback to Field#modifiers field to fetch the Field#accessFlags field instead | bug processor-java | Android's implementation of Field doesn't have a modifiers field, which means that static setters in instrumented tests fail. | 1.0 | Add a fallback to Field#modifiers field to fetch the Field#accessFlags field instead - Android's implementation of Field doesn't have a modifiers field, which means that static setters in instrumented tests fail. | process | add a fallback to field modifiers field to fetch the field accessflags field instead android s implementation of field doesn t have a modifiers field which means that static setters in instrumented tests fail | 1 |

1,870 | 4,697,583,912 | IssuesEvent | 2016-10-12 09:50:52 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Spawned process don't trigger close or exit event | child_process | Hi,

When i spawn a process and write in his stdin he will not trigger "close" or "exit" event.

```javascript

emuObject.currentProcess = spawn(externalCommands[cmd], cmdArgs, {cwd:emuObject.cd});

emuObject.stdin.pipe(emuObject.currentProcess.stdin);

emuObject.currentProcess.stdout.on('data', function(dat... | 1.0 | Spawned process don't trigger close or exit event - Hi,

When i spawn a process and write in his stdin he will not trigger "close" or "exit" event.

```javascript

emuObject.currentProcess = spawn(externalCommands[cmd], cmdArgs, {cwd:emuObject.cd});

emuObject.stdin.pipe(emuObject.currentProcess.stdin);

emu... | process | spawned process don t trigger close or exit event hi when i spawn a process and write in his stdin he will not trigger close or exit event javascript emuobject currentprocess spawn externalcommands cmdargs cwd emuobject cd emuobject stdin pipe emuobject currentprocess stdin emuobje... | 1 |

4,085 | 7,042,947,844 | IssuesEvent | 2017-12-30 20:35:33 | eve-savvy/eve-mail | https://api.github.com/repos/eve-savvy/eve-mail | closed | Authorization window stays open after successful authorization | bug help wanted renderer process | ### Issue

When an auth request opens the browser to allow for a user to authenticate a character the browser remains open on the authorization request after the user has successfully authenticated.

### Expectation

The user should either be notified that they can close the external browser window, or the extern... | 1.0 | Authorization window stays open after successful authorization - ### Issue

When an auth request opens the browser to allow for a user to authenticate a character the browser remains open on the authorization request after the user has successfully authenticated.

### Expectation

The user should either be notifi... | process | authorization window stays open after successful authorization issue when an auth request opens the browser to allow for a user to authenticate a character the browser remains open on the authorization request after the user has successfully authenticated expectation the user should either be notifi... | 1 |

112,172 | 9,556,120,890 | IssuesEvent | 2019-05-03 07:15:30 | kowainik/co-log | https://api.github.com/repos/kowainik/co-log | opened | [RFC] Laws property-based tests for `LogAction` | question tests | It would be really nice to have tests for the logging functionality, especially property-based testing. However, it's not really clear how to test it. It would be really nice though to have property-based tests for laws for every data type. However, in order to do this, we need to implement the following two pieces:

... | 1.0 | [RFC] Laws property-based tests for `LogAction` - It would be really nice to have tests for the logging functionality, especially property-based testing. However, it's not really clear how to test it. It would be really nice though to have property-based tests for laws for every data type. However, in order to do this,... | non_process | laws property based tests for logaction it would be really nice to have tests for the logging functionality especially property based testing however it s not really clear how to test it it would be really nice though to have property based tests for laws for every data type however in order to do this we ... | 0 |

164,676 | 6,246,009,401 | IssuesEvent | 2017-07-13 02:04:20 | jstanden/cerb | https://api.github.com/repos/jstanden/cerb | closed | [Refactor] Remove joins on message worklists (ticket, address) | priority-support refactor | The full list of millions of messages is causing issues in some environments. | 1.0 | [Refactor] Remove joins on message worklists (ticket, address) - The full list of millions of messages is causing issues in some environments. | non_process | remove joins on message worklists ticket address the full list of millions of messages is causing issues in some environments | 0 |

140,201 | 12,889,161,748 | IssuesEvent | 2020-07-13 14:08:02 | saltstack/salt | https://api.github.com/repos/saltstack/salt | closed | [DOCS] Missing docs for "requires" requisite in Salt Cloud maps | Bug Documentation Magnesium P3 Severity: Low | **Description**

Received report on Slack:

> In salt-cloud source I found that it supports `requires` directive (in the map file), although I cannot find any documentation for it. It seems to be a way to define that one VM requires another to be created (which is what I need), but I don't know how to use that direct... | 1.0 | [DOCS] Missing docs for "requires" requisite in Salt Cloud maps - **Description**

Received report on Slack:

> In salt-cloud source I found that it supports `requires` directive (in the map file), although I cannot find any documentation for it. It seems to be a way to define that one VM requires another to be creat... | non_process | missing docs for requires requisite in salt cloud maps description received report on slack in salt cloud source i found that it supports requires directive in the map file although i cannot find any documentation for it it seems to be a way to define that one vm requires another to be created w... | 0 |

335,664 | 10,164,851,899 | IssuesEvent | 2019-08-07 12:41:51 | pmem/issues | https://api.github.com/repos/pmem/issues | closed | Test: vmmalloc_init/TEST17: SETUP (all/pmem/nondebug/memcheck) fails | Exposure: Low OS: Linux Priority: 3 medium State: To be verified Type: Bug | <!--

Before creating new issue, ensure that similar issue wasn't already created

* Search: https://github.com/pmem/issues/issues

Note that if you do not provide enough information to reproduce the issue, we may not be able to take action on your report.

Remember this is just a minimal template. You can extend i... | 1.0 | Test: vmmalloc_init/TEST17: SETUP (all/pmem/nondebug/memcheck) fails - <!--

Before creating new issue, ensure that similar issue wasn't already created

* Search: https://github.com/pmem/issues/issues

Note that if you do not provide enough information to reproduce the issue, we may not be able to take action on y... | non_process | test vmmalloc init setup all pmem nondebug memcheck fails before creating new issue ensure that similar issue wasn t already created search note that if you do not provide enough information to reproduce the issue we may not be able to take action on your report remember this is just a mini... | 0 |

622,047 | 19,605,382,754 | IssuesEvent | 2022-01-06 08:48:15 | parallel-finance/parallel | https://api.github.com/repos/parallel-finance/parallel | closed | Switch from cDOT-project to cDOT-lease | high priority | ## Solutions

1. reserve token while minting cDOT to user, users need to come to us to unreserve (only work for cDOT-project it seems)

2. dont mint cDOT after contribution, issue cDOT after winning (via on_idle + childtrie)

3. dont mint cDOT after contribution, issue cDOT after winning (via offchain-client) | 1.0 | Switch from cDOT-project to cDOT-lease - ## Solutions

1. reserve token while minting cDOT to user, users need to come to us to unreserve (only work for cDOT-project it seems)

2. dont mint cDOT after contribution, issue cDOT after winning (via on_idle + childtrie)

3. dont mint cDOT after contribution, issue cDOT af... | non_process | switch from cdot project to cdot lease solutions reserve token while minting cdot to user users need to come to us to unreserve only work for cdot project it seems dont mint cdot after contribution issue cdot after winning via on idle childtrie dont mint cdot after contribution issue cdot af... | 0 |

162,938 | 12,698,122,211 | IssuesEvent | 2020-06-22 12:58:25 | radareorg/radare2 | https://api.github.com/repos/radareorg/radare2 | closed | add hexdump metadata(Cd) to data sections | enhancement test-required | After analysis (aaa), we can use `Cd 1` for all addresses in data sections (only where there's no other metadata) so that it will be easy in visual mode to know when you are in data sections. Something like what IDA does.

| 1.0 | add hexdump metadata(Cd) to data sections - After analysis (aaa), we can use `Cd 1` for all addresses in data sections (only where there's no other metadata) so that it will be easy in visual mode to know when you are in data sections. Something like what IDA does.

| non_process | add hexdump metadata cd to data sections after analysis aaa we can use cd for all addresses in data sections only where there s no other metadata so that it will be easy in visual mode to know when you are in data sections something like what ida does | 0 |

11,864 | 14,665,727,051 | IssuesEvent | 2020-12-29 14:51:05 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | DITA-OT does not rewrite xrefs to topic elements correctly during preprocessing when chunk = "to-content" is specified | bug preprocess preprocess/chunking priority/medium stale | When chunk = "to-content" is specified, DITA-OT creates a ditabase document during preprocessing that contains all of the topics that are being chunked. If the topics' IDs contain any duplicates, then unique IDs are generated to make all of the topic IDs unique in the ditabase document. However, xrefs to topic elemen... | 2.0 | DITA-OT does not rewrite xrefs to topic elements correctly during preprocessing when chunk = "to-content" is specified - When chunk = "to-content" is specified, DITA-OT creates a ditabase document during preprocessing that contains all of the topics that are being chunked. If the topics' IDs contain any duplicates, th... | process | dita ot does not rewrite xrefs to topic elements correctly during preprocessing when chunk to content is specified when chunk to content is specified dita ot creates a ditabase document during preprocessing that contains all of the topics that are being chunked if the topics ids contain any duplicates th... | 1 |

257,720 | 19,529,922,343 | IssuesEvent | 2021-12-30 14:54:30 | cython/cython | https://api.github.com/repos/cython/cython | closed | [BUG] Namespace is not inserted if a cppclass is renamed | R: worksforme Documentation | **Describe the bug**

When renaming a `cppclass` (`cdef cppclass CppObject "Object"`), namespace is not inserted into the compiled code.

Though everythins is okay if namespace is already provided in the 'renaming string', like `cdef cppclass CppObject "nspace::Object"`.

I am not sure if it is a bug, but this moment ... | 1.0 | [BUG] Namespace is not inserted if a cppclass is renamed - **Describe the bug**

When renaming a `cppclass` (`cdef cppclass CppObject "Object"`), namespace is not inserted into the compiled code.

Though everythins is okay if namespace is already provided in the 'renaming string', like `cdef cppclass CppObject "nspace... | non_process | namespace is not inserted if a cppclass is renamed describe the bug when renaming a cppclass cdef cppclass cppobject object namespace is not inserted into the compiled code though everythins is okay if namespace is already provided in the renaming string like cdef cppclass cppobject nspace ob... | 0 |

100,432 | 12,521,706,360 | IssuesEvent | 2020-06-03 17:51:49 | cr-ste-justine/clin-project | https://api.github.com/repos/cr-ste-justine/clin-project | opened | Rajouter d'autres colonnes selectionnable a la liste des patients | Clin Clin Design Front-end | Ajouter dans l’option “Afficher” les colonnes suivantes:

- MRN

- RAMQ, ID Famille

- Famille

- ID Specimen

- Étude

** Ces colonnes seront décochée par defaut **

| 1.0 | Rajouter d'autres colonnes selectionnable a la liste des patients - Ajouter dans l’option “Afficher” les colonnes suivantes:

- MRN

- RAMQ, ID Famille

- Famille

- ID Specimen

- Étude

** Ces colonnes seront ... | non_process | rajouter d autres colonnes selectionnable a la liste des patients ajouter dans l’option “afficher” les colonnes suivantes mrn ramq id famille famille id specimen étude ces colonnes seront décochée par defaut | 0 |

41,373 | 10,707,566,679 | IssuesEvent | 2019-10-24 17:45:28 | alan-turing-institute/sktime | https://api.github.com/repos/alan-turing-institute/sktime | opened | Compile manylinux wheels | build / ci help wanted | Currently, we only get wheels for specific linux versions which are not accepted by PyPI. We need to set up our CI to build wheels following the [manylinux](https://github.com/pypa/manylinux) convention. | 1.0 | Compile manylinux wheels - Currently, we only get wheels for specific linux versions which are not accepted by PyPI. We need to set up our CI to build wheels following the [manylinux](https://github.com/pypa/manylinux) convention. | non_process | compile manylinux wheels currently we only get wheels for specific linux versions which are not accepted by pypi we need to set up our ci to build wheels following the convention | 0 |

64,229 | 18,286,202,977 | IssuesEvent | 2021-10-05 10:33:07 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | Room settings save button can be incorrectly disabled even though there are changes | T-Defect | ### Steps to reproduce

1. Open Room Settings.

2. Edit the Room Name field. The Save button gets enabled.

3. Edit the Room Topic field. The Save button is still enabled.

4. Revert the edit to the Room Topic field. The Save button now incorrectly gets disabled.

### What happened?

### What did you expect?

The Sav... | 1.0 | Room settings save button can be incorrectly disabled even though there are changes - ### Steps to reproduce

1. Open Room Settings.

2. Edit the Room Name field. The Save button gets enabled.

3. Edit the Room Topic field. The Save button is still enabled.

4. Revert the edit to the Room Topic field. The Save button n... | non_process | room settings save button can be incorrectly disabled even though there are changes steps to reproduce open room settings edit the room name field the save button gets enabled edit the room topic field the save button is still enabled revert the edit to the room topic field the save button n... | 0 |

4,785 | 7,661,273,284 | IssuesEvent | 2018-05-11 13:44:51 | SharePoint/PnP-PowerShell | https://api.github.com/repos/SharePoint/PnP-PowerShell | closed | Add-PnPFile can't write Values Modified & Created | Needs investigation To be processed | ### Reporting an Issue or Missing Feature

When uploading a file with Add-PnPFile values for Modified & Created are ignored

### Expected behavior

> PS > $pubdate

> **Thursday, 19 November 2015 2:47:38 PM**

>

> PS > $pubdate.GetType()

> IsPublic IsSerial Name BaseType ... | 1.0 | Add-PnPFile can't write Values Modified & Created - ### Reporting an Issue or Missing Feature

When uploading a file with Add-PnPFile values for Modified & Created are ignored

### Expected behavior

> PS > $pubdate

> **Thursday, 19 November 2015 2:47:38 PM**

>

> PS > $pubdate.GetType()

> IsPublic IsSerial Na... | process | add pnpfile can t write values modified created reporting an issue or missing feature when uploading a file with add pnpfile values for modified created are ignored expected behavior ps pubdate thursday november pm ps pubdate gettype ispublic isserial name ... | 1 |

22,252 | 30,802,546,347 | IssuesEvent | 2023-08-01 03:27:04 | h4sh5/npm-auto-scanner | https://api.github.com/repos/h4sh5/npm-auto-scanner | opened | zip_achive_bp 1.999.0 has 2 guarddog issues | npm-install-script npm-silent-process-execution | ```{"npm-install-script":[{"code":" \"postinstall\": \"node preinstall.js\",","location":"package/package.json:7","message":"The package.json has a script automatically running when the package is installed"}],"npm-silent-process-execution":[{"code":"const child = spawn('node', ['index.js'], {\n detached: true,\n ... | 1.0 | zip_achive_bp 1.999.0 has 2 guarddog issues - ```{"npm-install-script":[{"code":" \"postinstall\": \"node preinstall.js\",","location":"package/package.json:7","message":"The package.json has a script automatically running when the package is installed"}],"npm-silent-process-execution":[{"code":"const child = spawn(... | process | zip achive bp has guarddog issues npm install script npm silent process execution n detached true n stdio ignore n location package preinstall js message this package is silently executing another executable | 1 |

21,173 | 28,144,306,237 | IssuesEvent | 2023-04-02 09:59:40 | bitfocus/companion-module-requests | https://api.github.com/repos/bitfocus/companion-module-requests | opened | CP750 Remote control | NOT YET PROCESSED | The name of the device, hardware, or software you would like to control:

Dolby CP750

What you would like to be able to make it do from Companion:

Remote control of format and fader, and feedback, as already existing on companion module for CP650 and CP950

Direct links or attachments to the ethernet control prot... | 1.0 | CP750 Remote control - The name of the device, hardware, or software you would like to control:

Dolby CP750

What you would like to be able to make it do from Companion:

Remote control of format and fader, and feedback, as already existing on companion module for CP650 and CP950

Direct links or attachments to th... | process | remote control the name of the device hardware or software you would like to control dolby what you would like to be able to make it do from companion remote control of format and fader and feedback as already existing on companion module for and direct links or attachments to the ethernet contr... | 1 |

18,602 | 24,576,114,471 | IssuesEvent | 2022-10-13 12:29:20 | aiidateam/aiida-core | https://api.github.com/repos/aiidateam/aiida-core | closed | `WithSerialize.serialize` calls the serializer even if the provided value is `None` | type/bug priority/important topic/engine topic/processes | This leads to problems with the `to_aiida_type` serializer, which will raise a `ValueError` when it receives `None`. Now it can be discussed whether `to_aiida_type` should simply register `None` and simply return the value when it receives `None` instead of excepting. However, does it ever make sense for a serializer o... | 1.0 | `WithSerialize.serialize` calls the serializer even if the provided value is `None` - This leads to problems with the `to_aiida_type` serializer, which will raise a `ValueError` when it receives `None`. Now it can be discussed whether `to_aiida_type` should simply register `None` and simply return the value when it rec... | process | withserialize serialize calls the serializer even if the provided value is none this leads to problems with the to aiida type serializer which will raise a valueerror when it receives none now it can be discussed whether to aiida type should simply register none and simply return the value when it rec... | 1 |

11,028 | 13,836,093,253 | IssuesEvent | 2020-10-14 00:11:29 | googleapis/gapic-generator-typescript | https://api.github.com/repos/googleapis/gapic-generator-typescript | closed | gts@3 issues related to generated code | type: process | I have a release candidate of `gts@3` sitting here:

https://www.npmjs.com/package/gts/v/3.0.0-alpha.1

Still working on a changelog, but it's mostly upgrading all the dependencies. I have a PR out on all the repos, and I'm seeing runs like this:

https://github.com/googleapis/nodejs-vision/pull/823/checks?check_run... | 1.0 | gts@3 issues related to generated code - I have a release candidate of `gts@3` sitting here:

https://www.npmjs.com/package/gts/v/3.0.0-alpha.1

Still working on a changelog, but it's mostly upgrading all the dependencies. I have a PR out on all the repos, and I'm seeing runs like this:

https://github.com/googleapi... | process | gts issues related to generated code i have a release candidate of gts sitting here still working on a changelog but it s mostly upgrading all the dependencies i have a pr out on all the repos and i m seeing runs like this the pattern looks to match home runner work nodejs vision nodej... | 1 |

1,895 | 11,042,642,182 | IssuesEvent | 2019-12-09 09:35:16 | DevExpress/testcafe | https://api.github.com/repos/DevExpress/testcafe | opened | We should give a capability to switch to invisible iframe | AREA: client SYSTEM: automations TYPE: enhancement support center | Reasons:

0) some sites use invisible iframes to perform some util logic (for example see [a Studio issue](https://github.com/DevExpress/testcafe-studio/issues/2881))

1) we have actions that don't check element's visiblity before execution (`pressKey, navigateTo` etc.)

2) we have even one action with selector that do... | 1.0 | We should give a capability to switch to invisible iframe - Reasons:

0) some sites use invisible iframes to perform some util logic (for example see [a Studio issue](https://github.com/DevExpress/testcafe-studio/issues/2881))

1) we have actions that don't check element's visiblity before execution (`pressKey, navigat... | non_process | we should give a capability to switch to invisible iframe reasons some sites use invisible iframes to perform some util logic for example see we have actions that don t check element s visiblity before execution presskey navigateto etc we have even one action with selector that doesn t check e... | 0 |

160,203 | 12,505,817,712 | IssuesEvent | 2020-06-02 11:26:50 | aliasrobotics/RVD | https://api.github.com/repos/aliasrobotics/RVD | opened | subprocess call with shell=True identified, security issue., /opt/ros_melodic_ws/src/ros/rosbash/test/test_scripts.py:55 | bandit bug static analysis testing triage | ```yaml

{

"id": 1,

"title": "subprocess call with shell=True identified, security issue., /opt/ros_melodic_ws/src/ros/rosbash/test/test_scripts.py:55",

"type": "bug",

"description": "HIGH confidence of HIGH severity bug. subprocess call with shell=True identified, security issue. at /opt/ros_melodic_ws/... | 1.0 | subprocess call with shell=True identified, security issue., /opt/ros_melodic_ws/src/ros/rosbash/test/test_scripts.py:55 - ```yaml

{

"id": 1,

"title": "subprocess call with shell=True identified, security issue., /opt/ros_melodic_ws/src/ros/rosbash/test/test_scripts.py:55",

"type": "bug",

"description":... | non_process | subprocess call with shell true identified security issue opt ros melodic ws src ros rosbash test test scripts py yaml id title subprocess call with shell true identified security issue opt ros melodic ws src ros rosbash test test scripts py type bug description ... | 0 |

11,170 | 13,957,694,718 | IssuesEvent | 2020-10-24 08:11:20 | alexanderkotsev/geoportal | https://api.github.com/repos/alexanderkotsev/geoportal | opened | CY: Geoportal Process for Data Service Linking (Workshop Ispra January 2019) | CY - Cyprus Geoportal Harvesting process | Dear Angelo,

we are trying to sort out things due to the upcoming workshop in Ispra.

A word file is attached including some questions regarding the document provided by Robert Tomas "Geoportal workflow for establishing links between data sets and network services".

Your help will be greatly appreciated.

... | 1.0 | CY: Geoportal Process for Data Service Linking (Workshop Ispra January 2019) - Dear Angelo,

we are trying to sort out things due to the upcoming workshop in Ispra.

A word file is attached including some questions regarding the document provided by Robert Tomas "Geoportal workflow for establishing links between ... | process | cy geoportal process for data service linking workshop ispra january dear angelo we are trying to sort out things due to the upcoming workshop in ispra a word file is attached including some questions regarding the document provided by robert tomas quot geoportal workflow for establishing links between dat... | 1 |

87,979 | 17,404,830,991 | IssuesEvent | 2021-08-03 03:22:56 | CATcher-org/CATcher | https://api.github.com/repos/CATcher-org/CATcher | closed | Representing AuthComponent state using ADT | aspect-CodeQuality category.Enhancement difficulty.Hard p.Low | # Overview

Algebraic Data Types (ADTs) can be viewed as enum with data. Currently, application state in the AuthComponent is represented by a collection of booleans (isAuthenticating, isOutdatedVersion, etc) which does not appropriately and intuitively translate into the state of the application.

State is determine... | 1.0 | Representing AuthComponent state using ADT - # Overview

Algebraic Data Types (ADTs) can be viewed as enum with data. Currently, application state in the AuthComponent is represented by a collection of booleans (isAuthenticating, isOutdatedVersion, etc) which does not appropriately and intuitively translate into the... | non_process | representing authcomponent state using adt overview algebraic data types adts can be viewed as enum with data currently application state in the authcomponent is represented by a collection of booleans isauthenticating isoutdatedversion etc which does not appropriately and intuitively translate into the... | 0 |

18,871 | 24,800,463,446 | IssuesEvent | 2022-10-24 21:11:44 | googleapis/google-cloud-go | https://api.github.com/repos/googleapis/google-cloud-go | closed | chore(ci): move linting presubmit from kokoro into GitHub Actions | type: process | We should move the Go linting tasks from kokoro into a GitHub Action for easier debugging, speed of execution (and thus quicker feedback), and ease of maintenance. | 1.0 | chore(ci): move linting presubmit from kokoro into GitHub Actions - We should move the Go linting tasks from kokoro into a GitHub Action for easier debugging, speed of execution (and thus quicker feedback), and ease of maintenance. | process | chore ci move linting presubmit from kokoro into github actions we should move the go linting tasks from kokoro into a github action for easier debugging speed of execution and thus quicker feedback and ease of maintenance | 1 |

17,773 | 23,701,399,786 | IssuesEvent | 2022-08-29 19:21:54 | openxla/stablehlo | https://api.github.com/repos/openxla/stablehlo | opened | Improve the interpreter tesing using fuzzing and cross-validation. | Interpreter Process | ### Request description

The idea here is to use a fuzzer to generate StableHLO programs, run them and validate the results against another implementation (e.g. a compiler maybe).

### Additional context

_No response_ | 1.0 | Improve the interpreter tesing using fuzzing and cross-validation. - ### Request description

The idea here is to use a fuzzer to generate StableHLO programs, run them and validate the results against another implementation (e.g. a compiler maybe).

### Additional context

_No response_ | process | improve the interpreter tesing using fuzzing and cross validation request description the idea here is to use a fuzzer to generate stablehlo programs run them and validate the results against another implementation e g a compiler maybe additional context no response | 1 |

207,814 | 15,837,375,828 | IssuesEvent | 2021-04-06 20:41:46 | microsoft/vscode-python | https://api.github.com/repos/microsoft/vscode-python | closed | pytest test discovery tries to import conftest.py from other projects | area-testing triage type-bug | Originally posted in #12538, I thought maybe it was the same problem, but I'm not sure.

My project structure is not the most common one, but not so unusual either, I think.

```

<project-root>/

|----src/

| |----<package>/

| | |----__init__.py

| | |----...

|----tests/

| |----__init__.py

| ... | 1.0 | pytest test discovery tries to import conftest.py from other projects - Originally posted in #12538, I thought maybe it was the same problem, but I'm not sure.

My project structure is not the most common one, but not so unusual either, I think.

```

<project-root>/

|----src/

| |----<package>/

| | |----... | non_process | pytest test discovery tries to import conftest py from other projects originally posted in i thought maybe it was the same problem but i m not sure my project structure is not the most common one but not so unusual either i think src init py ... | 0 |

7,297 | 10,442,770,175 | IssuesEvent | 2019-09-18 13:42:33 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Where is "Quick Create"? | Pri2 assigned-to-author automation/svc process-automation/subsvc product-question triaged | Create Runbook section, step 3.

Click the Add a runbook button found at the top of the list. On the Add Runbook page, select Quick Create.

I don't see the "Add a runbook" or "Quck Create" option anywhere.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue link... | 1.0 | Where is "Quick Create"? - Create Runbook section, step 3.

Click the Add a runbook button found at the top of the list. On the Add Runbook page, select Quick Create.

I don't see the "Add a runbook" or "Quck Create" option anywhere.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.micros... | process | where is quick create create runbook section step click the add a runbook button found at the top of the list on the add runbook page select quick create i don t see the add a runbook or quck create option anywhere document details ⚠ do not edit this section it is required for docs micros... | 1 |

14,246 | 3,814,364,940 | IssuesEvent | 2016-03-28 12:52:09 | lintool/warcbase | https://api.github.com/repos/lintool/warcbase | closed | Deprecate loadWarc and loadArc in favour of loadArchives | documentation | Since we now have `loadArchives`, which detects ARC or WARC input and processes appropriately, I think we could go ahead an replace `loadArc` and `loadWarc` with the new function in all the documentation. I recommend we leave the original functions in Warcbase, though. | 1.0 | Deprecate loadWarc and loadArc in favour of loadArchives - Since we now have `loadArchives`, which detects ARC or WARC input and processes appropriately, I think we could go ahead an replace `loadArc` and `loadWarc` with the new function in all the documentation. I recommend we leave the original functions in Warcbase,... | non_process | deprecate loadwarc and loadarc in favour of loadarchives since we now have loadarchives which detects arc or warc input and processes appropriately i think we could go ahead an replace loadarc and loadwarc with the new function in all the documentation i recommend we leave the original functions in warcbase ... | 0 |

7,171 | 10,533,573,800 | IssuesEvent | 2019-10-01 13:19:24 | girder/viime | https://api.github.com/repos/girder/viime | opened | Discuss testing strategy | type: requirements/docs | I've avoided writing tests to this point because things were very fluid and a large number of unit tests would simply have slowed us down and added no value.

I believe we're at a point now where a more explicit testing strategy is needed. I don't think we should strive for % coverage, but we could probably identify... | 1.0 | Discuss testing strategy - I've avoided writing tests to this point because things were very fluid and a large number of unit tests would simply have slowed us down and added no value.

I believe we're at a point now where a more explicit testing strategy is needed. I don't think we should strive for % coverage, but... | non_process | discuss testing strategy i ve avoided writing tests to this point because things were very fluid and a large number of unit tests would simply have slowed us down and added no value i believe we re at a point now where a more explicit testing strategy is needed i don t think we should strive for coverage but... | 0 |

16,496 | 21,472,562,816 | IssuesEvent | 2022-04-26 10:51:03 | camunda/zeebe | https://api.github.com/repos/camunda/zeebe | closed | IndexOutOfBounds if output collection of multi-instance is modified | kind/bug severity/high area/ux team/process-automation | **Describe the bug**

I deployed a BPMN process with a parallel multi-instance embedded subprocess. The multi-instance subprocess defines an output collection to collect the results of the iterations. If the output collection is modified during the iteration (e.g. the size of the output collection is reduced) then mu... | 1.0 | IndexOutOfBounds if output collection of multi-instance is modified - **Describe the bug**

I deployed a BPMN process with a parallel multi-instance embedded subprocess. The multi-instance subprocess defines an output collection to collect the results of the iterations. If the output collection is modified during the... | process | indexoutofbounds if output collection of multi instance is modified describe the bug i deployed a bpmn process with a parallel multi instance embedded subprocess the multi instance subprocess defines an output collection to collect the results of the iterations if the output collection is modified during the... | 1 |

49,470 | 12,344,624,212 | IssuesEvent | 2020-05-15 07:24:16 | angular/angular-cli | https://api.github.com/repos/angular/angular-cli | closed | Server bundles are minified by default | comp: devkit/build-angular | <!--🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅

Oh hi there! 😄

To expedite issue processing please search open and closed issues before submitting a new one.

Existing issues often contain information about workarounds, resolution, or progress updates.

🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅... | 1.0 | Server bundles are minified by default - <!--🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅

Oh hi there! 😄

To expedite issue processing please search open and closed issues before submitting a new one.

Existing issues often contain information about workarounds, resolution, or progress updates.

... | non_process | server bundles are minified by default 🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅🔅 oh hi there 😄 to expedite issue processing please search open and closed issues before submitting a new one existing issues often contain information about workarounds resolution or progress updates ... | 0 |

446,757 | 12,878,268,103 | IssuesEvent | 2020-07-11 15:40:14 | DevAdventCalendar/DevAdventCalendar | https://api.github.com/repos/DevAdventCalendar/DevAdventCalendar | closed | Fix Sonarcloud bugs | bug good first issue medium priority | **To Reproduce**

Steps to reproduce the behavior:

1. Go to https://sonarcloud.io/project/issues?id=DevAdventCalendar_DevAdventCalendar&resolved=false&types=BUG

**Current behavior**

There are many bugs and vulnerabilities

**Expected behavior**

There are no bugs (besides this from /libs or /wwwroot folder)

| 1.0 | Fix Sonarcloud bugs - **To Reproduce**

Steps to reproduce the behavior:

1. Go to https://sonarcloud.io/project/issues?id=DevAdventCalendar_DevAdventCalendar&resolved=false&types=BUG

**Current behavior**

There are many bugs and vulnerabilities

**Expected behavior**

There are no bugs (besides this from /libs or... | non_process | fix sonarcloud bugs to reproduce steps to reproduce the behavior go to current behavior there are many bugs and vulnerabilities expected behavior there are no bugs besides this from libs or wwwroot folder | 0 |

14,221 | 17,141,466,631 | IssuesEvent | 2021-07-13 10:04:43 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | closed | Preprocessor chain 'climate_statistics' and 'multimodel_statistics' fails as time dimension is not present | bug preprocessor | The construction of a preprocessor that computes first `climate_statistics` and then `multimodel_statistics` fails with a missing `time` coordinate error from the latter.

This is reasonable as for individual model `climate_statistics` the time axis is replaced with the one corresponding to requested operation (eithe... | 1.0 | Preprocessor chain 'climate_statistics' and 'multimodel_statistics' fails as time dimension is not present - The construction of a preprocessor that computes first `climate_statistics` and then `multimodel_statistics` fails with a missing `time` coordinate error from the latter.

This is reasonable as for individual ... | process | preprocessor chain climate statistics and multimodel statistics fails as time dimension is not present the construction of a preprocessor that computes first climate statistics and then multimodel statistics fails with a missing time coordinate error from the latter this is reasonable as for individual ... | 1 |

36,731 | 8,101,776,142 | IssuesEvent | 2018-08-12 17:29:36 | MDAnalysis/mdanalysis | https://api.github.com/repos/MDAnalysis/mdanalysis | opened | Residue.chi1_angle() selection is incomplete | Component-Core Component-Selections Difficulty-easy Format-PDB GSOC Starter defect | **Expected behavior**

`Residue.chi1_angle()` https://github.com/MDAnalysis/mdanalysis/blob/388346d38370d833b48152f61405a18942d25251/package/MDAnalysis/core/topologyattrs.py#L614 should find all chi1 angles for all residues for standard PDB files from the Protein Databank.

**Actual behavior**

@hfmull noticed... | 1.0 | Residue.chi1_angle() selection is incomplete - **Expected behavior**

`Residue.chi1_angle()` https://github.com/MDAnalysis/mdanalysis/blob/388346d38370d833b48152f61405a18942d25251/package/MDAnalysis/core/topologyattrs.py#L614 should find all chi1 angles for all residues for standard PDB files from the Protein Databa... | non_process | residue angle selection is incomplete expected behavior residue angle should find all angles for all residues for standard pdb files from the protein databank actual behavior hfmull noticed in that the standard selection is incomplete he implemented a custom selection in the new... | 0 |

184,222 | 14,281,890,637 | IssuesEvent | 2020-11-23 08:48:11 | enonic/lib-admin-ui | https://api.github.com/repos/enonic/lib-admin-ui | opened | Option Set - set's label is not displayed after reopening the content. | Bug Test is Failing | 1. Select `Features` and open new wizard for `Option Set`

The label is configured in this content type

```

<option-set name="radioOptionSet">

<label>Single selection</label>

```

2. Fill in the name input, then select `Option2` in `Single selection` and save it

when cgroup cpu.shares is default or set high | expert-multiprocessing | I have a user running some proprietary code that is misbehaving in the multiprocessing.map() function.

In the HTCondor scheduler, each job is assigned a cpu.shares number of 100 times the number of CPUS requested for the job.

When submitting a 40-worker pool, the pstree looks very orderly when the CPU request to ... | 1.0 | Pool spawns way too many subprocesses in map() when cgroup cpu.shares is default or set high - I have a user running some proprietary code that is misbehaving in the multiprocessing.map() function.

In the HTCondor scheduler, each job is assigned a cpu.shares number of 100 times the number of CPUS requested for the j... | process | pool spawns way too many subprocesses in map when cgroup cpu shares is default or set high i have a user running some proprietary code that is misbehaving in the multiprocessing map function in the htcondor scheduler each job is assigned a cpu shares number of times the number of cpus requested for the job... | 1 |

20,578 | 3,828,011,188 | IssuesEvent | 2016-03-31 02:20:29 | jecrockett/gametime | https://api.github.com/repos/jecrockett/gametime | closed | Testing strategy | before eval testing | * Discuss approach to testing.

* What will we test?

* What will we use for testing?

* What will we omit? | 1.0 | Testing strategy - * Discuss approach to testing.

* What will we test?

* What will we use for testing?

* What will we omit? | non_process | testing strategy discuss approach to testing what will we test what will we use for testing what will we omit | 0 |

22,660 | 31,895,945,931 | IssuesEvent | 2023-09-18 01:42:40 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | closed | Change term - basisOfRecord | Term - change Class - Record-level non-normative Task Group - Material Sample Process - complete | ## Term change

* Submitter: [Material Sample Task Group](https://www.tdwg.org/community/osr/material-sample/)

* Efficacy Justification (why is this change necessary?): It would be understood that MaterialEntity would be an informal superclass to `dwc:MaterialSample`, `dwc:PreservedSpecimen`, `dwc:LivingSpecimen`, `... | 1.0 | Change term - basisOfRecord - ## Term change

* Submitter: [Material Sample Task Group](https://www.tdwg.org/community/osr/material-sample/)

* Efficacy Justification (why is this change necessary?): It would be understood that MaterialEntity would be an informal superclass to `dwc:MaterialSample`, `dwc:PreservedSpec... | process | change term basisofrecord term change submitter efficacy justification why is this change necessary it would be understood that materialentity would be an informal superclass to dwc materialsample dwc preservedspecimen dwc livingspecimen dwc fossilspecimen examples involving the use ... | 1 |

102,004 | 12,736,977,637 | IssuesEvent | 2020-06-25 17:53:59 | department-of-veterans-affairs/caseflow | https://api.github.com/repos/department-of-veterans-affairs/caseflow | opened | Discovery Research Study: Intake Generative Research | Design: size-squirrel 🐿 Product: caseflow-intake Team: Foxtrot 🦊 Type: design 💅 | ## User story

As an Intake user, I need a way to share my needs and ideas in order to improve the product.

## Problem statement

<!-- Describe the problem the design, writing, or research is intended to solve. -->

Currently, there is no clear way for Intake users to relay issues they are facing with the product outsid... | 2.0 | Discovery Research Study: Intake Generative Research - ## User story

As an Intake user, I need a way to share my needs and ideas in order to improve the product.

## Problem statement

<!-- Describe the problem the design, writing, or research is intended to solve. -->

Currently, there is no clear way for Intake users ... | non_process | discovery research study intake generative research user story as an intake user i need a way to share my needs and ideas in order to improve the product problem statement currently there is no clear way for intake users to relay issues they are facing with the product outside of support tickets and mor... | 0 |

4,068 | 7,001,509,536 | IssuesEvent | 2017-12-18 10:29:58 | aiidateam/aiida_core | https://api.github.com/repos/aiidateam/aiida_core | closed | Array of calculations | coding-day/done topic/JobCalculationAndProcess type/accepted feature | Originally reported by: **Giovanni Pizzi (Bitbucket: [pizzi](https://bitbucket.org/pizzi), GitHub: [giovannipizzi](https://github.com/giovannipizzi))**

----------------------------------------

Due to policies of some clusters, sometimes only few jobs can be submitted, and they require to run with a script similar to ... | 1.0 | Array of calculations - Originally reported by: **Giovanni Pizzi (Bitbucket: [pizzi](https://bitbucket.org/pizzi), GitHub: [giovannipizzi](https://github.com/giovannipizzi))**

----------------------------------------

Due to policies of some clusters, sometimes only few jobs can be submitted, and they require to run w... | process | array of calculations originally reported by giovanni pizzi bitbucket github due to policies of some clusters sometimes only few jobs can be submitted and they require to run with a script similar to the following mpirun np exec mpirun np exe... | 1 |

57,412 | 7,055,698,520 | IssuesEvent | 2018-01-04 09:32:57 | drupaltransylvania/drupal-camp | https://api.github.com/repos/drupaltransylvania/drupal-camp | opened | Create article design | design | As a front-end developer

I want to have designed the article page

So that I can implement it.

**Acceptance criteria**

The article page will have a title and WYSIWYG body field that can contain the following elements:

- H1, H2, H3

- Bold, Italic, Underline

- Tables

Please make a smaller header for this type... | 1.0 | Create article design - As a front-end developer

I want to have designed the article page

So that I can implement it.

**Acceptance criteria**

The article page will have a title and WYSIWYG body field that can contain the following elements:

- H1, H2, H3

- Bold, Italic, Underline

- Tables

Please make a smal... | non_process | create article design as a front end developer i want to have designed the article page so that i can implement it acceptance criteria the article page will have a title and wysiwyg body field that can contain the following elements bold italic underline tables please make a smaller... | 0 |

29,557 | 13,133,439,053 | IssuesEvent | 2020-08-06 20:55:25 | terraform-providers/terraform-provider-aws | https://api.github.com/repos/terraform-providers/terraform-provider-aws | closed | Secrets manager policy validation fails for principals that are just created | bug service/iam service/secretsmanager | <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.html) and [State Backend](https://www.terraform.io/docs/backen... | 2.0 | Secrets manager policy validation fails for principals that are just created - <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/... | non_process | secrets manager policy validation fails for principals that are just created please note the following potential times when an issue might be in terraform core or resource ordering issues and issues issues issues spans resources across multiple providers if you are runn... | 0 |

51,780 | 13,648,272,252 | IssuesEvent | 2020-09-26 08:17:59 | srivatsamarichi/tailspin-spacegame | https://api.github.com/repos/srivatsamarichi/tailspin-spacegame | closed | CVE-2018-11694 (High) detected in node-sass0bd48bbad6fccb0da16d3bdf76ad541f5f45ec70, node-sass-4.12.0.tgz | bug security vulnerability | ## CVE-2018-11694 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>node-sass0bd48bbad6fccb0da16d3bdf76ad541f5f45ec70</b>, <b>node-sass-4.12.0.tgz</b></p></summary>

<p>

<details><summa... | True | CVE-2018-11694 (High) detected in node-sass0bd48bbad6fccb0da16d3bdf76ad541f5f45ec70, node-sass-4.12.0.tgz - ## CVE-2018-11694 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>node-sass... | non_process | cve high detected in node node sass tgz cve high severity vulnerability vulnerable libraries node node sass tgz node sass tgz wrapper around libsass library home page a href path to dependency file tailspin spacegame package json path to vulnera... | 0 |

393,393 | 26,990,019,460 | IssuesEvent | 2023-02-09 19:05:16 | Mingadinga/2023-Design-Pattern | https://api.github.com/repos/Mingadinga/2023-Design-Pattern | opened | Adapter Pattern | documentation | ## 핵심 의도

특정 클래스 인터페이스를 클라이언트에서 요구하는 다른 인스턴스로 변환한다. 인터페이스가 호환되지 않아 같이 쓸 수 없었던 클래스를 사용할 수 있게 도와준다.

## 적용 상황

클라이언트가 기존에 사용하고 있는 인터페이스가 있는데, 호환되지 않는 새로운 인터페이스를 기존 인터페이스(타깃)으로 사용하고 싶을 때 어댑터 패턴을 사용한다.

## 솔루션의 구조와 각 요소의 역할

으로 사용하고 싶을 때 어댑터 패턴을 사용한다.

## 솔루션의 구조와 각 요소의 역할

;

console.log('stdio', child.stdio);

```

test.js

```js

var count = 0;

setInterval(function () {

i... | 1.0 | Child Process: fork stdio option doesn't support the String variant that spawn does - Version: v7.4.0

Platform: Windows 64 bit

The following code forks a script but all it's stdio objects are null

index.js

```js

var child = childProcess.fork('./userapp/test.js', [], {

stdio: 'pipe'

});

console.log('stdio'... | process | child process fork stdio option doesn t support the string variant that spawn does version platform windows bit the following code forks a script but all it s stdio objects are null index js js var child childprocess fork userapp test js stdio pipe console log stdio c... | 1 |

259,198 | 27,621,719,688 | IssuesEvent | 2023-03-10 01:06:32 | mittell/ruby-practice | https://api.github.com/repos/mittell/ruby-practice | opened | turbo-rails-1.3.3.gem: 1 vulnerabilities (highest severity is: 7.5) | Mend: dependency security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>turbo-rails-1.3.3.gem</b></p></summary>

<p></p>

<p>Path to dependency file: /rails-intro/Gemfile.lock</p>

<p>Path to vulnerable library: /home/wss-scanner/.gem/ruby/2... | True | turbo-rails-1.3.3.gem: 1 vulnerabilities (highest severity is: 7.5) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>turbo-rails-1.3.3.gem</b></p></summary>

<p></p>

<p>Path to dependency file: /rails-intro/Gemfile... | non_process | turbo rails gem vulnerabilities highest severity is vulnerable library turbo rails gem path to dependency file rails intro gemfile lock path to vulnerable library home wss scanner gem ruby cache rack gem vulnerabilities cve severity cvss d... | 0 |

465 | 2,772,885,815 | IssuesEvent | 2015-05-03 03:44:01 | nealian/cse325_project6 | https://api.github.com/repos/nealian/cse325_project6 | opened | Implement the rest of the required functionality | requirement | Namely `fs_create`, `fs_delete`, `fs_read`, `fs_write`, `fs_get_filesize`, `fs_lseek`, and `fs_truncate`. Divide into sub-tasks as you see fit. | 1.0 | Implement the rest of the required functionality - Namely `fs_create`, `fs_delete`, `fs_read`, `fs_write`, `fs_get_filesize`, `fs_lseek`, and `fs_truncate`. Divide into sub-tasks as you see fit. | non_process | implement the rest of the required functionality namely fs create fs delete fs read fs write fs get filesize fs lseek and fs truncate divide into sub tasks as you see fit | 0 |

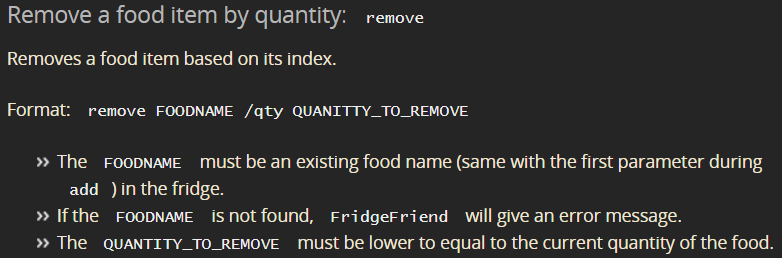

201,258 | 15,802,147,940 | IssuesEvent | 2021-04-03 08:17:59 | tzexern/ped | https://api.github.com/repos/tzexern/ped | opened | Minor Grammar Mistake under Remove Feature | severity.VeryLow type.DocumentationBug |

Under remove, notes for `QUANTITY_TO_REMOVE`, is `...must be lower to equal...` supposed to be `... lower or equal...`?

<!--session: 1617437377510-3c93c0e6-8609-4fd0-9f26-7eb717a196bb--> | 1.0 | Minor Grammar Mistake under Remove Feature -

Under remove, notes for `QUANTITY_TO_REMOVE`, is `...must be lower to equal...` supposed to be `... lower or equal...`?

<!--session: 1617437377510-3c93c0e6-860... | non_process | minor grammar mistake under remove feature under remove notes for quantity to remove is must be lower to equal supposed to be lower or equal | 0 |

1,695 | 4,346,120,150 | IssuesEvent | 2016-07-29 15:00:44 | OpenBitcoinPrivacyProject/wallet-ratings | https://api.github.com/repos/OpenBitcoinPrivacyProject/wallet-ratings | opened | Revisit criterion for number of clicks to perform first backup | criteria easy-to-process | OBPPV3-CR61 is:

> Number of clicks to create the first wallet backup

This is currently only used as a criterion for OBPPV3-CM56:

> Use eternal backups

Under this attack:

> Users may reuse non-ECDH addresses due to the fear of losing funds if avoiding reuse increases the risk that wallet backups will become une... | 1.0 | Revisit criterion for number of clicks to perform first backup - OBPPV3-CR61 is:

> Number of clicks to create the first wallet backup

This is currently only used as a criterion for OBPPV3-CM56:

> Use eternal backups

Under this attack:

> Users may reuse non-ECDH addresses due to the fear of losing funds if avoi... | process | revisit criterion for number of clicks to perform first backup is number of clicks to create the first wallet backup this is currently only used as a criterion for use eternal backups under this attack users may reuse non ecdh addresses due to the fear of losing funds if avoiding reuse incre... | 1 |

724,871 | 24,943,911,819 | IssuesEvent | 2022-10-31 21:32:21 | GQDeltex/ft_transcendence | https://api.github.com/repos/GQDeltex/ft_transcendence | closed | implement to join a public channel | frontend priority | We can now change the channel from private to public, but there is no way to see on the frontend if you are in that channel right now, or if its only displayed, because its a public channel.

Implement some kind of optical "change color" and remove "clickable" from shown public channels in channelList. | 1.0 | implement to join a public channel - We can now change the channel from private to public, but there is no way to see on the frontend if you are in that channel right now, or if its only displayed, because its a public channel.

Implement some kind of optical "change color" and remove "clickable" from shown public ch... | non_process | implement to join a public channel we can now change the channel from private to public but there is no way to see on the frontend if you are in that channel right now or if its only displayed because its a public channel implement some kind of optical change color and remove clickable from shown public ch... | 0 |

18,488 | 24,550,903,457 | IssuesEvent | 2022-10-12 12:32:27 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] [Angular Upgrade] Dashboard > Sites > UI issue | Bug P2 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | UI issue is observed in the 'Sites' screen i.e., Alignment is not proper

**AR:**

**ER:**

**ER:**

. | 1.0 | Dragdrop shadow should be using an overlay - Might apply to anything using the dragdrophelper but ideally it only works in the 1 viewport (relative to the mouse). | non_process | dragdrop shadow should be using an overlay might apply to anything using the dragdrophelper but ideally it only works in the viewport relative to the mouse | 0 |

10,568 | 13,368,987,894 | IssuesEvent | 2020-09-01 08:10:36 | didi/mpx | https://api.github.com/repos/didi/mpx | closed | 初始化项目之后npm run watch之后报错,一直在building... | processing | ### 环境信息

- 系统:macos 10.14.6

- node版本:v12.16.2

- @mpxjs/core: 2.5.11

### 操作步骤

1. 查看文档Introduction章节的【安装使用】

2. npm i -g @mpxjs/cli

3. mpx init mpx-project(选项为wx+no+yes+yes+yes+yes+no+no+mpx-project+A mpx project+'xcp'+touristappid)

4. cd mpx-project

5. npm I

6. npm run watch

### 情况说明

原来一直用微信小程序原生开发,今天想试试mpx... | 1.0 | 初始化项目之后npm run watch之后报错,一直在building... - ### 环境信息

- 系统:macos 10.14.6

- node版本:v12.16.2

- @mpxjs/core: 2.5.11

### 操作步骤

1. 查看文档Introduction章节的【安装使用】

2. npm i -g @mpxjs/cli

3. mpx init mpx-project(选项为wx+no+yes+yes+yes+yes+no+no+mpx-project+A mpx project+'xcp'+touristappid)

4. cd mpx-project

5. npm I

6. npm run ... | process | 初始化项目之后npm run watch之后报错,一直在building 环境信息 系统:macos node版本: mpxjs core 操作步骤 查看文档introduction章节的【安装使用】 npm i g mpxjs cli mpx init mpx project(选项为wx no yes yes yes yes no no mpx project a mpx project xcp touristappid) cd mpx project npm i npm run watch ... | 1 |

578,610 | 17,148,958,588 | IssuesEvent | 2021-07-13 17:50:37 | Couchers-org/couchers | https://api.github.com/repos/Couchers-org/couchers | closed | gRPC interceptors don't work with gRPC-Web Devtools | bug frontend priority: low | This means that the unathenticated error handler doesn't run correctly, so for example you won't be kicked out if the backend suddenly jails your or logs you out (e.g. due to ToS version updating).

This works correctly in the prod build though because we disable the dev tools there.

See also https://github.com/gr... | 1.0 | gRPC interceptors don't work with gRPC-Web Devtools - This means that the unathenticated error handler doesn't run correctly, so for example you won't be kicked out if the backend suddenly jails your or logs you out (e.g. due to ToS version updating).

This works correctly in the prod build though because we disable ... | non_process | grpc interceptors don t work with grpc web devtools this means that the unathenticated error handler doesn t run correctly so for example you won t be kicked out if the backend suddenly jails your or logs you out e g due to tos version updating this works correctly in the prod build though because we disable ... | 0 |

87,208 | 25,068,155,419 | IssuesEvent | 2022-11-07 09:59:40 | gitpod-io/gitpod | https://api.github.com/repos/gitpod-io/gitpod | closed | Webhooks for GitLab projects are disabled on Unauthorized Errors | type: bug git provider: gitlab feature: prebuilds feature: teams and projects | **Issue**

We've learned now that GitLab webhooks are disabled automatically if the receiver (Gitpod) is responding with status codes other than 2xx. The rules for [failing webhooks](https://docs.gitlab.com/ee/user/project/integrations/webhooks.html#failing-webhooks) are basically:

* on 5xx responses -> disable temp... | 1.0 | Webhooks for GitLab projects are disabled on Unauthorized Errors - **Issue**

We've learned now that GitLab webhooks are disabled automatically if the receiver (Gitpod) is responding with status codes other than 2xx. The rules for [failing webhooks](https://docs.gitlab.com/ee/user/project/integrations/webhooks.html#f... | non_process | webhooks for gitlab projects are disabled on unauthorized errors issue we ve learned now that gitlab webhooks are disabled automatically if the receiver gitpod is responding with status codes other than the rules for are basically on responses disable temporarily on responses disable ... | 0 |

12,783 | 15,165,440,156 | IssuesEvent | 2021-02-12 15:04:07 | endlessm/azafea | https://api.github.com/repos/endlessm/azafea | closed | Add a composite index on ping country + created_at | endless event processors enhancement | @ramcq tried to run the following query:

```sql

SELECT DISTINCT p.country,

(SELECT count(pq1.id)

FROM ping_v1 pq1

WHERE pq1.country = p.country

AND pq1.created_at >= '2019-01-01'::date

AND pq1.created_at < '2019-04-01':... | 1.0 | Add a composite index on ping country + created_at - @ramcq tried to run the following query:

```sql

SELECT DISTINCT p.country,

(SELECT count(pq1.id)

FROM ping_v1 pq1

WHERE pq1.country = p.country

AND pq1.created_at >= '2019-01-01'::date

... | process | add a composite index on ping country created at ramcq tried to run the following query sql select distinct p country select count id from ping where country p country and created at date ... | 1 |

148,086 | 5,658,731,927 | IssuesEvent | 2017-04-10 10:56:03 | research-resource/research_resource | https://api.github.com/repos/research-resource/research_resource | closed | Alert on consent page to confirm saliva kit has been requested | priority-2 T2h | As a user, I want to be reassured that my request for a saliva sample kit has been acknowledged and that the kit is on the way to me

What is the easiest way to do this?

Ties in with #18 - so when the user enters their address and clicks to submit the request, a message would show with 'Thank you for requesting your... | 1.0 | Alert on consent page to confirm saliva kit has been requested - As a user, I want to be reassured that my request for a saliva sample kit has been acknowledged and that the kit is on the way to me

What is the easiest way to do this?

Ties in with #18 - so when the user enters their address and clicks to submit the ... | non_process | alert on consent page to confirm saliva kit has been requested as a user i want to be reassured that my request for a saliva sample kit has been acknowledged and that the kit is on the way to me what is the easiest way to do this ties in with so when the user enters their address and clicks to submit the r... | 0 |

7,182 | 10,323,014,259 | IssuesEvent | 2019-08-31 17:27:30 | AlbaHoo/cheeger.blog | https://api.github.com/repos/AlbaHoo/cheeger.blog | opened | Image processing with AWS lambda & S3 buckets | Gitalk Image processing with AWS lambda & S3 buckets | https://cheeger.com/develop/2018/03/17/Image-process-with-aws-lambda.html

Image processing with AWS lambda & S3 buckets | 1.0 | Image processing with AWS lambda & S3 buckets - https://cheeger.com/develop/2018/03/17/Image-process-with-aws-lambda.html

Image processing with AWS lambda & S3 buckets | process | image processing with aws lambda buckets image processing with aws lambda buckets | 1 |

20,968 | 27,819,131,581 | IssuesEvent | 2023-03-19 02:06:51 | cse442-at-ub/project_s23-cinco | https://api.github.com/repos/cse442-at-ub/project_s23-cinco | closed | Set up demo for App's session authorization | Processing Task Sprint 2 | *Test Cases*

Local host with xampp apache running

*Test 1*

1) After having the php file in the htdocs of the xampp folder on your pc and with xamp running apache, put in the local host url into your browser. Similar to what appears in the image right below in step 2

2) Inspect the page and go to the application secti... | 1.0 | Set up demo for App's session authorization - *Test Cases*

Local host with xampp apache running

*Test 1*

1) After having the php file in the htdocs of the xampp folder on your pc and with xamp running apache, put in the local host url into your browser. Similar to what appears in the image right below in step 2

2) In... | process | set up demo for app s session authorization test cases local host with xampp apache running test after having the php file in the htdocs of the xampp folder on your pc and with xamp running apache put in the local host url into your browser similar to what appears in the image right below in step in... | 1 |

18,041 | 24,051,694,534 | IssuesEvent | 2022-09-16 13:22:03 | calaldees/KaraKara | https://api.github.com/repos/calaldees/KaraKara | closed | Ensure every track has a title and some attachments | feature processmedia2 | Right now the code assumes every track has `track.tags.title`, because the title is referenced in a ton of places and adding is-null checks in every place is a mess. But then if a track slips through without a title, things crash :(

Similar for attachments - we assume every track has `video`, `preview`, and `image` ... | 1.0 | Ensure every track has a title and some attachments - Right now the code assumes every track has `track.tags.title`, because the title is referenced in a ton of places and adding is-null checks in every place is a mess. But then if a track slips through without a title, things crash :(

Similar for attachments - we a... | process | ensure every track has a title and some attachments right now the code assumes every track has track tags title because the title is referenced in a ton of places and adding is null checks in every place is a mess but then if a track slips through without a title things crash similar for attachments we a... | 1 |

46,177 | 18,982,971,994 | IssuesEvent | 2021-11-21 08:01:57 | Azure/azure-powershell | https://api.github.com/repos/Azure/azure-powershell | closed | Set-AzPublicIpAddress does not work with IPs created from Public IP Prefix | Service Attention question Network - Virtual Network customer-reported needs-author-feedback no-recent-activity | <!--

- Make sure you are able to reproduce this issue on the latest released version of Az