Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

13,702 | 16,457,249,058 | IssuesEvent | 2021-05-21 14:07:36 | cncf/tag-security | https://api.github.com/repos/cncf/tag-security | closed | write up more detail on how we organize security reviewer teams | assessment-process inactive | @JustinCappos @justincormack @lumjjb and I met and discussed how to organize teams for the security audits.

Here are notes which may only make sense to the people who were present -- capturing them here in advance of PR with better explanation

| area-System.Linq tenet-performance in-pr | Hi! I noticed that the [`System.Linq.Enumerable.Chunk`](https://docs.microsoft.com/en-us/dotnet/api/system.linq.enumerable.chunk) operator is [currently](https://github.com/dotnet/runtime/blob/0d96247e828d153e738d0a2067ecbe330d7d58ab/src/libraries/System.Linq/src/System/Linq/Chunk.cs) (.NET 7) implemented in a way that... | True | The Enumerable.Chunk can leak memory (.NET 7) - Hi! I noticed that the [`System.Linq.Enumerable.Chunk`](https://docs.microsoft.com/en-us/dotnet/api/system.linq.enumerable.chunk) operator is [currently](https://github.com/dotnet/runtime/blob/0d96247e828d153e738d0a2067ecbe330d7d58ab/src/libraries/System.Linq/src/System/L... | non_process | the enumerable chunk can leak memory net hi i noticed that the operator is net implemented in a way that could potentially result in delaying the garbage collection of some objects the issue emerges in case the processing of each element contained in each tsource chunk involves allocating a... | 0 |

64,352 | 15,874,744,416 | IssuesEvent | 2021-04-09 05:44:27 | open-telemetry/opentelemetry-python | https://api.github.com/repos/open-telemetry/opentelemetry-python | closed | Delete old/renamed opentelemetry-ext-* packages on PyPI | backlog build & infra | We renamed instrumentation and exporter PyPI packages from `opentelemetry-ext-*` to `opentelemetry-instrumentation-*` and `opentelemetry-exporter-*`. Now there are bunch of deprecated packages stuck at version `0.11b0` or earlier that need to be deleted from PyPI.

However, deleting them outright can break user build... | 1.0 | Delete old/renamed opentelemetry-ext-* packages on PyPI - We renamed instrumentation and exporter PyPI packages from `opentelemetry-ext-*` to `opentelemetry-instrumentation-*` and `opentelemetry-exporter-*`. Now there are bunch of deprecated packages stuck at version `0.11b0` or earlier that need to be deleted from PyP... | non_process | delete old renamed opentelemetry ext packages on pypi we renamed instrumentation and exporter pypi packages from opentelemetry ext to opentelemetry instrumentation and opentelemetry exporter now there are bunch of deprecated packages stuck at version or earlier that need to be deleted from pypi ... | 0 |

37,257 | 18,245,438,004 | IssuesEvent | 2021-10-01 17:44:06 | firebase/firebase-ios-sdk | https://api.github.com/repos/firebase/firebase-ios-sdk | closed | Catalyst 15.0 with Swift Package Manager: No such module 'FirebasePerformance' | api: performance Catalyst | <!-- DO NOT DELETE

validate_template=true

template_path=.github/ISSUE_TEMPLATE/bug_report.md

-->

### [REQUIRED] Step 1: Describe your environment

* Xcode version: Version 13.0 beta 5 (13A5212g)

* Firebase SDK version: 8.8.0 (also tried 8.6.1)

* Installation method: `Swift Package Manager`

* Firebase... | True | Catalyst 15.0 with Swift Package Manager: No such module 'FirebasePerformance' - <!-- DO NOT DELETE

validate_template=true

template_path=.github/ISSUE_TEMPLATE/bug_report.md

-->

### [REQUIRED] Step 1: Describe your environment

* Xcode version: Version 13.0 beta 5 (13A5212g)

* Firebase SDK version: 8.8.0 (... | non_process | catalyst with swift package manager no such module firebaseperformance do not delete validate template true template path github issue template bug report md step describe your environment xcode version version beta firebase sdk version also tried ... | 0 |

20,832 | 27,592,380,962 | IssuesEvent | 2023-03-09 02:00:08 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Thu, 9 Mar 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

### Intermediate and Future Frame Prediction of Geostationary Satellite Imagery With Warp and Refine Network

- **Authors:** Minseok Seo, Yeji Choi, Hyungon Ry, Heesun Park, Hyungkun Bae, Hyesook Lee, Wanseok Seo

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** htt... | 2.0 | New submissions for Thu, 9 Mar 23 - ## Keyword: events

### Intermediate and Future Frame Prediction of Geostationary Satellite Imagery With Warp and Refine Network

- **Authors:** Minseok Seo, Yeji Choi, Hyungon Ry, Heesun Park, Hyungkun Bae, Hyesook Lee, Wanseok Seo

- **Subjects:** Computer Vision and Pattern Recog... | process | new submissions for thu mar keyword events intermediate and future frame prediction of geostationary satellite imagery with warp and refine network authors minseok seo yeji choi hyungon ry heesun park hyungkun bae hyesook lee wanseok seo subjects computer vision and pattern recogn... | 1 |

15,506 | 19,703,264,856 | IssuesEvent | 2022-01-12 18:52:11 | googleapis/java-analytics-admin | https://api.github.com/repos/googleapis/java-analytics-admin | opened | Your .repo-metadata.json file has a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* release_level must be equal to one of the allowed values in .repo-metadata.json

* api_shortname 'analytics-admin' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can close this issue.

Reach out to **go/github-automat... | 1.0 | Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* release_level must be equal to one of the allowed values in .repo-metadata.json

* api_shortname 'analytics-admin' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can cl... | process | your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 release level must be equal to one of the allowed values in repo metadata json api shortname analytics admin invalid in repo metadata json ☝️ once you correct these problems you can cl... | 1 |

720,895 | 24,810,240,431 | IssuesEvent | 2022-10-25 08:51:23 | spidernet-io/spiderpool | https://api.github.com/repos/spidernet-io/spiderpool | closed | Edit subnet is rejected | issue/not-assign priority/important-soon kind/bug | Describe the version

version about:

spiderpool

- v0.2.2

**Describe the bug**

A Subnet, without any IP assigned to the IPPool,But it is not possible to change the size of the ips

**Output of the failure**

```

# Please edit the object below. Lines beginning with a '#' will be ignored,

# and an empty file wil... | 1.0 | Edit subnet is rejected - Describe the version

version about:

spiderpool

- v0.2.2

**Describe the bug**

A Subnet, without any IP assigned to the IPPool,But it is not possible to change the size of the ips

**Output of the failure**

```

# Please edit the object below. Lines beginning with a '#' will be ignored... | non_process | edit subnet is rejected describe the version version about spiderpool describe the bug a subnet without any ip assigned to the ippool,but it is not possible to change the size of the ips output of the failure please edit the object below lines beginning with a will be ignored ... | 0 |

7,800 | 10,959,312,910 | IssuesEvent | 2019-11-27 11:07:58 | Altinn/altinn-studio | https://api.github.com/repos/Altinn/altinn-studio | closed | Implement solution for (flexible) process | Epic area/process status/draft team/nusse | **Goal for MVP2:** Know how to solve this

**Goal for MVP3:** Solve this for MVP - including update workflow to support one-step submit (not two step like in current workflow) | 1.0 | Implement solution for (flexible) process - **Goal for MVP2:** Know how to solve this

**Goal for MVP3:** Solve this for MVP - including update workflow to support one-step submit (not two step like in current workflow) | process | implement solution for flexible process goal for know how to solve this goal for solve this for mvp including update workflow to support one step submit not two step like in current workflow | 1 |

7,371 | 10,512,645,033 | IssuesEvent | 2019-09-27 18:26:50 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | closed | Applicant Status: Completed | Apply Process Requirements Ready State Dept. | Who: Student

What: Status on Dashboard

Why: As a student I would like to know what my status is as an applicant

Statuses:

**Not complete** - when a student is a primary selection and the Hiring Manger does not mark them complete before closing the internship.

**Completed** - when a student is an alternate or pri... | 1.0 | Applicant Status: Completed - Who: Student

What: Status on Dashboard

Why: As a student I would like to know what my status is as an applicant

Statuses:

**Not complete** - when a student is a primary selection and the Hiring Manger does not mark them complete before closing the internship.

**Completed** - when a ... | process | applicant status completed who student what status on dashboard why as a student i would like to know what my status is as an applicant statuses not complete when a student is a primary selection and the hiring manger does not mark them complete before closing the internship completed when a ... | 1 |

120,494 | 17,644,204,886 | IssuesEvent | 2021-08-20 01:57:00 | logbie/HyperGAN | https://api.github.com/repos/logbie/HyperGAN | opened | CVE-2021-29616 (High) detected in tensorflow_gpu-2.1.0-cp36-cp36m-manylinux2010_x86_64.whl | security vulnerability | ## CVE-2021-29616 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow_gpu-2.1.0-cp36-cp36m-manylinux2010_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine lea... | True | CVE-2021-29616 (High) detected in tensorflow_gpu-2.1.0-cp36-cp36m-manylinux2010_x86_64.whl - ## CVE-2021-29616 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow_gpu-2.1.0-cp36-... | non_process | cve high detected in tensorflow gpu whl cve high severity vulnerability vulnerable library tensorflow gpu whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file hypergan requirements... | 0 |

16,699 | 21,797,984,938 | IssuesEvent | 2022-05-15 22:26:48 | TheUltimateC0der/listrr.pro | https://api.github.com/repos/TheUltimateC0der/listrr.pro | closed | Create Lists that must meet all Genre selected | feature-request processing:server-side | For example, Crime and Thriller to get Crime Thrillers | 1.0 | Create Lists that must meet all Genre selected - For example, Crime and Thriller to get Crime Thrillers | process | create lists that must meet all genre selected for example crime and thriller to get crime thrillers | 1 |

228,435 | 18,232,491,512 | IssuesEvent | 2021-10-01 00:00:46 | ImagingDataCommons/IDC-WebApp | https://api.github.com/repos/ImagingDataCommons/IDC-WebApp | closed | Page selection buttons are jumping around (again) | bug merged:dev testing needed testing passed In Progress | This is what I see in the test tier:

| 2.0 | Page selection buttons are jumping around (again) - This is what I see in the test tier:

| non_process | page selection buttons are jumping around again this is what i see in the test tier | 0 |

50,707 | 10,549,209,124 | IssuesEvent | 2019-10-03 08:10:55 | CleverRaven/Cataclysm-DDA | https://api.github.com/repos/CleverRaven/Cataclysm-DDA | closed | Reduce scope of sunlight shadowcasting. | Code: Performance Good First Issue [C++] | **Is your feature request related to a problem? Please describe.**

During active play, recalculating sunlight levels in outdoor areas consumes a significant amount of processing time.

**Describe the solution you'd like**

On map shift or on initial sunlight propogation, we can track which horizontal map slices ... | 1.0 | Reduce scope of sunlight shadowcasting. - **Is your feature request related to a problem? Please describe.**

During active play, recalculating sunlight levels in outdoor areas consumes a significant amount of processing time.

**Describe the solution you'd like**

On map shift or on initial sunlight propogation,... | non_process | reduce scope of sunlight shadowcasting is your feature request related to a problem please describe during active play recalculating sunlight levels in outdoor areas consumes a significant amount of processing time describe the solution you d like on map shift or on initial sunlight propogation ... | 0 |

92,708 | 3,872,978,201 | IssuesEvent | 2016-04-11 15:29:39 | cs2103jan2016-W14-2J/main | https://api.github.com/repos/cs2103jan2016-W14-2J/main | closed | Parser to enhance Deadline parsing | Priority.medium Type.Enhancement | etc: meet Hannah by 7pm by the beach. (if 7pm has passed, set the date to the following day)

etc. finish revising chapter 1 in 2 hours time.

| 1.0 | Parser to enhance Deadline parsing - etc: meet Hannah by 7pm by the beach. (if 7pm has passed, set the date to the following day)

etc. finish revising chapter 1 in 2 hours time.

| non_process | parser to enhance deadline parsing etc meet hannah by by the beach if has passed set the date to the following day etc finish revising chapter in hours time | 0 |

9,585 | 12,536,739,608 | IssuesEvent | 2020-06-05 01:05:41 | googleapis/gapic-showcase | https://api.github.com/repos/googleapis/gapic-showcase | closed | chore: enable typescript-smoke-test once proto3_optional support is added | process | Disabled the `typescript-smoke-test` job in #380. Once gapic-generator-typescript implements proto3_optional support, we can enable it again (if we want to). | 1.0 | chore: enable typescript-smoke-test once proto3_optional support is added - Disabled the `typescript-smoke-test` job in #380. Once gapic-generator-typescript implements proto3_optional support, we can enable it again (if we want to). | process | chore enable typescript smoke test once optional support is added disabled the typescript smoke test job in once gapic generator typescript implements optional support we can enable it again if we want to | 1 |

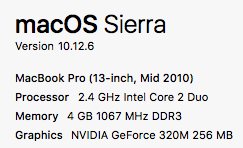

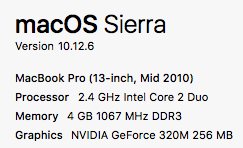

676,061 | 23,115,103,540 | IssuesEvent | 2022-07-27 15:58:18 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [docdb] Cluster fails on macOS Sierra / Intel Core 2 Duo due to missing SSE4.2 instruction set | kind/enhancement area/docdb priority/medium | Jira Link: [DB-1798](https://yugabyte.atlassian.net/browse/DB-1798)

$ uname -v

Darwin Kernel Version 16.7.0: Fri Apr 27 17:59:46 PDT 2018; root:xnu-3789.73.13~1/RELEASE_X8... | 1.0 | [docdb] Cluster fails on macOS Sierra / Intel Core 2 Duo due to missing SSE4.2 instruction set - Jira Link: [DB-1798](https://yugabyte.atlassian.net/browse/DB-1798)

$ uname... | non_process | cluster fails on macos sierra intel core duo due to missing instruction set jira link uname v darwin kernel version fri apr pdt root xnu release bin yb ctl num shards per tserver create cat tmp yugabyte local cluster node disk tserver e... | 0 |

15,440 | 19,656,047,763 | IssuesEvent | 2022-01-10 12:37:57 | plazi/community | https://api.github.com/repos/plazi/community | closed | A GENERIC CLASSIFICATION OF THE THELYPTERIDACEAE by Fawcett to be finished | process request | This is the file name for the fern book 2021_ThelypteridaceaeFerns_OACopy3.pdf

FF8EFF8EFFE99B4B602B7D49FFA7FFBB

thanks for looking into this - quiet a bit has already been done

tx donat

| 1.0 | A GENERIC CLASSIFICATION OF THE THELYPTERIDACEAE by Fawcett to be finished - This is the file name for the fern book 2021_ThelypteridaceaeFerns_OACopy3.pdf

FF8EFF8EFFE99B4B602B7D49FFA7FFBB

thanks for looking into this - quiet a bit has already been done

tx donat

| process | a generic classification of the thelypteridaceae by fawcett to be finished this is the file name for the fern book thelypteridaceaeferns pdf thanks for looking into this quiet a bit has already been done tx donat | 1 |

7,002 | 10,275,927,802 | IssuesEvent | 2019-08-24 12:46:54 | nsubstitute/NSubstitute.Analyzers | https://api.github.com/repos/nsubstitute/NSubstitute.Analyzers | closed | Remove code duplication for checking if given symbol belongs to NSubstitute | Non functional requirement | There is a lot of code duplication in the codebase related to checks if a given symbol/node is part of NSubstitute. Those checks should be extracted to some sort of helper class/service so as they can be easily reusable without need of copying the code all over the places

| 1.0 | Remove code duplication for checking if given symbol belongs to NSubstitute - There is a lot of code duplication in the codebase related to checks if a given symbol/node is part of NSubstitute. Those checks should be extracted to some sort of helper class/service so as they can be easily reusable without need of copyin... | non_process | remove code duplication for checking if given symbol belongs to nsubstitute there is a lot of code duplication in the codebase related to checks if a given symbol node is part of nsubstitute those checks should be extracted to some sort of helper class service so as they can be easily reusable without need of copyin... | 0 |

355,126 | 25,175,642,188 | IssuesEvent | 2022-11-11 09:00:44 | Yuvaraj0702/pe | https://api.github.com/repos/Yuvaraj0702/pe | opened | FAQ sections does not have many FAQ's | type.DocumentationBug severity.VeryLow | Description: There is only one bug in the FAQ sections while there may be many more frequently asked questions regarding compatibility and file storage etc.

Image of bug:

Reason for bug level:

These are... | 1.0 | FAQ sections does not have many FAQ's - Description: There is only one bug in the FAQ sections while there may be many more frequently asked questions regarding compatibility and file storage etc.

Image of bug:

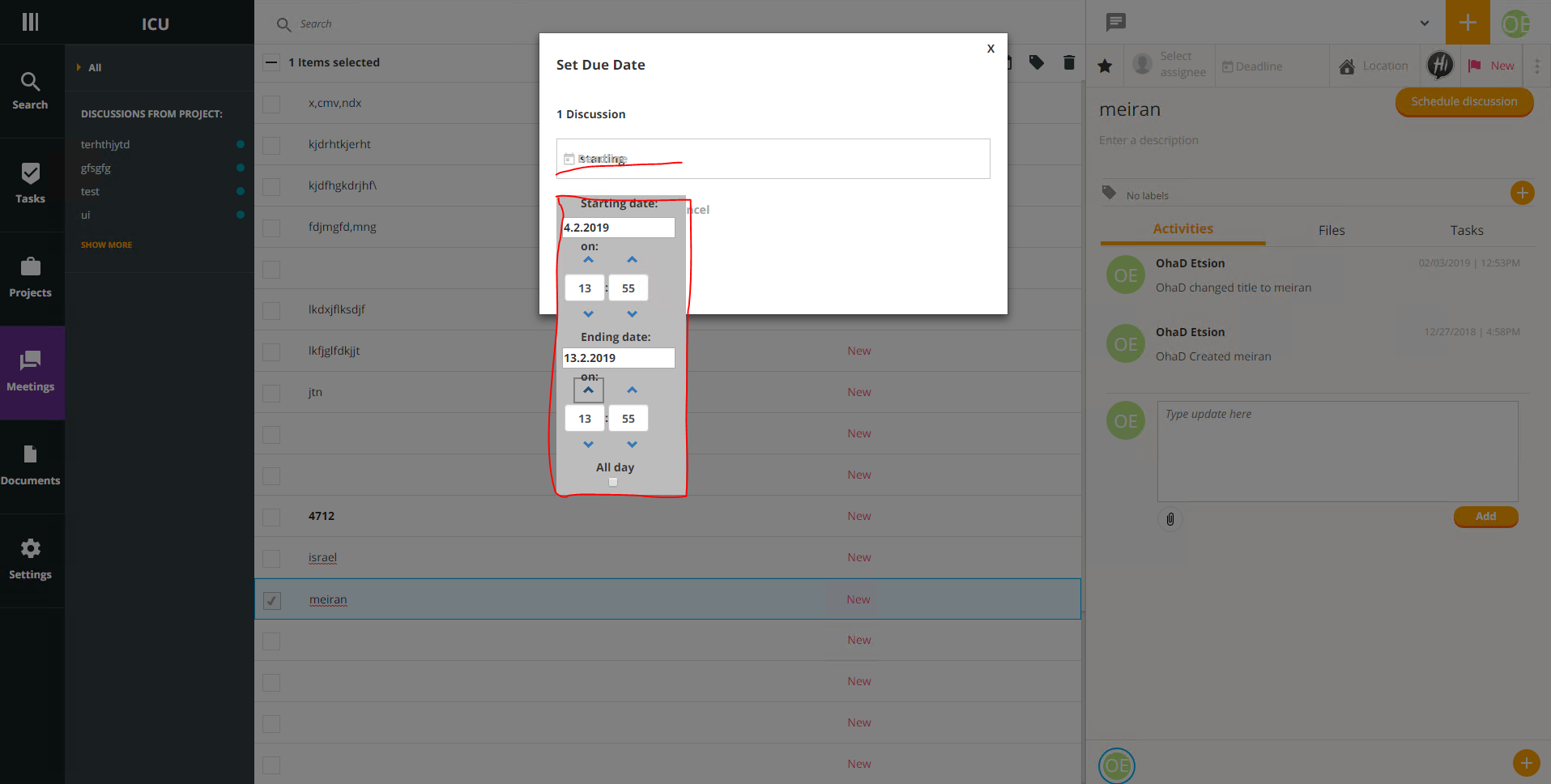

| 1.0 | Meetings: multiple select date error - go to meetings

open new meeting

click on multiple select

click on date filed

fill the fields Starting date and Ending date

click enter

the dates is not update

| process | meetings multiple select date error go to meetings open new meeting click on multiple select click on date filed fill the fields starting date and ending date click enter the dates is not update | 1 |

22,594 | 31,818,256,917 | IssuesEvent | 2023-09-13 22:37:15 | assimp/assimp | https://api.github.com/repos/assimp/assimp | closed | Bug: memory delete error. | Bug Postprocessing | error is occured when the postProcessing with ForceGenerateNormals

post option is

```

PostProcessSteps ppSteps = PostProcessSteps.None;

ppSteps |= PostProcessPreset.TargetRealTimeQuality;

ppSteps |= PostProcessSteps.GenerateSmoothNormals;

ppSteps |= PostProcessSteps.ForceGenerateNormals;

```

File:... | 1.0 | Bug: memory delete error. - error is occured when the postProcessing with ForceGenerateNormals

post option is

```

PostProcessSteps ppSteps = PostProcessSteps.None;

ppSteps |= PostProcessPreset.TargetRealTimeQuality;

ppSteps |= PostProcessSteps.GenerateSmoothNormals;

ppSteps |= PostProcessSteps.ForceGene... | process | bug memory delete error error is occured when the postprocessing with forcegeneratenormals post option is postprocesssteps ppsteps postprocesssteps none ppsteps postprocesspreset targetrealtimequality ppsteps postprocesssteps generatesmoothnormals ppsteps postprocesssteps forcegene... | 1 |

18,484 | 24,550,777,785 | IssuesEvent | 2022-10-12 12:27:00 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] [Angular Upgrade] My account > Change password > New password > Password view icon should get displayed | Bug P1 Participant manager Process: Fixed Process: Tested dev | My account > Change password > New password > Password view icon should get displayed

**Note:** Issue needs to be fixed wherever password view icon is getting displayed

**AR:**

**ER:**

detected in http-proxy-1.17.0.tgz | security vulnerability | ## WS-2020-0091 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>http-proxy-1.17.0.tgz</b></p></summary>

<p>HTTP proxying for the masses</p>

<p>Library home page: <a href="https://regis... | True | WS-2020-0091 (High) detected in http-proxy-1.17.0.tgz - ## WS-2020-0091 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>http-proxy-1.17.0.tgz</b></p></summary>

<p>HTTP proxying for the... | non_process | ws high detected in http proxy tgz ws high severity vulnerability vulnerable library http proxy tgz http proxying for the masses library home page a href path to dependency file choosealicense com assets vendor clipboard package json path to vulnerable library choos... | 0 |

14,899 | 18,291,533,875 | IssuesEvent | 2021-10-05 15:44:08 | googleapis/python-bigquery-pandas | https://api.github.com/repos/googleapis/python-bigquery-pandas | closed | Dependency Dashboard | type: process api: bigquery | This issue provides visibility into Renovate updates and their statuses. [Learn more](https://docs.renovatebot.com/key-concepts/dashboard/)

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/pandas-1.x -->[chore(deps): update... | 1.0 | Dependency Dashboard - This issue provides visibility into Renovate updates and their statuses. [Learn more](https://docs.renovatebot.com/key-concepts/dashboard/)

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/pandas-1.x ... | process | dependency dashboard this issue provides visibility into renovate updates and their statuses open these updates have all been created already click a checkbox below to force a retry rebase of any pull pull pull click on this checkbox to rebase all open pr... | 1 |

19,406 | 25,546,054,690 | IssuesEvent | 2022-11-29 18:54:23 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [processor/cumulativetodelta] Output delta histograms include min and max when they shouldnt | bug help wanted priority:p2 processor/cumulativetodelta | ### What happened?

## Description

The cumulativetodelta processor retains the min and max from the cumulative histograms when they shouldn't. Its semantically wrong to include cumulative min and max on a resulting delta.

## Steps to Reproduce

Its quite clear in the [source code](https://github.com/open-telem... | 1.0 | [processor/cumulativetodelta] Output delta histograms include min and max when they shouldnt - ### What happened?

## Description

The cumulativetodelta processor retains the min and max from the cumulative histograms when they shouldn't. Its semantically wrong to include cumulative min and max on a resulting delta. ... | process | output delta histograms include min and max when they shouldnt what happened description the cumulativetodelta processor retains the min and max from the cumulative histograms when they shouldn t its semantically wrong to include cumulative min and max on a resulting delta steps to reproduce ... | 1 |

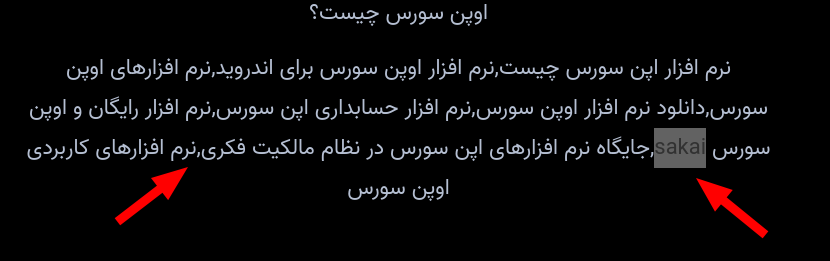

222,541 | 17,081,368,035 | IssuesEvent | 2021-07-08 05:55:57 | POSSF/POSSF | https://api.github.com/repos/POSSF/POSSF | closed | Typo & translate | documentation |

صفحهی اول اشکالات متعددی از نظر غلط املایی و... دارد.

اهداف، حامیان و برگزار کنندگان همایش نیز به طور مشخص یا حداقل واضح قابل رویت نیستند. | 1.0 | Typo & translate -

صفحهی اول اشکالات متعددی از نظر غلط املایی و... دارد.

اهداف، حامیان و برگزار کنندگان همایش نیز به طور مشخص یا حداقل واضح قابل رویت نیستند. | non_process | typo translate صفحهی اول اشکالات متعددی از نظر غلط املایی و دارد اهداف، حامیان و برگزار کنندگان همایش نیز به طور مشخص یا حداقل واضح قابل رویت نیستند | 0 |

8,280 | 11,438,356,710 | IssuesEvent | 2020-02-05 03:12:55 | GoogleContainerTools/kpt | https://api.github.com/repos/GoogleContainerTools/kpt | closed | Setup domain to work with the go module alias' | process | Need to be able to `go get kpt.dev` and `go get lib.kpt.dev` -- requires configuring the domain to respond with the correct go headers

See: https://golang.org/cmd/go/#hdr-Remote_import_paths

Also see:

https://github.com/GoogleCloudPlatform/govanityurls

| 1.0 | Setup domain to work with the go module alias' - Need to be able to `go get kpt.dev` and `go get lib.kpt.dev` -- requires configuring the domain to respond with the correct go headers

See: https://golang.org/cmd/go/#hdr-Remote_import_paths

Also see:

https://github.com/GoogleCloudPlatform/govanityurls

| process | setup domain to work with the go module alias need to be able to go get kpt dev and go get lib kpt dev requires configuring the domain to respond with the correct go headers see also see | 1 |

440,940 | 12,706,526,986 | IssuesEvent | 2020-06-23 07:21:24 | AGROFIMS/hagrofims | https://api.github.com/repos/AGROFIMS/hagrofims | opened | Add more soil info in Site description | medium priority site information | Add a section to give info about soil tests done prior to the experiment start, in Site description. | 1.0 | Add more soil info in Site description - Add a section to give info about soil tests done prior to the experiment start, in Site description. | non_process | add more soil info in site description add a section to give info about soil tests done prior to the experiment start in site description | 0 |

25,359 | 18,538,771,420 | IssuesEvent | 2021-10-21 14:09:20 | pythonitalia/pycon | https://api.github.com/repos/pythonitalia/pycon | closed | Add a shared local .env file in the repo | infrastructure | So who wants to work on this project doesn't need to know what to put in a .env file | 1.0 | Add a shared local .env file in the repo - So who wants to work on this project doesn't need to know what to put in a .env file | non_process | add a shared local env file in the repo so who wants to work on this project doesn t need to know what to put in a env file | 0 |

230,922 | 18,724,840,948 | IssuesEvent | 2021-11-03 15:20:00 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: X-Pack Case API Integration Tests.x-pack/test/case_api_integration/spaces_only/tests/trial/cases/push_case·ts - cases spaces only enabled: trial push_case "after each" hook for "should push a case in space1" | loe:hours failed-test impact:low Team: SecuritySolution | A test failed on a tracked branch

```

Error: ECONNREFUSED: Connection refused

at Test.assert (/dev/shm/workspace/parallel/11/kibana/node_modules/supertest/lib/test.js:165:15)

at assert (/dev/shm/workspace/parallel/11/kibana/node_modules/supertest/lib/test.js:131:12)

at /dev/shm/workspace/parallel/11/kibana... | 1.0 | Failing test: X-Pack Case API Integration Tests.x-pack/test/case_api_integration/spaces_only/tests/trial/cases/push_case·ts - cases spaces only enabled: trial push_case "after each" hook for "should push a case in space1" - A test failed on a tracked branch

```

Error: ECONNREFUSED: Connection refused

at Test.asser... | non_process | failing test x pack case api integration tests x pack test case api integration spaces only tests trial cases push case·ts cases spaces only enabled trial push case after each hook for should push a case in a test failed on a tracked branch error econnrefused connection refused at test assert d... | 0 |

21,814 | 30,316,587,015 | IssuesEvent | 2023-07-10 15:58:25 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | closed | Change term - measurementUnit: use at least one SI example | Term - change Class - MeasurementOrFact non-normative Process - complete | ## Term change

* Submitter: @damianooldoni @peterdesmet

* Efficacy Justification (why is this change necessary?): The comments for `measurementUnit` state: "Recommended best practice is to use the International System of Units (SI)." However, none of the examples (`mm`, `C`, `km`, `ha`) are one of the 7 base [SI un... | 1.0 | Change term - measurementUnit: use at least one SI example - ## Term change

* Submitter: @damianooldoni @peterdesmet

* Efficacy Justification (why is this change necessary?): The comments for `measurementUnit` state: "Recommended best practice is to use the International System of Units (SI)." However, none of the ... | process | change term measurementunit use at least one si example term change submitter damianooldoni peterdesmet efficacy justification why is this change necessary the comments for measurementunit state recommended best practice is to use the international system of units si however none of the ... | 1 |

17,386 | 23,202,597,633 | IssuesEvent | 2022-08-01 23:41:20 | UMEP-dev/SuPy | https://api.github.com/repos/UMEP-dev/SuPy | closed | Timestep issue when running SuPy with 1min forcing data | pre-processing | When running SUEWS with input data at 60s resolution, the model timestep is set to 60s, and the output is set to 60s, the resulting output is given for every 30s. Same happens when running the model for a 5min timestep with input data at 5min resolution. The results are then given every 2.5min. The results of these hal... | 1.0 | Timestep issue when running SuPy with 1min forcing data - When running SUEWS with input data at 60s resolution, the model timestep is set to 60s, and the output is set to 60s, the resulting output is given for every 30s. Same happens when running the model for a 5min timestep with input data at 5min resolution. The res... | process | timestep issue when running supy with forcing data when running suews with input data at resolution the model timestep is set to and the output is set to the resulting output is given for every same happens when running the model for a timestep with input data at resolution the results are then giv... | 1 |

16,099 | 20,271,295,266 | IssuesEvent | 2022-02-15 16:25:02 | NationalSecurityAgency/ghidra | https://api.github.com/repos/NationalSecurityAgency/ghidra | closed | Question on 16-bit x86: STOSD.REP ES:EDI | Feature: Processor/x86 | The bytes ``66 f2 67 ab`` disassemble to STOSD.REP ES:EDI on 16-bit x86. The resulting Pcode mentions the registers EDI, EAX, ECX. Is this a bug? | 1.0 | Question on 16-bit x86: STOSD.REP ES:EDI - The bytes ``66 f2 67 ab`` disassemble to STOSD.REP ES:EDI on 16-bit x86. The resulting Pcode mentions the registers EDI, EAX, ECX. Is this a bug? | process | question on bit stosd rep es edi the bytes ab disassemble to stosd rep es edi on bit the resulting pcode mentions the registers edi eax ecx is this a bug | 1 |

67,188 | 27,748,517,924 | IssuesEvent | 2023-03-15 18:48:08 | BCDevOps/developer-experience | https://api.github.com/repos/BCDevOps/developer-experience | closed | SDN - Fix and add static KLAB2 STOR dhcpd include to GitHub repo | *team/ DXC* tech/automation *team/ ops and shared services* NSXT/SDN | **Describe the issue**

During the course of an emergency RFC involving DNS issues needing us to update dhcpd.conf on all our Openshift clusters, it was discovered that the unique situation of KLAB2 STOR network is not persistent, and thus when a refresh of KLAB's dhcpd.conf configuration takes place from regular IDIR ... | 1.0 | SDN - Fix and add static KLAB2 STOR dhcpd include to GitHub repo - **Describe the issue**

During the course of an emergency RFC involving DNS issues needing us to update dhcpd.conf on all our Openshift clusters, it was discovered that the unique situation of KLAB2 STOR network is not persistent, and thus when a refresh... | non_process | sdn fix and add static stor dhcpd include to github repo describe the issue during the course of an emergency rfc involving dns issues needing us to update dhcpd conf on all our openshift clusters it was discovered that the unique situation of stor network is not persistent and thus when a refresh of klab... | 0 |

10,782 | 13,608,980,336 | IssuesEvent | 2020-09-23 03:55:33 | googleapis/java-securitycenter | https://api.github.com/repos/googleapis/java-securitycenter | closed | Dependency Dashboard | api: securitycenter type: process | This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/org.apache.maven.plugins-maven-project-info-reports-plugin-3.x -->build(deps): update dependency org.apache... | 1.0 | Dependency Dashboard - This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/org.apache.maven.plugins-maven-project-info-reports-plugin-3.x -->build(deps): updat... | process | dependency dashboard this issue contains a list of renovate updates and their statuses open these updates have all been created already click a checkbox below to force a retry rebase of any build deps update dependency org apache maven plugins maven project info reports plugin to chore de... | 1 |

1,433 | 3,996,531,667 | IssuesEvent | 2016-05-10 19:05:35 | kerubistan/kerub | https://api.github.com/repos/kerubistan/kerub | opened | planner: learn from mistakes | component:data processing enhancement priority: normal | The planner should somehow keep track of the steps that passed and failed in the past. This information could be used to deprioritize steps that failed recently or often. | 1.0 | planner: learn from mistakes - The planner should somehow keep track of the steps that passed and failed in the past. This information could be used to deprioritize steps that failed recently or often. | process | planner learn from mistakes the planner should somehow keep track of the steps that passed and failed in the past this information could be used to deprioritize steps that failed recently or often | 1 |

148,570 | 13,239,857,280 | IssuesEvent | 2020-08-19 04:46:49 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | [Android] Release Notes for Android 1.12.x Release | OS/Android QA/No documentation :writing_hand: release-notes/exclude | - Added Sync v2. ([#10203](https://github.com/brave/brave-browser/issues/10203))

- Enabled the "prefetch-privacy-changes" flag by default under brave://flags. ([#8319](https://github.com/brave/brave-browser/issues/8319))

- Added support for state level ads delivery. ([#9200](https://github.com/brave/brave-browser/... | 1.0 | [Android] Release Notes for Android 1.12.x Release - - Added Sync v2. ([#10203](https://github.com/brave/brave-browser/issues/10203))

- Enabled the "prefetch-privacy-changes" flag by default under brave://flags. ([#8319](https://github.com/brave/brave-browser/issues/8319))

- Added support for state level ads deliv... | non_process | release notes for android x release added sync enabled the prefetch privacy changes flag by default under brave flags added support for state level ads delivery added the date of installation to the stats ping added farbling for webgl api when fingerprinting blo... | 0 |

4,256 | 7,189,055,998 | IssuesEvent | 2018-02-02 12:35:28 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | closed | chifra improvements | apps-chifra status-inprocess type-enhancement | Chifra should allow for hitting enter on the names and if empty then name the contracts simply by dao_1, dao-2 etc. Also first block should be non-empty, but if second is empty it should ask if you want to use same block for all--or better, alow to sepcify block on command line and if present don't do anyhting. | 1.0 | chifra improvements - Chifra should allow for hitting enter on the names and if empty then name the contracts simply by dao_1, dao-2 etc. Also first block should be non-empty, but if second is empty it should ask if you want to use same block for all--or better, alow to sepcify block on command line and if present don... | process | chifra improvements chifra should allow for hitting enter on the names and if empty then name the contracts simply by dao dao etc also first block should be non empty but if second is empty it should ask if you want to use same block for all or better alow to sepcify block on command line and if present don... | 1 |

4,756 | 5,258,750,651 | IssuesEvent | 2017-02-03 00:30:52 | gahansen/Albany | https://api.github.com/repos/gahansen/Albany | opened | Implement Teko preconditioner for matrix-free GMRES + Schwarz | Infrastructure LCM | This is needed to improve convergence for matrix-free GMRES + Schwarz (= monolithic Schwarz), and will require changes in Piro. I will need some help from Trilinos experts, e.g., @rppawlo , on how to hook this up, as it is nontrivial. | 1.0 | Implement Teko preconditioner for matrix-free GMRES + Schwarz - This is needed to improve convergence for matrix-free GMRES + Schwarz (= monolithic Schwarz), and will require changes in Piro. I will need some help from Trilinos experts, e.g., @rppawlo , on how to hook this up, as it is nontrivial. | non_process | implement teko preconditioner for matrix free gmres schwarz this is needed to improve convergence for matrix free gmres schwarz monolithic schwarz and will require changes in piro i will need some help from trilinos experts e g rppawlo on how to hook this up as it is nontrivial | 0 |

846 | 2,517,129,666 | IssuesEvent | 2015-01-16 12:01:27 | GoogleChrome/webrtc | https://api.github.com/repos/GoogleChrome/webrtc | opened | Make sure all manual-test/* files pass grunt | bug enhancement manual-test | Currently the manual-test folder is excluded from lint checking etc. Need to make sure all files adhere to the guidelines. | 1.0 | Make sure all manual-test/* files pass grunt - Currently the manual-test folder is excluded from lint checking etc. Need to make sure all files adhere to the guidelines. | non_process | make sure all manual test files pass grunt currently the manual test folder is excluded from lint checking etc need to make sure all files adhere to the guidelines | 0 |

18,612 | 24,579,236,192 | IssuesEvent | 2022-10-13 14:29:25 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Consent API] [iOS] Study resources screen > Participants should be able to view and download the data-sharing consent screenshot, in the mobile app | Bug P0 iOS Process: Fixed Process: Tested dev | **Description:**

Pre-condition: The study should be created in SB by enabling data sharing permission

**Steps:**

1. Install the mobile app

3. Sign in / Sign up

4. Enroll to the study

5. Navigate to 'Study Resources' screen and Verify

**AR:** Participants are not able to view and download the data-sharing co... | 2.0 | [Consent API] [iOS] Study resources screen > Participants should be able to view and download the data-sharing consent screenshot, in the mobile app - **Description:**

Pre-condition: The study should be created in SB by enabling data sharing permission

**Steps:**

1. Install the mobile app

3. Sign in / Sign up

4.... | process | study resources screen participants should be able to view and download the data sharing consent screenshot in the mobile app description pre condition the study should be created in sb by enabling data sharing permission steps install the mobile app sign in sign up enroll to the s... | 1 |

64,953 | 14,703,898,074 | IssuesEvent | 2021-01-04 15:42:24 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | [SECURITY SOLUTION] Timeline updates may not be saved | Feature:Timeline Team: SecuritySolution Team:Threat Hunting bug fixed impact:high v7.11.0 | Kibana version:

7.9.0

Elasticsearch version:

7.9.0

Describe the bug:

When you update a Timeline and then immediately close it or navigate to a different page, the Timeline might not be saved.

1. Navigate to Security > Timelines

2. Create a new timeline

3. Add a title

4. Change the order of Timeline colum... | True | [SECURITY SOLUTION] Timeline updates may not be saved - Kibana version:

7.9.0

Elasticsearch version:

7.9.0

Describe the bug:

When you update a Timeline and then immediately close it or navigate to a different page, the Timeline might not be saved.

1. Navigate to Security > Timelines

2. Create a new timelin... | non_process | timeline updates may not be saved kibana version elasticsearch version describe the bug when you update a timeline and then immediately close it or navigate to a different page the timeline might not be saved navigate to security timelines create a new timeline add a title ... | 0 |

13,137 | 15,556,871,970 | IssuesEvent | 2021-03-16 08:25:12 | ethereumclassic/ECIPs | https://api.github.com/repos/ethereumclassic/ECIPs | closed | Kevin's ECIP Editor Permissions | meta:1 governance meta:3 process | It looks like @developerkevin has ECIP Editor permissions, but is not listed in the ECIP-1000. Can we get that updated? | 1.0 | Kevin's ECIP Editor Permissions - It looks like @developerkevin has ECIP Editor permissions, but is not listed in the ECIP-1000. Can we get that updated? | process | kevin s ecip editor permissions it looks like developerkevin has ecip editor permissions but is not listed in the ecip can we get that updated | 1 |

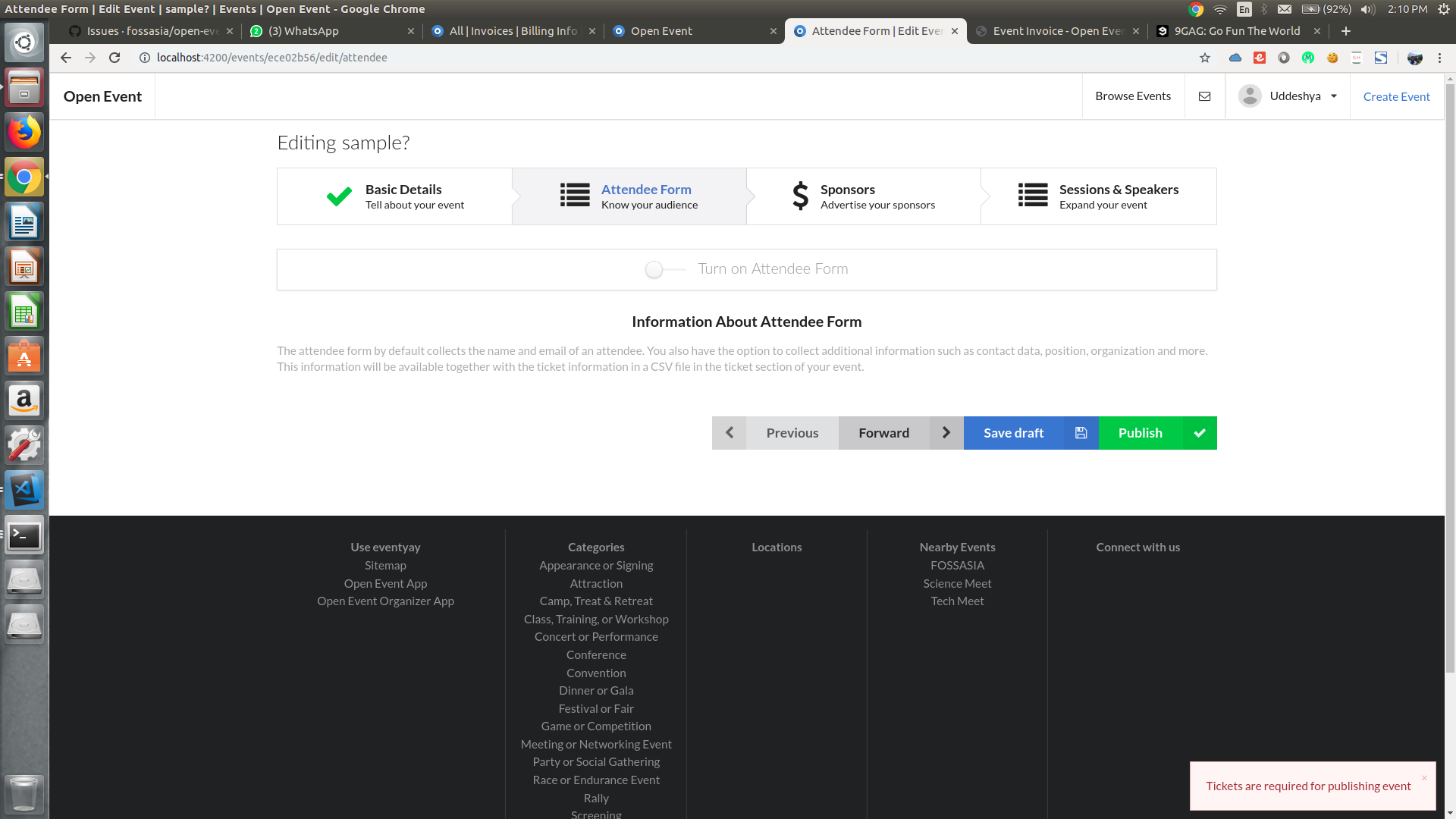

122,537 | 10,226,478,689 | IssuesEvent | 2019-08-16 17:56:28 | fossasia/open-event-frontend | https://api.github.com/repos/fossasia/open-event-frontend | closed | Tickets are required for publishing event error even when tickets are present | bug weekly-testing | **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

"Tickets are required for publishing event" is popping up even when tickets are present.

Error:

Tickets :

to yoshi-nodejs team. | type: process | Andrew is onboarding this week as a new Agendaless contractor for Node.js.

| 1.0 | Add Andrew Zammit (zamnuts) to yoshi-nodejs team. - Andrew is onboarding this week as a new Agendaless contractor for Node.js.

| process | add andrew zammit zamnuts to yoshi nodejs team andrew is onboarding this week as a new agendaless contractor for node js | 1 |

15,786 | 19,976,925,113 | IssuesEvent | 2022-01-29 08:20:19 | tushushu/ulist | https://api.github.com/repos/tushushu/ulist | closed | Implement apply method | data processing | Implement `apply` method like this:

```Python

>>> import ulist as ul

>>> arr = ul.arange(3)

>>> arr.apply(lambda x: x < 1)

UltraFastList([True, False, False])

``` | 1.0 | Implement apply method - Implement `apply` method like this:

```Python

>>> import ulist as ul

>>> arr = ul.arange(3)

>>> arr.apply(lambda x: x < 1)

UltraFastList([True, False, False])

``` | process | implement apply method implement apply method like this python import ulist as ul arr ul arange arr apply lambda x x ultrafastlist | 1 |

62,968 | 17,274,223,953 | IssuesEvent | 2021-07-23 02:24:24 | milvus-io/milvus-insight | https://api.github.com/repos/milvus-io/milvus-insight | opened | Auto id set true is not working when create collection | defect | **Describe the bug:**

Auto id set true is not working when create collection

**Steps to reproduce:**

1. create collection

2. set autoid true

3. it's always return false

**Milvus-insight version:**

latest

**Milvus version:**

| 1.0 | Auto id set true is not working when create collection - **Describe the bug:**

Auto id set true is not working when create collection

**Steps to reproduce:**

1. create collection

2. set autoid true

3. it's always return false

**Milvus-insight version:**

latest

**Milvus version:**

| non_process | auto id set true is not working when create collection describe the bug auto id set true is not working when create collection steps to reproduce create collection set autoid true it s always return false milvus insight version latest milvus version | 0 |

3,556 | 6,588,148,777 | IssuesEvent | 2017-09-14 01:07:49 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | child_process: fork() with shell is impossible? | child_process good first contribution windows | * **Version**: from v4. x up to v9.0

* **Platform**: Windows 7 x64

* **Subsystem**: child_process

Currently, [the doc](https://github.com/nodejs/node/blob/c02dcc7b5983b2925016194002b9bc9a1e9da6c4/doc/api/child_process.md#child_processforkmodulepath-args-options) says nothing if `fork()` is executed with shell, als... | 1.0 | child_process: fork() with shell is impossible? - * **Version**: from v4. x up to v9.0

* **Platform**: Windows 7 x64

* **Subsystem**: child_process

Currently, [the doc](https://github.com/nodejs/node/blob/c02dcc7b5983b2925016194002b9bc9a1e9da6c4/doc/api/child_process.md#child_processforkmodulepath-args-options) sa... | process | child process fork with shell is impossible version from x up to platform windows subsystem child process currently says nothing if fork is executed with shell also no shell option is mentioned however fork is based upon spawn and almost all the options a... | 1 |

290,551 | 21,884,903,960 | IssuesEvent | 2022-05-19 17:36:00 | hashicorp/terraform | https://api.github.com/repos/hashicorp/terraform | closed | Missing feature documentation: precondition and postcondition check blocks | bug documentation confirmed | <!--

Hi there,

Thank you for opening an issue. Please note that we try to keep the Terraform issue tracker reserved for bug reports and feature requests. For general usage questions, please see: https://www.terraform.io/community.html.

For feature requests concerning Terraform Cloud/Enterprise, please contact tf... | 1.0 | Missing feature documentation: precondition and postcondition check blocks - <!--

Hi there,

Thank you for opening an issue. Please note that we try to keep the Terraform issue tracker reserved for bug reports and feature requests. For general usage questions, please see: https://www.terraform.io/community.html.

... | non_process | missing feature documentation precondition and postcondition check blocks hi there thank you for opening an issue please note that we try to keep the terraform issue tracker reserved for bug reports and feature requests for general usage questions please see for feature requests concerning terrafo... | 0 |

15,854 | 20,032,969,290 | IssuesEvent | 2022-02-02 08:50:34 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | migrate diff: Provide a reliable way to detect empty diffs / migrations | process/candidate kind/improvement team/migrations topic: diff | ## Problem

Diff returns the difference between two schemas. While often, you assume that there is a difference, and you are interested in the content, an obvious extension of this functionality is to detect _whether two schemas are the same_.

Analogous to text diffs like `git diff`, `migrated diff` can tell us if... | 1.0 | migrate diff: Provide a reliable way to detect empty diffs / migrations - ## Problem

Diff returns the difference between two schemas. While often, you assume that there is a difference, and you are interested in the content, an obvious extension of this functionality is to detect _whether two schemas are the same_.

... | process | migrate diff provide a reliable way to detect empty diffs migrations problem diff returns the difference between two schemas while often you assume that there is a difference and you are interested in the content an obvious extension of this functionality is to detect whether two schemas are the same ... | 1 |

8,122 | 11,303,388,620 | IssuesEvent | 2020-01-17 19:59:24 | nlpie/mtap | https://api.github.com/repos/nlpie/mtap | opened | Race condition for event cleanup in multithreaded pipeline | area/framework/processing kind/bug lang/python | The done callback where event cleanup is done is set after the finished state and result are set on the task.

This potentially leads to a race condition:

```

Thread 1 submit -> wait for finished -> finished -> multiprocess returns, close pipeline client

Thread 2 work -> finished

Thread 3 ... | 1.0 | Race condition for event cleanup in multithreaded pipeline - The done callback where event cleanup is done is set after the finished state and result are set on the task.

This potentially leads to a race condition:

```

Thread 1 submit -> wait for finished -> finished -> multiprocess returns, close pipeline clien... | process | race condition for event cleanup in multithreaded pipeline the done callback where event cleanup is done is set after the finished state and result are set on the task this potentially leads to a race condition thread submit wait for finished finished multiprocess returns close pipeline clien... | 1 |

61,405 | 7,467,250,759 | IssuesEvent | 2018-04-02 14:37:25 | EvictionLab/eviction-maps | https://api.github.com/repos/EvictionLab/eviction-maps | closed | Flag Maryland and 99th percentile data | design needed feature high priority | Need to implement a way to flag high eviction rates (in the 99th percentile), and also flag when a user is looking at Maryland.

I'm thinking we could have a dismissible (but not auto dismissible) toast message that shows when they activate a location in the 99th percentile, OR when the map bounds are within a Maryla... | 1.0 | Flag Maryland and 99th percentile data - Need to implement a way to flag high eviction rates (in the 99th percentile), and also flag when a user is looking at Maryland.

I'm thinking we could have a dismissible (but not auto dismissible) toast message that shows when they activate a location in the 99th percentile, O... | non_process | flag maryland and percentile data need to implement a way to flag high eviction rates in the percentile and also flag when a user is looking at maryland i m thinking we could have a dismissible but not auto dismissible toast message that shows when they activate a location in the percentile or when th... | 0 |

13,829 | 16,592,427,193 | IssuesEvent | 2021-06-01 09:20:05 | hashicorp/packer-plugin-vagrant | https://api.github.com/repos/hashicorp/packer-plugin-vagrant | opened | [vagrant-cloud post-processor] Add option to overwrite existing version | enhancement post-processor/vagrant-cloud | _This issue was originally opened by @adriananeci as hashicorp/packer#9492. It was migrated here as a result of the [Packer plugin split](https://github.com/hashicorp/packer/issues/8610#issuecomment-770034737). The original body of the issue is below._

<hr>

#### Feature Description

When using vagrant-cloud post-proc... | 1.0 | [vagrant-cloud post-processor] Add option to overwrite existing version - _This issue was originally opened by @adriananeci as hashicorp/packer#9492. It was migrated here as a result of the [Packer plugin split](https://github.com/hashicorp/packer/issues/8610#issuecomment-770034737). The original body of the issue is b... | process | add option to overwrite existing version this issue was originally opened by adriananeci as hashicorp packer it was migrated here as a result of the the original body of the issue is below feature description when using vagrant cloud post processor to upload a freshly generated box into vagrant... | 1 |

17,817 | 23,741,281,646 | IssuesEvent | 2022-08-31 12:39:46 | km4ack/patmenu2 | https://api.github.com/repos/km4ack/patmenu2 | closed | echo statement incorrect. Should read "VARA HF" | in process | This [line](https://github.com/km4ack/patmenu2/blob/master/start-vara-hf#L101) is incorrect. It should read "VARA HF" | 1.0 | echo statement incorrect. Should read "VARA HF" - This [line](https://github.com/km4ack/patmenu2/blob/master/start-vara-hf#L101) is incorrect. It should read "VARA HF" | process | echo statement incorrect should read vara hf this is incorrect it should read vara hf | 1 |

5,206 | 7,977,681,616 | IssuesEvent | 2018-07-17 15:57:51 | harvard-lil/h2o | https://api.github.com/repos/harvard-lil/h2o | closed | Transition from old H2O to new H2O | Process | - [x] Identify and inform all known faculty authors of transition to new H2O

- [x] Make old H2O read-only

- [x] Modify old H2O template to inform users to contact us if they have not already been contacted about casebook transition

- [x] Get 'final' database dump from old H2O

- [x] Migrate selected data to new H2O ... | 1.0 | Transition from old H2O to new H2O - - [x] Identify and inform all known faculty authors of transition to new H2O

- [x] Make old H2O read-only

- [x] Modify old H2O template to inform users to contact us if they have not already been contacted about casebook transition

- [x] Get 'final' database dump from old H2O

- ... | process | transition from old to new identify and inform all known faculty authors of transition to new make old read only modify old template to inform users to contact us if they have not already been contacted about casebook transition get final database dump from old migrate selected d... | 1 |

22,200 | 30,757,975,141 | IssuesEvent | 2023-07-29 10:03:12 | bitfocus/companion-module-requests | https://api.github.com/repos/bitfocus/companion-module-requests | opened | AVGear AVG-IP410 | NOT YET PROCESSED | - [ ] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control:

AVGear AVG-IP410

What you would like to be able to make it do from Companion:

Control of power outputs with... | 1.0 | AVGear AVG-IP410 - - [ ] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control:

AVGear AVG-IP410

What you would like to be able to make it do from Companion:

Control of... | process | avgear avg i have researched the list of existing companion modules and requests and have determined this has not yet been requested the name of the device hardware or software you would like to control avgear avg what you would like to be able to make it do from companion control of power out... | 1 |

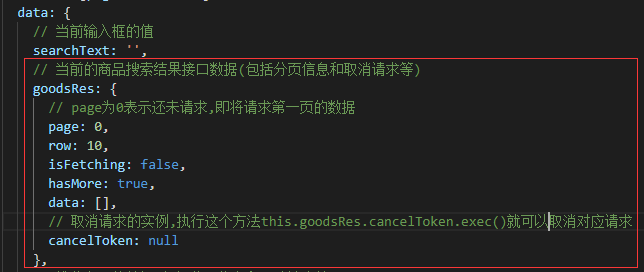

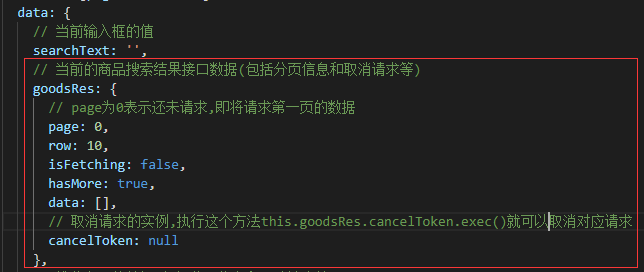

7,401 | 10,523,139,736 | IssuesEvent | 2019-09-30 10:16:49 | didi/mpx | https://api.github.com/repos/didi/mpx | closed | cancelToken.exec()方法导致的catch语句在下一个请求发出后才执行,期望是在下一个请求发出前执行 | processing | **场景:**

按下搜索按钮进行商品搜索,并显示搜索出来的商品列表

为了避免上次搜索请求还没返回结果,故在进行搜索前先取消上次搜索

**关键代码和步骤:**

_储存商品搜索结果对象的定义:_

_关键步骤:_

方法导致的catch语句在下一个请求发出后才执行,期望是在下一个请求发出前执行 - **场景:**

按下搜索按钮进行商品搜索,并显示搜索出来的商品列表

为了避免上次搜索请求还没返回结果,故在进行搜索前先取消上次搜索

**关键代码和步骤:**

_储存商品搜索结果对象的定义:_

_关键步骤:_

on August 22, 2014 11:48:20_

What capability do you want added or improved?

I want to improve the display of the ontology and metadata (see also issue #146 and issue #327 ), particularly values shown within the table. First prior... | 1.0 | Nicer LOD-friendly display of content on ontology page - _From [jbgrayb...@mindspring.com](https://code.google.com/u/106719590578390745654/) on August 22, 2014 11:48:20_

What capability do you want added or improved?

I want to improve the display of the ontology and metadata (see also issue #146 and issue #327 )... | non_process | nicer lod friendly display of content on ontology page from on august what capability do you want added or improved i want to improve the display of the ontology and metadata see also issue and issue particularly values shown within the table first priority is to provide auto recog... | 0 |

172,157 | 21,040,448,283 | IssuesEvent | 2022-03-31 11:50:40 | Tim-sandbox/WebGoat | https://api.github.com/repos/Tim-sandbox/WebGoat | opened | WS-2022-0107 (High) detected in spring-beans-5.3.4.jar | security vulnerability | ## WS-2022-0107 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-beans-5.3.4.jar</b></p></summary>

<p>Spring Beans</p>

<p>Library home page: <a href="https://github.com/spring-pr... | True | WS-2022-0107 (High) detected in spring-beans-5.3.4.jar - ## WS-2022-0107 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-beans-5.3.4.jar</b></p></summary>

<p>Spring Beans</p>

<p... | non_process | ws high detected in spring beans jar ws high severity vulnerability vulnerable library spring beans jar spring beans library home page a href path to dependency file webgoat integration tests pom xml path to vulnerable library home wss scanner repository org spr... | 0 |

537 | 3,000,558,782 | IssuesEvent | 2015-07-24 02:59:28 | HazyResearch/dd-genomics | https://api.github.com/repos/HazyResearch/dd-genomics | closed | Switch to faster extractors? | Candidate extraction Preprocessing | We are currently using the `tsv_extractor`. Should we switch to e.g. `plpy` or `piggy` extractor?

@netj any comments on what best practices are for this right now?

Also, more minor: right now we pre-convert postgres arrays to strings (`sentences` -> `sentences_input`) is this actually saving any time? Would be ... | 1.0 | Switch to faster extractors? - We are currently using the `tsv_extractor`. Should we switch to e.g. `plpy` or `piggy` extractor?

@netj any comments on what best practices are for this right now?

Also, more minor: right now we pre-convert postgres arrays to strings (`sentences` -> `sentences_input`) is this actua... | process | switch to faster extractors we are currently using the tsv extractor should we switch to e g plpy or piggy extractor netj any comments on what best practices are for this right now also more minor right now we pre convert postgres arrays to strings sentences sentences input is this actua... | 1 |

295,018 | 9,064,548,389 | IssuesEvent | 2019-02-14 01:32:41 | OpenRTM/OpenRTM-aist-Java | https://api.github.com/repos/OpenRTM/OpenRTM-aist-Java | closed | Add the way to fill checkbox in pull request template | enhancement priority : Low | **Is your feature request related to a problem? Please describe.**

pull request テンプレートにはチェックボックスがあるが、

チェックの記入方法が記載されていないのでチェック方法がわからない。

**Describe the solution you'd like**

テンプレートにチェック方法の記載を追加する

**Describe alternatives you've considered**

仮チェックを入れたチェックボックスをテンプレートに追加する

**Additional context**

なし | 1.0 | Add the way to fill checkbox in pull request template - **Is your feature request related to a problem? Please describe.**

pull request テンプレートにはチェックボックスがあるが、

チェックの記入方法が記載されていないのでチェック方法がわからない。

**Describe the solution you'd like**

テンプレートにチェック方法の記載を追加する

**Describe alternatives you've considered**

仮チェックを入れたチェックボッ... | non_process | add the way to fill checkbox in pull request template is your feature request related to a problem please describe pull request テンプレートにはチェックボックスがあるが、 チェックの記入方法が記載されていないのでチェック方法がわからない。 describe the solution you d like テンプレートにチェック方法の記載を追加する describe alternatives you ve considered 仮チェックを入れたチェックボッ... | 0 |

208,963 | 23,671,334,807 | IssuesEvent | 2022-08-27 12:00:59 | Soontao/miniflux | https://api.github.com/repos/Soontao/miniflux | reopened | github.com/mitchellh/go-server-timing-v1.0.0: 12 vulnerabilities (highest severity is: 7.5) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>github.com/mitchellh/go-server-timing-v1.0.0</b></p></summary>

<p></p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/Soontao/miniflux/commit/63bbc979c4f3... | True | github.com/mitchellh/go-server-timing-v1.0.0: 12 vulnerabilities (highest severity is: 7.5) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>github.com/mitchellh/go-server-timing-v1.0.0</b></p></summary>

<p></p>

<... | non_process | github com mitchellh go server timing vulnerabilities highest severity is vulnerable library github com mitchellh go server timing found in head commit a href vulnerabilities cve severity cvss dependency type fixed in remediation available ... | 0 |

3,219 | 6,278,429,939 | IssuesEvent | 2017-07-18 14:22:04 | syndesisio/syndesis-ui | https://api.github.com/repos/syndesisio/syndesis-ui | closed | yarn warnings - do we need to fix it? | bug dev process | ```bash

yarn

yarn install v0.21.3

[1/5] Resolving packages...

[2/5] Fetching packages...

# -------------------------------------------- guessing this one is ok

warning fsevents@1.1.1: The platform "linux" is incompatible with this module.

info "fsevents@1.1.1" is an optional dependency and failed compatibility c... | 1.0 | yarn warnings - do we need to fix it? - ```bash

yarn

yarn install v0.21.3

[1/5] Resolving packages...

[2/5] Fetching packages...

# -------------------------------------------- guessing this one is ok

warning fsevents@1.1.1: The platform "linux" is incompatible with this module.

info "fsevents@1.1.1" is an option... | process | yarn warnings do we need to fix it bash yarn yarn install resolving packages fetching packages guessing this one is ok warning fsevents the platform linux is incompatible with this module info fsevents is an optional depende... | 1 |

24,535 | 4,006,832,501 | IssuesEvent | 2016-05-12 16:04:12 | Supmenow/sup-issues | https://api.github.com/repos/Supmenow/sup-issues | closed | My friends disappear when I hide | defect | When I click show it takes time for them to come back.

Friends are not in sync with the hide button. I can sometimes see friends in dark mode and not in friends mode. | 1.0 | My friends disappear when I hide - When I click show it takes time for them to come back.

Friends are not in sync with the hide button. I can sometimes see friends in dark mode and not in friends mode. | non_process | my friends disappear when i hide when i click show it takes time for them to come back friends are not in sync with the hide button i can sometimes see friends in dark mode and not in friends mode | 0 |

2,848 | 5,809,457,475 | IssuesEvent | 2017-05-04 13:28:10 | P0cL4bs/WiFi-Pumpkin | https://api.github.com/repos/P0cL4bs/WiFi-Pumpkin | closed | 2 wireless cards for WiFi-Pumpkin [Kali Linux] | enhancement in process | Running WiFi-Pumpkin in Kali the problem is the known 2 card error, it doesn't want to use my internal card as an internet connection provider.The program that i found that manages to do exactly this is called mitmAP but it's missing some key features can its connection setup be added to WiFi-Pumpkin?

| 1.0 | 2 wireless cards for WiFi-Pumpkin [Kali Linux] - Running WiFi-Pumpkin in Kali the problem is the known 2 card error, it doesn't want to use my internal card as an internet connection provider.The program that i found that manages to do exactly this is called mitmAP but it's missing some key features can its connection ... | process | wireless cards for wifi pumpkin running wifi pumpkin in kali the problem is the known card error it doesn t want to use my internal card as an internet connection provider the program that i found that manages to do exactly this is called mitmap but it s missing some key features can its connection setup be ad... | 1 |

17,867 | 5,522,722,210 | IssuesEvent | 2017-03-20 01:42:13 | wallabyjs/public | https://api.github.com/repos/wallabyjs/public | closed | Wallaby UI elements not showing up in VSCode (e.g. code coverage indicators, footer test icon, etc.) | VS Code | ### Issue description or question

Wallaby UI elements are not showing up in VSCode (e.g. code coverage indicators, footer test icon, etc.). I can see in the VScode wallaby output that the tests are in fact running, but I just see no indication that they are in the vscode IDE.

I can also successfully navigate to the... | 1.0 | Wallaby UI elements not showing up in VSCode (e.g. code coverage indicators, footer test icon, etc.) - ### Issue description or question

Wallaby UI elements are not showing up in VSCode (e.g. code coverage indicators, footer test icon, etc.). I can see in the VScode wallaby output that the tests are in fact running, b... | non_process | wallaby ui elements not showing up in vscode e g code coverage indicators footer test icon etc issue description or question wallaby ui elements are not showing up in vscode e g code coverage indicators footer test icon etc i can see in the vscode wallaby output that the tests are in fact running b... | 0 |

145,391 | 5,575,047,132 | IssuesEvent | 2017-03-28 00:17:03 | SIMRacingApps/SIMRacingApps | https://api.github.com/repos/SIMRacingApps/SIMRacingApps | closed | Teamspeak 3.1.3 Issue | enhancement high priority | Teamspeak app no longer works with today's Teamspeak update 3.13. I get the following error:

`20170324144302.759: WARNING: TeamSpeak: _getClientList(): returned error 1796, currently not possible: com.SIMRacingApps.TeamSpeak._getClientList(TeamSpeak.java:320)[TeamSpeak.Listener]` | 1.0 | Teamspeak 3.1.3 Issue - Teamspeak app no longer works with today's Teamspeak update 3.13. I get the following error:

`20170324144302.759: WARNING: TeamSpeak: _getClientList(): returned error 1796, currently not possible: com.SIMRacingApps.TeamSpeak._getClientList(TeamSpeak.java:320)[TeamSpeak.Listener]` | non_process | teamspeak issue teamspeak app no longer works with today s teamspeak update i get the following error warning teamspeak getclientlist returned error currently not possible com simracingapps teamspeak getclientlist teamspeak java | 0 |

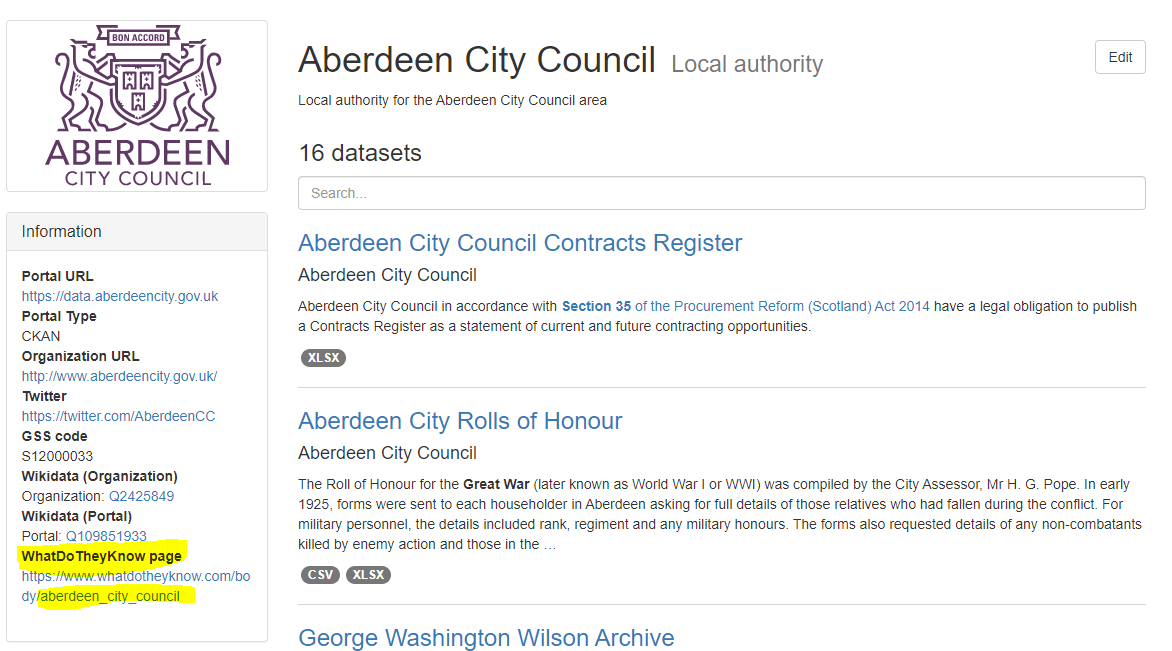

19,449 | 25,730,480,296 | IssuesEvent | 2022-12-07 19:53:59 | OpenDataScotland/the_od_bods | https://api.github.com/repos/OpenDataScotland/the_od_bods | closed | Populate WhatDoTheyKnow IDs | good first issue data processing |

We added a new field to orgs for WhatDoTheyKnow IDs so we can link to to their list of FOI requests published on https://www.whatdotheyknow.com/. However, not all orgs have this value populated. This could... | 1.0 | Populate WhatDoTheyKnow IDs -

We added a new field to orgs for WhatDoTheyKnow IDs so we can link to to their list of FOI requests published on https://www.whatdotheyknow.com/. However, not all orgs have th... | process | populate whatdotheyknow ids we added a new field to orgs for whatdotheyknow ids so we can link to to their list of foi requests published on however not all orgs have this value populated this could be a good exercise for someone to go through all the orgs in opendata scot and populate them accordingly ... | 1 |

7,052 | 10,210,877,807 | IssuesEvent | 2019-08-14 15:39:01 | neuropoly/spinalcordtoolbox | https://api.github.com/repos/neuropoly/spinalcordtoolbox | closed | Wrong slice information on QC output | bug good first issue sct_process_segmentation | The QC plot of `sct_process_segmentation` gives the wrong slice number.

To reproduce the issue, run:

~~~

sct_download_data -d sct_testing_data

cd sct_testing_data/data/t2

sct_process_segmentation -i t2_seg_manual.nii.gz -z 2:5 -perslice 1 -qc qc-test

~~~

The output QC looks like this:

| 1.0 | Setup Entity Framework - Use the following tutorial for reference to configure Entity Framework.

[Tutorial: Get started with EF Core in an ASP.NET MVC web app](https://docs.microsoft.com/en-us/aspnet/core/data/ef-mvc/intro?view=aspnetcore-3.1) | process | setup entity framework use the following tutorial for reference to configure entity framework | 1 |

10,848 | 13,628,209,609 | IssuesEvent | 2020-09-24 13:37:59 | Open-EO/openeo-processes | https://api.github.com/repos/Open-EO/openeo-processes | closed | Establish proposal procedure | chore help wanted new process question | With new processes coming in, it's sometimes not easy to decide whether to make them core and release them as part of the "stable contract" or not. Currently an example is #192, which seems like a useful process, but alternatives are also discussed. Nevertheless, an openEO partner wants such a process and thus it's goo... | 1.0 | Establish proposal procedure - With new processes coming in, it's sometimes not easy to decide whether to make them core and release them as part of the "stable contract" or not. Currently an example is #192, which seems like a useful process, but alternatives are also discussed. Nevertheless, an openEO partner wants s... | process | establish proposal procedure with new processes coming in it s sometimes not easy to decide whether to make them core and release them as part of the stable contract or not currently an example is which seems like a useful process but alternatives are also discussed nevertheless an openeo partner wants suc... | 1 |

9,484 | 12,477,988,790 | IssuesEvent | 2020-05-29 15:51:32 | Devnilson/fisima | https://api.github.com/repos/Devnilson/fisima | closed | Add editorconfig | process | # Editor configuration, see https://editorconfig.org/

root = true

[*]

charset = utf-8

indent_style = space

indent_size = 2

insert_final_newline = true

trim_trailing_whitespace = true

[*.md]

max_line_length = off

trim_trailing_whitespace = false | 1.0 | Add editorconfig - # Editor configuration, see https://editorconfig.org/

root = true

[*]

charset = utf-8

indent_style = space

indent_size = 2

insert_final_newline = true

trim_trailing_whitespace = true

[*.md]

max_line_length = off

trim_trailing_whitespace = false | process | add editorconfig editor configuration see root true charset utf indent style space indent size insert final newline true trim trailing whitespace true max line length off trim trailing whitespace false | 1 |

169,050 | 20,828,013,700 | IssuesEvent | 2022-03-19 01:18:06 | RG4421/modular | https://api.github.com/repos/RG4421/modular | opened | CVE-2021-44906 (Medium) detected in minimist-1.2.5.tgz | security vulnerability | ## CVE-2021-44906 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>minimist-1.2.5.tgz</b></p></summary>

<p>parse argument options</p>

<p>Library home page: <a href="https://registry.n... | True | CVE-2021-44906 (Medium) detected in minimist-1.2.5.tgz - ## CVE-2021-44906 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>minimist-1.2.5.tgz</b></p></summary>

<p>parse argument opti... | non_process | cve medium detected in minimist tgz cve medium severity vulnerability vulnerable library minimist tgz parse argument options library home page a href path to dependency file package json path to vulnerable library node modules minimist dependency hierarchy ... | 0 |

105,380 | 11,449,874,492 | IssuesEvent | 2020-02-06 08:23:20 | Azure/azure-cli | https://api.github.com/repos/Azure/azure-cli | closed | Shouldn't the CLI extensions have an Install section? | Documentation Extensions | Hi guys!

reading the documentation it's not clear that a "manual " installation is needed before using the commands. Therefore I miss a section where the install command is shown, something like:

**To enable the extension run:**

`az extension add --name subscription`

What are your thoughts on this?

Also ... | 1.0 | Shouldn't the CLI extensions have an Install section? - Hi guys!

reading the documentation it's not clear that a "manual " installation is needed before using the commands. Therefore I miss a section where the install command is shown, something like:

**To enable the extension run:**

`az extension add --name su... | non_process | shouldn t the cli extensions have an install section hi guys reading the documentation it s not clear that a manual installation is needed before using the commands therefore i miss a section where the install command is shown something like to enable the extension run az extension add name su... | 0 |

648,753 | 21,193,316,492 | IssuesEvent | 2022-04-08 20:12:50 | googleapis/python-bigtable | https://api.github.com/repos/googleapis/python-bigtable | closed | tests.system.test_instance_admin: test_cluster_create failed | api: bigtable type: bug priority: p2 flakybot: issue flakybot: flaky | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: b9ecfa97281ae21dcf233e60c70cacc701f12c32

b... | 1.0 | tests.system.test_instance_admin: test_cluster_create failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop comme... | non_process | tests system test instance admin test cluster create failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output target functools partial predi... | 0 |

5,881 | 32,007,379,014 | IssuesEvent | 2023-09-21 15:37:02 | centerofci/mathesar-website | https://api.github.com/repos/centerofci/mathesar-website | closed | Add Survey Banner to Website | restricted: maintainers status: draft type: enhancement work: frontend work: product | To gather feedback and understand our users' needs, we're introducing a user survey. The goal is to embed a survey banner on the Mathesar website to facilitate feedback collection, allowing us to improve our product based on user insights.

- The banner should be fixed at the top of the website.

- It should be visi... | True | Add Survey Banner to Website - To gather feedback and understand our users' needs, we're introducing a user survey. The goal is to embed a survey banner on the Mathesar website to facilitate feedback collection, allowing us to improve our product based on user insights.

- The banner should be fixed at the top of the... | non_process | add survey banner to website to gather feedback and understand our users needs we re introducing a user survey the goal is to embed a survey banner on the mathesar website to facilitate feedback collection allowing us to improve our product based on user insights the banner should be fixed at the top of the... | 0 |

135,191 | 30,259,811,387 | IssuesEvent | 2023-07-07 07:18:25 | JuliaLang/julia | https://api.github.com/repos/JuliaLang/julia | opened | `ssertion `!ctx.ssavalue_assigned.at(ssaidx_0based)' failed.` when testing some packages | regression codegen | When testing the packages

"LsqFit"

"Polyhedra"

"PowerModelsRestoration"

"SinusoidalRegressions"

"EasyFit

with an assert build they error with:

```

julia: /source/src/codegen.cpp:5042: void emit_ssaval_assign(jl_codectx_t&, ssize_t, jl_value_t*): Assertion `!ctx.ssavalue_assigned.at(ssaidx_0based)' fa... | 1.0 | `ssertion `!ctx.ssavalue_assigned.at(ssaidx_0based)' failed.` when testing some packages - When testing the packages

"LsqFit"

"Polyhedra"

"PowerModelsRestoration"

"SinusoidalRegressions"

"EasyFit

with an assert build they error with:

```

julia: /source/src/codegen.cpp:5042: void emit_ssaval_assign(jl... | non_process | ssertion ctx ssavalue assigned at ssaidx failed when testing some packages when testing the packages lsqfit polyhedra powermodelsrestoration sinusoidalregressions easyfit with an assert build they error with julia source src codegen cpp void emit ssaval assign jl codectx... | 0 |

10,908 | 13,686,525,475 | IssuesEvent | 2020-09-30 08:49:38 | prisma/prisma-engines | https://api.github.com/repos/prisma/prisma-engines | closed | All field attributes except relation should work with native types | engines/data model parser process/candidate team/engines | related https://github.com/prisma/prisma-engines/issues/1160

They all error if they are being used with arguments. | 1.0 | All field attributes except relation should work with native types - related https://github.com/prisma/prisma-engines/issues/1160

They all error if they are being used with arguments. | process | all field attributes except relation should work with native types related they all error if they are being used with arguments | 1 |