Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

12,030 | 14,738,593,766 | IssuesEvent | 2021-01-07 05:12:23 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Terminated Accounts Generating Holiday Charge | anc-ops anc-process anp-important ant-bug ant-support | In GitLab by @kdjstudios on Jun 25, 2018, 15:23

**Submitted by:** "Tobey McInally" <tobey.mcinally@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-06-25-26343/conversation

**Server:** Internal (Both)

**Client/Site:** Allentown

**Account:** 4411

**Issue:**

I noticed in Al... | 1.0 | Terminated Accounts Generating Holiday Charge - In GitLab by @kdjstudios on Jun 25, 2018, 15:23

**Submitted by:** "Tobey McInally" <tobey.mcinally@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-06-25-26343/conversation

**Server:** Internal (Both)

**Client/Site:** Allentown

... | process | terminated accounts generating holiday charge in gitlab by kdjstudios on jun submitted by tobey mcinally helpdesk server internal both client site allentown account issue i noticed in allentown’s billing that our terminated accounts have drafted holiday cha... | 1 |

253,256 | 21,671,684,103 | IssuesEvent | 2022-05-08 03:24:51 | ossf/scorecard-action | https://api.github.com/repos/ossf/scorecard-action | opened | Failed to run e2e test-organization-ls/scorecard-action-private-repo-tests | e2e automated-tests | Repo: https://github.com/test-organization-ls/scorecard-action-private-repo-tests/tree/main \n Run: https://github.com/test-organization-ls/scorecard-action-private-repo-tests/actions/runs/2288360610 \n Workflow name: Scorecards-golang \n Workflow file: https://github.com/test-organization-ls/scorecard-action-private-r... | 1.0 | Failed to run e2e test-organization-ls/scorecard-action-private-repo-tests - Repo: https://github.com/test-organization-ls/scorecard-action-private-repo-tests/tree/main \n Run: https://github.com/test-organization-ls/scorecard-action-private-repo-tests/actions/runs/2288360610 \n Workflow name: Scorecards-golang \n Work... | non_process | failed to run test organization ls scorecard action private repo tests repo n run n workflow name scorecards golang n workflow file n trigger schedule n branch main n date sun may utc | 0 |

522,182 | 15,158,119,009 | IssuesEvent | 2021-02-12 00:22:35 | NOAA-GSL/MATS | https://api.github.com/repos/NOAA-GSL/MATS | closed | The python JSON encoder can't handle NaNs | Priority: Medium Project: MATS Status: Closed Type: Bug | ---

Author Name: **molly.b.smith** (@mollybsmith-noaa)

Original Redmine Issue: 60863, https://vlab.ncep.noaa.gov/redmine/issues/60863

Original Date: 2019-03-01

Original Assignee: molly.b.smith

---

Python's JSON module dies if you ask it to JSONify a numpy NaN, for some reason, which kills the whole python query scr... | 1.0 | The python JSON encoder can't handle NaNs - ---

Author Name: **molly.b.smith** (@mollybsmith-noaa)

Original Redmine Issue: 60863, https://vlab.ncep.noaa.gov/redmine/issues/60863

Original Date: 2019-03-01

Original Assignee: molly.b.smith

---

Python's JSON module dies if you ask it to JSONify a numpy NaN, for some re... | non_process | the python json encoder can t handle nans author name molly b smith mollybsmith noaa original redmine issue original date original assignee molly b smith python s json module dies if you ask it to jsonify a numpy nan for some reason which kills the whole python query script in mete... | 0 |

34,541 | 12,292,565,931 | IssuesEvent | 2020-05-10 15:12:21 | GHPReporter/GHPReporter.github.io | https://api.github.com/repos/GHPReporter/GHPReporter.github.io | opened | CVE-2018-14042 (Medium) detected in bootstrap-3.3.6.min.js, bootstrap-3.3.6.js | security vulnerability | ## CVE-2018-14042 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>bootstrap-3.3.6.min.js</b>, <b>bootstrap-3.3.6.js</b></p></summary>

<p>

<details><summary><b>bootstrap-3.3.6.min.j... | True | CVE-2018-14042 (Medium) detected in bootstrap-3.3.6.min.js, bootstrap-3.3.6.js - ## CVE-2018-14042 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>bootstrap-3.3.6.min.js</b>, <b>boo... | non_process | cve medium detected in bootstrap min js bootstrap js cve medium severity vulnerability vulnerable libraries bootstrap min js bootstrap js bootstrap min js the most popular front end framework for developing responsive mobile first projects on the ... | 0 |

1,828 | 4,613,605,535 | IssuesEvent | 2016-09-25 03:46:23 | EBrown8534/StackExchangeStatisticsExplorer | https://api.github.com/repos/EBrown8534/StackExchangeStatisticsExplorer | closed | Add Site Comparisons | enhancement in process | Add a page where two (perhaps more) sites can be compared across all metrics side-by-side. | 1.0 | Add Site Comparisons - Add a page where two (perhaps more) sites can be compared across all metrics side-by-side. | process | add site comparisons add a page where two perhaps more sites can be compared across all metrics side by side | 1 |

9,576 | 12,530,567,632 | IssuesEvent | 2020-06-04 13:17:18 | GoogleCloudPlatform/dotnet-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/dotnet-docs-samples | closed | Bigtable: TestListTables is timing out in CI | api: bigtable priority: p1 type: process | After 10 minutes.

I've skipped it in #1001 but it should be looked at.

@billyjacobson assigning to you because you have commited to the Bigtable samples more recently. | 1.0 | Bigtable: TestListTables is timing out in CI - After 10 minutes.

I've skipped it in #1001 but it should be looked at.

@billyjacobson assigning to you because you have commited to the Bigtable samples more recently. | process | bigtable testlisttables is timing out in ci after minutes i ve skipped it in but it should be looked at billyjacobson assigning to you because you have commited to the bigtable samples more recently | 1 |

5,514 | 8,379,150,914 | IssuesEvent | 2018-10-06 21:44:00 | carloseduardov8/Viajato | https://api.github.com/repos/carloseduardov8/Viajato | closed | Corrigir diagrama de classes | Priority:Very High Process:Create/Update Class Diagram | Altera diagrama de classes em função do novo modelo de entidades feito no JHipster, removendo alguns elementos | 1.0 | Corrigir diagrama de classes - Altera diagrama de classes em função do novo modelo de entidades feito no JHipster, removendo alguns elementos | process | corrigir diagrama de classes altera diagrama de classes em função do novo modelo de entidades feito no jhipster removendo alguns elementos | 1 |

15,141 | 18,893,982,709 | IssuesEvent | 2021-11-15 15:57:21 | beer-garden/beer-garden | https://api.github.com/repos/beer-garden/beer-garden | closed | Remedy release process deficiencies | release process | Two specific issues were encountered while preparing the last release:

1. Beer-garden's `setup.py` has dependency versions that are out of sync with the `requirements.{txt,in}` files, with the potential to introduce difficult to assess bugs in the RPM creation.

2. The version of `wrapt` that's current on PyPI will ... | 1.0 | Remedy release process deficiencies - Two specific issues were encountered while preparing the last release:

1. Beer-garden's `setup.py` has dependency versions that are out of sync with the `requirements.{txt,in}` files, with the potential to introduce difficult to assess bugs in the RPM creation.

2. The version o... | process | remedy release process deficiencies two specific issues were encountered while preparing the last release beer garden s setup py has dependency versions that are out of sync with the requirements txt in files with the potential to introduce difficult to assess bugs in the rpm creation the version o... | 1 |

2,058 | 4,864,884,220 | IssuesEvent | 2016-11-14 19:15:55 | Sage-Bionetworks/Genie | https://api.github.com/repos/Sage-Bionetworks/Genie | opened | patients missing sample data, wrong oncotree codes | data processing MSK pending release | MSK uploaded new files to correct. Not sure whether patients were removed or sample info added. | 1.0 | patients missing sample data, wrong oncotree codes - MSK uploaded new files to correct. Not sure whether patients were removed or sample info added. | process | patients missing sample data wrong oncotree codes msk uploaded new files to correct not sure whether patients were removed or sample info added | 1 |

251,343 | 18,947,379,958 | IssuesEvent | 2021-11-18 11:40:31 | amosproj/amos2021ws05-fin-prod-port-quick-check | https://api.github.com/repos/amosproj/amos2021ws05-fin-prod-port-quick-check | closed | Use Case Diagram | est. size: 1 type: documentation type: infrastructure real size: 1 priority: low | ## User story

1. As a developer

2. I need a use case diagram

3. So that I know the different types of users, their interactions between each other and set of actions

## Acceptance criteria

* All known users are illustrated

* All known actions are illustrated

* Their interactions are illustrated

## Definitio... | 1.0 | Use Case Diagram - ## User story

1. As a developer

2. I need a use case diagram

3. So that I know the different types of users, their interactions between each other and set of actions

## Acceptance criteria

* All known users are illustrated

* All known actions are illustrated

* Their interactions are illustra... | non_process | use case diagram user story as a developer i need a use case diagram so that i know the different types of users their interactions between each other and set of actions acceptance criteria all known users are illustrated all known actions are illustrated their interactions are illustra... | 0 |

73,230 | 7,329,713,086 | IssuesEvent | 2018-03-05 06:49:04 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Edit Ingress page allows for namespace to be changed. | area/ui kind/bug status/resolved status/to-test version/2.0 | **Rancher versions: Server build from master - Mar 2

**Steps to Reproduce:**

Create an Ingress.

Edit Ingress .

In the Ingress edit page , user is allowed to change namespace.

This should not be allowed.

<img width="1188" alt="screen shot 2018-03-02 at 10 24 32 pm" src="https://user-images.githubusercontent.c... | 1.0 | Edit Ingress page allows for namespace to be changed. - **Rancher versions: Server build from master - Mar 2

**Steps to Reproduce:**

Create an Ingress.

Edit Ingress .

In the Ingress edit page , user is allowed to change namespace.

This should not be allowed.

<img width="1188" alt="screen shot 2018-03-02 at 1... | non_process | edit ingress page allows for namespace to be changed rancher versions server build from master mar steps to reproduce create an ingress edit ingress in the ingress edit page user is allowed to change namespace this should not be allowed img width alt screen shot at pm ... | 0 |

19,587 | 25,922,221,557 | IssuesEvent | 2022-12-15 23:23:32 | microsoft/cadl | https://api.github.com/repos/microsoft/cadl | closed | Variable interpolation Cannot work When input a parameter by --arg in command | bug :pushpin: WS: Process Tools & Automation Required for DPG 1.0 | ## How to reproduce

1. Download the [cadl project](https://github.com/Azure/azure-rest-api-specs/tree/dw/cadl-bug/specification/cognitiveservices/OpenAI.Inference) I used.

2. `npm install`

3. `npx cadl compile . --emit @azure-tools/cadl-java --arg "java-repo-folder=/tmp/java"`

## Result

The codes are generated i... | 1.0 | Variable interpolation Cannot work When input a parameter by --arg in command - ## How to reproduce

1. Download the [cadl project](https://github.com/Azure/azure-rest-api-specs/tree/dw/cadl-bug/specification/cognitiveservices/OpenAI.Inference) I used.

2. `npm install`

3. `npx cadl compile . --emit @azure-tools/cadl-... | process | variable interpolation cannot work when input a parameter by arg in command how to reproduce download the i used npm install npx cadl compile emit azure tools cadl java arg java repo folder tmp java result the codes are generated in cwd azure sdk for java expected... | 1 |

13,268 | 15,732,093,895 | IssuesEvent | 2021-03-29 17:52:32 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | opened | parentage: `chromosome segregation` | cell cycle and DNA processes | It seems that `chromosome separation (GO:0051304)` has these SubClass Of relationships:

* is_a `cell cycle process`

* 'part of' some 'chromosome segregation'

While `chromosome segregation` only has this SubClass Of relationship:

* is_a `cellular process`

I would think that `chromosome segregation` should also... | 1.0 | parentage: `chromosome segregation` - It seems that `chromosome separation (GO:0051304)` has these SubClass Of relationships:

* is_a `cell cycle process`

* 'part of' some 'chromosome segregation'

While `chromosome segregation` only has this SubClass Of relationship:

* is_a `cellular process`

I would think tha... | process | parentage chromosome segregation it seems that chromosome separation go has these subclass of relationships is a cell cycle process part of some chromosome segregation while chromosome segregation only has this subclass of relationship is a cellular process i would think that chr... | 1 |

8,989 | 12,100,880,405 | IssuesEvent | 2020-04-20 14:26:09 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | SourceValidationError should be pretty printed | bug/2-confirmed kind/bug process/candidate team/engines |

## Bug description

```

Error: Error: Error in datamodel: ErrorCollection { errors: [SourceValidationError { message: "The URL for datasource `db` must start with the protocol `sqlite://`.", source: "db", span: Span { start: 55, end: 73 } }] }

```

## How to reproduce

<!--

Steps to reproduce the behavior:

... | 1.0 | SourceValidationError should be pretty printed -

## Bug description

```

Error: Error: Error in datamodel: ErrorCollection { errors: [SourceValidationError { message: "The URL for datasource `db` must start with the protocol `sqlite://`.", source: "db", span: Span { start: 55, end: 73 } }] }

```

## How to repr... | process | sourcevalidationerror should be pretty printed bug description error error error in datamodel errorcollection errors how to reproduce steps to reproduce the behavior go to change run see error prisma schema prisma file prisma ... | 1 |

213,502 | 24,003,555,243 | IssuesEvent | 2022-09-14 13:19:00 | MatBenfield/news | https://api.github.com/repos/MatBenfield/news | closed | [SecurityWeek] Today: 2022 CISO Forum Virtual Event | SecurityWeek Stale |

[read more](https://www.securityweek.com/today-2022-ciso-forum-virtual-event)

<https://www.securityweek.com/today-2022-ciso-forum-virtual-event>

| True | [SecurityWeek] Today: 2022 CISO Forum Virtual Event -

[read more](https://www.securityweek.com/today-2022-ciso-forum-virtual-event)

<https://www.securityweek.com/today-2022-ciso-forum-virtual-event>

| non_process | today ciso forum virtual event sites default files features ciso forum header jpg | 0 |

22,181 | 30,732,505,871 | IssuesEvent | 2023-07-28 03:47:57 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | roblox-pyc 1.25.110 has 2 GuardDog issues | guarddog silent-process-execution | https://pypi.org/project/roblox-pyc

https://inspector.pypi.io/project/roblox-pyc

```{

"dependency": "roblox-pyc",

"version": "1.25.110",

"result": {

"issues": 2,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "roblox-pyc-1.25.110/robloxpyc/installationma... | 1.0 | roblox-pyc 1.25.110 has 2 GuardDog issues - https://pypi.org/project/roblox-pyc

https://inspector.pypi.io/project/roblox-pyc

```{

"dependency": "roblox-pyc",

"version": "1.25.110",

"result": {

"issues": 2,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "... | process | roblox pyc has guarddog issues dependency roblox pyc version result issues errors results silent process execution location roblox pyc robloxpyc installationmanager py code su... | 1 |

264,756 | 23,137,192,412 | IssuesEvent | 2022-07-28 15:09:33 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | pkg/sql/sqlitelogictest/tests/local/local_test: TestSqlLiteLogic_testindexview10slt_good_5_test failed | C-test-failure O-robot branch-master | pkg/sql/sqlitelogictest/tests/local/local_test.TestSqlLiteLogic_testindexview10slt_good_5_test [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_SQLiteLogicTestsBazel/5889610?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_SQLiteLogic... | 1.0 | pkg/sql/sqlitelogictest/tests/local/local_test: TestSqlLiteLogic_testindexview10slt_good_5_test failed - pkg/sql/sqlitelogictest/tests/local/local_test.TestSqlLiteLogic_testindexview10slt_good_5_test [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_SQLiteLogicTestsBazel/5889610?buildTab=... | non_process | pkg sql sqlitelogictest tests local local test testsqllitelogic good test failed pkg sql sqlitelogictest tests local local test testsqllitelogic good test with on master run testsqllitelogic good test test log scope go test logs captured to artifacts tmp tmp logtests... | 0 |

9,455 | 12,438,295,840 | IssuesEvent | 2020-05-26 08:10:15 | aiidateam/aiida-core | https://api.github.com/repos/aiidateam/aiida-core | closed | Add support for type hint parsing in automatic process spec determination for process functions | priority/nice-to-have topic/processes type/accepted feature type/duplicate | Now that we support only Python versions that have type hinting for function signatures, we could maybe inspect those hints to improve the automatically generated process spec to set the `valid_type` based on it. This will improve for example the output of `verdi plugin list` with the description of process functions w... | 1.0 | Add support for type hint parsing in automatic process spec determination for process functions - Now that we support only Python versions that have type hinting for function signatures, we could maybe inspect those hints to improve the automatically generated process spec to set the `valid_type` based on it. This will... | process | add support for type hint parsing in automatic process spec determination for process functions now that we support only python versions that have type hinting for function signatures we could maybe inspect those hints to improve the automatically generated process spec to set the valid type based on it this will... | 1 |

10,677 | 13,462,434,851 | IssuesEvent | 2020-09-09 16:04:03 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | closed | DD-MM-YYYY and MM-DD-YYYY in eventDate | Class - Event Docs - Text Guide Format - Text Process - dismissed answered | dwc guidance documents should explicitly recommend against these commonly human-used forms since it is impossible for some dates to distinguish between the U.S. usage MM-DD-YYYY and the European usage DD-MM-YYYY. A casual misreading of ISO8601 might lead one to (erroneously) believe that these are compliant with ISO86... | 1.0 | DD-MM-YYYY and MM-DD-YYYY in eventDate - dwc guidance documents should explicitly recommend against these commonly human-used forms since it is impossible for some dates to distinguish between the U.S. usage MM-DD-YYYY and the European usage DD-MM-YYYY. A casual misreading of ISO8601 might lead one to (erroneously) be... | process | dd mm yyyy and mm dd yyyy in eventdate dwc guidance documents should explicitly recommend against these commonly human used forms since it is impossible for some dates to distinguish between the u s usage mm dd yyyy and the european usage dd mm yyyy a casual misreading of might lead one to erroneously believe ... | 1 |

214,008 | 16,544,379,250 | IssuesEvent | 2021-05-27 21:25:02 | xuhanz/DailyDose | https://api.github.com/repos/xuhanz/DailyDose | opened | Developer Guideline Issues | documentation | Add more instructions regarding the format of newly added tests so they can fit in with existing tests. | 1.0 | Developer Guideline Issues - Add more instructions regarding the format of newly added tests so they can fit in with existing tests. | non_process | developer guideline issues add more instructions regarding the format of newly added tests so they can fit in with existing tests | 0 |

291,971 | 21,945,031,403 | IssuesEvent | 2022-05-23 22:56:21 | getditto/docs | https://api.github.com/repos/getditto/docs | closed | Problem: Missing write transaction section in concepts | documentation | # Problem

Ditto has long had support for write transactions across collections. However the documentation doesn't have a `Concepts > Write Transaction` section

# Solution

Please create a section called `Concepts > Write Transactions` and include necessary snippets. | 1.0 | Problem: Missing write transaction section in concepts - # Problem

Ditto has long had support for write transactions across collections. However the documentation doesn't have a `Concepts > Write Transaction` section

# Solution

Please create a section called `Concepts > Write Transactions` and include necessar... | non_process | problem missing write transaction section in concepts problem ditto has long had support for write transactions across collections however the documentation doesn t have a concepts write transaction section solution please create a section called concepts write transactions and include necessar... | 0 |

11,496 | 14,368,809,579 | IssuesEvent | 2020-12-01 08:56:13 | panther-labs/panther | https://api.github.com/repos/panther-labs/panther | closed | Introduce Custom Logs apis | p1 story team:data processing | ### Description

Introduce the BE components for supporting Custom Logs

### Acceptance Criteria

All Custom Logs-related code is in OSS. | 1.0 | Introduce Custom Logs apis - ### Description

Introduce the BE components for supporting Custom Logs

### Acceptance Criteria

All Custom Logs-related code is in OSS. | process | introduce custom logs apis description introduce the be components for supporting custom logs acceptance criteria all custom logs related code is in oss | 1 |

11,708 | 14,545,565,701 | IssuesEvent | 2020-12-15 19:50:56 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | multi-job output variable named Foo.Bar | Pri1 devops-cicd-process/tech devops/prod doc-enhancement stale-issue | It is explained in this section how to grab a variable output from one job, in another job: https://docs.microsoft.com/en-us/azure/devops/pipelines/process/variables?view=azure-devops&tabs=yaml%2Cbatch#set-a-multi-job-output-variable

However the variable name is simply "myOutputVar" in your example, and you access it ... | 1.0 | multi-job output variable named Foo.Bar - It is explained in this section how to grab a variable output from one job, in another job: https://docs.microsoft.com/en-us/azure/devops/pipelines/process/variables?view=azure-devops&tabs=yaml%2Cbatch#set-a-multi-job-output-variable

However the variable name is simply "myOutp... | process | multi job output variable named foo bar it is explained in this section how to grab a variable output from one job in another job however the variable name is simply myoutputvar in your example and you access it like this myvarfromjoba however in my case the variable i need to access is set b... | 1 |

2,522 | 5,287,965,563 | IssuesEvent | 2017-02-08 13:58:16 | Hurence/logisland | https://api.github.com/repos/Hurence/logisland | opened | add possibility to use setRouting in putElasticSearch processor | enhancement processor | You can use this to specify a string that will be used to gather document having the same routing in the same shard in order to optimize perfs. | 1.0 | add possibility to use setRouting in putElasticSearch processor - You can use this to specify a string that will be used to gather document having the same routing in the same shard in order to optimize perfs. | process | add possibility to use setrouting in putelasticsearch processor you can use this to specify a string that will be used to gather document having the same routing in the same shard in order to optimize perfs | 1 |

19,669 | 26,029,287,789 | IssuesEvent | 2022-12-21 19:23:53 | googleapis/google-cloud-go | https://api.github.com/repos/googleapis/google-cloud-go | closed | all: broken genbot CI build | type: process | The `gapicgen/genbot` build is currently broken ([log](https://fusion2.corp.google.com/invocations/43586277-80b3-42da-8e5d-96c85d496309/targets/cloud-devrel%2Fclient-libraries%2Fgo%2Fgapicgen%2Fgenbot/log)) and may continue to encounter the type of error shown below until we have dropped support for older versions of G... | 1.0 | all: broken genbot CI build - The `gapicgen/genbot` build is currently broken ([log](https://fusion2.corp.google.com/invocations/43586277-80b3-42da-8e5d-96c85d496309/targets/cloud-devrel%2Fclient-libraries%2Fgo%2Fgapicgen%2Fgenbot/log)) and may continue to encounter the type of error shown below until we have dropped s... | process | all broken genbot ci build the gapicgen genbot build is currently broken and may continue to encounter the type of error shown below until we have dropped support for older versions of go until then we should consider including the go version flags suggested below in our builds as needed ... | 1 |

6,386 | 9,460,102,452 | IssuesEvent | 2019-04-17 10:05:16 | googleapis/google-cloud-dotnet | https://api.github.com/repos/googleapis/google-cloud-dotnet | closed | Consider making Spanner libraries v2 only target netstandard2.0 | api: spanner type: process | This is similar to #2959, but for Spanner.

Currently we have the following targeting:

- Spanner.Data: netstandard1.5;netstandard2.0;net45

- Spanner.Common.V1: netstandard1.5;net45

- Spanner.V1: netstandard1.5;netstandard2.0;net45

- Spanner.Admin.Database.V1: netstandard1.5;net45

- Spanner.Admin.Instance.V1: n... | 1.0 | Consider making Spanner libraries v2 only target netstandard2.0 - This is similar to #2959, but for Spanner.

Currently we have the following targeting:

- Spanner.Data: netstandard1.5;netstandard2.0;net45

- Spanner.Common.V1: netstandard1.5;net45

- Spanner.V1: netstandard1.5;netstandard2.0;net45

- Spanner.Admin... | process | consider making spanner libraries only target this is similar to but for spanner currently we have the following targeting spanner data spanner common spanner spanner admin database spanner admin instance proposal make all of these... | 1 |

13,214 | 15,685,888,834 | IssuesEvent | 2021-03-25 11:48:59 | threefoldtech/js-sdk | https://api.github.com/repos/threefoldtech/js-sdk | closed | Extension of storage nodes systematically leads to timeouts | process_wontfix type_bug | I extended my VDC with storage capacity. I see the amount taken away from my balance quite immediately, but the VDC payment screen keeps on spinning, and gets a timeout. I had this a few times (and no successful try), so I imagine there is an issue.

, so I imagine there is an issue.

when the maximum length of the execution trace is... | 1.0 | Optimize range checker execution trace finalization / length check - Currently, the 8-bit range check table is built twice when the execution trace is finalized.

1. It is built during the [call to `RangeChecker::trace_len`](https://github.com/maticnetwork/miden/blob/78f4b7d228aca6d0949ac37dc9854d6662a2ee8a/processor... | process | optimize range checker execution trace finalization length check currently the bit range check table is built twice when the execution trace is finalized it is built during the when the maximum length of the execution trace is being computed in finalize trace it is built during the before t... | 1 |

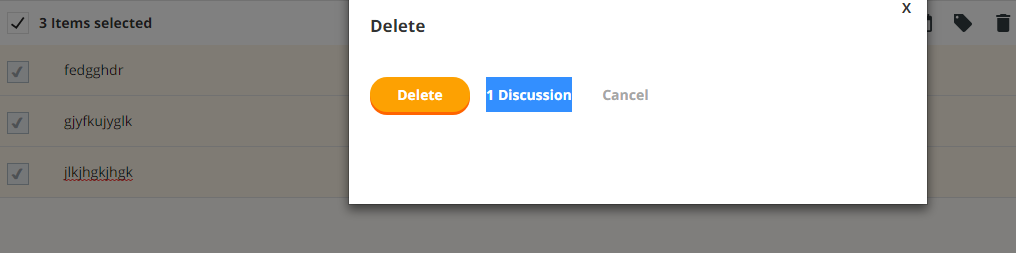

6,002 | 8,808,924,815 | IssuesEvent | 2018-12-27 16:55:17 | linnovate/root | https://api.github.com/repos/linnovate/root | closed | multiple selection in every entity select everything bug | 2.0.6 Fixed Process bug critical | when you press "select everything" in an entity, and try to update , it selects only the first entity in the list, and only updates that one

after opening atleast 60 entities, it only selects a few out of ... | 1.0 | multiple selection in every entity select everything bug - when you press "select everything" in an entity, and try to update , it selects only the first entity in the list, and only updates that one

after... | process | multiple selection in every entity select everything bug when you press select everything in an entity and try to update it selects only the first entity in the list and only updates that one after opening atleast entities it only selects a few out of the list instead of all of them | 1 |

18,497 | 24,551,078,990 | IssuesEvent | 2022-10-12 12:39:48 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [iOS] [Offline indicator] Enrollment flow > the following offline error message should get displayed when user is offline in the following scenario | Bug P1 iOS Process: Fixed Process: Tested dev | Steps:

1. Sign up or sign in to the mobile app

2. Click on the study

3. Click on the Participate

4. Turn off the data and observe

AR: Offline error message is not getting displayed

ER: 'You are offline, You can still use this section but may miss out on the latest content updates' error message should get displ... | 2.0 | [iOS] [Offline indicator] Enrollment flow > the following offline error message should get displayed when user is offline in the following scenario - Steps:

1. Sign up or sign in to the mobile app

2. Click on the study

3. Click on the Participate

4. Turn off the data and observe

AR: Offline error message is not ... | process | enrollment flow the following offline error message should get displayed when user is offline in the following scenario steps sign up or sign in to the mobile app click on the study click on the participate turn off the data and observe ar offline error message is not getting displayed er ... | 1 |

16,488 | 21,445,564,491 | IssuesEvent | 2022-04-25 05:45:54 | zotero/zotero | https://api.github.com/repos/zotero/zotero | opened | Classic citation dialog: Add all selected items to Multiple Sources pane | Papercuts Word Processor Integration Bug | https://forums.zotero.org/discussion/96671/citing-multiple-papers

Not that we want to do more to the classic dialog, but this seems like a trivial fix to a clear bug (albeit one that has probably existed for a decade), and it will help people until we have a new citation dialog. | 1.0 | Classic citation dialog: Add all selected items to Multiple Sources pane - https://forums.zotero.org/discussion/96671/citing-multiple-papers

Not that we want to do more to the classic dialog, but this seems like a trivial fix to a clear bug (albeit one that has probably existed for a decade), and it will help people... | process | classic citation dialog add all selected items to multiple sources pane not that we want to do more to the classic dialog but this seems like a trivial fix to a clear bug albeit one that has probably existed for a decade and it will help people until we have a new citation dialog | 1 |

14,993 | 18,674,461,890 | IssuesEvent | 2021-10-31 10:09:55 | googleapis/python-bigquery | https://api.github.com/repos/googleapis/python-bigquery | opened | Bump minimum openetelemetry version to support type checks | type: process | See the following comment for details: https://github.com/googleapis/python-bigquery/pull/1036#discussion_r739787329

If confirmed, we should bump to at least `opentelemetry-*==1.1.0`, and adjust our OpenTelemetry logic to the changes in the library API. | 1.0 | Bump minimum openetelemetry version to support type checks - See the following comment for details: https://github.com/googleapis/python-bigquery/pull/1036#discussion_r739787329

If confirmed, we should bump to at least `opentelemetry-*==1.1.0`, and adjust our OpenTelemetry logic to the changes in the library API. | process | bump minimum openetelemetry version to support type checks see the following comment for details if confirmed we should bump to at least opentelemetry and adjust our opentelemetry logic to the changes in the library api | 1 |

16,676 | 21,780,412,332 | IssuesEvent | 2022-05-13 18:13:23 | GoogleCloudPlatform/emblem | https://api.github.com/repos/GoogleCloudPlatform/emblem | closed | Proposal: Release Process | type: process persona: maintainer | This issue is created to propose a release process. I want to get some early agreement here, then will convert to a PR documenting the process in more detail.

* Commits will follow [Conventional Commit messages](https://www.conventionalcommits.org/)

* We will try [conventional-commit-lint bot](https://github.com/g... | 1.0 | Proposal: Release Process - This issue is created to propose a release process. I want to get some early agreement here, then will convert to a PR documenting the process in more detail.

* Commits will follow [Conventional Commit messages](https://www.conventionalcommits.org/)

* We will try [conventional-commit-li... | process | proposal release process this issue is created to propose a release process i want to get some early agreement here then will convert to a pr documenting the process in more detail commits will follow we will try to see if it helps us follow this practice versioning will follow semver org ... | 1 |

63,244 | 6,830,489,374 | IssuesEvent | 2017-11-09 07:02:18 | hypermodules/hyperamp | https://api.github.com/repos/hypermodules/hyperamp | closed | Fix calling t.end() twice. | testing | We don't remove event emitter listeners when we end the test or something in the artwork cache which causes inconsistent test failures when t.end gets called twice.

https://github.com/hypermodules/hyperamp/blob/master/main/lib/artwork-cache/test.js | 1.0 | Fix calling t.end() twice. - We don't remove event emitter listeners when we end the test or something in the artwork cache which causes inconsistent test failures when t.end gets called twice.

https://github.com/hypermodules/hyperamp/blob/master/main/lib/artwork-cache/test.js | non_process | fix calling t end twice we don t remove event emitter listeners when we end the test or something in the artwork cache which causes inconsistent test failures when t end gets called twice | 0 |

536,460 | 15,709,369,451 | IssuesEvent | 2021-03-26 22:22:01 | sopra-fs21-group-16/mth-client | https://api.github.com/repos/sopra-fs21-group-16/mth-client | opened | Create a visual clue to inform user of rejection of activity (swiping) | medium priority task | Create a visual clue, such that if an user swipes an suggested activity to the left, the user gets a feedback that indicates that the user has discarded the suggested activity #3. | 1.0 | Create a visual clue to inform user of rejection of activity (swiping) - Create a visual clue, such that if an user swipes an suggested activity to the left, the user gets a feedback that indicates that the user has discarded the suggested activity #3. | non_process | create a visual clue to inform user of rejection of activity swiping create a visual clue such that if an user swipes an suggested activity to the left the user gets a feedback that indicates that the user has discarded the suggested activity | 0 |

52,085 | 6,218,381,489 | IssuesEvent | 2017-07-09 00:54:03 | ProjectSidewalk/SidewalkWebpage | https://api.github.com/repos/ProjectSidewalk/SidewalkWebpage | opened | Can't close label context menus by clicking on the label | Priority: Low Relaunch Testing | You open the context menu by clicking on a label; the mouse-down does nothing, then the mouse-up opens the menu. When trying to close the menu by clicking on the label, the menu closes on mouse down, then opens back up again on mouse-up. Either

1. Users should be able to close the context menu by clicking on the label... | 1.0 | Can't close label context menus by clicking on the label - You open the context menu by clicking on a label; the mouse-down does nothing, then the mouse-up opens the menu. When trying to close the menu by clicking on the label, the menu closes on mouse down, then opens back up again on mouse-up. Either

1. Users should... | non_process | can t close label context menus by clicking on the label you open the context menu by clicking on a label the mouse down does nothing then the mouse up opens the menu when trying to close the menu by clicking on the label the menu closes on mouse down then opens back up again on mouse up either users should... | 0 |

15,120 | 18,852,210,389 | IssuesEvent | 2021-11-11 22:38:17 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Failed queries do not show SQL that was executed | .Proposal Querying/Processor | ### Feature requests and proposals

log every sql executed

I cannot see the sql being executed when it takes too long in question query builder. The sql being executed is the key to debug.

- Your browser and the version: Chrome

- Your operating system: OS X

- Your databases: MySQL

- Metabase version: 0.32.0

... | 1.0 | Failed queries do not show SQL that was executed - ### Feature requests and proposals

log every sql executed

I cannot see the sql being executed when it takes too long in question query builder. The sql being executed is the key to debug.

- Your browser and the version: Chrome

- Your operating system: OS X

- ... | process | failed queries do not show sql that was executed feature requests and proposals log every sql executed i cannot see the sql being executed when it takes too long in question query builder the sql being executed is the key to debug your browser and the version chrome your operating system os x ... | 1 |

6,699 | 9,814,731,140 | IssuesEvent | 2019-06-13 10:53:44 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Batch processing error in r.mapcalc.simple | Bug Processing | Author Name: **Daria Svidzinska** (@darsvid)

Original Redmine Issue: [22008](https://issues.qgis.org/issues/22008)

Affected QGIS version: 3.6.2

Redmine category:processing/core

---

I'm trying to perform batch processing for multiple rasters using a conditional expression in r.mapcalc.simple, GRASS 7.6.1.

The expre... | 1.0 | Batch processing error in r.mapcalc.simple - Author Name: **Daria Svidzinska** (@darsvid)

Original Redmine Issue: [22008](https://issues.qgis.org/issues/22008)

Affected QGIS version: 3.6.2

Redmine category:processing/core

---

I'm trying to perform batch processing for multiple rasters using a conditional expression i... | process | batch processing error in r mapcalc simple author name daria svidzinska darsvid original redmine issue affected qgis version redmine category processing core i m trying to perform batch processing for multiple rasters using a conditional expression in r mapcalc simple grass the ex... | 1 |

19,010 | 25,010,562,762 | IssuesEvent | 2022-11-03 14:58:37 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | closed | DNS resolution error on Apple M1 | bug process | ### Description

```

Unable to load io.netty.resolver.dns.macos.MacOSDnsServerAddressStreamProvider,fallback to system defaults. This may result in incorrect DNS resolutions on MacOS.

java.lang.ClassNotFoundException: io.netty.resolver.dns.macos.MacOSDnsServerAddressStreamProvider

```

### Steps to reproduce

Run gr... | 1.0 | DNS resolution error on Apple M1 - ### Description

```

Unable to load io.netty.resolver.dns.macos.MacOSDnsServerAddressStreamProvider,fallback to system defaults. This may result in incorrect DNS resolutions on MacOS.

java.lang.ClassNotFoundException: io.netty.resolver.dns.macos.MacOSDnsServerAddressStreamProvider

... | process | dns resolution error on apple description unable to load io netty resolver dns macos macosdnsserveraddressstreamprovider fallback to system defaults this may result in incorrect dns resolutions on macos java lang classnotfoundexception io netty resolver dns macos macosdnsserveraddressstreamprovider ... | 1 |

15,349 | 8,852,554,632 | IssuesEvent | 2019-01-08 18:41:23 | webhintio/hint | https://api.github.com/repos/webhintio/hint | reopened | Add rule for best practices for preload-prefetch-and-priorities | area:hint hint-category:performance | Addy Osmani published an interesting article about Chrome's [preload prefetch and priorities](https://medium.com/reloading/preload-prefetch-and-priorities-in-chrome-776165961bbf)

We should:

* [ ] Investigate how it works in Edge and Firefox.

* [ ] See if we can extrapolate a general best practices based on some pa... | True | Add rule for best practices for preload-prefetch-and-priorities - Addy Osmani published an interesting article about Chrome's [preload prefetch and priorities](https://medium.com/reloading/preload-prefetch-and-priorities-in-chrome-776165961bbf)

We should:

* [ ] Investigate how it works in Edge and Firefox.

* [ ] S... | non_process | add rule for best practices for preload prefetch and priorities addy osmani published an interesting article about chrome s we should investigate how it works in edge and firefox see if we can extrapolate a general best practices based on some patterns | 0 |

349,569 | 31,813,135,322 | IssuesEvent | 2023-09-13 18:20:09 | golang/go | https://api.github.com/repos/golang/go | closed | os/exec: TestCommand fails on Windows when `NoDefaultCurrentDirectoryInExePath` is set in the environment | Testing OS-Windows NeedsInvestigation | In investigating the data race reported in https://go.dev/cl/527337, I looked at the existing tests that cover the call to `lookExtensions` is `exec.Cmd.Start` on Windows.

I found several test cases in `TestCommand` that look suspicious: they expect `exec.LookPath` to always resolve a bare filename relative to the c... | 1.0 | os/exec: TestCommand fails on Windows when `NoDefaultCurrentDirectoryInExePath` is set in the environment - In investigating the data race reported in https://go.dev/cl/527337, I looked at the existing tests that cover the call to `lookExtensions` is `exec.Cmd.Start` on Windows.

I found several test cases in `TestCo... | non_process | os exec testcommand fails on windows when nodefaultcurrentdirectoryinexepath is set in the environment in investigating the data race reported in i looked at the existing tests that cover the call to lookextensions is exec cmd start on windows i found several test cases in testcommand that look suspici... | 0 |

16,486 | 21,444,061,565 | IssuesEvent | 2022-04-25 03:02:50 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Multipart to Singlepart feature could not be written | Feedback stale Processing Bug | ### What is the bug or the crash?

After the update to QGIS 3.24.0 Tisler, the 'Multipart to Singleparts' yields errors stating the features could not be written. As a result, no data was written to the result set (memory layer called Single_parts).

In the previous version of QGIS, running Multipart to Singleparts o... | 1.0 | Multipart to Singlepart feature could not be written - ### What is the bug or the crash?

After the update to QGIS 3.24.0 Tisler, the 'Multipart to Singleparts' yields errors stating the features could not be written. As a result, no data was written to the result set (memory layer called Single_parts).

In the previ... | process | multipart to singlepart feature could not be written what is the bug or the crash after the update to qgis tisler the multipart to singleparts yields errors stating the features could not be written as a result no data was written to the result set memory layer called single parts in the previo... | 1 |

5,020 | 7,845,548,341 | IssuesEvent | 2018-06-19 13:16:02 | AffiliateWP/AffiliateWP | https://api.github.com/repos/AffiliateWP/AffiliateWP | opened | This error is showing up on the referrals table | add-on batch-processing bug has HS to-notify | A customer is experiencing the following error:

>Fatal error: Uncaught Error: Call to a member function type() on boolean in /home/s793/html/wp-content/plugins/AffiliateWP-2.2.3/includes/admin/referrals/class-list-table.php:251 Stack trace: #0 /home/s793/html/wp-admin/includes/class-wp-list-table.php(1254): AffWP_Re... | 1.0 | This error is showing up on the referrals table - A customer is experiencing the following error:

>Fatal error: Uncaught Error: Call to a member function type() on boolean in /home/s793/html/wp-content/plugins/AffiliateWP-2.2.3/includes/admin/referrals/class-list-table.php:251 Stack trace: #0 /home/s793/html/wp-admi... | process | this error is showing up on the referrals table a customer is experiencing the following error fatal error uncaught error call to a member function type on boolean in home html wp content plugins affiliatewp includes admin referrals class list table php stack trace home html wp admin includ... | 1 |

16,206 | 20,732,470,540 | IssuesEvent | 2022-03-14 10:41:33 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | `'then' in PrimsaPromise` returns false | bug/2-confirmed kind/bug process/candidate topic: prisma-client tech/typescript team/client topic: fluent api | ### Bug description

Some libraries use `'then' in x` as part of their detection for promise-like/thenable objects.

The way prisma client builds its fluent APIs with proxies causes this to fail.

### How to reproduce

`console.log('then' in prisma.someModel.findUnique({ where: { id: 1 }))`

`console.log(type... | 1.0 | `'then' in PrimsaPromise` returns false - ### Bug description

Some libraries use `'then' in x` as part of their detection for promise-like/thenable objects.

The way prisma client builds its fluent APIs with proxies causes this to fail.

### How to reproduce

`console.log('then' in prisma.someModel.findUnique(... | process | then in primsapromise returns false bug description some libraries use then in x as part of their detection for promise like thenable objects the way prisma client builds its fluent apis with proxies causes this to fail how to reproduce console log then in prisma somemodel findunique ... | 1 |

2,962 | 5,959,741,134 | IssuesEvent | 2017-05-29 12:05:05 | itsyouonline/identityserver | https://api.github.com/repos/itsyouonline/identityserver | closed | GET request does not work for acquiring a JWT | process_wontfix type_bug | According to the docs, to acquire a JWT, one should do a GET request like:

`curl -H "Authorization: token OAUTH-TOKEN" https://itsyou.online/v1/oauth/jwt?scope=user:memberof:org1`

This, however, didn't work. Only the POST request did.

```

data = {'aud': 'aliorg', 'scope': 'user:email:main,user:memberof:aliorg'}... | 1.0 | GET request does not work for acquiring a JWT - According to the docs, to acquire a JWT, one should do a GET request like:

`curl -H "Authorization: token OAUTH-TOKEN" https://itsyou.online/v1/oauth/jwt?scope=user:memberof:org1`

This, however, didn't work. Only the POST request did.

```

data = {'aud': 'aliorg', ... | process | get request does not work for acquiring a jwt according to the docs to acquire a jwt one should do a get request like curl h authorization token oauth token this however didn t work only the post request did data aud aliorg scope user email main user memberof aliorg headers ... | 1 |

53,054 | 13,260,851,432 | IssuesEvent | 2020-08-20 18:52:11 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | icetray/trunk/resources/docs/i3frame.rst clean up (Trac #636) | IceTray Migrated from Trac defect | needs some serious help

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/636">https://code.icecube.wisc.edu/projects/icecube/ticket/636</a>, reported by anonymousand owned by troy</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2014-11-23T03:... | 1.0 | icetray/trunk/resources/docs/i3frame.rst clean up (Trac #636) - needs some serious help

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/636">https://code.icecube.wisc.edu/projects/icecube/ticket/636</a>, reported by anonymousand owned by troy</em></summary>

<p>

```... | non_process | icetray trunk resources docs rst clean up trac needs some serious help migrated from json status closed changetime ts description needs some serious help reporter anonymous cc resolution fixed time ... | 0 |

227,103 | 7,526,993,566 | IssuesEvent | 2018-04-13 15:35:57 | googleapis/nodejs-pubsub | https://api.github.com/repos/googleapis/nodejs-pubsub | closed | Duplicate messages exactly every 15 minutes | priority: p2 status: blocked type: bug | #### Environment details

- OS: Kubernetes Engine

- Node.js version: 6.3.0

- npm version: –

- @google-cloud/pubsub version: 0.16.2

#### Steps to reproduce

This is most likely related to the discussion in https://github.com/googleapis/nodejs-pubsub/issues/2#issuecomment-356423284 (and following commen... | 1.0 | Duplicate messages exactly every 15 minutes - #### Environment details

- OS: Kubernetes Engine

- Node.js version: 6.3.0

- npm version: –

- @google-cloud/pubsub version: 0.16.2

#### Steps to reproduce

This is most likely related to the discussion in https://github.com/googleapis/nodejs-pubsub/issues/... | non_process | duplicate messages exactly every minutes environment details os kubernetes engine node js version npm version – google cloud pubsub version steps to reproduce this is most likely related to the discussion in and following comments in that thread however si... | 0 |

4,713 | 7,551,185,302 | IssuesEvent | 2018-04-18 19:17:19 | nodejs/node | https://api.github.com/repos/nodejs/node | opened | test-child-process-exec-kill-throws fails intermittently on multiple platforms | child_process | <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: output of `node -v`

P... | 1.0 | test-child-process-exec-kill-throws fails intermittently on multiple platforms - <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in... | process | test child process exec kill throws fails intermittently on multiple platforms thank you for reporting an issue this issue tracker is for bugs and issues found within node js core if you require more general support please file an issue on our help repo please fill in as much of the template belo... | 1 |

16,410 | 21,191,417,539 | IssuesEvent | 2022-04-08 17:52:51 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | fix flaky session_spec in server-e2e-tests-electron | process: flaky test stage: icebox | ### Current behavior

example failure: https://app.circleci.com/pipelines/github/cypress-io/cypress/22975/workflows/4a639267-6eda-4e78-84b6-5c1f3426f04e/jobs/845803/parallel-runs/7

### Desired behavior

n/a

### Test code to reproduce

n/a

### Cypress Version

*

### Other

_No response_ | 1.0 | fix flaky session_spec in server-e2e-tests-electron - ### Current behavior

example failure: https://app.circleci.com/pipelines/github/cypress-io/cypress/22975/workflows/4a639267-6eda-4e78-84b6-5c1f3426f04e/jobs/845803/parallel-runs/7

### Desired behavior

n/a

### Test code to reproduce

n/a

### Cypres... | process | fix flaky session spec in server tests electron current behavior example failure desired behavior n a test code to reproduce n a cypress version other no response | 1 |

332,124 | 29,185,751,683 | IssuesEvent | 2023-05-19 15:17:23 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix jax_lax_operators.test_jax_lax_reshape | JAX Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4570376767/jobs/8067642702" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4570376767/jobs/8067642702" rel="noopener ... | 1.0 | Fix jax_lax_operators.test_jax_lax_reshape - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4570376767/jobs/8067642702" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/ru... | non_process | fix jax lax operators test jax lax reshape tensorflow img src torch img src numpy img src jax img src | 0 |

19,646 | 26,006,136,126 | IssuesEvent | 2022-12-20 19:34:07 | GSA/EDX | https://api.github.com/repos/GSA/EDX | closed | Onboarding GSA Website Manager - vltp.gsa.gov | process Proposed Next Projects | Welcome to the GSA.

As a Website Manager, responsibilities include:

* A number of conditions, described 21st Century IDEA

### Resources and trainings

* Digital.gov links

### How to get help

Contact EDX

### Information about the Website Inventory

https://touchpoints.app.cloud.gov/admin/websites/... | 1.0 | Onboarding GSA Website Manager - vltp.gsa.gov - Welcome to the GSA.

As a Website Manager, responsibilities include:

* A number of conditions, described 21st Century IDEA

### Resources and trainings

* Digital.gov links

### How to get help

Contact EDX

### Information about the Website Inventory

h... | process | onboarding gsa website manager vltp gsa gov welcome to the gsa as a website manager responsibilities include a number of conditions described century idea resources and trainings digital gov links how to get help contact edx information about the website inventory | 1 |

13,261 | 15,729,686,275 | IssuesEvent | 2021-03-29 15:06:42 | gfx-rs/naga | https://api.github.com/repos/gfx-rs/naga | closed | Validation function for the IR | area: processing help wanted kind: feature | The `Module` struct that our front-ends produce generally has some level of type-level encoding of the constraints, however many properties can only be checked at run-time. One big area of validation is type checking:

- builtins have the expected types

- constructors are using consistent values, e.g. #43

- typ... | 1.0 | Validation function for the IR - The `Module` struct that our front-ends produce generally has some level of type-level encoding of the constraints, however many properties can only be checked at run-time. One big area of validation is type checking:

- builtins have the expected types

- constructors are using con... | process | validation function for the ir the module struct that our front ends produce generally has some level of type level encoding of the constraints however many properties can only be checked at run time one big area of validation is type checking builtins have the expected types constructors are using con... | 1 |

6,732 | 9,854,683,236 | IssuesEvent | 2019-06-19 17:27:56 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | reopened | Bigtable: 'test_create_instance_w_two_clusters' flakes with '504 Deadline Exceeded' | api: bigtable flaky testing type: process | /cc @sduskis, @vikas-jamdar

From:

- https://circleci.com/gh/GoogleCloudPlatform/google-cloud-python/7977

- https://circleci.com/gh/GoogleCloudPlatform/google-cloud-python/7978

```python

___________ TestInstanceAdminAPI.test_create_instance_w_two_clusters ___________

self = <tests.system.TestInstanceAdmin... | 1.0 | Bigtable: 'test_create_instance_w_two_clusters' flakes with '504 Deadline Exceeded' - /cc @sduskis, @vikas-jamdar

From:

- https://circleci.com/gh/GoogleCloudPlatform/google-cloud-python/7977

- https://circleci.com/gh/GoogleCloudPlatform/google-cloud-python/7978

```python

___________ TestInstanceAdminAPI.tes... | process | bigtable test create instance w two clusters flakes with deadline exceeded cc sduskis vikas jamdar from python testinstanceadminapi test create instance w two clusters self def test create instance w two clusters self from google clou... | 1 |

2,191 | 5,037,520,038 | IssuesEvent | 2016-12-17 18:23:11 | paulkornikov/Pragonas | https://api.github.com/repos/paulkornikov/Pragonas | closed | Perf sur trace détail | a-enhancement processus t-performance workload III | Créer une requête pour ne récupérer que le détail d'une trace et pas toutes les traces puis sélection d'une.

Modifier le web api en requêtant directement l'id dans le remote sur le client. | 1.0 | Perf sur trace détail - Créer une requête pour ne récupérer que le détail d'une trace et pas toutes les traces puis sélection d'une.

Modifier le web api en requêtant directement l'id dans le remote sur le client. | process | perf sur trace détail créer une requête pour ne récupérer que le détail d une trace et pas toutes les traces puis sélection d une modifier le web api en requêtant directement l id dans le remote sur le client | 1 |

86,174 | 24,776,969,887 | IssuesEvent | 2022-10-23 21:06:00 | microsoft/azure-pipelines-tasks | https://api.github.com/repos/microsoft/azure-pipelines-tasks | closed | MSBuildV1 task delays to find target solutions as part of *.sln pattern | bug Area: ABTT stale Task: MSBuild | ## Required Information

Entering this information will route you directly to the right team and expedite traction.

**Question, Bug, or Feature?**

*Type*: Bug

**Enter Task Name**: MSBuildV1

## Environment

- Server - Azure Pipelines or TFS on-premises?

- If using Azure Pipelines, provide the ac... | 1.0 | MSBuildV1 task delays to find target solutions as part of *.sln pattern - ## Required Information

Entering this information will route you directly to the right team and expedite traction.

**Question, Bug, or Feature?**

*Type*: Bug

**Enter Task Name**: MSBuildV1

## Environment

- Server - Azure Pipelines... | non_process | task delays to find target solutions as part of sln pattern required information entering this information will route you directly to the right team and expedite traction question bug or feature type bug enter task name environment server azure pipelines or tfs on premi... | 0 |

20,981 | 27,844,686,947 | IssuesEvent | 2023-03-20 14:49:25 | camunda/issues | https://api.github.com/repos/camunda/issues | opened | Process Instance Version Migration | component:operate component:zeebe component:zeebe-process-automation public feature-parity potential:8.3 | ### Value Proposition Statement

Migrate running Process Instances between different versions of process definitions.

### User Problem

Migration itself:

- Our Operators have a new version of a workflow and want to move all the running instances from the old workflows to this new version because the other workflow ... | 1.0 | Process Instance Version Migration - ### Value Proposition Statement

Migrate running Process Instances between different versions of process definitions.

### User Problem

Migration itself:

- Our Operators have a new version of a workflow and want to move all the running instances from the old workflows to this ne... | process | process instance version migration value proposition statement migrate running process instances between different versions of process definitions user problem migration itself our operators have a new version of a workflow and want to move all the running instances from the old workflows to this ne... | 1 |

3,917 | 6,840,610,920 | IssuesEvent | 2017-11-11 01:56:33 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | When joining in a way that returns columns with same name, columns are suffixed and missing metadata | Limitation Query Processor | e.g. `name` and `name_2`. I believe this is done automatically by Clojure JDBC.

- Since we don't know the source of `name_2` the correct Metadata doesn't get returned along with it.

- It would be better to give it a name like `<table_name>.name`.

See also #1447.

| 1.0 | When joining in a way that returns columns with same name, columns are suffixed and missing metadata - e.g. `name` and `name_2`. I believe this is done automatically by Clojure JDBC.

- Since we don't know the source of `name_2` the correct Metadata doesn't get returned along with it.

- It would be better to give i... | process | when joining in a way that returns columns with same name columns are suffixed and missing metadata e g name and name i believe this is done automatically by clojure jdbc since we don t know the source of name the correct metadata doesn t get returned along with it it would be better to give i... | 1 |

15,151 | 18,907,831,440 | IssuesEvent | 2021-11-16 10:57:57 | sillsdev/silnlp | https://api.github.com/repos/sillsdev/silnlp | opened | Improve the error message when a parent model folder is missing. (Translate) | enhancement good first issue pipeline 6: infer pipeline 3: preprocess pipeline 4: train pipeline 5: test | When a parent model folder is missing then the config file for that parent folder cannot be found. That leads to an error like this:

```

FileNotFoundError: [Errno 2] No such file or directory: '/home/david/gutenberg/MT/experiments/BT-Swahili/en-swh-8/config.yml'

```

I'm running a child model where the folder name... | 1.0 | Improve the error message when a parent model folder is missing. (Translate) - When a parent model folder is missing then the config file for that parent folder cannot be found. That leads to an error like this:

```

FileNotFoundError: [Errno 2] No such file or directory: '/home/david/gutenberg/MT/experiments/BT-Swahi... | process | improve the error message when a parent model folder is missing translate when a parent model folder is missing then the config file for that parent folder cannot be found that leads to an error like this filenotfounderror no such file or directory home david gutenberg mt experiments bt swahili en sw... | 1 |

15,219 | 19,086,515,394 | IssuesEvent | 2021-11-29 07:01:10 | varabyte/kobweb | https://api.github.com/repos/varabyte/kobweb | closed | Break up WebModifiers.kt | process | The file is getting unwieldy.

Maybe something like

WebModifiers.color.kt

WebModifiers.size.kt

WebModifiers.input.kt

etc. | 1.0 | Break up WebModifiers.kt - The file is getting unwieldy.

Maybe something like

WebModifiers.color.kt

WebModifiers.size.kt

WebModifiers.input.kt

etc. | process | break up webmodifiers kt the file is getting unwieldy maybe something like webmodifiers color kt webmodifiers size kt webmodifiers input kt etc | 1 |

211,776 | 16,457,816,897 | IssuesEvent | 2021-05-21 14:44:55 | composer/composer | https://api.github.com/repos/composer/composer | closed | No lock file found message is not intuitive | Documentation | Couple of times, I got a question about "No lock file found" warning in Composer 2. Newbies that never or rarely worked with Composer (or simply do not use it day-to-day) are asking why should they use `composer update` over `composer install` when no lock file is present. Their questions are mainly due to situations w... | 1.0 | No lock file found message is not intuitive - Couple of times, I got a question about "No lock file found" warning in Composer 2. Newbies that never or rarely worked with Composer (or simply do not use it day-to-day) are asking why should they use `composer update` over `composer install` when no lock file is present. ... | non_process | no lock file found message is not intuitive couple of times i got a question about no lock file found warning in composer newbies that never or rarely worked with composer or simply do not use it day to day are asking why should they use composer update over composer install when no lock file is present ... | 0 |

600,025 | 18,288,041,443 | IssuesEvent | 2021-10-05 12:33:48 | UCREL/pymusas | https://api.github.com/repos/UCREL/pymusas | opened | Python 3.10 | enhancement low priority | When Conda releases Python version 3.10 we shall test against version 3.10 of Python. | 1.0 | Python 3.10 - When Conda releases Python version 3.10 we shall test against version 3.10 of Python. | non_process | python when conda releases python version we shall test against version of python | 0 |

22,409 | 31,142,292,680 | IssuesEvent | 2023-08-16 01:44:48 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Flaky test: must call #initializeConfig before accessing config | OS: linux process: flaky test topic: flake ❄️ stage: flake stale | ### Link to dashboard or CircleCI failure

https://app.circleci.com/pipelines/github/cypress-io/cypress/41402/workflows/68bc48c2-74e7-4588-97c3-fbc1b0bde495/jobs/1714672

### Link to failing test in GitHub

https://github.com/cypress-io/cypress/blob/develop/packages/server/test/integration/cypress_spec.js#L1661

... | 1.0 | Flaky test: must call #initializeConfig before accessing config - ### Link to dashboard or CircleCI failure

https://app.circleci.com/pipelines/github/cypress-io/cypress/41402/workflows/68bc48c2-74e7-4588-97c3-fbc1b0bde495/jobs/1714672

### Link to failing test in GitHub

https://github.com/cypress-io/cypress/blo... | process | flaky test must call initializeconfig before accessing config link to dashboard or circleci failure link to failing test in github analysis img width alt screen shot at pm src cypress version other search for this issue number in the c... | 1 |

602,316 | 18,466,684,859 | IssuesEvent | 2021-10-17 01:54:50 | space-wizards/space-station-14 | https://api.github.com/repos/space-wizards/space-station-14 | closed | Can't unbuckle mob from chair after pulling | Type: Bug Priority: 2-Before Release Difficulty: 2-Medium | <!-- To automatically tag this issue, add the uppercase label(s) surrounded by brackets below, for example: [LABEL] -->

## Description

1. Spawn Urist and a chair

2. Drag Urist on a chair and pull it for some time

3. You are not able unbuckle Urist from chair anymore

<!-- Explain your issue in detail, including... | 1.0 | Can't unbuckle mob from chair after pulling - <!-- To automatically tag this issue, add the uppercase label(s) surrounded by brackets below, for example: [LABEL] -->

## Description

1. Spawn Urist and a chair

2. Drag Urist on a chair and pull it for some time

3. You are not able unbuckle Urist from chair anymore

... | non_process | can t unbuckle mob from chair after pulling description spawn urist and a chair drag urist on a chair and pull it for some time you are not able unbuckle urist from chair anymore screenshots additional context | 0 |

136,071 | 18,722,296,364 | IssuesEvent | 2021-11-03 13:09:12 | KDWSS/dd-trace-java | https://api.github.com/repos/KDWSS/dd-trace-java | opened | CVE-2014-7810 (Medium) detected in multiple libraries | security vulnerability | ## CVE-2014-7810 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>tomcat-jasper-7.0.20.jar</b>, <b>tomcat-el-api-7.0.20.jar</b>, <b>tomcat-el-api-8.0.14.jar</b>, <b>tomcat-embed-jasp... | True | CVE-2014-7810 (Medium) detected in multiple libraries - ## CVE-2014-7810 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>tomcat-jasper-7.0.20.jar</b>, <b>tomcat-el-api-7.0.20.jar</b... | non_process | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries tomcat jasper jar tomcat el api jar tomcat el api jar tomcat embed jasper jar tomcat jasper jar tomcats jsp parser path to dependency file dd t... | 0 |

5,876 | 2,796,615,715 | IssuesEvent | 2015-05-12 08:45:49 | mysociety/popolo-viewer-sinatra | https://api.github.com/repos/mysociety/popolo-viewer-sinatra | opened | Make the Country List page look nicer | 1 - Desired design | At the moment http://data.everypolitician.org/ is a separate homepage with a list of countries. Ideally we'll consolidate the sites into one (requires #120), but we'll always need _something_ to show which countries we have, and a non-zoomable map on its own isn't enough.

Unless or until we resolve the wider issue,... | 1.0 | Make the Country List page look nicer - At the moment http://data.everypolitician.org/ is a separate homepage with a list of countries. Ideally we'll consolidate the sites into one (requires #120), but we'll always need _something_ to show which countries we have, and a non-zoomable map on its own isn't enough.

Unl... | non_process | make the country list page look nicer at the moment is a separate homepage with a list of countries ideally we ll consolidate the sites into one requires but we ll always need something to show which countries we have and a non zoomable map on its own isn t enough unless or until we resolve the wider... | 0 |

11,401 | 14,235,750,911 | IssuesEvent | 2020-11-18 15:11:34 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | How to set the cwd when a genrule's cmd is executed | team-Rules-Server type: support / not a bug (process) untriaged | Hi,

My project depends on an external git repository and I add it to the WORKSPACE file using `new_git_repository`. This external git repository has a python module and I need to execute it with `python -m module_name` to generate some C++ source and header files for the main repository. I use genrule with a `cmd = "p... | 1.0 | How to set the cwd when a genrule's cmd is executed - Hi,

My project depends on an external git repository and I add it to the WORKSPACE file using `new_git_repository`. This external git repository has a python module and I need to execute it with `python -m module_name` to generate some C++ source and header files f... | process | how to set the cwd when a genrule s cmd is executed hi my project depends on an external git repository and i add it to the workspace file using new git repository this external git repository has a python module and i need to execute it with python m module name to generate some c source and header files f... | 1 |

121,075 | 15,836,738,557 | IssuesEvent | 2021-04-06 19:46:12 | microsoft/WSL | https://api.github.com/repos/microsoft/WSL | closed | Calling `explorer.exe /directory` from within an Ubuntu instance opens the explorer but on an empty view and not on the specified directory | bydesign | Using Windows Insider 10.0.18932.1000 with Ubuntu 18.04, doing this from the Ubuntu command line

$ explorer.exe /etc

opens a File Explorer view which states 'This folder is empty"

While doing

$ cd /etc; explorer.exe .

Correctly open a File Explorer listing the directory content

They should be equivalent c... | 1.0 | Calling `explorer.exe /directory` from within an Ubuntu instance opens the explorer but on an empty view and not on the specified directory - Using Windows Insider 10.0.18932.1000 with Ubuntu 18.04, doing this from the Ubuntu command line

$ explorer.exe /etc

opens a File Explorer view which states 'This folder is e... | non_process | calling explorer exe directory from within an ubuntu instance opens the explorer but on an empty view and not on the specified directory using windows insider with ubuntu doing this from the ubuntu command line explorer exe etc opens a file explorer view which states this folder is empty w... | 0 |

250,916 | 7,992,563,954 | IssuesEvent | 2018-07-20 02:17:35 | magda-io/magda | https://api.github.com/repos/magda-io/magda | closed | Launceston have changed the URL of their data.json page. | priority: showstopper | ### Problem description

It's now at "https://data-launceston.opendata.arcgis.com/data.json". We need to update this in our config. | 1.0 | Launceston have changed the URL of their data.json page. - ### Problem description

It's now at "https://data-launceston.opendata.arcgis.com/data.json". We need to update this in our config. | non_process | launceston have changed the url of their data json page problem description it s now at we need to update this in our config | 0 |

755,327 | 26,425,108,339 | IssuesEvent | 2023-01-14 03:49:11 | yogstation13/Yogstation | https://api.github.com/repos/yogstation13/Yogstation | closed | You can't light other people's cigarrettes with things that aren't lighters | Bug Issue - Confirmed Issue - Low priority | ## Reproduction:

Try to light someone else's cigarrette with a welding tool or laser

Cry when you just shoot them or hit them with it instead | 1.0 | You can't light other people's cigarrettes with things that aren't lighters - ## Reproduction:

Try to light someone else's cigarrette with a welding tool or laser

Cry when you just shoot them or hit them with it instead | non_process | you can t light other people s cigarrettes with things that aren t lighters reproduction try to light someone else s cigarrette with a welding tool or laser cry when you just shoot them or hit them with it instead | 0 |

38,868 | 8,556,473,593 | IssuesEvent | 2018-11-08 13:17:36 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | [4.0] com_redirect bulk import | J4 Issue No Code Attached Yet | It is not possible to open the bulk import modal from the toolbar in com_redirect due to a javascript error

```

Uncaught TypeError: Cannot read property 'open' of null

at HTMLButtonElement.onclick (index.php?option=com_redirect&view=links:279)

``` | 1.0 | [4.0] com_redirect bulk import - It is not possible to open the bulk import modal from the toolbar in com_redirect due to a javascript error

```

Uncaught TypeError: Cannot read property 'open' of null

at HTMLButtonElement.onclick (index.php?option=com_redirect&view=links:279)

``` | non_process | com redirect bulk import it is not possible to open the bulk import modal from the toolbar in com redirect due to a javascript error uncaught typeerror cannot read property open of null at htmlbuttonelement onclick index php option com redirect view links | 0 |

14,597 | 17,703,567,913 | IssuesEvent | 2021-08-25 03:17:50 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | closed | Change term - coordinateUncertaintyInMeters | Term - change Class - Location non-normative Process - complete | ## Change term

* Submitter: https://github.com/RicardoOrtizG

* Justification (why is this change necessary?): For completeness

* Proponents (who needs this change): Everyone

Current Term definition: https://dwc.tdwg.org/terms/#dwc:coordinateUncertaintynMeters

Proposed new attributes of the term:

* Term na... | 1.0 | Change term - coordinateUncertaintyInMeters - ## Change term

* Submitter: https://github.com/RicardoOrtizG

* Justification (why is this change necessary?): For completeness

* Proponents (who needs this change): Everyone

Current Term definition: https://dwc.tdwg.org/terms/#dwc:coordinateUncertaintynMeters

Pro... | process | change term coordinateuncertaintyinmeters change term submitter justification why is this change necessary for completeness proponents who needs this change everyone current term definition proposed new attributes of the term term name in lowercamelcase coordinateuncertaint... | 1 |

14,198 | 17,099,225,637 | IssuesEvent | 2021-07-09 08:51:48 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Error: Error in migration engine. Reason: [libs/sql-schema-describer/src/walkers.rs:214:27] index out of bounds: the len is 0 but the index is 0 | bug/1-repro-available kind/bug process/candidate team/migrations | <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma migrate save --experimental`

Version: `2.10.0`

Binary Version: `af1ae11a423edfb5d24092a85be11fa77c5e499c`

Report: https://prisma-errors.netlify.app/report/13412

OS: `x64 darwin 20.5.0`

JS Stacktrace:

```

Error: Error... | 1.0 | Error: Error in migration engine. Reason: [libs/sql-schema-describer/src/walkers.rs:214:27] index out of bounds: the len is 0 but the index is 0 - <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma migrate save --experimental`

Version: `2.10.0`