Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

181,110 | 30,624,704,345 | IssuesEvent | 2023-07-24 10:37:48 | readthedocs/readthedocs.org | https://api.github.com/repos/readthedocs/readthedocs.org | closed | Filter by branch on project dashboard | Feature Needed: design decision | ## Details

Would be nice if there is a way to only show builds from certain branches. This is especially useful if a project has PR builder activated, which sometimes results in the build log full of PR builds, so it takes many clicks to find the last run of, say, `latest`.

.

Originally reported in #3447 | 1.0 | process: support ergonomic piping of stdio of one child process to another - Currently it is impossible to pass in `ChildStd{in,out,err}` into `tokio::process::Command::std{in,out,err}` since they cannot be automatically converted to `std::process::Stdio` (namely since they do not support `IntoRaw{Fd,Handle}`).

Orig... | process | process support ergonomic piping of stdio of one child process to another currently it is impossible to pass in childstd in out err into tokio process command std in out err since they cannot be automatically converted to std process stdio namely since they do not support intoraw fd handle orig... | 1 |

243,393 | 26,278,078,681 | IssuesEvent | 2023-01-07 01:55:24 | gavarasana/cra-test | https://api.github.com/repos/gavarasana/cra-test | opened | CVE-2021-23382 (High) detected in postcss-8.2.1.tgz | security vulnerability | ## CVE-2021-23382 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>postcss-8.2.1.tgz</b></p></summary>

<p>Tool for transforming styles with JS plugins</p>

<p>Library home page: <a href=... | True | CVE-2021-23382 (High) detected in postcss-8.2.1.tgz - ## CVE-2021-23382 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>postcss-8.2.1.tgz</b></p></summary>

<p>Tool for transforming sty... | non_process | cve high detected in postcss tgz cve high severity vulnerability vulnerable library postcss tgz tool for transforming styles with js plugins library home page a href path to dependency file package json path to vulnerable library node modules postcss safe parser no... | 0 |

117,674 | 11,953,462,230 | IssuesEvent | 2020-04-03 20:57:46 | JabRef/jabref | https://api.github.com/repos/JabRef/jabref | closed | How to install the 5.0 release aside the dev Snapshot? | documentation | I have the 5.1 dev-snapshot installed and wanted to install the 5.0 release side by side. This seems not possible. The 4.1 release had been well installed along side the 5.0 devs. Is there a way to have both versions installed? 5.1dev and 5.0 ?

| 1.0 | How to install the 5.0 release aside the dev Snapshot? - I have the 5.1 dev-snapshot installed and wanted to install the 5.0 release side by side. This seems not possible. The 4.1 release had been well installed along side the 5.0 devs. Is there a way to have both versions installed? 5.1dev and 5.0 ?

| non_process | how to install the release aside the dev snapshot i have the dev snapshot installed and wanted to install the release side by side this seems not possible the release had been well installed along side the devs is there a way to have both versions installed and | 0 |

3,666 | 6,542,111,225 | IssuesEvent | 2017-09-02 00:44:37 | Polymer/polymer | https://api.github.com/repos/Polymer/polymer | closed | <dom-if> interferes with justify-content: space-between | 1.x-2.x compatibility css p1 | <!--

If you are asking a question rather than filing a bug, try one of these instead:

- StackOverflow (http://stackoverflow.com/questions/tagged/polymer)

- Polymer Slack Channel (https://bit.ly/polymerslack)

- Mailing List (https://groups.google.com/forum/#!forum/polymer-dev)

-->

<!-- Instructions For Filing a Bu... | True | <dom-if> interferes with justify-content: space-between - <!--

If you are asking a question rather than filing a bug, try one of these instead:

- StackOverflow (http://stackoverflow.com/questions/tagged/polymer)

- Polymer Slack Channel (https://bit.ly/polymerslack)

- Mailing List (https://groups.google.com/forum/#!... | non_process | interferes with justify content space between if you are asking a question rather than filing a bug try one of these instead stackoverflow polymer slack channel mailing list description using in source code causes element to be put in resulting html regardless of... | 0 |

201,412 | 15,802,274,928 | IssuesEvent | 2021-04-03 08:56:25 | zikunz/ped | https://api.github.com/repos/zikunz/ped | opened | [UG] Potential improvement | severity.VeryLow type.DocumentationBug | Could spell out NUS SoC.

Could use "schedules" rather than "schedule"

Could include the welcome page screenshot (which is the same after typing help command)

<!--session: 1617437737885-9be6f5d3-b32d-4bb6-af87-1ab07f2a7014--> | 1.0 | [UG] Potential improvement - Could spell out NUS SoC.

Could use "schedules" rather than "schedule"

Could include the welcome page screenshot (which is the same after typing help command)

<!--session: 1617437737885-9be6f5d3-b32d-4bb6-af87-1ab07f2a7014--> | non_process | potential improvement could spell out nus soc could use schedules rather than schedule could include the welcome page screenshot which is the same after typing help command | 0 |

406,554 | 27,570,947,737 | IssuesEvent | 2023-03-08 09:14:43 | lmw7414/fastcampus-project-board-admin | https://api.github.com/repos/lmw7414/fastcampus-project-board-admin | closed | [어드민] 스프링 부트 프로젝트 시작하기 | documentation | #1 에서 정리한 내용을 토대로, 어드민 서비스를 만드는데 필요로 할 만한 요소를 담아 프로젝트 뼈대를 세우고 개발환경을 잡는다.

## Reference

- https://start.spring.io/ | 1.0 | [어드민] 스프링 부트 프로젝트 시작하기 - #1 에서 정리한 내용을 토대로, 어드민 서비스를 만드는데 필요로 할 만한 요소를 담아 프로젝트 뼈대를 세우고 개발환경을 잡는다.

## Reference

- https://start.spring.io/ | non_process | 스프링 부트 프로젝트 시작하기 에서 정리한 내용을 토대로 어드민 서비스를 만드는데 필요로 할 만한 요소를 담아 프로젝트 뼈대를 세우고 개발환경을 잡는다 reference | 0 |

66,623 | 7,008,730,606 | IssuesEvent | 2017-12-19 16:36:00 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | closed | Openlayers moves marker when click on vector data | bug In Test Priority: Blocker Project: C040 Tested | ### Description

Clicking on map with Openlayers moves the marker to the first coordinate of the clicked feature. This is due to the changes for annotations.

### In case of Bug (otherwise remove this paragraph)

*Browser Affected*

any

*Steps to reproduce*

- Open a map with openlayers

- Add a shape file ... | 2.0 | Openlayers moves marker when click on vector data - ### Description

Clicking on map with Openlayers moves the marker to the first coordinate of the clicked feature. This is due to the changes for annotations.

### In case of Bug (otherwise remove this paragraph)

*Browser Affected*

any

*Steps to reproduce*

... | non_process | openlayers moves marker when click on vector data description clicking on map with openlayers moves the marker to the first coordinate of the clicked feature this is due to the changes for annotations in case of bug otherwise remove this paragraph browser affected any steps to reproduce ... | 0 |

510,543 | 14,792,632,377 | IssuesEvent | 2021-01-12 14:58:40 | containrrr/watchtower | https://api.github.com/repos/containrrr/watchtower | opened | In v1.1.6, Notifications Fail with Insecure (HTTP) Gotify URL | Priority: Medium Status: Available Type: Bug | **Describe the bug**

On v1.1.6, Gotify notifications fail when configured with HTTP URL.

**To Reproduce**

Steps to reproduce the behavior:

1. Configure Watchtower to send notifications to insecure (HTTP) Gotify URL.

2. Trigger notification

3. Watchtower attempts to send via HTTPS & it fails.

**Expected behav... | 1.0 | In v1.1.6, Notifications Fail with Insecure (HTTP) Gotify URL - **Describe the bug**

On v1.1.6, Gotify notifications fail when configured with HTTP URL.

**To Reproduce**

Steps to reproduce the behavior:

1. Configure Watchtower to send notifications to insecure (HTTP) Gotify URL.

2. Trigger notification

3. Watch... | non_process | in notifications fail with insecure http gotify url describe the bug on gotify notifications fail when configured with http url to reproduce steps to reproduce the behavior configure watchtower to send notifications to insecure http gotify url trigger notification watchto... | 0 |

186,075 | 15,045,645,658 | IssuesEvent | 2021-02-03 05:53:33 | apache/buildstream | https://api.github.com/repos/apache/buildstream | closed | message format in user configuration is undocumented | bug documentation | [See original issue on GitLab](https://gitlab.com/BuildStream/buildstream/-/issues/510)

In GitLab by [[Gitlab user @tristanvb]](https://gitlab.com/tristanvb) on Jul 25, 2018, 13:32

## Summary

[//]: # (Summarize the bug encountered concisely)

While inspecting #509, we noticed that the `message-format` configuration i... | 1.0 | message format in user configuration is undocumented - [See original issue on GitLab](https://gitlab.com/BuildStream/buildstream/-/issues/510)

In GitLab by [[Gitlab user @tristanvb]](https://gitlab.com/tristanvb) on Jul 25, 2018, 13:32

## Summary

[//]: # (Summarize the bug encountered concisely)

While inspecting #50... | non_process | message format in user configuration is undocumented in gitlab by on jul summary summarize the bug encountered concisely while inspecting we noticed that the message format configuration is not properly documented we need to explain how this works in the and ensure that we ... | 0 |

12,120 | 14,740,711,311 | IssuesEvent | 2021-01-07 09:30:53 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Keener - Error Email - Accept invitation/Edit | anc-process anp-1 ant-bug has attachment | In GitLab by @kdjstudios on Nov 29, 2018, 08:45

**Submitted by:** Kyle

**Helpdesk:** NA

**Server:** External

**Client/Site:** Keener

**Account:** NA

**Issue:**

We received this error email about 10 times over the course of two hours this morning.

[SA_Billing_Error_Report_invitations_edit__ActionControllerActi... | 1.0 | Keener - Error Email - Accept invitation/Edit - In GitLab by @kdjstudios on Nov 29, 2018, 08:45

**Submitted by:** Kyle

**Helpdesk:** NA

**Server:** External

**Client/Site:** Keener

**Account:** NA

**Issue:**

We received this error email about 10 times over the course of two hours this morning.

[SA_Billing_Err... | process | keener error email accept invitation edit in gitlab by kdjstudios on nov submitted by kyle helpdesk na server external client site keener account na issue we received this error email about times over the course of two hours this morning uploads sa billin... | 1 |

43,873 | 5,575,578,056 | IssuesEvent | 2017-03-28 02:37:54 | infiniteautomation/ma-core-public | https://api.github.com/repos/infiniteautomation/ma-core-public | closed | Event Detector - Missing Definition Handling | Enhancement Ready for Testing | Ensure that the existing event detector types match the legacy types for JSON import and also reading from the DB.

Here is some code to perform checks at point init startup:

See commit: edc42f0e3eb128b7feff29c1373db066082f87ce | 1.0 | Event Detector - Missing Definition Handling - Ensure that the existing event detector types match the legacy types for JSON import and also reading from the DB.

Here is some code to perform checks at point init startup:

See commit: edc42f0e3eb128b7feff29c1373db066082f87ce | non_process | event detector missing definition handling ensure that the existing event detector types match the legacy types for json import and also reading from the db here is some code to perform checks at point init startup see commit | 0 |

10,947 | 13,756,384,392 | IssuesEvent | 2020-10-06 19:51:14 | paul-buerkner/brms | https://api.github.com/repos/paul-buerkner/brms | closed | Use extract_draws to get predictions of specified smooth terms | feature post-processing | Hi Paul,

This is in reference to this issue. https://discourse.mc-stan.org/t/calculate-the-first-derivative-and-its-posterior-distribution-of-an-estimated-spline-trend/10577

I thought I had resolved it, however, it was pointed out by the orignal author of the paper whose study I was trying to replicate in a bayesia... | 1.0 | Use extract_draws to get predictions of specified smooth terms - Hi Paul,

This is in reference to this issue. https://discourse.mc-stan.org/t/calculate-the-first-derivative-and-its-posterior-distribution-of-an-estimated-spline-trend/10577

I thought I had resolved it, however, it was pointed out by the orignal autho... | process | use extract draws to get predictions of specified smooth terms hi paul this is in reference to this issue i thought i had resolved it however it was pointed out by the orignal author of the paper whose study i was trying to replicate in a bayesian inference that i need to work at the level of smooths ... | 1 |

3,878 | 6,726,596,822 | IssuesEvent | 2017-10-17 10:26:39 | yarnpkg/yarn | https://api.github.com/repos/yarnpkg/yarn | closed | Support --registry flag from CLI commands | bug-configuration cat-compatibility cat-enhancement good first issue help wanted triaged | <!-- *Before creating an issue please make sure you are using the latest version of yarn.* -->

**Do you want to request a _feature_ or report a _bug_?**

yes, feature

**What is the current behavior?**

Only global config is available for setting registry

**What is the expected behavior?**

yarg add/install --registr... | True | Support --registry flag from CLI commands - <!-- *Before creating an issue please make sure you are using the latest version of yarn.* -->

**Do you want to request a _feature_ or report a _bug_?**

yes, feature

**What is the current behavior?**

Only global config is available for setting registry

**What is the expe... | non_process | support registry flag from cli commands do you want to request a feature or report a bug yes feature what is the current behavior only global config is available for setting registry what is the expected behavior yarg add install registry works please mention your node js yarn an... | 0 |

43,568 | 5,544,127,598 | IssuesEvent | 2017-03-22 18:24:53 | chamilo/chamilo-lms | https://api.github.com/repos/chamilo/chamilo-lms | closed | Sesión - Lista por categoría | Bug Requires testing | ### Expected behavior / Resultado esperado / Résultat attendu

Cuando doy click en el enlace de cantidad de sesiones en la página de lista de categorías de sesiones, debería listarme las sesiones que estan dentro de una categoría.

### Actual behavior / Resultado real / Résultat réel

Me parece la lista vacia.

###... | 1.0 | Sesión - Lista por categoría - ### Expected behavior / Resultado esperado / Résultat attendu

Cuando doy click en el enlace de cantidad de sesiones en la página de lista de categorías de sesiones, debería listarme las sesiones que estan dentro de una categoría.

### Actual behavior / Resultado real / Résultat réel

M... | non_process | sesión lista por categoría expected behavior resultado esperado résultat attendu cuando doy click en el enlace de cantidad de sesiones en la página de lista de categorías de sesiones debería listarme las sesiones que estan dentro de una categoría actual behavior resultado real résultat réel m... | 0 |

221,005 | 24,590,398,680 | IssuesEvent | 2022-10-14 01:13:40 | faizulho/vue-profile-website | https://api.github.com/repos/faizulho/vue-profile-website | opened | CVE-2022-37601 (High) detected in loader-utils-0.2.17.tgz, loader-utils-1.4.0.tgz | security vulnerability | ## CVE-2022-37601 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>loader-utils-0.2.17.tgz</b>, <b>loader-utils-1.4.0.tgz</b></p></summary>

<p>

<details><summary><b>loader-utils-0.2.1... | True | CVE-2022-37601 (High) detected in loader-utils-0.2.17.tgz, loader-utils-1.4.0.tgz - ## CVE-2022-37601 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>loader-utils-0.2.17.tgz</b>, <b>l... | non_process | cve high detected in loader utils tgz loader utils tgz cve high severity vulnerability vulnerable libraries loader utils tgz loader utils tgz loader utils tgz utils for webpack loaders library home page a href path to dependency file pack... | 0 |

10,579 | 13,389,371,511 | IssuesEvent | 2020-09-02 18:46:40 | jgraley/inferno-cpp2v | https://api.github.com/repos/jgraley/inferno-cpp2v | closed | Make variable ordering match | Constraint Processing | In the `SimpleSolver`, I think the way variables are determined is giving a breadth-first ordering relative to the program tree. But everything else, and in particular the `DecidedCompare()` recursive walk is in depth-first. I think with a recursive function we can get those variables to appear in the same order. This ... | 1.0 | Make variable ordering match - In the `SimpleSolver`, I think the way variables are determined is giving a breadth-first ordering relative to the program tree. But everything else, and in particular the `DecidedCompare()` recursive walk is in depth-first. I think with a recursive function we can get those variables to ... | process | make variable ordering match in the simplesolver i think the way variables are determined is giving a breadth first ordering relative to the program tree but everything else and in particular the decidedcompare recursive walk is in depth first i think with a recursive function we can get those variables to ... | 1 |

5,166 | 7,940,822,784 | IssuesEvent | 2018-07-10 00:40:49 | brucemiller/LaTeXML | https://api.github.com/repos/brucemiller/LaTeXML | closed | Incorrect references to tables, figures and equations | bug postprocessing | Affects XML and HTML document collections (i.e. split into multiple documents) generated with LaTeXML 0.8.2 using latexml LaTeX -> XML followed by latexmlpost XML -> collection of XML/HTML documents with splitnaming set to labelrelative and urlstyle set to server.

href attributes of hyperlinks (HTML)/refs (XML) to t... | 1.0 | Incorrect references to tables, figures and equations - Affects XML and HTML document collections (i.e. split into multiple documents) generated with LaTeXML 0.8.2 using latexml LaTeX -> XML followed by latexmlpost XML -> collection of XML/HTML documents with splitnaming set to labelrelative and urlstyle set to server.... | process | incorrect references to tables figures and equations affects xml and html document collections i e split into multiple documents generated with latexml using latexml latex xml followed by latexmlpost xml collection of xml html documents with splitnaming set to labelrelative and urlstyle set to server ... | 1 |

34,593 | 9,417,936,590 | IssuesEvent | 2019-04-10 17:57:46 | eclipse/openj9 | https://api.github.com/repos/eclipse/openj9 | closed | Regenerate test jobs to Add BUILD_TYPE | comp:build | I have created views with regexes to help sort the new separated jobs.

Please regenerate all the Test jobs to include the build type suffix

1. Nightly

1. OMR

1. Personal

1. Release

ex. Test-sanity.functional-JDK8-linux_x86-64_cmprssptrs_Nightly

Related #5182

cc @llxia | 1.0 | Regenerate test jobs to Add BUILD_TYPE - I have created views with regexes to help sort the new separated jobs.

Please regenerate all the Test jobs to include the build type suffix

1. Nightly

1. OMR

1. Personal

1. Release

ex. Test-sanity.functional-JDK8-linux_x86-64_cmprssptrs_Nightly

Related #5182

cc @llx... | non_process | regenerate test jobs to add build type i have created views with regexes to help sort the new separated jobs please regenerate all the test jobs to include the build type suffix nightly omr personal release ex test sanity functional linux cmprssptrs nightly related cc llxia | 0 |

10,883 | 13,653,763,297 | IssuesEvent | 2020-09-27 14:18:35 | raxod502/straight.el | https://api.github.com/repos/raxod502/straight.el | closed | "Process failed" error is very poor | error handling external command messaging process buffer ux | Currently when a subprocess invocation fails unexpectedly, you get

```

(error "Failed to run \"git\"; see buffer *straight-process*")

```

which is pretty bad. Firstly we should show part of the output from the subprocess in the minibuffer. Secondly we should just pop to the `*straight-process*` buffer automatic... | 1.0 | "Process failed" error is very poor - Currently when a subprocess invocation fails unexpectedly, you get

```

(error "Failed to run \"git\"; see buffer *straight-process*")

```

which is pretty bad. Firstly we should show part of the output from the subprocess in the minibuffer. Secondly we should just pop to the... | process | process failed error is very poor currently when a subprocess invocation fails unexpectedly you get error failed to run git see buffer straight process which is pretty bad firstly we should show part of the output from the subprocess in the minibuffer secondly we should just pop to the... | 1 |

277,813 | 24,104,922,956 | IssuesEvent | 2022-09-20 06:32:06 | jajm/koha-staff-interface-redesign | https://api.github.com/repos/jajm/koha-staff-interface-redesign | closed | Menu link in the top bar needs to be white on small screens | type: bug status: needs testing | When looking at the new GUI on a small screen, the menu icon has now green link text, which has no good contrast. It should be white as the other entries there on bigger screens.

(search bar issue will be filed separately)

Viewed story

2.) Clicked on Reply button

3.) Entered comment title: "0x7f - POT"

4.) Selected "Plain Old Text" from the Drop-Down list

5.) Clicked on 'Preview' button.

6.) G... | 1.0 | Server Error when preview comment having char < 0x1f, or char==0x7f - _From @marty-b on March 29, 2015 21:28_

Tried to submit a comment to: https://dev.soylentnews.org/article.pl?sid=15/03/29/1622202

1.) Viewed story

2.) Clicked on Reply button

3.) Entered comment title: "0x7f - POT"

4.) Selected "Plain Old Te... | non_process | server error when preview comment having char or char from marty b on march tried to submit a comment to viewed story clicked on reply button entered comment title pot selected plain old text from the drop down list clicked on preview button got ... | 0 |

1,814 | 4,561,746,316 | IssuesEvent | 2016-09-14 12:52:38 | openvstorage/framework | https://api.github.com/repos/openvstorage/framework | closed | Rescan not possible if a volumedriver disk is broken | process_duplicate type_bug | When losing a write role and trying to replace it we get this problem:

```

Traceback (most recent call last):

File "/usr/lib/python2.7/dist-packages/celery/app/trace.py", line 240, in trace_task

R = retval = fun(*args, **kwargs)

File "/usr/lib/python2.7/dist-packages/celery/app/trace.py", line 438, in __p... | 1.0 | Rescan not possible if a volumedriver disk is broken - When losing a write role and trying to replace it we get this problem:

```

Traceback (most recent call last):

File "/usr/lib/python2.7/dist-packages/celery/app/trace.py", line 240, in trace_task

R = retval = fun(*args, **kwargs)

File "/usr/lib/python2... | process | rescan not possible if a volumedriver disk is broken when losing a write role and trying to replace it we get this problem traceback most recent call last file usr lib dist packages celery app trace py line in trace task r retval fun args kwargs file usr lib dist packag... | 1 |

7,662 | 10,755,810,461 | IssuesEvent | 2019-10-31 09:55:17 | linnovate/root | https://api.github.com/repos/linnovate/root | opened | tasks from meetings inheritance | 2.0.8 Process bug | go to meetings

open meeting add watcher and give him permissions

go to tasks tab and open task from meetings

result : the mission did not receive inheritance from the discussion

| 1.0 | tasks from meetings inheritance - go to meetings

open meeting add watcher and give him permissions

go to tasks tab and open task from meetings

result : the mission did not receive inheritance from the discussion

| process | tasks from meetings inheritance go to meetings open meeting add watcher and give him permissions go to tasks tab and open task from meetings result the mission did not receive inheritance from the discussion | 1 |

11,348 | 14,170,012,457 | IssuesEvent | 2020-11-12 14:00:30 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Process tests do not return for 5 minutes | area-System.Diagnostics.Process untriaged | If you run the System.Diagnostics.Process tests locally on Windows, after the tests themselves complete there will be a 5 minute delay before `dotnet build /t:test` returns. this happens after this point:

```

=== TEST EXECUTION SUMMARY ===

System.Diagnostics.Process.Tests Total: 302, Errors: 0, Failed: 0, Sk... | 1.0 | Process tests do not return for 5 minutes - If you run the System.Diagnostics.Process tests locally on Windows, after the tests themselves complete there will be a 5 minute delay before `dotnet build /t:test` returns. this happens after this point:

```

=== TEST EXECUTION SUMMARY ===

System.Diagnostics.Process... | process | process tests do not return for minutes if you run the system diagnostics process tests locally on windows after the tests themselves complete there will be a minute delay before dotnet build t test returns this happens after this point test execution summary system diagnostics process... | 1 |

11,068 | 13,903,782,551 | IssuesEvent | 2020-10-20 07:44:23 | googleapis/nodejs-error-reporting | https://api.github.com/repos/googleapis/nodejs-error-reporting | opened | chore: add api-logging team to codeowners | api: clouderrorreporting lang: nodejs priority: p2 type: process | - removed "stackdriver" brand

- added api-logging team to codeowners

| 1.0 | chore: add api-logging team to codeowners - - removed "stackdriver" brand

- added api-logging team to codeowners

| process | chore add api logging team to codeowners removed stackdriver brand added api logging team to codeowners | 1 |

18,700 | 24,595,906,558 | IssuesEvent | 2022-10-14 08:18:51 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Big query] Form steps > Response records are not getting created in the views | Bug Blocker P0 Response datastore Process: Fixed Process: Tested dev | AR: Form steps > Response records are not getting created in the views

ER: Form steps > Response records should get created in the views and response tables

[Note: Issue should be fixed for both instruction step and form step questions]

| 2.0 | [Big query] Form steps > Response records are not getting created in the views - AR: Form steps > Response records are not getting created in the views

ER: Form steps > Response records should get created in the views and response tables

[Note: Issue should be fixed for both instruction step and form step questions... | process | form steps response records are not getting created in the views ar form steps response records are not getting created in the views er form steps response records should get created in the views and response tables | 1 |

19,038 | 25,042,549,729 | IssuesEvent | 2022-11-04 22:56:45 | USGS-WiM/StreamStats | https://api.github.com/repos/USGS-WiM/StreamStats | opened | BP: Add user instructions | Batch Processor | Part of #1455

We should provide some user instructions on the form.

We may also want to provide content to the StreamStats team about the BP on the Help > User Manual and Help > FAQ sections. | 1.0 | BP: Add user instructions - Part of #1455

We should provide some user instructions on the form.

We may also want to provide content to the StreamStats team about the BP on the Help > User Manual and Help > FAQ sections. | process | bp add user instructions part of we should provide some user instructions on the form we may also want to provide content to the streamstats team about the bp on the help user manual and help faq sections | 1 |

6,393 | 9,476,263,116 | IssuesEvent | 2019-04-19 14:31:05 | cityofaustin/techstack | https://api.github.com/repos/cityofaustin/techstack | closed | Form to lead user to right content CG (process page?) | Content Type: Process Page Size: L Team: Content | Deliverable in Community Garden project scope doc

https://docs.google.com/document/d/1NVNrS0FIfef1G5dxM8-u7Bvs7F_j3S5gQJjkcavpmWI/edit#

-development process tbd

- [x] Copy drafted | 1.0 | Form to lead user to right content CG (process page?) - Deliverable in Community Garden project scope doc

https://docs.google.com/document/d/1NVNrS0FIfef1G5dxM8-u7Bvs7F_j3S5gQJjkcavpmWI/edit#

-development process tbd

- [x] Copy drafted | process | form to lead user to right content cg process page deliverable in community garden project scope doc development process tbd copy drafted | 1 |

18,701 | 24,596,180,673 | IssuesEvent | 2022-10-14 08:32:05 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Error: Error in migration engine. Reason: [migration-engine\connectors\sql-migration-connector\src\sql_renderer\mysql_renderer.rs:522:97] internal error: entered unreachable code | bug/1-unconfirmed kind/bug process/candidate topic: error reporting team/schema | <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma db push`

Version: `4.4.0`

Binary Version: `f352a33b70356f46311da8b00d83386dd9f145d6`

Report: https://prisma-errors.netlify.app/report/14362

OS: `x64 win32 10.0.22000`

| 1.0 | Error: Error in migration engine. Reason: [migration-engine\connectors\sql-migration-connector\src\sql_renderer\mysql_renderer.rs:522:97] internal error: entered unreachable code - <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma db push`

Version: `4.4.0`

Binary Version: ... | process | error error in migration engine reason internal error entered unreachable code command prisma db push version binary version report os | 1 |

392,967 | 11,598,348,805 | IssuesEvent | 2020-02-24 22:53:22 | dmwm/WMCore | https://api.github.com/repos/dmwm/WMCore | closed | Locking data from request manager in the view of dynamo | High Priority New Feature Unified Porting WMStats | Opening this GH issue for starting the thread that could lead to a more stable system.

Dynamo (@yiiyama) needs to know the data that should not be touch, to the eye of Dataops. unified is taking care of this for the moment and in the way it is implemented right now, I think this would be best as a view in reqmgr2.

Al... | 1.0 | Locking data from request manager in the view of dynamo - Opening this GH issue for starting the thread that could lead to a more stable system.

Dynamo (@yiiyama) needs to know the data that should not be touch, to the eye of Dataops. unified is taking care of this for the moment and in the way it is implemented right... | non_process | locking data from request manager in the view of dynamo opening this gh issue for starting the thread that could lead to a more stable system dynamo yiiyama needs to know the data that should not be touch to the eye of dataops unified is taking care of this for the moment and in the way it is implemented right... | 0 |

173 | 2,736,253,850 | IssuesEvent | 2015-04-19 08:06:08 | tjhancocks/Nova | https://api.github.com/repos/tjhancocks/Nova | closed | x86 Interrupt Request Handlers | core architecture cpu enhancement kernel | Implement the 16 Interrupt Requests that allow for hardware originating interrupts | 1.0 | x86 Interrupt Request Handlers - Implement the 16 Interrupt Requests that allow for hardware originating interrupts | non_process | interrupt request handlers implement the interrupt requests that allow for hardware originating interrupts | 0 |

27,130 | 2,690,529,558 | IssuesEvent | 2015-03-31 16:33:29 | IQSS/dataverse | https://api.github.com/repos/IQSS/dataverse | closed | File Download: Download term of access popup now has a third button, validation. | Component: File Upload & Handling Priority: Critical Status: QA Type: Bug |

The third button always allows downloading, regardless of accepting terms. This seems like a developer's debug code was checked in by mistake. | 1.0 | File Download: Download term of access popup now has a third button, validation. -

The third button always allows downloading, regardless of accepting terms. This seems like a developer's debug code was checked in by mistake. | non_process | file download download term of access popup now has a third button validation the third button always allows downloading regardless of accepting terms this seems like a developer s debug code was checked in by mistake | 0 |

3,030 | 6,034,398,525 | IssuesEvent | 2017-06-09 10:59:34 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | investigate flaky test-benchmark-child-process on Windows | benchmark child_process test windows | * **Version**: 8.0.0-pre

* **Platform**: win2008r2

* **Subsystem**: test

<!-- Enter your issue details below this comment. -->

https://ci.nodejs.org/job/node-test-binary-windows/8189/RUN_SUBSET=3,VS_VERSION=vs2015-x86,label=win2008r2/console

```console

not ok 356 sequential/test-benchmark-child-process

-... | 1.0 | investigate flaky test-benchmark-child-process on Windows - * **Version**: 8.0.0-pre

* **Platform**: win2008r2

* **Subsystem**: test

<!-- Enter your issue details below this comment. -->

https://ci.nodejs.org/job/node-test-binary-windows/8189/RUN_SUBSET=3,VS_VERSION=vs2015-x86,label=win2008r2/console

```cons... | process | investigate flaky test benchmark child process on windows version pre platform subsystem test console not ok sequential test benchmark child process duration ms severity fail stack timeout refack adding ref | 1 |

131,986 | 10,726,990,340 | IssuesEvent | 2019-10-28 10:36:55 | neuromation/cookiecutter-neuro-project | https://api.github.com/repos/neuromation/cookiecutter-neuro-project | opened | Add functionality to skip project generation | tests | add pytest parameter `--project-path=...` to skip long project generation and necessity to do `make setup`.

Need to facilitate debugging other tests than `test_make_setup`. | 1.0 | Add functionality to skip project generation - add pytest parameter `--project-path=...` to skip long project generation and necessity to do `make setup`.

Need to facilitate debugging other tests than `test_make_setup`. | non_process | add functionality to skip project generation add pytest parameter project path to skip long project generation and necessity to do make setup need to facilitate debugging other tests than test make setup | 0 |

327,879 | 9,982,750,873 | IssuesEvent | 2019-07-10 10:37:07 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.dealnews.com - desktop site instead of mobile site | browser-fenix engine-gecko priority-normal | <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.dealnews.com/

**Browser / Version**: Firefox Mobile 68.0

**Operating System**: Android

**Tested Another Brow... | 1.0 | www.dealnews.com - desktop site instead of mobile site - <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.dealnews.com/

**Browser / Version**: Firefox Mobile... | non_process | desktop site instead of mobile site url browser version firefox mobile operating system android tested another browser no problem type desktop site instead of mobile site description looks like the desktop site unsure but scrolling is terrible steps to reproduc... | 0 |

335,515 | 10,154,585,849 | IssuesEvent | 2019-08-06 08:23:52 | Code-Poets/sheetstorm | https://api.github.com/repos/Code-Poets/sheetstorm | closed | Separate the days with bold horizontal lines in xlsx | feature priority medium | Should be done:

------------

- separate the days with bold lines in xlsx

-

-

-

| 1.0 | Separate the days with bold horizontal lines in xlsx - Should be done:

------------

- separate the days with bold lines in xlsx

-

-

-

| non_process | separate the days with bold horizontal lines in xlsx should be done separate the days with bold lines in xlsx | 0 |

13,030 | 15,382,163,747 | IssuesEvent | 2021-03-03 00:04:01 | retaildevcrews/ngsa | https://api.github.com/repos/retaildevcrews/ngsa | closed | NGSA - Survey - M2 - Sprint 2 | EngPrac Process | ### How well was the backlog maintained

- [ ] We did not use a backlog.

- [ ] We created a backlog, but did not maintain it.

- [ ] Our backlog was loosely defined for the project.

- [ ] Our backlog was organized into well-defined work items.

- [x] Our backlog was organized into well-defined work items and was ac... | 1.0 | NGSA - Survey - M2 - Sprint 2 - ### How well was the backlog maintained

- [ ] We did not use a backlog.

- [ ] We created a backlog, but did not maintain it.

- [ ] Our backlog was loosely defined for the project.

- [ ] Our backlog was organized into well-defined work items.

- [x] Our backlog was organized into we... | process | ngsa survey sprint how well was the backlog maintained we did not use a backlog we created a backlog but did not maintain it our backlog was loosely defined for the project our backlog was organized into well defined work items our backlog was organized into well defined ... | 1 |

650 | 3,114,988,491 | IssuesEvent | 2015-09-03 12:18:10 | alex/django-filter | https://api.github.com/repos/alex/django-filter | opened | Handle `RemovedInDjango110Warning` Notices | Testing/Process | Running the test suite for `py34-django-latest` we get a number of `RemovedInDjango110Warning` notices...

```

py34-django-latest runtests: commands[0] | ./runtests.py

Creating test database for alias 'default'...

......s...../Users/carlton/Documents/Django-Stack/django-filter/django_filters/filterset.py:104: Remo... | 1.0 | Handle `RemovedInDjango110Warning` Notices - Running the test suite for `py34-django-latest` we get a number of `RemovedInDjango110Warning` notices...

```

py34-django-latest runtests: commands[0] | ./runtests.py

Creating test database for alias 'default'...

......s...../Users/carlton/Documents/Django-Stack/django... | process | handle notices running the test suite for django latest we get a number of notices django latest runtests commands runtests py creating test database for alias default s users carlton documents django stack django filter django filters filterset py get field by ... | 1 |

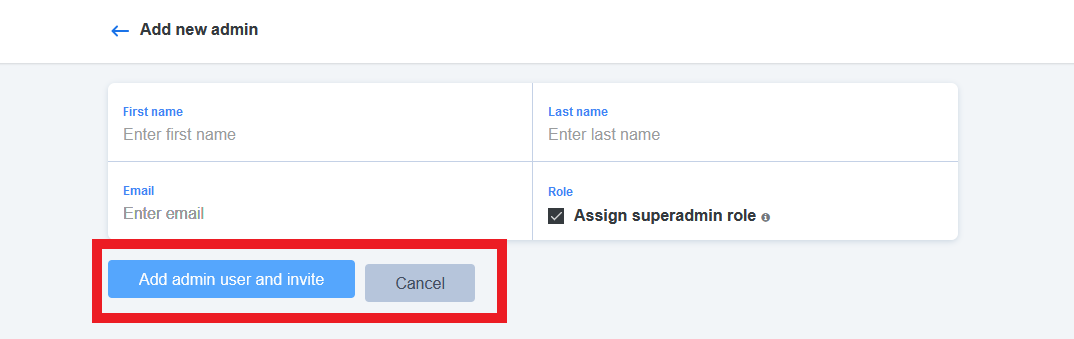

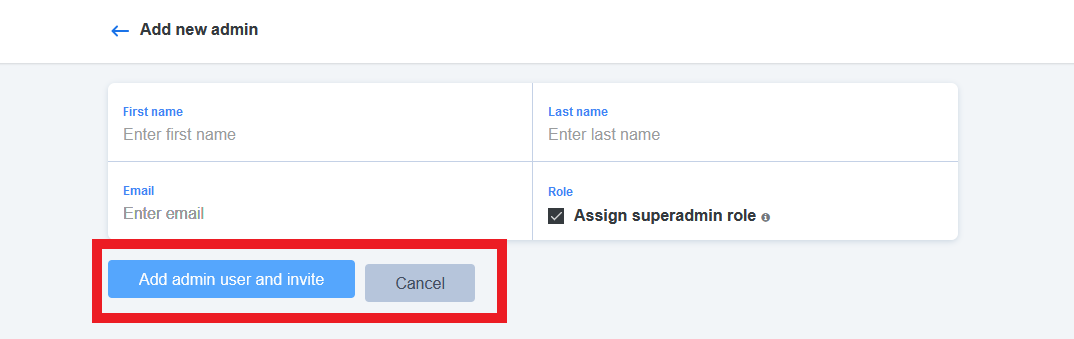

14,486 | 17,602,253,021 | IssuesEvent | 2021-08-17 13:15:02 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] Admins > Add new admin screen > UI issue | Bug P2 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | Admins > Add new admin screen > Buttons are not aligned properly

| 3.0 | [PM] Admins > Add new admin screen > UI issue - Admins > Add new admin screen > Buttons are not aligned properly

| process | admins add new admin screen ui issue admins add new admin screen buttons are not aligned properly | 1 |

3,066 | 6,051,274,672 | IssuesEvent | 2017-06-12 23:24:04 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | test: investigate flaky test-child-process-stdio-big-write-end | arm child_process test | <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: output of `node -v`

P... | 1.0 | test: investigate flaky test-child-process-stdio-big-write-end - <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the ... | process | test investigate flaky test child process stdio big write end thank you for reporting an issue this issue tracker is for bugs and issues found within node js core if you require more general support please file an issue on our help repo please fill in as much of the template below as you re able... | 1 |

751,852 | 26,261,435,809 | IssuesEvent | 2023-01-06 08:16:19 | WavesHQ/bridge | https://api.github.com/repos/WavesHQ/bridge | closed | `Contract` - shouldn't be able to send ether along with ERC20 token | needs/area needs/triage kind/bug needs/priority | <!--

Please use this template while reporting a bug and provide as much info as possible.

If the matter is security related, please disclose it privately via security@defichain.com

-->

#### What happened:

If users send ETH along with ERC20 token, those ETH will be unaccounted for. Admin will have to manually r... | 1.0 | `Contract` - shouldn't be able to send ether along with ERC20 token - <!--

Please use this template while reporting a bug and provide as much info as possible.

If the matter is security related, please disclose it privately via security@defichain.com

-->

#### What happened:

If users send ETH along with ERC20 t... | non_process | contract shouldn t be able to send ether along with token please use this template while reporting a bug and provide as much info as possible if the matter is security related please disclose it privately via security defichain com what happened if users send eth along with token th... | 0 |

14,467 | 17,571,238,762 | IssuesEvent | 2021-08-14 18:43:02 | elastic/beats | https://api.github.com/repos/elastic/beats | closed | Add glob or recursive option for processor `drop_fields` | enhancement libbeat :Processors Team:Integrations Stalled | **Describe the enhancement:**

Add glob or recursive option for processor `drop_fields` which would be able to check the entire document for the field to remove.

**Describe a specific use case for the enhancement or feature:**

The processor works only with statically defined list of the fields and also only with fi... | 1.0 | Add glob or recursive option for processor `drop_fields` - **Describe the enhancement:**

Add glob or recursive option for processor `drop_fields` which would be able to check the entire document for the field to remove.

**Describe a specific use case for the enhancement or feature:**

The processor works only with ... | process | add glob or recursive option for processor drop fields describe the enhancement add glob or recursive option for processor drop fields which would be able to check the entire document for the field to remove describe a specific use case for the enhancement or feature the processor works only with ... | 1 |

336,766 | 24,512,367,572 | IssuesEvent | 2022-10-10 23:24:07 | based-kwl/SemesterPlanner-client | https://api.github.com/repos/based-kwl/SemesterPlanner-client | closed | User story backlog | documentation | Create User Stories Backlog (You should create user stories for all requirements. Before the beginning

of each Sprint you will plan the user stories to be completed and estimate their user story points

using planning poker):

- [x] Backlog

- [x] plan user stories for Sprint 3 | 1.0 | User story backlog - Create User Stories Backlog (You should create user stories for all requirements. Before the beginning

of each Sprint you will plan the user stories to be completed and estimate their user story points

using planning poker):

- [x] Backlog

- [x] plan user stories for Sprint 3 | non_process | user story backlog create user stories backlog you should create user stories for all requirements before the beginning of each sprint you will plan the user stories to be completed and estimate their user story points using planning poker backlog plan user stories for sprint | 0 |

16,564 | 21,577,493,411 | IssuesEvent | 2022-05-02 15:08:19 | camunda/zeebe | https://api.github.com/repos/camunda/zeebe | opened | IllegalStateException when writing decision evaluation event | kind/bug area/reliability team/process-automation | **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

When trying to write the decision evaluation event an `IllegalArgumentException` is thrown. This is because when searching for decision by decision requirements key multiple results with the same decision id are returned:

```java

... | 1.0 | IllegalStateException when writing decision evaluation event - **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

When trying to write the decision evaluation event an `IllegalArgumentException` is thrown. This is because when searching for decision by decision requirements key multip... | process | illegalstateexception when writing decision evaluation event describe the bug when trying to write the decision evaluation event an illegalargumentexception is thrown this is because when searching for decision by decision requirements key multiple results with the same decision id are returned ja... | 1 |

88,842 | 8,179,119,982 | IssuesEvent | 2018-08-28 15:35:28 | celery/celery | https://api.github.com/repos/celery/celery | closed | Unable to save pickled objects with couchbase as result backend | Component: Canvas Component: Couchbase Results Backend Issue Type: Bug Status: Has Testcase ✔ | Hi it seems like when I attempt to process groups of chords, the couchbase result backend is consistently failing to unlock the chord when reading from the db:

`celery.chord_unlock[e3139ae5-a67d-4f0c-8c54-73b1e19433d2] retry: Retry in 1s: ValueFormatError()`

This behavior does not occur with the redis result back... | 1.0 | Unable to save pickled objects with couchbase as result backend - Hi it seems like when I attempt to process groups of chords, the couchbase result backend is consistently failing to unlock the chord when reading from the db:

`celery.chord_unlock[e3139ae5-a67d-4f0c-8c54-73b1e19433d2] retry: Retry in 1s: ValueFormatE... | non_process | unable to save pickled objects with couchbase as result backend hi it seems like when i attempt to process groups of chords the couchbase result backend is consistently failing to unlock the chord when reading from the db celery chord unlock retry retry in valueformaterror this behavior does not occu... | 0 |

3,433 | 6,533,097,499 | IssuesEvent | 2017-08-31 03:48:32 | rubberduck-vba/Rubberduck | https://api.github.com/repos/rubberduck-vba/Rubberduck | closed | MSForms control members trigger 'Member does not exist on interface' inspection | bug false-positive parse-tree-processing | I am getting the following errors:

> Member 'Clear' is not declared on the interface for type 'ListBox'.

> Member 'SetFocus' is not declared on the interface for type 'TextBox'.

These are clearly valid members because I can see them in the VBA code completion, they compile, and they function. You can clear a lis... | 1.0 | MSForms control members trigger 'Member does not exist on interface' inspection - I am getting the following errors:

> Member 'Clear' is not declared on the interface for type 'ListBox'.

> Member 'SetFocus' is not declared on the interface for type 'TextBox'.

These are clearly valid members because I can see the... | process | msforms control members trigger member does not exist on interface inspection i am getting the following errors member clear is not declared on the interface for type listbox member setfocus is not declared on the interface for type textbox these are clearly valid members because i can see the... | 1 |

13,931 | 16,686,711,269 | IssuesEvent | 2021-06-08 08:47:19 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Canceling Union with same layer kills QGIS | Bug Crash/Data Corruption Processing | <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

-... | 1.0 | Canceling Union with same layer kills QGIS - <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsor... | process | canceling union with same layer kills qgis bug fixing and feature development is a community responsibility and not the responsibility of the qgis project alone if this bug report or feature request is high priority for you we suggest engaging a qgis developer or support organisation and financially sponsor... | 1 |

9,720 | 12,717,197,692 | IssuesEvent | 2020-06-24 04:22:36 | kubeflow/pipelines | https://api.github.com/repos/kubeflow/pipelines | closed | Define Kubeflow Pipelines' Stable Requirements using Kubeflow Community's Process (as defined in Application Requirements Template) | kind/process lifecycle/stale status/triaged | ### What steps did you take:

[A clear and concise description of what the bug is.]

### What happened:

### What did you expect to happen:

### Environment:

<!-- Please fill in those that seem relevant. -->

How did you deploy Kubeflow Pipelines (KFP)?

<!-- If you are not sure, here's [an introduction of all... | 1.0 | Define Kubeflow Pipelines' Stable Requirements using Kubeflow Community's Process (as defined in Application Requirements Template) - ### What steps did you take:

[A clear and concise description of what the bug is.]

### What happened:

### What did you expect to happen:

### Environment:

<!-- Please fill in t... | process | define kubeflow pipelines stable requirements using kubeflow community s process as defined in application requirements template what steps did you take what happened what did you expect to happen environment how did you deploy kubeflow pipelines kfp kfp version ... | 1 |

11,534 | 30,833,227,849 | IssuesEvent | 2023-08-02 04:43:15 | Koniverse/SubWallet-Extension | https://api.github.com/repos/Koniverse/SubWallet-Extension | closed | Not showing staking record on account using different stash and controller account | enhancement extension architecture | Current staking feature does not show staking data in case the controller account is different from the stash account. More update later. Expected to be resolved after architecture update | 1.0 | Not showing staking record on account using different stash and controller account - Current staking feature does not show staking data in case the controller account is different from the stash account. More update later. Expected to be resolved after architecture update | non_process | not showing staking record on account using different stash and controller account current staking feature does not show staking data in case the controller account is different from the stash account more update later expected to be resolved after architecture update | 0 |

64,507 | 14,666,140,062 | IssuesEvent | 2020-12-29 15:41:13 | jgeraigery/experian-java | https://api.github.com/repos/jgeraigery/experian-java | opened | CVE-2020-9547 (High) detected in jackson-databind-2.9.2.jar | security vulnerability | ## CVE-2020-9547 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.2.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming... | True | CVE-2020-9547 (High) detected in jackson-databind-2.9.2.jar - ## CVE-2020-9547 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.2.jar</b></p></summary>

<p>General d... | non_process | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file experian java mavenworkspace bi... | 0 |

379 | 2,823,565,061 | IssuesEvent | 2015-05-21 09:36:33 | austundag/testing | https://api.github.com/repos/austundag/testing | closed | Only the allergies from the logon VistA instance show up | enhancement in process | It appears that currently only allergies from one VistA instance (PANAROMA and KODAK) shows up in eHMP. From eHMP presentation allergies from both instances should show up although they are identical. Investigation is needed to understand the reason and change settings if there is any. | 1.0 | Only the allergies from the logon VistA instance show up - It appears that currently only allergies from one VistA instance (PANAROMA and KODAK) shows up in eHMP. From eHMP presentation allergies from both instances should show up although they are identical. Investigation is needed to understand the reason and change... | process | only the allergies from the logon vista instance show up it appears that currently only allergies from one vista instance panaroma and kodak shows up in ehmp from ehmp presentation allergies from both instances should show up although they are identical investigation is needed to understand the reason and change... | 1 |

5,465 | 8,328,747,091 | IssuesEvent | 2018-09-27 02:32:33 | uccser/verto | https://api.github.com/repos/uccser/verto | closed | Add blockquote tag | processor implementation | The standard Markdown blockquote tag is limited in formatting (can be improved by CSS), but it would be nice to have a Verto tag to allow finer editing, especially for automatic use with [Bootstrap 4](https://getbootstrap.com/docs/4.1/content/typography/#blockquotes). Possibly could look like:

```markdown

{blockquo... | 1.0 | Add blockquote tag - The standard Markdown blockquote tag is limited in formatting (can be improved by CSS), but it would be nice to have a Verto tag to allow finer editing, especially for automatic use with [Bootstrap 4](https://getbootstrap.com/docs/4.1/content/typography/#blockquotes). Possibly could look like:

`... | process | add blockquote tag the standard markdown blockquote tag is limited in formatting can be improved by css but it would be nice to have a verto tag to allow finer editing especially for automatic use with possibly could look like markdown blockquote first and foremost we believe that speed is more ... | 1 |

212,171 | 16,430,824,876 | IssuesEvent | 2021-05-20 01:09:16 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | runner-ct: cy.screenshot wrong for very long viewports | component testing stage: review/qa | <!-- 👋 Use the template below to report a bug. Fill in as much info as possible.

Have a question? Start a new discussion 👉 https://github.com/cypress-io/cypress/discussions

As an open source project with a small maintainer team, it may take some time for your issue to be addressed. Please be patient and we wil... | 1.0 | runner-ct: cy.screenshot wrong for very long viewports - <!-- 👋 Use the template below to report a bug. Fill in as much info as possible.

Have a question? Start a new discussion 👉 https://github.com/cypress-io/cypress/discussions

As an open source project with a small maintainer team, it may take some time for... | non_process | runner ct cy screenshot wrong for very long viewports 👋 use the template below to report a bug fill in as much info as possible have a question start a new discussion 👉 as an open source project with a small maintainer team it may take some time for your issue to be addressed please be patient a... | 0 |

202,653 | 15,294,133,202 | IssuesEvent | 2021-02-24 01:47:58 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | Unexpected collapse/expand selfhost test provider behavior | polish testing | Testing #117305

When I expand/collapse test sections (i.e. just clicking on the icon/expansion symbol below), I don't expect the view to change to that file.

I would expect to be brought to that file... | 1.0 | Unexpected collapse/expand selfhost test provider behavior - Testing #117305

When I expand/collapse test sections (i.e. just clicking on the icon/expansion symbol below), I don't expect the view to change to that file.

document describes a scenario of fresh ideas changing the course of a WG. This can be problematic, but it can also demonstrate that a group has adapted its needs organically. A healthy WG will work on real implemen... | 1.0 | is limiting continuous incubation prohibiting organic growth? - The [Continuous Incubation](https://github.com/w3c/wg-effectiveness/blob/master/continuous_incubation.md) document describes a scenario of fresh ideas changing the course of a WG. This can be problematic, but it can also demonstrate that a group has adapte... | process | is limiting continuous incubation prohibiting organic growth the document describes a scenario of fresh ideas changing the course of a wg this can be problematic but it can also demonstrate that a group has adapted its needs organically a healthy wg will work on real implementations as it develops and may nee... | 1 |

6,004 | 8,808,939,439 | IssuesEvent | 2018-12-27 16:59:31 | linnovate/root | https://api.github.com/repos/linnovate/root | closed | plus menu, creating a project | 2.0.6 Fixed Process bug | when trying to create a project using the plus menu, instead of creating a project at the end of the list, it instead jumps to the first project on the list | 1.0 | plus menu, creating a project - when trying to create a project using the plus menu, instead of creating a project at the end of the list, it instead jumps to the first project on the list | process | plus menu creating a project when trying to create a project using the plus menu instead of creating a project at the end of the list it instead jumps to the first project on the list | 1 |

540,620 | 15,814,785,401 | IssuesEvent | 2021-04-05 10:02:47 | AY2021S2-CS2113-F10-1/tp | https://api.github.com/repos/AY2021S2-CS2113-F10-1/tp | closed | [PE-D] filter feature exceptions | priority.High severity.High type.Bug | If the filter feature is only able to take in certain values for filter type <value> (eg 1-5 room) in certain format, the right error message should be prompt to the user instead of just take in the value user typed and return no result. I key in filter type 4room and no result came out for the find. Maybe can try to u... | 1.0 | [PE-D] filter feature exceptions - If the filter feature is only able to take in certain values for filter type <value> (eg 1-5 room) in certain format, the right error message should be prompt to the user instead of just take in the value user typed and return no result. I key in filter type 4room and no result came o... | non_process | filter feature exceptions if the filter feature is only able to take in certain values for filter type eg room in certain format the right error message should be prompt to the user instead of just take in the value user typed and return no result i key in filter type and no result came out for the find... | 0 |

272,504 | 8,514,247,028 | IssuesEvent | 2018-10-31 18:02:16 | zulip/zulip | https://api.github.com/repos/zulip/zulip | opened | Add badge to guest avatars. | area: misc priority: high | It's a bit of a security issue for guests and members to look the same in the Zulip UI, since for social engineering reasons.

https://github.com/zulip/zulip/commit/deb29749c2c3c5bfae7108d0bdbc0a83f7771070 adds the necessary piping for the frontend to know whether a user is a guest or not. Two things remaining are:

... | 1.0 | Add badge to guest avatars. - It's a bit of a security issue for guests and members to look the same in the Zulip UI, since for social engineering reasons.

https://github.com/zulip/zulip/commit/deb29749c2c3c5bfae7108d0bdbc0a83f7771070 adds the necessary piping for the frontend to know whether a user is a guest or n... | non_process | add badge to guest avatars it s a bit of a security issue for guests and members to look the same in the zulip ui since for social engineering reasons adds the necessary piping for the frontend to know whether a user is a guest or not two things remaining are add css to make the avatar look like this ... | 0 |

14,353 | 17,375,115,781 | IssuesEvent | 2021-07-30 19:44:01 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Clarify when template parameters are actually parsed | Pri2 devops-cicd-process/tech devops/prod doc-enhancement | The documentation doesn't state when template parameters are actually replace in the template. I always assumed they would be replace compile time (since inside the template the parameter expression (`${{`) is used) and for this type of expression the documentation states they are replaced compile time.

But recently... | 1.0 | Clarify when template parameters are actually parsed - The documentation doesn't state when template parameters are actually replace in the template. I always assumed they would be replace compile time (since inside the template the parameter expression (`${{`) is used) and for this type of expression the documentation... | process | clarify when template parameters are actually parsed the documentation doesn t state when template parameters are actually replace in the template i always assumed they would be replace compile time since inside the template the parameter expression is used and for this type of expression the documentation... | 1 |

20,567 | 27,228,360,909 | IssuesEvent | 2023-02-21 11:20:02 | corona-warn-app/cwa-wishlist | https://api.github.com/repos/corona-warn-app/cwa-wishlist | closed | Version 3.0: Do not show how long the app needs to be installed before a SRS warning can be issued | enhancement mirrored-to-jira Test/Share process SRS | ## Upcoming Implementation

If the user just installed the app and tries to warn others with a SRS test, the app will show that a warning can not yet be issued and that the app needs to be installed for at least x days/hours.

## Suggested change to the upcoming implementation

I **strongly** suggest to not show... | 1.0 | Version 3.0: Do not show how long the app needs to be installed before a SRS warning can be issued - ## Upcoming Implementation

If the user just installed the app and tries to warn others with a SRS test, the app will show that a warning can not yet be issued and that the app needs to be installed for at least x day... | process | version do not show how long the app needs to be installed before a srs warning can be issued upcoming implementation if the user just installed the app and tries to warn others with a srs test the app will show that a warning can not yet be issued and that the app needs to be installed for at least x day... | 1 |

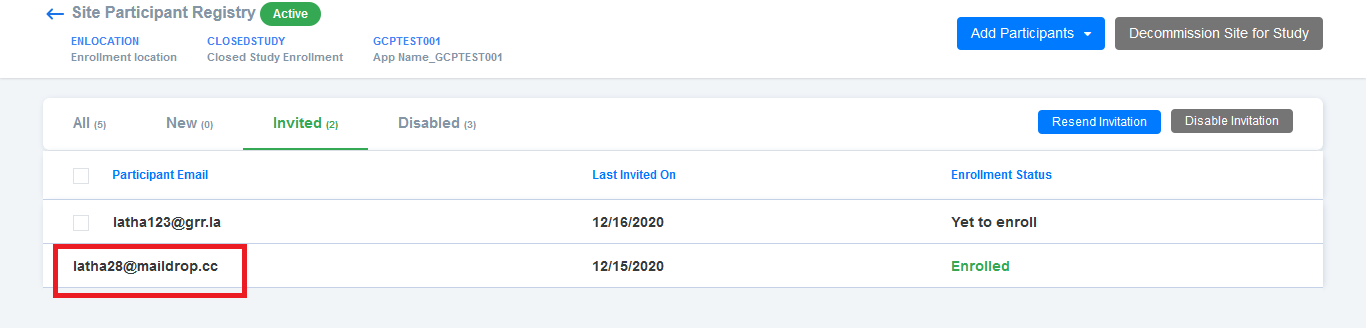

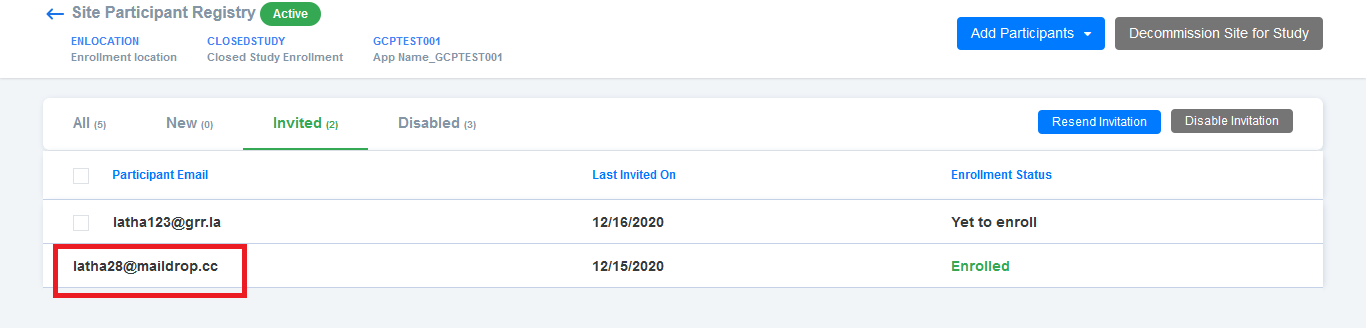

11,739 | 14,581,659,206 | IssuesEvent | 2020-12-18 11:04:05 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | Site participant registry > Invited tab > Alignment issue | Bug P2 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | Site participant registry > Invited tab > Enrolled participants > Alignment should be same as invision screen

| 3.0 | Site participant registry > Invited tab > Alignment issue - Site participant registry > Invited tab > Enrolled participants > Alignment should be same as invision screen

| process | site participant registry invited tab alignment issue site participant registry invited tab enrolled participants alignment should be same as invision screen | 1 |

481,385 | 13,885,114,114 | IssuesEvent | 2020-10-18 18:41:07 | webkom/lego | https://api.github.com/repos/webkom/lego | closed | CompanyInterest submission validation error | priority:high | So the interest form is broken in prod, so our updated changes won't work (as the normal form does not work).

.

| type: code-report | ```

210 if (ret == sz && !b_data(&trash))

211 next = htx_remove_blk(htx, blk);

212 else

CID 1445802 (#1 of 1): Unused value (UNUSED_VALUE)returned_pointer: Assigning value from htx_replace_blk_value(htx, blk, v, ... | 1.0 | src/flt_http_comp.c: unused value suspected by coverity (new finding) - ```

210 if (ret == sz && !b_data(&trash))

211 next = htx_remove_blk(htx, blk);

212 else

CID 1445802 (#1 of 1): Unused value (UNUSED_VALUE)re... | non_process | src flt http comp c unused value suspected by coverity new finding if ret sz b data trash next htx remove blk htx blk else cid of unused value unused value returned point... | 0 |

753,433 | 26,347,137,870 | IssuesEvent | 2023-01-10 23:26:10 | helpmebot/helpmebot | https://api.github.com/repos/helpmebot/helpmebot | opened | Phabricator task lookup | priority/low type/feature migrated | ```

!phab T566

[Task T566] Phabricator task lookup | Open | @stwalkerster | #helpmebot

``` | 1.0 | Phabricator task lookup - ```

!phab T566

[Task T566] Phabricator task lookup | Open | @stwalkerster | #helpmebot

``` | non_process | phabricator task lookup phab phabricator task lookup open stwalkerster helpmebot | 0 |

27,546 | 6,885,284,722 | IssuesEvent | 2017-11-21 15:40:57 | zeebe-io/zeebe | https://api.github.com/repos/zeebe-io/zeebe | opened | Map fails to remove bucket on merging overflow buckets | bug code ready zb-map | During our stability tests we discovered an bug which is triggered during merging of overflow buckets. We also assume that this can trigger other problems (see second stack trace).

```

java.lang.IllegalArgumentException: No bucket in buffer 0 on offset 4

at io.zeebe.map.BucketBufferArray.removeBucket(BucketBuffer... | 1.0 | Map fails to remove bucket on merging overflow buckets - During our stability tests we discovered an bug which is triggered during merging of overflow buckets. We also assume that this can trigger other problems (see second stack trace).

```

java.lang.IllegalArgumentException: No bucket in buffer 0 on offset 4

at... | non_process | map fails to remove bucket on merging overflow buckets during our stability tests we discovered an bug which is triggered during merging of overflow buckets we also assume that this can trigger other problems see second stack trace java lang illegalargumentexception no bucket in buffer on offset at... | 0 |

18,272 | 24,350,854,201 | IssuesEvent | 2022-10-02 23:02:41 | mmattDonk/AI-TTS-Donations | https://api.github.com/repos/mmattDonk/AI-TTS-Donations | closed | make a server client | feature_request Low Priority @solrock/processor @solrock/frontend @solrock/backend | serve the audio via an overlay, maybe a new repo for this? it would be really niche.

its really meant for the IT nerds for streamers to be able to set it up by themselves and have the streamer just input 1 overlay into their OBS. | 1.0 | make a server client - serve the audio via an overlay, maybe a new repo for this? it would be really niche.

its really meant for the IT nerds for streamers to be able to set it up by themselves and have the streamer just input 1 overlay into their OBS. | process | make a server client serve the audio via an overlay maybe a new repo for this it would be really niche its really meant for the it nerds for streamers to be able to set it up by themselves and have the streamer just input overlay into their obs | 1 |

33,722 | 9,201,535,932 | IssuesEvent | 2019-03-07 19:53:37 | ArctosDB/arctos | https://api.github.com/repos/ArctosDB/arctos | closed | Shipping Label report not working from Arctos | CF Report Builder Function-Transactions Priority-Critical | @dustymc

Dusty - shipping label report printout is not working from loans. We just tried for loan MVZ.Herp.2016.8268.Herp and we are getting this error:

An error occurred while processing this page!

The report CFR file /usr/local/httpd/htdocs/wwwarctos/Reports/templates/ship_label.cfr could not be found.

| 1.0 | Shipping Label report not working from Arctos - @dustymc

Dusty - shipping label report printout is not working from loans. We just tried for loan MVZ.Herp.2016.8268.Herp and we are getting this error:

An error occurred while processing this page!

The report CFR file /usr/local/httpd/htdocs/wwwarctos/Reports/template... | non_process | shipping label report not working from arctos dustymc dusty shipping label report printout is not working from loans we just tried for loan mvz herp herp and we are getting this error an error occurred while processing this page the report cfr file usr local httpd htdocs wwwarctos reports templates ship... | 0 |

559,841 | 16,578,534,645 | IssuesEvent | 2021-05-31 08:35:36 | saltudelft/libsa4py | https://api.github.com/repos/saltudelft/libsa4py | opened | Add line numbers for functions | Priority: Medium enhancement | Line and column numbers should be added to the final JSONOutput in the field `fn_ln`. | 1.0 | Add line numbers for functions - Line and column numbers should be added to the final JSONOutput in the field `fn_ln`. | non_process | add line numbers for functions line and column numbers should be added to the final jsonoutput in the field fn ln | 0 |

120,303 | 4,787,802,191 | IssuesEvent | 2016-10-30 06:55:02 | dhowe/AdNauseam | https://api.github.com/repos/dhowe/AdNauseam | closed | DNT whitelist checkbox not updating correctly | PRIORITY: Low | to recreate:

1) go to settings page, enable Hiding and Clicking

2) enable both DNT checkboxes

3) disable Hiding and Clicking

4) go to whitelist and notice the DNT list is still checked

go to settings page, enable Hiding and Clicking

2) enable both DNT checkboxes

3) disable Hiding and Clicking

4) go to whitelist and notice the DNT list is still checked

,[Public](https://angular-presentation.firebaseapp.com/create-first-app/imports) | 1.0 | maybe link to

native phone apps => NativeScript,

VR scenes => ???

and show how the imports change? - maybe link to

native phone apps => NativeScript,

VR scenes => ???

and show how the imports change?

Author: Will

Slide: [Local](http://localhost:4200/create-first-app/imports),[Public](https://angular-presentation.... | non_process | maybe link to native phone apps nativescript vr scenes and show how the imports change maybe link to native phone apps nativescript vr scenes and show how the imports change author will slide | 0 |

126,031 | 4,971,653,623 | IssuesEvent | 2016-12-05 19:19:20 | SIU-CS/BarGame-Production | https://api.github.com/repos/SIU-CS/BarGame-Production | closed | Navigation | Priority-Medium Product Backlog | I want to be able to easily navigate from quests to the shop to players (using a menu system).

| 1.0 | Navigation - I want to be able to easily navigate from quests to the shop to players (using a menu system).

| non_process | navigation i want to be able to easily navigate from quests to the shop to players using a menu system | 0 |

7,642 | 25,336,566,658 | IssuesEvent | 2022-11-18 17:20:51 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | closed | [DocDB][ASAN Unit Test Failure][2.8] YbAdminSnapshotScheduleTest.CleanupDeletedTablets | kind/bug area/docdb priority/medium qa_automation | Jira Link: [DB-3357](https://yugabyte.atlassian.net/browse/DB-3357)

### Description

On 2.8.9.0-b14 YbAdminSnapshotScheduleTest.CleanupDeletedTablets unit test is failing with a heap-use-after-free:

```

[m-1] ==30154==ERROR: AddressSanitizer: heap-use-after-free on address 0x61300024c9e0 at pc 0x7f1f279945f2 bp 0x7f... | 1.0 | [DocDB][ASAN Unit Test Failure][2.8] YbAdminSnapshotScheduleTest.CleanupDeletedTablets - Jira Link: [DB-3357](https://yugabyte.atlassian.net/browse/DB-3357)

### Description

On 2.8.9.0-b14 YbAdminSnapshotScheduleTest.CleanupDeletedTablets unit test is failing with a heap-use-after-free:

```

[m-1] ==30154==ERROR: Add... | non_process | ybadminsnapshotscheduletest cleanupdeletedtablets jira link description on ybadminsnapshotscheduletest cleanupdeletedtablets unit test is failing with a heap use after free error addresssanitizer heap use after free on address at pc bp sp read of size at thread ... | 0 |

360,621 | 25,299,957,111 | IssuesEvent | 2022-11-17 09:57:51 | IgniteUI/igniteui-theming | https://api.github.com/repos/IgniteUI/igniteui-theming | closed | [SassDoc] - Some items are not documented | :book: documentation :white_check_mark: status: resolved | Palettes are not documented, while others items are not made private. | 1.0 | [SassDoc] - Some items are not documented - Palettes are not documented, while others items are not made private. | non_process | some items are not documented palettes are not documented while others items are not made private | 0 |

18,252 | 24,334,813,214 | IssuesEvent | 2022-10-01 00:56:35 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [processor/probabilisticsampler] add more mterics to probabilistic sampler | enhancement priority:p2 processor/probabilisticsampler | **Is your feature request related to a problem? Please describe.**

wish to expose more metrics to observe probabilistic processor stats.

**Describe the solution you'd like**

following https://github.com/open-telemetry/opentelemetry-collector-contrib/blob/main/processor/tailsamplingprocessor/metrics.go#L47

I'd l... | 1.0 | [processor/probabilisticsampler] add more mterics to probabilistic sampler - **Is your feature request related to a problem? Please describe.**

wish to expose more metrics to observe probabilistic processor stats.

**Describe the solution you'd like**

following https://github.com/open-telemetry/opentelemetry-collec... | process | add more mterics to probabilistic sampler is your feature request related to a problem please describe wish to expose more metrics to observe probabilistic processor stats describe the solution you d like following i d like to add those pls assign it to me p | 1 |

738,140 | 25,546,925,693 | IssuesEvent | 2022-11-29 19:39:44 | zowe/imperative | https://api.github.com/repos/zowe/imperative | closed | Plugin Management Facility should not imply multiple uninstall | bug plugins priority-low | _From @zFernand0 on June 28, 2018 14:18_

Looking at the help of the uninstall command

```

USAGE

-----

"test_cli\TestCLI.ts" plugins uninstall [plugin...] [options]

```

It makes you think that you can uninstall multiple plugins with one single command like:

`$ ts-node "test_cli\TestCLI.ts"... | 1.0 | Plugin Management Facility should not imply multiple uninstall - _From @zFernand0 on June 28, 2018 14:18_

Looking at the help of the uninstall command

```

USAGE

-----

"test_cli\TestCLI.ts" plugins uninstall [plugin...] [options]

```

It makes you think that you can uninstall multiple plugin... | non_process | plugin management facility should not imply multiple uninstall from on june looking at the help of the uninstall command usage test cli testcli ts plugins uninstall it makes you think that you can uninstall multiple plugins with one single command like ... | 0 |

26,323 | 5,243,675,737 | IssuesEvent | 2017-01-31 21:21:35 | PeterCamilleri/mysh | https://api.github.com/repos/PeterCamilleri/mysh | closed | Add docs for helper methods. | documentation enhancement | The Mysh module has several helper methods for action authors. These deserve a section in the mysh readme file.

These include:

- Mysh.parse_args(input)

- Mysh.input.readline(parms)

- String#preprocess(evaluator=$mysh_exec_host)

- MNV[] and MNV[]=

- mysh(command_string)

- Mysh.run(args)

| 1.0 | Add docs for helper methods. - The Mysh module has several helper methods for action authors. These deserve a section in the mysh readme file.

These include:

- Mysh.parse_args(input)

- Mysh.input.readline(parms)

- String#preprocess(evaluator=$mysh_exec_host)

- MNV[] and MNV[]=

- mysh(command_string)

- M... | non_process | add docs for helper methods the mysh module has several helper methods for action authors these deserve a section in the mysh readme file these include mysh parse args input mysh input readline parms string preprocess evaluator mysh exec host mnv and mnv mysh command string mys... | 0 |