Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

70,645 | 7,194,973,813 | IssuesEvent | 2018-02-04 12:16:24 | OAButton/discussion | https://api.github.com/repos/OAButton/discussion | closed | test all the stats from tools | 1. Admin 1. Bookmarklet 1. Chatbot 1. DeliverOA 1. EmbedOA 1. Institutional 1. Plugin 1. Website 2. Enhancement 3. Blocked: Test | Make sure that:

- [x] uses from the frontpage show

- [x] /bulk uses show

- [ ] we can see uses from iframe embedoa

- [ ] we can see uses from different installs of /embedoa

- [x] we can see uses from illiad deliveroa

- [x] we can see uses from chatbot

- [x] need to test on new plugins ( bookmarklet / plugin / ... | 1.0 | test all the stats from tools - Make sure that:

- [x] uses from the frontpage show

- [x] /bulk uses show

- [ ] we can see uses from iframe embedoa

- [ ] we can see uses from different installs of /embedoa

- [x] we can see uses from illiad deliveroa

- [x] we can see uses from chatbot

- [x] need to test on new p... | non_process | test all the stats from tools make sure that uses from the frontpage show bulk uses show we can see uses from iframe embedoa we can see uses from different installs of embedoa we can see uses from illiad deliveroa we can see uses from chatbot need to test on new plugins bookm... | 0 |

20,048 | 6,808,623,095 | IssuesEvent | 2017-11-04 05:44:22 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | reopened | Release names | build-general status-inprocess type-info type-project | Releases are named after trolley line stops coming out of Philadelphia. Here are the names:

<img width="627" alt="screen shot 2017-08-25 at 11 25 44 pm" src="https://user-images.githubusercontent.com/5417918/29738274-dc7bdb44-89ec-11e7-8285-f55787132309.png">

| 1.0 | Release names - Releases are named after trolley line stops coming out of Philadelphia. Here are the names:

<img width="627" alt="screen shot 2017-08-25 at 11 25 44 pm" src="https://user-images.githubusercontent.com/5417918/29738274-dc7bdb44-89ec-11e7-8285-f55787132309.png">

| non_process | release names releases are named after trolley line stops coming out of philadelphia here are the names img width alt screen shot at pm src | 0 |

3,345 | 6,480,466,727 | IssuesEvent | 2017-08-18 13:29:57 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | child_process.on("error") does not function as expected in Ubuntu | child_process | Not working with:

* **Version**: 8.2.1

* **Platform**: Ubuntu (linux) 16.04

Works as expected with:

* **Version**: 10.0 16251

* **Platform**: Windows

The example in the [documentation](https://nodejs.org/api/child_process.html#child_process_child_process_spawn_command_args_options) cites the following workfl... | 1.0 | child_process.on("error") does not function as expected in Ubuntu - Not working with:

* **Version**: 8.2.1

* **Platform**: Ubuntu (linux) 16.04

Works as expected with:

* **Version**: 10.0 16251

* **Platform**: Windows

The example in the [documentation](https://nodejs.org/api/child_process.html#child_process_... | process | child process on error does not function as expected in ubuntu not working with version platform ubuntu linux works as expected with version platform windows the example in the cites the following workflow const spawn require child pro... | 1 |

758,452 | 26,555,833,729 | IssuesEvent | 2023-01-20 11:56:41 | saudalnasser/starlux | https://api.github.com/repos/saudalnasser/starlux | opened | feat: hot reload | type: feature priority: medium | ## Problem

there's no way to deploy commands automatically on changes during development

## Solution(s)

a way to look for changes in specific commands and deploy them accordingly to the specified guild

| 1.0 | feat: hot reload - ## Problem

there's no way to deploy commands automatically on changes during development

## Solution(s)

a way to look for changes in specific commands and deploy them accordingly to the specified guild

| non_process | feat hot reload problem there s no way to deploy commands automatically on changes during development solution s a way to look for changes in specific commands and deploy them accordingly to the specified guild | 0 |

183,461 | 6,688,901,299 | IssuesEvent | 2017-10-08 19:51:02 | qutebrowser/qutebrowser | https://api.github.com/repos/qutebrowser/qutebrowser | closed | Have a way to reset bindings to their default | component: completion component: config priority: 1 - middle | After doing `:unbind H`, there's no way to set it to its default without looking it up.

I can see two solutions:

- Something like `:unbind --default H` should exist (`:config-unset` isn't really a good match for bindings).

- The completion should show the default after `:bind H` (similar to what the `:set` compl... | 1.0 | Have a way to reset bindings to their default - After doing `:unbind H`, there's no way to set it to its default without looking it up.

I can see two solutions:

- Something like `:unbind --default H` should exist (`:config-unset` isn't really a good match for bindings).

- The completion should show the default a... | non_process | have a way to reset bindings to their default after doing unbind h there s no way to set it to its default without looking it up i can see two solutions something like unbind default h should exist config unset isn t really a good match for bindings the completion should show the default a... | 0 |

446,431 | 31,476,099,592 | IssuesEvent | 2023-08-30 10:48:31 | onOffice-Web-Org/oo-wp-plugin | https://api.github.com/repos/onOffice-Web-Org/oo-wp-plugin | closed | Sort property lists | needs documentation in review feature | ### Discussed in https://github.com/onOffice-Web-Org/oo-wp-plugin/discussions/507

<div type='discussions-op-text'>

<sup>Originally posted by **fredericalpers** April 25, 2023</sup>

### Current state

At the moment the created property lists can not be sorted. With a large number of lists, it is difficult to fi... | 1.0 | Sort property lists - ### Discussed in https://github.com/onOffice-Web-Org/oo-wp-plugin/discussions/507

<div type='discussions-op-text'>

<sup>Originally posted by **fredericalpers** April 25, 2023</sup>

### Current state

At the moment the created property lists can not be sorted. With a large number of lists,... | non_process | sort property lists discussed in originally posted by fredericalpers april current state at the moment the created property lists can not be sorted with a large number of lists it is difficult to find the correct one desired state the ones marked in the screenshot should ... | 0 |

782,900 | 27,511,003,187 | IssuesEvent | 2023-03-06 08:49:05 | AY2223S2-CS2103T-T17-3/tp | https://api.github.com/repos/AY2223S2-CS2103T-T17-3/tp | closed | Delete tag | type.Story priority.High | As a user I can delete tag from a person so that I can update the information overtime. | 1.0 | Delete tag - As a user I can delete tag from a person so that I can update the information overtime. | non_process | delete tag as a user i can delete tag from a person so that i can update the information overtime | 0 |

390,949 | 11,566,119,511 | IssuesEvent | 2020-02-20 11:51:35 | AugurProject/augur | https://api.github.com/repos/AugurProject/augur | closed | Buy participation tokens > My REP balance deducts after about a minute but my participation tokens don't show up here | Bug Needed for V2 launch Priority: High | **Read to end of ticket**

Here it should show 11

it is updating in the total PT tokens purchased but not showing that I own them

it is updating in the total PT token... | non_process | buy participation tokens my rep balance deducts after about a minute but my participation tokens don t show up here read to end of ticket here it should show it is updating in the total pt tokens purchased but not showing that i own them after pushing time forward a week it s now working correct... | 0 |

16,356 | 21,035,673,021 | IssuesEvent | 2022-03-31 07:36:06 | linuxdeepin/developer-center | https://api.github.com/repos/linuxdeepin/developer-center | closed | Transparent background misplaced | bug | interface style bug | porting other | delay processing | <!--Please keep one issue focus on one bug, If you have multiple issues to report, try open multiple issues to report each of them.-->

## Describe the bug

<!--A clear and concise description of what the bug is.-->

The transparent background in some applications is not in the correct place.

## To Reproduce

<!--We... | 1.0 | Transparent background misplaced - <!--Please keep one issue focus on one bug, If you have multiple issues to report, try open multiple issues to report each of them.-->

## Describe the bug

<!--A clear and concise description of what the bug is.-->

The transparent background in some applications is not in the corr... | process | transparent background misplaced describe the bug the transparent background in some applications is not in the correct place to reproduce steps to reproduce the behavior open notification panel in arch manjaro see the result or open deepin terminal make sure you have enabled ... | 1 |

14,000 | 16,772,297,253 | IssuesEvent | 2021-06-14 16:08:57 | deepset-ai/haystack | https://api.github.com/repos/deepset-ai/haystack | closed | Preprocessing of json files | topic:preprocessing type:bug | **Question**

Why does the Preprocessing of a large json file shows only 20% progress

**Additional context**

I have a set of 20 files to train when I train them individually the Preprocessing step shows 100% but while i train a combine json file it only shows 20% as though only 20% of the dataset has been preproce... | 1.0 | Preprocessing of json files - **Question**

Why does the Preprocessing of a large json file shows only 20% progress

**Additional context**

I have a set of 20 files to train when I train them individually the Preprocessing step shows 100% but while i train a combine json file it only shows 20% as though only 20% of... | process | preprocessing of json files question why does the preprocessing of a large json file shows only progress additional context i have a set of files to train when i train them individually the preprocessing step shows but while i train a combine json file it only shows as though only of the d... | 1 |

22,031 | 30,545,641,576 | IssuesEvent | 2023-07-20 03:29:23 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | roblox-pyc 1.18.53 has 2 GuardDog issues | guarddog silent-process-execution | https://pypi.org/project/roblox-pyc

https://inspector.pypi.io/project/roblox-pyc

```{

"dependency": "roblox-pyc",

"version": "1.18.53",

"result": {

"issues": 2,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "roblox-pyc-1.18.53/src/robloxpy.py:121",

... | 1.0 | roblox-pyc 1.18.53 has 2 GuardDog issues - https://pypi.org/project/roblox-pyc

https://inspector.pypi.io/project/roblox-pyc

```{

"dependency": "roblox-pyc",

"version": "1.18.53",

"result": {

"issues": 2,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "ro... | process | roblox pyc has guarddog issues dependency roblox pyc version result issues errors results silent process execution location roblox pyc src robloxpy py code subprocess call ... | 1 |

16,689 | 21,791,068,486 | IssuesEvent | 2022-05-14 22:53:26 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Add Tonight with Katherine Newbury from Late Night | suggested title in process | Title: Tonight with Katherine Newbury

Type (film/tv show): tv show

Film or show in which it appears: Late Night (2019)

Is the parent film/show streaming anywhere? Amazon

About when in the parent film/show does it appear? Throughout

Actual footage of the film/show can be seen (yes/no)? yes

| 1.0 | Add Tonight with Katherine Newbury from Late Night - Title: Tonight with Katherine Newbury

Type (film/tv show): tv show

Film or show in which it appears: Late Night (2019)

Is the parent film/show streaming anywhere? Amazon

About when in the parent film/show does it appear? Throughout

Actual footage of th... | process | add tonight with katherine newbury from late night title tonight with katherine newbury type film tv show tv show film or show in which it appears late night is the parent film show streaming anywhere amazon about when in the parent film show does it appear throughout actual footage of the f... | 1 |

435,365 | 30,496,200,434 | IssuesEvent | 2023-07-18 11:00:16 | CliMA/Oceananigans.jl | https://api.github.com/repos/CliMA/Oceananigans.jl | opened | Docs don't build for tagged releases | testing 🧪 documentation 📜 | See, e.g., recent v0.85.

Possibly something related with buildkite settings. E.g. for v0.84.1 the tag triggered [a buildkite run](https://buildkite.com/clima/oceananigans/builds/12019)

<img width="1215" alt="Screenshot 2023-07-18 at 1 57 24 pm" src="https://github.com/CliMA/Oceananigans.jl/assets/7112768/f0b0f59a... | 1.0 | Docs don't build for tagged releases - See, e.g., recent v0.85.

Possibly something related with buildkite settings. E.g. for v0.84.1 the tag triggered [a buildkite run](https://buildkite.com/clima/oceananigans/builds/12019)

<img width="1215" alt="Screenshot 2023-07-18 at 1 57 24 pm" src="https://github.com/CliMA/... | non_process | docs don t build for tagged releases see e g recent possibly something related with buildkite settings e g for the tag triggered img width alt screenshot at pm src img width alt screenshot at pm src | 0 |

216,616 | 7,310,173,459 | IssuesEvent | 2018-02-28 14:17:14 | ballerina-platform/ballerina-message-broker | https://api.github.com/repos/ballerina-platform/ballerina-message-broker | closed | JMS local transaction test cases | Complexity/Moderate Module/broker-core Priority/High Type/Task | **Description:**

Write JMS local transaction test cases for both queue and topic | 1.0 | JMS local transaction test cases - **Description:**

Write JMS local transaction test cases for both queue and topic | non_process | jms local transaction test cases description write jms local transaction test cases for both queue and topic | 0 |

14,646 | 17,774,387,421 | IssuesEvent | 2021-08-30 17:15:11 | cagov/design-system | https://api.github.com/repos/cagov/design-system | closed | In statewide header official tagline show only "State of California" in mobile | Proposal - Review process CA Design System | In statewide header make "Official website of the State of California" to show only "State of California" in mobile. Figma Example is below:

<img width="768" alt="Screen Shot 2021-08-19 at 4 29 39 PM" src="https://user-images.githubusercontent.com/31669748/130156877-cd6bf128-8232-4331-8555-2aa75657836b.png">

| 1.0 | In statewide header official tagline show only "State of California" in mobile - In statewide header make "Official website of the State of California" to show only "State of California" in mobile. Figma Example is below:

<img width="768" alt="Screen Shot 2021-08-19 at 4 29 39 PM" src="https://user-images.githubuserco... | process | in statewide header official tagline show only state of california in mobile in statewide header make official website of the state of california to show only state of california in mobile figma example is below img width alt screen shot at pm src | 1 |

64,164 | 8,714,210,517 | IssuesEvent | 2018-12-07 06:53:34 | PegaSysEng/pantheon | https://api.github.com/repos/PegaSysEng/pantheon | closed | Document debug_metrics | doc next release documentation | ### Requirements

* Add debug_metrics to JSON-RPC API as added by PR #313

* Add conceptual content about why and how to use debug_metrics

* Include specific use-cases or examples

| 1.0 | Document debug_metrics - ### Requirements

* Add debug_metrics to JSON-RPC API as added by PR #313

* Add conceptual content about why and how to use debug_metrics

* Include specific use-cases or examples

| non_process | document debug metrics requirements add debug metrics to json rpc api as added by pr add conceptual content about why and how to use debug metrics include specific use cases or examples | 0 |

19,499 | 25,809,137,132 | IssuesEvent | 2022-12-11 17:37:19 | anitsh/til | https://api.github.com/repos/anitsh/til | opened | Theory of Constraints (TOC) | principle process | A process improvement methodology that emphasizes the importance of identifying the "system constraint" or bottleneck.

By leveraging this constraint, organizations can achieve their financial goals while delivering on-time-in-full (OTIF) to customers, avoiding stock-outs in the supply chain, reducing lead time, etc... | 1.0 | Theory of Constraints (TOC) - A process improvement methodology that emphasizes the importance of identifying the "system constraint" or bottleneck.

By leveraging this constraint, organizations can achieve their financial goals while delivering on-time-in-full (OTIF) to customers, avoiding stock-outs in the supply ... | process | theory of constraints toc a process improvement methodology that emphasizes the importance of identifying the system constraint or bottleneck by leveraging this constraint organizations can achieve their financial goals while delivering on time in full otif to customers avoiding stock outs in the supply ... | 1 |

4,338 | 7,244,583,027 | IssuesEvent | 2018-02-14 15:33:10 | hardvolk/foodie-journal | https://api.github.com/repos/hardvolk/foodie-journal | closed | Trabajar investigando la funcionalidad de la API de Yelp y como integrarla al programa | In process | Investigación | 1.0 | Trabajar investigando la funcionalidad de la API de Yelp y como integrarla al programa - Investigación | process | trabajar investigando la funcionalidad de la api de yelp y como integrarla al programa investigación | 1 |

524,389 | 15,213,004,807 | IssuesEvent | 2021-02-17 11:10:55 | HSLdevcom/bultti | https://api.github.com/repos/HSLdevcom/bultti | closed | Ennakkotarkastusraporttien selailu | Epic Priority 1 | Toimija

HSL pääkäyttäjä, HSL muokkaaja ylempi ja alempi, HSL selaaja, Liikennöitsijä muokkaaja, liikennöitsijä se-laaja

Esiehto

Ennakkotarkastus on tehty

Lopputulos

Raporttien selailu / tarvittavien tietojen näkeminen

Käyttötiheys

Joitain kertoja per liikennöitsijä per aikataulukausi

1

Käyttäjälle näyt... | 1.0 | Ennakkotarkastusraporttien selailu - Toimija

HSL pääkäyttäjä, HSL muokkaaja ylempi ja alempi, HSL selaaja, Liikennöitsijä muokkaaja, liikennöitsijä se-laaja

Esiehto

Ennakkotarkastus on tehty

Lopputulos

Raporttien selailu / tarvittavien tietojen näkeminen

Käyttötiheys

Joitain kertoja per liikennöitsijä per ... | non_process | ennakkotarkastusraporttien selailu toimija hsl pääkäyttäjä hsl muokkaaja ylempi ja alempi hsl selaaja liikennöitsijä muokkaaja liikennöitsijä se laaja esiehto ennakkotarkastus on tehty lopputulos raporttien selailu tarvittavien tietojen näkeminen käyttötiheys joitain kertoja per liikennöitsijä per ... | 0 |

21,311 | 28,505,193,651 | IssuesEvent | 2023-04-18 20:44:37 | TUM-Dev/NavigaTUM | https://api.github.com/repos/TUM-Dev/NavigaTUM | closed | [Entry] [0502.EG.221]: | entry webform delete-after-processing | There's a WC hidden inside this Treppenhaus. I couldn't find the WC number, but the one thing I know from my observation is there's no gender markers for this. LG, DiversiTUM | 1.0 | [Entry] [0502.EG.221]: - There's a WC hidden inside this Treppenhaus. I couldn't find the WC number, but the one thing I know from my observation is there's no gender markers for this. LG, DiversiTUM | process | there s a wc hidden inside this treppenhaus i couldn t find the wc number but the one thing i know from my observation is there s no gender markers for this lg diversitum | 1 |

49,173 | 6,015,995,909 | IssuesEvent | 2017-06-07 04:59:36 | Microsoft/vsts-tasks | https://api.github.com/repos/Microsoft/vsts-tasks | closed | [DeployVisualStudioTestAgent] Problem with username having more than 8 characters | Area: Test | Hello,

I've tried to deploy the test agent with Machine's admin username: trinh.pham and got the error as below

```

2017-05-22T05:17:40.6870427Z RemoteDeployerSource Verbose: 1221675 : [RemoteDeployer][22:May:17:12:17:38:8037; 6772, 5](LGVN14143-W10:5985-9005d6f4-9e7f-441d-81be-38f36e1922be)Finished retrying 1 out... | 1.0 | [DeployVisualStudioTestAgent] Problem with username having more than 8 characters - Hello,

I've tried to deploy the test agent with Machine's admin username: trinh.pham and got the error as below

```

2017-05-22T05:17:40.6870427Z RemoteDeployerSource Verbose: 1221675 : [RemoteDeployer][22:May:17:12:17:38:8037; 6772... | non_process | problem with username having more than characters hello i ve tried to deploy the test agent with machine s admin username trinh pham and got the error as below remotedeployersource verbose finished retrying out of times for exception system management automation... | 0 |

8,397 | 11,567,076,146 | IssuesEvent | 2020-02-20 13:42:30 | GetTerminus/terminus-ui | https://api.github.com/repos/GetTerminus/terminus-ui | closed | Demo: Add new abbreviation pipe to pipes demo page | Focus: consumer Goal: Process Improvement Type: chore | - [ ] Add simple example to existing pipes demos page. | 1.0 | Demo: Add new abbreviation pipe to pipes demo page - - [ ] Add simple example to existing pipes demos page. | process | demo add new abbreviation pipe to pipes demo page add simple example to existing pipes demos page | 1 |

9,823 | 12,827,602,697 | IssuesEvent | 2020-07-06 18:49:20 | googleapis/code-suggester | https://api.github.com/repos/googleapis/code-suggester | opened | Create a README for users | type: process | Create README.md so users know what commands are available and how to install

- [ ] describe the CLI API

- [ ] describe the framework-core library

- [ ] describe how to install the package

- [ ] link to any external docs | 1.0 | Create a README for users - Create README.md so users know what commands are available and how to install

- [ ] describe the CLI API

- [ ] describe the framework-core library

- [ ] describe how to install the package

- [ ] link to any external docs | process | create a readme for users create readme md so users know what commands are available and how to install describe the cli api describe the framework core library describe how to install the package link to any external docs | 1 |

483,431 | 13,924,694,991 | IssuesEvent | 2020-10-21 15:52:21 | GoogleCloudPlatform/python-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples | closed | IAP special path should be query parameter | api: iap priority: p2 samples type: feature request | ## In which file did you encounter the issue?

https://github.com/GoogleCloudPlatform/python-docs-samples/blob/master/appengine/standard/iap/js/poll.js#L49

### Did you change the file? If so, how?

No

## Describe the issue

Current sample code uses special url path to initiate session refresh:

```js

iapSe... | 1.0 | IAP special path should be query parameter - ## In which file did you encounter the issue?

https://github.com/GoogleCloudPlatform/python-docs-samples/blob/master/appengine/standard/iap/js/poll.js#L49

### Did you change the file? If so, how?

No

## Describe the issue

Current sample code uses special url pa... | non_process | iap special path should be query parameter in which file did you encounter the issue did you change the file if so how no describe the issue current sample code uses special url path to initiate session refresh js iapsessionrefreshwindow window open gcp iap do session refresh... | 0 |

15,549 | 19,703,502,451 | IssuesEvent | 2022-01-12 19:07:56 | googleapis/java-network-security | https://api.github.com/repos/googleapis/java-network-security | opened | Your .repo-metadata.json file has a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* release_level must be equal to one of the allowed values in .repo-metadata.json

* api_shortname 'network-security' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can close this issue.

Reach out to **go/github-automa... | 1.0 | Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* release_level must be equal to one of the allowed values in .repo-metadata.json

* api_shortname 'network-security' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can c... | process | your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 release level must be equal to one of the allowed values in repo metadata json api shortname network security invalid in repo metadata json ☝️ once you correct these problems you can c... | 1 |

282,459 | 8,706,680,100 | IssuesEvent | 2018-12-06 04:03:29 | magda-io/magda | https://api.github.com/repos/magda-io/magda | opened | Search results help button text is not accessible (critical) | priority: high | ### Problem description

Intopia issue 24 - At the end of the Open Data Quality section, after the star rating, each search results listing provides a help button, a question mark in a circle. This is announced as “graphic help link”. Hovering over the button reveals a tooltip with important information for the user.

... | 1.0 | Search results help button text is not accessible (critical) - ### Problem description

Intopia issue 24 - At the end of the Open Data Quality section, after the star rating, each search results listing provides a help button, a question mark in a circle. This is announced as “graphic help link”. Hovering over the butt... | non_process | search results help button text is not accessible critical problem description intopia issue at the end of the open data quality section after the star rating each search results listing provides a help button a question mark in a circle this is announced as “graphic help link” hovering over the butto... | 0 |

298,439 | 22,499,118,965 | IssuesEvent | 2022-06-23 10:09:14 | MauriceNino/dashdot | https://api.github.com/repos/MauriceNino/dashdot | opened | [Feature] Allow integrations to dash. | type: documentation type: feature | ### Description of the feature

Dash. handles a lot of the overhead when querying system information through docker and displays all that in some charts. Other projects (like https://github.com/ajnart/homarr/) may want to simply include those, instead of going through the hassle of re-implementing everything.

### ... | 1.0 | [Feature] Allow integrations to dash. - ### Description of the feature

Dash. handles a lot of the overhead when querying system information through docker and displays all that in some charts. Other projects (like https://github.com/ajnart/homarr/) may want to simply include those, instead of going through the hassle ... | non_process | allow integrations to dash description of the feature dash handles a lot of the overhead when querying system information through docker and displays all that in some charts other projects like may want to simply include those instead of going through the hassle of re implementing everything a... | 0 |

10,014 | 13,043,884,184 | IssuesEvent | 2020-07-29 02:56:51 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `WeekWithMode` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `WeekWithMode` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_... | 2.0 | UCP: Migrate scalar function `WeekWithMode` from TiDB -

## Description

Port the scalar function `WeekWithMode` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.... | process | ucp migrate scalar function weekwithmode from tidb description port the scalar function weekwithmode from tidb to coprocessor score mentor s breeswish recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

131,941 | 28,057,607,917 | IssuesEvent | 2023-03-29 10:24:22 | TheCoderAdi/GamingBeast | https://api.github.com/repos/TheCoderAdi/GamingBeast | opened | More Games | enhancement hackcodex2023 | It's fun to have more games in a website , free feel to create mini games in javascript.

If you are creating more games don't add in the navigation bar .

You can create a new button in the navigation bar like more games , and add your games.

## Work

- [ ] Add games | 1.0 | More Games - It's fun to have more games in a website , free feel to create mini games in javascript.

If you are creating more games don't add in the navigation bar .

You can create a new button in the navigation bar like more games , and add your games.

## Work

- [ ] Add games | non_process | more games it s fun to have more games in a website free feel to create mini games in javascript if you are creating more games don t add in the navigation bar you can create a new button in the navigation bar like more games and add your games work add games | 0 |

782,304 | 27,492,779,896 | IssuesEvent | 2023-03-04 20:39:37 | azerothcore/azerothcore-wotlk | https://api.github.com/repos/azerothcore/azerothcore-wotlk | closed | Core/Script: Halls of Reflection | Instance - Dungeon - Northrend 80 Priority-Medium | <!-- IF YOU DO NOT FILL THIS OUT, WE WILL CLOSE YOUR ISSUE! -->

<!-- IF YOU DO NOT FILL THIS OUT, WE WILL CLOSE YOUR ISSUE! -->

<!-- IF YOU DO NOT FILL THIS OUT, WE WILL CLOSE YOUR ISSUE! -->

<!-- IF YOU DO NOT FILL THIS OUT, WE WILL CLOSE YOUR ISSUE! -->

<!-- IF YOU DO NOT FILL THIS OUT, WE WILL CLOSE YOUR ISSUE! ... | 1.0 | Core/Script: Halls of Reflection - <!-- IF YOU DO NOT FILL THIS OUT, WE WILL CLOSE YOUR ISSUE! -->

<!-- IF YOU DO NOT FILL THIS OUT, WE WILL CLOSE YOUR ISSUE! -->

<!-- IF YOU DO NOT FILL THIS OUT, WE WILL CLOSE YOUR ISSUE! -->

<!-- IF YOU DO NOT FILL THIS OUT, WE WILL CLOSE YOUR ISSUE! -->

<!-- IF YOU DO NOT FILL T... | non_process | core script halls of reflection description a wrong script in the halls of reflection current behaviour tell us what happens when you enter the dungeon triggered the script and it is not correct the sound and text don t match the text was from the opposite faction exampl... | 0 |

191 | 2,596,535,631 | IssuesEvent | 2015-02-20 21:23:43 | sensiasoft/sensorhub | https://api.github.com/repos/sensiasoft/sensorhub | opened | Janino based processing | enhancement processing | What about support for processing using simple java algorithms compiled in real-time by Janino? | 1.0 | Janino based processing - What about support for processing using simple java algorithms compiled in real-time by Janino? | process | janino based processing what about support for processing using simple java algorithms compiled in real time by janino | 1 |

19,432 | 25,600,933,439 | IssuesEvent | 2022-12-01 20:07:13 | dtcenter/MET | https://api.github.com/repos/dtcenter/MET | opened | Enhance the logic for determining quality flags for time summaries of point observations. | type: enhancement priority: medium alert: NEED ACCOUNT KEY alert: NEED PROJECT ASSIGNMENT requestor: METplus Team MET: PreProcessing Tools (Point) | ## Describe the Enhancement ##

While documenting MET's handling of quality flags for dtcenter/MET#2278, @JohnHalleyGotway found that that handling could be improved when computing time summaries of point observations. This step is preformed by calling common library code from the point pre-processing tools, including ... | 1.0 | Enhance the logic for determining quality flags for time summaries of point observations. - ## Describe the Enhancement ##

While documenting MET's handling of quality flags for dtcenter/MET#2278, @JohnHalleyGotway found that that handling could be improved when computing time summaries of point observations. This step... | process | enhance the logic for determining quality flags for time summaries of point observations describe the enhancement while documenting met s handling of quality flags for dtcenter met johnhalleygotway found that that handling could be improved when computing time summaries of point observations this step is... | 1 |

173,792 | 14,438,099,867 | IssuesEvent | 2020-12-07 12:32:51 | pi-top/pi-top-Python-SDK | https://api.github.com/repos/pi-top/pi-top-Python-SDK | closed | Update top image in README | documentation | Should be a more accurate representation of all of the things that the SDK is useful for. | 1.0 | Update top image in README - Should be a more accurate representation of all of the things that the SDK is useful for. | non_process | update top image in readme should be a more accurate representation of all of the things that the sdk is useful for | 0 |

8,561 | 11,734,226,532 | IssuesEvent | 2020-03-11 08:57:12 | labuladong/fucking-algorithm | https://api.github.com/repos/labuladong/fucking-algorithm | closed | Any chance of English translation? | enhancement processing | Hi,

I've few pages using Google Translator, and the translation didn't sound perfect. Still I managed to read this page https://labuladong.gitbook.io/algo/shu-ju-jie-gou-xi-lie/xue-xi-shu-ju-jie-gou-he-suan-fa-de-gao-xiao-fang-fa and you're explanation about the basic DS is very good.

I want to read the whole boo... | 1.0 | Any chance of English translation? - Hi,

I've few pages using Google Translator, and the translation didn't sound perfect. Still I managed to read this page https://labuladong.gitbook.io/algo/shu-ju-jie-gou-xi-lie/xue-xi-shu-ju-jie-gou-he-suan-fa-de-gao-xiao-fang-fa and you're explanation about the basic DS is very ... | process | any chance of english translation hi i ve few pages using google translator and the translation didn t sound perfect still i managed to read this page and you re explanation about the basic ds is very good i want to read the whole book but i wonder if you or someone is planning for english translatio... | 1 |

22,400 | 31,142,289,992 | IssuesEvent | 2023-08-16 01:44:34 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Flaky test: AssertionError: Timed out retrying after 4000ms: expected '<h1.pt-20px.font-medium.text-center.text-32px.text-body-gray-900>' to contain 'Choose a Browser' | OS: linux process: flaky test topic: flake ❄️ stage: flake stale | ### Link to dashboard or CircleCI failure

https://app.circleci.com/pipelines/github/cypress-io/cypress/41465/workflows/5d7f4386-729e-4aba-aeb6-3541a4a9c5b0/jobs/1716910

### Link to failing test in GitHub

https://github.com/cypress-io/cypress/blob/develop/packages/launchpad/cypress/e2e/choose-a-browser.cy.ts#L2... | 1.0 | Flaky test: AssertionError: Timed out retrying after 4000ms: expected '<h1.pt-20px.font-medium.text-center.text-32px.text-body-gray-900>' to contain 'Choose a Browser' - ### Link to dashboard or CircleCI failure

https://app.circleci.com/pipelines/github/cypress-io/cypress/41465/workflows/5d7f4386-729e-4aba-aeb6-3541... | process | flaky test assertionerror timed out retrying after expected to contain choose a browser link to dashboard or circleci failure link to failing test in github analysis img width alt screen shot at pm src cypress version other se... | 1 |

4,404 | 2,852,839,402 | IssuesEvent | 2015-06-01 15:32:08 | scikit-learn/scikit-learn | https://api.github.com/repos/scikit-learn/scikit-learn | closed | Pipeline.named_steps not documented | Documentation Easy | ``Pipeline.named_steps`` is not documented, and there is no example of using either ``named_steps`` or ``steps`` to access any attributes of the estimator.

That question comes up pretty frequently.

``named_steps`` is weird as it is initialized in ``__init__`` and doesn't have a trailing underscore.

Maybe the real fi... | 1.0 | Pipeline.named_steps not documented - ``Pipeline.named_steps`` is not documented, and there is no example of using either ``named_steps`` or ``steps`` to access any attributes of the estimator.

That question comes up pretty frequently.

``named_steps`` is weird as it is initialized in ``__init__`` and doesn't have a t... | non_process | pipeline named steps not documented pipeline named steps is not documented and there is no example of using either named steps or steps to access any attributes of the estimator that question comes up pretty frequently named steps is weird as it is initialized in init and doesn t have a t... | 0 |

2,667 | 5,446,928,891 | IssuesEvent | 2017-03-07 12:04:53 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | child_process exec '<()' unexpected token | child_process question | <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: output of `node -v`

P... | 1.0 | child_process exec '<()' unexpected token - <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you... | process | child process exec unexpected token thank you for reporting an issue this issue tracker is for bugs and issues found within node js core if you require more general support please file an issue on our help repo please fill in as much of the template below as you re able version output ... | 1 |

1,136 | 3,626,388,581 | IssuesEvent | 2016-02-10 00:33:23 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Failed test: test-child-process-fork-regr-gh-2847 | arm child_process test windows | Looks like this test is starting to fail in our windows environment: [fail 1](https://ci.nodejs.org/job/node-test-binary-windows/186/RUN_SUBSET=2,VS_VERSION=vs2013,label=win2008r2/tapTestReport/test.tap-11/), [fail 2](https://ci.nodejs.org/job/node-test-binary-windows/185/RUN_SUBSET=2,VS_VERSION=vs2013,label=win2008r2/... | 1.0 | Failed test: test-child-process-fork-regr-gh-2847 - Looks like this test is starting to fail in our windows environment: [fail 1](https://ci.nodejs.org/job/node-test-binary-windows/186/RUN_SUBSET=2,VS_VERSION=vs2013,label=win2008r2/tapTestReport/test.tap-11/), [fail 2](https://ci.nodejs.org/job/node-test-binary-windows... | process | failed test test child process fork regr gh looks like this test is starting to fail in our windows environment not ok test child process fork regr gh js events js throw er unhandled error event error read econnreset at exports errnoexception util js at ... | 1 |

17,304 | 23,122,081,495 | IssuesEvent | 2022-07-27 22:58:09 | CTSRD-CHERI/cheribsd | https://api.github.com/repos/CTSRD-CHERI/cheribsd | closed | vmspaces need identifiers | coprocesses | We need a way to refer to vmspaces in `ps`, `procstat`, etc output. We might also want to be able to name them (uniquely?). | 1.0 | vmspaces need identifiers - We need a way to refer to vmspaces in `ps`, `procstat`, etc output. We might also want to be able to name them (uniquely?). | process | vmspaces need identifiers we need a way to refer to vmspaces in ps procstat etc output we might also want to be able to name them uniquely | 1 |

50,972 | 26,863,107,957 | IssuesEvent | 2023-02-03 20:22:56 | bevyengine/bevy | https://api.github.com/repos/bevyengine/bevy | closed | Low level Component Reflection utiliites for Animation | C-Enhancement A-ECS C-Performance A-Animation A-Reflection | ## What problem does this solve or what need does it fill?

For property based animation (i.e. "animate anything"), we need lower level Reflect utilities than `ReflectComponent` for performant writes of the animated properties. For these systems we will likely have on hand a ComponentId, Entity (and thus EntityLocation... | True | Low level Component Reflection utiliites for Animation - ## What problem does this solve or what need does it fill?

For property based animation (i.e. "animate anything"), we need lower level Reflect utilities than `ReflectComponent` for performant writes of the animated properties. For these systems we will likely ha... | non_process | low level component reflection utiliites for animation what problem does this solve or what need does it fill for property based animation i e animate anything we need lower level reflect utilities than reflectcomponent for performant writes of the animated properties for these systems we will likely ha... | 0 |

621,864 | 19,598,132,737 | IssuesEvent | 2022-01-05 20:36:43 | gsbelarus/check-and-cash | https://api.github.com/repos/gsbelarus/check-and-cash | closed | Возврат спецпитания | POSitive:Check Priority-Normal Severity - Critical | Доделать возврат блюд, отданных в кредит и спецпитанию.

Изменять остатки и правльно подставлять суммы | 1.0 | Возврат спецпитания - Доделать возврат блюд, отданных в кредит и спецпитанию.

Изменять остатки и правльно подставлять суммы | non_process | возврат спецпитания доделать возврат блюд отданных в кредит и спецпитанию изменять остатки и правльно подставлять суммы | 0 |

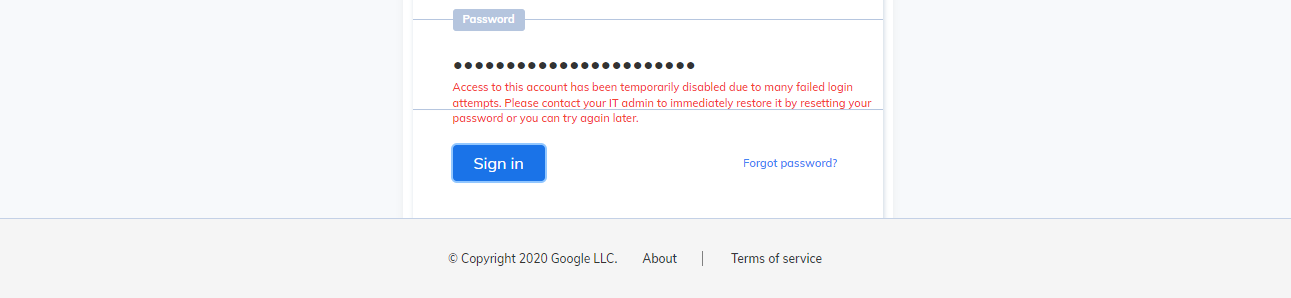

19,287 | 25,466,265,480 | IssuesEvent | 2022-11-25 04:55:27 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [GCI] [PM] Issue related to error message which is getting displayed for organizational users credentials in the sign in screen | Bug P1 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | UI of Error message is getting displayed differently for organizational user credentials in the sign in screen

**AR:**

**ER:** UI of Error message should get displayed similar for organization... | 3.0 | [GCI] [PM] Issue related to error message which is getting displayed for organizational users credentials in the sign in screen - UI of Error message is getting displayed differently for organizational user credentials in the sign in screen

**AR:**

| Discussion Enhancement help wanted Priority: Low Related to: Code Refactoring | We need to rename all files and classes because they are being described wrongly.

For examply:

`OutputExpr`, should be `OutputStmt`, however, `ArithmeticExpr` should probably be kept the same. We need to discuss it.

Any thoughts @haskellcamargo, @ryukinix, @rafaelcn ? | 1.0 | Rename expression files (and classes) - We need to rename all files and classes because they are being described wrongly.

For examply:

`OutputExpr`, should be `OutputStmt`, however, `ArithmeticExpr` should probably be kept the same. We need to discuss it.

Any thoughts @haskellcamargo, @ryukinix, @rafaelcn ? | non_process | rename expression files and classes we need to rename all files and classes because they are being described wrongly for examply outputexpr should be outputstmt however arithmeticexpr should probably be kept the same we need to discuss it any thoughts haskellcamargo ryukinix rafaelcn | 0 |

81,945 | 23,622,691,251 | IssuesEvent | 2022-08-24 22:31:47 | expo/expo | https://api.github.com/repos/expo/expo | closed | [expo-dev-menu] is broken for landscape orientation | Issue accepted Development Builds | ### Summary

`expo-dev-menu` is broken for projects that have `"orientation": "landscape"` in `app.json`.

`expo-dev-menu` is hardcoded for `portrait` orientation and is not usable in landscape(button on initial screen is not pressable)

Affects both Expo Go and app with `expo-dev-client`

### Managed or bare wor... | 1.0 | [expo-dev-menu] is broken for landscape orientation - ### Summary

`expo-dev-menu` is broken for projects that have `"orientation": "landscape"` in `app.json`.

`expo-dev-menu` is hardcoded for `portrait` orientation and is not usable in landscape(button on initial screen is not pressable)

Affects both Expo Go and... | non_process | is broken for landscape orientation summary expo dev menu is broken for projects that have orientation landscape in app json expo dev menu is hardcoded for portrait orientation and is not usable in landscape button on initial screen is not pressable affects both expo go and app with exp... | 0 |

6,364 | 9,416,767,728 | IssuesEvent | 2019-04-10 15:18:25 | material-components/material-components-ios | https://api.github.com/repos/material-components/material-components-ios | closed | [Buttons] Promote Theming Extensions to Ready | [Buttons] type:Process | This was filed as an internal issue. If you are a Googler, please visit [b/124516065](http://b/124516065) for more details.

<!-- Auto-generated content below, do not modify -->

---

#### Internal data

- Associated internal bug: [b/124516065](http://b/124516065)

- Blocked by: https://github.com/material-components/mate... | 1.0 | [Buttons] Promote Theming Extensions to Ready - This was filed as an internal issue. If you are a Googler, please visit [b/124516065](http://b/124516065) for more details.

<!-- Auto-generated content below, do not modify -->

---

#### Internal data

- Associated internal bug: [b/124516065](http://b/124516065)

- Blocked... | process | promote theming extensions to ready this was filed as an internal issue if you are a googler please visit for more details internal data associated internal bug blocked by | 1 |

365,487 | 25,538,393,343 | IssuesEvent | 2022-11-29 13:41:59 | cwinland/FastMoq | https://api.github.com/repos/cwinland/FastMoq | closed | Stack overflow when creating a mock | bug documentation Fixed | The mock system will attempt to create mocks for all inner mock constructors.

When InnerMockResolution is true (default), this can cause an endless loop.

To fix this, constructors with the same Type are removed if a valid default value is not provided. Meaning, if the mock is created and parameters are passed to i... | 1.0 | Stack overflow when creating a mock - The mock system will attempt to create mocks for all inner mock constructors.

When InnerMockResolution is true (default), this can cause an endless loop.

To fix this, constructors with the same Type are removed if a valid default value is not provided. Meaning, if the mock is ... | non_process | stack overflow when creating a mock the mock system will attempt to create mocks for all inner mock constructors when innermockresolution is true default this can cause an endless loop to fix this constructors with the same type are removed if a valid default value is not provided meaning if the mock is ... | 0 |

8,637 | 11,787,245,207 | IssuesEvent | 2020-03-17 13:44:05 | cncf/sig-security | https://api.github.com/repos/cncf/sig-security | closed | [Suggestion] Add recommendations for tooling in security assessments | assessment-process suggestion | Description: During the security assessments, there are some steps, like taking the self-assessment (that usually comes in a google doc form for collaboration), and make it into a markdown format for the repo.

Impact: This will help lead security reviewers in performing security assessments

Scope: After discussi... | 1.0 | [Suggestion] Add recommendations for tooling in security assessments - Description: During the security assessments, there are some steps, like taking the self-assessment (that usually comes in a google doc form for collaboration), and make it into a markdown format for the repo.

Impact: This will help lead securit... | process | add recommendations for tooling in security assessments description during the security assessments there are some steps like taking the self assessment that usually comes in a google doc form for collaboration and make it into a markdown format for the repo impact this will help lead security reviewers... | 1 |

8,445 | 11,614,077,314 | IssuesEvent | 2020-02-26 11:56:02 | MHRA/products | https://api.github.com/repos/MHRA/products | closed | Add Health Endpoint to Stub Document Manager API | EPIC - Auto Batch Process :oncoming_automobile: HIGH PRIORITY :arrow_double_up: | Further to conversations with Dilu, we need to add a health endpoint.

**Acceptance criteria:**

- [x] Should be accessible at `/health`

- [x] Should require authentication

- [x] Should return OK with no contents | 1.0 | Add Health Endpoint to Stub Document Manager API - Further to conversations with Dilu, we need to add a health endpoint.

**Acceptance criteria:**

- [x] Should be accessible at `/health`

- [x] Should require authentication

- [x] Should return OK with no contents | process | add health endpoint to stub document manager api further to conversations with dilu we need to add a health endpoint acceptance criteria should be accessible at health should require authentication should return ok with no contents | 1 |

67,297 | 16,869,946,743 | IssuesEvent | 2021-06-22 02:09:47 | opencv/opencv | https://api.github.com/repos/opencv/opencv | closed | OpenCV 4.5.2 videoio not compatible with ffmpeg 4.4 | category: build/install incomplete | <!--

If you have a question rather than reporting a bug please go to https://forum.opencv.org where you get much faster responses.

If you need further assistance please read [How To Contribute](https://github.com/opencv/opencv/wiki/How_to_contribute).

This is a template helping you to create an issue which can be ... | 1.0 | OpenCV 4.5.2 videoio not compatible with ffmpeg 4.4 - <!--

If you have a question rather than reporting a bug please go to https://forum.opencv.org where you get much faster responses.

If you need further assistance please read [How To Contribute](https://github.com/opencv/opencv/wiki/How_to_contribute).

This is a... | non_process | opencv videoio not compatible with ffmpeg if you have a question rather than reporting a bug please go to where you get much faster responses if you need further assistance please read this is a template helping you to create an issue which can be processed as quickly as possible this is t... | 0 |

6,259 | 9,218,158,630 | IssuesEvent | 2019-03-11 12:43:05 | Open-EO/openeo-api | https://api.github.com/repos/Open-EO/openeo-api | opened | parameters as array to remove parameter_order | process discovery | Currently, the parameters are stored as object. As JSON objects have no order, we added parameter_order for programming languages without names parameters. parameter_order on the other hand is only required for 2+ parameters and needs special handling in many implementations. Therefore the idea came up to remove parame... | 1.0 | parameters as array to remove parameter_order - Currently, the parameters are stored as object. As JSON objects have no order, we added parameter_order for programming languages without names parameters. parameter_order on the other hand is only required for 2+ parameters and needs special handling in many implementati... | process | parameters as array to remove parameter order currently the parameters are stored as object as json objects have no order we added parameter order for programming languages without names parameters parameter order on the other hand is only required for parameters and needs special handling in many implementati... | 1 |

166,516 | 6,305,932,550 | IssuesEvent | 2017-07-21 19:38:27 | fedarko/MetagenomeScope | https://api.github.com/repos/fedarko/MetagenomeScope | closed | Add AGP file export functionality for finished paths | highpriorityfeature | This has been on the docket for a while. Gonna take care of it soon.

See [the AGP specification](https://www.ncbi.nlm.nih.gov/assembly/agp/AGP_Specification/) for details. I guess we'd export node labels for GML input files and node IDs for other input files.

The main thing I'm unsure about is whether or not to p... | 1.0 | Add AGP file export functionality for finished paths - This has been on the docket for a while. Gonna take care of it soon.

See [the AGP specification](https://www.ncbi.nlm.nih.gov/assembly/agp/AGP_Specification/) for details. I guess we'd export node labels for GML input files and node IDs for other input files.

... | non_process | add agp file export functionality for finished paths this has been on the docket for a while gonna take care of it soon see for details i guess we d export node labels for gml input files and node ids for other input files the main thing i m unsure about is whether or not to prefix node ids with anythin... | 0 |

268,401 | 8,406,533,362 | IssuesEvent | 2018-10-11 18:13:15 | GCE-NEIIST/GCE-NEIIST-webapp | https://api.github.com/repos/GCE-NEIIST/GCE-NEIIST-webapp | closed | GCE-Thesis: adding semester field | Category: Coding Priority: High | A student might want to navigate and check older master theses. Adding a field to the entity thesis called "Semester" might help us differentiate between them.

Example:

title: "A title",

...

semester:"2017-2018-1S"

Given this differentiation, we can check for trends and provide a more valuable service to stu... | 1.0 | GCE-Thesis: adding semester field - A student might want to navigate and check older master theses. Adding a field to the entity thesis called "Semester" might help us differentiate between them.

Example:

title: "A title",

...

semester:"2017-2018-1S"

Given this differentiation, we can check for trends and pr... | non_process | gce thesis adding semester field a student might want to navigate and check older master theses adding a field to the entity thesis called semester might help us differentiate between them example title a title semester given this differentiation we can check for trends and provide a... | 0 |

8,065 | 11,235,819,848 | IssuesEvent | 2020-01-09 09:12:28 | Altinn/altinn-studio | https://api.github.com/repos/Altinn/altinn-studio | closed | Create Custom PEP for Process API | area/authorization area/process kind/user-story solution/app-backend team/steam | ## Description

As decided in #2521 we need to use the current action step for a current task to decide if a user is authorized to move a process forward.

A standard PEP (Created in #2554) will not be able to do that so we would need to either add this create this generic in pep or create custom code for it

## ... | 1.0 | Create Custom PEP for Process API - ## Description

As decided in #2521 we need to use the current action step for a current task to decide if a user is authorized to move a process forward.

A standard PEP (Created in #2554) will not be able to do that so we would need to either add this create this generic in pep... | process | create custom pep for process api description as decided in we need to use the current action step for a current task to decide if a user is authorized to move a process forward a standard pep created in will not be able to do that so we would need to either add this create this generic in pep or cr... | 1 |

5,484 | 8,358,679,619 | IssuesEvent | 2018-10-03 04:30:05 | googleapis/nodejs-spanner | https://api.github.com/repos/googleapis/nodejs-spanner | closed | deep-extend@0.4.2 security vulnerability | status: blocked type: process | #### Environment details

- OS: macOS High Sierra v10.13.3

- Node.js version: v8.9.4

- npm version: v5.6.0

- `@google-cloud/spanner` version: 1.5.0

#### Steps to reproduce

https://nodesecurity.io/advisories/612

path to package:

`@google-cloud/spanner@1.5.0 > google-gax@0.16.1 > grpc@1.12.4 > node... | 1.0 | deep-extend@0.4.2 security vulnerability - #### Environment details

- OS: macOS High Sierra v10.13.3

- Node.js version: v8.9.4

- npm version: v5.6.0

- `@google-cloud/spanner` version: 1.5.0

#### Steps to reproduce

https://nodesecurity.io/advisories/612

path to package:

`@google-cloud/spanner@1.5... | process | deep extend security vulnerability environment details os macos high sierra node js version npm version google cloud spanner version steps to reproduce path to package google cloud spanner google gax grpc node ... | 1 |

765,287 | 26,840,623,472 | IssuesEvent | 2023-02-03 00:00:11 | grpc/grpc | https://api.github.com/repos/grpc/grpc | closed | gRPC python (version 1.51.1) lead to exit django process with exit code 1 | kind/bug lang/Python priority/P2 disposition/requires reporter action | <!--

PLEASE DO NOT POST A QUESTION HERE.

This form is for bug reports and feature requests ONLY!

For general questions and troubleshooting, please ask/look for answers at StackOverflow, with "grpc" tag: https://stackoverflow.com/questions/tagged/grpc

For questions that specifically need to be answered by gRPC t... | 1.0 | gRPC python (version 1.51.1) lead to exit django process with exit code 1 - <!--

PLEASE DO NOT POST A QUESTION HERE.

This form is for bug reports and feature requests ONLY!

For general questions and troubleshooting, please ask/look for answers at StackOverflow, with "grpc" tag: https://stackoverflow.com/questions/... | non_process | grpc python version lead to exit django process with exit code please do not post a question here this form is for bug reports and feature requests only for general questions and troubleshooting please ask look for answers at stackoverflow with grpc tag for questions that specifically ... | 0 |

19,130 | 25,184,385,065 | IssuesEvent | 2022-11-11 16:29:04 | bradmartin333/batter | https://api.github.com/repos/bradmartin333/batter | closed | focus score | processing | - [ ] rewrite RedFocus algorithm

- [ ] add score to hue display

- [ ] add diagnostic into to ROI render | 1.0 | focus score - - [ ] rewrite RedFocus algorithm

- [ ] add score to hue display

- [ ] add diagnostic into to ROI render | process | focus score rewrite redfocus algorithm add score to hue display add diagnostic into to roi render | 1 |

505,354 | 14,632,033,476 | IssuesEvent | 2020-12-23 21:18:48 | cBioPortal/datahub | https://api.github.com/repos/cBioPortal/datahub | reopened | TRACERx - McGranahan et al. Clonal neoantigens elicit T cell immunoreactivity and sensitivity to immune checkpoint blockade. Science. 2016. | immunotherapy missing data/access limitation new public study not curatable priority wontfix | https://www.ncbi.nlm.nih.gov/pubmed/26940869

- [ ] [create a issue on datahub](https://github.com/cBioPortal/datahub/issues/new) before curating a study (one issue per study) and copy this checklist to the issue tracker

- [ ] List information of the dataset/paper in the issue, e.g. pmid, paper link, suppl file link... | 1.0 | TRACERx - McGranahan et al. Clonal neoantigens elicit T cell immunoreactivity and sensitivity to immune checkpoint blockade. Science. 2016. - https://www.ncbi.nlm.nih.gov/pubmed/26940869

- [ ] [create a issue on datahub](https://github.com/cBioPortal/datahub/issues/new) before curating a study (one issue per study) ... | non_process | tracerx mcgranahan et al clonal neoantigens elicit t cell immunoreactivity and sensitivity to immune checkpoint blockade science before curating a study one issue per study and copy this checklist to the issue tracker list information of the dataset paper in the issue e g pmid paper lin... | 0 |

10,743 | 13,538,554,707 | IssuesEvent | 2020-09-16 12:16:46 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Implement a `findFirst` API | kind/feature process/candidate team/engines team/product team/typescript | ## Problem

`findOne` is strict in that it requires a unique constraint (@unique, @id) to find a unique entry. That, however, doesn't allow for more flexible kinds of queries which should also usually return a single result.

## Suggested solution

`findFirst`, doing essentially a `findMany` + `take: 1` under the hoo... | 1.0 | Implement a `findFirst` API - ## Problem

`findOne` is strict in that it requires a unique constraint (@unique, @id) to find a unique entry. That, however, doesn't allow for more flexible kinds of queries which should also usually return a single result.

## Suggested solution

`findFirst`, doing essentially a `findM... | process | implement a findfirst api problem findone is strict in that it requires a unique constraint unique id to find a unique entry that however doesn t allow for more flexible kinds of queries which should also usually return a single result suggested solution findfirst doing essentially a findm... | 1 |

501,101 | 14,521,195,831 | IssuesEvent | 2020-12-14 06:57:28 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | accounts.firefox.com - design is broken | browser-fenix engine-gecko ml-needsdiagnosis-false ml-probability-high priority-normal | <!-- @browser: Firefox Mobile 84.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:84.0) Gecko/84.0 Firefox/84.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/63580 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://accounts.fir... | 1.0 | accounts.firefox.com - design is broken - <!-- @browser: Firefox Mobile 84.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:84.0) Gecko/84.0 Firefox/84.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/63580 -->

<!-- @extra_labels: browse... | non_process | accounts firefox com design is broken url browser version firefox mobile operating system android tested another browser no problem type design is broken description items not fully visible steps to reproduce need to zoom in and zoom out to see forms ... | 0 |

299,689 | 9,205,762,516 | IssuesEvent | 2019-03-08 11:34:15 | qissue-bot/QGIS | https://api.github.com/repos/qissue-bot/QGIS | closed | For PostGIS layers save style files in the database | Category: Vectors Component: Easy fix? Component: Pull Request or Patch supplied Component: Resolution Priority: Low Project: QGIS Application Status: Closed Tracker: Feature request | ---

Author Name: **Redmine Admin** (Redmine Admin)

Original Redmine Issue: 1059, https://issues.qgis.org/issues/1059

Original Assignee: nobody -

---

It would be, I think, a good idea to have the option to store the style data in the database. I reckon the comment field/area would be a good place to store it becaus... | 1.0 | For PostGIS layers save style files in the database - ---

Author Name: **Redmine Admin** (Redmine Admin)

Original Redmine Issue: 1059, https://issues.qgis.org/issues/1059

Original Assignee: nobody -

---

It would be, I think, a good idea to have the option to store the style data in the database. I reckon the comme... | non_process | for postgis layers save style files in the database author name redmine admin redmine admin original redmine issue original assignee nobody it would be i think a good idea to have the option to store the style data in the database i reckon the comment field area would be a good place t... | 0 |

14,331 | 17,364,448,468 | IssuesEvent | 2021-07-30 04:16:55 | googleapis/python-spanner | https://api.github.com/repos/googleapis/python-spanner | closed | samples.samples.quickstart_test: test_quickstart failed | api: spanner flakybot: flaky flakybot: issue samples type: process | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 3132587453f7bd0be72ebc393626b5c8b1bab982... | 1.0 | samples.samples.quickstart_test: test_quickstart failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenti... | process | samples samples quickstart test test quickstart failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output args parent projects python docs sa... | 1 |

241,276 | 18,440,695,042 | IssuesEvent | 2021-10-14 17:42:54 | AlgebraStudentCollab/START-HERE | https://api.github.com/repos/AlgebraStudentCollab/START-HERE | opened | Improve START-HERE and all READMEs | documentation | Goals

-Help newcomers figure out what this repo is About and how to use it

-Read me with: etiquette, tutorials (git, setup of things...etc)

-How to fork

-How to ask questions (issues etc..)

But for every repo:

-What is this repo about? (ex. Java 2 Swing bla bla)

-How to fork this ex... | 1.0 | Improve START-HERE and all READMEs - Goals

-Help newcomers figure out what this repo is About and how to use it

-Read me with: etiquette, tutorials (git, setup of things...etc)

-How to fork

-How to ask questions (issues etc..)

But for every repo:

-What is this repo about? (ex. Java 2 Swi... | non_process | improve start here and all readmes goals help newcomers figure out what this repo is about and how to use it read me with etiquette tutorials git setup of things etc how to fork how to ask questions issues etc but for every repo what is this repo about ex java swi... | 0 |

2,665 | 5,438,842,691 | IssuesEvent | 2017-03-06 11:39:15 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | An interesting feature process.hrtime | process question | Version: v7.7.1

Platform: Linux admin 4.9.0-0.bpo.1-amd64 #1 SMP Debian 4.9.2-2~bpo8+1 (2017-01-26) x86_64 GNU/Linux

Subsystem

Catching manual profiling functions with the help of designs

function profile(func) {

var wrapper = function () {

var start = process.hrtime(), diff;

var re... | 1.0 | An interesting feature process.hrtime - Version: v7.7.1

Platform: Linux admin 4.9.0-0.bpo.1-amd64 #1 SMP Debian 4.9.2-2~bpo8+1 (2017-01-26) x86_64 GNU/Linux

Subsystem

Catching manual profiling functions with the help of designs

function profile(func) {

var wrapper = function () {

var start =... | process | an interesting feature process hrtime version platform linux admin bpo smp debian gnu linux subsystem catching manual profiling functions with the help of designs function profile func var wrapper function var start process hrtime ... | 1 |

3,722 | 6,732,899,033 | IssuesEvent | 2017-10-18 13:13:55 | lockedata/rcms | https://api.github.com/repos/lockedata/rcms | opened | Register | attendee odoo processes | ## Detailed task

Buy a ticket for the conference

## Assessing the task

Try to perform the task. Use google and the system documentation to help - part of what we're trying to assess how easy it is for people to work out how to do tasks.

Use a 👍 (`:+1:`) reaction to this task if you were able to perform the tas... | 1.0 | Register - ## Detailed task

Buy a ticket for the conference

## Assessing the task

Try to perform the task. Use google and the system documentation to help - part of what we're trying to assess how easy it is for people to work out how to do tasks.

Use a 👍 (`:+1:`) reaction to this task if you were able to perf... | process | register detailed task buy a ticket for the conference assessing the task try to perform the task use google and the system documentation to help part of what we re trying to assess how easy it is for people to work out how to do tasks use a 👍 reaction to this task if you were able to perf... | 1 |

124,406 | 10,310,761,379 | IssuesEvent | 2019-08-29 15:47:34 | ansible/awx | https://api.github.com/repos/ansible/awx | closed | CLI: awx users create does not indicate correct required arguments | component:cli priority:medium state:needs_test type:bug | ##### ISSUE TYPE

- Bug Report

##### SUMMARY

<!-- Briefly describe the problem. -->

Help text only indicates need username, also some strange formatting

##### STEPS TO REPRODUCE

<!-- Please describe exactly how to reproduce the problem. -->

1) Have valid token or username/password in environment

2) `awx use... | 1.0 | CLI: awx users create does not indicate correct required arguments - ##### ISSUE TYPE

- Bug Report

##### SUMMARY

<!-- Briefly describe the problem. -->

Help text only indicates need username, also some strange formatting

##### STEPS TO REPRODUCE

<!-- Please describe exactly how to reproduce the problem. -->

... | non_process | cli awx users create does not indicate correct required arguments issue type bug report summary help text only indicates need username also some strange formatting steps to reproduce have valid token or username password in environment awx users create help expe... | 0 |

123,035 | 10,244,935,793 | IssuesEvent | 2019-08-20 11:41:33 | kyma-project/kyma | https://api.github.com/repos/kyma-project/kyma | closed | Runtimes and central connector service integration tests | area/application-connector enhancement quality/testability | Provide an integration test for the runtimes and central connector service. Current approach allows running tests during the deployment in the desired environment together with runtime provisioner tests. Extend existing tests to cover the following scenario:

- runtime is provisioned

- connectivity-certs-controller is ... | 1.0 | Runtimes and central connector service integration tests - Provide an integration test for the runtimes and central connector service. Current approach allows running tests during the deployment in the desired environment together with runtime provisioner tests. Extend existing tests to cover the following scenario:

-... | non_process | runtimes and central connector service integration tests provide an integration test for the runtimes and central connector service current approach allows running tests during the deployment in the desired environment together with runtime provisioner tests extend existing tests to cover the following scenario ... | 0 |

325,871 | 9,937,040,905 | IssuesEvent | 2019-07-02 20:45:36 | mlr-org/paradox | https://api.github.com/repos/mlr-org/paradox | closed | Function to print x values | Priority: Medium Type: Enhancement | Function values are stored in named lists.

To transform them to a single string you could use `paste(names(x), x, sep = "=" ,collapse=",")`

This is problematic for

* Long values

* x values that can not be transferred to a character. These should not exists, because complex types are just created by transformation. ... | 1.0 | Function to print x values - Function values are stored in named lists.

To transform them to a single string you could use `paste(names(x), x, sep = "=" ,collapse=",")`

This is problematic for

* Long values

* x values that can not be transferred to a character. These should not exists, because complex types are jus... | non_process | function to print x values function values are stored in named lists to transform them to a single string you could use paste names x x sep collapse this is problematic for long values x values that can not be transferred to a character these should not exists because complex types are jus... | 0 |

2,004 | 4,726,832,332 | IssuesEvent | 2016-10-18 11:35:44 | AdguardTeam/AdguardForAndroid | https://api.github.com/repos/AdguardTeam/AdguardForAndroid | closed | Bug with Updato app when Adguard enabled. | Bug Compatibility | Updato app (https://play.google.com/store/apps/details?id=samsungupdate.com&hl=en) can't update device because of Adguard. After entering device model and choosing update and download options app redirects to page, which says that we can't proceed it because of Adblocker.

Samsung-N910P

Android 6.0.1

Ver. 2.7.220

... | True | Bug with Updato app when Adguard enabled. - Updato app (https://play.google.com/store/apps/details?id=samsungupdate.com&hl=en) can't update device because of Adguard. After entering device model and choosing update and download options app redirects to page, which says that we can't proceed it because of Adblocker.

... | non_process | bug with updato app when adguard enabled updato app can t update device because of adguard after entering device model and choosing update and download options app redirects to page which says that we can t proceed it because of adblocker samsung android ver logs video guide ... | 0 |

493,196 | 14,227,955,533 | IssuesEvent | 2020-11-18 02:33:47 | aiidateam/aiida-core | https://api.github.com/repos/aiidateam/aiida-core | closed | Update `verdi import` | good first issue priority/quality-of-life topic/verdi type/refactoring | As mentioned [here](https://github.com/aiidateam/aiida_core/pull/2820#pullrequestreview-235910419):

Suggestions to update `verdi import` code (by @ltalirz):

- [ ] archives should simply be processed one by one (there is no need to create and validate the full list of archives before you even start. e.g. `rm file1 f... | 1.0 | Update `verdi import` - As mentioned [here](https://github.com/aiidateam/aiida_core/pull/2820#pullrequestreview-235910419):

Suggestions to update `verdi import` code (by @ltalirz):

- [ ] archives should simply be processed one by one (there is no need to create and validate the full list of archives before you even... | non_process | update verdi import as mentioned suggestions to update verdi import code by ltalirz archives should simply be processed one by one there is no need to create and validate the full list of archives before you even start e g rm also will start removing files and only stop when it encounte... | 0 |

8,114 | 11,302,311,845 | IssuesEvent | 2020-01-17 17:22:11 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | regulating the host phagocytosis machinery | multi-species process | <img width="1059" alt="Screenshot 2019-10-09 at 20 09 23" src="https://user-images.githubusercontent.com/7359272/66512395-bad30700-ead0-11e9-868a-f34a9222d3f9.png">

These are the terms related to modulation of host phagocytosis by symbiont

GO:0052190 modulation by symbiont of host phagocytosis

Any process in ... | 1.0 | regulating the host phagocytosis machinery - <img width="1059" alt="Screenshot 2019-10-09 at 20 09 23" src="https://user-images.githubusercontent.com/7359272/66512395-bad30700-ead0-11e9-868a-f34a9222d3f9.png">

These are the terms related to modulation of host phagocytosis by symbiont

GO:0052190 modulation by s... | process | regulating the host phagocytosis machinery img width alt screenshot at src these are the terms related to modulation of host phagocytosis by symbiont go modulation by symbiont of host phagocytosis any process in which an organism modulates the frequency rate or extent of phagocytosi... | 1 |

18,023 | 24,032,791,877 | IssuesEvent | 2022-09-15 16:19:21 | googleapis/java-gke-multi-cloud | https://api.github.com/repos/googleapis/java-gke-multi-cloud | opened | Your .repo-metadata.json file has a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'gke-multi-cloud' invalid in .repo-metadata.json

☝️ Once you address these problems, you can close this issue.

### Need help?

* [Schema definition](https://github.com/googleapis/repo-automation-bots/blob/main/packages/repo-me... | 1.0 | Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'gke-multi-cloud' invalid in .repo-metadata.json

☝️ Once you address these problems, you can close this issue.

### Need help?

* [Schema definition](https://github.com/googleapi... | process | your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 api shortname gke multi cloud invalid in repo metadata json ☝️ once you address these problems you can close this issue need help lists valid options for each field fo... | 1 |

501,062 | 14,520,305,187 | IssuesEvent | 2020-12-14 05:10:00 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | reopened | portal.xero.com - site is not usable | browser-firefox engine-gecko priority-important | <!-- @browser: Firefox 84.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:84.0) Gecko/20100101 Firefox/84.0 -->

<!-- @reported_with: unknown -->

**URL**: https://portal.xero.com/Agreement/Sign/febb6fe2-46b9-4e0d-93b4-b2f6270734dd

**Browser / Version**: Firefox 84.0

**Operating System**: Windows 10... | 1.0 | portal.xero.com - site is not usable - <!-- @browser: Firefox 84.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:84.0) Gecko/20100101 Firefox/84.0 -->

<!-- @reported_with: unknown -->