Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

386,352 | 26,679,072,596 | IssuesEvent | 2023-01-26 16:17:40 | arbor-sim/arbor | https://api.github.com/repos/arbor-sim/arbor | opened | Units and behavior of diffusive particles | documentation clarification needed | In the [documentation](https://docs.arbor-sim.org/en/latest/fileformat/nmodl.html#units), `mmol/L` is mentioned as the unit for `ion X diffusive concentration`, but it is not stated what it refers to (e.g., to point mechanisms, as it is stated for other quantities on the same documentation page). More importantly, the ... | 1.0 | Units and behavior of diffusive particles - In the [documentation](https://docs.arbor-sim.org/en/latest/fileformat/nmodl.html#units), `mmol/L` is mentioned as the unit for `ion X diffusive concentration`, but it is not stated what it refers to (e.g., to point mechanisms, as it is stated for other quantities on the same... | non_process | units and behavior of diffusive particles in the mmol l is mentioned as the unit for ion x diffusive concentration but it is not stated what it refers to e g to point mechanisms as it is stated for other quantities on the same documentation page more importantly the unit of the amount of diffusive par... | 0 |

13,083 | 15,429,487,470 | IssuesEvent | 2021-03-06 03:51:26 | bigwolftime/gitmentCommentsPlugin | https://api.github.com/repos/bigwolftime/gitmentCommentsPlugin | opened | 操作系统进程调度 | -xblog-system-process-dispatcher- | https://index1024.gitee.io/xblog/system-process-dispatcher/

一. 调度指标

周转时间

其中一个衡量调度算法性能的指标是周转时间, 定义为:

T(周转时间) = T(完成时间) - T(任务到达时间)

多个任务的平均周转时间定义为:

T(平均周转时间) = (T1(任务1周转时间) + T2(任务2周转时间) + ...) / n. n 为任务数

响应时间

一。 单 CPU 下的调度策略

1. 先进先出(First In First Out, FIFO)

使用了队列思想, 那个任务先来便运行哪个任务. 其特点是: 逻辑简单, 易于实现

假设1: 有 ... | 1.0 | 操作系统进程调度 - https://index1024.gitee.io/xblog/system-process-dispatcher/

一. 调度指标

周转时间

其中一个衡量调度算法性能的指标是周转时间, 定义为:

T(周转时间) = T(完成时间) - T(任务到达时间)

多个任务的平均周转时间定义为:

T(平均周转时间) = (T1(任务1周转时间) + T2(任务2周转时间) + ...) / n. n 为任务数

响应时间

一。 单 CPU 下的调度策略

1. 先进先出(First In First Out, FIFO)

使用了队列思想, 那个任务先来便运行哪个任务. 其特点是: 逻辑简单, 易于... | process | 操作系统进程调度 一 调度指标 周转时间 其中一个衡量调度算法性能的指标是周转时间 定义为 t 周转时间 t 完成时间 t 任务到达时间 多个任务的平均周转时间定义为 t 平均周转时间 n n 为任务数 响应时间 一。 单 cpu 下的调度策略 先进先出 first in first out fifo 使用了队列思想 那个任务先来便运行哪个任务 其特点是 逻辑简单 易于实现 有 a b c 三个任务 几乎同时到达系统 排队的序列为 a b c 假如每个任务的执行时间是 则第 运行任... | 1 |

10,765 | 13,561,164,255 | IssuesEvent | 2020-09-18 03:46:32 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | MaxWorkingSet supported platforms | area-System.Diagnostics.Process blocking-release | https://github.com/dotnet/runtime/blob/3130ac980c97c0664eddb04411f8f765a9f908d8/src/libraries/System.Diagnostics.Process/ref/System.Diagnostics.Process.cs#L45

The code specifies supported platforms as Windows only.

However, the [documentation](https://github.com/dotnet/docs/blob/3588fdadac34bee137fe519d50c9a187d7... | 1.0 | MaxWorkingSet supported platforms - https://github.com/dotnet/runtime/blob/3130ac980c97c0664eddb04411f8f765a9f908d8/src/libraries/System.Diagnostics.Process/ref/System.Diagnostics.Process.cs#L45

The code specifies supported platforms as Windows only.

However, the [documentation](https://github.com/dotnet/docs/blo... | process | maxworkingset supported platforms the code specifies supported platforms as windows only however the mentions it throws in linux only which means both windows and macos are supported i think macos should be missing in either the documentation or the supported platforms attribute | 1 |

13,847 | 16,610,098,629 | IssuesEvent | 2021-06-02 10:23:17 | sanger/General-Backlog-Items | https://api.github.com/repos/sanger/General-Backlog-Items | closed | GPL-730 [Improvement] Standarize deployment command (s) (Dulipcate of hot reload) | Deployment process improvement | Some projects (Limber, Labwhere) require an extra flag for deployment named ```full_deploy=true```, while other projects don't need it. This is caused because in this projects we have an issue that doesn't allow to stop and start all puma workers on deployment.

If we want to use full_deploy=true mandatorily, then we s... | 1.0 | GPL-730 [Improvement] Standarize deployment command (s) (Dulipcate of hot reload) - Some projects (Limber, Labwhere) require an extra flag for deployment named ```full_deploy=true```, while other projects don't need it. This is caused because in this projects we have an issue that doesn't allow to stop and start all pu... | process | gpl standarize deployment command s dulipcate of hot reload some projects limber labwhere require an extra flag for deployment named full deploy true while other projects don t need it this is caused because in this projects we have an issue that doesn t allow to stop and start all puma workers on ... | 1 |

500,328 | 14,496,250,986 | IssuesEvent | 2020-12-11 12:29:42 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.2 develop-109] Avatar doesn't update hair, beard and clothes during creation or editing. | Category: Gameplay Priority: High Type: Regression | But after press save it will be selected what you select during creation. Step to reproduce:

- start to create avatar, select others clothes, hair and beard then default. It will not updated:

- press save a... | 1.0 | [0.9.2 develop-109] Avatar doesn't update hair, beard and clothes during creation or editing. - But after press save it will be selected what you select during creation. Step to reproduce:

- start to create avatar, select others clothes, hair and beard then default. It will not updated:

`](#replyviewtemplate-context-options).

| 1.0 | Document response variety view - From the hapi doc:

- `variety` - a string indicating the type of `source` with available values:

- `'view'` - a view generated with [`reply.view()`](#replyviewtemplate-context-options).

| non_process | document response variety view from the hapi doc variety a string indicating the type of source with available values view a view generated with replyviewtemplate context options | 0 |

7,123 | 10,268,937,922 | IssuesEvent | 2019-08-23 07:49:24 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | error at the end of Processing/GDAL warp logs/runs | Bug Processing | On 3.8.1 Processing when running "warp" from GDAL the output is created as expected, but if the output is a temp layer then the log always ends with an error. In this sense, this bug is very similar to:

https://github.com/qgis/QGIS/issues/29346 | 1.0 | error at the end of Processing/GDAL warp logs/runs - On 3.8.1 Processing when running "warp" from GDAL the output is created as expected, but if the output is a temp layer then the log always ends with an error. In this sense, this bug is very similar to:

https://github.com/qgis/QGIS/issues/29346 | process | error at the end of processing gdal warp logs runs on processing when running warp from gdal the output is created as expected but if the output is a temp layer then the log always ends with an error in this sense this bug is very similar to | 1 |

4,408 | 7,299,004,297 | IssuesEvent | 2018-02-26 18:43:47 | UKHomeOffice/dq-aws-transition | https://api.github.com/repos/UKHomeOffice/dq-aws-transition | closed | Create Prod RDS SQL Server MDS instance in Data Ingest Subnet | DQ Data Ingest Production S4 Processing | Create Prod RDS SQL Server MDS instance in Data Ingest Subnet and migrate required MDS views/tables.

- [x] Create Prod RDS SQL Server 2012 R2 instance in Data Ingest Subnet

- [x] Create MDS Database

- [x] Create mds_user User

- [x] Create mdm schema

- [x] Create working tables from each MD View in MDS database i... | 1.0 | Create Prod RDS SQL Server MDS instance in Data Ingest Subnet - Create Prod RDS SQL Server MDS instance in Data Ingest Subnet and migrate required MDS views/tables.

- [x] Create Prod RDS SQL Server 2012 R2 instance in Data Ingest Subnet

- [x] Create MDS Database

- [x] Create mds_user User

- [x] Create mdm schema

... | process | create prod rds sql server mds instance in data ingest subnet create prod rds sql server mds instance in data ingest subnet and migrate required mds views tables create prod rds sql server instance in data ingest subnet create mds database create mds user user create mdm schema create ... | 1 |

100,173 | 12,507,453,430 | IssuesEvent | 2020-06-02 14:09:02 | eclipse/codewind | https://api.github.com/repos/eclipse/codewind | closed | Include social feed on our landing page | area/design area/docs area/website kind/enhancement | <!-- Please fill out the following form to suggest an enhancement. If some fields do not apply to your situation, feel free to skip them.-->

**Codewind version: 0.4

MacOS

**Che version:**

**IDE extension version:**

**IDE version:**

**Kubernetes cluster:**

**Description of the enhancement:**

We have a nu... | 1.0 | Include social feed on our landing page - <!-- Please fill out the following form to suggest an enhancement. If some fields do not apply to your situation, feel free to skip them.-->

**Codewind version: 0.4

MacOS

**Che version:**

**IDE extension version:**

**IDE version:**

**Kubernetes cluster:**

**Descr... | non_process | include social feed on our landing page codewind version macos che version ide extension version ide version kubernetes cluster description of the enhancement we have a number of social feeds which are linked from our main landing page but would like to see a live fe... | 0 |

158,876 | 13,750,584,981 | IssuesEvent | 2020-10-06 12:15:35 | competitive-programming-tools/competitive-problems-tools | https://api.github.com/repos/competitive-programming-tools/competitive-problems-tools | opened | Criar Heatmap | documentation management | ## Criar Heatmap

### O que é:

O Heatmap é útil para auxiliar no planejamento quanto a disponibilidade dos integrantes do grupo durante a realização do projeto.

### Description:

<!-- Describe issue briefly, inserting information necessary for the understanding of the future developer. -->

### Acceptance crite... | 1.0 | Criar Heatmap - ## Criar Heatmap

### O que é:

O Heatmap é útil para auxiliar no planejamento quanto a disponibilidade dos integrantes do grupo durante a realização do projeto.

### Description:

<!-- Describe issue briefly, inserting information necessary for the understanding of the future developer. -->

### ... | non_process | criar heatmap criar heatmap o que é o heatmap é útil para auxiliar no planejamento quanto a disponibilidade dos integrantes do grupo durante a realização do projeto description acceptance criteria criteria criteria tasks task task | 0 |

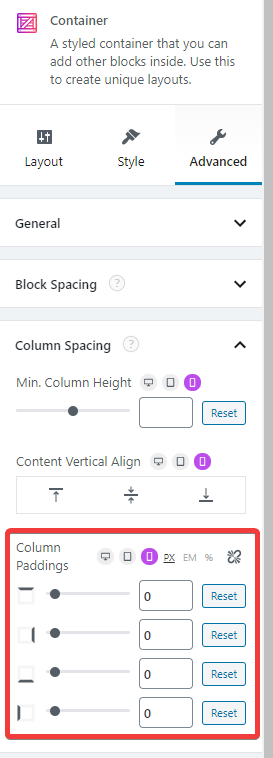

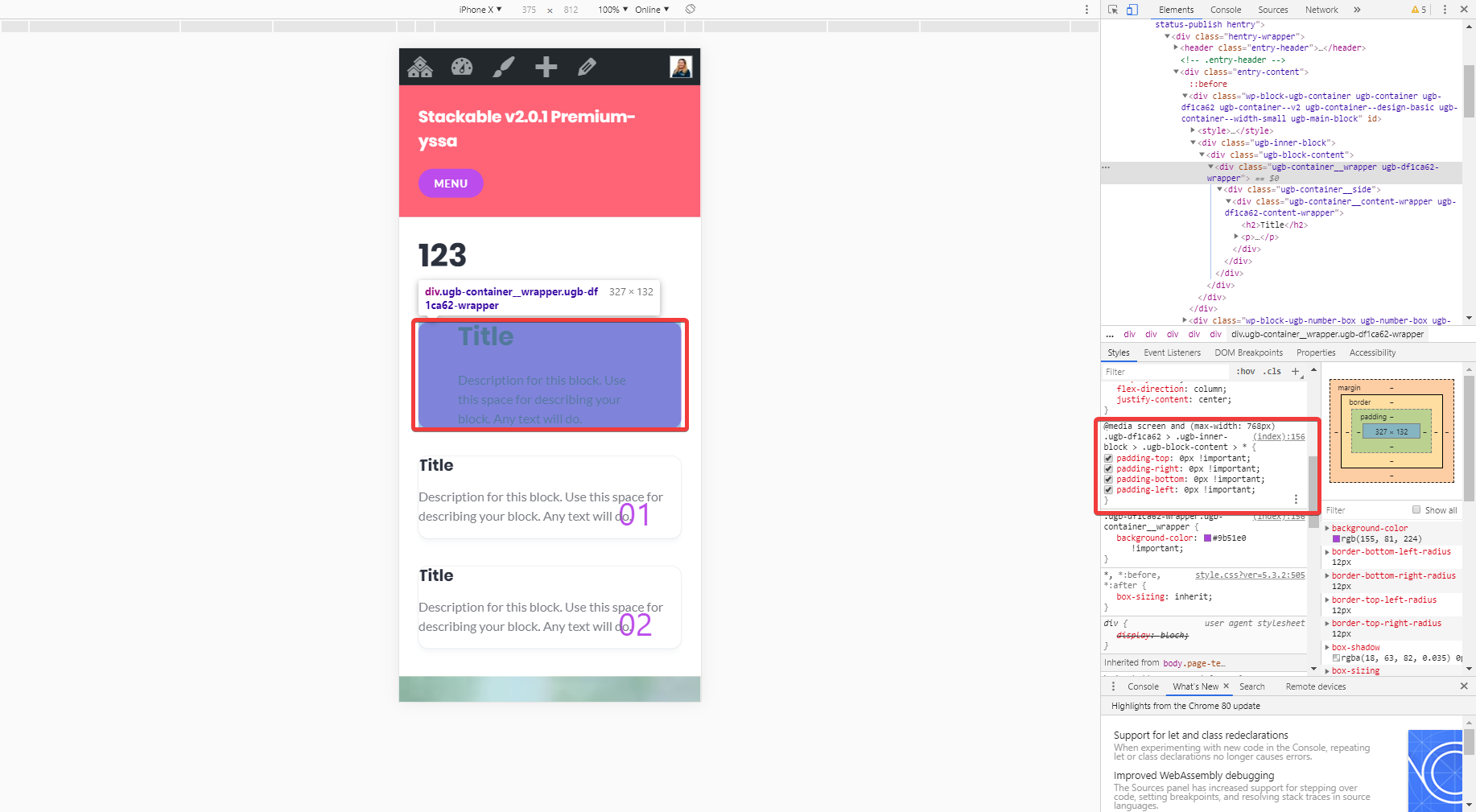

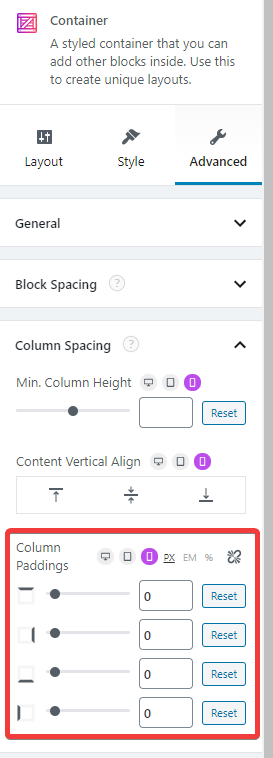

445,480 | 12,831,513,255 | IssuesEvent | 2020-07-07 05:34:44 | gambitph/Stackable | https://api.github.com/repos/gambitph/Stackable | closed | Container Column Paddings (right and left) are not working | [block] container bug high priority | Container Column Paddings (right and left) are not working

Backend

Frontend

| 1.0 | Container Column Paddings (right and left) are not working - Container Column Paddings (right and left) are not working

Backend

Frontend

`

- [ ] Use modal for a popup | 1.0 | Update UI/UX practice - - [ ] Remove `useClickOutside`

- [ ] Change data connection to remove `useEffect()`

- [ ] Use modal for a popup | process | update ui ux practice remove useclickoutside change data connection to remove useeffect use modal for a popup | 1 |

136,632 | 12,728,608,429 | IssuesEvent | 2020-06-25 03:09:43 | vmware/govmomi | https://api.github.com/repos/vmware/govmomi | closed | Query on SetRootCAs | documentation/examples | Hi,

Is there a way to create a cert pool and assign it to to the TLS config of the client directly instead of using SetRootCAs() function exposed by the soap client ? | 1.0 | Query on SetRootCAs - Hi,

Is there a way to create a cert pool and assign it to to the TLS config of the client directly instead of using SetRootCAs() function exposed by the soap client ? | non_process | query on setrootcas hi is there a way to create a cert pool and assign it to to the tls config of the client directly instead of using setrootcas function exposed by the soap client | 0 |

5,692 | 8,560,921,996 | IssuesEvent | 2018-11-09 03:47:58 | thewca/wca-regulations | https://api.github.com/repos/thewca/wca-regulations | closed | Proposal: Require a second scramble checker for possible WRs | 2019 controversial process proposal | It seems that we can't fix the mis-scrambling issue in general, but it keeps happening for high-profile cubers who are obviously fairly likely to set a WR (cf. Jeff Park).

I'd like to propose the following limited scramble checker policy:

--------

If a competitor has **an official PR single better than the WR ... | 1.0 | Proposal: Require a second scramble checker for possible WRs - It seems that we can't fix the mis-scrambling issue in general, but it keeps happening for high-profile cubers who are obviously fairly likely to set a WR (cf. Jeff Park).

I'd like to propose the following limited scramble checker policy:

--------

... | process | proposal require a second scramble checker for possible wrs it seems that we can t fix the mis scrambling issue in general but it keeps happening for high profile cubers who are obviously fairly likely to set a wr cf jeff park i d like to propose the following limited scramble checker policy ... | 1 |

5,055 | 7,860,930,833 | IssuesEvent | 2018-06-21 21:44:34 | GoogleCloudPlatform/google-cloud-python | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-python | opened | Regen / release vision, texttospeech, iot | api: iot api: text to speech api: vision type: process | Now that the gapic-generator [fix for shared modules is merged](https://github.com/googleapis/gapic-generator/pull/2063). The recent releases of those API libraries all contain the re-introduced module overwrites for shared modules (#5473). | 1.0 | Regen / release vision, texttospeech, iot - Now that the gapic-generator [fix for shared modules is merged](https://github.com/googleapis/gapic-generator/pull/2063). The recent releases of those API libraries all contain the re-introduced module overwrites for shared modules (#5473). | process | regen release vision texttospeech iot now that the gapic generator the recent releases of those api libraries all contain the re introduced module overwrites for shared modules | 1 |

9,446 | 12,426,847,797 | IssuesEvent | 2020-05-24 23:33:05 | aliceingovernment/aliceingovernment.com | https://api.github.com/repos/aliceingovernment/aliceingovernment.com | closed | tian/ plan necessary steps for reshaping AiG to focus on universities | in-process | 1. Only accept emails ending in @university.edu for each university separately (e.g. MIT accepts @mit.edu ONLY, UNAM accepts @unam.mx ONLY)

use spreadsheet shared with elf

2. DONE---Change copy on /home and /vote

3. Email existent voters and say dynamics are changing

4. Save existent voters with the... | 1.0 | tian/ plan necessary steps for reshaping AiG to focus on universities - 1. Only accept emails ending in @university.edu for each university separately (e.g. MIT accepts @mit.edu ONLY, UNAM accepts @unam.mx ONLY)

use spreadsheet shared with elf

2. DONE---Change copy on /home and /vote

3. Email existent ... | process | tian plan necessary steps for reshaping aig to focus on universities only accept emails ending in university edu for each university separately e g mit accepts mit edu only unam accepts unam mx only use spreadsheet shared with elf done change copy on home and vote email existent ... | 1 |

4,830 | 7,725,908,136 | IssuesEvent | 2018-05-24 19:28:54 | kaching-hq/Privacy-and-Security | https://api.github.com/repos/kaching-hq/Privacy-and-Security | opened | Prepare a "Data Privacy Guide" for our clients | Processes | - [ ] Present an overview of our security measures

- [ ] Present guidelines on supporting data subject rights (with our apps)

- [ ] Guide readers through setting up secure comms with us

- [ ] Share contact information for data protection purposes | 1.0 | Prepare a "Data Privacy Guide" for our clients - - [ ] Present an overview of our security measures

- [ ] Present guidelines on supporting data subject rights (with our apps)

- [ ] Guide readers through setting up secure comms with us

- [ ] Share contact information for data protection purposes | process | prepare a data privacy guide for our clients present an overview of our security measures present guidelines on supporting data subject rights with our apps guide readers through setting up secure comms with us share contact information for data protection purposes | 1 |

413,394 | 12,066,565,446 | IssuesEvent | 2020-04-16 11:59:54 | hotosm/tasking-manager | https://api.github.com/repos/hotosm/tasking-manager | closed | Notification Page: alignment issues | Component: Frontend Priority: Medium Status: Needs implementation | Noticed a few text and icon alignment issues. Also unsure of the list icon next to the notification type in text. Can we just have the notification type as `Mention, Validation, System, Task Comment` instead of a `notification` word appended to each type?

| 1.0 | Add basic markdown formatting to Swapbot descriptions - Because this looks terrible and i'm spoiled by @cryptonaut420 including it everywhere

| non_process | add basic markdown formatting to swapbot descriptions because this looks terrible and i m spoiled by including it everywhere | 0 |

305,623 | 9,372,070,635 | IssuesEvent | 2019-04-03 16:46:45 | mozilla/addons-code-manager | https://api.github.com/repos/mozilla/addons-code-manager | opened | Update the content of the `Index` component | priority: mvp priority: p3 | The content of the `Index` component was useful to quickly get access to add-ons/versions but those links won't work in stage or prod and we should start relying on different -dev data to maybe catch issues.

I am not sure what we could add instead, maybe [this gif](http://bestanimations.com/Site/Construction/under-c... | 2.0 | Update the content of the `Index` component - The content of the `Index` component was useful to quickly get access to add-ons/versions but those links won't work in stage or prod and we should start relying on different -dev data to maybe catch issues.

I am not sure what we could add instead, maybe [this gif](http:... | non_process | update the content of the index component the content of the index component was useful to quickly get access to add ons versions but those links won t work in stage or prod and we should start relying on different dev data to maybe catch issues i am not sure what we could add instead maybe | 0 |

294,612 | 9,037,597,293 | IssuesEvent | 2019-02-09 12:20:19 | Tribler/tribler | https://api.github.com/repos/Tribler/tribler | reopened | IPv8/Dispersy dual stack peer explosion | _Top Priority_ bug | Tribler takes a whole core for nearly 100% after running for days (167h of CPU of htop equal 75% avg. load):

:

![screenshot from 2019-01-26... | non_process | dispersy dual stack peer explosion tribler takes a whole core for nearly after running for days of cpu of htop equal avg load the swp is getting full maxed out box but that does not boost user space cpu usage running mostly idle for days continuously on an ubuntu lts box n... | 0 |

241,990 | 26,257,016,500 | IssuesEvent | 2023-01-06 02:16:19 | ams0/openhack-containers | https://api.github.com/repos/ams0/openhack-containers | closed | CVE-2020-9283 (High) detected in github.com/golang/crypto-v0.0.0-20190308221718-c2843e01d9a2 - autoclosed | security vulnerability | ## CVE-2020-9283 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>github.com/golang/crypto-v0.0.0-20190308221718-c2843e01d9a2</b></p></summary>

<p>[mirror] Go supplementary cryptography... | True | CVE-2020-9283 (High) detected in github.com/golang/crypto-v0.0.0-20190308221718-c2843e01d9a2 - autoclosed - ## CVE-2020-9283 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>github.com/g... | non_process | cve high detected in github com golang crypto autoclosed cve high severity vulnerability vulnerable library github com golang crypto go supplementary cryptography libraries library home page a href dependency hierarchy github com denisenkom go mssqldb ... | 0 |

4,409 | 7,299,072,051 | IssuesEvent | 2018-02-26 18:57:00 | ncbo/bioportal-project | https://api.github.com/repos/ncbo/bioportal-project | reopened | GO ontology is not getting new submissions. | ontology processing problem | Last submission of GO ontology is dated back to 01/22/2016; however, this ontology should be updating more frequently than that.

GO ontology also missing description. | 1.0 | GO ontology is not getting new submissions. - Last submission of GO ontology is dated back to 01/22/2016; however, this ontology should be updating more frequently than that.

GO ontology also missing description. | process | go ontology is not getting new submissions last submission of go ontology is dated back to however this ontology should be updating more frequently than that go ontology also missing description | 1 |

6,789 | 9,921,858,639 | IssuesEvent | 2019-06-30 22:03:59 | GroceriStar/fetch-constants | https://api.github.com/repos/GroceriStar/fetch-constants | closed | #### [Groceristar][Favorites][methods] | in-process |

By using names on methods from this [page](https://groceristar.github.io/documentation/docs/groceristar-website-methods-list/favorite-router/favorite-router.html)

In order to make it better, we'll create a set of constants, each for a different method.

Example:

*Get All ingredients with status favorite*

will ... | 1.0 | #### [Groceristar][Favorites][methods] -

By using names on methods from this [page](https://groceristar.github.io/documentation/docs/groceristar-website-methods-list/favorite-router/favorite-router.html)

In order to make it better, we'll create a set of constants, each for a different method.

Example:

*Get All... | process | by using names on methods from this in order to make it better we ll create a set of constants each for a different method example get all ingredients with status favorite will became export const get all favorite ingredients get all favorite ingredients | 1 |

133,908 | 29,669,414,853 | IssuesEvent | 2023-06-11 08:04:42 | dtcxzyw/llvm-ci | https://api.github.com/repos/dtcxzyw/llvm-ci | closed | Regressions Report [rv64gc-O3-thinlto] May 3rd 2023, 10:18:25 am | regression codegen reasonable | ## Metadata

+ Workflow URL: https://github.com/dtcxzyw/llvm-ci/actions/runs/4870636533

## Change Logs

from 583d492c630655dc0cd57ad167dec03e6c5d211c to c68e92d941723702810093161be4834f3ca68372

[c68e92d941723702810093161be4834f3ca68372](https://github.com/llvm/llvm-project/commit/c68e92d941723702810093161be4834f3ca68372... | 1.0 | Regressions Report [rv64gc-O3-thinlto] May 3rd 2023, 10:18:25 am - ## Metadata

+ Workflow URL: https://github.com/dtcxzyw/llvm-ci/actions/runs/4870636533

## Change Logs

from 583d492c630655dc0cd57ad167dec03e6c5d211c to c68e92d941723702810093161be4834f3ca68372

[c68e92d941723702810093161be4834f3ca68372](https://github.co... | non_process | regressions report may am metadata workflow url change logs from to fix msvc quot not all control paths return a value quot warning nfc use underlying object to rule out stack access regressions time name baseline current baseline time current time ratio ... | 0 |

20,729 | 27,429,259,641 | IssuesEvent | 2023-03-01 23:12:07 | wandb/wandb | https://api.github.com/repos/wandb/wandb | closed | Wandb only terminates one process when using DDP. | bug multiprocessing | **Describe the bug**

Each time I run a sweep (one or multiple runs per agent), some processes are left running (these hog 100% the processor they're assigned to and eat up RAM). The data from GPUs is not cleared after finishing a sweep, leading to `CUDA out of memory` error. Afterwards I need to kill all processes... | 1.0 | Wandb only terminates one process when using DDP. - **Describe the bug**

Each time I run a sweep (one or multiple runs per agent), some processes are left running (these hog 100% the processor they're assigned to and eat up RAM). The data from GPUs is not cleared after finishing a sweep, leading to `CUDA out of mem... | process | wandb only terminates one process when using ddp describe the bug each time i run a sweep one or multiple runs per agent some processes are left running these hog the processor they re assigned to and eat up ram the data from gpus is not cleared after finishing a sweep leading to cuda out of memor... | 1 |

21,925 | 30,446,558,352 | IssuesEvent | 2023-07-15 18:48:21 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | pyutils 0.0.1b18 has 2 GuardDog issues | guarddog typosquatting silent-process-execution | https://pypi.org/project/pyutils

https://inspector.pypi.io/project/pyutils

```{

"dependency": "pyutils",

"version": "0.0.1b18",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names, and might be a typosquatting attempt:... | 1.0 | pyutils 0.0.1b18 has 2 GuardDog issues - https://pypi.org/project/pyutils

https://inspector.pypi.io/project/pyutils

```{

"dependency": "pyutils",

"version": "0.0.1b18",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package nam... | process | pyutils has guarddog issues dependency pyutils version result issues errors results typosquatting this package closely ressembles the following package names and might be a typosquatting attempt pytils python utils silen... | 1 |

3,430 | 6,529,654,407 | IssuesEvent | 2017-08-30 12:33:51 | DynareTeam/dynare | https://api.github.com/repos/DynareTeam/dynare | closed | Allow writing steady_state_model-block into LaTeX-document | enhancement preprocessor | This would make debugging a lot easier | 1.0 | Allow writing steady_state_model-block into LaTeX-document - This would make debugging a lot easier | process | allow writing steady state model block into latex document this would make debugging a lot easier | 1 |

183,321 | 14,938,358,410 | IssuesEvent | 2021-01-25 15:41:03 | IBM/FHIR | https://api.github.com/repos/IBM/FHIR | closed | Document validation of resource types in fhir-server-config.json with whole-system search | documentation search | **Is your feature request related to a problem? Please describe.**

We need to document what must be specified in fhir-server-config.json in order for whole-system search to be allowed.

**Describe the solution you'd like**

Documentation should include an explanation of what is checked and example configurations.

... | 1.0 | Document validation of resource types in fhir-server-config.json with whole-system search - **Is your feature request related to a problem? Please describe.**

We need to document what must be specified in fhir-server-config.json in order for whole-system search to be allowed.

**Describe the solution you'd like**

D... | non_process | document validation of resource types in fhir server config json with whole system search is your feature request related to a problem please describe we need to document what must be specified in fhir server config json in order for whole system search to be allowed describe the solution you d like d... | 0 |

3,781 | 4,045,754,288 | IssuesEvent | 2016-05-22 07:35:23 | minetest/minetest | https://api.github.com/repos/minetest/minetest | closed | Laggy interactions | Performance | While playing Minetest, I have experienced frequent problems when interacting with the world. This is especially apparent when moving items around in the inventory screens. Note that this happens both in singleplayer and multiplayer.

I think it would make the engine much more polished if this lag did not happen. Doe... | True | Laggy interactions - While playing Minetest, I have experienced frequent problems when interacting with the world. This is especially apparent when moving items around in the inventory screens. Note that this happens both in singleplayer and multiplayer.

I think it would make the engine much more polished if this la... | non_process | laggy interactions while playing minetest i have experienced frequent problems when interacting with the world this is especially apparent when moving items around in the inventory screens note that this happens both in singleplayer and multiplayer i think it would make the engine much more polished if this la... | 0 |

18,034 | 24,044,358,285 | IssuesEvent | 2022-09-16 06:51:09 | zammad/zammad | https://api.github.com/repos/zammad/zammad | closed | Zammad ignores CSS white-space: pre-wrap; and displays multiline text in a single line | bug verified mail processing | <!--

Hi there - thanks for filing an issue. Please ensure the following things before creating an issue - thank you! 🤓

Since november 15th we handle all requests, except real bugs, at our community board.

Full explanation: https://community.zammad.org/t/major-change-regarding-github-issues-community-board/21

P... | 1.0 | Zammad ignores CSS white-space: pre-wrap; and displays multiline text in a single line - <!--

Hi there - thanks for filing an issue. Please ensure the following things before creating an issue - thank you! 🤓

Since november 15th we handle all requests, except real bugs, at our community board.

Full explanation: ht... | process | zammad ignores css white space pre wrap and displays multiline text in a single line hi there thanks for filing an issue please ensure the following things before creating an issue thank you 🤓 since november we handle all requests except real bugs at our community board full explanation ... | 1 |

20,492 | 27,146,979,408 | IssuesEvent | 2023-02-16 20:49:09 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Confusing/Contradictory statements in runtime expression syntax | devops/prod doc-bug Pri2 devops-cicd-process/tech | In the subsection, _What Syntax Should I Use_ under _Understand Variable Syntax_, you state the following:

> Choose a runtime expression if you are working with [conditions](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/conditions?view=azure-devops) and [expressions](https://docs.microsoft.com/en-u... | 1.0 | Confusing/Contradictory statements in runtime expression syntax - In the subsection, _What Syntax Should I Use_ under _Understand Variable Syntax_, you state the following:

> Choose a runtime expression if you are working with [conditions](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/conditions?vi... | process | confusing contradictory statements in runtime expression syntax in the subsection what syntax should i use under understand variable syntax you state the following choose a runtime expression if you are working with and the exception to this is if you have a pipeline where it will cause a proble... | 1 |

13,525 | 16,058,312,411 | IssuesEvent | 2021-04-23 08:53:31 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Error: [libs/sql-schema-describer/src/sqlite.rs:452:76] get name | bug/1-repro-available kind/bug process/candidate team/migrations | <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma introspect`

Version: `2.20.1`

Binary Version: `60ba6551f29b17d7d6ce479e5733c70d9c00860e`

Report: https://prisma-errors.netlify.app/report/13207

OS: `arm64 darwin 20.2.0`

JS Stacktrace:

```

Error: [libs/sql-schema-des... | 1.0 | Error: [libs/sql-schema-describer/src/sqlite.rs:452:76] get name - <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma introspect`

Version: `2.20.1`

Binary Version: `60ba6551f29b17d7d6ce479e5733c70d9c00860e`

Report: https://prisma-errors.netlify.app/report/13207

OS: `arm6... | process | error get name command prisma introspect version binary version report os darwin js stacktrace error get name at childprocess users jonnyparris dev duvnab node modules prisma build index js at childprocess emit node events at chil... | 1 |

298,685 | 25,847,263,156 | IssuesEvent | 2022-12-13 07:45:06 | dart-lang/co19 | https://api.github.com/repos/dart-lang/co19 | closed | _FROM_DEFERRED_LIBRARY location | bad-test | With https://dart-review.googlesource.com/c/sdk/+/274141 we changed the location on which `XYZ_FROM_DEFERRED_LIBRARY` is reported. By doing this I broke following tests:

```

co19/Language/Expressions/Constants/static_constant_t06

co19/Language/Expressions/Constants/static_constant_t07

co19_2/Language/Expressions/Co... | 1.0 | _FROM_DEFERRED_LIBRARY location - With https://dart-review.googlesource.com/c/sdk/+/274141 we changed the location on which `XYZ_FROM_DEFERRED_LIBRARY` is reported. By doing this I broke following tests:

```

co19/Language/Expressions/Constants/static_constant_t06

co19/Language/Expressions/Constants/static_constant_t... | non_process | from deferred library location with we changed the location on which xyz from deferred library is reported by doing this i broke following tests language expressions constants static constant language expressions constants static constant language expressions constants static constant ... | 0 |

2,597 | 5,356,196,634 | IssuesEvent | 2017-02-20 15:03:59 | AllenFang/react-bootstrap-table | https://api.github.com/repos/AllenFang/react-bootstrap-table | closed | Default value not working for select filter in remote mode | help wanted inprocess | I have a table with two enum columns, And I added a filter on them (the table is in remote mode).

Here is a piece of my code :

```

<TableHeaderColumn dataField="customerType" dataSort

filter={{ type: 'SelectFilter', options: zipObj(props.customerTypes, props.customerTypes),

defaultValue: filterDefaults.cust... | 1.0 | Default value not working for select filter in remote mode - I have a table with two enum columns, And I added a filter on them (the table is in remote mode).

Here is a piece of my code :

```

<TableHeaderColumn dataField="customerType" dataSort

filter={{ type: 'SelectFilter', options: zipObj(props.customerType... | process | default value not working for select filter in remote mode i have a table with two enum columns and i added a filter on them the table is in remote mode here is a piece of my code tableheadercolumn datafield customertype datasort filter type selectfilter options zipobj props customertype... | 1 |

372,072 | 11,008,762,735 | IssuesEvent | 2019-12-04 11:10:30 | coder3101/cp-editor2 | https://api.github.com/repos/coder3101/cp-editor2 | closed | Open .cpp file with CP editor | enhancement medium_priority windows | **Is your feature request related to a problem? Please describe.**

If this feature was added, there would be a solution to the issue regarding the crash of the editor when it opens the file explorer. This bug doesn't allow some users to save/open files directly from the program and is completely explained here: https:... | 1.0 | Open .cpp file with CP editor - **Is your feature request related to a problem? Please describe.**

If this feature was added, there would be a solution to the issue regarding the crash of the editor when it opens the file explorer. This bug doesn't allow some users to save/open files directly from the program and is c... | non_process | open cpp file with cp editor is your feature request related to a problem please describe if this feature was added there would be a solution to the issue regarding the crash of the editor when it opens the file explorer this bug doesn t allow some users to save open files directly from the program and is c... | 0 |

5,109 | 7,885,991,474 | IssuesEvent | 2018-06-27 14:03:49 | cptechinc/soft-dpluso | https://api.github.com/repos/cptechinc/soft-dpluso | closed | Rename Processwire configs | Processwire | In Processwire go into templates, choose a template

then under advanced there''s an area that says rename template, then you can change the name of the template. Rename the following templates

customer-config -> config

actions-config -> config-useractions

dplus-config -> config-dplus

interfax-config -> confi... | 1.0 | Rename Processwire configs - In Processwire go into templates, choose a template

then under advanced there''s an area that says rename template, then you can change the name of the template. Rename the following templates

customer-config -> config

actions-config -> config-useractions

dplus-config -> config-dpl... | process | rename processwire configs in processwire go into templates choose a template then under advanced there s an area that says rename template then you can change the name of the template rename the following templates customer config config actions config config useractions dplus config config dpl... | 1 |

194,057 | 15,395,935,475 | IssuesEvent | 2021-03-03 19:55:17 | UuuNyaa/blender_mmd_uuunyaa_tools | https://api.github.com/repos/UuuNyaa/blender_mmd_uuunyaa_tools | opened | Write a wiki on how to add asset issues | documentation | 1. create a issue to https://github.com/UuuNyaa/blender_mmd_assets/issues

2. download the issue (eg. 15) in debug mode on Python Console

```python

import mmd_uuunyaa_tools.debug

mmd_uuunyaa_tools.debug.debug_assets(15)

bpy.ops.mmd_uuunyaa_tools.reload_asset_jsons()

```

in debug mode on Python Console

```python

import mmd_uuunyaa_tools.debug

mmd_uuunyaa_tools.debug.debug_assets(15)

bpy.ops.mmd_uuunyaa_tools.reload_asset_jsons()

```

... | non_process | write a wiki on how to add asset issues create a issue to download the issue eg in debug mode on python console python import mmd uuunyaa tools debug mmd uuunyaa tools debug debug assets bpy ops mmd uuunyaa tools reload asset jsons do debug delete debug asset pytho... | 0 |

17,726 | 23,626,378,477 | IssuesEvent | 2022-08-25 04:37:25 | prisma/prisma | https://api.github.com/repos/prisma/prisma | reopened | Migrate dev fails on migration with unique fields | bug/1-unconfirmed kind/bug process/candidate team/schema topic: shadow database topic: prisma migrate dev | ### Bug description

Hello, guys!

I have very strange issue. I tried too many solutions for resolve, but have no results.

First, I create init schema and apply some migrations after it. All works fine.

In my next task I need to add unique fields to one of my models.

Ok, it seems easy and I made it. Migration r... | 1.0 | Migrate dev fails on migration with unique fields - ### Bug description

Hello, guys!

I have very strange issue. I tried too many solutions for resolve, but have no results.

First, I create init schema and apply some migrations after it. All works fine.

In my next task I need to add unique fields to one of my m... | process | migrate dev fails on migration with unique fields bug description hello guys i have very strange issue i tried too many solutions for resolve but have no results first i create init schema and apply some migrations after it all works fine in my next task i need to add unique fields to one of my m... | 1 |

19,236 | 25,387,544,156 | IssuesEvent | 2022-11-21 23:32:11 | AMDResearch/omnitrace | https://api.github.com/repos/AMDResearch/omnitrace | closed | Intermediate sampling flushing | enhancement sampling process sampling configuration | Right now, there is no way to limit the amount of sampling data stored in memory beyond setting `OMNITRACE_SAMPLING_DURATION`. Need to add a way to occasionally flush the data stored in memory and an option to configure it. | 1.0 | Intermediate sampling flushing - Right now, there is no way to limit the amount of sampling data stored in memory beyond setting `OMNITRACE_SAMPLING_DURATION`. Need to add a way to occasionally flush the data stored in memory and an option to configure it. | process | intermediate sampling flushing right now there is no way to limit the amount of sampling data stored in memory beyond setting omnitrace sampling duration need to add a way to occasionally flush the data stored in memory and an option to configure it | 1 |

57,185 | 7,043,611,273 | IssuesEvent | 2017-12-31 09:33:55 | Jo-ravar/Tutorials | https://api.github.com/repos/Jo-ravar/Tutorials | reopened | [UI] Design a logo | Design | ### Issue Description

- Come up with your own logo designs and add screenshot here.

| 1.0 | [UI] Design a logo - ### Issue Description

- Come up with your own logo designs and add screenshot here.

| non_process | design a logo issue description come up with your own logo designs and add screenshot here | 0 |

20,686 | 27,357,198,374 | IssuesEvent | 2023-02-27 13:40:14 | camunda/issues | https://api.github.com/repos/camunda/issues | opened | Improved visual feedback for Process Instance Modification | component:operate component:zeebe component:zeebe-process-automation public kind:epic potential:8.2 | ### Value Proposition Statement

Visual feedback after applying Process Instance Modification

### User Problem

**First problem:**

Currently, when I click "Apply" in the summary of modifications in Process Instance Modification, I get the toast notification:

## 10. 디자인 패턴

업무용 소프트웨어 시스템을 작성하려면 개발자는 사업방식을 완전히 이해해야 한다.

결과적으로 개발자는 종종 회사의 업무 과정에 대해 가장 친밀한 지식을 갖게 된다.

우리가 포유류 클래스를 만들 때, 모든 포유류가 특정 행위와 특성을 공유하기 때문에 이 포유류 클래스를 사용하면 개나 고양이 등의 클래스들을 수없이 만들 수 있다.

이렇... | 1.0 | 10장 디자인 패턴 - [Link](https://github.com/fkdl0048/BookReview/blob/main/The_Object-Oriented_Thought_Process/Chapter10.md)

## 10. 디자인 패턴

업무용 소프트웨어 시스템을 작성하려면 개발자는 사업방식을 완전히 이해해야 한다.

결과적으로 개발자는 종종 회사의 업무 과정에 대해 가장 친밀한 지식을 갖게 된다.

우리가 포유류 클래스를 만들 때, 모든 포유류가 특정 행위와 특성을 공유하기 때문에 이 포유류 클래스를 사용하면 개나 고양이 등의 클래스들을 수없이 만... | process | 디자인 패턴 디자인 패턴 업무용 소프트웨어 시스템을 작성하려면 개발자는 사업방식을 완전히 이해해야 한다 결과적으로 개발자는 종종 회사의 업무 과정에 대해 가장 친밀한 지식을 갖게 된다 우리가 포유류 클래스를 만들 때 모든 포유류가 특정 행위와 특성을 공유하기 때문에 이 포유류 클래스를 사용하면 개나 고양이 등의 클래스들을 수없이 만들 수 있다 이렇게 하다 보면 개 고양이 다람쥐 및 기타 포유류를 연구할 때 효과적인데 이는 패턴 을 발견할 수 있기 때문이다 이런 패턴을 기반으로 특정 동물을 살... | 1 |

7,424 | 10,543,359,796 | IssuesEvent | 2019-10-02 14:51:01 | fablabbcn/fablabs.io | https://api.github.com/repos/fablabbcn/fablabs.io | opened | Planned Labs Appear as active labs | Approval Process bug | **Describe the bug**

When fab labs are approved under the

> planned status of the lab

there is supposed to be placed under this category on the frontend, the problem is that there are appearing as working labs, rather than having some tag or sign that said - this is a planned lab- on previous versions of this ... | 1.0 | Planned Labs Appear as active labs - **Describe the bug**

When fab labs are approved under the

> planned status of the lab

there is supposed to be placed under this category on the frontend, the problem is that there are appearing as working labs, rather than having some tag or sign that said - this is a plann... | process | planned labs appear as active labs describe the bug when fab labs are approved under the planned status of the lab there is supposed to be placed under this category on the frontend the problem is that there are appearing as working labs rather than having some tag or sign that said this is a plann... | 1 |

491,682 | 14,169,054,790 | IssuesEvent | 2020-11-12 12:40:52 | mozilla/addons-server | https://api.github.com/repos/mozilla/addons-server | closed | Add confirmation page when changing a promoted group in the django admin | component: subscription priority: p3 state: pull request ready | With the new subscription system, changing the group of a promoted add-on might have implications.

For groups that require payment, we should change a few things in Stripe (like cancelling the subscription when we remove an add-on from a promoted group).

This issue is about adding a confirmation ~~page~~ message ... | 1.0 | Add confirmation page when changing a promoted group in the django admin - With the new subscription system, changing the group of a promoted add-on might have implications.

For groups that require payment, we should change a few things in Stripe (like cancelling the subscription when we remove an add-on from a prom... | non_process | add confirmation page when changing a promoted group in the django admin with the new subscription system changing the group of a promoted add on might have implications for groups that require payment we should change a few things in stripe like cancelling the subscription when we remove an add on from a prom... | 0 |

75,596 | 14,495,503,592 | IssuesEvent | 2020-12-11 11:17:06 | hzi-braunschweig/SORMAS-Project | https://api.github.com/repos/hzi-braunschweig/SORMAS-Project | closed | Prevent Blind SQL Injection [3] | Code Quality change important | ### Description

Using the https://pen1.sormas-oegd.de:443/sormas-rest/cases/push REST endpoint to create a case

with an invalid UUID allows to make a SQL injection in the function

```

de.symeda.sormas.backend.contact.ContactFacadeEjb.getNonSourceCaseCountForDashboard

public int getNonSourceCaseCountForDashboa... | 1.0 | Prevent Blind SQL Injection [3] - ### Description

Using the https://pen1.sormas-oegd.de:443/sormas-rest/cases/push REST endpoint to create a case

with an invalid UUID allows to make a SQL injection in the function

```

de.symeda.sormas.backend.contact.ContactFacadeEjb.getNonSourceCaseCountForDashboard

public i... | non_process | prevent blind sql injection description using the rest endpoint to create a case with an invalid uuid allows to make a sql injection in the function de symeda sormas backend contact contactfacadeejb getnonsourcecasecountfordashboard public int getnonsourcecasecountfordashboard list caseuuids ... | 0 |

111,149 | 17,016,145,575 | IssuesEvent | 2021-07-02 12:20:34 | anyulled/react-boilerplate | https://api.github.com/repos/anyulled/react-boilerplate | opened | WS-2021-0153 (High) detected in ejs-2.7.4.tgz | security vulnerability | ## WS-2021-0153 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ejs-2.7.4.tgz</b></p></summary>

<p>Embedded JavaScript templates</p>

<p>Library home page: <a href="https://registry.npm... | True | WS-2021-0153 (High) detected in ejs-2.7.4.tgz - ## WS-2021-0153 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ejs-2.7.4.tgz</b></p></summary>

<p>Embedded JavaScript templates</p>

<p>... | non_process | ws high detected in ejs tgz ws high severity vulnerability vulnerable library ejs tgz embedded javascript templates library home page a href path to dependency file react boilerplate package json path to vulnerable library react boilerplate node modules ejs depen... | 0 |

50,217 | 3,006,255,819 | IssuesEvent | 2015-07-27 09:12:34 | Itseez/opencv | https://api.github.com/repos/Itseez/opencv | opened | hough circle detection not symmetric, misses obvious circle | auto-transferred bug category: imgproc category: video priority: normal | Transferred from http://code.opencv.org/issues/2461

```

|| Walter Blume on 2012-10-19 17:20

|| Priority: Normal

|| Affected: None

|| Category: imgproc, video

|| Tracker: Bug

|| Difficulty: None

|| PR: None

|| Platform: None / None

```

hough circle detection not symmetric, misses obvious circle

-----------

```

For som... | 1.0 | hough circle detection not symmetric, misses obvious circle - Transferred from http://code.opencv.org/issues/2461

```

|| Walter Blume on 2012-10-19 17:20

|| Priority: Normal

|| Affected: None

|| Category: imgproc, video

|| Tracker: Bug

|| Difficulty: None

|| PR: None

|| Platform: None / None

```

hough circle detectio... | non_process | hough circle detection not symmetric misses obvious circle transferred from walter blume on priority normal affected none category imgproc video tracker bug difficulty none pr none platform none none hough circle detection not symmetric misses obvious circle ... | 0 |

4,554 | 7,388,056,864 | IssuesEvent | 2018-03-16 00:15:19 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | reopened | ProcessThreadTests.TestStartTimeProperty test failure in CI on Ubuntu | area-System.Diagnostics.Process test-run-core | https://ci.dot.net/job/dotnet_corefx/job/master/job/ubuntu14.04_debug/654/consoleText

```

System.Diagnostics.Tests.ProcessThreadTests.TestStartTimeProperty [FAIL]

System.ComponentModel.Win32Exception : Unable to retrieve the specified information about the process or thread. It may have exited or may be pri... | 1.0 | ProcessThreadTests.TestStartTimeProperty test failure in CI on Ubuntu - https://ci.dot.net/job/dotnet_corefx/job/master/job/ubuntu14.04_debug/654/consoleText

```

System.Diagnostics.Tests.ProcessThreadTests.TestStartTimeProperty [FAIL]

System.ComponentModel.Win32Exception : Unable to retrieve the specified in... | process | processthreadtests teststarttimeproperty test failure in ci on ubuntu system diagnostics tests processthreadtests teststarttimeproperty system componentmodel unable to retrieve the specified information about the process or thread it may have exited or may be privileged stack trace ... | 1 |

275,501 | 23,919,167,261 | IssuesEvent | 2022-09-09 15:12:46 | blockframes/blockframes | https://api.github.com/repos/blockframes/blockframes | closed | E2E - Add to bucket | App - Catalog :earth_africa: Type - e2e test Dev - Test / Quality Assurance July clean up | ### Description

We want to test that users are able to add titles to their bucket with corresponding terms.

### Context

BDD based on these contracts & related films https://docs.google.com/spreadsheets/d/1KHKdN9HAzDUfbKaoSi6_OHxCjMsMd-vQh0VL_qKSKls/edit#gid=0

MovieId `bR4fTHmDDuOSPrNaz39J` should be added as a t... | 2.0 | E2E - Add to bucket - ### Description

We want to test that users are able to add titles to their bucket with corresponding terms.

### Context

BDD based on these contracts & related films https://docs.google.com/spreadsheets/d/1KHKdN9HAzDUfbKaoSi6_OHxCjMsMd-vQh0VL_qKSKls/edit#gid=0

MovieId `bR4fTHmDDuOSPrNaz39J` ... | non_process | add to bucket description we want to test that users are able to add titles to their bucket with corresponding terms context bdd based on these contracts related films movieid should be added as a title from pulsar content orgid scenario connect with marketplace user g... | 0 |

13,519 | 16,057,043,674 | IssuesEvent | 2021-04-23 07:11:23 | ropensci/software-review-meta | https://api.github.com/repos/ropensci/software-review-meta | closed | Standard testing environment | automation process | We should set up a docker image for a standard testing environment. Our stack has a lot of system dependencies, so maybe we should use rocker/ropensci, with goodpractice installed. | 1.0 | Standard testing environment - We should set up a docker image for a standard testing environment. Our stack has a lot of system dependencies, so maybe we should use rocker/ropensci, with goodpractice installed. | process | standard testing environment we should set up a docker image for a standard testing environment our stack has a lot of system dependencies so maybe we should use rocker ropensci with goodpractice installed | 1 |

12,833 | 15,213,874,661 | IssuesEvent | 2021-02-17 12:26:20 | trilinos/Trilinos | https://api.github.com/repos/trilinos/Trilinos | closed | Create Trilinos GitHub Issue Lifecycle Model | CLOSED_DUE_TO_INACTIVITY MARKED_FOR_CLOSURE process improvement | Recent activity with GitHub issues suggests we need an explicit issue lifecycle model to communicate expectations for clients, users and developers.

This issue results from a query by @mwglass. Others who might be interested are: @jwillenbring @bmpersc and @bartlettroscoe

Also, I have added a new label "process impr... | 1.0 | Create Trilinos GitHub Issue Lifecycle Model - Recent activity with GitHub issues suggests we need an explicit issue lifecycle model to communicate expectations for clients, users and developers.

This issue results from a query by @mwglass. Others who might be interested are: @jwillenbring @bmpersc and @bartlettroscoe... | process | create trilinos github issue lifecycle model recent activity with github issues suggests we need an explicit issue lifecycle model to communicate expectations for clients users and developers this issue results from a query by mwglass others who might be interested are jwillenbring bmpersc and bartlettroscoe... | 1 |

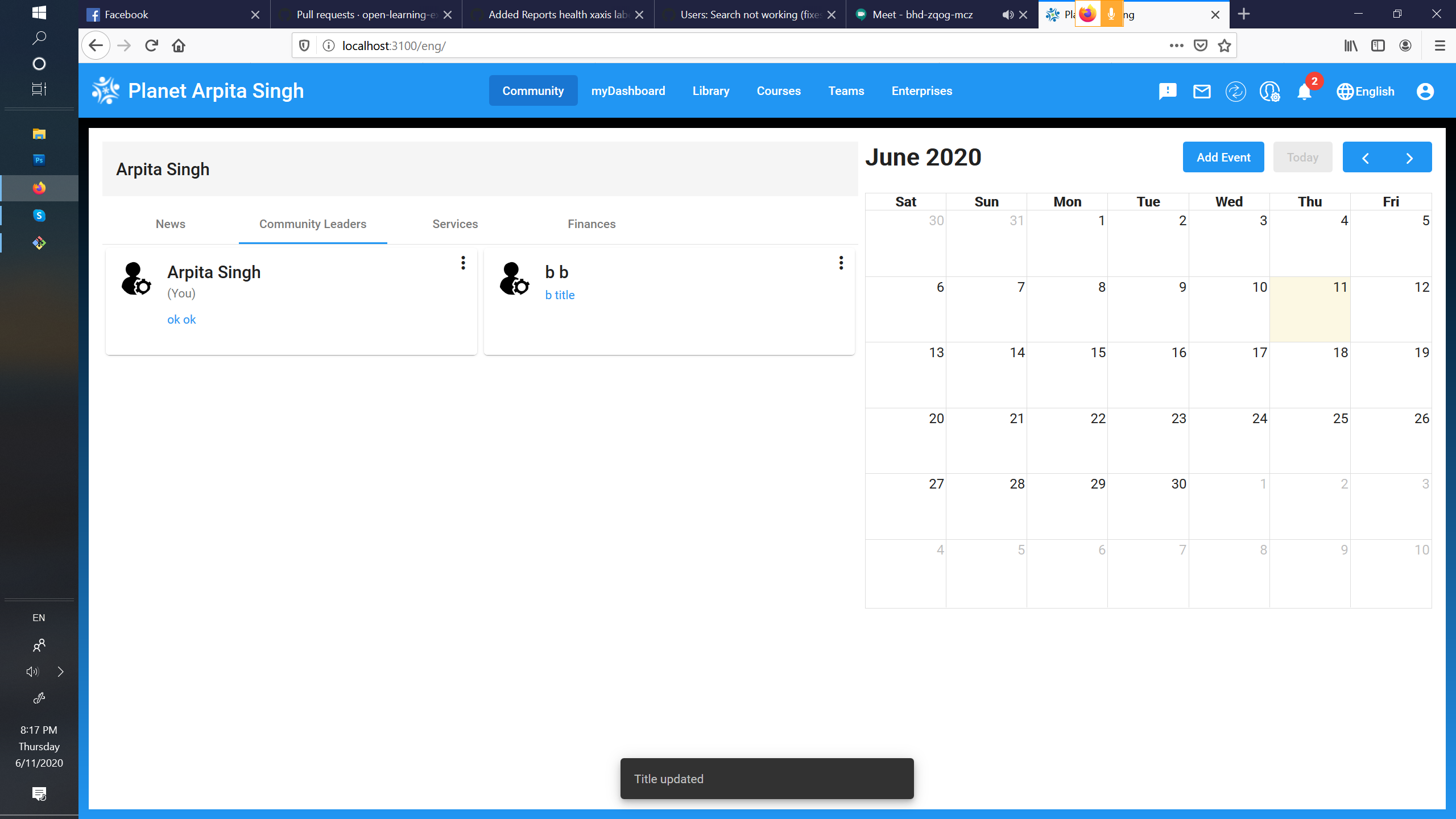

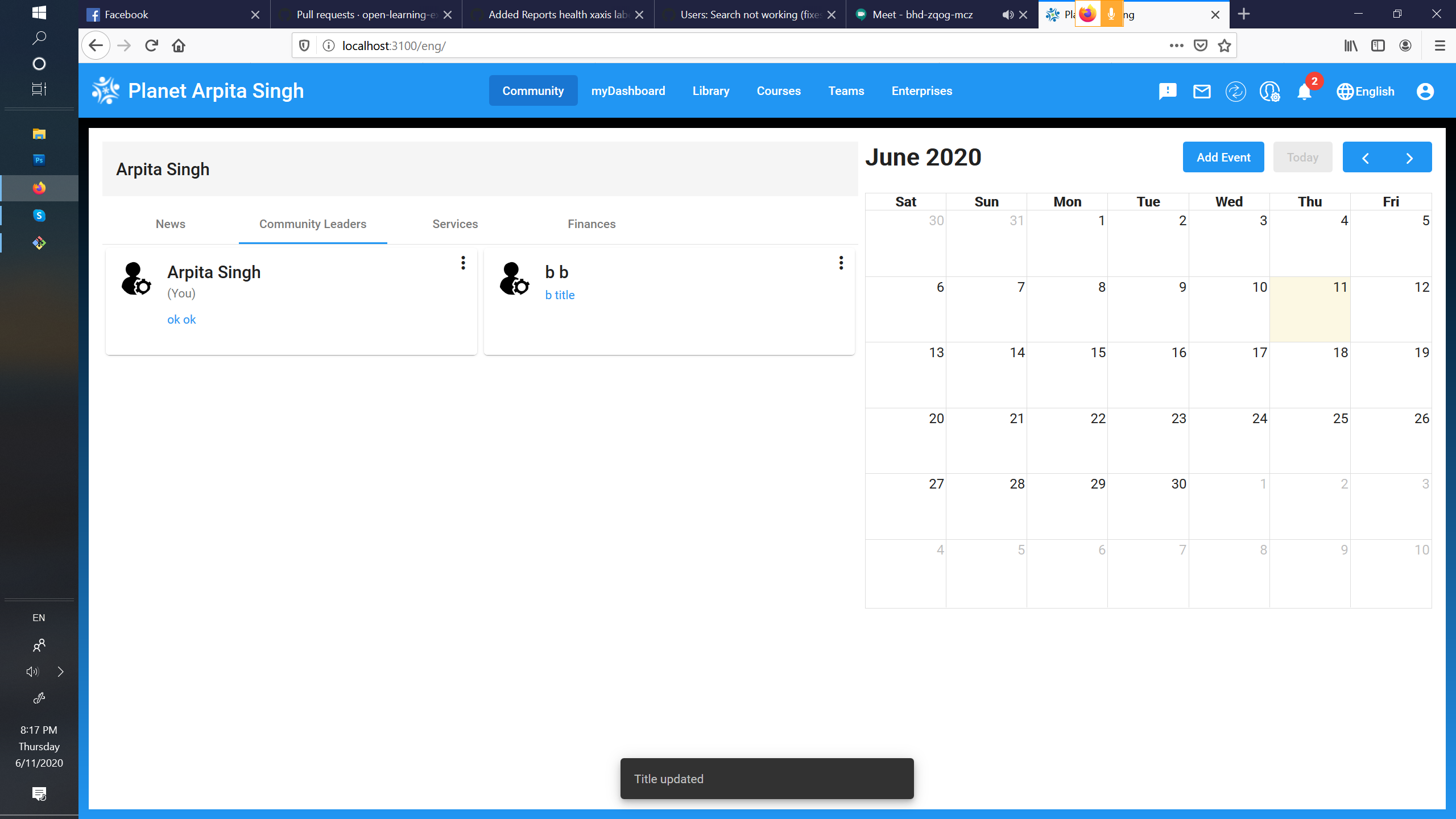

443,386 | 12,793,680,151 | IssuesEvent | 2020-07-02 04:49:33 | open-learning-exchange/planet | https://api.github.com/repos/open-learning-exchange/planet | closed | Community leaders: Add title shows "Updated title" success message | low priority | - go to community leaders

- add title to members

- message shown as "Title Updated"

It should show "Title added".

| 1.0 | Community leaders: Add title shows "Updated title" success message - - go to community leaders

- add title to members

- message shown as "Title Updated"

It should show "Title added".

| non_process | community leaders add title shows updated title success message go to community leaders add title to members message shown as title updated it should show title added | 0 |

68,717 | 7,108,101,620 | IssuesEvent | 2018-01-16 22:28:42 | Azure/azure-cli | https://api.github.com/repos/Azure/azure-cli | closed | [Test] `check_style` and `run_tests` do not work on Python 2. | Test bug | Related to https://github.com/Azure/azure-cli/issues/3883

When I run these scripts I get:

```

Traceback (most recent call last):

File "C:\Users\trpresco\Documents\github\env2\Scripts\check_style-script.py", line 11, in <module>

load_entry_point('azure-cli-dev-tools', 'console_scripts', 'check_style')()

... | 1.0 | [Test] `check_style` and `run_tests` do not work on Python 2. - Related to https://github.com/Azure/azure-cli/issues/3883

When I run these scripts I get:

```

Traceback (most recent call last):

File "C:\Users\trpresco\Documents\github\env2\Scripts\check_style-script.py", line 11, in <module>

load_entry_poin... | non_process | check style and run tests do not work on python related to when i run these scripts i get traceback most recent call last file c users trpresco documents github scripts check style script py line in load entry point azure cli dev tools console scripts check style ... | 0 |

958 | 3,419,126,066 | IssuesEvent | 2015-12-08 07:55:50 | e-government-ua/iBP | https://api.github.com/repos/e-government-ua/iBP | closed | Дніпропетровськ ГоловАПУ - Викопіювання з топографічного плану міста 1:500 | In process of testing | Согласовано с ГлавАПУ

Заявитель подает заявление на имя начальника ГлавАПУ и данные об участке, на который нужны копии.

ГлавАПУ обрабатывает и возвращает заявителю на почту заказанную копию.

Варианты отказа:

1. Отсутствие информации

2. Участок за пределами города

3. Информация засекречена (стратегические объекты)... | 1.0 | Дніпропетровськ ГоловАПУ - Викопіювання з топографічного плану міста 1:500 - Согласовано с ГлавАПУ

Заявитель подает заявление на имя начальника ГлавАПУ и данные об участке, на который нужны копии.

ГлавАПУ обрабатывает и возвращает заявителю на почту заказанную копию.

Варианты отказа:

1. Отсутствие информации

2. Уч... | process | дніпропетровськ головапу викопіювання з топографічного плану міста согласовано с главапу заявитель подает заявление на имя начальника главапу и данные об участке на который нужны копии главапу обрабатывает и возвращает заявителю на почту заказанную копию варианты отказа отсутствие информации учас... | 1 |

236,308 | 18,092,471,553 | IssuesEvent | 2021-09-22 04:24:02 | girlscript/winter-of-contributing | https://api.github.com/repos/girlscript/winter-of-contributing | closed | ML 1.5 : Probability Distributions (Gaussian, Standard, Poisson) (D) | documentation GWOC21 ML Assigned | Welcome to 'ML' Team, good to see you here

This issue will helps readers in acquiring all the knowledge that one needs to know about **Probability Distributions (Gaussian, Standard, Poisson)**.

To get assigned to this issue, add your **Batch Numbers** mentioned in the spreadsheet of "Machine Learning", the approa... | 1.0 | ML 1.5 : Probability Distributions (Gaussian, Standard, Poisson) (D) - Welcome to 'ML' Team, good to see you here

This issue will helps readers in acquiring all the knowledge that one needs to know about **Probability Distributions (Gaussian, Standard, Poisson)**.

To get assigned to this issue, add your **Batch N... | non_process | ml probability distributions gaussian standard poisson d welcome to ml team good to see you here this issue will helps readers in acquiring all the knowledge that one needs to know about probability distributions gaussian standard poisson to get assigned to this issue add your batch n... | 0 |

547 | 3,005,961,466 | IssuesEvent | 2015-07-27 06:51:33 | e-government-ua/i | https://api.github.com/repos/e-government-ua/i | closed | На дашборде реализовать раздел "Звіт" | hi priority In process of testing test | При нажатии кнопки "Сформувати файл" - предлагать сохранять файл, используя готовій сервис:

https://test.region.igov.org.ua/wf-region/service/rest/file/download_bp_timing?sID_BP_Name=lviv_mvk-1&sDateAt=2015-06-28&sDateTo=2015-07-01

Который написан по задаче: https://github.com/e-government-ua/i/issues/313, и АПИ кото... | 1.0 | На дашборде реализовать раздел "Звіт" - При нажатии кнопки "Сформувати файл" - предлагать сохранять файл, используя готовій сервис:

https://test.region.igov.org.ua/wf-region/service/rest/file/download_bp_timing?sID_BP_Name=lviv_mvk-1&sDateAt=2015-06-28&sDateTo=2015-07-01

Который написан по задаче: https://github.com/... | process | на дашборде реализовать раздел звіт при нажатии кнопки сформувати файл предлагать сохранять файл используя готовій сервис который написан по задаче и апи которого описано на нашей вики фактически нужно будет просто туда подставлять параметры из полей интерфейса условия вкладку отобра... | 1 |

325,664 | 24,056,194,651 | IssuesEvent | 2022-09-16 17:08:46 | squidfunk/mkdocs-material | https://api.github.com/repos/squidfunk/mkdocs-material | closed | Image link for hiding the sidebar is broken in the documentations | documentation | ### Contribution guidelines

- [X] I've read the [contribution guidelines](https://github.com/squidfunk/mkdocs-material/blob/master/CONTRIBUTING.md) and wholeheartedly agree

### I've found a bug and checked that ...

- [X] ... the problem doesn't occur with the `mkdocs` or `readthedocs` themes

- [ ] ... the problem pe... | 1.0 | Image link for hiding the sidebar is broken in the documentations - ### Contribution guidelines

- [X] I've read the [contribution guidelines](https://github.com/squidfunk/mkdocs-material/blob/master/CONTRIBUTING.md) and wholeheartedly agree

### I've found a bug and checked that ...

- [X] ... the problem doesn't occu... | non_process | image link for hiding the sidebar is broken in the documentations contribution guidelines i ve read the and wholeheartedly agree i ve found a bug and checked that the problem doesn t occur with the mkdocs or readthedocs themes the problem persists when all overrides are remo... | 0 |

227,332 | 18,056,822,754 | IssuesEvent | 2021-09-20 09:17:52 | Xaymar/obs-StreamFX | https://api.github.com/repos/Xaymar/obs-StreamFX | closed | New Unified logging causes crash on start under Linux/Gentoo | type:bug status:help-wanted status:testing-required | ### Operating System

Linux (like Arch Linux)

### OBS Studio Version?

27.0

### StreamFX Version

0.11.0a3

### OBS Studio Log

https://gist.github.com/WPettersson/1175b9225fc3154edb87b3094f8a6b36

### OBS Studio Crash Log

https://gist.github.com/WPettersson/9b7e1ba34ba8a94e969f1c119e00097d

This includes me runni... | 1.0 | New Unified logging causes crash on start under Linux/Gentoo - ### Operating System

Linux (like Arch Linux)

### OBS Studio Version?

27.0

### StreamFX Version

0.11.0a3

### OBS Studio Log

https://gist.github.com/WPettersson/1175b9225fc3154edb87b3094f8a6b36

### OBS Studio Crash Log

https://gist.github.com/WPetter... | non_process | new unified logging causes crash on start under linux gentoo operating system linux like arch linux obs studio version streamfx version obs studio log obs studio crash log this includes me running it through gdb to get a backtrace showing that something with logging w... | 0 |

1,282 | 3,814,199,427 | IssuesEvent | 2016-03-28 11:37:02 | pelias/api | https://api.github.com/repos/pelias/api | closed | [ngram branch] query weighting | experiment processed question | @hkrishna I'm opening this ticket to track the admin weighting feature we discussed and so I have a place to put some test cases.

the gist of this is that on the *ngram* branch the part of the query which gave higher scores to queries which matched an admin value and scripts seem to have less effect than it used to. | 1.0 | [ngram branch] query weighting - @hkrishna I'm opening this ticket to track the admin weighting feature we discussed and so I have a place to put some test cases.

the gist of this is that on the *ngram* branch the part of the query which gave higher scores to queries which matched an admin value and scripts seem to ... | process | query weighting hkrishna i m opening this ticket to track the admin weighting feature we discussed and so i have a place to put some test cases the gist of this is that on the ngram branch the part of the query which gave higher scores to queries which matched an admin value and scripts seem to have less eff... | 1 |

20,466 | 27,128,970,160 | IssuesEvent | 2023-02-16 08:21:22 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Verifying bazel releases installation | P3 type: process team-OSS stale | The bazel release has a sig file that is can be used to verify the sh file, but usually this sig file is used to verify the sha256 file. Either choose to verify the shasum or not remove it from use.

| 1.0 | Verifying bazel releases installation - The bazel release has a sig file that is can be used to verify the sh file, but usually this sig file is used to verify the sha256 file. Either choose to verify the shasum or not remove it from use.

| process | verifying bazel releases installation the bazel release has a sig file that is can be used to verify the sh file but usually this sig file is used to verify the file either choose to verify the shasum or not remove it from use | 1 |

14,473 | 17,595,872,239 | IssuesEvent | 2021-08-17 04:59:19 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Model with "Drop field(s)" processing algorithm is not working with QGIS LTR 3.16.9 because of "native:" prefix | Feedback Processing Bug Modeller | ### What is the bug or the crash?

Model with `Drop field(s)` processing algorithm is not working with QGIS LTR 3.16.9 becaue of `native:` prefix.

### Steps to reproduce the issue

[test_models.zip](https://github.com/qgis/QGIS/files/6975919/test_models.zip) contains two models with the `Drop field(s)` processing algo... | 1.0 | Model with "Drop field(s)" processing algorithm is not working with QGIS LTR 3.16.9 because of "native:" prefix - ### What is the bug or the crash?

Model with `Drop field(s)` processing algorithm is not working with QGIS LTR 3.16.9 becaue of `native:` prefix.

### Steps to reproduce the issue

[test_models.zip](https:... | process | model with drop field s processing algorithm is not working with qgis ltr because of native prefix what is the bug or the crash model with drop field s processing algorithm is not working with qgis ltr becaue of native prefix steps to reproduce the issue contains two models wi... | 1 |

176,070 | 28,023,443,310 | IssuesEvent | 2023-03-28 07:32:42 | dotnet/winforms | https://api.github.com/repos/dotnet/winforms | opened | Bindingsource not assigned | area: VS designer untriaged | ### Environment

Visual Studio 2022 - 17.5.3

### .NET version

7.0

### Did this work in a previous version of Visual Studio and/or previous .NET release?

Yes

### Issue description

Assigning a class as data source to a binding source doesn't seems to work within the designer. The data source class can be selected b... | 1.0 | Bindingsource not assigned - ### Environment

Visual Studio 2022 - 17.5.3

### .NET version

7.0

### Did this work in a previous version of Visual Studio and/or previous .NET release?

Yes

### Issue description

Assigning a class as data source to a binding source doesn't seems to work within the designer. The data s... | non_process | bindingsource not assigned environment visual studio net version did this work in a previous version of visual studio and or previous net release yes issue description assigning a class as data source to a binding source doesn t seems to work within the designer the data sourc... | 0 |

1,061 | 3,535,167,043 | IssuesEvent | 2016-01-16 08:59:05 | t3kt/vjzual2 | https://api.github.com/repos/t3kt/vjzual2 | closed | reversable transform module | enhancement video processing | for example rotate +32 and -32 and composite the outputs.

also for translate x/y.

it doesn't really make sense for scale, but maybe include it anyway | 1.0 | reversable transform module - for example rotate +32 and -32 and composite the outputs.

also for translate x/y.

it doesn't really make sense for scale, but maybe include it anyway | process | reversable transform module for example rotate and and composite the outputs also for translate x y it doesn t really make sense for scale but maybe include it anyway | 1 |

20,826 | 27,581,532,861 | IssuesEvent | 2023-03-08 16:31:38 | symfony/symfony | https://api.github.com/repos/symfony/symfony | closed | Can the process component be mocked in version 6? | Bug Process Status: Needs Review | ### Symfony version(s) affected

6.0

### Description

I was working with version 5.4. I had the following class returning a Process object https://github.com/nmeri17/suphle/blob/3a522da6818064f6dbbe4b3571c7a8ea6a2d7898/src/Server/VendorBin.php#L46

In my tests, that method was being mocked, thus phpunit returns a mo... | 1.0 | Can the process component be mocked in version 6? - ### Symfony version(s) affected

6.0

### Description

I was working with version 5.4. I had the following class returning a Process object https://github.com/nmeri17/suphle/blob/3a522da6818064f6dbbe4b3571c7a8ea6a2d7898/src/Server/VendorBin.php#L46

In my tests, th... | process | can the process component be mocked in version symfony version s affected description i was working with version i had the following class returning a process object in my tests that method was being mocked thus phpunit returns a mock process object all was fine until i upgraded to ve... | 1 |

626,482 | 19,824,708,333 | IssuesEvent | 2022-01-20 04:13:03 | phetsims/number-play | https://api.github.com/repos/phetsims/number-play | opened | Improve AccordionBox usages | priority:2-high | From meeting with @jonathanolson today - he gave some suggestions for how to better define their size and not resize in case of a content size change.

I will also use this issue to remove a lot of duplicated code - there is currently OnesAccordionBox, ObjectAccordionBox, and CompareAccordionBox, and with all of the... | 1.0 | Improve AccordionBox usages - From meeting with @jonathanolson today - he gave some suggestions for how to better define their size and not resize in case of a content size change.

I will also use this issue to remove a lot of duplicated code - there is currently OnesAccordionBox, ObjectAccordionBox, and CompareAcc... | non_process | improve accordionbox usages from meeting with jonathanolson today he gave some suggestions for how to better define their size and not resize in case of a content size change i will also use this issue to remove a lot of duplicated code there is currently onesaccordionbox objectaccordionbox and compareacc... | 0 |

354,286 | 25,158,742,416 | IssuesEvent | 2022-11-10 15:19:55 | GTMNERR/swmp-quarter-report | https://api.github.com/repos/GTMNERR/swmp-quarter-report | closed | Section 5.3.1 Total Nitrogen Last Sentence | documentation | Currently reads: This was especially true at Pine Island which only has data in January and February (Figure 5.5 (a) and the remaining sites are typically missing the March data.

Since, Pine Island had additional data added in September and October. I suggest maybe changing the sentence to:

This was especially t... | 1.0 | Section 5.3.1 Total Nitrogen Last Sentence - Currently reads: This was especially true at Pine Island which only has data in January and February (Figure 5.5 (a) and the remaining sites are typically missing the March data.

Since, Pine Island had additional data added in September and October. I suggest maybe changi... | non_process | section total nitrogen last sentence currently reads this was especially true at pine island which only has data in january and february figure a and the remaining sites are typically missing the march data since pine island had additional data added in september and october i suggest maybe changi... | 0 |

15,131 | 18,873,759,589 | IssuesEvent | 2021-11-13 17:01:09 | AmpersandTarski/Ampersand | https://api.github.com/repos/AmpersandTarski/Ampersand | closed | Support for build with Docker container | software process | I've found this post: https://docs.haskellstack.org/en/stable/docker_integration/

I think very promising for us.

Unfortunately no support for Windows yet.. but there's a fix coming very soon I gues: https://github.com/commercialhaskell/stack/issues/2421 and https://github.com/commercialhaskell/stack/pull/5315

Inte... | 1.0 | Support for build with Docker container - I've found this post: https://docs.haskellstack.org/en/stable/docker_integration/

I think very promising for us.

Unfortunately no support for Windows yet.. but there's a fix coming very soon I gues: https://github.com/commercialhaskell/stack/issues/2421 and https://github.com... | process | support for build with docker container i ve found this post i think very promising for us unfortunately no support for windows yet but there s a fix coming very soon i gues and integration of stack with docker can be specified configured in stack yaml yaml docker integration see dock... | 1 |

94,942 | 11,941,984,433 | IssuesEvent | 2020-04-02 19:26:22 | MozillaFoundation/Design | https://api.github.com/repos/MozillaFoundation/Design | closed | Update /brand with latest MozFest assets | design | Once the new style guide https://github.com/MozillaFoundation/Design/issues/483 is done we will need to update our /brand page.

[need to add more info]

cc @sabrinang | 1.0 | Update /brand with latest MozFest assets - Once the new style guide https://github.com/MozillaFoundation/Design/issues/483 is done we will need to update our /brand page.

[need to add more info]

cc @sabrinang | non_process | update brand with latest mozfest assets once the new style guide is done we will need to update our brand page cc sabrinang | 0 |

16,233 | 20,780,645,960 | IssuesEvent | 2022-03-16 14:29:51 | FOLIO-FSE/folio_migration_tools | https://api.github.com/repos/FOLIO-FSE/folio_migration_tools | closed | Library configuration file: make holdingsMergeCriteria options more intuitive | simplify_migration_process | Example of current:

`"holdingsMergeCriteria": "lb",

`

Could we name the options something in clear text (e.g. "location_and_callnumber")? Would make it easier for to read and work with the config file. | 1.0 | Library configuration file: make holdingsMergeCriteria options more intuitive - Example of current:

`"holdingsMergeCriteria": "lb",

`

Could we name the options something in clear text (e.g. "location_and_callnumber")? Would make it easier for to read and work with the config file. | process | library configuration file make holdingsmergecriteria options more intuitive example of current holdingsmergecriteria lb could we name the options something in clear text e g location and callnumber would make it easier for to read and work with the config file | 1 |

75,108 | 20,628,063,450 | IssuesEvent | 2022-03-08 01:48:19 | Rust-for-Linux/linux | https://api.github.com/repos/Rust-for-Linux/linux | opened | Makefile: a grep regular expression in the rust/Makefile may have problem | • kbuild | https://github.com/Rust-for-Linux/linux/blob/28a9bd2ebd821ab0f412f1d661c62801dbd2f632/rust/Makefile#L273-L274

When I learn the process of rust-bindgen, I find this expression confusing to me. Does the second part of this regular expression have a redundant "$"? If the answer is a yes, should I open a pr for it? Thanks... | 1.0 | Makefile: a grep regular expression in the rust/Makefile may have problem - https://github.com/Rust-for-Linux/linux/blob/28a9bd2ebd821ab0f412f1d661c62801dbd2f632/rust/Makefile#L273-L274

When I learn the process of rust-bindgen, I find this expression confusing to me. Does the second part of this regular expression hav... | non_process | makefile a grep regular expression in the rust makefile may have problem when i learn the process of rust bindgen i find this expression confusing to me does the second part of this regular expression have a redundant if the answer is a yes should i open a pr for it thanks for your advice | 0 |

17,263 | 23,043,962,336 | IssuesEvent | 2022-07-23 15:49:11 | andrewzah/openbook | https://api.github.com/repos/andrewzah/openbook | opened | [metadata] support multiple album references | enhancement rust-preprocessor lilypond | * [ ] update yaml parser to check for array values - models.rs

* [ ] display this information somehow in the song render | 1.0 | [metadata] support multiple album references - * [ ] update yaml parser to check for array values - models.rs

* [ ] display this information somehow in the song render | process | support multiple album references update yaml parser to check for array values models rs display this information somehow in the song render | 1 |

20,498 | 3,368,357,569 | IssuesEvent | 2015-11-22 22:15:06 | libkml/libkml | https://api.github.com/repos/libkml/libkml | closed | examples/java issues | auto-migrated Priority-Medium Type-Defect | ```

Makefile.am is missing CreateFolder.java

run.sh refers to non-existent ParseKml.java

```

Original issue reported on code.google.com by `kml.b...@gmail.com` on 17 Jun 2009 at 3:23 | 1.0 | examples/java issues - ```

Makefile.am is missing CreateFolder.java

run.sh refers to non-existent ParseKml.java

```

Original issue reported on code.google.com by `kml.b...@gmail.com` on 17 Jun 2009 at 3:23 | non_process | examples java issues makefile am is missing createfolder java run sh refers to non existent parsekml java original issue reported on code google com by kml b gmail com on jun at | 0 |

184,137 | 14,970,125,699 | IssuesEvent | 2021-01-27 19:08:58 | LibreTexts/metalc | https://api.github.com/repos/LibreTexts/metalc | closed | Create some examples of installing custom software in the libretexts construction guide | documentation good first issue medium priority | Our current policy is that we will install packages system-wide for users if they are available in the standard Ubuntu 18.04 APT repos or in the conda forge channel for conda packages (see the FAQ: https://jupyter.libretexts.org/hub/faq#how-can-i-install-custom-packages). We need one or more pages in the Libretexts con... | 1.0 | Create some examples of installing custom software in the libretexts construction guide - Our current policy is that we will install packages system-wide for users if they are available in the standard Ubuntu 18.04 APT repos or in the conda forge channel for conda packages (see the FAQ: https://jupyter.libretexts.org/h... | non_process | create some examples of installing custom software in the libretexts construction guide our current policy is that we will install packages system wide for users if they are available in the standard ubuntu apt repos or in the conda forge channel for conda packages see the faq we need one or more pages in the... | 0 |

13,061 | 15,394,677,568 | IssuesEvent | 2021-03-03 18:13:03 | googleapis/env-tests-logging | https://api.github.com/repos/googleapis/env-tests-logging | closed | Generalize library to be used by other languages | priority: p2 type: process | Hello, as I'm stepping through this test module for Go, starting a list of things that need to be generalized: