Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

15,001 | 18,682,582,620 | IssuesEvent | 2021-11-01 08:15:12 | Ghost-chu/QuickShop-Reremake | https://api.github.com/repos/Ghost-chu/QuickShop-Reremake | closed | [BUG] SPANISH LANG BROKEN | Bug Priority:Major In Process | ### Description

I update to 5.0.0.0. i restart my server and now all signs change languaje to english. (before use spanish like default)

Also i put spanish manually but traslations are broken(? some traslasted words now are in english

EXAMPLE:

Also i put spanish manually but traslations are broken(? some traslasted words now are in english

EXAMPLE:

for Jenkins is outdated, has no maintainers, and is receiving bug reports when users follow the instructions on that site.

The Jenkins governance board meeting Feb 6, 2023 approved ... | 1.0 | Redirect Chinese pages to English pages and shutdown the Chinese site - ### Describe your use-case which is not covered by existing documentation.

The [Chinese language web site](https://www.jenkins.io/zh/) for Jenkins is outdated, has no maintainers, and is receiving bug reports when users follow the instructions on ... | non_process | redirect chinese pages to english pages and shutdown the chinese site describe your use case which is not covered by existing documentation the for jenkins is outdated has no maintainers and is receiving bug reports when users follow the instructions on that site the jenkins governance board meeting f... | 0 |

26,389 | 5,247,012,745 | IssuesEvent | 2017-02-01 11:31:27 | UTNkar/moore | https://api.github.com/repos/UTNkar/moore | closed | Add testing instructions | documentation enhancement | ### Description

Currently nobody knows how to test the system. This is BAD programming practice. Please provide more documentatino

### Steps to Reproduce

1. Look at `README.md`

2. See `#testing`

**Expected behavior:** Find instructions how to run tests

**Actual behavior:** Nothing is shown

| 1.0 | Add testing instructions - ### Description

Currently nobody knows how to test the system. This is BAD programming practice. Please provide more documentatino

### Steps to Reproduce

1. Look at `README.md`

2. See `#testing`

**Expected behavior:** Find instructions how to run tests

**Actual behavior:** Not... | non_process | add testing instructions description currently nobody knows how to test the system this is bad programming practice please provide more documentatino steps to reproduce look at readme md see testing expected behavior find instructions how to run tests actual behavior not... | 0 |

9,062 | 12,136,755,519 | IssuesEvent | 2020-04-23 14:48:42 | MHRA/products | https://api.github.com/repos/MHRA/products | closed | Unauthorised request returns wrong error code | BUG :bug: EPIC - Auto Batch Process :oncoming_automobile: | **Describe the bug**

An unauthorized request to the document-index-updater returns a 500 (internal server error) but should return a 401 (unauthorized). This makes it more difficult for clients to diagnose possible issues with requests, as it surfaces like an issue on the document-index-updater side.

**To Reproduce... | 1.0 | Unauthorised request returns wrong error code - **Describe the bug**

An unauthorized request to the document-index-updater returns a 500 (internal server error) but should return a 401 (unauthorized). This makes it more difficult for clients to diagnose possible issues with requests, as it surfaces like an issue on th... | process | unauthorised request returns wrong error code describe the bug an unauthorized request to the document index updater returns a internal server error but should return a unauthorized this makes it more difficult for clients to diagnose possible issues with requests as it surfaces like an issue on the do... | 1 |

2,202 | 5,040,769,837 | IssuesEvent | 2016-12-19 07:34:51 | jlm2017/jlm-video-subtitles | https://api.github.com/repos/jlm2017/jlm-video-subtitles | opened | [subtitles] [eng] « La cause du terrorisme est dans la guerre et l'argent » - Jean-Luc Mélenchon au Parlement européen | Language: English Process: [1] Writing in progress | # Video title

« La cause du terrorisme est dans la guerre et l'argent » - Jean-Luc Mélenchon au Parlement européen

# URL

https://www.youtube.com/watch?v=A3KM2oOi-Jc&t=5s

# Youtube subtitle language

Anglais

# Duration

3:26

# URL subtitles

https://www.youtube.com/timedtext_editor?tab=captions&r... | 1.0 | [subtitles] [eng] « La cause du terrorisme est dans la guerre et l'argent » - Jean-Luc Mélenchon au Parlement européen - # Video title

« La cause du terrorisme est dans la guerre et l'argent » - Jean-Luc Mélenchon au Parlement européen

# URL

https://www.youtube.com/watch?v=A3KM2oOi-Jc&t=5s

# Youtube subtitl... | process | « la cause du terrorisme est dans la guerre et l argent » jean luc mélenchon au parlement européen video title « la cause du terrorisme est dans la guerre et l argent » jean luc mélenchon au parlement européen url youtube subtitle language anglais duration url subtitles... | 1 |

139,591 | 12,875,823,377 | IssuesEvent | 2020-07-11 00:55:40 | mimiframework/Mimi.jl | https://api.github.com/repos/mimiframework/Mimi.jl | closed | finish v1.0.0 docs | documentation | Documentation required for release of v0.10.0 (and v1.0.0) marked with **TODO**

**1. Home** - a single file

**2. Tutorials** - header

- Tutorials Intro

- Tutorial 1: Install Mimi

- Tutorial 2: Run an Existing Model

- Tutorial 3: Modify an Existing Model

- Tutorial 4: Create a Model

- Tutorial 5: Se... | 1.0 | finish v1.0.0 docs - Documentation required for release of v0.10.0 (and v1.0.0) marked with **TODO**

**1. Home** - a single file

**2. Tutorials** - header

- Tutorials Intro

- Tutorial 1: Install Mimi

- Tutorial 2: Run an Existing Model

- Tutorial 3: Modify an Existing Model

- Tutorial 4: Create a Mode... | non_process | finish docs documentation required for release of and marked with todo home a single file tutorials header tutorials intro tutorial install mimi tutorial run an existing model tutorial modify an existing model tutorial create a model ... | 0 |

144,135 | 19,274,740,386 | IssuesEvent | 2021-12-10 10:28:37 | symfony/symfony | https://api.github.com/repos/symfony/symfony | closed | [Security][RFC] Manual reset of login throttle | Security RFC | ### Description

I can see a use case where login throttling is set to longer periods (e.g., 10 minutes), and you want to give support staff the ability to manually reset a fat-fingered user's login throttle instead of telling the person to make a sandwich and wait.

Perhaps something like a ``LoginThrottlingResetLis... | True | [Security][RFC] Manual reset of login throttle - ### Description

I can see a use case where login throttling is set to longer periods (e.g., 10 minutes), and you want to give support staff the ability to manually reset a fat-fingered user's login throttle instead of telling the person to make a sandwich and wait.

P... | non_process | manual reset of login throttle description i can see a use case where login throttling is set to longer periods e g minutes and you want to give support staff the ability to manually reset a fat fingered user s login throttle instead of telling the person to make a sandwich and wait perhaps somethi... | 0 |

54,963 | 3,071,728,040 | IssuesEvent | 2015-08-19 13:45:49 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | opened | Ошибка в модуле отображения ссылок в чате | bug Component-UI imported Priority-Medium Usability | _From [dimitrij...@gmail.com](https://code.google.com/u/117085084104156933070/) on November 20, 2010 02:32:35_

При вставке магнета на файл

Аватар_-_Avatar_(Extended_Collector's_Cut)_2009_1080p_BDRip_x264@L4.1_DTS5.1_rus_eng.mkv (см. screenshot-258.png)

в чате только названия файла до ' отображается как ссылка, симво... | 1.0 | Ошибка в модуле отображения ссылок в чате - _From [dimitrij...@gmail.com](https://code.google.com/u/117085084104156933070/) on November 20, 2010 02:32:35_

При вставке магнета на файл

Аватар_-_Avatar_(Extended_Collector's_Cut)_2009_1080p_BDRip_x264@L4.1_DTS5.1_rus_eng.mkv (см. screenshot-258.png)

в чате только назван... | non_process | ошибка в модуле отображения ссылок в чате from on november при вставке магнета на файл аватар avatar extended collector s cut bdrip rus eng mkv см screenshot png в чате только названия файла до отображается как ссылка символ заменяется на см screenshot png ... | 0 |

10,243 | 13,099,405,920 | IssuesEvent | 2020-08-03 21:31:59 | googleapis/java-pubsublite | https://api.github.com/repos/googleapis/java-pubsublite | closed | Enable `clirr` to unblock Maven releases | api: pubsublite type: process | See https://github.com/googleapis/java-pubsublite/issues/131#issuecomment-665941171. We need to re-enable `clirr` to publish new releases of the library on Maven. Release is stuck at 0.1.7.

https://mvnrepository.com/artifact/com.google.cloud/google-cloud-pubsublite

Here's an example `clirr-ignored-differences.xm... | 1.0 | Enable `clirr` to unblock Maven releases - See https://github.com/googleapis/java-pubsublite/issues/131#issuecomment-665941171. We need to re-enable `clirr` to publish new releases of the library on Maven. Release is stuck at 0.1.7.

https://mvnrepository.com/artifact/com.google.cloud/google-cloud-pubsublite

Here... | process | enable clirr to unblock maven releases see we need to re enable clirr to publish new releases of the library on maven release is stuck at here s an example clirr ignored differences xml that ignores protos changes | 1 |

1,223 | 3,755,495,222 | IssuesEvent | 2016-03-12 18:05:36 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | doc: Event: 'unhandledRejection' code example not borked | doc good first contribution process | https://nodejs.org/api/process.html#process_event_unhandledrejection

```js

somePromise.then((res) => {

return reportToUser(JSON.parse(res)); // note the typo

}); // no `.catch` or `.then`

```

Someone [made off](https://github.com/jasnell/node/commit/f950904650a33ad9cdb0ecf6eb6cf1df335c14ca) with the typo. Whe... | 1.0 | doc: Event: 'unhandledRejection' code example not borked - https://nodejs.org/api/process.html#process_event_unhandledrejection

```js

somePromise.then((res) => {

return reportToUser(JSON.parse(res)); // note the typo

}); // no `.catch` or `.then`

```

Someone [made off](https://github.com/jasnell/node/commit/f... | process | doc event unhandledrejection code example not borked js somepromise then res return reporttouser json parse res note the typo no catch or then someone with the typo when this is put back together i d suggest updating to something like js note the typo ... | 1 |

7,286 | 10,435,660,321 | IssuesEvent | 2019-09-17 17:47:39 | usgs/libcomcat | https://api.github.com/repos/usgs/libcomcat | closed | Add libcomcat-code-version to UserAgent for all url requests | process | This will allow web guys to track how many search requests are coming from libcomcat as opposed to manual or other searches. | 1.0 | Add libcomcat-code-version to UserAgent for all url requests - This will allow web guys to track how many search requests are coming from libcomcat as opposed to manual or other searches. | process | add libcomcat code version to useragent for all url requests this will allow web guys to track how many search requests are coming from libcomcat as opposed to manual or other searches | 1 |

580,945 | 17,270,453,873 | IssuesEvent | 2021-07-22 19:04:49 | GaloisInc/saw-script | https://api.github.com/repos/GaloisInc/saw-script | closed | NULL-checking a pointer argument yields "Proof failed." | error-messages maybe-fixed priority | Assume a function that checks whether its pointer argument is NULL:

``` c

uint8_t test(uint8_t* a) {

return a ? 0 : 1;

}

```

Now take a SAW script that proves it always returns 0 with a non-null argument:

```

llvm_verify m "test" [] do {

llvm_ptr "a" (llvm_array 1 (llvm_int 8));

a <- llvm_var "*a" (llvm_... | 1.0 | NULL-checking a pointer argument yields "Proof failed." - Assume a function that checks whether its pointer argument is NULL:

``` c

uint8_t test(uint8_t* a) {

return a ? 0 : 1;

}

```

Now take a SAW script that proves it always returns 0 with a non-null argument:

```

llvm_verify m "test" [] do {

llvm_ptr "a" ... | non_process | null checking a pointer argument yields proof failed assume a function that checks whether its pointer argument is null c t test t a return a now take a saw script that proves it always returns with a non null argument llvm verify m test do llvm ptr a llvm arr... | 0 |

8,347 | 11,737,405,079 | IssuesEvent | 2020-03-11 14:35:06 | OpenDRR/opendrr-api | https://api.github.com/repos/OpenDRR/opendrr-api | opened | Look into ways to sync PostGIS with Elasticsearch | Requirement | We need to synchronize PostGIS with Elasticsearch. This need to be done when data in updated in PostGIS. Maybe we look at LogStash? | 1.0 | Look into ways to sync PostGIS with Elasticsearch - We need to synchronize PostGIS with Elasticsearch. This need to be done when data in updated in PostGIS. Maybe we look at LogStash? | non_process | look into ways to sync postgis with elasticsearch we need to synchronize postgis with elasticsearch this need to be done when data in updated in postgis maybe we look at logstash | 0 |

17,948 | 23,940,604,326 | IssuesEvent | 2022-09-11 21:06:13 | GregTechCEu/gt-ideas | https://api.github.com/repos/GregTechCEu/gt-ideas | opened | Air Distillation Overhaul | processing chain | ## Details

Currently, air distillation in gregtech simply involves cooling some air and distilling it to get some stuff. However I think it can be made more painful. This will outline a more realistic air separation process.

## Products

Main Product: Nitrogen, Oxygen, Argon

Side Product(s): Carbon Dioxide, Water

... | 1.0 | Air Distillation Overhaul - ## Details

Currently, air distillation in gregtech simply involves cooling some air and distilling it to get some stuff. However I think it can be made more painful. This will outline a more realistic air separation process.

## Products

Main Product: Nitrogen, Oxygen, Argon

Side Produc... | process | air distillation overhaul details currently air distillation in gregtech simply involves cooling some air and distilling it to get some stuff however i think it can be made more painful this will outline a more realistic air separation process products main product nitrogen oxygen argon side produc... | 1 |

172,145 | 14,350,742,017 | IssuesEvent | 2020-11-29 22:13:39 | RoyMagnussen/CottonBox-Data-Centric | https://api.github.com/repos/RoyMagnussen/CottonBox-Data-Centric | closed | [CHANGE REQUEST] User Stories | documentation | #### Describe the change wanted

Add User Stories to the project to provide people with information about what users of E-Commerce sites would like to see implemented.

#### Proposed solutions

- Add a `User Stories` section within the `UX` section of the `README` file and provide the user stories there. | 1.0 | [CHANGE REQUEST] User Stories - #### Describe the change wanted

Add User Stories to the project to provide people with information about what users of E-Commerce sites would like to see implemented.

#### Proposed solutions

- Add a `User Stories` section within the `UX` section of the `README` file and provide ... | non_process | user stories describe the change wanted add user stories to the project to provide people with information about what users of e commerce sites would like to see implemented proposed solutions add a user stories section within the ux section of the readme file and provide the user storie... | 0 |

12,801 | 15,181,037,028 | IssuesEvent | 2021-02-15 02:10:11 | Geonovum/disgeo-arch | https://api.github.com/repos/Geonovum/disgeo-arch | closed | 4.2 Functionele lagen in de inrichting: capabilities, functies en componenten? | Cosmetisch In Behandeling In behandeling - voorstel processen e.d. Processen Functies Componenten | In de alinea boven figuur 7 wordt gesproken over capabilities, clusters van functies en componenten. Onduidelijk is hoe deze termen zich tot elkaar verhouden of dat het synoniemen zijn. Kies één van deze drie, en hanteer die consequent in de rest van het document. | 2.0 | 4.2 Functionele lagen in de inrichting: capabilities, functies en componenten? - In de alinea boven figuur 7 wordt gesproken over capabilities, clusters van functies en componenten. Onduidelijk is hoe deze termen zich tot elkaar verhouden of dat het synoniemen zijn. Kies één van deze drie, en hanteer die consequent in ... | process | functionele lagen in de inrichting capabilities functies en componenten in de alinea boven figuur wordt gesproken over capabilities clusters van functies en componenten onduidelijk is hoe deze termen zich tot elkaar verhouden of dat het synoniemen zijn kies één van deze drie en hanteer die consequent in ... | 1 |

84,674 | 16,534,420,531 | IssuesEvent | 2021-05-27 10:04:33 | CiviWiki/OpenCiviWiki | https://api.github.com/repos/CiviWiki/OpenCiviWiki | closed | Remove features related to legislation | code quality dependencies enhancement good first issue help wanted mentoring | The legislation feature is currently U.S.-centric, making this project less relevant in other countries. We rely on third-party data sources for information on legislation, which adds a maintenance burden that this project cannot bear. Furthermore, we cannot support adding legislation for other political forms.

Remo... | 1.0 | Remove features related to legislation - The legislation feature is currently U.S.-centric, making this project less relevant in other countries. We rely on third-party data sources for information on legislation, which adds a maintenance burden that this project cannot bear. Furthermore, we cannot support adding legis... | non_process | remove features related to legislation the legislation feature is currently u s centric making this project less relevant in other countries we rely on third party data sources for information on legislation which adds a maintenance burden that this project cannot bear furthermore we cannot support adding legis... | 0 |

9,971 | 13,017,474,818 | IssuesEvent | 2020-07-26 12:40:47 | chanmakotoo/memories | https://api.github.com/repos/chanmakotoo/memories | opened | Processingの型 | Processing | | 型 | 説明 |

|--|--|

| char | 文字やユニコードシンボル''で囲む |

| int | 整数 |

| float | 浮動小数点 |

| boolean | 真偽 |

| byte | -127から128を格納できる |

| String | 文字列 | | 1.0 | Processingの型 - | 型 | 説明 |

|--|--|

| char | 文字やユニコードシンボル''で囲む |

| int | 整数 |

| float | 浮動小数点 |

| boolean | 真偽 |

| byte | -127から128を格納できる |

| String | 文字列 | | process | processingの型 型 説明 char 文字やユニコードシンボル で囲む int 整数 float 浮動小数点 boolean 真偽 byte string 文字列 | 1 |

12,592 | 14,992,153,556 | IssuesEvent | 2021-01-29 09:27:56 | AcademySoftwareFoundation/OpenCue | https://api.github.com/repos/AcademySoftwareFoundation/OpenCue | closed | docker files need to pin max pip version for python2 | process | **Describe the process**

The latest version of `pip` (21.0) and `setuptools` (45) has just dropped python 2 support.

As a result building the sandbox environment fails:

```

docker-compose --project-directory . -f sandbox/docker-compose.yml build

```

```

Step 8/25 : RUN python -m pip install --upgrade setupt... | 1.0 | docker files need to pin max pip version for python2 - **Describe the process**

The latest version of `pip` (21.0) and `setuptools` (45) has just dropped python 2 support.

As a result building the sandbox environment fails:

```

docker-compose --project-directory . -f sandbox/docker-compose.yml build

```

```

... | process | docker files need to pin max pip version for describe the process the latest version of pip and setuptools has just dropped python support as a result building the sandbox environment fails docker compose project directory f sandbox docker compose yml build step ... | 1 |

58,177 | 16,413,709,299 | IssuesEvent | 2021-05-19 01:47:22 | DependencyTrack/dependency-track | https://api.github.com/repos/DependencyTrack/dependency-track | closed | [Project] Unable to add PURL attribute containing "@" to project | defect p2 | ### Current Behavior:

DT Version : 4.2.2

Upload PURL attribute to a project using the web UI.

Attribute used: `pkg:npm/%40angular/animation@12.3.1`

Server will respond with a status code of 400 and response body: `[{"message":"The Package URL (purl) must be a valid URI and conform to https://github.com/package-ur... | 1.0 | [Project] Unable to add PURL attribute containing "@" to project - ### Current Behavior:

DT Version : 4.2.2

Upload PURL attribute to a project using the web UI.

Attribute used: `pkg:npm/%40angular/animation@12.3.1`

Server will respond with a status code of 400 and response body: `[{"message":"The Package URL (pur... | non_process | unable to add purl attribute containing to project current behavior dt version upload purl attribute to a project using the web ui attribute used pkg npm animation server will respond with a status code of and response body steps to reproduce see above expe... | 0 |

164,130 | 12,780,222,793 | IssuesEvent | 2020-07-01 00:02:50 | etcd-io/etcd | https://api.github.com/repos/etcd-io/etcd | closed | Improve etcd upgrade/downgrade policy and tests | area/doc area/feature area/testing stale | We don't have enough coverage on upgrades (none for downgrades). Only test case is upgrade from latest release to master branch https://github.com/coreos/etcd/blob/master/e2e/etcd_release_upgrade_test.go where we stop/restart with new versions of etcd (master branch) in CI.

- Clearly document compatibilities between... | 1.0 | Improve etcd upgrade/downgrade policy and tests - We don't have enough coverage on upgrades (none for downgrades). Only test case is upgrade from latest release to master branch https://github.com/coreos/etcd/blob/master/e2e/etcd_release_upgrade_test.go where we stop/restart with new versions of etcd (master branch) in... | non_process | improve etcd upgrade downgrade policy and tests we don t have enough coverage on upgrades none for downgrades only test case is upgrade from latest release to master branch where we stop restart with new versions of etcd master branch in ci clearly document compatibilities between different versions e... | 0 |

20,581 | 27,242,270,008 | IssuesEvent | 2023-02-21 21:37:54 | googleapis/google-cloud-node | https://api.github.com/repos/googleapis/google-cloud-node | closed | Website seems to be from archived repo | type: process | This website https://googleapis.dev/nodejs/run/latest/ points at https://github.com/googleapis/nodejs-run/tree/main/samples which is an archived repository. | 1.0 | Website seems to be from archived repo - This website https://googleapis.dev/nodejs/run/latest/ points at https://github.com/googleapis/nodejs-run/tree/main/samples which is an archived repository. | process | website seems to be from archived repo this website points at which is an archived repository | 1 |

2,119 | 4,955,812,068 | IssuesEvent | 2016-12-01 21:31:22 | demidovakatya/imaginaryfriend | https://api.github.com/repos/demidovakatya/imaginaryfriend | closed | Puctuation != words | enhancement text processing | Не надо, чтобы бот запоминал `Молодец)`. Слова нужно чистить от пунктуации. | 1.0 | Puctuation != words - Не надо, чтобы бот запоминал `Молодец)`. Слова нужно чистить от пунктуации. | process | puctuation words не надо чтобы бот запоминал молодец слова нужно чистить от пунктуации | 1 |

796,165 | 28,100,604,642 | IssuesEvent | 2023-03-30 19:10:02 | cloudflare/cloudflared | https://api.github.com/repos/cloudflare/cloudflared | closed | 💡 Getting authenticated Tunnel user | Type: Feature Request Priority: Normal | **Describe the feature you'd like**

After authenticating a Tunnel user through Zero Trust, I'd be interesting to get the current authenticated user email, maybe as another column of the output from `cloudflared tunnel info XPTO`

| 1.0 | 💡 Getting authenticated Tunnel user - **Describe the feature you'd like**

After authenticating a Tunnel user through Zero Trust, I'd be interesting to get the current authenticated user email, maybe as another column of the output from `cloudflared tunnel info XPTO`

| non_process | 💡 getting authenticated tunnel user describe the feature you d like after authenticating a tunnel user through zero trust i d be interesting to get the current authenticated user email maybe as another column of the output from cloudflared tunnel info xpto | 0 |

80,069 | 15,343,903,087 | IssuesEvent | 2021-02-27 22:26:07 | fwcd/kotlin-language-server | https://api.github.com/repos/fwcd/kotlin-language-server | opened | Store rendered descriptor previews in symbol index | code completion enhancement index | _(extracted from #268)_

Just like with normal completions, completions for non-imported symbols (i.e. those from the index) should include rendered descriptor previews. | 1.0 | Store rendered descriptor previews in symbol index - _(extracted from #268)_

Just like with normal completions, completions for non-imported symbols (i.e. those from the index) should include rendered descriptor previews. | non_process | store rendered descriptor previews in symbol index extracted from just like with normal completions completions for non imported symbols i e those from the index should include rendered descriptor previews | 0 |

304,083 | 9,320,852,714 | IssuesEvent | 2019-03-27 01:09:44 | kubeflow/pipelines | https://api.github.com/repos/kubeflow/pipelines | closed | Tensorboard not showing historical AUC / Accuracy | priority/p1 | When launching Tensorboard after running the TFX notebook samples, the graphs are not showing the AUC / Accuracy at each execution step of the training algorithm. Only the final result is displayed. | 1.0 | Tensorboard not showing historical AUC / Accuracy - When launching Tensorboard after running the TFX notebook samples, the graphs are not showing the AUC / Accuracy at each execution step of the training algorithm. Only the final result is displayed. | non_process | tensorboard not showing historical auc accuracy when launching tensorboard after running the tfx notebook samples the graphs are not showing the auc accuracy at each execution step of the training algorithm only the final result is displayed | 0 |

13,630 | 16,240,394,902 | IssuesEvent | 2021-05-07 08:50:08 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Error: [libs/sql-schema-describer/src/getters.rs:21:14] called `Result::unwrap()` on an `Err` value: "Getting is_identity from Resultrow ResultRow { columns: [\"table_name\", \"column_name\", \"formatted_type\", \"numeric_precision\", \"numeric_scale\", \"numeric_precision_radix\", \"datetime_precision\", \"data_type\"... | bug/1-repro-available kind/bug process/candidate team/migrations | <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma introspect`

Version: `2.21.2`

Binary Version: `e421996c87d5f3c8f7eeadd502d4ad402c89464d`

Report: https://prisma-errors.netlify.app/report/13280

OS: `x64 darwin 20.3.0`

JS Stacktrace:

```

Error: [libs/sql-schema-descr... | 1.0 | Error: [libs/sql-schema-describer/src/getters.rs:21:14] called `Result::unwrap()` on an `Err` value: "Getting is_identity from Resultrow ResultRow { columns: [\"table_name\", \"column_name\", \"formatted_type\", \"numeric_precision\", \"numeric_scale\", \"numeric_precision_radix\", \"datetime_precision\", \"data_type\"... | process | error called result unwrap on an err value getting is identity from resultrow resultrow columns values as string failed command prisma introspect version binary version report os darwin js stacktrace error called result unwrap on an ... | 1 |

84,082 | 15,720,829,459 | IssuesEvent | 2021-03-29 01:20:39 | LalithK90/processManagement | https://api.github.com/repos/LalithK90/processManagement | opened | CVE-2021-25122 (High) detected in tomcat-embed-core-9.0.30.jar | security vulnerability | ## CVE-2021-25122 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tomcat-embed-core-9.0.30.jar</b></p></summary>

<p>Core Tomcat implementation</p>

<p>Library home page: <a href="https:... | True | CVE-2021-25122 (High) detected in tomcat-embed-core-9.0.30.jar - ## CVE-2021-25122 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tomcat-embed-core-9.0.30.jar</b></p></summary>

<p>Cor... | non_process | cve high detected in tomcat embed core jar cve high severity vulnerability vulnerable library tomcat embed core jar core tomcat implementation library home page a href path to dependency file processmanagement build gradle path to vulnerable library home wss scanner... | 0 |

13,234 | 15,705,959,939 | IssuesEvent | 2021-03-26 16:48:10 | emacs-ess/ESS | https://api.github.com/repos/emacs-ess/ESS | closed | C-c C-c on script opens R in new frame, but puts script in new frame's bufffer | process:windows | Hello,

When I attempt to run R, opening it by `ess-eval-region-or-function-or-paragraph-and-step VIS` on an R script in its own buffer and frame, it creates a second frame and opens R in that frame, as expected by my use of `(setq inferior-ess-own-frame t)` in .init.el file in .emacs.d However, it then proceeds to ret... | 1.0 | C-c C-c on script opens R in new frame, but puts script in new frame's bufffer - Hello,

When I attempt to run R, opening it by `ess-eval-region-or-function-or-paragraph-and-step VIS` on an R script in its own buffer and frame, it creates a second frame and opens R in that frame, as expected by my use of `(setq inferi... | process | c c c c on script opens r in new frame but puts script in new frame s bufffer hello when i attempt to run r opening it by ess eval region or function or paragraph and step vis on an r script in its own buffer and frame it creates a second frame and opens r in that frame as expected by my use of setq inferi... | 1 |

10,592 | 13,400,944,135 | IssuesEvent | 2020-09-03 16:32:17 | jgraley/inferno-cpp2v | https://api.github.com/repos/jgraley/inferno-cpp2v | closed | Early-out on trivial problems | Constraint Processing bug | The case of for example `NotMatch(master_coupled_identifier)` causes a trivial `AndRuleEngine` problem. `my_agents` is empty, and root agent is a master boundary agent. It's wasteful to bother the conjectures etc. Also, CSP generation goes wrong: zero constraints should mean zero variables, but we expect one of the (ze... | 1.0 | Early-out on trivial problems - The case of for example `NotMatch(master_coupled_identifier)` causes a trivial `AndRuleEngine` problem. `my_agents` is empty, and root agent is a master boundary agent. It's wasteful to bother the conjectures etc. Also, CSP generation goes wrong: zero constraints should mean zero variabl... | process | early out on trivial problems the case of for example notmatch master coupled identifier causes a trivial andruleengine problem my agents is empty and root agent is a master boundary agent it s wasteful to bother the conjectures etc also csp generation goes wrong zero constraints should mean zero variabl... | 1 |

16,771 | 4,086,787,003 | IssuesEvent | 2016-06-01 07:27:48 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | closed | Add minimizers documentation with comparison of performance | Component: Fitting Priority: High Quality: Documentation | Add short documentation for minimizers and tables with summary and details on performance (accuracy and run time) for all minimizers, except FABADA.

Use the test problems from the NIST benchmark, and if they're ready in time some of the test problems from CUTEst. Organize the tables in three blocks, following the NI... | 1.0 | Add minimizers documentation with comparison of performance - Add short documentation for minimizers and tables with summary and details on performance (accuracy and run time) for all minimizers, except FABADA.

Use the test problems from the NIST benchmark, and if they're ready in time some of the test problems from... | non_process | add minimizers documentation with comparison of performance add short documentation for minimizers and tables with summary and details on performance accuracy and run time for all minimizers except fabada use the test problems from the nist benchmark and if they re ready in time some of the test problems from... | 0 |

35,509 | 7,756,333,957 | IssuesEvent | 2018-05-31 13:19:37 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | opened | Do not use InternalVisitListener for ORACLE12C dialect | C: Functionality P: Medium T: Defect | There is an `InternalVisitListener` that collects some column aliases from the `SELECT` clause and makes them available to the `ORDER BY` clause in the same scope, in case `OFFSET` pagination needs to be emulated (see #2080). This is currently being done for:

- DB2

- Oracle

- SQL Data Warehouse

- SQL Server 2008

... | 1.0 | Do not use InternalVisitListener for ORACLE12C dialect - There is an `InternalVisitListener` that collects some column aliases from the `SELECT` clause and makes them available to the `ORDER BY` clause in the same scope, in case `OFFSET` pagination needs to be emulated (see #2080). This is currently being done for:

... | non_process | do not use internalvisitlistener for dialect there is an internalvisitlistener that collects some column aliases from the select clause and makes them available to the order by clause in the same scope in case offset pagination needs to be emulated see this is currently being done for orac... | 0 |

12,852 | 15,238,460,741 | IssuesEvent | 2021-02-19 01:59:06 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Vector Feature Density | Feature Request Feedback Processing stale | Author Name: **Alejandro Pareja** (Alejandro Pareja)

Original Redmine Issue: [21249](https://issues.qgis.org/issues/21249)

Redmine category:processing/qgis

---

I suggest a tool for the density of vector features (points, lines or polygons) within a specified distance of each grid cell in a raster. Similar to ArcGIS ... | 1.0 | Vector Feature Density - Author Name: **Alejandro Pareja** (Alejandro Pareja)

Original Redmine Issue: [21249](https://issues.qgis.org/issues/21249)

Redmine category:processing/qgis

---

I suggest a tool for the density of vector features (points, lines or polygons) within a specified distance of each grid cell in a r... | process | vector feature density author name alejandro pareja alejandro pareja original redmine issue redmine category processing qgis i suggest a tool for the density of vector features points lines or polygons within a specified distance of each grid cell in a raster similar to arcgis line density and ... | 1 |

21,501 | 29,668,994,493 | IssuesEvent | 2023-06-11 06:49:59 | turt2live/matrix-media-repo | https://api.github.com/repos/turt2live/matrix-media-repo | opened | Refactoring checklist | enhancement media import release-blocker media export url previews multi-process datastores files antispam resource waste spec compliance performance transfer admin api gdpr | * [ ] Move URL previews to pipeline system

* [ ] Move imports/exports to pipeline system

* [ ] Move actionable admin APIs to pipeline system (transfer, purge, etc)

* [ ] Move remaining admin APIs to new database accessor

* [ ] Make plugins work again

* [ ] Delete dead code

* [ ] Integration tests??? | 1.0 | Refactoring checklist - * [ ] Move URL previews to pipeline system

* [ ] Move imports/exports to pipeline system

* [ ] Move actionable admin APIs to pipeline system (transfer, purge, etc)

* [ ] Move remaining admin APIs to new database accessor

* [ ] Make plugins work again

* [ ] Delete dead code

* [ ] Integratio... | process | refactoring checklist move url previews to pipeline system move imports exports to pipeline system move actionable admin apis to pipeline system transfer purge etc move remaining admin apis to new database accessor make plugins work again delete dead code integration tests | 1 |

14,649 | 17,774,844,813 | IssuesEvent | 2021-08-30 17:50:05 | open-telemetry/opentelemetry-collector | https://api.github.com/repos/open-telemetry/opentelemetry-collector | closed | Add way to communicate hash calculated by probabilistic sampler | area:processor | Related to https://github.com/open-telemetry/opentelemetry-collector/pull/469#discussion_r364000874

The idea is to make the probabilistic sampler capable of communicate the hash it calculated for sampled traces.

/cc @SergeyKanzhelev | 1.0 | Add way to communicate hash calculated by probabilistic sampler - Related to https://github.com/open-telemetry/opentelemetry-collector/pull/469#discussion_r364000874

The idea is to make the probabilistic sampler capable of communicate the hash it calculated for sampled traces.

/cc @SergeyKanzhelev | process | add way to communicate hash calculated by probabilistic sampler related to the idea is to make the probabilistic sampler capable of communicate the hash it calculated for sampled traces cc sergeykanzhelev | 1 |

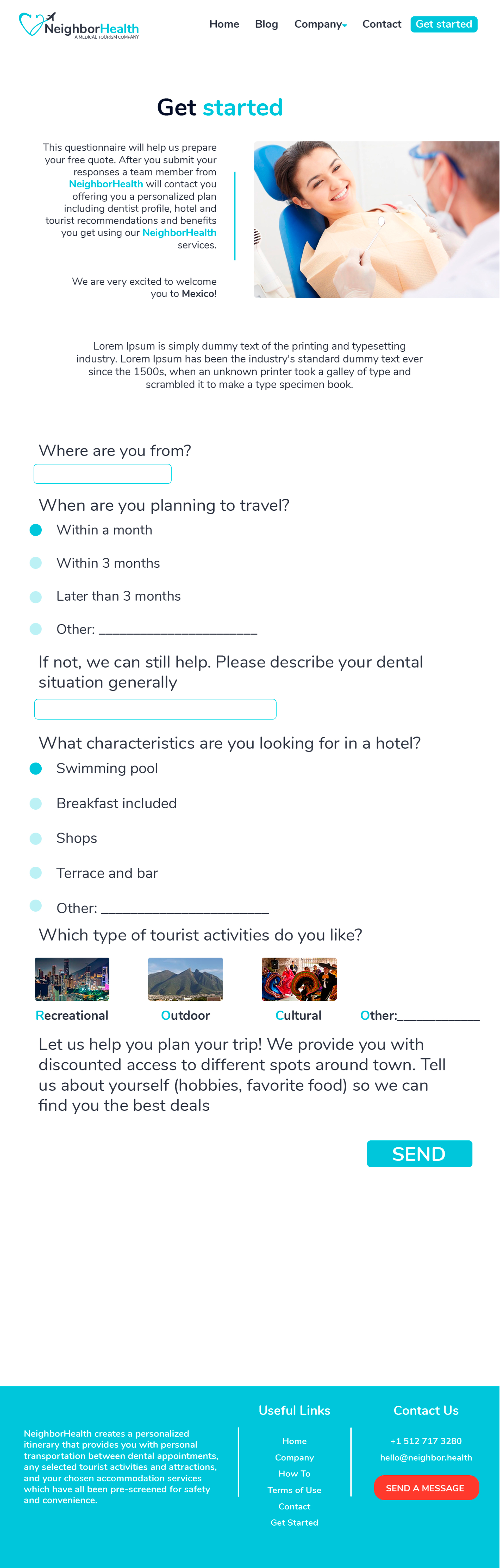

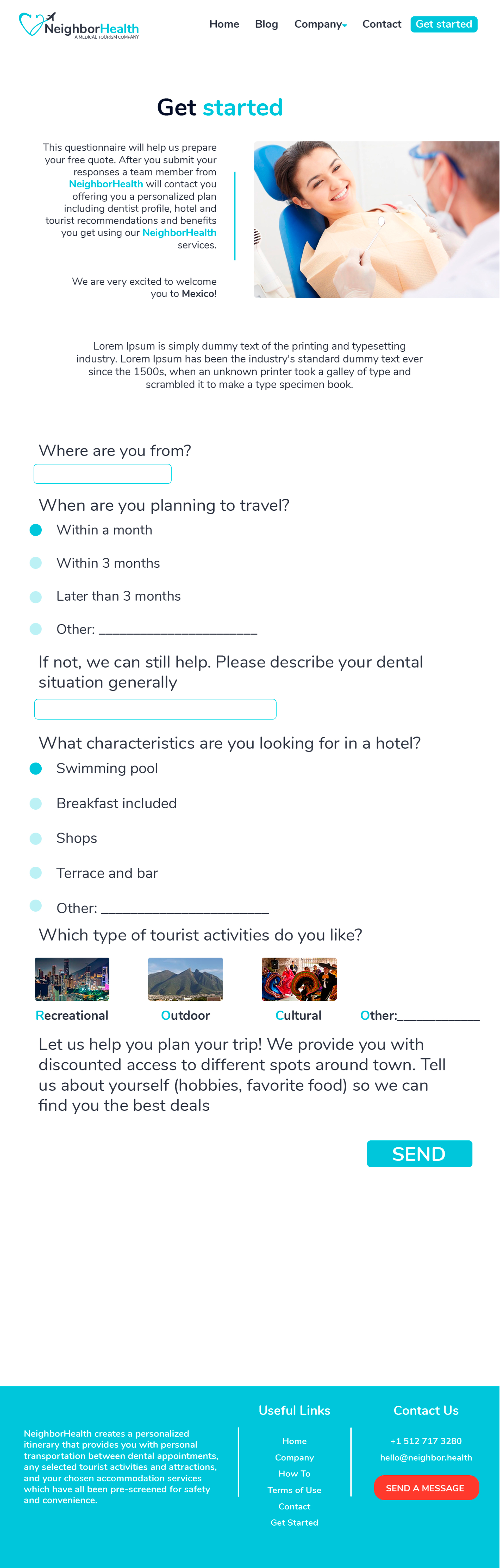

255,585 | 8,125,746,590 | IssuesEvent | 2018-08-16 22:08:12 | RobRuizR/NeighborHealth | https://api.github.com/repos/RobRuizR/NeighborHealth | closed | Forma para obtener información del cliente | high priority | Información general sobre las preferencias de viaje del cliente

| 1.0 | Forma para obtener información del cliente - Información general sobre las preferencias de viaje del cliente

| non_process | forma para obtener información del cliente información general sobre las preferencias de viaje del cliente | 0 |

11,957 | 14,726,009,043 | IssuesEvent | 2021-01-06 06:00:06 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | opened | Payment Due Reminders | anc-process anp-2 ant-enhancement pl-wish list | In GitLab by @kdjstudios on Oct 24, 2016, 24:51

Based on last invoice date and net terms, send a payment due reminder the day following the due date. | 1.0 | Payment Due Reminders - In GitLab by @kdjstudios on Oct 24, 2016, 24:51

Based on last invoice date and net terms, send a payment due reminder the day following the due date. | process | payment due reminders in gitlab by kdjstudios on oct based on last invoice date and net terms send a payment due reminder the day following the due date | 1 |

27,269 | 4,957,309,603 | IssuesEvent | 2016-12-02 03:40:02 | TNGSB/eWallet | https://api.github.com/repos/TNGSB/eWallet | closed | eWallet_MobileApp_Android (Registration) #09 | Defect - High (Sev-2) | [Defect_Mobile App #09.xlsx](https://github.com/TNGSB/eWallet/files/565973/Defect_Mobile.App.09.xlsx)

Test Description : To validate error message displayed when user input more than 14 characters for "Phone" field

Expected Result : "System should not allow input more than 14 characters and stop input when user re... | 1.0 | eWallet_MobileApp_Android (Registration) #09 - [Defect_Mobile App #09.xlsx](https://github.com/TNGSB/eWallet/files/565973/Defect_Mobile.App.09.xlsx)

Test Description : To validate error message displayed when user input more than 14 characters for "Phone" field

Expected Result : "System should not allow input more... | non_process | ewallet mobileapp android registration test description to validate error message displayed when user input more than characters for phone field expected result system should not allow input more than characters and stop input when user reach characters if user to insert more than charac... | 0 |

19,893 | 26,340,346,767 | IssuesEvent | 2023-01-10 17:11:17 | temporalio/sdk-typescript | https://api.github.com/repos/temporalio/sdk-typescript | closed | Set up publish from CI | CICD processes | Need to first consider:

- When to publish packages (on merge to main?)

- Which version to bump: patch, minor, major?

- Auto generate changelogs: https://github.com/lerna/lerna-changelog | 1.0 | Set up publish from CI - Need to first consider:

- When to publish packages (on merge to main?)

- Which version to bump: patch, minor, major?

- Auto generate changelogs: https://github.com/lerna/lerna-changelog | process | set up publish from ci need to first consider when to publish packages on merge to main which version to bump patch minor major auto generate changelogs | 1 |

22,069 | 30,593,286,362 | IssuesEvent | 2023-07-21 19:08:15 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Need a list of accepted build source type | devops/prod doc-bug Pri2 devops-cicd-process/tech | If you have an external CI build system that produces artifacts, you can consume artifacts with a builds resource. A builds resource can be any external CI systems like Jenkins, TeamCity, CircleCI, and so on.

resources: # types: pipelines | builds | repositories | containers | packages

builds:

- build: ... | 1.0 | Need a list of accepted build source type - If you have an external CI build system that produces artifacts, you can consume artifacts with a builds resource. A builds resource can be any external CI systems like Jenkins, TeamCity, CircleCI, and so on.

resources: # types: pipelines | builds | repositories | c... | process | need a list of accepted build source type if you have an external ci build system that produces artifacts you can consume artifacts with a builds resource a builds resource can be any external ci systems like jenkins teamcity circleci and so on resources types pipelines builds repositories c... | 1 |

14,762 | 18,041,451,796 | IssuesEvent | 2021-09-18 05:23:39 | ooi-data/CE01ISSM-MFD35-02-PRESFA000-telemetered-presf_abc_dcl_tide_measurement | https://api.github.com/repos/ooi-data/CE01ISSM-MFD35-02-PRESFA000-telemetered-presf_abc_dcl_tide_measurement | opened | 🛑 Processing failed: ResponseParserError | process | ## Overview

`ResponseParserError` found in `processing_task` task during run ended on 2021-09-18T05:23:38.716592.

## Details

Flow name: `CE01ISSM-MFD35-02-PRESFA000-telemetered-presf_abc_dcl_tide_measurement`

Task name: `processing_task`

Error type: `ResponseParserError`

Error message: Unable to parse response (no e... | 1.0 | 🛑 Processing failed: ResponseParserError - ## Overview

`ResponseParserError` found in `processing_task` task during run ended on 2021-09-18T05:23:38.716592.

## Details

Flow name: `CE01ISSM-MFD35-02-PRESFA000-telemetered-presf_abc_dcl_tide_measurement`

Task name: `processing_task`

Error type: `ResponseParserError`

E... | process | 🛑 processing failed responseparsererror overview responseparsererror found in processing task task during run ended on details flow name telemetered presf abc dcl tide measurement task name processing task error type responseparsererror error message unable to parse respo... | 1 |

9,852 | 12,838,976,956 | IssuesEvent | 2020-07-07 18:27:41 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | [Introspection] Pick up order of columns in database into schema | kind/improvement process/candidate topic: introspection | For https://github.com/prisma/prisma/issues/2554 @carmenberndt showed that it is possibly to get the order of the table columns from a table with the right SQL queries, https://github.com/prisma/prisma-engines/pull/872 even implements this already in good parts.

We should now change our Introspection implemented to ... | 1.0 | [Introspection] Pick up order of columns in database into schema - For https://github.com/prisma/prisma/issues/2554 @carmenberndt showed that it is possibly to get the order of the table columns from a table with the right SQL queries, https://github.com/prisma/prisma-engines/pull/872 even implements this already in go... | process | pick up order of columns in database into schema for carmenberndt showed that it is possibly to get the order of the table columns from a table with the right sql queries even implements this already in good parts we should now change our introspection implemented to indeed do this related internal di... | 1 |

121,697 | 17,662,089,865 | IssuesEvent | 2021-08-21 18:16:51 | ghc-dev/Carolyn-Maldonado | https://api.github.com/repos/ghc-dev/Carolyn-Maldonado | closed | CVE-2020-14365 (High) detected in ansible-2.9.9.tar.gz - autoclosed | security vulnerability | ## CVE-2020-14365 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansible-2.9.9.tar.gz</b></p></summary>

<p>Radically simple IT automation</p>

<p>Library home page: <a href="https://fi... | True | CVE-2020-14365 (High) detected in ansible-2.9.9.tar.gz - autoclosed - ## CVE-2020-14365 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansible-2.9.9.tar.gz</b></p></summary>

<p>Radica... | non_process | cve high detected in ansible tar gz autoclosed cve high severity vulnerability vulnerable library ansible tar gz radically simple it automation library home page a href path to dependency file carolyn maldonado requirements txt path to vulnerable library requireme... | 0 |

320,268 | 27,430,221,534 | IssuesEvent | 2023-03-02 00:19:57 | MPMG-DCC-UFMG/F01 | https://api.github.com/repos/MPMG-DCC-UFMG/F01 | closed | Teste de generalizacao para a tag Receitas - Dados de Receitas - Jeceaba | generalization test development | DoD: Realizar o teste de Generalização do validador da tag Receitas - Dados de Receitas para o Município de Jeceaba. | 1.0 | Teste de generalizacao para a tag Receitas - Dados de Receitas - Jeceaba - DoD: Realizar o teste de Generalização do validador da tag Receitas - Dados de Receitas para o Município de Jeceaba. | non_process | teste de generalizacao para a tag receitas dados de receitas jeceaba dod realizar o teste de generalização do validador da tag receitas dados de receitas para o município de jeceaba | 0 |

180,790 | 21,625,825,526 | IssuesEvent | 2022-05-05 01:54:43 | mgh3326/que_bang | https://api.github.com/repos/mgh3326/que_bang | closed | CVE-2021-35517 (High) detected in commons-compress-1.20.jar - autoclosed | security vulnerability | ## CVE-2021-35517 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-compress-1.20.jar</b></p></summary>

<p>Apache Commons Compress software defines an API for working with

compre... | True | CVE-2021-35517 (High) detected in commons-compress-1.20.jar - autoclosed - ## CVE-2021-35517 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-compress-1.20.jar</b></p></summary>

... | non_process | cve high detected in commons compress jar autoclosed cve high severity vulnerability vulnerable library commons compress jar apache commons compress software defines an api for working with compression and archive formats these include gzip lzma xz snappy traditional ... | 0 |

287,959 | 31,856,846,147 | IssuesEvent | 2023-09-15 08:06:21 | nidhi7598/linux-4.19.72_CVE-2022-3564 | https://api.github.com/repos/nidhi7598/linux-4.19.72_CVE-2022-3564 | closed | CVE-2023-1252 (High) detected in linuxlinux-4.19.294 - autoclosed | Mend: dependency security vulnerability | ## CVE-2023-1252 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.294</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.ke... | True | CVE-2023-1252 (High) detected in linuxlinux-4.19.294 - autoclosed - ## CVE-2023-1252 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.294</b></p></summary>

<p>

<p>The Li... | non_process | cve high detected in linuxlinux autoclosed cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch main vulnerable source files ... | 0 |

610,984 | 18,941,629,382 | IssuesEvent | 2021-11-18 04:05:07 | zgzgorg/iam-backend | https://api.github.com/repos/zgzgorg/iam-backend | opened | implement groups list | help wanted Priority:P2 Type:feature | As a user, we would like to know what groups are in this org.

We should have an API to show a list of groups

Outcome:

1. develop a API to show a list of group | 1.0 | implement groups list - As a user, we would like to know what groups are in this org.

We should have an API to show a list of groups

Outcome:

1. develop a API to show a list of group | non_process | implement groups list as a user we would like to know what groups are in this org we should have an api to show a list of groups outcome develop a api to show a list of group | 0 |

79,951 | 7,734,133,664 | IssuesEvent | 2018-05-26 20:25:53 | python/mypy | https://api.github.com/repos/python/mypy | closed | Use incremental mode in python evaluation test cases | priority-1-normal topic-incremental topic-tests | The Python evaluation test cases (which don't actually run the code if there are type errors...) use full stubs for `builtins` and `typing`. Using incremental mode would likely speed them up significantly, as processing stubs probably takes the majority of time. These tests are the long pole in the full test suite, so ... | 1.0 | Use incremental mode in python evaluation test cases - The Python evaluation test cases (which don't actually run the code if there are type errors...) use full stubs for `builtins` and `typing`. Using incremental mode would likely speed them up significantly, as processing stubs probably takes the majority of time. Th... | non_process | use incremental mode in python evaluation test cases the python evaluation test cases which don t actually run the code if there are type errors use full stubs for builtins and typing using incremental mode would likely speed them up significantly as processing stubs probably takes the majority of time th... | 0 |

11,678 | 14,536,465,453 | IssuesEvent | 2020-12-15 07:40:39 | aiidateam/aiida-core | https://api.github.com/repos/aiidateam/aiida-core | closed | Running a process function will create `Runner` without persister causing any submission after that to fail | priority/critical-blocking topic/engine topic/persistence topic/processes type/bug | When a process function is run, a runner is obtained through `Manager.get_runner`, but it passes `with_persistence=False`:

https://github.com/aiidateam/aiida-core/blob/073639aeb6d844acece2fda70aeefdca9d9005fc/aiida/engine/processes/functions.py#L116

In principle this would be fine since a process function runs in o... | 1.0 | Running a process function will create `Runner` without persister causing any submission after that to fail - When a process function is run, a runner is obtained through `Manager.get_runner`, but it passes `with_persistence=False`:

https://github.com/aiidateam/aiida-core/blob/073639aeb6d844acece2fda70aeefdca9d9005fc/... | process | running a process function will create runner without persister causing any submission after that to fail when a process function is run a runner is obtained through manager get runner but it passes with persistence false in principle this would be fine since a process function runs in one go and so do... | 1 |

35,199 | 30,832,741,200 | IssuesEvent | 2023-08-02 04:02:43 | dotnet/aspnetcore | https://api.github.com/repos/dotnet/aspnetcore | closed | Rename Microsoft.AspNetCore.Testing | area-infrastructure | As per https://github.com/dotnet/extensions/issues/4057#issuecomment-1660927215, https://github.com/dotnet/aspnetcore/blob/main/src/Testing/src/Microsoft.AspNetCore.Testing.csproj is used for internal purposes, and being published to the BAR it causes a clash with the project coming out of dotnet/extensions (e.g., http... | 1.0 | Rename Microsoft.AspNetCore.Testing - As per https://github.com/dotnet/extensions/issues/4057#issuecomment-1660927215, https://github.com/dotnet/aspnetcore/blob/main/src/Testing/src/Microsoft.AspNetCore.Testing.csproj is used for internal purposes, and being published to the BAR it causes a clash with the project comin... | non_process | rename microsoft aspnetcore testing as per is used for internal purposes and being published to the bar it causes a clash with the project coming out of dotnet extensions e g the project should be renamed or should not be published to the bar cc joperezr tratcher wtgodbe | 0 |

636,981 | 20,616,246,819 | IssuesEvent | 2022-03-07 13:31:30 | canonical-web-and-design/ubuntu.com | https://api.github.com/repos/canonical-web-and-design/ubuntu.com | closed | Ubuntu Core image download thank-you page points to incorrect installation instructions | Priority: Medium | When I download an Ubuntu Core 20 image from ubuntu.com, I get redirected to a 'thank you' page using the following URL: https://ubuntu.com/download/raspberry-pi/thank-you?version=20&architecture=core-20-arm64+raspi The page tries to be helpful and offers pointers to the installation instructions. However, those instr... | 1.0 | Ubuntu Core image download thank-you page points to incorrect installation instructions - When I download an Ubuntu Core 20 image from ubuntu.com, I get redirected to a 'thank you' page using the following URL: https://ubuntu.com/download/raspberry-pi/thank-you?version=20&architecture=core-20-arm64+raspi The page trie... | non_process | ubuntu core image download thank you page points to incorrect installation instructions when i download an ubuntu core image from ubuntu com i get redirected to a thank you page using the following url the page tries to be helpful and offers pointers to the installation instructions however those instrutio... | 0 |

1,138 | 3,626,850,499 | IssuesEvent | 2016-02-10 04:00:36 | worldspawn/mascis | https://api.github.com/repos/worldspawn/mascis | opened | Support Contains function for IEnumerables | enhancement linq-expression-parser postgres-language-processor t-sql-language-processor | - [ ] Linq Expression Parser

- [ ] T-Sql Language Processor

- [ ] Postgres Language Processor | 2.0 | Support Contains function for IEnumerables - - [ ] Linq Expression Parser

- [ ] T-Sql Language Processor

- [ ] Postgres Language Processor | process | support contains function for ienumerables linq expression parser t sql language processor postgres language processor | 1 |

63,043 | 7,680,263,219 | IssuesEvent | 2018-05-16 00:32:18 | ParabolInc/action | https://api.github.com/repos/ParabolInc/action | opened | Improve Congratulations Modal on Upgrade to Pro | design | The current modal congratulates you for giving us money, which isn't really a cause for celebration:

Instead, we should have it be more like a video game & tell you what features you've unlocked.

Acc... | 1.0 | Improve Congratulations Modal on Upgrade to Pro - The current modal congratulates you for giving us money, which isn't really a cause for celebration:

Instead, we should have it be more like a video gam... | non_process | improve congratulations modal on upgrade to pro the current modal congratulates you for giving us money which isn t really a cause for celebration instead we should have it be more like a video game tell you what features you ve unlocked acceptance criteria congratulations modal designed ... | 0 |

20,647 | 27,323,800,190 | IssuesEvent | 2023-02-24 22:52:28 | MPMG-DCC-UFMG/C01 | https://api.github.com/repos/MPMG-DCC-UFMG/C01 | closed | Deletar módulo `form-parser` do sistema | [0] Desenvolvimento [2] Média Prioridade [1] Aprimoramento [3] Processamento Dinâmico | ## Comportamento Esperado

O módulo `form-parser` deve ser removido do código.

## Comportamento Atual

Atualmente, este módulo não é utilizado por nenhuma funcionalidade do sistema. Em versões anteriores, o `form-parser` era usada conjuntamente com o módulo de injeção em formulários, que foi removido do sistema prin... | 1.0 | Deletar módulo `form-parser` do sistema - ## Comportamento Esperado

O módulo `form-parser` deve ser removido do código.

## Comportamento Atual

Atualmente, este módulo não é utilizado por nenhuma funcionalidade do sistema. Em versões anteriores, o `form-parser` era usada conjuntamente com o módulo de injeção em for... | process | deletar módulo form parser do sistema comportamento esperado o módulo form parser deve ser removido do código comportamento atual atualmente este módulo não é utilizado por nenhuma funcionalidade do sistema em versões anteriores o form parser era usada conjuntamente com o módulo de injeção em for... | 1 |

10,966 | 13,769,699,611 | IssuesEvent | 2020-10-07 19:02:05 | googleapis/python-pubsub | https://api.github.com/repos/googleapis/python-pubsub | closed | PubSub: emulator breaks under heavy load from Python | api: pubsub type: process | Hi.

It seems that when pubsub emulator is under high-load with python client (I haven't tested other clients, maybe it has the same issue), the pubsub emulator fails miserably even though all messages are under 10MB (they have ~500KB). Is there a way how to solve this from the Python client side?

I tried even the... | 1.0 | PubSub: emulator breaks under heavy load from Python - Hi.

It seems that when pubsub emulator is under high-load with python client (I haven't tested other clients, maybe it has the same issue), the pubsub emulator fails miserably even though all messages are under 10MB (they have ~500KB). Is there a way how to solv... | process | pubsub emulator breaks under heavy load from python hi it seems that when pubsub emulator is under high load with python client i haven t tested other clients maybe it has the same issue the pubsub emulator fails miserably even though all messages are under they have is there a way how to solve this ... | 1 |

15,733 | 11,688,280,324 | IssuesEvent | 2020-03-05 14:17:47 | danmermel/cryptario | https://api.github.com/repos/danmermel/cryptario | closed | rationalise lambda deployment | infrastructure | There seems to be a lot of repetition in this... we can turn it into a more streamlined script.

- The `./deploy.sh` should have an array of clue types (anagram, container etc).

- It should loop through this list, performing the tasks currently in `./<cluetype>/prepare.sh`

- then it should deploy the built zip and ... | 1.0 | rationalise lambda deployment - There seems to be a lot of repetition in this... we can turn it into a more streamlined script.

- The `./deploy.sh` should have an array of clue types (anagram, container etc).

- It should loop through this list, performing the tasks currently in `./<cluetype>/prepare.sh`

- then it ... | non_process | rationalise lambda deployment there seems to be a lot of repetition in this we can turn it into a more streamlined script the deploy sh should have an array of clue types anagram container etc it should loop through this list performing the tasks currently in prepare sh then it should de... | 0 |

14,583 | 17,703,499,590 | IssuesEvent | 2021-08-25 03:09:20 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | closed | Change term - verbatimLocality | Term - change Class - Location non-normative Process - complete | ## Change term

* Submitter: Kari Lintulaakso

* Justification (why is this change necessary?): mapping to ABCD term is incorrect

* Proponents (who needs this change): Kari Lintulaakso (Stan Blum agrees)

Current Term definition: https://dwc.tdwg.org/terms/#dwc:verbatimLocality

Proposed new attributes of th... | 1.0 | Change term - verbatimLocality - ## Change term

* Submitter: Kari Lintulaakso

* Justification (why is this change necessary?): mapping to ABCD term is incorrect

* Proponents (who needs this change): Kari Lintulaakso (Stan Blum agrees)

Current Term definition: https://dwc.tdwg.org/terms/#dwc:verbatimLocality... | process | change term verbatimlocality change term submitter kari lintulaakso justification why is this change necessary mapping to abcd term is incorrect proponents who needs this change kari lintulaakso stan blum agrees current term definition proposed new attributes of the term t... | 1 |

6,414 | 9,499,383,927 | IssuesEvent | 2019-04-24 06:13:19 | kiwicom/orbit-components | https://api.github.com/repos/kiwicom/orbit-components | closed | Create <Popover /> component | Enhancement Processing | ## Description

A popover can be used as a container for additional content that can be displayed on top of the page.

## Visual style

Zeplin: https://zpl.io/agrBvoM

### Additional information

- Pop... | 1.0 | Create <Popover /> component - ## Description

A popover can be used as a container for additional content that can be displayed on top of the page.

## Visual style

Zeplin: https://zpl.io/agrBvoM

##... | process | create component description a popover can be used as a container for additional content that can be displayed on top of the page visual style zeplin additional information popover can be used with different types of trigger for example button buttonlink tag or textlink ... | 1 |

19,131 | 25,185,682,926 | IssuesEvent | 2022-11-11 17:42:43 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [spanmetricsprocessor] Ability to either statefully route or aggregate span metrics across collectors | enhancement Stale processor/spanmetrics | **Is your feature request related to a problem? Please describe.**

The span metrics processor along with its examples provide documentation on how we can generate RED metrics and expose it against a prometheus endpoint that can be shipped to Prometheus like systems via remote write exporter. Based on high level experi... | 1.0 | [spanmetricsprocessor] Ability to either statefully route or aggregate span metrics across collectors - **Is your feature request related to a problem? Please describe.**

The span metrics processor along with its examples provide documentation on how we can generate RED metrics and expose it against a prometheus endpo... | process | ability to either statefully route or aggregate span metrics across collectors is your feature request related to a problem please describe the span metrics processor along with its examples provide documentation on how we can generate red metrics and expose it against a prometheus endpoint that can be shipp... | 1 |

251,754 | 21,521,962,922 | IssuesEvent | 2022-04-28 14:54:26 | damccorm/test-migration-target | https://api.github.com/repos/damccorm/test-migration-target | opened | beam_PreCommit_Python_Cron failing on test_create_uses_coder_for_pickling | test-failures test P2 | https://ci-beam.apache.org/job/beam_PreCommit_Python_Cron/5219/

Imported from Jira [BEAM-13769](https://issues.apache.org/jira/browse/BEAM-13769)

Reported by: kileys. | 2.0 | beam_PreCommit_Python_Cron failing on test_create_uses_coder_for_pickling - https://ci-beam.apache.org/job/beam_PreCommit_Python_Cron/5219/

Imported from Jira [BEAM-13769](https://issues.apache.org/jira/browse/BEAM-13769)

Reported by: kileys. | non_process | beam precommit python cron failing on test create uses coder for pickling imported from jira reported by kileys | 0 |

11,122 | 13,957,685,989 | IssuesEvent | 2020-10-24 08:08:48 | alexanderkotsev/geoportal | https://api.github.com/repos/alexanderkotsev/geoportal | opened | BE: Harvester in processing state | BE - Belgium Geoportal Harvesting process | Dear,

Just like a couple of weeks ago the harvester for the Flemish/Belgian portal stays in processing state since last Friday.

Thx

Bart | 1.0 | BE: Harvester in processing state - Dear,

Just like a couple of weeks ago the harvester for the Flemish/Belgian portal stays in processing state since last Friday.

Thx

Bart | process | be harvester in processing state dear just like a couple of weeks ago the harvester for the flemish belgian portal stays in processing state since last friday thx bart | 1 |

196,765 | 14,889,079,752 | IssuesEvent | 2021-01-20 20:51:32 | Oldes/Rebol-issues | https://api.github.com/repos/Oldes/Rebol-issues | closed | remove-each does not work on all series, could do so, though, and also on gobs, maps | CC.resolved Oldes.resolved Test.written Type.wish | _Submitted by:_ **meijeru**

``` rebol

is there a compelling reason why remove-each only works on block!, paren! and any-string! ??

```

I can see sensible application on the other types in series!:

``` rebol

- binary! vector! image!

- any-path! is perhaps a bit more doubtful

```

``` rebol

why not gob! and map! also?... | 1.0 | remove-each does not work on all series, could do so, though, and also on gobs, maps - _Submitted by:_ **meijeru**

``` rebol

is there a compelling reason why remove-each only works on block!, paren! and any-string! ??

```

I can see sensible application on the other types in series!:

``` rebol

- binary! vector! image... | non_process | remove each does not work on all series could do so though and also on gobs maps submitted by meijeru rebol is there a compelling reason why remove each only works on block paren and any string i can see sensible application on the other types in series rebol binary vector image... | 0 |

22,284 | 30,834,304,800 | IssuesEvent | 2023-08-02 06:00:04 | Open-EO/openeo-processes | https://api.github.com/repos/Open-EO/openeo-processes | opened | medoid process | new process | PS: this is a draft, needs some work, but have to get started somewhere, improvements welcome

**medoid**

## Context

In compositing methods, from a set of spectral band values, users want to select the one that is the least dissimilar to all other values. This is exactly what medoid does. The concept can also be... | 1.0 | medoid process - PS: this is a draft, needs some work, but have to get started somewhere, improvements welcome

**medoid**

## Context

In compositing methods, from a set of spectral band values, users want to select the one that is the least dissimilar to all other values. This is exactly what medoid does. The co... | process | medoid process ps this is a draft needs some work but have to get started somewhere improvements welcome medoid context in compositing methods from a set of spectral band values users want to select the one that is the least dissimilar to all other values this is exactly what medoid does the co... | 1 |

13,364 | 15,830,907,974 | IssuesEvent | 2021-04-06 13:04:04 | googleapis/python-os-config | https://api.github.com/repos/googleapis/python-os-config | closed | Release as production/stable | api: osconfig type: process | Package name: **google-cloud-os-config**

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

:calendar: **DO NOT RELEASE BEFORE 2020-0... | 1.0 | Release as production/stable - Package name: **google-cloud-os-config**

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

:calendar: ... | process | release as production stable package name google cloud os config current release beta proposed release ga instructions check the lists below adding tests documentation as required once all the required boxes are ticked please create a release and close this issue calendar ... | 1 |

182,429 | 30,848,075,173 | IssuesEvent | 2023-08-02 14:54:13 | CDCgov/prime-reportstream | https://api.github.com/repos/CDCgov/prime-reportstream | opened | Consolidating Figma file so that it is clear what is ready for dev | design experience | ## User story

As a designer, I want to create a single source of truth for engineers so that it's clear what's ready for development.

## Background & context

_A brief description of why we are doing this task, what user needs it meets, notes on the

approach if appropriate, and any useful context that would inform t... | 1.0 | Consolidating Figma file so that it is clear what is ready for dev - ## User story

As a designer, I want to create a single source of truth for engineers so that it's clear what's ready for development.

## Background & context

_A brief description of why we are doing this task, what user needs it meets, notes on th... | non_process | consolidating figma file so that it is clear what is ready for dev user story as a designer i want to create a single source of truth for engineers so that it s clear what s ready for development background context a brief description of why we are doing this task what user needs it meets notes on th... | 0 |

19,830 | 26,221,042,364 | IssuesEvent | 2023-01-04 14:52:15 | xcesco/kripton | https://api.github.com/repos/xcesco/kripton | closed | Add annotation processor parameter to generate date on schema files | enhancement orm module annotation-processor module | The default behavior till now, when a schema file is generated is to include the generation date. This implies that at every generation, the schema file is updated. To avoid an unuseful commit, a new parameter to the preprocessor needs to be defined. | 1.0 | Add annotation processor parameter to generate date on schema files - The default behavior till now, when a schema file is generated is to include the generation date. This implies that at every generation, the schema file is updated. To avoid an unuseful commit, a new parameter to the preprocessor needs to be defined. | process | add annotation processor parameter to generate date on schema files the default behavior till now when a schema file is generated is to include the generation date this implies that at every generation the schema file is updated to avoid an unuseful commit a new parameter to the preprocessor needs to be defined | 1 |

17,541 | 23,351,823,105 | IssuesEvent | 2022-08-10 01:23:10 | googleapis/google-cloud-ruby | https://api.github.com/repos/googleapis/google-cloud-ruby | closed | Warning: a recent release failed | type: process | The following release PRs may have failed:

* #19003 - The release job is 'autorelease: pending', but expected 'autorelease: published'.

* #19002 - The release job is 'autorelease: pending', but expected 'autorelease: published'.

* #19001 - The release job is 'autorelease: pending', but expected 'autorelease: published... | 1.0 | Warning: a recent release failed - The following release PRs may have failed:

* #19003 - The release job is 'autorelease: pending', but expected 'autorelease: published'.

* #19002 - The release job is 'autorelease: pending', but expected 'autorelease: published'.

* #19001 - The release job is 'autorelease: pending', b... | process | warning a recent release failed the following release prs may have failed the release job is autorelease pending but expected autorelease published the release job is autorelease pending but expected autorelease published the release job is autorelease pending but expected ... | 1 |

12,803 | 15,181,372,817 | IssuesEvent | 2021-02-15 03:19:35 | esmero/strawberryfield | https://api.github.com/repos/esmero/strawberryfield | closed | Edge case, strange situation, and ADO without a SBF field / a fix. | Digital Preservation Events and Subscriber JSON Postprocessors Symfony Services Typed Data and Search bug | # What?

Ok, this is super strange and requires more research, how it happened, why it happened.

@alliomeria during RC1 testing and content preparation managed to create an ADO without any SBF data. Not even the field itself. It is practically impossible since the SBF (field_descriptive_metadata) is required. The co... | 1.0 | Edge case, strange situation, and ADO without a SBF field / a fix. - # What?

Ok, this is super strange and requires more research, how it happened, why it happened.

@alliomeria during RC1 testing and content preparation managed to create an ADO without any SBF data. Not even the field itself. It is practically impo... | process | edge case strange situation and ado without a sbf field a fix what ok this is super strange and requires more research how it happened why it happened alliomeria during testing and content preparation managed to create an ado without any sbf data not even the field itself it is practically imposs... | 1 |

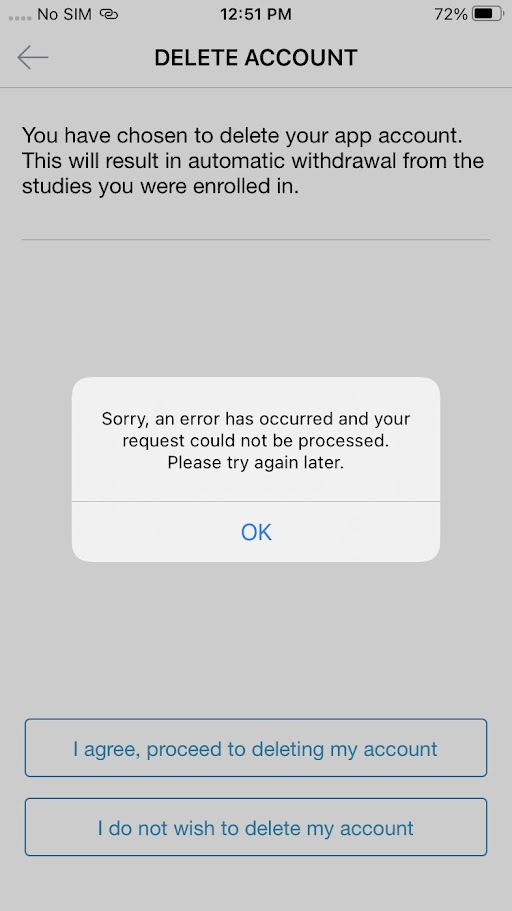

18,565 | 24,555,784,233 | IssuesEvent | 2022-10-12 15:45:45 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Mobile apps] Not able to delete the participant account > Error message is getting displayed | Bug Blocker P0 iOS Android Process: Fixed Process: Tested QA Process: Tested dev | AR: Not able to delete the participant account > Error message is getting displayed

ER: Participants should be able to delete their app account

| 3.0 | [Mobile apps] Not able to delete the participant account > Error message is getting displayed - AR: Not able to delete the participant account > Error message is getting displayed

ER: Participants should be able to delete their app account

detected in sails-1.5.2.tgz - autoclosed | security vulnerability | ## CVE-2021-44908 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>sails-1.5.2.tgz</b></p></summary>

<p>API-driven framework for building realtime apps, using MVC conventions (based on ... | True | CVE-2021-44908 (High) detected in sails-1.5.2.tgz - autoclosed - ## CVE-2021-44908 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>sails-1.5.2.tgz</b></p></summary>

<p>API-driven frame... | non_process | cve high detected in sails tgz autoclosed cve high severity vulnerability vulnerable library sails tgz api driven framework for building realtime apps using mvc conventions based on express and socket io library home page a href path to dependency file application... | 0 |

2,797 | 5,728,382,364 | IssuesEvent | 2017-04-21 00:43:19 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | System.Diagnostics.Tests.ProcessTests.TestEnableRaiseEvents failed in CI with "System.ComponentModel.Win32Exception" | area-System.Diagnostics.Process blocking-clean-ci test-run-core | Failed test: System.Diagnostics.Tests.ProcessTests.TestEnableRaiseEvents

Configuration: OuterLoop_Fedora23_release (build#147)

Detail: https://ci.dot.net/job/dotnet_corefx/job/master/job/outerloop_fedora23_release/147/consoleText

~~~

System.Diagnostics.Tests.ProcessTests.TestEnableRaiseEvents(enable: True) [F... | 1.0 | System.Diagnostics.Tests.ProcessTests.TestEnableRaiseEvents failed in CI with "System.ComponentModel.Win32Exception" - Failed test: System.Diagnostics.Tests.ProcessTests.TestEnableRaiseEvents

Configuration: OuterLoop_Fedora23_release (build#147)

Detail: https://ci.dot.net/job/dotnet_corefx/job/master/job/outerloo... | process | system diagnostics tests processtests testenableraiseevents failed in ci with system componentmodel failed test system diagnostics tests processtests testenableraiseevents configuration outerloop release build detail system diagnostics tests processtests testenableraiseevents enable true... | 1 |

15,153 | 5,071,987,968 | IssuesEvent | 2016-12-26 18:04:53 | exercism/xjava | https://api.github.com/repos/exercism/xjava | closed | raindrops: make test failures easier to troubleshoot | code good first patch | [`raindrops`](https://github.com/exercism/xjava/blob/master/exercises/raindrops/src/test/java/RaindropsTest.java) uses the JUnit [`Parameterized`](https://github.com/junit-team/junit4/wiki/parameterized-tests) test runner. This was done to make the test more compact (and once you learn how the mechanism works, easier ... | 1.0 | raindrops: make test failures easier to troubleshoot - [`raindrops`](https://github.com/exercism/xjava/blob/master/exercises/raindrops/src/test/java/RaindropsTest.java) uses the JUnit [`Parameterized`](https://github.com/junit-team/junit4/wiki/parameterized-tests) test runner. This was done to make the test more compa... | non_process | raindrops make test failures easier to troubleshoot uses the junit test runner this was done to make the test more compact and once you learn how the mechanism works easier to read however when a test fails the error message does not indicate which value failed this makes it really difficult to ... | 0 |