Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

7,951

| 11,137,559,603

|

IssuesEvent

|

2019-12-20 19:41:48

|

openopps/openopps-platform

|

https://api.github.com/repos/openopps/openopps-platform

|

closed

|

Application review page: work experience should in order selected

|

Apply Process Approved Requirements Ready State Dept.

|

Who: applicants

What: reviewing their work experience should display in order selected

Why: to be in line with the resume and USAJOBS

Acceptance Criteria:

Student application - review page: When a student applies, the work experience on the experience page should display in the order selected in Open Opps.

- the default order will be the sorted order from USAJOBS however the order can be changed in Open Opps. Whatever is saved in Open Opps is the order the review page should display

- the order saved should also be what is sent to ATP

|

1.0

|

Application review page: work experience should in order selected - Who: applicants

What: reviewing their work experience should display in order selected

Why: to be in line with the resume and USAJOBS

Acceptance Criteria:

Student application - review page: When a student applies, the work experience on the experience page should display in the order selected in Open Opps.

- the default order will be the sorted order from USAJOBS however the order can be changed in Open Opps. Whatever is saved in Open Opps is the order the review page should display

- the order saved should also be what is sent to ATP

|

process

|

application review page work experience should in order selected who applicants what reviewing their work experience should display in order selected why to be in line with the resume and usajobs acceptance criteria student application review page when a student applies the work experience on the experience page should display in the order selected in open opps the default order will be the sorted order from usajobs however the order can be changed in open opps whatever is saved in open opps is the order the review page should display the order saved should also be what is sent to atp

| 1

|

506,702

| 14,671,508,155

|

IssuesEvent

|

2020-12-30 08:21:06

|

TeamSTEP/project-witch-one

|

https://api.github.com/repos/TeamSTEP/project-witch-one

|

opened

|

[Task] Setup map editing environment

|

High Priority Hoon Kim add feature

|

# Task Summary

The team has decided to use Tiled as the main map editing tool and completely separate the game scene logic from the level design. This means that the Tiled map editor must be configured to allow the map designer to easily work with the tool.

## Subtasks

This ticket will be considered as finished when the following items are fulfilled:

- [ ] add object types

- [ ] configure terrain brushes for all the tilesets excluding ones we can't*

- [ ] add object templates

- [ ] set up automapping rules for all the cliff faces

- [ ] write documentation about using Tiled for this project

(*The house tiles (both interiors and the exteriors) are only a single tile high while the player character sprite is 2 tiles high. So we should not touch the house tileset until this problem is solved)

## Difficulty

3/10

## Estimated Implementation Time

> Note: the task start date is considered to be the day the ticket was opened or the next day when the dependent task was closed

- **Optimistic**: 1 week

- **Normal**: 10 days

- **Pessimistic**: 2 weeks

|

1.0

|

[Task] Setup map editing environment - # Task Summary

The team has decided to use Tiled as the main map editing tool and completely separate the game scene logic from the level design. This means that the Tiled map editor must be configured to allow the map designer to easily work with the tool.

## Subtasks

This ticket will be considered as finished when the following items are fulfilled:

- [ ] add object types

- [ ] configure terrain brushes for all the tilesets excluding ones we can't*

- [ ] add object templates

- [ ] set up automapping rules for all the cliff faces

- [ ] write documentation about using Tiled for this project

(*The house tiles (both interiors and the exteriors) are only a single tile high while the player character sprite is 2 tiles high. So we should not touch the house tileset until this problem is solved)

## Difficulty

3/10

## Estimated Implementation Time

> Note: the task start date is considered to be the day the ticket was opened or the next day when the dependent task was closed

- **Optimistic**: 1 week

- **Normal**: 10 days

- **Pessimistic**: 2 weeks

|

non_process

|

setup map editing environment task summary the team has decided to use tiled as the main map editing tool and completely separate the game scene logic from the level design this means that the tiled map editor must be configured to allow the map designer to easily work with the tool subtasks this ticket will be considered as finished when the following items are fulfilled add object types configure terrain brushes for all the tilesets excluding ones we can t add object templates set up automapping rules for all the cliff faces write documentation about using tiled for this project the house tiles both interiors and the exteriors are only a single tile high while the player character sprite is tiles high so we should not touch the house tileset until this problem is solved difficulty estimated implementation time note the task start date is considered to be the day the ticket was opened or the next day when the dependent task was closed optimistic week normal days pessimistic weeks

| 0

|

3,109

| 2,607,984,253

|

IssuesEvent

|

2015-02-26 00:50:59

|

chrsmithdemos/zen-coding

|

https://api.github.com/repos/chrsmithdemos/zen-coding

|

opened

|

Custom Abbreviations

|

auto-migrated Priority-Medium Type-Defect

|

```

I use the Komodo edit extension for zen coding. I was wondering would it be

possible to set custom abbreviations with a custom out put?

```

-----

Original issue reported on code.google.com by `jordan.r...@kasacapital.com` on 9 Aug 2011 at 8:10

|

1.0

|

Custom Abbreviations - ```

I use the Komodo edit extension for zen coding. I was wondering would it be

possible to set custom abbreviations with a custom out put?

```

-----

Original issue reported on code.google.com by `jordan.r...@kasacapital.com` on 9 Aug 2011 at 8:10

|

non_process

|

custom abbreviations i use the komodo edit extension for zen coding i was wondering would it be possible to set custom abbreviations with a custom out put original issue reported on code google com by jordan r kasacapital com on aug at

| 0

|

14,747

| 18,018,110,222

|

IssuesEvent

|

2021-09-16 15:56:32

|

googleapis/gapic-showcase

|

https://api.github.com/repos/googleapis/gapic-showcase

|

closed

|

Dependency Dashboard

|

priority: p2 type: process

|

This issue provides visibility into Renovate updates and their statuses. [Learn more](https://docs.renovatebot.com/key-concepts/dashboard/)

## Awaiting Schedule

These updates are awaiting their schedule. Click on a checkbox to get an update now.

- [ ] <!-- unschedule-branch=renovate/com_google_googleapis-digest -->chore(deps): update com_google_googleapis commit hash to 48d9fb8

- [ ] <!-- unschedule-branch=renovate/google.golang.org-genproto-digest -->fix(deps): update google.golang.org/genproto commit hash to 3192f97

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/google.golang.org-api-0.x -->[fix(deps): update module google.golang.org/api to v0.57.0](../pull/876)

---

- [ ] <!-- manual job -->Check this box to trigger a request for Renovate to run again on this repository

|

1.0

|

Dependency Dashboard - This issue provides visibility into Renovate updates and their statuses. [Learn more](https://docs.renovatebot.com/key-concepts/dashboard/)

## Awaiting Schedule

These updates are awaiting their schedule. Click on a checkbox to get an update now.

- [ ] <!-- unschedule-branch=renovate/com_google_googleapis-digest -->chore(deps): update com_google_googleapis commit hash to 48d9fb8

- [ ] <!-- unschedule-branch=renovate/google.golang.org-genproto-digest -->fix(deps): update google.golang.org/genproto commit hash to 3192f97

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/google.golang.org-api-0.x -->[fix(deps): update module google.golang.org/api to v0.57.0](../pull/876)

---

- [ ] <!-- manual job -->Check this box to trigger a request for Renovate to run again on this repository

|

process

|

dependency dashboard this issue provides visibility into renovate updates and their statuses awaiting schedule these updates are awaiting their schedule click on a checkbox to get an update now chore deps update com google googleapis commit hash to fix deps update google golang org genproto commit hash to open these updates have all been created already click a checkbox below to force a retry rebase of any pull check this box to trigger a request for renovate to run again on this repository

| 1

|

63,810

| 3,201,034,420

|

IssuesEvent

|

2015-10-02 02:06:03

|

cs2103aug2015-f10-4j/main

|

https://api.github.com/repos/cs2103aug2015-f10-4j/main

|

closed

|

A user should be able to duplicate recurring tasks/events with 1 command

|

component.parser priority.high type.story

|

so that the user does not have to re-enter all the details for recurring tasks/events

|

1.0

|

A user should be able to duplicate recurring tasks/events with 1 command - so that the user does not have to re-enter all the details for recurring tasks/events

|

non_process

|

a user should be able to duplicate recurring tasks events with command so that the user does not have to re enter all the details for recurring tasks events

| 0

|

19,674

| 26,031,336,144

|

IssuesEvent

|

2022-12-21 21:38:41

|

MicrosoftDocs/azure-devops-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-devops-docs

|

closed

|

Passing Between Stages Is Not correct

|

doc-enhancement devops/prod Pri2 devops-cicd-process/tech

|

The example for passing a variable from a deployment task across stages is incorrect as is. However, even with the proper syntax it still does not work. Please update the example with a working way to pass vars between a deployment job and a stage

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 5aeeaace-1c5b-a51b-e41f-f25b806155b8

* Version Independent ID: fd7ff690-b2e4-41c7-a342-e528b911c6e1

* Content: [Deployment jobs - Azure Pipelines](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/deployment-jobs?view=azure-devops#support-for-output-variables)

* Content Source: [docs/pipelines/process/deployment-jobs.md](https://github.com/MicrosoftDocs/azure-devops-docs/blob/master/docs/pipelines/process/deployment-jobs.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @juliakm

* Microsoft Alias: **jukullam**

|

1.0

|

Passing Between Stages Is Not correct -

The example for passing a variable from a deployment task across stages is incorrect as is. However, even with the proper syntax it still does not work. Please update the example with a working way to pass vars between a deployment job and a stage

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 5aeeaace-1c5b-a51b-e41f-f25b806155b8

* Version Independent ID: fd7ff690-b2e4-41c7-a342-e528b911c6e1

* Content: [Deployment jobs - Azure Pipelines](https://docs.microsoft.com/en-us/azure/devops/pipelines/process/deployment-jobs?view=azure-devops#support-for-output-variables)

* Content Source: [docs/pipelines/process/deployment-jobs.md](https://github.com/MicrosoftDocs/azure-devops-docs/blob/master/docs/pipelines/process/deployment-jobs.md)

* Product: **devops**

* Technology: **devops-cicd-process**

* GitHub Login: @juliakm

* Microsoft Alias: **jukullam**

|

process

|

passing between stages is not correct the example for passing a variable from a deployment task across stages is incorrect as is however even with the proper syntax it still does not work please update the example with a working way to pass vars between a deployment job and a stage document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source product devops technology devops cicd process github login juliakm microsoft alias jukullam

| 1

|

127,308

| 12,312,049,223

|

IssuesEvent

|

2020-05-12 13:23:17

|

HEPData/hepdata_lib

|

https://api.github.com/repos/HEPData/hepdata_lib

|

opened

|

readthedocs: Module index not available anymore

|

bug documentation

|

See https://hepdata-lib.readthedocs.io/en/latest/source/hepdata_lib.html

The module index does not build anymore

|

1.0

|

readthedocs: Module index not available anymore - See https://hepdata-lib.readthedocs.io/en/latest/source/hepdata_lib.html

The module index does not build anymore

|

non_process

|

readthedocs module index not available anymore see the module index does not build anymore

| 0

|

68,803

| 17,405,651,400

|

IssuesEvent

|

2021-08-03 05:17:07

|

aws/aws-sam-cli

|

https://api.github.com/repos/aws/aws-sam-cli

|

closed

|

How is the name of the image and tag generated?

|

area/build area/deploy type/question

|

### Description:

1. `sam build` generates an image that seems to be tagged with the name of the function. I would like to override this my choice. I tried setting `Properties.ImageUri` in `template.yaml`. I also used `--parameter-overrides ImageUri=myimage` in `sam build` command. But, in `.aws-sam/build/template.yaml` these changes are not taking effect.

2. `sam local invoke function -e events/event.json` seems to build yet another image with tag as `rapid-1.18.1`. What is this tag? How do I override it? Why is a new image generated at all?

3. `sam deploy --guided` allows me to provide the image repository URI. However, the image to be pushed uses a tag that is not recognizable. How do I override it? Why not use the one that was built and invoked locally as the default?

### Steps to reproduce:

<!-- Provide detailed steps to replicate the bug, including steps from third party tools (CDK, etc.) -->

1. Use any project created with `sam init` and package type as `Image`; the simpler the better. Run `sam build` and note the image in the `Successfully tagged` message.

2. Invoke the recently built image with `sam local invoke functionName -e events/event.json`. A new image is built.

3. Deploy with `sam deploy --guided`; the image to be pushed uses yet another tag name.

### Observed result:

<!-- Please provide command output with `--debug` flag set.-->

### Expected result:

<!-- Describe what you expected.-->

1. Ability to provide image names.

2. Use same tag for local testing and deployment.

### Additional environment details (Ex: Windows, Mac, Amazon Linux etc)

1. OS: Mac

2. If using SAM CLI, `sam --version`: `SAM CLI, version 1.18.1`

3. AWS region: `ap-south-1`

`Add --debug flag to any SAM CLI commands you are running`

|

1.0

|

How is the name of the image and tag generated? - ### Description:

1. `sam build` generates an image that seems to be tagged with the name of the function. I would like to override this my choice. I tried setting `Properties.ImageUri` in `template.yaml`. I also used `--parameter-overrides ImageUri=myimage` in `sam build` command. But, in `.aws-sam/build/template.yaml` these changes are not taking effect.

2. `sam local invoke function -e events/event.json` seems to build yet another image with tag as `rapid-1.18.1`. What is this tag? How do I override it? Why is a new image generated at all?

3. `sam deploy --guided` allows me to provide the image repository URI. However, the image to be pushed uses a tag that is not recognizable. How do I override it? Why not use the one that was built and invoked locally as the default?

### Steps to reproduce:

<!-- Provide detailed steps to replicate the bug, including steps from third party tools (CDK, etc.) -->

1. Use any project created with `sam init` and package type as `Image`; the simpler the better. Run `sam build` and note the image in the `Successfully tagged` message.

2. Invoke the recently built image with `sam local invoke functionName -e events/event.json`. A new image is built.

3. Deploy with `sam deploy --guided`; the image to be pushed uses yet another tag name.

### Observed result:

<!-- Please provide command output with `--debug` flag set.-->

### Expected result:

<!-- Describe what you expected.-->

1. Ability to provide image names.

2. Use same tag for local testing and deployment.

### Additional environment details (Ex: Windows, Mac, Amazon Linux etc)

1. OS: Mac

2. If using SAM CLI, `sam --version`: `SAM CLI, version 1.18.1`

3. AWS region: `ap-south-1`

`Add --debug flag to any SAM CLI commands you are running`

|

non_process

|

how is the name of the image and tag generated description sam build generates an image that seems to be tagged with the name of the function i would like to override this my choice i tried setting properties imageuri in template yaml i also used parameter overrides imageuri myimage in sam build command but in aws sam build template yaml these changes are not taking effect sam local invoke function e events event json seems to build yet another image with tag as rapid what is this tag how do i override it why is a new image generated at all sam deploy guided allows me to provide the image repository uri however the image to be pushed uses a tag that is not recognizable how do i override it why not use the one that was built and invoked locally as the default steps to reproduce use any project created with sam init and package type as image the simpler the better run sam build and note the image in the successfully tagged message invoke the recently built image with sam local invoke functionname e events event json a new image is built deploy with sam deploy guided the image to be pushed uses yet another tag name observed result expected result ability to provide image names use same tag for local testing and deployment additional environment details ex windows mac amazon linux etc os mac if using sam cli sam version sam cli version aws region ap south add debug flag to any sam cli commands you are running

| 0

|

11,740

| 14,581,817,109

|

IssuesEvent

|

2020-12-18 11:19:32

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

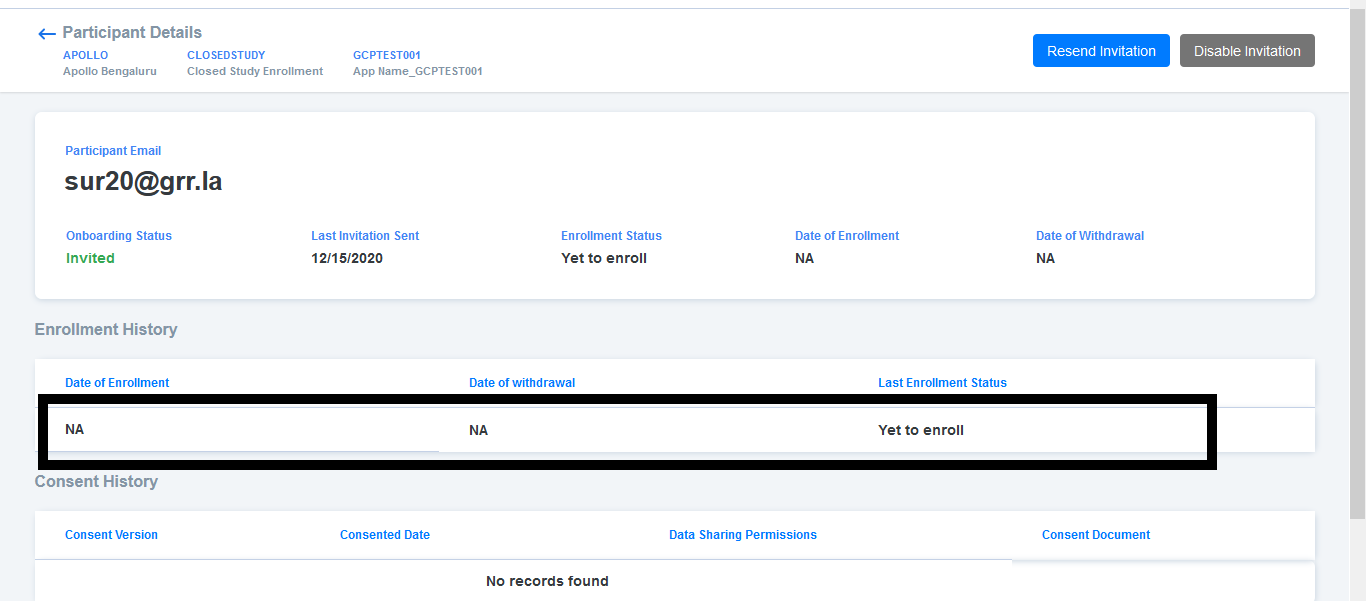

Participant registry >Enrollment history > 'Yet to enroll' status is displaying

|

Bug P2 Participant datastore Process: Fixed Process: Tested QA Process: Tested dev

|

Steps

1. Add/Invite a Participant to a Closed study

2. Navigate to Participant details page and observe Enrollment history

AR : History is displaying with 'Yet to enroll'

ER : 'No records found' text should be displayed when there are no records present in Enrollment history

|

3.0

|

Participant registry >Enrollment history > 'Yet to enroll' status is displaying - Steps

1. Add/Invite a Participant to a Closed study

2. Navigate to Participant details page and observe Enrollment history

AR : History is displaying with 'Yet to enroll'

ER : 'No records found' text should be displayed when there are no records present in Enrollment history

|

process

|

participant registry enrollment history yet to enroll status is displaying steps add invite a participant to a closed study navigate to participant details page and observe enrollment history ar history is displaying with yet to enroll er no records found text should be displayed when there are no records present in enrollment history

| 1

|

15,494

| 19,703,230,306

|

IssuesEvent

|

2022-01-12 18:49:52

|

googleapis/cloud-profiler-nodejs

|

https://api.github.com/repos/googleapis/cloud-profiler-nodejs

|

opened

|

Your .repo-metadata.json file has a problem 🤒

|

type: process repo-metadata: lint

|

You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'profiler' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can close this issue.

Reach out to **go/github-automation** if you have any questions.

|

1.0

|

Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'profiler' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can close this issue.

Reach out to **go/github-automation** if you have any questions.

|

process

|

your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 api shortname profiler invalid in repo metadata json ☝️ once you correct these problems you can close this issue reach out to go github automation if you have any questions

| 1

|

966

| 3,422,948,921

|

IssuesEvent

|

2015-12-09 02:13:19

|

MaretEngineering/MROV

|

https://api.github.com/repos/MaretEngineering/MROV

|

closed

|

XBOX button toggle

|

Processing question

|

It currently uses a debouncing method which requires a wait of 175 ms every time you push the button. I'm wondering if there is a way which doesn't require a delay.

|

1.0

|

XBOX button toggle - It currently uses a debouncing method which requires a wait of 175 ms every time you push the button. I'm wondering if there is a way which doesn't require a delay.

|

process

|

xbox button toggle it currently uses a debouncing method which requires a wait of ms every time you push the button i m wondering if there is a way which doesn t require a delay

| 1

|

179,873

| 30,316,587,109

|

IssuesEvent

|

2023-07-10 15:58:25

|

zeitgeistpm/ui

|

https://api.github.com/repos/zeitgeistpm/ui

|

closed

|

Review and remove notifications where no long applicable.

|

design

|

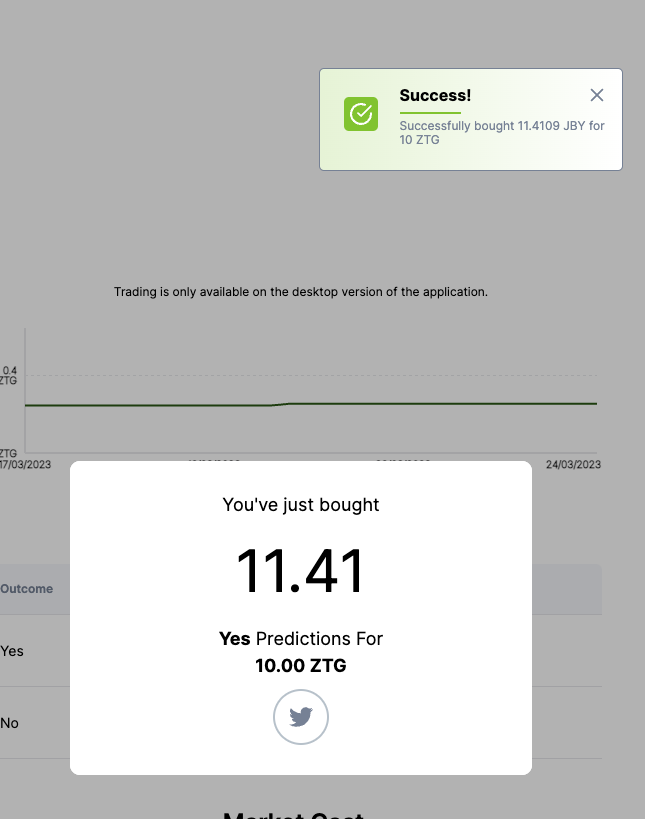

The notifications appearing in the top right corner doesn't seem to be needed(at least in most cases) in the new designs.

**Task: review and remove notifications where they aren't needed.**

_Implement new designs for them when and if they are needed as the current design doesnt really fit imho._

For example when buying the modal shows a success step so they can be removed:

|

1.0

|

Review and remove notifications where no long applicable. - The notifications appearing in the top right corner doesn't seem to be needed(at least in most cases) in the new designs.

**Task: review and remove notifications where they aren't needed.**

_Implement new designs for them when and if they are needed as the current design doesnt really fit imho._

For example when buying the modal shows a success step so they can be removed:

|

non_process

|

review and remove notifications where no long applicable the notifications appearing in the top right corner doesn t seem to be needed at least in most cases in the new designs task review and remove notifications where they aren t needed implement new designs for them when and if they are needed as the current design doesnt really fit imho for example when buying the modal shows a success step so they can be removed

| 0

|

16,756

| 21,925,277,782

|

IssuesEvent

|

2022-05-23 02:59:10

|

quark-engine/quark-engine

|

https://api.github.com/repos/quark-engine/quark-engine

|

closed

|

Support loading rules recursively from the rule path

|

issue-processing-state-06

|

**Is your feature request related to a problem? Please describe.**

In quark-engine/quark-rules#26, we plan to increase the visibility of the README.md by moving all rules into a folder. However, since Quark currently only searches rules right in the given path, this change will make it fail to find the default ruleset.

**Describe the solution you'd like**

I suggest adding the ability to load rules recursively from a path. In this way, Quark is compatible with both the old and new versions of the default rulesets.

|

1.0

|

Support loading rules recursively from the rule path - **Is your feature request related to a problem? Please describe.**

In quark-engine/quark-rules#26, we plan to increase the visibility of the README.md by moving all rules into a folder. However, since Quark currently only searches rules right in the given path, this change will make it fail to find the default ruleset.

**Describe the solution you'd like**

I suggest adding the ability to load rules recursively from a path. In this way, Quark is compatible with both the old and new versions of the default rulesets.

|

process

|

support loading rules recursively from the rule path is your feature request related to a problem please describe in quark engine quark rules we plan to increase the visibility of the readme md by moving all rules into a folder however since quark currently only searches rules right in the given path this change will make it fail to find the default ruleset describe the solution you d like i suggest adding the ability to load rules recursively from a path in this way quark is compatible with both the old and new versions of the default rulesets

| 1

|

139,690

| 20,926,173,926

|

IssuesEvent

|

2022-03-24 23:19:13

|

adobe/design-website

|

https://api.github.com/repos/adobe/design-website

|

opened

|

Review jobs posting formating for policy and About Adobe Design sections

|

polish design-question

|

Review and confirm that the sizing for the policy and About Adobe Design section have good readability. They currently feel a bit wide and difficult to take in.

Work with Jos on spacing.

<img width="838" alt="Screen Shot 2022-03-24 at 4 17 37 PM" src="https://user-images.githubusercontent.com/100241986/160025320-8cc774c1-79d4-416a-bf2e-554a58a51115.png">

|

1.0

|

Review jobs posting formating for policy and About Adobe Design sections - Review and confirm that the sizing for the policy and About Adobe Design section have good readability. They currently feel a bit wide and difficult to take in.

Work with Jos on spacing.

<img width="838" alt="Screen Shot 2022-03-24 at 4 17 37 PM" src="https://user-images.githubusercontent.com/100241986/160025320-8cc774c1-79d4-416a-bf2e-554a58a51115.png">

|

non_process

|

review jobs posting formating for policy and about adobe design sections review and confirm that the sizing for the policy and about adobe design section have good readability they currently feel a bit wide and difficult to take in work with jos on spacing img width alt screen shot at pm src

| 0

|

20,253

| 26,871,598,250

|

IssuesEvent

|

2023-02-04 14:36:41

|

ankidroid/Anki-Android

|

https://api.github.com/repos/ankidroid/Anki-Android

|

closed

|

Introduce `assertEquals` for `int`

|

Priority-Low Good First Issue! Stale Test process

|

Currently `assertEquals` only consider Long and Object. In Java, Int were converted to Long, but in Kotlin they are converted to Object. Which force conversion to explicitly write `toLong` to get the proper overloaded method to be invoked.

I suggest adding in AnkiDroid/src/test/java/com/ichi2/testutils/AnkiAssert.java the following methods

```java

public static void assertEquals(int left, int right) {

org.junit.Assert.assertEquals((long) left, (long) right);

}

public static void assertEquals(String message, int left, int right) {

org.junit.Assert.assertEquals(message, (long) left, (long) right);

}

```

and going through the 121 results of the search of `assertEquals\(.*long` in the codebase to uses this new method instead of the previous, and simplify the equality assertion slightly

|

1.0

|

Introduce `assertEquals` for `int` - Currently `assertEquals` only consider Long and Object. In Java, Int were converted to Long, but in Kotlin they are converted to Object. Which force conversion to explicitly write `toLong` to get the proper overloaded method to be invoked.

I suggest adding in AnkiDroid/src/test/java/com/ichi2/testutils/AnkiAssert.java the following methods

```java

public static void assertEquals(int left, int right) {

org.junit.Assert.assertEquals((long) left, (long) right);

}

public static void assertEquals(String message, int left, int right) {

org.junit.Assert.assertEquals(message, (long) left, (long) right);

}

```

and going through the 121 results of the search of `assertEquals\(.*long` in the codebase to uses this new method instead of the previous, and simplify the equality assertion slightly

|

process

|

introduce assertequals for int currently assertequals only consider long and object in java int were converted to long but in kotlin they are converted to object which force conversion to explicitly write tolong to get the proper overloaded method to be invoked i suggest adding in ankidroid src test java com testutils ankiassert java the following methods java public static void assertequals int left int right org junit assert assertequals long left long right public static void assertequals string message int left int right org junit assert assertequals message long left long right and going through the results of the search of assertequals long in the codebase to uses this new method instead of the previous and simplify the equality assertion slightly

| 1

|

84,539

| 10,544,983,200

|

IssuesEvent

|

2019-10-02 18:07:54

|

aragon/aragon-apps

|

https://api.github.com/repos/aragon/aragon-apps

|

closed

|

Determine what notifications we want

|

app: finance app: token manager app: vault app: voting component: frontend design: request enhancement

|

For the notifications feature to be implemented, we should decide on what notifications we want from each app.

Furthermore, there are technically two types of notifications: "global" and "direct", where global notifications are for all users of a DAO and direct notification is for a specific user or a specific set of users.

We should think about signal/noise ratio when deciding.

|

1.0

|

Determine what notifications we want - For the notifications feature to be implemented, we should decide on what notifications we want from each app.

Furthermore, there are technically two types of notifications: "global" and "direct", where global notifications are for all users of a DAO and direct notification is for a specific user or a specific set of users.

We should think about signal/noise ratio when deciding.

|

non_process

|

determine what notifications we want for the notifications feature to be implemented we should decide on what notifications we want from each app furthermore there are technically two types of notifications global and direct where global notifications are for all users of a dao and direct notification is for a specific user or a specific set of users we should think about signal noise ratio when deciding

| 0

|

22,733

| 32,054,791,764

|

IssuesEvent

|

2023-09-24 00:44:17

|

h4sh5/pypi-auto-scanner

|

https://api.github.com/repos/h4sh5/pypi-auto-scanner

|

opened

|

hpcflow-new2 0.2.0a108 has 2 GuardDog issues

|

guarddog exec-base64 silent-process-execution

|

https://pypi.org/project/hpcflow-new2

https://inspector.pypi.io/project/hpcflow-new2

```{

"dependency": "hpcflow-new2",

"version": "0.2.0a108",

"result": {

"issues": 2,

"errors": {},

"results": {

"exec-base64": [

{

"location": "hpcflow_new2-0.2.0a108/hpcflow/sdk/submission/jobscript.py:990",

"code": " init_proc = subprocess.Popen(\n args=args,\n cwd=str(self.workflow.path),\n creationflags=subprocess.CREATE_NO_WINDOW,\n )",

"message": "This package contains a call to the `eval` function with a `base64` encoded string as argument.\nThis is a common method used to hide a malicious payload in a module as static analysis will not decode the\nstring.\n"

}

],

"silent-process-execution": [

{

"location": "hpcflow_new2-0.2.0a108/hpcflow/sdk/helper/helper.py:111",

"code": " proc = subprocess.Popen(\n args=args,\n stdin=subprocess.DEVNULL,\n stdout=subprocess.DEVNULL,\n stderr=subprocess.DEVNULL,\n **kwargs,\n )",

"message": "This package is silently executing an external binary, redirecting stdout, stderr and stdin to /dev/null"

}

]

},

"path": "/tmp/tmp7f_yw727/hpcflow-new2"

}

}```

|

1.0

|

hpcflow-new2 0.2.0a108 has 2 GuardDog issues - https://pypi.org/project/hpcflow-new2

https://inspector.pypi.io/project/hpcflow-new2

```{

"dependency": "hpcflow-new2",

"version": "0.2.0a108",

"result": {

"issues": 2,

"errors": {},

"results": {

"exec-base64": [

{

"location": "hpcflow_new2-0.2.0a108/hpcflow/sdk/submission/jobscript.py:990",

"code": " init_proc = subprocess.Popen(\n args=args,\n cwd=str(self.workflow.path),\n creationflags=subprocess.CREATE_NO_WINDOW,\n )",

"message": "This package contains a call to the `eval` function with a `base64` encoded string as argument.\nThis is a common method used to hide a malicious payload in a module as static analysis will not decode the\nstring.\n"

}

],

"silent-process-execution": [

{

"location": "hpcflow_new2-0.2.0a108/hpcflow/sdk/helper/helper.py:111",

"code": " proc = subprocess.Popen(\n args=args,\n stdin=subprocess.DEVNULL,\n stdout=subprocess.DEVNULL,\n stderr=subprocess.DEVNULL,\n **kwargs,\n )",

"message": "This package is silently executing an external binary, redirecting stdout, stderr and stdin to /dev/null"

}

]

},

"path": "/tmp/tmp7f_yw727/hpcflow-new2"

}

}```

|

process

|

hpcflow has guarddog issues dependency hpcflow version result issues errors results exec location hpcflow hpcflow sdk submission jobscript py code init proc subprocess popen n args args n cwd str self workflow path n creationflags subprocess create no window n message this package contains a call to the eval function with a encoded string as argument nthis is a common method used to hide a malicious payload in a module as static analysis will not decode the nstring n silent process execution location hpcflow hpcflow sdk helper helper py code proc subprocess popen n args args n stdin subprocess devnull n stdout subprocess devnull n stderr subprocess devnull n kwargs n message this package is silently executing an external binary redirecting stdout stderr and stdin to dev null path tmp hpcflow

| 1

|

294,805

| 25,406,757,221

|

IssuesEvent

|

2022-11-22 15:49:18

|

TDAmeritrade/stumpy

|

https://api.github.com/repos/TDAmeritrade/stumpy

|

closed

|

Split Coverage Tests for Github Actions

|

testing

|

As our test suite gets longer, the `coverage` tests, which are executed in pure Python, will continue to need more time to complete. GIven that Github Actions has a job time limit, it may be beneficial to further split up the `coverage` testing time into two separate jobs (instead of all in one). However, this will require storing the `.coverage` file between jobs. This can be accomplished by using "Uploading/Downloading Artifacts" (see [this article](https://levelup.gitconnected.com/github-actions-how-to-share-data-between-jobs-fc1547defc3e)):

To upload a file:

```

steps:

- uses: actions/checkout@v2

- run: mkdir -p path/to/artifact

- run: echo hello > path/to/artifact/world.txt

- uses: actions/upload-artifact@v2

with:

name: my-artifact

path: path/to/artifact/world.txt

```

In our case, it might look something like:

```

coverage-testing:

runs-on: ${{ matrix.os }}

strategy:

matrix:

os: [ubuntu-latest, macos-latest, windows-latest]

python-version: ['3.7', '3.8', '3.9', '3.10']

steps:

- uses: actions/checkout@v2

- name: Set Up Python

uses: actions/setup-python@v2

with:

python-version: ${{ matrix.python-version }}

- name: Display Python Version

run: python -c "import sys; print(sys.version)"

shell: bash

- name: Install STUMPY And Other Dependencies

run: pip install --editable .[ci]

shell: bash

- name: Run Black

run: black --check --diff ./

shell: bash

- name: Run Flake8

run: flake8 ./

shell: bash

- name: Run Coverage Tests

run: ./test.sh coverage

shell: bash

- uses: actions/upload-artifact@v2

with:

name: coverage_results

path: ./.coverage

```

Then, for the second half of the job you can retrieve the file:

```

steps:

- uses: actions/checkout@v2

- uses: actions/download-artifact@v2

with:

name: my-artifact

```

or in our case:

```

steps:

- uses: actions/checkout@v2

- uses: actions/download-artifact@v2

with:

name: coverage_results

```

|

1.0

|

Split Coverage Tests for Github Actions - As our test suite gets longer, the `coverage` tests, which are executed in pure Python, will continue to need more time to complete. GIven that Github Actions has a job time limit, it may be beneficial to further split up the `coverage` testing time into two separate jobs (instead of all in one). However, this will require storing the `.coverage` file between jobs. This can be accomplished by using "Uploading/Downloading Artifacts" (see [this article](https://levelup.gitconnected.com/github-actions-how-to-share-data-between-jobs-fc1547defc3e)):

To upload a file:

```

steps:

- uses: actions/checkout@v2

- run: mkdir -p path/to/artifact

- run: echo hello > path/to/artifact/world.txt

- uses: actions/upload-artifact@v2

with:

name: my-artifact

path: path/to/artifact/world.txt

```

In our case, it might look something like:

```

coverage-testing:

runs-on: ${{ matrix.os }}

strategy:

matrix:

os: [ubuntu-latest, macos-latest, windows-latest]

python-version: ['3.7', '3.8', '3.9', '3.10']

steps:

- uses: actions/checkout@v2

- name: Set Up Python

uses: actions/setup-python@v2

with:

python-version: ${{ matrix.python-version }}

- name: Display Python Version

run: python -c "import sys; print(sys.version)"

shell: bash

- name: Install STUMPY And Other Dependencies

run: pip install --editable .[ci]

shell: bash

- name: Run Black

run: black --check --diff ./

shell: bash

- name: Run Flake8

run: flake8 ./

shell: bash

- name: Run Coverage Tests

run: ./test.sh coverage

shell: bash

- uses: actions/upload-artifact@v2

with:

name: coverage_results

path: ./.coverage

```

Then, for the second half of the job you can retrieve the file:

```

steps:

- uses: actions/checkout@v2

- uses: actions/download-artifact@v2

with:

name: my-artifact

```

or in our case:

```

steps:

- uses: actions/checkout@v2

- uses: actions/download-artifact@v2

with:

name: coverage_results

```

|

non_process

|

split coverage tests for github actions as our test suite gets longer the coverage tests which are executed in pure python will continue to need more time to complete given that github actions has a job time limit it may be beneficial to further split up the coverage testing time into two separate jobs instead of all in one however this will require storing the coverage file between jobs this can be accomplished by using uploading downloading artifacts see to upload a file steps uses actions checkout run mkdir p path to artifact run echo hello path to artifact world txt uses actions upload artifact with name my artifact path path to artifact world txt in our case it might look something like coverage testing runs on matrix os strategy matrix os python version steps uses actions checkout name set up python uses actions setup python with python version matrix python version name display python version run python c import sys print sys version shell bash name install stumpy and other dependencies run pip install editable shell bash name run black run black check diff shell bash name run run shell bash name run coverage tests run test sh coverage shell bash uses actions upload artifact with name coverage results path coverage then for the second half of the job you can retrieve the file steps uses actions checkout uses actions download artifact with name my artifact or in our case steps uses actions checkout uses actions download artifact with name coverage results

| 0

|

13,466

| 15,951,447,774

|

IssuesEvent

|

2021-04-15 09:51:22

|

zammad/zammad

|

https://api.github.com/repos/zammad/zammad

|

closed

|

Attached msg-Files break filenames in Zammad (combination Exchange and Outlook)

|

bug mail processing prioritised by payment verified

|

<!--

Hi there - thanks for filing an issue. Please ensure the following things before creating an issue - thank you! 🤓

Since november 15th we handle all requests, except real bugs, at our community board.

Full explanation: https://community.zammad.org/t/major-change-regarding-github-issues-community-board/21

Please post:

- Feature requests

- Development questions

- Technical questions

on the board -> https://community.zammad.org !

If you think you hit a bug, please continue:

- Search existing issues and the CHANGELOG.md for your issue - there might be a solution already

- Make sure to use the latest version of Zammad if possible

- Add the `log/production.log` file from your system. Attention: Make sure no confidential data is in it!

- Please write the issue in english

- Don't remove the template - otherwise we will close the issue without further comments

- Ask questions about Zammad configuration and usage at our mailinglist. See: https://zammad.org/participate

Note: We always do our best. Unfortunately, sometimes there are too many requests and we can't handle everything at once. If you want to prioritize/escalate your issue, you can do so by means of a support contract (see https://zammad.com/pricing#selfhosted).

* The upper textblock will be removed automatically when you submit your issue *

-->

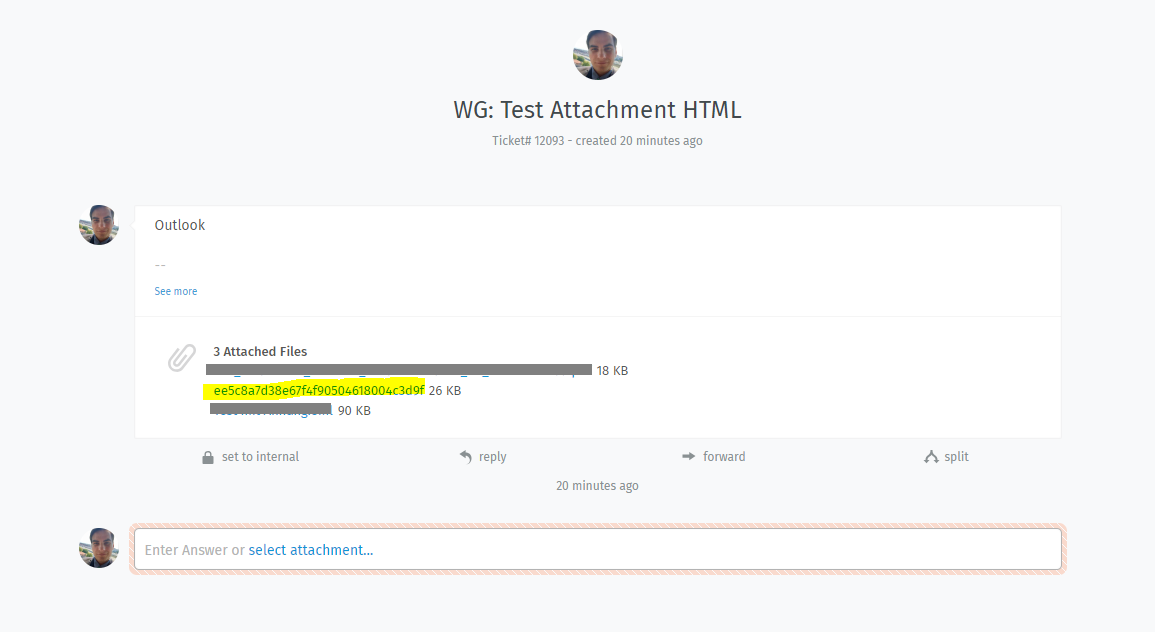

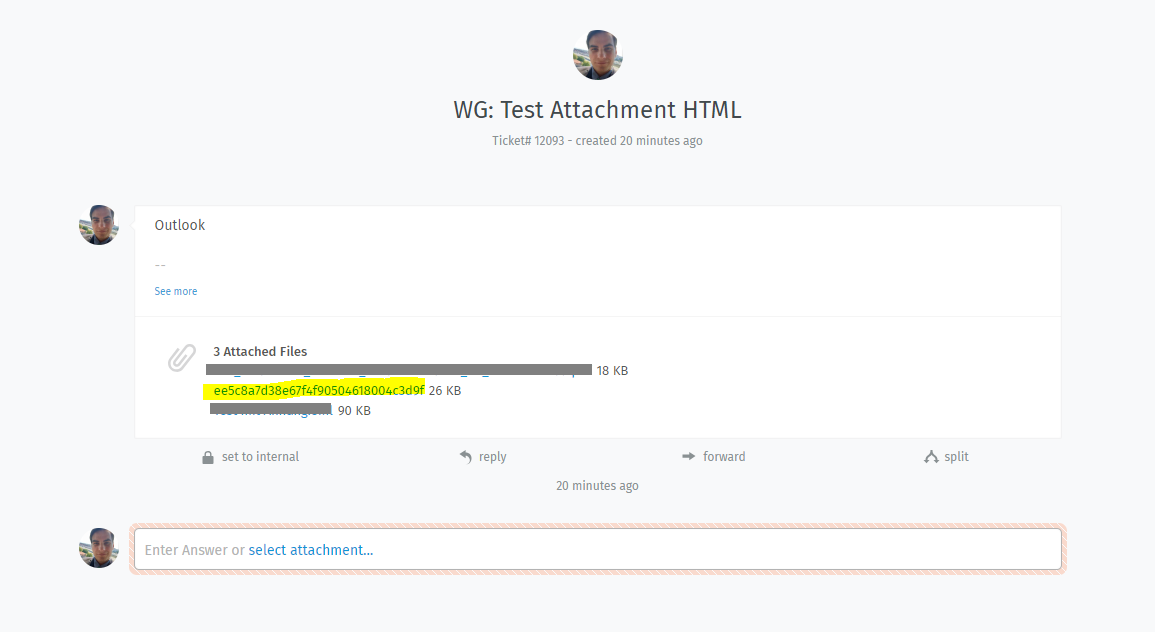

### Infos:

* Used Zammad version: 2.8 & develop

* Installation method (source, package, ..): rpm & Source

* Operating system: any

* Database + version: any

* Elasticsearch version: any

* Browser + version: any

* Outlook-Versions being affected for sure: Outlook 2013 & 2016 connected with Exchange

* Ticket-ID: #1034168, #1077162

### Expected behavior:

* When forwarding Mails with attachments to Zammad, Zammad will import the attachment exact like the original been send. Meaning, it will keep file extension and file name, if applicable.

### Actual behavior:

* In some special cases (Outlook + Exchange), the Mail with MSG-Attachment will contain a unique-ID (Content-ID). This causes Zammad to show the Content-ID instead of the file-name and without file extension.

### Steps to reproduce the behavior:

* Create a E-Mail with an attached .eml file

* Add a line with `Content-ID: <ee5c8a7d38e67f4f90504618004c3d9f@some.tld>` within the Part where the message attachment is referenced

* Import this file into Zammad

For reference, here's what the attachment part needs to look like:

```

------=_NextPart_000_0067_01D4A43B.1C2130F0

Content-Type: message/rfc822

Content-Transfer-Encoding: 7bit

Content-Disposition: attachment

Content-ID: <ee5c8a7d38e67f4f90504618004c3d9f@some.tld>

Received: from mx2.zammad.com

by mx2.zammad.com with LMTP

id yOHIAN1gL1y4UwAAABwAvw

(envelope-from <alias@some.tld>)

for <mh@zammad.com>; Fri, 04 Jan 2019 13:34:21 +0000

Received: from mikasa.some.tld (mikasa.some.tld [x.x.x.x])

by mx2.zammad.com (Postfix) with ESMTPS id B89E9A02C6

for <mh@zammad.com>; Fri, 4 Jan 2019 13:34:20 +0000 (UTC)

Received: from EHLO mikasa.some.tld (mikasa.some.tld [x.x.x.x])

by mikasa.some.tld with ESMTPSA

(version=TLSv1.2 cipher=ECDHE-RSA-AES128-GCM-SHA256 bits=128)

; Fri, 4 Jan 2019 14:34:17 +0100

From: "Marcel Herrguth" <alias@some.tld>

To: <alias@another.tld>

Subject: Test Attachment Text

Date: Fri, 4 Jan 2019 14:34:17 +0100

Organization: The home of Anime

Message-ID: <ee5c8a7d38e67f4f90504618004c3d9f@some.tld>

MIME-Version: 1.0

Content-Type: multipart/mixed;

boundary="----=_NextPart_000_005E_01D4A43B.1C1DD590"

X-Mailer: Microsoft Outlook 16.0

Thread-Index: AQFpQ8vnu4DrfNDtB+p/XwBv/6/XNg==

This is a multipart message in MIME format.

------=_NextPart_000_005E_01D4A43B.1C1DD590

Content-Type: text/plain;

boundary="=_d1b5b47c2bb88af990b40cf2152b3d67";

charset="us-ascii"

Content-Transfer-Encoding: 7bit

680gh4r6s8t

04 h

640trs

684 h06

8rs4t0

640

--

```

Look in Zammad:

Yes I'm sure this is a bug and no feature request or a general question.

|

1.0

|

Attached msg-Files break filenames in Zammad (combination Exchange and Outlook) - <!--

Hi there - thanks for filing an issue. Please ensure the following things before creating an issue - thank you! 🤓

Since november 15th we handle all requests, except real bugs, at our community board.

Full explanation: https://community.zammad.org/t/major-change-regarding-github-issues-community-board/21

Please post:

- Feature requests

- Development questions

- Technical questions

on the board -> https://community.zammad.org !

If you think you hit a bug, please continue:

- Search existing issues and the CHANGELOG.md for your issue - there might be a solution already

- Make sure to use the latest version of Zammad if possible

- Add the `log/production.log` file from your system. Attention: Make sure no confidential data is in it!

- Please write the issue in english

- Don't remove the template - otherwise we will close the issue without further comments

- Ask questions about Zammad configuration and usage at our mailinglist. See: https://zammad.org/participate

Note: We always do our best. Unfortunately, sometimes there are too many requests and we can't handle everything at once. If you want to prioritize/escalate your issue, you can do so by means of a support contract (see https://zammad.com/pricing#selfhosted).

* The upper textblock will be removed automatically when you submit your issue *

-->

### Infos:

* Used Zammad version: 2.8 & develop

* Installation method (source, package, ..): rpm & Source

* Operating system: any

* Database + version: any

* Elasticsearch version: any

* Browser + version: any

* Outlook-Versions being affected for sure: Outlook 2013 & 2016 connected with Exchange

* Ticket-ID: #1034168, #1077162

### Expected behavior:

* When forwarding Mails with attachments to Zammad, Zammad will import the attachment exact like the original been send. Meaning, it will keep file extension and file name, if applicable.

### Actual behavior:

* In some special cases (Outlook + Exchange), the Mail with MSG-Attachment will contain a unique-ID (Content-ID). This causes Zammad to show the Content-ID instead of the file-name and without file extension.

### Steps to reproduce the behavior:

* Create a E-Mail with an attached .eml file

* Add a line with `Content-ID: <ee5c8a7d38e67f4f90504618004c3d9f@some.tld>` within the Part where the message attachment is referenced

* Import this file into Zammad

For reference, here's what the attachment part needs to look like:

```

------=_NextPart_000_0067_01D4A43B.1C2130F0

Content-Type: message/rfc822

Content-Transfer-Encoding: 7bit

Content-Disposition: attachment

Content-ID: <ee5c8a7d38e67f4f90504618004c3d9f@some.tld>

Received: from mx2.zammad.com

by mx2.zammad.com with LMTP

id yOHIAN1gL1y4UwAAABwAvw

(envelope-from <alias@some.tld>)

for <mh@zammad.com>; Fri, 04 Jan 2019 13:34:21 +0000

Received: from mikasa.some.tld (mikasa.some.tld [x.x.x.x])

by mx2.zammad.com (Postfix) with ESMTPS id B89E9A02C6

for <mh@zammad.com>; Fri, 4 Jan 2019 13:34:20 +0000 (UTC)

Received: from EHLO mikasa.some.tld (mikasa.some.tld [x.x.x.x])

by mikasa.some.tld with ESMTPSA

(version=TLSv1.2 cipher=ECDHE-RSA-AES128-GCM-SHA256 bits=128)

; Fri, 4 Jan 2019 14:34:17 +0100

From: "Marcel Herrguth" <alias@some.tld>

To: <alias@another.tld>

Subject: Test Attachment Text

Date: Fri, 4 Jan 2019 14:34:17 +0100

Organization: The home of Anime

Message-ID: <ee5c8a7d38e67f4f90504618004c3d9f@some.tld>

MIME-Version: 1.0

Content-Type: multipart/mixed;

boundary="----=_NextPart_000_005E_01D4A43B.1C1DD590"

X-Mailer: Microsoft Outlook 16.0

Thread-Index: AQFpQ8vnu4DrfNDtB+p/XwBv/6/XNg==

This is a multipart message in MIME format.

------=_NextPart_000_005E_01D4A43B.1C1DD590

Content-Type: text/plain;

boundary="=_d1b5b47c2bb88af990b40cf2152b3d67";

charset="us-ascii"

Content-Transfer-Encoding: 7bit

680gh4r6s8t

04 h

640trs

684 h06

8rs4t0

640

--

```

Look in Zammad:

Yes I'm sure this is a bug and no feature request or a general question.

|

process

|

attached msg files break filenames in zammad combination exchange and outlook hi there thanks for filing an issue please ensure the following things before creating an issue thank you 🤓 since november we handle all requests except real bugs at our community board full explanation please post feature requests development questions technical questions on the board if you think you hit a bug please continue search existing issues and the changelog md for your issue there might be a solution already make sure to use the latest version of zammad if possible add the log production log file from your system attention make sure no confidential data is in it please write the issue in english don t remove the template otherwise we will close the issue without further comments ask questions about zammad configuration and usage at our mailinglist see note we always do our best unfortunately sometimes there are too many requests and we can t handle everything at once if you want to prioritize escalate your issue you can do so by means of a support contract see the upper textblock will be removed automatically when you submit your issue infos used zammad version develop installation method source package rpm source operating system any database version any elasticsearch version any browser version any outlook versions being affected for sure outlook connected with exchange ticket id expected behavior when forwarding mails with attachments to zammad zammad will import the attachment exact like the original been send meaning it will keep file extension and file name if applicable actual behavior in some special cases outlook exchange the mail with msg attachment will contain a unique id content id this causes zammad to show the content id instead of the file name and without file extension steps to reproduce the behavior create a e mail with an attached eml file add a line with content id within the part where the message attachment is referenced import this file into zammad for reference here s what the attachment part needs to look like nextpart content type message content transfer encoding content disposition attachment content id received from zammad com by zammad com with lmtp id envelope from for fri jan received from mikasa some tld mikasa some tld by zammad com postfix with esmtps id for fri jan utc received from ehlo mikasa some tld mikasa some tld by mikasa some tld with esmtpsa version cipher ecdhe rsa gcm bits fri jan from marcel herrguth to subject test attachment text date fri jan organization the home of anime message id mime version content type multipart mixed boundary nextpart x mailer microsoft outlook thread index p xwbv xng this is a multipart message in mime format nextpart content type text plain boundary charset us ascii content transfer encoding h look in zammad yes i m sure this is a bug and no feature request or a general question

| 1

|

2,182

| 5,032,103,696

|

IssuesEvent

|

2016-12-16 10:02:29

|

DevExpress/testcafe-hammerhead

|

https://api.github.com/repos/DevExpress/testcafe-hammerhead

|

closed

|

Html processor incorrectly processes invalid html

|

SYSTEM: resource processing TYPE: bug

|

```html

<table border='0' class='EditTable' id='TblGrid_domainRecordTablesARecordsGrid_2'>

<tbody>

<tr id='Act_Buttons'>

<td class='navButton ui-widget-content'>

<a href='javascript:void(0)' id='pData' class='fm-button ui-state-default ui-corner-left'>

<span class='ui-icon ui-icon-triangle-1-w'></span></div>

<a href='javascript:void(0)' id='nData' class='fm-button ui-state-default ui-corner-right'>

<span class='ui-icon ui-icon-triangle-1-e'></span></div>

</td>

<td class='EditButton ui-widget-content'>

<a href='javascript:void(0)' id='sData' class='fm-button ui-state-default ui-corner-all'>Submit</a>

<a href='javascript:void(0)' id='cData' class='fm-button ui-state-default ui-corner-all'>Close</a>

</td>

</tr>

<tr style='display:none' class='binfo'>

<td class='bottominfo' colspan='2'></td>

</tr>

</tbody>

</table>

```

|

1.0

|

Html processor incorrectly processes invalid html - ```html

<table border='0' class='EditTable' id='TblGrid_domainRecordTablesARecordsGrid_2'>

<tbody>

<tr id='Act_Buttons'>

<td class='navButton ui-widget-content'>

<a href='javascript:void(0)' id='pData' class='fm-button ui-state-default ui-corner-left'>

<span class='ui-icon ui-icon-triangle-1-w'></span></div>

<a href='javascript:void(0)' id='nData' class='fm-button ui-state-default ui-corner-right'>

<span class='ui-icon ui-icon-triangle-1-e'></span></div>

</td>

<td class='EditButton ui-widget-content'>

<a href='javascript:void(0)' id='sData' class='fm-button ui-state-default ui-corner-all'>Submit</a>

<a href='javascript:void(0)' id='cData' class='fm-button ui-state-default ui-corner-all'>Close</a>

</td>

</tr>

<tr style='display:none' class='binfo'>

<td class='bottominfo' colspan='2'></td>

</tr>

</tbody>

</table>

```

|

process

|

html processor incorrectly processes invalid html html submit close

| 1

|

13,684

| 16,442,174,779

|

IssuesEvent

|

2021-05-20 15:27:02

|

encode/uvicorn

|

https://api.github.com/repos/encode/uvicorn

|

closed

|

uvicorn.run() makes a shell program not killable by CTRL+C

|

multiprocessing

|

I'm developing a command line tool that hosts a uvicorn server, and it would be very convenient to terminate the process with CTRL+C.

For some reason, launching a sevter causes the program to not properly terminate on CTRL+X.

I need to kill it by sending a "kill -9" to the process in order to free the terminal.

=======================

I'm using uvicorn as an embeded server within a command line tool, and

```

INFO: Started server process [2120330]

INFO: Waiting for application startup.

INFO: ASGI 'lifespan' protocol appears unsupported.

INFO: Application startup complete.

INFO: Uvicorn running on http://127.0.0.1:5000 (Press CTRL+C to quit)

^CINFO: Shutting down

INFO: Finished server process [2120330]

```

=======================

Although the log says the server process is terminated, the programm is still stuck, and needs a kill -9 to terminate

|

1.0

|

uvicorn.run() makes a shell program not killable by CTRL+C - I'm developing a command line tool that hosts a uvicorn server, and it would be very convenient to terminate the process with CTRL+C.

For some reason, launching a sevter causes the program to not properly terminate on CTRL+X.

I need to kill it by sending a "kill -9" to the process in order to free the terminal.

=======================

I'm using uvicorn as an embeded server within a command line tool, and

```

INFO: Started server process [2120330]

INFO: Waiting for application startup.

INFO: ASGI 'lifespan' protocol appears unsupported.

INFO: Application startup complete.

INFO: Uvicorn running on http://127.0.0.1:5000 (Press CTRL+C to quit)

^CINFO: Shutting down

INFO: Finished server process [2120330]

```

=======================

Although the log says the server process is terminated, the programm is still stuck, and needs a kill -9 to terminate

|

process

|

uvicorn run makes a shell program not killable by ctrl c i m developing a command line tool that hosts a uvicorn server and it would be very convenient to terminate the process with ctrl c for some reason launching a sevter causes the program to not properly terminate on ctrl x i need to kill it by sending a kill to the process in order to free the terminal i m using uvicorn as an embeded server within a command line tool and info started server process info waiting for application startup info asgi lifespan protocol appears unsupported info application startup complete info uvicorn running on press ctrl c to quit cinfo shutting down info finished server process although the log says the server process is terminated the programm is still stuck and needs a kill to terminate

| 1

|

1,884

| 4,712,357,522

|

IssuesEvent

|

2016-10-14 16:29:59

|

material-motion/material-motion-family-pop-swift

|

https://api.github.com/repos/material-motion/material-motion-family-pop-swift

|

closed

|

Cut the v1.0.0 release

|

Process

|

This must be run by a @material-motion/core-team member.

`mdm release cut`

|

1.0

|

Cut the v1.0.0 release - This must be run by a @material-motion/core-team member.

`mdm release cut`

|

process

|

cut the release this must be run by a material motion core team member mdm release cut

| 1

|

20,951

| 27,812,769,330

|

IssuesEvent

|

2023-03-18 10:21:46

|

nextflow-io/nextflow

|

https://api.github.com/repos/nextflow-io/nextflow

|

closed

|

Textual input wildcard.

|

stale lang/processes

|

## New Feature & Justifying Scenario

Scenarios arise where an arbitrary number of same-named files get generated by some process which are then passed as an input to a subsequent process. The present wildcards `*` and `?` solve the problem of uniquely naming the links to or copies of these files in the downstream process, however, I am unaware of the capability of leaving the full name pattern of the files underspecified with a text wildcard.

This is technically possible in the case of `*` for the case of it appearing alone in the file string in an input declaration, and I would love it if this could be made more well-defined either as a separate character or as an extension of that property of `*` to `seq*` form inputs (for BC purposes, I imagine the former is preferable).

Motivation for this, instead of fully specifying the filename with a numeric wildcard only for multiple instances of that filename, is two-fold: firstly, it can be very strongly preferable to retain some unique string in the filename that is only discovered at run-time (e.g. sample ID) for purposes of analysis outside of Nextflow or by subsequent workflows. Secondly, it allows for improved robustness of the wildcard-based input method in the case of using modules you are perhaps not in control of in a context where you might want to use one or other that use slightly different naming conventions - right now this has to be resolved with extra boilerplate for each different naming convention.

## Implementation

Let's say the feature were to use a new character and let's choose to represent this capability with `@`. Consider the following patterns used in an input declaration:

1. `"@.report"`

2. `"*.report"`

3. `"@?.report"`

4. `"@*.report"`

5. `"@???.report"`

6. `"@*"`

7. `"@?"`

8. `"@???"`

The first and second would behave the same for a single file, while the first could either throw the "input file name collision" error for multiple files or continue to behave the same as `*` (I prefer the former, as it provides a more consistent behaviour).

For a single filename, the third and fourth would behave identically and for some number of files named "X.report" would either give as input "X.report" in the case of one file or "X1.report", "X2.report", "X3.report", etc in the case of multiple files. The fifth would do the same but with the usual padding zeroes of '?': "X001.report", "X002.report", "X003.report" etc.

The final three would be for consistency to the limiting case: it shouldn't, in the limit, be necessary to specify anything about the filename, but simply take all filenames passed to the input and simply append the integer as needed. This would, for the case of files with the name "X.report" yield "X.report1", "X.report2", "X.report3", and so on.

This behaviour of `@` would also allow multiple filenames to be passed to the input, say some number of files either named "X.report" or "Y.report". The third and fourth would either give as input "X.report" and "Y.report" in the case of one file of each, or "X1.report", "X2.report", "X3.report", "Y1.report", "Y2.report", etc in the case of multiple files of each. The fifth would do the same but with the usual padding zeroes of '?': "X001.report", "X002.report", "X003.report", "Y001.report", "Y002.report", etc. The final three would look similar but with the incrementing integer placed at the end.

|

1.0

|

Textual input wildcard. - ## New Feature & Justifying Scenario

Scenarios arise where an arbitrary number of same-named files get generated by some process which are then passed as an input to a subsequent process. The present wildcards `*` and `?` solve the problem of uniquely naming the links to or copies of these files in the downstream process, however, I am unaware of the capability of leaving the full name pattern of the files underspecified with a text wildcard.

This is technically possible in the case of `*` for the case of it appearing alone in the file string in an input declaration, and I would love it if this could be made more well-defined either as a separate character or as an extension of that property of `*` to `seq*` form inputs (for BC purposes, I imagine the former is preferable).

Motivation for this, instead of fully specifying the filename with a numeric wildcard only for multiple instances of that filename, is two-fold: firstly, it can be very strongly preferable to retain some unique string in the filename that is only discovered at run-time (e.g. sample ID) for purposes of analysis outside of Nextflow or by subsequent workflows. Secondly, it allows for improved robustness of the wildcard-based input method in the case of using modules you are perhaps not in control of in a context where you might want to use one or other that use slightly different naming conventions - right now this has to be resolved with extra boilerplate for each different naming convention.

## Implementation

Let's say the feature were to use a new character and let's choose to represent this capability with `@`. Consider the following patterns used in an input declaration:

1. `"@.report"`

2. `"*.report"`

3. `"@?.report"`

4. `"@*.report"`

5. `"@???.report"`

6. `"@*"`

7. `"@?"`

8. `"@???"`

The first and second would behave the same for a single file, while the first could either throw the "input file name collision" error for multiple files or continue to behave the same as `*` (I prefer the former, as it provides a more consistent behaviour).

For a single filename, the third and fourth would behave identically and for some number of files named "X.report" would either give as input "X.report" in the case of one file or "X1.report", "X2.report", "X3.report", etc in the case of multiple files. The fifth would do the same but with the usual padding zeroes of '?': "X001.report", "X002.report", "X003.report" etc.

The final three would be for consistency to the limiting case: it shouldn't, in the limit, be necessary to specify anything about the filename, but simply take all filenames passed to the input and simply append the integer as needed. This would, for the case of files with the name "X.report" yield "X.report1", "X.report2", "X.report3", and so on.

This behaviour of `@` would also allow multiple filenames to be passed to the input, say some number of files either named "X.report" or "Y.report". The third and fourth would either give as input "X.report" and "Y.report" in the case of one file of each, or "X1.report", "X2.report", "X3.report", "Y1.report", "Y2.report", etc in the case of multiple files of each. The fifth would do the same but with the usual padding zeroes of '?': "X001.report", "X002.report", "X003.report", "Y001.report", "Y002.report", etc. The final three would look similar but with the incrementing integer placed at the end.

|

process

|

textual input wildcard new feature justifying scenario scenarios arise where an arbitrary number of same named files get generated by some process which are then passed as an input to a subsequent process the present wildcards and solve the problem of uniquely naming the links to or copies of these files in the downstream process however i am unaware of the capability of leaving the full name pattern of the files underspecified with a text wildcard this is technically possible in the case of for the case of it appearing alone in the file string in an input declaration and i would love it if this could be made more well defined either as a separate character or as an extension of that property of to seq form inputs for bc purposes i imagine the former is preferable motivation for this instead of fully specifying the filename with a numeric wildcard only for multiple instances of that filename is two fold firstly it can be very strongly preferable to retain some unique string in the filename that is only discovered at run time e g sample id for purposes of analysis outside of nextflow or by subsequent workflows secondly it allows for improved robustness of the wildcard based input method in the case of using modules you are perhaps not in control of in a context where you might want to use one or other that use slightly different naming conventions right now this has to be resolved with extra boilerplate for each different naming convention implementation let s say the feature were to use a new character and let s choose to represent this capability with consider the following patterns used in an input declaration report report report report report the first and second would behave the same for a single file while the first could either throw the input file name collision error for multiple files or continue to behave the same as i prefer the former as it provides a more consistent behaviour for a single filename the third and fourth would behave identically and for some number of files named x report would either give as input x report in the case of one file or report report report etc in the case of multiple files the fifth would do the same but with the usual padding zeroes of report report report etc the final three would be for consistency to the limiting case it shouldn t in the limit be necessary to specify anything about the filename but simply take all filenames passed to the input and simply append the integer as needed this would for the case of files with the name x report yield x x x and so on this behaviour of would also allow multiple filenames to be passed to the input say some number of files either named x report or y report the third and fourth would either give as input x report and y report in the case of one file of each or report report report report report etc in the case of multiple files of each the fifth would do the same but with the usual padding zeroes of report report report report report etc the final three would look similar but with the incrementing integer placed at the end

| 1

|

172,368

| 13,303,156,232

|

IssuesEvent

|

2020-08-25 15:09:11

|

vgstation-coders/vgstation13

|

https://api.github.com/repos/vgstation-coders/vgstation13

|

closed

|

Hitting a blob with a fireball causes infinite explosions and lags the game to death

|

Bug / Fix Needs Moar Testing ⚠️ OH GOD IT'S LOOSE ⚠️

|

Good thing blob and wizard will never fire together, r-right?

|

1.0

|

Hitting a blob with a fireball causes infinite explosions and lags the game to death - Good thing blob and wizard will never fire together, r-right?

|

non_process

|

hitting a blob with a fireball causes infinite explosions and lags the game to death good thing blob and wizard will never fire together r right

| 0

|

19,868

| 26,280,295,673

|

IssuesEvent

|

2023-01-07 08:01:48

|

nodejs/node

|

https://api.github.com/repos/nodejs/node

|

closed

|

AbortController/AbortSignal Triggering 'Error' Event in Child Process

|

child_process good first issue

|

### Version

v18.9.0

### Platform

Linux Mint 20.1

### Subsystem

child_process

### What steps will reproduce the bug?

1. Create a child process with an AbortSignal attached.

2. Abort the child process via AbortSignal before the child process `exit` event

3. This will trigger the child process's `error` event.

The following code will generate the error for `spawn`, `execFile`, and `exec`

```js

const { spawn, exec, execFile } = require("child_process");

const tests = [

{ commandName: "spawn", command: spawn },

{ commandName: "execFile", command: execFile },

{ commandName: "exec", command: exec }

];

(async () => {

for ( let { commandName, command } of tests ) {

const abortController = new AbortController();

await new Promise( resolve => {

// I used 'ls' but the command does not seem to effect the result

const child = command( "ls", { signal: abortController.signal } )

.on( "error", ( err ) =>

console.log({ event: `${commandName}.error`, killed: child.killed, err }) )

.on( "exit", ( code, signal ) => {

console.log({ event: `${commandName}.exit`, code, signal, killed: child.killed });

} )

.on( "close", ( code, signal ) => {

console.log({ event: `${commandName}.close`, code, signal, killed: child.killed });

resolve();

} )

.on( "spawn", () => {

console.log({ event: `${commandName}.spawn`, killed: child.killed });

// child.kill( "SIGTERM" ); // child will NOT cause an 'error' event

abortController.abort(); // child will cause an 'error' event

} );

} );

}

})();

```

### How often does it reproduce? Is there a required condition?

This occurs every time, a child process is aborted via AbortSignal and it has not performed it's exit event.

### What is the expected behavior?

```console

{ event: 'spawn.spawn', killed: false }

{ event: 'spawn.exit', code: null, signal: 'SIGTERM', killed: true }

{ event: 'spawn.close', code: null, signal: 'SIGTERM', killed: true }

...

Repeated for execFile and exec

```

### What do you see instead?

```console

{ event: 'spawn.spawn', killed: false }

{

event: 'spawn.error',

killed: true,

err: AbortError: The operation was aborted

at abortChildProcess (node:child_process:706:27)

at AbortSignal.onAbortListener (node:child_process:776:7)

at [nodejs.internal.kHybridDispatch] (node:internal/event_target:731:20)

at AbortSignal.dispatchEvent (node:internal/event_target:673:26)

at abortSignal (node:internal/abort_controller:292:10)

at AbortController.abort (node:internal/abort_controller:322:5)

at ChildProcess.<anonymous> (/index.js:30:29)

at ChildProcess.emit (node:events:513:28)

at onSpawnNT (node:internal/child_process:481:8)

at process.processTicksAndRejections (node:internal/process/task_queues:81:21) {

code: 'ABORT_ERR'

}

}

{ event: 'spawn.exit', code: 0, signal: null, killed: true }

{ event: 'spawn.close', code: 0, signal: null, killed: true }

...

Repeated for execFile and exec

```

### Additional information

According to the documentation a child process `error` event occurs only on:

> * The process could not be spawned, or

> * The process could not be killed, or

> * Sending a message to the child process failed.

>

> [https://nodejs.org/api/child_process.html#event-error](https://nodejs.org/api/child_process.html#event-error)

None of these conditions are true when the process is aborted via AbortSignal. If the child process is terminated via a `child.kill( "SIGTERM" )` it does not trigger the `error` event.

I am assuming that `AbortSignal.abort()` is suppose to act like `child.kill( "SIGTERM" )` and not that the documentation is out-of-date.

|

1.0

|

AbortController/AbortSignal Triggering 'Error' Event in Child Process - ### Version

v18.9.0

### Platform

Linux Mint 20.1

### Subsystem

child_process

### What steps will reproduce the bug?

1. Create a child process with an AbortSignal attached.