Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

386,558 | 26,689,588,351 | IssuesEvent | 2023-01-27 02:37:08 | RalphHightower/RalphHightower | https://api.github.com/repos/RalphHightower/RalphHightower | opened | MurdaughAlex_TimeLine: P&C Podcasts | documentation | **What page should this be added to?**<br>

MurdaughAlex_TimeLine.md

**What section/heading should this be added to?**<br>

Understanding Murdaugh Podcasts

**Include the Markdown text that is to be added below:**<br>

| JANUARY 25TH, 2023 | 18 | [Murder trial officially begins with opening statements](https://u... | 1.0 | MurdaughAlex_TimeLine: P&C Podcasts - **What page should this be added to?**<br>

MurdaughAlex_TimeLine.md

**What section/heading should this be added to?**<br>

Understanding Murdaugh Podcasts

**Include the Markdown text that is to be added below:**<br>

| JANUARY 25TH, 2023 | 18 | [Murder trial officially be... | non_process | murdaughalex timeline p c podcasts what page should this be added to murdaughalex timeline md what section heading should this be added to understanding murdaugh podcasts include the markdown text that is to be added below january january descri... | 0 |

7,574 | 10,685,851,408 | IssuesEvent | 2019-10-22 13:27:11 | linnovate/root | https://api.github.com/repos/linnovate/root | closed | creating a task in my tasks doesnt assign the user as the assignee automatically | 2.0.7 Fixed Process bug | go to my tasks

create a task

the user that created the task isnt assigned to it automatically | 1.0 | creating a task in my tasks doesnt assign the user as the assignee automatically - go to my tasks

create a task

the user that created the task isnt assigned to it automatically | process | creating a task in my tasks doesnt assign the user as the assignee automatically go to my tasks create a task the user that created the task isnt assigned to it automatically | 1 |

6,774 | 9,913,991,491 | IssuesEvent | 2019-06-28 13:21:33 | googleapis/google-cloud-java | https://api.github.com/repos/googleapis/google-cloud-java | closed | mvn versions:set warns duplicate dependencies declaration | dependencies type: process | (Because usually I don't need to run "mvn versions:set", this is not blocking me)

#### Environment details

Git HEAD

#### Steps to reproduce

Run `mvn versions:set -DnextSnapshot=true -B` at root directory

#### Code example

N/A

#### Stack trace

```

suztomo@suxtomo24:~/google-cloud-java/google-clo... | 1.0 | mvn versions:set warns duplicate dependencies declaration - (Because usually I don't need to run "mvn versions:set", this is not blocking me)

#### Environment details

Git HEAD

#### Steps to reproduce

Run `mvn versions:set -DnextSnapshot=true -B` at root directory

#### Code example

N/A

#### Stack ... | process | mvn versions set warns duplicate dependencies declaration because usually i don t need to run mvn versions set this is not blocking me environment details git head steps to reproduce run mvn versions set dnextsnapshot true b at root directory code example n a stack ... | 1 |

12,009 | 5,132,709,456 | IssuesEvent | 2017-01-11 00:03:19 | Draccoz/twc | https://api.github.com/repos/Draccoz/twc | closed | Preserve documentation | bug builder parser | twc should preserve element and property documentation. For elements, [Polymer documentation](https://www.polymer-project.org/1.0/docs/tools/documentation#element-summaries) gives two possible ways:

1. as HTML comment above `<dom-module>`

1. if absent, as JS comment before `Polymer()` call

With twc, there is no... | 1.0 | Preserve documentation - twc should preserve element and property documentation. For elements, [Polymer documentation](https://www.polymer-project.org/1.0/docs/tools/documentation#element-summaries) gives two possible ways:

1. as HTML comment above `<dom-module>`

1. if absent, as JS comment before `Polymer()` call... | non_process | preserve documentation twc should preserve element and property documentation for elements gives two possible ways as html comment above if absent as js comment before polymer call with twc there is no just the template it may work to always have just the js comment even when ... | 0 |

11,261 | 14,046,812,648 | IssuesEvent | 2020-11-02 05:46:37 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | Standardize the errors relate to coprocessor | sig/coprocessor type/enhancement | ## Development Task

Standardize the errors relate to coprocessor | 1.0 | Standardize the errors relate to coprocessor - ## Development Task

Standardize the errors relate to coprocessor | process | standardize the errors relate to coprocessor development task standardize the errors relate to coprocessor | 1 |

17,507 | 23,317,687,431 | IssuesEvent | 2022-08-08 13:51:31 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | referentialIntegrity=prisma: Delete parent relation failing when using composite id | bug/2-confirmed kind/bug process/candidate tech/engines team/client topic: database-provider/planetscale topic: referential actions topic: referentialIntegrity | ### Bug description

I am trying to move to referential actions when deleting but get failures. If i don't add anything to `onDelete` the event gets deleted but not the sessions (whats the default action when using `referentialIntegrity = "prisma"`?), adding `onDelete: Cascade` I get an error `FailedPrecondition desc... | 1.0 | referentialIntegrity=prisma: Delete parent relation failing when using composite id - ### Bug description

I am trying to move to referential actions when deleting but get failures. If i don't add anything to `onDelete` the event gets deleted but not the sessions (whats the default action when using `referentialInteg... | process | referentialintegrity prisma delete parent relation failing when using composite id bug description i am trying to move to referential actions when deleting but get failures if i don t add anything to ondelete the event gets deleted but not the sessions whats the default action when using referentialinteg... | 1 |

210,237 | 7,187,164,758 | IssuesEvent | 2018-02-02 03:20:28 | xcat2/xcat-core | https://api.github.com/repos/xcat2/xcat-core | closed | [OpenBMC] openbmc in python framework refactor | priority:high sprint1 type:feature | * [ ] redefine the interface, framework for openbmc in python

design and coding

* [ ] move rpower with new framework | 1.0 | [OpenBMC] openbmc in python framework refactor - * [ ] redefine the interface, framework for openbmc in python

design and coding

* [ ] move rpower with new framework | non_process | openbmc in python framework refactor redefine the interface framework for openbmc in python design and coding move rpower with new framework | 0 |

17,885 | 23,846,678,553 | IssuesEvent | 2022-09-06 14:30:39 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [processor/tailsampling] SpanCount sampler makes incorrect decision on high volume of spans | bug processor/tailsampling | **Describe the bug**

Sometimes, the SpanCount sampler makes the incorrect decision even though there's many spans coming in for the same trace id. This is more likely to happen when there's a high volume of spans and traces coming in to the processor.

After looking at the code, it seems this might be caused by the ... | 1.0 | [processor/tailsampling] SpanCount sampler makes incorrect decision on high volume of spans - **Describe the bug**

Sometimes, the SpanCount sampler makes the incorrect decision even though there's many spans coming in for the same trace id. This is more likely to happen when there's a high volume of spans and traces c... | process | spancount sampler makes incorrect decision on high volume of spans describe the bug sometimes the spancount sampler makes the incorrect decision even though there s many spans coming in for the same trace id this is more likely to happen when there s a high volume of spans and traces coming in to the process... | 1 |

326,655 | 9,958,975,804 | IssuesEvent | 2019-07-06 01:14:06 | default51400/familynet | https://api.github.com/repos/default51400/familynet | opened | Create database using EF | Database high priority question task | Need to write all models with their data annotation (for example, reqiured, etc.). Create a database with the entire context, use the template repository (you need to clarify what Slava meant) | 1.0 | Create database using EF - Need to write all models with their data annotation (for example, reqiured, etc.). Create a database with the entire context, use the template repository (you need to clarify what Slava meant) | non_process | create database using ef need to write all models with their data annotation for example reqiured etc create a database with the entire context use the template repository you need to clarify what slava meant | 0 |

602,362 | 18,467,561,315 | IssuesEvent | 2021-10-17 06:27:10 | AY2122S1-CS2113-T16-3/tp | https://api.github.com/repos/AY2122S1-CS2113-T16-3/tp | opened | List possible recipes I can cook based on ingredients I have | type.Story priority.High | As a user, I can check which recipes I can cook based on the ingredients I currently have, so that I can save time on manually checking the ingredients and deciding on the recipe. | 1.0 | List possible recipes I can cook based on ingredients I have - As a user, I can check which recipes I can cook based on the ingredients I currently have, so that I can save time on manually checking the ingredients and deciding on the recipe. | non_process | list possible recipes i can cook based on ingredients i have as a user i can check which recipes i can cook based on the ingredients i currently have so that i can save time on manually checking the ingredients and deciding on the recipe | 0 |

17,544 | 23,356,530,047 | IssuesEvent | 2022-08-10 07:57:13 | inmanta/inmanta-dashboard | https://api.github.com/repos/inmanta/inmanta-dashboard | opened | implement package-cleanup script | process | This script in `package.json` should clean up dev builds older than 30 days. This can probably just be copied over from the web-console, with perhaps a few name changes. | 1.0 | implement package-cleanup script - This script in `package.json` should clean up dev builds older than 30 days. This can probably just be copied over from the web-console, with perhaps a few name changes. | process | implement package cleanup script this script in package json should clean up dev builds older than days this can probably just be copied over from the web console with perhaps a few name changes | 1 |

2,041 | 4,848,468,534 | IssuesEvent | 2016-11-10 17:35:29 | Alfresco/alfresco-ng2-components | https://api.github.com/repos/Alfresco/alfresco-ng2-components | opened | After cancelling a process list is not refreshed | browser: all bug comp: activiti-processList | 1. Cancel a process

2. List is not refreshed

3. Navigate away from page and back

4. Process cancelled is now not in list | 1.0 | After cancelling a process list is not refreshed - 1. Cancel a process

2. List is not refreshed

3. Navigate away from page and back

4. Process cancelled is now not in list | process | after cancelling a process list is not refreshed cancel a process list is not refreshed navigate away from page and back process cancelled is now not in list | 1 |

43,310 | 11,202,134,943 | IssuesEvent | 2020-01-04 09:56:39 | helix-toolkit/helix-toolkit | https://api.github.com/repos/helix-toolkit/helix-toolkit | closed | MeshGeometryHelper.FindSharpEdges() does not work | MeshBuilder bug you take it | This test fails:

var meshBuilder = new MeshBuilder();

meshBuilder.AddCube();

var meshGeometry3D = meshBuilder.ToMeshGeometry3D();

IntCollection edges = meshGeometry3D.FindSharpEdges(60);

// A cube has 12 edges, 2 points for one edge, there should be 24 indices in the collection.

Assert.T... | 1.0 | MeshGeometryHelper.FindSharpEdges() does not work - This test fails:

var meshBuilder = new MeshBuilder();

meshBuilder.AddCube();

var meshGeometry3D = meshBuilder.ToMeshGeometry3D();

IntCollection edges = meshGeometry3D.FindSharpEdges(60);

// A cube has 12 edges, 2 points for one edge, there s... | non_process | meshgeometryhelper findsharpedges does not work this test fails var meshbuilder new meshbuilder meshbuilder addcube var meshbuilder intcollection edges findsharpedges a cube has edges points for one edge there should be indices in the collection ... | 0 |

11,017 | 13,804,135,002 | IssuesEvent | 2020-10-11 07:24:25 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Mono.Linker.LinkerFatalErrorException: "Error processing method" at GetValueNodeFromGenericArgument | area-System.Diagnostics.Process untriaged | Hello,

Can't publish Blazor WebAssembly application with some specific code.

### Steps To Reproduce

0. Install .NET 5 RC1

1. Create some project:

```

dotnet new blazorwasm

```

2. Add some new *.cs file with following C# code:

```c#

using System.Text.Json;

namespace SomeNamespace

{

publ... | 1.0 | Mono.Linker.LinkerFatalErrorException: "Error processing method" at GetValueNodeFromGenericArgument - Hello,

Can't publish Blazor WebAssembly application with some specific code.

### Steps To Reproduce

0. Install .NET 5 RC1

1. Create some project:

```

dotnet new blazorwasm

```

2. Add some new *.cs ... | process | mono linker linkerfatalerrorexception error processing method at getvaluenodefromgenericargument hello can t publish blazor webassembly application with some specific code steps to reproduce install net create some project dotnet new blazorwasm add some new cs fi... | 1 |

316,453 | 23,632,648,043 | IssuesEvent | 2022-08-25 10:39:08 | input-output-hk/cardano-db-sync | https://api.github.com/repos/input-output-hk/cardano-db-sync | opened | Possible breaking changes in config files for node path | documentation enhancement | From this Slack thread: https://input-output-rnd.slack.com/archives/CA919E6Q6/p1661278131542139

it looks like `cardano-node` configuration files under https://github.com/input-output-hk/cardano-node/tree/master/configuration

may be removed in the near future. Some of our `db-sync` configs rely on those files, like `m... | 1.0 | Possible breaking changes in config files for node path - From this Slack thread: https://input-output-rnd.slack.com/archives/CA919E6Q6/p1661278131542139

it looks like `cardano-node` configuration files under https://github.com/input-output-hk/cardano-node/tree/master/configuration

may be removed in the near future. ... | non_process | possible breaking changes in config files for node path from this slack thread it looks like cardano node configuration files under may be removed in the near future some of our db sync configs rely on those files like mainnet the current source of truth for config files is | 0 |

117,176 | 9,915,419,535 | IssuesEvent | 2019-06-28 16:49:27 | jahshaka/Studio | https://api.github.com/repos/jahshaka/Studio | closed | open and save scene object selection | fixed and waiting to be tested | when you open a scene for the first time in the editor , can the world item be selected by default ? we have a empty right column otherwise not a bg deal...

when you save a scene can you also save the last selected object so when you open it you open with that object selected ? | 1.0 | open and save scene object selection - when you open a scene for the first time in the editor , can the world item be selected by default ? we have a empty right column otherwise not a bg deal...

when you save a scene can you also save the last selected object so when you open it you open with that object selected ? | non_process | open and save scene object selection when you open a scene for the first time in the editor can the world item be selected by default we have a empty right column otherwise not a bg deal when you save a scene can you also save the last selected object so when you open it you open with that object selected | 0 |

702,255 | 24,120,801,517 | IssuesEvent | 2022-09-20 18:31:18 | googleapis/gax-nodejs | https://api.github.com/repos/googleapis/gax-nodejs | closed | Run system tests for some libraries kms: should pass samples tests failed | priority: p1 type: bug samples flakybot: issue flakybot: flaky | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: fc7e03f7a3d3f805d0e9b132c1b34b814d3ac598

b... | 1.0 | Run system tests for some libraries kms: should pass samples tests failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I wi... | non_process | run system tests for some libraries kms should pass samples tests failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output command failed with ex... | 0 |

8,493 | 2,873,418,481 | IssuesEvent | 2015-06-08 16:55:57 | IBM-Watson/design-library | https://api.github.com/repos/IBM-Watson/design-library | closed | Remove Vagrant Dependency | design guide enhancement | One of the biggest pieces of feedback we had in the retrospective was that getting set up was hard. Back when we needed Ruby and Node to work across different platforms, Vagrant made a lot of sense, but having dropped the Ruby dependency and needing Node already installed for Bower, having the overhead of Vagrant is mo... | 1.0 | Remove Vagrant Dependency - One of the biggest pieces of feedback we had in the retrospective was that getting set up was hard. Back when we needed Ruby and Node to work across different platforms, Vagrant made a lot of sense, but having dropped the Ruby dependency and needing Node already installed for Bower, having t... | non_process | remove vagrant dependency one of the biggest pieces of feedback we had in the retrospective was that getting set up was hard back when we needed ruby and node to work across different platforms vagrant made a lot of sense but having dropped the ruby dependency and needing node already installed for bower having t... | 0 |

15,749 | 9,049,666,855 | IssuesEvent | 2019-02-12 05:49:44 | nearprotocol/nearcore | https://api.github.com/repos/nearprotocol/nearcore | opened | Benchmark TestNet | enhancement performance | Create benchmark for testnet:

Testing modes:

- run locally with N nodes

- run on AWS in 1 locale

- run on AWS in N locales

Testing strategy:

- Send money tx

- Execute simple WASM

Collect stats:

- transaction per second

- blocks per second

| True | Benchmark TestNet - Create benchmark for testnet:

Testing modes:

- run locally with N nodes

- run on AWS in 1 locale

- run on AWS in N locales

Testing strategy:

- Send money tx

- Execute simple WASM

Collect stats:

- transaction per second

- blocks per second

| non_process | benchmark testnet create benchmark for testnet testing modes run locally with n nodes run on aws in locale run on aws in n locales testing strategy send money tx execute simple wasm collect stats transaction per second blocks per second | 0 |

869 | 3,329,640,442 | IssuesEvent | 2015-11-11 03:46:32 | t3kt/vjzual2 | https://api.github.com/repos/t3kt/vjzual2 | opened | spatial distortion of grid with mapped input texture | enhancement geometry video processing | base the distortion in 3d on a video source selector | 1.0 | spatial distortion of grid with mapped input texture - base the distortion in 3d on a video source selector | process | spatial distortion of grid with mapped input texture base the distortion in on a video source selector | 1 |

18,500 | 24,551,163,011 | IssuesEvent | 2022-10-12 12:43:15 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [iOS] [Offline indicator] Error message is not displayed in dashboard screen when participant is offline | Bug P1 iOS Process: Fixed Process: Tested dev | **Steps:**

1. Install the app

2. Sign in/signup

3. Enroll into any study

4. Navigated to study activities

5. Switch off the internet

6. Navigate to dashboard

7. Observe

**Actual:** Error message is not displayed in dashboard screen when participant is offline

**Expected:** Toast message should display in... | 2.0 | [iOS] [Offline indicator] Error message is not displayed in dashboard screen when participant is offline - **Steps:**

1. Install the app

2. Sign in/signup

3. Enroll into any study

4. Navigated to study activities

5. Switch off the internet

6. Navigate to dashboard

7. Observe

**Actual:** Error message is not... | process | error message is not displayed in dashboard screen when participant is offline steps install the app sign in signup enroll into any study navigated to study activities switch off the internet navigate to dashboard observe actual error message is not displayed in dashboar... | 1 |

18,198 | 24,253,518,448 | IssuesEvent | 2022-09-27 15:50:18 | deepset-ai/haystack | https://api.github.com/repos/deepset-ai/haystack | opened | Preprocessing: Warning when max doc length significantly exceeds preprocessor split length | type:feature topic:preprocessing | **Is your feature request related to a problem? Please describe.**

When running a retriever-reader pipeline, we noticed that certain inference times were much longer than others, and were even running into timeout problems. We thought this was odd, since we had a preprocessor splitting files into 250-word docs in orde... | 1.0 | Preprocessing: Warning when max doc length significantly exceeds preprocessor split length - **Is your feature request related to a problem? Please describe.**

When running a retriever-reader pipeline, we noticed that certain inference times were much longer than others, and were even running into timeout problems. We... | process | preprocessing warning when max doc length significantly exceeds preprocessor split length is your feature request related to a problem please describe when running a retriever reader pipeline we noticed that certain inference times were much longer than others and were even running into timeout problems we... | 1 |

52,461 | 10,865,153,004 | IssuesEvent | 2019-11-14 18:22:12 | twilio/twilio-node | https://api.github.com/repos/twilio/twilio-node | closed | Sync Map Mutate | code-generation difficulty: medium status: help wanted type: community enhancement | There should be a mutate method for Sync Maps items that is similar to the update method, but pass a mutator function instead of a new value.

| 1.0 | Sync Map Mutate - There should be a mutate method for Sync Maps items that is similar to the update method, but pass a mutator function instead of a new value.

| non_process | sync map mutate there should be a mutate method for sync maps items that is similar to the update method but pass a mutator function instead of a new value | 0 |

5,996 | 8,805,571,047 | IssuesEvent | 2018-12-26 20:32:49 | GoogleCloudPlatform/google-cloud-cpp | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-cpp | closed | Compilation fails on Ubuntu 18.04 with undefined reference to libldap with "external" libcurl | type: bug type: process | I spent some time trying to fix it, but I haven't figured it out yet - I'll need more time.

Workaround: supply `-DGOOGLE_CLOUD_CPP_CURL_PROVIDER=package` to cmake.

```

$ cat /etc/issue

Ubuntu 18.04.1 LTS \n \l

$ cmake -H. -Bbuild-output

$ cd build-output

$ make

[...]

../../../../external/lib/libcurl.a(ldap... | 1.0 | Compilation fails on Ubuntu 18.04 with undefined reference to libldap with "external" libcurl - I spent some time trying to fix it, but I haven't figured it out yet - I'll need more time.

Workaround: supply `-DGOOGLE_CLOUD_CPP_CURL_PROVIDER=package` to cmake.

```

$ cat /etc/issue

Ubuntu 18.04.1 LTS \n \l

$ cma... | process | compilation fails on ubuntu with undefined reference to libldap with external libcurl i spent some time trying to fix it but i haven t figured it out yet i ll need more time workaround supply dgoogle cloud cpp curl provider package to cmake cat etc issue ubuntu lts n l cmake ... | 1 |

1,984 | 2,581,279,317 | IssuesEvent | 2015-02-13 23:47:58 | chwangaa/Charlie | https://api.github.com/repos/chwangaa/Charlie | opened | Mal Positioning of Cloudmap | enhancement Graphic Design | The current display is too left, needs to be centered, and with a legible space between the heading and the map | 1.0 | Mal Positioning of Cloudmap - The current display is too left, needs to be centered, and with a legible space between the heading and the map | non_process | mal positioning of cloudmap the current display is too left needs to be centered and with a legible space between the heading and the map | 0 |

8,229 | 11,415,575,158 | IssuesEvent | 2020-02-02 12:02:09 | parcel-bundler/parcel | https://api.github.com/repos/parcel-bundler/parcel | closed | Font url import fails to resolve at runtime | :bug: Bug CSS Preprocessing Stale | **This a 🐛 bug report**

### 🎛 Configuration (.babelrc, package.json, cli command)

**tsconfig.json**

```js

{

"compilerOptions": {

"strict": true,

"target": "es2015",

"jsx": "react"

}

}

```

**package.json**

```

{

"name": "wtfloat",

"version": "0.1.0",

"description": "A... | 1.0 | Font url import fails to resolve at runtime - **This a 🐛 bug report**

### 🎛 Configuration (.babelrc, package.json, cli command)

**tsconfig.json**

```js

{

"compilerOptions": {

"strict": true,

"target": "es2015",

"jsx": "react"

}

}

```

**package.json**

```

{

"name": "wtfloat... | process | font url import fails to resolve at runtime this a 🐛 bug report 🎛 configuration babelrc package json cli command tsconfig json js compileroptions strict true target jsx react package json name wtfloat ... | 1 |

30,410 | 13,240,968,704 | IssuesEvent | 2020-08-19 07:24:27 | Azure/azure-powershell | https://api.github.com/repos/Azure/azure-powershell | closed | $Resource.Properties.remoteDebuggingEnabled command changing value of previous execution | App Services Service Attention customer-reported question | <!--

- Make sure you are able to reproduce this issue on the latest released version of Az

- https://www.powershellgallery.com/packages/Az

- Please search the existing issues to see if there has been a similar issue filed

- For issue related to importing a module, please refer to our troubleshooting guide:

... | 2.0 | $Resource.Properties.remoteDebuggingEnabled command changing value of previous execution - <!--

- Make sure you are able to reproduce this issue on the latest released version of Az

- https://www.powershellgallery.com/packages/Az

- Please search the existing issues to see if there has been a similar issue file... | non_process | resource properties remotedebuggingenabled command changing value of previous execution make sure you are able to reproduce this issue on the latest released version of az please search the existing issues to see if there has been a similar issue filed for issue related to importing a module... | 0 |

1,697 | 4,346,240,036 | IssuesEvent | 2016-07-29 15:21:57 | opentrials/opentrials | https://api.github.com/repos/opentrials/opentrials | reopened | Make OSF integration | 3. In Development Processors | # Goal

Export data to OSF.

# Analysis

JamDB is a schema-less, immutable database that can optionally enforce a schema and stores provenance. It supports efficient full-text search, filtering by nested keys, and is accessible a REST API.

http://jamdb.readthedocs.io/en/latest/

We need to export our trials ... | 1.0 | Make OSF integration - # Goal

Export data to OSF.

# Analysis

JamDB is a schema-less, immutable database that can optionally enforce a schema and stores provenance. It supports efficient full-text search, filtering by nested keys, and is accessible a REST API.

http://jamdb.readthedocs.io/en/latest/

We nee... | process | make osf integration goal export data to osf analysis jamdb is a schema less immutable database that can optionally enforce a schema and stores provenance it supports efficient full text search filtering by nested keys and is accessible a rest api we need to export our trials data to osf us... | 1 |

4,143 | 4,932,035,949 | IssuesEvent | 2016-11-28 12:17:37 | idno/Known | https://api.github.com/repos/idno/Known | closed | .htaccess should block everything by default and only allow through requests to "the right things" | New feature Security | should I be able to request arbitrary .php files under Idno*, external or templates?

## <bountysource-plugin>

Want to back this issue? **[Post a bounty on it!](https://www.bountysource.com/issues/1246844-htaccess-should-block-everything-by-default-and-only-allow-through-requests-to-the-right-things?utm_campaign=plugin... | True | .htaccess should block everything by default and only allow through requests to "the right things" - should I be able to request arbitrary .php files under Idno*, external or templates?

## <bountysource-plugin>

Want to back this issue? **[Post a bounty on it!](https://www.bountysource.com/issues/1246844-htaccess-shoul... | non_process | htaccess should block everything by default and only allow through requests to the right things should i be able to request arbitrary php files under idno external or templates want to back this issue we accept bounties via | 0 |

131,762 | 12,489,821,444 | IssuesEvent | 2020-05-31 20:41:41 | edwardtheharris/machines-wat-learn-good | https://api.github.com/repos/edwardtheharris/machines-wat-learn-good | closed | Regression - Intro and Data | documentation | Welcome to the introduction to the regression section of the Machine Learning with Python tutorial series. By this point, you should have Scikit-Learn already installed. If not, get it, along with Pandas and matplotlib!

If you have a pre-compiled scientific distribution of Python like ActivePython from our sponsor, ... | 1.0 | Regression - Intro and Data - Welcome to the introduction to the regression section of the Machine Learning with Python tutorial series. By this point, you should have Scikit-Learn already installed. If not, get it, along with Pandas and matplotlib!

If you have a pre-compiled scientific distribution of Python like A... | non_process | regression intro and data welcome to the introduction to the regression section of the machine learning with python tutorial series by this point you should have scikit learn already installed if not get it along with pandas and matplotlib if you have a pre compiled scientific distribution of python like a... | 0 |

7,878 | 19,761,013,736 | IssuesEvent | 2022-01-16 12:17:30 | graphhopper/graphhopper | https://api.github.com/repos/graphhopper/graphhopper | closed | Check if moving TestAlgoCollector into test package of osm module is possible | improvement architecture | Then we could also use the TranslationMapTest.SINGLETON instead of the newly created instance. | 1.0 | Check if moving TestAlgoCollector into test package of osm module is possible - Then we could also use the TranslationMapTest.SINGLETON instead of the newly created instance. | non_process | check if moving testalgocollector into test package of osm module is possible then we could also use the translationmaptest singleton instead of the newly created instance | 0 |

10,588 | 13,397,737,001 | IssuesEvent | 2020-09-03 12:06:14 | panther-labs/panther | https://api.github.com/repos/panther-labs/panther | opened | Classifier fails to distinguish between Gitlab.API logs and Gitlab.Production logs | bug p1 team:data processing | ### Describe the bug

Depending on the state of the classifier queue Gitlab.API logs can end up into the Gitlab.Production table

### Steps to reproduce

Not easy to reproduce with certainty because of the randomness in the classifier queue

### Expected behavior

There should be a distinction between the... | 1.0 | Classifier fails to distinguish between Gitlab.API logs and Gitlab.Production logs - ### Describe the bug

Depending on the state of the classifier queue Gitlab.API logs can end up into the Gitlab.Production table

### Steps to reproduce

Not easy to reproduce with certainty because of the randomness in the cla... | process | classifier fails to distinguish between gitlab api logs and gitlab production logs describe the bug depending on the state of the classifier queue gitlab api logs can end up into the gitlab production table steps to reproduce not easy to reproduce with certainty because of the randomness in the cla... | 1 |

10,523 | 13,305,329,519 | IssuesEvent | 2020-08-25 18:21:49 | knative/serving | https://api.github.com/repos/knative/serving | closed | Implement an oncall rotation for Knative | area/productivity area/test-and-release epic kind/process | <!-- If you need to report a security issue with Knative, send an email to knative-security@googlegroups.com. -->

<!--

## In what area(s)?

Remove the '> ' to select

/area API

> /area autoscale

> /area build

> /area monitoring

> /area networking

> /area test-and-release

Other classifications:

> /kind goo... | 1.0 | Implement an oncall rotation for Knative - <!-- If you need to report a security issue with Knative, send an email to knative-security@googlegroups.com. -->

<!--

## In what area(s)?

Remove the '> ' to select

/area API

> /area autoscale

> /area build

> /area monitoring

> /area networking

> /area test-and-rel... | process | implement an oncall rotation for knative in what area s remove the to select area api area autoscale area build area monitoring area networking area test and release other classifications kind good first issue kind process kind spec describe the fe... | 1 |

34,554 | 7,453,901,911 | IssuesEvent | 2018-03-29 13:32:23 | kerdokullamae/test_koik_issued | https://api.github.com/repos/kerdokullamae/test_koik_issued | closed | Importeri vead | P: highest R: fixed T: defect | **Reported by sven syld on 9 Sep 2013 06:55 UTC**

1) admin_unit/manor/manor/1 juures tekib viga:

''

[ SQLSTATE[23505](PDOException]

): Unique violation: 7 ERROR: duplicate key value violates unique constraint "x_admin_unit_pkey"

DETAIL: Key (id)=(11136502) already exists.

''

Tabel on enne import tühjaks t... | 1.0 | Importeri vead - **Reported by sven syld on 9 Sep 2013 06:55 UTC**

1) admin_unit/manor/manor/1 juures tekib viga:

''

[ SQLSTATE[23505](PDOException]

): Unique violation: 7 ERROR: duplicate key value violates unique constraint "x_admin_unit_pkey"

DETAIL: Key (id)=(11136502) already exists.

''

Tabel on enne... | non_process | importeri vead reported by sven syld on sep utc admin unit manor manor juures tekib viga pdoexception unique violation error duplicate key value violates unique constraint x admin unit pkey detail key id already exists tabel on enne import tühjaks tehtud au i... | 0 |

51,187 | 13,624,574,014 | IssuesEvent | 2020-09-24 08:16:01 | Techini/juice-shop | https://api.github.com/repos/Techini/juice-shop | opened | CVE-2020-24977 (Medium) detected in gettextv0.20 | security vulnerability | ## CVE-2020-24977 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>gettextv0.20</b></p></summary>

<p>

<p>git://git.savannah.gnu.org/gettext.git </p>

<p>Library home page: <a href=http... | True | CVE-2020-24977 (Medium) detected in gettextv0.20 - ## CVE-2020-24977 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>gettextv0.20</b></p></summary>

<p>

<p>git://git.savannah.gnu.org/... | non_process | cve medium detected in cve medium severity vulnerability vulnerable library git git savannah gnu org gettext git library home page a href found in head commit a href vulnerable source files juice shop node modules vendor libxml xmlschema... | 0 |

243,875 | 7,868,156,147 | IssuesEvent | 2018-06-23 17:51:23 | cdgco/VestaWebInterface | https://api.github.com/repos/cdgco/VestaWebInterface | closed | Edit Backup Exclusions | Backend Priority: Medium Status: Accepted Type: Enhancement | Support for editing of backup exclusions. Data must be uploaded from frontend to backend, added to temp file, then directory linked to user.

Frontend framework in place. | 1.0 | Edit Backup Exclusions - Support for editing of backup exclusions. Data must be uploaded from frontend to backend, added to temp file, then directory linked to user.

Frontend framework in place. | non_process | edit backup exclusions support for editing of backup exclusions data must be uploaded from frontend to backend added to temp file then directory linked to user frontend framework in place | 0 |

325,982 | 27,972,288,007 | IssuesEvent | 2023-03-25 06:21:48 | qutebrowser/qutebrowser | https://api.github.com/repos/qutebrowser/qutebrowser | closed | iframe BDD tests flaky | component: tests priority: 1 - middle | See https://github.com/The-Compiler/qutebrowser/pull/1433#issuecomment-220830022

I'll mark them as skipped for now to avoid failing unrelated builds, but this should be fixed properly. Probably `quteproc.click_element` could be refactored to take any xpath, and that could be used to focus the iframe.

cc @lahwaacz

| 1.0 | iframe BDD tests flaky - See https://github.com/The-Compiler/qutebrowser/pull/1433#issuecomment-220830022

I'll mark them as skipped for now to avoid failing unrelated builds, but this should be fixed properly. Probably `quteproc.click_element` could be refactored to take any xpath, and that could be used to focus the ... | non_process | iframe bdd tests flaky see i ll mark them as skipped for now to avoid failing unrelated builds but this should be fixed properly probably quteproc click element could be refactored to take any xpath and that could be used to focus the iframe cc lahwaacz | 0 |

3,009 | 6,010,107,508 | IssuesEvent | 2017-06-06 12:24:31 | openvstorage/arakoon | https://api.github.com/repos/openvstorage/arakoon | closed | Arakoon cluster status | process_wontfix type_feature | Arakoon should expose call to request the status of the Arakoon cluster (f.e 2/3 nodes are working fine so the healthcheck can query this status. (see https://github.com/openvstorage/openvstorage-health-check/issues/324)

| 1.0 | Arakoon cluster status - Arakoon should expose call to request the status of the Arakoon cluster (f.e 2/3 nodes are working fine so the healthcheck can query this status. (see https://github.com/openvstorage/openvstorage-health-check/issues/324)

| process | arakoon cluster status arakoon should expose call to request the status of the arakoon cluster f e nodes are working fine so the healthcheck can query this status see | 1 |

144,084 | 13,095,375,014 | IssuesEvent | 2020-08-03 14:01:23 | pvcraven/arcade | https://api.github.com/repos/pvcraven/arcade | closed | arcade/examples/frametime_plotter.py has no corresponding rst in doc/examples | documentation | Examples in /examples need rst's to link provide documentation. | 1.0 | arcade/examples/frametime_plotter.py has no corresponding rst in doc/examples - Examples in /examples need rst's to link provide documentation. | non_process | arcade examples frametime plotter py has no corresponding rst in doc examples examples in examples need rst s to link provide documentation | 0 |

6,527 | 9,621,469,409 | IssuesEvent | 2019-05-14 10:42:46 | kangarko/Boss | https://api.github.com/repos/kangarko/Boss | closed | ********* SUPPORT DELAYED ANNOUNCEMENT ********* | Improvement Issue: cannot reproduce Issue: complaint Issue: confirmed Issue: won't fix Question Status: in progress Status: invalid Status: not started Status: processed Status: queued | Hello everyone,

I sincerely apology for the delay in support. I had some tough times getting all plugins up for Minecraft 1.14 but the good news is that we now fully support that version!

I will slowly get back to all of you as soon as possible, the most critical issues tomorrow morning and the rest starting Mond... | 1.0 | ********* SUPPORT DELAYED ANNOUNCEMENT ********* - Hello everyone,

I sincerely apology for the delay in support. I had some tough times getting all plugins up for Minecraft 1.14 but the good news is that we now fully support that version!

I will slowly get back to all of you as soon as possible, the most critical... | process | support delayed announcement hello everyone i sincerely apology for the delay in support i had some tough times getting all plugins up for minecraft but the good news is that we now fully support that version i will slowly get back to all of you as soon as possible the most critical ... | 1 |

816,379 | 30,597,510,524 | IssuesEvent | 2023-07-22 01:17:05 | dhruvkb/pls | https://api.github.com/repos/dhruvkb/pls | closed | Exception throw when listing non readable files | 🟨 priority: medium 🛠 goal: fix 🐛 versioning: patch 🖥️ os: linux | ## Description

<!-- Concisely describe the bug. -->

Exception is throw when trying to list directories that the user has not access to view.

## Reproduction

<!-- Provide detailed steps to reproduce the bug. -->

Create the temporary files

```sh

mkdir /tmp/test

touch /tmp/test/test

sudo chown -R root:root ... | 1.0 | Exception throw when listing non readable files - ## Description

<!-- Concisely describe the bug. -->

Exception is throw when trying to list directories that the user has not access to view.

## Reproduction

<!-- Provide detailed steps to reproduce the bug. -->

Create the temporary files

```sh

mkdir /tmp/test... | non_process | exception throw when listing non readable files description exception is throw when trying to list directories that the user has not access to view reproduction create the temporary files sh mkdir tmp test touch tmp test test sudo chown r root root tmp test sudo chmod r tmp test ... | 0 |

3,425 | 6,526,077,451 | IssuesEvent | 2017-08-29 18:15:32 | ncbo/bioportal-project | https://api.github.com/repos/ncbo/bioportal-project | closed | SSN: latest submission failed to parse | ontology processing problem ready | Submission upload date: April, 17th.

Submission ID: 2

Error from parsing log file:

```

E, [2017-07-11T13:07:14.292054 #17044] ERROR -- : ["Exception: Rapper cannot parse rdfxml file at /srv/ncbo/repository/SSN/2/owlapi.xrdf:

rapper: Parsing URI file:///srv/ncbo/repository/SSN/2/owlapi.xrdf with parser rdfxml

... | 1.0 | SSN: latest submission failed to parse - Submission upload date: April, 17th.

Submission ID: 2

Error from parsing log file:

```

E, [2017-07-11T13:07:14.292054 #17044] ERROR -- : ["Exception: Rapper cannot parse rdfxml file at /srv/ncbo/repository/SSN/2/owlapi.xrdf:

rapper: Parsing URI file:///srv/ncbo/reposit... | process | ssn latest submission failed to parse submission upload date april submission id error from parsing log file e error exception rapper cannot parse rdfxml file at srv ncbo repository ssn owlapi xrdf rapper parsing uri file srv ncbo repository ssn owlapi xrdf with parser rdf... | 1 |

11,850 | 14,662,942,372 | IssuesEvent | 2020-12-29 08:33:18 | encode/uvicorn | https://api.github.com/repos/encode/uvicorn | closed | Subprocess returncode is not detected when running Gunicorn with Uvicorn (with fix PR companion) | bug multiprocessing | ### Checklist

<!-- Please make sure you check all these items before submitting your bug report. -->

- [x] The bug is reproducible against the latest release and/or `master`.

- [x] There are no similar issues or pull requests to fix it yet.

### Describe the bug

<!-- A clear and concise description of what ... | 1.0 | Subprocess returncode is not detected when running Gunicorn with Uvicorn (with fix PR companion) - ### Checklist

<!-- Please make sure you check all these items before submitting your bug report. -->

- [x] The bug is reproducible against the latest release and/or `master`.

- [x] There are no similar issues or pu... | process | subprocess returncode is not detected when running gunicorn with uvicorn with fix pr companion checklist the bug is reproducible against the latest release and or master there are no similar issues or pull requests to fix it yet describe the bug when starting gunicorn with uv... | 1 |

6,806 | 9,954,480,731 | IssuesEvent | 2019-07-05 08:31:32 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Windows: Bazel client is slow | P3 platform: windows team-Windows type: process | ### Description of the problem / feature request:

This is an umbrella bug for Bazel client performance tuning on Windows.

### Bugs: what's the simplest, easiest way to reproduce this bug? Please provide a minimal example if possible.

Bazel on Windows feels a lot slower than on other platforms. Let's find out w... | 1.0 | Windows: Bazel client is slow - ### Description of the problem / feature request:

This is an umbrella bug for Bazel client performance tuning on Windows.

### Bugs: what's the simplest, easiest way to reproduce this bug? Please provide a minimal example if possible.

Bazel on Windows feels a lot slower than on o... | process | windows bazel client is slow description of the problem feature request this is an umbrella bug for bazel client performance tuning on windows bugs what s the simplest easiest way to reproduce this bug please provide a minimal example if possible bazel on windows feels a lot slower than on o... | 1 |

36,494 | 12,414,418,507 | IssuesEvent | 2020-05-22 14:30:39 | trellis-ldp/trellis-extensions | https://api.github.com/repos/trellis-ldp/trellis-extensions | closed | CVE-2017-15708 (High) detected in commons-collections-3.2.2.jar | security vulnerability | ## CVE-2017-15708 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-collections-3.2.2.jar</b></p></summary>

<p>Types that extend and augment the Java Collections Framework.</p>

<... | True | CVE-2017-15708 (High) detected in commons-collections-3.2.2.jar - ## CVE-2017-15708 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-collections-3.2.2.jar</b></p></summary>

<p>T... | non_process | cve high detected in commons collections jar cve high severity vulnerability vulnerable library commons collections jar types that extend and augment the java collections framework path to vulnerable library root gradle caches modules files commons collections common... | 0 |

27,864 | 8,048,437,439 | IssuesEvent | 2018-08-01 06:44:13 | libevent/libevent | https://api.github.com/repos/libevent/libevent | closed | release-2.1.8-stable MINGW64 automake vs cmake | build:cmake os:win32 | I am use msys2 and tried compiling release-2.1.8-stable.

Seems that compiling is working with automake but not with cmake.

(with MSYS Makefiles and MinGW Generator)

[cmake.log](https://github.com/libevent/libevent/files/1789427/cmake.log)

[automake.log](https://github.com/libevent/libevent/files/1789428/auto... | 1.0 | release-2.1.8-stable MINGW64 automake vs cmake - I am use msys2 and tried compiling release-2.1.8-stable.

Seems that compiling is working with automake but not with cmake.

(with MSYS Makefiles and MinGW Generator)

[cmake.log](https://github.com/libevent/libevent/files/1789427/cmake.log)

[automake.log](https... | non_process | release stable automake vs cmake i am use and tried compiling release stable seems that compiling is working with automake but not with cmake with msys makefiles and mingw generator | 0 |

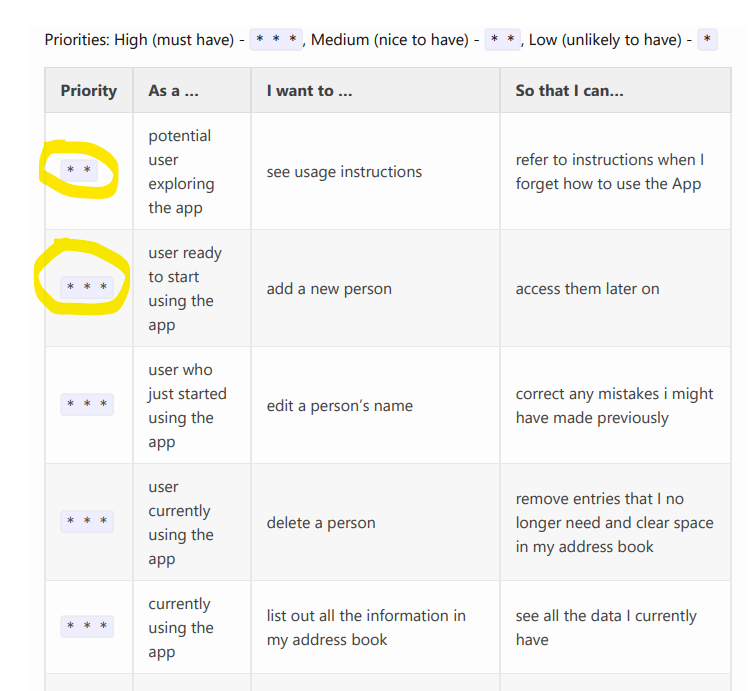

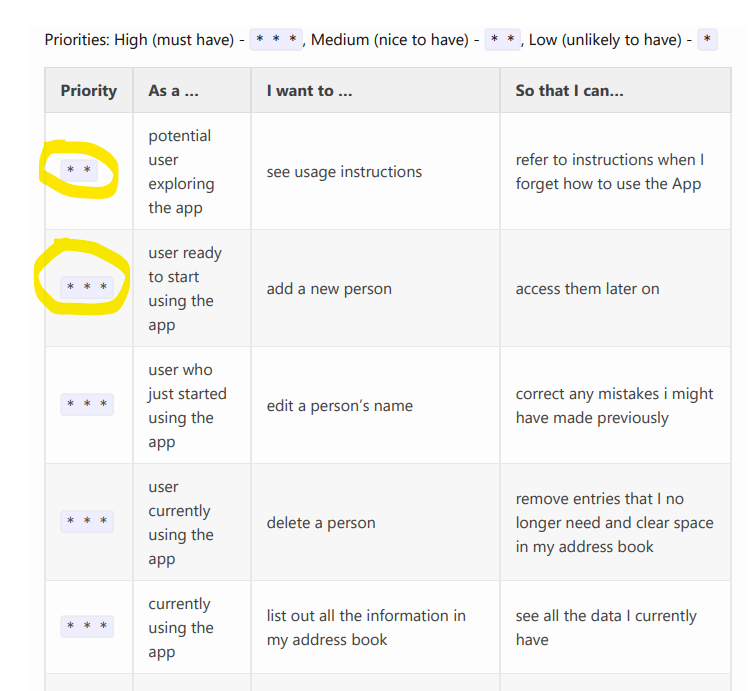

356,917 | 25,176,289,152 | IssuesEvent | 2022-11-11 09:33:10 | cadencjk/pe | https://api.github.com/repos/cadencjk/pe | opened | User stories not sorted according to priority in DG | type.DocumentationBug severity.VeryLow |

Some of the 2 stars are sorted at different positions in the table.

<!--session: 1668153883859-067a3b5a-f3a6-4e30-ab2a-24007eccd024-->

<!--Version: Web v3.4.4--> | 1.0 | User stories not sorted according to priority in DG -

Some of the 2 stars are sorted at different positions in the table.

<!--session: 1668153883859-067a3b5a-f3a6-4e30-ab2a-24007eccd024-->

<!--Version: Web... | non_process | user stories not sorted according to priority in dg some of the stars are sorted at different positions in the table | 0 |

25,287 | 4,282,457,836 | IssuesEvent | 2016-07-15 09:14:14 | Cockatrice/Cockatrice | https://api.github.com/repos/Cockatrice/Cockatrice | closed | Layout bug when pasting deck | App - Cockatrice Defect - Basic UI / UX | Running the latest master branch (81006d5342c95e3aa25e6bfce790875e9aebf9c7).

When opening the program, have a decklist in your clipboard and press the paste key combination (ctrl/cmd + V) without clicking on anything else.

<img width="1680" alt="screen shot 2016-07-15 at 10 42 33" src="https://cloud.githubusercon... | 1.0 | Layout bug when pasting deck - Running the latest master branch (81006d5342c95e3aa25e6bfce790875e9aebf9c7).

When opening the program, have a decklist in your clipboard and press the paste key combination (ctrl/cmd + V) without clicking on anything else.

<img width="1680" alt="screen shot 2016-07-15 at 10 42 33" s... | non_process | layout bug when pasting deck running the latest master branch when opening the program have a decklist in your clipboard and press the paste key combination ctrl cmd v without clicking on anything else img width alt screen shot at src here was the deck i used looks like al... | 0 |

13,910 | 16,668,318,139 | IssuesEvent | 2021-06-07 07:49:55 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | opened | Rewritten document of an iframe with the srcdoc attribute remains unproxied | SYSTEM: iframe processing TYPE: bug | After an iframe with the `srcdoc` attribute created from script and its content dynamically written (using the document's write method), the document remains unproxied and it leads to many errors like the following:

> SyntaxError: Unexpected token = in JSON at position`

It happens when the unproxied document tries... | 1.0 | Rewritten document of an iframe with the srcdoc attribute remains unproxied - After an iframe with the `srcdoc` attribute created from script and its content dynamically written (using the document's write method), the document remains unproxied and it leads to many errors like the following:

> SyntaxError: Unexpecte... | process | rewritten document of an iframe with the srcdoc attribute remains unproxied after an iframe with the srcdoc attribute created from script and its content dynamically written using the document s write method the document remains unproxied and it leads to many errors like the following syntaxerror unexpecte... | 1 |

17,668 | 23,491,339,091 | IssuesEvent | 2022-08-17 19:03:54 | openxla/stablehlo | https://api.github.com/repos/openxla/stablehlo | opened | Create a Bazel build | Process | As part of #13, we'll create a Blaze build for StableHLO (internal to Google3), and we can use that to bootstrap the Bazel build. | 1.0 | Create a Bazel build - As part of #13, we'll create a Blaze build for StableHLO (internal to Google3), and we can use that to bootstrap the Bazel build. | process | create a bazel build as part of we ll create a blaze build for stablehlo internal to and we can use that to bootstrap the bazel build | 1 |

194,826 | 6,899,579,011 | IssuesEvent | 2017-11-24 14:23:40 | rucio/rucio | https://api.github.com/repos/rucio/rucio | closed | Fix current flake8 errors on master and next | bug patch PRIORITY Release management | Motivation

----------

There are several flake8 errors both on master and next which need to be fixed.

`flake8 --ignore=E501 --exclude="*.cfg" bin/* lib/ tools/*.py tools/probes/common/*`

| 1.0 | Fix current flake8 errors on master and next - Motivation

----------

There are several flake8 errors both on master and next which need to be fixed.

`flake8 --ignore=E501 --exclude="*.cfg" bin/* lib/ tools/*.py tools/probes/common/*`

| non_process | fix current errors on master and next motivation there are several errors both on master and next which need to be fixed ignore exclude cfg bin lib tools py tools probes common | 0 |

21,900 | 30,346,907,063 | IssuesEvent | 2023-07-11 16:00:33 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | opened | [Mirror] rules_go 0.41.0 and Gazelle 0.32.0 | P2 type: process team-OSS mirror request | ### Please list the URLs of the archives you'd like to mirror:

https://github.com/bazelbuild/bazel-gazelle/releases/download/v0.32.0/bazel-gazelle-v0.32.0.tar.gz

https://github.com/bazelbuild/rules_go/releases/download/v0.41.0/rules_go-v0.41.0.zip | 1.0 | [Mirror] rules_go 0.41.0 and Gazelle 0.32.0 - ### Please list the URLs of the archives you'd like to mirror:

https://github.com/bazelbuild/bazel-gazelle/releases/download/v0.32.0/bazel-gazelle-v0.32.0.tar.gz

https://github.com/bazelbuild/rules_go/releases/download/v0.41.0/rules_go-v0.41.0.zip | process | rules go and gazelle please list the urls of the archives you d like to mirror | 1 |

9,119 | 12,196,918,574 | IssuesEvent | 2020-04-29 19:51:07 | jsamr/bootiso | https://api.github.com/repos/jsamr/bootiso | closed | Windows 10 missing drivers during install, possibly because of MBR + UEFI instead of GPT | bug installation-process | I think a GPT option is needed for Windows 10 ISOs. Currently mbr+rsync is used, but this causes some kind of weird missing driver error in the installation setup. I tried [windows2usb](https://github.com/ValdikSS/windows2usb) with mbr and it showed the same issue, but with gpt it worked. | 1.0 | Windows 10 missing drivers during install, possibly because of MBR + UEFI instead of GPT - I think a GPT option is needed for Windows 10 ISOs. Currently mbr+rsync is used, but this causes some kind of weird missing driver error in the installation setup. I tried [windows2usb](https://github.com/ValdikSS/windows2usb) w... | process | windows missing drivers during install possibly because of mbr uefi instead of gpt i think a gpt option is needed for windows isos currently mbr rsync is used but this causes some kind of weird missing driver error in the installation setup i tried with mbr and it showed the same issue but with gpt it... | 1 |

2,819 | 5,766,999,365 | IssuesEvent | 2017-04-27 08:51:34 | USC-CSSL/TACIT | https://api.github.com/repos/USC-CSSL/TACIT | closed | preprocessing: correct spelling option | Preprocessing | In preprocessing, can we provide an option to automatically correct misspellings?

| 1.0 | preprocessing: correct spelling option - In preprocessing, can we provide an option to automatically correct misspellings?

| process | preprocessing correct spelling option in preprocessing can we provide an option to automatically correct misspellings | 1 |

2,254 | 5,088,655,375 | IssuesEvent | 2017-01-01 00:07:46 | sw4j-org/tool-jpa-processor | https://api.github.com/repos/sw4j-org/tool-jpa-processor | opened | Handle @SecondaryTables Annotation | annotation processor task | Handle the `@SecondaryTables` annotation for an entity.

See [JSR 338: Java Persistence API, Version 2.1](http://download.oracle.com/otn-pub/jcp/persistence-2_1-fr-eval-spec/JavaPersistence.pdf)

- 11.1.47 SecondaryTables Annotation

| 1.0 | Handle @SecondaryTables Annotation - Handle the `@SecondaryTables` annotation for an entity.

See [JSR 338: Java Persistence API, Version 2.1](http://download.oracle.com/otn-pub/jcp/persistence-2_1-fr-eval-spec/JavaPersistence.pdf)

- 11.1.47 SecondaryTables Annotation

| process | handle secondarytables annotation handle the secondarytables annotation for an entity see secondarytables annotation | 1 |

14,988 | 18,531,966,753 | IssuesEvent | 2021-10-21 07:18:09 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] [Permission] App status is not getting displayed in the below mentioned screens | Bug P1 Participant manager Process: Fixed Process: Tested dev | **Login as Site admin**

1. Site > Enrollment registry.

2. Studies > Enrollment registry.

3. Studies > Study participant registry.

**Note:**

1. Issue is observed only in above three screens for the admins who has other than super admin permission.

2. Issue is observed only for open studies in Site > Enroll... | 2.0 | [PM] [Permission] App status is not getting displayed in the below mentioned screens - **Login as Site admin**

1. Site > Enrollment registry.

2. Studies > Enrollment registry.

3. Studies > Study participant registry.

**Note:**

1. Issue is observed only in above three screens for the admins who has other t... | process | app status is not getting displayed in the below mentioned screens login as site admin site enrollment registry studies enrollment registry studies study participant registry note issue is observed only in above three screens for the admins who has other than super admi... | 1 |

14,978 | 18,504,529,783 | IssuesEvent | 2021-10-19 16:59:00 | googleapis/python-api-core | https://api.github.com/repos/googleapis/python-api-core | closed | Tests should pass or be skipped when 'grcp' is not installed | type: process | From [this discussion](https://github.com/googleapis/python-api-core/pull/286#discussion_r725302077).

`grpcio` and related files are [optional dependencies](https://github.com/googleapis/python-api-core/blob/e6a3489a79da00a5de919a4a41782cc3b1d7f583/setup.py#L38-L42), but we don't have any CI which ensures that the "... | 1.0 | Tests should pass or be skipped when 'grcp' is not installed - From [this discussion](https://github.com/googleapis/python-api-core/pull/286#discussion_r725302077).

`grpcio` and related files are [optional dependencies](https://github.com/googleapis/python-api-core/blob/e6a3489a79da00a5de919a4a41782cc3b1d7f583/setup... | process | tests should pass or be skipped when grcp is not installed from grpcio and related files are but we don t have any ci which ensures that the optional part is really true we should test at least minimally without grpcio installed and ensure that tests or whole test modules which depend on it ar... | 1 |

17,788 | 23,715,346,821 | IssuesEvent | 2022-08-30 11:18:06 | wp-media/wp-rocket | https://api.github.com/repos/wp-media/wp-rocket | closed | Preload counter not incrementing | type: bug module: preload tool: background process priority: high status: blocked future severity: minor | **Describe the bug**

In specific cases, the preload counter is not incrementing. There are two separate things that are happening to the customer and they're causing the preload to stuck.

The first one is related to this `If` statement:

https://github.com/wp-media/wp-rocket/blob/8b2a567efcab3925dba6fff72c5a622ec2d... | 1.0 | Preload counter not incrementing - **Describe the bug**

In specific cases, the preload counter is not incrementing. There are two separate things that are happening to the customer and they're causing the preload to stuck.

The first one is related to this `If` statement:

https://github.com/wp-media/wp-rocket/blob/... | process | preload counter not incrementing describe the bug in specific cases the preload counter is not incrementing there are two separate things that are happening to the customer and they re causing the preload to stuck the first one is related to this if statement if item is a string we re not passin... | 1 |

315,776 | 27,106,112,499 | IssuesEvent | 2023-02-15 12:14:26 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | pkg/sql/logictest/tests/local-vec-off/local-vec-off_test: TestLogic_crdb_internal failed | C-test-failure O-robot branch-release-22.2 | pkg/sql/logictest/tests/local-vec-off/local-vec-off_test.TestLogic_crdb_internal [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/8714671?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/8714671?buildTab=artifa... | 1.0 | pkg/sql/logictest/tests/local-vec-off/local-vec-off_test: TestLogic_crdb_internal failed - pkg/sql/logictest/tests/local-vec-off/local-vec-off_test.TestLogic_crdb_internal [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/8714671?buildTab=log) with [artifacts](https://teamcity... | non_process | pkg sql logictest tests local vec off local vec off test testlogic crdb internal failed pkg sql logictest tests local vec off local vec off test testlogic crdb internal with on release run testlogic crdb internal test log scope go test logs captured to artifacts tmp tmp logt... | 0 |

46,365 | 5,798,620,342 | IssuesEvent | 2017-05-03 02:47:00 | crkellen/bands | https://api.github.com/repos/crkellen/bands | closed | invisible blocks | bug critical needs testing | Some blocks get placed but don't show that they've been placed until you rejoin | 1.0 | invisible blocks - Some blocks get placed but don't show that they've been placed until you rejoin | non_process | invisible blocks some blocks get placed but don t show that they ve been placed until you rejoin | 0 |

168,361 | 20,754,721,821 | IssuesEvent | 2022-03-15 11:03:58 | arngrimur/computersaysno | https://api.github.com/repos/arngrimur/computersaysno | closed | CVE-2021-41103 (High) detected in github.com/docker/docker-v20.10.9 - autoclosed | security vulnerability | ## CVE-2021-41103 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>github.com/docker/docker-v20.10.9</b></p></summary>

<p>Moby Project - a collaborative project for the container ecosys... | True | CVE-2021-41103 (High) detected in github.com/docker/docker-v20.10.9 - autoclosed - ## CVE-2021-41103 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>github.com/docker/docker-v20.10.9</b... | non_process | cve high detected in github com docker docker autoclosed cve high severity vulnerability vulnerable library github com docker docker moby project a collaborative project for the container ecosystem to assemble container based systems dependency hierarchy github com ... | 0 |

786,679 | 27,661,993,029 | IssuesEvent | 2023-03-12 16:25:10 | kubernetes/release | https://api.github.com/repos/kubernetes/release | closed | Dependency update - Golang 1.20.2/1.19.7 | kind/feature sig/release area/release-eng area/dependency needs-priority | ### Tracking info

<!-- Search query: https://github.com/kubernetes/release/issues?q=is%3Aissue+Dependency+update+-+Golang -->

<!-- Example: https://github.com/kubernetes/release/issues/2694 -->

Link to any previous tracking issue: https://github.com/kubernetes/release/issues/2909

<!-- golang-announce mailing li... | 1.0 | Dependency update - Golang 1.20.2/1.19.7 - ### Tracking info

<!-- Search query: https://github.com/kubernetes/release/issues?q=is%3Aissue+Dependency+update+-+Golang -->

<!-- Example: https://github.com/kubernetes/release/issues/2694 -->

Link to any previous tracking issue: https://github.com/kubernetes/release/iss... | non_process | dependency update golang tracking info link to any previous tracking issue golang mailing list announcement sig release slack thread work items kube cross image update image promotion go runner image update ... | 0 |

8,340 | 11,497,801,404 | IssuesEvent | 2020-02-12 10:42:31 | 18F/tts-tech-portfolio | https://api.github.com/repos/18F/tts-tech-portfolio | closed | TTS Tech Portfolio agile approach -- update documentation to match approach | Jan2020-inperson epic: internal workflow/procedures needs grooming workflow: process | ## Background information

<!-- description, links, etc. -->

## User stories

<!-- one or more -->

- As a ..., I want ... so that ...

- As a ..., I want ... so that ...

## Implementation

- [ ] grooming sections in doc

- [ ] around assignment

- [ ] when labeling happens

- [ ] epics ... | 1.0 | TTS Tech Portfolio agile approach -- update documentation to match approach - ## Background information

<!-- description, links, etc. -->

## User stories

<!-- one or more -->

- As a ..., I want ... so that ...

- As a ..., I want ... so that ...

## Implementation

- [ ] grooming sections in doc

... | process | tts tech portfolio agile approach update documentation to match approach background information user stories as a i want so that as a i want so that implementation grooming sections in doc around assignment when labeling hap... | 1 |

220,946 | 16,991,013,043 | IssuesEvent | 2021-06-30 20:28:46 | zephyrj/sim-racing-tools | https://api.github.com/repos/zephyrj/sim-racing-tools | opened | Update documentation for downloading latest stable release from pypi | documentation | Currently the docs only reference the git repo as a source for installing sim-racing-tools; add in the option to download the latest stable version from pypi with a standard `pip install` | 1.0 | Update documentation for downloading latest stable release from pypi - Currently the docs only reference the git repo as a source for installing sim-racing-tools; add in the option to download the latest stable version from pypi with a standard `pip install` | non_process | update documentation for downloading latest stable release from pypi currently the docs only reference the git repo as a source for installing sim racing tools add in the option to download the latest stable version from pypi with a standard pip install | 0 |

12,800 | 15,180,854,777 | IssuesEvent | 2021-02-15 01:30:39 | Geonovum/disgeo-arch | https://api.github.com/repos/Geonovum/disgeo-arch | closed | 1. Inleiding | In behandeling - voorstel processen e.d. In behandeling - voorstel scope doel Processen Functies Componenten Scope Doel | In de inleiding staat: ´ Een samenhangende objectenregistratie is een uniforme registratie met daarin basisgegevens over objecten in de fysieke werkelijkheid die zich voor gebruikers als één registratie gedraagt.´

Dit is eigenlijk al een hele vreemde definitie, met een samenraapsel van ongedefinieerde begrippen. - U... | 2.0 | 1. Inleiding - In de inleiding staat: ´ Een samenhangende objectenregistratie is een uniforme registratie met daarin basisgegevens over objecten in de fysieke werkelijkheid die zich voor gebruikers als één registratie gedraagt.´

Dit is eigenlijk al een hele vreemde definitie, met een samenraapsel van ongedefinieerde... | process | inleiding in de inleiding staat ´ een samenhangende objectenregistratie is een uniforme registratie met daarin basisgegevens over objecten in de fysieke werkelijkheid die zich voor gebruikers als één registratie gedraagt ´ dit is eigenlijk al een hele vreemde definitie met een samenraapsel van ongedefinieerde... | 1 |

237,826 | 7,767,106,454 | IssuesEvent | 2018-06-03 00:40:24 | openshift/origin | https://api.github.com/repos/openshift/origin | closed | Top allocator in 3.7 controller in large cluster | area/performance component/kubernetes component/storage lifecycle/rotten priority/P2 sig/master sig/scalability sig/storage | Object allocation hotspots in the controller manager in a very large AWS cluster:

```

(pprof) top30

Showing nodes accounting for 55791681178, 89.00% of 62687568655 total

Dropped 3608 nodes (cum <= 313437843)

Showing top 30 nodes out of 255

flat flat% sum% cum cum%

24313857977 38.79% 38.79% 24... | 1.0 | Top allocator in 3.7 controller in large cluster - Object allocation hotspots in the controller manager in a very large AWS cluster:

```

(pprof) top30

Showing nodes accounting for 55791681178, 89.00% of 62687568655 total

Dropped 3608 nodes (cum <= 313437843)

Showing top 30 nodes out of 255

flat flat% s... | non_process | top allocator in controller in large cluster object allocation hotspots in the controller manager in a very large aws cluster pprof showing nodes accounting for of total dropped nodes cum showing top nodes out of flat flat sum cum cum ... | 0 |

37,280 | 12,477,438,562 | IssuesEvent | 2020-05-29 14:57:43 | LibrIT/passhport | https://api.github.com/repos/LibrIT/passhport | closed | CVE-2020-11022 (Medium) detected in multiple libraries | security vulnerability | ## CVE-2020-11022 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jquery-1.9.0.min.js</b>, <b>jquery-3.3.1.min.js</b>, <b>jquery-2.1.4.min.js</b>, <b>jquery-1.11.0.min.js</b>, <b>jq... | True | CVE-2020-11022 (Medium) detected in multiple libraries - ## CVE-2020-11022 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jquery-1.9.0.min.js</b>, <b>jquery-3.3.1.min.js</b>, <b>jq... | non_process | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries jquery min js jquery min js jquery min js jquery min js jquery min js jquery js jquery min js jquery min js jquery min js... | 0 |

15,394 | 19,579,449,243 | IssuesEvent | 2022-01-04 19:12:59 | opensearch-project/data-prepper | https://api.github.com/repos/opensearch-project/data-prepper | closed | Processor to filter out (remove/drop) entire events | plugin - processor proposal | Data Prepper should provide a processor which removes entire events. This processor need not take any special input, but does require conditional support from #522. (Otherwise every event would be dropped).

**Outstanding Questions**

What name should this processor have? | 1.0 | Processor to filter out (remove/drop) entire events - Data Prepper should provide a processor which removes entire events. This processor need not take any special input, but does require conditional support from #522. (Otherwise every event would be dropped).

**Outstanding Questions**

What name should this proce... | process | processor to filter out remove drop entire events data prepper should provide a processor which removes entire events this processor need not take any special input but does require conditional support from otherwise every event would be dropped outstanding questions what name should this process... | 1 |

592 | 3,067,152,961 | IssuesEvent | 2015-08-18 08:39:13 | maraujop/django-crispy-forms | https://api.github.com/repos/maraujop/django-crispy-forms | closed | Remove `tests_require` block from setup.py | Cleanup Testing/Process | Historically the Make file was using this to set up the test environment but we're now favouring the pip requirements file. It can go. | 1.0 | Remove `tests_require` block from setup.py - Historically the Make file was using this to set up the test environment but we're now favouring the pip requirements file. It can go. | process | remove tests require block from setup py historically the make file was using this to set up the test environment but we re now favouring the pip requirements file it can go | 1 |

12,790 | 15,169,204,366 | IssuesEvent | 2021-02-12 20:43:43 | esmero/strawberryfield | https://api.github.com/repos/esmero/strawberryfield | closed | Big bad bad boy bug: "as:classification" using class level constants failed my sanity check! | Digital Preservation Events and Subscriber JSON Postprocessors bug | # What?

previously we have double as:structure checking (as:image, etc) Once per webform submit and once per Object Ingest via Event Subscriber. Once I removed the webform one (double processing, made little sense) my bug got exposed.

I have a constant in the JSON Helper class here

https://github.com/esmero/st... | 1.0 | Big bad bad boy bug: "as:classification" using class level constants failed my sanity check! - # What?

previously we have double as:structure checking (as:image, etc) Once per webform submit and once per Object Ingest via Event Subscriber. Once I removed the webform one (double processing, made little sense) my bug ... | process | big bad bad boy bug as classification using class level constants failed my sanity check what previously we have double as structure checking as image etc once per webform submit and once per object ingest via event subscriber once i removed the webform one double processing made little sense my bug ... | 1 |

96,228 | 3,966,389,177 | IssuesEvent | 2016-05-03 12:49:33 | daronco/test-issue-migrate2 | https://api.github.com/repos/daronco/test-issue-migrate2 | closed | Allow room keys to be changed while a meeting is running | Priority: High Status: Resolved Type: Feature | ---

Author Name: **Leonardo Daronco** (@daronco)

Original Redmine Issue: 828, http://dev.mconf.org/redmine/issues/828

Original Date: 2013-06-07

Original Assignee: Gustavo Miotto

---

If the keys (passwords) of a room are changed while a meeting is running, any further API call to that meeting is gonna fail, since th... | 1.0 | Allow room keys to be changed while a meeting is running - ---

Author Name: **Leonardo Daronco** (@daronco)

Original Redmine Issue: 828, http://dev.mconf.org/redmine/issues/828

Original Date: 2013-06-07

Original Assignee: Gustavo Miotto

---

If the keys (passwords) of a room are changed while a meeting is running, a... | non_process | allow room keys to be changed while a meeting is running author name leonardo daronco daronco original redmine issue original date original assignee gustavo miotto if the keys passwords of a room are changed while a meeting is running any further api call to that meeting is gonna ... | 0 |

16,849 | 22,100,710,314 | IssuesEvent | 2022-06-01 13:34:16 | green-coder/girouette | https://api.github.com/repos/green-coder/girouette | opened | Add a recent version of Preflight | enhancement contribution welcome good first issue tailwind-compat processor-tool | We need [to port this file](https://unpkg.com/tailwindcss@3.0.24/src/css/preflight.css) to the garden format, similarly of what was done for [this file](https://unpkg.com/tailwindcss@2.0.3/dist/base.css) in https://github.com/green-coder/girouette/blob/ab419782c932889d9e9ddeb5bbb92941063c593e/lib/girouette/src/girouett... | 1.0 | Add a recent version of Preflight - We need [to port this file](https://unpkg.com/tailwindcss@3.0.24/src/css/preflight.css) to the garden format, similarly of what was done for [this file](https://unpkg.com/tailwindcss@2.0.3/dist/base.css) in https://github.com/green-coder/girouette/blob/ab419782c932889d9e9ddeb5bbb9294... | process | add a recent version of preflight we need to the garden format similarly of what was done for in the comments presents in the source file must be preserved the preprocessor is affected it must accept a parameter saying which version of preflight to use | 1 |

42,916 | 5,487,257,105 | IssuesEvent | 2017-03-14 03:37:02 | yarnpkg/yarn | https://api.github.com/repos/yarnpkg/yarn | closed | yarn-e2e/label=docker,os=ubuntu-12.04 #129 failed | failure test | Build 'yarn-e2e/label=docker,os=ubuntu-12.04' is failing!

Last 50 lines of build output:

```

[...truncated 7.53 KB...]

Selecting previously unselected package openssl.

(Reading database ... 7578 files and directories currently installed.)

Unpacking openssl (from .../openssl_1.0.1-4ubuntu5.39_amd64.deb) ...

Selecting ... | 1.0 | yarn-e2e/label=docker,os=ubuntu-12.04 #129 failed - Build 'yarn-e2e/label=docker,os=ubuntu-12.04' is failing!

Last 50 lines of build output:

```

[...truncated 7.53 KB...]

Selecting previously unselected package openssl.

(Reading database ... 7578 files and directories currently installed.)

Unpacking openssl (from ...... | non_process | yarn label docker os ubuntu failed build yarn label docker os ubuntu is failing last lines of build output selecting previously unselected package openssl reading database files and directories currently installed unpacking openssl from openssl deb selectin... | 0 |

13,077 | 15,419,420,404 | IssuesEvent | 2021-03-05 10:08:02 | ooi-data/RS01SBPD-DP01A-04-FLNTUA102-recovered_wfp-dpc_flnturtd_instrument_recovered | https://api.github.com/repos/ooi-data/RS01SBPD-DP01A-04-FLNTUA102-recovered_wfp-dpc_flnturtd_instrument_recovered | opened | 🛑 Processing failed: PermissionError | process | ## Overview