Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

18,300 | 24,412,162,130 | IssuesEvent | 2022-10-05 13:16:10 | Project60/org.project60.sepa | https://api.github.com/repos/Project60/org.project60.sepa | closed | Recurring contributions payment processor needs certain configuration | wontfix needs funding CiviCRM 4.5 CiviCRM 4.6 payment processor | If you want to use the SDD payment processor to collect recurring contributions, your contribution page needs to have a certain setup:

- 'Recurring Contributions' needs to be enabled (obviously)

- 'Supported recurring units' needs to be `month` and `year`

- 'Support recurring intervals' needs to be activated

- The SDD ... | 1.0 | Recurring contributions payment processor needs certain configuration - If you want to use the SDD payment processor to collect recurring contributions, your contribution page needs to have a certain setup:

- 'Recurring Contributions' needs to be enabled (obviously)

- 'Supported recurring units' needs to be `month` and... | process | recurring contributions payment processor needs certain configuration if you want to use the sdd payment processor to collect recurring contributions your contribution page needs to have a certain setup recurring contributions needs to be enabled obviously supported recurring units needs to be month and... | 1 |

16,089 | 20,256,227,003 | IssuesEvent | 2022-02-14 23:39:51 | varabyte/kobweb | https://api.github.com/repos/varabyte/kobweb | opened | Use Gradle @Nested annotation? | good first issue process | Kobweb's Gradle blocks are nested, e.g.

```

kobweb {

index { ... }

}

kobwebx {

markdown { ... }

}

```

We're doing this manually right now using nested `ExtensionAware.create` calls but I just learned about the `@Nested` annotation. Maybe that can clean up the code a bit? | 1.0 | Use Gradle @Nested annotation? - Kobweb's Gradle blocks are nested, e.g.

```

kobweb {

index { ... }

}

kobwebx {

markdown { ... }

}

```

We're doing this manually right now using nested `ExtensionAware.create` calls but I just learned about the `@Nested` annotation. Maybe that can clean up the code a... | process | use gradle nested annotation kobweb s gradle blocks are nested e g kobweb index kobwebx markdown we re doing this manually right now using nested extensionaware create calls but i just learned about the nested annotation maybe that can clean up the code a... | 1 |

210,436 | 23,754,745,007 | IssuesEvent | 2022-09-01 01:12:33 | benlazarine/cas-overlay | https://api.github.com/repos/benlazarine/cas-overlay | opened | CVE-2021-37714 (High) detected in jsoup-1.10.1.jar | security vulnerability | ## CVE-2021-37714 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jsoup-1.10.1.jar</b></p></summary>

<p>jsoup HTML parser</p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulner... | True | CVE-2021-37714 (High) detected in jsoup-1.10.1.jar - ## CVE-2021-37714 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jsoup-1.10.1.jar</b></p></summary>

<p>jsoup HTML parser</p>

<p>Pa... | non_process | cve high detected in jsoup jar cve high severity vulnerability vulnerable library jsoup jar jsoup html parser path to dependency file pom xml path to vulnerable library root repository org jsoup jsoup jsoup jar dependency hierarchy cas server supp... | 0 |

87,726 | 3,757,348,067 | IssuesEvent | 2016-03-13 22:42:39 | squiggle-lang/squiggle-lang | https://api.github.com/repos/squiggle-lang/squiggle-lang | closed | Stop using `$ref`, add `declare` | enhancement high priority | Squiggle needs a way to "declare" an ambient variable, such as Node's `__dirname`, or `exports` when using Browserify, which are not global, and currently impossible to use in Squiggle without linter warnings.

We could use these declarations also to take care of mutual recursion, and switch back to just reporting va... | 1.0 | Stop using `$ref`, add `declare` - Squiggle needs a way to "declare" an ambient variable, such as Node's `__dirname`, or `exports` when using Browserify, which are not global, and currently impossible to use in Squiggle without linter warnings.

We could use these declarations also to take care of mutual recursion, a... | non_process | stop using ref add declare squiggle needs a way to declare an ambient variable such as node s dirname or exports when using browserify which are not global and currently impossible to use in squiggle without linter warnings we could use these declarations also to take care of mutual recursion a... | 0 |

84,953 | 10,422,693,224 | IssuesEvent | 2019-09-16 09:36:48 | reactor/reactor-core | https://api.github.com/repos/reactor/reactor-core | closed | Mention importing Java9Stubs / Java8Stubs in CONTRIBUTING.md | area/java9 type/chores type/documentation | ### Expected behavior

Project should be easily imported and built in any IDE.

### Actual behavior

Pre-checked out project can suddenly fail to build in IntelliJ notably after the `StackWalker` stub is introduced.

One needs to make sure that:

- Gradle JVM matches Modules Settings JDK (preferably 1.8)

- Intel... | 1.0 | Mention importing Java9Stubs / Java8Stubs in CONTRIBUTING.md - ### Expected behavior

Project should be easily imported and built in any IDE.

### Actual behavior

Pre-checked out project can suddenly fail to build in IntelliJ notably after the `StackWalker` stub is introduced.

One needs to make sure that:

- Gra... | non_process | mention importing in contributing md expected behavior project should be easily imported and built in any ide actual behavior pre checked out project can suddenly fail to build in intellij notably after the stackwalker stub is introduced one needs to make sure that gradle jvm matches mo... | 0 |

9,936 | 12,972,700,677 | IssuesEvent | 2020-07-21 12:57:18 | keep-network/keep-core | https://api.github.com/repos/keep-network/keep-core | closed | Balance reported on new overview page | :old_key: token dashboard process & client team | ### Background

I believe this was introduced in [this](https://github.com/keep-network/keep-core/pull/1847) PR. Last week we deployed an updated token-dashboard against Ropsten, from master. Last fully functional version of the token dashboard deployed was https://github.com/keep-network/keep-core/releases/tag... | 1.0 | Balance reported on new overview page - ### Background

I believe this was introduced in [this](https://github.com/keep-network/keep-core/pull/1847) PR. Last week we deployed an updated token-dashboard against Ropsten, from master. Last fully functional version of the token dashboard deployed was https://github... | process | balance reported on new overview page background i believe this was introduced in pr last week we deployed an updated token dashboard against ropsten from master last fully functional version of the token dashboard deployed was which did not include the the overview page the token overview ... | 1 |

8,115 | 11,302,386,402 | IssuesEvent | 2020-01-17 17:32:38 | NationalSecurityAgency/ghidra | https://api.github.com/repos/NationalSecurityAgency/ghidra | closed | x86: size of a pushed segment register should be extended | Feature: Processor/x86 Type: Bug | **Describe the bug**

Size of segment registers (`GS`, `FS`, `ES`, etc) on the stack should be zero-extended to the operand size.

**To Reproduce**

Steps to reproduce the behavior:

1. Open x86 program (32 or 64 bit).

1. Patch the listing: add `PUSH ES`, for example.

1. Stack depth changes by 16 bits (see Fig. 1),... | 1.0 | x86: size of a pushed segment register should be extended - **Describe the bug**

Size of segment registers (`GS`, `FS`, `ES`, etc) on the stack should be zero-extended to the operand size.

**To Reproduce**

Steps to reproduce the behavior:

1. Open x86 program (32 or 64 bit).

1. Patch the listing: add `PUSH ES`, f... | process | size of a pushed segment register should be extended describe the bug size of segment registers gs fs es etc on the stack should be zero extended to the operand size to reproduce steps to reproduce the behavior open program or bit patch the listing add push es for exa... | 1 |

49,119 | 12,289,821,225 | IssuesEvent | 2020-05-09 23:43:52 | nunit/nunit | https://api.github.com/repos/nunit/nunit | closed | Azure DevOps does not build release branch | is:build pri:high | We need to update the configuration so that the release branch builds automatically along with any PRs into the release branch. For future releases, I would like to start building and releasing from there. For 7.12 I am testing the process by preparing a pull request into the release branch and I will release the artif... | 1.0 | Azure DevOps does not build release branch - We need to update the configuration so that the release branch builds automatically along with any PRs into the release branch. For future releases, I would like to start building and releasing from there. For 7.12 I am testing the process by preparing a pull request into th... | non_process | azure devops does not build release branch we need to update the configuration so that the release branch builds automatically along with any prs into the release branch for future releases i would like to start building and releasing from there for i am testing the process by preparing a pull request into the... | 0 |

18,130 | 24,168,254,787 | IssuesEvent | 2022-09-22 16:47:13 | GoogleCloudPlatform/terraform-mean-cloudrun-mongodb | https://api.github.com/repos/GoogleCloudPlatform/terraform-mean-cloudrun-mongodb | closed | Migrate this repo to final destination when ready | process | - [ ] Need to determine ultimate home for the project.

- [ ] Migrate.

Considerations:

- #11

- Or just keep this repo | 1.0 | Migrate this repo to final destination when ready - - [ ] Need to determine ultimate home for the project.

- [ ] Migrate.

Considerations:

- #11

- Or just keep this repo | process | migrate this repo to final destination when ready need to determine ultimate home for the project migrate considerations or just keep this repo | 1 |

43,083 | 5,575,204,343 | IssuesEvent | 2017-03-28 00:55:33 | 18F/fec-cms | https://api.github.com/repos/18F/fec-cms | closed | Implement and test site-wide search prototype | Work: Design Work: Front-end | This is an exploratory issue to help us better understand the user needs (and potential ways to address them) around a site-wide search. To do this, we will use a combination of combing through external resources and doing lightweight prototyping using the out-of-the-box DigitalGov search tools.

**Questions to consi... | 1.0 | Implement and test site-wide search prototype - This is an exploratory issue to help us better understand the user needs (and potential ways to address them) around a site-wide search. To do this, we will use a combination of combing through external resources and doing lightweight prototyping using the out-of-the-box ... | non_process | implement and test site wide search prototype this is an exploratory issue to help us better understand the user needs and potential ways to address them around a site wide search to do this we will use a combination of combing through external resources and doing lightweight prototyping using the out of the box ... | 0 |

9,557 | 12,517,389,605 | IssuesEvent | 2020-06-03 11:03:38 | lishu/vscode-svg2 | https://api.github.com/repos/lishu/vscode-svg2 | closed | [Enhancement idea] Option to open the preview only by right-clicking | In process | Hello,

What do you think about adding the option to open the preview by right-clicking?

I would like to right click and open the preview (**without the source code of the file**)

It will be very very very useful to preview SVG files when you have many files that you want to open

This is the native way viewing... | 1.0 | [Enhancement idea] Option to open the preview only by right-clicking - Hello,

What do you think about adding the option to open the preview by right-clicking?

I would like to right click and open the preview (**without the source code of the file**)

It will be very very very useful to preview SVG files when you ... | process | option to open the preview only by right clicking hello what do you think about adding the option to open the preview by right clicking i would like to right click and open the preview without the source code of the file it will be very very very useful to preview svg files when you have many files t... | 1 |

7,706 | 10,800,079,802 | IssuesEvent | 2019-11-06 13:34:01 | prisma-labs/issues | https://api.github.com/repos/prisma-labs/issues | opened | Public notion content/process? | type/process | We have an internal notion page where a lot of valuable planning and process content lives. Some of that might sometimes be of interest to the current/future community.

It also makes our lives easier when we can share/link people to strong content that addresses their questions e.g. "why is this inactive" "what are ... | 1.0 | Public notion content/process? - We have an internal notion page where a lot of valuable planning and process content lives. Some of that might sometimes be of interest to the current/future community.

It also makes our lives easier when we can share/link people to strong content that addresses their questions e.g. ... | process | public notion content process we have an internal notion page where a lot of valuable planning and process content lives some of that might sometimes be of interest to the current future community it also makes our lives easier when we can share link people to strong content that addresses their questions e g ... | 1 |

63,146 | 17,394,015,205 | IssuesEvent | 2021-08-02 11:07:53 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Video doesn't resume one way after hold | A-VoIP T-Defect | Looks like when we resume a video call from being held, the video only resumes one way. When I tested, it stayed black on the side that un-held. | 1.0 | Video doesn't resume one way after hold - Looks like when we resume a video call from being held, the video only resumes one way. When I tested, it stayed black on the side that un-held. | non_process | video doesn t resume one way after hold looks like when we resume a video call from being held the video only resumes one way when i tested it stayed black on the side that un held | 0 |

3,762 | 6,734,899,697 | IssuesEvent | 2017-10-18 19:43:39 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Jazz up the Readme | process: contributing | The `README.md` for this repo is kind of sad. 😢 I would like to see the readme more focused on explaining what cypress is, why you would use cypress, **simple install instructions**, etc - for our users and have contributing the secondary focus.

**Ideas to jazz up**

- Add header from old readme branch.

- Embed... | 1.0 | Jazz up the Readme - The `README.md` for this repo is kind of sad. 😢 I would like to see the readme more focused on explaining what cypress is, why you would use cypress, **simple install instructions**, etc - for our users and have contributing the secondary focus.

**Ideas to jazz up**

- Add header from old rea... | process | jazz up the readme the readme md for this repo is kind of sad 😢 i would like to see the readme more focused on explaining what cypress is why you would use cypress simple install instructions etc for our users and have contributing the secondary focus ideas to jazz up add header from old rea... | 1 |

9,450 | 12,429,227,436 | IssuesEvent | 2020-05-25 08:03:15 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | closed | ssp534-over question? | preprocessor question | I've encountered a new experiment that I've never encoutered before. The ScenarioMIP experiment, `ssp534-over` branches from the run `ssp585` in 2040.

If I put this in a recipe like this:

```

exp: [historical, ssp585, ssp534-over]

```

Will ESMValTool be able to figure out that we don't want any data from `ssp58... | 1.0 | ssp534-over question? - I've encountered a new experiment that I've never encoutered before. The ScenarioMIP experiment, `ssp534-over` branches from the run `ssp585` in 2040.

If I put this in a recipe like this:

```

exp: [historical, ssp585, ssp534-over]

```

Will ESMValTool be able to figure out that we don't w... | process | over question i ve encountered a new experiment that i ve never encoutered before the scenariomip experiment over branches from the run in if i put this in a recipe like this exp will esmvaltool be able to figure out that we don t want any data from after | 1 |

20,501 | 27,166,344,126 | IssuesEvent | 2023-02-17 15:37:46 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Native query fails with commented-out parameter | Type:Bug Priority:P2 Querying/Processor Querying/Parameters & Variables Querying/Native .Backend | *Environment*:

- Your browser and the version: 66.0.3359.139

- Your operating system: OSX 10.13.3

- Your databases: Sample dataset

- Metabase version: v0.28.5

- Metabase hosting environment: Unknown

- Metabase internal database: Redshift

*Repeatable steps to reproduce the issue*:

1. Create a new native SQL qu... | 1.0 | Native query fails with commented-out parameter - *Environment*:

- Your browser and the version: 66.0.3359.139

- Your operating system: OSX 10.13.3

- Your databases: Sample dataset

- Metabase version: v0.28.5

- Metabase hosting environment: Unknown

- Metabase internal database: Redshift

*Repeatable steps to re... | process | native query fails with commented out parameter environment your browser and the version your operating system osx your databases sample dataset metabase version metabase hosting environment unknown metabase internal database redshift repeatable steps to reproduce th... | 1 |

15,799 | 19,987,126,090 | IssuesEvent | 2022-01-30 20:40:38 | maticnetwork/miden | https://api.github.com/repos/maticnetwork/miden | closed | Add fmp register the processor with associated operations | processor | As discussed in #73, to better support memory-based locals, we need to add `fmp` register to the processor. Operations needed to manipulate this register have been stubbed out in #83 - but we also need to implement them. | 1.0 | Add fmp register the processor with associated operations - As discussed in #73, to better support memory-based locals, we need to add `fmp` register to the processor. Operations needed to manipulate this register have been stubbed out in #83 - but we also need to implement them. | process | add fmp register the processor with associated operations as discussed in to better support memory based locals we need to add fmp register to the processor operations needed to manipulate this register have been stubbed out in but we also need to implement them | 1 |

306,516 | 23,163,694,709 | IssuesEvent | 2022-07-29 20:59:50 | gravitational/teleport | https://api.github.com/repos/gravitational/teleport | closed | [v.10.0] /docs/pages/enterprise/workflow/resource-requests.mdx | Clarify that Resource Access Request is in preview | documentation access-requests | ## Details

Make it clear in the docs that Resource Access Request is in preview.

### Category

- Improve Existing

| 1.0 | [v.10.0] /docs/pages/enterprise/workflow/resource-requests.mdx | Clarify that Resource Access Request is in preview - ## Details

Make it clear in the docs that Resource Access Request is in preview.

### Category

- Improve Existing

| non_process | docs pages enterprise workflow resource requests mdx clarify that resource access request is in preview details make it clear in the docs that resource access request is in preview category improve existing | 0 |

245,329 | 26,541,516,887 | IssuesEvent | 2023-01-19 19:41:07 | gms-ws-sandbox/Java-Demo | https://api.github.com/repos/gms-ws-sandbox/Java-Demo | closed | CVE-2021-40690 (High) detected in xmlsec-2.1.4.jar - autoclosed | security vulnerability | ## CVE-2021-40690 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xmlsec-2.1.4.jar</b></p></summary>

<p>Apache XML Security for Java supports XML-Signature Syntax and Processing,

... | True | CVE-2021-40690 (High) detected in xmlsec-2.1.4.jar - autoclosed - ## CVE-2021-40690 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xmlsec-2.1.4.jar</b></p></summary>

<p>Apache XML Sec... | non_process | cve high detected in xmlsec jar autoclosed cve high severity vulnerability vulnerable library xmlsec jar apache xml security for java supports xml signature syntax and processing recommendation february and xml encryption syntax and processing recomm... | 0 |

380,485 | 26,421,308,687 | IssuesEvent | 2023-01-13 20:48:33 | ufelgen/capstone-project | https://api.github.com/repos/ufelgen/capstone-project | closed | Checklist for QA Database Connection | documentation | Following [this US](https://github.com/ufelgen/capstone-project/issues/35) I replaced the local storage by a database connection. Therefore I had to change all the `create` and `update` functions, so they need to be quality tested again.

As these are quite a lot of functions, I made a checklist of functions for QA.... | 1.0 | Checklist for QA Database Connection - Following [this US](https://github.com/ufelgen/capstone-project/issues/35) I replaced the local storage by a database connection. Therefore I had to change all the `create` and `update` functions, so they need to be quality tested again.

As these are quite a lot of functions, ... | non_process | checklist for qa database connection following i replaced the local storage by a database connection therefore i had to change all the create and update functions so they need to be quality tested again as these are quite a lot of functions i made a checklist of functions for qa main page im... | 0 |

2,336 | 5,142,799,307 | IssuesEvent | 2017-01-12 14:23:45 | Graylog2/graylog2-server | https://api.github.com/repos/Graylog2/graylog2-server | closed | Slow syslog processing speed with force_rdns enabled | feature improvement performance processing won't fix | There seems to be a problem when the `force_rdns` option for Syslog inputs is enabled. Users report high CPU usage and reduced message throughput.

See: https://groups.google.com/forum/?hl=en#!topic/graylog2/bW2glCdBIUI

| 1.0 | Slow syslog processing speed with force_rdns enabled - There seems to be a problem when the `force_rdns` option for Syslog inputs is enabled. Users report high CPU usage and reduced message throughput.

See: https://groups.google.com/forum/?hl=en#!topic/graylog2/bW2glCdBIUI

| process | slow syslog processing speed with force rdns enabled there seems to be a problem when the force rdns option for syslog inputs is enabled users report high cpu usage and reduced message throughput see | 1 |

16,828 | 22,061,610,807 | IssuesEvent | 2022-05-30 18:43:49 | bitPogo/kmock | https://api.github.com/repos/bitPogo/kmock | closed | Prohibit deceiving kspy for generics | kmock-processor | Currently `kspy` is generated for kspy even if they are not enabled. While using them it will simply fails.

Acceptance criteria:

* do not generate the deceiving factories | 1.0 | Prohibit deceiving kspy for generics - Currently `kspy` is generated for kspy even if they are not enabled. While using them it will simply fails.

Acceptance criteria:

* do not generate the deceiving factories | process | prohibit deceiving kspy for generics currently kspy is generated for kspy even if they are not enabled while using them it will simply fails acceptance criteria do not generate the deceiving factories | 1 |

3,853 | 2,708,302,946 | IssuesEvent | 2015-04-08 07:56:45 | AprilFool/AprilFool | https://api.github.com/repos/AprilFool/AprilFool | opened | 어떤 web framework을 사용할 것인가? | design | 대략 아래

1. beego: full-feature, MVC, 중국에서 활발히 사용?

2. revel: almost full-feature

3. martini

4. gorilla: web tool kit

5. gocraft/web: web tool kit

5. net/http: golang built-in

beego나 revel을 사용하거나 gorilla+net/http, gocraft+net/http가 일반적인 방법인 것 같음.

특이한 웹서비스를 개발할 것이 아니므로 빠른 개발을 위해서 full-feature framework이 좋을 것 같음.

... | 1.0 | 어떤 web framework을 사용할 것인가? - 대략 아래

1. beego: full-feature, MVC, 중국에서 활발히 사용?

2. revel: almost full-feature

3. martini

4. gorilla: web tool kit

5. gocraft/web: web tool kit

5. net/http: golang built-in

beego나 revel을 사용하거나 gorilla+net/http, gocraft+net/http가 일반적인 방법인 것 같음.

특이한 웹서비스를 개발할 것이 아니므로 빠른 개발을 위해서 full-... | non_process | 어떤 web framework을 사용할 것인가 대략 아래 beego full feature mvc 중국에서 활발히 사용 revel almost full feature martini gorilla web tool kit gocraft web web tool kit net http golang built in beego나 revel을 사용하거나 gorilla net http gocraft net http가 일반적인 방법인 것 같음 특이한 웹서비스를 개발할 것이 아니므로 빠른 개발을 위해서 full ... | 0 |

11,128 | 13,957,688,116 | IssuesEvent | 2020-10-24 08:09:26 | alexanderkotsev/geoportal | https://api.github.com/repos/alexanderkotsev/geoportal | opened | BE: download link wfs | BE - Belgium Geoportal Harvesting process | Dear

Some datesets that are downloadable through a WFS service get a downloadlink in the form of getfeature/typename.

Other datasets get a downlink in the form of getfeare/storedqueryid.

How does the harvester or the resource linkage checker decide what kind of downloadlink to create?

For our harmonized data/services... | 1.0 | BE: download link wfs - Dear

Some datesets that are downloadable through a WFS service get a downloadlink in the form of getfeature/typename.

Other datasets get a downlink in the form of getfeare/storedqueryid.

How does the harvester or the resource linkage checker decide what kind of downloadlink to create?

For our ... | process | be download link wfs dear some datesets that are downloadable through a wfs service get a downloadlink in the form of getfeature typename other datasets get a downlink in the form of getfeare storedqueryid how does the harvester or the resource linkage checker decide what kind of downloadlink to create for our ... | 1 |

19,360 | 25,491,460,837 | IssuesEvent | 2022-11-27 05:16:31 | hsmusic/hsmusic-wiki | https://api.github.com/repos/hsmusic/hsmusic-wiki | closed | "Artists - by Contributions" should divide between music and artworks | scope: data processing scope: page generation - content thing: listings | This would make it match with "Artists - by Latest Contribution".

Thanks for the suggestion, Niklink! | 1.0 | "Artists - by Contributions" should divide between music and artworks - This would make it match with "Artists - by Latest Contribution".

Thanks for the suggestion, Niklink! | process | artists by contributions should divide between music and artworks this would make it match with artists by latest contribution thanks for the suggestion niklink | 1 |

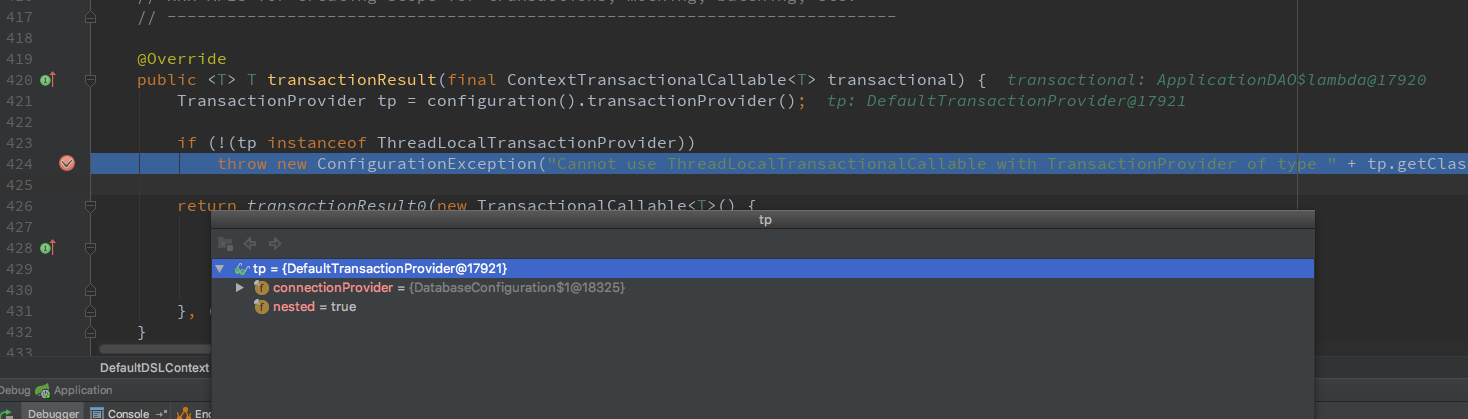

32,755 | 6,917,539,373 | IssuesEvent | 2017-11-29 08:57:04 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | Cannot use ThreadLocalTransactionalCallable with TransactionProvider of type class org.jooq.impl.DefaultTransactionProvider | C: Functionality P: Medium R: Duplicate T: Defect | ### Expected behavior and actual behavior:

### Steps to reproduce the problem:

I built the DefaultDSLContext directly from `DataSource`, but when I use dslContext.TransactionalResult(...),it give me this.

... | 1.0 | Cannot use ThreadLocalTransactionalCallable with TransactionProvider of type class org.jooq.impl.DefaultTransactionProvider - ### Expected behavior and actual behavior:

### Steps to reproduce the problem:

I built the DefaultDSLContext directly from `DataSource`, but when I use dslContext.TransactionalResult(...),it... | non_process | cannot use threadlocaltransactionalcallable with transactionprovider of type class org jooq impl defaulttransactionprovider expected behavior and actual behavior steps to reproduce the problem i built the defaultdslcontext directly from datasource but when i use dslcontext transactionalresult it... | 0 |

3,411 | 2,610,062,162 | IssuesEvent | 2015-02-26 18:18:17 | chrsmith/jsjsj122 | https://api.github.com/repos/chrsmith/jsjsj122 | opened | 路桥不孕不育检查项目及费用 | auto-migrated Priority-Medium Type-Defect | ```

路桥不孕不育检查项目及费用【台州五洲生殖医院】24小时健

康咨询热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地址:

台州市椒江区枫南路229号(枫南大转盘旁)乘车线路:乘坐104�

��108、118、198及椒江一金清公交车直达枫南小区,乘坐107、105

、109、112、901、 902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费。尖端医疗设备,与世界同... | 1.0 | 路桥不孕不育检查项目及费用 - ```

路桥不孕不育检查项目及费用【台州五洲生殖医院】24小时健

康咨询热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地址:

台州市椒江区枫南路229号(枫南大转盘旁)乘车线路:乘坐104�

��108、118、198及椒江一金清公交车直达枫南小区,乘坐107、105

、109、112、901、 902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

... | non_process | 路桥不孕不育检查项目及费用 路桥不孕不育检查项目及费用【台州五洲生殖医院】 康咨询热线 微信号tzwzszyy 医院地址 (枫南大转盘旁)乘车线路 � �� 、 、 , 、 、 、 、 、 ,步行即可到院。 诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,�� �精,无精。包皮包茎,精索静脉曲张,淋病等。 台州五洲生殖医院是台州最大的男科医院,权威专家在线免�� �咨询,拥有专业完善的男科检查治疗设备,严格按照国家标� ��收费。尖端医疗设备,与世界同步。权威专家,成就专业典 范。人性化服务,一切以患者为中心。 看男科就选台州五洲生殖医院,专业男科为男人。 ori... | 0 |

12,751 | 15,109,749,852 | IssuesEvent | 2021-02-08 18:15:58 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | multi-job output variable explaination misses that you need to depend on the source job | Pri2 devops-cicd-process/tech devops/prod doc-enhancement help wanted ready-to-doc | There is something that is not documented here. Multi-job output variable can only be used if the Job depends on the Source Job. So the example provided in the documentation works as B depends on A. But if you create a C depends on B and B depends on A you will see that the output variables cannot be retreived by depen... | 1.0 | multi-job output variable explaination misses that you need to depend on the source job - There is something that is not documented here. Multi-job output variable can only be used if the Job depends on the Source Job. So the example provided in the documentation works as B depends on A. But if you create a C depends o... | process | multi job output variable explaination misses that you need to depend on the source job there is something that is not documented here multi job output variable can only be used if the job depends on the source job so the example provided in the documentation works as b depends on a but if you create a c depends o... | 1 |

54,500 | 7,889,960,650 | IssuesEvent | 2018-06-28 07:10:00 | symfony/symfony-docs | https://api.github.com/repos/symfony/symfony-docs | closed | Document that console.command tag doesn’t support command aliases | Console Missing Documentation hasPR | I just noticed that using the `console.command` tag command aliases set via `setAliases` in `configure` are not taken into account. This should be documented on the `console/commands_as_services.rst` / http://symfony.com/doc/current/console/commands_as_services.html page. Or do you think that’s a bug of Symfony? If so ... | 1.0 | Document that console.command tag doesn’t support command aliases - I just noticed that using the `console.command` tag command aliases set via `setAliases` in `configure` are not taken into account. This should be documented on the `console/commands_as_services.rst` / http://symfony.com/doc/current/console/commands_as... | non_process | document that console command tag doesn’t support command aliases i just noticed that using the console command tag command aliases set via setaliases in configure are not taken into account this should be documented on the console commands as services rst page or do you think that’s a bug of symfony i... | 0 |

5,606 | 8,468,548,158 | IssuesEvent | 2018-10-23 20:04:12 | GoogleCloudPlatform/golang-samples | https://api.github.com/repos/GoogleCloudPlatform/golang-samples | opened | testing: don't set github status for child jobs | type: process | Kokoro's github status should only be set for the parent job (i.e., whether all child jobs succeeded). Currently, each child job sets a github status, and then after the last one completes, the parent job sets the status again.

This shows the status as green in the following situation:

Job 1: green

Job 2: green

... | 1.0 | testing: don't set github status for child jobs - Kokoro's github status should only be set for the parent job (i.e., whether all child jobs succeeded). Currently, each child job sets a github status, and then after the last one completes, the parent job sets the status again.

This shows the status as green in the f... | process | testing don t set github status for child jobs kokoro s github status should only be set for the parent job i e whether all child jobs succeeded currently each child job sets a github status and then after the last one completes the parent job sets the status again this shows the status as green in the f... | 1 |

17,987 | 24,008,840,715 | IssuesEvent | 2022-09-14 16:55:03 | OpenDataScotland/the_od_bods | https://api.github.com/repos/OpenDataScotland/the_od_bods | closed | Fix: CKAN PageURL to use /dataset instead of /package | bug data processing back end | Thanks to Will and Dom for picking this up on slack ([extracted 14 Sep](https://opendatascotland.slack.com/archives/C02HEHDL8AY/p1662916320398039)):

> Will: This looks great. One observation though is that all the urls to our CKAN resource are incorrect and don't work currently. The urls should be https://data.spati... | 1.0 | Fix: CKAN PageURL to use /dataset instead of /package - Thanks to Will and Dom for picking this up on slack ([extracted 14 Sep](https://opendatascotland.slack.com/archives/C02HEHDL8AY/p1662916320398039)):

> Will: This looks great. One observation though is that all the urls to our CKAN resource are incorrect and don... | process | fix ckan pageurl to use dataset instead of package thanks to will and dom for picking this up on slack will this looks great one observation though is that all the urls to our ckan resource are incorrect and don t work currently the urls should be and not | 1 |

5,054 | 7,860,887,261 | IssuesEvent | 2018-06-21 21:34:08 | StrikeNP/trac_test | https://api.github.com/repos/StrikeNP/trac_test | closed | Add a function to convert.m to changed a pressure profile into altitude (Trac #4) | Migrated from Trac enhancement fasching@uwm.edu post_processing | Add a function to convert.m to changed a pressure profile into altitude. This would be useful for cases that do not specify things in terms of altitude.

Attachments:

[plot_explicit_ta_configs.maff](https://github.com/larson-group/trac_attachment_archive/blob/master/trac_test/822/plot_explicit_ta_configs.maff)

[plot_n... | 1.0 | Add a function to convert.m to changed a pressure profile into altitude (Trac #4) - Add a function to convert.m to changed a pressure profile into altitude. This would be useful for cases that do not specify things in terms of altitude.

Attachments:

[plot_explicit_ta_configs.maff](https://github.com/larson-group/trac... | process | add a function to convert m to changed a pressure profile into altitude trac add a function to convert m to changed a pressure profile into altitude this would be useful for cases that do not specify things in terms of altitude attachments migrated from json ... | 1 |

16,731 | 21,893,071,230 | IssuesEvent | 2022-05-20 05:20:25 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Algorithm output node is placed half outsize model canvas | Feedback Processing Regression Bug | ### What is the bug or the crash?

When adding a "final" Model Output to an algorithm, a new green node will be placed on the model canvas. This node gets placed in the top left corner, half outside the visible model canvas.

I use "asd" as name here:

documentation with new.

The content of documentation must be:

* Global presentation of Hapic (advantage/inconve... | 1.0 | Begin user documentation - Require: https://github.com/algoo/hapic/issues/209

Hapic must have a basic documentation for users. The second step is to write it. Feed the current [basic](https://github.com/algoo/hapic/issues/209) documentation with new.

The content of documentation must be:

* Global presentation ... | non_process | begin user documentation require hapic must have a basic documentation for users the second step is to write it feed the current documentation with new the content of documentation must be global presentation of hapic advantage inconvenient how to use it which web framework are supported... | 0 |

12,631 | 15,016,279,165 | IssuesEvent | 2021-02-01 09:23:15 | panther-labs/panther | https://api.github.com/repos/panther-labs/panther | closed | Failed to classify CrowdStrike `aid_master` event | p1 team:data processing | ### Describe the bug

The CrowdStrike `aid_master` can be sent through FDR and contains info to relate the `aid` (sensor id) field to `ComputerName`.

The schema of the event doesn't match our `CrowdStrike.Unknown` schema, so we need a separate parser for it (or update the `CrowdStrike.Unknown` schema).

### Steps to re... | 1.0 | Failed to classify CrowdStrike `aid_master` event - ### Describe the bug

The CrowdStrike `aid_master` can be sent through FDR and contains info to relate the `aid` (sensor id) field to `ComputerName`.

The schema of the event doesn't match our `CrowdStrike.Unknown` schema, so we need a separate parser for it (or update... | process | failed to classify crowdstrike aid master event describe the bug the crowdstrike aid master can be sent through fdr and contains info to relate the aid sensor id field to computername the schema of the event doesn t match our crowdstrike unknown schema so we need a separate parser for it or update... | 1 |

535,712 | 15,697,012,070 | IssuesEvent | 2021-03-26 03:27:32 | dietterc/SEO-ker | https://api.github.com/repos/dietterc/SEO-ker | closed | [1.4] As a user, I want the game to differentiate me from other players in the game, so I know who is who. | feature 1 high priority user story | Acceptance criteria: I am able to differentiate all the players within a game.

Priority: High

Estimated length: Short | 1.0 | [1.4] As a user, I want the game to differentiate me from other players in the game, so I know who is who. - Acceptance criteria: I am able to differentiate all the players within a game.

Priority: High

Estimated length: Short | non_process | as a user i want the game to differentiate me from other players in the game so i know who is who acceptance criteria i am able to differentiate all the players within a game priority high estimated length short | 0 |

298,687 | 9,200,670,102 | IssuesEvent | 2019-03-07 17:37:02 | qissue-bot/QGIS | https://api.github.com/repos/qissue-bot/QGIS | closed | Crash when closing attribute table on OS X | Category: Vectors Component: Affected QGIS version Component: Crashes QGIS or corrupts data Component: Easy fix? Component: Operating System Component: Pull Request or Patch supplied Component: Regression? Component: Resolution Priority: Low Project: QGIS Application Status: Closed Tracker: Bug report | ---

Author Name: **Gary Sherman** (Gary Sherman)

Original Redmine Issue: 250, https://issues.qgis.org/issues/250

Original Assignee: Gary Sherman

---

Load a shapefile, open the attribute table, and then click the Close button in the attribute dialog. OS X crashes after getting the SBOD (spinning beachball of death)... | 1.0 | Crash when closing attribute table on OS X - ---

Author Name: **Gary Sherman** (Gary Sherman)

Original Redmine Issue: 250, https://issues.qgis.org/issues/250

Original Assignee: Gary Sherman

---

Load a shapefile, open the attribute table, and then click the Close button in the attribute dialog. OS X crashes after g... | non_process | crash when closing attribute table on os x author name gary sherman gary sherman original redmine issue original assignee gary sherman load a shapefile open the attribute table and then click the close button in the attribute dialog os x crashes after getting the sbod spinning beachball... | 0 |

13,547 | 16,089,670,627 | IssuesEvent | 2021-04-26 15:14:42 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | How can I setup stage condition in the devops release pipeline UI portal? | Pri2 cba devops-cicd-process/tech devops/prod support-request |

How can I setup stage condition in the devops release pipeline UI portal?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: d322215c-8025-4f21-0700-7dfa7dc5c46e

* Version Independent ID: 141fcdbb-8394-525b-bb29-eff9a693a9c4

* Cont... | 1.0 | How can I setup stage condition in the devops release pipeline UI portal? -

How can I setup stage condition in the devops release pipeline UI portal?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: d322215c-8025-4f21-0700-7dfa7dc5... | process | how can i setup stage condition in the devops release pipeline ui portal how can i setup stage condition in the devops release pipeline ui portal document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent... | 1 |

343,925 | 30,700,493,269 | IssuesEvent | 2023-07-26 22:42:04 | rancher-sandbox/rancher-desktop | https://api.github.com/repos/rancher-sandbox/rancher-desktop | closed | `yarn test:e2e e2e/FILE ...` is broken on Windows | kind/bug priority/0 component/unit-test | ### Actual Behavior

When I run `yarn test:e2e`, it runs the tests, but when I specify one or more files it starts running, but then shuts down saying 'Error: No tests found`

### Steps to Reproduce

```

yarn test:e2e .\e2e\main.e2e.spec.ts

```

(or any number of command-line files > 0

### Rancher Desktop ... | 1.0 | `yarn test:e2e e2e/FILE ...` is broken on Windows - ### Actual Behavior

When I run `yarn test:e2e`, it runs the tests, but when I specify one or more files it starts running, but then shuts down saying 'Error: No tests found`

### Steps to Reproduce

```

yarn test:e2e .\e2e\main.e2e.spec.ts

```

(or any numb... | non_process | yarn test file is broken on windows actual behavior when i run yarn test it runs the tests but when i specify one or more files it starts running but then shuts down saying error no tests found steps to reproduce yarn test main spec ts or any number of comman... | 0 |

240,097 | 26,254,320,464 | IssuesEvent | 2023-01-05 22:32:39 | MValle21/conduktor | https://api.github.com/repos/MValle21/conduktor | opened | CVE-2021-23424 (High) detected in ansi-html-0.0.7.tgz | security vulnerability | ## CVE-2021-23424 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansi-html-0.0.7.tgz</b></p></summary>

<p>An elegant lib that converts the chalked (ANSI) text to HTML.</p>

<p>Library ... | True | CVE-2021-23424 (High) detected in ansi-html-0.0.7.tgz - ## CVE-2021-23424 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansi-html-0.0.7.tgz</b></p></summary>

<p>An elegant lib that c... | non_process | cve high detected in ansi html tgz cve high severity vulnerability vulnerable library ansi html tgz an elegant lib that converts the chalked ansi text to html library home page a href path to dependency file client package json path to vulnerable library client ... | 0 |

10,174 | 13,044,162,759 | IssuesEvent | 2020-07-29 03:47:35 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `RowSig` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `RowSig` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @andylokandy

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_expr... | 2.0 | UCP: Migrate scalar function `RowSig` from TiDB -

## Description

Port the scalar function `RowSig` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @andylokandy

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/t... | process | ucp migrate scalar function rowsig from tidb description port the scalar function rowsig from tidb to coprocessor score mentor s andylokandy recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

129,394 | 27,456,780,939 | IssuesEvent | 2023-03-02 22:06:55 | boredzo/impluse-hfs | https://api.github.com/repos/boredzo/impluse-hfs | opened | Warnings cleanup | code-hygiene | There are, as of [7f49687], seven warnings. These should be fixed up by whatever means so that the project builds with zero warnings. | 1.0 | Warnings cleanup - There are, as of [7f49687], seven warnings. These should be fixed up by whatever means so that the project builds with zero warnings. | non_process | warnings cleanup there are as of seven warnings these should be fixed up by whatever means so that the project builds with zero warnings | 0 |

7,930 | 11,104,927,380 | IssuesEvent | 2019-12-17 08:47:37 | zammad/zammad | https://api.github.com/repos/zammad/zammad | closed | Error while fetching email from inbox will block processing of other mails in inbox | bug mail processing prioritized by payment verified | * Used Zammad version: 3.1.x

* Installation method (source, package, ..): any

* Operating system: any

* Database + version: any

* Elasticsearch version: any

* Browser + version: any

*Ticket #: 1053593

### Expected behavior:

* Fetch mails via IMAP/POP3 channel backend

* Error occurs while fetching email

* L... | 1.0 | Error while fetching email from inbox will block processing of other mails in inbox - * Used Zammad version: 3.1.x

* Installation method (source, package, ..): any

* Operating system: any

* Database + version: any

* Elasticsearch version: any

* Browser + version: any

*Ticket #: 1053593

### Expected behavior:

... | process | error while fetching email from inbox will block processing of other mails in inbox used zammad version x installation method source package any operating system any database version any elasticsearch version any browser version any ticket expected behavior fet... | 1 |

8,412 | 11,578,331,934 | IssuesEvent | 2020-02-21 15:45:46 | material-components/material-components-ios | https://api.github.com/repos/material-components/material-components-ios | closed | [TextControls] Internal issue: b/148450171 | [TextControls] type:Process | This was filed as an internal issue. If you are a Googler, please visit [b/148450171](http://b/148450171) for more details.

<!-- Auto-generated content below, do not modify -->

---

#### Internal data

- Associated internal bug: [b/148450171](http://b/148450171) | 1.0 | [TextControls] Internal issue: b/148450171 - This was filed as an internal issue. If you are a Googler, please visit [b/148450171](http://b/148450171) for more details.

<!-- Auto-generated content below, do not modify -->

---

#### Internal data

- Associated internal bug: [b/148450171](http://b/148450171) | process | internal issue b this was filed as an internal issue if you are a googler please visit for more details internal data associated internal bug | 1 |

2,312 | 5,132,979,713 | IssuesEvent | 2017-01-11 01:14:26 | mapbox/mapbox-gl-js | https://api.github.com/repos/mapbox/mapbox-gl-js | opened | Remove plugin build artifacts from this repository | release process | Including the plugin build artifacts in this repository isn't consistent with our general policies around checking in build artifacts or very convenient for plugin authors. We should experiment with other workflows such as

- requiring plugins be published to https://unpkg.com/

- requiring plugins handle their own... | 1.0 | Remove plugin build artifacts from this repository - Including the plugin build artifacts in this repository isn't consistent with our general policies around checking in build artifacts or very convenient for plugin authors. We should experiment with other workflows such as

- requiring plugins be published to http... | process | remove plugin build artifacts from this repository including the plugin build artifacts in this repository isn t consistent with our general policies around checking in build artifacts or very convenient for plugin authors we should experiment with other workflows such as requiring plugins be published to ... | 1 |

180,490 | 14,768,292,822 | IssuesEvent | 2021-01-10 11:21:37 | jgroth/kompendium | https://api.github.com/repos/jgroth/kompendium | closed | Add guide about how to use remark-admonitions syntax | documentation | for users of Kompendium,

it's good if they know we're using https://github.com/elviswolcott/remark-admonitions and how they can improve the documentations using their syntax | 1.0 | Add guide about how to use remark-admonitions syntax - for users of Kompendium,

it's good if they know we're using https://github.com/elviswolcott/remark-admonitions and how they can improve the documentations using their syntax | non_process | add guide about how to use remark admonitions syntax for users of kompendium it s good if they know we re using and how they can improve the documentations using their syntax | 0 |

167,502 | 26,510,258,289 | IssuesEvent | 2023-01-18 16:34:42 | microsoft/PowerToys | https://api.github.com/repos/microsoft/PowerToys | closed | Windows Task Bar | Product-Tweak UI Design Needs-Triage | ### Description of the new feature / enhancement

Can the team please add feature that centralizes the apps on the taskbar, but not the start menu. I'm not a fan of how Microsoft centralized the start menu with apps because it is much easier and faster to directly move your mouse to the bottom left. Plus the start menu... | 1.0 | Windows Task Bar - ### Description of the new feature / enhancement

Can the team please add feature that centralizes the apps on the taskbar, but not the start menu. I'm not a fan of how Microsoft centralized the start menu with apps because it is much easier and faster to directly move your mouse to the bottom left. ... | non_process | windows task bar description of the new feature enhancement can the team please add feature that centralizes the apps on the taskbar but not the start menu i m not a fan of how microsoft centralized the start menu with apps because it is much easier and faster to directly move your mouse to the bottom left ... | 0 |

1,985 | 4,816,785,087 | IssuesEvent | 2016-11-04 11:19:18 | woesterduolf/Mission-reisbureau | https://api.github.com/repos/woesterduolf/Mission-reisbureau | opened | Hotel kiezen pagina | Boekingsprocess priority: highest Type:Feature | **See mockup file**

On the left there are all the options a customer could want to choose from regarding hotel preferences. In this example I have added the amount of stars, the rating and the distance to the nearest land mark. There are however a lot more and they are accessible by scrolling down by using the verti... | 1.0 | Hotel kiezen pagina - **See mockup file**

On the left there are all the options a customer could want to choose from regarding hotel preferences. In this example I have added the amount of stars, the rating and the distance to the nearest land mark. There are however a lot more and they are accessible by scrolling d... | process | hotel kiezen pagina see mockup file on the left there are all the options a customer could want to choose from regarding hotel preferences in this example i have added the amount of stars the rating and the distance to the nearest land mark there are however a lot more and they are accessible by scrolling d... | 1 |

9,264 | 12,294,715,172 | IssuesEvent | 2020-05-11 01:15:59 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | malloc(): smallbin double linked list corrupted | bug duplicate log-processing on-disk | I'm getting this strange error.

```

*** Error in `goaccess': malloc(): smallbin double linked list corrupted: 0x0000000034cea660 ***

======= Backtrace: =========

/lib/x86_64-linux-gnu/libc.so.6(+0x777e5)[0x7fac750997e5]

/lib/x86_64-linux-gnu/libc.so.6(+0x82651)[0x7fac750a4651]

/lib/x86_64-linux-gnu/libc.so.6(__li... | 1.0 | malloc(): smallbin double linked list corrupted - I'm getting this strange error.

```

*** Error in `goaccess': malloc(): smallbin double linked list corrupted: 0x0000000034cea660 ***

======= Backtrace: =========

/lib/x86_64-linux-gnu/libc.so.6(+0x777e5)[0x7fac750997e5]

/lib/x86_64-linux-gnu/libc.so.6(+0x82651)[0x7... | process | malloc smallbin double linked list corrupted i m getting this strange error error in goaccess malloc smallbin double linked list corrupted backtrace lib linux gnu libc so lib linux gnu libc so lib linux gnu libc so libc malloc us... | 1 |

15,407 | 19,597,959,210 | IssuesEvent | 2022-01-05 20:22:38 | googleapis/google-cloud-dotnet | https://api.github.com/repos/googleapis/google-cloud-dotnet | opened | Your .repo-metadata.json files have a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json files:

Result of scan 📈:

```

release_level must be equal to one of the allowed values in apis/Google.Analytics.Admin.V1Alpha/.repo-metadata.json

api_shortname field missing from apis/Google.Analytics.Admin.V1Alpha/.repo-metadata.jsonrelease_level must be equal to one ... | 1.0 | Your .repo-metadata.json files have a problem 🤒 - You have a problem with your .repo-metadata.json files:

Result of scan 📈:

```

release_level must be equal to one of the allowed values in apis/Google.Analytics.Admin.V1Alpha/.repo-metadata.json

api_shortname field missing from apis/Google.Analytics.Admin.V1Alpha/.re... | process | your repo metadata json files have a problem 🤒 you have a problem with your repo metadata json files result of scan 📈 release level must be equal to one of the allowed values in apis google analytics admin repo metadata json api shortname field missing from apis google analytics admin repo metadata ... | 1 |

18,361 | 24,492,213,005 | IssuesEvent | 2022-10-10 04:09:47 | phamtanduongtk29/html-css-training | https://api.github.com/repos/phamtanduongtk29/html-css-training | opened | Create and Responsive template section | not yet processing | - Estimates: 2 hours

- Create heading

- Create image

- Create description | 1.0 | Create and Responsive template section - - Estimates: 2 hours

- Create heading

- Create image

- Create description | process | create and responsive template section estimates hours create heading create image create description | 1 |

245,677 | 7,889,424,515 | IssuesEvent | 2018-06-28 04:03:43 | ubclaunchpad/inertia | https://api.github.com/repos/ubclaunchpad/inertia | opened | inertia [remote] up cookie not found | todo: priority :fire: type: bug :bug: | wtf?

```

bobbook:inertia robertlin$ inertia --config inertia.bumper.toml provision-test-5 up

[WARNING] Configuration version 'latest' does not match your Inertia CLI version 'test'

Cookie not found

``` | 1.0 | inertia [remote] up cookie not found - wtf?

```

bobbook:inertia robertlin$ inertia --config inertia.bumper.toml provision-test-5 up

[WARNING] Configuration version 'latest' does not match your Inertia CLI version 'test'

Cookie not found

``` | non_process | inertia up cookie not found wtf bobbook inertia robertlin inertia config inertia bumper toml provision test up configuration version latest does not match your inertia cli version test cookie not found | 0 |

133,448 | 10,822,163,469 | IssuesEvent | 2019-11-08 20:31:02 | rancher/rke | https://api.github.com/repos/rancher/rke | closed | Error message when pulling new images should be removed | [zube]: To Test kind/enhancement team/ca | **RKE version:**

```

$ rke -v

rke version v0.3.0

```

**Docker version: (`docker version`,`docker info` preferred)**

```

$ docker version

Client: Docker Engine - Community

Version: 18.09.8

API version: 1.39

Go version: go1.10.8

Git commit: 0dd43dd87f

Built: Wed... | 1.0 | Error message when pulling new images should be removed - **RKE version:**

```

$ rke -v

rke version v0.3.0

```

**Docker version: (`docker version`,`docker info` preferred)**

```

$ docker version

Client: Docker Engine - Community

Version: 18.09.8

API version: 1.39

Go version: go1.10.... | non_process | error message when pulling new images should be removed rke version rke v rke version docker version docker version docker info preferred docker version client docker engine community version api version go version git... | 0 |

19,694 | 26,047,573,451 | IssuesEvent | 2022-12-22 15:37:48 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Stages, jobs and pipeline agents | doc-enhancement devops/prod Pri2 devops-cicd-process/tech |

When stages execute in parallel, are they free to run on any free pipeline agent in the pool?

When stages execute in series, are they free to change to some other free agent in the pool?

Can jobs run in parallel and on different free agents?

---

#### Document Details

⚠ *Do not edit this section. It is requ... | 1.0 | Stages, jobs and pipeline agents -

When stages execute in parallel, are they free to run on any free pipeline agent in the pool?

When stages execute in series, are they free to change to some other free agent in the pool?

Can jobs run in parallel and on different free agents?

---

#### Document Details

⚠ *D... | process | stages jobs and pipeline agents when stages execute in parallel are they free to run on any free pipeline agent in the pool when stages execute in series are they free to change to some other free agent in the pool can jobs run in parallel and on different free agents document details ⚠ d... | 1 |

136,121 | 11,038,098,344 | IssuesEvent | 2019-12-08 11:39:26 | ably/ably-cocoa | https://api.github.com/repos/ably/ably-cocoa | opened | Flaky test: RTN5 (basic operations should work | test suite | Pretty often, in Travis, we get a timeout:

```

[10:42:01]: ▸ ✗ Connection__basic_operations_should_work_simultaneously, Waited more than 10.0 seconds

```

This doesn't happen on my machine, and given this is a timeout on stressful test, we're probably just asking for too much. But we should double-check this... | 1.0 | Flaky test: RTN5 (basic operations should work - Pretty often, in Travis, we get a timeout:

```

[10:42:01]: ▸ ✗ Connection__basic_operations_should_work_simultaneously, Waited more than 10.0 seconds

```

This doesn't happen on my machine, and given this is a timeout on stressful test, we're probably just as... | non_process | flaky test basic operations should work pretty often in travis we get a timeout ▸ ✗ connection basic operations should work simultaneously waited more than seconds this doesn t happen on my machine and given this is a timeout on stressful test we re probably just asking for too ... | 0 |

27,953 | 11,579,337,315 | IssuesEvent | 2020-02-21 17:42:03 | mwilliams7197/node | https://api.github.com/repos/mwilliams7197/node | opened | CVE-2011-4969 (Medium) detected in jquery-1.6.2.js, jquery-1.4.4.min.js | security vulnerability | ## CVE-2011-4969 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jquery-1.6.2.js</b>, <b>jquery-1.4.4.min.js</b></p></summary>

<p>

<details><summary><b>jquery-1.6.2.js</b></p></sum... | True | CVE-2011-4969 (Medium) detected in jquery-1.6.2.js, jquery-1.4.4.min.js - ## CVE-2011-4969 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jquery-1.6.2.js</b>, <b>jquery-1.4.4.min.j... | non_process | cve medium detected in jquery js jquery min js cve medium severity vulnerability vulnerable libraries jquery js jquery min js jquery js javascript library for dom operations library home page a href path to dependency file tmp ws scm node... | 0 |

122,113 | 26,088,552,630 | IssuesEvent | 2022-12-26 07:51:00 | sebastianbergmann/phpunit | https://api.github.com/repos/sebastianbergmann/phpunit | closed | Fatal error instead of PHPUnit warning when no code coverage driver is available | feature/test-runner feature/code-coverage | ```

An error occurred inside PHPUnit.

Message: No code coverage driver available

Location: /usr/local/src/php-code-coverage/src/Driver/Selector.php:41

#0 /usr/local/src/php-code-coverage/vendor/phpunit/phpunit/src/Runner/CodeCoverage.php(51): SebastianBergmann\CodeCoverage\Driver\Selector->forLineCoverage()

#... | 1.0 | Fatal error instead of PHPUnit warning when no code coverage driver is available - ```

An error occurred inside PHPUnit.

Message: No code coverage driver available

Location: /usr/local/src/php-code-coverage/src/Driver/Selector.php:41

#0 /usr/local/src/php-code-coverage/vendor/phpunit/phpunit/src/Runner/CodeCov... | non_process | fatal error instead of phpunit warning when no code coverage driver is available an error occurred inside phpunit message no code coverage driver available location usr local src php code coverage src driver selector php usr local src php code coverage vendor phpunit phpunit src runner codecove... | 0 |

89,562 | 25,836,899,316 | IssuesEvent | 2022-12-12 20:24:42 | xamarin/xamarin-android | https://api.github.com/repos/xamarin/xamarin-android | closed | Xamarin.Android project build fails after upgrading to Visual Studio 17.3.5 (from 17.2.5) due to missing .dll in AOT folder and aapt_rules.txt | Area: App+Library Build | ### Android application type

Classic Xamarin.Android (MonoAndroid12.0, etc.)

### Affected platform version

VS 17.3.5

### Description

Not a single change in our project or CI script, just VS got update to the currently latest one and we experience 2 strange and blocker side-effect during AOT apk build, th... | 1.0 | Xamarin.Android project build fails after upgrading to Visual Studio 17.3.5 (from 17.2.5) due to missing .dll in AOT folder and aapt_rules.txt - ### Android application type

Classic Xamarin.Android (MonoAndroid12.0, etc.)

### Affected platform version

VS 17.3.5

### Description

Not a single change in our ... | non_process | xamarin android project build fails after upgrading to visual studio from due to missing dll in aot folder and aapt rules txt android application type classic xamarin android etc affected platform version vs description not a single change in our project or ci s... | 0 |

5,132 | 2,775,069,613 | IssuesEvent | 2015-05-04 14:03:42 | azavea/nyc-trees | https://api.github.com/repos/azavea/nyc-trees | opened | Shorten width of species autocomplete list | design | From Parks:

It is also hard to click away from the list, especially on the mobile screen - there is just not enough inactive white space to click on, to make the list go away. Perhaps this could be addressed by making the field slightly less wide than the screen, so that when the user scrolls down the screen to the... | 1.0 | Shorten width of species autocomplete list - From Parks:

It is also hard to click away from the list, especially on the mobile screen - there is just not enough inactive white space to click on, to make the list go away. Perhaps this could be addressed by making the field slightly less wide than the screen, so that... | non_process | shorten width of species autocomplete list from parks it is also hard to click away from the list especially on the mobile screen there is just not enough inactive white space to click on to make the list go away perhaps this could be addressed by making the field slightly less wide than the screen so that... | 0 |

16,871 | 22,151,690,069 | IssuesEvent | 2022-06-03 17:33:35 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | opened | Release checklist 0.58 | enhancement P1 process | ### Problem

We need a checklist to verify the release is rolled out successfully.

### Solution

## Preparation

- [ ] Milestone field populated on [relevant issues](https://github.com/hashgraph/hedera-mirror-node/issues?q=is%3Aclosed+no%3Amilestone+sort%3Aupdated-desc)

- [x] Nothing [open](https://github.com/hashgra... | 1.0 | Release checklist 0.58 - ### Problem

We need a checklist to verify the release is rolled out successfully.

### Solution

## Preparation

- [ ] Milestone field populated on [relevant issues](https://github.com/hashgraph/hedera-mirror-node/issues?q=is%3Aclosed+no%3Amilestone+sort%3Aupdated-desc)

- [x] Nothing [open](h... | process | release checklist problem we need a checklist to verify the release is rolled out successfully solution preparation milestone field populated on nothing for milestone github checks for branch are passing automated kubernetes deployment successful tag release up... | 1 |

94,918 | 11,940,832,225 | IssuesEvent | 2020-04-02 17:22:46 | US-CBP/cbp-theme | https://api.github.com/repos/US-CBP/cbp-theme | closed | Label text color overridden when in an list group | design | Labels Text Color is overridden when in an anchor tag by:

.list-group .list-group-item a {

color: #333;

}

Where it should have the color from:

.label {

display: inline;

padding: .2em .6em .3em;

font-size: 75%;

font-weight: 700;

line-height: 1;

**color: #fff;**

text-alig... | 1.0 | Label text color overridden when in an list group - Labels Text Color is overridden when in an anchor tag by:

.list-group .list-group-item a {

color: #333;

}

Where it should have the color from:

.label {

display: inline;

padding: .2em .6em .3em;

font-size: 75%;

font-weight: 700;

... | non_process | label text color overridden when in an list group labels text color is overridden when in an anchor tag by list group list group item a color where it should have the color from label display inline padding font size font weight line height... | 0 |

1,638 | 4,259,131,322 | IssuesEvent | 2016-07-11 09:52:38 | e-government-ua/iBP | https://api.github.com/repos/e-government-ua/iBP | closed | Коростень: исправление атрибутов | In process of testing in work test | В реквизитах ЦНАП города Коростеня:

1) необходимо сменить номер телефона (там сейчас ошибочно указан номер районного ЦНАП). Правильный номер: 0 (4142) 50146;

2) добавить номер кабинета: каб. №11 | 1.0 | Коростень: исправление атрибутов - В реквизитах ЦНАП города Коростеня:

1) необходимо сменить номер телефона (там сейчас ошибочно указан номер районного ЦНАП). Правильный номер: 0 (4142) 50146;

2) добавить номер кабинета: каб. №11 | process | коростень исправление атрибутов в реквизитах цнап города коростеня необходимо сменить номер телефона там сейчас ошибочно указан номер районного цнап правильный номер добавить номер кабинета каб № | 1 |

22,021 | 30,533,899,894 | IssuesEvent | 2023-07-19 15:55:29 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | Merge and rename 'autophagy involved in symbiotic interaction' and children | multi-species process | Hello,

I think this 'autophagy involved in symbiotic interaction' triad should be cleaned:

- GO:0075071 autophagy involved in symbiotic interaction

-- GO:0075044 autophagy of host cells involved in interaction with symbiont

--- GO:0075072 autophagy of symbiont cells involved in interaction with host

... | 1.0 | Merge and rename 'autophagy involved in symbiotic interaction' and children - Hello,

I think this 'autophagy involved in symbiotic interaction' triad should be cleaned:

- GO:0075071 autophagy involved in symbiotic interaction

-- GO:0075044 autophagy of host cells involved in interaction with symbiont

---... | process | merge and rename autophagy involved in symbiotic interaction and children hello i think this autophagy involved in symbiotic interaction triad should be cleaned go autophagy involved in symbiotic interaction go autophagy of host cells involved in interaction with symbiont go autop... | 1 |

13,825 | 5,468,080,436 | IssuesEvent | 2017-03-10 04:06:58 | docker/docker | https://api.github.com/repos/docker/docker | closed | Exporting volume from container running in windows 10 insider preview doesn't work | area/builder area/bundles platform/windows version/1.13 | **Description**

I am trying the latest windows 10 insider preview build with docker overlay driver support.

I have a image thats build with the below `Dockerfile`

```

FROM microsoft/windowsservercore

COPY . C:/temp

SHELL ["powershell", "-Command"]

RUN New-Item c:\Qumram -Type directory; \

New-It... | 1.0 | Exporting volume from container running in windows 10 insider preview doesn't work - **Description**

I am trying the latest windows 10 insider preview build with docker overlay driver support.

I have a image thats build with the below `Dockerfile`

```

FROM microsoft/windowsservercore

COPY . C:/temp

SHEL... | non_process | exporting volume from container running in windows insider preview doesn t work description i am trying the latest windows insider preview build with docker overlay driver support i have a image thats build with the below dockerfile from microsoft windowsservercore copy c temp shell ... | 0 |

14,743 | 8,679,685,436 | IssuesEvent | 2018-12-01 01:32:23 | vim/vim | https://api.github.com/repos/vim/vim | closed | Feature Request: cache syntax highlighting to improve 'relativenumber' scroll performance | performance | Hello Vim team,

Spawning a new feature request from the existing [Ruby high CPU usage #282 issue](https://github.com/vim/vim/issues/282). Note, the [vim-ruby #243 issue](https://github.com/vim-ruby/vim-ruby/issues/243) is fundamentally the same issue as well.

Basically the issue boils down to syntax highlighting ... | True | Feature Request: cache syntax highlighting to improve 'relativenumber' scroll performance - Hello Vim team,

Spawning a new feature request from the existing [Ruby high CPU usage #282 issue](https://github.com/vim/vim/issues/282). Note, the [vim-ruby #243 issue](https://github.com/vim-ruby/vim-ruby/issues/243) is fun... | non_process | feature request cache syntax highlighting to improve relativenumber scroll performance hello vim team spawning a new feature request from the existing note the is fundamentally the same issue as well basically the issue boils down to syntax highlighting needing to be calculated for every visible l... | 0 |

422,851 | 12,287,489,099 | IssuesEvent | 2020-05-09 12:26:48 | googleapis/elixir-google-api | https://api.github.com/repos/googleapis/elixir-google-api | opened | Synthesis failed for Slides | api: slides autosynth failure priority: p1 type: bug | Hello! Autosynth couldn't regenerate Slides. :broken_heart:

Here's the output from running `synth.py`:

```

2020-05-09 05:19:53 [INFO] logs will be written to: /tmpfs/src/github/synthtool/logs/googleapis/elixir-google-api

2020-05-09 05:19:53,834 autosynth > logs will be written to: /tmpfs/src/github/synthtool/logs/goo... | 1.0 | Synthesis failed for Slides - Hello! Autosynth couldn't regenerate Slides. :broken_heart:

Here's the output from running `synth.py`:

```

2020-05-09 05:19:53 [INFO] logs will be written to: /tmpfs/src/github/synthtool/logs/googleapis/elixir-google-api

2020-05-09 05:19:53,834 autosynth > logs will be written to: /tmpfs... | non_process | synthesis failed for slides hello autosynth couldn t regenerate slides broken heart here s the output from running synth py logs will be written to tmpfs src github synthtool logs googleapis elixir google api autosynth logs will be written to tmpfs src github synthtool l... | 0 |

10,548 | 13,327,743,107 | IssuesEvent | 2020-08-27 13:36:09 | prisma/prisma-client-js | https://api.github.com/repos/prisma/prisma-client-js | closed | Unmigrated database leads to minimal error message for missing table | kind/improvement process/candidate size/XS topic: error | When e.g. running Prisma Client in a Netlify function, this is what the error output looks when you did not migrate your database yet (and all tables are missing):

```

◈ Error during invocation: {

errorMessage: 'Error occurred during query execution:\n' +

'ConnectorError(ConnectorError { user_facing_error:... | 1.0 | Unmigrated database leads to minimal error message for missing table - When e.g. running Prisma Client in a Netlify function, this is what the error output looks when you did not migrate your database yet (and all tables are missing):

```

◈ Error during invocation: {

errorMessage: 'Error occurred during query e... | process | unmigrated database leads to minimal error message for missing table when e g running prisma client in a netlify function this is what the error output looks when you did not migrate your database yet and all tables are missing ◈ error during invocation errormessage error occurred during query e... | 1 |

19,703 | 26,052,917,679 | IssuesEvent | 2022-12-22 20:48:50 | aolabNeuro/analyze | https://api.github.com/repos/aolabNeuro/analyze | closed | eye samplerate | help wanted preprocessing | the sampling rate of eye data from rig1 is ~240 hz, synced with optitrack. and yet we are saving the full 25khz sampled version from the ecube into *_eye.hdf. which makes for really big files and wasted space. I think we can safely downsample to 240hz without losing any resolution. | 1.0 | eye samplerate - the sampling rate of eye data from rig1 is ~240 hz, synced with optitrack. and yet we are saving the full 25khz sampled version from the ecube into *_eye.hdf. which makes for really big files and wasted space. I think we can safely downsample to 240hz without losing any resolution. | process | eye samplerate the sampling rate of eye data from is hz synced with optitrack and yet we are saving the full sampled version from the ecube into eye hdf which makes for really big files and wasted space i think we can safely downsample to without losing any resolution | 1 |

728,127 | 25,067,212,962 | IssuesEvent | 2022-11-07 09:18:15 | TencentBlueKing/bk-nodeman | https://api.github.com/repos/TencentBlueKing/bk-nodeman | closed | [BUG] 2.0 Agent 注册及健康检查上报错误信息缺失 | kind/bug version/V2.2.X priority/middle module/script | **问题描述**

2.0 Agent 注册及健康检查上报错误信息缺失

**重现方法**

1. 安装 Agent 或 Proxy

2. 让 register / healthz 报错

**预期现象**

把报错信息上报到后台,例如:xxx failed:xxxx

**截屏**

... | 1.0 | [BUG] 2.0 Agent 注册及健康检查上报错误信息缺失 - **问题描述**

2.0 Agent 注册及健康检查上报错误信息缺失

**重现方法**

1. 安装 Agent 或 Proxy

2. 让 register / healthz 报错

**预期现象**

把报错信息上报到后台,例如:xxx failed:xxxx

**截屏**

detected in y18n-4.0.0.tgz - autoclosed | security vulnerability | ## CVE-2020-7774 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>y18n-4.0.0.tgz</b></p></summary>

<p>the bare-bones internationalization library used by yargs</p>

<p>Library home page:... | True | CVE-2020-7774 (High) detected in y18n-4.0.0.tgz - autoclosed - ## CVE-2020-7774 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>y18n-4.0.0.tgz</b></p></summary>

<p>the bare-bones inter... | non_process | cve high detected in tgz autoclosed cve high severity vulnerability vulnerable library tgz the bare bones internationalization library used by yargs library home page a href path to dependency file worldguard adapters package json path to vulnerable library wor... | 0 |