Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

44,535 | 5,843,584,760 | IssuesEvent | 2017-05-10 09:32:58 | canonical-websites/www.ubuntu.com | https://api.github.com/repos/canonical-websites/www.ubuntu.com | closed | Top and bottom padding of footer should be the same (L screen) | Review: Design +1 Status: Review | Top seems bigger now. Reviewing /about section | 1.0 | Top and bottom padding of footer should be the same (L screen) - Top seems bigger now. Reviewing /about section | non_process | top and bottom padding of footer should be the same l screen top seems bigger now reviewing about section | 0 |

4,626 | 7,472,697,351 | IssuesEvent | 2018-04-03 13:26:43 | ODiogoSilva/assemblerflow | https://api.github.com/repos/ODiogoSilva/assemblerflow | closed | Add dependency for spades and trimmomatic processes | process | The `integrity_coverage` module should be specified as a dependency of `spades` (because of the read max length) and `trimmomatic` and `fastqc_trimmomatic` (because of the phred). | 1.0 | Add dependency for spades and trimmomatic processes - The `integrity_coverage` module should be specified as a dependency of `spades` (because of the read max length) and `trimmomatic` and `fastqc_trimmomatic` (because of the phred). | process | add dependency for spades and trimmomatic processes the integrity coverage module should be specified as a dependency of spades because of the read max length and trimmomatic and fastqc trimmomatic because of the phred | 1 |

12,361 | 14,888,833,850 | IssuesEvent | 2021-01-20 20:27:51 | cypress-io/cypress-documentation | https://api.github.com/repos/cypress-io/cypress-documentation | closed | Look into speeding up parallel builds via RAM disk | process: ci | When we install and run tests, we get widely different times for attaching the workspace. CircleCI has suggested we use RAM disk instead of the workspace

https://discuss.circleci.com/t/attaching-workspace-has-an-unpredicably-different-timing/38521 | 1.0 | Look into speeding up parallel builds via RAM disk - When we install and run tests, we get widely different times for attaching the workspace. CircleCI has suggested we use RAM disk instead of the workspace

https://discuss.circleci.com/t/attaching-workspace-has-an-unpredicably-different-timing/38521 | process | look into speeding up parallel builds via ram disk when we install and run tests we get widely different times for attaching the workspace circleci has suggested we use ram disk instead of the workspace | 1 |

5,622 | 8,477,035,089 | IssuesEvent | 2018-10-25 00:48:43 | ncbo/bioportal-project | https://api.github.com/repos/ncbo/bioportal-project | closed | FASTO: Initial submission failed to parse | in progress ontology processing problem | User reached out to us on the support list to indicate that their initial upload of the [FASTO ontology](http://bioportal.bioontology.org/ontologies/FASTO) didn't parse.

There were two issues that I asked the user to address:

1). The ontology was submitted as a ZIP file, but didn't follow our required convention ... | 1.0 | FASTO: Initial submission failed to parse - User reached out to us on the support list to indicate that their initial upload of the [FASTO ontology](http://bioportal.bioontology.org/ontologies/FASTO) didn't parse.

There were two issues that I asked the user to address:

1). The ontology was submitted as a ZIP file... | process | fasto initial submission failed to parse user reached out to us on the support list to indicate that their initial upload of the didn t parse there were two issues that i asked the user to address the ontology was submitted as a zip file but didn t follow our required convention where the name of th... | 1 |

736 | 3,214,317,146 | IssuesEvent | 2015-10-07 00:46:24 | broadinstitute/hellbender | https://api.github.com/repos/broadinstitute/hellbender | closed | Choose approach to fix scaling of ReadBAMTransform, and implement fix | Dataflow DataflowPreprocessingPipeline profiling | From an analysis by @jean-philippe-martin:

**The Problem**

Doing a preliminary performance analysis of Hellbender, I found that ReadBAM did not scale with the number of workers.

Logs indicated it was... | 1.0 | Choose approach to fix scaling of ReadBAMTransform, and implement fix - From an analysis by @jean-philippe-martin:

**The Problem**

Doing a preliminary performance analysis of Hellbender, I found that ReadBAM did not scale with the number of workers.

' has invalid rel... | 1.0 | No character can join society after game reloading - **Mod Version**

136c94f4

**What expansions do you have installed?**

All

**Please explain your issue in as much detail as possible:**

Sometimes "No character" with "Rebels" name joins society.

This things probably can be related:

> [character.cpp:2744... | non_process | no character can join society after game reloading mod version what expansions do you have installed all please explain your issue in as much detail as possible sometimes no character with rebels name joins society this things probably can be related character fitch of ... | 0 |

260,471 | 22,623,631,808 | IssuesEvent | 2022-06-30 08:45:41 | gra-m/DBServer | https://api.github.com/repos/gra-m/DBServer | closed | Investigate testing Private Methods/seek & write | testing | I was curious of how seek/write works (seek to length of record/file write writes FROM there, obviously meaning the next available byte AFTER that point).

I was curious of how to test private methods, and despite the general opinion seeming to be that this means code-smell I took it as an opportunity to try Reflectio... | 1.0 | Investigate testing Private Methods/seek & write - I was curious of how seek/write works (seek to length of record/file write writes FROM there, obviously meaning the next available byte AFTER that point).

I was curious of how to test private methods, and despite the general opinion seeming to be that this means code... | non_process | investigate testing private methods seek write i was curious of how seek write works seek to length of record file write writes from there obviously meaning the next available byte after that point i was curious of how to test private methods and despite the general opinion seeming to be that this means code... | 0 |

230,315 | 25,463,880,800 | IssuesEvent | 2022-11-25 00:27:03 | neinteractiveliterature/intercode | https://api.github.com/repos/neinteractiveliterature/intercode | closed | babel-loader-8.3.0.tgz: 2 vulnerabilities (highest severity is: 7.5) - autoclosed | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>babel-loader-8.3.0.tgz</b></p></summary>

<p></p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/neinteractiveliterature/intercode/commit/da0c9c84fdbc82b3b... | True | babel-loader-8.3.0.tgz: 2 vulnerabilities (highest severity is: 7.5) - autoclosed - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>babel-loader-8.3.0.tgz</b></p></summary>

<p></p>

<p>

<p>Found in HEAD commit: <a... | non_process | babel loader tgz vulnerabilities highest severity is autoclosed vulnerable library babel loader tgz found in head commit a href vulnerabilities cve severity cvss dependency type fixed in babel loader version remediation available ... | 0 |

499,314 | 14,444,785,395 | IssuesEvent | 2020-12-07 21:48:46 | ansible/awx | https://api.github.com/repos/ansible/awx | closed | ansible.tower.tower_group module is having an erratic behavior. | component:awx_collection priority:medium state:needs_info | ##### ISSUE TYPE

- Bug Report

##### SUMMARY

<!-- Briefly describe the problem. -->

Running the tower_group module, it is erratically removing the existing hosts from the existing groups instead of preserve them there. As I can see, the group IDs are preserved (it is not removing them)

##### ENVIRONMENT

* AWX... | 1.0 | ansible.tower.tower_group module is having an erratic behavior. - ##### ISSUE TYPE

- Bug Report

##### SUMMARY

<!-- Briefly describe the problem. -->

Running the tower_group module, it is erratically removing the existing hosts from the existing groups instead of preserve them there. As I can see, the group IDs a... | non_process | ansible tower tower group module is having an erratic behavior issue type bug report summary running the tower group module it is erratically removing the existing hosts from the existing groups instead of preserve them there as i can see the group ids are preserved it is not removing them... | 0 |

12,829 | 15,211,953,375 | IssuesEvent | 2021-02-17 09:46:45 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Using supported types in `Unsupported` fields yields unnecessary migrations | engines/migration engine process/candidate team/migrations topic: migrate | Reproduction on postgres/mysql.

```prisma

model Cat {

id Int @id

data Unsupported("TEXT")

}

```

We have to make type diffing specifically aware of this scenario to avoid migrating, in that case. | 1.0 | Using supported types in `Unsupported` fields yields unnecessary migrations - Reproduction on postgres/mysql.

```prisma

model Cat {

id Int @id

data Unsupported("TEXT")

}

```

We have to make type diffing specifically aware of this scenario to avoid migrating, in that case. | process | using supported types in unsupported fields yields unnecessary migrations reproduction on postgres mysql prisma model cat id int id data unsupported text we have to make type diffing specifically aware of this scenario to avoid migrating in that case | 1 |

59,373 | 14,379,615,717 | IssuesEvent | 2020-12-02 00:46:48 | gate5/react-16.0.0 | https://api.github.com/repos/gate5/react-16.0.0 | opened | CVE-2018-0734 (Medium) detected in io.jsv5.11.1 | security vulnerability | ## CVE-2018-0734 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>io.jsv5.11.1</b></p></summary>

<p>

<p>Node.js JavaScript runtime :sparkles::turtle::rocket::sparkles:</p>

<p>Library ... | True | CVE-2018-0734 (Medium) detected in io.jsv5.11.1 - ## CVE-2018-0734 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>io.jsv5.11.1</b></p></summary>

<p>

<p>Node.js JavaScript runtime :s... | non_process | cve medium detected in io cve medium severity vulnerability vulnerable library io node js javascript runtime sparkles turtle rocket sparkles library home page a href found in head commit a href vulnerable source files react scr... | 0 |

19,468 | 25,763,307,908 | IssuesEvent | 2022-12-08 22:40:23 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | Terminal killed | info-needed terminal-process new release |

Type: <b>Bug</b>

If I open a new terminal is auto killed and closed

VS Code version: Code 1.74.0 (Universal) (5235c6bb189b60b01b1f49062f4ffa42384f8c91, 2022-12-05T16:43:37.594Z)

OS version: Darwin x64 22.1.0

Modes:

Sandboxed: No

<details>

<summary>System Info</summary>

|Item|Value|

|---|---|

|CPUs|Intel(R) Core(TM... | 1.0 | Terminal killed -

Type: <b>Bug</b>

If I open a new terminal is auto killed and closed

VS Code version: Code 1.74.0 (Universal) (5235c6bb189b60b01b1f49062f4ffa42384f8c91, 2022-12-05T16:43:37.594Z)

OS version: Darwin x64 22.1.0

Modes:

Sandboxed: No

<details>

<summary>System Info</summary>

|Item|Value|

|---|---|

|CPU... | process | terminal killed type bug if i open a new terminal is auto killed and closed vs code version code universal os version darwin modes sandboxed no system info item value cpus intel r core tm cpu x gpu status canvas enabled canvas oop ra... | 1 |

127,393 | 12,321,882,204 | IssuesEvent | 2020-05-13 09:25:47 | Chocobozzz/PeerTube | https://api.github.com/repos/Chocobozzz/PeerTube | closed | Documentation contains broken links | Component: Documentation :books: Type: Bug :bug: good first issue :beginner: | <!-- If you have a question, please read the FAQ.md first -->

<!-- If you report a security issue, please refrain from filling an issue and refer to SECURITY.md for the disclosure procedure. -->

<!-- If you report a bug, please fill the form -->

**What happened?**

When clicking some links (e.g. `/support/doc/api`... | 1.0 | Documentation contains broken links - <!-- If you have a question, please read the FAQ.md first -->

<!-- If you report a security issue, please refrain from filling an issue and refer to SECURITY.md for the disclosure procedure. -->

<!-- If you report a bug, please fill the form -->

**What happened?**

When clicki... | non_process | documentation contains broken links what happened when clicking some links e g support doc api it leads to a page it seems like these were not intended to be actual links what do you expect to happen instead i expect links i click on to lead somewhere preferably somew... | 0 |

22,037 | 30,553,933,099 | IssuesEvent | 2023-07-20 10:21:11 | brucemiller/LaTeXML | https://api.github.com/repos/brucemiller/LaTeXML | closed | MakeBibliography.pm typo? | bug postprocessing bibliography | https://github.com/brucemiller/LaTeXML/blob/master/lib/LaTeXML/Post/MakeBibliography.pm, line 785 says

`ltx:title`

while the other bibliographic types use `ltx:bib-title` .

Please check if this is by design or a typo. | 1.0 | MakeBibliography.pm typo? - https://github.com/brucemiller/LaTeXML/blob/master/lib/LaTeXML/Post/MakeBibliography.pm, line 785 says

`ltx:title`

while the other bibliographic types use `ltx:bib-title` .

Please check if this is by design or a typo. | process | makebibliography pm typo line says ltx title while the other bibliographic types use ltx bib title please check if this is by design or a typo | 1 |

218,083 | 7,330,384,065 | IssuesEvent | 2018-03-05 09:45:10 | NCEAS/metacat | https://api.github.com/repos/NCEAS/metacat | closed | Install of data-registry requires cvs checkout | Category: metacat Component: Bugzilla-Id Priority: Normal Status: Resolved Tracker: Bug | ---

Author Name: **Saurabh Garg** (Saurabh Garg)

Original Redmine Issue: 1755, https://projects.ecoinformatics.org/ecoinfo/issues/1755

Original Date: 2004-11-03

Original Assignee: Saurabh Garg

---

Install of data-registry does a cvs checkout from cvs.nceas.ucsb.edu. For

someone outside NCEAS, this requires gettin... | 1.0 | Install of data-registry requires cvs checkout - ---

Author Name: **Saurabh Garg** (Saurabh Garg)

Original Redmine Issue: 1755, https://projects.ecoinformatics.org/ecoinfo/issues/1755

Original Date: 2004-11-03

Original Assignee: Saurabh Garg

---

Install of data-registry does a cvs checkout from cvs.nceas.ucsb.edu. ... | non_process | install of data registry requires cvs checkout author name saurabh garg saurabh garg original redmine issue original date original assignee saurabh garg install of data registry does a cvs checkout from cvs nceas ucsb edu for someone outside nceas this requires getting a cvs usern... | 0 |

336,776 | 30,221,140,980 | IssuesEvent | 2023-07-05 19:30:37 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | ccl/changefeedccl: TestWebhookSink failed | C-test-failure O-robot A-cdc T-cdc branch-release-23.1 | ccl/changefeedccl.TestWebhookSink [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/9599673?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/9599673?buildTab=artifacts#/) on release-23.1 @ [ad16885ca3b4567ed5eb3... | 1.0 | ccl/changefeedccl: TestWebhookSink failed - ccl/changefeedccl.TestWebhookSink [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/9599673?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/9599673?buildTab=artifacts... | non_process | ccl changefeedccl testwebhooksink failed ccl changefeedccl testwebhooksink with on release run testwebhooksink gostd server go http tls handshake error from remote error tls bad certificate gostd server go http tls handshake err... | 0 |

21,064 | 28,012,482,563 | IssuesEvent | 2023-03-27 19:44:12 | NationalSecurityAgency/ghidra | https://api.github.com/repos/NationalSecurityAgency/ghidra | closed | PowerPC comparisons returned or stored in variables decompile poorly | Type: Bug Feature: Processor/PowerPC | **Describe the bug**

PowerPC comparisons returned or stored in variables decompile poorly, as they use `countLeadingZeros(v) >> 5` as a way to check if `v` is 0 (as if so, `v` has 32 bits set to 0, and `32 >> 5 == 1`).

**To Reproduce**

Decompile the attached binaries, using `PowerPC:BE:32:default:default` as the l... | 1.0 | PowerPC comparisons returned or stored in variables decompile poorly - **Describe the bug**

PowerPC comparisons returned or stored in variables decompile poorly, as they use `countLeadingZeros(v) >> 5` as a way to check if `v` is 0 (as if so, `v` has 32 bits set to 0, and `32 >> 5 == 1`).

**To Reproduce**

Decompil... | process | powerpc comparisons returned or stored in variables decompile poorly describe the bug powerpc comparisons returned or stored in variables decompile poorly as they use countleadingzeros v as a way to check if v is as if so v has bits set to and to reproduce decompile ... | 1 |

17,425 | 23,246,365,495 | IssuesEvent | 2022-08-03 20:36:25 | Ultimate-Hosts-Blacklist/whitelist | https://api.github.com/repos/Ultimate-Hosts-Blacklist/whitelist | closed | [FALSE-POSITIVE?] breitbart.com | whitelisting process | **Domains or links**

www.breitbart.com

**More Information**

How did you discover your web site or domain was listed here?

didn't load in browser

**Have you requested removal from other sources?**

no, no other blocklists I use contain this domain.

**Additional context**

This is a news website. Even though... | 1.0 | [FALSE-POSITIVE?] breitbart.com - **Domains or links**

www.breitbart.com

**More Information**

How did you discover your web site or domain was listed here?

didn't load in browser

**Have you requested removal from other sources?**

no, no other blocklists I use contain this domain.

**Additional context**

T... | process | breitbart com domains or links more information how did you discover your web site or domain was listed here didn t load in browser have you requested removal from other sources no no other blocklists i use contain this domain additional context this is a news website even thou... | 1 |

245,371 | 7,885,550,695 | IssuesEvent | 2018-06-27 12:49:47 | linterhub/schema | https://api.github.com/repos/linterhub/schema | closed | Support multiple urls | Priority: Medium Status: In Progress Type: Feature | There are a lot of cases when packages described more than one url: homepage, issues, repository, etc. Add ability to specify **one or more** urls for `package` (backward compatible) and it's type. Default is `homepage`. | 1.0 | Support multiple urls - There are a lot of cases when packages described more than one url: homepage, issues, repository, etc. Add ability to specify **one or more** urls for `package` (backward compatible) and it's type. Default is `homepage`. | non_process | support multiple urls there are a lot of cases when packages described more than one url homepage issues repository etc add ability to specify one or more urls for package backward compatible and it s type default is homepage | 0 |

66,678 | 16,674,184,975 | IssuesEvent | 2021-06-07 14:23:31 | adventuregamestudio/ags | https://api.github.com/repos/adventuregamestudio/ags | closed | Tool: translation compiler | ags3 context: game building type: enhancement | As a step of decoupling game compilation procedure from the Editor, we need a stand-alone tool that is run from the command-line, parses a translation source (TRS) file and writes a compiled translation (TRA) file in binary format.

Should be written in the similar line with the existing tools, in C++ (#1262, #1264, #1... | 1.0 | Tool: translation compiler - As a step of decoupling game compilation procedure from the Editor, we need a stand-alone tool that is run from the command-line, parses a translation source (TRS) file and writes a compiled translation (TRA) file in binary format.

Should be written in the similar line with the existing to... | non_process | tool translation compiler as a step of decoupling game compilation procedure from the editor we need a stand alone tool that is run from the command line parses a translation source trs file and writes a compiled translation tra file in binary format should be written in the similar line with the existing to... | 0 |

67,262 | 14,860,779,924 | IssuesEvent | 2021-01-18 21:15:30 | kadirselcuk/electron-webpack-quick-start | https://api.github.com/repos/kadirselcuk/electron-webpack-quick-start | opened | CVE-2020-4075 (High) detected in electron-8.2.0.tgz | security vulnerability | ## CVE-2020-4075 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>electron-8.2.0.tgz</b></p></summary>

<p>Build cross platform desktop apps with JavaScript, HTML, and CSS</p>

<p>Library... | True | CVE-2020-4075 (High) detected in electron-8.2.0.tgz - ## CVE-2020-4075 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>electron-8.2.0.tgz</b></p></summary>

<p>Build cross platform desk... | non_process | cve high detected in electron tgz cve high severity vulnerability vulnerable library electron tgz build cross platform desktop apps with javascript html and css library home page a href path to dependency file electron webpack quick start package json path to vulner... | 0 |

5,737 | 8,391,993,949 | IssuesEvent | 2018-10-09 16:21:10 | bull313/Musika | https://api.github.com/repos/bull313/Musika | opened | Requirement 8: Music Dynamics | compiler requirement | The Musika language shall support different dynamic levels (at least the basics: ppp, pp, p, mp, mf, f, ff, fff). | 1.0 | Requirement 8: Music Dynamics - The Musika language shall support different dynamic levels (at least the basics: ppp, pp, p, mp, mf, f, ff, fff). | non_process | requirement music dynamics the musika language shall support different dynamic levels at least the basics ppp pp p mp mf f ff fff | 0 |

16,637 | 21,707,261,437 | IssuesEvent | 2022-05-10 10:45:59 | sjmog/smartflix | https://api.github.com/repos/sjmog/smartflix | opened | Enriching show data synchronously | 04-background-processing Ruby/HTTP Ruby/JSON | In the previous ticket, we started enriching shows with data from the OMDb API. The enriched data is fetched when the user views a single show.

But right now, we're just dumping this rich data into the view as JSON. Let's store some of this useful data in the database and display it in a more useful way.

In this tick... | 1.0 | Enriching show data synchronously - In the previous ticket, we started enriching shows with data from the OMDb API. The enriched data is fetched when the user views a single show.

But right now, we're just dumping this rich data into the view as JSON. Let's store some of this useful data in the database and display it... | process | enriching show data synchronously in the previous ticket we started enriching shows with data from the omdb api the enriched data is fetched when the user views a single show but right now we re just dumping this rich data into the view as json let s store some of this useful data in the database and display it... | 1 |

7,846 | 11,015,563,872 | IssuesEvent | 2019-12-05 02:04:52 | GoogleCloudPlatform/python-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples | closed | Run all tests not just a subset | testing type: process | I noticed on a few of my PRs, it was only running a subset of the tests and not all of them.

While I've run them all locally there could be something I have set correctly here but not for everyone else that would be good to catch. | 1.0 | Run all tests not just a subset - I noticed on a few of my PRs, it was only running a subset of the tests and not all of them.

While I've run them all locally there could be something I have set correctly here but not for everyone else that would be good to catch. | process | run all tests not just a subset i noticed on a few of my prs it was only running a subset of the tests and not all of them while i ve run them all locally there could be something i have set correctly here but not for everyone else that would be good to catch | 1 |

626 | 3,091,957,920 | IssuesEvent | 2015-08-26 15:32:21 | e-government-ua/iBP | https://api.github.com/repos/e-government-ua/iBP | opened | Тернополь ОДА - Надання містобудівних умов та обмежень забудови земельної ділянки | in process of creating | Описание

https://drive.google.com/file/d/0B-TXzbaEvbw9dWFIbnVBb0ZYblU/view?usp=sharing

Заказчик: Ирина Кельнер | 1.0 | Тернополь ОДА - Надання містобудівних умов та обмежень забудови земельної ділянки - Описание

https://drive.google.com/file/d/0B-TXzbaEvbw9dWFIbnVBb0ZYblU/view?usp=sharing

Заказчик: Ирина Кельнер | process | тернополь ода надання містобудівних умов та обмежень забудови земельної ділянки описание заказчик ирина кельнер | 1 |

20,088 | 26,599,153,278 | IssuesEvent | 2023-01-23 14:37:59 | NixOS/nix | https://api.github.com/repos/NixOS/nix | opened | Release notes automation | developer-experience process | **Is your feature request related to a problem? Please describe.**

Goals

- Automate more of the release process

- Avoid merge conflicts in the release notes

- Improve the release notes of patch releases

By automating more of the release process, we move towards a situation where a release can be triggered b... | 1.0 | Release notes automation - **Is your feature request related to a problem? Please describe.**

Goals

- Automate more of the release process

- Avoid merge conflicts in the release notes

- Improve the release notes of patch releases

By automating more of the release process, we move towards a situation where a... | process | release notes automation is your feature request related to a problem please describe goals automate more of the release process avoid merge conflicts in the release notes improve the release notes of patch releases by automating more of the release process we move towards a situation where a... | 1 |

17,072 | 22,550,313,293 | IssuesEvent | 2022-06-27 04:23:07 | camunda/zeebe | https://api.github.com/repos/camunda/zeebe | closed | Feature Request: On Timer events add scheduling at specific time | kind/feature scope/broker blocker/stakeholder team/process-automation area/bpmn-support | Hi,

we are looking at automating all our user workflows using Zeebe-IO, We are a e-commerce analytics company and we are supposed to deliver reports at US time . we would like to have the capability of configuring and running the report analytic workflows at UTC 12:30 AM. Kindly consider adding timer-events on speci... | 1.0 | Feature Request: On Timer events add scheduling at specific time - Hi,

we are looking at automating all our user workflows using Zeebe-IO, We are a e-commerce analytics company and we are supposed to deliver reports at US time . we would like to have the capability of configuring and running the report analytic work... | process | feature request on timer events add scheduling at specific time hi we are looking at automating all our user workflows using zeebe io we are a e commerce analytics company and we are supposed to deliver reports at us time we would like to have the capability of configuring and running the report analytic work... | 1 |

95,374 | 3,946,683,300 | IssuesEvent | 2016-04-28 06:20:03 | Captianrock/android_PV | https://api.github.com/repos/Captianrock/android_PV | closed | Need GUI to ask user to enter source path if not src/main/java | High Priority New Feature | Some source code, such as AlarmClock, and most decompiled APKs don't have src/main/java defined. We need the true source path defined to we don't analyze extra classes (such as Test classes) that will ultimately break the module. | 1.0 | Need GUI to ask user to enter source path if not src/main/java - Some source code, such as AlarmClock, and most decompiled APKs don't have src/main/java defined. We need the true source path defined to we don't analyze extra classes (such as Test classes) that will ultimately break the module. | non_process | need gui to ask user to enter source path if not src main java some source code such as alarmclock and most decompiled apks don t have src main java defined we need the true source path defined to we don t analyze extra classes such as test classes that will ultimately break the module | 0 |

221,778 | 17,026,917,471 | IssuesEvent | 2021-07-03 18:21:11 | MysteryBlokHed/databind | https://api.github.com/repos/MysteryBlokHed/databind | closed | Use tabs (or 4 spaces) in functions/loops | documentation enhancement | Right now, a function might look like this:

```databind

func example

say Hello, World!

end

```

It would be easier to tell what's where if tabbing in lines inside functions was recommended, like this:

```databind

func example

say Hello, World!

end

```

Or this:

```databind

func example

va... | 1.0 | Use tabs (or 4 spaces) in functions/loops - Right now, a function might look like this:

```databind

func example

say Hello, World!

end

```

It would be easier to tell what's where if tabbing in lines inside functions was recommended, like this:

```databind

func example

say Hello, World!

end

```

O... | non_process | use tabs or spaces in functions loops right now a function might look like this databind func example say hello world end it would be easier to tell what s where if tabbing in lines inside functions was recommended like this databind func example say hello world end o... | 0 |

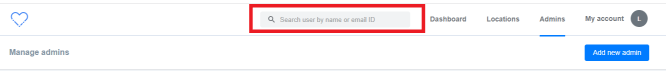

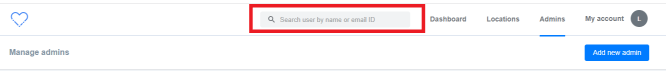

18,692 | 24,595,215,613 | IssuesEvent | 2022-10-14 07:43:36 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] Admins > Search bar > Placeholder text >Text change | Bug P2 Participant manager Process: Fixed Process: Tested dev | Admins > Search bar > Placeholder text >Text change > 'user' should be changed to 'admin'

| 2.0 | [PM] Admins > Search bar > Placeholder text >Text change - Admins > Search bar > Placeholder text >Text change > 'user' should be changed to 'admin'

| process | admins search bar placeholder text text change admins search bar placeholder text text change user should be changed to admin | 1 |

317,624 | 9,667,051,936 | IssuesEvent | 2019-05-21 12:23:53 | telerik/kendo-ui-core | https://api.github.com/repos/telerik/kendo-ui-core | closed | Decimal values are not displayed when negative percentage value is filled in a cell | Bug C: Spreadsheet FP: Completed Kendo2 Next LIB Priority 2 SEV: Low | ### Bug report

Decimal values are not displayed when negative percentage value is filled in a cell

Related to #2963

### Reproduction of the problem

Use the Kendo Spreadsheet to fill "-0.046%" and set its format to %. Sample here: [https://dojo.telerik.com/ACAGeTEL/2](https://dojo.telerik.com/ACAGeTEL/2)

##... | 1.0 | Decimal values are not displayed when negative percentage value is filled in a cell - ### Bug report

Decimal values are not displayed when negative percentage value is filled in a cell

Related to #2963

### Reproduction of the problem

Use the Kendo Spreadsheet to fill "-0.046%" and set its format to %. Sample ... | non_process | decimal values are not displayed when negative percentage value is filled in a cell bug report decimal values are not displayed when negative percentage value is filled in a cell related to reproduction of the problem use the kendo spreadsheet to fill and set its format to sample here ... | 0 |

634,506 | 20,363,690,967 | IssuesEvent | 2022-02-21 01:16:35 | NCC-CNC/whattemplatemaker | https://api.github.com/repos/NCC-CNC/whattemplatemaker | closed | Action IDs must be unique - should we provide guidance? | bug high priority | e.g., if they have two separate types of invasive species management? I think people can get around this by manually entering invasive spp management for second instance. Should we make that clearer or is that something for the manual? | 1.0 | Action IDs must be unique - should we provide guidance? - e.g., if they have two separate types of invasive species management? I think people can get around this by manually entering invasive spp management for second instance. Should we make that clearer or is that something for the manual? | non_process | action ids must be unique should we provide guidance e g if they have two separate types of invasive species management i think people can get around this by manually entering invasive spp management for second instance should we make that clearer or is that something for the manual | 0 |

109,354 | 16,843,681,014 | IssuesEvent | 2021-06-19 02:49:25 | bharathirajatut/fitbit-api-example-java2 | https://api.github.com/repos/bharathirajatut/fitbit-api-example-java2 | opened | CVE-2019-11358 (Medium) detected in jquery-2.1.1.jar | security vulnerability | ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-2.1.1.jar</b></p></summary>

<p>WebJar for jQuery</p>

<p>Library home page: <a href="http://webjars.org">http:... | True | CVE-2019-11358 (Medium) detected in jquery-2.1.1.jar - ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-2.1.1.jar</b></p></summary>

<p>WebJar for jQuery</p>

<... | non_process | cve medium detected in jquery jar cve medium severity vulnerability vulnerable library jquery jar webjar for jquery library home page a href path to dependency file fitbit api example pom xml path to vulnerable library canner repository org webjars jquery ... | 0 |

395,530 | 27,073,466,242 | IssuesEvent | 2023-02-14 08:57:04 | microsoftgraph/msgraph-developer-proxy | https://api.github.com/repos/microsoftgraph/msgraph-developer-proxy | closed | [How-to guide]: Update my application code to use Microsoft Graph JavaScript SDK | documentation | We should move the [Move to the Graph JS SDK](https://github.com/microsoftgraph/msgraph-developer-proxy/blob/main/msgraph-developer-proxy/Move-to-JS-SDK.md) guidance to our wiki so that it's in the same place as the rest of our docs and we can update it more easily. | 1.0 | [How-to guide]: Update my application code to use Microsoft Graph JavaScript SDK - We should move the [Move to the Graph JS SDK](https://github.com/microsoftgraph/msgraph-developer-proxy/blob/main/msgraph-developer-proxy/Move-to-JS-SDK.md) guidance to our wiki so that it's in the same place as the rest of our docs and ... | non_process | update my application code to use microsoft graph javascript sdk we should move the guidance to our wiki so that it s in the same place as the rest of our docs and we can update it more easily | 0 |

299,529 | 25,909,683,114 | IssuesEvent | 2022-12-15 13:03:28 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: sqlsmith/setup=seed-multi-region/setting=multi-region failed | C-test-failure O-robot O-roachtest branch-release-22.1 | roachtest.sqlsmith/setup=seed-multi-region/setting=multi-region [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=7969273&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=7969273&tab=artifacts#/sqlsmith/setup=seed-multi-region/setting=multi-region) on release-22.1 @ [7ce... | 2.0 | roachtest: sqlsmith/setup=seed-multi-region/setting=multi-region failed - roachtest.sqlsmith/setup=seed-multi-region/setting=multi-region [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=7969273&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=7969273&tab=artifacts#/sql... | non_process | roachtest sqlsmith setup seed multi region setting multi region failed roachtest sqlsmith setup seed multi region setting multi region with on release the test failed on branch release cloud gce test artifacts and logs in artifacts sqlsmith setup seed multi region setting multi region... | 0 |

11,518 | 2,653,053,394 | IssuesEvent | 2015-03-16 20:51:16 | portah/biowardrobe | https://api.github.com/repos/portah/biowardrobe | closed | For some genes RPKMs don't correlate with log change | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. DEseq

2. Filter on significant genes with either removing or not non coding

What is the expected output? What do you see instead?

NM_001198868,NM_001198869,NM_005186,NR_040008 CAPN1 chr11 64949304 64979477 + 16

8.8881362 168.8581147 -0.585938255 0.004820842 0.026719021

Wh... | 1.0 | For some genes RPKMs don't correlate with log change - ```

What steps will reproduce the problem?

1. DEseq

2. Filter on significant genes with either removing or not non coding

What is the expected output? What do you see instead?

NM_001198868,NM_001198869,NM_005186,NR_040008 CAPN1 chr11 64949304 64979477 + 16

8.8881... | non_process | for some genes rpkms don t correlate with log change what steps will reproduce the problem deseq filter on significant genes with either removing or not non coding what is the expected output what do you see instead nm nm nm nr what version of the product are y... | 0 |

13,144 | 15,569,437,663 | IssuesEvent | 2021-03-17 00:09:33 | tokio-rs/tokio | https://api.github.com/repos/tokio-rs/tokio | closed | Memory leak in tokio runtime. | A-tokio C-bug M-process M-signal | **Version**

└── tokio v1.2.0

└── tokio-macros v1.1.0 (proc-macro)

**Platform**

Linux TukuZaZa-D1 5.11.1-zen1-1-zen-uksm #1 ZEN SMP PREEMPT Wed, 24 Feb 2021 07:02:49 +0000 x86_64 GNU/Linux

**Description**

Tokio always leak memory after drop a `tokio::runtime::Runtime`.

````rust

fn main() {

for _ in ... | 1.0 | Memory leak in tokio runtime. - **Version**

└── tokio v1.2.0

└── tokio-macros v1.1.0 (proc-macro)

**Platform**

Linux TukuZaZa-D1 5.11.1-zen1-1-zen-uksm #1 ZEN SMP PREEMPT Wed, 24 Feb 2021 07:02:49 +0000 x86_64 GNU/Linux

**Description**

Tokio always leak memory after drop a `tokio::runtime::Runtime`.

``... | process | memory leak in tokio runtime version └── tokio └── tokio macros proc macro platform linux tukuzaza zen uksm zen smp preempt wed feb gnu linux description tokio always leak memory after drop a tokio runtime runtime rust fn main ... | 1 |

17,017 | 3,353,840,346 | IssuesEvent | 2015-11-18 09:02:35 | hollyjoke/33HU6POQKFJUS6K4M6BXQISV | https://api.github.com/repos/hollyjoke/33HU6POQKFJUS6K4M6BXQISV | closed | fdPfWf9+736SSTOcIIL4M2tW4rWlSVeS5zWvfVgXL8vRQKhSZnYCPFO04kP/YlI3MM8sAvHEEZ21HxOlsaoQKWI5XigQyYuvO3+YIv63EGm9SIYQvW9OuIl+yRUfr7zhoV/ZdX/UFge2acmPiuDIJc2NRS6csKiMuQ7pESyHMR0= | design | DPlZI5wK0CgLi4uTSHdfeIvFy8T9ENf3sDMt4KemT/szs9c7i+hfPAuX31gO4qy9nvMxGLC2Zef401uTsEjdlU1zfVinmjkxqP/Rvi4W3YdqsYQ3z/n4ehwkFy8JXa3gXyWerg90nGAQneU2mmi+62wIfYVQDPT/vMYFml3Bl/HsPWN+f7xYtK9ikn2DuAvqux/ECYkXdzMM82yWK9PNWhocBgHLkaGWpNppMHLdWG1HhK8CoOT1w9NzoPrZIEqMnyzgzODjbyMQj8OMSzvuJU7VXIaocAa4TzCDxT3YUJzDwlb4Xt13tZXyWwR6n87K... | 1.0 | fdPfWf9+736SSTOcIIL4M2tW4rWlSVeS5zWvfVgXL8vRQKhSZnYCPFO04kP/YlI3MM8sAvHEEZ21HxOlsaoQKWI5XigQyYuvO3+YIv63EGm9SIYQvW9OuIl+yRUfr7zhoV/ZdX/UFge2acmPiuDIJc2NRS6csKiMuQ7pESyHMR0= - DPlZI5wK0CgLi4uTSHdfeIvFy8T9ENf3sDMt4KemT/szs9c7i+hfPAuX31gO4qy9nvMxGLC2Zef401uTsEjdlU1zfVinmjkxqP/Rvi4W3YdqsYQ3z/n4ehwkFy8JXa3gXyWerg90nGAQneU2m... | non_process | zdx hspwn vopztg vfgq vfgq mjqmcbyvs coz oxknqgjiubynhyj | 0 |

55,611 | 6,910,506,038 | IssuesEvent | 2017-11-28 02:42:25 | cs340tabyu/cs340Fall2017 | https://api.github.com/repos/cs340tabyu/cs340Fall2017 | closed | Pressing the back button in the lobby places user in blank activity | P4: Aesthetic or Design Flaw Team 7 | When the user is in the game lobby, pressing the back button sends them to a blank activity, of which they cannot leave and have to restart the app. | 1.0 | Pressing the back button in the lobby places user in blank activity - When the user is in the game lobby, pressing the back button sends them to a blank activity, of which they cannot leave and have to restart the app. | non_process | pressing the back button in the lobby places user in blank activity when the user is in the game lobby pressing the back button sends them to a blank activity of which they cannot leave and have to restart the app | 0 |

1,124 | 3,603,634,967 | IssuesEvent | 2016-02-03 19:47:27 | mkdocs/mkdocs | https://api.github.com/repos/mkdocs/mkdocs | opened | Collecting a list of MkDocs themes | Process | With 0.15 out the door, I am starting to hear about new themes - much faster than I expected. I know of three already.

- [Cinder](https://github.com/chrissimpkins/cinder)

- [Alabaster](https://github.com/iamale/mkdocs-alabaster)

- http://protobluff.org/getting-started/ (I don't think this has a name and isn't on P... | 1.0 | Collecting a list of MkDocs themes - With 0.15 out the door, I am starting to hear about new themes - much faster than I expected. I know of three already.

- [Cinder](https://github.com/chrissimpkins/cinder)

- [Alabaster](https://github.com/iamale/mkdocs-alabaster)

- http://protobluff.org/getting-started/ (I don't... | process | collecting a list of mkdocs themes with out the door i am starting to hear about new themes much faster than i expected i know of three already i don t think this has a name and isn t on pypi yet but this is an example usage to aid discovery and help promote the great work done by ... | 1 |

8,778 | 11,900,468,755 | IssuesEvent | 2020-03-30 10:42:49 | MHRA/products | https://api.github.com/repos/MHRA/products | closed | Job status endpoint isn't returning XML response | BUG :bug: EPIC - Auto Batch Process :oncoming_automobile: | **Describe the bug**

Job status endpoint isn't returning XML response - only JSON. Reported by Accenture team whilst performing SIT.

**To Reproduce**

Make XML request to job status endpoint.

```

curl "http://localhost:8000/jobs/cd14ea09-7fa5-4df1-a051-9cdd2aef2770" \

-H 'Accept: application/xml' \

... | 1.0 | Job status endpoint isn't returning XML response - **Describe the bug**

Job status endpoint isn't returning XML response - only JSON. Reported by Accenture team whilst performing SIT.

**To Reproduce**

Make XML request to job status endpoint.

```

curl "http://localhost:8000/jobs/cd14ea09-7fa5-4df1-a051-9cdd2aef... | process | job status endpoint isn t returning xml response describe the bug job status endpoint isn t returning xml response only json reported by accenture team whilst performing sit to reproduce make xml request to job status endpoint curl h accept application xml h content ... | 1 |

541,928 | 15,836,312,408 | IssuesEvent | 2021-04-06 19:09:45 | GoogleChrome/lighthouse | https://api.github.com/repos/GoogleChrome/lighthouse | closed | Pagespeed Insights results differ from Chrome to Firefox | needs-priority pending-close | <!-- We would love to hear anything on your mind about Lighthouse -->

**Summary**

When I run the check on Firefox everything is Groovy. 92% The same test with Chrome is 77%

What in my site is making Chrome hang?

Our site is: https://habitatgtr.org/ | 1.0 | Pagespeed Insights results differ from Chrome to Firefox - <!-- We would love to hear anything on your mind about Lighthouse -->

**Summary**

When I run the check on Firefox everything is Groovy. 92% The same test with Chrome is 77%

What in my site is making Chrome hang?

Our site is: https://habitatgtr.org/ | non_process | pagespeed insights results differ from chrome to firefox summary when i run the check on firefox everything is groovy the same test with chrome is what in my site is making chrome hang our site is | 0 |

2,152 | 4,999,057,955 | IssuesEvent | 2016-12-09 21:56:37 | codeforamerica/jail-dashboard | https://api.github.com/repos/codeforamerica/jail-dashboard | closed | User can see bar chart for population status | in progress processing_status | _From @hartsick on November 30, 2016 21:53_

Derived from `status` field in bookings, bounded by booking date & release date

Use existing Louisville site for reference

**N.B.**: Mob on this to set up example for future bar charts.

_Copied from original issue: codeforamerica/jail-dashboard-project#130_ | 1.0 | User can see bar chart for population status - _From @hartsick on November 30, 2016 21:53_

Derived from `status` field in bookings, bounded by booking date & release date

Use existing Louisville site for reference

**N.B.**: Mob on this to set up example for future bar charts.

_Copied from original issue: codeforame... | process | user can see bar chart for population status from hartsick on november derived from status field in bookings bounded by booking date release date use existing louisville site for reference n b mob on this to set up example for future bar charts copied from original issue codeforamerica j... | 1 |

95,550 | 3,953,524,712 | IssuesEvent | 2016-04-29 13:49:29 | opencaching/opencaching-pl | https://api.github.com/repos/opencaching/opencaching-pl | opened | Improve password strength in registration and password change forms | Component_Core General_Discussion Priority_High Type_Enhancement | When I tested my new solution for activating account by link I noticed problem about password strength in OC web-service.

At this moment:

- my password have to be longer then **two** characters !

- I can't use any special characters in my password !!!

Now I can create account with those passwords:

- qaz

- 123

... | 1.0 | Improve password strength in registration and password change forms - When I tested my new solution for activating account by link I noticed problem about password strength in OC web-service.

At this moment:

- my password have to be longer then **two** characters !

- I can't use any special characters in my password... | non_process | improve password strength in registration and password change forms when i tested my new solution for activating account by link i noticed problem about password strength in oc web service at this moment my password have to be longer then two characters i can t use any special characters in my password... | 0 |

128 | 2,564,632,410 | IssuesEvent | 2015-02-06 21:14:29 | MozillaFoundation/plan | https://api.github.com/repos/MozillaFoundation/plan | opened | Improve demo calls | process | People should feel engaged and inspired by seeing each other's work at demos. It should be fun! Let's come up with some ways to make it even more so than it already is.

Basic improvement ideas on the table:

* Time limit for each demo, circa 5min

* Rotate facilitation

* keep longform discussion/ Q&A to other forum... | 1.0 | Improve demo calls - People should feel engaged and inspired by seeing each other's work at demos. It should be fun! Let's come up with some ways to make it even more so than it already is.

Basic improvement ideas on the table:

* Time limit for each demo, circa 5min

* Rotate facilitation

* keep longform discussio... | process | improve demo calls people should feel engaged and inspired by seeing each other s work at demos it should be fun let s come up with some ways to make it even more so than it already is basic improvement ideas on the table time limit for each demo circa rotate facilitation keep longform discussion ... | 1 |

16,353 | 21,012,714,132 | IssuesEvent | 2022-03-30 08:14:00 | threefoldfoundation/tft | https://api.github.com/repos/threefoldfoundation/tft | closed | No suitable peers | priority_critical process_duplicate | ```

ERROR[03-30|07:42:48.054] Error occured while minting: context deadline exceeded

INFO [03-30|07:42:49.043] Imported new block headers count=1 elapsed=902.003µs number=16503891 hash=67e57a…2e91aa

INFO [03-30|07:42:51.964] Imported new block headers count=1 elapsed=929.935µs numb... | 1.0 | No suitable peers - ```

ERROR[03-30|07:42:48.054] Error occured while minting: context deadline exceeded

INFO [03-30|07:42:49.043] Imported new block headers count=1 elapsed=902.003µs number=16503891 hash=67e57a…2e91aa

INFO [03-30|07:42:51.964] Imported new block headers count=1 el... | process | no suitable peers error error occured while minting context deadline exceeded info imported new block headers count elapsed number hash … info imported new block headers count elapsed number hash … info imported new block headers cou... | 1 |

18,838 | 24,744,337,319 | IssuesEvent | 2022-10-21 08:28:25 | fadeoutsoftware/WASDI | https://api.github.com/repos/fadeoutsoftware/WASDI | closed | Docker > Jupyter / Traefik > Implement a native Jinja engine / stop to use the sysadmin tool | bug P2 app / processor | For the Jupyter containers and for Traefik, we need to render a Jinja template.

For the need of my presentation meeting, I wrote a script myself. This tool was more:

- a proof of concept

- a tool for sysadmin needs

To use this tool is dangerous:

- the tool uses my Toolbox engine: if I have to update the ... | 1.0 | Docker > Jupyter / Traefik > Implement a native Jinja engine / stop to use the sysadmin tool - For the Jupyter containers and for Traefik, we need to render a Jinja template.

For the need of my presentation meeting, I wrote a script myself. This tool was more:

- a proof of concept

- a tool for sysadmin needs

... | process | docker jupyter traefik implement a native jinja engine stop to use the sysadmin tool for the jupyter containers and for traefik we need to render a jinja template for the need of my presentation meeting i wrote a script myself this tool was more a proof of concept a tool for sysadmin needs ... | 1 |

19,551 | 25,870,440,506 | IssuesEvent | 2022-12-14 02:00:07 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Wed, 14 Dec 22 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

There is no result

## Keyword: event camera

There is no result

## Keyword: events camera

There is no result

## Keyword: white balance

There is no result

## Keyword: color contrast

There is no result

## Keyword: AWB

### Towards Deeper and Better Multi-view Feature Fusion for 3D Semantic Segmenta... | 2.0 | New submissions for Wed, 14 Dec 22 - ## Keyword: events

There is no result

## Keyword: event camera

There is no result

## Keyword: events camera

There is no result

## Keyword: white balance

There is no result

## Keyword: color contrast

There is no result

## Keyword: AWB

### Towards Deeper and Better Multi-view Fea... | process | new submissions for wed dec keyword events there is no result keyword event camera there is no result keyword events camera there is no result keyword white balance there is no result keyword color contrast there is no result keyword awb towards deeper and better multi view featu... | 1 |

1,609 | 3,808,042,474 | IssuesEvent | 2016-03-25 12:52:14 | ngageoint/hootenanny | https://api.github.com/repos/ngageoint/hootenanny | opened | "memory leak" messages seen sometimes when starting tomcat with hoot deployed to it | Category: Services Priority: Medium Status: Defined Type: Bug | I see this in certain situations when starting up tomcat after hoot has been deployed to it.

"SEVERE: The web application [/hoot-services] appears to have started a thread named [Thread-4] but has failed to stop it. This is very likely to create a memory leak."

* Reproduce the error message

* Determine if its le... | 1.0 | "memory leak" messages seen sometimes when starting tomcat with hoot deployed to it - I see this in certain situations when starting up tomcat after hoot has been deployed to it.

"SEVERE: The web application [/hoot-services] appears to have started a thread named [Thread-4] but has failed to stop it. This is very li... | non_process | memory leak messages seen sometimes when starting tomcat with hoot deployed to it i see this in certain situations when starting up tomcat after hoot has been deployed to it severe the web application appears to have started a thread named but has failed to stop it this is very likely to create a memory ... | 0 |

22,649 | 31,895,827,418 | IssuesEvent | 2023-09-18 01:31:57 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | closed | Change term - group | Term - change Class - GeologicalContext normative Task Group - Material Sample Process - complete | ## Term change

* Submitter: [Material Sample Task Group](https://www.tdwg.org/community/osr/material-sample/)

* Efficacy Justification (why is this change necessary?): Create consistency of terms for material in Darwin Core.

* Demand Justification (if the change is semantic in nature, name at least two organizatio... | 1.0 | Change term - group - ## Term change

* Submitter: [Material Sample Task Group](https://www.tdwg.org/community/osr/material-sample/)

* Efficacy Justification (why is this change necessary?): Create consistency of terms for material in Darwin Core.

* Demand Justification (if the change is semantic in nature, name at... | process | change term group term change submitter efficacy justification why is this change necessary create consistency of terms for material in darwin core demand justification if the change is semantic in nature name at least two organizations that independently need this term which include... | 1 |

1,286 | 3,822,801,783 | IssuesEvent | 2016-03-30 03:46:58 | mapbox/mapbox-gl-js | https://api.github.com/repos/mapbox/mapbox-gl-js | opened | Speeding up render tests | testing & release process | Render tests take a big portion of our test time (~10 min), especially after we switched on test coverage with `istanbul`. We could try to significantly speed this up:

1. Run it in two stages — the first generates all actual images, the second runs pixelmatch-powered diffing on all generated images. This allows us t... | 1.0 | Speeding up render tests - Render tests take a big portion of our test time (~10 min), especially after we switched on test coverage with `istanbul`. We could try to significantly speed this up:

1. Run it in two stages — the first generates all actual images, the second runs pixelmatch-powered diffing on all generat... | process | speeding up render tests render tests take a big portion of our test time min especially after we switched on test coverage with istanbul we could try to significantly speed this up run it in two stages — the first generates all actual images the second runs pixelmatch powered diffing on all generate... | 1 |

21,129 | 28,101,560,216 | IssuesEvent | 2023-03-30 19:57:15 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Random Extract duplicates features | Processing Bug | ### What is the bug or the crash?

I've got a vector layer with 2705 features from which I want to randonmly extract 1000 of them. Using the `Random Extract` tool, it returns a set of 1000 features but some of them are duplicated (up to four times). I've checked the `id` attribute and it is correctly set.

For being o... | 1.0 | Random Extract duplicates features - ### What is the bug or the crash?

I've got a vector layer with 2705 features from which I want to randonmly extract 1000 of them. Using the `Random Extract` tool, it returns a set of 1000 features but some of them are duplicated (up to four times). I've checked the `id` attribute a... | process | random extract duplicates features what is the bug or the crash i ve got a vector layer with features from which i want to randonmly extract of them using the random extract tool it returns a set of features but some of them are duplicated up to four times i ve checked the id attribute and it is ... | 1 |

492,973 | 14,223,687,088 | IssuesEvent | 2020-11-17 18:33:40 | longhorn/longhorn | https://api.github.com/repos/longhorn/longhorn | opened | [BUG] Longhorn didn't choose to rebuild on an existing replica if there is a scheduling failed replica after | area/manager enhancement priority/3 | **Describe the bug**

Longhorn didn't choose to rebuild on an existing replica if there is a scheduling failed replica.

**To Reproduce**

Steps to reproduce the behavior:

1. Create a volume with 3 replicas on a three-node cluster.

2. Shutdown one of the replica node.

3. Wait for more than `Replica Replenishment W... | 1.0 | [BUG] Longhorn didn't choose to rebuild on an existing replica if there is a scheduling failed replica after - **Describe the bug**

Longhorn didn't choose to rebuild on an existing replica if there is a scheduling failed replica.

**To Reproduce**

Steps to reproduce the behavior:

1. Create a volume with 3 replica... | non_process | longhorn didn t choose to rebuild on an existing replica if there is a scheduling failed replica after describe the bug longhorn didn t choose to rebuild on an existing replica if there is a scheduling failed replica to reproduce steps to reproduce the behavior create a volume with replicas on... | 0 |

5,028 | 7,850,468,099 | IssuesEvent | 2018-06-20 08:39:42 | rivine/recordchain | https://api.github.com/repos/rivine/recordchain | closed | Implement keep allive | process_wontfix | each node will send messages ( http calls with jwt and ip of node ) to the orderbook.

When the orderbook doesn’t receive these messages from the node, the orders will be deleted.

A node will always send his orders when a connection with the orderbook is created.

| 1.0 | Implement keep allive - each node will send messages ( http calls with jwt and ip of node ) to the orderbook.

When the orderbook doesn’t receive these messages from the node, the orders will be deleted.

A node will always send his orders when a connection with the orderbook is created.

| process | implement keep allive each node will send messages http calls with jwt and ip of node to the orderbook when the orderbook doesn’t receive these messages from the node the orders will be deleted a node will always send his orders when a connection with the orderbook is created | 1 |

571,512 | 17,023,315,745 | IssuesEvent | 2021-07-03 01:23:29 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Tag:service=parking aisle needs rendered | Component: mapnik Priority: major Resolution: duplicate Type: enhancement | **[Submitted to the original trac issue database at 11.10pm, Friday, 24th October 2008]**

http://wiki.openstreetmap.org/index.php/Tag:service%3Dparking_aisle

service=parking has yet to be rendered corectly. | 1.0 | Tag:service=parking aisle needs rendered - **[Submitted to the original trac issue database at 11.10pm, Friday, 24th October 2008]**

http://wiki.openstreetmap.org/index.php/Tag:service%3Dparking_aisle

service=parking has yet to be rendered corectly. | non_process | tag service parking aisle needs rendered service parking has yet to be rendered corectly | 0 |

14,692 | 17,850,765,845 | IssuesEvent | 2021-09-04 02:39:21 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Coding problems when running algorithms | Feedback stale Processing Bug | <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS developers alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

... | 1.0 | Coding problems when running algorithms - <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS developers alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsor... | process | coding problems when running algorithms bug fixing and feature development is a community responsibility and not the responsibility of the qgis developers alone if this bug report or feature request is high priority for you we suggest engaging a qgis developer or support organisation and financially sponsor... | 1 |

8,492 | 10,516,234,434 | IssuesEvent | 2019-09-28 16:03:33 | OpenXRay/xray-16 | https://api.github.com/repos/OpenXRay/xray-16 | opened | Teach renderer to work with and without HQ Geometry fix | Compatibility Modmaker Experience Player Experience Render | Check out this commit: https://github.com/OpenXRay/xray-16/commit/2a91825eeb30b3cf8af6b91201a25bd4a90cd01e

This introduced support for high quality models along with the loss of compatibility with the original `skin.h` shader.

Renderer should dynamically detect if shaders has installed HQ geometry fix, and work c... | True | Teach renderer to work with and without HQ Geometry fix - Check out this commit: https://github.com/OpenXRay/xray-16/commit/2a91825eeb30b3cf8af6b91201a25bd4a90cd01e

This introduced support for high quality models along with the loss of compatibility with the original `skin.h` shader.

Renderer should dynamically d... | non_process | teach renderer to work with and without hq geometry fix check out this commit this introduced support for high quality models along with the loss of compatibility with the original skin h shader renderer should dynamically detect if shaders has installed hq geometry fix and work correctly no matter if it... | 0 |

4,639 | 7,482,346,906 | IssuesEvent | 2018-04-05 00:46:48 | UnbFeelings/unb-feelings-GQA | https://api.github.com/repos/UnbFeelings/unb-feelings-GQA | closed | Reorganização da parte da wiki de processo | process wiki | Foi colocado pela professora: difícil distinção entre a definição do processo de trabalho(GQA, o processo geral) e o processo do auditor, existem subprocessos que não cabem em todas as auditorias

Logo, ao documentar na wiki explicitar que o subprocesso "Programar auditoria" ocorrerá apenas uma vez, isto é, as outras... | 1.0 | Reorganização da parte da wiki de processo - Foi colocado pela professora: difícil distinção entre a definição do processo de trabalho(GQA, o processo geral) e o processo do auditor, existem subprocessos que não cabem em todas as auditorias

Logo, ao documentar na wiki explicitar que o subprocesso "Programar auditori... | process | reorganização da parte da wiki de processo foi colocado pela professora difícil distinção entre a definição do processo de trabalho gqa o processo geral e o processo do auditor existem subprocessos que não cabem em todas as auditorias logo ao documentar na wiki explicitar que o subprocesso programar auditori... | 1 |

19,227 | 25,376,834,477 | IssuesEvent | 2022-11-21 14:42:18 | ResqDiver1317/ThirdPeril_PerilousSkies | https://api.github.com/repos/ResqDiver1317/ThirdPeril_PerilousSkies | closed | Figure out why the damn CF launcher won't connect to the freaking server...... | bug COMPLETE/RESOLVED In Process | Self Explanatory in the title. | 1.0 | Figure out why the damn CF launcher won't connect to the freaking server...... - Self Explanatory in the title. | process | figure out why the damn cf launcher won t connect to the freaking server self explanatory in the title | 1 |

6,767 | 9,905,579,756 | IssuesEvent | 2019-06-27 11:58:09 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | closed | Preprocessor feature request: Common mask for multiple datasets | enhancement preprocessor | For multiple datasets it is necessary to produce a common mask and apply it to all of them if you want to compare respective results.

As far as I know, there is currently no preprocessor for producing and applying such a common mask.

Is there a work-around? Is this a needed/wanted feature? If I'm the only one, I... | 1.0 | Preprocessor feature request: Common mask for multiple datasets - For multiple datasets it is necessary to produce a common mask and apply it to all of them if you want to compare respective results.

As far as I know, there is currently no preprocessor for producing and applying such a common mask.

Is there a wo... | process | preprocessor feature request common mask for multiple datasets for multiple datasets it is necessary to produce a common mask and apply it to all of them if you want to compare respective results as far as i know there is currently no preprocessor for producing and applying such a common mask is there a wo... | 1 |

21,355 | 29,188,351,583 | IssuesEvent | 2023-05-19 17:26:42 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Mongodb won’t work if version does fall into the “semantic-version-gte” pattern (Percona) | Type:Bug Priority:P1 Database/Mongo .Regression .Team/QueryProcessor :hammer_and_wrench: .Escalation | **Describe the bug**

After upgrading to metabase version 0.46, I can't access to any mongodb databases.

From what I've seen, in metabase database, table `metabase_database`, the dbms_version is wrong, because mongodb doest not follow semantic version.

What i get from the `dbms_version` => {"version":"5.0.14-12","s... | 1.0 | Mongodb won’t work if version does fall into the “semantic-version-gte” pattern (Percona) - **Describe the bug**

After upgrading to metabase version 0.46, I can't access to any mongodb databases.

From what I've seen, in metabase database, table `metabase_database`, the dbms_version is wrong, because mongodb doest not... | process | mongodb won’t work if version does fall into the “semantic version gte” pattern percona describe the bug after upgrading to metabase version i can t access to any mongodb databases from what i ve seen in metabase database table metabase database the dbms version is wrong because mongodb doest not ... | 1 |

1,341 | 3,900,990,741 | IssuesEvent | 2016-04-18 09:00:15 | e-government-ua/iBP | https://api.github.com/repos/e-government-ua/iBP | closed | Богодухов (Харьковская обл.) - раскрыть "Звернення до міського голови" | In process of testing in work | инфо от координатора:

Чуть больше недели назад мы проводили презентацию для Богодухова, там согласились на внедрение услуги "Звернення до голови" для трех голов.

Вот контакты исполнителей этих услуг для Богодухова:

ИВАХ Алина (Представник районної державної адміністрації)

093-962-27-29

a.ivakh@bogodukhivrda.gov.... | 1.0 | Богодухов (Харьковская обл.) - раскрыть "Звернення до міського голови" - инфо от координатора:

Чуть больше недели назад мы проводили презентацию для Богодухова, там согласились на внедрение услуги "Звернення до голови" для трех голов.

Вот контакты исполнителей этих услуг для Богодухова:

ИВАХ Алина (Представник рай... | process | богодухов харьковская обл раскрыть звернення до міського голови инфо от координатора чуть больше недели назад мы проводили презентацию для богодухова там согласились на внедрение услуги звернення до голови для трех голов вот контакты исполнителей этих услуг для богодухова ивах алина представник рай... | 1 |

308,521 | 23,252,073,373 | IssuesEvent | 2022-08-04 05:27:41 | UoaWDCC/NZCSA-Frontend | https://api.github.com/repos/UoaWDCC/NZCSA-Frontend | opened | [Documentation] SponsorsLogoLayout and SponsorGrid | Type: Documentation | **Describe the task that needs to be done.**

Document the SponsorsLogoLayout and SponsorGrid using jsdoc and comment any methods.

In the js doc, must include the use of the file, and what is in the input props if its applicable.

**Describe how a solution to your proposed task might look like (and any alternativ... | 1.0 | [Documentation] SponsorsLogoLayout and SponsorGrid - **Describe the task that needs to be done.**

Document the SponsorsLogoLayout and SponsorGrid using jsdoc and comment any methods.

In the js doc, must include the use of the file, and what is in the input props if its applicable.

**Describe how a solution to y... | non_process | sponsorslogolayout and sponsorgrid describe the task that needs to be done document the sponsorslogolayout and sponsorgrid using jsdoc and comment any methods in the js doc must include the use of the file and what is in the input props if its applicable describe how a solution to your proposed t... | 0 |

270,027 | 28,960,382,432 | IssuesEvent | 2023-05-10 01:37:20 | Nivaskumark/kernel_v4.19.72_old | https://api.github.com/repos/Nivaskumark/kernel_v4.19.72_old | reopened | CVE-2020-25645 (High) detected in linuxlinux-4.19.83 | Mend: dependency security vulnerability | ## CVE-2020-25645 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.83</b></p></summary>

<p>

<p>Apache Software Foundation (ASF)</p>

<p>Library home page: <a href=https:/... | True | CVE-2020-25645 (High) detected in linuxlinux-4.19.83 - ## CVE-2020-25645 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.83</b></p></summary>

<p>

<p>Apache Software Fou... | non_process | cve high detected in linuxlinux cve high severity vulnerability vulnerable library linuxlinux apache software foundation asf library home page a href found in head commit a href found in base branch master vulnerable source files ... | 0 |

489,757 | 14,111,992,725 | IssuesEvent | 2020-11-07 02:41:32 | chingu-voyages/v25-geckos-team-01 | https://api.github.com/repos/chingu-voyages/v25-geckos-team-01 | opened | Create application mockup | UserStory priority:must_have | **User Story Description**

As a Developer

I want to have a mockup of the app screens

So I can implement a UI & UX that ties functions to screens to meet the needs of all users.

**Steps to Follow (optional)**

- [ ] Create a paper or digital mockup of app screens, the elements in them, actions, and navigation

- [... | 1.0 | Create application mockup - **User Story Description**

As a Developer

I want to have a mockup of the app screens

So I can implement a UI & UX that ties functions to screens to meet the needs of all users.

**Steps to Follow (optional)**

- [ ] Create a paper or digital mockup of app screens, the elements in them, ... | non_process | create application mockup user story description as a developer i want to have a mockup of the app screens so i can implement a ui ux that ties functions to screens to meet the needs of all users steps to follow optional create a paper or digital mockup of app screens the elements in them ac... | 0 |

22,716 | 32,039,639,073 | IssuesEvent | 2023-09-22 18:10:04 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | procpath 1.8.1 has 2 GuardDog issues | guarddog exec-base64 silent-process-execution | https://pypi.org/project/procpath

https://inspector.pypi.io/project/procpath

```{

"dependency": "procpath",

"version": "1.8.1",

"result": {

"issues": 2,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "Procpath-1.8.1/procpath/test.py:2386",

"cod... | 1.0 | procpath 1.8.1 has 2 GuardDog issues - https://pypi.org/project/procpath

https://inspector.pypi.io/project/procpath

```{

"dependency": "procpath",

"version": "1.8.1",

"result": {

"issues": 2,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "Procpath-1.8.1... | process | procpath has guarddog issues dependency procpath version result issues errors results silent process execution location procpath procpath test py code p subprocess popen... | 1 |

577,279 | 17,107,111,899 | IssuesEvent | 2021-07-09 19:43:51 | adirh3/Fluent-Search | https://api.github.com/repos/adirh3/Fluent-Search | closed | The Unpin action doesn't highlight | Low Priority bug | **Describe the bug**

The Unpin in the home screen doesn't high like the other shortcuts.

**To Reproduce**

Steps to reproduce the behavior:

1. Pin a result to the home screen

2. Right-click on the result

3. Hover mouse over the Unpin action

4. See error

**Expected behavior**

Unpin should highlight like the... | 1.0 | The Unpin action doesn't highlight - **Describe the bug**

The Unpin in the home screen doesn't high like the other shortcuts.

**To Reproduce**

Steps to reproduce the behavior:

1. Pin a result to the home screen

2. Right-click on the result

3. Hover mouse over the Unpin action

4. See error

**Expected behav... | non_process | the unpin action doesn t highlight describe the bug the unpin in the home screen doesn t high like the other shortcuts to reproduce steps to reproduce the behavior pin a result to the home screen right click on the result hover mouse over the unpin action see error expected behav... | 0 |

279,252 | 30,702,485,040 | IssuesEvent | 2023-07-27 01:34:09 | maddyCode23/linux-4.1.15 | https://api.github.com/repos/maddyCode23/linux-4.1.15 | closed | CVE-2019-15292 (Medium) detected in multiple libraries - autoclosed | Mend: dependency security vulnerability | ## CVE-2019-15292 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linux-stable-rtv4.1.33</b>, <b>linux-stable-rtv4.1.33</b>, <b>linux-stable-rtv4.1.33</b>, <b>linux-stable-rtv4.1.33... | True | CVE-2019-15292 (Medium) detected in multiple libraries - autoclosed - ## CVE-2019-15292 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linux-stable-rtv4.1.33</b>, <b>linux-stable-r... | non_process | cve medium detected in multiple libraries autoclosed cve medium severity vulnerability vulnerable libraries linux stable linux stable linux stable linux stable vulnerability details an issue was discovered in the linux kernel before ... | 0 |

3,113 | 6,143,199,432 | IssuesEvent | 2017-06-27 04:21:46 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Not all attributes defined on a DITA Composite are copied in the preprocessing stage | preprocess | The callback `org.dita.dost.reader.MergeTopicParser.startElement(String, String, String, Attributes)` by default copies all attributes defined on the element in the string buffer. But for a `<dita>` element it makes a fast return and does not copy the attributes defined on the <dita> element to the string buffer.

Why ... | 1.0 | Not all attributes defined on a DITA Composite are copied in the preprocessing stage - The callback `org.dita.dost.reader.MergeTopicParser.startElement(String, String, String, Attributes)` by default copies all attributes defined on the element in the string buffer. But for a `<dita>` element it makes a fast return and... | process | not all attributes defined on a dita composite are copied in the preprocessing stage the callback org dita dost reader mergetopicparser startelement string string string attributes by default copies all attributes defined on the element in the string buffer but for a element it makes a fast return and does... | 1 |

8,853 | 11,955,294,299 | IssuesEvent | 2020-04-04 03:47:44 | kubernetes/minikube | https://api.github.com/repos/kubernetes/minikube | closed | discuss: minikube wait for default service accont ? | kind/process priority/backlog triage/discuss | as part of this PR https://github.com/kubernetes/minikube/pull/6999 to close https://github.com/kubernetes/minikube/issues/6997

I added wait for default service account to be created before the integeration tests apply yaml files.

at first I wanted to add that to minikube itself but that added 30 seconds to minikub... | 1.0 | discuss: minikube wait for default service accont ? - as part of this PR https://github.com/kubernetes/minikube/pull/6999 to close https://github.com/kubernetes/minikube/issues/6997

I added wait for default service account to be created before the integeration tests apply yaml files.