Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

18,009 | 24,025,354,905 | IssuesEvent | 2022-09-15 11:02:19 | COS301-SE-2022/Pure-LoRa-Tracking | https://api.github.com/repos/COS301-SE-2022/Pure-LoRa-Tracking | closed | (processing): message queue CRON service | (system) Server (bus) processing | Check message queue for data and store in db

Consider the case of what time the data came in.

It may be required to be matched with previous data to complete a row in the database | 1.0 | (processing): message queue CRON service - Check message queue for data and store in db

Consider the case of what time the data came in.

It may be required to be matched with previous data to complete a row in the database | process | processing message queue cron service check message queue for data and store in db consider the case of what time the data came in it may be required to be matched with previous data to complete a row in the database | 1 |

17,456 | 23,277,703,575 | IssuesEvent | 2022-08-05 08:53:23 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Missing breaking change upgrade to 4.0 client - null values in seed files | bug/0-unknown kind/bug process/candidate tech/typescript team/client topic: seeding 4.0.0 | ### Bug description

In the client 3.x.x i was able to generate seed files with mockaroo that contained null values.

e..g.

```

[

{ "title": "Melursus ursinus", "parent_id": null, "parent_index": 1 },

{ "title": "Choriotis kori", "parent_id": 1, "parent_index": 2 },

{ "title": "Oryx gazella", "parent_i... | 1.0 | Missing breaking change upgrade to 4.0 client - null values in seed files - ### Bug description

In the client 3.x.x i was able to generate seed files with mockaroo that contained null values.

e..g.

```

[

{ "title": "Melursus ursinus", "parent_id": null, "parent_index": 1 },

{ "title": "Choriotis kori", ... | process | missing breaking change upgrade to client null values in seed files bug description in the client x x i was able to generate seed files with mockaroo that contained null values e g title melursus ursinus parent id null parent index title choriotis kori ... | 1 |

18,226 | 24,290,566,619 | IssuesEvent | 2022-09-29 05:23:16 | altillimity/SatDump | https://api.github.com/repos/altillimity/SatDump | closed | Raspberry Pi 4 not seeing GOES 16/17 | bug Processing | Howdy

For the last month, I have been trying to set up a 24/7 Satdump setup on a Raspberry Pi 4 model B but it never receives the GOES 16 and 17 signals. I have a RTL-SDR V3, a NooElec GOES SAWbird, and NooElec Goes dish. I have downloaded the new beta and is using:

"./satdump live goes_hrit /media/regs1/REGS11/G... | 1.0 | Raspberry Pi 4 not seeing GOES 16/17 - Howdy

For the last month, I have been trying to set up a 24/7 Satdump setup on a Raspberry Pi 4 model B but it never receives the GOES 16 and 17 signals. I have a RTL-SDR V3, a NooElec GOES SAWbird, and NooElec Goes dish. I have downloaded the new beta and is using:

"./satdu... | process | raspberry pi not seeing goes howdy for the last month i have been trying to set up a satdump setup on a raspberry pi model b but it never receives the goes and signals i have a rtl sdr a nooelec goes sawbird and nooelec goes dish i have downloaded the new beta and is using satdump liv... | 1 |

818,673 | 30,699,345,896 | IssuesEvent | 2023-07-26 21:27:10 | Rexo99/MoneyMate | https://api.github.com/repos/Rexo99/MoneyMate | closed | Ausgaben Foto Attribut (Geschäftslogik) | Almost finished low priority | * Datenmodell anpassen

* Informieren wie und wo das Foto abgespeichert wird | 1.0 | Ausgaben Foto Attribut (Geschäftslogik) - * Datenmodell anpassen

* Informieren wie und wo das Foto abgespeichert wird | non_process | ausgaben foto attribut geschäftslogik datenmodell anpassen informieren wie und wo das foto abgespeichert wird | 0 |

10,935 | 13,750,327,954 | IssuesEvent | 2020-10-06 11:53:18 | Arch666Angel/mods | https://api.github.com/repos/Arch666Angel/mods | closed | Colored working_visualisations on the bio processor | Angels Bio Processing Impact: Enhancement | **Describe the bug**

Similar to how the ingots are colored on the induction furnace, angel wants to have colors working on the bio processor.

**Additional context**

The graphic files are already present inside the mod, however this was not possible at that time: https://forums.factorio.com/viewtopic.php?f=65&t=526... | 1.0 | Colored working_visualisations on the bio processor - **Describe the bug**

Similar to how the ingots are colored on the induction furnace, angel wants to have colors working on the bio processor.

**Additional context**

The graphic files are already present inside the mod, however this was not possible at that time... | process | colored working visualisations on the bio processor describe the bug similar to how the ingots are colored on the induction furnace angel wants to have colors working on the bio processor additional context the graphic files are already present inside the mod however this was not possible at that time... | 1 |

21,473 | 29,506,628,547 | IssuesEvent | 2023-06-03 11:52:55 | firebase/firebase-cpp-sdk | https://api.github.com/repos/firebase/firebase-cpp-sdk | closed | [C++] Nightly Integration Testing Report for Firestore | type: process nightly-testing |

<hidden value="integration-test-status-comment"></hidden>

### [build against repo] Integration test with FLAKINESS (succeeded after retry)

Requested by @sunmou99 on commit 974fb0704b555906be19c3a892fbbabf34f112d1

Last updated: Fri Jun 2 04:51 PDT 2023

**[View integration test log & download artifacts](https://gith... | 1.0 | [C++] Nightly Integration Testing Report for Firestore -

<hidden value="integration-test-status-comment"></hidden>

### [build against repo] Integration test with FLAKINESS (succeeded after retry)

Requested by @sunmou99 on commit 974fb0704b555906be19c3a892fbbabf34f112d1

Last updated: Fri Jun 2 04:51 PDT 2023

**[Vie... | process | nightly integration testing report for firestore integration test with flakiness succeeded after retry requested by on commit last updated fri jun pdt failures configs firestore failed tests nbsp nbsp crash timeout add flaky t... | 1 |

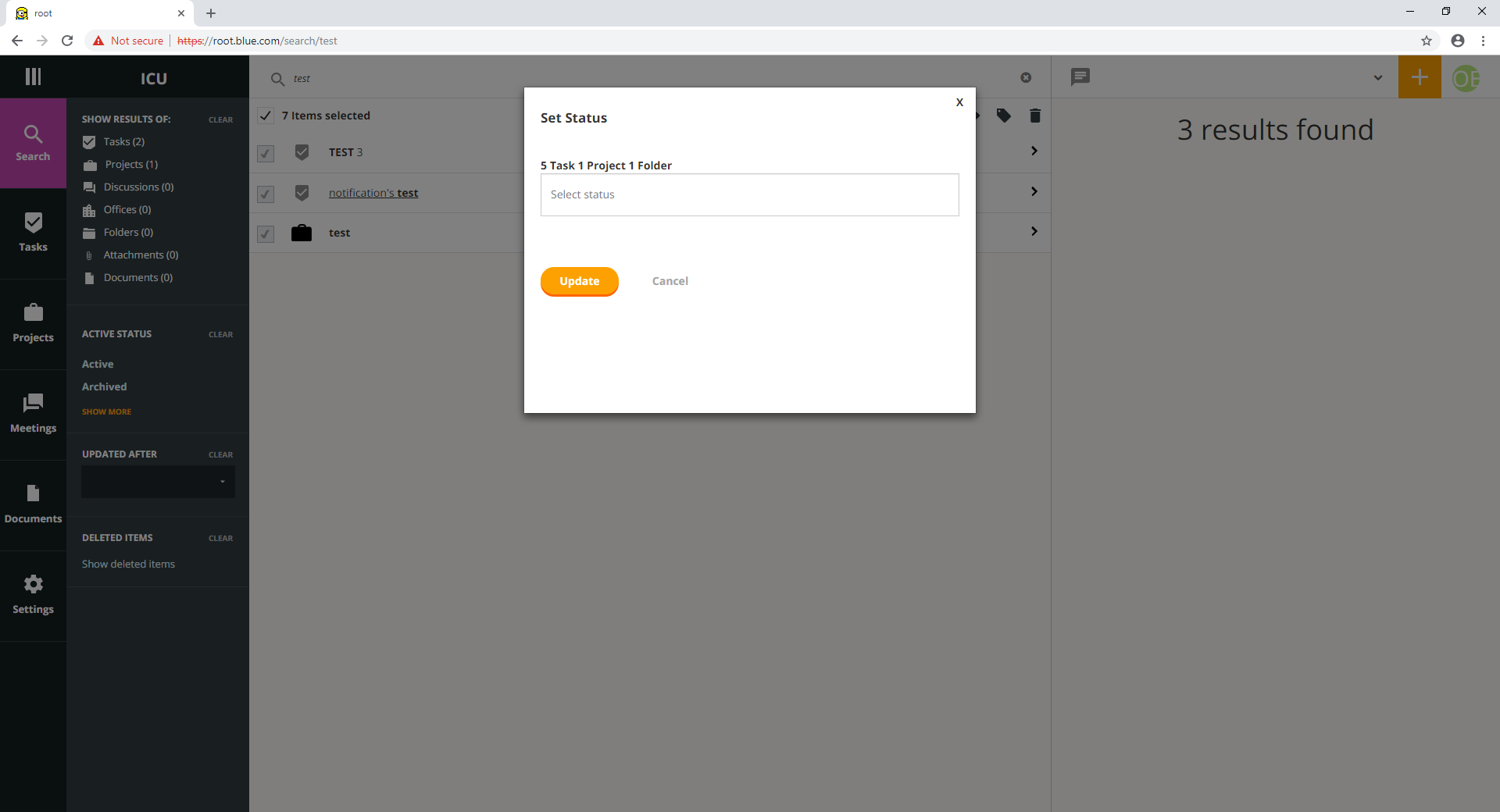

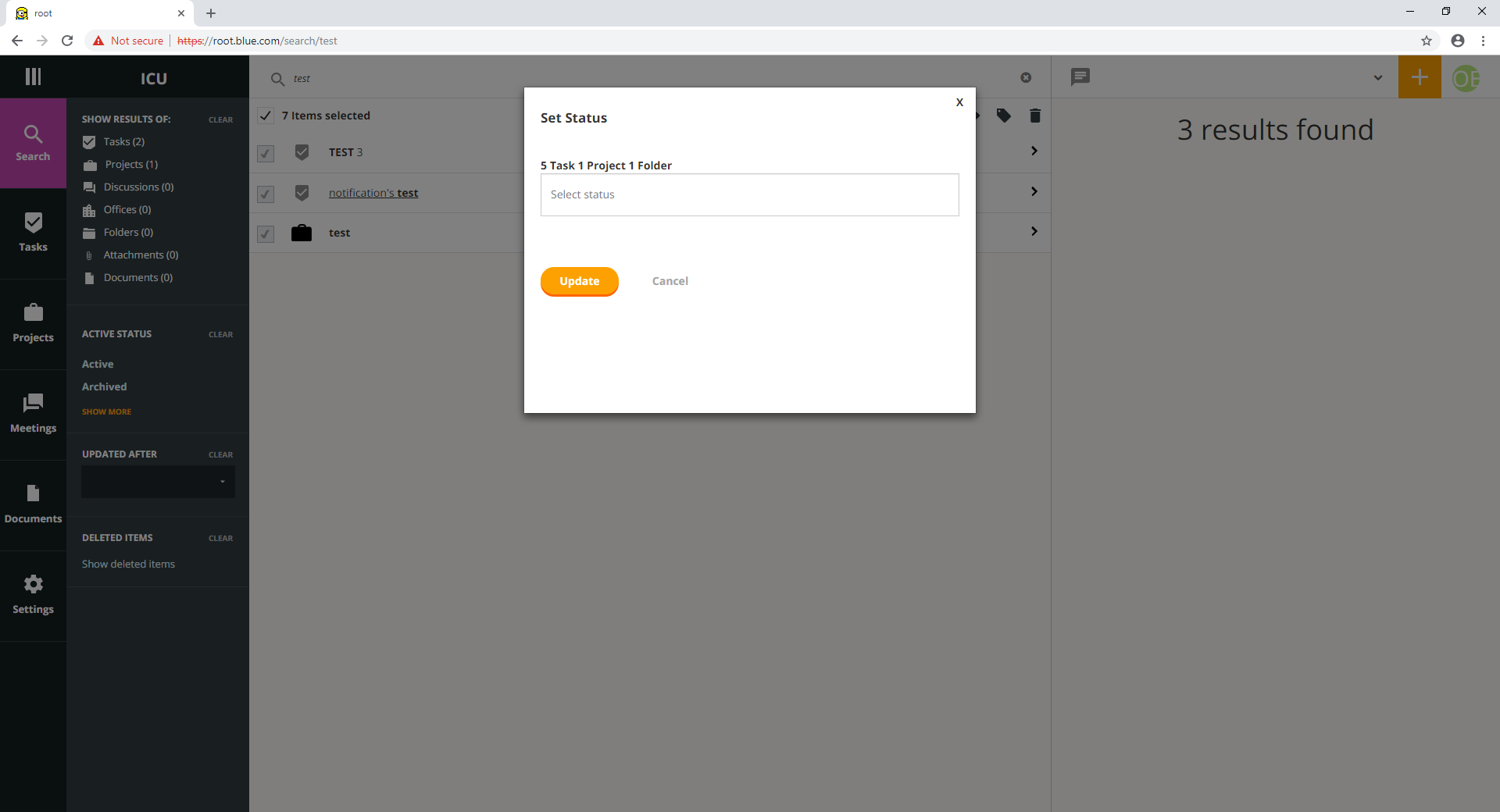

6,018 | 8,822,871,941 | IssuesEvent | 2019-01-02 11:12:30 | linnovate/root | https://api.github.com/repos/linnovate/root | opened | select all in search shows and selects deleted items | 2.0.6 Process bug | in search

enter multiple selection mode

select everything

it selects even the deleted items that have the same name

| 1.0 | select all in search shows and selects deleted items - in search

enter multiple selection mode

select everything

it selects even the deleted items that have the same name

| process | select all in search shows and selects deleted items in search enter multiple selection mode select everything it selects even the deleted items that have the same name | 1 |

6,252 | 9,213,798,611 | IssuesEvent | 2019-03-10 15:00:54 | chuminh712/BookStorage---Group-2 | https://api.github.com/repos/chuminh712/BookStorage---Group-2 | closed | Architecture Design | In Process | Design sequence diagram for Use Case Manage Book

Design sequence diagram for Use Case Manage Book Category | 1.0 | Architecture Design - Design sequence diagram for Use Case Manage Book

Design sequence diagram for Use Case Manage Book Category | process | architecture design design sequence diagram for use case manage book design sequence diagram for use case manage book category | 1 |

19,657 | 26,017,047,843 | IssuesEvent | 2022-12-21 09:23:27 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Multi-schema support for `db pull` for MySQL | kind/feature process/candidate topic: introspection topic: re-introspection tech/engines tech/engines/introspection engine topic: mysql team/schema topic: prisma db pull topic: multiSchema | Within the `multiSchema` preview feature, `db pull` should by default introspect all schemas in the database. | 1.0 | Multi-schema support for `db pull` for MySQL - Within the `multiSchema` preview feature, `db pull` should by default introspect all schemas in the database. | process | multi schema support for db pull for mysql within the multischema preview feature db pull should by default introspect all schemas in the database | 1 |

15,088 | 18,941,873,325 | IssuesEvent | 2021-11-18 04:34:33 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | closed | Why a non nullable field can be with null value? | question sql-compatibility question-answered | > Make sure to check documentation https://clickhouse.yandex/docs/en/ first. If the question is concise and probably has a short answer, asking it in Telegram chat https://telegram.me/clickhouse_en is probably the fastest way to find the answer. For more complicated questions, consider asking them on StackOverflow with... | True | Why a non nullable field can be with null value? - > Make sure to check documentation https://clickhouse.yandex/docs/en/ first. If the question is concise and probably has a short answer, asking it in Telegram chat https://telegram.me/clickhouse_en is probably the fastest way to find the answer. For more complicated qu... | non_process | why a non nullable field can be with null value make sure to check documentation first if the question is concise and probably has a short answer asking it in telegram chat is probably the fastest way to find the answer for more complicated questions consider asking them on stackoverflow with clickhouse ... | 0 |

61,034 | 8,483,891,058 | IssuesEvent | 2018-10-25 23:29:01 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Out of date comments in System.Numerics.Vectors | area-System.Numerics documentation up-for-grabs | There is a comment at the beginning of Vectors.cs [https://github.com/dotnet/corefx/blob/master/src/System.Numerics.Vectors/src/System/Numerics/Vector.cs#L22-L32](url)

> PATTERN:

* if (Vector.IsHardwareAccelerated) { ... }

* else { ... }

* EXPLANATION

* This pattern solves two problems... | 1.0 | Out of date comments in System.Numerics.Vectors - There is a comment at the beginning of Vectors.cs [https://github.com/dotnet/corefx/blob/master/src/System.Numerics.Vectors/src/System/Numerics/Vector.cs#L22-L32](url)

> PATTERN:

* if (Vector.IsHardwareAccelerated) { ... }

* else { ... }

* EXPL... | non_process | out of date comments in system numerics vectors there is a comment at the beginning of vectors cs url pattern if vector ishardwareaccelerated else explanation this pattern solves two problems allows us to unroll loops when we know the... | 0 |

19,728 | 26,074,242,756 | IssuesEvent | 2022-12-24 08:37:59 | bitfocus/companion-module-requests | https://api.github.com/repos/bitfocus/companion-module-requests | opened | Roland 4k V\ideo scaler VC-100UHD Region of Interest | NOT YET PROCESSED | The name of the device, hardware, or software you would like to control:

What you would like to be able to make it do from Companion:

create controls to zoom in, zoom out (digital zoom), and modify the positions of the ROI boxes.

Direct links or attachments to the ethernet control protocol or API:

"- I am not... | 1.0 | Roland 4k V\ideo scaler VC-100UHD Region of Interest - The name of the device, hardware, or software you would like to control:

What you would like to be able to make it do from Companion:

create controls to zoom in, zoom out (digital zoom), and modify the positions of the ROI boxes.

Direct links or attachment... | process | roland v ideo scaler vc region of interest the name of the device hardware or software you would like to control what you would like to be able to make it do from companion create controls to zoom in zoom out digital zoom and modify the positions of the roi boxes direct links or attachments to t... | 1 |

1,785 | 10,755,967,299 | IssuesEvent | 2019-10-31 10:13:44 | elastic/apm-integration-testing | https://api.github.com/repos/elastic/apm-integration-testing | closed | Opbeans node is broken after Docker images changes | [zube]: In Review automation bug ci | After lates changes on Opbeans-node Docker image, the Docker image in the integration testing is broken

`./scripts/compose.py start 7.4.0 --release --with-opbeans-node`

```

2019-10-29T11:04:59: PM2 log: Launching in no daemon mode

2019-10-29T11:04:59: PM2 log: App [server:0] starting in -cluster mode-

2019-10-... | 1.0 | Opbeans node is broken after Docker images changes - After lates changes on Opbeans-node Docker image, the Docker image in the integration testing is broken

`./scripts/compose.py start 7.4.0 --release --with-opbeans-node`

```

2019-10-29T11:04:59: PM2 log: Launching in no daemon mode

2019-10-29T11:04:59: PM2 log... | non_process | opbeans node is broken after docker images changes after lates changes on opbeans node docker image the docker image in the integration testing is broken scripts compose py start release with opbeans node log launching in no daemon mode log app starting in clu... | 0 |

174,227 | 27,598,214,553 | IssuesEvent | 2023-03-09 08:15:13 | saving-satoshi/saving-satoshi | https://api.github.com/repos/saving-satoshi/saving-satoshi | closed | Revise the about page copy | copy design | Don't think the information is really accurate anymore. Things to consider:

- Is the story intro still accurate?

- Let's describe the demo state, what's included and what to expect going forward

- Let's expand on "How to contribute". Just opening an issue is fine for small bugs, but not helpful if someone wants to... | 1.0 | Revise the about page copy - Don't think the information is really accurate anymore. Things to consider:

- Is the story intro still accurate?

- Let's describe the demo state, what's included and what to expect going forward

- Let's expand on "How to contribute". Just opening an issue is fine for small bugs, but no... | non_process | revise the about page copy don t think the information is really accurate anymore things to consider is the story intro still accurate let s describe the demo state what s included and what to expect going forward let s expand on how to contribute just opening an issue is fine for small bugs but no... | 0 |

86,256 | 3,704,312,576 | IssuesEvent | 2016-02-29 23:35:35 | BlinkUX/ISB-LSDF | https://api.github.com/repos/BlinkUX/ISB-LSDF | closed | Plotted user feature axis render terrible axis labels | Awaiting Confirmation bug Priority 1 | <img width="828" alt="screen shot 2016-02-27 at 12 12 50 pm" src="https://cloud.githubusercontent.com/assets/4040084/13375094/89f60a24-dd4b-11e5-989e-7456090e1c28.png">

| 1.0 | Plotted user feature axis render terrible axis labels - <img width="828" alt="screen shot 2016-02-27 at 12 12 50 pm" src="https://cloud.githubusercontent.com/assets/4040084/13375094/89f60a24-dd4b-11e5-989e-7456090e1c28.png">

| non_process | plotted user feature axis render terrible axis labels img width alt screen shot at pm src | 0 |

49 | 2,513,878,256 | IssuesEvent | 2015-01-15 04:33:35 | GsDevKit/zinc | https://api.github.com/repos/GsDevKit/zinc | closed | socket error while snapping off continuation | inprocess | See the discussion and attached stack in http://forum.world.st/GS-SS-Beta-Setup-a-new-copy-of-Glass-tp4757105p4757522.html for details of the error ...

To reproduce generate an error using the WAExceptionFunctionalTest test: http://localhost:8383/tests/functional/WAExceptionFunctionalTest

GemStone3.1.0.5 and Sea... | 1.0 | socket error while snapping off continuation - See the discussion and attached stack in http://forum.world.st/GS-SS-Beta-Setup-a-new-copy-of-Glass-tp4757105p4757522.html for details of the error ...

To reproduce generate an error using the WAExceptionFunctionalTest test: http://localhost:8383/tests/functional/WAExce... | process | socket error while snapping off continuation see the discussion and attached stack in for details of the error to reproduce generate an error using the waexceptionfunctionaltest test and seaside loaded using | 1 |

17,440 | 23,265,882,762 | IssuesEvent | 2022-08-04 17:19:42 | MPMG-DCC-UFMG/C01 | https://api.github.com/repos/MPMG-DCC-UFMG/C01 | opened | Transparência - Detalhes do coletor/Filtragem URLs e RegEx | [1] Requisito [0] Desenvolvimento [2] Média Prioridade [3] Processamento Dinâmico | ## Comportamento Esperado

Espera-se que as configurações de filtrar links por URLs e RegEx se apliquem também aos passos do processamento dinâmico.

## Comportamento Atual

Ao configurar um coletor dinâmico com essa ferramenta, os links filtrados são, basicamente, o que podem ser filtrados através do processamento d... | 1.0 | Transparência - Detalhes do coletor/Filtragem URLs e RegEx - ## Comportamento Esperado

Espera-se que as configurações de filtrar links por URLs e RegEx se apliquem também aos passos do processamento dinâmico.

## Comportamento Atual

Ao configurar um coletor dinâmico com essa ferramenta, os links filtrados são, basi... | process | transparência detalhes do coletor filtragem urls e regex comportamento esperado espera se que as configurações de filtrar links por urls e regex se apliquem também aos passos do processamento dinâmico comportamento atual ao configurar um coletor dinâmico com essa ferramenta os links filtrados são basi... | 1 |

288,578 | 24,917,374,802 | IssuesEvent | 2022-10-30 15:08:08 | FuelLabs/fuel-indexer | https://api.github.com/repos/FuelLabs/fuel-indexer | closed | Add tests for new Receipt types | testing | - Now that we have support for several receipt types, it would be nice to have this functionality tested

- We initially punted on testing in the relevant PRs because we wanted to make sure we were on track to complete the milestone

- Not only this, but we also don't really know how to trigger some of these events i... | 1.0 | Add tests for new Receipt types - - Now that we have support for several receipt types, it would be nice to have this functionality tested

- We initially punted on testing in the relevant PRs because we wanted to make sure we were on track to complete the milestone

- Not only this, but we also don't really know how... | non_process | add tests for new receipt types now that we have support for several receipt types it would be nice to have this functionality tested we initially punted on testing in the relevant prs because we wanted to make sure we were on track to complete the milestone not only this but we also don t really know how... | 0 |

42,521 | 6,987,545,613 | IssuesEvent | 2017-12-14 09:38:25 | raiden-network/microraiden | https://api.github.com/repos/raiden-network/microraiden | opened | Update documentation | channel manager documentation m2m client sprint candidate | Quite a lot has changed (and will change during this sprint) concerning usage of the proxy and python client/lib. We should consolidate README-like information into a single top-level README.md file and update usage instructions on basic examples.

We should also document all classes and methods (using docstrings) th... | 1.0 | Update documentation - Quite a lot has changed (and will change during this sprint) concerning usage of the proxy and python client/lib. We should consolidate README-like information into a single top-level README.md file and update usage instructions on basic examples.

We should also document all classes and method... | non_process | update documentation quite a lot has changed and will change during this sprint concerning usage of the proxy and python client lib we should consolidate readme like information into a single top level readme md file and update usage instructions on basic examples we should also document all classes and method... | 0 |

11,833 | 14,655,443,642 | IssuesEvent | 2020-12-28 11:01:58 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM[ [Dev] Participant details page >Withdrawal date is displaying | Bug P2 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | Participant details page >Withdrawal date is displaying

Steps

1. Join a study and withdrawn from the study

2. Enable invitation for the same participant

3. Navigate to Participant details page and Observe the Withdrawal date

AR : Withdrawal date is displaying

ER : Withdrawal date should be displayed as 'NA'... | 3.0 | [PM[ [Dev] Participant details page >Withdrawal date is displaying - Participant details page >Withdrawal date is displaying

Steps

1. Join a study and withdrawn from the study

2. Enable invitation for the same participant

3. Navigate to Participant details page and Observe the Withdrawal date

AR : Withdrawal... | process | participant details page withdrawal date is displaying participant details page withdrawal date is displaying steps join a study and withdrawn from the study enable invitation for the same participant navigate to participant details page and observe the withdrawal date ar withdrawal date is ... | 1 |

35,765 | 5,006,624,221 | IssuesEvent | 2016-12-12 14:41:44 | d3athrow/vgstation13 | https://api.github.com/repos/d3athrow/vgstation13 | closed | Parapen disappearing | Bug / Fix Needs Moar Testing | (WEB REPORT BY: brolaire REMOTE: 158.69.55.2:7777)

> Revision (Should be above if you're viewing this from ingame!)

> ce5a82256bbcd88f97bf112624142b6f9e608981

>

> General description of the issue

> Parapen put into left pocket, disappeared from the HUD, later on during search, HoS pulled it OUT OF MY EYES-slot.

>

> ... | 1.0 | Parapen disappearing - (WEB REPORT BY: brolaire REMOTE: 158.69.55.2:7777)

> Revision (Should be above if you're viewing this from ingame!)

> ce5a82256bbcd88f97bf112624142b6f9e608981

>

> General description of the issue

> Parapen put into left pocket, disappeared from the HUD, later on during search, HoS pulled it OUT... | non_process | parapen disappearing web report by brolaire remote revision should be above if you re viewing this from ingame general description of the issue parapen put into left pocket disappeared from the hud later on during search hos pulled it out of my eyes slot what you expected to ha... | 0 |

240,234 | 18,296,196,373 | IssuesEvent | 2021-10-05 20:42:42 | tc39/proposal-pipeline-operator | https://api.github.com/repos/tc39/proposal-pipeline-operator | closed | Readme indents change | question documentation | There are no indents all around the whole readme, there should be indents, i.e. 2 spaces.

This is a general js tendency, for example here:

```js

context

.call1()

.call2()

.call3()

```

So in the readme it should be:

```js

return xf

|> bind(^['@@transducer/step'], ^)

|> obj[methodName](^, ACC)

... | 1.0 | Readme indents change - There are no indents all around the whole readme, there should be indents, i.e. 2 spaces.

This is a general js tendency, for example here:

```js

context

.call1()

.call2()

.call3()

```

So in the readme it should be:

```js

return xf

|> bind(^['@@transducer/step'], ^)

|> obj... | non_process | readme indents change there are no indents all around the whole readme there should be indents i e spaces this is a general js tendency for example here js context so in the readme it should be js return xf bind obj acc xf ... | 0 |

38,689 | 19,503,378,118 | IssuesEvent | 2021-12-28 08:38:30 | ARK-Builders/ARK-Navigator | https://api.github.com/repos/ARK-Builders/ARK-Navigator | opened | Asynchronous writing to tags storage | performance | At the moment, after tags are changed for a resource, we wait till new state of tags storage is written into filesystem.

We can update the storage file in background allowing user to continue working. Right now there is slight delay less than 0.5 sec after applying new tags which can be removed. We must ensure that su... | True | Asynchronous writing to tags storage - At the moment, after tags are changed for a resource, we wait till new state of tags storage is written into filesystem.

We can update the storage file in background allowing user to continue working. Right now there is slight delay less than 0.5 sec after applying new tags which... | non_process | asynchronous writing to tags storage at the moment after tags are changed for a resource we wait till new state of tags storage is written into filesystem we can update the storage file in background allowing user to continue working right now there is slight delay less than sec after applying new tags which... | 0 |

20,120 | 26,658,397,179 | IssuesEvent | 2023-01-25 18:47:25 | NationalSecurityAgency/ghidra | https://api.github.com/repos/NationalSecurityAgency/ghidra | closed | Ghidra cannot disassemble a LDS instruction in real mode | Type: Bug Feature: Processor/x86 Status: Internal | **Describe the bug**

C5B70D03 is supposed to disassemble to LDS SI,DWORD PTR [BX+030D]

However in ghidra I get an error:

Error | Bad Instruction | Unable to resolve constructor at f000:0026 | f000:0026 | | ?? C5h

**To Reproduce**

Create a project in real mode and import hex C5B70D03 in the bytes view, and tr... | 1.0 | Ghidra cannot disassemble a LDS instruction in real mode - **Describe the bug**

C5B70D03 is supposed to disassemble to LDS SI,DWORD PTR [BX+030D]

However in ghidra I get an error:

Error | Bad Instruction | Unable to resolve constructor at f000:0026 | f000:0026 | | ?? C5h

**To Reproduce**

Create a project in ... | process | ghidra cannot disassemble a lds instruction in real mode describe the bug is supposed to disassemble to lds si dword ptr however in ghidra i get an error error bad instruction unable to resolve constructor at to reproduce create a project in real mode and import hex in... | 1 |

77,683 | 3,507,212,594 | IssuesEvent | 2016-01-08 11:56:25 | OregonCore/OregonCore | https://api.github.com/repos/OregonCore/OregonCore | closed | [Bug] Out of range use the melee ability (BB #663) | migrated Priority: Medium Type: Bug | This issue was migrated from bitbucket.

**Original Reporter:** Alex_Step

**Original Date:** 22.08.2014 05:08:15 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** closed

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/663

<hr>

Attack ability are only possible if you stand c... | 1.0 | [Bug] Out of range use the melee ability (BB #663) - This issue was migrated from bitbucket.

**Original Reporter:** Alex_Step

**Original Date:** 22.08.2014 05:08:15 GMT+0000

**Original Priority:** major

**Original Type:** bug

**Original State:** closed

**Direct Link:** https://bitbucket.org/oregon/oregoncore/issues/663... | non_process | out of range use the melee ability bb this issue was migrated from bitbucket original reporter alex step original date gmt original priority major original type bug original state closed direct link attack ability are only possible if you stand close to the enem... | 0 |

320,680 | 27,450,085,042 | IssuesEvent | 2023-03-02 16:45:46 | allure-framework/allure-python | https://api.github.com/repos/allure-framework/allure-python | reopened | pytest-bdd 5.0.0: error on generating report with failed tests with scenario outlines | type:bug theme:pytest-bdd | [//]: # (

. Note: for support questions, please use Stackoverflow or Gitter**.

. This repository's issues are reserved for feature requests and bug reports.

.

. In case of any problems with Allure Jenkins plugin** please use the following repository

. to create an issue: https://github.com/jenkinsci/allure-plugi... | 1.0 | pytest-bdd 5.0.0: error on generating report with failed tests with scenario outlines - [//]: # (

. Note: for support questions, please use Stackoverflow or Gitter**.

. This repository's issues are reserved for feature requests and bug reports.

.

. In case of any problems with Allure Jenkins plugin** please use t... | non_process | pytest bdd error on generating report with failed tests with scenario outlines note for support questions please use stackoverflow or gitter this repository s issues are reserved for feature requests and bug reports in case of any problems with allure jenkins plugin please use the ... | 0 |

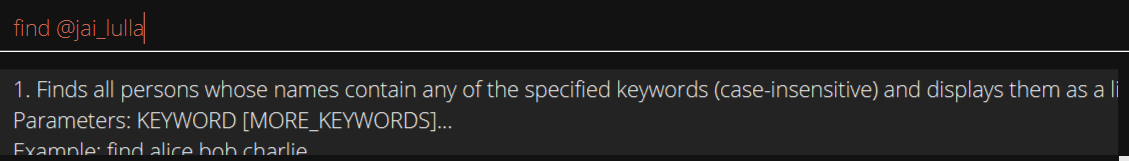

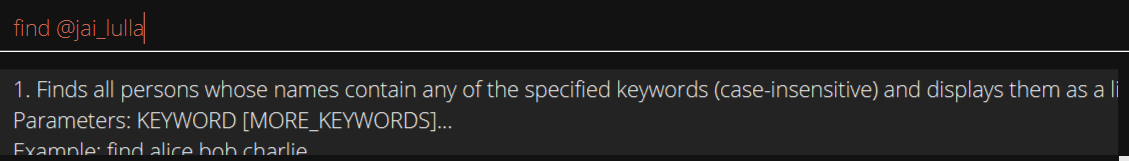

243,143 | 18,677,078,989 | IssuesEvent | 2021-10-31 18:42:47 | AY2122S1-CS2103T-T10-1/tp | https://api.github.com/repos/AY2122S1-CS2103T-T10-1/tp | closed | [PE-D] inconsisten prefix for tele | type.Documentation priority.High mustfix |

many of the tele prefix in the UG uses @.

However, the app error messages range from using @ and... | 1.0 | [PE-D] inconsisten prefix for tele -

many of the tele prefix in the UG uses @.

However, the app ... | non_process | inconsisten prefix for tele many of the tele prefix in the ug uses however the app error messages range from using and te labels type documentationbug severity medium original hpkoh ped | 0 |

43,162 | 5,579,933,182 | IssuesEvent | 2017-03-28 15:33:55 | open-horizon/anax | https://api.github.com/repos/open-horizon/anax | opened | Add resource checking / mgmt. support to anax that can be used before registration, agreement, and container start | bug design | This has been ignored for a long time in the platform and causes instability and other ugly problems. | 1.0 | Add resource checking / mgmt. support to anax that can be used before registration, agreement, and container start - This has been ignored for a long time in the platform and causes instability and other ugly problems. | non_process | add resource checking mgmt support to anax that can be used before registration agreement and container start this has been ignored for a long time in the platform and causes instability and other ugly problems | 0 |

15,621 | 19,767,644,328 | IssuesEvent | 2022-01-17 05:54:35 | pkgjs/parseargs | https://api.github.com/repos/pkgjs/parseargs | closed | Transfer iansu/eslint-plugin-node-core to pkgjs org | process | I extracted the ESLint config used by Node core into a standalone package here: https://github.com/iansu/eslint-plugin-node-core. The idea is that we can use this config in projects like parseargs to make the eventual porting of the code into Node core as easy as possible.

We would like to transfer this repo from my... | 1.0 | Transfer iansu/eslint-plugin-node-core to pkgjs org - I extracted the ESLint config used by Node core into a standalone package here: https://github.com/iansu/eslint-plugin-node-core. The idea is that we can use this config in projects like parseargs to make the eventual porting of the code into Node core as easy as po... | process | transfer iansu eslint plugin node core to pkgjs org i extracted the eslint config used by node core into a standalone package here the idea is that we can use this config in projects like parseargs to make the eventual porting of the code into node core as easy as possible we would like to transfer this repo f... | 1 |

20,679 | 27,350,548,366 | IssuesEvent | 2023-02-27 09:16:02 | haddocking/haddock3 | https://api.github.com/repos/haddocking/haddock3 | opened | create detailed documentation for haddock3-analyse | analysis/postprocessing | haddock3-analyse should now have an adequate, detailed documentation | 1.0 | create detailed documentation for haddock3-analyse - haddock3-analyse should now have an adequate, detailed documentation | process | create detailed documentation for analyse analyse should now have an adequate detailed documentation | 1 |

127,925 | 12,343,269,587 | IssuesEvent | 2020-05-15 03:26:57 | COVID-19-electronic-health-system/Corona-tracker | https://api.github.com/repos/COVID-19-electronic-health-system/Corona-tracker | opened | [DOCS] README for translations python script | documentation | # ⚠️ IMPORTANT: Please fill out this template to give us as much information as possible to consider/implement this update.

### Summary

<!-- One paragraph explanation of the feature. -->

In reference to #702, and to have a modular issue, this issue is to provide an improved README that more precisely details t... | 1.0 | [DOCS] README for translations python script - # ⚠️ IMPORTANT: Please fill out this template to give us as much information as possible to consider/implement this update.

### Summary

<!-- One paragraph explanation of the feature. -->

In reference to #702, and to have a modular issue, this issue is to provide a... | non_process | readme for translations python script ⚠️ important please fill out this template to give us as much information as possible to consider implement this update summary in reference to and to have a modular issue this issue is to provide an improved readme that more precisely details the script... | 0 |

5,975 | 8,794,017,079 | IssuesEvent | 2018-12-21 22:41:38 | babel/babel | https://api.github.com/repos/babel/babel | closed | @babel/polyfill was published yesterday without pushing browser.js | area: publishing process i: bug i: regression pkg: polyfill | ## Bug Report

**Current Behavior**

A clear and concise description of the behavior.

In version 7.0.0 there was a file, browser.js, which was distributed when published.

**Input Code**

- REPL or Repo link if applicable:

https://github.com/babel/babel/blob/master/packages/babel-polyfill/scripts/postpublish.js... | 1.0 | @babel/polyfill was published yesterday without pushing browser.js - ## Bug Report

**Current Behavior**

A clear and concise description of the behavior.

In version 7.0.0 there was a file, browser.js, which was distributed when published.

**Input Code**

- REPL or Repo link if applicable:

https://github.com/b... | process | babel polyfill was published yesterday without pushing browser js bug report current behavior a clear and concise description of the behavior in version there was a file browser js which was distributed when published input code repl or repo link if applicable js var your ... | 1 |

96,681 | 16,163,106,432 | IssuesEvent | 2021-05-01 02:09:27 | Heavencraft/heavencraft-legacy-201404-201603 | https://api.github.com/repos/Heavencraft/heavencraft-legacy-201404-201603 | closed | CVE-2011-1498 Medium Severity Vulnerability detected by WhiteSource | security vulnerability | ## CVE-2011-1498 - Medium Severity Vulnerability

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>httpclient-4.0.1.jar</b></p></summary>

<p>HttpComponents Client (base module)</p>

<p>path: /root/.m2/reposit... | True | CVE-2011-1498 Medium Severity Vulnerability detected by WhiteSource - ## CVE-2011-1498 - Medium Severity Vulnerability

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>httpclient-4.0.1.jar</b></p></summary>

... | non_process | cve medium severity vulnerability detected by whitesource cve medium severity vulnerability vulnerable library httpclient jar httpcomponents client base module path root repository org apache httpcomponents httpclient httpclient jar library home page a href ... | 0 |

227,929 | 18,110,911,529 | IssuesEvent | 2021-09-23 03:43:31 | apache/shardingsphere | https://api.github.com/repos/apache/shardingsphere | closed | Remove useless assertions in unit test | in: test | DistSQL should be able to be executed in any mode, there are some unit tests need to be cleared,

Search the code `No Registry center to execute` in all unit test classes, remove and refactor them. | 1.0 | Remove useless assertions in unit test - DistSQL should be able to be executed in any mode, there are some unit tests need to be cleared,

Search the code `No Registry center to execute` in all unit test classes, remove and refactor them. | non_process | remove useless assertions in unit test distsql should be able to be executed in any mode there are some unit tests need to be cleared search the code no registry center to execute in all unit test classes remove and refactor them | 0 |

22,173 | 30,723,908,679 | IssuesEvent | 2023-07-27 18:01:59 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | ServiceController's API got wrong service status | question area-System.ServiceProcess no-recent-activity needs-author-action | My env is Windows 11/.net 6 sdk/VS 2022.

I am trying to start my service if found the service is stopped.

What I manually to make the service to stop is stop the service and delete a critical file(a DLL file), so then the service cannot be started.

And then code will be go into the if statement. but after I call s... | 1.0 | ServiceController's API got wrong service status - My env is Windows 11/.net 6 sdk/VS 2022.

I am trying to start my service if found the service is stopped.

What I manually to make the service to stop is stop the service and delete a critical file(a DLL file), so then the service cannot be started.

And then code w... | process | servicecontroller s api got wrong service status my env is windows net sdk vs i am trying to start my service if found the service is stopped what i manually to make the service to stop is stop the service and delete a critical file a dll file so then the service cannot be started and then code will ... | 1 |

16,730 | 21,891,887,936 | IssuesEvent | 2022-05-20 03:13:09 | sgaxun/sgaxun.github.io | https://api.github.com/repos/sgaxun/sgaxun.github.io | closed | Spring Boot 启动流程 | sgaxun's blog | Gitalk /post/spring-boot-start-process/ | https://blog.sgaxun.me/post/spring-boot-start-process/

Spring Boot 的启动很简单,代码如下:

@SpringBootApplication

public class Application implements CommandLineRunner {

public stat... | 1.0 | Spring Boot 启动流程 | sgaxun's blog - https://blog.sgaxun.me/post/spring-boot-start-process/

Spring Boot 的启动很简单,代码如下:

@SpringBootApplication

public class Application implements CommandLineRunner {

public stat... | process | spring boot 启动流程 sgaxun s blog spring boot 的启动很简单,代码如下: springbootapplication public class application implements commandlinerunner public stat | 1 |

7,803 | 19,426,430,219 | IssuesEvent | 2021-12-21 06:26:12 | MicrosoftDocs/architecture-center | https://api.github.com/repos/MicrosoftDocs/architecture-center | closed | Enterprise integration using message broker and events | doc-enhancement cxp triaged architecture-center/svc reference-architecture/subsvc Pri2 | Hi,

First of all, thank you. These pages are a very useful reference, and I'm really pleased they exist!

In regards to this page:

https://docs.microsoft.com/en-us/azure/architecture/reference-architectures/enterprise-integration/queues-events

I see that a Service Bus Queue feeds into Event Grid. I am curio... | 2.0 | Enterprise integration using message broker and events - Hi,

First of all, thank you. These pages are a very useful reference, and I'm really pleased they exist!

In regards to this page:

https://docs.microsoft.com/en-us/azure/architecture/reference-architectures/enterprise-integration/queues-events

I see t... | non_process | enterprise integration using message broker and events hi first of all thank you these pages are a very useful reference and i m really pleased they exist in regards to this page i see that a service bus queue feeds into event grid i am curious if this is still the best advice i assume that ... | 0 |

5,130 | 7,917,091,894 | IssuesEvent | 2018-07-04 08:46:09 | Open-EO/openeo-api | https://api.github.com/repos/Open-EO/openeo-api | closed | Uniform process_graph_id, and job_id generation across different backends | in discussion job management process graph management | As addressed during the last biweekly development prints it would be a useful feature for the handling of processes if the ID’s, in particular the process_graph_id and eventually also the job_id would be uniformly generated across the different backends. This would make the handling of distributed processes somehow sim... | 1.0 | Uniform process_graph_id, and job_id generation across different backends - As addressed during the last biweekly development prints it would be a useful feature for the handling of processes if the ID’s, in particular the process_graph_id and eventually also the job_id would be uniformly generated across the different... | process | uniform process graph id and job id generation across different backends as addressed during the last biweekly development prints it would be a useful feature for the handling of processes if the id’s in particular the process graph id and eventually also the job id would be uniformly generated across the different... | 1 |

18,367 | 24,495,644,165 | IssuesEvent | 2022-10-10 08:26:14 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | reopened | TPV regulation of mitotic metaphase/anaphase transition | cell cycle and DNA processes parent relationship query | remove the parent

GO:0010965 regulation of mitotic sister chromatid separation

from

GO:0030071 regulation of mitotic metaphase/anaphase transition

they are related but the relationship is odd. | 1.0 | TPV regulation of mitotic metaphase/anaphase transition - remove the parent

GO:0010965 regulation of mitotic sister chromatid separation

from

GO:0030071 regulation of mitotic metaphase/anaphase transition

they are related but the relationship is odd. | process | tpv regulation of mitotic metaphase anaphase transition remove the parent go regulation of mitotic sister chromatid separation from go regulation of mitotic metaphase anaphase transition they are related but the relationship is odd | 1 |

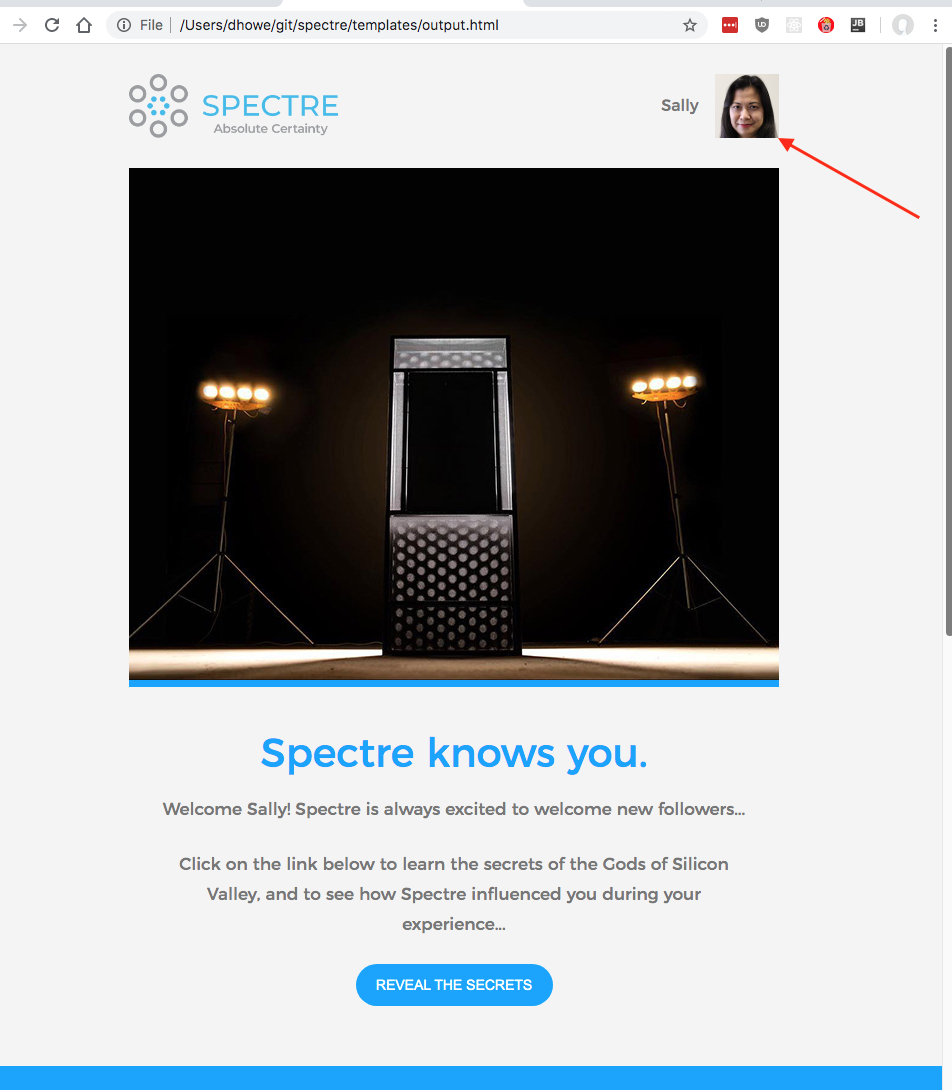

393,573 | 11,621,713,097 | IssuesEvent | 2020-02-27 03:57:00 | dhowe/spectre | https://api.github.com/repos/dhowe/spectre | opened | Dark-ad 'share' button has broken text display | high-priority needs-quick-fix | Please make sure this is fixed for ALL buttons

| 1.0 | Dark-ad 'share' button has broken text display - Please make sure this is fixed for ALL buttons

| non_process | dark ad share button has broken text display please make sure this is fixed for all buttons | 0 |

3,582 | 6,620,700,826 | IssuesEvent | 2017-09-21 16:23:35 | cptechinc/soft-6-ecomm | https://api.github.com/repos/cptechinc/soft-6-ecomm | closed | Fix Includes for head and foot | PHP Processwire | ```

<?php

include('./_head.php');

?>

<?php

include('./_foot.php');

?>

```

Should look like

```

<?php include('./_head.php'); ?>

<?php include('./_foot.php'); ?>

```

| 1.0 | Fix Includes for head and foot - ```

<?php

include('./_head.php');

?>

<?php

include('./_foot.php');

?>

```

Should look like

```

<?php include('./_head.php'); ?>

<?php include('./_foot.php'); ?>

```

| process | fix includes for head and foot php include head php php include foot php should look like | 1 |

657,231 | 21,789,220,100 | IssuesEvent | 2022-05-14 16:16:12 | misskey-dev/misskey | https://api.github.com/repos/misskey-dev/misskey | closed | Crash when processing certain PNGs in ARM64 | 🐛Bug ⚙️Server 🔥high priority | <!--

Thanks for reporting!

First, in order to avoid duplicate Issues, please search to see if the problem you found has already been reported.

-->

## 💡 Summary

ARM64上で稼働しているインスタンスで特定のPNGを処理しようとするとクラッシュします。

```

malloc(): corrupted top size

ERR * [core cluster] [1] died :(

```

特定のPNGとして Pi... | 1.0 | Crash when processing certain PNGs in ARM64 - <!--

Thanks for reporting!

First, in order to avoid duplicate Issues, please search to see if the problem you found has already been reported.

-->

## 💡 Summary

ARM64上で稼働しているインスタンスで特定のPNGを処理しようとするとクラッシュします。

```

malloc(): corrupted top size

ERR * [core cl... | non_process | crash when processing certain pngs in thanks for reporting first in order to avoid duplicate issues please search to see if the problem you found has already been reported 💡 summary 。 malloc corrupted top size err died 特定のpngとして pixelfed のデフォルト ... | 0 |

8,747 | 11,872,768,428 | IssuesEvent | 2020-03-26 16:17:38 | w3c/webauthn | https://api.github.com/repos/w3c/webauthn | closed | Upgrade Bikeshed to Python 3 | type:process | Bikeshed is about to port to Python 3 soon, so it's likely we'll need to update our build scripts to match. | 1.0 | Upgrade Bikeshed to Python 3 - Bikeshed is about to port to Python 3 soon, so it's likely we'll need to update our build scripts to match. | process | upgrade bikeshed to python bikeshed is about to port to python soon so it s likely we ll need to update our build scripts to match | 1 |

512 | 2,982,201,076 | IssuesEvent | 2015-07-17 09:25:30 | e-government-ua/i | https://api.github.com/repos/e-government-ua/i | closed | На главном портале в документах, починить диалог открытия доступа к документу. | hi priority In process of testing test version | еще несколько дней назад работало.

Работает на бэте:

не работает на альфе:

| 1.0 | На главном портале в документах, починить диалог открытия доступа к документу. - еще несколько дней назад работало.

Работает на бэте:

не работает на альфе:

`.

### What should it be?

Allow adding multiple tags with a single line using `WithTags(my, tags, are, cool)`, either as a param list or IEnumerable | 1.0 | Add `DamageInfo.WithTags` method - ### What it is?

You have to add tags to damage info one at a time with `WithTag(sometag)`.

### What should it be?

Allow adding multiple tags with a single line using `WithTags(my, tags, are, cool)`, either as a param list or IEnumerable | non_process | add damageinfo withtags method what it is you have to add tags to damage info one at a time with withtag sometag what should it be allow adding multiple tags with a single line using withtags my tags are cool either as a param list or ienumerable | 0 |

417,741 | 28,110,900,965 | IssuesEvent | 2023-03-31 07:05:21 | Sheemo/ped | https://api.github.com/repos/Sheemo/ped | opened | Inconsistencies in User Guide for T/ tag | severity.VeryLow type.DocumentationBug | Sometimes it reads TEAM_NAME (under show), sometimes it reads TEAMNAME (under remove), sometimes it reads TEAMTAG (under edit)

<!--session: 1680242662877-68f1f0b8-49bf-45a2-b0d6-9c11a4d5249c-->

<!--Version: Web v3.4.7--> | 1.0 | Inconsistencies in User Guide for T/ tag - Sometimes it reads TEAM_NAME (under show), sometimes it reads TEAMNAME (under remove), sometimes it reads TEAMTAG (under edit)

<!--session: 1680242662877-68f1f0b8-49bf-45a2-b0d6-9c11a4d5249c-->

<!--Version: Web v3.4.7--> | non_process | inconsistencies in user guide for t tag sometimes it reads team name under show sometimes it reads teamname under remove sometimes it reads teamtag under edit | 0 |

20,778 | 27,515,563,304 | IssuesEvent | 2023-03-06 11:43:28 | HPSCTerrSys/TSMP | https://api.github.com/repos/HPSCTerrSys/TSMP | closed | ToDo for TSMP on github: see what we do with the standard pre and postpro tools from Shrestha that are still on git in bonn (Bugzilla Bug 54) | pre/post processing | This issue was created automatically with bugzilla2github

# Bugzilla Bug 54

Date: 2020-02-04 14:14:19 +0100

From: Klaus Goergen <<k.goergen@fz-juelich.de>>

To: Abouzar Ghasemi, <<a.ghasemi@fz-juelich.de>>

CC: a.ghasemi@fz-juelich.de, n.wagner@fz-juelich.de

Last updated: 2020-02-04 14:14:19 +0100

## C... | 1.0 | ToDo for TSMP on github: see what we do with the standard pre and postpro tools from Shrestha that are still on git in bonn (Bugzilla Bug 54) - This issue was created automatically with bugzilla2github

# Bugzilla Bug 54

Date: 2020-02-04 14:14:19 +0100

From: Klaus Goergen <<k.goergen@fz-juelich.de>>

To: Abouzar ... | process | todo for tsmp on github see what we do with the standard pre and postpro tools from shrestha that are still on git in bonn bugzilla bug this issue was created automatically with bugzilla bug date from klaus goergen lt gt to abouzar ghasemi lt gt cc a ghasemi fz juelich de n... | 1 |

14,981 | 18,511,961,387 | IssuesEvent | 2021-10-20 05:08:04 | juzi5201314/maop | https://api.github.com/repos/juzi5201314/maop | closed | 改进password的配置 | http processing | 为了不储存密码明文,将密码hash后放到data path中。

1. 在终端与用户交互获取密码

2. 通过`--no-password`启动,禁用身份验证(禁用需要验证的操作

3. 通过`password`子命令手动设置密码 | 1.0 | 改进password的配置 - 为了不储存密码明文,将密码hash后放到data path中。

1. 在终端与用户交互获取密码

2. 通过`--no-password`启动,禁用身份验证(禁用需要验证的操作

3. 通过`password`子命令手动设置密码 | process | 改进password的配置 为了不储存密码明文,将密码hash后放到data path中。 在终端与用户交互获取密码 通过 no password 启动,禁用身份验证 禁用需要验证的操作 通过 password 子命令手动设置密码 | 1 |

19,128 | 25,183,502,195 | IssuesEvent | 2022-11-11 15:43:27 | opensearch-project/data-prepper | https://api.github.com/repos/opensearch-project/data-prepper | opened | Provide a type conversion / cast processor | enhancement plugin - processor | **Is your feature request related to a problem? Please describe.**

Some pipelines have Event values in one type (e.g. string), but want to convert them to another type (e.g. int).

**Describe the solution you'd like**

Provide a new convert processor along with the other Mutate Event Processors.

```

processo... | 1.0 | Provide a type conversion / cast processor - **Is your feature request related to a problem? Please describe.**

Some pipelines have Event values in one type (e.g. string), but want to convert them to another type (e.g. int).

**Describe the solution you'd like**

Provide a new convert processor along with the ot... | process | provide a type conversion cast processor is your feature request related to a problem please describe some pipelines have event values in one type e g string but want to convert them to another type e g int describe the solution you d like provide a new convert processor along with the ot... | 1 |

6,606 | 9,692,946,092 | IssuesEvent | 2019-05-24 14:55:35 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | closed | Storage: 'tearDownModule' flakes with 409 | api: storage flaky testing type: process | From: https://source.cloud.google.com/results/invocations/b09e6e84-ca6e-427f-8014-887b811578bc/targets/cloud-devrel%2Fclient-libraries%2Fgoogle-cloud-python%2Fpresubmit%2Fstorage/log

```python

def tearDownModule():

errors = (exceptions.Conflict, exceptions.TooManyRequests)

retry = RetryErrors(... | 1.0 | Storage: 'tearDownModule' flakes with 409 - From: https://source.cloud.google.com/results/invocations/b09e6e84-ca6e-427f-8014-887b811578bc/targets/cloud-devrel%2Fclient-libraries%2Fgoogle-cloud-python%2Fpresubmit%2Fstorage/log

```python

def tearDownModule():

errors = (exceptions.Conflict, exceptions.To... | process | storage teardownmodule flakes with from python def teardownmodule errors exceptions conflict exceptions toomanyrequests retry retryerrors errors max tries retry config test bucket delete force true e conflict delete the bucket you tried to delet... | 1 |

13,986 | 16,761,219,546 | IssuesEvent | 2021-06-13 20:36:07 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | color balance rgb gamut clipping | scope: image processing | Hi

I am not sure if this is a bug, or if I am just too stupid to get my head around the color science. For some learning exercise I've loaded some artificial test images and played with the color balance rgb module.

According to the manual:

> At its output, color balance RGB checks that the graded colors fit ins... | 1.0 | color balance rgb gamut clipping - Hi

I am not sure if this is a bug, or if I am just too stupid to get my head around the color science. For some learning exercise I've loaded some artificial test images and played with the color balance rgb module.

According to the manual:

> At its output, color balance RGB ch... | process | color balance rgb gamut clipping hi i am not sure if this is a bug or if i am just too stupid to get my head around the color science for some learning exercise i ve loaded some artificial test images and played with the color balance rgb module according to the manual at its output color balance rgb ch... | 1 |

17,087 | 22,595,997,155 | IssuesEvent | 2022-06-29 03:06:29 | pyanodon/pybugreports | https://api.github.com/repos/pyanodon/pybugreports | closed | 248k mod error | postprocess-fail | ### Mod source

PyAE Beta

### Which mod are you having an issue with?

- [ ] pyalienlife

- [ ] pyalternativeenergy

- [ ] pycoalprocessing

- [ ] pyfusionenergy

- [ ] pyhightech

- [ ] pyindustry

- [ ] pypetroleumhandling

- [X] pypostprocessing

- [ ] pyrawores

### Operating system

>=Windows 10

### What kind of issue i... | 1.0 | 248k mod error - ### Mod source

PyAE Beta

### Which mod are you having an issue with?

- [ ] pyalienlife

- [ ] pyalternativeenergy

- [ ] pycoalprocessing

- [ ] pyfusionenergy

- [ ] pyhightech

- [ ] pyindustry

- [ ] pypetroleumhandling

- [X] pypostprocessing

- [ ] pyrawores

### Operating system

>=Windows 10

### Wha... | process | mod error mod source pyae beta which mod are you having an issue with pyalienlife pyalternativeenergy pycoalprocessing pyfusionenergy pyhightech pyindustry pypetroleumhandling pypostprocessing pyrawores operating system windows what kind of issue is thi... | 1 |

769,318 | 27,001,470,122 | IssuesEvent | 2023-02-10 08:11:47 | WavesHQ/bridge | https://api.github.com/repos/WavesHQ/bridge | closed | chore(EvmTransactionConfirmer): Follow up tasks from PR #256 | needs/area needs/triage kind/feature needs/priority server | <!-- Please only use this template for submitting enhancement/feature requests -->

#### What would you like to be added:

- [x] Keeping test code DRY

- [x] Shift `TestingExampleModule` into its own file

- [x] Shift test/app fixture logic into the `BridgeServerTestingApp` constructor (or wherever appropriate)

... | 1.0 | chore(EvmTransactionConfirmer): Follow up tasks from PR #256 - <!-- Please only use this template for submitting enhancement/feature requests -->

#### What would you like to be added:

- [x] Keeping test code DRY

- [x] Shift `TestingExampleModule` into its own file

- [x] Shift test/app fixture logic into the `... | non_process | chore evmtransactionconfirmer follow up tasks from pr what would you like to be added keeping test code dry shift testingexamplemodule into its own file shift test app fixture logic into the bridgeservertestingapp constructor or wherever appropriate persistence of last b... | 0 |

239,282 | 7,788,324,319 | IssuesEvent | 2018-06-07 03:54:07 | fossasia/open-event-server | https://api.github.com/repos/fossasia/open-event-server | closed | Travis build is failing | Priority: URGENT help-wanted | **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

Since the last merge, travis has been failing, it has to do with the SQL being offline or being unable to connect with the database, it'd be a nice idea to add exception handling to such cases, and maybe figure out how to make it work a... | 1.0 | Travis build is failing - **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

Since the last merge, travis has been failing, it has to do with the SQL being offline or being unable to connect with the database, it'd be a nice idea to add exception handling to such cases, and maybe figure... | non_process | travis build is failing describe the bug since the last merge travis has been failing it has to do with the sql being offline or being unable to connect with the database it d be a nice idea to add exception handling to such cases and maybe figure out how to make it work again | 0 |

8,107 | 11,300,637,598 | IssuesEvent | 2020-01-17 14:02:44 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | penetration peg and penetration hyphae | multi-species process | GO:0075201 formation of symbiont penetration hypha for entry into host

has the definition

The assembly by the symbiont of a threadlike, tubular structure, which may contain multiple nuclei and may or may not be divided internally by septa or cross-walls, for the purpose of penetration into its host organism. In t... | 1.0 | penetration peg and penetration hyphae - GO:0075201 formation of symbiont penetration hypha for entry into host

has the definition

The assembly by the symbiont of a threadlike, tubular structure, which may contain multiple nuclei and may or may not be divided internally by septa or cross-walls, for the purpose of... | process | penetration peg and penetration hyphae go formation of symbiont penetration hypha for entry into host has the definition the assembly by the symbiont of a threadlike tubular structure which may contain multiple nuclei and may or may not be divided internally by septa or cross walls for the purpose of penet... | 1 |

237,032 | 26,078,753,689 | IssuesEvent | 2022-12-25 01:05:09 | gabriel-milan/denoising-autoencoder | https://api.github.com/repos/gabriel-milan/denoising-autoencoder | opened | CVE-2022-40898 (Medium) detected in wheel-0.36.2-py2.py3-none-any.whl | security vulnerability | ## CVE-2022-40898 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>wheel-0.36.2-py2.py3-none-any.whl</b></p></summary>

<p>A built-package format for Python</p>

<p>Library home page: <... | True | CVE-2022-40898 (Medium) detected in wheel-0.36.2-py2.py3-none-any.whl - ## CVE-2022-40898 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>wheel-0.36.2-py2.py3-none-any.whl</b></p></su... | non_process | cve medium detected in wheel none any whl cve medium severity vulnerability vulnerable library wheel none any whl a built package format for python library home page a href path to dependency file training requirements txt path to vulnerable library trainin... | 0 |

7,642 | 10,737,536,146 | IssuesEvent | 2019-10-29 13:17:34 | dotnet/coreclr | https://api.github.com/repos/dotnet/coreclr | opened | Getting process information is few times slower on Linux | area-System.Diagnostics.Process os-linux tenet-performance | Getting process information is few times slower on Linux:

| Slower | Lin/Win | Win Median (ns) | Lin Median (ns) | Modality|

| -------------------------------------------------- | -------:| ---------------:| ---------------:| --------:|

| System.Diagnostics.Perf_Process.... | 1.0 | Getting process information is few times slower on Linux - Getting process information is few times slower on Linux:

| Slower | Lin/Win | Win Median (ns) | Lin Median (ns) | Modality|

| -------------------------------------------------- | -------:| ---------------:| -----... | process | getting process information is few times slower on linux getting process information is few times slower on linux slower lin win win median ns lin median ns modality ... | 1 |

275,001 | 23,887,646,194 | IssuesEvent | 2022-09-08 08:57:47 | opencurve/curve | https://api.github.com/repos/opencurve/curve | closed | The length of the file written by the mount point is not updated | bug need test | **Describe the bug (描述bug)**

The length of the file written by the mount point is not updated

**To Reproduce (复现方法)**

1、mount fs1 -> test1 fs1 -> test2

2、echo a > test1/a

3、cat test2/a -> a

4、echo b >> test1/a

sleep 5mins

5、cat test2/a -> a

6、umount test5 test6 / mount test5 test6

**Expected behavior (... | 1.0 | The length of the file written by the mount point is not updated - **Describe the bug (描述bug)**

The length of the file written by the mount point is not updated

**To Reproduce (复现方法)**

1、mount fs1 -> test1 fs1 -> test2

2、echo a > test1/a

3、cat test2/a -> a

4、echo b >> test1/a

sleep 5mins

5、cat test2/a -> a

... | non_process | the length of the file written by the mount point is not updated describe the bug 描述bug the length of the file written by the mount point is not updated to reproduce 复现方法 、mount 、echo a a 、cat a a 、echo b a sleep 、cat a a 、umount mount ... | 0 |

10,123 | 13,044,162,280 | IssuesEvent | 2020-07-29 03:47:31 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `StringTimeTimeDiff` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `StringTimeTimeDiff` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @sticnarf

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src... | 2.0 | UCP: Migrate scalar function `StringTimeTimeDiff` from TiDB -

## Description

Port the scalar function `StringTimeTimeDiff` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @sticnarf

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- http... | process | ucp migrate scalar function stringtimetimediff from tidb description port the scalar function stringtimetimediff from tidb to coprocessor score mentor s sticnarf recommended skills rust programming learning materials already implemented expressions ported from tidb ... | 1 |

41,766 | 5,396,446,890 | IssuesEvent | 2017-02-27 11:44:38 | RestComm/jain-slee.sip | https://api.github.com/repos/RestComm/jain-slee.sip | closed | Make the parameter of port listerning configurable | 2. Enhancement Testing | Now the parameter of SIP RA listerning port is hardcored now. Let's do it easier configurable.

| 1.0 | Make the parameter of port listerning configurable - Now the parameter of SIP RA listerning port is hardcored now. Let's do it easier configurable.

| non_process | make the parameter of port listerning configurable now the parameter of sip ra listerning port is hardcored now let s do it easier configurable | 0 |

612,948 | 19,060,158,625 | IssuesEvent | 2021-11-26 06:12:46 | oilshell/oil | https://api.github.com/repos/oilshell/oil | closed | shopt -s parse_paren could allow function call syntax | oil-language low-priority maybe-new-syntax | Right now this is OK in `bin/oil`:

```

f() { echo hi }

```

but it should be

```

proc f { echo hi }

```

And that frees up `f()` for a function call.

We can get rid of `parse_do` as well and add the "modeless" `call`:

So

```

call print(x + 42) # bin/osh

print(x + 42) # bin/oil wi... | 1.0 | shopt -s parse_paren could allow function call syntax - Right now this is OK in `bin/oil`:

```

f() { echo hi }

```

but it should be

```

proc f { echo hi }

```

And that frees up `f()` for a function call.

We can get rid of `parse_do` as well and add the "modeless" `call`:

So

```

call print(x + ... | non_process | shopt s parse paren could allow function call syntax right now this is ok in bin oil f echo hi but it should be proc f echo hi and that frees up f for a function call we can get rid of parse do as well and add the modeless call so call print x ... | 0 |

8,045 | 11,218,512,324 | IssuesEvent | 2020-01-07 11:41:09 | Starcounter/Home | https://api.github.com/repos/Starcounter/Home | closed | Database instances limit | hosting hosting-single-process question windows | Starcounter version: `<2.3.1.8415>`.

### Issue type

- [x] Bug

- [ ] Feature request

- [ ] Suggestion

- [x] Question

- [ ] Cannot reproduce

- [ ] Urgent

### Issue description

Is there a limit in how many Starcounter databases that can be run simultaneously on a server?

When I start the 16th database, ... | 1.0 | Database instances limit - Starcounter version: `<2.3.1.8415>`.

### Issue type

- [x] Bug

- [ ] Feature request

- [ ] Suggestion

- [x] Question

- [ ] Cannot reproduce

- [ ] Urgent

### Issue description

Is there a limit in how many Starcounter databases that can be run simultaneously on a server?

When ... | process | database instances limit starcounter version issue type bug feature request suggestion question cannot reproduce urgent issue description is there a limit in how many starcounter databases that can be run simultaneously on a server when i start the database ... | 1 |

22,070 | 30,593,969,596 | IssuesEvent | 2023-07-21 19:49:43 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | [MLv2] [Bug] Error when using `replace-clause` with joins | .metabase-lib .Team/QueryProcessor :hammer_and_wrench: | Probably related to [#32026](https://github.com/metabase/metabase/issues/32026)

Calling `replace-clause` to replace a join, can fail with the following error:

```js

Cannot read properties of null (reading 'lastIndexOf')

at goog.string.internal.startsWith (cljs_env.js:3208:1)

at Object.clojure$string$st... | 1.0 | [MLv2] [Bug] Error when using `replace-clause` with joins - Probably related to [#32026](https://github.com/metabase/metabase/issues/32026)

Calling `replace-clause` to replace a join, can fail with the following error:

```js

Cannot read properties of null (reading 'lastIndexOf')

at goog.string.internal.star... | process | error when using replace clause with joins probably related to calling replace clause to replace a join can fail with the following error js cannot read properties of null reading lastindexof at goog string internal startswith cljs env js at object clojure string starts wit... | 1 |

44 | 2,512,262,030 | IssuesEvent | 2015-01-14 15:18:27 | tinkerpop/tinkerpop3 | https://api.github.com/repos/tinkerpop/tinkerpop3 | opened | Consider going full Optional semantics on TraversalSideEffects | enhancement process | Right now if you do `TraversalSideEffects.get(key)` and the value doesn't exist, you get an exception. The reason we did this was that we don't want `null` and we don't want `Optional` because users will interact with the sideEffects via `Traverser`. For example:

```java

g.V().out().map{it.sideEffects(it.get().valu... | 1.0 | Consider going full Optional semantics on TraversalSideEffects - Right now if you do `TraversalSideEffects.get(key)` and the value doesn't exist, you get an exception. The reason we did this was that we don't want `null` and we don't want `Optional` because users will interact with the sideEffects via `Traverser`. For ... | process | consider going full optional semantics on traversalsideeffects right now if you do traversalsideeffects get key and the value doesn t exist you get an exception the reason we did this was that we don t want null and we don t want optional because users will interact with the sideeffects via traverser for ... | 1 |

470,692 | 13,542,866,220 | IssuesEvent | 2020-09-16 18:01:58 | GEOSX/GEOSX | https://api.github.com/repos/GEOSX/GEOSX | closed | VTK output error | priority: low type: bug | **Describe the bug**

I got this error running hi24l case using 64 cores on Quartz. This occurs at 1.6e6 seconds. The previous 15 vtk output writes seem fine.

Received signal 8: Floating point exception

Floating point exception:

** StackTrace of 24 frames **

Frame 1: LvArray::stackTraceHandler(int, bool)

Frame... | 1.0 | VTK output error - **Describe the bug**

I got this error running hi24l case using 64 cores on Quartz. This occurs at 1.6e6 seconds. The previous 15 vtk output writes seem fine.

Received signal 8: Floating point exception

Floating point exception:

** StackTrace of 24 frames **

Frame 1: LvArray::stackTraceHandle... | non_process | vtk output error describe the bug i got this error running case using cores on quartz this occurs at seconds the previous vtk output writes seem fine received signal floating point exception floating point exception stacktrace of frames frame lvarray stacktracehandler int bo... | 0 |

5,494 | 8,362,788,316 | IssuesEvent | 2018-10-03 17:51:40 | facebook/graphql | https://api.github.com/repos/facebook/graphql | closed | Enforce HTTPS for github.io pages? | 🐝 Process | At the moment HTTPS is not forced for http://graphql.github.io/ and http://facebook.github.io/graphql/ the GraphQL readme even links to the "unsecure" http://facebook.github.io/graphql/.

Would it not be cleaner if http://graphql.github.io/ and http://facebook.github.io/graphql/ were forced over [HTTPS](https://help.... | 1.0 | Enforce HTTPS for github.io pages? - At the moment HTTPS is not forced for http://graphql.github.io/ and http://facebook.github.io/graphql/ the GraphQL readme even links to the "unsecure" http://facebook.github.io/graphql/.

Would it not be cleaner if http://graphql.github.io/ and http://facebook.github.io/graphql/ w... | process | enforce https for github io pages at the moment https is not forced for and the graphql readme even links to the unsecure would it not be cleaner if and were forced over | 1 |

17,952 | 3,013,817,468 | IssuesEvent | 2015-07-29 11:26:59 | yawlfoundation/yawl | https://api.github.com/repos/yawlfoundation/yawl | closed | YAWL looses Workflow after Database Foreign Key Violation | auto-migrated Priority-Medium Type-Defect | ```

To make it short, the engine looses cases, every time on different points. The

case is still visible in the case management window, but is away from all other

views.

-------------------------------------

1) The database constraint violation:

-------------------------------------

ERROR - FEHLER: Aktualisieren oder... | 1.0 | YAWL looses Workflow after Database Foreign Key Violation - ```

To make it short, the engine looses cases, every time on different points. The

case is still visible in the case management window, but is away from all other

views.

-------------------------------------

1) The database constraint violation:

------------... | non_process | yawl looses workflow after database foreign key violation to make it short the engine looses cases every time on different points the case is still visible in the case management window but is away from all other views the database constraint violation ... | 0 |

14,673 | 17,790,743,536 | IssuesEvent | 2021-08-31 15:54:01 | dotnet/csharpstandard | https://api.github.com/repos/dotnet/csharpstandard | opened | Plan for integrating C# 7.x - C# 10.0 feature specifications into the ECMA standard | type: process | Representatives from the ECMA committee will attend an upcoming C# LDM (language design meeting) to create a plan and process to integrate the latest features in the shipped C# compiler into the ECMA standard text.

The purpose of the discussion is to develop a plan and processes the have the committee and the LDM co... | 1.0 | Plan for integrating C# 7.x - C# 10.0 feature specifications into the ECMA standard - Representatives from the ECMA committee will attend an upcoming C# LDM (language design meeting) to create a plan and process to integrate the latest features in the shipped C# compiler into the ECMA standard text.

The purpose of t... | process | plan for integrating c x c feature specifications into the ecma standard representatives from the ecma committee will attend an upcoming c ldm language design meeting to create a plan and process to integrate the latest features in the shipped c compiler into the ecma standard text the purpose of th... | 1 |

21,775 | 30,289,582,016 | IssuesEvent | 2023-07-09 05:19:55 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [exporter/prometheus] Expired metrics were not be deleted | bug Stale processor/spanmetrics connector/spanmetrics closed as inactive | ### Component(s)

exporter/prometheus

### What happened?

## Description

Hi, I am trying to use spanmetrics processor and prometheus exporter to transform spans to metrics. But I found some expired metrics seems to be appeared repeatedly when new metrics received. And also the memory usage continued to rise. So is th... | 1.0 | [exporter/prometheus] Expired metrics were not be deleted - ### Component(s)

exporter/prometheus

### What happened?

## Description

Hi, I am trying to use spanmetrics processor and prometheus exporter to transform spans to metrics. But I found some expired metrics seems to be appeared repeatedly when new metrics rec... | process | expired metrics were not be deleted component s exporter prometheus what happened description hi i am trying to use spanmetrics processor and prometheus exporter to transform spans to metrics but i found some expired metrics seems to be appeared repeatedly when new metrics received and also the ... | 1 |

14,556 | 17,685,709,392 | IssuesEvent | 2021-08-24 01:01:36 | googleapis/java-websecurityscanner | https://api.github.com/repos/googleapis/java-websecurityscanner | opened | Warning: a recent release failed | type: process | The following release PRs may have failed:

* #155

* #168

* #190

* #155

* #168

* #190 | 1.0 | Warning: a recent release failed - The following release PRs may have failed:

* #155

* #168

* #190

* #155

* #168

* #190 | process | warning a recent release failed the following release prs may have failed | 1 |

9,204 | 12,238,802,507 | IssuesEvent | 2020-05-04 20:27:54 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Do environment deployments respect the ##vso[build.updatebuildnumber] command? | Pri1 devops-cicd-process/tech devops/prod | If you use:

echo ##vso[build.updatebuildnumber]1.2.3.4

Either in the same or previous stage as a deployment job in some pipeline, it appears the environment still uses the 'original' build number of something like 20200501.1 rather than a custom one of something like 1.2.3.4 when showing the little "label" in the dep... | 1.0 | Do environment deployments respect the ##vso[build.updatebuildnumber] command? - If you use:

echo ##vso[build.updatebuildnumber]1.2.3.4

Either in the same or previous stage as a deployment job in some pipeline, it appears the environment still uses the 'original' build number of something like 20200501.1 rather than ... | process | do environment deployments respect the vso command if you use echo vso either in the same or previous stage as a deployment job in some pipeline it appears the environment still uses the original build number of something like rather than a custom one of something like when showing the... | 1 |

8,199 | 11,394,992,237 | IssuesEvent | 2020-01-30 10:28:43 | prisma/prisma2 | https://api.github.com/repos/prisma/prisma2 | opened | Make using DATABASE_URL as default DB connection URL a best practice | kind/docs process/candidate | - `DATABASE_URL` should be our new default connection URL env var name (→ works out of the box with Heroku)

- Docs + examples should point out that we strongly recommend to not check in the `.env` file to their VCS

- We need to do an extra good job of explaining how env vars are handled so developers can 100% underst... | 1.0 | Make using DATABASE_URL as default DB connection URL a best practice - - `DATABASE_URL` should be our new default connection URL env var name (→ works out of the box with Heroku)

- Docs + examples should point out that we strongly recommend to not check in the `.env` file to their VCS

- We need to do an extra good jo... | process | make using database url as default db connection url a best practice database url should be our new default connection url env var name → works out of the box with heroku docs examples should point out that we strongly recommend to not check in the env file to their vcs we need to do an extra good jo... | 1 |

9,694 | 12,699,496,748 | IssuesEvent | 2020-06-22 14:56:37 | prisma/vscode | https://api.github.com/repos/prisma/vscode | closed | Run integration tests on multiple platforms | kind/improvement process/candidate topic: automation topic: tests | Using the GH action matrix feature, we should run the integration tests os windows, Linux and Mac.

Currently, we only test Linux in the integration tests. | 1.0 | Run integration tests on multiple platforms - Using the GH action matrix feature, we should run the integration tests os windows, Linux and Mac.

Currently, we only test Linux in the integration tests. | process | run integration tests on multiple platforms using the gh action matrix feature we should run the integration tests os windows linux and mac currently we only test linux in the integration tests | 1 |