Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

449,014 | 31,822,420,068 | IssuesEvent | 2023-09-14 04:13:06 | CABLE-LSM/benchcab | https://api.github.com/repos/CABLE-LSM/benchcab | closed | Documentation errors | documentation | Some documentation errors that need to be fixed:

- [ ] In user_guide.md - 'Directory structure and files', `tasks` should be `runs/fluxsite/tasks`.

- [ ] In config_options.md - "NRI Land testing" should be "benchcab-evaluation"

- [ ] In internal.py - "NRI Land testing" should be "benchcab-evaluation" | 1.0 | Documentation errors - Some documentation errors that need to be fixed:

- [ ] In user_guide.md - 'Directory structure and files', `tasks` should be `runs/fluxsite/tasks`.

- [ ] In config_options.md - "NRI Land testing" should be "benchcab-evaluation"

- [ ] In internal.py - "NRI Land testing" should be "benchcab-eval... | non_process | documentation errors some documentation errors that need to be fixed in user guide md directory structure and files tasks should be runs fluxsite tasks in config options md nri land testing should be benchcab evaluation in internal py nri land testing should be benchcab evaluation... | 0 |

21,566 | 29,923,515,295 | IssuesEvent | 2023-06-22 02:00:08 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Thu, 22 Jun 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

### Self-Distilled Masked Auto-Encoders are Efficient Video Anomaly Detectors

- **Authors:** Nicolae-Catalin Ristea, Florinel-Alin Croitoru, Radu Tudor Ionescu, Marius Popescu, Fahad Shahbaz Khan, Mubarak Shah

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Machine Learning (cs.LG... | 2.0 | New submissions for Thu, 22 Jun 23 - ## Keyword: events

### Self-Distilled Masked Auto-Encoders are Efficient Video Anomaly Detectors

- **Authors:** Nicolae-Catalin Ristea, Florinel-Alin Croitoru, Radu Tudor Ionescu, Marius Popescu, Fahad Shahbaz Khan, Mubarak Shah

- **Subjects:** Computer Vision and Pattern Recogni... | process | new submissions for thu jun keyword events self distilled masked auto encoders are efficient video anomaly detectors authors nicolae catalin ristea florinel alin croitoru radu tudor ionescu marius popescu fahad shahbaz khan mubarak shah subjects computer vision and pattern recogniti... | 1 |

20,866 | 27,645,774,705 | IssuesEvent | 2023-03-10 22:50:24 | cse442-at-ub/project_s23-iweatherify | https://api.github.com/repos/cse442-at-ub/project_s23-iweatherify | closed | Create tables for database | Processing Task Sprint 2 | **Task Tests**

*Test 1*

1) Go to https://www-student.cse.buffalo.edu/tools/db/phpmyadmin

2) For the username, type in vwong27

3) For the password, type in 50342607

3) Ensure that the server name host is oceanus

4) Click "Go"

5) On the left hand panel click on "cse442_2023_spring_team_a_db"

6) Verify that there exists ... | 1.0 | Create tables for database - **Task Tests**

*Test 1*

1) Go to https://www-student.cse.buffalo.edu/tools/db/phpmyadmin

2) For the username, type in vwong27

3) For the password, type in 50342607

3) Ensure that the server name host is oceanus

4) Click "Go"

5) On the left hand panel click on "cse442_2023_spring_team_a_db"... | process | create tables for database task tests test go to for the username type in for the password type in ensure that the server name host is oceanus click go on the left hand panel click on spring team a db verify that there exists at least one table in the database | 1 |

34,564 | 30,180,770,963 | IssuesEvent | 2023-07-04 08:44:46 | Chaste/Chaste | https://api.github.com/repos/Chaste/Chaste | closed | #3053 - Improve performance of Chaste webserver | component: infrastructure priority: high | This issue continues the discussion for legacy trac ticket 3053:

---

[jmpf](https://github.com/orgs/Chaste/people/jmpf) created the following ticket on 2020-10-22 at 11:40:57, it is owned by [jmpf](https://github.com/orgs/Chaste/people/jmpf)

Improve performance of Chaste web-server.

Most nights it's running o... | 1.0 | #3053 - Improve performance of Chaste webserver - This issue continues the discussion for legacy trac ticket 3053:

---

[jmpf](https://github.com/orgs/Chaste/people/jmpf) created the following ticket on 2020-10-22 at 11:40:57, it is owned by [jmpf](https://github.com/orgs/Chaste/people/jmpf)

Improve performance o... | non_process | improve performance of chaste webserver this issue continues the discussion for legacy trac ticket created the following ticket on at it is owned by improve performance of chaste web server most nights it s running out of memory and swapping this is disruptive to people w... | 0 |

21,364 | 29,194,080,011 | IssuesEvent | 2023-05-20 00:31:44 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Hibrido / Caieiras, São Paulo, Brazil] Front-end Developer (Júnior) (Híbrido) na Coodesh | SALVADOR FRONT-END PJ BANCO DE DADOS JAVASCRIPT FULL-STACK HTML SQL ANGULAR REACT VUE REQUISITOS PROCESSOS GITHUB UMA AUTOMAÇÃO DE PROCESSOS HIBRIDO ALOCADO Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/fullstack-developer-netangular-hibrid... | 2.0 | [Hibrido / Caieiras, São Paulo, Brazil] Front-end Developer (Júnior) (Híbrido) na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para u... | process | front end developer júnior híbrido na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personalizado ... | 1 |

2,906 | 2,533,941,786 | IssuesEvent | 2015-01-24 11:52:31 | zendframework/modules.zendframework.com | https://api.github.com/repos/zendframework/modules.zendframework.com | closed | Performance Improvement | high priority | Currently parts of the page are facing huge loading times due to several repeated requests to the github api.

Locate performance bottlenecks and implement caching or over improvements. | 1.0 | Performance Improvement - Currently parts of the page are facing huge loading times due to several repeated requests to the github api.

Locate performance bottlenecks and implement caching or over improvements. | non_process | performance improvement currently parts of the page are facing huge loading times due to several repeated requests to the github api locate performance bottlenecks and implement caching or over improvements | 0 |

17,074 | 22,575,021,268 | IssuesEvent | 2022-06-28 06:25:03 | weiquany/KTVAnywhere | https://api.github.com/repos/weiquany/KTVAnywhere | closed | Lyrics retrieval | feature: song preprocessing | Automatically retrieve lyrics file for a song online if possible.

## User story

As a user,

* I want to have lyrics for KTV, and I want them to be provided for me.

### Acceptance criteria

The application should:

- [x] Be able to retrieve lyrics if they can be found

- [x] Allow users to choose to let the applic... | 1.0 | Lyrics retrieval - Automatically retrieve lyrics file for a song online if possible.

## User story

As a user,

* I want to have lyrics for KTV, and I want them to be provided for me.

### Acceptance criteria

The application should:

- [x] Be able to retrieve lyrics if they can be found

- [x] Allow users to choos... | process | lyrics retrieval automatically retrieve lyrics file for a song online if possible user story as a user i want to have lyrics for ktv and i want them to be provided for me acceptance criteria the application should be able to retrieve lyrics if they can be found allow users to choose to... | 1 |

21,037 | 27,979,263,476 | IssuesEvent | 2023-03-26 00:20:10 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | Enhanced dehazing algorithm (with new code) | feature: enhancement wip scope: image processing no-issue-activity | First I'll pre-apologize for not being GIT trained enough to do this myself.

I have a little write up of an enhanced version of the haze removal tool (attached).

I simply overwrote the previous src/iop/hazeremoval.c with my changes, which is attached. But I think you'd want a whole new module name if you think i... | 1.0 | Enhanced dehazing algorithm (with new code) - First I'll pre-apologize for not being GIT trained enough to do this myself.

I have a little write up of an enhanced version of the haze removal tool (attached).

I simply overwrote the previous src/iop/hazeremoval.c with my changes, which is attached. But I think you... | process | enhanced dehazing algorithm with new code first i ll pre apologize for not being git trained enough to do this myself i have a little write up of an enhanced version of the haze removal tool attached i simply overwrote the previous src iop hazeremoval c with my changes which is attached but i think you... | 1 |

201,092 | 7,022,393,617 | IssuesEvent | 2017-12-22 10:21:47 | Mandiklopper/People-Connect | https://api.github.com/repos/Mandiklopper/People-Connect | closed | Leave Balance Request rejection | High Priority |

Days "Taken" should be reversed /returned back to the staff and the leave balance records should be updated once request is rejected | 1.0 | Leave Balance Request rejection -

Days "Taken" should be reversed /returned back to the staff and the leave balance records should be updated once request is rejected | non_process | leave balance request rejection days taken should be reversed returned back to the staff and the leave balance records should be updated once request is rejected | 0 |

95,528 | 27,533,620,434 | IssuesEvent | 2023-03-07 00:46:37 | CasparCG/server | https://api.github.com/repos/CasparCG/server | closed | Make build-scripts checkout themselves when a build is triggered | type/enhancement build | ### Expected Behaviour

When a build is triggered automatically, the build-server should checkout the build-scripts itself from a repository. By going this approach, it would be enough with a pull request against the build-scripts repository to update the scripts when needed.

### Current Behaviour

When changes are ... | 1.0 | Make build-scripts checkout themselves when a build is triggered - ### Expected Behaviour

When a build is triggered automatically, the build-server should checkout the build-scripts itself from a repository. By going this approach, it would be enough with a pull request against the build-scripts repository to update t... | non_process | make build scripts checkout themselves when a build is triggered expected behaviour when a build is triggered automatically the build server should checkout the build scripts itself from a repository by going this approach it would be enough with a pull request against the build scripts repository to update t... | 0 |

17,707 | 23,596,256,774 | IssuesEvent | 2022-08-23 19:33:01 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [processor/transform] Add ability to concat strings | good first issue priority:p2 processor/transform | **Is your feature request related to a problem? Please describe.**

There are situations where a user might need to combine existing fields to be used as an argument in an Invocation. [Here is an example.](https://cloud-native.slack.com/archives/C01N6P7KR6W/p1657799193277499). At the moment the TQL has no build-in ca... | 1.0 | [processor/transform] Add ability to concat strings - **Is your feature request related to a problem? Please describe.**

There are situations where a user might need to combine existing fields to be used as an argument in an Invocation. [Here is an example.](https://cloud-native.slack.com/archives/C01N6P7KR6W/p165779... | process | add ability to concat strings is your feature request related to a problem please describe there are situations where a user might need to combine existing fields to be used as an argument in an invocation at the moment the tql has no build in capability to do this and the transform processor has not ... | 1 |

511,830 | 14,882,712,438 | IssuesEvent | 2021-01-20 12:18:18 | Hypothesize/standard.js | https://api.github.com/repos/Hypothesize/standard.js | closed | Reimplement the outlier filter | high priority | Currently the data table filter function doesn't filter values when the operator is `outlier`.

It should be reimplemented. | 1.0 | Reimplement the outlier filter - Currently the data table filter function doesn't filter values when the operator is `outlier`.

It should be reimplemented. | non_process | reimplement the outlier filter currently the data table filter function doesn t filter values when the operator is outlier it should be reimplemented | 0 |

211,409 | 23,818,045,127 | IssuesEvent | 2022-09-05 08:41:24 | sast-automation-dev/BenchmarkJava-333 | https://api.github.com/repos/sast-automation-dev/BenchmarkJava-333 | opened | bootstrap-3.3.4.js: 6 vulnerabilities (highest severity is: 6.1) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-3.3.4.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile first projects on the web.</p>

<p>Library home page: <a... | True | bootstrap-3.3.4.js: 6 vulnerabilities (highest severity is: 6.1) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-3.3.4.js</b></p></summary>

<p>The most popular front-end framework for developing respons... | non_process | bootstrap js vulnerabilities highest severity is vulnerable library bootstrap js the most popular front end framework for developing responsive mobile first projects on the web library home page a href path to vulnerable library scorecard content js bootstrap js fou... | 0 |

7,769 | 10,889,738,104 | IssuesEvent | 2019-11-18 18:54:07 | ORNL-AMO/AMO-Tools-Desktop | https://api.github.com/repos/ORNL-AMO/AMO-Tools-Desktop | closed | O2 enrichment metric bug | Calculator Process Heating bug | Using the example:

Imperial -

Metric -

Not sure what the screwy unit conversion is but some... | 1.0 | O2 enrichment metric bug - Using the example:

Imperial -

Metric -

Not sure what the screwy ... | process | enrichment metric bug using the example imperial metric not sure what the screwy unit conversion is but something is causing fuel consumption to be way to high yet available heat and efficiency are okay | 1 |

18,481 | 24,550,742,761 | IssuesEvent | 2022-10-12 12:25:29 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [iOS] Unable to enroll to the study | Bug Blocker P0 iOS Android Process: Fixed Process: Tested dev | Participants are not able to enroll to the studies > Getting continuous loading after the consent flow

Note: Issue observed in the latest build 3.0(49)

App ID: GCPMOB001

| 2.0 | [iOS] Unable to enroll to the study - Participants are not able to enroll to the studies > Getting continuous loading after the consent flow

Note: Issue observed in the latest build 3.0(49)

App ID: GCPMOB001

| process | unable to enroll to the study participants are not able to enroll to the studies getting continuous loading after the consent flow note issue observed in the latest build app id | 1 |

614,846 | 19,190,796,829 | IssuesEvent | 2021-12-05 23:35:50 | ondryaso/kachna-online | https://api.github.com/repos/ondryaso/kachna-online | opened | Accept externally provided KIS refresh tokens to log in | enhancement frontend low-priority | ### Proposal

Add a frontend route that accepts a KIS refresh token and uses it to log in. Use this _frontend endpoint_ to enable users to open the app directly from KIS Administration or Operator without having to log in again. | 1.0 | Accept externally provided KIS refresh tokens to log in - ### Proposal

Add a frontend route that accepts a KIS refresh token and uses it to log in. Use this _frontend endpoint_ to enable users to open the app directly from KIS Administration or Operator without having to log in again. | non_process | accept externally provided kis refresh tokens to log in proposal add a frontend route that accepts a kis refresh token and uses it to log in use this frontend endpoint to enable users to open the app directly from kis administration or operator without having to log in again | 0 |

47,469 | 7,328,358,002 | IssuesEvent | 2018-03-04 19:58:15 | liballeg/allegro5 | https://api.github.com/repos/liballeg/allegro5 | opened | Display options inconsistently set on platforms | Core Library Documentation | This affects pretty much every platform, and unfortunately likely needs to be fixed in the platform specific code. The idea is that you should get meaningful values for `al_get_display_option`, even if that option does nothing for that platform.

See https://github.com/liballeg/allegro5/issues/887 for an example issu... | 1.0 | Display options inconsistently set on platforms - This affects pretty much every platform, and unfortunately likely needs to be fixed in the platform specific code. The idea is that you should get meaningful values for `al_get_display_option`, even if that option does nothing for that platform.

See https://github.co... | non_process | display options inconsistently set on platforms this affects pretty much every platform and unfortunately likely needs to be fixed in the platform specific code the idea is that you should get meaningful values for al get display option even if that option does nothing for that platform see for an example ... | 0 |

288,052 | 31,856,946,666 | IssuesEvent | 2023-09-15 08:10:30 | nidhi7598/linux-4.19.72_CVE-2022-3564 | https://api.github.com/repos/nidhi7598/linux-4.19.72_CVE-2022-3564 | closed | CVE-2020-29370 (High) detected in linuxlinux-4.19.294 - autoclosed | Mend: dependency security vulnerability | ## CVE-2020-29370 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.294</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.k... | True | CVE-2020-29370 (High) detected in linuxlinux-4.19.294 - autoclosed - ## CVE-2020-29370 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.294</b></p></summary>

<p>

<p>The ... | non_process | cve high detected in linuxlinux autoclosed cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch main vulnerable source files mm sl... | 0 |

21,411 | 29,351,206,386 | IssuesEvent | 2023-05-27 00:34:48 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Remoto] C#/Xamarin Developer (Sênior) na Coodesh | SALVADOR BACK-END PJ XAMARIN SCRUM SENIOR AGILE STARTUP MOBILE REQUISITOS IOS REMOTO ANDROID PROCESSOS GITHUB INGLÊS UMA C Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/desenvolvedor-cxamarin-senior-1957503... | 1.0 | [Remoto] C#/Xamarin Developer (Sênior) na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coo... | process | c xamarin developer sênior na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personalizado de candid... | 1 |

24,710 | 5,098,043,512 | IssuesEvent | 2017-01-03 23:41:54 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | hack/verify-gendocs.sh is slow | kind/documentation priority/backlog team/ux (deprecated - do not use) | The slowest part of every commit is waiting for hooks. I added some printouts to find the culprit - see $SUBJECT. Can we not run this test on every commit and rebase?

If we switched to using pre-commit instead of prepare-commit-msg we could force-override it (--no-verify) if needed.

@lavalamp

| 1.0 | hack/verify-gendocs.sh is slow - The slowest part of every commit is waiting for hooks. I added some printouts to find the culprit - see $SUBJECT. Can we not run this test on every commit and rebase?

If we switched to using pre-commit instead of prepare-commit-msg we could force-override it (--no-verify) if needed.

... | non_process | hack verify gendocs sh is slow the slowest part of every commit is waiting for hooks i added some printouts to find the culprit see subject can we not run this test on every commit and rebase if we switched to using pre commit instead of prepare commit msg we could force override it no verify if needed ... | 0 |

407,702 | 11,936,030,788 | IssuesEvent | 2020-04-02 09:36:42 | project-koku/koku | https://api.github.com/repos/project-koku/koku | closed | Sources: editing Azure billing_source can result in failure | bug priority - medium | **Describe the bug**

Updating a single billing-source field (resource-group or storage-account) results in validation failure. The error message says that the field which was not updated is missing.

**To Reproduce**

Steps to reproduce the behavior:

1. Create Azure source

2. Edit the source. Change resource-group... | 1.0 | Sources: editing Azure billing_source can result in failure - **Describe the bug**

Updating a single billing-source field (resource-group or storage-account) results in validation failure. The error message says that the field which was not updated is missing.

**To Reproduce**

Steps to reproduce the behavior:

1. ... | non_process | sources editing azure billing source can result in failure describe the bug updating a single billing source field resource group or storage account results in validation failure the error message says that the field which was not updated is missing to reproduce steps to reproduce the behavior ... | 0 |

7,010 | 10,151,609,760 | IssuesEvent | 2019-08-05 20:47:50 | jtablesaw/tablesaw | https://api.github.com/repos/jtablesaw/tablesaw | closed | Code format standards | process | Having thought more on the google format option, I would propose this:

- We switch over to using the google format tool, including going back to 2 spaces. Staying with 4 spaces would mean people having to configure the tool in various ways depending on whether or not they're using a plugin.

- We publish it as the s... | 1.0 | Code format standards - Having thought more on the google format option, I would propose this:

- We switch over to using the google format tool, including going back to 2 spaces. Staying with 4 spaces would mean people having to configure the tool in various ways depending on whether or not they're using a plugin.

... | process | code format standards having thought more on the google format option i would propose this we switch over to using the google format tool including going back to spaces staying with spaces would mean people having to configure the tool in various ways depending on whether or not they re using a plugin ... | 1 |

11,736 | 13,808,060,476 | IssuesEvent | 2020-10-12 01:03:32 | storybookjs/storybook | https://api.github.com/repos/storybookjs/storybook | closed | "babel-plugin-react-docgen" breaks Storybook v6 builds when using the Flow spread operator | bug compatibility with other tools has workaround inactive | **Observed behavior**

Storybook does not build successfully when it parses components using the Flow spread operator.

**Expected behavior**

I expect Storybook to build successfully.

**Details**

After upgrading to Storybook v6 and installing the Create React App preset, Storybook fails to build due to an ... | True | "babel-plugin-react-docgen" breaks Storybook v6 builds when using the Flow spread operator - **Observed behavior**

Storybook does not build successfully when it parses components using the Flow spread operator.

**Expected behavior**

I expect Storybook to build successfully.

**Details**

After upgrading to... | non_process | babel plugin react docgen breaks storybook builds when using the flow spread operator observed behavior storybook does not build successfully when it parses components using the flow spread operator expected behavior i expect storybook to build successfully details after upgrading to ... | 0 |

155,604 | 5,957,542,699 | IssuesEvent | 2017-05-29 02:53:48 | wordpress-mobile/AztecEditor-Android | https://api.github.com/repos/wordpress-mobile/AztecEditor-Android | closed | Image-loading scrolls the editor to the cursor | bug high priority | When an image is being uploaded and a user scrolls away from the cursor, the editor automatically scrolls back to the cursor on every image-loading progress bar update. | 1.0 | Image-loading scrolls the editor to the cursor - When an image is being uploaded and a user scrolls away from the cursor, the editor automatically scrolls back to the cursor on every image-loading progress bar update. | non_process | image loading scrolls the editor to the cursor when an image is being uploaded and a user scrolls away from the cursor the editor automatically scrolls back to the cursor on every image loading progress bar update | 0 |

211,675 | 23,835,724,781 | IssuesEvent | 2022-09-06 05:34:46 | ioana-nicolae/keycloak | https://api.github.com/repos/ioana-nicolae/keycloak | opened | CVE-2022-38749 (Medium) detected in multiple libraries | security vulnerability | ## CVE-2022-38749 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>snakeyaml-1.27.jar</b>, <b>snakeyaml-1.19.jar</b>, <b>snakeyaml-1.17.jar</b>, <b>snakeyaml-1.14.jar</b></p></summar... | True | CVE-2022-38749 (Medium) detected in multiple libraries - ## CVE-2022-38749 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>snakeyaml-1.27.jar</b>, <b>snakeyaml-1.19.jar</b>, <b>snak... | non_process | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries snakeyaml jar snakeyaml jar snakeyaml jar snakeyaml jar snakeyaml jar yaml parser and emitter for java library home page a href path to dependen... | 0 |

42,878 | 11,349,483,947 | IssuesEvent | 2020-01-24 05:09:06 | idaholab/raven | https://api.github.com/repos/idaholab/raven | closed | If illegal XML named variables are present, the code crashes | defect devel priority_critical | --------

Issue Description

--------

##### What did you expect to see happen?

Ability to load CSV files with invalid XML variables (e.g. containing "/", etc)

##### What did you see instead?

If a variable has an invalid XML character (e.g. "/", "$", etc.), the XML utilis methods (used to write out the metadata) i... | 1.0 | If illegal XML named variables are present, the code crashes - --------

Issue Description

--------

##### What did you expect to see happen?

Ability to load CSV files with invalid XML variables (e.g. containing "/", etc)

##### What did you see instead?

If a variable has an invalid XML character (e.g. "/", "$", e... | non_process | if illegal xml named variables are present the code crashes issue description what did you expect to see happen ability to load csv files with invalid xml variables e g containing etc what did you see instead if a variable has an invalid xml character e g e... | 0 |

349,744 | 10,472,662,125 | IssuesEvent | 2019-09-23 10:44:25 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | opened | [master-preview] Hammer filled type glitch | Low Priority QA | Steps:

1) Take material

2) Take iron hammer

3) Take point fill and some form

4) Build

5) Press ctrl

6) Build

It should still build with point, but after Ctrl was pressed fill type became rectangle without any changes in the UI (Ctrl is for Crouch in the Controls).

https://drive.google.com/file/d/1HFtc-phduv... | 1.0 | [master-preview] Hammer filled type glitch - Steps:

1) Take material

2) Take iron hammer

3) Take point fill and some form

4) Build

5) Press ctrl

6) Build

It should still build with point, but after Ctrl was pressed fill type became rectangle without any changes in the UI (Ctrl is for Crouch in the Controls).

... | non_process | hammer filled type glitch steps take material take iron hammer take point fill and some form build press ctrl build it should still build with point but after ctrl was pressed fill type became rectangle without any changes in the ui ctrl is for crouch in the controls | 0 |

326,180 | 9,948,440,899 | IssuesEvent | 2019-07-04 08:56:18 | kubermatic/machine-controller | https://api.github.com/repos/kubermatic/machine-controller | closed | Add support for OpenStack TENANT_ID | priority/important-soon team/lifecycle | In some environments the tenant name is not unique and will lead to errors.

To avoid this, the tenant ID must be used.

**Acceptance criteria**:

- [ ] The machine-controller can create instances when using the tenant id instead of the name | 1.0 | Add support for OpenStack TENANT_ID - In some environments the tenant name is not unique and will lead to errors.

To avoid this, the tenant ID must be used.

**Acceptance criteria**:

- [ ] The machine-controller can create instances when using the tenant id instead of the name | non_process | add support for openstack tenant id in some environments the tenant name is not unique and will lead to errors to avoid this the tenant id must be used acceptance criteria the machine controller can create instances when using the tenant id instead of the name | 0 |

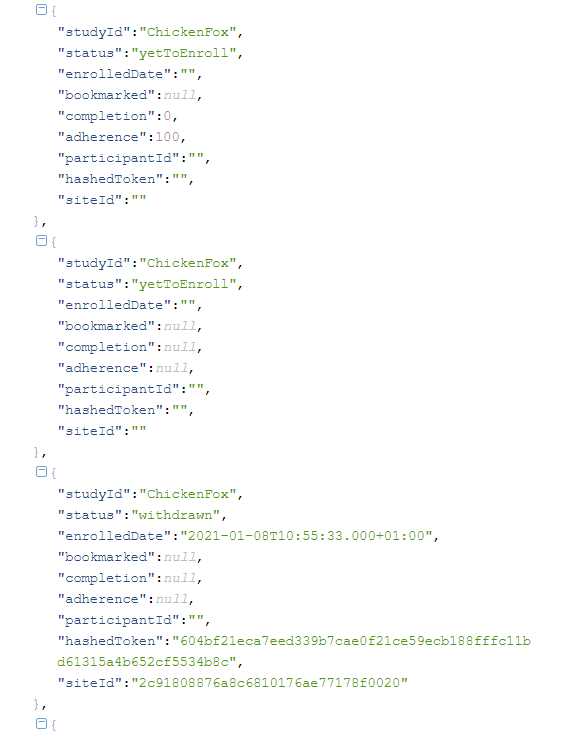

12,611 | 15,013,584,895 | IssuesEvent | 2021-02-01 04:49:29 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | Multiple record for same study in studyState endpoint | Bug P1 Process: Fixed | The studyState needs to send only the latest state of the study in the studySate endpoint. The front end application has no way to determine which among those should be used if multiple records are coming

... | 1.0 | Multiple record for same study in studyState endpoint - The studyState needs to send only the latest state of the study in the studySate endpoint. The front end application has no way to determine which among those should be used if multiple records are coming

3. For te same study fails the eligibility test

4. Click on Apps tab >Navigate to App participant registry and observe the number of count

AR : Number of Studies count is reducing

ER : Count ... | 2.0 | App participant registry > Number of count is reducing in Studies column - Steps

1. Join a open study

2. Withdrawn from the study ( Observe number of studies count in App participant registry)

3. For te same study fails the eligibility test

4. Click on Apps tab >Navigate to App participant registry and observe t... | process | app participant registry number of count is reducing in studies column steps join a open study withdrawn from the study observe number of studies count in app participant registry for te same study fails the eligibility test click on apps tab navigate to app participant registry and observe t... | 1 |

11,539 | 14,427,290,493 | IssuesEvent | 2020-12-06 03:05:40 | cggos/cggos.github.io | https://api.github.com/repos/cggos/cggos.github.io | opened | 图像分析之高斯滤波 - Gavin Gao's Blog | Gitalk image-process-gauss-filter | https://cggos.github.io/image-process-gauss-filter.html

[TOC]高斯函数一维高斯函数\[f(x) = A \cdot e^{-\frac{(x-\mu)^2}{2{\sigma}^2}}\]多维高斯函数\[f_{X}\left(x_{1}, x_{2}, \cdots, x_{k}\right) =A \cdot \exp \left(-\frac{1}{2}(X-... | 1.0 | 图像分析之高斯滤波 - Gavin Gao's Blog - https://cggos.github.io/image-process-gauss-filter.html

[TOC]高斯函数一维高斯函数\[f(x) = A \cdot e^{-\frac{(x-\mu)^2}{2{\sigma}^2}}\]多维高斯函数\[f_{X}\left(x_{1}, x_{2}, \cdots, x_{k}\right) =A \cdot \exp \left(-\frac{1}{2}(X-... | process | 图像分析之高斯滤波 gavin gao s blog 高斯函数一维高斯函数 多维高斯函数 f x left x x cdots x k right a cdot exp left frac x | 1 |

63,217 | 8,665,603,204 | IssuesEvent | 2018-11-29 00:03:51 | fga-eps-mds/2018.2-Roles | https://api.github.com/repos/fga-eps-mds/2018.2-Roles | opened | Atualizar documentação de metodologia | Documentation Eps Mds | **Descrição**

Deverá ser atualizado o documento de plano metodológico.

***Requisitos:***

Deverão ser revisados os erros ortográficos.

| 1.0 | Atualizar documentação de metodologia - **Descrição**

Deverá ser atualizado o documento de plano metodológico.

***Requisitos:***

Deverão ser revisados os erros ortográficos.

| non_process | atualizar documentação de metodologia descrição deverá ser atualizado o documento de plano metodológico requisitos deverão ser revisados os erros ortográficos | 0 |

20,306 | 26,947,013,727 | IssuesEvent | 2023-02-08 08:55:44 | prisma/prisma-engines | https://api.github.com/repos/prisma/prisma-engines | opened | Fix Postgres introspection of partition tables false positives on inherited tables | process/candidate kind/improvement tech/engines/introspection engine team/schema | I kept the PG10+ SQL as if we were also using it for CockroachDb, which no longer is the case. I can now verify if the table is a partition table correctly, rather than rely on whether it uses inheritance.

See https://github.com/prisma/prisma-engines/blob/main/libs/sql-schema-describer/src/postgres/tables_query.sql ... | 1.0 | Fix Postgres introspection of partition tables false positives on inherited tables - I kept the PG10+ SQL as if we were also using it for CockroachDb, which no longer is the case. I can now verify if the table is a partition table correctly, rather than rely on whether it uses inheritance.

See https://github.com/pri... | process | fix postgres introspection of partition tables false positives on inherited tables i kept the sql as if we were also using it for cockroachdb which no longer is the case i can now verify if the table is a partition table correctly rather than rely on whether it uses inheritance see specifically i think ... | 1 |

9,674 | 6,412,310,255 | IssuesEvent | 2017-08-08 02:38:40 | FReBOmusic/FReBO | https://api.github.com/repos/FReBOmusic/FReBO | opened | Borrowing Listitem | Usability | In the event that the user navigates to the Borrowing Listview, on the Inventory Screen.

**Expected Response**: The Borrowing Listview should be populated with records of the active borrowing transactions made by the user. | True | Borrowing Listitem - In the event that the user navigates to the Borrowing Listview, on the Inventory Screen.

**Expected Response**: The Borrowing Listview should be populated with records of the active borrowing transactions made by the user. | non_process | borrowing listitem in the event that the user navigates to the borrowing listview on the inventory screen expected response the borrowing listview should be populated with records of the active borrowing transactions made by the user | 0 |

176,009 | 14,547,987,763 | IssuesEvent | 2020-12-16 00:10:23 | pi-top/pi-top-Python-SDK | https://api.github.com/repos/pi-top/pi-top-Python-SDK | closed | Reorganize example sections | documentation good first issue | - [x] Rename sections: use recipes instead of examples

- [x] Move component basic examples to the respective class documentation

- [ ] Add low-hanging fruit examples where they are obviously not there | 1.0 | Reorganize example sections - - [x] Rename sections: use recipes instead of examples

- [x] Move component basic examples to the respective class documentation

- [ ] Add low-hanging fruit examples where they are obviously not there | non_process | reorganize example sections rename sections use recipes instead of examples move component basic examples to the respective class documentation add low hanging fruit examples where they are obviously not there | 0 |

69,259 | 17,613,102,378 | IssuesEvent | 2021-08-18 06:00:38 | ballerina-platform/ballerina-release | https://api.github.com/repos/ballerina-platform/ballerina-release | opened | Create a GitHub pages website to show dependencies graph | Type/Task Team/BuildPipeline | **Description:**

Currently dependency graph is represented in a dot file. We need to create a GitHub pages website to show dependencies graph | 1.0 | Create a GitHub pages website to show dependencies graph - **Description:**

Currently dependency graph is represented in a dot file. We need to create a GitHub pages website to show dependencies graph | non_process | create a github pages website to show dependencies graph description currently dependency graph is represented in a dot file we need to create a github pages website to show dependencies graph | 0 |

19,772 | 26,146,482,188 | IssuesEvent | 2022-12-30 06:08:58 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | [Mirror] jdk 19 archives | P2 type: process team-OSS mirror request | ### Please list the URLs of the archives you'd like to mirror:

https://cdn.azul.com/zulu/bin/zulu19.30.11-ca-jdk19.0.1-linux_aarch64.tar.gz

https://cdn.azul.com/zulu/bin/zulu19.30.11-ca-jdk19.0.1-linux_x64.tar.gz

https://cdn.azul.com/zulu/bin/zulu19.30.11-ca-jdk19.0.1-win_x64.zip

https://cdn.azul.com/zulu/bin/zulu... | 1.0 | [Mirror] jdk 19 archives - ### Please list the URLs of the archives you'd like to mirror:

https://cdn.azul.com/zulu/bin/zulu19.30.11-ca-jdk19.0.1-linux_aarch64.tar.gz

https://cdn.azul.com/zulu/bin/zulu19.30.11-ca-jdk19.0.1-linux_x64.tar.gz

https://cdn.azul.com/zulu/bin/zulu19.30.11-ca-jdk19.0.1-win_x64.zip

https:/... | process | jdk archives please list the urls of the archives you d like to mirror | 1 |

234,351 | 25,842,054,964 | IssuesEvent | 2022-12-13 01:39:36 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | System.Security.Cryptography.Xml 7.0.0 package doesn't work on .NET Framework | area-System.Security untriaged | ### Description

When compiling against System.Security.Cryptography.Xml 7.0.0 for netstandard2.0 and running on .NET Framework, you get a TypeLoadException. This is because the net462 version of the assembly in the package is missing the type fowarding attributes to the .NET Framework assembly System.Sercurity.dll. It... | True | System.Security.Cryptography.Xml 7.0.0 package doesn't work on .NET Framework - ### Description

When compiling against System.Security.Cryptography.Xml 7.0.0 for netstandard2.0 and running on .NET Framework, you get a TypeLoadException. This is because the net462 version of the assembly in the package is missing the t... | non_process | system security cryptography xml package doesn t work on net framework description when compiling against system security cryptography xml for and running on net framework you get a typeloadexception this is because the version of the assembly in the package is missing the type fowarding at... | 0 |

41,423 | 5,356,124,311 | IssuesEvent | 2017-02-20 14:53:35 | mozilla/fxa-content-server | https://api.github.com/repos/mozilla/fxa-content-server | closed | test - sign in with incorrect email case before normalization fix, on second attempt canonical form is used | tests waffle:review | The following test is unstable on CI:

```

× firefox on any platform - sign_in cached - sign in with incorrect email case before normalization fix, on second attempt canonical form is used (3.373s)

```

https://circleci.com/gh/mozilla/fxa-content-server/6113 | 1.0 | test - sign in with incorrect email case before normalization fix, on second attempt canonical form is used - The following test is unstable on CI:

```

× firefox on any platform - sign_in cached - sign in with incorrect email case before normalization fix, on second attempt canonical form is used (3.373s)

```

ht... | non_process | test sign in with incorrect email case before normalization fix on second attempt canonical form is used the following test is unstable on ci × firefox on any platform sign in cached sign in with incorrect email case before normalization fix on second attempt canonical form is used | 0 |

13,287 | 15,764,977,166 | IssuesEvent | 2021-03-31 13:44:21 | dhh1128/ctwg | https://api.github.com/repos/dhh1128/ctwg | opened | [PROCESS] Hyperlink transformation | process | ## Need

The current [hyperlinks-document](https://github.com/dhh1128/ctwg/blob/master/docs/hyperlinks.md) not only tries to inventory the various kinds of hyperlinks that need to be considered, but also how they change in the various transformations that we already have defined, and of which I expect others will follo... | 1.0 | [PROCESS] Hyperlink transformation - ## Need

The current [hyperlinks-document](https://github.com/dhh1128/ctwg/blob/master/docs/hyperlinks.md) not only tries to inventory the various kinds of hyperlinks that need to be considered, but also how they change in the various transformations that we already have defined, an... | process | hyperlink transformation need the current not only tries to inventory the various kinds of hyperlinks that need to be considered but also how they change in the various transformations that we already have defined and of which i expect others will follow proposed solution define the set of hyperli... | 1 |

689 | 3,175,950,934 | IssuesEvent | 2015-09-24 04:54:30 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | DITA-OT 2.0 - Build Error (Windows) - Illegal character - keyref target | bug P2 platform-dependent preprocess/keyref | Attempting to produce output using DITA-OT 2.0 on Windows results in build failure with the following message:

> C:\ditaot\plugins\org.dita.base\build_preprocess.xml:285: java.lang.IllegalArgumentException: Illegal character in path at index 3: bcc\concepts\notification_events.dita

The map file is the following:

... | 1.0 | DITA-OT 2.0 - Build Error (Windows) - Illegal character - keyref target - Attempting to produce output using DITA-OT 2.0 on Windows results in build failure with the following message:

> C:\ditaot\plugins\org.dita.base\build_preprocess.xml:285: java.lang.IllegalArgumentException: Illegal character in path at index 3... | process | dita ot build error windows illegal character keyref target attempting to produce output using dita ot on windows results in build failure with the following message c ditaot plugins org dita base build preprocess xml java lang illegalargumentexception illegal character in path at index ... | 1 |

12,653 | 15,024,756,308 | IssuesEvent | 2021-02-01 20:05:42 | elastic/beats | https://api.github.com/repos/elastic/beats | opened | Improve handling of missing field in dissect processor | :Processors Functionbeat | 7.9.3

```

- dissect:

tokenizer: "Init Duration: %{init_duration} ms"

field: "lambda.extra"

target_prefix: "lambda"

ignore_failure: true

```

When there is a dissect processor referencing a field but the event does not contain the field, we can only tell that something is wrong if we increase ... | 1.0 | Improve handling of missing field in dissect processor - 7.9.3

```

- dissect:

tokenizer: "Init Duration: %{init_duration} ms"

field: "lambda.extra"

target_prefix: "lambda"

ignore_failure: true

```

When there is a dissect processor referencing a field but the event does not contain the field,... | process | improve handling of missing field in dissect processor dissect tokenizer init duration init duration ms field lambda extra target prefix lambda ignore failure true when there is a dissect processor referencing a field but the event does not contain the field ... | 1 |

1,362 | 3,921,626,255 | IssuesEvent | 2016-04-22 00:14:54 | 18F/FEC | https://api.github.com/repos/18F/FEC | closed | Fonts are varying size on "Tax ID and bank account" page | bug processed |

## General feedback?

https://beta.fec.gov/registration-and-reporting/get-tax-id-and-bank-account/","https://beta.fec.gov/registration-and-reporting/get-tax-id-and-bank-account/

"You'll list the name and address of the bank..." is a smaller font than the rest of the page. Verified in Chrome and I... | 1.0 | Fonts are varying size on "Tax ID and bank account" page -

## General feedback?

https://beta.fec.gov/registration-and-reporting/get-tax-id-and-bank-account/","https://beta.fec.gov/registration-and-reporting/get-tax-id-and-bank-account/

"You'll list the name and address of the bank..." is a small... | process | fonts are varying size on tax id and bank account page general feedback you ll list the name and address of the bank is a smaller font than the rest of the page verified in chrome and ie details url user agent mozilla windows nt rv gecko firefox | 1 |

283,980 | 30,913,577,277 | IssuesEvent | 2023-08-05 02:17:36 | Satheesh575555/linux-4.1.15_CVE-2022-45934 | https://api.github.com/repos/Satheesh575555/linux-4.1.15_CVE-2022-45934 | reopened | CVE-2022-1943 (High) detected in linuxlinux-4.6 | Mend: dependency security vulnerability | ## CVE-2022-1943 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.... | True | CVE-2022-1943 (High) detected in linuxlinux-4.6 - ## CVE-2022-1943 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Libra... | non_process | cve high detected in linuxlinux cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files vulnerab... | 0 |

578,713 | 17,150,517,826 | IssuesEvent | 2021-07-13 19:55:37 | cagov/cannabis.ca.gov | https://api.github.com/repos/cagov/cannabis.ca.gov | opened | Design: Announcements page - Show only top 5 and paginate the rest + styling considerations | 1 pt Low Priority - Non-critical | Feedback on styling: Maybe color code differences between Announcements, Press Releases, etc. | 1.0 | Design: Announcements page - Show only top 5 and paginate the rest + styling considerations - Feedback on styling: Maybe color code differences between Announcements, Press Releases, etc. | non_process | design announcements page show only top and paginate the rest styling considerations feedback on styling maybe color code differences between announcements press releases etc | 0 |

587,842 | 17,632,673,994 | IssuesEvent | 2021-08-19 09:56:02 | massenergize/api | https://api.github.com/repos/massenergize/api | closed | Enable Teams to span multiple communities | db model change priority 2 | This was part of API #145 but not done at that time.

An example is a religious congregation team with members from neighboring towns.

Easiest part of this is to change the single ForeignKey field "Community" to a ManyToMany field "Communities" and updating the data so the list of Communities includes the original... | 1.0 | Enable Teams to span multiple communities - This was part of API #145 but not done at that time.

An example is a religious congregation team with members from neighboring towns.

Easiest part of this is to change the single ForeignKey field "Community" to a ManyToMany field "Communities" and updating the data so t... | non_process | enable teams to span multiple communities this was part of api but not done at that time an example is a religious congregation team with members from neighboring towns easiest part of this is to change the single foreignkey field community to a manytomany field communities and updating the data so the... | 0 |

14,755 | 18,025,388,642 | IssuesEvent | 2021-09-17 03:19:33 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Build Virtual Raster tool creates an additional layer when selecting and reordering layers | Processing Bug | The Build Virtual Raster can create one additional, duplicate layer when reordering input files, leading to unexpected behaviour. It is almost certainly a bug in the Input Layers selection window itself.

This seems to only happen when selecting (any/all) layers **first** and then reordering with drag-and-drop. Re-or... | 1.0 | Build Virtual Raster tool creates an additional layer when selecting and reordering layers - The Build Virtual Raster can create one additional, duplicate layer when reordering input files, leading to unexpected behaviour. It is almost certainly a bug in the Input Layers selection window itself.

This seems to only h... | process | build virtual raster tool creates an additional layer when selecting and reordering layers the build virtual raster can create one additional duplicate layer when reordering input files leading to unexpected behaviour it is almost certainly a bug in the input layers selection window itself this seems to only h... | 1 |

20,959 | 27,817,509,513 | IssuesEvent | 2023-03-18 21:19:03 | cse442-at-ub/project_s23-iweatherify | https://api.github.com/repos/cse442-at-ub/project_s23-iweatherify | closed | Load unit and temperature setting from the database to the respective page when visited | Processing Task Sprint 2 | **Task Tests**

*Test 1*

1. Go to the following URL: https://github.com/cse442-at-ub/project_s23-iweatherify/tree/dev

2. Click on the green `<> Code` button and download the ZIP file.

3. Unzip the downloaded... | 1.0 | Load unit and temperature setting from the database to the respective page when visited - **Task Tests**

*Test 1*

1. Go to the following URL: https://github.com/cse442-at-ub/project_s23-iweatherify/tree/dev

2. Click on the green `<> Code` button and download the ZIP file.

| 1.0 | We may be able to stop skipping UploadObject_BucketHasDefaultKmsKey_UploadWithCsek - (Will investigate when I have time.) | process | we may be able to stop skipping uploadobject buckethasdefaultkmskey uploadwithcsek will investigate when i have time | 1 |

13,140 | 15,558,728,279 | IssuesEvent | 2021-03-16 10:38:39 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | `prisma migrate deploy` sometimes errors with `Database error: Error querying the database: db error: ERROR: prepared statement "s0" does not exist` with pgbouncer | bug/0-needs-info kind/bug process/candidate team/migrations topic: pgbouncer | <!--

Thanks for helping us improve Prisma! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by setting the `DEBUG="*"` environment variable and enabling additional logging output in Prisma Client.

Learn more about writing proper bug reports here: htt... | 1.0 | `prisma migrate deploy` sometimes errors with `Database error: Error querying the database: db error: ERROR: prepared statement "s0" does not exist` with pgbouncer - <!--

Thanks for helping us improve Prisma! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, ... | process | prisma migrate deploy sometimes errors with database error error querying the database db error error prepared statement does not exist with pgbouncer thanks for helping us improve prisma 🙏 please follow the sections in the template and provide as much information as possible about your problem e... | 1 |

5,740 | 8,580,836,332 | IssuesEvent | 2018-11-13 13:10:50 | easy-software-ufal/annotations_repos | https://api.github.com/repos/easy-software-ufal/annotations_repos | opened | NakedObjectsGroup/NakedObjectsFramework Table view not rendering properly if field in a column is not visible | C# RMA test wrong processing | Issue: `https://github.com/NakedObjectsGroup/NakedObjectsFramework/issues/41`

PR: `https://github.com/NakedObjectsGroup/NakedObjectsFramework/commit/5630bcfca67216b4871ecf53b0a984057af6b077`

Multiple pull requests. | 1.0 | NakedObjectsGroup/NakedObjectsFramework Table view not rendering properly if field in a column is not visible - Issue: `https://github.com/NakedObjectsGroup/NakedObjectsFramework/issues/41`

PR: `https://github.com/NakedObjectsGroup/NakedObjectsFramework/commit/5630bcfca67216b4871ecf53b0a984057af6b077`

Multiple pull r... | process | nakedobjectsgroup nakedobjectsframework table view not rendering properly if field in a column is not visible issue pr multiple pull requests | 1 |

769,899 | 27,021,609,035 | IssuesEvent | 2023-02-11 03:54:57 | okTurtles/group-income | https://api.github.com/repos/okTurtles/group-income | closed | Archive payments in indexed storage using pagination | Kind:Enhancement App:Frontend Priority:High | ### Problem

We have `gi.contracts/group/archivePayments` method in group contract which archives historical payments in Indexed Storage of the browser.

What this function does is to clone the state of historical `payments` and `paymentsByPeriod` to the indexed storage.

We need the payments in pagination, so we sho... | 1.0 | Archive payments in indexed storage using pagination - ### Problem

We have `gi.contracts/group/archivePayments` method in group contract which archives historical payments in Indexed Storage of the browser.

What this function does is to clone the state of historical `payments` and `paymentsByPeriod` to the indexed ... | non_process | archive payments in indexed storage using pagination problem we have gi contracts group archivepayments method in group contract which archives historical payments in indexed storage of the browser what this function does is to clone the state of historical payments and paymentsbyperiod to the indexed ... | 0 |

14,647 | 17,774,412,730 | IssuesEvent | 2021-08-30 17:17:07 | julioPlaceres/A_Helping_Hand | https://api.github.com/repos/julioPlaceres/A_Helping_Hand | opened | Home Page | in process | Create Home Page (Initial page that will be seeing, with options to log in and sign up) | 1.0 | Home Page - Create Home Page (Initial page that will be seeing, with options to log in and sign up) | process | home page create home page initial page that will be seeing with options to log in and sign up | 1 |

560,024 | 16,583,248,263 | IssuesEvent | 2021-05-31 14:39:20 | asteca/ASteCA | https://api.github.com/repos/asteca/ASteCA | closed | Explore chaospy as a possible addition to the best fit process | priority:low type:best-fit type:packages |

* Article: [Chaospy: An open source tool for designing methods of uncertainty quantification](https://www.sciencedirect.com/science/article/pii/S1877750315300119?via%3Dihub)

* Repo: https://github.com/jonathf/chaospy

* Docs: https://chaospy.readthedocs.io/en/master/index.html

> Chaospy is a numerical tool for perf... | 1.0 | Explore chaospy as a possible addition to the best fit process -

* Article: [Chaospy: An open source tool for designing methods of uncertainty quantification](https://www.sciencedirect.com/science/article/pii/S1877750315300119?via%3Dihub)

* Repo: https://github.com/jonathf/chaospy

* Docs: https://chaospy.readthedoc... | non_process | explore chaospy as a possible addition to the best fit process article repo docs chaospy is a numerical tool for performing uncertainty quantification using polynomial chaos expansions and monte carlo methods | 0 |

8,886 | 11,984,094,180 | IssuesEvent | 2020-04-07 15:21:52 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | NTR: effector-mediated suppression of effector-triggered immunity | multi-species process |

A process mediated by a molecule secreted by a symbiont that results in the suppression of host effector-triggered immune response. The host is defined as the larger of the organisms involved in a symbiotic interaction.

descendant of

GO:0140403 effector-mediated suppression of host innate immune response by s... | 1.0 | NTR: effector-mediated suppression of effector-triggered immunity -

A process mediated by a molecule secreted by a symbiont that results in the suppression of host effector-triggered immune response. The host is defined as the larger of the organisms involved in a symbiotic interaction.

descendant of

GO:0140403... | process | ntr effector mediated suppression of effector triggered immunity a process mediated by a molecule secreted by a symbiont that results in the suppression of host effector triggered immune response the host is defined as the larger of the organisms involved in a symbiotic interaction descendant of go ef... | 1 |

546 | 3,005,947,173 | IssuesEvent | 2015-07-27 06:41:56 | HotCore-Studio/hot-project | https://api.github.com/repos/HotCore-Studio/hot-project | closed | основной 3D рендерер | In process | Инициализация, работа, рендеринг, установка вьюпортов, свап чеинов, контекста и тд.

Выполняет [ToxikCoder](https://github.com/ToxikCoder) | 1.0 | основной 3D рендерер - Инициализация, работа, рендеринг, установка вьюпортов, свап чеинов, контекста и тд.

Выполняет [ToxikCoder](https://github.com/ToxikCoder) | process | основной рендерер инициализация работа рендеринг установка вьюпортов свап чеинов контекста и тд выполняет | 1 |

469,553 | 13,520,692,757 | IssuesEvent | 2020-09-15 05:29:53 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.facebook.com - site is not usable | browser-firefox engine-gecko ml-needsdiagnosis-false ml-probability-high priority-critical | <!-- @browser: Firefox 81.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:81.0) Gecko/20100101 Firefox/81.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/58248 -->

**URL**: https://www.facebook.com/rsrc.php/v3/yx/r/pyNVUg5EM0j.png

**Bro... | 1.0 | www.facebook.com - site is not usable - <!-- @browser: Firefox 81.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:81.0) Gecko/20100101 Firefox/81.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/58248 -->

**URL**: https://www.facebook.com... | non_process | site is not usable url browser version firefox operating system windows tested another browser yes internet explorer problem type site is not usable description buttons or links not working steps to reproduce view the screenshot img alt ... | 0 |

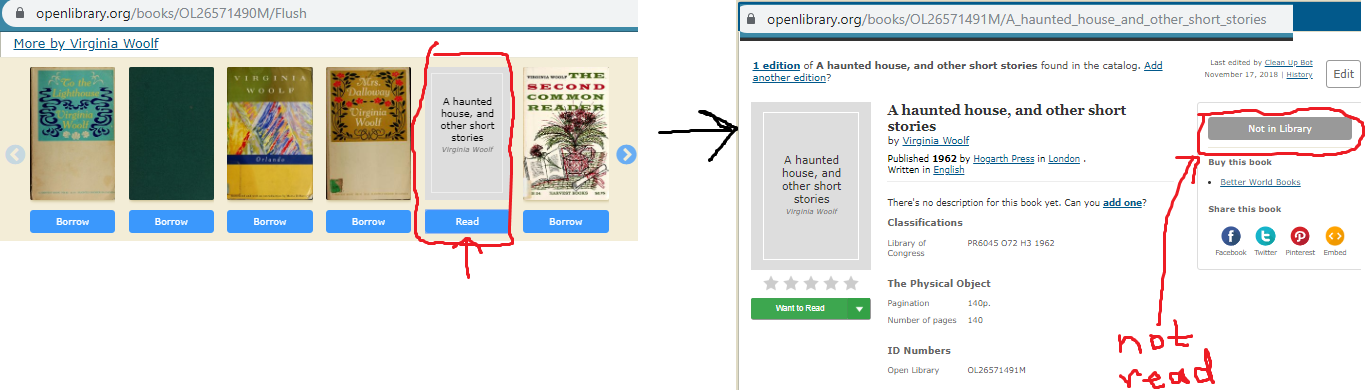

465,018 | 13,349,998,046 | IssuesEvent | 2020-08-30 05:04:37 | internetarchive/openlibrary | https://api.github.com/repos/internetarchive/openlibrary | closed | There is a mismatch of availability of a book in a carousel to its true status | Good First Issue Lead: @mekarpeles Priority: 3 Type: Bug | ### Example:

https://openlibrary.org/books/OL26571490M/Flush

https://openlibrary.org/books/OL26571491M/A_haunted_house_and_other_short_stories

### Problem:

Works in carousel say 'read', but when clicked... | 1.0 | There is a mismatch of availability of a book in a carousel to its true status - ### Example:

https://openlibrary.org/books/OL26571490M/Flush

https://openlibrary.org/books/OL26571491M/A_haunted_house_and_other_short_stories

(Híbrido - São Paulo) na Coodesh | SALVADOR BACK-END FRONT-END JAVA FULL-STACK ANGULAR REACT REQUISITOS PROCESSOS BACKEND GITHUB UMA QUALIDADE R NEGÓCIOS ALOCADO Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/jobs/consultant-java-developer-hibrida-sao-... | 1.0 | [Hibrido / São Paulo, São Paulo, Brazil] Fullstack Developer (Java) (Híbrido - São Paulo) na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te l... | process | fullstack developer java híbrido são paulo na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up pers... | 1 |

467,184 | 13,442,977,733 | IssuesEvent | 2020-09-08 07:39:16 | teamforus/general | https://api.github.com/repos/teamforus/general | closed | Implement feedback Noordoostpolder webshop | Approval: Granted Priority: Must have Scope: Small Status: In progress Type: Change request project-89 | Learn more about change requests here: https://bit.ly/39CWeEE

### Requested by:

-

### Change description

https://www.figma.com/file/pUWeZwelwsJK8W58ZGip9r/Webshop-Noordoostpolder?node-id=658%3A88

| 1.0 | Implement feedback Noordoostpolder webshop - Learn more about change requests here: https://bit.ly/39CWeEE

### Requested by:

-

### Change description

https://www.figma.com/file/pUWeZwelwsJK8W58ZGip9r/Webshop-Noordoostpolder?node-id=658%3A88

| non_process | implement feedback noordoostpolder webshop learn more about change requests here requested by change description | 0 |

405,912 | 11,884,275,403 | IssuesEvent | 2020-03-27 17:21:24 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | closed | Some energy and power related calculation functions are missing in MBP | priority: low team: dynamics type: bug | Functions that are missing in MBP include

1. CalcKineticEnergy()

2. CalcNonConservativePower() | 1.0 | Some energy and power related calculation functions are missing in MBP - Functions that are missing in MBP include

1. CalcKineticEnergy()

2. CalcNonConservativePower() | non_process | some energy and power related calculation functions are missing in mbp functions that are missing in mbp include calckineticenergy calcnonconservativepower | 0 |

1,639 | 4,259,886,123 | IssuesEvent | 2016-07-11 12:44:09 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | child_process.spawn execution is significantly slower than running command directly from shell | child_process performance unconfirmed | This issue came up in Glavin001/atom-beautify#893.

I have made a minimal test case for the issue: [phpcbf_spawn_test.zip](https://github.com/nodejs/node/files/356751/phpcbf_spawn_test.zip), but it requires you to have `phpcbf` from [PHP-Code-Sniffer](https://github.com/squizlabs/PHP_CodeSniffer) installed on your sys... | 1.0 | child_process.spawn execution is significantly slower than running command directly from shell - This issue came up in Glavin001/atom-beautify#893.

I have made a minimal test case for the issue: [phpcbf_spawn_test.zip](https://github.com/nodejs/node/files/356751/phpcbf_spawn_test.zip), but it requires you to have `ph... | process | child process spawn execution is significantly slower than running command directly from shell this issue came up in atom beautify i have made a minimal test case for the issue but it requires you to have phpcbf from installed on your system the problem is i want to execute phpcbf command wit... | 1 |

47,412 | 12,031,500,958 | IssuesEvent | 2020-04-13 09:49:27 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | opened | ERROR: Config value download_clang is not defined in any .rc file | type:build/install | tensorflow:r2.2

bazel :2.0.0

ERROR: Config value download_clang is not defined in any .rc file

print info:

`bazel build -c opt //tensorflow/contrib/android:libtensorflow_inference.so --crosstool_top=//external:android/crosstool --host_crosstool_top=@bazel_tools//tools/cpp:toolchain --cpu=armeabi-v7a

... | 1.0 | ERROR: Config value download_clang is not defined in any .rc file - tensorflow:r2.2

bazel :2.0.0

ERROR: Config value download_clang is not defined in any .rc file

print info:

`bazel build -c opt //tensorflow/contrib/android:libtensorflow_inference.so --crosstool_top=//external:android/crosstool --host... | non_process | error config value download clang is not defined in any rc file tensorflow: bazel : error config value download clang is not defined in any rc file print info: bazel build c opt tensorflow contrib android libtensorflow inference so crosstool top external android crosstool host ... | 0 |

5,596 | 8,453,014,344 | IssuesEvent | 2018-10-20 11:13:40 | SerialLain3170/GAN-papers | https://api.github.com/repos/SerialLain3170/GAN-papers | opened | Parallel-Data-Free Voice Conversion Using Cycle-Consistent Adversarial Networks | Speech Processing | # Paper

[Parallel-Data-Free Voice Conversion Using Cycle-Consistent Adversarial Networks](https://arxiv.org/pdf/1711.11293.pdf)

# Summary

- Voice ConversionにCycleGANを適用、Adversarial lossとCycle consistency lossに加え、identity mapping lossも考慮している。

- メルケプストラム24次元を変換、基本周波数は線形変換。

- Network ArchitectureにはGated CNNを適用

# Summary

- Voice ConversionにCycleGANを適用、Adversarial lossとCycle consistency lossに加え、identity mapping loss... | process | parallel data free voice conversion using cycle consistent adversarial networks paper summary voice conversionにcycleganを適用、adversarial lossとcycle consistency lossに加え、identity mapping lossも考慮している。 、基本周波数は線形変換。 network architectureにはgated cnnを適用 date | 1 |

14,053 | 16,857,301,031 | IssuesEvent | 2021-06-21 08:30:15 | aiidateam/aiida-core | https://api.github.com/repos/aiidateam/aiida-core | closed | `ProcessBuilder` looses `AttributeDict` properties of nested namespaces when `_update` is used with nested dictionary | priority/important topic/processes type/bug | When a process builder is updated with a nested dictionary using the `_update` method, the nested namespaces are overwritten by plain dictionaries instead of `ProcessBuilderNamespace` instances, causing the nested namespaces to loose their functionality, such as validation and tab-completion. The solution is to properl... | 1.0 | `ProcessBuilder` looses `AttributeDict` properties of nested namespaces when `_update` is used with nested dictionary - When a process builder is updated with a nested dictionary using the `_update` method, the nested namespaces are overwritten by plain dictionaries instead of `ProcessBuilderNamespace` instances, causi... | process | processbuilder looses attributedict properties of nested namespaces when update is used with nested dictionary when a process builder is updated with a nested dictionary using the update method the nested namespaces are overwritten by plain dictionaries instead of processbuildernamespace instances causi... | 1 |

16,148 | 20,507,379,119 | IssuesEvent | 2022-03-01 00:16:39 | newrelic/docs-website | https://api.github.com/repos/newrelic/docs-website | closed | Process council: PM handbook updates | process | # Overview

We are going to roll out a process council in the next week or so. In the spirit of such, we should follow our proposed council process for these changes.

# Proposal

The process for a change will be:

1. Someone has an idea for a process change or experiment. This can also just be identifying the issue... | 1.0 | Process council: PM handbook updates - # Overview

We are going to roll out a process council in the next week or so. In the spirit of such, we should follow our proposed council process for these changes.

# Proposal

The process for a change will be:

1. Someone has an idea for a process change or experiment. This... | process | process council pm handbook updates overview we are going to roll out a process council in the next week or so in the spirit of such we should follow our proposed council process for these changes proposal the process for a change will be someone has an idea for a process change or experiment this... | 1 |

1,852 | 4,651,283,230 | IssuesEvent | 2016-10-03 09:30:37 | openvstorage/volumedriver | https://api.github.com/repos/openvstorage/volumedriver | closed | Quickly removing and recreating a volume with the same name triggers a fault | process_wontfix | Disks of routeros virtual machines in OVC are being created with a specific naming convention. It happens that these routeros virtual machines are quickly recycled, and this triggers a race condition in the volumedriver.

The result is that the "recreated" volume is left in a zombie state, that can only be solved by ... | 1.0 | Quickly removing and recreating a volume with the same name triggers a fault - Disks of routeros virtual machines in OVC are being created with a specific naming convention. It happens that these routeros virtual machines are quickly recycled, and this triggers a race condition in the volumedriver.

The result is tha... | process | quickly removing and recreating a volume with the same name triggers a fault disks of routeros virtual machines in ovc are being created with a specific naming convention it happens that these routeros virtual machines are quickly recycled and this triggers a race condition in the volumedriver the result is tha... | 1 |

22,578 | 31,805,302,396 | IssuesEvent | 2023-09-13 13:37:41 | GSA/EDX | https://api.github.com/repos/GSA/EDX | opened | Update personal access token for GitHub Workflow (December 2023) | process | For the EDXPROJECT_TOKEN to automate the issue workflow (adding it to EDX's Inbox in its Kanban board)

Instructions:

Click on your user icon at the top right

Click settings

Scroll to bottom, click "Developer Settings"

Under personal access tokens, click tokens classic

You want to update the EDXPROJECT_TOKEN one... | 1.0 | Update personal access token for GitHub Workflow (December 2023) - For the EDXPROJECT_TOKEN to automate the issue workflow (adding it to EDX's Inbox in its Kanban board)

Instructions:

Click on your user icon at the top right

Click settings

Scroll to bottom, click "Developer Settings"

Under personal access tokens... | process | update personal access token for github workflow december for the edxproject token to automate the issue workflow adding it to edx s inbox in its kanban board instructions click on your user icon at the top right click settings scroll to bottom click developer settings under personal access tokens c... | 1 |

269,267 | 8,433,952,195 | IssuesEvent | 2018-10-17 08:51:54 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | m2.wyylde.com - see bug description | browser-firefox-mobile browser-focus-geckoview priority-normal | <!-- @browser: Firefox Mobile 62.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 8.1.0; Mobile; rv:62.0) Gecko/62.0 Firefox/62.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://m2.wyylde.com/

**Browser / Version**: Firefox Mobile 62.0

**Operating System**: Android 8.1.0

**Te... | 1.0 | m2.wyylde.com - see bug description - <!-- @browser: Firefox Mobile 62.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 8.1.0; Mobile; rv:62.0) Gecko/62.0 Firefox/62.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://m2.wyylde.com/

**Browser / Version**: Firefox Mobile 62.0

**... | non_process | wyylde com see bug description url browser version firefox mobile operating system android tested another browser yes problem type something else description the site won t showing up although i don t need it steps to reproduce from with ❤️ | 0 |

805,108 | 29,508,052,751 | IssuesEvent | 2023-06-03 15:08:22 | jrsteensen/OpenHornet | https://api.github.com/repos/jrsteensen/OpenHornet | closed | [Bug]: UIP parts with same part number: OH1A1-28 | Type: Bug/Obsolesce Category: MCAD Priority: Normal | ### Discord Username

Arribe

### Bug Summary

On the UIP part number OH1A1-28 is used for both the UPPER UIP FACE MOUNT, RIGHT, and UIP RIGHT GLARESHIELD.

### Expected Results

Parts should have their own part numbers. If possible please keep UPPER UIP FACE MOUNT, RIGHT associated with OH1A1-28 as that was used to g... | 1.0 | [Bug]: UIP parts with same part number: OH1A1-28 - ### Discord Username

Arribe

### Bug Summary

On the UIP part number OH1A1-28 is used for both the UPPER UIP FACE MOUNT, RIGHT, and UIP RIGHT GLARESHIELD.

### Expected Results

Parts should have their own part numbers. If possible please keep UPPER UIP FACE MOUNT, R... | non_process | uip parts with same part number discord username arribe bug summary on the uip part number is used for both the upper uip face mount right and uip right glareshield expected results parts should have their own part numbers if possible please keep upper uip face mount right associate... | 0 |

16,484 | 21,443,566,330 | IssuesEvent | 2022-04-25 02:07:19 | huutho77/CNPMNC_ThayAi | https://api.github.com/repos/huutho77/CNPMNC_ThayAi | opened | [Browser UI] Fearure Filter Products based on Category | processing dev/quocky2211 dev/haichao784 dev/phamtan | - Show category list

- Get categoryID or categoryName

- Filter related products based on Category

- Show the products list from the result | 1.0 | [Browser UI] Fearure Filter Products based on Category - - Show category list

- Get categoryID or categoryName

- Filter related products based on Category

- Show the products list from the result | process | fearure filter products based on category show category list get categoryid or categoryname filter related products based on category show the products list from the result | 1 |

12,471 | 14,940,246,660 | IssuesEvent | 2021-01-25 18:00:36 | eddieantonio/predictive-text-studio | https://api.github.com/repos/eddieantonio/predictive-text-studio | closed | Remove headers from uploaded wordlists via the Google Sheets API | data-backing data-processing good first issue 🔥 High priority | An extension of issue #199

> Remove headers such as "word" and "count" headers in the first row of all uploaded wordlists (either Google Sheets or Excel).

>

> This should be done automatically.

Add functionality from #217 to the Google Sheets API. Code reuse is ideal.

| 1.0 | Remove headers from uploaded wordlists via the Google Sheets API - An extension of issue #199

> Remove headers such as "word" and "count" headers in the first row of all uploaded wordlists (either Google Sheets or Excel).

>

> This should be done automatically.

Add functionality from #217 to the Google Sheets API... | process | remove headers from uploaded wordlists via the google sheets api an extension of issue remove headers such as word and count headers in the first row of all uploaded wordlists either google sheets or excel this should be done automatically add functionality from to the google sheets api co... | 1 |

50,204 | 3,006,246,791 | IssuesEvent | 2015-07-27 09:08:52 | Itseez/opencv | https://api.github.com/repos/Itseez/opencv | opened | Add new create() method for Feature2D | auto-transferred category: features2d feature priority: normal | Transferred from http://code.opencv.org/issues/2333

```

|| Maria Dimashova on 2012-09-05 09:58

|| Priority: Normal

|| Affected: None

|| Category: features2d

|| Tracker: Feature

|| Difficulty: None

|| PR: None

|| Platform: None / None

```

Add new create() method for Feature2D

-----------

```

with arguments Ptr<Feature... | 1.0 | Add new create() method for Feature2D - Transferred from http://code.opencv.org/issues/2333

```

|| Maria Dimashova on 2012-09-05 09:58

|| Priority: Normal

|| Affected: None

|| Category: features2d

|| Tracker: Feature

|| Difficulty: None

|| PR: None

|| Platform: None / None

```

Add new create() method for Feature2D

--... | non_process | add new create method for transferred from maria dimashova on priority normal affected none category tracker feature difficulty none pr none platform none none add new create method for with arguments ptr and ptr history ... | 0 |