Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

140,355 | 21,083,592,797 | IssuesEvent | 2022-04-03 09:16:11 | bounswe/bounswe2022group3 | https://api.github.com/repos/bounswe/bounswe2022group3 | opened | Creating a Issue Template | documentation design | ### Task:

A template which is needed to be comprehensive about issue like what an issue is, and why we do care about it to handle, what things are used, and where the result of it is put, and if necessary what additional notes are.

### Format (make a table, list, etc.):

A template

### Required tools (if app... | 1.0 | Creating a Issue Template - ### Task:

A template which is needed to be comprehensive about issue like what an issue is, and why we do care about it to handle, what things are used, and where the result of it is put, and if necessary what additional notes are.

### Format (make a table, list, etc.):

A template

... | non_process | creating a issue template task a template which is needed to be comprehensive about issue like what an issue is and why we do care about it to handle what things are used and where the result of it is put and if necessary what additional notes are format make a table list etc a template ... | 0 |

6,644 | 9,761,606,899 | IssuesEvent | 2019-06-05 09:11:05 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Problem with ParameterFile in batch mode | Bug Processing | Hello,

I use QGis 3.6.3 in windows

I've a script that use a ParameterFile to select a folder (QgsProcessingParameterFile.Folder option in the self.addParameter function)

It works well in normal mode, but crashed in batch mode.

Here is the error message displayed

AttributeError: 'NoneType' object has no attribu... | 1.0 | Problem with ParameterFile in batch mode - Hello,

I use QGis 3.6.3 in windows

I've a script that use a ParameterFile to select a folder (QgsProcessingParameterFile.Folder option in the self.addParameter function)

It works well in normal mode, but crashed in batch mode.

Here is the error message displayed

Attri... | process | problem with parameterfile in batch mode hello i use qgis in windows i ve a script that use a parameterfile to select a folder qgsprocessingparameterfile folder option in the self addparameter function it works well in normal mode but crashed in batch mode here is the error message displayed attri... | 1 |

239,849 | 26,232,286,799 | IssuesEvent | 2023-01-05 02:04:00 | TheKingTermux/alice | https://api.github.com/repos/TheKingTermux/alice | opened | Prototype Pollution in JSON5 via Parse Method | Security Auto Create Issues | ### Description

Dependabot cannot update to the required version as there is already an existing pull request for the latest version

There is already an existing pull request for the latest version: 2.2.3

Prototype Pollution in JSON5 via Parse Method #95

Open Opened 2 minutes ago on json5 (npm) · package-lock.js... | True | Prototype Pollution in JSON5 via Parse Method - ### Description

Dependabot cannot update to the required version as there is already an existing pull request for the latest version

There is already an existing pull request for the latest version: 2.2.3

Prototype Pollution in JSON5 via Parse Method #95

Open Opene... | non_process | prototype pollution in via parse method description dependabot cannot update to the required version as there is already an existing pull request for the latest version there is already an existing pull request for the latest version prototype pollution in via parse method open opened minut... | 0 |

21,639 | 30,055,256,313 | IssuesEvent | 2023-06-28 06:09:43 | h4sh5/npm-auto-scanner | https://api.github.com/repos/h4sh5/npm-auto-scanner | opened | @emivespa/iih 0.1.0 has 1 guarddog issues | npm-silent-process-execution | ```{"npm-silent-process-execution":[{"code":" const iiProcess = spawn('ii', ['-s', `${cli.flags.host}`, '-n', `${cli.flags.nick}`], {\n detached: true,\n stdio: 'ignore',\n });","location":"package/dist/cli.js:58","message":"This package is silently executing another executable"}]}``` | 1.0 | @emivespa/iih 0.1.0 has 1 guarddog issues - ```{"npm-silent-process-execution":[{"code":" const iiProcess = spawn('ii', ['-s', `${cli.flags.host}`, '-n', `${cli.flags.nick}`], {\n detached: true,\n stdio: 'ignore',\n });","location":"package/dist/cli.js:58","message":"This package is silently execut... | process | emivespa iih has guarddog issues npm silent process execution n detached true n stdio ignore n location package dist cli js message this package is silently executing another executable | 1 |

12,205 | 14,742,715,012 | IssuesEvent | 2021-01-07 12:46:34 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Site 068 Portland: Unable to process Credit Card Transactions in SA Billing | anc-process anp-important ant-bug | In GitLab by @kdjstudios on May 17, 2019, 13:00

**Submitted by:** <jeffrey.casey@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-05-17-88178/conversation

**Server:** Internal

**Client/Site:** Portland

**Account:**

**Issue:**

When attempting to process and save Credit Card... | 1.0 | Site 068 Portland: Unable to process Credit Card Transactions in SA Billing - In GitLab by @kdjstudios on May 17, 2019, 13:00

**Submitted by:** <jeffrey.casey@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-05-17-88178/conversation

**Server:** Internal

**Client/Site:** Portlan... | process | site portland unable to process credit card transactions in sa billing in gitlab by kdjstudios on may submitted by helpdesk server internal client site portland account issue when attempting to process and save credit card transactions sa billing returns “somet... | 1 |

15,341 | 19,488,412,338 | IssuesEvent | 2021-12-26 21:23:20 | MasterPlayer/adxl345-sv | https://api.github.com/repos/MasterPlayer/adxl345-sv | opened | SingleTap Interrupt processing in hw | enhancement hardware process | add ability of component for processing in hardware SingleTap interrupts | 1.0 | SingleTap Interrupt processing in hw - add ability of component for processing in hardware SingleTap interrupts | process | singletap interrupt processing in hw add ability of component for processing in hardware singletap interrupts | 1 |

20,346 | 27,002,727,003 | IssuesEvent | 2023-02-10 09:13:32 | bitfocus/companion-module-requests | https://api.github.com/repos/bitfocus/companion-module-requests | opened | Audac - M2 - zonematrix processor | NOT YET PROCESSED | - [x] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control: Audac - M2 - zonematrix processor

What you would like to be able to make it do from Companion:

Would be gr... | 1.0 | Audac - M2 - zonematrix processor - - [x] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

The name of the device, hardware, or software you would like to control: Audac - M2 - zonematrix processor

What you would like to be able to mak... | process | audac zonematrix processor i have researched the list of existing companion modules and requests and have determined this has not yet been requested the name of the device hardware or software you would like to control audac zonematrix processor what you would like to be able to make it... | 1 |

24,146 | 16,945,108,565 | IssuesEvent | 2021-06-28 05:12:31 | Unidata/MetPy | https://api.github.com/repos/Unidata/MetPy | closed | Upload image test results on AppVeyor | Area: Infrastructure Type: Enhancement | Looks like AppVeyor supports uploading build artifacts, so we should do so. Matplotlib's config:

```yaml

artifacts:

- path: dist\*

name: packages

- path: result_images\*

name: result_images

type: zip

on_finish:

on_failure:

- python tools/visualize_tests.py --no-browser

- echo zippin... | 1.0 | Upload image test results on AppVeyor - Looks like AppVeyor supports uploading build artifacts, so we should do so. Matplotlib's config:

```yaml

artifacts:

- path: dist\*

name: packages

- path: result_images\*

name: result_images

type: zip

on_finish:

on_failure:

- python tools/visualiz... | non_process | upload image test results on appveyor looks like appveyor supports uploading build artifacts so we should do so matplotlib s config yaml artifacts path dist name packages path result images name result images type zip on finish on failure python tools visualiz... | 0 |

82,950 | 16,065,172,718 | IssuesEvent | 2021-04-23 17:54:02 | gleam-lang/gleam | https://api.github.com/repos/gleam-lang/gleam | closed | Basic JS code generation | area:codegen help wanted | - [x] Expressions

- [x] Ints (with bitwise operator hints to make then ints rather than floats)

- [x] Floats

- [x] Strings

- [x] Function calls

- [x] Constants

- [x] Ints

- [x] Floats

- [x] Strings

- [x] Module level functions | 1.0 | Basic JS code generation - - [x] Expressions

- [x] Ints (with bitwise operator hints to make then ints rather than floats)

- [x] Floats

- [x] Strings

- [x] Function calls

- [x] Constants

- [x] Ints

- [x] Floats

- [x] Strings

- [x] Module level functions | non_process | basic js code generation expressions ints with bitwise operator hints to make then ints rather than floats floats strings function calls constants ints floats strings module level functions | 0 |

19,784 | 14,543,744,205 | IssuesEvent | 2020-12-15 17:15:12 | coreos/zincati | https://api.github.com/repos/coreos/zincati | closed | metrics: values from runtime config could be set earlier | area/observability area/usability jira kind/friction | From a race condition observed in https://github.com/coreos/fedora-coreos-tracker/issues/686.

Most metrics are initially set in an ad-hoc fashion as soon as execution reaches the relevant codepath. In particular, this is the case for `zincati_update_agent_updates_enabled` which is first set when the actor [is starte... | True | metrics: values from runtime config could be set earlier - From a race condition observed in https://github.com/coreos/fedora-coreos-tracker/issues/686.

Most metrics are initially set in an ad-hoc fashion as soon as execution reaches the relevant codepath. In particular, this is the case for `zincati_update_agent_up... | non_process | metrics values from runtime config could be set earlier from a race condition observed in most metrics are initially set in an ad hoc fashion as soon as execution reaches the relevant codepath in particular this is the case for zincati update agent updates enabled which is first set when the actor this ... | 0 |

16,440 | 21,317,069,446 | IssuesEvent | 2022-04-16 13:16:19 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | DOMException when using two "ditavalref"'s on first level in map | bug priority/medium preprocess preprocess/branch-filtering stale | Reported here:

https://groups.yahoo.com/neo/groups/dita-users/conversations/messages/43072

I'm attaching a sample project:

[two-ditavalref-map.zip](https://github.com/dita-ot/dita-ot/files/1566943/two-ditavalref-map.zip)

There are two ditaval files referenced on the first DITA Map level like:

<map>

... | 2.0 | DOMException when using two "ditavalref"'s on first level in map - Reported here:

https://groups.yahoo.com/neo/groups/dita-users/conversations/messages/43072

I'm attaching a sample project:

[two-ditavalref-map.zip](https://github.com/dita-ot/dita-ot/files/1566943/two-ditavalref-map.zip)

There are two ditav... | process | domexception when using two ditavalref s on first level in map reported here i m attaching a sample project there are two ditaval files referenced on the first dita map level like i can reproduce problem with both dita ot x and x build failed ... | 1 |

792,208 | 27,950,583,601 | IssuesEvent | 2023-03-24 08:31:19 | ahmedkaludi/accelerated-mobile-pages | https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages | closed | While using the Yuki Blogger theme, when we edit the AMP customization, it shows a fatal error. | bug [Priority: HIGH] Ready for Review | While using the Yuki Blogger theme, when we edit the AMP customization, it shows a fatal error.

Ref video: https://monosnap.com/direct/JisIlAW3qq7OarIcluBmCXv8ONyokO

Ref ticket:https://magazine3.in/conversation/110482?folder_id=29 | 1.0 | While using the Yuki Blogger theme, when we edit the AMP customization, it shows a fatal error. - While using the Yuki Blogger theme, when we edit the AMP customization, it shows a fatal error.

Ref video: https://monosnap.com/direct/JisIlAW3qq7OarIcluBmCXv8ONyokO

Ref ticket:https://magazine3.in/conversation/11048... | non_process | while using the yuki blogger theme when we edit the amp customization it shows a fatal error while using the yuki blogger theme when we edit the amp customization it shows a fatal error ref video ref ticket | 0 |

5,942 | 8,766,817,440 | IssuesEvent | 2018-12-17 17:52:01 | knative/serving | https://api.github.com/repos/knative/serving | closed | Enable object versioning for our release artifact GCR buckets | area/test-and-release kind/cleanup kind/process | <!--

Pro-tip: You can leave this block commented, and it still works!

Select the appropriate areas for your issue:

/area test-and-release

Classify what kind of issue this is:

/kind cleanup

/kind process

/assign @jonjohnsonjr

-->

## Expected Behavior

We have object versioning for our GCR bucket, to ... | 1.0 | Enable object versioning for our release artifact GCR buckets - <!--

Pro-tip: You can leave this block commented, and it still works!

Select the appropriate areas for your issue:

/area test-and-release

Classify what kind of issue this is:

/kind cleanup

/kind process

/assign @jonjohnsonjr

-->

## Exp... | process | enable object versioning for our release artifact gcr buckets pro tip you can leave this block commented and it still works select the appropriate areas for your issue area test and release classify what kind of issue this is kind cleanup kind process assign jonjohnsonjr exp... | 1 |

106,039 | 16,664,152,758 | IssuesEvent | 2021-06-06 21:39:42 | AlexRogalskiy/github-action-open-jscharts | https://api.github.com/repos/AlexRogalskiy/github-action-open-jscharts | opened | CVE-2020-28469 (Medium) detected in glob-parent-5.1.1.tgz | security vulnerability | ## CVE-2020-28469 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>glob-parent-5.1.1.tgz</b></p></summary>

<p>Extract the non-magic parent path from a glob string.</p>

<p>Library home... | True | CVE-2020-28469 (Medium) detected in glob-parent-5.1.1.tgz - ## CVE-2020-28469 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>glob-parent-5.1.1.tgz</b></p></summary>

<p>Extract the n... | non_process | cve medium detected in glob parent tgz cve medium severity vulnerability vulnerable library glob parent tgz extract the non magic parent path from a glob string library home page a href path to dependency file github action open jscharts package json path to vulnerab... | 0 |

287,899 | 24,872,440,430 | IssuesEvent | 2022-10-27 16:11:26 | datafuselabs/databend | https://api.github.com/repos/datafuselabs/databend | closed | Track: Sql logic test experience improvement | C-testing | ### Summary

This is a tracking issue for sql logic experience, anything about logic test can put here.

### tasks

- [x] https://github.com/datafuselabs/databend/issues/6696

- [x] https://github.com/datafuselabs/databend/issues/6694

- [x] https://github.com/datafuselabs/databend/issues/6697

- [x] https://g... | 1.0 | Track: Sql logic test experience improvement - ### Summary

This is a tracking issue for sql logic experience, anything about logic test can put here.

### tasks

- [x] https://github.com/datafuselabs/databend/issues/6696

- [x] https://github.com/datafuselabs/databend/issues/6694

- [x] https://github.com/dat... | non_process | track sql logic test experience improvement summary this is a tracking issue for sql logic experience anything about logic test can put here tasks | 0 |

387,010 | 11,454,628,879 | IssuesEvent | 2020-02-06 17:25:58 | Activiti/Activiti | https://api.github.com/repos/Activiti/Activiti | closed | [Core] Versioning Support Release concept by Deploying a set of related artefacts | core priority1 release-notes-required risk | When a new version of the process definition is deployed a new record is put in the database. Process instances for old and new versions can be live in parallel.

But we also have json files that define the variables expected for the connectors in the process definition. Could a breaking change be made to one of thes... | 1.0 | [Core] Versioning Support Release concept by Deploying a set of related artefacts - When a new version of the process definition is deployed a new record is put in the database. Process instances for old and new versions can be live in parallel.

But we also have json files that define the variables expected for the ... | non_process | versioning support release concept by deploying a set of related artefacts when a new version of the process definition is deployed a new record is put in the database process instances for old and new versions can be live in parallel but we also have json files that define the variables expected for the conne... | 0 |

406,531 | 27,570,081,467 | IssuesEvent | 2023-03-08 08:34:06 | GenericMappingTools/pygmt | https://api.github.com/repos/GenericMappingTools/pygmt | closed | Add a gallery example for the Figure.timestamp method | documentation | The `Figure.timestamp()` method was added in https://github.com/GenericMappingTools/pygmt/pull/2208, and a gallery example is needed (xref https://github.com/GenericMappingTools/pygmt/pull/2208#issuecomment-1451522619). | 1.0 | Add a gallery example for the Figure.timestamp method - The `Figure.timestamp()` method was added in https://github.com/GenericMappingTools/pygmt/pull/2208, and a gallery example is needed (xref https://github.com/GenericMappingTools/pygmt/pull/2208#issuecomment-1451522619). | non_process | add a gallery example for the figure timestamp method the figure timestamp method was added in and a gallery example is needed xref | 0 |

115,721 | 24,803,497,662 | IssuesEvent | 2022-10-25 00:55:34 | alefragnani/vscode-bookmarks | https://api.github.com/repos/alefragnani/vscode-bookmarks | closed | [BUG] - Repeated gutter icon on line wrap | bug caused by vscode | This doesn't seem to be intended, at least I can't see the point of having that many bookmark icons in the gutter.

<!-- Please search existing issues to avoid creating duplicates. -->

<!-- Use Help > Report Issue to prefill some of these. -->

**Environment/version**

- Extension version: v13.3.1

- VSCode vers... | 1.0 | [BUG] - Repeated gutter icon on line wrap - This doesn't seem to be intended, at least I can't see the point of having that many bookmark icons in the gutter.

<!-- Please search existing issues to avoid creating duplicates. -->

<!-- Use Help > Report Issue to prefill some of these. -->

**Environment/version**

... | non_process | repeated gutter icon on line wrap this doesn t seem to be intended at least i can t see the point of having that many bookmark icons in the gutter report issue to prefill some of these environment version extension version vscode version os version macos monterey ... | 0 |

16,845 | 22,096,552,001 | IssuesEvent | 2022-06-01 10:35:56 | camunda/zeebe | https://api.github.com/repos/camunda/zeebe | closed | IllegalStateException when writing decision evaluation event | kind/bug severity/mid area/reliability team/process-automation | **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

When trying to write the decision evaluation event an `IllegalArgumentException` is thrown. This is because when searching for decision by decision requirements key multiple results with the same decision id are returned:

```java

... | 1.0 | IllegalStateException when writing decision evaluation event - **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

When trying to write the decision evaluation event an `IllegalArgumentException` is thrown. This is because when searching for decision by decision requirements key multip... | process | illegalstateexception when writing decision evaluation event describe the bug when trying to write the decision evaluation event an illegalargumentexception is thrown this is because when searching for decision by decision requirements key multiple results with the same decision id are returned ja... | 1 |

243,628 | 26,286,969,558 | IssuesEvent | 2023-01-07 23:46:05 | MValle21/riposte | https://api.github.com/repos/MValle21/riposte | opened | CVE-2021-37137 (High) detected in netty-codec-4.1.49.Final.jar | security vulnerability | ## CVE-2021-37137 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-codec-4.1.49.Final.jar</b></p></summary>

<p>Netty is an asynchronous event-driven network application framework ... | True | CVE-2021-37137 (High) detected in netty-codec-4.1.49.Final.jar - ## CVE-2021-37137 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-codec-4.1.49.Final.jar</b></p></summary>

<p>Net... | non_process | cve high detected in netty codec final jar cve high severity vulnerability vulnerable library netty codec final jar netty is an asynchronous event driven network application framework for rapid development of maintainable high performance protocol servers and clients ... | 0 |

80,312 | 3,560,834,504 | IssuesEvent | 2016-01-23 10:39:10 | jadjoubran/laravel5-angular-material-starter | https://api.github.com/repos/jadjoubran/laravel5-angular-material-starter | closed | Update angular folder structure/naming & fix generators accordingly | enhancement Priority: High | - [x] `angular/app` should be renamed to `angular/app/pages/`

- [x] `angular/directives` should be renamed to `angular/app/components`

- [x] `.directive.js` should become `.component.js`

- [x] page_name`.page.html` (for page views)

- [x] finalize other breaking changes

- [x] update documentation

- [x] upgrade gui... | 1.0 | Update angular folder structure/naming & fix generators accordingly - - [x] `angular/app` should be renamed to `angular/app/pages/`

- [x] `angular/directives` should be renamed to `angular/app/components`

- [x] `.directive.js` should become `.component.js`

- [x] page_name`.page.html` (for page views)

- [x] finalize... | non_process | update angular folder structure naming fix generators accordingly angular app should be renamed to angular app pages angular directives should be renamed to angular app components directive js should become component js page name page html for page views finalize other bre... | 0 |

241,929 | 20,173,542,184 | IssuesEvent | 2022-02-10 12:36:57 | woocommerce/woocommerce-gutenberg-products-block | https://api.github.com/repos/woocommerce/woocommerce-gutenberg-products-block | closed | Critical flows: Merchant → Cart → Can add inner blocks | type: enhancement ◼️ block: cart category: tests | ### Note

Create e2e tests to verify that a merchant can add inner blocks.

### File

`tests/e2e/specs/merchant/cart-inner-blocks.test` | 1.0 | Critical flows: Merchant → Cart → Can add inner blocks - ### Note

Create e2e tests to verify that a merchant can add inner blocks.

### File

`tests/e2e/specs/merchant/cart-inner-blocks.test` | non_process | critical flows merchant → cart → can add inner blocks note create tests to verify that a merchant can add inner blocks file tests specs merchant cart inner blocks test | 0 |

103,607 | 16,602,936,223 | IssuesEvent | 2021-06-01 22:17:46 | gms-ws-sandbox/nibrs | https://api.github.com/repos/gms-ws-sandbox/nibrs | opened | CVE-2018-11040 (Medium) detected in spring-webmvc-4.3.11.RELEASE.jar, spring-web-4.3.11.RELEASE.jar | security vulnerability | ## CVE-2018-11040 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>spring-webmvc-4.3.11.RELEASE.jar</b>, <b>spring-web-4.3.11.RELEASE.jar</b></p></summary>

<p>

<details><summary><b>... | True | CVE-2018-11040 (Medium) detected in spring-webmvc-4.3.11.RELEASE.jar, spring-web-4.3.11.RELEASE.jar - ## CVE-2018-11040 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>spring-webmvc... | non_process | cve medium detected in spring webmvc release jar spring web release jar cve medium severity vulnerability vulnerable libraries spring webmvc release jar spring web release jar spring webmvc release jar spring web mvc library home page a href ... | 0 |

21,642 | 30,056,517,079 | IssuesEvent | 2023-06-28 07:15:40 | 0xPolygonMiden/miden-vm | https://api.github.com/repos/0xPolygonMiden/miden-vm | closed | Question: Why is Fmp (free memory pointer) required? | processor | The fmp takes up one column in the execution trace and has two associated vm operations. It looks like its used for generating memory addresses for procedure locals? I'm trying to understand why we need the fmp. Couldn't we just delegate calculation of the memory addresses for procedure locals to the compiler? This w... | 1.0 | Question: Why is Fmp (free memory pointer) required? - The fmp takes up one column in the execution trace and has two associated vm operations. It looks like its used for generating memory addresses for procedure locals? I'm trying to understand why we need the fmp. Couldn't we just delegate calculation of the memory ... | process | question why is fmp free memory pointer required the fmp takes up one column in the execution trace and has two associated vm operations it looks like its used for generating memory addresses for procedure locals i m trying to understand why we need the fmp couldn t we just delegate calculation of the memory ... | 1 |

36,399 | 2,799,326,196 | IssuesEvent | 2015-05-12 23:43:22 | wordpress-mobile/WordPress-Android | https://api.github.com/repos/wordpress-mobile/WordPress-Android | closed | Navigation Drawer: Notifications tap highlight shows over border | core notifications priority-low | When you tap on Notifications, the highlight shows over the border at the bottom of the header:

Sony Z3 compact, Android 4.4.4 | 1.0 | Navigation Drawer: Notifications tap highlight shows over border - When you tap on Notifications, the highlight shows over the border at the bottom of the header:

Sony Z3 co... | non_process | navigation drawer notifications tap highlight shows over border when you tap on notifications the highlight shows over the border at the bottom of the header sony compact android | 0 |

10,018 | 13,043,914,557 | IssuesEvent | 2020-07-29 03:02:15 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `Compress` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `Compress` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_expr... | 2.0 | UCP: Migrate scalar function `Compress` from TiDB -

## Description

Port the scalar function `Compress` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv... | process | ucp migrate scalar function compress from tidb description port the scalar function compress from tidb to coprocessor score mentor s breeswish recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

145,841 | 11,710,120,744 | IssuesEvent | 2020-03-08 22:47:37 | JuliaDocs/Documenter.jl | https://api.github.com/repos/JuliaDocs/Documenter.jl | closed | PDF/LaTeX test phase failing on tags | Type: Tests | https://travis-ci.org/JuliaDocs/Documenter.jl/builds/644089901

https://travis-ci.org/JuliaDocs/Documenter.jl/builds/638646797

...

https://travis-ci.org/JuliaDocs/Documenter.jl/builds/615449662

It blocks the documentation deployment phase, so that phase has to be restarted manually for tags as long as this is not ... | 1.0 | PDF/LaTeX test phase failing on tags - https://travis-ci.org/JuliaDocs/Documenter.jl/builds/644089901

https://travis-ci.org/JuliaDocs/Documenter.jl/builds/638646797

...

https://travis-ci.org/JuliaDocs/Documenter.jl/builds/615449662

It blocks the documentation deployment phase, so that phase has to be restarted ma... | non_process | pdf latex test phase failing on tags it blocks the documentation deployment phase so that phase has to be restarted manually for tags as long as this is not fixed | 0 |

17,732 | 23,641,606,097 | IssuesEvent | 2022-08-25 17:36:56 | apache/arrow-rs | https://api.github.com/repos/apache/arrow-rs | closed | Potential OOB Access in Unreleased Comparison Kernels | bug development-process | **Describe the bug**

<!--

A clear and concise description of what the bug is.

-->

https://github.com/apache/arrow-rs/pull/2533 introduced a potential OOB access as described [here](https://github.com/apache/arrow-rs/pull/2533#discussion_r953949061). This should be fixed prior to the next release

**To Reproduce... | 1.0 | Potential OOB Access in Unreleased Comparison Kernels - **Describe the bug**

<!--

A clear and concise description of what the bug is.

-->

https://github.com/apache/arrow-rs/pull/2533 introduced a potential OOB access as described [here](https://github.com/apache/arrow-rs/pull/2533#discussion_r953949061). This sho... | process | potential oob access in unreleased comparison kernels describe the bug a clear and concise description of what the bug is introduced a potential oob access as described this should be fixed prior to the next release to reproduce steps to reproduce the behavior expect... | 1 |

258,450 | 22,320,328,552 | IssuesEvent | 2022-06-14 05:28:30 | NebulaMC-GG/Support | https://api.github.com/repos/NebulaMC-GG/Support | closed | /evs and /ivs commands not working in spawn | bug needs testing spigot | Self-explanatory in the title. The commands work fine in the wilderness world, they just don't work in spawn. | 1.0 | /evs and /ivs commands not working in spawn - Self-explanatory in the title. The commands work fine in the wilderness world, they just don't work in spawn. | non_process | evs and ivs commands not working in spawn self explanatory in the title the commands work fine in the wilderness world they just don t work in spawn | 0 |

18,365 | 24,492,474,476 | IssuesEvent | 2022-10-10 04:35:43 | f-lab-edu/MarketFlea | https://api.github.com/repos/f-lab-edu/MarketFlea | opened | 회원 탈퇴 | in process | - [ ] 비밀번호 입력 후 매치 성공시 현재 로그인 중인 회원 탈퇴

- [ ] 회원 탈퇴 성공시 자동으로 로그아웃

- [ ] 비밀번호 불일치 시 안내 메시지 리턴 | 1.0 | 회원 탈퇴 - - [ ] 비밀번호 입력 후 매치 성공시 현재 로그인 중인 회원 탈퇴

- [ ] 회원 탈퇴 성공시 자동으로 로그아웃

- [ ] 비밀번호 불일치 시 안내 메시지 리턴 | process | 회원 탈퇴 비밀번호 입력 후 매치 성공시 현재 로그인 중인 회원 탈퇴 회원 탈퇴 성공시 자동으로 로그아웃 비밀번호 불일치 시 안내 메시지 리턴 | 1 |

127,466 | 12,323,600,524 | IssuesEvent | 2020-05-13 12:27:10 | gatsbyjs/gatsby | https://api.github.com/repos/gatsbyjs/gatsby | closed | new pages dont update: Tutorial can't be followed | stale? status: needs reproduction type: bug type: documentation | <!--

Please fill out each section below, otherwise, your issue will be closed. This info allows Gatsby maintainers to diagnose (and fix!) your issue as quickly as possible.

Useful Links:

- Documentation: https://www.gatsbyjs.org/docs/

- How to File an Issue: https://www.gatsbyjs.org/contributing/how-to-fi... | 1.0 | new pages dont update: Tutorial can't be followed - <!--

Please fill out each section below, otherwise, your issue will be closed. This info allows Gatsby maintainers to diagnose (and fix!) your issue as quickly as possible.

Useful Links:

- Documentation: https://www.gatsbyjs.org/docs/

- How to File an Is... | non_process | new pages dont update tutorial can t be followed please fill out each section below otherwise your issue will be closed this info allows gatsby maintainers to diagnose and fix your issue as quickly as possible useful links documentation how to file an issue before opening a ... | 0 |

9,415 | 12,411,234,670 | IssuesEvent | 2020-05-22 08:11:00 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | closed | Feature request: Preprocessor to extract amplitude of cycles | enhancement preprocessor | We do not have a preprocessor yet which is able to extract the amplitude of cycles (annual cycle, diurnal cycle, etc.).

I will open a PR for that. | 1.0 | Feature request: Preprocessor to extract amplitude of cycles - We do not have a preprocessor yet which is able to extract the amplitude of cycles (annual cycle, diurnal cycle, etc.).

I will open a PR for that. | process | feature request preprocessor to extract amplitude of cycles we do not have a preprocessor yet which is able to extract the amplitude of cycles annual cycle diurnal cycle etc i will open a pr for that | 1 |

8,015 | 11,205,217,469 | IssuesEvent | 2020-01-05 12:45:13 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | QGIS 3.10: Broken clustering method selection in GRASS v.cluster processing algorithm? | Bug Feedback Processing | 1. QGIS DBSCAN works properly.

2. GRASS v.cluster methods do not.

3. When the user selects the Clustering method it would be helpful if the dialog entry displayed the entry. DBSCAN for example, currently it displays how many options are selected. ( 1 options selected). If the goal is to run all algorithms than pe... | 1.0 | QGIS 3.10: Broken clustering method selection in GRASS v.cluster processing algorithm? - 1. QGIS DBSCAN works properly.

2. GRASS v.cluster methods do not.

3. When the user selects the Clustering method it would be helpful if the dialog entry displayed the entry. DBSCAN for example, currently it displays how many ... | process | qgis broken clustering method selection in grass v cluster processing algorithm qgis dbscan works properly grass v cluster methods do not when the user selects the clustering method it would be helpful if the dialog entry displayed the entry dbscan for example currently it displays how many o... | 1 |

15,687 | 19,847,968,519 | IssuesEvent | 2022-01-21 09:04:29 | googleapis/java-eventarc-publishing | https://api.github.com/repos/googleapis/java-eventarc-publishing | opened | Your .repo-metadata.json file has a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'eventarc-publishing' invalid in .repo-metadata.json

☝️ Once you address these problems, you can close this issue.

### Need help?

* [Schema definition](https://github.com/googleapis/repo-automation-bots/blob/main/packages/rep... | 1.0 | Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'eventarc-publishing' invalid in .repo-metadata.json

☝️ Once you address these problems, you can close this issue.

### Need help?

* [Schema definition](https://github.com/googl... | process | your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 api shortname eventarc publishing invalid in repo metadata json ☝️ once you address these problems you can close this issue need help lists valid options for each field ... | 1 |

628,407 | 19,985,421,202 | IssuesEvent | 2022-01-30 15:36:04 | WasmEdge/WasmEdge | https://api.github.com/repos/WasmEdge/WasmEdge | closed | [Install] Add Linux aarch64 support for extensions | enhancement priority:medium platform:linux | The current installer does not attempt to install TF and image extensions on Linux aarch64 since those extensions were not available on that platform.

However, we have recently released those extensions on Linux aarch64. The installer should support that. | 1.0 | [Install] Add Linux aarch64 support for extensions - The current installer does not attempt to install TF and image extensions on Linux aarch64 since those extensions were not available on that platform.

However, we have recently released those extensions on Linux aarch64. The installer should support that. | non_process | add linux support for extensions the current installer does not attempt to install tf and image extensions on linux since those extensions were not available on that platform however we have recently released those extensions on linux the installer should support that | 0 |

92,157 | 26,597,845,895 | IssuesEvent | 2023-01-23 13:48:15 | appsmithorg/appsmith | https://api.github.com/repos/appsmithorg/appsmith | closed | [Bug]: The fields that cause errors in widgets do not get a red highlight without clicking on them | Bug Property Pane App Viewers Pod Low Production Needs Triaging UI Builders Pod All Widgets | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Description

We do not get to see the red highlight over the fields in the property pane that cause any errors in any widgets.

https://www.loom.com/share/a7e8ba9dd42545f1beecff592cacb8c0

### Steps To Reproduce

1. Go to any appli... | 1.0 | [Bug]: The fields that cause errors in widgets do not get a red highlight without clicking on them - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Description

We do not get to see the red highlight over the fields in the property pane that cause any errors in any widgets.

ht... | non_process | the fields that cause errors in widgets do not get a red highlight without clicking on them is there an existing issue for this i have searched the existing issues description we do not get to see the red highlight over the fields in the property pane that cause any errors in any widgets s... | 0 |

10,734 | 13,533,723,991 | IssuesEvent | 2020-09-16 03:46:33 | timberio/vector | https://api.github.com/repos/timberio/vector | opened | Process metrics - Remap tags | Epic domain: metrics domain: processing type: feature | Like logs, metrics can be messy and Vector should provide the necessary tools to clean metric data up. Vector currently offers very weak tools for this job (add, rename, and remove tags). This epic is focused on improving this area of Vector so that it at least has parity with other leading metrics collectors (Telegraf... | 1.0 | Process metrics - Remap tags - Like logs, metrics can be messy and Vector should provide the necessary tools to clean metric data up. Vector currently offers very weak tools for this job (add, rename, and remove tags). This epic is focused on improving this area of Vector so that it at least has parity with other leadi... | process | process metrics remap tags like logs metrics can be messy and vector should provide the necessary tools to clean metric data up vector currently offers very weak tools for this job add rename and remove tags this epic is focused on improving this area of vector so that it at least has parity with other leadi... | 1 |

18,832 | 24,736,505,684 | IssuesEvent | 2022-10-20 22:35:53 | varabyte/kotter | https://api.github.com/repos/varabyte/kotter | closed | Get code coverage to 90+% for all non-UI classes | good first issue process | Create a test executor and test terminal so we can verify behaviors in unit tests. | 1.0 | Get code coverage to 90+% for all non-UI classes - Create a test executor and test terminal so we can verify behaviors in unit tests. | process | get code coverage to for all non ui classes create a test executor and test terminal so we can verify behaviors in unit tests | 1 |

83,486 | 24,064,063,439 | IssuesEvent | 2022-09-17 08:03:45 | pyodide/pyodide | https://api.github.com/repos/pyodide/pyodide | closed | micropip import error | bug build | After building pyodide, I tried the following code :

```

pyodide.loadPackage("micropip").then(() => {

pyodide.runPythonAsync(`

import micropip

`);

});

```

```

pyodide.asm.js:14 Uncaught (in promise) PythonError: Traceback (most recent... | 1.0 | micropip import error - After building pyodide, I tried the following code :

```

pyodide.loadPackage("micropip").then(() => {

pyodide.runPythonAsync(`

import micropip

`);

});

```

```

pyodide.asm.js:14 Uncaught (in promise) PythonError... | non_process | micropip import error after building pyodide i tried the following code pyodide loadpackage micropip then pyodide runpythonasync import micropip pyodide asm js uncaught in promise pythonerror ... | 0 |

255,107 | 27,484,745,677 | IssuesEvent | 2023-03-04 01:14:40 | panasalap/linux-4.1.15 | https://api.github.com/repos/panasalap/linux-4.1.15 | opened | CVE-2020-29371 (Low) detected in linux-yocto-4.1v4.1.17 | security vulnerability | ## CVE-2020-29371 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yocto-4.1v4.1.17</b></p></summary>

<p>

<p>[no description]</p>

<p>Library home page: <a href=https://git.yoctopro... | True | CVE-2020-29371 (Low) detected in linux-yocto-4.1v4.1.17 - ## CVE-2020-29371 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yocto-4.1v4.1.17</b></p></summary>

<p>

<p>[no descripti... | non_process | cve low detected in linux yocto cve low severity vulnerability vulnerable library linux yocto library home page a href found in base branch master vulnerable source files fs romfs storage c fs romfs storage c fs romfs ... | 0 |

81 | 2,532,040,874 | IssuesEvent | 2015-01-23 13:11:23 | schoffelen/MOUS | https://api.github.com/repos/schoffelen/MOUS | reopened | do a new iteration of parcellation of mne-erf results (visual) | data processing meg | As discussed in the 2014 fall workshop, in order to pinpoint more the spatial location of the effects we should re-attempt the statistics based on a parcellation. For consistency, we will use the conte69 subparcellated Broadmann area atlas.

To do:

-revisit the parcellation step: this is an explicit step that needs... | 1.0 | do a new iteration of parcellation of mne-erf results (visual) - As discussed in the 2014 fall workshop, in order to pinpoint more the spatial location of the effects we should re-attempt the statistics based on a parcellation. For consistency, we will use the conte69 subparcellated Broadmann area atlas.

To do:

-r... | process | do a new iteration of parcellation of mne erf results visual as discussed in the fall workshop in order to pinpoint more the spatial location of the effects we should re attempt the statistics based on a parcellation for consistency we will use the subparcellated broadmann area atlas to do revisit th... | 1 |

1,920 | 3,427,813,527 | IssuesEvent | 2015-12-10 04:49:27 | ForNeVeR/kaiwa | https://api.github.com/repos/ForNeVeR/kaiwa | closed | Typescript integration | enhancement infrastructure | All the new code should be fully type checked by the TypeScript compiler. There are too many problems due to JavaScript's dynamic nature; it's time to overcome this. We'll need to find a way to integrate TypeScript compiler into Kaiwa build and serving process. | 1.0 | Typescript integration - All the new code should be fully type checked by the TypeScript compiler. There are too many problems due to JavaScript's dynamic nature; it's time to overcome this. We'll need to find a way to integrate TypeScript compiler into Kaiwa build and serving process. | non_process | typescript integration all the new code should be fully type checked by the typescript compiler there are too many problems due to javascript s dynamic nature it s time to overcome this we ll need to find a way to integrate typescript compiler into kaiwa build and serving process | 0 |

61,697 | 12,194,867,381 | IssuesEvent | 2020-04-29 16:26:41 | kwk/test-llvm-bz-import-5 | https://api.github.com/repos/kwk/test-llvm-bz-import-5 | closed | scheduler crash on CodeGen/Generic/add-with-overflow-128.ll when running on x86-32 | BZ-BUG-STATUS: RESOLVED BZ-RESOLUTION: FIXED dummy import from bugzilla libraries/Common Code Generator Code | This issue was imported from Bugzilla https://bugs.llvm.org/show_bug.cgi?id=8823. | 2.0 | scheduler crash on CodeGen/Generic/add-with-overflow-128.ll when running on x86-32 - This issue was imported from Bugzilla https://bugs.llvm.org/show_bug.cgi?id=8823. | non_process | scheduler crash on codegen generic add with overflow ll when running on this issue was imported from bugzilla | 0 |

10,801 | 13,609,287,696 | IssuesEvent | 2020-09-23 04:50:07 | googleapis/java-datacatalog | https://api.github.com/repos/googleapis/java-datacatalog | closed | Dependency Dashboard | api: datacatalog type: process | This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/com.google.cloud-google-cloud-datacatalog-1.x -->chore(deps): update dependency com.google.cloud:google-clo... | 1.0 | Dependency Dashboard - This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/com.google.cloud-google-cloud-datacatalog-1.x -->chore(deps): update dependency com.... | process | dependency dashboard this issue contains a list of renovate updates and their statuses open these updates have all been created already click a checkbox below to force a retry rebase of any chore deps update dependency com google cloud google cloud datacatalog to chore deps update depen... | 1 |

7,934 | 11,115,060,504 | IssuesEvent | 2019-12-18 09:58:42 | Open-EO/openeo-api | https://api.github.com/repos/Open-EO/openeo-api | closed | Process graph: Complex validation of array and objects that contain variables and other references | process graphs | This issue is a follow-up on #160 to keep track of issues we are facing with the new process graphs.

**1. Validation of array and objects that contain variables and other references**

An issue I am currently facing is that I can't easily validate arrays and objects that contain variables, references to results or... | 1.0 | Process graph: Complex validation of array and objects that contain variables and other references - This issue is a follow-up on #160 to keep track of issues we are facing with the new process graphs.

**1. Validation of array and objects that contain variables and other references**

An issue I am currently facin... | process | process graph complex validation of array and objects that contain variables and other references this issue is a follow up on to keep track of issues we are facing with the new process graphs validation of array and objects that contain variables and other references an issue i am currently facing ... | 1 |

229,807 | 25,378,458,991 | IssuesEvent | 2022-11-21 15:44:07 | elastic/integrations | https://api.github.com/repos/elastic/integrations | closed | AWS Security Hub #3589: SecurityHub Dashboard Overview and other dashboard improvements | Team:Security-External Integrations Integration:AWS | As we discussed in the demo call please consider adding the following resources to the AWS Security Hub overview dashboard:

- Findings by resource type

- Findings by Types

- Top 10 security hub findings

- Top 10 critical findings by severity

- Critical findings count comparison in last week

- Counts records by ... | True | AWS Security Hub #3589: SecurityHub Dashboard Overview and other dashboard improvements - As we discussed in the demo call please consider adding the following resources to the AWS Security Hub overview dashboard:

- Findings by resource type

- Findings by Types

- Top 10 security hub findings

- Top 10 critical fin... | non_process | aws security hub securityhub dashboard overview and other dashboard improvements as we discussed in the demo call please consider adding the following resources to the aws security hub overview dashboard findings by resource type findings by types top security hub findings top critical findings... | 0 |

54,756 | 6,402,926,497 | IssuesEvent | 2017-08-06 14:21:15 | pixelhumain/co2 | https://api.github.com/repos/pixelhumain/co2 | closed | Editer profil | to test | Dans réseaux sociaux : la mise à jour du lien vers la page github ne fonctionne pas, ça tourne en boucle

Dans informations générales, la date de naissance ne fonctionne pas. On peut en entrer ... | 1.0 | Editer profil - Dans réseaux sociaux : la mise à jour du lien vers la page github ne fonctionne pas, ça tourne en boucle

Dans informations générales, la date de naissance ne fonctionne pas. O... | non_process | editer profil dans réseaux sociaux la mise à jour du lien vers la page github ne fonctionne pas ça tourne en boucle dans informations générales la date de naissance ne fonctionne pas on peut en entrer une et suavegarder mais quand on recharge la page elle est différente | 0 |

605,139 | 18,725,420,231 | IssuesEvent | 2021-11-03 15:49:39 | GoogleCloudPlatform/nodejs-getting-started | https://api.github.com/repos/GoogleCloudPlatform/nodejs-getting-started | closed | background: "after all" hook for "should get the correct response" failed | type: bug priority: p1 flakybot: issue flakybot: flaky | Note: #478 was also for this test, but it is locked

----

commit: 5bccb4d294a17e64b3f561644595b688713ac13c

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/d3b26d36-dd78-459e-a4e8-5f7e7843ebbe), [Sponge](http://sponge2/d3b26d36-dd78-459e-a4e8-5f7e7843ebbe)

status: failed

<details><summary>T... | 1.0 | background: "after all" hook for "should get the correct response" failed - Note: #478 was also for this test, but it is locked

----

commit: 5bccb4d294a17e64b3f561644595b688713ac13c

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/d3b26d36-dd78-459e-a4e8-5f7e7843ebbe), [Sponge](http://spon... | non_process | background after all hook for should get the correct response failed note was also for this test but it is locked commit buildurl status failed test output unauthenticated request had invalid authentication credentials expected oauth access token login cookie or other valid au... | 0 |

16,722 | 21,885,291,079 | IssuesEvent | 2022-05-19 17:59:48 | metabase/metabase | https://api.github.com/repos/metabase/metabase | opened | [Actions] Disallow executing `is_readwrite` Saved Questions from normal QP API endpoints | Querying/Processor Misc/API .Backend Actions/ | Part of #22542

Attempting to execute an `is_readwrite` query thru the usual readonly query endpoints such as `POST /api/card/:id/query` or the DashCard execution endpoint should result in an error. Readwrite queries should only be executable via the Actions pathway for the time being. | 1.0 | [Actions] Disallow executing `is_readwrite` Saved Questions from normal QP API endpoints - Part of #22542

Attempting to execute an `is_readwrite` query thru the usual readonly query endpoints such as `POST /api/card/:id/query` or the DashCard execution endpoint should result in an error. Readwrite queries should onl... | process | disallow executing is readwrite saved questions from normal qp api endpoints part of attempting to execute an is readwrite query thru the usual readonly query endpoints such as post api card id query or the dashcard execution endpoint should result in an error readwrite queries should only be executa... | 1 |

16,236 | 20,782,550,754 | IssuesEvent | 2022-03-16 15:58:42 | opensearch-project/data-prepper | https://api.github.com/repos/opensearch-project/data-prepper | closed | String Manipulation Processor | plugin - processor proposal | Data Prepper should have a Processor for string manipulation.

Some candidate operations:

* `uppercase` - Make a string all uppercase

* `lowercase` - Make a string all lowercase

* `trim` - Trim whitespace from the start and end of a string

* `split` - Split a string on a delimiter into an array of strings

* `j... | 1.0 | String Manipulation Processor - Data Prepper should have a Processor for string manipulation.

Some candidate operations:

* `uppercase` - Make a string all uppercase

* `lowercase` - Make a string all lowercase

* `trim` - Trim whitespace from the start and end of a string

* `split` - Split a string on a delimite... | process | string manipulation processor data prepper should have a processor for string manipulation some candidate operations uppercase make a string all uppercase lowercase make a string all lowercase trim trim whitespace from the start and end of a string split split a string on a delimite... | 1 |

19,755 | 2,622,166,781 | IssuesEvent | 2015-03-04 00:12:43 | byzhang/rapidjson | https://api.github.com/repos/byzhang/rapidjson | closed | demo request | auto-migrated Priority-Medium Type-Task | ```

Hi folks:

I am trying to use rapidjson to get two value pairs unknown from a server. I

mean sort like { "x":"y" } both x and y unknown (several of this pairs) and I

couldn't find a demo to do that in rapidjson. I need to get/print both values.

I think it would be great to have one around in the examples. All I ... | 1.0 | demo request - ```

Hi folks:

I am trying to use rapidjson to get two value pairs unknown from a server. I

mean sort like { "x":"y" } both x and y unknown (several of this pairs) and I

couldn't find a demo to do that in rapidjson. I need to get/print both values.

I think it would be great to have one around in the e... | non_process | demo request hi folks i am trying to use rapidjson to get two value pairs unknown from a server i mean sort like x y both x and y unknown several of this pairs and i couldn t find a demo to do that in rapidjson i need to get print both values i think it would be great to have one around in the e... | 0 |

193,469 | 6,885,179,046 | IssuesEvent | 2017-11-21 15:22:59 | tsgrp/OpenAnnotate | https://api.github.com/repos/tsgrp/OpenAnnotate | closed | Duplicate Replace-Text Lines Created on Document Load | High Priority Issue | When loading a document with replace-text annotations, duplicate svg elements are created on the document. So when you attempt to delete the annotation, another copy of the lines remains. This only occurs with text selects, and is most likely occurring due to the "groupedReply" relationship with the Caret.

GO:0044055 modulation by symbiont of host system process

has these descendants

GO:0044061 modulation by symbiont of host excretion | is_a

-- | --

GO:0044064 modulation by symbiont of host res... | 1.0 | MP: fix in pathogen host branch missing parent -

GO:0044055 modulation by symbiont of host system process

has these descendants

GO:0044061 modulation by symbiont of host excretion | is_a

-- | --

... | process | mp fix in pathogen host branch missing parent go modulation by symbiont of host system process has these descendants go modulation by symbiont of host excretion is a go modulation by symbiont of host respiratory system process is a go modulation by symbiont of host ne... | 1 |

9,629 | 8,057,429,625 | IssuesEvent | 2018-08-02 15:22:14 | privacyidea/privacyidea | https://api.github.com/repos/privacyidea/privacyidea | closed | Provide python-croniter in Ubuntu Repo | infrastructure and config | We need to provide python-croniter >= 0.3.8 at least in our ubuntu 14.04 repos.

| 1.0 | Provide python-croniter in Ubuntu Repo - We need to provide python-croniter >= 0.3.8 at least in our ubuntu 14.04 repos.

| non_process | provide python croniter in ubuntu repo we need to provide python croniter at least in our ubuntu repos | 0 |

16,581 | 21,625,440,220 | IssuesEvent | 2022-05-05 01:02:17 | googleapis/repo-automation-bots | https://api.github.com/repos/googleapis/repo-automation-bots | closed | owlbot: make OwlBot a required check for Python repositories | type: process bot: owl-bot | If OwlBot doesn't run on a pull request, it can result in a bad merge to the main branch, e.g., merging the staging directory.

OwlBot should be made a required check for languages that are using OwlBot. If OwlBot ever fails to run:

* we would be grateful if you'd notify the GitHub automation chat immediately _(if... | 1.0 | owlbot: make OwlBot a required check for Python repositories - If OwlBot doesn't run on a pull request, it can result in a bad merge to the main branch, e.g., merging the staging directory.

OwlBot should be made a required check for languages that are using OwlBot. If OwlBot ever fails to run:

* we would be grate... | process | owlbot make owlbot a required check for python repositories if owlbot doesn t run on a pull request it can result in a bad merge to the main branch e g merging the staging directory owlbot should be made a required check for languages that are using owlbot if owlbot ever fails to run we would be grate... | 1 |

436,696 | 12,551,719,992 | IssuesEvent | 2020-06-06 15:42:37 | googleapis/nodejs-web-risk | https://api.github.com/repos/googleapis/nodejs-web-risk | closed | Synthesis failed for nodejs-web-risk | api: webrisk autosynth failure priority: p1 type: bug | Hello! Autosynth couldn't regenerate nodejs-web-risk. :broken_heart:

Here's the output from running `synth.py`:

```

b'gleapis-16\nnothing to commit, working tree clean\n2020-06-05 04:43:33,489 synthtool [DEBUG] > Ensuring dependencies.\nDEBUG:synthtool:Ensuring dependencies.\n2020-06-05 04:43:33,494 synthtool [DEBUG]... | 1.0 | Synthesis failed for nodejs-web-risk - Hello! Autosynth couldn't regenerate nodejs-web-risk. :broken_heart:

Here's the output from running `synth.py`:

```

b'gleapis-16\nnothing to commit, working tree clean\n2020-06-05 04:43:33,489 synthtool [DEBUG] > Ensuring dependencies.\nDEBUG:synthtool:Ensuring dependencies.\n20... | non_process | synthesis failed for nodejs web risk hello autosynth couldn t regenerate nodejs web risk broken heart here s the output from running synth py b gleapis nnothing to commit working tree clean synthtool ensuring dependencies ndebug synthtool ensuring dependencies synthto... | 0 |

597,663 | 18,168,412,987 | IssuesEvent | 2021-09-27 17:00:16 | googleapis/google-cloud-go | https://api.github.com/repos/googleapis/google-cloud-go | closed | bigquery: TestIntegration_RemoveTimePartitioning failed | type: bug api: bigquery priority: p1 flakybot: issue | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 05fe61c5aa4860bdebbbe3e91a9afaba16aa6184

b... | 1.0 | bigquery: TestIntegration_RemoveTimePartitioning failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting... | non_process | bigquery testintegration removetimepartitioning failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output integration test go googleapi er... | 0 |

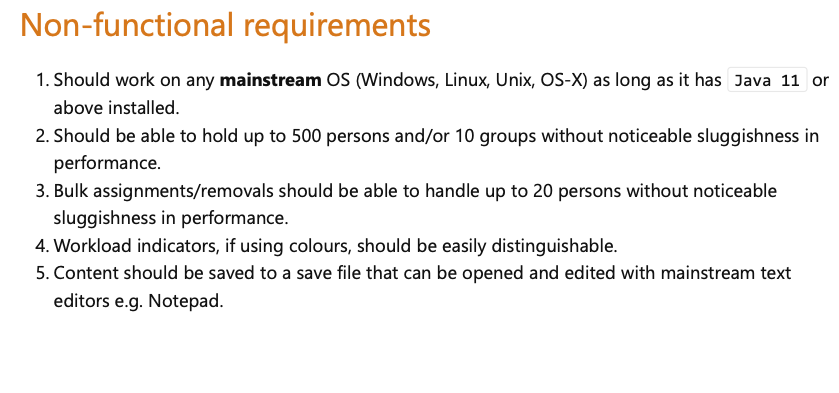

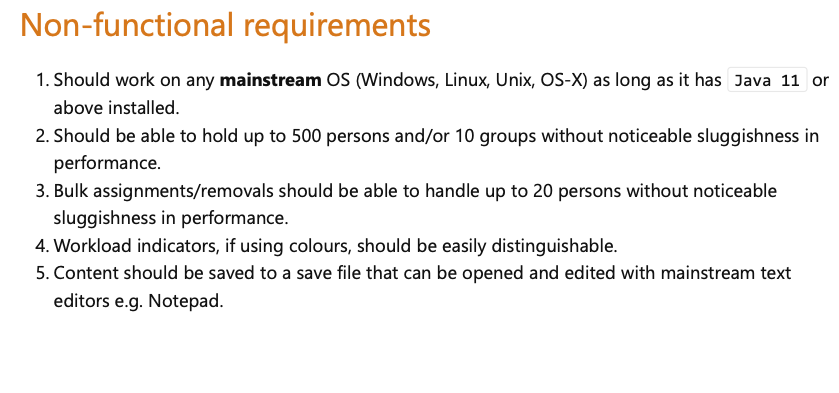

357,248 | 25,176,352,130 | IssuesEvent | 2022-11-11 09:36:21 | arnav-ag/pe | https://api.github.com/repos/arnav-ag/pe | opened | [DG] NFR Scope | severity.Low type.DocumentationBug | For point 4:

It is hard to know when it is achieved. How do we know if the workload indicators are easily distinguishable? On the first run of using the application, I did not notice the indicators, so do I... | 1.0 | [DG] NFR Scope - For point 4:

It is hard to know when it is achieved. How do we know if the workload indicators are easily distinguishable? On the first run of using the application, I did not notice the in... | non_process | nfr scope for point it is hard to know when it is achieved how do we know if the workload indicators are easily distinguishable on the first run of using the application i did not notice the indicators so do i consider that being indistinguishable | 0 |

75,829 | 26,082,594,755 | IssuesEvent | 2022-12-25 15:57:53 | vector-im/element-ios | https://api.github.com/repos/vector-im/element-ios | opened | Loudspeaker not automatically activated when giving a video call - Loudspeaker's icon not explicit | T-Defect | ### Steps to reproduce

Where are you starting? What can you see?

One of my relative uses an iphone 8 with Element 1.9.14

Problem 1. When she calls me by video, the loudspeaker is not automatically activated. Also the loudspeaker's icon is greyed (see screenshot), so the loudspeaker's status is not very clear as ... | 1.0 | Loudspeaker not automatically activated when giving a video call - Loudspeaker's icon not explicit - ### Steps to reproduce

Where are you starting? What can you see?

One of my relative uses an iphone 8 with Element 1.9.14

Problem 1. When she calls me by video, the loudspeaker is not automatically activated. Also... | non_process | loudspeaker not automatically activated when giving a video call loudspeaker s icon not explicit steps to reproduce where are you starting what can you see one of my relative uses an iphone with element problem when she calls me by video the loudspeaker is not automatically activated also ... | 0 |

9,491 | 12,484,206,101 | IssuesEvent | 2020-05-30 13:36:35 | underdocs/underdocs | https://api.github.com/repos/underdocs/underdocs | opened | Preprocessor Conditional as Entity | area: parser area: renderer epic: preprocessor type: epic :100: | ## Brief

Promote preprocessor conditionals to separate, documentable entities.

## Background

There are multiple reasons why this change would be beneficial:

* complex conditionals deserve their own documentation, so that clients would know how different values of macros would affect compilation,

* we w... | 1.0 | Preprocessor Conditional as Entity - ## Brief

Promote preprocessor conditionals to separate, documentable entities.

## Background

There are multiple reasons why this change would be beneficial:

* complex conditionals deserve their own documentation, so that clients would know how different values of macro... | process | preprocessor conditional as entity brief promote preprocessor conditionals to separate documentable entities background there are multiple reasons why this change would be beneficial complex conditionals deserve their own documentation so that clients would know how different values of macro... | 1 |

21,625 | 30,024,821,553 | IssuesEvent | 2023-06-27 04:48:33 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | pih 1.45 has 2 GuardDog issues | guarddog typosquatting silent-process-execution | https://pypi.org/project/pih

https://inspector.pypi.io/project/pih

```{

"dependency": "pih",

"version": "1.45",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names, and might be a typosquatting attempt: pip, pid",

... | 1.0 | pih 1.45 has 2 GuardDog issues - https://pypi.org/project/pih

https://inspector.pypi.io/project/pih

```{

"dependency": "pih",

"version": "1.45",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names, and might be a typos... | process | pih has guarddog issues dependency pih version result issues errors results typosquatting this package closely ressembles the following package names and might be a typosquatting attempt pip pid silent process execution ... | 1 |

139,953 | 20,986,804,777 | IssuesEvent | 2022-03-29 04:41:40 | batch-dart/batch.dart | https://api.github.com/repos/batch-dart/batch.dart | opened | Support logging features in parallel processing | Feedback: improvement Type: design | <!-- When reporting improvement, please read this complete template and fill all the questions in order to get a better response -->

# 1. What could be improved

<!-- What part of the code/functionality could be improved? -->

Support logging features in parallel processing.

# 2. Why should this be improved

... | 1.0 | Support logging features in parallel processing - <!-- When reporting improvement, please read this complete template and fill all the questions in order to get a better response -->

# 1. What could be improved

<!-- What part of the code/functionality could be improved? -->

Support logging features in parallel... | non_process | support logging features in parallel processing what could be improved support logging features in parallel processing why should this be improved design a process that enables logging during parallel processing since logger instances created by the main thread cannot be shared dur... | 0 |

612,090 | 18,990,616,869 | IssuesEvent | 2021-11-22 06:39:35 | rafinkanisa/ngm-reportDesk | https://api.github.com/repos/rafinkanisa/ngm-reportDesk | closed | RH AF Health Updates Activity Name | priority | As requested by the Health cluster

Kindly update the following activity into a new name

Risk Community and Community Engagement

to :

Risk Communication and Community Engagement

Required todo :

Update the database | 1.0 | RH AF Health Updates Activity Name - As requested by the Health cluster

Kindly update the following activity into a new name

Risk Community and Community Engagement

to :

Risk Communication and Community Engagement

Required todo :

Update the database | non_process | rh af health updates activity name as requested by the health cluster kindly update the following activity into a new name risk community and community engagement to risk communication and community engagement required todo update the database | 0 |

5,378 | 5,672,304,296 | IssuesEvent | 2017-04-12 00:50:00 | LiskHQ/lisk | https://api.github.com/repos/LiskHQ/lisk | closed | SQL performance & flexibility | enhancement performance security | - [x] SQL performance & flexibility

- [x] Review queries and test their performance, find slow queries/views

- [x] Improve performance for existing queries/view (if needed)

- [x] Review data types performance & consistency

From: #449 | True | SQL performance & flexibility - - [x] SQL performance & flexibility

- [x] Review queries and test their performance, find slow queries/views

- [x] Improve performance for existing queries/view (if needed)

- [x] Review data types performance & consistency

From: #449 | non_process | sql performance flexibility sql performance flexibility review queries and test their performance find slow queries views improve performance for existing queries view if needed review data types performance consistency from | 0 |

345,491 | 24,862,202,710 | IssuesEvent | 2022-10-27 09:08:23 | woocommerce/woocommerce-blocks | https://api.github.com/repos/woocommerce/woocommerce-blocks | opened | Document how to use @woocommerce/shared-hocs | type: documentation type: cooldown | In #7454, @Tropicalista asked how to use `@woocommerce/shared-hocs` as this was not clear in our docs. This issue aims to improve our docs by explaining how to use `@woocommerce/shared-hocs` and showing an example, e.g. https://github.com/woocommerce/woocommerce-blocks/issues/7454#issuecomment-1289519939. | 1.0 | Document how to use @woocommerce/shared-hocs - In #7454, @Tropicalista asked how to use `@woocommerce/shared-hocs` as this was not clear in our docs. This issue aims to improve our docs by explaining how to use `@woocommerce/shared-hocs` and showing an example, e.g. https://github.com/woocommerce/woocommerce-blocks/iss... | non_process | document how to use woocommerce shared hocs in tropicalista asked how to use woocommerce shared hocs as this was not clear in our docs this issue aims to improve our docs by explaining how to use woocommerce shared hocs and showing an example e g | 0 |

661,707 | 22,066,191,533 | IssuesEvent | 2022-05-31 03:41:01 | status-im/status-desktop | https://api.github.com/repos/status-im/status-desktop | opened | The date is shown when trying to copy Profile URL on the Profile | bug priority 3: low Settings | # Bug Report

## Description

<!-- Provide a short description describing the problem you are experiencing. -->

## Steps to reproduce

1. Open a public chat and click on any name of the members

2. Click 'View Profile' - click on the icon to copy the Profile URL

#### Expected behavior

The date is not shown w... | 1.0 | The date is shown when trying to copy Profile URL on the Profile - # Bug Report

## Description

<!-- Provide a short description describing the problem you are experiencing. -->

## Steps to reproduce

1. Open a public chat and click on any name of the members

2. Click 'View Profile' - click on the icon to copy... | non_process | the date is shown when trying to copy profile url on the profile bug report description steps to reproduce open a public chat and click on any name of the members click view profile click on the icon to copy the profile url expected behavior the date is not shown while using on... | 0 |

10,058 | 13,044,161,774 | IssuesEvent | 2020-07-29 03:47:26 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `AddDateDatetimeString` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `AddDateDatetimeString` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query... | 2.0 | UCP: Migrate scalar function `AddDateDatetimeString` from TiDB -

## Description

Port the scalar function `AddDateDatetimeString` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

... | process | ucp migrate scalar function adddatedatetimestring from tidb description port the scalar function adddatedatetimestring from tidb to coprocessor score mentor s breeswish recommended skills rust programming learning materials already implemented expressions ported from tidb ... | 1 |

206,312 | 23,374,924,098 | IssuesEvent | 2022-08-11 01:08:14 | Galaxy-Software-Service/WebGoat | https://api.github.com/repos/Galaxy-Software-Service/WebGoat | opened | CVE-2022-31160 (Medium) detected in jquery-ui-1.12.1.min.js | security vulnerability | ## CVE-2022-31160 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-ui-1.12.1.min.js</b></p></summary>

<p>A curated set of user interface interactions, effects, widgets, and the... | True | CVE-2022-31160 (Medium) detected in jquery-ui-1.12.1.min.js - ## CVE-2022-31160 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-ui-1.12.1.min.js</b></p></summary>

<p>A curated... | non_process | cve medium detected in jquery ui min js cve medium severity vulnerability vulnerable library jquery ui min js a curated set of user interface interactions effects widgets and themes built on top of the jquery javascript library library home page a href path to vulner... | 0 |

218,298 | 16,758,603,678 | IssuesEvent | 2021-06-13 10:12:54 | bounswe/2021SpringGroup9 | https://api.github.com/repos/bounswe/2021SpringGroup9 | opened | Write Evaluation of Tools and Processes for Milestone Report-2 | difficulty: medium documentation practice-app priority: critical | I will write the evaluation for some tools and processes. @mecolak will also work on evaluations for other tools: #189

My tools are:

- [ ] AWS

- [ ] Docker

- [ ] Git

- [ ] GitHub

- [ ] VSCode | 1.0 | Write Evaluation of Tools and Processes for Milestone Report-2 - I will write the evaluation for some tools and processes. @mecolak will also work on evaluations for other tools: #189

My tools are:

- [ ] AWS

- [ ] Docker

- [ ] Git

- [ ] GitHub

- [ ] VSCode | non_process | write evaluation of tools and processes for milestone report i will write the evaluation for some tools and processes mecolak will also work on evaluations for other tools my tools are aws docker git github vscode | 0 |

19,668 | 26,027,875,141 | IssuesEvent | 2022-12-21 17:59:59 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | closed | Automated release workflow fails at step Install JDK | bug regression process | ### Description

The automated release which works with the deprecated maven build system fails at step `Install SDK`:

```

Run actions/setup-java@v3

Installed distributions

Creating settings.xml with server-id: github

Writing to /home/runner/.m2/settings.xml

Error: No file in /home/runner/work/hedera-mirror-nod... | 1.0 | Automated release workflow fails at step Install JDK - ### Description

The automated release which works with the deprecated maven build system fails at step `Install SDK`:

```

Run actions/setup-java@v3

Installed distributions

Creating settings.xml with server-id: github

Writing to /home/runner/.m2/settings.xml... | process | automated release workflow fails at step install jdk description the automated release which works with the deprecated maven build system fails at step install sdk run actions setup java installed distributions creating settings xml with server id github writing to home runner settings xml ... | 1 |

21,929 | 30,446,559,208 | IssuesEvent | 2023-07-15 18:48:30 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | pyutils 0.0.1b10 has 2 GuardDog issues | guarddog typosquatting silent-process-execution | https://pypi.org/project/pyutils

https://inspector.pypi.io/project/pyutils

```{

"dependency": "pyutils",

"version": "0.0.1b10",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names, and might be a typosquatting attempt:... | 1.0 | pyutils 0.0.1b10 has 2 GuardDog issues - https://pypi.org/project/pyutils

https://inspector.pypi.io/project/pyutils

```{

"dependency": "pyutils",

"version": "0.0.1b10",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package nam... | process | pyutils has guarddog issues dependency pyutils version result issues errors results typosquatting this package closely ressembles the following package names and might be a typosquatting attempt pytils python utils silen... | 1 |

89 | 2,534,632,073 | IssuesEvent | 2015-01-25 05:23:23 | rg3/youtube-dl | https://api.github.com/repos/rg3/youtube-dl | closed | Write a wiki page on how to use axel | postprocessors request | Or another external downloader.

We should even implement bindings for some common external downloaders... Like "have axel or curl or wget in your path and use this option and we will call 'em" | 1.0 | Write a wiki page on how to use axel - Or another external downloader.