Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

823,948 | 31,074,738,891 | IssuesEvent | 2023-08-12 10:39:43 | ArkScript-lang/Ark | https://api.github.com/repos/ArkScript-lang/Ark | opened | Consider a smaller stack size | enhancement virtual machine compiler optimization priority/medium | ## The problem

Creating a VM requires creating an `ExecutionContext`, that holds a few important values, such as the stack for the VM, defined as a `std::array<Value, VmStackSize>`. As of today, `sizeof(Value)` returns 40B, and the stack size is set to 8192 ; creating a VM thus requires 327kB.

## Some tidbits abo... | 1.0 | Consider a smaller stack size - ## The problem

Creating a VM requires creating an `ExecutionContext`, that holds a few important values, such as the stack for the VM, defined as a `std::array<Value, VmStackSize>`. As of today, `sizeof(Value)` returns 40B, and the stack size is set to 8192 ; creating a VM thus requir... | non_process | consider a smaller stack size the problem creating a vm requires creating an executioncontext that holds a few important values such as the stack for the vm defined as a std array as of today sizeof value returns and the stack size is set to creating a vm thus requires some tidbits... | 0 |

127,000 | 12,303,858,978 | IssuesEvent | 2020-05-11 19:27:05 | 7h3Rabbit/StaticWebEpiserverPlugin | https://api.github.com/repos/7h3Rabbit/StaticWebEpiserverPlugin | closed | Showcasing usage of Events | documentation enhancement | We need more examples on our functionality, this is why we need to create a project showcasing how our events can be used.

For this we want to create the following:

- For every <link> element including stylesheet by pages, make them inline in markup for every page.

- Don't include CSS rules that we know would not ... | 1.0 | Showcasing usage of Events - We need more examples on our functionality, this is why we need to create a project showcasing how our events can be used.

For this we want to create the following:

- For every <link> element including stylesheet by pages, make them inline in markup for every page.

- Don't include CSS ... | non_process | showcasing usage of events we need more examples on our functionality this is why we need to create a project showcasing how our events can be used for this we want to create the following for every element including stylesheet by pages make them inline in markup for every page don t include css rules... | 0 |

20,154 | 26,702,976,265 | IssuesEvent | 2023-01-27 15:47:30 | bazelbuild/bazel-skylib | https://api.github.com/repos/bazelbuild/bazel-skylib | closed | Add gazelle plugin to BCR | P2 type: process | With #400 the gazelle plugin has now been added to the distribution, and it should be possible to ship it to BCR. | 1.0 | Add gazelle plugin to BCR - With #400 the gazelle plugin has now been added to the distribution, and it should be possible to ship it to BCR. | process | add gazelle plugin to bcr with the gazelle plugin has now been added to the distribution and it should be possible to ship it to bcr | 1 |

4,140 | 7,094,787,141 | IssuesEvent | 2018-01-13 08:34:41 | triplea-game/triplea | https://api.github.com/repos/triplea-game/triplea | closed | Server Ops - documentation and DR checklists - let's build an index of ops docs! | category: admin task category: dev & admin process | An opportunity to review ops. For everything we run: lobby, bots, forum, github.io website, dice server, warclub website and forum; do we have:

### documentation:

- how to install

- how to check if running

- how to restart

### Disaster Recovery

- are we taking data backups?

- are data backups replicated to a... | 1.0 | Server Ops - documentation and DR checklists - let's build an index of ops docs! - An opportunity to review ops. For everything we run: lobby, bots, forum, github.io website, dice server, warclub website and forum; do we have:

### documentation:

- how to install

- how to check if running

- how to restart

### D... | process | server ops documentation and dr checklists let s build an index of ops docs an opportunity to review ops for everything we run lobby bots forum github io website dice server warclub website and forum do we have documentation how to install how to check if running how to restart d... | 1 |

10,073 | 13,044,161,929 | IssuesEvent | 2020-07-29 03:47:27 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `TimestampAdd` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `TimestampAdd` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @mapleFU

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_ex... | 2.0 | UCP: Migrate scalar function `TimestampAdd` from TiDB -

## Description

Port the scalar function `TimestampAdd` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @mapleFU

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.co... | process | ucp migrate scalar function timestampadd from tidb description port the scalar function timestampadd from tidb to coprocessor score mentor s maplefu recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

8,657 | 11,796,880,601 | IssuesEvent | 2020-03-18 11:38:32 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Custom Mapping causes "No expression named ___" error when used in table view with joined fields | Administration/Data Model Priority:P3 Querying/Processor Type:Bug | Your databases: default custom dataset (but also MySQL 5.0)

Metabase version: 0.30.1

Metabase hosting environment: windows, jar (but also CentOS 7, jar)

Metabase internal database: default (but also Postgres)

Custom Mapping throws an error when you use it and and visible fields from a joined table.

Using the... | 1.0 | Custom Mapping causes "No expression named ___" error when used in table view with joined fields - Your databases: default custom dataset (but also MySQL 5.0)

Metabase version: 0.30.1

Metabase hosting environment: windows, jar (but also CentOS 7, jar)

Metabase internal database: default (but also Postgres)

Custom... | process | custom mapping causes no expression named error when used in table view with joined fields your databases default custom dataset but also mysql metabase version metabase hosting environment windows jar but also centos jar metabase internal database default but also postgres custom ... | 1 |

15,036 | 18,757,061,463 | IssuesEvent | 2021-11-05 12:13:37 | tndd/alpaca_v2 | https://api.github.com/repos/tndd/alpaca_v2 | closed | 決定木分析のためのbarsテーブルの変換 | data_processing | # 概要

決定木の説明変数として直接"高値"、"安値"などの数値を渡すのは適切とは思えない。

むしろ始値から"高値"や"安値"がどの程度**相対的に**変動したのかが重要だと感じる。

"取引量"については絶対的な値で問題ない。

# テーブル定義

## 説明変数

カラム名 | 型 | 説明

-- | -- | --

high_bp | float | 始値から高値の値上がりベーシスポイント

low_bp | float | 始値から安値の値下がりベーシスポイント

close_bp | float | 始値からの終値の変動ベーシスポイント

volume | int | 取引量

## 目的変数

カラム... | 1.0 | 決定木分析のためのbarsテーブルの変換 - # 概要

決定木の説明変数として直接"高値"、"安値"などの数値を渡すのは適切とは思えない。

むしろ始値から"高値"や"安値"がどの程度**相対的に**変動したのかが重要だと感じる。

"取引量"については絶対的な値で問題ない。

# テーブル定義

## 説明変数

カラム名 | 型 | 説明

-- | -- | --

high_bp | float | 始値から高値の値上がりベーシスポイント

low_bp | float | 始値から安値の値下がりベーシスポイント

close_bp | float | 始値からの終値の変動ベーシスポイント

volume | in... | process | 決定木分析のためのbarsテーブルの変換 概要 決定木の説明変数として直接 高値 、 安値 などの数値を渡すのは適切とは思えない。 むしろ始値から 高値 や 安値 がどの程度 相対的に 変動したのかが重要だと感じる。 取引量 については絶対的な値で問題ない。 テーブル定義 説明変数 カラム名 型 説明 high bp float 始値から高値の値上がりベーシスポイント low bp float 始値から安値の値下がりベーシスポイント close bp float 始値からの終値の変動ベーシスポイント volume in... | 1 |

8,452 | 11,624,081,807 | IssuesEvent | 2020-02-27 10:07:26 | atilaneves/dpp | https://api.github.com/repos/atilaneves/dpp | closed | avro: /macro_.d(396): Range violation | bug preprocessor | I have this dockerfile

``` dockerfile

FROM dlang2/ldc-ubuntu:1.19.0 as ldc

RUN apt-get install -y unzip cmake curl clang-9 libclang-9-dev libavro-dev

RUN ln -s /usr/bin/clang-9 /usr/bin/clang

COPY avro.dpp /tmp/

RUN DFLAGS="-L=-L/usr/lib/llvm-9/lib/" dub run dpp -- /tmp/avro.dpp \

--include-path /usr... | 1.0 | avro: /macro_.d(396): Range violation - I have this dockerfile

``` dockerfile

FROM dlang2/ldc-ubuntu:1.19.0 as ldc

RUN apt-get install -y unzip cmake curl clang-9 libclang-9-dev libavro-dev

RUN ln -s /usr/bin/clang-9 /usr/bin/clang

COPY avro.dpp /tmp/

RUN DFLAGS="-L=-L/usr/lib/llvm-9/lib/" dub run dpp -- ... | process | avro macro d range violation i have this dockerfile dockerfile from ldc ubuntu as ldc run apt get install y unzip cmake curl clang libclang dev libavro dev run ln s usr bin clang usr bin clang copy avro dpp tmp run dflags l l usr lib llvm lib dub run dpp tmp avr... | 1 |

3,429 | 6,529,630,524 | IssuesEvent | 2017-08-30 12:28:14 | w3c/w3process | https://api.github.com/repos/w3c/w3process | closed | Proposed changes to TAG makeup | Process2018Candidate | A proposed set of changes to the makeup of the TAG, as part of #4

* Be explicit that the chair(s) does not need to be a member of the TAG

* Suggest that the Director nominate appointees **after** the TAG election, rather than before

* Add one elected member to the TAG

This suggestion will be reviewed by the TA... | 1.0 | Proposed changes to TAG makeup - A proposed set of changes to the makeup of the TAG, as part of #4

* Be explicit that the chair(s) does not need to be a member of the TAG

* Suggest that the Director nominate appointees **after** the TAG election, rather than before

* Add one elected member to the TAG

This sugg... | process | proposed changes to tag makeup a proposed set of changes to the makeup of the tag as part of be explicit that the chair s does not need to be a member of the tag suggest that the director nominate appointees after the tag election rather than before add one elected member to the tag this sugg... | 1 |

129,716 | 18,109,436,881 | IssuesEvent | 2021-09-23 00:21:26 | Tim-Demo/JS-Demo | https://api.github.com/repos/Tim-Demo/JS-Demo | closed | CVE-2016-1000232 (Medium) detected in tough-cookie-2.2.2.tgz - autoclosed | security vulnerability | ## CVE-2016-1000232 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tough-cookie-2.2.2.tgz</b></p></summary>

<p>RFC6265 Cookies and Cookie Jar for node.js</p>

<p>Library home page: <... | True | CVE-2016-1000232 (Medium) detected in tough-cookie-2.2.2.tgz - autoclosed - ## CVE-2016-1000232 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tough-cookie-2.2.2.tgz</b></p></summary... | non_process | cve medium detected in tough cookie tgz autoclosed cve medium severity vulnerability vulnerable library tough cookie tgz cookies and cookie jar for node js library home page a href path to dependency file js demo package json path to vulnerable library js demo no... | 0 |

13,096 | 15,444,785,917 | IssuesEvent | 2021-03-08 10:52:04 | Ultimate-Hosts-Blacklist/whitelist | https://api.github.com/repos/Ultimate-Hosts-Blacklist/whitelist | closed | [FALSE-POSITIVE?] verizon.com | whitelisting process | It's an American multinational telecommunications conglomerate. There is no reason to block this. | 1.0 | [FALSE-POSITIVE?] verizon.com - It's an American multinational telecommunications conglomerate. There is no reason to block this. | process | verizon com it s an american multinational telecommunications conglomerate there is no reason to block this | 1 |

2,895 | 5,877,561,252 | IssuesEvent | 2017-05-16 00:17:36 | inasafe/inasafe-realtime | https://api.github.com/repos/inasafe/inasafe-realtime | closed | Realtime EQ contour smoothing | bug enhancement feature request realtime processor | problem

InaSAFE EQ realtime needs to have its contours smoothed for display. However, the number of people exposed to different shaking levels should be estimated from the raw (not smoothed) data.

proposed solution

For smoothing, Hadi used spatial convolution of intensity matrix with a smoothing kernel to filt... | 1.0 | Realtime EQ contour smoothing - problem

InaSAFE EQ realtime needs to have its contours smoothed for display. However, the number of people exposed to different shaking levels should be estimated from the raw (not smoothed) data.

proposed solution

For smoothing, Hadi used spatial convolution of intensity matrix... | process | realtime eq contour smoothing problem inasafe eq realtime needs to have its contours smoothed for display however the number of people exposed to different shaking levels should be estimated from the raw not smoothed data proposed solution for smoothing hadi used spatial convolution of intensity matrix... | 1 |

22,515 | 11,642,500,565 | IssuesEvent | 2020-02-29 07:31:20 | JuliaReach/LazySets.jl | https://api.github.com/repos/JuliaReach/LazySets.jl | closed | Special case concrete Minkowski sum for intervals | performance | Currently, the concrete minkowski sum between a pair of intervals falls back to the [AbstractHyperrectangle](https://github.com/JuliaReach/LazySets.jl/blob/a4c2db2cb07f7b66f441074303cbfdba4bc276e8/src/ConcreteOperations/minkowski_sum.jl#L216). It should rather use the concrete `+`. | True | Special case concrete Minkowski sum for intervals - Currently, the concrete minkowski sum between a pair of intervals falls back to the [AbstractHyperrectangle](https://github.com/JuliaReach/LazySets.jl/blob/a4c2db2cb07f7b66f441074303cbfdba4bc276e8/src/ConcreteOperations/minkowski_sum.jl#L216). It should rather use the... | non_process | special case concrete minkowski sum for intervals currently the concrete minkowski sum between a pair of intervals falls back to the it should rather use the concrete | 0 |

669 | 3,143,373,074 | IssuesEvent | 2015-09-14 06:18:10 | e-government-ua/i | https://api.github.com/repos/e-government-ua/i | closed | На главном портале скачанные документы всегда пустые | bug In process of testing test | Шаги для воспроизведения:

1. Создайте на своем компьютере текстовый (.txt) файл

2. В разделе "Документи" загрузите только что созданный файл

3. Скачайте только что загруженный документ

Ожидаемы результат: документ скачан, содержимое документа корректное

Действительный результат: документ пуст, размер документа 0 b... | 1.0 | На главном портале скачанные документы всегда пустые - Шаги для воспроизведения:

1. Создайте на своем компьютере текстовый (.txt) файл

2. В разделе "Документи" загрузите только что созданный файл

3. Скачайте только что загруженный документ

Ожидаемы результат: документ скачан, содержимое документа корректное

Действ... | process | на главном портале скачанные документы всегда пустые шаги для воспроизведения создайте на своем компьютере текстовый txt файл в разделе документи загрузите только что созданный файл скачайте только что загруженный документ ожидаемы результат документ скачан содержимое документа корректное действ... | 1 |

11,658 | 14,522,416,667 | IssuesEvent | 2020-12-14 08:47:30 | plazi/arcadia-project | https://api.github.com/repos/plazi/arcadia-project | opened | treatment metadata: zookeys example: error in scientific name authorship, link to article deposit in BLR | processing input quality control treatment@zenodo | @teodorgeorgiev I just looked at this treatment https://doi.org/10.5281/zenodo.4056468 in this article https://zenodo.org/record/3555661 with 136 treatments

1. The article deposit in BLR does not list the treatments in the metadata

2. The article BLR deposit is not listed in the treatment deposits

3. The figur... | 1.0 | treatment metadata: zookeys example: error in scientific name authorship, link to article deposit in BLR - @teodorgeorgiev I just looked at this treatment https://doi.org/10.5281/zenodo.4056468 in this article https://zenodo.org/record/3555661 with 136 treatments

1. The article deposit in BLR does not list the t... | process | treatment metadata zookeys example error in scientific name authorship link to article deposit in blr teodorgeorgiev i just looked at this treatment in this article with treatments the article deposit in blr does not list the treatments in the metadata the article blr deposit is not listed in ... | 1 |

13,924 | 16,681,321,775 | IssuesEvent | 2021-06-08 00:24:57 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Allow cancelling gdal algorithms | Feature Request Processing | Author Name: **Lene Fischer** (@LeneFischer)

Original Redmine Issue: [20060](https://issues.qgis.org/issues/20060)

Redmine category:processing/core

---

Trying to clip a raster with a mask layer. Deciding to stop the process - but Executing in the statusbar does not respond to clik at the red cross

---

Related ... | 1.0 | Allow cancelling gdal algorithms - Author Name: **Lene Fischer** (@LeneFischer)

Original Redmine Issue: [20060](https://issues.qgis.org/issues/20060)

Redmine category:processing/core

---

Trying to clip a raster with a mask layer. Deciding to stop the process - but Executing in the statusbar does not respond to clik ... | process | allow cancelling gdal algorithms author name lene fischer lenefischer original redmine issue redmine category processing core trying to clip a raster with a mask layer deciding to stop the process but executing in the statusbar does not respond to clik at the red cross related issue s... | 1 |

17,161 | 22,719,045,154 | IssuesEvent | 2022-07-06 06:31:07 | gradle/gradle | https://api.github.com/repos/gradle/gradle | closed | Incremental annotation processing does not find dependent classes with `proc:only` | a:bug @execution affects-version:7.0 in:annotation-processing closed:invalid | When doing incremental annotation processing with `proc:only`, compilation may fail because of some missing classes.

For example when you have a class `MyImmutable` which depends on `SupportType`, and then you only change `MyImmutable`, then `SupportType` is not passed to the Java compiler by incremental annotation ... | 1.0 | Incremental annotation processing does not find dependent classes with `proc:only` - When doing incremental annotation processing with `proc:only`, compilation may fail because of some missing classes.

For example when you have a class `MyImmutable` which depends on `SupportType`, and then you only change `MyImmutab... | process | incremental annotation processing does not find dependent classes with proc only when doing incremental annotation processing with proc only compilation may fail because of some missing classes for example when you have a class myimmutable which depends on supporttype and then you only change myimmutab... | 1 |

56,125 | 13,759,157,808 | IssuesEvent | 2020-10-07 02:11:39 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | closed | ModuleNotFoundError: No module named 'tensorflow' | stalled stat:awaiting response subtype:windows type:build/install | -using anaconda python v3.8

-working with jupyter notebook

-try to add on cmd system --- conda install tensorflow but loading old version tensorflow and than give to me this error code:

```

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense

```

---------------------------... | 1.0 | ModuleNotFoundError: No module named 'tensorflow' - -using anaconda python v3.8

-working with jupyter notebook

-try to add on cmd system --- conda install tensorflow but loading old version tensorflow and than give to me this error code:

```

from tensorflow.keras.models import Sequential

from tensorflow.keras.l... | non_process | modulenotfounderror no module named tensorflow using anaconda python working with jupyter notebook try to add on cmd system conda install tensorflow but loading old version tensorflow and than give to me this error code from tensorflow keras models import sequential from tensorflow keras la... | 0 |

36,200 | 14,949,406,432 | IssuesEvent | 2021-01-26 11:29:16 | terraform-providers/terraform-provider-azurerm | https://api.github.com/repos/terraform-providers/terraform-provider-azurerm | closed | Support for base64 output in azurerm_key_vault_certificate | enhancement good first issue service/keyvault | <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not l... | 1.0 | Support for base64 output in azurerm_key_vault_certificate - <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the communi... | non_process | support for output in azurerm key vault certificate community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or me too comments they generate extra noise for issue followers and do not... | 0 |

3,095 | 6,108,454,615 | IssuesEvent | 2017-06-21 10:32:57 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | System.Diagnostics.Tests.ProcessStartInfoTests.StartInfo_TextFile_ShellExecute [FAIL] | area-System.Diagnostics.Process bug | Found in https://ci.dot.net/job/dotnet_corefx/job/master/job/outerloop_netcoreapp_windows_nt_debug_prtest/122/consoleFull#-4760281082d31e50d-1517-49fc-92b3-2ca637122019

Ran part of #21239.

```

System.Diagnostics.Tests.ProcessStartInfoTests.StartInfo_TextFile_ShellExecute [FAIL]

15:48:45 Could not sta... | 1.0 | System.Diagnostics.Tests.ProcessStartInfoTests.StartInfo_TextFile_ShellExecute [FAIL] - Found in https://ci.dot.net/job/dotnet_corefx/job/master/job/outerloop_netcoreapp_windows_nt_debug_prtest/122/consoleFull#-4760281082d31e50d-1517-49fc-92b3-2ca637122019

Ran part of #21239.

```

System.Diagnostics.Tests.Proc... | process | system diagnostics tests processstartinfotests startinfo textfile shellexecute found in ran part of system diagnostics tests processstartinfotests startinfo textfile shellexecute could not start c users dotnet bot appdata local temp processstartinfotests startinfo textfile ... | 1 |

5,577 | 8,414,995,093 | IssuesEvent | 2018-10-13 09:42:04 | bitshares/bitshares-community-ui | https://api.github.com/repos/bitshares/bitshares-community-ui | closed | Signup component UI | Signup feature process ui | Use Login.vue for examples

- Should have two forms (password/private key) (see zeplin) with tabs switch

- Should be able to copy generated password from the input field to clipboard

- Should have validations: all fields are required, passwords/pins should match, account name shouldn't be used on bitshares

| 1.0 | Signup component UI - Use Login.vue for examples

- Should have two forms (password/private key) (see zeplin) with tabs switch

- Should be able to copy generated password from the input field to clipboard

- Should have validations: all fields are required, passwords/pins should match, account name shouldn't be used... | process | signup component ui use login vue for examples should have two forms password private key see zeplin with tabs switch should be able to copy generated password from the input field to clipboard should have validations all fields are required passwords pins should match account name shouldn t be used... | 1 |

12,106 | 14,740,400,716 | IssuesEvent | 2021-01-07 09:01:47 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Admin - Configure EMail to send Deactivation msg section label | anc-ui anp-2.5 ant-enhancement grt-ui processes | In GitLab by @kdjstudios on Nov 5, 2018, 07:40

Hello Team,

I believe over the last year the functionality of this section has expanded and the label of "Configure EMail to send Deactivation msg" no longer accurately represents it. If we could please simplify this label to be "Email Notifications" I feel that would be... | 1.0 | Admin - Configure EMail to send Deactivation msg section label - In GitLab by @kdjstudios on Nov 5, 2018, 07:40

Hello Team,

I believe over the last year the functionality of this section has expanded and the label of "Configure EMail to send Deactivation msg" no longer accurately represents it. If we could please sim... | process | admin configure email to send deactivation msg section label in gitlab by kdjstudios on nov hello team i believe over the last year the functionality of this section has expanded and the label of configure email to send deactivation msg no longer accurately represents it if we could please simplify... | 1 |

19,488 | 25,798,831,979 | IssuesEvent | 2022-12-10 20:41:01 | bbrewington/dbt-bigquery-information-schema | https://api.github.com/repos/bbrewington/dbt-bigquery-information-schema | closed | [FEATURE] Get a dbt "hello world" project started | status/in_process | Acceptance Criteria:

- Connects to BigQuery (a.k.a. GBQ)

- User-specific settings in profiles.yml (with instructions in README prompting user to update)

- dbt_project.yml is project-specific and not user-specific

- at least one GBQ information schema view added in models/ folder | 1.0 | [FEATURE] Get a dbt "hello world" project started - Acceptance Criteria:

- Connects to BigQuery (a.k.a. GBQ)

- User-specific settings in profiles.yml (with instructions in README prompting user to update)

- dbt_project.yml is project-specific and not user-specific

- at least one GBQ information schema view added ... | process | get a dbt hello world project started acceptance criteria connects to bigquery a k a gbq user specific settings in profiles yml with instructions in readme prompting user to update dbt project yml is project specific and not user specific at least one gbq information schema view added in model... | 1 |

154,428 | 19,724,715,879 | IssuesEvent | 2022-01-13 18:45:55 | Techini/vulnado | https://api.github.com/repos/Techini/vulnado | opened | CVE-2021-42550 (Medium) detected in logback-classic-1.2.3.jar | security vulnerability | ## CVE-2021-42550 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>logback-classic-1.2.3.jar</b></p></summary>

<p>logback-classic module</p>

<p>Library home page: <a href="http://logb... | True | CVE-2021-42550 (Medium) detected in logback-classic-1.2.3.jar - ## CVE-2021-42550 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>logback-classic-1.2.3.jar</b></p></summary>

<p>logba... | non_process | cve medium detected in logback classic jar cve medium severity vulnerability vulnerable library logback classic jar logback classic module library home page a href path to dependency file pom xml path to vulnerable library home wss scanner repository ch qos logb... | 0 |

13,122 | 15,505,261,847 | IssuesEvent | 2021-03-11 15:10:01 | dluiscosta/weather_api | https://api.github.com/repos/dluiscosta/weather_api | closed | Use Flask's Blueprints | development process enhancement intrinsic motivation | Refactor endpoint definitions at ```app/api.py``` to make use of Flask's Blueprints. | 1.0 | Use Flask's Blueprints - Refactor endpoint definitions at ```app/api.py``` to make use of Flask's Blueprints. | process | use flask s blueprints refactor endpoint definitions at app api py to make use of flask s blueprints | 1 |

306,596 | 26,483,052,575 | IssuesEvent | 2023-01-17 15:58:32 | cosmos/cosmos-sdk | https://api.github.com/repos/cosmos/cosmos-sdk | closed | WaitForHeight test util doesn't check the node's "local" height | T: Tests T:Sprint | We've seen many times tests that do stuff like "1. send tx, 2. check output, 3. query something related to the tx/get tx with hash" which should fine, but they would fail sometimes in the CI or even locally. The errors would always be stuff like "tx not found", "x was expected to be equal to y", etc Like the tx never h... | 1.0 | WaitForHeight test util doesn't check the node's "local" height - We've seen many times tests that do stuff like "1. send tx, 2. check output, 3. query something related to the tx/get tx with hash" which should fine, but they would fail sometimes in the CI or even locally. The errors would always be stuff like "tx not ... | non_process | waitforheight test util doesn t check the node s local height we ve seen many times tests that do stuff like send tx check output query something related to the tx get tx with hash which should fine but they would fail sometimes in the ci or even locally the errors would always be stuff like tx not ... | 0 |

16,750 | 21,918,524,753 | IssuesEvent | 2022-05-22 07:46:49 | q191201771/lal | https://api.github.com/repos/q191201771/lal | closed | Docker方式启动后,推rtmp流,报 ERROR [lal: buffer too short(avc.go:532)] | #Question *In process | 按照README.md里面,用docker方式启动,推rtmp流,刷下面错误(推rtsp流就没有报错):

```

2022/05/18 06:51:03.701809 INFO initial log succ. - config.go:235

2022/05/18 06:51:03.701894 INFO

__ ___ __

/ / / | / /

/ / / /| | / /

/ /___/ ___ |/ /___

/_____/_/ |_/_____/

- config.go:238

2022/05/18 06:51:03.702392 INFO lo... | 1.0 | Docker方式启动后,推rtmp流,报 ERROR [lal: buffer too short(avc.go:532)] - 按照README.md里面,用docker方式启动,推rtmp流,刷下面错误(推rtsp流就没有报错):

```

2022/05/18 06:51:03.701809 INFO initial log succ. - config.go:235

2022/05/18 06:51:03.701894 INFO

__ ___ __

/ / / | / /

/ / / /| | / /

/ /___/ ___ |/ /___

/_____/_/ ... | process | docker方式启动后,推rtmp流,报 error 按照readme md里面,用docker方式启动,推rtmp流,刷下面错误(推rtsp流就没有报错 : info initial log succ config go info config go info load conf file... | 1 |

808,848 | 30,113,561,606 | IssuesEvent | 2023-06-30 09:38:50 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Adding filter of summarized field grouped by latitude and longitude on a map causes table visualization error | Type:Bug Priority:P2 Visualization/Maps .Reproduced .Team/42 :milky_way: | **Describe the bug**

- Filtering on aggregate column after binning on a map breaks the map and causes unexpected UI Table visualization

**Logs**

<img width="741" alt="image" src="https://user-images.githubusercontent.com/8808703/231587277-a9817d6b-9527-4ba2-b2c9-6c291e385fba.png">

**To Reproduce**

Steps to r... | 1.0 | Adding filter of summarized field grouped by latitude and longitude on a map causes table visualization error - **Describe the bug**

- Filtering on aggregate column after binning on a map breaks the map and causes unexpected UI Table visualization

**Logs**

<img width="741" alt="image" src="https://user-images.gith... | non_process | adding filter of summarized field grouped by latitude and longitude on a map causes table visualization error describe the bug filtering on aggregate column after binning on a map breaks the map and causes unexpected ui table visualization logs img width alt image src to reproduce s... | 0 |

1,400 | 3,967,585,376 | IssuesEvent | 2016-05-03 16:42:26 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Empty map causes odd mapref error | bug P2 preprocess | I have an empty map that is pulled into my primary map. The map is generated, and in this case, happened to have no content, just

```xml

<map title="sample title">

</map>

```

I've added a reference to this in hierarchy.ditamap:

```xml

<topicref href="copy.ditamap" format="ditamap"/>

```

With the map as sho... | 1.0 | Empty map causes odd mapref error - I have an empty map that is pulled into my primary map. The map is generated, and in this case, happened to have no content, just

```xml

<map title="sample title">

</map>

```

I've added a reference to this in hierarchy.ditamap:

```xml

<topicref href="copy.ditamap" format="di... | process | empty map causes odd mapref error i have an empty map that is pulled into my primary map the map is generated and in this case happened to have no content just xml i ve added a reference to this in hierarchy ditamap xml with the map as shown above i get this error the... | 1 |

232,383 | 25,577,299,559 | IssuesEvent | 2022-11-30 23:38:14 | pactflow/example-bi-directional-consumer-wiremock | https://api.github.com/repos/pactflow/example-bi-directional-consumer-wiremock | opened | CVE-2022-42003 (High) detected in jackson-databind-2.10.2.jar | security vulnerability | ## CVE-2022-42003 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.10.2.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streami... | True | CVE-2022-42003 (High) detected in jackson-databind-2.10.2.jar - ## CVE-2022-42003 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.10.2.jar</b></p></summary>

<p>Gener... | non_process | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file build gradle path to vulnera... | 0 |

19,858 | 26,269,138,176 | IssuesEvent | 2023-01-06 15:20:56 | apache/arrow-datafusion | https://api.github.com/repos/apache/arrow-datafusion | closed | Release DataFusion 15.0.0 | enhancement development-process | **Is your feature request related to a problem or challenge? Please describe what you are trying to do.**

I plan on creating DataFusion 15.0.0-rc1 on Friday 2nd December (4 weeks since the previous release).

**Describe the solution you'd like**

- [ ] [Update version & generate changelog](https://github.com/apach... | 1.0 | Release DataFusion 15.0.0 - **Is your feature request related to a problem or challenge? Please describe what you are trying to do.**

I plan on creating DataFusion 15.0.0-rc1 on Friday 2nd December (4 weeks since the previous release).

**Describe the solution you'd like**

- [ ] [Update version & generate changel... | process | release datafusion is your feature request related to a problem or challenge please describe what you are trying to do i plan on creating datafusion on friday december weeks since the previous release describe the solution you d like cut rc and start vote vote p... | 1 |

7,246 | 10,412,869,857 | IssuesEvent | 2019-09-13 17:03:06 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | Merge terms - 'response to phytoalexin production by other organism involved in symbiotic interaction' and children (was:obsolete...) | Other term-related request multi-species process obsoletion | While working on #17441

I came across this branch:

- GO:0052549 response to phytoalexin production by other organism involved in symbiotic interaction

- - GO:0052566 response to host phytoalexin production

- - - GO:0052378 evasion or tolerance by organism of phytoalexins produced by other organism involved i... | 1.0 | Merge terms - 'response to phytoalexin production by other organism involved in symbiotic interaction' and children (was:obsolete...) - While working on #17441

I came across this branch:

- GO:0052549 response to phytoalexin production by other organism involved in symbiotic interaction

- - GO:0052566 respon... | process | merge terms response to phytoalexin production by other organism involved in symbiotic interaction and children was obsolete while working on i came across this branch go response to phytoalexin production by other organism involved in symbiotic interaction go response to host phyto... | 1 |

365,521 | 25,540,171,875 | IssuesEvent | 2022-11-29 14:48:33 | qiboteam/qibo | https://api.github.com/repos/qiboteam/qibo | closed | Remove qibo logo from doc when site is online | documentation | For now we are leaving the logo in the documentation compiled with `sphinx`. When the site is available the logo will have to be removed because it will already be in the navbar. | 1.0 | Remove qibo logo from doc when site is online - For now we are leaving the logo in the documentation compiled with `sphinx`. When the site is available the logo will have to be removed because it will already be in the navbar. | non_process | remove qibo logo from doc when site is online for now we are leaving the logo in the documentation compiled with sphinx when the site is available the logo will have to be removed because it will already be in the navbar | 0 |

250,911 | 21,388,607,594 | IssuesEvent | 2022-04-21 03:24:09 | Nithin-Kamineni/peekNshop | https://api.github.com/repos/Nithin-Kamineni/peekNshop | closed | Backend testing of other user functionalities | back-end Sprint-4 testing | Backend testing of other user functionalities like the favourite stores of particular user, change Address, change User detailsand so on. | 1.0 | Backend testing of other user functionalities - Backend testing of other user functionalities like the favourite stores of particular user, change Address, change User detailsand so on. | non_process | backend testing of other user functionalities backend testing of other user functionalities like the favourite stores of particular user change address change user detailsand so on | 0 |

16,100 | 5,214,884,511 | IssuesEvent | 2017-01-26 01:45:00 | serde-rs/serde | https://api.github.com/repos/serde-rs/serde | opened | Struct fields and variant tags both go through deserialize_struct_field | bug codegen | In `#[derive(Deserialize)]` we are generating effectively the same Deserialize implementation for deciding which struct field is next vs which variant we are looking at. As a result, both implementations go through Deserializer::deserialize_struct_field which is unexpected for Deserializer authors trying to implement `... | 1.0 | Struct fields and variant tags both go through deserialize_struct_field - In `#[derive(Deserialize)]` we are generating effectively the same Deserialize implementation for deciding which struct field is next vs which variant we are looking at. As a result, both implementations go through Deserializer::deserialize_struc... | non_process | struct fields and variant tags both go through deserialize struct field in we are generating effectively the same deserialize implementation for deciding which struct field is next vs which variant we are looking at as a result both implementations go through deserializer deserialize struct field which is une... | 0 |

77,398 | 7,573,453,036 | IssuesEvent | 2018-04-23 17:49:29 | apache/incubator-openwhisk-wskdeploy | https://api.github.com/repos/apache/incubator-openwhisk-wskdeploy | closed | manifest_basic_tar_grammar.yaml also tests feeds and api grammar; split them out | priority: low tests: unit | Originally, this test file was supposed to test basic **Trigger**-**Action**-**Rule** (TAR) grammars. Over time, **feeds** and now **api** grammars were added to the same test file.

As we add more "entities" like **feeds** and **apis** that build on more basic ones like **triggers**, etc. we should look to test t... | 1.0 | manifest_basic_tar_grammar.yaml also tests feeds and api grammar; split them out - Originally, this test file was supposed to test basic **Trigger**-**Action**-**Rule** (TAR) grammars. Over time, **feeds** and now **api** grammars were added to the same test file.

As we add more "entities" like **feeds** and **ap... | non_process | manifest basic tar grammar yaml also tests feeds and api grammar split them out originally this test file was supposed to test basic trigger action rule tar grammars over time feeds and now api grammars were added to the same test file as we add more entities like feeds and ap... | 0 |

117,475 | 11,947,744,420 | IssuesEvent | 2020-04-03 10:29:15 | thoughtbot/administrate | https://api.github.com/repos/thoughtbot/administrate | opened | We should bring community plugins into the docs | documentation | In #1535, @sedubois writes:

> - people can create their own plugins and share them on the [list of plugins wiki page](https://github.com/thoughtbot/administrate/wiki/List-of-Plugins), but that information tends to be outdated or not verified and so the quality information gets diluted;

> - external plugins might no... | 1.0 | We should bring community plugins into the docs - In #1535, @sedubois writes:

> - people can create their own plugins and share them on the [list of plugins wiki page](https://github.com/thoughtbot/administrate/wiki/List-of-Plugins), but that information tends to be outdated or not verified and so the quality inform... | non_process | we should bring community plugins into the docs in sedubois writes people can create their own plugins and share them on the but that information tends to be outdated or not verified and so the quality information gets diluted external plugins might not be as stable as administrate itself beca... | 0 |

5,585 | 8,442,070,399 | IssuesEvent | 2018-10-18 12:14:21 | kiwicom/orbit-components | https://api.github.com/repos/kiwicom/orbit-components | closed | Radio - value covering info on smaller width | bug processing | As the title says. Here's a screenshot: https://monosnap.com/file/nueREAGeNt3Wj4SCy3I9blT1ddGRsJ

## Expected Behavior

The label span should wrap the text

## Current Behavior

It doesnt wrap it, the text gets pushed down and the label consumes the same space in one block

## Possible Solution

Add styles below ... | 1.0 | Radio - value covering info on smaller width - As the title says. Here's a screenshot: https://monosnap.com/file/nueREAGeNt3Wj4SCy3I9blT1ddGRsJ

## Expected Behavior

The label span should wrap the text

## Current Behavior

It doesnt wrap it, the text gets pushed down and the label consumes the same space in one b... | process | radio value covering info on smaller width as the title says here s a screenshot expected behavior the label span should wrap the text current behavior it doesnt wrap it the text gets pushed down and the label consumes the same space in one block possible solution add styles below to the r... | 1 |

351,087 | 31,934,099,402 | IssuesEvent | 2023-09-19 09:20:09 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix Array API linalg.test_cholesky | Array API Sub Task Failing Test ToDo_internal | | | |

|---|---|

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/5132836219/jobs/9234645598"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5132836219/jobs/9234645598"><img src=https://img.shields.io/badge/-failure-red></a>

|numpy|<a ... | 1.0 | Fix Array API linalg.test_cholesky - | | |

|---|---|

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/5132836219/jobs/9234645598"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5132836219/jobs/9234645598"><img src=https://img.shields.i... | non_process | fix array api linalg test cholesky torch a href src jax a href src numpy a href src tensorflow a href src | 0 |

50,261 | 13,187,407,143 | IssuesEvent | 2020-08-13 03:19:03 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | Update operator docs for pnf system (Trac #405) | Migrated from Trac defect jeb + pnf | PnF operator docs need to be updated:

- New location for DB on fpslave01

- New location for "start/stop" scripts and that these should auto-start on boot

- 1 writer -> 2 writers

- Expanded client pool.

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/405

, reported by blaufuss and owned by blauf... | 1.0 | Update operator docs for pnf system (Trac #405) - PnF operator docs need to be updated:

- New location for DB on fpslave01

- New location for "start/stop" scripts and that these should auto-start on boot

- 1 writer -> 2 writers

- Expanded client pool.

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ti... | non_process | update operator docs for pnf system trac pnf operator docs need to be updated new location for db on new location for start stop scripts and that these should auto start on boot writer writers expanded client pool migrated from reported by blaufuss and owned by blaufuss jso... | 0 |

774 | 3,257,730,206 | IssuesEvent | 2015-10-20 19:07:05 | symfony/symfony | https://api.github.com/repos/symfony/symfony | closed | Broken test SigchildEnabledProcessTest::testPTYCommand | Process Unconfirmed | ```

There was 1 failure:

1) Symfony\Component\Process\Tests\SigchildEnabledProcessTest::testPTYCommand

Failed asserting that two strings are equal.

--- Expected

+++ Actual

@@ @@

'foo

+sh: 1: 3: Bad file descriptor

'

```

```

cat /etc/lsb-release

DISTRIB_ID=Ubuntu

DISTRIB_RELEASE=14.04

DISTRIB_CODEN... | 1.0 | Broken test SigchildEnabledProcessTest::testPTYCommand - ```

There was 1 failure:

1) Symfony\Component\Process\Tests\SigchildEnabledProcessTest::testPTYCommand

Failed asserting that two strings are equal.

--- Expected

+++ Actual

@@ @@

'foo

+sh: 1: 3: Bad file descriptor

'

```

```

cat /etc/lsb-release ... | process | broken test sigchildenabledprocesstest testptycommand there was failure symfony component process tests sigchildenabledprocesstest testptycommand failed asserting that two strings are equal expected actual foo sh bad file descriptor cat etc lsb release ... | 1 |

24,582 | 2,669,238,972 | IssuesEvent | 2015-03-23 14:31:51 | Connexions/webview | https://api.github.com/repos/Connexions/webview | closed | Changing text format in editor is not triggering save button | bug High Priority | 1. Create a page.

2. Add some text and save.

3. Highlight the text and choose a format from the dropdown. Save is not triggered. | 1.0 | Changing text format in editor is not triggering save button - 1. Create a page.

2. Add some text and save.

3. Highlight the text and choose a format from the dropdown. Save is not triggered. | non_process | changing text format in editor is not triggering save button create a page add some text and save highlight the text and choose a format from the dropdown save is not triggered | 0 |

358,792 | 10,640,963,303 | IssuesEvent | 2019-10-16 08:30:03 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.audible.co.uk - site is not usable | ML Correct ML ON browser-firefox engine-gecko priority-normal | <!-- @browser: Firefox 70.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:70.0) Gecko/20100101 Firefox/70.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://www.audible.co.uk/ep/acx-redemption?bp_o=true

**Browser / Version**: Firefox 70.0

**Operating System**: Windows 10

**Tested Ano... | 1.0 | www.audible.co.uk - site is not usable - <!-- @browser: Firefox 70.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:70.0) Gecko/20100101 Firefox/70.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://www.audible.co.uk/ep/acx-redemption?bp_o=true

**Browser / Version**: Firefox 70.0

**Op... | non_process | site is not usable url browser version firefox operating system windows tested another browser yes problem type site is not usable description sometimes it showing this steps to reproduce browser configuration gfx webrender all false gfx webr... | 0 |

25,236 | 18,292,930,621 | IssuesEvent | 2021-10-05 17:10:39 | crystal-lang/crystal | https://api.github.com/repos/crystal-lang/crystal | opened | `test_macos` is broken | kind:bug topic:infrastructure | Just as #11275 has been fixed, there's another CI issue on macos: https://github.com/crystal-lang/crystal/runs/3805485097

```

nix-shell --pure --run 'TZ=America/New_York make std_spec clean threads=1 junit_output=.junit/std_spec.xml'

warning: file 'nixpkgs' was not found in the Nix search path (add it using $NIX... | 1.0 | `test_macos` is broken - Just as #11275 has been fixed, there's another CI issue on macos: https://github.com/crystal-lang/crystal/runs/3805485097

```

nix-shell --pure --run 'TZ=America/New_York make std_spec clean threads=1 junit_output=.junit/std_spec.xml'

warning: file 'nixpkgs' was not found in the Nix searc... | non_process | test macos is broken just as has been fixed there s another ci issue on macos nix shell pure run tz america new york make std spec clean threads junit output junit std spec xml warning file nixpkgs was not found in the nix search path add it using nix path or i at string wi... | 0 |

173,674 | 27,511,175,756 | IssuesEvent | 2023-03-06 08:56:45 | starplanter93/The_Garden_of_Musicsheet | https://api.github.com/repos/starplanter93/The_Garden_of_Musicsheet | closed | Design: 마이페이지 및 작성페이지 반응형 구현 | Design | ## Description

마이페이지 및 작성페이지 반응형 구현

## Todo

- [x] 마이페이지 반응형

- [x] 작성페이지 반응형

## ETC

기타사항

| 1.0 | Design: 마이페이지 및 작성페이지 반응형 구현 - ## Description

마이페이지 및 작성페이지 반응형 구현

## Todo

- [x] 마이페이지 반응형

- [x] 작성페이지 반응형

## ETC

기타사항

| non_process | design 마이페이지 및 작성페이지 반응형 구현 description 마이페이지 및 작성페이지 반응형 구현 todo 마이페이지 반응형 작성페이지 반응형 etc 기타사항 | 0 |

120,270 | 25,771,160,212 | IssuesEvent | 2022-12-09 08:06:02 | pulumi/pulumi-yaml | https://api.github.com/repos/pulumi/pulumi-yaml | closed | Invalid generated yaml for example | kind/bug impact/usability area/docs language/yaml area/codegen | ### What happened?

This doc page:

https://www.pulumi.com/registry/packages/azure-native/api-docs/containerservice/managedcluster/

Includes an example with this snippet:

```

- availabilityZones:

- 1

- 2

- 3

```

### Steps to reproduce

https://www.pulumi.com/reg... | 1.0 | Invalid generated yaml for example - ### What happened?

This doc page:

https://www.pulumi.com/registry/packages/azure-native/api-docs/containerservice/managedcluster/

Includes an example with this snippet:

```

- availabilityZones:

- 1

- 2

- 3

```

### Steps to re... | non_process | invalid generated yaml for example what happened this doc page includes an example with this snippet availabilityzones steps to reproduce expected behavior availabilityzones ... | 0 |

5,932 | 8,755,260,880 | IssuesEvent | 2018-12-14 14:22:46 | u-root/u-bmc | https://api.github.com/repos/u-root/u-bmc | closed | Remove u-boot and use Linux as bootloader | process | Seeing as we don't need u-boot, and the philosophy we try to follow is the lesser amount of attack surfaces - having Linux as the bootloader makes sense.

This would make #6 (possibly, it would make it easier to solve anyhow), #75, #76 obsolete and would allow us to insert early boot code (like LPC disable) on platfo... | 1.0 | Remove u-boot and use Linux as bootloader - Seeing as we don't need u-boot, and the philosophy we try to follow is the lesser amount of attack surfaces - having Linux as the bootloader makes sense.

This would make #6 (possibly, it would make it easier to solve anyhow), #75, #76 obsolete and would allow us to insert ... | process | remove u boot and use linux as bootloader seeing as we don t need u boot and the philosophy we try to follow is the lesser amount of attack surfaces having linux as the bootloader makes sense this would make possibly it would make it easier to solve anyhow obsolete and would allow us to insert ea... | 1 |

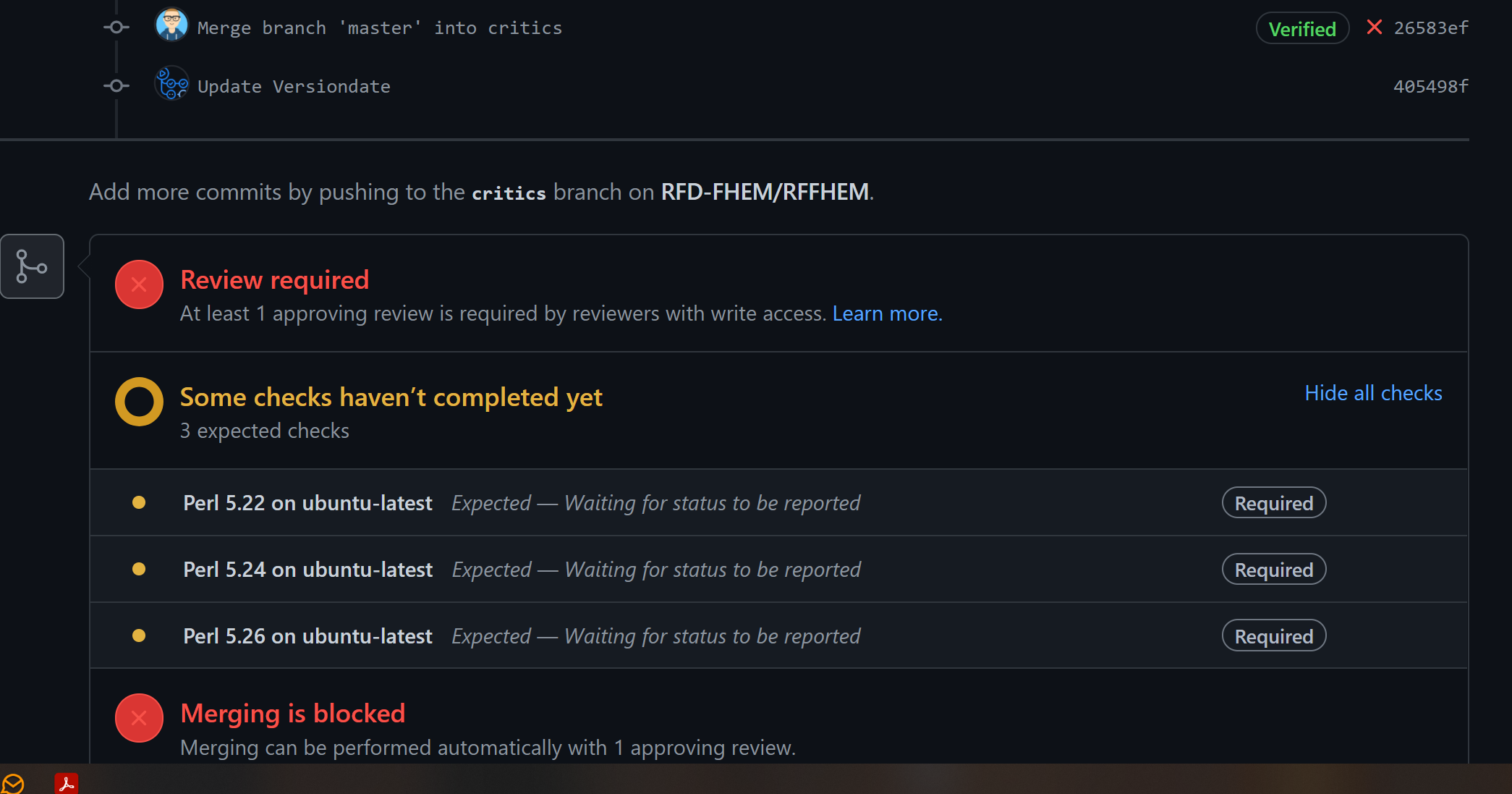

222,249 | 17,401,459,409 | IssuesEvent | 2021-08-02 20:20:07 | RFD-FHEM/RFFHEM | https://api.github.com/repos/RFD-FHEM/RFFHEM | closed | Github checks are failing if job is skipped | fixed unittest | ## Expected Behavior

Status checks are taken from the last successfull commit if there is a skipped one

## Actual Behavior

Required checks are failing, if the last commit job is skipped:

| 1.0 | Github checks are failing if job is skipped - ## Expected Behavior

Status checks are taken from the last successfull commit if there is a skipped one

## Actual Behavior

Required checks are failing, if the last commit job is skipped:

- Have a LIMIT to the query for visualisation update, and choose to remove this LIMIT when exporting

=> this is possible today (but not so user-... | 1.0 | Option for downloading CSV with no rows limit - It would be great to facilitate data extraction through Metabase.

A few potential enhancements to do so:

- Choose columns to display/export (already covered by #1445)

- Have a LIMIT to the query for visualisation update, and choose to remove this LIMIT when exporting... | process | option for downloading csv with no rows limit it would be great to facilitate data extraction through metabase a few potential enhancements to do so choose columns to display export already covered by have a limit to the query for visualisation update and choose to remove this limit when exporting ... | 1 |

135,143 | 12,675,959,591 | IssuesEvent | 2020-06-19 03:28:28 | cashapp/sqldelight | https://api.github.com/repos/cashapp/sqldelight | closed | Comprehension questions: initial table creation and migration | component: sqlite-migrations documentation | Hi,

I've read through the documentation but there are still some open questions regarding initial table creation and migration. I'm working on the JVM, no Android or iOS.

The `.sq` files should both create the tables and define queries. Is it recommended to use `IF NOT EXISTS` here? Should `Database.Schema.create... | 1.0 | Comprehension questions: initial table creation and migration - Hi,

I've read through the documentation but there are still some open questions regarding initial table creation and migration. I'm working on the JVM, no Android or iOS.

The `.sq` files should both create the tables and define queries. Is it recomme... | non_process | comprehension questions initial table creation and migration hi i ve read through the documentation but there are still some open questions regarding initial table creation and migration i m working on the jvm no android or ios the sq files should both create the tables and define queries is it recomme... | 0 |

5,025 | 7,846,657,773 | IssuesEvent | 2018-06-19 16:03:23 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | any automatic process to address processing doc? | Processing | I note that there are some processing tools that have help in doc but not in desktop:

- `Point Displacement`, `Execute SQL`...

- `symmetrical difference` has help when opened from vector menu but not from toolbox (need an issue report?)

Is there any process to automatically fill help (or list of algs) between doc and ... | 1.0 | any automatic process to address processing doc? - I note that there are some processing tools that have help in doc but not in desktop:

- `Point Displacement`, `Execute SQL`...

- `symmetrical difference` has help when opened from vector menu but not from toolbox (need an issue report?)

Is there any process to automat... | process | any automatic process to address processing doc i note that there are some processing tools that have help in doc but not in desktop point displacement execute sql symmetrical difference has help when opened from vector menu but not from toolbox need an issue report is there any process to automat... | 1 |

76,765 | 21,568,419,469 | IssuesEvent | 2022-05-02 03:56:03 | seek-oss/vanilla-extract | https://api.github.com/repos/seek-oss/vanilla-extract | closed | url( ) local imports does not works with esbuild and esbuild-plugin plugin | bug/integration bug/esbuild | ### Describe the bug

Can not setup local url( ) import in css.ts files

### Link to reproduction

https://github.com/dmytro-shpak/esbuild-vanilla-extract-bug/

```

npm install

npm test

```

> Could not resolve "./x.svg" (the plugin "vanilla-extract" didn't set a resolve directory)`

### System Info

Output of... | 1.0 | url( ) local imports does not works with esbuild and esbuild-plugin plugin - ### Describe the bug

Can not setup local url( ) import in css.ts files

### Link to reproduction

https://github.com/dmytro-shpak/esbuild-vanilla-extract-bug/

```

npm install

npm test

```

> Could not resolve "./x.svg" (the plugin "va... | non_process | url local imports does not works with esbuild and esbuild plugin plugin describe the bug can not setup local url import in css ts files link to reproduction npm install npm test could not resolve x svg the plugin vanilla extract didn t set a resolve directory system... | 0 |

153,019 | 13,491,096,031 | IssuesEvent | 2020-09-11 16:00:39 | COSC481W-2020Fall/cosc481w-581-2020-fall-convoluted-classifiers | https://api.github.com/repos/COSC481W-2020Fall/cosc481w-581-2020-fall-convoluted-classifiers | closed | Research Python Modules | documentation | We need to research and create a list of Python modules that can be used for our project. | 1.0 | Research Python Modules - We need to research and create a list of Python modules that can be used for our project. | non_process | research python modules we need to research and create a list of python modules that can be used for our project | 0 |

12,220 | 8,643,752,555 | IssuesEvent | 2018-11-25 20:53:06 | patrickfav/armadillo | https://api.github.com/repos/patrickfav/armadillo | closed | Password is not being used for derivating encryption key | bug security | The user provided password is supposed to be used to derivate the encryption key. However, it seems that currently is not being used.

How to reproduce:

1. Instantiate Armadillo with password A.

2. Save some data

3. Instantiate Armadillo with password B (without deleting data).

4. Try to retrieved data stored wit... | True | Password is not being used for derivating encryption key - The user provided password is supposed to be used to derivate the encryption key. However, it seems that currently is not being used.

How to reproduce:

1. Instantiate Armadillo with password A.

2. Save some data

3. Instantiate Armadillo with password B (w... | non_process | password is not being used for derivating encryption key the user provided password is supposed to be used to derivate the encryption key however it seems that currently is not being used how to reproduce instantiate armadillo with password a save some data instantiate armadillo with password b w... | 0 |

6,403 | 5,411,211,678 | IssuesEvent | 2017-03-01 10:59:02 | devtools-html/debugger.html | https://api.github.com/repos/devtools-html/debugger.html | reopened | Lots of "Not responding" / Spinning wheels with large js file | bug bug: P1 performance Release: Commitment | I know this isn't the most useful bug report, but I am getting lots of "Not responding" issues on Windows 10. That is, the Firefox window regularly hangs for about 5-10secs. Happens:

- When I simply Ctrl+F in a JS File which is 2MB

- When I have breakpoints in this file and reload

My system isn't "weak" either:

... | True | Lots of "Not responding" / Spinning wheels with large js file - I know this isn't the most useful bug report, but I am getting lots of "Not responding" issues on Windows 10. That is, the Firefox window regularly hangs for about 5-10secs. Happens:

- When I simply Ctrl+F in a JS File which is 2MB

- When I have breakpoi... | non_process | lots of not responding spinning wheels with large js file i know this isn t the most useful bug report but i am getting lots of not responding issues on windows that is the firefox window regularly hangs for about happens when i simply ctrl f in a js file which is when i have breakpoints in t... | 0 |

579,239 | 17,186,253,182 | IssuesEvent | 2021-07-16 02:43:09 | TeamDooRiBon/DooRi-iOS | https://api.github.com/repos/TeamDooRiBon/DooRi-iOS | closed | [FEAT] 살펴보기뷰 API 연결 | Feat Minjae 🐻❄️ Network P1 / Priority High Sangjin 🐨 | # 👀 이슈 (issue)

살펴보기뷰에 대한 API를 연결합니다.

<img width="250" alt="스크린샷 2021-07-16 오전 2 01 58" src="https://user-images.githubusercontent.com/61109660/125828324-6c2d2d0f-471c-420d-85d9-3b5bcf653b55.png">

# 🚀 to-do

<!-- 진행할 작업에 대해 적어주세요 -->

- [ ] Postman 테스트

- [ ] 데이터 모델 생성

- [ ] 데이터 서비스 구현

- [ ] API ... | 1.0 | [FEAT] 살펴보기뷰 API 연결 - # 👀 이슈 (issue)

살펴보기뷰에 대한 API를 연결합니다.

<img width="250" alt="스크린샷 2021-07-16 오전 2 01 58" src="https://user-images.githubusercontent.com/61109660/125828324-6c2d2d0f-471c-420d-85d9-3b5bcf653b55.png">

# 🚀 to-do

<!-- 진행할 작업에 대해 적어주세요 -->

- [ ] Postman 테스트

- [ ] 데이터 모델 생성

- [ ] ... | non_process | 살펴보기뷰 api 연결 👀 이슈 issue 살펴보기뷰에 대한 api를 연결합니다 img width alt 스크린샷 오전 src 🚀 to do postman 테스트 데이터 모델 생성 데이터 서비스 구현 api 연결 | 0 |

8,446 | 11,614,671,832 | IssuesEvent | 2020-02-26 13:00:24 | scikit-learn/scikit-learn | https://api.github.com/repos/scikit-learn/scikit-learn | closed | sklearn.preprocessing.StandardScaler gets NaN variance when partial_fit with sparse data | Bug module:preprocessing | #### Describe the bug

When I feed a specific dataset (which is sparse) to sklearn.preprocessing.StandardScaler.partial_fit in a specific order, I get variance which is NaN although data does **NOT** contains any NaNs and is very small.

When I convert the sparse arrays to dense, it works. When I change the order to fe... | 1.0 | sklearn.preprocessing.StandardScaler gets NaN variance when partial_fit with sparse data - #### Describe the bug

When I feed a specific dataset (which is sparse) to sklearn.preprocessing.StandardScaler.partial_fit in a specific order, I get variance which is NaN although data does **NOT** contains any NaNs and is very... | process | sklearn preprocessing standardscaler gets nan variance when partial fit with sparse data describe the bug when i feed a specific dataset which is sparse to sklearn preprocessing standardscaler partial fit in a specific order i get variance which is nan although data does not contains any nans and is very... | 1 |

17,352 | 23,174,902,473 | IssuesEvent | 2022-07-31 09:14:02 | droidyuecom/comments_droidyuecom | https://api.github.com/repos/droidyuecom/comments_droidyuecom | opened | 使用 flutter attach 实现代码与应用进程关联 - 技术小黑屋 | Gitalk 2022/07/31/flutter-attach-process/ | https://droidyue.com/blog/2022/07/31/flutter-attach-process/

使用 Flutter Attach 实现代码与应用进程关联 Jul 31st, 2022 当我们使用 flutter run 调试 App 时,假如数据线接触不良或者断开,当我们想要继续调试的时候,可能就需要再次执行 flutter run。 但其实,还有一个命令叫做 flutter … | 1.0 | 使用 flutter attach 实现代码与应用进程关联 - 技术小黑屋 - https://droidyue.com/blog/2022/07/31/flutter-attach-process/

使用 Flutter Attach 实现代码与应用进程关联 Jul 31st, 2022 当我们使用 flutter run 调试 App 时,假如数据线接触不良或者断开,当我们想要继续调试的时候,可能就需要再次执行 flutter run。 但其实,还有一个命令叫做 flutter … | process | 使用 flutter attach 实现代码与应用进程关联 技术小黑屋 使用 flutter attach 实现代码与应用进程关联 jul 当我们使用 flutter run 调试 app 时,假如数据线接触不良或者断开,当我们想要继续调试的时候,可能就需要再次执行 flutter run。 但其实,还有一个命令叫做 flutter … | 1 |

319,313 | 23,765,120,749 | IssuesEvent | 2022-09-01 12:11:55 | actions/cache | https://api.github.com/repos/actions/cache | closed | using cache on tags does not work | documentation area:tags | hi!

I have a cache created on a non-default branch, all works fine except when the workflow is run on **a tag**, the cache isn't hit

when it's not a tagged commit, and my github.ref is "...heads" it starts working again.

I guess it's related to https://github.com/actions/cache#cache-scopes

Essentially, it... | 1.0 | using cache on tags does not work - hi!

I have a cache created on a non-default branch, all works fine except when the workflow is run on **a tag**, the cache isn't hit

when it's not a tagged commit, and my github.ref is "...heads" it starts working again.

I guess it's related to https://github.com/actions/c... | non_process | using cache on tags does not work hi i have a cache created on a non default branch all works fine except when the workflow is run on a tag the cache isn t hit when it s not a tagged commit and my github ref is heads it starts working again i guess it s related to essentially it looks l... | 0 |

13,944 | 16,720,307,525 | IssuesEvent | 2021-06-10 06:19:23 | aodn/imos-toolbox | https://api.github.com/repos/aodn/imos-toolbox | closed | WorkhorseParser - wrong assignment of velocity components | Type:Reprocessing Type:bug Unit:Instrument Reader Unit:TimeSeries | This bug affects v2.6.11 & v2.6.12

When reading ENU datasets with the newly refactored workhorse Parser, the variable mappings are reversed and a wrong assignment is being done. The current bug lies in assigning `velocity1->VCUR` and `velocity2->UCUR`, while the correct is the reverse.

This only occurs for ENU d... | 1.0 | WorkhorseParser - wrong assignment of velocity components - This bug affects v2.6.11 & v2.6.12

When reading ENU datasets with the newly refactored workhorse Parser, the variable mappings are reversed and a wrong assignment is being done. The current bug lies in assigning `velocity1->VCUR` and `velocity2->UCUR`, whi... | process | workhorseparser wrong assignment of velocity components this bug affects when reading enu datasets with the newly refactored workhorse parser the variable mappings are reversed and a wrong assignment is being done the current bug lies in assigning vcur and ucur while the correct is th... | 1 |

31,686 | 26,005,889,061 | IssuesEvent | 2022-12-20 19:19:06 | FuelLabs/infrastructure | https://api.github.com/repos/FuelLabs/infrastructure | closed | Upgrade fuel-dev & fuel-prod to v1.24 | infrastructure | Update terraform code to deploy v1.24 for fuel-dev and fuel-prod:

-fuel-dev

v1.21 -> 1.22 -> 1.23 -> 1.24

- fuel-prod

v1.23 -> 1.24 | 1.0 | Upgrade fuel-dev & fuel-prod to v1.24 - Update terraform code to deploy v1.24 for fuel-dev and fuel-prod:

-fuel-dev

v1.21 -> 1.22 -> 1.23 -> 1.24

- fuel-prod

v1.23 -> 1.24 | non_process | upgrade fuel dev fuel prod to update terraform code to deploy for fuel dev and fuel prod fuel dev fuel prod | 0 |

115,079 | 4,651,652,172 | IssuesEvent | 2016-10-03 11:01:08 | TheScienceMuseum/collectionsonline | https://api.github.com/repos/TheScienceMuseum/collectionsonline | closed | Use the right property (name) of the category object for the facet | enhancement priority-4 | Use the right property (name) of the category object for the facet (category.name) to just show the category name (without the museum name).

~~This update should ideally be made to the index,which I will chase up.~~

~~But in the interim would it be possible to strip the Museum name from the front of Category fiel... | 1.0 | Use the right property (name) of the category object for the facet - Use the right property (name) of the category object for the facet (category.name) to just show the category name (without the museum name).

~~This update should ideally be made to the index,which I will chase up.~~

~~But in the interim would it... | non_process | use the right property name of the category object for the facet use the right property name of the category object for the facet category name to just show the category name without the museum name this update should ideally be made to the index which i will chase up but in the interim would it... | 0 |

3,268 | 6,344,377,706 | IssuesEvent | 2017-07-27 19:46:34 | rubberduck-vba/Rubberduck | https://api.github.com/repos/rubberduck-vba/Rubberduck | closed | Resolver Error when method call has 0 or >1 args in parentheses on continued line | bug parse-tree-processing | This would appear to be a regression, and/or not covered by tests.

```vb

Sub test _

() 'These are fine

Debug.Print Now _

() 'These are not

End Sub

```

Refer #2888 | 1.0 | Resolver Error when method call has 0 or >1 args in parentheses on continued line - This would appear to be a regression, and/or not covered by tests.

```vb

Sub test _

() 'These are fine

Debug.Print Now _

() 'These are not

End Sub

```

Refer #2888 | process | resolver error when method call has or args in parentheses on continued line this would appear to be a regression and or not covered by tests vb sub test these are fine debug print now these are not end sub refer | 1 |

5,579 | 8,432,465,562 | IssuesEvent | 2018-10-17 02:12:41 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | opened | Kokoro skipping jobs inappropriately | priority: p1 testing type: process | E.g., for PR #6202, which scribbles all over `bigquery/`, the [Kokoro - BigQuery job](https://source.cloud.google.com/results/invocations/de8b3c7d-1952-4e13-acf2-d0491c739608/log) logs (line 95)

> bigquery was not modified, returning.

And returns successfully without running any tests. | 1.0 | Kokoro skipping jobs inappropriately - E.g., for PR #6202, which scribbles all over `bigquery/`, the [Kokoro - BigQuery job](https://source.cloud.google.com/results/invocations/de8b3c7d-1952-4e13-acf2-d0491c739608/log) logs (line 95)

> bigquery was not modified, returning.

And returns successfully without running... | process | kokoro skipping jobs inappropriately e g for pr which scribbles all over bigquery the logs line bigquery was not modified returning and returns successfully without running any tests | 1 |

102,935 | 11,310,267,803 | IssuesEvent | 2020-01-19 18:27:19 | simensrostad/TTK4235 | https://api.github.com/repos/simensrostad/TTK4235 | opened | Construct and generate documentation | documentation | Make description and update header files. Use doxygen to generate documentation. | 1.0 | Construct and generate documentation - Make description and update header files. Use doxygen to generate documentation. | non_process | construct and generate documentation make description and update header files use doxygen to generate documentation | 0 |

283,502 | 30,913,322,493 | IssuesEvent | 2023-08-05 01:39:50 | hshivhare67/kernel_v4.19.72_CVE-2022-42896_new | https://api.github.com/repos/hshivhare67/kernel_v4.19.72_CVE-2022-42896_new | reopened | CVE-2021-28952 (High) detected in linuxlinux-4.19.279 | Mend: dependency security vulnerability | ## CVE-2021-28952 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.279</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.k... | True | CVE-2021-28952 (High) detected in linuxlinux-4.19.279 - ## CVE-2021-28952 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.279</b></p></summary>

<p>

<p>The Linux Kernel<... | non_process | cve high detected in linuxlinux cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in base branch master vulnerable source files sound soc qcom c sound soc qcom c ... | 0 |

20,508 | 27,167,377,885 | IssuesEvent | 2023-02-17 16:21:38 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Example in "Use a template parameter as part of a condition" is not clear | devops/prod doc-bug Pri1 devops-cicd-process/tech | The example in the *Use a template parameter as part of a condition* section is not clear. It uses two code snippets, both with default value `true` for the parameter, then clarifies that the expected result is `true` - the lack of differentiation here makes the user liable to miss the point.

<details>

<summary>code ... | 1.0 | Example in "Use a template parameter as part of a condition" is not clear - The example in the *Use a template parameter as part of a condition* section is not clear. It uses two code snippets, both with default value `true` for the parameter, then clarifies that the expected result is `true` - the lack of differentiat... | process | example in use a template parameter as part of a condition is not clear the example in the use a template parameter as part of a condition section is not clear it uses two code snippets both with default value true for the parameter then clarifies that the expected result is true the lack of differentiat... | 1 |

161,693 | 25,384,202,834 | IssuesEvent | 2022-11-21 20:18:35 | MozillaFoundation/Design | https://api.github.com/repos/MozillaFoundation/Design | opened | Design recap 2022 | design DesignOps | Add to the existing deck [here](https://docs.google.com/presentation/d/1MGbETMOGXuUCVAufeafS99fcXmHwBWpTC-m7njOedNk/edit#slide=id.g139fb3a49ae_0_0).

### Goals & Audience

- Show the impact the design team has had

- Celebrate and remissness about all the work we've done this year

- Learn from our previous work and... | 2.0 | Design recap 2022 - Add to the existing deck [here](https://docs.google.com/presentation/d/1MGbETMOGXuUCVAufeafS99fcXmHwBWpTC-m7njOedNk/edit#slide=id.g139fb3a49ae_0_0).

### Goals & Audience

- Show the impact the design team has had

- Celebrate and remissness about all the work we've done this year

- Learn from o... | non_process | design recap add to the existing deck goals audience show the impact the design team has had celebrate and remissness about all the work we ve done this year learn from our previous work and use it to guide planning what types of work do we do what did we enjoy working on the most where ca... | 0 |

414,067 | 12,098,351,384 | IssuesEvent | 2020-04-20 10:09:36 | teamforus/forus | https://api.github.com/repos/teamforus/forus | closed | Provider is accepted two times for the same fund. | Difficulty: Medium Priority: Must have Scope: Small Topic: Backend bug | @GerbenBosschieter commented on [Thu Feb 20 2020](https://github.com/teamforus/development/issues/403)

# Description

We found a provider that is accepted for the same funds two times.

Couldn't find any reason why this happend.

The provider didn't accept the invitation and applied manually for the new fund.

##... | 1.0 | Provider is accepted two times for the same fund. - @GerbenBosschieter commented on [Thu Feb 20 2020](https://github.com/teamforus/development/issues/403)

# Description

We found a provider that is accepted for the same funds two times.

Couldn't find any reason why this happend.

The provider didn't accept the inv... | non_process | provider is accepted two times for the same fund gerbenbosschieter commented on description we found a provider that is accepted for the same funds two times couldn t find any reason why this happend the provider didn t accept the invitation and applied manually for the new fund task pleas ... | 0 |

18,579 | 24,562,623,672 | IssuesEvent | 2022-10-12 21:58:00 | NEARWEEK/NEWS | https://api.github.com/repos/NEARWEEK/NEWS | closed | Get input, finalize, drive & evaluate OKRs for Q4 | Process | ## 🎉 Subtasks

- [x] Get input from all of team

- [x] Provide first version, get feedback

- [x] Finalize OKRs

- [x] Make sure all team members translate their OKRs into Github milestones & issues

- [x] Setup a monthly evaluation call to evaluate progress on OKRs and all things process

## 🤼♂️ Reviewer

... | 1.0 | Get input, finalize, drive & evaluate OKRs for Q4 - ## 🎉 Subtasks

- [x] Get input from all of team

- [x] Provide first version, get feedback

- [x] Finalize OKRs

- [x] Make sure all team members translate their OKRs into Github milestones & issues

- [x] Setup a monthly evaluation call to evaluate progress on O... | process | get input finalize drive evaluate okrs for 🎉 subtasks get input from all of team provide first version get feedback finalize okrs make sure all team members translate their okrs into github milestones issues setup a monthly evaluation call to evaluate progress on okrs and all... | 1 |

67,636 | 17,024,410,878 | IssuesEvent | 2021-07-03 07:06:40 | apache/shardingsphere | https://api.github.com/repos/apache/shardingsphere | closed | Calcite always can not download when mvn install in windows env | status: volunteer wanted type: build | Calcite lib always can not download when mvn install in windows env, please investigate the reason.

error log:

```