Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

407,008 | 11,905,170,184 | IssuesEvent | 2020-03-30 18:05:52 | INN/Google-Analytics-Popular-Posts | https://api.github.com/repos/INN/Google-Analytics-Popular-Posts | closed | Make sure that the posts returned by AnayticBridgePopularPosts are cached | priority: low type: improvement | If they aren't, then the cache should be in the class and the cache should be reset by the cron job.

| 1.0 | Make sure that the posts returned by AnayticBridgePopularPosts are cached - If they aren't, then the cache should be in the class and the cache should be reset by the cron job.

| non_process | make sure that the posts returned by anayticbridgepopularposts are cached if they aren t then the cache should be in the class and the cache should be reset by the cron job | 0 |

67,988 | 14,894,532,746 | IssuesEvent | 2021-01-21 07:42:51 | alexcloudstar/hacker-news-search | https://api.github.com/repos/alexcloudstar/hacker-news-search | opened | CVE-2019-11358 (Medium) detected in jquery-2.1.4.min.js | security vulnerability | ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-2.1.4.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="ht... | True | CVE-2019-11358 (Medium) detected in jquery-2.1.4.min.js - ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-2.1.4.min.js</b></p></summary>

<p>JavaScript librar... | non_process | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file hacker news search node modules js attic test moment index html path to vu... | 0 |

4,160 | 7,105,590,995 | IssuesEvent | 2018-01-16 14:11:22 | zotero/zotero | https://api.github.com/repos/zotero/zotero | closed | Option to delay updating citations in documents | Word Processor Integration | Moved from zotero/zotero-word-for-windows-integration#35

See discussion https://forums.zotero.org/discussion/64960/feature-suggestion-delay-updating-citations-in-documents | 1.0 | Option to delay updating citations in documents - Moved from zotero/zotero-word-for-windows-integration#35

See discussion https://forums.zotero.org/discussion/64960/feature-suggestion-delay-updating-citations-in-documents | process | option to delay updating citations in documents moved from zotero zotero word for windows integration see discussion | 1 |

12,727 | 15,096,185,226 | IssuesEvent | 2021-02-07 14:08:59 | Ghost-chu/QuickShop-Reremake | https://api.github.com/repos/Ghost-chu/QuickShop-Reremake | closed | [BUG] Holograms won't go away after removing shop | Bug Cannot Reproduce Help Wanted In Process Priority:Major | **Describe the bug**

When you remove a shop, the item/hologram comes back after a second or two

**To Reproduce**

Steps to reproduce the behavior:

1. Create a random shop

2. Remove the shop

3. The hologram comes back after a second or two

**Expected behavior**

The hologram shouldn't come back because the sho... | 1.0 | [BUG] Holograms won't go away after removing shop - **Describe the bug**

When you remove a shop, the item/hologram comes back after a second or two

**To Reproduce**

Steps to reproduce the behavior:

1. Create a random shop

2. Remove the shop

3. The hologram comes back after a second or two

**Expected behavior... | process | holograms won t go away after removing shop describe the bug when you remove a shop the item hologram comes back after a second or two to reproduce steps to reproduce the behavior create a random shop remove the shop the hologram comes back after a second or two expected behavior ... | 1 |

21,300 | 28,496,630,939 | IssuesEvent | 2023-04-18 14:39:06 | inmanta/inmanta-core | https://api.github.com/repos/inmanta/inmanta-core | opened | install pyadr through Makefile | process | `pyadr` is currently part of `requirements.dev.txt` but it is never used by the CI and it doesn't seem to be actively maintained. To prevent it holding back other dependencies it was decided to drop it from the requirement file and install it from the Makefile directly instead. Other repos should be checked for `pyadr`... | 1.0 | install pyadr through Makefile - `pyadr` is currently part of `requirements.dev.txt` but it is never used by the CI and it doesn't seem to be actively maintained. To prevent it holding back other dependencies it was decided to drop it from the requirement file and install it from the Makefile directly instead. Other re... | process | install pyadr through makefile pyadr is currently part of requirements dev txt but it is never used by the ci and it doesn t seem to be actively maintained to prevent it holding back other dependencies it was decided to drop it from the requirement file and install it from the makefile directly instead other re... | 1 |

10,844 | 13,624,221,958 | IssuesEvent | 2020-09-24 07:43:38 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | r.rgb layers are not correctly generated | Bug Feedback Processing | I am trying to use r.rgb to split my raster into thee separate rasters (R,G,B bands).

Each time I try r.rgb I get the red error massage:

> The following layers were not correctly generated.

> • C:/Users/Felix/AppData/Local/Temp/processing_hQOjSy/a3a562fa8e1a423ca4c8698e923ca01a/red.tif

> • C:/Users/Felix/AppDat... | 1.0 | r.rgb layers are not correctly generated - I am trying to use r.rgb to split my raster into thee separate rasters (R,G,B bands).

Each time I try r.rgb I get the red error massage:

> The following layers were not correctly generated.

> • C:/Users/Felix/AppData/Local/Temp/processing_hQOjSy/a3a562fa8e1a423ca4c8698e... | process | r rgb layers are not correctly generated i am trying to use r rgb to split my raster into thee separate rasters r g b bands each time i try r rgb i get the red error massage the following layers were not correctly generated • c users felix appdata local temp processing hqojsy red tif • c users ... | 1 |

401,681 | 11,795,865,512 | IssuesEvent | 2020-03-18 09:45:43 | pravega/pravega | https://api.github.com/repos/pravega/pravega | opened | Failed transactions metric incorrectly reported | area/controller area/metrics area/transaction kind/bug priority/P2 version/0.7.1 version/0.8.0 | **Problem description**

When the Controller is committing transactions against a segment that is scaling, the commit transaction attempt may fail with a `OperationNotAllowedException` and therefore the Controller will retry the commit again. This is an expected situation and it does not mean that the transaction commi... | 1.0 | Failed transactions metric incorrectly reported - **Problem description**

When the Controller is committing transactions against a segment that is scaling, the commit transaction attempt may fail with a `OperationNotAllowedException` and therefore the Controller will retry the commit again. This is an expected situati... | non_process | failed transactions metric incorrectly reported problem description when the controller is committing transactions against a segment that is scaling the commit transaction attempt may fail with a operationnotallowedexception and therefore the controller will retry the commit again this is an expected situati... | 0 |

24,214 | 17,014,179,061 | IssuesEvent | 2021-07-02 09:36:18 | kaitai-io/kaitai_struct | https://api.github.com/repos/kaitai-io/kaitai_struct | closed | Add link to xref.html in the format gallery | infrastructure | While digging in the generating code for the [format gallery](https://formats.kaitai.io/), I noticed that every time it generates page https://formats.kaitai.io/xref.html, which is quite a neat summary of all formats included with their licenses and cross-references. It's a pity that there isn't any link leading to it,... | 1.0 | Add link to xref.html in the format gallery - While digging in the generating code for the [format gallery](https://formats.kaitai.io/), I noticed that every time it generates page https://formats.kaitai.io/xref.html, which is quite a neat summary of all formats included with their licenses and cross-references. It's ... | non_process | add link to xref html in the format gallery while digging in the generating code for the i noticed that every time it generates page which is quite a neat summary of all formats included with their licenses and cross references it s a pity that there isn t any link leading to it so nobody can actually get th... | 0 |

6,687 | 9,808,684,309 | IssuesEvent | 2019-06-12 16:07:44 | EthVM/EthVM | https://api.github.com/repos/EthVM/EthVM | closed | Value too long on contract | bug project:processing | * **I'm submitting a ...**

- [ ] feature request

- [x] bug report

* **Bug Report**

Current trace:

```

org.apache.kafka.connect.errors.ConnectException: Exiting WorkerSinkTask due to unrecoverable exception.\n\tat org.apache.kafka.connect.runtime.WorkerSinkTask.deliverMessages(WorkerSinkTask.java:560... | 1.0 | Value too long on contract - * **I'm submitting a ...**

- [ ] feature request

- [x] bug report

* **Bug Report**

Current trace:

```

org.apache.kafka.connect.errors.ConnectException: Exiting WorkerSinkTask due to unrecoverable exception.\n\tat org.apache.kafka.connect.runtime.WorkerSinkTask.deliverMes... | process | value too long on contract i m submitting a feature request bug report bug report current trace org apache kafka connect errors connectexception exiting workersinktask due to unrecoverable exception n tat org apache kafka connect runtime workersinktask delivermessage... | 1 |

98,634 | 11,091,661,919 | IssuesEvent | 2019-12-15 13:56:25 | Kokan/toowtrsywen | https://api.github.com/repos/Kokan/toowtrsywen | closed | provide a five-star user experience for the reader of the requirement documentation | documentation | Attila Kovacs, teacher at lecture: a requirement documentation of good quality means using cross-references (clickable in-pdf links, e.g. "Related requirement(s): ...")

Someone to investigate Google Doc's built-in possibilities (in the Add-ons section maybe?).

Converting each (major) doc version into some word pr... | 1.0 | provide a five-star user experience for the reader of the requirement documentation - Attila Kovacs, teacher at lecture: a requirement documentation of good quality means using cross-references (clickable in-pdf links, e.g. "Related requirement(s): ...")

Someone to investigate Google Doc's built-in possibilities (in... | non_process | provide a five star user experience for the reader of the requirement documentation attila kovacs teacher at lecture a requirement documentation of good quality means using cross references clickable in pdf links e g related requirement s someone to investigate google doc s built in possibilities in... | 0 |

721,039 | 24,815,959,511 | IssuesEvent | 2022-10-25 13:11:13 | zitadel/zitadel | https://api.github.com/repos/zitadel/zitadel | closed | Organization pagination does not work | type: bug category: frontend state: ready priority: low | **Describe the bug**

If I show all the organizations of an instance, the first page works fine. As soon as I navigate to the second page, All the organizations are shown.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to instance organizations

2. Click on next page

3. All organizations are shown

**... | 1.0 | Organization pagination does not work - **Describe the bug**

If I show all the organizations of an instance, the first page works fine. As soon as I navigate to the second page, All the organizations are shown.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to instance organizations

2. Click on next pa... | non_process | organization pagination does not work describe the bug if i show all the organizations of an instance the first page works fine as soon as i navigate to the second page all the organizations are shown to reproduce steps to reproduce the behavior go to instance organizations click on next pa... | 0 |

76,124 | 21,182,579,344 | IssuesEvent | 2022-04-08 09:24:35 | appsmithorg/appsmith | https://api.github.com/repos/appsmithorg/appsmith | opened | [Bug]: Widgets overlap when multiple widgets collide with the edge of canvas | Bug Production Needs Triaging UI Builders Pod Drag & Drop Reflow & Resize | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Description

Uploading Reflow Overlapping Bug.mp4…

### Steps To Reproduce

1. Create a layout similar to the one in the video

2. Move the bottom most widget to the top till the top most widget completely resizes

3. See tha... | 1.0 | [Bug]: Widgets overlap when multiple widgets collide with the edge of canvas - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Description

Uploading Reflow Overlapping Bug.mp4…

### Steps To Reproduce

1. Create a layout similar to the one in the video

2. Move the bottom... | non_process | widgets overlap when multiple widgets collide with the edge of canvas is there an existing issue for this i have searched the existing issues description uploading reflow overlapping bug … steps to reproduce create a layout similar to the one in the video move the bottom most wi... | 0 |

20,644 | 27,322,738,702 | IssuesEvent | 2023-02-24 21:34:07 | googleapis/google-api-java-client | https://api.github.com/repos/googleapis/google-api-java-client | closed | Update clirr check to ignore OOB deprecation | priority: p2 type: process | Since v2.2.0, clirr check on PRs will fail on `OOB_REDIRECT_URI` as part of the deprecated and removed OAuth OOB flow ([discussion](https://github.com/googleapis/google-api-java-client/pull/2242#discussion_r1086200169)). This is currently blocking PRs for automerge.

```

Error: 6011: com.google.api.client.googleapis... | 1.0 | Update clirr check to ignore OOB deprecation - Since v2.2.0, clirr check on PRs will fail on `OOB_REDIRECT_URI` as part of the deprecated and removed OAuth OOB flow ([discussion](https://github.com/googleapis/google-api-java-client/pull/2242#discussion_r1086200169)). This is currently blocking PRs for automerge.

```

... | process | update clirr check to ignore oob deprecation since clirr check on prs will fail on oob redirect uri as part of the deprecated and removed oauth oob flow this is currently blocking prs for automerge error com google api client googleapis auth googleoauthconstants field oob redirect uri ha... | 1 |

629,337 | 20,029,606,645 | IssuesEvent | 2022-02-02 02:56:16 | ocaml-bench/sandmark-nightly | https://api.github.com/repos/ocaml-bench/sandmark-nightly | closed | Parallel benchmarks should be run on ocaml/ocaml#trunk | high-priority | The parallel benchmarks are only being run on the 5.00+domains variant which points to ocaml-multicore/ocaml-multicore#5.00. This branch is no longer in development since multicore is merged with upstream OCaml. The parallel benchmarks should now be run on ocaml/ocaml#trunk. No need to run the benchmarks on ocaml-multi... | 1.0 | Parallel benchmarks should be run on ocaml/ocaml#trunk - The parallel benchmarks are only being run on the 5.00+domains variant which points to ocaml-multicore/ocaml-multicore#5.00. This branch is no longer in development since multicore is merged with upstream OCaml. The parallel benchmarks should now be run on ocaml/... | non_process | parallel benchmarks should be run on ocaml ocaml trunk the parallel benchmarks are only being run on the domains variant which points to ocaml multicore ocaml multicore this branch is no longer in development since multicore is merged with upstream ocaml the parallel benchmarks should now be run on ocaml oc... | 0 |

25,762 | 25,837,969,906 | IssuesEvent | 2022-12-12 21:18:51 | pulumi/registry | https://api.github.com/repos/pulumi/registry | closed | Change H1s to match selected tab | resolution/fixed kind/enhancement area/registry impact/usability | ## overview

we would like to change the h1s in our package packages to reflect the selected tab. this is a change intended to improve accessibility and seo. #1702

## example

currently we have an h1 in the heading of the package page that is only the name of the package. eg. `Datadog` or `Azure Native`. whenev... | True | Change H1s to match selected tab - ## overview

we would like to change the h1s in our package packages to reflect the selected tab. this is a change intended to improve accessibility and seo. #1702

## example

currently we have an h1 in the heading of the package page that is only the name of the package. eg. ... | non_process | change to match selected tab overview we would like to change the in our package packages to reflect the selected tab this is a change intended to improve accessibility and seo example currently we have an in the heading of the package page that is only the name of the package eg datadog... | 0 |

275,360 | 23,909,885,020 | IssuesEvent | 2022-09-09 07:06:25 | enonic/app-contentstudio | https://api.github.com/repos/enonic/app-contentstudio | opened | Add ui-tests for clearing properrties in option-set | Test | Verify issue Unchecking an option in an option-set should clear its underlying property set #5096 | 1.0 | Add ui-tests for clearing properrties in option-set - Verify issue Unchecking an option in an option-set should clear its underlying property set #5096 | non_process | add ui tests for clearing properrties in option set verify issue unchecking an option in an option set should clear its underlying property set | 0 |

177,589 | 21,479,403,454 | IssuesEvent | 2022-04-26 16:14:40 | HughC-GH-Demo/Java-Demo | https://api.github.com/repos/HughC-GH-Demo/Java-Demo | closed | CVE-2019-10086 (High) detected in commons-beanutils-core-1.8.3.jar - autoclosed | security vulnerability | ## CVE-2019-10086 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-beanutils-core-1.8.3.jar</b></p></summary>

<p>The Apache Software Foundation provides support for the Apache c... | True | CVE-2019-10086 (High) detected in commons-beanutils-core-1.8.3.jar - autoclosed - ## CVE-2019-10086 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-beanutils-core-1.8.3.jar</b><... | non_process | cve high detected in commons beanutils core jar autoclosed cve high severity vulnerability vulnerable library commons beanutils core jar the apache software foundation provides support for the apache community of open source software projects the apache projects are chara... | 0 |

9,364 | 12,371,827,308 | IssuesEvent | 2020-05-18 19:15:54 | googleapis/python-firestore | https://api.github.com/repos/googleapis/python-firestore | closed | lint check fails on master | api: firestore type: process | A recent change of flake8 or its config causes the code style check to fail on the master branch - it complains about a ambiguous variable name in few files:

tests/unit/v1/test_order.py:210:16: E741 ambiguous variable name 'l'

tests/unit/v1beta1/test_order.py:210:16: E741 ambiguous variable name 'l'

/cc @crwilco... | 1.0 | lint check fails on master - A recent change of flake8 or its config causes the code style check to fail on the master branch - it complains about a ambiguous variable name in few files:

tests/unit/v1/test_order.py:210:16: E741 ambiguous variable name 'l'

tests/unit/v1beta1/test_order.py:210:16: E741 ambiguous var... | process | lint check fails on master a recent change of or its config causes the code style check to fail on the master branch it complains about a ambiguous variable name in few files tests unit test order py ambiguous variable name l tests unit test order py ambiguous variable name l cc c... | 1 |

258,894 | 22,356,350,548 | IssuesEvent | 2022-06-15 15:58:08 | VirtusLab/git-machete | https://api.github.com/repos/VirtusLab/git-machete | closed | Add tests for resolving location of machete file from worktrees | testing underlying git | Follow-up to #361. See #360 on what's the problem (and hence, what needs to be tested).

Would be good to resolve #362 first. | 1.0 | Add tests for resolving location of machete file from worktrees - Follow-up to #361. See #360 on what's the problem (and hence, what needs to be tested).

Would be good to resolve #362 first. | non_process | add tests for resolving location of machete file from worktrees follow up to see on what s the problem and hence what needs to be tested would be good to resolve first | 0 |

160,765 | 20,118,447,277 | IssuesEvent | 2022-02-07 22:19:02 | sureng-ws-ibm/t1 | https://api.github.com/repos/sureng-ws-ibm/t1 | opened | selenium-webdriver-2.53.3.tgz: 1 vulnerabilities (highest severity is: 5.5) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>selenium-webdriver-2.53.3.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/adm-zip/package.jso... | True | selenium-webdriver-2.53.3.tgz: 1 vulnerabilities (highest severity is: 5.5) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>selenium-webdriver-2.53.3.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /pac... | non_process | selenium webdriver tgz vulnerabilities highest severity is vulnerable library selenium webdriver tgz path to dependency file package json path to vulnerable library node modules adm zip package json found in head commit a href vulnerabilities cve severity... | 0 |

14,373 | 17,397,277,253 | IssuesEvent | 2021-08-02 14:52:28 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | Huge Spike in unkown user agents | log-processing question |

For a couple of days, I can see a huge spike in unknown user agents.

I have done some tests and android and windows devices seem still to be logged correctly.

I can't verify apple devices but I assume they aren't logged correctly anymore. Idk how I reached a spike of 17% of Unknown user agents. | 1.0 | Huge Spike in unkown user agents -

For a couple of days, I can see a huge spike in unknown user agents.

I have done some tests and android and windows devices seem still to be logged correctly.

I can't verify apple devices but I assume they aren't logged correctly anymore. Idk how I reached a spike of 17% of Unknow... | process | huge spike in unkown user agents for a couple of days i can see a huge spike in unknown user agents i have done some tests and android and windows devices seem still to be logged correctly i can t verify apple devices but i assume they aren t logged correctly anymore idk how i reached a spike of of unknown... | 1 |

20,883 | 27,708,103,521 | IssuesEvent | 2023-03-14 12:36:43 | toggl/track-windows-feedback | https://api.github.com/repos/toggl/track-windows-feedback | closed | Suggestion: Please add release date to your changelog | processed | The new Windows native app has been wonderful so far! Thank you for the great work. I just noticed we can now see a changelog in the About > Whats New menu. This is great. But could you add dates to each version entry so I can get an idea of when something was updated? I never know if there were recent updates to look ... | 1.0 | Suggestion: Please add release date to your changelog - The new Windows native app has been wonderful so far! Thank you for the great work. I just noticed we can now see a changelog in the About > Whats New menu. This is great. But could you add dates to each version entry so I can get an idea of when something was upd... | process | suggestion please add release date to your changelog the new windows native app has been wonderful so far thank you for the great work i just noticed we can now see a changelog in the about whats new menu this is great but could you add dates to each version entry so i can get an idea of when something was upd... | 1 |

157,486 | 19,957,420,918 | IssuesEvent | 2022-01-28 02:02:33 | Aivolt1/u-i-u-x-volt-ai | https://api.github.com/repos/Aivolt1/u-i-u-x-volt-ai | opened | CVE-2022-0355 (High) detected in simple-get-3.1.0.tgz | security vulnerability | ## CVE-2022-0355 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>simple-get-3.1.0.tgz</b></p></summary>

<p>Simplest way to make http get requests. Supports HTTPS, redirects, gzip/defla... | True | CVE-2022-0355 (High) detected in simple-get-3.1.0.tgz - ## CVE-2022-0355 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>simple-get-3.1.0.tgz</b></p></summary>

<p>Simplest way to make ... | non_process | cve high detected in simple get tgz cve high severity vulnerability vulnerable library simple get tgz simplest way to make http get requests supports https redirects gzip deflate streams in library home page a href path to dependency file package json path to vu... | 0 |

430,279 | 12,450,632,181 | IssuesEvent | 2020-05-27 09:07:12 | wso2/carbon-apimgt | https://api.github.com/repos/wso2/carbon-apimgt | opened | [Publisher] Can not scroll Open API editor using mouse wheel | Priority/Normal Type/Bug | ### Description:

Can not scroll Open API defintion using mouse wheel when focusing Open API editor

### Steps to reproduce:

- Download WSO2 APIM 3.1.0

- Start server

- Go to publisher

- Create an API with an Open API Definition

- Save

- Edit API

- Go to API Definition > Edit

- Clic on left part and try to us... | 1.0 | [Publisher] Can not scroll Open API editor using mouse wheel - ### Description:

Can not scroll Open API defintion using mouse wheel when focusing Open API editor

### Steps to reproduce:

- Download WSO2 APIM 3.1.0

- Start server

- Go to publisher

- Create an API with an Open API Definition

- Save

- Edit API

-... | non_process | can not scroll open api editor using mouse wheel description can not scroll open api defintion using mouse wheel when focusing open api editor steps to reproduce download apim start server go to publisher create an api with an open api definition save edit api go to api de... | 0 |

81,459 | 3,591,211,907 | IssuesEvent | 2016-02-01 10:39:10 | ow2-proactive/scheduling | https://api.github.com/repos/ow2-proactive/scheduling | closed | Replicate job with 1000 tasks fails to execute | priority:minor resolution:fixed type:bug | <a href="https://jira.activeeon.com/browse/SCHEDULING-2218" title="SCHEDULING-2218">Original issue</a> created by <a href="mailto:youri.bonnaffe_AT_activeeon.com">Youri Bonnaffe</a> on 14, Jan 2015 at 10:51 AM - SCHEDULING-2218

<hr />

When submitting a replicate job (split/process/merge) with 1000 replicated task... | 1.0 | Replicate job with 1000 tasks fails to execute - <a href="https://jira.activeeon.com/browse/SCHEDULING-2218" title="SCHEDULING-2218">Original issue</a> created by <a href="mailto:youri.bonnaffe_AT_activeeon.com">Youri Bonnaffe</a> on 14, Jan 2015 at 10:51 AM - SCHEDULING-2218

<hr />

When submitting a replicate jo... | non_process | replicate job with tasks fails to execute original issue created by youri bonnaffe on jan at am scheduling when submitting a replicate job split process merge with replicated tasks the merge task will fail because of a database error see below it looks like it could be related to... | 0 |

3,305 | 6,401,515,927 | IssuesEvent | 2017-08-05 21:43:22 | pwittchen/ReactiveNetwork | https://api.github.com/repos/pwittchen/ReactiveNetwork | closed | Release 0.11.0 | release process | **Initial release notes**:

- `RxJava1.x` branch:

- added `WalledGardenInternetObservingStrategy` - fixes #116

- made `WalledGardenInternetObservingStrategy` a default strategy for checking Internet connectivity

- added documentation for NetworkObservingStrategy - solves #197

- added documentation for In... | 1.0 | Release 0.11.0 - **Initial release notes**:

- `RxJava1.x` branch:

- added `WalledGardenInternetObservingStrategy` - fixes #116

- made `WalledGardenInternetObservingStrategy` a default strategy for checking Internet connectivity

- added documentation for NetworkObservingStrategy - solves #197

- added doc... | process | release initial release notes x branch added walledgardeninternetobservingstrategy fixes made walledgardeninternetobservingstrategy a default strategy for checking internet connectivity added documentation for networkobservingstrategy solves added documentation ... | 1 |

7,802 | 10,959,865,741 | IssuesEvent | 2019-11-27 12:22:26 | prisma/prisma2 | https://api.github.com/repos/prisma/prisma2 | closed | Preview017 error on "prisma2 dev" or "generate" - 'C:/Program' not a windows command | bug/2-confirmed kind/regression process/candidate | Hi! I got this error while running Preview017 on windows.

```

C:\projetob\quoro\backend>prisma2 generate

'C:\Program' is not recognized as an internal or external command,

operable program or batch file.

Error:

Error: Generator at C:/projetob/quoro/backend/node_modules/@prisma/photon/generator-build/index.j... | 1.0 | Preview017 error on "prisma2 dev" or "generate" - 'C:/Program' not a windows command - Hi! I got this error while running Preview017 on windows.

```

C:\projetob\quoro\backend>prisma2 generate

'C:\Program' is not recognized as an internal or external command,

operable program or batch file.

Error:

Error: Gen... | process | error on dev or generate c program not a windows command hi i got this error while running on windows c projetob quoro backend generate c program is not recognized as an internal or external command operable program or batch file error error generator at c projetob quoro ba... | 1 |

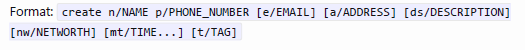

357,269 | 25,176,355,684 | IssuesEvent | 2022-11-11 09:36:32 | WR3nd3/pe | https://api.github.com/repos/WR3nd3/pe | opened | Inconsistent command formatting for Create | type.DocumentationBug severity.VeryLow | When comparing the 2 images below, one taken from the Creation section, and the other from the Command Summary, we see a difference in parameter format for the mt/ prefix with TIME... and TIME .

There is a huge gap between the images and the thumbnails line of smaller images. If there is no thumbnail images, the gap is before the next element.

This gap is not happening on the desktop version.

My s... | 1.0 | Huge gap between under the caroussel on iphone - ### Describe the bug:

I am getting an issue on the iphone mobile version however . (Firefox on IOS 12)

There is a huge gap between the images and the thumbnails line of smaller images. If there is no thumbnail images, the gap is before the next element.

This gap... | non_process | huge gap between under the caroussel on iphone describe the bug i am getting an issue on the iphone mobile version however firefox on ios there is a huge gap between the images and the thumbnails line of smaller images if there is no thumbnail images the gap is before the next element this gap ... | 0 |

355,696 | 10,583,545,347 | IssuesEvent | 2019-10-08 13:54:46 | openshift/installer | https://api.github.com/repos/openshift/installer | closed | libvirt: should support multiple clusters on a single host | platform/libvirt priority/backlog | This is a tracker for a feature request, and the feature might already be supported — at least, I know CIDRs can be configured in the manifests, and clusters with different names can be defined in parallel and will result in different libvirt resources.

Feel free to close this if it’s known to work; otherwise I’ll c... | 1.0 | libvirt: should support multiple clusters on a single host - This is a tracker for a feature request, and the feature might already be supported — at least, I know CIDRs can be configured in the manifests, and clusters with different names can be defined in parallel and will result in different libvirt resources.

Fe... | non_process | libvirt should support multiple clusters on a single host this is a tracker for a feature request and the feature might already be supported — at least i know cidrs can be configured in the manifests and clusters with different names can be defined in parallel and will result in different libvirt resources fe... | 0 |

44,227 | 9,553,528,698 | IssuesEvent | 2019-05-02 19:30:12 | redhat-developer/vscode-java | https://api.github.com/repos/redhat-developer/vscode-java | closed | The quick fix label for generating getter and setter has an unnecessary ellipsis | bug code action | So if you have 2 fields without accessors, the quickfix to generate them has an ellipsis, yet no wizard is shown as you'd expect from the ellipsis.

<img width="378" alt="Screen Shot 2019-04-29 at 7 05 30 PM" src="https://user-images.githubusercontent.com/148698/56932428-c9dd7400-6ab1-11e9-86c2-5c2e5714ea88.png">

| 1.0 | The quick fix label for generating getter and setter has an unnecessary ellipsis - So if you have 2 fields without accessors, the quickfix to generate them has an ellipsis, yet no wizard is shown as you'd expect from the ellipsis.

<img width="378" alt="Screen Shot 2019-04-29 at 7 05 30 PM" src="https://user-images.git... | non_process | the quick fix label for generating getter and setter has an unnecessary ellipsis so if you have fields without accessors the quickfix to generate them has an ellipsis yet no wizard is shown as you d expect from the ellipsis img width alt screen shot at pm src | 0 |

46,698 | 19,412,971,744 | IssuesEvent | 2021-12-20 11:39:39 | hashicorp/terraform-provider-azurerm | https://api.github.com/repos/hashicorp/terraform-provider-azurerm | closed | Consider splitting `azurerm_application_gateway` resource into several smaller ones | enhancement service/application-gateway upstream-microsoft | <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not l... | 1.0 | Consider splitting `azurerm_application_gateway` resource into several smaller ones - <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original ... | non_process | consider splitting azurerm application gateway resource into several smaller ones community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or me too comments they generate extra noise ... | 0 |

295 | 2,732,224,003 | IssuesEvent | 2015-04-17 03:04:22 | mitchellh/packer | https://api.github.com/repos/mitchellh/packer | closed | Packer 0.7.2 is failing on response from vagrantcloud post-processor | bug post-processor/vagrant | When trying to create a new Virtualbox and push it to vagrant-cloud (note: this worked 20 days ago), I am getting the following:

```

[...]

==> virtualbox-iso: Unregistering and deleting virtual machine...

==> virtualbox-iso: Running post-processor: vagrant

==> virtualbox-iso (vagrant): Creating Vagrant box for '... | 1.0 | Packer 0.7.2 is failing on response from vagrantcloud post-processor - When trying to create a new Virtualbox and push it to vagrant-cloud (note: this worked 20 days ago), I am getting the following:

```

[...]

==> virtualbox-iso: Unregistering and deleting virtual machine...

==> virtualbox-iso: Running post-proce... | process | packer is failing on response from vagrantcloud post processor when trying to create a new virtualbox and push it to vagrant cloud note this worked days ago i am getting the following virtualbox iso unregistering and deleting virtual machine virtualbox iso running post processor ... | 1 |

181,775 | 14,074,560,087 | IssuesEvent | 2020-11-04 07:33:30 | OpenMined/PySyft | https://api.github.com/repos/OpenMined/PySyft | closed | Add torch.Tensor.q_per_channel_axis to allowlist and test suite | Priority: 2 - High :cold_sweat: Severity: 3 - Medium :unamused: Status: Available :wave: Type: New Feature :heavy_plus_sign: Type: Testing :test_tube: |

# Description

This issue is a part of Syft 0.3.0 Epic 2: https://github.com/OpenMined/PySyft/issues/3696

In this issue, you will be adding support for remote execution of the torch.Tensor.q_per_channel_axis

method or property. This might be a really small project (literally a one-liner) or

it might require adding si... | 2.0 | Add torch.Tensor.q_per_channel_axis to allowlist and test suite -

# Description

This issue is a part of Syft 0.3.0 Epic 2: https://github.com/OpenMined/PySyft/issues/3696

In this issue, you will be adding support for remote execution of the torch.Tensor.q_per_channel_axis

method or property. This might be a really s... | non_process | add torch tensor q per channel axis to allowlist and test suite description this issue is a part of syft epic in this issue you will be adding support for remote execution of the torch tensor q per channel axis method or property this might be a really small project literally a one liner or it mig... | 0 |

350,234 | 24,974,323,562 | IssuesEvent | 2022-11-02 06:02:13 | cse110-fa22-group28/cse110-fa22-group28 | https://api.github.com/repos/cse110-fa22-group28/cse110-fa22-group28 | closed | Roadmap and Pitch Update | documentation | # Administrative or Organizational Tasks

What is the purpose of this task?

To plan out the project deadlines and compile all discussions into the finalized pitch document.

Steps to complete the task:

- [x] Statement of Purpose for Pitch

- [x] Goals for the product in the pitch document

- [x] Create a milest... | 1.0 | Roadmap and Pitch Update - # Administrative or Organizational Tasks

What is the purpose of this task?

To plan out the project deadlines and compile all discussions into the finalized pitch document.

Steps to complete the task:

- [x] Statement of Purpose for Pitch

- [x] Goals for the product in the pitch docu... | non_process | roadmap and pitch update administrative or organizational tasks what is the purpose of this task to plan out the project deadlines and compile all discussions into the finalized pitch document steps to complete the task statement of purpose for pitch goals for the product in the pitch document... | 0 |

180,256 | 6,647,688,088 | IssuesEvent | 2017-09-28 05:59:18 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | services.gst.gov.in - site is not usable | browser-firefox-mobile priority-normal status-needstriage | <!-- @browser: Firefox Mobile 58.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.0; Mobile; rv:58.0) Gecko/58.0 Firefox/58.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://services.gst.gov.in/services/login

**Browser / Version**: Firefox Mobile 58.0

**Operating System**: Android 7.0

**Tested Another Brow... | 1.0 | services.gst.gov.in - site is not usable - <!-- @browser: Firefox Mobile 58.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.0; Mobile; rv:58.0) Gecko/58.0 Firefox/58.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://services.gst.gov.in/services/login

**Browser / Version**: Firefox Mobile 58.0

**Operating ... | non_process | services gst gov in site is not usable url browser version firefox mobile operating system android tested another browser yes problem type site is not usable description the website does not let you login on desktop or mobile steps to reproduce gst gov in t... | 0 |

20,158 | 26,713,313,499 | IssuesEvent | 2023-01-28 06:28:22 | rusefi/rusefi_documentation | https://api.github.com/repos/rusefi/rusefi_documentation | closed | Proposal: to avoid "back and forth" "close issue" should be done only by person that opened issue | wiki location & process change feedback requested | There is the old saying:

**Only the customer defines a job well done**

I've noticed on several occasions that issues had been only partially fixes but the related issued was closed.

In line with quoted old saying

I'm suggestion to make the rule:

**"close issue" should be done only by person that opened iss... | 1.0 | Proposal: to avoid "back and forth" "close issue" should be done only by person that opened issue - There is the old saying:

**Only the customer defines a job well done**

I've noticed on several occasions that issues had been only partially fixes but the related issued was closed.

In line with quoted old sayin... | process | proposal to avoid back and forth close issue should be done only by person that opened issue there is the old saying only the customer defines a job well done i ve noticed on several occasions that issues had been only partially fixes but the related issued was closed in line with quoted old sayin... | 1 |

180,477 | 30,508,492,139 | IssuesEvent | 2023-07-18 18:48:42 | GCTC-NTGC/gc-digital-talent | https://api.github.com/repos/GCTC-NTGC/gc-digital-talent | closed | Increase table scroll affordance when table width exceeds screen real estate | feature blocked: design | <sup>ℹ️ [Figma (root)][figma] | [Sitemap][sitemap]</sup>

<sup>_This initial comment is collaborative and open to modification by all._</sup>

## Description

Certain tables have a tendency to exceed the available width of the viewport, and this creates a horizontal scrollbar that provides access to the rest of the t... | 1.0 | Increase table scroll affordance when table width exceeds screen real estate - <sup>ℹ️ [Figma (root)][figma] | [Sitemap][sitemap]</sup>

<sup>_This initial comment is collaborative and open to modification by all._</sup>

## Description

Certain tables have a tendency to exceed the available width of the viewport, an... | non_process | increase table scroll affordance when table width exceeds screen real estate ℹ️ this initial comment is collaborative and open to modification by all description certain tables have a tendency to exceed the available width of the viewport and this creates a horizontal scrollbar that provides a... | 0 |

7,828 | 11,008,133,644 | IssuesEvent | 2019-12-04 09:56:10 | spring-projects/spring-hateoas | https://api.github.com/repos/spring-projects/spring-hateoas | closed | Spring hateoas show title ="" even the property title of Link is null | in: mediatypes process: waiting for feedback | Hi,

I am using spring-hateoas 1.0.1.RELEASE and spring boot 2.2.1.RELEASE.

I have a simple Person class:

```@AllArgsConstructor

@Getter

public class Person {

private String id;

private String name;

}

```

Assembler:

```

@Component

public class PersonAssembler implements SimpleRepresentationModel... | 1.0 | Spring hateoas show title ="" even the property title of Link is null - Hi,

I am using spring-hateoas 1.0.1.RELEASE and spring boot 2.2.1.RELEASE.

I have a simple Person class:

```@AllArgsConstructor

@Getter

public class Person {

private String id;

private String name;

}

```

Assembler:

```

@Compo... | process | spring hateoas show title even the property title of link is null hi i am using spring hateoas release and spring boot release i have a simple person class allargsconstructor getter public class person private string id private string name assembler compo... | 1 |

8,456 | 11,631,060,157 | IssuesEvent | 2020-02-28 00:08:58 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | Autograd fails if used before multiprocessing Pool | actionable fixathon module: autograd module: multiprocessing topic: deadlock triaged | ## 🐛 Bug

Just like [#3966](https://github.com/pytorch/pytorch/issues/3966) autograd fails when using it on main process before a child process but not for normally started processes but for process pools.

## To Reproduce

```python

import torch

from torch import multiprocessing as mp

FAIL = True

def f(a=... | 1.0 | Autograd fails if used before multiprocessing Pool - ## 🐛 Bug

Just like [#3966](https://github.com/pytorch/pytorch/issues/3966) autograd fails when using it on main process before a child process but not for normally started processes but for process pools.

## To Reproduce

```python

import torch

from torch im... | process | autograd fails if used before multiprocessing pool 🐛 bug just like autograd fails when using it on main process before a child process but not for normally started processes but for process pools to reproduce python import torch from torch import multiprocessing as mp fail true def f a... | 1 |

6,766 | 9,905,540,818 | IssuesEvent | 2019-06-27 11:52:12 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Batch processing a graphical modeller model results in AttributeError: 'NoneType' object has no attribute 'parameterValue' | Bug Processing | When a self-made model created in the graphical modeller is run in batch processing mode, it throws "Python error : An error has occurred while executing Python code: See message log (Python Error) for more details". The message log, nor the batch processing window log show anything, but when Stack Trace is clicked, t... | 1.0 | Batch processing a graphical modeller model results in AttributeError: 'NoneType' object has no attribute 'parameterValue' - When a self-made model created in the graphical modeller is run in batch processing mode, it throws "Python error : An error has occurred while executing Python code: See message log (Python Err... | process | batch processing a graphical modeller model results in attributeerror nonetype object has no attribute parametervalue when a self made model created in the graphical modeller is run in batch processing mode it throws python error an error has occurred while executing python code see message log python err... | 1 |

37,870 | 2,831,695,944 | IssuesEvent | 2015-05-24 21:12:27 | HellscreamWoW/Tracker | https://api.github.com/repos/HellscreamWoW/Tracker | closed | NPC: Monty | Priority-Normal Type-Creature | There is an issue with this npc, it's duplicated, it's like two of the same npc sitting into each other. Monty is in deeprun tram.

| 1.0 | NPC: Monty - There is an issue with this npc, it's duplicated, it's like two of the same npc sitting into each other. Monty is in deeprun tram.

| non_process | npc monty there is an issue with this npc it s duplicated it s like two of the same npc sitting into each other monty is in deeprun tram | 0 |

6,218 | 9,150,867,002 | IssuesEvent | 2019-02-28 00:03:55 | ArctosDB/new-collections | https://api.github.com/repos/ArctosDB/new-collections | closed | UNR Draft MOU | MOU draft in process | Work with new collection to complete Draft MOU, answer any questions about migration, Arctos operating procedures, and costs; (download sample template include collection contact info). | 1.0 | UNR Draft MOU - Work with new collection to complete Draft MOU, answer any questions about migration, Arctos operating procedures, and costs; (download sample template include collection contact info). | process | unr draft mou work with new collection to complete draft mou answer any questions about migration arctos operating procedures and costs download sample template include collection contact info | 1 |

204,340 | 15,438,926,134 | IssuesEvent | 2021-03-07 22:12:45 | trevorNgo/Measure2.0 | https://api.github.com/repos/trevorNgo/Measure2.0 | opened | CS4ZP6 Tester Feedback: Clicking "View Past Jobs" under the "Start New Year Term" section does not do anything | tester | **Description:** Clicking the **View Past Jobs** button under the **Start New Year Term** on the homepage when logged in as an **Admin** does not do anything.

**OS:** Windows 10 Enterprise

**Browser:** Chrome Version 89.0.4389.82

**Reproduction steps:**

* Sign in as an **Admin**

* Click on **View Past Jobs*... | 1.0 | CS4ZP6 Tester Feedback: Clicking "View Past Jobs" under the "Start New Year Term" section does not do anything - **Description:** Clicking the **View Past Jobs** button under the **Start New Year Term** on the homepage when logged in as an **Admin** does not do anything.

**OS:** Windows 10 Enterprise

**Browser:** ... | non_process | tester feedback clicking view past jobs under the start new year term section does not do anything description clicking the view past jobs button under the start new year term on the homepage when logged in as an admin does not do anything os windows enterprise browser chrome... | 0 |

221,684 | 17,364,895,119 | IssuesEvent | 2021-07-30 05:22:51 | bitpodio/bitpodjs | https://api.github.com/repos/bitpodio/bitpodjs | closed | My Registration=>navigates to old "Reg not found" page if reload is done after email is edited | Bug Minor My Registration New Resolved-Accepted in Test Env rls_01-07-21 | -Go to "My Registrations" page

-Enter valid details for Reg no & Email

-In another tab, edit the email address of the registration

-Reload the My Registration page=>Navigates to old "Registration not found" page

-Expected behavior as discussed with lokesh=> Default Parent page (My Registration) should be displayed ... | 1.0 | My Registration=>navigates to old "Reg not found" page if reload is done after email is edited - -Go to "My Registrations" page

-Enter valid details for Reg no & Email

-In another tab, edit the email address of the registration

-Reload the My Registration page=>Navigates to old "Registration not found" page

-Expect... | non_process | my registration navigates to old reg not found page if reload is done after email is edited go to my registrations page enter valid details for reg no email in another tab edit the email address of the registration reload the my registration page navigates to old registration not found page expect... | 0 |

113,290 | 9,635,156,563 | IssuesEvent | 2019-05-15 23:48:50 | SpongePowered/Ore | https://api.github.com/repos/SpongePowered/Ore | closed | Manual (web-interface) and automatic (Gradle, API) deploy have different minimum character limit for Changelog/Release Bulletin. | status: needs testing type: bug report | **Describe the bug**

Manual (web-interface) and automatic (Gradle, API) deploy have different minimum character limit for Changelog/Release Bulletin. Web-interface allows to have any minimum length, but if you try to automatically deploy through gradle, it will error out with "Content too short" message.

**To Repro... | 1.0 | Manual (web-interface) and automatic (Gradle, API) deploy have different minimum character limit for Changelog/Release Bulletin. - **Describe the bug**

Manual (web-interface) and automatic (Gradle, API) deploy have different minimum character limit for Changelog/Release Bulletin. Web-interface allows to have any minim... | non_process | manual web interface and automatic gradle api deploy have different minimum character limit for changelog release bulletin describe the bug manual web interface and automatic gradle api deploy have different minimum character limit for changelog release bulletin web interface allows to have any minim... | 0 |

2,144 | 4,996,193,420 | IssuesEvent | 2016-12-09 13:00:52 | jlm2017/jlm-video-subtitles | https://api.github.com/repos/jlm2017/jlm-video-subtitles | opened | [subtitles] [eng] Réunion publique à Bordeaux | Language: English Process: [2] Ready for review (1) | # Video title

Réunion publique à Bordeaux

# URL

https://www.youtube.com/watch?v=boAJ1tTpW0s

# Youtube subtitle language

English

# Duration

1:48:52

# URL subtitles

https://www.youtube.com/timedtext_editor?lang=en&ui=hd&v=boAJ1tTpW0s&ref=player&action_mde_edit_form=1&tab=captions&bl=vmp | 1.0 | [subtitles] [eng] Réunion publique à Bordeaux - # Video title

Réunion publique à Bordeaux

# URL

https://www.youtube.com/watch?v=boAJ1tTpW0s

# Youtube subtitle language

English

# Duration

1:48:52

# URL subtitles

https://www.youtube.com/timedtext_editor?lang=en&ui=hd&v=boAJ1tTpW0s&ref=player&a... | process | réunion publique à bordeaux video title réunion publique à bordeaux url youtube subtitle language english duration url subtitles | 1 |

10,959 | 13,764,378,319 | IssuesEvent | 2020-10-07 11:59:33 | googleapis/java-bigtable-hbase | https://api.github.com/repos/googleapis/java-bigtable-hbase | closed | Add kokoro job for sequencefile importer | api: bigtable type: process | To prevent #2366 from re-occurring, we need to have a kokoro test for the sequencefileIntegrationTest profile

something like:

mvn verify \

-pl bigtable-dataflow-parent/bigtable-beam-import \

-Dgoogle.bigtable.instance.id="int-tst" \

-Dgoogle.bigtable.project.id="PROJECT_ID" \

-Dgoogle.dataflow.staging... | 1.0 | Add kokoro job for sequencefile importer - To prevent #2366 from re-occurring, we need to have a kokoro test for the sequencefileIntegrationTest profile

something like:

mvn verify \

-pl bigtable-dataflow-parent/bigtable-beam-import \

-Dgoogle.bigtable.instance.id="int-tst" \

-Dgoogle.bigtable.project.id=... | process | add kokoro job for sequencefile importer to prevent from re occurring we need to have a kokoro test for the sequencefileintegrationtest profile something like mvn verify pl bigtable dataflow parent bigtable beam import dgoogle bigtable instance id int tst dgoogle bigtable project id pr... | 1 |

5,712 | 2,610,213,919 | IssuesEvent | 2015-02-26 19:08:17 | chrsmith/somefinders | https://api.github.com/repos/chrsmith/somefinders | opened | попки иписьки | auto-migrated Priority-Medium Type-Defect | ```

'''Ананий Фёдоров'''

Привет всем не подскажите где можно найти

.попки иписьки. как то выкладывали уже

'''Воин Воронов'''

Качай тут http://bit.ly/HrOecI

'''Ванадий Афанасьев'''

Просит ввести номер мобилы!Не опасно ли это?

'''Адам Белоусов'''

Неа все ок у меня ничего не списало

'''Арнольд Крюков'''

Неа все ок у ... | 1.0 | попки иписьки - ```

'''Ананий Фёдоров'''

Привет всем не подскажите где можно найти

.попки иписьки. как то выкладывали уже

'''Воин Воронов'''

Качай тут http://bit.ly/HrOecI

'''Ванадий Афанасьев'''

Просит ввести номер мобилы!Не опасно ли это?

'''Адам Белоусов'''

Неа все ок у меня ничего не списало

'''Арнольд Крюков'... | non_process | попки иписьки ананий фёдоров привет всем не подскажите где можно найти попки иписьки как то выкладывали уже воин воронов качай тут ванадий афанасьев просит ввести номер мобилы не опасно ли это адам белоусов неа все ок у меня ничего не списало арнольд крюков неа все ок у мен... | 0 |

1,723 | 4,380,975,994 | IssuesEvent | 2016-08-06 00:36:27 | bpython/bpython | https://api.github.com/repos/bpython/bpython | closed | bpython run greenlet bug | requires-separate-process | ```

from greenlet import getcurrent

getcurrent()

getcurrent()

getcurrent()

```

run the code above, even I call `getcurrent` for 3(or more) times, it will get the same obj in `python` shell and `ipython` shell, but it failed in `bpython`, it'll give more than one different greenlet, so it'll cause bugs like [fla... | 1.0 | bpython run greenlet bug - ```

from greenlet import getcurrent

getcurrent()

getcurrent()

getcurrent()

```

run the code above, even I call `getcurrent` for 3(or more) times, it will get the same obj in `python` shell and `ipython` shell, but it failed in `bpython`, it'll give more than one different greenlet, so... | process | bpython run greenlet bug from greenlet import getcurrent getcurrent getcurrent getcurrent run the code above even i call getcurrent for or more times it will get the same obj in python shell and ipython shell but it failed in bpython it ll give more than one different greenlet so... | 1 |

17,661 | 23,480,753,139 | IssuesEvent | 2022-08-17 10:19:42 | q191201771/lal | https://api.github.com/repos/q191201771/lal | closed | Deprecation of package ioutil in Go 1.16 | *In process #Opt | the `ioutil` functions need to be changed to `io` packages

`

"io/ioutil" has been deprecated since Go 1.16: As of Go 1.16, the same functionality is now provided by package io or package os, and those implementations should be preferred in new code. See the specific function documentation for details. (SA1019)

`

... | 1.0 | Deprecation of package ioutil in Go 1.16 - the `ioutil` functions need to be changed to `io` packages

`

"io/ioutil" has been deprecated since Go 1.16: As of Go 1.16, the same functionality is now provided by package io or package os, and those implementations should be preferred in new code. See the specific functi... | process | deprecation of package ioutil in go the ioutil functions need to be changed to io packages io ioutil has been deprecated since go as of go the same functionality is now provided by package io or package os and those implementations should be preferred in new code see the specific function ... | 1 |

2,099 | 4,932,132,162 | IssuesEvent | 2016-11-28 12:38:49 | elastic/beats | https://api.github.com/repos/elastic/beats | closed | Document add_cloud_metadata processor | :Processors docs v5.1.0 | We need to add documentation for the `add_cloud_metadata` (#2728) processor. I can write up the initial draft for this.

In support of new processors, we should probably look at reorganizing the [documentation](https://www.elastic.co/guide/en/beats/winlogbeat/5.0/configuration-processors.html) for processors. Other pr... | 1.0 | Document add_cloud_metadata processor - We need to add documentation for the `add_cloud_metadata` (#2728) processor. I can write up the initial draft for this.

In support of new processors, we should probably look at reorganizing the [documentation](https://www.elastic.co/guide/en/beats/winlogbeat/5.0/configuration-p... | process | document add cloud metadata processor we need to add documentation for the add cloud metadata processor i can write up the initial draft for this in support of new processors we should probably look at reorganizing the for processors other products have a page per processor | 1 |

17,146 | 22,692,895,183 | IssuesEvent | 2022-07-05 00:15:47 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | k8sprocessor: enrich metadata if only pod name and pod namespace are available | comp: k8sprocessor | **Is your feature request related to a problem? Please describe.**

k8sprocessor enriches metadata only for `podUid` or `pod ip`. I have a case when only pod name and pod namespace are obtained by the receiver. In that case I'm unable to enrich metadata using this processor

**Describe the solution you'd like**

I wo... | 1.0 | k8sprocessor: enrich metadata if only pod name and pod namespace are available - **Is your feature request related to a problem? Please describe.**

k8sprocessor enriches metadata only for `podUid` or `pod ip`. I have a case when only pod name and pod namespace are obtained by the receiver. In that case I'm unable to e... | process | enrich metadata if only pod name and pod namespace are available is your feature request related to a problem please describe enriches metadata only for poduid or pod ip i have a case when only pod name and pod namespace are obtained by the receiver in that case i m unable to enrich metadata using t... | 1 |

62,140 | 12,198,029,927 | IssuesEvent | 2020-04-29 21:56:57 | firebase/firebase-ios-sdk | https://api.github.com/repos/firebase/firebase-ios-sdk | closed | iOS 9 crashes after Xcode 11.4 | Xcode 11.4 - 32 bit api: analytics | <!-- DO NOT DELETE

validate_template=true

template_path=.github/ISSUE_TEMPLATE/bug_report.md

-->

### [REQUIRED] Step 1: Describe your environment

* Xcode version: 11.4

* Firebase SDK version: 6.9.0

* Firebase Component: Analytics

* Component version: 6.1.2

* Installation method: `CocoaPods`

##... | 1.0 | iOS 9 crashes after Xcode 11.4 - <!-- DO NOT DELETE

validate_template=true

template_path=.github/ISSUE_TEMPLATE/bug_report.md

-->

### [REQUIRED] Step 1: Describe your environment

* Xcode version: 11.4

* Firebase SDK version: 6.9.0

* Firebase Component: Analytics

* Component version: 6.1.2

* Insta... | non_process | ios crashes after xcode do not delete validate template true template path github issue template bug report md step describe your environment xcode version firebase sdk version firebase component analytics component version installation met... | 0 |

3,159 | 6,216,856,594 | IssuesEvent | 2017-07-08 08:43:48 | bpython/bpython | https://api.github.com/repos/bpython/bpython | closed | Let bpython be run from not main thread | frontend-limitations requires-separate-process | I think this is just a problem with signal handlers. I'm trying playing with this now.

| 1.0 | Let bpython be run from not main thread - I think this is just a problem with signal handlers. I'm trying playing with this now.

| process | let bpython be run from not main thread i think this is just a problem with signal handlers i m trying playing with this now | 1 |

132,334 | 18,268,455,004 | IssuesEvent | 2021-10-04 11:14:38 | AnyVisionltd/anv-ui-components | https://api.github.com/repos/AnyVisionltd/anv-ui-components | closed | CVE-2021-23343 (High) detected in path-parse-1.0.6.tgz | security vulnerability | ## CVE-2021-23343 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>path-parse-1.0.6.tgz</b></p></summary>

<p>Node.js path.parse() ponyfill</p>

<p>Library home page: <a href="https://reg... | True | CVE-2021-23343 (High) detected in path-parse-1.0.6.tgz - ## CVE-2021-23343 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>path-parse-1.0.6.tgz</b></p></summary>

<p>Node.js path.parse(... | non_process | cve high detected in path parse tgz cve high severity vulnerability vulnerable library path parse tgz node js path parse ponyfill library home page a href dependency hierarchy node sass tgz root library meow tgz normalize package data... | 0 |

1,378 | 3,941,734,835 | IssuesEvent | 2016-04-27 09:02:48 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | Implement proper encoding name normalizaton | !IMPORTANT! AREA: server COMPLEXITY: easy SYSTEM: resource processing TYPE: enhancement | Currently we have pretty trivial encoding name normalization: https://github.com/DevExpress/testcafe-hammerhead/blob/master/src/processing/encoding/charset.js#L50

But possible encoding aliases (aka labels) are defined in HTML spec: https://encoding.spec.whatwg.org/#names-and-labels

We need use this labels table ins... | 1.0 | Implement proper encoding name normalizaton - Currently we have pretty trivial encoding name normalization: https://github.com/DevExpress/testcafe-hammerhead/blob/master/src/processing/encoding/charset.js#L50

But possible encoding aliases (aka labels) are defined in HTML spec: https://encoding.spec.whatwg.org/#names... | process | implement proper encoding name normalizaton currently we have pretty trivial encoding name normalization but possible encoding aliases aka labels are defined in html spec we need use this labels table instead | 1 |

19,810 | 3,784,909,223 | IssuesEvent | 2016-03-20 04:55:37 | HubTurbo/HubTurbo | https://api.github.com/repos/HubTurbo/HubTurbo | closed | Cache dependencies on Travis to make building faster | aspect-testing priority.medium type.enhancement | Every build on Travis is downloading all the dependencies, which takes quite an amount of time.

We should try caching the dependencies to reduce the waiting time.

The Travis Doc mentioned a way to do this:

https://docs.travis-ci.com/user/languages/java#Caching | 1.0 | Cache dependencies on Travis to make building faster - Every build on Travis is downloading all the dependencies, which takes quite an amount of time.

We should try caching the dependencies to reduce the waiting time.

The Travis Doc mentioned a way to do this:

https://docs.travis-ci.com/user/languages/java#Cachi... | non_process | cache dependencies on travis to make building faster every build on travis is downloading all the dependencies which takes quite an amount of time we should try caching the dependencies to reduce the waiting time the travis doc mentioned a way to do this | 0 |

54,863 | 3,071,454,741 | IssuesEvent | 2015-08-19 12:14:29 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | closed | Появление белого фона в списке пользователей (r500 beta28) | bug Component-UI imported Priority-Medium | _From [dimitrij...@gmail.com](https://code.google.com/u/117085084104156933070/) on October 01, 2010 18:17:14_

При наведении курсора мыши на описание пользователя, появляется белый фон под описание. Белый фон не появляется, если все описание не влазит в ширину колонки (тогда появляется всплывающая подсказка с описанием... | 1.0 | Появление белого фона в списке пользователей (r500 beta28) - _From [dimitrij...@gmail.com](https://code.google.com/u/117085084104156933070/) on October 01, 2010 18:17:14_

При наведении курсора мыши на описание пользователя, появляется белый фон под описание. Белый фон не появляется, если все описание не влазит в ширин... | non_process | появление белого фона в списке пользователей from on october при наведении курсора мыши на описание пользователя появляется белый фон под описание белый фон не появляется если все описание не влазит в ширину колонки тогда появляется всплывающая подсказка с описанием без обрезания спустя ... | 0 |

6,524 | 9,612,280,912 | IssuesEvent | 2019-05-13 08:32:51 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | in IE11, my content="IE=edge" not working. | AREA: server SYSTEM: resource processing TYPE: bug | <!--

If you have all reproduction steps with a complete sample app, please share as many details as possible in the sections below.

Make sure that you tried using the latest TestCafe version (https://github.com/DevExpress/testcafe/releases), where this behavior might have been already addressed.

Before submittin... | 1.0 | in IE11, my content="IE=edge" not working. - <!--

If you have all reproduction steps with a complete sample app, please share as many details as possible in the sections below.

Make sure that you tried using the latest TestCafe version (https://github.com/DevExpress/testcafe/releases), where this behavior might hav... | process | in my content ie edge not working if you have all reproduction steps with a complete sample app please share as many details as possible in the sections below make sure that you tried using the latest testcafe version where this behavior might have been already addressed before submitting an i... | 1 |

155,134 | 12,239,511,118 | IssuesEvent | 2020-05-04 21:50:49 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Panic after provisioning azure cluster with cloud provider set | [zube]: To Test | Version: Master

K8s: v1.13.5

Steps:

1. Provision a Azure cluster with cloud provider setup.

Observed a Panic few minutes after provisioning the cluster

```

2019/06/10 23:35:53 [INFO] cluster [c-8j7n7] provisioning: [network] Setting up network plugin: canal

2019/06/10 23:35:53 [INFO] cluster [c-8j7n7] p... | 1.0 | Panic after provisioning azure cluster with cloud provider set - Version: Master

K8s: v1.13.5

Steps:

1. Provision a Azure cluster with cloud provider setup.

Observed a Panic few minutes after provisioning the cluster

```

2019/06/10 23:35:53 [INFO] cluster [c-8j7n7] provisioning: [network] Setting up netw... | non_process | panic after provisioning azure cluster with cloud provider set version master steps provision a azure cluster with cloud provider setup observed a panic few minutes after provisioning the cluster cluster provisioning setting up network plugin canal ... | 0 |

21,701 | 30,195,876,494 | IssuesEvent | 2023-07-04 20:57:22 | xpsi-group/xpsi | https://api.github.com/repos/xpsi-group/xpsi | opened | Tight layout not working with corner plots | postprocessing | The tight layout is not working with large corner plots for the python3 version of X-PSI. The reason for that is not clear to me. This means that for large corner plots vertical white spaces appear between the subplots unless rotating the x-axis tick labels by 90 degrees using `axis_tick_x_rotation` option. An example ... | 1.0 | Tight layout not working with corner plots - The tight layout is not working with large corner plots for the python3 version of X-PSI. The reason for that is not clear to me. This means that for large corner plots vertical white spaces appear between the subplots unless rotating the x-axis tick labels by 90 degrees usi... | process | tight layout not working with corner plots the tight layout is not working with large corner plots for the version of x psi the reason for that is not clear to me this means that for large corner plots vertical white spaces appear between the subplots unless rotating the x axis tick labels by degrees using axi... | 1 |

9,629 | 12,576,526,124 | IssuesEvent | 2020-06-09 08:02:49 | kubeflow/manifests | https://api.github.com/repos/kubeflow/manifests | closed | Remove Ambassador annotations from Jupyter web app | area/jupyter kind/feature kind/process lifecycle/stale priority/p1 | We can get rid of the Ambassador annotations on the jupyter web app

https://github.com/kubeflow/manifests/blob/67eabbfd907dbb212b2fa39baeddb3a4b7e9e743/jupyter/jupyter-web-app/base/service.yaml#L5

because we no longer use Ambassador to route traffic.

Ref: kubeflow/kubeflow#3833 | 1.0 | Remove Ambassador annotations from Jupyter web app - We can get rid of the Ambassador annotations on the jupyter web app

https://github.com/kubeflow/manifests/blob/67eabbfd907dbb212b2fa39baeddb3a4b7e9e743/jupyter/jupyter-web-app/base/service.yaml#L5

because we no longer use Ambassador to route traffic.

Ref: kube... | process | remove ambassador annotations from jupyter web app we can get rid of the ambassador annotations on the jupyter web app because we no longer use ambassador to route traffic ref kubeflow kubeflow | 1 |

775,551 | 27,234,814,780 | IssuesEvent | 2023-02-21 15:36:52 | ascheid/itsg33-pbmm-issue-gen | https://api.github.com/repos/ascheid/itsg33-pbmm-issue-gen | closed | CM-6 CONFIGURATION SETTINGS | Priority: P1 | (A) The organization establishes and documents configuration settings for information technology products employed within the information system using [Assignment: organization-defined security configuration checklists] that reflect the most restrictive mode consistent with operational requirements.

(B) The organizatio... | 1.0 | CM-6 CONFIGURATION SETTINGS - (A) The organization establishes and documents configuration settings for information technology products employed within the information system using [Assignment: organization-defined security configuration checklists] that reflect the most restrictive mode consistent with operational re... | non_process | cm configuration settings a the organization establishes and documents configuration settings for information technology products employed within the information system using that reflect the most restrictive mode consistent with operational requirements b the organization implements the configuration setti... | 0 |

13,561 | 16,103,845,961 | IssuesEvent | 2021-04-27 12:50:26 | thesofproject/sof | https://api.github.com/repos/thesofproject/sof | opened | [BUG] Post Processing XRUN when playing multiple times on same topology | Post Processing TGL bug | **Describe the bug**

In any Post Processing test when trying to play 2 times or more on one topology (new streams are created, topology stays intact) SteamStall caused by XRUN occurs.

**Topology**

```

+------------------------------------+

+------+ | +---+ +-------+ +---+ +-------+ |

... | 1.0 | [BUG] Post Processing XRUN when playing multiple times on same topology - **Describe the bug**

In any Post Processing test when trying to play 2 times or more on one topology (new streams are created, topology stays intact) SteamStall caused by XRUN occurs.

**Topology**

```

+---------------------... | process | post processing xrun when playing multiple times on same topology describe the bug in any post processing test when trying to play times or more on one topology new streams are created topology stays intact steamstall caused by xrun occurs topology ... | 1 |

205,885 | 16,012,852,668 | IssuesEvent | 2021-04-20 12:52:03 | fluid-cloudnative/fluid | https://api.github.com/repos/fluid-cloudnative/fluid | closed | sdk 是否能够提供易用的查询资源状态的接口? | documentation features | **What feature you'd like to add:**

sdk 能够提供一个易用的接口,通过这个接口可以查询已有的不同资源的相关属性。例如 kubectl get ${resource} 时得到的信息。

**Why is this feature needed:**

当使用 go sdk 创建资源后,我需要知道资源是否处于可用状态。例如创建 dataset 后,我需要确定 phase 为 bound 时才能使用。但现有的 Get 接口比较抽象,希望能够针对不同资源提供易用的 Get。 | 1.0 | sdk 是否能够提供易用的查询资源状态的接口? - **What feature you'd like to add:**

sdk 能够提供一个易用的接口,通过这个接口可以查询已有的不同资源的相关属性。例如 kubectl get ${resource} 时得到的信息。

**Why is this feature needed:**

当使用 go sdk 创建资源后,我需要知道资源是否处于可用状态。例如创建 dataset 后,我需要确定 phase 为 bound 时才能使用。但现有的 Get 接口比较抽象,希望能够针对不同资源提供易用的 Get。 | non_process | sdk 是否能够提供易用的查询资源状态的接口? what feature you d like to add sdk 能够提供一个易用的接口,通过这个接口可以查询已有的不同资源的相关属性。例如 kubectl get resource 时得到的信息。 why is this feature needed 当使用 go sdk 创建资源后,我需要知道资源是否处于可用状态。例如创建 dataset 后,我需要确定 phase 为 bound 时才能使用。但现有的 get 接口比较抽象,希望能够针对不同资源提供易用的 get。 | 0 |

131,277 | 18,263,529,785 | IssuesEvent | 2021-10-04 04:40:02 | phetsims/fourier-making-waves | https://api.github.com/repos/phetsims/fourier-making-waves | closed | Waveform changes to 'custom' when you open any amplitude keypad. | design:general priority:2-high | For phetsims/qa#711, and related to https://github.com/phetsims/fourier-making-waves/issues/200 ...

As soon as you open the keypad for any amplitude, the Waveform combo box switches to 'custom'. You don't actually have to change the value.

For example:

1. Go to the Discrete screen

2. Select 'wave packet' from... | 1.0 | Waveform changes to 'custom' when you open any amplitude keypad. - For phetsims/qa#711, and related to https://github.com/phetsims/fourier-making-waves/issues/200 ...

As soon as you open the keypad for any amplitude, the Waveform combo box switches to 'custom'. You don't actually have to change the value.

For e... | non_process | waveform changes to custom when you open any amplitude keypad for phetsims qa and related to as soon as you open the keypad for any amplitude the waveform combo box switches to custom you don t actually have to change the value for example go to the discrete screen select wave packe... | 0 |

7,480 | 10,571,981,776 | IssuesEvent | 2019-10-07 08:33:50 | Hurence/logisland | https://api.github.com/repos/Hurence/logisland | opened | add computer vision module support with OpenCV | processor | the computer vision module provide users with an API for image records manipulation

and computer vision algorithm :

https://www.learnopencv.com/histogram-of-oriented-gradients/ | 1.0 | add computer vision module support with OpenCV - the computer vision module provide users with an API for image records manipulation

and computer vision algorithm :

https://www.learnopencv.com/histogram-of-oriented-gradients/ | process | add computer vision module support with opencv the computer vision module provide users with an api for image records manipulation and computer vision algorithm | 1 |

17,334 | 12,302,821,320 | IssuesEvent | 2020-05-11 17:37:27 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | reopened | TestWrappers get built to wrong location | area-Infrastructure-coreclr untriaged | After a coreclr test build, the following directories exist (Windows, x64, Checked):

```

f:\gh\runtime2\artifacts\tests>dir /b f:\gh\runtime2\artifacts\tests\coreclr\Windows_NT.x64.Checked\TestWrappers\JIT.SIMD

JIT.SIMD.XUnitWrapper.cs

JIT.SIMD.XUnitWrapper.csproj

f:\gh\runtime2\artifacts\tests>dir /b f:\gh\ru... | 1.0 | TestWrappers get built to wrong location - After a coreclr test build, the following directories exist (Windows, x64, Checked):

```