Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

167,036 | 14,099,697,038 | IssuesEvent | 2020-11-06 02:06:03 | matplotlib/matplotlib | https://api.github.com/repos/matplotlib/matplotlib | opened | Current `tight_layout` example raises UserWarning | API Changes Documentation | <!--To help us understand and resolve your issue, please fill out the form to the best of your ability.-->

<!--You can feel free to delete the sections that do not apply.-->

### Problem

As can be seen in the [tight layout docs](https://matplotlib.org/tutorials/intermediate/tight_layout_guide.html) (Ctrl-F for "O... | 1.0 | Current `tight_layout` example raises UserWarning - <!--To help us understand and resolve your issue, please fill out the form to the best of your ability.-->

<!--You can feel free to delete the sections that do not apply.-->

### Problem

As can be seen in the [tight layout docs](https://matplotlib.org/tutorials/... | non_process | current tight layout example raises userwarning problem as can be seen in the ctrl f for out gridspec tight layout raises a userwarning whenever there are axes in a figure that come from other gridspec besides self is this the intended api if so is the use case being demo... | 0 |

39,926 | 10,421,705,655 | IssuesEvent | 2019-09-16 07:06:03 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | Fix Javadoc warnings | C: Build C: Documentation E: All Editions P: Low T: Defect | The build currently complains about the following Javadoc warnings:

```

[WARNING] Javadoc Warnings

[WARNING] C:\Users\lukas\workspace-jooq-pro\jOOQ\jOOQ\src\main\java\org\jooq\BatchBindStep.java:60: warning - invalid usage of tag >

[WARNING] C:\Users\lukas\workspace-jooq-pro\jOOQ\jOOQ\src\main\java\org\jooq\Batch... | 1.0 | Fix Javadoc warnings - The build currently complains about the following Javadoc warnings:

```

[WARNING] Javadoc Warnings

[WARNING] C:\Users\lukas\workspace-jooq-pro\jOOQ\jOOQ\src\main\java\org\jooq\BatchBindStep.java:60: warning - invalid usage of tag >

[WARNING] C:\Users\lukas\workspace-jooq-pro\jOOQ\jOOQ\src\m... | non_process | fix javadoc warnings the build currently complains about the following javadoc warnings javadoc warnings c users lukas workspace jooq pro jooq jooq src main java org jooq batchbindstep java warning invalid usage of tag c users lukas workspace jooq pro jooq jooq src main java org jooq batchbi... | 0 |

9,109 | 12,192,306,098 | IssuesEvent | 2020-04-29 12:44:07 | naoki-shigehisa/paper | https://api.github.com/repos/naoki-shigehisa/paper | opened | Gaussian Process Latent Variable Model Factorization for Context-aware Recommender Systems | 2019 Gaussian Process recommendation | ## 0. 論文

タイトル:[Gaussian Process Latent Variable Model Factorization for Context-aware Recommender Systems](https://arxiv.org/abs/1912.09593)

著者:

arXiv投稿日:2019/12/19

学会/ジャーナル:... | 1.0 | Gaussian Process Latent Variable Model Factorization for Context-aware Recommender Systems - ## 0. 論文

タイトル:[Gaussian Process Latent Variable Model Factorization for Context-aware Recommender Systems](https://arxiv.org/abs/1912.09593)

著者:

--- | :---

Nosy | @pitrou, @mgorny, @Julian, @wimglenn, @applio, @itamarst

<sup>*Note: these values reflect the state of the issue at the time it was migrated and might not reflect the current state.*</sup>

<details><summary>Show more details</summary><p>

GitHub fiel... | 1.0 | multiprocessing's default posix start method of `'fork'` is broken: change to `'spawn'` - BPO | [40379](https://bugs.python.org/issue40379)

--- | :---

Nosy | @pitrou, @mgorny, @Julian, @wimglenn, @applio, @itamarst

<sup>*Note: these values reflect the state of the issue at the time it was migrated and might not reflec... | process | multiprocessing s default posix start method of fork is broken change to spawn bpo nosy pitrou mgorny julian wimglenn applio itamarst note these values reflect the state of the issue at the time it was migrated and might not reflect the current state show more details ... | 1 |

497,319 | 14,367,968,311 | IssuesEvent | 2020-12-01 07:38:16 | teamforus/forus | https://api.github.com/repos/teamforus/forus | closed | CR: Webshop - remove "Hoe het werkt" below header description | Priority: Must have | @maxvisser commented on [Tue Sep 15 2020](https://github.com/teamforus/general/issues/445)

Learn more about change requests here: https://bit.ly/39CWeEE

### Requested by:

- Geertruidenberg

### Change description

I don't think such a button really fits there if there is a menu at the top of the page.

The bu... | 1.0 | CR: Webshop - remove "Hoe het werkt" below header description - @maxvisser commented on [Tue Sep 15 2020](https://github.com/teamforus/general/issues/445)

Learn more about change requests here: https://bit.ly/39CWeEE

### Requested by:

- Geertruidenberg

### Change description

I don't think such a button reall... | non_process | cr webshop remove hoe het werkt below header description maxvisser commented on learn more about change requests here requested by geertruidenberg change description i don t think such a button really fits there if there is a menu at the top of the page the button how it works ... | 0 |

22,568 | 31,790,020,810 | IssuesEvent | 2023-09-13 02:00:10 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Wed, 13 Sep 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

### SoccerNet 2023 Challenges Results

- **Authors:** Anthony Cioppa, Silvio Giancola, Vladimir Somers, Floriane Magera, Xin Zhou, Hassan Mkhallati, Adrien Deliège, Jan Held, Carlos Hinojosa, Amir M. Mansourian, Pierre Miralles, Olivier Barnich, Christophe De Vleeschouwer, Alexandre Alahi, Bernard Gh... | 2.0 | New submissions for Wed, 13 Sep 23 - ## Keyword: events

### SoccerNet 2023 Challenges Results

- **Authors:** Anthony Cioppa, Silvio Giancola, Vladimir Somers, Floriane Magera, Xin Zhou, Hassan Mkhallati, Adrien Deliège, Jan Held, Carlos Hinojosa, Amir M. Mansourian, Pierre Miralles, Olivier Barnich, Christophe De Vlee... | process | new submissions for wed sep keyword events soccernet challenges results authors anthony cioppa silvio giancola vladimir somers floriane magera xin zhou hassan mkhallati adrien deliège jan held carlos hinojosa amir m mansourian pierre miralles olivier barnich christophe de vleeschou... | 1 |

14,645 | 17,773,567,497 | IssuesEvent | 2021-08-30 16:16:47 | googleapis/python-storage | https://api.github.com/repos/googleapis/python-storage | closed | Dependency Dashboard | api: storage type: process | This issue provides visibility into Renovate updates and their statuses. [Learn more](https://docs.renovatebot.com/key-concepts/dashboard/)

## Ignored or Blocked

These are blocked by an existing closed PR and will not be recreated unless you click a checkbox below.

- [ ] <!-- recreate-branch=renovate/googleapis-com... | 1.0 | Dependency Dashboard - This issue provides visibility into Renovate updates and their statuses. [Learn more](https://docs.renovatebot.com/key-concepts/dashboard/)

## Ignored or Blocked

These are blocked by an existing closed PR and will not be recreated unless you click a checkbox below.

- [ ] <!-- recreate-branch=... | process | dependency dashboard this issue provides visibility into renovate updates and their statuses ignored or blocked these are blocked by an existing closed pr and will not be recreated unless you click a checkbox below pull pull pull pull chec... | 1 |

119,972 | 17,644,003,757 | IssuesEvent | 2021-08-20 01:26:19 | AkshayMukkavilli/Analyzing-the-Significance-of-Structure-in-Amazon-Review-Data-Using-Machine-Learning-Approaches | https://api.github.com/repos/AkshayMukkavilli/Analyzing-the-Significance-of-Structure-in-Amazon-Review-Data-Using-Machine-Learning-Approaches | opened | CVE-2021-29529 (High) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl | security vulnerability | ## CVE-2021-29529 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning... | True | CVE-2021-29529 (High) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl - ## CVE-2021-29529 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27mu-m... | non_process | cve high detected in tensorflow whl cve high severity vulnerability vulnerable library tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file finalproject requirements tx... | 0 |

110,025 | 23,854,240,817 | IssuesEvent | 2022-09-06 21:08:48 | WordPress/openverse-catalog | https://api.github.com/repos/WordPress/openverse-catalog | opened | Consider setting category to 'illustration' for all svgs | 🟩 priority: low ✨ goal: improvement 💻 aspect: code | ## Current Situation

<!-- Describe the part of the code you think should improve -->

Related #614

Wikimedia sets the `category` for all records of filetype `svg` to "illustration". We should consider whether it's acceptable to do this for *all* providers, and if so we can move that logic to the `ImageStore` class... | 1.0 | Consider setting category to 'illustration' for all svgs - ## Current Situation

<!-- Describe the part of the code you think should improve -->

Related #614

Wikimedia sets the `category` for all records of filetype `svg` to "illustration". We should consider whether it's acceptable to do this for *all* providers,... | non_process | consider setting category to illustration for all svgs current situation related wikimedia sets the category for all records of filetype svg to illustration we should consider whether it s acceptable to do this for all providers and if so we can move that logic to the imagestore class ... | 0 |

253,725 | 27,300,814,243 | IssuesEvent | 2023-02-24 01:40:18 | panasalap/linux-4.19.72_1 | https://api.github.com/repos/panasalap/linux-4.19.72_1 | closed | WS-2021-0462 (Medium) detected in linux-yoctov5.4.51 - autoclosed | security vulnerability | ## WS-2021-0462 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Embedded kernel</p>

<p>Library home page: <a href=https://git.... | True | WS-2021-0462 (Medium) detected in linux-yoctov5.4.51 - autoclosed - ## WS-2021-0462 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto ... | non_process | ws medium detected in linux autoclosed ws medium severity vulnerability vulnerable library linux yocto linux embedded kernel library home page a href found in head commit a href found in base branch master vulnerable source files ... | 0 |

121,237 | 25,941,646,322 | IssuesEvent | 2022-12-16 19:02:51 | xygeni/xygeni-goat | https://api.github.com/repos/xygeni/xygeni-goat | opened | Add initial curated list of bad code | bad code | The goal is to add sources with intentionally flawed code, configurations, dependencies graph, IaC templates, etc. for learning about Software Supply Chain Security, and for running security tools.

### Location of files

The initial set of bad code will be added under source/KIND, with KIND in { `secrets`, `miscon... | 1.0 | Add initial curated list of bad code - The goal is to add sources with intentionally flawed code, configurations, dependencies graph, IaC templates, etc. for learning about Software Supply Chain Security, and for running security tools.

### Location of files

The initial set of bad code will be added under source/... | non_process | add initial curated list of bad code the goal is to add sources with intentionally flawed code configurations dependencies graph iac templates etc for learning about software supply chain security and for running security tools location of files the initial set of bad code will be added under source ... | 0 |

17,973 | 23,984,561,074 | IssuesEvent | 2022-09-13 17:51:54 | googleapis/google-cloud-node | https://api.github.com/repos/googleapis/google-cloud-node | opened | Configure blunderbuss and CODEOWNERS on this repository | type: process | This repo will contain hundreds of client libraries and will want some help assigning issues and PRs to the correct reviewers. | 1.0 | Configure blunderbuss and CODEOWNERS on this repository - This repo will contain hundreds of client libraries and will want some help assigning issues and PRs to the correct reviewers. | process | configure blunderbuss and codeowners on this repository this repo will contain hundreds of client libraries and will want some help assigning issues and prs to the correct reviewers | 1 |

18,770 | 24,674,394,274 | IssuesEvent | 2022-10-18 15:51:04 | deepset-ai/haystack | https://api.github.com/repos/deepset-ai/haystack | closed | Page number in document meta data not correct | type:bug topic:file_converter topic:preprocessing | **Describe the bug**

The new "add page number" features, implemented in #2932, seem to have a bug in combination with the `PDFToTextConverter`

**Error message**

Wrong page number in metadata

**To Reproduce**

Use the following haystack components:

```python

elastic_search_document_store = ElasticsearchDo... | 1.0 | Page number in document meta data not correct - **Describe the bug**

The new "add page number" features, implemented in #2932, seem to have a bug in combination with the `PDFToTextConverter`

**Error message**

Wrong page number in metadata

**To Reproduce**

Use the following haystack components:

```python

... | process | page number in document meta data not correct describe the bug the new add page number features implemented in seem to have a bug in combination with the pdftotextconverter error message wrong page number in metadata to reproduce use the following haystack components python el... | 1 |

19,079 | 25,119,464,349 | IssuesEvent | 2022-11-09 06:39:39 | streamnative/flink | https://api.github.com/repos/streamnative/flink | opened | [Connector] Do not fail job when PulsarAdmin API fails when getting the data. | compute/data-processing | Currently, Pulsar connector periodically queries the admin api to detect partition changes. However, this can cause the pipeline to fail if the admin api fail.

@weixiangchen Reported a admin 502 when running pipeline for longer peirods (30 minutes).

We should discuss whether we want to

1) fail the job

2) restart the... | 1.0 | [Connector] Do not fail job when PulsarAdmin API fails when getting the data. - Currently, Pulsar connector periodically queries the admin api to detect partition changes. However, this can cause the pipeline to fail if the admin api fail.

@weixiangchen Reported a admin 502 when running pipeline for longer peirods (30... | process | do not fail job when pulsaradmin api fails when getting the data currently pulsar connector periodically queries the admin api to detect partition changes however this can cause the pipeline to fail if the admin api fail weixiangchen reported a admin when running pipeline for longer peirods minutes w... | 1 |

12,914 | 15,287,552,000 | IssuesEvent | 2021-02-23 15:53:25 | threefoldtech/js-sdk | https://api.github.com/repos/threefoldtech/js-sdk | closed | vdc user to be able to set autoscale limit | process_wontfix type_feature | the user may need to specify an autoscale limit so they don't grow indefinitely

| 1.0 | vdc user to be able to set autoscale limit - the user may need to specify an autoscale limit so they don't grow indefinitely

| process | vdc user to be able to set autoscale limit the user may need to specify an autoscale limit so they don t grow indefinitely | 1 |

107,836 | 9,231,248,308 | IssuesEvent | 2019-03-13 01:29:26 | rust-lang/rust | https://api.github.com/repos/rust-lang/rust | closed | ICE Type parameter out of range when substituting | A-typesystem E-needstest I-ICE T-compiler | Both nightly and stable ICE on this code:

```rust

use once_cell::sync::OnceCell;

use std::collections::HashMap;

type Cache<K, T> = OnceCell<HashMap<K, T>>;

trait Provider<K, T> {

fn new_cache() -> Cache<K, T> {

OnceCell::INIT

}

}

struct Fib;

impl Provider<u32, u32> for Fib {}

fn ... | 1.0 | ICE Type parameter out of range when substituting - Both nightly and stable ICE on this code:

```rust

use once_cell::sync::OnceCell;

use std::collections::HashMap;

type Cache<K, T> = OnceCell<HashMap<K, T>>;

trait Provider<K, T> {

fn new_cache() -> Cache<K, T> {

OnceCell::INIT

}

}

stru... | non_process | ice type parameter out of range when substituting both nightly and stable ice on this code rust use once cell sync oncecell use std collections hashmap type cache oncecell trait provider fn new cache cache oncecell init struct fib impl provider for... | 0 |

621,590 | 19,592,255,948 | IssuesEvent | 2022-01-05 14:13:53 | WordPress/openverse-frontend | https://api.github.com/repos/WordPress/openverse-frontend | closed | Implement redesign and new components | 🟧 priority: high ✨ goal: improvement 🕹 aspect: interface | This is a meta ticket to track the ongoing redesign efforts in Openverse. This ticket is very much a work in progress and is currently being used to track missing, ongoing issues with the redesign.

## Design Todos

### General

- [ ] #256

- [x] #273

- [ ] #349

- [ ] Simplified homepage (no featured collec... | 1.0 | Implement redesign and new components - This is a meta ticket to track the ongoing redesign efforts in Openverse. This ticket is very much a work in progress and is currently being used to track missing, ongoing issues with the redesign.

## Design Todos

### General

- [ ] #256

- [x] #273

- [ ] #349

- [ ]... | non_process | implement redesign and new components this is a meta ticket to track the ongoing redesign efforts in openverse this ticket is very much a work in progress and is currently being used to track missing ongoing issues with the redesign design todos general simplified ho... | 0 |

11,553 | 14,435,280,100 | IssuesEvent | 2020-12-07 08:30:19 | linuxdeepin/developer-center | https://api.github.com/repos/linuxdeepin/developer-center | closed | Control panel crash when click icons | bug | Ports bug | functional behavior other | delay processing suggest | functional behavior | Hi, i'm using deepin DE on Arch Linux, at the last upgrade when i switch from deepin-control-center-5.2.0.1 to deepin-control-center-5.2.0.3 i had a problem: the program crash when i select the account icon.

When i downgrade to the older version it works, i think that is a problem of the newest version. | 1.0 | Control panel crash when click icons - Hi, i'm using deepin DE on Arch Linux, at the last upgrade when i switch from deepin-control-center-5.2.0.1 to deepin-control-center-5.2.0.3 i had a problem: the program crash when i select the account icon.

When i downgrade to the older version it works, i think that is a probl... | process | control panel crash when click icons hi i m using deepin de on arch linux at the last upgrade when i switch from deepin control center to deepin control center i had a problem the program crash when i select the account icon when i downgrade to the older version it works i think that is a probl... | 1 |

23,709 | 12,086,734,667 | IssuesEvent | 2020-04-18 11:28:20 | flutter/flutter | https://api.github.com/repos/flutter/flutter | closed | Dart high memory when open VS Code (macos) | dependency: dart framework platform-mac severe: performance tool | Hi, I'm getting slow when I open vs code that has installed Flutter plugins.

I look memory size of dart, consumed : 1,05 GB

Details :

```

cwd

/

txt

/Users/macosx/flutter-1.10.4/bin/cache/dart-sdk/bin/dart

txt

/Users/macosx/flutter-1.10.4/bin/cache/dart-sdk/bin/snapshots/analysis_server.dart.snapshot

txt

/U... | True | Dart high memory when open VS Code (macos) - Hi, I'm getting slow when I open vs code that has installed Flutter plugins.

I look memory size of dart, consumed : 1,05 GB

Details :

```

cwd

/

txt

/Users/macosx/flutter-1.10.4/bin/cache/dart-sdk/bin/dart

txt

/Users/macosx/flutter-1.10.4/bin/cache/dart-sdk/bin/sna... | non_process | dart high memory when open vs code macos hi i m getting slow when i open vs code that has installed flutter plugins i look memory size of dart consumed gb details cwd txt users macosx flutter bin cache dart sdk bin dart txt users macosx flutter bin cache dart sdk bin snapsh... | 0 |

164,423 | 13,942,219,347 | IssuesEvent | 2020-10-22 20:40:04 | TheRedDaemon/LittleCrusaderAsi | https://api.github.com/repos/TheRedDaemon/LittleCrusaderAsi | opened | Readme for implementation concepts still needs to be written. | documentation | It is already linkend and should contain something.

Otherwise it is not helpful... | 1.0 | Readme for implementation concepts still needs to be written. - It is already linkend and should contain something.

Otherwise it is not helpful... | non_process | readme for implementation concepts still needs to be written it is already linkend and should contain something otherwise it is not helpful | 0 |

31,722 | 5,989,019,130 | IssuesEvent | 2017-06-02 07:18:11 | icebob/vue-form-generator | https://api.github.com/repos/icebob/vue-form-generator | closed | update docs | difficulty: easy documentation | There are some recent changes what we need to update in documentation too.

- [x] `change` property in schema of file input #173

- [x] supported async validators #171

- [x] custom validation message for fields #169

- [x] supported string-based validators #167

- [x] vue-multiselect fixed #30 but in beta15, but the... | 1.0 | update docs - There are some recent changes what we need to update in documentation too.

- [x] `change` property in schema of file input #173

- [x] supported async validators #171

- [x] custom validation message for fields #169

- [x] supported string-based validators #167

- [x] vue-multiselect fixed #30 but in b... | non_process | update docs there are some recent changes what we need to update in documentation too change property in schema of file input supported async validators custom validation message for fields supported string based validators vue multiselect fixed but in but they expanded... | 0 |

279,712 | 24,249,318,430 | IssuesEvent | 2022-09-27 13:08:16 | hazelcast/hazelcast-python-client | https://api.github.com/repos/hazelcast/hazelcast-python-client | closed | test_translate_is_used | Type: Test-Failure Source: Internal | Failed on Windows against Python 2.7

https://github.com/hazelcast/hazelcast-python-client/runs/3889839024?check_suite_focus=true

```

======================================================================

FAIL: test_translate_is_used (tests.integration.connection_manager_translate_test.ConnectionManagerTranslateTe... | 1.0 | test_translate_is_used - Failed on Windows against Python 2.7

https://github.com/hazelcast/hazelcast-python-client/runs/3889839024?check_suite_focus=true

```

======================================================================

FAIL: test_translate_is_used (tests.integration.connection_manager_translate_test.Con... | non_process | test translate is used failed on windows against python fail test translate is used tests integration connection manager translate test connectionmanagertranslatetest ... | 0 |

14,684 | 18,019,713,125 | IssuesEvent | 2021-09-16 17:47:08 | lgblgblgb/xemu | https://api.github.com/repos/lgblgblgb/xemu | opened | MEGA65: V400 problem with non-bitplane modes? | target:MEGA65 compatibility-example VIC-IV | OpenROMs display only the top half of the screen, but not the bottom half, if 80x50 mode is used (it's OK 25 lines mode). Just trying to cursor down beyond the half of the screen, type something, and cursor up again: the cursor is again visible, the text is not, though in memory dump, the screen content clearly shows t... | True | MEGA65: V400 problem with non-bitplane modes? - OpenROMs display only the top half of the screen, but not the bottom half, if 80x50 mode is used (it's OK 25 lines mode). Just trying to cursor down beyond the half of the screen, type something, and cursor up again: the cursor is again visible, the text is not, though in... | non_process | problem with non bitplane modes openroms display only the top half of the screen but not the bottom half if mode is used it s ok lines mode just trying to cursor down beyond the half of the screen type something and cursor up again the cursor is again visible the text is not though in memory dump ... | 0 |

21,515 | 29,801,061,569 | IssuesEvent | 2023-06-16 08:08:43 | openfoodfacts/openfoodfacts-server | https://api.github.com/repos/openfoodfacts/openfoodfacts-server | closed | The includes and their translations have not been deployed for Nova Groups pages | bug P1 static content nova processing Stale :star: top bug | ### Describe the bug

The Nova Group pages seem to not be loading right, regardless of the amount of time I've tried, they aren't loading especially the fresh one, lots of information is missing.

### To Reproduce

https://fr.openfoodfacts.org/nova

https://world.openfoodfacts.org/nova

### Expected behavior

... | 1.0 | The includes and their translations have not been deployed for Nova Groups pages - ### Describe the bug

The Nova Group pages seem to not be loading right, regardless of the amount of time I've tried, they aren't loading especially the fresh one, lots of information is missing.

### To Reproduce

https://fr.open... | process | the includes and their translations have not been deployed for nova groups pages describe the bug the nova group pages seem to not be loading right regardless of the amount of time i ve tried they aren t loading especially the fresh one lots of information is missing to reproduce exp... | 1 |

21,825 | 30,316,774,062 | IssuesEvent | 2023-07-10 16:05:41 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | closed | New Term - eventType | Term - add Class - Event normative Process - complete | ## New term

* Submitter: John Wieczorek

* Efficacy Justification (why is this term necessary?): The hierarchical Event structure is not currently capable of distinguishing what different Events are for.

* Demand Justification (name at least two organizations that independently need this term): The need for an eve... | 1.0 | New Term - eventType - ## New term

* Submitter: John Wieczorek

* Efficacy Justification (why is this term necessary?): The hierarchical Event structure is not currently capable of distinguishing what different Events are for.

* Demand Justification (name at least two organizations that independently need this ter... | process | new term eventtype new term submitter john wieczorek efficacy justification why is this term necessary the hierarchical event structure is not currently capable of distinguishing what different events are for demand justification name at least two organizations that independently need this ter... | 1 |

11,241 | 9,285,929,371 | IssuesEvent | 2019-03-21 08:58:14 | astrolabsoftware/fink-broker | https://api.github.com/repos/astrolabsoftware/fink-broker | closed | Define common Apache Spark initialization step for services | apache kafka apache spark services | all services start with the same spark initialization steps (including setting the same Kafka parameters, etc). This is unnecessary redundancy, and prone to mistakes. The idea would be to factor this part, and include it into Fink core. | 1.0 | Define common Apache Spark initialization step for services - all services start with the same spark initialization steps (including setting the same Kafka parameters, etc). This is unnecessary redundancy, and prone to mistakes. The idea would be to factor this part, and include it into Fink core. | non_process | define common apache spark initialization step for services all services start with the same spark initialization steps including setting the same kafka parameters etc this is unnecessary redundancy and prone to mistakes the idea would be to factor this part and include it into fink core | 0 |

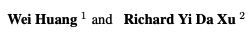

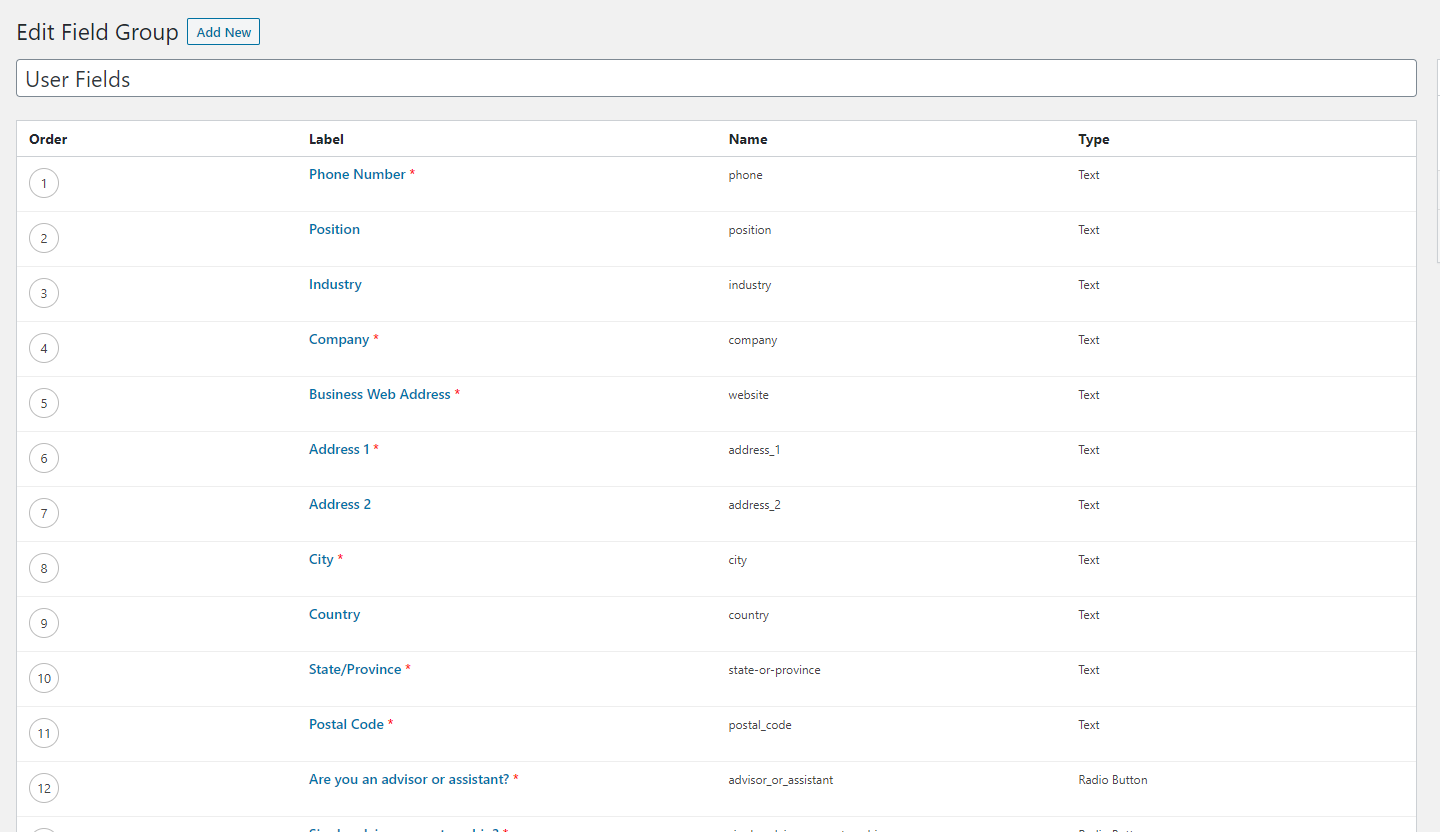

166,064 | 14,018,951,902 | IssuesEvent | 2020-10-29 17:30:22 | actions-pack/feedbacks | https://api.github.com/repos/actions-pack/feedbacks | closed | Compatibility with Advanced Custom Field Plugin? | documentation |

You need to map your ACF field name in Registration Action's user meta fields. E.g one of the user meta field names is **phone** as shown in the image. So you just need to map your Elementor Form's Phone Fi... | 1.0 | Compatibility with Advanced Custom Field Plugin? -

You need to map your ACF field name in Registration Action's user meta fields. E.g one of the user meta field names is **phone** as shown in the image. So ... | non_process | compatibility with advanced custom field plugin you need to map your acf field name in registration action s user meta fields e g one of the user meta field names is phone as shown in the image so you just need to map your elementor form s phone field id to phone | 0 |

12,186 | 9,594,825,905 | IssuesEvent | 2019-05-09 14:46:31 | opencb/opencga | https://api.github.com/repos/opencb/opencga | opened | Upload web service fails to upload to root folder | bug web services | files/upload web service fails when the user attempts to upload files to the root folder of the path. | 1.0 | Upload web service fails to upload to root folder - files/upload web service fails when the user attempts to upload files to the root folder of the path. | non_process | upload web service fails to upload to root folder files upload web service fails when the user attempts to upload files to the root folder of the path | 0 |

36,750 | 6,548,340,108 | IssuesEvent | 2017-09-04 20:58:09 | ekeih/OmNomNom | https://api.github.com/repos/ekeih/OmNomNom | opened | Improve documentation | documentation | Currently the bot is missing mostly everything you would expect from a well documented project.

If you are thinking about contributing code or just want to run the bot yourself, please let me know, so I can prioritize this issue ;-) | 1.0 | Improve documentation - Currently the bot is missing mostly everything you would expect from a well documented project.

If you are thinking about contributing code or just want to run the bot yourself, please let me know, so I can prioritize this issue ;-) | non_process | improve documentation currently the bot is missing mostly everything you would expect from a well documented project if you are thinking about contributing code or just want to run the bot yourself please let me know so i can prioritize this issue | 0 |

17,338 | 23,157,876,125 | IssuesEvent | 2022-07-29 14:38:52 | Tencent/tdesign-miniprogram | https://api.github.com/repos/Tencent/tdesign-miniprogram | closed | [tabs] 希望支持选中的下标改色 | good first issue in process | ### 这个功能解决了什么问题

现在每次改色,都要手动去修改,极其不方便,希望能支持两种模式,纯改色,和slot的

### 你建议的方案是什么

把设置单独做成组件属性 | 1.0 | [tabs] 希望支持选中的下标改色 - ### 这个功能解决了什么问题

现在每次改色,都要手动去修改,极其不方便,希望能支持两种模式,纯改色,和slot的

### 你建议的方案是什么

把设置单独做成组件属性 | process | 希望支持选中的下标改色 这个功能解决了什么问题 现在每次改色,都要手动去修改,极其不方便,希望能支持两种模式,纯改色,和slot的 你建议的方案是什么 把设置单独做成组件属性 | 1 |

5,335 | 8,154,440,234 | IssuesEvent | 2018-08-23 03:17:18 | HumanCellAtlas/dcp-community | https://api.github.com/repos/HumanCellAtlas/dcp-community | closed | Objective or Objectives | charter-process | I think in the context of our charters Objective as found in https://github.com/HumanCellAtlas/dcp-community/blob/master/charters/charter-template.md tends to be used in the plural rather than singular

Can this be updated to the plural Objectives? | 1.0 | Objective or Objectives - I think in the context of our charters Objective as found in https://github.com/HumanCellAtlas/dcp-community/blob/master/charters/charter-template.md tends to be used in the plural rather than singular

Can this be updated to the plural Objectives? | process | objective or objectives i think in the context of our charters objective as found in tends to be used in the plural rather than singular can this be updated to the plural objectives | 1 |

18,525 | 24,552,095,119 | IssuesEvent | 2022-10-12 13:21:37 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [iOS] [Offline indicator] Offline feature is not working in the following scenario | Bug P1 iOS Process: Fixed Process: Tested dev | **Description**

**Pre_condition:** The testing device should connect to WiFi

**Steps:**

1. Install the mobile app on the testing device

2. Turn off the main data (Don't turn off the WI-FI of the testing device)

3. Observe

**AR:** Offline indicator feature is not working

**ER:** Offline indicator feature sh... | 2.0 | [iOS] [Offline indicator] Offline feature is not working in the following scenario - **Description**

**Pre_condition:** The testing device should connect to WiFi

**Steps:**

1. Install the mobile app on the testing device

2. Turn off the main data (Don't turn off the WI-FI of the testing device)

3. Observe

*... | process | offline feature is not working in the following scenario description pre condition the testing device should connect to wifi steps install the mobile app on the testing device turn off the main data don t turn off the wi fi of the testing device observe ar offline indicat... | 1 |

1,703 | 4,349,928,413 | IssuesEvent | 2016-07-30 22:27:31 | pwittchen/ReactiveSensors | https://api.github.com/repos/pwittchen/ReactiveSensors | closed | Release 0.1.2 | release process | **Initial release notes**:

- bumped RxJava dependency to v. 1.1.8

- bumped RxAndroid dependency to v. 1.2.1

- bumped Google Truth test dependency to v. 0.28

- bumped Compile SDK version to v. 23

- bumped Kotlin to v. 1.0.0

- updated sample apps

**Things to do**:

- [x] bump library version

- [x] upload arch... | 1.0 | Release 0.1.2 - **Initial release notes**:

- bumped RxJava dependency to v. 1.1.8

- bumped RxAndroid dependency to v. 1.2.1

- bumped Google Truth test dependency to v. 0.28

- bumped Compile SDK version to v. 23

- bumped Kotlin to v. 1.0.0

- updated sample apps

**Things to do**:

- [x] bump library version

-... | process | release initial release notes bumped rxjava dependency to v bumped rxandroid dependency to v bumped google truth test dependency to v bumped compile sdk version to v bumped kotlin to v updated sample apps things to do bump library version u... | 1 |

427,697 | 12,397,948,738 | IssuesEvent | 2020-05-21 00:15:28 | eclipse-ee4j/glassfish | https://api.github.com/repos/eclipse-ee4j/glassfish | closed | [osgi-cdi]OSGi service automatic publishing with @Publish-liking annotation | Component: OSGi-JavaEE ERR: Assignee Priority: Critical Stale Type: New Feature | Liking Weld-OSGi:

allows developers to automatically publish service implementation.There is nothing to do, just put the

annotation. OSGi framework is completely hidden. Then the service is accessible through CDI-OSGi service injection

and OSGi classic mechanisms.

In addition, on OSGi RFP-0146 Draft,

CDI002 - The sp... | 1.0 | [osgi-cdi]OSGi service automatic publishing with @Publish-liking annotation - Liking Weld-OSGi:

allows developers to automatically publish service implementation.There is nothing to do, just put the

annotation. OSGi framework is completely hidden. Then the service is accessible through CDI-OSGi service injection

and O... | non_process | osgi service automatic publishing with publish liking annotation liking weld osgi allows developers to automatically publish service implementation there is nothing to do just put the annotation osgi framework is completely hidden then the service is accessible through cdi osgi service injection and osgi class... | 0 |

19,070 | 25,098,729,614 | IssuesEvent | 2022-11-08 12:07:55 | hoprnet/hoprnet | https://api.github.com/repos/hoprnet/hoprnet | closed | Add staging branches for all supported releases | devops epic processes | We want to be able to merge PRs into release staging branches and only when we consider the sum of changes release-worthy, that branch is merged into the release branch. This process should be started with #4275

# Example

Release branch: `release/bogota`

Staging branch: `release-staging/bogota`

1. PRs are me... | 1.0 | Add staging branches for all supported releases - We want to be able to merge PRs into release staging branches and only when we consider the sum of changes release-worthy, that branch is merged into the release branch. This process should be started with #4275

# Example

Release branch: `release/bogota`

Staging... | process | add staging branches for all supported releases we want to be able to merge prs into release staging branches and only when we consider the sum of changes release worthy that branch is merged into the release branch this process should be started with example release branch release bogota staging br... | 1 |

2,598 | 5,356,200,627 | IssuesEvent | 2017-02-20 15:04:35 | AllenFang/react-bootstrap-table | https://api.github.com/repos/AllenFang/react-bootstrap-table | closed | Bootstrap Table Warnings | enhancement inprocess | Hi Allen,

Thanks again for taking your time everyday to read through these issues. It is much appreciated. I'm getting the following warnings when I render your tables:

This doesn't break the tables as ... | 1.0 | Bootstrap Table Warnings - Hi Allen,

Thanks again for taking your time everyday to read through these issues. It is much appreciated. I'm getting the following warnings when I render your tables:

This d... | process | bootstrap table warnings hi allen thanks again for taking your time everyday to read through these issues it is much appreciated i m getting the following warnings when i render your tables this doesn t break the tables as of now but is obviously a concern for the future currently using version ... | 1 |

57,767 | 24,223,083,146 | IssuesEvent | 2022-09-26 12:30:34 | adi-H/top-picks | https://api.github.com/repos/adi-H/top-picks | closed | add change review data to product page | enhancement ui list-microservice ratings-microservice to be continued backend | continution of TBD

**updated 27/8**

ratings

- [x] edit / update rating route + tests

lists

- [ ] find product in user lists

ui

- [ ] add ur own review

- [ ] change ur review | 2.0 | add change review data to product page - continution of TBD

**updated 27/8**

ratings

- [x] edit / update rating route + tests

lists

- [ ] find product in user lists

ui

- [ ] add ur own review

- [ ] change ur review | non_process | add change review data to product page continution of tbd updated ratings edit update rating route tests lists find product in user lists ui add ur own review change ur review | 0 |

2,670 | 5,468,638,854 | IssuesEvent | 2017-03-10 07:04:26 | openslide/openslide | https://api.github.com/repos/openslide/openslide | closed | Audit security options pages | development-process enhancement | There are lots of new options for teams and collaboration now. We should go through and decide if any changes are needed.

https://github.com/organizations/openslide/settings/member_privileges

https://github.com/organizations/openslide/settings/oauth_application_policy

https://github.com/openslide/openslide/setting... | 1.0 | Audit security options pages - There are lots of new options for teams and collaboration now. We should go through and decide if any changes are needed.

https://github.com/organizations/openslide/settings/member_privileges

https://github.com/organizations/openslide/settings/oauth_application_policy

https://github.... | process | audit security options pages there are lots of new options for teams and collaboration now we should go through and decide if any changes are needed | 1 |

337,745 | 30,259,979,870 | IssuesEvent | 2023-07-07 07:26:32 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: failover/non-system/pause failed | C-test-failure O-robot O-roachtest branch-master release-blocker T-kv | roachtest.failover/non-system/pause [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/10797165?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/10797165?buildTab=artifacts#/failover/non... | 2.0 | roachtest: failover/non-system/pause failed - roachtest.failover/non-system/pause [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/10797165?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceB... | non_process | roachtest failover non system pause failed roachtest failover non system pause with on master test runner go runtest test timed out cluster go run output in run cockroach workload i cockroach workload init kv splits pgurl returned command problem exit status tes... | 0 |

473,818 | 13,648,059,297 | IssuesEvent | 2020-09-26 07:03:44 | TerryCavanagh/diceydungeonsbeta | https://api.github.com/repos/TerryCavanagh/diceydungeonsbeta | closed | Precious Egg and similar should be immune to fury/have unique behavior | High Priority release candidate v0.1 | When furied, precious egg duplicates, and this can make your skillcard inaccessible which is potentially run-breaking. Remix robot getting fury is really common so this can be a problem! Maybe it should just do nothing when used with fury, or skip a step? (Ex. precious egg 6 skips to 4, precious egg 2 just gives you th... | 1.0 | Precious Egg and similar should be immune to fury/have unique behavior - When furied, precious egg duplicates, and this can make your skillcard inaccessible which is potentially run-breaking. Remix robot getting fury is really common so this can be a problem! Maybe it should just do nothing when used with fury, or skip... | non_process | precious egg and similar should be immune to fury have unique behavior when furied precious egg duplicates and this can make your skillcard inaccessible which is potentially run breaking remix robot getting fury is really common so this can be a problem maybe it should just do nothing when used with fury or skip... | 0 |

2,007 | 4,827,340,176 | IssuesEvent | 2016-11-07 13:19:24 | opentrials/opentrials | https://api.github.com/repos/opentrials/opentrials | closed | Fix identifiers extraction in EUCTR | 3. In Development bug Processors | Currently we just [prepend "EUCTR" to `eudract_number`](https://github.com/opentrials/processors/blob/master/processors/euctr/extractors.py#L27) but there are [records whose `eudract_number` looks like `EUCTR2014-001259-22-3rd`](https://query.opentrials.net/queries/140). They will end up [having no identifiers](https:/... | 1.0 | Fix identifiers extraction in EUCTR - Currently we just [prepend "EUCTR" to `eudract_number`](https://github.com/opentrials/processors/blob/master/processors/euctr/extractors.py#L27) but there are [records whose `eudract_number` looks like `EUCTR2014-001259-22-3rd`](https://query.opentrials.net/queries/140). They will ... | process | fix identifiers extraction in euctr currently we just but there are they will end up we should prepend smartly to avoid turning identifiers like the one above into invalid ones euctr processor is the one that has been observed but there may be others with this issue so they should all... | 1 |

8,540 | 11,714,073,216 | IssuesEvent | 2020-03-09 11:34:44 | kazuwjnlab/cvpaper | https://api.github.com/repos/kazuwjnlab/cvpaper | opened | [cvpaper] CVPR2019 #686 Libra R-CNN: Towards Balanced Learning for Object Detection | Object Detection imbalance training process | ## \#686 [Libra R-CNN: Towards Balanced Learning for Object Detection](http://openaccess.thecvf.com/content_CVPR_2019/papers/Pang_Libra_R-CNN_Towards_Balanced_Learning_for_Object_Detection_CVPR_2019_paper.pdf)

Jiangmiao Pang, Kai Chen, Jianping Shi, Huajun Feng, Wanli Ouyang, Dahua Lin

### どんな論文か?

この研究は、物体検出の標準的な訓練方法を再... | 1.0 | [cvpaper] CVPR2019 #686 Libra R-CNN: Towards Balanced Learning for Object Detection - ## \#686 [Libra R-CNN: Towards Balanced Learning for Object Detection](http://openaccess.thecvf.com/content_CVPR_2019/papers/Pang_Libra_R-CNN_Towards_Balanced_Learning_for_Object_Detection_CVPR_2019_paper.pdf)

Jiangmiao Pang, Kai Chen... | process | libra r cnn towards balanced learning for object detection jiangmiao pang kai chen jianping shi huajun feng wanli ouyang dahua lin どんな論文か? この研究は、物体検出の標準的な訓練方法を再検討します。 この論文では、検出性能についての言及は、 。 )サンプルレベル、 )特徴レベル、 )客観的レベル。 この観察の結果として、本論文は天秤座r cnn、物体検出のためのバランスのとれた学習に向けた簡単だが効果的なフレームワークを提案する。 ... | 1 |

21,780 | 30,294,577,725 | IssuesEvent | 2023-07-09 17:44:29 | The-Data-Alchemists-Manipal/MindWave | https://api.github.com/repos/The-Data-Alchemists-Manipal/MindWave | closed | Eye Detection Using opencv | image-processing | ## 💥 Proposal

In this project, I am going to use open cv to detect eyes in the image/video.

| 1.0 | Eye Detection Using opencv - ## 💥 Proposal

In this project, I am going to use open cv to detect eyes in the image/video.

| process | eye detection using opencv 💥 proposal in this project i am going to use open cv to detect eyes in the image video | 1 |

57,139 | 8,142,320,778 | IssuesEvent | 2018-08-21 07:10:16 | github/orchestrator | https://api.github.com/repos/github/orchestrator | closed | Differentiating GracefulTakeover from MasterFailure in Hook | documentation | I noticed that the Recovery Hooks do not seem to differentiate between planned failovers (Graceful-Master-Takeover) and actual failures.

I had been having an issue where I has triggering a STONITH process in my PreFailoverProcesses and noticed that this was making my GracefulTakeovers fail. What was happening was I... | 1.0 | Differentiating GracefulTakeover from MasterFailure in Hook - I noticed that the Recovery Hooks do not seem to differentiate between planned failovers (Graceful-Master-Takeover) and actual failures.

I had been having an issue where I has triggering a STONITH process in my PreFailoverProcesses and noticed that this ... | non_process | differentiating gracefultakeover from masterfailure in hook i noticed that the recovery hooks do not seem to differentiate between planned failovers graceful master takeover and actual failures i had been having an issue where i has triggering a stonith process in my prefailoverprocesses and noticed that this ... | 0 |

7,229 | 10,368,257,304 | IssuesEvent | 2019-09-07 15:29:55 | banctilrobitaille/kerosene | https://api.github.com/repos/banctilrobitaille/kerosene | closed | [FEATURE] Implement a standard api for custom variable ploting | EventPreprocessor ploting | **Is your feature request related to a problem? Please describe.**

No.

**Describe the solution you'd like**

Instead of having different EventPreprocessor for every visdom plot type we should have a single event preprocessor for custom variables that accepts the type plot, frequency and opts as args.

**Describe ... | 1.0 | [FEATURE] Implement a standard api for custom variable ploting - **Is your feature request related to a problem? Please describe.**

No.

**Describe the solution you'd like**

Instead of having different EventPreprocessor for every visdom plot type we should have a single event preprocessor for custom variables that ... | process | implement a standard api for custom variable ploting is your feature request related to a problem please describe no describe the solution you d like instead of having different eventpreprocessor for every visdom plot type we should have a single event preprocessor for custom variables that accepts ... | 1 |

69,926 | 30,505,008,875 | IssuesEvent | 2023-07-18 16:13:59 | hashicorp/terraform-provider-azurerm | https://api.github.com/repos/hashicorp/terraform-provider-azurerm | closed | Error: could not find DNS zone | bug service/dns | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Community Note

<!--- Please keep this note for the community --->

* Please vote on this issue by adding a :thumbsup: [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to th... | 1.0 | Error: could not find DNS zone - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Community Note

<!--- Please keep this note for the community --->

* Please vote on this issue by adding a :thumbsup: [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-req... | non_process | error could not find dns zone is there an existing issue for this i have searched the existing issues community note please vote on this issue by adding a thumbsup to the original issue to help the community and maintainers prioritize this request please do not leave or me... | 0 |

9,944 | 11,948,421,548 | IssuesEvent | 2020-04-03 11:49:57 | icatproject/python-icat | https://api.github.com/repos/icatproject/python-icat | opened | Consider to switch to suds-community | compatibility | The original version of `suds` is dead since very long time. Jurko Gospodnetić has been taken over and created the `suds-jurko` fork. But development seem to have stalled again since 2015. Many new forks have been created since then, most of them rather short-lived. Now, there is one that lasted a little bit longer an... | True | Consider to switch to suds-community - The original version of `suds` is dead since very long time. Jurko Gospodnetić has been taken over and created the `suds-jurko` fork. But development seem to have stalled again since 2015. Many new forks have been created since then, most of them rather short-lived. Now, there is... | non_process | consider to switch to suds community the original version of suds is dead since very long time jurko gospodnetić has been taken over and created the suds jurko fork but development seem to have stalled again since many new forks have been created since then most of them rather short lived now there is on... | 0 |

729,081 | 25,108,544,443 | IssuesEvent | 2022-11-08 18:30:10 | cse-sim/cse | https://api.github.com/repos/cse-sim/cse | opened | Review and consider localizing environment-specific code | 1 - low priority | The original idea of envpak was to isolate OS-specific and/or compiler-specific code -- provide a uniform internal API for the associated features.

There are OS and compiler dependencies cropping up in other files. Notably rmkerr, but others also.

Those dependencies could be reviewed and some capabilities moved ... | 1.0 | Review and consider localizing environment-specific code - The original idea of envpak was to isolate OS-specific and/or compiler-specific code -- provide a uniform internal API for the associated features.

There are OS and compiler dependencies cropping up in other files. Notably rmkerr, but others also.

Those ... | non_process | review and consider localizing environment specific code the original idea of envpak was to isolate os specific and or compiler specific code provide a uniform internal api for the associated features there are os and compiler dependencies cropping up in other files notably rmkerr but others also those ... | 0 |

611,590 | 18,959,053,651 | IssuesEvent | 2021-11-19 00:51:38 | GoogleChrome/lighthouse | https://api.github.com/repos/GoogleChrome/lighthouse | closed | Lighthouse test feature in Chrome just stopped working | bug needs-priority | ### FAQ

- [X] Yes, my issue is not about [variability](https://github.com/GoogleChrome/lighthouse/blob/master/docs/variability.md) or [throttling](https://github.com/GoogleChrome/lighthouse/blob/master/docs/throttling.md).

- [X] Yes, my issue is not about a specific accessibility audit (file with [axe-core](https://gi... | 1.0 | Lighthouse test feature in Chrome just stopped working - ### FAQ

- [X] Yes, my issue is not about [variability](https://github.com/GoogleChrome/lighthouse/blob/master/docs/variability.md) or [throttling](https://github.com/GoogleChrome/lighthouse/blob/master/docs/throttling.md).

- [X] Yes, my issue is not about a spe... | non_process | lighthouse test feature in chrome just stopped working faq yes my issue is not about or yes my issue is not about a specific accessibility audit file with instead url what happened i cannot perform lighthouse test on for a few days now there wasn t any problems befor... | 0 |

10,147 | 13,044,162,542 | IssuesEvent | 2020-07-29 03:47:33 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `Sleep` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `Sleep` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_expr)

... | 2.0 | UCP: Migrate scalar function `Sleep` from TiDB -

## Description

Port the scalar function `Sleep` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @breeswish

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/... | process | ucp migrate scalar function sleep from tidb description port the scalar function sleep from tidb to coprocessor score mentor s breeswish recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

372,775 | 26,019,138,222 | IssuesEvent | 2022-12-21 11:02:37 | boostercloud/booster | https://api.github.com/repos/boostercloud/booster | opened | Write documentation on how to extend existing provider implementations or create your own from scratch | documentation provider:multicloud | It would be extremely helpful to have documentation on extending existing provider implementations or creating new ones from scratch. This would allow developers to customize the infrastructure to meet their specific requirements more easily, and it would also make Booster easier to adopt in specific environments with ... | 1.0 | Write documentation on how to extend existing provider implementations or create your own from scratch - It would be extremely helpful to have documentation on extending existing provider implementations or creating new ones from scratch. This would allow developers to customize the infrastructure to meet their specifi... | non_process | write documentation on how to extend existing provider implementations or create your own from scratch it would be extremely helpful to have documentation on extending existing provider implementations or creating new ones from scratch this would allow developers to customize the infrastructure to meet their specifi... | 0 |

7,271 | 10,425,352,520 | IssuesEvent | 2019-09-16 15:15:50 | SpongePowered/Mixin | https://api.github.com/repos/SpongePowered/Mixin | closed | Add support for SuppressWarnings annotation in Mixin AP to allow silencing nuisance warnings | annotation processor enhancement | I want to have zero warnings when compiling my code, but currently mixin makes this impossible. This is basically the same issue as #290 but since `@Dynamic` not helping is intentional, it was closed. But this still leaves no solution to silence the warnings.

Consider there is a class `X` (in my case `RenderChunk`) ... | 1.0 | Add support for SuppressWarnings annotation in Mixin AP to allow silencing nuisance warnings - I want to have zero warnings when compiling my code, but currently mixin makes this impossible. This is basically the same issue as #290 but since `@Dynamic` not helping is intentional, it was closed. But this still leaves no... | process | add support for suppresswarnings annotation in mixin ap to allow silencing nuisance warnings i want to have zero warnings when compiling my code but currently mixin makes this impossible this is basically the same issue as but since dynamic not helping is intentional it was closed but this still leaves no s... | 1 |

767,487 | 26,927,623,433 | IssuesEvent | 2023-02-07 14:48:17 | daisy/ebraille | https://api.github.com/repos/daisy/ebraille | opened | Automatic, converted, and human prepared files will have different levels of quality and expectations | use case High Priority content spec metadata | I am a braille user and my expectations for the quality of file I am receiving will vary based on whether the file was prepared by a transcriber, an automatic process, or converted from a braille file in an older format.

*Detail*

The best braille files will be those prepared by a human and within that subset they w... | 1.0 | Automatic, converted, and human prepared files will have different levels of quality and expectations - I am a braille user and my expectations for the quality of file I am receiving will vary based on whether the file was prepared by a transcriber, an automatic process, or converted from a braille file in an older for... | non_process | automatic converted and human prepared files will have different levels of quality and expectations i am a braille user and my expectations for the quality of file i am receiving will vary based on whether the file was prepared by a transcriber an automatic process or converted from a braille file in an older for... | 0 |

340,408 | 10,272,042,724 | IssuesEvent | 2019-08-23 15:27:18 | KSP-SpaceDock/SpaceDock | https://api.github.com/repos/KSP-SpaceDock/SpaceDock | opened | On the Game page the banner "Browse N more Mods" counts unpublished mods | Priority: Low Type: Backend Type: Bug | Description says it all

| 1.0 | On the Game page the banner "Browse N more Mods" counts unpublished mods - Description says it all

| non_process | on the game page the banner browse n more mods counts unpublished mods description says it all | 0 |

6,944 | 10,112,666,444 | IssuesEvent | 2019-07-30 15:07:08 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | PowerShell Steps for ML Anomaly Detection | Pri2 assigned-to-author machine-learning/svc product-question team-data-science-process/subsvc triaged | Will the 'Invoke-MLAnomalyapi', as shown in the below video, be added to the Az.MachineLearning module in future?

https://www.youtube.com/embed/1EVHChiqZOw?start=1399&end=1443

[](https://www.youtube.com/embed/1EVHChiqZOw?start=1399&end=1443)

---

... | 1.0 | PowerShell Steps for ML Anomaly Detection - Will the 'Invoke-MLAnomalyapi', as shown in the below video, be added to the Az.MachineLearning module in future?

https://www.youtube.com/embed/1EVHChiqZOw?start=1399&end=1443

[](https://www.youtube.com/em... | process | powershell steps for ml anomaly detection will the invoke mlanomalyapi as shown in the below video be added to the az machinelearning module in future document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id ver... | 1 |

11,824 | 14,648,348,865 | IssuesEvent | 2020-12-27 02:13:47 | YDongY/Coding | https://api.github.com/repos/YDongY/Coding | opened | 02 Process Control | Coding | /docs/Shell/ShellScript/02-ProcessControl/ Gitalk | https://coding.ydongy.cn/docs/Shell/ShellScript/02-ProcessControl/

流程控制 # 条件判断 # if-then # if 条件/命令; then 指令 fi bash shell 的 if 语句会运行 if 后面的那个命令。如果该命令的退出状态码是 0(该命令成功运行),位于 then 部分的命令就会被执行。如果该命令的退出状态码是其他值,then 部分的命令就不会被执行。fi 语句用来表示 if-then 语句到此结束

#!/bin/bash if pwd ; then echo $(pwd) fi if-then-else # if 条件 ; the... | 1.0 | 02 Process Control | Coding - https://coding.ydongy.cn/docs/Shell/ShellScript/02-ProcessControl/

流程控制 # 条件判断 # if-then # if 条件/命令; then 指令 fi bash shell 的 if 语句会运行 if 后面的那个命令。如果该命令的退出状态码是 0(该命令成功运行),位于 then 部分的命令就会被执行。如果该命令的退出状态码是其他值,then 部分的命令就不会被执行。fi 语句用来表示 if-then 语句到此结束

#!/bin/bash if pwd ; then echo $(pwd) ... | process | process control coding 流程控制 条件判断 if then if 条件 命令 then 指令 fi bash shell 的 if 语句会运行 if 后面的那个命令。如果该命令的退出状态码是 (该命令成功运行),位于 then 部分的命令就会被执行。如果该命令的退出状态码是其他值,then 部分的命令就不会被执行。fi 语句用来表示 if then 语句到此结束 bin bash if pwd then echo pwd fi if then else if 条件 then else fi if pwd then echo su... | 1 |

678,133 | 23,189,733,062 | IssuesEvent | 2022-08-01 11:34:29 | ita-social-projects/TeachUA | https://api.github.com/repos/ita-social-projects/TeachUA | opened | [News in administration tab] Admin can not change information in any news item without changing the date of the news to the current date or future date | bug Backend Priority: Medium | **Environment:** Windows 10 Professional, Chrome 96.0.4664.110

**Reproducible:** always

**Build found:** https://speak-ukrainian.org.ua/dev/

**Preconditions**

1. Log in as an administrator on https://speak-ukrainian.org.ua/dev/ (login: [admin@gmail.com](mailto:admin@gmail.com); password: admin)

2. Go to the admi... | 1.0 | [News in administration tab] Admin can not change information in any news item without changing the date of the news to the current date or future date - **Environment:** Windows 10 Professional, Chrome 96.0.4664.110

**Reproducible:** always

**Build found:** https://speak-ukrainian.org.ua/dev/

**Preconditions**

1... | non_process | admin can not change information in any news item without changing the date of the news to the current date or future date environment windows professional chrome reproducible always build found preconditions log in as an administrator on login mailto admin gmail com ... | 0 |

5,435 | 8,299,549,511 | IssuesEvent | 2018-09-21 03:35:31 | flutterchina/dio | https://api.github.com/repos/flutterchina/dio | closed | Content size exceeds specified contentLength | processing | ## Steps to Reproduce

- 请求方式

```java

Map<String, dynamic> data

dio.post(path, data: data);

```

- 请求失败

```java

requestPost host:http://api-shuangshi.test.tengyue360.com/ path:/backend/student/stuApp/evaluate data:{content: 测试测试测试, courseId: 110, score: 3, anonymous: 1, evaluateObject: 2, timestamp: 15374... | 1.0 | Content size exceeds specified contentLength - ## Steps to Reproduce

- 请求方式

```java

Map<String, dynamic> data

dio.post(path, data: data);

```

- 请求失败

```java

requestPost host:http://api-shuangshi.test.tengyue360.com/ path:/backend/student/stuApp/evaluate data:{content: 测试测试测试, courseId: 110, score: 3, an... | process | content size exceeds specified contentlength steps to reproduce 请求方式 java map data dio post path data data 请求失败 java requestpost host path backend student stuapp evaluate data content 测试测试测试 courseid score anonymous evaluateobject timestamp 如果我把con... | 1 |

9,992 | 13,039,523,833 | IssuesEvent | 2020-07-28 16:54:01 | GoogleCloudPlatform/python-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples | closed | [tests] Tests in tables/automl/dataset_test.py expect to have access to gs://python-docs-samples-tests-automl-tables-test | api: automl priority: p2 type: process | ## In which file did you encounter the issue?

tables/automl/dataset_test.py

## Describe the issue

All tests are failing with error:

AccessDeniedException: 403 xxx@xxx.com does not have storage.objects.list access to the Google Cloud Storage bucket.

It's not clear what account should I use for tests. All that... | 1.0 | [tests] Tests in tables/automl/dataset_test.py expect to have access to gs://python-docs-samples-tests-automl-tables-test - ## In which file did you encounter the issue?

tables/automl/dataset_test.py

## Describe the issue

All tests are failing with error:

AccessDeniedException: 403 xxx@xxx.com does not have st... | process | tests in tables automl dataset test py expect to have access to gs python docs samples tests automl tables test in which file did you encounter the issue tables automl dataset test py describe the issue all tests are failing with error accessdeniedexception xxx xxx com does not have storage ob... | 1 |

205,136 | 23,299,717,965 | IssuesEvent | 2022-08-07 05:57:10 | TheFloAnd/eventlisting-laravel | https://api.github.com/repos/TheFloAnd/eventlisting-laravel | closed | laravel-mix-6.0.43.tgz: 2 vulnerabilities (highest severity is: 7.5) - autoclosed | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>laravel-mix-6.0.43.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/terser/package.json</p>

<p... | True | laravel-mix-6.0.43.tgz: 2 vulnerabilities (highest severity is: 7.5) - autoclosed - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>laravel-mix-6.0.43.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /pack... | non_process | laravel mix tgz vulnerabilities highest severity is autoclosed vulnerable library laravel mix tgz path to dependency file package json path to vulnerable library node modules terser package json found in head commit a href vulnerabilities cve severity ... | 0 |

279,751 | 24,252,594,201 | IssuesEvent | 2022-09-27 15:12:20 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | Test badge on TreeView | testplan-item | Refs: https://github.com/microsoft/vscode/issues/62783

- [x] anyOS @meganrogge

- [x] anyOS @bpasero

Complexity: 4

Roles: Developer

[Create Issue](https://github.com/microsoft/vscode/issues/new?body=Testing+%23161780%0A%0A&assignees=alexr00)

---

We have newly finalized API for badges on TreeViews: https:... | 1.0 | Test badge on TreeView - Refs: https://github.com/microsoft/vscode/issues/62783

- [x] anyOS @meganrogge

- [x] anyOS @bpasero

Complexity: 4

Roles: Developer

[Create Issue](https://github.com/microsoft/vscode/issues/new?body=Testing+%23161780%0A%0A&assignees=alexr00)

---

We have newly finalized API for ba... | non_process | test badge on treeview refs anyos meganrogge anyos bpasero complexity roles developer we have newly finalized api for badges on treeviews this badge shows as a circle with a number on the view s view container to test read the inline documentation for the api and v... | 0 |

16,093 | 20,262,151,763 | IssuesEvent | 2022-02-15 08:39:47 | sillsdev/silnlp | https://api.github.com/repos/sillsdev/silnlp | opened | The source vocab for a multilingual parent model is not correctly transferred to the child model | bug pipeline 3: preprocess | The source vocab for a multilingual model will contain special target language tags. When a child model is finetuned from a multilingual model, it will need to add a new target language tag to the vocab. Because of this, the preprocess script will build a new sentencepiece model, because the child vocab contains tokens... | 1.0 | The source vocab for a multilingual parent model is not correctly transferred to the child model - The source vocab for a multilingual model will contain special target language tags. When a child model is finetuned from a multilingual model, it will need to add a new target language tag to the vocab. Because of this, ... | process | the source vocab for a multilingual parent model is not correctly transferred to the child model the source vocab for a multilingual model will contain special target language tags when a child model is finetuned from a multilingual model it will need to add a new target language tag to the vocab because of this ... | 1 |

160,794 | 13,797,237,431 | IssuesEvent | 2020-10-09 21:22:51 | rrousselGit/river_pod | https://api.github.com/repos/rrousselGit/river_pod | closed | Usage of watch. Documentation shows 2 different usage of watch | documentation | Documentation shows 2 different usage of watch:

`watch(xxxProvider.state)` and `watch(xxxProvider).state` , is there any difference? clarify when to use which? | 1.0 | Usage of watch. Documentation shows 2 different usage of watch - Documentation shows 2 different usage of watch:

`watch(xxxProvider.state)` and `watch(xxxProvider).state` , is there any difference? clarify when to use which? | non_process | usage of watch documentation shows different usage of watch documentation shows different usage of watch watch xxxprovider state and watch xxxprovider state is there any difference clarify when to use which | 0 |

7,739 | 10,862,521,073 | IssuesEvent | 2019-11-14 13:28:38 | bisq-network/bisq | https://api.github.com/repos/bisq-network/bisq | closed | Buying/selling grin not possible through bisq? | in:altcoins in:trade-process was:dropped | Grin was added to bisq in https://github.com/bisq-network/bisq/pull/2217 but as far as I can tell it's not possible to (reliably, or maybe at all) buy/sell grin through bisq for two reasons:

1. grin cannot be transferred without off-chain interaction between accounts in question (see e.g. https://blog.blockcypher.c... | 1.0 | Buying/selling grin not possible through bisq? - Grin was added to bisq in https://github.com/bisq-network/bisq/pull/2217 but as far as I can tell it's not possible to (reliably, or maybe at all) buy/sell grin through bisq for two reasons:

1. grin cannot be transferred without off-chain interaction between accounts... | process | buying selling grin not possible through bisq grin was added to bisq in but as far as i can tell it s not possible to reliably or maybe at all buy sell grin through bisq for two reasons grin cannot be transferred without off chain interaction between accounts in question see e g easiest way with bis... | 1 |

291,606 | 21,931,781,228 | IssuesEvent | 2022-05-23 10:22:00 | typeorm/typeorm | https://api.github.com/repos/typeorm/typeorm | closed | connection-api falsely imports getEntityManager instead of getManager | documentation requires triage | <!--

Please follow the template. If you don't, your issue may be closed.

Have a question? This is the TypeORM issue tracker - and not the right place

for general support or questions. Instead, check the "Support" Documentation

on the best places to ask questions!

https://github.com/typeorm/typeorm... | 1.0 | connection-api falsely imports getEntityManager instead of getManager - <!--

Please follow the template. If you don't, your issue may be closed.

Have a question? This is the TypeORM issue tracker - and not the right place

for general support or questions. Instead, check the "Support" Documentation

on t... | non_process | connection api falsely imports getentitymanager instead of getmanager please follow the template if you don t your issue may be closed have a question this is the typeorm issue tracker and not the right place for general support or questions instead check the support documentation on t... | 0 |

90,661 | 26,162,176,809 | IssuesEvent | 2022-12-31 18:33:58 | sandboxie-plus/Sandboxie | https://api.github.com/repos/sandboxie-plus/Sandboxie | closed | SandMan: Explorer context menu does not work directly after a clean installation on Windows 11 | fixed in next build Issue reproduced Win 11 SbieDll | ### Describe what you noticed and did

With a clean reinstallation, the **Explorer context menu** (shell integration) usually only works after a restart of Windows.

### Clean VM (running **Windows 11**, 21H2, 64-bit):

1.) Download and install the latest version of Sandboxie-Plus (v1.3.4, 64-bit).

2.) Click thr... | 1.0 | SandMan: Explorer context menu does not work directly after a clean installation on Windows 11 - ### Describe what you noticed and did

With a clean reinstallation, the **Explorer context menu** (shell integration) usually only works after a restart of Windows.

### Clean VM (running **Windows 11**, 21H2, 64-bit):

... | non_process | sandman explorer context menu does not work directly after a clean installation on windows describe what you noticed and did with a clean reinstallation the explorer context menu shell integration usually only works after a restart of windows clean vm running windows bit ... | 0 |

15,158 | 18,909,998,106 | IssuesEvent | 2021-11-16 13:12:51 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Better error message if using `TEXT` or `BLOB` in MySQL @id/@index/@unique | process/candidate topic: indexes team/migrations topic: extendedIndexes | We now say this is not allowed. We should point to the correct preview feature and mention about using the `length` argument for indexes to work with TEXT or BLOB fields. | 1.0 | Better error message if using `TEXT` or `BLOB` in MySQL @id/@index/@unique - We now say this is not allowed. We should point to the correct preview feature and mention about using the `length` argument for indexes to work with TEXT or BLOB fields. | process | better error message if using text or blob in mysql id index unique we now say this is not allowed we should point to the correct preview feature and mention about using the length argument for indexes to work with text or blob fields | 1 |

52,493 | 27,592,009,460 | IssuesEvent | 2023-03-09 01:33:07 | keras-team/keras | https://api.github.com/repos/keras-team/keras | closed | Can't excute ConvNeXt with input_tensor | type:bug/performance stat:awaiting response from contributor stale | When keras.applications.ConvNeXt is executed with input_tensor, it is not executed.

The cause seems to be a problem that occurs as it changes to the form of '[input_tensor]' when passing utils.layer_utils.get_source_inputs.

(When using input_shape, there is no problem.)

This problem was identified in versions tens... | True | Can't excute ConvNeXt with input_tensor - When keras.applications.ConvNeXt is executed with input_tensor, it is not executed.

The cause seems to be a problem that occurs as it changes to the form of '[input_tensor]' when passing utils.layer_utils.get_source_inputs.

(When using input_shape, there is no problem.)

Th... | non_process | can t excute convnext with input tensor when keras applications convnext is executed with input tensor it is not executed the cause seems to be a problem that occurs as it changes to the form of when passing utils layer utils get source inputs when using input shape there is no problem this problem wa... | 0 |

21,413 | 29,359,588,912 | IssuesEvent | 2023-05-28 00:36:15 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Remoto] Node.js Developer na Coodesh | SALVADOR BACK-END INFRAESTRUTURA FULL-STACK SCRUM BDD GIT TYPESCRIPT NODE.JS DOCKER DEVOPS REACT AWS REMOTO PROCESSOS INOVAÇÃO BACKEND GITHUB KANBAN CI CD SEGURANÇA GITFLOW UMA C QUALIDADE CLEAN XP TESTES AUTOMATIZADOS MICROSERVICES METODOLOGIAS ÁGEIS EXPRESS NEGÓCIOS MONITORAMENTO SRE PAAS Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vagas/nodejs-developer-plsr-131641269?utm_s... | 1.0 | [Remoto] Node.js Developer na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/vag... | process | node js developer na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personalizado de candidatura 👋 ... | 1 |

11,876 | 14,675,535,217 | IssuesEvent | 2020-12-30 17:50:40 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | gdal polygonize fails with "No module named '_gdal'. message | Bug Feedback Processing | <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

-... | 1.0 | gdal polygonize fails with "No module named '_gdal'. message - <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.